Deep Learning in Embryo Assessment: How Convolutional Neural Networks are Revolutionizing IVF Selection

This article comprehensively examines the application of Convolutional Neural Networks (CNNs) for embryo quality assessment in clinical in vitro fertilization (IVF).

Deep Learning in Embryo Assessment: How Convolutional Neural Networks are Revolutionizing IVF Selection

Abstract

This article comprehensively examines the application of Convolutional Neural Networks (CNNs) for embryo quality assessment in clinical in vitro fertilization (IVF). Covering foundational principles to clinical validation, we explore how deep learning models analyze time-lapse imaging and static embryo images to predict development potential, ploidy status, and clinical outcomes. The review synthesizes evidence from recent studies on model architectures, including novel federated learning approaches for data privacy, explainable AI for clinical trust, and performance comparisons against manual embryologist assessment. For researchers and drug development professionals, this analysis identifies current methodological challenges, optimization strategies, and future directions for integrating AI-assisted embryo selection into precision reproductive medicine.

The Rise of AI in Embryology: Foundations of CNN-Based Embryo Assessment

Infertility affects an estimated 17.5% of the global adult population, with approximately one in six individuals experiencing infertility during their lifetime [1]. Despite advancing assisted reproductive technologies, average live birth rates remain around 30% per embryo transfer [2] [3], highlighting a critical need for improved embryo selection methodologies. This challenge is compounded by the subjectivity and inter-observer variability inherent in traditional morphological embryo assessment [1] [2].

Convolutional Neural Networks (CNNs) offer a transformative approach to embryo quality assessment by providing objective, data-driven evaluation that can identify subtle morphological patterns imperceptible to the human eye [1]. This protocol details the application of deep learning frameworks to enhance embryo selection, thereby addressing a pivotal bottleneck in IVF success.

Quantitative Performance of CNN-Based Embryo Assessment

Recent studies demonstrate that CNN-based models significantly outperform traditional assessment methods and even experienced embryologists in predicting embryo viability and implantation potential.

Table 1: Performance Metrics of CNN Models for Embryo Assessment

| Model / Study Description | Accuracy | Sensitivity | Specificity | AUC | Comparison / Notes |

|---|---|---|---|---|---|

| Dual-Branch CNN (EfficientNet-based) [4] | 94.3% | - | - | - | Outperformed standard CNNs (VGG-16, ResNet-50) |

| CNN for Blastocyst Implantation Selection [2] | 90.97% | - | - | - | Accuracy in choosing highest-quality embryo |

| CNN vs. Embryologists (Euploid Embryos) [2] | - | - | - | - | CNN: 75.26%; Embryologists: 67.35% (p<0.0001) |

| Meta-analysis of AI Embryo Selection [3] | - | 0.69 | 0.62 | 0.70 | Pooled diagnostic performance |

| Life Whisperer AI Model [3] | 64.3% | - | - | - | Prediction of clinical pregnancy |

| FiTTE System (Image + Clinical Data) [3] | 65.2% | - | - | 0.70 | - |

Table 2: Comparative Performance of CNN Architectures for Blastocyst Morphology Classification [5]

| CNN Architecture | Reported Performance |

|---|---|

| Xception | Best performing in differentiation based on morphology |

| Inception v3 | Evaluated for comparison |

| ResNET-50 | Evaluated for comparison |

| Inception-ResNET-v2 | Evaluated for comparison |

| NASNetLarge | Evaluated for comparison |

| ResNeXt-101 | Evaluated for comparison |

| ResNeXt-50 | Evaluated for comparison |

Experimental Protocols

Protocol 1: Development of a Dual-Branch CNN for Day 3 Embryo Assessment

This protocol outlines the methodology for creating a CNN that integrates spatial and morphological features for objective embryo quality evaluation on Day 3 of development [4].

Materials and Data Preparation

- Image Dataset: 220 embryo images from public datasets (e.g., Kaggle World Championship 2023 Embryo Classification).

- Hardware: Computer system with GPU support for deep learning model training.

- Software: Python with deep learning libraries (e.g., TensorFlow, Keras, PyTorch).

Methodology

Image Preprocessing and Segmentation:

- Standardize all input images to consistent dimensions and lighting conditions.

- Implement bounding box segmentation for individual embryos.

- Calculate morphological parameters: symmetry scores and fragmentation percentages from segmented images.

Dual-Branch CNN Architecture:

- Branch 1 (Spatial Features): Implement a modified EfficientNet architecture to extract deep spatial features from preprocessed embryo images.

- Branch 2 (Morphological Features): Process calculated symmetry scores and fragmentation percentages through a dedicated neural network branch.

- Feature Integration: Concatenate feature outputs from both branches.

- Classification: Process integrated features through SoftMax-activated fully connected layers for final quality grade classification.

Model Training:

- Utilize labeled dataset with embryo quality grades.

- Employ standard deep learning training procedures with appropriate loss function and optimizer.

- Training time: approximately 4.5 hours to achieve target performance.

Validation:

- Validate model performance on held-out test set.

- Compare results against traditional assessment methods and other CNN architectures.

Protocol 2: Static Image-Based Blastocyst Assessment at 113 hpi

This protocol describes the use of CNNs for embryo selection using single time-point static images captured at 113 hours post-insemination (hpi), enabling deployment in clinics without expensive time-lapse systems [2].

Materials and Data Preparation

- Image Dataset: 2,440 static human embryo images at 113 hpi.

- Data Source: Images captured using traditional microscopes or time-lapse systems with extracted single frames.

- Annotation: Embryos graded by senior embryologists using a hierarchical categorization system derived from Gardner grading.

Methodology

Data Organization and Hierarchical Structuring:

- Categorize embryos into training classes (1-5) based on developmental state at 113 hpi:

- Class 1: Degenerated/arrested embryos (no compaction)

- Class 2: Morula stage embryos

- Class 3: Early blastocysts (blastocoel cavity present, thick zona pellucida)

- Class 4: Blastocysts below cryopreservation quality

- Class 5: Blastocysts meeting cryopreservation criteria

- Group classes into inference categories: Non-blastocysts (Classes 1-2) and Blastocysts (Classes 3-5).

- Categorize embryos into training classes (1-5) based on developmental state at 113 hpi:

CNN Model Development:

- Employ transfer learning approach using a CNN pre-trained on ImageNet dataset (1.4 million images).

- Fine-tune the network (Xception architecture recommended) using the embryo image dataset.

- Implement a genetic algorithm scheme to generate unified scores for rank ordering embryos.

Model Evaluation:

- Test the model on an independent set of 97 clinical patient cohorts (742 embryos).

- For implantation potential assessment, use a separate test set of 97 euploid embryos with known implantation outcomes.

- Compare CNN performance against 15 trained embryologists from multiple fertility centers.

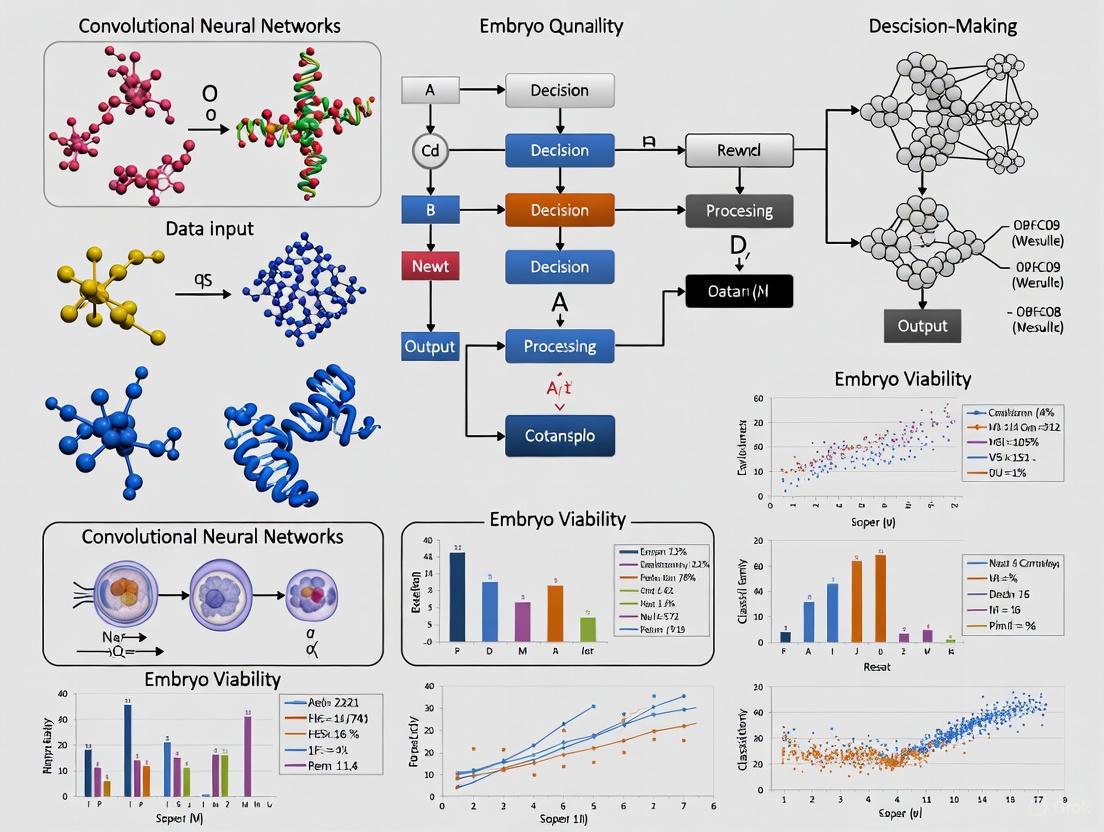

Workflow Visualization

CNN Embryo Assessment Workflow

Dual-Branch CNN Architecture

Research Reagent Solutions

Table 3: Essential Materials and Reagents for CNN Embryo Assessment Research

| Item | Function / Application | Specifications / Notes |

|---|---|---|

| Time-Lapse Imaging System (e.g., Embryoscope) | Continuous embryo monitoring without culture disturbance; generates training data [5] [1] | Uses Hoffman modulated contrast optics; captures images at multiple focal planes |

| Traditional Microscope with Camera | Image acquisition for static image analysis; enables technology access in resource-constrained settings [2] | Enables use of static image-based CNNs without time-lapse hardware |

| GPU-Accelerated Computing System | Training and deployment of deep learning models | Significantly reduces model training time; enables real-time inference |

| Embryo Image Datasets | Training and validation of CNN models | Publicly available datasets (e.g., Kaggle) or institutional collections [4] |

| Python Deep Learning Frameworks (TensorFlow, PyTorch) | Implementation of CNN architectures | Provides pre-built components for efficient model development |

| Data Annotation Platform | Embryologist labeling of training images | Critical for supervised learning; requires senior embryologist input |

CNNs represent a paradigm shift in embryo selection, demonstrating superior performance compared to traditional morphological assessment by embryologists. The protocols outlined enable implementation of both sophisticated dual-branch architectures for detailed morphological analysis and static image-based systems for broader accessibility. As these technologies evolve, integration with complementary advancements such as non-invasive genetic testing and intelligent incubator systems will further enhance IVF success rates, addressing the pressing global challenge of infertility. Future development should focus on creating more generalized models trained on diverse, multi-center datasets to ensure robust clinical applicability across diverse patient populations and clinic environments.

The selection of embryos with the highest developmental potential is a cornerstone of successful in vitro fertilization (IVF). For decades, this selection has relied on conventional methods: static morphological assessment and, more recently, manual morphokinetic analysis using time-lapse imaging (TLI) [6]. These methods, while foundational, are intrinsically limited by significant subjectivity and variability [7] [8]. Within research focused on Convolutional Neural Networks (CNNs) for embryo quality assessment, a precise understanding of these limitations is crucial. It not only justifies the development of automated systems but also informs the design of robust models and training datasets that directly address the shortcomings of human-based evaluation. This document details these limitations, supported by quantitative data and experimental protocols, to provide a clear rationale for the integration of artificial intelligence (AI) in embryology.

Limitations of Static Morphological Assessment

Static morphological assessment involves the visual evaluation of embryos at discrete, predetermined time points using a standard microscope. Embryos are removed from the incubator for these brief examinations, and their quality is graded based on established criteria.

Core Limitations and Underlying Causes

The primary limitations of this method stem from its inherent design:

- Subjectivity and Inter-Observer Variability: Visual grading is highly dependent on the embryologist's expertise and experience. Parameters such as cell symmetry, fragmentation degree, and trophectoderm (TE) structure are open to interpretation, leading to inconsistent scoring between different embryologists [8] [1].

- Disruption of Culture Conditions: Removing embryos from the stable environment of the incubator for assessment exposes them to fluctuations in temperature, pH, and gas levels. This repeated environmental stress can potentially compromise embryo viability [6].

- Incomplete Developmental Data: As a "snapshot" in time, static assessment misses critical dynamic events in embryonic development. Abnormalities in cell division patterns or other transient morphokinetic phenomena that occur between observations are undetectable [6].

Quantitative Evidence of Limitations

Table 1: Performance Comparison of Embryologist Morphological Assessment vs. AI Models

| Evaluation Method | Predictive Task | Median Accuracy | Key References |

|---|---|---|---|

| Embryologist Morphological Assessment | Embryo Morphology Grade | 65.4% (Range: 47-75%) | [8] |

| AI Models (Image-Based) | Embryo Morphology Grade | 75.5% (Range: 59-94%) | [8] |

| Embryologist Morphological Assessment | Clinical Pregnancy | 64% (Range: 58-76%) | [8] |

| AI Models (Image-Based) | Clinical Pregnancy | 77.8% (Range: 68-90%) | [8] |

The data in Table 1, synthesized from a systematic review, demonstrates that AI models consistently outperform trained embryologists in predicting both embryo morphology and clinical pregnancy outcomes from images, highlighting the limitation of human visual assessment [8].

Limitations of Manual Morphokinetic Analysis

Time-lapse imaging (TLI) systems represent a significant advancement by enabling continuous, non-invasive monitoring of embryo development within the incubator. They capture images at short, regular intervals, generating a video sequence that allows for manual morphokinetic analysis—the tracking of the timing of specific developmental milestones.

Core Limitations and Underlying Causes

Despite its advantages over static assessment, manual morphokinetic analysis retains several key limitations:

- Labor-Intensive and Time-Consuming: The review of extensive time-lapse videos for each embryo is a protracted process, making it difficult to scale in high-throughput clinical or research settings [1].

- Persistent Subjectivity: Although TLI provides more data, the interpretation of morphokinetic parameters (e.g., precise timing of cell divisions) remains prone to human subjectivity and inter-observer disagreement [7] [6].

- Algorithm Generalizability: Proprietary selection algorithms bundled with TLI systems are often trained on specific populations and may not perform optimally across diverse patient demographics or different laboratory protocols [6].

- Limited Predictive Scope for Ploidy: A critical limitation is the inability to accurately detect all types of aneuploidy. Embryos with trisomies (an extra chromosome), particularly of small to medium-sized chromosomes, display morphokinetic profiles nearly identical to euploid embryos, "flying under the radar" of manual and algorithm-based TLI analysis [9].

Quantitative Evidence of Limitations

Table 2: Diagnostic Performance of Manual and AI-Enhanced Embryo Assessment

| Method | Input Data Type | Pooled Sensitivity | Pooled Specificity | Area Under Curve (AUC) | Key References |

|---|---|---|---|---|---|

| AI-Based Methods (Pooled) | Images & Clinical Data | 0.69 | 0.62 | 0.70 | [3] |

| MAIA AI Platform (Prospective) | Blastocyst Images | - | - | 0.65 | [7] |

| Integrated Fusion Model (Image + Clinical) | Blastocyst Images & Clinical Data | - | - | 0.91 | [10] |

| Manual Embryologist Selection | Images & Clinical Data | - | - | - | [8] |

Table 2 shows that while AI models show robust performance, no model is perfect. The MAIA platform's AUC of 0.65 in a prospective clinical test indicates room for improvement [7]. Furthermore, the superior performance of a fusion model (AUC 0.91) that integrates both images and clinical data versus an image-only CNN model (AUC 0.73) underscores that image analysis alone is insufficient for maximal predictive power [10].

Experimental Protocols for Validation

For researchers aiming to quantitatively evaluate these limitations or benchmark new CNN models, the following protocols provide a framework.

Protocol 1: Quantifying Inter-Observer Variability in Morphological Grading

Objective: To measure the consistency of embryo quality assessments between different embryologists. Materials:

- Curated dataset of static embryo images (minimum n=200) at a specific developmental stage (e.g., Day 3 or Day 5).

- Panel of at least 3 trained embryologists.

- Standardized grading form based on Gardner criteria or Istanbul consensus. Procedure:

- Each embryologist independently grades the entire set of images, blinded to the assessments of others and clinical outcomes.

- Record scores for key parameters: blastocyst expansion grade, inner cell mass (ICM) quality, and trophectoderm (TE) quality.

- For Day-3 embryos, record cell number, symmetry, and fragmentation percentage. Data Analysis:

- Calculate the intra-class correlation coefficient (ICC) for continuous measures (e.g., fragmentation %).

- Compute Fleiss' Kappa statistic for categorical ratings (e.g., ICM grade A, B, C). An ICC/Kappa value below 0.7 generally indicates poor to moderate agreement, highlighting significant subjectivity.

Protocol 2: Benchmarking CNN Performance Against Manual Morphokinetic Analysis

Objective: To compare the accuracy of a CNN model versus embryologists in predicting a key clinical outcome (e.g., blastocyst formation or clinical pregnancy) from time-lapse data. Materials:

- Time-lapse video dataset of embryos with known, unambiguous outcomes.

- Cohort of embryologists for manual analysis.

- Trained CNN model (e.g., based on EfficientNet, ResNet architectures). Procedure:

- Embryologists review time-lapse videos and provide a ranking or binary prediction (e.g., high/low potential) for each embryo.

- The same dataset is processed by the CNN model to generate its predictions.

- Predictions from both methods are compared against the ground truth outcomes. Data Analysis:

- Construct ROC curves for both manual and CNN predictions.

- Compare Accuracy, Sensitivity, Specificity, and AUC.

- A study using this design found a CNN achieved 75.26% accuracy in identifying implantation-potential euploid embryos, outperforming 15 embryologists whose average accuracy was 67.35% (p<0.0001) [11].

Visualization of Conventional Workflow and Limitations

The following diagram illustrates the standard workflow for conventional embryo assessment and pinpoints where its key limitations are introduced.

Conventional Embryo Assessment Workflow & Limitations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Embryo Assessment Research

| Item | Function in Research | Example Product/Brand |

|---|---|---|

| Time-Lapse Incubator | Provides continuous imaging in a stable culture environment. Enables collection of morphokinetic data for manual and AI analysis. | EmbryoScopeⓇ (Vitrolife), GeriⓇ (Genea Biomedx) [7] |

| Early Embryo Viability Assessment System | Automated algorithm focusing on early cleavage-stage morphokinetic markers to generate a viability score. | EevaⓇ System (Merck KGaA) [6] |

| AI-Based Scoring Software | Provides an automated, objective embryo evaluation and ranking to compare against manual methods. | iDAScore (Vitrolife), Life Whisperer [7] [3] |

| Standardized Grading Media & Consumables | Ensures consistency in culture conditions, a critical factor for valid morphokinetic comparisons across studies. | Various IVF-specific media and culture dishes from companies like Cook Medical, Vitrolife, and Irvine Scientific. |

| Publicly Available Datasets | Provides benchmark data for training and validating new CNN models. | Kaggle World Championship Embryo Classification [4] |

The subjectivity inherent in conventional morphological assessment and manual morphokinetic analysis presents a clear and documented impediment to optimal embryo selection in IVF. Quantitative evidence demonstrates that these methods are not only variable and labor-intensive but also are consistently outperformed by AI-driven approaches. For researchers developing CNNs for embryo assessment, these limitations define the problem space. The future of embryo evaluation lies in integrated systems that combine the objectivity of AI analysis of images with relevant clinical data, moving beyond the constraints of human perception to create more reliable, scalable, and effective selection tools.

Convolutional Neural Networks (CNNs) are revolutionizing embryo quality assessment in Assisted Reproductive Technology (ART) by automating the extraction of relevant morphological features from embryo images. Traditional embryo evaluation relies on manual morphological assessment by embryologists, a process prone to subjectivity and inter-observer variability [12]. CNN-based deep learning models address these limitations by automatically learning to identify complex visual patterns directly from pixel data, enabling objective, standardized, and high-throughput embryo analysis [13] [1]. This capability is particularly valuable for analyzing time-lapse imaging (TLI) data, where CNNs can process vast amounts of visual information to identify subtle morphological features potentially overlooked by human observers [13].

CNN Architecture for Embryo Image Analysis

Fundamental Building Blocks

CNNs automate feature extraction through a hierarchical architecture of specialized layers:

- Convolutional Layers: Apply learnable filters across the input image to detect visual features like edges, textures, and patterns. Each filter convolves across the image, producing feature maps that highlight where specific features appear [14].

- Pooling Layers: Reduce spatial dimensions of feature maps while retaining important information, providing translation invariance and controlling overfitting.

- Fully Connected Layers: Integrate extracted features for final classification tasks, such as embryo quality grading or pregnancy outcome prediction [15].

This architecture enables CNNs to learn increasingly complex feature hierarchies - from simple edges in initial layers to sophisticated morphological structures in deeper layers - directly from embryo images without manual feature engineering [14].

Specialized CNN Architectures for Embryology

Researchers have developed specialized CNN architectures optimized for embryo analysis:

- Dual-Branch CNN: Integrates spatial features from embryo images with morphological parameters (symmetry scores, fragmentation percentages) through parallel network branches [4].

- Modified EfficientNet: Balances model complexity and performance for clinical deployment, achieving 94.3% accuracy in embryo quality classification [4].

- EmbryoNet-VGG16: Combines Otsu segmentation preprocessing with a modified VGG16 architecture to extract boundary features and structural integrity indicators from embryo images [12].

- Siamese Networks: Enable comparative analysis of matched embryo pairs from the same stimulation cycle with different implantation outcomes [16].

Research Reagent Solutions

Table 1: Essential materials and computational resources for CNN-based embryo assessment

| Category | Specific Resource | Function/Application |

|---|---|---|

| Time-Lapse Imaging Systems | EmbryoScope/EmbryoScope+ (Vitrolife) [16] [17] | Continuous embryo monitoring with image capture every 10 minutes at multiple focal planes |

| Culture Media | G-TL (Vitrolife) [16], FertiCult IVF (FertiPro) [16] | Embryo culture in stable conditions during time-lapse monitoring |

| Image Annotation Software | EmbryoViewer (Vitrolife) [16] | Manual annotation of morphokinetic parameters and embryo quality grading |

| Deep Learning Frameworks | PyTorch [10], Python-based frameworks [14] | CNN model development, training, and implementation |

| Computational Resources | Ubuntu OS, 1080 Ti GPU, i7-8700 CPU [14] | Processing power for training and running complex CNN models |

Quantitative Performance of CNN Models

Table 2: Performance comparison of CNN architectures for embryo assessment tasks

| CNN Architecture | Application Task | Accuracy | Precision | Recall/Sensitivity | AUC |

|---|---|---|---|---|---|

| Dual-Branch CNN with EfficientNet [4] | Embryo quality grade classification | 94.3% | 0.849 | 0.900 | - |

| Fusion Model (Clinical + Image data) [10] | Clinical pregnancy prediction | 82.42% | 0.910 | - | 0.91 |

| EmbryoNet-VGG16 with Otsu segmentation [12] | Embryo quality classification | 88.1% | 0.90 | 0.86 | - |

| CNN (Images only) [10] | Clinical pregnancy prediction | 66.89% | 0.740 | - | 0.73 |

| Clinical MLP Model [10] | Clinical pregnancy prediction | 81.76% | 0.900 | - | 0.91 |

| DeepEmbryo (3 timepoints) [17] | Pregnancy outcome prediction | 75.0% | - | - | - |

Experimental Protocols

Protocol 1: Dual-Branch CNN for Embryo Quality Assessment

Sample Preparation

- Collect embryo images from time-lapse imaging systems (e.g., EmbryoScope) captured at 10-minute intervals [4] [16]

- Include day 3 embryos with known quality grades based on standard morphological assessment [4]

- Exclude embryos with blurry imaging, large obstructions, or degeneration affecting >50% of embryo area [14]

CNN Architecture Configuration

- Implement two parallel branches: spatial feature extraction and morphological parameter processing [4]

- Branch 1: Modified EfficientNet architecture for deep spatial feature extraction

- Branch 2: Processing of symmetry scores and fragmentation percentages from bounding box analysis

- Integration: Combine features from both branches through fully connected layers with SoftMax activation [4]

Training Procedure

- Input: 220 embryo images from Kaggle World Championship 2023 Embryo Classification competition [4]

- Augmentation: Apply rotation, scaling, and flipping to address limited dataset size [12]

- Optimization: Train for 4.5 hours with balanced batches to ensure even class distribution [4] [10]

- Validation: Use hold-out validation set to prevent overfitting and select best performing model [10]

Performance Validation

- Evaluate using precision, recall, and F1-score in addition to accuracy [4]

- Compare against standard CNN architectures (VGG-16, ResNet-50, MobileNetV2) on same dataset [4]

- Validate segmentation methodology through bounding box accuracy (95.2%) [4]

Protocol 2: Multi-Timepoint Embryo Analysis with DeepEmbryo

Image Acquisition and Preprocessing

- Extract frames from time-lapse videos at 19±1, 44±1, and 68±1 hours post-insemination [17]

- Crop images to restrict view around embryo and reduce computational requirements [16]

- Discard poor-quality frames with artifacts or visual defects [16]

- Resize images to 256×256 pixels and convert to grayscale if necessary [14] [17]

Transfer Learning Implementation

- Utilize pre-trained CNN architectures (AlexNet, ResNet-18, ResNet-34, Inception V3, DenseNet-121) [17]

- Replace final classification layers to adapt to embryo-specific tasks

- Fine-tune all layers on embryo dataset to specialize feature extraction [17]

Training with Limited Data

- Apply extensive data augmentation: rotation, horizontal flip, vertical flip [17]

- Use weighted batch sampling to ensure balanced learning across classes [10]

- Implement k-fold cross-validation to maximize use of available data [17]

Evaluation Against Human Experts

- Compare CNN predictions with assessments from five experienced embryologists [17]

- Use identical embryo images for both CNN and human evaluations

- Measure statistical significance of performance differences [17]

Workflow Visualization

CNN Feature Extraction Workflow for Embryo Assessment

Advanced Architectures

Dual-Branch CNN Architecture for Embryo Assessment

Technical Considerations and Limitations

While CNNs show remarkable performance in embryo assessment, several technical challenges require consideration. Data limitations remain significant, with studies often utilizing small datasets (e.g., 84-220 images) necessitating extensive augmentation [4] [12]. Clinical integration requires balancing model complexity with efficiency - the dual-branch CNN achieves this balance with 8.3 million parameters and 4.5-hour training time [4]. Generalizability concerns persist, as models trained on specific imaging systems may not transfer well across clinics with different equipment and protocols [12]. Future directions include developing more sophisticated architectures that integrate clinical patient data with image features to improve predictive performance for clinical outcomes like live birth [10].

The assessment of embryo quality is a critical determinant of success in in vitro fertilization (IVF). Traditional methods rely on manual morphological evaluation by embryologists, a process inherently limited by subjectivity and inter-observer variability [13] [18] [19]. Convolutional Neural Networks (CNNs) offer a promising solution by automating embryo analysis, providing objective, consistent, and high-throughput assessments [13] [20]. The performance and applicability of these CNN models are fundamentally shaped by the imaging modality used for training—either time-lapse imaging (TLI) systems or static image modalities. This document delineates the data landscapes of these two modalities, providing a structured comparison and detailed experimental protocols for researchers in the field of embryo quality assessment.

Comparative Data Landscape: Time-Lapse vs. Static Imaging

The choice between time-lapse and static imaging dictates the type of features a model can learn, the architecture required, and the ultimate predictive power of the CNN. The table below summarizes the core characteristics of each data modality.

Table 1: Quantitative and Qualitative Comparison of Imaging Modalities for CNN Training

| Characteristic | Time-Lapse Imaging (TLI) Systems | Static Image Modalities |

|---|---|---|

| Data Type | Video sequences (temporal series of images) [13] [16] | Single, two-dimensional images [4] [19] |

| Core Data Strength | Captures dynamic, morphokinetic parameters (e.g., cell division timings) [13] [21] | Captures static morphological features at a specific time point [5] |

| Primary Applications | Predicting embryo development potential, clinical pregnancy, and live birth [13] [16] | Classifying embryo quality, stage (e.g., blastocyst), and morphological grade [4] [5] [19] |

| Typical CNN Architectures | CNNs + Recurrent Neural Networks (RNNs) or 3D CNNs for video processing [16] | Standard 2D CNNs (e.g., EfficientNet, ResNet, VGG) [4] [5] [19] |

| Reported Performance (Sample) | AUC of 0.64 for predicting implantation [16] | Up to 94.3% accuracy for embryo quality grading [4] |

| Key Advantages | - Reveals dynamic patterns invisible to static analysis [13] [18]- Reduces subjectivity [21]- Maintains stable culture conditions [21] | - Lower computational cost and complexity [5]- Easier data acquisition and storage- Well-established for specific classification tasks [19] |

| Inherent Limitations | - High cost of equipment [21]- Large, complex datasets require sophisticated processing [13]- Potential lack of generalizability across labs [21] | - Lacks crucial temporal developmental context [13]- Assessment remains a snapshot, potentially missing key events [21]- Highly dependent on the selected time point for image capture |

Experimental Protocols for CNN-Based Embryo Assessment

Protocol 1: CNN Training with Time-Lapse Imaging Data

This protocol is designed to leverage the dynamic information contained within TLI videos to predict developmental outcomes.

Objective: To train a deep learning model capable of predicting embryo implantation potential from time-lapse video sequences.

Materials and Reagents:

- Time-Lapse Incubator System: Such as EmbryoScope+ (Vitrolife) or Eeva system, which automatically captures images at defined intervals (e.g., every 5-20 minutes) across multiple focal planes [16] [21].

- Annotated TLI Dataset: Raw embryo videos linked to known implantation data (KID) or clinical pregnancy outcomes [13] [16].

Methodology:

- Data Preprocessing:

- Video Export and Frame Extraction: Export raw videos from the TLI system and use a script (e.g., Python with OpenCV) to extract frames at all time points or specific developmental milestones [16] [5].

- Frame Cropping and Quality Control: Crop frames to focus on the embryo region and discard frames with significant artifacts or poor focus to reduce noise [16] [5].

- Data Augmentation: Apply techniques such as random rotation, horizontal flipping, and color jittering to the training set frames to improve model generalizability [19].

Model Architecture and Training:

- Architecture Selection: Employ a hybrid model architecture. A CNN (e.g., EfficientNet-B0, ResNet) first acts as a feature extractor on individual frames. The extracted features are then fed into a temporal model, such as a Recurrent Neural Network (RNN) or a Siamese network, to learn sequences and patterns across time [16].

- Training Loop: Train the model using an optimizer (e.g., Adam) with a low learning rate (e.g., 0.0001) and a loss function like Cross-Entropy Loss. Use a separate validation set to monitor for overfitting [16] [19].

Validation: Perform external validation on a held-out test set from a different clinic or patient cohort to assess the model's robustness and generalizability [18].

The following workflow diagram illustrates the complete experimental pipeline for Protocol 1:

Protocol 2: CNN Training with Static Image Modalities

This protocol outlines the procedure for training a CNN to perform embryo grading using single, static images, a more computationally straightforward approach.

Objective: To train a CNN for accurate classification of embryo quality or developmental stage from a single static image.

Materials and Reagents:

- Inverted Microscope: Equipped with digital camera and optics consistent across image captures (e.g., Hoffman modulated contrast) [5].

- Annotated Static Image Dataset: High-resolution embryo images captured at a specific time point (e.g., 113 hours post-insemination for blastocysts), graded by senior embryologists according to a standardized system like Gardner's [5] [19].

Methodology:

- Data Preprocessing:

- Image Standardization: Resize all images to a uniform input size required by the chosen CNN architecture (e.g., 224x224 or 299x299 pixels) [19].

- Normalization: Normalize pixel values using the mean and standard deviation of a reference dataset (e.g., ImageNet) to stabilize training [19].

- Data Augmentation: Apply extensive augmentation (random rotations, flips, color jitter, etc.) to increase the effective dataset size and combat overfitting, especially with class imbalance [19].

Model Architecture and Training:

- Architecture Selection: Utilize standard 2D CNN architectures. Studies have shown EfficientNet-B0 to outperform others like VGG16, ResNet50, and InceptionV3 in blastocyst grading tasks [19]. Dual-branch CNNs that integrate raw images with manually extracted morphological features (e.g., symmetry score) have also shown high accuracy [4].

- Transfer Learning: Initialize the model with weights pre-trained on a large dataset like ImageNet to leverage learned feature detectors and accelerate convergence [19].

- Training Loop: Train the model using a balanced batch sampler to ensure all quality classes are represented, and monitor performance on a validation set [19] [10].

Model Interpretation: Apply visualization techniques like Gradient-weighted Class Activation Mapping (Grad-CAM) to highlight the image regions (e.g., Inner Cell Mass) that most influenced the model's decision, enhancing transparency and trust [19].

The following workflow diagram illustrates the complete experimental pipeline for Protocol 2:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the aforementioned protocols requires specific tools and data. The following table catalogs key components for building CNN models in embryo assessment.

Table 2: Essential Materials and Tools for Embryo Assessment CNN Research

| Item Name | Function/Description | Example/Specification |

|---|---|---|

| Time-Lapse Incubator | Provides a stable culture environment while automatically capturing sequential embryo images. | EmbryoScope+ (Vitrolife) [16] [21] |

| Inverted Microscope | Enables high-resolution imaging of static embryos for morphological grading. | Microscope with Hoffman modulation contrast and a 20x objective [5] |

| Annotated Clinical Datasets | Serves as the ground-truth labeled data for supervised model training and validation. | Datasets with Known Implantation Data (KID) or Gardner blastocyst grades [13] [16] [5] |

| Pre-trained CNN Models | Provides a starting point for model development, improving performance and training speed via transfer learning. | Architectures like EfficientNet-B0, ResNet-50, pre-trained on ImageNet [4] [19] |

| Grad-CAM Visualization Tool | Interprets model predictions by generating heatmaps of decisive image regions, critical for clinical trust. | PyTorch or TensorFlow implementation of Grad-CAM [19] |

The data landscape for CNN training in embryo assessment is distinctly bifurcated by the choice of imaging modality. Time-lapse imaging provides a rich, dynamic data source ideal for predicting complex outcomes like implantation and live birth but demands sophisticated models and faces cost and generalizability challenges. Static imaging offers a pragmatic and effective path for standardized tasks like morphological grading and blastocyst classification, with lower computational overhead. The emerging trend of multi-modal fusion, which integrates static images with clinical patient data, demonstrates that the future of AI in IVF may not lie in a single data type, but in the intelligent synthesis of diverse information streams to empower more confident clinical decisions [10]. Researchers must therefore align their choice of data modality and experimental protocol with their specific clinical question and available resources.

The assessment of embryo quality represents a pivotal challenge in the field of assisted reproductive technology (ART). Traditional methods, which rely on visual morphological assessment by embryologists, are inherently subjective, leading to significant inter- and intra-observer variability and consequently, modest in vitro fertilization (IVF) success rates [1] [21]. Convolutional Neural Networks (CNNs), a class of deep learning algorithms, are revolutionizing this domain by providing objective, automated, and highly accurate analyses of embryo viability. These models leverage large datasets of embryo images and time-lapse videos to identify complex, non-linear patterns that are often imperceptible to the human eye. This document details the clinical applications of CNNs, spanning from early development prediction to the forecasting of critical clinical outcomes, and provides standardized protocols for their implementation in research settings. By translating embryonic visual data into quantitative, actionable predictions, CNNs are bridging the gap between embryonic morphology and reproductive potential, enabling a more refined and effective selection process in clinical embryology.

Clinical Applications Spectrum of CNNs in Embryology

The application of Convolutional Neural Networks in embryology covers a broad spectrum, from predicting basic developmental milestones to forecasting complex clinical outcomes like implantation and live birth. The following table summarizes the key application areas, the specific tasks performed by CNNs, and their demonstrated performance metrics as reported in recent literature.

Table 1: Spectrum of Clinical Applications for CNNs in Embryo Assessment

| Application Area | Specific CNN Task | Reported Performance | Key Citation(s) |

|---|---|---|---|

| Embryo Development & Quality Prediction | Forecasting future embryo morphology from time-lapse videos. | Successfully predicted subsequent 7 frames (2 hours) from an initial 7-frame input sequence. | [22] |

| Classification of embryo quality (e.g., good vs. poor) on Day 3. | 94.3% accuracy, 0.849 precision, 0.900 recall, 0.874 F1-score. | [4] | |

| Automated embryo quality classification using a modified VGG16 architecture. | 88.1% accuracy, 0.90 precision, 0.86 recall. | [12] | |

| Implantation & Clinical Pregnancy Prediction | Prediction of clinical pregnancy from blastocyst images. | 64.3% accuracy in predicting clinical pregnancy. | [3] |

| Prediction of implantation potential from time-lapse videos using a self-supervised model. | AUC of 0.64 in predicting implantation. | [16] | |

| Implantation prediction from single static blastocyst images (113 hpi). | Outperformed 15 embryologists (75.26% vs. 67.35% accuracy). | [2] | |

| Integrated Outcome Prediction | Prediction of clinical pregnancy by fusing blastocyst images with patient clinical data. | 82.42% accuracy, 91% average precision, 0.91 AUC. | [23] |

| Prediction of clinical pregnancy using the FiTTE system (integrates images and clinical data). | 65.2% prediction accuracy with an AUC of 0.7. | [3] | |

| Overall Diagnostic Performance | Meta-analysis of AI-based embryo selection for predicting implantation success. | Pooled sensitivity: 0.69, specificity: 0.62, AUC: 0.7. | [3] |

Experimental Protocols for Key Applications

Protocol 1: Early Embryo Development Forecasting with ConvLSTM

Application Objective: To predict future morphological changes in human embryo development by recursively forecasting frames in time-lapse videos, allowing for early assessment and potential reduction in culture time [22].

Materials and Reagents:

- Time-lapse incubator system (e.g., EmbryoScope+): Maintains stable culture conditions while capturing sequential images.

- Time-lapse video datasets: Retrospective data from fertility clinics, featuring embryos from transfer and "avoid" categories.

Methodological Steps:

- Data Preprocessing:

- Export raw time-lapse videos from the incubator system's software.

- Restrict the field of view by cropping images around the embryo to reduce computational load.

- Discard frames with poor quality or visual artifacts.

- Focus analysis on specific developmental intervals, such as 31-43 hours post-insemination (hpi) for day 2 and 90-113 hpi for day 4.

Model Architecture and Training:

- Model: Employ a Convolutional Long Short-Term Memory (ConvLSTM) network, which is adept at spatiotemporal sequence prediction.

- Input: A sequence of seven consecutive frames from the time-lapse video, representing two hours of development.

- Task: The model is trained to forecast the subsequent seven frames in the sequence.

- Training Cycle: After predicting the last frame, the input sequence shifts by one frame (incorporating a new observation), and the forecasting process repeats, enabling a progressive analysis of development.

Output and Analysis:

- The model generates a forecasted video sequence visualizing the embryo's potential morphological progression over the subsequent hours.

- Embryologists can analyze these predicted frames to identify key biomarkers and assess developmental trajectories earlier than with traditional methods.

Protocol 2: Embryo Quality Classification Using a Dual-Branch CNN

Application Objective: To perform an objective, automated evaluation of Day 3 embryo quality by integrating deep spatial features with hand-crafted morphological parameters [4].

Materials and Reagents:

- Static embryo images: High-resolution images of Day 3 embryos.

- Annotation software: For manual labeling of embryo bounding boxes and grading.

Methodological Steps:

- Data Preprocessing and Feature Extraction:

- Spatial Feature Branch: Input full embryo images into a modified EfficientNet backbone to automatically extract deep spatial features.

- Morphological Parameter Branch:

- Perform bounding box segmentation to isolate the embryo.

- Calculate a symmetry score based on the spatial distribution and size uniformity of blastomeres.

- Calculate the fragmentation percentage by identifying and quantifying anucleate cytoplasmic fragments.

Model Architecture and Training:

- Architecture: Implement a dual-branch CNN.

- Branch 1 (Spatial): Uses EfficientNet to process raw pixel data and learn complex hierarchical features.

- Branch 2 (Morphological): Processes the calculated symmetry scores and fragmentation percentages.

- Fusion: The features from both branches are concatenated and fed into fully connected layers, activated by SoftMax, for the final classification (e.g., "Good" or "Poor" quality).

Validation:

- Validate the model's performance against a ground truth dataset graded by expert embryologists according to standardized criteria (e.g., BLEFCO classification).

Protocol 3: Predicting Implantation from Static Blastocyst Images

Application Objective: To directly assess the implantation potential of blastocyst-stage embryos from a single static image captured at 113 hours post-insemination, providing a tool accessible to clinics without time-lapse systems [2].

Materials and Reagents:

- Static blastocyst images: Single images captured at a standardized time point (e.g., 113 hpi).

- Pre-trained CNN model: A model like Xception, pre-trained on a large dataset (e.g., ImageNet).

Methodological Steps:

- Data Curation:

- Collect a large dataset of static blastocyst images with known implantation outcomes (KID).

- For studies focusing on ploidy, use images from euploid embryos that underwent Preimplantation Genetic Testing for Aneuploidy (PGT-A).

Model Development:

- Transfer Learning: Utilize a pre-trained CNN and fine-tune it on the curated dataset of blastocyst images.

- Training Objective: Train the network to either rank embryos within a patient's cohort based on morphological quality or to directly classify them as having "High" or "Low" implantation potential.

Validation and Benchmarking:

- Blind Testing: Evaluate the model on a held-out test set of embryos not seen during training.

- Clinical Benchmarking: Compare the model's performance against assessments made by multiple embryologists from different fertility centers to demonstrate comparative efficacy.

Protocol 4: Multi-Modal Fusion for Enhanced Pregnancy Prediction

Application Objective: To improve the accuracy of clinical pregnancy prediction by integrating image-based features from blastocyst images with structured clinical data from the patients [23].

Materials and Reagents:

- Blastocyst still images from the day of transfer.

- Structured clinical data including female and male age, infertility diagnosis, BMI, treatment type (IVF/ICSI), and embryo transfer category (Fresh/Frozen).

Methodological Steps:

- Data Preprocessing:

- Clinical Data: Normalize and encode categorical variables from the patient's clinical records.

- Image Data: Preprocess blastocyst images (e.g., cropping, normalization).

Model Architecture and Training:

- Clinical Model: Develop a Multi-Layer Perceptron (MLP) to process the structured clinical data.

- Image Model: Develop a Convolutional Neural Network (CNN) to extract features from blastocyst images.

- Fusion Model: Integrate the feature vectors from both the MLP and CNN models. This can be done via concatenation or more complex fusion mechanisms. The fused features are then processed by a final classifier.

- Training: Use a weighted batch sampling strategy during training to handle class imbalance (e.g., between pregnant and non-pregnant outcomes).

Interpretation and Analysis:

- Employ visualization techniques (e.g., Gradient-weighted Class Activation Mapping - Grad-CAM, or SHAP values) to identify which features in the embryo images and which clinical variables (e.g., trophectoderm quality, female age) were most influential in the model's prediction.

Workflow Visualization

The following diagram illustrates the logical workflow of a multi-modal AI system that integrates embryo images and clinical data for pregnancy prediction, as detailed in the experimental protocols.

Multi-Modal Pregnancy Prediction Workflow

This workflow demonstrates how image data and clinical data are processed in parallel by specialized neural networks. The extracted features are then fused to make a more informed and accurate prediction of clinical pregnancy than would be possible with either data type alone [23].

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogues the essential materials, algorithms, and data types that form the foundation of CNN-based research in embryo assessment.

Table 2: Essential Research Reagents and Materials for CNN-based Embryo Assessment

| Tool Category | Specific Item / Solution | Function / Application Note |

|---|---|---|

| Imaging Hardware | Time-Lapse Incubator (e.g., EmbryoScope+) | Provides continuous imaging under stable culture conditions; generates time-lapse videos for dynamic morphokinetic analysis [16] [21]. |

| Conventional Microscope | Enables capture of static embryo images; allows CNN application in resource-constrained settings without time-lapse systems [2]. | |

| Data & Annotations | Known Implantation Data (KID) | Provides ground truth labels for model training and validation; crucial for predicting clinical outcomes like implantation and pregnancy [16]. |

| Preimplantation Genetic Testing (PGT-A) Data | Used as ground truth for models aiming to predict embryo ploidy status non-invasively [2]. | |

| Manual Embryo Grading Labels (e.g., Gardner, BLEFCO) | Provides standardized quality scores for training models on embryo quality classification [4] [16]. | |

| Core AI Algorithms & Architectures | Convolutional Neural Network (CNN) | The core architecture for feature extraction from both static images and individual video frames [4] [1] [2]. |

| ConvLSTM / Recurrent Neural Networks (RNNs) | Used for analyzing time-series data from time-lapse videos; capable of forecasting future developmental stages [22]. | |

| Transfer Learning (Pre-trained models e.g., on ImageNet) | Leverages features learned from large natural image datasets; improves model performance when embryo dataset size is limited [2] [12]. | |

| Siamese Networks & Contrastive Learning | Used for fine-grained comparison between embryos from the same cohort to identify subtle viability differences [16]. | |

| Software & Libraries | Python with PyTorch/TensorFlow | Primary programming environment for developing, training, and testing deep learning models [23]. |

| Image Preprocessing Libraries (e.g., OpenCV) | Used for cropping, normalization, and augmentation of embryo images to improve model robustness [4]. |

Architectures and Implementation: Technical Approaches to CNN-Based Embryo Analysis

Convolutional Neural Networks (CNNs) have emerged as the foundational technology for automating and enhancing the assessment of embryo quality in assisted reproductive technology (ART). Traditional embryo assessment relies on subjective visual grading by embryologists, leading to inconsistencies due to inter-observer variability [24] [1]. The application of CNNs addresses this critical challenge by providing objective, reproducible, and highly accurate evaluations. These models excel at analyzing complex image data, identifying subtle morphological and spatial patterns that may be imperceptible to the human eye, thus enabling more reliable selection of viable embryos for implantation [4] [1]. This document details the specific CNN architectures, experimental protocols, and reagent solutions that form the basis of this transformative technology in embryo research.

Quantitative Performance of CNN Architectures

Research demonstrates that specific CNN architectures significantly outperform traditional assessment methods. The following table summarizes the performance of various models reported in recent studies.

Table 1: Performance comparison of deep learning models in embryo quality assessment

| Model Architecture | Reported Accuracy (%) | Precision | Recall | F1-Score | Primary Application |

|---|---|---|---|---|---|

| Dual-Branch CNN with EfficientNet [4] | 94.30 | 0.849 | 0.900 | 0.874 | Day-3 embryo quality classification |

| EfficientNetV2 [24] | 95.26 | 0.963 | 0.972 | - | Good/Not-Good embryo classification (Day-3 & Day-5) |

| VGG-19 [24] | - | - | - | - | Good/Not-Good embryo classification |

| ResNet-50 [4] [24] | 80.80 | - | - | - | Embryo quality classification |

| InceptionV3 [24] | - | - | - | - | Good/Not-Good embryo classification |

| MobileNetV2 [4] | 82.10 | - | - | - | Embryo quality classification |

| VGG-16 [4] | 79.20 | - | - | - | Embryo quality classification |

A scoping review of 77 studies confirmed that CNNs are the predominant deep learning architecture, accounting for 81% of the models used for embryo evaluation and selection using time-lapse imaging [1]. The primary applications include predicting embryo development and quality (61% of studies) and forecasting clinical outcomes such as pregnancy and implantation (35% of studies) [1].

Detailed Experimental Protocols

Protocol 1: Dual-Branch CNN for Day-3 Embryo Assessment

This protocol is based on a model that integrates spatial and morphological features [4].

1. Objective: To classify Day-3 embryo quality with high accuracy by combining deep spatial features and expert-derived morphological parameters.

2. Materials:

- Dataset: 220 embryo images from the Kaggle World Championship 2023 Embryo Classification competition.

- Hardware: GPU-enabled computing system.

- Software: Python deep learning frameworks (e.g., TensorFlow, PyTorch).

3. Methodology:

- Step 1 - Image Preprocessing: Resize all input embryo images to a uniform resolution. Apply normalization.

- Step 2 - Spatial Feature Extraction (Branch 1):

- Implement a modified EfficientNet architecture as the first branch.

- This branch processes the raw embryo image to extract deep, hierarchical spatial features.

- Step 3 - Morphological Feature Extraction (Branch 2):

- Perform bounding box segmentation to isolate individual blastomeres.

- Calculate key morphological parameters:

- Symmetry Score: Quantify the regularity of blastomere size and shape.

- Fragmentation Percentage: Calculate the proportion of anuclear cytoplasmic fragments.

- Step 4 - Feature Fusion and Classification:

- Concatenate the high-dimensional feature vectors from Branch 1 and Branch 2.

- Feed the integrated feature vector into fully connected (dense) layers.

- Use a SoftMax activation function in the final layer to output quality grade probabilities.

4. Training Specifications:

- Training Time: Approximately 4.5 hours.

- Model Size: 8.3 million parameters.

- Performance Validation: The model achieved a bounding box segmentation accuracy of 95.2%, ensuring reliable morphological feature extraction [4].

Protocol 2: Transfer Learning for Blastocyst-Stage Assessment

This protocol utilizes pre-trained models for efficient training on embryo image datasets [24].

1. Objective: To leverage transfer learning for classifying blastocyst-stage embryos as "good" or "not good" using established CNN architectures.

2. Materials:

- Dataset: Clinical embryo image dataset from a hospital institution (e.g., Hung Vuong Hospital).

- Models: Pre-trained versions of VGG-19, ResNet-50, InceptionV3, and EfficientNetV2.

3. Methodology:

- Step 1 - Data Preparation: Curate a dataset of embryo images labeled by embryologists. Split data into training, validation, and test sets.

- Step 2 - Model Adaptation:

- Remove the original classification heads of the pre-trained CNNs.

- Replace with new dense layers tailored for the binary classification task (Good/Not Good).

- Step 3 - Model Training:

- Employ transfer learning: initially freeze the weights of the pre-trained layers and train only the new head.

- Optionally, fine-tune the entire model by unfreezing some or all of the pre-trained layers for further training at a low learning rate.

- Step 4 - Evaluation: Use metrics such as accuracy, precision, and recall on a held-out test set to evaluate model performance. EfficientNetV2 has been shown to achieve state-of-the-art results with this approach [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and reagents for deep learning-based embryo assessment research

| Item Name | Function/Application | Specifications/Notes |

|---|---|---|

| Time-Lapse Incubator System [1] | Provides a stable culture environment while capturing sequential images of developing embryos at multiple focal planes. | Generates the time-lapse video data used for training and validating deep learning models. |

| Embryo Image Dataset [4] [24] | Serves as the foundational data for model training, validation, and testing. | Datasets should be de-identified and annotated with quality grades by experienced embryologists. |

| GPU-Accelerated Workstation | Accelerates the computationally intensive processes of model training and inference. | Essential for handling complex architectures like EfficientNet and processing large datasets within feasible timeframes. |

| Image Annotation Software | Used by embryologists to label embryo images with quality grades, morphological parameters, and segmentation masks. | Critical for creating high-quality ground truth data for supervised learning. |

| Python Deep Learning Frameworks | Provides the programming environment for implementing, training, and evaluating CNN models. | Common frameworks include TensorFlow, Keras, and PyTorch. |

Workflow and Model Architecture Visualizations

The following diagrams, generated with Graphviz, illustrate the logical relationships and workflows central to CNN-based embryo assessment.

CNN Embryo Assessment Workflow

Data Processing Pipeline

The assessment of embryo quality represents a critical challenge in reproductive medicine, with conventional morphological evaluation being subjective and prone to inter-observer variability [13] [25] [16]. The integration of time-lapse imaging (TLI) systems in clinical in vitro fertilization (IVF) laboratories has enabled the continuous monitoring of embryonic development, generating rich spatiotemporal data that captures both morphological appearance and dynamic developmental patterns [13] [16]. This technological advancement has created an pressing need for analytical frameworks capable of extracting and interpreting complex spatiotemporal features to improve embryo selection.

Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks offer complementary strengths for this challenge. CNNs excel at extracting hierarchical spatial features from individual embryo images, while LSTMs specialize in modeling temporal dependencies across sequential data [26] [27]. The fusion of these architectures creates a powerful tool for analyzing embryo development videos, enabling simultaneous capture of spatial morphological details and temporal morphokinetic patterns that predict developmental potential [13] [28].

This protocol details the implementation of hybrid CNN-LSTM models for embryo quality assessment, providing researchers with practical frameworks for leveraging spatiotemporal information in embryo selection. By integrating these advanced architectural fusion techniques, IVF laboratories can move toward more objective, standardized, and predictive embryo evaluation systems.

Performance Comparison of Deep Learning Architectures in Embryo Assessment

Table 1: Comparative Performance of Deep Learning Architectures in Embryo Assessment

| Architecture | Primary Application | Key Advantages | Reported Performance | Reference |

|---|---|---|---|---|

| CNN-LSTM (Fused) | Embryo classification using time-lapse imaging | Captures both spatial features and temporal dependencies; ideal for video data | 97.7% accuracy (after augmentation) for good/poor embryo classification | [28] |

| CNN (Standalone) | Blastocyst image analysis | Strong spatial feature extraction; well-established architecture | 89.9% accuracy for blastocyst assessment | [28] |

| Dual-Branch CNN | Day 3 embryo quality assessment | Integrates spatial and morphological features simultaneously | 94.3% accuracy for embryo quality grading | [4] |

| Self-Supervised CNN with Contrastive Learning | Implantation prediction from time-lapse | Reduces annotation requirement; learns unbiased feature representations | AUC = 0.64 for implantation prediction | [16] |

Table 2: CNN-LSTM Performance Across Domains with Spatiotemporal Data

| Domain | Architecture Variant | Data Type | Performance | Key Innovation | |

|---|---|---|---|---|---|

| Nuclear Power Plant Fault Diagnosis | Multi-scale CNN-LSTM | Sensor time-series | 98.88% accuracy under high noise | Robustness to extreme noise conditions (-100 dB) | [26] |

| Power Load Forecasting | GAT-CNN-LSTM | Grid sensor data | Significant error reduction vs. baselines | Dynamic spatial correlation capture | [29] |

| Embryo Quality Classification | CNN-LSTM with LIME | Time-lapse videos | 90%→97.7% accuracy (post-augmentation) | Enhanced interpretability via explainable AI | [28] |

Experimental Protocols

Data Acquisition and Preprocessing Protocol

Time-Lapse Imaging Data Collection

- Culture Conditions: Maintain embryos in integrated time-lapse incubators (e.g., EmbryoScope+) under stable conditions (5% O₂, 6% CO₂, 37°C) throughout the culture period [16].

- Image Acquisition: Capture images at 10-minute intervals across multiple focal planes (typically 11 planes) using minimal LED illumination (635 nm) to minimize embryo stress [16].

- Data Export: Export raw video sequences in their native format along with associated metadata using the manufacturer's software (e.g., EmbryoViewer for EmbryoScope systems).

- Ethical Considerations: Obtain appropriate institutional review board (IRB) approval and patient consent for the use of embryo imaging data in research.

Image Preprocessing Pipeline

- Frame Extraction and Selection: Convert time-lapse videos to individual frames, discarding poor-quality frames containing artifacts or extreme blur [16].

- Region of Interest (ROI) Extraction: Crop images to focus on the embryo region, reducing computational load and removing irrelevant background [16].

- Data Augmentation: Apply transformations to increase dataset diversity:

- Rotation (±10°)

- Horizontal and vertical flipping

- Brightness and contrast variation (±20%)

- Gaussian noise addition

- Frame Sequence Assembly: Organize preprocessed frames into ordered sequences representing complete embryonic development timelines.

CNN-LSTM Model Implementation Protocol

Architecture Configuration

Spatial Feature Extraction Branch:

- Implement CNN front-end using TimeDistributed wrappers to process each frame independently [27]

- Utilize pre-trained architectures (e.g., EfficientNet, VGG-16) with custom classifiers

- Configure convolutional layers with increasing filter sizes (32, 64, 128, 256)

- Apply batch normalization and ReLU activation after each convolutional layer

Temporal Modeling Branch:

Fusion and Classification Head:

- Concatenate spatial and temporal features

- Implement fully connected layers with decreasing dimensions (128→64→32)

- Apply softmax activation for final classification

Model Training Protocol

Data Partitioning:

- Split data into training (70%), validation (15%), and test (15%) sets

- Maintain patient-level separation to prevent data leakage

- Ensure balanced class distribution across splits

Training Configuration:

- Initialize with Adam optimizer (learning rate: 1e-4)

- Utilize categorical cross-entropy loss for classification tasks

- Implement batch sizes of 8-16 sequences due to memory constraints

- Apply early stopping with patience of 15-20 epochs

- Employ reduce-on-plateau learning rate scheduling

Validation and Testing:

- Monitor accuracy, precision, recall, and F1-score on validation set

- Perform final evaluation on held-out test set

- Generate confusion matrices and ROC curves for performance visualization

Model Interpretation Protocol

Explainable AI Implementation:

- Apply Local Interpretable Model-agnostic Explanations (LIME) to identify influential regions [28]

- Generate attention maps highlighting temporally significant developmental stages

- Visualize spatial features contributing to classification decisions

Clinical Validation:

- Compare model predictions with embryologist annotations

- Correlate feature importance with known embryological markers

- Assess model performance across patient subgroups (e.g., maternal age, infertility diagnosis)

Workflow Visualization

CNN-LSTM Embryo Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for CNN-LSTM Embryo Assessment

| Category | Item/Solution | Specification/Function | Application Context | |

|---|---|---|---|---|

| Culture Media | G-TL Global Culture Medium | Sequential media optimized for time-lapse culture | Maintains embryo viability during extended imaging | [16] |

| Time-Lapse System | EmbryoScope+ Incubator | Integrated microscope with 11 focal planes, 10-min intervals | Automated image acquisition without culture disturbance | [16] |

| Image Processing | Python OpenCV Library | Computer vision algorithms for frame preprocessing | ROI detection, image enhancement, sequence assembly | [16] [28] |

| Deep Learning Framework | PyTorch/TensorFlow with Keras | Flexible neural network implementation | CNN-LSTM model development and training | [26] [27] |

| Data Augmentation | Albumentations Library | Optimized augmentation for medical images | Dataset expansion with rotation, flip, contrast variation | [28] |

| Model Interpretation | LIME (Local Interpretable Model-agnostic Explanations) | Explains predictions of any classifier | Visualizing decision rationale for clinical trust | [28] |

| Evaluation Metrics | Scikit-learn Library | Comprehensive model performance assessment | Accuracy, precision, recall, F1-score, AUC calculation | [30] [16] |

End-to-End Experimental Workflow

The application of Convolutional Neural Networks (CNNs) to embryo quality assessment represents a frontier in assisted reproductive technology (ART). However, developing robust, generalizable models is constrained by the fundamental challenge of data accessibility. Centralizing large-scale, sensitive embryo datasets from multiple clinical sites raises significant privacy concerns and is often prohibited by regulations such as the Health Insurance Portability and Accountability Act (HIPAA) and the General Data Protection Regulation (GDPR) [31] [32]. Federated Learning (FL) has emerged as a transformative paradigm that enables collaborative model training across distributed institutions without the need to share or centralize raw patient data [33]. This article details the application notes and protocols for implementing FL frameworks specifically for CNN-based embryo research, facilitating privacy-preserving multi-institutional collaboration.

Federated Learning Fundamentals and Relevance to Embryo Assessment

Federated Learning is a machine learning technique that trains an algorithm across multiple decentralized edge devices or servers holding local data samples, without exchanging them [31]. The canonical process involves a central server orchestrating a collaborative training cycle across multiple clients (e.g., hospitals).

A typical FL workflow, as illustrated below, is iterative. The global model is distributed to clients, who perform local training and send model updates back to a central server for aggregation into an improved global model. This process is repeated over multiple communication rounds [31].

Figure 1: The iterative federated learning workflow. Clients train on local embryo data, and only model updates are aggregated by the central server [31].

In the context of embryo assessment, FL allows clinical sites to collaboratively train a CNN model on their local collections of embryo time-lapse images and associated morphological data (e.g., cell symmetry, blastomere count) while keeping this sensitive information within their firewalls [34] [1]. This is crucial because embryo images and their linked clinical outcomes are highly sensitive health data.

Application Note: FedEmbryo for Personalized Embryo Selection

A state-of-the-art implementation of FL for embryo assessment is FedEmbryo, a distributed AI system designed for personalized embryo selection while preserving data privacy [34].

Core Innovation: Federated Task-Adaptive Learning (FTAL)

FedEmbryo introduces a Federated Task-Adaptive Learning (FTAL) approach to address key clinical challenges. Embryo evaluation is inherently a multi-task process, involving assessments at different developmental stages (pronuclear, cleavage, blastocyst) and prediction of clinical outcomes like live birth [34]. FTAL integrates Multi-Task Learning (MTL) with FL through a unified architecture containing:

- Shared Layers: Common feature extractors (e.g., CNN backbone) that learn generalized representations from all data across clients.

- Task-Specific Layers: Dedicated layers for individual tasks (e.g., blastocyst grading, live-birth prediction) that allow for personalization and accommodate varying task setups across different clinics [34].

Hierarchical Dynamic Weighting Adaptation (HDWA)

A key challenge in FL is the statistical heterogeneity (non-IID data) across clients. FedEmbryo tackles this with a Hierarchical Dynamic Weighting Adaptation (HDWA) mechanism. Instead of using a static aggregation scheme, HDWA dynamically adjusts the weight of each client's contribution and the attention to each task based on learning feedback (loss ratios) during training [34]. This ensures a balanced collaboration among clients with different data distributions and task complexities.

Performance and Validation

In extensive experiments, FedEmbryo demonstrated superior performance in both morphological evaluation and prediction of live-birth outcomes compared to models trained on a single site's local data alone, as well as other standard FL methods [34]. This validates that FL can effectively capture stage-specific morphological features of embryos from diverse, distributed datasets, leading to more accurate and generalizable models for clinical decision-making in IVF.

Experimental Protocol for Federated CNN Training on Embryo Images

This protocol provides a detailed methodology for setting up and executing a federated learning experiment for CNN-based embryo quality assessment across multiple clinical research sites.

Pre-experiment Setup and Governance

- Ethical and Legal Compliance: Secure approval from the Institutional Review Board (IRB) or Ethics Committee at all participating sites. Obtain written informed consent from patients for the use of their anonymized embryo data in research [34].

- Data Anonymization: Ensure all patient identifiers are removed from embryo images and associated metadata. Implement strict data access controls at each client site.

- Consortium Agreement: Establish a consortium agreement among all participating institutions covering intellectual property, roles, responsibilities, and data usage terms [35].

- Technical Infrastructure: Deploy the FL infrastructure. This can be built using open-source frameworks or a custom infrastructure like the Personal Health Train (PHT), which uses "stations" (data repositories), "trains" (containerized analysis apps), and "tracks" (secure communication channels) [35].

Data Preparation and CNN Model Configuration

Table 1: Example Dataset Division for Federated Training

| Client Site | Task | Number of Patients (Training) | Number of Embryo Images (Training) | Key Annotations |

|---|---|---|---|---|

| Client A | Morphology Assessment | 255 | 354 | Cell symmetry, fragmentation, blastocyst formation [34] |

| Client B | Morphology Assessment | 413 | 2191 | Cell symmetry, fragmentation, blastocyst formation [34] |

| Client C | Live-Birth Prediction | 547 | 1828 | Maternal age, endometrium, infertility duration [34] |

| Client D | Live-Birth Prediction | 457 | 1492 | Maternal age, endometrium, infertility duration [34] |

- Data Curation: At each client site, curate embryo image datasets according to the intended task (e.g., morphology classification, live-birth prediction). Follow standardized grading guidelines (e.g., Istanbul consensus) for annotations [34].

- Data Partitioning: Split the local data at each client into training, validation, and test sets (e.g., 70/20/10 ratio based on patient count) to ensure a fair evaluation [34].

- CNN Selection and Adaptation:

- Select a pre-trained CNN architecture (e.g., EfficientNetV2, ResNet-50) as a backbone. These models have shown high performance in centralized embryo classification tasks [24].

- Replace the final classification layer with task-specific output layers. For a multi-task client, this will involve multiple output heads.

- Initialize the model weights with pre-trained values from ImageNet to benefit from transfer learning [33].

Federated Training Loop Execution

The following diagram and steps outline the core training procedure, which is repeated for a set number of communication rounds or until the global model converges.

Figure 2: Detailed protocol for the federated training loop, highlighting local training and server aggregation steps.

- Server Initialization: The central server initializes the global CNN model with pre-trained weights.

- Communication Round:

- Broadcast: The server sends the current global model to all or a subset of participating clients.

- Client-Side Local Training: Each client performs the following:

- Train the model on its local training dataset for a predefined number of epochs.

- Use standard deep learning optimizers (e.g., Adam, SGD) and loss functions appropriate for the task (e.g., cross-entropy for classification).

- Validate locally on the client's hold-out validation set to monitor for overfitting.

- Update Transmission: Clients send their updated model weights (or gradients) back to the server. The raw embryo data never leaves the client.

- Server-Side Aggregation:

- The server collects the model updates from all participating clients.

- Apply the HDWA mechanism: Dynamically calculate aggregation weights for each client based on their data sample size and task performance feedback (loss ratios) [34].

- Update the global model by computing a weighted average of all client models (e.g., using Federated Averaging - FedAvg) according to the HDWA weights [34] [33].

- Repetition and Evaluation: Steps 2-3 are repeated for multiple communication rounds. The global model is evaluated on a held-out test set (potentially from each client) after selected rounds to assess performance and convergence.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function/Description | Example/Specification |

|---|---|---|

| Embryo Time-Lapse Images | Raw input data for CNN training. Captured under optimal lighting at high magnification (e.g., ×200) [34]. | Inverted microscope (e.g., Nikon ECLIPSE Ti2-U) [34]. |

| Clinical & Morphological Annotations | Ground truth labels for supervised learning. | Metrics: Cell symmetry, blastomere count, fragmentation [34]. Outcomes: Implantation, live birth [34]. |

| Pre-trained CNN Models | Foundation for transfer learning, providing powerful feature extractors. | EfficientNetV2, ResNet-50, VGG-19 [33] [24]. |

| Federated Learning Framework | Software infrastructure to orchestrate the FL process. | Vantage6, LlmTornado SDK, or custom PHT infrastructure [36] [35]. |

| Secure Aggregation Server | A trusted, inaccessible environment where model averaging is performed to prevent data leakage from model updates [35]. | Deployed in a trusted cloud or on-premise environment with strict access controls. |

Federated Learning represents a paradigm shift for collaborative AI in reproductivemedicine. It directly addresses the critical barriers of data privacy and regulatory compliance that have historically impeded the development of large-scale, robust CNN models for embryo assessment [32]. Frameworks like FedEmbryo demonstrate that it is possible to leverage distributed data effectively, achieving performance that surpasses locally trained models and even competing FL methods [34].

Challenges and Future Directions

Despite its promise, FL implementation faces challenges. Data heterogeneity across clinics remains a significant hurdle, though adaptive aggregation methods like HDWA are mitigating this [34]. Communication overhead and computational resource disparity between sites are technical challenges that can be addressed through gradient compression and asynchronous update protocols [36]. Furthermore, ensuring robust security against model poisoning attacks requires continuous monitoring and anomaly detection [36] [32]. Future work will focus on refining dynamic aggregation algorithms, integrating explainable AI (XAI) to build trust in federated models, and establishing standardized, scalable FL infrastructures like the Personal Health Train for global collaboration in reproductive health research [35].