Addressing Class Imbalance in Sperm Morphology Datasets: Advanced Techniques for Robust AI in Male Fertility Research

This article provides a comprehensive analysis of class imbalance, a critical challenge in developing AI models for sperm morphology classification.

Addressing Class Imbalance in Sperm Morphology Datasets: Advanced Techniques for Robust AI in Male Fertility Research

Abstract

This article provides a comprehensive analysis of class imbalance, a critical challenge in developing AI models for sperm morphology classification. Tailored for researchers and drug development professionals, it explores the root causes of imbalance in specialized datasets like SMD/MSS and Hi-LabSpermMorpho, where rare morphological defects are inherently scarce. The content details a spectrum of solutions, from foundational data augmentation to advanced algorithmic strategies like hierarchical ensemble frameworks and bio-inspired optimization. It further establishes rigorous validation protocols and comparative performance metrics, synthesizing these into a cohesive guide for building generalizable, accurate, and clinically applicable diagnostic tools in reproductive medicine.

Understanding the Root Causes and Impact of Class Imbalance in Sperm Morphology Analysis

Sperm morphology analysis is a cornerstone of male fertility assessment, where a high percentage of abnormally shaped sperm is associated with decreased fertility [1]. The clinical examination involves analyzing the percentage of normally and abnormally shaped sperm in a fixed number of over 200 sperms, categorizing defects according to standardized systems such as those from the World Health Organization (WHO) or the more detailed modified David classification [2] [3]. This creates a natural and challenging class imbalance problem for researchers and clinicians. While all men produce some abnormal sperm, the distribution of specific defect types is highly skewed. Most sperm in a sample may be normal or exhibit common abnormalities, while certain rare morphological defects—such as specific head shape anomalies, midpiece defects, or tail abnormalities—occur with very low frequency [4] [2].

This inherent scarcity presents significant obstacles for both manual assessment and the development of automated artificial intelligence (AI) systems. For human morphologists, rare defects are difficult to learn and recognize consistently without extensive, standardized training [4] [5]. For machine learning models, the lack of sufficient examples of rare defects in training datasets leads to poor generalization and an inability to accurately identify these uncommon but potentially clinically significant anomalies [2] [3]. This technical support document addresses these challenges through troubleshooting guides, FAQs, and detailed protocols designed to help researchers manage class imbalance in sperm morphology datasets effectively.

FAQ: Understanding the Scarcity Problem

Q1: Why is class imbalance a particularly severe problem in sperm morphology research?

Class imbalance is especially problematic in this field due to the convergence of three key factors. First, there is the biological reality that many specific morphological defects are intrinsically rare. Second, the clinical standard requires the assessment of a limited number of sperm (typically 200-300) per sample, making it statistically unlikely to capture sufficient examples of rare defects from a single donor [3]. Third, there is the analytical challenge that both human experts and AI models require multiple examples to learn consistent classification patterns. Without specialized strategies, this imbalance biases assessment systems toward the majority classes (e.g., "normal" or common defects), reducing diagnostic sensitivity for rare but potentially critical morphological anomalies [6].

Q2: What is the practical impact of different classification systems on observed imbalance?

The complexity of the classification system directly influences the severity of the perceived imbalance and the accuracy of assessment. Research has demonstrated that as classification systems become more detailed, accuracy naturally decreases and variability increases. The table below summarizes the performance differences across classification systems of varying complexity, as observed in training studies [4].

Table: Classification System Complexity and Its Impact on Assessment Accuracy

| Number of Categories | Description | Untrained User Accuracy | Trained User Accuracy |

|---|---|---|---|

| 2 Categories | Normal vs. Abnormal | 81.0% ± 2.5% | 98.0% ± 0.4% |

| 5 Categories | Defects by location (head, midpiece, tail, etc.) | 68.0% ± 3.6% | 97.0% ± 0.6% |

| 8 Categories | Common specific defect types | 64.0% ± 3.5% | 96.0% ± 0.8% |

| 25+ Categories | Comprehensive individual defects | 53.0% ± 3.7% | 90.0% ± 1.4% |

Q3: What are the most significant data-related bottlenecks in developing robust AI models for rare defect detection?

The primary bottlenecks are the lack of standardized, high-quality annotated datasets and the inherent class imbalance [3]. Building an effective dataset is challenging because sperm images are often intertwined or only partially visible, and annotation requires expert knowledge across multiple defect categories [3]. Furthermore, establishing "ground truth" is complicated by significant inter-expert variability, where even experts may only agree on a normal/abnormal classification for about 73% of sperm images [5]. Without a large, well-balanced, and consistently annotated dataset, even advanced deep learning models will underperform on rare defect classes.

Troubleshooting Guides & Experimental Protocols

Guide: Implementing Resampling Strategies for Imbalanced Sperm Datasets

Resampling is a fundamental data-level approach to mitigate class imbalance. The choice between oversampling and undersampling depends on your dataset size and research goals.

Table: Comparison of Resampling Strategies for Sperm Morphology Data

| Strategy | Mechanism | Best For | Advantages | Limitations | Key Algorithms |

|---|---|---|---|---|---|

| Random Oversampling | Duplicates existing minority class examples | Small datasets with very few rare defect instances | Simple to implement; increases presence of rare classes | High risk of overfitting to repeated examples | RandomOverSampler [7] |

| Synthetic Oversampling | Generates new, synthetic minority class examples | Situations where dataset diversity is needed | Increases variety of minority class examples; reduces overfitting | Synthetic examples may not be biologically plausible | SMOTE, ADASYN [7] |

| Random Undersampling | Removes examples from the majority class | Large datasets where data can be sacrificed | Reduces dataset size and computational cost | Loss of potentially useful majority class information | RandomUnderSampler [7] |

| Hybrid Sampling | Combines oversampling and undersampling | Maximizing dataset quality and balance | Can create a optimally balanced dataset | More complex to implement and tune | SMOTE-Tomek [7] |

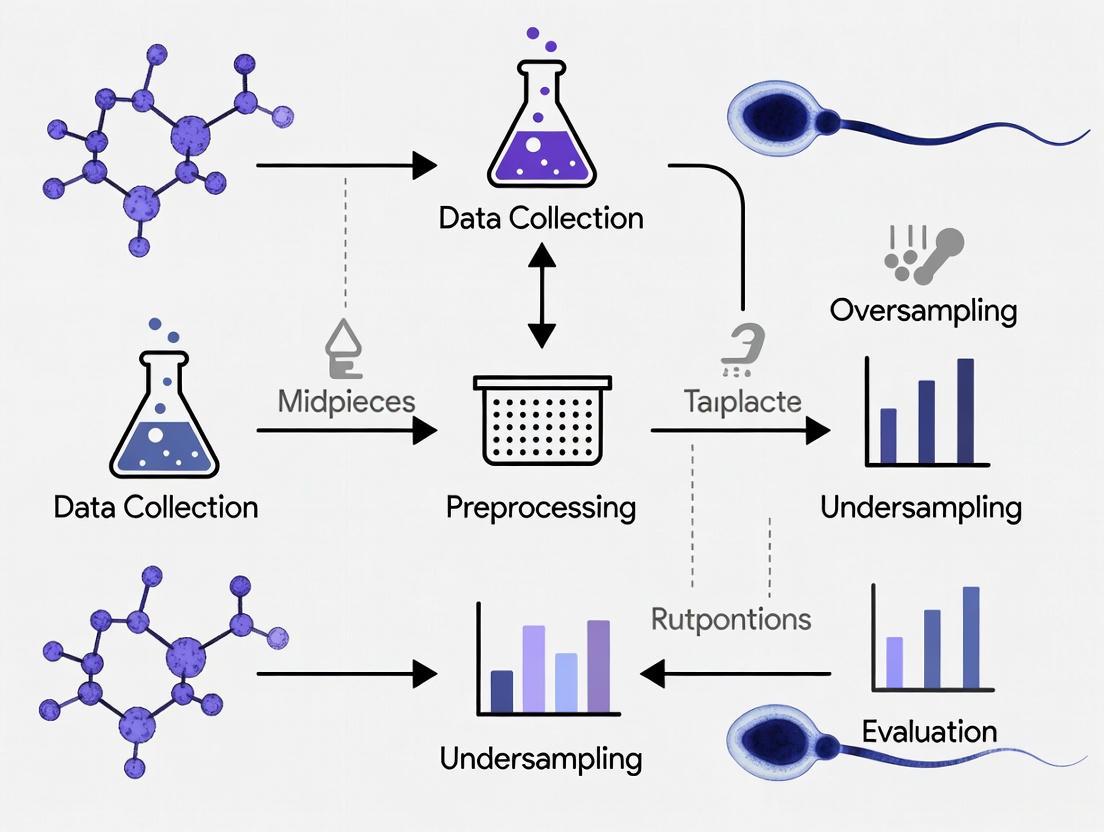

Workflow Diagram: Resampling Strategy Decision Process

Protocol: Establishing Expert Consensus for High-Quality Ground Truth

Creating a reliable dataset for training both humans and AI models requires establishing a robust "ground truth." This protocol is based on methods validated in recent studies [4] [5].

Objective: To create a validated dataset of sperm morphology images with minimal subjective bias, suitable for training and evaluating models on rare defects.

Materials & Reagents:

- Microscope: High-quality system (e.g., Olympus BX53) with DIC or phase contrast objectives (100x oil immersion) and high numerical aperture (≥0.75) [5].

- Camera: High-resolution CMOS sensor camera (e.g., Olympus DP28) [5].

- Staining: RAL Diagnostics staining kit or equivalent for clear morphological visualization [2].

- Software: Data management tool (e.g., Excel spreadsheet) for collecting independent classifications [2].

Procedure:

- Image Acquisition: Capture Field of View (FOV) images from semen smears prepared according to WHO guidelines. Aim for a large number of FOVs across multiple samples to maximize the chance of capturing rare defects.

- Image Cropping: Use a machine-learning algorithm or manual cropping to extract individual sperm images, ensuring each image contains a single spermatozoon to avoid confusion [5].

- Independent Expert Classification: Have at least three experienced morphologists classify each sperm image independently using a comprehensive classification system (e.g., the 30-category system from [5]).

- Consensus Analysis: Analyze the level of agreement among experts for each image. Categorize agreement as:

- Total Agreement (TA): All experts assign identical labels.

- Partial Agreement (PA): Two out of three experts agree.

- No Agreement (NA): No consensus among experts.

- Ground Truth Assignment: Use only images with Total Agreement (TA) for your high-confidence "ground truth" dataset. Images with Partial Agreement (PA) may be used for intermediate training but should be treated with caution. Images with No Agreement (NA) should be excluded or re-reviewed.

Troubleshooting:

- Low Total Agreement Rate: If the TA rate is too low, review the classification guidelines with experts, provide standardized training, or consider using a less complex classification system to build initial consensus.

- Insufficient Rare Defects: Even with large initial datasets, the final number of consensus-rated rare defects may be small. Collaborate with multiple laboratories to pool resources and datasets.

Protocol: Data Augmentation for Deep Learning on Imbalanced Data

For deep learning approaches, data augmentation is a crucial technique to artificially increase the size and diversity of the training set, particularly for rare classes [2] [8].

Objective: To expand the number of training examples for rare morphological defect classes through label-preserving image transformations.

Materials:

- A base dataset of sperm images (e.g., SMD/MSS, HuSHeM, or in-house dataset).

- Image processing libraries (e.g., in Python using TensorFlow/Keras or PyTorch).

Procedure:

- Identify Minority Classes: Calculate the frequency of each morphological class in your dataset. Select the classes with the lowest frequencies for targeted augmentation.

- Apply Transformations: For each image in the minority classes, generate new images using a combination of the following transformations:

- Geometric: Rotation (±10°), horizontal and vertical flipping (if biologically valid), slight zooming (±5%), and shearing.

- Pixel-level: Adjustments to brightness, contrast, and adding small amounts of noise to simulate imaging variations.

- Augmentation Volume: Apply augmentation aggressively to the rarest classes. The goal is to bring the number of examples for each rare defect close to the number of examples in your majority classes.

- Validation: Ensure that all transformations are "label-preserving." For example, a rotation should not change the classification of a "pyriform head."

Example from Literature: One study successfully expanded an initial dataset of 1,000 sperm images to 6,035 images after augmentation, which significantly improved the performance of their Convolutional Neural Network (CNN) model, enabling it to achieve accuracies between 55% and 92% across different morphological classes [2].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Resources for Sperm Morphology and Class Imbalance Research

| Item / Resource | Function / Description | Example Use Case |

|---|---|---|

| Imbalanced-learn Library | Python package providing resampling algorithms | Implementing SMOTE, RandomUnderSampler, and hybrid methods [7] |

| RAL Diagnostics Staining Kit | Stains sperm smear for clear morphological visualization | Preparing semen samples for high-resolution imaging [2] |

| DIC/Phase Contrast Microscope | High-resolution imaging of sperm without distortion | Capturing detailed images of sperm head, midpiece, and tail for defect analysis [5] |

| Sperm Morphology Datasets (e.g., SMD/MSS, HuSHeM) | Publicly available benchmark datasets | Training and validating machine learning models [2] [8] |

| Consensus Classification Platform | Web-based tool for collecting expert labels | Establishing validated "ground truth" data for rare defects [4] [5] |

| Data Augmentation Pipelines | Automated image transformation workflows | Balancing class distribution in deep learning projects [2] |

Effectively managing the inherent scarcity of rare morphological defects requires a multi-faceted strategy that integrates data, methodology, and expert knowledge. The path forward involves a systematic approach, as visualized below.

Diagram: Integrated Strategy for Rare Defect Analysis

By building upon a foundation of robust ground truth established through expert consensus [4] [5], researchers can then expand their data through multi-center collaborations and targeted collection. The subsequent data balancing phase, utilizing the resampling and augmentation protocols outlined in this guide, directly addresses the class imbalance [7] [2] [6]. Finally, deploying advanced modeling techniques, such as custom Convolutional Neural Networks (CNNs) that are designed to be sensitive to class imbalance, enables the accurate and reliable detection of even the rarest morphological defects [3] [8]. This comprehensive framework empowers researchers to overcome the inherent scarcity problem, leading to more precise diagnostic tools and a deeper understanding of male fertility factors.

Frequently Asked Questions (FAQs)

FAQ 1: Why is high accuracy misleading when my model is trained on an imbalanced sperm morphology dataset? In imbalanced datasets, a model can achieve high accuracy by simply always predicting the majority class. For example, if 95% of sperm cells in a dataset are morphologically normal, a model that predicts "normal" for every cell will be 95% accurate but will completely fail to identify any abnormal cells. This phenomenon is often called the "accuracy paradox" and provides a false sense of model performance [9] [10]. The model's learning process becomes biased toward the majority class because minimizing errors on this class has a larger effect on reducing the overall loss function [9].

FAQ 2: Which evaluation metrics should I use instead of accuracy for my imbalanced dataset? For imbalanced classification tasks, such as distinguishing between rare sperm defects and normal morphology, you should use a set of metrics that provide a more complete picture of model performance. Key metrics include [11] [10]:

- F1 Score: The harmonic mean of precision and recall. It is particularly useful when you need to balance the trade-off between false positives and false negatives.

- Precision and Recall: Precision measures how many of the predicted positive cases are actually positive, while recall measures how many of the actual positive cases the model can find.

- Matthews Correlation Coefficient (MCC): A balanced metric that takes into account all four categories of the confusion matrix and is reliable even when the classes are of very different sizes.

- Area Under the Precision-Recall Curve (AUPRC): More informative than the ROC curve for imbalanced data, as it focuses on the performance of the positive (minority) class.

The confusion matrix is the foundation for calculating most of these metrics [10].

FAQ 3: What are the core techniques to fix a class imbalance problem in my data? The main strategies can be categorized as follows [12] [13]:

- Data-Level Methods: These involve modifying the training dataset itself.

- Algorithm-Level Methods: These involve modifying the learning algorithm to make it more sensitive to the minority class.

FAQ 4: How does class imbalance affect the model's generalizability in a clinical setting? A model trained on an imbalanced dataset often fails to learn the true underlying patterns of the minority class. Instead, it learns to be biased toward the majority class. When deployed in a real-world clinical environment, where the model will encounter the natural, imbalanced distribution of sperm defects, its performance will likely degrade significantly. It will be unreliable for predicting the rare but crucial abnormal morphologies it was designed to detect, potentially leading to incorrect diagnostic support [12] [10].

Troubleshooting Guides

Problem: Model has high accuracy but fails to detect minority class instances

Description Your model reports high accuracy (e.g., >90%), but a closer look at the confusion matrix reveals it is failing to identify most, or all, of the abnormal sperm cells.

Diagnosis Steps

- Check Class Distribution: Calculate the number of examples in each morphological class (e.g., normal, microcephalous, tapered head). A high imbalance ratio (e.g., 100:1) is a strong indicator of this problem [9].

- Analyze the Confusion Matrix: Generate a confusion matrix. If the counts for the minority class are primarily in the False Negative column, your model is ignoring that class [10].

- Calculate Precision and Recall: You will likely find that the recall for your minority class is very low, confirming the model's inability to detect it [11].

Solution Apply Data Resampling and Use Robust Metrics.

- Procedure:

- Resample your training data. Choose either:

- Random Oversampling: Randomly duplicate examples from the minority class.

- SMOTE: Generate synthetic examples for the minority class by interpolating between existing instances [7] [11].

- Random Undersampling: Randomly remove examples from the majority class (use with caution to avoid losing important information) [9].

- Re-train your model on the resampled, balanced dataset.

- Evaluate with the correct metrics. Use F1-score, MCC, and the confusion matrix instead of relying on accuracy [10].

- Resample your training data. Choose either:

Table: Quantitative Impact of Resampling on a Model's Performance

| Metric | Before Resampling (Imbalanced) | After Random Oversampling | After SMOTE |

|---|---|---|---|

| Overall Accuracy | 98.2% | 91.5% | Varies |

| Minority Class Recall | 75.6% | Improved | Improved |

| Minority Class F1-Score | 78.3% | Improved | Improved |

Note: Example values are illustrative. The performance after SMOTE will depend on the specific dataset and parameters. Oversampling can lead to a drop in overall accuracy but a significant improvement in the detection of the minority class, which is the goal [9].

Problem: Model is overfitting to the noise in the minority class after oversampling

Description After applying oversampling techniques like SMOTE, the model's performance on the training data is excellent, but it performs poorly on the validation or test set, indicating overfitting.

Diagnosis Steps

- Compare Performance: Check for a large gap between high training scores (e.g., F1-score) and low validation/test scores.

- Inspect Synthetic Samples: If using SMOTE, the generated samples might be unrealistic or noisy, especially if the original minority class examples are few and not representative.

Solution Use Advanced Ensemble Methods and Algorithmic Adjustments.

- Procedure:

- Try Ensemble Methods: Use a

BalancedBaggingClassifierwhich naturally combines bagging with undersampling to create balanced training subsets for each model in the ensemble [11]. - Apply Cost-Sensitive Learning: If your algorithm supports it (e.g., SVM, logistic regression), use built-in class weight parameters like

class_weight='balanced'. This increases the penalty for misclassifying the minority class without altering the data [12] [13]. - Implement a Two-Stage Classification Framework: Inspired by recent sperm morphology research, you can use a hierarchical approach. A first-stage "splitter" model categorizes sperm into major groups (e.g., "Head/Neck Abnormalities" vs. "Normal/Tail Abnormalities"), and then dedicated second-stage models perform fine-grained classification within each group. This simplifies the task at each stage and can reduce misclassification between visually similar categories [14].

- Try Ensemble Methods: Use a

Problem: Model demonstrates significant prediction bias

Description The model's predictions are skewed, consistently favoring the majority class (e.g., "normal" sperm), leading to poor performance on the minority classes.

Diagnosis Steps

- Analyze Prediction Distribution: Compare the distribution of predicted classes to the true distribution in your test set. A strong bias will show a much higher frequency of majority class predictions.

- Check per-class metrics: Low precision and recall scores specifically for the minority classes indicate bias.

Solution Apply a Combination of Downsampling and Upweighting.

- Procedure:

- Downsample (Undersample) the Majority Class: Train on a disproportionately low percentage of the majority class examples to create an artificially more balanced training set. For example, downsample by a factor of 25 to change a 99:1 imbalance to a more manageable 80:20 ratio [15].

- Upweight the Downsampled Class: To correct for the bias introduced by downsampling, upweight the loss function for the majority class by the same factor you downsampled. This means if you downsampled by 25, you multiply the loss for each majority class example by 25. This teaches the model both the true data distribution and the correct feature-label connections [15].

Experimental Protocols & Workflows

Protocol: Two-Stage Ensemble Classification for Sperm Morphology

This protocol is based on a study that proposed a novel framework to improve accuracy and reduce misclassification in complex, multi-class sperm morphology datasets [14].

1. Objective To accurately classify sperm images into multiple fine-grained morphological classes (e.g., 18 classes) by breaking down the problem into simpler, hierarchical stages, thereby handling class imbalance and high inter-class similarity.

2. Materials and Dataset

- Dataset: A labeled sperm morphology image dataset (e.g., Hi-LabSpermMorpho dataset) with multiple staining protocols (e.g., BesLab, Histoplus, GBL) [14].

- Models: Deep learning architectures such as NFNet-F4 and Vision Transformer (ViT) variants for building the ensemble.

- Computing Framework: Python with deep learning libraries (e.g., TensorFlow, PyTorch).

3. Methodology

Workflow Diagram: Two-Stage Classification

Step-by-Step Instructions:

First Stage - Splitting:

- Train a dedicated "splitter" model to categorize each sperm image into one of two broad categories:

- Category 1: Head and neck region abnormalities.

- Category 2: Normal morphology and tail-related abnormalities.

- Train a dedicated "splitter" model to categorize each sperm image into one of two broad categories:

Second Stage - Fine-Grained Ensemble Classification:

- For each category from Stage 1, use a separate, customized ensemble of deep learning models (e.g., integrating NFNet-F4 and ViT).

- Instead of simple majority voting, employ a structured multi-stage voting strategy. In this approach, each model in the ensemble casts a primary vote. If a clear winner is not established, models can cast secondary votes based on their second-most-likely prediction, enhancing decision reliability [14].

Evaluation:

- Evaluate the overall framework's accuracy on a held-out test set and compare it against single-model baselines. The reported study showed a statistically significant 4.38% improvement over prior approaches [14].

Protocol: Handling Imbalance with Data Augmentation and CNN

This protocol is based on a study that developed a predictive model for sperm morphology using a Convolutional Neural Network (CNN) on an augmented dataset [2].

1. Objective To create a deep learning model for automated sperm morphology classification that is robust to the limited number and imbalanced distribution of original sperm images.

2. Materials

- Images: 1000 original images of individual spermatozoa acquired via a CASA system [2].

- Staining: RAL Diagnostics staining kit [2].

- Software: Python 3.8 with deep learning libraries.

3. Methodology

Workflow Diagram: Data Augmentation & Training

Step-by-Step Instructions:

Data Acquisition and Labeling:

- Capture images of individual sperm cells using an optical microscope with a digital camera (e.g., MMC CASA system with a 100x oil immersion objective) [2].

- Have multiple experts classify each spermatozoon according to a standard classification system (e.g., modified David classification) to establish a ground truth [2].

Data Augmentation:

- To address limited data and class imbalance, apply data augmentation techniques to the original images. This can include rotations, flips, scaling, and changes in brightness and contrast.

- In the referenced study, this process expanded the dataset from 1,000 to 6,035 images, creating a more balanced representation across morphological classes [2].

Image Pre-processing:

- Clean and standardize the images. This typically involves:

- Denoising: Reducing noise signals from the microscope or staining.

- Grayscale Conversion: Converting images to grayscale.

- Resizing: Standardizing the image size (e.g., 80x80 pixels).

- Normalization: Scaling pixel values to a standard range (e.g., 0-1) [2].

- Clean and standardize the images. This typically involves:

Model Training and Evaluation:

- Develop a CNN architecture.

- Partition the augmented dataset into training (80%) and testing (20%) sets.

- Train the CNN on the training set and evaluate its performance on the test set. The cited study reported a final accuracy ranging from 55% to 92%, demonstrating the variability and challenge of the task while showing the promise of the approach [2].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Sperm Morphology Analysis Experiments

| Item Name | Function / Application | Example from Literature |

|---|---|---|

| RAL Diagnostics Staining Kit | Stains semen smears to reveal fine morphological details of sperm cells (head, midpiece, tail) for microscopic evaluation and image acquisition. | Used to prepare smears for the SMD/MSS dataset [2]. |

| Diff-Quick Staining Kits | A Romanowsky-type stain used to enhance contrast and visualization of cellular structures in sperm morphology datasets. Different brands (e.g., BesLab, Histoplus, GBL) can be compared. | Used in the Hi-LabSpermMorpho dataset across three staining variants [14]. |

| MMC CASA System | A Computer-Assisted Semen Analysis (CASA) system used for automated image acquisition from sperm smears. It consists of an optical microscope with a digital camera. | Used for acquiring 1000 individual spermatozoa images for the SMD/MSS dataset [2]. |

| Imbalanced-Learn (Python library) | An open-source library providing a wide range of techniques (e.g., RandomUnderSampler, SMOTE, Tomek Links, BalancedBaggingClassifier) to handle imbalanced datasets. | Libraries like this are used to implement oversampling and undersampling [7] [11]. |

| Bright-Field Microscope with Mobile Camera | A customized imaging setup that uses a mobile phone camera attached to a bright-field microscope for a potentially lower-cost and accessible image acquisition method. | Used for acquiring images for the Hi-LabSpermMorpho dataset [14]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of class imbalance in sperm morphology datasets? Class imbalance in sperm morphology datasets primarily arises from biological and methodological factors. Biologically, the prevalence of normal sperm in fertile samples and the natural rarity of specific morphological defects (like certain head or tail anomalies) create a skewed distribution. Methodologically, inconsistent staining, subjective manual labeling by experts, and the high cost of data acquisition exacerbate the problem [2] [16].

FAQ 2: How does class imbalance negatively impact the training of a deep learning model? Class imbalance can cause a deep learning model to become biased toward the majority class (e.g., "normal" sperm). The model may achieve high overall accuracy by simply predicting the majority class most of the time, while failing to learn the distinguishing features of the underrepresented abnormal classes. This results in poor generalization and low sensitivity for detecting critical abnormalities, which is detrimental for clinical diagnostics [16] [17].

FAQ 3: What are the most effective techniques to mitigate class imbalance in this research field? The most effective techniques include data-level and algorithm-level approaches.

- Data-level: Data augmentation is widely used, involving geometric transformations to artificially increase the size of minority classes [2]. In one study, an initial set of 1,000 images was expanded to 6,035 using these techniques [2].

- Algorithm-level: Utilizing hybrid frameworks that combine neural networks with nature-inspired optimization algorithms (like Ant Colony Optimization) has shown promise. These frameworks enhance learning efficiency and improve predictive accuracy for minority classes [17].

FAQ 4: How can I assess the quality and potential bias of a public sperm morphology dataset before using it? Before using a public dataset, you should:

- Examine the class distribution statistics to identify the degree of imbalance.

- Review the labeling protocol, including the number of experts involved and the inter-expert agreement rate. Low agreement can indicate subjective bias [2].

- Check for the use of data augmentation and understand which techniques were applied.

- Look for performance metrics like sensitivity or F1-score per class in prior studies that used the dataset, not just overall accuracy [16].

Troubleshooting Guides

Problem 1: Model exhibits high overall accuracy but fails to identify abnormal sperm classes. This is a classic sign of a model biased by class imbalance.

- Step 1: Diagnose with Confusion Matrix. Do not rely on accuracy alone. Generate a confusion matrix to see which specific classes are being misclassified.

- Step 2: Implement Weighted Loss Function. Use a weighted cross-entropy loss function during training. Assign higher weights to the minority classes to penalize misclassifications more heavily.

- Step 3: Apply Advanced Data Augmentation. Beyond simple rotations and flips, use more sophisticated augmentation like synthetic data generation (e.g., SMOTE) or style transfer to create more diverse examples for the minority classes.

- Step 4: Try a Different Model Architecture. Consider using architectures that incorporate attention mechanisms (like CBAM) or hybrid models that combine deep feature extraction with classical classifiers like SVM, which have been shown to achieve high accuracy on imbalanced sperm datasets [16] [17].

Problem 2: Low inter-expert agreement in labeled data is causing noisy labels and poor model convergence. Inconsistent labels from experts confuse the model during training.

- Step 1: Quantify the Disagreement. Calculate the inter-expert agreement rate (e.g., Fleiss' Kappa) for your dataset. In one study, experts had only partial or total agreement on labels, which reflects the inherent difficulty of the task [2].

- Step 2: Establish a Consensus Protocol. Define a rule for determining the final label, such as using the label from the majority of experts or involving a senior embryologist as a tie-breaker.

- Step 3: Utilize Label-Smoothing. Apply label-smoothing techniques during training to reduce the model's overconfidence in any single, potentially noisy, label.

- Step 4: Adopt a Noise-Robust Training Strategy. Use training methods designed to be robust to label noise, such as co-teaching or robust loss functions.

Dataset Analysis and Comparison

The following tables summarize the key characteristics and class distributions of the public sperm morphology datasets discussed in this case study.

Table 1: Key Characteristics of Public Sperm Morphology Datasets

| Dataset Name | Total Images | Number of Classes | Annotation Standard | Key Features |

|---|---|---|---|---|

| SMD/MSS [2] | 1,000 (extended to 6,035 via augmentation) | 12 (Normal + 11 anomalies) | Modified David classification | Covers head, midpiece, and tail defects; includes expert disagreement data. |

| HuSHeM [16] | 216 | 4 | Not specified | A smaller, established benchmark dataset. |

| SMIDS [16] | 3,000 | 3 | Not specified | A larger dataset for a simpler 3-class classification task. |

Table 2: Reported Class Distribution and Performance

| Dataset | Reported Class Distribution | Reported Baseline Performance | Performance with Imbalance Mitigation |

|---|---|---|---|

| SMD/MSS [2] | Not fully detailed; includes normal sperm and 11 anomaly types. | Deep learning model accuracy ranged from 55% to 92% [2]. | Data augmentation increased dataset size to 6,035 images, improving model robustness [2]. |

| HuSHeM [16] | Not explicitly stated in results. | Baseline CNN performance was approximately 86.36% [16]. | A CBAM-enhanced ResNet50 with feature engineering achieved 96.77% accuracy [16]. |

| SMIDS [16] | Not explicitly stated in results. | Baseline CNN performance was approximately 88.00% [16]. | A CBAM-enhanced ResNet50 with feature engineering achieved 96.08% accuracy [16]. |

| UCI Fertility Dataset [17] | 88 "Normal" vs. 12 "Altered" seminal quality. | Highlights inherent real-world clinical imbalance. | A hybrid MLFFN–ACO framework achieved 99% classification accuracy [17]. |

Experimental Protocols for Handling Class Imbalance

Protocol 1: Data Augmentation for Sperm Images

Purpose: To increase the size and diversity of training data for minority morphological classes. Materials: Python 3.x, libraries: TensorFlow/Keras or PyTorch, OpenCV, NumPy. Procedure:

- Isolate Minority Classes: Identify all images belonging to the underrepresented morphological classes (e.g., "microcephalous," "coiled tail").

- Apply Geometric Transformations: For each image in the minority class, generate new samples by applying:

- Rotation (e.g., between -15 and +15 degrees)

- Horizontal and vertical flipping

- Zooming (e.g., 0.8x to 1.2x)

- Shearing and width/height shifting

- Apply Photometric Transformations (Optional): To further increase diversity, adjust:

- Brightness and contrast

- Hue and saturation

- Integrate and Train: Add the newly generated images to your training set, ensuring a more balanced class distribution before model training [2].

Protocol 2: Hybrid Deep Feature Engineering with SVM

Purpose: To leverage deep feature representations and combine them with a powerful classifier that can handle imbalanced data effectively. Materials: Pre-trained CNN (e.g., ResNet50), feature selection tools (e.g., PCA, Chi-square), SVM classifier (e.g., from scikit-learn). Procedure:

- Feature Extraction: Use a pre-trained CNN (enhanced with an attention module like CBAM) as a feature extractor. Remove the final classification layer and extract deep features from a global average pooling (GAP) layer.

- Feature Selection: Apply dimensionality reduction and feature selection techniques like Principal Component Analysis (PCA) to the extracted deep features. This reduces noise and focuses on the most discriminative features.

- Train SVM Classifier: Train a Support Vector Machine (SVM) with a linear or RBF kernel on the selected features. SVMs often generalize well on the resulting feature space, even with class imbalance [16].

- Evaluate: Test the hybrid pipeline on the hold-out test set, paying close attention to per-class metrics like precision and recall.

Workflow Visualization

Sperm Analysis Workflow

Data Augmentation Process

Research Reagent Solutions

Table 3: Essential Materials for Sperm Morphology Analysis

| Reagent / Material | Function in Experiment |

|---|---|

| RAL Diagnostics Staining Kit [2] | Provides differential staining for spermatozoa, allowing clear visualization of the head, midpiece, and tail for morphological assessment. |

| Formaldehyde Solution (4% in PBS) [2] | Used for sample fixation to preserve the structural integrity of sperm cells during smear preparation. |

| Cultrex Basement Membrane Extract | Used in 3D cell culture models, such as for growing organoids, which can be relevant for toxicological studies on spermatogenesis. |

| Primary and Secondary Antibodies | Used for immunohistochemistry (IHC) or immunocytochemistry (ICC) to detect specific protein markers in sperm or testicular tissue. |

| Ant Colony Optimization (ACO) Algorithm [17] | A bio-inspired optimization algorithm used in hybrid machine learning frameworks to enhance feature selection and model performance on imbalanced data. |

| Convolutional Block Attention Module (CBAM) [16] | A lightweight neural network module that enhances a CNN's ability to focus on diagnostically relevant regions of a sperm image. |

Expert Variability and Annotation Challenges as Contributing Factors to Data Scarcity

Frequently Asked Questions

Q1: Why is expert variability such a significant problem in creating sperm morphology datasets?

Expert variability introduces substantial inconsistency in dataset labels, which directly impacts the quality and reliability of datasets used for training machine learning models. Studies report diagnostic disagreement with kappa values as low as 0.05–0.15 among trained technicians, and up to 40% inter-observer variability even among expert evaluators [16] [3]. This inconsistency stems from the complexity of WHO standards, which classify sperm into head, neck, and tail abnormalities with 26 distinct abnormal morphology types [3]. When different experts annotate the same sperm images differently, it creates noisy labels that hamper model training and contribute to effective data scarcity, as consistent examples for the model to learn from are reduced.

Q2: What specific annotation challenges lead to data scarcity in this field?

The annotation process for sperm morphology faces several technical hurdles that limit the creation of large, high-quality datasets. Key challenges include: (1) Structural Complexity: Simultaneous evaluation of head, vacuoles, midpiece, and tail abnormalities substantially increases annotation difficulty and time [3]. (2) Image Quality Issues: Sperm may appear intertwined in images, or only partial structures may be displayed at image edges, affecting annotation accuracy [3]. (3) Workload Intensity: Laboratories must examine at least 200 sperm per sample to obtain reliable morphology assessment, a tedious task requiring specialized expertise [16] [3]. These factors collectively constrain the production of standardized, high-quality annotated datasets necessary for robust deep learning applications.

Q3: How does poor dataset quality exacerbate class imbalance problems?

When dataset quality is compromised by annotation inconsistencies and variability, the resulting class imbalance problems become more severe and difficult to address. Inconsistent annotations can artificially inflate or deflate certain abnormality categories, creating misleading class distributions. For instance, if amorphous head defects (representing up to one-third of all head anomalies) are inconsistently annotated, it distorts the true prevalence of this important class [18]. This "hidden" imbalance problem persists even after applying technical solutions like SMOTE or class weighting, because the fundamental label quality remains compromised. Consequently, models may learn incorrect feature representations, undermining both majority and minority class performance [19] [20].

Q4: What strategies can mitigate expert variability during dataset creation?

Implementing structured annotation protocols can significantly reduce variability. The two-stage classification framework demonstrates one effective approach, where a splitter first routes images to major categories (head/neck abnormalities vs. tail abnormalities/normal sperm), then category-specific ensembles perform fine-grained classification [18]. This hierarchical approach reduces misclassification between visually similar categories. Additionally, employing consensus voting among multiple experts, rather than single-expert annotations, creates more reliable ground truth labels. Some studies also recommend using attention visualization tools like Grad-CAM to validate that models focus on morphologically relevant regions, providing a check on annotation quality [16].

Quantitative Impact of Annotation Challenges

Table 1: Documented Variability in Sperm Morphology Assessment

| Variability Metric | Reported Value | Impact on Data Quality |

|---|---|---|

| Inter-observer Disagreement | Up to 40% between experts [16] | Reduces label consistency across dataset |

| Kappa Statistic | As low as 0.05-0.15 among technicians [16] | Indicates near-random agreement levels |

| Classification Categories | 18-26 abnormality types [18] [3] | Increases annotation complexity and time |

| Minimum Sperm Count | 200+ per sample [16] [3] | Creates significant annotation workload |

Table 2: Technical Solutions to Address Annotation-Driven Data Scarcity

| Solution Approach | Implementation Method | Benefit |

|---|---|---|

| Two-Stage Classification | Hierarchical splitter + category-specific ensembles [18] | Reduces misclassification between similar categories |

| Attention Mechanisms | CBAM-enhanced architectures [16] | Helps models focus on clinically relevant features |

| Ensemble Voting | Multi-stage voting with primary/secondary votes [18] | Mitigates influence of individual expert bias |

| Deep Feature Engineering | Hybrid CNN + classical feature selection [16] | Improves performance on limited data |

Experimental Protocols for Quality Assurance

Protocol 1: Implementing Consensus Annotation for Ground Truth Establishment

Purpose: To establish reliable ground truth labels by mitigating individual expert variability through structured consensus.

Materials: Sperm images, multiple trained annotators, annotation platform with voting capability.

Procedure:

- Independent Annotation: Provide at least 3 trained experts with the same set of sperm images for independent classification according to WHO guidelines

- Initial Agreement Check: Calculate raw agreement percentage and Cohen's kappa between all annotator pairs

- Consensus Meeting: For images with disagreeing annotations, facilitate structured discussion among experts to reach consensus

- Tie-Breaking Mechanism: Employ senior embryologist as final arbiter for persistent disagreements

- Documentation: Record final labels along with initial disagreement rates to track dataset reliability

Validation: Use the consensus labels to train a baseline model and compare performance against models trained on individual expert labels [18] [16].

Protocol 2: Hierarchical Annotation Workflow for Complex Morphology

Purpose: To reduce annotation complexity and improve consistency through a structured, two-tiered approach.

Materials: Sperm images, annotation platform with hierarchical classification capability.

Procedure:

- Stage 1 - Major Category Classification: Annotators first classify sperm into major categories: (a) head/neck abnormalities, (b) tail abnormalities/normal sperm

- Stage 2 - Fine-Grained Annotation: Within each major category, perform detailed abnormality classification using category-specific criteria

- Quality Check: Implement automatic consistency checks between major category and fine-grained labels

- Validation Subset: Re-annotate 10% of images by different experts to measure intra-protocol consistency

This approach mirrors the successful two-stage framework that achieved 4.38% improvement over prior approaches [18].

Experimental Workflow Visualization

Hierarchical Annotation Workflow with Quality Control

Research Reagent Solutions

Table 3: Essential Materials for Sperm Morphology Analysis Research

| Reagent/Resource | Function/Purpose | Application Notes |

|---|---|---|

| Hi-LabSpermMorpho Dataset [18] | Benchmark dataset with 18-class morphology categories | Includes images from 3 staining protocols (BesLab, Histoplus, GBL) |

| Diff-Quick Staining Kits [18] | Enhances morphological features for classification | Three staining variants available for protocol comparison |

| SMOTE Algorithm [21] [20] | Synthetic minority over-sampling to address class imbalance | Generates synthetic samples for underrepresented abnormality classes |

| CBAM-enhanced ResNet50 [16] | Attention-based feature extraction with interpretability | Provides Grad-CAM visualization for model decision validation |

| Imbalanced-learn Python Library [21] [7] | Comprehensive resampling techniques implementation | Includes SMOTE, ADASYN, Tomek Links, and ensemble methods |

Strategic Solutions: From Data-Level Augmentation to Algorithmic-Level Approaches

Frequently Asked Questions

Q1: What are the most effective data augmentation techniques for addressing class imbalance in sperm morphology datasets?

Geometric and photometric transformations are foundational for tackling class imbalance. For sperm image analysis, key techniques include rotation (to account for random sperm orientation on slides), flipping (horizontal/vertical to increase variation), color jittering (adjusting brightness, contrast, and saturation to simulate different staining intensities), and adding noise (to improve model robustness against imaging artifacts) [22]. For severe class imbalances, advanced methods like Generative Adversarial Networks (GANs) can synthesize high-quality, photorealistic samples of under-represented morphological classes, such as specific head defects, which are often rare in clinical samples [22].

Q2: My deep learning model is overfitting to the majority classes (e.g., normal sperm). How can augmentation help?

Overfitting to majority classes is a classic sign of class imbalance. Augmentation provides a direct countermeasure. You can implement a class-specific augmentation strategy, where you apply more aggressive augmentation to the minority classes (e.g., amorphous heads, tail defects) than to the majority classes. This creates a more balanced training distribution. Furthermore, using GAN-based synthesis like CycleGAN can generate entirely new, high-fidelity images for rare defect classes, providing the model with more diverse examples to learn from and reducing its reliance on memorizing the common "normal" morphology [22].

Q3: After extensive augmentation, my model's performance on validation data is poor. What could be wrong?

This often indicates a domain shift introduced by inappropriate augmentation. If augmentations are too extreme or unrealistic, they can destroy biologically critical features. For instance, excessive rotation might alter the perceived head shape, or aggressive color shifting could mimic staining artifacts not present in real clinical images. To troubleshoot, visually inspect your augmented dataset. Ensure that the transformed images still represent plausible sperm morphologies. It's also crucial to preserve the original, unprocessed images for validation and to meticulously document all augmentation parameters to ensure reproducibility and facilitate debugging [23].

Q4: Are there standardized protocols for augmenting sperm image datasets?

While there is no single universal protocol, recent research provides strong methodological guidance. Successful studies often use a combination of basic and advanced techniques. The table below summarizes a typical workflow and its impact from a published study [2]:

Table: Experimental Data Augmentation Protocol from SMD/MSS Dataset Study

| Augmentation Step | Description | Purpose / Impact |

|---|---|---|

| Initial Dataset | 1,000 images of individual spermatozoa [2] | Baseline dataset before augmentation. |

| Augmentation Techniques Applied | Rotation, flipping, color/lighting adjustments, etc. [2] | Increase dataset size and diversity; combat overfitting. |

| Final Augmented Dataset | 6,035 images [2] | Creates a more balanced dataset across morphological classes. |

| Reported Model Accuracy | 55% to 92% [2] | Accuracy range achieved on the augmented dataset. |

Troubleshooting Guides

Problem: Model Performance is Highly Variable Across Different Sperm Morphology Classes

- Symptoms: Good accuracy on common classes (e.g., normal sperm) but poor performance on rare abnormality classes.

- Root Cause: The dataset suffers from significant class imbalance, and your augmentation strategy may not be sufficiently addressing it.

- Solution:

- Audit Your Class Distribution: Calculate the number of images per morphological class in your training set.

- Implement Strategic Oversampling: Use a library like

imbalanced-learnto oversample the minority classes before applying augmentations. - Apply Targeted Augmentation: For the undersampled classes, use a more aggressive augmentation pipeline. If basic transformations are insufficient, explore GAN-based methods like DAGAN or CycleGAN to generate high-quality synthetic images for the specific rare classes [22].

- Validate: Ensure the synthetic images are morphologically plausible by having an expert review them.

Problem: Augmentation Causes Loss of Critical Morphological Features

- Symptoms: The model fails to learn key diagnostic features (e.g., acrosome shape, tail integrity), leading to low overall accuracy.

- Root Cause: The augmentation parameters are too aggressive, distorting biologically relevant structures.

- Solution:

- Constraint Augmentation Parameters: Limit the range of geometric transformations. For example, restrict rotations to smaller angles (e.g., ±15-20 degrees) to prevent the sperm head from appearing rotated in an unrealistic way.

- Prioritize Photometric over Geometric Transforms: Focus on color, contrast, and noise variations, which are less likely to alter the fundamental shape of the sperm [22].

- Visual Quality Control: Always maintain a manual review process. After defining your augmentation pipeline, generate a batch of augmented images and visually inspect them to confirm that critical diagnostic features remain intact [23].

Experimental Protocols & Data

Table: Summary of Data Augmentation Impact in Sperm Morphology Studies

| Study / Model | Dataset(s) Used | Key Augmentation & Feature Engineering Methods | Reported Performance |

|---|---|---|---|

| Deep Feature Engineering (CBAM+ResNet50) [16] | SMIDS (3-class), HuSHeM (4-class) | Attention mechanisms (CBAM), deep feature extraction, PCA for feature selection [16]. | 96.08% accuracy (SMIDS), 96.77% accuracy (HuSHeM) [16]. |

| Two-Stage Ensemble Framework [14] | Hi-LabSpermMorpho (18-class) | Hierarchical classification, ensemble learning (NFNet, ViT), multi-stage voting [14]. | ~70% accuracy (across staining protocols), 4.38% improvement over baselines [14]. |

| CNN with Basic Augmentation [2] | SMD/MSS (12-class) | Geometric and photometric transformations (rotation, flipping, color shifts) [2]. | 55% - 92% accuracy range [2]. |

Detailed Methodology: A Standard Data Augmentation Pipeline for Sperm Images

The following protocol, inspired by recent studies, can be implemented in Python using libraries like TensorFlow/Keras ImageDataGenerator or Albumentations:

Image Preprocessing:

- Normalization: Rescale pixel intensities to a [0, 1] or [-1, 1] range.

- Resizing: Standardize all images to a fixed size (e.g., 80x80 or 224x224).

- Grayscale Conversion: Optionally convert RGB images to grayscale to reduce complexity, if color is not a critical feature [2].

Data Augmentation:

- Geometric Transformations:

- Rotation: Random rotation within a constrained range (e.g., ±20 degrees).

- Flipping: Horizontal and vertical flips.

- Translation: Small random shifts in width and height.

- Photometric Transformations:

- Brightness & Contrast: Random adjustments to simulate varying illumination.

- Color Jitter: For color images, make small changes to hue and saturation.

- Noise Injection: Add random Gaussian noise to improve model robustness [22].

- Geometric Transformations:

Advanced Synthesis (for class imbalance):

- Utilize GAN architectures (e.g., CycleGAN) to generate synthetic images for underrepresented morphological classes, creating a more balanced training set [22].

The Scientist's Toolkit

Table: Essential Research Reagents & Materials for Sperm Morphology Analysis

| Item | Function / Description | Example / Note |

|---|---|---|

| RAL Diagnostics Stain | Staining kit for semen smears to reveal morphological details [2]. | Used in the creation of the SMD/MSS dataset [2]. |

| Computer-Assisted Semen Analysis (CASA) System | Microscope with digital camera for automated image acquisition of sperm smears [2]. | MMC CASA system was used for the SMD/MSS dataset [2]. |

| Hi-LabSpermMorpho Dataset | A large-scale, expert-labeled dataset with 18 distinct sperm morphology classes [14]. | Used for training complex models like two-stage ensembles [14]. |

| Data Augmentation Tools (Software) | Libraries to programmatically augment image datasets. | Python libraries: Albumentations, TensorFlow/Keras ImageDataGenerator, PyTorch TorchIO. |

| Deep Learning Frameworks | Software for building and training predictive models. | Python with TensorFlow, PyTorch; pre-trained models like ResNet50, ViT [16] [14]. |

Workflow Visualization

The following diagram illustrates a hierarchical classification and augmentation strategy for handling class imbalance, as used in advanced sperm morphology research [14].

Diagram 1: Two-stage classification and augmentation workflow.

The next diagram shows the core architecture of a Generative Adversarial Network (GAN) used for data augmentation, a key technique for generating synthetic data to balance classes [22].

Diagram 2: GAN architecture for synthetic data generation.

Frequently Asked Questions (FAQs)

Q1: What are the fundamental differences between SMOTE and GANs for addressing class imbalance in sperm morphology datasets?

SMOTE and GANs are both synthetic data generation techniques but operate on different principles. SMOTE is an oversampling technique that creates synthetic samples for the minority class by linearly interpolating between existing minority class instances in the feature space. It finds the k-nearest neighbors (default k=5) for a minority sample and generates new points along the line segments connecting them [24] [25]. In contrast, Generative Adversarial Networks (GANs) use a generator-discriminator framework where the generator creates synthetic samples while the discriminator evaluates their authenticity against real data. Through this adversarial process, GANs learn the underlying data distribution to produce highly realistic synthetic samples [26] [27]. For sperm morphology analysis, GANs can capture complex morphological patterns that simple interpolation methods might miss.

Q2: My GAN-generated sperm morphology images lack diversity and show repetitive patterns. How can I address this mode collapse issue?

Mode collapse occurs when the GAN generator produces limited varieties of samples. Several strategies can address this:

- Intra-class sparse point detection: Use algorithms like iForest to identify sparse regions within minority classes, then apply affine transformations to augment these underrepresented patterns before GAN training [28].

- Modified loss functions: Incorporate additional loss components that penalize repetitive outputs. For instance, Onto-CGAN integrates ontology embeddings with a custom loss function that penalizes deviations from expected disease characteristics [26].

- Boundary sample focusing: Assign higher weights to boundary samples during GAN training to ensure the model learns difficult edge cases [28].

- Architectural improvements: Implement models like StyleGAN3 with adaptive discriminator augmentation (ADA) to prevent overfitting, particularly crucial for small medical datasets [27].

Q3: When should I prefer SMOTE over GANs for sperm morphology data augmentation?

SMOTE is preferable when:

- Working with tabular clinical data or feature vectors rather than images [24] [25]

- Computational resources are limited, as SMOTE is less resource-intensive than GANs [25]

- Working with smaller datasets where GAN training would be challenging [24]

- Dealing with well-separated classes without significant overlap [25]

- Need for quick implementation without extensive hyperparameter tuning [24]

For image-based sperm morphology analysis (e.g., classifying head defects, midpiece anomalies), GANs typically produce superior results despite higher computational demands [2] [28].

Q4: How can I evaluate whether my synthetic sperm morphology data is of sufficient quality for downstream tasks?

Implement a multi-faceted evaluation strategy:

- Distribution similarity: Use Kolmogorov-Smirnov (KS) tests to compare variable distributions between real and synthetic data. Aim for KS scores >0.7, similar to Onto-CGAN's achievement of 0.797 [26].

- Correlation preservation: Calculate Pearson Correlation Coefficients (PCC) for variable pairs. Quality synthetic data should maintain correlation patterns, though possibly with reduced strength [26].

- Machine learning utility: Employ the Train on Synthetic, Test on Real (TSTR) framework. If classifiers trained on synthetic data perform comparably to those trained on real data (within 5-10% accuracy), the synthetic data has good utility [26].

- Expert validation: For sperm morphology, have embryologists assess whether synthetic images capture clinically relevant morphological features [2].

Q5: How can I integrate domain knowledge about rare sperm abnormalities into synthetic data generation?

The Onto-CGAN framework provides an excellent blueprint for incorporating domain knowledge:

- Ontology embeddings: Convert structured knowledge about sperm abnormalities (e.g., David classification of head, midpiece, and tail defects) into embedding vectors using tools like OWL2Vec* [26].

- Conditional generation: Use these embeddings as conditional inputs to GAN generators, ensuring synthetic samples align with known abnormality characteristics [26].

- Custom loss functions: Modify GAN loss functions to incorporate penalties when generated samples deviate from expected morphological patterns based on domain knowledge [26] [27].

Troubleshooting Guides

Problem: GAN-Generated Sperm Images Appear Blurry or Artifactual

Possible Causes and Solutions:

Insufficient Training Data

Inappropriate Model Architecture

Poor Image Preprocessing

Problem: Synthetic Data Improves Training Accuracy but Fails to Generalize to Real Test Data

Diagnosis and Solutions:

Domain Gap Between Synthetic and Real Data

Inadequate Capture of Rare Subtypes

- Solution: Focus on boundary samples and intra-class sparse regions. Use iForest to detect sparse samples within minority classes and oversample these regions specifically [28]. For sperm morphology, ensure all abnormality subtypes (tapered heads, coiled tails, etc.) are adequately represented in both real and synthetic data.

Correlation Mismatch

- Solution: Monitor correlation preservation during generation. If synthetic data shows significantly weaker correlations than real data (as observed in CTGAN vs Onto-CGAN), incorporate correlation constraints into the loss function [26].

Problem: SMOTE Implementation Degrades Performance for Sperm Morphology Classification

Identification and Resolution:

Irrelevant Synthetic Sample Generation

Categorical Variable Handling

- Solution: Use SMOTENC (SMOTE for Numerical and Categorical) when your dataset contains categorical features, as standard SMOTE only works with continuous variables [25].

Noise Amplification

Experimental Protocols from Literature

Protocol 1: Onto-CGAN for Rare Disease Data Generation

This protocol adapts the Onto-CGAN framework for sperm morphology applications [26]:

Data Preparation

- Collect and annotate sperm images according to modified David classification (12 abnormality classes) [2]

- Extract clinical features from electronic health records where available

- Develop ontology embeddings for each abnormality class using OWL2Vec*

Model Configuration

- Implement conditional GAN architecture with ontology embeddings as additional input

- Use combined loss function: Ltotal = LDiscriminator + α·OntologyLoss

- Set α to balance realism and biological plausibility (typically 0.5-1.0)

Training Procedure

- Train for 10,000-50,000 iterations depending on dataset size

- Monitor KS scores and correlation similarity every 1000 iterations

- Use early stopping if synthetic data quality plateaus

Validation

- Compare distributions of key morphological features (head size, tail length)

- Calculate correlation similarity for biologically related variables

- Implement TSTR evaluation with multiple classifier types

Protocol 2: Deep Feature Engineering with CBAM-Enhanced ResNet50

Based on state-of-the-art sperm morphology classification achieving 96.08% accuracy [16]:

Image Preprocessing

- Resize all images to consistent dimensions (80×80 or 256×256)

- Apply grayscale conversion and normalization

- Use REAL-ESRGAN for super-resolution where needed [27]

Feature Extraction

- Implement ResNet50 backbone with Convolutional Block Attention Module (CBAM)

- Extract features from multiple layers: CBAM, Global Average Pooling (GAP), Global Max Pooling (GMP)

- Apply PCA for dimensionality reduction (retain 95% variance)

Classification

- Use Support Vector Machines with RBF kernel on deep features

- Alternative: k-Nearest Neighbors with k=5 for comparison

- Apply 5-fold cross-validation for robust performance estimation

Interpretation

- Generate Grad-CAM visualizations to identify discriminative regions

- Validate attention maps with embryologist annotations

Quantitative Performance Comparison

Table 1: Performance Metrics of Synthetic Data Generation Techniques

| Method | Dataset | KS Score | Correlation Similarity | TSTR Accuracy | Key Strengths |

|---|---|---|---|---|---|

| Onto-CGAN | MIMIC-III (AML) | 0.797 | 0.784 | 92.3% | Generates unseen diseases, preserves correlations [26] |

| CTGAN | MIMIC-III (AML) | 0.743 | 0.711 | 85.7% | Handles mixed data types, good for tabular data [26] |

| StyleGAN3 | GestaltMatcher | N/A | N/A | 94.1%* | Photorealistic images, preserves privacy [27] |

| SMOTE | Various Medical | N/A | N/A | 82.5% | Simple implementation, fast computation [24] [25] |

| ADASYN | Various Medical | N/A | N/A | 84.2% | Focuses on difficult samples, adaptive [24] |

| IBGAN | MedMNIST | N/A | N/A | 89.7% | Addresses intra-class imbalance, boundary focus [28] |

Based on expert evaluation rather than TSTR [27] *Average across multiple medical datasets [24]

Table 2: Sperm Morphology Classification Performance with Data Augmentation

| Classification Approach | Dataset | Original Accuracy | Augmented Accuracy | Improvement |

|---|---|---|---|---|

| CBAM-ResNet50 + DFE | SMIDS | 88.0% | 96.08% | +8.08% [16] |

| CBAM-ResNet50 + DFE | HuSHeM | 86.36% | 96.77% | +10.41% [16] |

| CNN + Augmentation | SMD/MSS | 55% (baseline) | 92% (best) | +37% [2] |

| Ensemble CNN | HuSHeM | ~90% | 95.2% | +5.2% [16] |

| MobileNet | SMIDS | ~82% | 87% | +5% [16] |

Research Reagent Solutions

Table 3: Essential Tools and Resources for Sperm Morphology Research

| Resource | Type | Function | Example/Reference |

|---|---|---|---|

| GestaltMatcher Database | Dataset | 10,980 images of 581 disorders with facial dysmorphisms | [27] |

| SMD/MSS Dataset | Dataset | 1,000+ sperm images with David classification | [2] |

| SMIDS Dataset | Dataset | 3,000 sperm images across 3 classes | [16] |

| HuSHeM Dataset | Dataset | 216 sperm images across 4 classes | [16] |

| OWL2Vec* | Software | Generates ontology embeddings from disease ontologies | [26] |

| REAL-ESRGAN | Software | Image super-resolution for low-quality inputs | [27] |

| DDColor | Software | Colorization of black-and-white images | [27] |

| StyleGAN3 | Algorithm | Photorealistic image generation with rotation invariance | [27] |

| CBAM-Enhanced ResNet50 | Architecture | Attention-based feature extraction with state-of-art performance | [16] |

| MedMNIST | Benchmark | Lightweight medical images for method validation | [28] |

Workflow Diagrams

Synthetic Data Generation Workflow

Onto-CGAN Architecture for Rare Abnormality Generation

SMOTE Implementation Process

In the field of male fertility research, the automated classification of sperm morphology presents a significant challenge due to the inherent class imbalance in biological datasets. Traditional machine learning algorithms, which pursue overall accuracy, often fail when confronted with class imbalance, making the separate hyperplane biased towards the majority class [29]. In sperm morphology analysis, this manifests as models that perform poorly on abnormal sperm classes—precisely the categories of greatest clinical interest. Manual sperm morphology assessment is time-intensive, subjective, and prone to significant inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators [16]. This diagnostic variability, combined with the data imbalance problem, necessitates algorithmic solutions that can pay greater attention to minority classes and hard-to-classify examples. Cost-sensitive learning (CSL) and advanced loss functions like focal loss represent two promising strategies that directly address these challenges by assigning greater misclassification costs to minority classes and focusing learning on difficult samples [29] [30].

Core Concepts: Understanding the Algorithmic Solutions

Fundamentals of Cost-Sensitive Learning

Cost-sensitive learning operates on the principle of assigning distinct misclassification costs for different classes. The fundamental assumption is that higher costs are assigned to samples from the minority class, with the objective being to minimize high-cost errors [31]. In medical applications like sperm morphology analysis, this approach is particularly valuable as certain misclassifications can have more severe consequences. For instance, misclassifying a morphologically abnormal sperm as normal could lead to its selection for Intracytoplasmic Sperm Injection (ICSI), potentially affecting fertilization outcomes [32].

The guidelines for developing a competitive cost-sensitive model can be summarized through four key properties:

- Property 1: The penalty parameter of the minority class is set to be greater than that of the majority class

- Property 2: Given a classification error ξ, the loss function L(ξ) is monotonically increasing

- Property 3: The loss for the minority class is greater than that for the majority class

- Property 4: As the classification error increases, the growth rate of the loss function L(ξ) gradually decreases [29]

Focal Loss Mechanics

Focal loss represents an algorithm-level strategy that modifies the standard cross-entropy loss to address class imbalance. It introduces a modulating factor ((1-\hat{p}i)^\gamma) to the cross entropy loss, where (\hat{p}i) is the model's predicted probability of the ground truth class [30]. This factor adds emphasis to incorrectly classified examples when updating a model's parameters via backpropagation.

The mathematical formulation contrasts with standard cross-entropy:

- Normal cross entropy loss: (-\log(\hat{p}_i))

- Focal loss: (-(1-\hat{p}i)^\gamma * \log(\hat{p}i)) [30]

The modulating parameter (\gamma) adjusts the rate at which easy examples are down-weighted. When γ = 0, focal loss is equivalent to cross-entropy loss. As γ increases, the effect of the modulating factor increases, further focusing learning on hard, misclassified examples [30]. Research has shown that the optimal γ value typically falls between 0.5 and 2.5, with γ = 2.0 providing strong performance across various applications [30].

Hybrid Approaches

Recent research has demonstrated that combining data-level and algorithm-level strategies can yield superior results. The Batch-Balanced Focal Loss (BBFL) algorithm represents one such hybrid approach, integrating batch-balancing (a data-level strategy) with focal loss (an algorithm-level strategy) [30]. This combination ensures that each training batch contains balanced class representation while the loss function emphasizes hard examples, addressing both between-class and within-class imbalances simultaneously.

Technical Support: Troubleshooting Common Implementation Issues

Frequently Asked Questions

Q1: My model achieves high overall accuracy but fails to detect abnormal sperm classes. What cost-sensitive strategies can improve minority class recall?

A: This common issue indicates strong bias toward the majority class. Implement these solutions:

- Apply the "class_weight" parameter in scikit-learn models set to "balanced," which automatically adjusts weights inversely proportional to class frequencies [33]

- For deep learning models, replace standard cross-entropy with focal loss with γ = 2.0 to focus learning on hard, minority-class examples [30]

- For SVM architectures, employ different penalty parameters for different classes, such as in DEC-style methods [29]

- Consider hybrid approaches like Batch-Balanced Focal Loss, which combines batch-balancing with focal loss [30]

Q2: How do I determine the optimal class weights or focal loss parameters for my specific sperm morphology dataset?

A: Parameter optimization is dataset-dependent. Follow this methodology:

- For classical ML models: Utilize Bayesian optimization to suggest the best combination of hyperparameters for model variables and class imbalance treatment [33]

- For focal loss γ: Conduct cross-validation testing using a range of values from 0.5 to 2.5, as research indicates optimal performance typically lies within this range [30]

- Implement k-fold cross-validation (5-fold is common) to evaluate parameter effectiveness across different data splits [2] [16]

- For sperm morphology datasets with multiple abnormality types, consider treating the problem as multi-class imbalance and adjust weights for each class separately

Q3: My model seems to overfit to the minority class after implementing cost-sensitive methods. How can I maintain balance?

A: Overfitting to minority classes indicates need for regularization:

- Introduce dropout layers at a rate of 50% after fully connected layers in deep learning architectures [30]

- Apply data augmentation techniques specifically for minority classes, including random horizontal and vertical flipping, 90-degree rotations, shearing, and axis transformations [32] [2]

- For sperm images, employ elastic transformations to generate additional abnormal sperm examples [32]

- Implement learning rate scheduling, reducing the rate by a factor of 0.1 at 80% and 90% of training iterations [32]

- Regularize cost-sensitive SVM models using sparsity control parameters like ν in ν-CSSVM [29]

Q4: What evaluation metrics should I use to properly assess model performance on imbalanced sperm morphology data?

A: Accuracy alone is misleading for imbalanced datasets. Instead, employ:

- Sensitivity (recall) and Positive Predictive Value (PPV) for each class, particularly abnormal sperm classes [32]

- F1-score, which balances precision and recall [30]

- Area Under the Receiver Operator Characteristic Curve (AUC) for binary classification [30] [33]

- Mean accuracy and mean F1-score for multi-class classification [30]

- Confusion matrices to visualize specific misclassification patterns [32] [30]

Experimental Protocols for Sperm Morphology Analysis

Protocol 1: Implementing Cost-Sensitive Learning for SVM-Based Sperm Classification

Data Preparation:

Feature Extraction:

- Utilize deep feature engineering with ResNet50 backbone enhanced with Convolutional Block Attention Module (CBAM) [16]

- Extract features from multiple layers: CBAM, Global Average Pooling (GAP), Global Max Pooling (GMP) [16]

- Apply feature selection methods: Principal Component Analysis (PCA), Chi-square test, Random Forest importance [16]

Model Training:

Evaluation:

Protocol 2: Integrating Focal Loss for Deep Learning-Based Sperm Classification

Data Preparation & Augmentation:

Model Architecture:

Loss Implementation:

Training & Evaluation:

Performance Comparison Tables

Table 1: Comparative Performance of Different Class Imbalance Techniques on Medical Image Classification Tasks

| Technique | Dataset | Performance Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Batch-Balanced Focal Loss (BBFL) | Glaucoma Fundus Images (n=7,873) | Binary: 93.0% accuracy, 84.7% F1, 0.971 AUC [30] | Combines data-level and algorithm-level strategies | Requires careful parameter tuning |

| Modified Stein Loss (CSMS) | Class-imbalanced benchmark datasets | Improved robustness to noise [29] | Monotonic increasing, decreasing growth rate | Less explored in deep learning architectures |

| Focal Loss | RNFLD Dataset (n=7,258) | 92.6% accuracy, 83.7% F1, 0.964 AUC [30] | Focuses on hard examples, easy to implement | Fixed γ may limit adaptability |

| Cost-Sensitive Random Forest | Antibacterial Discovery Dataset (n=2,335) | ROC-AUC: 0.917 [33] | Highly interpretable, handles feature relationships | May underperform compared to deep learning on images |

| Deep Feature Engineering + SVM | SMIDS Sperm Dataset (n=3,000) | 96.08% accuracy [16] | High accuracy, combines deep and traditional ML | Complex multi-stage pipeline |

Table 2: Sperm Morphology Classification Performance Across Different Architectural Approaches

| Architecture | Dataset | Accuracy | Sensitivity/PPV Abnormal | Key Innovation |

|---|---|---|---|---|

| CBAM-ResNet50 + Deep Feature Engineering [16] | SMIDS (3-class) | 96.08% ± 1.2% | Not specified | Attention mechanisms + feature selection |

| YOLOv3 with Batch Balancing [32] | Jikei Sperm Dataset | Abnormal sperm: 0.881 sensitivity, 0.853 PPV | 0.881/0.853 | Simultaneous morphology assessment & tracking |

| Deep CNN with Data Augmentation [2] | SMD/MSS (12-class) | 55-92% (range across classes) | Varies by abnormality type | Comprehensive augmentation strategies |

| Stacked Ensemble CNNs [16] | HuSHeM (216 images) | 95.2% | Not specified | Combination of multiple architectures |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for Sperm Morphology Analysis

| Resource | Type | Function | Example Implementation |

|---|---|---|---|

| Sperm Morphology Datasets | Data | Model training and validation | JSD (4625 images) [32], SMD/MSS (1000+ images) [2], SMIDS (3000 images) [16] |

| Deep Learning Frameworks | Software | Model architecture implementation | Python with TensorFlow/PyTorch [31], Darknet for YOLOv3 [32] |

| Data Augmentation Tools | Algorithm | Address data scarcity and imbalance | Elastic transform [32], geometric & intensity transformations [30] |

| Attention Mechanisms | Algorithm | Focus learning on relevant features | CBAM integrated with ResNet50 [16] |

| Bayesian Optimization | Algorithm | Hyperparameter tuning for imbalance | CILBO pipeline for automated parameter selection [33] |

| Interpretability Tools | Software | Model decision explanation | Grad-CAM visualization [16], SHAP values [34] |

| Evaluation Metrics | Framework | Performance assessment on imbalance | F1-score, AUC, sensitivity, PPV [32] [30] |

The integration of cost-sensitive learning and focal loss approaches represents a paradigm shift in addressing class imbalance challenges in sperm morphology analysis. These algorithmic innovations move beyond traditional data-level sampling strategies by embedding imbalance awareness directly into the learning process [29] [30]. The demonstrated success of these methods across various medical imaging domains, including sperm morphology classification, highlights their potential to standardize and improve male fertility diagnostics.

Future research directions should explore adaptive focal loss formulations that dynamically adjust parameters during training [34], multi-modal approaches that combine morphological with motility assessment [32], and explainable AI techniques that provide interpretable insights for clinical decision-making [16] [34]. As these algorithmic innovations continue to mature, they hold significant promise for delivering automated, objective, and clinically reliable sperm morphology analysis that can enhance patient care and treatment outcomes in reproductive medicine.

Frequently Asked Questions (FAQs)