Addressing Class Overlap in Fertility Datasets: Advanced Resampling and Machine Learning Strategies for Biomedical Research

Class overlap, the phenomenon where examples from different classes share similar feature characteristics, significantly impairs the performance of machine learning models in fertility and reproductive medicine.

Addressing Class Overlap in Fertility Datasets: Advanced Resampling and Machine Learning Strategies for Biomedical Research

Abstract

Class overlap, the phenomenon where examples from different classes share similar feature characteristics, significantly impairs the performance of machine learning models in fertility and reproductive medicine. This article provides a comprehensive analysis for researchers and scientists on mitigating this issue. We first explore the foundational theory of how class overlap synergizes with class imbalance to amplify classification complexity in datasets ranging from IVF outcomes to sperm morphology. The article then details methodological applications of adaptive resampling techniques and ensemble learning models specifically designed for fertility data. We further present troubleshooting and optimization protocols to enhance model robustness and conclude with validation frameworks and comparative performance analyses of state-of-the-art approaches, providing a complete guide for developing reliable predictive tools in drug development and clinical research.

Understanding Class Overlap: The Foundational Challenge in Fertility Data Complexity

Defining Class Overlap and Its Synergy with Class Imbalance in Medical Datasets

Frequently Asked Questions (FAQs)

Q1: What exactly is class overlap, and why is it particularly problematic when combined with class imbalance in medical datasets?

Class overlap occurs when samples from different classes share a common region in the feature space, meaning instances belonging to separate categories have similar feature values [1]. In medical datasets, this creates ambiguous regions where distinguishing between classes (e.g., diseased vs. healthy) becomes inherently difficult for classifiers [1] [2].

When combined with class imbalance—where the clinically important "positive" cases (like a rare disease) make up less than 30% of the dataset—the problem is critically exacerbated [3]. Standard classifiers are already biased toward the majority class. Overlap further "hides" the scarce minority instances among similar majority instances, leading to a significant deterioration in performance. Research indicates that in such scenarios, class overlap can be a more substantial obstacle to classification performance than the imbalance itself [1] [2]. The misclassification cost in medicine is high; for example, incorrectly predicting a COVID-19 patient as non-COVID due to overlap and imbalance could lead to severe outcomes [1].

Q2: How can I quantitatively measure the degree of class overlap in my imbalanced fertility dataset?

You can use specialized metrics designed for imbalanced distributions. The R value and its enhanced version, R_aug, are established metrics for this purpose [2].

RValue: This metric estimates the ratio of samples residing in the overlapping area. A sample is considered overlapped if at leastθ+1of itsknearest neighbors belong to a different class. The classic parameter setting isk=7andθ=3[2].R_augValue: The standardRvalue can be dominated by the majority class in imbalanced settings. TheR_augvalue addresses this by applying a higher weight to the overlap found in the minority class, providing a more accurate assessment for imbalanced datasets like those in fertility research [2].

The following table summarizes and compares these two key metrics:

Table 1: Metrics for Quantifying Class Overlap in Imbalanced Datasets

| Metric | Formula | Key Principle | Advantage for Imbalanced Data |

|---|---|---|---|

R Value [2] |

R = (IR * R(C_N) + R(C_P)) / (IR + 1) |

Measures the ratio of overlapped samples in the entire dataset. | Simple and intuitive. |

R_aug Value (Augmented R) [2] |

R_aug = (R(C_N) + IR * R(C_P)) / (IR + 1) |

Weights the minority class overlap more heavily. | More representative of the true challenge by focusing on the critical minority class. |

Legend: IR = Imbalance Ratio (`|C_N |

/ | C_P | ),CN= Majority Class,CP= Minority Class,R(CN)= R value for the majority class,R(CP)` = R value for the minority class. |

Q3: What are the most effective data-level methods to handle co-occurring class imbalance and overlap?

Research shows that advanced under-sampling techniques, which strategically remove majority class instances, are often more effective than over-sampling for this combined problem [1] [4]. Over-sampling can lead to over-fitting and over-generalization of the minority class region [1]. The most promising methods include:

- Metaheuristic-Based Under-sampling: These methods frame instance selection as an optimization problem. They use evolutionary algorithms to select an optimal subset of majority class samples that mitigates both imbalance and overlap, without being forced to achieve a 1:1 ratio [1].

- Hesitation-Based Instance Selection: This novel method uses intuitionistic fuzzy sets to assign weights to instances based on their proximity to class borders (so-called "self-borderline" and "cross-borderline" instances). It focuses on removing ambiguous majority instances to improve class separability [4].

- Overlap-Based Undersampling (URNS): This method uses recursive neighborhood searching to identify and remove majority class instances located in the overlapping region that "surround" multiple minority instances, thereby maximizing the visibility of the minority class [5].

- Hybrid Techniques (SMOTEEN): Combining SMOTE (Synthetic Minority Over-sampling Technique) with an under-sampling method like Edited Nearest Neighbors (ENN) has also demonstrated strong performance. SMOTE generates synthetic minority instances, while ENN cleans the resulting dataset by removing any instances (majority or minority) that are misclassified by their neighbors, which often targets overlapping regions [6] [7].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Low Sensitivity in Fertility Prediction Models

Problem: Your model achieves high overall accuracy but fails to identify true positive cases (e.g., fertility issues), resulting in unacceptably low sensitivity/recall.

Investigation Path:

Steps:

Confirm Class Imbalance:

- Calculate the Imbalance Ratio (IR). In fertility contexts, this is common. For example, one public

Fertilitydataset has an IR of 7.33 (88 normal cases vs. 12 abnormal cases) [6].

- Calculate the Imbalance Ratio (IR). In fertility contexts, this is common. For example, one public

Quantify Class Overlap:

- Compute the

R_augvalue for your dataset (see FAQ 2). A high value confirms that overlap is a contributing factor.

- Compute the

Apply a Targeted Resampling Method:

- If overlap is high, avoid simple random over-sampling. Instead, employ an overlap-informed method.

- Recommended Action: Apply a metaheuristic-based or hesitation-based under-sampling algorithm [1] [4]. These are specifically designed to selectively remove majority instances from overlapping regions, thereby reducing ambiguity and making minority class patterns more apparent to the classifier.

Validate the Solution:

- After resampling, re-train your classifier.

- Use metrics like Sensitivity, F1-Score, and AUC to evaluate improvement. The goal is to see a significant boost in sensitivity without a catastrophic drop in specificity.

Guide 2: Selecting a Resampling Strategy for an Imbalanced Fertility Dataset with Suspected Overlap

Problem: You are unsure whether to use over-sampling, under-sampling, or a hybrid method for your pre-processing.

Decision Path:

Explanation of Paths:

- Path to Random Under-sampling: Choose this for a quick, computationally cheap baseline. Be aware that it may discard potentially useful information from the majority class [3] [8].

- Path to SMOTEEN (Hybrid): A robust choice when imbalance is the dominant issue. SMOTEEN combines SMOTE (oversampling) and ENN (undersampling) to both create new minority instances and clean the data by removing overlapping instances from both classes, which has proven effective in clinical datasets [6] [7].

- Path to Advanced Under-sampling: This is the recommended path when class overlap is a confirmed or suspected major factor. Methods like the metaheuristic-based approach or hesitation-based under-sampling are specifically engineered to handle the synergy of imbalance and overlap, often leading to superior performance [1] [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Handling Imbalance and Overlap

| Tool / Technique | Type | Primary Function | Key Consideration |

|---|---|---|---|

imbalanced-learn (Python) [8] |

Software Library | Provides implementations of ROS, RUS, SMOTE, SMOTEEN, Tomek Links, and more. | Ideal for quick prototyping and applying standard resampling techniques. |

| Metaheuristic Under-sampler [1] | Algorithm | Uses evolutionary algorithms to optimally select majority class instances for removal. | Best for complex datasets with high overlap; more computationally intensive. |

| Hesitation-Based Instance Selector [4] | Algorithm | Uses fuzzy logic to weight and select borderline instances for removal. | Highly effective for managing ambiguity in overlapping regions. |

Overlap Metric (R_aug) [2] |

Diagnostic Metric | Quantifies the severity of class overlap in an imbalanced dataset. | Essential for data characterization and informing method selection. |

| Cost-Sensitive Classifier [3] [4] | Algorithmic Approach | Directly assigns a higher misclassification cost to the minority class during model training. | An algorithm-level alternative to data resampling; requires cost matrix definition. |

Troubleshooting Guides

1. Issue: Model with High Overall Accuracy Fails to Detect Rare Fertility Events

- Problem Description: Your model achieves high accuracy (e.g., >95%) during training and validation, but in real-world deployment, it consistently fails to identify critical but rare fertility events, such as specific endocrine patterns or successful embryo implantation markers. This is a classic symptom of a class imbalance problem, where one class (the majority) vastly outnumbers another (the minority), causing the model to ignore the minority class [9].

- Diagnosis Steps:

- Check Class Distribution: Calculate the ratio between the majority (e.g., non-fertile cycles) and minority (e.g., fertile cycles) classes in your dataset. In fertility research, imbalanced ratios of 1:13 or worse are common [9].

- Review Performance Metrics: Do not rely on accuracy alone. Examine metrics sensitive to the minority class, such as Sensitivity (Recall), Precision, and the F1-Score. A high accuracy with a sensitivity of zero for the minority class confirms the issue [10].

- Run a Baseline Comparison: Compare your model's performance against a ZeroR classifier baseline. If the baseline accuracy (which simply predicts the majority class) is very high, it indicates that the data structure itself is not conducive to learning the minority class without intervention [9].

- Solutions:

- Data-Level: Apply resampling techniques to rebalance the training data.

- Oversampling: Use the Synthetic Minority Oversampling Technique (SMOTE) or its variants (Borderline-SMOTE, ADASYN) to generate synthetic examples of the minority class [9] [10].

- Undersampling: Use methods like random undersampling (RandUS) or Tomek's Links to reduce the majority class, but be cautious of losing important information [10].

- Algorithm-Level: Use cost-sensitive learning where a higher penalty is assigned to misclassifying the minority class during model training.

- Ensemble Methods: Combine resampling with ensemble classifiers like Random Forests to improve robustness [9].

- Data-Level: Apply resampling techniques to rebalance the training data.

2. Issue: Model Predictions are Unreliable and Inconsistent Across Patient Subgroups

- Problem Description: The model performs well for some patient profiles (e.g., women under 35) but fails for others (e.g., women over 40 or patients with specific endocrine profiles). This can be caused by small disjuncts, where the concept learned by the model is actually a collection of several smaller sub-concepts that are not well captured by the overall classification rules.

- Diagnosis Steps:

- Stratified Error Analysis: Break down your model's error rates by different patient demographics, lifestyle factors, or clinical subgroups. Consistent high error in a specific subgroup indicates small disjuncts.

- Analyze Rule Complexity: If using interpretable models like decision trees, look for long, specific rules that only apply to a very small number of instances.

- Solutions:

- Feature Engineering: Create new, more discriminative features that can better separate the subgroups. In fertility, this could involve creating composite features from hormonal time-series data.

- Cluster-Based Resampling: Apply resampling techniques (like SMOTE) not just globally, but within identified patient clusters to ensure each sub-concept is adequately represented [10].

- Model Selection: Consider using local learning algorithms or ensemble methods that can handle complex decision boundaries more effectively.

3. Issue: Model Performance is Highly Sensitive to Small Variations in Input Data

- Problem Description: The model's performance degrades significantly with slight perturbations in the input features, or different training-test splits yield vastly different results. This is often due to class overlap, where feature values for different classes (e.g., fertile vs. non-fertile) are very similar, and noise, which refers to errors or anomalies in the data labels or feature values.

- Diagnosis Steps:

- Visualize Feature Space: Use dimensionality reduction techniques like PCA or t-SNE to project your data into 2D or 3D. Look for visual overlap between data points from different classes.

- Identify Noisy Labels: Manually audit a sample of data points that your model is most confident about but got wrong. These are likely mislabeled instances.

- Solutions:

- For Overlap:

- Feature Selection: Use methods to select the most discriminative features, removing redundant or irrelevant ones that contribute to overlap. This helps build a more parsimonious and robust model [9].

- Use Complex Models: Models like SVM with non-linear kernels or deep learning can sometimes learn more complex boundaries to separate overlapping classes.

- For Noise:

- Data Cleaning: Employ techniques like Edited Nearest Neighbors (ENN) which remove instances that are misclassified by their nearest neighbors [10].

- Hybrid Methods: Use hybrid resampling like SMOTE followed by ENN (SMOTEENN), which both generates new minority samples and cleans the resulting data [10].

- For Overlap:

Frequently Asked Questions

Q1: What performance metrics should I prioritize over accuracy when working with imbalanced fertility datasets? Accuracy is misleading with imbalanced data. You should primarily use the F1-Score, Sensitivity (Recall), and Precision for the minority class. The Geometric Mean (G-Mean) is also a useful metric as it maximizes accuracy on both classes simultaneously [9] [10]. Always report a confusion matrix for a complete picture.

Q2: My fertility dataset is both imbalanced and high-dimensional (many features). Where should I start? Start with feature selection to reduce dimensionality and noise. Then, apply resampling techniques like SMOTE on the reduced feature space. Studies have shown that combining feature-space transformation (e.g., with PCA) with class rebalancing can provide better representations for the models to learn from [10]. This approach helps in building a more robust and parsimonious model [9].

Q3: Are complex oversampling techniques like ADASYN always better than simple random oversampling? Not necessarily. Comparative studies have shown that no single method consistently outperforms all others. The best technique is highly dependent on your specific dataset. One study on physiological signals found that simple Random Undersampling (RandUS) could improve sensitivity by up to 11%, while more sophisticated methods like ADASYN provided no trivial benefit in the presence of subject dependencies [10]. It is crucial to empirically evaluate multiple methods.

Q4: How can I acquire more data for the rare class in fertility studies, given the cost and difficulty? While acquiring more real data is ideal, it is often impractical. A viable alternative is to use data augmentation techniques to create new synthetic samples. For time-series fertility data (e.g., hormonal levels, PPG signals), this could involve adding small random shifts or jitters, or using generative models. Furthermore, leveraging transfer learning from models pre-trained on larger, related biomedical datasets can be effective.

Experimental Protocols for Key Cited Studies

Protocol 1: Mitigating Class Imbalance in Apnoea Detection from PPG Signals [10]

- Objective: To compare the effectiveness of 10 data-level class rebalancing methods for detecting apnoea events from photoplethysmography (PPG) signals, a problem with clinical relevance to fertility and overall health.

- Data Acquisition:

- Sensor: Reflectance PPG sensor (MAX30102) placed on the neck.

- Participants: 8 healthy subjects.

- Protocol: Subjects simulated apnoea by holding their breath for 10-100 seconds, 3-10 times over 30 minutes. Start and end of events were hand-marked.

- Data Preprocessing:

- Downsample raw PPG (Red & IR channels) from 400 Hz to 100 Hz.

- Segment data using a 30-second overlapping sliding window (1-second shift).

- Filter signals with a median filter (5-sample window) and a 2nd-order Savitsky-Golay smoothing filter (0.25s window).

- Combine filtered Red and IR channels by time-wise addition, then standardize.

- Feature Extraction: Extract 49 features from each 30-second PPG segment, including:

- Time-domain: Features per PPG pulse (e.g., pulse rise time, amplitude, duration) and their statistics (mean, SD) across the window.

- Frequency-domain, Correlogram, and Envelope Features.

- Class Rebalancing & Modelling:

- Apply 10 rebalancing methods: RandUS, RandOS, CNNUS, ENNUS, TomekUS, SMOTE, BLSMOTE, ADASYN, SMOTETomek, SMOTEENN.

- Train a Random Forest classifier on the rebalanced datasets.

- Optionally, apply PCA before rebalancing to transform the feature space.

- Evaluation: Compare performance using sensitivity, accuracy, and other relevant metrics.

Protocol 2: Addressing Imbalance in Chicken Egg Fertility Classification [9]

- Objective: To build a robust predictive model for classifying fertile and non-fertile chicken eggs, where the natural data distribution is highly imbalanced (~ 10% non-fertile).

- Data Characteristics: A naturally imbalanced dataset with a ratio of approximately 1:13 (non-fertile:fertile).

- Modelling Challenge:

- Using 25 Partial Least Squares (PLS) components, the model achieved 100% true positive rate for the minority class but was non-parsimonious.

- Reducing to 5 PCs shifted accuracy in favor of the majority class, demonstrating the need to address imbalance for model optimization.

- Recommended Workflow:

- Initial Training: Train the model on the true, imbalanced distribution.

- Evaluate for Overfitting: If the model performs poorly or overfits, restructure the data.

- Data Restructuring: Apply resampling techniques (e.g., SMOTE) to create a more balanced training set.

- Feature Learning: Use feature selection to build a model with few, important discriminating features to avoid non-robustness due to noisy features [9].

The following table summarizes quantitative findings from research on class imbalance mitigation.

Table 1: Summary of Class Imbalance Mitigation Techniques in Biomedical Research

| Source Data / Application | Imbalance Ratio / Baseline | Mitigation Technique(s) Tested | Key Finding(s) | Performance Change (Example) |

|---|---|---|---|---|

| Network Intrusion Detection [9] | Up to 1:2,700,000ZeroR Acc: 99.9% | SMOTE and variations | Clear optimization of outputs after tackling imbalance. Models without mitigation had accuracy below the useless baseline. | F1-Score, Recall, Precision shown in bold as optimized outputs post-SMOTE. |

| Apnoea Detection from PPG Signals [10] | N/A | RandUS, RandOS, SMOTE, ADASYN, ENN, etc. | RandUS was best for improving sensitivity (up to 11%).Oversampling (e.g., SMOTE) was non-trivial and needs development for subject-dependent data. | Sensitivity: ↑ up to 11% with RandUS. |

| Chicken Egg Fertility [9] | ~1:13 | Modelling with PLS components | Without addressing imbalance, a parsimonious model (5 PCs) failed. A complex model (25 PCs) worked but was non-robust. | Highlighted necessity of handling imbalance for a parsimonious and robust model. |

| Diabetes Diagnosis [10] | N/A | ENN, SMOTE, SMOTEENN, SMOTETomek | ENN (undersampling) resulted in superior improvements, especially in recall. Hybrid methods produced less but comparable improvements. | Recall: Superior improvement with ENN. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Fertility Data Science Research

| Item / Tool Name | Function / Application in Research |

|---|---|

| PPG Sensor (e.g., MAX30102) | Acquires photoplethysmography signals from the neck or other body parts; used for non-invasive monitoring of physiological events like apnoea, which can be relevant to fertility studies [10]. |

| SMOTE (Synthetic Minority Oversampling Technique) | A software algorithm to generate synthetic samples for the minority class to balance imbalanced datasets; crucial for improving model sensitivity to rare fertility events [9] [10]. |

| Pre-implantation Genetic Testing (PGT) | A laboratory technique used in IVF to screen embryos for chromosomal abnormalities and genetic disorders; a source of high-dimensional data for building predictive models of embryo viability [11]. |

| Random Forest Classifier | A robust, ensemble machine learning algorithm frequently used in biomedical research due to its good performance on complex datasets and ability to handle a mix of feature types [10]. |

| Embryoscope/Time-lapse Imaging System | An incubator with an integrated camera that takes frequent images of developing embryos without disturbing them; generates rich, time-series image data for AI-based embryo selection models [11]. |

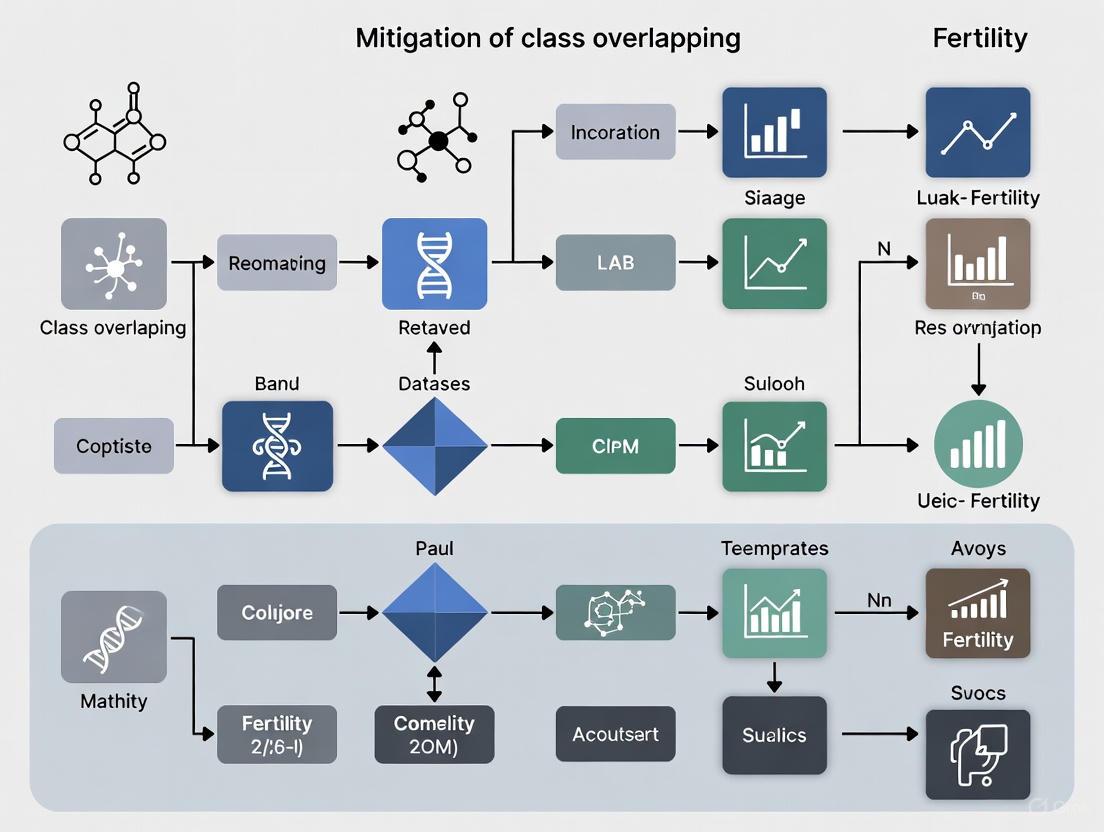

Experimental Workflow and Signaling Pathway Diagrams

Diagram 1: Fertility data analysis workflow.

Diagram 2: Apnoea detection from PPG signals.

FAQs on Class Overlap in Fertility Data Research

What is class overlap, and why is it a critical problem in fertility research datasets?

Class overlap occurs when examples from different outcome classes (e.g., "successful" vs. "failed" IVF cycles, or "normal" vs. "abnormal" sperm) share nearly identical feature values in a dataset. This is a critical problem in fertility research because the underlying biological processes are often complex and continuous, leading to ambiguous cases. For instance, the distinction between a normal sperm and an abnormal one can be subtle and subjective. When machine learning models encounter these overlapping regions, their ability to distinguish between classes is significantly compromised, leading to reduced accuracy and unreliable predictions. Mitigating this issue is therefore essential for developing robust clinical decision-support tools [12].

What are the primary sources of class overlap in sperm morphology datasets?

The main sources are inter-expert disagreement and inherent biological continuums:

- Inter-Expert Disagreement: In the SMD/MSS dataset study, three experts classifying the same sperm images only reached total agreement (TA) for 3/3 experts on the same label in a portion of cases. Other scenarios included partial agreement (PA) from 2/3 experts, and no agreement (NA) among the experts. This disagreement directly introduces class overlap in the labeled data, as the same sperm could be assigned to different morphological classes by equally qualified professionals [12].

- Biological Continuums: Morphological defects are not always discrete. A sperm cell can exhibit a spectrum of anomalies in the head, midpiece, and tail, creating a continuum of forms that do not fit neatly into strict categorical classes [12] [13].

How does class overlap manifest in IVF outcome prediction models?

Class overlap in IVF prediction arises from the multifactorial and heterogeneous nature of infertility. Key factors include:

- Similar Profiles, Different Outcomes: Two patients with nearly identical profiles (e.g., same age, AMH levels, and sperm parameters) can have截然不同的IVF outcomes due to unknown genetic, epigenetic, or environmental factors not captured in the dataset.

- Center-Specific Practices: A study comparing machine learning models found that a single, national-level model (SART) was outperformed by center-specific models (MLCS). This suggests that inter-center variations in patient populations and clinical practices create a form of dataset-wide overlap, which is mitigated by training models on local data [14].

What methodological strategies can help mitigate class overlap in sperm morphology analysis?

- Consensus Labeling: Utilizing multiple annotators and establishing a consensus label, such as using the majority vote from several experts, can create a more reliable ground truth [12].

- Data Augmentation: Techniques like image rotation, scaling, and flipping can artificially balance underrepresented morphological classes and improve model generalization. One study expanded its dataset from 1,000 to 6,035 images using these methods [12].

- Advanced Model Architecture: Employing Convolutional Neural Networks (CNNs) with robust preprocessing (e.g., image denoising, normalization) can help the model learn more discriminative features to separate overlapping classes [12].

What strategies are effective for handling class overlap in IVF outcome prediction?

- Center-Specific Modeling: Building machine learning models tailored to a specific fertility clinic's data can significantly improve prediction accuracy. One study showed that center-specific models provided more accurate live birth predictions (LBP) than a generalized national model, appropriately reassigning over 20% of patients to a more accurate prognosis category [14].

- Ensemble Methods: Using ensemble models like Logit Boost, Random Forest, and RUS Boost can enhance performance. These methods combine multiple weak learners to create a strong classifier that is more robust to noisy, overlapping data points. One study achieved an accuracy of 96.35% using the Logit Boost algorithm [15].

- Live Model Validation (LMV): Continuously validating models on new, out-of-time test sets ensures that they remain effective as patient populations and clinical practices evolve, preventing performance degradation due to "concept drift," a form of temporal overlap [14].

Troubleshooting Guides

Issue: High Model Accuracy on Training Data but Poor Performance on Validation Set in Sperm Morphology Classification

Potential Cause: Class overlap exacerbated by inconsistent labeling and insufficient data variety, leading to model overfitting.

Solution:

- Audit the Ground Truth: Re-examine the labeled data for inter-expert variability. Implement a consensus-driven labeling protocol.

- Augment the Dataset: Apply data augmentation techniques (e.g., rotation, flipping, brightness adjustment) to increase the diversity of the training examples for all morphological classes [12].

- Implement a CNN with Preprocessing: Use a pipeline that includes image denoising and normalization before classification to enhance feature clarity [12].

- Apply Model Regularization: Introduce dropout layers or L2 regularization within the neural network to prevent over-reliance on specific features and improve generalization.

Experimental Workflow for Sperm Morphology Analysis

Issue: IVF Prediction Model Fails to Generalize Across Multiple Fertility Clinics

Potential Cause: Class overlap and dataset shift caused by demographic and procedural differences between clinical centers.

Solution:

- Adopt a Center-Specific Approach: Instead of a one-size-fits-all model, train and validate separate machine learning models on data from each individual clinic (MLCS) [14].

- Feature Engineering: Collaborate with clinical experts to identify and incorporate center-specific predictive features that may not be present in national registries.

- Utilize Ensemble Learners: Employ boosting algorithms (e.g., Logit Boost, AdaBoost) that are particularly effective at learning from complex, imbalanced datasets with overlapping classes [15].

- Perform Live Model Validation (LMV): Regularly test the model on the most recent patient data from the clinic to monitor for performance decay and retrain as necessary [14].

Comparative Analysis of IVF Prediction Models

Experimental Protocols

Detailed Methodology: Deep-Learning for Sperm Morphology Classification

The following protocol is based on a study that developed a predictive model using the SMD/MSS dataset [12].

1. Data Acquisition and Preparation

- Samples: Collect semen samples from patients with a concentration of at least 5 million/mL. Exclude very high concentrations (>200 million/mL) to avoid image overlap.

- Staining and Smears: Prepare smears according to WHO guidelines and stain with a RAL Diagnostics kit.

- Imaging: Use an MMC CASA system with a x100 oil immersion objective in bright field mode to capture images of individual spermatozoa.

2. Expert Labeling and Analysis of Inter-Expert Agreement

- Classification Standard: Classify each spermatozoon according to the modified David classification (12 classes of defects).

- Multiple Annotators: Have three experienced experts classify each image independently.

- Quantify Agreement: Categorize agreement as Total Agreement (TA: 3/3), Partial Agreement (PA: 2/3), or No Agreement (NA). Use statistical software (e.g., IBM SPSS) and Fisher's exact test to assess agreement levels. This step is crucial for identifying and quantifying class overlap.

3. Image Pre-processing and Augmentation

- Cleaning and Normalization: Resize images to a standard size (e.g., 80x80 pixels) and convert to grayscale. Normalize pixel values.

- Data Augmentation: To balance classes and mitigate overfitting, apply techniques including rotation, flipping, and scaling to expand the dataset.

4. Model Training and Evaluation

- Partitioning: Randomly split the augmented dataset into training (80%) and testing (20%) sets.

- CNN Architecture: Implement a Convolutional Neural Network in Python (v3.8) for classification.

- Evaluation: Report model accuracy across the different morphological classes.

Table 1: Sperm Morphology Dataset (SMD/MSS) Composition and Model Performance

| Metric | Value | Details |

|---|---|---|

| Initial Image Count | 1,000 | Individual spermatozoa images [12] |

| Final Image Count (Post-Augmentation) | 6,035 | Expanded via data augmentation techniques [12] |

| Classification Standard | Modified David Classification | 12 classes of defects (7 head, 2 midpiece, 3 tail) [12] |

| Expert Agreement Analysis | TA, PA, NA | Quantified levels of Total, Partial, and No Agreement among 3 experts [12] |

| Deep Learning Model | Convolutional Neural Network (CNN) | Implemented in Python 3.8 [12] |

| Reported Accuracy Range | 55% - 92% | Varies across morphological classes [12] |

Detailed Methodology: Machine Learning for IVF Outcome Prediction

This protocol synthesizes methodologies from recent studies on predicting IVF success [15] [14].

1. Data Collection and Preprocessing

- Data Source: Utilize historical IVF cycle data from a single center or a national registry (e.g., SART). For center-specific models (MLCS), use data from the target clinic.

- Key Features: Include patient demographics (female age, BMI), infertility factors (type, duration, AMH levels), treatment protocols (IVF/ICSI), and embryo details [15] [14].

- Data Cleaning: Handle missing values and outliers. Normalize or standardize numerical features.

2. Feature Engineering and Model Selection

- Algorithm Selection: Test a suite of machine learning models for baseline comparison. Common choices include:

- Logistic Regression

- Support Vector Machines (SVM)

- k-Nearest Neighbors (KNN)

- Multi-layer Perceptron (MLP)

- Ensemble Methods: Random Forest, AdaBoost, Logit Boost, RUS Boost [15].

- Hyperparameter Tuning: Optimize each model's parameters using cross-validation.

3. Model Training and Validation

- Center-Specific Training: For MLCS models, train on data exclusively from one fertility center.

- Validation Strategy: Perform internal validation using cross-validation. Crucially, perform external validation using a separate "out-of-time" test set (Live Model Validation) to ensure the model remains applicable to new patients [14].

- Comparison to Baseline: Compare the performance of complex models against a baseline model (e.g., a model based on female age alone).

4. Performance Evaluation

- Metrics: Evaluate models using:

- ROC-AUC: For overall discrimination.

- F1 Score: For balancing precision and recall at specific thresholds.

- Precision-Recall AUC (PR-AUC): For minimization of false positives and negatives.

- Brier Score: For calibration accuracy.

- PLORA: To assess predictive power improvement over the Age model [14].

Table 2: Key Machine Learning Models and Performance in IVF Prediction

| Model Type | Example Algorithms | Reported Performance | Advantages for Handling Overlap |

|---|---|---|---|

| Ensemble Methods | Logit Boost, Random Forest, AdaBoost | Logit Boost: 96.35% Accuracy [15] | Combines multiple learners to improve robustness on noisy, complex data. |

| Center-Specific Model (MLCS) | Custom ensemble or neural net | Higher F1 Score and PR-AUC vs. Generalized Model [14] | Reduces inter-center variation, a major source of overlap. |

| Neural Network | Deep Inception-Residual Network | 76% Accuracy, ROC-AUC 0.80 [15] | Can learn complex, non-linear relationships in the data. |

| Baseline Model | Age-based prediction | Lower ROC-AUC and PLORA [14] | Serves as a benchmark for model improvement. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Featured Fertility Informatics Experiments

| Item Name | Function/Application | Specific Example from Research |

|---|---|---|

| MMC CASA System | Automated sperm image acquisition and morphometric analysis (head dimensions, tail length). | Used for acquiring 1000 individual sperm images for the SMD/MSS dataset [12]. |

| RAL Diagnostics Stain | Staining kit for sperm smears to enhance visual contrast for morphological assessment. | Used to prepare semen smears according to WHO guidelines prior to imaging [12]. |

| Time-Lapse Imaging (TLI) System | Continuous monitoring of embryo development in a stable culture environment, generating large image datasets. | Source of ~2.4 million embryo images for training AI morphokinetic models [16]. |

| Open-Access Annotated Dataset | Publicly available dataset for training and benchmarking AI models, promoting reproducibility. | Gomez et al. TLI video dataset of 704 couples used to train a CNN for embryo stage classification [16]. |

| Preimplantation Genetic Testing (PGT) | Genetic screening of embryos to select those with the highest potential for successful implantation. | PGS/PGD cited as a method to improve IVF success by selecting genetically healthy embryos [17]. |

Frequently Asked Questions

FAQ 1: What metrics should I use to evaluate models on imbalanced fertility datasets? Traditional metrics like accuracy can be misleading. For fertility datasets, where correctly identifying the minority class (e.g., 'altered' fertility) is often critical, you should prioritize sensitivity (recall), specificity, and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). The Geometric Mean (G-mean), which is the square root of sensitivity times specificity, is also highly recommended as it provides a balanced view of model performance on both classes [6] [18].

FAQ 2: My fertility dataset has a very low positive rate. What is a viable sample size for building a stable model? Empirical research on medical data suggests that logistic model performance stabilizes when the positive rate is at least 10-15% and the total sample size is above 1,200-1,500 [18]. For smaller or more severely imbalanced datasets, employing sampling techniques is crucial to achieve reliable results.

FAQ 3: How can I address class overlap in my fertility data? Class overlap, where samples from different classes share similar feature characteristics, is a major complexity. One effective method is the Overlap-Based Undersampling technique, which uses recursive neighborhood searching (URNS) to detect and remove majority class instances from the overlapping region, thereby improving class separability [5].

FAQ 4: What are the main technical challenges when working with imbalanced fertility data? You are likely to encounter three core challenges [19] [6]:

- Class Overlapping: The region where majority and minority class samples are intermixed, making them hard to distinguish.

- Small Sample Size: A limited number of total samples, and particularly of minority class samples, hinders the model's ability to learn generalizable patterns.

- Small Disjuncts: The minority class concept may be formed by several small sub-concepts, which are prone to overfitting.

FAQ 5: Are there specialized methods for image-based fertility analysis, like sperm morphology classification? Yes. For imbalanced image datasets, a common approach is data augmentation. This involves artificially expanding your dataset using techniques like rotation, flipping, and scaling to create more balanced morphological classes for training deep learning models like Convolutional Neural Networks (CNNs) [12].

Troubleshooting Guides

Issue 1: Poor Sensitivity (Recall) for the Minority Class

Problem: Your model has high overall accuracy but fails to identify most of the crucial minority class cases (e.g., it misses patients with fertility alterations).

Solution Steps:

- Diagnose: Confirm the issue by checking the confusion matrix. You will observe a high number of False Negatives.

- Apply Sampling: Use advanced sampling techniques to rebalance the training data. SMOTE and ADASYN are widely used oversampling methods that generate synthetic minority class samples [6] [18] [20]. The table below compares common techniques.

- Re-train and Re-evaluate: Train your model on the resampled dataset and evaluate using sensitivity and G-mean.

Issue 2: Model is Biased and Fails to Generalize

Problem: The model performs well on the training data but poorly on unseen test data, often due to small disjuncts or overfitting on the small minority class.

Solution Steps:

- Feature Selection: Use a robust feature selection method like BORUTA to identify the most relevant predictors. This reduces noise and dimensionality, helping the model focus on the most significant signals [20].

- Use Ensemble Methods: Implement ensemble algorithms like Random Forest or XGBoost, which are naturally more robust to imbalance and noise [19] [21].

- Hybrid Sampling: Apply a hybrid sampling technique like SMOTEENN, which combines SMOTE with an undersampling step (Edited Nearest Neighbors) to clean the data. This method has been shown to outperform others across various clinical datasets [6].

Quantitative Data on Imbalance in Clinical Domains

The following table summarizes the class distribution and imbalance ratio (IR) for several clinical datasets, including fertility, illustrating the pervasiveness of this issue [6].

Table 1: Class Distribution in Various Clinical Datasets

| Dataset Name | # Instances | Class Distribution (Majority:Minority) | Imbalance Ratio (IR) |

|---|---|---|---|

| Fertility | 100 | 88 : 12 | 7.33 |

| Breast Cancer (Diagnostic) | 569 | 357 : 212 | 1.69 |

| Pima Indians Diabetes | 768 | 500 : 268 | 1.9 |

| Hepatitis | 155 | 133 : 32 | 4.15 |

| Lung Cancer | 32 | 23 : 9 | 2.55 |

Experimental Protocols for Handling Imbalance

Protocol 1: Implementing the URNS Undersampling Method

This protocol is designed to directly mitigate class overlap by removing majority class samples from the overlapping region [5].

- Normalize Data: Begin by normalizing the feature space using a method like z-scores to ensure distance-based calculations are not skewed.

- First-Stage Neighbor Search: For every minority class instance (query), find its k nearest neighbors. A common heuristic for k is the square root of the dataset size.

- Identify Overlapped Instances: Mark any majority class instance that appears as a nearest neighbor for at least two different minority class queries.

- Second-Stage (Recursive) Search: Use the marked majority class instances from step 3 as new queries. Again, find their k nearest neighbors and mark any common majority class instances.

- Remove Overlapped Instances: Remove all majority class instances identified in steps 3 and 4 from the training set.

- Train Classifier: Proceed to train your chosen classifier on the newly undersampled, less overlapped training data.

Protocol 2: A Hybrid Framework with Bio-Inspired Optimization

This protocol uses a nature-inspired algorithm to optimize a neural network for high sensitivity on imbalanced fertility data [22] [23].

- Data Preprocessing: Load the fertility dataset. Perform min-max normalization to scale all features to a [0,1] range.

- Feature Analysis: Run a feature importance analysis (e.g., using a Proximity Search Mechanism or Random Forest) to identify key contributory factors like sedentary hours or environmental exposures.

- Model Definition: Initialize a Multilayer Feedforward Neural Network (MLFFN).

- Ant Colony Optimization (ACO): Use the ACO algorithm to adaptively tune the hyperparameters (e.g., learning rate, number of hidden units) of the MLFFN. The ACO's foraging behavior helps in efficiently finding a high-performance parameter set.

- Train and Validate: Train the ACO-optimized MLFFN. Due to the optimization and framework design, this approach has been shown to achieve very high sensitivity and accuracy on imbalanced fertility data.

Workflow Visualization

Diagram: Integrated Workflow for Imbalanced Fertility Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Imbalanced Fertility Research

| Tool / Technique | Type | Primary Function in Research |

|---|---|---|

| SMOTE | Software Algorithm | Generates synthetic samples for the minority class to balance the dataset [6] [20]. |

| ADASYN | Software Algorithm | Adaptively generates synthetic samples, focusing on harder-to-learn minority class examples [18] [20]. |

| URNS | Software Algorithm | An undersampling method that removes majority class instances from the class-overlap region [5]. |

| BORUTA | Software Algorithm | A feature selection wrapper method that identifies all relevant features for a model [20]. |

| Ant Colony Optimization (ACO) | Bio-inspired Algorithm | A metaheuristic used to optimize model parameters and improve convergence on imbalanced data [22] [23]. |

| Random Forest | Ensemble Classifier | Provides robust performance on imbalanced data and intrinsic feature importance analysis [19] [18]. |

| SHAP | Explainable AI Library | Explains the output of any ML model, crucial for understanding predictions on clinical data [19]. |

FAQs: Understanding Class Overlap in Fertility Datasets

1. What is class overlap, and why is it particularly problematic in reproductive medicine research?

Class overlap occurs when samples from different classes (e.g., PCOS vs. non-PCOS) share similar feature values in the dataset. In reproductive medicine, where datasets are often small and imbalanced, this ambiguity makes it difficult for models to find a clear separating boundary [5] [24]. The model tends to favor the majority class because, from its perspective, guessing the majority class yields a higher overall accuracy. This is critical in domains like PCOS diagnosis, where the minority class (those with the condition) is the class of primary interest [20].

2. Beyond overall accuracy, what metrics should I use to evaluate a model trained on an imbalanced, overlapped fertility dataset?

Overall accuracy is a misleading metric when class imbalance and overlap are present. You should prioritize metrics that focus on the model's performance on the minority class [5]. These include:

- Sensitivity (Recall): The ability of the model to correctly identify patients with the condition.

- Specificity: The ability of the model to correctly identify patients without the condition.

- AUC (Area Under the ROC Curve): A measure of the model's ability to distinguish between classes. A high sensitivity is often the primary goal in medical diagnosis, and a good trade-off with specificity is sought [5].

3. My model for classifying sperm morphology abnormalities has high overall accuracy but fails to detect specific rare defects. Could class overlap be the cause?

Yes. In complex classification tasks like sperm morphology with multiple, visually similar categories (e.g., different head defects), class overlap is a common challenge [25]. The model may be learning to correctly classify the most frequent abnormalities and normal sperm but "giving up" on distinguishing between rare or highly similar classes because the overlapping feature space makes it difficult. A two-stage hierarchical classification framework, where a model first separates samples into major categories before fine-grained classification, has been shown to reduce this type of misclassification [25].

4. I've applied SMOTE to balance my dataset, but my model's performance on the minority class hasn't improved. What else should I consider?

SMOTE addresses imbalance but does not specifically target the overlapping region, which can be a primary source of classification error. If synthetic instances are generated in the already-ambiguous overlapping zone, they may not provide new, clear information to the model. You should consider methods that directly address the overlap, such as overlap-based undersampling. These methods identify and remove majority class instances from the overlapping region, thereby increasing the relative visibility of minority class instances and improving class separability for the learning algorithm [5].

Troubleshooting Guides & Experimental Protocols

Guide 1: Implementing an Overlap-Based Undersampling Method (URNS)

This protocol is based on the URNS (Undersampling based on Recursive Neighbourhood Search) method, designed to improve the visibility of minority class samples in the overlapping region [5].

Objective: To reduce classification bias by removing negative class (majority) instances from the region where the two classes overlap.

Materials:

- Normalized training dataset (e.g., using Z-scores).

- A computing environment with a programming language like Python.

Methodology:

- Data Preprocessing: Normalize the training data (e.g., using Z-scores) to ensure the distance-based neighbor search is not biased by different feature scales [5].

- First-Round Neighbour Search:

- For every instance in the minority class (the "query"), find its

knearest neighbours. - Identify any majority class instances that are common neighbours to at least two different minority class queries. Mark these as "overlapped instances."

- For every instance in the minority class (the "query"), find its

- Second-Round (Recursive) Neighbour Search:

- Use the common majority class neighbours identified in Step 2 as the new queries.

- Again, find their

knearest neighbours and identify any majority class instances that are common to at least two of these new queries.

- Remove Instances: Combine the list of overlapped instances from both rounds and remove them from the training set.

- Train Model: Use the cleaned and balanced training set to train your chosen classifier.

Technical Notes:

- The parameter

k(number of neighbours) can be set adaptively, often related to the square root of the dataset size [5]. - This method is sensitive to noise, which is why initial normalization and the requirement for a instance to be a common neighbour of multiple queries are important.

- The parameter

The following workflow diagram illustrates the URNS process:

Guide 2: Applying a Two-Stage Divide-and-Ensemble Framework

This protocol is inspired by a method developed for complex sperm morphology classification, which is effective for managing high inter-class similarity and imbalance [25].

Objective: To reduce misclassification between visually similar categories by breaking down a complex, multi-class problem into simpler, hierarchical decisions.

Materials:

- A dataset with labeled classes that can be logically grouped into broader categories (e.g., head/neck abnormalities vs. tail abnormalities/normal).

- Multiple deep learning or machine learning models to form an ensemble.

Methodology:

- Stage 1 - The Splitter Model:

- Train a dedicated classifier ("splitter") to categorize input images into two or more broad, high-level categories. For example, in sperm morphology, this could be "Head and Neck Abnormalities" vs. "Normal and Tail Abnormalities" [25].

- Stage 2 - Category-Specific Ensemble Models:

- For each high-level category defined in Stage 1, train a separate, specialized ensemble model. This ensemble is responsible for performing the fine-grained classification within its assigned category.

- The ensemble can integrate diverse architectures (e.g., CNNs, Vision Transformers) to leverage complementary strengths.

- Structured Voting:

- Instead of simple majority voting, implement a multi-stage voting strategy where models in the ensemble cast primary and secondary votes. This enhances decision reliability and mitigates the influence of any single dominant class [25].

- Stage 1 - The Splitter Model:

Technical Notes:

- This framework delivers higher accuracy by constraining the decision space for each specialist model, forcing it to become an expert in distinguishing between a smaller set of similar classes.

- It has been shown to outperform conventional single-model classifiers and unstructured ensembles [25].

The logical flow of the two-stage framework is shown below:

Performance Data of Resampling Techniques

The following table summarizes quantitative results from various studies that tackled class imbalance and overlap, providing a comparison point for your own experiments.

| Resampling Method | Classifier Used | Dataset / Application | Key Performance Results | Source |

|---|---|---|---|---|

| URNS (Overlap-Based Undersampling) | Not Specified | Medical Diagnosis (Imbalanced) | Achieved high sensitivity (positive class accuracy) and good trade-offs between sensitivity & specificity. [5] | [5] |

| DBMIST-US (DBSCAN + MST) | k-NN, J48 Decision Tree, SVM | Synthetic & Real-Life Imbalanced Datasets | Significantly outperformed 12 state-of-the-art undersampling methods across multiple classifiers and datasets. [24] | [24] |

| ADASYN & SMOTE with Stacked Ensemble | Stacked Ensemble | PCOS Classification | Achieved 97% accuracy by addressing data imbalance and integrating feature selection (BORUTA). [20] | [20] |

| Two-Stage Ensemble Framework | Custom Ensemble (NFNet, ViT) | Sperm Morphology (18-class) | Achieved ~70% accuracy, a 4.38% significant improvement over prior approaches, reducing misclassification. [25] | [25] |

The Scientist's Toolkit: Key Research Reagents & Solutions

This table lists essential computational and methodological "reagents" for designing experiments to mitigate class overlap.

| Item Name | Type | Function / Explanation |

|---|---|---|

| ADASYN | Algorithm | An oversampling algorithm that generates synthetic data for the minority class, with a focus on creating instances for difficult-to-learn examples, thereby reducing bias. [20] |

| BORUTA | Algorithm | A feature selection method that identifies all features which are statistically relevant for classification, helping to reduce dimensionality and noise that can exacerbate overlap. [20] |

| DBSCAN | Algorithm | A clustering algorithm used as a noise filter to identify and remove noisy majority class instances, cleaning the decision boundary between classes. [24] |

| k-Nearest Neighbours (kNN) | Algorithm | A foundational algorithm used in many resampling methods to analyze the local neighborhood of instances for identifying overlap, noise, and borderline points. [5] [24] |

| Minimum Spanning Tree (MST) | Algorithm | Used in undersampling to discover the core structure of the majority class, helping to remove redundant negative instances while preserving the underlying data topology. [24] |

| Structured Multi-Stage Voting | Method | An ensemble decision-making strategy that goes beyond majority voting, allowing models to cast primary and secondary votes to increase reliability for imbalanced classes. [25] |

| Z-score Normalization | Preprocessing | Standardizes features to have a mean of zero and a standard deviation of one, which is critical for any distance-based resampling method (e.g., URNS, kNN) to function correctly. [5] |

Methodological Arsenal: Adaptive Resampling and Ensemble Learning for Fertility Data

Frequently Asked Questions (FAQs)

Q1: Why do standard resampling methods like Random Oversampling often fail on my fertility dataset with significant class overlap? Standard Random Oversampling duplicates existing minority class instances, which introduces no new information and can lead to overfitting, especially in regions where majority and minority classes overlap [26]. In complex fertility datasets, where minority classes (e.g., specific fertility outcomes) are not only rare but also intermingled with majority classes, this simple duplication causes the classifier to learn noise rather than meaningful decision boundaries [27] [26].

Q2: What does it mean to "tailor resampling to problematic data regions," and how is it detected? Tailoring resampling involves identifying specific areas within your dataset where classification is most difficult, such as regions with high class overlap, small disjuncts (small, isolated sub-concepts within a class), or noise [27]. The core idea is to focus resampling efforts on these regions to make the classifier more robust. Detection is achieved through data complexity analysis, which quantifies these factors. For example, you can identify borderline majority samples that are nearest to minority samples or calculate the ratio of majority-to-minority samples in a local neighborhood to find areas of overlap [28] [27].

Q3: My model is biased towards the majority class even after applying SMOTE. What adaptive oversampling techniques can help? Standard SMOTE generates synthetic samples linearly, which can blur boundaries and create unrealistic samples in overlapping regions [29]. Adaptive techniques like ADASYN shift the importance to difficult-to-learn minority samples by generating more synthetic data for minority examples that are harder to classify, based on the density of majority class neighbors [30] [31]. Newer methods like Feature Information Aggregation Oversampling (FIAO) generate new minority samples by considering feature density, standard deviation, and feature importance per feature dimension, creating more meaningful synthetic data conducive to classification [32]. Another advanced method, Subspace Optimization with Bayesian Reinforcement (SOBER), generates synthetic samples within optimized feature subspaces that best distinguish the classes, avoiding the "curse of dimensionality" that plagues neighborhood-based methods in high-dimensional spaces [28].

Q4: When should I use undersampling instead of oversampling on my fertility data? Undersampling is a compelling choice when your fertility dataset is very large and the majority class contains many redundant or safe samples far from the decision boundary [26]. In such cases, you can use Tomek Links to remove majority class instances that are nearest neighbors to minority class instances, effectively cleaning the overlap region [30] [31]. However, undersampling risks losing potentially important information if not applied selectively. It is often best used in a hybrid approach, combined with oversampling, to simultaneously clean the majority class and reinforce the minority class [30] [27].

Q5: How do I evaluate the success of an adaptive resampling strategy for a fertility classification problem? In imbalanced domains like fertility research, standard metrics like accuracy are misleading [9] [30]. You should use metrics that focus on the minority class, such as Recall (to ensure you capture as many true positives as possible), Precision, and the F1-score which balances the two [33]. The Geometric Mean (G-mean) of sensitivity and specificity is also widely recommended, as it provides a balanced view of the performance on both classes [27]. Ultimately, success should be measured by a significant improvement in these metrics on a hold-out test set with the original, unaltered class distribution, compared to a baseline model without adaptive resampling [26].

Detailed Experimental Protocols for Adaptive Resampling

Protocol 1: Implementing the FIAO (Feature Information Aggregation Oversampling) Method

FIAO generates high-quality synthetic minority samples by leveraging feature-level information instead of relying on Euclidean distance in the full feature space [32].

Methodology:

- Input: Original imbalanced fertility dataset.

- Feature Interval Partitioning: For each feature, calculate the size of feature intervals using the feature's standard deviation and its importance (e.g., derived from a Random Forest classifier).

- Interval Filtering: For each partitioned interval, calculate the feature density for both majority and minority classes. Select intervals where the minority class density is high relative to the majority class, indicating a potentially safe region for generation.

- Feature Value Generation: For each selected interval, generate new feature values by sampling from a Gaussian distribution defined by the characteristics of the minority class samples in that interval.

- Sample Synthesis: Randomly combine the newly generated feature values from all dimensions to form a complete new synthetic minority class sample.

- Output: A balanced dataset.

Table 1: Key Parameters for FIAO Implementation

| Parameter | Description | Suggested Consideration for Fertility Data |

|---|---|---|

| Feature Importance Metric | Determines the weight of each feature in interval sizing. | Use a tree-based classifier (e.g., Random Forest) to obtain robust importance scores from noisy biological data. |

| Interval Sizing Rule | How to calculate the partition size using std and importance. | A weighted formula (e.g., size = α * std + β * importance) can be tuned via cross-validation. |

| Density Threshold | The minimum minority-to-majority density ratio for an interval to be eligible. | Set a conservative threshold (e.g., >0.8) initially to avoid generating noise in overlapping regions. |

Protocol 2: Implementing the SOBER (Subspace Optimization with Bayesian Reinforcement) Method

SOBER addresses the curse of dimensionality by generating samples within low-dimensional feature subspaces that are optimized for class discrimination [28].

Methodology:

- Subspace Sampling: Randomly select a low-cardinality (2-3 features) subspace from the entire feature space.

- Optimization & Evaluation: In this subspace, generate a candidate synthetic minority sample. Evaluate this sample using a class-sensitive objective function that captures the density and distribution of both classes to ensure effective discrimination.

- Bayesian Reinforcement: The objective value obtained from the evaluation step is used to update a Dirichlet distribution. This distribution reinforces the probability of selecting more effective subspaces for future sample generation.

- Iteration: Repeat steps 1-3 until the desired number of minority samples is generated.

- Output: A balanced dataset.

Table 2: Key Parameters for SOBER Implementation

| Parameter | Description | Suggested Consideration for Fertility Data |

|---|---|---|

| Subspace Cardinality | Number of features in each selected subspace. | Keep it low (2 or 3) to manage computational cost and avoid the curse of dimensionality. |

| Objective Function | Function to minimize for effective sample generation. | A function that maximizes local minority density while minimizing local majority density in the subspace. |

| Dirichlet Prior | The initial parameters for the Bayesian reinforcement. | Start with a uniform prior to allow all subspaces to be explored initially. |

Workflow Visualization

Adaptive Resampling for Fertility Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Adaptive Resampling Experiments

| Tool / Reagent | Function in Experiment | Usage Notes |

|---|---|---|

| Imbalanced-Learn (imblearn) | Python library providing implementations of SMOTE, ADASYN, Tomek Links, and other sampling methods. | The primary library for implementing standard and hybrid resampling algorithms. Compatible with scikit-learn [30]. |

| SMOTE-Variants | Python package offering a vast collection (85+) of oversampling techniques. | Useful for benchmarking and finding the optimal oversampling method for a specific fertility dataset [31]. |

| Scikit-learn | Provides machine learning models (RF, SVM), feature importance calculators, and evaluation metrics. | Essential for the entire modeling pipeline, from data preprocessing to model evaluation [33]. |

| Complexity Metrics | Algorithms to quantify data difficulty factors like class overlap, small disjuncts, and noise. | Used in the initial analysis phase to objectively identify and quantify problematic regions in the fertility dataset [27]. |

| Custom Scripts for FIAO/SOBER | Implementation of the latest adaptive resampling methods from recent literature. | Required as these advanced methods may not yet be available in standard libraries. Crucial for pushing the state-of-the-art in fertility data analysis [32] [28]. |

Frequently Asked Questions

1. What is the primary problem that oversampling techniques solve in fertility datasets? Oversampling techniques address the issue of class imbalance, where one class (e.g., 'altered fertility' or 'treatment failure') has significantly fewer instances than another (e.g., 'normal fertility'). This imbalance can cause machine learning models to become biased toward the majority class, leading to poor predictive accuracy for the critical minority class that is often of primary research interest [34] [35] [26].

2. My fertility dataset is very small. Which oversampling method should I start with? For small fertility datasets, Random Oversampling is a straightforward initial approach because it effectively increases the number of minority class samples without requiring a large amount of data [26]. However, be cautious of potential overfitting. If your dataset has a more complex structure, SMOTE or ADASYN can generate synthetic samples that may provide better generalization [34] [35].

3. How do I choose between SMOTE and ADASYN for my project? The choice depends on the characteristics of your minority class. Use SMOTE to generate a uniform number of synthetic samples across all minority class instances. Choose ADASYN if your dataset has complex, hard-to-learn sub-regions within the minority class, as it adaptively generates more samples for minority examples that are harder to classify [35].

4. I've applied oversampling, but my model still performs poorly on the minority class. What should I check? First, verify that you applied oversampling only to the training set and not the validation or test sets. Second, consider combining oversampling with feature selection techniques to ensure your model focuses on the most predictive variables [23] [34]. Third, explore using ensemble methods or cost-sensitive learning in conjunction with oversampling [36].

5. Are there specific performance metrics I should use when evaluating models on oversampled fertility data? Yes, accuracy alone can be misleading. Prioritize metrics that are robust to class imbalance, such as:

- Sensitivity (Recall): The model's ability to correctly identify positive cases (e.g., infertile couples).

- Specificity: The model's ability to correctly identify negative cases.

- F1-Score: The harmonic mean of precision and recall.

- Area Under the ROC Curve (AUC): The model's overall discriminative ability [37] [34] [36].

Troubleshooting Guides

Problem: Model Overfitting After Random Oversampling

Symptoms: The model achieves near-perfect training accuracy but performs poorly on the validation or test set, especially on the minority class.

Solutions:

- Switch to Advanced Oversampling: Replace Random Oversampling with SMOTE or ADASYN. These techniques generate synthetic, non-identical samples rather than simply duplicating existing ones, which helps the model learn generalizable patterns rather than memorizing specific data points [35] [26].

- Apply Random Oversampling with Noise: Introduce small random perturbations (noise) to the duplicated minority class samples. This technique, sometimes called a "smoothed bootstrap," creates slight variations in the data, reducing the risk of overfitting [35].

- Validate Feature Set: Use feature importance analysis (e.g., Permutation Feature Importance or SHAP) to ensure the model is relying on clinically relevant predictors and not on noise [23] [37].

Problem: Identifying the Optimal Degree of Oversampling

Symptoms: Uncertainty about how much to oversample the minority class to achieve optimal model performance without introducing artifacts.

Solutions:

- Aim for Balance: A common and effective strategy is to oversample until the minority and majority classes are perfectly balanced (a 1:1 ratio) [26].

- Follow Empirical Evidence: Research on medical data suggests that model performance stabilizes when the positive sample rate (for the minority class) reaches at least 10-15% of the dataset. Use this as a guideline, especially if a perfect 1:1 ratio is not feasible [34].

- Systematic Experimentation: Create multiple resampled datasets with varying degrees of imbalance (e.g., 1:2, 1:1, 2:1 minority-to-majority ratios). Train your model on each and compare performance metrics like F1-Score and AUC to find the optimal balance for your specific dataset [34].

Problem: Integrating Oversampling into a Complex Analysis Pipeline

Symptoms: Errors or data leakage when combining oversampling with feature selection, hyperparameter tuning, or complex models like deep learning.

Solutions:

- Correct Pipeline Order: Always perform oversampling after splitting your data into training and testing sets, and only on the training data. Applying it before the split allows information from the test set to leak into the training process, invalidating your results.

- Use a Pipeline Object: Implement your workflow using a

Pipelineclass (e.g., fromimblearnorsklearn). This ensures that all preprocessing steps, including oversampling, are correctly applied during cross-validation and model training [35]. - Adopt a Hybrid Framework: For maximum performance, consider integrating oversampling with optimization algorithms. For instance, one study combined a neural network with an Ant Colony Optimization (ACO) algorithm for parameter tuning, achieving 99% accuracy on a fertility dataset [23]. Another successful pipeline used Particle Swarm Optimization (PSO) for feature selection alongside a deep learning model [38].

The following table consolidates key quantitative results from research on oversampling and predictive modeling in reproductive health.

| Study Focus | Dataset Size & Imbalance | Key Techniques | Performance Outcomes |

|---|---|---|---|

| Male Fertility Diagnostics [23] | 100 cases (88 Normal, 12 Altered) | MLP + Ant Colony Optimization (ACO) | 99% Accuracy, 100% Sensitivity, 0.00006 sec computational time |

| Natural Conception Prediction [37] | 197 couples | XGBoost, Random Forest, Permutation Feature Importance | 62.5% Accuracy, ROC-AUC of 0.580 |

| Assisted Reproduction Data [34] | 17,860 medical records | Logistic Regression, SMOTE, ADASYN | Model performance stabilized with a >15% positive rate and >1500 sample size. SMOTE/ADASYN recommended for low positive rates. |

| IVF Outcome Prediction [38] | Not Specified | TabTransformer + Particle Swarm Optimization (PSO) | 97% Accuracy, 98.4% AUC |

Experimental Protocols for Cited Works

Protocol 1: Hybrid ML-ACO for Male Fertility [23]

- Data Acquisition: Obtain a clinically profiled male fertility dataset (e.g., the UCI Fertility Dataset).

- Preprocessing: Apply Min-Max normalization to scale all features to the [0, 1] range.

- Model Architecture: Construct a Multilayer Feedforward Neural Network (MLFFN).

- Optimization: Integrate the Ant Colony Optimization (ACO) algorithm to adaptively tune the neural network's parameters, mimicking ant foraging behavior for efficient search.

- Interpretability: Perform a feature-importance analysis (e.g., Proximity Search Mechanism) to identify key contributory factors like sedentary habits.

- Evaluation: Evaluate the model on a held-out test set using accuracy, sensitivity, and computational time.

Protocol 2: Handling Highly Imbalanced Medical Data [34]

- Data Preparation: Retrospectively collect medical records (e.g., from an Assisted Reproductive Technology center). Define the outcome variable (e.g., cumulative live birth).

- Create Imbalanced Subsets: Systematically construct datasets with varying degrees of imbalance (e.g., positive rates from 1% to 15%) and sample sizes.

- Apply Resampling: On the training data only, apply different techniques:

- Oversampling: SMOTE, ADASYN.

- Undersampling: One-Sided Selection (OSS), Condensed Nearest Neighbor (CNN).

- Model Training and Evaluation: Train a logistic regression model on each resampled dataset. Evaluate performance using AUC, G-mean, F1-Score, and Recall to determine the most effective method for the given imbalance level.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Structured Data Collection Form [37] | A standardized tool to capture sociodemographic, lifestyle, and clinical history from both partners, ensuring consistent and comprehensive data. |

| Ant Colony Optimization (ACO) [23] | A nature-inspired optimization algorithm used to fine-tune machine learning model parameters, enhancing convergence and predictive accuracy. |

| Particle Swarm Optimization (PSO) [38] | An optimization technique for selecting the most relevant features from a high-dimensional dataset, improving model performance and interpretability. |

| Synthetic Minority Oversampling (SMOTE) [34] [35] | An algorithm that generates synthetic samples for the minority class by interpolating between existing instances, mitigating overfitting from simple duplication. |

| Permutation Feature Importance [37] | A model-agnostic method for evaluating the importance of each predictor variable by measuring the drop in model performance when a feature's values are randomly shuffled. |

| SHAP (SHapley Additive exPlanations) [38] | A unified approach to explain the output of any machine learning model, providing clarity on how each feature contributes to individual predictions. |

Workflow and Technique Selection Diagrams

Oversampling in ML Workflow

Selecting an Oversampling Technique

Frequently Asked Questions

Q1: What is the primary goal of strategic undersampling in fertility dataset research? The primary goal is to mitigate class imbalance without losing critical information. Unlike random undersampling, which removes majority class samples arbitrarily, strategic approaches aim to selectively remove redundant majority samples and de-cluster areas of high class overlap near the decision boundary. This process enhances the model's ability to learn from the minority class (e.g., rare fertility outcomes) and improves the generalizability of predictive models [18] [19].

Q2: My model is biased towards the majority class (e.g., 'No Live Birth') despite using undersampling. What strategic methods can I use? This common issue, often resulting from small disjuncts and class overlapping, indicates that your current sampling method may be removing informative samples. We recommend moving beyond random undersampling and implementing one of these advanced techniques [19]:

- One-Sided Selection (OSS): A hybrid method that combines Tomek Links for cleaning the decision boundary and Condensed Nearest Neighbor (CNN) for removing redundant majority samples far from the boundary [18].

- Condensed Nearest Neighbor (CNN) Undersampling: This method aims to find a consistent subset of the majority class, retaining only the samples that are necessary for defining the class boundary, thereby reducing dataset size and complexity [18].

Q3: How do I choose between undersampling and oversampling for my fertility dataset? The choice depends on your dataset size and the nature of the imbalance [18] [19].

- Use Undersampling when your dataset is sufficiently large, and you can afford to lose some samples from the majority class without harming the model's representativeness. It is computationally efficient.

- Use Oversampling (e.g., SMOTE, ADASYN) when your dataset is small, and preserving all majority class samples is crucial. Oversampling is often preferred for datasets with a very small number of minority-class samples [18]. A hybrid approach (both undersampling and oversampling) can also be highly effective.

Q4: Can you provide a protocol for implementing the One-Sided Selection (OSS) technique? Yes, the following protocol outlines the steps for implementing OSS on a fertility dataset.

- Experimental Protocol: Implementing One-Sided Selection

- Step 1 - Data Preprocessing: Handle missing values and encode categorical variables. Standardize or normalize numerical features (e.g., hormone levels, age) to ensure all features contribute equally to the distance calculations [18] [20].

- Step 2 - Initial Subset Selection (CNN): Start with all minority class samples and a single random sample from the majority class. Use a 1-Nearest Neighbor rule to iteratively add majority class samples that are misclassified by the current subset. This retains the "hard" majority samples that are critical for defining the boundary [18].

- Step 3 - Boundary Cleaning (Tomek Links): Identify Tomek Links in the subset obtained from Step 2. A Tomek Link exists between two samples of different classes if they are each other's nearest neighbors. Remove the majority class instance from each pair. This helps in cleaning the overlap between classes and clarifying the decision boundary [18].

- Step 4 - Model Training and Validation: Train your classifier (e.g., Random Forest, XGBoost) on the processed dataset. Use stratified cross-validation and prioritize metrics like G-mean and F1-score over plain accuracy, as they are more informative for imbalanced classes [18] [19].

Q5: What are the key performance metrics to evaluate the success of these approaches on a fertility prediction task? After applying strategic undersampling, do not rely solely on accuracy. A comprehensive evaluation should include the following metrics, which are particularly meaningful for imbalanced datasets [18]:

- G-mean: The geometric mean of sensitivity and specificity. It ensures that the model performs well on both classes.

- F1-Score: The harmonic mean of precision and recall. It is a robust metric for the minority class performance.

- AUC (Area Under the ROC Curve): Measures the model's ability to distinguish between classes across all classification thresholds.

- Sensitivity/Recall: The true positive rate, crucial for correctly identifying rare positive outcomes like live birth or PCOS.

- Precision: The accuracy of positive predictions.

The table below summarizes a comparative analysis of different sampling methods on a fertility-related dataset.

- Table 1: Performance Comparison of Sampling Techniques on a PCOS Classification Task

| Sampling Method | Accuracy (%) | F1-Score | G-Mean | AUC |

|---|---|---|---|---|

| No Sampling (Baseline) | 90 | 0.85 | 0.84 | 0.93 |

| Random Undersampling | 88 | 0.87 | 0.86 | 0.94 |

| SMOTE (Oversampling) | 94 | 0.92 | 0.91 | 0.97 |