Advanced Segmentation Methods for Sperm Morphological Structures: From Deep Learning to Clinical Application

This article provides a comprehensive review of the latest computational methods for segmenting sperm morphological structures, a critical task in male infertility diagnosis and reproductive research.

Advanced Segmentation Methods for Sperm Morphological Structures: From Deep Learning to Clinical Application

Abstract

This article provides a comprehensive review of the latest computational methods for segmenting sperm morphological structures, a critical task in male infertility diagnosis and reproductive research. We explore the evolution from traditional image processing to advanced deep learning models like U-Net, Mask R-CNN, and YOLO variants, addressing core challenges such as handling unstained samples, overlapping sperm, and subcellular part differentiation. The content systematically compares model performance across segmentation tasks, examines optimization strategies for complex clinical data, and validates methods against gold-standard benchmarks. Tailored for researchers, scientists, and drug development professionals, this review synthesizes current evidence to guide model selection, highlights emerging unsupervised techniques, and outlines future directions for integrating artificial intelligence into clinical andrology and assisted reproductive technologies.

The Foundation of Sperm Morphology Analysis: Clinical Significance and Segmentation Challenges

The Critical Role of Sperm Morphology in Male Infertility Assessment

Sperm morphology, which refers to the size, shape, and appearance of sperm, represents a fundamental parameter in the evaluation of male fertility potential [1]. According to the World Health Organization (WHO) guidelines, semen analysis serves as the cornerstone for evaluating male infertility, with morphology being one of several critical parameters assessed alongside sperm concentration, motility, vitality, and DNA fragmentation [2]. A normal sperm cell is characterized by a smooth, oval head with a well-defined acrosome, an intact midpiece, and a single uncoiled tail that enables progressive motility [1]. These structural components each serve essential functions: the head contains genetic material and enzymes for egg penetration, the midpiece provides energy through mitochondria, and the tail enables propulsion [3].

The clinical significance of sperm morphology lies in its correlation with fertilization potential. Abnormally shaped sperm often demonstrate reduced ability to penetrate and fertilize the oocyte [1]. These abnormalities can manifest in various forms, including macrocephaly (giant head), microcephaly (small head), globozoospermia (round head without acrosome), pinhead sperm, multiple heads or tails, coiled tails, and stump tails [1]. The prevalence of these abnormalities is remarkably high, with typically only 4% to 10% of sperm in most semen samples meeting strict morphological standards [4]. When a large percentage of sperm demonstrate abnormal morphology (a condition termed teratozoospermia), fertility potential may be significantly impaired, though the predictive value of morphology alone remains a subject of ongoing research and debate within the scientific community [5].

Traditional Assessment Methods and Limitations

Manual Morphology Assessment

Traditional sperm morphology assessment relies on visual examination of sperm cells under a microscope by experienced laboratory technicians. The most widely adopted methodology follows the Kruger Strict Criteria, which classifies sperm samples based on the percentage of normally shaped sperm: over 14% (high fertility probability), 4-14% (slightly decreased fertility), and 0-3% (extremely impaired fertility) [1]. This manual assessment requires technicians to evaluate at least 200 sperm per sample, annotating each part (acrosome, nucleus, midpiece, and tail) according to stringent WHO guidelines [6].

The manual process presents several significant challenges that impact its reliability and clinical utility. The assessment is inherently subjective, leading to substantial inter- and intra-laboratory variability in results [2]. This variability stems from differences in human interpretation, staining techniques, and preparation methods. Furthermore, the process is exceptionally labor-intensive, requiring the annotation of over 1,000 contours per patient sample (200 sperm × 5 parts each), making it impractical for high-throughput clinical settings [6]. The dependence on operator expertise introduces additional bias and inconsistency, particularly for borderline cases where morphological features are ambiguous.

Limitations of Current Computer-Aided Systems

While Computer-Aided Sperm Analysis (CASA) systems have emerged to address some limitations of manual assessment, even state-of-the-art systems still require significant human operator intervention for morphology evaluation [2] [3]. Traditional image processing techniques employed in these systems, such as thresholding, clustering, and active contour methods, have proven inadequate for accurately segmenting all sperm components simultaneously [2]. These methods struggle particularly with the challenging characteristics of semen smear images, including non-uniform lighting, low contrast between sperm tails and surrounding regions, various artifacts such as stained spots and debris, high sperm concentration with overlapping cells, and the wide spectrum of abnormal sperm shapes [2].

Advanced Segmentation Methods for Sperm Morphology

Deep Learning Approaches

Recent advances in deep learning have revolutionized sperm morphology analysis by enabling automated, multi-part segmentation with unprecedented accuracy. The table below summarizes the performance of leading deep learning models across different sperm components based on comparative studies:

Table 1: Performance comparison of deep learning models for sperm part segmentation (IoU metrics)

| Sperm Component | Mask R-CNN | YOLOv8 | YOLO11 | U-Net |

|---|---|---|---|---|

| Head | 0.891 | 0.885 | 0.879 | 0.874 |

| Acrosome | 0.823 | 0.801 | 0.809 | 0.815 |

| Nucleus | 0.845 | 0.839 | 0.831 | 0.826 |

| Neck | 0.792 | 0.798 | 0.785 | 0.779 |

| Tail | 0.801 | 0.812 | 0.806 | 0.829 |

The data reveals that Mask R-CNN generally outperforms other models for segmenting smaller, more regular structures like the head, acrosome, and nucleus, while U-Net demonstrates superior performance for the morphologically complex tail region due to its global perception and multi-scale feature extraction capabilities [3]. This performance differential highlights the importance of model selection based on the specific sperm component of interest.

Concatenated Learning Frameworks

Sophisticated frameworks that combine multiple computational approaches have demonstrated remarkable success in comprehensive sperm segmentation. Movahed et al. developed a concatenated learning approach that integrates convolutional neural networks (CNNs) with classical machine learning methods and specialized preprocessing [2]. This framework employs a multi-stage pipeline beginning with serialized preprocessing to enhance sperm cell appearance and suppress unwanted image distortions. Two dedicated CNN models then generate probability maps for the head and axial filament regions. The internal head components (acrosome and nucleus) are segmented using K-means clustering applied to the head regions, while the axial filament is classified into tail and mid-piece regions using a Support Vector Machine (SVM) classifier trained on pixels from dilated axial filament regions [2].

This approach addresses previous limitations by simultaneously segmenting all sperm components (head, axial filament, tail, mid-piece, acrosome, and nucleus), providing a complete foundation for automated morphology analysis [2]. The method significantly outperforms previous works in head, acrosome, and nucleus segmentation while additionally providing the first solution for axial filament segmentation [2].

Instance-Aware Part Segmentation Networks

For quantitative morphology measurement, instance-aware part segmentation networks represent a significant advancement. These networks follow a "detect-then-segment" paradigm, first locating individual sperm within images using bounding boxes, then segmenting the parts for each located instance [6]. However, traditional top-down methods suffer from context loss and feature distortion due to bounding box cropping and resizing operations.

A novel attention-based instance-aware part segmentation network has been developed to address these limitations. This network incorporates a refinement module that uses preliminary segmented masks to provide spatial cues for each sperm instance, then merges these masks with features extracted by a Feature Pyramid Network (FPN) through an attention mechanism [6]. The merged features are subsequently refined by CNN to produce improved segmentation results. This approach has demonstrated a 9.2% improvement in Average Precision compared to state-of-the-art top-down methods, achieving 57.2% AP(^p_{vol}) on sperm segmentation datasets [6].

Experimental Protocols

Protocol 1: Multi-Part Sperm Segmentation Using Deep Learning

Purpose: To accurately segment sperm components (head, acrosome, nucleus, neck, tail) from microscopic images for morphological analysis.

Materials and Reagents:

- Microscope with digital imaging capabilities (1000x magnification recommended)

- Staining solutions (Diff-Quik, Papanicolaou, or Hematoxylin and Eosin)

- Gold-standard dataset [2] or clinically labeled live, unstained human sperm dataset [3]

- Computational resources (GPU-enabled workstation with ≥8GB VRAM)

- Software frameworks (Python, PyTorch/TensorFlow, OpenCV)

Procedure:

- Sample Preparation and Image Acquisition:

- Prepare semen smears on glass slides and apply appropriate staining.

- Capture microscopic images at 1000x magnification, ensuring consistent lighting.

- For unstained live sperm analysis, use phase-contrast microscopy.

Data Preprocessing:

- Apply serialized preprocessing to suppress distortions and enhance sperm appearance.

- Implement normalization and contrast enhancement techniques.

- Augment dataset through rotation, flipping, and brightness variations.

Model Selection and Training:

- Select appropriate model architecture based on target components.

- For comprehensive segmentation, implement Mask R-CNN with ResNet-50/101 backbone.

- Train models using annotated datasets with 80-10-10 train-validation-test split.

- Employ data augmentation strategies to prevent overfitting.

Segmentation and Validation:

- Generate segmentation masks for all sperm components.

- Apply post-processing using morphological operations and geometric constraints.

- Validate results against expert-annotated ground truths using IoU, Dice, Precision, Recall, and F1 Score metrics.

Table 2: Research reagent solutions for sperm morphology analysis

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Diff-Quik Stain | Provides contrast for sperm components | Standard for clinical morphology assessment |

| SCIAN-SpermSegGS Dataset | Gold-standard public dataset | 20 images (780×580) with normal/abnormal sperm [2] |

| Live Unstained Sperm Dataset | Clinical dataset for unstained analysis | 93 "Normal Fully Agree Sperms" images [3] |

| Feature Pyramid Network (FPN) | Multi-scale feature extraction | Enhances detection of small sperm parts |

| ROI Align | Preserves spatial accuracy | Avoids feature distortion in instance segmentation |

Protocol 2: Automated Morphology Parameter Measurement

Purpose: To quantitatively measure morphology parameters from segmented sperm components.

Materials: Segmented sperm part masks from Protocol 1.

Procedure:

- Head Morphometry:

- Fit ellipse to segmented head region.

- Calculate length (major axis), width (minor axis), and ellipticity (length/width ratio).

- Compute head area and perimeter.

Acrosome and Nucleus Analysis:

- Determine acrosome-to-nucleus ratio.

- Assess acrosomal shape and positioning.

Midpiece Morphometry:

- Apply rectangle fitting to midpiece segment.

- Measure length, width, and orientation angle relative to head.

Tail Morphology Measurement:

- Implement centerline-based measurement using improved Steger-based methods.

- Apply outlier filtering and endpoint detection algorithms.

- Calculate tail length, average width, and curvature.

Statistical Analysis:

- Aggregate measurements across all analyzed sperm (minimum 200 cells).

- Compare against WHO reference values.

- Perform statistical analysis for clinical correlation.

Visualization of Experimental Workflows

Deep Learning Segmentation Workflow

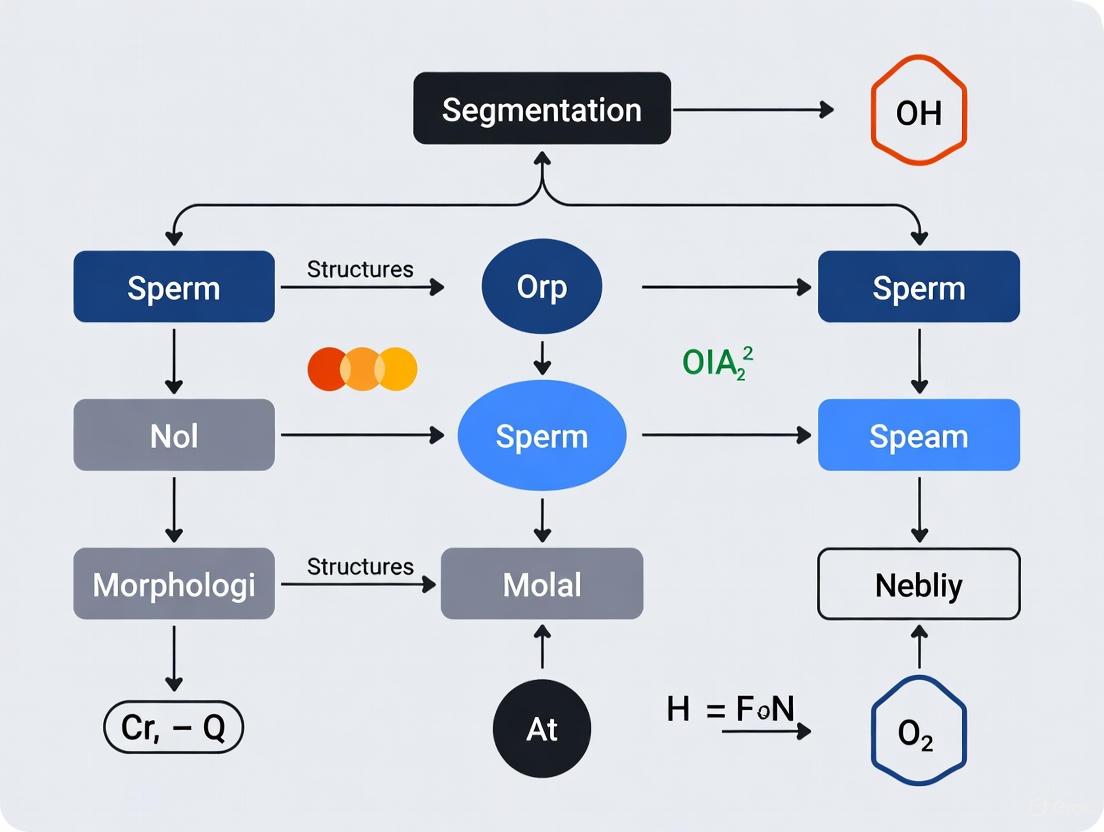

Diagram 1: Sperm segmentation workflow

Instance-Aware Segmentation Architecture

Diagram 2: Instance segmentation architecture

Clinical Applications and Implications

Diagnostic Applications

Automated sperm morphology analysis using advanced segmentation methods provides significant advantages for male infertility diagnosis. The quantitative nature of these techniques reduces subjectivity and enables more consistent assessment across different laboratories and technicians. These methods can detect specific morphological syndromes with high precision, including globozoospermia (round-headed sperm without acrosomes), macrocephalic spermatozoa syndrome, pinhead spermatozoa syndrome, and multiple flagellar abnormalities [5]. The French BLEFCO Group recommends that laboratories use qualitative or quantitative methods specifically for detecting these monomorphic abnormalities, with results reported as either interpretative commentary or numerical percentage of detailed abnormalities [5].

The clinical value of these automated approaches extends beyond basic diagnosis to treatment selection and planning. While the percentage of normal-form sperm alone may not reliably predict outcomes for assisted reproductive technologies like IUI, IVF, or ICSI [5], detailed morphological analysis of specific defects can inform clinical decisions. For instance, sperm with severe head abnormalities or DNA fragmentation may be less suitable for conventional IVF, directing clinicians toward ICSI as a more appropriate treatment option.

Research Applications

In research settings, automated sperm morphology analysis enables large-scale studies that would be impractical with manual methods. The ability to rapidly analyze thousands of sperm cells with consistent criteria facilitates investigations into:

- Genetic studies of hereditary morphological abnormalities

- Toxicology research assessing environmental impacts on spermatogenesis

- Pharmacological studies evaluating drug effects on sperm quality

- Evolutionary biology comparing sperm morphology across species

The high-throughput capabilities of these systems also support the development of new male contraceptive methods and fertility treatments by providing precise quantitative metrics for assessing intervention efficacy.

Future Directions

The field of automated sperm morphology analysis continues to evolve with several promising research directions. Integration of multi-modal data, including combining morphological assessment with motility analysis and DNA fragmentation testing, represents a significant opportunity for comprehensive sperm quality evaluation [3]. The development of explainable AI systems that provide transparent reasoning for morphological classifications would enhance clinical trust and adoption. Further validation studies across diverse patient populations and laboratory settings remain essential to establish standardized protocols and reference ranges. As these technologies mature, they hold the potential to transform male infertility assessment from a subjective art to an objective, quantitative science that delivers improved diagnostic accuracy and personalized treatment recommendations.

The male gamete, or spermatozoon, is a highly specialized and polarized cell, optimized for the single mission of delivering paternal DNA to the oocyte. Its design, or bauplan, is conserved around a core structure consisting of a head, midpiece (neck), and tail (flagellum), all enclosed by a single plasma membrane [7] [8]. The precise morphology of these components is critically linked to the sperm's functional competence, including its hydrodynamic efficiency, motility, and ability to penetrate the oocyte [7] [1]. Within the context of male infertility, which affects a significant proportion of couples globally, the morphological evaluation of sperm is a cornerstone of diagnostic assessment [9] [10]. Traditional manual analysis under a microscope is, however, fraught with subjectivity, substantial workload, and poor reproducibility, hindering accurate clinical diagnosis [9]. This application note details the key morphological components of the sperm cell and frames advanced, quantitative segmentation methods as essential protocols for objective and precise analysis in modern andrology and drug development research.

A mature sperm cell is a "stripped-down" cell, unencumbered by most cytoplasmic organelles to minimize size and weight for its journey [8]. The following table summarizes the core morphological components and their functions.

Table 1: Key Morphological Components of a Sperm Cell and Their Functions

| Component | Subcomponent | Key Anatomical Features | Primary Functions |

|---|---|---|---|

| Head | --- | Condensed haploid nucleus; Anterior cap-like structure (acrosome) [11] [8]. | Carries paternal genetic material; Penetrates oocyte vestments [8] [10]. |

| Acrosome | Secretory vesicle containing hydrolytic enzymes [8]. | Facilitates penetration of the oocyte's outer layers during the acrosome reaction [8] [10]. | |

| Nucleus | Extremely compact chromatin due to protamine binding [8]. | Houses paternal DNA; Compact shape minimizes hydrodynamic drag [7] [8]. | |

| Neck (Midpiece) | --- | Connects head to tail; Contains centrioles; Surrounded by mitochondria [11] [8]. | Connects structural units; Generates ATP for tail movement [11] [10]. |

| Tail (Flagellum) | --- | Long, whip-like structure with a central axoneme [11] [8]. | Propels the sperm cell through a corkscrew-like motion [11] [1]. |

The integrity of this structure is paramount for fertility. Abnormalities in any component can lead to dysfunctional sperm. Teratozoospermia, a condition characterized by a high percentage of misshapen sperm, can manifest as macrocephaly (giant head), microcephaly (small head), globozoospermia (round head without an acrosome), bent tail, coiled tail, or the presence of multiple heads or tails [11] [1]. According to the Kruger Strict Criteria, a sperm sample with less than 4% normal morphology is considered to have extremely impaired fertility potential [1].

Diagram 1: Sperm structure and function.

Segmentation Methods for Sperm Morphological Structures

The quantitative analysis of sperm morphology requires the precise segmentation of its constituent parts from microscopic images. The transition from traditional methods to deep learning-based approaches represents a paradigm shift in this field.

Evolution of Segmentation Methodologies

Traditional Image Processing & Conventional Machine Learning: Early approaches relied on handcrafted feature extraction. Techniques included K-means clustering for locating sperm heads [9] [10], edge-based active contour models for delineating boundaries, and classifiers like Support Vector Machines (SVM) to categorize sperm based on extracted features [9]. While these methods achieved notable success, with some reporting over 90% accuracy in head classification, they were fundamentally limited by their dependence on manually designed features and struggled with the variability and low contrast of unstained sperm images [9] [10].

Modern Deep Learning (DL) Approaches: Current state-of-the-art research has shifted toward deep learning algorithms, particularly Convolutional Neural Networks (CNNs), which can automatically learn hierarchical features directly from data [9] [10]. These models have demonstrated superior performance in segmenting the intricate and small structures of sperm. Commonly employed architectures include:

- Mask R-CNN: A two-stage instance segmentation model that excels at detecting objects and generating a pixel-wise mask for each.

- U-Net: A fully convolutional network with a symmetric encoder-decoder structure, renowned for its effectiveness in biomedical image segmentation with limited training data.

- YOLO Models (e.g., YOLOv8, YOLO11): Single-stage detectors that balance high speed with good accuracy, suitable for real-time applications.

Quantitative Performance Comparison of DL Models

A systematic evaluation of these models on a dataset of live, unstained human sperm provides a clear comparison of their efficacy in multi-part segmentation [10]. Performance is typically measured using metrics such as Intersection over Union (IoU), which measures the overlap between the predicted segmentation and the ground truth, and the F1 Score, which balances precision and recall.

Table 2: Performance Comparison of Deep Learning Models in Sperm Segmentation (Adapted from [10])

| Sperm Component | Best Performing Model | Key Performance Metric (IoU/F1) | Comparative Model Performance |

|---|---|---|---|

| Head | Mask R-CNN | High IoU | Excels in segmenting smaller, regular structures. |

| Acrosome | Mask R-CNN | Highest IoU | Robustness in segmenting this small anterior cap. |

| Nucleus | Mask R-CNN | Slightly Higher IoU | Slightly outperforms YOLOv8 for nuclear segmentation. |

| Neck | YOLOv8 | Comparable/Slightly Higher IoU | Single-stage models can rival two-stage models. |

| Tail | U-Net | Highest IoU | Advantage in handling long, thin, morphologically complex structures. |

Experimental Protocols for Sperm Segmentation

Protocol: A Standardized Workflow for Deep Learning-Based Sperm Segmentation

This protocol outlines the key steps for training and evaluating a deep learning model for multi-part sperm segmentation, based on current research methodologies [9] [10].

Diagram 2: DL segmentation workflow.

Step 1: Dataset Acquisition. Procure a high-quality, annotated dataset of sperm images. Publicly available datasets include the Sperm Videos and Images Analysis (SVIA) dataset [9] and the VISEM-Tracking dataset [9]. The SVIA dataset, for instance, contains over 125,000 annotated instances for object detection and 26,000 segmentation masks [9]. Alternatively, establish an in-house dataset from clinical samples.

Step 2: Image Annotation and Pre-processing. Annotate sperm images with pixel-wise masks for each target structure: head, acrosome, nucleus, neck, and tail. This is a critical and labor-intensive step that requires expertise to ensure annotation quality and consistency. Pre-processing steps may include image resizing, normalization of pixel values, and data augmentation (e.g., rotation, flipping) to increase the effective size and diversity of the training set and improve model generalization.

Step 3: Model Selection and Training. Select an appropriate deep learning architecture based on the segmentation task and performance requirements (refer to Table 2 for guidance). For instance, choose Mask R-CNN for superior head and acrosome segmentation or U-Net for superior tail segmentation. Initialize the model with pre-trained weights (transfer learning) to accelerate convergence. Train the model using the annotated dataset, typically with a loss function like Dice Loss suited for segmentation tasks.

Step 4: Model Evaluation and Validation. Evaluate the trained model on a separate, held-out test dataset. Use multiple quantitative metrics to assess performance comprehensively:

- Intersection over Union (IoU): Measures the area of overlap between the predicted and ground truth masks divided by the area of their union.

- Dice Coefficient: Similar to IoU, it measures the spatial overlap between two segmentations.

- Precision and Recall: Assess the model's ability to identify only relevant pixels (Precision) and find all relevant pixels (Recall).

- F1 Score: The harmonic mean of Precision and Recall.

Step 5: Deployment and Inference. Integrate the validated model into a Computer-Aided Sperm Analysis (CASA) system. The model can then be used to perform automated, high-throughput segmentation of new, unseen sperm images, providing quantitative morphological data for clinical or research purposes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Sperm Morphology Segmentation Research

| Item / Resource | Type | Function / Application in Research |

|---|---|---|

| SVIA Dataset [9] | Dataset | A large-scale public resource with annotations for detection, segmentation, and classification of unstained sperm. |

| VISEM-Tracking Dataset [9] | Dataset | A multi-modal dataset containing videos and over 656,000 annotated objects with tracking details. |

| Mask R-CNN [10] | Algorithm | Deep learning model for instance segmentation; optimal for head, acrosome, and nucleus. |

| U-Net [10] | Algorithm | Deep learning model for semantic segmentation; superior for segmenting long, thin tails. |

| YOLOv8 / YOLO11 [10] | Algorithm | Deep learning models for real-time object detection and segmentation; good speed/accuracy balance. |

| Kruger Strict Criteria [1] | Clinical Standard | Reference guidelines for the clinical assessment of sperm morphology against which algorithm performance can be benchmarked. |

The precise segmentation of the key morphological components of sperm—the head, acrosome, nucleus, neck, and tail—is fundamental to advancing the scientific understanding of male fertility and improving clinical diagnostics. The move from subjective manual assessments to quantitative, AI-driven analyses represents a significant leap forward. As evidenced by recent research, deep learning models like Mask R-CNN, U-Net, and YOLOv8 offer robust and accurate solutions for this complex task, each with distinct strengths for different sperm structures. The continued development of standardized, high-quality datasets and optimized segmentation protocols will be crucial for translating these technological advancements into reliable tools for researchers, scientists, and drug development professionals working to address male infertility.

Sperm morphology analysis is a cornerstone of male fertility assessment, providing critical diagnostic information for infertility workups and assisted reproductive technology (ART) procedures such as intracytoplasmic sperm injection (ICSI) [9] [5]. The accurate segmentation of individual sperm components—including the head, acrosome, nucleus, neck, and tail—is fundamental to quantitative morphology analysis, enabling the measurement of crucial parameters that indicate sperm health and fertilization potential [3] [12]. However, the path to automated, high-fidelity sperm image segmentation is fraught with significant technical challenges that impact the accuracy, reliability, and clinical applicability of these analyses.

This application note delineates the three predominant challenges in sperm image segmentation: low contrast in unstained samples, overlapping sperm structures, and imaging artifacts. We provide a systematic analysis of these obstacles, present quantitative performance comparisons of current segmentation models, detail experimental protocols for addressing these issues, and catalog essential research reagents and computational tools. This framework is designed to equip researchers and clinicians with methodologies to enhance segmentation accuracy for more reliable sperm morphology analysis.

Major Segmentation Challenges and Technical Solutions

Challenge 1: Low Contrast in Unstained Sperm Images

Nature of the Challenge: The clinical preference for using unstained, live sperm for ART procedures to avoid potential cellular damage introduces a fundamental image analysis challenge: low contrast. Unlike stained specimens where chemical dyes enhance structural visibility, unstained sperm images exhibit low signal-to-noise ratios, indistinct structural boundaries, and minimal color differentiation between components [3] [12]. This problem is exacerbated when imaging is performed under lower magnification (e.g., 20×) to prevent sperm from swimming out of the field of view, resulting in further reduced resolution and blurred boundaries that obscure critical morphological details [12].

Technical Solutions: Advanced deep learning architectures with enhanced feature extraction capabilities have shown promising results in addressing low contrast. The Multi-Scale Part Parsing Network, which integrates semantic and instance segmentation branches, has demonstrated robust performance by leveraging complementary information from both global and local features [12]. Additionally, incorporating attention mechanisms, such as the Convolutional Block Attention Module (CBAM), into networks like ResNet50 helps the model focus on morphologically relevant regions while suppressing background noise, thereby mitigating the effects of low contrast [13]. For post-processing, measurement accuracy enhancement strategies employing statistical analysis (e.g., interquartile range filtering) and signal processing techniques (e.g., Gaussian filtering) can correct segmentation errors induced by low-resolution images [12].

Challenge 2: Overlapping Sperm and Structural Complexity

Nature of the Challenge: Sperm cells in microscopic images frequently appear intertwined or in close proximity, leading to overlapping structures—particularly of the slender and complex tails. This overlapping presents a significant obstacle for instance-level parsing, which is essential for distinguishing individual sperm and performing accurate morphological measurements [14] [12]. The problem is geometrically complex, as overlapping tails can form intricate patterns that are difficult to disentangle using conventional segmentation approaches.

Technical Solutions: Novel clustering algorithms specifically designed for biological structures offer a promising direction. The Con2Dis algorithm, for instance, effectively segments overlapping tails by simultaneously considering three geometric factors: connectivity, conformity, and distance [14]. From an architectural perspective, bottom-up segmentation strategies that begin by segmenting pixels before aggregating them into object instances have demonstrated superior capability in capturing local details of small targets like sperm tails compared to top-down approaches [12]. For head segmentation in crowded environments, leveraging foundation models like the Segment Anything Model (SAM) with customized filtering mechanisms can effectively isolate individual sperm heads while ignoring dye impurities and other artifacts [14].

Challenge 3: Image Artifacts and Noise

Nature of the Challenge: Sperm microscopy images often contain various artifacts including noise from the imaging process, dye impurities, blur due to sperm motility, and irrelevant biological debris [14] [15]. These artifacts can be mistakenly identified as sperm structures by segmentation algorithms, leading to inaccurate morphology assessment and measurement errors.

Technical Solutions: Comprehensive data augmentation during model training significantly enhances robustness to artifacts. Effective augmentation techniques include random rotations, horizontal and vertical flips, brightness and contrast adjustments, Gaussian noise addition, and color variations [15]. These strategies simulate the imperfections encountered in real-world imaging conditions and train the model to distinguish between genuine sperm features and artifacts. Additionally, hybrid approaches that combine multiple segmentation and filtering methods, such as the SpeHeatal framework, demonstrate improved ability to discriminate between actual sperm structures and imaging artifacts [14].

Quantitative Performance Analysis of Segmentation Models

The performance of deep learning models varies significantly across different sperm components, reflecting the distinct morphological challenges presented by each structure. The following table summarizes the quantitative performance of four state-of-the-art models evaluated using the Intersection over Union (IoU) metric on a dataset of live, unstained human sperm:

Table 1: Model Performance Comparison for Sperm Component Segmentation (IoU Metrics)

| Sperm Component | Mask R-CNN | YOLOv8 | YOLO11 | U-Net |

|---|---|---|---|---|

| Head | 0.89 | 0.87 | 0.86 | 0.85 |

| Acrosome | 0.84 | 0.81 | 0.82 | 0.80 |

| Nucleus | 0.86 | 0.85 | 0.83 | 0.82 |

| Neck | 0.79 | 0.80 | 0.78 | 0.77 |

| Tail | 0.75 | 0.76 | 0.74 | 0.78 |

Source: Adapted from [3]

Performance Insights: Mask R-CNN demonstrates superior performance for smaller, more regular structures like the head, acrosome, and nucleus, attributed to its two-stage architecture that enables refined feature extraction [3]. For the morphologically complex tail, U-Net achieves the highest IoU, benefiting from its encoder-decoder structure with skip connections that preserve spatial information across multiple scales [3]. YOLOv8 shows competitive performance for the neck region, indicating that single-stage models can match two-stage architectures for certain intermediate structures [3].

Experimental Protocols

Protocol 1: Multi-Part Sperm Segmentation Using Deep Learning

Application: This protocol provides a standardized methodology for segmenting all sperm components (head, acrosome, nucleus, neck, and tail) from unstained live human sperm images using deep learning models, suitable for both research and clinical applications in reproductive medicine.

Workflow Diagram: Sperm Segmentation Using Deep Learning

Step-by-Step Procedures:

Sample Preparation and Image Acquisition:

- Prepare semen samples according to WHO standards using unstained, live human sperm [3].

- Capture images using phase-contrast microscopy at 20×-40× magnification to balance field of view and resolution.

- Collect a minimum of 93 high-quality images of "Normal Fully Agree Sperms" as validated by multiple embryologists with ≥10 years of experience [3].

Data Annotation and Preprocessing:

- Annotate all sperm components (acrosome, nucleus, head, midpiece, and tail) using specialized annotation software by trained experts.

- Perform data augmentation including random rotations (±15°), horizontal and vertical flips, brightness/contrast adjustments (±10%), and Gaussian noise addition to enhance model robustness [15].

- Split dataset into training (70%), validation (15%), and test (15%) sets while maintaining stratification.

Model Selection and Training:

- Select appropriate models based on target components: Mask R-CNN for head/acrosome/nucleus, U-Net for tails, or YOLOv8 for neck segmentation [3].

- Implement transfer learning using pre-trained weights on ImageNet or similar datasets to improve convergence.

- Train models with Adam optimizer, initial learning rate of 0.001, batch size of 8-16 (depending on GPU memory), and early stopping with patience of 15 epochs.

Evaluation and Validation:

- Evaluate model performance using multiple metrics: IoU, Dice coefficient, Precision, Recall, and F1 Score [3].

- Perform statistical significance testing (e.g., McNemar's test) to compare model performance [13].

- Validate clinical utility through correlation with embryologist assessments and comparison with established CASA systems.

Protocol 2: Handling Overlapping Sperm Structures with SpeHeatal

Application: This protocol specifically addresses the challenge of overlapping sperm structures, particularly tails, using the SpeHeatal framework which combines the Segment Anything Model (SAM) with the Con2Dis clustering algorithm for robust instance segmentation in crowded sperm images.

Workflow Diagram: Handling Overlapping Sperm Structures

Step-by-Step Procedures:

SAM-Based Head Segmentation:

- Process input image with Segment Anything Model (SAM) to generate candidate masks for all visible objects.

- Filter out non-sperm artifacts and impurities based on morphological characteristics (size, shape, texture).

- Extract high-confidence sperm head masks using shape descriptors and size thresholds.

Con2Dis Clustering for Tail Separation:

- Identify tail regions using edge detection and texture analysis algorithms.

- Apply Con2Dis clustering algorithm to overlapping tail regions, which considers three factors:

- Connectivity: Pixel-level connection relationships between tail segments.

- Conformity: Geometric consistency with expected tail morphology.

- Distance: Spatial proximity to corresponding sperm heads.

- Separate intertwined tails by assigning pixels to individual sperm instances based on these criteria.

Mask Integration and Validation:

- Combine head masks from SAM with corresponding tail masks using customized mask splicing techniques.

- Validate complete sperm masks for morphological consistency and physiological plausibility.

- Perform quantitative evaluation using object-level metrics such as detection rate and false positive rate for overlapping scenarios.

Research Reagent Solutions and Computational Tools

Table 2: Essential Research Reagents and Computational Tools for Sperm Image Segmentation

| Category | Item/Resource | Specification/Function | Application Context |

|---|---|---|---|

| Datasets | SVIA Dataset [9] | 125,000 annotated instances; 26,000 segmentation masks; 125,880 classification images | Large-scale model training for detection, segmentation, and classification |

| VISEM-Tracking [9] | 656,334 annotated objects with tracking details | Sperm motility analysis and segmentation in video sequences | |

| MHSMA Dataset [9] | 1,540 grayscale sperm head images | Sperm head morphology classification studies | |

| Models | Mask R-CNN [3] | Two-stage instance segmentation model | Optimal for head, acrosome, and nucleus segmentation |

| U-Net [3] [15] | Encoder-decoder with skip connections | Superior for tail segmentation and general medical imaging | |

| YOLOv8/YOLO11 [3] | Single-stage object detection and segmentation | Balanced speed and accuracy for various sperm components | |

| CBAM-enhanced ResNet50 [13] | Attention mechanism for feature refinement | Sperm morphology classification with improved focus on relevant features | |

| Software Tools | Con2Dis Algorithm [14] | Specialized clustering for overlapping structures | Resolution of intertwined sperm tails |

| Multi-Scale Part Parsing Network [12] | Fusion of instance and semantic segmentation | Instance-level parsing for multiple sperm targets | |

| Measurement Accuracy Enhancement [12] | Statistical analysis and signal processing | Correction of measurement errors from low-resolution images |

The segmentation of sperm images presents distinct challenges stemming from the inherent biological characteristics of sperm and technical limitations of imaging systems. Low contrast in unstained samples, overlapping sperm structures, and various image artifacts collectively impede accurate morphological analysis essential for clinical diagnostics and ART procedures. However, as demonstrated by the quantitative evaluations and experimental protocols presented herein, strategic implementation of advanced deep learning architectures—selected according to target sperm components—coupled with specialized algorithms for addressing specific challenges like overlapping tails, can significantly enhance segmentation accuracy and reliability.

The ongoing development of standardized, high-quality annotated datasets and the refinement of attention mechanisms and multi-scale parsing networks promise further advances in this field. By adopting the methodologies and frameworks outlined in this application note, researchers and clinicians can contribute to more objective, reproducible, and clinically meaningful sperm morphology assessments, ultimately improving diagnostic accuracy and treatment outcomes in reproductive medicine.

The Impact of Stained vs. Unstained Samples on Segmentation Difficulty

In the field of sperm morphological analysis, the choice between using stained or unstained samples presents a significant trade-off between segmentation accuracy and clinical viability. This distinction is paramount for developing robust Computer-Aided Sperm Analysis (CASA) systems, particularly for applications in intracytoplasmic sperm injection (ICSI) [3]. Staining procedures enhance image contrast, facilitating the distinction of sperm structures, whereas unstained images often exhibit low signal-to-noise ratios, indistinct structural boundaries, and minimal color differentiation between components [3]. This document, framed within a broader thesis on segmentation methodologies, details the quantitative impact of this choice, provides standardized protocols for both pathways, and outlines key computational tools to address the inherent challenges.

Quantitative Analysis of Segmentation Performance

The performance of deep learning models varies considerably between stained and unstained samples and across different sperm components. The following tables summarize quantitative results from a systematic evaluation of four models on a dataset of live, unstained human sperm [3].

Table 1: Model Performance Comparison (IoU) for Unstained Sperm Segmentation

| Sperm Component | Mask R-CNN | YOLOv8 | YOLO11 | U-Net |

|---|---|---|---|---|

| Head | Slightly Higher | Comparable | Not Specified | Lower |

| Acrosome | Superior | Not Specified | Lower | Lower |

| Nucleus | Slightly Higher | Comparable | Not Specified | Lower |

| Neck | Comparable | Slightly Higher | Not Specified | Lower |

| Tail | Lower | Lower | Lower | Highest |

Table 2: Advantages and Disadvantages of Sample Preparation Methods

| Characteristic | Stained Samples | Unstained Samples |

|---|---|---|

| Image Contrast | High, facilitates structure distinction [3] | Low, with minimal color differentiation [3] |

| Structural Boundaries | Distinct | Indistinct [3] |

| Signal-to-Noise Ratio | High | Low [3] |

| Clinical Safety | Risk of morphology alteration [3] | Safe, no risk of damage [3] |

| Primary Challenge | Potential alteration of sperm morphology and structure, compromising diagnostic value [3] | Significant technical difficulty for accurate segmentation [3] |

| Best-Suited Model | Models requiring clear feature definition (e.g., Mask R-CNN for heads) [3] | U-Net for complex, elongated structures (e.g., tails) [3] |

Experimental Protocols

Protocol 1: Segmentation of Unstained Live Human Sperm Using Deep Learning

Application Note: This protocol is designed for the automated, multi-part segmentation of unstained, live human sperm images, which is critical for clinical ICSI procedures to avoid cellular damage [3].

Materials:

- Clinically labeled live, unstained human sperm dataset [citation:49-50 in 4].

- "Normal Fully Agree Sperms" image subset (93 images) [3].

- Computational hardware (GPU recommended).

Procedure:

- Dataset Curation: Select 93 images of normal sperm, as unanimously identified by multiple morphology experts, from the source dataset [3].

- Annotation Pairing: Ensure each sperm part (acrosome, nucleus, head, midpiece, and tail) is accurately annotated and paired with its corresponding source image [3].

- Data Partitioning: Split the annotated images into training and validation sets (e.g., 80-20 split).

- Model Selection & Training:

- Select models based on target components: Mask R-CNN for head, acrosome, and nucleus; YOLOv8 for the neck; U-Net for the tail [3].

- Train each model using standard deep learning frameworks.

- Performance Evaluation: Quantitatively evaluate model performance using Intersection over Union (IoU), Dice coefficient, Precision, Recall, and F1 Score [3].

Protocol 2: A Gold-Standard Framework for Sperm Head Segmentation

Application Note: This protocol provides a robust, illumination-invariant method for detecting and segmenting human sperm heads, which is the foundational step for all subsequent morphological classification [16].

Materials:

- Gold-standard dataset with hand-segmented sperm head masks.

- Image processing software (e.g., MATLAB, Python with OpenCV).

Procedure:

- Color Space Conversion: Convert the input RGB image to multiple color spaces, specifically L*a*b* and YCbCr [16].

- Clustering for Detection: Apply a clustering algorithm (e.g., k-means) on the combined color space data to identify and detect sperm heads [16].

- Directionality Analysis: Implement an ellipse-fitting algorithm to determine the orientation and front direction of the sperm head [16].

- Segmentation Refinement: Use image processing techniques (e.g., morphological operations) to refine the segmentation masks [16].

- Validation: Compare results against the gold-standard hand-segmented masks to evaluate detection and segmentation precision [16].

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials for Sperm Morphology Segmentation Research

| Item Name | Function / Application Note |

|---|---|

| Live, Unstained Human Sperm Dataset | Provides clinically viable images for developing non-destructive CASA systems [3]. |

| Gold-Standard Annotations | Hand-segmented masks for sperm parts (acrosome, nucleus, etc.) used as ground truth for training and validating models [3] [16]. |

| Mask R-CNN Model | A two-stage deep learning model selected for segmenting smaller, regular structures like the sperm head, acrosome, and nucleus [3]. |

| U-Net Model | A convolutional neural network architecture excels at segmenting morphologically complex structures like the sperm tail due to its global perception and multi-scale feature extraction [3]. |

| Color Space Transformation Tools | Software functions for converting images from RGB to L*a*b* and YCbCr, enabling illumination-invariant segmentation [16]. |

The application of artificial intelligence (AI) and deep learning (DL) in sperm morphology analysis (SMA) represents a transformative advancement for male infertility diagnosis and assisted reproductive technology (ART). However, the development of robust, automated sperm analysis systems is critically constrained by a fundamental challenge: the lack of standardized, high-quality annotated datasets [9]. This bottleneck impedes the training of reliable deep learning models capable of precise segmentation and classification of sperm morphological structures—the head, midpiece, and tail. Current datasets are often limited by small sample sizes, inconsistent annotation standards, low image resolution, and a lack of diversity in morphological abnormalities [9] [17]. This application note delineates the specific challenges of dataset development, presents a quantitative comparison of existing resources, provides detailed experimental protocols for dataset creation and model training, and proposes standardized solutions to accelerate research in this vital field.

The Dataset Landscape in Sperm Morphology Analysis

The field relies on several public datasets, each with specific strengths and limitations. The table below provides a structured comparison of key datasets available for sperm morphology research.

Table 1: Comparison of Publicly Available Sperm Morphology Datasets

| Dataset Name | Publication Year | Primary Content | Key Annotations | Notable Strengths | Inherent Limitations |

|---|---|---|---|---|---|

| VISEM-Tracking [17] | 2023 | 20 video recordings (29,196 frames); 656,334 annotated objects [9] [17] | Bounding boxes, tracking IDs, motility characteristics [17] | Large scale; multi-modal (videos + clinical data); tracking information | Does not focus on fine-grained morphological part segmentation |

| SVIA [9] [17] | 2022 | 101 short video clips; 125,000 object instances [9] [17] | Object detection, 26,000 segmentation masks, classification [9] | Diverse tasks: detection, segmentation, classification | Video clips are very short (1-3 seconds) |

| MHSMA [9] [17] | 2019 | 1,540 grayscale sperm head images [9] [17] | Classification of head morphology [9] | Useful for head-specific classification tasks | Cropped heads only; no midpiece or tail data; low resolution |

| HSMA-DS [9] [17] | 2015 | 1,457 sperm images from 235 patients [9] [17] | Vacuole, tail, midpiece, and head abnormality (binary notation) [9] | Provides multi-structure abnormality annotations | Non-stained, noisy, and low-resolution images [9] |

| SCIAN-MorphoSpermGS [9] | 2017 | 1,854 sperm images [9] | Classification into five classes (normal, tapered, pyriform, small, amorphous) [9] | Stained images with higher resolution | Focuses solely on head morphology classification |

| HuSHeM [9] [17] | 2017 | 725 sperm head images (216 publicly available) [9] [17] | Classification of head morphology [9] | Stained and high-resolution images | Very limited number of publicly available images |

Core Technical and Procedural Challenges

The path to creating high-quality datasets is fraught with several interconnected challenges:

- Annotation Complexity and Subjectivity: Sperm defect assessment requires simultaneous evaluation of the head, vacuoles, midpiece, and tail against the WHO's 26 types of abnormal morphology [9]. This process is inherently complex and subjective, requiring specialized expertise, which leads to high inter-annotator variability and inconsistencies in labeling [9].

- Image Acquisition Hurdles: Conventional staining methods, while enhancing contrast, can damage sperm cells and alter their natural morphology, making them unsuitable for clinical use in procedures like Intracytoplasmic Sperm Injection (ICSI) [12]. Conversely, non-stained live sperm images, crucial for clinical applications, often suffer from low resolution, blurred boundaries, and low signal-to-noise ratios, especially under lower magnifications used to keep sperm in the field of view [12]. Furthermore, sperm may appear intertwined or with only partial structures visible at image edges, complicating accurate annotation [9].

- Data Scarcity and Standardization: Many medical institutions continue to rely on conventional assessment methods that do not systematically save valuable image data, leading to significant data loss [9]. There is a pronounced lack of a unified, community-wide standard for slide preparation, staining, image acquisition, and annotation, resulting in datasets that are not interoperable and models with poor generalization capabilities [9].

Detailed Experimental Protocols

Protocol 1: Creating a Standardized Dataset for Sperm Morphology

This protocol outlines a comprehensive procedure for acquiring and annotating high-quality sperm image data suitable for training deep learning models for segmentation and classification.

Table 2: Research Reagent Solutions for Sperm Image Acquisition

| Item | Function/Description | Key Considerations |

|---|---|---|

| Phase-Contrast Microscope | Enables examination of unstained, live sperm preparations by enhancing contrast of transparent specimens [17]. | Essential for clinical, non-invasive analysis as per WHO recommendations [17]. |

| Heated Microscope Stage | Maintains samples at 37°C during recording [17]. | Critical for preserving sperm motility and vitality for realistic analysis. |

| Microscope-Mounted Camera (e.g., UEye UI-2210C) [17] | Captures video footage of sperm samples for dynamic analysis. | Should support recording at a minimum of 30 frames per second for accurate motility tracking. |

| Labeling Software (e.g., LabelBox) [17] | Platform for manual annotation of bounding boxes, segmentation masks, and class labels. | Should support multiple annotators and consensus mechanisms to reduce subjectivity. |

Procedure:

Sample Preparation and Video Acquisition:

- Prepare fresh semen samples on a heated microscope stage maintained at 37°C [17].

- Record videos using a phase-contrast microscope at 400x magnification, as recommended by the WHO for unstained preparations [17]. Save videos in a lossless format like AVI.

- For consistent processing, split long videos into 30-second clips [17].

Frame Extraction and Pre-processing:

- Extract all frames from the video clips. This results in a large set of images (e.g., ~1,500 frames per 30-second video) for annotation [17].

- Apply minimal pre-processing to retain biological authenticity. Techniques like Gaussian filtering can be used in later analysis stages to smooth data and enhance measurement accuracy [12].

Multi-Level Annotation:

- Bounding Box Annotation: Use software like LabelBox to draw bounding boxes around every visible sperm in each frame [17]. Different classes should be assigned (e.g.,

0for normal sperm,1for sperm clusters,2for pinhead sperm) [17]. - Instance Segmentation: For a more precise analysis, annotate the exact pixel-level boundaries of each sperm. This is more labor-intensive but enables accurate morphological measurement.

- Part Segmentation: For each sperm instance, perform fine-grained segmentation to label the head, midpiece, and tail separately [12]. This is crucial for detailed morphology analysis.

- Tracking ID Assignment: For video data, assign a unique tracking identifier to the same spermatozoon across consecutive frames to enable motility and kinematics analysis [17].

- Bounding Box Annotation: Use software like LabelBox to draw bounding boxes around every visible sperm in each frame [17]. Different classes should be assigned (e.g.,

Quality Control and Curation:

- Annotations must be performed by data scientists in close collaboration with experienced biologists or andrologists to ensure biological accuracy [17].

- Implement a robust review process. All annotations should be verified by multiple experts to ensure consistency and correctness [17].

- Exclude outliers and low-quality frames using statistical methods like the Interquartile Range (IQR) to ensure a clean, reliable dataset [12].

Protocol 2: Training a Multi-Scale Part Parsing Network for Sperm Instance Segmentation

This protocol describes a state-of-the-art deep learning methodology for parsing multiple sperm targets and their constituent parts, addressing the challenge of instance-level morphological analysis [12].

Workflow Overview:

Procedure:

Network Architecture and Training:

- Model Design: Implement a multi-scale part parsing network that integrates both instance segmentation and semantic segmentation branches [12].

- Instance Branch: This branch generates masks for accurate localization of individual sperm instances, distinguishing one sperm from another in a crowded field [12].

- Semantic Branch: This branch performs pixel-level classification to delineate the head, midpiece, and tail components of all sperm simultaneously [12].

- Feature Fusion: Fuse the outputs from both branches. The instance masks from the first branch are used to isolate individual sperm, and within each isolated region, the semantic labels from the second branch provide the detailed part segmentation [12]. This fusion creates a comprehensive instance-level parsing map where each sperm is separated and its parts are clearly identified.

Measurement Accuracy Enhancement:

- After segmentation, extract morphological parameters (e.g., head length and width) from the parsed images.

- To counteract errors from low-resolution, non-stained images, employ a post-processing strategy based on statistical analysis and signal processing [12]:

- Outlier Filtering: Use the Interquartile Range (IQR) method to exclude biologically implausible measurement outliers [12].

- Data Smoothing: Apply a Gaussian filter to smooth the measured data, reducing noise-induced jitter [12].

- Robust Correction: Employ maximum value extraction or similar robust techniques to estimate the true maximum morphological dimensions, which are often underestimated due to blurred boundaries [12]. This step can reduce measurement errors by up to 35.0% for critical parameters like head length [12].

Validation:

- Validate the model's performance using metrics such as Average Precision (AP), particularly for volumetric parts (

APvolp). State-of-the-art models have achieved 59.3%APvolp[12]. - Ensure clinical validity by having experienced sperm physicians confirm the morphological accuracy of a subset of the results. Target a morphological accuracy percentage of over 90% against expert manual analysis [18].

- Validate the model's performance using metrics such as Average Precision (AP), particularly for volumetric parts (

Emerging Solutions and Future Directions

Synthetic Data Generation

To overcome the limitations of real-world data collection, synthetic data generation presents a powerful alternative. Tools like AndroGen offer an open-source solution for generating customizable synthetic sperm images from different species without requiring real data or extensive training of generative models [19]. This approach significantly reduces the cost and annotation effort associated with creating large datasets. Researchers can use AndroGen's graphical interface to set parameters for creating task-specific datasets, tailoring the synthetic data to their specific research needs, such as emphasizing rare morphological abnormalities [19].

Data Tracking and Kinematics

Beyond static morphology, tracking sperm motility is crucial for a comprehensive assessment. The VISEM-Tracking dataset exemplifies this multi-modal approach by providing not only bounding boxes but also tracking identifiers that allow researchers to follow individual sperm across video frames [17]. This enables the analysis of movement patterns, kinematics, and the correlation between motility and morphology. Improved tracking algorithms, such as those incorporating sperm head movement distance and angle into the matching cost function, can further enhance the accuracy of these analyses [18].

The critical bottleneck of standardized, high-quality annotated datasets is the primary impediment to the advancement of AI-based sperm morphology analysis. Addressing this challenge requires a concerted effort from the research community to adopt standardized protocols for data acquisition and annotation, leverage emerging technologies like synthetic data generation, and develop robust, multi-task deep learning models capable of detailed instance parsing. By systematically implementing the protocols and solutions outlined in this document, researchers and clinicians can build more powerful, reliable, and clinically applicable tools, ultimately improving diagnostic accuracy and success rates in the treatment of male infertility.

Accurate segmentation of sperm morphological structures—the head, acrosome, nucleus, neck, and tail—is foundational for assessing male fertility potential. Traditional manual semen analysis suffers from substantial subjectivity, inter-observer variability, and labor-intensive processes, hindering standardized diagnosis and research reproducibility [9] [20]. The evolution towards automated systems began with traditional image processing algorithms and has now transitioned to deep learning models, aiming to overcome these limitations. This progression has been driven by the need for precise, high-throughput analysis in clinical diagnostics and drug development. Segmentation provides the essential first step for quantitative morphometry, enabling researchers to extract objective measurements of critical parameters such as head size, acrosomal area, tail length, and neck integrity, which correlate strongly with fertilization potential [3]. The historical journey from manual thresholding to sophisticated convolutional neural networks reflects a broader paradigm shift in biomedical image analysis, emphasizing automation, objectivity, and integration with computer-assisted sperm analysis (CASA) systems.

From Traditional Image Processing to Modern Deep Learning

Traditional Image Processing Techniques

The initial automated approaches to sperm segmentation relied on classical image processing algorithms that required significant manual feature engineering and parameter tuning.

- Thresholding Methods: Early segmentation used global or adaptive thresholding to binarize images, separating sperm structures from the background based on pixel intensity values. This method converted grayscale images into binary maps, facilitating subsequent contour detection and shape analysis [21].

- Region-Based Segmentation: Algorithms like region-growing started from seed pixels and grouped adjacent pixels with similar properties. The watershed algorithm, another popular choice, treated image intensity as a topographic surface and simulated flooding from local minima to define boundaries [21].

- Edge Detection: Techniques such as the Canny edge detector identified sharp intensity changes in images to outline sperm boundaries. These detectors used gradient calculations and filtering to highlight structural edges [21].

- Clustering-Based Algorithms: Unsupervised learning methods like K-means clustering grouped pixels into a predefined number of clusters ('K') based on feature similarity (e.g., color, intensity, texture), effectively partitioning the image into segments corresponding to different sperm parts [9] [22].

Table 1: Traditional Image Processing Techniques for Sperm Segmentation

| Technique | Underlying Principle | Commonly Used Algorithms | Reported Performance |

|---|---|---|---|

| Thresholding | Pixels classified based on intensity value relative to a set threshold | Otsu's method, Adaptive thresholding | Foundation for binarization; often required further processing [21] |

| Region-Based | Groups pixels with similar characteristics growing from seed points | Region-growing, Watershed | Prone to over-segmentation with noisy or low-contrast images [21] |

| Edge Detection | Identifies boundaries based on high-intensity gradients | Canny, Sobel, Laplacian of Gaussian (LoG) | Effective for clear boundaries but often produced discontinuous edges [21] |

| Clustering | Partitions pixels into clusters based on feature similarity | K-means, Mean-Shift | Achieved ~90% accuracy for head classification in some studies [22] |

The Shift to Deep Learning

Conventional machine learning algorithms, including Support Vector Machines (SVM) and decision trees, demonstrated success but were fundamentally limited by their dependence on handcrafted features (e.g., grayscale intensity, texture, contour shape) [9] [22]. These manually designed features were often inadequate for capturing the complex and variable morphology of sperm, particularly in low-resolution or unstained images, leading to issues like over-segmentation, under-segmentation, and poor generalization across different datasets [22].

Deep learning (DL) overcame these limitations by automatically learning hierarchical feature representations directly from raw pixel data. Convolutional Neural Networks (CNNs), with their encoder-decoder architecture, became the cornerstone of modern sperm segmentation. The encoder compresses the input image into a latent feature representation, while the decoder reconstructs this representation into a detailed segmentation map [21]. Key architectural innovations like U-Net introduced skip connections between encoder and decoder layers, preserving spatial information lost during downsampling and proving highly effective for medical imaging tasks [21] [3]. Models like Mask R-CNN extended object detection frameworks to simultaneously generate bounding boxes and pixel-level masks for each object instance, which is crucial for analyzing individual sperm in dense samples [3].

Comparative Performance Analysis

Recent studies have systematically evaluated the performance of various deep learning models across different sperm components. The results indicate that no single model universally outperforms others on all structures; instead, performance is highly dependent on the size, shape, and complexity of the target morphology.

Table 2: Quantitative Performance Comparison of Deep Learning Models on Sperm Segmentation

| Sperm Structure | Best Performing Model | Key Metric (IoU) | Comparative Model Performance |

|---|---|---|---|

| Head | Mask R-CNN | Highest IoU | Robust for small, regular structures [3] |

| Nucleus | Mask R-CNN | Highest IoU | Slightly outperformed YOLOv8 [3] |

| Acrosome | Mask R-CNN | Highest IoU | Surpassed YOLO11 [3] |

| Neck | YOLOv8 | Comparable/IoU slightly > Mask R-CNN | Single-stage models can rival two-stage models [3] |

| Tail | U-Net | Highest IoU | Superior global perception for elongated structures [3] |

Quantitative metrics such as the Intersection over Union (IoU) and Dice coefficient are critical for these evaluations. The superior performance of Mask R-CNN on compact structures like the head and nucleus is attributed to its two-stage architecture and region-based refinement. In contrast, U-Net's strength in segmenting the long, thin tail is linked to its multi-scale feature extraction and ability to capture global contextual information [3].

Detailed Experimental Protocols

Protocol for a Comparative Multi-Model Segmentation Study

This protocol outlines the steps for training and evaluating different deep learning models to segment key sperm components, providing a standardized framework for reproducible research.

A. Sample Preparation and Image Acquisition

- Sample Collection and Smear Preparation: Collect semen samples from donors following ethical guidelines and informed consent. Prepare smears on glass slides according to WHO laboratory manual protocols. Use RAL Diagnostics staining kit or similar for staining, if working with stained samples. For unstained live sperm analysis, ensure minimal processing to preserve natural morphology [20] [3].

- Image Acquisition: Use a Computer-Assisted Semen Analysis (CASA) system or a microscope with a 100x oil immersion objective and a digital camera. Acquire images in bright-field mode. Ensure each captured image contains a single spermatozoon where possible to simplify initial analyses. The MMC CASA system is an example of a suitable platform [20].

B. Data Annotation and Pre-processing

- Expert Annotation: Have multiple experts (e.g., three with extensive experience in semen analysis) manually annotate each component of the sperm (head, acrosome, nucleus, neck, tail) using a standardized classification system (e.g., modified David classification or WHO criteria) [20] [3]. Use annotation software like ITK-SNAP [23].

- Inter-Expert Agreement Analysis: Calculate the level of agreement among experts (e.g., Total Agreement: 3/3 experts agree). Use statistical software like IBM SPSS to assess agreement with Fisher's exact test (p < 0.05 considered significant) [20].

- Image Pre-processing: Resize all images to a consistent dimensions (e.g., 80x80 pixels). Convert images to grayscale. Apply normalization to scale pixel values to a standard range (e.g., 0-1). This step helps denoise the images and standardize the input for the model [20].

C. Model Training and Evaluation

- Data Partitioning: Randomly split the dataset into a training set (80%), a validation set (10%), and a test set (10%). The validation set is used for hyperparameter tuning during training, while the test set is reserved for the final, unbiased evaluation [20] [24].

- Data Augmentation: Augment the training dataset to increase its size and variability, improving model generalization. Apply techniques including random rotations, horizontal and vertical flips, slight brightness and contrast adjustments, and zoom transformations [20].

- Model Implementation and Training: Implement models such as U-Net, Mask R-CNN, YOLOv8, and YOLO11 using a deep learning framework like Python 3.8 with TensorFlow or PyTorch. Train each model on the augmented training set and use the validation set to monitor for overfitting [20] [3].

- Quantitative Evaluation: Evaluate the trained models on the held-out test set using standard metrics: Intersection over Union (IoU), Dice coefficient, Precision, Recall, and F1-Score. Perform statistical analysis to determine significant performance differences between models [3].

Diagram Title: Sperm Segmentation Study Workflow

Protocol for Sperm Detection and Dynamic Tracking

This protocol describes a methodology for detecting and tracking multiple sperm in video sequences, which is crucial for analyzing motility alongside morphology.

A. Sperm Detection with Deep Learning

- Dataset Construction: Use a public dataset like VISEM or create a custom dataset from sperm microscopic videos. Extract video frames to create a set of images for training. An example is the VISEM-1 dataset, constructed from 6000 randomly selected microscopic images [24].

- Model Development: Build a detection model based on a architecture like YOLOv8. To optimize for real-time performance, incorporate modules like GSConv for lightweight convolution and a Slim-neck structure to reduce computational complexity while maintaining accuracy [24].

- Model Training and Validation: Train the detection model on the training set. Use the Frames Per Second (FPS) metric and average precision ([email protected]) to evaluate both the speed and accuracy of the model. The DP-YOLOv8n model, for instance, achieved an [email protected] of 86.8% and an FPS of 86.27 on a test dataset [24].

B. Multi-Sperm Tracking with an Interactive Motion Model

- Motion Model Integration: Implement an Interacting Multiple Model (IMM) filter. Integrate the Singer model (for uniform motion) and the Coordinated Turn (CT) model (for non-linear, maneuvering motion) to accurately predict sperm trajectories during complex movements [24].

- Trajectory Estimation and Association: Use a tracking algorithm like ByteTrack. Feed it with the position information from the detection model and the motion state predictions from the IMM filter to associate detections across frames and maintain consistent sperm identities, even through collisions and occlusions [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Sperm Morphology Segmentation

| Item Name | Specification / Example | Primary Function in Research |

|---|---|---|

| Staining Kit | RAL Diagnostics Staining Kit | Enhances contrast of sperm structures on slides for traditional and CASA analysis [20]. |

| Public Datasets | VISEM-Tracking, SVIA Dataset, SMD/MSS | Provides large-scale, annotated sperm images/videos for model training and benchmarking [9] [20] [24]. |

| Annotation Software | ITK-SNAP | Enables precise manual segmentation of sperm components to create ground truth data [23]. |

| CASA System | MMC CASA System | Automated platform for standardized image acquisition and initial morphometric analysis [20]. |

| Deep Learning Framework | Python 3.8 with TensorFlow/PyTorch | Provides the programming environment for building and training segmentation models like U-Net and YOLO [20] [24]. |

The historical progression from traditional image processing to deep learning has fundamentally transformed the segmentation of sperm morphological structures. While traditional algorithms provided the initial foundation for automation, they were constrained by their reliance on handcrafted features. Deep learning models, with their capacity for automatic feature learning, have demonstrated superior performance and robustness. Current research indicates a trend towards hybrid and specialized architectures, such as CP-Net for tiny subcellular structures and multi-model frameworks that combine tracking with segmentation for a holistic sperm quality assessment [3]. The future of this field lies in the development of large, high-quality, multi-center annotated datasets, the creation of more efficient and explainable models, and the full integration of these advanced segmentation tools into clinical CASA systems to standardize and improve male fertility diagnostics and the efficacy evaluation of novel pharmacological agents.

Deep Learning Architectures for Sperm Segmentation: Models, Mechanisms, and Workflows

Accurate segmentation of sperm morphological structures is a critical requirement in modern andrology and assisted reproductive technology (ART). Within this domain, instance segmentation models, particularly Mask R-CNN, have emerged as powerful tools for precisely delineating sperm components such as the head, acrosome, nucleus, neck, and tail [3]. This precision is fundamental for computer-aided sperm analysis (CASA) systems, which aim to automate and standardize sperm quality assessment, a process traditionally reliant on manual, subjective evaluation by embryologists [25]. The analysis of sperm morphology provides vital insights into male fertility potential, as any abnormalities in the shape or size of key structures can impair sperm function and reduce fertilization success [26]. The two-stage architecture of Mask R-CNN, which generates bounding boxes for each object instance in the first stage and precise segmentation masks in the second, is uniquely suited for this task, enabling researchers to perform detailed morphological analysis on a scale and with an accuracy that was previously unattainable [27] [3].

Quantitative Performance Analysis of Mask R-CNN in Sperm Segmentation

Systematic evaluations comparing deep learning models for multi-part sperm segmentation highlight the robust performance of Mask R-CNN. In a comprehensive 2025 study, Mask R-CNN was benchmarked against other state-of-the-art models including U-Net, YOLOv8, and YOLO11 for segmenting the head, acrosome, nucleus, neck, and tail of live, unstained human sperm [3].

Table 1: Performance Comparison of Segmentation Models for Sperm Structures (IoU Metric) [3]

| Sperm Structure | Mask R-CNN | YOLOv8 | YOLO11 | U-Net |

|---|---|---|---|---|

| Head | 0.901 | 0.892 | 0.885 | 0.878 |

| Nucleus | 0.883 | 0.875 | 0.861 | 0.852 |

| Acrosome | 0.867 | 0.849 | 0.838 | 0.841 |

| Neck | 0.798 | 0.802 | 0.791 | 0.776 |

| Tail | 0.812 | 0.819 | 0.806 | 0.827 |

The data demonstrates that Mask R-CNN consistently outperforms other models in segmenting smaller and more regular structures, achieving the highest Intersection over Union (IoU) scores for the nucleus and acrosome [3]. This superiority is attributed to its two-stage architecture, which allows for refined feature extraction and mask generation for each detected object. Conversely, for the morphologically complex tail, U-Net achieved the highest IoU, capitalizing on its strong global perception and multi-scale feature extraction capabilities [3]. For the neck, YOLOv8 performed comparably or slightly better, suggesting that single-stage models can be competitive for certain structures [3].

Table 2: Additional Performance Metrics for Mask R-CNN on Sperm Segmentation [3]

| Metric | Average Score |

|---|---|

| Dice Coefficient | 0.912 |

| Precision | 0.934 |

| Recall | 0.895 |

| F1-Score | 0.914 |

These quantitative results confirm that Mask R-CNN provides a balanced and high-performing approach, with strong precision and recall, making it a robust choice for a unified segmentation framework targeting multiple sperm components [3].

Experimental Protocol for Sperm Morphology Segmentation

Dataset Preparation and Annotation

- Sample Collection & Preparation: Use clinically labeled live, unstained human sperm samples [3]. For animal studies, such as bovine sperm, collect semen via electroejaculation, dilute with an appropriate extender like Optixcell, and prepare slides with fixed samples for imaging [28].

- Image Acquisition: Capture images using a microscope equipped with a negative phase contrast objective (e.g., 40x) and a digital camera. Standardize conditions to minimize variability in contrast and illumination [28]. For field applications, use digital cameras (e.g., Sony ILCE-5100) with consistent settings for distance and lighting [29].

- Image Annotation: Annotate each sperm component (head, acrosome, nucleus, neck, tail) precisely using an interactive polygon tool in labeling software such as LabelMe [29]. Save annotations in JSON format. For instance segmentation, assign the same group ID to different parts of the same sperm instance if they are truncated or separated by occlusion [29].

- Dataset Splitting: Divide the annotated dataset into training, validation, and test sets. A typical ratio is 80:10:10. Ensure that images from the same source are not spread across different sets to prevent data leakage [26].

Model Implementation and Training

- Model Configuration: Utilize the Matterport Mask R-CNN implementation on Python 3, Keras, and TensorFlow [27]. The model is built on a Feature Pyramid Network (FPN) and a ResNet101 backbone [27].

- Configuration Class: Subclass the

Configclass to set parameters specific to the sperm dataset. Key parameters include:NAME: 'sperm_segmentation'NUM_CLASSES: 1 (background + sperm) [27]IMAGES_PER_GPU: 1 or 2 (depending on GPU memory)NUM_WORKERS: 4STEPS_PER_EPOCH: 100 (number of training images / IMAGESPERGPU)VALIDATION_STEPS: 50DETECTION_MIN_CONFIDENCE: 0.8MAX_GT_INSTANCES: 20 (maximum number of sperm instances in an image)RPN_ANCHOR_SCALES: (16, 32, 64, 128, 256) (anchor sizes for region proposal)