Advanced Sperm Morphology Classification Algorithms: From Traditional ML to Deep Learning and Clinical Translation

This article provides a comprehensive overview of the evolution, current state, and future directions of sperm morphology classification algorithms for a specialized audience of researchers, scientists, and drug development professionals.

Advanced Sperm Morphology Classification Algorithms: From Traditional ML to Deep Learning and Clinical Translation

Abstract

This article provides a comprehensive overview of the evolution, current state, and future directions of sperm morphology classification algorithms for a specialized audience of researchers, scientists, and drug development professionals. It systematically explores the foundational challenges driving automation, including the high subjectivity and inter-observer variability of manual analysis. The review delves into the methodological shift from conventional machine learning, reliant on handcrafted features, to advanced deep learning architectures like CNNs, ResNet, and VGG, enhanced by attention mechanisms and transfer learning. It further examines critical troubleshooting and optimization strategies addressing dataset limitations and model performance, and concludes with a rigorous validation and comparative analysis of algorithmic performance against expert benchmarks and clinical standards, highlighting pathways for integration into biomedical research and clinical diagnostics.

The Drive for Automation: Overcoming the Limitations of Manual Sperm Morphology Analysis

Sperm morphology assessment, the analysis of sperm size and shape, is a cornerstone of male fertility evaluation. The "gold standard" for this assessment, as defined by the World Health Organization (WHO), is a manual evaluation by trained technicians using strict criteria [1]. This method classifies sperm as normal or abnormal based on the appearance of the head, midpiece, and tail, with the current threshold for a normal sample set at ≥4% typical forms [1].

Despite its established role, this gold standard is compromised by inherent subjectivity and high variability [2] [3]. These limitations pose significant challenges for clinical diagnostics and the development of automated classification systems. For researchers creating algorithms, the manual classification used as a training benchmark is itself unreliable, which can limit the accuracy and generalizability of computational models [2] [4]. This article deconstructs the flaws in the manual assessment protocol and examines their impact on fertility research and treatment decisions.

Deconstructing the Gold Standard: Methodology and Workflow

The manual assessment of sperm morphology is a multi-step process, with precision required at each stage to ensure a valid result. Deviations in protocol introduce major sources of error.

Standardized Staining and Sample Preparation

Proper preparation is critical for accurate visualization. The recommended method is Papanicolaou staining, which provides the best overall visibility of all sperm regions [1]. Alternative methods like Diff-Quick or Shorr can be used but must be rigorously validated against the standard technique [1]. The staining process differentiates cellular components; for instance, in a modified Hematoxylin/Eosin procedure, the nucleus is stained with Hematoxylin and the acrosome with Eosin [2].

Manual Microscopic Assessment Workflow

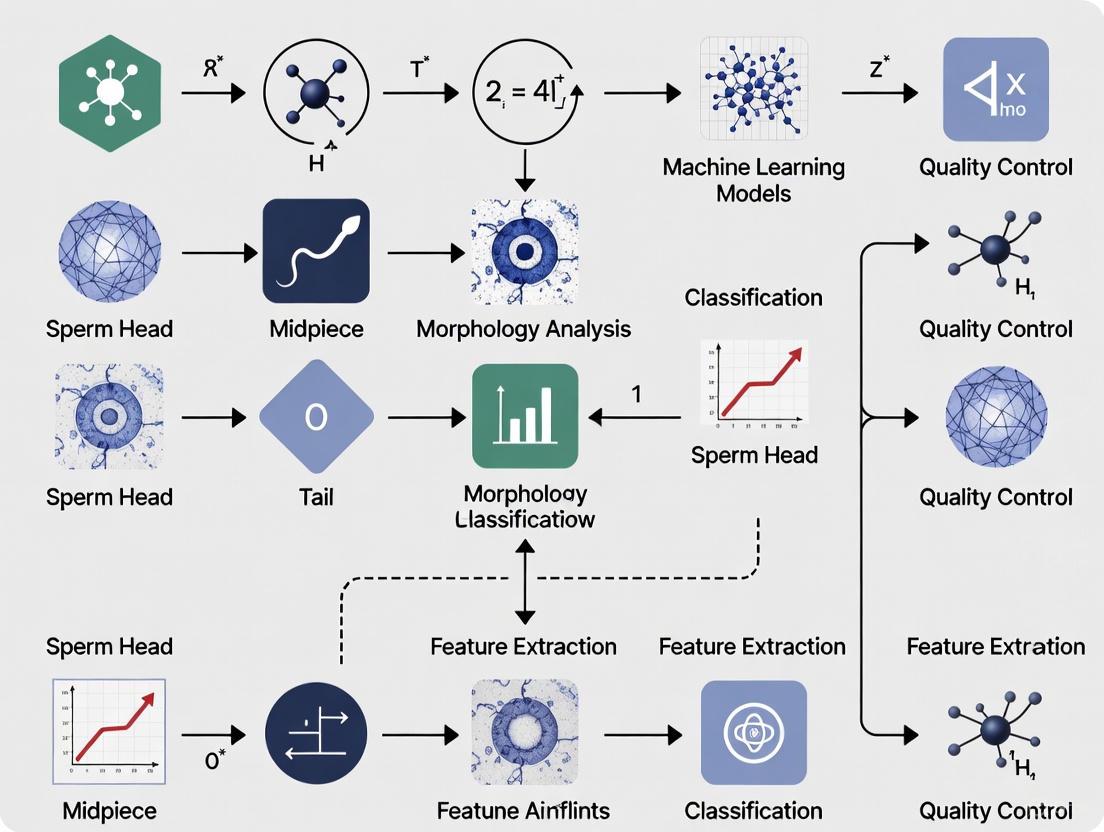

The core analysis follows a structured workflow, visualized below.

Classification Criteria and Systems

The complexity of classification can vary, impacting both difficulty and consistency. Technicians may use systems of varying complexity [5]:

- 2-category: Normal vs. Abnormal.

- 5-category: Normal, Head defect, Midpiece defect, Tail defect, Cytoplasmic droplet.

- 8-category and 25-category: More granular systems specifying individual defects like pyriform heads or knobbed acrosomes [5].

Table 1: Key Reagents and Materials for Manual Morphology Assessment

| Research Reagent/Material | Primary Function in Protocol | Technical Notes |

|---|---|---|

| Papanicolaou Stain | Cytological staining to differentiate sperm structures (acrosome, nucleus, midpiece) [1] | WHO-recommended for optimal visualization. |

| Hematoxylin | Nuclear stain; colors the sperm head core [2]. | Used in modified H/E protocols; requires precise immersion times. |

| Eosin | Counterstain; colors the acrosome and cytoplasmic components [2]. | Used in modified H/E protocols; helps distinguish acrosomal boundaries. |

| Ethanol (70%) | Slide fixation prior to staining [2]. | Preserves cell structure on the slide. |

| Phase Contrast Microscope | Visualization of unstained sperm for initial assessment. | Not suitable for strict criteria; requires stained smears for detailed morphology. |

| Brightfield Microscope | High-magnification assessment of stained sperm smears [1]. | Must use 100x oil immersion objective for detailed evaluation. |

Quantifying Subjectivity and Variability

The theoretical protocol is sound, but in practice, its execution is plagued by subjectivity. Quantitative studies reveal the extent of this problem, showing that inconsistency is not an anomaly but a fundamental characteristic of manual assessment.

Inter- and Intra-Observer Variability

A primary source of error is the disagreement between different experts (inter-observer) and even by the same expert at different times (intra-observer). Studies report up to 40% disagreement between expert evaluators examining the same sperm sample [4]. This high degree of inter-expert variability is a major hurdle for standardizing diagnostics [2] [3].

The complexity of the classification system directly influences accuracy and agreement. A 2025 training study demonstrated that novice morphologists achieved significantly higher accuracy with simpler systems. When using a basic 2-category system (normal/abnormal), untrained users had an accuracy of 81.0%, which plummeted to 53.0% when using a complex 25-category system [5]. This finding underscores the inherent difficulty of consistent, fine-grained classification.

The Impact of Training on Variability

While training is essential, the lack of a universal, standardized training protocol is a critical flaw. Evidence shows that structured training can significantly improve performance. A study using a "Sperm Morphology Assessment Standardisation Training Tool" based on machine learning principles demonstrated remarkable improvements. Novice morphologists who underwent repeated training over four weeks saw their accuracy in the 25-category system jump from 82% to 90%, while the time taken to classify each image decreased from 7.0 to 4.9 seconds [5]. This confirms that variability can be reduced, but it also highlights that the standard of practice across laboratories is not uniform.

Table 2: Quantitative Evidence of Manual Assessment Limitations

| Study Focus | Key Metric | Performance/Outcome Data | Implication |

|---|---|---|---|

| Inter-Observer Agreement [4] | Disagreement between experts | Up to 40% | The gold standard is highly subjective and non-reproducible. |

| Classification System Complexity [5] | Untrained user accuracy | 2-category: 81.0%5-category: 68.0%25-category: 53.0% | More detailed classification systems are inherently less reliable. |

| Training Impact [5] | Accuracy improvement (25-category) | Pre-training: 82%Post-training: 90% | Standardized training reduces, but does not eliminate, variability. |

| Diagnostic Speed [5] | Time per image classification | Pre-training: 7.0 secondsPost-training: 4.9 seconds | Proficiency improves speed, but manual analysis remains time-consuming. |

Consequences for Clinical and Research Applications

The flaws in the gold standard have direct and serious consequences, affecting both patient care and the development of new technologies.

Compromised Clinical Utility and Guidelines

The high variability challenges the clinical value of sperm morphology as a standalone prognostic tool. The 2025 expert review from the French BLEFCO Group reflects this, stating there is "insufficient evidence to demonstrate the clinical value of indexes of multiple sperm defects" and does not recommend using the percentage of normal sperm as a prognostic criterion for selecting assisted reproductive techniques like IUI, IVF, or ICSI [6].

The clinical impact is nuanced. For polymorphic teratozoospermia (a mix of various abnormalities), the prognostic value for IUI or IVF outcomes is considered limited [1]. The primary clinical utility of morphology assessment may now lie in identifying specific monomorphic abnormalities (e.g., globozoospermia, macrocephalic sperm), which are rare but have clear diagnostic and genetic implications [6] [1].

The "Ground-Truth" Problem for Algorithm Development

For researchers developing computer-assisted sperm analysis (CASA) and deep learning models, the lack of a reliable gold standard is a major bottleneck. Machine learning algorithms require high-quality, accurately labeled datasets for training—a requirement known as "ground-truth" [5]. As one study notes, "it is impossible to count with a ground-truth because of the subjectivity of the task" [2].

To circumvent this, researchers create "gold-standards" or "pseudo ground-truths" by using consensus labels from multiple experts [2] [5]. For instance, the SCIAN-MorphoSpermGS dataset was built from the classifications of three domain experts on 1,854 sperm head images [2]. However, any model trained on this consensus will inherit the biases and inconsistencies of the human experts who labeled the data, fundamentally limiting the model's potential accuracy and objectivity [3]. This creates a significant barrier to developing robust and generalizable AI solutions.

Emerging Solutions and Standardized Protocols

Addressing these flaws is an active area of research, with two parallel paths emerging: the refinement of human training and the adoption of automated technologies.

Standardized Training Tools

The development of structured training tools shows promise for reducing human error. These tools, based on supervised machine learning principles, provide trainees with immediate feedback by comparing their classifications against a dataset validated by expert consensus [5]. This creates a traceable and consistent training standard, allowing morphologists to achieve higher levels of accuracy and lower variability independently [5].

Computer-Assisted and Deep Learning Systems

Automated systems represent the most promising path toward objective assessment. Computer-assisted sperm morphology (CASM) systems and deep learning models are designed to eliminate human subjectivity.

Recent deep learning frameworks have demonstrated performance surpassing conventional methods. One 2025 model combining a ResNet50 architecture with advanced feature engineering achieved test accuracies of 96.77% on a human sperm dataset, a significant improvement over baseline models [4]. These systems can reduce analysis time from 30-45 minutes to under one minute per sample, offering both objectivity and massive gains in efficiency [4].

Expert groups are beginning to endorse this shift. The French BLEFCO Group gives a "positive opinion on the use of automated systems based on cytological analysis after staining", provided that laboratories properly qualify the operators and validate the system's analytical performance internally [6]. The following diagram illustrates the contrasting workflows of manual and AI-assisted analysis, highlighting sources of human error versus computational consistency.

The manual assessment of sperm morphology, the current gold standard, is fundamentally compromised by subjectivity and high variability, which stem from its reliance on human visual perception and the lack of universal standardized training. These flaws undermine its clinical prognostic value and create a significant "ground-truth" problem that hinders the development of robust algorithmic solutions. The path forward lies in the adoption of two complementary strategies: the implementation of standardized, technology-based training tools to enhance human consistency, and the broader validation and integration of deep learning-based automated systems. These approaches are critical for transitioning from a subjective and variable gold standard to a new era of objective, reproducible, and clinically reliable sperm morphology analysis.

The morphological evaluation of sperm is a cornerstone of male fertility assessment, providing critical prognostic information about the functional potential of spermatozoa. Despite its clinical importance, sperm morphology analysis has historically been one of the most challenging and subjective parameters to standardize in routine semen analysis [7]. This challenge has led to the development of various classification systems, each with distinct philosophical approaches to defining "normal" sperm morphology and categorizing abnormalities. The three predominant systems used globally are the World Health Organization (WHO) guidelines, the Kruger (or Tygerberg) strict criteria, and David's modified classification. These systems form the foundational framework upon which clinical diagnoses, research methodologies, and increasingly, artificial intelligence algorithms are built. The evolution of these classifications reflects an ongoing effort to enhance the objectivity, reproducibility, and clinical predictive value of sperm morphology assessment in the diagnosis and treatment of male factor infertility.

Classification System Specifications and Comparison

World Health Organization (WHO) Criteria

The WHO system, as detailed in its laboratory manuals, provides a comprehensive framework for semen analysis. It traditionally employs a more inclusive definition of normality. The primary focus is on identifying defects in the sperm head, midpiece, and tail, and reporting the percentage of normal forms. While specific quantitative thresholds for normality have evolved across editions, the system is characterized by its detailed categorization of anomalies and its use in establishing basic semen parameter reference ranges for fertile populations [7] [8].

Kruger (Tygerberg Strict) Criteria

The Kruger, or strict, criteria represent a more stringent approach to morphological assessment. This system defines normality within very narrow limits, classifying a spermatozoon as normal only if it displays an oval head with a well-defined acrosome covering 40-70% of the head area, no neck/midpiece or tail defects, and no cytoplasmic droplets [8]. The clinical utility of this system lies in its strong correlation with fertilization success in Assisted Reproductive Technologies (ART), particularly In Vitro Fertilization (IVF). Studies have shown that pregnancy rates from Intrauterine Insemination (IUI) were significantly higher for couples where the male partner had strict morphology values >4% compared to those with ≤4% (15.0% vs. 2.7% in one study) [8]. However, a phenomenon known as "classification drift" has been observed over time, where the same strict criteria have been applied more stringently, increasing the diagnosis of teratozoospermia and potentially reducing the predictive value of the test [8].

David’s Modified Classification

David's modified classification offers a highly granular system, detailing a wide spectrum of specific morphological defects. It catalogues anomalies into distinct classes, providing a detailed morphological profile of an ejaculate. According to the SMD/MSS dataset development, this system includes 12 classes of morphological defects [7]:

- 7 head defects: tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, and abnormal acrosome.

- 2 midpiece defects: cytoplasmic droplet and bent midpiece.

- 3 tail defects: coiled, short, and multiple tails. This system is used by a large number of laboratories worldwide and is particularly valuable for in-depth research into the etiology of sperm defects and their specific impact on function [7].

Table 1: Comparative Analysis of Key Sperm Morphology Classification Systems

| Feature | WHO Criteria | Kruger (Strict) Criteria | David's Modified Criteria |

|---|---|---|---|

| Philosophical Approach | Inclusive, pragmatic | Stringent, prognostic for ART | Descriptive, granular |

| Definition of Normal | Broader, based on population references | Very narrow, perfect oval shape, etc. | Not a single "normal"; focus on defect typing |

| Primary Clinical Use | General diagnosis & reference ranges | Predicting success in IVF | Detailed morphological profiling & research |

| Key Quantitative Thresholds | Varies by WHO edition; lower threshold for normality | <4% normal forms indicates poor prognosis for IVF | N/A - focuses on defect categories |

| Classes of Defects | Broadly categorized (Head, Midpiece, Tail) | Implicit in strict "normal" definition | 12 specific defect classes [7] |

Experimental Protocols for Morphology Assessment

Standardized Smear Preparation and Staining

The foundational step for reliable morphology assessment is a standardized preparation of semen smears. Protocols derived from WHO guidelines are typically followed. As detailed in the development of the SMD/MSS dataset, semen smears are prepared, air-dried for a minimum of two hours, and then fixed with 2% (v/v) glutaraldehyde in phosphate-buffered saline (PBS) for 3 minutes. After fixation, smears are washed thoroughly in distilled water and stained with an appropriate stain, such as RAL Diagnostics kit or a fluorescent stain like Hoechst 33342 for computer-assisted analysis [9] [7].

Microscopy and Image Acquisition for CASA and AI

For traditional manual assessment, oil immersion under 100x magnification is used. For computer-assisted sperm morphometry analysis (CASA-Morph) and AI model training, high-resolution digital images are acquired. The MMC CASA system with a 100x oil immersion objective in bright field mode is one platform used for this purpose [7]. Alternatively, fluorescence-based CASA-Morph systems using dyes like Hoechst 33342 can be employed with an epifluorescence microscope (e.g., Leica DM4500B) equipped with a high-quality digital camera (e.g., Canon Eos 400D) to capture images of sperm nuclei for precise morphometric analysis [9]. For AI training datasets, it is critical to capture images of individual spermatozoa, which can be achieved by cropping field-of-view images using machine learning algorithms [10].

Expert Classification and Ground Truth Establishment

A critical protocol for research and database creation involves establishing a robust "ground truth" through expert consensus. In the SMD/MSS dataset, each spermatozoon was manually classified by three independent experts according to David's modified classification [7]. Similarly, for the ram sperm training tool, images were labelled by multiple experienced assessors, and only those with 100% consensus were integrated into the final "ground truth" dataset used for training and validation [10] [5]. This multi-expert consensus strategy is essential to mitigate the inherent subjectivity of the assessment and to create a reliable standard for both human training and AI algorithm development.

Statistical and Computational Analysis

CASA-Morph Analysis: Fluorescence-based CASA-Morph systems analyze at least 200 sperm cells per sample. Primary morphometric parameters measured include Area (A, μm²), Perimeter (P, μm), Length (L, μm), and Width (W, μm). Derived shape parameters are calculated, such as Ellipticity (L/W), Rugosity (4πA/P²), Elongation ([L - W]/[L + W]), and Regularity (πLW/4A) [9]. To identify sperm morphometric subpopulations, multivariate statistical analyses like two-step cluster procedures (involving Principal Component Analysis followed by cluster analysis) or discriminant analyses are employed [9].

AI Model Training: For deep learning approaches, a dataset (e.g., 1000 images extended to 6035 via data augmentation) is partitioned into training (80%) and testing (20%) sets. The images undergo pre-processing, including normalization and resizing (e.g., to 80x80x1 grayscale). A Convolutional Neural Network (CNN) architecture is then trained and evaluated using platforms like Python 3.8 to classify sperm into morphological categories based on the established ground truth [7].

Workflow Visualization of Morphology Classification Research

The following diagram illustrates the integrated experimental workflow for sperm morphology classification research, encompassing both traditional analysis and modern artificial intelligence approaches.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Sperm Morphology Research

| Item Name | Function/Application | Specific Example/Note |

|---|---|---|

| Glutaraldehyde (2% in PBS) | Fixation of semen smears; preserves sperm structure for accurate morphometric analysis. | Used in fluorescence-based CASA-Morph protocols [9]. |

| Hoechst 33342 | Fluorescent nuclear stain; used for precise computer-assisted sperm morphometry analysis (CASA-Morph) of the sperm head. | Allows for automatic measurement of nuclear parameters like Area and Perimeter [9]. |

| RAL Diagnostics Staining Kit | A Romanowsky-type stain for manual sperm morphology assessment; provides contrast to differentiate sperm components. | Used for staining smears in studies applying David's classification [7]. |

| Computer-Assisted Semen Analysis (CASA) System | Automated platform for acquiring and analyzing sperm images; increases objectivity of morphometry. | Systems like MMC CASA used for image acquisition for AI models [7]. |

| ImageJ with Custom Plug-in | Open-source image analysis software; used for automated measurement of primary and derived sperm morphometric parameters. | Plug-in modules can be created for specific CASA-Morph analyses [9]. |

| Convolutional Neural Network (CNN) | A class of deep learning algorithm designed for image recognition and classification tasks. | Used to develop predictive models for automated sperm morphology classification [7]. |

The automated analysis of sperm morphology is a critical component in the objective diagnosis of male infertility. While conventional semen analysis provides foundational data, the detailed classification of sperm shapes offers profound insights into male reproductive health and potential etiologies of infertility [3]. The development of robust classification algorithms, however, confronts two persistent and technically complex challenges: the reliable distinction of intact sperm from cellular debris and other artifacts in semen samples, and the precise capture of subtle morphological defects across the sperm's head, midpiece, and tail [11] [12]. These hurdles are compounded by the inherent limitations of manual assessment, including substantial inter-observer variability and the subjective interpretation of complex morphological criteria [5]. This technical guide examines the fundamental obstacles facing sperm morphology classification algorithms and explores advanced computational strategies that are being developed to overcome them, thereby paving the way for more reliable, automated diagnostic systems in clinical andrology.

Core Technical Challenges in Sperm Morphology Analysis

Distinguishing Sperm from Non-Sperm Elements

The accurate segmentation of individual spermatozoa from complex semen backgrounds represents the primary technical bottleneck in automated analysis pipelines. This challenge stems from several intrinsic and methodological factors:

- Low Resolution and Insufficient Semantic Information: Sperm are notably small cells, and their images often suffer from low resolution, which causes detection failures in deep learning models. The low-level features extracted for detecting these small targets typically lack rich semantic information, making it difficult for networks to learn discriminative features that reliably separate sperm from visually similar debris [12].

- Morphological Simplicity and Overfitting: The relatively simple and consistent morphology of sperm, compared to more complex cell types, paradoxically increases the risk of model overfitting. Without sufficient variation in training data, algorithms may fail to generalize, latching onto incidental visual patterns rather than biologically meaningful features [12].

- Image Acquisition Artifacts: Practical issues during sample preparation and imaging introduce significant noise. Sperm may appear intertwined or have only partial structures visible at image edges, while inconsistent staining, insufficient lighting in optical microscopes, and poorly prepared semen smears further degrade image quality and analytical accuracy [7] [3].

Capturing Subtle Morphological Defects

Beyond initial detection, the precise classification of specific morphological abnormalities presents a second layer of complexity characterized by:

- High Structural Complexity and Variability: The World Health Organization recognizes 26 distinct types of abnormal morphology across the head, neck, and tail regions [3]. This classification complexity is compounded by high inter-class similarity (where different defects appear visually similar) and significant intra-class variability (where the same defect manifests differently across samples) [13].

- Subjectivity in Ground Truth Annotation: Establishing reliable "ground truth" for model training is complicated by substantial disagreement even among expert morphologists. Studies reveal that experts may agree on only 73% of normal/abnormal classifications for the same sperm images, creating a fuzzy target for supervised learning algorithms [5].

- Data Scarcity and Imbalance: Large-scale, high-quality annotated datasets remain scarce. Existing public datasets often suffer from limited sample sizes, insufficient representation of rare defect categories, and inconsistent annotation standards, severely limiting model generalizability across different clinical settings and populations [11] [3].

Table 1: Publicly Available Sperm Morphology Datasets and Their Key Characteristics

| Dataset Name | Image Count | Annotation Type | Key Characteristics | Noted Limitations |

|---|---|---|---|---|

| SMD/MSS [7] | 1,000 (extended to 6,035 with augmentation) | Classification (12 classes via David classification) | Sperm from 37 patients; Single sperm per image | Limited original sample size |

| MHSMA [3] | 1,540 | Classification | Focus on sperm head features (acrosome, shape, vacuoles) | Non-stained, noisy, low-resolution images |

| SVIA [3] | 125,000 instances | Detection, Segmentation, Classification | Includes videos and images; Multiple annotation types | Low-resolution, unstained samples |

| VISEM-Tracking [3] | 656,334 annotated objects | Detection, Tracking, Regression | Multi-modal with videos and participant data | Low-resolution, unstained grayscale sperm |

| Hi-LabSpermMorpho [13] | 18 categories across 3 staining protocols | Classification | Expert-labeled; 18 morphological classes | Class imbalance between abnormality types |

Advanced Algorithmic Approaches to Technical Hurdles

Enhanced Detection Architectures

Contemporary research has developed specialized neural network architectures to address the fundamental challenge of sperm detection amidst debris:

- Multi-Scale Feature Pyramid Networks (FPN): Advanced FPN architectures have been engineered to enhance semantic information flow across network layers. By incorporating contextual relationships through multi-scale feature fusion and attention mechanisms, these networks significantly improve detection accuracy for small sperm targets, achieving up to 98.37% Average Precision (AP) on benchmark datasets—surpassing mainstream detectors like YOLOv4, YOLOv7, and YOLOv8 [12].

- Keypoint Dropout Regularization: To counter overfitting caused by sperm's simple morphology, a novel Keypoint Dropout mechanism employs an adaptive threshold to selectively discard less informative features during training. This approach forces the network to learn more robust, generalized representations rather than relying on potentially misleading simple patterns [12].

- Copy-Paste Data Augmentation: This technique artificially increases the representation of small sperm targets in training datasets by strategically oversampling and pasting sperm instances across varied background contexts. This enhances model robustness to the heterogeneous conditions encountered in real clinical samples [12].

Sophisticated Classification Frameworks

For the nuanced task of defect classification, hierarchical and ensemble approaches have demonstrated remarkable efficacy:

- Two-Stage Divide-and-Ensemble Framework: This sophisticated pipeline decomposes the complex classification task into manageable subtasks. In the first stage, a "splitter" model categorizes sperm into major groups (e.g., head/neck abnormalities versus tail abnormalities/normal). In the second stage, specialized ensemble models—incorporating multiple architectures like DeepMind's NFNet and Vision Transformers (ViT)—perform fine-grained classification within each category. This approach has achieved 69-71% accuracy across diverse staining protocols, representing a statistically significant 4.38% improvement over conventional single-model baselines [13].

- Structured Multi-Stage Voting: Moving beyond simple majority voting, this ensemble strategy allows constituent models to cast both primary and secondary votes. This nuanced decision fusion mechanism mitigates the influence of dominant classes and ensures more balanced performance across different sperm abnormality types, particularly valuable for addressing class imbalance [13].

- Contrastive Meta-Learning with Auxiliary Tasks: Emerging approaches leverage meta-learning frameworks that enable models to rapidly adapt to new data distributions with minimal examples. Combined with contrastive learning that maximizes separation between defect classes in feature space, these methods show promise for generalized classification across varied clinical settings and staining protocols [14].

Experimental Protocols for Model Validation

Rigorous experimental validation is essential for assessing algorithm performance under conditions mimicking real-world clinical challenges:

- Dataset Partitioning and Augmentation: Standard practice involves partitioning datasets with approximately 80% for training and 20% for testing. To address data scarcity, comprehensive augmentation techniques are applied, including rotation, scaling, color space adjustments, and copy-paste oversampling of rare abnormality classes [7] [12].

- Inter-Expert Agreement Analysis: Establishing reliable ground truth requires quantifying agreement between multiple expert morphologists. Statistical measures like Fleiss' Kappa or intraclass correlation coefficients are used to identify images with total expert agreement (3/3 experts), partial agreement (2/3), or no agreement. Only images achieving consensus are typically included in high-quality training sets [7] [5].

- Cross-Staining Protocol Validation: To ensure model robustness across different clinical preparation methods, algorithms should be validated across multiple staining techniques (e.g., Diff-Quick variants like BesLab, Histoplus, and GBL). Performance consistency across these protocols indicates better generalizability to diverse laboratory settings [13].

Table 2: Performance Comparison of Sperm Analysis Algorithms Across Technical Challenges

| Algorithm/Approach | Primary Technical Focus | Reported Performance | Key Advantages | Limitations |

|---|---|---|---|---|

| Multi-Scale FPN with Keypoint Dropout [12] | Small sperm detection; Overfitting reduction | 98.37% AP on EVISAN dataset | Superior small object detection; Adaptive regularization | Computational complexity; Training instability |

| Two-Stage Divide-and-Ensemble [13] | Fine-grained defect classification | 71.34% accuracy (4.38% improvement) | Reduces misclassification; Handles class imbalance | Complex training pipeline; High resource requirements |

| Convolutional Neural Network (CNN) [7] | Basic morphology classification | 55% to 92% accuracy range | Automated feature extraction; Standard architecture | Performance varies with image quality |

| Support Vector Machine (SVM) [3] | Head defect classification | 88.59% AUC-ROC; >90% precision | Strong with handcrafted features; Computationally efficient | Limited to pre-defined features; Poor generalization |

| Bayesian Density Estimation [3] | Head morphology classification | 90% accuracy on head types | Probabilistic classification; Handles uncertainty | Limited to head defects only |

Essential Research Reagents and Materials

The experimental workflows discussed require specific laboratory reagents and computational resources to implement effectively:

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Reagent/Material | Specification/Example | Primary Function in Research |

|---|---|---|

| Staining Kits | RAL Diagnostics; Diff-Quick variants (BesLab, Histoplus, GBL) [7] [13] | Enhances morphological features for microscopic analysis and algorithm training |

| Microscopy Systems | MMC CASA system; Phase-contrast microscopes [7] [5] | Image acquisition with appropriate magnification and resolution for analysis |

| Annotation Software | Custom Excel templates; specialized image labeling tools [7] | Facilitates ground truth labeling by multiple experts for dataset creation |

| Deep Learning Frameworks | Python 3.8 with TensorFlow/PyTorch [7] [13] | Implementation of CNN, ViT, and ensemble models for classification |

| Public Datasets | SMD/MSS, SVIA, VISEM-Tracking, Hi-LabSpermMorpho [7] [13] [3] | Benchmarking and training data for algorithm development and validation |

The journey toward fully automated, reliable sperm morphology analysis continues to grapple with fundamental technical hurdles in distinguishing sperm from debris and capturing subtle morphological defects. While traditional machine learning approaches remain limited by their dependence on handcrafted features and inability to generalize across diverse clinical samples, emerging deep learning strategies offer promising pathways forward. Through specialized architectures like multi-scale feature pyramids, hierarchical classification frameworks, and sophisticated ensemble methods, researchers are steadily overcoming these challenges. The continued development of standardized, high-quality datasets and rigorous validation protocols will be essential to translate these technological advances into clinically impactful tools that enhance diagnostic accuracy, reduce inter-observer variability, and ultimately improve patient care in the field of male reproductive medicine.

Infertility is a significant global health issue, affecting approximately 15% of couples, with male factors being a contributor in about 50% of cases [7] [15] [3]. Among the standard semen parameters assessed during male fertility investigation—concentration, motility, and morphology—sperm morphology requires special attention as it is considered of greatest clinical interest and most correlated with fertility potential [7]. Sperm morphology refers to the size, shape, and structural characteristics of sperm cells, including head shape, acrosome integrity, neck structure, and tail configuration [16]. According to World Health Organization (WHO) guidelines, normal sperm morphology is characterized by an oval head (length: 4.0–5.5 μm, width: 2.5–3.5 μm), an intact acrosome covering 40–70% of the head, and a single, uniform tail [16].

Despite its clinical importance, sperm morphology assessment represents one of the most challenging aspects of semen analysis due to its subjective nature, often reliant on the operator's expertise [7]. Manual sperm morphology assessment suffers from key limitations, including high inter-observer variability (with reported kappa values as low as 0.05–0.15), lengthy evaluation times (30–45 minutes per sample), inconsistent standards across laboratories, and the need for expert training [16]. This variability has led to ongoing debates about the prognostic value of sperm morphology in both natural and assisted reproduction, making the standardization of assessment methodologies a core clinical imperative [15].

Evolution of Morphological Assessment Criteria and Clinical Correlation

Historical Changes in Classification Standards

Sperm morphology criteria have evolved significantly since the introduction of the first WHO manual in 1980, with progressively stricter definitions of "normal" morphology [15] [17]. The reference value for normal sperm morphology has sharply decreased from ≥80.5% in the 1st edition to ≥4% in the most recent 5th and 6th editions [17]. This evolution reflects an increasing recognition that humans produce a high proportion of defective sperm compared to other animal species, and that stricter criteria may better correlate with fertility outcomes.

Table 1: Evolution of WHO Sperm Morphology Reference Values

| WHO Edition | Publication Year | Reference Value for Normal Forms | Classification Approach |

|---|---|---|---|

| 1st Edition | 1980 | ≥80.5% | Obvious, well-defined abnormalities |

| 2nd Edition | 1987 | ≥80.5% | Obvious, well-defined abnormalities |

| 3rd Edition | 1992 | ≥30% | Introduction of Kruger strict criteria |

| 4th Edition | 1999 | <15% may affect IVF | Strict criteria |

| 5th Edition | 2010 | ≥4% | Strict criteria, detailed defect classification |

| 6th Edition | 2021 | ≥4% | Increased emphasis on specific defect characterization |

The 6th edition handbook contains several notable recommendations that enhance clinical correlation. First, assessments of sperm morphology should be performed by trained personnel familiar with all criteria used to designate spermatozoa as abnormal. Second, frequent internal and external quality assessments should be utilized to minimize variability in results. Importantly, a major change in the 6th edition is an increased emphasis on characterizing specific defects in each region of the sperm—head, neck/midpiece, tail, and cytoplasm—rather than grouping all defects into a single "abnormal" category [15].

Clinical Evidence for Morphology-Fertility Correlation

The relationship between sperm morphology and fertility outcomes presents a complex picture with conflicting evidence across studies. Initial studies, particularly those using the Kruger (Tygerberg) strict criteria, found significantly diminished oocyte fertilization rates when sperm morphology dropped below 14% [15]. However, more recent investigations have questioned the independent predictive value of morphology.

A retrospective study of intrauterine insemination (IUI) outcomes across two eras revealed a dramatic shift in predictive value. In the earlier era (1996-97), pregnancy rates per cycle were 2.7% versus 15.0% for couples with strict morphology ≤4% or >4%, respectively. In the later era (2005-06), this relationship was no longer present, with pregnancy rates of 13.3% versus 14.7% for the same morphology thresholds [8]. The authors concluded that "classification drift increased the percentage of men diagnosed with teratozoospermia and resulted in a loss of predictive value."

The LIFE study of 501 couples attempting natural conception found that percent abnormal morphology by both strict and traditional criteria was associated with a small but statistically significant increase in time to pregnancy [15]. However, after controlling for other semen parameters such as sperm count or concentration, this association was not retained, suggesting that sperm morphology is not an independent predictor of fecundity. Similarly, a retrospective analysis of patients with 0% normal forms found that 29% were able to conceive without assisted reproductive technologies compared with 56% of controls [15]. All men with 0% normal forms who conceived naturally went on to have another child also via natural conception, leading the authors to conclude that morphology alone should not be used to predict fertilization, pregnancy, or live birth potential.

Table 2: Clinical Correlation of Sperm Morphology with Fertility Outcomes

| Fertility Context | Correlation Strength | Key Evidence | Limitations |

|---|---|---|---|

| Natural Conception | Weak to Moderate | LIFE study: small increase in time to pregnancy [15] | Not independent of other parameters |

| Intrauterine Insemination | Inconsistent | Strong correlation in earlier studies lost in recent eras [8] | Classification drift over time |

| In Vitro Fertilization | Moderate | Initial Kruger studies showed <14% morphology affected rates [15] | Laboratory-specific variability |

| Intracytoplasmic Sperm Injection | Weak | Patients with 0% normal forms can achieve success [15] | Morphology bypassed by direct injection |

Technical Methodologies in Morphology Assessment

Conventional Manual Assessment and Limitations

Traditional manual sperm morphology assessment follows specific methodology outlined in the WHO manual for semen analysis [7]. The process involves examining stained semen smears under brightfield microscopy with an oil immersion 100x objective. According to guidelines, at least 200 spermatozoa should be evaluated and classified based on strict criteria into categories of normal or specific abnormal forms affecting the head, midpiece, or tail [3].

The fundamental limitations of manual assessment include substantial inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators [16]. This variability stems from several factors: the subjective interpretation of borderline forms, differences in staining techniques, variable microscope optics, and the inherent challenge of consistently applying complex classification criteria. One study analyzing inter-expert agreement found three distinct scenarios among three experts: no agreement (NA) among experts, partial agreement (PA) where 2/3 experts agreed on the same label, and total agreement (TA) where 3/3 experts agreed on the same label for all categories [7]. Statistical analysis using Fisher's exact test revealed significant differences between experts in each morphology class (p < 0.05), highlighting the inherent subjectivity.

Computer-Assisted Semen Analysis (CASA) Systems

Computer-Assisted Semen Analysis (CASA) systems were developed to address the limitations of manual assessment. These systems allow sequential acquisition of images using a microscope equipped with a camera [7]. However, routine use of CASA for automated sperm morphology analysis remains limited for several reasons: limited ability to accurately distinguish between spermatozoa and cellular debris, difficulty classifying midpiece and tail abnormalities, and unsatisfactory results due to limited quality of captured microscopic images [7].

Deep Learning and Artificial Intelligence Approaches

Recent advances in artificial intelligence have led to the development of sophisticated deep learning models for sperm morphology classification. Convolutional Neural Networks (CNNs) have shown remarkable promise for image-based classification tasks in reproductive medicine [7] [16]. These approaches typically involve multiple stages: image acquisition, pre-processing, data augmentation, model training, and evaluation.

One study developed a predictive model for sperm morphological evaluation utilizing artificial neural networks trained on the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) dataset enhanced through data augmentation techniques [7]. The methodology included:

- Sample Preparation: Smears prepared from semen samples obtained from 37 patients with sperm concentration of at least 5 million/mL and varying morphological profiles.

- Data Acquisition: MMC CASA system used for acquiring images from sperm smears with bright field mode using an oil immersion 100x objective.

- Expert Classification: Three experts with extensive experience in semen analysis classified each spermatozoon following modified David classification, which includes 12 classes of morphological defects (7 head defects, 2 midpiece defects, and 3 tail defects).

- Data Augmentation: The initial dataset of 1000 images was extended to 6035 images after applying data augmentation techniques to balance morphological classes.

- Algorithm Development: A CNN-based algorithm was implemented in Python 3.8 with five stages: image pre-processing, database partitioning, data augmentation, program training, and evaluation.

The deep learning model produced satisfactory results, with accuracy ranging from 55% to 92% across different morphological classes [7].

More advanced approaches have integrated attention mechanisms and feature engineering. One study presented a novel deep learning framework combining Convolutional Block Attention Module (CBAM) with ResNet50 architecture and advanced deep feature engineering techniques [16]. The proposed hybrid architecture integrated ResNet50 backbone with CBAM attention mechanisms, enhanced by a comprehensive deep feature engineering pipeline incorporating multiple feature extraction layers combined with 10 distinct feature selection methods including Principal Component Analysis, Chi-square test, Random Forest importance, and variance thresholding.

This framework achieved exceptional performance with test accuracies of 96.08% ± 1.2% on the SMIDS dataset (3000 images, 3-class) and 96.77% ± 0.8% on the HuSHeM dataset (216 images, 4-class) using deep feature engineering, representing significant improvements of 8.08% and 10.41% respectively over baseline CNN performance [16]. McNemar's test confirmed statistical significance (p < 0.05). The best configuration (GAP + PCA + SVM RBF) demonstrated superior performance compared to existing state-of-the-art approaches.

Table 3: Performance Comparison of Sperm Morphology Assessment Methods

| Assessment Method | Accuracy Range | Advantages | Limitations |

|---|---|---|---|

| Manual Assessment | 55-92% [7] | Low equipment cost, direct observation | High inter-observer variability, time-consuming |

| Conventional CASA | 70-85% [3] | Semi-automated, reduced subjectivity | Limited defect classification, image quality issues |

| Basic CNN Models | 88% [16] | Automated, reduced variability | Requires large datasets, computational resources |

| Advanced AI with Feature Engineering | 96-97% [16] | High accuracy, objective, rapid processing | Complex implementation, specialized expertise needed |

Experimental Protocols for Advanced Morphology Analysis

Protocol 1: Deep Learning-Based Morphology Classification

Objective: To develop and validate a deep learning model for automated sperm morphology classification using convolutional neural networks.

Materials and Reagents:

- Semen samples with varying morphological profiles

- RAL Diagnostics staining kit

- MMC CASA system for image acquisition

- Python 3.8 with deep learning libraries (TensorFlow, Keras, PyTorch)

- High-performance computing workstation with GPU acceleration

Methodology:

- Sample Preparation and Staining: Prepare semen smears following WHO guidelines [7]. Fix and stain using RAL Diagnostics staining kit according to manufacturer's instructions.

- Image Acquisition: Capture images of individual spermatozoa using MMC CASA system with bright field mode, oil immersion 100x objective. Ensure each image contains a single spermatozoon with head, midpiece, and tail visible.

- Expert Annotation and Ground Truth Establishment: Have three independent experts classify each spermatozoon according to modified David classification [7]. Establish ground truth through expert consensus for images with disagreement.

- Data Pre-processing: Resize images to 80×80×1 grayscale format with linear interpolation strategy. Apply normalization to bring pixel values to common scale [7].

- Data Augmentation: Apply transformation techniques including rotation, flipping, scaling, and brightness adjustment to extend dataset from 1000 to 6035 images and balance morphological classes [7].

- Dataset Partitioning: Split dataset into training (80%) and testing (20%) subsets randomly. Further extract 20% from training set for validation during model development [7].

- Model Architecture and Training: Implement CNN architecture with multiple convolutional and pooling layers followed by fully connected layers. Train model using augmented dataset with appropriate loss function and optimization algorithm.

- Model Evaluation: Assess model performance on test set using accuracy, precision, recall, F1-score, and confusion matrix analysis.

Protocol 2: Advanced Feature Engineering with CBAM-Enhanced ResNet50

Objective: To implement a sophisticated deep feature engineering pipeline with attention mechanisms for high-accuracy sperm morphology classification.

Materials and Reagents:

- Publicly available sperm image datasets (SMIDS with 3000 images, HuSHeM with 216 images)

- Python with scikit-learn, OpenCV, and deep learning frameworks

- Workstation with significant GPU memory and processing capabilities

Methodology:

- Data Preparation: Obtain and pre-process images from benchmark datasets. Apply standardization to normalize image dimensions and color channels.

- Backbone Feature Extraction: Implement ResNet50 architecture integrated with Convolutional Block Attention Module (CBAM) [16]. CBAM sequentially applies channel-wise and spatial attention to intermediate feature maps, enabling the network to focus on the most relevant sperm features.

- Deep Feature Extraction: Extract features from multiple layers including CBAM, Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final layers [16].

- Feature Selection: Apply 10 distinct feature selection methods including Principal Component Analysis (PCA), Chi-square test, Random Forest importance, variance thresholding, and their intersections to identify most discriminative features.

- Classifier Training: Train Support Vector Machines with RBF/Linear kernels and k-Nearest Neighbors algorithms on selected feature sets [16].

- Model Validation: Evaluate using 5-fold cross-validation to ensure robustness. Calculate performance metrics including accuracy, sensitivity, specificity, and area under ROC curve.

- Visualization and Interpretation: Apply Grad-CAM attention visualization to generate clinically interpretable results highlighting morphological features contributing to classification decisions [16].

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Reagents and Materials for Sperm Morphology Studies

| Reagent/Material | Specification/Function | Application Context |

|---|---|---|

| RAL Diagnostics Staining Kit | Standardized staining for sperm morphology | Differentiates sperm components for microscopic evaluation [7] |

| MMC CASA System | Microscope with camera for image acquisition | Standardized digital image capture for analysis [7] |

| Python 3.8 with DL Libraries | TensorFlow, Keras, PyTorch for algorithm development | Implementation of CNN and deep learning models [7] [16] |

| SMIDS & HuSHeM Datasets | Publicly available benchmark datasets with 3000+ images | Model training and validation [16] |

| ResNet50 Architecture | Pre-trained CNN model for feature extraction | Backbone network for transfer learning [16] |

| Convolutional Block Attention Module | Attention mechanism for feature emphasis | Enhances focus on morphologically relevant regions [16] |

| Feature Selection Algorithms | PCA, Chi-square, Random Forest importance | Dimensionality reduction and feature optimization [16] |

| GPU Workstation | High-performance computing with graphics processing unit | Accelerates model training and inference [16] |

Discussion and Future Directions

The correlation between sperm morphology and fertility outcomes remains a complex clinical imperative with evolving significance. While traditional assessment methods have shown variable predictive value, emerging artificial intelligence approaches offer promising avenues for standardization and enhanced correlation with clinical outcomes.

The declining predictive value of sperm morphology across different eras, as demonstrated in IUI studies [8], highlights the impact of classification drift and changing laboratory practices. This underscores the need for standardized, objective assessment methods that can provide consistent prognostic information across different clinical settings. Advanced deep learning models, particularly those incorporating attention mechanisms and sophisticated feature engineering, have demonstrated remarkable accuracy exceeding 96% in research settings [16]. These approaches not only offer objective assessment but also significantly reduce analysis time from 30-45 minutes for manual assessment to less than one minute per sample [16].

Future research directions should focus on several key areas: (1) developing large, diverse, and well-annotated datasets that encompass the full spectrum of morphological variations across different patient populations; (2) validating AI models in prospective clinical trials to establish clear correlation with fertility outcomes; (3) integrating morphology assessment with other semen parameters and clinical factors for comprehensive fertility prediction; and (4) exploring the genetic and molecular basis of morphological defects to establish stronger links between phenotype and fertility potential.

The clinical application of automated morphology assessment systems holds significant promise for standardizing fertility evaluation, reducing diagnostic variability, improving reproducibility across laboratories, and potentially enabling real-time analysis during assisted reproductive procedures [16]. As these technologies mature and undergo rigorous clinical validation, they may fundamentally transform the role of sperm morphology assessment in the clinical evaluation and management of male infertility.

Algorithmic Evolution: From Handcrafted Features to Deep Neural Networks

The assessment of sperm morphology is a cornerstone of male fertility evaluation, providing critical diagnostic and prognostic information. According to the World Health Organization (WHO) standards, sperm morphology is categorized into head, neck, and tail compartments, with 26 distinct types of abnormal morphologies identified. The clinical procedure requires the analysis and classification of over 200 individual sperm cells, a process that is inherently labor-intensive, time-consuming, and subject to significant observer subjectivity and variability [11]. This lack of reproducibility poses a substantial challenge for clinical diagnosis. Conventional machine learning (ML) techniques have emerged as a powerful solution to these limitations, offering a pathway to automated, objective, and high-throughput sperm analysis. By leveraging handcrafted feature extraction and robust classification algorithms, these methods substantially reduce inter-observer variability and analytical workload, thereby enhancing the reliability of sperm quality assessment [11] [18].

This technical guide delves into the core components of a conventional ML pipeline for sperm morphology classification. We will explore the operational principles and implementation of two archetypal algorithms: k-Means for image segmentation and Support Vector Machines (SVM) for morphological classification. The efficacy of these models hinges on the quality of the features engineered from sperm images. Consequently, this paper provides an in-depth examination of critical handcrafted feature descriptors, notably Hu Moments and Zernike Moments, which are used to quantify the shape and texture of sperm heads. The content is framed within a broader research overview of sperm morphology classification algorithms, with a specific focus on providing detailed methodologies and protocols for researchers, scientists, and drug development professionals working at the intersection of andrology and artificial intelligence.

The Machine Learning Pipeline: From Images to Classification

A standardized machine learning pipeline for sperm morphology analysis involves a sequence of critical steps, from image acquisition to final classification. The workflow is designed to transform raw pixel data into a meaningful diagnostic output.

The following diagram illustrates the end-to-end experimental workflow for conventional machine learning-based sperm morphology analysis.

The Researcher's Toolkit: Essential Materials and Datasets

Successful implementation of an ML pipeline for sperm morphology analysis requires specific reagents, datasets, and computational tools. The table below catalogues the key resources referenced in this guide.

Table 1: Research Reagent Solutions and Essential Materials for Sperm Morphology Analysis

| Item Name | Type | Function/Description |

|---|---|---|

| SCIAN-MorphoSpermGS [2] | Gold-Standard Dataset | A public dataset of 1,854 stained sperm head images, expertly classified into five categories: normal, tapered, pyriform, small, and amorphous. |

| HuSHeM [11] | Public Dataset | The Human Sperm Head Morphology dataset contains 725 images, though only 216 sperm head images are publicly available. |

| Hematoxylin/Eosin Staining [2] | Staining Protocol | A chemical staining procedure used to distinguish different parts of the sperm cell. Hematoxylin stains the nucleus, while Eosin stains the acrosome, mid-piece, and tail. |

| Support Vector Machine (SVM) [11] [19] | Classification Algorithm | A supervised learning model that finds an optimal hyperplane to separate different classes of sperm morphology based on extracted features. |

| k-Means Clustering [11] [20] | Segmentation Algorithm | An unsupervised learning algorithm used to partition image pixels into clusters, effectively segmenting the sperm head from the background. |

| GridSearchCV [19] [21] | Hyperparameter Tuning Tool | A scikit-learn function that exhaustively searches over a specified parameter grid to find the optimal hyperparameters for an ML model using cross-validation. |

Core Technical Components and Experimental Protocols

Image Segmentation using k-Means Clustering

The first critical step in the pipeline is segmenting the sperm head from the background and other cellular components. k-Means Clustering is a widely used unsupervised algorithm for this task due to its simplicity and effectiveness, particularly with stained images where color and intensity provide clear separation [11].

Experimental Protocol for k-Means Segmentation:

- Input Image Pre-processing: Convert the original RGB image to a suitable color space (e.g., LAB or YCbCr). Experimental studies have shown that combining clustering with histogram statistical methods and exploring various color spaces can enhance segmentation accuracy for structures like the sperm acrosome and nucleus [11].

- Feature Vector Formation: For each pixel in the image, create a feature vector. This vector can include the pixel's color channel values and its spatial (x, y) coordinates.

- Cluster Initialization: Specify the number of clusters,

k(e.g., k=3 for background, sperm head, and acrosome). Initializekcluster centroids randomly. - Iterative Clustering:

- Assignment Step: Assign each pixel in the image to the cluster whose centroid is nearest (using a distance metric like Euclidean distance).

- Update Step: Recalculate the centroids of each cluster as the mean of all pixels assigned to that cluster.

- Convergence Check: Repeat the Assignment and Update steps until the centroid positions no longer change significantly or a maximum number of iterations is reached.

- Output: The algorithm yields a labeled image where each pixel is assigned a cluster ID. The cluster with properties matching the sperm head is selected as the Region of Interest (ROI) for subsequent analysis.

Handcrafted Feature Extraction: Hu and Zernike Moments

After segmentation, shape-based descriptors are critical for quantifying the morphology of the sperm head. These handcrafted features form the input for the classifier.

- Hu Moments (Invariant Moments): Hu Moments are a set of seven values derived from the central moments of an image. Their key advantage is invariance to translation, scale, and rotation, making them ideal for classifying sperm heads regardless of their position or orientation in the image [2]. They capture global shape characteristics.

- Zernike Moments: Zernike Moments are based on a set of complex orthogonal polynomials defined over the interior of a unit circle. They are also rotationally invariant and are highly effective at representing the fine-grained details and internal texture of a shape. This makes them suitable for distinguishing between subtle morphological differences, such as normal versus pyriform (pear-shaped) heads [2].

Table 2: Quantitative Performance of Conventional ML Models on Sperm Morphology Classification

| Study (Reference) | Algorithm | Feature Descriptors | Dataset Used | Reported Accuracy / Performance |

|---|---|---|---|---|

| Bijar et al. [11] | Bayesian Density Model | Shape-based Descriptors | Not Specified | 90% accuracy in classifying sperm heads into four morphological categories. |

| Chang et al. [11] [2] | k-Means & other classifiers | Shape, Texture, Grayscale | SCIAN-MorphoSpermGS | Established a baseline for five-class classification using shape-based descriptors. |

| General Pipeline [11] | Support Vector Machine (SVM) | Hu Moments, Zernike Moments, etc. | Public Datasets (e.g., HuSHeM, SCIAN) | Achieves significant success in differentiating normal and abnormal morphological features. |

Classification using Support Vector Machines (SVM)

The final step is classification, where an SVM model is trained to categorize sperm heads based on the extracted feature vectors [11].

Experimental Protocol for SVM Classification:

- Dataset Preparation: Use a labeled dataset like SCIAN-MorphoSpermGS. Split the data into features (the vectors of Hu and Zernike moments) and labels (e.g., 'normal', 'tapered', 'pyriform').

- Data Splitting: Divide the dataset into a training set (e.g., 70%) and a hold-out test set (e.g., 30%) using

train_test_split[22]. - Hyperparameter Tuning with GridSearchCV: The performance of an SVM is highly dependent on its hyperparameters. Use

GridSearchCVto find the optimal combination [19] [21]. This process systematically tests all combinations of hyperparameters, using 5-fold cross-validation on the training data to evaluate each combination's performance [22] [19]. - Model Training & Evaluation: Train the final SVM model on the entire training set using the best-found hyperparameters. The model's generalization error is then estimated on the held-out test set to provide a realistic performance metric [23].

Strengths, Limitations, and Outlook

Conventional machine learning pipelines, built on SVM, k-Means, and handcrafted features, have demonstrated considerable success in automating sperm morphology analysis. Their primary strength lies in their ability to objectively and consistently analyze sperm cells, thereby alleviating the substantial workload and subjectivity associated with manual observation [11]. Techniques like Hu and Zernike moments provide powerful, mathematically grounded descriptors for shape quantification.

However, these methods are fundamentally limited by their reliance on manual feature engineering. The performance of the entire system is contingent on the expertise of the researcher in selecting and extracting relevant features. This approach may fail to capture more complex, abstract, or subtle patterns in the data that are not predefined by the feature set [11]. Furthermore, the quality of the underlying datasets is a persistent challenge; many public datasets suffer from limitations in sample size, resolution, and diversity of abnormality categories, which can hinder the development of robust, generalizable models [11] [2].

While recent clinical guidelines have questioned the prognostic value of traditional morphology assessment for certain ART procedures, they acknowledge a positive role for automated systems, provided they are properly validated within the laboratory [6]. The field is now witnessing a paradigm shift towards deep learning (DL) algorithms. DL models can automatically learn hierarchical feature representations directly from raw pixel data, overcoming the need for manual feature engineering and often achieving superior performance in segmentation and classification tasks [11] [18]. Despite this shift, the conventional ML pipeline detailed in this guide remains a foundational and well-understood methodology. It provides a critical benchmark against which newer approaches can be measured and continues to be a viable solution for laboratories embarking on the path of automated sperm morphology analysis.

The diagnostic evaluation of male infertility has long relied on semen analysis, with sperm morphology assessment being a cornerstone due to its significant correlation with fertility outcomes. Traditional manual morphology assessment, however, is plagued by substantial subjectivity, inter-observer variability, and time-intensive processes, with studies reporting disagreement rates of up to 40% between expert evaluators [16]. Conventional Computer-Assisted Semen Analysis (CASA) systems have attempted to address these limitations but often demonstrate inadequate performance in distinguishing subtle morphological defects and are frequently limited to analyzing only sperm heads [7] [3].

The advent of deep learning, particularly Convolutional Neural Networks (CNNs), has revolutionized this field by enabling end-to-end feature learning and classification directly from raw pixel data. This paradigm shift moves beyond the limitations of traditional machine learning approaches that relied on manually engineered features, instead allowing models to automatically discover and extract hierarchically complex features relevant to morphological classification [11] [16]. This technical guide explores the transformative impact of CNN-based approaches on sperm morphology classification, detailing architectural innovations, experimental methodologies, and performance benchmarks that are establishing new standards for objectivity, efficiency, and accuracy in male fertility assessment.

Technical Background: From Manual Feature Engineering to Deep Learning

Limitations of Conventional Machine Learning Approaches

Traditional machine learning approaches for sperm morphology analysis followed a multi-stage pipeline requiring significant manual intervention and domain expertise:

- Handcrafted Feature Extraction: Techniques involved shape-based descriptors (Hu moments, Zernike moments, Fourier descriptors), texture analysis, and grayscale intensity measurements [3].

- Classical Algorithms: Support Vector Machines (SVM), k-Nearest Neighbors (k-NN), and decision trees were commonly employed for classification [11] [3].

- Performance Limitations: These methods achieved moderate success, with accuracy ranging from 49% to 90% depending on the specific task and dataset, but struggled with generalization across different imaging conditions and laboratories [3].

A fundamental constraint of these conventional approaches was their inability to automatically adapt to the considerable morphological diversity and subtle defect patterns present in human spermatozoa, ultimately limiting their clinical adoption [3].

The CNN Advantage for Sperm Morphology

CNNs fundamentally transformed this paradigm through several key capabilities:

- Hierarchical Feature Learning: CNN architectures automatically learn relevant features directly from images through multiple layers of abstraction, from simple edges in initial layers to complex morphological patterns in deeper layers [16].

- Spatial Hierarchy Preservation: Convolutional operations maintain spatial relationships within the image, crucial for recognizing structural defects across sperm head, midpiece, and tail regions [7] [24].

- Translation Invariance: Pooling operations provide robustness to positional variations of sperm within images [16].

- End-to-End Training: The entire system—from feature extraction to classification—is optimized jointly for the specific task, typically using backpropagation and gradient-based optimization [7] [16].

Table 1: Comparison of Conventional ML versus Deep Learning Approaches for Sperm Morphology Analysis

| Feature | Conventional Machine Learning | Deep Learning (CNN) |

|---|---|---|

| Feature Extraction | Manual, requires domain expertise | Automatic, learned from data |

| Architecture | Separate feature extraction and classification | End-to-end integrated pipeline |

| Data Dependency | Works with smaller datasets | Requires larger, annotated datasets |

| Performance | Moderate (49%-90% accuracy) | High (up to 96.77% accuracy) |

| Generalization | Often limited to specific imaging conditions | Better generalization with diverse training data |

| Computational Demand | Lower | Higher, requires GPU acceleration |

CNN Architectures for Sperm Morphology Classification

Fundamental Architectural Components

Modern CNN architectures for sperm morphology classification typically incorporate several fundamental components, each serving a distinct purpose in the feature learning pipeline:

- Convolutional Layers: Apply learnable filters to extract spatial hierarchies of features, with initial layers capturing basic edges and textures, and deeper layers identifying complex morphological patterns [16].

- Activation Functions: Introduce non-linearity using ReLU (Rectified Linear Unit) or its variants, enabling the network to learn complex decision boundaries [16].

- Pooling Layers: Perform spatial dimensionality reduction while retaining the most salient features, providing translational invariance and reducing computational complexity [7].

- Fully Connected Layers: Integrate extracted features for final classification decisions, typically preceding the output layer [16].

- Attention Mechanisms: Modules such as Convolutional Block Attention Module (CBAM) enable the network to focus on morphologically relevant regions (e.g., head shape, acrosome integrity) while suppressing background noise [16].

Advanced Architectural Innovations

Recent research has introduced sophisticated architectural enhancements to address the unique challenges of sperm morphology classification:

- ResNet50 with CBAM: Integration of Residual Networks with attention mechanisms has demonstrated exceptional performance, achieving 96.08% accuracy on the SMIDS dataset and 96.77% on the HuSHeM dataset [16].

- Multi-Task Learning Architectures: Joint learning frameworks simultaneously perform sperm head segmentation and morphological category prediction, leveraging shared feature representations for mutually beneficial tasks [25].

- Ensemble Approaches: Combining multiple CNN architectures (VGG16, DenseNet-161, ResNet-34) with meta-classifiers has achieved F1 scores of 98.2% on benchmark datasets [24].

- EfficientNetV2 Hybrids: Leveraging feature-level and decision-level fusion of multiple EfficientNetV2 variants with SVM, Random Forest, and MLP-Attention classifiers has shown strong performance on datasets with multiple morphological classes [24].

The following diagram illustrates a typical end-to-end CNN workflow for sperm morphology classification:

Experimental Protocols and Methodologies

Dataset Preparation and Augmentation

The development of robust CNN models requires carefully curated datasets with comprehensive morphological representations:

- Dataset Composition: The SMD/MSS dataset exemplifies proper composition, containing 1,000 individual sperm images extended to 6,035 through augmentation, classified according to modified David classification encompassing 12 morphological defect categories [7].

- Expert Annotation: Ground truth establishment typically involves multiple experienced embryologists (typically three) performing independent classifications, with statistical analysis of inter-expert agreement (Total Agreement, Partial Agreement, No Agreement) to quantify annotation consistency [7].

- Data Augmentation Protocols: To address class imbalance and limited dataset sizes, standard augmentation techniques include random rotations (±15°), horizontal and vertical flips, brightness and contrast variations (±20%), and Gaussian noise injection [7] [16].

- Pre-processing Pipelines: Standardized processing includes image resizing (typically 80×80 pixels for individual sperm), grayscale conversion, normalization (pixel values scaled to [0,1]), and denoising using Gaussian filters [7].

Model Training Methodologies

Effective training protocols for sperm morphology classification incorporate several specialized techniques:

- Transfer Learning: Pre-training on large-scale natural image datasets (ImageNet) followed by domain-specific fine-tuning on sperm morphology datasets [16] [24].

- Cross-Validation: Rigorous evaluation using 5-fold cross-validation protocols to ensure reliable performance estimation and mitigate overfitting [16].

- Class Imbalance Mitigation: Strategic approaches including weighted loss functions, focal loss, and oversampling of minority classes during training [24].

- Optimization Configuration: Standard use of Adam optimizer with initial learning rates of 1e-4, batch sizes of 32-64, and categorical cross-entropy loss for multi-class problems [16].

Table 2: Performance Benchmarks of Recent CNN Architectures for Sperm Morphology Classification

| Architecture | Dataset | Classes | Accuracy | Key Innovations |

|---|---|---|---|---|

| CBAM-ResNet50 with DFE [16] | SMIDS | 3 | 96.08% ± 1.2% | Attention mechanisms + deep feature engineering |

| CBAM-ResNet50 with DFE [16] | HuSHeM | 4 | 96.77% ± 0.8% | Hybrid deep learning + feature selection |

| Multi-Level Ensemble [24] | Hi-LabSpermMorpho | 18 | 67.70% | Feature-level + decision-level fusion |

| Basic CNN [7] | SMD/MSS | 12 | 55-92% | Data augmentation strategies |

| Stacked Ensemble [24] | HuSHeM | - | 98.2% F1 | Multiple CNN architectures + meta-classifier |

Advanced Techniques and Fusion Strategies

Deep Feature Engineering

Deep Feature Engineering (DFE) represents a sophisticated hybrid approach that combines the representational power of CNNs with classical feature selection methods:

- Feature Extraction Layers: Multiple feature types are extracted from intermediate network layers, including Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final layer activations [16].

- Feature Selection Methods: DFE employs diverse selection techniques including Principal Component Analysis (PCA), Chi-square tests, Random Forest importance, and variance thresholding to identify the most discriminative features [16].

- Classifier Integration: Processed features are classified using SVM with RBF/linear kernels or k-Nearest Neighbors algorithms, often outperforming end-to-end CNN classifiers [16].

- Performance Impact: The DFE approach has demonstrated significant improvements, boosting baseline CNN performance by 8.08% on SMIDS and 10.41% on HuSHeM datasets [16].

Multi-Level Fusion Frameworks

Ensemble methods leveraging multi-level fusion have shown remarkable success in addressing the complexity of sperm morphology classification:

- Feature-Level Fusion: Combining features extracted from multiple CNN architectures (e.g., EfficientNetV2 variants) to leverage complementary representations [24].

- Decision-Level Fusion: Integrating predictions from multiple classifiers through soft voting or meta-classifier approaches to enhance robustness [24].

- Multi-Task Learning: Joint architectures that simultaneously perform sperm head segmentation and morphological classification, improving feature learning through shared representations [25].

- Contrastive Meta-Learning: Emerging approaches that combine contrastive learning with meta-learning for improved generalization across diverse morphological patterns [14].

The following diagram illustrates a sophisticated multi-task learning architecture for simultaneous segmentation and classification:

Research Reagent Solutions and Computational Tools

Successful implementation of CNN-based sperm morphology classification requires both wet laboratory reagents and computational resources:

Table 3: Essential Research Reagents and Computational Tools for CNN-Based Sperm Morphology Analysis

| Category | Specific Product/Technology | Function/Purpose |

|---|---|---|

| Staining Kits | RAL Diagnostics Staining Kit [7] | Enhances contrast for morphological features in bright-field microscopy |

| Microscopy Systems | MMC CASA System [7] | Standardized image acquisition with 100x oil immersion objectives |

| Data Annotation Tools | Custom Excel Templates [7] | Systematic ground truth labeling by multiple experts |

| Deep Learning Frameworks | Python 3.8 with TensorFlow/PyTorch [7] [16] | CNN model implementation and training |

| Computational Hardware | GPU Acceleration (NVIDIA) [16] | Enables efficient training of deep CNN architectures |

| Attention Mechanisms | CBAM (Convolutional Block Attention Module) [16] | Focuses network on morphologically relevant regions |

| Pre-trained Models | ImageNet Pre-trained ResNet50 [16] | Transfer learning initialization for improved performance |