Advancing Sperm Morphology Classification: Integrating AI, Standardization, and Clinical Validation for Enhanced Male Fertility Assessment

This article comprehensively reviews the latest strategies for improving accuracy in sperm morphology classification, a critical yet historically subjective component of male fertility evaluation.

Advancing Sperm Morphology Classification: Integrating AI, Standardization, and Clinical Validation for Enhanced Male Fertility Assessment

Abstract

This article comprehensively reviews the latest strategies for improving accuracy in sperm morphology classification, a critical yet historically subjective component of male fertility evaluation. Tailored for researchers, scientists, and drug development professionals, we explore the foundational challenges driving innovation, including high inter-expert variability and the lack of standardized training. The content delves into cutting-edge methodological applications, particularly deep learning and convolutional neural networks, which are achieving accuracies of 55-92% and enabling the analysis of unstained, live sperm. We further address key troubleshooting areas such as dataset limitations and model generalizability, and critically evaluate validation frameworks and comparative performance against traditional techniques. The synthesis of these intents provides a roadmap for developing robust, clinically applicable classification systems that can enhance diagnostic precision and personalize infertility treatments.

The Foundational Challenge: Why Sperm Morphology Accuracy Lags and What's at Stake

Sperm morphology, the study of the size, shape, and appearance of sperm, is a foundational component of male fertility assessment. For researchers and drug development professionals, it is critical to understand that its clinical utility is nuanced. While it is a key parameter in standard semen analysis, its value as an independent prognostic indicator is a subject of ongoing debate and refinement within the scientific community [1].

The assessment of sperm morphology has continuously evolved, with the World Health Organization (WHO) manuals providing standardized, albeit frequently changing, criteria over the past four decades. The most recent 6th edition has increased the emphasis on characterizing specific defects in each sperm region—head, neck/midpiece, tail, and cytoplasm—rather than grouping all defects into a single "abnormal" category [1]. A central challenge in the field is the inherent subjectivity of the test, which can lead to significant inter-laboratory and inter-observer variability, impacting the reliability of data for clinical trials and diagnostic test development [2]. This technical support document is designed to address these specific experimental and diagnostic challenges, providing standardized protocols and troubleshooting guides to enhance the accuracy and reproducibility of your research.

Frequently Asked Questions (FAQs)

Q1: What are the specific morphological criteria for a "normal" sperm cell according to current WHO standards?

A1: The WHO 6th edition manual provides precise, standardized criteria for a normal spermatozoon, focusing on specific regions [1]:

- Head: Smooth, regularly contoured, and oval in shape. The acrosomal region should constitute 40–70% of the head area. No large vacuoles should be present, and no more than two small vacuoles are permitted.

- Midpiece: Slender, about the same length as the sperm head, and aligned with the major axis of the head.

- Tail: A single, uncoiled tail of uniform caliber, approximately ten times the length of the head, and without sharp angulations.

- Cytoplasmic Residue: Cytoplasmic droplets should be less than one-third the size of a normal sperm head.

Q2: What is the current clinical reference value for normal sperm morphology, and how is it applied?

A2: The current WHO 6th edition reference value for normal sperm morphology is 4% [3]. This means a semen sample is considered to have fertility potential if 4% or more of the evaluated sperm population is classified as normal using "strict" (Kruger) criteria. Clinically, results are often interpreted as follows [3]:

- >14% normal forms: High probability of fertility.

- 4-14% normal forms: Fertility slightly decreased.

- 0-3% normal forms: Fertility extremely impaired.

Q3: My research involves correlating morphology with assisted reproductive technology (ART) outcomes. What is the evidence for its predictive value?

A3: The evidence is mixed, and researchers should be cautious. Initially, studies suggested a significant inverse association between teratozoospermia (high levels of abnormal sperm) and fertility outcomes. However, most recent studies fail to show a strong independent association between sperm morphology and outcomes in natural conception or assisted reproductive technologies [1]. Some studies have shown that even men with 0% normal forms can still achieve natural conception, indicating that morphology alone is a poor predictor of fertilization potential [1].

Q4: What are the most common environmental and anatomical factors that can confound sperm morphology data in a study cohort?

A4: Key confounding factors include [1]:

- Lifestyle Factors: Smoking has a inconsistent but potential negative association. Alcohol use shows a more consistent dose-dependent negative effect on morphology.

- Environmental Exposures: Exposure to air pollution is significantly associated with teratozoospermia. Evidence for pesticides is inadequate but suggests potential toxicity.

- Anatomic & Health Factors: Varicocele repair has been shown to improve sperm morphology by a mean difference of 6.1%. Febrile illnesses can disrupt spermatogenesis and temporarily worsen morphology. Certain bacterial infections (e.g., Ureaplasma urealyticum) may also have a detrimental effect.

Troubleshooting Common Experimental & Diagnostic Challenges

| Challenge | Root Cause | Solution |

|---|---|---|

| High variability in morphology assessment results between technicians. | Lack of standardized training and the inherent subjectivity of the test [2]. | Implement a standardized training tool using expert-consensus "ground truth" image datasets. One study showed this improved novice accuracy from 53% to 90% for a complex 25-category system [2]. |

| Poor correlation between morphology results and fertility outcomes. | Morphology may not be an independent predictor of fertility; other factors like DNA fragmentation or motility may be dominant [1]. | Ensure concomitant assessment of other semen parameters (concentration, motility, DNA fragmentation). Use multi-parameter analysis instead of relying on morphology alone. |

| Slow diagnostic speed affecting laboratory throughput. | Inexperience and the use of overly complex classification systems [2]. | Structured, repeated training over several weeks. One study showed diagnostic speed improved from 7.0 seconds to 4.9 seconds per image after training. Start with simpler (2-category) systems before progressing to complex ones [2]. |

| Classifying specific sperm defects (e.g., head vs. midpiece anomalies). | Insufficient training on nuanced criteria for different abnormality categories [2]. | Use visual aids and training tools focused on multi-category classification. Training can improve accuracy in a 5-category system (head, midpiece, tail, cytoplasmic droplet, normal) from 68% to 97% [2]. |

Standardized Experimental Protocols for Morphology Assessment

Protocol: Sample Preparation and Staining for Morphology Analysis

- Sample Collection: Collect semen sample via masturbation into a sterile container after 2-5 days of sexual abstinence [4].

- Liquefaction: Allow the sample to liquefy at room temperature for 20-30 minutes.

- Slide Preparation: Create a thin smear of the semen sample on a clean, labeled microscope slide.

- Staining: Air-dry the smear and use a preferred staining method (e.g., Diff-Quik, Papanicolaou) to differentiate cell structures. The specific staining protocol will vary by kit manufacturer.

- Coverslipping: Once stained and dried, mount a coverslip using a compatible mounting medium.

Protocol: Implementing a Standardized Training Program for Morphologists

- Baseline Testing: Assess the initial accuracy and variation of novice morphologists using a validated image dataset across different classification systems (e.g., 2-category, 5-category, 8-category) [2].

- Intensive Training Day: Expose trainees to visual aids, instructional videos, and the "ground truth" dataset. Focus on the specific classification system to be used [2].

- Repeated Practice: Conduct repeated training and testing sessions over a period of at least four weeks. Studies show the greatest improvement occurs after the first day, with accuracy plateauing in subsequent weeks [2].

- Proficiency Assessment: Perform a final test to determine if the morphologist has reached a pre-defined accuracy threshold (e.g., >90% for a 2-category system) [2].

Quantitative Data: Impact of Training on Classification Accuracy

The following data, derived from a 2025 validation study, demonstrates the efficacy of a standardized training tool in improving the accuracy and reducing the variation of sperm morphology assessment [2].

Table 1: Accuracy of Sperm Morphology Classification Before and After Standardized Training

| Classification System Complexity | Untrained User Accuracy (Mean ± SE) | Final Accuracy After Training (Mean ± SE) |

|---|---|---|

| 2-Category (Normal/Abnormal) | 81.0% ± 2.5% | 98.0% ± 0.43% |

| 5-Category (Head, Midpiece, Tail, etc.) | 68.0% ± 3.59% | 97.0% ± 0.58% |

| 8-Category (Pyriform, Vacuoles, etc.) | 64.0% ± 3.5% | 96.0% ± 0.81% |

| 25-Category (All Defects Individualized) | 53.0% ± 3.69% | 90.0% ± 1.38% |

Table 2: Impact of Training on Diagnostic Speed and Variation

| Metric | At Start of Training (Test 1) | At End of Training (Test 14) |

|---|---|---|

| Time Spent Classifying per Image | 7.0 ± 0.4 seconds | 4.9 ± 0.3 seconds |

| Coefficient of Variation (CV) Among Users | High (CV = 0.28) | Significantly Reduced (CV as low as 0.027) |

Research Reagent Solutions & Essential Materials

Table 3: Essential Materials for Sperm Morphology Research

| Item | Function in Experiment |

|---|---|

| Microscope with Oil Immersion Objective (100x) | Essential for high-magnification examination of sperm cell details, including head vacuoles and tail structure. |

| Phase Contrast Optics | Allows for detailed assessment of sperm morphology without the need for staining, useful for live sperm analysis. |

| Standardized Staining Kits (e.g., Diff-Quik) | Provides differential staining of sperm cell components (head, midpiece, tail) for clearer visualization under brightfield microscopy. |

| "Ground Truth" Image Dataset | A validated set of sperm images classified by expert consensus. This is critical for training new morphologists and validating the accuracy of new automated systems [2]. |

| Neubauer Hemocytometer or CASA System | For determining sperm concentration, a key correlative parameter in semen analysis. |

| Sperm Morphology Classification Training Tool | Software or a structured program that applies machine learning principles to provide infinite, independent training for morphologists, significantly improving accuracy and reducing variation [2]. |

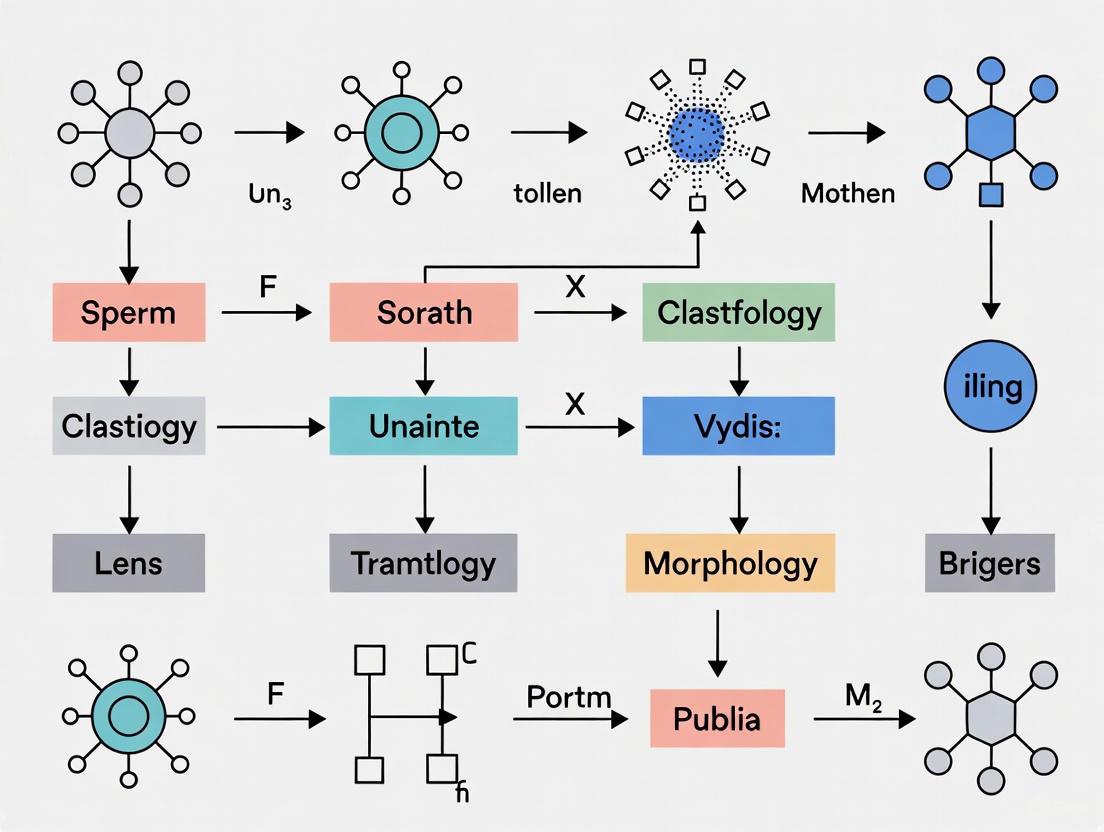

Workflow Visualization: Standardization and Classification

Sperm Morphologist Training Pathway

Morphology Defect Classification Tree

Inter-observer variability refers to the variation in test results when different experts perform the same test on the same sample or patient [5]. In diagnostic fields like sperm morphology assessment, this variability represents a significant challenge to standardization and reliability. Traditional manual sperm morphology assessment is recognized as particularly challenging to standardize due to its subjective nature, often relying heavily on the operator's expertise [6]. Studies report up to 40% disagreement between expert evaluators, highlighting the profound impact of human interpretation on diagnostic consistency [7].

This technical support center provides researchers with methodologies to quantify, troubleshoot, and minimize these variability sources in sperm morphology classification systems. By implementing standardized protocols and validation frameworks, research teams can improve the accuracy and reproducibility of their morphological assessments, ultimately advancing reproductive biology and drug development research.

Quantifying Variability: Key Metrics and Data

Understanding and measuring variability requires specific statistical approaches. The table below summarizes the primary metrics used in reliability studies.

Table 1: Statistical Measures for Quantifying Inter-Observer Variability

| Metric | Application | Interpretation Guidelines | Example from Literature |

|---|---|---|---|

| Intraclass Correlation Coefficient (ICC) | Assesses consistency for continuous or ordinal data [8]. | <0.5: Poor; 0.5-0.75: Moderate; 0.75-0.9: Good; >0.9: Excellent [8]. | Excellent agreement (ICC=0.95) was found for effective diameter measurements in CT scans [8]. |

| Kappa (κ) Statistic | Measures agreement for categorical data, correcting for chance [9]. | 0-0.20: Poor; 0.21-0.40: Fair; 0.41-0.60: Moderate; 0.61-0.80: Good; 0.81-1.00: Excellent [9]. | Diagnosis and classification tasks often show mean kappa values of 0.78-0.80, while complex outlining tasks can be lower (κ=0.45) [9]. |

| Percentage Agreement | Simple calculation of exact agreement between observers. | Highly influenced by chance; best used alongside other metrics [5]. | Reported in 19% of interobserver variability studies, though often insufficient alone [5]. |

Recent methodological reviews of interobserver variability studies reveal common design shortcomings. A 2023 review found that the median number of observers in such studies was only 4 (IQR: 2-7), and the median number of patient samples was 47 (IQR: 23-88), with only 15% of studies providing justification for their sample size [5]. This lack of statistical power planning remains a significant limitation in the field.

Troubleshooting Guides for Common Experimental Challenges

FAQ: How can we establish reliable "ground truth" for a subjective classification task?

Answer: Establishing a robust ground truth is foundational. Relying on a single expert's classification is insufficient due to inherent individual bias.

Solution: Implement a multi-expert consensus model.

- Procedure: Have a minimum of three independent, experienced assessors classify each sample or image [10] [6].

- Data Inclusion: For your ground truth dataset, only include classifications where assessors achieve 100% consensus on all labels [10]. In one study, this yielded 4,821 images out of an initial 9,365, providing a high-confidence dataset for training and validation [10].

- Documentation: Maintain a ground truth file detailing the image name, all expert classifications, and the final consensus label [6].

FAQ: Our inter-observer reliability metrics (ICC/Kappa) are lower than expected. What are the first things to check?

Answer: Low agreement often stems from pre-analytical and analytical factors. Systematically investigate these areas.

Solution: Follow this troubleshooting guide to identify and resolve common issues.

Table 2: Troubleshooting Guide for Low Inter-Observer Reliability

| Problem Area | Specific Issue | Diagnostic Steps | Resolution & Best Practices |

|---|---|---|---|

| Training & Standardization | Inconsistent application of classification criteria. | Review records for initial joint training sessions. Check if reference images are available during scoring. | Re-train all observers using a validated, consensus-based training tool [10]. Ensure detailed written guidelines and reference images are always accessible. |

| Sample & Data Quality | Poor image quality or preparation leading to ambiguous morphology. | Audit sample preparation protocols. Check for staining inconsistencies, debris, or blurry images. | Standardize sample prep (e.g., follow WHO manual for semen smears) [6]. Use high-resolution microscopy with high numerical aperture objectives [10]. Exclude low-quality images. |

| Study Design | Inadequate sample size or poorly defined study protocol. | Check if a sample size calculation was performed. Verify if all observers assessed the exact same set of images. | Justify sample size via a priori calculation [5]. Use a "crossed design" where all observers interpret all images to reduce noise [5]. |

FAQ: How can we reduce variability when integrating new personnel into our morphology assessment team?

Answer: Variability often increases with new staff due to differences in training and experience.

Solution: Deploy a standardized, self-paced training tool with immediate feedback.

- Implementation: Develop or use a web-based training interface that presents users with individual sperm images [10].

- Process: The user classifies each sperm, and the system provides instant feedback on whether the label was correct or incorrect, based on the pre-established expert consensus [10].

- Outcome: This method allows new researchers to train independently, at their own pace, and provides an objective assessment of their proficiency before they analyze real experimental data [10].

Advanced Protocols for Minimizing Variability

Protocol: Implementing a Multi-Expert Consensus Framework

This protocol is designed to create a robust ground truth dataset for sperm morphology classification, as validated in recent studies [10] [6].

1. Expert Selection and Blinding:

- Select at least three experts with extensive experience in the specific classification system (e.g., WHO, David) [6].

- Provide each expert with the same set of randomly ordered, high-quality images. Ensure they perform classifications independently and are blinded to each other's assessments.

2. Data Collection and Agreement Analysis:

- Collect classifications from all experts. Analyze the level of agreement for each image. Categorize agreement as:

- Use statistical tests (e.g., Fisher's exact test) to evaluate if differences between experts are significant [6].

3. Ground Truth Establishment:

- Use only images with Total Agreement (TA) for your high-confidence ground truth dataset [10].

- For images with partial or no agreement, organize a moderated session where experts discuss discrepant cases to reach a consensus, or exclude them from the final dataset.

The following workflow diagrams the multi-expert process for establishing a ground-truth dataset.

Protocol: Leveraging AI and Data Augmentation for Standardization

Artificial Intelligence (AI) models can help overcome human subjectivity. This protocol outlines steps for developing a deep-learning model for sperm morphology classification, based on state-of-the-art research [7] [6].

1. Dataset Curation and Augmentation:

- Start with a ground truth dataset established via multi-expert consensus.

- Address class imbalance by using data augmentation techniques. These computationally generate variations of existing images (e.g., rotations, flips, brightness adjustments) to create a larger, more balanced dataset [6]. One study augmented an initial set of 1,000 images to 6,035 images using these methods [6].

2. Model Development and Training:

- Use a Convolutional Neural Network (CNN) architecture, which is well-suited for image classification [6].

- Enhance the model with attention mechanisms like the Convolutional Block Attention Module (CBAM), which help the network focus on relevant morphological features (e.g., head shape, tail integrity) and achieve higher accuracy [7].

- Split the augmented dataset into a training set (e.g., 80%) and a testing set (e.g., 20%) [6].

3. Validation and Implementation:

- Rigorously validate the model using the held-out test set and report accuracy, precision, and recall.

- The final model can provide objective, rapid classifications, reducing analysis time from 30-45 minutes to under one minute per sample and significantly minimizing inter-observer variability [7].

The workflow below illustrates the AI model development process for objective analysis.

The Researcher's Toolkit

Table 3: Essential Research Reagents and Solutions for Sperm Morphology Studies

| Item / Solution | Function / Application | Key Specification / Standard |

|---|---|---|

| RAL Diagnostics Staining Kit | Staining semen smears for clear morphological visualization under a microscope. | Follows guidelines outlined in the WHO manual for semen analysis [6]. |

| MMC CASA System | Computer-Assisted Semen Analysis system for acquiring and storing images from sperm smears. | Typically used with bright field mode and an oil immersion 100x objective [6]. |

| High-NA Microscope Objectives | To maximize resolution for image capture, crucial for both manual and AI-based analysis. | Use objectives with high Numerical Aperture (e.g., NA 0.95 for DIC optics) [10]. |

| Validated Training Tool | A web interface for training and testing personnel on a sperm-by-sperm basis against expert consensus. | Provides instant feedback on classification accuracy to ensure standardization [10]. |

| Data Augmentation Algorithms | Software to generate additional training images from a limited dataset, balancing morphological classes. | Techniques include rotation, flipping, and scaling to create robust AI models [6]. |

| CNN with CBAM | A deep-learning model for automated, objective sperm morphology classification. | Enhanced ResNet50 architecture with Convolutional Block Attention Module for improved feature focus [7]. |

Troubleshooting Guides

Guide: Addressing High Variability in Morphology Assessment Results

Problem: Significant differences in normal morphology percentages between technicians or when comparing results to external quality control samples.

Explanation: Sperm morphology assessment is inherently subjective and relies heavily on technician expertise and training. [2] Without robust standardization, results are prone to human error and bias, leading to unreliable data.

Solution: Implement a standardized training tool using machine learning principles. [2]

- Steps:

- Utilize Consensus-Validated Images: Train technicians using image sets classified by multiple experts to establish "ground truth." [2]

- Start with Simple Categories: Begin training with a 2-category system (normal/abnormal), achieving >90% accuracy before progressing to more complex classifications. [2]

- Schedule Repeated Training: Conduct training sessions over at least four weeks. Studies show this improves accuracy from ~82% to ~90% and reduces classification time from 7.0s to 4.9s per image. [2]

- Cross-Validate with AI: Where available, use deep learning-based classification models to verify manual assessments and reduce subjectivity. [6]

Guide: Interpreting Discrepancies Between Different Classification Systems

Problem: Obtaining different clinical interpretations when using David's classification versus Kruger strict criteria.

Explanation: Different classification systems use varying measurement criteria and thresholds for "normal," leading to apparent discrepancies that can confuse clinical decision-making.

Solution: Understand the specific criteria and clinical predictive value of each system.

- Steps:

- Identify the System's Threshold: Know that Kruger strict criteria define normal forms as ≥4%, while older WHO4 criteria used ≥14%. [11] [12]

- Recognize System Correlation: Understand that despite different thresholds, WHO4 and Kruger (WHO5) morphology assessments show high correlation (Spearman coefficient = 0.94). [11]

- Check for Clinical Purpose: For predicting IVF fertilization success, strict criteria (cut-off ~16%) show better predictive value (AUC=0.735) compared to David's classification (AUC=0.572). [13]

- Standardize Your Lab's Practice: Adopt the WHO 6th edition guidelines, which recommend strict criteria, to align with international standards. [14]

Frequently Asked Questions (FAQs)

FAQ 1: What is the current clinical threshold for "normal" sperm morphology using strict Kruger criteria?

The WHO 6th edition manual (2021) maintains that the reference value for normal forms using strict Kruger criteria is ≥4%. [15] [12] [16] This means semen samples with 4% or more normally shaped sperm are considered to have normal morphology.

FAQ 2: How does David's classification differ from Kruger strict morphology criteria?

David's classification uses multiple specific defect categories (7 head defects, 2 midpiece defects, 3 tail defects), while Kruger strict criteria apply more rigorous measurement parameters for what qualifies as "normal." [6] [13] Clinically, Kruger strict criteria have demonstrated better prediction of fertilization success in IVF settings compared to David's classification. [13]

FAQ 3: Does abnormal sperm morphology correlate with increased DNA fragmentation?

A 2024 retrospective study found no statistically significant correlation between abnormal Kruger strict morphology and higher sperm DNA fragmentation rates. [17] This suggests these are independent parameters of sperm quality that should both be assessed in comprehensive male fertility evaluation.

FAQ 4: What are the key advancements in the WHO 6th Edition (2021) manual for sperm morphology assessment?

The WHO 6th edition introduced:

- New sperm tests for DNA fragmentation and seminal oxidative stress [12]

- Updated reference ranges from a more geographically diverse population [12]

- Stronger emphasis on quality control and standardized training protocols [14] [12]

- Clarification that reference values are not sole diagnostic tools for male infertility [12]

FAQ 5: How can artificial intelligence improve sperm morphology assessment?

Deep learning models using Convolutional Neural Networks (CNNs) can:

- Automate and standardize classification, reducing inter-technician variability [6]

- Achieve accuracy rates ranging from 55% to 92% compared to expert classification [6]

- Process large image datasets enhanced through data augmentation techniques [6]

Quantitative Data Comparison

Table 1: Comparison of Sperm Morphology Classification Systems

| Classification System | Normal Threshold | Key Characteristics | Clinical Predictive Value |

|---|---|---|---|

| Kruger Strict Criteria (WHO 6th Ed.) | ≥4% [15] [12] | Rigorous measurement of head, midpiece, and tail dimensions; global standard | Better predictor of IVF fertilization (AUC=0.735) [13] |

| WHO 4th Edition Criteria | ≥14% [11] | Less stringent morphology assessment | High correlation with Kruger (r=0.94) but less clinical utility [11] |

| David's Classification | Not specified | 12 specific defect categories; commonly used in France | Lower predictive value for fertilization (AUC=0.572) [13] |

Table 2: Impact of Standardized Training on Morphology Assessment Accuracy

| Training Status | 2-Category Accuracy | 5-Category Accuracy | 8-Category Accuracy | 25-Category Accuracy | Classification Speed |

|---|---|---|---|---|---|

| Untrained Users | 81.0% [2] | 68.0% [2] | 64.0% [2] | 53.0% [2] | 9.5s/image [2] |

| After 4-Week Training | 98.0% [2] | 97.0% [2] | 96.0% [2] | 90.0% [2] | 4.9s/image [2] |

Experimental Protocols

Protocol: Strict Kruger Morphology Assessment (WHO 6th Edition)

Principle: Sperm are categorized based on strict measurements of head and tail sizes and shapes. Only sperm with ideal dimensions are classified as normal. [15]

Materials:

- Semen sample collected after 2-7 days of sexual abstinence [15]

- Semen Analysis Kit (T178) [15]

- Spermac Stain (FertiPro) or equivalent [17]

- Microscope with 100x oil immersion objective [17]

Procedure:

- Sample Preparation: Allow semen to liquefy at room temperature (37°C) for 30-60 minutes post-collection. [17]

- Smear Preparation: Prepare a thin smear using 10µL of well-mixed semen on a clean glass slide. [17]

- Staining: Fix and stain using Spermac Stain according to manufacturer specifications. [17]

- Microscopic Evaluation: Examine under 1000x magnification with oil immersion. [17]

- Assessment: Evaluate 200 spermatozoa systematically across the slide. [17]

- Classification: Categorize each sperm as normal or abnormal based on strict criteria:

- Calculation: Calculate percentage of normal forms. Report as normal if ≥4%. [15]

Protocol: Sperm DNA Fragmentation Index (DFI) Assessment

Principle: The Sperm Chromatin Dispersion (SCD) test distinguishes sperm with fragmented DNA (no halo) from those with intact DNA (with halo) after acid denaturation and protein removal. [17]

Materials:

- CANFrag Kit (CANDORE BIOSCIENCE) or equivalent [17]

- Water bath (37°C)

- Ethanol series (70%, 90%, 100%)

- Light microscope

Procedure:

- Sample Preparation: Dilute semen sample to 15-20 million/mL if necessary. [17]

- Agarose Embedding: Mix semen aliquot with agarose and place on pre-treated slide. [17]

- Denaturation: Treat with acid denaturant to expose DNA breaks. [17]

- Lysis: Immerse in lysis solution to remove nuclear proteins. [17]

- Washing & Dehydration: Wash in distilled water and dehydrate through ethanol series. [17]

- Staining: Apply appropriate staining solution. [17]

- Evaluation: Count 200 spermatozoa; calculate DFI as (number without halo/total counted) × 100. [17]

- Interpretation: DFI <25% considered normal; ≥25% indicates significant DNA fragmentation. [17]

Signaling Pathways and Workflows

Sperm Morphology Classification Evolution

Standardized Morphology Training Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sperm Morphology Research

| Reagent/Material | Function | Example Product/Specification |

|---|---|---|

| Spermac Stain | Differentiates sperm structures for morphology assessment | FertiPro (Belgium) [17] |

| CANFrag Kit | Detects sperm DNA fragmentation using SCD methodology | CANDORE BIOSCIENCE [17] |

| Semen Analysis Kit | Standardized sample collection and transport | T178 Container [15] |

| MMC CASA System | Computer-assisted semen analysis for image acquisition | Microscope with digital camera [6] |

| RAL Diagnostics Stain | Staining for David's classification methodology | RAL Diagnostics staining kit [6] |

| Standardized Training Tool | Trains morphologists using machine learning principles | Web interface with expert-validated images [2] |

Systematic Review of Sperm Morphological Defects

Sperm morphology is a critical parameter in male fertility assessment, with anomalies classified based on their location on the sperm cell: the head, midpiece, tail, and cytoplasmic components. The following table provides a structured overview of key defects, their characteristics, and clinical significance.

Table 1: Classification and Characteristics of Sperm Morphological Defects

| Anatomical Region | Specific Defect | Morphological Characteristics | Potential Functional Impact | Associated Clinical/Cellular Factors |

|---|---|---|---|---|

| Head | Macrocephaly (Large Head) | Giant head, often carries extra chromosomes [3]. | Impaired egg fertilization [3]. | Homozygous mutation of the aurora kinase C gene (potentially genetic) [3]. |

| Microcephaly (Small Head) | Smaller than normal head, defective acrosome, reduced genetic material [3]. | Reduced fertilization potential [3]. | Not specified in search results. | |

| Globozoospermia (Round Head) | Round head, absence of acrosome or defective inner parts [3]. | Failure to activate the egg and initiate fertilization [3]. | Not specified in search results. | |

| Tapered/Pyriform Head | "Cigar-shaped" or pear-shaped head [3] [6]. | Abnormal chromatin packaging (DNA), aneuploidy [3]. | Varicocele, constant scrotal heat exposure [3]. | |

| Nuclear Vacuoles | Two or more large vacuoles or multiple small vacuoles in the head [3]. | May have low fertilization potential [3]. | Studies show conflicting evidence on functional impact [3]. | |

| Multiple Heads | Two or more heads [3]. | Impaired swimming and egg penetration [3]. | Exposure to toxic chemicals, heavy metals, smoke, or high prolactin [3]. | |

| Midpiece | Excess Residual Cytropyright (ERC) | Cytoplasm larger than one-third of the sperm head area [18] [19]. | Impaired motility, increased reactive oxygen species (ROS), oxidative stress [19]. | Arrest in spermiogenesis, incomplete cytoplasmic extrusion [19]. |

| Cytoplasmic Droplet (CD) | Normal occurrence, cytoplasm at the neck of the midpiece [19]. | Considered normal; contains enzymes for energy metabolism and osmoregulation [19]. | A marker of normal sperm morphology [20]. | |

| Large Swollen Midpiece | Abnormally large midpiece/neck [3]. | Defective mitochondria, missing or broken centrioles [3]. | Not specified in search results. | |

| Bent Midpiece | Misaligned midpiece [6]. | Potential impact on motility and force generation [6]. | Not specified in search results. | |

| Tail | Coiled Tail | Tail coiled upon itself [3]. | Non-motile sperm; cannot swim [3]. | Exposure to incorrect seminal fluid conditions, bacteria, heavy smoking [3]. |

| Short Tail (Stump Tail) | Abnormally short tail, also known as Dysplasia of the Fibrous Sheath (DFS) [3]. | Low or no motility [3]. | Autosomal recessive genetic disease; associated with chronic respiratory disease (immotile cilia syndrome) [3]. | |

| Multiple Tails | Two or more tails [3] [6]. | Impaired swimming function [3]. | Not specified in search results. | |

| Absent Tail | Tail-less sperm (acaudate) [3]. | Non-motile [3]. | Often seen during necrosis (cell death) [3]. |

Troubleshooting Guides & FAQs for Sperm Morphology Analysis

This section addresses common challenges researchers face during sperm morphology assessment and provides evidence-based guidance.

FAQ 1: How can we reduce high inter-laboratory variation in sperm morphology assessment results?

The Challenge: Sperm morphology assessment is highly subjective, relying on the technician's experience and perception, which leads to significant inter- and intra-laboratory variation and unreliable data [18].

Solution & Protocol:

- Implement Standardized Training Tools: Utilize a structured training tool based on machine learning principles. One study showed that using a "Sperm Morphology Assessment Standardisation Training Tool" significantly improved novice morphologists' accuracy from 53% to 90% in a complex 25-category system and reduced classification time [2].

- Establish Quality Control (QC): The laboratory must implement detailed step-by-step protocols, internal quality control (IQC), and external quality control (EQC) schemes [18]. Regular proficiency testing using standardized, pre-classified image sets is crucial.

- Use an Ocular Micrometer: A precise evaluation of sperm dimensions (head length 5-6 µm, width 2.5-3.5 µm) cannot be performed without the aid of an ocular micrometer [18].

FAQ 2: What is the critical distinction between a normal cytoplasmic droplet and pathological excess residual cytoplasm (ERC)?

The Challenge: Confusing a normal cytoplasmic droplet (CD) with pathological excess residual cytoplasm (ERC) can lead to misclassification of sperm and incorrect data interpretation [19].

Solution & Protocol:

- Differentiate by Size and Composition: A normal CD is a common feature of ejaculated human sperm and is not considered detrimental. Pathological ERC is defined as cytoplasm larger than one-third of the sperm head's area [18] [19].

- Understand the Functional Impact: ERC contains a surplus of cytoplasmic enzymes (e.g., Creatine Kinase), leading to increased production of Reactive Oxygen Species (ROS), which causes oxidative stress, impairs sperm motility, and reduces fertilization potential [19].

- Staining and Observation: ERC survives air-drying techniques used for seminal smears and often stains pink/red or reddish-orange, depending on the stain used [18] [19].

FAQ 3: How can we improve the accuracy and throughput of morphology classification in research?

The Challenge: Manual classification is slow, subject to fatigue, and difficult to standardize, especially with complex classification systems [6].

Solution & Protocol:

- Adopt Deep Learning Models: Develop or implement Convolutional Neural Network (CNN) models for automated classification. A recent study created the SMD/MSS dataset, augmented it from 1,000 to 6,035 images, and achieved classification accuracies ranging from 55% to 92% compared to expert judgment [6].

- Ensure High-Quality "Ground Truth" Data: The accuracy of any AI model depends on the quality of the training data. Use datasets where sperm images are labeled based on a consensus of multiple experts to establish a reliable "ground truth" [2] [6].

- Simplify Classification Systems: Where possible, use a less complex classification system. Research shows that accuracy is significantly higher in a 2-category (normal/abnormal) system (98%) compared to a 25-category system (90%) [2].

Detailed Experimental Protocols

Protocol for Standardized Sperm Smear Preparation and Staining (Diff-Quik)

This protocol is adapted from WHO guidelines and ensures consistent slide preparation for accurate morphology assessment [18].

Workflow Diagram: Sperm Smear Preparation and Staining

Steps:

- Sample Preparation: Collect semen in a sterile container. Incubate the sample at 37°C for 30 minutes to allow for liquefaction. If the sample is viscous, proteolytic enzymes like α-chymotrypsin can be added and incubated for an additional 10 minutes [18].

- Smear Preparation: Vortex the liquefied sample for 10 seconds. Place a 10 µL aliquot of well-mixed semen on one end of a clean, frosted slide. Use a second slide at a 45° angle to quickly and smoothly spread the drop, creating a thin smear. Prepare slides in duplicate and air-dry them completely [18].

- Staining (Diff-Quik Method):

- Immerse the air-dried slide in the fixative solution five times and allow it to dry completely for 15 minutes.

- Immerse the slide three times in solution I for 10 seconds. Drain the excess stain.

- Immerse the slide five times in solution II for 10 seconds.

- Quickly rinse the slide by immersing it in sterile water to remove excess stain.

- Place the slide vertically on absorbent paper to air-dry [18].

- Mounting: Once dry, place a few drops of a mounting medium (e.g., Cytoseal) on the slide and carefully lower a coverslip onto it, avoiding air bubbles. Allow the slide to dry completely before examination [18].

- Microscopy: Examine the stained smear using a bright-field microscope with a 100x oil immersion objective and a 10x eyepiece. An ocular micrometer is essential for precise measurement of sperm dimensions [18].

Protocol for Implementing a Morphology Training and QC Program

This protocol is based on a study that successfully used a standardized training tool to improve morphologist accuracy [2].

Workflow Diagram: Morphology Training and QC Program

Steps:

- Establish Ground Truth: Create or acquire a validated dataset of sperm images where each image has been classified by a consensus of multiple expert morphologists. This serves as the objective standard [2] [6].

- Baseline Assessment: Have all morphologists (novice and experienced) complete an initial proficiency test using the ground truth dataset. This establishes a baseline for accuracy and speed and identifies variation [2].

- Structured Training Cycle: Conduct intensive training using the standardized tool. This should include:

- Visual Aids: Provide clear diagrams and reference images for each defect category.

- Video Tutorials: Use videos to demonstrate the classification process.

- Repeated Practice: Trainees should undergo repeated testing and immediate feedback on their performance against the "ground truth." One effective study involved 14 tests over four weeks [2].

- Final Proficiency Test: After the training cycle, administer a final test to quantify improvement. The goal is to achieve >90% accuracy in complex classification systems [2].

- Ongoing Quality Control: Integrate regular QC into laboratory routine. This includes periodic re-testing of personnel using the training tool and participation in external quality assurance programs [18].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Sperm Morphology Research

| Item | Function/Application | Specific Example/Note |

|---|---|---|

| Diff-Quik Stain | A rapid, standardized staining kit for sperm morphology assessment. Provides contrast to differentiate head, midpiece, and tail [18]. | Consists of a fixative, solution I (eosin), and solution II (thiazine dye) [18]. |

| RAL Diagnostics Stain | A staining kit used for sperm morphology classification, particularly in studies building datasets for AI models [6]. | Used in the creation of the SMD/MSS dataset for deep learning [6]. |

| Papanicolaou Stain | Considered the "gold standard" stain for detailed morphological evaluation of the sperm head, including acrosomal status and vacuoles [18]. | A more complex staining procedure but offers high cellular detail [18]. |

| Ocular Micrometer | A calibrated graticule placed in the microscope eyepiece to accurately measure sperm dimensions (head length/width). | Critical for objective application of strict Kruger/WHO criteria [18]. |

| Sperm Morphology Standardisation Training Tool | Software or tool that uses expert-validated image sets to train and test morphologists, reducing subjectivity [2]. | One study used a tool that improved novice accuracy from 53% to 90% in a 25-category system [2]. |

| Convolutional Neural Network (CNN) Model | An artificial intelligence (AI) model for automated, high-throughput sperm classification, reducing human bias [6]. | A model trained on the SMD/MSS dataset achieved accuracies of 55-92% compared to experts [6]. |

| SYPL1 Antibody | A research reagent for investigating the role of the SYPL1 protein in cytoplasmic droplet formation and male fertility [20]. | SYPL1 is enriched in cytoplasmic droplets; its knockout in mice causes infertility [20]. |

Sperm morphology assessment is a cornerstone of male fertility evaluation, yet it remains one of the most challenging and subjective tests in the andrology laboratory. Despite its recommended inclusion in standard semen analysis by the World Health Organization, the clinical utility and prognostic value of morphology are frequently debated among researchers and clinicians [1]. This technical support document examines the fundamental limitations of both conventional manual assessment and Computer-Aided Sperm Analysis (CASA) systems, framing these challenges within the broader context of improving accuracy in sperm morphology classification systems research. The inherent variability in morphology assessment stems from multiple factors: the subjective nature of visual classification, differences in training and expertise, the complexity of classification systems, and technological limitations of automated systems. Understanding these constraints is essential for researchers developing improved classification methods and for laboratory professionals seeking to optimize their analytical protocols. This guide provides troubleshooting guidance and methodological insights to help address these persistent challenges in sperm morphology research and clinical practice.

FAQ: Understanding Methodological Limitations

Q1: What are the primary factors contributing to variability in manual sperm morphology assessment?

Manual sperm morphology assessment is susceptible to multiple sources of variability that can compromise result reliability and reproducibility:

Subjectivity of Visual Classification: The fundamental challenge lies in the subjective nature of visual assessment, where individual assessors may interpret borderline morphological features differently. Studies demonstrate that even expert morphologists show significant disagreement, with one study reporting only 73% consensus on normal/abnormal classification for the same sperm images [2]. This inter-assessor variability poses a substantial challenge for research requiring consistent classification across multiple evaluators or study sites.

Inadequate Standardization Training: Currently, no universally adopted standardized training method exists for sperm morphology assessment. Traditional approaches like side-by-side training with an experienced assessor are time-consuming and rely heavily on the trainer's own (potentially unvalidated) expertise [10]. Without robust, standardized training tools, each laboratory develops its own assessment culture, leading to systematic differences between facilities.

Classification System Complexity: The choice of classification system significantly impacts accuracy and consistency. Research demonstrates that more complex systems naturally lead to lower accuracy and higher variability. One study found untrained users achieved 81% accuracy with a simple 2-category system (normal/abnormal) compared to only 53% accuracy with a detailed 25-category system [2]. This trade-off between diagnostic detail and reliability presents a fundamental methodological challenge for researchers.

Microscopic Technique Variations: Differences in microscope optics (phase contrast vs. DIC), magnification, sample preparation methods, and staining techniques can all influence the apparent morphology of spermatozoa, further adding to inter-laboratory variability [10].

Q2: How does CASA technology address these limitations, and what new challenges does it introduce?

Computer-Aided Sperm Analysis (CASA) systems were developed to reduce subjectivity and standardize semen analysis, but they introduce distinct technical considerations:

Concentration-Dependent Performance: CASA systems demonstrate varying accuracy depending on sample concentration. They show increased variability in both low-concentration (<15 million/mL) and high-concentration (>60 million/mL) specimens [21]. This non-linear performance characteristic requires researchers to understand the optimal concentration ranges for their specific CASA instruments and establish verification protocols for samples falling outside these ranges.

Susceptibility to Sample Contaminants: The presence of non-sperm cells, debris, or agglutinated sperm can significantly interfere with CASA's automated tracking and classification algorithms, leading to inaccurate motility measurements and morphological misclassification [21]. This necessitates rigorous sample preparation protocols and visual verification of problematic samples.

Morphology Assessment Limitations: While CASA shows reasonable correlation with manual methods for concentration and motility assessment, morphology analysis remains particularly challenging for automated systems. The multidimensional nature of morphological defects and the subtlety of some abnormalities exceed the capabilities of many current CASA platforms [21]. One systematic review found morphology results showed the highest level of difference between CASA and manual evaluation [21].

Technology-Specific Performance Characteristics: Different CASA systems employ varying methodologies (image processing vs. electro-optics) and algorithms, leading to system-specific performance characteristics. This complicates cross-study comparisons and requires researchers to thoroughly validate their specific instrumentation rather than relying on generalized CASA performance claims [21].

Q3: What methodological approaches can improve assessment accuracy?

Implementing rigorous methodological protocols can significantly enhance the reliability of sperm morphology assessment:

Standardized Training Tools: Emerging training tools that apply machine learning principles show promise for improving assessment accuracy. One study utilizing a "Sperm Morphology Assessment Standardisation Training Tool" demonstrated significant improvement in novice morphologist accuracy, from 81% to 98% for 2-category classification after training [2]. These tools provide instant feedback and objective assessment against expert-validated "ground truth" classifications.

Consensus-Based Ground Truth Establishment: Adopting the machine learning concept of "ground truth" through multi-expert consensus can substantially improve classification validity. One development study used three experienced assessors to classify images, retaining only those with 100% consensus (4,821 out of 9,365 images) for integration into their training tool [10]. This approach ensures trainees learn from definitively classified examples rather than potentially subjective individual assessments.

Protocols for Sample Preparation: Standardizing pre-analytical variables including staining methods, slide preparation, and imaging conditions reduces technical sources of variation. Establishing rigorous internal quality control procedures with regular proficiency testing helps maintain assessment consistency over time [1].

Hybrid Assessment Approaches: For complex research questions, combining CASA efficiency with manual verification of borderline cases may provide an optimal balance between throughput and accuracy. This approach leverages the strengths of both methodologies while mitigating their respective limitations.

Troubleshooting Guides

Guide 1: Addressing High Inter-Assessor Variability

Problem: Significant disagreement between different assessors evaluating the same samples, compromising data reliability.

Solutions:

- Implement Standardized Training: Utilize validated training tools that provide immediate feedback on classification accuracy. Research shows that structured training over four weeks can improve accuracy from 82% to 90% even for complex classification systems [2].

- Establish Consensus Protocols: Develop procedures for regular consensus meetings where assessors review borderline cases together and establish standardized classification criteria.

- Simplify Classification Systems: When scientifically justified, use simpler classification systems. Studies show that reducing categories from 25 to 2 can improve initial accuracy from 53% to 81% for untrained users [2].

- Implement Blind Verification: Incorporate periodic blind re-assessment of a subset of samples to monitor intra-assessor consistency over time.

Validation Check: After implementing these measures, re-assess a standardized set of images. The coefficient of variation between assessors should decrease significantly, with target accuracy above 90% for 2-category systems [2].

Guide 2: Managing CASA System Limitations

Problem: Inaccurate results from CASA systems, particularly with challenging samples.

Solutions:

- Optimize Sample Concentration: For samples with concentrations <15 million/mL or >60 million/mL, implement manual verification protocols. Consider dilution or concentration adjustments to bring samples within the optimal range for your specific CASA system [21].

- Pre-Filter Problematic Samples: Visually screen samples for excessive debris, non-sperm cells, or agglutination before CASA analysis. For contaminated samples, consider additional washing steps or manual assessment.

- Validate Morphology Findings: For all CASA morphology assessments, implement random manual verification of a subset of classifications to identify systematic errors in the algorithm's performance.

- Regular System Calibration: Establish rigorous calibration schedules using quality control beads and standardized reference samples to ensure consistent performance over time [21].

Validation Check: Regularly compare CASA results with manual assessments for the same samples. Correlation coefficients should exceed 0.90 for concentration and 0.80 for motility when samples are within optimal parameters [21].

Guide 3: Improving Methodology for Research Applications

Problem: Inconsistent morphology data compromising research validity and reproducibility.

Solutions:

- Document Classification Criteria Exhaustively: Create detailed visual guides with example images for every morphological category, including borderline cases.

- Implement Multi-Level Verification: For key research findings, implement a tiered assessment protocol where a second independent assessor verifies a subset of classifications, with third-party adjudication for disputed cases.

- Control Pre-Analytical Variables: Standardize abstinence periods (recent research suggests 1 day may be optimal for some research questions [22]), sample processing time, staining protocols, and imaging parameters across all samples.

- Utilize High-Quality Imaging Equipment: Invest in microscopy with DIC optics and high numerical apertures (≥0.75) to maximize resolution and minimize classification ambiguity [10].

Validation Check: Implement a proficiency testing program where all assessors regularly evaluate standardized sets of images. Maintain accuracy records and provide refresher training when accuracy falls below established thresholds (e.g., <85% for 2-category systems) [2].

Experimental Protocols

Protocol 1: Ground Truth Establishment for Morphology Classification

This protocol outlines a method for creating validated image datasets essential for training and research standardization.

Materials:

- Fresh semen samples from appropriate species

- Microscope with DIC optics and high-resolution camera (≥8.9MP recommended)

- Standardized staining reagents (e.g., Diff-Quik, Papanicolaou)

- Image classification software or database

Methodology:

- Sample Preparation: Prepare slides using standardized staining protocols appropriate for your research model. Ensure even sperm distribution and minimal debris.

- Image Acquisition: Capture a minimum of 50 fields of view per sample at 40× magnification with DIC optics. Use consistent lighting and exposure settings across all images [10].

- Image Cropping: Isolate individual sperm cells using automated cropping algorithms or manual selection. A machine-learning approach can efficiently process large image sets [10].

- Independent Expert Classification: Have a minimum of three experienced morphologists classify each sperm image independently using your predefined classification system.

- Consensus Establishment: Retain only images with 100% consensus among all classifiers for your "ground truth" dataset. One study achieved 100% consensus on 4,821 of 9,365 images (51.5%) using this method [10].

- Dataset Organization: Structure the validated images in an accessible format (e.g., web interface) that allows for easy retrieval during training or verification procedures.

Validation: The resulting dataset should demonstrate high classification consistency when tested by independent experts not involved in the initial classification process.

Protocol 2: Comparative Validation of CASA vs. Manual Morphology Assessment

This protocol provides a framework for objectively evaluating CASA system performance against manual assessment.

Materials:

- CASA system with latest software version

- Microscope with oil immersion objective (100×)

- Standardized semen samples spanning various concentration ranges

- Quality control beads for system calibration

- Data recording spreadsheet

Methodology:

- System Calibration: Calibrate the CASA system according to manufacturer instructions using quality control beads.

- Sample Selection: Select 50-100 semen samples representing a range of concentrations (<15, 15-60, >60 million/mL) and morphological profiles [21].

- Blinded Assessment:

- For CASA analysis: Process each sample according to standardized protocols, recording concentration, motility, and morphology parameters.

- For manual assessment: Have experienced morphologists evaluate the same samples using standardized criteria, blinded to the CASA results.

- Data Analysis:

- Calculate correlation coefficients for each parameter between CASA and manual methods.

- Assess agreement using Bland-Altman plots to identify any concentration-dependent biases.

- Analyze specific morphological categories where discrepancies are most pronounced.

Expected Outcomes: Systematic reviews indicate high correlation for concentration (r=0.95-0.98) and motility (r=0.74-0.93), but lower agreement for morphology assessment, particularly in complex classification systems [21].

Quantitative Data Comparison

Table 1: Performance Comparison of Manual vs. CASA Sperm Assessment Methods

| Parameter | Manual Assessment | CASA Assessment | Correlation Coefficient | Key Limitations |

|---|---|---|---|---|

| Concentration | Standardized per WHO guidelines [22] | Variable accuracy: increased error at <15 million/mL and >60 million/mL [21] | 0.95-0.98 [21] | CASA shows non-linear performance across concentration ranges |

| Total Motility | Subjective visual estimation | Automated tracking, but impaired by debris/aggregates [21] | 0.74-0.93 [21] | CASA overestimates rapid motility in contaminated samples [21] |

| Morphology | High inter-assessor variation [2] | Limited accuracy for complex defects [21] | 0.36-0.77 [21] | Both methods struggle with subtle abnormalities and classification consistency |

| Time Efficiency | ~100 sperm/5-10 minutes [2] | Rapid analysis of larger populations | N/A | Manual method provides more detailed morphological observation |

Table 2: Impact of Training and Classification System Complexity on Assessment Accuracy

| Training Status | 2-Category System Accuracy | 5-Category System Accuracy | 8-Category System Accuracy | 25-Category System Accuracy |

|---|---|---|---|---|

| Untrained Users | 81.0% ± 2.5% [2] | 68.0% ± 3.6% [2] | 64.0% ± 3.5% [2] | 53.0% ± 3.7% [2] |

| After Initial Training | 94.9% ± 0.7% [2] | 92.9% ± 0.8% [2] | 90.0% ± 0.9% [2] | 82.7% ± 1.1% [2] |

| After 4-Week Training | 98.0% ± 0.4% [2] | 97.0% ± 0.6% [2] | 96.0% ± 0.8% [2] | 90.0% ± 1.4% [2] |

Signaling Pathways and Workflow Diagrams

Research Reagent Solutions

Table 3: Essential Materials for Advanced Sperm Morphology Research

| Research Tool | Specific Product Examples | Research Application | Technical Considerations |

|---|---|---|---|

| High-Resolution Imaging Systems | Olympus BX53 with DIC optics, DP28 camera [10] | Capture detailed morphology for ground truth datasets | High numerical aperture (≥0.75) objectives essential for resolution |

| Standardized Staining Kits | Diff-Quik, Papanicolaou, Quick-Stain | Consistent morphological visualization | Staining protocol must be standardized across all samples |

| CASA Systems | SCA (Microptics), IVOS (Hamilton-Thorne), SQA-V Gold (Medical Electronic Systems) [21] | High-throughput analysis, objective motility assessment | Require validation against manual methods; performance varies by concentration |

| Quality Control Materials | Latex Accu-Beads, validated reference samples [21] | System calibration, proficiency testing | Essential for maintaining inter-laboratory consistency |

| Morphology Training Tools | Sperm Morphology Assessment Standardisation Training Tool [2] | Assessor training, reducing inter-individual variation | Based on expert consensus-classified images ("ground truth") |

| Sample Collection Materials | Standardized containers, temperature monitoring systems | Pre-analytical standardization | Maintain consistent abstinence periods (1 day recommended for some studies [22]) |

Methodological Breakthroughs: From Conventional ML to Deep Learning Architectures

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed for researchers and scientists working to improve accuracy in sperm morphology classification systems. It addresses common challenges encountered when implementing and using AI-driven Computer-Assisted Sperm Analysis (CASA) platforms.

Frequently Asked Questions (FAQs)

Q1: Our AI-CASA system's cell detection accuracy drops significantly with changes in microscope lighting. How can we mitigate this?

A1: Traditional CASA systems that rely on machine vision are highly susceptible to variations in illumination, as their detection is based on predefined area calculations [23]. For AI-driven systems, ensure you are using the platform's full capabilities:

- Utilize Raw Images: True AI-CASA systems are designed to use raw images from the microscope, eliminating the need for pre-processing filters that can be affected by lighting [23].

- Leverage the Neural Network: The AI model should be trained to recognize sperm cells across a variety of lighting conditions. If performance is poor, it may indicate that the neural network requires further training with a more diverse set of images that includes different lighting scenarios [23].

- Standardize Imaging Protocols: As a best practice, establish and adhere to consistent microscope setup procedures for condenser alignment, light intensity, and objective use to minimize extreme variability [23].

Q2: What steps should we take when the AI model produces a high rate of false positives, misclassifying debris as sperm cells?

A2: Misclassification is often a training data issue. Follow this debugging protocol:

- Interrogate the Training Data: This error suggests the AI model's training data may have lacked sufficient examples of debris or may not have been properly labeled to distinguish between cells and dirt within the sample [23].

- Implement Confidence Thresholding: Most AI classification models output a confidence score. Review these scores for the misclassified debris and consider implementing a higher confidence threshold for a cell to be classified as a spermatozoon.

- Curate a Validation Set: Create a small, manually annotated dataset of images containing challenging debris. Use this set to validate the model post-training and identify specific failure modes [24].

Q3: How can we validate the performance of a new AI-CASA morphology classification model against traditional methods?

A3: A rigorous validation experiment is key to establishing credibility for a new model. Below is a summarized protocol based on current research methodologies.

| Validation Metric | Experimental Protocol | Expected Outcome (from recent research) |

|---|---|---|

| Diagnostic Accuracy | Train model on a public dataset (e.g., SMIDS, HuSHeM). Compare its classifications against a panel of expert embryologists on a separate test set. Calculate accuracy, precision, recall, and F1-score [25]. | State-of-the-art models (e.g., CBAM-ResNet50 with feature engineering) achieve test accuracies of ~96% on benchmark datasets, significantly outperforming manual assessment [25]. |

| Inter-Observer Variability | Have multiple technicians and the AI model analyze the same set of samples. Compare the results using statistical measures like Cohen's Kappa [26] [25]. | AI systems provide a standardized, objective assessment, eliminating the high inter-observer variability (up to 40% disagreement) common in manual analysis [25]. |

| Processing Time | Time experienced morphologists and the AI system as they analyze the same batch of samples (e.g., 200 sperm cells per sample) [25]. | AI can reduce analysis time from 30-45 minutes per sample to less than 1 minute, enabling high-throughput evaluation [25]. |

Q4: We are getting inconsistent results when sperm are viewed from different angles or are overlapping. Is this a limitation of 2D imaging?

A4: Yes, this is a recognized limitation of conventional 2D CASA systems. A flat view cannot fully capture the natural 3D motion patterns of sperm, and overlapping cells disrupt tracking and morphology analysis [23] [27].

- Short-term Mitigation: Ensure sample preparation creates a monolayer of sperm to minimize overlap. Some software may have algorithms to handle brief crossings.

- Long-term Research Solution: The field is moving towards 3D imaging techniques, such as digital holographic microscopy and light-sheet microscopy, which provide a more realistic analysis environment akin to the female reproductive tract [27]. Consider exploring these technologies for future system upgrades.

Q5: What does an "AI hallucination" mean in the context of sperm analysis, and how can we prevent it?

A5: In this context, "AI hallucination" would refer to the model generating a plausible but factually incorrect analysis, such as identifying a non-existent sperm defect or misclassifying a normal sperm based on a learned but irrelevant pattern [24].

- Prevention Strategies:

- High-Quality, Diverse Data: Train the model on a large, comprehensive, and accurately labeled dataset that covers the full spectrum of morphological abnormalities and various sample qualities [24] [25].

- Human-in-the-Loop (HITL) Validation: For critical diagnostics, implement a protocol where a human expert reviews a subset of the AI's results, especially those with low confidence scores or rare classifications [24].

- Robust Feature Engineering: Advanced techniques like Deep Feature Engineering (DFE), which combines deep learning with classical feature selection (e.g., PCA, Random Forest importance), can improve model accuracy and robustness, reducing spurious correlations [25].

Experimental Protocols for Benchmarking

Protocol: Evaluating a Novel Deep Learning Architecture for Sperm Morphology Classification

This protocol outlines the methodology used in a recent state-of-the-art study, providing a template for your own experiments [25].

1. Hypothesis: Integrating an attention mechanism and deep feature engineering into a convolutional neural network will improve the accuracy of sperm morphology classification.

2. Materials and Reagent Solutions:

| Research Reagent / Material | Function in the Experiment |

|---|---|

| Public Datasets (SMIDS, HuSHeM) | Provide standardized, annotated image sets for training and benchmarking the AI model, ensuring reproducibility and comparison to other work [25]. |

| Pre-trained CNN (ResNet50) | Serves as a robust backbone feature extractor, leveraging knowledge from large-scale image recognition tasks (transfer learning) [25]. |

| Convolutional Block Attention Module (CBAM) | A lightweight neural network module that directs the model's focus to the most relevant morphological features (e.g., head shape, tail defects) while suppressing background noise [25]. |

| Feature Selection Algorithms (PCA, Chi-square) | Techniques from classical machine learning used to reduce noise and dimensionality in the high-dimensional features extracted by the CNN, improving classifier performance [25]. |

| Support Vector Machine (SVM) Classifier | A powerful shallow classifier that is trained on the refined deep features to perform the final morphology classification (e.g., normal, abnormal) [25]. |

3. Workflow Diagram:

4. Methodology:

- Data Preparation: Split the dataset into training, validation, and test sets using 5-fold cross-validation [25].

- Model Architecture: Integrate the CBAM module into the ResNet50 architecture. The CBAM sequentially applies channel and spatial attention to feature maps [25].

- Feature Engineering Pipeline: Extract features from multiple layers of the network (CBAM, Global Average Pooling). Apply 10 distinct feature selection methods (e.g., PCA, Chi-square, Random Forest) and use their intersections to create an optimal feature set [25].

- Training & Evaluation: Train the hybrid model and the SVM classifier. Evaluate final performance on the held-out test set using accuracy and statistical tests like McNemar's test [25].

This technical support guide is designed for researchers and scientists working on the development of automated sperm morphology classification systems. A robust and accurate classification system is a critical component of modern Computer-Aided Sperm Analysis (CASA), which aims to standardize and improve the success rates of assisted reproductive technologies like in vitro fertilization (IVF) and intracytoplasmic sperm injection (ICSI) [28]. This resource provides targeted troubleshooting advice and answers to frequently asked questions, framed within the context of a thesis focused on enhancing the predictive accuracy of these deep learning models. The guidance herein draws from the latest research to help you overcome common experimental hurdles in model design, training, and evaluation.

Troubleshooting Guides

Guide: Addressing Low Testing Accuracy Despite High Training Accuracy

Problem: Your model achieves near-perfect accuracy (e.g., 100%) on the training data but performs poorly on the testing dataset, a classic sign of overfitting [29].

Investigation & Resolution Steps:

- Diagnose Data Scarcity: Confirm the size of your training dataset. A very small dataset (e.g., 15 images per class) is a primary cause of overfitting, as the model memorizes the examples rather than learning generalizable patterns [29].

- Implement Data Augmentation: Artificially expand your training dataset by applying realistic transformations to the original images. For sperm images, this can include:

- Horizontal Mirroring: A generally safe transformation that doubles your dataset size.

- Rotation & Zooming: Apply small, realistic degrees of rotation and zoom.

- Important: Avoid transformations that would not occur in real life, such as inverting a sperm image upside down or mirroring text-containing medical labels [29].

- Apply Regularization Techniques:

- Dropout: Introduce dropout layers, which randomly disable a fraction of neurons during training to prevent co-adaptation. Consider advanced methods like Probabilistic Feature Importance Dropout (PFID), which dynamically adjusts dropout rates based on feature significance, improving generalization [30].

- Batch Normalization: Add batch normalization layers after convolutional layers to stabilize and accelerate training, which also has a minor regularizing effect [31] [29].

- Review Learning Rate: A high learning rate can cause the model to overfit to the training data too quickly. Try reducing the learning rate to allow for a more gradual and stable convergence [29].

- Simplify or Redesign Architecture: If your model is overly complex (too many layers or parameters) for the small dataset, consider simplifying the architecture. Alternatively, for small datasets, leverage transfer learning from pre-trained models, which can be more effective than building a network from scratch [29].

Guide: Selecting an Architecture for Multi-Part Sperm Segmentation

Problem: Choosing the right model architecture for segmenting different components of a sperm cell (head, acrosome, nucleus, neck, tail), which vary in size, shape, and morphological complexity.

Investigation & Resolution Steps:

- Define the Segmentation Target: Identify the primary structure you need to segment, as model performance varies significantly by target [28].

- Select a Model Based on Target Characteristics:

- For small, regular structures like the head, nucleus, and acrosome, the two-stage Mask R-CNN model has been shown to deliver superior performance, offering robustness in precise segmentation [28].

- For the morphologically complex tail, which is long and thin, the U-Net architecture, with its strong global perception and multi-scale feature extraction capabilities, has achieved the highest Intersection over Union (IoU) scores [28].

- For a balance between accuracy and computational speed, single-stage detectors like YOLOv8 can be considered, as they have shown performance comparable to Mask R-CNN for certain parts like the neck [28].

- Utilize a Hybrid Approach: Do not feel constrained to a single model. Consider an ensemble or pipeline approach that uses the best-performing architecture for each specific sperm component to create an overall superior segmentation system.

Table: Model Performance for Sperm Part Segmentation (Based on IoU)

| Sperm Component | Recommended Model | Key Reasoning |

|---|---|---|

| Head, Nucleus, Acrosome | Mask R-CNN | Excels at segmenting smaller, more regular structures with high precision [28]. |

| Tail | U-Net | Superior global perception handles long, thin, and complex morphological shapes best [28]. |

| Neck | YOLOv8 / Mask R-CNN | Single-stage models like YOLOv8 can rival two-stage models in this region [28]. |

Guide: Mitigating Data Imbalance and Heterogeneity

Problem: Your dataset is characterized by a low signal-to-noise ratio, unclear structural boundaries, and class imbalance, which is common in unstained live human sperm images [28] [32].

Investigation & Resolution Steps:

- Data Quality Assurance: Implement a quality control pipeline that includes inter-annotator agreement checks and expert (e.g., embryologist) review of diagnostic outliers to ensure label consistency [32].

- Advanced Preprocessing:

- Combat Data Scarcity and Imbalance:

- Leverage Transfer Learning: Start with a model pre-trained on a large, general image dataset (like ImageNet). This provides a strong feature extraction foundation that can be fine-tuned on your specialized sperm dataset, reducing the need for an excessively large annotated dataset [33].

Frequently Asked Questions (FAQs)

Q1: Why does my model's training error decrease while testing error increases from the very first epoch, even on unseen data?

A: This phenomenon suggests a fundamental distribution shift between your training and testing datasets [34]. The assumptions that training and test data are drawn from the same distribution and are properly shuffled may be violated. You should verify the random splitting and shuffling procedures for your data. This can also occur if the test set contains more challenging or different types of images (e.g., more impurities, different staining) than the training set [34].

Q2: My dataset of sperm images is very small. What are the most effective strategies to prevent overfitting?

A: With a small dataset, your priority should be maximizing the utility of your existing data and constraining model complexity.

- Data Augmentation is Critical: Systematically apply transformations like mirroring, rotation, and zoom to artificially increase your dataset size [29].

- Use Regularization: Integrate Dropout and Batch Normalization layers into your CNN architecture [30] [29].

- Employ Transfer Learning: Rather than training from scratch, fine-tune a pre-trained model (e.g., VGG16, MobileNetV3) on your sperm dataset. This leverages features learned from millions of general images [33].

- Simplify the Model: A model with too many parameters for a small dataset will easily memorize the data. Reduce the number of layers or neurons if you are not using transfer learning [29].

Q3: For segmenting different parts of a sperm cell, which deep learning model should I choose?

A: The optimal model depends on the specific sperm structure, as no single model is best for all parts. Quantitative evaluations show:

- Mask R-CNN is superior for segmenting smaller, more regular structures like the head, nucleus, and acrosome [28].

- U-Net performs best for the long, thin, and complex tail structure due to its architecture that captures multi-scale contextual information [28].

- YOLOv8 offers a strong and fast alternative, achieving performance comparable to Mask R-CNN for parts like the neck [28].

Q4: What are some advanced regularization techniques I can use beyond traditional dropout?

A: Recent research has moved towards dynamic and context-aware dropout strategies. A notable advancement is Probabilistic Feature Importance Dropout (PFID), which assigns dropout rates to individual features based on their learned statistical importance, rather than using a static rate across the network [30]. This "feature-aware" design helps retain critical information while effectively regularizing less important activations, leading to improved generalization and training efficiency [30].

Experimental Protocols & Data

Quantitative Model Comparison for Sperm Segmentation

The following table summarizes the findings from a systematic 2025 evaluation of deep learning models on multi-part segmentation of unstained live human sperm. The performance is measured using Intersection over Union (IoU), a common metric for segmentation tasks [28].

Table: Model Performance for Sperm Part Segmentation (Based on IoU)

| Sperm Component | Recommended Model | Key Reasoning |

|---|---|---|

| Head, Nucleus, Acrosome | Mask R-CNN | Excels at segmenting smaller, more regular structures with high precision [28]. |

| Tail | U-Net | Superior global perception handles long, thin, and complex morphological shapes best [28]. |

| Neck | YOLOv8 / Mask R-CNN | Single-stage models like YOLOv8 can rival two-stage models in this region [28]. |

Detailed Methodology: Model Evaluation for Sperm Segmentation

This protocol outlines the methodology for a standardized comparison of segmentation models, as described in recent literature [28].