Beyond the Training Set: Strategies for Enhancing Generalization in Fertility Prediction Models

This article addresses the critical challenge of generalizability in machine learning models for fertility prediction.

Beyond the Training Set: Strategies for Enhancing Generalization in Fertility Prediction Models

Abstract

This article addresses the critical challenge of generalizability in machine learning models for fertility prediction. While high accuracy on internal datasets is often reported, the performance of these models frequently degrades when applied to new, diverse populations or clinical settings. We explore the foundational causes of this limitation, including dataset bias and non-representative training data. The review then examines methodological innovations, from feature engineering to advanced deep learning architectures, that can improve model robustness. Furthermore, we analyze troubleshooting and optimization techniques to mitigate overfitting and discuss rigorous validation frameworks essential for assessing real-world applicability. This synthesis provides researchers and drug development professionals with a comprehensive roadmap for building fertility prediction tools that are not only accurate but also broadly generalizable and clinically reliable.

The Generalization Gap: Understanding Core Challenges in Fertility AI

Defining Generalization in the Context of Clinical Fertility Prediction

Frequently Asked Questions (FAQs)

1. What does "generalization" mean for a clinical fertility prediction model? Generalization refers to a model's ability to maintain accurate predictive performance when applied to new, unseen patient data from a different clinic or population than the one on which it was originally developed. A model with poor generalization might perform well at its original development site but fail when used elsewhere [1] [2].

2. Why do models developed on national registries (like SART) sometimes perform poorly at individual clinics? National registry models are trained on aggregated data from many centers, which can obscure the specific clinical, demographic, and laboratory characteristics unique to a single clinic. Performance drops due to data drift (differences in patient population characteristics) and concept drift (differences in the relationship between predictors and outcomes) across sites [2]. One study found that machine learning center-specific (MLCS) models significantly outperformed the SART model, more appropriately assigning 23% of all patients to a higher and more accurate live birth prediction (LBP) category [2].

3. What are the key steps to validate a model's generalizability? The recommended process involves external validation and live model validation (LMV). First, test the existing model on your local dataset to establish a performance baseline (e.g., AUC, calibration). Second, develop a center-specific model using your local data and compare its performance directly against the external model. Finally, implement "live model validation" by continuously testing the model on new, prospective patient data to ensure it remains applicable over time [1] [2].

4. Which machine learning algorithms are most effective for building generalizable fertility models? Studies consistently show that tree-based ensemble methods like Random Forest (RF), XGBoost, and LightGBM deliver superior performance for fertility prediction tasks. These algorithms can capture complex, non-linear relationships in clinical data. For example, one study found Random Forest achieved an AUC >0.8 for predicting live birth, outperforming other models [3]. Another reported LightGBM was optimal for predicting blastocyst yield, offering a good balance of accuracy and interpretability [4].

5. What are the most critical features for predicting live birth outcomes in IVF? While feature importance can vary by population, the most consistently powerful predictors across studies are:

- Female Age

- Embryo Quality Grades

- Number of Usable Embryos

- Endometrial Thickness [3] For predicting blastocyst formation, embryo morphology parameters on Day 3 (like mean cell number and proportion of 8-cell embryos) are highly important [4].

6. How can we improve a model's calibration when applying it to a new population? If an external model shows good discrimination (AUC) but poor calibration (under- or over-prediction), you can recalibrate it on your local data. This process adjusts the model's output probabilities to align with the observed outcomes in your population, often by refitting the model's intercept or scaling parameter. One study successfully rescaled the McLernon 2022 model, which significantly improved its calibration for a Chinese population [1].

Experimental Protocols for Model Validation and Development

Protocol 1: External Validation of an Existing Prediction Model

Purpose: To evaluate the performance of a published fertility prediction model on your local patient population.

Methodology:

- Data Collection: Extract a dataset from your local clinic's records that matches the inclusion/exclusion criteria of the model you are validating (e.g., first IVF cycles, specific age ranges) [1] [2].

- Variable Mapping: Reformulate your local dataset variables to match the definitions and categories required by the external model. Continuous variables may need to be categorized or transformed as specified by the original authors [1].

- Outcome Definition: Align your local outcome definition with the model's target (e.g., "cumulative live birth per ovum pick-up over 2 years") [1].

- Missing Data: Implement a robust imputation strategy for missing values, such as multiple imputation by chained equations (MICE) or a non-parametric method like

missForest[1] [5]. - Performance Calculation: Apply the model's published coefficients to your local data. Calculate key performance metrics:

- Discrimination: Area Under the Receiver Operating Characteristic Curve (AUC).

- Calibration: Calibration slope and intercept; visualized with a calibration plot [1].

- Interpretation: Compare the performance metrics obtained on your local data with those reported in the model's original publication.

Protocol 2: Development of a Center-Specific Prediction Model

Purpose: To build and validate a machine learning model tailored to your clinic's specific patient population for improved generalizability locally.

Methodology:

- Data Preprocessing:

- Split data chronologically (e.g., 2013-2019 for development, 2020 for validation) or randomly (e.g., 80/20 split) [1] [6].

- Perform feature selection by combining statistical significance (p < 0.05) and feature importance ranking (e.g., from Random Forest), followed by clinical expert review to eliminate biologically irrelevant variables [3].

- Model Training: Train multiple machine learning algorithms (e.g., RF, XGBoost, LightGBM, SVM) on the training set using hyperparameter tuning via grid search and 5-fold cross-validation to prevent overfitting [3] [5].

- Model Evaluation: Validate the final model on the held-out test set. Report AUC, accuracy, precision, recall, F1-score, and Brier score [3] [2].

- Model Interpretation: Use techniques like feature importance analysis, partial dependence plots (PDP), and individual conditional expectation (ICE) plots to understand how the model makes predictions and to gain biological insights [4] [3].

Performance Data of Fertility Prediction Models

Table 1: Comparison of Model Performance Across Different Populations and Studies

| Model Name / Type | Study Population | Key Predictors | Performance (AUC) | Generalization Notes |

|---|---|---|---|---|

| McLernon 2016 (HFEA) [1] | Chinese Population (External Validation) | Female age, duration of infertility, tubal factor | 0.69 (95% CI 0.68–0.69) | Provided useful discrimination but showed underestimation of risk. |

| SART Model [2] | 6 US Fertility Centers (External Validation) | Not specified | Lower than MLCS | MLCS models assigned more appropriate LBP to 23% of patients. |

| Machine Learning Center-Specific (MLCS) [2] | 6 US Fertility Centers | Center-specific patient and treatment features | Superior to SART model (p < 0.05) | Improved minimization of false positives and negatives; externally validated. |

| Random Forest (Fresh ET) [3] | Chinese Single-Center | Female age, embryo grades, usable embryos, endometrial thickness | > 0.80 | Demonstrated high predictive power within the development center. |

| LightGBM (Blastocyst Yield) [4] | Single-Center Cohort | # of extended culture embryos, Day 3 mean cell number, proportion of 8-cell embryos | R²: 0.673–0.676 (Regression) | Selected as optimal for accuracy and interpretability with fewer features. |

Table 2: Key Feature Importance in Different Prediction Tasks

| Feature Category | Specific Feature | Prediction Context | Relative Importance |

|---|---|---|---|

| Patient Demographics | Female Age | Live Birth | Top predictor across multiple studies [1] [3] |

| Embryo Morphology | Number of Usable Embryos | Live Birth | Top predictor [3] |

| Embryo Morphology | Grades of Transferred Embryos | Live Birth | Top predictor [3] |

| Cycle Parameters | Number of Extended Culture Embryos | Blastocyst Yield | Most critical (61.5%) [4] |

| Embryo Morphology | Mean Cell Number on Day 3 | Blastocyst Yield | High (10.1%) [4] |

| Cycle Parameters | Endometrial Thickness | Live Birth | Significant predictor [3] |

| Patient History | Duration of Infertility | Live Birth | Key predictor [1] |

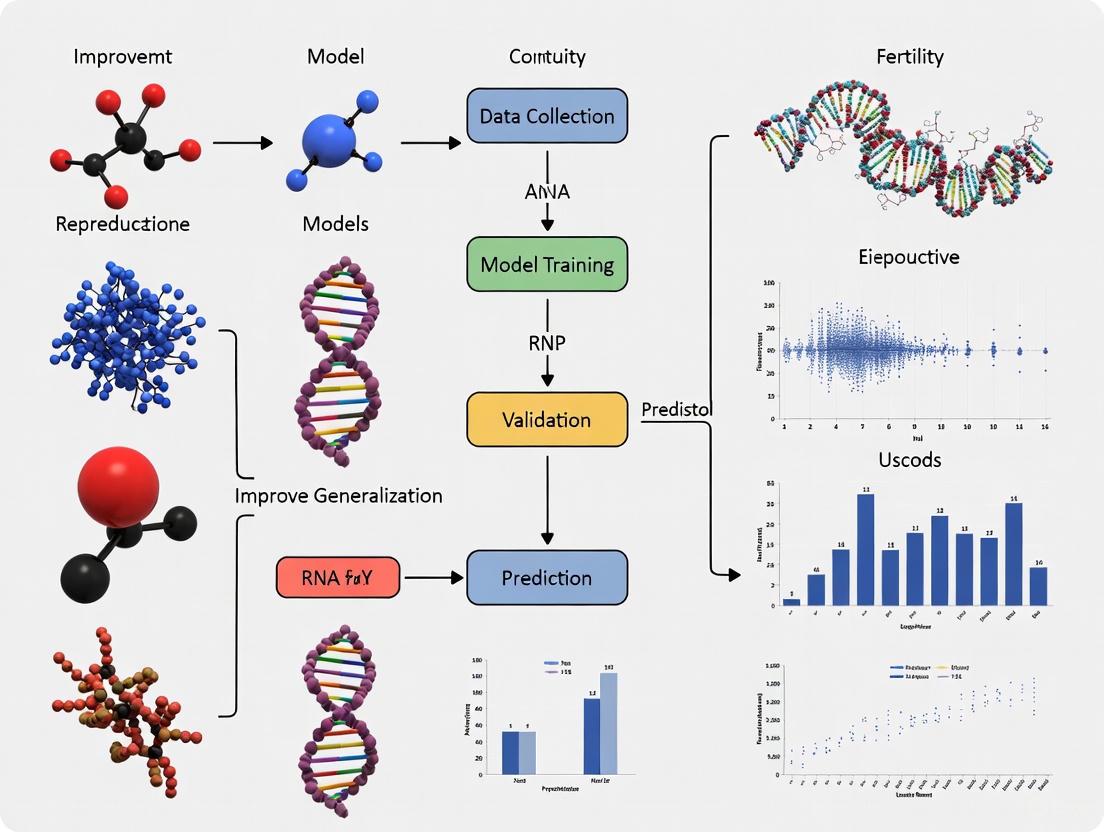

Workflow Diagrams

Model Generalization Assessment Workflow

Center-Specific Model Development

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Analytical Tools for Fertility Prediction Research

| Tool / Reagent | Function / Application | Example in Context |

|---|---|---|

| Machine Learning Algorithms (e.g., RF, XGBoost, LightGBM) | Building predictive models that capture complex, non-linear relationships in clinical data. | Used to develop center-specific live birth prediction models that outperformed national registry models [2] [6]. |

| Model Interpretation Libraries (e.g., SHAP, PDP, ICE) | Providing post-hoc interpretability for "black box" ML models, revealing feature importance and effects. | Identifying "number of extended culture embryos" as the top predictor for blastocyst yield (61.5% importance) [4]. |

Data Imputation Software (e.g., missForest in R) |

Handling missing data in clinical datasets using non-parametric, random forest-based imputation. | Used to impute missing values in ovarian stimulation protocols and other clinical variables prior to model development [3] [5]. |

| Hyperparameter Tuning Frameworks (e.g., Grid Search, Random Search) | Systematically optimizing model parameters to maximize predictive performance and prevent overfitting. | Implemented with 5-fold cross-validation to select the best hyperparameters for Random Forest and other algorithms [3]. |

| Clinical Data Variables (Female Age, Embryo Grade, etc.) | The fundamental predictors used to train and validate the fertility prediction models. | Consistently identified as top features across studies; the raw "reagents" for model building [1] [3]. |

Frequently Asked Questions

1. What are the main types of data bias that affect the generalizability of fertility prediction models? The three primary sources of bias are geographic, demographic, and clinical heterogeneity.

- Geographic Bias arises when training data is sourced from specific regions whose populations have unique characteristics. For instance, U.S. state-level personality traits (like agreeableness or neuroticism) are correlated with regional fertility patterns [7]. A model trained on such data may not work well in other countries or even other regions within the same country.

- Demographic Bias occurs when certain demographic groups are underrepresented. A model trained on data from Somalia identified age group, region, and parity as top predictors [8]. If such a model were applied to a population with a different age structure or wealth distribution, its predictions would be unreliable.

- Clinical Heterogeneity is introduced when data comes from specific clinical workflows, patient cohorts, or ART techniques. For example, a model trained on fresh embryo transfer cycles may not generalize well to frozen cycles, and center-specific models often outperform generalized national models [2] [3].

2. How can I quantify the impact of geographic bias in my model's training data? You can quantify geographic bias by analyzing how key predictive features and outcome rates vary across different regions. The table below summarizes empirical evidence of geographic variation in fertility-related factors:

Table 1: Documented Evidence of Geographic Variation in Fertility-Related Factors

| Factor Category | Specific Example | Impact on Fertility Patterns | Source Location |

|---|---|---|---|

| Personality Traits | Higher state-level agreeableness/conscientiousness | Associated with more traditional fertility patterns (higher fertility, earlier childbearing) [7] | United States [7] |

| Personality Traits | Higher state-level neuroticism/openness | Associated with more non-traditional fertility patterns [7] | United States [7] |

| Sociodemographics | Region (federal member state) | Identified as a top-2 predictor of fertility preferences [8] | Somalia [8] |

| Access to Healthcare | Distance to health facilities | A critical barrier and predictor of fertility desires [8] | Somalia [8] |

3. My model performs well internally but fails on external datasets. Could demographic bias be the cause? Yes, this is a classic symptom of demographic bias. Performance drops occur when the external dataset has a different distribution of key demographic features than your training set. To diagnose this:

- Identify Top Features: Use explainable AI (XAI) techniques like SHAP to identify the top demographic features your model relies on [8].

- Compare Distributions: Compare the distributions of these top features (e.g., age, parity, education level) between your training data and the external dataset.

- Stratified Analysis: Perform a stratified analysis on the external dataset to see if performance drops are concentrated in specific demographic subgroups.

Table 2: Top Demographic Predictors of Fertility Preferences Identified via Machine Learning

| Predictor | Relative Importance (Example) | Effect on Fertility Preference | Study Context |

|---|---|---|---|

| Age Group | Top predictor [8] | Women aged 45-49 are significantly more likely to prefer no more children. | Somalia [8] |

| Region | Second most important predictor [8] | Preferences vary significantly by geographic region within a country. | Somalia [8] |

| Parity | Third most important predictor (e.g., number of births in last 5 years) [8] | Women with higher parity are more likely to prefer to cease childbearing. | Somalia [8] |

| Wealth & Education | High importance (wealth index, education level) [8] | Strongly influences desired family size and family planning use. | Somalia [8] |

4. What experimental protocols can mitigate clinical heterogeneity when building a model from multi-center data? A robust protocol for handling multi-center clinical data involves center-specific modeling and rigorous external validation, as demonstrated in recent studies [2] [9].

- Protocol: Center-Specific Model Development and Validation

- Objective: To determine whether machine learning center-specific (MLCS) models provide more accurate predictions than a single model built on aggregated national data.

- Dataset: A retrospective cohort of 4,635 patients from 6 unrelated fertility centers across the US [2].

- Method:

- For each center, train a dedicated ML model (e.g., Random Forest) using only that center's local patient data.

- Compare the performance of these MLCS models against the national SART prediction model on a held-out test set from each center.

- Use metrics that are relevant for clinical utility, including:

- Discrimination: Area Under the Receiver Operating Characteristic Curve (ROC-AUC).

- Calibration: Brier Score (closer to 0 is better).

- Overall Performance: Precision-Recall AUC (PR-AUC) and F1-score [2].

- Key Finding: MLCS models significantly improved the minimization of false positives and negatives and more appropriately assigned live birth probabilities to patients compared to the generalized national model [2].

Center-Specific vs. Aggregated Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Mitigating Bias in Fertility Prediction Research

| Tool / Technique | Function | Application Example |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Explains the output of any ML model by quantifying the contribution of each feature to the prediction [8]. | Identified age group and region as the top predictors of fertility preferences in a Somali population, revealing key demographic drivers [8]. |

| Machine Learning Center-Specific (MLCS) Models | A modeling approach where a unique model is trained for each clinical center or distinct subpopulation. | Outperformed a national, generalized model in predicting IVF live birth rates across 6 US fertility centers, mitigating clinical heterogeneity [2]. |

| Live Model Validation (LMV) | A validation technique using an "out-of-time" test set from a period contemporaneous with clinical model usage. | Tests for data and concept drift, ensuring a model remains applicable to current patient populations after deployment [2]. |

| Random Forest Algorithm | A robust, ensemble ML algorithm suitable for classification and regression tasks, often providing high accuracy. | Frequently used as the top-performing algorithm in fertility studies for predicting live birth [3] and oocyte yield [10]. |

| Sensitivity Analysis (Subgroup & Perturbation) | Assesses model stability and generalizability by testing its performance across different patient subgroups or with slightly altered data. | Recommended practice to ensure model robustness and identify subgroups where performance may degrade [3]. |

A significant performance gap exists between machine learning (ML) models predicting blastocyst formation and those predicting live birth outcomes in assisted reproductive technology (ART). Models for blastocyst development frequently demonstrate high accuracy by leveraging clear, early-stage morphological data [4] [11]. In contrast, live birth prediction models must account for a vastly more complex and extended sequence of biological events, leading to greater performance challenges and highlighting a critical generalization problem in fertility AI research [2] [3]. This case study analyzes the roots of this discrepancy and provides a technical troubleshooting guide to help researchers develop more robust and generalizable models.

Performance Data Comparison

The table below summarizes the performance metrics of recent models, illustrating the distinct performance tiers for different prediction tasks.

Table 1: Performance Comparison of Fertility Prediction Models

| Prediction Task | Model Type | Key Predictors | Performance (AUC) | Citation |

|---|---|---|---|---|

| Blastocyst Formation | XGBoost (Time-lapse images) | Cell stage annotations, Veeck grades, maternal age | 0.87 - 0.88 | [11] |

| Good Blastocyst Quality | XGBoost (Time-lapse images) | Cell stage annotations, Veeck grades, maternal age | 0.88 | [11] |

| Blastocyst Yield (Quantitative) | LightGBM (Cycle-level) | Number of extended culture embryos, Day 3 embryo morphology | R²: 0.673-0.676 | [4] |

| Live Birth (Fresh ET) | Random Forest (Clinical & lab data) | Female age, embryo grades, endometrial thickness, usable embryos | >0.80 | [3] |

| Live Birth (Pretreatment) | Machine Learning Center-Specific (MLCS) | Multiple clinical and patient factors | Superior to national registry model (SART) | [2] |

| Positive Pregnancy (IUI) | Linear SVM (Clinical & lab data) | Pre-wash sperm concentration, stimulation protocol, maternal age | 0.78 | [12] |

| Natural Conception | XGB Classifier (Sociodemographic/Lifestyle) | BMI, caffeine, endometriosis, chemical/heat exposure | 0.580 | [13] |

Experimental Protocols for Key Studies

Interpretable AI for Blastocyst Selection (RCT Protocol)

This randomized controlled trial (RCT) protocol outlines a method for transparent blastocyst selection [14].

- Objective: To verify the effectiveness of an interpretable AI-based method for blastocyst selection by comparing IVF outcomes between AI-selected and embryologist-selected blastocysts.

- Study Design: Single-centre, single-blind, parallel-group RCT with a 1:1 allocation ratio.

- Participants: 1100 women aged 20-35 years undergoing their first IVF/ICSI cycle.

- Intervention:

- Control Group: Blastocyst selection via traditional Gardner grading system (evaluates development stage, inner cell mass, and trophectoderm).

- AI Group: Blastocyst selection using a novel interpretable AI method that analyzes static blastocyst images based on quantitative, transparent features.

- Primary Outcome: Ongoing pregnancy rate (viable intrauterine pregnancy at ≥12 weeks gestation).

- Integration: The AI software is installed on a workstation in the IVF lab, retrieves blastocyst images, and provides evaluation and ranking results to embryologists.

Live Birth Prediction for Fresh Embryo Transfer

This study developed an ML model to predict live birth outcomes prior to fresh embryo transfer [3].

- Data Source: 51,047 ART records from a single hospital (2016-2023), preprocessed to 11,728 records with 55 pre-pregnancy features.

- Models Compared: Random Forest (RF), XGBoost, GBM, AdaBoost, LightGBM, and Artificial Neural Network (ANN).

- Model Training & Selection:

- A grid search approach with 5-fold cross-validation was used for hyperparameter tuning.

- The area under the curve (AUC) was the primary evaluation metric.

- Key Workflow Steps:

- Data filtering (fresh embryo transfer cycles, cleavage-stage transfers).

- Missing value imputation using the non-parametric

missForestmethod. - Tiered feature selection: data-driven criteria (p<0.05 or top-20 RF importance) followed by clinical expert validation.

- Model interpretation using partial dependence (PD), local dependence (LD), and accumulated local (AL) profiles.

Troubleshooting Guide: FAQs on Model Generalization

FAQ 1: Why does my model perform well on blastocyst prediction but poorly on live birth prediction?

Root Cause: This is primarily an outcome complexity and data scope issue.

- Blastocyst Prediction is largely dependent on embryonic factors observable in the lab (e.g., cell number, symmetry, fragmentation) [4] [11]. Your model likely excels because it uses high-quality, standardized data from a controlled environment.

- Live Birth Prediction depends on a cascade of additional, complex factors beyond the embryo itself:

- Maternal Environment: Endometrial receptivity, uterine anatomy, presence of medical conditions (e.g., endocrine disorders) [14] [3].

- Clinical Protocols: Type of endometrial preparation for frozen transfers (natural vs. artificial cycle) can impact live birth rates [15].

- Extended Timeline: The outcome is separated from the input data by a long and variable period, introducing uncontrolled variables.

Solution:

- Feature Expansion: Incorporate key maternal clinical features identified in high-performing models: female age, endometrial thickness, and number of usable embryos [3].

- Consider Transfer Strategy: Account for whether a transfer is fresh or frozen and the endometrial preparation protocol, as these can be significant confounding factors [15].

FAQ 2: My model validates internally but fails on external data from another clinic. How can I improve cross-center performance?

Root Cause: Data drift and population differences. Patient populations and clinical protocols vary significantly between fertility centers, leading to different underlying data distributions [2].

Solution:

- Adopt Center-Specific Modeling (MLCS): Research shows that machine learning center-specific (MLCS) models significantly outperform national, center-agnostic models (like the SART model) because they are tailored to local patient populations and practices [2].

- Federated Learning: If possible, train models across multiple centers without sharing raw data to learn robust, generalizable patterns while preserving data privacy.

- Continuous Validation: Implement "live model validation" (LMV) to test models on out-of-time data contemporaneous with clinical usage, monitoring for data and concept drift [2].

FAQ 3: How can I address the "black box" problem to make my model clinically acceptable?

Root Cause: Many complex ML models (e.g., deep learning) are not inherently interpretable, causing epistemic and ethical concerns that hinder clinical adoption [14].

Solution:

- Develop Interpretable AI: Prioritize models and methods where the reasoning process is transparent. One interpretable AI method for blastocyst selection uses quantitative features that are clear to embryologists [14].

- Utilize Explainability Tools: Apply techniques like SHapley Additive exPlanations (SHAP) to quantify feature importance and illustrate how individual features affect a specific prediction [11] [16]. This was used effectively in a time-lapse imaging model to ensure transparency [11].

- Feature Selection: Build more parsimonious models with fewer, clinically meaningful features. The LightGBM model for blastocyst yield was selected partly because it used only 8 key features, enhancing interpretability without sacrificing much accuracy [4].

FAQ 4: What are the common pitfalls in dataset preparation that hurt model generalization?

Root Cause: Inadequate data preprocessing and feature engineering that does not account for clinical reality and data quality.

Solution:

- Robust Preprocessing:

- Clinically-Guided Feature Selection:

- Combine data-driven selection (e.g., p-values, feature importance ranking) with validation by clinical experts to eliminate biologically irrelevant variables and retain clinically critical features, even if they are less powerful statistically [3].

- Address Class Imbalance: Use techniques like SMOTE or adjusted class weights, as datasets often have many more negative outcomes (non-live-birth) than positive ones.

Workflow Visualization

Diagram 1: Fertility Model Development and Troubleshooting Workflow. This diagram outlines the key stages in developing and refining predictive models for fertility outcomes, highlighting common failure points and their solutions.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Materials for Fertility Prediction Studies

| Reagent / Material | Function in Experiment | Example from Search Results |

|---|---|---|

| Time-Lapse Incubators | Provides continuous, undisturbed embryo culture and generates rich morphokinetic data for image-based AI models. | Used to capture blastocyst images for interpretable AI model [14] [11]. |

| Vitrification Solutions & Carriers | Enables cryopreservation of blastocysts for frozen transfer cycles, a key variable in live birth outcome studies. | Kitazato solutions with Cryotop open system carrier used in FET study [15]. |

| Ovarian Stimulation Agents | Standardizes and controls superovulation; different protocols (e.g., recombinant FSH, clomiphene) are predictive features. | Recombinant FSH (Gonal-F), clomiphene citrate, letrozole used in IUI study [12]. |

| Sperm Preparation Media | Standardizes sperm processing; post-wash parameters (e.g., motile sperm count) are key predictors for IUI success. | Density gradient media (e.g., Gynotec Sperm filter) used for IUI cycles [12]. |

| Hormonal Assay Kits | Quantifies serum levels of hormones (e.g., hCG, LH, estradiol) for cycle monitoring and outcome confirmation. | hCG trigger (Ovidrel) and LH ovulation tests used for timing in NC-FET [15]. |

| Embryo Grading Software | Provides standardized, quantitative assessment of embryo quality (blastocyst stage, ICM, TE), crucial for model features. | Integrated into AI workstation for blastocyst evaluation and ranking [14]. |

The Impact of Limited and Non-Harmonized Feature Sets on Model Portability

Troubleshooting Guide & FAQs

Frequently Asked Questions

Q1: Why does my fertility prediction model's performance drop significantly when validated on data from a different clinic?

A: This is a classic symptom of limited model portability, primarily caused by using non-harmonized feature sets. The performance drop occurs because your model has learned patterns specific to your original dataset's "batch effects"—such as differences in patient demographics, clinical protocols, laboratory techniques, or equipment—rather than the true biological signals of infertility. For example, a model trained on UK/US populations showed underestimation when applied to a Chinese population, and AKI prediction models exhibited cross-site performance deterioration due to population heterogeneity [1] [17]. Without harmonization, these scanner and population differences become confounding variables.

Q2: What are the most common sources of "non-biological variation" in multi-center fertility prediction research?

A: The common sources include:

- Patient Demographics and Clinical Protocols: Differences in inclusion/exclusion criteria, ovarian stimulation protocols, and embryo grading systems between clinics [1].

- Laboratory Techniques: Variation in hormone assay kits, semen analysis protocols, and culture conditions.

- Data Collection and Entry: Heterogeneity in electronic health record (EHR) systems and coding practices [17].

- Population Heterogeneity: Differences in genetic background, lifestyle, and underlying causes of infertility across geographic regions [1]. These factors create "batch effects" that can be mistakenly learned by models, reducing their portability [18] [19].

Q3: We have a small local dataset. Is data harmonization still feasible for us?

A: Yes, specific distributed learning approaches are designed for this scenario. The Traveling Model (TM) approach is particularly advantageous for centers with limited local datasets [18]. Unlike Federated Learning, which trains models in parallel and requires aggregation, the TM sequentially visits one center at a time for training. This method allows a model to be trained across multiple centers without sharing data and is effective even when some centers contribute very few data points (e.g., fewer than 10) [18].

Q4: After harmonization, how can I verify that the biological information in my features has been preserved?

A: The gold standard is to test the harmonized features on a specific, biologically meaningful classification task. For instance, in one study, the effectiveness of ComBat harmonization was validated by using the harmonized radiomic features to classify different tissues (liver, spleen, bone marrow). The results showed that classification accuracy improved significantly after harmonization, demonstrating that the biological signal was not only preserved but also more accessible to the model [20]. You should apply a similar validation using a clinically relevant endpoint in your fertility research.

Troubleshooting Common Problems

Problem: Model Performance is Unstable in Distributed Learning

- Symptoms: The model performs well on some clinic's data but poorly on others; the model's performance fluctuates wildly during sequential training in a Traveling Model setup.

- Possible Cause: The model is learning scanner- or site-specific artifacts as shortcuts for prediction, a phenomenon known as "shortcut learning" [18].

- Solution: Implement HarmonyTM, a harmonization method tailored for the Traveling Model approach. It uses adversarial training to "unlearn" scanner-specific information from the model's feature representation while retaining disease-related information.

- Protocol: Integrate an adversarial network with your classifier. This network should include a "domain classification head" that tries to predict the scanner or site from the features, while your main classifier tries to predict the clinical outcome. The feature extraction process is trained to fool the domain classifier, thereby creating features invariant to the scanner [18].

- Outcome: In one study, this method improved disease classification accuracy from 72% to 76% while reducing the model's ability to identify the scanner from 53% to 30% [18].

Problem: Inconsistent Feature Distributions Across Multiple Sites

- Symptoms: Statistical analysis (e.g., t-tests, ANOVA) shows significant differences in the distributions of the same feature extracted from data from different scanners or sites.

- Possible Cause: Technical variability (e.g., scanner manufacturer, magnetic field strength, acquisition protocols) introduces non-biological variance, overshadowing the biological signal [19].

- Solution: Apply the ComBat harmonization method. This empirical Bayesian framework effectively adjusts for batch effects (e.g., scanner, site) while preserving the biological signal of interest [20] [19].

- Protocol:

- Feature Extraction: Extract your radiomic or deep learning features from the multi-center dataset.

- Model Batch Effects: ComBat models each feature as a combination of the overall mean, batch effects (additive and multiplicative), and biological covariates (e.g., age, patient sex).

- Adjust Data: It then removes the estimated batch effects to generate harmonized features. The method can be applied to both radiomic and deep features [19].

- Outcome: One study on abdominal MRI showed that before harmonization, over 75% of radiomic features differed significantly between manufacturers. After ComBat, no significant differences remained, and effect sizes (Cohen's F) were substantially reduced [19].

- Protocol:

Problem: External Model Performs Poorly on Local Population

- Symptoms: A published, high-performing pretreatment prediction model for IVF outcomes provides poorly calibrated predictions for your local patient population, often underestimating or overestimating success rates [1].

- Possible Cause: The model was developed on a population with different prevalence rates, demographic characteristics, or clinical practices.

- Solution: Perform model recalibration or develop a center-specific model.

- Protocol for Recalibration:

- External Validation: First, validate the external model on your local dataset. Calculate its AUC for discrimination and plot calibration curves to assess the degree of miscalibration [1].

- Rescale Predictions: Use a simple intercept and slope adjustment (e.g., via Platt scaling or logistic calibration) to align the model's predicted probabilities with the observed outcomes in your local data. For example, the McLernon 2022 model showed improved calibration after rescaling in a Chinese population [1].

- Protocol for Center-Specific Model: If relevant newer predictors are available in your local data but not in the external model, consider developing a local model using algorithms like XGBoost or logistic regression, which have shown good performance for IVF outcome prediction [9] [21].

- Protocol for Recalibration:

Quantitative Data on Harmonization Impact

Table 1: Impact of ComBat Harmonization on Multi-Scanner Radiomics Classification Accuracy [20]

| Radiomic Feature Class | Accuracy (Unharmonized) | Accuracy (Harmonized) | Performance Increase |

|---|---|---|---|

| Gray-Level Histogram | 58.9% | 68.3% | +9.4% |

| Gray-Level Cooccurrence Matrix | 50.0% | 86.1% | +36.1% |

| Gray-Level Run-Length Matrix | 58.3% | 82.8% | +24.5% |

| Gray-Level Size-Zone Matrix | 52.8% | 85.6% | +32.8% |

| Neighborhood Gray-Tone Matrix | 53.9% | 77.2% | +23.3% |

| Multiclass Radiomic Signature | 58.3% | 84.4% | +26.1% |

Table 2: Performance of Generalized vs. Center-Specific IVF Prediction Models in a Chinese Population [1]

| Prediction Model | Area Under Curve (AUC) | Calibration Note |

|---|---|---|

| McLernon 2016 (UK-based) | 0.69 | Underestimation |

| Luke (US-based) | 0.67 | Underestimation |

| Dhillon (CARE-based) | 0.69 | Underestimation |

| McLernon 2022 (SART-based) | 0.67 | Underestimation (best after rescaling) |

| Center-Specific Model (XGboost, Lasso, GLM) | 0.71 | Better calibration for local population |

Experimental Protocols for Key Methodologies

Protocol 1: Implementing ComBat Harmonization for Radiomic/Deep Features

This protocol is based on studies that successfully harmonized features from MRI and PET data [20] [19].

- Data Collection and Feature Extraction:

- Collect multi-center data, ensuring metadata for "batch" (e.g., scanner model, site) and relevant biological covariates (e.g., age, sex) are available.

- Extract features using a standardized pipeline (e.g., PyRadiomics for radiomic features or a pre-trained Swin Transformer for deep features).

- Apply ComBat Harmonization:

- Use the ComBat algorithm to model each feature

Y_ijf(for featuref, subjectj, batchi) as:Y_ijf = α_f + γ_if + Xβ_f + δ_if * ε_ijfwhereα_fis the overall mean,γ_ifis the additive batch effect,δ_ifis the multiplicative batch effect,Xis the matrix of covariates,β_fis the corresponding coefficients, andε_ijfis the error term. - The harmonized feature

Y_ijf*is then calculated by removing the estimated batch effectsγ_ifandδ_if.

- Use the ComBat algorithm to model each feature

- Validation:

Protocol 2: Setting up a Traveling Model with HarmonyTM

This protocol mitigates shortcut learning in distributed environments with limited data [18].

- Model Initialization: Initialize the model (e.g., a convolutional neural network for image analysis) at a central server or the first participating center.

- Sequential Training with Adversarial Loss:

- The model "travels" sequentially to each center. At each center

k, the model is trained on the local datasetD_k. - The key innovation is the adversarial component. The model's architecture includes:

- A feature encoder.

- A main task classifier (e.g., PD or fertility outcome prediction).

- A domain classifier (to predict the scanner or site).

- The training objective is a minimax game:

- The domain classifier is trained to correctly identify the source site from the features.

- The feature encoder is trained to excel at the main task while simultaneously "fooling" the domain classifier, making the features domain-invariant.

- The model "travels" sequentially to each center. At each center

- Cycling: Multiple cycles through the participating centers can be performed to enhance performance.

Workflow Visualization

Harmonized Traveling Model Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Portable Model Development

| Tool / Reagent | Function / Application | Example Use Case |

|---|---|---|

| ComBat | A statistical harmonization tool that removes center/scanner-specific batch effects from features using an empirical Bayesian framework. | Harmonizing radiomic features extracted from PET/CT and PET/MRI scanners from different vendors before building a classification model [20] [19]. |

| PyRadiomics | An open-source Python package for the extraction of a large set of hand-crafted radiomic features from medical images. | Standardized feature extraction from liver and spleen in abdominal MRI for a multi-center study [19]. |

| Traveling Model (TM) | A distributed learning paradigm where a single model is sequentially trained on data from one center at a time. | Enabling model training across 83 centers with very limited local data (some with <5 samples) for Parkinson's disease classification [18]. |

| HarmonyTM | An extension of the TM that uses adversarial training to "unlearn" scanner-specific information from the model's feature representation. | Improving disease classification accuracy while reducing the model's ability to identify the scanner source, preventing shortcut learning [18]. |

| Swin Transformer | A deep learning architecture that can be used as a feature extractor to generate high-dimensional deep features from image data. | Extracting 1024 deep features from each abdominal T2W MRI exam for subsequent analysis and harmonization [19]. |

Frequently Asked Questions & Troubleshooting Guides

This technical support center provides data-driven insights and methodologies to help researchers address common challenges in the field of AI for fertility prediction. The information is framed within the broader thesis of improving the generalization of fertility prediction models.

How widely adopted is AI in reproductive medicine, and what are the primary use cases?

Answer: Artificial Intelligence adoption in reproductive medicine has seen significant growth, moving from niche to mainstream use between 2022 and 2025. The primary application remains embryo selection, though usage has expanded to other areas [22].

Table: Evolution of AI Adoption and Applications (2022 vs. 2025)

| Aspect | 2022 Survey Findings | 2025 Survey Findings |

|---|---|---|

| Overall AI Usage | 24.8% of respondents used AI [22] | 53.22% (regular or occasional use); 21.64% regular use [22] |

| Primary Application | Embryo selection (86.3% of AI users) [22] | Embryo selection (32.75% of all respondents) [22] |

| Professional Familiarity | Indirect evidence of lower familiarity [22] | 60.82% reported at least moderate familiarity [22] |

| Key Emerging Benefit | Sperm selection (87.5% interest), embryo annotation (92.4% interest) [22] | Workflow optimization, medical education [22] |

Experimental Protocol for Tracking Adoption Trends: The comparative data is derived from two global, web-based questionnaires distributed through the IVF-Worldwide.com platform. The first survey was conducted from July to August 2022 (n=383), and the second from February to March 2025 (n=171). Participants included physicians, embryologists, and other professionals from six continents. Surveys were administered using Community Surveys Pro, and a verification system matched self-reported data with platform registration to eliminate duplicates. Descriptive statistics, including frequencies and percentages, were used to summarize responses. Comparative analyses used Chi-square or Fisher's exact tests to assess differences between the two survey periods [22].

What are the most significant barriers to AI adoption reported by fertility specialists?

Answer: The perceived barriers to adoption have shifted notably between 2022 and 2025. While early concerns questioned the fundamental value of AI, current challenges are more practical, focusing on implementation costs, training deficiencies, and ethical considerations [22].

Table: Key Barriers and Risks to AI Adoption in Reproductive Medicine

| Category | Specific Barrier/Risk | 2025 Survey Prevalence |

|---|---|---|

| Practical Barriers | High implementation cost | 38.01% [22] |

| Lack of training | 33.92% [22] | |

| Ethical & Legal Risks | Over-reliance on technology | 59.06% [22] |

| Data privacy concerns | Significant concern [22] | |

| Perception Shifts | Perceived value (Lack of proven utility) | Less dominant concern in 2025 [22] |

Troubleshooting Guide: Addressing Adoption Barriers

- Problem: High Implementation Cost

- Action Plan: Develop a phased implementation strategy. Begin with a pilot project focusing on a single, high-impact application like embryo selection to demonstrate ROI before expanding [22].

- Problem: Lack of Training

- Action Plan: Create a continuous education program utilizing multiple channels. Encourage team participation in specialized AI conferences (cited by 35.67% for familiarity) and subscription to key academic journals (cited by 32.75%) [22].

- Problem: Over-reliance on Technology (Ethical Risk)

Which AI models and tools are most relevant for fertility prediction research?

Answer: Research utilizes a diverse set of AI models, from time-series forecasting to complex ensemble methods, each suited to different prediction tasks such as live birth outcomes or demographic trends.

Table: AI Models and Applications in Fertility Research

| Model Name | Primary Application | Key Performance Metric | Research Context |

|---|---|---|---|

| XGBoost [24] [16] | Predicting clinical pregnancy from IVF clinical data [24] | AUC: 0.999 (95% CI: 0.999-1.000) for pregnancy prediction [24] | Trained on data from 2,625 women; uses clinical and hormonal factors [24] |

| LightGBM [24] | Predicting live birth from IVF clinical data [24] | AUC: 0.913 (95% CI: 0.895–0.930) for live birth prediction [24] | Trained on data from 2,625 women; uses clinical and hormonal factors [24] |

| Prophet [16] | Forecasting annual birth totals (Time-series) [16] | RMSE = 6,231.41 (CA), MAPE = 0.83% (CA) [16] | Used to project state-level births through 2030; outperformed linear regression [16] |

| BELA (AI System) [23] | Predicting embryo ploidy (euploidy/aneuploidy) [23] | Higher accuracy than predecessor (STORK-A); validated on external datasets [23] | Analyzes time-lapse video images and maternal age; allows for non-invasive assessment [23] |

| DeepEmbryo [23] | Predicting pregnancy outcomes from static embryo images [23] | 75.0% accuracy in predicting pregnancy outcomes [23] | Accessible tool for labs without time-lapse incubators [23] |

Experimental Protocol for Developing a Fertility Prediction Model: This protocol is based on a 2025 study that developed models to predict IVF pregnancy outcomes [24].

- Dataset Curation: Clinical data from 2,625 women who underwent fresh cycle IVF-ET between 2016 and 2022 was used. Inclusion criteria were age (20-40 years) and fresh cycle transfer. Exclusion criteria were systemic diseases (e.g., hypertension, diabetes), male-factor infertility, chromosomal abnormalities, and records with significant data gaps [24].

- Feature Collection: A wide range of features was collected, including patient age, infertility factors, BMI, basic female sex hormone levels (on day 2-3 of menstruation), karyotype analysis, and parameters from the IVF cycle (e.g., Gn dose, oocytes retrieved, number of high-quality embryos) [24].

- Model Training and Validation: The dataset was divided into a training set (80%) and a test set (20%). Multiple machine learning models (XGBoost, LightGBM, Random Forest, etc.) were constructed and evaluated. The model with the highest Area Under the Curve (AUC) for the specific outcome (pregnancy or live birth) was selected [24].

How can we improve the generalization of fertility prediction models for diverse populations?

Answer: Improving model generalization is a critical, open challenge. Key strategies include employing explainable AI (XAI) techniques to understand model drivers, using federated learning to train on more diverse datasets without centralizing sensitive data, and conducting rigorous external validation [16] [25].

Methodology for an Explainable AI (XAI) Approach: This methodology explains the process used in a 2025 study that combined forecasting with interpretability to understand fertility trends [16].

- Data Preparation: Obtain state-level reproductive health statistics (e.g., annual births, abortions, miscarriages). Filter the data for the populations of interest (e.g., California and Texas from 1973-2020). Handle missing values via forward-filling or interpolation [16].

- Forecasting and Regression: Utilize the Prophet model for time-series forecasting of annual births. In parallel, apply the XGBoost regression model to understand the non-linear relationships between predictors (e.g., abortion totals, miscarriage totals) and birth outcomes [16].

- Interpretability with SHAP: Calculate SHapley Additive exPlanations (SHAP) values for each predictor in the XGBoost model. SHAP values quantify the contribution of each feature to the model's predictions for individual outcomes. Generate feature importance plots and dependence plots to interpret how predictors influence birth totals [16].

- Validation: Benchmark the performance of the advanced models (Prophet, XGBoost) against a baseline linear regression model using Root Mean Squared Error (RMSE) and Mean Absolute Percentage Error (MAPE) [16].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential AI Tools and Analytical Components for Fertility Research

| Tool / Component | Function in Research | Specific Example / Note |

|---|---|---|

| XGBoost / LightGBM | Powerful, gradient-boosting frameworks for building predictive models on structured clinical data [24] [16]. | Achieved high AUC (0.999) for pregnancy prediction in a 2025 study [24]. |

| Prophet | A time-series forecasting procedure for analyzing trends and making projections on temporal data [16]. | Used to forecast annual state-level births through 2030 [16]. |

| SHAP (SHapley Additive exPlanations) | An explainable AI (XAI) method to interpret the output of complex machine learning models [16]. | Identified miscarriage totals and abortion access as key drivers of fertility outcomes [16]. |

| Convolutional Neural Networks (CNNs) | Deep learning models ideal for analyzing image-based data, such as embryo micrographs or time-lapse videos [23]. | Core technology behind tools like BELA and DeepEmbryo for embryo selection [23]. |

| BELA System | An automated AI tool that predicts embryo ploidy status using time-lapse imaging and maternal age [23]. | Trained on nearly 2,000 embryos; offers a non-invasive alternative to PGT-A [23]. |

| DeepEmbryo | An AI tool that predicts pregnancy outcomes using only three static embryo images, increasing accessibility [23]. | Demonstrated 75.0% accuracy, useful for labs without time-lapse systems [23]. |

Architectural Innovations and Feature Engineering for Robust Models

FAQs: Model Selection and Troubleshooting for Fertility Prediction

FAQ 1: How do I choose between a CNN, a Tree-Based Ensemble, and a Transformer for my fertility prediction project?

The choice depends on your data type, dataset size, and the specific predictive task.

| Model Architecture | Best For | Data Requirements | Key Strengths | Common Pitfalls |

|---|---|---|---|---|

| Tree-Based Ensembles (e.g., Random Forest, XGBoost) | Tabular clinical data (age, hormone levels, embryo grade) [26]. | Low; performs well on small-to-midsize datasets [2]. | High interpretability; handles mixed data types; strong performance on tabular data [26] [27]. | May struggle with very complex, non-linear relationships compared to deep learning. |

| Convolutional Neural Networks (CNNs) | Image-based data (embryo micrographs, ultrasound images) [28]. | Moderate to High; requires many images for training [29]. | Automatic feature extraction from images; proven success in computer vision [30] [31]. | "Black box" nature; requires large, labeled image datasets; computationally intensive [30]. |

| Transformers | Complex, multi-modal data or very large datasets [32] [33]. | Very High; requires large datasets to avoid overfitting [29]. | Captures complex, long-range dependencies in data; highly scalable [33]. | Computationally expensive; requires significant expertise to implement and tune [34]. |

Troubleshooting Tip: If you have a small dataset (<100,000 samples), start with a tree-based model like Random Forest or XGBoost, which have shown strong performance in clinical settings [2] [26]. Reserve CNNs and Transformers for projects with access to very large, image-rich datasets.

FAQ 2: My model performs well on training data but poorly on new patient data. How can I improve generalization?

This is a classic case of overfitting. Here are several strategies to improve your model's generalization:

- Data Augmentation: If using image data, artificially expand your training set by applying random rotations, flips, and crops to your images. This teaches the model to recognize objects regardless of their orientation or position [30].

- Regularization Techniques:

- Dropout: Randomly "turn off" a percentage of neurons in the network during training. This prevents the model from becoming over-reliant on any single neuron and forces it to learn more robust features [30].

- SMOTE (Synthetic Minority Oversampling Technique): If your dataset has imbalanced outcomes (e.g., many more failed cycles than live births), use SMOTE to generate synthetic examples of the minority class. This prevents the model from being biased toward the majority class [27].

- Center-Specific Modeling: A model trained on national data may not generalize well to a specific clinic due to population differences. Research shows that building Machine Learning, Center-Specific (MLCS) models can significantly improve performance over a one-size-fits-all model by accounting for local patient demographics and practices [2].

- Feature Selection: Use optimization techniques like Particle Swarm Optimization (PSO) or tree-based feature importance to identify and use only the most clinically relevant features. This reduces noise and complexity, helping the model focus on what truly matters [32].

FAQ 3: How can I make my "black box" model's predictions more interpretable for clinicians?

Interpretability is crucial for clinical adoption. For tree-based models, you can directly visualize feature importance. For all models, especially CNNs and Transformers, use SHapley Additive exPlanations (SHAP) analysis.

SHAP quantifies the contribution of each input feature to the final prediction for an individual patient [32] [27]. This allows you to generate explanations like: "The model predicted a 65% probability of live birth, primarily due to the patient's young age (28) and high embryo grade (4AA)." Providing this context builds trust and facilitates clinical decision-making.

Experimental Protocols for Fertility Prediction Models

Protocol 1: Developing a Tree-Based Ensemble for Live Birth Prediction

This protocol is based on a study that achieved an AUC > 0.8 using Random Forest [26].

1. Data Preprocessing:

- Source: 11,728 records of fresh embryo transfer cycles with 55 pre-pregnancy features [26].

- Key Features: Female age, grades of transferred embryos, number of usable embryos, endometrial thickness [26].

- Handling Missing Data: Use non-parametric imputation methods like

missForestto handle missing values without introducing bias [26]. - Class Balancing: Apply SMOTE to the training data to balance the ratio of live birth vs. non-live birth outcomes [27].

2. Model Training & Evaluation:

- Algorithms: Train and compare multiple models including Random Forest (RF), XGBoost, and LightGBM [26].

- Hyperparameter Tuning: Use a grid search approach with 5-fold cross-validation to optimize hyperparameters. The area under the ROC curve (AUC) should be the primary evaluation metric [26].

- Validation: Strictly separate data into training, validation, and testing sets. Perform external validation on a hold-out dataset from a different time period to ensure real-world applicability (Live Model Validation) [2].

Protocol 2: Implementing a CNN or Transformer for Embryo Selection

This protocol is based on AI models for analyzing embryo images [28].

1. Data Preparation:

- Image Standardization: Collect a large dataset of time-lapse embryo images or blastocyst micrographs. Standardize image size and lighting conditions.

- Annotation: Each image must be labeled with the known outcome (e.g., implantation success, blastocyst formation).

2. Model Selection & Training:

- CNN Setup: Use a pre-trained architecture (e.g., EfficientNet). Replace the final classification layer and fine-tune the network on your embryo image dataset [29].

- Transformer Setup: For a multi-modal approach, a model like the TabTransformer can be used. It combines transformer-based processing for categorical clinical data with other numerical features [32].

- Feature Selection: Integrate Particle Swarm Optimization (PSO) into your pipeline to select the most optimal set of features from both images and clinical data before training the final model [32].

- Training: Use transfer learning to speed up training. Monitor for overfitting by tracking performance on a validation set.

Model Selection and Integration Workflow

Multi-Modal Data Integration for IVF Prediction

Research Reagent Solutions: Essential Materials for Fertility AI Research

| Item / Technique | Function / Purpose | Application Example |

|---|---|---|

| Tree-Based Ensembles (XGBoost, LightGBM) | A powerful machine learning algorithm for structured/tabular data. It often provides state-of-the-art results for classification and regression tasks. | Predicting live birth outcomes from patient clinical data (age, BMI, embryo grade) [26] [27]. |

| Convolutional Neural Network (CNN) | A class of deep neural networks most commonly applied to analyzing visual imagery. It automatically and adaptively learns spatial hierarchies of features. | Analyzing embryo or blastocyst images to assign a viability score for embryo selection [28]. |

| Transformer Architecture | A model architecture that uses self-attention mechanisms to weigh the importance of different parts of the input data, excelling at capturing long-range dependencies. | Building a unified model that integrates both clinical data and image-based features for a holistic prediction [32]. |

| SHAP (SHapley Additive exPlanations) | A game theoretic approach to explain the output of any machine learning model. It connects optimal credit allocation with local explanations. | Interpreting a model's prediction to understand which factors (e.g., female age, embryo quality) most influenced the outcome [32] [27]. |

| SMOTE | A synthetic data generation technique to balance class distribution in a dataset. It creates new, synthetic examples from the minority class. | Addressing class imbalance in a dataset where successful live births are less frequent than unsuccessful cycles [27]. |

| Particle Swarm Optimization (PSO) | A computational method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given measure of quality. | Selecting the most relevant combination of clinical and image-derived features to improve model accuracy and reduce overfitting [32]. |

Frequently Asked Questions (FAQs)

General Feature Selection Troubleshooting

Q1: My model is overfitting despite using feature selection. What could be wrong? A common issue is conducting feature selection on the entire dataset before splitting it into training and testing sets, which leaks information. Always perform feature selection within each fold of cross-validation or on the training set only. Using Permutation Feature Importance on training data can falsely highlight irrelevant features if the model has overfit [35] [36]. Ensure you are using a held-out test set for final evaluation.

Q2: How do I handle highly correlated features in selection? Highly correlated features can skew the results of some selection methods. PCA inherently handles this by creating uncorrelated components [37] [38]. For Permutation Importance, consider using the conditional permutation approach, which accounts for feature dependencies, though it is more complex to implement [36]. Alternatively, a pre-processing step to remove highly correlated features based on a simple correlation matrix can be effective.

Q3: Which feature selection method is the best for fertility prediction models? There is no single best method; each has strengths. For high-dimensional data (many features), PCA is excellent for compression and noise reduction [38] [39]. For identifying the most predictive subset of original features, PSO is a powerful global search tool [40]. To understand which features your final model relies on most, Permutation Importance is model-agnostic and reliable [35] [36]. A hybrid approach, as demonstrated in infertility research, often yields the best results [40].

Q4: Why does PCA not directly give me a subset of my original features? PCA is a feature extraction technique, not a strict feature selection method. It creates new features (principal components) that are linear combinations of all original features [37] [38]. If your goal is to select a subset of the original features (e.g., for model interpretability), you should use the loadings from the first component(s) to identify and retain the original features with the highest absolute coefficients [37].

PCA-Specific Issues

Q5: Should I scale my data before applying PCA? Yes, it is critical to scale your data (e.g., standardization) before PCA. PCA is sensitive to the variance of features, and if features are on different scales, those with larger ranges will dominate the first principal components, regardless of their true importance [38].

Q6: How many principal components should I retain? The number of components is a trade-off between dimensionality reduction and information retention. A common approach is to choose the number of components that capture a high percentage (e.g., 95-99%) of the total variance in the data. You can use a scree plot to visually identify the "elbow," where the marginal gain in explained variance drops [37] [38].

PSO-Specific Issues

Q7: How do I choose parameters for PSO (inertia weight, cognitive/social coefficients)? Parameter selection significantly impacts PSO performance. The inertia weight (w) should be less than 1 to prevent divergence. Typical values for the cognitive (c1) and social (c2) coefficients are between 1 and 3. The constriction coefficient method is one approach for deriving balanced parameters [41]. For fertility prediction models, you may need to tune these parameters specifically for your dataset [40].

Q8: My PSO algorithm converges to a local optimum. How can I improve exploration? This indicates an imbalance between exploration and exploitation. Try decreasing the inertia weight to encourage local search or adjusting the cognitive and social coefficients. A higher cognitive coefficient favors personal best positions (exploration), while a higher social coefficient favors the swarm's best position (exploitation) [41] [42]. You can also implement adaptive PSO (APSO), where parameters like the inertia weight change during the run to transition from exploration to exploitation [41].

Permutation Importance Issues

Q9: The Permutation Importance for my two highly correlated features is low. Why? When features are correlated, permuting one feature alone may not significantly increase the model error because the model can still get similar information from the correlated feature. This is a known limitation of the standard (marginal) Permutation Importance. The conditional Permutation Importance method was developed to address this by accounting for feature dependencies [36].

Q10: What is a significant value for Permutation Importance? A good practice is to run the permutation process multiple times (e.g., 30 repeats) to get a distribution of importance scores [35]. A feature is generally considered important if the mean importance score is positive and its distribution (e.g., mean minus two standard deviations) is clearly above zero. This helps distinguish true importance from random noise [35].

Experimental Protocols & Methodologies

Protocol for Principal Component Analysis (PCA)

Objective: To reduce dimensionality and noise in a high-dimensional fertility dataset prior to model training.

Materials:

- Dataset (e.g., patient hormone levels, ultrasound metrics, embryo morphology scores).

- StandardScaler from

scikit-learn. - PCA from

sklearn.decomposition.

Methodology:

- Data Preprocessing: Handle missing values and scale all features to have zero mean and unit variance using

StandardScaler()[38]. - PCA Fitting: Fit the PCA model to the scaled training data. Do not fit on the entire dataset to avoid data leakage.

- Component Selection: Determine the optimal number of components (k) by analyzing the cumulative explained variance ratio, targeting ~95-99% variance retention [38].

- Transformation: Transform the original training and test features into the new k-dimensional subspace using the fitted PCA object.

- Model Training: Train your predictive model (e.g., Random Forest, Neural Network) on the transformed training features.

Protocol for Particle Swarm Optimization (PSO) for Feature Selection

Objective: To identify an optimal subset of features from the original set that maximizes model performance for fertility outcome prediction.

Materials:

- Dataset.

- A predefined predictive model (e.g., Random Forest Classifier).

- An objective function (e.g., maximization of cross-validation accuracy).

Methodology:

- Swarm Initialization: Initialize a population (swarm) of particles. Each particle's position (Xi) is a binary vector representing a feature subset (1=include, 0=exclude). Initialize velocities (Vi) randomly [41] [42].

- Fitness Evaluation: For each particle, train and evaluate a model using the feature subset it represents. The model's performance (e.g., accuracy from 5-fold CV on the training data) is the particle's fitness [40].

- Update Personal & Global Best: Compare each particle's current fitness to its personal best (pbest). Update pbest if the current fitness is better. Identify the swarm's global best position (gbest) [41] [42].

- Update Velocity and Position: For each particle and dimension, update its velocity and position using the standard PSO equations [41] [42]:

- Velocity Update:

V_i(t+1) = w * V_i(t) + c1 * r1 * (pbest_i - X_i(t)) + c2 * r2 * (gbest - X_i(t)) - Position Update:

X_i(t+1) = X_i(t) + V_i(t+1) - (A sigmoid function is often applied to the position to convert it to a probability for binary selection.)

- Velocity Update:

- Termination: Repeat steps 2-4 until a stopping criterion is met (e.g., max iterations, no improvement in gbest).

- Final Evaluation: Train a final model using the feature subset defined by the final

gbestand evaluate it on the held-out test set.

Protocol for Permutation Feature Importance

Objective: To evaluate the contribution of each feature to the performance of a trained fertility prediction model.

Materials:

- A trained model.

- A held-out test set (Xtest, ytest) not used during model training or feature selection.

- A performance metric (L) such as Mean Absolute Error or F1-Score.

permutation_importancefromsklearn.inspection.

Methodology:

- Baseline Score: Calculate the baseline score (e_orig) of the model on the unmodified test set [35] [36].

- Permutation: For each feature j:

- Randomly shuffle the values of feature j in the test set to create a corrupted dataset (Xperm,j). This breaks the relationship between feature j and the target.

- Calculate the model's score (eperm,j) on this permuted dataset.

- Compute the importance score for feature j as the difference:

FI_j = e_orig - e_perm,j[35]. (For a metric where higher is better, like accuracy,FI_j = e_perm,j - e_orig).

- Repetition & Aggregation: Repeat the permutation process (e.g., n_repeats=30) to get a distribution of importance scores for each feature. The final importance is the mean of these repetitions, and the standard deviation indicates its stability [35].

- Interpretation: Features with a high positive mean importance are the most critical. The results can be visualized with a boxplot or bar chart.

Data Presentation

Table 1: Comparison of Feature Selection Techniques for Fertility Prediction

| Technique | Type | Key Hyperparameters | Computational Cost | Strengths | Limitations in Fertility Context |

|---|---|---|---|---|---|

| Principal Component Analysis (PCA) [37] [38] | Feature Extraction | Number of components (k), Scaler | Low | Handles multicollinearity; reduces noise; useful for visualization. | Loss of interpretability (new features are not original clinical variables). |

| Particle Swarm Optimization (PSO) [41] [40] [42] | Wrapper | Swarm size, iterations, inertia (w), c1, c2 | Very High | Powerful global search; can find complex, non-linear interactions; retains original features. | Computationally intensive; requires careful parameter tuning; risk of overfitting without proper CV. |

| Permutation Importance (PI) [35] [36] | Model-Specific | Number of repeats (n_repeats) | Medium (no retraining) | Model-agnostic; intuitive interpretation; accounts for all feature interactions. | Can be biased by correlated features (marginal version); requires a held-out test set. |

Table 2: Key Features Identified in Fertility Prediction Literature

The following table summarizes features identified as important in recent studies using advanced feature selection and modeling on IVF/ICSI data.

| Feature Category | Specific Feature | Description / Rationale | Citation |

|---|---|---|---|

| Ovarian Reserve & Stimulation | Follicle-Stimulation Hormone (FSH) | Indicator of ovarian reserve; higher levels can correlate with reduced success. | [40] |

| Number of Oocytes Retrieved | A key metric of response to ovarian stimulation. | [40] [39] | |

| Embryo Quality | Embryo Quality (e.g., GIII) | Morphological grading of embryos before transfer. | [40] |

| Blastocyst Development Rate | Rate of embryos developing to blastocyst stage. | [39] | |

| 16-Cell Stage | Presence of a 16-cell embryo is a positive predictor. | [40] | |

| Patient Demographics | Female Age (FAge) | Single most important factor affecting egg quality and quantity. | [40] [43] [44] |

| Laboratory KPIs | Metaphase II (MII) Oocyte Rate | Proportion of mature eggs retrieved, a laboratory competency metric. | [39] |

| Fertilization Rate | Rate of successfully fertilized oocytes. | [39] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Feature Selection Experiments

| Item | Function in Context | Example / Note |

|---|---|---|

Python scikit-learn Library |

Provides implementations for PCA (decomposition.PCA) and Permutation Importance (inspection.permutation_importance). |

Industry standard for machine learning prototyping. [35] [38] |

PSO Python Library (e.g., pyswarm) |

Provides a pre-built PSO optimizer for feature selection tasks. | Allows researchers to focus on the fitness function rather than algorithm implementation. |

| Medical Information System Database | Source of structured clinical and laboratory data for model training. | Should include cycle outcomes (clinical pregnancy) for supervised learning. [39] |

| Computational Resources (GPU) | Accelerates the training of multiple models required for wrapper methods like PSO and for cross-validation. | Essential for large-scale hyperparameter tuning and deep learning models. [39] |

| Key Performance Indicator (KPI) Framework | Standardized metrics (e.g., fertilization rate, blastulation rate) to be used as features. | Ensures consistent, comparable data across different clinics or studies. [39] |

The Role of Explainable AI (XAI) and SHAP Analysis in Identifying Clinically Relevant Predictors

Troubleshooting Guide: FAQs on XAI and SHAP in Fertility Research

FAQ 1: Why is my SHAP analysis failing to run after model training, and how can I fix it?

Answer: This is a common issue often related to library compatibility, model object type, or data shape mismatches.

- Root Cause & Solution:

- Library Version Conflict: Incompatibility between your SHAP library version and other packages (e.g., scikit-learn, XGBoost) is a frequent cause. SHAP is the most discussed XAI tool on developer forums and a top source for troubleshooting queries [45].

- Action: Create a fresh virtual environment and install compatible versions of

shap,xgboost, andscikit-learn. Consistently use the same data type (e.g., NumPy arrays or Pandas DataFrames) for both model training and SHAP explanation generation. - Incorrect Explainer Object: Using the wrong explainer class for your model type (e.g.,

TreeExplainerfor a non-tree-based model) will cause failure. - Action: Ensure you are using the appropriate SHAP explainer.

TreeExplaineris for tree-based models like Random Forest and XGBoost, whileKernelExplaineris model-agnostic but slower.

FAQ 2: My SHAP summary plot shows a feature as important, but it is not clinically plausible. What should I do?

Answer: This signals a potential issue with your model or data, not necessarily with SHAP itself. SHAP faithfully explains the model's logic, which may be based on spurious correlations.

- Root Cause & Solution:

- Data Leakage: The model might be accidentally trained on a feature that contains information from the future or the target variable itself.

- Action: Rigorously audit your data preprocessing pipeline. Ensure that training data is strictly separated from validation/test data and that no precomputed statistics from the test set influence the training process.

- Model Bias: The model may have learned biases present in the training data.

- Action: Use SHAP dependence plots to investigate the relationship between the questionable feature and the model's output. Collaborate with clinical domain experts to validate whether the identified relationship is biologically or clinically reasonable [46].

FAQ 3: How can I improve the trust of clinical stakeholders in my fertility prediction model?

Answer: Transition from a "black-box" model to an interpretable one using XAI, which can increase clinician trust in AI-driven diagnoses by up to 30% [47].

- Root Cause & Solution:

- Lack of Transparency: Complex models like deep learning are inherently difficult to understand, leading to mistrust.

- Action: Integrate XAI techniques like SHAP and LIME directly into your clinical reporting [46]. Don't just report the prediction; provide a visualization of the top features that drove the decision. For example, in a lead toxicity prediction model for pregnant women, SHAP can show that "environmental contamination" and "occupational exposure" were the key predictors for a high-risk classification, allowing clinicians to verify the logic [46].

FAQ 4: My model has high accuracy, but the SHAP plots are visually cluttered and hard to interpret. How can I improve them?

Answer: This is a prevalent visualisation challenge. Plot customisation and styling is one of the largest subtopics discussed by developers in the XAI community [45].

- Root Cause & Solution:

- Too Many Features: Displaying all features, including low-importance ones, creates clutter.

- Action: Use SHAP's built-in functionality to display only the top N features (e.g.,

max_display=20in summary plots). Perform feature selection prior to modelling to reduce dimensionality. - Inadequate Customisation: The default plot settings may not be optimal for your specific dataset.

- Action: Leverage the plotting library's customisation options (e.g., in

matplotliborseaborn) to adjust figure size, font size, and color schemes for better clarity. Theshaplibrary also offers various plot types (beeswarm, violin, bar) that might be more suitable for your data distribution.

Key Experimental Protocols and Performance Data

This protocol is based on a study that used machine learning to identify key predictors of fertility preferences among women in Somalia [8].

- Objective: To predict the desire for more children versus the preference to cease childbearing and identify the most influential sociodemographic predictors.

- Dataset: 8,951 women aged 15–49 from the 2020 Somalia Demographic and Health Survey (SDHS) [8].

- Preprocessing: The outcome variable (fertility preference) was dichotomized. Predictor variables included age, education, parity, wealth index, residence, and distance to health facilities.

- Model Training: Seven ML algorithms were evaluated. The Random Forest model was selected as optimal.

- XAI Interpretation: SHAP (SHapley Additive exPlanations) was employed to quantify the contribution of each feature to the model's predictions [8].

- Key Output: A ranked list of the most influential predictors of fertility preferences.

Table 1: Performance Metrics of the Random Forest Model for Fertility Preference Prediction

| Metric | Value |

|---|---|

| Accuracy | 81% |

| Precision | 78% |

| Recall | 85% |

| F1-Score | 82% |

| AUROC | 0.89 |

Table 2: Top Predictors of Fertility Preferences Identified by SHAP Analysis [8]

| Rank | Predictor | Clinical/Demographic Relevance |

|---|---|---|

| 1 | Age Group | Women aged 45-49 were significantly more likely to prefer no more children. |

| 2 | Region | Geographic location captured unobserved cultural and economic factors. |

| 3 | Number of Births (Last 5 Years) | A direct measure of recent fertility activity. |

| 4 | Number of Children Born (Parity) | Higher parity was strongly linked to a desire to stop childbearing. |

| 5 | Distance to Health Facilities | Emerged as a critical barrier, influencing reproductive intentions. |

Protocol: Forecasting Live Birth in IVF with XGBoost and SHAP