Breaking the Data Bottleneck: Strategies for Managing and Analyzing Reproductomics Data in Biomedical Research

The emerging field of reproductomics, which applies multi-omics technologies to reproductive medicine, faces significant data management bottlenecks that hinder research progress and clinical translation.

Breaking the Data Bottleneck: Strategies for Managing and Analyzing Reproductomics Data in Biomedical Research

Abstract

The emerging field of reproductomics, which applies multi-omics technologies to reproductive medicine, faces significant data management bottlenecks that hinder research progress and clinical translation. This article explores the foundational challenges of managing vast, complex reproductive datasets, examines methodological approaches for data integration and analysis, discusses optimization strategies to enhance data quality and reproducibility, and evaluates validation frameworks for predictive models. Targeting researchers, scientists, and drug development professionals, we provide a comprehensive roadmap for navigating data management challenges in reproductomics to accelerate discoveries in reproductive health and assisted reproductive technologies.

Understanding the Reproductomics Data Deluge: Sources, Scale, and Complexity

Reproductomics is a rapidly emerging field that utilizes computational tools and multi-omics technologies to analyze and interpret reproductive data with the aim of improving reproductive health outcomes [1]. This discipline investigates the complex interplay between hormonal regulation, environmental factors, genetic predisposition (including DNA composition and epigenome), and resulting biological responses [1]. By integrating data from genomics, transcriptomics, epigenomics, proteomics, metabolomics, and microbiomics, reproductomics provides a comprehensive framework for understanding the molecular mechanisms underlying various physiological and pathological processes in reproduction [1].

The field has significantly advanced our understanding of diverse reproductive conditions including infertility, polycystic ovary syndrome (PCOS), premature ovarian insufficiency (POI), uterine fibroids, and reproductive cancers [1]. Through the application of machine learning algorithms, gene editing technologies, and single-cell sequencing techniques, reproductomics enables researchers to predict fertility outcomes, correct genetic abnormalities, and analyze gene expression patterns at individual cell resolution [1].

Data Management Bottlenecks in Reproductomics Research

Core Data Challenges

The analysis and interpretation of vast omics data in reproductive research is complicated by the cyclic regulation of hormones and multiple other factors [1]. Researchers face several significant bottlenecks in data management:

Data Volume and Complexity: The advent of high-throughput omics technologies has led to a situation where data volumes vastly surpass our ability to thoroughly analyze and interpret them [1]. While millions of gene expression datasets are available in public repositories like the Gene Expression Omnibus (GEO) and ArrayExpress, this abundance can become an impediment, requiring powerful tools for distilling biologically significant conclusions [1].

Underutilization of Data: A substantial proportion of data generated by high-throughput techniques remains considerably underutilized. Many researchers tend to concentrate on a restricted subset of available data to draw comparisons with their own results rather than fully exploiting the wealth of available information [1].

Integrative Analysis Challenges: Reproductomics involves correlating data from multiple omics layers, which presents challenges in both execution and interpretation [1]. For instance, understanding the relationship between epigenomic modifications (such as DNA methylation) and transcriptomic fluctuations requires sophisticated analytical approaches that can account for non-linear associations [1].

Table 1: Common Data Management Bottlenecks in Reproductomics

| Bottleneck Category | Specific Challenge | Impact on Research |

|---|---|---|

| Data Heterogeneity | Variations in data types, scales, and distributions across omics modalities [2] [3] | Complicates integration and requires extensive normalization |

| High-Dimensionality | Significantly more variables (features) than samples (HDLSS problem) [3] | Increases risk of overfitting and reduces generalizability of models |

| Missing Values | Incomplete datasets across omics modalities [3] | Hampers downstream integrative bioinformatics analyses |

| Technical Variability | Batch effects and measurement inaccuracies [4] [5] | Reduces experimental reproducibility and introduces confounding noise |

Multi-Omics Integration Strategies for Reproductomics

Integration Approaches

The integration of heterogeneous multi-omics data presents a cascade of challenges involving unique data scaling, normalization, and transformation requirements for each individual dataset [3]. Effective integration strategies must account for the regulatory relationships between datasets from different omics layers to accurately reflect the nature of this multidimensional data [3].

Table 2: Multi-Omics Data Integration Strategies for Reproductomics

| Integration Strategy | Technical Approach | Advantages | Limitations |

|---|---|---|---|

| Early Integration | Concatenates all omics datasets into a single large matrix [6] [3] | Simple and easy to implement [3] | Creates complex, noisy, high-dimensional matrix; discounts dataset size differences and data distribution [3] |

| Mixed Integration | Separately transforms each omics dataset into new representation before combining [3] | Reduces noise, dimensionality, and dataset heterogeneities [3] | Requires careful weighting of different data modalities |

| Intermediate Integration | Simultaneously integrates multi-omics datasets to output multiple representations [3] | Captures both common and omics-specific patterns [6] | Requires robust pre-processing to handle data heterogeneity [3] |

| Late Integration | Analyzes each omics separately and combines final predictions [6] [3] | Adapts well to specificities of each source [6] | Does not capture inter-omics interactions [3] |

| Hierarchical Integration | Includes prior regulatory relationships between different omics layers [3] | Embodies intent of trans-omics analysis; reveals interactions across layers [3] | Limited generalizability; often focuses on specific omics types [3] |

Advanced Computational Frameworks

Several advanced computational frameworks have been developed specifically for multi-omics integration in biomedical research:

CustOmics: A versatile deep-learning based strategy for multi-omics integration that employs a two-phase approach. In the first phase, training is adapted to each data source independently before learning cross-modality interactions in the second phase. This approach succeeds at taking advantage of all sources more efficiently than other strategies and can provide interpretable results in a multi-source setting [6].

DeepMoIC: A framework utilizing deep Graph Convolutional Networks (GCN) for multi-omics data integration. This approach extracts compact representations from omics data using autoencoder modules and incorporates a patient similarity network through the similarity network fusion algorithm. The method handles non-Euclidean data and explores high-order omics information effectively [2].

INTRIGUE: A set of computational methods to evaluate and control reproducibility in high-throughput experiments. These approaches are built upon a novel definition of reproducibility that emphasizes directional consistency when experimental units are assessed with signed effect size estimates [4].

Troubleshooting Guides and FAQs for Reproductomics Experiments

Data Quality and Preprocessing Issues

FAQ: How can I handle missing values in my multi-omics dataset before integration?

- Challenge: Omics datasets often contain missing values, which can hamper downstream integrative bioinformatics analyses [3].

- Solution: Implement an imputation process to infer missing values in incomplete datasets before statistical analyses. The specific imputation method (e.g., k-nearest neighbors, matrix factorization, or model-based approaches) should be selected based on the nature of the missing data (missing completely at random, missing at random, or missing not at random) and the specific omics data type.

- Prevention: During experimental design, incorporate technical replicates and quality control measures to minimize missing data. Use standardized protocols for data generation to reduce technical variability.

FAQ: How can I address the high-dimensionality (many features, few samples) problem in my reproductomics study?

- Challenge: The high-dimension low sample size (HDLSS) problem occurs when variables significantly outnumber samples, leading machine learning algorithms to overfit these datasets and decreasing their generalizability on new data [3].

- Solution:

- Apply dimensionality reduction techniques such as Principal Component Analysis (PCA), Non-negative Matrix Factorization (NMF), or autoencoders [6] [2].

- Utilize regularization methods (L1/L2 regularization) in predictive models to prevent overfitting.

- Implement feature selection approaches based on biological knowledge or statistical criteria to reduce the feature space.

- Employ cross-validation strategies that account for the high-dimensionality setting.

- Advanced Approach: Use deep learning architectures like autoencoders to extract compact representations from high-dimensional omics data [2].

Data Integration and Analysis Issues

FAQ: What integration strategy should I choose for my heterogeneous multi-omics reproductomics data?

- Decision Framework:

- Choose Early Integration for simple, straightforward datasets with similar dimensions and distributions across omics types [3].

- Select Mixed or Intermediate Integration when you need to balance source-specific characteristics with cross-omics interactions [6] [3].

- Opt for Late Integration when omics sources have dramatically different characteristics or when analyzing each source independently is preferable [6].

- Consider Hierarchical Integration when prior knowledge of regulatory relationships between omics layers is available and crucial for your analysis [3].

- Implementation Tip: For reproductomics studies investigating hormonal cycling effects, Mixed or Intermediate integration strategies often perform best as they can accommodate cyclic variations while capturing cross-omics interactions.

FAQ: How can I improve the reproducibility of my reproductomics data analysis?

- Fundamental Practice: Ensure documentation of methods in such a way that their deduction is fully transparent. This requires detailed description of methods used to obtain data and making the full dataset and code to calculate results easily accessible [7] [8].

- Technical Solutions:

- Utilize version control systems (e.g., Git) for all code and analysis scripts.

- Implement containerization (e.g., Docker, Singularity) to capture complete computational environments.

- Adopt workflow management systems (e.g., Nextflow, Snakemake) for automated, reproducible analysis pipelines.

- Maintain detailed electronic lab notebooks that document both wet-lab and computational procedures.

- Quality Assessment: Incorporate quality control (QC) and quality assessment (QA) approaches to spot analytical issues, reduce experimental variability, and increase confidence in analytical results [5].

Interpretation and Validation Issues

FAQ: Why do I get different biomarker signatures when analyzing similar reproductomics datasets?

- Challenge: Discrepancies in experimental design, sampling procedures, data processing pipelines, and data presentation standards can lead to inconsistent findings across studies [1].

- Solution:

- Perform meta-analysis using robust rank aggregation methods to compare distinct gene lists and identify common overlapping genes [1].

- Analyze raw expression datasets when possible, rather than processed results.

- Utilize systems biology approaches that integrate multiple omics layers to generate more robust computational models [1].

- Case Example: In endometrial receptivity studies, a meta-analysis of nine datasets identified 57 potential biomarkers, with only a small subset (SPP1, PAEP, GPX3, etc.) showing consistent evidence across studies [1].

Experimental Workflows in Reproductomics

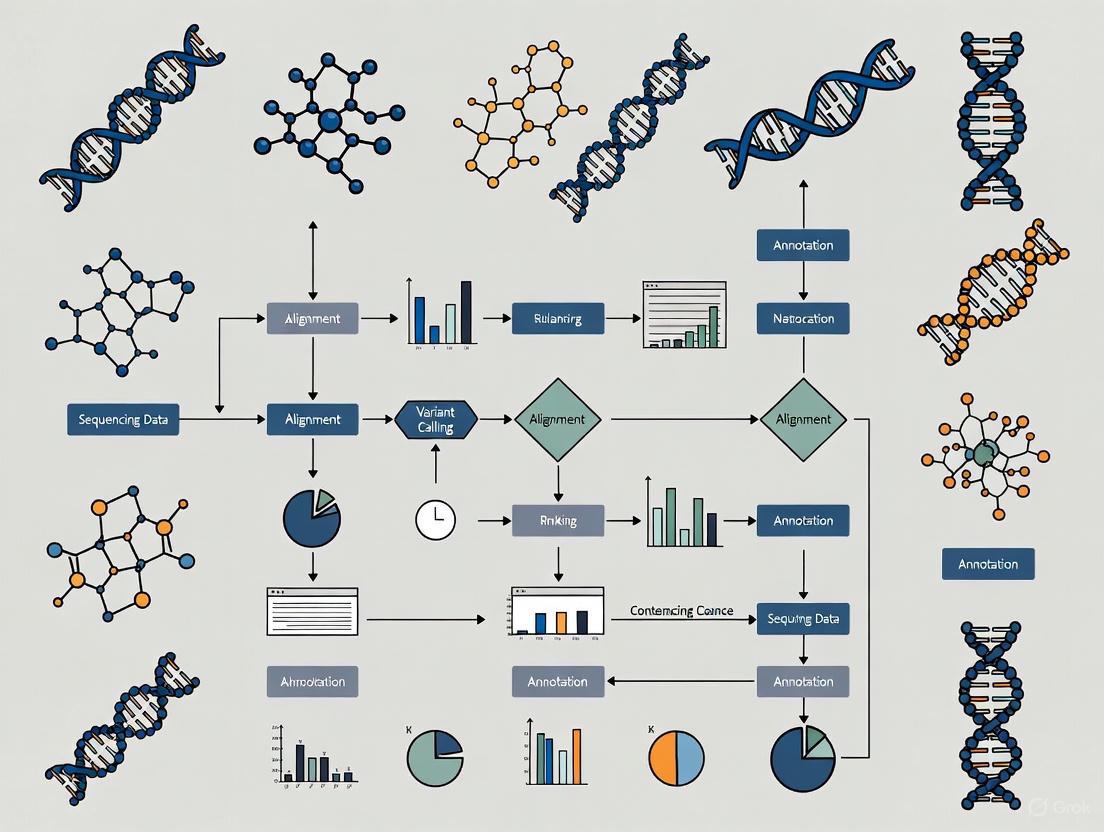

The typical workflow for reproductomics research involves multiple stages from experimental design through data integration and interpretation. The following diagram illustrates a generalized workflow for reproductomics studies:

Reproductomics Experimental Workflow

Essential Research Reagents and Computational Tools

Research Reagent Solutions for Reproductomics

Table 3: Essential Research Reagents and Platforms for Reprodutomics Studies

| Reagent/Platform | Function | Application in Reproductomics |

|---|---|---|

| High-Throughput Sequencers | Comprehensive DNA/RNA sequencing | Genomic and transcriptomic profiling of reproductive tissues [1] |

| Mass Spectrometers | Protein and metabolite identification and quantification | Proteomic and metabolomic analysis of reproductive samples [1] |

| DNA Methylation Kits | Assessment of epigenetic modifications | Epigenomic studies of hormonal regulation in endometrial tissue [1] |

| Single-Cell RNA Seq Kits | Gene expression profiling at individual cell level | Analysis of cellular heterogeneity in ovarian follicles or testicular tissue [1] |

| Cell Culture Media | Maintenance of primary reproductive cells | In vitro models of endometrial receptivity or gametogenesis [1] |

Computational Tools for Reproductomics Data Analysis

Table 4: Key Computational Tools and Databases for Reproductomics

| Tool/Database | Function | Application Context |

|---|---|---|

| Gene Expression Omnibus (GEO) | Public repository of functional genomics data | Accessing endometrial transcriptome data for receptivity studies [1] |

| Human Gene Expression Endometrial Receptivity Database (HGEx-ERdb) | Specialized database of endometrial gene expression | Identifying genes associated with endometrial receptivity (contains 19,285 genes) [1] |

| INTRIGUE | Statistical framework for reproducibility assessment | Evaluating and controlling reproducibility in high-throughput reproductomics experiments [4] |

| CustOmics | Deep learning-based multi-omics integration | Integrating heterogeneous omics data for classification and survival prediction [6] |

| DeepMoIC | Graph convolutional network for multi-omics | Cancer subtype classification in reproductive cancers [2] |

FAQs on Omics Data Management

1. What are the most common bottlenecks in omics data analysis? The three most pressing challenges are data processing, inefficient bioinformatics infrastructure, and collaboration between different teams. Data pre-processing is a significant bottleneck, requiring improved infrastructure to make analysis accessible to those without advanced programming skills [9].

2. Where should I deposit my different types of omics data? Appropriate repositories depend on your data type. Here are the recommended destinations [10]:

Table: Recommended Repositories for Omics Data

| Data Type | Data Formats | Repository |

|---|---|---|

| DNA sequence data (amplicon, metagenomic) | Raw FASTQ | NCBI SRA |

| RNA sequence data (RNA-Seq) | Raw FASTQ | NCBI SRA |

| Functional genomics data (ChIP-Seq, methylation seq) | Metadata, processed data, raw FASTQ | NCBI GEO (raw data to SRA) |

| Genome assemblies | FASTA or SQN file | NCBI WGS |

| Mass spectrometry data (metabolomics, proteomics) | Raw mass spectra, MZML, MZID | ProteomeXChange, Metabolomics Workbench |

| Feature observation tables | BIOM format (HDF5), tab-delimited text | NCEI, Zenodo, or Figshare |

3. How can I effectively integrate different types of omics data (e.g., transcriptomic and metabolomic)? Several data-driven approaches exist for integration without prior biological knowledge [11]:

- Statistical & Correlation-based: Use simple scatter plots, Pearson’s/Spearman’s correlation, or Procrustes analysis to relate features from different datasets.

- Correlation Networks: Construct networks where nodes represent biological entities and edges are based on correlation thresholds. Tools like WGCNA can identify clusters (modules) of highly correlated features across omics layers [11].

- Multivariate & Machine Learning Methods: Employ tools like the

mixOmicsR package for methods such as sparse PLS-Discriminant Analysis, or use other AI-driven integration frameworks [12] [11].

4. What are the key considerations for creating accessible data visualizations? Color should not be the only way information is conveyed. Ensure sufficient contrast (a 3:1 ratio is recommended by WCAG 2.1) for all critical graphical elements against their background. Use additional cues like textures, shapes, divider lines, and accessible axes to assist in data interpretation [13].

Troubleshooting Guides

Issue: Managing the Sheer Volume and Storage of Omics Data

Problem: The enormous volume of FASTQ, BAM, and other omics files complicates storage, processing, and analysis [14].

Solution:

- Immediate Redundant Storage: Upon receipt, immediately store raw sequencing data (e.g., FASTQ files) in two separate locations (e.g., a local drive and an institutional cloud drive) [10].

- Systematic Tracking: Record file names, associated projects, and metadata in a centralized, backed-up spreadsheet [10].

- Scheduled Archiving: Differentiate between raw, intermediate, and processed data. Implement a regularly scheduled backup plan for storage locations. Archive intermediate files from computationally intensive analyses until project completion or public deposition [10].

- Leverage Repositories: Submit raw data and final data analysis products to relevant public repositories for long-term archiving, as detailed in the FAQ above [10].

Issue: Integrating Multi-Omics Datasets with Missing Values

Problem: Integrating multiple omics views (e.g., genomics, proteomics) is challenging due to complex interactions and datasets with various view-missing patterns [15].

Solution:

- Advanced Computational Methods: Employ frameworks designed for incomplete multi-view observations. One effective approach is a deep variational information bottleneck method, which applies the information bottleneck framework to marginal and joint representations of the observed views. By modeling the joint representations as a product of marginal representations, it can efficiently learn from datasets with different missing-value patterns [15].

Issue: Overcoming Data Quality and Collaboration Bottlenecks

Problem: Data quality issues and inefficient collaboration between bioinformaticians, biologists, and management staff can delay data interpretation [9] [14].

Solution:

- Data Quality Monitoring: Establish data quality monitoring standards to ensure decisions are based on reliable data. Recognize that "not all data is created equal" [14].

- Democratize Data Analysis: Utilize interactive data analysis and visualization platforms (e.g., Omics Playground) that allow biologists to explore data without advanced programming skills. This bridges the gap between technical and non-technical team members [9].

- Iterative Discussions: Facilitate iterative discussions between all stakeholders (biologists, bioinformaticians, project managers) to fully understand the underlying biological mechanisms, a process that can take weeks to months [9].

Standardized Experimental Protocols

Protocol 1: Integrated Transcriptomic and Metabolomic Profiling of Plant Tissues

This protocol is adapted from a study on Breynia androgyna and provides a framework for generating coupled transcriptome and metabolome datasets [16].

1. Sample Collection and Preparation

- Cultivate plants under controlled conditions.

- Harvest tissue samples (e.g., leaf, flower, fruit, stem) and immediately process them to preserve RNA integrity and metabolite stability.

- Grind tissues into a fine powder under liquid nitrogen.

2. Metabolite Extraction and LC-MS Profiling

- Extraction: For each tissue type, add acidified methanol (HPLC grade) to the powdered sample. Vortex and sonicate the mixture to enhance metabolite solubilization. Centrifuge, filter the supernatant, and transfer to LC-MS vials. Prepare triplicate biological replicates [16].

- LC-MS Instrumentation:

- Chromatography: Use a UPLC system with a C18 column (e.g., Thermo Scientific Acclaim Polar Advantage II).

- Mass Spectrometry: Perform detection using a mass spectrometer (e.g., MicrOTOF-Q III) in positive electrospray ionization (ESI+) mode, scanning an m/z range of 50-1000 [16].

- Data Processing: Process raw data with appropriate software (e.g., Compass DataAnalysis). Apply an internal standard (e.g., vanillic acid) for normalization. Export data for statistical analysis in CSV format [16].

3. RNA Extraction, Sequencing, and Transcriptome Assembly

- RNA Extraction: Isolate total RNA using a modified protocol. Assess RNA quality using an Agilent Bioanalyzer (RIN > 7.0) and quantify via Nanodrop [16].

- Library Prep and Sequencing: Construct strand-specific cDNA libraries (e.g., using SureSelect RNA Library Prep Kit). Sequence on an Illumina HiSeq platform (e.g., 150 bp paired-end) [16].

- De Novo Transcriptome Assembly: Trim raw reads for adapters and quality (Phred score > Q20). Perform de novo assembly using Trinity software. Predict coding sequences (CDS) with TransDecoder [16].

- Functional Annotation: Annotate assembled unigenes using BLASTx against public databases (Nr, Swiss-Prot, KEGG, GO) with an e-value cutoff of 1e-5 [16].

Table: Key Research Reagents and Materials

| Item | Function |

|---|---|

| Acidified Methanol (HPLC grade) | Extraction of secondary metabolites from plant tissue powder. |

| UPLC System with C18 Column | Chromatographic separation of complex metabolite mixtures. |

| Q-TOF Mass Spectrometer | High-resolution mass detection for accurate metabolite profiling. |

| RNA Isolation Kit | Extraction of high-quality, intact total RNA. |

| Agilent 2100 Bioanalyzer | Assessment of RNA Integrity Number (RIN) to ensure sequencing quality. |

| SureSelect RNA Library Prep Kit | Construction of strand-specific cDNA libraries for sequencing. |

| Illumina HiSeq Platform | High-throughput sequencing of transcriptome libraries. |

The following workflow diagram illustrates the integrated transcriptomic and metabolomic profiling protocol:

Protocol 2: A Strategy for Multi-Omics Data Integration

This protocol outlines a knowledge-free, data-driven integration strategy for correlating features from different omics platforms, as reviewed in recent literature [11].

1. Data Preprocessing

- Format all omics datasets into matrices where rows represent samples and columns represent features (e.g., gene counts, metabolite intensities).

- Perform platform-specific normalization, log-transformation, and scaling as required.

2. Correlation-Based Integration using xMWAS

- Tool: Use the

xMWASplatform for pairwise association analysis [11]. - Analysis: The tool performs integration by combining Partial Least Squares (PLS) components and regression coefficients to determine association scores between features from different omics datasets [11].

- Network Construction: Generate an integrative network graph where nodes are omics features and edges are drawn if they meet a predefined threshold for association score and statistical significance [11].

- Community Detection: Identify highly interconnected communities within the network using a multilevel community detection algorithm that iteratively maximizes modularity [11].

The following diagram illustrates the logical flow of the data integration process:

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of poor data quality in single-cell sequencing, and how can I identify them? Poor data quality in single-cell sequencing often arises from issues during library preparation or the sequencing run itself. Common problems include adapter contamination, an overabundance of reads from a few highly expressed genes (sequence duplication), and a high percentage of base calls with low confidence (per-base N content) [17].

You can identify these issues by running quality control (QC) tools like FastQC on your raw FASTQ files. Key modules in the FastQC report to examine are the "Adapter Content," which should be near zero; the "Per Base N Content," which should be consistently at or near zero across the entire read length; and the "Sequence Duplication Levels," where high duplication can be expected but should be interpreted with caution as FastQC is not UMI-aware [17]. For multiple samples, use MultiQC to aggregate reports into a single view [17].

FAQ 2: My bioinformatics pipeline produces different results each time I run it, even with the same input data. Why does this happen, and how can I fix it? This irreproducibility is often caused by the inherent randomness (stochasticity) in some bioinformatics algorithms or by tools that are sensitive to the order in which input data is processed [18]. For instance, some structural variant callers can produce different variant call sets when the read order is shuffled [18].

To fix this, you should:

- Set Random Seeds: Ensure that any tool using a probabilistic algorithm allows you to set a fixed random seed. Record this seed in your workflow documentation [19].

- Use Containerized Workflows: Implement your pipeline using container technologies like Docker and workflow languages like the Common Workflow Language (CWL). This freezes the entire computational environment—including software versions, dependencies, and system libraries—guaranteeing identical execution every time [20] [19].

- Demand Provenance: Use workflow features, like the

--provenanceflag in CWL, to generate a detailed record of all inputs, outputs, and computational steps [20].

FAQ 3: I have successfully identified biomarker candidates in my discovery cohort, but they fail in a follow-up study. Is this a validation or a replication problem? This is a classic challenge and hinges on the distinction between validation and replication. Replication tests the same association under nearly identical circumstances (similar population, identical lab procedures and analysis pipelines) in an independent sample. Validation, particularly external validation, tests the association in a different population, which may differ in ethnicity, data collection methods, or other systematic factors [21].

Your issue could be related to either or both:

- If the follow-up study used a different population or platform, it was a validation effort. The failure could be due to these systematic differences or because the initial discovery was a false positive [21].

- To ensure replication, the original and confirmatory study samples should be independent but similar, and the laboratory and computational methods must be identical [21].

To avoid this, pre-plan your confirmation strategy, use large enough sample sizes, and employ stringent statistical corrections in the discovery phase [21].

FAQ 4: How can I integrate data from different 'omics studies that used different experimental designs? Integrating disparate 'omics studies is challenging due to differences in sample collection, platforms, and data processing. A powerful computational strategy is in-silico data mining and meta-analysis [1].

This involves:

- Data Mining: Collecting raw or processed data from public repositories like the Gene Expression Omnibus (GEO) with analogous research questions [1].

- Robust Integration: Using methods like the robust rank aggregation to identify common genes across studies that may have used different technologies. This approach was successfully used to define a meta-signature for endometrial receptivity by analyzing data from nine different studies [1].

- Systems Biology: A holistic, interdisciplinary approach that combines data from genomics, transcriptomics, and other 'omics to build computational models that can interpret complex cellular behavior beyond what isolated data can show [1].

Troubleshooting Guides

Issue: Inconsistent Alignment and Variant Calling Across Technical Replicates

Problem: Your bioinformatics tools (aligners, variant callers) produce inconsistent results when processing data from the same biological sample that was sequenced in multiple technical replicates (different library preps or sequencing runs).

Explanation: Technical variability is inherent in sequencing experiments. Bioinformatics tools should be robust enough to accommodate this and produce consistent results (achieving genomic reproducibility). However, some tools introduce deterministic biases (e.g., reference bias in aligners) or stochastic variations (e.g., from random algorithms), leading to irreproducible results [18].

Solution:

- Assess Genomic Reproducibility: Actively evaluate your tools using technical replicates. The focus is not on accuracy against a gold standard, but on consistency across replicates [18].

- Choose Reproducible Tools: Prefer tools that are known to be consistent. For example, one study found Bowtie2 produced consistent results irrespective of read order, while BWA-MEM showed variability with shuffled reads [18].

- Standardize the Pipeline: Implement your entire analysis, from read alignment to variant calling, in a reproducible workflow framework like CWL or Nextflow, and containerize it with Docker [20] [19]. This ensures that every component and its version are fixed.

Table 1: Key Metrics for Single-Cell RNA-seq Raw Data QC

| QC Metric | Tool/Method | Interpretation of a Good Result | Common Pitfalls |

|---|---|---|---|

| Per Base Sequence Quality | FastQC |

Quality score boxes remain in the green area for all positions; a gradual drop at the end is common [17]. | Scores falling into red area indicate poor quality calls, suggesting the need for quality trimming [17]. |

| Adapter Content | FastQC |

The cumulative percentage of adapter sequence is negligible across the read [17]. | High levels indicate incomplete adapter removal during library prep, requiring trimming [17]. |

| Per Base N Content | FastQC |

Percentage of bases called as 'N' is consistently at or near 0% [17]. | Any noticeable non-zero N content indicates issues with sequencing quality or library prep [17]. |

| Sequence Duplication | FastQC |

A diverse library where the majority of sequences show low duplication levels [17]. | High duplication can trigger a warning but is expected in single-cell data; tool is not UMI-aware [17]. |

Issue: Managing and Analyzing Large, Multimodal Reproductomics Datasets

Problem: You are generating large volumes of high-throughput multimodal data (e.g., genomic, transcriptomic, proteomic) on reproductive processes, but the data complexity creates a bottleneck. Conventional computational methods are inadequate for processing, integrating, and interpreting this data.

Explanation: The field of reproductomics investigates the interplay between hormonal regulation, genetics, and environmental factors. The vast amount of data generated by various 'omics technologies often surpasses our ability to thoroughly analyze it, leading to a data management bottleneck where biologically significant conclusions are difficult to distill [1].

Solution:

- Employ a Systems Biology Approach: Move beyond analyzing single 'omics layers in isolation. Use interdisciplinary approaches to combine genomics, transcriptomics, and other data types into unified computational models for a holistic view of reproductive systems [1].

- Utilize Integrated Computational Tools: Leverage machine learning algorithms for predicting outcomes (e.g., fertility), single-cell sequencing techniques for granular cell-level analysis, and R/Bioconductor packages like

PharmacoGxfor standardizing the analysis of large pharmacogenomic datasets [1] [20]. - Implement Robust Workflows: Create scalable, flexible, and reproducible bioinformatics pipelines using frameworks like the Common Workflow Language (CWL) to ensure your analysis is transparent and can be shared and validated by the community [20].

Experimental Protocols

Detailed Protocol: Creating a Reproducible Pharmacogenomic Analysis Pipeline

This protocol outlines the methodology for creating a portable and reproducible pipeline to process pharmacogenomic data, combining pharmacological (e.g., drug response) and molecular (e.g., gene expression) profiles into a single, shareable data object [20].

1. Workflow Specification:

- Define the pipeline using the Common Workflow Language (CWL). Each processing step must be a CWL-encoded tool with explicit definitions for inputs, outputs, base commands, and requirements (e.g., Docker container, computational resources) [20].

- Combine these steps into a CWL workflow that specifies the execution order and data flow between them.

2. Computational Environment:

- Containerization: Package each tool and its dependencies into a Docker image. This isolates the analysis environment, ensuring that operating system, library versions, and software versions remain consistent [20] [19].

- Resource Management: The CWL workflow handles resource allocation (compute and memory) for each step, which is often a limitation of conventional scripting [20].

3. Data Processing and Object Creation:

- Data Curation: Curate cell line and drug annotation files. The pipeline should assign unique identifiers to each, which are used to validate data integrity in subsequent steps [20].

- Drug Response Computation: Within the R environment, use the

PharmacoGxpackage to compute standard drug sensitivity metrics from raw dose-response data. Key metrics include: - Molecular Profile Integration: Incorporate processed molecular data (e.g., RNA-seq, SNP arrays) into the object.

- PharmacoSet (PSet) Assembly: The

PharmacoGxpackage assembles all curated annotations, computed drug responses, and molecular profiles into a unified R object called a PharmacoSet (PSet) [20].

4. Provenance Tracking and Sharing:

- Execute the CWL workflow with the

--provenanceflag. This generates a Research Object—a bundled container with all input files, output files, and metadata, including checksums for granular data provenance [20]. - Assign a Digital Object Identifier (DOI) to the final PSet and workflow on a data repository (e.g., Harvard Dataverse) to ensure persistent access and sharing [20].

Diagram: Reproducible Bioinformatics Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Reproductomics Data Management

| Tool / Resource | Function | Application in Reproductomics |

|---|---|---|

| Common Workflow Language (CWL) | A standard for describing data analysis workflows in a way that is portable and scalable across different software environments [20]. | Ensures pharmacogenomic and other reproductomics pipelines are reproducible, transparent, and can be executed identically by other researchers [20]. |

| Docker Containers | OS-level virtualization to package software and all its dependencies into a standardized unit, ensuring the software runs the same regardless of the host environment [19]. | Freezes the entire computational environment for a bioinformatics tool or pipeline, eliminating "works on my machine" problems and guaranteeing long-term reproducibility [19]. |

| PharmacoGx (R/Bioconductor) | An R package that provides computational methods to process, analyze, and integrate large-scale pharmacogenomic datasets [20]. | Creates unified PharmacoSet (PSet) objects from reproductive cell line screens, combining drug response and molecular data for easy sharing and secondary analysis [20]. |

| FastQC | A quality control tool that provides an overview of basic statistics and potential issues in raw high-throughput sequencing data [17]. | The first line of defense for identifying sequencing or library preparation artifacts in single-cell or bulk sequencing data from reproductive tissues [17]. |

| Robust Rank Aggregation | A computational meta-analysis method designed to compare distinct gene lists and identify common overlapping genes [1]. | Identifies consensus biomarker signatures (e.g., for endometrial receptivity) by integrating gene lists from multiple, disparate transcriptomics studies [1]. |

FAQs: Navigating Data and Methodological Hurdles

FAQ 1: What are the most common causes of inconsistent results in endometrial receptivity (ER) clinical trials?

Inconsistent results often stem from a combination of methodological and data-related bottlenecks:

- Lack of Standardized Protocols: Many studies use bespoke, in-house protocols for sample preparation and analysis. This makes it difficult to reproduce experiments or compare results across different laboratories [22]. For instance, preparations like Platelet-Rich Plasma (PRP) vary significantly in "platelet concentration, leukocyte and fibrin content," and administration methods, leading to heterogeneous findings on its efficacy [23].

- Heterogeneous Patient Populations and Definitions: A core challenge is the lack of a universal definition for conditions like Recurrent Implantation Failure (RIF). Studies use varying criteria for the number of failed cycles and embryo quality, creating non-uniform cohorts that compromise data comparability [24].

- Manual Data Handling Errors: Sample preparation stages in advanced analyses like proteomics are "highly manual and time-consuming," making them prone to human error. This can lead to mischaracterization and unreliable data, which is particularly problematic with sensitive clinical samples that are difficult to reacquire [22].

FAQ 2: Which data-driven technologies show the most promise for overcoming current ER assessment limitations?

Emerging technologies are focusing on integration and automation to improve objectivity and predictive power.

- Deep Learning (DL) and Multi-Modal Data Fusion: DL models, particularly convolutional neural networks (CNNs), can analyze routine ultrasound images to identify subtle features associated with ER states that are imperceptible to the human eye. One study achieved superior predictive accuracy for Recurrent Pregnancy Loss (RPL) risk by using a fusion model that integrated a CNN for image analysis (ResNet-50) and another network for clinical tabular data (TabNet) [25].

- Multi-Omics Integration: Advanced computational methods, such as variational information bottleneck approaches, are being developed to integrate complex data from multiple "omics" techniques (e.g., transcriptomics, proteomics). This is crucial for understanding the complex interactions within and across different biological systems, even when data is incomplete [15].

- Automated Sample Preparation: Laboratory automation, including automated liquid handlers, is a key solution for overcoming manual workflow bottlenecks. Automation reduces human error, increases throughput, and standardizes sample processing, which enhances the reproducibility and reliability of proteomic and other molecular data [22].

FAQ 3: What is the current clinical evidence for the efficacy of Endometrial Receptivity Analysis (ERA)?

The evidence for ERA is mixed and reflects the broader crisis in standardizing molecular diagnostics.

- Supporting Evidence: A large 2025 retrospective study of 3,605 patients with previous failed embryo transfers found that personalized embryo transfer (pET) guided by ERA significantly improved clinical pregnancy and live birth rates for both RIF and non-RIF patients. It also reduced the early abortion rate in the non-RIF group [26].

- Contradictory Evidence: Other research indicates that ERA may not be effective and "could even be detrimental to pregnancy rates" [23]. A 2019 systematic review and meta-analysis concluded that while results from modern molecular tests like ERA are promising, more data is needed before they can be universally recommended, as many conventional markers show poor predictive ability for clinical pregnancy [27].

Troubleshooting Common Experimental Scenarios

Scenario: Investigating a Novel ER Biomarker with Inconsistent Proteomics Data

Problem: Your mass spectrometry (LC-MS) results are inconsistent, with high technical variation between replicates.

Solution: Implement an automated and standardized sample preparation workflow.

- Step 1: Diagnose the Bottleneck. The primary issue is likely the reliance on manual, low-throughput sample preparation techniques (e.g., cell lysis, protein digestion, peptide purification). These repetitive, meticulous tasks are susceptible to user-derived errors and sample-to-sample variation [22].

- Step 2: Apply the Fix.

- Adopt Automation: Integrate an automated liquid handling system to execute critical pipetting and preparation steps. This minimizes human error and variation [22].

- Standardize the Protocol: Use a standardized, commercially available kit for sample preparation instead of an in-house protocol. This ensures consistency and improves reproducibility across your lab and others [22].

- Process Controls: Include a standardized control sample in every batch to monitor technical variability and batch effects introduced during preparation.

- Step 3: Validate the Data.

- After implementing automation, re-run a set of samples and controls.

- Compare the coefficient of variation for protein abundance measurements before and after the change. A significant decrease indicates improved data quality and reliability.

Quantitative Data Synthesis

Table 1: Comparative Reproducibility of Key ER Research Methodologies

| Methodology | Key Measurable Output(s) | Common Sources of Data Variance | Evidence Level for Improving Live Birth Rate (LBR) |

|---|---|---|---|

| Endometrial Receptivity Array (ERA) | Personalized Window of Implantation (WOI) | Biopsy timing, RNA sequencing platform, algorithmic interpretation of transcriptomic signature | Mixed: Large studies show benefit in RIF [26], while others show no effect or detriment [23]. |

| Endometrial Scratching | Clinical Pregnancy Rate | Technique (instrument, depth), timing in relation to cycle, operator skill | Controversial: Recent well-designed RCTs found no beneficial effect [23]. |

| Platelet-Rich Plasma (PRP) for Thin Endometrium | Endometrial Thickness (mm), LBR | Preparation method (platelet/leukocyte concentration), number of infusions | Uncertain: Small randomized studies show conflicting results; Cochrane review finds overall evidence uncertain [23]. |

| Proteomic LC-MS/MS | Protein Identification & Quantification | Manual sample prep, trypsin digestion efficiency, LC column performance | Promising but not yet translational; dependent on standardized workflows [22]. |

| Deep Learning on Ultrasound | RPL Risk Probability Score | Image acquisition parameters, model architecture, clinical data integration | Emerging: Shows high accuracy for risk stratification in initial studies [25]. |

Table 2: Clinical Evidence for ERA from a Large-Scale Study (n=3,605) [26]

| Patient Group & Intervention | Clinical Pregnancy Rate | Live Birth Rate | Early Abortion Rate |

|---|---|---|---|

| Non-RIF with npET (n=1,744) | 58.3% | 48.3% | 13.0% |

| Non-RIF with pET (n=301) | 64.5% | 57.1% | 8.2% |

| RIF with npET (after PSM) | 49.3% | 40.4% | Not Specified |

| RIF with pET (after PSM) | 62.7% | 52.5% | Not Specified |

Experimental Protocols

Protocol: Endometrial Tissue Sampling for Transcriptomic Analysis (ERA)

This protocol is based on the hormone replacement therapy (HRT) cycle methodology used in recent clinical studies [26].

1. Objective: To obtain a standardized endometrial tissue sample for RNA extraction and subsequent transcriptomic analysis to determine the window of implantation (WOI).

2. Materials:

- Estrogen supplements (e.g., oral or transdermal)

- Progesterone (P) supplements (e.g., intramuscular injection)

- Pipelle endometrial suction catheter or similar device

- Speculum

- RNA preservation solution (e.g., RNAlater)

- Sterile gloves and antiseptic solution

3. Step-by-Step Workflow:

4. Key Considerations:

- Timing: The biopsy must be performed after a specific duration of progesterone exposure (e.g., 5 days in an HRT cycle) [26].

- Sample Handling: The tissue must be immediately placed in RNAlater to preserve RNA integrity.

- Clinical Data: Record the exact timing of the biopsy relative to the start of progesterone and the patient's hormone levels.

Protocol: Developing a Deep Learning Model for ER Assessment from Ultrasound

This protocol is adapted from a study on automated RPL risk assessment [25].

1. Objective: To develop a fusion deep learning model that integrates grayscale ultrasound images and clinical data to assess endometrial receptivity and stratify RPL risk.

2. Materials:

- Ultrasound Machine: With capability to export DICOM or high-resolution image files.

- Computing Environment: GPU-accelerated workstation (e.g., with NVIDIA CUDA support).

- Software: Python with deep learning libraries (PyTorch/TensorFlow), and scikit-learn.

3. Step-by-Step Workflow:

4. Key Considerations:

- Data Quality: Ultrasound images must be standardized (e.g., longitudinal section of the endometrium). Clinical data (e.g., age, BMI, hormone levels) must be curated.

- Pre-processing: Images are typically resized (e.g., to 224x224 pixels) and undergo data augmentation (random flips, rotations) to improve model generalizability [25].

- Validation: Use a strict train-test split or k-fold cross-validation and report performance on the held-out test set to avoid overfitting.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Advanced Endometrial Receptivity Research

| Item | Function in Research | Specific Example / Note |

|---|---|---|

| Endometrial Biopsy Catheter | To obtain endometrial tissue samples for histology, transcriptomics, or proteomics. | Pipelle de Cornier is commonly used for minimal discomfort sampling. |

| RNAlater Stabilization Solution | To immediately preserve RNA integrity in tissue samples for gene expression studies like ERA. | Critical for ensuring accurate transcriptomic profiles from biopsies [26]. |

| Automated Liquid Handler | To automate and standardize sample preparation steps for proteomics and molecular assays. | Systems like Beckman Coulter's Biomek series can overcome manual workflow bottlenecks [22]. |

| Pre-trained Deep Learning Models | As a starting point for developing custom image analysis models for ultrasound or histology images. | Models like ResNet-50 can be used with transfer learning for medical image analysis [25]. |

| Hormone Assay Kits | To quantitatively measure serum levels of estradiol (E2) and progesterone (P) for cycle monitoring. | An optimal E2/P ratio has been correlated with a lower rate of displaced WOI [26]. |

| Liquid Chromatography Tandem Mass Spectrometry (LC-MS/MS) | To identify and quantify protein expression and post-translational modifications in endometrial samples. | The quality of this analysis is entirely dependent on the preceding sample preparation [22]. |

Ethical and Regulatory Considerations in Reproductive Data Storage and Sharing

Frequently Asked Questions (FAQs) and Troubleshooting Guides

General Data Sharing Principles

Q1: What are the core ethical principles I should follow for managing reproductive data? The 5Cs of data ethics provide a foundational framework for managing sensitive data [28].

- Consent: Obtain informed and voluntary consent before data collection or use. Individuals must understand what data is collected, how it will be used, and by whom. Consent should be easy to withdraw [28].

- Collection: Only collect data that is necessary for a specific, stated purpose. Avoid gathering excessive or irrelevant information [28].

- Control: Give individuals control over their own data, including the rights to access, review, update, and know who has access to it [28].

- Confidentiality: Protect data from unauthorized access, breaches, or leaks using security measures like encryption and access controls [28].

- Compliance: Adhere to all relevant legal and regulatory requirements, such as GDPR or institutional policies [28].

Q2: What does "FAIR" mean in the context of data sharing, and why is it important? FAIR is a set of guiding principles to make data Findable, Accessible, Interoperable, and Reusable [29]. Adhering to FAIR principles helps maximize the impact and utility of your research data by ensuring others can easily locate, understand, and use it. This promotes transparency, reproducibility, and accelerates scientific discovery by enabling meta-analyses and method development [29].

Ethics, Privacy, and Human Subject Data

Q3: How should I handle data derived from human research participants? Sharing human data requires balancing participant privacy with the benefits of data sharing [30] [29]. Key steps include:

- De-identification: Adequately de-identify personal information prior to sharing to protect participant privacy and mitigate risk [30].

- Informed Consent: For studies generating genomic data, informed consent documents should state what data types will be shared, for what purposes, and whether sharing will be open or controlled-access [30].

- Controlled-Access: For data that is challenging to fully de-identify or still poses privacy risks (e.g., genomic, detailed phenotypic, or qualitative data), use a controlled-access repository where only qualified researchers can access the data [30] [29].

Q4: My data is too sensitive for public release. What are my sharing options? Not all data can be shared publicly. Consider these strategies to make your data as accessible as possible [31] [29]:

- Controlled-Access Sharing: Submit individual-level raw data to a controlled-access repository like the European Genome-phenome Archive (EGA) or dbGaP [29].

- Share Processed Data: Make summary statistics (e.g., means, variant-level association statistics) available, even if raw data cannot be shared [29].

- Data Simulation: Generate and share simulated data that is statistically similar to the original data. This allows others to reproduce your analysis workflow without accessing the identifiable original data [29].

Q5: How long am I allowed to store research data? You should not store personal data for longer than necessary [32]. The GDPR states data should be stored for the shortest time possible, and you must be able to justify your retention period. Develop policies for standard retention periods and regularly review data to erase or anonymize it when it is no longer needed [32].

Practical Data Management

Q6: I'm overwhelmed by the amount of data my omics experiments generate. What is the bottleneck, and how can I address it? The field of reproductomics has reached a "data management bottleneck," where data volumes vastly surpass our ability to thoroughly analyze and interpret them [1]. To overcome this:

- Utilize Computational Tools: Employ robust bioinformatic applications and machine learning algorithms for processing and analysis [1].

- Adopt a Systems Biology Approach: Use an interdisciplinary approach that amalgamates genomics, transcriptomics, proteomics, etc., to generate comprehensive computational models [1].

- Use Public Repositories: Deposit your data in public repositories to leverage community resources and tools [1].

Q7: Where should I submit my reproductive genomics data?

- Primary Repository: The NHGRI expects researchers to use the AnVIL repository for a variety of data types, including genomic and non-genomic data from human participants [30].

- Registration: Large-scale human genomic studies must be registered in dbGaP [30].

- Alternatives: If you wish to use an alternative repository, you must propose and justify it in your Data Management and Sharing (DMS) Plan for assessment prior to funding [30].

Q8: What is the difference between raw, intermediate, and processed data? Understanding these categories helps in deciding what to share [31]:

- Raw Data: The initial output from an instrument (e.g., FASTQ files from an RNA-seq experiment) [31].

- Intermediate Data: Data products between raw data and final findings (e.g., gene expression estimates, VCF files from variant calling) [31].

- Findings: The final results, plots, and statistics produced by analyzing the data [31]. It is recommended to share as much raw and intermediate data as possible to ensure reproducibility and enable secondary analyses [31].

Data Sharing Tiers and Repository Comparison

The table below summarizes the primary methods for sharing genomic data, balancing accessibility with privacy protection [31].

| Sharing Method | Description | Ideal For | Examples |

|---|---|---|---|

| Public Sharing | Data is released without barriers for reuse. | Non-human data; fully anonymized human data with minimal re-identification risk. | Gene Expression Omnibus (GEO), AnVIL (open data) [30] [31] |

| Controlled-Access | Access is granted to qualified researchers who apply and agree to specific terms. | Individual-level human genomic, phenotypic, or other sensitive data where privacy risks exist. | dbGaP, European Genome-phenome Archive (EGA) [30] [29] |

| Upon-Request | Data is shared directly by the researcher upon receipt of a request. | Generally discouraged as it is inefficient and can lead to delays and inequitable access. | N/A |

Experimental Protocol: Implementing a Responsible Data Sharing Workflow

This protocol outlines the key steps for ethically sharing research data, particularly data derived from human participants in reproductive studies, in alignment with FAIR principles and regulatory requirements [30] [29].

1. Pre-Collection: Planning and Consent

- Develop a Data Management & Sharing (DMS) Plan: Outline the types of data and metadata you will generate, the standards you will use, and your chosen repositories [30] [29].

- Secure Informed Consent: For prospective studies, ensure consent documents explicitly state plans for data sharing, including the data types, purposes (e.g., general research use), and access mode (e.g., controlled-access) [30].

2. Pre-Publication: Data Preparation and Documentation

- De-identify Data: Remove all direct identifiers. For sensitive datasets, assess the risk of re-identification from quasi-identifiers or the data itself [30] [31].

- Organize and Document Metadata: Create detailed metadata using structured formats and community-standard ontologies (e.g., Experiment Factor Ontology) [30] [31]. Describe the sample information (e.g., tissue type, diagnosis) and handling protocols (e.g., library preparation method) thoroughly.

3. Submission and Release

- Select an Appropriate Repository: Choose a repository based on your data type and privacy needs (see Table above) [30] [29].

- Submit Data and Metadata: Follow the repository's submission guide. NHGRI expects data to be submitted to the selected repository before publication-related manuscript acceptance [30].

- Obtain a Persistent Identifier: Ensure the repository issues a unique, citable identifier (e.g., a DOI) for your dataset [29].

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key resources and tools essential for managing and analyzing reproductive omics data.

| Item | Function |

|---|---|

| AnVIL (NHGRI's Repository) | A primary cloud-based platform for storing, sharing, and analyzing genomic and related data, supporting both controlled and open access [30]. |

| dbGaP (Database of Genotypes and Phenotypes) | A controlled-access repository designed to archive and distribute the results of studies that investigate the interaction of genotype and phenotype [30]. |

| FASTQ File Format | The standard text-based format for storing both a biological sequence (usually nucleotide sequence) and its corresponding quality scores from sequencing instruments [31]. |

| VCF (Variant Call Format) | A standardized text file format used for storing gene sequence variations (e.g., SNPs, indels) called from sequencing experiments [31]. |

| Experiment Factor Ontology (EFO) | An ontology that provides systematic descriptions of experimental variables, such as disease, tissue, and treatment, enabling structured metadata annotation [31]. |

| Machine Learning Models (e.g., in Kipoi) | Pre-trained models that can be repurposed for new genomic analyses, such as predicting variant effects or gene expression [31]. |

Workflow Diagram: Data Classification for Ethical Sharing

The following diagram illustrates the decision process for classifying and selecting the appropriate sharing method for research data, with a focus on human subjects.

Troubleshooting Common Scenarios

Problem: A colleague requests data via email, but the data is sensitive.

- Solution: Avoid ad-hoc "upon-request" sharing for sensitive data. Direct your colleague to the controlled-access repository (e.g., dbGaP, EGA) where your data is housed and guide them through the standard application process. This ensures consistent, fair, and secure access for all researchers [31] [29].

Problem: My dataset is complex and includes multiple omics layers. I'm unsure how to make it FAIR.

- Solution: Utilize an integrative in-silico analysis approach. Employ systems biology frameworks to amalgamate data from genomics, transcriptomics, and other omics fields into a unified computational model. Deposit the entire dataset in a single, appropriate repository like AnVIL whenever possible, and use rich, structured metadata with controlled vocabularies to describe all data layers comprehensively [1] [30].

Problem: I am using legacy biospecimens that lack explicit consent for broad data sharing.

- Solution: NHGRI expects explicit consent for all human data generated by its funded research. If you propose to use specimens lacking such consent, you must document a request for an exception in your DMS Plan, providing scientific justification for using these specific data sources [30].

Computational Approaches and Analytical Frameworks for Reproductomics Data

Technical Troubleshooting Guides

Data Integration & Preprocessing Challenges

Q: How can I handle inconsistent data formats and structures from different studies? A: This is a common challenge in data integration. Solutions include:

- Use ETL/ELT Tools: Implement Extract, Transform, Load (ETL) or Extract, Load, Transform (ELT) tools to manage different data formats and structures. These tools streamline the process of data extraction, transformation into a standard format, and loading into a target system, which reduces manual errors [33].

- Automated Data Profiling: Use automated data profiling tools to identify data quality issues such as missing values, inconsistencies, and duplicates early in the process [34].

- Establish Data Governance: Create data governance frameworks with clear ownership and data standards to ensure a common understanding and consistent usage of data across projects [34] [33].

Q: My integrated dataset has poor quality (missing values, duplicates). How can I fix it? A: Data quality is paramount for reliable analysis.

- Implement Data Cleansing: Use data cleansing tools, especially those with AI capabilities for pattern recognition, to identify and correct complex data quality issues [34].

- Proactive Validation: Check for errors or inconsistencies soon after collecting data, before integrating it into your primary dataset. This prevents poor-quality data from corrupting your analysis [33].

- Set Validation Rules: Implement validation rules at data entry points to prevent bad data from entering the system in the first place [34].

Reproducibility & Workflow Management

Q: How can I ensure my in-silico analysis is reproducible? A: Reproducibility is a critical challenge in digital medicine and computational biology.

- Adopt Open Science Practices: Utilize frameworks like the Open Science Framework (OSF) to make data and workflows easily accessible to collaborators and for future replication. This includes pre-registering studies to state research questions and methods upfront [35].

- Use Workflow Management Systems (WMS): Automate in-silico data analysis processes through WMS. These systems provide flexible and extensible tools for creating, deploying, and documenting data integration and analysis pipelines, ensuring that the same steps can be followed to achieve consistent results [36].

- Thorough Documentation: Maintain detailed documentation of all data sources, transformation steps, software versions, and parameters used. This is a cornerstone of reproducible research [35].

Q: My analysis workflow is becoming too complex and unmanageable. What can I do? A: As projects grow, complexity can hinder progress.

- Automate with WMS: As noted above, Workflow Management Systems are designed specifically to manage complex, multi-step computational workflows, reducing manual intervention and the potential for error [36].

- Implement Data Lineage Tools: Use tools that provide data lineage, giving you visibility into how data flows, where it starts, what changes it has gone through, and where it is delivered. This helps build trust, detect anomalies, and optimize performance [37].

Computational & Scalability Issues

Q: My data processing is too slow for large-scale multi-omics datasets. How can I improve performance? A: Large data volumes can overwhelm traditional methods.

- Leverage Modern Platforms: Adopt modern data management platforms that support massively parallel processing (MPP), Spark processing, or elastic processing. These can handle multiple terabytes of data simultaneously, saving time and money [37] [33].

- Utilize Serverless Architecture: To ease infrastructure management, use serverless data integration architectures where zero effort is needed to provision and maintain underlying cloud instances. This allows researchers to focus on analysis rather than administrative work [37].

- Apply Incremental Data Loading: Break data into smaller segments and load them incrementally rather than attempting to load the entire dataset at once [33].

Biological Interpretation & Validation

Q: After integrating data, I struggle with the biological interpretation of the results. A: Moving from data to biological insight is a key challenge.

- Leverage Network-Based Methods: Use network-based multi-omics integration methods (e.g., network propagation, graph neural networks) that contextualize results within known biological networks (PPI, metabolic pathways). This provides a systems-level view and helps in identifying biologically relevant patterns [38].

- Consult Multi-Omics Resources: Platforms like InSilico DB provide access to a large number of curated public genomic datasets, which can be used for comparison and validation of your findings against existing data [39].

Frequently Asked Questions (FAQs)

Q: What are the biggest data management bottlenecks in reproductomics research? A: The primary bottlenecks include:

- Data Quality and Consistency: Inconsistencies arising from combining data from various sources with different formats and accuracy levels [34].

- Data Security and Privacy Compliance: Ensuring integrated data, especially sensitive health information, adheres to regulations like GDPR and HIPAA [34].

- Legacy System Integration: Difficulties in connecting older, outdated systems with modern technologies due to proprietary formats and limited documentation [34].

- The Reproducibility Crisis: A significant portion of scientific findings cannot be replicated, often due to a lack of transparency, data sharing, and standardized workflows [40] [35].

Q: How can I securely integrate data while maintaining patient privacy? A: Security must be integrated into the process.

- Implement Robust Security Measures: Protect sensitive data using encryption (both in transit and at rest), data masking, anonymization techniques, and granular role-based access controls [34] [33].

- Regular Audits: Conduct regular security audits and penetration testing to identify and address vulnerabilities [34].

- Choose Compliant Tools: Select data integration solutions that are designed with strong security features and compliance frameworks in mind [33].

Q: What should I do if my data sources update at different rates? A: This "velocity" problem is common with heterogeneous sources.

- Thorough Documentation: Maintain detailed records of all source systems, including their data change protocols and rates [33].

- Create Asynchronous Processes: Design workflows with asynchronous processes for performance-intensive operations to prevent slower systems from bottlenecking the entire pipeline [34].

Experimental Protocols & Data Tables

Protocol: A Standard Workflow for Network-Based Multi-Omics Integration

This protocol is adapted from methodologies reviewed in network-based multi-omics studies [38].

- Data Collection & Curation: Gather omics data (e.g., genomics, transcriptomics) from disparate sources, such as public repositories (e.g., GEO, TCGA) via hubs like InSilico DB [39].

- Quality Control & Preprocessing: Perform automated data profiling and cleansing. Handle missing values, normalize data, and correct for batch effects.

- Network Construction/Selection: Obtain or construct a relevant biological network (e.g., a Protein-Protein Interaction network from STRING database or a gene co-expression network).

- Data Integration: Map the preprocessed multi-omics data onto the network. This can be done using various methods:

- Network Propagation/Diffusion: To smooth data and identify active network regions.

- Graph Neural Networks (GNNs): To learn complex patterns from the network-structured data.

- Analysis & Model Training: Perform the core analysis, such as identifying differentially active subnetworks, predicting drug responses, or classifying disease subtypes.

- Validation: Validate findings using independent datasets or through experimental follow-up.

- Interpretation & Reporting: Interpret the results in the context of the underlying biology. Use data lineage tools to document the entire workflow for reproducibility.

Table 1: Common Data Integration Challenges and Solutions in Reproductomics

| Challenge | Impact on Research | Recommended Solution |

|---|---|---|

| Data Quality & Consistency [34] | Undermines integrated view, leads to flawed analytics and decision-making. | Implement automated data profiling & cleansing tools; establish data governance [34] [33]. |

| Reproducibility Crisis [40] [35] | Shakes confidence in scientific validity; policies/treatments may be based on unverified findings. | Adopt Open Science practices; pre-register studies; use Workflow Management Systems (WMS) [35] [36]. |

| Computational Scalability [38] [33] | Overwhelms traditional methods, causing long processing times and inability to handle large data. | Use modern platforms with parallel processing (e.g., Spark); apply incremental data loading [37] [33]. |

| Semantic Heterogeneity [34] | Different data sources use varying formats, schemas, and languages, complicating integration. | Use ETL/ELT tools and managed integration solutions to transform data into a uniform format [33]. |

| Security & Privacy Compliance [34] | Risk of data breaches, reputational damage, and violation of regulations (GDPR, HIPAA). | Implement encryption, data masking, anonymization, and role-based access controls [34] [33]. |

Table 2: Key Software Tools and Platforms for In-Silico Data Mining

| Tool / Platform Category | Example(s) | Primary Function in Research |

|---|---|---|

| Workflow Management Systems (WMS) [36] | (e.g., Nextflow, Snakemake) | Automate and manage complex, multi-step computational data analysis pipelines. |

| Data Integration & ETL/ELT [37] [33] | Informatica Cloud Data Integration, Talend, Estuary Flow | Extract, transform, and load data from disparate sources into a unified format for analysis. |

| Genomic Datasets Hub [39] | InSilico DB | Provides curated, ready-to-analyze genomic datasets from public repositories like GEO and TCGA. |

| Network Analysis & Multi-Omics [38] | (Various specialized algorithms) | Integrate multi-omics data using biological networks (PPI, GNNs) for discovery. |

| Open Science Framework [35] | Open Science Framework (OSF) | Facilitates collaborative project management, data sharing, and study pre-registration. |

Workflow and Pathway Visualizations

In-Silico Data Mining Workflow

Network-Based Multi-Omics Integration

Data Management Bottlenecks in Reprodutomics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential "Reagents" for In-Silico Data Mining

| Item / Resource | Type | Primary Function |

|---|---|---|

| InSilico DB [39] | Data Repository Hub | Provides efficient access to curated, normalized, and ready-to-analyze public genomic datasets, bridging the gap between data repositories and analysis tools. |

| Workflow Management System (WMS) [36] | Software Platform | Automates in-silico data analysis processes, enabling the creation of reproducible, scalable, and manageable computational pipelines. |

| ETL/ELT Tools [37] [33] | Data Integration Software | Automates the extraction of data from sources, its transformation into a unified format, and loading into a target system, solving heterogeneous data structure challenges. |

| Biological Networks (PPI, GRN) [38] | Knowledgebase / Data | Provides a structured framework (nodes and edges) for integrating and interpreting multi-omics data in a biologically meaningful context. |

| Open Science Framework (OSF) [35] | Collaborative Platform | Facilitates project management, data sharing, and study pre-registration to enhance transparency, collaboration, and ultimately, reproducibility. |

Machine Learning Algorithms for Predicting Fertility Outcomes and Treatment Success

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed for researchers and scientists encountering challenges in developing and deploying machine learning (ML) models within the field of reproductomics, with a specific focus on predicting fertility treatment outcomes. The guidance is framed within the critical context of overcoming data management bottlenecks that commonly hinder research progress in this domain.

Frequently Asked Questions (FAQs)

FAQ 1: My model's performance is inconsistent and unreliable. What are the most critical features to include to build a robust predictive model?

A primary challenge in predictive modeling is feature selection. Based on a systematic review of ML in Assisted Reproductive Technology (ART), the following features are most frequently reported as critical for model performance [41]:

- Female Age: Consistently the most important predictor across studies, largely due to its correlation with egg quality and quantity [42] [41].

- Ovarian Reserve Markers: Such as Anti-Müllerian Hormone (AMH) levels, which indicate egg quantity [42].

- Follicle Counts and Size Distribution: Particularly the number of follicles in the 16-20 mm and 11-15 mm diameter ranges, which are key for determining the optimal time for trigger injection [43].

- Body Mass Index (BMI): A BMI outside the normal range (19-24.9) is associated with lower pregnancy and higher miscarriage rates [42].

- Stimulation Parameters and Hormone Levels: Including estradiol levels during treatment [43].

Table 1: Key Predictive Features for Fertility Outcome Models

| Feature Category | Specific Features | Clinical/Technical Rationale |

|---|---|---|

| Patient Demographics | Female Age, BMI | Age is the strongest predictor of egg quality; BMI impacts pregnancy rates [42] [41]. |

| Ovarian Response | Follicle Count (11-15mm, 16-20mm), AMH, Estradiol Level | Directly measures response to stimulation and yield of mature oocytes [43]. |

| Treatment Protocol | Stimulation Drugs, Trigger Timing, Type of Treatment (e.g., ICSI) | Different protocols suit different patient profiles and impact egg retrieval success [43] [44]. |

| Historical Data | Total Previous Treatment Attempts, Previous Pregnancy History | Provides context on patient-specific treatment history and cumulative success chances [44]. |

FAQ 2: What are the best-performing machine learning algorithms for predicting fertility outcomes?

The choice of algorithm depends on your data structure and the specific outcome you wish to predict. A systematic review identified that while many algorithms are used, several show strong performance [41]. Furthermore, advanced implementations often use ensemble methods to combine the strengths of multiple algorithms [45].

Table 2: Commonly Used ML Algorithms and Their Performance in Reproductomics

| Algorithm | Reported Performance (Examples) | Strengths and Common Use Cases |

|---|---|---|

| Support Vector Machine (SVM) | Frequently applied technique (44.44% of reviewed studies) [41]. | Effective in high-dimensional spaces [41]. |

| Random Forest (RF) | AUC: 0.72-0.83; Accuracy: ~65-77% [41]. | Handles mixed data types, provides feature importance, robust to overfitting [41]. |

| Gradient Boosting (XGBoost, LightGBM, CatBoost) | Used in top-performing ensembles; LightGBM offers superior performance on large-scale data and memory efficiency [45]. | High accuracy, native handling of categorical features (CatBoost), fast training [45]. |

| Bayesian Network Model | Accuracy: 91.3%; AUC: 0.997 [41]. | Models probabilistic relationships between variables. |

| Logistic Regression (LR) | Often used as a baseline model for comparison [41]. | Simple, interpretable, good for establishing a performance baseline. |

FAQ 3: I am facing significant data management bottlenecks, from siloed data to poor quality. How can I address this?

Data management challenges are a major bottleneck in reproductomics research. Here are specific solutions aligned with common problems [46] [14]:

- Challenge: Sheer Volume and Multiple Data Storages (Silos)

- Challenge: Data Quality

- Solution: Implement rigorous data quality monitoring standards. This includes automated checks for accuracy and completeness. Not all data is equally valuable; processes must prioritize retaining high-quality, accurate data critical for research [14].

- Challenge: Lack of Skilled Resources

- Solution: Invest in training for existing staff and leverage automation tools for data preprocessing, integration, and analysis to reduce the manual burden and dependency on a large number of highly specialized data scientists [14].

FAQ 4: How can I validate that my model's predictions are clinically meaningful and not just statistically significant?

Beyond standard metrics like AUC and accuracy, clinical validation is key. This involves:

- Causal Inference for Treatment Optimization: Move beyond prediction to causal inference. One study used a ML model to optimize the day of trigger injection, framing the decision as "trigger today vs. wait another day." The model, using a T-learner with bagged decision trees, recommended a different trigger day than the physician in nearly half the cases. Following the model's recommendation would have yielded significantly more fertilized oocytes and usable blastocysts per stimulation cycle on average [43].

- Comparison to Established Benchmarks: Compare your model's performance against established clinical tools, such as the SART IVF Success Prediction Tool, which uses national data to give patients personalized success estimates [47]. Your model should offer a tangible improvement.

- Prospective Validation: The ultimate test is a prospective study where clinical decisions are guided by the model and outcomes are compared to a control group, as proposed in the trigger timing research [43].

Detailed Experimental Protocols

Protocol 1: Developing an Ensemble Model for IVF Success Prediction

This protocol is based on a project that developed an advanced ensemble system using a large dataset of 346,418 fertility treatment records [45].

1. Data Preprocessing:

- Dropping Irrelevant Columns: Remove columns with high missingness or irrelevance to the prediction target (e.g., 'PGS 시술 여부' in the cited study) [45].

- Handling Boolean Columns: Convert binary categorical columns (e.g., '배란 자극 여부', '단일 배아 이식 여부') to a boolean data type. Retain NaN values or address them explicitly based on the data model [45].

- Converting Categorical to Numeric: Clean ordinal columns by removing suffixes (e.g., '회') and converting ranges (e.g., '6 이상' to 6). Map categorical codes (e.g., '시술 시기 코드') to integers using predefined dictionaries [45].

2. Model Architecture (Ensemble Blending):

- Base Models Selection: Select multiple complementary gradient boosting algorithms to build a robust ensemble. The cited project used four [45]: