Breaking the Reproducibility Barrier: A Roadmap for Standardizing Microbiome Protocols Across Research Sites

The translation of microbiome research from bench to bedside is critically hindered by a lack of standardization, leading to challenges in reproducibility and data comparability.

Breaking the Reproducibility Barrier: A Roadmap for Standardizing Microbiome Protocols Across Research Sites

Abstract

The translation of microbiome research from bench to bedside is critically hindered by a lack of standardization, leading to challenges in reproducibility and data comparability. This article provides a comprehensive framework for researchers, scientists, and drug development professionals aiming to implement robust, standardized microbiome protocols. Drawing on the latest international consensus, multi-laboratory ring trials, and new reference materials, we explore the foundational need for standardization, detail methodological best practices from sample collection to sequencing, offer troubleshooting strategies for common pitfalls, and present validation frameworks for comparative analysis. The synthesis of these elements provides a actionable path toward enhanced data integrity, cross-site reproducibility, and accelerated clinical translation in microbiome science.

The Urgent Need for Standardization: Overcoming the Reproducibility Crisis in Microbiome Science

FAQs on Methodological Variability

Q1: Why do microbiome results vary so much between different laboratories?

Methodological choices at every stage, from sample collection to data analysis, significantly impact microbiome sequencing results and limit comparability between studies. An international interlaboratory study comparing experimental protocols found that these choices introduce both bias and variability in measurements, even when laboratories are analyzing the same reference samples [1].

- Key Sources of Variability:

- Sample Collection & Handling: Differences in storage conditions, timing, and collection tools affect microbial DNA integrity [2].

- DNA Extraction Methods: Variations in lysis efficiency and kit chemistry can bias which microbial groups are recovered [1].

- Sequencing Technology: The choice between 16S rRNA gene amplicon sequencing and whole-genome shotgun metagenomics yields different taxonomic and functional profiles [1].

- Bioinformatics Pipelines: A lack of standardized software and parameters for processing raw sequence data leads to high variability in final results [1] [3].

Q2: How can our multi-site study minimize methodological variability?

Implementing a standardized operating procedure (SOP) across all participating sites is the most effective strategy. The following table summarizes the critical control points based on interlaboratory studies and consensus recommendations [1] [2] [4].

Table: Key Elements of a Standardized Microbiome Protocol for Multi-Site Studies

| Protocol Stage | Standardization Goal | Recommended Practice |

|---|---|---|

| Sample Collection | Preserve sample integrity and prevent contamination. | Use sterile, DNA-free collection tools; fix collection timing relative to factors like food intake; use uniform preservation solutions and storage temperatures (e.g., immediate freezing at -80°C) [2] [4]. |

| DNA Extraction | Ensure reproducible and unbiased lysis of microbial cells. | Employ the same validated extraction kit across all sites; use In Vitro Diagnostic (IVD)-certified kits where possible to ensure performance standards [2]. |

| Sequencing | Generate consistent and comparable sequence data. | Utilize the same sequencing platform (e.g., Illumina); centralize sequencing at a single, dedicated facility if feasible [2]. |

| Bioinformatics | Convert raw data into reliable, comparable results. | Adopt a unified, validated bioinformatics pipeline for all samples, including set parameters for quality filtering, taxonomy assignment, and contamination removal [3]. |

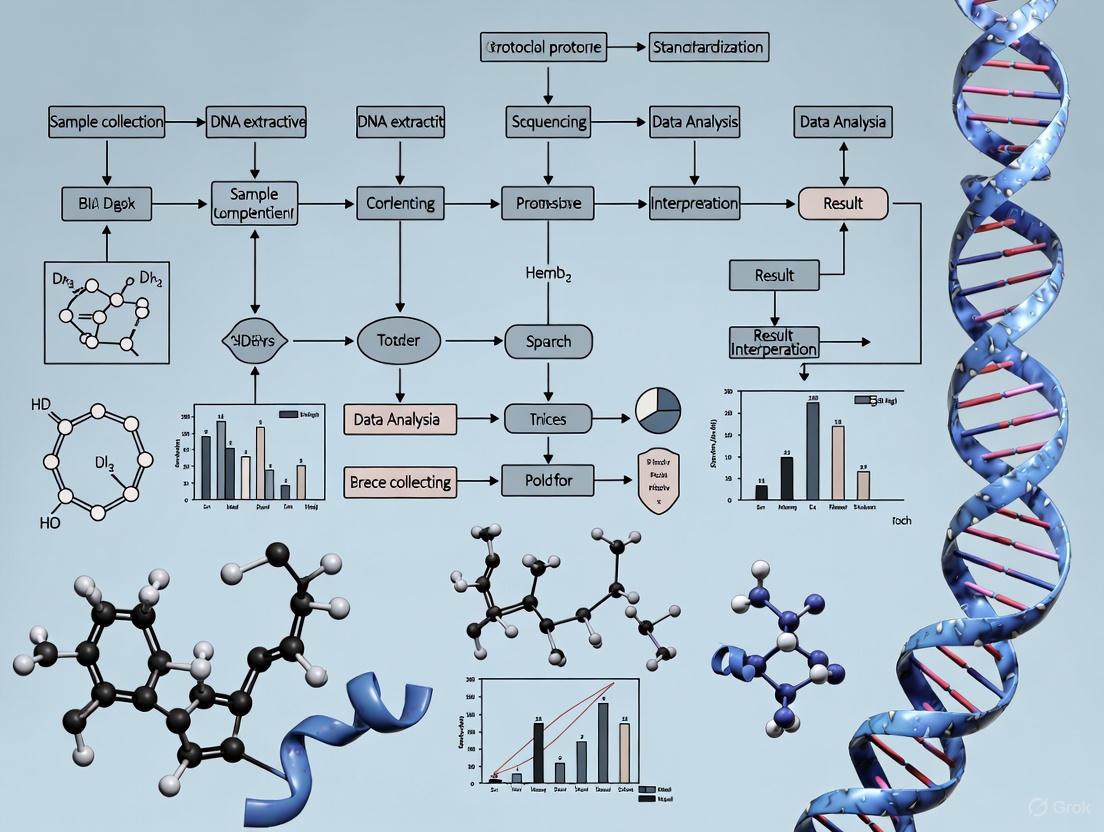

Diagram: Workflow for Standardizing Multi-Site Microbiome Research

FAQs on Bioinformatics Inconsistency

Bioinformatics inconsistency arises from the high degree of flexibility in how raw sequencing data is processed. Key sources include:

- Choice of Algorithms and Software: Different tools for quality filtering, sequence clustering (OTUs vs. ASVs), and taxonomic assignment can produce divergent results from the same raw data [2].

- Database and Parameter Selection: The reference database used for taxonomy and the specific parameters (e.g., similarity thresholds) dramatically influence the final microbial community profile [5].

- Lack of Clinical Validation: Many bioinformatics pipelines are developed for research and lack the rigorous validation required for clinical diagnostics, leading to potential inaccuracies that impact patient care [3].

Q4: What are the best practices for validating a bioinformatics pipeline?

For reliable and reproducible results, a pipeline must be properly validated. The Association for Molecular Pathology and the College of American Pathologists recommend a comprehensive approach to ensure accuracy, precision, and robustness [3].

- Accuracy: Demonstrate that the pipeline correctly identifies known organisms in a validated reference material, such as a mock community with a defined composition.

- Precision: Show that the pipeline produces the same results when the same sample is tested repeatedly (within-run and between-run precision).

- Robustness: Evaluate the pipeline's performance under varying conditions, such as changes in sequencing depth or input DNA quality.

- Sensitivity/Specificity: Determine the pipeline's ability to correctly detect true positives and reject false positives, especially at low levels of abundance.

FAQs on Population Underrepresentation

Q5: How severe is the population representation gap in microbiome research?

The gap is profound and geographically systematic. An analysis of public human microbiome data revealed that 71% of all samples come from North America and Europe, which represent only about 14% of the global population [6]. In contrast, countries from Central and Southern Asia (26% of the global population) contribute only 2% of samples [6]. The United States alone, with 4% of the world's population, accounts for 40% of all microbiome samples [7].

Q6: What are the scientific consequences of this underrepresentation?

This bias restricts the universal applicability of microbiome science and has direct consequences for biology and medicine.

- Limited Definition of "Healthy": A "healthy" microbiome baseline defined solely by Western populations may not be applicable to other global populations with different diets, lifestyles, and environmental exposures [6].

- Missed Microbial Functions: Research focused solely on Western microbiomes can miss functions essential to other populations. For example, gut microbes in Japanese populations produce enzymes for digesting seaweed, which are absent in North American microbiomes [6].

- Inequitable Therapeutic Development: The promised wave of microbiome-based therapeutics may not work effectively in global populations that were not represented in the research and development phase [6].

Table: Quantitative Overview of Global Microbiome Sampling Bias

| Region | Global Population Share | Representation in Microbiome Samples |

|---|---|---|

| North America & Europe | ~14% | 71% (Highly overrepresented) |

| United States | ~4% | 40% (Highly overrepresented) |

| Central & Southern Asia | ~26% | 2% (Highly underrepresented) |

| UN-defined Least Developed Countries | ~14% | 3% (Highly underrepresented) |

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials and Controls for Robust Microbiome Research

| Item | Function & Importance | Application Example |

|---|---|---|

| Mock Microbial Communities | A defined mix of microbial strains with known genomic sequences. Serves as a positive control to assess accuracy, bias, and limit of detection in the entire wet-lab and bioinformatics pipeline [1]. | Included in every sequencing run to quantify technical variability and measurement bias between batches and sites [1]. |

| DNA/RNA-Free Water | Used as a negative control during DNA extraction. Essential for identifying contaminating DNA introduced from reagents, kits, or the laboratory environment [4]. | Processed alongside actual samples through DNA extraction and sequencing to create a "background contamination" profile for post-hoc filtering [4]. |

| Standardized DNA Extraction Kits | Validated kits ensure consistent and reproducible lysis of microbial cells. IVD-certified kits are recommended for diagnostic applications due to stricter quality control [2]. | Used uniformly across all samples in a multi-site study to minimize protocol-driven variability in microbial community profiles [1] [2]. |

| Sample Preservation Solution | A buffer that stabilizes microbial DNA/RNA at the point of collection, preventing shifts in microbial composition due to room-temperature storage or freeze-thaw cycles [2]. | Added to stool, saliva, or tissue samples immediately after collection to preserve a "snapshot" of the microbiome for later analysis. |

The Impact of Non-Standardization on Data Comparability and Clinical Translation

The translation of preclinical microbiome findings into viable clinical applications is remarkably low, with recent studies estimating that only 5-10% of promising preclinical studies successfully advance to clinical use [8]. This alarming failure rate represents a critical bottleneck in therapeutic development. A primary driver of this translational gap is the pervasive lack of standardization across microbiome research protocols, which introduces substantial variability and limits data comparability across research sites [9].

When laboratories employ different methodologies for sample collection, DNA extraction, sequencing, and bioinformatics analysis, they generate results that are often incompatible for meaningful comparison or meta-analysis [10] [9]. This non-standardization effectively masks true biological signals with methodological noise, undermining the collective progress of the entire field and delaying the development of microbiome-based diagnostics and therapies.

Troubleshooting Guides: Common Standardization Challenges and Solutions

Data Comparability and Integration Issues

Problem: Researchers cannot compare or integrate microbiome datasets generated from different laboratories or studies, despite investigating similar research questions.

Explanation: Microbiome data possesses several intrinsic characteristics that complicate analysis: it is compositional (relative abundance rather than absolute counts), over-dispersed, sparse (containing many zero values), and high-dimensional (many more measured features than samples) [11]. When different labs use custom protocols, these inherent challenges are exacerbated by technical variability.

Solution: Implement a multi-tiered standardization framework:

- Pre-analytical standardization: Adopt consistent sample collection, storage, and DNA extraction protocols across sites [10] [9].

- Reference materials: Incorporate DNA reference reagents with known compositions (e.g., NIBSC Gut-Mix-RR) in every sequencing run to control for technical variability [9].

- Data standards: Align experimental metadata and results with established frameworks like the Minimum Information about any (x) Sequence (MIxS) standards to improve data sharing and integration.

Preventive Measures:

- Establish Standard Operating Procedures (SOPs) for all laboratory processes and provide regular training.

- Use standardized reporting frameworks to evaluate pipeline performance, focusing on sensitivity, false positive relative abundance, diversity estimation, and composition similarity [9].

- Implement Data Transfer Specifications (DTS) that define how non-CRF data should be collected and formatted, ensuring seamless information exchange between collaborators and vendors [12].

Bioinformatics Pipeline Variability

Problem: The same raw sequencing data processed through different bioinformatics pipelines yields significantly different biological conclusions.

Explanation: Bioinformatics tools for taxonomic profiling exhibit inherent biases and trade-offs. Some tools prioritize sensitivity (detecting true positives) at the cost of higher false positive relative abundance, while others demonstrate the opposite pattern [9]. These differences dramatically impact key metrics like alpha diversity and taxonomic composition.

Solution: Systematically evaluate and validate bioinformatics pipelines using benchmarked reference reagents.

- Pipeline Selection: Choose tools that demonstrate optimal performance for your specific microbiome niche (e.g., gut versus environmental).

- Parameter Consistency: Document and standardize all software parameters and database versions across collaborating sites.

- Reporting Framework: Apply a standardized reporting system to assess pipeline performance using four key measures [9]:

Table: Four-Measure Framework for Evaluating Bioinformatics Pipelines

| Reporting Measure | Definition | Impact on Results |

|---|---|---|

| Sensitivity | Percentage of known species correctly identified | Affects detection of low-abundance but potentially significant taxa |

| False Positive Relative Abundance (FPRA) | Total relative abundance of falsely reported species | Impacts accuracy of community composition and diversity measures |

| Diversity | Observed number of species compared to actual count | Influences core diversity metrics reported in most studies |

| Similarity | Bray-Curtis similarity between predicted and actual composition | Affects overall accuracy of community structure representation |

Validation Workflow:

- Sequence DNA reference reagents with known composition alongside experimental samples.

- Process the reference data through your standard bioinformatics pipeline.

- Calculate the four key measures to quantify pipeline bias.

- Use this information to interpret experimental results with appropriate caution, acknowledging methodological limitations.

Low Sequencing Library Yield and Quality

Problem: Sequencing libraries prepared from microbiome samples yield insufficient quantity or quality for robust analysis, creating roadblocks and introducing bias.

Explanation: Low library yield often stems from suboptimal input DNA quality, inefficient fragmentation or ligation during library preparation, or over-aggressive purification steps [13]. These issues reduce library complexity and compromise statistical power in downstream analyses.

Solution: Implement a systematic diagnostic approach:

- Input Quality Control: Use fluorometric methods (e.g., Qubit) rather than UV spectrophotometry alone for accurate DNA quantification, ensuring 260/230 ratios >1.8 and 260/280 ratios ~1.8 [13].

- Protocol Optimization: Titrate adapter-to-insert molar ratios to minimize adapter-dimer formation and optimize fragmentation parameters for your specific sample type [13].

- Purification Consistency: Standardize bead-based cleanup ratios across all samples and operators to minimize technical variability.

Table: Troubleshooting Common Sequencing Preparation Issues

| Problem Category | Typical Failure Signals | Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input/Quality | Low starting yield; smear in electropherogram | Degraded DNA; sample contaminants; inaccurate quantification | Re-purify input sample; use fluorometric quantification; assess DNA integrity |

| Fragmentation/Ligation | Unexpected fragment size; adapter-dimer peaks | Over/under-shearing; improper buffer conditions; suboptimal adapter ratio | Optimize fragmentation parameters; titrate adapter:insert ratio; ensure fresh enzymes |

| Amplification/PCR | Overamplification artifacts; high duplicate rate | Too many PCR cycles; polymerase inhibitors; primer exhaustion | Reduce cycle number; use high-fidelity polymerases; optimize primer concentrations |

| Purification/Cleanup | Incomplete removal of small fragments; sample loss | Wrong bead ratio; bead over-drying; inefficient washing | Standardize bead:sample ratios; avoid over-drying beads; use fresh wash buffers |

Frequently Asked Questions (FAQs)

Q1: Why can't we just use different protocols and normalize the data computationally later?

A: While computational normalization methods exist, they cannot fully correct for biases introduced during wet-lab procedures. Methods introduced during sample collection, DNA extraction, and primer selection create irreversible technical artifacts that bias the representation of certain microbial taxa [10] [11]. Post-hoc normalization can mitigate some differences in sequencing depth but cannot recover biological signals lost during earlier technical steps. The field best practice is to standardize wet-lab protocols first, then apply appropriate computational normalization.

Q2: Our lab has limited resources. Which single standardization step would provide the biggest impact?

A: Incorporating DNA reference reagents with known microbial composition provides the most value for resource-limited laboratories. By including these reagents in your sequencing runs, you can:

- Quantify technical variability specific to your pipeline

- Detect batch effects and procedural drift over time

- Benchmark your bioinformatics pipeline's performance

- Provide evidence for data quality when collaborating or publishing [9]

This single investment offers a robust quality control mechanism that significantly enhances the interpretability and reliability of your data.

Q3: How does non-standardization specifically impact drug development and clinical translation?

A: Non-standardization creates three major roadblocks in the drug development pipeline:

- Inconsistent Target Identification: Variability across studies makes it difficult to confidently identify microbial taxa or genes that are reproducibly associated with disease states [8].

- Poor Preclinical Reproducibility: Therapeutic effects observed in animal models cannot be reliably replicated across research sites, hindering the selection of lead candidates for clinical trials [8] [14].

- Regulatory Challenges: Regulatory agencies like the FDA require standardized data formats (e.g., CDISC SEND, SDTM, ADaM) for submission. Non-standardized microbiome data creates significant obstacles for regulatory review and approval [15].

Q4: Are there specific reagent solutions that can help standardize microbiome research?

A: Yes, several key reagents are critical for standardization efforts:

Table: Essential Research Reagent Solutions for Microbiome Standardization

| Reagent Type | Function | Examples | Application |

|---|---|---|---|

| DNA Reference Reagents | Controls for biases in library preparation, sequencing, and bioinformatics | NIBSC Gut-Mix-RR, Gut-HiLo-RR [9] | Pipeline benchmarking; inter-laboratory calibration |

| Whole Cell Reagents | Controls for biases in DNA extraction efficiency across different protocols | Defined microbial communities in cell form [9] | Extraction protocol optimization; quantitative assessment |

| Matrix-Spiked Reagents | Controls for biases from sample matrix inhibitors or storage conditions | Microbial cells spiked into specific sample matrices [9] | Protocol validation for specific sample types (e.g., stool, saliva) |

Standardization Workflows and Visualization

Microbiome Analysis Standardization Pathway

The following workflow outlines the critical standardization points throughout the microbiome analysis pipeline, from sample collection to data interpretation:

Reference Reagent Evaluation Framework

When using reference reagents to evaluate bioinformatics pipelines, the following four-measure framework provides a comprehensive assessment of pipeline performance:

The journey toward standardized microbiome research requires concerted effort across multiple domains—from wet-lab protocols to computational frameworks. By implementing the troubleshooting guides and standardization strategies outlined in this technical support center, researchers can significantly enhance the comparability, reproducibility, and translational potential of their microbiome data.

The critical first steps include adopting reference reagents, establishing standardized operating procedures across collaborating sites, and implementing rigorous pipeline evaluation using the four-measure framework. Through these efforts, the field can overcome the current limitations of non-standardization and accelerate the development of reliable microbiome-based diagnostics and therapies.

Global initiatives play a pivotal role in harmonizing microbiome research methodologies across international borders. The International Human Microbiome Standards (IHMS) project, for instance, specifically coordinates the development of standard operating procedures (SOPs) designed to optimize data quality and comparability in the human microbiome field [16]. Similarly, the Strengthening The Organization and Reporting of Microbiome Studies (STORMS) initiative provides a comprehensive 17-item checklist that spans the typical sections of a scientific publication, offering guidance for concise and complete reporting of microbiome studies [17]. These frameworks address the critical need for standardization in this rapidly evolving field, where inconsistent methodologies can lead to irreproducible results and hinder scientific progress.

The Clinical-Based Human Microbiome Research and Development Project (cHMP) in the Republic of Korea exemplifies a national-level adoption of such standards, implementing protocols for clinical metadata collection, specimen handling, DNA extraction, and sequencing methods to ensure consistent data quality [18]. These coordinated efforts underscore a global recognition that methodological standardization is essential for enhancing data integrity, reproducibility, and advancing microbiome-based research with potential applications for improving human health outcomes.

Key International Initiatives and Their Contributions

The table below summarizes major international microbiome initiatives and their primary contributions to field standardization.

Table 1: Major International Microbiome Standardization Initiatives

| Initiative Name | Lead Organization/Region | Primary Focus Areas | Key Outputs |

|---|---|---|---|

| International Human Microbiome Standards (IHMS) | International Human Microbiome Consortium [16] | Sample collection, identification, extraction, sequencing, and data analysis [16] | Standard Operating Procedures (SOPs) for core methodologies [16] |

| STORMS | Multidisciplinary international consortium [17] | Comprehensive reporting guidelines for microbiome studies [17] | 17-item checklist for manuscript preparation and review [17] |

| Clinical-Based Human Microbiome R&D Project (cHMP) | Korea Disease Control and Prevention Agency (KDCA) [18] | Clinical metadata, specimen handling, DNA extraction, sequencing QC [18] | Standardized national protocols for various body sites [18] |

| Human Microbiome Project (HMP) | National Institutes of Health (NIH) [18] | Generating research resources to enable comprehensive characterization of the human microbiome [18] | Reference datasets, protocols, and technological development |

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What are the most critical confounding factors to control for in human microbiome study design?

Answer: The human microbiome is highly sensitive to its environment, and numerous factors can confound study results if not properly accounted for. Key confounders include:

- Medication Use: Antibiotic use significantly alters gut microbiota, and even non-antibiotic drugs like proton pump inhibitors can cause substantial shifts [19]. Document all medication use within at least 6 months of specimen collection [18].

- Demographic and Lifestyle Factors: Age, sex, diet, geography, and pet ownership have all been demonstrated to influence microbiome composition and function [19]. For diet, record patterns like Western, Mediterranean, or vegan diets, and frequency of specific food consumption [18].

- Technical Variability: Different batches of DNA extraction kits can be a significant source of variation. To minimize this, purchase all extraction kits needed at the study start or store samples and extract all at the same time [19].

Best Practice: Always enumerate possible confounders during experimental design, quantify each systematically using detailed case report forms, and treat them as independent variables in statistical analyses [18] [19].

FAQ 2: How should we handle low microbial biomass samples to avoid contamination artifacts?

Answer: Samples with low microbial biomass (e.g., skin, plasma, tissue biopsies) are particularly susceptible to contamination, where contaminating DNA can comprise most or all of the signal [19].

Troubleshooting Guide:

- Implement Rigorous Controls: Always run both positive controls (samples with known microbial composition) and negative controls (blank extraction kits with no sample) alongside experimental samples [19].

- Analyze Control Samples: Sequentially analyze negative controls to identify contaminating sequences, which should be subtracted from experimental samples. This is particularly crucial for low-biomass studies [19].

- Use Dedicated Reagents: Assign dedicated reagents for low-biomass work and use UV-irradiated and/or filtered tips and tubes to minimize background contamination.

FAQ 3: What are the common pitfalls in reference sequence databases, and how can we mitigate them?

Answer: Reference sequence databases are foundational for metagenomic analysis but suffer from several pervasive issues that can compromise results [20].

Common Pitfalls and Mitigation Strategies:

- Taxonomic Mislabeling: An estimated 1-3.6% of prokaryotic genomes in RefSeq and GenBank are taxonomically mislabeled [20]. This can lead to false positive detections.

- Mitigation: Use databases that employ Average Nucleotide Identity (ANI) clustering to identify and correct outliers, or those that have undergone rigorous validation [20].

- Database Contamination: Millions of sequences in public databases are contaminated with foreign DNA [20].

- Mitigation: Leverage databases that have been systematically curated for contamination, or use tools designed to detect and filter contaminated sequences.

- Non-Specific Taxonomic Labeling: Sequences are sometimes annotated to a broad taxonomic group (e.g., "Bacteria") rather than the most specific leaf possible [20].

- Mitigation: Select databases that prioritize deep taxonomic annotation to ensure the highest resolution for your analysis.

Standardized Experimental Workflows

The following diagram illustrates a consensus workflow for microbiome sample processing, from collection to data analysis, integrating steps from multiple international standards.

Essential Research Reagent Solutions

The table below details key reagents and kits commonly used in standardized microbiome research protocols.

Table 2: Essential Research Reagent Solutions for Microbiome Workflows

| Reagent/Kits | Primary Function | Application Notes |

|---|---|---|

| FastDNA SPIN Kit for Soil [21] | DNA extraction from complex samples | Provides thorough homogenization, lysis, and high DNA yield from diverse, difficult-to-lyse specimens [21]. |

| OMNIgene Gut Kit [19] | Fecal sample collection & stabilization | Allows stable transport and storage of fecal samples at ambient temperatures, crucial for field studies [19]. |

| 95% Ethanol [19] | Sample preservation | A low-cost alternative for preserving fecal samples when immediate freezing at -80°C is not possible [19]. |

| FTA Cards [19] | Sample collection & nucleic acid stabilization | Useful for stable room-temperature storage of various sample types for DNA analysis [19]. |

| Validated Primer Sets (e.g., for 16S V3-V4) [10] | Target amplification for sequencing | Hypervariable regions V3-V4 are commonly used for bacterial identification and cataloguing [10]. |

Regulatory Considerations for Microbiome-Based Products

The regulatory landscape for microbiome-based therapies is evolving rapidly in response to scientific advances. A key concept is that a product's intended use, defined by labeling claims and advertising, is a primary determinant of its regulatory status. Products intended for disease prevention or treatment are regulated as medicinal products [22].

Classification Spectrum: Microbiome-based therapies exist on a continuum:

- Microbiota Transplantation (MT): Minimally manipulated community transferred from a donor [22].

- Donor-Derived Medicinal Products: Highly complex ecosystems (e.g., from fecal or vaginal material) that are industrially manufactured [22].

- Live Biotherapeutic Products (LBPs): Defined live organisms (single strain or mixture) grown from clonal cell banks, classified as biological drugs [22] [23].

Regulatory Pathways: In the European Union, the Regulation on Substances of Human Origin (SoHO) now provides a framework for many microbiome-based therapies. In the United States, the FDA's Center for Biologics Evaluation and Research (CBER) oversees these products, with the first MMPs (Rebyota, VOWST) approved in 2022 for recurrent C. difficile infection [22]. For market approval, developers must submit comprehensive data covering Chemistry, Manufacturing, and Controls (CMC), preclinical safety, and clinical efficacy, adhering to Good Laboratory (GLP), Clinical (GCP), and Manufacturing (GMP) Practices throughout the product lifecycle [23].

From Theory to Practice: Implementing Standardized Protocols for Sample Collection, Storage, and Analysis

Low-biomass microbiome environments, such as certain human tissues, the atmosphere, and treated drinking water, present unique challenges for researchers. Working near the limits of detection means that contamination from external sources can disproportionately impact results and lead to spurious conclusions [4]. This guide provides standardized protocols for minimizing contamination throughout the specimen collection and processing workflow, supporting the broader goal of standardizing microbiome research across multiple sites.

FAQs on Contamination Prevention

What defines a low-biomass sample and why is it so vulnerable?

A low-biomass sample contains minimal microbial load, approaching the detection limits of standard DNA-based sequencing methods [4]. These samples are vulnerable because even tiny amounts of contaminating DNA from reagents, sampling equipment, or the environment can overwhelm the true biological signal. This makes distinguishing contaminants from true microbial residents particularly challenging [4].

What are the most critical steps for preventing contamination during sample collection?

The most critical steps involve rigorous decontamination and the use of physical barriers [4]:

- Equipment Decontamination: Use single-use, DNA-free collection tools where possible. For reusable equipment, decontaminate with 80% ethanol followed by a nucleic acid-degrading solution like sodium hypochlorite (bleach) [4].

- Personal Protective Equipment (PPE): Researchers should wear gloves, cleansuits, masks, and shoe covers to limit contamination from skin, hair, or aerosols [4].

- Environmental Controls: Collect samples using laminar flow hoods where feasible to create a sterile workspace with HEPA-filtered air [24].

Including various control samples is non-negotiable for interpreting low-biomass studies [4]. Essential controls include:

- Negative Controls: Process empty collection vessels, swabs exposed to air, or aliquots of preservation solution alongside your samples [4].

- Process Controls: Swab PPE or laboratory surfaces to identify potential contamination sources [4].

- Reagent Controls: Include DNA extraction and PCR blank controls to detect contaminants from kits and reagents.

How can cross-contamination between samples be minimized during processing?

- Automate Processes: Automated liquid handlers significantly reduce human error and cross-contamination [24].

- Use Disposable Consumables: Opt for disposable plastic homogenizer probes to eliminate cleaning bottlenecks and contamination risks [25].

- Validate Cleaning: For reusable tools, run a blank solution after cleaning to verify no residual analytes remain [25].

Troubleshooting Common Contamination Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| High background in negative controls. | Contaminated reagents or lab surfaces. | Test water and reagents; use DNA removal solutions on surfaces [25] [24]. |

| Inconsistent results between replicates. | Well-to-well cross-contamination during plate setup. | Centrifuge sealed plates before removal; remove seals slowly and carefully [25]. |

| Unexpected microbial taxa in data. | Contamination from sampling equipment or operator. | Review and enhance decontamination protocols; increase sampling controls [4]. |

| All samples (including controls) show contamination. | Systemic issue, potentially with water supply or a common reagent. | Check and service water purification systems; test reagents systematically [24]. |

Experimental Protocols and Workflows

Standardized Workflow for Low-Biomass Sample Collection

The following diagram outlines the critical steps for collecting low-biomass samples while minimizing contamination risks.

Contamination Prevention Checklist by Research Phase

This table provides a quantitative overview of key actions required at each stage of research.

| Research Phase | Prevention Method | Key Performance Indicator |

|---|---|---|

| Planning & Preparation | Validate sterility of all reagents and collection vessels [4]. | 100% of reagents tested for microbial DNA. |

| Sample Collection | Use extensive PPE and decontaminated equipment [4]. | Zero sample exposure to unscreened environments. |

| Laboratory Processing | Use disposable homogenizer probes and automated liquid handlers [25] [24]. | Cross-contamination events reduced by >95%. |

| Data Analysis & Reporting | Apply bioinformatic contamination removal tools [4]. | Minimal reads assigned to control samples. |

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function | Application Notes |

|---|---|---|

| Sodium Hypochlorite (Bleach) | Degrades contaminating DNA on surfaces and equipment [4]. | Use fresh dilutions; easily inactivated by organic matter [4]. |

| DNA-Free Water | Serves as a negative control and reagent component [24]. | Regularly test with culture media or PCR to ensure sterility [24]. |

| UV-C Light Source | Sterilizes plasticware, glassware, and surfaces by damaging nucleic acids [4]. | Ensure adequate exposure time and distance for effectiveness. |

| Disposable Homogenizer Probes | Disrupts tissue and cells without cross-contamination risk [25]. | Ideal for high-throughput labs; less robust for very fibrous samples [25]. |

| HEPA Filter Laminar Flow Hood | Provides sterile workspace by removing airborne particles [24]. | Certify filters regularly; ensure proper airflow before use [24]. |

Frequently Asked Questions

1. What is the "gold standard" method for storing microbiome samples? Immediate freezing at -80 °C is widely considered the gold standard for preserving microbiome samples, as it most effectively halts microbial activity and preserves the original community structure [26]. However, this method is often logistically challenging in non-laboratory settings.

2. I cannot freeze samples immediately. What is the best alternative? When immediate freezing is not possible, chemical stabilization buffers are a reliable alternative. For DNA-based analyses (16S rRNA gene and shotgun metagenomic sequencing), buffers like OMNIgene.GUT and RNAlater have been shown to produce highly comparable results to frozen samples, even after storage at room temperature for up to 72 hours [27] [26]. For metaproteomics, RNAlater is also a suitable preservative [28].

3. How long can stabilized samples be stored at room temperature? Studies have validated several preservation buffers for room-temperature storage for at least 72 hours without significant changes to microbial community composition as measured by DNA sequencing [26]. Some systems, like the GutAlive device, demonstrate maintenance of viable obligate anaerobes for 24-48 hours [29].

4. Does sample preservation affect the observed bacterial diversity? The preservation method can influence results. Immediate freezing at -80 °C and refrigeration at 4 °C show negligible effects on alpha and beta diversity [26]. However, storage at ambient temperature without preservatives or using certain buffers like Tris-EDTA can cause significant shifts in the observed microbial composition and reduce diversity [26]. Ethanol preservation is not recommended for metaproteomics as it significantly alters protein abundance profiles [28].

5. Are there any special considerations for preserving viable bacteria (not just DNA)? Yes. If your research requires live bacteria (e.g., for fecal microbiota transplantation or culturomics), limiting oxygen exposure is critical. Standard containers expose samples to air, killing extremely oxygen-sensitive (EOS) bacteria like Faecalibacterium prausnitzii. Anaerobic collection systems (e.g., GutAlive) that create an oxygen-free atmosphere are designed specifically to maintain the viability of these delicate organisms during transport [29].

The following tables summarize the performance of various storage methods compared to the gold standard of immediate freezing at -80°C, based on 16S rRNA gene sequencing data.

Table 1: Impact of 72-Hour Storage on Alpha Diversity and Phylum-Level Abundance

| Storage Method | Temperature | Change in Alpha Diversity | Key Changes in Major Phyla (vs. -80°C) |

|---|---|---|---|

| Immediate Freezing (-80°C) | -80°C | (Baseline) | (Baseline) |

| Refrigeration | 4°C | No significant change [26] | No significant change [26] |

| OMNIgene.GUT | Room Temp | No significant change in Shannon index [26] | Slight significant increase in Proteobacteria [26] |

| RNAlater | Room Temp | Lower evenness [26] | Significant changes in Firmicutes, Actinobacteria, Bacteroidetes, and Proteobacteria [26] |

| Tris-EDTA (TE) Buffer | Room Temp | Information Not Specified | Significant changes in Firmicutes, Actinobacteria, Bacteroidetes, and Proteobacteria [26] |

| Air-drying / Room Temp | Room Temp | Lower Shannon diversity and evenness [26] | Significant increase in Actinobacteria and Firmicutes [26] |

Table 2: Comparative Method Performance for Different Analytical Goals

| Storage Method | 16S / Shotgun Metagenomics | Metaproteomics | Maintenance of Bacterial Viability |

|---|---|---|---|

| Immediate Freezing (-80°C) | Optimal (Gold Standard) [27] | Optimal (Gold Standard) [28] | Not specified |

| OMNIgene.GUT | Recommended (Minor differences) [27] [26] | Information Not Specified | Information Not Specified |

| RNAlater | Recommended (Some compositional shifts) [26] | Recommended (Performs as well as freezing) [28] | Information Not Specified |

| Home-made NAP Buffer | Cost-effective alternative [30] | Information Not Specified | Information Not Specified |

| Ethanol (95%) | Acceptable with consistent use [28] | Not Recommended (Alters protein profiles) [28] | Information Not Specified |

| Anaerobic Collection System | Information Not Specified | Information Not Specified | Optimal for EOS bacteria [29] |

Experimental Protocols: Key Methodologies from Cited Studies

Protocol 1: Comparing Fresh-Frozen vs. Stabilized-Frozen Samples via 16S and Shotgun Sequencing This protocol is adapted from a study on hospitalized patients [27].

- Sample Collection: A single stool specimen is collected from a donor.

- Sample Splitting: The specimen is divided into two aliquots:

- Fresh-Frozen (FF): One aliquot is immediately frozen at -80°C.

- Stabilized-Frozen (SF): The other aliquot is placed in a chemical preservative (e.g., OMNIgene.GUT) and stored at room temperature for a defined period (up to 16 days in the study) before transfer to -80°C.

- DNA Extraction: DNA is extracted from all samples using the same standardized kit and protocol.

- Sequencing & Analysis: Both 16S rRNA gene (e.g., V4 region) and shotgun metagenomic sequencing are performed. Data is analyzed for alpha-diversity (Shannon, Simpson indices), beta-diversity (Bray-Curtis dissimilarity), and taxonomic composition (relative abundance).

Protocol 2: Evaluating Preservation Buffers for Metaproteomics This protocol is adapted from a mouse study evaluating preservation for metaproteomic analysis [28].

- Master Mix Preparation: Fecal samples from multiple donors are combined and homogenized to create a master mix, reducing individual variation.

- Aliquot Preservation: Aliquots of the master mix are preserved using different methods:

- Flash-freezing in liquid nitrogen

- Immersion in RNAlater

- Immersion in a home-made RNAlater-like buffer

- Immersion in 95% ethanol

- Storage: Preserved samples are stored for different durations (e.g., 1 week and 4 weeks).

- Protein Extraction & LC-MS/MS: Proteins are extracted, digested into peptides, and analyzed by Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS).

- Data Comparison: The identified proteins and their abundances are compared between preservation treatments to assess bias and variability.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Microbiome Sample Storage

| Reagent / Kit | Primary Function | Key Considerations |

|---|---|---|

| OMNIgene.GUT | Chemical stabilization of fecal DNA at room temperature. | Effective for DNA-based sequencing (16S, shotgun); shown to work in clinical/hospital settings [27] [26]. |

| RNAlater | Stabilizes and protects nucleic acids (RNA & DNA). | Widely used; effective for DNA and metaproteomics [28] [26]; may require a centrifugation step to remove the buffer before DNA extraction [30]. |

| DNA/RNA Shield | Inactivates nucleases and microbes to protect nucleic acids. | Can be used directly in many DNA purification kits without removal [30]. |

| Home-made NAP Buffer | Low-cost, home-made solution for nucleic acid preservation. | A cost-effective alternative to commercial buffers; performs well in comparative studies [30]. |

| GutAlive Device | Anaerobic collection system to maintain viability of obligate anaerobes. | Critical for studies requiring live bacteria (e.g., FMT, culturomics); generates an anaerobic atmosphere upon closing [29]. |

Troubleshooting Guide: Common Issues and Solutions

Problem: Inconsistent microbiome profiles between samples from the same study cohort.

- Potential Cause: Inconsistent storage methods or times across samples, especially if some were frozen immediately and others were held at room temperature without stabilization.

- Solution: Implement a Standardized Operating Procedure (SOP) for the entire cohort. Choose a single, logistically feasible preservation method (e.g., OMNIgene.GUT for all participants) and ensure the time between collection and freezing/processing is standardized [26].

Problem: Loss of obligate anaerobic bacteria in culture.

- Potential Cause: Exposure to atmospheric oxygen during sample collection and transport.

- Solution: Use an anaerobic collection device (e.g., GutAlive) that generates an oxygen-free environment immediately after sample submission [29].

Problem: Low DNA yield or quality from samples stored in preservation buffers.

- Potential Cause: Some buffers require removal prior to DNA extraction. Incomplete removal can inhibit downstream enzymatic reactions.

- Solution: Follow the manufacturer's protocol or literature methods for buffer removal. This often involves a dilution step with PBS followed by centrifugation to pellet the microbial material before proceeding with the standard DNA extraction protocol [30].

Problem: Discrepant results when the same samples are used for metaproteomics versus DNA sequencing.

- Potential Cause: The preservation method may not be optimal for all molecule types. For example, ethanol preservation is suitable for DNA analysis but is not recommended for metaproteomics [28].

- Solution: If multi-omics analysis is planned, select a preservation method validated for all intended analyses. RNAlater has shown good performance for both DNA sequencing and metaproteomics [27] [28]. Alternatively, split the sample and use different, optimized preservation methods for each analysis.

Decision Workflow for Selecting a Storage Method

The following diagram outlines a systematic approach to choosing the right storage method based on your research objectives and logistical constraints.

Frequently Asked Questions

1. What is the most important factor when choosing between 16S rRNA and shotgun metagenomic sequencing?

Your choice should primarily depend on your research questions, budget, and required taxonomic resolution. If your study focuses exclusively on bacterial and archaeal composition at the genus level and cost is a major constraint, 16S rRNA sequencing is suitable. If you require species- or strain-level resolution, need to profile fungi/viruses, or want to assess functional genetic potential, shotgun metagenomics is necessary despite higher costs [31] [32]. For stool samples with high microbial biomass, shotgun is often preferred, while for tissue samples or targeted aims, 16S can be more suitable [31].

2. How can we ensure reproducibility across multiple research sites?

Standardization across sites requires strict protocol harmonization for sample collection, storage, transportation, DNA extraction, and sequencing. The Clinical-Based Human Microbiome Research Project (cHMP) demonstrates that using controlled specimen collection, uniform storage conditions, identical DNA extraction kits, and centralized sequencing analysis ensures consistent data quality [18]. Furthermore, employing standardized bioinformatics pipelines and reference databases is crucial for reproducible data analysis [33].

3. Our shotgun sequencing results show high host DNA contamination. How can we mitigate this?

Host DNA contamination is particularly challenging for samples like skin swabs, biopsies, and buccal samples. To mitigate this, you can:

- Use laboratory methods to deplete host cells or DNA prior to extraction

- Increase sequencing depth to ensure sufficient microbial reads

- Consider 16S rRNA sequencing for high-host-DNA samples since it uses PCR to amplify specific microbial regions [32]

- For stool samples, which have high microbial-to-host DNA ratio, shallow shotgun sequencing can be a cost-effective alternative [32]

4. Which bioinformatics pipelines are recommended for 16S rRNA data analysis?

For 16S data, established pipelines include QIIME, MOTHUR, and USEARCH-UPARSE [32] [34]. A recent benchmarking study found that ASV (Amplicon Sequence Variant) algorithms like DADA2 and OTU (Operational Taxonomic Unit) algorithms like UPARSE most closely resemble intended microbial communities [35]. DADA2 provides consistent output but may over-split sequences, while UPARSE achieves clusters with lower errors but with more over-merging [35].

5. Why do our fungal profiles from shotgun data seem incomplete compared to bacterial data?

This is a common challenge due to limitations in fungal-specific bioinformatics tools and reference databases. A 2025 study evaluated six mycobiome analysis tools and found that FunOMIC, EukDetect, and MiCoP showed the highest accuracy [36]. The limited number of identified fungal species (only ~4% of estimated species) and inadequate database coverage significantly hamper mycobiome characterization from shotgun data [36].

6. How does DNA extraction method affect sequencing results?

DNA extraction methodology significantly impacts your results. Key considerations include:

- Extraction kit selection must be appropriate for your sample type (stool, soil, water, swabs)

- The lysis step should be optimized for different cell wall types (gram-positive vs. gram-negative bacteria, fungal cells)

- Inhibition removal is critical for PCR-based 16S sequencing

- Consistent use of the same extraction kit across all samples in a study is essential for comparability [34]

For vitamin-containing products, researchers have developed optimized DNA extraction protocols specifically tailored to diverse formulations that inhibit standard methods [37].

Method Comparison Table

Table 1: Technical comparison between 16S rRNA and shotgun metagenomic sequencing

| Factor | 16S rRNA Sequencing | Shotgun Metagenomic Sequencing |

|---|---|---|

| Cost per sample | ~$50 USD [32] | Starting at ~$150 USD [32] |

| Taxonomic resolution | Genus level (sometimes species) [32] | Species level (sometimes strains) [32] |

| Taxonomic coverage | Bacteria and Archaea only [32] | All domains: Bacteria, Archaea, Fungi, Viruses [32] |

| Functional profiling | No (only predicted) [32] | Yes (functional genes and pathways) [32] |

| Host DNA sensitivity | Low (PCR amplifies target) [32] | High (sequences all DNA) [32] |

| Bioinformatics requirements | Beginner to intermediate [32] | Intermediate to advanced [32] |

| Reference databases | Well-established (SILVA, Greengenes) [31] [34] | Growing, less curated (NCBI refseq, GTDB) [31] [32] |

| Method bias | Medium to High (primer and region-dependent) [31] [32] | Lower (untargeted, but analytical biases exist) [32] |

Research Reagent Solutions

Table 2: Essential materials and reagents for standardized microbiome research

| Reagent/Solution | Function | Application Notes |

|---|---|---|

| NucleoSpin Soil Kit | DNA extraction from complex samples | Optimized for shotgun analysis from fecal samples [31] |

| DNeasy PowerLyzer Powersoil Kit | DNA extraction with mechanical lysis | Used for 16S rRNA sequencing from fecal samples [31] |

| SILVA Database | Taxonomic classification | Reference database for 16S rRNA gene sequences [31] [35] |

| NCBI RefSeq Database | Whole-genome reference | Primary database for shotgun metagenomic analysis [31] [36] |

| Universal 16S Primers | Amplification of target regions | Target hypervariable regions (e.g., V3-V4 for bacteria) [31] [34] |

| MetaPhlAn4 | Taxonomic profiling from shotgun data | Uses clade-specific marker genes [36] |

| Kraken2 | Taxonomic sequence classification | Can be used for both bacterial and fungal profiling [36] |

Experimental Workflows

Standardized DNA Extraction Protocol

For multi-site studies, use the same commercial extraction kits across all locations:

- Sample Preservation: Freeze samples immediately at -20°C or -80°C [34]. For temporary storage, use 4°C or preservation buffers.

- Lysis: Combine chemical (enzymes) and mechanical (bead beating) methods to ensure comprehensive cell wall disruption [34].

- Inhibition Removal: Use kit-specific inhibitors removal steps; this is critical for PCR-based methods.

- DNA Precipitation: Add salt solution and alcohol to separate DNA from other cellular components [34].

- Purification: Wash isolated DNA to remove impurities and resuspend in molecular grade water [34].

- Quality Control: Measure DNA concentration and purity (A260/280 ratio) using spectrophotometry.

Decision Workflow for Method Selection

The following diagram outlines a systematic approach to selecting the appropriate sequencing method:

Troubleshooting Common Problems

Problem: Inconsistent microbiome profiles across replicate samples

- Potential Cause: Variability in sample collection, storage, or DNA extraction

- Solution: Implement standardized collection protocols across all sites. Collect clinical metadata including antibiotic usage, dietary habits, and health history [18]. Use the same storage conditions (-80°C preferred) and limit freeze-thaw cycles [34]

Problem: Low taxonomic resolution in 16S rRNA sequencing

- Potential Cause: Limited variable region selection or shallow sequencing depth

- Solution: Use multiple hypervariable regions or switch to shotgun metagenomics if species-/strain-level resolution is essential [38] [32]

Problem: Discrepancies in microbial composition between 16S and shotgun

- Potential Cause: Different reference databases and methodological biases

- Solution: This is expected [31]. 16S detects dominant bacteria while shotgun provides broader community snapshot. When comparing studies, note the technique used and consider combined approaches [31]

Problem: Inability to detect fungi in shotgun metagenomic data

- Potential Cause: Limited fungal databases and tool limitations

- Solution: Use specialized mycobiome tools like FunOMIC, EukDetect, or MiCoP rather than general taxonomic classifiers [36]

Key Recommendations for Multi-Site Studies

- Establish Standard Operating Procedures (SOPs) for every step from sample collection to data analysis [18] [33]

- Use the same DNA extraction kits across all sites to minimize technical variability [18]

- Include control samples in each batch to monitor technical variation [34]

- Sequence deeply enough for your research questions - higher depth is needed for strain-level analysis [32]

- Consider shallow shotgun sequencing as a compromise between 16S and deep shotgun for large-scale studies [32]

- Document all metadata following standardized case report forms, including patient information, medication use, and dietary habits [18]

Standardization of DNA extraction and sequencing methods is fundamental for generating comparable, reproducible microbiome data across research sites. By carefully selecting the appropriate sequencing method based on research goals and implementing consistent protocols, researchers can overcome the challenges of microbiome variability and advance the field toward clinically meaningful applications.

Troubleshooting Guide: Common Issues with Gut Microbiome Reference Materials

Problem: Inconsistent results between laboratories

- Cause: Variations in DNA extraction methods, sequencing platforms, and bioinformatics pipelines can lead to irreproducible data [39] [40].

- Solution: Use NIST's Human Fecal Material (RM 8048) as a cross-laboratory benchmark. Run the reference material alongside your samples using the same processing pipeline and compare your results to NIST's provided data to identify methodological biases [39] [41].

Problem: Difficulty validating metabolomic findings

- Cause: Complex metabolite mixtures in stool and variations in mass spectrometry or NMR methodologies create analytical challenges [42] [43].

- Solution: Incorporate NIST's companion material, RGTM 10212 Fecal Metabolite Mixture (in development), for instrument calibration and method validation [43] [44].

Problem: Uncertain sample stability during storage

- Cause: Microbial composition can shift if samples are not properly preserved, especially when immediate freezing at -80°C isn't feasible [45].

- Solution: NIST RM 8048 has a documented 5-year shelf life and is homogeneous. For your own samples, when -80°C storage is impossible, use preservative buffers like OMNIgene·GUT or AssayAssure, noting their potential selective effects on certain taxa [39] [45].

Problem: Low DNA yield from samples

- Cause: Insufficient sample volume or inefficient cell lysis during DNA extraction, particularly problematic for low-biomass samples [45].

- Solution: For stool, ensure collection of at least 1 gram. Homogenize the entire sample before aliquoting to ensure uniformity. Validate your DNA extraction kit's efficiency against the NIST RM to ensure it effectively lyses tough-to-break microbial cells [18] [45].

Frequently Asked Questions (FAQs)

Q1: What exactly is NIST RM 8048, and what does it contain?

- A: NIST RM 8048 is a Human Fecal Material reference material developed by the National Institute of Standards and Technology. It consists of eight frozen vials of human feces from healthy donors (both vegetarians and omnivores) in an aqueous solution. Each purchase includes extensive data on the material's composition, identifying over 150 microbial species and 150 metabolites through metagenomic and metabolomic analyses [39].

Q2: How should I incorporate this reference material into my experimental workflow?

- A: The reference material should be processed alongside your experimental samples at every step, from DNA extraction and sequencing to metabolomic analysis. This allows you to use NIST's extensively characterized data as a benchmark to control for technical variability introduced by your specific methods and reagents [39] [40].

Q3: Can I use this material to standardize studies beyond the human gut?

- A: While specifically designed for human gut microbiome research, the principles of standardization it provides are broadly applicable. It can serve as a complex microbial community model for developing and validating methods in other areas, though findings should be confirmed with domain-specific controls [42] [46].

Q4: What are the specific storage and handling requirements?

- A: The material is shipped and should be stored frozen. It is designed to be stable for at least five years and is homogeneous, meaning every aliquot is consistent. Always thaw and handle the vials using the provided instructions to maintain integrity [39].

Q5: Where can I purchase RM 8048, and what documentation comes with it?

- A: NIST RM 8048 is available for purchase through the NIST Store. The material comes with a Certificate of Analysis and over 25 pages of detailed data characterizing the microbial and metabolite components [41] [43].

The table below summarizes key quantitative information about the NIST Human Fecal Material reference material and related guidelines.

Table 1: Reference Material Specifications and Collection Guidelines

| Parameter | Specification / Guideline | Source / Context |

|---|---|---|

| RM 8048 Contents | 8 x 100 mg vials (4 vegetarian, 4 omnivore) | [39] |

| Microbial Species | >150 species identified | [39] |

| Metabolites | >150 metabolites identified | [39] |

| Shelf Life | Minimum of 5 years | [39] |

| Minimum Stool Sample | 1 g solid or 5 mL liquid | Clinical-based HMP protocol [18] |

| Catheterized Urine Volume | 30-50 mL recommended | Best practice guidelines [45] |

Table 2: Essential Clinical Metadata for Gastrointestinal Studies

| Category | Specific Data to Collect |

|---|---|

| Demographics & History | Diet, medication use (last 6 months), BMI, smoking/alcohol history, surgical history [18] |

| Bowel Habits & Lifestyle | Bristol stool chart, exercise frequency, oral hygiene [18] |

| Dietary Habits | Breakfast consumption, Western/Mediterranean/vegetarian/ketogenic diet patterns, dairy/vegetable intake, eating out frequency [18] |

Experimental Protocols for Standardization

Protocol 1: Using RM 8048 for Metagenomic Sequencing Quality Control

This protocol ensures consistency in next-generation sequencing (NGS) workflows for microbiome analysis [39] [41].

- Sample Processing: Thaw one vial of NIST RM 8048 on ice alongside your experimental samples.

- DNA Extraction: Extract DNA from the RM and all samples using your standard kit. Note: The choice of DNA extraction kit significantly impacts yield and taxa representation [45].

- Library Preparation & Sequencing: Process the RM DNA and sample DNA in the same sequencing run to control for batch effects.

- Bioinformatics Analysis: Analyze the raw sequencing data from the RM using your standard bioinformatics pipeline.

- Data Comparison: Compare the microbial taxonomy and relative abundances you obtained for the RM to the benchmark data provided by NIST.

- Bias Assessment: Significant deviations from the NIST benchmark indicate potential biases or errors in your wet-lab or computational methods, allowing for protocol adjustment.

Protocol 2: Validating Metabolomic Methods with Fecal Reference Materials

This protocol uses NIST materials to validate mass spectrometry-based metabolomic analyses [42] [43].

- Sample Preparation: Reconstitute and dilute the RGTM 10212 Fecal Metabolite Mixture (or use RM 8048) according to experiment needs.

- Instrument Calibration: Use the mixture to tune and calibrate your mass spectrometer.

- Parallel Processing: Process RM 8048 alongside experimental samples for metabolite extraction.

- Data Acquisition: Run the extracted metabolites from RM 8048 on your LC-MS/MS or GC-MS platform.

- Performance Check: Identify the metabolites detected in your analysis of RM 8048 and check them against the NIST-assigned values for identity and quantity.

- Normalization: Use the performance data to correct for technical variation in your experimental sample data.

Workflow Visualization

The diagram below illustrates the integrated role of reference materials in a standardized microbiome research workflow.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Standardized Microbiome Research

| Reagent / Material | Function / Purpose |

|---|---|

| NIST RM 8048 (Human Fecal Material) | Gold-standard reference material for validating metagenomic and metabolomic measurements across labs [39] [41]. |

| RGTM 10212 (Fecal Metabolite Mixture) | Instrument calibration and validation for metabolomic studies of the gut microbiome [43] [44]. |

| NIST RM 8376 (Mixed Microbial Genomic DNA) | Genomic DNA standard for assessing performance of NGS-based pathogen detection methods [40] [46]. |

| Preservative Buffers (e.g., OMNIgene·GUT, AssayAssure) | Maintain microbial composition at room temperature or 4°C when immediate freezing is not possible [45]. |

| Standardized DNA Extraction Kits | Ensure consistent lysis of diverse microbial cells and high-quality DNA yield for sequencing [18] [45]. |

Navigating Common Pitfalls: Strategies for Optimizing Protocol Consistency and Data Quality

A practical guide for standardizing microbiome research protocols across multi-center studies.

FAQ: Navigating Common Pre-analytical Challenges

1. Why is the pre-analytical phase so critical in microbiome research? A large part of the failure to reproduce experiments in biomedical research has been attributed to errors in the pre-analytical phase, where the quality of biological samples is compromised [47]. The pre-analytical phase encompasses all steps from sample collection to analysis, and variables in this phase can introduce significant inaccuracies that do not reflect the real situation in the human body [47]. Standardizing this phase is essential for generating FAIR (Findable, Accessible, Interoperable, Reusable) data and is a prerequisite for reliable diagnostics and development of tests [47].

2. What is the most common oversight when controlling for diet in human studies? The most common oversight is failing to account for both long-term and short-term dietary influences. While long-term dietary patterns (e.g., high protein/animal fat vs. high carbohydrate) are linked to major community types, studies have shown that even extreme short-term dietary alterations can rapidly and reproducibly alter microbial community structure and gene expression [19]. Researchers should record not just habitual diet, but also acute dietary changes in the days immediately preceding sample collection.

3. How long should participants be advised to avoid antibiotics before providing a microbiome sample? The impact of antibiotics is profound and can be long-lasting. While some microbiomes may "bounce back," others can experience changes that last indefinitely [48]. The necessary washout period depends on the specific antibiotic, duration of use, and the individual's microbiome. A conservative approach is recommended, especially in studies of adults where the microbiome is relatively stable. The effect is most dramatic in infancy, where antibiotic treatment in the first 18 months results in greater disruption than subsequent administration [49].

4. Are there specific times of day that are optimal for sample collection? Yes, sample timing matters. The gut microbiome has been reported to display circadian behavior on a 24-hour cycle [19]. Therefore, for longitudinal studies or multi-center trials, it is crucial to standardize the time of day for sample collection across all participants and time points to minimize variability introduced by these daily rhythms.

5. What is a major pitfall in controlling for medications beyond antibiotics? A common pitfall is focusing solely on antibiotics and overlooking other prescription drugs that can significantly alter the gut microbiome. For example, proton pump inhibitors (PPIs), which reduce stomach acid, have been shown to allow upper gastrointestinal microbes to move down into the gut, altering the composition of the lower gastrointestinal microbiota [19]. A comprehensive medication history, including over-the-counter drugs, is essential.

Troubleshooting Guides

Issue: High Inter-Subject Variability Masks Intervention Effects

Problem: The differences between study subjects are so large that it becomes difficult to detect the effect of the intervention itself.

Solutions:

- Implement a Crossover Design: Where ethically and practically feasible, use a design where each subject serves as their own control. This involves a baseline period, an intervention period, and often a washout period, effectively controlling for the unique, stable microbiome of each individual [50].

- Increase Sample Size: Power your study appropriately to account for the expected high baseline variability. Underpowered studies are a primary reason for inconclusive results in microbiome research [50].

- Stratify Participants: Recruit and randomize participants into groups based on key confounding factors such as age, BMI, or baseline microbiome features (e.g., enterotype) to ensure these variables are evenly distributed across study groups [19].

Issue: Inconsistent Sample Quality in Multi-Center Studies

Problem: Samples collected from different clinical sites show technical variations due to different collection and handling protocols.

Solutions:

- Adopt a Standardized Protocol: Implement a single, validated standard operating procedure (SOP) across all sites. The European pre-analytical standard CEN/TS 17626:2021 provides specifications for human specimen intended for microbiome DNA analysis and can serve as a foundational document [47].

- Use Standardized Collection Kits: Provide all sites with identical sample collection kits, including the same swabs, storage tubes, and preservation buffers to minimize kit-to-kit variability.

- Control Storage Conditions: Ensure all samples are stored at the same temperature immediately after collection and for the same duration before processing. For fecal samples that cannot be immediately frozen, evidence supports the use of 95% ethanol, FTA cards, or the OMNIgene Gut kit for preservation [19].

Issue: Unexplained Shifts in Microbial Composition During a Longitudinal Study

Problem: Drifts in the microbiome data appear over time that cannot be attributed to the intervention.

Solutions:

- Audit Participant Diaries: Closely review participant diet, travel, and medication logs for unapproved changes or incidental antibiotic use.

- Check for Technical Batch Effects: A common source of variation in longitudinal studies is different batches of DNA extraction kit reagents [19]. Purchase all necessary kits from a single lot at the start of the study, or extract DNA from all samples in a single, randomized batch.

- Include a Control Group: Always include a concurrent control group that does not receive the intervention. This allows you to distinguish true intervention effects from broader temporal shifts affecting all participants [50].

Table 1: Impact of Common Pre-analytical Variables on the Gut Microbiome

| Variable Category | Specific Factor | Quantitative/Qualitative Impact | Recommended Control Measure |

|---|---|---|---|

| Diet | Long-term Patterns | Linked to dominance by specific genera (e.g., Bacteroides vs. Prevotella) [19] | Record habitual diet via validated FFQ; stratify by enterotype. |

| Short-term Changes | Rapid, reproducible alteration in community structure & gene expression [19] | Standardize diet 24-48h prior to sampling; provide controlled meals. | |

| Dietary Diversity | Aiming for ≥30 different plant foods/week benefits microbial diversity [48] [51] | Use dietary diversity as a covariate in analyses. | |

| Medications | Antibiotics | Most dramatic effect; can cause long-term or permanent changes [48] [49] | Define conservative washout periods (weeks to months); document historical use. |

| Proton Pump Inhibitors (PPIs) | Alters GI tract biogeography, increasing risk of infections [19] | Record all prescription & OTC drug use; exclude or stratify users. | |

| Other Prescription Drugs | Various drugs (e.g., antipsychotics) shown to impact microbiota [19] | Comprehensive medication history is essential. | |

| Sample Timing | Circadian Rhythms | Microbial communities exhibit 24-hour cyclical behavior [19] | Collect all samples at a standardized time of day (±1-2 hours). |

| Longitudinal Instability | Healthy adult gut is largely stable; other body sites (e.g., vagina) vary more [19] | Understand natural variation of the body site being studied. | |

| Sample Handling | Room Temp Storage | Significant changes can occur if not frozen immediately [19] | Immediate freezing at -80°C is ideal. Use preservatives if freezing is delayed. |

| DNA Extraction | Different batches of kits can be a significant source of variation [19] | Use a single kit lot for entire study; randomize sample processing. |

Experimental Protocols for Standardization

Protocol 1: Validating Sample Collection and Storage Methods

Aim: To establish the stability of microbial communities under different storage conditions that may be encountered during multi-site sampling.

Materials:

- Fresh fecal sample

- Standardized collection tubes (e.g., DNA/RNA Shield tubes)

- OMNIgene Gut kit

- 95% Ethanol

- FTA cards

- Freezers (-80°C) and refrigerators (4°C)

Method:

- Homogenize a fresh fecal sample.

- Aliquot the sample into multiple portions.

- Process each aliquot immediately with a different preservation method:

- Gold Standard: Immediate freezing at -80°C.

- Test Method 1: Store in 95% ethanol at room temperature for 24-48h, then freeze.

- Test Method 2: Apply to FTA card and air dry, then store at room temperature.

- Test Method 3: Mix with OMNIgene Gut solution and store at room temperature per manufacturer's instructions.

- Test Method 4: Refrigerate at 4°C for 24h, then freeze.

- After the designated storage period, extract DNA from all samples simultaneously using the same kit and lot.

- Perform 16S rRNA gene sequencing on all samples.

- Analysis: Compare the alpha and beta diversity of each test condition to the gold standard (immediate freeze) using ordination techniques (e.g., PCoA) and statistical tests like PERMANOVA.

Protocol 2: Monitoring the Impact of Antibiotic Perturbation and Recovery

Aim: To track the longitudinal effect of a defined antibiotic course on the gut microbiome and resistome in healthy adults.

Materials:

- Study participants (healthy adults)

- Defined antibiotic (e.g., a single broad-spectrum course)

- Stool collection kits

- DNA extraction kits

- Shotgun metagenomic sequencing services

Method:

- Collect baseline stool samples from participants on 3 separate days prior to antibiotic administration.

- Participants undergo a standardized course of antibiotics.

- Collect stool samples daily during the antibiotic course, then weekly for 4-8 weeks post-antibiotics.

- Extract DNA and perform shotgun metagenomic sequencing to assess both taxonomic composition and the abundance of antibiotic resistance genes (ARGs) [49].

- Analysis:

- Calculate intra-individual (within-subject) similarity over time to assess stability and resilience.

- Track the abundance of specific taxa known to be susceptible or resistant to the antibiotic.

- Quantify the diversity and abundance of ARGs in the "resistome" before, during, and after perturbation [49].

Signaling Pathways and Workflow Diagrams

Pre-analytical Variables Influence

Sample Standardization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Standardized Microbiome Sampling

| Item | Function | Considerations for Standardization |

|---|---|---|

| OMNIgene Gut Kit | Stabilizes microbial DNA in fecal samples at room temperature for several days [19]. | Ideal for multi-center studies where immediate freezing is logistically challenging. |

| DNA/RNA Shield Tubes | Preserves nucleic acids and inactivates microbes immediately upon sample collection. | Provides a standardized matrix for both DNA and RNA-based analyses. |

| FTA Cards | A solid matrix for room-temperature storage of fecal samples for DNA analysis [19]. | Low-cost and easy to transport via regular mail; suitable for field studies. |

| Single-Lot DNA Extraction Kits | To isolate total genomic DNA from samples. Using a single lot controls for a major source of technical variation [19]. | Purchase all kits needed for the entire study from a single manufacturing lot. |

| Mock Microbial Communities | Composed of known, defined strains of bacteria in specified abundances. | Serves as a positive control to assess the accuracy and bias of the entire wet-lab workflow. |

| Advanced DMEM/F12 with Antibiotics | A transport medium for tissue samples to maintain viability and prevent contamination [52]. | Critical for studies involving biopsies or other tissue-derived microbiomes. |

Frequently Asked Questions: Contamination Control

Q1: Why are my low-biomass sample results dominated by unexpected or common skin bacteria?

This is a classic sign of contamination, often from reagents, the sampling environment, or the researcher. Even minute amounts of exogenous DNA can overwhelm the true signal in low-biomass samples (e.g., from tissues like placenta or blood) [53]. To address this:

- Use Certified Reagents: Employ reagents and kits certified to be DNA-free.

- Include Rigorous Controls: Process negative control samples (e.g., empty collection tubes, pure water) alongside your patient samples through the entire workflow, from DNA extraction to sequencing. The taxa found in your negative controls are likely contaminants [53].

- Decontaminate Surfaces: Wipe down work surfaces with DNA degradation solutions and use UV-C irradiation in biosafety cabinets before use [53].

Q2: How can I prevent cross-contamination between samples during processing?

Cross-contamination, where DNA from one sample carries over to another, can occur via aerosolized droplets or contaminated equipment [53].

- Physical Separation: Establish a unidirectional workflow in the lab, physically separating pre-PCR (sample preparation, DNA extraction) and post-PCR areas (amplification, library building) [53].

- Use Filter Tips: Always use filtered pipette tips to prevent aerosol contamination.

- Validate with Controls: Include a cross-contamination monitoring control, such as a sample spiked with a unique synthetic oligonucleotide, to track any "tag jumping" or sample-to-sample leakage [53].

Q3: Our multi-site study is showing high variability in microbiome profiles. How can we improve consistency?

Variability often stems from a lack of standardized protocols across sites. Key factors to standardize include:

- Sample Collection Time: The gut microbiome composition fluctuates significantly throughout the day. Collecting samples at a standardized time (e.g., always in the morning before breakfast) dramatically improves reproducibility [54].

- Sample Processing Time: Define and adhere to a maximum time between sample collection and processing/freezing. For oral samples, the KOBN mandates transfer to the lab within 4 hours, with temporary storage at 0°C–4°C [55].

- Uniform Kits and Protocols: Use the same collection kits, DNA extraction methods, and sequencing platforms across all sites. The international consensus on microbiome testing recommends stool collection kits with a genomic DNA preservative [56].

Q4: What is the minimum set of controls needed for a reliable low-biomass microbiome study?

A robust control framework is non-negotiable. For each batch of samples, you should include [53]:

- Field/Collection Blanks: Empty collection tubes opened and closed at the sampling site.

- Reagent Blanks: Take the preservation or lysis buffer through the DNA extraction and sequencing process.

- Extraction Blanks: A no-sample control that undergoes the entire DNA extraction protocol.

- Positive Controls: A mock microbial community of known composition to assess the accuracy and sensitivity of your entire workflow.

Troubleshooting Common Experimental Issues