Building Robust Bioinformatics Pipelines for POI NGS Data: From Foundational Concepts to AI-Driven Analysis

This article provides a comprehensive guide for researchers and drug development professionals on constructing and optimizing bioinformatics pipelines for Primary Ovarian Insufficiency (POI) Next-Generation Sequencing (NGS) data.

Building Robust Bioinformatics Pipelines for POI NGS Data: From Foundational Concepts to AI-Driven Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on constructing and optimizing bioinformatics pipelines for Primary Ovarian Insufficiency (POI) Next-Generation Sequencing (NGS) data. It covers the entire workflow from foundational NGS principles and POI-specific genomic considerations to methodological implementation using modern tools like Snakemake and Nextflow. The content addresses critical troubleshooting strategies for data quality issues and computational bottlenecks, and establishes rigorous validation and benchmarking frameworks to ensure analytical accuracy. By integrating emerging trends such as AI-based variant calling and multi-omics integration, this guide aims to equip scientists with the knowledge to derive clinically actionable insights from POI genomic data, ultimately advancing personalized therapeutic development.

Understanding POI Genomics and NGS Fundamentals: Laying the Groundwork for Analysis

Primary Ovarian Insufficiency (POI) is a clinically heterogeneous disorder characterized by the loss of ovarian function before the age of 40, affecting approximately 1-3.7% of the female population [1] [2]. The condition is defined by a combination of oligomenorrhea or amenorrhea for at least four months, and elevated follicle-stimulating hormone (FSH) levels (>25 IU/L on two occasions) [3] [4]. POI represents a significant cause of female infertility, with profound implications for long-term health, including increased risks of osteoporosis, cardiovascular disease, and cognitive decline [1] [2]. The genetic architecture of POI has proven to be remarkably complex, with chromosomal abnormalities, single-gene mutations, and emerging oligogenic models all contributing to its pathogenesis. Next-generation sequencing (NGS) technologies have revolutionized our understanding of POI genetics, revealing numerous genes involved in key biological processes such as meiosis, DNA repair, folliculogenesis, and steroidogenesis [5] [3]. This application note provides a comprehensive overview of the current genetic landscape of POI and detailed protocols for implementing bioinformatics pipelines in POI research.

POI Phenotypic Spectrum and Diagnostic Criteria

The clinical presentation of POI spans a broad spectrum, ranging from primary amenorrhea (absence of menarche by age 15) to secondary amenorrhea (cessation of established menses) [5] [1]. Primary amenorrhea is often associated with more severe genetic abnormalities and is frequently diagnosed in individuals with delayed puberty and absent breast development. Secondary amenorrhea represents the more common phenotype, characterized by normal pubertal development followed by irregular menstrual cycles and eventual cessation of menstruation [5]. The prevalence of POI increases with advancing age, with estimates of 1:10,000 by age 20, 1:1,000 by age 30, and 1:100 by age 40 [5] [6]. Recent large-scale studies suggest the overall prevalence may be as high as 3.7% in women under 40 [1] [2].

Table 1: Clinical Classification and Prevalence of POI

| Parameter | Primary Amenorrhea | Secondary Amenorrhea |

|---|---|---|

| Definition | Absence of menarche by age 15 | Cessation of menses for ≥4 months after previously established menstruation |

| Typical Age at Diagnosis | Younger age (often adolescence) | <20 to 40 years |

| Pubertal Development | Often delayed or incomplete | Normal |

| Preportion among POI Patients | 16-20% | 80-84% |

| Common Genetic Findings | More severe chromosomal abnormalities, syndromic forms | Monogenic and oligogenic defects |

Genetic Architecture of POI

Etiological Spectrum

The causes of POI are highly heterogeneous, encompassing genetic, autoimmune, iatrogenic, infectious, and toxic factors, though a significant proportion remains idiopathic [2]. Historically, up to 50-70% of cases were classified as idiopathic, but advances in genetic testing have substantially reduced this percentage [1] [2]. A comparative analysis of historical and contemporary cohorts reveals a shifting etiological landscape, with iatrogenic causes (due to chemotherapy, radiotherapy, or surgery) showing a more than fourfold increase in recent years [2].

Table 2: Etiological Distribution of POI in Historical vs. Contemporary Cohorts

| Etiology | Historical Cohort (1978-2003) | Contemporary Cohort (2017-2024) | Change |

|---|---|---|---|

| Genetic | 11.6% | 9.9% | Stable |

| Autoimmune | 8.7% | 18.9% | 2.2x increase |

| Iatrogenic | 7.6% | 34.2% | 4.5x increase |

| Idiopathic | 72.1% | 36.9% | 49% decrease |

Chromosomal Abnormalities

Chromosomal abnormalities account for approximately 10-13% of POI cases, with a higher frequency in primary amenorrhea (21.4%) compared to secondary amenorrhea (10.6%) [2]. The most common abnormalities involve the X chromosome, including:

- Turner syndrome (45,X and mosaic variants)

- X-chromosome deletions and rearrangements

- X-autosome translocations

- Trisomy X (47,XXX)

The regions Xq13.3 to Xq27 (POI1 and POI2 loci) represent critical areas for normal ovarian function, with genes such as COL4A6, DACH2, DIAPH2, PGRMC1, POF1B, and XPNPEP2 implicated in POI pathogenesis when disrupted [5].

Monogenic and Oligogenic Contributions

NGS studies have identified pathogenic variants in over 90 genes associated with POI, accounting for approximately 18.7-23.5% of cases [3] [7]. The genetic contribution is significantly higher in primary amenorrhea (25.8%) compared to secondary amenorrhea (17.8%) [3]. Recent evidence strongly supports an oligogenic model for POI, where multiple genetic variants in interacting genes collectively contribute to the phenotype [8] [7] [9].

Table 3: Major Gene Categories and Their Contributions to POI Pathogenesis

| Functional Category | Representative Genes | Approximate Contribution | Key Biological Processes |

|---|---|---|---|

| Meiosis & DNA Repair | MSH4, MSH5, HFM1, SPIDR, BRCA2, BLM, RECQL4 | 48.7% of cases with identified genetic defects [3] | Homologous recombination, meiotic progression, DNA damage repair |

| Transcription Factors | NOBOX, FIGLA, FOXL2, SOHLH1, NR5A1 | Varied | Regulation of oocyte-specific genes, folliculogenesis |

| Hormone Signaling & Receptors | FSHR, LHCGR, BMP15, GDF9, BMPR2 | Varied | Follicle development, ovulation, steroidogenesis |

| Metabolic & Mitochondrial | EIF2B2, GALT, AARS2, HARS2, POLG | 22.3% of cases with identified genetic defects [3] | Cellular metabolism, mitochondrial function |

| Extracellular Matrix & Signaling | HMMR, ALOX12, ZP3 | Varied | Follicle development, ovulation, cell communication |

A study of 500 Chinese Han POI patients revealed that 14.4% carried pathogenic or likely pathogenic variants, with 1.8% exhibiting digenic or multigenic inheritance patterns [7]. Similarly, targeted NGS of 295 genes in 64 early-onset POI patients identified 75% with at least one genetic variant, and many with multiple variants (17% with two variants, 14% with three variants, 14% with four variants) [9]. Patients with oligogenic variants often present with more severe phenotypes, including delayed menarche, earlier POI onset, and higher prevalence of primary amenorrhea [7].

Bioinformatics Pipeline for POI NGS Data Analysis

Sample Preparation and Library Construction

Protocol: Targeted Gene Panel Sequencing for POI

- DNA Extraction: Extract genomic DNA from peripheral blood using standardized kits (e.g., Qiagen DNeasy Blood & Tissue Kit).

- Library Preparation: Use multiplex PCR amplification with primer pools covering target genes.

- DNA input: 10-50 ng

- PCR conditions: 99°C for 2 min; 19 cycles of 99°C for 15s and 60°C for 4min

- Adapter Ligation: Partially digest primers and ligate sequencing adapters and barcodes.

- Library Purification: Use AMPure XP beads for purification.

- Quality Control: Quantify library using qPCR (Ion Library TaqMan Quantitation Kit).

- Template Preparation: Perform emulsion PCR using Ion 520 OT2 Kit on OneTouch 2 instrument.

- Enrichment: Enrich template-positive ion sphere particles using OneTouch ES.

- Sequencing: Load prepared particles onto Ion 520 chip and sequence using Ion S5 Sequencing Kit with 500 flows [6] [7].

Bioinformatics Analysis Workflow

Protocol: NGS Data Processing and Variant Calling

- Base Calling and Demultiplexing: Use platform-specific software (Torrent Suite v5.10 for Ion Torrent).

- Quality Control: Assess read quality using FastQC.

- Read Alignment: Map reads to reference genome (hg19/GRCh37) using TMAP or BWA-MEM.

- Variant Calling: Identify variants using GATK Unified Genotyper.

- Variant Annotation: Annotate variants using Ion Reporter and Varsome.

- Variant Filtering:

- Remove common variants (MAF > 0.01 in gnomAD/1000 Genomes)

- Filter by quality scores (Phred-scaled CADD > 20, MetaSVM, DANN)

- Pathogenicity Assessment: Classify variants according to ACMG guidelines.

- Validation: Confirm potentially pathogenic variants by Sanger sequencing or other orthogonal methods [6] [7] [10].

Pathogenicity Interpretation Framework

Protocol: Variant Classification and Validation

Variant Prioritization:

- Focus on rare (MAF < 0.1%), protein-altering variants

- Prioritize loss-of-function variants (nonsense, frameshift, splice-site)

- Consider missense variants with high CADD scores (>20)

Segregation Analysis: Perform haplotype analysis in families to confirm compound heterozygosity or digenic inheritance.

Functional Validation:

- For transcriptional factors (e.g., FOXL2), perform luciferase reporter assays

- Assess impact on protein function through in vitro studies

- Evaluate effects on known downstream targets (e.g., CYP17A1, CYP19A1 for FOXL2) [7]

Oligogenic Analysis: Investigate potential interactions between variants in different genes, particularly in pathways such as:

- Meiosis and DNA repair

- Folliculogenesis

- Hormone signaling and response

Key Signaling Pathways in POI Pathogenesis

The genetic basis of POI involves disruptions in several critical biological pathways essential for normal ovarian function. The diagram below illustrates the major pathways and their interactions:

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Reagents for POI Genetic Studies

| Reagent/Resource | Function/Application | Examples/Specifications |

|---|---|---|

| Targeted Gene Panels | Simultaneous screening of multiple POI-associated genes | Custom panels (28-295 genes) covering meiosis, folliculogenesis, hormone signaling [7] [9] |

| Whole Exome Sequencing | Hypothesis-free approach for novel gene discovery | Coverage of ~60Mb exonic regions; useful for familial cases and research [3] |

| NGS Platforms | High-throughput sequencing | Illumina NextSeq 500, Ion Torrent S5 [7] [9] |

| Variant Annotation Tools | Functional prediction of genetic variants | CADD, MetaSVM, DANN for pathogenicity prediction [7] |

| Functional Assay Systems | Validation of variant impact | Luciferase reporter assays (e.g., for FOXL2 transcriptional activity) [7] |

The genetic architecture of Primary Ovarian Insufficiency is highly complex, involving chromosomal abnormalities, monogenic defects, and an increasingly recognized oligogenic model. Next-generation sequencing has dramatically expanded our understanding of POI pathogenesis, revealing the importance of genes involved in meiosis, DNA repair, folliculogenesis, and ovarian signaling pathways. The bioinformatics pipelines and experimental protocols outlined here provide a framework for advancing POI genetic research, with implications for improved molecular diagnosis, genetic counseling, and the development of targeted therapeutic interventions. Future directions should focus on validating oligogenic interactions, functional characterization of novel variants, and integrating multi-omics approaches to fully elucidate the pathophysiological mechanisms underlying this heterogeneous disorder.

Next-generation sequencing (NGS) workflows are fundamental to modern genomics, enabling the high-throughput, parallel analysis of genetic material. In clinical and research settings, such as the study of Premature Ovarian Insufficiency (POI), a rigorously validated workflow is crucial for generating reliable data for downstream bioinformatics analysis [10] [6]. This document outlines the key steps and quantitative standards for implementing a robust NGS protocol.

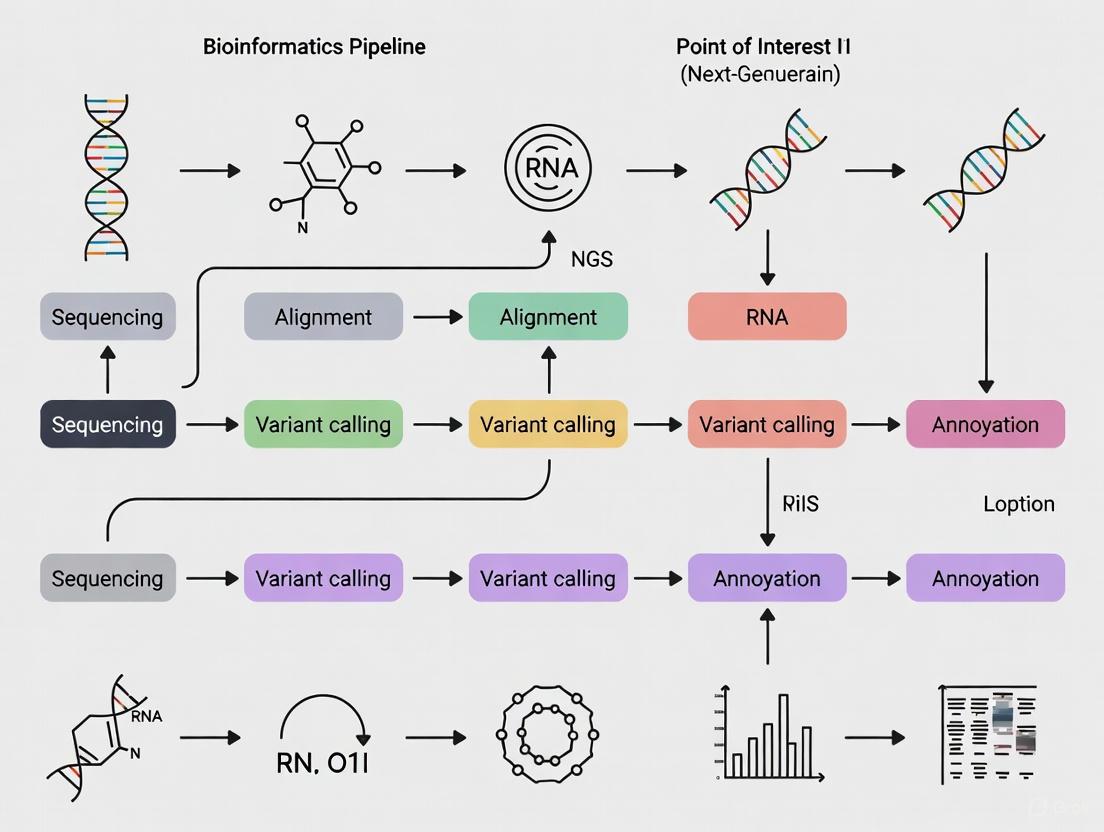

The standard NGS workflow consists of four sequential stages, each with critical quality control checkpoints. The following diagram illustrates the complete process and its key technical parameters.

Table 1: Key Performance Metrics for NGS Workflow Stages. WGS: Whole Genome Sequencing; WES: Whole Exome Sequencing.

| Workflow Step | Key Parameter | Typical Specification | Application Note |

|---|---|---|---|

| Nucleic Acid Extraction | Purity (A260/A280) | 1.8-2.0 [11] | UV spectrophotometry for purity; fluorometry for quantitation. |

| Quantity | Varies by application | Input requirements depend on library prep method. | |

| Library Preparation | Fragment Size | 100-800 bp [12] | Size selection is critical for even sequencing coverage. |

| Library Concentration | Adequate for sequencing platform | Measured via qPCR or bioanalyzer. | |

| Sequencing | Read Depth (Coverage) | WGS: 30x; WES: 100x; Panels: 500x+ [10] | Higher depth required for heterogeneous cancer or POI samples. |

| Read Length | 75-300 bp (Short-Read) [13] | Balance between cost, accuracy, and application needs. | |

| Base Call Accuracy | ≥ Q30 (99.9% accuracy) [12] | Critical for confident variant calling. |

Detailed Experimental Protocols

Step 1: Nucleic Acid Extraction and QC

The process begins with the isolation of high-quality genetic material from various sample types.

- Objective: To isolate pure, high-integrity DNA or RNA from patient samples (e.g., blood, tissue, FFPE blocks) [11] [6].

- Materials: Commercial extraction kits, microspectrophotometer, fluorometer.

- Method: Use validated commercial kits for genomic DNA or total RNA extraction. For POI studies with Hungarian cohorts, 10 ng of genomic DNA was used as input [6].

- Quality Control: Assess purity via UV spectrophotometry (A260/A280 ratio of 1.8-2.0 is acceptable) and quantify yield using fluorometric methods, which are more accurate for NGS applications [11].

Step 2: Library Preparation

This process converts the extracted nucleic acids into a format compatible with the sequencer.

- Objective: To generate a library of DNA/cDNA fragments with adapters for sequencing [11] [12].

- Materials: Library preparation kit, thermocycler, magnetic bead-based purification system, bioanalyzer.

- Fragmentation & End-Prep: Fragment DNA via sonication or enzymatic shearing (e.g., using FuPa reagent) and repair ends [12] [6].

- Adapter Ligation: Ligate platform-specific sequencing adapters to fragment ends. Adapters contain sequences for binding to the flow cell and unique molecular barcodes (indexes) to allow sample multiplexing [12].

- Target Enrichment (for Panel/WES): Hybridize fragments to biotinylated probes (hybrid-capture) or use PCR to amplify regions of interest. A POI study used a customized targeted panel of 31 genes [6].

- Library Amplification & QC: Amplify the library via PCR and quantify final yield. Assess fragment size distribution using a bioanalyzer.

Step 3: Sequencing

The prepared library is loaded onto a sequencer for massively parallel sequencing.

- Objective: To determine the nucleotide sequence of millions to billions of DNA fragments simultaneously [11] [12].

- Materials: NGS sequencer (e.g., Illumina, Ion Torrent), sequencing reagents, flow cell.

- Cluster Amplification: Load the library onto a flow cell where individual fragments are clonally amplified into clusters via bridge amplification [12].

- Sequencing by Synthesis (SBS): Sequence clusters by flowing fluorescently labeled, terminator-bound nucleotides across the flow cell. A camera detects the color signal as each base is incorporated into the growing DNA strand [11] [12]. The Ion Torrent platform detects a pH change upon nucleotide incorporation instead of a light signal [6].

- Base Calling: Software converts the detected signals into sequence data (reads) and assigns a quality score (Q-score) to each base.

Step 4: Data Analysis

Raw sequencing data is processed through a bioinformatics pipeline to identify clinically relevant variants.

- Primary Analysis: The sequencer's onboard software performs base calling, converting raw image data into sequence reads stored in FASTQ files, which contain the sequences and their quality scores [12] [13].

- Secondary Analysis: Reads are aligned to a reference genome (e.g., hg19/GRCh38) to create BAM files. Variant calling algorithms then identify differences (SNPs, indels, CNVs) from the reference, outputting a VCF file [12] [13] [6].

- Tertiary Analysis & Interpretation: Identified variants are annotated using databases (e.g., dbSNP, gnomAD, ClinVar) and filtered. For POI, variants in a 31-gene panel were classified according to ACMG guidelines to determine pathogenicity [12] [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for NGS Workflow Implementation.

| Item | Function | Application Note |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolate DNA/RNA from various sample matrices (blood, FFPE). | Ensure compatibility with sample type. POI studies often use peripheral blood [6]. |

| Library Prep Kit (e.g., Ion AmpliSeq) | Facilitates fragmentation, adapter ligation, and amplification. | The POI study used Ion AmpliSeq Library Kit Plus with 19 PCR cycles [6]. |

| Target Enrichment Panels | Hybrid-capture or amplicon probes to select genomic regions. | Custom or pre-designed panels (e.g., 31-gene POI panel) focus sequencing power [6]. |

| Sequencing Chemistry & Flow Cells | Provides enzymes and nucleotides for the sequencing reaction. | Platform-specific (e.g., Ion S5 Sequencing Kit). Dictates read length and output [6]. |

| Bioinformatics Software (e.g., Ion Reporter) | For base calling, alignment, variant calling, and annotation. | Critical for converting raw data to actionable results. Requires rigorous validation [13] [6] [14]. |

Application to POI NGS Data Research

Applying this workflow to POI research requires specific considerations. A targeted gene panel is often the most efficient approach for this genetically heterogeneous condition. As demonstrated in a Hungarian cohort study, designing a panel covering known POI-associated genes (e.g., FMR1, GDF9, NOBOX, EIF2B) allows for the simultaneous screening of multiple etiologies [6]. The library preparation and sequencing depth must be optimized to ensure high sensitivity for detecting heterogeneous genetic causes, including monogenic defects, oligogenic combinations, and risk factors. Finally, variant interpretation must be integrated with patient clinical phenotypes, such as primary or secondary amenorrhea, to establish accurate genotype-phenotype correlations [6]. Adherence to these standardized protocols ensures the generation of high-quality, reproducible NGS data, forming a reliable foundation for bioinformatics analysis in POI and other complex genetic disorders.

Essential Components of a Bioinformatics Pipeline for Genomic Data

The emergence of high-throughput (HT) sequencing technologies has revolutionized biological research, allowing scientists to bridge the gap between genotype and phenotype on an unprecedented scale [15]. Next-Generation Sequencing (NGS) represents a revolutionary leap from traditional Sanger sequencing, enabling massive parallelization where millions of DNA fragments are sequenced simultaneously [16]. This technological advancement has democratized genomic research, making personalized genomics and precision medicine a modern reality [17]. In the specific context of Premature Ovarian Insufficiency (POI) research, NGS has proven invaluable for identifying genetic variations across large patient cohorts, with targeted gene panels successfully identifying pathogenic variants in a significant proportion (14.4%) of POI patients [18]. The effective implementation of bioinformatics pipelines is crucial for transforming raw sequencing data into biologically meaningful insights, particularly for complex conditions like POI that exhibit substantial genetic heterogeneity.

Essential Workflow Components of an NGS Pipeline

Sample Processing and Library Preparation

The bioinformatics pipeline begins even before sequencing, with critical wet-lab procedures that fundamentally impact downstream analysis. Nucleic acid extraction and purification from tissue samples (e.g., blood, bulk tissue, or individual cells) must be performed to isolate DNA or RNA [11]. For research involving tumor tissues, as might be relevant in cancer-related fertility issues, pathological assessment of tumor cell purity is essential, as lower purity reduces somatic mutation prevalence and affects variant detection sensitivity [16]. The extracted DNA is then processed through library preparation, which involves fragmenting the nucleic acids into smaller pieces, ligating adapter oligonucleotides to each end, and performing PCR amplification to increase concentration [16]. For targeted sequencing approaches like those used in POI research [18], two main strategies are employed:

- Hybridization capture: Uses designed oligonucleotide probes (baits) that bind to complementary DNA sequences to enrich fragments of interest

- Amplicon-based sequencing: Relies on flanking PCR primers to amplify specific genomic regions

Table 1: Key Sample and Library Preparation Considerations

| Component | Description | Impact on Downstream Analysis |

|---|---|---|

| Sample Type | Fresh-frozen vs. FFPE tissue | FFPE tissue more prone to DNA damage; affects sequence quality [16] |

| DNA Input | 10-1000 ng depending on application | Insufficient input affects library complexity and coverage uniformity [16] |

| Tumor Purity | Percentage of tumor cells in sample | Lower purity reduces variant allele frequency for somatic mutations [16] |

| Fragment Size | Insert size between adapters | Affects sequencing efficiency and structural variant detection [16] |

| Multiplexing | Pooling multiple samples with barcodes | Enables cost-effective sequencing; requires demultiplexing step [16] |

Sequencing and Primary Data Generation

The prepared libraries are sequenced using platforms that employ different chemistries, with Illumina's sequencing-by-synthesis (SBS) being widely adopted [11]. During this phase, the sequencer generates raw data files in FASTQ format, which contain nucleotide sequences and corresponding quality scores [15]. Two critical parameters must be considered:

- Read Length: The length of DNA fragments that are read by the sequencer

- Depth: The number of sequencing reads overlapping a particular nucleotide position, often expressed as "fold" coverage (e.g., 10X) [16]

The choice of sequencing approach—whole genome sequencing (WGS), whole exome sequencing (WES), or targeted panels—significantly impacts the bioinformatics strategy. For POI research, targeted panels focusing on known causative genes (e.g., 28-gene panel) have proven effective for molecular diagnosis despite the condition's genetic heterogeneity [18].

Data Analysis: Alignment and Variant Calling

Sequence Alignment

The first computational step involves aligning or mapping sequencing reads to a reference genome (e.g., GRCh38/hg38) [16]. This process determines where each read originated in the genome, producing alignment files in BAM or SAM format. The accuracy of alignment is crucial for subsequent variant detection, particularly in regions with high sequence similarity or repetitive elements.

Variant Calling

Variant calling identifies genetic differences between the sequenced sample and the reference genome [16]. The specific approach varies by variant type:

- Single Nucleotide Variants (SNVs): Single-base changes

- Insertions/Deletions (INDELs): Small insertions or deletions (<10bp) that may cause frameshift mutations in protein-coding genes [16]

- Copy Number Variations (CNVs): Larger duplications or deletions

- Structural Variations (SVs): Major rearrangements like translocations and inversions [16]

In POI research, variant calling pipelines must be sensitive enough to detect heterogeneous genetic causes, including monogenic, oligogenic, and digenic inheritance patterns [18].

Table 2: Key Bioinformatics File Formats and Their Purposes

| File Format | Content | Pipeline Stage |

|---|---|---|

| FASTQ | Nucleotide sequences with quality scores | Raw data output from sequencer [15] |

| BAM/SAM | Aligned sequencing reads | Post-alignment; used for variant calling [16] |

| VCF | Identified genetic variants | Post-variant calling; used for annotation [16] |

| FASTA | Reference genome sequence | Used for read alignment [16] |

Advanced Analytical Components

Variant Annotation and Prioritization

Following variant calling, annotation adds biological context to genetic variants, which is particularly important for prioritizing potentially causative mutations in POI research. This process involves:

- Functional Impact Prediction: Using tools like CADD, DANN, and MetaSVM to predict whether variants affect protein function [18]

- Population Frequency Filtering: Comparing against databases like gnomAD and 1000 Genomes to remove common polymorphisms [18]

- Inheritance Pattern Assessment: Evaluating variants against known inheritance models (autosomal dominant, autosomal recessive, X-linked) [18]

In the POI study by [18], this annotation and filtering process narrowed 772 initially identified variants down to 79 potentially causative pathogenic/likely pathogenic variants across 19 genes.

Multi-Omics Integration and Functional Validation

While genomic data provides the foundation, integrating multiple data types offers a more comprehensive biological understanding. Multi-omics approaches combine:

- Transcriptomics: RNA expression levels from RNA-seq

- Proteomics: Protein abundance and interactions

- Metabolomics: Metabolic pathways and compounds

- Epigenomics: DNA methylation and chromatin modifications [17]

For functional validation in a POI context, approaches like luciferase reporter assays can test whether identified variants (e.g., in the FOXL2 gene) actually impair transcriptional regulatory function [18]. Pedigree haplotype analysis further supports pathogenicity assessment for compound heterozygous variants [18].

Implementation Protocols for POI Research

Experimental Protocol: Targeted Gene Panel Sequencing for POI

Objective: To identify pathogenic genetic variants in a cohort of POI patients using a targeted NGS panel.

Materials and Reagents:

- Nucleic acid extraction kit (appropriate for sample type)

- DNA quantification equipment (fluorometric methods recommended)

- Targeted gene panel (e.g., 28 POI-associated genes [18])

- Library preparation reagents

- Sequencing platforms (Illumina MiSeq/iSeq for smaller panels; NextSeq for larger panels) [11]

Methodology:

- DNA Extraction and QC: Extract DNA from patient samples (blood or tissue). Assess purity using UV spectrophotometry and quantify using fluorometric methods [11].

- Library Preparation: Fragment DNA, ligate adapters, and perform size selection. Amplify via PCR. For targeted sequencing, use either hybridization capture or amplicon-based approaches [16].

- Sequencing: Sequence libraries on appropriate platform. For POI panel, ensure minimum 100X coverage for reliable variant calling.

- Variant Calling and Annotation:

- Align reads to reference genome (GRCh38)

- Call variants using specialized tools

- Annotate variants using population databases and prediction algorithms

- Variant Prioritization:

- Filter based on population frequency (<0.1% in gnomAD)

- Retain rare, protein-altering variants

- Assess against inheritance patterns

- Evaluate potential oligogenic contributions [18]

Validation:

- Confirm pathogenic variants via Sanger sequencing

- Perform functional assays (e.g., luciferase reporter assays) for novel variants

- Conduct segregation analysis in families where possible [18]

Pipeline Implementation with Workflow Management

Implementing robust, reproducible pipelines requires workflow management systems. Snakemake provides a Python-based framework for creating scalable bioinformatics pipelines [19]. A basic Snakefile for RNA-seq analysis includes rules for:

- Defining sample names and patterns

- Quantifying gene expression

- Collating outputs into a master results file [19]

NGS Bioinformatics Pipeline Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for POI NGS Research

| Category | Specific Examples | Function/Purpose |

|---|---|---|

| Sample Collection | PAXgene Blood RNA tubes, FFPE tissue blocks | Preserve biological samples for nucleic acid extraction [16] |

| Extraction Kits | Qiagen DNA/RNA kits, Magnetic bead-based kits | Isolate high-quality nucleic acids from samples [11] |

| Library Prep | Illumina Nextera, Kapa HyperPrep | Prepare sequencing libraries with appropriate adapters [16] |

| Target Enrichment | IDT xGen baits, Twist Panels | Enrich specific genomic regions (for targeted sequencing) [18] |

| QC Instruments | Bioanalyzer, Qubit fluorometer, Nanodrop | Assess nucleic acid quality, quantity, and fragment size [11] |

| Sequencing Platforms | Illumina NovaSeq, NextSeq, MiSeq | Generate sequence data with different throughput needs [11] |

| Alignment Tools | BWA, Bowtie2, STAR | Map sequencing reads to reference genome [16] |

| Variant Callers | GATK, DeepVariant, FreeBayes | Identify genetic variants from aligned reads [16] [17] |

| Annotation Resources | ANNOVAR, SnpEff, gnomAD, CADD | Add functional and population frequency information to variants [18] |

| Workflow Management | Snakemake, Nextflow | Create reproducible, scalable analysis pipelines [19] |

Quality Control and Data Management

Quality Control Metrics

Throughout the NGS pipeline, quality control is essential at multiple stages:

- Pre-sequencing: DNA/RNA quality (RIN/DIN), quantity, and purity (A260/280 ratio)

- Post-sequencing: Base quality scores (Phred scores), GC content, adapter contamination

- Post-alignment: Mapping rates, coverage uniformity, insert size distribution

- Post-variant calling: Transition/transversion ratios, strand bias, depth distribution [16]

For POI research specifically, special attention should be paid to coverage in known POI-associated genes and the ability to detect different variant types, including frameshift mutations that may disrupt open reading frames [16] [18].

Data Management Challenges

NGS workflows generate massive datasets with a 3-5× expansion from raw to processed data [15]. Effective data management requires:

- Storage Solutions: Scalable systems for FASTQ, BAM, and VCF files

- Provenance Tracking: Recording analysis parameters and software versions

- Retention Policies: Balancing accessibility with storage constraints [15]

Cloud computing platforms (AWS, Google Cloud) offer scalable solutions for genomic data storage and analysis, providing compliance with regulatory frameworks like HIPAA and GDPR [17].

POI Genetic Analysis Research Workflow

A well-constructed bioinformatics pipeline for genomic data analysis requires careful integration of multiple components, from sample processing to variant interpretation. In POI research, where genetic heterogeneity presents significant challenges, targeted sequencing approaches coupled with robust bioinformatics pipelines have successfully identified pathogenic variants in a substantial proportion of cases [18]. The future of genomic analysis lies in integrating artificial intelligence for variant calling [17], multi-omics data for comprehensive biological understanding [17], and cloud computing for scalable data analysis [17]. As these technologies evolve, they will further enhance our ability to unravel the genetic complexity of conditions like POI, ultimately enabling more precise molecular diagnoses and personalized therapeutic approaches.

POI-Specific Genetic Targets and Relevant Genomic Regions

Primary Ovarian Insufficiency (POI) is a complex clinical disorder characterized by the loss of ovarian function before age 40, affecting approximately 1-3.7% of women worldwide [20]. It represents a significant cause of female infertility, with genetic factors contributing to 20-25% of cases [3] [20]. The condition demonstrates remarkable heterogeneity, manifesting through primary or secondary amenorrhea, elevated gonadotropin levels, and estrogen deficiency [18]. Understanding the genetic architecture of POI is paramount for developing targeted diagnostic and therapeutic strategies. This application note synthesizes current knowledge on POI-specific genetic targets and genomic regions, providing a structured framework for researchers investigating this complex condition through next-generation sequencing (NGS) approaches.

Established Genetic Targets in POI

Chromosomal Abnormalities and Syndromic POI

Chromosomal abnormalities account for 10-13% of POI cases, with X-chromosome anomalies being particularly significant [20] [21]. Turner Syndrome (45,X) constitutes 4-5% of POI cases, while Trisomy X Syndrome (47,XXX) also demonstrates association with diminished ovarian reserve [20]. Critical regions on the X chromosome include Xq13.3-q21.1 (POI2) and Xq24-q27 (POI1), where deletions or translocations frequently correlate with POI phenotypes [20] [21]. Structural variations such as isochromosomes (46,Xi(Xq)), deletions, and X-autosomal translocations can disrupt genes essential for ovarian function, with 80% of translocation breakpoints occurring in the Xq21 cytoband [20].

Table 1: Key Chromosomal Regions and Syndromic Associations in POI

| Genetic Abnormality | Prevalence in POI | Key Genes/Regions | Clinical Features |

|---|---|---|---|

| X Chromosome Aneuploidy (Turner Syndrome) | 4-5% [20] | Entire X chromosome | Streak ovaries, primary amenorrhea, short stature |

| X Chromosome Structural Abnormalities | 4.2-12% [20] | Xq13.3-q21.1 (POI2), Xq24-q27 (POI1) | Isolated POI or syndromic features |

| FMR1 Premutations | 3-15% [22] [21] | FMR1 5' UTR CGG repeats | Fragile X-associated tremor/ataxia syndrome |

| Autosomal Translocations | Rare [20] | Multiple autosomal regions | Variable, often isolated POI |

Monogenic Contributions to POI

Advanced sequencing technologies have identified numerous genes associated with POI pathogenesis. These genes participate in diverse biological processes including gonadal development, meiosis, DNA repair, folliculogenesis, and hormone signaling [3] [20]. A large-scale whole-exome sequencing study of 1,030 POI patients identified pathogenic or likely pathogenic variants in 59 known POI-causative genes, accounting for 18.7% of cases [3]. Among these, genes involved in meiosis and DNA repair constituted the largest proportion (48.7%) [3].

Table 2: Major Gene Categories and Their Representative Members in POI Pathogenesis

| Functional Category | Representative Genes | Primary Biological Role | Prevalence in POI Cohorts |

|---|---|---|---|

| Meiosis & DNA Repair | MSH4, MSH5, SPIDR, HFM1, SMC1B, FANCE [18] [3] |

Chromosome pairing, recombination, DNA damage repair | 48.7% of genetically explained cases [3] |

| Transcription Regulation | NOBOX, FIGLA, SOHLH1, NR5A1, FOXL2 [18] |

Ovarian development, folliculogenesis | ~3.2% for FOXL2 variants [18] |

| Hormone Signaling & Receptors | FSHR, BMP15, GDF9, AMH, AMHR2 [18] [21] |

Follicle development, ovulation | Recurrent variants in Turkish cohort [21] |

| Mitochondrial Function | AARS2, HARS2, POLG, LARS2 [3] [20] |

Cellular energy production, oxidative metabolism | 22.3% of genetically explained cases [3] |

| Immune Regulation | AIRE [3] |

Autoimmune tolerance, prevents ovarian autoimmunity | Associated with APS-1 syndrome [20] |

Emerging Genetic Insights from Genomic Studies

Inflammation-Related Proteins as Causal Mediators

Mendelian randomization studies have revealed specific inflammation-related proteins with causal relationships to POI. A 2025 study analyzing 91 inflammation-related proteins identified CXCL10 and CX3CL1 as exerting protective effects against POI, while IL-18R1, IL-18, MCP-1/CCL2, and CCL28 increased POI risk [23]. Additional proteins including IL-17C, TRANCE, uPA, LAP TGF-β1, and CXCL9 demonstrated protective effects, while TNFSF14, CD40, IL-24, ARTN, LIF-R, and IL-2RB were identified as risk factors [23]. Experimental validation in POI models confirmed significant changes in MCP-1/CCL2, TGFB1, ARTN, and LIFR, which converge in the oncostatin M signaling pathway [23]. Gene-drug analysis further identified CCL2 and TGFB1 as potential therapeutic targets, with genistein and melatonin prioritized as potential treatments [23].

Novel Candidates from Integrated Genomic Analyses

Integrated genomic approaches combining expression quantitative trait loci (eQTL) data with genome-wide association studies (GWAS) have revealed novel POI-associated genes. A 2024 study identified four genes (HM13, FANCE, RAB2A, and MLLT10) significantly associated with reduced POI risk through Mendelian randomization analysis [22] [24]. Colocalization analyses provided strong evidence for FANCE (involved in DNA repair through the Fanconi anemia pathway) and RAB2A (regulating autophagy) as promising therapeutic targets [22] [24]. These findings highlight the potential of bioinformatics approaches to identify previously unrecognized genetic contributors to POI.

Figure 1: Bioinformatics workflow for POI genetic target identification, integrating NGS data with functional validation.

Oligogenic Architecture of POI

Emerging evidence supports an oligogenic model for POI, where combinations of variants across multiple genes contribute to disease pathogenesis. A targeted NGS study of 295 candidate genes in 64 POI patients revealed that 75% carried at least one genetic variant, with 39% carrying 2-6 variants [9]. Patients with more severe phenotypes tended to carry either a greater number of variants or variants with higher predicted pathogenicity [9]. Similarly, a study of 500 Chinese Han patients identified 9 individuals (1.8%) with digenic or multigenic pathogenic variants who presented with delayed menarche, early POI onset, and higher prevalence of primary amenorrhea compared to those with monogenic variants [18]. These findings underscore the genetic complexity of POI and suggest that comprehensive genetic profiling should encompass multiple candidate genes rather than focusing on single-gene analyses.

Experimental Protocols for POI Genetic Research

Targeted Gene Panel Sequencing for POI

Purpose: To simultaneously screen multiple known POI-associated genes for pathogenic variants in a cost-effective and efficient manner.

Sample Preparation:

- Collect peripheral blood samples in EDTA tubes (2 mL minimum) [21]

- Extract genomic DNA using commercial kits (e.g., EZ1 DNA Investigator Kit, QIAGEN) [21]

- Quantify DNA using fluorometric methods (e.g., Quant-iT PicoGreen) [9]

- Ensure DNA integrity through agarose gel electrophoresis or equivalent methods

Library Preparation and Sequencing:

- Design custom capture panels targeting known POI genes (26-295 genes based on research focus) [18] [9] [21]

- Utilize target enrichment systems (e.g., QIAseq Targeted DNA Custom Panel, Illumina Nextera Rapid Capture) [9] [21]

- Prepare sequencing libraries following manufacturer protocols with appropriate quality controls

- Sequence on NGS platforms (e.g., Illumina MiSeq, NextSeq 500) with minimum 50x coverage across target regions [9]

Data Analysis Pipeline:

- Perform base calling and demultiplexing using platform-specific software

- Align reads to reference genome (GRCh37/hg19 or GRCh38/hg38) using BWA-MEM algorithm [9]

- Conduct variant calling with GATK Unified Genotyper or similar tools [9] [21]

- Annotate variants using databases including gnomAD, ExAC, ClinVar, and in-house population frequencies

- Filter variants based on quality scores (Phred score ≥20), population frequency (<0.1% in control databases), and predicted functional impact [18] [9]

- Prioritize variants through in silico prediction tools (PolyPhen-2, SIFT, CADD, MutationTaster) [18] [21]

- Validate potentially pathogenic variants through Sanger sequencing

Whole Exome Sequencing for Novel Gene Discovery

Purpose: To identify novel POI-associated genes and variants beyond known candidates through unbiased sequencing of protein-coding regions.

Methodological Considerations:

- Library preparation using exome capture kits (e.g., Illumina Nextera, IDT xGen Exome Research Panel)

- Sequencing depth: Minimum 100x mean coverage with >95% of target bases covered at ≥20x [3]

- Include family-based designs (trios or multiplex families) to aid variant interpretation

- Implement appropriate case-control matching for association studies

Analytical Framework:

- Perform quality control checks at sample and variant levels

- Conduct variant filtering based on inheritance patterns (de novo, recessive, compound heterozygous)

- Implement burden tests for gene-based association analyses comparing cases and controls [3]

- Integrate functional annotations including gene expression data, protein-protein interactions, and pathway enrichment

- Consider oligogenic effects by testing for multiple variants within biological pathways [9]

Functional Validation of Candidate Variants

Purpose: To establish biological relevance and pathogenicity of identified genetic variants through experimental assays.

In Vitro Models:

- Utilize human granulosa-like tumor cell lines (KGN) for functional studies [23]

- Establish POI models using cyclophosphamide (CTX) treatment (1 mg/mL for 48 hours) [23]

- Implement gene editing approaches (CRISPR-Cas9) to introduce specific variants

- Assess protein localization and expression through immunofluorescence and Western blotting [23]

- Evaluate transcriptional effects using luciferase reporter assays and RT-PCR [23] [18]

Functional Assays:

- Meiotic progression analysis in suitable model systems

- DNA repair capacity assessment following induced damage

- Apoptosis assays in ovarian somatic cells

- Hormone response profiling for receptor variants

Figure 2: Key signaling pathways in POI pathogenesis, highlighting potential therapeutic targets.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for POI Genetic Studies

| Reagent/Platform | Specific Example | Application in POI Research |

|---|---|---|

| NGS Library Prep Kits | QIAseq Targeted DNA Custom Panel [21] | Targeted sequencing of POI gene panels |

| NGS Library Prep Kits | Illumina Nextera Rapid Capture [9] | Whole exome and targeted sequencing |

| Sequencing Platforms | Illumina MiSeq/NextSeq 500 [9] [21] | Medium-to-high throughput NGS |

| Cell Culture Models | KGN human granulosa-like tumor cell line [23] | In vitro modeling of ovarian function |

| POI Modeling Reagents | Cyclophosphamide (CTX) [23] | Inducing ovarian insufficiency in models |

| Antibody Reagents | Anti-MCP-1, Anti-TGF-β1, Anti-LIF-R [23] | Protein validation through Western blot |

| Bioinformatics Tools | SMR software [22] | Mendelian randomization analysis |

| Bioinformatics Tools | coloc R package [22] [24] | Colocalization analysis for causal inference |

| Variant Prediction | PolyPhen-2, SIFT, CADD, MutationTaster [18] [21] | In silico assessment of variant pathogenicity |

The genetic landscape of POI encompasses diverse elements including chromosomal abnormalities, monogenic contributions, oligogenic interactions, and inflammatory mediators. This application note has synthesized current knowledge on POI-specific genetic targets and genomic regions, providing experimental frameworks for their investigation. The integration of NGS technologies with functional validation approaches offers powerful strategies for elucidating the molecular basis of POI, ultimately facilitating the development of targeted diagnostic and therapeutic interventions. As research progresses, the continued refinement of bioinformatics pipelines and analytical frameworks will be essential for deciphering the complex genetic architecture underlying this heterogeneous condition.

Premature Ovarian Insufficiency (POI) is a complex reproductive endocrine disorder affecting women under 40, characterized by infertility and perimenopausal symptoms. Its genetic etiology is highly heterogeneous, with approximately 90% of cases having an unknown cause [25]. Next-generation sequencing (NGS) technologies have become indispensable for unraveling the genetic underpinnings of such complex conditions. For POI research, selecting an appropriate sequencing platform is crucial for detecting the full spectrum of genetic variants, transcriptomic alterations, and epigenetic modifications that may contribute to pathogenesis.

The three dominant sequencing platforms—Illumina, Oxford Nanopore Technology (ONT), and Pacific Biosciences (PacBio)—each offer distinct advantages and limitations. This review provides a structured comparison of these technologies within the specific context of building a bioinformatics pipeline for POI research, offering application notes and detailed protocols to guide researchers and drug development professionals in platform selection and implementation.

Technology Comparison and Performance Metrics

Technical Specifications and Performance Characteristics

Table 1: Sequencing platform performance characteristics relevant to POI research

| Feature | Illumina | PacBio HiFi | Oxford Nanopore |

|---|---|---|---|

| Read Length | Short (up to 2x300 bp for MiSeq) [26] | Long (average ~16 kb) [27] | Very Long (theoretically unlimited) [25] |

| Single-Molecule Accuracy | >99.9% (inherent) | ~Q27 (99.8%) [26] | >99% with Q20+ chemistry [28] |

| Typical Output per Run | 0.12 Gb (MiSeq V3-V4) [26] | 0.55 Gb (16S sequencing) [26] | 0.89 Gb (16S sequencing) [26] |

| Primary Strengths | High throughput, low per-base cost, established workflows | High accuracy long reads, simultaneous epigenetic detection [27] | Ultra-long reads, real-time analysis, direct RNA/epigenetic detection [25] |

| Key Limitations | Limited to short fragments, cannot resolve complex regions | Lower throughput than Illumina, higher DNA input requirements | Higher raw error rate than competitors, though correctable [29] |

| Ideal POI Application | Targeted gene panels, variant validation, miRNA sequencing | Full-length isoform sequencing, haplotype phasing, imprinting disorders [27] | Novel transcript discovery, alternative splicing, base modification analysis [25] |

Taxonomic and Variant Resolution Performance

Table 2: Comparative taxonomic resolution across platforms (species-level classification performance)

| Platform | Target Region | Species-Level Classification Rate | Notes |

|---|---|---|---|

| Illumina MiSeq | V3-V4 (∼442 bp) [26] | 47% [26] | Limited by short read length; many sequences labeled "uncultured_bacterium" [26] |

| PacBio Sequel II | Full-length 16S (∼1,453 bp) [26] | 63% [26] | 16% improvement over Illumina; better resolution but still limited by database annotations [26] |

| ONT MinION | Full-length 16S (∼1,412 bp) [26] | 76% [26] | 29% improvement over Illumina; best resolution but database limitations persist [26] |

Long-read technologies (PacBio and ONT) demonstrate a clear advantage in species-level resolution, which is critical for microbiome studies associated with POI. However, a significant challenge across all platforms is the high proportion of sequences classified with ambiguous names such as "uncultured_bacterium," highlighting a limitation in current reference databases rather than sequencing technology itself [26].

For variant detection, a recent clinical study of pediatric rare diseases demonstrated that HiFi sequencing provided a 10% higher diagnostic yield (37% vs. 27%) compared to standard testing methods, highlighting its power for resolving complex genetic disorders [27]. ONT's accuracy for Single Nucleotide Polymorphism (SNP) calling is now comparable to state-of-the-art short-read methods, and its Q20+ chemistry enables realistic systematic analysis of cancerous mutations [28].

Application Notes for POI Research

Insights from a Nanopore Sequencing Study of POI

A 2025 study utilizing ONT's PromethION platform for full-length transcriptome sequencing of POI patient blood samples demonstrated the unique value of long-read sequencing for this condition. Key findings included [25]:

- Identification of 26,122 transcripts, including 7,724 novel gene loci and 13,593 novel transcripts not previously annotated.

- Detection of 382 differentially expressed transcripts (366 down-regulated, 16 up-regulated).

- Characterization of 8,834 alternative splicing events and 65,254 alternative polyadenylation sites, revealing major sources of transcript diversity.

- Pathway enrichment analysis linking ferroptosis to POI pathogenesis.

- Prediction of 494 high-confidence lncRNAs and 1,768 transcription factors from full-length sequences.

- Immune profiling revealing down-regulated CD8+ T cells and monocytes significantly correlated with Anti-Müllerian Hormone (AMH) levels.

This study exemplifies how ONT's long-read capability can uncover previously inaccessible layers of transcriptomic complexity in POI, providing new insights into pathogenesis and potential diagnostic biomarkers.

Platform-Specific Applications for POI

- Illumina: Most suitable for targeted gene panels of known POI-associated genes (e.g., FMRI, BMP15) and miRNA expression profiling. Its high accuracy is ideal for confirming suspected point mutations. Emerging 5-base chemistry enables simultaneous genetic and epigenetic profiling in a single run [30].

- PacBio HiFi: Optimal for resolving imprinting disorders relevant to POI. HiFi sequencing enables phasing of variants and methylation detection, having uncovered 60% previously unknown loci with parent-of-origin effects in a developmental study [27]. Excellent for full-length isoform sequencing of candidate genes.

- Oxford Nanopore: Superior for novel transcript discovery and characterizing post-transcriptional regulation (alternative splicing, fusion genes). Direct RNA sequencing captures nucleotide modifications without cDNA conversion. Portable models enable potential point-of-care applications [25].

Experimental Protocols

Full-Length Transcriptome Sequencing for POI (ONT Protocol)

Sample Collection and Preparation [25]:

- Patient Selection: Collect peripheral blood from POI patients (diagnosed by age <40, amenorrhea >4 months, FSH >25 IU/L on two occasions) and age/BMI-matched controls.

- RNA Preservation: Draw 2.5 ml blood into PAXgene Blood RNA Tubes and store at -80°C.

- RNA Extraction: Use PAXgene Blood miRNA Kit (QIAGEN) following manufacturer's protocol.

- Library Preparation:

- Synthesize cDNA using Thermo Scientific Maxima H Minus Reverse Transcriptase.

- Prepare sequencing libraries using ONT's ligation sequencing kit without fragmentation.

- Sequencing: Load library onto PromethION flow cell and run for up to 72 hours with real-time basecalling.

Bioinformatic Analysis [25]:

- Basecalling and Demultiplexing: Use Guppy or Dorado for basecalling followed by barcode removal.

- Read Filtering: Retain reads with >90% identity and >85% coverage to reference.

- Alignment: Map to human reference genome (GRCh38) using Minimap2.

- Transcript Assembly & Annotation: Identify novel transcripts with Gffcompare; functionally annotate using NR, SwissProt, GO, KEGG databases.

- Differential Expression: Use DESeq2 with |log2FC|>1.5 and FDR<0.05 thresholds.

- Isoform Analysis: Identify alternative splicing with AStalavista; polyadenylation sites with TAPIS pipeline.

- lncRNA Prediction: Use CPC, CPAT, and CNCI tools to identify non-coding RNAs.

Figure 1: Full-length transcriptome analysis workflow for POI research using Oxford Nanopore technology.

Targeted Gene Panel Sequencing for POI (Illumina Protocol)

Library Preparation and Sequencing [10]:

- DNA Extraction: Use validated methods (e.g., QIAamp DNA Blood Mini Kit) with quality control (A260/280 ratio 1.8-2.0).

- Target Enrichment: Design probes to capture known POI-associated genes (e.g., FOXL2, NR5A1, FIGLA) and susceptibility loci.

- Library Preparation:

- Fragment DNA to 200-300bp (Covaris sonicator).

- Perform end-repair, A-tailing, and adapter ligation (Illumina TruSeq Kit).

- Amplify library with 8-10 PCR cycles.

- Quality Control: Validate library size distribution (Fragment Analyzer) and quantify (qPCR).

- Sequencing: Load pooled libraries on MiSeq or NextSeq system; aim for minimum 100x coverage.

Bioinformatic Analysis [10]:

- Base Calling and Demultiplexing: Use Illumina bcl2fastq.

- Quality Control: Assess with FastQC; trim adapters with Trimmomatic.

- Alignment: Map to reference genome (GRCh38) using BWA-MEM.

- Variant Calling: Use GATK HaplotypeCaller for SNPs/indels; Manta for SVs.

- Annotation: Annotate variants with ANNOVAR or SnpEff; prioritize based on population frequency (gnomAD), predicted impact (CADD), and POI association (ClinVar).

Figure 2: Targeted gene panel sequencing workflow for mutation screening in POI patients.

HiFi Sequencing for Imprinting Disorders (PacBio Protocol)

Library Preparation and Sequencing [27]:

- DNA Extraction: Use high-molecular-weight DNA extraction method (e.g., MagAttract HMW DNA Kit).

- Library Preparation:

- Repair DNA and select >10kb fragments (BluePippin system).

- Prepare SMRTbell library without DNA fragmentation (SMRTbell Prep Kit 3.0).

- Validate library quality (Fragment Analyzer).

- Sequencing: Load on Sequel IIe system with 10h movie time; use binding kit 3.0 and sequencing kit 2.0.

Bioinformatic Analysis [27]:

- CCS Read Generation: Use SMRT Link with minimum 3 full passes.

- Alignment: Map to reference genome (GRCh38) using pbmm2.

- Variant Calling: Use DeepVariant for SNPs/indels; pbsv for structural variants.

- Methylation Analysis: Use MoMI for base modification detection.

- Phasing: Use HapCUT2 or WhatsHap for haplotype phasing.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key reagents and kits for POI sequencing studies

| Reagent/Kits | Application | Function | Example Product |

|---|---|---|---|

| Blood RNA Collection Tube | Sample Collection | Stabilizes intracellular RNA at room temperature for transport and storage | PAXgene Blood RNA Tube [25] |

| Total RNA Extraction Kit | Nucleic Acid Extraction | Isoles high-quality, inhibitor-free total RNA from whole blood | PAXgene Blood miRNA Kit [25] |

| HMW DNA Extraction Kit | Nucleic Acid Extraction | Isolates high-molecular-weight DNA for long-read sequencing | MagAttract HMW DNA Kit |

| Reverse Transcriptase | Library Prep | Synthesizes cDNA from RNA templates for transcriptome sequencing | Maxima H Minus Reverse Transcriptase [25] |

| Ligation Sequencing Kit | Library Prep (ONT) | Prepares DNA libraries for nanopore sequencing with native barcoding | ONT Ligation Sequencing Kit V14 [28] |

| SMRTbell Prep Kit | Library Prep (PacBio) | Creates SMRTbell libraries for PacBio circular consensus sequencing | SMRTbell Prep Kit 3.0 [27] |

| TruSeq DNA PCR-Free | Library Prep (Illumina) | Prepares high-quality Illumina libraries without PCR amplification bias | Illumina TruSeq DNA PCR-Free Kit |

Platform Selection Guidelines for POI Research

Decision Framework

- Choose Illumina when: Your POI research requires high-throughput sequencing of known genomic regions, validation of candidate variants, or cost-effective profiling of large patient cohorts. Ideal for targeted panels and miRNA discovery.

- Choose PacBio HiFi when: Investigating genomic imprinting, haplotype-specific effects, or complex structural variations in POI families. Provides the best balance of long reads and high accuracy for de novo mutation discovery.

- Choose Oxford Nanopore when: Exploring novel transcriptome features, alternative splicing patterns, RNA modifications, or rapid analysis in POI. Superior for full-length isoform sequencing and real-time adaptive sampling.

Emerging Technologies and Future Directions

Recent advancements across all platforms are particularly relevant for POI research:

- Illumina's 5-base chemistry enables simultaneous genetic and epigenetic profiling in a single run, potentially revealing novel methylation markers in POI [30].

- PacBio's HiFi sequencing now enables comprehensive analysis of hemoglobin variants in population screening, suggesting applications for carrier screening in POI families [27].

- Nanopore's direct RNA sequencing and protein barcoding roadmap points toward truly integrated multiomic analysis of POI samples [31].

The convergence of these technologies with improved bioinformatic pipelines will likely enable more comprehensive POI diagnostics, potentially combining targeted sequencing for known variants with long-read approaches for novel discovery in a multi-platform strategy.

Next-Generation Sequencing (NGS) has revolutionized biomedical research, enabling comprehensive analysis of the genetic basis of diseases. In the context of Premature Ovarian Insufficiency (POI) research, a condition affecting 1-3.7% of women before age 40 and characterized by the cessation of ovarian function, NGS technologies are instrumental in identifying associated genetic variants [32] [6]. The analytical process relies on a structured pipeline that transforms raw sequencing data into interpretable genetic variants through three principal file formats: FASTQ, BAM, and VCF. This protocol outlines the characteristics, functionalities, and processing steps of these formats within a bioinformatics pipeline tailored for POI research, providing researchers with practical guidance for their genomic analyses.

Understanding Core NGS File Formats

FASTQ: Raw Sequence Data Storage

The FASTQ format serves as the primary output from high-throughput sequencing instruments and the starting point for most bioinformatics analyses [33]. This text-based format stores both nucleotide sequences and corresponding quality scores, providing the essential data required for downstream processing.

Key Characteristics:

- File Extension:

.fastqor.fq(typically compressed as.fastq.gzor.fq.gz) - Compression: Almost always gzipped to conserve storage space

- Distribution Pattern: Usually distributed in pairs (R1 and R2) for paired-end sequencing

- Human Readability: Not easily readable in raw form due to size and structure

Structural Components: Each read in a FASTQ file occupies exactly four lines:

- Sequence Identifier: Begins with '@' character and contains instrument data

- Nucleotide Sequence: Raw bases (A, C, G, T, N)

- Separator Line: Typically just a '+' character

- Quality Scores: ASCII-encoded Phred-scaled quality values for each base

Example FASTQ entry:

Quality Score Interpretation: The quality scores represent the probability of sequencing error for each base, encoded in ASCII format where each character corresponds to a Phred quality score (Q) calculated as Q = -10log₁₀(P), where P is the estimated probability of the base call being incorrect [34]. These scores enable quality control and filtering during processing.

Table 1: FASTQ Quality Score Interpretation

| Phred Quality Score (Q) | Probability of Incorrect Base Call | Typical ASCII Character (Sanger) |

|---|---|---|

| 10 | 1 in 10 | + |

| 20 | 1 in 100 | 5 |

| 30 | 1 in 1,000 | ? |

| 40 | 1 in 10,000 | I |

BAM: Aligned Sequence Data Storage

BAM (Binary Alignment Map) represents the compressed binary version of the SAM format, used to store sequencing reads aligned to a reference genome [33]. This format is essential for variant calling, coverage analysis, and visualization.

Key Characteristics:

- File Extension:

.bam - Companion File: Paired with

.baiindex file for rapid access - Compression: Binary format with efficient compression

- Reference Dependency: Aligned to specific genome versions (e.g., GRCh38/hg38)

Data Content: BAM files contain comprehensive alignment information including:

- Read sequences aligned to a reference genome

- Quality scores for each base

- Mapping positions and alignment flags

- CIGAR strings representing alignment operations

- Optional metadata fields

CRAM Format Alternative: CRAM provides a more space-efficient alternative to BAM, typically reducing file sizes by 30-60% through reference-based compression [33]. However, it requires access to the reference sequence for decompression, making it ideal for archiving but less suitable for data distribution.

VCF: Variant Call Format Storage

VCF serves as the standard format for storing genetic variation data, including SNPs, insertions, deletions, and structural variants [33]. This format represents the final output of variant calling pipelines and the starting point for downstream interpretation.

Key Characteristics:

- File Extension:

.vcf(often compressed as.vcf.gzwith tabix index.vcf.gz.tbi) - Human Readability: Semi-readable text format

- Structure: Header section with metadata and data section with variant information

Data Content: VCF files contain:

- Chromosome and position information

- Reference and alternative alleles

- Quality metrics and filtering flags

- Genotype information for multiple samples

- Functional annotations (when available)

Experimental Protocols for NGS Data Processing

From FASTQ to BAM: Data Preparation Protocol

The initial processing of raw sequencing data involves multiple quality control and alignment steps to produce analysis-ready BAM files [35].

Materials and Equipment:

- High-performance computing cluster with Unix/Linux environment

- Minimum 16 GB RAM (32+ GB recommended for whole genomes)

- Multi-core processors (8+ cores recommended)

- Sufficient storage capacity (hundreds of GB to TB depending on sample count)

Procedure:

Step 1: Quality Control and Preprocessing

- Assess raw read quality using FastQC (v0.11.7 or newer)

- Perform adapter trimming and quality filtering with fastp (v0.20.0 or newer)

- Remove low-quality bases and artifacts

- For paired-end data, ensure proper matching of R1 and R2 files

Step 2: Read Alignment

- Align reads to reference genome (GRCh38 recommended) using BWA-MEM (v0.7.17 or newer)

- Command:

bwa mem -M -t <threads> <reference.fa> <read1.fq> <read2.fq> > <aligned.sam> - Convert SAM to BAM:

samtools view -Sb <aligned.sam> > <aligned.bam>

Step 3: Post-Alignment Processing

- Sort BAM files by coordinate:

samtools sort -o <sorted.bam> <aligned.bam> - Mark duplicate reads:

samblaster -i <sorted.bam> -o <deduplicated.bam> - Index final BAM file:

samtools index <deduplicated.bam>

Step 4: Base Quality Score Recalibration (Optional but Recommended)

- Generate recalibration table with GATK BaseRecalibrator

- Apply recalibration with GATK ApplyBQSR

From BAM to VCF: Variant Calling Protocol

Variant calling identifies genetic differences between the sample and reference genome, producing VCF files for downstream analysis [35].

Procedure:

Step 1: Variant Discovery

- Use GATK HaplotypeCaller for superior SNP and indel calling

- Command:

gatk HaplotypeCaller -R <reference.fa> -I <input.bam> -O <raw_variants.vcf> - For large sample sets, use GVCF approach:

gatk HaplotypeCaller -R <reference.fa> -I <input.bam> -O <sample.g.vcf> -ERC GVCF

Step 2: Joint Genotyping (Multiple Samples)

- Combine GVCFs:

gatk CombineGVCFs -R <reference.fa> --VCF <gvcf_list> -O <cohort.g.vcf> - Perform joint genotyping:

gatk GenotypeGVCFs -R <reference.fa> -V <cohort.g.vcf> -O <raw_cohort_variants.vcf>

Step 3: Variant Filtering

- Apply variant quality score recalibration (VQSR) for optimal filtering

- SNP recalibration:

gatk VariantRecalibrator -R <reference.fa> -V <input.vcf> --resource <known_sites> -O <recal_file> - Apply recalibration:

gatk ApplyVQSR -R <reference.fa> -V <input.vcf> -O <filtered.vcf> --recal-file <recal_file>

Step 4: Variant Annotation

- Add functional annotations using ANNOVAR, VEP, or similar tools

- Include population frequency databases (gnomAD, 1000 Genomes)

- Predict functional consequences on genes and proteins

Application to POI Research: Case Studies

Targeted Gene Panel Sequencing for POI

Recent studies have successfully implemented NGS approaches to identify genetic variants associated with POI. A 2021 study developed a custom NGS panel (OVO-Array) containing 295 genes associated with ovarian function and POI pathogenesis [32].

Experimental Design:

- Subjects: 64 patients with early-onset POI (age range: 10-25 years)

- Sequencing Method: Targeted NGS with Illumina NextSeq 500

- Coverage: 90% of target regions at 50x coverage

- Analysis Pipeline: BWA for alignment, GATK for variant calling

Results:

- 75% of patients carried at least one genetic variant in candidate genes

- 17% carried two variants, 14% carried three variants

- Oligogenic inheritance pattern observed in multiple cases

- Pathway analysis revealed variants in critical biological processes:

- Cell cycle, meiosis, and DNA repair

- Extracellular matrix remodeling

- NOTCH and WNT signaling pathways

- Folliculogenesis and oocyte development

Table 2: POI Genetic Analysis Results from Targeted NGS Studies

| Study | Patient Cohort | Genes Analyzed | Variant Detection Rate | Key Findings |

|---|---|---|---|---|

| Rota et al. (2021) [32] | 64 early-onset POI | 295 genes | 75% | Oligogenic inheritance in 48% of patients |

| Hungarian Study (2024) [6] | 48 POI patients | 31 known POI genes | 16.7% monogenic defects | Additional 29.2% with potential risk factors |

Implementation Considerations for POI Research

Custom Panel Design: For POI research, targeted gene panels offer cost-effective deep sequencing of established candidate genes. Essential gene categories include:

- Folliculogenesis players (GDF9, BMP15, FIGLA, NOBOX)

- Meiosis and DNA repair genes (SYCE1, STAG3, MCM8, MCM9)

- Hormone signaling pathway genes (FSHR, LHCGR)

- Metabolic and autoimmune association genes

Data Analysis Specifications:

- Reference Genome: GRCh38/hg38 recommended

- Variant Filtering: Focus on rare variants (MAF <0.01 in population databases)

- Annotation Priorities: Predicted deleteriousness, conservation scores, previous POI associations

- Validation: Sanger sequencing for confirmed pathogenic variants

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for POI NGS Analysis

| Category | Item/Software | Specification/Version | Function in Workflow |

|---|---|---|---|

| Wet Lab Reagents | TruSeq DNA PCR-Free Library Prep | Illumina HT protocol | Library preparation for WGS |

| Ion AmpliSeq Library Kit Plus | Thermo Fisher Scientific | Targeted panel library preparation | |

| Agencourt AMPure XP Reagent | Beckmann Coulter | Library purification | |

| Reference Materials | Human Reference Genome | GRCh38/hg38 | Alignment reference |

| High-Confidence Variant Sets | GIAB NA12878, NIST v3.3.2 | Benchmarking and validation | |

| Bioinformatics Tools | BWA | v0.7.17 or newer | Read alignment |

| SAMtools | v1.10 or newer | BAM file processing | |

| GATK | v4.1.2.0 or newer | Variant discovery and calling | |

| FastQC | v0.11.7 or newer | Quality control | |

| Variant Databases | gnomAD | v2.1.1 or newer | Population frequency filtering |

| ClinVar | Latest release | Pathogenic variant annotation | |

| OMIM | Latest release | Disease association data |

Advanced Considerations and Emerging Technologies

Data Compression and Storage Optimization

With WGS data requiring approximately 65 GB per sample for FASTQ files and 55 GB for BAM files at 35x coverage, efficient compression is essential for large-scale studies [36]. Recent benchmarks show specialized tools can achieve compression ratios up to 1:6 for FASTQ data.

Compression Options:

- DRAGEN ORA: 1:5.64 compression ratio, fastest processing

- Genozip: 1:5.99 compression ratio, versatile format support

- CRAM Format: 30-60% smaller than BAM, ideal for archiving

Accelerated Analysis Pipelines

Emerging tools like the LUSH toolkit offer significant performance improvements, processing 30x WGS data in 1.6 hours compared to 27 hours for standard GATK - approximately 17x faster while maintaining accuracy [37]. These advancements enable rapid analysis for clinical applications and large cohort studies.

The structured progression from FASTQ to BAM to VCF files forms the computational backbone of modern POI genetic research. By implementing robust, standardized processing protocols and leveraging specialized analysis tools, researchers can effectively identify and interpret genetic variants contributing to ovarian insufficiency. The integration of these bioinformatics approaches with targeted gene panels and functional validation enables comprehensive characterization of POI genetics, advancing our understanding of this complex disorder and paving the way for improved diagnostic and therapeutic strategies.

Reference Genomes and Annotations for Human Genomics (GRCh38/hg38)

The Genome Reference Consortium human build 38 (GRCh38), also commonly known as hg38, represents the current standard in human reference genomics since its initial release in December 2013. [38] This assembly marks a significant evolution from its predecessor, GRCh37/hg19, through substantial improvements in sequence accuracy, gap closure, and the representation of global human genetic diversity. [38] [39] The primary advancements in GRCh38 include a major expansion of alternate (ALT) contigs to better represent population-level variation, correction of thousands of sequencing artifacts present in GRCh37 that caused false SNP and indel calls, inclusion of synthetic centromeric sequences, and updates to non-nuclear genomic sequences. [38]

For researchers investigating complex genetic disorders such as premature ovarian insufficiency (POI), adopting GRCh38 is increasingly crucial. [32] [6] Its enhanced representation of genetic variation provides a more accurate framework for identifying and annotating clinically relevant variants, thereby improving the reliability of downstream analyses and clinical interpretations. [40] [39] The transition to GRCh38 enables laboratories to leverage growing genomic resources, including the Match Annotation from NCBI and EMBL-EBI (MANE) transcript set and the latest gnomAD version 4.1 database, which contains approximately five times more genomes than previous versions mapped to GRCh37. [39]

Technical Improvements in GRCh38/hg38 Over Previous Assemblies

Key Enhancements and Features

GRCh38 introduced several foundational improvements that directly impact variant calling accuracy and comprehensive genomic analysis:

- Expanded Alternate Loci: The assembly includes 261 ALT contigs across 60 megabases, dramatically increasing the representation of common complex variation, particularly in major histocompatibility loci (MHC) and other highly polymorphic regions. [38] [39] This contrasts with GRCh37, which contained only 9 ALT contigs at 3 genomic loci. [39]

- Correction of Sequence Errors: Thousands of small sequencing artifacts present in GRCh37 have been resolved, eliminating numerous false positive SNPs and indels that previously complicated variant analysis. [38]

- Structural Corrections: Several clinically relevant regions affected by assembly errors in GRCh37 have been corrected, including internal inversions in genes such as PTPRQ and false intergenic gaps affecting TMEM236 and MRC1. [39]

- Analysis Set Masking: The GRCh38 analysis set includes hard-masked pseudo-autosomal regions (PAR) on chromosome Y to facilitate mapping to chromosome X, along with masking of homologous centromeric and genomic repeat arrays on chromosomes 5, 14, 19, 21, and 22. [38]

Comparative Analysis of GRCh37 and GRCh38

Table 1: Key differences between GRCh37 and GRCh38 reference genomes

| Feature | GRCh37/hg19 | GRCh38/hg38 | Impact on Analysis |

|---|---|---|---|

| Release Date | 2009 | 2013 (ongoing patches) | GRCh38 incorporates more recent genomic data |

| ALT Contigs | 9 ALT contigs at 3 loci [39] | 261 ALT contigs across 60 Mb [39] | Improved representation of population variation |

| Sequence Errors | Contains thousands of artifacts causing false variants [38] | Corrected sequencing artifacts [38] | Reduced false positive SNPs and indels |

| Centromeric Regions | Largely undefined | Synthetic centromeric sequences added [38] | Better characterization of peri-centromeric regions |

| Clinical Variants as Reference | Few instances | Several clinically relevant variants now reference (e.g., F5 p.R534Q) [39] | Altered variant annotation requires careful interpretation |

| MHC Region Representation | Limited | Expanded with alternative haplotypes [38] | Improved immunogenomics studies |

Implementing GRCh38/hg38 in POI Research Pipelines

Wet-Lab Sequencing Considerations

For targeted next-generation sequencing studies in premature ovarian insufficiency, several laboratory protocols have successfully implemented GRCh38. The fundamental workflow begins with quality DNA extraction, followed by library preparation and sequencing aligned to the GRCh38 reference.

DNA Quality and Quantity Requirements:

- Input DNA: 10-50 ng of genomic DNA is typically used for amplicon-based NGS panels. [6]

- Quality: High molecular weight DNA with chemical purity is essential, free from contaminants like polysaccharides, proteoglycans, or secondary metabolites that can impair library preparation efficacy. [41]

Library Preparation and Sequencing: A standardized protocol for POI gene panel sequencing involves:

- Amplicon Library Preparation: Using the Ion AmpliSeq Library Kit Plus with multiplexed primer pairs (typically 2 pools) [6]

- PCR Conditions: 99°C for 2 minutes; 19 cycles of 99°C for 15 seconds and 60°C for 4 minutes; hold at 10°C [6]

- Template Preparation: Using semi-automated Ion OneTouch 2 instrument with emulsion PCR [6]

- Sequencing: Performing runs on Ion S5 system with 500 flows [6]

Bioinformatics Implementation

Alignment and Variant Calling: The basic workflow for processing NGS data against GRCh38 includes:

- Base Calling and Demultiplexing: Using platform-specific pipelines (e.g., Torrent Suite for Ion Torrent data) [6]

- Alignment to GRCh38: Using optimized aligners (TMAP, BWA-MEM, or DRAGEN) [6]

- Variant Calling: Using GATK Unified Genotyper or similar tools [32]

- Variant Annotation: Functional consequences using Annovar, VEP, or similar tools [32] [6]

Table 2: Essential bioinformatics tools for GRCh38-based analysis

| Tool Category | Specific Tools | Application in POI Research |

|---|---|---|

| Sequence Alignment | BWA-MEM, DRAGEN, DRAGMAP, TMAP | Mapping reads to GRCh38 reference [6] [42] |

| Variant Calling | GATK Unified Genotyper, Atlas V2 | Identifying SNVs and indels [32] |

| Variant Annotation | Annovar, Ensembl VEP, Ion Reporter | Predicting functional consequences [32] [6] |

| Variant Validation | VariantValidator, ClinGen Allele Registry | Harmonizing variant descriptions across builds [39] |

| Quality Control | FastQC, MultiQC | Assessing sequence data quality [32] |

Handling ALT Contigs in GRCh38: Two primary methods exist for managing the expanded ALT contigs in GRCh38:

- ALT-aware Alignment: Uses liftover approaches to map reads aligning to ALT contigs back to primary chromosomal locations (older method) [42]