Comparative Analysis of Sperm Morphology Classification Algorithms: From Conventional ML to Advanced Deep Learning

This article provides a comprehensive comparison of computational algorithms for sperm morphology classification, a critical yet subjective component of male fertility diagnostics.

Comparative Analysis of Sperm Morphology Classification Algorithms: From Conventional ML to Advanced Deep Learning

Abstract

This article provides a comprehensive comparison of computational algorithms for sperm morphology classification, a critical yet subjective component of male fertility diagnostics. We systematically evaluate the evolution from conventional machine learning techniques to advanced deep learning architectures, including Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs). The analysis covers foundational principles, methodological applications, optimization strategies to overcome data and model limitations, and rigorous performance validation. Targeted at researchers and drug development professionals, this review synthesizes current evidence to highlight state-of-the-art approaches, their clinical applicability, and future directions for integrating artificial intelligence into standardized reproductive diagnostics.

The Foundation of Automated Analysis: Challenges and Datasets in Sperm Morphology

Sperm morphology analysis represents a critical, yet notoriously variable, component of male fertility assessment. Despite its established role in infertility diagnostics and treatment planning, conventional manual analysis suffers from significant subjectivity, with studies reporting diagnostic disagreement of up to 40% between expert evaluators [1]. This variability stems from multiple factors: the inherent complexity of sperm morphological classification, differences in technician training and expertise, and the labor-intensive nature of analyzing hundreds of sperm per sample [2] [3]. The clinical imperative for standardization is clear—without consistent, reproducible assessment, accurate diagnosis, appropriate treatment selection, and reliable prognostic information for patients remain compromised.

The evolution of sperm morphology analysis has progressed through distinct phases: initial reliance on purely manual assessment, the introduction of computer-assisted sperm analysis (CASA) systems utilizing traditional image processing, and most recently, the emergence of deep learning algorithms capable of automated, high-accuracy classification [2] [1] [4]. This guide provides a comprehensive comparison of these morphological classification approaches, examining their technical methodologies, performance characteristics, and clinical applicability to inform researchers, scientists, and drug development professionals in the field of reproductive medicine.

Comparative Analysis of Classification Algorithms

Performance Metrics Across Algorithm Classes

Table 1: Comparative performance of sperm morphology classification algorithms

| Algorithm Class | Specific Method | Reported Accuracy | Dataset | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Deep Learning | CBAM-enhanced ResNet50 + Deep Feature Engineering | 96.08% ± 1.2% [1] | SMIDS (3-class) | High accuracy, attention mechanisms, reduced processing time | Complex implementation, requires substantial computational resources |

| CBAM-enhanced ResNet50 + Deep Feature Engineering | 96.77% ± 0.8% [1] | HuSHeM (4-class) | Superior performance on complex classification | Same as above | |

| MobileNet | 87% [4] | Custom dataset | Suitable for mobile deployment, efficient | Lower accuracy compared to more complex architectures | |

| Stacked CNN Ensemble | 95.2% [1] | HuSHeM | Combines multiple architectures | Computationally intensive | |

| Conventional Machine Learning | Wavelet + Descriptor features + SVM | 83.8% [4] | Custom dataset | Interpretable features | Limited by handcrafted features |

| Wavelet features + SVM | 80.5% [4] | Custom dataset | Same as above | Same as above | |

| Human Assessment | Expert morphologists (untrained) | 53-81% (varies by category system) [3] | Custom images | Clinical interpretability | High variability, time-intensive |

| Expert morphologists (trained with tool) | 90-98% (varies by category system) [3] | Custom images | Improves with standardized training | Requires extensive training to maintain proficiency |

Clinical Applicability and Standardization Potential

Table 2: Clinical implementation characteristics of classification approaches

| Parameter | Manual Assessment | Traditional CASA | Deep Learning Algorithms |

|---|---|---|---|

| Analysis Time | 30-45 minutes per sample [1] | 5-10 minutes per sample | <1 minute per sample [1] |

| Inter-observer Variability | High (kappa values 0.05-0.15) [1] | Moderate | Minimal (algorithm-dependent) |

| Training Requirements | Extensive (months to years) | Moderate | Minimal after implementation |

| Standardization Potential | Low without rigorous training protocols [3] | Moderate (system-dependent) | High |

| Ability to Detect Rare Abnormalities | High (experts) | Limited | High (with sufficient training data) |

| Regulatory Approval Status | Established reference | Varies by system | Emerging |

| Initial Implementation Cost | Low | Moderate to high | High |

Detailed Experimental Protocols

Deep Learning with Enhanced Architectures

Protocol 1: CBAM-enhanced ResNet50 with Deep Feature Engineering [1]

Sample Preparation: Sperm samples are stained using the Papanicolaou method according to WHO laboratory manual standards. Smears are prepared with 95% ethanol fixation, followed by sequential rehydration in 80%, 50% ethanol, and purified water. Nuclear staining employs Harris's hematoxylin for 4 minutes, with cytoplasmic staining using G-6 orange and EA-50 green.

Image Acquisition: Utilize an Olympus CX43 upright microscope with 100× oil immersion objective lens, coupled with a CMOS-based microscope camera (1920 × 1200 resolution, ≥70 fps frame rate). The system captures a series of Z-axis images (≥40 fps) to calculate the optimal focal plane, typically analyzing 400 sperm or 100 fields per sample.

Algorithm Implementation: The framework integrates ResNet50 backbone with Convolutional Block Attention Module (CBAM) attention mechanisms. The architecture includes multiple feature extraction layers (CBAM, Global Average Pooling, Global Max Pooling) combined with 10 distinct feature selection methods including Principal Component Analysis, Chi-square test, and Random Forest importance. Classification is performed using Support Vector Machines with RBF/Linear kernels and k-Nearest Neighbors algorithms. The model is evaluated using 5-fold cross-validation on benchmark datasets (SMIDS with 3000 images, 3-class; HuSHeM with 216 images, 4-class).

Validation Methodology: Performance metrics include accuracy, precision, recall, F1-score, and McNemar's test for statistical significance. Grad-CAM attention visualization provides clinical interpretability by highlighting morphologically relevant regions (head shape, acrosome integrity, tail defects).

Traditional Machine Learning Approaches

Protocol 2: Conventional Feature-Based Classification [4]

Image Processing: Apply wavelet denoising and directional masking to enhance sperm head contours. Implement group sparsity approaches for segmentation of possible sperm shapes. Extract domain-specific features using wavelet transform and descriptors (Hu moments, Zernike moments, Fourier descriptors).

Classification Pipeline: Utilize support vector machines (SVM) with manually engineered features. Compare performance with k-nearest neighbors and decision tree algorithms. Training employs 5-fold cross-validation with rigorous train-test splits to prevent data leakage.

Table 3: Analysis of handcrafted features for conventional ML

| Feature Category | Specific Descriptors | Morphological Correlation | Classification Performance |

|---|---|---|---|

| Shape-based | Hu moments, Zernike moments, Fourier descriptors | Head shape, ellipticity, acrosome coverage | Up to 90% accuracy for head defects [2] |

| Texture-based | Wavelet coefficients, gray-level co-occurrence | Chromatin condensation, vacuolization | Moderate performance for subtle defects |

| Contour-based | Boundary signatures, curvature features | Head contour regularity, midpiece attachment | Effective for gross abnormalities |

Research Reagent Solutions and Essential Materials

Table 4: Essential research reagents and materials for sperm morphology analysis

| Category | Specific Product/System | Application in Research | Performance Considerations |

|---|---|---|---|

| Staining Methods | Papanicolaou stain [5] | Standardized morphology assessment | Recommended by WHO manuals, provides differential staining of head vs. tail structures |

| Shorr staining procedure [6] | Rapid morphology screening | Suitable for fertility clinics, faster than Papanicolaou | |

| Analysis Systems | SSA-II Plus CASA System [5] | Automated sperm morphometry | Measures head length, width, area, perimeter, ellipticity, acrosome area |

| Hamilton Thorne CEROS [6] | Clinical semen analysis | Validated against WHO standards, measures concentration, motility, and morphology | |

| SQA-V GOLD [6] | High-throughput screening | Based on electro-optical signals, high precision for concentration and motility | |

| Microscopy | Olympus CX43 with 100× oil immersion [5] | High-resolution imaging | Essential for detailed morphological assessment, requires proper calibration |

| Classification Tools | Custom deep learning frameworks [1] | Algorithm development | Requires specialized programming expertise, offers highest accuracy potential |

| Training Resources | Sperm Morphology Assessment Standardisation Training Tool [3] | Technician proficiency | Based on machine learning principles, uses expert consensus "ground truth" labels |

Clinical Validation and Proficiency Assessment

Training and Standardization Protocols

The development of standardized training tools represents a critical advancement in addressing inter-observer variability. Recent research demonstrates that novice morphologists using a 'Sperm Morphology Assessment Standardisation Training Tool' achieved significant improvements in classification accuracy across multiple category systems [3]. Untrained users initially demonstrated accuracies of 53±3.69% to 81±2.5% depending on classification system complexity (2-category to 25-category systems). Following structured training, accuracy rates improved to 90±1.38% to 98±0.43% across the same classification systems [3].

Reference Values and Quality Control

Establishing population-specific reference values remains essential for accurate clinical assessment. A recent study of 29,994 sperm from a fertile male population provided precise morphometric reference values using the SSA-II Plus system [5]. Key parameters included head length (mean 4.28µm), head width (mean 2.98µm), head area (mean 9.82µm²), perimeter (mean 12.13µm), and ellipticity (mean 1.45) [5]. These values provide critical benchmarks for both manual and automated classification systems.

Quality control programs such as the German QuaDeGA and UK NEQAS represent essential components of laboratory standardization, though their infrequency and expense limit their effectiveness [3]. Automated systems offer inherent advantages in continuous quality assurance through algorithm consistency and reduced drift in classification criteria over time.

The standardization of sperm morphology assessment represents an ongoing challenge with significant implications for clinical andrology and reproductive research. While manual assessment continues to serve as the historical reference standard, its limitations in reproducibility, throughput, and inter-observer variability necessitate complementary approaches. Traditional CASA systems offer improved standardization for basic parameters but remain limited in complex morphological classification. Deep learning algorithms demonstrate superior performance with accuracy exceeding 96% and processing times reduced from 30-45 minutes to under 1 minute per sample [1].

The optimal path forward likely integrates multiple approaches: standardized training tools to improve human proficiency, validated automated systems for high-throughput screening, and advanced deep learning algorithms for complex diagnostic challenges. Future developments should focus on expanding high-quality annotated datasets, validating algorithms across diverse populations, and establishing regulatory frameworks for clinical implementation. Through continued refinement and validation of these complementary technologies, the field can achieve the standardization necessary for reliable diagnosis, appropriate treatment selection, and improved patient outcomes in reproductive medicine.

Inherent Limitations of Manual Analysis and Inter-Expert Variability

Sperm morphology assessment is a cornerstone of male fertility evaluation, providing critical diagnostic and prognostic information for assisted reproductive outcomes [2] [7]. This analysis involves classifying sperm into normal and various abnormal categories based on strict structural criteria established by the World Health Organization (WHO) [1]. Traditionally, this assessment has been performed manually by trained embryologists and technicians who visually examine stained sperm samples under high magnification [2]. However, this manual approach suffers from fundamental limitations that compromise diagnostic consistency and clinical utility. The inherent subjectivity of visual assessment, combined with the complexity of morphological criteria, results in significant inter-expert and intra-laboratory variability [7]. This article examines the quantitative evidence of these limitations and compares traditional manual analysis with emerging computational approaches, focusing on their performance in standardizing sperm morphology classification for research and clinical applications.

Quantifying the Limitations of Manual Analysis

Inter-Expert Variability and Diagnostic Disagreement

Manual sperm morphology assessment demonstrates considerable variability between different evaluators, even among trained experts following standardized protocols. Quantitative studies have revealed startling levels of disagreement in morphological classifications:

Table 1: Documented Inter-Expert Variability in Manual Sperm Morphology Assessment

| Study Reference | Nature of Disagreement | Quantitative Measure | Context |

|---|---|---|---|

| Kılıç (2025) [1] | Overall diagnostic disagreement | Up to 40% disagreement between expert evaluators | General sperm morphology classification |

| Kılıç (2025) [1] | Reliability of manual assessment | Kappa values as low as 0.05–0.15 | Inter-observer agreement among trained technicians |

| SCIAN-MorphoSpermGS (2017) [8] | Classification consistency | High inter-expert variability confirmed | Gold-standard dataset creation |

| Biochemia Medica (2019) [7] | Intra-laboratory agreement | Kappa values of 0.700 (WHO) and 0.715 (Strict criteria) | Comparison of WHO vs. Strict criteria |

This variability stems from multiple factors, including differences in technical training, subjective interpretation of borderline morphological features, visual fatigue during extended analysis sessions, and inconsistencies in applying classification criteria to individual sperm cells [1] [2]. The diagnostic consequences are significant, as varying morphology assessments can lead to different clinical diagnoses and treatment pathways for infertile couples.

Operational Inefficiencies and Workload Challenges

The manual morphology assessment process is notoriously time-intensive and laborious. Current standards require technicians to evaluate at least 200 sperm per sample to obtain a statistically reliable assessment, a process that typically takes 30-45 minutes per sample [1] [2]. This creates substantial bottlenecks in clinical laboratory workflows and limits patient throughput. Furthermore, the requirement for extensive expert training to achieve even moderate levels of inter-observer agreement creates resource constraints for laboratories, particularly in regions with limited access to specialized expertise in reproductive medicine.

Computational Algorithms: Performance Comparison

Conventional Machine Learning Approaches

Early computational approaches to sperm morphology analysis relied on traditional machine learning algorithms combined with handcrafted feature extraction. These methods typically employed shape-based descriptors to quantify morphological characteristics, which were then fed into classifiers for categorization.

Table 2: Performance of Conventional Machine Learning Algorithms

| Algorithm Combination | Reported Performance | Limitations | Study Reference |

|---|---|---|---|

| Fourier descriptor + SVM | 49% mean correct classification | Poor discrimination among non-normal sperm heads | SCIAN-MorphoSpermGS [8] |

| Bayesian Density Estimation + Shape Descriptors | 90% accuracy | Limited to head morphology only | Bijar et al. [2] |

| SVM Classifier | 88.59% AUC-ROC, >90% precision | Required manual feature engineering | Mirsky et al. [2] |

| K-means + Histogram Statistics | Variable segmentation accuracy | Struggled with overlapping sperm and impurities | Chang et al. [2] |

These conventional approaches demonstrated modest success in specific classification tasks but faced fundamental limitations. Their reliance on manually engineered features restricted their ability to capture the full spectrum of morphological subtleties that trained embryologists recognize. Additionally, they typically focused exclusively on sperm head morphology, neglecting other clinically relevant structures such as the neck, midpiece, and tail [2].

Deep Learning and Hybrid Approaches

Recent advances in deep learning have transformed sperm morphology analysis by enabling automated feature extraction from raw images. Hybrid architectures that combine deep learning with classical machine learning have demonstrated particularly impressive performance.

Table 3: Performance of Deep Learning and Hybrid Algorithms

| Algorithm / Framework | Dataset | Performance | Key Advantages |

|---|---|---|---|

| CBAM-enhanced ResNet50 + Deep Feature Engineering + SVM [1] | SMIDS (3-class) | 96.08 ± 1.2% accuracy | Attention mechanisms focus on relevant morphological features |

| CBAM-enhanced ResNet50 + Deep Feature Engineering + SVM [1] | HuSHeM (4-class) | 96.77 ± 0.8% accuracy | 10.41% improvement over baseline CNN |

| ResNet50 Transfer Learning [9] | Confocal Microscopy Images | 93% test accuracy, 0.91-0.95 precision/recall | Analyzes unstained live sperm for clinical use |

| Stacked CNN Ensemble [1] | HuSHeM | 95.2% accuracy | Combines multiple architectures (VGG16, ResNet-34, DenseNet) |

| In-house AI Model [9] | High-resolution CLSM | Correlation: r=0.88 with CASA, r=0.76 with conventional | Processes 25,000 images in ~140 seconds |

The most significant improvements have come from architectures that incorporate attention mechanisms and sophisticated feature engineering pipelines. For instance, the integration of Convolutional Block Attention Module (CBAM) with ResNet50 enables the model to focus on clinically relevant sperm features while suppressing background noise [1]. When enhanced with deep feature engineering involving multiple feature selection methods (Principal Component Analysis, Chi-square test, Random Forest importance) and classified using Support Vector Machines with RBF kernels, these frameworks achieve performance improvements of 8.08-10.41% over baseline CNN models [1].

Experimental Protocols and Methodologies

Protocol for Deep Feature Engineering with Attention Mechanisms

The top-performing approach from Kılıç (2025) employs a comprehensive experimental protocol that integrates modern deep learning with classical machine learning [1]:

- Dataset Preparation: Utilize benchmark datasets (SMIDS with 3,000 images/3-class or HuSHeM with 216 images/4-class) with expert-annotated morphological labels.

- Architecture Selection: Implement a hybrid architecture with ResNet50 backbone enhanced with Convolutional Block Attention Module (CBAM) to enable spatial and channel-wise attention.

- Feature Extraction: Extract multi-dimensional features from four distinct layers: CBAM attention weights, Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final classification layer.

- Feature Selection: Apply 10 distinct feature selection methods including Principal Component Analysis (PCA), Chi-square test, Random Forest importance, and variance thresholding, along with their intersections.

- Classification: Employ shallow classifiers (Support Vector Machines with RBF/Linear kernels and k-Nearest Neighbors) on the selected feature subsets.

- Validation: Perform rigorous 5-fold cross-validation with statistical significance testing (McNemar's test) and attention visualization (Grad-CAM) for clinical interpretability.

Deep Feature Engineering Workflow

Protocol for Live Sperm Analysis with AI

A novel approach for analyzing unstained live sperm, enabling clinical use of analyzed specimens, follows this methodology [9]:

- Sample Preparation: Collect semen samples from donors (2-7 days abstinence) and aliquot for comparative analysis.

- Image Acquisition: Capture high-resolution images using confocal laser scanning microscopy at 40× magnification with Z-stack imaging (0.5μm interval, 2μm range).

- Dataset Creation: Manually annotate sperm images using LabelImg program with high inter-annotator agreement (correlation coefficient: 0.95-1.0).

- Model Training: Implement ResNet50 transfer learning model trained on 9,000 images (4,500 normal/4,500 abnormal) for 150 epochs.

- Validation: Compare AI assessment against Computer-Aided Semen Analysis (CASA) and Conventional Semen Analysis (CSA) using correlation analysis and statistical measures.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for Sperm Morphology Analysis

| Item | Function / Application | Specification / Notes |

|---|---|---|

| Giemsa Stain [7] | Conventional sperm staining for WHO criteria | Requires fixation, renders sperm unusable |

| Spermac Stain [7] | Specialized staining for strict criteria assessment | Requires fixation and washing steps |

| Diff-Quik Stain [9] | Romanowsky stain variant for CASA analysis | Used with computer-assisted systems |

| Quinn's Sperm Washing Medium [7] | Preparation for strict criteria assessment | Centrifugation at 300g for 10 minutes |

| Leja Slides [9] | Standardized chamber slides for CASA | 20μm preparation depth, 4-chamber design |

| Confocal Laser Scanning Microscope [9] | High-resolution imaging of live sperm | 40× magnification, Z-stack capability |

| Hamilton Thorne IVOS II [9] | Computer-Assisted Semen Analysis (CASA) | DIMENSIONS II Morphology Software |

Manual vs Automated Analysis Comparison

The evidence demonstrates that manual sperm morphology analysis is fundamentally limited by inherent subjectivity, resulting in significant inter-expert variability that compromises diagnostic reliability. Quantitative studies reveal alarming disagreement rates of up to 40% between expert evaluators and consistently low kappa values (0.05-0.15), highlighting the methodological limitations of human-based assessment [1] [8].

Advanced computational approaches, particularly deep learning frameworks enhanced with attention mechanisms and feature engineering, have demonstrated superior performance with accuracy exceeding 96% and minimal variance [1]. These automated systems not only outperform conventional manual analysis in accuracy but also provide dramatic improvements in efficiency, reducing analysis time from 30-45 minutes to under one minute per sample while eliminating inter-observer variability [1]. For research and clinical applications requiring standardized, reproducible sperm morphology assessment, automated algorithms represent a transformative advancement that addresses the critical limitations of manual analysis.

The accurate assessment of sperm morphology is a critical component of male fertility evaluation, with abnormal sperm shapes strongly correlated with reduced fertility rates and poor outcomes in assisted reproductive technology [1]. Traditional manual analysis performed by embryologists is notoriously subjective and time-intensive, suffering from significant inter-observer variability that can reach up to 40% disagreement between expert evaluators [1]. This diagnostic inconsistency, coupled with lengthy evaluation times of 30-45 minutes per sample, has created an urgent need for automated, objective sperm morphology classification systems [10].

Benchmark datasets serve as the foundational pillar for developing and validating these automated algorithms, providing standardized platforms for comparing performance across different methodologies. Within reproductive medicine, three datasets have emerged as critical benchmarks: the HuSHeM (Human Sperm Head Morphology) dataset, the SMIDS (Sperm Morphology Image Data Set), and the SMD (Sperm Morphology Dataset) or MSS (Maritime SMD) [11] [12] [13]. These carefully curated collections enable researchers to objectively evaluate algorithmic performance, ensure reproducible results, and accelerate the development of clinically viable solutions that can transform fertility diagnostics by providing standardized, objective assessments while significantly reducing analysis time from minutes to seconds [1].

Dataset Specifications and Technical Characteristics

Comprehensive Dataset Comparison

The three benchmark datasets each offer unique characteristics tailored to different research needs and algorithmic approaches, from detailed sperm head morphology to broader classification tasks and application-specific benchmarking.

Table 1: Technical Specifications of Sperm Morphology Benchmark Datasets

| Feature | HuSHeM | SMIDS | SMD/MSS |

|---|---|---|---|

| Primary Focus | Detailed sperm head morphology classification | General sperm morphology classification | Maritime object detection (non-medical) |

| Classes | Normal, Pyriform, Tapered, Amorphous [14] | Normal, Abnormal, Non-sperm [13] | Various maritime objects [12] |

| Total Images | 216 sperm head images [1] [14] | 3,000 images (1021 normal, 1005 abnormal, 974 non-sperm) [13] | Not specified for sperm morphology |

| Image Format | 131×131 pixels, RGB [14] | RGB color space [13] | Not applicable |

| Sample Preparation | Diff-Quick stained, manually cropped [14] | Modified hematoxylin eosin stained [13] | Not applicable |

| Key Strength | High-quality expert consensus on head morphology | Large dataset size with non-sperm category | Benchmark for deep learning in specialized environments |

Dataset-Specific Characteristics and Applications

HuSHeM was meticulously curated from semen samples collected from fifteen patients at the Isfahan Fertility and Infertility Center. The sperm samples were fixed and stained using the Diff-Quik method, then imaged using an Olympus CX21 microscope with a ×100 objective lens. A key strength of this dataset is the rigorous annotation process: sperm heads were classified into five classes by three specialists, with only samples achieving collective consensus retained in the final dataset. This meticulous approach ensures high-quality ground truth labels for reliable algorithm training and validation [14].

SMIDS distinguishes itself through its scale and practical acquisition methodology. The dataset was collected using a smartphone-based data acquisition approach, making it particularly valuable for developing accessible, cost-effective diagnostic solutions. Unlike HuSHeM, which focuses exclusively on carefully cropped sperm heads, SMIDS images may include noise, multiple sperm heads, and mixed tails, better reflecting real-world clinical imaging conditions and presenting additional challenges for preprocessing and segmentation algorithms [13].

SMD/MSS, while sharing a similar acronym, serves a completely different purpose. This benchmark was designed specifically for evaluating deep learning-based object detection algorithms in maritime environments, not for sperm morphology analysis. Its relevance to reproductive medicine is limited, though it exemplifies how specialized benchmarks accelerate algorithm development in their respective domains [12] [15].

Experimental Protocols and Methodological Approaches

State-of-the-Art Deep Learning Framework

Recent research has demonstrated exceptional performance using a hybrid deep learning framework combining Convolutional Block Attention Module (CBAM) with ResNet50 architecture and advanced deep feature engineering techniques. This approach was rigorously evaluated on both SMIDS and HuSHeM datasets using 5-fold cross-validation, achieving test accuracies of 96.08% ± 1.2% on SMIDS and 96.77% ± 0.8% on HuSHeM. These results represent significant improvements of 8.08% and 10.41% respectively over baseline CNN performance [1].

The methodology employs a comprehensive deep feature engineering pipeline that integrates multiple feature extraction layers (CBAM, Global Average Pooling, Global Max Pooling, pre-final layers) combined with 10 distinct feature selection methods including Principal Component Analysis, Chi-square test, Random Forest importance, and variance thresholding. Classification is then performed using Support Vector Machines with RBF/Linear kernels and k-Nearest Neighbors algorithms [1].

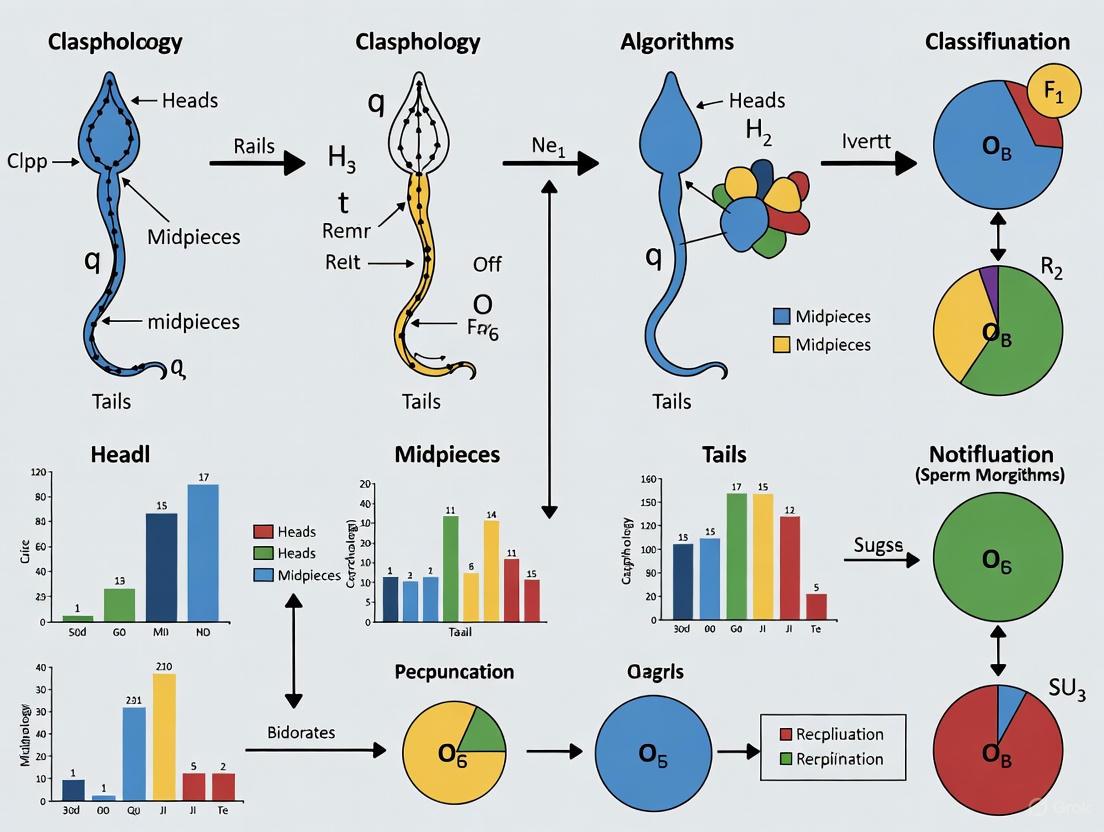

Diagram 1: Hybrid Deep Learning Workflow for Sperm Morphology Classification

Traditional Computer Vision Approach

For comparison, traditional computer vision approaches have employed multi-stage frameworks incorporating cascade-connected preprocessing techniques. One notable study implemented a comprehensive pipeline including wavelet-based local adaptive denoising, modified overlapping group shrinkage, image gradient analysis, and automatic directional masking. These preprocessing steps were combined with region-based descriptor features and non-linear kernel SVM classification [16].

This methodology demonstrated significant performance improvements, increasing classification accuracy by 10% on HuSHeM and 5% on SMIDS datasets compared to baseline approaches. A key advantage of this framework is its ability to eliminate exhaustive manual orientation and cropping operations while maintaining reasonable computational efficiency [16].

Performance Comparison Across Methods

Table 2: Experimental Results Across Methodologies and Datasets

| Methodology | HuSHeM Accuracy | SMIDS Accuracy | Key Advantages | Limitations |

|---|---|---|---|---|

| CBAM-ResNet50 + Deep Feature Engineering | 96.77% ± 0.8% [1] | 96.08% ± 1.2% [1] | State-of-the-art performance, attention visualization | Computational complexity, requires expertise |

| Traditional Computer Vision + SVM | ~92% (10% improvement) [16] | ~85% (5% improvement) [16] | Computational efficiency, interpretability | Lower performance on complex cases |

| MobileNet-based Approach | Not reported | 87% [1] | Mobile deployment capability | Limited representational capacity |

| Ensemble Methods | 95.2% [1] | Not reported | Combines multiple architectures | Complex training process |

Essential Research Reagents and Computational Tools

The development of robust sperm morphology classification systems requires both biological reagents for sample preparation and computational tools for algorithm development. This section details essential components for reproducing state-of-the-art experiments in this domain.

Table 3: Research Reagent Solutions for Sperm Morphology Analysis

| Category | Specific Resource | Function/Purpose | Example Usage |

|---|---|---|---|

| Staining Reagents | Diff-Quick stain [14] | Visual enhancement of sperm structures | HuSHeM dataset preparation |

| Staining Reagents | Modified hematoxylin eosin assay [13] | Staining for better visualization of sperm parts | SMIDS dataset preparation |

| Microscopy Equipment | Olympus CX21 microscope [14] | High-resolution sperm imaging | HuSHeM image acquisition |

| Imaging Accessories | Sony color camera (Model No SSC-DC58AP) [14] | Digital image capture | HuSHeM dataset |

| Computational Frameworks | CBAM-enhanced ResNet50 [1] | Attention-based feature extraction | State-of-the-art classification |

| Feature Selection | PCA, Chi-square, Random Forest [1] | Dimensionality reduction and feature optimization | Deep feature engineering pipeline |

| Classification Algorithms | SVM with RBF/Linear kernels [1] | Final morphology classification | Multiple methodologies |

The systematic comparison of HuSHeM, SMIDS, and SMD/MSS benchmarks reveals a rapidly evolving landscape in sperm morphology analysis. HuSHeM excels in detailed sperm head morphology classification with expert-validated annotations, while SMIDS offers larger scale and real-world imaging conditions valuable for robust algorithm development. The documented progression from traditional computer vision approaches to sophisticated deep learning frameworks highlights the critical role of standardized benchmarks in driving algorithmic innovation.

The most promising developments combine attention mechanisms with classical feature engineering, achieving unprecedented accuracy while providing clinically interpretable results through visualization techniques like Grad-CAM [1]. These approaches demonstrate the potential to transform clinical practice by reducing diagnostic variability, significantly shortening analysis time from 30-45 minutes to under one minute per sample, and improving reproducibility across laboratories [1]. Future research directions will likely focus on multi-center validation, integration with other semen parameters, and development of real-time analysis systems for assisted reproductive procedures, ultimately enhancing patient care and treatment outcomes in reproductive medicine.

The World Health Organization (WHO) laboratory manual provides the global standard for human semen examination, establishing standardized criteria for classifying sperm morphology as normal or abnormal. These criteria are essential for clinical diagnostics, treatment planning, and research consistency in male fertility assessment. The WHO system categorizes sperm abnormalities into defects of the head, neck, midpiece, and tail, and requires the evaluation of over 200 spermatozoa to determine the percentage of normal forms—a process that is both time-consuming and subject to inter-observer variability [2]. In response to these challenges, computational approaches have emerged to automate and objectivize sperm classification.

The Modified David classification system, while less explicitly detailed in the available literature, represents an adaptation of traditional morphological analysis that incorporates computational methodologies. This system and similar modified frameworks leverage machine learning (ML) and deep learning (DL) algorithms to enhance the accuracy, efficiency, and reproducibility of sperm morphology analysis. The transition from manual WHO criteria to automated, modified classification systems represents a significant paradigm shift in andrology, driven by advances in artificial intelligence and computer vision [2].

Methodologies: From Manual Assessment to Computational Analysis

WHO Manual Classification Protocol

The traditional WHO methodology relies on visual inspection of stained semen smears under a microscope. The detailed protocol involves:

- Sample Preparation: Semen samples are collected and prepared on microscope slides using staining techniques (e.g., Diff-Quik, Papanicolaou) to enhance cellular detail.

- Morphological Assessment: A trained technician systematically scans the slide and classifies each spermatozoon according to strict morphological criteria:

- Normal Sperm: Possessing an oval-shaped head with a well-defined acrosome covering 40-70% of the head area, no neck/midpiece/tail defects, and no cytoplasmic droplets more than one-half the size of the sperm head.

- Head Defects: Includes large/small heads, tapered/pyriform heads, double heads, and amorphous heads.

- Neck/Midpiece Defects: Includes bent tails, asymmetrical insertion, thick or thin midpieces.

- Tail Defects: Includes short, coiled, double, or broken tails.

- Quantification: At least 200 spermatozoa are evaluated across multiple microscopic fields to calculate the percentage of normal forms, with reference values provided by the WHO (currently >4% normal forms is considered normal) [2].

Computational Classification Methodologies

Modified classification systems employ a variety of computational workflows that can be categorized into conventional machine learning and deep learning approaches:

Conventional Machine Learning Pipeline

Conventional ML approaches follow a multi-stage process for sperm classification:

- Image Pre-processing: Enhancement of sperm images using techniques like noise reduction, contrast adjustment, and color space conversion to improve feature visibility [4] [2].

- Sperm Segmentation: Isolation of individual sperm cells and their components (head, midpiece, tail) using clustering algorithms like K-means or histogram-based methods [4] [2].

- Feature Extraction: Manual computation of morphological, texture, and shape descriptors including:

- Classification Algorithm: Use of classifiers such as Support Vector Machines (SVM), decision trees, or Bayesian models to categorize sperm based on the extracted features [4] [2].

Deep Learning Pipeline

DL approaches utilize neural networks to automate feature extraction and classification:

- Data Preparation: Curating large datasets of annotated sperm images (e.g., HSMA-DS, VISEM-Tracking, SVIA dataset) with segmentation masks and classification labels [2].

- Model Training: Training convolutional neural networks (CNNs) like Mobile-Net, U-Net, or custom architectures on the annotated data.

- End-to-End Classification: The trained model directly processes input images and outputs classification results, integrating feature extraction and classification into a single step [4] [2].

The following diagram illustrates the comparative workflows of these methodological approaches:

Performance Comparison: Quantitative Analysis of Classification Systems

The transition from manual WHO criteria to computational classification systems has demonstrated significant improvements in accuracy, efficiency, and reproducibility. The table below summarizes key performance metrics from experimental studies comparing these approaches:

Table 1: Performance comparison of sperm morphology classification systems

| Classification System | Reported Accuracy | Precision/Specificity | Key Advantages | Limitations |

|---|---|---|---|---|

| Manual WHO Criteria | Subject to inter-observer variability (5-20%) [2] | Highly dependent on technician expertise | Clinical gold standard, direct visual assessment | Time-consuming, subjective, high variability |

| Conventional ML (SVM with wavelet/descriptor features) | 80.5-83.8% [4] | Varies by feature set and dataset | Interpretable features, works with smaller datasets | Dependent on manual feature engineering |

| Conventional ML (Bayesian with shape descriptors) | Up to 90% (head defects only) [2] | Precision >90% reported for specific defects [2] | Effective for specific morphological classes | Limited to head morphology, poor generalization |

| Deep Learning (Mobile-Net) | 87% [4] | Superior feature learning capability | Automatic feature extraction, high generalization | Requires large annotated datasets |

| Tree-Based ML (Stochastic Gradient Boosting) | 85.7% (balanced accuracy) [17] | Effective with motility parameters | Robust with kinetic parameters, good interpretability | Limited to specific data types |

Beyond accuracy metrics, computational systems address fundamental limitations of manual classification. One study highlighted that conventional manual assessment exhibits significant inter-expert variability, which was substantially reduced through automated approaches [2]. Furthermore, while a manually trained technician can process approximately 200-400 spermatozoa per hour, computational systems can analyze thousands of sperm cells in minutes once implemented, dramatically increasing throughput [2].

The performance of these systems varies significantly based on the morphological component being analyzed. Head defect classification generally achieves higher accuracy rates (up to 90% in some studies) compared to neck and tail abnormalities, regardless of the methodological approach [2]. This performance disparity highlights the continued challenges in comprehensive sperm morphology analysis, particularly for subtle structural defects.

Technical Implementation and Research Reagents

Successful implementation of computational sperm classification systems requires specific technical components and research reagents. The following table details essential solutions and their functions in the experimental workflow:

Table 2: Research reagent solutions for computational sperm morphology analysis

| Research Reagent | Function/Application | Implementation Notes |

|---|---|---|

| Standardized Staining Kits (Diff-Quik, Papanicolaou) | Cellular contrast enhancement for microscopy | Critical for consistent image quality across samples [2] |

| Public Annotated Datasets (HSMA-DS, VISEM-Tracking, SVIA) | Model training and validation | SVIA dataset contains 125,000 annotated instances [2] |

| Computer-Assisted Sperm Analysis (CASA) | Automated sperm motility and kinetic analysis | Provides parameters like VCL, VSL, ALH, BCF for tree-based classification [17] |

| Mobile-Net Architecture | Deep learning-based feature extraction and classification | Optimized for mobile deployment with 87% accuracy [4] |

| Clustering Algorithms (K-means with group sparsity) | Initial sperm segmentation in conventional ML | Enhances region of interest extraction [4] |

| Tree-Based Algorithms (Stochastic Gradient Boosting, Random Forest) | Classification based on motility parameters | 85.7% balanced accuracy for breed classification [17] |

The architecture of deep learning systems for sperm classification typically involves several interconnected components, as visualized in the following diagram:

Discussion and Future Directions

The evolution from WHO criteria to modified David classification systems represents a significant advancement in male fertility assessment. While manual WHO classification remains the clinical gold standard, its limitations in reproducibility, throughput, and objectivity have driven the development of computational alternatives. The experimental data demonstrates that both conventional machine learning and deep learning approaches can achieve classification accuracies exceeding 80%, with some implementations reaching 87% accuracy using Mobile-Net architectures [4].

The integration of these systems into clinical practice faces several challenges. There remains a critical need for larger, more diverse, and standardized annotated datasets to improve model generalization across different populations and laboratory protocols [2]. Furthermore, the black-box nature of some deep learning algorithms presents interpretability challenges in clinical settings where diagnostic justification is required.

Future research directions should focus on:

- Developing hybrid models that combine the interpretability of conventional ML with the performance of deep learning

- Creating multi-modal systems that integrate morphological, motility, and genetic parameters

- Establishing standardized validation protocols across institutions

- Implementing quality control frameworks for continuous model improvement

As these computational systems mature, they hold the potential to transform andrology laboratories through enhanced standardization, improved diagnostic accuracy, and more personalized treatment recommendations for male infertility. The continued refinement of modified classification systems will likely establish them as indispensable tools in both clinical and research settings, complementing rather than completely replacing the established WHO criteria that provide the foundational morphological framework for sperm assessment.

The application of artificial intelligence (AI) in sperm morphology analysis represents a paradigm shift in male fertility assessment, offering the potential to overcome the notorious subjectivity and variability of manual evaluation by embryologists [18] [2]. However, the development of robust, clinically applicable algorithms faces three fundamental data-related challenges: the scarcity of high-quality, annotated datasets; significant issues in annotation quality and consistency; and profound class imbalance within available data [2] [19]. These challenges directly impact model performance, generalizability, and ultimately, their translational value in clinical and research settings. This guide provides a comprehensive comparison of how different algorithmic approaches navigate these constraints, presenting experimental data and methodologies to inform researcher selection and implementation strategies.

The Core Data Challenges in Sperm Morphology Analysis

Data Scarcity and Standardization

The development of deep learning models requires extensive, well-annotated datasets, yet such resources for sperm morphology remain limited. Available public datasets vary dramatically in size, image characteristics, and annotation protocols [2]. For instance, the Modified Human Sperm Morphology Analysis (MHSMA) dataset contains only 1,540 sperm head images, while the HuSHeM dataset provides even fewer examples [2]. This scarcity forces researchers to employ data augmentation techniques or limit model complexity. More recently, the SVIA dataset has emerged with 125,000 annotated instances, representing a significant scale improvement [2]. The Hi-LabSpermMorpho dataset, containing 18,456 images across 18 morphological classes, was specifically designed to address these limitations with better class representation [20].

Annotation Quality and Subjectivity

The foundation of supervised learning—reliable ground truth labels—is particularly unstable in sperm morphology. Annotation quality suffers from significant inter-observer variability, even among seasoned experts. A stark demonstration of this issue comes from a secondary analysis of the Males, Antioxidants, and Infertility trial, where world-class laboratories showed no overall correlation in their assessments of the same semen samples, with extremely poor inter-observer agreement (κ = 0.05-0.15) [18]. This subjectivity permeates public datasets; the SCIAN dataset includes images with only partial expert agreement, introducing label noise that directly challenges model training [19].

Class Imbalance

Morphological class distribution in sperm images is inherently imbalanced, with normal sperm typically outnumbered by various abnormal types, which themselves occur at different frequencies [20] [19]. In the SCIAN dataset, for example, the Amorphous class contains ten times more examples than the Small class [19]. This imbalance biases models toward majority classes, reducing sensitivity for detecting rare but clinically significant abnormalities. Advanced sampling strategies and loss functions are often necessary to mitigate this effect.

Comparative Analysis of Algorithmic Performance

The table below summarizes the performance of various algorithms across different datasets, highlighting how architectural choices and learning strategies address the core data challenges.

Table 1: Performance Comparison of Sperm Morphology Classification Algorithms

| Algorithm | Dataset | Key Architecture/Strategy | Performance Metrics | Data Challenge Focus |

|---|---|---|---|---|

| In-house AI Model [9] | Confocal Microscopy Dataset (12,683 images) | ResNet50 transfer learning | Precision: 0.95 (abnormal), 0.91 (normal); Recall: 0.91 (abnormal), 0.95 (normal); Correlation with CASA: r=0.88 | Scarcity (via transfer learning), Annotation Quality (high inter-annotator agreement: CC=0.95-1.0) |

| Multi-Level Ensemble [20] | Hi-LabSpermMorpho (18,456 images, 18 classes) | Ensemble of EfficientNetV2 variants with feature/decision-level fusion | Accuracy: 67.70% (significantly outperforming individual classifiers) | Class Imbalance (18-class distribution), Scarcity (ensemble generalization) |

| Specialized CNN [19] | SCIAN (1,854 images) | Custom CNN with multiple filter sizes, fewer parameters | Recall: 88% (SCIAN), 95% (HuSHeM) | Scarcity (efficient architecture for small data), Annotation Quality (robust to label noise) |

| Mask R-CNN [21] | Combined Kaggle & Mendeley (1,300 images) | ResNet-101 backbone, instance segmentation | mAP: 89.1%; Inference accuracy: 98% (good), 98.8% (bad) | Scarcity (data augmentation), Annotation Quality (instance-level segmentation) |

| MobileNet [22] | Novel Dataset (size not specified) | Mobile-optimized CNN architecture | Accuracy: 87% | Scarcity (efficient architecture suitable for smaller datasets) |

| Multi-Scale Part Parsing [23] | Novel Dataset (size not specified) | Semantic + instance segmentation fusion, measurement enhancement | 59.3% APvolp (surpassing AIParsing by 9.20%); Measurement error reduction up to 35.0% | Annotation Quality (precision measurement), Scarcity (multi-scale feature extraction) |

Quantitative Performance Insights

The comparative data reveals several important patterns. First, ensemble methods demonstrate superior performance on complex, multi-class datasets, with the multi-level ensemble approach achieving 67.70% accuracy across 18 morphological classes—a notable advancement given the class imbalance challenge [20]. Second, specialized architectures designed for computational efficiency (MobileNet) or specific morphological tasks (Multi-Scale Part Parsing) maintain strong performance while addressing data scarcity through architectural optimization [22] [23]. Third, transfer learning approaches using established architectures like ResNet50 demonstrate excellent generalization even on smaller datasets, achieving high precision (0.95) and recall (0.95) metrics [9] [21].

Experimental Protocols and Methodologies

Protocol 1: Ensemble Learning with Multi-Level Fusion

Table 2: Experimental Protocol for Ensemble Classification

| Protocol Component | Specification | Purpose |

|---|---|---|

| Dataset | Hi-LabSpermMorpho (18,456 images, 18 classes) | Address class imbalance with diverse morphological representation |

| Feature Extraction | Multiple EfficientNetV2 variants | Leverage complementary feature representations |

| Fusion Strategy | Feature-level + decision-level fusion (soft voting) | Enhance robustness and generalization |

| Classifiers | SVM, Random Forest, MLP with Attention | Combine diverse classification paradigms |

| Validation | Cross-validation with stratified sampling | Ensure representative performance across imbalanced classes |

| Evaluation Metrics | Accuracy, per-class precision/recall, F1-score | Comprehensive performance assessment beyond overall accuracy |

This methodology specifically addresses class imbalance through several mechanisms: the use of a large, diverse dataset; ensemble techniques that reduce variance; and stratified evaluation that ensures adequate representation of all classes [20].

Protocol 2: Stained-Free Morphology Measurement

Table 3: Experimental Protocol for Stained-Free Analysis

| Protocol Component | Specification | Purpose |

|---|---|---|

| Microscopy | Confocal laser scanning at 40× magnification | Capture high-resolution images without staining |

| Image Processing | Multi-scale part parsing network (instance + semantic segmentation) | Enable precise sperm part identification and measurement |

| Measurement Enhancement | Interquartile Range (IQR) outlier exclusion, Gaussian filtering, robust correction | Counteract resolution limitations of unstained images |

| Annotation Protocol | Multiple embryologists with correlation validation (CC=0.95-1.0) | Ensure annotation quality and consistency |

| Validation | Comparison with CASA and conventional semen analysis | Establish method validity against existing standards |

This protocol specifically addresses annotation quality through rigorous inter-annotator agreement metrics and measurement enhancement strategies that compensate for the inherent limitations of unstained sperm imaging [9] [23].

Visualization of Experimental Workflows

Ensemble Classification for Imbalanced Data

Figure 1: Ensemble classification workflow for handling class imbalance.

Stained-Free Morphology Analysis Pipeline

Figure 2: Stained-free analysis pipeline preserving sperm viability.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Resources for Sperm Morphology Analysis

| Resource | Type | Key Features | Applications |

|---|---|---|---|

| Hi-LabSpermMorpho Dataset [20] | Data | 18,456 images, 18 morphological classes | Training/evaluating models for comprehensive morphology classification |

| SVIA Dataset [2] | Data | 125,000 annotated instances, segmentation masks | Large-scale model training, detection, and segmentation tasks |

| SCIAN-MorphoSpermGS [19] | Data | 1,854 images, expert-annotated, 5 classes | Benchmarking head morphology classification algorithms |

| Confocal Laser Scanning Microscopy [9] | Equipment | 40× magnification, Z-stack imaging, high-resolution | Capturing unstained live sperm images for analysis |

| Spermac Stain [24] | Reagent | Dichromatic staining, high contrast for acrosome | Detailed morphological assessment, acrosomal integrity evaluation |

| Eosin-Nigrosin Stain [24] | Reagent | Vitality assessment, morphological details | Simultaneous vitality and morphology evaluation |

| Diff-Quick Stain [24] | Reagent | Rapid, standardized staining protocol | Routine morphological analysis, clinical settings |

| ResNet50/101 Architectures [9] [21] | Algorithm | Transfer learning, proven backbone | Feature extraction, classification tasks |

| EfficientNetV2 Variants [20] | Algorithm | Scaling efficiency, balanced model size/performance | Ensemble learning, resource-constrained environments |

| Mask R-CNN Framework [21] | Algorithm | Instance segmentation, object detection | Detailed sperm part segmentation and classification |

The comparative analysis presented in this guide reveals that while significant progress has been made in addressing data challenges for sperm morphology classification, the optimal algorithmic approach remains context-dependent. Ensemble methods demonstrate superior performance for comprehensive multi-class classification but require substantial computational resources. Specialized CNNs offer an excellent balance of performance and efficiency for specific tasks like head morphology classification. Transfer learning approaches provide practical solutions for limited data scenarios, while stained-free analysis methods enable novel applications in clinical ART settings where sperm viability must be preserved.

The trajectory of the field points toward increased dataset standardization, more sophisticated data augmentation techniques, and hybrid approaches that combine the strengths of multiple algorithmic paradigms. As these trends continue, researchers should prioritize solutions that not only achieve high performance metrics but also address the fundamental data challenges of scarcity, annotation quality, and class imbalance that have long constrained progress in automated sperm morphology analysis.

Algorithmic Evolution: From Handcrafted Features to Deep Neural Networks

The diagnosis of male infertility traditionally relies on the microscopic evaluation of sperm morphology, a process that is inherently subjective, time-consuming, and prone to significant inter-observer variability [2] [25]. To address these challenges, conventional machine learning (ML) algorithms have been extensively applied to automate and standardize sperm morphology classification. Among these, Support Vector Machines (SVM) and k-Means clustering, coupled with meticulous feature engineering, have formed the cornerstone of early automated sperm analysis systems. This guide provides a comparative analysis of these conventional ML techniques, placing them in the context of modern deep learning alternatives and highlighting their enduring strengths and limitations within the specific domain of sperm morphology analysis [2] [26].

Performance Comparison: Conventional ML vs. Deep Learning

The evolution of sperm morphology analysis is marked by a clear transition from feature-engineered conventional ML to automated deep feature extraction. The table below summarizes quantitative performance data across key studies, illustrating this technological shift.

Table 1: Performance Comparison of Sperm Morphology Analysis Algorithms

| Algorithm Category | Specific Method | Dataset Used | Reported Performance Metric | Performance Value | Key Limitations / Notes |

|---|---|---|---|---|---|

| Conventional ML with Feature Engineering | SVM with contour & gray-level features [26] | Proprietary Database | Accuracy | High (Exact value not provided, but reported better than comparators) | Hand-crafted features (contour waveform) |

| SVM on manual features [27] | 1,400 Sperm Cells | AUC-ROC | 88.59% | Focused on sperm head classification only | |

| Bayesian Density Estimation & Hu Moments [2] | Not Specified | Accuracy | 90% | Classified heads into 4 categories | |

| Fourier Descriptor + SVM (non-normal heads) [2] | Not Specified | Accuracy | 49% | Highlights variability and challenge of conventional methods | |

| k-Means Clustering | k-Means for sperm head detection [25] | Gold-Standard (200+ cells) | Detection Success Rate | 98% | Used in combination with multiple color spaces (RGB, Lab*, YCbCr) |

| Deep Learning (Comparison) | VGG16 (Transfer Learning) [28] | HuSHeM & SCIAN | High Accuracy | High | Improvement over CE-SVM; similar performance to APDL |

| CBAM-enhanced ResNet50 with Deep Feature Engineering + SVM [29] | SMIDS & HuSHeM | Accuracy | 96.08% & 96.77% | Represents a hybrid approach (deep feature extraction + SVM classification) | |

| Multi-Model CNN Fusion [30] | HuSHeM & SCIAN-Morpho | Accuracy | 94% & 62% | Performance varies significantly with dataset quality | |

| Ensemble CNN with MLP-Attention & SVM [20] | Hi-LabSpermMorpho (18 classes) | Accuracy | 67.70% | A significantly more complex classification task (18 classes) |

Experimental Protocols and Workflows

The application of conventional machine learning to sperm morphology analysis follows a standardized, multi-stage pipeline. The effectiveness of the final model is heavily dependent on each preparatory step.

Key Experimental Protocols

1. Data Preprocessing and Sperm Head Segmentation: The initial and critical step involves isolating the sperm head from the background and other semen components. A common and effective protocol uses the k-Means clustering algorithm for segmentation. The typical methodology is a two-stage framework [25]:

- Stage 1 - Detection: The microscopic image is processed, often by combining information from multiple color spaces (RGB, Lab*, YCbCr) to achieve illumination invariance. The k-Means algorithm (frequently with k=3 to cluster background, sperm head, and other components) is applied to identify and isolate sperm head regions [25].

- Stage 2 - Segmentation: Once the head region is detected, more precise segmentation of sub-parts like the acrosome and nucleus is performed using statistical techniques, such as histogram analysis of intensity values within the clustered region [30] [25]. This step may also involve ellipse-fitting algorithms to determine the head's orientation, which is crucial for subsequent alignment and feature extraction [25].

2. Handcrafted Feature Engineering: Following segmentation, domain-specific features are manually engineered from the sperm head image. Traditional studies rely on several types of feature extractors [2] [26]:

- Shape and Contour Descriptors: The contour of the sperm head is transformed into a one-dimensional waveform. This can be achieved by calculating the distance between consecutive boundary points or the distance from the geometric center to each point on the edge. This waveform serves as a rotation-invariant feature [26].

- Morphometric Features: These include basic measurements like the length, width, area, and perimeter of the sperm head [25].

- Texture and Moment-Based Features: Algorithms like Hu moments, Zernike moments, and Fourier descriptors are used to capture texture and complex shape characteristics that are invariant to rotation and scale [2].

3. SVM Model Training and Classification: The extracted features are used to train a classifier. The Support Vector Machine (SVM) is a popular choice due to its effectiveness in high-dimensional spaces [26]. The standard protocol involves:

- Data Splitting: The dataset is split into training, validation, and test sets. A typical split is 70% for training, 15% for validation, and 15% for the final test [31].

- Hyperparameter Tuning: Critical SVM hyperparameters, such as the kernel type (e.g., Linear, RBF), the regularization parameter

C, and the kernel coefficientgamma, are optimized using techniques like Grid Search or Random Search, often with k-fold cross-validation on the training set to ensure robustness [31]. - Classification: The optimized SVM model is used to classify sperms into categories such as "normal" vs. "abnormal" or into specific morphological classes like "pyriform," "tapered," or "small/amorphous" [2] [26].

Workflow Visualization

The logical workflow for a conventional ML approach to sperm morphology analysis, from image acquisition to final classification, is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

The development and validation of conventional machine learning models for sperm morphology analysis rely on several key resources, including publicly available datasets and specific algorithmic tools.

Table 2: Essential Research Materials and Resources

| Resource Name | Type | Key Features / Function | Relevance to Conventional ML |

|---|---|---|---|

| HuSHeM Dataset [30] | Image Dataset | 216 images of sperm heads; 4-class morphology classification. | A standard benchmark for evaluating feature engineering and classification algorithms. |

| SCIAN-Morpho Dataset [30] | Image Dataset | Images of normal and abnormal sperm, with abnormal sub-classes (small, amorphous, etc.). | Used for testing algorithm robustness on a more challenging dataset with lower image resolution. |

| SMIDS Dataset [30] | Image Dataset | 3000 image patches for both detection and classification tasks. | Provides a larger dataset for training and validating traditional ML models. |

| VISEM-Tracking Dataset [2] | Video & Image Dataset | A multi-modal dataset with sperm videos and related data. | Useful for broader analysis, potentially for tracking and motility in addition to morphology. |

| k-Means Clustering [25] | Algorithm | Unsupervised clustering algorithm for image segmentation. | Critical for the initial stage of sperm head detection and isolation from the background. |

| Support Vector Machine (SVM) [26] | Algorithm | Supervised learning model for classification and regression. | The primary classifier for categorized feature vectors derived from sperm images. |

| Shape & Texture Descriptors [2] [26] | Feature Extraction | Algorithms (Hu moments, Fourier descriptors) to quantify shape and texture. | The core of conventional ML; transforms image data into a numerical feature set for the SVM. |

The comparative data reveals a clear narrative. Conventional ML models, particularly SVMs, can achieve high accuracy (up to 90% in controlled settings) when paired with sophisticated feature engineering [2] [26]. Their performance is highly dependent on the quality and relevance of the handcrafted features, such as contour waveforms and morphometric descriptors. The k-Means algorithm has proven to be a highly effective and reliable tool for the initial, critical task of sperm head segmentation, with success rates as high as 98% [25].

However, the primary limitation of these conventional approaches is their reliance on manual feature extraction, which is not only laborious but also inherently limited by human design. These models often struggle with the vast morphological diversity and complexity of abnormal sperm, particularly when analyzing components beyond the head, such as the neck and tail [2]. This is evidenced by the starkly variable performance (e.g., accuracy ranging from 49% to 90%) across different datasets and abnormality subtypes [2].

In contrast, deep learning (DL) models consistently demonstrate superior performance, achieving accuracies exceeding 96% on standard benchmarks [29]. The key advantage of DL is its ability to automatically learn hierarchical feature representations directly from raw pixel data, bypassing the bottleneck and bias of manual feature engineering. Furthermore, hybrid approaches that use deep CNNs for feature extraction and then feed these deep features into an SVM classifier represent a powerful fusion, marrying the representational power of DL with the robust classification boundaries of conventional ML [29] [20].

In conclusion, while conventional machine learning with SVM and k-Means established the foundational framework for automated sperm morphology analysis, recent advancements are unequivocally driven by deep learning. For researchers, conventional methods remain a valuable benchmark and a potential component in hybrid systems. Yet, for state-of-the-art performance and comprehensive analysis of complex sperm morphology, deep learning-based approaches are the prevailing and most promising path forward.

The diagnosis of male infertility relies heavily on the accurate assessment of sperm morphology, a process traditionally performed through manual microscopic examination. This method, however, is notoriously subjective, time-consuming, and prone to inter-observer variability [2]. Over the past decade, deep learning architectures, particularly Convolutional Neural Networks (CNNs), have catalyzed a revolution towards fully automated, end-to-end sperm classification systems. These systems promise to deliver the objectivity, consistency, and high-throughput analysis essential for modern clinical diagnostics and reproductive research [32].

This guide provides a comparative analysis of the CNN architectures and emerging transformer-based models that are shaping the field of automated sperm morphology analysis. We objectively evaluate their performance against traditional methods and each other, supported by experimental data and detailed methodologies, to serve researchers, scientists, and drug development professionals in selecting and implementing these advanced computational tools.

Comparative Performance of Deep Learning Architectures

The evolution from traditional machine learning to deep learning has significantly boosted the performance of sperm morphology classification systems. CNNs excel at automatically learning hierarchical features from raw pixel data, eliminating the need for manual feature extraction and its inherent biases [32]. The table below summarizes the reported performance of various deep learning architectures on three public benchmark datasets.

Table 1: Performance Comparison of Sperm Morphology Classification Models

| Model Architecture | Dataset | Reported Accuracy | Key Advantages | Reference/Study |

|---|---|---|---|---|

| Multi-model CNN Fusion (6 CNNs) | SMIDS | 90.73% | Enhanced robustness via model averaging | [33] |

| HuSHeM | 85.18% | |||

| SCIAN-Morpho | 71.91% | |||

| DenseNet169 | HuSHeM | 97.78% | Addresses vanishing gradient, feature reuse | [34] |

| SCIAN-Morpho | 78.79% | |||

| Custom CNN (Iqbal et al.) | HuSHeM | 95% | Fewer parameters, optimized for sperm heads | [19] |

| SCIAN-Morpho | 63% | |||

| InceptionV3 | SMIDS | 87.3% | Multi-scale feature processing | [30] |

| Vision Transformer (BEiT_Base) | SMIDS | 92.5% | Captures long-range dependencies, state-of-the-art | [35] |

| HuSHeM | 93.52% | |||

| Fine-tuned VGG16 | HuSHeM | 94% | Leverages transfer learning from ImageNet | [30] |

| SCIAN-Morpho | 62% | |||

| ResNet50 (for live sperm) | Custom Clinical | 93% (Test Acc.) | Applied to unstained, live sperm analysis | [9] |

Key Dataset Challenges

The variation in model performance is closely tied to the characteristics of the benchmark datasets. The SCIAN-Morpho dataset presents particular challenges, with even the best models achieving lower accuracy (e.g., 78.79% with DenseNet169 [34]). This dataset contains low-resolution images (approximately 35x35 pixels) and suffers from high inter-class similarity and significant class imbalance, making classification inherently difficult [19]. In contrast, models trained on the HuSHeM and SMIDS datasets generally achieve higher accuracy, benefiting from better image resolution and quality [35].

Experimental Protocols and Methodologies

The Multi-CNN Fusion Framework

A prominent study [30] [33] detailed a robust methodology for end-to-end classification using an ensemble of CNNs. The workflow ensures comprehensive learning and objective assessment.

Key Experimental Steps:

- Data Preparation and Augmentation: The framework employed a five-fold cross-validation technique to ensure reliable performance estimation. To combat overfitting and increase effective dataset size, extensive data augmentation was applied, including random rotations, flips, and scaling [30] [33].

- Model Training: Six distinct CNN models were trained independently on the augmented data. This diversity in architecture ensures that the models learn complementary features from the sperm images.

- Decision-Level Fusion: Predictions from all six CNNs were aggregated using two fusion strategies:

- Hard Voting: The final class is determined by a majority vote from the models.

- Soft Voting: The final class is determined by averaging the predicted probabilities from each model, often leading to better performance as it accounts for the model's confidence [33]. This approach achieved 90.73% accuracy on the SMIDS dataset.

DenseNet for Feature Propagation

A 2025 study [34] implemented the DenseNet169 architecture, which features dense connectivity between layers.

Key Experimental Steps:

- Architecture Configuration: The model was trained from scratch on the HuSHeM and SCIAN datasets using different data splits (e.g., 70:25:5 for training, validation, and test) to evaluate data efficiency.

- Feature Reuse: DenseNet's architecture connects each layer to every other layer in a feed-forward fashion. This encourages feature reuse, mitigates the vanishing gradient problem, and reduces the number of parameters, making it very efficient [34].

- Performance: This method achieved a top accuracy of 97.78% on the HuSHeM dataset, demonstrating the effectiveness of dense connectivity patterns for complex morphological feature learning.

Vision Transformers for Global Context

A 2025 benchmark [35] introduced Vision Transformers (ViTs) as a powerful alternative to CNNs.

Key Experimental Steps:

- Patch Embedding: Input images were split into fixed-size patches, linearly embedded, and fed into a standard transformer encoder.

- Self-Attention Mechanism: The core of the ViT is the self-attention mechanism, which weighs the importance of different image patches relative to each other. This allows the model to capture long-range spatial dependencies across the entire image, a capability that is more limited in CNNs with small receptive fields.

- Hyperparameter Optimization: The study conducted extensive tuning of learning rates and optimizers. It found that data augmentation was critical for ViTs to generalize well, especially given the typically small size of medical image datasets.

- Performance: The BEiT_Base model set new state-of-the-art results, achieving 92.5% on SMIDS and 93.52% on HuSHeM, outperforming previous CNN-based approaches. Visualization with Attention Maps confirmed the model's ability to focus on discriminative morphological features like head shape and tail integrity [35].

Successful development of a deep learning model for sperm classification relies on a foundation of key resources. The table below details these essential components.

Table 2: Key Research Reagents and Resources for Sperm Morphology Analysis

| Resource Name | Type | Key Features & Characteristics | Primary Function in Research |

|---|---|---|---|

| HuSHeM Dataset [19] [35] | Image Dataset | 216 images; 4 classes (Normal, Pyriform, Tapered, Amorphous); 131x131 px resolution. | Benchmarking for sperm head morphology classification. |

| SCIAN-MorphoSpermGS [19] | Image Dataset | 1,854 images; 5 classes; low-resolution (~35x35 px); expert-annotated. | Gold-standard dataset for challenging, low-res classification. |

| SMIDS [30] [35] | Image Dataset | ~3,000 images; 3 classes (Normal, Abnormal, Non-sperm); 190x170 px resolution. | Benchmarking for detection and multi-class classification. |

| SVIA Dataset [2] [9] | Video & Image Dataset | 125,000 detection instances; 26,000 segmentation masks; from unstained sperm. | Training models for live sperm analysis and motility tracking. |

| Confocal Laser Scanning Microscopy [9] | Imaging Equipment | High-resolution, Z-stack imaging at low magnification without staining. | Capturing high-quality, subcellular images of live, unstained sperm. |

| ResNet50 [9] | Deep Learning Model | A standard 50-layer CNN; often used with transfer learning. | A common baseline or backbone model for feature extraction. |

| DenseNet169 [34] | Deep Learning Model | Dense connectivity pattern promoting feature reuse and mitigating gradient loss. | Building efficient and high-accuracy classifiers for sperm images. |

| Vision Transformer (ViT) [35] | Deep Learning Model | Transformer-based architecture using self-attention for global context. | State-of-the-art classification by modeling relationships across the image. |

Architectural Comparison and Clinical Applicability

The following diagram synthesizes the logical relationships and decision pathways for selecting and implementing these deep learning architectures in a clinical research context.

Pathway Analysis

The clinical implementation pathway highlights key decision points:

- For analyzing stained sperm from public benchmarks, the choice depends on priorities: ViTs for top accuracy, Multi-CNN fusion for robust high-throughput, and DenseNet/VGG for a balance of performance and complexity [34] [35].

- For the analysis of live, unstained sperm—a crucial requirement for Assisted Reproductive Technology (ART) where sperm must remain viable—models like ResNet50 trained on high-resolution confocal microscopy images have shown strong correlation (r=0.88) with CASA systems, offering a non-destructive assessment method [9].

Male infertility is a significant global health concern, contributing to 20–30% of all infertility cases among couples [27]. Traditional semen analysis, particularly the assessment of sperm morphology (the size, shape, and structural characteristics of sperm cells), remains a cornerstone of male fertility evaluation. According to World Health Organization (WHO) guidelines, normal sperm morphology is characterized by an oval head measuring 4.0–5.5 μm in length and 2.5–3.5 μm in width, with an intact acrosome covering 40–70% of the head area and a single, uniform tail [1]. These precise morphological parameters are clinically vital as abnormalities are strongly correlated with reduced fertilization rates and poor outcomes in assisted reproductive technologies [29].

Despite its clinical importance, conventional manual morphology assessment performed by embryologists suffers from substantial limitations. This labor-intensive process requires examining at least 200 sperm per sample and can take 30–45 minutes per case [1]. More critically, it demonstrates high inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators and kappa values as low as 0.05–0.15, indicating minimal diagnostic agreement even among trained specialists [1] [29]. This subjectivity and inconsistency in morphology assessment has created an urgent need for automated, objective classification systems that can standardize fertility diagnostics across laboratories and improve reproductive healthcare outcomes.

Technical Foundation: From CNNs to Attention Mechanisms

Evolution of Deep Learning Architectures

The application of deep learning to medical image analysis has progressed through several generations of architectural innovations:

Standard Convolutional Neural Networks (CNNs): Early approaches demonstrated that CNNs could automatically learn discriminative features from sperm images, achieving notable but limited success. These networks typically consisted of sequential convolutional, pooling, and fully-connected layers that progressively extracted and transformed image features [28] [27].