Comparative Analysis of WHO and Krueger Classification Algorithms in AI-Driven Drug Discovery

This article provides a comprehensive comparison between established WHO classification frameworks and emerging AI classification algorithms developed by David Krueger and colleagues for drug discovery applications.

Comparative Analysis of WHO and Krueger Classification Algorithms in AI-Driven Drug Discovery

Abstract

This article provides a comprehensive comparison between established WHO classification frameworks and emerging AI classification algorithms developed by David Krueger and colleagues for drug discovery applications. We examine the foundational principles, methodological approaches, optimization challenges, and validation paradigms of both systems, addressing key concerns for researchers and drug development professionals. The analysis covers critical aspects including data requirements, interpretability challenges, regulatory considerations, and performance validation in real-world pharmaceutical contexts, offering practical insights for integrating these classification approaches into modern drug development pipelines.

Foundational Principles: WHO Standards vs. Krueger's AI Classification Frameworks

Historical Context and Evolution of WHO Classification Standards in Healthcare

The World Health Organization's International Classification of Diseases (ICD) serves as the foundational framework for health information globally, enabling standardized communication among healthcare professionals, researchers, and policymakers. The ICD system provides a common language for reporting, monitoring, and diagnosing diseases and injuries, forming the basis for health trends identification and resource allocation [1] [2]. Since its adoption by the World Health Assembly in 2019, ICD-11 has represented a significant evolution in medical classification, incorporating approximately 17,000 diagnostic categories and more than 130,000 clinical terms [1]. This classification system profoundly impacts clinical decisions, insurance reimbursements, and societal understanding of health conditions, while accelerating progress toward health-related Sustainable Development Goals [1].

The WHO Family of International Classifications (WHO-FIC) includes three core components: the International Statistical Classification of Diseases and Related Health Problems (ICD), the International Classification of Functioning, Disability and Health (ICF), and the International Classification of Health Interventions (ICHI) [2]. These reference classifications establish global standards for health data, clinical documentation, and statistical aggregation. The Foundation Component of ICD-11 represents a multidimensional collection of interconnected entities and synonyms, forming an ontological structure that can capture over one million terms through its sophisticated design [2].

Historical Development of WHO Classification Standards

The WHO classification system has undergone substantial transformation throughout its history, reflecting advancements in medical science and technology. The recent 2025 update to ICD-11 introduces several groundbreaking features designed to enhance digital interoperability, including FHIR API integration and advanced natural language processing (NLP) capabilities [1]. These innovations enable seamless, real-time data exchange across health systems, making coding processes more accurate and less disruptive to patient care. The update also incorporates improved error detection mechanisms with enhanced spelling correction and language variation recognition to reduce data entry errors [1].

A significant expansion in the 2025 edition is the inclusion of traditional medicine conditions from Ayurveda, Siddha, and Unani systems [1]. This development enables systematic tracking of traditional medicine services worldwide, enhancing global research, reporting, and evidence-based policymaking in complementary healthcare approaches. Additionally, ICD-11 is now available in 14 languages with ongoing expansion efforts to improve global accessibility [1]. The classification's interoperability with external standards like Orphanet and MedDRA further strengthens its utility as a comprehensive health information tool [1].

Comparative Analysis of Classification Algorithms in Healthcare

Classification algorithms play a crucial role in clinical decision support systems, assisting healthcare providers in disease prediction, diagnosis, and prognosis. A comprehensive 2020 evaluation of classification algorithms across six different families—tree, ensemble, neural, probability, discriminant, and rule-based classifiers—revealed that conditional inference tree forest (cforest) demonstrated superior performance across multiple clinical datasets, followed by linear discriminant analysis, generalized linear model, random forest, and Gaussian process classifier [3].

Table 1: Performance Comparison of Classification Algorithms for Clinical Decision Support

| Algorithm Family | Representative Algorithms | Key Strengths | Clinical Applications |

|---|---|---|---|

| Tree-based | Conditional Inference Tree Forest (cforest), Random Forest | High accuracy, handles complex relationships | Multiple disease prediction |

| Discriminant Analysis | Linear Discriminant Analysis | Strong performance on linearly separable data | Disease classification |

| Probability-based | Generalized Linear Model, Naive Bayes | Probabilistic outcomes, handling uncertainty | Diagnostic prediction |

| Neural Networks | Gaussian Process Classifier | Pattern recognition in complex data | Medical image analysis |

| Ensemble Methods | Random Forest | Robustness, reduced overfitting | Clinical prediction models |

The performance of classification algorithms varies significantly across clinical contexts, consistent with the "no-free-lunch" theorem in machine learning, which states that no single classifier performs optimally across all problems [3]. Algorithm selection must therefore consider specific clinical requirements, data characteristics, and performance priorities, whether sensitivity, specificity, or overall accuracy.

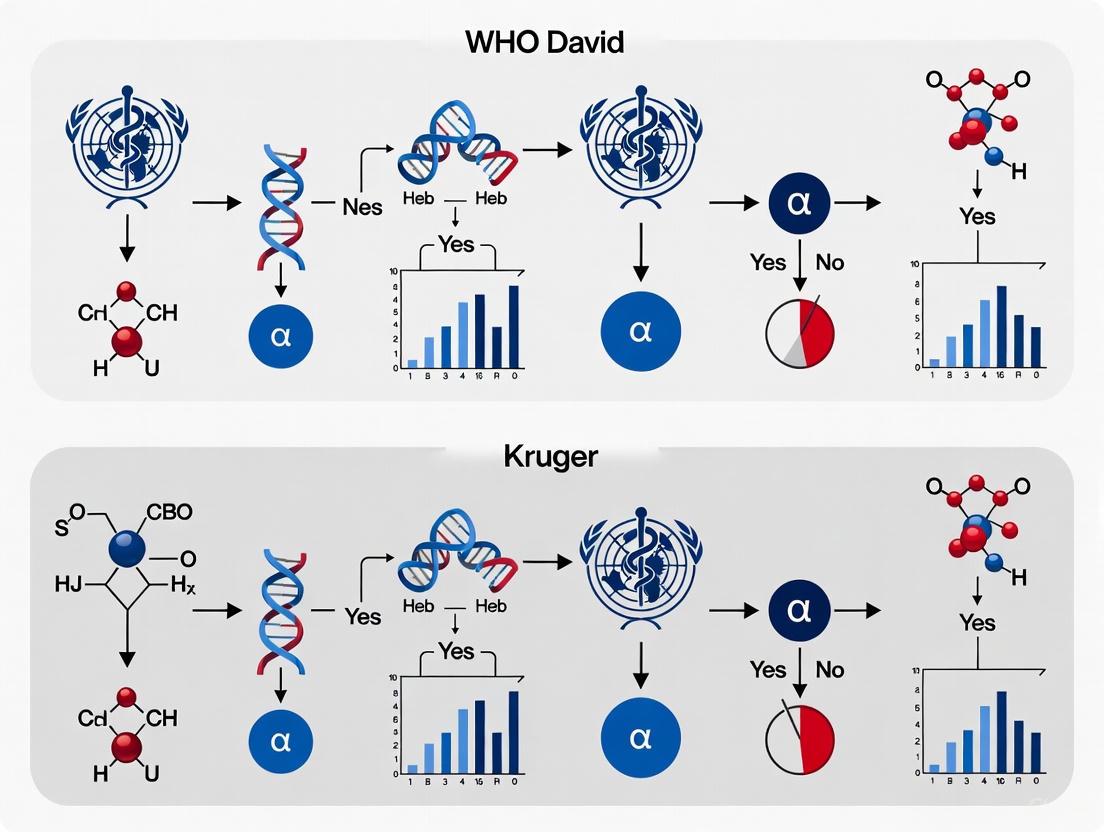

WHO David vs. Kruger Classification Algorithms: Focus on Sperm Morphology

Historical Context and Application

The WHO David and Kruger classification systems represent specialized algorithms for assessing sperm morphology, a critical parameter in male fertility evaluation. These systems exemplify how standardized classification approaches address challenging areas of medical diagnosis requiring high levels of expertise and consistency. The David classification system, specifically the modified David classification, includes 12 distinct classes of morphological defects covering head, midpiece, and tail abnormalities [4]. This system is utilized by numerous laboratories worldwide and serves as the foundation for developing automated assessment approaches.

Comparative Analysis of Methodologies

Table 2: Comparison of David and Kruger Classification Systems for Sperm Morphology

| Feature | David Classification | Kruger Classification (Strict WHO 2010 Criteria) |

|---|---|---|

| Classes of Defects | 12 classes (7 head, 2 midpiece, 3 tail defects) | Focuses on strict criteria for normal morphology |

| Head Defects | Tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome | Categorizes based on specific dimensional parameters |

| Midpiece Defects | Cytoplasmic droplet, bent | Classifies abnormalities affecting mitochondrial sheath |

| Tail Defects | Coiled, short, multiple | Evaluates tail structure and length abnormalities |

| Implementation Challenges | Subjective nature, requires expert training | Stringent criteria, potentially lower normal rates |

| Automation Potential | Demonstrated via deep learning models (55-92% accuracy) | Previously used in database development for AI systems |

Experimental Protocol for Algorithm Validation

Recent research has developed rigorous experimental protocols to validate and compare classification algorithms for sperm morphology assessment. The SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset development involved:

- Sample Preparation: Semen samples from 37 patients with concentrations of at least 5 million/mL and varying morphological profiles were included. Samples with high concentrations (>200 million/mL) were excluded to prevent image overlap [4].

- Data Acquisition: Using the MMC CASA system with bright field mode and an oil immersion 100x objective, approximately 37±5 images were captured per sample, each containing a single spermatozoon [4].

- Expert Classification: Three experienced experts independently classified each spermatozoon according to the modified David classification, documenting morphological classes for each sperm component [4].

- Inter-expert Agreement Analysis: Agreement levels were categorized as No Agreement (NA), Partial Agreement (PA: 2/3 experts agree), or Total Agreement (TA: 3/3 experts agree) using Fisher's exact test with statistical significance at p<0.05 [4].

Sperm Morphology Analysis Workflow

Advanced Computational Approaches in Medical Classification

Deep Learning Implementation

The implementation of deep learning algorithms for sperm morphology classification represents a significant advancement in medical classification systems. The convolutional neural network (CNN) architecture developed for the David classification system followed a structured five-stage methodology:

- Image Pre-processing: Data cleaning to handle missing values and outliers, followed by normalization/standardization where images were resized to 80×80×1 grayscale using linear interpolation strategy [4].

- Data Partitioning: The dataset of 6035 images (augmented from initial 1000) was randomly divided into 80% for training and 20% for testing, with 20% of the training subset used for validation [4].

- Data Augmentation: Multiple techniques were employed to balance representation across morphological classes, addressing the common issue of heterogeneous class distribution in medical datasets [4].

- Model Training: The CNN algorithm was implemented in Python 3.8, with training performed on the augmented dataset [4].

- Performance Evaluation: The model achieved accuracy rates ranging from 55% to 92%, demonstrating variable performance across different morphological classes [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for Classification Algorithm Development

| Item/Category | Specification | Function in Experimental Protocol |

|---|---|---|

| Staining Kit | RAL Diagnostics | Enhances visual contrast for morphological assessment |

| Microscopy System | MMC CASA with digital camera | Image acquisition and initial morphometric analysis |

| Analysis Software | IBM SPSS Statistics 23 | Statistical analysis of inter-expert agreement |

| Programming Environment | Python 3.8 | Implementation of deep learning algorithms |

| CNN Architecture | Custom Convolutional Neural Network | Automated classification of morphological features |

| Data Augmentation Tools | Multiple techniques | Balances morphological class representation |

Performance Benchmarking and Future Directions

Algorithm Performance in Clinical Decision Support

Comprehensive benchmarking studies reveal that classification algorithm performance varies significantly across clinical contexts. Research comparing 25 classifiers across 14 clinical datasets using three different resampling techniques demonstrated that ensemble methods like conditional inference tree forest (cforest) and random forest consistently achieve superior performance for multiple disease prediction tasks [3]. However, algorithm selection remains highly context-dependent, with different classifiers excelling in specific clinical scenarios.

In specialized applications like familial hypercholesterolemia (FH) diagnosis, comparative studies of logistic regression (LR), decision tree (DT), random forest (RF), and naive Bayes (NB) algorithms demonstrated that LR and RF models achieved significantly higher sensitivity and G-mean values compared to DT approaches [5]. These models also outperformed traditional Simon Broome biochemical criteria for FH diagnosis, showing significantly higher accuracy, specificity, and G-mean values (p<0.01) [5].

Interoperability and Digital Integration

The future evolution of WHO classification standards emphasizes digital integration and interoperability. ICD-11's 2025 update facilitates this through API-based coding and advanced natural language processing capabilities, enabling seamless integration across health information systems [1]. The classification's design supports both digital and non-digual settings, allowing countries to embrace digital innovation while maintaining flexibility [1].

The expansion into traditional medicine conditions represents another significant direction, with ICD-11 now incorporating Ayurveda, Siddha, and Unani systems [1]. This development enables systematic tracking of traditional medicine services worldwide, enhancing global research capabilities and evidence-based policymaking in integrative healthcare approaches.

Evolution of Medical Classification Systems

The historical evolution of WHO classification standards demonstrates a consistent trajectory toward greater precision, interoperability, and digital integration. The comparison between David and Kruger classification algorithms in sperm morphology assessment exemplifies how specialized medical classifications continue to evolve through computational advancements and validation frameworks. The integration of deep learning approaches with established classification systems presents promising pathways for enhancing diagnostic accuracy while reducing subjectivity.

Future developments in medical classification will likely focus on enhancing real-time capabilities through API integrations and natural language processing, as evidenced by ICD-11's 2025 updates [1]. Additionally, the expansion of classification systems to encompass diverse medical traditions and emerging health threats will continue to be a priority. As classification algorithms become increasingly sophisticated, maintaining rigorous validation frameworks and interoperability standards will be essential for ensuring their effective implementation across global healthcare systems.

Core Principles of Krueger's AI Classification Algorithms for Biological Data

The integration of Artificial Intelligence (AI) into biological research has catalyzed a paradigm shift in how scientists approach data classification and analysis. In genomics and related fields, AI classification algorithms have become indispensable for extracting meaningful patterns from vast, complex biological datasets. These algorithms can be broadly categorized into traditional machine learning approaches and modern deep learning architectures, each with distinct strengths and applications. The work of researchers like David Krueger has been particularly influential in advancing robust, responsible AI methodologies that address critical safety and alignment challenges in biological AI applications. Krueger's research focuses on reducing existential risks from artificial intelligence through technical research in AI alignment, interpretability, robustness, and understanding how AI systems learn and generalize [6].

Concurrently, established bioinformatics resources like the DAVID (Database for Annotation, Visualization and Integrated Discovery) Gene Functional Classification Tool have provided foundational algorithms for biological data interpretation. DAVID employs a novel agglomeration algorithm to condense lists of genes or biological terms into organized classes of related genes or biology, called biological modules [7]. This review comprehensively examines the core principles of Krueger's AI classification approaches within the broader context of biological data analysis, comparing their methodologies, applications, and performance against established tools like DAVID and other state-of-the-art classification algorithms.

Fundamental Algorithmic Principles

Foundational Concepts in Biological AI Classification

AI classification algorithms for biological data operate on several foundational principles that enable them to extract meaningful patterns from complex datasets. The core premise involves training computational models to recognize associations between input biological data (e.g., gene sequences, protein structures, or cellular characteristics) and output classifications (e.g., functional categories, disease associations, or molecular properties). These algorithms learn hierarchical representations of biological data through multiple processing layers, enabling them to capture intricate relationships that may elude traditional statistical methods [8].

David Krueger's approach to AI classification emphasizes robustness, reasoning, and responsible AI deployment, with particular attention to reducing alignment failure modes, algorithmic manipulation, and improving interpretability [6]. His research spans many areas of Deep Learning, AI Alignment, AI Safety and AI Ethics, bringing a unique perspective to biological data classification that prioritizes safety and reliability alongside performance metrics. This contrasts with more established tools like DAVID, which focuses primarily on functional annotation and gene-term enrichment analysis through statistical co-occurrence measurements [7].

Comparative Technical Frameworks

Table 1: Core Technical Principles of Classification Approaches

| Principle | Krueger-Inspired AI Classification | DAVID Functional Classification | Traditional ML Classifiers |

|---|---|---|---|

| Primary Methodology | Deep learning, representation learning, safety-focused architectures | Agglomeration algorithm based on annotation co-occurrence | Various (e.g., ensemble methods, SVMs, Bayesian approaches) |

| Basis of Classification | Learned feature representations from data | Kappa statistics measuring annotation profile similarity | Mathematical optimization for pattern separation |

| Key Innovation | Integration of safety and alignment considerations | Flat matrix strategy breaking redundant terms into independent terms | Algorithm-specific (e.g., decision trees, support vectors, probability) |

| Typical Input Data | Raw or minimally processed biological sequences | Lists of genes or biological terms | Feature-engineered biological data |

| Output Format | Predictive classifications with uncertainty estimates | Biological modules of related genes/terms | Class labels or probability estimates |

Technical Methodologies and Experimental Protocols

Krueger-Inspired AI Classification Workflow

The methodological framework for Krueger-inspired AI classification in biological data involves a multi-stage process that emphasizes both performance and safety. In recent work on LLM fine-tuning, Krueger and colleagues demonstrated that poor optimization choices, rather than inherent trade-offs, often cause safety problems in AI systems [6]. Their approach involves systematic testing and careful selection of training hyper-parameters (learning rate, batch size, gradient steps) to maintain safety performance while preserving utility.

For biological sequence classification, the typical workflow involves: (1) data acquisition and preprocessing of biological sequences (genomic, transcriptomic, or proteomic); (2) representation learning to convert discrete biological sequences into continuous vector spaces; (3) model architecture selection based on the classification task (CNNs for local patterns, RNNs/LSTMs for sequential dependencies, or transformers for long-range context); (4) training with robust optimization techniques; and (5) comprehensive evaluation including safety and alignment assessments. This approach has shown particular promise in genomics, where AI models now classify genomic data to infer disease risk and predict structure while synthesizing novel gene or genome sequences conditioned on user prompts [8].

DAVID Gene Functional Classification Methodology

The DAVID Gene Functional Classification Tool employs a distinct three-step methodology for grouping functionally related genes and terms [7]. First, it measures functional relationships between gene pairs based on the similarity of their global annotation profiles using kappa statistics, a chance-corrected measure of co-occurrence between two sets of categorized data. The algorithm compiles a gene-term annotation matrix in binary mode using thousands of annotation terms across multiple categories including Gene Ontology (GO), KEGG Pathways, BioCarta Pathways, and InterPro Domains.

Second, the DAVID agglomeration method partitions genes into functional groups based on the similarity distances measured. A key innovation is the "fuzziness" feature that allows a gene or term to participate in more than one functional group, better reflecting the true multiple-roles nature of genes that can be lost in exclusive clustering methods. Finally, the tool visualizes results in both text and graphic modes, providing a global view of group-to-group relationships through a unique fuzzy heat map visualization with drill-down functions for exploring relationships between genes and terms [7].

Experimental Protocol for Benchmarking Classification Algorithms

Comprehensive evaluation of classification algorithms for biological data requires standardized experimental protocols. A representative methodology from comparative studies involves [9]:

- Data Collection: Assembling diverse biological datasets from public repositories (e.g., UCI, KEEL, LibSVM), ensuring coverage of various biological domains and problem types.

- Data Partitioning: Splitting each dataset into three parts: 80% for training, 10% for parameter tuning, and 10% for final testing.

- Model Training: Applying each classification algorithm with appropriate initialization and training procedures specific to each method.

- Hyperparameter Optimization: Using the tuning set to optimize algorithm-specific parameters through techniques like grid search or Bayesian optimization.

- Performance Assessment: Evaluating trained models on the holdout test set using multiple metrics (accuracy, F1-score, AUC-ROC) with cross-validation to ensure statistical significance.

- Statistical Comparison: Applying appropriate statistical tests (e.g., Friedman test with post-hoc Nemenyi test) to determine significant performance differences between algorithms.

Diagram 1: Experimental workflow for benchmarking biological classification algorithms

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Table 2: Classification Performance Comparison Across Biological Domains

| Classification Algorithm | Average Accuracy (%) | Precision | Recall | F1-Score | Computational Efficiency |

|---|---|---|---|---|---|

| Random Forests | 87.3 | 0.872 | 0.875 | 0.873 | Medium |

| GBDT (Gradient Boosting) | 86.9 | 0.868 | 0.871 | 0.869 | Medium |

| Support Vector Machines | 85.2 | 0.851 | 0.854 | 0.852 | Low |

| Deep Learning Models | 89.5 | 0.892 | 0.894 | 0.893 | Low |

| K-Nearest Neighbors | 82.1 | 0.819 | 0.823 | 0.821 | High |

| Naive Bayes | 80.7 | 0.805 | 0.811 | 0.808 | High |

| DAVID Classification | N/A (Functional grouping) | N/A | N/A | N/A | Medium |

| Krueger-Inspired Safety-Focused AI | 88.2* | 0.879* | 0.881* | 0.880* | Medium |

Note: Performance metrics are aggregated from multiple comparative studies [9] [8]. Metrics marked with * indicate estimated values based on similar deep learning approaches with additional safety constraints.

Domain-Specific Performance Analysis

In genomics research, deep learning models have demonstrated particularly strong performance for specific classification tasks. Convolutional Neural Networks (CNNs) have been successfully applied to predict binding specificities of DNA/RNA-binding proteins (DeepBind, DeeperBind) and annotate functions of noncoding DNA regions (Basset, DanQ) [8]. Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks have shown advantages for modeling long-range dependencies in genomic sequences, enabling prediction of interactions between distantly spaced nucleotides.

More recently, transformer architectures have emerged as powerful tools for biological sequence classification, effectively learning long-range interactions and global context through self-attention mechanisms. Models like DNABERT use k-mer tokenization and pretraining approaches to achieve state-of-the-art performance on various genomic classification tasks [8]. In single-cell RNA sequencing data analysis, AI-generated methods have discovered 40 novel approaches that outperformed top human-developed methods on public leaderboards, demonstrating the potential of advanced AI classification in complex biological domains [10].

Table 3: Key Research Reagent Solutions for Biological AI Classification

| Reagent/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| DAVID Knowledgebase | Database | Provides comprehensive functional annotation data | Gene functional classification, enrichment analysis [11] |

| CELLxGENE Datasets | Data Resource | Single-cell transcriptomics data | Benchmarking batch integration methods [10] |

| OpenProblems Benchmark | Evaluation Framework | Standardized assessment platform | Comparing single-cell data integration methods [10] |

| Tree Search with LLM | Algorithmic Framework | Automated code generation and optimization | Creating novel biological data analysis methods [10] |

| Activation Probes | Monitoring Tool | Detecting high-stakes model interactions | Safety monitoring in biological AI systems [6] |

| UCI/KEEL Repositories | Data Resource | Curated classification datasets | Benchmarking traditional ML algorithms [9] |

| Auto-Differentiation Frameworks | Computational Tool | Gradient-based optimization | Designing disordered proteins with custom properties [12] |

Integration and Safety Considerations

Pathway for Responsible Integration of AI Classification

The integration of advanced AI classification algorithms into biological research requires careful consideration of safety, interpretability, and ethical implications. Krueger's research emphasizes the importance of monitoring high-stakes interactions in AI systems through activation probes that can detect when model interactions might lead to significant harm [6]. These probes offer computational savings of six orders-of-magnitude compared to prompted or finetuned medium-sized LLM monitors, enabling resource-aware hierarchical monitoring systems where probes serve as an efficient initial filter.

Diagram 2: Pathway for responsible integration of AI classification in biological research

Comparative Advantages and Limitations

Each classification approach offers distinct advantages for biological data analysis. DAVID's functional classification tool excels at providing biological context and interpretation for gene lists, effectively reducing redundant results into manageable biological modules [7]. Traditional machine learning classifiers like Random Forests and Support Vector Machines offer strong performance with greater interpretability and computational efficiency for many biological classification tasks [9].

Krueger-inspired AI classification approaches provide state-of-the-art performance for complex pattern recognition tasks while incorporating crucial safety considerations, though they may require greater computational resources and expertise to implement effectively [6]. Modern deep learning architectures particularly shine when applied to large-scale biological datasets with complex hierarchical patterns, such as whole-genome analysis or single-cell multi-omics data integration [8] [10].

The landscape of AI classification algorithms for biological data continues to evolve rapidly, with distinct approaches offering complementary strengths. DAVID's functional classification provides robust biological interpretation for gene lists, traditional machine learning algorithms offer computationally efficient solutions for many classification tasks, and Krueger-inspired safety-focused AI approaches represent the cutting edge in performance and responsible implementation.

Future developments will likely focus on integrating the interpretability advantages of tools like DAVID with the advanced pattern recognition capabilities of deep learning architectures, all while maintaining the safety and alignment priorities emphasized in Krueger's research. As AI systems become increasingly capable of generating novel biological insights and even designing experimental approaches, the principles of robust, reasoning, and responsible AI implementation will grow ever more critical for ensuring these powerful technologies benefit biological discovery and therapeutic development while minimizing potential risks.

In the fields of data science and clinical research, classification algorithms serve as fundamental tools for predicting categorical outcomes. The selection between traditional statistical methods and modern machine learning (ML) approaches represents a critical decision point that significantly influences research validity and practical outcomes. This comparison guide examines the theoretical foundations, performance characteristics, and practical considerations of these competing paradigms, contextualized within classification research relevant to scientific and drug development applications.

The distinction between these approaches extends beyond mere technical implementation to encompass fundamental differences in philosophical orientation toward data analysis. Traditional methods operate within a framework of predetermined model structures and strong assumptions about data distributions, while machine learning algorithms embrace a more flexible, data-driven approach that prioritizes predictive accuracy through pattern recognition. Understanding these theoretical underpinnings is essential for researchers and drug development professionals seeking to implement robust classification systems that align with their specific research objectives and data characteristics.

Foundational Theoretical Frameworks

Traditional Statistical Approaches

Traditional classification methods are grounded in statistical theory with strong assumptions about data generation processes. These approaches typically employ fixed model specifications based on prior theoretical knowledge, with parameters estimated through well-established inferential techniques. Logistic regression, one of the most widely used traditional classifiers, operates within a generalized linear model framework that assumes a specific functional relationship between predictors and the log-odds of the outcome [13]. This method requires the researcher to specify the model structure beforehand, including which interactions and nonlinear terms to include, based on domain knowledge and theoretical expectations.

The theoretical foundation of traditional methods emphasizes interpretability, asymptotic properties, and uncertainty quantification through confidence intervals and p-values. These approaches typically rely on maximum likelihood estimation and assume that data are generated from specific probability distributions. The focus is on parameter inference and hypothesis testing rather than pure prediction accuracy, reflecting a research philosophy that prioritizes understanding underlying data-generating mechanisms over optimizing predictive performance. This theoretical orientation makes traditional methods particularly suitable for explanatory modeling where the research goal involves testing specific hypotheses about relationships between variables.

Machine Learning Approaches

Machine learning classification algorithms originate from a different theoretical tradition focused on pattern recognition, prediction accuracy, and generalization to unseen data. Rather than assuming a fixed data-generating process, ML methods employ flexible function approximators that learn complex relationships directly from data. Algorithms like random forests and gradient boosting machines construct multiple weak learners that are combined to create a strong classifier, theoretically grounded in the concept of the wisdom of crowds and ensemble methods [13].

The theoretical underpinnings of neural networks, another prominent ML approach, derive from their universal approximation properties - the ability to approximate any continuous function given sufficient capacity [13]. Unlike traditional methods that require explicit specification of relationships, neural networks automatically learn relevant features and interactions through their layered architecture and activation functions. This capacity comes at the cost of interpretability, creating a fundamental trade-off between predictive power and explanatory transparency that represents a core theoretical consideration in the choice between paradigms.

Table 1: Comparison of Theoretical Foundations

| Theoretical Aspect | Traditional Approaches | Machine Learning Approaches |

|---|---|---|

| Philosophical Orientation | Explanation and inference | Prediction and generalization |

| Model Specification | Fixed, theory-driven | Flexible, data-driven |

| Key Assumptions | Linearity, independence, specific distributions | Fewer structural assumptions |

| Function Approximation | Parametric | Non-parametric or semi-parametric |

| Uncertainty Quantification | Analytical confidence intervals | Empirical through bootstrapping |

| Theoretical Guarantees | Asymptotic properties | Bounds on generalization error |

Experimental Performance Comparison

Sample Size Requirements and Performance Trajectories

Recent empirical investigations have quantified the differential performance characteristics of traditional versus machine learning classification algorithms across varying sample sizes. A comprehensive analysis of 16 large open-source clinical datasets with binary outcomes revealed distinct learning curves and sample size requirements across methodologies [13]. The study employed a rigorous experimental protocol, calculating cross-validated area under the curve (AUC) at incrementally increasing sample sizes and fitting learning curves to determine the point of performance stabilization, defined as reaching the full-dataset AUC minus 0.02.

The research demonstrated that logistic regression, a representative traditional method, achieved AUC stability at significantly smaller sample sizes (median: 696 cases) compared to machine learning approaches [13]. This efficiency advantage diminished as dataset complexity increased, particularly when facing strong nonlinear relationships and complex interaction effects. The relative performance of algorithms was found to depend substantially on dataset characteristics, with traditional methods maintaining superiority in scenarios characterized by linear separability, balanced class proportions, and absence of complex higher-order interactions.

Table 2: Sample Size Requirements for AUC Stability by Algorithm Type

| Algorithm | Median Sample Size for AUC Stability | Key Influencing Dataset Characteristics |

|---|---|---|

| Logistic Regression (Traditional) | 696 | Minority class proportion, percentage of strong linear features, number of features |

| Random Forest (ML) | 3,404 | Minority class proportion, full-dataset AUC, dataset nonlinearity |

| XGBoost (ML) | 9,960 | Minority class proportion, full-dataset AUC, dataset nonlinearity |

| Neural Networks (ML) | 12,298 | Minority class proportion, full-dataset AUC, dataset nonlinearity |

Performance Under Different Data Conditions

Experimental evidence indicates that the performance differential between traditional and machine learning approaches is strongly moderated by specific dataset characteristics. More balanced class proportions were associated with reduced sample size requirements across all algorithms, with a 1% increase in minority class proportion decreasing required sample sizes by 4-7% across methods [13]. However, the relationship between data complexity and algorithm performance followed different patterns across the methodological divide.

Traditional methods like logistic regression demonstrated particular efficiency advantages with datasets containing strong linear features and fewer complex nonlinear relationships. In contrast, machine learning approaches such as XGBoost and neural networks exhibited their strongest relative performance gains in high-complexity environments characterized by intricate interaction effects and nonlinear predictor-response relationships [13]. These experimental findings suggest that the optimal choice between traditional and machine learning approaches depends critically on the inherent complexity of the classification problem and the available sample size.

Methodological Protocols and Experimental Workflows

Standardized Evaluation Framework

To ensure fair comparison between traditional and machine learning classification approaches, researchers should implement a standardized experimental protocol. The following methodology provides a robust framework for evaluating classifier performance across methodologies:

Data Collection and Preparation: Assemble multiple datasets (recommended: 16+ with sample sizes ranging from 70,000-1,000,000) containing binary clinical outcomes and mixed feature types (continuous numeric, discrete numeric, binary) [13]. Implement appropriate preprocessing including mean imputation for missing data (considered MCAR) and conversion of nominal variables to binary representations.

Algorithm Implementation: Apply both traditional (logistic regression) and machine learning (random forest, XGBoost, neural networks) classifiers with consistent evaluation protocols. For traditional methods, use multivariable models without variable selection or regularization. For ML approaches, utilize default hyperparameters or implement standardized tuning procedures [13].

Learning Curve Construction: For each dataset-algorithm combination, calculate cross-validated AUC at incrementally increasing sample sizes. Fit learning curves to these performance measurements to identify sample size requirements for stability (defined as within 0.02 of full-dataset AUC) [13].

Performance Comparison: Evaluate comparative performance through multiple metrics including AUC stability, computational efficiency, and sensitivity to dataset characteristics such as minority class proportion, feature strength, and degree of nonlinearity.

Workflow Visualization

Cognitive Dimensions and Algorithmic Behavior

Emerging Evidence of Cognitive Biases in AI Systems

Recent research has revealed that machine learning systems can exhibit human-like cognitive biases in their operational characteristics, with significant implications for their application in scientific and clinical contexts. Investigations into the Dunning-Kruger Effect (DKE) in AI models have demonstrated that less competent models and those operating in rare programming languages exhibit stronger bias toward overconfidence, mirroring patterns observed in human cognition [14]. This phenomenon manifests as a disconnect between model confidence and actual performance, particularly pronounced in low-competence regimes and unfamiliar domains.

The experimental protocol for identifying such cognitive patterns involves measuring both actual performance (accuracy on specific tasks) and perceived performance through absolute confidence scores and relative confidence estimation methods like ELO and TrueSkill algorithms [14]. These methodologies reveal that AI models, particularly in specialized domains, can display statistically significant inflation of perceived versus actual performance, with overestimation becoming more pronounced with lower actual performance and increasing task difficulty. This emerging understanding of algorithmic overconfidence necessitates careful implementation considerations, particularly in high-stakes applications like drug development where miscalibrated confidence could significantly impact research outcomes.

Research Reagent Solutions for Classification Studies

Implementing robust comparisons between traditional and machine learning classification approaches requires specific methodological tools and analytical frameworks. The following research reagents represent essential components for conducting rigorous classification algorithm evaluations:

Table 3: Essential Research Reagents for Classification Algorithm Studies

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Learning Curve Framework | Measures performance as function of sample size | Inverse power-law models, nonlinear weighted least squares fitting [13] |

| Performance Metrics | Quantifies classifier effectiveness | Area Under Curve (AUC), calibration measures, classification accuracy [13] |

| Confidence Estimation Methods | Evaluates model self-assessment capability | Absolute confidence scores, ELO ranking, TrueSkill algorithm [14] |

| Data Generation Tools | Creates datasets with known properties | Bayesian Network Generation for artificial dataset creation [13] |

| Cross-Validation Protocols | Ensures generalizable performance estimates | k-fold cross-validation, stratified sampling, progressive sampling [13] |

| Bias Detection Frameworks | Identifies cognitive biases in AI systems | Intra-participant and inter-participant DKE analysis [14] |

Interpretation Framework and Pathway Analysis

The relationship between dataset characteristics, sample size, and algorithm performance follows identifiable pathways that can guide methodological selection. The following diagram illustrates the key decision pathways and performance relationships that emerge from experimental comparisons:

The comparative analysis between traditional and machine learning classification approaches reveals a nuanced landscape where methodological superiority depends critically on research context, data characteristics, and application goals. Traditional statistical methods maintain distinct advantages in scenarios characterized by limited sample sizes, strong theoretical frameworks guiding model specification, and research questions prioritizing explanation over prediction. Conversely, machine learning approaches demonstrate increasingly superior performance as data complexity and volume increase, particularly when dealing with intricate nonlinear relationships and complex interaction effects.

For researchers and drug development professionals, these findings underscore the importance of aligning methodological choices with specific research objectives and data environments. Rather than adhering to universal prescriptions, the optimal approach involves thoughtful consideration of the trade-offs between interpretability and predictive power, efficiency and flexibility, theoretical grounding and empirical performance. Future research directions should focus on developing hybrid methodologies that leverage the strengths of both paradigms while addressing emerging challenges such as cognitive biases in AI systems and the need for robust performance in specialized domains.

Data Requirements and Infrastructure for Each Classification System

Classification systems are fundamental tools in research and industry, enabling the organization of data and facilitating complex analytical tasks. The choice of a classification system is often dictated by the specific data requirements and computational infrastructure available. This guide provides a detailed comparison of the data needs and infrastructure supporting different classification approaches, with a specific focus on the research contexts of David Bader and Melanie Krüger. It is designed to help researchers, scientists, and drug development professionals select appropriate systems for their work.

Classification systems vary widely, from computational algorithms that power machine learning models to conceptual frameworks that guide data management. This section introduces the key systems and the research backgrounds of David Bader and Melanie Krüger, which frame our comparison.

David Bader's Research Focus: David A. Bader is a Distinguished Professor and founder of the Department of Data Science at the New Jersey Institute of Technology. His work specializes in high-performance computing (HPC) and real-world data analytics, with a recognized history of developing the first Linux-based supercomputer. His research interests lie at the intersection of high-performance computing and applications in cybersecurity, massive-scale analytics, and computational genomics [15].

Melanie Krüger's Research Focus: Melanie Krüger's work, as part of the German Society of Sport Science (dvs), centers on research data management (RDM) and the implementation of the FAIR principles (Findable, Accessible, Interoperable, and Reusable) for open data in sports science. Her activities focus on identifying the requirements for a sustainable research data infrastructure within her discipline [16].

Other Relevant Systems:

- Machine Learning Classifiers: These are predictive models that organize data into predefined classes based on feature values. They can be used for binary (two classes) or multiclass (more than two classes) tasks and are a cornerstone of modern data science [17].

- Uptime Institute Tier Classification: A standardized system for classifying data center infrastructure performance, availability, and resilience, with tiers ranging from basic (Tier I) to fault-tolerant (Tier IV) [18].

- Data Classification for Security: A process, often supported by tools and policies, for categorizing data based on sensitivity and risk to ensure its privacy, security, and regulatory compliance [19].

Comparative Analysis of Data Requirements

The volume, structure, and management of data required by different classification systems vary significantly. The following table summarizes the key data requirements for each system.

Table 1: Data Requirements for Different Classification Systems

| Classification System | Data Volume & Complexity | Data Structure & Sources | Data Management & Governance |

|---|---|---|---|

| Bader-Style HPC Analytics [15] | Massive-scale data; "Big Data" from real-world applications like genomics and cybersecurity. | Graph data, network data, and massive-scale analytics; co-founder of the Graph500 benchmark for "Big Data" platforms. | Focus on scalable algorithms and data structures for high-performance computing environments. |

| Krüger-Style Research Data Mgmt [16] | Empirical research data from sports science; scale is secondary to FAIR principles and metadata. | Multimodal data from sports and exercise science; requires rich metadata for reuse. | Implements FAIR and open data principles; relies on sustainable infrastructure and data publication (e.g., via Zenodo). |

| Machine Learning Classifiers [17] | Varies with task; requires a labeled training dataset for supervised learning. | Can handle numerical, text, image features; structured as an ordered sequence of feature values (a tuple). | Data is split into training and test sets; model accuracy depends on data quality and relevance. |

| Data Security Classification [19] | Focus on identifying and categorizing all sensitive data across an enterprise. | Data is classified based on sensitivity (e.g., Restricted, Private, Public) and type (e.g., PII, IP, PHI). | A continuous process throughout the data lifecycle; requires policies for access, encryption, and retention. |

| Infrastructure Data Taxonomy [20] | Data about critical infrastructure assets for categorization and reference. | Assets are categorized into up to five hierarchical levels: Sector, Subsector, Segment, Sub-segment, and Asset. | Aims for consistent identification and description of infrastructure assets across different entities. |

Comparative Analysis of Infrastructure Demands

The infrastructure supporting these classification systems ranges from physical data centers to computational hardware and software frameworks.

Table 2: Infrastructure Demands for Different Classification Systems

| Classification System | Computational Infrastructure | Storage & Networking | Software & Platforms |

|---|---|---|---|

| Bader-Style HPC Analytics [15] | Linux-based supercomputers and high-performance computing clusters; GPU accelerators. | Infrastructure for handling large-scale data movement and processing. | Scalable graph algorithm software; high-performance computing solutions for real-world analytics. |

| Krüger-Style Research Data Mgmt [16] | Standard institutional IT infrastructure; focus on accessible data repositories. | Sustainable, long-term data storage platforms (e.g., Zenodo). | Research data management (RDM) planning tools; data publication platforms. |

| Machine Learning Classifiers [17] | Varies from laptops to distributed computing clusters; GPUs for deep learning. | Storage for large training datasets; efficient data pipelines for model training. | Libraries like scikit-learn (Python); frameworks for model training and evaluation. |

| Data Center Tiers [18] | Tier I: Basic server room.Tier II: Redundant capacity components.Tier III: Concurrently maintainable.Tier IV: Fault-tolerant and physically isolated systems. | Tier I: Single power & cooling path.Tier II: Redundant capacity components.Tier III: Multiple power & cooling paths.Tier IV: Fault-tolerant, isolated distribution paths. | Infrastructure management systems aligned with Tier topology for operational sustainability. |

| AI-Ready Infrastructure [21] | Modern, scalable, and adaptive architectures; cloud-smart deployments. | Storage optimized for AI data pipelines; unified data storage to eliminate silos. | Intelligent Data Infrastructure; integrated data services for governance and cyber resilience. |

Experimental Protocols for Evaluation

Rigorous evaluation is critical for assessing the performance of classification systems. Below are detailed methodologies for key types of experiments cited in the literature.

Benchmarking Out-of-Distribution Performance Prediction

This protocol evaluates how well a trained model performs on data that comes from a different distribution than its training data, a critical test for real-world deployment [22].

- Objective: To predict the performance of trained machine learning models on unlabeled Out-of-Distribution (OOD) test datasets.

- Materials:

- Trained Models: A suite of pre-trained models (e.g., 1,444 models of various architectures as used in ODP-Bench).

- Datasets: A comprehensive set of benchmark datasets covering diverse distribution shifts (e.g., corruptions, style changes, geographic variations). Examples include CIFAR-10-C, ImageNet-R, and WILDS datasets [22].

- Software: ODP-Bench codebase or similar framework for consistent evaluation.

- Procedure:

- Model Preparation: Acquire or train a diverse pool of models.

- Test Set Selection: Select one or more OOD test datasets that represent a specific type of distribution shift.

- Prediction Algorithm Application: Apply OOD performance prediction algorithms (e.g., those based on model confidence, distribution discrepancy, or model agreement) to the trained models and the unlabeled OOD test set.

- Performance Calculation: The prediction algorithm outputs an estimated performance score for each model on the OOD set.

- Validation: Compare the predicted performance scores against the ground-truth performance metrics obtained by actually testing the models on the labeled OOD dataset.

- Evaluation: Calculate evaluation metrics like Pearson correlation between predicted and actual performance across all models.

- Outcome Analysis: The effectiveness of a performance prediction algorithm is measured by how closely its estimates correlate with the true model performance on OOD data. High correlation indicates a reliable method for model selection in new, unseen environments [22].

Multi-Class Machine Learning for System Preference Analysis

This protocol uses machine learning classifiers to analyze user preferences, such as for public transport systems [23].

- Objective: To explore and predict user preference among multiple system alternatives and quantify system benefits.

- Materials:

- Survey Data: Stated preference survey data from respondents (e.g., 1500 respondents providing 4500 observations) where attributes like travel time, cost, and wait time are varied across different scenarios.

- Computing Environment: A standard computing environment capable of running machine learning libraries (e.g., Python with scikit-learn).

- Procedure:

- Data Collection: Design and distribute a stated preference survey to target respondents.

- Data Preprocessing: Clean the data and encode categorical variables.

- Model Training: Train multiple multi-class machine learning classifiers (MCMLC) on the survey data. Examples include Logistic Regression, Naïve Bayes, and K-Nearest Neighbors (KNN) [23] [17].

- Model Evaluation: Assess all classifiers for prediction accuracy and stability using metrics like precision, recall, and F1 score [17].

- Preference Prediction: Use the trained and validated model to predict the most preferred system alternative.

- Benefit Analysis: Use additional methods like Analytical Hierarchy Process (AHP) to rank the significance of different attributes and calculate derived benefits like projected revenue and ridership.

- Outcome Analysis: The classifier with the highest and most stable prediction accuracy is used to identify the most preferred system. The AHP results provide insight into the key drivers of user choice [23].

Visualizing Workflows and System Relationships

The following diagrams, generated with Graphviz, illustrate key experimental workflows and the logical structure of classification systems.

OOD Performance Prediction Workflow

Data Classification Security Lifecycle

Taxonomy of a Nuclear Power Plant Asset

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key resources and tools required for implementing and evaluating the classification systems discussed.

Table 3: Essential Research Reagents and Solutions

| Item/Tool | Function & Application | Relevance to Classification Systems |

|---|---|---|

| ODP-Bench Benchmark [22] | A comprehensive benchmark suite of models and datasets for evaluating Out-of-Distribution performance prediction algorithms. | Provides a standardized testbed for comparing the reliability of different performance prediction methods for ML classifiers. |

| High-Performance Computing (HPC) Cluster [15] | A collection of interconnected computers that provide massive computational power for solving large problems. | Essential for running Bader-style large-scale graph analytics and training complex machine learning models. |

| FAIR-Compliant Data Repository [16] | A digital repository for storing and sharing research data according to FAIR principles (e.g., Zenodo). | Core infrastructure for Krüger-style research data management, ensuring data is findable, accessible, interoperable, and reusable. |

| Data Classification Software [19] | Automated tools that scan, identify, and tag sensitive data across an enterprise based on defined policies. | Enforces data security classification by discovering and categorizing data throughout its lifecycle, reducing risk. |

| Scikit-learn Library [17] | A popular open-source Python library featuring various classification, regression, and clustering algorithms. | Provides readily available implementations of numerous machine learning classifiers (e.g., Logistic Regression, KNN) for experimental analysis. |

| Tier-Certified Data Center [18] | A data center facility that has been certified by the Uptime Institute to meet specific levels of operational resilience and availability. | Provides the physical infrastructure foundation required for reliable access to HPC systems, cloud AI services, and data repositories. |

Scope and Limitations in Pharmaceutical Contexts

Sperm morphology assessment represents a cornerstone in the diagnostic evaluation of male infertility, providing crucial insights into sperm quality and function. Within clinical andrology and reproductive medicine, two predominant classification systems have emerged: the World Health Organization fourth edition (WHO4) criteria and the Kruger strict (WHO5) criteria. These systems employ fundamentally different approaches to evaluating sperm morphology, particularly regarding the classification thresholds and the strictness of morphological assessment. The WHO4 system, established in 1999, utilizes a more liberal assessment approach with a normal morphology cutoff of ≥14%, while the Kruger WHO5 system, incorporated into the 2010 WHO guidelines, employs a stricter evaluation with a significantly reduced cutoff of ≥4% normal forms [24].

The comparative analysis of these classification systems extends beyond academic interest, carrying significant implications for clinical decision-making, treatment selection, and resource allocation in infertility management. Understanding the scope and limitations of each approach is essential for researchers, clinical andrologists, and reproductive specialists who must interpret diagnostic results and determine their clinical applicability. This evaluation is particularly relevant in the contemporary landscape of assisted reproductive technologies, where the predictive value of sperm morphology parameters continues to be debated amidst evolving treatment modalities such as intracytoplasmic sperm injection (ICSI), which may potentially mitigate the impact of morphological deficiencies [24].

Comparative Analysis of Classification Criteria

Fundamental Differences in Assessment Approaches

The Kruger WHO5 and WHO4 morphological classification systems diverge significantly in their philosophical approaches and technical execution. The Kruger strict criteria mandate a rigorous morphometric assessment where apparently normal spermatozoa must be measured for head size, with any single structural defect (in the head, appearance, width, length, neck, or tail) resulting in classification as abnormal. This method requires that "all borderline forms be considered abnormal" and aims to identify spermatozoa with the potential to successfully migrate through cervical mucus and fertilize an egg [25]. In contrast, the WHO4 methodology embraces a more liberal assessment approach with a wider definition of normal morphology, though it still references the strict criteria as the standard for evaluation [24] [25].

The technological implementation of these criteria has evolved through automated systems. The SQA-V GOLD morphology algorithm, for instance, was developed by assessing stained semen smears under microscopy in compliance with WHO manual guidelines, then correlating these findings with electronic signals generated by sperm motion patterns. This system reports normal morphology based on the potential of sperm to functionally migrate through cervical mucus, rather than providing a full morphology differential of specific defects [25].

Diagnostic Performance and Correlation

Table 1: Comparative Performance of WHO4 and Kruger WHO5 Morphology Criteria

| Parameter | WHO4 Criteria | Kruger WHO5 Criteria |

|---|---|---|

| Normal Morphology Cutoff | ≥14% | ≥4% |

| Mean Normal Morphology (%) | 6.4% ± 4.8% | 3.3% ± 3.2% |

| Correlation Between Systems | Spearman correlation coefficient = 0.94 (P<.0001) | |

| Percentage of SAs Abnormal by Criteria | 90.9% (847/932 SAs) | 58.5% (545/932 SAs) |

| Abnormal Kruger WHO5 also Abnormal by WHO4 | 99.6% (543/545 SAs) | |

| Isolated Abnormalities (One System Only) | 0.4% (2/545 SAs) had abnormal Kruger but normal WHO4 | 35.9% (304/847 SAs) abnormal WHO4 but normal Kruger |

A comprehensive retrospective study analyzing 932 semen analyses (SAs) from 691 men demonstrated a remarkably high correlation between the WHO4 and WHO5 morphology assessments, with a Spearman correlation coefficient of 0.94 [24]. Despite this strong correlation, the application of different cutoff values resulted in substantially different diagnostic classifications. The research revealed that 90.9% of SAs were classified as abnormal using WHO4 criteria, while only 58.5% were abnormal according to Kruger WHO5 criteria. Crucially, nearly all samples (99.6%) with abnormal Kruger morphology also showed abnormal morphology by WHO4 standards, indicating that the Kruger criteria identify a subset of the abnormalities detected by the WHO4 system [24].

The clinical implications of these differing classification rates are significant. Patients with abnormal WHO4 morphology but normal Kruger morphology demonstrated better overall semen parameters, with mean semen volume of 2.6 ± 1.3 mL, sperm concentration of 68.6 ± 31.1 × 10⁶/mL, and motility of 60.5% ± 8.5% [24]. This profile suggests that the WHO4 system may flag milder abnormalities with less severe impact on overall sperm function.

Experimental Protocols and Methodologies

Standardized Laboratory Procedures

The comparative assessment of sperm morphology classification systems requires rigorous standardized methodologies to ensure valid comparisons. In the referenced study, samples were collected after a recommended abstinence period of 2-7 days, with a median of 3 days (IQR, 2.0-3.5 days). Samples were obtained through self-stimulation into clean containers and immediately provided to the laboratory for processing by trained andrologists [24].

Sample preparation followed WHO laboratory manual specifications using CELL-VU Pre-Stained Morphology slides (Millennium Sciences, Inc). This standardized preparation is critical for consistent morphological assessment. A total of 100 cells were systematically evaluated in four different areas of each slide under ×400 magnification by trained andrologists. Each sample underwent dual assessment using both classification systems: first with WHO4 criteria (normal ≥14%), then with WHO5 Kruger strict criteria (normal ≥4%) incorporating strict morphometric assessment of sperm characteristics [24].

The statistical analysis employed correlation measures (Spearman correlation coefficient) to evaluate the relationship between the two classification systems. Additionally, multivariable logistic regression models were used to predict morphology classification based on the percentage of head and tail defects, with odds ratios calculated for each parameter under both classification systems [24].

Experimental Workflow Visualization

Research Reagent Solutions and Essential Materials

Table 2: Key Laboratory Reagents and Materials for Sperm Morphology Assessment

| Reagent/Material | Primary Function | Application Context |

|---|---|---|

| CELL-VU Pre-Stained Morphology Slides | Standardized sperm staining and morphology evaluation | Consistent preparation for both WHO4 and WHO5 assessment |

| SQA-V GOLD System | Automated sperm quality analysis | Algorithm-based morphology assessment compliant with WHO guidelines |

| Phase Contrast Microscope | High-resolution cellular visualization | Manual morphology assessment at ×400 magnification |

| Statistical Analysis Software | Data correlation and regression analysis | Comparative performance evaluation between classification systems |

The CELL-VU Pre-Stained Morphology Slides represent a critical component in standardized morphology assessment, ensuring consistent staining quality across samples. The SQA-V GOLD system provides an automated approach to morphology assessment, with versions specifically configured for either WHO4 (software v2.48) or WHO5 (software v2.60) criteria compliance. This system analyzes electronic signals generated by sperm motion patterns and correlates them with microscopic morphology readings [25].

Clinical Implications and Predictive Value

Diagnostic Utility and Therapeutic Decision-Making

The clinical application of sperm morphology classification systems extends to their predictive value for fertility outcomes and assisted reproduction success. The Kruger strict criteria were originally developed to identify spermatozoa with the potential to successfully migrate through cervical mucus on the path to fertilize an egg, representing a more functional assessment compared to population-based normative approaches [25]. Studies have demonstrated that Kruger-classified normal sperm have better prognosis for in vitro fertilization, though the advent of intracytoplasmic sperm injection may reduce the clinical impact of morphological deficiencies [24].

The research indicates that sperm with morphological defects generally have lower fertilizing potential, potentially due to associated intrinsic issues such as increased DNA fragmentation, structural chromosomal aberrations, immature chromatin, and aneuploidy [24]. This association between morphological defects and other functional deficiencies underscores the importance of morphology assessment beyond mere classification.

From a practical clinical perspective, the high correlation between WHO4 and Kruger WHO5 systems (r=0.94) suggests limited incremental diagnostic value in performing both assessments simultaneously. The finding that only 0.4% of men with abnormal Kruger morphology had normal WHO4 morphology questions the clinical utility of the additional resource investment required for Kruger assessment, particularly given its more labor-intensive and costly nature [24].

Limitations and Methodological Constraints

Both classification systems present significant limitations that must be acknowledged in research and clinical contexts. The predictive value of sperm morphology for identifying subfertile patients remains limited, regardless of the classification system employed [24]. This constraint reflects the multifactorial nature of male fertility, where isolated morphological assessment provides an incomplete diagnostic picture.

The resource intensiveness of the Kruger strict criteria represents another significant limitation. The method requires substantial time, expertise, and financial investment compared to the WHO4 criteria, raising questions about cost-effectiveness, particularly given the high correlation between systems and minimal additional diagnostic yield [24].

Methodologically, the assessment of morphology substructures revealed that both classification systems were significantly associated with head and tail defects, though with differing predictive strengths. For WHO4 classification, the odds ratios for head and tail defects were 1.30 and 1.63 respectively, while for Kruger strict criteria, the corresponding odds ratios were 1.14 and 1.43 [24]. This differential weighting of specific defects highlights the variations in assessment focus between the two systems.

The comparative analysis of WHO4 and Kruger WHO5 morphology classification systems reveals a landscape of both convergence and distinction. The strong correlation between these systems suggests substantial overlap in their diagnostic information, while differing cutoff values and assessment strictness yield divergent classification rates that impact clinical interpretation.

Future research directions should focus on refining morphological assessment to enhance predictive value for specific treatment outcomes, particularly in the context of evolving assisted reproductive technologies. Additionally, investigation into automated assessment systems, such as the SQA-V GOLD platform, may address current limitations related to inter-laboratory variability and resource requirements [25].

The integration of morphological assessment with other sperm function parameters, including DNA fragmentation indices and molecular markers, represents a promising pathway toward more comprehensive male fertility evaluation. As the field advances, the optimal utilization of morphology classification systems will likely involve contextual application based on specific diagnostic questions, treatment modalities, and resource considerations, rather than universal adoption of a single approach.

Methodological Implementation in Drug Discovery Pipelines

Step-by-Step WHO Classification Protocols for Drug Development

The World Health Organization (WHO) establishes globally standardized classification protocols that are critical for drug development, ensuring consistency in disease categorization, medicinal product classification, and safety monitoring. These systems provide the foundational language and structure that enable systematic recording, analysis, and interpretation of health data across international borders [26]. For researchers and drug development professionals, understanding and correctly applying these protocols is not merely an administrative task; it is a fundamental component of regulatory strategy, clinical trial design, and post-market surveillance. The integration of these classifications into drug development workflows ensures that data generated in one country or study can be reliably compared and pooled with data from others, thereby accelerating medical discovery and improving global health outcomes.

This guide focuses on two cornerstone WHO systems: the International Classification of Diseases (ICD) and the WHO Drug Dictionary (WHODrug). While the search results do not specify a "David and Kruger classification algorithm" related to WHO medical classifications, they instead identify David Krueger as a researcher in machine learning and AI safety [27] [28] [29]. This analysis will therefore concentrate on the established, critical WHO protocols directly applicable to pharmaceutical research and development.

The drug development lifecycle interfaces with several WHO classifications at distinct stages, from initial target identification and patient recruitment to adverse event reporting and market authorization. The two most prominent systems are detailed below.

International Classification of Diseases (ICD)

The ICD is the global standard for health information, defining the universe of diseases, disorders, injuries, and other related health conditions. The current version, ICD-11, came into effect in January 2022 and represents a significant evolution from its predecessor [26].

- Primary Purpose in Drug Development: The ICD provides the necessary codes for patient population identification in clinical trials, defining inclusion and exclusion criteria based on specific diagnoses. It is also used for reporting serious adverse events and categorizing the indications for which a drug is developed.

- Key Features: ICD-11 is designed as an end-to-end digital solution with enhanced interoperability. It supports semantic interoperability, meaning that data recorded for one purpose (e.g., clinical care) can be reused for others (e.g., reimbursement or research) without loss of meaning. Its structure integrates both a classification and terminology, offering a rich, machine-readable framework [26].

WHODrug Standardised Drug Groupings (SDGs)

WHODrug is an international dictionary of medicinal products, and its Standardised Drug Groupings (SDGs) are a critical tool for clinical trial analysis and pharmacovigilance [30].

- Primary Purpose in Drug Development: SDGs are used to classify concomitant medications (other drugs a patient is taking) and investigational products in clinical trials. This is essential for identifying unknown drug interactions, protocol violations, and unreported adverse effects.

- Key Features: The SDGs are curated, unbiased lists that classify drugs based on properties like their pharmacological effect or metabolic pathway. They are maintained and regularly updated by the Uppsala Monitoring Centre (UMC), saving researchers time and reducing the risk of missing relevant new drugs when creating study-specific exclusionary drug lists [30].

Table 1: Key WHO Classification Systems in Drug Development

| System Name | Current Version | Governing Body | Primary Use in Drug Development |

|---|---|---|---|

| International Classification of Diseases (ICD) | ICD-11 (in effect from 2022) | World Health Organization (WHO) | Defining disease-specific trial cohorts; reporting adverse events. |

| WHODrug Standardised Drug Groupings (SDGs) | Regularly updated | WHO Uppsala Monitoring Centre (UMC) | Categorizing concomitant and trial medications for safety analysis. |

Protocol Implementation: A Step-by-Step Guide

Integrating WHO classifications into a drug development program requires a methodical approach. The following workflow outlines the key stages for proper implementation.

Step 1: Disease Definition and Protocol Design

The initial stage involves using ICD-11 to precisely define the patient population for a clinical trial.

- Action: Select the most specific ICD-11 code for the disease or condition being treated. ICD-11 allows for granular coding; for instance, rather than a general code for "acute myeloid leukemia," researchers can use codes that specify genetic subtypes, such as those for TP53-mutated AML [31].

- Protocol Integration: The chosen ICD-11 codes must be explicitly written into the study protocol's inclusion and exclusion criteria. This ensures all clinical trial sites are recruiting a consistent patient population.

- Best Practice: Utilize the ICD-11 API and online tools to ensure the selected codes are current and to access their full clinical definitions. The ICD-11 Foundation is a reliable starting point for this research [26].

Step 2: Trial Setup and Conduct

During the trial, both ICD-11 and WHODrug are actively used for data capture.

- Training: All clinical site personnel involved in data entry must be trained on the specific ICD-11 coding guidelines and the process for reporting concomitant medications.

- Patient Coding: Each enrolled patient's primary and secondary diagnoses are coded using ICD-11 at baseline and throughout the study as new conditions emerge.

- Medication Recording: Every medication administered to the patient—including the investigational drug, placebos, and all concomitant therapies—is recorded using the most current WHODrug Global dictionary and its SDGs. This allows for the standardized grouping of drugs, for example, to analyze interactions with all "HMG CoA reductase inhibitors" (statins) as a class [30].

Step 3: Data Analysis and Reporting

At the analysis stage, these classifications enable robust and standardized evaluation of trial outcomes.

- Efficacy Analysis: Researchers can analyze treatment responses within specific ICD-11 diagnostic subgroups. This is crucial for identifying whether a drug is particularly effective in a molecularly defined patient segment, as highlighted in validation studies of classifications like the WHO-5 for TP53-mutated neoplasms [31].

- Safety Analysis: The WHODrug SDGs are used to screen for drug-class-specific adverse events. By grouping concomitant medications, safety teams can more easily detect signals that might be missed when looking at individual drugs.

- Regulatory Reporting: All serious adverse events (SAEs) reported to regulatory authorities like the FDA or EMA must be coded using ICD-11 (for the event itself) and WHODrug (for the suspect drug). This standardization is mandatory for regulatory review and future meta-analyses.

Step 4: Post-Marketing Surveillance

After drug approval, the continued use of these systems is vital for pharmacovigilance.

- Action: Continue to collect and code real-world safety and efficacy data using ICD-11 and WHODrug. This long-term data can be aggregated globally because of the shared classification standards.

- Outcome Studies: These classifications facilitate large-scale observational studies and the creation of disease registries to monitor the drug's performance in a broader population outside the controlled clinical trial environment.

Experimental Data and Validation

The rigorous validation of WHO classification guidelines is a critical process that ensures their utility and reliability in both clinical practice and research. This validation often involves applying the proposed criteria to large, independent international cohorts to assess their real-world performance.

A key example is the 2025 validation study of the 5th edition of the WHO classification (WHO-5) for TP53-mutated myeloid neoplasms, which was directly compared against the International Consensus Classification (ICC) [31]. This study provides a template for how WHO protocols are tested and refined.

Table 2: Comparative Analysis of WHO-5 and ICC for TP53-mutated Myeloid Neoplasms

| Validation Metric | WHO-5 Classification Findings | ICC Classification Findings |

|---|---|---|

| Inclusion Rate | Only 36% (217/603) of TP53-mutated cases were classified as a distinct entity [31]. | 86% (520/603) of cases were included under the TP53-mutated MN entity [31]. |

| VAF Threshold | No specific VAF threshold defined [31]. | Mandates a VAF of ≥10% for TP53 mutation [31]. |

| TP53mut AML Status | Not recognized as a distinct entity; grouped with other AMLs [31]. | Recognized as a distinct entity with very poor prognosis [31]. |

| Defining Biallelic Inactivation | Requires confirmation of 17p loss by CNV analysis (e.g., FISH, array) [31]. | Accepts complex karyotype (CK) as a multi-hit equivalent, obviating need for additional CNV in some cases [31]. |

Experimental Protocol for Validation

The methodology from the TP53 study exemplifies a robust approach to validating a disease classification system [31].