Comparative Performance of SVM, Random Forest, and ANN in Male Infertility Prediction: A Systematic Analysis for Biomedical Research

This article systematically compares the performance of three prominent machine learning algorithms—Support Vector Machine (SVM), Random Forest (RF), and Artificial Neural Network (ANN)—in predicting male infertility.

Comparative Performance of SVM, Random Forest, and ANN in Male Infertility Prediction: A Systematic Analysis for Biomedical Research

Abstract

This article systematically compares the performance of three prominent machine learning algorithms—Support Vector Machine (SVM), Random Forest (RF), and Artificial Neural Network (ANN)—in predicting male infertility. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, methodological applications, and optimization strategies for these models. By synthesizing current evidence, including performance metrics like accuracy and AUC, and addressing challenges such as data standardization and model interpretability, this review provides a validated, comparative framework to guide the development of robust, clinically relevant predictive tools in reproductive medicine.

The Critical Role of AI and Machine Learning in Modern Male Infertility Diagnosis

The Global Burden of Male Infertility and Diagnostic Challenges

Male infertility represents a significant and often underappreciated global health challenge, contributing to 20-30% of all infertility cases among couples worldwide, with some studies suggesting male factors may be present in up to 50% of cases [1] [2] [3]. This condition affects an estimated 30 million men globally, with the highest prevalence observed in Africa and Eastern Europe [1]. Traditionally, the diagnostic pathway for male infertility has relied heavily on conventional semen analysis, which assesses parameters such as sperm concentration, motility, and morphology [2]. However, these methods face significant limitations, including substantial inter-observer variability, subjectivity, and poor reproducibility, often complicating accurate diagnosis and treatment planning [1]. Furthermore, traditional tools frequently lack the precision to detect subtle causes of infertility, such as sperm DNA fragmentation or early-stage testicular dysfunction [1].

In response to these diagnostic challenges, artificial intelligence (AI) has emerged as a transformative tool in reproductive medicine. Machine learning (ML) algorithms, including Support Vector Machines (SVM), Random Forests (RF), and Artificial Neural Networks (ANN), are increasingly being applied to enhance diagnostic precision, predict treatment outcomes, and personalize therapeutic strategies [1] [2] [4]. These computational approaches can integrate and analyze complex, multidimensional data from clinical, lifestyle, genetic, and environmental factors, offering a more comprehensive assessment of male reproductive health than previously possible [4] [3]. This review systematically compares the performance of SVM, RF, and ANN algorithms within male infertility prediction research, providing researchers and clinicians with an evidence-based analysis of these rapidly advancing diagnostic technologies.

The Expanding Scope of Male Infertility

Male infertility extends beyond its clinical definitions to encompass profound psychological, social, and economic dimensions. The inability to conceive often induces significant emotional distress, relationship strain, and feelings of inadequacy, particularly in sociocultural contexts where fertility is closely tied to masculine identity [2] [3]. The etiology of male infertility is multifactorial, encompassing genetic abnormalities (such as karyotypic anomalies and Y-chromosome microdeletions), hormonal imbalances, anatomical issues like varicocele, and a spectrum of lifestyle and environmental factors [4] [3]. Prolonged sedentary behavior, exposure to environmental toxins, endocrine-disrupting chemicals, and psychosocial stress have been identified as exacerbating factors in reproductive health disorders [3].

Alarmingly, research indicates a declining trend in semen quality over time, particularly in parameters of sperm concentration and count, with young men in certain regions, including China, showing notable deterioration in sperm morphology, vitality, and quantity [5]. This trend underscores the growing public health significance of male infertility and the urgent need for advanced diagnostic methodologies. Importantly, reduced sperm quality may serve as a biomarker for broader systemic health issues, including metabolic syndrome, endocrine dysfunction, and cardiovascular disease, positioning male infertility within an integrated health continuum rather than as an isolated concern [3].

Conventional Diagnostics and the Imperative for Innovation

Limitations of Traditional Approaches

The cornerstone of male fertility assessment, conventional semen analysis, exhibits several critical limitations that hinder its diagnostic reliability. The process remains heavily reliant on manual assessment, which introduces substantial subjectivity and inter-observer variability [1] [5]. This variability complicates the accurate evaluation of critical sperm parameters such as morphology, motility, and concentration, ultimately affecting treatment planning and success [1]. Furthermore, traditional diagnostic tools often lack the sensitivity to detect subtle or multifactorial causes of infertility, such as sperm DNA fragmentation (SDF) or early-stage testicular dysfunction, limiting their ability to guide personalized interventions [1].

Sperm morphology analysis (SMA) exemplifies these challenges. According to World Health Organization (WHO) standards, SMA involves categorizing sperm into head, neck, and tail compartments with 26 distinct abnormality types, requiring the analysis of over 200 sperm per sample [5]. This process is not only labor-intensive but also highly susceptible to subjective interpretation, leading to inconsistencies in results across different laboratories and technicians [5]. Additionally, predictive models based on traditional statistical methods often struggle to integrate the complex interplay of clinical, environmental, and lifestyle factors that contribute to infertility, resulting in suboptimal accuracy for forecasting outcomes of assisted reproductive technologies (ART) such as in vitro fertilization (IVF) and intracytoplasmic sperm injection (ICSI) [1].

The Promise of Computational Diagnostics

Artificial intelligence, particularly machine learning, offers a paradigm shift in male infertility diagnostics by automating analytical processes, reducing variability, and identifying subtle patterns beyond human perception [1]. ML algorithms can enhance diagnostic accuracy by automating sperm evaluation across multiple parameters, including morphology, motility, and DNA integrity, with greater consistency than manual methods [1] [5]. AI-driven predictive tools integrate diverse data types—including clinical parameters, imaging data, genetic markers, and patient history—to improve prediction of sperm retrieval success, fertilization potential, and ART outcomes [1].

In severe conditions such as non-obstructive azoospermia (NOA), which affects approximately 1% of men and 10-15% of infertile men, AI models can assist in identifying viable sperm in testicular biopsies, a task that remains challenging with current histopathological techniques [1]. Beyond diagnostics, AI-powered approaches can optimize treatment selection by identifying patients most likely to benefit from specific interventions such as varicocele repair or hormonal therapy, thereby avoiding unnecessary procedures and improving resource allocation [1].

Comparative Analysis of Machine Learning Algorithms

Evaluation of machine learning models for male infertility prediction incorporates multiple performance metrics, with Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve being particularly prominent as it measures the trade-off between sensitivity and specificity across different classification thresholds [6]. Additional important metrics include accuracy, sensitivity, specificity, and precision, which collectively provide a comprehensive view of model performance [2] [7]. Systematic reviews of ML applications in male infertility report a median accuracy of 88% across various models, with ANN-specific studies showing a slightly lower median accuracy of 84% [2] [8].

Table 1: Comparative Performance of Machine Learning Algorithms in Male Infertility Prediction

| Algorithm | Reported AUC | Reported Accuracy | Key Applications | Notable Performances |

|---|---|---|---|---|

| Support Vector Machine (SVM) | 88.59% (Sperm Morphology) [1] | 89.9% (Sperm Motility) [1] | Sperm morphology classification, motility analysis, infertility risk prediction | 96% AUC for infertility risk prediction [4] |

| Random Forest (RF) | 84.23% (IVF Success) [1] | High in ensemble methods [7] | IVF/ICSI success prediction, feature importance analysis, oocyte selection | 97% AUC for ICSI success prediction [6] |

| Artificial Neural Networks (ANN) | High values reported [7] | 84% median accuracy [2] [8] | Sperm concentration prediction, clinical pregnancy prediction, non-linear pattern recognition | 99% accuracy in hybrid ANN-ACO framework [3] |

| Gradient Boosting Trees (GBT) | 0.807 (NOA Sperm Retrieval) [1] | - | Non-obstructive azoospermia sperm retrieval prediction | 91% sensitivity [1] |

Support Vector Machines (SVM) in Male Infertility

Support Vector Machines represent a robust approach for classification tasks, particularly effective in scenarios with clear margin of separation between classes. The algorithm operates by identifying an optimal hyperplane that maximizes the margin between different classes in the feature space [4]. For non-linearly separable patterns, SVM employs kernel functions to transform data into higher-dimensional spaces where linear separation becomes feasible, a technique known as the "kernel trick" [4].

In male infertility applications, SVM has demonstrated exceptional performance in various domains. One study developing a predictive model for male infertility risk factors reported that SVM achieved an AUC of 96%, outperforming several other algorithms except for the SuperLearner ensemble method [4]. In sperm morphology analysis, SVM models have attained 88.59% AUC when analyzing 1,400 sperm images, while in motility assessment, SVM achieved 89.9% accuracy on 2,817 sperm [1]. These results highlight SVM's capability in handling both structural sperm analysis and clinical parameter-based prediction tasks.

Random Forest (RF) in Male Infertility

Random Forest is an ensemble learning method that constructs multiple decision trees during training and outputs the mode of their classes for classification tasks [4]. This algorithm employs bagging (bootstrap aggregating) to create diverse subsets of the original data, enhancing model stability and reducing overfitting [6]. A key advantage of RF is its ability to provide feature importance rankings, which offer valuable insights into the relative contribution of different clinical and lifestyle factors to infertility risk [4].

Research demonstrates RF's strong performance in predicting ART outcomes. One investigation utilizing 10,036 patient records with 46 clinical features to predict ICSI treatment success found that RF achieved the highest AUC score of 0.97 among compared algorithms, followed closely by Neural Networks at 0.95 [6]. Another study reported RF achieving 84.23% AUC in predicting IVF success based on 486 patient records [1]. The algorithm's robustness against overfitting and its capacity to handle high-dimensional data make it particularly valuable for complex infertility prediction tasks involving numerous input variables.

Artificial Neural Networks (ANN) in Male Infertility

Artificial Neural Networks are computational models inspired by the biological neural networks of the human brain, characterized by interconnected nodes organized in layers [2]. These models excel at identifying complex, non-linear relationships in data, making them particularly suitable for the multifaceted nature of male infertility diagnostics [3]. ANN architectures can range from simple multilayer perceptrons to sophisticated deep learning networks with numerous hidden layers [1].

In male fertility assessment, ANNs have demonstrated considerable success across diverse applications. A hybrid framework combining a multilayer feedforward neural network with a nature-inspired Ant Colony Optimization (ACO) algorithm achieved remarkable 99% classification accuracy with 100% sensitivity on a clinically profiled male fertility dataset [3]. The model also exhibited ultra-low computational time of just 0.00006 seconds, highlighting its potential for real-time clinical applications [3]. Beyond direct infertility diagnosis, ANNs have proven valuable in predicting sperm concentration, a crucial determinant of male fertility [2]. Systematic reviews indicate that while ANN models show a slightly lower median accuracy of 84% compared to the overall ML median of 88%, their capacity to model complex interactions continues to make them a promising approach in the field [2] [8].

Experimental Protocols and Methodologies

Data Sourcing and Preprocessing

Robust experimental design in male infertility ML research begins with comprehensive data collection from diverse sources, including clinical records, semen analysis parameters, hormone levels, genetic markers, lifestyle factors, and environmental exposures [4] [3]. For instance, one study incorporated data from 587 infertile and 57 fertile patients, capturing attributes such as age, hormone analysis (FSH, LH, testosterone levels), routine semen parameters, sperm concentration, and genetic variations [4]. Similarly, research on ICSI success prediction utilized an extensive dataset of 10,036 patient records with 46 clinical features documented prior to treatment decisions [6].

Data preprocessing represents a critical step in ensuring model reliability. Common practices include addressing missing values through imputation or exclusion, normalizing numerical features using techniques like Z-score normalization or Min-Max scaling to [0,1] range, and encoding categorical variables [4] [3]. These procedures mitigate bias from heterogeneous measurement scales and enhance model convergence. For image-based sperm morphology analysis, additional preprocessing includes image enhancement, noise reduction, and segmentation of sperm components (head, neck, tail) [5].

Model Training and Validation

Rigorous validation methodologies are essential for assessing model generalizability beyond training data. The 10-fold cross-validation technique is widely employed, where the dataset is partitioned into ten subsets, with the model trained on nine and validated on one, rotating this process ten times [4]. This approach provides robust performance estimates while maximizing data utility. Studies typically employ train-test splits at various ratios (e.g., 80-20%, 70-30%, 60-40%) to further evaluate model performance on unseen data [4].

To address class imbalance common in medical datasets (where fertile cases may outnumber infertile ones), researchers implement techniques such as synthetic minority oversampling (SMOTE) or adjusted class weights in algorithm configurations [3]. Hyperparameter optimization through grid search or random search fine-tunes model configurations for optimal performance. Increasingly, nature-inspired optimization algorithms like Ant Colony Optimization (ACO) are being integrated with traditional ML methods to enhance learning efficiency, convergence, and predictive accuracy [3].

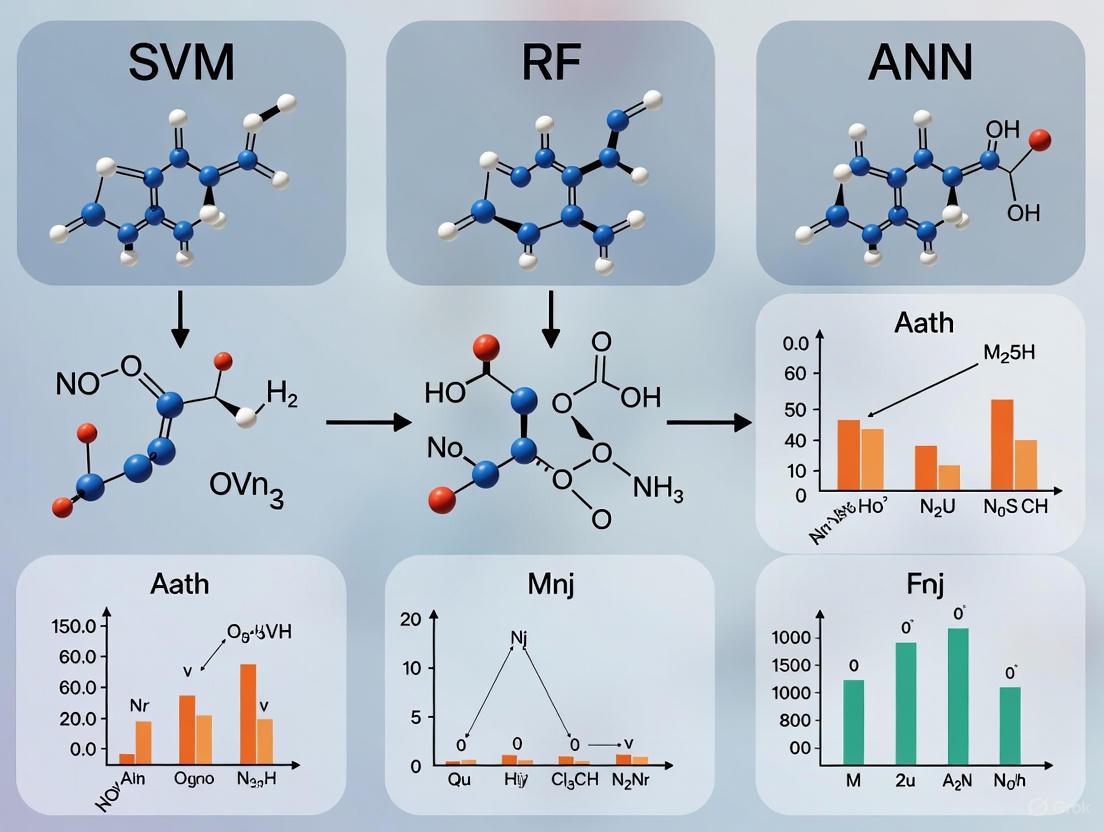

Diagram 1: Experimental workflow for ML model development in male infertility research, showing the progression from data preparation through model development to evaluation and implementation.

Emerging Hybrid Approaches

Recent research has explored hybrid frameworks that combine the strengths of multiple computational approaches to enhance predictive performance. One innovative study integrated a multilayer feedforward neural network with an Ant Colony Optimization algorithm, leveraging ACO's adaptive parameter tuning inspired by ant foraging behavior to overcome limitations of conventional gradient-based methods [3]. This hybrid strategy demonstrated improved reliability, generalizability, and efficiency compared to standalone models.

Another advancement involves the incorporation of Explainable AI (XAI) frameworks to enhance model interpretability, a critical factor for clinical adoption [3]. Techniques such as Proximity Search Mechanism (PSM) provide feature-level insights, enabling healthcare professionals to understand and trust model predictions [3]. Similarly, ensemble methods like SuperLearner have been employed to combine multiple algorithms with different weights determined through cross-validation, outperforming individual classifiers in infertility risk prediction [4].

Essential Research Toolkit

Table 2: Key Research Reagent Solutions and Computational Tools for Male Infertility ML Research

| Resource Category | Specific Examples | Function/Purpose | Relevance to Male Infertility Research |

|---|---|---|---|

| Public Datasets | HuSHeM [5], VISEM-Tracking [5], SVIA Dataset [5], UCI Fertility Dataset [3] | Benchmarking algorithm performance, training models | Provide standardized, annotated data for sperm morphology, motility, and clinical parameters |

| ML Algorithms | SVM, Random Forest, ANN (including CNN), Gradient Boosting Trees [1] [4] | Core predictive modeling, classification, regression | Enable infertility diagnosis, treatment outcome prediction, sperm characteristic analysis |

| Optimization Techniques | Ant Colony Optimization (ACO) [3], Genetic Algorithms [3] | Hyperparameter tuning, feature selection, model optimization | Enhance model accuracy, convergence, and efficiency through bio-inspired computation |

| Software/Libraries | R (caret, SL, e1071, rpart packages) [4], Python (scikit-learn, TensorFlow, PyTorch) | Model implementation, statistical analysis, visualization | Provide ecosystem for data preprocessing, model development, and performance evaluation |

| Explainability Tools | SHAP, LIME, Proximity Search Mechanism (PSM) [3] | Model interpretability, feature importance analysis | Bridge between algorithmic predictions and clinical understanding for trusted adoption |

Performance Interpretation and Clinical Translation

Algorithm Selection Guidelines

Choosing the appropriate machine learning algorithm depends on multiple factors, including dataset characteristics, computational resources, and specific clinical objectives. For high-dimensional datasets with complex nonlinear relationships, Artificial Neural Networks often demonstrate superior performance, particularly when integrated with optimization techniques like ACO [3]. When model interpretability and feature importance are priorities, Random Forest offers valuable insights while maintaining strong predictive accuracy [6] [4]. For tasks requiring clear margin maximization between classes, particularly with structured data, Support Vector Machines continue to deliver robust performance [1] [4].

Ensemble methods that combine multiple algorithms typically outperform individual classifiers, with SuperLearner achieving 97% AUC in male infertility risk prediction compared to 96% for SVM alone [4]. Similarly, hybrid approaches that integrate optimization algorithms with base classifiers demonstrate enhanced accuracy and efficiency, as evidenced by the ANN-ACO framework achieving 99% classification accuracy [3]. These advanced approaches represent the cutting edge of ML applications in male infertility diagnostics.

Pathway to Clinical Implementation

The translation of ML models from research to clinical practice requires addressing several critical challenges. Model generalizability remains a significant concern, as algorithms trained on specific populations may perform poorly when applied to different demographic groups or clinical settings [9]. Multicenter validation trials using diverse datasets are essential to ensure broad applicability [1]. Additionally, data quality and standardization issues must be resolved, particularly for image-based sperm analysis where variations in staining protocols, microscopy techniques, and annotation standards can significantly impact model performance [5].

Ethical considerations, including data privacy, algorithmic bias, and transparency, require careful attention to ensure equitable and trustworthy implementation [1] [9]. The development of explainable AI systems that provide intuitive rationale for their predictions will be crucial for gaining clinician trust and facilitating adoption [3]. Future directions point toward multi-modal learning approaches that integrate diverse data types—including clinical records, imaging, and omics data—within unified frameworks to provide more comprehensive fertility assessments [9]. As these technologies mature, they hold the potential to transform male infertility from a subjectively diagnosed condition to one characterized by precise, personalized, and predictive diagnostics.

Diagram 2: Machine learning algorithm comparison for male infertility prediction, highlighting distinctive strengths and clinical applications of different approaches.

Male infertility is a significant public health problem, contributing to 20–30% of all infertility cases among couples. [1] The diagnosis and management of male infertility have traditionally relied on semen analysis, which can be subjective and variable. [1] Artificial Intelligence (AI) and Machine Learning (ML) are revolutionizing this field by providing powerful tools for predictive modeling, enhancing diagnostic accuracy, and personalizing treatment strategies. [8] [1] These technologies can analyze complex datasets—encompassing clinical, hormonal, genetic, and semen analysis parameters—to identify patterns that may elude conventional statistical methods. This article provides a focused comparison of three prominent ML algorithms—Support Vector Machine (SVM), Random Forest (RF), and Artificial Neural Network (ANN)—in the context of predicting male infertility, offering researchers a clear guide to their performance and application.

Algorithm Performance Comparison

Extensive research has been conducted to evaluate the efficacy of different ML models for male infertility prediction. The performance is typically measured using metrics such as accuracy (the proportion of correct predictions), AUC (Area Under the Curve, which measures the model's ability to distinguish between classes), sensitivity (the ability to correctly identify positive cases), and precision (the proportion of positive identifications that were actually correct).

The table below summarizes the performance of SVM, RF, and ANN algorithms as reported in recent studies and systematic reviews.

Table 1: Performance Metrics of Key Machine Learning Algorithms in Male Infertility Prediction

| Algorithm | Reported Accuracy (%) | Reported AUC | Key Strengths | Common Applications in Male Infertility |

|---|---|---|---|---|

| Support Vector Machine (SVM) | 89.9% (motility analysis) [1] | 96% [4] | High performance with limited data; effective in high-dimensional spaces [10] | Sperm motility & morphology classification [1], Predicting infertility risk from clinical & genetic data [4] |

| Random Forest (RF) | Up to 96% (general ML median ~88%) [7] [8] | 84.23% (IVF success prediction) [1] | High accuracy; robust to overfitting; provides feature importance rankings [11] [4] | Predicting IVF success [1], General infertility prediction from diverse clinical data [8] |

| Artificial Neural Network (ANN) | Median 84% (in male infertility prediction) [8] [2] | Up to 0.97 in selected studies [7] | Superior for complex, non-linear problems; excels with large datasets & image data [10] | Sperm concentration prediction [8], Oocyte and embryo selection via image analysis [7] |

A broad systematic review of ML models for male infertility reported a median prediction accuracy of 88% across 43 studies, with ANN models specifically achieving a median accuracy of 84%. [8] [2] Another review highlighted that models using methods like SVM and RF achieved an average AUC of 0.91, with some models reaching accuracy between 90–96%. [7] This demonstrates the overall robust performance of ML in this domain.

Detailed Experimental Protocols

To ensure the reproducibility of ML models in male infertility research, a clear understanding of the standard experimental workflow is essential. The following diagram outlines the typical pipeline, from data collection to model deployment.

Figure 1: A generalized workflow for developing machine learning models in male infertility research.

Data Sourcing and Pre-processing

The foundation of any robust ML model is a high-quality dataset. In male infertility research, data typically includes:

- Clinical and Lifestyle Parameters: Patient age, hormone levels (FSH, LH, testosterone), sperm concentration, motility, morphology, and lifestyle factors. [4]

- Genetic Data: Information on karyotypic abnormalities, Y-chromosome microdeletions, and specific gene mutations. [4]

- Image Data: Microscopic images of sperm for morphology and motility analysis. [1]

A critical pre-processing step involves handling missing data and normalizing numerical features. For example, one study used Z-score normalization to scale clinical data before model training. [4] Feature selection algorithms, such as Particle Swarm Optimization (PSO), can be employed to identify the most predictive features, thereby improving model efficiency and performance. [12]

Model Training and Validation

The core of the experimental protocol involves training and rigorously validating the models.

- Data Splitting: The dataset is typically split into a training set (e.g., 60-80%) for model building and a hold-out test set (e.g., 20-40%) for final performance evaluation. [4]

- Cross-Validation: k-fold cross-validation (e.g., 10-fold) is a standard technique to validate the model's performance and ensure its generalizability beyond the training data. [4] This process involves partitioning the training data into 'k' subsets, iteratively training the model on k-1 folds, and validating on the remaining fold.

- Performance Assessment: Models are evaluated on the test set using the metrics detailed in Table 1.

Building and validating ML models for male infertility requires a combination of data, software, and computational resources. The following table lists key components of the research toolkit.

Table 2: Essential Research Reagents and Resources for ML in Male Infertility

| Tool Category | Specific Tool / Technique | Function and Role in Research |

|---|---|---|

| Data Types | Clinical & Hormonal Data (FSH, LH, Testosterone) [4] | Provides foundational input features for predictive models based on standard patient workups. |

| Semen Analysis Parameters (Concentration, Motility) [4] [1] | Core metrics for traditional diagnosis; used as both input features and prediction targets. | |

| Genetic Variation Data [4] | Enables models to uncover genetic risk factors and their impact on infertility. | |

| Sperm Microscopy Images [1] | The raw data for computer vision models (e.g., CNN, SVM) to assess morphology and motility. | |

| Software & Libraries | R Programming Language (caret, e1071, rpart packages) [4] | A primary environment for statistical computing and implementing various ML algorithms. |

| Python (with scikit-learn, TensorFlow, PyTorch) | A widely used platform for machine learning and deep learning model development. | |

| Image Feature Extraction Tools (e.g., pyFeats) [12] | Extracts handcrafted features (GLCM, LBP, Wavelets) from images for traditional ML models. | |

| Methodologies | k-Fold Cross-Validation [4] | A fundamental validation technique to assess model generalizability and prevent overfitting. |

| Ensemble Methods (e.g., SuperLearner) [4] | Combines multiple base algorithms to achieve better predictive performance than any single model. | |

| Feature Selection Algorithms (PSO, GA) [12] | Identifies the most relevant predictive variables, simplifying models and improving performance. |

The integration of SVM, RF, and ANN into male infertility research marks a significant shift towards data-driven, predictive medicine. While each algorithm has distinct strengths—SVM's high AUC with limited data, RF's robust accuracy and feature ranking, and ANN's power for complex image-based tasks—the choice depends on the specific research question, data type, and volume. The consistent high performance of these models, with accuracies frequently exceeding 85-90%, underscores their potential to become indispensable tools for clinicians and researchers.

Future progress in the field hinges on several key factors: the development of large, multi-center datasets to enhance model generalizability, the creation of standardized protocols for data collection and model reporting, and a focused effort on building explainable AI that provides transparent insights into the models' predictions. By addressing these challenges, ML algorithms will fully realize their potential to revolutionize the diagnosis and treatment of male infertility.

Male infertility is a significant global health issue, contributing to approximately 50% of infertility cases among couples [3]. The diagnosis and treatment of male infertility are increasingly leveraging artificial intelligence (AI) and machine learning (ML) to enhance precision, objectivity, and predictive power. Traditional diagnostic methods, such as manual semen analysis, are often limited by subjectivity, inter-observer variability, and an inability to capture the complex interplay of biological, environmental, and lifestyle factors that contribute to infertility [13]. Machine learning algorithms address these limitations by automating the analysis of complex datasets, identifying subtle patterns, and providing robust predictive models for clinical decision-making.

Among the plethora of ML algorithms, Support Vector Machines (SVM), Random Forests (RF), and Artificial Neural Networks (ANN) have emerged as prominent tools in male infertility research. A 2024 systematic review investigating the use of ML for predicting male infertility found a median accuracy of 88% across various models, underscoring the potential of these computational approaches [2]. These algorithms are applied to diverse challenges, including sperm morphology classification, prediction of assisted reproductive technology (ART) success, and diagnosis of severe conditions like non-obstructive azoospermia (NOA). This guide provides a detailed, data-driven comparison of SVM, RF, and ANN, framing their performance within the specific context of male infertility prediction.

Performance Comparison of SVM, RF, and ANN

The performance of SVM, RF, and ANN can vary significantly depending on the specific clinical task, dataset characteristics, and the nature of the predictive features. The following table synthesizes quantitative performance data from recent studies to enable a direct comparison.

Table 1: Performance Metrics of Key Algorithms in Male Infertility Applications

| Algorithm | Application Context | Reported Performance | Dataset & Key Predictors | Citation |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Predicting IUI pregnancy outcome | AUC = 0.78 | 9,501 IUI cycles; Strong predictors: Pre-wash sperm concentration, ovarian stimulation protocol, cycle length, maternal age. Weak predictor: Paternal age. | [14] |

| SVM | Sperm head morphology classification | AUC-ROC = 88.59%, Precision > 90% | >1,400 human sperm cells from 8 donors; Classified sperm heads as "good" or "bad". | [15] |

| Random Forest (RF) | Predicting ICSI treatment success | AUC = 0.97 | 10,036 patient records with 46 clinical features known prior to treatment decision. | [6] |

| RF | General male infertility prediction | Median Accuracy = 88% (across ML models) | Systematic review of 43 publications and 40 different ML models. | [2] |

| Artificial Neural Network (ANN) | General male infertility prediction | Median Accuracy = 84% | Analysis of seven studies using ANN models. | [2] |

| ANN with ACO (Hybrid) | Diagnosing altered seminal quality | Accuracy = 99%, Sensitivity = 100% | 100 clinical cases from UCI repository; Lifestyle and environmental factors. | [3] |

| XGBoost (Gradient Boosting) | Predicting azoospermia | AUC = 0.987 | 2,334 subjects; Top predictors: Follicle-stimulating hormone, inhibin B, bitesticular volume. | [16] |

Comparative Analysis of Results

Predictive Power: The data indicates that all three algorithms can achieve high performance, but excelling in different areas. The Hybrid ANN model and RF achieved the highest reported accuracy (99%) and AUC (0.97), respectively, in specific, focused tasks [3] [6]. SVM demonstrated strong, reliable performance in classification tasks like morphology analysis and IUI outcome prediction [14] [15].

Context Dependence: Algorithm performance is highly context-dependent. For instance, ANN's median accuracy (84%) from the systematic review [2] is lower than the specific hybrid model's result (99%) [3], highlighting the impact of model architecture, optimization, and data quality. RF has shown exceptional performance in predicting the success of complex procedures like ICSI [6].

Feature Importance: The most influential predictors vary by clinical question. Hormonal profiles (FSH, inhibin B) and testicular volume are critical for diagnosing conditions like azoospermia [16] [17], while for IUI outcomes, sperm parameters and female factors are paramount [14]. This underscores the need for feature selection tailored to the predictive task.

Detailed Experimental Protocols

To ensure the reproducibility of the cited results, this section outlines the experimental methodologies and workflows common to high-quality studies in the field.

Common Workflow for Model Development

The following diagram illustrates a generalized experimental workflow for developing and validating predictive models in male infertility research.

Figure 1: Generic ML Workflow for Male Infertility Prediction

Protocol Breakdown and Key Methodologies

Data Sourcing and Ethical Approval: Research typically relies on retrospective clinical data from single or multiple tertiary centers. Data includes semen analysis parameters (volume, concentration, motility, morphology), serum hormone levels (FSH, LH, Testosterone, Inhibin B), patient demographics, lifestyle factors, and environmental data [14] [16] [17]. Studies must obtain institutional review board approval and, where applicable, written informed consent from participants [14].

Data Preprocessing: This critical step ensures data quality and consistency.

- Handling Missing Data: Cycles with excessive missing data are excluded. For records with one or two missing values, imputation using the median or mode is common [14].

- Normalization: Features with different scales are normalized to prevent bias. Studies often compare methods like Min-Max scaling, Standard Scaler, and PowerTransformer, with the best-performing one selected for the final model [3] [14].

- Class Imbalance Management: Techniques like specialized sampling or algorithmic tuning are used to address imbalanced datasets (e.g., few cases of azoospermia versus many normal samples) [3] [16].

Feature Engineering and Selection: This step identifies the most predictive variables.

- Analysis Techniques: Bivariate correlation analysis and Principal Component Analysis (PCA) are used to understand data structure and reduce dimensionality [16].

- Automated Feature Importance: Algorithms like XGBoost provide F-scores to rank the importance of features (e.g., FSH, environmental pollutants like PM10) in the prediction [16] [17].

Model Training and Validation: A robust validation strategy is essential to avoid overfitting.

- Data Splitting: The dataset is split into training, validation, and test sets.

- Cross-Validation: Models are typically trained and tuned using k-fold cross-validation (e.g., 4-fold or 5-fold) [14] [16].

- Hyperparameter Tuning: Randomized or grid searches are conducted to find the optimal algorithm parameters [16].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials, datasets, and software tools frequently employed in this field of research.

Table 2: Key Research Reagents and Resources for Male Infertility AI Research

| Item Name | Type | Function/Application | Example from Search Results |

|---|---|---|---|

| UCI Fertility Dataset | Public Dataset | A benchmark dataset containing lifestyle and clinical data from 100 men used to develop diagnostic models. | Used to evaluate a hybrid ANN-ACO model [3]. |

| SVIA Dataset | Public Image Dataset | A large, annotated dataset of sperm videos and images for object detection, segmentation, and classification tasks. | Used for training deep learning models on sperm morphology [15]. |

| WHO Laboratory Manual | Clinical Standard | Defines the standardized procedures for semen analysis, ensuring consistency and reliability of key input parameters. | Referenced as the gold standard for semen analysis in multiple studies [16] [17]. |

| Prediction One / AutoML Tables | Commercial AI Software | User-friendly platforms that automate the machine learning pipeline, enabling researchers without deep coding expertise to build models. | Used to create AI models predicting male infertility risk from serum hormones [17]. |

| Scikit-learn | Python Library | An open-source library providing efficient tools for data mining, analysis, and implementation of ML algorithms like SVM and RF. | Used for model implementation, normalization, and validation [14]. |

| Hormonal Assay Kits | Laboratory Reagent | Used to measure serum levels of FSH, LH, Testosterone, and Inhibin B, which are critical predictive features for many models. | Identified as top predictors in studies on azoospermia and general infertility [16] [17]. |

The comparative analysis of SVM, RF, and ANN reveals that no single algorithm is universally superior for all male infertility prediction tasks. The choice of algorithm must be guided by the specific clinical question, data type, and available sample size. SVM offers robust performance in classification tasks like morphology analysis. Random Forest excels in handling high-dimensional clinical data and has shown top-tier performance in predicting complex outcomes like ICSI success. Artificial Neural Networks, particularly when enhanced with optimization techniques or structured as deep learning models, demonstrate the potential for exceptionally high accuracy in diagnostic classification.

Future work should focus on external validation of these models in diverse populations, standardization of data collection and annotation [15], and the development of explainable AI (XAI) frameworks to build clinical trust [3]. The integration of these algorithms into clinical decision-support systems holds the promise of more personalized, accurate, and efficient diagnosis and treatment pathways for male infertility.

Current Limitations of Traditional Diagnostic Methods

Male infertility is a significant public health problem, affecting approximately 8-12% of couples worldwide, with male factors contributing to 20-30% of infertility cases [2] [13]. The condition represents a highly heterogeneous disorder influenced by genetic abnormalities, hormonal imbalances, lifestyle factors, and environmental exposures [2]. Traditional diagnostic methods for male infertility have primarily relied on semen analysis, including assessment of sperm concentration, motility, morphology, and volume [2]. This conventional approach suffers from significant limitations including inter-observer variability, subjectivity, and poor reproducibility [13]. Furthermore, these methods often lack the precision to detect subtle or multifactorial causes of infertility, such as sperm DNA fragmentation or early-stage testicular dysfunction, limiting their ability to guide personalized interventions [13].

The inherent limitations of traditional diagnostic approaches have created a pressing need for more advanced, objective, and predictive assessment tools. Artificial intelligence (AI) and machine learning (ML) models have emerged as transformative technologies in healthcare, offering potential solutions to these diagnostic challenges. This review examines the current limitations of traditional diagnostic methods for male infertility within the broader context of comparing the performance of Support Vector Machines (SVM), Random Forest (RF), and Artificial Neural Networks (ANN) in predicting male infertility outcomes.

Key Limitations of Traditional Diagnostic Approaches

Subjectivity and Variability

Traditional semen analysis, the cornerstone of male infertility diagnosis, relies heavily on manual assessment by embryologists and technicians, leading to significant inter-observer variability [13]. This subjectivity complicates the accurate evaluation of critical sperm parameters such as morphology, motility, and concentration, which are essential for appropriate treatment planning [13]. The reliance on human expertise introduces inconsistency in results, making it difficult to establish standardized diagnostic criteria across different clinical settings. This variability is particularly problematic given the heterogeneity of male infertility manifestations, where symptoms and parameters can vary widely in terms of severity and presentation.

Inability to Detect Complex Underlying Factors

Conventional diagnostic tools often lack the precision to identify subtle or multifactorial causes of infertility. Conditions such as sperm DNA fragmentation (SDF) or early-stage testicular dysfunction frequently go undetected with standard semen analysis [13]. Additionally, traditional methods struggle to integrate the complex interplay of clinical, environmental, and lifestyle factors that contribute to infertility, resulting in suboptimal accuracy for forecasting treatment outcomes [13]. Genetic abnormalities, including karyotypic abnormalities, CFTR gene mutations, and microdeletions on the Y chromosome, are well-known genetic causes in azoospermic or severely oligozoospermic men, yet these often require specialized testing beyond routine semen analysis [4].

Limited Predictive Capability

Predictive models based on traditional statistical methods demonstrate limited accuracy in forecasting outcomes of assisted reproductive technologies (ART) such as in vitro fertilization (IVF) and intracytoplasmic sperm injection (ICSI) [13]. These limitations contribute to delayed diagnoses, inappropriate treatment selections, and reduced success rates in ART procedures [13]. The inability to accurately predict treatment success based on standard diagnostic parameters represents a significant clinical challenge, often leading to emotional distress and financial burden for couples undergoing fertility treatments.

Machine Learning Approaches as Diagnostic Alternatives

Machine learning algorithms offer powerful alternatives to traditional diagnostic methods by automating analysis, reducing variability, and identifying complex patterns in multidimensional data. Among various ML models, Support Vector Machines (SVM), Random Forest (RF), and Artificial Neural Networks (ANN) have demonstrated particular promise in male infertility applications.

Performance Comparison of SVM, RF, and ANN

Table 1: Performance Metrics of ML Algorithms in Male Infertility Prediction

| Algorithm | Reported Accuracy | AUC | Key Strengths | Study Details |

|---|---|---|---|---|

| Support Vector Machine (SVM) | 89.9% (sperm motility) [13] | 96% [4] | Effective for linear and non-linear classification; Robust on small sample sets [4] | Sperm morphology analysis (AUC 88.59% on 1,400 sperm) [13] |

| Random Forest (RF) | - | 0.97 [6] | Handles high-dimensional data; Reduces overfitting through ensemble learning [4] | ICSI treatment prediction (10,036 patient records) [6] |

| Artificial Neural Networks (ANN) | Median 84% (male infertility prediction) [2] | 0.95 [6] | Captures complex non-linear relationships; Pattern recognition in imaging data [2] | Seven studies specifically using ANN for male infertility [2] |

| SuperLearner (Ensemble) | - | 97% [4] | Combines multiple algorithms; Optimizes weights via cross-validation [4] | Integrated DT, KNN, NB, SVM, RF [4] |

Table 2: Comparative Performance Across Multiple Studies

| Study Focus | Best Performing Algorithm | Performance Metrics | Data Characteristics |

|---|---|---|---|

| General Male Infertility Prediction [2] | Multiple ML Models | Median accuracy: 88% | 43 relevant publications reviewed |

| ICSI Treatment Success Prediction [6] | Random Forest | AUC: 0.97 | 10,036 patient records, 46 clinical features |

| Risk Factor Classification [4] | SuperLearner (SVM close second) | AUC: 97% (SL), 96% (SVM) | 329 infertile, 56 fertile patients |

| Sperm Morphology Analysis [13] | Support Vector Machine | AUC: 88.59% | 1,400 sperm samples |

| Sperm Motility Classification [13] | Support Vector Machine | Accuracy: 89.9% | 2,817 sperm samples |

Methodological Approaches in ML Research

Table 3: Experimental Protocols in Key Studies

| Study Component | SVM Protocols | RF Protocols | ANN Protocols |

|---|---|---|---|

| Data Preprocessing | Z-score normalization [4] | Handling missing values [4] | Feature scaling and normalization [2] |

| Feature Selection | Sperm concentration, FSH, LH, genetic factors [4] | Bootstrapped sampling with random feature subsets [4] | Automated feature extraction from complex data [2] |

| Model Validation | 10-fold cross-validation [4] | Out-of-bag error estimation [4] | Train-test split validation (70-30%, 80-20%) [4] |

| Performance Evaluation | AUC, accuracy, sensitivity, specificity [13] [4] | AUC, accuracy, variable importance [6] | Accuracy, ROC curves, precision-recall [2] |

Analytical Workflow for ML-Based Infertility Assessment

The following diagram illustrates the typical analytical workflow for machine learning approaches in male infertility diagnosis:

Essential Research Reagents and Computational Tools

Table 4: Research Reagent Solutions for Male Infertility Studies

| Reagent/Resource | Function/Application | Example Use in Studies |

|---|---|---|

| Semen Analysis Reagents | Standardized sperm assessment | Evaluation of concentration, motility, morphology [2] |

| Hormonal Assay Kits | FSH, LH, testosterone measurement | Identification of endocrine imbalances [4] |

| Genetic Screening Panels | Detection of Y chromosome microdeletions, karyotypic abnormalities | Assessment of genetic factors in infertility [4] |

| Computer-Assisted Semen Analysis (CASA) | Automated sperm parameter quantification | Objective measurement of sperm characteristics [2] [13] |

| R Statistical Software | Data analysis and ML implementation | Classification using caret, SL, e1071 packages [4] |

| Python ML Libraries (scikit-learn, TensorFlow) | Development of custom ML models | Deep learning implementation for complex pattern recognition [2] |

| Time-Lapse Imaging Systems | Continuous monitoring of embryo development | AI-assisted embryo selection in IVF [7] |

Traditional diagnostic methods for male infertility face significant limitations including subjectivity, inability to detect complex underlying factors, and limited predictive capability. Machine learning approaches, particularly SVM, RF, and ANN, offer promising alternatives with demonstrated superior performance in various infertility prediction tasks. The integration of these computational methods with traditional diagnostic parameters can enhance objectivity, improve predictive accuracy, and ultimately lead to more personalized treatment strategies for male infertility. Future research should focus on multicenter validation trials, standardized implementation protocols, and the development of explainable AI systems to facilitate clinical adoption and improve patient outcomes in reproductive medicine.

How SVM, RF, and ANN are Applied in Male Infertility Prediction: Algorithms in Action

Support Vector Machine (SVM) represents a powerful supervised machine learning algorithm widely employed for classification and regression tasks in biomedical research. Its fundamental principle involves identifying the optimal hyperplane that maximizes the margin between different classes in a high-dimensional feature space. This characteristic makes SVM particularly effective for handling the complex, multidimensional data prevalent in biological and medical diagnostics. In sperm analysis, SVM algorithms process intricate morphological features extracted from sperm images, enabling automated classification with reduced subjectivity compared to manual assessments [5] [15].

The application of SVM in male infertility research addresses critical challenges in traditional semen analysis, which often suffers from inter-observer variability and limited reproducibility. By transforming input features using kernel functions, SVM can efficiently handle non-linearly separable data, such as the subtle morphological variations distinguishing normal from abnormal sperm. This capability is essential for analyzing sperm head shape, acrosome integrity, and vacuole presence—key parameters in clinical fertility assessment [13] [15]. The robustness of SVM against overfitting, especially in scenarios with limited training samples, further establishes its utility in reproductive medicine where annotated datasets remain challenging to compile.

Comparative Performance of SVM, RF, and ANN in Male Infertility Prediction

Quantitative Performance Metrics Across Algorithms

Extensive research has evaluated the predictive accuracy of SVM alongside other machine learning algorithms, including Random Forest (RF) and Artificial Neural Networks (ANN), across various sperm analysis tasks. These comparative studies provide critical insights for researchers selecting appropriate analytical tools for male infertility prediction.

Table 1: Performance Comparison of Machine Learning Algorithms in Sperm Morphology Classification

| Algorithm | Application Context | Performance Metrics | Reference |

|---|---|---|---|

| SVM | Sperm head morphology classification (1,400 sperm cells) | AUC: 88.59%, Precision: >90% | [15] |

| RF | Clinical pregnancy prediction (IVF/ICSI) | Accuracy: 72%, AUC: 0.80 | [18] |

| ANN | Sperm concentration prediction | Accuracy: 90%, Sensitivity: 95.45%, Specificity: 50% | [19] |

| Bayesian Density Estimation | Sperm head classification | Accuracy: 90% | [15] |

| Ensemble Models (Bagging) | Clinical pregnancy prediction | Accuracy: 74%, AUC: 0.79 | [18] |

Table 2: Overall Predictive Performance for Male Infertility Across Study Types

| Algorithm Type | Reported Median Accuracy | Key Strengths | Common Applications |

|---|---|---|---|

| Conventional ML (including SVM) | 88% (median across studies) | Robust with limited samples, minimal overfitting | Sperm morphology classification, motility analysis |

| ANN | 84% (median across studies) | Automatic feature extraction, handles complex patterns | Sperm concentration prediction, IVF outcome forecasting |

| RF | Up to 99% in optimized frameworks | Handles non-linear relationships, feature importance ranking | Clinical pregnancy prediction, feature selection |

The performance variance among algorithms reflects their distinctive operational characteristics. SVM demonstrates particular proficiency in sperm morphology classification, achieving 88.59% AUC and exceeding 90% precision in distinguishing normal from abnormal sperm heads [15]. This precision is clinically significant as morphological assessment remains a cornerstone of male fertility evaluation. In contrast, RF excels in clinical outcome prediction, achieving 72% accuracy and 0.80 AUC for forecasting clinical pregnancy success following IVF/ICSI procedures [18]. ANN models demonstrate strengths in concentration prediction with 90% accuracy, though with variable specificity (50%) indicating potential challenges in consistently identifying abnormal cases [19].

A systematic review of 43 relevant publications encompassing 40 different ML models reported a median accuracy of 88% in predicting male infertility using conventional machine learning models, with ANN models specifically achieving a median accuracy of 84% [2]. This comprehensive analysis confirms that while SVM delivers competitive performance for specific morphological classification tasks, ensemble methods like RF may offer advantages for integrating diverse clinical parameters in outcome prediction.

Relative Strengths and Limitations in Clinical Applications

Each algorithm class presents distinctive advantages and limitations within the male infertility domain. SVM's structural risk minimization principle enhances generalization capability with limited samples, a valuable trait when working with rare infertility conditions or constrained datasets. Furthermore, SVM's effectiveness in high-dimensional spaces enables robust analysis of the multiple morphological features essential for comprehensive sperm assessment [5] [15].

RF ensembles multiple decision trees to reduce overfitting and automatically rank feature importance, providing insights into which parameters (e.g., morphology, count, or motility) most significantly impact clinical outcomes. Studies utilizing SHapley Additive exPlanations (SHAP) value analysis with RF have revealed that sperm parameters differentially influence pregnancy success across treatment types, with morphology demonstrating consistent importance in both IUI and IVF/ICSI cycles [18].

ANN architectures, particularly deep learning networks, excel at automated feature extraction from raw image data, reducing reliance on manual annotation and potentially identifying subtle patterns beyond human perception. However, their "black box" nature complicates clinical interpretation, and they typically require larger training datasets than SVM to optimize performance and prevent overfitting [2] [19].

Experimental Protocols for SVM Implementation in Sperm Analysis

Standardized Workflow for Sperm Morphology Classification

Implementing SVM for sperm morphology analysis follows a structured pipeline encompassing image acquisition, preprocessing, feature extraction, model training, and validation. Adherence to standardized protocols ensures reproducible and clinically relevant outcomes.

Table 3: Essential Research Reagents and Computational Tools for SVM-Based Sperm Analysis

| Resource Category | Specific Examples | Function/Application | Key Characteristics |

|---|---|---|---|

| Public Datasets | HSMA-DS, MHSMA, VISEM-Tracking, SVIA | Algorithm training/validation | Annotated sperm images with morphological classifications |

| Staining Reagents | Diff-Quik, Papanicolaou stains | Sample preparation for morphology | Enhance contrast for morphological feature identification |

| Computational Frameworks | Scikit-learn, Python, R, MATLAB | Algorithm implementation | Libraries with optimized SVM implementations and kernels |

| Imaging Systems | Computer-assisted semen analysis (CASA) microscopy | Standardized image acquisition | Consistent magnification, resolution, and staining protocols |

The experimental workflow initiates with standardized sample preparation and image acquisition using established staining protocols (e.g., Diff-Quik or Papanicolaou stains) and consistent microscopy conditions [5] [15]. The acquired images undergo preprocessing to enhance quality, including noise reduction, contrast adjustment, and segmentation to isolate individual sperm cells from seminal debris. Subsequently, feature extraction focuses on morphometric parameters such as head area, perimeter, ellipticity, acrosome ratio, and vacuole presence, creating the multidimensional feature vectors for SVM training [15].

The SVM model training phase employs annotated datasets, such as the MHSMA dataset containing 1,540 sperm images or the SVIA dataset with 125,000 annotated instances [5] [15]. Critical implementation decisions include kernel selection (linear, polynomial, or radial basis function), regularization parameter tuning, and cross-validation strategy. Model performance validation typically follows k-fold cross-validation protocols against independent test sets, with metrics including accuracy, precision, recall, AUC-ROC, and AUC-PR providing comprehensive performance assessment [15].

Methodological Considerations for Optimal Performance

Several methodological considerations significantly impact SVM performance in sperm analysis. Kernel selection should align with data characteristics, with linear kernels suitable for linearly separable morphological features and radial basis function kernels accommodating more complex decision boundaries. The regularization parameter (C) requires careful optimization to balance margin maximization with classification error, typically through grid search approaches with cross-validation [15].

Addressing class imbalance represents another critical consideration, as abnormal sperm morphologies typically occur at lower frequencies in clinical samples. Techniques such as synthetic minority oversampling (SMOTE), class weighting, or stratified sampling ensure balanced model training and prevent bias toward majority classes [20]. Furthermore, feature selection preceding SVM implementation enhances model interpretability and computational efficiency by eliminating redundant morphometric parameters. Studies employing hybrid frameworks integrating nature-inspired optimization algorithms like Ant Colony Optimization (ACO) with SVM have demonstrated improved feature selection and classification performance in biomedical applications [20].

Validation rigor remains paramount, with recommended practices including external validation on completely independent datasets, comparison against manual assessments by multiple experienced embryologists, and clinical correlation with fertilization success or pregnancy outcomes [5] [18]. Such comprehensive validation establishes both the technical proficiency and clinical utility of SVM implementations in reproductive medicine.

Comparative Strengths and Application-Specific Recommendations

Algorithm Selection Guidelines for Research Objectives

The optimal algorithm selection for male infertility prediction depends significantly on specific research objectives, data characteristics, and clinical application requirements. SVM demonstrates distinct advantages for specific sperm analysis applications while showing limitations in others.

Table 4: Application-Specific Algorithm Recommendations for Male Infertility Research

| Research Focus | Recommended Algorithm | Rationale | Expected Performance Range |

|---|---|---|---|

| Sperm head morphology classification | SVM | High precision with limited samples, effective with morphological features | AUC: 88-91%, Precision: >90% |

| Clinical pregnancy prediction | RF | Handles mixed data types, provides feature importance rankings | Accuracy: 72-80%, AUC: 0.75-0.82 |

| Sperm concentration/count estimation | ANN | Effective for continuous value prediction from complex inputs | Accuracy: 86-93%, R²: 0.85-0.98 |

| Motility analysis and categorization | SVM or CNN | Effective for motion pattern classification from video data | Accuracy: 89-92% |

| Integrated fertility assessment (multiple parameters) | RF or Hybrid ACO-MLFFN | Robust with clinical, lifestyle, and environmental factors | Accuracy up to 99% in optimized frameworks |

SVM presents compelling advantages for image-based classification tasks, particularly sperm morphology analysis, where it achieves superior precision (>90%) in distinguishing normal and abnormal sperm heads [15]. Its resilience with limited training samples makes it particularly valuable for analyzing rare morphological abnormalities or when working with constrained datasets. Furthermore, SVM's clear decision boundaries facilitate interpretability compared to the more complex "black box" nature of deep neural networks.

For clinical outcome prediction incorporating diverse data types—including semen parameters, patient demographics, lifestyle factors, and treatment protocols—ensemble methods like RF often outperform SVM, achieving accuracy up to 72% and AUC of 0.80 for predicting clinical pregnancy following IVF/ICSI [18]. RF's inherent feature importance analysis additionally provides valuable insights into parameter influence, revealing through SHAP analysis that sperm morphology consistently impacts pregnancy success across treatment modalities while motility effects vary between IUI and IVF/ICSI cycles [18].

Emerging hybrid approaches integrating bio-inspired optimization algorithms with machine learning models demonstrate remarkable performance, with one study reporting 99% classification accuracy for male fertility status using a multilayer feedforward neural network optimized with ant colony optimization [20]. While such approaches require further validation across diverse populations, they represent promising directions for enhancing predictive accuracy in male infertility assessment.

Future Directions and Implementation Challenges

Despite promising results, several challenges persist in the widespread clinical implementation of SVM and other machine learning algorithms for sperm analysis. Dataset limitations represent a significant constraint, with issues including limited sample sizes, insufficient morphological categories, and variability in staining and imaging protocols across institutions [5] [15]. Recent initiatives like the SVIA dataset, containing 125,000 annotated instances and 26,000 segmentation masks, represent important steps toward addressing these limitations [5].

Model interpretability remains another critical consideration for clinical adoption. While SVM provides clearer decision boundaries than deep learning approaches, explaining specific classification decisions to clinicians and patients requires additional techniques such as local interpretable model-agnostic explanations (LIME) or SHAP analysis [18]. Integrating these explainable AI approaches with SVM implementations will be essential for building clinical trust and facilitating integration into diagnostic workflows.

The future trajectory of SVM in sperm analysis will likely involve increased integration with emerging technologies, including multi-modal learning approaches combining image analysis with clinical parameters, genetic markers, and proteomic data [13] [9]. Furthermore, federated learning frameworks enabling model training across multiple institutions without data sharing offer promising solutions to dataset limitations while maintaining patient privacy [9]. As these technological advances mature, SVM and complementary machine learning algorithms will play increasingly vital roles in objective, standardized, and predictive male infertility assessment.

Male infertility constitutes a significant clinical challenge, contributing to 20–30% of all infertility cases among couples [1]. The accurate prediction of male infertility and the success of subsequent treatments, such as In Vitro Fertilization (IVF) and Intracytoplasmic Sperm Injection (ICSI), is complicated by the multifactorial nature of the condition, where biological, physiological, lifestyle, environmental, and socio-demographic factors all play interconnected roles [1]. Traditional statistical models often struggle to capture the complex, non-linear relationships between these diverse clinical features and fertility outcomes. In this context, machine learning (ML) offers powerful alternatives by learning intricate patterns directly from data without relying on strict pre-specified assumptions [21].

Among the various ML algorithms being explored, three in particular have demonstrated significant promise: Support Vector Machines (SVM), Artificial Neural Networks (ANN), and Random Forest (RF). A systematic review of ML applications in male infertility found these models achieve a median accuracy of 88%, highlighting their potential for clinical decision support [2]. Each algorithm brings distinct strengths to the challenge. This guide provides an objective comparison of their performance, with particular focus on RF's ensemble approach for integrating the diverse clinical, hormonal, and genetic features characteristic of male infertility datasets.

Algorithm Comparison: Performance Evaluation in Male Infertility Research

Direct comparative studies reveal how SVM, RF, and ANN perform on identical male infertility prediction tasks, measured by robust metrics like Area Under the Curve (AUC).

Table 1: Comparative Performance of ML Algorithms in Male Infertility Prediction

| Algorithm | Reported AUC | Key Strengths | Dataset Context |

|---|---|---|---|

| Random Forest (RF) | 0.97 [6], 0.84 [1] | High discriminative power, handles mixed data types, provides feature importance | Prediction of ICSI success (n=10,036 patients with 46 clinical features) [6] |

| Artificial Neural Networks (ANN) | 0.95 [6], 0.84 (Median Accuracy) [2] | Models complex non-linear relationships | Prediction of ICSI success [6]; General male infertility prediction [2] |

| Support Vector Machines (SVM) | 0.96 [4], 0.89 [1] | Effective in high-dimensional spaces | Diagnosis of male infertility from genetic and clinical factors (n=385 patients) [4]; Sperm motility analysis [1] |

RF demonstrated superior discriminative performance in a large-scale study predicting ICSI success [6]. SVM achieved perfect classification in a smaller, specific dataset for infertility risk [4]. All three algorithms consistently achieve high performance, but RF's strength in integrating diverse data types gives it a distinct advantage for heterogeneous clinical data.

Experimental Protocols: Methodologies for Model Development and Evaluation

To ensure the reliability and validity of the performance metrics cited in comparisons, researchers adhere to rigorous experimental protocols encompassing data collection, preprocessing, model training, and evaluation.

Data Sourcing and Preprocessing

The foundation of any robust ML model is a high-quality dataset. In male infertility research, data typically aggregates from patient medical records and includes a combination of semen analysis parameters (volume, concentration, motility), serum hormone levels (FSH, LH, testosterone, estradiol), and genetic factors [17] [4]. For example, one study utilized data from 3,662 patients, incorporating age, LH, FSH, prolactin, testosterone, E2 (estradiol), and the testosterone-to-estradiol ratio (T/E2) as key predictive features [17]. Another study based on 385 patients included sperm concentration, FSH, LH, and specific genetic variations [4].

Prior to model training, a crucial pre-processing step involves data normalization. This is often done using techniques like Z-score normalization to scale numerical data, ensuring that variables with larger inherent scales (e.g., hormone levels) do not disproportionately influence the model compared to other features [4]. Categorical data, such as specific genetic markers, are typically encoded into a numerical format suitable for algorithmic processing.

Model Training and Validation Framework

A standard methodology for developing and comparing models involves splitting the dataset into distinct subsets.

- Training Set: Typically 60-80% of the data is used to train the ML algorithms.

- Testing Set: The remaining 20-40% is held back to provide an unbiased evaluation of the final model's performance [4].

To fine-tune model parameters and prevent overfitting, k-fold cross-validation (e.g., 10-fold) is routinely employed on the training set [4]. This technique ensures that the model's performance is robust and not dependent on a particular subset of the training data. The entire workflow, from data preparation to model validation, can be visualized as a sequential process.

Diagram 1: Experimental workflow for model development and evaluation

The Random Forest Advantage: Integrating Diverse Data Structures

Random Forest's ensemble mechanism is particularly well-suited for the complex and multi-faceted nature of male infertility data. Its performance advantage stems from several key factors that align perfectly with the challenges of the domain.

Handling Mixed Data Types and High-Dimensionality

Clinical datasets for infertility are inherently heterogeneous, containing a mix of continuous numerical values (e.g., hormone levels, age), binary indicators (e.g., presence of genetic markers), and categorical strings (e.g., patient categories) [6]. RF natively handles these mixed data types without requiring extensive transformation. Furthermore, it robustly manages datasets with a large number of potential predictors (high-dimensionality), a common scenario when integrating numerous clinical, hormonal, and genetic factors [21].

Robustness and Feature Importance

By building multiple decision trees on bootstrapped subsets of the data and aggregating their results, RF effectively averages out noise and reduces the risk of overfitting, a common pitfall with complex datasets [21]. A critical feature for clinical research is the model's ability to rank predictors by their importance. For instance, in a study predicting infertility risk from serum hormones alone, RF and other models consistently identified FSH as the most critical predictor, followed by the T/E2 ratio and LH [17]. This provides valuable biological insight, helping clinicians and researchers understand which factors drive the model's predictions.

Comparison of Algorithmic Approaches

The fundamental differences in how SVM, ANN, and RF process information and generate predictions explain their relative strengths and weaknesses in practical applications.

Table 2: Core Mechanisms of SVM, RF, and ANN

| Aspect | Support Vector Machines (SVM) | Random Forest (RF) | Artificial Neural Networks (ANN) |

|---|---|---|---|

| Core Mechanism | Finds optimal hyperplane to separate classes in high-dimensional space [4]. | Ensemble of decorrelated decision trees; prediction by majority vote [4] [21]. | Interconnected layers of nodes (like neurons) that learn hierarchical feature representations [2]. |

| Key Strength | Effective in high-dimensional spaces; strong theoretical foundations. | Handles mixed data, robust to noise, provides native feature importance. | High capacity to model complex, non-linear relationships. |

| Consideration | Performance can be sensitive to choice of kernel and tuning parameters [4]. | Can be computationally intensive with many trees; less interpretable than single trees. | Often acts as a "black box"; requires large datasets for optimal performance [2]. |

Diagram 2: Algorithmic structures of SVM, RF, and ANN

Research Reagent Solutions: Essential Tools for Implementation

Translating these algorithmic comparisons into practical research requires a suite of software tools and libraries. The table below details key resources that facilitate the implementation and testing of SVM, RF, and ANN models for male infertility prediction.

Table 3: Key Software Tools and Libraries for ML Research in Male Infertility

| Tool / Library | Primary Function | Application Example |

|---|---|---|

| R Programming Language | Open-source statistical computing environment. | Primary software for data analysis in several studies [4] [21]. |

caret Package (R) |

Streamlines the process for creating predictive models. | Used for classification, regression training, and tuning model parameters [4]. |

randomForest or rpart (R) |

Implements Breiman's Random Forest algorithm and classification trees. | Used for building the RF model and determining variable importance [4] [21]. |

e1071 Package (R) |

Provides functions for statistics and probability theory, including SVM. | Used for implementing Support Vector Machine algorithms [4]. |

| SuperLearner Package (R) | Enables ensemble modeling by combining multiple ML algorithms. | Used to create a super learner algorithm that outperformed single models [4]. |

| Python Scikit-Learn | Comprehensive ML library for Python. | Offers implementations of SVM, RF, and ANN, commonly used for similar biomedical applications. |

| AutoML Platforms (e.g., Prediction One, AutoML Tables) | Automated machine learning for users with less coding expertise. | Used to generate and evaluate AI prediction models for male infertility risk [17]. |

Male infertility affects millions of couples worldwide, contributing to approximately 30% of all infertility cases [2]. The complex, multifactorial nature of male infertility—encompassing genetic, hormonal, lifestyle, and environmental factors—presents significant challenges for accurate diagnosis and prediction using traditional statistical methods [3]. Artificial intelligence (AI) approaches, particularly machine learning (ML), have emerged as transformative tools capable of integrating diverse data types to enhance diagnostic precision and treatment outcomes in reproductive medicine [1] [22].

Among various ML algorithms, Artificial Neural Networks (ANN), Support Vector Machines (SVM), and Random Forest (RF) have demonstrated particular promise in male infertility applications. These algorithms differ substantially in their architectural approaches and learning mechanisms, resulting in varied performance characteristics across different prediction tasks [2] [23]. This comparison guide provides an objective evaluation of these three prominent algorithms, presenting quantitative performance data, detailed experimental methodologies, and practical implementation considerations for researchers and clinicians in reproductive medicine.

Performance Comparison in Male Infertility Prediction

Quantitative Performance Metrics

Extensive research has evaluated the performance of ANN, SVM, and RF algorithms across various male infertility prediction tasks. The table below summarizes key performance metrics reported in recent studies:

Table 1: Performance comparison of ANN, SVM, and RF in male infertility prediction

| Algorithm | Reported Accuracy Range | AUC Range | Key Strengths | Optimal Use Cases |

|---|---|---|---|---|

| ANN | 84% (median) [2] [8] to 99% [3] | Up to 99.98% [23] | Complex pattern recognition; Handles high-dimensional data [3] | Large, complex datasets with non-linear relationships [3] |

| SVM | 86-96% [1] [4] [23] | Up to 96% [4] | Effective with limited samples; Robust to outliers [4] | Small to medium-sized datasets with clear margin separation [4] |

| RF | 88-90.47% [2] [23] | Up to 84.23% [1] | Handles missing data; Feature importance ranking [23] [24] | Datasets with multiple feature types; Requires interpretability [23] |

Table 2: Specialized application performance across sperm parameters

| Algorithm | Sperm Morphology | Sperm Motility | IVF Success Prediction | DNA Fragmentation |

|---|---|---|---|---|

| ANN | High accuracy in structural classification [3] [22] | 82% accuracy [23] | AUC up to 84.23% [1] | Emerging applications [1] |

| SVM | AUC 88.59% (1,400 sperm) [1] | 89.9% accuracy (2,817 sperm) [1] | Consistent high performance [4] | Pattern recognition in DNA integrity [22] |

| RF | Moderate morphology assessment | Feature importance for motility factors [24] | AUC 84.23% (486 patients) [1] | Association with lifestyle factors [23] |

Clinical Implementation Considerations

Beyond raw accuracy, algorithm selection must consider clinical implementation factors. ANN models, particularly deep learning architectures, excel in image-based sperm analysis tasks such as morphology classification and motility assessment, achieving performance comparable to expert embryologists [22]. However, this performance often requires substantial computational resources and large training datasets [3].

SVM algorithms demonstrate particular strength in predicting surgical sperm retrieval success in non-obstructive azoospermia (NOA), with one study reporting 91% sensitivity using gradient boosting trees [1]. Their resistance to overfitting with limited samples makes them valuable for specific clinical scenarios with restricted data availability [4].

RF classifiers offer built-in feature importance analysis, identifying key predictors such as sperm concentration, follicular-stimulating hormone (FSH), and lifestyle factors including sedentary behavior [4] [24]. This interpretability advantage provides clinical value beyond pure prediction accuracy [23].

Experimental Protocols and Methodologies

Data Acquisition and Preprocessing

Standardized experimental protocols are essential for valid algorithm comparisons. The following methodologies represent current best practices in male infertility prediction research:

Table 3: Essential research reagents and computational resources

| Category | Item | Specification/Function | Example Sources |

|---|---|---|---|

| Data Sources | Clinical data | Patient demographics, hormone levels, genetic factors [4] | University hospital records [4] |

| Lifestyle data | Smoking, alcohol consumption, sitting hours [24] | Structured questionnaires [24] | |

| Semen analysis | Concentration, motility, morphology [22] | CASA systems [22] | |

| Computational Tools | Programming languages | R, Python for algorithm implementation [4] [24] | CRAN, PyPI |

| ML libraries | caret, randomForest, e1071 (R) [4] | Comprehensive R Archive Network | |