Contrastive Meta-Learning for Sperm Head Morphology: A Generalized AI Framework for Male Fertility Assessment

This article explores contrastive meta-learning with auxiliary tasks, a novel deep learning paradigm for generalized classification of human sperm head morphology.

Contrastive Meta-Learning for Sperm Head Morphology: A Generalized AI Framework for Male Fertility Assessment

Abstract

This article explores contrastive meta-learning with auxiliary tasks, a novel deep learning paradigm for generalized classification of human sperm head morphology. Aimed at researchers and drug development professionals, it addresses critical challenges in male infertility diagnostics, including dataset limitations and model generalizability. The content systematically covers foundational principles, methodological implementation using contrastive meta-learning frameworks, optimization strategies for clinical deployment, and rigorous validation against current state-of-the-art approaches. By integrating the latest research, this comprehensive review demonstrates how this advanced AI technique achieves superior performance in sperm morphology analysis while providing clinically interpretable results that can enhance reproductive medicine and drug development pipelines.

Understanding Sperm Morphology Analysis and the Need for Advanced AI

The Clinical Significance of Sperm Head Morphology in Male Infertility

Sperm morphology, particularly the architecture of the sperm head, serves as a critical biomarker for male fertility potential. The sperm head houses the paternal genetic material and is equipped with enzymes essential for oocyte penetration, making its structural integrity paramount for successful fertilization and embryonic development [1] [2]. Assessment of sperm head morphology is a cornerstone of male infertility diagnostics, providing invaluable insights into testicular and epididymal function [3]. However, traditional manual analysis is plagued by subjectivity, poor reproducibility, and significant inter-observer variability, with reported disagreement rates among experts as high as 40% [4] [5]. This application note details standardized protocols for sperm head morphology evaluation and explores the integration of contrastive meta-learning frameworks to overcome these limitations, offering researchers and drug development professionals a pathway to more precise, automated, and clinically predictive analysis.

Quantitative Reference Data for Sperm Head Morphology

Establishing robust reference values is fundamental for distinguishing normal from pathological sperm heads. The following tables consolidate quantitative morphometric parameters from a fertile male population, providing a baseline for clinical and research applications.

Table 1: Core Sperm Head Morphometric Parameters from a Fertile Population (N=21) [6]

| Parameter | Description | Reference Value (Mean) |

|---|---|---|

| Head Length (HL) | Distance between the two furthest points along the long axis | 4.0 - 5.5 µm |

| Head Width (HW) | Perpendicular distance between the two furthest points on the short axis | 2.5 - 3.5 µm |

| Head Area (HA) | Area calculated based on the head contour | Not Specified |

| Head Perimeter (HP) | Length of the boundary surrounding the head | Not Specified |

| Ellipticity (L/W) | Ratio of head length to width | Not Specified |

| Acrosome Area (AcA) | Area of the cap-like structure on the sperm head | Not Specified |

| Acrosome Ratio (AcR) | Ratio of acrosome area to head area | 40 - 70% |

Table 2: Clinical Classification and Implications of Sperm Head Morphology

| Category | Morphological Definition | Clinical Significance & Reference Values |

|---|---|---|

| Normal Morphology | Smooth, oval head; well-defined acrosome covering 40-70% of head; no neck/midpiece/tail defects; no vacuoles >20% head area [1] [2]. | WHO 5th edition lower reference limit: ≥4% normal forms [1]. |

| Teratozoospermia | Percentage of morphologically normal sperm is below the reference value. | Associated with poor fertilization in IUI/IVF; indicates need for ICSI [1]. |

| Monomorphic Defects | All sperm exhibit the same specific abnormality (e.g., globozoospermia, macrocephalic sperm) [7]. | Requires specific detection and interpretative commentary; strong genetic basis [7]. |

| Abnormal Head Forms | Includes amorphous, tapered, pyriform, small, and vacuolated heads [1] [3]. | High percentages are associated with decreased fertilization rates in assisted reproduction [1]. |

Experimental Protocols for Sperm Morphology Analysis

Standardized Staining and Manual Assessment Protocol

This protocol, based on WHO guidelines, ensures consistent sample preparation and staining for accurate morphology evaluation [1].

Research Reagent Solutions:

- Fixative Solution: 95% Ethanol (v/v) for sample preservation.

- Papanicolaou Staining Reagents: Harris's Hematoxylin (nuclear stain), G-6 Orange, and EA-50 Green (cytoplasmic stains) for structural differentiation.

- Mounting Medium: Cytoseal or equivalent xylene-based medium for slide preservation.

Procedure:

- Sample Preparation: Collect semen in a sterile container and allow it to liquefy at 37°C for 30 minutes. For viscous samples, add proteolytic enzymes (e.g., α-chymotrypsin) and incubate for an additional 10 minutes at 37°C [1].

- Smear Preparation: Vortex the liquefied sample for 10 seconds. Place a 10 µL aliquot on a clean frosted slide. Use a second slide at a 45° angle to spread the drop, creating a thin, even smear. Air-dry the slide completely [1].

- Papanicolaou Staining:

- Fix the smear in 95% ethanol for at least 15 minutes.

- Rehydrate through graded ethanols: 80% (30 sec), 50% (30 sec), and purified water (30 sec).

- Stain nuclei in Harris's Hematoxylin for 4 minutes. Rinse in water and differentiate in acidic ethanol (4-8 dips). Rinse again and immerse in Scott's solution, followed by a 5-minute wash in cold tap water.

- Dehydrate in 50%, 80%, and 95% ethanol.

- Counterstain cytoplasm: Immerse in G-6 Orange for 1 minute, then in EA-50 Green for 1 minute after dehydration in 95% ethanol.

- Complete final dehydration in 95% and 100% ethanol. Clear in xylene and mount with a coverslip using Cytoseal [6].

- Microscopic Evaluation: Examine the slide under a bright-field microscope with a 100x oil immersion objective. Use an ocular micrometer to measure sperm head dimensions precisely. Score a minimum of 200 spermatozoa, classifying each as normal or abnormal based on strict Kruger criteria. All borderline forms should be considered abnormal [1].

Protocol for Automated Analysis Using Deep Learning

This protocol leverages deep learning for high-throughput, objective sperm morphology classification, suitable for large-scale studies and drug efficacy testing.

Research Reagent Solutions:

- Annotation Software: Roboflow for labeling sperm images for model training.

- Deep Learning Framework: YOLOv7 or ResNet50-CBAM for object detection and classification.

- Staining Reagents: Diff-Quik rapid stain or Papanicolaou stain, depending on imaging requirements.

Procedure:

- Dataset Curation & Preprocessing: Collect a large set of sperm images (e.g., 1,000+ images) using a standardized microscopy setup. Annotate images using software like Roboflow, labeling sperm structures (head, midpiece, tail) and classifying abnormalities based on expert consensus to establish "ground truth" [8] [5].

- Model Selection & Training:

- Option 1 (YOLOv7): Ideal for real-time detection and classification of multiple sperm in a single image. Train the model on annotated datasets to detect and classify sperm into categories like normal, head defect, and vacuolated [8].

- Option 2 (ResNet50-CBAM): A Convolutional Neural Network (CNN) enhanced with a Convolutional Block Attention Module (CBAM). This architecture is particularly effective for focusing on subtle morphological features in the sperm head. Train the model using a hybrid approach, extracting deep features and classifying them with a Support Vector Machine (SVM) for optimal accuracy [4].

- Model Validation & Deployment: Rigorously validate the model's performance on a separate, unseen dataset. Key metrics include accuracy, precision, recall, and mean Average Precision (mAP). Deploy the validated model to automatically analyze new semen samples, generating reports on the percentage and types of morphological defects [8] [4].

Integration of Contrastive Meta-Learning Frameworks

Contrastive meta-learning represents a paradigm shift for sperm head morphology research, enabling models to learn robust feature representations from limited data by leveraging prior knowledge from related tasks.

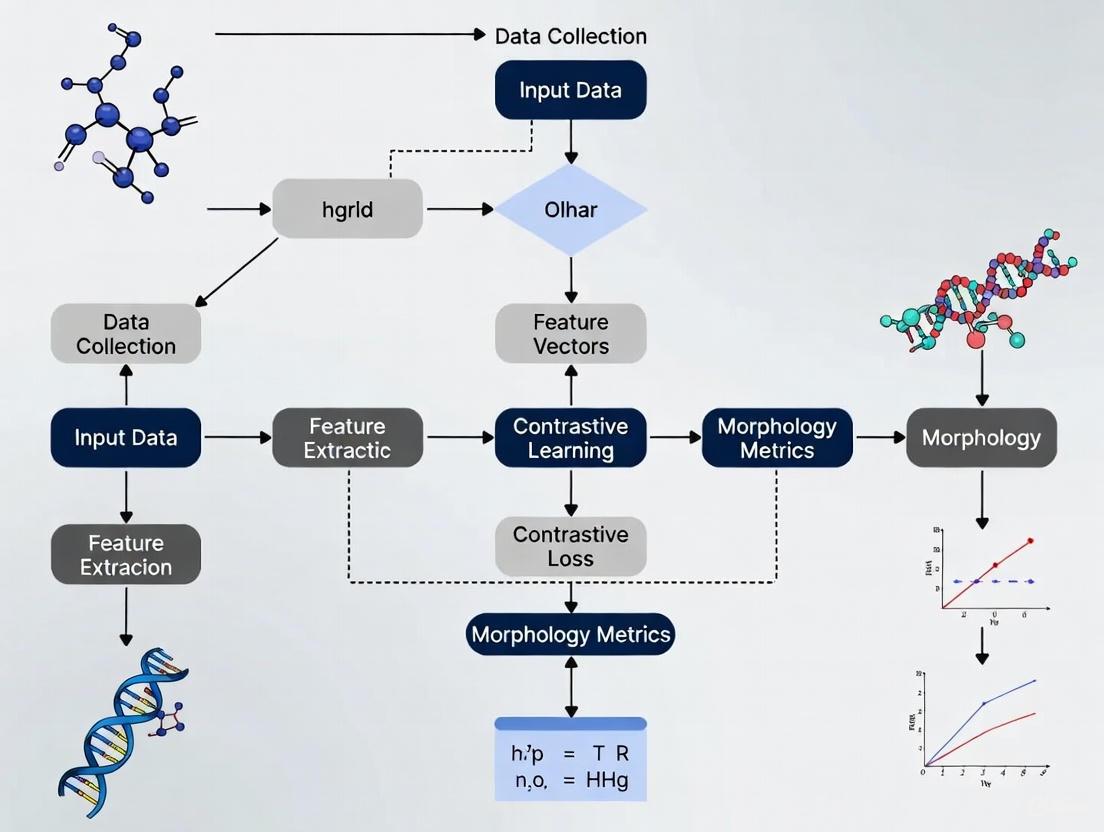

Diagram 1: Contrastive meta-learning for sperm morphology analysis. The model learns from multiple tasks to create a generalizable feature encoder, enabling rapid adaptation to new, unseen datasets with high accuracy.

Workflow and Logical Relationships:

- Multi-Task Training: The model is exposed to a variety of related tasks (e.g., vacuole detection, acrosome classification) from multiple datasets (e.g., SMIDS, HuSHeM) [3] [4]. This teaches the model to identify universally relevant features of sperm head morphology.

- Contrastive Learning: Within each task, the model learns to minimize the distance between embeddings of similar sperm heads (e.g., two normal heads) while maximizing the distance between dissimilar ones (e.g., normal vs. amorphous). This creates a well-structured feature space [4].

- Meta-Optimization: The optimizer adjusts the model's parameters so that it can quickly adapt to a new classification task after seeing only a few examples (the support set), significantly reducing the need for large, annotated datasets for each new clinical study [3].

Quality Control and Standardization

Ensuring accuracy and reproducibility in sperm morphology assessment requires rigorous quality control (QC) and standardized training.

Diagram 2: Standardized training and quality control workflow. Training tools using expert-validated images significantly improve novice morphologist accuracy and reduce inter-observer variability.

Implementing standardized training tools that use images with expert-validated "ground truth" labels can dramatically improve accuracy. Untrained novices show high variability (CV=0.28) and low accuracy (53-81%, depending on classification complexity). With standardized training, accuracy can exceed 90% and variability is significantly reduced [5]. For AI systems, continuous QC involves monitoring performance metrics (precision, recall) against a set of gold-standard images to ensure consistent analytical performance over time [1] [5].

The detailed analysis of sperm head morphology remains an indispensable tool in male infertility assessment. The integration of standardized manual protocols with emerging AI methodologies, particularly those leveraging contrastive meta-learning, is poised to revolutionize the field. These approaches mitigate the subjectivity of traditional analysis, enhance throughput, and improve diagnostic precision. For researchers and drug developers, these application notes provide a framework for implementing robust, reproducible, and clinically significant sperm head morphology analyses, paving the way for advanced diagnostic and therapeutic innovations.

Limitations of Traditional Manual Microscopy and Current CASA Systems

Semen analysis is a cornerstone of male fertility assessment, yet the methodologies for evaluating sperm parameters present significant challenges. Traditional manual microscopy, long considered the gold standard, is increasingly supplemented or replaced by Computer-Assisted Semen Analysis (CASA) systems. While CASA offers automation and objectivity, it introduces its own set of limitations. This application note details the specific constraints of both approaches, providing a framework for researchers developing advanced computational solutions like contrastive meta-learning for sperm head morphology analysis. Understanding these limitations is crucial for innovating beyond current technological boundaries and improving the accuracy and clinical value of semen analysis [9] [10].

Comparative Analysis of Limitations

The evaluation of semen parameters involves a complex trade-off between the subjectivity of manual assessment and the technical constraints of automation. The table below summarizes the core limitations of each method, providing a quantitative and qualitative comparison essential for methodological development.

Table 1: Key Limitations of Manual Microscopy and CASA Systems

| Parameter | Manual Microscopy Limitations | Current CASA System Limitations |

|---|---|---|

| General Principle | Subjective visual assessment by a technician [9] | Automated analysis via image analysis or electro-optical signals [9] [11] |

| Primary Drawbacks | High subjectivity, human error, and significant intra- and inter-operator variability [9] [10] | High cost, inflexible algorithms, limited access to raw images, and high result variability [12] [9] |

| Concentration Analysis | Prone to pipetting and dilution errors; uses standardized chambers (e.g., Neubauer) [10] | Overestimation in oligozoospermic samples reported in some systems [9] |

| Motility Analysis | Subjective classification of progressive, non-progressive, and immotile sperm [10] | Tendency for manual methods to overestimate progressive motility compared to automated counts [11] |

| Morphology Analysis | High variability; largest inter-operator variability (CV up to 29.9%); subjective visual assessment [11] [10] | Historically, ESHRE guidelines reported borderline usefulness; modern systems show improved but not perfect agreement [11] [10] |

| Key Evidence | Significant differences (p<0.0001) in concentration and progressive motility vs. CASA in a study of 230 samples [10] | Significant differences (p<0.0001) in concentration, progressive motility, and morphology vs. manual method [10] |

| Standard Deviation | Lower standard deviation for concentration and morphology compared to CASA in comparative studies [10] | Higher standard deviation for concentration and morphology compared to manual method [10] |

Experimental Protocols for Validation

To objectively assess the performance of semen analysis methods, controlled experiments comparing manual and CASA techniques are essential. The following protocols are derived from recent validation studies.

Protocol for Comparative Analysis of Sperm Concentration and Motility

This protocol is adapted from a 2022 study comparing CASA algorithms and a 2019 validation of a smartphone-based CASA system [13] [14].

- Sample Preparation: Collect semen samples via masturbation after 2-5 days of sexual abstinence. Allow samples to liquefy for 15-60 minutes at room temperature [14] [10].

- Manual Assessment (Reference Method):

- Concentration: Load a fixed volume (e.g., 10 µL) of liquefied semen into a Makler or Neubauer counting chamber. Assess sperm concentration manually under a microscope according to WHO 2010 guidelines [14] [10].

- Motility: Classify a minimum of 200 spermatozoa across five fields of view into progressively motile, non-progressively motile, and immotile categories [10].

- CASA Assessment:

- Load an identical volume of semen into a chamber compatible with the CASA system (e.g., Leja chamber).

- Acquire multiple video sequences (e.g., 1-second videos at 25 frames per second) using the CASA microscope and camera system [13].

- Analyze sperm concentration and motility using the manufacturer's software settings. Ensure a minimum of 200 spermatozoa are tracked for the analysis [10].

- Statistical Analysis: Compare results using Pearson correlation coefficients, Bland-Altman plots for agreement, and paired t-tests or Wilcoxon tests for significant differences (p < 0.05 considered significant) [14].

Protocol for Sperm Morphometry and Morphology (CASMA)

This protocol is based on a 2024 study optimizing Computer-Aided Sperm Morphology Analysis (CASMA) for a novel species, highlighting factors affecting morphometric accuracy [15].

- Sample Fixation: Divide a liquefied semen sample into aliquots and fix using different fixatives for comparison. Common fixatives include:

- 10% Formalin in Equine Semen Diluent

- 2.5% Glutaraldehyde in 0.1 M sodium cacodylate buffer

- 4% Paraformaldehyde

- Staining: Stain fixed sperm smears using one of several staining techniques per aliquot:

- SpermBlue

- Quick III

- Hemacolor

- Coomassie Blue

- CASMA Analysis: Use a CASA system with morphology module (e.g., Sperm Class Analyzer) to analyze at least 200 sperm per sample. Measure key head morphometric parameters [15].

- Data Analysis: Use multivariate analysis to determine the independent and interactive effects of fixation and staining techniques on sperm head size and shape (morphometry). Visually assess morphology for abnormalities using brightfield microscopy [15].

Visualization of Experimental Workflows

The following diagram illustrates the logical workflow for the comparative validation of semen analysis methods, integrating the protocols described above.

Figure 1: Workflow for Semen Analysis Method Validation

The Scientist's Toolkit: Research Reagent Solutions

Successful and reproducible semen analysis relies on a standardized set of materials and reagents. The following table details essential items and their functions for laboratory and research use.

Table 2: Essential Research Reagents and Materials for Semen Analysis

| Item | Function & Application |

|---|---|

| Leja Counting Chamber | Standardized chamber with 10 µm or 20 µm depth for consistent CASA or manual analysis of sperm concentration and motility [10]. |

| Neubauer Hemocytometer | Standard chamber for manual sperm concentration counting according to WHO guidelines; used as a reference method [10]. |

| SpermBlue Stain | Staining solution for sperm morphology assessment; used in CASMA protocols for clear nuclear definition [15]. |

| Quick III Stain | A rapid staining method for sperm morphology, used in comparative studies to evaluate staining effects on morphometry [15]. |

| Papanicolaou Stain | A complex staining procedure used for detailed assessment of sperm morphology in manual analysis [10]. |

| Glutaraldehyde Fixative | A fixative (e.g., 2.5% in cacodylate buffer) used to preserve sperm structure for subsequent morphological and morphometric analysis [15]. |

| Paraformaldehyde Fixative | A common cross-linking fixative (e.g., 4% solution) used to preserve sperm for staining and analysis [15]. |

| α-Chymotrypsin | Enzyme used to treat highly viscous semen samples to improve sperm recovery rate and total motile sperm count for ART [11]. |

| Quality Control Beads (Accu-Beads) | Latex beads used for training personnel and validating the precision and accuracy of both manual and CASA systems [9]. |

The application of contrastive meta-learning to human sperm head morphology (HSHM) classification represents a promising frontier in computational andrology. However, the development of robust, generalizable models is fundamentally constrained by three core dataset challenges: scarcity of high-quality, annotated samples; complexity of morphological annotation processes; and standardization issues across domains and classification systems. These challenges necessitate specialized protocols to ensure research reproducibility and clinical relevance. This document provides detailed application notes and experimental protocols to address these impediments within the context of contrastive meta-learning frameworks, specifically tailoring methodologies for research audiences in reproductive biology and AI-assisted drug development.

The challenges of scarcity, annotation complexity, and standardization are interconnected. The following tables summarize their quantitative impact on model development and the corresponding strategic solutions.

Table 1: Impact and Manifestation of Core Dataset Challenges

| Challenge | Key Manifestation | Impact on Model Generalizability |

|---|---|---|

| Scarcity | Limited number of high-quality, annotated samples; Class imbalance [16] | Models prone to overfitting; Reduced accuracy (55%-92% reported range) [16] |

| Annotation Complexity | Low inter-expert agreement; Subjective interpretation of criteria [16] | Introduces label noise; Compromises reliability of ground truth |

| Standardization | Use of different classification systems (e.g., David vs. WHO) [16]; Cross-domain variance | Limits model transferability between clinics and datasets |

Table 2: HSHM Classification Systems and Defect Categories

| Classification System | Defect Categories (with Abbreviations) | Number of Classes | Key Reference |

|---|---|---|---|

| Modified David | Tapered (A), Thin (B), Microcephalous (C), Macrocephalous (D), Multiple (E), Abnormal post-acrosomal region (F), Abnormal acrosome (G), Cytoplasmic droplet (H), Bent (J), Coiled (N), Short (L), Multiple tails (O), Associated anomalies (CN), Normal (NR) [16] | 14 (7 head, 2 midpiece, 3 tail, CN, NR) | [16] |

| WHO | Focuses on strict criteria for head, midpiece, and tail defects [16] | Varies | [16] |

Experimental Protocols

Protocol for Dataset Curation and Augmentation

This protocol is designed to mitigate the challenge of data scarcity.

- Objective: To create a large, balanced, and powerful dataset for training contrastive meta-learning models, using the SMD/MSS dataset as a base [16].

- Materials:

- Semen samples with a sperm concentration of ≥5 million/mL and varying morphological profiles.

- RAL Diagnostics staining kit.

- MMC CASA (Computer-Assisted Semen Analysis) system with an optical microscope and digital camera.

- Methods:

- Sample Preparation and Image Acquisition:

- Prepare smears according to WHO guidelines and stain with RAL Diagnostics kit [16].

- Using the MMC CASA system with a 100x oil immersion objective in bright field mode, acquire images. Capture approximately 37 ± 5 images per sample to avoid overlap [16].

- Ensure each image contains a single spermatozoon with a clear view of the head, midpiece, and tail.

- Expert Annotation and Ground Truth Compilation:

- Inter-Expert Agreement Analysis:

- Data Augmentation:

- Apply augmentation techniques to the base dataset (e.g., 1,000 images) to significantly increase its size and balance morphological classes (e.g., to 6,035 images) [16].

- Techniques should include geometric transformations (rotation, flipping), and photometric adjustments (brightness, contrast) to simulate real-world variance and improve model robustness.

- Sample Preparation and Image Acquisition:

Protocol for Contrastive Meta-learning with Auxiliary Tasks (HSHM-CMA)

This protocol directly addresses generalization across domains and tasks.

- Objective: To implement the HSHM-CMA algorithm, which integrates contrastive learning in the meta-learning outer loop to learn invariant features, enhancing performance on unseen tasks and datasets [17].

- Materials:

- The augmented SMD/MSS dataset (from Protocol 3.1).

- Python 3.8 environment with deep learning libraries (e.g., PyTorch, TensorFlow).

- Methods:

- Image Pre-processing:

- Data Cleaning: Handle missing values and outliers.

- Normalization: Resize all images to a consistent size (e.g., 80x80 pixels) and convert to grayscale. Normalize pixel values to a common scale [16].

- Data Partitioning:

- Randomly split the dataset: 80% for training and 20% for testing.

- Further split the training set, using 80% for model training and 20% for validation [16].

- Meta-Training with Contrastive Objective:

- Task Construction: In each training episode, sample a batch of tasks. Each task is a few-shot learning problem simulating adaptation to a new HSHM category or dataset.

- Contrastive Meta-Objective: The core of HSHM-CMA. For models generated by the meta-learner, the objective is to minimize the distance (maximize similarity) between representations of models trained on different subsets of the same task (positive pairs) while maximizing the distance for models from different tasks (negative pairs) [18] [17]. This builds alignment and discrimination abilities into the meta-learner.

- Auxiliary Tasks: Separate meta-training tasks into primary and auxiliary tasks to prevent gradient conflict and further improve generalization [17].

- Model Evaluation:

- Evaluate the model's generalization under three objectives [17]:

- Same dataset, different HSHM categories.

- Different datasets, same HSHM categories.

- Different datasets, different HSHM categories.

- Report accuracy and other relevant metrics (e.g., F1-score) for each objective.

- Evaluate the model's generalization under three objectives [17]:

- Image Pre-processing:

Visualization of Workflows and Signaling Pathways

HSHM-CMA Experimental Workflow

This diagram outlines the end-to-end process for applying the HSHM-CMA algorithm.

Contrastive Meta-Objective Mechanism

This diagram details the core mechanism of the contrastive meta-objective within the HSHM-CMA framework.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for HSHM Research

| Item | Function/Application in HSHM Research | Key Consideration |

|---|---|---|

| MMC CASA System | Automated image acquisition from sperm smears; provides morphometric data (head dimensions, tail length) [16]. | Limited ability to classify midpiece/tail defects and distinguish sperm from debris can necessitate AI enhancement [16]. |

| RAL Diagnostics Staining Kit | Staining semen smears for morphological assessment, improving visual contrast for both manual and automated analysis [16]. | Must be applied according to WHO manual specifications to ensure standardization and reproducibility of staining quality [16]. |

| SMD/MSS Dataset | A foundational dataset of sperm images classified per modified David criteria, used for training and benchmarking models [16]. | Can be augmented to address class imbalance and increase dataset size for robust deep learning model training [16]. |

| HSHM-CMA Algorithm | A meta-learning algorithm that uses contrastive learning and auxiliary tasks to improve cross-domain generalization in sperm classification [17]. | Designed to be problem- and learner-agnostic, allowing for integration with various model architectures and task definitions [18] [17]. |

Evolution from Conventional Machine Learning to Deep Learning Approaches

The analysis of human sperm head morphology (HSHM) is a critical diagnostic procedure in male infertility assessments. Traditional methods have largely relied on manual evaluation by trained experts, a process that is often subjective, time-consuming, and prone to variability. The emergence of computational approaches has begun to transform this field, offering a path toward more standardized, rapid, and objective analysis. This evolution has progressed from using conventional machine learning algorithms, which require significant manual feature engineering, to modern deep learning techniques that can automatically learn relevant features from raw data. Most recently, advanced paradigms like contrastive meta-learning are being explored to address the significant challenge of generalizability across different clinical datasets and staining protocols [17]. This document outlines the key quantitative differences between these approaches and provides detailed experimental protocols for their application in HSHM research.

Comparative Analysis: Conventional Machine Learning vs. Deep Learning

The transition from conventional Machine Learning (ML) to Deep Learning (DL) represents a fundamental shift in how models learn from data. The table below summarizes the core distinctions between these two paradigms, which are critical for selecting the appropriate tool for a given research problem.

Table 1: A Comparison of Conventional Machine Learning and Deep Learning Characteristics.

| Characteristic | Conventional Machine Learning | Deep Learning |

|---|---|---|

| Data Representation | Relies on manually engineered features created by domain experts [19]. | Automatically learns hierarchical feature representations directly from raw data (e.g., images) [19]. |

| Model Complexity | Simpler models with fewer parameters (e.g., SVM, Decision Trees) [19]. | Complex models with many layers and parameters (e.g., Deep Neural Networks) [19]. |

| Data Volume | Performs well with relatively smaller, structured datasets [19]. | Requires large volumes of training data to effectively learn and avoid overfitting [20] [19]. |

| Interpretability | Generally more interpretable; decisions can often be traced through explicit features [19]. | Often acts as a "black box"; internal decision-making process can be difficult to interpret [19]. |

| Feature Engineering | Essential and time-consuming; requires domain expertise to create relevant input features [21]. | Not required; the model learns the optimal features during the training process [20]. |

| Computational Resource | Lower computational requirements for training and inference [19]. | High computational cost, often requiring powerful processors with parallel computing power like GPUs [20]. |

The performance impact of this paradigm shift is evident in quantitative studies. For instance, in a systematic comparison of models for predicting mental illness from clinical text, a novel deep learning architecture (CB-MH) achieved the best F1 score of 0.62, while another attention-based model was best for F2 (0.71) [22]. Similarly, in a supply chain cost prediction task, a Convolutional Neural Network (CNN) model demonstrated superior accuracy with a Root Mean Square Error (RMSE) of 0.528 and an R² value of 0.953, outperforming conventional models like Random Forest and Support Vector Machines [23].

Experimental Protocols for Sperm Head Morphology Analysis

Protocol 1: Conventional Machine Learning with Engineered Features

This protocol is suitable for smaller datasets where computational resources are limited and domain knowledge can be effectively encoded into hand-crafted features.

1. Sample Preparation and Image Acquisition: - Staining: Prepare semen slides using a standardized staining protocol (e.g., Diff-Quik, Papanicolaou) to ensure consistent contrast and nuclear detail [7]. - Imaging: Capture digital images of spermatozoa using a high-resolution microscope with a 100x oil immersion objective. Ensure consistent lighting and focus across all images.

2. Image Pre-processing: - Segmentation: Use image processing techniques (e.g., Otsu's thresholding, watershed algorithm) to isolate individual sperm heads from the background and other cells. - Normalization: Apply normalization to adjust for variations in staining intensity and illumination. Scale all images to a uniform pixel dimensions.

3. Feature Engineering: - Morphometric Features: Extract quantitative descriptors of shape, including: - Area, Perimeter, Width, Length - Aspect Ratio, Ellipticity, Rugosity - Texture Features: Calculate features that describe the internal pattern of the sperm head, such as: - Haralick features (from the Gray-Level Co-occurrence Matrix) - Local Binary Patterns (LBP)

4. Model Training and Validation: - Data Splitting: Split the dataset with labeled sperm images (e.g., "normal," "tapered," "amorphous") into training (65%), validation (15%), and test (20%) sets. Ensure all images from a single patient are contained within one set to prevent data leakage [24]. - Algorithm Selection: Train a conventional ML model, such as a Support Vector Machine (SVM) or Random Forest (RF), using the engineered features. - Validation: Use the validation set to tune hyperparameters. Evaluate the final model on the held-out test set and report performance metrics including sensitivity, specificity, and accuracy [24].

Protocol 2: Deep Learning-Based Classification

This protocol leverages deep learning for end-to-end learning and is ideal for larger datasets where it can automatically discover complex features.

1. Data Curation and Annotation: - Dataset Assembly: Compile a large dataset of sperm images. Data augmentation techniques (e.g., rotation, flipping, slight color jittering) should be applied to increase dataset size and improve model robustness. - Expert Annotation: Have trained embryologists annotate the images according to standardized WHO criteria or a specific laboratory schema. Establish inter-observer reliability scores to ensure label consistency [7].

2. Model Selection and Training: - Architecture Choice: Select a pre-trained Convolutional Neural Network (CNN) architecture, such as ResNet or EfficientNet, for transfer learning. - Transfer Learning: Fine-tune the pre-trained model on the curated HSHM dataset. Replace the final classification layer to match the number of morphology classes in your study. - Training Loop: Train the model using a suitable optimizer (e.g., Adam) and a loss function like categorical cross-entropy. Monitor performance on the validation set to prevent overfitting.

3. Model Interpretation and Deployment: - Explainability: Apply interpretability methods like Integrated Gradients or Grad-CAM to identify which image regions most influenced the model's decision [22]. - Performance Assessment: Evaluate the model on the test set, reporting metrics beyond accuracy, such as the F1-score (especially for imbalanced classes) and the area under the ROC curve (AUC) [24].

Protocol 3: Contrastive Meta-Learning for Generalized Morphology Classification (HSHM-CMA)

This advanced protocol addresses the challenge of generalizing across different domains (e.g., labs, staining methods) by learning invariant features.

1. Task Formation for Meta-Learning: - Construct a set of tasks from your source datasets. In the context of meta-learning, each task is a small classification problem (e.g., a "5-way, 5-shot" learning problem). This simulates the real-world scenario of learning new morphology categories from limited examples.

2. HSHM-CMA Algorithm Execution: - The HSHM-CMA algorithm integrates contrastive learning into the outer loop of the meta-learning process [17]. - Inner Loop: For each task, the model performs a few steps of learning (adaptation) on the small support set. - Outer Loop (with Contrastive Learning): The model is updated based on its performance across all tasks. The integration of localized contrastive learning in this phase helps the model learn to pull representations of similar morphologies closer together and push dissimilar ones apart, regardless of the domain-specific variations (e.g., stain color intensity) [17]. This enhances the model's ability to learn invariant features.

3. Evaluation of Generalization: - The model's performance should be evaluated under three rigorous testing objectives [17]: - Same dataset, different HSHM categories. - Different datasets, same HSHM categories. - Different datasets, different HSHM categories. - The HSHM-CMA model has been shown to achieve accuracies of 65.83%, 81.42%, and 60.13% respectively under these objectives, outperforming standard meta-learning approaches [17].

Visualization of Workflows

The following diagrams illustrate the logical relationships and experimental workflows for the key methodologies discussed.

Diagram 1: A high-level comparison of Conventional ML versus Deep Learning workflows for HSHM analysis.

Diagram 2: The workflow for Contrastive Meta-Learning (HSHM-CMA), designed for generalization.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Computational Sperm Morphology Research.

| Item Name | Function / Explanation |

|---|---|

| Standardized Staining Kits (e.g., Diff-Quik, Papanicolaou) | Provides consistent cytological staining for sperm head morphology, which is crucial for both manual assessment and creating uniform datasets for computational analysis [7]. |

| High-Resolution Microscope & Digital Camera | Enables the acquisition of high-quality digital images of spermatozoa, which serve as the primary input data for all computational models. |

| Annotated HSHM Datasets | Collections of sperm images labeled by expert embryologists. These are the fundamental resource for training supervised machine learning and deep learning models. |

| Pre-trained Deep Learning Models (e.g., on ImageNet) | Models like ResNet or EfficientNet provide a powerful starting point for transfer learning, significantly reducing the data and computational resources required to train an accurate HSHM classifier. |

| Contrastive Meta-Learning Framework (HSHM-CMA) | An advanced algorithmic solution that enhances model generalization across different clinical settings and datasets by learning invariant features [17]. |

| Integrated Gradients / Grad-CAM | Explainability tools that help researchers understand and trust model predictions by visualizing the image features that were most influential in the classification decision [22]. |

Theoretical Foundations

Contrastive Learning Principles

Contrastive Learning is a machine learning paradigm where unlabeled data points are juxtaposed against each other to teach a model which points are similar and which are different. The fundamental principle involves contrasting samples against each other so that those belonging to the same distribution are pushed toward each other in the embedding space, while those belonging to different distributions are pulled apart [25]. This approach has revolutionized computer vision by enabling models to learn rich representations from unlabeled data that generalize well to diverse vision tasks [26].

The basic framework consists of selecting a data sample called an "anchor," a data point belonging to the same distribution as the anchor called a "positive sample," and another data point belonging to a different distribution called a "negative sample." The model then tries to minimize the distance between the anchor and positive samples in the latent space while simultaneously maximizing the distance between the anchor and negative samples [25]. This process mimics how humans learn about the world by comparing and contrasting similar and different examples.

Meta-Learning Fundamentals

Meta-learning, often described as "learning to learn," enables learning systems to adapt quickly to new tasks with limited data, similar to human learning capabilities [27] [28]. Different meta-learning approaches operate under the mini-batch episodic training framework, which naturally provides information about task identity that can serve as additional supervision for meta-training to improve generalizability [27].

The core objective of meta-learning is to train models on a distribution of tasks such that they can rapidly adapt to new tasks from the same distribution with only a few examples. This paradigm is particularly valuable in domains where labeled data is scarce or expensive to obtain, such as medical imaging and computational biology [17].

Integration: Contrastive Meta-Learning

The integration of contrastive learning with meta-learning creates a powerful framework that enhances model generalization capabilities. Contrastive meta-learning extends contrastive learning from the representation space in unsupervised learning to the model space in meta-learning [28]. By leveraging task identity as an additional supervision signal during meta-training, this approach contrasts the outputs of the meta-learner in the model space, minimizing inner-task distance (between models trained on different subsets of the same task) and maximizing inter-task distance (between models from different tasks) [28].

This integration has demonstrated significant improvements across diverse few-shot learning tasks and can be applied to optimization-based, metric-based, and amortization-based meta-learning algorithms, as well as in-context learning [28].

Quantitative Performance Comparison

Table 1: Performance Comparison of Contrastive Meta-Learning Models in Sperm Morphology Classification

| Model/Approach | Testing Objective | Accuracy (%) | Key Innovation |

|---|---|---|---|

| HSHM-CMA | Same dataset, different HSHM categories | 65.83 | Separates meta-training tasks into primary and auxiliary tasks |

| HSHM-CMA | Different datasets, same HSHM categories | 81.42 | Integrates localized contrastive learning in outer loop of meta-learning |

| HSHM-CMA | Different datasets, different HSHM categories | 60.13 | Uses contrastive learning to exploit invariant features across domains |

| Traditional Computer-Assisted Analysis | Normal vs. abnormal sperm classification | 95.00 | Linear discriminant analysis with eight parameters [29] |

| Traditional Computer-Assisted Analysis | 10-shape classification | 86.00 | Jackknifed classification procedure [29] |

Table 2: Contrastive Learning Objective Functions and Their Applications

| Loss Function | Mathematical Formulation | Key Characteristics | Application Context |

|---|---|---|---|

| Max Margin Contrastive Loss | ( L = (1-y)\frac{1}{2}(d\theta)^2 + y\frac{1}{2}{\max(0, \epsilon-d\theta)}^2 ) | Maximizes distance between different distributions, minimizes between similar ones | One of the oldest loss functions in contrastive learning literature [25] |

| Triplet Loss | ( L = \max(0, d(sa, s+) - d(sa, s-) + \epsilon) ) | Uses anchor, positive, and negative samples simultaneously; requires difficult negative samples | Effective when negative samples are carefully chosen (e.g., raccoons vs. ringtails) [25] |

| N-pair Loss | ( L = -\log\frac{\exp(si^T s+)}{\exp(si^T s+) + \sum{j=1}^{N-1} \exp(si^T s_j^-)} ) | Extends triplet loss with multiple negative samples | Creates more challenging comparison scenarios [25] |

| NT-Xent Loss | ( L = -\log\frac{\exp(\text{sim}(zi,zj)/\tau)}{\sum{k=1}^{2N} 1{[k\neq i]}\exp(\text{sim}(zi,zk)/\tau)} ) | Modification of N-pair loss with temperature parameter | Uses cosine similarity function [25] |

Experimental Protocols in Sperm Morphology Research

Contrastive Meta-Learning with Auxiliary Tasks (HSHM-CMA) Protocol

Objective: To classify human sperm head morphology (HSHM) with improved cross-domain generalizability by learning invariant features across tasks [17].

Materials and Reagents:

- Stained semen smears (Feulgen reaction recommended) [29]

- Microscopy equipment with high numerical aperture (NA = 1.3 recommended) [29]

- Image analysis system capable of 0.125-μm sampling intervals [29]

Procedure:

- Data Preparation:

- Collect semen samples from donors following ethical guidelines

- Prepare stained smears using standardized staining protocols

- Select prototypic examples of morphology classes for training

Feature Extraction:

- Acquire sperm head images through microscope

- Measure parameters including stain content, length, width, perimeter, area

- Calculate arithmetically derived combinations of measurements

- Perform optical sectioning at right angles to major axis for shape heterogeneity assessment

Model Architecture:

- Implement meta-learning framework with separate primary and auxiliary tasks

- Integrate localized contrastive learning in the outer loop of meta-learning

- Design network to learn invariant sperm morphology features across domains

Training Protocol:

- Train model using episodic training strategy

- Apply contrastive meta-objective to minimize inner-task distance and maximize inter-task distance

- Use task identity as additional supervision signal

Evaluation:

- Assess generalization performance using three testing objectives:

- Same dataset with different HSHM categories

- Different datasets with same HSHM categories

- Different datasets with different HSHM categories

- Compare against baseline meta-learning approaches

- Assess generalization performance using three testing objectives:

Stained-Free Sperm Morphology Measurement Protocol

Objective: To provide automated, accurate, and non-invasive multi-sperm morphology assessment without staining procedures [30].

Materials:

- Phase-contrast or differential interference contrast microscopy

- Computer vision system with multi-scale part parsing network

- Measurement accuracy enhancement algorithms

Procedure:

- Sample Preparation:

- Use native semen samples without staining or fixation

- Ensure sperm remain motile for physiological assessment

Image Acquisition:

- Capture images under 20× magnification to prevent sperm swimming out of view

- Acquire multiple frames for potential fusion approaches

Multi-Target Instance Parsing:

- Implement multi-scale part parsing network integrating semantic and instance segmentation

- Create masks for accurate sperm localization (instance segmentation branch)

- Provide detailed segmentation of sperm parts (semantic segmentation branch)

- Fuse outputs from both branches for comprehensive parsing

Measurement Accuracy Enhancement:

- Apply interquartile range (IQR) method to exclude outliers

- Implement Gaussian filtering to smooth data

- Use robust correction techniques to extract maximum morphological features

- Address blurred boundaries and loss of details in low-resolution images

Morphological Parameter Extraction:

- Measure head dimensions (length, width, area, perimeter)

- Assess midpiece characteristics

- Evaluate tail length and morphology

- Calculate derived parameters (ellipticity, elongation, etc.)

Visualization of Methodologies

Contrastive Meta-Learning Workflow

Sperm Morphology Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Research

| Item | Function | Application Context |

|---|---|---|

| Feulgen Stain | DNA-specific staining for sperm head visualization | Traditional stained sperm morphology analysis [29] |

| Phase-Contrast Microscopy | Enables observation of unstained sperm cells | Stain-free sperm morphology assessment [30] |

| Multi-Scale Part Parsing Network | Enables instance-level parsing of sperm components | Automated sperm morphology measurement [30] |

| Gaussian Filtering Algorithms | Reduces noise in morphological measurements | Measurement accuracy enhancement in stain-free approaches [30] |

| Interquartile Range (IQR) Method | Statistical approach for outlier exclusion | Data quality control in automated analysis [30] |

| Contrastive Meta-Learning Framework (HSHM-CMA) | Improves cross-domain generalization | Sperm head morphology classification across datasets [17] |

| Episodic Training Framework | Mimics few-shot learning scenario | Meta-learning for rapid adaptation to new morphology categories [27] |

Implementation Considerations

Data Augmentation Strategies for Contrastive Learning

Effective contrastive learning relies heavily on appropriate data augmentation techniques to generate positive and negative sample pairs. For sperm morphology analysis, recommended augmentations include [25]:

- Color Jittering: Modifying brightness, contrast, and saturation to ensure models focus on morphological features rather than color variations

- Image Rotation: Applying random rotations within 0-90 degrees to build rotation invariance

- Image Noising: Adding pixel-wise random noise to enhance model robustness to image quality variations

- Random Affine: Implementing geometric transformations that preserve lines and parallelism while altering perspectives

Evaluation Metrics for Morphology Classification

Comprehensive evaluation of sperm morphology classification systems should incorporate multiple metrics beyond accuracy:

- Cross-Dataset Generalization: Performance consistency across different datasets and acquisition conditions

- Class Imbalance Handling: Effectiveness in dealing with rare morphology categories

- Clinical Correlation: Agreement with expert embryologist assessments and clinical outcomes

- Computational Efficiency: Inference speed for potential real-time clinical applications

The integration of contrastive learning with meta-learning paradigms represents a significant advancement in computational sperm morphology analysis, offering improved generalization capabilities and reduced dependency on large annotated datasets. These approaches hold particular promise for clinical applications where staining procedures may damage sperm viability and where expert annotations are scarce and expensive to obtain.

Implementing Contrastive Meta-Learning with Auxiliary Tasks for Sperm Classification

Contrastive Meta-Learning (ConML) represents an advanced machine learning paradigm that enhances the ability of learning systems to rapidly adapt to new tasks with limited data. This framework is particularly valuable in specialized biomedical domains, such as sperm head morphology research, where labeled data is scarce and classification tasks require robust, generalizable models [17]. By integrating principles from meta-learning and contrastive learning, ConML equips models with improved alignment and discrimination capabilities, mirroring human cognitive learning processes [18].

The core innovation of ConML lies in its extension of contrastive learning from traditional representation space to the model space of meta-learning. This approach leverages task identity as intrinsic supervisory information during meta-training, enabling the learning system to minimize intra-task variations while maximizing inter-task distinctions [18] [28]. This architecture overview details the fundamental components, experimental protocols, and practical implementations of ConML frameworks, with specific application to sperm head morphology classification challenges.

Theoretical Framework and Core Components

Foundation of Meta-Learning

Meta-learning, or "learning to learn," operates on the principle of training a model across a distribution of related tasks to acquire transferable knowledge that enables rapid adaptation to novel tasks. Formally, a meta-learner ( g(\mathcal{D}; \theta) ) maps a dataset ( \mathcal{D} ) to a model ( h ), with the objective of minimizing the expected loss on unseen tasks sampled from the task distribution ( p(\tau) ) [18]. The standard episodic training framework divides each task into support (training) and query (validation) sets, simulating the few-shot learning scenario encountered during meta-testing [18].

Integration of Contrastive Learning

The ConML framework introduces a contrastive meta-objective that operates alongside conventional meta-learning objectives. This component is designed to enhance the meta-learner's alignment and discrimination abilities:

- Alignment: Encourages the meta-learner to produce similar model representations when presented with different subsets of the same task, promoting robustness to data variations and noise [18].

- Discrimination: Encourages the meta-learner to produce dissimilar model representations for different tasks, even when input similarities exist, enhancing task-specific specialization [18].

This is achieved through a contrastive loss function that treats different subsets of the same task as positive pairs and datasets from different tasks as negative pairs, effectively minimizing within-task distance while maximizing between-task distance in the model representation space [18] [28].

Universal Applicability

A key advantage of the ConML framework is its learner-agnostic design, enabling integration with diverse meta-learning approaches:

- Optimization-based methods: Models like MAML that learn optimal parameter initializations [18]

- Metric-based methods: Approaches like Prototypical Networks that leverage learned similarity metrics [18]

- Amortization-based methods: Models that amortize the inference of task-specific parameters [18]

- In-context learning: Emerging capabilities in large language models [18]

ConML Implementation for Sperm Head Morphology Classification

Problem Context and Challenges

Human sperm head morphology (HSHM) classification presents significant challenges for conventional deep learning approaches due to limited annotated datasets, substantial biological variability, and critical requirements for cross-domain generalizability in clinical settings [17]. The Contrastive Meta-Learning with Auxiliary Tasks (HSHM-CMA) algorithm has been specifically developed to address these challenges by learning invariant features across tasks and efficiently transferring knowledge to new classification problems [17].

Architectural Framework

The HSHM-CMA framework incorporates several innovative components to enhance classification performance:

- Localized contrastive learning: Integrated into the outer loop of meta-learning to exploit invariant sperm morphology features across domains [17]

- Auxiliary task separation: Mitigates gradient conflicts in multi-task learning by separating meta-training tasks into primary and auxiliary categories [17]

- Multi-scale feature extraction: Captures both cellular and sub-cellular morphological characteristics

The following diagram illustrates the core workflow of the HSHM-CMA framework:

Diagram 1: HSHM-CMA workflow illustrating the interaction between task distribution, meta-learner, and contrastive module.

Experimental Validation and Performance Metrics

The HSHM-CMA framework has been rigorously evaluated across multiple testing objectives to assess generalization capabilities:

- Same dataset, different HSHM categories: Testing the model's ability to discriminate between morphological classes within a consistent data distribution [17]

- Different datasets, same HSHM categories: Evaluating cross-dataset robustness when classifying familiar morphological patterns [17]

- Different datasets, different HSHM categories: Assessing the model's capacity to generalize to entirely novel data distributions and classification tasks [17]

Table 1: Performance evaluation of HSHM-CMA across different testing objectives

| Testing Objective | Dataset Conditions | Morphology Categories | Accuracy (%) |

|---|---|---|---|

| Same dataset, different HSHM categories | Consistent dataset | Varied morphological classes | 65.83 |

| Different datasets, same HSHM categories | Multiple datasets | Consistent class definitions | 81.42 |

| Different datasets, different HSHM categories | Multiple datasets | Novel morphological classes | 60.13 |

Detailed Experimental Protocols

Meta-Training Procedure

The meta-training phase follows an episodic training paradigm with integrated contrastive learning:

- Task Sampling: For each episode, sample a batch of ( B ) tasks from the task distribution ( p(\tau) ) [18]

- Task Splitting: For each task ( \taui ), split the dataset into support set ( \mathcal{D}^{tr}{\taui} ) and query set ( \mathcal{D}^{val}{\tau_i} ) [18]

- Inner Loop Adaptation: For each task, compute adapted parameters using the support set through gradient descent or closed-form solution

- Contrastive Objective Calculation:

- Generate positive pairs from different subsets of the same task

- Generate negative pairs from different tasks

- Compute contrastive loss based on model representation distances [18]

- Outer Loop Optimization: Update meta-parameters by combining conventional meta-loss and contrastive meta-objective

The following diagram details the contrastive meta-learning process:

Diagram 2: Contrastive meta-learning process showing parallel computation of task loss and contrastive loss.

Sperm Head Morphology Classification Protocol

For HSHM classification, the following specialized protocol should be implemented:

Data Preprocessing and Augmentation

- Image normalization and standardization

- Data augmentation techniques (rotation, flipping, color jittering)

- Semantic consistency preservation during augmentation

Task Construction for Meta-Learning

- Define each task as N-way K-shot classification problems

- Balance task difficulty across episodes

- Ensure representative sampling of morphological classes

Model Training with HSHM-CMA

- Implement localized contrastive learning in outer loop

- Separate primary and auxiliary tasks to mitigate gradient conflicts

- Utilize diverse HSHM datasets for comprehensive meta-training [17]

Evaluation Protocol

- Assess generalization using the three testing objectives outlined in Table 1

- Compare against baseline meta-learning approaches

- Perform statistical significance testing on results

Implementation Details

Table 2: Key hyperparameters for ConML implementation in HSHM classification

| Hyperparameter | Recommended Value | Description |

|---|---|---|

| Meta-batch size | 4-8 tasks | Number of tasks per training episode |

| Inner loop learning rate | 0.01-0.1 | Learning rate for task-specific adaptation |

| Outer loop learning rate | 0.001-0.01 | Learning rate for meta-parameter updates |

| Contrastive loss weight | 0.1-0.5 | Weighting factor for contrastive objective |

| Support samples per class | 1-5 | Number of examples in support set (few-shot setting) |

| Query samples per class | 10-15 | Number of examples in query set |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents and computational resources for contrastive meta-learning experiments

| Resource | Type | Function/Purpose |

|---|---|---|

| HSHM Datasets | Data | Multiple datasets of human sperm head images for model training and evaluation [17] |

| ODAM Framework | Software Tool | Facilitates FAIR-compliant data management and structural metadata organization [31] |

| Contrastive Meta-Learning Code | Algorithm | Implements task-level contrastive learning for model space alignment and discrimination [18] |

| Computational Resources | Infrastructure | GPU clusters for efficient meta-training across multiple tasks and episodes |

| Data Augmentation Pipeline | Preprocessing | Generates varied task instances while preserving semantic content for contrastive learning |

The ConML framework represents a significant advancement in meta-learning methodology by incorporating task-level contrastive objectives to enhance model generalization capabilities. For sperm head morphology research, this approach enables the development of robust classification systems that maintain performance across diverse datasets and morphological categories. The HSHM-CMA algorithm demonstrates the practical efficacy of this framework, achieving state-of-the-art performance in cross-domain generalization tasks. As contrastive meta-learning continues to evolve, it holds substantial promise for addressing critical challenges in biomedical image analysis and other data-scarce scientific domains.

Auxiliary Task Integration for Enhanced Feature Representation

The morphological analysis of human sperm heads represents a critical diagnostic procedure in male infertility assessment. Traditional classification methods often suffer from limited generalizability across diverse clinical datasets and imaging conditions. This protocol details the integration of auxiliary tasks within a contrastive meta-learning framework to enhance feature representation for improved generalization in sperm head morphology classification. The HSHM-CMA (Human Sperm Head Morphology - Contrastive Meta-learning with Auxiliary Tasks) algorithm addresses gradient conflicts in multi-task learning by strategically separating meta-training tasks into primary and auxiliary objectives, enabling the learning of domain-invariant features that significantly improve cross-domain classification performance [17].

Key Concepts and Theoretical Framework

Auxiliary Tasks in Machine Learning

Auxiliary tasks are secondary learning objectives processed alongside a primary task to induce better data representations and improve data efficiency. These tasks provide additional learning signals that encourage models to develop more general and useful feature representations, which subsequently enhance performance on the primary objective. In medical imaging contexts, properly designed auxiliary tasks force the model to focus on biologically relevant features rather than dataset-specific artifacts [32].

Contrastive Meta-Learning Fundamentals

Meta-learning, or "learning to learn," creates models that can rapidly adapt to new tasks with minimal data. The HSHM-CMA framework enhances conventional meta-learning through localized contrastive learning in the outer loop of meta-optimization, exploiting invariant morphological features across domains to improve task convergence and adaptation to novel sperm morphology categories [17] [18].

Experimental Performance Data

The HSHM-CMA algorithm was rigorously evaluated under three testing scenarios representing realistic clinical challenges. The following table summarizes its performance compared to existing meta-learning approaches:

Table 1: Performance Evaluation of HSHM-CMA Algorithm Across Testing Scenarios

| Testing Objective | Description | HSHM-CMA Accuracy | Performance Advantage |

|---|---|---|---|

| Same dataset, different HSHM categories | Evaluates fine-grained discrimination within consistent data source | 65.83% | Significant improvement over baseline meta-learning methods |

| Different datasets, same HSHM categories | Tests cross-domain generalization with consistent classification schema | 81.42% | Enhanced domain invariance and representation learning |

| Different datasets, different HSHM categories | Most challenging scenario assessing full generalization capability | 60.13% | Superior adaptation to novel domains and categories |

The demonstrated performance across these evaluation scenarios, particularly the 81.42% accuracy in cross-domain classification with consistent morphology categories, confirms that auxiliary task integration substantially improves feature representation robustness for sperm morphology analysis [17].

Implementation Protocols

HSHM-CMA Architecture Specification

The Contrastive Meta-Learning with Auxiliary Tasks algorithm implements a specialized bi-level optimization structure:

Primary Task Formulation:

- Input: Sperm head images with morphological classifications

- Output: Probability distribution over morphology categories

- Loss Function: Cross-entropy for classification tasks

Auxiliary Task Selection:

- Morphometric Prediction: Continuous regression of head dimensions (area, perimeter, ellipticity)

- Spatial Relationship Modeling: Relative positioning of acrosomal and post-acrosomal regions

- Data Augmentation Identification: Self-supervised task to recognize applied transformations

- Domain Discriminator: Adversarial task to learn domain-invariant features [17]

Table 2: Research Reagent Solutions for Sperm Morphology Analysis

| Reagent/Equipment | Specification | Function in Experimental Protocol |

|---|---|---|

| CASA-Morph System | Computer-Assisted Sperm Analysis | Automated morphometric analysis of sperm head parameters |

| Fluorescence Microscope | Epifluorescence with 63× plan apochromatic objective | High-resolution imaging of sperm nuclei |

| Nuclear Stain | Hoechst 33342 (20 μg ml⁻¹ in TRIS-based solution) | Fluorescent labeling of sperm DNA for consistent morphometry |

| Image Analysis Software | ImageJ with custom plugin | Automated measurement of primary and derived morphometric parameters |

| Fixative Solution | 2% (v/v) glutaraldehyde in PBS | Sample preservation and morphological stabilization |

Workflow Visualization

Auxiliary Task Integration Protocol

Step 1: Primary-Auxiliary Task Segregation

- Separate meta-training tasks into distinct primary and auxiliary objectives

- Implement gradient conflict mitigation through task-specific weighting

- Balance influence of auxiliary tasks using MetaBalance-inspired adaptation [32] [17]

Step 2: Contrastive Meta-Learning Loop

- Inner Loop: Rapid task-specific adaptation using both primary and auxiliary objectives

- Outer Loop: Localized contrastive learning across task representations

- Meta-Optimization: Learn parameters that maximize performance across task distribution [18]

Step 3: Representation Enhancement

- Apply contrastive objectives to minimize intra-class distance while maximizing inter-class separation

- Leverage task identity as supervisory signal for improved alignment and discrimination

- Regularize feature space to emphasize biologically relevant morphological characteristics [17] [18]

Advanced Technical Specifications

Morphometric Analysis Parameters

For comprehensive sperm head characterization, the following morphometric parameters must be extracted using CASA-Morph technology:

Table 3: Essential Morphometric Parameters for Sperm Head Analysis

| Parameter Category | Specific Measurements | Biological Significance |

|---|---|---|

| Primary Parameters | Area (μm²), Perimeter (μm), Length (μm), Width (μm) | Fundamental size descriptors of sperm head |

| Derived Shape Parameters | Ellipticity (L/W), Rugosity (4πA/P²), Elongation ([L-W]/[L+W]) | Quantification of head shape characteristics |

| Nuclear Classification | Small (<10.90 μm²), Intermediate (10.91-13.07 μm²), Large (>13.07 μm²) | Size-based categorization per established clinical standards |

| Shape Categories | Oval, Pyriform, Round, Elongated | Morphological typing based on canonical forms |

These parameters provide the quantitative foundation for both primary classification and auxiliary task formulation, with particular emphasis on derived shape parameters that capture clinically relevant morphological variations [33].

Algorithmic Framework Relationships

Application Notes for Clinical Implementation

Cross-Domain Generalization Protocol

For optimal performance across varied clinical settings:

Data Preprocessing Standards:

- Implement consistent staining protocols using Hoechst 33342 for nuclear visualization

- Standardize image acquisition parameters (63× objective, consistent exposure settings)

- Apply identical augmentation strategies (random cropping, color distortion, rotation) across domains

- Establish morphometric calibration using control samples [33]

Validation Framework:

- Employ three-tier evaluation strategy matching the published testing objectives

- Implement confidence thresholding for clinical deployment (minimum 0.85 confidence score)

- Establish continuous performance monitoring with drift detection for sustained reliability [17]

Integration with Existing Clinical Workflows

The HSHM-CMA framework can be incorporated into standard infertility diagnostic pipelines with these adaptations:

Compatibility Requirements:

- CASA-Morph system with fluorescence imaging capability

- Export functionality for raw morphometric parameters

- Minimum dataset of 200 spermatozoa per sample for reliable classification

- Integration with laboratory information systems for patient data correlation [33]

Quality Assurance Measures:

- Regular validation against manual expert classification (minimum 95% concordance)

- Continuous calibration using standardized control samples

- Periodic retraining with institution-specific data to maintain performance

- Cross-validation against clinical outcomes for predictive value assessment [17]

Data Preprocessing and Augmentation Strategies for Limited Datasets

The application of deep learning to sperm head morphology research represents a paradigm shift in male fertility diagnostics, yet it is fundamentally constrained by the scarcity of high-quality, annotated datasets. This challenge is particularly acute in this domain, where manual expert classification is time-consuming, suffers from significant subjectivity, and yields high inter- and intra-laboratory variability [16] [34] [35]. These limitations directly impact the reliability and throughput of morphological analysis. This application note details robust data preprocessing and augmentation protocols, contextualized within a modern contrastive meta-learning framework, to maximize model performance and generalization when labeled data is severely limited. The strategies outlined herein are designed to enable researchers to build more accurate, reliable, and data-efficient diagnostic systems for sperm morphology analysis.

The Data Scarcity Challenge in Sperm Morphology Analysis

The development of automated sperm morphology analysis systems is hindered by several data-related challenges. Manual assessment, the current clinical standard, is laborious, non-repeatable, and heavily dependent on technician expertise [35]. Furthermore, sperm defect assessment requires simultaneous evaluation of head, vacuoles, midpiece, and tail abnormalities, which substantially increases annotation difficulty and complexity [34].

Available public datasets, such as the SCIAN and HuSHeM datasets, are often characterized by a limited number of images, high noise levels in low-magnification microscopy, and significant class imbalance [35]. For instance, the SMD/MSS dataset began with only 1,000 individual sperm images before augmentation [16]. These factors collectively contribute to the central problem of data scarcity, leading to model overfitting and poor generalization in real-world clinical settings. Preprocessing and augmentation are therefore not merely performance enhancements but essential prerequisites for developing robust deep learning models in this field.

Data Preprocessing Protocols

Effective preprocessing is critical for standardizing input data and enhancing feature visibility before model training. The following protocol outlines a sequential workflow for preparing sperm morphology images.

Experimental Preprocessing Workflow

The diagram below illustrates the sequential stages of the data preprocessing pipeline.

Detailed Preprocessing Methodology

Data Cleaning and Denoising: Sperm images acquired via optical microscopes often contain significant noise from insufficient lighting or poorly stained semen smears [16]. The primary goal of this stage is to accurately estimate the spermatozoon's signal by reducing these overlapping noise signals. Techniques include identifying and handling missing values, outliers, or any inconsistencies in the dataset to ensure the model is not influenced by noise that might hinder performance [16].

Normalization and Standardization: This step transforms numerical features to a common scale to prevent any particular feature from dominating the learning process due to magnitude differences. A common approach, as employed in the SMD/MSS dataset study, is to resize images using a linear interpolation strategy to a uniform size of 80x80 pixels in grayscale (80801) [16]. Min-Max normalization can also be applied to rescale all pixel intensities to a [0, 1] range, enhancing numerical stability during model training [36].

Data Augmentation Strategies for Limited Datasets

Data augmentation artificially expands the training dataset by creating modified versions of existing images, which is crucial for preventing overfitting and improving model generalization when data is scarce [37] [38]. The following table summarizes core and advanced augmentation techniques relevant to sperm morphology images.

Table 1: Data Augmentation Techniques for Sperm Morphology Analysis

| Technique Category | Specific Method | Impact on Model Performance | Application Consideration for Sperm Images |

|---|---|---|---|

| Geometric/Orientation | Rotation & Flipping | Improves symmetry recognition, simulates different viewing angles [37] | Use small rotation angles to avoid unrealistic sperm orientations |

| Cropping & Scaling | Forces model to learn local features, simulates varying distances [39] | Ensure critical structures (head, tail) remain visible | |

| Color & Lighting | Brightness/Contrast Adjustments | Simulates different microscope lighting conditions [38] | Vital for generalizing across lab equipment and staining variations |

| Color Jittering | Enhances adaptability to different cameras and staining kits [39] | Moderate changes to preserve biological relevance of color | |

| Advanced/Mix-based | CutMix & MixUp | Blends images/labels; smooths decision boundaries, reduces overfitting [37] | Effective when basic methods plateau; requires careful label mixing |

| Generative Methods (GANs) | Generates high-fidelity synthetic samples for rare classes [37] [38] | Computationally intensive but valuable for balancing imbalanced classes |

The quantitative benefits of these strategies are significant. One study on tech product photos found that random cropping with different aspect ratios led to a 23% accuracy increase compared to using only flips and rotations [37]. In a specialized study, applying data augmentation to a sperm morphology dataset increased the available images from 1,000 to 6,035, which was instrumental in achieving a deep learning model accuracy ranging from 55% to 92% across different morphological classes [16].

Integration with a Contrastive Meta-Learning Framework

Contrastive meta-learning offers a powerful synergy with the aforementioned strategies, specifically addressing the challenges of noisy labels and data-efficient learning.

Framework Architecture and Workflow

The following diagram illustrates how data preprocessing, augmentation, and the CML framework are integrated.

Core Experimental Protocols

Protocol 1: Confident Learning for Noisy Label Correction A major challenge in sperm datasets is inter-expert disagreement. A contrastive meta-learning framework can be employed to mitigate this [40] [41].

- Objective: To assign confidence scores to expert annotations and down-weight the influence of noisy or uncertain labels during training.

- Methodology: Instead of traditional confident learning, which discards uncertain samples, a soft confident learning approach assigns confidence-based weights to all training data. This preserves boundary information while emphasizing prototypical normal patterns [40].

- Quantification: Data uncertainty is quantified through IQR-based thresholding, while model uncertainty is managed via covariance-based regularization within a Model-Agnostic Meta-Learning (MAML) loop [40]. This approach has been shown to outperform models trained solely on clean data in other domains with noisy labels [41].

Protocol 2: Data Augmentation for Meta-Learning Generalization

- Objective: To maximize the diversity of "tasks" presented during the meta-learning phase, enabling rapid adaptation to new, unseen sperm morphology profiles.

- Methodology: Within the meta-learning framework, each "task" is created by applying a unique combination of augmentation techniques (e.g., rotation + brightness change) to a subset of classes. This teaches the model how to quickly learn from limited data, a core tenet of meta-learning.

- Outcome: The framework learns a discriminative feature space where normal sperm patterns form compact clusters, distinct from various abnormality classes, thereby enabling rapid domain adaptation and improved few-shot learning capabilities [40].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Sperm Morphology AI Research

| Item / Resource | Function / Description | Example / Note |

|---|---|---|

| MMC CASA System | Microscope-camera system for acquiring images from sperm smears. | Used with a 100x oil immersion objective in bright field mode [16]. |

| RAL Diagnostics Staining Kit | Stains sperm smears for better visual contrast and feature distinction. | Standard staining protocol as per WHO guidelines [16]. |

| SMD/MSS Dataset | A dataset of sperm images with 12 classes of morphological defects based on modified David classification. | Initially contained 1,000 images, expanded to 6,035 via augmentation [16]. |

| Albumentations Library | A Python library for fast and flexible image augmentations. | Ideal for implementing geometric and color transformations on-the-fly [37] [39]. |

| PyTorch / TensorFlow | Deep learning frameworks. | Provide built-in data loading and augmentation utilities (e.g., torchvision.transforms) [39]. |

| Contrastive Meta-Learning (CML) | A framework combining contrastive and meta learning. | Used to improve feature representations and assess label quality from noisy annotations [40] [41]. |

The integration of systematic data preprocessing, strategic data augmentation, and advanced contrastive meta-learning frameworks presents a powerful solution to the data scarcity problem in sperm head morphology research. By adhering to the detailed protocols and utilizing the toolkit outlined in this document, researchers and drug development professionals can significantly enhance the accuracy, robustness, and clinical applicability of AI-based diagnostic systems. This approach not only makes more efficient use of precious and limited annotated data but also directly addresses the critical issue of label noise inherent in subjective morphological assessments, paving the way for more reliable male fertility diagnostics.