Convolutional Neural Networks for Sperm Classification: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive exploration of Convolutional Neural Networks (CNNs) for automated sperm morphology analysis, tailored for researchers and drug development professionals.

Convolutional Neural Networks for Sperm Classification: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive exploration of Convolutional Neural Networks (CNNs) for automated sperm morphology analysis, tailored for researchers and drug development professionals. It covers the foundational need for AI in standardizing subjective manual assessments, details the methodological pipeline from dataset creation to model architecture, addresses critical troubleshooting aspects like data augmentation and fairness, and validates performance against expert benchmarks and conventional methods. By synthesizing current evidence and applications, this review serves as a technical resource for developing robust, clinically applicable deep learning tools in reproductive medicine.

The Critical Need for AI in Sperm Morphology Analysis

In the field of male fertility research, the morphological analysis of sperm is a cornerstone of diagnostic evaluation. For decades, this assessment has relied on manual examination by trained experts, a method governed by standardized guidelines from the World Health Organization (WHO). Despite its status as the historical gold standard, manual assessment is increasingly recognized as a significant bottleneck in the pipeline of reproductive biology research and clinical diagnostics. The inherent subjectivity of the human eye and the complex, time-consuming nature of the process introduce substantial challenges to reproducibility, thereby impacting the reliability of scientific findings and clinical decisions. This whitepaper delineates the core limitations of manual sperm assessment, with a specific focus on the issues of subjectivity and reproducibility. Furthermore, it frames these challenges within the context of a burgeoning solution: the application of Convolutional Neural Networks (CNNs) for automated, objective, and standardized sperm classification. The transition to deep learning-based methodologies is not merely a technical enhancement but a necessary evolution to bolster the scientific rigor in the field of reproductive medicine.

The Core Challenges of Manual Assessment

Subjectivity and Expert Disagreement

The manual evaluation of sperm morphology is fundamentally a subjective exercise, heavily reliant on the expertise and judgment of the individual analyst. This subjectivity directly leads to significant inter-expert variability, where different specialists may classify the same sperm cell differently.

A critical study highlighting this issue developed the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) and meticulously analyzed agreement between three experts. The results, summarized in Table 1, reveal a startling lack of consensus [1].

Table 1: Inter-Expert Agreement on Sperm Morphology Classification

| Agreement Scenario | Description | Findings from SMD/MSS Study |

|---|---|---|

| Total Agreement (TA) | All three experts assigned the same morphological label. | Only achieved for a fraction of the dataset. |

| Partial Agreement (PA) | Two out of three experts agreed on the same label. | A common outcome, indicating frequent disagreement. |

| No Agreement (NA) | All three experts provided different classifications. | Occurred with notable frequency. |

This inconsistency stems from the challenge of interpreting subtle morphological features. According to a recent review, "manual observation involves a substantial workload and is always influenced by the subjectivity of observers, thereby hindering clinical diagnosis" [2]. The problem is exacerbated by the complexity of the classification system itself, which involves assessing the head, neck, and tail for 26 different types of abnormalities based on WHO standards [2].

Reproducibility and the "Reproducibility Crisis"

Reproducibility, defined as the ability to obtain consistent results when an experiment is repeated under the same conditions, is a cornerstone of scientific validity. Manual sperm morphology assessment suffers from poor reproducibility, a manifestation of a broader "reproducibility crisis" in biomedical research [3].

The reproducibility problem in this context is two-fold:

- Intra-laboratory Reproducibility: The same technician may struggle to produce identical results when analyzing the same sample at different times due to fatigue or subtle changes in judgment.

- Inter-laboratory Reproducibility: Different laboratories, even when following the same WHO guidelines, can produce vastly different results for the same sample. This is powerfully illustrated by data from an External Quality Assessment (EQA) programme, where multiple reference laboratories assessed the same sperm motility videos. Considerable variation was observed between their manual assessments, which was attributed to the subjective nature of the task. This inter-laboratory variation is a critical challenge, as it directly impacts the training of deep learning models, which rely on consistent "ground truth" data [4].

The functional impact of this is a lack of standardization across clinics and research studies, making it difficult to compare results, validate findings, and establish universally applicable clinical thresholds.

Quantitative Data: Manual vs. Automated Performance

The limitations of manual analysis become starkly evident when its performance is quantified and compared against emerging deep-learning techniques. Studies have shown that even experts using traditional computer-assisted semen analysis (CASA) systems achieve limited accuracy, which more advanced deep learning models are now surpassing.

Table 2: Performance Comparison of Sperm Classification Methods

| Study / Model | Dataset | Methodology | Key Performance Metric | Result |

|---|---|---|---|---|

| Chang et al. [5] | SCIAN-MorphoSpermGS | Cascade Ensemble of SVM (CE-SVM) with manual feature extraction. | Average True Positive Rate | 58% |

| Shaker et al. [5] | SCIAN-MorphoSpermGS | Adaptive Patch-based Dictionary Learning (APDL). | Average True Positive Rate | 62% |

| Deep CNN (VGG16) [5] | HuSHeM | Transfer learning with VGG16 architecture. | Average True Positive Rate | 94.1% |

| Deep CNN (VGG16) [5] | SCIAN-MorphoSpermGS | Transfer learning with VGG16 architecture. | Average True Positive Rate | 62% |

| DCNN (ResNet-50) [4] | EQA Motility Videos | Predicting WHO motility categories from video. | Mean Absolute Error (MAE) for progressive motility | 0.05 (Three-category model) |

The performance of deep learning models is intrinsically linked to the quality of the data they are trained on. The SMD/MSS study, which utilized a deep learning algorithm, reported a wide accuracy range from 55% to 92%, underscoring that model performance is highly dependent on the quality and consistency of the expert annotations used for training [1]. This further emphasizes how subjectivity in manual assessment propagates into and limits the potential of new technologies.

The Convolutional Neural Network Solution

Convolutional Neural Networks represent a paradigm shift in sperm image analysis. Unlike traditional machine learning that requires manual, and often subjective, feature engineering (e.g., measuring head area or perimeter), CNNs automatically learn hierarchical features directly from raw pixel data. This end-to-end learning approach bypasses human bias in feature selection.

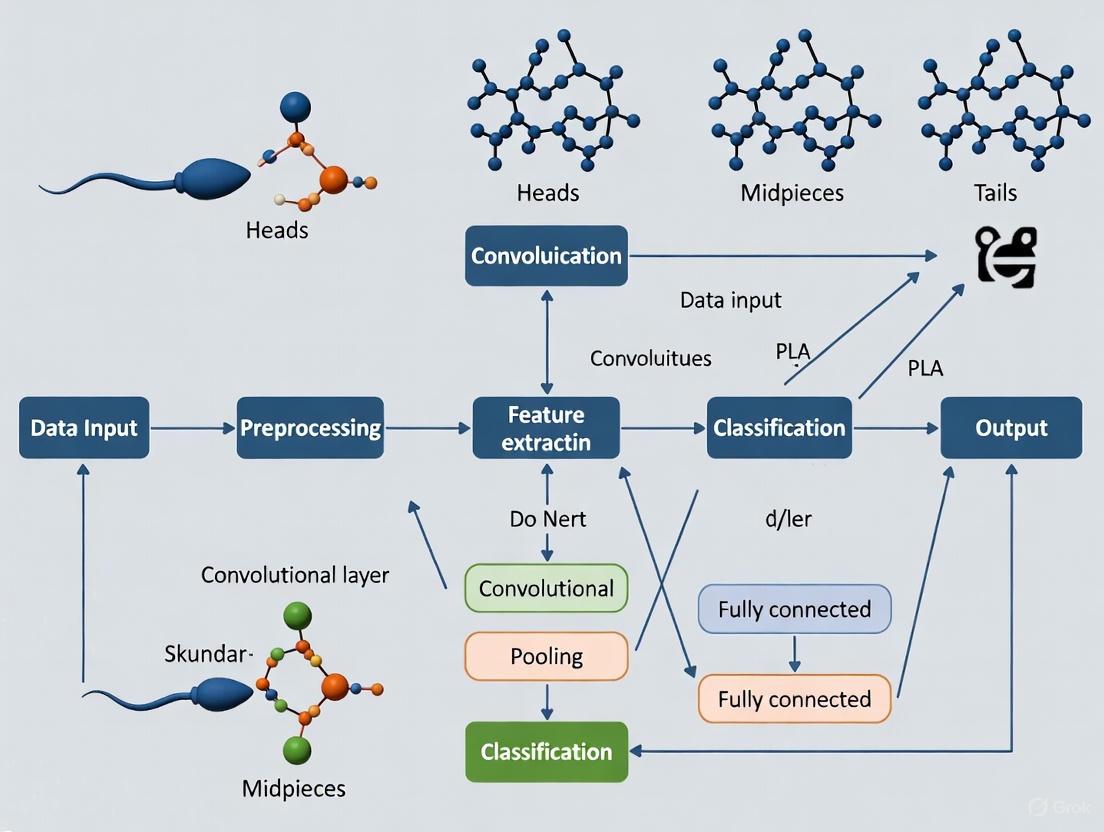

The typical workflow for a CNN-based sperm classification system, as detailed in several studies, involves a structured pipeline from data acquisition to model inference [1] [5] [4]. The following diagram illustrates this process, highlighting how it addresses the limitations of manual methods.

Experimental Protocols in Deep Learning for Sperm Analysis

The implementation of CNNs for sperm classification follows a rigorous, multi-stage experimental protocol. The methodology from the SMD/MSS study provides a clear example [1]:

- Sample Preparation & Data Acquisition: Semen smears are prepared from patient samples following WHO guidelines and stained. The MMC CASA system (an optical microscope with a digital camera) is then used to acquire images of individual spermatozoa at 100x magnification using bright-field mode.

- Expert Classification & Ground Truth Labeling: Each sperm image is independently classified by multiple experts based on a standardized classification system (e.g., modified David classification with 12 defect classes). A ground truth file is compiled, documenting the image name, expert classifications, and morphometric data.

- Image Pre-processing: This critical step aims to denoise images and standardize the input. It involves:

- Data Cleaning: Identifying and handling inconsistencies.

- Normalization/Standardization: Rescaling images to a common size (e.g., 80x80 pixels) and converting them to a common scale or grayscale to ensure no feature dominates the learning process due to magnitude differences.

- Data Augmentation: To address the challenge of limited and imbalanced dataset sizes, techniques such as rotation, flipping, and scaling are used to artificially expand the database and improve model generalization. The SMD/MSS study, for instance, expanded its dataset from 1,000 to 6,035 images through augmentation [1].

- Model Training & Evaluation: A CNN architecture (e.g., VGG16, ResNet-50) is employed, often using transfer learning. The dataset is partitioned (e.g., 80% for training, 20% for testing). The model is trained to minimize the difference between its predictions and the expert-derived ground truth labels. Performance is then evaluated on the unseen test set using metrics like accuracy, true positive rate, or mean absolute error.

Essential Research Reagent Solutions

The transition to robust, CNN-based sperm classification systems relies on a foundation of specific materials and data resources. The table below details key components essential for research in this field.

Table 3: Key Research Reagents and Resources for CNN-Based Sperm Analysis

| Item Name | Type | Function & Application in Research |

|---|---|---|

| MMC CASA System [1] | Hardware | An integrated system of microscope and camera for acquiring standardized digital images and videos of sperm for analysis. |

| RAL Diagnostics Staining Kit [1] | Chemical Reagent | Used to prepare semen smears, enhancing the contrast and visibility of sperm structures for morphological evaluation. |

| SMD/MSS Dataset [1] | Data | A dataset of 1,000+ sperm images classified by multiple experts according to the modified David classification, used for training and validating models. |

| VISEM-Tracking Dataset [6] | Data | A multi-modal dataset containing 20 annotated videos for sperm tracking and motility analysis, supporting supervised machine learning. |

| HuSHeM & SCIAN Datasets [5] | Data | Publicly available reference datasets of sperm head images classified into WHO categories, used for benchmarking classification algorithms. |

| Pre-trained CNN Models (e.g., VGG16, ResNet-50) [5] [4] | Software/Model | Established deep learning architectures used as a starting point for transfer learning, significantly reducing required data and training time. |

The limitations of manual sperm assessment—primarily its inherent subjectivity and consequent poor reproducibility—pose a significant challenge to the advancement of reproductive biology and the consistency of clinical diagnostics. Quantitative evidence demonstrates clear expert disagreement and performance ceilings for traditional methods. Within the broader thesis of understanding CNNs for sperm classification, these limitations are not merely problems to be documented but are the very justification for a paradigm shift. Deep learning approaches, particularly CNNs, offer a path toward automation, standardization, and enhanced accuracy by learning directly from data, thereby mitigating human bias. The successful implementation of this technology hinges on the availability of high-quality, consistently annotated datasets and rigorous experimental protocols. As the field moves forward, the focus must be on creating larger, more standardized datasets and developing robust, transparent AI tools to overcome the long-standing challenges of manual assessment and usher in a new era of reproducible research and reliable male fertility diagnostics.

Shortcomings of Conventional CASA Systems and Machine Learning

The morphological assessment of sperm is a cornerstone of male fertility diagnosis, providing critical insights into a patient's reproductive health. For decades, this analysis has relied on conventional computer-assisted semen analysis (CASA) systems and traditional machine learning (ML) approaches. These methods aim to bring objectivity to a field historically plagued by subjectivity and inter-laboratory variability. Despite their initial promise, these conventional systems face fundamental limitations in their ability to accurately classify the complex and subtle morphological features of human spermatozoa. Within the broader context of research on convolutional neural networks (CNN) for sperm classification, understanding these shortcomings is essential for driving innovation toward more robust, automated, and accurate diagnostic solutions. This technical guide provides an in-depth analysis of the methodological and practical limitations of conventional CASA and ML systems, framing them as the critical problem statement that modern deep learning approaches seek to overcome.

Technical Limitations of Conventional CASA Systems

Conventional CASA systems were developed to automate semen analysis and reduce the subjectivity inherent in manual assessments. However, their technical architecture introduces several significant constraints that limit their diagnostic reliability and clinical utility.

Limited Morphological Discrimination: Traditional CASA systems demonstrate a limited ability to accurately distinguish spermatozoa from non-sperm cells and debris in a sample [1]. Furthermore, they show poor performance in classifying specific abnormalities, particularly those related to the midpiece and tail, which are crucial for sperm function and motility [1] [7]. Their analytical capabilities are often restricted to basic head morphometrics, failing to provide a comprehensive morphological assessment.

Dependence on Image Quality: The performance of these systems is heavily dependent on optimal sample preparation and staining [1]. Variations in staining intensity, smear thickness, or the presence of background artifacts can significantly degrade analysis accuracy. They often produce unsatisfactory results with low-quality microscopic images, a common challenge in routine clinical settings [1].

Inflexibility and High Cost: Conventional CASA systems represent closed, proprietary platforms that are not easily adaptable to new classification schemes or the detection of novel morphological defects [8]. This inflexibility, coupled with their high acquisition cost, has hindered their widespread adoption, particularly in smaller laboratories and in the livestock industry [8] [7].

Table 1: Core Technical Shortcomings of Conventional CASA Systems

| Shortcoming Category | Specific Technical Limitations | Impact on Clinical Diagnosis |

|---|---|---|

| Analytical Capability | Limited discrimination of sperm from debris; Poor detection of midpiece and tail defects [1] [7] | Incomplete morphological profile; Potential misdiagnosis of sperm dysfunctions |

| Image Processing | High sensitivity to staining quality and background noise [1] | Reduced reliability and reproducibility across different laboratories and technicians |

| System Flexibility & Access | Closed, proprietary architecture; High initial capital investment [8] | Inability to adapt to new clinical findings; Barriers to widespread implementation |

Methodological Flaws in Traditional Machine Learning Approaches

Before the ascendancy of deep learning, traditional machine learning approaches represented the state-of-the-art in automated sperm classification. These methods, however, are hampered by fundamental methodological flaws rooted in their reliance on manual feature engineering.

The Burden of Manual Feature Engineering

The primary limitation of conventional ML models is their dependence on handcrafted features for classification [2] [5]. This process requires domain experts to pre-define and extract specific quantitative descriptors from sperm images, such as:

- Shape-based descriptors: Head area, perimeter, aspect ratio, and eccentricity [5].

- Texture features: Measures of acrosomal and nuclear texture [2].

- Mathematical descriptors: Zernike moments, Fourier descriptors, and geometric Hu moments to capture complex shapes [5].

This manual feature extraction is not only time-consuming but also inherently biased and incomplete. The performance of the model is strictly limited by the human expert's ability to identify and quantify which features are relevant for classification. Subtle but clinically significant morphological patterns may be overlooked if they are not captured by the pre-defined feature set [2] [5].

Performance and Generalization Issues

The reliance on manual feature engineering directly leads to problems with model performance and generalizability. As shown in experimental studies, traditional ML models exhibit limited classification accuracy. For instance, a Cascade Ensemble of Support Vector Machines (CE-SVM) achieved an average true positive rate of only 58% on the SCIAN-MorphoSpermGS dataset for classifying sperm heads into five categories [5]. Another Bayesian Density Estimation model reached 90% accuracy but relied exclusively on shape-based features, ignoring other discriminative information like texture and intensity [2].

Furthermore, these models often generalize poorly to new datasets. Features engineered from images captured under specific conditions (e.g., a particular microscope, stain, or lighting) may not be relevant or may manifest differently in images from other sources, leading to a significant drop in performance [2].

Table 2: Comparative Performance of Traditional ML versus Deep Learning for Sperm Head Classification

| Classification Method | Dataset | Key Methodology | Reported Performance |

|---|---|---|---|

| Cascade Ensemble SVM (CE-SVM) [5] | SCIAN-MorphoSpermGS | Manual extraction of shape descriptors (area, perimeter, Zernike moments) fed into a classifier | 58% average true positive rate |

| Adaptive Patch-based Dictionary Learning (APDL) [5] | HuSHeM | Class-specific dictionaries trained from image patches | 92.3% average true positive rate (for full expert agreement) |

| Bayesian Density Estimation [2] | Not Specified | Manual shape-based feature extraction and classification | 90% accuracy |

| Deep CNN (VGG-16 Transfer Learning) [5] | HuSHeM | Automated feature learning from raw image input | 94.1% average true positive rate |

The Foundational Challenge: Data Limitations

A critical bottleneck affecting both conventional CASA and traditional ML is the scarcity of high-quality, standardized data for model development and validation.

- Lack of Standardized Datasets: The development of robust models is hindered by the absence of large, diverse, and high-quality annotated datasets [2] [9]. Many existing public datasets, such as HSMA-DS and MHSMA, are characterized by low resolution, limited sample size, and insufficient representation of all morphological categories [2] [9]. This imbalance can bias models toward over-represented classes.

- Subjectivity in Ground Truth: The "ground truth" labels used for training are themselves derived from human experts, who often disagree due to the intrinsic subjectivity of morphological assessment [1]. This inter-observer variability introduces noise into the training data, limiting the maximum achievable performance of any model trained on it [1].

Experimental Protocols for Benchmarking Sperm Classification Models

To quantitatively evaluate and compare the performance of different sperm classification algorithms, researchers employ standardized experimental protocols. Below is a detailed methodology for a typical comparative study, as referenced in the literature.

Protocol: Benchmarking ML vs. CNN for Sperm Head Morphology Classification

1. Objective: To compare the classification accuracy and efficiency of a traditional Machine Learning model (e.g., Support Vector Machine - SVM) against a Deep Learning model (e.g., CNN using Transfer Learning) on a public dataset of human sperm head images.

2. Datasets:

- Primary Dataset: HuSHeM (Human Sperm Head Morphology) or SCIAN-MorphoSpermGS [5].

- Preparation: Images are typically pre-processed, including resizing to a uniform dimension (e.g., 224x224 pixels for VGG16), and normalized. The dataset is split into training (~80%), validation (~10%), and test (~10%) sets.

3. Traditional ML Pipeline (Control Arm):

- Feature Extraction: For each sperm head image, manually extract a set of features. This includes:

- Model Training: Train a classifier, such as an SVM with a radial basis function (RBF) kernel, using the extracted feature vectors. Optimize hyperparameters (e.g., regularization parameter C, gamma) via cross-validation on the training set.

- Evaluation: The trained SVM model is used to predict classes on the held-out test set. Performance metrics (Accuracy, Precision, Recall, F1-score) are recorded.

4. Deep Learning Pipeline (Test Arm):

- Model Selection & Preparation: Use a pre-trained CNN architecture like VGG16 [5]. Remove the original classification head and replace it with a new one tailored to the number of sperm morphology classes (e.g., Normal, Tapered, Pyriform, Small, Amorphous).

- Training (Transfer Learning):

- Stage 1: Freeze the convolutional base and only train the new classification head. Use a low learning rate.

- Stage 2 (Fine-tuning): Unfreeze some of the higher-level layers in the convolutional base and continue training with a very low learning rate to adapt the pre-learned features to the sperm image domain [5].

- Evaluation: The fine-tuned CNN is evaluated on the same test set as the SVM. The same performance metrics are calculated for a direct comparison.

5. Outcome Measures:

- Primary: Average True Positive Rate (TPR) or F1-score across all classes.

- Secondary: Computational time for feature extraction and training, model robustness to image noise.

The Scientist's Toolkit: Key Reagents and Materials

The experimental workflows for developing sperm classification models rely on a suite of essential reagents, instruments, and computational tools. The following table details these key resources.

Table 3: Essential Research Resources for Sperm Morphology Analysis Studies

| Category | Item / Solution | Specific Function in Research Context |

|---|---|---|

| Sample Preparation & Staining | RAL Diagnostics Stain / Diff-Quik [1] [9] | Provides contrast for visualizing sperm structures under a bright-field microscope for manual analysis and traditional CASA. |

| Formaldehyde (2%) [8] | Used for fixing sperm samples to preserve morphology during image acquisition in flow cytometry studies. | |

| Image Acquisition | Bright-field Microscope (100x oil) [1] | The standard instrument for acquiring high-magnification images of stained sperm smears. |

| ImageStreamX Mark II (IBFC) [8] | Image-based flow cytometer that enables high-throughput, single-cell imaging of thousands of sperm, pairing with deep learning. | |

| Confocal Laser Microscope [9] | Captures high-resolution, z-stack images of unstained, live sperm, facilitating label-free morphological analysis. | |

| Datasets & Software | Public Datasets (HuSHeM, SCIAN) [5] [2] | Provide benchmark, human-annotated sperm images for training and validating new machine learning models. |

| Python with TensorFlow/Keras [1] [4] | The primary programming environment and libraries for building, training, and testing deep convolutional neural networks. | |

| Computational Models | Pre-trained CNNs (VGG16, ResNet50) [5] [9] [4] | Established network architectures used as a starting point for transfer learning, significantly reducing required data and training time. |

Conventional CASA systems and traditional machine learning approaches are fundamentally constrained by their analytical inflexibility, dependence on error-prone manual feature engineering, and vulnerability to data quality issues. The shortcomings detailed in this document—from their limited discriminatory capabilities to their poor generalizability—create a clear imperative for a paradigm shift. This evidence-based analysis of their technical and methodological limitations establishes a strong foundational rationale for the adoption of end-to-end deep learning solutions, which promise to overcome these hurdles through automated feature learning and enhanced classification performance, thereby advancing the field of automated sperm morphology analysis.

Male infertility is a prevalent global health issue, contributing to approximately 50% of infertility cases among couples [1] [10] [11]. Within clinical andrology, sperm morphology—which assesses the size, shape, and structural integrity of sperm components (head, midpiece, and tail)—represents a fundamental parameter in male fertility assessment [11] [12]. Despite its established role, traditional morphology assessment faces significant challenges related to subjectivity, standardization, and reproducibility [1] [11]. The emergence of artificial intelligence (AI), particularly convolutional neural networks (CNNs), offers transformative potential for overcoming these limitations, enabling automated, precise, and high-throughput sperm classification systems that could revolutionize both infertility diagnosis and clinical decision-making for assisted reproductive technologies (ART) [1] [5] [11].

The Clinical Problem: Limitations of Conventional Morphology Assessment

Standardization Challenges and Subjectivity

The manual assessment of sperm morphology remains fraught with variability. Despite guidelines from the World Health Organization (WHO), the process is highly dependent on technician expertise and subjective interpretation [13] [1]. This assessment is particularly challenging as it requires classification based on stringent WHO criteria encompassing 26 distinct abnormality types across the head, neck, and tail structures [11]. A significant workload is involved, as analysts must evaluate over 200 sperm per sample, leading to inter-observer and intra-observer variability that compromises result reproducibility and clinical reliability [11]. Recent expert reviews have questioned the analytical reliability and clinical utility of conventional sperm morphology assessment, noting "huge variability in performance and interpretation" [13].

Clinical Relevance and Diagnostic Value

The clinical value of sperm morphology as a standalone prognostic marker is increasingly debated. The French BLEFCO Group's 2025 guidelines explicitly recommend against using the percentage of normal-form sperm as a prognostic criterion before intrauterine insemination (IUI), in vitro fertilization (IVF), or intracytoplasmic sperm injection (ICSI) [13]. The overall level of evidence supporting current practices is considered low, challenging the traditional reliance on morphology thresholds for ART selection [13]. Nevertheless, morphology retains clinical importance for detecting specific monomorphic syndromes like globozoospermia and macrocephalic sperm head syndrome, which have profound implications for fertility outcomes [13].

Table 1: Sperm Morphology Parameters by Age Group in Fertile and Subfertile Populations

| Age Range | Normal Morphology (%) - Fertile | Normal Morphology (%) - General | Sperm Concentration (million/mL) | Motility (%) |

|---|---|---|---|---|

| 18-29 Years | 11.5-20.5% [14] | ~20% [15] | 53.85-127.05 [14] | 52-66 [14] |

| 30-39 Years | 9-19% [14] | Not Reported | 54.51-117.68 [14] | 48-61 [14] |

| 40-49 Years | 9-16% [14] | <20% (declining) [15] | 47.44-100.8 [14] | 47-60 [14] |

Table 2: Temporal Trends in Semen Parameters (2000-2019, n=8,990 samples)

| Parameter | Trend Over Time | Statistical Significance |

|---|---|---|

| Sperm Morphology | Significant decrease [15] | p<0.001 [15] |

| Semen Volume | Significant decrease [15] | p<0.001 [15] |

| Sperm Motility | Significant decrease [15] | p<0.001 [15] |

| Sperm Concentration | Remained fairly constant [15] | p=0.100 [15] |

Experimental Paradigms in Sperm Morphology Research

Conventional Machine Learning Approaches

Traditional machine learning approaches for sperm classification have relied on manually engineered features extracted from sperm images. These include shape-based descriptors such as head area, perimeter, eccentricity, Zernike moments, and Fourier descriptors [5] [11]. Classifiers such as Support Vector Machines (SVM), k-nearest neighbors, and decision trees were then applied to these features. Representative studies include:

- Chang et al. (2017): Implemented a Cascade Ensemble of SVM (CE-SVM) classifiers achieving 58% average true positive rate on a dataset with expert consensus [5].

- Bijar et al. (2016): Developed a Bayesian Density Estimation model achieving 90% accuracy for classifying sperm heads into four morphological categories [11].

- Mirsky et al. (2017): Trained an SVM classifier on 1,400 human sperm cells, achieving 88.59% area under the ROC curve and precision rates above 90% [11].

These conventional methods, while foundational, face limitations including dependence on manual feature engineering, limited generalization across datasets, and focus primarily on sperm heads rather than complete sperm structures [11].

Deep Learning and CNN-Based Classification

Convolutional Neural Networks (CNNs) represent a significant advancement by automatically learning relevant features directly from raw pixel data, eliminating the need for manual feature engineering [5] [11]. Key methodological approaches include:

- Transfer Learning: Retraining pre-trained networks (e.g., VGG16) on sperm image datasets, achieving up to 94.1% true positive rate on benchmark datasets [5].

- Custom CNN Architectures: Developing specialized networks for sperm classification, such as the model trained on the SMD/MSS dataset which achieved accuracy ranging from 55% to 92% across morphological classes [1].

- Data Augmentation: Techniques to expand limited datasets, as demonstrated in the SMD/MSS study which increased the dataset from 1,000 to 6,035 images [1].

CNN Workflow for Sperm Classification

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Key Research Reagent Solutions for Sperm Morphology Analysis

| Reagent/Equipment | Function/Application | Specification/Example |

|---|---|---|

| Papanicolaou Stain | Differential staining of sperm structures (acrosome, nucleus, midpiece) | WHO-recommended staining method [16] |

| RAL Diagnostics Stain | Sperm staining for morphological analysis | Used in SMD/MSS dataset creation [1] |

| SSA-II Plus CASA System | Computer-Assisted Sperm Analysis for automated morphology measurement | Measures head length, width, area, perimeter, ellipticity, acrosome area [16] |

| MMC CASA System | Image acquisition for sperm morphology datasets | Used for SMD/MSS dataset with 100x oil immersion objective [1] |

| Harris's Hematoxylin | Nuclear staining in Papanicolaou method | Stains nuclei for 4 minutes in standardized protocol [16] |

| EA-50 Green | Cytoplasmic staining in Papanicolaou method | Stains cytoplasm and nucleoli in standardized protocol [16] |

Dataset Curation and Annotation Protocols

The development of robust CNN models requires high-quality, annotated datasets. Key methodological considerations include:

- Expert Annotation: The SMD/MSS dataset employed three independent experts with extensive experience in semen analysis to classify each spermatozoon according to modified David classification [1].

- Inter-Expert Agreement Analysis: Statistical evaluation of expert consensus (no agreement, partial agreement, total agreement) using Fisher's exact test with significance at p<0.05 [1].

- Standardized Staining: Following WHO manual standards for smear preparation and Papanicolaou staining to ensure consistency [16].

- Image Acquisition Specifications: Using 100x oil immersion objectives, bright field microscopy, and standardized camera systems [1] [16].

Clinical Problem and AI Solution Framework

Current Research Gaps and Future Directions

Despite significant advances, several challenges remain in the application of CNNs for sperm morphology classification. The lack of standardized, high-quality annotated datasets continues to hinder model generalization [11]. Current public datasets (e.g., HuSHeM, SCIAN, SMD/MSS) often suffer from limitations in sample size, image quality, and diversity of morphological representations [1] [5] [11]. Future research priorities should include:

- Development of large-scale, multi-center datasets with standardized annotation protocols

- Creation of models capable of segmenting and classifying complete sperm structures (head, midpiece, tail) rather than focusing solely on head morphology

- Validation of clinical utility through prospective studies correlating CNN classifications with ART outcomes

- Exploration of explainable AI techniques to enhance clinical trust and adoption

The integration of CNN-based sperm morphology assessment into clinical workflows represents a promising frontier in reproductive medicine, with potential to standardize diagnostic criteria, improve ART success rates, and provide deeper insights into the complex relationship between sperm structure and function.

The manual assessment of sperm morphology is widely recognized as a challenging parameter to standardize due to its inherent subjectivity, which often relies heavily on the operator's expertise and experience [1]. This methodological variability presents significant obstacles in male infertility diagnostics, where accurate morphological evaluation serves as a crucial parameter for clinical decision-making. Within reproductive biology laboratories worldwide, the absence of automated, standardized systems for sperm classification has necessitated dependence on manual techniques that demonstrate considerable inter-laboratory and inter-technician variability despite established WHO guidelines [11].

Convolutional Neural Networks (CNNs), a specialized class of deep learning algorithms particularly suited for image processing and classification tasks, offer a promising technological solution to these standardization challenges [17]. These artificial neural networks automatically learn hierarchical feature representations directly from image data, eliminating the need for manual feature engineering that has limited conventional machine learning approaches in sperm morphology analysis [11]. The application of CNNs to sperm classification enables the development of objective, consistent analytical systems capable of operating without operator fatigue or subjective interpretation biases.

Quantitative Performance of CNN Models in Sperm Analysis

Research studies have demonstrated varying performance levels for CNN models applied to sperm classification tasks, with implementation details and architectural choices significantly influencing outcomes. The tables below summarize key quantitative findings from recent investigations.

Table 1: Performance Metrics of CNN Models in Sperm Morphology Classification

| Study | Dataset Size | Classes | Accuracy | Key Metrics |

|---|---|---|---|---|

| SMD/MSS Dataset [1] | 1,000 images (expanded to 6,035 via augmentation) | 12 morphological classes (David classification) | 55-92% | Accuracy range across morphological classes |

| ResNet-50 Motility Classification [4] | 65 video recordings | 3 motility categories (progressive, non-progressive, immotile) | N/A | MAE: 0.05, Pearson's r: 0.88-0.89 |

| ResNet-50 Motility Classification [4] | 65 video recordings | 4 motility categories (including rapid/slow progressive) | N/A | MAE: 0.07, Pearson's r: 0.673 (rapid progressive) |

Table 2: Comparison of Conventional ML versus Deep Learning Approaches

| Feature | Conventional ML | Deep Learning (CNN) |

|---|---|---|

| Feature Extraction | Manual (shape, texture, thresholds) [11] | Automatic (learned from data) [1] |

| Architecture | Non-hierarchical (SVM, K-means, decision trees) [11] | Hierarchical layered structure [17] |

| Performance | Variable (49-90% accuracy for head classification) [11] | Higher accuracy potential (up to 92%) [1] |

| Generalization | Limited, dataset-specific [11] | Enhanced with diverse training data [1] |

| Structural Coverage | Primarily head-only classification [11] | Complete sperm structure (head, midpiece, tail) [1] |

Experimental Protocols and Methodologies

Dataset Development and Preparation Protocol

The creation of the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset exemplifies a rigorous approach to dataset development for CNN training in sperm classification research [1]. The protocol encompasses several critical stages:

Sample Collection and Inclusion Criteria: Semen samples are collected from patients with a sperm concentration of at least 5 million/mL, excluding samples with concentrations exceeding 200 million/mL to prevent image overlap and facilitate capture of whole spermatozoa [1]. This selective approach ensures image quality while maintaining morphological diversity.

Slide Preparation and Staining: Smears are prepared according to WHO manual guidelines and stained with standardized staining kits (e.g., RAL Diagnostics staining kit) to enhance morphological feature visibility and consistency across samples [1].

Image Acquisition: Using an MMC CASA system with an optical microscope equipped with a digital camera, images are captured in bright field mode with an oil immersion ×100 objective. Each image contains a single spermatozoon comprising head, midpiece, and tail structures [1].

Expert Annotation and Ground Truth Establishment: Each spermatozoon undergoes manual classification by three independent experts with extensive experience in semen analysis. Classification follows the modified David classification system, which includes 12 classes of morphological defects: 7 head defects (tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome), 2 midpiece defects (cytoplasmic droplet, bent), and 3 tail defects (coiled, short, multiple) [1].

Inter-expert Agreement Analysis: The level of agreement among the three experts is statistically assessed using Fisher's exact test, with classifications categorized as no agreement (NA), partial agreement (PA: 2/3 experts agree), or total agreement (TA: 3/3 experts agree) [1].

Data Augmentation Methodology

To address the common challenge of limited dataset size in medical imaging applications, researchers employ data augmentation techniques to artificially expand the training dataset [1]. The SMD/MSS dataset implementation demonstrates this approach, initially comprising 1,000 images but expanded to 6,035 images after applying augmentation [1]. This process enhances class balance across morphological categories and improves model robustness.

CNN Architecture and Training Protocol

The implementation of CNN models for sperm classification follows a standardized computational workflow:

Image Pre-processing: Raw images undergo cleaning to handle missing values, outliers, or inconsistencies. Normalization or standardization transforms numerical features to a common scale, typically resizing images to standardized dimensions (e.g., 80×80×1 grayscale with linear interpolation strategy) [1].

Data Partitioning: The entire image set is divided into two subsets through random splitting: 80% for model training and 20% for testing. From the training subset, 20% is typically extracted for validation during the training process [1].

Model Implementation: The algorithm is developed using a convolutional neural network architecture implemented in Python (version 3.8) [1]. For motility assessment, studies have successfully employed ResNet-50 architecture trained on optical flow-based images generated by Lucas-Kanade method to capture temporal movement information [4].

Training Configuration: Models are trained using the Adam optimizer with a learning rate of 0.0004, calculating loss via mean absolute error (MAE). Training typically employs a maximum of 1,000 epochs with early stopping implemented if validation performance doesn't improve for 15 consecutive epochs [4].

Validation Method: Ten-fold cross-validation is recommended to compensate for limited dataset sizes, where one-tenth of the data serves as an independent validation set excluded from training in each fold [4].

Visualization of CNN Workflow for Sperm Classification

The following diagram illustrates the complete experimental workflow for CNN-based sperm morphology analysis, from sample preparation to model validation:

CNN Workflow for Sperm Analysis

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for CNN-Based Sperm Morphology Studies

| Item | Specification/Function |

|---|---|

| Semen Samples | Minimum concentration 5 million/mL; exclusion of samples >200 million/mL to prevent image overlap [1] |

| Staining Kit | RAL Diagnostics staining kit for enhanced morphological feature visibility [1] |

| Microscope System | MMC CASA system with optical microscope, digital camera, and oil immersion ×100 objective [1] |

| Image Annotation Software | Tools for expert classification and ground truth establishment [1] |

| Data Augmentation Tools | Software for image transformation and dataset expansion [1] |

| CNN Framework | Python 3.8 with deep learning libraries (TensorFlow, Keras, PyTorch) [1] [4] |

| Computational Resources | GPUs with sufficient memory for training deep neural networks [17] |

| Validation Tools | Statistical analysis software (IBM SPSS, GraphPad Prism) for performance evaluation [1] [4] |

Convolutional Neural Networks represent a transformative technology with significant potential to standardize and automate sperm morphology analysis, addressing long-standing challenges of subjectivity and variability in male infertility diagnostics. The methodologies and experimental protocols outlined provide researchers with a comprehensive framework for implementing CNN-based classification systems, while the performance metrics demonstrate the current capabilities and future potential of these approaches. As dataset quality continues to improve and model architectures advance, CNN-based systems are poised to become invaluable tools in reproductive medicine, offering unprecedented consistency, efficiency, and accuracy in sperm morphology assessment.

Building a CNN Pipeline for Sperm Classification: From Data to Diagnosis

The application of Convolutional Neural Networks (CNNs) for sperm classification represents a paradigm shift in male fertility diagnostics. This transition from manual, subjective assessment to automated, objective analysis is critically dependent on the foundational elements of high-quality public datasets and robust annotation standards [11]. The inherent subjectivity of sperm morphology evaluation, with reported inter-observer variability as high as 40%, underscores the necessity for standardized, data-driven approaches [18]. Within the broader context of CNN research for sperm classification, datasets serve not only as training resources but also as essential benchmarks for comparing algorithmic performance, validating new techniques, and ensuring clinical relevance [5] [19]. This technical guide examines the current landscape of public datasets, details the annotation methodologies that establish reliable ground truth, and outlines experimental protocols that leverage these resources to advance CNN model development for reproductive medicine.

Public Datasets for Sperm Morphology Analysis

The development of robust CNN models requires access to well-curated, public datasets. These datasets provide the essential raw material for training, validation, and benchmarking. Several key datasets have emerged as community standards, each with distinct characteristics and annotation schemes.

Table 1: Key Public Datasets for Sperm Morphology Analysis

| Dataset Name | Sample Size | Annotation Classes | Annotation Standard | Key Features |

|---|---|---|---|---|

| SCIAN-MorphoSpermGS [5] [19] | 1,854 sperm head images | 5 classes (Normal, Tapered, Pyriform, Small, Amorphous) | WHO criteria by 3 experts | Focuses exclusively on sperm heads; established gold-standard for comparison |

| HuSHeM [5] [18] | 216 sperm images | 4 morphological classes | Expert classifications | Publicly available reference database for algorithm baselining |

| SMD/MSS [1] | 1,000 original images (extended to 6,035 via augmentation) | 12 classes based on modified David classification | Classified by 3 experts | Covers head, midpiece, and tail anomalies; includes data augmentation |

| SVIA [11] | 125,000 annotated instances | Object detection, segmentation, classification | Not specified | Large-scale dataset with annotations for multiple computer vision tasks |

The selection of an appropriate dataset is a critical first step in CNN pipeline development. Researchers must consider the alignment between the dataset's annotation scheme (e.g., WHO vs. David classification) and their clinical or research objectives. Furthermore, dataset size and diversity significantly impact model generalizability. The SMD/MSS dataset demonstrates a common strategy to mitigate limited sample sizes: employing data augmentation techniques to artificially expand the dataset to 6,035 images, thereby enhancing model robustness [1]. For research focusing specifically on sperm head morphology, the SCIAN and HuSHeM datasets provide targeted benchmarks.

Annotation Standards and Ground Truth Establishment

The accuracy of a supervised CNN model is fundamentally bounded by the quality of its labels. Establishing reliable ground truth for sperm images is particularly challenging due to the intrinsic subjectivity of morphological assessment. The field has therefore developed consensus-based methodologies to create a reproducible "gold standard."

The Expert Consensus Model

The most prevalent strategy for annotating sperm morphology datasets involves aggregating classifications from multiple domain experts. This approach directly addresses the issue of inter-observer variability. Key datasets exemplify this method:

- SCIAN-MorphoSpermGS: Three referent domain experts classified each sperm head image according to WHO criteria [19].

- SMD/MSS: Three experts from the laboratory independently classified each spermatozoon based on the modified David classification [1].

The level of agreement among experts can be used to stratify data quality. The SMD/MSS study defined three agreement scenarios: No Agreement (NA), Partial Agreement (PA) where 2/3 experts agree, and Total Agreement (TA) where 3/3 experts concur [1]. Models can then be trained and evaluated on subsets with higher agreement rates to enhance reliability.

Quantifying Annotation Quality and Training Impact

The subjectivity of sperm morphology classification directly impacts the performance of both human morphologists and AI models. Studies have quantified the performance of novice morphologists across different levels of classification complexity, as shown in the table below. Furthermore, structured training has been proven to significantly improve assessment accuracy.

Table 2: Impact of Classification System Complexity and Training on Morphology Assessment Accuracy

| Classification System | Number of Categories | Untrained User Accuracy | Trained User Accuracy | Key Study |

|---|---|---|---|---|

| Normal/Abnormal | 2 | 81.0% | 98% | [20] |

| Defect Location | 5 | 68.0% | 97% | [20] |

| Detailed Defects | 8 | 64.0% | 96% | [20] |

| Granular Defects | 25 | 53.0% | 90% | [20] |

The data reveals a clear inverse relationship between the number of classification categories and initial annotation accuracy. However, structured training can dramatically improve performance across all system complexities, with one study showing accuracy improvements from 82% to 90% after four weeks of training, while also increasing diagnostic speed [20]. This underscores the importance of both expert-led standardization and comprehensive training for establishing reliable ground truth.

Experimental Protocols for CNN-Based Sperm Classification

Leveraging public datasets for CNN development involves a standardized experimental pipeline, from data pre-processing to model evaluation. The following protocols synthesize methodologies from recent seminal studies.

Data Pre-processing and Augmentation

- Image Cleaning and Denoising: Raw microscopic images often contain noise from insufficient lighting or poor staining. Initial processing aims to denoise images for accurate signal estimation [1]. This includes handling missing values, outliers, and inconsistencies.

- Normalization/Standardization: Numerical features are brought to a common scale to prevent any single feature from dominating the learning process. A common approach is resizing images (e.g., to 80×80×1 grayscale) using linear interpolation [1].

- Data Augmentation: To address limited dataset sizes and class imbalance, techniques such as random rotations, flips, and color variations are used. The SMD/MSS dataset was expanded from 1,000 to 6,035 images through augmentation, which helps balance morphological classes and improve model generalizability [1].

CNN Model Architectures and Training Strategies

- Transfer Learning: A prevalent strategy involves fine-tuning pre-trained CNNs (e.g., VGG16, ResNet50) initially trained on large-scale datasets like ImageNet [5]. This approach is computationally efficient and effective, especially with limited sperm image data.

- Custom Architectures with Attention Mechanisms: Advanced frameworks integrate attention modules like the Convolutional Block Attention Module (CBAM) with architectures such as ResNet50. This allows the network to focus on morphologically relevant regions (e.g., head shape, acrosome) while suppressing background noise [18].

- Hybrid Deep Feature Engineering (DFE): This paradigm combines deep CNN feature extraction with classical machine learning classifiers. One study extracted features using a CBAM-enhanced ResNet50, applied Principal Component Analysis (PCA) for dimensionality reduction, and used a Support Vector Machine (SVM) for classification, achieving a test accuracy of 96.08% on the SMIDS dataset—an 8% improvement over the baseline CNN [18].

Model Evaluation and Benchmarking

Rigorous evaluation is essential for validating model performance and ensuring clinical utility. Standard practices include:

- Data Partitioning: Splitting the dataset into training (e.g., 80%) and testing (e.g., 20%) subsets, with a portion of the training set used for validation [1].

- Performance Metrics: Reporting standard metrics such as accuracy, true positive rate, F1-score, and area under the receiver operating characteristic curve (AUC-ROC) [5] [18].

- Benchmarking Against State-of-the-Art: Comparing results with existing methods and human expert performance on the same public datasets (e.g., SCIAN, HuSHeM) is crucial for contextualizing advancements [5] [19].

The Researcher's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Sperm Morphology CNN Research

| Tool / Resource | Type | Function in the Research Pipeline |

|---|---|---|

| RAL Diagnostics Staining Kit [1] | Wet Lab Reagent | Prepares semen smears for morphological analysis by providing contrast. |

| Modified Hematoxylin/Eosin [19] | Wet Lab Reagent | Stains sperm cells to distinguish nuclei (hematoxylin) and acrosomes/midpieces/tails (eosin). |

| MMC CASA System [1] | Hardware | Computer-Assisted Semen Analysis system for automated image acquisition from sperm smears. |

| SCIAN & HuSHeM Datasets [5] [19] | Data Resource | Public gold-standard datasets for model training, validation, and benchmarking. |

| VGG16, ResNet50 [5] [18] | Software/Model | Pre-trained CNN architectures used as backbones for transfer learning. |

| Convolutional Block Attention Module (CBAM) [18] | Software/Model | Attention mechanism integrated into CNNs to focus learning on salient sperm features. |

| Python 3.8 & PyTorch/TensorFlow [1] [18] | Software | Core programming language and deep learning frameworks for implementing and training models. |

| Support Vector Machine (SVM) [18] | Software/Model | Classical classifier used in hybrid Deep Feature Engineering pipelines after deep feature extraction. |

The advancement of convolutional neural networks for sperm classification is inextricably linked to progress in dataset development and curation. Publicly available, gold-standard datasets like SCIAN-MorphoSpermGS and HuSHeM provide the foundational bedrock for training and benchmarking, while rigorous annotation protocols based on multi-expert consensus establish the reliable ground truth necessary for clinical relevance. Future efforts must focus on expanding the size, diversity, and granularity of these public resources, particularly with complete sperm structures (head, midpiece, tail) and across diverse patient populations. By adhering to standardized experimental protocols and leveraging emerging techniques like attention mechanisms and hybrid deep feature engineering, researchers can develop increasingly accurate, robust, and clinically impactful CNN models for male fertility assessment.

Image pre-processing is a critical prerequisite for building robust and accurate deep learning models in computer vision. In the specialized domain of sperm classification research, where model predictions can directly influence clinical diagnoses and treatment pathways, the consistency and quality of input data are paramount. Convolutional Neural Networks (CNNs) are highly sensitive to the input they receive; variations in image quality, noise, and color channels can significantly impact feature extraction and, consequently, classification performance [1] [4]. This technical guide provides an in-depth examination of three core pre-processing techniques—denoising, normalization, and grayscale conversion—framed within the context of developing reliable CNN models for sperm morphology analysis.

The challenges in sperm image analysis are distinct. Datasets are often characterized by a high degree of subjectivity in ground-truth labels, class imbalance, and images captured under varying conditions [1] [21]. Effective pre-processing mitigates these issues by standardizing input data, suppressing irrelevant noise, and highlighting salient morphological features, such as head shape and tail structure, which are crucial for accurate classification according to systems like the modified David classification [1]. This document outlines the theoretical underpinnings, practical methodologies, and experimental protocols for implementing these techniques, providing researchers with a structured framework to enhance their deep learning pipelines.

Denoising

Image denoising is the process of removing unwanted noise signals from a corrupted image, with the primary goal of enhancing image quality by removing noise while preserving important structural details such as textures, edges, and contours [22]. In the context of sperm image analysis, noise can arise from various sources during acquisition, including sensor limitations on microscopes, insufficient lighting, transmission errors, or poorly stained semen smears [1] [22]. This noise can obscure critical morphological features, leading to misclassification by a CNN. Denoising is therefore not merely an enhancement step but a vital one for ensuring the model focuses on biologically relevant features.

Real-world noise, such as that found in medical images, is often complex, non-Gaussian, and spatially variant [22]. While a common benchmark in research is Additive White Gaussian Noise (AWGN) with a fixed noise level, real-world noise profiles are often more complex and signal-dependent [23] [22]. The choice of denoising technique must carefully balance noise suppression with the preservation of fine, diagnostically significant details.

Key Methods and Experimental Protocols

Denoising methods can be broadly categorized into classical and deep learning-based approaches. The following table summarizes the characteristics of several key methods.

Table 1: Comparison of Image Denoising Methods

| Method | Domain | Noise Handling Capability | Edge Preservation | Computational Complexity |

|---|---|---|---|---|

| Gaussian Pyramid (GP) [22] | Multiscale | High for real-world noise | High | Low |

| Wavelet Transform [22] | Transform | High for Gaussian noise | Moderate | Moderate |

| Median Filter [22] | Spatial | Low (Salt & Pepper) | Moderate | Low |

| Non-Local Means (NLM) [22] | Spatial | Moderate to High | Excellent | High |

| CNN-based Denoisers [23] [22] | Data-driven | High (with sufficient data) | High | Very High (Training) / Moderate (Inference) |

Gaussian Pyramid (GP) Workflow: A multi-scale GP approach has demonstrated strong performance for real-world images, offering a favorable balance between denoising quality and computational efficiency [22]. The typical workflow is as follows:

- Noise Estimation: Analyze the image to estimate the level and type of noise present.

- Pyramid Construction: Iteratively apply low-pass filtering (e.g., with a Gaussian kernel) and downsampling to create a series of images at progressively lower resolutions (the pyramid).

- Denoising at Each Level: Apply a denoising algorithm (which could be a simple filter or a more complex method) at each level of the pyramid. Noise is more easily attenuated at coarser levels.

- Feature Fusion & Reconstruction: Reconstruct the full-resolution denoised image by fusing the processed information from all pyramid levels, preserving fine details from higher resolutions [22].

Deep Learning-Based Denoising: Recent trends involve using CNNs and hybrid architectures for denoising. For instance, the winning method in the NTIRE 2025 Image Denoising Challenge employed a hybrid transformer-convolutional architecture and was trained on datasets like DIV2K and LSDIR [23]. Key strategies from state-of-the-art models include:

- Hybrid Architectures: Combining transformer blocks (to capture global features) with convolutional layers (to capture local features) [23].

- Advanced Loss Functions: Utilizing losses like the Wavelet Transform loss to help the model escape local optima during training [23].

- Progressive Learning & Ensembling: Training models progressively and using self-ensemble techniques during inference to boost performance [23].

Diagram 1: Gaussian pyramid denoising

Application to Sperm Image Analysis

For sperm morphology classification, a practical approach is to start with computationally efficient methods like the Gaussian Pyramid, which has been validated on medical images [22]. The protocol below can be integrated into a CNN pipeline:

Experimental Protocol: Denoising Sperm Images with a Gaussian Pyramid

- Input: Raw grayscale sperm image (e.g., 80x80 pixels).

- Parameters: Set the number of pyramid levels (e.g., 5) and the standard deviation for the Gaussian kernel (e.g., σ=1.0).

- Process: Implement the GP workflow as described above.

- Output: Denoised image ready for subsequent pre-processing steps.

- Validation: Quantitatively, compare the PSNR (Peak Signal-to-Noise Ratio) and SSIM (Structural Similarity Index) of the denoised image against a clean reference, if available. Qualitatively, ensure that sperm head boundaries and tail structures remain sharp and are not smoothed over [22].

Normalization

Normalization is a standardization technique that transforms pixel intensity values to a common scale. This step is crucial for stabilizing and accelerating the training of CNNs. Without normalization, features with inherently larger numerical ranges (like pixel intensities from 0-255) can disproportionately dominate the gradient updates, leading to an unstable and slow training process. By controlling the input distribution, normalization helps the optimizer converge faster and often to a better minimum [1].

In sperm image analysis, normalization mitigates variations caused by differences in staining intensity, slide thickness, and microscope lighting conditions. This ensures that the CNN's learning is focused on morphological differences between sperm classes, rather than being biased by technical artifacts.

Key Methods and Experimental Protocols

The core objective of normalization is to rescale pixel values. A common method is Min-Max Normalization, which maps the original pixel values to a [0, 1] range using the formula: I_normalized = (I - I_min) / (I_max - I_min), where I is the original image, and I_min and I_max are its minimum and maximum pixel values.

Beyond input normalization, normalization layers within the CNN architecture itself (e.g., Batch Normalization) are standard. A benchmark study evaluated four such methods for a CNN-based object detection task, with the following results [24]:

Table 2: Comparison of Normalization Methods within a CNN

| Normalization Method | Impact on Training Stability | Impact on Classification Accuracy | Impact on Convergence Speed |

|---|---|---|---|

| Batch Normalization (BN) | High | High | Fast |

| Layer Normalization (LN) | High | Moderate | Moderate |

| Instance Normalization (IN) | Moderate | Moderate | Moderate |

| Group Normalization (GN) | High | High | Fast |

Experimental Protocol: Normalizing Sperm Images for CNN Input

- Input: Denoised grayscale sperm image.

- Calculation: Compute the minimum (

I_min) and maximum (I_max) pixel intensity values from the entire image. - Transformation: Apply the Min-Max normalization formula to every pixel in the image.

- Output: A normalized image where all pixel values reside in the [0, 1] range.

- Integration: This pre-processed image is then fed into the CNN, which may also contain internal normalization layers like Batch Normalization to further stabilize training [24] [1].

Diagram 2: Min-max normalization

Grayscale Conversion

Grayscale conversion simplifies an image by transforming it from a multi-channel color space (e.g., RGB) to a single-channel representation where each pixel value represents its perceived brightness or luminance. This reduces the computational complexity of the model, as the number of input parameters is cut by two-thirds [25] [26].

The decision to use grayscale is application-dependent. For sperm classification, where the diagnostic criteria are predominantly based on shape and structural morphology (head size, acrosome shape, tail coiling) rather than color, grayscale is often sufficient and can be beneficial [1]. It simplifies the input, forcing the model to prioritize structural features over potentially misleading color variations from staining. However, if color provides a meaningful signal—for instance, in distinguishing different stain types—RGB should be retained [25].

Key Methods and Experimental Protocols

The most common algorithms for grayscale conversion involve calculating a weighted average of the R, G, and B channels. The choice of weights impacts the perceived luminance.

Table 3: Grayscale Conversion Algorithms

| Algorithm | Formula (for each pixel) | Rationale | Suitability for Sperm Images |

|---|---|---|---|

| Luminosity | 0.299*R + 0.591*G + 0.114*B |

Approximates human luminance perception. | High (Recommended) |

| Average | (R + G + B) / 3 |

Simple, but can dull contrast. | Moderate |

| Desaturation | (max(R, G, B) + min(R, G, B)) / 2 |

Creates a flat, less dynamic image. | Low |

Experimental Protocol: Converting Sperm Images to Grayscale

- Input: Raw RGB sperm image.

- Channel Separation: Split the image into its constituent Red, Green, and Blue channels.

- Weighted Combination: For each pixel, compute the grayscale value using the Luminosity method:

Gray = 0.299*R + 0.591*G + 0.114*B. This method best preserves contrast relevant to human vision, which can aid in both manual and automated analysis [25] [26]. - Output: A single-channel grayscale image.

- Resizing: As reported in sperm morphology studies, the grayscale image is often resized to a standard dimension (e.g., 80x80 pixels) using linear interpolation before being fed into the CNN [1].

Diagram 3: Grayscale conversion

The Scientist's Toolkit

This section details essential reagents, datasets, and software tools as utilized in recent deep learning studies for sperm image analysis.

Table 4: Research Reagent Solutions for Sperm Image Analysis

| Item | Function / Description | Example / Citation |

|---|---|---|

| SMD/MSS Dataset | A published dataset of sperm images with annotations based on the modified David classification, used for training and validation. | [1] |

| RAL Diagnostics Stain | A staining kit used to prepare semen smears, enhancing the visibility of sperm structures under a microscope. | [1] |

| MMC CASA System | A Computer-Assisted Sperm Analysis system used for automated image acquisition and initial morphometric measurements. | [1] |

| DIV2K & LSDIR Datasets | High-resolution, general-image datasets often used for pre-training denoising models, which can be leveraged via transfer learning. | [23] |

| ResNet-50 Architecture | A deep CNN architecture that has been successfully applied to sperm motility and morphology classification tasks. | [4] |

| Python with Keras/TensorFlow | Primary programming language and deep learning libraries used for implementing and training CNN models. | [1] [4] |

Integrated Pre-processing Pipeline and Experimental Protocol

For a comprehensive sperm classification project, these techniques are combined into a sequential pipeline. The following workflow and protocol provide a template for a robust experiment.

Integrated Pre-processing Workflow for Sperm Classification:

Raw RGB Image → Grayscale Conversion → Denoising → Normalization → CNN for Classification

Detailed Experimental Protocol:

- Data Acquisition: Acquire sperm images using a standardized protocol (e.g., 100x oil immersion objective, bright-field mode, stained smears) [1]. Ensure a diverse dataset that represents all morphological classes of interest.

- Pre-processing Pipeline:

- Grayscale Conversion: Convert all RGB images to grayscale using the Luminosity method.

- Denoising: Apply a Gaussian Pyramid-based denoising method with optimized parameters.

- Normalization: Apply Min-Max normalization to rescale pixel values to [0, 1].

- Data Partitioning: Randomly split the processed dataset into three sets: 80% for training, 10% for validation, and 10% for testing [1].

- Model Training & Evaluation:

- Model Selection: Choose a suitable CNN architecture (e.g., ResNet-50).

- Training: Train the model on the pre-processed training set. Use the validation set for hyperparameter tuning and to avoid overfitting.

- Evaluation: Evaluate the final model's performance on the held-out test set. Report standard metrics such as accuracy, and for imbalanced datasets, use ROC AUC, precision, and recall [21].

- Ablation Study: To quantify the impact of each pre-processing step, conduct an ablation study. Train and evaluate the model with different pre-processing configurations (e.g., with/without denoising, with grayscale vs. RGB) and compare the performance metrics.

Denoising, normalization, and grayscale conversion are not mere ancillary steps but foundational components of a successful deep learning pipeline for sperm image classification. By systematically implementing these techniques, researchers can significantly enhance the signal-to-noise ratio in their data, standardize inputs for stable model training, and focus computational resources on the most salient morphological features. The protocols and comparisons provided herein serve as a guide for developing more accurate, reliable, and robust CNN models, ultimately advancing the field of automated semen analysis and its application in clinical andrology.

Convolutional Neural Networks (CNNs) have emerged as a cornerstone technology for automating and standardizing sperm morphology analysis, a critical yet challenging component of male infertility diagnostics. Traditional manual assessment suffers from significant subjectivity, with reported inter-observer variability as high as 40% among even trained experts [11] [18]. This technical guide examines the evolution of CNN architectures within this specialized domain, tracing the pathway from custom-built models to sophisticated transfer learning and feature engineering approaches. The progression mirrors broader trends in medical image analysis while addressing unique challenges specific to sperm classification, including limited dataset availability, class imbalance, and the need for precise morphological feature extraction across head, midpiece, and tail structures [1] [11].

The Challenge of Sperm Morphology Analysis

Sperm morphology analysis represents a significant classification challenge within medical image analysis. According to World Health Organization standards, normal sperm morphology is characterized by an oval head measuring 4.0–5.5 μm in length and 2.5–3.5 μm in width, with an intact acrosome covering 40–70% of the head and a single, uniform tail [18]. However, the modified David classification system expands this into 12 distinct morphological defect classes: seven head defects (tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome), two midpiece defects (cytoplasmic droplet, bent), and three tail defects (coiled, short, multiple) [1].

The fundamental challenges in automating this analysis include substantial inter-expert variability (with kappa values as low as 0.05–0.15 reported between technicians), lengthy manual evaluation times (30–45 minutes per sample), and inconsistent standards across laboratories [18]. Furthermore, creating high-quality annotated datasets is particularly challenging due to sperm sometimes appearing intertwined in images, partial structures being displayed at image edges, and the simultaneous assessment required for head, vacuoles, midpiece, and tail abnormalities [11].

Custom CNN Architectures: Foundational Approaches

Early approaches to automated sperm morphology classification focused on developing custom CNN architectures trained from scratch on domain-specific datasets. These models typically employed fundamental convolutional building blocks to learn hierarchical feature representations directly from sperm images.

A representative study by researchers at the Medical School of Sfax developed a custom CNN algorithm implemented in Python 3.8 for spermatozoa classification [1]. Their methodology followed a structured pipeline:

- Image Acquisition: 1000 images of individual spermatozoa were acquired using the MMC CASA system, with each image containing a single spermatozoon comprising head, midpiece, and tail.

- Data Augmentation: The original dataset was expanded from 1000 to 6035 images using augmentation techniques to balance morphological classes.

- Pre-processing: Images underwent cleaning to handle missing values and outliers, followed by normalization and resizing to 80×80×1 grayscale using linear interpolation.

- Architecture: A sequential CNN model was designed with multiple convolutional layers for feature extraction, though specific architectural details were not elaborated in the available literature.

- Training: The augmented dataset was partitioned with 80% for training and 20% for testing, with 20% of the training subset used for validation.

This custom CNN approach achieved accuracy ranging from 55% to 92%, demonstrating feasibility but highlighting limitations in robustness and generalization [1]. The performance variability underscores the challenges of designing effective custom architectures with limited data.

Table 1: Performance Comparison of Custom CNN Architectures

| Study | Dataset | Classes | Pre-processing | Reported Accuracy |

|---|---|---|---|---|

| Sfax Medical School [1] | SMD/MSS (6035 images) | 12 (David classification) | 80×80×1 grayscale, data augmentation | 55%-92% |

| Custom CNN Baseline [18] | SMIDS (3000 images) | 3-class | Not specified | ~88% |

The following diagram illustrates the typical workflow for developing and training custom CNN architectures for sperm morphology analysis:

Transfer Learning: Leveraging Pre-trained Models

Transfer learning has emerged as a powerful alternative to custom CNNs, particularly valuable when dealing with limited medical imaging datasets. This approach utilizes architectures pre-trained on large-scale natural image datasets (e.g., ImageNet) and adapts them to the specialized domain of sperm morphology analysis.

A comprehensive study by Kılıç (2025) implemented a hybrid architecture integrating ResNet50 backbones with Convolutional Block Attention Module (CBAM) attention mechanisms [18]. The methodology incorporated:

- Backbone Selection: ResNet50 pre-trained on ImageNet served as the foundational feature extractor, leveraging learned hierarchical representations.

- Attention Mechanism Integration: CBAM sequentially applied channel-wise and spatial attention to feature maps, enabling the network to focus on morphologically relevant sperm structures.

- Deep Feature Engineering Pipeline: Multiple feature extraction layers (CBAM, Global Average Pooling, Global Max Pooling, pre-final) were combined with 10 distinct feature selection methods.

- Classification Head: Support Vector Machines with RBF/Linear kernels and k-Nearest Neighbors algorithms replaced traditional softmax layers for final classification.

This transfer learning approach demonstrated exceptional performance, achieving test accuracies of 96.08% ± 1.2% on the SMIDS dataset (3000 images, 3-class) and 96.77% ± 0.8% on the HuSHeM dataset (216 images, 4-class) [18]. These results represented significant improvements of 8.08% and 10.41% respectively over baseline CNN performance, with McNemar's test confirming statistical significance (p < 0.001).

Table 2: Performance of Transfer Learning Approaches with Feature Engineering

| Model Architecture | Feature Engineering | Classifier | SMIDS Accuracy | HuSHeM Accuracy |

|---|---|---|---|---|

| ResNet50 + CBAM [18] | Global Average Pooling + PCA | SVM RBF | 96.08% ± 1.2% | 96.77% ± 0.8% |

| ResNet50 Baseline [18] | None (End-to-End) | Softmax | ~88% | ~86% |

| Ensemble CNN (Spencer et al.) [18] | Stacked Generalization | Meta-Learner | 95.2% | Not reported |

The following diagram illustrates the architecture and workflow of the CBAM-enhanced ResNet50 model for sperm morphology classification:

Experimental Protocols and Methodologies

Dataset Preparation and Annotation

Robust dataset creation is fundamental for effective CNN model development. The SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) provides a representative example of systematic dataset development [1]:

- Sample Collection: Semen samples were obtained from 37 patients with sperm concentrations of at least 5 million/mL, excluding samples with high concentrations (>200 million/mL) to avoid image overlap.

- Smear Preparation and Staining: Smears were prepared following WHO manual guidelines and stained with RAL Diagnostics staining kit.

- Image Acquisition: Images were captured using an MMC CASA system with bright field mode and an oil immersion 100x objective, with each image containing a single spermatozoon.

- Expert Annotation: Three experts with extensive experience in semen analysis independently classified each spermatozoon according to the modified David classification, with disagreements resolved through consensus.

- Data Augmentation: Techniques included geometric transformations (rotation, scaling, flipping) and photometric adjustments (brightness, contrast) to expand the dataset from 1000 to 6035 images and address class imbalance.

Model Training and Evaluation

Standardized training protocols ensure reproducible model performance:

- Data Partitioning: Random splitting into training (80%), validation (20% of training set), and test sets (20% of total dataset) [1].

- Cross-Validation: Implementation of 5-fold cross-validation for robust performance estimation [18].

- Evaluation Metrics: Comprehensive assessment using accuracy, precision, recall, F1-score, and area under receiver operating characteristic curve (AUC-ROC).

- Statistical Testing: Application of McNemar's test for comparing model performance with statistical significance [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Analysis Research

| Item | Function | Example/Specification |

|---|---|---|