Data Augmentation for Sperm Morphology Analysis: Techniques to Overcome Dataset Limitations and Enhance AI Performance

This article provides a comprehensive guide to data augmentation techniques specifically for sperm morphology datasets, a critical frontier in male fertility research.

Data Augmentation for Sperm Morphology Analysis: Techniques to Overcome Dataset Limitations and Enhance AI Performance

Abstract

This article provides a comprehensive guide to data augmentation techniques specifically for sperm morphology datasets, a critical frontier in male fertility research. Aimed at researchers and scientists, it details how these methods overcome the significant challenge of limited, high-quality annotated data needed to train robust deep learning models. The content explores foundational concepts like the SMD/MSS and VISEM-Tracking datasets, outlines practical implementation methodologies from basic image transformations to advanced deep feature engineering, and addresses common troubleshooting and optimization strategies. Finally, it covers rigorous validation frameworks and performance comparisons, synthesizing how effective data augmentation standardizes and automates sperm morphology assessment, thereby accelerating diagnostic innovation in reproductive medicine.

The Critical Need for Data Augmentation in Sperm Morphology Analysis

Sperm morphology analysis (SMA) is a cornerstone of male fertility assessment, providing crucial diagnostic information for predicting natural pregnancy outcomes and informing assisted reproductive technologies (ART) such as in vitro fertilization (IVF) [1]. The accurate classification of sperm into normal and abnormal categories—encompassing defects in the head, midpiece, and tail—is essential for clinical diagnosis [2] [1]. However, this field faces a significant and fundamental challenge: a severe scarcity of standardized, high-quality image datasets. This data bottleneck impedes the development and reliability of automated analysis systems based on deep learning (DL), which require large, diverse, and meticulously curated datasets to learn from and generalize effectively [1].

The inherent complexity of sperm morphology, coupled with the subjective nature of manual assessment, creates a pressing need for robust, AI-driven solutions. This application note details the specific challenges in sperm image data curation, quantifies the current landscape of available datasets, provides experimental protocols for dataset creation, and outlines data augmentation strategies to overcome the data scarcity bottleneck within the broader context of sperm morphology research.

The Core Challenges in Curating Sperm Image Datasets

Building high-quality sperm image datasets is a multi-faceted challenge. The primary obstacles researchers encounter are summarized in the table below.

Table 1: Key Challenges in Curating High-Quality Sperm Image Datasets

| Challenge Category | Specific Limitations | Impact on Model Development |

|---|---|---|

| Data Acquisition & Annotation | High inter-expert variability in manual classification [2]; Difficulty annotating overlapping sperm or partial structures [1]; Complexity of labeling multiple defect types (head, midpiece, tail) [1] | Introduces label noise and inconsistency, reducing model accuracy and reliability. |

| Dataset Scale & Diversity | Limited number of images in most public datasets [1]; Lack of diverse representation of all morphological defect classes [2]; Insufficient demographic and pathological diversity [3] | Leads to models that overfit to limited training data and perform poorly on new, unseen clinical data. |

| Technical & Standardization Hurdles | Lack of standardized protocols for slide preparation, staining, and imaging [1]; Variable image quality due to microscope settings and staining quality [2]; Class imbalance, with rare abnormalities being underrepresented [3] | Hinders model generalization and makes it difficult to compare algorithms across different studies and clinical settings. |

Current Landscape of Sperm Image Datasets

Several research groups have made efforts to create and publish sperm image datasets to fuel progress in the field. The table below provides a quantitative overview of some key datasets, highlighting their primary characteristics and limitations.

Table 2: Overview of Available Sperm Image Datasets for Morphology Analysis

| Dataset Name | Key Characteristics | Notable Limitations |

|---|---|---|

| SMD/MSS [2] | 1,000 original images extended to 6,035 via data augmentation; Annotated per modified David classification (12 defect classes). | Initial dataset size is small; Augmented data may lack realism. |

| MHSMA [1] | 1,540 cropped sperm images; Focus on features like acrosome, head shape, and vacuoles. | Limited sample size; Low image resolution (128x128 pixels). |

| VISEM-Tracking [4] | 20 videos (29,196 frames) with bounding boxes; Provides motility and kinematic data. | Focused on tracking and motility, not fine-grained morphology classification. |

| SVIA [1] | 125,000 annotated instances for detection; 26,000 segmentation masks; 125,880 images for classification. | A relatively new dataset; Broader community validation results are pending. |

| HSMA-DS [4] | 1,457 sperm images; Annotated for vacuole, tail, midpiece, and head abnormality. | Limited number of images; May not cover the full spectrum of morphological diversity. |

The Impact of Data Scarcity on Model Performance

The limitations of existing datasets have a direct and measurable impact on the performance of machine learning models. Conventional machine learning algorithms, which rely on handcrafted features (e.g., shape descriptors, texture analysis), have demonstrated limited performance, with one study reporting classification accuracy for non-normal sperm heads as low as 49% [1]. While deep learning models offer a promising alternative by automatically learning features, their performance is critically dependent on the data they are trained on. Models trained on small or imbalanced datasets often fail to generalize, exhibiting overfitting where they perform well on the training data but poorly on new clinical data [1] [5]. Furthermore, the subjectivity of manual annotation introduces "label noise," where the same sperm may be classified differently by multiple experts. One analysis found that achieving total agreement among three experts was challenging, with varying levels of agreement (no agreement, partial agreement, total agreement) across different morphological classes [2]. This inconsistency confuses the model during training, limiting its ultimate accuracy and clinical utility.

Experimental Protocols for Building High-Quality Datasets

To address the data bottleneck, researchers must adopt rigorous and standardized protocols for dataset creation. The following workflow, developed from recent studies, provides a detailed methodology.

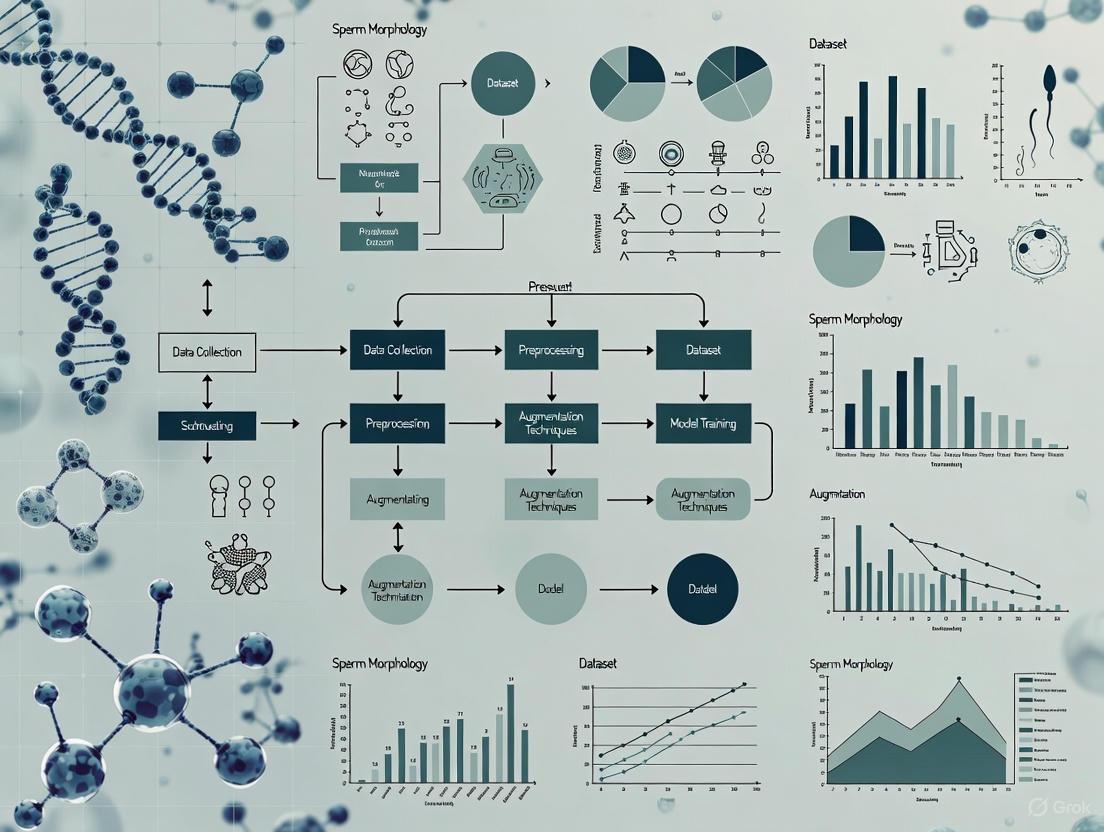

Diagram 1: Sperm Image Dataset Creation Workflow

Protocol: Sample Preparation and Image Acquisition

Objective: To consistently acquire high-resolution, standardized images of individual spermatozoa.

Materials:

- Fresh semen samples (with concentration ≥5 million/mL) [2].

- RAL Diagnostics staining kit or similar for contrast [2].

- Phase-contrast optical microscope with a 100x oil immersion objective [2] [4].

- Microscope-mounted digital camera (e.g., IDS UI-2210C) [4].

- Computer-Assisted Semen Analysis (CASA) system for sequential image capture (optional but recommended) [2].

Methodology:

- Sample Preparation: Prepare smears from fresh semen samples according to WHO guidelines and stain them using a standardized protocol to enhance cellular contrast [2].

- Microscope Setup: Place the prepared smear on a heated microscope stage maintained at 37°C to mimic physiological conditions [4]. Use bright-field or phase-contrast mode with a 100x oil immersion objective [2].

- Image Capture: Systematically capture images, ensuring each frame contains a single, whole spermatozoon (head, midpiece, and tail). Avoid samples with high concentrations (>200 million/mL) to prevent image overlap [2]. Save images in a lossless format.

Protocol: Multi-Expert Annotation and Ground Truth Establishment

Objective: To create a reliable ground truth dataset by mitigating individual annotator subjectivity.

Materials:

- Collected sperm images.

- Annotation software (e.g., LabelBox, VGG Image Annotator) [4] [6].

- A panel of at least three experienced embryologists or andrologists [2].

Methodology:

- Develop Annotation Guidelines: Create detailed guidelines based on a recognized classification system (e.g., modified David classification or WHO strict criteria). Include clear definitions and visual examples of each defect class (e.g., tapered head, coiled tail, bent midpiece) [2] [6].

- Independent Annotation: Each expert independently classifies every spermatozoon in the dataset using the established guidelines. The annotation should cover all parts: head, midpiece, and tail [2].

- Compile Ground Truth File: For each image, create a record containing the image filename, the classifications from all experts, and metadata such as sperm head dimensions [2].

- Analyze Inter-Expert Agreement: Calculate agreement statistics (e.g., Fleiss' Kappa). Resolve discrepancies through a consensus meeting among experts to establish a final, high-confidence label for each sperm image [2].

Protocol: Data Pre-processing and Augmentation

Objective: To enhance dataset quality, balance morphological classes, and increase effective size for robust deep learning.

Materials:

- Python programming environment (version 3.8 or higher) [2].

- Image processing libraries (OpenCV, Pillow).

- Deep learning frameworks (TensorFlow, PyTorch).

Methodology:

- Image Pre-processing:

- Data Augmentation: Apply a hybrid of transformation techniques to the existing images to artificially expand the dataset, focusing particularly on underrepresented classes [7] [5].

- Affine Transformations: Apply rotations (e.g., ±15°), flips (horizontal/vertical), slight zooms, and translations [7].

- Pixel-Level Transformations: Adjust brightness, contrast, and saturation to simulate different staining and lighting conditions [7].

- Advanced Techniques: For complex scenarios, use Generative Adversarial Networks (GANs) to generate high-quality synthetic sperm images that are plausible but artificial, further increasing diversity [7] [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Dataset Research

| Item Name | Function/Application | Specification/Example |

|---|---|---|

| RAL Diagnostics Stain | Stains sperm cells on a smear to improve contrast and visibility of morphological structures under a microscope. | Standardized staining kit for semen smears [2]. |

| Phase-Contrast Microscope | Enables high-resolution imaging of unstained or live sperm cells by enhancing contrast of transparent specimens. | Olympus CX31 microscope with 100x oil immersion objective [4]. |

| CASA System | Automates the capture and initial morphometric analysis of sperm images (e.g., head length, tail length). | MMC CASA system for sequential image acquisition [2]. |

| Annotation Software Platform | Provides a user-friendly interface for experts to efficiently label and classify sperm images. | LabelBox, VGG Image Annotator (VIA) [4] [6]. |

| Deep Learning Framework | Provides the programming environment to build, train, and test convolutional neural network (CNN) models. | Python 3.8 with TensorFlow/PyTorch libraries [2]. |

Data Augmentation Pathways to Overcome Data Scarcity

Given the extreme difficulty of collecting vast clinical datasets, data augmentation is not just beneficial but essential. The following diagram illustrates a strategic hybrid augmentation pathway.

Diagram 2: Hybrid Data Augmentation Strategy

This hybrid approach, which combines simpler transformations with more complex generative models, has been proven highly effective. One study on medical image classification found that a hybrid data augmentation method achieved a top accuracy of 99.54%, significantly outperforming any single technique used in isolation [5]. In sperm morphology research, applying these techniques allowed one group to expand their dataset from 1,000 to 6,035 images, which was crucial for training a CNN model that achieved accuracy results comparable to expert judgment [2]. Affine and pixel-level transformations often provide the best trade-off between performance gains and implementation complexity [7].

The scarcity of standardized, high-quality sperm image datasets remains a significant bottleneck in the development of reliable AI tools for male infertility diagnosis. This challenge is rooted in the complexities of data acquisition, annotation, and the natural class imbalance of morphological defects. However, by adopting systematic and rigorous protocols for dataset creation—encompassing standardized sample preparation, multi-expert annotation, and comprehensive quality assurance—researchers can build a solid foundation. Furthermore, strategically employing a hybrid of data augmentation techniques is a powerful and necessary method to amplify the value of collected data, balance classes, and ultimately train robust deep learning models. Addressing this data bottleneck is paramount for translating AI research into clinical tools that can offer objective, rapid, and accurate sperm morphology analysis to benefit patients worldwide.

The integration of artificial intelligence (AI) into reproductive medicine is transforming the assessment of sperm morphology, a critical parameter in male fertility diagnostics. Traditional manual analysis is inherently subjective, time-consuming, and prone to significant inter-observer variability, with reported disagreement rates as high as 40% among experts [8]. This lack of standardization hampers diagnostic consistency and reproducibility across laboratories.

Deep learning models, particularly Convolutional Neural Networks (CNNs), offer a pathway to automated, objective, and high-throughput analysis. However, the development of robust, generalizable models is critically dependent on access to large, high-quality, and well-annotated public datasets [9]. This application note provides a detailed overview of key public datasets—SMD/MSS, VISEM-Tracking, SVIA, and HuSHeM—framed within the essential context of data augmentation techniques. We summarize their core attributes, present standardized experimental protocols for their use, and visualize the typical AI workflow to serve as a resource for researchers and drug development professionals in the field of reproductive biology.

The following section details two of the key datasets, SMD/MSS and HuSHeM. It is important to note that within the provided search results, specific quantitative details for the VISEM-Tracking and SVIA datasets were not available. Therefore, the comparative analysis focuses on the datasets for which complete information could be sourced.

Table 1: Key Characteristics of SMD/MSS and HuSHeM Datasets

| Characteristic | SMD/MSS | HuSHeM |

|---|---|---|

| Primary Focus | Morphology Classification | Morphology Classification |

| Initial Image Count | 1,000 [2] | 216 [8] |

| Final Image Count (Post-Augmentation) | 6,035 [2] | Information missing |

| Morphology Classification Scheme | Modified David Classification (12 classes) [2] | WHO-based [8] |

| Key Anomalies Covered | Head (tapered, thin, microcephalous, etc.), Midpiece (cytoplasmic droplet, bent), Tail (coiled, short, multiple) [2] | Head shape, acrosome integrity, neck structure, tail configuration [8] |

| Annotation Process | Independent classification by three experts; detailed ground truth file [2] | Expert annotations [8] |

| Reported Model Performance | Accuracy: 55% - 92% [2] | Accuracy: 96.77% with advanced DL models [8] |

Experimental Protocols for Dataset Utilization

Protocol 1: SMD/MSS Dataset Construction and Preprocessing

The SMD/MSS dataset was developed to address the need for a dataset based on the modified David classification, which is widely used in laboratories globally [2].

- Sample Preparation: Semen samples were obtained from 37 patients. Smears were prepared following WHO manual guidelines and stained with a RAL Diagnostics staining kit. Samples with a concentration of at least 5 million/mL were included, while those exceeding 200 million/mL were excluded to prevent image overlap [2].

- Data Acquisition: Individual spermatozoa images were acquired using an MMC CASA system, which consists of an optical microscope with a digital camera. Images were captured in bright-field mode using an oil immersion 100x objective [2].

- Expert Annotation and Ground Truth: Each sperm image was independently classified by three experienced experts according to the 12 classes of the modified David classification. A comprehensive ground truth file was compiled for each image, containing the image name, classifications from all three experts, and morphometric dimensions of the sperm head and tail [2].

- Data Augmentation: To overcome the limitations of a small original dataset and heterogeneous class representation, data augmentation techniques were employed. The initial 1,000 images were expanded to 6,035 images, creating a more balanced and powerful dataset for training deep learning models [2].

Protocol 2: A Deep Learning Workflow for Sperm Morphology Classification

This protocol outlines a state-of-the-art methodology for building a high-accuracy classifier, as demonstrated on datasets like HuSHeM [8].

Model Architecture Selection and Enhancement:

- Backbone Network: Select a deep CNN architecture such as ResNet50 as a feature extractor [8].

- Integration of Attention Mechanisms: Enhance the backbone network by integrating a Convolutional Block Attention Module (CBAM). This lightweight module sequentially applies channel and spatial attention to feature maps, forcing the model to focus on diagnostically relevant regions of the sperm (e.g., head shape, acrosome, tail) while suppressing irrelevant background noise [8].

Deep Feature Engineering (DFE) Pipeline:

- Feature Extraction: Extract high-dimensional feature maps from multiple layers of the CBAM-enhanced network, including the CBAM layer itself, Global Average Pooling (GAP), and Global Max Pooling (GMP) layers [8].

- Feature Selection: Apply feature selection algorithms such as Principal Component Analysis (PCA), Chi-square tests, or Random Forest importance to the extracted feature set. This step reduces dimensionality and noise, improving model performance [8].

- Classification: Instead of using the CNN's final classification layer, train a traditional machine learning classifier like a Support Vector Machine (SVM) with an RBF kernel on the selected feature set. This hybrid approach has been shown to yield higher accuracy than end-to-end CNN training [8].

Model Training and Evaluation:

- Rigorous Validation: Employ 5-fold cross-validation to ensure robust performance estimation and avoid overfitting [8].

- Statistical Testing: Use statistical tests like McNemar's test to validate that performance improvements are significant [8].

- Model Interpretation: Utilize visualization techniques like Grad-CAM to generate attention maps, providing clinicians with interpretable insights into the model's decision-making process [8].

Figure 1: AI-Based Sperm Morphology Analysis Workflow. This diagram outlines the standard pipeline for automated sperm classification, from raw image input to final diagnosis.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Solutions for Sperm Morphology Analysis

| Item | Function/Application | Example/Specification |

|---|---|---|

| RAL Diagnostics Staining Kit | Staining of sperm smears for clear visualization of morphological details [2]. | Used in the preparation of the SMD/MSS dataset [2]. |

| Optixcell Extender | Semen extender used for diluting and preserving bull sperm samples during analysis [10]. | Used in bovine sperm morphology studies [10]. |

| Trumorph System | A dye-free system for sperm fixation using controlled pressure and temperature, preserving native morphology [10]. | Employed for fixation in veterinary sperm analysis [10]. |

| MMC CASA System | Computer-Assisted Semen Analysis system for automated image acquisition and initial morphometric analysis [2]. | Used for acquiring images for the SMD/MSS dataset [2]. |

| Optika B-383Phi Microscope | Optical microscope for high-resolution image capture of spermatozoa [10]. | Used with negative phase contrast objectives for bovine sperm imaging [10]. |

The move towards standardized, AI-driven sperm morphology analysis represents a significant advancement in reproductive medicine. Public datasets like SMD/MSS and HuSHeM are foundational resources that enable the development of robust deep-learning models. The application of structured data augmentation techniques is critical to mitigating the challenges of limited data and class imbalance, thereby enhancing model generalizability.

The experimental protocols and the integrated deep feature engineering pipeline outlined in this note provide a roadmap for researchers to build accurate, interpretable, and clinically valuable tools. As the field evolves, future work should focus on the creation of even larger, multi-center datasets, the development of standardized metadata reporting formats [11] [12], and the rigorous clinical validation of these systems to ensure their reliability in diagnostic settings. The ultimate goal is to provide consistent, objective, and efficient fertility assessments to improve patient care worldwide.

The accurate assessment of sperm morphology is a cornerstone of male fertility diagnosis and a critical parameter in assisted reproductive technology (ART) outcomes. However, the creation of reliable, high-quality datasets for research is fundamentally hampered by two inherent challenges: significant expert subjectivity in manual annotation and the profound structural complexity of the sperm cell itself. Manual sperm morphology assessment is recognized as a challenging parameter to standardize due to its subjective nature, which is often reliant on the operator's expertise [13] [2]. Even highly trained experts display substantial diagnostic disagreement, with reported kappa values as low as 0.05–0.15, highlighting inconsistent standards across laboratories [8]. This subjectivity directly impacts the "ground truth" labels essential for training robust machine learning models.

Compounding the issue of subjectivity is the intricate structural nature of the spermatozoon. A morphologically normal spermatozoon must exhibit an oval-shaped head (length: 4.0–5.5 µm, width: 2.5–3.5 µm), an intact acrosome covering 40–70% of the head, a regular midpiece about the same length as the head, and a single, uniform tail approximately 45 µm long [14] [8]. The process of spermiogenesis that generates this highly specialized cell is complex and inefficient in humans, producing a high percentage of spermatozoa with various abnormal and imperfect features [15]. Annotating this continuum of biometrics and the multitude of potential defects in the head, midpiece, and tail requires immense precision, a task that is complicated by limitations in imaging technology and the minute scale of the structures involved [15] [16]. This document details these challenges and provides standardized protocols to mitigate them, thereby enhancing the quality of datasets for computational research.

Quantitative Analysis of Expert Subjectivity and Annotation Complexity

The variability in expert annotation can be systematically quantified, providing insights into the magnitude of the challenge and the factors that influence consensus.

Table 1: Quantifying Expert Annotation Subjectivity

| Metric of Subjectivity | Reported Value or Range | Context/Description |

|---|---|---|

| Inter-Expert Agreement (Kappa) | 0.05 - 0.15 [8] | Even among trained technicians, signifying slight to fair agreement only. |

| Expert Consensus on Normal/Abnormal | 73% [17] | Percentage of sheep sperm images where experts agreed on a binary normal/abnormal classification. |

| Deep Learning Model Accuracy Range | 55% - 92% [13] [2] | Range of accuracy achieved by a CNN model, reflecting inconsistency in the training labels provided by experts. |

| Untrained Novice Accuracy (2-category) | 81.0% ± 2.5% [17] | Initial accuracy of novices in a binary classification system (normal vs. abnormal). |

| Untrained Novice Accuracy (25-category) | 53% ± 3.69% [17] | Initial accuracy for a complex 25-category system, showing a significant drop with increased complexity. |

The complexity of the classification system itself is a major driver of annotation variability. Research has demonstrated that the number of categories used has a direct and negative correlation with annotation accuracy.

Table 2: Impact of Classification System Complexity on Annotation Accuracy

| Classification System | Final Trained User Accuracy | Key Annotated Defects |

|---|---|---|

| 2-Category System | 98% ± 0.43% [17] | Normal, Abnormal. |

| 5-Category System | 97% ± 0.58% [17] | Normal, Head defect, Midpiece defect, Tail defect, Cytoplasmic droplet. |

| 8-Category System | 96% ± 0.81% [17] | Normal, Cytoplasmic droplet; Midpiece defect; Loose heads & abnormal tails; Pyriform head; Knobbed acrosomes; Vacuoles & teratoids; Swollen acrosomes. |

| 25-Category System | 90% ± 1.38% [17] | Normal; all other defects defined individually with high specificity. |

Experimental Protocols for Establishing Annotation Ground Truth

To overcome the challenge of expert subjectivity, a rigorous, multi-stage protocol for establishing a reliable ground truth dataset is essential. The following methodology, inspired by machine learning data validation principles, provides a standardized approach.

Protocol: Expert Consensus for Ground Truth Labeling

Objective: To create a standardized and high-quality annotated sperm morphology dataset by mitigating individual expert bias through a structured consensus process.

Materials and Reagents:

- Sperm Smears: Prepared from semen samples according to WHO guidelines (liquefaction at 37°C for 30 minutes; use of proteolytic enzymes like α-chymotrypsin for viscous samples) [14].

- Staining Kit: RAL Diagnostics staining kit or Papanicolaou/Diff-Quik stain [14] [2].

- Imaging System: Microscope with 100x oil immersion objective and digital camera (e.g., MMC CASA system) [2].

- Software: Image annotation and data management software (e.g., spreadsheet for collating expert classifications).

Procedure:

- Sample Preparation & Image Acquisition:

- Prepare thin smears on clean, frosted slides using 10 µL of well-mixed semen. Air-dry completely [14].

- Stain the smears using a standardized protocol (e.g., for Diff-Quik: immerse in fixative 5x, then solution I for 10s, then solution II for 10s, rinse in water, and air-dry) [14].

- Capture images of individual spermatozoa using a bright-field microscope with a 100x objective. Ensure each image contains a single, whole spermatozoon [2].

Independent Multi-Expert Classification:

- Engage a minimum of three independent experts, each with extensive experience in semen analysis.

- Provide each expert with the same set of images and a predefined classification guide (e.g., Modified David classification with 12 defect classes: tapered, thin, microcephalous, macrocephalous, multiple heads, abnormal post-acrosomal region, abnormal acrosome, cytoplasmic droplet, bent midpiece, coiled tail, short tail, multiple tails) [2].

- Each expert classifies every spermatozoon independently, annotating defects for the head, midpiece, and tail without consultation.

Data Collation and Consensus Analysis:

- Compile all expert classifications into a single ground truth file.

- Analyze the level of agreement for each sperm image. The scenarios are:

- Total Agreement (TA): All three experts assign identical labels.

- Partial Agreement (PA): Two out of three experts agree on the label.

- No Agreement (NA): All three experts provide different labels [2].

- Use statistical software (e.g., IBM SPSS) to calculate inter-expert agreement using Fisher's exact test (p < 0.05 considered significant) [2].

Final Ground Truth Assignment:

- For images with TA, assign the unanimously agreed label as the ground truth.

- For images with PA, assign the label agreed upon by the two experts. Flag these images in the dataset for potential review during model training.

- For images with NA, exclude them from the final training dataset or subject them to a final arbitration round by a senior morphologist.

Protocol: Stain-Free Sperm Morphometry with Accuracy Enhancement

Objective: To perform precise, non-invasive morphometric analysis of sperm head, midpiece, and tail, minimizing errors induced by staining and low-resolution images.

Materials and Reagents:

- Non-Stained Sperm Sample.

- Fixation System: Trumorph system or equivalent for dye-free fixation using controlled pressure and temperature [18].

- Microscopy System: Microscope with 20x to 40x phase-contrast objectives (e.g., Optika B-383Phi) [16] [18].

- Computational Framework: Python-based environment with libraries for image processing (OpenCV) and deep learning (PyTorch/TensorFlow).

Procedure:

- Sample Preparation and Image Capture:

- Dilute the semen sample to an appropriate concentration (e.g., 17.5–27.5 x 10⁶/mL) [18].

- Place 10 µL on a slide, cover with a coverslip, and fix using the Trumorph system (e.g., 60°C, 6 kp pressure) to immobilize sperm without staining [18].

- Capture images under 20x or 40x magnification. The lower magnification prevents sperm from swimming away but reduces resolution, necessitating the following enhancement steps [16].

Multi-Target Instance Parsing:

- Employ a multi-scale part parsing network that integrates both semantic segmentation and instance segmentation.

- The instance segmentation branch creates masks to accurately localize and separate individual sperm cells from each other and the background.

- The semantic segmentation branch performs fine-grained pixel-wise classification to delineate the head, midpiece, and tail for each sperm [16].

- Fuse the outputs of both branches to achieve instance-level parsing, where every pixel is assigned to a specific part of a specific sperm.

Morphological Measurement and Accuracy Enhancement:

- Extract raw morphological parameters (head length/width, midpiece and tail length) from the parsed segments.

- Apply a measurement accuracy enhancement strategy to correct for blurring and boundary errors in low-resolution images:

- Outlier Exclusion: Use the Interquartile Range (IQR) method to filter out biologically implausible measurements.

- Data Smoothing: Apply Gaussian filtering to smooth the measured parameter data and reduce noise.

- Robust Correction: Extract the maximum morphological features from the smoothed data to counteract the underestimation caused by blurred contours [16].

Visualization of Annotation Workflows and Challenges

The following diagram illustrates the multi-expert annotation workflow and the primary sources of subjectivity and complexity.

Diagram 1: Multi-expert annotation workflow and inherent challenges.

The subsequent diagram outlines the stain-free analysis protocol designed to address the challenges of structural complexity.

Diagram 2: Stain-free sperm morphology analysis with accuracy enhancement.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Annotation Research

| Item Name | Function/Application | Specific Example / Note |

|---|---|---|

| Diff-Quik Stain | Rapid staining of sperm smears for clear visualization of head, midpiece, and tail structures. | A Romanowsky-type stain; consists of fixative, solution I (eosin), and solution II (methylene blue) [14]. |

| RAL Diagnostics Stain | Staining kit used for sperm morphology assessment according to specific laboratory protocols. | Used in the creation of the SMD/MSS dataset for expert classification [2]. |

| Optixcell Extender | Semen extender used to dilute and preserve semen samples prior to smear preparation and analysis. | Used in bovine sperm morphology studies to maintain sperm viability during processing [18]. |

| Trumorph System | A dye-free fixation system that uses controlled pressure and temperature to immobilize sperm for analysis. | Prevents sperm damage from staining, enabling non-invasive morphology assessment [18]. |

| MMC CASA System | Computer-Assisted Semen Analysis system for automated image acquisition and morphometric analysis. | Used for acquiring images of individual spermatozoa with defined head and tail dimensions [2]. |

| Python with Deep Learning Libraries | Core programming environment for implementing data augmentation, CNN models, and instance parsing networks. | Used with libraries like TensorFlow/PyTorch for developing sperm classification algorithms [2] [16] [8]. |

Application Note: The Challenge of Generalizability in Clinical AI

The deployment of artificial intelligence (AI) in clinical settings represents a frontier in modern medicine, promising enhanced diagnostic accuracy, standardization, and workflow efficiency. However, a significant gap exists between developing high-performing models in research settings and achieving robust, generalizable AI tools that function reliably across diverse clinical environments. This challenge is particularly acute in specialized fields like reproductive medicine, where subjective assessments, such as sperm morphology evaluation, are the standard [13] [2].

A primary obstacle is data scarcity and variability. AI models, particularly deep learning models, require large, diverse datasets to learn effectively and avoid overfitting. In medicine, especially for rare diseases or specific diagnostic tasks like sperm classification, acquiring large datasets is difficult, expensive, and often constrained by patient privacy concerns [19] [20]. Furthermore, models trained on data from a single institution often experience a significant performance drop when validated externally. One study demonstrated that single-institution models for classifying medical procedures showed a mean accuracy of 92.5% on internal data but generalized poorly, with performance dropping by an average of 22.4% on external data [21].

Another critical challenge is dataset shift, where the statistical properties of the data used for training and the data encountered in real-world deployment differ. This can be due to changes in patient populations, medical equipment, clinical protocols, or even public health policies over time. For instance, a COVID-19 risk prediction model built during the first wave of the pandemic saw drastically reduced performance in later waves due to changes in testing policies and virus variants [22]. Therefore, achieving generalizability requires a holistic strategy that addresses not only model architecture but also data acquisition, validation, and continuous monitoring post-deployment.

Protocol for Data Augmentation in Sperm Morphology Analysis

This protocol outlines a detailed methodology for applying data augmentation to create a robust and generalizable deep-learning model for sperm morphology classification, based on established research [13] [2].

Background and Principle

Manual sperm morphology assessment is subjective, time-consuming, and prone to inter-observer variability. Deep learning offers a path to automation and standardization. The Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) exemplifies the initial data scarcity problem, starting with 1,000 individual sperm images [13] [2]. This protocol uses data augmentation to artificially expand the dataset, introducing variability that helps the model learn invariant features and generalize better to new images from different sources.

Materials and Equipment

- Sperm Image Data: Raw digital images of individual spermatozoa, acquired using a Computer-Assisted Semen Analysis (CASA) system like the MMC CASA system with a bright-field microscope and oil immersion 100x objective [2].

- Computing Hardware: A computer with a GPU (e.g., NVidia Titan RTX) is recommended for accelerated deep learning training [21].

- Software Environment: Python 3.8 or later, with deep learning libraries such as TensorFlow or PyTorch [2] [21].

Experimental Procedure

Step 1: Expert Annotation and Dataset Curation

- Acquire semen samples and prepare smears according to WHO guidelines [2].

- Capture images of individual spermatozoa.

- Establish a ground truth by having each sperm image classified by multiple experts (e.g., three) based on a standardized classification system like the modified David classification, which includes 12 classes of morphological defects (e.g., tapered head, microcephalous, coiled tail) [2].

- Analyze inter-expert agreement. Resolve discrepancies through consensus, and use only images with high agreement (e.g., total or partial agreement among experts) for training to ensure label quality [2].

Step 2: Data Pre-processing

- Clean Images: Identify and handle any corrupt or low-quality images.

- Normalize Images: Resize all images to a consistent dimensions (e.g., 80x80 pixels) and convert to grayscale to reduce computational complexity [2].

- Denoise: Apply techniques to remove noise signals from insufficient lighting or poor staining [2].

Step 3: Data Augmentation Strategy

Apply a series of geometric and photometric transformations to the pre-processed training set images to generate new, synthetic variants. The table below summarizes the key transformations used to expand the SMD/MSS dataset from 1,000 to 6,035 images [2] [20].

Table 1: Data Augmentation Techniques for Sperm Morphology Images

| Augmentation Category | Specific Techniques | Purpose |

|---|---|---|

| Geometric Transformations | Rotation, Translation, Shearing, Horizontal/Vertical Flipping | Makes the model invariant to sperm orientation and position in the image. |

| Photometric Transformations | Adjusting Brightness, Contrast, Gamma, Adding Noise | Makes the model robust to variations in staining intensity and lighting conditions. |

Step 4: Model Training and Evaluation

- Data Partitioning: Randomly split the augmented dataset into a training set (80%) and a testing set (20%). Further, split the training set to use a portion (e.g., 20%) for validation during training [2].

- Model Selection: Implement a Convolutional Neural Network (CNN) architecture. For enhanced performance, consider a hybrid model like a CNN with a Convolutional Block Attention Module (CBAM) integrated with a ResNet50 backbone, which helps the model focus on salient features like the sperm head and tail [8].

- Training: Train the model on the augmented training set. Use the validation set to tune hyperparameters and monitor for overfitting.

- Evaluation: Evaluate the final model's performance on the held-out test set. Report standard metrics including Accuracy, Precision, Recall, and F1-Score [2] [8]. The expected outcome based on the SMD/MSS study is an accuracy ranging from 55% to 92% across different morphological classes [13] [2].

Roadmap for Clinical Deployment of AI Models

Successfully transitioning an AI model from a research prototype to a clinically deployed tool requires careful planning across three continuous phases: pre-implementation, peri-implementation, and post-implementation [22]. The following workflow visualizes this roadmap, highlighting critical actions and checks at each stage to ensure generalizability and safety.

Pre-Implementation Phase

Before any clinical integration, the model must be rigorously validated.

- Local Performance Validation: Conduct retrospective evaluation using local data from the deployment site to ensure the model generalizes to the target population and equipment [22].

- Bias and Fairness Audit: Evaluate model performance across different demographic groups (e.g., age, ethnicity) to ensure it does not introduce or perpetuate healthcare inequities [22].

- Infrastructure and Stakeholder Mapping: Plan the data flow with the IT team, often using standards like FHIR to connect with Electronic Health Record (EHR) systems. Crucially, align incentives with end-users (clinicians) to ensure adoption, following the "five rights" of clinical decision support: the right information, person, time, channel, and format [22].

Peri-Implementation Phase

This phase involves the initial "go-live" and controlled testing.

- Define Clinical Success Metrics: The measure of success should not be model accuracy alone, but an improvement in clinical outcomes (e.g., reduced time to diagnosis, mortality reduction) compared to the standard of care [22].

- Silent Validation and Pilot Study: Run the model in "silent mode" where it generates predictions without displaying them to clinicians, to verify production data feeds and output stability. Follow this with a pilot study in a small patient subset to assess the user interface, education materials, and workflow integration [22].

Post-Implementation Phase

AI deployment is not a one-time event but requires ongoing maintenance.

- Continuous Monitoring and Surveillance: Proactively monitor for performance degradation caused by dataset shift or changes in clinical practice [22].

- Solution Performance Auditing: Log how clinicians interact with and potentially override the model's recommendations. This feedback is essential for understanding real-world utility and failure modes [22].

- Model Retraining and Decommissioning: Establish a clear protocol for model updating or retirement if it becomes obsolete or harmful [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Developing a Sperm Morphology AI Model

| Item Name | Function / Rationale |

|---|---|

| MMC CASA System | An integrated system (microscope, camera, software) for standardized and sequential acquisition of high-quality digital sperm images, which is crucial for building a consistent dataset [2]. |

| RAL Diagnostics Staining Kit | Provides the reagents for staining sperm smears, creating the contrast necessary for visualizing morphological details under a microscope [2]. |

| SMD/MSS Dataset | A foundational dataset comprising 1,000+ expert-classified sperm images based on the modified David classification. It serves as a benchmark for training and validating new models [13] [2]. |

| Convolutional Neural Network (CNN) | The core deep learning algorithm for image recognition. It automatically learns hierarchical features from pixel data, eliminating the need for manual feature engineering [13] [8]. |

| Convolutional Block Attention Module (CBAM) | An advanced neural network component that can be added to CNNs (e.g., ResNet50). It directs the model's "attention" to the most relevant parts of the sperm image (e.g., head, midpiece), improving accuracy and interpretability [8]. |

| Data Augmentation Pipeline (Geometric/Photometric) | A software pipeline that programmatically applies transformations (rotation, contrast changes, etc.) to existing images. It is a cost-effective method to increase dataset size and diversity, directly combating overfitting and improving model generalizability [2] [20]. |

A Practical Toolkit: Data Augmentation Techniques for Sperm Images

The application of artificial intelligence (AI) for sperm morphology analysis represents a significant advancement in male infertility diagnostics. Deep learning models, particularly Convolutional Neural Networks (CNNs), have demonstrated potential for automating and standardizing the assessment of sperm head, midpiece, and tail defects [1]. However, the robustness of these AI technologies is fundamentally constrained by the need for large, diverse, and accurately annotated datasets [2] [1]. Manual sperm morphology analysis is inherently subjective, time-consuming, and suffers from significant inter-observer variability, making the creation of such datasets challenging [2]. This application note details core image manipulation techniques—rotation, flipping, scaling, and brightness/contrast adjustment—employed as data augmentation strategies to enhance the size and quality of sperm morphology datasets, thereby improving the performance and generalizability of deep learning models in reproductive biology research.

The Role of Data Augmentation in Sperm Morphology Analysis

Sperm morphology analysis (SMA) is a critical yet challenging component of male fertility assessment. The World Health Organization (WHO) recognizes 26 types of abnormal morphology, requiring the analysis of over 200 sperm cells per sample, which leads to a substantial workload and subjective results [1]. AI models offer a solution by automating this process, but their development faces two primary data-related challenges:

- Limited Dataset Size and Diversity: Collecting and annotating a large number of sperm images is costly and time-consuming. Many existing datasets, such as the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS), start with a limited number of base images (e.g., 1,000) and require augmentation to reach a viable size for model training (e.g., 6,035 images) [2].

- Class Imbalance: Certain morphological defects occur more rarely than others, leading to imbalanced datasets where a model becomes biased toward more frequent classes.

Data augmentation artificially expands the training dataset by creating modified versions of existing images. This practice mitigates overfitting, improves model generalization, and helps balance class distributions [2]. For sperm image analysis, augmentations must be chosen to realistically represent the biological variations and imaging artifacts encountered in clinical practice while preserving the critical morphological features used for classification.

Core Image Manipulation Protocols

This section provides detailed experimental protocols for implementing core image manipulations in the context of augmenting sperm morphology datasets.

Geometric Manipulations: Rotation and Flipping

Geometric transformations are foundational augmentation techniques that introduce viewpoint variance without altering the core morphological features of the sperm cell.

Rationale: Sperm cells can appear in any orientation on a microscope slide. Training a model to be invariant to rotation and reflection is crucial for robust real-world performance. These manipulations are label-preserving, meaning the class of the sperm (e.g., "normal head," "coiled tail") does not change after the transformation.

Experimental Protocol:

- Input: A single sperm image, preferably with a clean background, extracted from a semen smear. The image should be in a standard format (e.g., PNG, TIFF).

- Software Tools: Python libraries such as TensorFlow (

tf.keras.preprocessing.image.random_rotation,tf.image.flip_left_right) or OpenCV (cv2.rotate,cv2.flip) are typically used. - Parameterization:

- Rotation: Apply random rotations within a defined range. A common practice is to use rotations between

-180and+180degrees to cover all possible orientations fully. Theinterpolationparameter should be set toINTERPOLATION_NEARESTorINTERPOLATION_LINEARto handle pixel values. - Flipping: Implement horizontal (

flip_left_right) and vertical (flip_up_down) flipping. Horizontal flipping is more biologically plausible than vertical flipping.

- Rotation: Apply random rotations within a defined range. A common practice is to use rotations between

- Output: A set of new images demonstrating the same sperm cell in various orientations and reflections.

Considerations for Sperm Morphology: These transformations are generally safe for all parts of the sperm (head, midpiece, tail). However, researchers should validate that extreme rotations do not introduce artifacts at the image boundaries that could be misconstrued as morphological defects.

Spatial Manipulation: Scaling

Scaling, or zooming, alters the apparent size of the sperm cell within the image frame.

Rationale: Minor variations in the distance between the microscope objective and the sample can cause sperm cells to appear slightly larger or smaller. Augmentation with scaling makes the model invariant to these minor magnification differences.

Experimental Protocol:

- Input: A single sperm image.

- Software Tools: Python libraries like TensorFlow (

tf.image.resize) or OpenCV (cv2.resize). - Parameterization:

- Determine a scaling factor range. A conservative range of

[0.9, 1.1](i.e., 10% zoom in/out) is often used to avoid excessive distortion or the creation of unrealistic sizes. - Apply the scaling factor to both the height and width of the image.

- After scaling, the image may need to be padded or cropped back to the original dimensions to maintain a consistent input size for the neural network.

- Determine a scaling factor range. A conservative range of

- Output: Images of the same sperm cell at different apparent sizes.

Considerations for Sperm Morphology: Aggressive scaling outside a biologically plausible range (e.g., making a sperm head appear 50% larger) should be avoided, as it could lead the model to misclassify a normal sperm as macrocephalous or microcephalous [2].

Photometric Manipulations: Brightness and Contrast Adjustment

Adjusting brightness and contrast simulates variations in microscope lighting conditions, staining intensity, and sample preparation, which are common challenges in clinical settings [2] [23].

Rationale: Microscopy images can suffer from poor contrast due to uneven illumination or improper staining. Models trained on ideally lit images may fail under suboptimal conditions. Brightness and contrast augmentation enhances model robustness to these technical variabilities.

Experimental Protocol:

- Input: A single sperm image.

- Software Tools: TensorFlow (

tf.image.adjust_brightness,tf.image.adjust_contrast) or custom algorithms based on Histogram Equalization (HE) and Adaptive Histogram Equalization (CLAHE) [23] [24]. - Parameterization:

- Brightness: Add a random delta value to the pixel intensities. The delta is typically sampled from a small range (e.g.,

[-0.2, +0.2]multiplied by the maximum pixel value) to prevent saturation to pure black or white. - Contrast: Multiply the pixel intensities by a random factor. A factor of

1.0leaves the image unchanged, while factors below and above1.0decrease and increase contrast, respectively (e.g., a range of[0.8, 1.5]). - Advanced Methods: For more sophisticated enhancement, implement a two-stage technique involving global HE followed by a local enhancement method to address differences in average local contrast [23]. CLAHE can be particularly effective for improving local contrast without over-amplifying noise [23] [24].

- Brightness: Add a random delta value to the pixel intensities. The delta is typically sampled from a small range (e.g.,

- Output: A set of images of the same sperm cell under various simulated lighting and contrast conditions.

Considerations for Sperm Morphology: The primary risk is the creation of unrealistic artifacts or the obscuring of subtle morphological features. For instance, excessive contrast adjustment might artificially sharpen the boundaries of the sperm head or make a faint vacuole disappear. Augmentation parameters must be carefully tuned to stay within biologically and technically plausible limits.

Quantitative Analysis of Augmentation Impact

The following tables summarize key quantitative data from relevant studies, illustrating the impact of data augmentation on model performance for sperm morphology analysis.

Table 1: Impact of Data Augmentation on Dataset Size and Model Performance

| Study / Dataset | Initial Image Count | Augmented Image Count | Augmentation Techniques Used | Model Performance (Accuracy) | Key Morphological Classes |

|---|---|---|---|---|---|

| SMD/MSS [2] | 1,000 | 6,035 | Data augmentation techniques (specifics not listed) | 55% to 92% | 12 classes (head, midpiece, tail defects) based on modified David classification |

| Deep Learning Review [1] | Varies (e.g., 1,540 in MHSMA) | N/A (discusses general need) | Implicit in DL pipelines | Improved performance and generalization | Head, neck, and tail compartments |

Table 2: Parameter Ranges for Core Image Manipulations in Sperm Analysis

| Image Manipulation | Core Parameters | Recommended Range for Sperm Analysis | Purpose & Rationale |

|---|---|---|---|

| Rotation | Angle | -180 to +180 degrees | Achieve full rotational invariance. |

| Flipping | Axis | Horizontal, Vertical | Introduce reflectional variance. |

| Scaling | Zoom Factor | 0.9 to 1.1 (10% variation) | Simulate minor magnification differences. |

| Brightness | Delta | -0.2 to +0.2 (normalized) | Simulate lighting variations during microscopy. |

| Contrast | Multiplier | 0.8 to 1.5 | Simulate staining differences and contrast settings. |

Research Reagent Solutions

The following table lists key computational "reagents" and resources essential for implementing the described data augmentation protocols.

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Function/Benefit | Application Note |

|---|---|---|

| TensorFlow / Keras | Open-source library providing high-level APIs for implementing data augmentation layers (e.g., RandomRotation, RandomFlip, RandomContrast). |

Enables easy integration of real-time augmentation directly into the model training pipeline. |

| OpenCV | Library optimized for real-time computer vision. Provides core functions for image manipulation (rotation, flipping, scaling, histogram equalization). | Ideal for building custom, high-performance pre-processing and augmentation pipelines. |

| AndroGen [25] | Open-source software for generating synthetic sperm images from different species without relying on real data or generative training. | Complements traditional augmentation; useful when initial real datasets are very small or subject to privacy concerns. |

| SMD/MSS Dataset [2] | A dataset of 1,000 sperm images (extendable to 6,035) classified by experts according to the modified David classification. | Serves as a valuable benchmark for developing and testing augmentation and AI models for sperm morphology. |

| QUAREP-LiMi Guidelines [26] | Global checklists for publishing microscopy images, ensuring data is scientifically legible and reproducible. | Critical for maintaining quality and standardization when sharing augmented datasets and research findings. |

Workflow and Signaling Pathways

The following diagram illustrates the logical workflow for applying core image manipulations to create an augmented sperm morphology dataset for deep learning model training.

Augmentation to Analysis Workflow diagrams the process from a limited original dataset, through a parallel augmentation pipeline applying geometric, spatial, and photometric manipulations, to the creation of a robust dataset used for training a deep learning model capable of automated sperm morphology analysis.

The systematic application of core image manipulations—rotation, flipping, scaling, and brightness/contrast adjustment—is a fundamental and powerful strategy for data augmentation in sperm morphology research. By artificially expanding and diversifying training datasets, these techniques directly address the critical limitations of small sample sizes and class imbalance that often hinder the development of robust AI models. When implemented within biologically plausible parameters, as outlined in the provided protocols, these augmentations enhance model generalizability, leading to more accurate, automated, and standardized sperm morphology analysis systems. This advancement holds significant promise for improving the diagnostic efficiency and consistency of male infertility assessments in clinical practice.

Infertility affects a significant proportion of couples globally, with male factors contributing to approximately half of all cases [2]. The analysis of sperm morphology—the size, shape, and structural characteristics of sperm cells—remains a critical component in male fertility assessment, as abnormal sperm morphology is strongly correlated with reduced fertility rates and poor outcomes in assisted reproductive technologies [2] [8]. Traditional manual sperm morphology assessment, while important, suffers from several limitations: it is time-intensive (requiring 30-45 minutes per sample), highly subjective, and prone to significant inter-observer variability, with studies reporting disagreement rates of up to 40% between expert evaluators [2] [8].

The Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) was developed to address the critical need for standardized, high-quality data in this field. This dataset emerged from recognition that the robustness of artificial intelligence (AI) technologies for medical image analysis depends primarily on the creation of large and diverse databases [2]. Prior to its development, researchers faced two major challenges: a limited number of available sperm images and heterogeneous representation of different morphological classes, which impeded the development of reliable automated analysis systems [2].

The SMD/MSS Dataset: Original Composition and Acquisition

Sample Collection and Preparation

The original SMD/MSS dataset was constructed through a prospective study conducted at the Laboratory of Reproductive Biology, Medical School of Sfax, Tunisia [2]. Semen samples were obtained from 37 patients after informed consent, with specific inclusion and exclusion criteria to ensure data quality. Samples with a sperm concentration of at least 5 million/mL were included, while those with high concentrations (>200 million/mL) were excluded to prevent image overlap and facilitate capture of whole spermatozoa [2]. Smears were prepared according to World Health Organization (WHO) guidelines and stained with RAL Diagnostics staining kit to enhance morphological visibility [2].

Image Acquisition and Expert Classification

Images were acquired using the MMC CASA (Computer-Assisted Semen Analysis) system, consisting of an optical microscope equipped with a digital camera [2]. The system operated in bright field mode with an oil immersion x100 objective, capturing images of individual spermatozoa that included the head, midpiece, and tail for comprehensive morphological assessment [2].

A rigorous classification process was implemented with three experts from the laboratory, each possessing extensive experience in semen analysis [2]. The classification followed the modified David classification system, which includes 12 classes of morphological defects across three primary regions [2]:

- Head defects (7 classes): Tapered, thin, microcephalous, macrocephalous, multiple heads, abnormal post-acrosomal region, and abnormal acrosome

- Midpiece defects (2 classes): Cytoplasmic droplet and bent

- Tail defects (3 classes): Coiled, short, and multiple tails

Additionally, categories were included for associated anomalies (CN) and normal sperm (NR) [2]. Each spermatozoon was independently classified by all three experts, with results documented in a shared Excel spreadsheet containing the image name, expert classifications, and dimensions of sperm head and tail [2].

Inter-Expert Agreement Analysis

The complexity of sperm morphological classification was quantified through analysis of inter-expert agreement distribution [2]. Three agreement scenarios were identified among the three experts: No Agreement (NA) among experts, Partial Agreement (PA) where 2/3 experts agreed on the same label, and Total Agreement (TA) where 3/3 experts agreed on the same label for all categories [2]. Statistical analysis using IBM SPSS Statistics 23 software with Fisher's exact test revealed significant differences between experts in each morphology class (p < 0.05), highlighting the inherent subjectivity of manual assessment and underscoring the need for automated standardization [2].

Table 1: Original SMD/MSS Dataset Composition Before Augmentation

| Component | Specification |

|---|---|

| Original Image Count | 1,000 images |

| Source | 37 patient samples |

| Acquisition System | MMC CASA system |

| Microscopy | Bright field, oil immersion x100 objective |

| Classification Standard | Modified David classification (12 defect classes) |

| Expert Annotators | 3 independent experts |

| Annotation Method | Independent classification with agreement analysis |

Data Augmentation Methodology and Implementation

Rationale for Data Augmentation in Medical Imaging

Data augmentation has become an essential strategy in medical image analysis to address the perennial challenge of limited dataset sizes [27] [7]. Medical images are often scarce due to multiple factors: insufficient patients for some conditions, privacy concerns restricting data sharing, lack of medical equipment, inability to obtain images meeting desired criteria, and the time-consuming, expertise-dependent nature of medical image annotation [27]. These limitations frequently lead to biased datasets, overfitting of models, and ultimately inaccurate results when deploying deep learning systems in clinical practice [27].

The systematic application of data augmentation techniques enables researchers to expand training datasets artificially, improving model generalization without collecting new samples [7]. This approach is particularly valuable for balancing morphological classes in imbalanced datasets—a common issue in sperm morphology analysis where normal sperm typically outnumber specific defect categories [2] [8]. Data augmentation also promotes learning invariance with respect to transformations of input data that should not affect output, regularizing deep neural networks without requiring architectural modifications to enforce equivariance or invariance [7].

Augmentation Techniques for Sperm Morphology Images

The SMD/MSS dataset expansion employed multiple data augmentation techniques to transform the original 1,000 images into 6,035 enhanced samples [2] [13]. While the specific combination of techniques applied to SMD/MSS is not exhaustively detailed in the available literature, research in medical image augmentation more broadly categorizes these methods into several families:

Table 2: Common Data Augmentation Techniques in Medical Imaging

| Augmentation Category | Specific Techniques | Application Rationale |

|---|---|---|

| Affine Transformations | Rotation, translation, scaling, flipping, shearing | Learn spatial invariance, simulate viewing variations |

| Pixel-level Transformations | Brightness/contrast adjustment, noise addition, blurring, sharpening | Simulate different staining intensities, microscope settings |

| Elastic Deformations | Non-linear warp fields, elastic transformations | Account for biological shape variability |

| Generative Approaches | Generative Adversarial Networks (GANs), synthetic data generation | Create entirely new samples for rare morphological classes |

Based on broader medical imaging literature, the most effective augmentation approaches for classification tasks typically include affine and pixel-level transformations, which achieve the optimal trade-off between performance improvement and implementation complexity [7]. These techniques were likely applied to the SMD/MSS dataset, potentially including rotation, flipping, brightness/contrast adjustments, and noise addition to generate visually distinct but morphologically consistent variations of original sperm images [2].

Implementation Workflow

The implementation of data augmentation for the SMD/MSS dataset followed a structured pipeline within a Python-based deep learning framework [2]. The process involved several methodical stages from original image processing to expanded dataset generation, as visualized in the following workflow:

Diagram 1: Data Augmentation Workflow for SMD/MSS Dataset Expansion

The image pre-processing stage involved critical preparation steps including data cleaning to handle inconsistencies, normalization/standardization of numerical features to a common scale, and image resizing to 80×80×1 grayscale using linear interpolation strategy [2]. This standardization ensured that no particular feature dominated the learning process due to magnitude differences and optimized the images for subsequent deep learning processing [2].

Following augmentation, the expanded dataset was partitioned with 80% allocated for model training and the remaining 20% reserved for testing [2]. From the training subset, an additional 20% was extracted for validation purposes, creating a robust framework for model development and evaluation [2].

Experimental Framework and Deep Learning Architecture

Convolutional Neural Network Design

The expanded SMD/MSS dataset served as the foundation for developing a predictive model for sperm morphological classification based on artificial neural networks [2]. The implemented algorithm utilized a Convolutional Neural Network (CNN) architecture, which has demonstrated remarkable performance in image classification tasks across medical domains [2] [27]. The complete experimental framework encompassed five distinct stages: image pre-processing, database partitioning, data augmentation, program training, and evaluation [2].

The CNN architecture was implemented in Python (version 3.8), leveraging its comprehensive ecosystem of deep learning libraries and tools for medical image analysis [2]. While the specific architectural details (number of layers, filter sizes, etc.) are not explicitly provided in the available literature, the model was designed to effectively process the pre-processed 80×80×1 grayscale sperm images and output classifications across the morphological categories defined by the modified David classification system [2].

Comparative Performance Analysis

The deep learning model trained on the augmented SMD/MSS dataset produced satisfactory results, with accuracy ranging from 55% to 92% across different morphological categories [2] [13]. This performance range reflects the varying complexity of distinguishing between specific abnormality classes, with some morphological features proving more challenging to classify than others.

To contextualize these results, it is valuable to compare the SMD/MSS approach with other recent advances in sperm morphology classification:

Table 3: Performance Comparison of Sperm Morphology Classification Approaches

| Study/Method | Dataset | Architecture | Reported Performance |

|---|---|---|---|

| SMD/MSS Baseline [2] | Original (1,000 images) | CNN | Lower accuracy (specific values not provided) |

| SMD/MSS Augmented [2] | Expanded (6,035 images) | CNN | Accuracy: 55-92% (across classes) |

| CBAM+ResNet50+DFE [8] | SMIDS (3,000 images, 3-class) | ResNet50 + CBAM + Feature Engineering | Accuracy: 96.08 ± 1.2% |

| CBAM+ResNet50+DFE [8] | HuSHeM (216 images, 4-class) | ResNet50 + CBAM + Feature Engineering | Accuracy: 96.77 ± 0.8% |

| Bovine Sperm Analysis [18] | 277 annotated images | YOLOv7 | mAP@50: 0.73, Precision: 0.75, Recall: 0.71 |

The performance variance across studies highlights several important considerations. The CBAM-enhanced ResNet50 with deep feature engineering demonstrated that incorporating attention mechanisms and traditional feature selection methods can significantly boost performance [8]. This approach achieved exceptional results by integrating ResNet50 backbones with Convolutional Block Attention Module (CBAM) attention mechanisms, enabling the network to focus on the most relevant sperm features while suppressing background noise [8].

Notably, the SMD/MSS study's value extends beyond raw accuracy metrics. The development of a comprehensively annotated dataset according to the modified David classification—used by numerous laboratories worldwide—represents a significant contribution to the field, addressing a gap in available resources for this important classification standard [2].

Research Reagents and Computational Tools

Successful implementation of data augmentation and deep learning approaches for sperm morphology analysis requires specific research reagents and computational tools. The following table details essential components used across referenced studies:

Table 4: Essential Research Reagents and Computational Tools for Sperm Morphology Analysis

| Category | Specific Tool/Reagent | Function/Application |

|---|---|---|

| Microscopy Systems | MMC CASA System [2] | Automated sperm image acquisition and analysis |

| Microscopy Systems | Optika B-383Phi Microscope [18] | High-resolution sperm imaging for dataset creation |

| Staining Reagents | RAL Diagnostics Staining Kit [2] | Sperm staining for enhanced morphological visibility |

| Sample Preparation | Optixcell Extender [18] | Semen dilution and preservation for analysis |

| Deep Learning Frameworks | Python 3.8 [2] | Core programming environment for algorithm development |

| Annotation Tools | Roboflow [18] | Image annotation and dataset management platform |

| Object Detection | YOLOv7 Framework [18] | Real-time object detection for sperm localization and classification |

| Attention Mechanisms | CBAM (Convolutional Block Attention Module) [8] | Feature refinement in deep neural networks |

| Feature Engineering | PCA (Principal Component Analysis) [8] | Dimensionality reduction and feature selection |

These tools collectively enable the complete pipeline from sample preparation to automated analysis, forming an essential toolkit for researchers working in computational sperm morphology assessment. The integration of specialized laboratory equipment with advanced computational frameworks highlights the interdisciplinary nature of this research domain.

Discussion and Clinical Implications

Impact of Data Augmentation on Model Performance

The expansion of the SMD/MSS dataset from 1,000 to 6,035 images through data augmentation techniques represents a case study in addressing fundamental challenges in medical AI development. This approach directly counteracts the issues of limited data availability and class imbalance that frequently plague biomedical image analysis projects [27]. The achieved accuracy range of 55-92% demonstrates that while data augmentation significantly improves model performance, certain morphological classes remain challenging to classify accurately, potentially due to subtle visual features or inconsistent expert annotations on specific abnormality types [2].

The relationship between dataset size, augmentation strategies, and model performance can be visualized as follows:

Diagram 2: Impact of Data Augmentation on Model Development Challenges

This case study aligns with broader evidence in medical imaging literature, where data augmentation has demonstrated consistent benefits across all organs, modalities, and tasks [7]. Specifically, affine and pixel-level transformations have been shown to achieve the best trade-off between performance improvement and implementation complexity [7]. The SMD/MSS expansion project provides a focused illustration of these principles within the specific context of sperm morphology analysis.

Clinical Applications and Future Directions

The automation of sperm morphology analysis through deep learning approaches offers several transformative benefits for clinical practice:

Standardization and Objectivity: Automated systems reduce diagnostic variability between laboratories and technicians, addressing the fundamental limitation of manual assessment which exhibits inter-observer disagreement rates as high as 40% [8].

Time Efficiency: Deep learning systems can reduce analysis time from the manual 30-45 minutes per sample to less than one minute, significantly increasing laboratory throughput [8].

Reproducibility: Automated systems provide consistent results across different time points and laboratory settings, enhancing the reliability of fertility assessment and treatment monitoring [2] [8].

Potential for Real-Time Analysis: The computational efficiency of certain architectures suggests potential for real-time analysis during assisted reproductive procedures, potentially guiding clinical decision-making in dynamic contexts [8] [18].

Future research directions should explore more sophisticated augmentation techniques, including generative adversarial networks (GANs) for synthetic sperm image generation [27] [7]. Additionally, the integration of multiple classification standards (WHO, David, Kruger) within unified models could enhance utility across different clinical contexts. The development of explainable AI approaches that provide visual explanations for classification decisions would also strengthen clinical adoption by maintaining transparency in automated assessments [8].

The expansion of the SMD/MSS dataset from 1,000 to 6,035 images through systematic data augmentation represents a significant methodological advancement in the field of computational sperm morphology analysis. This case study demonstrates how carefully designed augmentation strategies can address fundamental challenges of limited data availability and class imbalance in medical image analysis. The resulting dataset has enabled the development of deep learning models with promising performance (55-92% accuracy across morphological classes), providing a foundation for automated, standardized sperm morphology assessment.

This work underscores the critical importance of high-quality, comprehensively annotated datasets in advancing medical AI applications. By making the SMD/MSS dataset available to the research community, this project contributes to the broader effort to develop reliable, automated tools for male fertility assessment that can improve diagnostic consistency, reduce analysis time, and ultimately enhance patient care in reproductive medicine. The integration of data augmentation methodologies within deep learning frameworks for sperm morphology analysis represents an important step toward addressing the significant public health challenge of male infertility through technological innovation.

The analysis of sperm morphology is a cornerstone of male fertility assessment, yet traditional methods are plagued by subjectivity, inconsistency, and an inability to use the analyzed sperm for subsequent assisted reproductive technologies (ART) due to staining and fixation requirements [28] [1]. These limitations create a pressing need for automated, objective, and non-destructive evaluation techniques. Deep learning, particularly Convolutional Neural Networks (CNNs), offers a powerful solution by enabling the automated extraction of features and classification of sperm cells from images. However, the development of robust CNN models is critically dependent on large, high-quality, and diverse datasets [29] [1]. The field of sperm morphology analysis currently suffers from a lack of such standardized datasets, which are often characterized by low resolution, limited sample sizes, and insufficient categorical representation of abnormal morphologies [28] [1]. This application note details a comprehensive methodology that integrates a tailored data augmentation pipeline with a CNN architecture to overcome these data limitations, thereby enhancing the accuracy, generalizability, and clinical applicability of AI-driven sperm morphology analysis for researchers and drug development professionals.

The following tables summarize key quantitative findings from the reviewed literature, highlighting the performance of deep learning models and the impact of data enhancement techniques.

Table 1: Performance Metrics of Deep Learning Models in Biomedical Image Analysis

| Model/Application | Accuracy | Precision | Recall/Sensitivity | Key Findings |

|---|---|---|---|---|

| In-house AI (ResNet50) for Sperm Morphology [28] | 93% | 95% (Abnormal), 91% (Normal) | 91% (Abnormal), 95% (Normal) | Strong correlation with CASA (r=0.88) and CSA (r=0.76). Processes ~25,000 images in 139.7 seconds. |

| GONF (CNN with mRMR) for Cancer Classification [30] | 97% (TCGA), 95% (AHBA) | Not Specified | Not Specified | Integrated gene selection with CNN, reducing false positives and negatives. |

| VGG-16 with Data Enhancement for Colorectal Cancer [29] | 86% | Improved F1-score | Improved Recall (Cancer class) | Data augmentation, outlier handling, and class balancing significantly improved model generalizability and recall. |

Table 2: Impact of Data Enhancement Techniques on Model Performance

| Technique | Application | Key Outcome Metrics |

|---|---|---|

| Outlier Handling (K-means) [29] | Colorectal Cancer Classification | Improved data quality and model robustness. |

| Data Augmentation | Colorectal Cancer Classification [29] | Increased dataset diversity, confirmed via Pearson correlation; enhanced accuracy and generalizability. |

| Class Balancing | Colorectal Cancer Classification [29] | Addressed class imbalance, leading to better performance on minority classes. |

| Deep Learning-Optimized CLAHE [31] | Suzhou Garden Images | SSIM increased by 24.69%, PSNR by 24.36%, LOE reduced by 36.62%. |

Experimental Protocols