Decoding Biomarker Significance: A Comparative Analysis of Feature Importance in Machine Learning Models for Fertility Prediction

This article synthesizes current research to provide a systematic comparison of feature importance across diverse machine learning models predicting fertility outcomes, including IVF, IUI, and natural conception.

Decoding Biomarker Significance: A Comparative Analysis of Feature Importance in Machine Learning Models for Fertility Prediction

Abstract

This article synthesizes current research to provide a systematic comparison of feature importance across diverse machine learning models predicting fertility outcomes, including IVF, IUI, and natural conception. Tailored for researchers and drug development professionals, it explores the foundational biological drivers, evaluates methodological approaches in model construction, addresses challenges in feature selection and model interpretability, and validates findings through performance benchmarking. The analysis aims to inform the development of robust, clinically applicable predictive tools and highlight potential biomarkers for therapeutic intervention.

Core Biological Drivers: Identifying Universal and Context-Specific Predictors of Fertility

The Paramount Role of Female Age and Ovarian Reserve Markers

In the fields of reproductive medicine and drug development, predicting female fertility potential remains a significant challenge. The decline in reproductive capacity with age is a well-established phenomenon, driven primarily by the quantitative and qualitative deterioration of the ovarian follicular pool [1]. For researchers and clinicians, two categories of predictive factors are paramount: female chronological age and biomarkers of ovarian reserve, such as Anti-Müllerian Hormone (AMH) and Antral Follicle Count (AFC). While these parameters are intrinsically linked, a critical question persists regarding their relative importance and specific applications in forecasting treatment outcomes in assisted reproductive technology (ART).

This guide provides an objective, data-driven comparison of these key features, framing them within the context of predictive modeling for infertility treatments. It synthesizes current research, including histological validations and clinical outcome studies, to equip scientists and pharmaceutical professionals with evidence-based insights for developing and evaluating fertility prediction models and therapeutic interventions.

Feature Comparison: Age vs. Biomarkers in Prediction Models

Female age and ovarian reserve markers serve as proxies for the underlying biological status of the ovaries, yet they capture different aspects and have distinct predictive strengths.

The Fundamental Role of Female Age

Chronological age is the most robust and universal predictor of reproductive success. Its influence is rooted in two core biological processes:

- Quantitative Depletion: Women are born with a finite number of oocytes, which declines irreversibly from a peak of nearly 7 million in mid-gestation to approximately 1-2 million at birth, and further to about 400,000 by puberty. This depletion accelerates around age 35, culminating in menopause with fewer than 1,000 follicles [1].

- Qualitative Deterioration: With advancing age, oocytes accumulate DNA damage, experience mitochondrial dysfunction, and exhibit meiotic spindle disruptions. This leads to an increased rate of aneuploidy, reducing the chances of successful fertilization, implantation, and live birth [1].

The American Society for Reproductive Medicine (ASRM) emphasizes that while ovarian reserve markers predict oocyte quantity, they are poor predictors of reproductive potential independently from age [2]. Age encapsulates the cumulative effect of both diminishing quantity and deteriorating quality.

The Specific Value of Ovarian Reserve Markers

Ovarian reserve markers like AMH and AFC provide a snapshot of the remaining follicular pool. Table 1 summarizes the key characteristics of the primary markers used in clinical research and practice.

Table 1: Key Biomarkers of Ovarian Reserve

| Marker | Biological Source | Clinical Measurement | Primary Correlation |

|---|---|---|---|

| Anti-Müllerian Hormone (AMH) | Granulosa cells of preantral and small antral follicles [2] | Serum test (relative consistency across the cycle) [2] | Strongly correlated with histologically quantified primordial follicle count (ρ=0.75) [3] |

| Antral Follicle Count (AFC) | Follicles 2-10 mm in diameter visible on ultrasound [2] | Transvaginal ultrasonography during early follicular phase [2] | Strongly correlated with histologically quantified primordial follicle count (ρ=0.85) [3] |

| Basal FSH | Pituitary gland (indirect marker; rises as follicular pool declines) [2] | Serum test on cycle day 2-4 [2] | Specific but not sensitive for diminished ovarian reserve; significant inter-cycle variability [2] |

AMH and AFC are considered the most sensitive direct and sonographic markers, respectively, and are largely equivalent in predicting ovarian response to stimulation [2]. Their strong correlation with the true histological ovarian reserve validates their use as non-invasive surrogates in research and clinical protocols [3].

Predictive Performance in Clinical and Research Settings

The utility of age and ovarian reserve markers varies significantly depending on the clinical outcome being predicted.

Predicting Response to Ovarian Stimulation

For forecasting oocyte yield following controlled ovarian stimulation (OS), biomarkers like AMH and AFC are superior to age alone.

- High-Specificity AMH Assays: A 2025 prospective study in poor responders (AMH <1.1 ng/mL) found that high-specific AMH assays, particularly the AL-196 assay (AnshLabs), showed the highest correlation with the number of cumulus-oocyte complexes (COCs) and metaphase II oocytes. A model combining AFC and this specific AMH assay offered the best predictive value for oocyte yield (Adjusted R² = 0.474 for COCs, p<0.001) [4]. This demonstrates that advanced assays can enhance prediction precision in challenging populations.

- General Predictive Power: Both AMH and AFC are strong predictors of oocyte yield following OS and oocyte retrieval, making them indispensable for personalizing stimulation protocols in ART [2].

Predicting Live Birth and Treatment Success

When the outcome of interest is live birth or clinical pregnancy, female age consistently emerges as the dominant feature.

- Machine Learning Models: A 2022 retrospective study of 2,485 treatment cycles comparing machine learning models found that age was the most essential feature for predicting clinical pregnancy in both IVF/ICSI and IUI treatments. Other important features included FSH, endometrial thickness, and infertility duration [5]. The Random Forest model, which identified these features, achieved an AUC of 0.73 for predicting clinical pregnancy in IVF/ICSI cycles.

- Hormonal Levels and Live Birth: A 2025 study on GnRH antagonist protocols identified that serum estradiol (E2) levels on the day of antagonist initiation have a non-linear relationship with Live Birth Rates (LBR). The optimal E2 range was 400-650 pg/mL, with levels below 400 pg/mL or between 650-800 pg/mL being independent factors that reduced the likelihood of a live birth after adjusting for age and other confounders [6]. This highlights that while specific hormone levels can fine-tune predictions, their effect is evaluated in the context of age.

- Limitations of Biomarkers for Natural Fertility: Large prospective cohort studies, such as the EAGER trial, have shown that women with low AMH levels have similar cumulative pregnancy rates as women with normal levels when attempting unassisted conception. This confirms that ovarian reserve tests are poor predictors of reproductive potential in women with unproven fertility [2].

Table 2 provides a consolidated comparison of the predictive strengths of these features for different endpoints.

Table 2: Comparative Predictive Power of Age and Ovarian Reserve Markers

| Predictive Endpoint | Dominant Predictive Feature | Supporting Data and Performance |

|---|---|---|

| Oocyte Yield after Stimulation | AMH & AFC | Model with AFC + high-specific AMH (AL-196): Adjusted R² = 0.474 for COCs [4]; AMH and AFC strongly correlate with primordial follicle count [3]. |

| Live Birth (LB) / Clinical Pregnancy (CP) in ART | Female Age | Random Forest model identified age as top feature for predicting CP (AUC: 0.73 for IVF/ICSI) [5]; ASRM states markers are poor predictors of reproductive potential independent of age [2]. |

| Success in Unassisted Conception | Female Age | Women with low AMH (<1 ng/mL) had similar cumulative pregnancy rates to those with normal AMH in prospective studies [2]. |

| Personalized Stimulation Response | AMH & AFC | Used to predict poor or hyper-response; aid in determining gonadotropin starting doses [2]. |

Experimental Insights and Novel Pathways

Beyond established markers, research is uncovering new biological mechanisms and potential therapeutic targets that influence ovarian function.

The Role of Ovarian Vascular Aging

Emerging evidence suggests that ovarian vascular aging is a hidden driver of mid-life fertility decline. Unlike the general decline in vessel density in later life, the ovary exhibits a pronounced reduction in blood vessel density and angiogenesis intensity as early as middle age in mice models. This impairs the transport of hormones and nutrients, disrupting follicle development even when the ovarian reserve is still sufficient.

- Experimental Workflow: Research using advanced 3D whole-mount imaging with subcellular resolution reconstructed the spatial and temporal patterns of angiogenesis in adult ovaries. Cell lineage tracing revealed that angiogenesis is primarily active in growing follicles, and these dynamic vascular networks are crucial for follicle development [7].

- Therapeutic Intervention: The natural compound salidroside, derived from Rhodiola rosea L, was found to reverse ovarian vascular aging by reducing oxidative stress and stimulating angiogenesis. In aged mice, salidroside treatment enhanced ovarian blood supply, improved follicle development and oocyte quality, and significantly increased natural pregnancy and birth rates [7].

The following diagram illustrates the mechanism of ovarian vascular aging and the proposed action of salidroside.

Histological Validation of Biomarkers

A 2025 prospective cross-sectional study provided crucial histological validation for AMH and AFC by directly correlating them with primordial follicle counts from excised ovarian tissue.

- Experimental Protocol:

- Participant Cohort: 89 healthy, menstruating women aged 35-48 years undergoing oophorectomy for benign conditions.

- Pre-operative Assessment: Serum AMH, FSH, and estradiol (E2) were measured, and AFC was assessed via transvaginal ultrasonography during the early follicular phase.

- Histological Analysis: Excised ovarian tissues were processed, serially sectioned, and stained with H&E. A blinded pathologist quantified primordial follicles, defined as oocytes surrounded by a single layer of flattened pre-granulosa cells.

- Statistical Analysis: Spearman's rank correlation was used to evaluate the relationship between biomarkers and follicle count [3].

- Key Result: Both AMH (ρ=0.75) and AFC (ρ=0.85) showed strong and statistically significant (p<0.001) positive correlations with the histologically determined primordial follicle count, confirming their accuracy as non-invasive surrogates for the true ovarian reserve [3].

The Scientist's Toolkit: Research Reagent Solutions

To investigate the pathways of ovarian aging and evaluate novel biomarkers, specific research tools and assays are essential. The following table details key reagents and their applications in this field.

Table 3: Essential Research Reagents for Ovarian Aging and Reserve Studies

| Reagent / Solution | Primary Function in Research | Example Application |

|---|---|---|

| High-Specificity AMH Assays | Quantify specific molecular isoforms of AMH with high precision. | Differentiating between ovarian reserve states in poor responders; AL-196 assay (AnshLabs) showed superior prediction of oocyte yield [4]. |

| ELISA Kits (AMH, FSH, E2) | Enable quantitative measurement of hormone levels in serum or culture media. | Standardized assessment of ovarian reserve biomarkers in clinical and research settings (e.g., Beckman Coulter, Roche Elecsys) [3]. |

| Pyrosequencing Reagents | Analyze DNA methylation levels at specific CpG sites for epigenetic age estimation. | Building models to calculate biological age using genes like ELOVL2, TRIM59, and KLF14 [8]. |

| Salidroside | A natural compound used to study rejuvenation of ovarian vascular function. | Investigating the reversal of ovarian vascular aging and its impact on follicle development and fertility in aged models [7]. |

| Primordial Follicle Staining (H&E) | Allows for the histological identification and manual quantification of the primordial follicle pool. | Providing the gold-standard validation for non-invasive ovarian reserve markers like AMH and AFC [3]. |

| Single-Cell RNA Sequencing Kits | Profile gene expression at single-cell resolution to map cellular heterogeneity and aging processes. | Identifying key regulators and changes in ovarian cell types (e.g., granulosa cells, stromal cells) during aging [1] [7]. |

The comparison between female age and ovarian reserve markers reveals a clear paradigm for their use in fertility prediction models. Female chronological age remains the undisputed, paramount feature for predicting live birth and cumulative pregnancy chances, as it is an irreversible summary of both oocyte quantity and quality. In contrast, biomarkers like AMH and AFC are more precise tools for forecasting the quantitative response to ovarian stimulation, such as oocyte yield, and are critical for personalizing ART protocols.

For researchers and drug developers, this hierarchy is essential. Models aiming to predict treatment success or population-level fertility trends must prioritize female age. Meanwhile, efforts to optimize stimulation protocols or manage patient expectations regarding egg retrieval outcomes should leverage the power of AMH and AFC. Emerging research on the ovarian microenvironment, particularly vascular aging, opens new avenues for therapeutic intervention beyond the follicular pool itself, suggesting that future models may incorporate these novel pathways to further refine our understanding and management of female fertility.

Sperm quality serves as a critical prognostic indicator for success in assisted reproductive technology (ART), with specific parameters carrying varying predictive weight across different treatment modalities. Within infertility practice, approximately 30-50% of cases are attributed to male factors, specifically abnormalities in sperm quality [9]. The evaluation of semen parameters, including concentration, motility, morphology, and DNA integrity, provides fundamental diagnostic and prognostic information for clinical decision-making. However, the interpretation of these parameters must be contextualized within the specific treatment modality employed, as the biological requirements for success differ significantly between intrauterine insemination (IUI) and in vitro fertilization (IVF).

This review systematically compares the prognostic value of sperm quality parameters in IUI versus IVF cycles, examining evidence-based threshold values, methodological approaches for sperm preparation, and the emerging role of artificial intelligence in enhancing predictive models. By synthesizing current research and clinical data, we aim to provide a comprehensive framework for evaluating sperm parameters across different ART contexts, facilitating more precise treatment selection and prognostic assessment for couples facing infertility.

Comparative Analysis of Sperm Parameter Thresholds

Prognostic Thresholds for Intrauterine Insemination

IUI success demonstrates a strong dependence on specific sperm quality thresholds, particularly regarding motility parameters. Evidence from large clinical studies reveals that pregnancy rates plateau when initial sperm values exceed certain critical thresholds: concentration of ≥5 × 10^6/mL, total count of ≥10 × 10^6, progressive motility of ≥30%, or total motile sperm count (TMSC) of ≥5 × 10^6 [10]. Notably, minimal increases in fecundity occur when initial values surpass these levels, establishing them as practical clinical benchmarks.

A separate study investigating sperm motility before and after preparation identified pre-processing motility as the most significant predictor of live birth, with an optimal threshold of ≥72.5% for predicting successful outcomes [11]. This research further demonstrated that initial sperm motility, rather than post-preparation motility or the degree of change during processing, served as the primary prognostic factor for IUI success. The clinical pregnancy rate was 14.5% and live birth rate was 10.4% across the studied cycles, with pre-wash sperm motility significantly higher in groups achieving clinical pregnancy and live birth (71.4%±10.9% vs. 67.2%±11.7%, p=0.020) [11].

Table 1: Sperm Parameter Thresholds for IUI Success

| Parameter | Threshold for ≥8.2% Pregnancy Rate | Lowest Reported Values Resulting in Pregnancy | Optimal Threshold for Live Birth Prediction |

|---|---|---|---|

| Concentration | ≥5 × 10^6/mL | 2 × 10^6/mL | - |

| Total Count | ≥10 × 10^6 | 5 × 10^6 | - |

| Progressive Motility | ≥30% | 17% | - |

| Total Motile Sperm Count | ≥5 × 10^6 | 1.6 × 10^6 | - |

| Pre-Preparation Motility | - | - | ≥72.5% |

Sperm Quality Requirements for IVF/ICSI

In contrast to IUI, IVF, particularly with intracytoplasmic sperm injection (ICSI), demonstrates success with substantially lower sperm parameters, as the technical procedure bypasses many natural selection barriers. While specific threshold values for IVF were not explicitly detailed in the search results, the biological requirements differ fundamentally from IUI. During conventional IVF, sperm must undergo capacitation, navigate the female reproductive tract, penetrate the cumulus complex, and fuse with the oocyte—processes requiring adequate motility and morphological normality. With ICSI, a single sperm is directly injected into the oocyte, circumventing these natural barriers and making minimal motility and concentration requirements sufficient for technical execution.

The focus in IVF/ICSI shifts toward more subtle aspects of sperm quality, including DNA integrity, which can significantly impact embryo development and pregnancy outcomes even when conventional parameters appear adequate. Sperm processing techniques become particularly important in this context, as they influence not just motility but also DNA fragmentation levels and overall sperm functional competence [9].

Experimental Protocols in Sperm Quality Research

Semen Analysis and Preparation Methodologies

Standardized protocols for semen analysis and processing form the foundation of experimental research in male fertility assessment. The World Health Organization (WHO) guidelines establish the fundamental framework for manual semen evaluation, which includes assessment of volume, concentration, motility, and morphology after liquefaction [11]. In research settings, semen samples are typically collected after 2-3 days of ejaculatory abstinence and allowed to liquefy for 30-60 minutes at room temperature before processing.

The density gradient centrifugation (DGC) technique represents the most common processing method in contemporary ART research. The detailed methodology involves layering liquefied semen over a density gradient medium (e.g., SpermGrad, PureSperm), followed by centrifugation at 300-500 × g for 15-20 minutes [9] [11]. This process separates motile, morphologically normal sperm from leukocytes, cellular debris, and immotile sperm, with the highly motile sperm pellet subsequently washed and resuspended in culture medium. The conventional swim-up technique represents an alternative approach, where motile sperm migrate into an overlying culture medium during incubation, typically yielding a higher percentage of motile sperm but with potentially lower overall recovery [9].

Table 2: Comparison of Sperm Processing Techniques

| Method | Principles | Advantages | Disadvantages | Impact on DNA Integrity |

|---|---|---|---|---|

| Density Gradient Centrifugation | Separation by density during centrifugation | High yield of motile sperm, effective debris removal | Potential for ROS generation, may collect DNA-damaged senescent sperm | Variable effects, potential increase in DNA fragments |

| Conventional Swim-Up | Active migration of motile sperm into medium | High purity of motile sperm recovery | Low yield, potential ROS damage from pellet | Reduced normally chromatin-condensed spermatozoa |

| Magnetic Activated Cell Sorting | Separation based on apoptotic markers | Maintains nuclear DNA integrity, selects non-apoptotic sperm | Uncertain improvement in pregnancy rates, technical complexity | Improved DNA integrity in selected sperm population |

| Hyaluronic Acid Binding | Binding to hyaluronic acid receptor on mature sperm | Selects mature sperm with normal morphology, lower DNA fragmentation | Requires experienced embryological skills, insufficient outcome studies | Lower DNA fragmentation and chromosomal aneuploidy rates |

Advanced Sperm Quality Assessment Techniques

Research into male infertility increasingly employs sophisticated genomic and molecular analyses to identify subtle sperm abnormalities. Whole-genome sequencing (WGS) of sperm DNA represents a powerful methodology for identifying genetic variants associated with sperm dysfunction. The experimental workflow involves collecting sperm samples from normozoospermic controls and men with defined sperm pathologies (oligozoospermia, asthenozoospermia, teratozoospermia), followed by purification using 45%-90% density gradients to remove somatic cells and debris [12].

DNA extraction employs modified protocols using kits such as the QIAamp DNA Mini Kit, with additional steps to improve DNA yield and purity, including comprehensive washing and centrifugation series at 500 × g [12]. The extracted DNA undergoes WGS, followed by variant identification and validation through Sanger sequencing. This approach has identified numerous potentially deleterious variants in genes critical for sperm flagellar function and motility, including DNAJB13, MNS1, DNAH6, and CATSPER1 [12]. These genetic findings provide insights into the molecular underpinnings of idiopathic male infertility and represent potential biomarkers for diagnostic development.

Signaling Pathways and Genetic Regulation of Sperm Function

(Sperm Motility Regulatory Pathways)

Integration of Artificial Intelligence in Sperm and Embryo Assessment

Artificial intelligence is transforming the assessment of gametes and embryos in ART, with machine learning (ML) algorithms increasingly applied to predict treatment outcomes. AI adoption in reproductive medicine has grown significantly, from 24.8% of fertility specialists in 2022 to 53.22% in 2025 (including both regular and occasional use) [13]. Embryo selection represents the primary application, with 86.3% of AI users in 2022 and 32.75% of all respondents in 2025 identifying it as the dominant use case.

ML models have demonstrated particular utility in predicting blastocyst formation, a critical determinant of IVF success. In comparative studies, machine learning approaches (SVM, LightGBM, XGBoost) significantly outperformed traditional linear regression models (R²: 0.673-0.676 vs. 0.587, MAE: 0.793-0.809 vs. 0.943) [14]. The LightGBM model emerged as optimal, utilizing fewer features (8 vs. 10-11) while maintaining comparable performance and offering superior interpretability. Feature importance analysis identified the number of extended culture embryos, mean cell number on Day 3, and proportion of 8-cell embryos as the most critical predictors of blastocyst yield [14].

Beyond embryo selection, AI applications are expanding to sperm analysis, with algorithms capable of assessing sperm motility, morphology, and concentration with reduced inter-observer variability. These tools offer potential for standardizing semen analysis and identifying subtle patterns not discernible through conventional microscopy. However, implementation barriers persist, including cost concerns (38.01%), lack of training (33.92%), and ethical considerations regarding over-reliance on technology (59.06%) [13].

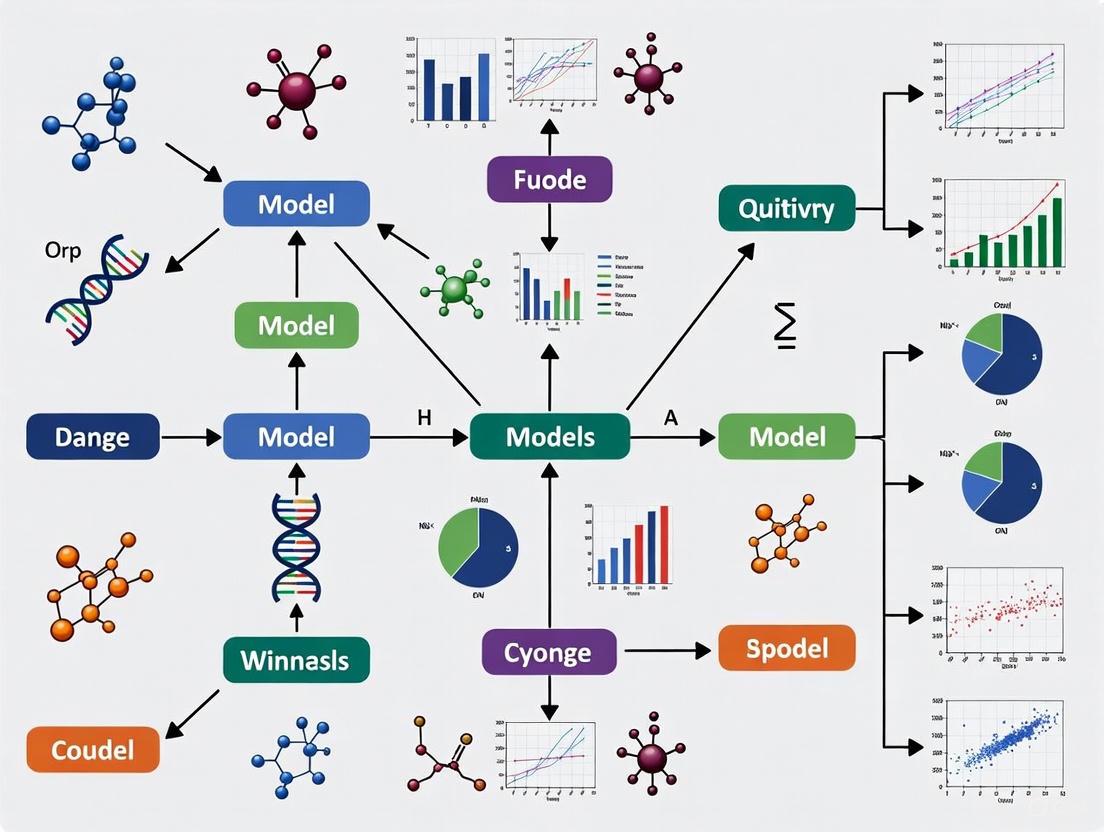

(AI Model Development Workflow)

Research Reagent Solutions for Sperm Quality Studies

Table 3: Essential Research Reagents and Materials for Sperm Quality Studies

| Reagent/Material | Application | Function | Examples/Specifications |

|---|---|---|---|

| Density Gradient Media | Sperm processing | Separation of motile sperm based density | PureSperm, SpermGrad, Sil-Select |

| Sperm Washing Medium | Semen processing | Provides nutrients, maintains pH | Ham's F-10, Human Tubal Fluid (HTF) |

| Antibiotic Supplements | Culture media | Prevent microbial contamination | Penicillin-Streptomycin, Gentamicin |

| Protein Supplement | Culture media | Simulate reproductive tract fluids | Serum Albumin (HSA) |

| DNA Extraction Kits | Genetic analysis | Isolation of genomic DNA from sperm | QIAamp DNA Mini Kit |

| Hyaluronic Acid | Sperm selection | Binding mature sperm with intact acrosome | Medicult, PICSI plates |

| MACS Microbeads | Apoptotic sperm removal | Magnetic separation based on phosphatidylserine | Annexin V microbeads |

| Cryopreservation Media | Sperm vitrification | Cryoprotection during freezing | SpermFreeze, TEST-yolk buffer |

The comparative analysis of sperm quality parameters across ART modalities reveals distinct prognostic thresholds and technical requirements. IUI success demonstrates strong dependence on pre-processing motility and total motile sperm count, with clearly defined minimum thresholds below which success rates decline precipitously. In contrast, IVF/ICSI can technically proceed with substantially lower parameters while shifting prognostic emphasis toward genetic integrity and functional competence.

The integration of artificial intelligence and advanced genetic screening represents a paradigm shift in male fertility assessment, enabling more precise prediction of treatment outcomes and identification of subtle sperm dysfunction not apparent through conventional analysis. Future research directions should focus on validating these emerging technologies in diverse clinical settings, establishing standardized implementation protocols, and addressing ethical considerations surrounding their increasing role in clinical decision-making. As these technologies mature, they promise to advance the field toward truly personalized male fertility assessment and treatment selection.

In assisted reproductive technology (ART), the careful control of cycle characteristics—including endometrial thickness, hormonal levels, and the selection of stimulation protocols—is fundamental to optimizing treatment outcomes. These parameters are deeply interconnected, influencing endometrial receptivity, embryonic development, and ultimately, pregnancy success. Researchers and clinicians face the ongoing challenge of balancing these factors to achieve optimal results across diverse patient populations.

This guide provides a comparative analysis of key cycle characteristics and their impact on treatment efficacy. By synthesizing data from recent clinical studies and emerging artificial intelligence applications, we aim to offer a structured overview of how different parameters and protocols perform in controlled settings. The focus extends beyond pregnancy rates to include practical considerations such as treatment duration, medication requirements, and risk mitigation, providing a comprehensive framework for protocol selection in both research and clinical practice.

Comparative Analysis of Stimulation Protocols

Protocol Definitions and Workflows

Controlled ovarian hyperstimulation (COH) protocols are designed to induce multifollicular development while preventing premature ovulation. The most common protocols include the GnRH agonist long protocol, the GnRH antagonist protocol, and the progestin-primed ovarian stimulation (PPOS) protocol [15] [16].

GnRH Agonist Long Protocol: Initiated in the mid-luteal phase (approximately cycle day 21) with daily administration of GnRH agonist (e.g., triptorelin 0.1 mg). Gonadotropin stimulation (150-225 IU/day) begins after pituitary downregulation is confirmed, typically on cycle day 2 or 3. Both medications continue until the day of trigger [15].

GnRH Antagonist Protocol: Gonadotropin stimulation starts on cycle day 2/3. The GnRH antagonist (e.g., cetrorelix) is introduced once the leading follicle reaches approximately 14 mm in diameter (typically around day 6 of stimulation) and continues until trigger [15].

Minimal Stimulation Protocol: Utilizes oral agents such as clomiphene citrate (CC) or letrozole, often in combination with low-dose gonadotropins. CC administration typically begins on day 3-5 of the menstrual cycle and continues until trigger [15].

PPOS Protocol: Uses oral progestins (medroxyprogesterone acetate, dydrogesterone, or micronized progesterone) alongside gonadotropins from cycle day 3. The progestin prevents premature LH surges through negative feedback on the pituitary, making this protocol suitable for freeze-all strategies [16].

Table 1: Key Characteristics of Major Ovarian Stimulation Protocols

| Protocol | Treatment Duration | Gonadotropin Dose | Cycle Cancellation Rate | Primary Advantages | Primary Disadvantages |

|---|---|---|---|---|---|

| GnRH Agonist Long | Longer duration [15] | Higher consumption [15] | Similar to antagonist [15] | Superior folliculogenesis, higher pregnancy rates [15] | Risk of ovarian cysts, menopausal symptoms [15] |

| GnRH Antagonist | Shorter duration [15] | Lower consumption [15] | Similar to agonist [15] | Lower OHSS risk, patient-friendly [15] | Possibly lower pregnancy rates [15] |

| Minimal Stimulation | Shortest duration [15] | Lowest consumption [15] | Not specified | Reduced medication burden, cost-effective [15] | Lower oocyte yield [15] |

| PPOS | Not specified | Not specified | Not specified | Prevents LH surge, suitable for various populations [16] | Requires frozen embryo transfer [16] |

Endometrial Preparation Protocols for Frozen-Thawed Embryo Transfer

With the increasing use of freeze-all strategies, endometrial preparation protocols have gained importance. The three main approaches are natural cycles (NC), hormone replacement therapy (HRT) cycles, and ovarian stimulation (OS) cycles [17].

Natural Cycle (NC): Suitable for ovulatory women with regular cycles. Involves monitoring spontaneous follicular development and timing transfer based on ovulation [17].

Hormone Replacement Therapy (HRT): Uses exogenous estrogen and progesterone to create an artificial cycle, ideal for women with irregular ovulation [17].

Ovarian Stimulation (OS): Employs mild stimulation (e.g., letrozole with or without gonadotropins) to induce follicular development and endogenous hormone production [17].

Table 2: Pregnancy Outcomes by Endometrial Preparation Protocol in High-OHSS-Risk Patients

| Outcome Measure | Natural Cycle (NC) | Hormone Replacement (HRT) | Ovarian Stimulation (OS) | Statistical Significance |

|---|---|---|---|---|

| Live Birth Rate | 1.50 (1.03-2.19)* | Reference | 2.53 (1.55-4.14)* | p<0.05 for both vs. HRT [17] |

| Clinical Pregnancy Rate | 1.57 (1.03-2.39)* | Reference | 2.14 (1.22-3.75)* | p<0.05 for both vs. HRT [17] |

| Miscarriage Rate | Not significant | Reference | 0.29 (0.12-0.71)* | p<0.05 for OS vs. HRT [17] |

| Cesarean Delivery Rate | 0.44 (0.26-0.74)* | Reference | Not significant | p<0.05 for NC vs. HRT [17] |

Values represent adjusted odds ratios (95% confidence intervals)

Endometrial Thickness as a Critical Parameter

Endometrial Thickness Measurement and Impact

Endometrial thickness (EMT) is routinely monitored via transvaginal ultrasonography during treatment cycles. Measurements are typically taken at the thickest point in the midsagittal plane, including both anterior and posterior layers [16]. The optimal timing for measurement is on the day of hCG administration in fresh cycles or on the day of progesterone initiation in frozen cycles [16].

Research consistently demonstrates that EMT significantly influences pregnancy outcomes. In PPOS protocols, an EMT ≥8 mm on hCG day is associated with significantly higher ongoing pregnancy rates (34.2% vs. 29.1%, p=0.039) compared to thinner endometria [16]. This effect is particularly pronounced in blastocyst transfers, where clinical pregnancy rates (49% vs. 40.2%, p=0.009) and ongoing pregnancy rates (39.6% vs. 30.6%, p=0.005) are substantially improved with thicker endometria [16].

Interestingly, the relationship between endometrial thickness and stimulation intensity appears complex. While conventional stimulated IVF cycles produce significantly thicker endometria compared to natural cycles (9.75±2.05 mm vs. 8.12±1.66 mm, p<0.001), this artificial thickening does not necessarily translate to improved implantation rates [18]. This suggests that endometrial quality and function may be more important than absolute thickness alone.

Endometrial Preparation Protocol Efficacy by Thickness Category

The optimal endometrial preparation protocol may vary depending on baseline endometrial characteristics. For suboptimal endometrium (EMT <8 mm), natural cycles show potentially better outcomes than HRT or OS protocols, with ongoing pregnancy rates of 34.1% versus 29.9% and 26.3%, respectively [16]. In contrast, for women with adequate EMT (≥8 mm), the GnRH agonist-plus-HRT protocol yields superior results, with ongoing pregnancy rates of 40.4% compared to 33.8% with HRT alone and 25.2% with natural cycles [16].

Hormonal Dynamics Across Protocols

Estradiol and Progesterone Patterns

Hormonal levels during stimulation cycles follow distinct patterns based on the protocol used. In conventional gonadotropin-stimulated cycles, estradiol (E2) concentrations rise significantly higher than in natural cycles due to multifollicular development [18]. However, the endometrial response to rising E2 levels is not linear; the increase in endometrial thickness slows with increasing E2 concentrations (time × estradiol concentration: -0.19, p=0.010) [18].

Progesterone elevation during the late follicular phase is a concern across all protocols, as it may adversely impact endometrial receptivity. The PPOS protocol uniquely utilizes this effect therapeutically, administering progestins from stimulation day 3 to prevent premature LH surges through pituitary suppression [16].

LH Suppression Strategies

Preventing premature LH surges is a cornerstone of successful COH. The GnRH agonist long protocol achieves this through pituitary downregulation, while the antagonist protocol provides competitive receptor blockade [15]. The PPOS protocol represents a paradigm shift, using progestins to suppress LH via progesterone-mediated negative feedback [16]. Each approach has distinct endocrine effects, with agonist protocols associated with more profound suppression and potentially better follicular synchronization [15].

Emerging AI Applications in Protocol Optimization

Machine Learning for Outcome Prediction

Artificial intelligence is increasingly applied to optimize cycle-specific parameters and predict treatment outcomes. Machine learning models now demonstrate strong performance in predicting live birth following fresh embryo transfer (AUC >0.8) [19], blastocyst yield (R²: 0.673-0.676) [14], and intrauterine insemination success (AUC=0.78) [20].

Feature importance analyses from these models provide data-driven insights into critical parameters. For blastocyst formation prediction, the number of embryos in extended culture emerges as the most significant predictor (61.5%), followed by Day 3 embryo morphology parameters [14]. For live birth prediction after fresh transfer, key features include female age, embryo grade, number of usable embryos, and endometrial thickness [19].

Comparative Feature Importance Across Prediction Models

Table 3: Key Predictors in Fertility Outcome Machine Learning Models

| Prediction Task | Top Performing Model | Most Important Features | Performance Metrics |

|---|---|---|---|

| Live Birth (Fresh ET) | Random Forest [19] | Female age, embryo grade, usable embryo count, endometrial thickness [19] | AUC >0.8 [19] |

| Blastocyst Yield | LightGBM [14] | Extended culture embryos (61.5%), Day 3 mean cell number (10.1%), 8-cell proportion (10.0%) [14] | R²: 0.676, MAE: 0.793 [14] |

| IUI Success | Linear SVM [20] | Pre-wash sperm concentration, stimulation protocol, cycle length, maternal age [20] | AUC: 0.78 [20] |

| Natural Conception | XGB Classifier [21] | BMI, caffeine consumption, endometriosis history, chemical/heat exposure [21] | Accuracy: 62.5%, AUC: 0.580 [21] |

Signaling Pathways and Physiological Mechanisms

Hormonal Regulation in Ovarian Stimulation

The following diagram illustrates the key signaling pathways involved in different stimulation protocols:

Hormonal Regulation Pathways in Stimulation Protocols

Endometrial Preparation Workflow

The following diagram outlines the methodological workflow for endometrial preparation in frozen-thawed embryo transfer cycles:

Endometrial Preparation Workflow for FET

Research Reagent Solutions

Table 4: Essential Research Reagents for Fertility Protocol Studies

| Reagent Category | Specific Examples | Research Applications | Key Functions |

|---|---|---|---|

| GnRH Agonists | Triptorelin, Leuprorelin, Goserelin [15] | Ovarian suppression studies | Pituitary downregulation, prevent LH surges [15] |

| GnRH Antagonists | Cetrorelix, Ganirelix [15] | Cycle flexibility research | Immediate LH suppression, OHSS risk reduction [15] |

| Gonadotropins | r-FSH (Gonal-F, Puregon), hMG, HCG [15] [16] | Stimulation efficacy trials | Follicular development, ovulation trigger [15] |

| Oral Ovulation Inducers | Clomiphene citrate, Letrozole [15] | Minimal stimulation protocols | Endogenous FSH release, aromatase inhibition [15] |

| Progestins | Medroxyprogesterone acetate, Dydrogesterone [16] | PPOS protocol development | LH surge prevention via negative feedback [16] |

| Estrogen Preparations | Estradiol valerate (Progynova) [16] [17] | Endometrial preparation studies | Endometrial proliferation, cycle control [16] |

| Progesterone Formulations | Micronized progesterone (Utrogestan), Crinone [16] [17] | Luteal phase support research | Endometrial transformation, implantation support [16] |

The comparative analysis of cycle characteristics reveals a complex interplay between endometrial parameters, hormonal dynamics, and stimulation protocols. While the GnRH agonist long protocol demonstrates advantages in folliculogenesis and pregnancy rates for normal responders, alternative protocols offer specific benefits for particular patient populations. The GnRH antagonist protocol reduces OHSS risk and treatment burden, while minimal stimulation and PPOS protocols provide valuable options for poor responders or those requiring freeze-all strategies.

Endometrial thickness remains a critical predictive parameter, with ≥8 mm generally associated with superior outcomes, particularly in blastocyst transfer cycles. However, the relationship between artificially thickened endometrium and implantation rates highlights that functional quality may outweigh absolute measurements.

Emerging machine learning applications are refining our understanding of feature importance across treatment modalities, offering data-driven insights for protocol personalization. As ART continues to evolve, the integration of traditional clinical parameters with advanced analytics promises more individualized, effective, and safer treatment paradigms for diverse patient populations.

The pursuit of effective fertility prediction models represents a critical frontier in reproductive medicine, where understanding the relative importance of various input features directly impacts clinical decision-making and therapeutic outcomes. This comparison guide objectively analyzes the performance of key lifestyle and demographic factors—specifically Body Mass Index (BMI), infertility duration, and sociodemographic characteristics—as predictive features across fertility research. As assisted reproductive technologies (ART) evolve, discerning which factors most significantly influence treatment success allows clinicians to prioritize interventions and manage patient expectations. The following analysis synthesizes current experimental data and methodologies, framing findings within the broader thesis that feature importance varies substantially across different fertility prediction models and patient populations, with body composition metrics often outperforming traditional demographic factors in predictive power.

Comparative Analysis of Predictive Factors in Fertility Outcomes

Body Mass Index (BMI) and Body Composition Metrics

Table 1: Impact of Elevated BMI on Assisted Reproductive Technology Outcomes

| BMI Category | Clinical Pregnancy Odds Ratio | Live Birth Odds Ratio | Oocyte Retrieval Impact | Gonadotropin Dose Requirements |

|---|---|---|---|---|

| Overweight (BMI ≥25) | 0.76 (95% CI: 0.62-0.93) [22] | Not consistently reported | Reduced oocyte yield [22] | Increased requirements [22] |

| Obese (BMI ≥30) | 0.61 (95% CI: 0.39-0.98) [22] | Limited reporting | Significantly reduced [22] | Significantly increased [22] |

Table 2: Comparative Performance of Obesity Indicators for Predicting Infertility

| Obesity Indicator | Adjusted Odds Ratio for Infertility | 95% Confidence Interval | Diagnostic Efficiency |

|---|---|---|---|

| Body Mass Index (BMI) | 2.10 | 1.40-3.18 [23] | Moderate |

| Waist Circumference (WC) | 2.28 | 1.52-3.47 [23] | High |

| Waist-to-Height Ratio (WHtR) | 2.09 | 1.39-3.19 [23] | High |

| Relative Fat Mass (RFM) | 2.09 | 1.39-3.19 [23] | High |

| Body Roundness Index (BRI) | 2.09 | 1.39-3.19 [23] | High |

Research consistently demonstrates that body composition metrics surpassing BMI in predictive accuracy for infertility. Women in the highest RFM quartile show nearly three-fold higher odds of infertility history compared to those in the lowest quartile (OR: 2.87; 95% CI: 1.85-4.44) [24]. This association is particularly strong in women under 35 years, highlighting age-specific predictive patterns [24].

Infertility Duration and Type

Table 3: Association Between Infertility Duration/Type and BMI in Ghanaian Women

| Infertility Characteristic | Normal Weight (%) | Overweight (%) | Obese (%) | Statistical Significance |

|---|---|---|---|---|

| Primary Infertility | 36.95 | 36.81 | p<0.001 [25] | |

| Secondary Infertility | 63.05 | 63.19 | p<0.001 [25] | |

| Duration 2-5 years | 295 women | 457 women | 526 women | Significant [25] |

| Duration 6-10 years | Not specified | 464 women | 498 women | Significant [25] |

The Ghanaian study revealed that 76.83% of women seeking fertility treatment had elevated BMI, with overweight (37.27%) and obese (39.56%) categories predominating [25]. Secondary infertility was more prevalent among overweight (63.05%) and obese (63.19%) women compared to those with primary infertility [25]. Longer infertility duration (2-10 years) was associated with higher BMI categories, suggesting a complex relationship between body weight and protracted infertility struggles [25].

Sociodemographic Factors

Table 4: Sociodemographic Correlates of Fertility Motivation and Outcomes

| Sociodemographic Factor | Correlation with Fertility Motivation | Impact on Treatment Outcomes | Population-Specific Findings |

|---|---|---|---|

| Age | Significant correlation with desire for children (p<0.05) [26] | Strong predictor in IUI cycles [20] | Advanced maternal age reduces blastocyst yield [14] |

| Education Level | Significant correlation with desire for children (p<0.05) [26] | Not directly reported | Higher education associated with elevated BMI in infertile Ghanaian women (p<0.003) [25] |

| Employment Status | Significant difference in motivation scores (p<0.05) [26] | Not directly reported | Unemployed women showed different childbearing motivations [26] |

| Income Level | Significant correlation with desire for children (p<0.05) [26] | Not directly reported | - |

| Marital Duration | Significant correlation with desire for children (p<0.05) [26] | Not directly reported | - |

Sociodemographic characteristics significantly influence childbearing motivations, with age, education level, income, social support, and marital duration all showing significant correlations with desire for children (p<0.05) [26]. Employment status and spousal compatibility also significantly affected motivation scores [26]. Notably, occupational patterns emerged in the Ghanaian study, where traders showed the highest prevalence of elevated BMI, potentially reflecting sedentary lifestyles [25].

Experimental Protocols and Methodologies

NHANES Analysis Protocol (RFM and Infertility)

Study Design: Cross-sectional analysis of National Health and Nutrition Examination Survey data (2013-2020) [24]. Population: 3,915 women aged 18-45 years with complete infertility, RFM, and covariate data [24]. Infertility Assessment: Self-reported based on two criteria: (1) attempting conception for ≥12 months without success, or (2) seeking medical help for infertility [24]. RFM Calculation: RFM = 64 - (20 × height/waist circumference) + (12 × sex), where sex=1 for women [24]. Covariate Adjustment: Three statistical models employed: Crude (unadjusted), Model 1 (age, race), Model 2 (comprehensive including socioeconomic factors, health behaviors, comorbidities) [24]. Statistical Analysis: Sampling weights applied for national representativeness; weighted t-tests, chi-square tests, logistic regression with odds ratios and 95% confidence intervals [24].

Machine Learning Model Development (IUI Outcome Prediction)

Data Source: Retrospective analysis of 9,501 IUI cycles from 3,535 couples (2011-2015) [20]. Feature Set: 21 clinical parameters including male/female age, sperm parameters, ovarian stimulation protocol, cycle characteristics [20]. Data Preprocessing: Exclusion of cycles with >3 missing features; median/mode imputation for 1-2 missing features; PowerTransformer normalization; one-hot encoding for categorical variables [20]. Model Training: Multiple algorithms tested (Linear SVM, AdaBoost, Kernel SVM, Random Forest, Extreme Forest, Bagging, Voting); stratified 4-fold cross-validation for hyperparameter optimization [20]. Performance Metrics: Accuracy evaluated by Area Under Curve (AUC) analysis; feature importance ranking [20]. Key Findings: Linear SVM outperformed other models (AUC=0.78); pre-wash sperm concentration, ovarian stimulation protocol, cycle length, and maternal age were strongest predictors [20].

Systematic Review Protocol (BMI and Fertility Outcomes)

Search Strategy: Comprehensive search of EMBASE, MEDLINE, Cochrane Library (2000-2023) using MeSH terms related to female infertility and BMI [22]. Eligibility Criteria: Strict exclusion of comorbidities affecting fertility (PCOS, thyroid disease); English-language original research only [22]. Quality Assessment: Newcastle-Ottawa Scale for risk of bias; funnel plot analysis for publication bias [22]. Data Extraction: Independent extraction by two authors; disagreement resolution by third senior author [22]. Statistical Analysis: RevMan software; Mantel-Haenszel method for dichotomous data (OR with 95% CI); inverse variance for continuous data (standardized mean differences) [22].

Pathophysiological Pathways and Research Workflows

Obesity-Related Infertility Mechanisms

Machine Learning Research Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 5: Essential Research Materials for Fertility Prediction Studies

| Research Material | Specific Examples | Research Application | Key Function |

|---|---|---|---|

| Anthropometric Measurement Tools | Electronic scale with stadiometer (JENIX DS-103) [25] | Body composition assessment | Precise height, weight, and BMI measurement |

| Laboratory Assays | HbA1c, fasting plasma glucose [24] | Metabolic parameter assessment | Diabetes diagnosis and metabolic health evaluation |

| Sperm Analysis Systems | Makler Chamber [20] | Male fertility assessment | Sperm concentration, motility, and progression analysis |

| Sperm Processing Media | Density gradient media (Gynotec Sperm filter) [20] | IUI sperm preparation | Isolation of motile spermatozoa for insemination |

| Ovarian Stimulation Agents | Gonal-F, Puregon, Menopur [20] | Controlled ovarian stimulation | Follicle development and ovulation induction |

| Ovulation Trigger Agents | Ovidrel (recombinant hCG) [20] | Ovulation timing | Final oocyte maturation prior to retrieval/insemination |

| Luteal Phase Support | Prometrium (micronized progesterone) [20] | Endometrial preparation | Enhancement of endometrial receptivity |

| Laboratory Culture Media | SpermWash [20] | Sperm processing | Preparation of sperm samples for ART procedures |

This comparison guide demonstrates significant variability in predictive performance across lifestyle and demographic factors in fertility research. Body composition metrics—particularly RFM, WHtR, and waist circumference—consistently outperform traditional BMI in infertility prediction, with women in the highest RFM quartile facing nearly three-fold higher infertility odds [23] [24]. While sociodemographic factors like age, education, and income significantly correlate with fertility motivations [26], their predictive power for treatment outcomes appears secondary to direct physiological measures. Infertility duration and type show complex interactions with BMI, particularly in specific populations like Ghanaian women where secondary infertility predominates among overweight and obese patients [25]. Machine learning approaches further refine our understanding of feature importance, with models like Linear SVM and LightGBM identifying key predictors including ovarian stimulation protocols, embryo morphology parameters, and female age [14] [20]. These findings collectively underscore that effective fertility prediction requires multidimensional models incorporating both traditional demographic factors and more precise body composition metrics, with feature importance heavily dependent on specific patient populations and treatment modalities.

Algorithmic Approaches: How Model Selection Shapes Feature Importance Rankings

The accurate prediction of complex biological outcomes, such as those in fertility research, requires machine learning algorithms capable of capturing intricate, nonlinear relationships within datasets. Tree-based ensemble methods have emerged as particularly powerful tools in this domain, combining the predictive power of multiple decision trees to achieve superior accuracy and robustness. Among these ensembles, Random Forest, XGBoost, and LightGBM have gained significant traction in computational biology and reproductive medicine research due to their ability to handle diverse data types, manage missing values, and provide insights into feature importance [27]. These capabilities are especially valuable in fertility studies where researchers must identify key predictors from numerous sociodemographic, lifestyle, and clinical variables [28].

Within fertility prediction research, understanding which factors most significantly influence outcomes is paramount for both clinical decision-making and scientific discovery. Feature importance analysis provided by these ensemble methods helps researchers identify the most influential predictors—such as female age, embryo morphology, or lifestyle factors—thereby concentrating future research efforts and potentially revealing previously unrecognized biological relationships [28] [14]. This comparative analysis examines how Random Forest, XGBoost, and LightGBM address the challenge of modeling nonlinear relationships in fertility prediction contexts, focusing on their relative strengths, methodological differences, and implications for research applications.

Algorithmic Fundamentals and Structural Differences

Core Architectural Approaches

The three ensemble algorithms employ distinct architectural approaches to building predictive models from decision trees, with significant implications for their performance in fertility research applications:

Random Forest employs a technique known as bootstrap aggregating (bagging), which builds multiple decision trees independently on random subsets of the data and features, then combines their predictions through averaging or voting [27] [29]. This approach introduces diversity through both feature and data randomization, making the ensemble robust to noisy data and reducing overfitting. For fertility researchers, this robustness is particularly valuable when working with heterogeneous patient data containing measurement inconsistencies or missing values.

XGBoost utilizes gradient boosting, where trees are built sequentially with each new tree attempting to correct the errors of the previous ensemble [30] [27]. The algorithm incorporates advanced regularization techniques (L1 and L2) to control model complexity and prevent overfitting, making it particularly effective for datasets with high-dimensional feature spaces common in fertility research, where numerous patient variables must be considered simultaneously [30] [27].

LightGBM also employs a gradient boosting framework but introduces two key innovations: Gradient-Based One-Side Sampling (GOSS) and Exclusive Feature Bundling (EFB) [30]. These innovations allow it to handle large-scale data more efficiently than XGBoost, which is advantageous for fertility studies incorporating extensive patient records or time-series data from medical monitoring devices.

Tree Growth Strategies

A fundamental structural difference between these algorithms lies in their approach to growing decision trees, which directly impacts their efficiency and effectiveness:

XGBoost uses a level-wise (horizontal) tree growth strategy, which expands the entire level of a tree simultaneously [30]. While this approach can be more computationally intensive, it often produces more robust models, particularly important in clinical fertility prediction where model reliability is paramount.

LightGBM employs a leaf-wise (vertical) tree growth strategy that expands the node with the maximum loss reduction, resulting in more complex trees with potentially higher accuracy [30]. This strategy can lead to faster training times and reduced memory usage, though it may increase the risk of overfitting on smaller fertility datasets without proper parameter tuning.

Random Forest trees are typically grown to maximum depth without pruning, with the ensemble nature of the algorithm providing regularization [29]. Each tree is built independently on bootstrap samples of the data, with a random subset of features considered for each split.

Table 1: Fundamental Algorithmic Characteristics

| Algorithm | Ensemble Strategy | Tree Growth Method | Key Innovation | Ideal Data Characteristics |

|---|---|---|---|---|

| Random Forest | Bagging | Level-wise | Feature and data randomization | Smaller datasets, noisy data |

| XGBoost | Gradient Boosting | Level-wise | Regularization, parallel processing | Medium to large datasets requiring high accuracy |

| LightGBM | Gradient Boosting | Leaf-wise | GOSS and EFB for efficiency | Very large datasets, real-time applications |

Performance Comparison in Fertility Research Context

Predictive Performance Metrics

Recent studies in reproductive medicine provide empirical evidence of how these algorithms perform on fertility prediction tasks:

In a 2025 study predicting natural conception among couples using sociodemographic and sexual health data, researchers evaluated multiple machine learning models on a dataset of 197 couples [28]. The XGBoost Classifier demonstrated the highest performance among the models tested with an accuracy of 62.5% and a ROC-AUC of 0.580, though the authors noted limited predictive capacity overall, highlighting the challenges of fertility prediction [28].

A separate 2025 study on predicting blastocyst yield in IVF cycles provided a more comprehensive comparison, developing and validating models on over 9,000 IVF/ICSI cycles [14]. The researchers found that LightGBM, XGBoost, and SVM demonstrated comparable performance and significantly outperformed traditional linear regression models (R²: 0.673–0.676 vs. 0.587, Mean absolute error: 0.793–0.809 vs. 0.943) [14]. Among these high-performing models, LightGBM emerged as the optimal choice due to utilizing fewer features (8 vs. 10–11 in SVM/XGBoost) while offering superior interpretability [14].

Computational Efficiency

Computational efficiency represents a critical consideration for fertility researchers working with large datasets or requiring rapid model iteration:

LightGBM generally demonstrates faster training speed and lower memory usage compared to XGBoost, particularly on larger datasets, due to its histogram-based algorithm and leaf-wise growth strategy [30] [31]. This efficiency advantage can significantly accelerate the research process when experimenting with different feature combinations or model architectures.

XGBoost implements a pre-sorting algorithm for split finding and supports parallel processing, making it highly efficient on datasets of small to medium size [30]. While potentially slower than LightGBM on very large datasets, XGBoost often achieves comparable predictive performance with potentially better robustness.

Random Forest can be efficiently parallelized as trees are built independently, though it may require more memory than gradient boosting methods since all trees are grown to maximum depth [29]. For fertility researchers with limited computational resources, this factor may influence algorithm selection.

Table 2: Performance Comparison in Fertility Prediction Studies

| Algorithm | Accuracy | Training Speed | Memory Usage | Robustness to Overfitting | Interpretability |

|---|---|---|---|---|---|

| Random Forest | Moderate | Fast (parallelizable) | Higher | High (via ensemble diversity) | High (native feature importance) |

| XGBoost | High | Moderate (depends on dataset size) | Moderate | High (regularization) | Moderate (multiple importance measures) |

| LightGBM | High | Very fast | Lower | Moderate (requires careful parameter tuning) | Moderate (multiple importance measures) |

Feature Importance Analysis in Fertility Prediction

Methodological Approaches to Feature Importance

Understanding how each algorithm calculates and reports feature importance is crucial for interpreting results in fertility research contexts:

Random Forest offers two primary importance measures: accuracy-based importance (the decrease in model accuracy when a feature's values are permuted) and Gini importance (the total reduction in Gini impurity achieved by splits using that feature) [32] [29]. The Gini-based method is computationally efficient as it's calculated during training, while accuracy-based importance provides a more direct measure of a feature's predictive contribution [29].

XGBoost provides three importance metrics: gain (the average training accuracy improvement when using a feature for splitting), weight (the number of times a feature is used to split the data), and cover (the relative number of observations per feature) [33] [34]. Research suggests that "gain" typically provides the most reliable measure of a feature's true importance, though inconsistencies between these metrics can occur [34].

LightGBM offers two importance types: split (the number of times a feature is used in splits) and gain (the total improvement in model accuracy from splits using the feature) [31] [35]. The "gain" metric is generally more informative as it accounts for both the frequency and quality of splits [31].

Application in Fertility Research

Feature importance analysis has yielded valuable biological insights in recent fertility studies:

In the blastocyst yield prediction study, LightGBM feature importance analysis identified the number of extended culture embryos as the most critical predictor (61.5% importance), followed by Day 3 embryo metrics: mean cell number (10.1%), the proportion of 8-cell embryos (10.0%), and the proportion of symmetry (4.4%) [14]. Demographic factors like female age demonstrated relatively lower importance (2.4%) in predicting blastocyst development [14].

The natural conception prediction study utilized Permutation Feature Importance to select 25 key predictors from 63 initial variables [28]. The selected predictors encompassed a balance of medical, lifestyle, and reproductive factors for both partners, including BMI, caffeine consumption, history of endometriosis, and exposure to chemical agents or heat, emphasizing the couple-based approach to fertility prediction [28].

Diagram 1: Experimental Workflow for Fertility Prediction Studies

Experimental Protocols and Implementation Guidelines

Data Preprocessing and Feature Engineering

Proper data preprocessing is essential for optimal performance of tree-based ensembles in fertility research:

Handling Missing Values: Both XGBoost and LightGBM can natively handle missing values without imputation by learning direction decisions during training [30] [27]. Random Forest implementations typically require missing value imputation before training. For fertility datasets with substantial missing clinical measurements, the native handling capabilities of XGBoost and LightGBM can be advantageous.

Categorical Feature Encoding: Random Forest and XGBoost typically require one-hot encoding or label encoding of categorical variables [30]. LightGBM provides native support for categorical features, which can significantly reduce preprocessing requirements for fertility datasets containing categorical clinical variables [27].

Feature Scaling: Tree-based models are generally insensitive to feature scaling, eliminating the need for normalization or standardization procedures required by many other machine learning algorithms [33]. This characteristic simplifies the preprocessing pipeline for fertility researchers.

Hyperparameter Tuning Strategies

Each algorithm requires specific hyperparameter tuning to optimize performance for fertility prediction tasks:

XGBoost Critical Parameters:

n_estimators(number of trees),max_depth(tree complexity),learning_rate(shrinkage factor),subsample(row sampling),colsample_bytree(column sampling), and regularization parameters (reg_alphaandreg_lambda) [30] [33].LightGBM Critical Parameters:

num_leaves(controls model complexity),max_depth(tree depth limit),learning_rate,min_data_in_leaf(prev overfitting),feature_fraction(column sampling), andbagging_fraction(row sampling) [31] [35].Random Forest Critical Parameters:

n_estimators(number of trees),max_depth(tree complexity),max_features(number of features considered per split),min_samples_splitandmin_samples_leaf(control overfitting) [32] [29].

For fertility datasets, which are often characterized by limited sample sizes relative to the number of features, careful tuning of regularization parameters and sampling rates is particularly important to prevent overfitting.

Diagram 2: Algorithm Selection Guide for Fertility Research

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Fertility Prediction Research

| Tool Category | Specific Implementation | Research Application | Key Advantages |

|---|---|---|---|

| Algorithm Libraries | Scikit-learn (Random Forest), XGBoost package, LightGBM package | Model development and training | Standardized APIs, integration with Python data ecosystem |

| Feature Importance Analysis | SHAP, permutation importance, built-in importance methods | Biological insight generation, predictor identification | Model interpretability, hypothesis generation |

| Hyperparameter Optimization | GridSearchCV, RandomizedSearchCV, Bayesian optimization | Model performance optimization | Automated parameter tuning, reproducibility |

| Model Evaluation | Scikit-learn metrics, ROC analysis, calibration plots | Model validation and comparison | Comprehensive performance assessment |

| Data Processing | Pandas, NumPy, category_encoders | Dataset preparation for analysis | Efficient handling of clinical and demographic data |

Based on comparative performance analysis and recent applications in reproductive medicine, each algorithm offers distinct advantages for fertility prediction research:

For studies prioritizing model interpretability and robustness on small to medium-sized datasets, Random Forest provides an excellent choice with its straightforward feature importance measures and resistance to overfitting [29]. Its native feature importance calculations are particularly valuable for identifying key biological predictors in exploratory research.

When predictive accuracy is the primary concern, particularly on medium-sized datasets, XGBoost often delivers superior performance, as demonstrated in the natural conception prediction study [28]. Its regularization capabilities help prevent overfitting on the limited sample sizes common in clinical fertility studies.

For research involving large-scale datasets or requiring rapid model iteration, LightGBM offers significant advantages in computational efficiency while maintaining competitive accuracy, as evidenced by its optimal performance in the blastocyst yield prediction study [14]. Its ability to work effectively with fewer features can also enhance model interpretability.

Future fertility research would benefit from ensemble approaches that combine the strengths of multiple algorithms, as well as continued refinement of feature importance methodologies to better capture the complex, nonlinear relationships underlying reproductive outcomes. As these machine learning techniques become more sophisticated and accessible, their integration into reproductive medicine promises to enhance both scientific understanding and clinical decision-making for fertility treatment.

Support Vector Machines and Linear Models for High-Dimensional Data

In the field of fertility prediction, researchers are confronted with complex, high-dimensional datasets encompassing clinical, laboratory, and demographic variables. Within this context, selecting appropriate machine learning algorithms becomes paramount for developing robust predictive models. This guide provides an objective comparison between Support Vector Machines (SVM) and Linear Models, two prominent algorithmic approaches, focusing on their performance in fertility prediction research. The analysis is framed within a broader thesis on feature importance comparison, highlighting how different model architectures identify and prioritize predictive biomarkers, thereby influencing clinical interpretability and decision-making.

The table below summarizes quantitative performance metrics for SVM and Linear Models from recent fertility prediction studies, enabling a direct comparison of their predictive capabilities.

Table 1: Performance Comparison of SVM and Linear Models in Fertility Prediction

| Study & Prediction Task | Algorithm | Key Performance Metrics | Top-Ranked Predictive Features |

|---|---|---|---|

| ICSI Outcome Prediction [36] | Linear SVM | Accuracy: 75.7% | Couples' medical records, hormonal tests, cause of infertility (all pre-operative) |

| IUI Outcome Prediction [20] | Linear SVM | AUC: 0.78 | Pre-wash sperm concentration, ovarian stimulation protocol, cycle length, maternal age |

| Blastocyst Yield Prediction [14] | SVM | ( R^2 ): 0.673-0.676, MAE: 0.793-0.809 | Number of extended culture embryos, mean cell number on Day 3, proportion of 8-cell embryos |

| Linear Regression | ( R^2 ): 0.587, MAE: 0.943 | (Same feature set as SVM) | |

| General ART Success Prediction [37] | SVM | Most frequently applied technique (44.44% of studies) | Female age (most common feature across all studies) |

Detailed Experimental Protocols and Methodologies

Protocol: Predicting Blastocyst Yield in IVF Cycles

This study provides a direct, head-to-head comparison of SVM and Linear Regression, following a rigorous protocol for model development and validation [14].

- Objective: To quantitatively predict the number of blastocysts (blastocyst yield) obtained in an IVF cycle.

- Dataset: Analysis of 9,649 IVF/ICSI cycles, split into training and test sets. The outcome was categorized into 0, 1-2, or ≥3 usable blastocysts.

- Model Training: Three machine learning models (SVM, LightGBM, XGBoost) and a traditional Linear Regression model were trained.

- Feature Selection: Recursive feature elimination (RFE) was used to identify the optimal subset of features from an initial larger set.

- Performance Evaluation: Models were compared using the coefficient of determination (( R^2 )) and Mean Absolute Error (MAE) on the test set. The study also evaluated performance in a multi-class classification task for clinically relevant blastocyst yield categories.

Protocol: Predicting Clinical Pregnancy after IUI

This study exemplifies the application of a Linear SVM model using a large, single-center dataset [20].

- Objective: To develop a robust machine learning model to predict a positive pregnancy outcome following Intrauterine Insemination (IUI).

- Dataset: 9,501 IUI cycles from 3,535 couples, described by 21 clinical and laboratory parameters.

- Data Pre-processing: Cycles with data missing from three or more features were excluded. Missing values for one or two features were imputed using the median or mode. The

PowerTransformermethod was used for data normalization. - Model Training and Selection: Multiple classifiers were trained and compared, including Linear SVM, AdaBoost, Kernel SVM, Random Forest, and Extreme Forest.

- Feature Importance Analysis: The influence of each predictor was ranked post-model development to identify key factors for clinical implementation.

Protocol: A Broader Review of ML in ART Success Prediction

A systematic review offers a macro-level perspective on the adoption and performance of different algorithms in the field [37].

- Search Methodology: A systematic search was conducted in PubMed, Web of Science, Scopus, and Embase for papers published between 2000 and 2022.

- Study Selection: From 3,655 initial records, 27 papers meeting the inclusion criteria were selected for analysis.

- Data Extraction: Information on dataset characteristics, ML techniques, performance indicators, and features used was collected from each study.

- Synthesis: The review synthesized the most commonly used algorithms and performance metrics, reporting that SVM was the most frequently applied technique.

Visualizing Model Selection and Analysis Workflow

The following diagram illustrates a generalized experimental workflow for comparing SVM and linear models in fertility prediction research, integrating the key methodologies from the cited studies.

Fertility Prediction Model Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials and Computational Tools for Fertility Prediction Studies

| Item/Tool | Function in Research | Example from Cited Studies |

|---|---|---|

| Clinical Data from IVF/ICSI Cycles | Serves as the foundational dataset for training and validating prediction models. | 9,649 cycles for blastocyst prediction [14]; 10,036 records for ICSI success [38]. |

| Recursive Feature Elimination (RFE) | Identifies the most informative subset of variables, improving model simplicity and performance. | Used to select 8-11 key features from a larger set for blastocyst yield prediction [14]. |

| Scikit-learn Library | A comprehensive Python library providing implementations of SVM, linear models, and feature selection tools. | Implied standard for ML model implementation in Python, used for IUI prediction [20]. |

| Permutation Feature Importance | A model-agnostic method to evaluate the contribution of each feature to the model's predictive power. | Key technique for interpreting models and identifying top predictors like sperm concentration and maternal age [20]. |

| Performance Metrics Suite | Quantifies and compares model accuracy, discriminative power, and prediction errors. | Common metrics include AUC, Accuracy, R², MAE, Sensitivity, and Specificity [14] [37] [20]. |

The experimental data indicates that SVM often outperforms traditional Linear Models in fertility prediction tasks. For instance, in blastocyst yield prediction, SVM achieved a superior ( R^2 ) (0.673-0.676 vs. 0.587) and lower error (MAE: 0.793-0.809 vs. 0.943) compared to Linear Regression [14]. Furthermore, SVM's versatility is demonstrated by its strong performance across diverse prediction targets, from ICSI [36] to IUI outcomes [20], making it the most frequently applied ML technique in this domain according to one systematic review [37].

From a feature importance perspective, a critical finding across studies is that while the best-performing model may be a complex algorithm, feature importance analysis consistently reveals a compact set of clinically interpretable biomarkers. Top-ranked features often include embryological variables (e.g., number of extended culture embryos, Day 3 embryo cell number [14]), patient demographics (e.g., female age [37] [20]), and sperm-related parameters (e.g., pre-wash concentration [20]). This suggests that a hybrid analytical approach—using a powerful model like SVM for prediction and then employing interpretability techniques to extract key features—may be most effective. Such an approach aligns clinical utility with model accuracy, providing both actionable predictions and insights into the biological drivers of fertility outcomes.

The application of deep learning in reproductive medicine represents a paradigm shift from traditional statistical methods to data-driven pattern recognition. Convolutional Neural Networks (CNNs) and Transformer-based models have emerged as particularly powerful architectures for analyzing complex biomedical data, from clinical records to high-resolution images. In fertility prediction, these models excel at identifying subtle, non-linear patterns across diverse data modalities, offering unprecedented accuracy for outcomes ranging from sperm morphology classification to live birth prediction. This comparison guide examines the architectural strengths, performance characteristics, and implementation considerations of CNNs versus Transformers within fertility prediction research, with particular emphasis on their divergent approaches to feature importance and representation learning.

Performance Comparison: Quantitative Metrics Across Fertility Applications

Extensive benchmarking across reproductive medicine applications reveals distinct performance patterns for CNN and Transformer architectures. The following table synthesizes quantitative results from recent studies, providing a comprehensive comparison of their capabilities across different prediction tasks.

Table 1: Performance Comparison of CNN and Transformer Models in Fertility Prediction Tasks

| Application Area | Model Architecture | Performance Metrics | Key Features Identified | Citation |

|---|---|---|---|---|

| Sperm Morphology Analysis | Vision Transformer (BEiT_Base) | 93.52% accuracy (HuSHeM), 92.5% accuracy (SMIDS) | Head shape, tail integrity, long-range spatial dependencies | [39] |

| Sperm Morphology Analysis | CNN (VGG-16/GoogleNet ensemble) | 90.87% accuracy (SMIDS), 92.1% accuracy (HuSHeM) | Local texture patterns, morphological contours | [39] |

| Live Birth Prediction | TabTransformer with PSO | 97% accuracy, 98.4% AUC | Optimized clinical feature subsets | [40] [41] |

| Live Birth Prediction | CNN (Structured EMR data) | 93.94% accuracy, 88.99% AUC | Maternal age, BMI, antral follicle count, gonadotropin dosage | [42] |

| Live Birth Prediction | Random Forest | 94.06% accuracy, 97.34% AUC | Female age, embryo grades, usable embryo count, endometrial thickness | [43] |

| Blastocyst Yield Prediction | LightGBM | R²: 0.673-0.676, MAE: 0.793-0.809 | Extended culture embryos, Day 3 cell number, 8-cell embryo proportion | [14] |

| Embryo Selection (AI-based) | Various AI Models | Pooled sensitivity: 0.69, specificity: 0.62, AUC: 0.7 | Morphokinetic parameters, morphological features | [44] |

The performance data indicates that Transformer architectures consistently achieve superior accuracy in image-based analysis tasks such as sperm morphology classification, outperforming comparable CNN models by 1.42-1.63% on benchmark datasets [39]. This advantage stems from their self-attention mechanism, which effectively captures global contextual relationships across entire images. For structured electronic medical record (EMR) data, both architectures demonstrate robust performance, with the TabTransformer achieving exceptional accuracy (97%) and AUC (98.4%) when combined with particle swarm optimization for feature selection [40] [41].

Architectural Comparison: Feature Extraction Mechanisms

CNN Architecture for Local Feature Extraction