Deep Learning for Sperm Morphology Classification: A Comprehensive Review for Biomedical Research and Clinical Translation

This article provides a comprehensive examination of deep learning (DL) applications in sperm morphology classification, a critical yet subjective component of male fertility assessment.

Deep Learning for Sperm Morphology Classification: A Comprehensive Review for Biomedical Research and Clinical Translation

Abstract

This article provides a comprehensive examination of deep learning (DL) applications in sperm morphology classification, a critical yet subjective component of male fertility assessment. We explore the foundational concepts driving the shift from conventional manual analysis and machine learning towards deep neural networks, primarily Convolutional Neural Networks (CNNs). The review details the complete methodological pipeline, from dataset creation and augmentation to model architecture and training. It further addresses key challenges such as data standardization, model interpretability, and performance optimization, synthesizing current troubleshooting strategies. Finally, we present a comparative analysis of model performance against expert evaluations and traditional methods, highlighting validated accuracy metrics. This synthesis is tailored for researchers, scientists, and drug development professionals seeking to understand and advance the integration of robust, automated AI solutions in reproductive biology and clinical andrology.

The Paradigm Shift: From Manual Microscopy to AI-Driven Sperm Morphology Analysis

Male infertility is a prevalent global health issue, contributing to approximately 50% of all infertility cases [1] [2]. Among the various diagnostic parameters, sperm morphology analysis (SMA) stands as a cornerstone evaluation, providing crucial insights into male reproductive potential and underlying testicular function [3] [1]. Traditional manual morphology assessment, however, faces significant challenges including substantial inter-observer variability, subjectivity, and poor reproducibility due to the complex nature of sperm morphology, which encompasses 26 different types of abnormalities across the head, neck, and tail compartments [1] [4].

The integration of artificial intelligence (AI) and deep learning (DL) technologies is now revolutionizing this diagnostic landscape. These advanced computational approaches offer the potential to overcome human limitations, enabling automated, precise, and high-throughput sperm morphology analysis [3] [1]. This document outlines the current evidence, quantitative performance metrics, and detailed experimental protocols for implementing AI-driven sperm morphology analysis within research and clinical settings, framed within the context of deep learning research for sperm classification.

Performance Metrics of AI Models in Sperm Analysis

Table 1: Performance of Various AI Models in Male Infertility and Sperm Analysis

| Application Area | AI Model/Technique | Reported Performance | Sample Size |

|---|---|---|---|

| Male Infertility Prediction (Overall) | Various ML Models (Median Accuracy) | Accuracy: 88% | 43 studies [5] |

| Male Infertility Prediction | Artificial Neural Networks (ANN) | Accuracy: 84% | 7 studies [5] |

| Male Fertility Diagnostics | Hybrid MLFFN-ACO Framework | Accuracy: 99%, Sensitivity: 100% | 100 clinical profiles [2] |

| Sperm Morphology Classification | Support Vector Machine (SVM) | AUC-ROC: 88.59%, Precision >90% | 1,400 sperm cells [1] [4] |

| Sperm Head Morphology Classification | Bayesian Density Estimation | Accuracy: 90% | Not specified [1] |

| Non-Obstructive Azoospermia Sperm Retrieval Prediction | Gradient Boosting Trees (GBT) | AUC: 0.807, Sensitivity: 91% | 119 patients [4] |

| Multi-Target Sperm Parsing | Multi-Scale Part Parsing Network | Achieved 59.3% APvolp | Not specified [6] |

Experimental Protocols for AI-Based Sperm Morphology Analysis

Protocol 1: Deep Learning-Based Sperm Morphology Classification

Principle: This protocol utilizes a deep neural network to automatically segment and classify sperm structures from stained or unstained images, significantly reducing analytical workload and inter-observer variability [1] [4].

Materials & Reagents:

- Sperm sample slides

- Staining solutions (if using stained-based methods)

- Microscope with digital imaging capabilities

- High-performance computing workstation with GPU

- Labeled sperm image dataset for model training

Procedure:

- Sample Preparation & Image Acquisition:

- Prepare semen samples according to WHO-standardized protocols for smear preparation and staining [7] [1].

- Capture high-resolution digital images of sperm cells using a microscope with a consistent magnification factor (typically 100x oil immersion) [1].

- For unstained methods, use phase-contrast microscopy under 20x magnification to prevent sperm damage [6].

Data Annotation & Preprocessing:

- Annotate a minimum of 200 sperm cells per sample, labeling head, neck, and tail compartments along with specific abnormality types [1].

- Apply data augmentation techniques (rotation, flipping, brightness adjustment) to increase dataset diversity and size [1].

- Normalize pixel values and resize images to consistent dimensions for model input.

Model Training & Validation:

- Implement a convolutional neural network (CNN) architecture such as U-Net or Mask R-CNN for segmentation tasks [1] [6].

- Divide data into training (70%), validation (15%), and test sets (15%) using stratified sampling to maintain class balance.

- Train model with backpropagation and optimization algorithm (e.g., Adam) with appropriate learning rate scheduling.

- Validate model performance using cross-validation and compare against expert andologist annotations.

Morphological Analysis & Reporting:

- Generate segmentation masks for individual sperm structures.

- Calculate morphological parameters (head length/width, midpiece length, tail length) from segmented structures.

- Classify sperm into normal/abnormal categories based on WHO criteria or laboratory-specific thresholds.

- Output quantitative report including percentage of normal forms and specific defect types.

Protocol 2: Stained-Free Sperm Morphology Measurement with Multi-Target Instance Parsing

Principle: This protocol employs a novel multi-scale part parsing network combining semantic and instance segmentation for non-invasive sperm morphology assessment, eliminating potential sperm damage from staining procedures [6].

Materials & Reagents:

- Fresh semen samples

- Makler counting chamber or similar

- Phase-contrast microscope

- Computer with dedicated parsing software

Procedure:

- Sample Preparation & Imaging:

- Load fresh, unprocessed semen sample into counting chamber.

- Capture video sequences or multiple image frames using phase-contrast microscope at 20x magnification.

- Ensure adequate focus and illumination to maximize image clarity without staining.

Multi-Target Instance Parsing:

- Process images through multi-scale part parsing network integrating instance and semantic segmentation branches.

- The instance segmentation branch creates masks for accurate sperm localization.

- The semantic segmentation branch provides detailed segmentation of sperm parts (head, midpiece, tail).

- Fuse outputs from both branches for comprehensive instance-level parsing.

Measurement Accuracy Enhancement:

- Apply interquartile range (IQR) method to exclude morphological measurement outliers.

- Implement Gaussian filtering to smooth measurement data while preserving essential features.

- Utilize robust correction techniques to extract maximum morphological features of sperm.

- Compare enhanced measurements against ground truth data to validate accuracy.

Quality Control & Interpretation:

- Verify parsing accuracy by comparing automated results with manual assessment of subset of images.

- Calculate key morphological parameters for each sperm instance.

- Generate comprehensive report highlighting distribution of normal and abnormal forms.

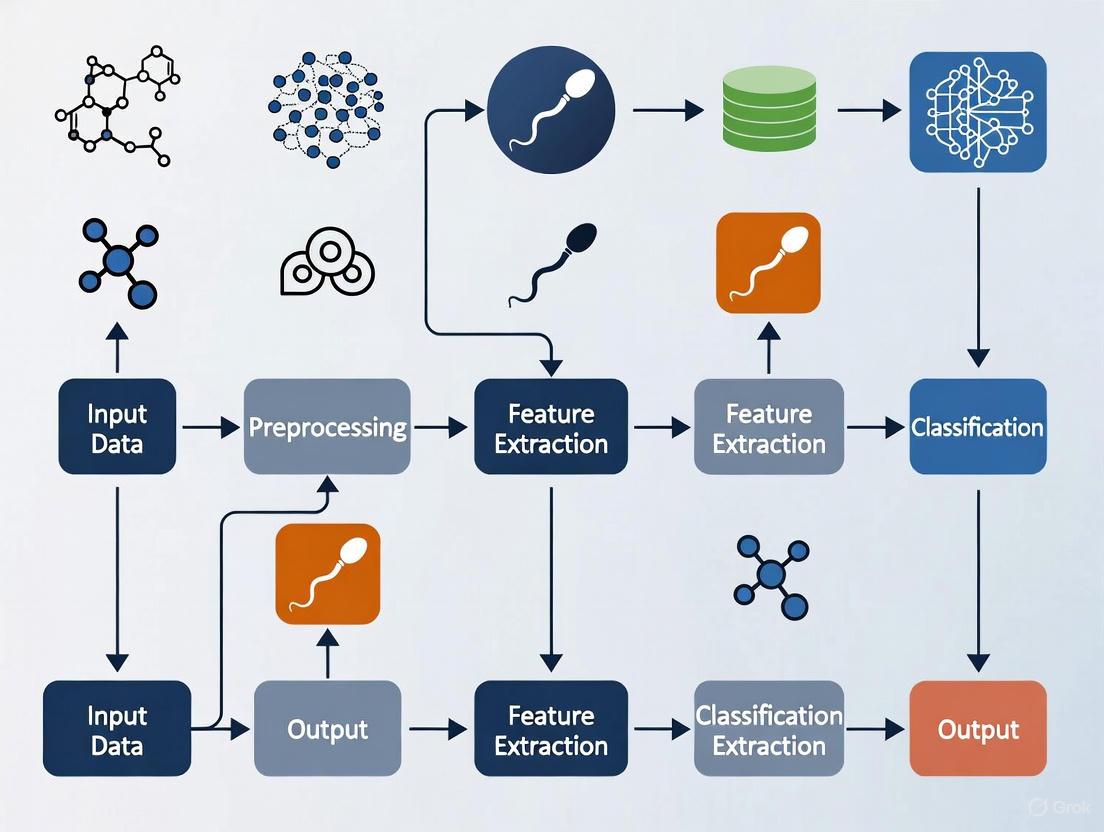

Workflow Visualization

AI-Based Sperm Morphology Analysis Workflow

Multi-Target Instance Parsing Network

Research Reagent Solutions & Essential Materials

Table 2: Essential Research Reagents and Materials for AI-Based Sperm Morphology Analysis

| Item | Function/Application | Implementation Notes |

|---|---|---|

| Staining Solutions (e.g., Diff-Quik, Papanicolaou) | Enhances contrast for traditional and automated morphology analysis | Required for stained-based methods; may cause sperm damage [1] |

| Phase-Contrast Microscope | Enables observation of unstained, live sperm | Essential for non-invasive methods; 20x magnification recommended [6] |

| Makler Counting Chamber | Standardized sperm concentration and motility assessment | Provides consistent imaging field for analysis [6] |

| Multi-Scale Part Parsing Network | Software for instance-level sperm parsing | Combines instance and semantic segmentation; key for unstained analysis [6] |

| Public Sperm Datasets (e.g., HSMA-DS, VISEM-Tracking, SVIA) | Training and validation of AI models | SVIA dataset contains 125,000 annotated instances [1] |

| Hybrid MLFFN-ACO Framework | Bio-inspired optimization for fertility diagnosis | Combines neural networks with ant colony optimization; reported 99% accuracy [2] |

| Measurement Accuracy Enhancement Algorithm | Reduces errors in low-resolution images | Uses IQR, Gaussian filtering, and robust correction techniques [6] |

The integration of artificial intelligence, particularly deep learning approaches, into sperm morphology analysis represents a paradigm shift in male infertility diagnostics. The quantitative evidence demonstrates that these technologies can achieve high accuracy rates exceeding 88% in classification tasks, significantly reducing subjectivity and variability inherent in manual assessments [5] [4]. The presented protocols and methodologies provide researchers with standardized approaches for implementing these advanced analytical techniques, with particular emphasis on both stained and stain-free applications. As these technologies continue to evolve, future directions should focus on multicenter validation trials, development of standardized datasets, and enhanced model interpretability to facilitate broader clinical adoption and ultimately improve diagnostic precision in male infertility evaluation [4] [2].

The assessment of sperm morphology remains a cornerstone in the clinical evaluation of male infertility, providing critical diagnostic and prognostic information [8] [9]. Traditional analysis relies on manual examination by trained technicians using microscopy, a method outlined in the World Health Organization (WHO) laboratory manuals [10]. Despite efforts to standardize these procedures, conventional manual assessment is fraught with significant challenges that compromise its reliability and clinical utility. These limitations primarily manifest as excessive subjectivity, poor reproducibility, and substantial workload burden for technicians [8] [1]. This application note systematically details these constraints and their implications for both clinical practice and research, framing them within the broader context of advancing deep learning-based solutions for sperm morphology classification. The inherent variability in manual methods not only affects diagnostic accuracy but also hinders the development of consistent treatment pathways for infertility, underscoring the urgent need for automated, standardized approaches leveraging artificial intelligence technologies.

Core Limitations of Conventional Manual Assessment

Subjectivity and Inter-Expert Variability

The fundamental challenge in manual sperm morphology analysis stems from its inherent subjectivity, which permeates every stage of the assessment process. This subjectivity arises from multiple sources, including differences in technician training, experience, and individual interpretation of complex morphological criteria.

Complex Classification Standards: According to WHO standards, sperm morphology is divided into head, neck, and tail compartments, with up to 26 distinct types of abnormal morphology recognized [8] [1]. Technicians must simultaneously evaluate abnormalities across multiple structures—head, vacuoles, midpiece, and tail—which substantially increases annotation difficulty and introduces interpretive variability [8].

Quantitative Evidence of Disagreement: Studies quantifying inter-expert agreement reveal concerning levels of discrepancy. In the development of the SMD/MSS dataset, researchers documented three separate agreement scenarios among three experts: no agreement (NA) among experts, partial agreement (PA) where 2/3 experts concurred, and total agreement (TA) where all three experts shared the same classification [10]. Statistical analysis using Fisher's exact test confirmed significant differences between experts in each morphology class (p < 0.05) [10]. This variability directly impacts the reliability of clinical diagnoses and treatment decisions based on morphology assessments.

Table 1: Documented Inter-Expert Variability in Sperm Morphology Classification

| Study | Expert Agreement Scenario | Description | Impact on Classification |

|---|---|---|---|

| SMD/MSS Dataset Development [10] | No Agreement (NA) | 0/3 experts agree on classification | Complete diagnostic inconsistency |

| Partial Agreement (PA) | 2/3 experts agree on the same label | Moderate reliability, potential misclassification | |

| Total Agreement (TA) | 3/3 experts agree on all categories | High reliability but rarely achieved |

Reproducibility Challenges

The reproducibility of sperm morphology analysis is compromised by both technical and human factors, creating substantial barriers to consistent clinical assessment and reliable research outcomes.

Inter-Laboratory Variability: Despite the availability of standardized WHO protocols, different laboratories frequently implement varying sample preparation, staining techniques, and classification interpretations [9]. This lack of standardized protocols across institutions means that results from one laboratory may not be directly comparable to those from another, complicating longitudinal studies and multi-center research initiatives [8] [9].

Sample Preparation Inconsistencies: Variations in staining methods (e.g., RAL Diagnostics staining kit, Papanicolaou stain) and smear preparation techniques introduce pre-analytical variables that affect morphological appearance and subsequent classification [10]. These technical discrepancies compound the interpretive variations between technicians, creating a compounded reproducibility problem spanning both sample preparation and analysis phases.

Substantial Workload and Operational Inefficiency

The operational burden of manual sperm morphology analysis creates practical constraints on laboratory throughput and introduces fatigue-related errors that further compromise accuracy.

Labor-Intensive Process: WHO guidelines recommend the analysis and counting of more than 200 sperms per sample to obtain statistically meaningful morphology assessments [8] [1]. Given the need to evaluate each sperm across multiple morphological compartments (head, neck, and tail) against 26 potential abnormality types, this process demands considerable time and focused attention from skilled technicians [8].

Economic and Workflow Implications: The substantial time investment required for each analysis limits laboratory throughput and increases operational costs. Additionally, technician fatigue during extended evaluation sessions can introduce additional errors and inconsistencies, particularly in high-volume clinical settings [1]. This workload burden has direct implications for patient wait times and accessibility of comprehensive fertility testing services.

Quantitative Comparison of Methodological Limitations

Table 2: Comparative Analysis of Manual vs. Deep Learning Approaches in Sperm Morphology Assessment

| Parameter | Manual Assessment | Deep Learning Approaches | Clinical & Research Implications |

|---|---|---|---|

| Subjectivity | High inter-expert variability (significant differences at p<0.05) [10] | Eliminates human interpretive variation | DL enables standardized diagnosis across clinics |

| Reproducibility | Poor inter-laboratory consistency due to protocol variations [9] | High reproducibility with consistent algorithms | Enables multi-center research with comparable results |

| Workload | High: requires analysis of >200 sperm per sample by expert [8] | Automated processing of large image volumes | Increases laboratory throughput and reduces costs |

| Classification Accuracy | Variable (55-92% vs. expert consensus) [10] | Higher and more consistent (up to 94.1% TPR reported) [11] | More reliable fertility prognosis and treatment planning |

| Throughput | Limited by technician capacity and fatigue | Rapid batch processing capabilities | Scalable for high-volume screening applications |

| Standardization | Difficult to achieve across operators and centers | Inherently standardized once validated | Creates consistent diagnostic thresholds |

Experimental Protocols for Evaluating Methodological Limitations

Protocol for Quantifying Inter-Expert Variability

Purpose: To systematically evaluate and quantify the degree of subjectivity in manual sperm morphology assessment among different experts.

Materials:

- Sperm samples prepared according to WHO standard protocols

- RAL Diagnostics staining kit or equivalent

- Optical microscope with 100x oil immersion objective

- MMC CASA system or similar for image acquisition

- Data collection spreadsheet for expert annotations

Procedure:

- Sample Preparation: Prepare semen smears from samples with varying morphological profiles. Ensure sperm concentration is at least 5 million/mL but exclude samples >200 million/mL to avoid image overlap [10].

- Image Acquisition: Capture 37±5 images per sample using bright field mode with oil immersion 100x objective. Ensure each image contains a single spermatozoon with clear visualization of head, midpiece, and tail structures [10].

- Expert Classification: Engage multiple experienced technicians (minimum of 3) to independently classify each spermatozoon according to modified David classification (12 classes of morphological defects) [10].

- Data Collection: Create a shared spreadsheet where each expert documents morphological classifications for each sperm component without consultation.

- Statistical Analysis:

- Categorize agreement scenarios: No Agreement (NA), Partial Agreement (PA), and Total Agreement (TA) [10].

- Use Fisher's exact test to evaluate statistical differences between experts for each morphology class, with significance set at p<0.05 [10].

- Calculate intraclass correlation coefficients (ICC) to measure reliability of continuous measurements.

Protocol for Assessing Reproducibility Across Laboratories

Purpose: To evaluate the reproducibility of sperm morphology assessments across different laboratories and imaging conditions.

Materials:

- Standardized sperm sample aliquots

- Multiple microscope systems with different configurations

- Phase contrast, Hoffman modulation contrast, and bright field imaging capabilities [12]

- Sample preparation reagents consistent across sites

Procedure:

- Sample Distribution: Prepare identical aliquots from well-characterized semen samples and distribute to participating laboratories.

- Multi-Center Imaging: Acquire images using different microscope brands, imaging modes (bright field, phase contrast, Hoffman modulation contrast), and magnifications (10x, 20x, 40x, 60x, 100x) to simulate real-world clinical variation [12].

- Standardized Analysis: Implement the same classification criteria across all sites using the modified David classification or WHO standards [10].

- Data Integration and Comparison:

- Calculate coefficient of variation across laboratories for each morphological parameter.

- Assess intraclass correlation coefficients (ICC) for precision (0.97 reported in rigorous studies) and recall across sites [12].

- Perform ablation studies to determine how each imaging variable (magnification, mode, preprocessing) affects morphological classification consistency.

Experimental Workflow for Methodological Evaluation

Experimental Workflow for Evaluating Methodological Limitations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Sperm Morphology Studies

| Research Reagent/Material | Function/Application | Protocol Considerations |

|---|---|---|

| RAL Diagnostics Staining Kit | Provides differential staining of sperm structures for morphological assessment | Follow manufacturer instructions for consistent staining intensity and contrast [10] |

| VISEM-Tracking Dataset | Publicly available dataset containing 656,334 annotated objects with tracking details | Enables algorithm training and benchmarking without additional sample collection [8] |

| SVIA Dataset | Comprehensive dataset with 125,000 annotated instances, 26,000 segmentation masks | Supports detection, segmentation, and classification tasks in DL development [8] |

| HuSHeM Dataset | Human Sperm Head Morphology dataset with stained, higher resolution images | Useful for head-specific classification algorithms; limited to 216 publicly available images [8] [11] |

| SCIAN-MorphoSpermGS Dataset | Gold-standard dataset with 1,854 sperm images across five WHO classes | Provides expert-validated ground truth for training and validation [8] |

| MMC CASA System | Computer-Assisted Semen Analysis for standardized image acquisition | Ensures consistent magnification (100x oil immersion) and imaging conditions [10] |

The limitations of conventional manual sperm morphology assessment—subjectivity, poor reproducibility, and substantial workload—present significant barriers to accurate male infertility diagnosis and treatment. Quantitative evidence demonstrates concerning inter-expert variability with statistical significance, while operational constraints limit laboratory efficiency and consistency. These methodological challenges directly impact clinical decision-making and highlight the critical need for standardized, automated approaches. Deep learning-based classification systems represent a promising solution, offering the potential to overcome these limitations through automated feature extraction, consistent application of morphological criteria, and significantly reduced analytical workload. By addressing the fundamental constraints of conventional methods, deep learning approaches can enhance diagnostic reliability, enable multi-center research collaboration, and ultimately improve patient care in reproductive medicine.

Sperm morphology analysis is a cornerstone of male fertility assessment, with a demonstrated significant correlation between abnormal sperm forms and infertility [1]. For decades, the evaluation of sperm shape was a manual, subjective process, highly dependent on the technician's expertise and leading to significant inter-laboratory variability [10] [11]. The introduction of Computer-Assisted Semen Analysis (CASA) systems promised a new era of standardization and objectivity. However, traditional CASA often fell short, struggling with accurately distinguishing sperm from debris and classifying subtle midpiece and tail abnormalities [10] [13].

The emergence of deep learning represents a paradigm shift, moving from automated measurement to intelligent classification. This evolution leverages convolutional neural networks (CNNs) to automatically learn discriminative features from raw sperm images, eliminating the need for manual feature extraction and offering a path toward highly accurate, reproducible, and rapid sperm morphology classification [11] [1]. These Application Notes detail the experimental protocols and quantitative evidence driving this technological transition, providing a framework for researchers to implement and advance these methods.

From Manual Analysis to CASA: The First Steps in Automation

The Workflow and Limitations of Traditional CASA

Traditional CASA systems were designed to bring objectivity to semen analysis by automating the image acquisition and measurement processes. A typical CASA workflow involves loading a prepared semen sample onto a microscope stage equipped with a digital camera. The system then captures multiple images or video sequences, which are processed to identify sperm cells and quantify parameters like concentration and motility [13].

For morphology, CASA systems relied on extracting handcrafted morphometric features from sperm images. These features typically included:

- Head dimensions: Length, width, area, and perimeter.

- Head shape descriptors: Eccentricity, elongation, and shape factors.

- Complex descriptors: Fourier descriptors, Zernike moments, and Hu moments to capture contour and texture details [11].

These extracted features were then fed into conventional machine learning classifiers, such as Support Vector Machines (SVM) or k-nearest neighbors (k-NN), to categorize sperm into morphological classes [1].

Despite their contribution to standardization, these systems faced fundamental limitations. Their performance was heavily dependent on image quality and often failed in the presence of cellular debris or when sperm were agglutinated. Furthermore, their reliance on pre-defined features made them inflexible and unable to generalize well to the vast and subtle spectrum of sperm abnormalities, particularly in the midpiece and tail [10] [1]. This often resulted in unsatisfactory performance and limited their routine clinical adoption for robust morphological assessment.

A Research-Grade CASA Simulation Protocol

To objectively assess and validate CASA algorithms without the constraints of variable real-world image quality, researchers have developed simulation tools that generate life-like semen images with known, controllable ground-truth parameters [13].

Protocol: Simulating Semen Images for Algorithm Validation

Sperm Cell Modeling:

- Head Generation: Model the sperm head as a generally oval shape. The image is created by defining a center point and applying point spread functions to simulate the head's core and membrane, resulting in a final, realistic head image [13].

- Flagellum Generation: Model the tail as a thin, flexible curve. Define a series of points along the intended tail path and apply a different point spread function to generate a pixelated representation of the flagellum. The head and tail images are then merged to form a complete sperm cell [13].

Motion Path Modeling: Implement different swimming modes to create dynamic video sequences. The four primary modes are:

- Linear Mean: Progressive movement along a relatively straight path.

- Circular: Movement along a circular trajectory.

- Hyperactive: High-amplitude, non-progressive thrashing.

- Immotile: No movement, representing dead or non-motile sperm [13].

Multi-Cell Image Synthesis: Populate a simulated image frame by generating multiple sperm cells, each with a defined position and swimming mode. Add controlled levels of noise and background intensity variation to mimic real-world microscopy conditions [13].

Algorithm Testing: Use the simulated image sequences, where all parameters (positions, shapes, paths) are known, as a ground-truth benchmark to quantitatively evaluate the performance of segmentation, localization, and tracking algorithms using metrics like precision, recall, and Multi-Object Tracking Accuracy (MOTA) [13].

The Deep Learning Revolution in Sperm Morphology

Core Architectural Principles

Deep learning, particularly Convolutional Neural Networks (CNNs), has overcome many limitations of traditional CASA by learning relevant features directly from the data. CNNs consist of multiple layers that automatically and hierarchically learn to detect patterns, from simple edges and gradients in early layers to complex morphological structures like acrosomes and tail bends in deeper layers [11]. Common approaches include:

- Transfer Learning: Fine-tuning pre-existing networks, such as VGG16, which were originally trained on large-scale datasets like ImageNet. This approach is computationally efficient and effective, especially with limited medical image data [11].

- End-to-End Object Detection: Employing architectures like YOLO (You Only Look Once) that perform both sperm detection and classification in a single pass, optimizing for speed and efficiency suitable for clinical workflows [14].

Quantitative Performance Comparison

The transition from conventional machine learning to deep learning has yielded measurable improvements in classification accuracy, as evidenced by studies on public datasets.

Table 1: Performance Comparison of Sperm Classification Methods on Public Datasets

| Dataset | Method | Key Features | Reported Performance | Reference |

|---|---|---|---|---|

| HuSHeM | Cascade Ensemble-SVM (CE-SVM) | Shape-based descriptors (Area, Eccentricity, Zernike moments) | 78.5% Average True Positive Rate | [11] |

| HuSHeM | Deep CNN (VGG16 Transfer Learning) | Automated feature extraction from raw images | 94.1% Average True Positive Rate | [11] |

| SCIAN (Partial Agreement) | CE-SVM | Manual feature engineering | 58% Average True Positive Rate | [11] |

| SCIAN (Partial Agreement) | Deep CNN (VGG16 Transfer Learning) | Automated feature extraction from raw images | 62% Average True Positive Rate | [11] |

| Bovine Sperm Dataset | YOLOv7 | Single-stage detection & classification | 0.73 mAP@50, 0.75 Precision, 0.71 Recall | [14] |

The following diagram illustrates the typical end-to-end workflow for developing a deep learning-based sperm morphology classification system.

Protocol for Implementing a Deep Learning Morphology Classifier

Protocol: Building a CNN-based Sperm Morphology Classifier

Dataset Curation and Augmentation:

- Image Acquisition: Acquire images of individual spermatozoa using a microscope with a 100x oil immersion objective, preferably under bright-field mode. Staining (e.g., RAL Diagnostics kit) is typically used for fixed smears [10].

- Expert Annotation: Have multiple experienced embryologists or andrologists classify each sperm image according to a standardized classification system (e.g., modified David classification or WHO criteria). Resolve discrepancies through consensus [10].

- Data Augmentation: To address class imbalance and prevent overfitting, artificially expand the dataset using techniques such as random rotation, flipping, scaling, brightness/contrast adjustment, and elastic deformations. For example, a base set of 1,000 images can be expanded to over 6,000 images [10].

Model Training:

- Pre-processing: Resize all images to a uniform dimensions (e.g., 80x80 pixels). Convert to grayscale and normalize pixel values [10].

- Data Partitioning: Split the augmented dataset randomly into training (80%), validation (10-20%), and hold-out test (20%) sets [10].

- Model Selection and Fine-Tuning:

- Option A (Transfer Learning): Use a pre-trained network like VGG16. Replace the final classification layer with a new one matching the number of sperm morphology classes. First, train only the new layer, then fine-tune all layers with a very low learning rate [11].

- Option B (Object Detection): For frameworks like YOLOv7, format the annotations accordingly and train the model to both locate and classify sperm in an image [14].

- Training: Train the model using the training set, monitoring loss and accuracy on the validation set to avoid overfitting.

Evaluation and Deployment:

- Performance Metrics: Evaluate the final model on the held-out test set. Report standard metrics including accuracy, precision, recall, F1-score, and area under the ROC curve (AUC-ROC) [14].

- Inference: Deploy the trained model to classify new, unseen sperm images. The model outputs a probability distribution over the possible morphological classes for each input image.

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful implementation of a deep learning morphology system relies on a foundation of wet-lab and computational tools.

Table 2: Key Research Reagents and Solutions for Sperm Morphology Analysis

| Item | Function / Application | Example / Specification |

|---|---|---|

| RAL Staining Kit | Stains sperm cells on fixed smears for clear visualization of morphological details under bright-field microscopy. | RAL Diagnostics kit [10] |

| Optixcell Extender | Semen extender used to dilute and preserve bull sperm samples for morphological analysis. | IMV Technologies [14] |

| Trumorph System | A dye-free fixation system that uses controlled pressure and temperature to immobilize sperm for morphology evaluation. | Proiser R+D, S.L. [14] |

| MMC CASA System | An integrated system comprising an optical microscope and camera for automated image acquisition and initial analysis. | Used for acquiring individual sperm images [10] |

| B-383Phi Microscope | A microscope used for high-resolution imaging of sperm cells, often paired with image capture software. | Optika, with 40x negative phase contrast objective [14] |

| Python with Deep Learning Libraries | The primary programming environment for developing, training, and testing deep learning models (CNNs, YOLO). | Python 3.8, TensorFlow/PyTorch, OpenCV [10] [14] |

| Roboflow | Web-based tool for annotating images, managing datasets, and performing preprocessing and augmentation. | Used for labeling and preparing training data [14] |

The evolution from CASA to deep learning marks a significant maturation of automation in sperm morphology analysis. While CASA provided initial steps toward objectivity, its dependence on handcrafted features was a fundamental constraint. Deep learning, with its capacity for end-to-end learning from raw pixel data, has demonstrated superior performance and offers a robust framework for standardizing this critical diagnostic procedure. The detailed protocols and quantitative comparisons provided here equip researchers to contribute to this rapidly advancing field, pushing the boundaries of accuracy, efficiency, and accessibility in male fertility assessment.

Deep learning, a subset of artificial intelligence (AI), has emerged as a transformative technology for analyzing complex biological data. Its capacity to automatically learn hierarchical features from raw input data makes it particularly well-suited for medical image analysis tasks that have traditionally relied on manual, subjective assessment. In the field of reproductive biology, deep learning approaches are revolutionizing the analysis of sperm morphology—a key diagnostic parameter in male fertility assessment. Convolutional Neural Networks (CNNs), a specialized class of deep neural networks, have demonstrated remarkable success in processing image data by mimicking the hierarchical structure of biological visual processing systems.

The application of these technologies to sperm morphology classification addresses significant challenges in conventional analysis methods. Traditional manual assessment is notoriously subjective, time-consuming, and prone to inter-observer variability, while earlier computer-assisted semen analysis (CASA) systems have shown limited ability to accurately distinguish spermatozoa from cellular debris and classify specific morphological abnormalities [10] [1]. Deep learning models, particularly CNNs, offer the potential for automation, standardization, and acceleration of semen analysis while achieving accuracy levels comparable to human experts [15].

Neural Networks and CNNs: Architectural Foundations

Fundamental Neural Network Components

At their core, neural networks are computational models inspired by the structure and function of the human brain. The basic building block is the artificial neuron, which receives inputs, applies a mathematical transformation, and produces an output. These neurons are organized into layers—typically an input layer, one or more hidden layers, and an output layer—with connections between them having associated weights that are adjusted during training [16].

The fundamental components of a neural network include:

- Layers: Stacked sets of neurons that process information sequentially

- Weights and Biases: Parameters that determine the strength of connections between neurons

- Activation Functions: Mathematical functions that introduce non-linearity, enabling the network to learn complex patterns (e.g., ReLU, sigmoid, tanh)

- Loss Functions: Objectives that the network optimizes during training

- Optimizers: Algorithms that adjust weights and biases to minimize the loss function [16]

Convolutional Neural Networks (CNNs)

CNNs represent a specialized neural network architecture designed specifically for processing grid-like data such as images. Their unique structural properties make them exceptionally well-suited for visual data analysis tasks, including biological image classification. Unlike traditional fully-connected networks, CNNs employ three key architectural features:

Convolutional Layers: These layers apply a series of filters (kernels) across the input image to detect spatial hierarchies of features, from simple edges and textures in early layers to complex morphological patterns in deeper layers. Each filter slides across the input, computing dot products to generate feature maps that highlight specific characteristics present in the image [11] [16].

Pooling Layers: Typically inserted between convolutional layers, pooling operations (e.g., max pooling, average pooling) reduce the spatial dimensions of feature maps while retaining the most salient information. This dimensionality reduction provides translational invariance and decreases computational complexity while preventing overfitting [11].

Fully-Connected Layers: In the final stages of the network, these traditional neural network layers integrate the high-level features extracted by the convolutional and pooling layers to perform the final classification task, such as categorizing sperm into normal versus abnormal morphological classes [11].

The training process for both standard neural networks and CNNs involves forward propagation of input data, calculation of loss between predictions and ground truth, and backward propagation of errors to adjust weights using optimization algorithms like gradient descent. This iterative process enables the network to gradually improve its performance on the designated task [16].

CNN Basic Architecture for Image Classification

Application to Sperm Morphology Classification

Problem Formulation and Significance

Sperm morphology analysis represents a critical diagnostic procedure in male fertility assessment, with the proportion and types of morphologically abnormal spermatozoa providing valuable prognostic information for natural conception and assisted reproductive outcomes. According to World Health Organization (WHO) standards, sperm morphology is evaluated across three primary components: head, midpiece, and tail, with numerous specific abnormality patterns identified within each category [10] [1].

The clinical challenge stems from the subjective nature of manual assessment, which relies heavily on technician expertise and demonstrates significant inter-laboratory variability. Furthermore, the process is labor-intensive, requiring classification of 200 or more spermatozoa per sample—a time-consuming task that contributes to diagnostic inconsistency [1]. Deep learning approaches directly address these limitations by providing automated, standardized classification with reduced operator dependency and potentially higher throughput.

Comparative Performance of Deep Learning Approaches

Recent research has demonstrated the effectiveness of deep learning models for sperm morphology classification, with several studies reporting performance metrics approaching or exceeding expert-level accuracy. The following table summarizes quantitative results from key studies in the field:

Table 1: Performance Comparison of Deep Learning Models for Sperm Morphology Classification

| Study | Dataset | Model Architecture | Key Performance Metrics | Classification Categories |

|---|---|---|---|---|

| SMD/MSS Study (2025) [15] [10] | SMD/MSS (1,000 images augmented to 6,035) | Custom CNN | Accuracy: 55-92% (variation across morphological classes) | 12 classes based on modified David classification |

| Deep Learning for Classification (2019) [11] | HuSHeM Dataset | VGG16 (Transfer Learning) | Average True Positive Rate: 94.1% | 5 WHO categories: Normal, Tapered, Pyriform, Small, Amorphous |

| Deep Learning for Classification (2019) [11] | SCIAN Dataset | VGG16 (Transfer Learning) | Average True Positive Rate: 62% | 5 WHO categories |

| Current Literature Review (2025) [1] | Multiple Public Datasets | Various Deep Learning Models | Accuracy range: 59-92% across studies | Varies by study (typically 3-12 morphological classes) |

The variation in reported performance metrics highlights several important considerations for implementing deep learning solutions in this domain. Dataset characteristics—including size, quality, annotation consistency, and class balance—significantly influence model performance. Additionally, the specific architectural choices and training methodologies employed impact the resulting classification accuracy [1].

Experimental Protocols for Sperm Morphology Classification

Dataset Curation and Preparation Protocol

Purpose: To systematically collect, annotate, and preprocess sperm images for training and evaluating deep learning models.

Materials and Equipment:

- Microscope with 100x oil immersion objective

- Digital camera system

- Stained semen smears (RAL Diagnostics staining kit)

- Computer with image acquisition software

- Data annotation platform

Procedure:

- Sample Preparation: Prepare semen smears according to WHO guidelines [10]. Apply RAL Diagnostics staining to enhance cellular structure visualization.

- Image Acquisition: Capture individual sperm images using an MMC CASA system or equivalent. Use bright-field microscopy with 100x oil immersion objective. Ensure each image contains a single spermatozoon with clear visualization of head, midpiece, and tail structures [10].

- Expert Annotation: Engage multiple experienced embryologists (minimum 3) to independently classify each sperm image according to modified David classification or WHO criteria. The classification should encompass:

- Head defects: Tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome

- Midpiece defects: Cytoplasmic droplet, bent

- Tail defects: Coiled, short, multiple [10]

- Ground Truth Establishment: Resolve annotation discrepancies through consensus meetings or majority voting. Compile final classifications into a ground truth file containing image name, expert classifications, and morphological measurements [10].

- Data Augmentation: Address class imbalance and limited dataset size by applying augmentation techniques including:

- Rotation and flipping

- Brightness and contrast adjustment

- Scaling and translation

- Synthetic image generation (if applicable) [15]

- Data Partitioning: Split the dataset into training (80%), validation (10%), and test (10%) sets, ensuring representative distribution of all morphological classes across partitions [10].

CNN Model Development and Training Protocol

Purpose: To design, implement, and train a convolutional neural network for automated sperm morphology classification.

Materials and Software:

- Python programming environment (version 3.8+)

- Deep learning frameworks (TensorFlow, PyTorch, or Keras)

- GPU-accelerated computing resources

- Preprocessed and annotated sperm image dataset

Procedure:

- Image Preprocessing:

- Resize all images to uniform dimensions (e.g., 80×80 pixels)

- Apply normalization to scale pixel values to [0,1] range

- Convert images to grayscale if color information is not diagnostically relevant

- Apply noise reduction algorithms to enhance image quality [10]

Model Architecture Design:

- Implement a CNN architecture with convolutional, pooling, and fully-connected layers

- Consider transfer learning using pre-trained networks (e.g., VGG16, ResNet) when training data is limited [11]

- Include appropriate regularization techniques (dropout, batch normalization) to prevent overfitting

Model Training:

- Initialize model parameters using established weight initialization strategies

- Define appropriate loss function (categorical cross-entropy for multi-class classification)

- Select optimization algorithm (Adam, SGD) with appropriate learning rate

- Implement batch training with batch size optimized for available computational resources

- Train model for sufficient epochs while monitoring validation loss to avoid overfitting [11]

Model Evaluation:

Sperm Morphology Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Deep Learning-Based Sperm Morphology Analysis

| Item | Specification/Example | Function/Purpose |

|---|---|---|

| Microscope System | MMC CASA system with 100x oil immersion objective | High-resolution image acquisition of individual spermatozoa |

| Staining Kit | RAL Diagnostics staining kit | Enhances contrast and visualization of sperm morphological structures |

| Annotation Software | Custom Excel templates or specialized annotation platforms | Systematic documentation of expert morphological classifications |

| Data Augmentation Tools | Python libraries (TensorFlow, Keras, PyTorch) | Expands dataset size and diversity through image transformations |

| Deep Learning Framework | TensorFlow, PyTorch, Keras with Python 3.8+ | Provides infrastructure for implementing and training CNN models |

| Computational Resources | GPU-accelerated workstations (NVIDIA CUDA-compatible) | Enables efficient training of computationally intensive deep learning models |

| Performance Metrics Package | Scikit-learn, custom evaluation scripts | Quantifies model performance through accuracy, precision, recall, F1-score |

| Public Datasets | HuSHeM, SCIAN, SVIA datasets [1] [11] | Provides benchmark data for model development and comparative performance assessment |

Implementation Considerations and Future Directions

The implementation of deep learning systems for sperm morphology classification presents several practical considerations. Dataset quality and annotation consistency remain paramount, as models are highly dependent on training data quality. The SMD/MSS study highlighted the importance of addressing inter-expert variability in annotations, reporting scenarios with no agreement (NA), partial agreement (PA), and total agreement (TA) among the three experts [10]. Future research directions include developing more sophisticated data augmentation techniques, integrating multiple classification frameworks (WHO, David, Kruger), and exploring explainable AI methods to enhance clinical trust and adoption [15] [1].

As the field advances, the integration of deep learning-based morphology assessment into comprehensive semen analysis systems offers the potential to transform male fertility diagnostics. By providing standardized, automated, and objective classification, these technologies can enhance diagnostic consistency across laboratories and improve patient care through more reliable fertility assessment and treatment planning.

Building the Model: A Technical Deep Dive into Deep Learning Pipelines for Sperm Classification

The development of robust deep learning models for sperm morphology classification is critically dependent on the availability of high-quality, well-annotated datasets. Within this field, three significant datasets have emerged: SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax), VISEM, and SVIA (Sperm Videos and Images Analysis dataset). These datasets address a pressing need in male infertility research, where manual sperm morphology analysis remains highly subjective, challenging to standardize, and dependent on technician experience [10] [1]. The SMD/MSS dataset provides meticulously classified individual sperm images focusing on detailed morphological defects according to the modified David classification [10]. In contrast, the VISEM dataset offers a multi-modal resource containing video recordings of spermatozoa alongside extensive clinical and biological data from participants [17] [18]. The SVIA dataset represents a large-scale collection with diverse annotations suitable for multiple computer vision tasks, including object detection, segmentation, and classification [19]. Together, these resources enable the training and validation of sophisticated deep learning algorithms, moving the field toward automated, standardized, and accurate sperm morphology analysis.

Dataset Comparison and Characteristics

The SMD/MSS, VISEM, and SVIA datasets vary significantly in scale, content type, and annotation focus, making them suitable for different research applications within sperm morphology analysis.

Table 1: Quantitative Comparison of Sperm Morphology Datasets

| Characteristic | SMD/MSS | VISEM | SVIA |

|---|---|---|---|

| Primary Content | 1,000 individual sperm images (extended to 6,035 with augmentation) [10] | 20 annotated videos (29,196 frames) + 166 unlabeled clips [17] | 101 video clips, 125,000 object locations, 26,000 segmentation masks [19] |

| Annotation Focus | Morphological defects (head, midpiece, tail) per modified David classification [10] | Bounding boxes, tracking IDs, sperm motility [17] | Bounding boxes, segmentation masks, object categories [19] |

| Data Modalities | Static images | Videos, clinical data, biological samples [18] | Videos, images |

| Key Strengths | Expert classification by multiple andrologists; CASA morphometrics [10] | Multi-modal; tracking annotations; clinical correlation potential [17] [18] | Large-scale; diverse annotations for multiple computer vision tasks [19] |

| Primary Use Cases | Sperm morphology classification; defect identification [10] | Sperm tracking; motility analysis; multi-modal prediction [17] | Object detection; segmentation; classification [19] |

Table 2: Detailed Annotation Specifications

| Dataset | Annotation Types | Class Labels/Details | Annotation Format |

|---|---|---|---|

| SMD/MSS | Morphological class per spermatozoon [10] | 12 defect classes: 7 head defects, 2 midpiece defects, 3 tail defects [10] | Image filename codes (A: Tapered, B: Thin, etc.); Ground truth file [10] |

| VISEM-Tracking | Bounding boxes; tracking IDs [17] | 0: normal sperm, 1: sperm clusters, 2: small/pinhead [17] | YOLO format text files; CSV with sperm counts [17] |

| SVIA | Bounding boxes; segmentation masks; object categories [19] | Normal, pin, amorphous, tapered, round, multi-nucleated head sperm, impurities [19] | Category information; segmentation masks; independent images [19] |

Dataset Curation and Annotation Protocols

SMD/MSS Dataset Curation

The SMD/MSS dataset was developed through a rigorous multi-step curation process designed to maximize quality and consistency for morphological classification tasks.

Sample Preparation and Acquisition: Smears were prepared from semen samples obtained from 37 patients following World Health Organization (WHO) guidelines and stained with RAL Diagnostics staining kit. Samples with sperm concentrations of at least 5 million/mL were included, while those exceeding 200 million/mL were excluded to prevent image overlap and facilitate capture of whole sperm. Images were acquired using an MMC CASA system comprising an optical microscope with a digital camera using bright field mode with an oil immersion 100x objective. The system captured morphometric data including head width and length, and tail length for each spermatozoon [10].

Expert Annotation and Quality Control: Each spermatozoon underwent manual classification by three experienced experts following the modified David classification, which includes 12 classes of morphological defects: 7 head defects (tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome), 2 midpiece defects (cytoplasmic droplet, bent), and 3 tail defects (coiled, short, multiple) [10]. The inter-expert agreement was systematically analyzed across three scenarios: no agreement (NA), partial agreement (PA) where 2/3 experts agreed, and total agreement (TA) where all three experts agreed on the same label for all categories. Statistical analysis using Fisher's exact test was performed to assess differences between experts for each morphological class [10].

Data Augmentation: To address class imbalance and limited data issues, augmentation techniques were applied to expand the original 1,000 images to 6,035 images, creating a more balanced representation across morphological classes [10].

VISEM Dataset Curation

The VISEM dataset represents a unique multi-modal resource curated with an emphasis on video data and clinical correlations.

Multi-modal Data Collection: Data was originally collected for studies on overweight and obesity in relation to male reproductive function. The dataset includes 85 male participants aged 18 years or older, with video recordings of spermatozoa placed on a heated microscope stage (37°C) and examined under 400x magnification using an Olympus CX31 microscope. Videos were captured using a UEye UI-2210C camera and saved as AVI files [18]. In addition to video data, the dataset incorporates standard semen analysis results, sperm fatty acid profiles, fatty acid composition of serum phospholipids, demographic data, and sex hormone measurements [18].

Tracking Annotation Protocol: For the VISEM-Tracking subset, 20 video recordings of 30 seconds each (comprising 29,196 frames) were selected based on diversity to obtain as many varied tracking samples as possible [17]. Annotation was performed by data scientists using the LabelBox tool in close collaboration with male reproduction researchers. Biologists verified all annotations to ensure correctness [17]. Each annotated video folder contains extracted frames, bounding box labels for each frame, and bounding box labels with corresponding tracking identifiers. All bounding box coordinates are provided in YOLO format, with text files containing class labels and unique tracking IDs to identify individual spermatozoa throughout videos [17].

Data Structure and Organization: The dataset is organized with 20 sub-folders for annotated videos, each containing extracted frames, bounding box labels per frame, and labels with tracking identifiers. Additional CSV files contain participant-related data, semen analysis results, sex hormone levels, and sperm counts per frame [17].

SVIA Dataset Curation

The SVIA dataset was curated as a large-scale resource for computer-aided sperm analysis, with extensive annotations supporting multiple computer vision tasks.

Large-scale Data Collection and Annotation: The dataset preparation began in 2017 and involved approximately four years of work, resulting in more than 278,000 annotated objects [19]. Fourteen reproductive doctors and biomedical scientists performed annotations, with verification by six reproductive doctors and biomedical scientists. The dataset includes normal and abnormal sperm categories, including pin, amorphous, tapered, round, and multi-nucleated head sperm, as well as impurities [19].

Structured Data Organization: The SVIA dataset is organized into three distinct subsets supporting different research applications. Subset-A contains 125,000 object locations with bounding box annotations from 101 videos. Subset-B includes 26,000 segmentation masks from 10 videos. Subset-C provides 125,000 independent images of sperm and impurities for classification tasks [19].

Quality Assurance: The extensive annotation process involved multiple specialists to ensure accuracy and consistency across the large-scale dataset. The inclusion of various abnormality types and impurities enhances the dataset's utility for real-world applications where such distinctions are clinically relevant [19].

Experimental Protocols for Deep Learning Applications

Sperm Morphology Classification with SMD/MSS

Data Preprocessing: Images underwent cleaning to handle missing values, outliers, and inconsistencies. Normalization or standardization transformed numerical features to a common scale, ensuring no particular feature dominated the learning process. Images were resized using linear interpolation strategy to 80×80×1 grayscale to standardize input dimensions [10].

Dataset Partitioning: The entire image set was randomly divided into training (80%) and testing (20%) subsets. From the training subset, 20% was further extracted for validation during model development, ensuring robust evaluation on unseen data [10].

Deep Learning Architecture: A Convolutional Neural Network (CNN) architecture was implemented in Python 3.8, comprising five stages: image preprocessing, database partitioning, data augmentation, program training, and evaluation. The model was trained to classify sperm images into the various morphological categories defined in the annotation protocol [10].

Sperm Detection and Tracking with VISEM-Tracking

Baseline Detection Model: Researchers established baseline sperm detection performance using the YOLOv5 deep learning model trained on the VISEM-Tracking dataset [17]. This provided a benchmark for subsequent research and demonstrated the dataset's utility for training complex DL models to analyze spermatozoa.

Object Tracking Methodology: The tracking identifiers provided with bounding boxes enable development and evaluation of sperm tracking algorithms. These algorithms can analyze movement patterns, classify spermatozoa based on motility, and compute kinematic parameters essential for comprehensive sperm quality assessment [17].

Multi-task Learning with SVIA Dataset

Object Detection Experiments: For Subset-A, researchers evaluated five deep learning models for object detection: Single Shot MultiBox Detector (SSD), RetinaNet, Faster RCNN, and YOLO-v3/v4. Performance was assessed using evaluation metrics including accuracy, precision, recall, and F1-score calculated from confusion matrices [19].

Image Segmentation Benchmarking: For Subset-B, four traditional image segmentation methods (Markov Random Field, Watershed, Otsu thresholding, Region Growing) and four deep learning-based methods (k-means, U-net, SegNet, and Mask RCNN) were compared to segment original images [19].

Image Denoising Evaluation: For Subset-C, 13 kinds of conventional noise were added to original images, followed by application of different denoising methods including DnCNN, U-net, and traditional filters to assess robustness and image enhancement capabilities [19].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials

| Item | Function/Application | Dataset Context |

|---|---|---|

| RAL Diagnostics Staining Kit | Sperm smear staining for morphological analysis | SMD/MSS sample preparation [10] |

| Olympus CX31 Microscope | Optical microscopy with 400x magnification for video recording | VISEM video acquisition [17] [18] |

| UEye UI-2210C Camera | Microscope-mounted camera for video capture (50 FPS) | VISEM video recording [17] [18] |

| MMC CASA System | Computer-assisted semen analysis for image acquisition and morphometrics | SMD/MSS data collection [10] |

| Heated Microscope Stage | Maintains samples at 37°C for physiological motility assessment | VISEM sample preparation [17] [18] |

| LabelBox Annotation Tool | Web-based platform for bounding box and tracking annotation | VISEM-Tracking annotation [17] |

The SMD/MSS, VISEM, and SVIA datasets represent significant advancements in resources for sperm morphology classification using deep learning. Each dataset offers unique strengths: SMD/MSS provides detailed morphological classification following standardized clinical guidelines, VISEM offers multi-modal data with clinical correlations, and SVIA delivers large-scale annotations for diverse computer vision tasks. The rigorous curation protocols, including multi-expert annotation, quality control measures, and comprehensive documentation, ensure these datasets meet the demanding requirements of deep learning research. By addressing critical challenges in data quality, annotation consistency, and clinical relevance, these resources facilitate the development of robust, standardized, and clinically applicable deep learning solutions for male infertility assessment. Future work should focus on expanding dataset diversity, developing standardized evaluation benchmarks, and exploring federated learning approaches to leverage these resources while addressing privacy concerns in medical data.

In the field of male fertility research, deep learning for sperm morphology classification has emerged as a powerful tool to overcome the subjectivity and variability of manual analysis by embryologists [1]. The performance and generalizability of these models are fundamentally constrained by the quality, quantity, and balance of the training data. This document outlines standardized protocols for data preprocessing and augmentation, specifically tailored for sperm image analysis, to enhance model robustness and clinical applicability. These procedures are critical for building reliable automated systems that can standardize fertility assessment, reduce diagnostic variability, and improve patient care outcomes in reproductive medicine [20].

Data Preprocessing Techniques

Proper preprocessing of raw sperm images is essential to mitigate confounding artifacts and prepare data for effective model training.

Image Denoising and Cleaning

Raw images acquired from optical microscopes often contain noise from insufficient lighting, uneven staining, or cellular debris [10] [21].

- Objective: Remove noise signals that overlap with sperm images while preserving morphological structures.

- Methods: Implement wavelet denoising or median filtering to reduce high-frequency noise without blurring critical edges defining sperm head contours, acrosome boundaries, and tail structures [22] [20].

Normalization and Standardization

Consistent pixel value scaling ensures stable model convergence by mitigating variations in staining intensity and illumination [10].

- Procedure: Rescale pixel intensity values to a common range, typically [0, 1] or [-1, 1], by dividing by the maximum possible pixel value. For dataset-wide standardization, rescale images to have zero mean and unit variance [10].

- Specifications: In the SMD/MSS dataset implementation, images were resized to 80×80 pixels using a linear interpolation strategy and converted to grayscale (1 channel) [10].

Data Partitioning

A rigorous split of the dataset prevents data leakage and ensures unbiased evaluation.

- Standard Protocol: Randomly partition the entire dataset into three subsets:

- Training Set (80%): Used for model parameter learning.

- Validation Set (10%): Used for hyperparameter tuning and model selection.

- Test Set (10%): Used only once for the final evaluation of the model's generalization performance [10].

- Cross-Validation: For smaller datasets, employ k-fold cross-validation (e.g., 5-fold) to maximize data usage and obtain more reliable performance estimates [20].

Table 1: Standardized Data Preprocessing Pipeline for Sperm Morphology Analysis

| Processing Stage | Core Objective | Recommended Technique | Key Parameters |

|---|---|---|---|

| Denoising | Reduce imaging artifacts & noise | Wavelet Denoising, Median Filtering | Kernel size: 3×3, Wavelet: 'db8' |

| Color Normalization | Standardize stain intensity & contrast | Grayscale conversion, Min-Max Scaling | Target range: [0,1], Output channels: 1 |

| Spatial Standardization | Uniform input dimensions for the network | Resizing with Linear Interpolation | Target size: 80×80 pixels [10] |

| Data Partitioning | Ensure unbiased model training & test | Random Split, Stratified K-Fold | Train/Val/Test: 80/10/10, K=5 [10] [20] |

Data Augmentation Strategies

Data augmentation artificially expands the training dataset by creating modified versions of existing images, which is crucial for combating overfitting and improving model generalizability, especially given the limited size of many medical datasets [10] [1].

Geometric Transformations

These are fundamental augmentation techniques that alter the spatial orientation of sperm images, teaching the model to be invariant to these changes.

- Techniques: Include random rotations (e.g., ±15°), horizontal and vertical flips, slight translations (±10% of image width/height), and zooming (90-110% of original scale) [21].

- Application: In sperm morphology analysis, flipping and small rotations are particularly effective as they simulate different microscopic viewing angles without distorting critical morphological features [23].

Photometric Transformations

These adjustments modify the pixel intensity values to make the model robust to variations in staining and lighting conditions.

- Techniques: Adjust image brightness (±20%), contrast (0.8-1.2 factor), and add Gaussian noise to simulate different staining intensities and acquisition conditions [21].

- Consideration: Transformations should be applied conservatively to avoid altering the diagnostic appearance of sperm structures, such as the acrosome or midpiece.

Advanced and Synthetic Data Generation

For severe class imbalance or data scarcity, more advanced techniques are required.

- Mix-up: A data augmentation strategy that creates new samples by linearly combining pairs of existing images and their labels. This encourages the model to learn smoother decision boundaries [23].

- Synthetic Data Generation: Tools like AndroGen provide an open-source solution for generating highly customizable, morphologically diverse synthetic sperm images and video sequences without relying on extensive real image collections or training generative models [24].

- Mechanism: AndroGen uses a parameterized rendering algorithm based on multivariate normal distributions to model sperm morphometric parameters (e.g., head length/width, midpiece length/width, tail length/width) from published scientific literature, ensuring biological plausibility [24].

- Output: It can generate images with simultaneous bounding box annotations (for detection) and segmentation masks (for segmentation), supporting multiple species including human, horse, and boar [24].

Table 2: Quantitative Impact of Data Augmentation on Model Performance

| Dataset / Study | Initial Size | Augmented Size | Augmentation Methods Used | Reported Model Performance (Accuracy) |

|---|---|---|---|---|

| SMD/MSS Dataset [10] [15] | 1,000 images | 6,035 images | Geometric transformations, Photometric adjustments | 55% to 92% (across different morphological classes) |

| CBAM-ResNet50 (SMIDS) [20] | 3,000 images | - | Mix-up, Attention mechanisms, Deep Feature Engineering | 96.08% ± 1.2% |

| Lung Sounds (VGG-11) [23] | - | - | Spectrogram Flipping, Mix-up, SpecMix | F1-score: 75.4% (test phase) |

Diagram 1: Sperm image preprocessing pipeline.

Experimental Protocol: Application to Sperm Morphology Classification

This protocol details the application of preprocessing and augmentation for training a deep learning model to classify sperm morphology based on the modified David classification [10].

Materials and Dataset Preparation

- Source Dataset: Utilize the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) or similar, which includes 12 classes of morphological defects (e.g., tapered head, microcephalous, bent midpiece, coiled tail) [10].

- Expert Annotation: Each sperm image must be independently classified by multiple experts (e.g., three) to establish a reliable ground truth. Analyze inter-expert agreement (Total Agreement, Partial Agreement, No Agreement) to gauge task complexity [10].

- Initial Preprocessing:

- Clean the data: Identify and handle any corrupt or unreadable images.

- Denoise: Apply a wavelet denoising filter.

- Normalize: Rescale pixel values to the [0, 1] range.

- Standardize: Resize all images to a uniform 80×80 pixel resolution and convert to grayscale [10].

- Partition: Perform an 80/20 train/test split, followed by a 80/20 split of the training set to create a training and validation subset (resulting in 64% train, 16% validation, 20% test) [10].

Data Augmentation Implementation

- Tool: Use a deep learning framework like TensorFlow or PyTorch.

- Augmentation Pipeline: On the training set only, apply a real-time augmentation pipeline that includes:

- Random horizontal and vertical flipping.

- Random rotation within a ±15-degree range.

- Random brightness and contrast adjustments (max delta=0.2).

- For addressing class imbalance, integrate the Mix-up technique with an alpha value of 0.2 [23].

Model Training and Evaluation

- Model Architecture: Implement a Convolutional Neural Network (CNN), such as a ResNet50 backbone enhanced with a Convolutional Block Attention Module (CBAM) to help the network focus on morphologically relevant regions [20].

- Training: Train the model using the augmented training set. Monitor loss and accuracy on the validation set to avoid overfitting.

- Evaluation: Finally, evaluate the model on the held-out test set, which has not been used in any part of the training or validation process, to assess its true generalizability. Report standard metrics including accuracy, precision, recall, and F1-score [20].

Diagram 2: Data augmentation strategy.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Reagents for Automated Sperm Morphology Analysis

| Item / Tool | Function / Description | Application Context |

|---|---|---|

| RAL Diagnostics Staining Kit | Standardized staining of semen smears for clear visualization of sperm structures (head, midpiece, tail). | Sample preparation for image acquisition according to WHO guidelines [10]. |

| MMC CASA System | Computer-Assisted Semen Analysis system for automated image acquisition from stained sperm smears using a microscope with a digital camera. | Standardized capture of individual sperm images at 100x oil immersion [10]. |

| AndroGen Software Tool | Open-source tool for generating parametric, synthetic sperm images and videos, creating customizable datasets for machine learning. | Overcoming data scarcity and privacy limitations; generating data for detection, segmentation, and tracking tasks [24]. |

| SMD/MSS Dataset | A public dataset of 1000+ individual sperm images, classified by experts based on the modified David classification (12 defect classes). | Benchmarking and training deep learning models for sperm morphology classification [10]. |

| TensorFlow / PyTorch | Open-source machine learning frameworks used to build, train, and deploy deep neural networks for image classification. | Implementing CNN architectures (e.g., ResNet50), preprocessing pipelines, and data augmentation protocols [21] [20]. |

The analysis of sperm morphology is a cornerstone of male fertility assessment, providing critical diagnostic and prognostic information. Traditional manual evaluation, however, is plagued by subjectivity, significant inter-observer variability, and time-intensive procedures, with reported disagreement rates among experts as high as 40% [20]. These limitations have catalyzed the development of automated, objective analysis systems, with deep learning emerging as a particularly transformative technology. Within this domain, Convolutional Neural Networks (CNNs) have established themselves as the predominant and most successful architecture for sperm image classification tasks [8] [20]. Their ability to automatically learn hierarchical and discriminative features from raw pixel data—such as the subtle morphological variations in sperm head shape, acrosome integrity, and tail defects—makes them exceptionally suited for this clinical application. This document outlines the key CNN architectures, experimental protocols, and resources that form the foundation of modern, AI-driven sperm morphology analysis.

Predominant CNN Architectures and Performance

Research has explored a range of CNN-based models, from custom-built networks to sophisticated adaptations of established architectures enhanced with attention mechanisms. The following table summarizes the performance of several key models reported in recent literature.

Table 1: Performance of CNN Architectures in Sperm Morphology Classification

| Model Architecture | Key Features | Dataset(s) Used | Reported Performance | Reference |

|---|---|---|---|---|

| CBAM-enhanced ResNet50 | Residual learning blocks; Convolutional Block Attention Module (CBAM) for focused feature learning | SMIDS (3-class), HuSHeM (4-class) | 96.08% accuracy (SMIDS), 96.77% accuracy (HuSHeM) | [20] |

| DenseNet169 | Dense connectivity between layers to promote feature reuse; mitigates vanishing gradient | HuSHeM, SCIAN | 97.78% accuracy (HuSHeM), 78.79% accuracy (SCIAN) | [25] |

| Custom CNN | Basic convolutional network; data augmentation | SMD/MSS (12-class) | 55% to 92% accuracy (range across classes) | [10] [15] |

| Stacked Ensemble | Combination of multiple CNNs (e.g., VGG16, ResNet-34, DenseNet) | HuSHeM | ~98.2% accuracy | [20] |

The integration of attention mechanisms, such as the Convolutional Block Attention Module (CBAM), represents a significant advancement. These modules allow the network to dynamically focus computational resources on the most informative spatial regions and feature channels of the sperm image—for instance, the head acrosome or midpiece structure—while suppressing irrelevant background noise [20]. This leads to more robust and interpretable models. Furthermore, hybrid approaches that combine deep CNN feature extraction with classical machine learning classifiers (e.g., Support Vector Machines) have demonstrated state-of-the-art performance, achieving accuracy improvements of over 8% compared to end-to-end CNN models alone [20].

Standardized Experimental Protocol for Sperm Morphology Classification

To ensure reproducible and reliable results, the following structured experimental protocol is recommended. The workflow is designed to systematically address common challenges in medical image analysis, such as limited data and class imbalance.

Diagram 1: Sperm morphology classification workflow.

Sample Preparation, Data Acquisition, and Annotation

- Sample Preparation: Semen samples are prepared as smears according to WHO guidelines and stained using standardized kits (e.g., RAL Diagnostics staining kit) [10]. Samples should have a concentration of at least 5 million/mL to ensure sufficient data, but high-concentration samples (>200 million/mL) should be excluded to prevent image overlap [10].

- Data Acquisition: Images of individual spermatozoa are captured using a Computer-Assisted Semen Analysis (CASA) system, such as the MMC CASA system. Acquisition should use an oil immersion 100x objective in bright-field mode to ensure high-resolution images suitable for morphological analysis [10].

- Expert Annotation & Ground Truth: Each sperm image is independently classified by multiple experienced embryologists (typically three) to establish a reliable ground truth. Classification should follow a recognized morphological classification system, such as the modified David classification (which defines 12 classes of defects across the head, midpiece, and tail) or the WHO criteria [10] [20]. A ground truth file is compiled, detailing the image name, annotations from all experts, and morphometric data.

Data Preprocessing and Augmentation

This critical phase prepares the raw image data for effective model training.

- Image Preprocessing:

- Cleaning: Handle missing values, outliers, and inconsistencies [10].

- Normalization: Resize images to a standard dimension (e.g., 80x80 pixels) and convert to grayscale to reduce computational complexity. Pixel values are normalized to a common scale, often [0, 1] [10].

- Denoising: Apply techniques to reduce noise from poor lighting or staining artifacts [10].

- Data Augmentation:

- To overcome the challenge of small, imbalanced datasets, apply augmentation techniques to artificially expand the dataset and improve model generalization. The SMD/MSS dataset, for instance, was expanded from 1,000 to 6,035 images using augmentation [10] [15]. Common operations include:

- Geometric transformations: Random cropping, horizontal/vertical flipping.

- Color space adjustments: Modifications to brightness, contrast.

- To overcome the challenge of small, imbalanced datasets, apply augmentation techniques to artificially expand the dataset and improve model generalization. The SMD/MSS dataset, for instance, was expanded from 1,000 to 6,035 images using augmentation [10] [15]. Common operations include:

Model Training, Validation, and Evaluation