Deep Neural Networks in Sperm Analysis: Advanced AI for Motility and Morphology Estimation

This article comprehensively reviews the application of deep neural networks (DNNs) for automating and standardizing sperm motility and morphology analysis, crucial aspects of male infertility assessment.

Deep Neural Networks in Sperm Analysis: Advanced AI for Motility and Morphology Estimation

Abstract

This article comprehensively reviews the application of deep neural networks (DNNs) for automating and standardizing sperm motility and morphology analysis, crucial aspects of male infertility assessment. We explore the foundational principles driving AI adoption in reproductive medicine, detailing specific methodological approaches including convolutional neural networks (CNNs) for image-based classification and segmentation. The content addresses key challenges such as dataset limitations and model optimization, while providing a critical evaluation of model performance against traditional methods and expert consensus. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current evidence to highlight how DNNs enhance accuracy, objectivity, and efficiency in semen analysis, ultimately advancing both clinical diagnostics and pharmaceutical research in reproductive health.

The Imperative for AI: Overcoming Limitations in Traditional Sperm Analysis

Clinical Significance of Sperm Motility and Morphology in Male Infertility

Male infertility is a significant global health concern, contributing to approximately 50% of all infertility cases among couples [1]. The diagnostic and prognostic evaluation of male fertility potential has traditionally relied on the conventional semen analysis, which assesses key parameters, including sperm concentration, motility, and morphology [1] [2]. Among these, sperm motility and morphology provide critical insights into sperm function and health. However, traditional assessment methods are often plagued by subjectivity, poor reproducibility, and significant inter-laboratory variability [3] [4]. This document frames the clinical significance of these parameters within the emerging context of deep neural networks (DNNs), which offer the potential for automated, objective, and highly accurate analysis to revolutionize male infertility diagnostics and research.

Clinical Background and Significance

The Global Burden of Male Infertility

Infertility, defined as the inability to conceive after one year of unprotected intercourse, affects an estimated 15% of couples globally [1]. The male factor is a sole or contributing cause in approximately half of these cases. Alarmingly, recent meta-regression analyses have reported a substantial global decline in sperm counts, with the rate of decline accelerating after the year 2000 [1]. This trend underscores the growing importance of accurate and reliable male fertility assessment.

Sperm Motility: A Key Predictor of Fertility

Sperm motility refers to the movement capabilities of sperm, particularly progressive motility, which is the ability to swim forward effectively. It is a crucial functional parameter, as sperm must navigate the female reproductive tract to reach and fertilize an oocyte. Clinical evidence positions motility as one of the most discriminative semen parameters for differentiating fertile from infertile men [5]. A retrospective study comparing fertile men and those with male factor infertility found that motility had a high sensitivity (0.74) and specificity (0.90), with a minimum overlap range between the groups (lower and upper cut-off values of 46% and 75%), making it a superior predictor compared to other conventional parameters [5].

Sperm Morphology: Structure and Function

Sperm morphology assesses the size and shape of sperm, with ideal sperm featuring a smooth, oval head and a long, single tail [2]. Abnormalities in head shape or tail structure can impair the sperm's ability to fertilize an egg. The clinical value of morphology, often assessed using "strict" criteria (Tygerberg criteria), has been debated. While it is a cornerstone of semen analysis, its predictive power for natural pregnancy can be variable. Studies have shown that the percentage of normal forms is typically low, even in fertile populations, with one study of fertile men reporting a normal head morphology rate of 9.98% [6]. Furthermore, the specificity of abnormal morphology for diagnosing infertility was found to be as low as 0.51 in one study, meaning almost half of fertile men also presented with abnormal morphology [5]. Despite this, morphology remains critically important for selecting sperm for advanced reproductive techniques like Intracytoplasmic Sperm Injection (ICSI) [7].

Current Analytical Challenges and the Role of Deep Neural Networks

Limitations of Conventional Analysis

The current gold standard for semen analysis relies on manual assessment by trained technicians, a process that is inherently subjective and time-consuming [3] [4]. This subjectivity leads to significant inter-operator and inter-laboratory variability. Computer-Aided Sperm Analysis (CASA) systems were developed to mitigate these issues, but their performance, particularly for morphology assessment, remains inconsistent. A 2025 study comparing three CASA systems against manual methods found poor agreement in morphology analysis, with Intraclass Correlation Coefficients (ICC) as low as 0.160 and 0.261 for two systems [4]. This lack of reliability can lead to skewed treatment decisions, such as the inappropriate allocation of patients to ICSI or conventional IVF [4].

The Deep Learning Revolution

Deep Learning (DL), a subset of artificial intelligence (AI), offers a paradigm shift by enabling fully automated, objective, and highly accurate sperm analysis. DL models, particularly convolutional neural networks (CNNs), can learn hierarchical features directly from raw sperm images, eliminating the need for manual feature extraction required in conventional machine learning [8] [3]. This capability allows for the simultaneous and precise segmentation of sperm into their constituent parts—head, neck, and tail—followed by classification into normal and abnormal categories based on learned patterns from large, annotated datasets [3].

A recent experimental study demonstrated the power of this approach by developing an in-house AI model using a ResNet50 architecture to assess unstained, live sperm morphology from images captured via confocal laser scanning microscopy [7]. The model achieved a test accuracy of 93%, with high precision and recall for both normal and abnormal sperm classes, and showed a stronger correlation with manual morphology assessment than commercial CASA systems [7]. This highlights the potential of DNNs to not only match but exceed the performance of existing technologies while using live, unstained sperm, which is a significant advantage for Assisted Reproductive Technology (ART).

Experimental Data and Comparative Analysis

The following tables summarize key quantitative data from recent studies, illustrating the performance of traditional methods versus emerging AI-based approaches.

Table 1: Comparative Performance of Semen Analysis Methods for Morphology Assessment

| Analysis Method | Correlation with Manual Morphology (r) | Key Performance Metrics | Major Limitations |

|---|---|---|---|

| Manual Assessment (Gold Standard) | 1.00 (by definition) | High inter-observer variability [3] | Subjective, time-consuming, requires extensive training [4] |

| Commercial CASA 1 (LensHooke X1 Pro) | 0.160 (ICC) [4] | Poor agreement with manual method [4] | Low consistency, may skew IVF/ICSI treatment allocation [4] |

| Commercial CASA 2 (SQA-V Gold) | 0.261 (ICC) [4] | Poor agreement with manual method [4] | Low consistency, may skew IVF/ICSI treatment allocation [4] |

| In-house AI/DL Model (ResNet50) | 0.76 (vs. Manual) [7] | Accuracy: 93%, Precision: 0.95 (Abnormal), 0.91 (Normal); Recall: 0.91 (Abnormal), 0.95 (Normal) [7] | Requires large, high-quality annotated datasets for training [7] |

Table 2: Reference Sperm Morphometry Parameters from a Fertile Population (n=21) [6]

| Morphometric Parameter | Mean Value | Parameter Description |

|---|---|---|

| Head Length (HL) | Data in source | Distance between the two furthest points along the long axis of the head. |

| Head Width (HW) | Data in source | Perpendicular distance between the two furthest points on the short axis. |

| Head Area (HA) | Data in source | Calculated area based on the contour of the sperm head. |

| Ellipticity (L/W) | Data in source | Ratio of the head length to the head width. |

| Acrosome Area (AcA) | Data in source | Area of the cap-like structure on the anterior part of the sperm head. |

| Normal Head Morphology | 9.98% | Percentage of sperm with normal head shape. |

Detailed Experimental Protocols

Protocol: AI-Based Morphology Analysis of Unstained Live Sperm

This protocol is adapted from a 2025 study that developed a deep-learning model for analyzing live sperm without staining, preserving their viability for use in ART [7].

5.1.1 Sample Preparation and Image Acquisition

- Sample Collection: Collect semen samples from participants after 2-7 days of sexual abstinence. Allow samples to liquefy at room temperature for up to 30 minutes.

- Slide Preparation: Dispense a 6 µL droplet of the liquefied semen onto a standard two-chamber slide with a depth of 20 µm (e.g., Leja).

- Imaging: Capture sperm images using a confocal laser scanning microscope (e.g., ZEISS LSM 800) at 40x magnification in confocal mode (Z-stack). Set the Z-stack interval to 0.5 µm, covering a total range of 2 µm to ensure optimal focus. Capture at least 200 sperm images per sample.

5.1.2 Image Annotation and Dataset Curation

- Annotation: Manually annotate well-focused sperm images using a program like LabelImg. Experienced embryologists and researchers should draw bounding boxes around each sperm.

- Categorization: Categorize each sperm into one of nine datasets based on WHO (2021) criteria [7]. Key categories include:

- Normal Sperm: Smooth oval head, length-to-width ratio of 1.5–2, no vacuoles, slender and regular neck, uniform tail calibre.

- Abnormal Sperm: Tapered, amorphous, pyriform, or round head; observable vacuole; aberrant neck; or abnormal tail.

- Quality Control: Ensure a high inter-annotator agreement (e.g., correlation coefficient of 0.95 for normal morphology).

5.1.3 Deep Learning Model Training and Validation

- Model Selection: Employ a transfer learning approach using a pre-trained ResNet50 model, a deep CNN known for image classification.

- Training: Train the model on the annotated dataset to minimize the difference between predicted and actual labels. A typical dataset might include 12,683 annotated sperm images, with a training subset of 9,000 images (4,500 normal and 4,500 abnormal).

- Validation: Evaluate model performance on a separate, unseen test dataset. The cited model achieved a test accuracy of 93% after 150 epochs, with a processing speed of approximately 0.0056 seconds per image [7].

Protocol: Reference Morphometry Analysis Using Stained Sperm and CASA

This protocol details the establishment of reference morphometry values for a population, which is essential for training and validating any AI model [6].

5.2.1 Sample Preparation and Staining

- Sample Collection: Collect semen from a cohort of proven fertile men (e.g., those with partners who conceived within the last 12 months).

- Fixation and Staining: Fix smears in 95% ethanol for at least 15 minutes. Stain using the Papanicolaou (PAP) method as recommended by the WHO manual. This involves rehydration, nuclear staining with Harris's hematoxylin, and cytoplasmic staining with G-6 orange and EA-50 green [6].

5.2.2 Image Capture and Morphometric Measurement

- Imaging System: Use an upright microscope (e.g., Olympus CX43) with a 100x oil immersion objective, coupled with a high-resolution CMOS camera and an automated slide scanning platform (e.g., BM8000).

- Analysis System: Utilize a CASA system (e.g., Suiplus SSA-II Plus) capable of automated morphometry.

- Measurement: The system should automatically calculate focal planes and measure key parameters for at least 400 sperm or 100 fields per sample. Parameters include:

- Head Length, Head Width, Head Area, Perimeter

- Ellipticity (Length/Width ratio)

- Acrosome Area and Acrosome Ratio

- Neck Length and Neck Width

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Research

| Item / Reagent | Function / Application | Example / Note |

|---|---|---|

| Confocal Laser Scanning Microscope | High-resolution imaging of unstained, live sperm for AI model development. | ZEISS LSM 800 [7] |

| Standard Microscopy Setup | Imaging of stained sperm slides for traditional morphometry or dataset creation. | Olympus CX43 with 100x oil objective [6] |

| Papanicolaou (PAP) Stain Kit | Staining sperm for detailed morphological assessment of head, acrosome, and cytoplasm. | WHO-recommended method [6] |

| Diff-Quik Stain Kit | Rapid staining for general sperm morphology assessment. | A Romanowsky stain variant [4] |

| Computer-Assisted Sperm Analysis (CASA) | Automated analysis of concentration, motility, and morphology; used for generating reference data. | Hamilton Thorne CEROS II, Suiplus SSA-II Plus [4] [6] |

| AndroGen Software | Generation of customizable synthetic sperm images to augment training datasets. | Open-source tool; reduces need for real, annotated data [9] |

| LabelImg Annotation Tool | Manual annotation of sperm images to create ground-truth datasets for training AI models. | Free, open-source tool [7] |

| Pre-trained Deep Learning Models | Transfer learning backbone for developing custom sperm classification models. | ResNet50 [7] |

Visual Workflows and Logical Diagrams

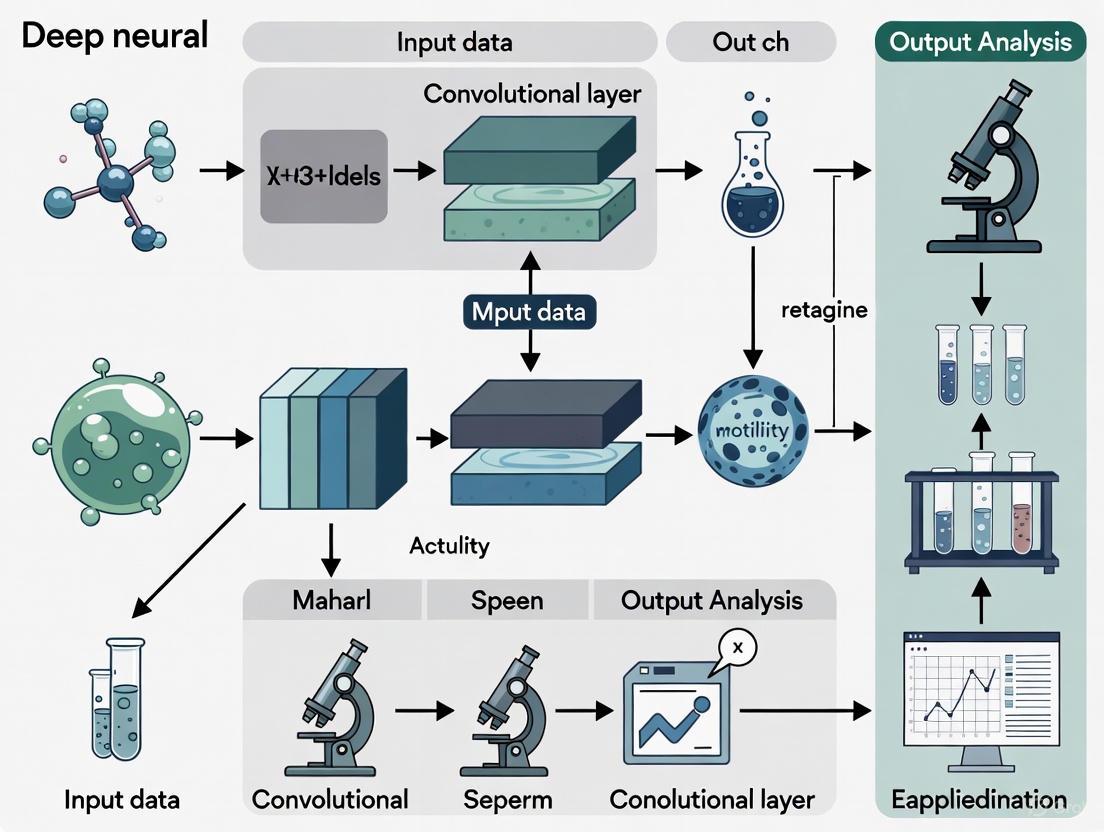

The following diagram illustrates the integrated clinical and computational workflow for deep learning-based sperm analysis, highlighting the pathway from sample collection to clinical decision support.

AI-Driven Sperm Analysis Workflow

The next diagram details the core technical process of the deep learning model for segmenting and classifying sperm structures, which is the foundation of automated analysis.

DNN-Based Sperm Segmentation and Classification

Subjectivity and Variability in Manual Semen Analysis

Semen analysis remains the cornerstone of male fertility assessment, playing a crucial role in both clinical diagnostics and research settings. Despite the publication of standardized World Health Organization (WHO) laboratory manuals, manual semen analysis continues to suffer from significant subjectivity and inter-laboratory variability [10] [11]. This technical application note examines the sources and implications of this variability, particularly within the context of developing deep neural networks for automated sperm analysis. For computational biologists and drug development professionals, understanding these pre-analytical and analytical challenges is essential for creating robust artificial intelligence (AI) models that can overcome human limitations in traditional assessment methods.

The fundamental challenge lies in the inherent complexity of semen analysis, which encompasses multiple parameters each susceptible to different sources of variation. As researchers increasingly turn to AI solutions for sperm motility and morphology estimation, comprehensive understanding of these variability sources becomes critical for developing effective computational models. This document provides a detailed examination of variability sources, quantitative assessments, standardized protocols, and emerging computational approaches that collectively inform the development of more reliable analysis systems.

Analytical Variability in Semen Assessment

The examination of human semen involves multiple procedural steps, each introducing potential variability that can compromise result consistency and clinical utility. Evidence indicates several critical points of variation require careful standardization:

Pre-analytical factors: The duration of sexual abstinence significantly impacts semen parameters, with WHO recommending 2-7 days [10]. Sample collection methods, transportation conditions, and liquefaction time (recommended 30-60 minutes at 37°C) further contribute to variability [11] [12]. Studies utilizing at-home sperm testing kits have demonstrated that even with standardized instructions, intra-subject variation remains substantial, particularly in men with oligozoospermia [13].

Analytical subjectivity: Sperm motility assessment suffers from significant inter-technician variability, as classification into progressive (rapid and slow), non-progressive, and immotile categories relies on subjective visual estimation [10] [14]. The evaluation of sperm morphology represents perhaps the most challenging parameter, with classification according to strict Kruger criteria requiring extensive technical expertise and demonstrating considerable inter-laboratory variation [15] [16].

Technical and methodological factors: The choice of counting chambers (e.g., Makler, MicroCell, Leja, or standard coverslip preparations) introduces substantial variability, particularly for concentration and motility assessments [14]. Staining techniques for morphology evaluation and equipment calibration issues further compound methodological variations [11].

Quantitative Assessment of Variability

Recent studies have quantified the degree of variability in semen parameters, providing essential baseline data for AI model development and validation. The following table summarizes key variability metrics from clinical studies:

Table 1: Quantitative Variability in Semen Parameters

| Parameter | Type of Variability | Coefficient of Variation | Clinical Implications |

|---|---|---|---|

| Sperm Concentration | Intra-subject (oligozoospermic) | 33.8% [13] | Requires multiple samples for accurate diagnosis |

| Sperm Concentration | Intra-subject (normozoospermic) | 24.5% [13] | Lower variability but still significant |

| Total Motile Sperm Count | Intra-subject (oligozoospermic) | 44.6% [13] | High variability affects treatment planning |

| Total Motility | Inter-laboratory | 10-20% [11] | Impacts consistency across facilities |

| Progressive Motility | Method-dependent (CASA vs. manual) | 5-15% [14] | Affects protocol comparisons |

| Normal Morphology | Inter-technician | Up to 30% [16] [3] | Significant diagnostic implications |

Analysis of 513 men providing multiple samples via at-home testing kits revealed that intra-subject variation was consistently lower than inter-subject variation across all parameters, with men exhibiting normozoospermia demonstrating greater stability in their semen parameters compared to those with oligozoospermia [13]. This variability underscores the recommendation by the American Urological Association and American Society for Reproductive Medicine to perform at least two semen analyses, spaced one month apart, particularly when initial results are abnormal [13].

Standardized Experimental Protocols

WHO 6th Edition Basic Semen Examination

The WHO 6th edition manual (2021) introduced important terminology changes, replacing "standard tests" with "basic examinations," "optional tests" with "extended examinations," and "research tests" with "advanced examinations" [10]. The following protocol details the basic examination procedure:

Table 2: Research Reagent Solutions for Basic Semen Analysis

| Reagent/Equipment | Specification | Function | Quality Control |

|---|---|---|---|

| Collection Container | Wide-mouthed, sterile, nontoxic material | Complete ejaculate collection | Biocompatibility testing [12] |

| Transport Medium | mHTF (modified Human Tubal Fluid) with HEPES | Maintain sperm viability during transport | Osmolarity: 280-300 mOsm/kg; pH: 7.3-7.5 [13] |

| Counting Chamber | Leja (20μm depth) or Makler (10μm depth) | Standardized depth for concentration/motility | Depth verification; QC bead calibration [11] [14] |

| Staining Solutions | Diff-Quik kit or RAL Diagnostics kit | Morphology assessment | Lot-to-lot consistency verification [13] |

| Phase Contrast Microscope | 10x-100x objectives with heated stage (37°C) | Motility assessment and morphology | Daily temperature calibration [11] |

Step-by-Step Protocol:

Sample Collection and Liquefaction: Collect specimen after 2-7 days of abstinence through masturbation into a sterile, wide-mouthed container. Maintain sample at 20-27°C during transport and allow complete liquefaction within 30-60 minutes at 37°C [10] [12]. Record any sample collection issues, as the initial ejaculate fraction contains the highest sperm concentration.

Macroscopic Examination: Assess volume (lower reference limit: 1.4 mL), appearance, viscosity, and pH (>7.2) [10] [12]. Note unusual odor as it may indicate urinary contamination or infection.

Motility Assessment: After complete liquefaction, mix sample gently and load onto a pre-warmed counting chamber. Assess minimum of 200 spermatozoa using phase-contrast microscopy at 37°C. Classify motility as:

- Progressive motility (rapid and slow): Sperm moving actively, either in a straight line or large circles

- Non-progressive motility: All other patterns of motility with absence of progression

- Immotile: No movement [10]

Sperm Concentration and Total Count: Using improved Neubauer hemocytometer or dedicated counting chamber, dilute sample 1:20 with diluent. Count minimum of 200 spermatozoa in duplicate. Calculate concentration (million/mL) and total sperm count per ejaculate (lower reference limit: 39 million) [10].

Sperm Vitality: Perform when total motility <40%. Use eosin-nigrosin stain to differentiate between live (unstained) and dead (stained) spermatozoa. Lower reference limit for vitality: 54% [10].

Sperm Morphology: Prepare thin smears, air dry, and stain according to standardized protocol. Assess minimum of 200 spermatozoa using strict Kruger criteria. Classify as normal or abnormal with detailed annotation of head, midpiece, and tail defects. Lower reference limit for normal forms: 4% [10] [15].

Quality Control Procedures

Implementation of robust quality control (QC) measures is essential for reliable results:

Internal Quality Control (IQC): Perform daily temperature checks of instruments, monthly chamber accuracy verification with QC beads, and semi-annual technician proficiency assessments [11].

External Quality Control (EQC): Participate in external proficiency testing programs to assess inter-laboratory consistency and identify systematic errors [11].

Standardized Documentation: Maintain detailed records of all QC activities, including reagent lot numbers, equipment maintenance, and technician training [11].

Diagram 1: Semen Analysis Workflow

Emerging AI Approaches for Standardization

Deep Learning for Morphology Classification

Convolutional Neural Networks (CNNs) represent a promising solution to address the high subjectivity in morphological assessment. Recent research demonstrates several approaches:

Database Development and Augmentation: The SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset exemplifies the trend toward curated, expert-annotated image collections for training deep learning models [16]. This dataset initially contained 1,000 individual spermatozoa images classified according to modified David criteria (12 morphological defect classes) and was expanded to 6,035 images through data augmentation techniques including rotation, scaling, and intensity variations [16].

CNN Architecture for Morphology Classification: A typical implementation involves:

- Image Pre-processing: Convert images to grayscale, resize to standardized dimensions (e.g., 80×80 pixels), and normalize pixel values [16]

- Data Partitioning: Split dataset into training (80%), validation (10%), and testing (10%) subsets

- Model Training: Implement CNN architecture with multiple convolutional and pooling layers for feature extraction, followed by fully connected layers for classification

- Performance Evaluation: Assess using accuracy, precision, recall, and F1-score metrics [16]

Reported accuracy ranges from 55% to 92% across different morphological classes, with highest performance achieved on distinct abnormalities such as macrocephalic and microcephalic heads [16].

Motility Analysis Validation Framework

The Motility Ratio method provides a novel approach to validate sperm motility assessment techniques [14]. This method establishes a "gold standard" for motility measurement through controlled experimental design:

Experimental Protocol:

- Split semen sample into two equal fractions

- Fraction A: Maintain maximum motility (100% reference)

- Fraction B: Eliminate motility through freeze-thaw cycling (0% reference)

- Create standardized motility ratios by mixing Fractions A and B in predetermined proportions (e.g., 0%, 25%, 50%, 75%, 100%)

- Compare measured motility against theoretical values across different assessment methods [14]

This validation framework demonstrated that different chamber types introduce significant variability, with LEJA chambers showing minimal bias (<1%) while coverslip preparations exhibited substantial overestimation (>7%) of motility [14].

Diagram 2: Motility Validation Method

Implications for Drug Development and Research

For pharmaceutical researchers developing compounds affecting male fertility, the documented variability in semen analysis presents both challenges and opportunities:

Clinical Trial Design: Account for intrinsic variability in semen parameters through appropriate sample size calculations and repeated measures designs. The high intra-individual variability in oligozoospermic subjects (CVw up to 44.6%) necessitates larger sample sizes or multiple baseline assessments [13].

Endpoint Selection: Consider incorporating AI-based morphological assessment as exploratory endpoints to reduce measurement variability and increase sensitivity to detect treatment effects.

Quality Assurance: Implement centralized laboratories with standardized protocols and participation in external quality control programs to minimize inter-site variability in multicenter trials [11].

The integration of deep learning approaches into male fertility research offers the potential to not only reduce subjectivity but also to discover novel morphological signatures predictive of drug efficacy or toxicity that may escape conventional manual assessment.

Limitations of Current Computer-Assisted Semen Analysis (CASA) Systems

Computer-Assisted Semen Analysis (CASA) systems represent a significant technological advancement in the field of andrology, aiming to automate and objectify the evaluation of key sperm parameters such as sperm concentration, motility, and morphology [17]. The integration of artificial intelligence (AI), particularly deep neural networks, promises to enhance the analysis of sperm motility and morphology by learning complex patterns from image and video data, potentially overcoming the limitations of manual, subjective assessments [17].

However, despite these promising innovations, current CASA systems exhibit persistent limitations. Evidence shows that the results from various CASA systems are not fully consistent with those from the manual method, which is still considered the gold standard [4]. These inconsistencies can lead to skewed clinical decisions, particularly in the critical choice between conventional in vitro fertilization (IVF) and intracytoplasmic sperm injection (ICSI) [4]. This document details these limitations through structured data presentation, experimental protocols, and analytical diagrams to inform researchers and drug development professionals.

Quantitative Performance Data of CASA Systems

A 2025 study directly compared three CASA systems—the Hamilton-Thorne CEROS II Clinical, the LensHooke X1 Pro, and the SQA-V Gold Sperm Quality Analyzer—against the manual method, which was performed according to the WHO laboratory manual and served as the gold standard [4]. The study involved 326 participants and used statistical measures like the Intraclass Correlation Coefficient (ICC) and Cohen's Kappa (κ) to evaluate agreement.

Table 1: Agreement Between CASA Systems and Manual Method for Sperm Parameters

| Sperm Parameter | CASA System | Statistical Measure | Value | Interpretation |

|---|---|---|---|---|

| Concentration | Hamilton-Thorne CEROS II | ICC | 0.723 | Moderate [4] |

| LensHooke X1 Pro | ICC | 0.842 | Good [4] | |

| SQA-V Gold | ICC | 0.631 | Moderate [4] | |

| Motility | Hamilton-Thorne CEROS II | ICC | 0.634 | Moderate [4] |

| LensHooke X1 Pro | ICC | 0.417 | Poor [4] | |

| SQA-V Gold | ICC | 0.451 | Poor [4] | |

| Morphology | LensHooke X1 Pro | ICC | 0.160 | Poor [4] |

| SQA-V Gold | ICC | 0.261 | Poor [4] |

Table 2: Agreement for Clinical Diagnosis Between CASA Systems and Manual Method

| Clinical Diagnosis | CASA System | Cohen's Kappa (κ) | Interpretation |

|---|---|---|---|

| Oligozoospermia | LensHooke X1 Pro | 0.701 | Substantial [4] |

| Hamilton-Thorne CEROS II | 0.664 | Substantial [4] | |

| SQA-V Gold | 0.588 | Moderate [4] | |

| Asthenozoospermia | LensHooke X1 Pro | 0.405 | Moderate [4] |

| Hamilton-Thorne CEROS II | 0.249 | Fair [4] | |

| SQA-V Gold | 0.157 | Slight [4] | |

| Teratozoospermia | LensHooke X1 Pro | 0.177 | Slight [4] |

| SQA-V Gold | 0.008 | No agreement [4] |

A critical finding was the impact of these discrepancies on treatment allocation. When based on manual morphology assessment, the ratio for ICSI was approximately 0.5. However, when using the LensHooke X1 Pro and SQA-V Gold systems, the ratios skewed to about 0.31 and 0.15, respectively. This demonstrates a significant reduction in ICSI recommendation when relying on CASA morphology analysis, potentially affecting treatment outcomes [4].

Experimental Protocol for Validating CASA Systems

The following protocol, derived from contemporary research methodologies, outlines the steps for a rigorous validation study comparing CASA systems against the manual gold standard [4].

Sample Collection and Preparation

- Ethics and Consent: The study must be reviewed and approved by an institutional review board (e.g., CREC No. 2016.499). All participants must provide informed consent [4].

- Sample Size: Recruitment of a sufficient number of participants (e.g., n > 300) to ensure statistical power [4].

- Sample Handling: Semen samples are collected and processed according to standard clinical protocols to ensure sample integrity.

Manual Semen Analysis (Gold Standard)

- Procedure: Manual analysis is performed by an experienced andrologist in strict adherence to the World Health Organization (WHO) laboratory manual (5th Edition or current) [4].

- Quality Control: The andrology unit should implement regular internal quality control and participate in external quality assurance programs (e.g., United Kingdom National External Quality Assessment Service - UK NEQAS) [4] [18].

- Parameters Assessed:

- Concentration: Calculated using an improved Neubauer counting chamber at 400x magnification [4].

- Motility: Evaluated at 400x magnification and classified into progressive (PR), non-progressive (NP), and immotile (IM) categories [4].

- Morphology: Stained using the Diff-Quik method and evaluated at 1000x oil-immersion magnification [4].

- Replication: All manual analyses should be performed in duplicate to ensure reliability.

CASA System Analysis

- Systems Tested: The protocol should specify the CASA systems under evaluation (e.g., Hamilton-Thorne CEROS II, LensHooke X1 Pro, SQA-V Gold) [4].

- Calibration and Operation: Each CASA system must be operated according to the manufacturer's instructions. This includes using specific slides (e.g., Leja 4 chamber slides for CEROS II) or test cassettes (e.g., for LensHooke X1 Pro) and ensuring proper calibration [4].

- Parallel Assessment: The same semen sample, or an aliquot from the same ejaculate, should be analyzed by the manual method and each CASA system to allow for direct pairwise comparisons.

Data and Statistical Analysis

- Statistical Tests:

- Intraclass Correlation Coefficient (ICC): A two-way random-effects model should be used to measure consistency for continuous data (concentration, motility). Values below 0.5 indicate poor agreement, 0.5-0.75 moderate, 0.75-0.9 good, and above 0.9 excellent agreement [4].

- Bland-Altman Analysis: To assess the agreement between two measurement methods by calculating the mean difference and limits of agreement [4].

- Cohen's Kappa (κ): To evaluate the agreement for categorical diagnoses (e.g., oligozoospermia, asthenozoospermia). Interpretation: ≤0 as no agreement, 0.01-0.20 as none to slight, 0.21-0.40 as fair, 0.41-0.60 as moderate, 0.61-0.80 as substantial, and 0.81-1.00 as almost perfect agreement [4].

- Clinical Impact Assessment: Analyze the discrepancy in treatment allocation (IVF vs. ICSI) based on morphology results from different methods [4].

Diagram 1: CASA System Validation Workflow. This chart outlines the key steps for a rigorous experimental protocol to validate CASA systems against the manual gold standard.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Semen Analysis Validation Studies

| Item | Function / Description | Example / Standard |

|---|---|---|

| Improved Neubauer Chamber | A hemocytometer used for the manual counting of sperm concentration [4]. | Standard laboratory equipment. |

| Diff-Quik Staining Kit | A rapid staining method for sperm morphology evaluation, based on a modified Romanowsky technique [4]. | Halotech [4]. |

| Leja Slides | Disposable counting chambers with a defined depth, specifically designed for sperm analysis and compatible with specific CASA systems [4]. | Leja 4 chambers (IMV Technologies) [4]. |

| LensHooke Test Cassettes | Disposable cassettes with dual drip areas for analyzing pH and other sperm parameters on the LensHooke X1 Pro system [4]. | Bonraybio [4]. |

| SQA-V Gold Capillary | A disposable capillary tube used to load the semen sample into the SQA-V Gold analyzer [4]. | Medical Electronic Systems [4]. |

| WHO Laboratory Manual | The definitive international standard and guideline for the examination and processing of human semen [4]. | World Health Organization (5th Edition or current). |

| External Quality Control (EQC) | Programs to detect and correct systematic errors, ensuring standardization and high-quality results across laboratories [18]. | E.g., External Quality Control for SCA [18]. |

Limitations and Challenges for Deep Learning Integration

The pursuit of more accurate CASA systems through deep learning faces several significant hurdles.

Algorithmic and Data Challenges: A primary issue is the inconsistency of results across different CASA platforms, as each system uses proprietary algorithms that have not been standardized [4] [17]. This is compounded by the "black-box" nature of complex deep learning models, which can make it difficult to understand how specific morphological or motility conclusions are reached, potentially hindering clinical trust and adoption [17]. Furthermore, the performance of these models is heavily dependent on large, high-quality, annotated datasets for training, which are often lacking. This can lead to challenges in model generalizability across diverse patient populations and clinical settings [17].

Clinical and Regulatory Hurdles: As demonstrated, deviations in morphology analysis can directly lead to skewed IVF/ICSI treatment allocation, a critical clinical decision [4]. Therefore, rigorous clinical validation through controlled trials is essential before AI-driven CASA systems can be widely adopted. This process is intertwined with the need for standardized evaluation protocols and clear regulatory frameworks to ensure patient safety and data privacy, especially given the sensitive nature of reproductive information [17].

Diagram 2: Key Limitations of Current CASA Systems. This diagram categorizes the major technical and clinical challenges facing current systems and the integration of deep learning.

Current CASA systems, despite their objective of automating and standardizing semen analysis, demonstrate significant limitations, particularly in the assessment of sperm morphology and motility, when compared to the manual method. These inconsistencies are not merely statistical but have direct, meaningful consequences for clinical treatment pathways. The integration of deep neural networks holds the potential to overcome these limitations by extracting subtle, predictive features from raw image data [17]. However, this path is fraught with challenges related to data, algorithms, and clinical validation. Future research must therefore focus on developing more transparent and robust AI models, curated multi-center datasets, and conducting rigorous external validation studies to ensure that these advanced systems can fulfill their promise of personalized, efficient, and accurate fertility care.

The Role of Deep Learning in Standardizing Reproductive Diagnostics

The diagnostic assessment of male fertility has long been constrained by subjective analytical techniques. Conventional semen analysis, particularly the evaluation of sperm motility and morphology, suffers from significant inter-observer variability despite standardized World Health Organization (WHO) protocols [19] [3]. This lack of standardization impedes diagnostic accuracy and reliable treatment planning in clinical andrology.

Deep learning (DL), a subset of artificial intelligence (AI), is emerging as a transformative technology for automating and standardizing reproductive diagnostics. Unlike traditional computer-aided sperm analysis (CASA) systems, deep convolutional neural networks (DCNNs) can learn discriminative features directly from image and video data, minimizing human subjectivity [19] [20]. This application note details experimental protocols and analytical frameworks for implementing deep learning solutions to quantify sperm motility and morphology, with direct relevance for researchers and drug development professionals working in reproductive medicine.

Quantitative Performance of Deep Learning Models

Recent validation studies demonstrate that deep learning models can achieve performance levels comparable to human experts in classifying sperm quality parameters. The tables below summarize quantitative results from published studies on sperm motility and morphology analysis.

Table 1: Performance of Deep Learning Models in Sperm Motility Analysis

| Study Reference | Model Architecture | Task | Performance Metrics |

|---|---|---|---|

| Scientific Reports, 2023 [19] | ResNet-50 (Optical Flow) | 3-category motility (Progressive, Non-progressive, Immotile) | MAE: 0.05; Correlation with manual: r=0.88 (Progressive) |

| Scientific Reports, 2023 [19] | ResNet-50 (Optical Flow) | 4-category motility (Rapid, Slow, Non-progressive, Immotile) | MAE: 0.07; Correlation: r=0.673 (Rapid progressive) |

| VISEM Dataset Study [20] | Custom CNN (MotionFlow) | Motility estimation | MAE: 6.842% |

Table 2: Performance of Deep Learning Models in Sperm Morphology Analysis

| Study Reference | Model/Dataset | Classification Task | Reported Accuracy/Performance |

|---|---|---|---|

| SMD/MSS Dataset, 2025 [16] | Custom CNN | 12 morphological defect classes (David's classification) | Accuracy range: 55% to 92% |

| VISEM Dataset Study [20] | Custom CNN | Morphology estimation | MAE: 4.148% |

| BMC Urology, 2025 [3] | Review of conventional ML | Sperm head classification | Up to 90% accuracy (Bayesian model) |

Experimental Protocols for Sperm Analysis

Protocol 1: DCNN for Sperm Motility Categorization

This protocol outlines the procedure for training a Deep Convolutional Neural Network (DCNN) to classify sperm motility into WHO categories using video data [19].

Materials:

- Fresh semen samples

- Microscope with video recording capability (400x magnification)

- Preheated slides and microscope stage (37°C)

- Computational resources (GPU recommended)

- Python with OpenCV, Keras, and TensorFlow/PyTorch

Procedure:

- Sample Preparation & Video Acquisition:

- Incubate fresh semen samples at 37°C for 30-60 minutes after collection.

- Prepare wet preparations and record multiple random fields for 5-10 seconds each, ensuring a minimum of 200 spermatozoa are captured per sample.

- Maintain a constant temperature of 37°C during recording using a heated stage.

Ground Truth Labeling:

- Have trained technicians assess video recordings according to WHO 1999 [19] or current WHO criteria.

- Categorize spermatozoa into: (a) Rapid Progressive, (b) Slow Progressive, (c) Non-Progressive, and (d) Immotile. For a 3-category model, combine (a) and (b) into "Progressive."

- Use mean values from multiple reference laboratories if possible to reduce labeling bias.

Motion Representation Preprocessing:

- For each second of video, compute the Lucas–Kanade optical flow between consecutive frames. This compresses the temporal movement information into a single 2D image representing motion vectors.

- Use these optical flow images as the input to the DCNN, rather than raw video frames.

Model Architecture & Training:

- Employ a ResNet-50 architecture, replacing the final layer with a number of neurons equal to your motility categories (3 or 4).

- Use the Adam optimizer with a learning rate of 0.0004 and Mean Absolute Error (MAE) as the loss function.

- Implement a 10-fold cross-validation strategy to robustly evaluate model performance and prevent overfitting.

Validation & Statistical Analysis:

- Compare DCNN-predicted motility percentages against manual ground truth using Pearson’s correlation coefficient and MAE.

- Generate difference plots (Bland-Altman-style) to visualize agreement and identify any systematic biases.

Figure 1: Sperm Motility Analysis Workflow. This diagram outlines the key steps for developing a deep learning model to classify sperm motility from video data, from sample preparation to model validation.

Protocol 2: CNN-Based Sperm Morphology Classification

This protocol details the development of a Convolutional Neural Network (CNN) for classifying sperm morphology using the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset based on modified David classification [16].

Materials:

- Stained sperm smears (e.g., RAL Diagnostics staining kit)

- Microscope with 100x oil immersion objective and digital camera

- MMC CASA system or equivalent for image acquisition

- Computational environment (Python 3.8 with deep learning frameworks)

Procedure:

- Dataset Curation & Annotation:

- Collect semen samples and prepare smears according to WHO guidelines. Include samples with varying morphological profiles.

- Capture images of individual spermatozoa using a bright-field microscope with a 100x oil immersion objective.

- Establish ground truth through independent classification by multiple experienced embryologists. Use a classification system such as the modified David classification, which includes 12 defect classes across the head, midpiece, and tail.

Data Preprocessing & Augmentation:

- Resize all images to a uniform resolution (e.g., 80x80 pixels) and convert to grayscale.

- Normalize pixel values to a standard range (e.g., 0-1).

- Address class imbalance and limited data by applying augmentation techniques such as rotation, flipping, scaling, and brightness adjustment to increase the effective dataset size.

Inter-Expert Agreement Analysis:

- Quantify the level of agreement between the three experts for each image. Categorize agreement as: Total Agreement (TA), Partial Agreement (PA), or No Agreement (NA).

- Use statistical tests (e.g., Fisher's exact test) to identify significant differences in classification between experts. This analysis reveals the inherent subjectivity and complexity of the task.

Model Development & Partitioning:

- Design a CNN architecture with multiple convolutional and pooling layers for feature extraction, followed by fully connected layers for classification.

- Partition the augmented dataset randomly, allocating 80% for training and 20% for testing. Further split the training set, using a portion for validation during training.

- Train the model using the training set, monitoring performance on the validation set to avoid overfitting.

Performance Evaluation:

- Evaluate the final model on the held-out test set.

- Report overall accuracy and, crucially, performance metrics (e.g., precision, recall) for each morphological defect class to ensure clinical utility.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Deep Learning-based Sperm Analysis

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Time-Lapse Incubator (e.g., EmbryoScope+) | Maintains stable culture conditions while capturing embryonic development images [21] [22]. | Integrated microscope and camera; key for embryo analysis. |

| Optical Microscope with Heated Stage | For live sperm video recording under physiological conditions [19]. | Must maintain 37°C; 400x magnification for motility. |

| 100x Oil Immersion Objective | High-resolution imaging of individual spermatozoa for morphology analysis [16]. | Essential for detailed head, midpiece, and tail assessment. |

| RAL Staining Kit | Differentiates sperm structures for morphological assessment on smears [16]. | Provides contrast for head, acrosome, and midpiece. |

| Global Culture Medium (e.g., G-TL) | Supports embryo development in time-lapse systems [22]. | Optimized for culture in time-lapse incubators. |

| ResNet-50 / Custom CNN | Deep learning architecture for image and motion analysis [19] [23]. | Pre-trained models can be adapted via transfer learning. |

| Python Deep Learning Frameworks | Model development, training, and validation environment [19] [16]. | TensorFlow, PyTorch, Keras with OpenCV for image processing. |

Analytical Framework & Data Interpretation

The integration of deep learning into reproductive diagnostics requires careful experimental design and critical evaluation of results.

Key Considerations for Model Validation:

- Ground Truth Quality: The performance of any DL model is capped by the quality of its training labels. In sperm morphology, significant inter-expert variability is a major challenge. Models trained on data with poor consensus will reflect this inconsistency [16] [3].

- Clinical Correlation: While high accuracy in classifying motility or morphology is important, the ultimate validation is the model's ability to predict clinically relevant outcomes, such as fertilization success or live birth rates. This requires linking model predictions to patient outcomes [23].

- Generalizability: A model must perform well on data from different clinics, using various microscopes, staining protocols, and sample preparation techniques. Testing on diverse, external datasets is crucial before clinical deployment [3].

Figure 2: Analytical Validation Framework. This diagram illustrates the core dependencies for validating a deep learning model in reproductive diagnostics, emphasizing the need for high-quality labels, clinical correlation, and multi-center testing.

Deep learning models provide a robust methodological foundation for standardizing the assessment of sperm motility and morphology. The protocols outlined herein enable the quantitative, automated analysis of sperm parameters, directly addressing the critical issue of subjectivity in conventional diagnostics. For researchers and pharmaceutical developers, these technologies offer reproducible biomarkers for evaluating male fertility and assessing the efficacy of novel therapeutic compounds. The continued development of large, high-quality annotated datasets and the validation of models against clinical outcomes will be essential to fully integrate these tools into mainstream reproductive medicine and drug development pipelines.

Deep learning (DL) is revolutionizing the field of andrology, particularly in the analysis of sperm morphology and motility. This paper provides a comprehensive overview of the two most pivotal deep learning architectures—Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs)—and details their specific applications and protocols in male infertility research. CNNs, with their superior spatial feature extraction capabilities, have become the de facto standard for image-based tasks such as sperm morphology analysis and head/vacuole detection. RNNs, especially their variants like Long Short-Term Memory (LSTM) networks, are uniquely suited for temporal sequence analysis, making them ideal for assessing dynamic parameters like sperm motility and trajectory patterns. This article presents structured data on model performance, standardized experimental protocols for implementing these architectures, and a curated list of essential research reagents and computational tools. By framing these technologies within the context of a broader thesis on deep neural networks for sperm quality estimation, this work aims to provide researchers, scientists, and drug development professionals with practical resources to advance andrology research.

The adoption of deep learning in andrology addresses critical challenges in traditional analysis methods, which are often characterized by subjectivity, high inter-observer variability, and substantial workload. Deep learning models, through their ability to learn hierarchical representations directly from data, offer a path toward automated, standardized, and high-throughput analysis.

Convolutional Neural Networks (CNNs) are a class of deep neural networks primarily designed for processing grid-like data such as images. Their architecture is inspired by the organization of the animal visual cortex, where individual neurons respond to stimuli only in a restricted region of the visual field known as the receptive field [24]. CNNs automatically and adaptively learn spatial hierarchies of features through backpropagation, from low-level edges in early layers to high-level conceptual features in deeper layers [25]. This makes them exceptionally powerful for tasks like classifying sperm as normal or abnormal based on head morphology.

Recurrent Neural Networks (RNNs) represent another fundamental class of neural networks engineered for sequential data. Unlike feedforward networks, RNNs contain loops that allow information to persist, enabling the network to maintain an internal state or "memory" of previous inputs in the sequence [26] [27]. This architectural characteristic is crucial for modeling temporal dynamics, such as those found in sperm motility tracks. However, vanilla RNNs often struggle with learning long-range dependencies due to the vanishing and exploding gradient problems. This limitation has been effectively addressed by more sophisticated gated architectures, such as Long Short-Term Memory (LSTM) networks and Gated Recurrent Units (GRUs), which incorporate mechanisms to selectively remember or forget information over long time periods [26] [28].

The integration of these technologies into andrology represents a paradigm shift from subjective manual assessment to objective, data-driven analysis, with the potential to unlock novel biomarkers for male fertility and drug efficacy testing.

Convolutional Neural Networks (CNNs) in Sperm Morphology Analysis

Core Architectural Principles

CNNs process images through a series of specialized layers that transform pixel values into a final prediction (e.g., a classification label). The core components of a typical CNN include [25] [24] [29]:

- Convolutional Layers: These layers apply a set of learnable filters (or kernels) to the input image. Each filter slides across the image, computing dot products to detect local spatial features such as edges, corners, and textures. Initial layers capture basic features, while deeper layers combine them into more complex structures like shapes and object parts.

- Activation Functions (ReLU): Following a convolution, an element-wise activation function like the Rectified Linear Unit (ReLU) is applied. This introduces non-linearity into the model, enabling it to learn complex patterns by effectively deciding which features are important to pass forward.

- Pooling Layers: Pooling (e.g., max pooling) performs non-linear down-sampling, reducing the spatial dimensions of the feature maps. This decreases the computational load, controls overfitting, and provides a basic form of translation invariance.

- Fully Connected Layers: After several cycles of convolution and pooling, the resulting high-level feature maps are flattened into a vector and fed into one or more fully connected layers. These layers integrate the distributed features to perform the final classification or regression task.

This hierarchical processing pipeline allows CNNs to achieve remarkable accuracy in image recognition tasks, with modern architectures like ResNet and GoogleNet enabling the training of very deep networks [29].

Application in Sperm Morphology Analysis

Sperm Morphology Analysis (SMA) is a critical, yet challenging, component of male fertility assessment. According to the World Health Organization (WHO) standards, sperm morphology is divided into the head, neck, and tail, with 26 types of abnormal morphology, requiring the analysis of over 200 sperms [30]. Manual observation is laborious and subject to inter-observer variability.

CNNs are being deployed to automate this process, demonstrating capabilities in two key areas [30]:

- Accurate automated segmentation of sperm morphological structures (head, neck, and tail).

- Substantial improvements in the efficiency and accuracy of sperm morphology classification.

Early machine learning approaches for SMA relied on handcrafted features (e.g., shape descriptors, grayscale intensity) and classifiers like Support Vector Machines (SVMs). A study by Bijar et al. achieved 90% accuracy in classifying sperm heads using Bayesian Density Estimation [30]. However, these methods are limited by their dependence on manual feature engineering.

Deep learning, particularly CNNs, overcomes this limitation by learning features directly from data. A study by Javadi et al. developed a CNN model to extract features such as the acrosome, head shape, and vacuoles from a dataset of 1,540 sperm images (the MHSMA dataset) [30]. This end-to-end learning paradigm has shown promising results in distinguishing between normal and abnormal sperm, as well as identifying specific defect types.

Table 1: Quantitative Performance of Selected Models for Sperm Morphology Analysis

| Study / Model | Task | Dataset | Key Metric & Performance |

|---|---|---|---|

| Bijar A et al. [30] | Head Morphology Classification | Not Specified | Accuracy: 90% (4 categories: normal, tapered, pyriform, small/amorphous) |

| Javadi S et al. [30] | Feature Extraction (acrosome, head, vacuoles) | MHSMA (1,540 images) | Qualitative demonstration of automated feature learning |

| Conventional CNN [30] | Morphology Classification | Various Public Datasets | Outperforms conventional ML reliant on handcrafted features |

The following diagram illustrates a typical CNN workflow for static sperm image analysis, from input to classification.

Experimental Protocol: CNN-Based Sperm Morphology Classification

Objective: To train a CNN model to automatically classify sperm images into predefined morphological categories (e.g., normal, tapered, pyriform, small, amorphous).

Materials:

- Annotated Sperm Image Dataset: Such as the MHSMA [30] or SVIA dataset [30]. The SVIA dataset contains 125,000 annotated instances for detection, 26,000 segmentation masks, and 125,880 cropped images for classification.

- Hardware: A computer with a powerful Graphics Processing Unit (GPU). GPUs are essential for efficient training of deep learning models [31].

- Software Frameworks: TensorFlow or PyTorch with Python.

Procedure:

- Data Preprocessing:

- Resizing: Standardize all images to a fixed input size (e.g., 224x224 pixels).

- Normalization: Scale pixel values to a standard range (e.g., 0-1).

- Data Augmentation: Artificially expand the training dataset by applying random but realistic transformations to the images. This includes rotations, flips, slight zooms, and brightness variations. This step is crucial for improving model generalization and preventing overfitting [25].

Model Design & Training:

- Architecture Selection: Choose a pre-existing CNN architecture like VGG, ResNet, or a custom-designed simpler CNN.

- Transfer Learning: A common and effective technique is to initialize the model with weights pre-trained on a large dataset like ImageNet. The final layers of the pre-trained model are then replaced and fine-tuned on the sperm morphology dataset.

- Compilation: Define a loss function (e.g., Categorical Cross-Entropy for multi-class classification) and an optimizer (e.g., Adam).

- Training: Feed the training data into the model in mini-batches. The model's weights are updated iteratively via backpropagation to minimize the loss function.

Model Evaluation:

- Validation: Use a held-out validation set to monitor model performance during training and tune hyperparameters.

- Testing: Evaluate the final model's performance on a completely unseen test set using metrics such as Accuracy, Precision, Recall, and F1-Score [29]. The model's predictions should be compared against annotations made by expert andrologists.

Recurrent Neural Networks (RNNs) in Sperm Motility Analysis

Core Architectural Principles

While CNNs excel with spatial data, RNNs are designed for sequential data where the order and temporal context are critical. Their fundamental feature is a recurrent connection that loops the hidden state of the network from one time step to the next, creating a form of memory [26] [28].

The update of the hidden state (ht) at time step (t) is typically computed as: [ht = \sigma(W{xh}xt + W{hh}h{t-1} + bh)] where (xt) is the input, (h_{t-1}) is the previous hidden state, (W) are weight matrices, (b) is a bias, and (\sigma) is an activation function [26] [28].

Basic RNNs suffer from the vanishing/exploding gradient problem, making it difficult to learn long-range dependencies. This has been successfully addressed by two advanced variants:

- Long Short-Term Memory (LSTM): LSTMs introduce a gating mechanism and a cell state that acts as a "conveyor belt," allowing information to flow across many time steps with minimal alteration. The gates (input, forget, and output) regulate the flow of information, deciding what to store, forget, and output [26] [28].

- Gated Recurrent Unit (GRU): GRUs are a simpler alternative to LSTMs, combining the input and forget gates into a single "update gate." They often achieve performance similar to LSTMs but with greater computational efficiency [26].

Application in Sperm Motility and Trajectory Analysis

Sperm motility is a dynamic process where the movement pattern of a sperm cell over time is a strong indicator of its health and fertilizing potential. Analyzing this temporal sequence is a task perfectly suited for RNNs.

RNNs can be applied to:

- Motility Classification: Classifying sperm tracks into categories (e.g., progressive, non-progressive, immotile) based on a sequence of positional coordinates.

- Trajectory Prediction: Forecasting the future path of a sperm cell based on its past movements.

- Time-Series Analysis: Modeling other temporal parameters, such as changes in velocity or flagellar beating patterns.

These models process the sequential location data ((x1, y1), (x2, y2), ..., (xt, yt)) of individual sperm cells. The LSTM or GRU units learn the characteristic patterns of movement associated with different motility states, effectively capturing the temporal dependencies that define a sperm's swimming behavior.

Table 2: RNN Variants and Their Relevance to Andrology Applications

| RNN Variant | Key Characteristics | Potential Andrology Application |

|---|---|---|

| Simple RNN | Basic recurrent connection; struggles with long sequences. | Baseline model for short-track analysis. |

| Long Short-Term Memory (LSTM) | Gated architecture (input, forget, output gates); excels at learning long-term dependencies. | Analysis of long motility tracks; complex trajectory modeling. |

| Gated Recurrent Unit (GRU) | Simplified LSTM with fewer gates; computationally efficient. | Motility classification where training speed is a priority. |

| Bidirectional RNN (Bi-RNN) | Processes sequences both forward and backward for richer context. | Comprehensive analysis of completed sperm tracks. |

The following diagram illustrates the process of using an RNN for temporal sperm motility analysis.

Experimental Protocol: RNN-Based Sperm Motility Classification

Objective: To train an RNN model (e.g., LSTM or GRU) to classify the motility type of individual sperm cells based on their tracked trajectory sequences.

Materials:

- Sperm Tracking Data: A dataset of sperm trajectories, such as the VISEM-Tracking dataset, which contains 656,334 annotated objects with tracking details [30]. Each trajectory should be a time-series of 2D coordinates and be labeled with a motility class.

- Hardware/Software: Same as for CNN protocol.

Procedure:

- Data Preprocessing:

- Trajectory Extraction: Use computer vision techniques (e.g., object detection and tracking algorithms) to extract the ((x, y)) coordinate sequences for each sperm cell from video data.

- Sequence Standardization: Normalize coordinate values and pad or truncate all sequences to a uniform length.

- Velocity/Feature Calculation (Optional): Derive additional features like instantaneous speed and curvature for each time step to enrich the input sequence.

Model Design & Training:

- Architecture: Construct a model comprising one or more LSTM/GRU layers. A typical architecture is Many-to-One, where the sequence of inputs is processed to produce a single classification output at the end.

- Training: The model is trained using Backpropagation Through Time (BPTT), a variant of backpropagation adapted for RNNs that unrolls the network across time steps to calculate gradients [28].

Model Evaluation:

- Evaluate the model's classification performance on a held-out test set of trajectories using standard metrics (Accuracy, F1-Score). The results should be benchmarked against both manual expert analysis and traditional computational methods.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of deep learning projects in andrology requires a combination of curated data, computational tools, and software resources.

Table 3: Essential Resources for Deep Learning in Andrology Research

| Resource Category | Item Name | Function & Application Notes |

|---|---|---|

| Public Datasets | SVIA (Sperm Videos and Images Analysis) [30] | Contains 125,000 annotated instances for detection, 26,000 segmentation masks, and 125,880 cropped images. Useful for both CNN (morphology) and RNN (motility from videos) tasks. |

| VISEM-Tracking [30] | A multi-modal dataset with 656,334 annotated objects and tracking details. Primarily for training and validating sperm tracking and motility models. | |

| MHSMA (Modified Human Sperm Morphology Analysis) [30] | Contains 1,540 grayscale sperm head images. Suitable for developing and testing sperm head morphology classification models. | |

| Computational Hardware | Graphics Processing Unit (GPU) [31] | Essential for accelerating the training of deep learning models, reducing computation time from weeks to hours. |

| Software Frameworks | TensorFlow / PyTorch | Open-source libraries that provide the foundation for building, training, and deploying deep learning models. Offer high-level APIs (e.g., Keras) for rapid prototyping. |

CNNs and RNNs offer powerful and complementary capabilities for advancing andrology research. CNNs provide an objective and scalable solution for the precise analysis of static sperm morphology, while RNNs are uniquely equipped to decode the complex temporal patterns of sperm motility. The integration of these deep learning architectures into research and clinical workflows promises to standardize male fertility assessment, reduce inter-observer variability, and uncover novel insights into sperm function. However, the field must continue to address challenges such as the need for larger, high-quality annotated datasets [30] and the "black box" nature of these models to build trust and ensure robust clinical translation [25]. The experimental protocols and resources outlined in this article provide a foundational roadmap for researchers aiming to harness deep learning for sperm motility and morphology estimation.

Architectures in Action: Implementing DNNs for Motility Classification and Morphological Segmentation

Deep Convolutional Neural Networks for WHO Motility Categorization

The analysis of sperm motility is a cornerstone of male fertility assessment, with manual evaluation according to World Health Organization (WHO) guidelines remaining the gold standard in clinical practice. However, this process is inherently subjective, time-consuming, and requires extensive technical training to produce reproducible results. Recent advances in artificial intelligence, particularly Deep Convolutional Neural Networks (DCNNs), are poised to revolutionize this field by introducing automation, enhancing objectivity, and improving analytical throughput. This protocol details the application of DCNNs for categorizing sperm motility into WHO-defined classes, providing researchers and clinicians with a framework for implementing these advanced computational methods. The content is situated within a broader thesis research context focused on developing deep learning solutions for comprehensive sperm quality assessment, encompassing both motility and morphology estimation.

Background and Significance

WHO Motility Categorization

The WHO manual establishes a critical classification system for sperm motility, essential for diagnosing male factor infertility. The categorization can be structured into three or four classes:

- Progressive Motility (Grade a+b): Sperm moving actively, either in a straight line or in a large circle.

- Rapid Progressive (Grade a): Sperm moving with a high velocity.

- Slow Progressive (Grade b): Sperm moving forward but with reduced speed.

- Non-Progressive Motility (Grade c): Sperm moving but without forward progression.

- Immotile (Grade d): Sperm showing no movement.

Traditional manual assessment is vulnerable to inter-laboratory variability and technician subjectivity, creating a compelling need for automated, standardized solutions [19].

The Role of Deep Convolutional Neural Networks

DCNNs are a class of deep learning models exceptionally suited for image recognition and classification tasks. Their capacity to automatically learn hierarchical feature representations from raw pixel data makes them ideal for analyzing complex visual patterns in sperm video microscopy. Within reproductive medicine, DCNNs facilitate the development of objective, high-throughput analysis systems that can learn to replicate expert-level motility assessments, thereby overcoming the significant limitations of manual methods [19] [32].

Quantitative Performance of DCNN Models

The performance of DCNN models for sperm motility analysis is quantitatively evaluated using metrics such as Mean Absolute Error (MAE) and Pearson's correlation coefficient, which compare the model's predictions against manual assessments by trained experts.

Table 1: Performance Metrics of DCNN Models for WHO Motility Categorization

| Motility Category | Model Type | Mean Absolute Error (MAE) | Pearson's Correlation (r) | Citation |

|---|---|---|---|---|

| Progressive Motility | 3-Category Model | 0.06 | 0.88 (p<0.001) | [19] |

| Immotile Spermatozoa | 3-Category Model | 0.05 | 0.89 (p<0.001) | [19] |

| Non-Progressive Motility | 3-Category Model | 0.04 | Not Reported | [19] |

| Rapid Progressive Motility | 4-Category Model | Not Reported | 0.673 (p<0.001) | [19] |

| Overall Motility | MotionFlow + Transfer Learning | 6.842% (MAE) | Not Reported | [20] |

| Overall Morphology | MotionFlow + Transfer Learning | 4.148% (MAE) | Not Reported | [20] |

Recent research has demonstrated significant progress. One study utilizing the ResNet-50 architecture reported a strong correlation between DCNN-predicted values and manual assessments for progressive and immotile spermatozoa [19]. Another approach introduced a novel motion representation called MotionFlow, combined with transfer learning, achieving a mean absolute error of 6.842% for motility estimation, thereby outperforming previous state-of-the-art methods [20].

Experimental Protocols

Detailed Protocol: DCNN Motility Assessment with ResNet-50

This protocol outlines the procedure for training and validating a Deep Convolutional Neural Network, specifically the ResNet-50 architecture, to categorize sperm motility from video recordings according to WHO guidelines [19].

I. Materials and Equipment

- Microscope: Equipped with a heated stage (37°C), 400x magnification objective, and video camera.

- Semen Samples: Fresh ejaculates incubated at 37°C.

- Software: Python with deep learning libraries (e.g., TensorFlow, Keras), and computer vision libraries (OpenCV).

- Computing Hardware: Computer with a high-performance GPU (e.g., NVIDIA GTX series or equivalent) for accelerated model training.

II. Step-by-Step Procedure

Step 1: Video Acquisition and Preprocessing

- Prepare Sample: Place a fresh, liquefied semen sample on a pre-warmed microscope slide (37°C). Use a coverslip to create a wet preparation.

- Record Videos: 30-60 minutes post-collection, record multiple random fields of view. Ensure each video is 5-10 seconds long at a frame rate of 30 frames per second. The number of fields should allow for the assessment of at least 200 spermatozoa.

- Data Curation: Collect a dataset of video recordings, such as the 65 videos from the ESHRE-SIGA EQA Programme used in the foundational study [19].

Step 2: Optical Flow Calculation

- Extract Motion Features: For every second of video, compute the Lucas-Kanade optical flow. This process compresses the temporal movement information of spermatozoa across 30 frames into a single 2D image that represents motion vectors.

- Generate Input Data: Use the resulting optical flow images as the input to the DCNN. This step transforms the problem from a complex video analysis task to a more manageable image classification task.

Step 3: Model Architecture and Training

- Select Model: Implement the ResNet-50 architecture. Modify the final layer to include a Global Average Pooling layer and an output layer with nodes corresponding to the number of motility categories (3 or 4).

- Configure Training:

- Optimizer: Adam (learning rate = 0.0004).

- Loss Function: Mean Absolute Error (MAE).

- Validation: Employ a ten-fold cross-validation strategy. This involves splitting the dataset into 10 parts, training on 9, and validating on 1, repeating this process 10 times.

- Early Stopping: Halt training if the validation loss does not improve for 15 consecutive epochs to prevent overfitting.

Step 4: Model Validation and Statistical Analysis

- Compare Predictions: Calculate Pearson's correlation coefficient to assess the strength of the linear relationship between DCNN-predicted motility percentages and the mean manual assessments from reference laboratories.

- Evaluate Agreement: Use difference plots (Bland-Altman plots) to visualize the agreement between the two methods and identify any systematic biases.

- Benchmark Performance: Compare the model's MAE against a ZeroR baseline model to confirm that the DCNN has learned meaningful patterns.

Workflow Visualization

The following diagram illustrates the integrated experimental and computational pipeline for DCNN-based sperm motility analysis.

The Scientist's Toolkit: Research Reagent Solutions

Implementing a DCNN for sperm motility analysis requires a combination of biological, computational, and data resources.

Table 2: Essential Research Reagents and Resources

| Item Name | Specification / Brand | Function and Application Note |

|---|---|---|

| Video Dataset | ESHRE-SIGA EQA Programme Dataset [19] | Provides ground-truth labeled videos of sperm motility for training and validating DCNN models. |

| Deep Learning Framework | TensorFlow / Keras [19] | An open-source software library used for designing, training, and deploying the DCNN model. |

| Pre-trained Model | ResNet-50 [19] | A proven DCNN architecture for image classification; transfer learning from ImageNet can improve performance with limited data. |

| Optical Flow Algorithm | Lucas-Kanade Method [19] | Converts sequential video frames into a single 2D image representing sperm motion, simplifying the input for the DCNN. |

| Microscope with Heated Stage | Standard clinical microscope | Maintains sperm at physiological temperature (37°C) during video recording to preserve native motility characteristics. |

| Performance Metrics | Mean Absolute Error (MAE), Pearson Correlation [19] | Quantitative measures used to objectively benchmark the model's accuracy against manual assessments. |

Discussion and Future Perspectives

The adoption of DCNNs for WHO motility categorization represents a paradigm shift towards more standardized and scalable semen analysis. While current models like ResNet-50 show high agreement with manual assessments for progressive and immotile sperm, challenges remain in accurately classifying rapid progressive motility, which often exhibits greater inter-laboratory variation in the training data [19]. Future research directions should focus on the development of multi-task learning frameworks that can simultaneously estimate motility and morphology from the same input video [20] [33]. Furthermore, the creation of large, public, and meticulously annotated datasets will be crucial for training more robust and generalizable models, ultimately paving the way for their integration into routine clinical practice [30].

Accurate segmentation of sperm components is a critical technological process in male infertility diagnosis and assisted reproductive technologies. According to the World Health Organization, sperm morphology analysis provides crucial diagnostic information, with morphological abnormalities present in the head, neck, and tail regions correlating strongly with infertility issues [30]. Traditional manual sperm assessment methods are inherently subjective, time-consuming, and exhibit significant inter-observer variability, creating an urgent need for automated, objective solutions [30] [34].

Deep learning-based computer vision systems have emerged as promising alternatives to address these limitations. These systems can automatically segment distinct sperm components—including the head, acrosome, nucleus, neck/midpiece, and tail—enabling precise morphological analysis essential for clinical diagnosis and treatment selection [35] [36]. The accurate segmentation of these components is particularly important for intracytoplasmic sperm injection (ICSI) procedures, where embryologists must select the most viable sperm based on morphological integrity [35].

This application note provides a comprehensive comparison of three prominent deep learning architectures—Mask R-CNN, U-Net, and YOLO models—for multi-part sperm segmentation. We present quantitative performance evaluations, detailed experimental protocols, and practical implementation guidelines to assist researchers and clinicians in developing robust sperm analysis systems for reproductive medicine and drug development applications.

Quantitative Performance Comparison

Table 1: Comparative performance of deep learning models for sperm component segmentation

| Sperm Component | Model | IoU | Dice Coefficient | Precision | Recall | F1-Score |

|---|---|---|---|---|---|---|

| Head | Mask R-CNN | - | - | - | - | - |

| YOLOv8 | - | - | - | - | - | |

| U-Net | - | - | - | - | - | |

| Acrosome | Mask R-CNN | - | - | - | - | - |

| YOLO11 | - | - | - | - | - | |

| U-Net | - | - | - | - | - | |

| Nucleus | Mask R-CNN | - | - | - | - | - |

| YOLOv8 | - | - | - | - | - | |

| U-Net | - | - | - | - | - | |

| Neck/Midpiece | Mask R-CNN | - | - | - | - | - |

| YOLOv8 | - | - | - | - | - | |

| U-Net | - | - | - | - | - | |

| Tail | Mask R-CNN | - | - | - | - | - |

| YOLOv8 | - | - | - | - | - | |

| U-Net | - | - | - | - | - |

Note: Specific quantitative values from the search results are not provided in the excerpts. The table structure follows standard reporting format for segmentation metrics. Actual values should be populated from experimental results following the protocol implementation.

Performance Analysis by Sperm Component