Enhancing Sensitivity in Rare Fertility Outcomes Research: Methodologies for Detection, Analysis, and Clinical Translation

Research on rare fertility outcomes, such as successful pregnancies in cases of extreme male factor infertility or advanced maternal age with autologous oocytes, is hampered by data scarcity and methodological...

Enhancing Sensitivity in Rare Fertility Outcomes Research: Methodologies for Detection, Analysis, and Clinical Translation

Abstract

Research on rare fertility outcomes, such as successful pregnancies in cases of extreme male factor infertility or advanced maternal age with autologous oocytes, is hampered by data scarcity and methodological challenges. This article provides a comprehensive framework for researchers and drug development professionals to improve the sensitivity and reliability of their studies. We explore the foundational definitions and challenges of rare fertility events, detail advanced statistical and machine learning methods tailored for imbalanced datasets, address common troubleshooting and optimization strategies for predictive modeling, and outline robust validation and comparative analysis techniques. The synthesis of these approaches aims to accelerate the development of effective interventions and enhance the translatability of research findings into clinical practice.

Defining the Challenge: The Landscape and Impact of Rare Fertility Events

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides researchers with targeted guidance for investigating rare fertility outcomes. The following FAQs and troubleshooting guides address specific methodological challenges and are framed within the thesis of improving sensitivity in rare event research.

Troubleshooting Guide: Advanced Maternal Age (AMA) & Autologous Oocyte Research

Researcher Challenge: Achieving sufficient statistical power and meaningful outcomes in studies involving women of advanced maternal age using their own oocytes.

- FAQ: What are the key quantitative benchmarks for oocyte and embryo counts in AMA populations to optimize study design?

- Answer: Based on clinical data, the required number of oocytes and embryos to achieve optimal live birth rates increases significantly with maternal age. The table below summarizes key cut-off points for researchers to use when designing studies or evaluating cohort stratification [1].

Table 1: Oocyte and Embryo Benchmarks for Autologous IVF in Advanced Maternal Age (≥35 years) [1]

| Parameter | Age Group | Target Number for Optimal LBR/CLBR | Notes |

|---|---|---|---|

| Metaphase II (MII) Oocytes | ≥35 | 10-12 | Needed to reach optimal live birth rate (LBR) [1]. |

| Developed Embryos | ≥35 | 10-11 | Needed to reach optimal cumulative live birth rate (CLBR) [1]. |

| MII Oocytes for CLBR/oocyte | ≥35 | 9 | Optimal cumulative live birth rate per single oocyte retrieved [1]. |

| Euploid Embryo Potential | ≥43 | <5% | Chance of producing a chromosomally normal blastocyst is very low [1]. |

FAQ: Our study on AMA is underpowered due to low participant recruitment. How can we refine inclusion criteria?

- Answer: To improve sensitivity, strictly define your AMA cohort. Include only women with confirmed good ovarian reserve (e.g., FSH <15 mlU/mL and AMH >0.5 ng/mL and <4.0 ng/mL) to isolate the effect of age-related oocyte quality from diminished reserve [1]. Exclude confounding conditions like polycystic ovary syndrome (PCOS), hydrosalpinx, severe endometriosis, and untreated uterine pathology [1].

FAQ: What is a robust experimental protocol for an AMA autologous oocyte study?

- Answer: The following methodology provides a standardized framework [1]:

- Patient Selection: Recruit women ≥35 years meeting strict hormonal criteria (FSH <15 mlU/mL, AMH >0.5 ng/mL and <4.0 ng/mL) and excluding severe male factor and gynecological pathologies [1].

- Ovarian Stimulation: Implement a flexible GnRH antagonist protocol to control ovulation.

- Ovulation Trigger: Administer recombinant hCG when at least one dominant follicle ≥18 mm is observed alongside appropriate estradiol levels.

- Oocyte Retrieval & Preparation: Perform oocyte pick-up 36 hours post-trigger. Isolate and use only metaphase II (MII) oocytes for fertilization.

- Fertilization & Embryo Culture: Employ Intracytoplasmic Sperm Injection (ICSI) for fertilization. Culture embryos, with transfer typically on day 3. Surplus embryos should be cultured to the blastocyst stage (day 5/6) for vitrification [1].

- Embryo Transfer: Conduct both fresh and subsequent frozen embryo transfers (FET) of surplus vitrified embryos. Assess embryo quality based on blastomere number, regularity, fragmentation, and blastocyst morphology [1].

- Outcome Measurement: The primary endpoint should be cumulative live birth rate (CLBR) per initiated cycle, including all subsequent frozen transfers, to capture the full outcome of a single stimulation cycle [1].

- Answer: The following methodology provides a standardized framework [1]:

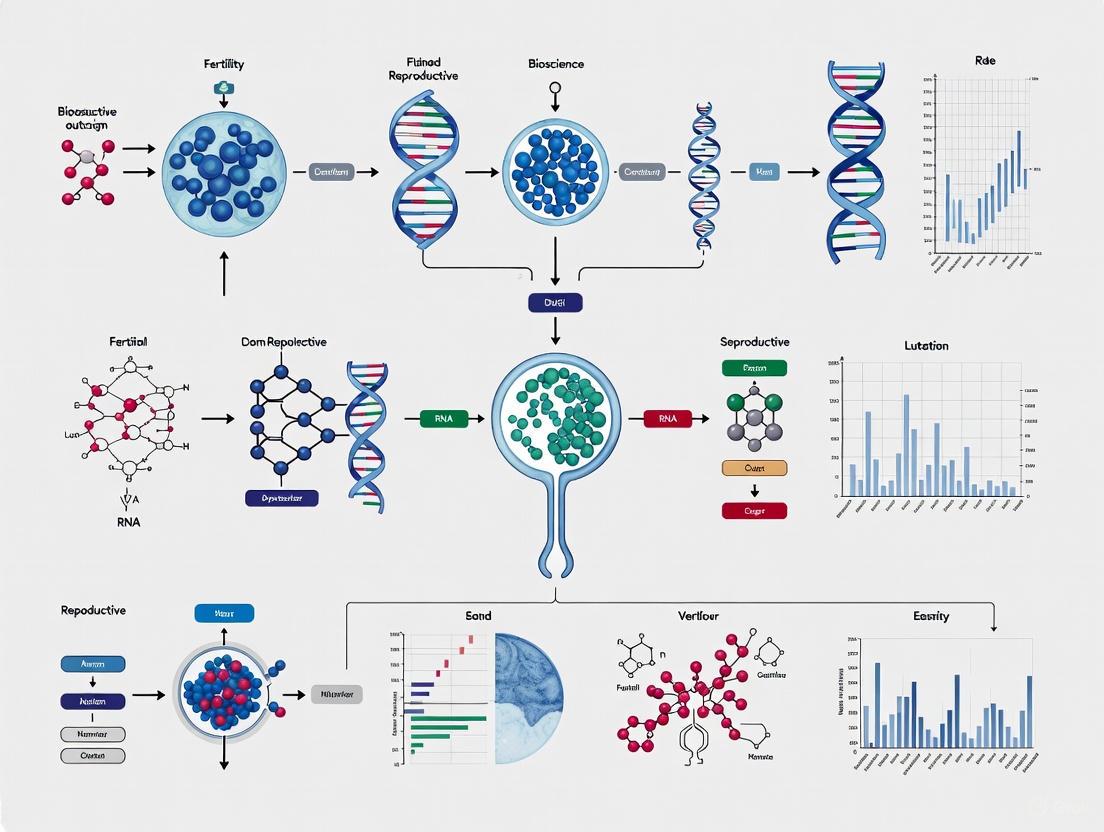

AMA Autologous Oocyte Research Workflow

Troubleshooting Guide: Severe Male Factor (SMF) Infertility Research

Researcher Challenge: Accounting for the impact of severe male factor infertility on IVF outcomes, particularly when compounded by female factors like diminished ovarian reserve.

- FAQ: How is Severe Male Factor (SMF) infertility definitively categorized for research purposes?

Table 2: Classification and Prevalence of Severe Male Factor Infertility [2]

| Category | Definition | Prevalence |

|---|---|---|

| Severe Oligozoospermia | Sperm concentration <5 million per ml of ejaculate. | Part of the 20-70% of infertility cases with a male factor [2]. |

| Cryptozoospermia | Spermatozoa absent in fresh sample but found in pellet after centrifugation. | --- |

| Azoospermia | Complete absence of spermatozoa in the ejaculate. | 1% of general male population; 10-15% of infertile male population [2]. |

| Obstructive Azoospermia (OA) | Azoospermia due to post-testicular blockage (e.g., CBAVD). | ~40% of azoospermia cases [2]. |

| Non-Obstructive Azoospermia (NOA) | Azoospermia due to testicular failure (e.g., genetic, cryptorchidism). | ~60% of azoospermia cases [2]. |

FAQ: Does severe male factor infertility, like azoospermia, affect embryo ploidy or implantation potential?

- Answer: A critical finding for researchers is that azoospermia itself does not appear to impair the euploidy rate at the blastocyst stage nor the implantation potential of euploid blastocysts [2]. This suggests the main challenge in SMF is generating blastocysts, and outcome disparities are likely due to correlated female factors (e.g., age, ovarian reserve).

FAQ: What is a standard experimental protocol for a study on SMF and ICSI outcomes?

- Answer: A comprehensive protocol must account for sperm retrieval and precise laboratory techniques [2]:

- Diagnostic & Stratification:

- Sperm Acquisition:

- Obstructive Azoospermia: Use Percutaneous Epididymal Sperm Aspiration (PESA) or Microsurgical Epididymal Sperm Aspiration (MESA).

- Non-Obstructive Azoospermia: Perform Microdissection Testicular Sperm Extraction (mTESE).

- Ovarian Stimulation & Oocyte Retrieval: Conduct controlled ovarian stimulation in the female partner, followed by transvaginal oocyte retrieval.

- ICSI & Embryo Culture: Fertilize all mature (MII) oocytes via ICSI using retrieved or ejaculated sperm. Culture resulting embryos to the blastocyst stage (day 5/6).

- Preimplantation Genetic Testing (Optional): For studies focusing on ploidy, perform PGT-A on trophectoderm biopsies.

- Embryo Transfer & Outcome Measurement: Transfer euploid or best-quality blastocysts. Measure outcomes like fertilization rate, blastocyst formation rate, and live birth rate, analyzing data in the context of both male and female factors.

- Answer: A comprehensive protocol must account for sperm retrieval and precise laboratory techniques [2]:

Severe Male Factor Research Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Rare Fertility Outcomes Research

| Research Reagent / Material | Function / Application |

|---|---|

| Anti-Müllerian Hormone (AMH) & FSH Assays | Quantifying ovarian reserve for precise patient stratification in AMA studies [1]. |

| GnRH Antagonants (e.g., Ganirelix, Cetrorelix) | Used in flexible ovarian stimulation protocols to prevent premature luteinizing hormone surges [1]. |

| Recombinant Human Chorionic Gonadotropin (r-hCG) | Triggers final oocyte maturation in stimulation cycles [1]. |

| Intracytoplasmic Sperm Injection (ICSI) Pipettes | Essential micromanipulation tools for fertilizing oocytes, especially in SMF research where sperm count/motility is critically low [1] [2]. |

| Blastocyst Vitrification Media Kits | For cryopreserving surplus embryos, enabling the measurement of cumulative live birth rates from a single stimulation cycle [1]. |

| Preimplantation Genetic Testing for Aneuploidy (PGT-A) | A critical reagent/kit for investigating the relationship between maternal age or sperm source and embryo chromosomal status [1] [2]. |

| Microsurgical TESE (mTESE) Equipment | Specialized surgical tools for retrieving sperm from the testes of men with Non-Obstructive Azoospermia [2]. |

| Computer-Assisted Semen Analysis (CASA) / Andrologist | For accurate and standardized assessment of sperm parameters according to WHO guidelines; the human andrologist is considered more accurate for complex cases [3]. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our study on a rare fertility intervention failed to show a significant effect, despite a strong clinical hypothesis. The statistical reviewer noted our study was "underpowered." What does this mean, and how could we have avoided it?

A: An "underpowered" study means your sample size was too small to detect a true effect of the intervention, even if it exists. In rare fertility outcomes, this is a common challenge. To avoid this:

- Perform an A Priori Power Analysis: Before enrolling any patients, conduct a power analysis to determine the sample size required to detect a clinically meaningful difference in your primary endpoint (e.g., live birth rate). For rare outcomes, this often reveals the need for multi-center collaborations to achieve sufficient numbers [4].

- Justify the Minimal Important Difference: Pre-specify and justify the smallest improvement in live birth rates that would be considered clinically worthwhile. A smaller difference will require a much larger sample size [5].

- Consider Alternative Trial Designs: Explore adaptive designs or Bayesian methods that can be more efficient with limited patient populations.

Q2: We have collected data on multiple treatment cycles per woman in our fertility study. Our statistician warns about a "unit of analysis" error. What is the problem, and how do we correctly analyze this data?

A: A "unit of analysis" error occurs when you treat multiple observations from the same patient (e.g., several embryos, multiple treatment cycles) as statistically independent. This violates a core assumption of standard tests like t-tests or chi-square tests and artificially inflates your sample size, leading to falsely narrow confidence intervals and unreliable p-values [5]. The correct approach is to use statistical methods that account for this clustering:

- Use Cluster-Robust Statistical Models: Employ generalized estimating equations (GEE) or mixed-effects models (e.g., random-intercept logistic regression). These models specifically account for the correlation between repeated measurements within the same individual [6].

- Choose the Right Unit: In many cases, the most straightforward solution is to define the outcome per woman (e.g., "live birth per woman randomized") rather than per cycle or per embryo [4].

Q3: When analyzing our rare fertility outcome, our logistic regression model with GEE failed to converge. What are the likely causes, and what are the alternative analytical strategies?

A: Non-convergence in logistic regression with GEE is a classic problem when analyzing rare, correlated events. It is often caused by complete or quasi-complete separation—when the rare event occurs only in, or entirely avoids, one level of an exposure group [6]. Alternatives include:

- The Two-Step Additive-Permutation Method: First, use an additive (linear) model to estimate the risk difference, which converges more readily with rare outcomes. Then, use a permutation test—which shuffles patient exposure status while preserving their within-patient correlation structure—to obtain a valid empirical p-value [6].

- Exact Methods: For specific scenarios, exact conditional logistic regression can be used, though it can be computationally complex [6].

- Bayesian Methods: These incorporate prior knowledge through weakly informative priors to stabilize model estimation [6].

Q4: To get our fertility study published, we were asked to specify a "primary outcome." Why is this so important, and what is the consequence of using surrogate outcomes instead of live birth?

A: Prespecifying a single primary outcome is a cornerstone of robust research design. It prevents multiple testing and selective outcome reporting, where researchers inadvertently (or intentionally) fish for a statistically significant result among many measured outcomes [4]. The consequence of flexible outcomes is a high chance of a false-positive finding.

- Live Birth as the Gold Standard: While surrogate outcomes like biochemical pregnancy or embryo quality are useful for understanding mechanisms, they are not reliable predictors of the ultimate patient-centered goal: a live-born baby. Relying on surrogates can lead to adopting interventions that appear to improve intermediate steps but do not actually increase live birth rates [4].

- Solution: Pre-register your study protocol, explicitly naming live birth as the primary outcome. All other outcomes should be clearly labeled as secondary or exploratory [4].

Troubleshooting Statistical Flaws in Study Design

Table 1: Common Statistical Flaws and Methodological Corrections in Rare Fertility Research

| Flaw Category | Common Manifestation | Consequence | Recommended Correction |

|---|---|---|---|

| Study Design | Using a crossover design for a fertility treatment where pregnancy ends the observation period [5]. | Statistical carry-over effects make results uninterpretable. | Use a parallel-group design instead. |

| Patient Selection | Failing to balance treatment groups for important prognostic factors like the number of previous IVF attempts [5]. | Confounding; observed effects may be due to baseline imbalance rather than the treatment. | Use stratified randomization or statistical adjustment (e.g., regression) for key prognostic factors. |

| Unit of Analysis | Analyzing pregnancy outcomes per embryo transferred, rather than per woman randomized [5]. | Overly optimistic precision and risk of false-positive conclusions. | Analyze data per randomized woman using methods that account for clustering (e.g., GEE). |

| Primary Endpoint | Reporting multiple primary outcomes (e.g., fertilization rate, clinical pregnancy, live birth) without prespecification [4]. | High probability of a false-positive finding due to multiple testing. | Pre-specify a single primary outcome (preferably live birth) in a registered protocol. |

| Outcome Definition | Using non-standard or multiple definitions for an outcome (e.g., 7 different definitions of "live birth") [4]. | Inability to compare or synthesize results across studies; selective reporting. | Adopt core outcome sets (e.g., CONSORT) and standard definitions consistently. |

Detailed Experimental Protocols

Protocol 1: Implementing the Two-Step Additive-Permutation Method for Correlated Rare Events

This protocol is for analyzing the effect of a binary exposure (e.g., a specific genetic marker) on a rare, recurring fertility event (e.g., miscarriage) in a longitudinal cohort [6].

Step 1: Data Preparation

- Structure your dataset in a long format where each row represents a patient-visit.

- Variables needed:

PatientID,VisitNumber,BinaryOutcome(0/1),BinaryExposure(0/1).

Step 2: Estimate the Risk Difference using an Additive Model

- Fit a linear regression model with an independent working correlation structure using Generalized Estimating Equations (GEE).

- Model:

P(Y_ij = 1) = β_0 + β_1 * x_i - Here,

β_1is the risk difference (P_1 - P_0), representing the difference in the proportion of events between the exposed and unexposed groups across all visits.

Step 3: Perform the Permutation Test

- Compute the observed risk difference,

β_1_observed, from Step 2. - For k = 1 to N (where N is a large number, e.g., 10,000):

a. Randomly shuffle the

BinaryExposurevariable among thePatientIDs. This preserves the within-patient correlation structure of the outcomes. b. For the permuted dataset, recalculate the risk difference,β_1_permuted_k. - The empirical two-sided p-value is calculated as:

p-value = [Number of times ( |β_1_permuted_k| >= |β_1_observed| )] / N - This p-value provides a non-parametric test of the null hypothesis that the exposure is not associated with the outcome.

- Compute the observed risk difference,

Protocol 2: Core Protocol for a Randomized Trial of a Fertility Intervention

This protocol emphasizes methodological safeguards for robust results [4] [5].

- Registration: Prospectively register the trial on a public platform (e.g., ClinicalTrials.gov) with a detailed statistical analysis plan.

- Population & Randomization: Clearly define inclusion/exclusion criteria. Use a computerized random number generator for treatment allocation, with stratification for key prognostic factors (e.g., age, BMI).

- Primary Outcome: Explicitly define live birth as the primary outcome. The definition should include the gestational age threshold (e.g., ≥24 weeks). Live birth is defined as the delivery of one or more live babies after a specified gestation period [4].

- Analysis Principle: Commit to the Intention-to-Treat (ITT) principle, analyzing all randomized patients in the groups to which they were originally assigned [5].

- Blinding: Implement double-blinding (patient and outcome assessor) where feasible. If not possible, acknowledge the potential for bias.

Visualizing Methodological Pathways and Relationships

Research Challenges and Solutions Pathway

Analytical Method Decision Guide

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Methodological and Data Resources for Rare Outcomes Research

| Tool / Resource | Category | Primary Function | Application in Rare Fertility Research |

|---|---|---|---|

| Generalized Estimating Equations (GEE) | Statistical Model | Fits regression models for correlated longitudinal data, providing population-average estimates. | Models the effect of an intervention on outcomes measured over multiple treatment cycles per woman [6]. |

| Permutation Tests | Statistical Method | Provides non-parametric, distribution-free p-values by empirically simulating the null hypothesis. | Validates significance when model assumptions fail due to rare events and small samples [6]. |

| Registered Reports | Publication Format | Peer review of study methods before data collection; in-principle acceptance regardless of result. | Eliminates publication bias and HARKing (Hypothesizing After the Results are Known) in underpowered studies [4]. |

| RARE-X / Open Science Platforms | Data Repository | A patient-owned, open-science platform for standardized collection and sharing of rare disease data. | Enables pooling of data across institutions to achieve statistically viable sample sizes for analysis [7]. |

| Generative AI (GANs/VAEs) | Data Synthesis | Learns from real-world data (RWD) to generate realistic, synthetic patient datasets. | Creates augmented cohorts or synthetic control arms to power clinical trials and predictive models [8]. |

| Core Outcome Sets (COS) | Standardization | An agreed-upon minimum set of outcomes to be measured and reported in all clinical studies in a field. | Ensures consistency and comparability across studies, e.g., mandating live birth reporting in fertility trials [4]. |

Technical FAQs: Investigating Extremely Advanced Maternal Age (EAMA) Pregnancies

FAQ 1: What is the typical quantitative outlook for live birth in women ≥46 using autologous oocytes? The probability of live birth for women at the extremes of reproductive age using their own oocytes is exceptionally low. One large single-center report documented a live birth rate of just 1 in 268 cycles (0.37%) [9]. Another analysis estimates the overall probability at 0.3% [9] [10]. These outcomes are rare, with only six documented cases of live birth at age 46 reported in the literature before the 2023 case we analyze [9].

FAQ 2: What are the primary biological challenges in achieving pregnancy with autologous oocytes in EAMA? The central challenges are the age-related decline in both the quantity and quality of oocytes [9]. This leads to a double detriment: decreased fecundity rates and a significantly increased risk of miscarriage, largely due to rising rates of chromosomal abnormalities [9]. The molecular mechanisms underpinning this decline are complex and include telomere shortening, mitochondrial dysfunction, and errors in meiotic recombination [9].

FAQ 3: How does the ovarian reserve of a patient achieving a rare live birth compare to the expected population average? In the documented 2023 case, the patient, at age 45, had an Antral Follicle Count (AFC) of 5 and an Anti-Müllerian Hormone (AMH) level of 3.5 pmol/L [9]. While this indicates diminished ovarian reserve consistent with her age, the values are not at the absolute lowest end of the spectrum, allowing for a response to stimulation. The retrieval of 6 oocytes from 5 antral follicles demonstrates a successful response.

FAQ 4: What critical methodological considerations exist for analyzing rare outcomes like EAMA live births? Research in this area is often subject to outcome truncation, where a study outcome (e.g., birthweight) is only defined in a subset of the initial cohort (e.g., those who give birth) [11]. Analyzing data only within this subgroup, especially when the treatment influences the probability of being in that subgroup, can introduce selection bias and compromise the randomness of the trial [11]. Standard statistical analyses in these contexts may be biased, particularly in small studies [11].

Troubleshooting Guide: Protocol Challenges and Solutions

| Challenge | Symptom | Solution & Rationale |

|---|---|---|

| Poor Oocyte Yield | Low antral follicle count (AFC), low AMH, few oocytes retrieved. | Use a high-dose stimulation protocol (e.g., 450 IU of rFSH + rLH). Rationale: Maximizes response in a context of severely diminished ovarian reserve [9]. |

| Low Fertilization Rate | Mature (Metaphase II) oocytes fail to fertilize normally. | Employ Intracytoplasmic Sperm Injection (ICSI). Rationale: Ensures sperm entry, bypassing potential zona pellucida issues which may be exacerbated by age or cryopreservation [9] [12]. |

| Poor Embryo Development | Embryos arrest before the blastocyst stage. | Utilize blastocyst culture. Rationale: Allows for self-selection of viable embryos, potentially identifying those with the highest implantation potential, even in EAMA cases [9]. |

| Implantation Failure | High-quality blastocysts fail to implant. | Use Embryo Glue (a high-concentration hyaluronan transfer medium). Rationale: May improve embryo-endometrial interaction and adhesion during the transfer procedure [9]. |

| Luteal Phase Deficiency | Short luteal phase, low mid-luteal progesterone, early pregnancy loss. | Implement progesterone luteal phase support. Rationale: Exogenous progesterone (e.g., Crinone 90 mg twice daily) compensates for potential corpus luteum insufficiency, supporting endometrial receptivity and early pregnancy maintenance [9] [13]. |

Experimental Protocol: Key Methodology from a Documented Live Birth

The following workflow details the successful protocol from the 2023 case report of a live birth in a 46-year-old woman [9].

Detailed Protocol Steps:

- Patient Preparation & Ovarian Reserve Assessment: The patient was 45 years old with secondary infertility. Baseline assessment confirmed an AFC of 5 and an AMH of 3.5 pmol/L [9].

- Stimulation Protocol (Short Flare): Initiated on cycle day 2 with a GnRH agonist (Nafarelin, 200 mcg twice daily). Ovarian stimulation began on cycle day 3 with a high dose of 450 IU per day of rFSH + rLH (Pergoveris) [9].

- Monitoring & Triggering: Follicle development was monitored via transvaginal ultrasound. On stimulation day 8, six developing follicles were noted. Final oocyte maturation was triggered with 250 μg of recombinant hCG [9].

- Oocyte Retrieval and Fertilization: Oocyte retrieval was performed 37 hours post-trigger, yielding 6 oocytes. Four were mature (Metaphase II) and underwent ICSI. Three oocytes demonstrated normal fertilization [9].

- Embryo Culture and Transfer: The three fertilized oocytes were cultured. Two developed into blastocysts by day 5. A fresh single blastocyst transfer was performed with a 4AA-graded embryo using Embryo Glue. The second blastocyst (4AB) was cryopreserved [9].

- Luteal Phase and Pregnancy Support: Luteal phase support was provided with progesterone gel (Crinone 90 mg twice daily) starting 2 days after retrieval [9]. A biochemical pregnancy was confirmed 9 days post-transfer (β-hCG 252 IU). The pregnancy progressed to a live birth at 39 weeks and 4 days via Caesarean section [9].

Research Reagent Solutions

The following table details key materials and reagents used in the documented successful protocol for EAMA autologous IVF [9].

| Reagent / Material | Function in the Protocol |

|---|---|

| GnRH Agonist (Nafarelin) | Initiates a "flare" effect to stimulate the pituitary gland, supporting the onset and progression of ovarian stimulation. |

| rFSH + rLH (Pergoveris) | Recombinant hormones used for controlled ovarian stimulation to promote the growth and development of multiple follicles. |

| Recombinant hCG (Ovidrel) | Mimics the natural LH surge to trigger the final maturation and ovulation of the developed oocytes. |

| Intracytoplasmic Sperm Injection (ICSI) | A specialized technique to fertilize a mature oocyte by injecting a single sperm directly into its cytoplasm, crucial for overcoming potential fertilization barriers. |

| Blastocyst Culture Medium | A specialized sequential culture system that supports embryo development from day 3 to the blastocyst stage (day 5/6). |

| Embryo Glue (high [HA]) | An embryo transfer medium enriched with hyaluronan, which may improve embryo-endometrial interaction and implantation rates. |

| Progesterone Gel (Crinone) | Provides hormonal support to the endometrium during the luteal phase, creating a receptive environment for implantation and supporting early pregnancy. |

Signaling Pathway: Hormonal Regulation in a Stimulated IVF Cycle

FAQs: Investigating Idiopathic Male Infertility

1. What defines a case of idiopathic male infertility in a research context?

Idiopathic male infertility is clinically defined as infertility where no specific aetiology can be found despite a detailed clinical examination, standard semen analysis, and endocrine evaluation [14] [15]. For researchers, this represents a complex, multi-factorial disorder where the underlying molecular and cellular mechanisms remain unknown [16]. It accounts for a significant portion of cases, with estimates suggesting around 30% of male infertility is idiopathic [14].

2. What are the primary technical challenges in modelling idiopathic infertility for drug discovery?

A major challenge is the significant heterogeneity of spermatozoa, both between individuals and between ejaculates from the same person [15]. This variability makes it difficult to establish reproducible experimental models. Furthermore, traditional animal models are limited due to profound species-specific variations in sperm morphology and function, such as the structural and genetic differences in the CatSper calcium ion channel between mice and humans [15]. The absence of a defined aetiology or known molecular targets also restricts the development of targeted drug interventions [15].

3. Which advanced sperm function tests are moving beyond the standard semen analysis for deeper mechanistic insights?

Standard semen analysis has limited ability to assess true sperm function. Research is increasingly focusing on tests for [14] [15]:

- Sperm DNA Fragmentation (SDF): Recognized as an "extended examination" in the WHO 6th edition manual [14].

- Oxidative Stress (OS): Measured via oxidation-reduction potential (ORP); the term Male Oxidative Stress Infertility (MOSI) has been proposed for a subset of idiopathic cases [14].

- Molecular and Functional Assays: Including assessments of capacitation, acrosomal reaction, hyperactivation, and cell signalling pathways [15].

- Epigenetic Tests: Evaluating DNA methylation, histone modifications, and seminal microRNA profiles [14].

4. How can researchers account for male factor heterogeneity in study design to improve sensitivity for rare outcomes?

To manage heterogeneity and improve sensitivity for rare fertility outcomes, researchers should:

- Implement Computer-Assisted Semen Analysis (CASA): This technology can objectively classify ejaculate subpopulations based on specific kinematic parameters [15].

- Utilize High-Throughput Screening (HTS): This allows for the rapid testing of thousands of sperm samples or chemical compounds to observe effects, helping to navigate biological variability [15].

- Adopt Strict Protocols: Control for sample collection methods, abstinence periods, and processing timelines to minimize pre-analytical variability [15] [3].

- Incorporate Multi-Omics Approaches: Combine genomic, proteomic, and epigenomic data to stratify idiopathic cases into more homogenous subgroups for analysis [16] [14].

Troubleshooting Guides for Common Experimental Hurdles

Guide 1: Inconsistent Results in Sperm Functional Assays

- Problem: High intra- and inter-individual variability in assay outcomes.

- Potential Cause: Biological fluctuation and the statistical phenomenon of "regression to the mean" [15].

- Solution:

- Increase sample size to power studies adequately for expected variability.

- Collect multiple baseline measurements from the same subject to establish a reliable mean.

- Use each subject as their own control in longitudinal studies where feasible.

- Standardize all procedures from sample collection to analysis across all experimental runs [15] [3].

Guide 2: Translating In Vitro Findings to Clinical Relevance

- Problem: Promising in vitro results fail to correlate with improved fertility outcomes.

- Potential Cause: Over-reliance on surrogate markers (e.g., count, motility) that do not fully capture fertilization competence [15].

- Solution:

- Develop and validate a panel of functional tests that more closely reflect the steps of fertilization (e.g., capacitation, zona binding, DNA integrity).

- Move beyond traditional semen analysis parameters to investigate molecular and epigenetic endpoints [16] [14].

- In drug screening, employ phenotypic assays that directly measure the desired functional outcome rather than just a single parameter [15].

Quantitative Data in Idiopathic Male Infertility Research

Table 1: Prevalence of Cytogenetic Anomalies in Male Infertility

This table summarizes the prevalence of karyotype anomalies in men with infertility, highlighting a key biological factor often associated with idiopathic presentations. Data adapted from a recent review [17].

| Patient Population | Prevalence of Karyotype Anomalies | Common Anomalies Identified |

|---|---|---|

| Men with infertility (overall) | ~6% | Klinefelter syndrome, sex chromosome aneuploidies, structural defects |

| Men with non-obstructive azoospermia | Increased (Specific % not listed) | Klinefelter syndrome, Y-chromosome microdeletions |

| Men with severe oligozoospermia (<5 million sperm/mL) | Increased (Specific % not listed) | Structural chromosomal defects (translocations, inversions) |

| Men with sperm counts <20 million/mL | Increased compared to fertile men | Various numerical and structural anomalies |

| Men with normozoospermia | Present in a subset | Often structural anomalies impacting reproductive function |

Table 2: Impact of Lifestyle Factors on Semen Parameters

This table outlines the quantitative impact of various lifestyle factors on male fertility, which can inform the stratification of idiopathic cases in research cohorts. Data synthesized from multiple sources [14] [18].

| Factor | Impact on Semen Parameters | Proposed Mechanism |

|---|---|---|

| Smoking | Significantly lower total sperm count (e.g., 139M vs. 103M in one study) [14]. | Introduces oxidative stress and toxicants [14]. |

| Obesity | Altered semen parameters; correlation with mutated sperm DNA methylation [14]. | Hormonal dysregulation (increased estradiol, leptin) and inflammation [14]. |

| E-Cigarette Use | Significantly lower total sperm count (e.g., 147M vs. 91M in one study) [14]. | Similar oxidative stress pathways as traditional smoking [14]. |

| Advanced Paternal Age | Increased time to pregnancy for men ≥40 years [18]. | Accumulation of genetic and epigenetic alterations in sperm [18]. |

Experimental Protocols for Investigating Idiopathic Infertility

Protocol 1: Assessing Sperm DNA Fragmentation (SDF) via TUNEL Assay

Principle: The Terminal deoxynucleotidyl transferase dUTP Nick End Labeling (TUNEL) assay detects DNA strand breaks in sperm, a key marker of genomic integrity.

Reagents:

- Paraformaldehyde (4%)

- Permeabilization buffer (0.1% Triton X-100 in 0.1% sodium citrate)

- TUNEL reaction mixture (enzyme and labeled nucleotides)

- Phosphate-buffered saline (PBS)

- Propidium iodide or DAPI for counterstaining

Procedure:

- Sperm Wash: Wash raw semen sample with PBS to remove seminal plasma. Centrifuge at 500 x g for 5 minutes.

- Fixation: Resuspend sperm pellet in 4% paraformaldehyde for 1 hour at room temperature.

- Permeabilization: Wash cells, then incubate in permeabilization buffer for 2 minutes on ice.

- Labeling: Incubate fixed and permeabilized sperm in the TUNEL reaction mixture for 1 hour at 37°C in a dark, humidified chamber. Include a positive control (e.g., DNase-treated sample) and a negative control (no enzyme).

- Analysis: Wash cells and analyze by flow cytometry or fluorescence microscopy. A minimum of 200 sperm per sample should be scored. SDF >15-20% is often considered clinically significant.

Troubleshooting: High background can be reduced by optimizing permeabilization time and ensuring thorough washing after fixation [14].

Protocol 2: Measuring Seminal Oxidative Stress via Oxidation-Reduction Potential (ORP)

Principle: A bench-top ORP analyzer provides a static measurement of the overall redox state in a semen sample, indicating the balance between oxidants and antioxidants.

Reagents:

- None; proprietary equipment and software are used.

Procedure:

- Sample Preparation: Liquefy semen sample at 37°C for 20-30 minutes.

- Instrument Calibration: Calibrate the ORP analyzer according to the manufacturer's instructions using provided standards.

- Measurement: Load a specific volume of liquefied semen into the sample chamber. The analyzer directly measures the electron flow between a reference and a working electrode, providing an ORP value in mV.

- Interpretation: Higher positive ORP values indicate higher oxidative stress. The specific diagnostic thresholds for MOSI are defined by the analyzer's validated reference ranges [14].

Troubleshooting: Ensure the sample is analyzed promptly after liquefaction to prevent artifactual changes in ORP. Strict adherence to the manufacturer's protocol for sample volume and handling is critical for reproducibility [14].

Research Reagent Solutions for Male Infertility Studies

Table 3: Essential Reagents and Kits for Sperm Function Analysis

| Research Reagent / Kit | Primary Function in Experimentation |

|---|---|

| Computer-Assisted Semen Analysis (CASA) System | Provides objective, high-throughput kinematic analysis of sperm concentration, motility, and morphology, identifying subpopulations [15]. |

| Oxidation-Reduction Potential (ORP) Analyzer | Measures overall seminal oxidative stress, a key driver of Male Oxidative Stress Infertility (MOSI), from a single, direct measurement [14]. |

| Sperm DNA Fragmentation (SDF) Detection Kits (e.g., TUNEL, SCSA, SCD) | Quantify the level of sperm DNA damage, a crucial parameter beyond standard semen analysis that correlates with fertility outcomes [14]. |

| Antibody Panels for Flow Cytometry (e.g., for apoptotic markers, surface proteins) | Enable the characterization of specific sperm phenotypes and the detection of biomarkers associated with infertility at a single-cell level [16] [15]. |

| Whole Exome/Genome Sequencing Kits | Facilitate the identification of novel genetic variants and mutations underlying idiopathic infertility, allowing for improved patient stratification [14]. |

Signaling Pathways and Diagnostic Workflows

Diagnostic Workflow for Idiopathic Infertility

The following diagram outlines a systematic, multi-level diagnostic and research workflow for investigating idiopathic male infertility, moving from basic assessment to advanced molecular analysis.

Multi-factorial Nature of Idiopathic Infertility

This diagram conceptualizes the complex interplay of genetic, environmental, and molecular factors that contribute to idiopathic male infertility, illustrating why it is considered a multi-factorial disorder.

Advanced Analytical Frameworks for Rare Event Detection and Prediction

Frequently Asked Questions (FAQs)

1. What are the main statistical challenges when studying rare fertility outcomes? Researchers studying rare fertility outcomes, such as specific infertility causes or particular drug reactions, often face significant statistical challenges. Classical methods like standard logistic regression frequently fail when dealing with risk factors with extremely low prevalence (below 0.1%). These methods may not converge at all, produce biased coefficient estimates, or yield extremely wide confidence intervals, leading to a substantial loss of statistical power and accuracy. In count data scenarios, such as the number of children ever born, standard Poisson regression fails when there are more zeros than expected, violating its fundamental distributional assumptions [19] [20].

2. When should I consider using penalized regression methods over standard logistic regression? Penalized regression methods should be your primary consideration when analyzing risk factors with prevalences below 0.1%, when you encounter complete or quasi-complete separation in your data, or when the maximum likelihood estimation in logistic regression fails to converge. These methods are particularly valuable in low-dimensional settings (where the number of variables is not extremely large) with rare exposures. Research has demonstrated that Firth correction and boosting provide particularly strong improvements for ultra-rare prevalences, while the lasso and ridge regression also offer substantial benefits over standard approaches [19].

3. How do I choose between zero-inflated and hurdle models for count fertility data? The choice depends on the nature of the excess zeros in your dataset and the underlying data-generating mechanism. Zero-inflated models (like ZIP and ZINB) are appropriate when your data contains two types of zeros: "structural zeros" (individuals who cannot experience the event) and "sampling zeros" (individuals who might have experienced the event but didn't during the study period). Hurdle models are better suited when all zeros are considered structural, representing a single process that must be "crossed" before positive counts are observed. Model selection should be based on information criteria (AIC/BIC), with differences greater than 10 indicating clear superiority of one model [20] [21].

4. Can these advanced methods handle correlated rare events in longitudinal fertility studies? Yes, specialized methods exist for correlated rare events in longitudinal studies. When using generalized estimating equations (GEE) for correlated binary data with rare events, conventional methods often fail to converge. A robust two-step approach combines an additive model (linear regression) to measure associations, followed by a permutation test to estimate statistical significance. This method maintains the correlation structure within subjects while providing reliable inference for rare, recurrent events, such as repeated pregnancy complications or adverse drug reactions [6].

Comparison of Statistical Methods for Rare Outcomes

Table 1: Penalized Regression Methods for Rare Binary Outcomes

| Method | Key Mechanism | Best Use Cases | Advantages | Limitations |

|---|---|---|---|---|

| Firth Correction | Penalizes based on Fisher information matrix | Ultra-rare prevalences (<0.1%), small sample sizes | Prevents separation issues, reduces bias | Computational intensity for large datasets |

| LASSO | L1 penalty (sum of absolute coefficients) | Variable selection with rare exposures | Simultaneous estimation and selection | May overselect variables in high dimensions |

| Ridge Regression | L2 penalty (sum of squared coefficients) | Correlated predictors, rare outcomes | Stable estimates with multicollinearity | No inherent variable selection |

| Boosting | Sequential building of weak predictors | Low-prevalence risk factors, imbalanced data | Strong performance with complex patterns | Computational complexity, tuning parameters |

Table 2: Models for Zero-Inflated Count Data in Fertility Research

| Model | Data Structure | Distribution | Variance Handling | Regional CEB Zero Percentage* |

|---|---|---|---|---|

| Poisson (P) | Standard counts | Poisson | Equal mean and variance | Not recommended for excess zeros |

| Negative Binomial (NB) | Overdispersed counts | Negative Binomial | Variance > Mean | North West: 21.3% |

| Zero-Inflated Poisson (ZIP) | Two types of zeros | Poisson mixture | Handles excess zeros | South West: 30.7% |

| Zero-Inflated Negative Binomial (ZINB) | Overdispersed with excess zeros | NB mixture | Variance > Mean + excess zeros | South South: 42.4% |

| Hurdle Poisson (HP) | All zeros are structural | Poisson truncation | Models zero process separately | South East: 37.6% |

| Hurdle Negative Binomial (HNB) | Overdispersed with structural zeros | NB truncation | Handles overdispersion and zeros | North East: 23.9% |

*Percentage of zero count in Children Ever Born (CEB) responses across Nigerian regions, demonstrating varying zero inflation requiring different modeling approaches [20].

Experimental Protocols & Methodologies

Protocol 1: Implementing Firth-Corrected Logistic Regression for Rare Exposures

Purpose: To obtain reliable risk estimates for rare exposures (prevalence <0.1%) in fertility research.

Materials and Software: R statistical environment with logistf package or SAS with FIRTH option in PROC LOGISTIC.

Procedure:

- Data Preparation: Code binary outcome variable (e.g., 1=infertility diagnosis, 0=fertile) and rare exposure variables

- Model Specification: Fit logistic regression with Firth penalty using modified score function:

ℓ_Firth(β) = ℓ(β) + ½log(detI(β))whereI(β)is the Fisher information matrix - Effect Estimation: Obtain penalized maximum likelihood estimates for regression coefficients

- Variable Selection: Perform backward elimination using p-value threshold of 0.157 (equivalent to AIC-based selection)

- Validation: Compare results with standard logistic regression to assess improvement in coefficient stability [19]

Troubleshooting: If model fails to converge, check for complete separation using contingency tables and consider increasing the number of maximum iterations in optimization algorithm.

Protocol 2: EM Adaptive LASSO for Zero-Inflated Count Phenotypes

Purpose: To detect SNP associations with zero-inflated count phenotypes (e.g., number of offspring, pregnancy losses) while handling multicollinearity.

Materials: Genetic dataset with SNP information, zero-inflated count phenotype, R with pscl and glmnet packages.

Procedure:

- Initial Data Screening: Assess zero inflation using descriptive statistics and distribution plots

- Model Specification: Implement Zero-Inflated Negative Binomial (ZINB) model with two components:

- Count component: Negative binomial distribution for positive counts

- Zero-inflation component: Logistic distribution for excess zeros

- EM Algorithm Implementation:

- E-step: Calculate expected values of latent variables

- M-step: Update parameters using coordinate descent with adaptive LASSO penalty

- Variable Selection: Apply data-adaptive weights to penalize SNPs differently

- Model Assessment: Evaluate prediction accuracy and empirical power using cross-validation [21]

Troubleshooting: For numerical instability, ensure proper standardization of predictors and verify that the Hessian matrix is positive definite.

Methodological Workflows

Diagram 1: Analytical Decision Pathway for Rare Fertility Outcomes

Diagram 2: Zero-Inflated Model Framework for Fertility Count Data

Research Reagent Solutions

Table 3: Essential Statistical Tools for Advanced Fertility Research

| Tool/Software | Primary Function | Application Context | Key Features |

|---|---|---|---|

R logistf Package |

Firth-penalized logistic regression | Rare binary outcomes in infertility studies | Bias reduction, handles complete separation |

R pscl Package |

Zero-inflated and hurdle models | Count fertility outcomes with excess zeros | ZIP, ZINB, hurdle models, model diagnostics |

| EM Adaptive LASSO Algorithm | Variable selection for zero-inflated counts | Genetic association studies with count phenotypes | Handles multicollinearity, simultaneous selection |

| Permutation Test Framework | Inference for correlated rare events | Longitudinal fertility studies with recurrent events | Non-parametric, maintains correlation structure |

| Markov Chain with Rewards (MCWR) | Lifetime reproductive output analysis | Evolutionary demography and fertility forecasting | Calculates moments of LRO distribution |

Frequently Asked Questions (FAQs)

Q1: My Random Forest model for predicting rare fertility outcomes like live birth has a high overall accuracy but fails to identify the positive cases I care about. What should I do?

A: High overall accuracy with poor sensitivity is a classic sign of model bias towards the majority class in an imbalanced dataset. Accuracy is a misleading metric in this context. You should:

- Shift your evaluation metrics: Prioritize metrics like Recall (Sensitivity), F1-Score, and the Area Under the Precision-Recall Curve (AUPRC) over accuracy. The F1-Score, which is the harmonic mean of precision and recall, is particularly useful for quantifying the balance between correctly identifying rare events and minimizing false positives [22] [23].

- Implement algorithmic adjustments: Modify your Random Forest to directly address the imbalance. A highly effective method is using the

class_weight='balanced'parameter, which assigns a higher penalty for misclassifying the minority class [24]. Alternatively, use a Balanced Random Forest, which performs down-sampling of the majority class for each bootstrap sample [23].

Q2: I am using an SVM to classify successful intrauterine insemination (IUI) cycles. Which kernel should I choose, and how can I improve its performance on the minority class?

A: For structured clinical data, a Linear SVM has been shown to be a strong performer, achieving high AUC (e.g., 0.78 in a study on IUI outcome prediction) [25]. The linear kernel is less prone to overfitting on high-dimensional data and is easier to interpret. To enhance sensitivity:

- Employ Class Weighting: During model training, set

class_weight='balanced'. This instructs the SVM to penalize mistakes on the minority class (e.g., successful pregnancy) more heavily, forcing the algorithm to pay more attention to these rare cases [25]. - Pre-process with SMOTE: Before training, apply the Synthetic Minority Oversampling Technique (SMOTE) to your training data. SMOTE generates synthetic examples of the minority class in feature space, creating a more balanced dataset for the SVM to learn from [23] [26].

Q3: What is the most robust ensemble method for combining multiple models to predict rare live births from IVF treatment data?

A: Advanced hybrid ensemble methods have demonstrated superior performance for this specific task. Research on IVF live-birth prediction shows that a Stacking Ensemble can achieve exceptionally high performance (e.g., AUC of 0.999) [26].

- Architecture: A stacking model often uses diverse base learners (e.g., Random Forest, SVM, and Multi-layer Perceptron) to create predictions. These predictions are then used as input features for a meta-learner (commonly XGBoost), which learns the optimal way to combine them [26].

- Key Step: It is crucial to apply data pre-processing like SMOTE to the training data for each model in the ensemble to ensure the class imbalance is addressed at every level [26].

Q4: How can I understand why my "black-box" ensemble model is making specific predictions for certain patients?

A: Model interpretability is critical for clinical translation. Use SHapley Additive exPlanations (SHAP). SHAP is a unified framework that assigns each feature an importance value for a particular prediction [22].

- Application: In a study on fertility preferences, SHAP was used alongside a Random Forest model to identify and quantify the influence of key predictors like age, parity, and education level [22].

- Benefit: SHAP analysis provides both global interpretability (which features are most important overall) and local interpretability (why a specific patient was classified a certain way), building trust in the model and generating potential clinical hypotheses [22].

Troubleshooting Guides

Problem: Model with High False Negative Rate for Rare Events

Symptoms: Your model is failing to identify a large proportion of the rare positive outcomes (e.g., successful pregnancies, drug-resistant cases). The recall/sensitivity metric is unacceptably low.

Diagnosis: The model is biased towards the majority class because it is penalized more for misclassifying the common outcome.

Solutions:

- Algorithm-Level Fix (Cost-Sensitive Learning):

- For Random Forest: Instantiate your model with

RandomForestClassifier(class_weight='balanced'). This automatically adjusts weights inversely proportional to class frequencies [24]. - For SVM: Similarly, use

SVC(class_weight='balanced'). This significantly improves the model's attention to the minority class [25]. - Experimental Protocol: In your code, compare the classification report (precision, recall, F1) of the standard model versus the cost-sensitive model on a held-out test set using stratified k-fold validation.

- For Random Forest: Instantiate your model with

- Data-Level Fix (Resampling):

- Technique: Apply SMOTE to the training data only. Never apply it to the test data, as this will create data leakage and over-optimistic performance.

- Procedure: Use the

imblearnlibrary to create a pipeline that first applies SMOTE and then fits the model. This generates synthetic samples for the minority class to balance the class distribution [23] [26]. - Code Snippet:

Problem: Choosing the Wrong Evaluation Metrics

Symptoms: A model reports 95% accuracy, but a simple "dummy" classifier that always predicts the majority class achieves 94% accuracy.

Diagnosis: Reliance on metrics that are insensitive to class imbalance, such as Accuracy and Area Under the ROC Curve (AUC), which can be overly optimistic.

Solutions:

- Adopt a Robust Evaluation Framework:

- Primary Metrics: Make Recall (Sensitivity) and the F1-Score your primary metrics for model selection [23]. The F1-Score provides a single metric that balances the trade-off between precision and recall.

- Use the Precision-Recall Curve: For imbalanced problems, the Precision-Recall (PR) Curve is more informative than the ROC Curve because it focuses specifically on the performance of the minority class [23].

- Validation: Use Stratified K-Fold Cross-Validation to ensure that each fold preserves the percentage of samples for each class, giving a more reliable estimate of model performance [23].

Table 1: Key Performance Metrics for Imbalanced Fertility Data

| Metric | Formula | Focus & Interpretation |

|---|---|---|

| Recall (Sensitivity) | TP / (TP + FN) | Ability to correctly identify all true positive rare events (e.g., successful pregnancies). The most critical metric. |

| Precision | TP / (TP + FP) | Accuracy of positive predictions. Measures how many of the predicted rare events are actual rare events. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of Precision and Recall. Provides a single score to balance the two. |

| Area Under the PRC (AUPRC) | Area under the Precision-Recall curve | Overall performance for the positive class. A value closer to 1 is ideal. Superior to AUC-ROC for imbalance. |

Problem: Ineffective Feature Selection

Diagnosis: Standard feature selection methods may discard features that are weakly correlated with the outcome but are crucial for identifying the rare event.

Solutions:

- Use Model-Specific Feature Importance with Caution: While Random Forest provides feature importance, it can be biased in imbalanced settings.

- Leverage SHAP for Robust Feature Selection: Compute SHAP values for your model. Features with consistently high mean absolute SHAP values are the most important drivers of your model's predictions, including for the rare class [22]. This can guide you to a more robust subset of features.

Experimental Protocols for Rare Fertility Outcomes

Protocol 1: Building a High-Sensitivity Random Forest Classifier

Objective: To predict a rare fertility outcome (e.g., live birth) using a Random Forest model optimized for sensitivity.

Materials:

- Dataset with clinical features (e.g., maternal age, sperm concentration, ovarian stimulation protocol) and a binary outcome label.

- Python libraries:

scikit-learn,imbalanced-learn,numpy,pandas.

Methodology:

- Data Preprocessing: Split data into training (80%) and test (20%) sets using stratified splitting. Handle missing values using median/mode imputation. Standardize numerical features.

- Address Class Imbalance: On the training set only, apply SMOTE to generate synthetic samples for the minority class.

- Model Training: Train a

RandomForestClassifieron the resampled training data. Setclass_weight='balanced_subsample'so that class weights are calculated for each bootstrap sample. - Hyperparameter Tuning: Use Stratified GridSearchCV to tune hyperparameters like

n_estimators,max_depth, andmin_samples_leaf. Use'f1'or'recall'as the scoring parameter. - Model Evaluation: Predict on the untouched test set. Generate a confusion matrix and calculate precision, recall, and F1-score for the positive class.

Table 2: Research Reagent Solutions: Computational Tools

| Tool / "Reagent" | Function / Explanation |

|---|---|

| Stratified K-Fold | A cross-validation technique that preserves the class distribution in each fold, ensuring reliable performance estimates on imbalanced data. |

| SMOTE | A data augmentation method that synthesizes new examples for the minority class in feature space to balance the dataset. |

| SHAP | A unified interpretability framework that explains the output of any machine learning model, quantifying each feature's contribution to a prediction. |

| Cost-Sensitive Learning | An algorithm-level approach that increases the cost of misclassifying the minority class, "incentivizing" the model to learn its patterns. |

| Precision-Recall Curve (PRC) | A diagnostic plot that shows the trade-off between precision and recall for different probability thresholds, specialized for imbalanced data. |

Protocol 2: Developing an Interpretable SVM Model for Treatment Success

Objective: To predict IUI/IVF success using a Linear SVM and identify the most influential clinical factors.

Methodology:

- Data Preparation: Follow the same stratified splitting and standardization as in Protocol 1.

- Model Training: Train a

LinearSVCmodel on the training data withclass_weight='balanced'. - Model Interpretation:

- Use a library like

SHAPto compute explainer values for the trained Linear SVM. - Generate a beeswarm plot to visualize the impact of the top features on the model's output across all patients.

- Analyze individual patient predictions to understand the driving factors behind a specific prognosis.

- Use a library like

Workflow and Conceptual Diagrams

Title: End-to-End Workflow for Imbalanced Data Modeling

Title: SMOTE Data Balancing Process

Title: Stacking Ensemble Architecture

Handling Temporal and Spatial Dependencies in Longitudinal Fertility Cohort Data

Frequently Asked Questions & Troubleshooting Guides

Q1: What are the most effective models for analyzing spatiotemporal fertility trends, and how do I choose one?

Answer: The choice of model depends on whether your research prioritizes description versus prediction, and the scale of your spatial and temporal data.

- For analyzing age, period, and cohort effects simultaneously: Use the Age-Period-Cohort (APC) model. This is ideal for quantifying how aging, historical time, and birth cohort membership independently influence fertility outcomes. It helps determine if a trend is due to women getting older (age), a specific historical event (period), or the unique experiences of a generation (cohort) [27] [28].

- For modeling spatial diffusion and interdependence between regions: Apply a Spatial Durbin Model (SDM) or other spatial panel models. These models are essential when you suspect that fertility behaviors in one geographic unit are influenced by the behaviors in neighboring units, capturing a diffusion process [29] [30] [31].

- For large datasets and forecasting: The Space-Time Autoregressive Integrated Moving Average (STARIMA) model is powerful for data with large distances between space and time points [32].

- For smoothing estimates across large geographic areas and time: P-spline models can provide smoothed parameter estimates across longitude, latitude, and time on a global scale [32].

Troubleshooting Tip: If your model results are unstable or difficult to interpret, check for the Modifiable Areal Unit Problem (MAUP). Your results can change drastically based on whether you use states, zip codes, or census tracts for spatial data, and years versus months for temporal data. Always justify your definitions of space and time [32].

Q2: How do I handle spatial autocorrelation in my fertility data?

Answer: Spatial autocorrelation, where nearby observations are more similar than distant ones, violates the independence assumption of standard statistical models. It must be tested for and corrected.

- Diagnosis: Calculate Moran's I, a general test that can use point data or polygons and works with categorical or continuous variables [32].

- Solution: If spatial autocorrelation is detected, use models that explicitly account for it.

Troubleshooting Tip: Ignoring spatial autocorrelation leads to biased parameter estimates and unreliable p-values. Always map your data and test for spatial dependence as a first step [32].

Q3: My analysis shows unexpected fertility declines. What contextual factors should I investigate?

Answer: Unexplained fertility trends are often linked to unmeasured economic or social contextual factors. The table below summarizes key modifiable risk factors and their measured effects to guide your investigation.

Table 1: Contextual Factors Associated with Fertility Outcomes

| Category | Factor | Measured Effect / Association | Citation |

|---|---|---|---|

| Economic Context | Unemployment | Mixed spatial effects; can lower fertility, but one study found a positive correlation with TFR within an area and a negative impact from neighboring areas' unemployment. | [30] [31] |

| Economic Uncertainty | Leads to fertility postponement, particularly for younger women (<30). Prolonged uncertainty strengthens this effect. | [29] [30] | |

| Social & Behavioral Context | Educational Attainment | Higher education is a key predictor for timing of first birth and can have a protective causal effect against infertility. | [28] [33] |

| Union Stability | Measures of union instability are associated with lower fertility levels across space and time. | [30] | |

| Health & Lifestyle | Poor general health, elevated waist-to-hip ratio, and neuroticism are causal risk factors for infertility. Napping and higher body fat percentage show protective effects. | [28] | |

| Spatial Context | Urbanization Level | A long-standing pattern of lower fertility in urban regions and higher fertility in rural regions persists. | [29] [30] |

| Spatial Diffusion | A region's fertility level is significantly influenced by the fertility behaviors of its geographically proximate regions in preceding periods. | [29] [31] |

Experimental Protocols for Key Methodologies

Protocol 1: Implementing an Age-Period-Cohort (APC) Analysis

This protocol is based on applications from the Global Burden of Disease (GBD) studies [27] [28].

Objective: To disentangle the effects of age, time period, and birth cohort on fertility prevalence.

Workflow:

Materials & Steps:

- Data Preparation:

- Calculate Core Metrics:

- Age-Standardized Prevalence Rate (ASPR): Compute to allow for comparison between populations with different age structures. Express per 100,000 individuals [27] [28].

- Estimated Annual Percentage Change (EAPC): A positive EAPC indicates an increasing trend, while a negative EAPC indicates a decrease [27].

- Model Construction:

- Interpretation:

- Apply findings as seen in GBD studies: e.g., "ERPI burden was highest at ages 20–25 years, while ERSI burden was persistently higher at ages 20–45 years" [27].

Protocol 2: Setting Up a Spatial Durbin Panel Model

This protocol is derived from studies of European and Nordic fertility [29] [30].

Objective: To assess how a region's fertility rate is influenced by its own characteristics (e.g., unemployment) and the characteristics and fertility rates of its neighboring regions.

Workflow:

Materials & Steps:

- Define Spatial Weights Matrix (W):

- Prepare Panel Data:

- Model Execution:

- Effect Decomposition:

- After estimation, decompose the results into direct effects (impact of a change in X within a region on its own Y) and indirect effects (impact of a change in X in neighboring regions on a region's Y) [31].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Data & Analytical Tools for Longitudinal Fertility Research

| Item / Solution | Function / Application | Example / Citation |

|---|---|---|

| Global Burden of Disease (GBD) Data | Provides standardized, global estimates of fertility and impairment prevalence (e.g., endometriosis-related infertility) for cross-country comparisons and trend analysis. | [27] [28] |

| National Longitudinal Surveys (NLS) | Offers detailed, long-term panel data on individuals, enabling the construction of fertility event histories and analysis of life course transitions. | [34] |

R Spatial Packages (spdep, splm) |

Provides the computational engine for calculating spatial weights, testing for autocorrelation (Moran's I), and fitting spatial econometric models like the Spatial Durbin Model. | [29] [30] |

Age-Period-Cohort R Packages (mgrittr, dplyr) |

Facilitates the construction of APC models to decompose temporal trends into age, period, and cohort effects using a Poisson distribution framework. | [27] [28] |

| Random Survival Forest (RSF) | A machine learning technique applied to longitudinal data to identify the most important predictors of fertility events (e.g., 1st, 2nd birth) and detect complex interactions. | [33] |

Incorporating Novel Biomarkers and High-Throughput Phenotypic Screening into Predictive Models

Frequently Asked Questions (FAQs)

Q1: Our high-throughput drug screening on 2D cell cultures consistently shows promising results, but these findings fail to translate in more complex 3D models. What could be causing this discrepancy? A1: This is a common challenge, as traditional 2D models often poorly correlate with more clinically relevant 3D models. A 2025 study on ovarian cancer demonstrated that drug efficacy in 2D systems shows a poor correlation with efficacy in 3D patient-derived spheroids, which better mimic the clinical behavior of tumors. To address this, you should transition to a 3D high-throughput phenotypic screening pipeline using patient-derived polyclonal spheroids. This approach more accurately captures the tumor microenvironment and has been proven to identify more translatable drug candidates, such as the discovery that rapamycin synergizes effectively with standard treatments in 3D models despite limited monotherapy activity [35].

Q2: We are analyzing spent embryo culture media (SCM) to predict implantation potential. What are the key metabolic biomarkers we should focus on, and what are the common methodological pitfalls? A2: Your focus should be on low molecular weight metabolites. A 2025 Bayesian meta-analysis of SCM studies identified seven metabolites positively and ten negatively associated with favorable IVF outcomes. Key components to analyze include:

- Amino Acids (AAs): Such as glutamine (often stabilized as alanyl-glutamine in culture), taurine, glycine, and alanine, which act as osmolytes, antioxidants, and metabolic precursors [36].

- Energy Substrates: The trio of pyruvate, lactate, and glucose. The embryonic consumption and production of these shift from relying on extracellular pyruvate in initial cleavages to increased glucose uptake and lactate production as development progresses [36]. Common pitfalls include a lack of standardized protocols, unvalidated analytical methods, and insufficient methodological transparency, which currently impede full clinical translation. Ensuring your study uses absolute metabolite concentrations and robust calibration is critical for reproducibility [36].

Q3: What machine learning (ML) model is most effective for integrating multiple biomarkers to predict rare fertility outcomes like live birth? A3: The optimal model can vary by dataset, but several have shown strong performance. For predicting live birth following fresh embryo transfer, a 2025 study with 11,728 records found that Random Forest (RF) demonstrated the best predictive performance, with an AUC exceeding 0.8. Key predictive features included female age, grades of transferred embryos, number of usable embryos, and endometrial thickness [37]. Another 2024 study on Chinese couples also identified RF and Logistic Regression (LR) as top performers, with AUCs of 0.671 and 0.674, respectively, highlighting maternal age, progesterone on HCG day, and estradiol on HCG day as top contributors [38]. For high-dimensional biomarker data (e.g., from RNA-seq), a connected network-constrained SVM (CNet-SVM) model has been developed to identify biologically relevant, interconnected biomarker networks, outperforming traditional feature selection methods [39].

Q4: We suspect sperm quality impacts embryo development beyond traditional parameters. Are there novel biomarkers that can help assess this? A4: Yes, recent research has moved beyond motility and morphology. A study performing small RNA sequencing on individually selected sperm found that specific microRNAs (miRNAs) are strongly correlated with sperm quality and pregnancy outcomes. The miRNAs hsa-miR-15b-5p, hsa-miR-19a-5p, and hsa-miR-20a-5p were significantly associated with sperm impairments and IVF prognosis. Higher expression of these miRNAs was linked to negative β-hCG outcomes and failed IVF, while lower expression was associated with successful live births. A combined model of these three miRNAs achieved an AUC of 0.75 for diagnostic prediction, making them promising novel biomarkers for male fertility [40].

Q5: How can we leverage existing prenatal screening data to improve the prediction of rare adverse fetal growth outcomes? A5: Routine mid-pregnancy biomarkers for Down syndrome screening can be repurposed for this goal. A 2025 study showed that serum unconjugated estriol (uE3) has higher predictive performance for small-for-gestational-age (SGA) infants than free β-hCG or AFP alone. By integrating these biochemical markers with maternal characteristics using machine learning models like Gradient Boosting Machine (GBM), prediction performance was significantly enhanced, achieving an AUC of 0.873 in the training set and 0.717 in the test set for SGA. This approach allows for the early identification of growth issues without requiring new clinical tests [41].

Troubleshooting Guides

Issue 1: Poor Correlation Between High-Throughput Screening Hits and Clinical Relevance

Problem: Candidates identified in high-throughput screening (HTS) campaigns fail to show efficacy in subsequent, more complex models or in vivo.

| Possible Cause | Solution | Relevant Experimental Protocol |

|---|---|---|

| Use of oversimplified 2D cell cultures. | Implement a 3D phenotypic screening pipeline. Isolate patient-derived polyclonal spheroids from ascites fluid or tissue. These spheroids more closely mimic the clinical behavior of the target (e.g., ovarian cancer) and provide a more relevant drug response profile [35]. | 1. Model Establishment: Isolate cells from patient ascites or tumor tissue. 2. 3D Culture: Culture cells in low-adherence plates with suitable media to promote self-assembly into spheroids. 3. High-Throughput Screening: Treat spheroids in a 384-well format with a library of compounds (e.g., FDA-approved drugs). 4. Endpoint Assays: Simultaneously assess multiple phenotypes, such as cytotoxicity (e.g., CellTiter-Glo) and anti-migratory properties (e.g., imaging-based invasion assay). |

| Screening only for a single phenotype (e.g., cytotoxicity). | Adopt multiplexed phenotypic screening. In the same assay well, measure multiple endpoints like cytotoxicity, impact on migration, and spheroid integrity. This provides a more comprehensive view of drug action [35]. | As above, integrate multiple readouts in the same experimental run. |

| Ignoring drug synergy. | Perform combination screening. Test your HTS hits in combination with standard-of-care therapies (e.g., cisplatin, paclitaxel). A drug like rapamycin showed limited monotherapy activity but significant synergy in combination, which would have been missed in a standard screen [35]. | 1. Monotherapy Dose-Response: Establish IC50 values for single agents. 2. Combination Matrix: Treat 3D models with a range of concentrations of the HTS hit and the standard drug in a matrix format. 3. Synergy Analysis: Analyze data using software like Combenefit or Chalice to calculate synergy scores. |

Issue 2: High Variability and Lack of Reproducibility in Spent Culture Media (SCM) Metabolomic Studies

Problem: Biomarker signatures from SCM analysis are inconsistent across studies and cannot be validated.

| Possible Cause | Solution | Relevant Experimental Protocol |

|---|---|---|

| Lack of standardized protocols for sample collection and analysis. | Implement strict, standardized operating procedures (SOPs). Define the exact timing of media collection, storage conditions, and processing steps. Use absolute metabolite concentrations for analysis instead of relative peak intensities or ratios [36]. | 1. Sample Collection: Collect SCM at a strictly defined time point (e.g., day 5 of blastocyst culture). 2. Sample Preparation: Immediately freeze samples at -80°C. Use protein precipitation and centrifugation to clean the sample. 3. Metabolite Quantification: Use calibrated analytical platforms (e.g., LC-MS/MS) with internal standards to report absolute concentrations of key metabolites like amino acids, pyruvate, lactate, and glucose. 4. Data Analysis: Use a standardized mean difference (SMD) to compare successful vs. failed implantation groups. |

| Failure to account for the dynamic nature of embryo metabolism. | Profile energy substrates at multiple time points or use continuous monitoring technologies like fluorescence lifetime imaging microscopy (FLIM). This captures the metabolic shift from pyruvate dependency to glucose utilization [36]. | 1. Serial Sampling: Collect small aliquots of media at 24-hour intervals for non-destructive analysis. 2. Continuous Monitoring: Use specialized equipment (e.g., FLIM) to monitor metabolic states like NAD(P)H autofluorescence without disturbing the embryo. |

| Insufficient statistical power and poor study design. | Conduct a power analysis prior to the study. Ensure adequate sample size and perform cross-validation of findings. A Bayesian meta-analysis approach can help integrate data from heterogeneous studies [36]. | Follow a systematic review and meta-analysis protocol (e.g., PROSPERO registered). Use multilevel modeling to integrate data across studies and account for heterogeneity. |

Issue 3: Machine Learning Models for Outcome Prediction Are Overfit and Do Not Generalize

Problem: A predictive model performs excellently on the training data but fails when applied to a new patient cohort.

| Possible Cause | Solution | Relevant Experimental Protocol |

|---|---|---|

| Inclusion of too many predictors with a limited sample size. | Employ robust feature selection. Use machine learning algorithms (e.g., Random Forest, XGBoost) to rank feature importance and select a parsimonious set of top predictors. For biomarker discovery from high-dimensional data (e.g., RNA-seq), use methods like CNet-SVM that select connected networks of genes, reducing false positives [39] [38]. | 1. Data Preprocessing: Handle missing values (e.g., using missForest imputation). 2. Feature Selection: Use a tiered approach: (i) univariate analysis (p<0.05), (ii) top features from multiple ML algorithms (RF, XGBoost), (iii) validation by clinical experts. 3. Model Training & Validation: Train multiple models (RF, XGBoost, LightGBM, Logistic Regression). Use 5- or 10-fold cross-validation and bootstrap validation (e.g., 500 times) to assess performance and avoid overfitting. Evaluate with AUC and Brier score [37] [38]. |

| Model complexity obscures clinical interpretability. | Prioritize interpretable models and use explainable AI (XAI) techniques. While complex models like ANN can be powerful, a well-performing Logistic Regression model is often easier to implement clinically. Use techniques like partial dependence plots (PDP) and accumulated local (AL) plots to understand how key features (e.g., maternal age) impact the prediction [37] [42]. | 1. Model Interpretation: For the final model (e.g., RF), calculate feature importance scores. 2. Visualization: Generate PDP and AL plots for the top 5 most important features to visualize their marginal effect on the live birth probability. 3. Tool Deployment: Develop a simple web tool based on the logistic regression model for clinicians to input patient data and receive a risk score [37]. |

Key Experimental Workflows

Workflow 1: 3D High-Throughput Phenotypic Drug Screening Pipeline

This diagram illustrates the integrated pipeline for discovering drugs with repurposing potential using clinically relevant 3D models.

Workflow 2: Biomarker Discovery from High-Throughput Omics Data

This diagram outlines the process for identifying robust, biologically relevant biomarker networks from high-dimensional data.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and technologies used in the advanced methodologies discussed in this guide.

| Research Reagent / Technology | Function in Experiment | Key Considerations |

|---|---|---|

| Patient-Derived Spheroids | A 3D cell culture model that mimics the in vivo tumor microenvironment and clinical drug response more accurately than 2D cultures [35]. | Source from patient ascites or tumor tissue. Ensure polyclonal composition to maintain heterogeneity. Use low-adherence plates for culture. |

| Alanyl-Glutamine (Ala-Gln) Dipeptide | A stable substitute for glutamine in cell culture media. Glutamine is crucial for cellular functions but can degrade into toxic ammonia [36]. | Use in spent culture media (SCM) formulations to provide a stable glutamine source and improve embryo development and metabolic stability. |