Ensemble Learning for Imbalanced Fertility Data: Advanced Methods for Predictive Modeling in Reproductive Medicine

This article provides a comprehensive examination of ensemble learning techniques specifically designed to address class imbalance in fertility and reproductive health datasets.

Ensemble Learning for Imbalanced Fertility Data: Advanced Methods for Predictive Modeling in Reproductive Medicine

Abstract

This article provides a comprehensive examination of ensemble learning techniques specifically designed to address class imbalance in fertility and reproductive health datasets. Tailored for researchers, scientists, and drug development professionals, it explores the foundational challenges of imbalanced data in clinical fertility studies, presents a methodological review of advanced ensemble frameworks like P-EUSBagging and hybrid neural network-optimization models, and discusses optimization strategies including data-level resampling and algorithm-level tuning. The content further synthesizes validation protocols and performance metrics essential for robust model evaluation, offering a critical resource for developing reliable, interpretable, and clinically actionable predictive tools in reproductive medicine.

The Imbalanced Data Challenge in Fertility Diagnostics and Prediction

The Prevalence and Impact of Class Imbalance in Reproductive Health Datasets

Class imbalance, a prevalent condition in machine learning where one class significantly outnumbers others, presents a substantial bottleneck for predictive modeling in reproductive health [1]. In this domain, the condition of interest—such as infertility, specific fertility disorders, or successful pregnancy outcomes—is often the minority class, making up a small fraction of the available data [1]. Conventional machine learning algorithms trained on such imbalanced datasets tend to exhibit an inductive bias toward the majority class, prioritizing overall accuracy at the expense of reliably identifying critical minority cases [1] [2]. This systematic bias can have profound consequences in reproductive medicine, where failing to correctly identify at-risk patients or underlying conditions may delay diagnosis, compromise treatment efficacy, and adversely affect patient outcomes [1]. This Application Note examines the prevalence and impact of class imbalance across reproductive health datasets and provides detailed protocols for employing ensemble learning techniques to address these challenges effectively.

Quantitative Landscape of Class Imbalance in Reproductive Health

The problem of class imbalance manifests across multiple domains of reproductive health research, influencing both clinical and public health studies. The following table summarizes documented prevalence rates and imbalance ratios from recent investigations:

Table 1: Documented Class Imbalance in Reproductive Health Studies

| Condition or Context | Reported Prevalence/Imbalance | Data Source/Study |

|---|---|---|

| Female Infertility | 14.8% (2017-2018) to 27.8% (2021-2023) | NHANES cross-cohort analysis (2015-2023) [3] |

| Anovulatory Cycles | 47% of tracked cycles lacked clear ovulation signs | Analysis of 211,000 tracked cycles [4] |

| Low Progesterone (PdG) | Present in 22% of tracked cycles | Hormonal health index report [4] |

| Elevated LH (Potential PCOS) | Noted in 13% of cases | Hormonal health index report [4] |

| Modern Contraceptive Use | Varies significantly by socioeconomic status | Analysis across 48 low- and middle-income countries [5] |

The increasing prevalence of self-reported infertility, rising from 14.8% in 2017-2018 to 27.8% in 2021-2023 in U.S. women, underscores a growing public health challenge while simultaneously creating increasingly balanced datasets for machine learning applications [3]. This trend contrasts with other reproductive health conditions that remain strongly imbalanced, such as anovulatory cycles observed in nearly half of all tracked cycles [4].

Beyond clinical conditions, class imbalance also arises in fertility-related agricultural research, which often serves as a model for human reproductive studies. In chicken egg fertility classification, for instance, imbalance ratios of 1:13 (minority:majority) are commonly encountered, creating significant predictive modeling challenges analogous to those in human fertility studies [2].

Ensemble Learning Frameworks for Imbalanced Fertility Data

Ensemble learning methods combine multiple models to achieve improved robustness and predictive performance compared to single-model approaches. These techniques are particularly valuable for addressing class imbalance in fertility datasets. The following table summarizes key ensemble approaches applicable to reproductive health data:

Table 2: Ensemble Learning Techniques for Imbalanced Fertility Data

| Ensemble Technique | Core Mechanism | Applicability to Fertility Data |

|---|---|---|

| Bagging (Bootstrap Aggregating) | Creates multiple dataset variants via bootstrapping; aggregates predictions [6]. | Reduces variance and mitigates overfitting to majority class in fertility datasets. |

| Boosting (e.g., XGBoost) | Sequentially builds models that focus on previously misclassified instances [3]. | Enhances detection of rare fertility disorders by emphasizing minority class cases. |

| Stacking Classifier Ensemble | Combines diverse base models via a meta-classifier [3]. | Leverages strengths of multiple algorithms for robust infertility risk prediction. |

| Easy Ensemble & Balance Cascade | Uses ensemble of undersampled models with specific sampling strategies [6]. | Efficiently handles severe imbalance in fertility treatment outcome prediction. |

| Bagging of Extrapolation Borderline-SMOTE SVM (BEBS) | Integrates borderline-informed sampling with ensemble of SVMs [6]. | Addresses critical borderline cases in fertility classification tasks. |

Recent research demonstrates the successful application of these ensemble methods for infertility risk prediction. One study utilizing NHANES data showed that multiple ensemble models, including Random Forest, XGBoost, and a Stacking Classifier, achieved excellent and comparable predictive ability (AUC > 0.96) despite relying on a streamlined feature set [3]. This reinforces the effectiveness of ensemble methods for infertility risk stratification even with minimal predictor sets.

Experimental Protocols for Ensemble Learning on Imbalanced Fertility Data

Protocol 1: Data Preprocessing and Feature Selection for Fertility Datasets

Objective: Prepare imbalanced reproductive health data for ensemble modeling through appropriate preprocessing and feature selection.

Materials and Reagents:

- Software: Python 3.8+ with scikit-learn, imbalanced-learn, pandas, numpy

- Dataset: NHANES reproductive health data (2015-2023) or equivalent fertility dataset

- Hardware: Standard computing workstation (8+ GB RAM recommended)

Procedure:

- Data Harmonization: Select only variables consistently available across all dataset cycles (e.g., age at menarche, total deliveries, menstrual irregularity, pelvic infection history) to ensure comparability [3].

- Inclusion Criteria Definition: Apply strict inclusion criteria (e.g., women aged 19-45 with complete infertility-related variable data) [3].

- Feature Engineering:

- Encode categorical variables (menstrual irregularity, PID history) using one-hot encoding

- Scale continuous variables (age at menarche, total deliveries) using standardization

- Create binary classification target based on self-reported infertility definition [3]

- Feature Selection:

- Apply LASSO (Least Absolute Shrinkage and Selection Operator) regularization for feature refinement and enhanced model interpretability [7]

- Use multivariate logistic regression to estimate adjusted odds ratios and inform feature importance [3]

- Retain features with significant associations (p < 0.05) in preliminary analysis

- Data Splitting: Partition data into training (70%), validation (15%), and test (15%) sets while preserving the imbalance ratio across splits

Quality Control:

- Address missing data using appropriate imputation methods or exclusion criteria

- Verify no data leakage between splits using subject identifiers

- Confirm consistent preprocessing across all dataset segments

Protocol 2: Hybrid Resampling and Ensemble Classification

Objective: Implement a combined resampling and ensemble approach to handle severe class imbalance in fertility prediction tasks.

Materials and Reagents:

- Software: Python with imbalanced-learn, scikit-learn, xgboost

- Dataset: Preprocessed fertility dataset from Protocol 1

- Hardware: Standard computing workstation

Procedure:

- Baseline Model Establishment:

- Train a ZeroR or dummy classifier as performance baseline

- Calculate baseline metrics (accuracy, F1-score, AUC-ROC) [2]

- Resampling Technique Evaluation:

- Ensemble Model Training:

- Implement Bagging with decision trees as base estimators

- Configure Random Forest with class weighting adjusted for imbalance

- Train XGBoost with scaleposweight parameter adjusted for imbalance ratio

- Construct Stacking Classifier combining diverse base models (LR, RF, XGBoost) with meta-classifier [3]

- Model Tuning and Validation:

Quality Control:

- Ensure synthetic samples from SMOTE conform to physiological plausibility for medical data [1]

- Validate model calibration using reliability curves

- Confirm robustness through multiple random seeds and dataset variations

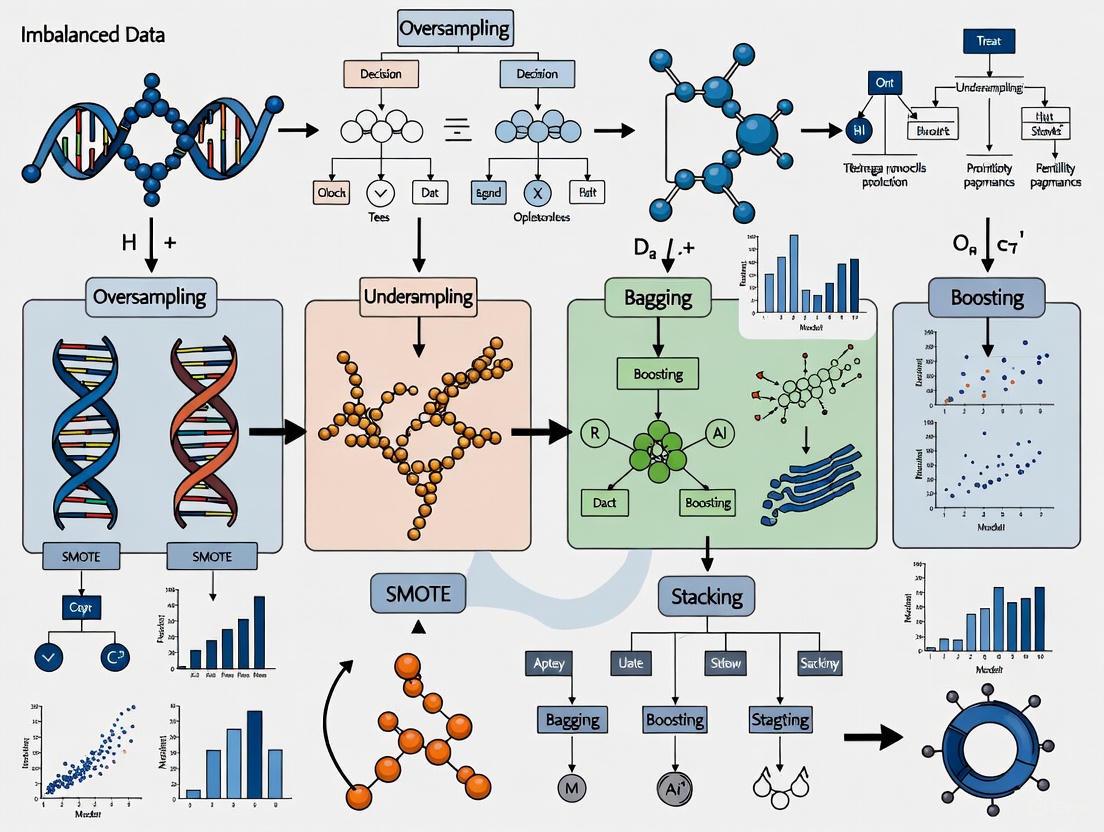

Workflow Visualization

Diagram 1: Ensemble learning workflow for imbalanced fertility data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Imbalanced Fertility Research

| Tool/Technique | Function | Application Context |

|---|---|---|

| SMOTE | Generates synthetic minority class instances | Addressing rarity of specific fertility conditions in datasets [6] |

| Borderline-SMOTE | Creates synthetic samples focusing on class boundary | Improving detection of borderline fertility disorder cases [6] |

| LASSO Regularization | Performs feature selection with regularization | Identifying most predictive fertility markers from high-dimensional data [7] |

| Stratified Cross-Validation | Preserves class distribution in data splits | Ensuring representative model validation on imbalanced fertility data [3] |

| Cost-Sensitive Learning | Adjusts misclassification costs during training | Prioritizing correct identification of rare fertility disorders [1] |

| UMAP (Uniform Manifold Approximation and Projection) | Reduces data dimensionality while preserving structure | Visualizing and analyzing patterns in high-dimensional fertility data [7] |

Class imbalance represents a fundamental challenge in reproductive health data science, potentially compromising the validity and clinical utility of predictive models. Ensemble learning techniques, particularly when combined with strategic resampling approaches and appropriate evaluation metrics, offer a powerful framework for addressing these challenges. The protocols outlined in this Application Note provide methodological guidance for developing robust predictive models capable of effectively identifying rare reproductive health conditions and outcomes. As reproductive health datasets continue to grow in scale and complexity, the thoughtful application of these ensemble methods will be essential for advancing both clinical care and public health initiatives in this domain.

Application Notes: Clinical Scenarios and Quantitative Findings

This section synthesizes key quantitative findings from recent investigations into male infertility biomarkers and diagnostic models. The data presented below supports the development of robust, ensemble-based predictive frameworks for managing imbalanced fertility datasets.

Table 1: Biomarker Expression and Sperm DNA Integrity Correlations

| Parameter | Oligozoospermic Group (Mean ± SD) | Normozoospermic Group (Mean ± SD) | Fold Change | P-value | Correlation with Progressive Motile Sperm (ρ) |

|---|---|---|---|---|---|

| 5'tRF-Glu-CTC Expression | 15.58 ± 4.34 | 12.53 ± 4.99 | 1.692 | 0.024 | Not Significant |

| Sperm DNA Fragmentation Index (DFI) | Not Significant | Not Significant | - | >0.05 | -0.537 (P=0.015) |

| Total Progressive Motile Sperm Count | - | - | - | - | -0.509 (P=0.026) |

Source: Adapted from [8]

Table 2: Performance Metrics of Advanced Diagnostic Models for Male Infertility

| Model / Framework | Reported Accuracy | Sensitivity | Specificity | Computational Time (seconds) | Key Innovation |

|---|---|---|---|---|---|

| Hybrid MLFFN–ACO Framework | 99% | 100% | Not Reported | 0.00006 | Ant Colony Optimization for parameter tuning [9] |

| Multi-Level Ensemble (Feature & Decision Fusion) | 67.70% | Not Reported | Not Reported | Not Reported | Fusion of multiple EfficientNetV2 features & classifier voting [10] |

| CNN-SVM/RF/MLP-A Hybrid | Not Reported | Not Reported | Not Reported | Not Reported | Combination of deep feature extraction with traditional classifiers [10] |

Source: Adapted from [9] and [10]

Experimental Protocols

Protocol: Quantification of 5'tRF-Glu-CTC in Seminal Plasma

Application: This protocol details the steps for extracting and measuring the expression level of the tRNA-derived fragment 5'tRF-Glu-CTC from human seminal plasma, a potential biomarker for oligozoospermia [8].

Reagents:

- SanPrep Column microRNA Miniprep Kit (Biobasic, Cat. No. SK8811)

- miRNA All-In-One cDNA Synthesis Kit (ABM, Cat. No. G898)

- BlasTaq 2X qPCR MasterMix (Applied Biological Materials)

- Stem-loop oligomer probes and primers specific for 5'tRF-Glu-CTC and reference miR-320 [8]

Procedure:

- Sample Preparation: Centrifuge freshly collected semen samples at 600xg for 5 minutes to separate seminal plasma from the sperm pellet.

- RNA Isolation: Extract total RNA, including small RNAs, from 200 μL of seminal plasma using the SanPrep Column microRNA Miniprep Kit according to the manufacturer's instructions.

- Quality Assessment: Measure RNA concentration and purity (A260/A280 ratio) using a microplate spectrophotometer.

- Reverse Transcription (cDNA Synthesis):

- Use 200 ng of total RNA as a template.

- Perform reverse transcription in a 20 μL reaction volume containing 10 μL of 2x miRNA cDNA Synthesis SuperMix, enzyme mix, and stem-loop oligomer probes.

- Use the following sequence-specific probe for 5'tRF-Glu-CTC:

5’-GTCTCCTCTGGTGCAGGGTCCGAGGTATTCGCACCAGAGGAGACCGTGCCG-3’.

- Quantitative Real-Time PCR (qRT-PCR):

- Set up 20 μL reactions containing 10 μL of BlasTaq 2X qPCR MasterMix, 0.5 μL of each forward and reverse primer (10 μM), and template cDNA.

- Use the following 5'tRF-Glu-CTC-specific forward primer:

5’-GGCGGTCCCTGGTGGTCTAGTGGTTAGGATT-3’. - Use a universal reverse primer.

- Run all samples in duplicate.

- Normalize the mean quantification cycle (Cq) values of 5'tRF-Glu-CTC to the reference small RNA miR-320 for each sample.

- Data Analysis: Calculate relative gene expression changes using the 2−ΔΔCq method [8].

Protocol: Sperm DNA Fragmentation Index (DFI) Assessment via TUNEL Assay

Application: This protocol describes the terminal deoxynucleotidyl transferase-mediated dUTP nick-end labeling (TUNEL) method for evaluating sperm DNA fragmentation, a key parameter correlated with sperm motility and ART outcomes [8].

Reagents:

- In situ Apoptosis Detection Kit (Takara Bio Inc., Shiga, Japan)

- 3.6% Paraformaldehyde

- Phosphate Buffer with Sucrose

- Poly-L-lysine coated slides

- DAPI (4',6-diamidino-2-phenylindole) for nuclear counterstaining

Procedure:

- Sperm Fixation: Fix the sperm pellet in 3.6% paraformaldehyde.

- Slide Preparation: Transfer fixed sperm onto poly-L-lysine coated slides using a phosphate buffer containing sucrose. Allow to adhere overnight.

- TUNEL Reaction: Perform the in situ labeling reaction using the In situ Apoptosis Detection Kit, strictly following the manufacturer's instructions. This step incorporates fluorescein-labeled nucleotides at the 3'-OH ends of fragmented DNA.

- Counterstaining: Apply DAPI to stain all sperm nuclei.

- Microscopy and Analysis:

- Analyze spermatozoa using a fluorescent microscope equipped with FITC and DAPI filters. Examine general morphology with a light microscope.

- For each sample, analyze at least 5 fields and a minimum of 200 cells.

- Use image analysis software (e.g., ImageJ, Version 1.54p) for quantification.

- DFI Calculation: Calculate the DNA Fragmentation Index (DFI) as the number of spermatozoa exhibiting FITC fluorescence (indicating DNA fragmentation) divided by the total number of spermatozoa counted [8].

Protocol: Ensemble Learning Framework for Sperm Morphology Classification

Application: This protocol outlines a novel ensemble-based approach for the automated classification of sperm morphology into multiple categories, addressing class imbalance and improving diagnostic robustness [10].

Reagents/Resources:

- Hi-LabSpermMorpho dataset (or equivalent, containing multiple morphological classes)

- Computational environment (e.g., Python with TensorFlow/PyTorch)

- Pretrained EfficientNetV2 models (S, M, L variants)

- Support Vector Machine (SVM), Random Forest (RF), and Multi-Layer Perceptron with Attention (MLP-A) classifiers

Procedure:

- Data Preparation:

- Utilize a comprehensive sperm image dataset (e.g., Hi-LabSpermMorpho with 18,456 images across 18 classes).

- Apply standard image preprocessing (resizing, normalization) and augment the data to mitigate class imbalance.

- Feature Extraction:

- Use multiple pretrained EfficientNetV2 models to extract deep features from the sperm images.

- Extract features from the penultimate layer of each network.

- Feature-Level Fusion:

- Concatenate the feature vectors obtained from the different EfficientNetV2 models to create a comprehensive, high-dimensional feature set.

- Classification with Ensemble:

- Train multiple classifiers, including SVM, RF, and MLP-A, on the fused feature set.

- Feature-Level Fusion Path: Use the fused features to train the SVM, RF, and MLP-A classifiers.

- Decision-Level Fusion Path: Perform soft voting on the predictions from the individual EfficientNetV2 models to combine their outputs.

- Model Evaluation:

- Evaluate the final ensemble model's performance on a held-out test set using metrics such as accuracy, precision, recall, and F1-score, with particular attention to performance on under-represented morphological classes [10].

Workflow Visualizations

tRNA-derivative Analysis Workflow

Ensemble Learning for Morphology

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Fertility Diagnostics Research

| Item / Reagent | Function / Application | Example / Specification |

|---|---|---|

| SanPrep Column microRNA Miniprep Kit | Isolation of total RNA, including small tRNA-derived fragments (tRFs), from seminal plasma. | Biobasic, Cat. No. SK8811 [8] |

| miRNA All-In-One cDNA Synthesis Kit | Reverse transcription of miRNA and tRFs using stem-loop probes for highly specific cDNA synthesis. | ABM, Cat. No. G898 [8] |

| Stem-loop RT and qPCR Primers | Sequence-specific detection and quantification of target tRFs (e.g., 5'tRF-Glu-CTC) via qRT-PCR. | Custom designed sequences [8] |

| In situ Apoptosis Detection Kit | Fluorescent labeling of DNA strand breaks in spermatozoa for TUNEL assay and DFI calculation. | Takara Bio Inc. [8] |

| Hi-LabSpermMorpho Dataset | A comprehensive image dataset for training and validating automated sperm morphology classification models. | 18,456 images across 18 distinct morphology classes [10] |

| Pretrained CNN Models (EfficientNetV2) | Deep feature extraction from sperm images for subsequent classification tasks. | EfficientNetV2 S, M, L variants [10] |

| Ant Colony Optimization (ACO) Algorithm | Nature-inspired metaheuristic for optimizing neural network parameters and feature selection in diagnostic models. | Used in hybrid MLFFN–ACO frameworks [9] |

Limitations of Traditional Statistical and Machine Learning Methods

In the specialized field of fertility research, the quality of data is paramount. A pervasive challenge that compromises this quality is class imbalance, a phenomenon where the number of observations in one category significantly outweighs those in another [11]. In contexts such as the prediction of successful embryo implantation or the classification of specific infertility etiologies, "positive" cases are often drastically outnumbered by "negative" ones [12]. Traditional statistical and machine learning (ML) methods, designed with the assumption of relatively balanced class distributions, frequently fail under these conditions, leading to models that are biased, inaccurate, and clinically unreliable [11] [13]. This application note details the specific limitations of these traditional approaches and provides structured, actionable protocols for adopting more robust ensemble learning techniques, framed within the critical context of imbalanced fertility data.

The Core Challenge: Why Traditional Methods Fail on Imbalanced Data

Traditional ML algorithms, including logistic regression, support vector machines (SVM), and standard decision trees, optimize for overall accuracy. When confronted with a dataset where the majority class comprises 90% or more of the samples, these models naturally develop a bias toward the majority class, as this is the easiest path to achieving high accuracy [14] [13]. For instance, a model predicting successful pregnancy from in vitro fertilization (IVF) cycles might achieve 95% accuracy by simply predicting "failure" for all cases, thereby completely failing to identify the successful outcomes that are of primary clinical interest [13].

The root problem is often not the imbalance itself but a combination of other factors exacerbated by it. These include the use of inappropriate evaluation metrics like accuracy, an absolute lack of sufficient minority class samples for the model to learn meaningful patterns, and poor inherent separability between the classes based on the available features [13]. Traditional methods, which are often less flexible, struggle to capture the complex, non-linear patterns that might distinguish a viable embryo from a non-viable one when such patterns are present in only a small fraction of the data [13].

Table 1: Limitations of Traditional Methods in Imbalanced Fertility Data Contexts

| Traditional Method | Primary Limitation | Manifestation in Fertility Research |

|---|---|---|

| Logistic Regression | Linear decision boundary; biased towards the majority class due to optimization for overall error minimization. | Misses complex, non-linear interactions between hormonal levels, genetic markers, and clinical outcomes. Produces poorly calibrated probabilities. |

| Standard Decision Trees | Splitting criteria (e.g., Gini impurity) are global and can ignore small minority class clusters. | A tree might fail to split on a key biomarker for implantation success because the split does not significantly improve overall node purity. |

| Support Vector Machines (SVM) | Tries to find a large-margin hyperplane, which can be skewed by the dense majority class, effectively ignoring the minority class. | The optimal hyperplane for classifying embryo quality might be pushed to a region that excludes all rare but viable embryo phenotypes. |

| k-Nearest Neighbours (k-NN) | The class of a new sample is based on its local neighbours, which are likely all from the majority class in imbalanced regions. | An embryo with a rare but promising morphological pattern may be misclassified because its nearest neighbours in the dataset are all non-viable. |

Advanced Techniques: Ensemble Learning and Data Augmentation

To overcome these limitations, the ML community has developed advanced techniques focused on altering the data distribution or the learning algorithm itself. The most effective strategies for imbalanced fertility data involve ensemble learning and data augmentation.

Ensemble Learning Methods

Ensemble learning combines multiple base models to produce a single, more robust and accurate predictive model [15]. Its power lies in leveraging the "wisdom of the crowd," where the collective decision of diverse models mitigates the individual errors of any single one [14] [16]. For imbalanced data, specialized ensemble techniques have been developed.

Table 2: Specialized Ensemble Methods for Imbalanced Data

| Ensemble Method | Core Mechanism | Advantage for Fertility Data |

|---|---|---|

| Balanced Random Forest | Each tree is trained on a bootstrap sample where the majority class is under-sampled to balance the classes [17]. | Ensures every decision tree in the forest has adequate exposure to rare positive outcomes (e.g., successful sperm retrieval in non-obstructive azoospermia), improving sensitivity. |

| Easy Ensemble | Uses bagging to train multiple AdaBoost classifiers on balanced subsets of the data created by random under-sampling of the majority class [17]. | Effectively creates several "experts" focused on different aspects of the hard-to-predict minority class, ideal for complex tasks like predicting live birth from multi-modal data. |

| Balanced Bagging | Similar to Balanced Random Forest but allows for any base estimator (e.g., SVM, decision trees) and can also incorporate oversampling [17]. | Offers flexibility to use the most appropriate base model for the specific fertility dataset (e.g., hormonal time-series, genetic data). |

| Boosting (e.g., AdaBoost, XGBoost) | Trains models sequentially, with each new model focusing on the instances previously misclassified, thereby giving more weight to the minority class over time [14] [16]. | Adaptively learns from "difficult" cases, such as patients with unexplained infertility, forcing the model to improve its predictions where it matters most. |

Data Augmentation and Synthetic Data Generation

Data augmentation techniques artificially increase the number of samples in the minority class. The Synthetic Minority Over-sampling Technique (SMOTE) and its variants generate new synthetic examples by interpolating between existing minority class instances in feature space [11] [18]. More recently, Generative Adversarial Networks (GANs) have been used to create highly realistic synthetic tabular data, which is particularly valuable when the absolute number of minority samples is very low, a common scenario in rare infertility disorders [12] [19].

Table 3: Data Augmentation Techniques for Imbalanced Fertility Datasets

| Technique | Description | Considerations for Fertility Data |

|---|---|---|

| SMOTE | Creates synthetic samples along line segments joining k nearest neighbors of the minority class [11]. | Can help balance a dataset for embryo image classification but may generate non-physiological samples if features are not continuous. |

| Borderline-SMOTE | Focuses synthetic data generation on the "borderline" instances of the minority class that are near the decision boundary [11]. | Useful for highlighting the subtle morphological differences that separate high-grade from borderline-low-grade embryos. |

| SVM-SMOTE | Uses support vectors to identify areas of the minority class to oversample, often focusing on harder-to-learn instances [12]. | Can target patient subgroups that are most ambiguous, improving model performance on edge cases. |

| GAN-based (e.g., GBO, SSG) | Employs a generator network to create new synthetic data and a discriminator to critique it, leading to highly realistic synthetic samples [12]. | Promising for generating synthetic patient profiles for rare conditions while preserving patient privacy, enabling more robust model development. |

Experimental Protocols

Protocol 1: Implementing a Balanced Ensemble Model for Embryo Viability Classification

Objective: To train a classifier for predicting day-5 blastocyst viability using clinical and morphological data, mitigating the class imbalance where high-quality viable embryos are the minority.

Workflow Overview:

Materials & Reagents:

- Dataset: Retrospective dataset of embryo records with features (morphology grade, patient age, fertilization method) and label (viable/not-viable).

- Software: Python 3.8+,

imbalanced-learn(imblearn) library,scikit-learn. - Computing: Computer with minimum 8GB RAM.

Step-by-Step Procedure:

- Data Preprocessing: Clean the data by handling missing values (e.g., imputation or removal) and encode categorical variables (e.g., one-hot encoding for fertilization method). Standardize numerical features (e.g., patient age) to have zero mean and unit variance.

- Train-Test Split: Split the preprocessed data into training (80%) and testing (20%) sets, using stratification to preserve the original class imbalance ratio in both splits.

- Baseline Model Training: Train a standard logistic regression model on the training set. Use this model's performance as a benchmark.

- Balanced Ensemble Training:

- Balanced Random Forest: Instantiate a

BalancedRandomForestClassifierfrom theimblearn.ensemblemodule. Set parameters liken_estimators=100andrandom_state=42for reproducibility. Fit the model on the training data. - Easy Ensemble: Instantiate an

EasyEnsembleClassifierfrom the same module, also withn_estimators=100. Fit it on the training data.

- Balanced Random Forest: Instantiate a

- Model Evaluation: Generate predictions on the test set for all three models. Calculate evaluation metrics, with a primary focus on F1-Score and Area Under the Precision-Recall Curve (AUC-PR) for the minority class (viable embryos), as these are more informative than accuracy under imbalance [13].

- Model Selection: Deploy the model that demonstrates the highest F1-score and AUC-PR for the viable embryo class, ensuring it meets the clinical requirement for a balance between precision and recall.

Protocol 2: Generating Synthetic Patient Data Using GANs

Objective: To augment a small dataset of patients with a rare infertility syndrome by generating high-fidelity synthetic patient data, enabling more robust downstream analysis.

Workflow Overview:

Materials & Reagents:

- Dataset: A small, curated dataset of patients diagnosed with the rare condition, containing clinical and lab values.

- Software: Python 3.8+,

ctganlibrary orPyTorch/TensorFlowfor custom GAN implementation. - Computing: Computer with a GPU (recommended for faster training), 16GB RAM.

Step-by-Step Procedure:

- Data Preparation: Preprocess the original real-world dataset. This includes normalizing continuous variables (e.g., hormone levels) and encoding categorical variables (e.g., genetic markers).

- GAN Selection and Initialization: Select a GAN architecture designed for tabular data, such as a Conditional Tabular GAN (CTGAN). Initialize the generator and discriminator networks with random weights.

- Adversarial Training:

- In each training epoch, the discriminator is trained to distinguish real patient records from synthetic ones produced by the generator.

- The generator is then trained to produce synthetic data that can "fool" the discriminator.

- This iterative process continues for a predefined number of epochs or until the synthetic data quality is deemed sufficient [12] [19].

- Synthetic Data Generation and Validation: After training, use the generator to create a new set of synthetic patient records.

- Fidelity Check: Use metrics like Similarity Score [19] or statistical distance measures (e.g., Jensen-Shannon divergence) to compare the distributions of synthetic and real data.

- Privacy Check: Ensure no synthetic record is an exact copy of a real patient to prevent privacy breaches.

- Data Augmentation: Combine the validated synthetic data with the original real data to create a larger, balanced dataset for subsequent machine learning tasks.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Imbalanced Fertility Research

| Tool / Reagent | Type | Function / Application | Example / Source |

|---|---|---|---|

imbalanced-learn |

Software Library | Provides a wide range of resampling (SMOTE, ADASYN) and ensemble (BalancedRF, EasyEnsemble) algorithms specifically for imbalanced data. | Python Package Index (PyPI) |

| CTGAN & TVAE | Generative Model | Deep learning models specifically designed to generate synthetic tabular data for augmenting small or imbalanced datasets. | sdv.dev (Synthetic Data Vault) |

| XGBoost | Ensemble Algorithm | A highly efficient and effective gradient boosting framework that can be tuned for imbalanced data using scaleposweight parameter. | xgboost.ai |

| SHAP (SHapley Additive exPlanations) | Explainable AI (XAI) Tool | Interprets model predictions by quantifying the contribution of each feature, crucial for validating model logic in clinical settings. | shap.readthedocs.io |

| Stratified K-Fold Cross-Validation | Evaluation Protocol | Ensures that each fold of cross-validation maintains the same class distribution as the full dataset, preventing biased performance estimates. | scikit-learn |

The journey from traditional, biased statistical models to advanced, fair ML systems for fertility research is necessitated by the field's inherent data challenges. Traditional methods, which fail when classes are imbalanced, can lead to clinically misleading conclusions. As outlined in this note, the path forward involves a strategic shift towards specialized ensemble methods like Balanced Random Forest and Easy Ensemble, complemented by sophisticated data augmentation techniques including SMOTE variants and GANs. By adopting the structured experimental protocols and tools provided, researchers and drug developers can build more reliable and actionable models, ultimately accelerating progress in the understanding and treatment of infertility.

Defining Ensemble Learning and its Core Principles for Data Imbalance

Ensemble learning is a machine learning technique that aggregates two or more learners (e.g., regression models, neural networks) to produce better predictions than any single model alone [20]. This approach operates on the principle that a collectivity of learners yields greater overall accuracy than an individual learner, effectively mitigating issues of bias and variance that often plague single-model approaches [21] [20]. In the context of imbalanced data, where one class significantly outnumbers others (a common challenge in fertility research and medical diagnostics), ensemble methods provide particularly valuable solutions by combining multiple models to improve detection of minority classes without sacrificing overall performance [14].

The relevance of ensemble learning to imbalanced fertility data research stems from its ability to address critical challenges in reproductive medicine. Fertility datasets often exhibit significant class imbalance, with normal semen quality samples substantially outnumbering altered or pathological cases [9]. This imbalance can lead to models that achieve high accuracy by simply predicting the majority class, while failing to identify clinically significant minority classes—a potentially disastrous outcome in diagnostic applications [14]. Ensemble methods effectively counter this tendency through various mechanisms that force models to focus on difficult-to-classify minority instances.

Core Principles and Mechanisms

Fundamental Principles

Ensemble learning operates on several core principles that explain its effectiveness, particularly for imbalanced data scenarios:

Diversity Principle: Ensemble methods combine diverse models trained on different data subsets or using different algorithms, creating a collective intelligence that captures patterns a single model might miss [21] [20]. This diversity is crucial for identifying rare patterns in minority classes.

Error Reduction Principle: By combining multiple models, ensemble methods average out individual model errors, reducing both variance (through techniques like bagging) and bias (through techniques like boosting) [21] [20].

Focus Principle: Specific ensemble techniques, particularly boosting algorithms, sequentially focus on misclassified instances, forcing subsequent models to pay greater attention to difficult cases that often belong to minority classes [14].

Key Ensemble Types for Imbalanced Data

Table 1: Ensemble Learning Types for Addressing Data Imbalance

| Ensemble Type | Core Mechanism | Advantages for Imbalanced Data | Common Algorithms |

|---|---|---|---|

| Bagging | Trains models in parallel on random data subsets and aggregates predictions [21] | Reduces variance and overfitting; can incorporate class weight adjustments [14] | Random Forest, Balanced Random Forest [14] |

| Boosting | Trains models sequentially with each new model focusing on previous errors [21] | Naturally prioritizes difficult minority class instances; reduces bias [14] | AdaBoost, Gradient Boosting, XGBoost [21] [20] |

| Stacking | Combines multiple models via a meta-learner that learns optimal combination [21] | Leverages diverse model strengths; can capture complex minority class patterns [21] | Stacked Generalization with heterogeneous classifiers [20] |

| Hybrid Approaches | Combines ensemble methods with sampling techniques like SMOTE [18] | Addresses imbalance at both data and algorithm levels [18] | Random Forest with SMOTE, Boosting with oversampling [18] |

Experimental Protocols for Fertility Data Research

Protocol 1: Balanced Random Forest for Fertility Classification

Objective: To implement a Balanced Random Forest (BRF) classifier for male fertility diagnosis using clinical, lifestyle, and environmental factors.

Materials and Dataset:

- Fertility Dataset from UCI Machine Learning Repository (100 samples, 10 attributes) [9]

- Preprocessing: Min-Max normalization to [0,1] range to handle heterogeneous feature scales [9]

- Class distribution: 88 "Normal" and 12 "Altered" seminal quality (moderate imbalance) [9]

Methodology:

- Data Preprocessing: Apply range scaling to all features using Min-Max normalization to ensure consistent contribution to the learning process [9].

- Bootstrap Sampling: For each tree in the forest, create balanced bootstrap samples with equal representation of both classes [14].

- Model Training: Train multiple decision trees on different balanced subsets, ensuring each tree has a balanced perspective [14].

- Prediction Aggregation: Combine predictions through majority voting or probability averaging across all trees [21].

- Evaluation: Assess using sensitivity, specificity, and AUC-PR in addition to accuracy, with emphasis on minority class performance [14].

Expected Outcomes: BRF typically demonstrates improved recall for the minority "Altered" class compared to standard Random Forest, while maintaining competitive overall accuracy [14].

Protocol 2: Feature-Level Fusion with Ensemble Classification

Objective: To develop an ensemble framework combining multiple CNN architectures with feature-level fusion for sperm morphology classification.

Materials:

- Hi-LabSpermMorpho dataset (18,456 images across 18 morphology classes) [10]

- Multiple EfficientNetV2 variants for feature extraction [10]

- Traditional classifiers: SVM, Random Forest, MLP with Attention mechanism [10]

Methodology:

- Feature Extraction: Extract deep features from multiple EfficientNetV2 models using penultimate layer activations [10].

- Feature-Level Fusion: Concatenate features from different architectures to create enriched feature representations [10].

- Classifier Training: Train multiple diverse classifiers (SVM, RF, MLP-A) on fused features [10].

- Decision-Level Fusion: Implement soft voting to combine classifier predictions [10].

- Evaluation: Assess using multi-class accuracy and per-class metrics, with particular attention to low-sample classes [10].

Expected Outcomes: The fusion-based ensemble achieved 67.70% accuracy on 18-class sperm morphology classification, significantly outperforming individual classifiers and effectively mitigating class imbalance issues [10].

Workflow Visualization

Ensemble Methods for Imbalanced Fertility Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Ensemble Learning in Fertility Research

| Research Reagent | Type | Function in Ensemble Fertility Research | Implementation Example |

|---|---|---|---|

| Random Forest with Class Weighting | Algorithm | Adjusts class weights to penalize minority class misclassification, improving sensitivity [14] | class_weight='balanced' in scikit-learn [14] |

| Balanced Random Forest (BRF) | Algorithm | Ensures each tree is trained on balanced bootstrap samples for fair minority class representation [14] | Imbalanced-learn library implementation [14] |

| AdaBoost with Sequential Focus | Algorithm | Iteratively increases weight on misclassified minority instances, forcing model attention [14] | AdaBoostClassifier with decision stumps in scikit-learn [21] |

| EfficientNetV2 Architectures | Feature Extractor | Provides multi-scale feature representations for fusion-based ensembles in image analysis [10] | Transfer learning from pre-trained models on sperm morphology images [10] |

| Ant Colony Optimization (ACO) | Bio-inspired Optimizer | Enhances neural network learning efficiency and convergence for fertility prediction [9] | Hybrid MLFFN-ACO framework for parameter tuning [9] |

| SMOTE + Ensemble Hybrids | Data Augmentation | Generates synthetic minority samples combined with ensemble classification [18] | Random Oversampling or SMOTE with Random Forest [18] |

| Multi-Level Fusion Framework | Architecture | Combines feature-level and decision-level fusion for robust ensemble predictions [10] | EfficientNetV2 features + SVM/RF/MLP-A + soft voting [10] |

Performance Metrics and Evaluation

Table 3: Quantitative Performance of Ensemble Methods on Fertility Data

| Ensemble Method | Dataset | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| Hybrid MLFFN-ACO Framework [9] | UCI Fertility Dataset (100 cases) | 99% accuracy, 100% sensitivity, 0.00006s computational time [9] | Ultra-fast prediction suitable for real-time clinical applications with perfect sensitivity |

| Multi-Level Fusion Ensemble [10] | Hi-LabSpermMorpho (18,456 images, 18 classes) | 67.70% accuracy, significant improvement over individual classifiers [10] | Effectively handles multi-class imbalance in complex morphology classification |

| Random Forest with Class Weighting [14] | Imbalanced clinical datasets | High minority class recall, maintained specificity [14] | Simple implementation with immediate improvement over unweighted models |

| Data Augmentation + Ensemble Combinations [18] | Various benchmark imbalanced datasets | Significant performance improvements over single approach solutions [18] | Addresses imbalance at both data and algorithm levels for enhanced robustness |

The performance data demonstrates that ensemble methods consistently outperform individual classifiers on imbalanced fertility datasets. The hybrid MLFFN-ACO framework achieves remarkable 100% sensitivity, ensuring no true positive cases are missed—a critical requirement in clinical diagnostics [9]. The multi-level fusion approach shows substantial gains in complex multi-class scenarios, proving particularly valuable for detailed morphological analysis in spermatology [10].

Implementation Considerations for Fertility Research

When implementing ensemble methods for imbalanced fertility data, several practical considerations emerge:

Data Quality and Annotation: High-quality, consistently annotated data is essential, particularly for medical images where expert labeling is costly but necessary [10].

Computational Resources: Complex ensembles, especially those combining multiple deep learning architectures, require significant computational resources and efficient implementation [10].

Clinical Interpretability: Model decisions must be interpretable for clinical adoption. Techniques like feature importance analysis and proximity search mechanisms provide necessary transparency [9].

Class Imbalance Strategies: The severity of imbalance should guide method selection—moderate imbalances may be addressed with class weighting, while severe imbalances may require hybrid approaches combining sampling with ensembles [18].

Ensemble learning represents a powerful methodology for addressing the pervasive challenge of class imbalance in fertility research and reproductive medicine. By leveraging the collective intelligence of multiple models, these techniques enhance detection of rare but clinically significant conditions, ultimately supporting more accurate diagnosis and personalized treatment planning in reproductive healthcare.

A Methodological Deep Dive: Ensemble Architectures for Fertility Data

Ensemble learning techniques, particularly bagging-based approaches, provide powerful methodological frameworks for addressing the critical challenge of class imbalance in biomedical datasets. This application note details the implementation of two robust bagging algorithms—Random Forest and the novel P-EUSBagging—within the context of imbalanced fertility data research. We present structured performance comparisons, detailed experimental protocols, and specialized toolkits to enable researchers to effectively apply these methods for predicting rare reproductive outcomes, ultimately supporting more accurate clinical decision-making in reproductive medicine and drug development.

Class imbalance represents a significant obstacle in fertility research, where outcomes of interest such as successful implantation or specific treatment-related complications naturally occur at low frequencies. Standard machine learning algorithms often exhibit bias toward the majority class, leading to poor predictive performance for these critical minority classes. Bagging (Bootstrap Aggregating) addresses this challenge by creating multiple models on bootstrapped dataset subsets and aggregating their predictions, thereby reducing variance and improving generalization [22] [23].

Random Forest extends bagging by incorporating random feature selection at each split, creating diverse trees that collectively form a robust classifier [23] [24]. P-EUSBagging represents a recent advancement specifically designed for imbalanced learning, utilizing data-level diversity metrics and adaptive voting to enhance minority class detection [25]. When applied to fertility datasets characterized by skewed class distributions, these bagging variants can significantly improve prediction of rare reproductive events, enabling more reliable research conclusions and clinical predictions.

Technical Performance Comparison

The following tables summarize key performance characteristics and diversity metrics for bagging-based approaches relevant to imbalanced fertility data research.

Table 1: Performance Comparison of Bagging Algorithms on Imbalanced Datasets

| Algorithm | Key Mechanism | Best Performing Context | Reported Accuracy | Reported AUC | Advantages for Fertility Data |

|---|---|---|---|---|---|

| Random Forest [26] [24] | Bootstrap samples + random feature selection | General imbalanced data with feature heterogeneity | 75-82% (with ROS) | 0.89-0.93 | Handles mixed data types; minimal preprocessing; provides feature importance |

| P-EUSBagging [25] | IED diversity metric + weight-adaptive voting | Severe imbalance with complex minority patterns | Significantly improves G-Mean | Significantly improves AUC | Explicitly maximizes data diversity; adaptive reward/penalty voting |

| Balanced Random Forest [17] | Under-samples majority class in each bootstrap | Severe imbalance where minority preservation critical | Comparable to RF with sampling | Comparable to RF with sampling | Maintains all minority instances; reduces bias toward majority class |

| EasyEnsemble [17] [27] | AdaBoost learners on balanced bootstrap samples | Complex minority class patterns requiring high recall | High recall (e.g., 0.86) potentially with lower precision | Not specified | Excellent minority class detection; hierarchical ensemble structure |

Table 2: Diversity Metrics in Ensemble Learning for Imbalanced Data

| Diversity Metric | Type | Computational Requirements | Correlation with Performance | Application in Fertility Research |

|---|---|---|---|---|

| IED (Instance Euclidean Distance) [25] | Data-level (no model training) | Low complexity; one-time evaluation | High (mean absolute correlation: 0.94 with classifier-based) | Pre-training diversity assessment; dataset optimization |

| Q-statistics [25] | Classifier-level (pairwise) | High (requires trained models) | Established reference metric | Post-hoc ensemble analysis |

| Disagreement Measure [25] | Classifier-level (pairwise) | High (requires trained models) | Established reference metric | Model selection and combination |

| Correlation Coefficient (ρ) [25] | Classifier-level (pairwise) | High (requires trained models) | Established reference metric | Diagnostic evaluation of ensemble components |

Experimental Protocols

Random Forest for Imbalanced Fertility Data

Principle: Construct multiple decision trees using bootstrap samples from the original dataset with random feature selection at each split, then aggregate predictions through majority voting [23] [24]. For imbalanced fertility data, this approach benefits from inherent variance reduction and can be enhanced with strategic sampling.

Protocol:

Data Preparation:

- Compile fertility dataset with clinical features and imbalanced outcome variable

- Partition data into training (70%) and test (30%) sets, preserving imbalance ratio

- Preprocess features: normalize continuous variables, encode categorical variables

Parameter Configuration:

- Set number of trees (

n_estimators= 500) - Determine feature subset size at each split (

max_features= √p for classification) - Specify minimum samples at leaf node (

min_samples_leaf= 1-5 for high imbalance) - Enable bootstrap sampling (

bootstrap= True)

- Set number of trees (

Imbalance-Specific Adjustments:

- Apply Random Oversampling (ROS) to training set prior to model building [26]

- Consider stratified bootstrap sampling to ensure minority class representation

- Implement weighted random forests using

class_weight= 'balanced'

Model Training:

Performance Validation:

- Evaluate using G-Mean and AUC-ROC in addition to accuracy

- Utilize out-of-bag (OOB) error estimation as internal validation [23]

- Perform 10-fold cross-validation with stratification to maintain imbalance

P-EUSBagging for Severe Imbalance

Principle: Generate multiple balanced subsets with maximal data-level diversity using the Instance Euclidean Distance (IED) metric, then combine predictions through weight-adaptive voting that rewards correct minority class predictions [25].

Protocol:

Data Preprocessing:

- Compile fertility dataset with confirmed outcome labels

- Normalize all features to ensure comparable distance calculations

- Identify minority class instances for special consideration in sampling

IED Diversity Calculation:

- Apply optimal instance pairing or greedy instance pairing algorithm

- Compute pairwise diversity between all potential subsets using Euclidean distance

- Select sub-datasets with maximal IED values for base classifier training

Population Based Incremental Learning (PBIL) Integration:

- Initialize probability vector for instance selection

- Generate candidate subsets using current probability vector

- Evaluate subset fitness using IED diversity metric

- Update probability vector toward high-diversity solutions

- Iterate until convergence or maximum generations

Ensemble Construction:

- Train base classifiers (typically decision trees) on each diverse subset

- Apply weight-adaptive voting strategy:

- Initialize equal weights for all classifiers

- For each prediction, increase weight for correct classifiers

- Decrease weight for classifiers producing errors

- Particularly reward correct minority class predictions

Implementation Framework:

Validation and Interpretation:

- Compare performance against standard Random Forest

- Analyze weight distribution across classifiers

- Examine diversity-performance correlation

- Conduct statistical testing on G-Mean improvements

Workflow Visualization

Bagging Workflow for Imbalanced Fertility Data: This diagram illustrates the complete analytical pathway for applying bagging-based approaches to imbalanced fertility datasets, highlighting key decision points between Random Forest and P-EUSBagging based on imbalance severity and research objectives.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Specific Function | Application in Fertility Research |

|---|---|---|---|

| scikit-learn [22] [24] | Python Library | Implements Random Forest and base bagging algorithms | Core machine learning framework for building ensemble models |

| imbalanced-learn [17] [27] | Python Library | Provides specialized ensemble methods for imbalanced data | Access to BalancedRandomForest, EasyEnsemble, and sampling methods |

| Instance Euclidean Distance (IED) [25] | Diversity Metric | Measures data-level diversity without model training | Pre-training assessment of dataset suitability for P-EUSBagging |

| Population Based Incremental Learning (PBIL) [25] | Evolutionary Algorithm | Generates diverse data subsets for ensemble training | Optimization of training subsets for maximum diversity in P-EUSBagging |

| Weight-Adaptive Voting [25] | Ensemble Strategy | Dynamically adjusts classifier weights based on performance | Enhanced focus on accurate minority class prediction in fertility outcomes |

| G-Mean & AUC-ROC [26] [25] | Evaluation Metrics | Assess model performance on imbalanced data | Comprehensive evaluation of fertility outcome prediction quality |

| Stratified Cross-Validation [26] | Validation Technique | Maintains class distribution in training/validation splits | Reliable performance estimation for rare fertility events |

Within the domain of ensemble learning, gradient boosting algorithms represent a powerful class of sequential learning techniques that build models in a stage-wise fashion, with each new model attempting to correct the errors of its predecessors. XGBoost (eXtreme Gradient Boosting) and LightGBM (Light Gradient Boosting Machine) have emerged as two of the most dominant and effective implementations of this paradigm, particularly for structured or tabular data commonly encountered in medical and biological research [28] [29]. Their application is especially potent in specialized fields like fertility research, where datasets are often characterized by class imbalance, a multitude of interacting clinical features, and a critical need for both high accuracy and model interpretability [30] [31] [32].

This article provides detailed application notes and experimental protocols for leveraging XGBoost and LightGBM within the specific context of imbalanced fertility data research. It synthesizes performance benchmarks from recent scientific studies, outlines structured implementation workflows, and provides a clear, comparative analysis of both algorithms to assist researchers and scientists in selecting and optimizing the appropriate tool for their predictive modeling tasks.

Performance Analysis and Comparative Evaluation

A critical step in experimental design is the selection of an appropriate algorithm based on empirical evidence. The following tables summarize quantitative performance metrics of XGBoost and LightGBM from various recent studies, including those focused on fertility outcomes.

Table 1: Comparative Model Performance in Fertility Research Applications

| Study / Prediction Task | Best Model | Key Performance Metrics | Comparative Models |

|---|---|---|---|

| Clinical Pregnancy Outcome after IVF [31] | LightGBM | Accuracy: 92.31%, Recall: 87.80%, F1-Score: 90.00%, AUC: 90.41% | XGBoost, KNN, Naïve Bayes, Random Forest, Decision Tree |

| Blastocyst Yield in IVF Cycles [29] | LightGBM | R²: ~0.67, MAE: ~0.79-0.81; Multi-class Accuracy: 67.8%, Kappa: 0.5 | XGBoost, SVM, Linear Regression |

| Live Birth Outcome after Fresh Embryo Transfer [32] | Random Forest | AUC: >0.80 | XGBoost (2nd best performer), GBM, AdaBoost, LightGBM, ANN |

| Type 2 Diabetes Risk Prediction [33] | XGBoost | Accuracy: 96.07%, AUC: 99.29% | CatBoost |

Table 2: Architectural and Operational Characteristics

| Characteristic | XGBoost | LightGBM |

|---|---|---|

| Tree Growth Strategy | Level-wise (grows tree breadth-first) [28] | Leaf-wise (grows tree depth-first, seeking the highest gain leaf) [28] |

| Handling of Sparse Data | Good, but may require more pre-processing [28] | Excellent, natively handles sparse data (e.g., csr_matrix) [28] |

| Memory & Speed | Higher memory usage; generally faster on smaller datasets [28] | Lower memory usage; often significantly faster on large datasets (>10,000 samples) [28] [29] |

| Overfitting Control | Strong, via regularization parameters in its objective function [28] [32] | Can be more prone on small datasets; controlled via max_depth and other leaf-growth parameters [28] |

Key Insights from Performance Data

- Performance Parity and Nuance: In many studies, XGBoost and LightGBM demonstrate remarkably similar top-line performance, as seen in the blastocyst yield prediction study where both achieved nearly identical R² and MAE [29]. The choice between them then becomes a matter of secondary factors such as training speed, memory efficiency, and model interpretability.

- Dataset Size and Structure Dependence: LightGBM's leaf-wise growth and histogram-based optimization often give it a distinct speed and memory advantage with high-dimensional and large-scale datasets, making it a preferred choice for massive data resources like biobanks [28] [29]. Conversely, XGBoost's level-wise approach can be more robust and easier to tune on smaller, cleaner datasets.

- Superior Performance in Specific Contexts: As shown in Table 1, LightGBM can achieve superior performance on specific fertility prediction tasks, such as clinical pregnancy outcome, where it identified key predictors like estrogen concentration at HCG injection and endometrium thickness [31]. However, other tasks, such as live birth prediction, may see other algorithms like Random Forest perform best, with XGBoost as a strong contender [32].

Experimental Protocols for Imbalanced Fertility Data

This section outlines a standardized, end-to-end protocol for developing and validating predictive models using XGBoost and LightGBM, with integrated techniques to address class imbalance commonly found in fertility datasets (e.g., where successful pregnancies are outnumbered by unsuccessful ones).

Data Pre-processing and Feature Engineering

Objective: To prepare a clean, well-scaled dataset with a robust set of features for model training. Materials: Raw clinical dataset (e.g., CSV file), Python with pandas, scikit-learn, and imbalanced-learn libraries.

Handling Missing Data:

- For fertility datasets, use non-parametric imputation methods suitable for mixed data types. The missForest algorithm, available in R, has been effectively used in fertility studies to impute missing values in pre-treatment patient characteristics [32].

- Alternatively, impute missing values for numerical features with the median and for categorical features with the mode.

Addressing Outliers:

- Use the Mahalanobis Distance for multivariate outlier detection in clinical features, as applied in IVF pregnancy outcome studies [31].

- Visually inspect features using boxplots to identify univariate outliers. Consider capping extreme values at a specified percentile (e.g., 5th and 95th).

Feature Scaling:

- Apply min-max scaling to normalize continuous features to a [0, 1] range, ensuring all clinical features contribute equally during model fitting [31].

- Formula: ( X{\text{scaled}} = \frac{X - X{\text{min}}}{X{\text{max}} - X{\text{min}}} )

Feature Selection:

- Option A (Filter Method): Use the

SelectFromModelfunction in scikit-learn with a LightGBM or XGBoost estimator as the base model to select the most important features based on importance thresholds [33]. - Option B (Wrapper Method): Employ Recursive Feature Elimination (RFE) to iteratively remove the least important features until the optimal subset is identified, as demonstrated in blastocyst yield prediction [29].

- Retain features based on both data-driven importance and clinical expert validation to ensure biological relevance [32].

- Option A (Filter Method): Use the

Addressing Class Imbalance

Objective: To mitigate model bias towards the majority class (e.g., non-pregnancy) and improve sensitivity to the minority class.

Primary Approach: Algorithm-Level Cost-Setting

- Both XGBoost and LightGBM allow for the specification of class weights directly in their loss functions. This is the most straightforward and often most effective method [30] [27].

- Set the

scale_pos_weightparameter in XGBoost or theclass_weightparameter in LightGBM to be inversely proportional to the class frequencies. This increases the penalty for misclassifying minority class samples.

Secondary Approach: Data-Level Resampling

- If cost-setting is insufficient, especially with very weak learners, data resampling can be explored [27].

- Recommended Technique: Apply the Synthetic Minority Over-sampling Technique (SMOTE) to generate synthetic samples for the minority class. This has been successfully combined with gradient boosting models in medical studies [30] [33].

- Protocol:

- Import

SMOTEfrom theimbalanced-learnlibrary. - Apply SMOTE only to the training split after train-test splitting to avoid data leakage.

- Use

RandomOverSamplerorRandomUnderSamplerfor a simpler, often equally effective, baseline [27].

- Import

Model Training, Hyperparameter Tuning & Evaluation

Objective: To train a robust, high-performance model that generalizes well to unseen data.

Stratified Data Splitting

- Split the dataset into training (e.g., 70%) and testing (e.g., 30%) sets using stratified splitting (

train_test_splitwithstratify=y) to preserve the original class distribution in both splits [30].

- Split the dataset into training (e.g., 70%) and testing (e.g., 30%) sets using stratified splitting (

Hyperparameter Optimization with Optuna

- Manual tuning is inefficient. Use the Optuna framework for automated, efficient hyperparameter search, which has been shown to significantly boost model performance and reduce training time [34] [35].

- Key Hyperparameters to Tune:

- XGBoost:

learning_rate,max_depth,subsample,colsample_bytree,reg_lambda,reg_alpha,scale_pos_weight. - LightGBM:

learning_rate,num_leaves,feature_fraction,bagging_fraction,lambda_l1,lambda_l2,min_data_in_leaf.

- XGBoost:

- Protocol Snippet:

Model Training with Cross-Validation

- Train the final model using the optimized hyperparameters from Optuna.

- Employ 5-fold cross-validation repeated 5 times on the training set to obtain a robust estimate of model performance and ensure stability [31].

Performance Evaluation on Test Set

- Critical Step: For threshold-dependent metrics like Precision and Recall, do not use the default 0.5 probability threshold. Optimize the decision threshold based on the Precision-Recall trade-off that is most relevant to the clinical problem (e.g., high recall for sensitive screening) [27].

- Recommended Metrics:

- Threshold-independent: AUC-ROC and AUC-PR (Precision-Recall Curve), with AUC-PR being more informative for imbalanced data [30].

- Threshold-dependent: Precision, Recall (Sensitivity), F1-Score (harmonic mean of Precision and Recall) [30] [31].

- Holistic Metrics: Confusion Matrix, Matthew's Correlation Coefficient (MCC), and Cohen's Kappa, which provide a more balanced view for imbalanced classes [30].

Visualization of the Experimental Workflow

The following diagram illustrates the integrated experimental protocol for handling imbalanced fertility data, from pre-processing to model interpretation.

Diagram Title: Imbalanced Fertility Data Modeling Workflow

Table 3: Key Computational Tools and Libraries

| Item / Library | Function / Application | Reference |

|---|---|---|

| XGBoost Library | Implementation of the XGBoost algorithm; optimal for datasets where precision and regularization are critical. | [28] [32] |

| LightGBM Library | Implementation of the LightGBM algorithm; optimal for large, high-dimensional datasets requiring fast training and lower memory footprint. | [28] [31] [29] |

| Imbalanced-learn (imblearn) | Python library providing implementations of oversampling (e.g., SMOTE) and undersampling techniques. | [30] [27] |

| Optuna Framework | An automatic hyperparameter optimization software framework, particularly effective for tuning LightGBM and XGBoost. | [34] [35] |

| SHAP (SHapley Additive exPlanations) | A unified approach to explain the output of any machine learning model, crucial for identifying key predictive features in clinical models. | [29] [34] [33] |

| Scikit-learn | Provides fundamental utilities for data splitting, preprocessing, metrics, and baseline models. | [30] [31] |

Model Interpretation and Clinical Insight Generation

Objective: To translate model predictions into clinically actionable insights by identifying the most influential features and their directional impact on the prediction.

Global Interpretability with SHAP:

- Use the SHAP library to calculate Shapley values for the entire dataset.

- Generate a summary plot to visualize the global feature importance and the distribution of each feature's impact on the model output [33]. This can reveal, for instance, that female age and embryo grade are consistently the top predictors of live birth [32].

- Protocol Snippet:

Local Interpretability:

- For a single patient's prediction, use SHAP to create a force plot. This explains the "reasoning" behind the model's prediction for that specific instance, showing how each feature pushed the prediction from the base value towards the final outcome [33].

- This is invaluable for clinicians to understand and trust the model's recommendation for an individual patient.

Partial Dependence Analysis:

- Plot Partial Dependence Plots (PDPs) or Individual Conditional Expectation (ICE) plots to understand the functional relationship between a key feature (e.g., Estrogen Concentration) and the predicted outcome, marginalizing over the effects of all other features [29] [32].

- This can visually confirm known clinical relationships, such as the negative correlation between female age and probability of live birth.

The integration of neural networks with nature-inspired optimization algorithms represents a paradigm shift in computational intelligence, particularly for tackling complex, real-world problems characterized by high dimensionality, non-linearity, and data imbalance. These hybrid frameworks leverage the powerful pattern recognition and predictive capabilities of deep learning models, while nature-inspired metaheuristics enhance their efficiency, robustness, and generalizability by optimizing critical parameters and architectural components. Within the specific and critical domain of fertility data research—where datasets are often small, costly to obtain, and inherently imbalanced—these hybrid approaches offer a promising path toward more reliable, interpretable, and clinically actionable diagnostic tools. This document provides detailed application notes and experimental protocols for developing and validating such hybrid systems, framed within a broader thesis on ensemble learning techniques for imbalanced fertility data.

Conceptual Framework and Key Applications

At its core, a hybrid framework combines a neural network (e.g., a Multilayer Perceptron or Convolutional Neural Network) with a nature-inspired optimization algorithm (e.g., Ant Colony Optimization, Biogeography-Based Optimization). The neural network acts as the primary predictive model, whereas the metaheuristic algorithm performs a crucial supporting role, such as hyperparameter tuning, feature selection, or class imbalance mitigation, thereby overcoming key limitations of standalone deep learning models.

The logical workflow of such a system can be visualized as a cyclic process of improvement, as illustrated below.

Diagram 1: High-level workflow of a hybrid neural network and nature-inspired optimization framework.

Recent research demonstrates the efficacy of this approach across multiple domains, including biomedical diagnostics and environmental monitoring. Key applications relevant to fertility research include:

- Medical Diagnostics: A hybrid framework combining a multilayer feedforward neural network with Ant Colony Optimization (ACO) was developed for male fertility diagnostics. The ACO algorithm provided adaptive parameter tuning, enhancing predictive accuracy and overcoming the limitations of conventional gradient-based methods. This system achieved a remarkable 99% classification accuracy and 100% sensitivity on a clinical dataset, demonstrating high efficacy in handling imbalanced data [9].

- Medical Image Analysis: The HDL-ACO framework integrates CNNs with ACO for classifying ocular Optical Coherence Tomography (OCT) images. In this system, ACO is employed for hyperparameter optimization and feature space refinement, leading to a model that achieved 95% training accuracy and 93% validation accuracy, outperforming standard models like ResNet-50 and VGG-16 [36].

- Environmental Modeling: For drought susceptibility assessment, an ensemble deep learning model (CNN-Attention-LSTM) was enhanced using Biogeography-Based Optimization (BBO) and Differential Evolution (DE). The BBO-optimized ensemble achieved the best performance (AUROC = 0.91), showcasing the utility of metaheuristics in optimizing complex, multi-component neural architectures [37].

Quantitative Performance Comparison

The performance of various hybrid frameworks is summarized in the table below for easy comparison. These metrics highlight the potential gains in accuracy and efficiency from successful hybridization.

Table 1: Performance Metrics of Hybrid Frameworks in Various Applications

| Application Domain | Neural Network Component | Optimization Algorithm | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Male Fertility Diagnostics | Multilayer Feedforward Network | Ant Colony Optimization (ACO) | 99% Accuracy, 100% Sensitivity, 0.00006 sec computational time | [9] |

| Ocular OCT Image Classification | Convolutional Neural Network (CNN) | Ant Colony Optimization (ACO) | 95% Training Accuracy, 93% Validation Accuracy | [36] |

| Plant Leaf Image Classification | Convolutional Neural Network (CNN) | Hybrid PB3C-3PGA | 98.96% Accuracy on Mendeley Dataset | [38] |

| Drought Susceptibility Assessment | CNN-Attention-LSTM Ensemble | Biogeography-Based Optimization (BBO) | AUROC = 0.91, R² = 0.79, RMSE = 0.22 | [37] |

Experimental Protocols

This section provides a detailed, step-by-step protocol for replicating a hybrid framework, using the male fertility diagnostics study [9] as a primary exemplar.

Protocol 4.1: ACO-Optimized Neural Network for Imbalanced Fertility Data

I. Objective: To develop a high-accuracy diagnostic model for male fertility that effectively handles class imbalance by integrating a Multilayer Feedforward Neural Network (MLFFN) with Ant Colony Optimization (ACO).

II. Dataset Preprocessing and Preparation

- Data Source: Acquire the "Fertility Dataset" from the UCI Machine Learning Repository. The used dataset contained 100 samples with 10 attributes (e.g., lifestyle, environmental factors) and a binary label (Normal/Altered) [9].

- Data Cleansing: Remove incomplete records. The final dataset should be curated to 100 samples.

- Range Scaling (Normalization): Apply Min-Max normalization to rescale all feature values to a [0, 1] range. This ensures consistent contribution from features originally on different scales (e.g., binary 0/1 and discrete -1/0/1) [9].

- Formula: ( X{\text{norm}} = \frac{X - X{\min}}{X{\max} - X{\min}} )

III. Model Architecture and Optimization Setup

- Neural Network Configuration:

- Type: Multilayer Feedforward Neural Network (MLFFN).

- Input Layer: Number of nodes equal to the number of features after preprocessing (9).

- Hidden Layers: The exact architecture (number and size of layers) is a key hyperparameter to be optimized by ACO. Start with a simple topology like one hidden layer with 5-10 neurons for exploration.

- Output Layer: 1 node with a sigmoid activation function for binary classification.

- Ant Colony Optimization Setup:

- Role of ACO: The ACO algorithm is used to optimize the hyperparameters of the MLFFN (e.g., learning rate, number of hidden units, momentum) and to perform feature selection, thereby enhancing learning efficiency and convergence [9].

- ACO Parameters: Initialize standard ACO parameters: number of ants (e.g., 20-50), pheromone evaporation rate (e.g., ρ=0.5), and heuristic information (based on feature importance or inverse of error).

IV. Experimental Workflow The detailed, iterative process of training and optimizing the hybrid model is outlined in the following workflow.

Diagram 2: Detailed workflow of the ACO-based optimization loop for neural network tuning.

Steps:

- ACO Initialization: Initialize pheromone trails for all hyperparameters and/or features to be optimized [9].

- Solution Construction: For each ant in the colony, probabilistically construct a candidate solution. This solution represents a specific set of hyperparameters for the MLFFN and/or a selected subset of features.

- Model Training & Evaluation: For each ant's candidate solution:

- Configure the MLFFN with the proposed hyperparameters.

- Train the network on the (normalized) training data. To address class imbalance, use a fitness function like the F1-Score or Sensitivity instead of raw accuracy during evaluation [9].

- Evaluate the trained model on a validation set. The performance metric (fitness) is recorded.

- Pheromone Update: After all ants have constructed and evaluated their solutions, update the global pheromone trails. Paths (hyperparameters/features) that led to higher fitness models receive stronger pheromone reinforcement, guiding the search in subsequent iterations [9].

- Termination and Selection: Repeat steps 2-4 for a predefined number of iterations or until convergence. The best solution (hyperparameter set and feature subset) found over all iterations is selected as the final model configuration.

V. Model Interpretation and Validation

- Feature Importance Analysis: Implement a Proximity Search Mechanism (PSM) or use SHapley Additive exPlanations (SHAP) to analyze the trained model. This identifies and ranks the contribution of lifestyle and environmental factors (e.g., sedentary habits, stress) to the prediction, providing crucial clinical interpretability [9] [37].

- Performance Reporting: Evaluate the final model on a completely held-out test set. Report standard classification metrics, with a strong emphasis on Sensitivity (Recall) and Specificity due to the imbalanced nature of the data. The use of the Area Under the Receiver Operating Characteristic Curve (AUROC) is also recommended.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Materials for Hybrid Framework Development

| Item Name / Category | Function / Purpose | Exemplars & Notes |

|---|---|---|

| Programming Frameworks | Provides the foundation for implementing neural networks and optimization algorithms. | Python with libraries: TensorFlow/PyTorch (Neural Networks), Scikit-learn (preprocessing, metrics), Numpy/Pandas (data handling). MATLAB is also used [38] [36]. |

| Optimization Algorithms | Nature-inspired metaheuristics for tuning hyperparameters and selecting features. | Ant Colony Optimization (ACO) [9] [36], Biogeography-Based Optimization (BBO) [37], Differential Evolution (DE) [37], Hybrid PB3C-3PGA [38]. |

| Explainable AI (XAI) Tools | Provides post-hoc interpretability of the "black-box" model, critical for clinical adoption. | SHapley Additive exPlanations (SHAP) [37], Proximity Search Mechanism (PSM) [9], One-At-a-Time (OAT) sensitivity analysis [37]. |

| Public Datasets | Standardized benchmarks for development, training, and validation. | UCI Machine Learning Repository (e.g., Fertility Dataset [9]), Mendeley Data [38], annotated medical image datasets (e.g., OCT datasets [36]). |