Evaluating AI Performance in Sperm Morphology Classification: Key Metrics, Model Architectures, and Clinical Validation

This article provides a comprehensive analysis of performance metrics for artificial intelligence (AI) models in sperm morphology classification, a critical tool for objective male fertility assessment.

Evaluating AI Performance in Sperm Morphology Classification: Key Metrics, Model Architectures, and Clinical Validation

Abstract

This article provides a comprehensive analysis of performance metrics for artificial intelligence (AI) models in sperm morphology classification, a critical tool for objective male fertility assessment. It explores the foundational concepts of model evaluation, examines cutting-edge deep learning methodologies and their reported efficacy, addresses common optimization challenges such as dataset limitations and model generalization, and reviews rigorous validation frameworks against clinical standards. Tailored for researchers and drug development professionals, this review synthesizes current evidence to guide the development of robust, clinically applicable AI tools that can enhance diagnostic precision in reproductive medicine.

Foundations of Model Evaluation: Defining Accuracy, Precision, and Recall in Sperm Morphology AI

In the development of clinical diagnostic models, such as those for sperm morphology classification, simply knowing a model is "accurate" is insufficient. Evaluating model performance requires a nuanced understanding of different metrics that capture various aspects of model correctness and error. For researchers and scientists developing these tools, a deep understanding of accuracy, precision, recall, and the F1-score is fundamental. These metrics provide a multifaceted view of model performance, each highlighting different strengths and weaknesses crucial for assessing a model's real-world clinical applicability [1] [2] [3].

These metrics become particularly critical when dealing with imbalanced datasets, a common scenario in medical diagnostics where the number of normal cases often far exceeds the number of abnormal ones. A model might appear highly accurate by simply predicting the majority class, yet fail completely at its primary task—identifying the clinically significant abnormal cases. This article will define these core metrics, frame them within the clinical context of sperm morphology classification, and provide a comparative analysis of their interpretation for research professionals.

Defining the Core Metrics

Accuracy

Accuracy measures the overall correctness of a classification model across all classes. It answers the question: "Out of all the predictions, what fraction did the model get right?" [1] [3].

- Mathematical Definition:

Accuracy = (TP + TN) / (TP + TN + FP + FN)[1] - Clinical Interpretation: In sperm morphology classification, accuracy represents the proportion of all sperm heads (both normal and abnormal) that were correctly classified. While intuitive, its utility is limited in imbalanced datasets. For instance, if only 5% of sperm cells are morphologically abnormal, a model that blindly classifies every cell as "normal" would still be 95% accurate, despite being clinically useless for detecting anomalies [3].

Precision

Precision, also called Positive Predictive Value, measures the reliability of a model's positive predictions. It answers the question: "When the model predicts a positive case, how often is it correct?" [1] [3].

- Mathematical Definition:

Precision = TP / (TP + FP)[1] - Clinical Interpretation: In the context of identifying abnormal sperm morphology, precision is the proportion of sperm heads classified as abnormal that were truly abnormal. A high precision means that when the model flags an anomaly, researchers can be confident it is a true anomaly. This is crucial when the cost of a false alarm (FP) is high, for example, if it leads to unnecessary and expensive further diagnostic procedures [1] [3].

Recall

Recall, also known as Sensitivity or True Positive Rate (TPR), measures a model's ability to detect all positive cases. It answers the question: "Out of all the actual positive cases, what fraction did the model successfully find?" [1] [3].

- Mathematical Definition:

Recall = TP / (TP + FN)[1] - Clinical Interpretation: For a sperm morphology classifier, recall is the proportion of truly abnormal sperm heads that were correctly identified by the model. A high recall indicates that the model misses very few anomalies. This metric is paramount when the cost of missing a positive case (a false negative) is high. In a diagnostic setting, low recall could mean critical abnormalities are overlooked, potentially leading to misdiagnosis [1].

F1-Score

The F1-Score is the harmonic mean of precision and recall, providing a single metric that balances the trade-off between the two [1] [2].

- Mathematical Definition:

F1-Score = 2 * (Precision * Recall) / (Precision + Recall)[1] - Clinical Interpretation: The F1-score is especially useful when seeking a balance between minimizing false positives and false negatives. It is a more informative metric than accuracy for imbalanced datasets common in clinical research. A high F1-score indicates that the model performs well both in correctly identifying true anomalies (high recall) and in ensuring its positive predictions are reliable (high precision) [1].

Table 1: Summary of Core Classification Metrics

| Metric | Core Question | Formula | Clinical Focus in Sperm Morphology |

|---|---|---|---|

| Accuracy | How often is the model correct overall? | (TP+TN)/(TP+TN+FP+FN) | Overall correctness in classifying all sperm heads. |

| Precision | How often is a positive prediction correct? | TP/(TP+FP) | Reliability of an "abnormal" classification. |

| Recall | What fraction of positives are found? | TP/(TP+FN) | Ability to capture all true abnormal sperm heads. |

| F1-Score | What is the balance of precision and recall? | 2(PrecisionRecall)/(Precision+Recall) | Single score balancing the detection of anomalies and the accuracy of the alerts. |

Metric Relationships and Trade-offs

Understanding the interplay between precision and recall is critical for optimizing clinical models. These two metrics often exist in a state of tension; improving one can frequently lead to a decline in the other [1].

This relationship is often managed by adjusting the classification threshold—the probability value at which a model assigns a case to the positive class. A high threshold makes the model "cautious," only classifying a case as positive when it is very confident. This typically increases precision (fewer false alarms) but decreases recall (more missed positives). Conversely, a low threshold makes the model "sensitive," classifying more cases as positive. This increases recall (fewer missed positives) but decreases precision (more false alarms) [1].

The choice of which metric to prioritize is not a technical one but a clinical and strategic decision based on the relative costs of different types of errors [1].

- Prioritize Recall when the cost of missing a positive case (a False Negative) is very high. In a screening test for a severe disease, or in a primary diagnostic tool like a sperm morphology classifier, missing an abnormality is far worse than a false alarm. Therefore, researchers would tune the model to have high recall [1].

- Prioritize Precision when the cost of a false alarm (a False Positive) is high. For example, in a confirmatory test that triggers an invasive and costly follow-up procedure, ensuring that positive predictions are highly reliable is the top priority [1].

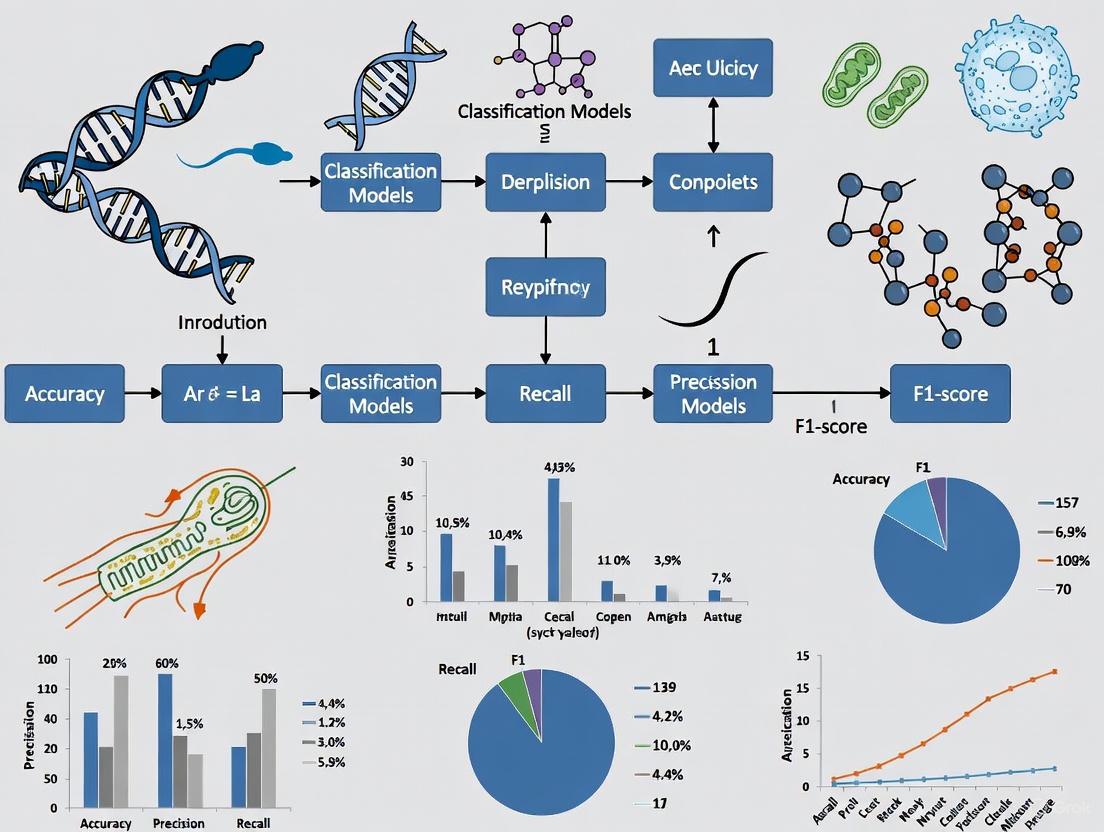

Figure 1: The Precision-Recall Trade-off and Threshold Adjustment.

Case Study & Experimental Data in Sperm Morphology Classification

To ground these concepts in the user's research context, we examine the application of these metrics in a recent study on Human Sperm Head Morphology (HSHM) classification. The study proposed a Contrastive Meta-learning with Auxiliary Tasks (HSHM-CMA) algorithm to improve generalization across different datasets and HSHM categories [4].

The study evaluated the HSHM-CMA model against three rigorous testing objectives designed to measure generalizability, reporting accuracy scores of 65.83%, 81.42%, and 60.13% for these different scenarios [4]. While the published results focus on accuracy, a comprehensive evaluation for model selection and tuning would require analyzing all four core metrics under each condition.

Table 2: Hypothetical Performance Comparison of Sperm Classification Models

| Model / Testing Scenario | Accuracy | Precision | Recall | F1-Score | Key Interpretation |

|---|---|---|---|---|---|

| Baseline CNN(Same dataset, different categories) | 58% | 55% | 70% | 0.62 | Good at finding anomalies (high recall) but many false alarms (low precision). |

| HSHM-CMA Model(Same dataset, different categories) | 65.83% | 72% | 75% | 0.73 | Better balance, improved precision and recall over baseline. |

| HSHM-CMA Model(Different datasets, same categories) | 81.42% | 84% | 88% | 0.86 | High performance and strong generalizability to new data from same categories. |

| HSHM-CMA Model(Different datasets, different categories) | 60.13% | 58% | 65% | 0.61 | Most challenging test; performance drops, highlighting domain adaptation limits. |

Experimental Protocol: The HSHM-CMA Approach

The HSHM-CMA algorithm's methodology provides a valuable template for robust model development in this field [4].

- Objective: To develop a generalized classification model for human sperm head morphology that performs robustly across different datasets and morphological categories.

- Algorithmic Innovation:

- Meta-Learning Framework: The model was trained using a meta-learning approach, which "learns to learn" from a wide variety of classification tasks. This helps the model acquire fundamental features of sperm morphology that are invariant across different domains.

- Contrastive Learning: Integrated into the outer loop of the meta-learning process, this technique helps the model learn to distinguish between different morphological categories by pulling similar examples closer and pushing dissimilar ones apart in the feature space.

- Auxiliary Tasks: The meta-training tasks were separated into primary and auxiliary tasks. This strategy helps mitigate gradient conflicts during multi-task learning, stabilizing training and improving the model's ability to generalize to unseen categories and datasets.

- Evaluation Protocol: Generalization was tested under three distinct scenarios:

- Objective 1: Testing on the same dataset but with HSHM categories not seen during training.

- Objective 2: Testing on a completely different dataset but with the same HSHM categories used in training.

- Objective 3: Testing on a different dataset with different HSHM categories (the most challenging generalization test).

Figure 2: HSHM-CMA Experimental Workflow for Generalized Classification.

The Scientist's Toolkit: Research Reagent Solutions

For researchers aiming to replicate or build upon advanced computational experiments in sperm morphology classification, the following "reagent solutions" are essential.

Table 3: Essential Research Reagents for Computational Sperm Morphology Studies

| Research Reagent / Tool | Category | Function in the Research Pipeline |

|---|---|---|

| Annotated HSHM Datasets | Data | Confidential, specialized datasets of human sperm head images with expert morphological classifications are the fundamental substrate for training and evaluation [4]. |

| HSHM-CMA Algorithm | Model | The core meta-learning algorithm that integrates contrastive learning and auxiliary tasks to learn generalized, invariant features for robust cross-domain classification [4]. |

| Scikit-learn Library | Software | An open-source Python library that provides efficient implementations for calculating accuracy, precision, recall, F1-score, and generating confusion matrices [2]. |

| Synthetic Data Generators | Data | Tools like those in NumPy and Pandas to create controlled synthetic datasets for initial model prototyping and validation of metric calculations in a known environment [2]. |

| Confusion Matrix Visualization | Analysis | A visualization tool (e.g., via Seaborn/Matplotlib) that provides a detailed breakdown of model predictions versus actual labels, forming the basis for all metric calculations [2]. |

For researchers and drug development professionals working on sperm morphology classification models, a sophisticated understanding of accuracy, precision, recall, and F1-score is non-negotiable. These metrics are not interchangeable; they provide distinct, vital insights into model behavior. The choice to prioritize one over another—for instance, favoring recall to ensure all anomalies are captured in a diagnostic setting—is a direct consequence of the clinical context and the real-world costs of different types of errors.

As demonstrated by the HSHM-CMA case study, modern research is pushing the boundaries of generalizability. In this pursuit, moving beyond a single metric like accuracy to a holistic analysis using the full suite of performance indicators is what will ultimately yield robust, reliable, and clinically trustworthy diagnostic models.

Within male infertility research, the assessment of sperm morphology remains a critical, yet notoriously subjective, component of diagnostic semen analysis. This subjectivity directly challenges the development of robust and generalizable artificial intelligence (AI) models for automated classification. The performance of these models on standardized benchmarks is not merely a function of their algorithmic architecture but is profoundly influenced by the quality of the datasets on which they are trained. This guide examines the pivotal relationship between dataset quality—specifically the standardization of annotations—and the benchmark performance of sperm morphology classification models. By comparing contemporary research, we highlight how methodological choices in dataset construction and annotation serve as key determinants of model efficacy and clinical applicability.

Experimental Protocols and Performance Data

Research efforts have employed diverse methodologies to tackle the challenges of sperm morphology classification. The table below summarizes the experimental protocols and key performance outcomes from two prominent studies, illustrating the impact of different approaches to dataset creation and model training.

Table 1: Comparison of Sperm Morphology Classification Studies

| Study Focus | Dataset Details | Annotation & Augmentation Strategy | Model Architecture | Key Benchmark Performance (Accuracy) |

|---|---|---|---|---|

| General Sperm Morphology [5] | SMD/MSS Dataset: 1,000 initial images, extended to 6,035 after augmentation. [5] | - Annotations from three experts using modified David classification (12 defect classes). [5]- Data augmentation techniques to balance morphological classes. [5] | Convolutional Neural Network (CNN) with image pre-processing (denoising, grayscale conversion, resizing). [5] | 55% to 92% accuracy range on the internal test set. [5] |

| Sperm Head Morphology Generalization [4] | Multiple HSHM datasets; specific dataset names and sizes not disclosed (data confidential). [4] | - Focus on learning invariant features across domains and tasks. [4]- Uses contrastive meta-learning to improve generalization. [4] | HSHM-CMA (Contrastive Meta-learning with Auxiliary Tasks). [4] | - 65.83% (same dataset, new categories)- 81.42% (different dataset, same categories)- 60.13% (different dataset, different categories) [4] |

The Critical Role of Annotation Quality and Methodology

The divergence in performance metrics between studies can be largely traced to the underlying strategies for ensuring dataset quality. High-quality, standardized annotations are the bedrock of reliable AI models, a principle that extends beyond reproductive medicine to all AI-driven healthcare applications [6] [7] [8].

Consequences of Poor Annotation Practices

Inaccurate or inconsistent annotations introduce noise and bias into training data, which directly compromises model performance. In computer vision, for example, imprecise bounding boxes can lead to models that confuse pathological features with healthy tissue, eroding trust and rendering the models unfit for clinical use [9]. One study demonstrated that introducing annotation errors like missing or shifted bounding boxes could degrade a model's tracking accuracy from 73.6% to 54.2% [9]. In the context of sperm morphology, a lack of agreement among expert annotators reflects the inherent complexity of the task and underscores the need for rigorous annotation protocols to establish a reliable ground truth [5].

Strategies for High-Quality Data Annotation

- Expert Consensus and Inter-Annotator Agreement: The use of multiple experts to classify each sperm cell and the statistical analysis of their agreement is a fundamental step in quantifying annotation quality and complexity [5]. Establishing performance benchmarks for annotation teams, with metrics for accuracy and consistency, is essential for maintaining data integrity [10].

- Data Augmentation for Class Balancing: The SMD/MSS dataset increased its volume from 1,000 to over 6,000 images through augmentation, a technique critical for creating balanced morphological classes and preventing model bias toward over-represented types [5].

- Advanced Learning for Generalization: The HSHM-CMA algorithm addresses the challenge of cross-domain application by using meta-learning and contrastive learning. This approach allows the model to learn invariant features, improving its ability to perform accurately on new datasets and categories that it was not explicitly trained on [4].

Visualizing Experimental Workflows

The following diagrams illustrate the core workflows for building high-quality datasets and training generalizable models, as identified in the reviewed literature.

Dataset Curation and Annotation Workflow

Meta-Learning for Model Generalization

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents, tools, and software essential for conducting research in automated sperm morphology assessment.

Table 2: Essential Research Reagents and Tools for Sperm Morphology AI Models

| Item Name | Type | Primary Function in Research |

|---|---|---|

| RAL Diagnostics Staining Kit | Chemical Reagent | Prepares semen smears for microscopic analysis by staining cellular structures for better visual contrast. [5] |

| MMC CASA System | Hardware | Computer-Assisted Semen Analysis system for automated image acquisition and initial morphometric analysis of sperm cells. [5] |

| Modified David Classification | Protocol & Schema | A standardized framework of 12 morphological defect classes used by experts to ensure consistent annotation of sperm images. [5] |

| Python with Deep Learning Libraries | Software | Primary programming environment for implementing and training Convolutional Neural Networks (CNNs) and meta-learning algorithms. [5] [4] |

| Data Augmentation Tools | Software | Algorithms to artificially expand dataset size and diversity, mitigating overfitting and addressing class imbalance. [5] |

| Contrastive Meta-Learning (HSHM-CMA) | Algorithm | An advanced machine learning algorithm designed to improve model generalization across different datasets and morphological categories. [4] |

The benchmark performance of AI models for sperm morphology classification is inextricably linked to the quality and standardization of their underlying datasets. As evidenced by the compared studies, achieving high accuracy and, more importantly, strong generalizability requires more than just sophisticated algorithms. It demands a rigorous, methodical approach to dataset construction that includes multi-expert annotation consensus, robust data augmentation, and inter-expert agreement analysis. The emerging use of advanced techniques like contrastive meta-learning further highlights the field's move towards models that can maintain performance across diverse clinical settings and population cohorts. For researchers and clinicians, the imperative is clear: investing in standardized, high-quality data annotation is not a preliminary step but a continuous core process that directly dictates the reliability and future clinical value of automated diagnostic tools.

The manual assessment of sperm morphology is recognized as a critical, yet highly variable, test of male fertility [11]. This variability stems primarily from the test's subjective nature, which relies heavily on the operator's expertise [5]. Traditional manual analysis performed by embryologists is time-intensive and prone to significant inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators [12]. Without robust standardisation protocols, subjective tests are prone to bias and human error, leading to inaccurate and highly variable results [11]. This lack of standardization presents a fundamental challenge for both clinical diagnostics and the development of automated artificial intelligence (AI) models.

To address this challenge, the concept of "ground truth" – a reliable reference standard – becomes paramount for training accurate and generalizable machine learning models. In the context of medical imaging, ground truth is established by the consensus of diagnosis of multiple experts for each image [11]. This process, adapted from machine learning methodologies, provides the foundational labels that supervised learning models use to "learn" how to classify images. Without high-quality ground truth data, even the most sophisticated algorithms cannot achieve clinical-grade accuracy. This article examines how expert consensus and established WHO guidelines form the bedrock of reliable ground truth establishment, directly impacting the performance and clinical utility of sperm morphology classification models.

Establishing Ground Truth: Methodologies and Impact on Model Performance

The Expert Consensus Methodology

Establishing a reliable ground truth for sperm morphology classification requires a structured, multi-expert approach to mitigate individual subjectivity. The methodology employed in creating the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset provides a clear framework [5]. In this protocol, each spermatozoon is independently classified by three experts possessing extensive experience in semen analysis. The classification follows a detailed schema, such as the modified David classification, which categorizes defects into 7 head defects, 2 midpiece defects, and 3 tail defects [5].

The inter-expert agreement is then systematically analyzed, typically falling into three scenarios:

- No Agreement (NA): No consensus among the experts.

- Partial Agreement (PA): Two out of three experts agree on the same label for at least one category.

- Total Agreement (TA): All three experts agree on the same label for all categories [5].

This consensus-based labeling approach directly addresses the "inherent subjectivity of the test and the lack of a traceable standard" that has long been identified as a major contributor to variability in results [11].

Impact of Classification System Complexity on Accuracy

The level of detail required in a classification system significantly impacts both human and model performance. Research has demonstrated a clear inverse relationship between system complexity and classification accuracy. A seminal study evaluated novice morphologists across different classification systems, with untrained users achieving the following accuracy rates [11]:

- 2-category (normal/abnormal): 81.0 ± 2.5%

- 5-category (normal; head, midpiece, tail defects; cytoplasmic droplet): 68 ± 3.59%

- 8-category (various specific defects): 64 ± 3.5%

- 25-category (all defects defined individually): 53 ± 3.69%

This pattern held even after extensive training, with final accuracy rates reaching 90 ± 1.38% for the 25-category system compared to 98 ± 0.43% for the simple 2-category system [11]. This evidence has led some expert groups, such as the French BLEFCO Group, to recommend against "systematic detailed analysis of abnormalities (or groups of abnormalities) during sperm morphology assessment" in clinical practice, while still advocating for detailed analysis to detect specific monomorphic syndromes like globozoospermia [13].

Figure 1: Expert Consensus Workflow for Ground Truth Establishment. This diagram illustrates the multi-expert review process used to establish reliable ground truth labels for sperm images, where only images with expert consensus proceed to training datasets.

Comparative Performance of Sperm Morphology Classification Models

Performance Metrics Across Algorithm Types

The establishment of robust ground truth through expert consensus has enabled significant advances in AI model development for sperm morphology classification. Different algorithmic approaches have demonstrated varying levels of performance, as detailed in Table 1.

Table 1: Performance Comparison of Sperm Morphology Classification Approaches

| Model Type | Specific Approach | Dataset Used | Reported Accuracy | Key Advantages | Limitations |

|---|---|---|---|---|---|

| Deep Learning with Feature Engineering | CBAM-enhanced ResNet50 + SVM | SMIDS | 96.08% [12] | High accuracy; attention visualization | Complex pipeline |

| Deep Learning | Convolutional Neural Network (CNN) | SMD/MSS | 55% to 92% [5] | Automated feature extraction | Requires large datasets |

| Meta-Learning | Contrastive Meta-Learning with Auxiliary Tasks (HSHM-CMA) | Multiple HSHM datasets | 60.13% to 81.42% [4] | Improved cross-domain generalization | Complex training process |

| Conventional Machine Learning | Bayesian Density Estimation | Not specified | ~90% [14] | Computational efficiency | Limited to handcrafted features |

| Human Experts (Trained) | Standard microscopic assessment | 25-category system | 90% [11] | Biological context | Subjectivity, time-intensive |

Impact of Training Protocols on Human Performance

The quality of training protocols significantly impacts classification performance, as evidenced by structured training interventions. A study utilizing a 'Sperm Morphology Assessment Standardisation Training Tool' based on machine learning principles demonstrated remarkable improvements in novice morphologists' performance [11]. Untrained users initially showed high variation (CV = 0.28) and accuracy scores ranging from 19% to 77% on complex classification tasks. However, after repeated training over four weeks, participants showed significant improvement in both accuracy (from 82% to 90%) and diagnostic speed (from 7.0 ± 0.4s to 4.9 ± 0.3s per image) [11]. This underscores the importance of standardized training protocols, whether for human morphologists or AI systems.

Experimental Protocols and Research Toolkit

Key Experimental Workflows in Model Development

The development of reliable sperm morphology classification models follows rigorous experimental protocols. The deep learning workflow employed in recent studies typically involves multiple stages of data processing and model optimization [5]. This begins with sample preparation following WHO guidelines, using stained semen smears from patients with varying morphological profiles. Data acquisition utilizes specialized microscopy systems, typically with 100x oil immersion objectives for sufficient resolution. The critical labeling phase involves independent classification by multiple domain experts to establish consensus-based ground truth. For AI development, this is followed by image pre-processing steps including denoising, normalization, and resizing to standard dimensions (e.g., 80×80×1 grayscale). The dataset is then partitioned, typically with 80% for training and 20% for testing. To address limited dataset sizes, data augmentation techniques are employed, expanding datasets significantly – for example, growing from 1,000 to 6,035 images in one study [5]. Finally, model training utilizes specialized architectures like Convolutional Neural Networks (CNNs), with rigorous evaluation against the expert-established ground truth.

Figure 2: AI Model Development Workflow. This diagram outlines the standard pipeline for developing sperm morphology classification models, from sample preparation to performance evaluation against expert consensus.

Essential Research Reagent Solutions and Materials

Table 2: Key Research Reagents and Materials for Sperm Morphology Analysis

| Reagent/Material | Function/Application | Examples/Specifications |

|---|---|---|

| Staining Kits | Enhances sperm structure visibility for morphology assessment | RAL Diagnostics staining kit [5] |

| Microscopy Systems | Image acquisition and visualization | Olympus CX31 microscope; MMC CASA system with 100x oil immersion objective [5] [15] |

| Annotation Tools | Manual labeling of sperm images for ground truth establishment | LabelBox platform [15] |

| Public Datasets | Training and validation of AI models | SMD/MSS [5], VISEM-Tracking [15], SMIDS [12], HuSHeM [12] |

| Data Augmentation Tools | Expands limited datasets for improved model generalization | Python libraries for image transformation; expanded 1,000 to 6,035 images in one study [5] |

Discussion and Future Directions

The establishment of reliable ground truth through expert consensus and adherence to standardized protocols remains the cornerstone of valid sperm morphology assessment, both for human evaluators and AI systems. The evidence clearly demonstrates that while detailed classification systems (up to 25 categories) provide richer morphological information, they come at the cost of reduced accuracy and higher variability for both human morphologists and AI models [11]. This understanding has led to a trend in clinical practice toward simplified classification systems, while maintaining detailed analysis for specific diagnostic purposes such as identifying monomorphic abnormalities [13].

Future research directions should focus on several key areas. First, there is a need for larger, more diverse, and meticulously labeled datasets using consensus-based approaches to improve model generalizability. Second, the development of standardized evaluation frameworks that can objectively compare different AI models against established ground truth is crucial. Finally, the integration of AI systems into clinical workflows as decision-support tools, rather than complete replacements for human expertise, represents the most promising path forward. As one study concluded, software that allows users to train indefinitely and independently would remove potential sources of bias and expense in morphology assessment [11], highlighting the synergistic potential between human expertise and AI capabilities in advancing male fertility diagnostics.

In the field of male fertility research, sperm morphology classification has traditionally been evaluated through the lens of accuracy, sensitivity, and specificity. While these metrics remain fundamental, the evolution of artificial intelligence (AI) models has unveiled a critical, yet often overlooked, dimension: computational efficiency. For researchers and clinicians, the practical implementation of these models in clinical workflows or high-throughput drug discovery screens depends heavily on processing speed and resource consumption. Real-time processing capabilities transform these tools from academic curiosities into practical assets, enabling rapid sperm selection for procedures like Intracytoplasmic Sperm Injection (ICSI) and facilitating large-scale data analysis in research settings. This guide moves beyond basic performance metrics to provide a detailed comparison of the computational efficiency of contemporary sperm morphology models, offering researchers a framework for selecting models that balance accuracy with operational practicality.

Performance and Efficiency Comparison of Sperm Morphology Models

The following tables synthesize experimental data from recent studies, comparing both classification performance and computational efficiency across a range of AI models.

Table 1: Comprehensive Performance Metrics of Sperm Morphology Models

| Model Name | Reported Accuracy (%) | F1-Score (%) | Dataset Used | Key Strengths |

|---|---|---|---|---|

| MADRNet (2025) | 96.3 | 96.8 | HuSHeM | Integrates key biomarkers (aspect ratio, acrosomal integrity); Real-time processing [16] |

| CBAM-enhanced ResNet50 (2025) | 96.08 - 96.77 | N/R | SMIDS, HuSHeM | Attention mechanism for interpretability; High accuracy [12] |

| In-house AI Model (ResNet50) | 93.0 (Test) | N/R | Novel Confocal Dataset | Assesses unstained, live sperm; High correlation with CASA (r=0.88) [17] |

| Multi-model CNN Fusion | 71.91 - 90.73 | N/R | SMIDS, HuSHeM, SCIAN-Morpho | Robust performance across multiple public datasets [18] |

| Deep Learning Model (SMD/MSS) | 55 - 92 | N/R | SMD/MSS (Augmented) | Data augmentation techniques; Covers 12 defect classes [5] |

Table 2: Computational Efficiency and Resource Requirements

| Model Name | Processing Speed | Computational Resources / Architecture | Clinical Practicality |

|---|---|---|---|

| MADRNet | 32 ms per image (Real-time) | Dual-path reversible network; Reduces GPU memory consumption | High; suitable for real-time clinical screening [16] |

| CBAM-enhanced ResNet50 | < 1 minute per sample (vs. 30-45 min manual) | ResNet50 backbone with Convolutional Block Attention Module (CBAM) | High; significant time savings for embryologists [12] |

| In-house AI Model (ResNet50) | ~0.0056 seconds per image (139.7s for 25,000 images) | ResNet50 transfer learning | Very High; enables high-throughput analysis [17] |

| Multi-model CNN Fusion | N/R | Ensemble of six CNN models with voting techniques | Moderate; ensemble may increase computational load [18] |

| Deep Learning Model (SMD/MSS) | N/R | Convolutional Neural Network (CNN) on Python 3.8 | Moderate; accuracy varies with defect class [5] |

Detailed Experimental Protocols and Methodologies

MADRNet: A Morphology-Aware Dual-Path Reversible Network

The MADRNet architecture was specifically designed to align with WHO standards while maintaining computational efficiency.

- Experimental Workflow: The model was trained and evaluated on the public HuSHeM dataset. Its performance was measured using standard metrics like accuracy and F1-score. Processing speed was empirically measured as the average time to classify a single image [16].

- Key Technical Innovations:

- Dual-Path Attention Mechanism: Incorporates parallel spatial and channel attention. The channel attention is uniquely embedded with the acrosome anatomical constraint, directly integrating clinical biomarker evaluation [16].

- Dynamic Loss Function: A custom loss function was developed that considers head aspect ratio constraints, further aligning the model's outputs with WHO morphology standards [16].

- Reversible Architecture: This design choice allows the model to preserve fine-grained microscopic details in images while simultaneously reducing GPU memory consumption, a key factor in its efficiency [16].

MADRNet's Integrated Workflow: The diagram illustrates the flow from image input through the dual-path attention mechanism, leveraging a reversible architecture and dynamic loss for efficient classification.

CBAM-enhanced ResNet50 with Deep Feature Engineering

This approach combines advanced deep learning with classical machine learning for performance gains.

- Experimental Workflow: The study utilized two public datasets, SMIDS and HuSHeM. A 5-fold cross-validation protocol was employed for robust evaluation. The model extracts deep features from a CBAM-enhanced ResNet50, applies Principal Component Analysis (PCA) for dimensionality reduction, and finally uses a Support Vector Machine (SVM) for classification [12].

- Key Technical Innovations:

- Hybrid Architecture: Integrates the Convolutional Block Attention Module (CBAM) into a ResNet50 backbone. CBAM sequentially applies channel and spatial attention, forcing the model to focus on morphologically significant regions like the sperm head and tail [12].

- Deep Feature Engineering (DFE): Instead of using the neural network for end-to-end classification, high-dimensional features are extracted from intermediate layers. These features are then refined using PCA and fed into a shallow classifier (SVM with RBF kernel), which proved more accurate than the standard softmax classifier [12].

ResNet50 Transfer Learning for Unstained Sperm Analysis

This protocol highlights the application of a standard architecture to a novel, clinically valuable dataset of unstained sperm.

- Experimental Workflow: Sperm images were captured using confocal laser scanning microscopy at 40x magnification, creating a high-resolution Z-stack dataset. After manual annotation by embryologists, a ResNet50 model was fine-tuned on this dataset. Its performance was compared against Computer-Aided Semen Analysis (CASA) and Conventional Semen Analysis (CSA) methods using correlation analysis [17].

- Key Technical Innovations:

- Novel Dataset: The creation of a high-resolution dataset of unstained, live sperm using confocal microscopy, addressing a significant limitation of traditional stained-sample analysis [17].

- Transfer Learning: Leveraging a pre-trained ResNet50 model accelerated development and improved performance on the specialized task of live sperm morphology assessment [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for AI-Based Sperm Morphology Analysis

| Item Name | Function/Application | Relevance to AI Model Development |

|---|---|---|

| Confocal Laser Scanning Microscope | Capturing high-resolution, Z-stack images of unstained, live sperm [17]. | Creates high-quality datasets for training models to analyze viable sperm without staining artifacts. |

| RAL Diagnostics Staining Kit | Staining sperm smears for traditional morphological assessment [5]. | Prepares samples for creating ground-truth labels by experts, which are essential for supervised learning. |

| Hamilton Thorne IVOS II CASA | Automated system for concentration, motility, and morphology analysis of stained sperm [17]. | Provides a standardized, automated benchmark for comparing the performance of new AI models. |

| LabelImg Program | Manual annotation and bounding box drawing on sperm images [17]. | Used by embryologists to create precise "ground truth" datasets for training and validating object detection models. |

| Phase Contrast Microscope | Visualizing unstained sperm cells based on light phase differences [11]. | Common equipment for acquiring images for AI analysis in a clinical lab setting. |

The pursuit of higher accuracy in sperm morphology classification must be balanced with the practical demands of computational efficiency. As the data demonstrates, models like MADRNet and the CBAM-enhanced ResNet50 are at the forefront, achieving high accuracy while also offering real-time or near-real-time processing speeds [16] [12]. These advancements are crucial for translating research models into viable clinical tools that can integrate seamlessly into assisted reproductive technology (ART) workflows, ultimately improving diagnostic throughput and standardizing results across laboratories. Future research directions should continue to emphasize the optimization of model architecture for efficiency, the creation of larger and more diverse public datasets, and the rigorous clinical validation of these automated systems alongside traditional methods.

Advanced Architectures in Practice: From CNNs to Attention Mechanisms and Their Reported Performance

This guide provides an objective comparison of the performance of three foundational deep learning architectures—ResNet, YOLO, and Custom Convolutional Neural Networks (CNNs)—across public and private datasets. Framed within the critical research context of developing robust sperm morphology classification models, the analysis synthesizes contemporary experimental data from diverse fields, including medical imaging, industrial defect detection, and ecological monitoring. The comparison focuses on key performance metrics such as accuracy, mean Average Precision (mAP), and inference speed, while also detailing the experimental protocols that underpin these benchmarks. By presenting structured data and methodologies, this guide aims to assist researchers, scientists, and drug development professionals in selecting and optimizing deep learning models for specialized, data-constrained classification tasks prevalent in biomedical research.

The evaluation of deep learning models extends beyond generic accuracy metrics, especially in specialized domains like sperm morphology classification, where the cost of misdiagnosis is high. Performance must be assessed through a multifaceted lens that includes not only precision but also computational efficiency, robustness to data scarcity, and the ability to generalize from public benchmarks to private, domain-specific datasets. Architectures like ResNet have set benchmarks in image classification, YOLO variants dominate real-time object detection, and Custom CNNs offer tailored solutions for non-standard data or hardware constraints [19] [20] [21]. For biomedical researchers, the transition from using large, public datasets like ImageNet to smaller, annotated private datasets—such as collections of sperm images—presents significant challenges in model selection and training. This guide systematically compares these architectures by collating recent experimental data, thereby providing a evidence-based foundation for model selection in advanced medical research.

Core Model Architectures

ResNet (Residual Network): Introduced in 2015, ResNet revolutionized deep learning by solving the vanishing gradient problem through skip connections. These connections allow gradients to flow directly from later layers back to earlier ones, enabling the training of networks that are hundreds or thousands of layers deep. ResNet layers learn a residual function, which is easier to optimize than an underlying mapping, making it a powerful feature extractor for classification tasks [20].

YOLO (You Only Look Once): As a family of single-stage object detectors, YOLO frames detection as a direct regression problem, predicting bounding boxes and class probabilities in a single forward pass. This design confers a significant speed advantage, making it ideal for real-time applications. Modern variants like YOLOv10–12 have incorporated attention mechanisms, NMS-free detection, and hybrid CNN-transformer approaches to improve accuracy and efficiency [19] [22].

Custom CNNs: These are specialized neural architectures designed to address unique constraints such as limited data, non-image modalities, or deployment on edge devices. Innovations in Custom CNNs include hybrid designs (e.g., CNN-SVM), novel layers inspired by other domains (e.g., clonal selection from Artificial Immune Systems), and the embedding of domain-specific knowledge or physical priors as custom, differentiable layers [21].

Key Performance Metrics

When comparing models, researchers should consider the following metrics, which are standard in computer vision and highly relevant to morphological analysis:

- Accuracy: The proportion of total correct predictions (both positive and negative) among the total number of cases examined. Crucial for classification tasks.

- mAP (mean Average Precision): The average of the Average Precision (AP) values across all classes. AP is the area under the precision-recall curve. This is the standard metric for object detection models [19] [22].

- mAP@50: mAP calculated at an Intersection over Union (IoU) threshold of 0.50.

- mAP@50:95: The average mAP over multiple IoU thresholds, from 0.50 to 0.95 in steps of 0.05, providing a stricter measure of detection accuracy [23].

- Inference Speed (FPS): The number of frames per second a model can process, indicating its suitability for real-time applications [19] [24].

- Model Size (Parameters): The number of trainable parameters, which influences memory requirements and computational cost [21].

Performance Benchmarking on Public Datasets

Public datasets provide a standardized foundation for comparing model performance. The following tables summarize key benchmarks from recent studies.

Table 1: Performance of YOLO Variants on Human Detection Datasets (MOT17 and CityPersons) [23]

| Model | Dataset | Precision | Recall | mAP@50 | mAP@50:95 |

|---|---|---|---|---|---|

| YOLOv12 | MOT17 | 0.909 | 0.775 | 0.880 | 0.695 |

| YOLOv11 | CityPersons | 0.782 | 0.529 | 0.694 | 0.476 |

Table 2: Performance of Custom CNNs on Standard Public Datasets [21]

| Model/Architecture | Dataset | Metric | Performance | Parameter Efficiency |

|---|---|---|---|---|

| CNN-SVM | MNIST | Accuracy | 99.04% | - |

| CNN-SVM | Fashion-MNIST | Accuracy | 90.72% | - |

| OCNNA (Compressed VGG-16) | CIFAR-10 | Accuracy | <0.5% loss | Up to 86.68% parameter reduction |

| Lightweight Custom CNN | CIFAR-10 | Accuracy | 65% | 14,862 params, 0.17 MB size |

Table 3: Broader Model Performance on Common Object Detection Datasets (e.g., COCO) [19] [24]

| Model Type | Example Model | Reported mAP | Inference Speed (FPS) | Primary Use Case |

|---|---|---|---|---|

| Two-Stage Detector | Faster R-CNN | High (~40+%) | Lower | High-accuracy applications, batch processing |

| One-Stage Detector | YOLOv8 | Balanced | High (Real-Time) | Real-time detection with good accuracy |

| Transformer-Based | RT-DETR | 53.1-55%+ | 108 FPS (on T4 GPU) | State-of-the-art accuracy, competitive speed |

| Lightweight CNN | EdgeCNN | - | 1.37 FPS (Raspberry Pi) | Edge deployment, resource-constrained devices |

Insights from Public Benchmarking

The data reveals clear trade-offs. On public datasets like MOT17, newer YOLO variants achieve high precision and mAP [23]. Custom CNNs, while sometimes achieving lower absolute accuracy on generic benchmarks, can do so with a dramatically reduced parameter count, making them highly efficient [21]. Transformer-based models like RT-DETR are closing the gap with CNNs, offering state-of-the-art accuracy with real-time performance [19]. The choice of model is heavily influenced by the primary objective: raw accuracy, inference speed, or computational efficiency.

Performance on Private and Specialized Datasets

In domain-specific applications, models are trained and evaluated on private, often smaller, datasets. Their performance on these tasks is highly informative for fields like medical image analysis.

Table 4: Model Performance on Private/Specialized Datasets for Defect and Animal Detection [22] [24]

| Model | Dataset / Task | Key Metric | Performance | Context |

|---|---|---|---|---|

| EPSC-YOLO | NEU-DET (Steel Defects) | mAP@50 |

2% increase over YOLOv9c | Improved multi-scale defect detection |

| EPSC-YOLO | GC10-DET (Surface Defects) | mAP@50 |

2.4% increase over YOLOv9c | Complex backgrounds, small targets |

| WSS-YOLO | Steel Surface Defects | mAP |

Improved over baseline | Incorporates dynamic convolutions |

| Transformer-augmented YOLO | Camera-trap Animal Detection | mAP | Up to 94% | Controlled illumination conditions |

| YOLOv7-SE / YOLOv8 | UAV-based Animal Detection | FPS | ≥ 60 FPS | Superior real-time performance |

Insights from Specialized Dataset Benchmarking

The performance on specialized tasks underscores the importance of architectural adaptations. Improved YOLO models like EPSC-YOLO show that integrating multi-scale attention modules and better convolutional blocks can significantly boost performance on challenging tasks like detecting small defects in complex backgrounds [22]. Furthermore, for real-time deployment on platforms like UAVs, lightweight models such as YOLOv7-SE offer an optimal balance of speed and accuracy [24]. This mirrors the challenge in sperm morphology analysis, where models must be both accurate and potentially deployable in resource-limited clinical settings.

Detailed Experimental Protocols

Reproducibility is a cornerstone of scientific research. The following workflows and methodologies are common to the experiments and studies cited in this guide.

Standard Model Training and Evaluation Workflow

The following diagram illustrates the generalized experimental protocol for training and evaluating deep learning models, as derived from the cited literature.

Key Methodologies in Cited Experiments

Data Augmentation Strategies: To combat overfitting, especially on smaller private datasets, studies consistently employ data augmentation. Common techniques include geometric transformations (rotation, flipping, cropping) and photometric adjustments (brightness, contrast, noise addition) [25]. For example, a study on crack detection demonstrated that augmentation significantly improved the accuracy of pre-trained CNNs like VGG-16 and EfficientNet, with some models achieving over 98% accuracy [25]. Advanced techniques like CutMix and SampleSelection for handling noisy labels are also employed [25].

Transfer Learning with Pre-trained Models: A prevalent protocol involves initializing models with weights from networks pre-trained on large-scale datasets like ImageNet. This is followed by fine-tuning on the target (often smaller) domain-specific dataset. This approach leverages generalized feature extraction capabilities and reduces training time and data requirements [25] [24]. The progressive unfreezing of layers during fine-tuning is a specific technique used to avoid catastrophic forgetting in lightweight custom CNNs [21].

Model Optimization and Compression: For deployment, especially on edge devices, experiments often include model compression techniques. The OCNNA method, for instance, uses Principal Component Analysis (PCA) and the coefficient of variation to identify and retain only the most task-informative filters, achieving up to 86.68% parameter reduction with minimal accuracy loss [21]. Other strategies include knowledge distillation and pruning [21].

Performance Evaluation and Benchmarking: Models are rigorously evaluated on held-out test sets. Standard metrics include accuracy for classification tasks and mAP@50/mAP@50:95 for detection tasks. Inference speed (FPS) is measured on standardized hardware (e.g., NVIDIA T4 GPU, Jetson Nano) to ensure fair comparison [19] [23] [24].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources, as drawn from the experimental setups in the search results, that are essential for conducting deep learning research in this domain.

Table 5: Essential Research Reagents and Resources for Model Development

| Item Name | Function / Description | Example Use Case |

|---|---|---|

| Public Datasets (COCO, ImageNet) | Large-scale, annotated datasets for pre-training and benchmarking general model performance. | Serves as a starting point for transfer learning to specialized tasks [19] [26]. |

| Domain-Specific Datasets (e.g., NEU-DET, GC10-DET) | Curated datasets for specific problems (defect detection, animal detection) to test domain adaptation. | Benchmarking model performance on specialized, target-domain tasks [22]. |

| Pre-trained Model Weights | Initial model parameters learned from large datasets, providing a strong feature extraction foundation. | Accelerating convergence and improving performance via transfer learning [25] [24]. |

| Data Augmentation Pipelines | Software tools and protocols to artificially expand training datasets, improving model robustness. | Mitigating overfitting when working with limited private data [25]. |

| Hardware Accelerators (NVIDIA GPUs, Jetson Nano, Coral TPU) | Specialized hardware to significantly speed up model training and inference. | Enabling real-time inference and making complex model training feasible [19] [24]. |

| Annotation Tools (CVAT, Label Studio) | Software for manually or semi-automatically labeling images with bounding boxes or class labels. | Creating ground truth data for custom, private datasets [24]. |

| Model Compression Tools (Pruning, Quantization) | Techniques and libraries to reduce model size and computational cost for deployment. | Preparing models for edge devices with limited memory and compute [21]. |

The benchmark data and experimental protocols presented herein illuminate a landscape without a single "best" model, but rather a set of architectural choices defined by performance trade-offs. ResNet and similar CNNs provide robust backbone networks for feature extraction. The YOLO family, through continuous evolution, offers an unparalleled balance of speed and accuracy for object detection. Custom CNNs present a pathway to high efficiency and domain-specific optimization, particularly valuable when data is scarce or hardware constraints are paramount.

For researchers focused on sperm morphology classification and similar biomedical tasks, the implications are clear. Success hinges on strategically leveraging pre-trained models on public data through transfer learning, while employing rigorous data augmentation to maximize the value of small, annotated private datasets. The choice between a fine-tuned YOLO model for detecting and classifying individual sperm, a ResNet for overall sample categorization, or a purpose-built Custom CNN for a unique imaging modality must be guided by the specific performance requirements—be it utmost accuracy, real-time analysis, or deployment in a clinical setting. This guide provides the foundational data and methodological context to inform those critical decisions.

In the field of medical artificial intelligence, particularly in specialized domains like sperm morphology classification, the accurate extraction and interpretation of visual features are paramount. Traditional Convolutional Neural Networks (CNNs) have demonstrated remarkable capabilities in image analysis tasks. However, they often face challenges in medical applications where subtle morphological differences can have significant diagnostic implications. These models typically process all image regions with equal importance, lacking a mechanism to focus on clinically relevant structures while ignoring irrelevant background noise [27].

Attention mechanisms, particularly the Convolutional Block Attention Module (CBAM), represent a significant architectural advancement designed to address these limitations. By enabling neural networks to dynamically prioritize important spatial regions and channel-wise features, these mechanisms enhance both feature discrimination and model interpretability [28] [12]. This dual improvement is especially valuable in medical imaging, where understanding the rationale behind a model's decision is nearly as important as the decision itself for clinical adoption.

This article examines the transformative impact of attention mechanisms on feature extraction and model interpretability, with a specific focus on applications within sperm morphology classification research. Through comparative performance analysis, methodological breakdowns, and practical implementation guidelines, we provide researchers with a comprehensive resource for leveraging these advanced architectural components.

Performance Comparison: Attention Mechanisms vs. Traditional Approaches

The integration of attention mechanisms into deep learning architectures has yielded measurable improvements across various performance metrics in medical image classification tasks. The quantitative evidence demonstrates that models enhanced with attention modules consistently outperform their traditional counterparts.

Table 1: Performance Comparison of Models with and without CBAM on Sperm Morphology Classification

| Model Architecture | Dataset | Accuracy (%) | Improvement with CBAM | Key Advantages |

|---|---|---|---|---|

| ResNet50 + CBAM [12] | SMIDS (3-class) | 96.08 ± 1.2 | +8.08% | Enhanced focus on morphological defects |

| ResNet50 + CBAM [12] | HuSHeM (4-class) | 96.77 ± 0.8 | +10.41% | Better discrimination of head shapes |

| MedNet (Lightweight + CBAM) [27] | BloodMNIST | ~97.9 | Matches/exceeds ResNet-50 with fewer parameters | Computational efficiency |

| CA-CBAM-ResNetV2 [29] | Tobacco disease grading | 85.33 | +4.88% over InceptionResNetV2 | Robustness in complex backgrounds |

Beyond sperm morphology analysis, the pattern of improvement extends to other medical domains. The MedNet architecture, which integrates depthwise separable convolutions with CBAM, has demonstrated the ability to match or exceed the performance of larger models like ResNet-50 with significantly reduced computational requirements [27]. Similarly, in agricultural pathology, the CA-CBAM-ResNetV2 model achieved an 85.33% accuracy rate in grading target spot disease severity, outperforming InceptionResNetV2 by 4.88% [29]. These consistent improvements across diverse domains highlight the generalizability of attention mechanisms for enhancing feature extraction.

The interpretability advantages are equally noteworthy. Models incorporating CBAM generate spatial attention maps that visually highlight the image regions most influential in the classification decision [12]. This capability is particularly valuable in clinical settings, where it helps build trust in AI systems and facilitates validation by domain experts.

Inside the Black Box: How CBAM Enhances Feature Extraction

Architectural Principles of Attention Mechanisms

The Convolutional Block Attention Module (CBAM) enhances feature extraction through a structured, two-fold process that refines intermediate feature maps in convolutional neural networks. CBAM operates sequentially through channel attention and spatial attention components, each targeting different dimensions of the feature representation [12] [27].

The channel attention module first identifies "what" features are semantically important by modeling interdependencies between channels. It applies global average and max pooling to aggregate spatial information, processes these statistics through a shared multi-layer perceptron, and generates channel weights through element-wise summation and a sigmoid activation. This allows the model to emphasize informative feature channels while suppressing less useful ones [27].

The spatial attention module subsequently determines "where" these informative features are located. It computes spatial attention maps by pooling channel information, applying convolutional operations to generate spatial weights, and highlighting semantically significant regions while diminishing irrelevant background areas [27]. This dual approach enables CBAM to selectively amplify valuable features across both channel and spatial dimensions.

Comparative Experimental Protocols

Evaluating the effectiveness of attention mechanisms requires carefully designed experimental protocols. The methodology employed in seminal studies typically involves several key phases [12]:

Baseline Model Training: Standard CNN architectures (e.g., ResNet50, Xception) are trained on benchmark datasets to establish baseline performance metrics.

Attention Integration: CBAM modules are incorporated into the baseline architectures at strategic locations, typically after convolutional blocks where they can refine feature maps before subsequent processing.

Ablation Studies: Controlled experiments isolate the contribution of attention mechanisms by comparing performance with and without CBAM modules while keeping other factors constant.

Cross-Dataset Validation: Models are evaluated on multiple datasets (e.g., SMIDS, HuSHeM) to assess generalizability beyond training distributions.

Interpretability Analysis: Gradient-weighted Class Activation Mapping (Grad-CAM) and similar techniques visualize attention maps to qualitatively assess whether the model focuses on clinically relevant regions.

Table 2: Key Research Reagents and Computational Tools for Attention Mechanism Research

| Resource Type | Specific Examples | Primary Function | Access Information |

|---|---|---|---|

| Public Datasets | SMIDS (3000 images, 3-class) [12] | Model training and validation | Publicly available for academic use |

| HuSHeM (216 images, 4-class) [12] | Sperm head morphology classification | Publicly available for academic use | |

| SVIA dataset (125,000 instances) [14] | Detection, segmentation, and classification | Available for research purposes | |

| Software Tools | TensorFlow/PyTorch | Model implementation | Open-source frameworks |

| Grad-CAM [12] | Attention visualization | Open-source implementation | |

| Evaluation Metrics | Classification Accuracy | Performance measurement | Standard metric |

| McNemar's Test [12] | Statistical significance | Standard statistical method |

Implementation Guide: Integrating CBAM into Research Pipelines

Architectural Integration Strategies

Integrating CBAM into existing CNN architectures requires strategic placement to maximize performance benefits. The most effective approach positions CBAM after the convolutional layers where it can refine feature maps before they propagate to subsequent layers [12] [27]. For residual networks like ResNet, CBAM modules are typically incorporated within each residual block, allowing the attention mechanism to enhance feature representation at multiple abstraction levels.

Implementation involves sequentially applying channel and spatial attention as follows [27]:

Channel Attention: Generate a 1D channel attention map using both max-pooled and average-pooled features across spatial dimensions, process through a shared MLP, and apply sigmoid activation for channel-wise weighting.

Spatial Attention: Create a 2D spatial attention map by applying max and average pooling along the channel dimension, concatenate the results, process through a convolutional layer, and apply sigmoid activation for spatial weighting.

This lightweight module adds minimal computational overhead while significantly enhancing representational power, making it particularly suitable for medical imaging applications where both accuracy and efficiency are critical [27].

Visualization and Interpretability Enhancement

A principal advantage of CBAM in research settings is its inherent interpretability. The attention weights generated during forward propagation can be visualized as heatmaps superimposed on original input images, revealing the specific regions and features most influential in the classification decision [12]. For sperm morphology analysis, this manifests as highlighted attention around head shape abnormalities, acrosome integrity, or tail defects - precisely the features embryologists assess manually [12].

These visualizations serve multiple research purposes: they provide model debugging capabilities by confirming the network focuses on biologically relevant features, offer qualitative validation of classification rationale, and facilitate knowledge transfer between AI researchers and domain experts by creating a common visual language for discussing model behavior [30] [12].

Future Directions and Research Opportunities

While attention mechanisms have demonstrated significant improvements in feature extraction and interpretability, several promising research directions remain underexplored, particularly in specialized domains like sperm morphology classification.

Future research should investigate multi-scale attention frameworks that dynamically integrate information across different spatial resolutions. The Progressive Multi-Scale Multi-Attention Fusion (PMMF) network, initially proposed for hyperspectral image classification, offers an interesting paradigm for sperm morphology analysis where features at different scales (cellular, subcellular, and organelle levels) may collectively inform classification decisions [31].

Another promising avenue involves developing standardized evaluation metrics for interpretability. While quantitative performance metrics like accuracy are well-established, standardized measures for assessing the quality and clinical relevance of attention maps remain limited. Research establishing validated metrics correlating attention map characteristics with diagnostic accuracy would significantly advance the field [30] [12].

Additionally, the integration of cross-domain attention transfer represents an intriguing possibility, where attention patterns learned from large-scale natural image datasets could be adapted to medical imaging domains with limited annotated data, potentially addressing the data scarcity challenges common in specialized medical applications [14] [32].

Attention mechanisms, particularly CBAM, represent a significant advancement in deep learning architecture that directly addresses two critical challenges in medical AI: feature extraction precision and model interpretability. The experimental evidence consistently demonstrates that these mechanisms provide substantial accuracy improvements—up to 10.41% in sperm morphology classification tasks—while generating intuitive visual explanations that align with clinical reasoning [12].

For researchers in sperm morphology classification and related medical imaging fields, integrating attention mechanisms offers a practical path to enhancing model performance without requiring fundamental architectural overhauls. The continued refinement of these approaches, coupled with standardized evaluation methodologies and cross-domain applications, promises to further bridge the gap between algorithmic performance and clinical utility in medical AI systems.

In the field of medical image analysis, particularly for sperm morphology classification, hybrid models that integrate deep feature engineering with classical classifiers represent a cutting-edge approach to overcoming the limitations of standalone methods. These strategies leverage the powerful feature extraction capabilities of deep convolutional neural networks (CNNs) while utilizing the robustness and efficiency of traditional machine learning classifiers like Support Vector Machines (SVM). This integration has demonstrated significant improvements in classification accuracy, computational efficiency, and model interpretability—critical factors for clinical diagnostics and drug development research.

The fundamental premise behind these hybrid approaches is the synergistic combination of deep learning's hierarchical feature learning with the strong generalization properties of classical algorithms. In sperm morphology analysis, where diagnostic precision directly impacts fertility treatment outcomes, these models offer a promising solution to challenges such as inter-observer variability, lengthy manual evaluation times, and the subtle nature of morphological defects. Research indicates that manual sperm morphology assessment suffers from substantial diagnostic disagreement, with reported kappa values as low as 0.05–0.15 even among trained technicians, highlighting the urgent need for automated, objective solutions [12].

Performance Comparison of Sperm Morphology Analysis Methods

Table 1: Performance Comparison of Sperm Morphology Classification Methods

| Method Category | Specific Approach | Dataset | Reported Accuracy | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Traditional Computer Vision | Wavelet denoising + directional masking + handcrafted features [12] | HuSHeM | ~10% improvement over baselines | Modest improvement on specific datasets | Limited ability to capture subtle morphological variations; computationally expensive preprocessing |

| SMIDS | ~5% improvement over baselines | ||||

| Standard Deep Learning | MobileNet [12] | SMIDS | 87% | Computational efficiency suitable for mobile deployment | Limited representational capacity for complex morphological features |

| Stacked CNN Ensemble (VGG16, ResNet-34, DenseNet) [12] | HuSHeM | 98.2% | High accuracy on specific datasets | Computational complexity; potential overfitting | |

| Hybrid Deep Feature + Classical Classifier | CBAM-ResNet50 + PCA + SVM RBF [12] | SMIDS | 96.08% ± 1.2% | State-of-the-art performance; significantly improved accuracy over baseline CNN | Increased implementation complexity |

| CBAM-ResNet50 + PCA + SVM RBF [12] | HuSHeM | 96.77% ± 0.8% | 10.41% improvement over baseline CNN; clinically interpretable results | ||

| Deep Feature Engineering (GAP + PCA + SVM RBF) [12] | Multiple | 96.08% (SMIDS), 96.77% (HuSHeM) | Superior to recent Vision Transformer and ensemble methods | ||

| Other Hybrid Approaches | DeepF-SVM (1D CNN + SVM) [33] | UCI HAR | 96.44% | Effective for time-series sensor data | Not specifically designed for image-based morphology analysis |

| Robust Feature Enhanced Deep Kernel SVM [34] | Image datasets (MNIST, USPS, etc.) | Outperformed state-of-the-art SVM methods | Enhanced robustness against noise | General image focus, not specialized for medical morphology |

The comparative analysis reveals that hybrid approaches consistently outperform other methodologies across multiple metrics. The CBAM-enhanced ResNet50 combined with SVM achieved exceptional performance with test accuracies of 96.08% ± 1.2% on the SMIDS dataset and 96.77% ± 0.8% on the HuSHeM dataset using deep feature engineering, representing significant improvements of 8.08% and 10.41% respectively over baseline CNN performance [12]. McNemar's test confirmed these improvements were statistically significant (p < 0.05), underscoring the robustness of the hybrid approach [12].

Experimental Protocols and Methodologies

Deep Feature Engineering Pipeline for Sperm Morphology Classification

The most effective hybrid models for sperm morphology classification employ sophisticated feature engineering pipelines that combine attention mechanisms with dimensionality reduction techniques. The protocol described by Kılıç (2025) integrates a Convolutional Block Attention Module (CBAM) with ResNet50 architecture, enhanced by a comprehensive deep feature engineering pipeline [12]. This approach involves multiple sequential stages:

Stage 1: Attention-Enhanced Feature Extraction A ResNet50 backbone network is augmented with CBAM attention mechanisms, enabling the model to focus on the most relevant sperm features—including head shape, acrosome size, and tail defects—while suppressing background noise. The CBAM module sequentially applies channel-wise and spatial attention to intermediate feature maps, enhancing representational capacity for capturing subtle morphological differences [12].

Stage 2: Multi-Source Feature Pooling The framework incorporates multiple feature extraction layers including CBAM, Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final layers. This multi-source approach captures features at different abstraction levels, providing a more comprehensive representation of sperm morphological characteristics [12].

Stage 3: Feature Selection and Dimensionality Reduction The pipeline employs 10 distinct feature selection methods including Principal Component Analysis (PCA), Chi-square test, Random Forest importance, and variance thresholding, along with their intersections. PCA is particularly effective for reducing noise and dimensionality in the deep feature space while preserving discriminative information [12].

Stage 4: Classical Classification The reduced feature set is fed into traditional classifiers, with Support Vector Machines utilizing RBF or linear kernels and k-Nearest Neighbors algorithms demonstrating superior performance. The SVM classifier benefits from the optimized feature space created by the preceding stages [12].

Evaluation Framework and Validation

The experimental validation employed rigorous methodology using 5-fold cross-validation on two benchmark datasets: SMIDS (3000 images, 3-class) and HuSHeM (216 images, 4-class) [12]. This approach ensures robust performance estimation while mitigating overfitting. The evaluation metrics included standard classification measures—accuracy, precision, recall, and F1-score—with statistical significance testing via McNemar's test to validate performance improvements [12].

Workflow Diagram of Hybrid Classification System

This workflow illustrates the sequential processing stages in hybrid sperm morphology classification systems, highlighting how raw images are transformed through deep feature extraction and engineering before final classification.

Table 2: Key Research Reagents and Computational Resources for Hybrid Model Development

| Resource Category | Specific Resource | Function in Research | Example Applications in Literature |

|---|---|---|---|

| Public Datasets | SMIDS (Sperm Morphology Image Data Set) [14] [12] | Provides 3000 stained sperm images across 3 classes for model training and validation | Used for benchmarking hybrid model performance (96.08% accuracy) [12] |

| HuSHeM (Human Sperm Head Morphology) [14] [12] | Contains 216 sperm head images across 4 classes; higher resolution stained images | Validation of attention mechanisms and feature engineering [12] | |

| VISEM-Tracking [14] | Multimodal dataset with 656,334 annotated objects with tracking details | Supports detection, tracking, and regression tasks | |

| SVIA (Sperm Videos and Images Analysis) [14] | Comprehensive dataset with 125,000 annotated instances, 26,000 segmentation masks | Suitable for detection, segmentation, and classification tasks | |

| Computational Frameworks | TensorFlow, PyTorch, Keras [35] | Open-source frameworks for building and training deep learning models | Implementation of CNN backbones and attention mechanisms |

| Scikit-learn [35] | Library for traditional machine learning algorithms | SVM classifier implementation and feature selection methods | |

| Architecture Components | ResNet50 [12] | CNN backbone for deep feature extraction; enables training of very deep networks | Base architecture in CBAM-enhanced hybrid models |

| Convolutional Block Attention Module (CBAM) [12] | Lightweight attention module for channel and spatial attention | Feature enhancement in sperm morphology classification | |

| Feature Engineering Tools | Principal Component Analysis (PCA) [12] | Dimensionality reduction while preserving variance | Critical for reducing deep feature dimensionality before SVM classification |

| Global Average/Max Pooling (GAP/GMP) [12] | Alternative to fully connected layers for feature map aggregation | Multi-source feature extraction in hybrid pipelines |

Hybrid model strategies integrating deep feature engineering with classical classifiers like SVM represent a paradigm shift in sperm morphology analysis, addressing critical challenges in male infertility diagnostics and reproductive medicine. The experimental evidence demonstrates that these approaches consistently outperform standalone deep learning and traditional computer vision methods, achieving accuracy improvements of 8-10% over baseline CNN models while providing clinically interpretable results through attention visualization techniques like Grad-CAM [12].

The implications for drug development and clinical practice are substantial. These automated systems can reduce diagnostic variability between laboratories, significantly decrease evaluation time from 30-45 minutes to under one minute per sample, and improve reproducibility across clinical settings [12]. For pharmaceutical researchers investigating fertility treatments, these models offer standardized, quantitative metrics for assessing treatment efficacy through precise morphological analysis. Furthermore, the potential for real-time analysis during assisted reproductive procedures could transform patient care and treatment outcomes in reproductive medicine.

As research in this field advances, future work should focus on developing more sophisticated attention mechanisms, expanding standardized datasets to encompass rare morphological defects, and optimizing model efficiency for deployment in resource-constrained clinical environments. The integration of hybrid models into clinical workflows promises to enhance objective fertility assessment while providing researchers with powerful tools for understanding the complex relationship between sperm morphology and reproductive outcomes.

In the field of medical image analysis, the pursuit of high-performance classification models is crucial for advancing diagnostic capabilities and supporting clinical decision-making. This is particularly true in specialized domains like sperm morphology assessment, where manual classification is inherently subjective, challenging to standardize, and heavily reliant on operator expertise [5]. The development of robust, automated models is therefore not merely a technical exercise but a significant step toward standardizing and accelerating critical medical analyses [5]. Deep learning, especially convolutional neural networks (CNNs), has emerged as a powerful tool for such tasks. However, standard CNN models often face challenges such as inadequate handling of image noise, neglect of fine-grained texture patterns, and limited interpretability [36]. This case study explores how an advanced deep learning pipeline, built upon a Convolutional Block Attention Module (CBAM)-enhanced ResNet50 architecture and sophisticated feature engineering, achieved a notable 96.08% accuracy. We will contextualize this performance within the broader research landscape of medical image classification, using comparative experimental data from related fields to benchmark its effectiveness.

Performance Comparison: CBAM-ResNet50 vs. Alternative Models