Evaluating Machine Learning Performance in Sperm Concentration Prediction: Metrics, Models, and Clinical Translation

This article provides a comprehensive analysis of performance metrics and methodologies for machine learning (ML) applications in predicting sperm concentration, a critical parameter in male fertility assessment.

Evaluating Machine Learning Performance in Sperm Concentration Prediction: Metrics, Models, and Clinical Translation

Abstract

This article provides a comprehensive analysis of performance metrics and methodologies for machine learning (ML) applications in predicting sperm concentration, a critical parameter in male fertility assessment. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of ML in andrology, details the application of specific algorithms like XGBoost and CNNs, addresses key challenges in model optimization and data standardization, and offers a comparative validation of ML approaches against traditional techniques. By synthesizing current evidence, this review aims to establish a framework for robust performance evaluation, guiding the development of reliable, clinically applicable ML tools in reproductive medicine.

The Foundation of AI in Andrology: Why Machine Learning is Revolutionizing Sperm Concentration Analysis

Sperm concentration, defined as the number of spermatozoa per milliliter of semen, represents a fundamental parameter in male fertility assessment. According to the World Health Organization (WHO) guidelines, a normal sperm concentration is at least 15 million per milliliter, with a total sperm count of at least 39 million per ejaculation [1]. This metric serves as a cornerstone in andrology, providing critical insights into testicular function and spermatogenetic efficiency. Beyond its reproductive implications, emerging evidence identifies sperm concentration as a marker of overall male health, with significant studies revealing that impaired semen quality, including low concentration, is associated with increased all-cause mortality [2] [3]. A landmark study of 78,284 men followed for up to 50 years demonstrated that men with superior semen parameters could expect to live 2.7 years longer than those with severely impaired parameters, underscoring the broader health implications beyond fertility [2].

The clinical imperative to accurately measure and interpret sperm concentration has intensified against the backdrop of documented global declines. A comprehensive meta-regression analysis revealed a 52.4% decline in mean sperm concentration among unselected Western men between 1973 and 2011, representing an average decline of 1.4% per year [4]. This trend underscores a growing public health concern and highlights the need for advanced predictive approaches to better understand, diagnose, and address male infertility. The integration of machine learning (ML) methodologies offers promising avenues to enhance the predictive value of sperm concentration data, moving beyond traditional analytical limitations toward more personalized clinical applications.

Traditional Assessment and Current Challenges

The conventional assessment of sperm concentration relies on manual microscopy and computer-assisted semen analysis (CASA) systems, which, despite standardization efforts, face significant limitations. The semen analysis suffers from substantial intra-individual variability and inter-laboratory discrepancies [5]. Studies demonstrate that inexperienced laboratories show 2.9 times more variance in sperm counting and 1.4 times more variance in motility quantification compared to specialized centers [5]. This variability complicates clinical decision-making and treatment planning.

The clinical interpretation of sperm concentration extends beyond a binary classification of normal versus abnormal. The probability of natural conception correlates strongly with sperm concentration, yet many men with subnormal concentrations can still achieve pregnancy, albeit with diminished likelihood [5]. The parameter must be evaluated in conjunction with other semen parameters, particularly motility and morphology, to derive meaningful clinical insights. The calculation of Total Motile Sperm Count (TMSC), derived from concentration, volume, and motility, provides a more comprehensive functional assessment [1] [5]. Reproductive urologists often utilize TMSC to determine appropriate treatment pathways, with values below 3-5 million typically necessitating advanced assisted reproductive technologies [5].

Table 1: Key Semen Parameters and Clinical Reference Values

| Parameter | Clinical Reference Value | Significance | Methodological Challenges |

|---|---|---|---|

| Sperm Concentration | ≥15 million/mL | Indicator of spermatogenetic efficiency; predictor of natural conception odds | High inter-laboratory variability; sample heterogeneity |

| Total Sperm Count | ≥39 million per ejaculation | Total functional sperm output | Dependent on collection completeness and abstinence period |

| Total Motile Sperm Count (TMSC) | >20 million for natural conception | Integrated measure of functional sperm | Requires accurate assessment of volume, concentration, and motility |

| Progressive Motility | ≥40% | Sperm movement capability | Subjective assessment; rapid decline post-ejaculation |

| Morphology | ≥4% normal forms | Sperm structural integrity | High subjectivity in "strict" criteria application |

Machine Learning Approaches for Sperm Concentration Prediction

Artificial intelligence (AI) and machine learning (ML) are revolutionizing male infertility management by addressing critical limitations in traditional semen analysis. These technologies offer opportunities for proactive, cost-effective, and efficient assessment through the integration of complex, multidimensional data [6]. ML applications in sperm analysis have surged since 2021, with 57% of identified studies in a recent mapping review published between 2021-2023 [7]. This reflects growing recognition of AI's potential to enhance diagnostic precision and predictive capability.

Multiple ML architectures have demonstrated efficacy in semen parameter assessment. Deep convolutional neural networks (CNNs) have achieved up to 97.37% accuracy in human sperm classification [6]. For motility assessment, CNN models show strong correlation with manual assessments for progressively motile spermatozoa (Pearson's r = 0.88) [6]. Alternative approaches include artificial neural networks that estimate sperm concentration from absorption spectra with 93% prediction accuracy [6]. The diversity of ML techniques enables selection of optimal architectures for specific clinical questions, from basic parameter assessment to complex outcome prediction.

The predictive modeling extends beyond mere parameter quantification to forecasting therapeutic outcomes. Machine learning models can predict blastocyst yield in IVF cycles with significant accuracy, outperforming traditional statistical approaches. LightGBM models have achieved R² values of 0.67-0.68 in predicting blastocyst formation, substantially surpassing linear regression models (R² = 0.59) [8]. This enhanced predictive capability supports more personalized treatment planning and improves counseling for couples undergoing fertility treatments.

Table 2: Performance Metrics of Machine Learning Models in Male Fertility Applications

| ML Application | Algorithm | Performance | Clinical Utility |

|---|---|---|---|

| Sperm Morphology Classification | Deep Convolutional Neural Network | 97.37% accuracy [6] | Automated abnormality detection; reduced subjectivity |

| Sperm Motility Assessment | Convolutional Neural Networks | Pearson's r=0.88 for progressive motility [6] | Standardized motility classification; eliminates inter-observer variability |

| Sperm Concentration Estimation | Artificial Neural Network | 93% prediction accuracy [6] | Alternative assessment methods; potential for novel diagnostic devices |

| IVF Success Prediction | Random Forest | AUC 84.23% on 486 patients [7] | Improved patient counseling; treatment pathway optimization |

| Sperm Retrieval Prediction (NOA) | Gradient Boosting Trees | AUC 0.807, 91% sensitivity [7] | Prognostication for surgical sperm retrieval in azoospermic men |

| Blastocyst Yield Prediction | LightGBM | R²: 0.673-0.676, MAE: 0.793-0.809 [8] | Informed decisions on extended embryo culture |

Experimental Protocols and Methodologies

Whole-Genome Sequencing for Genetic Biomarker Discovery

Advanced genomic approaches are elucidating the genetic underpinnings of sperm dysfunction, providing potential biomarkers for severe male factor infertility. A 2025 study performed whole-genome sequencing (WGS) on sperm samples from eight normozoospermic men and nine men with oligozoospermia, asthenozoospermia, or both [9]. The methodology involved meticulous sample purification using 45%-90% PureSperm gradients followed by centrifugation at 500 g for 20 minutes to remove somatic cells and debris [9]. Genomic DNA extraction utilized the QIAamp DNA Mini Kit with modifications to improve DNA release efficiency, yielding higher purity and integrity suitable for WGS [9].

Comparative analysis revealed a higher burden of genomic variants in the sperm dysfunction infertility group (SDIG) versus the normozoospermic group (NG). The study identified several exclusively present nonsynonymous missense variants in the SDIG cohort, including mutations in DNAJB13 (p.Ile159Asn), MNS1 (p.Asp217Asn), DNAH6 (p.Ser2210Leu), HYDIN (p.Gly901Ala,

Semen analysis, universally regarded as the gold standard for diagnosing male infertility, is plagued by significant limitations that compromise its reliability and objectivity. Male factors contribute to approximately 50% of infertility cases, placing critical importance on accurate diagnostic methods [10] [11]. Conventional semen analysis involves manual assessment of parameters including sperm concentration, motility, and morphology by laboratory technicians. However, this process is inherently subjective, labor-intensive, and prone to substantial inter-observer and intra-observer variability [12] [13]. The diagnostic workflow faces a fundamental challenge: morphological evaluation of sperm still faces considerable limitations in reproducibility and objectivity [10]. This article examines the quantifiable limitations of conventional semen analysis methods and contrasts them with emerging machine learning (ML) approaches that offer enhanced precision, objectivity, and predictive power for sperm concentration prediction in research contexts.

Comparative Analysis: Conventional vs. Machine Learning Methods

Table 1: Performance Comparison of Conventional and ML-Based Sperm Analysis Methods

| Method Category | Specific Technique | Key Performance Metrics | Primary Limitations | Reference |

|---|---|---|---|---|

| Conventional Manual Analysis | Standard semen analysis (WHO guidelines) | Subjective assessment; High inter-observer variability | Operator dependency; Time-consuming; Limited reproducibility | [10] [12] [13] |

| Machine Learning (Composite) | Elastic Net SQI (8 parameters + mtDNAcn) | AUC: 0.73 (95% CI: 0.61–0.84) for pregnancy at 12 cycles | Requires specialized computational expertise | [14] |

| Deep Learning (Image-Based) | VGG-16 (testicular ultrasound images) | AUC: 0.76 for sperm concentration classification | Dependency on high-quality annotated datasets | [12] |

| Machine Learning (Clinical) | XGBoost (multiple clinical parameters) | AUC: 0.987 for azoospermia prediction | Model interpretability challenges | [11] |

| Individual Biomarker | Sperm mtDNA copy number | AUC: 0.68 (95% CI: 0.58–0.78) for pregnancy at 12 cycles | Single parameter limitation | [14] |

Table 2: Direct Methodological Comparison for Sperm Concentration Assessment

| Aspect | Conventional Manual Methods | ML-Enhanced Methods |

|---|---|---|

| Basis of Assessment | Visual estimation by trained technicians | Quantitative algorithmic analysis |

| Time Requirement | 30–60 minutes per sample (morphology analysis of 200+ sperm) | Near real-time processing after model training |

| Standardization Potential | Low (high operator dependency) | High (consistent algorithm application) |

| Data Utilization | Limited to basic parameter quantification | Multidimensional parameter integration |

| Predictive Capability | Descriptive rather than predictive | High predictive accuracy for clinical outcomes |

| Scalability | Limited by trained personnel availability | High scalability with computational resources |

Experimental Evidence: Quantifying the Limitations

Documented Variability in Conventional Methods

Manual semen analysis represents a significant challenge in morphological analysis, characterized by high recognition difficulty [10]. According to classification standards established by the World Health Organization (WHO), sperm morphology is divided into the head, neck, and tail, with 26 types of abnormal morphology, requiring the analysis and counting of more than 200 sperms [10]. This labor-intensive process naturally introduces variability, as manual observation involves a substantial workload and is always influenced by the subjectivity of observers [10]. The conventional analysis pipeline suffers from multiple points of potential variability, including sample collection methods, technician training levels, assessment environment, and interpretation criteria.

Recent studies implementing machine learning algorithms to analyze comprehensive andrological datasets have revealed significant, yet previously hidden connections between parameters, suggesting potential intra-individual links that could provide valuable insights into male infertility [11]. This success serves as a prime example of how artificial intelligence can be harnessed to advance our understanding of the factors contributing to male infertility [11].

Performance Superiority of ML Approaches

Experimental results from recent studies demonstrate the superior performance of ML methods. In one study examining the utility of semen parameters and sperm mitochondrial DNA copy number (mtDNAcn) to predict couples' time to pregnancy, machine learning approaches significantly outperformed conventional assessment methods [14]. For individual semen measures, sperm mtDNAcn was most predictive of pregnancy at 12 menstrual cycles in ROC analyses (AUC 0.68), but among multiparameter biomarkers, a composite machine learning Elastic Net SQI (ElNet-SQI) demonstrated the highest predictive power (AUC 0.73) [14].

Another innovative approach used deep learning algorithms to predict semen analysis parameters from testicular ultrasonography images [12]. The research achieved an AUC of 0.76 for classifying sperm concentration (oligospermia versus normal), demonstrating that image-based AI assessment can provide reliable predictions of semen parameters without direct semen analysis [12]. This approach is particularly valuable for patients reluctant to provide samples or when advanced assessment capabilities are unavailable.

Experimental Protocols in ML-Based Sperm Analysis

Machine Learning Protocol for Pregnancy Outcome Prediction

Table 3: Key Research Reagent Solutions for ML-Based Sperm Analysis

| Reagent/Resource | Function in Research | Application Context |

|---|---|---|

| Sperm Mitochondrial DNA Copy Number (mtDNAcn) | Biomarker of overall sperm fitness and reproductive success | Prediction of time to pregnancy in conjunction with conventional parameters |

| Elastic Net Algorithm | Regularized regression method that combines L1 and L2 penalties | Development of weighted sperm quality indices from multiple parameters |

| XGBoost Classifier | Ensemble learning method using gradient boosted decision trees | Classification of semen analysis categories from clinical parameters |

| VGG-16 Architecture | Deep convolutional neural network for image classification | Prediction of semen parameters from testicular ultrasonography images |

| Annotated Sperm Datasets (e.g., SVIA, VISEM-Tracking) | Training and validation resources for machine learning models | Development of automated sperm recognition and classification systems |

A seminal study investigating machine learning approaches for predicting couples' fecundity provides a robust experimental protocol [14]. The study assessed the predictive power of sperm mtDNAcn and 34 conventional semen parameters using discrete-time proportional hazard models, logistic regression, and receiver operating characteristic (ROC) analyses [14]. The experimental workflow involved:

Participant Recruitment: 281 men from the Longitudinal Investigation of Fertility and the Environment study, a large preconception general population cohort.

Parameter Measurement: Comprehensive semen analysis following WHO guidelines alongside quantification of sperm mtDNAcn.

Index Development: Creation of two composite semen quality indices (SQIs) - an unweighted ranked-sperm quality index derived from only semen parameters and a weighted sperm quality index generated using machine learning via elastic net.

Predictive Modeling: Evaluation of the predictive ability for achieving pregnancy at 3, 6, and 12 months, and overall time to pregnancy using multiple statistical approaches.

The machine learning implementation specifically utilized elastic net regularization, which combines L1 and L2 regularization, to develop a weighted sperm quality index that incorporated 8 semen parameters along with mtDNAcn [14]. This method automatically selected the most relevant features while preventing overfitting, resulting in a model that was most strongly associated with time to pregnancy compared to any individual parameter or other combinations.

Deep Learning Protocol for Image-Based Semen Parameter Prediction

An innovative experimental protocol demonstrated the prediction of semen analysis parameters from testicular ultrasonography images using deep learning algorithms [12]. The methodology included:

Patient Cohort: 249 patients (498 testicular images) presenting with infertility complaints despite at least one year of unprotected intercourse.

Comprehensive Assessment: All patients underwent blood hormone profiling, semen analysis, and scrotal ultrasonography by the same operator to minimize technical variability.

Image Processing: Longitudinal-axis images of both testes were obtained and manually segmented to remove patient information and minimize the influence of irrelevant areas.

Data Categorization: Patients were categorized based on semen analysis results according to WHO criteria, with each parameter subdivided into "low" and "normal" categories.

Model Training and Validation: Segmented images were organized into datasets, augmented, and partitioned into 80% training and 20% test sets. Classification was performed using the VGG-16 deep learning architecture.

This approach achieved an AUC of 0.76 for sperm concentration classification, demonstrating that deep learning can extract meaningful information from ultrasonography images that correlates with conventional semen analysis results [12].

Technical Requirements for ML Implementation

Data Quality and Annotation Standards

The successful implementation of machine learning in sperm analysis depends critically on data quality and standardization. Deep learning relies on big data for multidimensional data extraction and analysis, enabling automatic feature extraction and training [10]. However, limitations in datasets including low resolution, limited sample size, and insufficient categories still exist [10]. To ensure effective application of deep learning in sperm morphology research, attention must be paid to the quality and diversity of datasets to guarantee the generalization ability of the model [10].

Recent research has utilized publicly available datasets such as HSMA-DS (Human Sperm Morphology Analysis DataSet), MHSMA (Modified Human Sperm Morphology Analysis Dataset), and VISEM-Tracking [10]. More newly, the SVIA (Sperm Videos and Images Analysis) dataset was established, comprising 125,000 annotated instances for object detection, 26,000 segmentation masks, and 125,880 cropped image objects for classification tasks [10]. The field requires standardized processes for sperm morphology slide preparation, staining, image acquisition, and annotation to maximize model performance.

Algorithm Selection and Model Training Considerations

The selection of appropriate machine learning algorithms depends on the specific research question and data characteristics. In one comprehensive study, XGBoost was selected after evaluating other classifiers because it fit scenarios requiring high accuracy, large datasets, high scalability, diverse feature types, and adaptation to unbalanced classes [11]. The implementation included:

Pre-processing: Normalization for numeric variables and encoding for categorical ones, with imputation to fill missing values using nearest neighbor values for numerical features and most frequent values for categorical features.

Training Pipeline: 5-fold cross-validation and randomized fine-tuning of hyperparameters using randomly selected data within datasets.

Multi-class Problem Addressing: Application of both One versus Rest (OvR) and One versus One (OvO) approaches to transform multi-class problems into binary classification tasks.

For image-based analysis, convolutional neural networks (CNNs) like VGG-16 have proven effective, particularly when trained on adequate datasets with appropriate augmentation techniques to increase sample diversity and model robustness [12].

The limitations of conventional semen analysis methods - particularly high variability and subjectivity - present significant challenges in male infertility diagnostics and research. Quantitative evidence demonstrates that machine learning approaches consistently outperform traditional methods in predictive accuracy, objectivity, and reproducibility. The integration of ML techniques, from elastic net regression for parameter weighting to deep learning for image-based assessment, offers researchers powerful tools to advance sperm concentration prediction and overall fertility assessment. While implementation challenges remain, particularly regarding data standardization and model interpretability, the performance advantages of ML methods position them as transformative technologies that will ultimately enhance both clinical practice and research in male reproductive health.

The field of predictive modeling has undergone a significant transformation, evolving from traditional statistical methods to sophisticated machine learning (ML) and deep learning (DL) algorithms. In scientific research, particularly in specialized domains such as andrology and infertility research, this evolution presents both opportunities and challenges for researchers and drug development professionals. The core distinction in supervised machine learning lies between regression, which predicts continuous numerical values (e.g., sperm concentration), and classification, which categorizes data into discrete classes (e.g., "normal" vs. "abnormal" morphology) [15]. Traditional regression models, such as ordinary least squares (OLS) and logistic regression, have long been the foundation of statistical analysis, prized for their interpretability and well-understood theoretical properties. However, they often rely on strict assumptions about data linearity and variable relationships, which can limit their performance on complex, high-dimensional biological datasets [16] [17].

In contrast, machine learning approaches, including ensemble methods like Random Forest and XGBoost, offer flexible mechanisms to approximate estimation models without prespecified functional forms, potentially capturing complex nonlinear relationships and interactions within the data [16]. More recently, deep learning, a subset of machine learning based on artificial neural networks with multiple layers, has demonstrated remarkable success in pattern recognition tasks, including image-based analysis in medical research [18] [19]. This guide provides an objective comparison of the performance of these paradigms, with a specific focus on applications in male infertility and sperm analysis research, to inform methodological choices in scientific investigation and drug development.

Performance Comparison Across Domains

Quantitative comparisons across diverse medical and biological research domains reveal a consistent pattern: machine learning and deep learning models frequently outperform traditional regression, though the margin of improvement varies significantly by application.

Table 1: Performance Comparison of Modeling Paradigms Across Research Domains

| Research Domain | Traditional Model | ML/DL Model | Performance Metrics | Key Findings |

|---|---|---|---|---|

| Health Utility Mapping [16] | Ordinary Least Squares (OLS) | Bayesian Networks, LASSO | MAE, MSE, R-squared | ML showed minor average improvement (MAE: +0.007, R-squared: +0.058) over regression models. |

| Colon Cancer Survival [20] | Cox Proportional Hazard (CPH) | LSTM Deep Learning | AUC, Brier Score | Deep learning (AUC: 0.910) significantly outperformed CPH (AUC: 0.793). |

| IVF Blastocyst Yield Prediction [8] | Linear Regression | LightGBM (ML) | R², MAE | ML models (R²: 0.673-0.676) outperformed linear regression (R²: 0.587). |

| Sepsis Mortality Prediction [17] | Logistic Regression | Random Forest (ML) | AUC | Random Forest (AUC: 0.999) demonstrated advantages over logistic regression. |

| Heart Failure Preventable Utilization [19] | Logistic Regression | Deep Learning | Precision at 1% | Deep learning (Precision: 43%) outperformed enhanced logistic regression (Precision: 30%). |

The performance advantage of ML and DL models is particularly pronounced in complex pattern recognition tasks. For instance, in a study on heart failure, deep learning models achieved a precision of 43% at the 1st percentile for identifying preventable hospitalizations, compared to 30% for enhanced logistic regression [19]. Similarly, in colon cancer survival prediction, deep learning models like Long Short-Term Memory (LSTM) networks achieved Area Under the Curve (AUC) values of 0.910, substantially outperforming traditional Cox regression (AUC: 0.793) [20]. These results suggest that as task complexity increases, the relative performance of more flexible models improves.

Experimental Protocols in Male Infertility Research

The application of these modeling paradigms in male infertility research follows rigorous experimental protocols designed to ensure robust and generalizable results.

Dataset Preparation and Preprocessing

Research in sperm analysis typically relies on retrospective datasets compiled from clinical andrological evaluations. For example, a study using machine learning to identify infertility-related markers utilized two distinct Italian datasets: one (UNIROMA) encompassing semen analysis, sex hormones, and testicular ultrasound parameters (n=2,334 subjects), and another (UNIMORE) incorporating semen analysis, hormones, biochemical examinations, and environmental pollution parameters (n=11,981 records) [11]. Key preprocessing steps include:

- Data Cleaning: Handling missing values through imputation methods (e.g., using nearest neighbor values or most frequent values) [11].

- Normalization: Scaling numerical variables to ensure stable model training [11].

- Class Definition: Categorizing outcomes based on clinical criteria (e.g., normozoospermia, altered semen parameters, and azoospermia according to WHO standards) [11].

- Dataset Splitting: Randomly dividing data into training (70-80%) and validation (20-30%) sets, with some studies including an additional test set for final evaluation [8] [17].

Model Training and Validation

The model development process involves simultaneous training of multiple algorithms with cross-validation to identify the best performer.

- Model Selection: Researchers typically train multiple models simultaneously. For instance, a blastocyst yield prediction study compared Support Vector Machine (SVM), LightGBM, and XGBoost alongside traditional linear regression [8].

- Hyperparameter Tuning: Model configuration parameters are optimized using techniques like randomized search with cross-validation [11].

- Feature Selection: Methods such as Recursive Feature Elimination (RFE) are employed to identify the most predictive variables and reduce overfitting [8]. Least Absolute Shrinkage and Selection Operator (LASSO) regression is also used for variable selection in both regression and ML contexts [16] [17].

- Validation Method: K-fold cross-validation (commonly 5- or 10-fold) is standard practice, where the dataset is partitioned into k subsets, with each subset serving as a validation set while the remaining k-1 subsets are used for training [11].

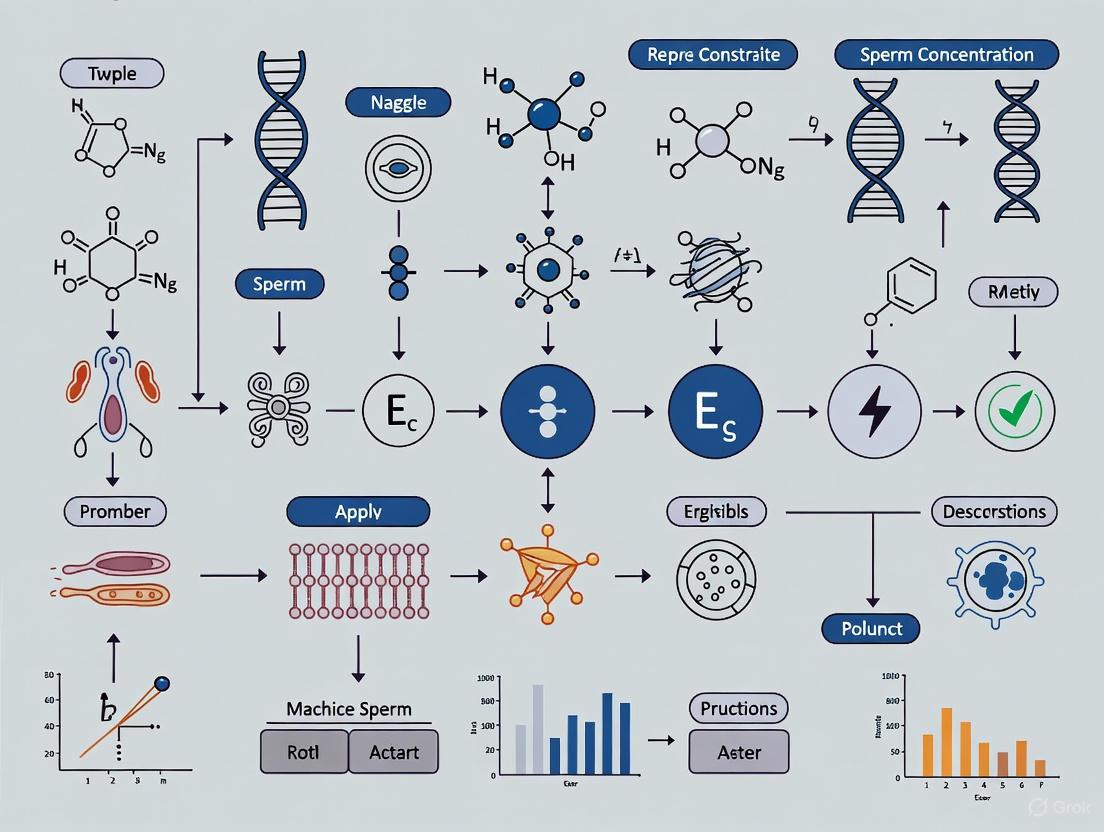

Diagram 1: Experimental workflow for comparing modeling paradigms.

Performance Evaluation Metrics

The choice of evaluation metrics depends on whether the task is regression or classification:

- Classification Tasks (e.g., normal vs. abnormal morphology): Use Area Under the Receiver Operating Characteristic Curve (AUC), Precision, Recall, F1-score, and Accuracy [11] [15] [17].

- Regression Tasks (e.g., predicting sperm concentration): Use R-squared (R²), Mean Absolute Error (MAE), Mean Squared Error (MSE), and Root Mean Squared Error (RMSE) [16] [21] [8].

Application in Sperm Analysis Research

In male infertility research, machine learning applications span several critical domains, each with distinct methodological considerations.

Semen Analysis and Infertility Marker Identification

Machine learning models have demonstrated exceptional capability in identifying complex, non-linear relationships between clinical parameters and semen

In machine learning, particularly within sensitive domains like medical diagnostics and biological research, selecting the appropriate performance metrics is not a mere technicality—it is a foundational aspect of model validation that directly impacts interpretability and clinical utility. Metrics such as Accuracy, Area Under the Curve (AUC), Sensitivity, Specificity, and Mean Absolute Error (MAE) each provide a unique lens through which a model's performance can be evaluated. For predictive tasks in andrology, such as sperm concentration prediction, the choice of metric must align with the specific clinical or research question, whether it is a classification task (e.g., classifying samples as normozoospermic or oligozoospermic) or a regression task (e.g., predicting exact concentration values). This guide provides a structured comparison of these key metrics, supported by experimental data and protocols, to inform researchers and drug development professionals in their model assessment processes.

Metric Definitions and Core Trade-offs

The following table summarizes the purpose, calculation, and primary use case for each key metric.

Table 1: Definition and Application of Key Performance Metrics

| Metric | Definition | Calculation Formula | Primary Use Case |

|---|---|---|---|

| Accuracy | Proportion of total correct predictions [22] [23]. | (TP + TN) / (TP + TN + FP + FN) [22] | Overall performance on balanced datasets; provides a general snapshot [22]. |

| Sensitivity (Recall) | Proportion of actual positives correctly identified [24] [25]. | TP / (TP + FN) [22] [24] | Critical for "rule-out" tests; minimizes false negatives [26] [25]. |

| Specificity | Proportion of actual negatives correctly identified [24] [25]. | TN / (TN + FP) [22] [24] | Critical for "rule-in" tests; minimizes false positives [26] [25]. |

| AUC-ROC | Overall model discriminative ability across all thresholds [22] [23]. | Area under the ROC curve [22] | Evaluating model ranking capability, independent of a single threshold [22] [23]. |

| MAE | Average magnitude of absolute errors in regression [27] [28]. | (1/n) * Σ|yi - ŷi| [27] | Regression tasks; interpreting error in the target variable's original units [27] [28]. |

A critical understanding in binary classification is the inherent trade-off between Sensitivity and Specificity. This relationship is governed by the classification threshold [24] [26]. As illustrated in the ROC curve below, increasing the threshold to reduce false positives (increasing specificity) typically leads to a decrease in sensitivity (more false negatives), and vice versa [26] [29]. The optimal operating point on this curve is a decision informed by the relative cost of false positive and false negative errors in the specific application context.

Quantitative Metric Comparison and Interpretation

To move beyond definitions, it is essential to understand how these metrics interact and how their values should be interpreted in a practical context, such as evaluating different diagnostic models.

Table 2: Metric Comparison and Interpretation Guide

| Metric | Range | Ideal Value | Interpretation in Context | Strengths | Weaknesses |

|---|---|---|---|---|---|

| Accuracy | 0 to 1 | 1 | In a balanced dataset, 95% accuracy indicates 95% of all samples were correctly classified. | Intuitive and easy to compute [22]. | Misleading with class imbalance [22] [23]. |

| Sensitivity | 0 to 1 | 1 | A sensitivity of 0.98 means the model detects 98% of true oligozoospermic samples. | Excellent for ruling out disease [26]. | Does not consider false positives [24]. |

| Specificity | 0 to 1 | 1 | A specificity of 0.94 means 94% of normozoospermic samples are correctly identified as negative. | Excellent for confirming (ruling in) a condition [26]. | Does not consider false negatives [24]. |

| AUC-ROC | 0 to 1 | 1 | An AUC of 0.90 means a randomly chosen positive sample has a 90% higher ranking than a negative one [22]. | Single measure for overall performance; threshold-independent [22] [23]. | Does not indicate specific threshold to use [26]. |

| MAE | 0 to ∞ | 0 | An MAE of 1.5 million/mL means predictions are, on average, 1.5 million/mL off from the true concentration. | Interpreted in original units; robust to outliers [27] [28]. | Does not indicate direction of error (over/under-prediction) [27]. |

Experimental Protocols for Metric Evaluation

Robust evaluation requires a standardized experimental protocol. The following workflow outlines the key stages for a rigorous assessment of a sperm concentration prediction model, from data preparation to final metric calculation.

Detailed Experimental Methodology

To execute the workflow above, the following detailed methodologies should be employed:

- Dataset Construction: Assemble a dataset of sperm samples with ground-truth concentration values, ideally from multiple sources to enhance generalizability. For a classification task, samples should be labeled according to WHO guidelines (e.g., oligozoospermic: concentration < 15 million/mL). It is critical to document pre-processing steps like image normalization or noise reduction.

- Data Splitting: Employ a stratified k-fold cross-validation (e.g., k=5 or k=10) to split the data into training, validation, and test sets [30]. Stratification ensures that the proportion of each class (e.g., normozoospermic vs. oligozoospermic) is preserved in all splits, providing a more reliable performance estimate, especially for imbalanced datasets.

- Model Training & Threshold Selection: Train the model on the training set. For classification models that output probabilities (e.g., Logistic Regression, Random Forests

Performance Metrics for ML in Sperm Concentration Prediction

The application of machine learning (ML) in male reproductive health is transforming the identification of key predictors for conditions like low sperm concentration. Traditional statistical methods often struggle with the complex, non-linear relationships between multiple factors, whereas ML algorithms excel at uncovering these intricate interactions from high-dimensional data [31].

The table below summarizes the performance and key findings of recent studies that employed machine learning to identify predictors of male fertility and sperm quality.

Table 1: Machine Learning Models for Predicting Male Fertility and Sperm Quality

| Study Focus | ML Models Used | Key Performance Metrics | Top Identified Predictors |

|---|---|---|---|

| Predicting Sperm Count [31] | Random Forest (RF), Stochastic Gradient Boosting (SGB), LASSO, Ridge Regression, XGBoost | Model performance evaluated using symmetric mean absolute percentage error, relative absolute error, root relative squared error, and root mean squared error. | Sleep Time (ST), Alpha-Fetoprotein (AFP), Body Fat (BF), Systolic Blood Pressure (SBP), and Blood Urea Nitrogen (BUN). |

| Predicting Male Infertility Risk from Serum [32] | Prediction One, AutoML Tables | AUC (Area Under the Curve) of 74.42% (Prediction One); AUC ROC of 74.2% (AutoML Tables). | FSH (most important), Testosterone/Estradiol (T/E2) ratio, Luteinizing Hormone (LH). |

| Predicting Couples' Fecundity [14] | Elastic Net (ElNet) | AUC of 0.73 (95% CI: 0.61–0.84) for pregnancy at 12 cycles. | A weighted Sperm Quality Index (ElNet-SQI) comprising 8 semen parameters and sperm mitochondrial DNA copy number (mtDNAcn). |

These studies demonstrate that ML models can achieve good predictive power and consistently identify a different set of influential factors than those highlighted by traditional correlation analyses. For instance, novel factors like sleep time and alpha-fetoprotein emerged as top predictors from an ML analysis of a general health screening database, offering fresh insights into sperm count regulation [31].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for future research, below are the detailed methodologies from two key studies that utilized machine learning approaches.

Table 2: Summary of Key Experimental Protocols

| Protocol Component | Study on Sperm Count Predictors [31] | Study on Male Infertility Risk from Hormones [32] |

|---|---|---|

| Data Source | MJ Health Research Foundation database, a major health screening center in Taiwan. | Medical records of 3,662 patients who underwent fertility testing. |

| Cohort Details | 1,375 eligible male subjects (average age 33.22 ± 4.36 years) from annual health screenings (2010-2017). | Patients were classified by semen analysis results: non-obstructive azoospermia (NOA), obstructive azoospermia (OA), cryptozoospermia, oligo/asthenozoospermia, and normal. |

| Predictor Variables | 30 health screening indicators and questionnaire variables, including lifestyle and metabolic factors. | Age, LH, FSH, prolactin (PRL), testosterone (T), estradiol (E2), and T/E2 ratio. |

| Outcome Variable | Sperm count. | Total motile sperm count, with a value below 9.408 × 10^6 defined as abnormal. |

| ML Preprocessing & Analysis | Five ML algorithms (RF, SGB, LASSO, Ridge, XGBoost) were used and compared. Models were trained to identify major risk factors associated with sperm count. | Two commercial AI software platforms (Prediction One and AutoML Tables) were used to build predictive models from hormone data alone. Feature importance was ranked by the models. |

Signaling Pathways and Logical Workflows

The predictors identified by machine learning models exert their influence on sperm concentration through specific biological pathways. The diagram below synthesizes findings from multiple studies to illustrate the complex interplay between lifestyle, environmental, hormonal, and cellular factors in regulating male fertility.

Diagram 1: Integrated Pathways Affecting Sperm Concentration and Fertility. This diagram illustrates how lifestyle, environmental, and metabolic factors converge on key physiological mechanisms to impair sperm production and function.

As shown, factors like tobacco use, alcohol consumption, and exposure to environmental toxins (e.g., phthalates, pesticides) can induce oxidative stress by generating reactive oxygen species (ROS) [33] [34]. This oxidative stress damages sperm cell membranes, proteins, and crucially, DNA integrity, leading to high sperm DNA fragmentation (SDF) [33]. It is also implicated in reducing the sperm mitochondrial DNA copy number (mtDNAcn), a biomarker for overall sperm fitness and energy production capability [14].

Concurrently, factors such as obesity, psychological stress, and certain EDCs disrupt the hypothalamic-pituitary-gonadal (HPG) axis [34] [35]. This disruption can lead to a hormonal imbalance, characterized by low testosterone, elevated estrogen, and high FSH levels, which is detrimental to the spermatogenesis process and directly reduces sperm concentration [33] [32]. The combination of poor sperm concentration, DNA damage, and inadequate cellular energy ultimately leads to impaired fertility and longer time to pregnancy [14].

The Scientist's Toolkit: Research Reagent Solutions

To investigate the predictors and pathways outlined, researchers rely on a suite of specialized reagents and materials. The following table details key solutions essential for conducting studies in this field.

Table 3: Essential Research Reagents and Materials for Male Fertility Studies

| Reagent/Material | Primary Function in Research | Application Example |

|---|---|---|

| WHO Semen Analysis Kit | Standardized assessment of basic semen parameters (volume, concentration, motility, morphology) according to WHO guidelines. | The foundational diagnostic tool for classifying patient cohorts (e.g., normozoospermic vs. oligozoospermic) in clinical studies [33] [35]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | Quantitative measurement of reproductive hormone levels (FSH, LH, Testosterone, Estradiol, Prolactin) in blood serum. | Used to obtain hormonal predictor variables for ML models and to diagnose endocrine disruption [35] [32]. |

| Sperm Chromatin Dispersion (SCD) Test Kit | Evaluation of sperm DNA fragmentation (SDF), a key biomarker of DNA integrity. | Critical for assessing the impact of oxidative stress and environmental toxins on sperm genetic quality [33]. |

| Eosin-Nigrosin Staining Kit | Differential staining of viable (unstained) versus non-viable (pink/red) spermatozoa. | Used in manual semen analysis protocols to assess sperm viability, as per WHO guidelines [35]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | High-precision quantification of endogenous hormones and endocrine-disrupting chemicals (EDCs) in biological samples like urine or serum. | Employed in advanced exposure studies to precisely measure concentrations of dozens of EDCs (e.g., bisphenols, phthalates) and hormones for ML analysis [36] [37]. |

| qPCR Assay for mtDNAcn | Quantitative measurement of mitochondrial DNA copy number in sperm cells. | Used to assess sperm bioenergetic status and its correlation with fertility outcomes, as a novel biomarker in predictive models [14]. |

Algorithmic Deep Dive: Methodologies and Real-World Applications for Sperm Concentration Prediction

In the rapidly evolving field of reproductive medicine, machine learning (ML) has emerged as a transformative force, particularly in addressing complex diagnostic challenges such as predicting semen quality and fertility treatment outcomes. The application of supervised learning algorithms provides powerful tools for analyzing multidimensional clinical data and identifying subtle patterns that may elude conventional statistical methods. This review objectively compares the performance of three ML workhorses—XGBoost, Random Forest, and Support Vector Machines (SVM)—within the specific context of sperm concentration prediction research, a critical area in male fertility assessment.

Accurate prediction of semen parameters is essential for diagnosing male factor infertility, which contributes to approximately 50% of all infertility cases [38]. Traditional semen analysis, while foundational, suffers from subjectivity and variability, creating an urgent need for more standardized, data-driven approaches [13] [38]. Machine learning algorithms, with their capacity to handle complex, heterogeneous datasets and model nonlinear relationships, offer promising solutions to these challenges, potentially revolutionizing andrology laboratories and clinical decision-making [13] [38].

Algorithm Fundamentals and Comparative Mechanics

Random Forest: The Ensemble Robust

Random Forest operates as an ensemble method that constructs multiple decision trees during training and outputs predictions based on their collective voting (for classification) or averaging (for regression) [39]. Its robustness stems from introducing randomness in both feature selection and data sampling (bagging) for each tree, effectively reducing overfitting—a common limitation of individual decision trees [39]. This algorithm demonstrates particular strength in handling datasets with numerous features without significant performance deterioration and can manage missing data effectively without requiring complex imputation techniques [39].

XGBoost: The Sequential Optimizer

XGBoost (Extreme Gradient Boosting) represents an advanced implementation of gradient boosting that builds trees sequentially, with each new tree correcting errors made by previous ones [39] [40]. Its unique optimization objective and regularization techniques enhance efficiency and accuracy while controlling model complexity [39]. A key innovation in XGBoost is its compressed column-based storage structure, where data is pre-sorted, allowing each attribute to be processed only once and enabling parallel computation for split finding [39]. This makes it exceptionally efficient for large-scale datasets common in medical research.

Support Vector Machines: The Margin Maximizer

SVM operates on the principle of finding the optimal hyperplane that maximizes the margin between different classes in the feature space [41]. For linearly inseparable data, SVM utilizes kernel functions to transform input data into higher-dimensional spaces where effective separation becomes possible [41]. The linear SVM variant, which employs a simple linear kernel, often demonstrates strong performance with fewer computational demands compared to nonlinear alternatives, making it suitable for many clinical prediction tasks where interpretability is valued [41].

Table 1: Fundamental Characteristics of ML Algorithms in Sperm Analysis

| Algorithm | Core Mechanism | Key Strengths | Common Applications in Reproductive Medicine |

|---|---|---|---|

| Random Forest | Ensemble of decorrelated decision trees via bagging and random feature selection | High accuracy, robust to outliers and overfitting, handles missing data | Classification of fertilization success, semen quality assessment [39] [42] |

| XGBoost | Sequential building of trees with gradient boosting and regularization | High execution speed, handles mixed data types, superior performance in many domains | Predicting semen parameters from lifestyle factors, blastocyst yield prediction [39] [40] [8] |

| SVM | Finding optimal separating hyperplane with maximum margin | Effective in high-dimensional spaces, memory efficient, versatile with kernel functions | Pregnancy outcome prediction, semen parameter classification [41] [38] |

Performance Comparison in Semen Quality Prediction

Direct Performance Metrics

Recent studies have provided direct comparisons of these algorithms in reproductive medicine contexts. In developing a predictive model for conventional in vitro fertilization outcomes, researchers found that logistic regression surprisingly outperformed both Random Forest and XGBoost, with mean AUC values of 0.734±0.049 versus 0.714±0.034 and 0.697±0.038, respectively [42]. This highlights that simpler models may sometimes achieve superior performance in specific clinical prediction tasks, possibly due to their lower vulnerability to overfitting on limited datasets.

For intrauterine insemination (IUI) success prediction, linear SVM demonstrated superior performance compared to multiple ensemble methods including Random Forest, with an AUC of 0.78 [41]. The study analyzed 9,501 IUI cycles and found that pre-wash sperm concentration, ovarian stimulation protocol, cycle length, and maternal age were the strongest predictors, with paternal age being the least informative [41].

In quantitative blastocyst yield prediction for IVF cycles, XGBoost, LightGBM, and SVM showed remarkably similar performance (R²: 0.673-0.676), all significantly outperforming traditional linear regression (R²: 0.587) [8]. Researchers ultimately selected LightGBM as the optimal model due to its comparable accuracy with fewer features and superior interpretability [8].

Individual Algorithm Performance in Specialized Tasks

Beyond direct comparisons, each algorithm has demonstrated strengths in specific reproductive medicine applications. XGBoost has shown particular utility in predicting semen quality based on modifiable lifestyle factors, with AUC values ranging from 0.648 to 0.697 for parameters like semen volume, sperm concentration, and motility [40]. Feature importance analysis revealed smoking status as the major factor affecting semen volume, sperm concentration, and motility, while age was the primary predictor for DNA Fragmentation Index [40].

Random Forest has been effectively employed in sperm concentration prediction, showing good predictive accuracy (AUC = 0.72) according to studies evaluating automated semen analysis systems [38]. Its ensemble structure makes it particularly robust for handling the heterogeneous cellular populations present in semen samples, which include both sperm and non-sperm cells of comparable size [38].

Table 2: Quantitative Performance Comparison Across Studies

| Study Context | Best Performing Algorithm | Key Performance Metrics | Top Predictive Features Identified |

|---|---|---|---|

| c-IVF Outcome Prediction [42] | Logistic Regression | Mean AUC = 0.734 ± 0.049 | Male age (protective), TPMC (protective), Female BMI (risk), DFI (risk) |

| IUI Pregnancy Outcome [41] | Linear SVM | AUC = 0.78 | Pre-wash sperm concentration, ovarian stimulation protocol, cycle length, maternal age |

| Blastocyst Yield Prediction [8] | LightGBM/XGBoost/SVM | R² = 0.673-0.676, MAE = 0.793-0.809 | Number of extended culture embryos, mean cell number on Day 3, proportion of 8-cell embryos |

| Semen Quality Prediction [40] | XGBoost | AUC range: 0.648-0.697 | Smoking status, age, abstinence period, sleeplessness |

Experimental Protocols and Methodologies

Data Collection and Preprocessing Standards

High-quality data collection and rigorous preprocessing are fundamental to developing reliable prediction models in sperm concentration research. Studies typically incorporate demographic information, clinical parameters, and comprehensive semen analysis results based on World Health Organization guidelines [40] [38]. The dataset from one large-scale study included 5,109 men with complete information on 10 lifestyle factors, general characteristics, and comprehensive semen parameters including semen volume, sperm concentration, progressive and total sperm motility, sperm morphology, and DNA fragmentation index [40].

Data preprocessing commonly involves handling missing values through median/mode imputation when only a few features are missing, while excluding cases with extensive missing data [41] [40]. Feature normalization techniques like PowerTransformer have proven effective for aligning data distributions closer to Gaussian, enhancing model performance [41]. Categorical variables typically undergo one-hot encoding to make them suitable for ML algorithms [41].

Model Training and Validation Frameworks

Robust validation frameworks are essential for evaluating true model performance. Nested cross-validation approaches with stratification effectively assess model generalizability while mitigating overfitting [42]. Synthetic Minority Over-sampling Technique (SMOTE) is frequently employed to address class imbalance in fertility outcomes [42].

Hyperparameter optimization follows systematic approaches, with studies often utilizing grid search or random search with cross-validation to identify optimal parameter combinations [39] [40]. For XGBoost, key hyperparameters include learning rate, number of estimators, maximum depth, and minimum child weight, which are systematically tuned to maximize performance metrics [40].

Model evaluation typically incorporates multiple metrics including area under the curve (AUC), accuracy, precision, recall, F1-score, and for regression tasks, R-squared and mean absolute error [42] [41] [8]. These are complemented by feature importance analyses to enhance interpretability [40] [8].

Diagram 1: Standardized Experimental Workflow for ML in Sperm Prediction Research. This workflow illustrates the comprehensive methodology from data collection through model interpretation, as implemented across multiple studies [42] [41] [40].

Essential Research Toolkit

Laboratory and Analytical Reagents

Table 3: Essential Research Reagents for Semen Analysis and ML Integration

| Reagent/Equipment | Primary Function | Application Context | Reference |

|---|---|---|---|

| Computer-Aided Sperm Analysis (CASA) | Automated assessment of sperm concentration, motility, and kinematics | Standardized semen parameter quantification for feature engineering | [13] [38] [43] |

| Density Gradient Centrifugation Media | Isolation of motile spermatozoa based on density differences | Sample preparation for consistent analysis and treatment procedures | [41] |

| Acridine Orange Stain | DNA integrity assessment through flow cytometry | Measurement of DNA Fragmentation Index (DFI) as a predictive feature | [40] |

| Diff-Quick Staining Solution | Sperm morphology evaluation through visual assessment | Traditional semen analysis parameter for model feature sets | [40] |

| Recombinant Human Chorionic Gonadotropin | Ovulation triggering in treatment cycles | Controlled timing for insemination procedures in outcome studies | [41] |

The implementation of ML algorithms in sperm concentration prediction relies on specific computational frameworks and libraries. Python-based ecosystems with scikit-learn provide foundational support for algorithm implementation, while specialized libraries like XGBoost and LightGBM offer optimized gradient boosting capabilities [41] [40]. Data normalization tools such as PowerTransformer effectively prepare features for analysis by transforming distributions closer to Gaussian [41].

For handling class imbalance common in fertility outcomes, SMOTE (Synthetic Minority Over-sampling Technique) implementations help create balanced training datasets [42]. Model interpretation packages like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) enhance transparency by elucidating feature contributions to predictions [39].

The comparative analysis of XGBoost, Random Forest, and SVM in sperm concentration prediction research reveals a complex landscape where no single algorithm universally dominates. Each method demonstrates distinct strengths contingent upon specific data characteristics, sample sizes, and clinical contexts. Linear SVM has shown superior performance in predicting IUI success [41], while XGBoost excelled in associating lifestyle factors with semen parameters [40]. Random Forest provides robust performance with reduced overfitting risk [39] [42], making it valuable for smaller datasets.

The integration of these supervised learning workhorses into reproductive medicine continues to evolve, with promising directions including enhanced interpretability techniques, algorithm integration with deep learning, and improved scalability for real-time clinical applications [39] [13]. As datasets expand and multimodal information incorporating genetic, environmental, and clinical factors becomes more accessible, these algorithms will play an increasingly vital role in developing personalized fertility treatments and improving diagnostic precision in andrology laboratories.

Ensemble learning is a machine learning technique that combines the predictions of multiple individual models, often referred to as "weak learners," to produce a more accurate and robust prediction than any single model alone [44]. A real-world analogy for this approach is a jury decision in a courtroom, where multiple individuals with varying backgrounds and perspectives collectively reach a more reliable verdict than a single judge could achieve independently [44]. Boosting represents a specific approach within ensemble learning where models are built sequentially, with each new model focusing on correcting the errors made by previous ones [44]. This iterative process of learning from mistakes allows boosted ensembles to achieve high predictive accuracy by gradually reducing both bias and variance in the predictions.

In the context of reproductive medicine and sperm concentration prediction, these techniques have demonstrated significant utility for handling complex, multidimensional clinical data. Machine learning applications in this domain must navigate challenges including imbalanced datasets, non-linear relationships between predictors, and the need for robust generalization to diverse patient populations [45] [46]. The application of boosting algorithms has emerged as particularly valuable for building predictive models that can inform clinical decision-making for conditions such as male infertility, where traditional statistical methods often fail to capture intricate patterns within the data [40] [47].

Theoretical Foundations: Stochastic Gradient Boosting (SGB) and XGBoost

Stochastic Gradient Boosting (SGB)

Stochastic Gradient Boosting builds upon the standard gradient boosting framework by introducing randomness into the model training process [48]. The algorithm operates through a sequential process where each new weak learner (typically a decision tree) is trained to predict the residuals or errors of the ensemble constructed up to that point [44]. The "stochastic" element comes from training each tree on a random subsample of the training data, typically without replacement, which introduces diversity among the base learners and helps prevent overfitting [48]. This approach differs from bagging methods like Random Forest, where trees are grown independently in parallel; in SGB, trees are built sequentially with each new tree focusing on instances that previous trees misclassified [44] [48].

The mathematical foundation of SGB involves optimizing a loss function through gradient descent in function space. At each iteration ( m ), the algorithm computes the negative gradient of the loss function with respect to the current prediction, then fits a weak learner to this gradient. The model update can be represented as:

[ Fm(x) = F{m-1}(x) + \nu \cdot \gammam hm(x) ]

Where ( \gammam ) is the multiplier obtained by line search, ( hm(x) ) is the weak learner, and ( \nu ) is the learning rate that controls the contribution of each tree [44]. The stochastic element is introduced by fitting ( h_m(x) ) to a random subsample of the training data rather than the entire dataset.

XGBoost (Extreme Gradient Boosting)

XGBoost represents an optimized and scalable implementation of gradient boosting that incorporates several enhancements over traditional gradient boosting and SGB [44] [49]. Developed by Tianqi Chen, XGBoost introduces a more regularized model formalization to control over-fitting, which often provides better performance [44] [50]. The algorithm employs second-order derivatives of the loss function (Hessian information) to achieve more precise optimization, and includes explicit regularization terms in its objective function [44].

The core optimization problem in XGBoost can be represented by the following regularized objective function:

[ \mathcal{L}^{(t)} = \sum{i=1}^n l(yi, \hat{y}i^{(t-1)} + ft(xi)) + \Omega(ft) ]

Where ( \Omega(f_t) = \gamma T + \frac{1}{2}\lambda \|w\|^2 ) is the regularization term, with ( T ) representing the number of leaves in the tree, ( w ) being the leaf weights, and ( \gamma ), ( \lambda ) being regularization parameters [44] [50]. This regularized approach, combined with algorithmic optimizations around tree pruning, missing value handling, and computational efficiency, distinguishes XGBoost from earlier gradient boosting implementations [44].

Key Algorithmic Differences and Implementation Considerations

Comparative Analysis of SGB and XGBoost

Table 1: Key Algorithmic Differences Between SGB and XGBoost

| Feature | Stochastic Gradient Boosting (SGB) | XGBoost |

|---|---|---|

| Regularization | Limited regularization options | Extensive L1 (Lasso) and L2 (Ridge) regularization |

| Tree Construction | Builds trees sequentially with stochastic sampling | Uses depth-first tree pruning with regularization penalties |

| Handling Missing Values | Requires preprocessing or surrogate splits | Built-in automatic handling of missing values |

| Computational Efficiency | Moderate training speed | Optimized with parallel processing, faster training |

| Flexibility | Standard implementation with less customization | Extensive parameter tuning and customization options |

| Gradient Calculation | First-order gradients typically used | Utilizes second-order derivatives (Hessian) for faster convergence |

The comparison reveals that XGBoost incorporates more sophisticated regularization techniques, which helps prevent overfitting and improves model generalization [44]. While both algorithms follow the principle of gradient boosting, XGBoost employs a more regularized model formalization and includes additional engineering optimizations for computational efficiency [50]. These differences become particularly significant when working with high-dimensional biomedical data where feature selection and regularization are critical for model performance.

Implementation in Reproductive Medicine Research

In practice, implementing these algorithms for sperm concentration prediction requires careful consideration of several factors. For SGB implementations, key parameters requiring optimization include the number of trees (iterations), learning rate (shrinkage), tree depth, and subsampling fraction [48]. For XGBoost, additional parameters such as regularization terms (lambda and alpha), tree pruning criteria, and missing value handling strategies must be tuned [44] [40].

The selection between SGB and XGBoost often depends on specific research constraints. XGBoost typically demonstrates advantages with larger datasets, when computational efficiency is prioritized, or when automatic handling of missing values is beneficial [44] [40]. SGB may be preferable when interpretability is paramount or when working with smaller datasets where XGBoost's complexity might lead to overfitting without extensive parameter tuning.

Experimental Comparison in Sperm Quality Prediction

Experimental Protocols and Methodologies

Recent studies have implemented rigorous experimental protocols to evaluate the performance of boosting algorithms in reproductive medicine applications. A comprehensive cattle reproduction study applied C5.0, Random Forest, and SGB algorithms to classify post-thawed semen samples from Holstein, Simmental, and Charolais bulls based on eight computer-assisted sperm analysis (CASA) derived variables [48]. The experimental workflow involved:

- Data Collection: 542 commercially produced semen samples analyzed using CASA device measuring progressive motility (PM), non-PM, velocity curve linear (VCL), velocity straight line (VSL), beat-cross frequency (BCF), amplitude of lateral head displacement (ALH), hyperactivity, and velocity average path (VAP)

The application of machine learning (ML) in male fertility research represents a paradigm shift from traditional statistical methods, offering unprecedented capabilities for predicting sperm concentration and overall fertility potential. This guide objectively compares the performance of various ML models and the critical parameters they utilize, framing the discussion within the broader context of performance metrics for ML in sperm concentration prediction research. By synthesizing experimental data from recent studies, we provide a structured analysis of feature engineering strategies, model efficacies, and methodological protocols that are advancing the field of computational andrology.

Traditional semen analysis, while foundational, faces significant challenges including high inter-laboratory variability, subjective manual assessment, and limited predictive power for actual fertility outcomes [51] [52]. Machine learning approaches overcome these limitations by capturing complex, non-linear relationships between parameters that conventional statistical methods might miss [31]. The declining global fertility rates and sperm counts underscore the urgent need for more precise predictive tools to guide clinical interventions and personalize treatment strategies [31].

Comparative Analysis of Predictive Features for Sperm Quality

Feature Categories and Their Predictive Strength

Research indicates that the most robust predictive models incorporate multimodal data spanning several categories. The table below summarizes the critical parameters identified in recent studies and their relative predictive strength for sperm concentration and overall fertility potential.

Table 1: Critical Parameter Categories for Sperm Concentration Prediction

| Category | Specific Parameters | Predictive Strength | Key Findings |

|---|---|---|---|

| Conventional Semen Parameters | Total Motile Count (TMC), Sperm Concentration, Motility (Progressive & Total), Morphology, Volume [53] [54] | Foundational | TMC is one of the best predictors of male fertility; combines volume, concentration, and motility [53] [54]. Morphology has limited predictive value for pregnancy [53]. |

| Hormonal Profiles | Follicle-Stimulating Hormone (FSH), Luteinizing Hormone (LH), Testosterone (T) [55] [56] | Moderate | Elevated FSH is associated with impaired spermatogenesis [55] [56]. Specific patterns (e.g., low T, high FSH/LH) suggest primary hypogonadism [55]. |

| Molecular Sperm Markers | Sperm Mitochondrial DNA Copy Number (mtDNAcn), Sperm DNA Fragmentation [14] | High | mtDNAcn was the most predictive individual biomarker for pregnancy at 12 cycles (AUC: 0.68) and serves as a biomarker of overall sperm fitness [14]. |

| Patient Clinical & Lifestyle Data | Age, BMI, Body Fat (BF), Sleep Time (ST), Systolic Blood Pressure (SBP), Blood Urea Nitrogen (BUN), Alpha-fetoprotein (AFP) [31] | High | ST, AFP, BF, SBP, and BUN were identified as the top five risk factors associated with sperm count in a large-scale health screening study [31]. |

| Kinematic & Morphometric Data | Sperm Trajectory, Velocity, Head Morphology, Tail Defects [51] [52] | High (for specific tasks) | Deep learning models analyzing sperm videos can predict motility with high consistency. Morphology CNNs can classify head defects with >87% accuracy [51] [52]. |

Performance Comparison of ML Models and Parameters

Different ML architectures are suited to different data types and prediction tasks. The following table compares the performance of various models as reported in recent experimental studies.

Table 2: Machine Learning Model Performance on Sperm-Related Prediction Tasks

| Study (Year) | Prediction Task | Model(s) Used | Key Features | Performance |

|---|---|---|---|---|

| F&S Reports (2025) [14] | Pregnancy within 12 menstrual cycles | Elastic Net (ElNet-SQI) | Composite of 8 semen parameters + mtDNAcn | AUC: 0.73 (Highest among multiparameter biomarkers) |

| Scientific Reports (2019) [52] | Sperm Motility (Progressive, Non-progressive, Immotile) | Convolutional Neural Networks (CNN) | Microscope videos of semen samples | Rapid and consistent prediction; MAE not specified for best model |

| JCM (2023) [31] | Sperm Count | Random Forest (RF), XGBoost, Stochastic Gradient Boosting, LASSO, Ridge Regression | 30 health screening indicators (e.g., ST, BF, SBP, BUN, AFP) | ML models outperformed traditional Multiple Linear Regression (MLR) |

| PMC (2024) [51] | Sperm Morphology Classification | Deep Neural Networks (DNN), CNN | Sperm head images (from HuSHeM, SCIAN datasets) | Accuracy up to 94.1% on HuSHeM dataset |

Detailed Experimental Protocols and Methodologies

Protocol: Developing a Composite ML Model for Pregnancy Prediction

This protocol is based on the study that developed the Elastic Net Sperm Quality Index (ElNet-SQI), which demonstrated high predictive ability for time to pregnancy [14].

- Cohort Selection: Participants were recruited from a preconception cohort (e.g., the Longitudinal Investigation of Fertility and the Environment study). The study included 281 men from couples trying to conceive.

- Data Collection:

- Semen Parameters: Collect 34 conventional and detailed semen parameters via standard semen analysis per WHO guidelines, including concentration, motility, and morphology.

- Molecular Biomarker: Quantify sperm mitochondrial DNA copy number (mtDNAcn) from the semen sample.

- Outcome Data: Record the couple's time to pregnancy (TTP) over 12 menstrual cycles.

- Feature Preprocessing: Normalize all semen parameters and mtDNAcn values.

- Model Training and Index Creation:

- Use an Elastic Net regression model, which combines L1 (Lasso) and L2 (Ridge) regularization.

- The model is trained to assign optimal weights to each of the input parameters (including mtDNAcn) to create a single, weighted SQI (ElNet-SQI) that is most predictive of TTP.

- Validation: Evaluate the predictive power of the ElNet-SQI using discrete-time proportional hazard models and Receiver Operating Characteristic (ROC) analysis for pregnancy status at 3, 6, and 12 cycles.

Diagram 1: ML Model Development Workflow for Sperm Quality Index (SQI) creation and validation, illustrating the integration of multimodal data and machine learning training processes.

Protocol: Video-Based Sperm Motility Analysis Using CNN

This protocol outlines the methodology for using deep learning on microscopic videos to objectively assess sperm motility [52].

- Sample Preparation and Video Acquisition:

- Place 10 μL of liquefied semen on a glass slide and cover with a 22x22 mm cover slip.

- Use a phase-contrast microscope (e.g., Olympus CX31) with a heated stage (37°C) and a mounted camera.

- Record videos at 400x magnification for 2-7 minutes at a high frame rate (e.g., 50 frames per second). Store as AVI files.

- Ground Truth Annotation: An experienced laboratory technician manually assesses the sperm motility (progressive, non-progressive, immotile) for each sample, providing the ground truth labels for model training.

- Frame Extraction and Preprocessing: Extract sequences of frames from the videos. Preprocessing may include normalization and resizing.

- Model Architecture and Training:

- Employ a Convolutional Neural Network (CNN) architecture designed for sequence or video processing.

- The model learns to map sequences of frames to the three motility percentages.

- Evaluation: Use three-fold cross-validation and report the Mean Absolute Error (MAE) to evaluate model performance and ensure generalizability.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the aforementioned protocols requires specific reagents and equipment. The following table details the essential solutions and materials for researchers in this field.

Table 3: Research Reagent Solutions for Advanced Sperm Quality Analysis

| Item / Reagent | Specific Example / Brand | Critical Function in Research |

|---|---|---|

| Phase-Contrast Microscope with Heated Stage | Olympus CX31 [52] | Enables observation of live, unstained sperm and recording of motility videos under physiological temperature (37°C). |

| Microscope-Mounted Camera | UEye UI-2210C [52] | Captures high-frame-rate videos (e.g., 50 fps) for subsequent computer vision and deep learning analysis. |

| Staining Kits for Morphology | Rapi-Diff, Testsimplets [51] | Increases contrast for detailed morphological analysis of sperm heads, acrosomes, and vacuoles via AI models. |

| Staining for DNA Assessment | Acridine Orange [51] | Fluorescent stain used to assess sperm DNA quality, a critical feature for training models on genetic integrity. |

| DNA Extraction & qPCR Kits | Not Specified (Commercial Kits) | Essential for quantifying the biomarker sperm mitochondrial DNA copy number (mtDNAcn) from semen samples [14]. |

| Open Multimodal Datasets | VISEM Dataset [52], HuSHeM [51], SCIAN [51] | Provide curated, annotated data (videos, images, participant data) for training and benchmarking new ML models. |

Diagram 2: Multimodal data acquisition and feature engineering pipeline, showing the transformation of raw biological samples and clinical data into a feature vector for machine learning models.

The integration of machine learning with male fertility assessment is moving beyond conventional semen analysis. The evidence compared in this guide consistently demonstrates that composite models, which leverage engineered features from molecular biomarkers like mtDNAcn and kinematic data from videos, alongside curated clinical lifestyle factors, provide superior predictive power for sperm concentration and couple fecundity. The future of this field lies in the continued development of standardized, open multimodal datasets and the rigorous validation of these ML models across diverse populations to translate computational predictions into actionable clinical pathways.

The application of machine learning (ML) in andrology has demonstrated significant potential for improving the diagnosis of azoospermia and the prediction of treatment outcomes. The table below summarizes the performance metrics of various ML models as reported in recent, relevant studies.

Table 1: Performance Metrics of Machine Learning Models in Male Infertility Applications

| Study Focus | ML Model(s) Used | Key Performance Metric(s) | Dataset Size | Top Predictive Features Identified |

|---|---|---|---|---|

| Predicting Second microTESE Success [57] | Support Vector Machine (SVM) | Accuracy: 80% [57] | 47 patients | Histopathology, varicocele, FSH & testosterone levels, interval between procedures [57] |

| Differentiating NOA from OA [58] | Gradient Boosting Decision Trees (GBDT) | AUC: 0.974 [58] | 352 patients | Semen pH, FSH, Inhibin B (INHB), Mean Testicular Volume (MTV) [58] |

| Predicting Pregnancy within 12 Cycles [14] | Elastic Net (ElNet-SQI) | AUC: 0.73 (95% CI, 0.61–0.84) [14] | 281 men | Sperm mitochondrial DNA copy number combined with 8 semen parameters [14] |

| Classifying Azoospermia [11] | XGBoost | AUC: 0.987 (UNIROMA dataset) [11] | 2,334 men (UNIROMA) | FSH serum levels, Inhibin B, bitesticular volume [11] |

| Classifying Azoospermia with Environmental Data [11] | XGBoost | AUC: 0.668 (UNIMORE dataset) [11] | 11,981 records (UNIMORE) | Environmental pollution (PM10, NO2), white blood cell count [11] |

Detailed Experimental Protocols and Methodologies

Case Study 1: Predicting Success of a Second microTESE Procedure

- Research Objective: To develop and evaluate a machine learning algorithm to predict the success of a second microsurgical testicular sperm extraction (microTESE) in men with non-obstructive azoospermia (NOA) following an initial failed attempt [57].

- Data Source and Cohort: The study analyzed medical records of 47 patients who underwent a second microTESE. Variables included procedure side, histopathology, preoperative FSH, preoperative testosterone, testicular volume, and comorbidities like Klinefelter’s syndrome and cryptorchidism [57].

- ML Pipeline and Data Preprocessing:

- Data Preparation: Categorical variables were converted to integers using Label Encoder. Duplicate entries were removed, and no missing values were reported [57].

- Data Splitting: The dataset was split into 80% for training and 20% for testing due to the small sample size [57].

- Model Training and Validation: Supervised algorithms, including SVM, logistic regression, XGBoost regressor, and random forests, were implemented using scikit-learn in Python. The SVM model underwent hyperparameter tuning using

GridSearchCV[57].

- Key Findings: The tuned SVM model achieved an accuracy of 80%. Bilateral procedures and longer intervals between surgeries were associated with higher success rates, while a history of cancer correlated with negative outcomes [57].

Diagram 1: Workflow for Predicting Second microTESE Success

Case Study 2: Distinguishing Non-Obstructive from Obstructive Azoospermia

- Research Objective: To develop and validate a nomogram model using machine learning to accurately identify Non-Obstructive Azoospermia (NOA) among azoospermic patients, leveraging basic clinical parameters [58].

- Data Source and Cohort: A retrospective study of 352 azoospermia patients (200 NOA, 152 OA). Data included semen pH, serum levels of FSH and Inhibin B (INHB), and mean testicular volume (MTV) measured by Prader’s orchidometer [58].

- ML Pipeline and Data Preprocessing:

- Data Splitting: The data were randomly divided into a training set (70%, n=244) and a validation set (30%, n=108) [58].

- Model Training and Comparison: Nine different machine learning methods were employed, including Random Forest, Gradient Boosting Decision Trees (GBDT), XGBoost, and Support Vector Machine (SVM). Multivariate logistic regression identified four key predictors for the final nomogram [58].