Explainable AI in Fertility Diagnostics: A Comparative Analysis of Methods, Validation, and Clinical Translation

This article provides a comprehensive comparative analysis of Explainable Artificial Intelligence (XAI) methodologies within fertility diagnostics and Assisted Reproductive Technology (ART).

Explainable AI in Fertility Diagnostics: A Comparative Analysis of Methods, Validation, and Clinical Translation

Abstract

This article provides a comprehensive comparative analysis of Explainable Artificial Intelligence (XAI) methodologies within fertility diagnostics and Assisted Reproductive Technology (ART). Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles necessitating a shift from 'black box' models to interpretable systems. The review critically examines and compares specific XAI techniques, including SHapley Additive exPlanations (SHAP), for applications in embryo selection, sperm analysis, and treatment personalization. It further addresses pivotal challenges in model optimization, data bias, and clinical deployment, while evaluating validation frameworks and performance metrics against traditional methods. The analysis concludes by synthesizing the translational pathway for XAI, highlighting its implications for fostering trust, ensuring equity, and accelerating personalized reproductive medicine.

The Imperative for Explainability: Foundations of XAI in Reproductive Medicine

The integration of artificial intelligence (AI) into clinical decision-making represents a paradigm shift in modern healthcare, promising enhanced diagnostic precision, optimized treatment protocols, and personalized patient care. Nowhere is this potential more significant than in the data-rich field of fertility diagnostics and treatment, where AI-driven tools are increasingly deployed for tasks ranging from embryo selection to outcome prediction [1] [2]. However, a fundamental challenge threatens to undermine this potential: the "black box" problem inherent in many conventional AI systems. These systems produce outputs and recommendations through processes that are opaque, complex, and often incomprehensible to clinicians, researchers, and patients alike [3] [4]. This opacity creates significant epistemic and ethical barriers to their responsible implementation, particularly in sensitive domains like reproductive medicine where decisions carry profound consequences. This analysis examines the limitations of conventional black-box AI through a comparative lens, contrasting its performance and methodological constraints against emerging explainable AI (XAI) approaches, with specific focus on applications within fertility diagnostics and research.

Performance Comparison: Black-Box AI vs. Explainable AI in Fertility Applications

Quantitative performance metrics alone provide an incomplete picture of AI efficacy in clinical settings. The following table synthesizes documented performance and key characteristics of AI approaches as applied to reproductive medicine, highlighting the critical trade-offs between accuracy and interpretability.

Table 1: Comparative Analysis of AI Approaches in Fertility Diagnostics and Embryology

| Feature | Conventional Black-Box AI | Explainable AI (XAI) Approaches |

|---|---|---|

| Reported Performance (Embryo Selection) | AUC >0.9 [3], Accuracy up to 96.94% for broad "good/poor" embryo classification [3] | Comparable accuracy with interpretable neural networks reported in literature [3] |

| Clinical Utility | Limited in differentiating embryos of similar quality [3] | Aims to assist in competitive selection between morphologically similar embryos |

| Interpretability | Opaque; reasoning process is not accessible or understandable [3] [4] | High; models are constrained for human understanding [3] or use post-hoc explanations (e.g., SHAP [5]) |

| Typical Techniques | Deep Neural Networks, proprietary algorithms [3] [2] | Interpretable neural networks, rule-based models combined with ML, SHAP analysis [5] [3] |

| Handling of Confounders | Prone to learning spurious correlations; difficult to detect or correct [3] | Allows for manual verification of features and reasoning, mitigating confounder risks [3] |

| Evidence Level | Primarily proof-of-concept efficacy studies; lack of RCTs [3] | Emerging literature; advocates call for RCTs and long-term follow-up [3] |

Methodological Limitations: Experimental Protocols and Inherent Flaws

The evaluation of conventional AI systems in fertility is hampered by methodological shortcomings that inflate perceived performance and limit clinical applicability. A critical analysis of experimental protocols reveals significant gaps.

Efficacy vs. Effectiveness in Embryo Selection Trials

Many seminal studies on AI for embryo selection demonstrate efficacy (performance under ideal conditions) but not effectiveness (performance in real-world practice) [3]. For instance, the IVY model reportedly achieved an Area Under the Curve (AUC) of 0.93 for predicting fetal heartbeat pregnancy [3]. However, a critical flaw in its experimental protocol was that the training and test datasets contained a high proportion of poor-quality embryos that would typically be discarded in clinical practice. This artificially inflated the algorithm's discriminatory power by having it distinguish between "obviously non-viable" and "potentially viable" embryos, rather than addressing the true clinical need: ranking a cohort of morphologically similar, good-quality embryos to select the single one with the highest implantation potential [3]. Similarly, Khosravi et al.'s (2019) algorithm achieved 96.94% accuracy in categorizing embryos as "good" or "poor" quality, aligning with embryologist consensus. Yet, the protocol excluded the "fair-quality" embryos, which constitute the very group where clinicians most need decision support [3].

Data Scarcity and Generalizability Challenges

A common pitfall in AI development for reproductive medicine is the use of small, non-generalizable datasets. Over 50% of studies applying machine learning to analyze Intensive Care Unit (ICU) data utilized datasets from fewer than 1,000 patients, leading to performance overestimation in the absence of external validation [6]. This issue translates directly to fertility contexts, where data scarcity for rare occurrences or specific patient subgroups is a major constraint. Furthermore, population shift or population bias poses a significant threat. A model trained on data from one patient demographic, clinic population, or using specific laboratory protocols may generalize poorly to different populations [6] [3]. For example, an AI model trained predominantly on embryos from one demographic group may perform suboptimally for other patient populations, a risk that is difficult to assess without transparent, interpretable models.

The Cognitive Workflow and Impact of the Black Box in Clinical Practice

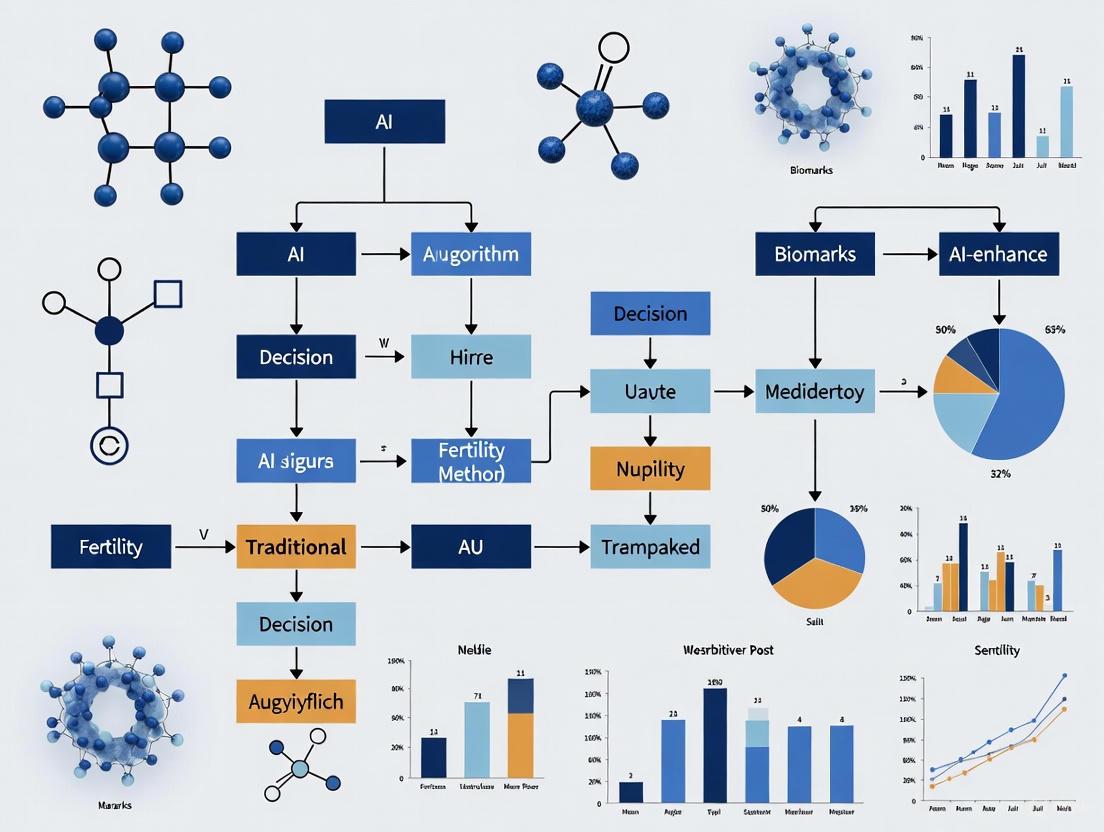

The interaction between a clinician and an AI-CDSS can be modeled as a process fraught with uncertainty when the AI is a black box. The following diagram illustrates this challenging workflow and its potential breakdown points.

This workflow highlights the core problem: the "Zone of Unexplainability" forces clinicians to make decisions based on a recommendation whose reasoning is hidden. This creates a trust gap and information asymmetry, where the clinician must rely on the output without understanding the underlying medical rationale [3] [7] [4]. Qualitative studies synthesizing clinician perspectives reveal that this opacity often leads to skepticism, as clinicians question the system's ability to compete with their own expertise, particularly when the AI lacks contextual patient information [7]. The result can be either under-utilization (discarding potentially correct recommendations due to lack of trust) or, conversely, over-reliance (accepting incorrect recommendations), both of which degrade clinical performance [7].

Essential Research Reagents and Tools for Fertility AI Research

The development and validation of AI tools in reproductive medicine rely on a specific set of data, software, and analytical tools. The following table details key components of the "research toolkit" for this field.

Table 2: Key Research Reagent Solutions for AI in Fertility Diagnostics

| Reagent / Tool Category | Specific Examples | Function & Application in Research |

|---|---|---|

| Embryo Imaging & Annotation | Time-lapse Imaging Systems, iDAScore [1], BELA system [1] | Provides continuous, high-dimensional visual data for model training; automated embryo assessment and ploidy prediction. |

| Clinical Data Repositories | Open Science Framework (OSF) U.S. fertility measures [5], Institutional EHRs | Supplies structured, tabular data on patient history, cycle outcomes, and biomarkers for predictive modeling. |

| AI Modeling Frameworks | Prophet (Time-series) [5], XGBoost [5], Interpretable Neural Networks [3] | Used for forecasting trends (e.g., birth rates) and building classification/regression models with varying interpretability. |

| Interpretability & Analysis Libraries | SHAP (SHapley Additive exPlanations) [5], LIME | Provides post-hoc explanations for black-box models and quantifies feature influence on predictions. |

| Statistical & Coding Environments | Python (pandas, scikit-learn) [5], R, Jupyter Notebooks [5] | Enables data cleaning, manipulation, model development, and validation in a reproducible research environment. |

The limitations of conventional black-box AI in clinical decision-making are not merely technical hurdles but represent fundamental epistemic and ethical challenges. In the high-stakes domain of fertility care, where decisions impact family-building outcomes and patient well-being, the inability to interrogate, understand, and trust an AI's reasoning is a critical barrier to adoption and safe integration [3] [7] [4]. The comparative analysis presented herein demonstrates that while black-box systems can show impressive efficacy in controlled experiments, their lack of transparency, vulnerability to confounders, and poor alignment with actual clinical workflows limit their real-world effectiveness and pose significant risks.

The path forward requires a concerted shift towards the development and implementation of explainable AI (XAI) systems. These models, whether interpretable by design or augmented with explanation interfaces, are essential for building clinician trust, ensuring regulatory compliance, facilitating the detection of bias, and ultimately, upholding the principles of patient-centered care [3] [2]. Future research must prioritize rigorous external validation, prospective randomized controlled trials, and long-term follow-up of children born following AI-assisted selection [3]. By moving beyond the black box, the field of reproductive medicine can harness the true potential of AI as a powerful, transparent, and trustworthy partner in advancing patient care.

The integration of Artificial Intelligence (AI) into reproductive medicine represents a paradigm shift in how clinicians diagnose and treat infertility. As these technologies become increasingly complex, they often operate as "black boxes," making decisions through processes that are opaque even to their developers [8]. This opacity creates significant challenges in fertility diagnostics, where treatment decisions have profound emotional, financial, and ethical implications for patients. Explainable AI (XAI) has emerged as a critical framework to address these challenges by making AI decisions transparent, interpretable, and accountable to clinicians, researchers, and patients [9].

The field of fertility diagnostics presents unique challenges that make explainability particularly vital. Treatment decisions often rely on complex, multimodal data including medical imagery, clinical history, and laboratory results. Furthermore, the high-stakes nature of fertility treatments demands that clinicians understand and trust AI recommendations before incorporating them into patient care pathways. This comparative analysis examines how XAI principles are being implemented across fertility diagnostic applications, evaluates their methodological approaches, and assesses their impact on clinical transparency and accountability.

Theoretical Foundations of Explainable AI

Core Principles and Definitions

Explainable AI is built upon three foundational principles that ensure AI systems remain transparent and accountable throughout their lifecycle:

Transparency: AI systems should provide clear explanations of their decision-making processes, including the data and algorithms used and the rationale behind predictions or recommendations [9] [10]. This principle requires that the internal logic of AI systems be accessible for examination rather than hidden within impenetrable code.

Interpretability: The reasoning behind AI decisions must be accessible and understandable to all stakeholders, including those without technical expertise [9]. This ensures that AI decision-making is comprehensible to clinicians, patients, and regulators who may lack deep knowledge of machine learning methodologies.

Accountability: It must be possible to track and trace AI decisions to detect biases and ensure fairness, particularly in high-stakes domains like healthcare where AI decisions significantly impact human lives [9]. Accountability mechanisms ensure that responsibility for AI-assisted decisions can be properly attributed.

XAI Methodologies and Techniques

Multiple technical approaches have been developed to implement these core principles across different AI systems:

Model-Agnostic Methods provide explanations applicable across various AI models without altering their internal structure. SHAP (SHapley Additive exPlanations) uses cooperative game theory to assign contribution scores to each feature in a prediction, quantifying which factors had the biggest impact on the final decision [8] [10]. LIME (Local Interpretable Model-agnostic Explanations) approximates complex models locally with interpretable surrogate models to explain individual predictions [8] [10].

Interpretable Models are algorithms designed with inherent transparency, including decision trees that offer clear rule paths, linear regression that provides coefficient interpretations tied directly to feature influence, and rule-based systems that encode human-readable conditions [8] [10]. These models often trade some predictive accuracy for better interpretability, making them preferable when explainability is critical.

Visualization Techniques help users grasp complex model behavior through feature importance charts, saliency maps that emphasize regions influencing computer vision outputs, and attention visualizations that reveal which elements influence natural language processing tasks [10]. These techniques are particularly valuable in medical imaging applications within fertility diagnostics.

Comparative Analysis of XAI Applications in Fertility Diagnostics

Male Fertility Assessment

A hybrid diagnostic framework combining a multilayer feedforward neural network with a nature-inspired ant colony optimization algorithm has demonstrated remarkable performance in male fertility assessment. The system incorporates a Proximity Search Mechanism (PSM) to provide interpretable, feature-level insights for clinical decision-making [11]. When evaluated on a publicly available dataset of 100 clinically profiled male fertility cases representing diverse lifestyle and environmental risk factors, the model achieved exceptional performance metrics, as detailed in Table 1.

Table 1: Performance Metrics of XAI Framework for Male Fertility Diagnostics

| Metric | Performance Value | Clinical Significance |

|---|---|---|

| Classification Accuracy | 99% | Ultra-high diagnostic precision |

| Sensitivity | 100% | Identifies all true positive cases |

| Computational Time | 0.00006 seconds | Enables real-time clinical application |

| Key Features Identified | Sedentary habits, environmental exposures | Guides targeted interventions |

The study employed range-based normalization to standardize the feature space and facilitate meaningful correlations across variables operating on heterogeneous scales [11]. All features were rescaled to the [0, 1] range to ensure consistent contribution to the learning process, prevent scale-induced bias, and enhance numerical stability during model training. The resulting system provides a cost-effective, time-efficient approach to male reproductive health diagnostics that illustrates the effective synergy between machine learning and bio-inspired optimization.

Follicle Optimization for Oocyte Retrieval

In female fertility treatment, a multi-center study harnessing explainable artificial intelligence identified follicle sizes that contribute most to relevant downstream clinical outcomes during in vitro fertilization (IVF) [12]. The research, encompassing 19,082 treatment-naive female patients across 11 European IVF centers, employed a histogram-based gradient boosting regression tree model to determine optimal follicle characteristics.

The investigation revealed that intermediately-sized follicles (12-20 mm) on the day of trigger administration contributed most to the number of oocytes retrieved, while a tighter range of 13-18 mm follicles were most productive for yielding mature metaphase-II oocytes [12]. For downstream laboratory outcomes, follicles of 14-20 mm were most important for high-quality blastocysts. These findings enable more precise timing for trigger administration in IVF protocols, potentially improving live birth rates.

Table 2: Most Contributory Follicle Sizes for IVF Outcomes Identified Through XAI

| Clinical Outcome | Most Contributory Follicle Sizes | Patient Population | Sample Size |

|---|---|---|---|

| All Oocytes Retrieved | 12-20 mm | General IVF population | 19,082 patients |

| Mature (MII) Oocytes | 13-18 mm | General IVF population | 14,140 patients |

| Mature Oocytes (Women ≤35) | 13-18 mm | Younger patient subgroup | 5,707 patients |

| Mature Oocytes (Women >35) | 11-20 mm | Advanced maternal age | 4,717 patients |

| High-Quality Blastocysts | 14-20 mm | General IVF population | 17,488 patients |

The model performance was validated through internal-external validation across the eleven clinics, with the model for predicting mature oocytes in the ICSI population achieving a mean absolute error (MAE) of 3.60 and median absolute error (MedAE) of 2.59 [12]. SHAP analysis confirmed these findings, showing an accentuated increase in values across similar ranges of intermediately-sized follicles, corresponding to an increased expectation of mature oocytes.

Fertility Preference Prediction in Population Studies

Machine learning algorithms with SHAP analysis have been applied to identify key predictors of fertility preferences among reproductive-aged women in low-resource settings [13]. This cross-sectional study utilized data from the 2020 Somalia Demographic and Health Survey, encompassing 8,951 women aged 15-49 years, to predict fertility preferences dichotomized as either desire for more children or preference to cease childbearing.

Among seven evaluated ML algorithms, Random Forest emerged as the optimal model based on performance metrics including accuracy, precision, recall, F1-score, and the Area Under the Receiver Operating Characteristic Curve (AUROC) [13]. The model demonstrated superior performance, achieving an accuracy of 81%, precision of 78%, recall of 85%, F1-score of 82%, and AUROC of 0.89.

SHAP analysis identified the most influential predictors of fertility preferences as age group, region, number of births in the last five years, number of children born, marital status, wealth index, education level, residence, and distance to health facilities [13]. Specifically, age group was the most significant feature, followed by region and number of births in the last five years. Women aged 45-49 years and those with higher parity were significantly more likely to prefer no additional children. Distance to health facilities emerged as a critical barrier, with better access being associated with a greater likelihood of desiring more children.

Experimental Protocols and Methodologies

Common Workflows in Fertility XAI Research

The application of XAI in fertility diagnostics follows methodological patterns that ensure rigorous validation and clinical relevance. The following diagram illustrates a standardized workflow for developing and validating explainable AI systems in fertility research:

Technical Implementation Framework

The technical implementation of XAI systems in fertility research typically involves a structured approach to data handling, model selection, and validation:

Data Preprocessing Protocols: Studies consistently employ data normalization techniques to handle heterogeneous clinical data. Min-Max normalization linearly transforms each feature to a consistent scale, typically [0, 1], to prevent scale-induced bias and enhance numerical stability during model training [11]. Additional preprocessing may include handling missing data, addressing class imbalance through techniques like SMOTE, and feature selection to reduce dimensionality.

Model Selection and Training: Researchers typically compare multiple machine learning algorithms to identify the optimal approach for their specific fertility diagnostic task. Common algorithms include Random Forest, Gradient Boosting machines, Support Vector Machines, and neural networks [13] [12] [11]. Models are trained using cross-validation techniques to ensure robustness and avoid overfitting, with hyperparameter tuning to optimize performance.

Validation Methodologies: Internal-external validation approaches, where models are trained on multiple clinics and tested on held-out clinics, provide the most rigorous assessment of generalizability [12]. Performance metrics commonly reported include accuracy, precision, recall, F1-score, and Area Under the Receiver Operating Characteristic Curve (AUROC) for classification tasks, and Mean Absolute Error (MAE) or Median Absolute Error (MedAE) for regression tasks [13] [12].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for XAI in Fertility Diagnostics

| Reagent/Resource | Function in XAI Research | Example Implementation |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Quantifies feature contribution to predictions using game theory | Identified age group as primary predictor of fertility preferences in Somali women [13] |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates local surrogate models to explain individual predictions | Approximates complex IVF outcome models for specific patient cases [8] |

| Histogram-based Gradient Boosting | Handles complex, non-linear relationships in clinical data | Identified optimal follicle sizes for oocyte retrieval in IVF [12] |

| Ant Colony Optimization | Nature-inspired optimization for parameter tuning and feature selection | Enhanced neural network performance in male fertility assessment [11] |

| Random Forest Algorithm | Robust classification handling multiple feature types | Optimal model for fertility preference prediction with 81% accuracy [13] |

| Multilayer Perceptron | Deep learning approach for complex pattern recognition | Alternative model for oocyte yield prediction in IVF [12] |

Regulatory and Implementation Considerations

Compliance Frameworks

The implementation of XAI in fertility diagnostics operates within an evolving regulatory landscape that emphasizes transparency and accountability. Major regulatory frameworks influencing XAI adoption include:

The General Data Protection Regulation (GDPR) mandates the right to explanation when automated decisions affect individuals, requiring fertility clinics to provide interpretable justifications for AI-assisted diagnoses and treatment recommendations [10] [14].

The EU AI Act categorizes AI systems used in healthcare as high-risk, mandating strict transparency requirements, detailed documentation, and human oversight provisions [10] [15].

The U.S. Food and Drug Administration (FDA) issues guidelines for AI/ML-based medical devices that emphasize transparency and clinical validation, requiring rigorous documentation of explainability for regulatory approval [10].

Adoption Barriers and Implementation Challenges

Despite its promise, the integration of XAI into fertility practice faces significant practical challenges:

Technical Complexity: Balancing model accuracy with interpretability remains challenging, as deep learning models often yield superior performance but lack inherent explainability [10]. Simplifying models to enhance interpretability may reduce predictive power in some applications.

Data Limitations: Many fertility datasets are limited in size, lack demographic diversity, and originate predominantly from high-income settings, limiting model generalizability and equity [15]. Most AI research in reproductive medicine utilizes private datasets with limited clinical and demographic diversity.

Implementation Costs: Financial barriers represent significant obstacles, with 38.01% of fertility specialists citing cost as a primary barrier to AI adoption in a 2025 global survey [16]. Additional resources required for training, integration with existing systems, and ongoing maintenance further increase implementation barriers.

Ethical Concerns: Over-reliance on technology and algorithmic bias represent significant risks, with 59.06% of specialists citing over-reliance as a concern in the same survey [16]. Ensuring that AI systems complement rather than replace clinical judgment remains a critical consideration.

The integration of Explainable AI into fertility diagnostics represents a transformative advancement with the potential to enhance precision, objectivity, and personalization in reproductive medicine. Through comparative analysis of current implementations, this review demonstrates that XAI methodologies—particularly SHAP analysis, model-agnostic interpretation techniques, and inherently interpretable models—provide critical insights into male fertility assessment, ovarian follicle optimization, and population-level fertility preferences.

The foundational principles of transparency, interpretability, and accountability provide a framework for developing AI systems that clinicians can understand, trust, and appropriately integrate into patient care pathways. As the field evolves, ongoing attention to validation standards, ethical implementation, and equitable access will be essential to realizing the full potential of these technologies. The continuing maturation of XAI in fertility diagnostics promises not only incremental improvements in laboratory performance but also a fundamental shift toward more transparent, accountable, and patient-centered reproductive care.

The Clinical and Ethical Demand for Interpretability in Fertility Diagnostics

The integration of artificial intelligence (AI) into reproductive medicine is transforming the diagnosis and treatment of infertility, a condition affecting an estimated one in six couples globally [12] [17]. Male infertility alone contributes to 30-50% of all cases, yet traditional diagnostic methods like manual semen analysis are often hampered by subjectivity, poor reproducibility, and an inability to capture the complex interplay of biological, lifestyle, and environmental factors [18] [11] [19]. AI, particularly machine learning (ML) and deep learning (DL), promises to overcome these limitations by enhancing the precision of sperm, oocyte, and embryo analysis, and by improving the prediction of treatment success for procedures like in vitro fertilization (IVF) [18] [20] [17].

However, the "black-box" nature of many complex AI models presents a significant barrier to their clinical adoption. When an AI model recommends a specific sperm for injection or an embryo for transfer, clinicians and patients must understand the reasoning behind that decision. This has created a clinical and ethical demand for interpretability and explainability in AI systems. Explainable AI (XAI) provides insights into model decisions, fostering trust, enabling verification, and ensuring that critical treatment decisions are transparent and actionable. This comparative analysis examines the current state of interpretable AI in fertility diagnostics, evaluating the methodologies, performance, and clinical applicability of various XAI frameworks.

Comparative Analysis of Explainable AI Approaches

Research in explainable AI for fertility diagnostics has produced diverse approaches, ranging from hybrid models that integrate optimization algorithms to deep learning frameworks capable of visualizing decision-making processes. The table below summarizes the performance of several key XAI frameworks as reported in recent studies.

Table 1: Performance Comparison of Explainable AI Frameworks in Fertility Diagnostics

| XAI Framework | Clinical Application | Dataset Size | Key Performance Metrics | Explainability Method |

|---|---|---|---|---|

| MLFFN–ACO Hybrid Model [11] [19] | Male fertility diagnosis | 100 records | Accuracy: 99%, Sensitivity: 100%, Computational Time: 0.00006s | Proximity Search Mechanism (PSM), Feature Importance Analysis |

| Histogram-Based Gradient Boosting [12] | Follicle contribution to mature oocytes | 19,082 patients | Model for MII oocytes: MAE: 3.60, MedAE: 2.59 | Permutation Importance, SHAP Values |

| CNN-LSTM with LIME [21] | Embryo selection (Blastocyst) | 98 images (augmented to 1,470) | Accuracy (After Augmentation): 97.7% | LIME (Local Interpretable Model-agnostic Explanations) |

| Deep Neural Network (DNN) [22] | IVF pregnancy prediction | 8,732 treatment cycles | Accuracy: 0.78, Specificity: 0.86, AUC: 0.68-0.86 | Feature Correlation Analysis (XGBoost) |

| Gradient Boosting Trees (GBT) [18] | Sperm retrieval in azoospermia | 119 patients | AUC: 0.807, Sensitivity: 91% | Not Specified |

Methodologies and Experimental Protocols

The experimental protocols and methodologies underpinning these XAI frameworks are critical to understanding their comparative value.

The MLFFN–ACO Hybrid Framework for Male Fertility Diagnosis This framework was designed to address the multifactorial nature of male infertility by integrating clinical, lifestyle, and environmental factors [11] [19].

- Data Preprocessing: A publicly available dataset of 100 male fertility cases from the UCI repository was used. All features were rescaled to a [0, 1] range using min-max normalization to ensure consistent contribution and prevent scale-induced bias.

- Model Architecture: The core is a Multilayer Feedforward Neural Network (MLFFN). Its learning efficiency and predictive accuracy are enhanced by integration with a nature-inspired Ant Colony Optimization (ACO) algorithm. The ACO performs adaptive parameter tuning, mimicking ant foraging behavior to overcome the limitations of conventional gradient-based methods.

- Explainability Protocol: The framework incorporates a Proximity Search Mechanism (PSM) to provide feature-level interpretability. This allows clinicians to understand which specific factors (e.g., sedentary habits, environmental exposures) most contributed to a diagnosis of "altered" seminal quality, thereby enabling actionable clinical insights.

The CNN-LSTM and LIME Framework for Embryo Selection This approach addresses the subjective and time-consuming nature of manual embryo grading by embryologists [21].

- Dataset and Augmentation: The model was trained on the STORK dataset, which initially contained only 98 blastocyst images. To overcome data scarcity, extensive image augmentation techniques (geometric transformations, rotations, etc.) were applied, expanding the dataset to 1,470 images.

- Model Architecture: A hybrid Convolutional Neural Network (CNN) and Long Short-Term Memory (LSTM) architecture was employed. The CNN extracts spatial features from the blastocyst images, while the LSTM captures temporal dependencies, together modeling both the structure and developmental sequence of the embryo.

- Explainability Protocol: LIME (Local Interpretable Model-agnostic Explanations) was used to interpret the "black-box" CNN-LSTM model. LIME works by perturbing the input image and observing changes in the prediction, thereby generating a heatmap that highlights the specific image regions (e.g., parts of the inner cell mass or trophectoderm) that were most influential in classifying an embryo as "good" or "poor." This visualization is crucial for embryologists to validate the AI's decision.

The Histogram-Based Gradient Boosting for Follicle Analysis This large multi-center study aimed to identify which follicle sizes on the day of trigger administration contribute most to successful IVF outcomes [12].

- Data Source: The model was trained on data from 19,082 treatment-naive female patients across 11 European IVF centers.

- Model and Analysis: A histogram-based gradient boosting regression tree model was used to predict the number of mature oocytes retrieved based on follicle sizes. The primary explainability technique used was permutation importance, which measures the decrease in model performance when a specific feature (in this case, a follicle size bin) is randomly shuffled. This identifies which follicle sizes the model relies on most for accurate predictions.

- Validation: The findings were further explained and validated using SHAP (SHapley Additive exPlanations) values, which quantify the marginal contribution of each feature to the model's prediction for an individual patient.

The following diagram illustrates the core workflow of an interpretable AI system in fertility diagnostics, from data input to clinical decision-making:

The Scientist's Toolkit: Essential Research Reagents and Solutions

For researchers aiming to develop or validate explainable AI tools in reproductive medicine, a specific set of data, algorithmic, and validation tools is essential. The table below details key components of this toolkit.

Table 2: Research Reagent Solutions for Explainable Fertility AI

| Tool Category | Specific Tool / Solution | Function in Research |

|---|---|---|

| Public Datasets | UCI Fertility Dataset [11] [19] | Provides structured clinical, lifestyle, and environmental data for model training in male fertility diagnosis. |

| Public Datasets | STORK Dataset [21] | Offers blastocyst images for developing and benchmarking embryo selection algorithms. |

| AI Algorithms | Ant Colony Optimization (ACO) [11] [19] | A nature-inspired metaheuristic for optimizing model parameters and feature selection. |

| AI Algorithms | CNN-LSTM Hybrid Models [21] | Captures both spatial and temporal features from image data, ideal for embryo development analysis. |

| Explainability Libraries | LIME (Local Interpretable Model-agnostic Explanations) [21] | Explains predictions of any classifier by approximating it locally with an interpretable model. |

| Explainability Libraries | SHAP (SHapley Additive exPlanations) [12] | Unpacks the contribution of each feature to a single prediction based on cooperative game theory. |

| Validation Frameworks | Internal-External Validation [12] [22] | Tests model performance across multiple clinics or datasets to ensure generalizability and robustness. |

Discussion and Future Directions

The comparative analysis reveals that no single XAI approach is universally superior; rather, the optimal choice is dictated by the specific clinical question and data type. For structured data (e.g., clinical parameters), models like gradient boosting with feature importance analysis (SHAP) provide clear, quantifiable insights [11] [12]. For complex image data (e.g., embryos), DL models combined with visual explanation tools (LIME) are necessary to bridge the interpretability gap [21].

A critical challenge is the trade-off between model performance and interpretability. The hybrid MLFFN-ACO model [11] [19] and the CNN-LSTM model [21] demonstrate that it is possible to achieve high accuracy (>97%) while maintaining a degree of interpretability. However, the clinical validation of these tools remains a work in progress. While studies report strong metrics like AUC and sensitivity, their ultimate impact on live birth rates needs confirmation through large-scale, prospective trials [18] [23].

Future development must also address ethical imperatives. The ability to explain an AI's decision is fundamental to ensuring accountability, mitigating bias, and maintaining patient autonomy. As these technologies evolve, interdisciplinary collaboration among AI experts, clinicians, embryologists, and ethicists will be paramount to developing solutions that are not only powerful but also transparent, fair, and trustworthy [17] [23]. The integration of XAI is not merely a technical enhancement but a clinical and ethical necessity for the responsible implementation of AI in the deeply human context of fertility care.

In the rapidly evolving field of fertility diagnostics, artificial intelligence (AI) systems are increasingly being deployed to analyze complex patterns in reproductive health data, from hormonal levels to embryo viability assessments. For researchers, scientists, and drug development professionals, the "black box" nature of many advanced algorithms presents significant challenges for clinical validation and regulatory approval. Explainable AI (XAI) has therefore emerged as a critical requirement—not merely an enhancement—for ensuring that AI-driven diagnostic tools are trustworthy, clinically actionable, and compliant with regulatory standards across major markets [24].

The global regulatory landscape for AI in healthcare is characterized by two dominant but divergent frameworks: the United States Food and Drug Administration (FDA) approach and the European Union's AI Act. Understanding their distinct requirements for transparency, interpretability, and validation is essential for successfully navigating the compliance pathway for fertility diagnostic technologies. This comparative analysis examines these frameworks through the specific lens of XAI requirements, providing researchers with strategic guidance for developing compliant and clinically effective AI solutions for reproductive medicine.

Philosophical Divide: Contrasting Regulatory Approaches

The FDA and EU approaches to AI regulation stem from fundamentally different philosophical foundations that directly impact XAI implementation strategies.

US FDA: Pro-Innovation with Lifecycle Oversight

The FDA's approach prioritizes fostering innovation while ensuring safety through a "total product lifecycle" model [25]. This framework acknowledges that AI systems, particularly those based on machine learning, evolve over time through continuous learning and improvement. Rather than treating AI-based medical devices as static products, the FDA has developed adaptive pathways that accommodate iterative updates within predefined boundaries [26].

Central to this approach is the Predetermined Change Control Plan (PCCP), which allows manufacturers to specify anticipated modifications—including algorithm updates and performance enhancements—during the initial premarket review [26] [27]. For fertility diagnostics research, this means that XAI methodologies can be integrated into the development pipeline with a clear roadmap for how explanatory capabilities will evolve alongside the core algorithm, without requiring a new submission for each improvement [25].

The FDA's guidance emphasizes Good Machine Learning Practices (GMLP) that align with XAI principles, including robust validation, transparency in design, and comprehensive documentation of model performance across relevant patient populations [25]. This principles-based approach offers flexibility for researchers to implement XAI techniques appropriate to their specific algorithmic architecture and clinical context in reproductive medicine.

EU AI Act: Precautionary Principle with Strict Categorization

In contrast, the EU AI Act establishes a comprehensive, risk-based regulatory framework that applies strict, legally binding requirements to AI systems based on their potential impact on health, safety, and fundamental rights [28]. The regulation adopts a precautionary approach, emphasizing thorough upfront validation and continuous monitoring of high-risk AI applications [26].

Most AI-powered fertility diagnostics are classified as "high-risk" AI systems under the EU framework, as they are considered safety components of medical devices that influence diagnostic or therapeutic decisions [28]. This categorization triggers extensive obligations for transparency, human oversight, and robust performance validation that directly implicate XAI requirements [25].

The EU's approach requires dual conformity assessment for AI-enabled medical devices, which must satisfy both the existing Medical Device Regulation (MDR) and the specific requirements of the AI Act [25]. This creates a multi-layered compliance landscape where XAI must demonstrate not only clinical validity but also adherence to fundamental rights protections, including non-discrimination and privacy—particularly relevant for fertility diagnostics that may involve sensitive genetic or health data [28].

Table 1: Foundational Philosophical Differences Between FDA and EU AI Act

| Aspect | US FDA Approach | EU AI Act Approach |

|---|---|---|

| Core Philosophy | Pro-innovation, lifecycle oversight | Precautionary, risk-based regulation |

| Regulatory Model | Flexible, adaptive pathways | Strict, legally binding requirements |

| Key Mechanism | Predetermined Change Control Plans (PCCPs) | Conformity assessment by Notified Bodies |

| XAI Emphasis | Transparency for clinical utility | Transparency for fundamental rights protection |

| Governance | Centralized FDA review | Distributed enforcement through member states |

Comparative Framework Analysis: XAI Requirements

FDA XAI Guidance and Expectations

The FDA's approach to XAI is contextual and focused on the clinical application of AI systems. Through its Artificial Intelligence/Machine Learning (AI/ML)-Based Software as a Medical Device (SaMD) Action Plan and subsequent guidance documents, the FDA emphasizes that the level of explainability required should be commensurate with the device's risk profile, intended use, and the potential impact of incorrect outputs [27].

For fertility diagnostics, this means that XAI capabilities must be sufficient to enable healthcare providers to understand the basis for the AI's conclusions well enough to make informed clinical decisions. The FDA's draft guidance "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products" introduces a risk-based credibility assessment framework that can be applied to evaluate AI models in medical contexts [29] [30]. This framework emphasizes the importance of defining the "context of use" (COU) for the AI model, which for fertility diagnostics might include specific patient populations, clinical scenarios, or decision-support functions [29].

The FDA encourages the use of Real-World Evidence (RWE) and post-market monitoring to validate XAI performance across diverse populations—a critical consideration for fertility diagnostics that may exhibit varying performance across different ethnic groups, age ranges, or underlying health conditions [25]. This lifecycle approach allows for continuous refinement of XAI capabilities based on actual clinical experience.

EU AI Act XAI Mandates and Obligations

The EU AI Act establishes more prescriptive requirements for XAI through its provisions on transparency and human oversight for high-risk AI systems. Article 13 specifically requires that high-risk AI systems be "sufficiently transparent to enable users to interpret the system's output and use it appropriately" [28]. For fertility diagnostics, this translates to several concrete obligations:

- Technical Documentation: Providers must maintain detailed documentation of the AI system's logic, training methodologies, data protocols, and explanatory capabilities [28].

- Human Oversight: Systems must be designed with effective human oversight measures, including interpretation tools that enable clinicians to understand the AI's reasoning and potentially override decisions [25].

- Information to Users: Clear and adequate information must be provided to deployers about the system's capabilities, limitations, and the meaning of its outputs [28].

The EU's requirements extend beyond clinical utility to encompass fundamental rights impact assessments, particularly relevant for fertility diagnostics that may involve sensitive health data or have implications for reproductive autonomy [28]. XAI in this context must enable not just clinical validation but also ethical review and rights-based oversight.

Table 2: Comparative XAI Requirements for Fertility Diagnostics

| Requirement Category | FDA Expectations | EU AI Act Mandates |

|---|---|---|

| Explainability Level | Contextual based on intended use and risk | Sufficient for users to interpret output and use appropriately |

| Documentation | Good Machine Learning Practice (GMLP) principles | Detailed technical documentation of system logic and capabilities |

| Validation | Clinical validation across relevant populations | Fundamental rights impact assessment and clinical validation |

| Human Oversight | Emphasized for clinical decision support | Required design feature with override capabilities |

| Post-Market Monitoring | Real-World Evidence (RWE) collection for performance tracking | Post-market monitoring system with incident reporting |

Strategic Compliance Pathway for Fertility Diagnostics

Navigating the dual requirements of FDA and EU regulatory frameworks requires a strategic approach to XAI implementation from the earliest stages of development. The following workflow outlines a comprehensive compliance pathway for fertility diagnostic AI systems:

Figure 1: XAI Compliance Pathway for Fertility Diagnostics

Experimental Protocols for XAI Validation

Validating XAI systems for regulatory compliance requires a multi-dimensional approach that addresses both technical performance and clinical utility. The following experimental protocol provides a framework for generating the evidence required by both FDA and EU regulators:

Protocol: Multi-dimensional XAI Validation for Fertility Diagnostics

Objective: To comprehensively validate XAI methodologies for AI-based fertility diagnostic systems against FDA and EU regulatory requirements.

Primary Endpoints:

- Technical Explainability Metrics: Quantitative assessment of explanation accuracy, completeness, and stability using standardized metrics (e.g., faithfulness, monotonicity, sensitivity).

- Clinical Utility Metrics: Healthcare provider comprehension scores, diagnostic confidence improvement, and clinical decision correlation with explanations.

- Robustness Metrics: Performance consistency across diverse patient demographics and clinical scenarios.

Methodology:

- Dataset Curation: Collect retrospective fertility diagnostic data with comprehensive demographic representation, including age, ethnicity, reproductive history, and relevant comorbidities. Ensure appropriate ethical approvals and data governance protocols are in place [5].

- XAI Implementation: Integrate appropriate XAI methodologies (e.g., SHAP, LIME, counterfactual explanations) tailored to the specific AI architecture and clinical use case. For embryo viability prediction, this might include feature importance rankings for morphological characteristics [5].

- Technical Validation: Conduct quantitative experiments measuring explanation fidelity using perturbation tests, consistency across similar cases, and robustness to input variations.

- Clinical Validation: Deploy the XAI system in simulated clinical environments with reproductive endocrinologists and embryologists. Measure comprehension through structured surveys, diagnostic accuracy with and without explanations, and clinical workflow integration assessment.

- Bias and Fairness Assessment: Evaluate explanation consistency and model performance across demographic subgroups to identify potential disparities in explanatory quality or diagnostic accuracy [24].

Statistical Analysis:

- Employ appropriate statistical tests to compare performance across subgroups and validate explanation consistency.

- Calculate confidence intervals for clinical utility metrics to establish minimal acceptable thresholds for explainability performance.

This comprehensive validation approach generates the evidence necessary to demonstrate compliance with both FDA's emphasis on clinical utility and the EU's requirements for transparency and fundamental rights protection.

Research Reagent Solutions for XAI Compliance

Successfully implementing XAI for regulatory compliance requires leveraging specialized tools and frameworks throughout the development lifecycle. The following table outlines essential "research reagents" for developing compliant XAI systems in fertility diagnostics:

Table 3: Essential Research Reagents for XAI Compliance in Fertility Diagnostics

| Research Reagent | Function | Regulatory Application |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Quantifies feature contribution to model predictions using game theory | Generates quantitative explanations for technical documentation [5] |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates local surrogate models to explain individual predictions | Provides case-specific explanations for clinical validation [24] |

| Counterfactual Explanation Frameworks | Generates "what-if" scenarios showing minimal changes to alter outcomes | Supports clinical decision-making and bias assessment [24] |

| Model Cards and Datasheets | Standardized documentation for model characteristics and limitations | Fulfills EU AI Act technical documentation requirements [28] |

| Fairness Assessment Toolkits | Quantifies model performance across demographic subgroups | Enables bias testing for fundamental rights compliance [24] |

| Predetermined Change Control Plan Templates | Structures planned modifications for iterative improvement | Supports FDA PCCP submissions for lifecycle management [26] |

| Real-World Performance Monitoring Platforms | Tracks model performance and explanation quality post-deployment | Addresses post-market monitoring requirements for both frameworks [25] |

The regulatory landscape for XAI in fertility diagnostics is characterized by two distinct but equally important frameworks. The FDA's flexible, lifecycle-oriented approach provides pathways for iterative improvement of explanatory capabilities, while the EU AI Act establishes comprehensive, legally binding requirements for transparency and human oversight. For researchers and developers, success in this environment requires a strategic approach that integrates XAI considerations from the earliest stages of development, employs robust validation methodologies addressing both technical and clinical dimensions, and maintains comprehensive documentation throughout the product lifecycle. By adopting the compliance pathway and experimental protocols outlined in this analysis, fertility diagnostics researchers can navigate this complex landscape effectively, accelerating the development of AI systems that are not only regulatory compliant but also clinically valuable and ethically sound.

XAI in Action: Comparative Methodologies and Diagnostic Applications

The integration of artificial intelligence (AI) in fertility diagnostics has created a critical need for model interpretability. Explainable AI (XAI) techniques address the "black-box" nature of complex machine learning models, making their decisions transparent and actionable for clinicians and researchers. Within this landscape, SHapley Additive exPlanations (SHAP) has emerged as a powerful unified framework for interpreting model predictions based on cooperative game theory [31]. SHAP quantifies the marginal contribution of each input feature to a model's final prediction, providing both global interpretability (overall model behavior) and local interpretability (individual prediction rationale) [32].

In fertility research, where treatment decisions have profound implications, SHAP offers a mathematically rigorous approach to feature importance analysis. By calculating Shapley values—a concept derived from game theory that fairly distributes the "payout" among "players" (features)—SHAP enables researchers to identify which factors most significantly influence predictions of treatment success, fertility preferences, or diagnostic outcomes [33] [13]. This capability is particularly valuable in assisted reproductive technology (ART), where multiple clinical parameters interact in complex, non-linear ways that traditional statistical methods may fail to capture adequately [34] [17].

SHAP Methodology: Core Principles and Implementation

Theoretical Foundation

SHAP builds upon Shapley values, which provide a theoretically grounded solution to the problem of fairly distributing credit among collaborating features. The core SHAP value for a specific feature i is calculated using a weighted average of all possible feature coalitions, expressed as:

This comprehensive approach ensures that SHAP values satisfy three key properties: local accuracy (the explanation model matches the original model for the specific instance being explained), missingness (features absent from the coalition receive no attribution), and consistency (if a model changes so that a feature's contribution increases, the SHAP value does not decrease) [31].

Implementation Variants

SHAP offers multiple implementation approaches tailored to different model architectures:

- KernelSHAP: A model-agnostic method that approximates Shapley values using weighted linear regression, applicable to any machine learning model [31]

- TreeSHAP: A specialized, computationally efficient algorithm for tree-based models (e.g., Random Forest, XGBoost, LightGBM) that leverages the tree structure to compute exact Shapley values [33] [34]

- DeepSHAP: An approximation method for deep learning models that builds on DeepLIFT, providing faster computation than KernelSHAP for neural networks

In fertility research, TreeSHAP has gained particular prominence due to the widespread use of tree-based ensemble methods like XGBoost and Random Forest, which consistently demonstrate strong predictive performance for complex biological outcomes [33] [34] [35].

Comparative Analysis of XAI Techniques in Fertility Research

SHAP vs. Alternative XAI Methods

While SHAP has gained significant traction in fertility research, several alternative XAI methods offer complementary capabilities. The table below compares SHAP with other prominent interpretability techniques:

Table 1: Comparison of Explainable AI Techniques in Fertility Research

| Method | Theoretical Basis | Scope | Fertility Research Applications | Advantages | Limitations |

|---|---|---|---|---|---|

| SHAP | Game Theory (Shapley values) | Global & Local | Feature importance for live birth prediction [34], fertility preferences [33], PPH risk [32] | Mathematical rigor, consistent, unified framework | Computationally intensive for some variants |

| LIME | Perturbation-based Local Surrogate | Local | Interpreting individual predictions in complex models [31] | Model-agnostic, intuitive local explanations | Instability across different random samples |

| Feature Importance | Model-specific Metrics | Global | Preliminary feature ranking in fertility studies [35] | Computationally efficient, simple to implement | No individual prediction explanations, potentially biased |

| Partial Dependence Plots (PDP) | Marginal Effect Visualization | Global | Understanding feature relationships in fertility outcomes [12] | Intuitive visualization of feature relationships | Assumes feature independence, can be misleading |

Quantitative Performance Comparison in Fertility Studies

Multiple fertility diagnostics studies have implemented both SHAP and alternative interpretability methods, enabling direct comparison of their effectiveness:

Table 2: Empirical Performance of XAI Methods in Fertility Research Applications

| Study Focus | Best-Performing ML Model | XAI Methods Compared | Key Advantage of SHAP | Performance Metrics |

|---|---|---|---|---|

| PCOS Live Birth Prediction [34] | XGBoost (AUC: 0.822) | Feature Importance, SHAP | Identified non-linear relationships (maternal age, testosterone) | Revealed embryo transfer count as top predictor |

| Fertility Preferences in Somalia [33] [13] | Random Forest (Accuracy: 81%, AUROC: 0.89) | Permutation Importance, SHAP | Quantified directionality of effects (age, parity, distance to healthcare) | Identified age group as most influential feature |

| Female Infertility Risk [35] | LGBM (AUROC: 0.964) | Feature Importance, SHAP | Detected interaction effects (heavy metals, cardiovascular health) | Ranked Cd exposure, BMI, LE8 score as top predictors |

| Optimal Follicle Identification [12] | Histogram-based Gradient Boosting | Ablation Analysis, SHAP | Precise quantification of follicle size contributions | Identified 13-18mm as optimal follicle size range |

Experimental Protocols for SHAP Analysis in Fertility Research

Standardized Workflow for SHAP Implementation

Implementing SHAP analysis in fertility research follows a systematic protocol that ensures reproducible and meaningful results. The following diagram illustrates the complete workflow from data preparation to clinical interpretation:

Detailed Methodological Specifications

Data Collection and Preprocessing

Fertility studies employing SHAP analysis typically utilize diverse data sources, including electronic health records, demographic surveys, laboratory results, and medical imaging. For example, the PCOS live birth prediction study incorporated 1,062 fresh embryo transfer cycles, collecting demographic information, laboratory test results, and treatment procedure details [34]. The Somalia fertility preferences study utilized data from 8,951 women aged 15-49 years from the 2020 Somalia Demographic and Health Survey [33] [13].

Data preprocessing follows rigorous standards:

- Handling Missing Values: Techniques range from median imputation (for clinical datasets) to more advanced methods like missForest imputation [34] [32]

- Feature Selection: Employing methods like LASSO regression and Recursive Feature Elimination (RFE) to reduce dimensionality and enhance model interpretability [34]

- Data Splitting: Typically 70:30 or 80:20 splits for training and testing, with external validation on temporally or geographically distinct datasets [32]

Model Training and Validation

The optimal machine learning model for SHAP analysis varies by application domain in fertility research:

- Random Forest: Demonstrated superior performance for fertility preference classification (81% accuracy, AUROC 0.89) [33] [13]

- XGBoost: Excelled in PCOS live birth prediction (AUC 0.822) and postpartum hemorrhage risk prediction (AUC 0.894) [34] [32]

- LightGBM: Achieved best performance for female infertility risk prediction based on lifestyle and environmental factors (AUROC 0.964) [35]

Model validation employs robust techniques including k-fold cross-validation (typically 5-fold), grid search for hyperparameter tuning, and comprehensive evaluation metrics (AUC, accuracy, precision, recall, F1-score, Brier score) [34] [32].

SHAP Calculation and Interpretation

The SHAP computation process involves:

- Explainer Selection: Choosing appropriate SHAP explainers based on model type (TreeSHAP for tree-based models, KernelSHAP for other models)

- Value Calculation: Computing SHAP values for all instances in the validation set

- Visualization Generation: Creating multiple plot types to extract insights at different levels of granularity

Critical interpretation principles include:

- Global Feature Importance: Summary plots display mean absolute SHAP values across the dataset, ranking features by overall impact [33] [34]

- Feature Directionality: Dependence plots reveal how specific features influence predictions, showing positive/negative relationships and interaction effects [32] [35]

- Individual Prediction Explanations: Force plots decompose single predictions, showing how each feature contributes to moving the base value to the final prediction [32]

Research Reagent Solutions: Essential Tools for SHAP Implementation

Successful implementation of SHAP analysis in fertility research requires specific computational tools and frameworks. The table below details essential "research reagents" for conducting SHAP-based interpretability studies:

Table 3: Essential Research Reagents for SHAP Analysis in Fertility Diagnostics

| Tool Category | Specific Solution | Function in SHAP Analysis | Implementation Example |

|---|---|---|---|

| Programming Languages | Python 3.9+ | Primary implementation environment for ML and SHAP | Fertility preference prediction [33] |

| SHAP Libraries | SHAP Python package (0.40.0+) | Core SHAP value computation and visualization | PCOS live birth prediction [34] |

| Machine Learning Frameworks | XGBoost, Scikit-learn, LightGBM | Model training and evaluation | Female infertility risk prediction [35] |

| Data Handling Libraries | pandas, NumPy | Data manipulation and preprocessing | Postpartum hemorrhage prediction [32] |

| Visualization Tools | matplotlib, Seaborn | Customizing SHAP plots and creating publication-quality figures | Follicle size optimization [12] |

| Clinical Data Platforms | Electronic Health Records, NHANES, DHS | Source of fertility-related features and outcomes | LE8 and heavy metal study [35] |

Applications in Fertility Diagnostics: Case Studies

Predicting Live Birth Outcomes in PCOS Patients

A recent study demonstrated SHAP's utility in explaining live birth predictions for polycystic ovary syndrome (PCOS) patients undergoing fresh embryo transfer [34]. Using XGBoost trained on 1,062 transfer cycles, researchers achieved an AUC of 0.822. SHAP analysis revealed that embryo transfer count, embryo type, maternal age, infertility duration, BMI, serum testosterone, and progesterone levels on HCG administration day were pivotal predictors. The analysis quantified non-linear relationships, showing how specific thresholds of maternal age and testosterone levels significantly impacted live birth probabilities, enabling more personalized treatment protocols.

The application of SHAP to fertility preferences in Somalia showcased its ability to handle complex sociodemographic data [33] [13]. Using Random Forest (accuracy: 81%, AUROC: 0.89) on data from 8,951 women, SHAP identified age group as the most influential predictor, followed by region, number of births in the last five years, and distance to health facilities. SHAP dependence plots revealed that better access to healthcare facilities was associated with a greater likelihood of desiring more children, challenging conventional assumptions about healthcare access and fertility preferences in low-resource settings.

Optimizing Follicle Selection in IVF Treatment

In a multi-center study of 19,082 patients, SHAP analysis identified optimal follicle sizes that contribute most to successful IVF outcomes [12]. The histogram-based gradient boosting model leveraged SHAP to determine that follicles sized 13-18mm on the day of trigger administration contributed most to mature oocyte yield. SHAP dependence plots further revealed that continuing ovarian stimulation beyond the optimal window resulted in follicles >18mm that secreted progesterone prematurely, negatively impacting live birth rates with fresh embryo transfer. These data-driven insights enable more precise timing of trigger administration in IVF protocols.

Assessing Female Infertility Risk from Environmental and Lifestyle Factors

A novel application of SHAP integrated cardiovascular health metrics (Life's Essential 8) and heavy metal exposure to predict female infertility risk [35]. The LightGBM model achieved exceptional performance (AUROC: 0.964) on NHANES data from 873 American women. SHAP analysis identified cadmium exposure, BMI, and overall LE8 score as the most influential predictors. The analysis revealed intricate interaction effects, showing how heavy metal exposure and cardiovascular health metrics jointly influence infertility risk, providing insights for multifactorial prevention strategies.

Limitations and Future Directions

Despite its significant advantages, SHAP analysis in fertility research faces several challenges. Computational demands can be substantial for large datasets or complex models, though TreeSHAP mitigates this for tree-based ensembles. The interpretation of SHAP values requires statistical expertise, particularly for understanding interaction effects and avoiding causal misinterpretations. Additionally, as with all explainable AI methods, SHAP provides explanations of model behavior rather than definitive causal relationships.

Future developments will likely focus on enhancing SHAP's efficiency for very large-scale fertility datasets, improving the visualization of complex feature interactions, and integrating temporal aspects for longitudinal fertility data. As prospective validation of AI systems in fertility care becomes more standardized [36], SHAP will play an increasingly critical role in translating predictive models into clinically actionable insights, ultimately advancing toward more personalized, effective fertility treatments.

In the high-stakes field of fertility diagnostics and in vitro fertilization (IVF), artificial intelligence (AI) offers unprecedented potential to process complex datasets and identify subtle patterns beyond human capability [37] [3]. However, the transition from experimental AI tools to clinically trusted systems hinges on a critical property: interpretability. Black-box models—those whose internal logic remains opaque—create significant epistemic and ethical concerns in medical contexts, including problems with trust, potential poor generalization to different populations, and a responsibility gap when selection choices prove suboptimal [3]. Local Interpretable Model-agnostic Explanations (LIME) represents a foundational technique in the Explainable AI (XAI) domain that addresses these challenges by providing case-specific insights into model predictions [38]. For researchers and clinicians working in fertility diagnostics, understanding LIME's comparative performance against alternatives like SHAP is essential for implementing transparent, trustworthy AI systems that can enhance clinical decision-making while maintaining human oversight.

Core Methodology: How LIME Generates Case-Specific Insights

LIME operates on a fundamentally local and model-agnostic principle: it explains individual predictions of any machine learning model by approximating its behavior locally with a simpler, interpretable model [38] [39]. The technique treats the original model as a black box, requiring no knowledge of its internal workings, and generates explanations by systematically perturbing input data and observing how the model responds to these variations [39].

The technical workflow of LIME follows a structured sequence:

- Instance Selection: A specific data instance (e.g., an embryo image or patient profile) is selected for explanation.

- Perturbation Generation: LIME creates numerous slightly modified versions of this instance by perturbing or tweaking its features while keeping the label constant.

- Prediction Observation: Each perturbed sample is passed through the original black-box model to obtain its prediction.

- Weighting by Proximity: The generated samples are weighted based on their proximity to the original instance—closer samples exert more influence on the explanation.

- Surrogate Model Training: A simple, interpretable model (typically a sparse linear model or decision tree) is trained on this weighted, perturbed dataset to approximate the original model's predictions locally around the instance of interest.

- Explanation Extraction: The coefficients or feature importances of this transparent surrogate model highlight which input features most influenced the prediction for that specific instance [38] [39].

The following diagram illustrates LIME's core operational workflow:

In fertility research contexts, LIME might explain a model's classification of an embryo as high-quality by highlighting that specific morphological features—such as trophectoderm structure or inner cell mass appearance—were most influential in that particular decision [40]. This case-specific insight provides embryologists with interpretable reasoning that builds trust and enables validation of the model's decision logic.

Performance Comparison: LIME Versus SHAP in Experimental Settings

When selecting XAI techniques for fertility diagnostics, researchers must evaluate comparative performance across multiple dimensions. The table below summarizes key experimental findings comparing LIME with SHAP (SHapley Additive exPlanations), another prominent explanation method:

| Performance Metric | LIME | SHAP |

|---|---|---|

| Computational Speed | Significantly faster; suitable for real-time applications [38] | Slower due to computation of Shapley values; can take minutes for 5,000 samples [38] |

| Explanation Scope | Local explanations for individual predictions [38] | Local and global explanations; unified approach [38] |

| Theoretical Foundation | Local linear approximations [38] | Game-theoretically optimal Shapley values [38] |

| Consistency Guarantees | No theoretical consistency guarantees [38] | Guarantees consistency and local accuracy [38] |

| Implementation Complexity | Lower complexity; direct compatibility with NumPy arrays [38] [41] | Higher complexity; requires compatibility with model architecture [38] |

| Fertility Research Applications | Limited direct documentation in fertility literature | Well-documented in fertility preference prediction and outcome studies [33] [42] |

The performance differential stems from fundamental methodological differences. SHAP employs a game-theoretic approach that considers all possible combinations of input features to compute their marginal contributions, guaranteeing properties like consistency and local accuracy [38]. This exhaustive computation provides robust explanations but creates significant computational overhead. In contrast, LIME's local sampling approach generates explanations more efficiently but lacks the same theoretical guarantees, potentially producing slightly different explanations between runs [38].

In fertility diagnostics, this trade-off manifests practically: SHAP might be preferable for thorough retrospective analysis of model behavior across population subgroups, while LIME offers advantages when integrating explanations into clinical workflows requiring rapid feedback, such as during time-sensitive embryo selection procedures.

Experimental Protocols for XAI Evaluation in Fertility Research

Protocol 1: Model Interpretation for Fertility Preference Prediction

A 2025 study published in Scientific Reports demonstrated the application of machine learning and explainable AI to predict fertility preferences among reproductive-aged women in Somalia, providing a template for XAI evaluation in demographic fertility research [33].

Dataset: The study utilized data from the 2020 Somalia Demographic and Health Survey (SDHS), encompassing 8,951 women aged 15-49 years. The outcome variable was fertility preference (desire for more children versus preference to cease childbearing), with predictors including sociodemographic factors, wealth index, education, residence, and distance to health facilities [33].

Model Training and Evaluation: Seven machine learning algorithms were evaluated using a cross-sectional design. The Random Forest model emerged as optimal, achieving accuracy of 81%, precision of 78%, recall of 85%, F1-score of 82%, and AUROC of 0.89. Although this particular study employed SHAP rather than LIME for interpretation, the experimental design provides a validated framework for comparing XAI techniques on identical models and datasets [33].

Explanation Generation: The SHAP analysis identified age group as the most significant predictor, followed by region and number of births in the last five years. Women aged 45-49 years and those with higher parity were significantly more likely to prefer no additional children. Distance to health facilities emerged as a critical barrier, with better access associated with a greater likelihood of desiring more children [33]. A comparable LIME implementation would generate similar insights but with different computational characteristics.

Protocol 2: Embryo Quality Classification Interpretation

Research in Nature Communications (2024) applied deep learning to classify blastocyst morphologic quality using 2,170 expert-annotated blastocyst images, achieving an AUC of 0.93 [40]. While this study used a specialized interpretability method called DISCOVER rather than LIME, it establishes rigorous validation protocols for XAI in embryo evaluation.

Image Preprocessing and Model Training: The protocol involved localizing blastocysts within images, followed by fine-tuning a pre-trained VGG-19 deep convolutional neural network to discriminate between high- versus low-quality blastocysts based on inner cell mass and trophectoderm morphology [40].

Interpretation Validation: Expert embryologists qualitatively assessed explanations against known embryo grading criteria (Gardner and Schoolcraft standards). This human-in-the-loop validation approach is essential for establishing clinical trustworthiness [40]. For LIME applications, similar validation would require embryologists to evaluate whether highlighted image regions align with biologically plausible features.

Quantitative Interpretation Metrics: The study measured the ability of explanations to identify known embryo properties, discover previously unmeasured properties, and determine which quality properties dominated classification decisions for specific embryos [40]. These metrics could be adapted to benchmark LIME's performance against alternative XAI methods in embryo assessment tasks.

| Tool Category | Specific Solution | Function in LIME Implementation |

|---|---|---|

| Software Libraries | marcotcr's LIME Package [41] | Python implementation for explaining text, tabular, and image classifiers; supports any model with prediction function |

| Microsoft MMLSpark TabularLIME [38] | Apache Spark-based implementation for distributed computing environments | |

| Model Framework | scikit-learn [41] | Compatible with LIME; provides built-in support for many standard classifiers |

| Visualization | LIME HTML Widgets [41] | Generates interactive explanations with highlighted features for text and images |

| Data Handling | NumPy Arrays [38] | Primary data format for marcotcr's LIME implementation |

| Validation Tools | Expert Annotation Protocols [40] | Framework for clinical validation of explanations by domain specialists |

Applications and Limitations of LIME in Fertility Diagnostics

Fertility Research Applications

LIME provides particular value in fertility diagnostics contexts requiring case-specific transparency:

- Embryo Selection Justification: Explaining why a particular embryo was ranked highest for transfer among a cohort of morphologically similar embryos [3] [40].

- Treatment Outcome Prediction: Interpreting predictions for individual patients in IVF outcome tools, highlighting which patient factors (age, infertility duration, diagnosis) most influenced their personalized prognosis [43].

- Clinical Decision Support: Providing embryologists with intuitive explanations that build appropriate trust in AI recommendations and enable identification of potential model errors or biases [3].

Technical Limitations and Considerations

Despite its advantages, LIME presents several limitations that researchers must consider:

- Linearity Constraints: The local explanation model is typically linear, potentially struggling to accurately explain highly non-linear decision boundaries [38].

- Instability: Explanations may vary between runs due to the random sampling component of the perturbation process [38] [39].

- Feature Representation Challenges: The method primarily works with NumPy arrays, potentially requiring data format conversions in big data environments using platforms like PySpark [38].

- Null Effect Oversight: Standard implementations may miss important null feature effects if the sampling process excludes null features [38].

For fertility diagnostics researchers selecting XAI methods, LIME offers distinct advantages for generating rapid, case-specific explanations when computational efficiency and model-agnostic flexibility are priorities. Its ability to provide intuitive local interpretations makes it particularly valuable for clinical settings requiring transparent decision support. However, for studies requiring rigorous theoretical guarantees or population-level insights, SHAP may represent a more appropriate choice despite its computational intensity [38] [33].