Explaining Male Fertility AI Models with SHAP: A Guide for Biomedical Research and Clinical Translation

This article provides a comprehensive exploration of Explainable AI (XAI) for male fertility prediction, specifically focusing on the application of SHapley Additive exPlanations (SHAP).

Explaining Male Fertility AI Models with SHAP: A Guide for Biomedical Research and Clinical Translation

Abstract

This article provides a comprehensive exploration of Explainable AI (XAI) for male fertility prediction, specifically focusing on the application of SHapley Additive exPlanations (SHAP). Tailored for researchers, scientists, and drug development professionals, it details the transition from 'black-box' machine learning models to interpretable, clinically actionable tools. The content covers foundational principles, methodological implementation of algorithms like Random Forest and XGBoost, strategies for optimizing performance on imbalanced medical datasets, and rigorous validation protocols. By synthesizing current research and performance benchmarks, this guide aims to bridge the gap between computational model development and trustworthy clinical application in reproductive medicine.

Understanding the 'Black Box' Problem and the SHAP Solution in Male Infertility

Male factor infertility represents a significant and growing global health burden, implicated in approximately 50% of infertility cases among couples worldwide. Despite its prevalence, male infertility remains underprioritized in public health initiatives and research funding, particularly in low-resource settings. Recent epidemiological studies reveal a steady increase in the global burden of male infertility, with pronounced disparities across geographic regions and socioeconomic groups. Concurrently, artificial intelligence (AI) methodologies, particularly explainable AI (XAI) frameworks incorporating SHapley Additive exPlanations (SHAP), are emerging as transformative tools for male fertility prediction and analysis. These technologies offer unprecedented capabilities for identifying key predictive factors, demystifying model decision-making processes, and providing clinically actionable insights. This technical review synthesizes current evidence on the global epidemiology of male infertility, examines the application of AI/ML models with SHAP analysis for fertility prediction, and outlines standardized experimental protocols to advance this critical field of men's health research.

Global Epidemiology and Disease Burden

The quantification of male infertility's global burden has been systematically tracked through the Global Burden of Disease (GBD) studies, revealing substantial prevalence and concerning trends.

Table 1: Global Burden of Male Infertility (1990-2021)

| Metric | 1990 Estimate | 2019 Estimate | 2021 Estimate | Key Trends |

|---|---|---|---|---|

| Global Prevalence | Not specified | 56,530.4 thousand (95% UI: 31,861.5-90,211.7) [1] | >55 million cases [2] [3] | 76.9% increase from 1990 to 2019 [1] |

| Global DALYs | Not specified | Not specified | >300,000 [2] [3] | Steady increase globally, particularly in low and low-middle SDI regions [2] |

| Age-Standardized Prevalence Rate (per 100,000) | Not specified | 1,402.98 (95% UI: 792.24-2,242.45) [1] | Significantly increased in specific regions | 19% increase from 1990 to 2019 [1] |

| Peak Age Group | Not specified | 30-34 years [1] | 35-39 years [3] | Demographic shift observed in recent data |

| Highest Burden Regions | Not specified | Western Sub-Saharan Africa, Eastern Europe, East Asia [1] | Eastern Europe, Western Sub-Saharan Africa (1.5x global average) [2] [3] | Persistent geographic disparities |

The epidemiological profile demonstrates that the global prevalence of male infertility reached approximately 56.5 million cases in 2019, reflecting a dramatic 76.9% increase since 1990 [1]. By 2021, this burden exceeded 55 million prevalent cases globally, with over 300,000 disability-adjusted life years (DALYs) attributed to the condition [2] [3]. The age-standardized prevalence rate stood at 1,402.98 per 100,000 population in 2019, representing a 19% increase compared to 1990 levels [1].

Significant disparities in disease burden exist across geographic and socioeconomic dimensions. Regions with the highest age-standardized prevalence rates include Western Sub-Saharan Africa, Eastern Europe, and East Asia [1]. Eastern Europe and Western Sub-Saharan Africa particularly stand out, with rates reaching approximately 1.5 times the global average [2] [3]. The Socio-demographic Index (SDI) reveals a complex relationship with male infertility burden, with high-middle and middle SDI regions exceeding the global average in both age-standardized prevalence and YLD rates [1]. Since 2010, low and middle-low SDI regions have experienced notably upward trends in male infertility burden [1].

China represents a particularly significant case study, accounting for approximately 20% of the global male infertility burden, with age-standardized rates significantly exceeding the global average [2] [3]. Interestingly, while the global burden of male infertility has increased steadily from 1990 to 2021, China has exhibited a stable trend with a gradual decline after 2008 [2] [3]. Decomposition analysis indicates that population growth serves as the primary driver of global prevalence increases, while age-related factors play a more significant role in China's epidemiology [2] [3].

Clinical Underrepresentation and Comorbid Associations

Despite contributing to approximately 50% of couple infertility cases, male infertility receives disproportionately insufficient attention in research, clinical practice, and public health policy [1] [2] [4]. This underrepresentation is particularly pronounced in less developed countries and regions with strong cultural and societal norms that attribute infertility primarily to female factors [1] [3]. In many patriarchal societies, men are often reluctant to undergo fertility assessments, leading to systematic underdiagnosis and inadequate epidemiological data [3].

Beyond its reproductive implications, male infertility functions as a biomarker of overall male health, with significant comorbid associations. Large-scale cohort studies consistently demonstrate that men with infertility face elevated all-cause mortality compared to fertile counterparts, with a dose-dependent pattern whereby more severe semen parameter abnormalities correlate with higher risk of premature death [4]. A 2021 systematic review and meta-analysis spanning approximately 60,000 men found that infertile men have a 26% higher risk of all-cause mortality than fertile men (pooled HR = 1.26), with those exhibiting oligospermia or azoospermia facing a 67% higher mortality risk relative to men with normal sperm counts [4].

Table 2: Comorbidity Risks Associated with Male Infertility

| Health Condition | Risk Increase | Key Findings |

|---|---|---|

| All-Cause Mortality | HR = 1.26 (infertile vs. fertile) [4] | Dose-response relationship: more severe semen abnormalities correlate with higher mortality [4] |

| Testicular Cancer | RR = 1.86 (95% CI: 1.41-2.45) [4] | Significant association with germ cell tumors [4] |

| Prostate Cancer | RR = 1.66 (95% CI: 1.06-2.61) [4] | Higher risk of early-onset disease in infertile men [4] |

| Melanoma | RR = 1.30 (95% CI: 1.08-1.56) [4] | Consistent association across multiple studies [4] |

| Diabetes | HR = 1.39 (95% CI: 1.09-1.71) [4] | Linked through shared metabolic pathways [4] |

| Cardiovascular Events | HR = 1.20 (95% CI: 1.00-1.44) [4] | Associated with endothelial dysfunction and metabolic syndrome [4] |

Proposed mechanisms linking infertility to reduced life expectancy encompass genetic, hormonal, and lifestyle factors [4]. Klinefelter syndrome exemplifies a genetic cause of azoospermia that also predisposes to metabolic syndrome, diabetes, and certain malignancies [4]. Low testosterone, frequently identified in testicular dysfunction, is implicated in obesity, insulin resistance, and cardiovascular disease, all of which can shorten lifespan [4]. Additionally, psychosocial stress and depression—commonly reported among infertile men—may contribute to health-compromising behaviors that further exacerbate these risks [4].

AI and SHAP Experimental Framework for Male Fertility Prediction

Algorithm Selection and Performance Metrics

The application of artificial intelligence (AI) and machine learning (ML) models for male fertility prediction has demonstrated remarkable potential for early detection and clinical decision support. Research indicates that seven industry-standard ML models are predominantly employed: Support Vector Machine (SVM), Random Forest (RF), Decision Tree (DT), Logistic Regression (LR), Naïve Bayes (NB), AdaBoost (ADA), and Multi-Layer Perceptron (MLP) [5]. Performance validation utilizes key metrics including accuracy, precision, recall, F1-score, and the Area Under the Receiver Operating Characteristic Curve (AUROC) [6] [5].

In comparative studies, Random Forest consistently emerges as a top-performing algorithm for male fertility prediction. RF has achieved optimal accuracy of 90.47% and an exceptional AUC of 99.98% when employing five-fold cross-validation with a balanced dataset [5]. Other high-performing approaches include artificial neural networks with novel optimization techniques, which have reported accuracy up to 99.96% [5], and transformer-based deep learning models integrated with particle swarm optimization for IVF outcome prediction, achieving 97% accuracy and 98.4% AUC [7].

Experimental Protocol for Male Fertility Prediction with SHAP Interpretation

Phase 1: Data Preprocessing and Feature Engineering

- Data Collection: Utilize the Fertility Dataset from the UCI Machine Learning Repository or equivalent clinical database containing semen parameters, environmental factors, and personal habits [5].

- Feature Set: Standard parameters include semen concentration, motility, morphology, volume, along with lifestyle factors (alcohol, smoking), duration of sexual abstinence, season, age, and medical history [5].

- Class Imbalance Handling: Address dataset skewness through sampling techniques, particularly Synthetic Minority Oversampling Technique (SMOTE), to mitigate small sample size, class overlapping, and small disjuncts issues [5].

- Data Splitting: Partition data into training (70%), validation (15%), and test (15%) sets, maintaining class distribution consistency across splits.

Phase 2: Model Training and Validation

- Algorithm Implementation: Train the seven industry-standard ML models using standardized hyperparameter tuning through grid search or random search with cross-validation [5].

- Validation Scheme: Employ k-fold cross-validation (typically k=5 or k=10) to assess model robustness and stability, preventing overfitting and providing realistic performance estimates [5].

- Performance Benchmarking: Evaluate all models using consistent metrics: accuracy, precision, recall, F1-score, and AUROC, with statistical significance testing between top-performing algorithms [5].

Phase 3: SHAP Interpretation and Clinical Validation

- SHAP Implementation: Apply SHapley Additive exPlanations to quantify the feature impact on each model's decision-making process, using either TreeSHAP for tree-based models or KernelSHAP for other algorithms [5].

- Feature Importance Analysis: Generate SHAP summary plots to visualize global feature importance and dependence plots to examine individual feature effects [5].

- Clinical Correlation: Validate identified important features against established clinical knowledge, with particular attention to novel relationships discovered by the models [5].

- Model Deployment: Integrate the optimized model with SHAP explanation capabilities into clinical workflow systems, ensuring real-time interpretation of predictions for clinical users [5].

SHAP Interpretation Framework

SHAP (SHapley Additive exPlanations) provides a unified approach for interpreting model predictions by computing the marginal contribution of each feature to the prediction outcome [5]. The methodology is based on cooperative game theory, where features are considered "players" in a game, and their Shapley values represent their fair contribution to the final prediction [5].

For male fertility prediction, SHAP analysis typically identifies key influential features including lifestyle factors (alcohol consumption, smoking), environmental exposures, sexual abstinence duration, age, and specific semen parameters [5]. The Random Forest model with SHAP interpretation has demonstrated that these features collectively provide transparent explanations for fertility status classification, enabling clinicians to understand both individual and population-level prediction drivers [5].

Research Reagent Solutions for Male Fertility Assessment

Table 3: Essential Research Reagents for Male Fertility Studies

| Reagent/Category | Function | Application in Male Fertility Research |

|---|---|---|

| Semen Analysis Kits | Quantitative assessment of semen parameters | Measurement of sperm concentration, motility, morphology [5] |

| Hormonal Assays | Evaluation of endocrine function | Testosterone, FSH, LH level quantification [4] |

| DNA Fragmentation Kits | Assessment of sperm genetic integrity | Sperm chromatin structure analysis (SCSA) [8] |

| Oxidative Stress Markers | Measurement of reactive oxygen species | Evaluation of oxidative damage to sperm membranes and DNA [8] |

| Cryopreservation Media | Long-term storage of gametes | Sperm banking for fertility preservation [9] |

| AI Training Datasets | Model development and validation | Curated clinical data for algorithm training [5] [7] |

| SHAP Visualization Tools | Model interpretation and explanation | Feature importance quantification and visualization [5] |

Future Directions and Clinical Integration

The integration of AI technologies in reproductive medicine is rapidly advancing, with global surveys indicating increased adoption among fertility specialists. Between 2022 and 2025, AI usage in IVF clinics increased from 24.8% to 53.22%, with embryo selection remaining the dominant application [9]. This trend is expected to continue, with 83.62% of 2025 survey respondents indicating likelihood to invest in AI within 1-5 years [9].

Future research priorities should focus on developing standardized AI validation frameworks specific to male fertility assessment, addressing current barriers including implementation costs (cited by 38.01% of specialists) and lack of training (33.92%) [9]. Ethical considerations around AI implementation, particularly regarding over-reliance on technology (cited by 59.06% of specialists), must be addressed through transparent, interpretable models that complement rather than replace clinical judgment [9].

The emerging recognition of male infertility as a marker of overall health necessitates a paradigm shift in clinical approach, moving beyond reproductive concerns to encompass comprehensive men's health screening and intervention [4]. AI-powered predictive models with robust explainability features represent a promising pathway toward personalized fertility treatments and improved long-term health outcomes for infertile men.

Limitations of Traditional 'Black-Box' AI in Clinical Decision-Making

The integration of artificial intelligence (AI) into clinical decision-making, particularly in sensitive fields like male fertility, represents a paradigm shift in reproductive medicine. However, the opaque nature of traditional "black-box" AI models poses significant challenges to their clinical adoption, including issues of trust, accountability, and generalizability. This technical guide examines the limitations of non-interpretable AI systems in male fertility assessment and demonstrates how Explainable AI (XAI) frameworks, specifically SHapley Additive exPlanations (SHAP), can transform these black boxes into transparent, clinically actionable tools. By providing a detailed methodology for implementing SHAP in male fertility prediction models, this review equips researchers and clinicians with the framework necessary to develop AI systems that are not only accurate but also interpretable and ethically sound, thereby bridging the critical gap between algorithmic performance and clinical utility.

Black-box AI refers to machine learning models whose internal decision-making processes are too complex for humans to comprehend or are proprietary in nature, making comprehension by outsiders impossible [10]. In clinical contexts, particularly in reproductive medicine, these models create significant information asymmetries between developers and healthcare providers, forcing clinicians to abrogate decision-making to systems they cannot fully understand or verify [10]. This opacity is particularly problematic in male infertility, where AI applications have expanded to include sperm morphology classification, motility analysis, prediction of successful sperm retrieval in non-obstructive azoospermia, and overall IVF success prediction [11].

The clinical imperative for explainability becomes evident when considering the consequences of erroneous AI recommendations. In male fertility treatment, where decisions directly impact family formation and involve significant emotional and financial investments, the inability to interrogate an AI's reasoning process introduces ethical, legal, and clinical challenges [10] [5]. Furthermore, epistemic concerns arise when black-box systems that performed well in initial trials fail to generalize to diverse patient populations, potentially due to unrecognized confounding factors or dataset shift issues that cannot be diagnosed without model transparency [10].

Critical Limitations of Black-Box AI in Clinical Practice

Epistemic and Validation Challenges

The implementation of black-box AI in clinical settings presents fundamental epistemic limitations that hinder proper scientific validation and clinical adoption:

Generalization Failures: Black-box models often exhibit performance degradation when applied to populations different from their training data. For instance, radiology AI systems that gained FDA approval subsequently performed poorly in clinical practice without clear reasons, raising concerns about their generalizability across diverse clinical settings and patient demographics [10].

Confounding Vulnerabilities: These models are particularly susceptible to learning spurious correlations from confounders present in training data. Without transparent reasoning processes, it is impossible to determine whether predictions are based on clinically relevant features or confounding variables that may not generalize to new patients [10].

Evaluation Limitations: Traditional performance metrics like area under the curve (AUC) can be misleading. Studies on embryo selection AI demonstrated outstanding AUC scores (>0.9), but closer examination revealed these results were artificially inflated because algorithms were tested on embryos that embryologists would readily discard, not on the clinically challenging task of differentiating between similar-quality embryos [10].

Ethical and Clinical Implementation Barriers

Beyond technical limitations, black-box AI introduces significant ethical concerns that directly impact patient care and clinical workflows:

Responsibility Gaps: When AI systems make erroneous clinical recommendations, the opacity of their decision processes creates ambiguity regarding responsibility and accountability, potentially leaving clinicians liable for decisions they cannot adequately verify or understand [10].

Trust Deficits: Clinicians are justifiably reluctant to trust systems whose reasoning remains opaque, particularly in high-stakes fields like fertility treatment where decisions have profound emotional and financial consequences for patients [5].

Value Misrepresentation: Black-box systems may optimize for statistical objectives that do not fully align with patient values and preferences, potentially introducing a more paternalistic decision-making process that excludes important patient-centered considerations [10].

Economic Implications: The adoption of proprietary black-box systems may create dependencies on specific vendors, potentially increasing healthcare costs and limiting flexibility for clinical institutions [10].

SHAP as a Solution for Male Fertility AI Interpretation

Theoretical Foundations of SHAP

SHapley Additive exPlanations (SHAP) is a unified approach based on cooperative game theory that explains the output of any machine learning model by calculating the marginal contribution of each feature to the final prediction [12] [13]. The method treats each feature as a "player" in a game where the prediction is the "payout," and fairly allocates the contribution among the features by considering all possible combinations of features [13].

The SHAP value for a specific feature i is calculated using the formula:

[ \phii = \sum{S \subseteq F \setminus {i}} \frac{|S|! (|F| - |S| - 1)!}{|F|!} [f(S \cup {i}) - f(S)] ]

Where:

- F = the set of all features

- S = a subset of features without feature i

- f(S) = the prediction model using only the feature subset S

- |S| = the size of subset S

This approach satisfies several desirable properties including local accuracy (the explanation model matches the original model for the specific instance being explained), missingness (features absent from the model have no impact), and consistency (if a feature's contribution increases, its assigned importance should not decrease) [12] [13].

SHAP Implementation Methodology for Male Fertility

Implementing SHAP for male fertility prediction involves a structured workflow that transforms black-box models into interpretable systems:

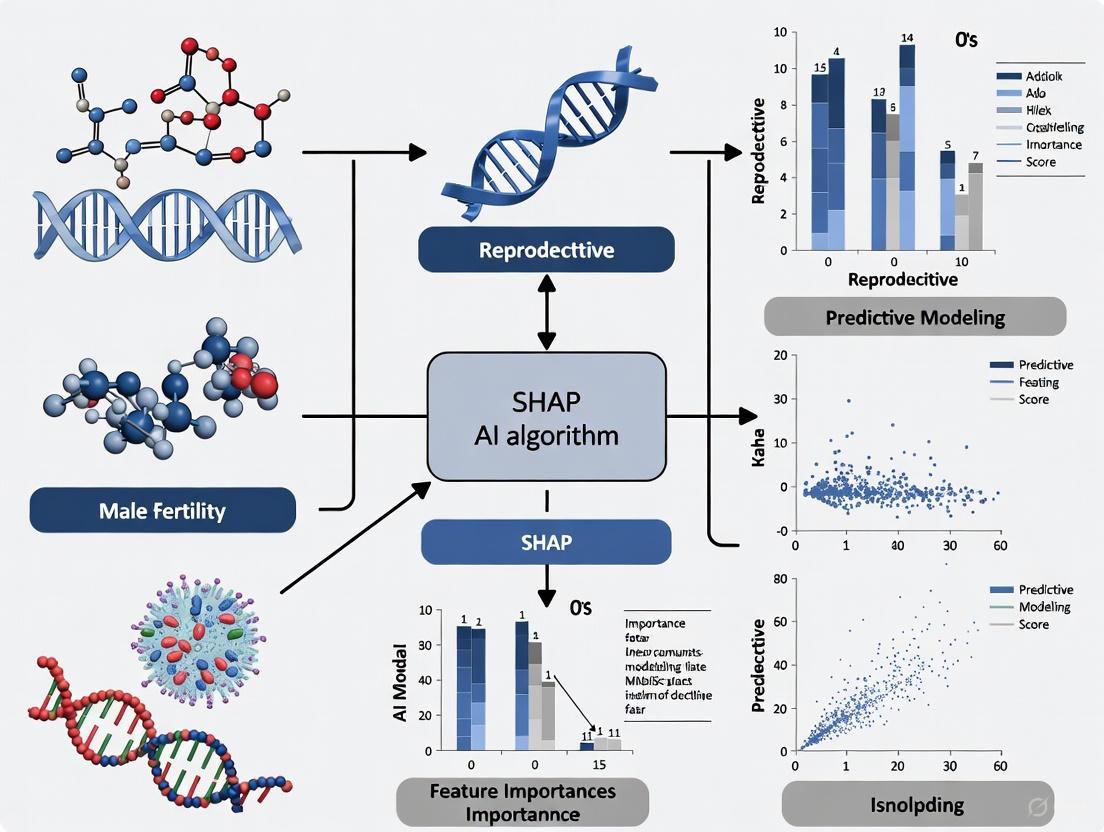

Figure 1: SHAP Implementation Workflow for Male Fertility Prediction. This diagram illustrates the comprehensive process from data preparation through model training to explainable AI implementation.

The experimental protocol for applying SHAP to male fertility prediction involves these critical steps:

Data Collection and Preprocessing:

- Collect male fertility parameters including lifestyle factors (smoking, alcohol consumption, sitting time), environmental factors, and clinical semen analysis results [5].

- Address class imbalance using techniques like SMOTE (Synthetic Minority Oversampling Technique) to prevent model bias toward majority classes [5].

- Partition data into training (70-80%), validation (10-15%), and test sets (10-15%) maintaining class distribution consistency.

Model Training and Validation:

- Train multiple industry-standard classifiers including Random Forests, Support Vector Machines, Logistic Regression, and Gradient Boosting machines [5].

- Implement rigorous cross-validation (5-fold or 10-fold) to assess model stability and prevent overfitting.

- Evaluate performance using comprehensive metrics including AUC, accuracy, sensitivity, specificity, and F1-score.

SHAP Value Calculation:

- For tree-based models, utilize TreeSHAP algorithm for computational efficiency [12].

- For non-tree models, employ KernelSHAP as a model-agnostic approximation method.

- Compute SHAP values for both global model behavior and local individual predictions.

Interpretation and Clinical Validation:

- Generate visualization plots including force plots, summary plots, and dependence plots.

- Correlate feature importance rankings with established clinical knowledge.

- Conduct clinical validation sessions with reproductive specialists to assess explanatory utility.

Research Reagent Solutions for Male Fertility AI

Table 1: Essential Research Tools for SHAP-Based Male Fertility AI Implementation

| Research Tool | Function | Implementation Considerations |

|---|---|---|

| Python SHAP Library | Calculates SHAP values and generates explanatory visualizations | Compatible with most ML libraries; optimized for tree-based models [12] [14] |

| Scikit-learn | Provides baseline ML models and preprocessing utilities | Essential for data normalization, feature selection, and model comparison [5] |

| XGBoost/LightGBM | High-performance gradient boosting frameworks | Particularly suitable for clinical tabular data; efficient SHAP implementation [5] |

| InterpretML | Framework for interpretable modeling, including Explainable Boosting Machines (EBMs) | Useful for creating inherently interpretable models as benchmarks [12] |

| Pandas/NumPy | Data manipulation and numerical computation | Required for data cleaning, feature engineering, and preprocessing pipelines [5] |

| Matplotlib/Seaborn | Custom visualization and plot generation | Enables customization of SHAP plots for clinical audiences [14] |

Quantitative Results and Comparative Performance

Performance Metrics of AI Models in Male Fertility

Table 2: Comparative Performance of AI Models in Male Fertility Assessment with SHAP Interpretation

| AI Model | Accuracy Range | AUC | Key Features Identified via SHAP | Clinical Interpretation |

|---|---|---|---|---|

| Random Forest | 88-90.5% [5] | 99.98% [5] | Lifestyle factors, semen parameters | Highest overall performance; robust to noise |

| Support Vector Machine | 86-89.9% [11] [5] | 88.59% [11] | Morphological features, motility patterns | Effective for sperm classification tasks |

| Gradient Boosting Trees | 90-95% [11] | 80.7% [11] | Clinical markers, hormonal profiles | Strong predictive power for sperm retrieval |

| Logistic Regression | 85-88% [5] | 84.23% [11] | Linear combinations of risk factors | Naturally interpretable but limited complexity |

| Multi-Layer Perceptron | 86-90% [5] | N/R | Non-linear feature interactions | Captures complex patterns but less interpretable |

N/R = Not Reported in Studies Analyzed

SHAP Visualization for Model Interpretation

SHAP provides multiple visualization modalities that facilitate clinical interpretation of male fertility models:

Beeswarm Plots: Offer global model interpretation by displaying the distribution of SHAP values for each feature across the entire dataset, revealing both feature importance and the direction of impact (positive or negative association with fertility) [14].

Force Plots: Provide local explanations for individual predictions, showing how each feature contributes to pushing the model output from the base value (average prediction) to the final predicted value for a specific case [14].

Waterfall Plots: Illustrate the sequential cumulative effect of features for a single prediction, visually demonstrating how each feature addition moves the prediction from the expected value to the final model output [12].

Dependence Plots: Reveal the relationship between a feature's value and its SHAP value, potentially uncovering non-linear relationships and interaction effects with other features [12].

Figure 2: SHAP Visualization Framework for Clinical Interpretation. This diagram illustrates how different SHAP plot types serve distinct clinical explanatory purposes in male fertility assessment.

Methodological Considerations and Limitations

Technical Implementation Challenges

While SHAP significantly advances model interpretability, researchers must consider several methodological limitations:

Computational Complexity: Exact SHAP value calculation is NP-hard, requiring approximation methods for models with numerous features. KernelSHAP provides model-agnostic approximation but remains computationally intensive for large datasets [13].

Feature Correlation Effects: SHAP values can be misleading when features are highly correlated, as the method may arbitrarily distribute importance among correlated variables. Advanced SHAP extensions like SHAP interaction values can partially address this but increase computational demands [13].

Model Dependency: SHAP explanations are highly dependent on the underlying model. Different models trained on the same data may yield different feature importance rankings, necessitating careful model selection beyond mere predictive performance [13].

Clinical Context Integration: SHAP explains what features the model uses but not necessarily why they are clinically relevant. Effective implementation requires integration of clinical expertise to distinguish medically meaningful explanations from statistically significant but clinically irrelevant patterns [5].

Validation Framework for Clinical Deployment

Robust validation is essential before deploying SHAP-enabled AI systems in clinical male fertility practice:

Prospective Clinical Trials: Conduct randomized controlled trials comparing AI-assisted decisions with standard care, measuring outcomes including pregnancy rates, time to conception, and patient satisfaction [10].

Multi-Center Validation: Validate models across diverse populations and clinical settings to ensure generalizability and identify potential biases in feature importance [11].

Long-Term Outcome Tracking: Implement longitudinal follow-up of children born through AI-assisted selection to assess long-term health outcomes [10].

Clinical Utility Assessment: Evaluate whether SHAP explanations actually improve clinician decision-making, trust, and patient outcomes through structured interviews and workflow analysis [5].

The transition from black-box AI to interpretable systems represents a critical evolution in clinical AI, particularly for sensitive domains like male fertility where decisions have profound implications. SHAP provides a mathematically rigorous framework for model explanation that bridges the gap between algorithmic performance and clinical utility. By implementing the methodologies and validation frameworks outlined in this technical guide, researchers and clinicians can develop AI systems that not only predict male fertility outcomes with increasing accuracy but do so in a transparent, accountable manner that enhances clinical trust and facilitates personalized treatment strategies. Future work should focus on standardizing SHAP implementation across clinical platforms, improving computational efficiency for real-time use, and developing specialized visualization tools tailored to clinical workflows in reproductive medicine.

The increasing adoption of sophisticated Artificial Intelligence (AI) and Machine Learning (ML) models, particularly complex "black-box" models like Deep Neural Networks (DNNs), has created a pressing need for transparency. When AI decisions impact critical domains like healthcare, finance, and law, stakeholders require an understanding of how these decisions are made [15]. Explainable AI (XAI) is a field of research that addresses this need by providing methods to make the reasoning behind AI models' predictions understandable and transparent to humans [16]. This is crucial for ensuring safety, scrutinizing automated decision-making, and building trust, which is a prerequisite for effective human-AI collaboration [17] [16]. This guide provides an in-depth technical overview of XAI and details one of its most powerful techniques, SHapley Additive exPlanations (SHAP), framing them within the applied context of male fertility research.

Demystifying Explainable AI (XAI)

Core Concepts and Definitions

At its core, XAI is a set of processes and methods that allows human users to comprehend and trust the results and output created by machine learning algorithms [17]. The field is built upon several key principles:

- Transparency: A model is transparent if the processes that extract its parameters from training data and generate predictions can be described and motivated by the designer [16]. This can be broken down into:

- Simulatability: The ability for a human to simulate the model's decision-making process.

- Decomposability: The ability to provide an intuitive explanation for each part of the model and its parameters.

- Algorithmic Transparency: The ability to understand the learning process of the algorithm itself [15].

- Interpretability: This refers to the level of understanding how the underlying AI technology works and the ability to comprehend the model's reasoning, presenting the underlying basis for decisions in a human-understandable way [16].

- Explainability: This focuses on the level of understanding how an AI-based system arrived at a specific result for a given example, often by highlighting the collection of features that contributed to a particular decision [16].

The Importance and Goals of XAI

XAI is not merely an academic exercise; it is a fundamental component of responsible AI deployment. Its importance is driven by several critical needs [15] [17]:

- Building Trust and Confidence: For medical professionals or other domain experts to accept and act upon AI-driven insights, they must trust the model's outputs. XAI provides the necessary visibility to foster this trust [17].

- Ensuring Fairness and Detecting Bias: AI models can inadvertently learn and amplify biases present in training data. XAI techniques allow developers and auditors to detect, and consequently correct, these biases based on sensitive attributes like race or gender [15] [17].

- Model Debugging and Improvement: Understanding how a model makes predictions can help developers identify errors, weaknesses, or nonsensical rules learned from the data, leading to more robust and accurate models [18].

- Meeting Regulatory and Compliance Standards: As AI is integrated into regulated industries, the ability to justify and explain automated decisions is becoming a legal and ethical requirement, such as the "right to explanation" [16].

A Deep Dive into SHapley Additive exPlanations (SHAP)

Theoretical Foundations

SHAP (SHapley Additive exPlanations) is a unified framework for interpreting model predictions. It is based on Shapley values, a concept from cooperative game theory that fairly distributes the "payout" (the prediction) among all the "players" (the input features) [18] [19].

The core idea is to evaluate the importance of a feature by comparing the model's prediction with and without the feature. However, since features in a model often interact, simply removing one feature is not straightforward. SHAP resolves this by calculating the average marginal contribution of a feature across all possible combinations of features [16]. The key characteristic of SHAP is its additive feature attribution, meaning the sum of the SHAP values for all features equals the difference between the model's prediction for that instance and the average prediction over the dataset (the base value) [18].

Key Properties of SHAP

SHAP's foundation in game theory gives it several desirable properties [18]:

- Model-Agnostic: It can be used to explain the output of any machine learning model, from linear regressions to complex deep neural networks.

- Local and Global Explanations: It can explain individual predictions (local explainability) as well as provide a global overview of feature importance across the entire dataset.

- Consistent and Fair Attribution: The method ensures that the attribution of importance to features is consistent and fair, even when the model or data changes.

SHAP Explanation Workflow

The following diagram illustrates the standard workflow for generating and interpreting explanations using SHAP.

SHAP in Action: A Technical Protocol for Male Fertility Research

To ground the theory, we apply SHAP to a real-world research scenario: interpreting a model for male fertility diagnostics. The following experimental protocol is based on a study that achieved high predictive accuracy using a hybrid ML framework [20] [21].

Experimental Setup and Dataset

- Objective: To predict "Normal" or "Altered" seminal quality based on clinical, lifestyle, and environmental factors.

- Dataset: The publicly available Fertility Dataset from the UCI Machine Learning Repository, comprising 100 samples with 10 attributes after removal of incomplete records [21].

- Attributes: Key features include season, age, childhood diseases, accident/trauma, surgical intervention, high fever, alcohol consumption, smoking habit, and sitting hours per day [21].

- Class Distribution: The dataset exhibits moderate class imbalance (88 "Normal" vs. 12 "Altered" instances), a common challenge in medical diagnostics [21].

Detailed Methodology

Step 1: Install Required Libraries

Step 2: Data Preprocessing and Model Training

- Load and Prepare Data: Load the dataset and perform one-hot encoding on categorical variables (e.g., 'Season'). Separate features (X) from the target variable (y = 'Diagnosis').

- Address Class Imbalance: Apply techniques like SMOTE (Synthetic Minority Over-sampling Technique) or adjust class weights in the model to improve sensitivity to the minority "Altered" class [20].

- Split Data: Divide the dataset into training (80%) and test (20%) sets.

- Train Model: Train an XGBoost classifier, a powerful tree-based algorithm well-suited for tabular data.

Step 3: Compute SHAP Values

Key SHAP Visualizations for Male Fertility Analysis

SHAP provides a suite of visualizations to dissect model behavior. The table below summarizes their utility in a clinical research context.

Table: SHAP Visualization Techniques for Clinical Research

| Visualization | Description | Clinical/Research Utility |

|---|---|---|

| Force Plot | Shows how features push the model's base value to the final prediction for a single patient [18]. | Personalized Diagnostics: Explains the prediction for an individual, highlighting their specific risk factors (e.g., high sitting hours and smoking). |

| Summary Plot | Combines feature importance with feature effects, showing the distribution of SHAP values per feature across the dataset [18]. | Global Risk Factor Identification: Reveals which factors (e.g., 'Sitting Hours', 'Age') are most important overall and how they impact risk. |

| Bar Plot (Mean |SHAP|) | A standard bar chart showing the mean absolute SHAP value for each feature [18]. | Prioritizing Research: Ranks features by their average impact on the model's output, guiding further investigation. |

| Dependence Plot | Shows the effect of a single feature on the SHAP value, potentially colored by a second interacting feature [18]. | Understanding Complex Interactions: Uncovers how the effect of one risk factor (e.g., 'Age') might depend on another (e.g., 'Alcohol Consumption'). |

| Waterfall Plot | Illustrates the sequential contribution of each feature from the base (average) value to the final output [18]. | Step-by-Step Justification: Provides a detailed, linear explanation of the prediction logic for a single case. |

The Researcher's Toolkit: Essential Reagents and Computational Tools

For replicating SHAP-based analysis in male fertility or similar biomedical research, the following tools and "reagents" are essential.

Table: Essential Computational Toolkit for SHAP Analysis

| Tool/Reagent | Function | Explanation |

|---|---|---|

| SHAP Python Library | Core explanation engine. | Provides the algorithms (TreeExplainer, KernelExplainer, etc.) to compute Shapley values for any model [19]. |

| XGBoost / Scikit-learn | Model training frameworks. | Libraries used to build and train the predictive models that SHAP will later explain [18]. |

| Jupyter Notebook | Interactive development environment. | Ideal for exploratory data analysis, model building, and generating interactive SHAP visualizations. |

| Pandas & NumPy | Data manipulation and numerical computing. | Essential for loading, cleaning, and preprocessing the clinical dataset before model training and explanation. |

| Matplotlib/Seaborn | Static visualization libraries. | Used to customize and save SHAP plots for publications and reports. |

| Fertility Dataset (UCI) | Benchmark clinical data. | A standardized dataset that allows researchers to compare methods and validate findings [21]. |

Interpreting Results and Quantitative Analysis in a Fertility Context

Applying SHAP to the male fertility model yields quantifiable insights. The hypothetical results below are based on the reported high-performance metrics (99% accuracy, 100% sensitivity) of a similar study [20] [21].

Table: Hypothetical Model Performance Metrics on Fertility Dataset

| Metric | Value | Interpretation |

|---|---|---|

| Accuracy | 99% | The overall proportion of correct predictions made by the model. |

| Sensitivity (Recall) | 100% | The model's ability to correctly identify all patients with "Altered" fertility, crucial for a diagnostic test. |

| Computational Time | ~0.00006s | The efficiency of the explanation generation, highlighting feasibility for real-time use [20]. |

Table: Hypothetical Feature Importance Derived from Mean |SHAP| Values

| Rank | Feature | Mean |SHAP| Value | Clinical Interpretation |

|---|---|---|---|

| 1 | Sitting Hours per Day | 0.32 | Prolonged sedentary behavior is the strongest predictor of altered seminal quality. |

| 2 | Smoking Habit | 0.28 | Smoking has a high, consistent negative impact on fertility outcomes. |

| 3 | Age | 0.15 | Age is a moderate contributing factor within the studied age range (18-36). |

| 4 | Alcohol Consumption | 0.12 | Regular alcohol intake is a identifiable risk factor. |

| 5 | Childhood Disease | 0.08 | A weaker, but still relevant, predictor in the model. |

The integration of Explainable AI and specifically SHAP into predictive modeling for male fertility represents a paradigm shift. It moves beyond opaque black-box models towards transparent, accountable, and clinically actionable AI systems. By providing both local and global explanations, SHAP empowers researchers and clinicians to not only predict fertility outcomes with high accuracy but also to understand the "why" behind each prediction. This fosters trust, validates the model's decision-making process, and ultimately uncovers the complex interplay of lifestyle and environmental factors affecting male reproductive health, paving the way for more personalized and effective interventions.

Key Lifestyle and Environmental Features for AI Model Input

The application of Explainable Artificial Intelligence (XAI), particularly SHapley Additive exPlanations (SHAP), is transforming the study of male fertility. SHAP values allow researchers and clinicians to interpret the output of complex machine learning models by quantifying the contribution of each input feature to a final prediction. This is critical in a clinical setting, where understanding why a model suggests a specific infertility risk is as important as the prediction itself. This guide details the core lifestyle and environmental factors that serve as model inputs, the experimental protocols for data collection, and the molecular pathways that link these exposures to clinical outcomes, providing a framework for building robust, interpretable AI models in andrology.

For AI models predicting male fertility outcomes, a specific set of quantifiable features is essential. The table below synthesizes the key lifestyle and environmental factors, their measurable aspects, and their quantified impact on semen quality and DNA integrity, providing a structured dataset for feature engineering.

Table 1: Key Lifestyle and Environmental Input Features for Male Fertility AI Models

| Feature Category | Specific Measurable Inputs | Impact on Semen Parameters & DNA | Quantitative Effect Size |

|---|---|---|---|

| Substance Use | Cigarette smoking status, pack-years, cotinine levels [22] | Increased sperm DNA fragmentation (SDF), reduced motility [22] [23] | ↑ SDF by ~10% [22] |

| Alcohol consumption (type, units/week), chronic use [22] | Increased SDF, testicular atrophy, hormonal disruption [22] | ↑ SDF by a comparable magnitude to smoking [22] | |

| Cannabis, opioid, or anabolic steroid use [22] | Suppressed spermatogenesis, hormonal imbalance [22] | Not specified | |

| Body Composition | Body Mass Index (BMI), Waist-to-Hip Ratio [24] [23] | Reduced sperm concentration, motility; decreased testosterone [24] [23] | Negative correlation with sperm concentration & testosterone (p<0.05) [23] |

| Psychological Factors | Hospital Anxiety and Depression Scale (HADS) score [23] | Reduced sperm motility, viability, and concentration [23] | Significant association (p < 0.05) [23] |

| Environmental Exposures | Airborne Particulate Matter (PM2.5), Ozone levels [24] [25] | Lower sperm count, motility; abnormal morphology [25] | Effects observed below "safe" thresholds [25] |

| Occupational heat exposure [24] | Reduced sperm concentration and motility [24] | Not specified | |

| Endocrine Disruptors (Bisphenol A, Phthalates) [24] [23] | Reduced sperm motility and concentration [23] | Not specified | |

| Diet & Physical Activity | Caffeine consumption [23] | Increased progressive sperm motility [23] | Positive association [23] |

| Physical activity level (moderate vs. excessive) [24] | Improved semen quality with moderation [24] | Not specified |

Experimental Protocols for Data Collection

Robust AI models require high-quality, standardized data. The following experimental protocols, derived from recent clinical studies, provide a template for generating reliable datasets for model training and validation.

Cross-Sectional Study Design for Lifestyle Factor Association

A standardized protocol for recruiting participants and collecting multimodal data is essential for building a coherent dataset [23].

- Participant Recruitment & Criteria:

- Recruit males (e.g., aged 25-55) from fertility clinics to ensure a relevant population base [23].

- Inclusion Criteria: Willingness to provide semen and blood samples [23].

- Exclusion Criteria: Known genetic disorders, chronic illnesses affecting fertility, recent febrile illness (within 3 months), history of vasectomy, or use of medications known to impair fertility (e.g., anabolic steroids) [23].

- Data Collection Modules:

- Structured Questionnaire: Administer a paper-based or digital questionnaire divided into key sections [23]:

- Demographics: Age, marital status, education level.

- Lifestyle Factors: Detailed history of smoking, alcohol, caffeine, use of alcoholic bitters, and physical activity.

- Psychological Stress: Assess using the validated Hospital Anxiety and Depression Scale (HADS), which provides scores for anxiety and depression subscales [23].

- Environmental Exposures: Document occupational heat exposure, mobile phone use patterns, and laptop usage.

- Reproductive History: Record previous fertility treatments and outcomes.

- Anthropometric Assessment: Measure body weight (kg) using a digital scale and height (m) using a stadiometer. Calculate BMI as weight/height² and classify according to WHO categories [23].

- Biological Sampling Protocol:

- Semen Collection: Instruct participants to maintain an abstinence period of 2-5 days before sample collection. Provide additional instructions to avoid caffeine, smoking, or alcohol prior to collection [23].

- Semen Analysis: Analyze samples following WHO 2010 guidelines [23]. Specific protocols include:

- Progressive Motility: Assess using light microscopy with a pre-warmed (37°C) phase-contrast microscope and a Neubauer counting chamber. Grade motility as A (rapid progressive), B (slow/sluggish progressive), C (non-progressive), and D (immotile) [23].

- Sperm Viability: Use the eosin-nigrosin staining method. Count at least 200 sperm per sample; viable sperm remain unstained, while non-viable sperm absorb the dye [23].

- Sperm Morphology: Evaluate using Papanicolaou or Diff-Quik staining, assessing under 1000x magnification with oil immersion using Kruger's strict criteria [23].

- Blood Collection: Collect blood samples for reproductive hormone profiling. Analyze Luteinizing Hormone (LH), Follicle-Stimulating Hormone (FSH), testosterone, and estradiol using a standardized enzyme-linked fluorescent assay (ELFA) [23].

- Structured Questionnaire: Administer a paper-based or digital questionnaire divided into key sections [23]:

Environmental Exposure Assessment Protocol

Linking ambient environmental data to individual patient records requires a geospatial approach.

- Air Pollution Exposure Estimation:

- Data Sourcing: Utilize satellite observations and ground-based air quality monitoring networks to collect data on pollutants such as particulate matter (PM2.5), ozone (O3), nitrogen oxides (NOx), and volatile organic compounds (VOCs) [25].

- Modeling: Apply atmospheric modeling to create high-resolution pollution maps. Epidemiologists can then bridge this environmental data with health data by using the residential postal codes of study participants to estimate individual exposure levels [25].

- Exposure Time Windows: For studies on spermatogenesis, which lasts approximately 70-90 days, it is critical to analyze pollutant exposure during different developmental stages (early, middle, and late) of sperm production, as each stage may be differentially sensitive [25].

Signaling Pathways and Molecular Mechanisms

Understanding the biological pathways through which lifestyle and environmental factors impair fertility is crucial for validating model predictions and generating biologically plausible explanations. The primary convergent mechanism is oxidative stress.

Diagram 1: Oxidative Stress as a Central Pathway in Male Infertility

Detailed Pathway Breakdown

The diagram above illustrates how disparate risk factors converge on a common pathological endpoint.

- Induction of Oxidative Stress: Multiple factors directly increase reactive oxygen species (ROS) production. Cigarette smoke and air pollutants (e.g., particulate matter, ozone) contain pro-oxidant chemicals that directly stimulate ROS generation [24] [25]. Obesity-induced systemic inflammation, characterized by elevated tumor necrosis factor α (TNFα) and interleukin 6 (IL6), also potentiates oxidative stress [24].

- Hormonal Dysregulation: Obesity increases the activity of the aromatase enzyme complex in adipose tissue, which converts testosterone into 17β-estradiol. This leads to hypogonadism (low testosterone) and disrupts the feedback loop of the hypothalamic-pituitary-gonadal (HPG) axis, ultimately impairing spermatogenesis [24] [22]. Endocrine-disrupting chemicals (EDCs) like bisphenol A (BPA) and phthalates can mimic or block hormone action, exacerbating this dysregulation [24] [23].

- Sperm Damage: The resulting excessive ROS produce a chain of damaging events [24]:

- Sperm DNA Fragmentation: ROS directly attack and break the DNA strands in the sperm nucleus [24] [22].

- Lipid Peroxidation: ROS damage the polyunsaturated fatty acids in the sperm cell membrane, compromising its integrity and reducing motility [24].

- Mitochondrial Dysfunction: The sperm midpiece, packed with mitochondria, is a key target. ROS damage mitochondria, reducing adenosine triphosphate (ATP) production and crippling the energy supply needed for sperm movement [24].

The Scientist's Toolkit: Essential Research Reagents

The following table details key reagents and materials required to conduct the experimental research and biomarker analysis outlined in this guide.

Table 2: Essential Research Reagents and Materials for Male Fertility Studies

| Reagent/Material | Function & Application in Research |

|---|---|

| Enzyme-Linked Fluorescent Assay (ELFA) | Used for precise quantification of reproductive hormone profiles (LH, FSH, Testosterone, Estradiol) in blood serum [23]. |

| Eosin-Nigrosin Stain | A vital stain used to assess sperm viability. Non-viable sperm with compromised membranes absorb the eosin dye and appear pink, while viable sperm exclude the dye [23]. |

| Papanicolaou (PAP) Stain | A standardized staining method for evaluating sperm morphology (head, midpiece, tail defects) under light microscopy using Kruger's strict criteria [23]. |

| Phase-Contrast Microscope with Warming Stage | Essential for accurate assessment of sperm motility and concentration, as it allows for clear visualization of unstained sperm and maintains sample at 37°C during analysis [23]. |

| Hospital Anxiety & Depression Scale (HADS) | A validated, standardized questionnaire for assessing psychological stress (anxiety and depression) in clinical populations, providing a quantifiable score for analysis [23]. |

| Neubauer Counting Chamber | A calibrated hemocytometer used specifically for sperm concentration and motility analysis under the microscope [23]. |

| SHApley Additive exPlanations (SHAP) | A game theory-based method used in machine learning to interpret model output, providing a unified measure of feature importance for any model [26] [27]. |

The integration of Artificial Intelligence (AI) into male fertility diagnostics represents a paradigm shift with transformative potential for reproductive medicine. Male factor infertility contributes to approximately 30-50% of all infertility cases, yet it remains underdiagnosed and underrepresented as a disease entity [28] [11] [20]. Traditional diagnostic approaches, particularly manual semen analysis, suffer from significant limitations including inter-observer variability, subjectivity, and poor reproducibility [11]. AI technologies, especially machine learning (ML) models, have demonstrated remarkable capabilities in overcoming these limitations by automating sperm evaluation, analyzing complex multifactorial data, and predicting treatment outcomes with increasing accuracy [11] [20].

However, the "black-box" nature of many complex AI algorithms presents a critical barrier to clinical adoption. When AI systems provide diagnoses or recommendations without explanation, clinicians justifiably hesitate to trust and act upon them, particularly in sensitive domains like reproductive medicine where decisions carry profound emotional and ethical implications [28] [29]. This trust deficit is reflected in broader healthcare AI adoption trends, where surveys indicate both healthcare professionals and patients express significant concerns about AI reliability and transparency [29].

The emerging discipline of Explainable AI (XAI) directly addresses this challenge by making AI decision-making processes transparent, interpretable, and clinically actionable. Among XAI techniques, Shapley Additive Explanations (SHAP) has emerged as a particularly powerful framework for explaining ML model outputs in healthcare contexts [28] [26]. This technical guide examines the clinical necessity of transparency in AI-powered male fertility diagnostics, with specific focus on SHAP-based explanation methodologies and their critical role in building trust among researchers, clinicians, and patients.

The Male Fertility Diagnostic Landscape: Traditional Challenges and AI Opportunities

Limitations of Conventional Diagnostic Approaches

Traditional male fertility assessment relies primarily on semen analysis performed according to World Health Organization (WHO) guidelines, evaluating parameters such as sperm concentration, motility, and morphology. While foundational, this approach faces several significant limitations:

- Subjectivity and Variability: Manual assessment introduces substantial inter-observer variability, compromising result consistency and reliability [11]

- Multifactorial Complexity: Conventional analysis fails to adequately capture the complex interactions between biological, lifestyle, and environmental factors that collectively influence fertility outcomes [20]

- Diagnostic Incompleteness: Routine parameters often miss subtle but clinically significant aspects of sperm function, such as DNA fragmentation or early-stage testicular dysfunction [11]

These limitations contribute to the approximately 70% of male infertility cases that remain unexplained despite standard diagnostic evaluation [11].

The AI Revolution in Male Fertility Assessment

Artificial intelligence approaches have demonstrated significant potential to overcome these limitations through:

- Enhanced Diagnostic Accuracy: ML algorithms can analyze sperm morphology, motility, and DNA integrity with greater consistency and precision than manual methods [11]

- Multivariate Analysis Capability: AI models can integrate diverse data types—clinical parameters, imaging, lifestyle factors, and environmental exposures—to identify complex patterns beyond human analytical capacity [28] [20]

- Predictive Power: ML techniques show promising results in predicting sperm retrieval success, fertilization potential, and IVF outcomes, enabling more personalized treatment planning [11]

Table 1: Performance Metrics of Select AI Models in Male Fertility Applications

| AI Model | Application | Accuracy | AUC | Sample Size | Reference |

|---|---|---|---|---|---|

| Random Forest | Fertility Detection | 90.47% | 99.98% | 100 men | [28] |

| Hybrid MLFFN-ACO | Fertility Classification | 99% | N/R | 100 men | [20] |

| Support Vector Machine | Sperm Motility Analysis | 89.9% | N/R | 2,817 sperm | [11] |

| Gradient Boosting Trees | NOA Sperm Retrieval | 91% sensitivity | 0.807 | 119 patients | [11] |

| TabTransformer | IVF Live Birth Prediction | 97% | 98.4% | 486 patients | [7] |

The Transparency Challenge: AI's Trust Deficit in Clinical Practice

Despite demonstrated technical capabilities, AI adoption in clinical reproductive medicine faces significant trust-related barriers. Recent global surveys reveal critical insights into this adoption challenge:

- Limited Clinical Penetration: As of 2025, only approximately 29% of fertility specialists reported regularly or occasionally using AI in their clinical practice, despite recognizing its potential benefits [9]

- Implementation Barriers: Cost (38.01%) and lack of training (33.92%) represent significant adoption barriers, but trust-related concerns regarding over-reliance on technology (59.06%) and ethical implications also substantially impact integration [9]

- Professional-Patient Confidence Gap: Healthcare professionals express more confidence in AI than patients, with both groups sharing concerns about reliability, transparency, and potential for clinical error [29]

This trust deficit stems primarily from the opaque nature of many high-performing AI models. When clinicians cannot understand how an AI system arrives at a diagnosis or recommendation, they appropriately hesitate to incorporate it into clinical decision-making, particularly in high-stakes domains like fertility care.

SHAP Methodologies: Technical Foundations for Explainable AI in Male Fertility

Theoretical Foundations of SHAP

Shapley Additive Explanations (SHAP) is based on cooperative game theory concepts originally developed by economist Lloyd Shapley. In the context of ML model explanation, SHAP values quantify the marginal contribution of each input feature to the difference between a model's actual prediction and its baseline prediction (typically the average prediction across the dataset) [28] [26].

The mathematical foundation of SHAP derives from the Shapley value formula:

[ \phii(f,x) = \sum{S \subseteq N \setminus {i}} \frac{|S|!(|N|-|S|-1)!}{|N|!}[fx(S \cup {i}) - fx(S)] ]

Where:

- (\phi_i(f,x)) = SHAP value for feature i

- (N) = total set of features

- (S) = subset of features excluding i

- (f_x(S)) = prediction using feature subset S

- (|S|) = size of subset S

This approach ensures that feature importance values satisfy desirable properties including local accuracy, missingness, and consistency [28].

SHAP Implementation Workflows in Male Fertility Research

Implementing SHAP explanations in male fertility AI research involves a systematic process:

Figure 1: SHAP Implementation Workflow for Male Fertility AI Research

The critical stages in this workflow include:

Comprehensive Data Collection: Male fertility datasets typically incorporate clinical parameters (hormone levels, semen analysis results), lifestyle factors (smoking, alcohol consumption, sedentary behavior), and environmental exposures (heavy metals, pollutants) [28] [20]

Robust Model Training: Multiple ML algorithms are trained and evaluated using appropriate validation techniques, with tree-based models like Random Forest frequently demonstrating optimal performance in fertility prediction tasks [28] [26]

SHAP Value Calculation: The trained model is analyzed using SHAP frameworks to quantify the contribution of each feature to individual predictions and overall model behavior

Clinical Validation: Domain experts interpret SHAP explanations in clinical context, validating biological plausibility and clinical relevance of identified feature importance patterns

Experimental Protocols for SHAP-Based Male Fertility Studies

Research investigating SHAP explanations for male fertility AI models typically follows rigorous experimental protocols:

Dataset Characteristics:

- Sample sizes typically range from 100-500 male subjects with comprehensive feature profiling [28] [20]

- Data collection follows WHO standards for fertility assessment with additional lifestyle and environmental factor documentation

- Common datasets include the UCI Fertility Dataset and institutional clinical databases

Model Development Protocol:

- Data Preprocessing: Handling missing values, addressing class imbalance through techniques like SMOTE oversampling, and feature normalization [28] [20]

- Model Selection: Comparative evaluation of multiple ML algorithms including Random Forest, Support Vector Machines, Decision Trees, Logistic Regression, and Artificial Neural Networks [28] [30]

- Validation Framework: Implementation of k-fold cross-validation (typically 5-fold or 10-fold) to ensure robust performance estimation and mitigate overfitting [28]

- Performance Assessment: Comprehensive evaluation using metrics including accuracy, precision, recall, F1-score, and Area Under the Receiver Operating Characteristic Curve (AUC) [28] [26]

SHAP Explanation Phase:

- Explanation Generation: Calculation of SHAP values for each feature across the dataset using appropriate computational frameworks

- Global Interpretation: Analysis of overall feature importance patterns using summary plots and mean absolute SHAP values

- Local Interpretation: Examination of individual prediction explanations to understand specific clinical cases

- Clinical Correlation: Interpretation of SHAP outputs in context of established biological mechanisms and clinical knowledge

Research Reagent Solutions: Essential Tools for SHAP-Based Fertility Research

Table 2: Essential Research Tools for SHAP-Based Male Fertility Studies

| Tool Category | Specific Solutions | Function in Research | Application Example |

|---|---|---|---|

| ML Algorithms | Random Forest, XGBoost, SVM, ANN | Pattern recognition and prediction from complex fertility datasets | Random Forest achieved 90.47% accuracy in fertility detection [28] |

| Explainability Frameworks | SHAP, LIME, Partial Dependence Plots | Model interpretation and feature importance quantification | SHAP explained impact of lifestyle factors on RF model decisions [28] |

| Data Balancing Techniques | SMOTE, ADASYN, Random Undersampling | Address class imbalance in fertility datasets | SMOTE improved model sensitivity to rare fertility outcomes [28] |

| Validation Methods | k-Fold Cross-Validation, Bootstrapping | Robust performance estimation and overfitting prevention | 5-fold CV provided reliable accuracy estimates for fertility models [28] |

| Visualization Tools | SHAP summary plots, dependence plots, force plots | Communicate model behavior to clinical audiences | SHAP visualizations highlighted sedentary lifestyle impact [28] [20] |

Case Studies: SHAP-Enabled Transparency in Male Fertility AI

Lifestyle Factor Impact Analysis

A 2023 comprehensive study utilizing seven industry-standard ML models for male fertility detection demonstrated SHAP's capability to identify and quantify the impact of modifiable lifestyle factors on fertility risk [28]. The Random Forest model, which achieved optimal performance (90.47% accuracy, 99.98% AUC), was extensively analyzed using SHAP, revealing:

- Sedentary Behavior: Consistently identified as a high-impact factor, with prolonged sitting (>4 hours daily) significantly associated with higher proportions of immotile sperm

- Environmental Exposures: Occupational and environmental factors including air pollutants and heavy metals demonstrated substantial negative impact on semen quality

- Psychological Stress: Emerged as a significant contributor, with SHAP values quantifying its relative importance alongside biological parameters

The SHAP explanations provided biological plausibility to model predictions, enabling clinicians to understand not just the prediction but the reasoning behind it, significantly enhancing trust and clinical actionability [28].

Clinical Parameter Interpretation in Complex Cases

Research incorporating SHAP-based explanation of male fertility models has demonstrated particular utility in complex clinical scenarios where multiple factors interact. In these contexts, SHAP force plots visually communicate how different factors push model predictions toward normal or altered fertility classifications for individual patients [28] [20]. This granular interpretation capability:

- Supports personalized intervention planning by identifying dominant risk factors for specific individuals

- Enhances clinician confidence in AI recommendations by providing transparent rationale

- Facilitates patient education and shared decision-making through visual explanation of contributing factors

Implementation Framework: Integrating SHAP-Explained AI into Clinical Workflows

Successfully integrating SHAP-explained AI into male fertility clinical practice requires systematic approach:

Figure 2: Clinical Integration Framework for SHAP-Explained AI

Key implementation considerations include:

- Workflow Integration: SHAP explanations should be seamlessly incorporated into existing clinical documentation systems with intuitive visualization

- Clinician Training: Comprehensive education on interpreting SHAP outputs and understanding their clinical implications

- Validation Protocols: Ongoing monitoring of model performance and explanation accuracy in real-world clinical settings

- Feedback Mechanisms: Structured processes for clinician feedback on explanation utility and accuracy, enabling continuous refinement

Future Directions: Advancing SHAP Methodology in Male Fertility Research

The application of SHAP explanations in male fertility AI continues to evolve, with several promising research directions emerging:

- Longitudinal Explanation Development: Adapting SHAP methodologies to handle temporal patterns in fertility data, enabling explanation of how risk factors evolve over time

- Multimodal Data Integration: Extending SHAP approaches to incorporate diverse data types including genomic, proteomic, and imaging information within unified explanation frameworks

- Standardized Evaluation Metrics: Developing validated metrics for assessing explanation quality and clinical utility in fertility-specific contexts

- Regulatory Science Advancement: Establishing standardized protocols for SHAP-based model explanation that meet regulatory requirements for clinical AI validation

The clinical need for transparency in AI-powered male fertility diagnostics is both pressing and addressable through rigorous implementation of SHAP-based explanation methodologies. As AI adoption in healthcare accelerates—with healthcare organizations now implementing domain-specific AI tools at more than twice the rate of the broader economy [31] [32]—the imperative for transparent, interpretable systems intensifies correspondingly.

In male fertility care, where diagnostic and treatment decisions carry profound personal and societal implications, SHAP explanations bridge the critical trust gap between algorithmic performance and clinical adoption. By making visible the reasoning behind AI recommendations, SHAP empowers clinicians to understand, validate, and appropriately act upon AI insights, transforming black-box algorithms into collaborative clinical tools.

The continuing evolution of SHAP methodologies and their integration into clinical workflows promises to accelerate the responsible adoption of AI in reproductive medicine, ultimately advancing both the science and practice of male fertility care while maintaining the essential human values of trust, transparency, and shared decision-making.

Implementing SHAP with Industry-Standard AI Models for Fertility Prediction

Selecting and Training Core Machine Learning Algorithms (RF, XGBoost, SVM, ANN)

The application of artificial intelligence (AI) in male infertility represents a paradigm shift in reproductive medicine. Male factors contribute to 20-30% of infertility cases, yet traditional diagnostic methods face limitations in accuracy and consistency due to their reliance on manual assessment and subjective interpretation [33]. Machine learning (ML) algorithms are poised to revolutionize this field by enhancing diagnostic precision, predicting treatment outcomes, and ultimately improving success rates for in vitro fertilization (IVF) procedures.

The integration of ML in male fertility research has surged since 2021, with studies demonstrating promising results across various applications including sperm morphology analysis, motility assessment, and prediction of successful sperm retrieval in non-obstructive azoospermia (NOA) cases [33]. However, the transition of these models from research tools to clinical assets requires not only high predictive performance but also transparency and interpretability. This is particularly critical in healthcare domains like fertility treatment, where understanding the rationale behind a model's prediction is essential for clinical adoption and trust [5].

Explainable AI (XAI) techniques, particularly SHAP (SHapley Additive exPlanations), have emerged as vital tools for demystifying complex ML models. SHAP provides a unified framework for interpreting model outputs by quantifying the contribution of each input feature to individual predictions [34] [35]. This capability is invaluable for fertility researchers and clinicians who need to verify that models are leveraging clinically relevant factors in their decision-making process rather than spurious correlations in the data.

This technical guide provides a comprehensive framework for selecting, training, and interpreting four core ML algorithms—Random Forest (RF), XGBoost, Support Vector Machines (SVM), and Artificial Neural Networks (ANN)—within the context of male fertility research, with special emphasis on SHAP-based model explanation and validation.

Algorithm Fundamentals and Male Fertility Applications

Core Algorithm Theoretical Foundations

The effective application of ML in male fertility research requires a solid understanding of the underlying algorithms and their suitability for different types of fertility-related prediction tasks.

Random Forest (RF) is an ensemble method that constructs multiple decision trees during training and outputs the mode of the classes (classification) or mean prediction (regression) of the individual trees [5]. RF introduces randomness through bagging (bootstrap aggregating) and random feature selection, which helps mitigate overfitting—a common challenge with medical datasets that often have limited samples. For male fertility applications, this robustness to overfitting is particularly valuable when working with relatively small patient cohorts.

XGBoost (Extreme Gradient Boosting) is an advanced implementation of gradient boosted decision trees that sequentially builds trees, with each new tree correcting errors made by previous ones [12]. XGBoost incorporates regularization techniques to control model complexity, enhancing generalization performance. This algorithm has demonstrated exceptional performance in various biomedical prediction tasks, including male fertility detection where it achieved 93.22% mean accuracy with five-fold cross-validation in recent studies [5].

Support Vector Machines (SVM) identify an optimal hyperplane that maximizes the margin between different classes in a high-dimensional feature space [33]. Through the use of kernel functions, SVM can effectively handle non-linear decision boundaries without explicit feature transformation. In male fertility research, SVM has been applied to sperm analysis tasks, achieving 89.9% accuracy in sperm motility classification [33].

Artificial Neural Networks (ANN) are composed of interconnected layers of nodes (neurons) that transform input data through non-linear activation functions [36]. Deep learning architectures, including multi-layer perceptrons (MLP), can learn hierarchical representations of complex patterns in data. In male fertility, ANN models have demonstrated 90% accuracy for sperm concentration prediction [5], leveraging their capacity to model intricate relationships in high-dimensional biomedical data.

Algorithm Selection Guidance for Male Fertility Tasks

Different ML algorithms offer distinct advantages for specific male fertility applications:

- For small to medium-sized datasets (n < 1000), SVM and RF often perform well, as they are less prone to overfitting with limited samples [5].

- For tabular data with mixed feature types (common in fertility patient records), tree-based methods (RF, XGBoost) typically outperform other algorithms due to their native handling of heterogeneous data [5].

- For high-dimensional data such as sperm morphology images, CNN architectures (a specialized ANN) are preferable for their proven capability in image analysis [33] [36].

- When model interpretability is paramount, RF and XGBoost paired with SHAP analysis offer an optimal balance between performance and explainability [5].

Experimental Design and Performance Benchmarking

Data Preparation Protocols for Male Fertility Research

Robust data preprocessing is foundational to developing reliable ML models for male fertility prediction. The unique characteristics of fertility-related datasets necessitate specialized handling approaches:

Data Collection and Annotation: Male fertility datasets typically comprise clinical parameters (age, BMI, medical history), lifestyle factors (smoking, alcohol consumption, sedentary behavior), environmental exposures, and semen analysis parameters (concentration, motility, morphology) [5]. Additional specialized measurements may include sperm DNA fragmentation index, hormonal profiles, and genetic markers. Establishing standardized protocols for data collection across multiple centers is essential for ensuring dataset consistency and model generalizability [33].

Addressing Class Imbalance: Male fertility datasets often exhibit significant class imbalance, with normal fertility cases outnumbering pathological cases or vice versa. This imbalance can severely impact model performance, particularly for minority classes. Effective strategies include:

- Synthetic Minority Oversampling Technique (SMOTE): Generates synthetic samples for the minority class to balance class distribution [5].

- Combined Sampling Approaches: Integrating both oversampling of minority classes and undersampling of majority classes can optimize performance [5].

- Algorithm-Specific Solutions: Utilizing class weighting parameters in algorithms like RF, XGBoost, and SVM to assign higher penalty costs for misclassifying minority class samples.

Feature Engineering Considerations: Domain-specific feature engineering enhances model performance by incorporating clinical expertise:

- Creating composite indices that combine related semen parameters

- Developing temporal features for longitudinal fertility assessments

- Incorporating interaction terms between lifestyle factors and clinical measurements

Data Partitioning Strategy: Implement stratified splitting to preserve class distribution across training, validation, and test sets. Given the typically limited sample sizes in fertility studies, nested cross-validation approaches provide more reliable performance estimation [5].

Model Training and Hyperparameter Optimization

Systematic model training and hyperparameter tuning are critical for maximizing algorithmic performance:

Table 1: Optimal Hyperparameter Configurations for Male Fertility Prediction

| Algorithm | Key Hyperparameters | Recommended Ranges for Fertility Data | Optimization Technique |

|---|---|---|---|

| Random Forest | nestimators, maxdepth, minsamplessplit, minsamplesleaf | nestimators: 100-500, maxdepth: 5-15, minsamplessplit: 2-10, minsamplesleaf: 1-5 | Bayesian Optimization |

| XGBoost | learningrate, nestimators, maxdepth, subsample, colsamplebytree | learningrate: 0.01-0.3, nestimators: 100-500, max_depth: 3-10, subsample: 0.6-1.0 | Bayesian Optimization |

| SVM | C, gamma, kernel | C: 0.1-100, gamma: scale, auto, or 0.001-1.0, kernel: rbf, linear | Grid Search |

| ANN | hiddenlayers, neuronsperlayer, activation, dropout, learningrate | hiddenlayers: 1-3, neuronsperlayer: 32-256, activation: relu, dropout: 0.2-0.5, learningrate: 0.0001-0.01 | Random Search |

Training Protocol Specifications:

- Implement k-fold cross-validation (typically k=5 or k=10) to mitigate overfitting and provide robust performance estimates [5].

- Utilize early stopping for gradient-based methods (XGBoost, ANN) to prevent overfitting and reduce training time.