From Data to Clinic: A 2025 Guide to Machine Learning for Predictive Biomarker Validation

This article provides a comprehensive guide for researchers and drug development professionals on the application of machine learning (ML) in the validation of predictive biomarkers.

From Data to Clinic: A 2025 Guide to Machine Learning for Predictive Biomarker Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the application of machine learning (ML) in the validation of predictive biomarkers. It covers the foundational principles of biomarkers and their role in precision medicine, explores advanced ML methodologies for biomarker analysis, addresses critical challenges and optimization strategies, and establishes robust frameworks for clinical validation and model comparison. By synthesizing the latest 2025 research and trends, this resource aims to bridge the gap between computational discovery and clinically actionable, validated biomarkers, ultimately accelerating the development of personalized therapeutics.

The New Frontier: Defining Predictive Biomarkers and the Machine Learning Revolution

Biomarkers, defined as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes or responses to an exposure or intervention," form the cornerstone of modern diagnostic and therapeutic development [1]. These measurable indicators appear in blood, tissue, or other biological samples, providing crucial data about normal processes, disease states, and treatment responses [2]. The joint FDA-NIH Biomarkers, EndpointS, and other Tools (BEST) resource has established standardized definitions to create a shared understanding across research and clinical practice, recognizing that confusion about fundamental definitions and concepts has historically slowed progress in diagnostic and therapeutic technology development [1].

The evolution of biomarkers represents a journey from single-molecule measurements to complex multi-omics profiles, reshaping how researchers approach disease understanding and drug development. This transformation is particularly evident in complex fields like chronic disease and nutrition, where single biomarkers often fail to capture disease complexity [1]. The emergence of large-scale biobanks integrating electronic health records with multi-omics data has created unprecedented opportunities to discover novel biomarkers and develop predictive algorithms for human disease [3]. This guide provides a comprehensive comparison of traditional and modern biomarker approaches, examining their performance characteristics, validation methodologies, and applications in contemporary research and drug development.

Traditional Biomarker Classifications and Applications

Fundamental Biomarker Categories

Traditional biomarker classification systems categorize these molecular indicators based on their specific clinical applications and contextual use. The BEST resource defines several critical subtypes with distinct purposes [1]:

- Diagnostic biomarkers detect or confirm the presence of a disease or condition of interest, or identify individuals with a disease subtype. Examples include troponin for myocardial infarction and PSA for prostate cancer screening [1] [2].

- Monitoring biomarkers are measured serially to assess the status of a disease or medical condition, such as hemoglobin A1c in diabetes management or CD4 counts in HIV infection monitoring [1].

- Pharmacodynamic/response biomarkers indicate that a biological response has occurred in an individual who has been exposed to a medical product or environmental agent.

- Predictive biomarkers help identify individuals who are more likely to experience a favorable or unfavorable effect from a specific medical product, enabling targeted therapies.

- Prognostic biomarkers forecast the likelihood of future clinical events, disease recurrence, or progression in patients with a specific medical condition.

- Safety biomarkers are measured before or after exposure to a medical product to indicate the likelihood, presence, or extent of toxicity as an adverse effect.

- Susceptibility/risk biomarkers indicate the potential for developing a disease or medical condition in individuals without clinically apparent disease.

A single biomarker may fulfill multiple roles across different contexts, but each specific use requires separate evidence development and validation [1]. This classification system enables healthcare teams to develop targeted, effective treatment strategies and provides a framework for regulatory evaluation [2].

Comparison of Traditional Biomarker Types

Table 1: Classification and Applications of Traditional Biomarker Types

| Biomarker Type | Primary Function | Clinical Context | Examples | Regulatory Considerations |

|---|---|---|---|---|

| Diagnostic | Detects or confirms disease presence | Identification of disease or subtype | Troponin (myocardial infarction), PSA (prostate cancer) | Must have very low false-positive rate for low-prevalence diseases requiring invasive follow-up [1] |

| Monitoring | Assesses disease status over time | Serial measurement of disease progression or treatment response | Hemoglobin A1c (diabetes), CD4 counts (HIV) | Optimal measurement intervals and clinical decision thresholds often require refinement [1] |

| Predictive | Identifies likely treatment responders | Patient stratification for targeted therapies | EGFR mutations (lung cancer), HER2 status (breast cancer) | Critical for enrichment strategies in clinical trials [2] |

| Prognostic | Forecasts disease course | Informs long-term treatment planning and patient counseling | Cancer staging, Oncotype DX recurrence score | Must be distinguished from predictive biomarkers for proper clinical application [1] |

| Safety | Indicates potential toxicity | Monitoring adverse effects of treatments | Liver enzymes for hepatotoxicity, QTc prolongation | Often used in early clinical development to identify dose-limiting toxicities [1] |

Validation Framework for Traditional Biomarkers

The validation of traditional biomarkers requires a rigorous, multi-step process specific to each condition of use. This process encompasses three interdependent components: analytical validation, qualification using an evidentiary assessment, and utilization [1]. Analytical validation ensures the biomarker can be measured accurately, reliably, and reproducibly through defined analytical methods. Qualification involves assessing the evidence linking the biomarker to a specific biological process or clinical endpoint. Utilization establishes the appropriateness of the biomarker for a specific context in drug development or regulatory decision-making.

The operating characteristics of biomarker assays vary considerably, creating challenges for clinical implementation. For example, the many troponin assays demonstrate substantial variability, especially at lower detection limits where misclassification can significantly impact medical care [1]. The advent of high-sensitivity troponin assays has enabled sophisticated diagnosis of small myocardial necrosis episodes but has simultaneously created new interpretation challenges when elevations occur at previously undetectable levels [1].

The Multi-Omics Revolution in Biomarker Discovery

Multi-Omics Technologies and Their Applications

Multi-omics strategies integrate large-scale, high-throughput analyses across multiple molecular layers, including genomics, transcriptomics, proteomics, metabolomics, and epigenomics [4]. This comprehensive approach provides unprecedented insights into cellular dynamics and facilitates biomarker identification crucial for cancer diagnosis, prognosis, and therapeutic decision-making [4]. Landmark projects such as The Cancer Genome Atlas (TCGA) Pan-Cancer Atlas, the Pan-Cancer Analysis of Whole Genomes (PCAWG), MSK-IMPACT, and the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have demonstrated the utility of multi-omics in uncovering cancer biology and clinically actionable biomarkers [4].

Each omics layer provides distinct biological insights:

- Genomics investigates alterations at the DNA level using sequencing technologies to identify copy number variations, genetic mutations, and single nucleotide polymorphisms [4]. The tumor mutational burden (TMB), validated in the KEYNOTE-158 trial, has been approved by the FDA as a predictive biomarker for pembrolizumab treatment across solid tumors [4].

- Transcriptomics explores RNA expression using microarrays and RNA sequencing, encompassing mRNAs and noncoding RNAs [4]. Clinically validated gene-expression signatures such as Oncotype DX (21-gene) and MammaPrint (70-gene) have demonstrated utility in tailoring adjuvant chemotherapy decisions in breast cancer patients [4].

- Proteomics investigates protein abundance, modifications, and interactions using high-throughput methods including mass spectrometry [4]. CPTAC studies of ovarian and breast cancers showed that proteomics can identify functional subtypes and reveal druggable vulnerabilities missed by genomics alone [4].

- Metabolomics examines cellular metabolites, including small molecules, carbohydrates, lipids, and nucleosides [4]. In IDH1/2-mutant gliomas, the oncometabolite 2-hydroxyglutarate (2-HG) functions as both a diagnostic and mechanistic biomarker [4].

- Epigenomics investigates DNA and histone modifications, including DNA methylation [4]. MGMT promoter methylation serves as a classic clinical biomarker predicting benefit from temozolomide chemotherapy in glioblastoma [4].

Comparative Performance of Multi-Omics Platforms

Table 2: Performance Characteristics of Multi-Omics Technologies in Biomarker Discovery

| Omics Layer | Analytical Platforms | Key Biomarker Applications | Clinical Validation Examples | Strengths | Limitations |

|---|---|---|---|---|---|

| Genomics | Whole exome sequencing, Whole genome sequencing | Tumor mutational burden, MSI status, BRCA mutations | FDA approval of TMB for pembrolizumab; ~37% of tumors harbor actionable alterations in MSK-IMPACT [4] | Comprehensive mutation profiling; established clinical utility | Does not capture functional protein or regulatory effects |

| Transcriptomics | RNA sequencing, Microarrays | Gene expression signatures, Fusion genes, Immune signatures | Oncotype DX (TAILORx trial), MammaPrint (MINDACT trial) for breast cancer chemotherapy decisions [4] | High sensitivity and cost-effectiveness; reflects active biological processes | mRNA levels may not correlate with protein abundance |

| Proteomics | Mass spectrometry, Liquid chromatography-MS | Protein abundance, Post-translational modifications, Pathway activation | CPTAC studies identifying functional subtypes in ovarian and breast cancers [4] | Directly measures functional effectors; post-translational modifications | Analytical complexity; dynamic range challenges |

| Metabolomics | LC-MS, GC-MS | Metabolic pathway alterations, Oncometabolites | 2-hydroxyglutarate in IDH-mutant gliomas; 10-metabolite plasma signature in gastric cancer [4] | Closest to phenotypic expression; dynamic response indicators | Complex sample preparation; database limitations |

| Epigenomics | Whole genome bisulfite sequencing, ChIP-seq | DNA methylation signatures, Histone modifications | MGMT promoter methylation in glioblastoma; multi-cancer early detection assays [4] | Stable markers; tissue-of-origin signatures | Tissue-specific patterns; complex data interpretation |

Experimental Protocols for Multi-Omics Integration

Multi-omics integration involves comprehensive analysis of data from various sources, offering more robust results for biomarker discovery. Two primary integration strategies have emerged [4]:

- Horizontal integration combines the same type of omics data from multiple studies or cohorts to increase statistical power and validate findings across diverse populations.

- Vertical integration simultaneously analyzes different omics layers from the same samples to build comprehensive molecular networks and identify cross-omics interactions.

The experimental workflow for multi-omics biomarker discovery typically includes [4]:

- Sample preparation using standardized protocols for nucleic acid, protein, and metabolite extraction

- Data generation across multiple analytical platforms

- Quality control and normalization within each omics dataset

- Data integration using computational methods

- Biomarker identification and validation in independent cohorts

Quality control steps are critical for each omics data type. For genomics and transcriptomics, this includes assessing sequencing depth, mapping rates, and batch effects. For proteomics, quality metrics encompass peptide identification confidence, protein inference, and quantification accuracy. Metabolomics requires evaluation of peak detection, alignment, and identification reliability [4].

Machine Learning and Computational Frameworks for Biomarker Validation

Advanced Machine Learning Approaches

Machine learning holds significant promise for accelerating biomarker discovery in clinical proteomics and other multi-omics fields, though its real-world impact remains limited by methodological pitfalls and unrealistic expectations [5]. Machine learning enhances biomarker discovery by integrating diverse and high-volume data types, such as genomics, transcriptomics, proteomics, metabolomics, imaging, and clinical records [6]. These approaches successfully identify diagnostic, prognostic, and predictive biomarkers across fields including oncology, infectious diseases, neurological disorders, and autoimmune diseases [6].

Key machine learning methodologies in biomarker discovery include [6]:

- Supervised learning trains predictive models on labeled datasets to classify disease status or predict clinical outcomes using techniques like support vector machines, random forests, and gradient boosting algorithms (XGBoost, LightGBM).

- Unsupervised learning explores unlabeled datasets to discover inherent structures or novel subgroupings without predefined outcomes using clustering methods (k-means, hierarchical clustering) and dimensionality reduction approaches (principal component analysis).

- Deep learning architectures, particularly convolutional neural networks (CNNs) and recurrent neural networks (RNNs), handle complex biomedical data. CNNs identify spatial patterns in imaging data, while RNNs capture temporal dynamics in longitudinal data.

The MILTON framework (machine learning with phenotype associations) exemplifies advanced machine learning applications, utilizing a range of biomarkers to predict 3,213 diseases in the UK Biobank [3]. This ensemble machine-learning framework leverages longitudinal health record data to predict incident disease cases undiagnosed at time of recruitment, largely outperforming available polygenic risk scores [3]. MILTON achieved AUC ≥ 0.7 for 1,091 disease codes, AUC ≥ 0.8 for 384 codes, and AUC ≥ 0.9 for 121 codes across all time-models and ancestries [3].

Experimental Protocol for Machine Learning Validation

Robust machine learning validation requires rigorous methodology to avoid common pitfalls such as overfitting, data leakage, and poor generalizability. A standardized protocol includes [5] [3]:

Feature Selection: Initial biomarker candidates are identified from multi-omics measurements. Dimensionality reduction techniques may be applied to address the high dimensionality typical of omics data.

Model Training: Using a training subset (typically 70-80% of data), models are trained with careful attention to avoiding overfitting through techniques like regularization and cross-validation.

Hyperparameter Tuning: Model parameters are optimized using validation sets or nested cross-validation to maximize performance while maintaining generalizability.

Performance Evaluation: Models are tested on held-out test sets using appropriate metrics including area under the curve (AUC), sensitivity, specificity, and positive predictive value.

External Validation: Ideally, models should be validated using completely independent cohorts to assess true generalizability across different populations and settings.

For clinical proteomics specifically, researchers caution against the uncritical application of complex models such as deep learning architectures that often exacerbate problems with small sample sizes, offering limited interpretability and negligible performance gains [5]. Instead, they advocate for realistic and responsible use of machine learning, grounded in rigorous study design, appropriate validation strategies, and transparent, reproducible modeling practices [5].

Biomarker Validation and Comparison Frameworks

Standardized statistical frameworks enable direct comparison of biomarker performance across modalities and measurement techniques. These frameworks operationalize specific criteria including precision in capturing change over time and clinical validity [7]. In Alzheimer's disease research, for example, ventricular volume and hippocampal volume showed the best precision in detecting change over time in both individuals with mild cognitive impairment and dementia [7].

The Biomarker Toolkit provides an evidence-based guideline to predict cancer biomarker success and guide development [8]. Developed through systematic literature review, expert interviews, and Delphi surveys, this validated checklist includes 129 attributes grouped into four main categories: rationale, clinical utility, analytical validity, and clinical validity [8]. Validation studies demonstrated that the total score generated by this toolkit significantly predicts biomarker implementation success in both breast and colorectal cancer [8].

Key validation criteria for biomarkers include [7] [8]:

- Analytical validity: Measures how accurately and reliably the biomarker can be measured, including sensitivity, specificity, reproducibility, and stability.

- Clinical validity: Assesses how accurately the biomarker identifies or predicts the clinical outcome of interest, including prognostic and predictive value.

- Clinical utility: Determines whether using the biomarker for decision-making leads to improved patient outcomes and whether the benefits outweigh any risks or costs.

Visualization and Interpretation of Complex Biomarker Data

Advanced Visualization Techniques

Biomarker heatmaps with clustering analysis enable visualization of complex multi-dimensional biomarker data, helping to identify patterns or trends in relative abundance variations [9]. This approach is particularly valuable for interpreting high-temporal resolution biomarker data, such as monitoring storm-induced changes in fluvial particulate organic carbon composition [9]. The methodology involves:

- Data Scaling: Biomarker concentration data are converted to z-scores using autoscaling to avoid inadvertent weighting due to extreme high or low absolute concentrations.

- Heatmap Construction: Z-scores are visualized using color gradients, with red typically indicating higher-than-average concentrations and blue indicating lower-than-average concentrations.

- Hierarchical Clustering: Both rows and columns are reordered based on similarity in biomarker profiles, grouping similar samples and similar biomarkers together.

This visualization approach helps identify hidden patterns in complex biomarker data and generates hypotheses for follow-up analyses [9]. Compared to principal component analysis (PCA), biomarker heatmaps perform better in visualizing temporal changes of individual biomarkers while maintaining the ability to identify sample clusters [9].

Visualizing Biomarker Classification and Validation Pathways

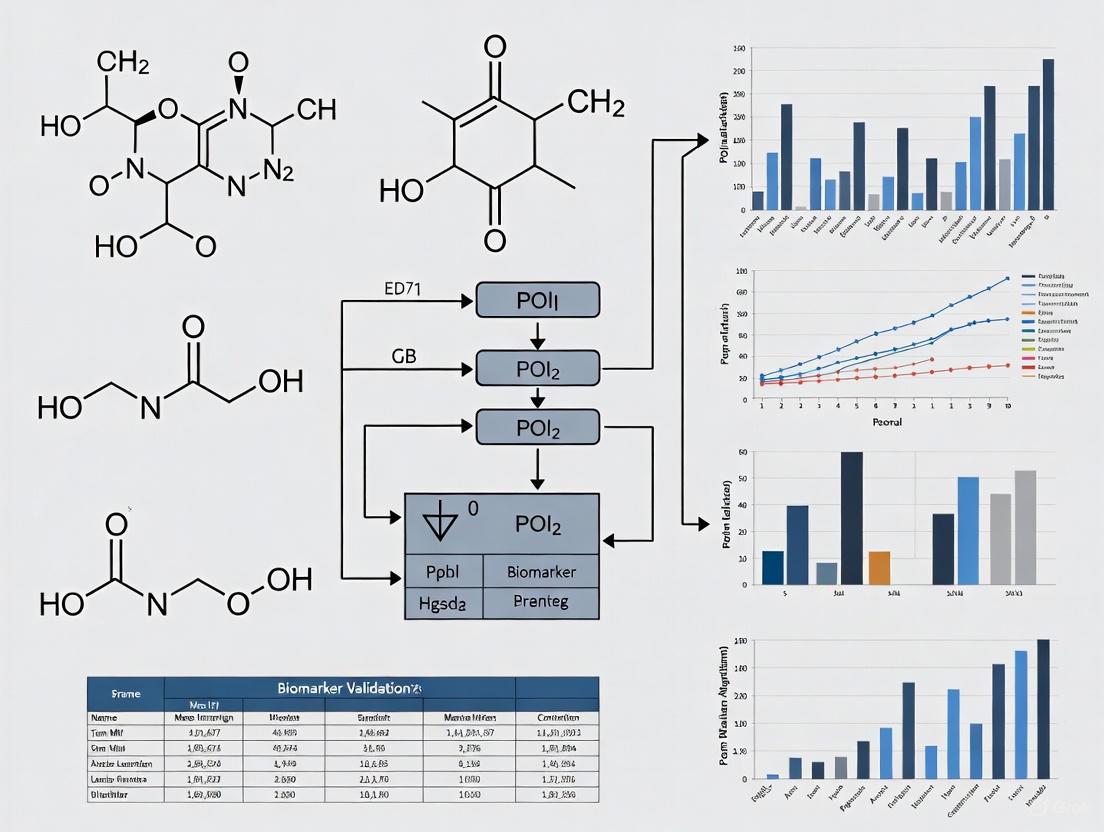

Diagram 1: Comprehensive Biomarker Discovery and Validation Workflow. This workflow illustrates the pathway from sample collection through multi-omics data generation, computational analysis, classification, and validation to clinical application.

Diagram 2: Multi-Omics Integration for Biomarker Discovery. This diagram shows how different molecular layers are derived from biological samples and integrated to identify comprehensive biomarker signatures.

Key Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Biomarker Discovery and Validation

| Category | Specific Tools/Reagents | Primary Function | Application Context | Considerations |

|---|---|---|---|---|

| Sample Preparation | Omni LH 96 homogenizer, Automated nucleic acid extractors | Standardized sample processing and nucleic acid extraction | Critical for reproducible multi-omics studies; reduces human error and processing variability [2] | Automation ensures consistent extraction across studies, reducing variability that compromises analyses [2] |

| Genomics Platforms | Next-generation sequencers (Illumina), Whole exome/genome kits | Comprehensive DNA mutation and variation profiling | Identification of genetic biomarkers, tumor mutational burden, copy number variations [4] | Library preparation consistency is crucial for comparative analyses; requires rigorous quality control metrics |

| Proteomics Reagents | Mass spectrometry systems, Antibody arrays, LC-MS platforms | Protein identification, quantification, and post-translational modification mapping | Discovery of protein biomarkers, pathway activation analysis, therapeutic target identification [4] [5] | Standardized protocols essential for cross-study comparisons; dynamic range limitations require consideration |

| Metabolomics Tools | LC-MS, GC-MS systems, Metabolite standards, Extraction kits | Comprehensive metabolite profiling and quantification | Identification of metabolic biomarkers, pathway analysis, therapeutic response monitoring [4] | Sample stability critical; comprehensive standards libraries needed for compound identification |

| Computational Resources | Multi-omics databases (TCGA, CPTAC), Machine learning libraries | Data integration, analysis, and biomarker model development | Multi-omics integration, biomarker signature identification, predictive model building [4] [3] | Data harmonization essential; computational expertise required for advanced machine learning applications |

The evolution from traditional single-molecule biomarkers to modern multi-omics profiles represents a paradigm shift in diagnostic and therapeutic development. While traditional biomarkers continue to provide critical clinical value in specific contexts, multi-omics approaches offer unprecedented comprehensive profiling of biological systems. The integration of machine learning and computational frameworks enables researchers to extract meaningful patterns from these complex datasets, accelerating biomarker discovery and validation.

Successful biomarker development requires rigorous attention to analytical validation, clinical validity, and demonstrated clinical utility. Frameworks like the Biomarker Toolkit provide evidence-based guidance for prioritizing biomarker development efforts [8]. As multi-omics technologies continue to advance and computational methods become more sophisticated, the biomarker landscape will increasingly embrace complex composite biomarkers and digital biomarkers derived from sensors and mobile technologies [1].

The future of biomarker research lies in effectively integrating traditional clinical knowledge with cutting-edge multi-omics profiling, leveraging machine learning to identify robust signatures, and applying rigorous validation frameworks to ensure clinical utility. This integrated approach promises to deliver more precise, personalized diagnostic and therapeutic strategies, ultimately improving patient outcomes across diverse disease areas.

The Critical Role of Predictive Biomarkers in Precision Medicine and Drug Development

Predictive biomarkers are fundamentally reshaping precision medicine and drug development by enabling patient stratification, forecasting therapeutic efficacy, and guiding targeted treatment strategies. These measurable indicators of biological processes or drug responses have evolved from single-molecule entities to complex multi-analyte signatures, thanks to technological advancements in high-throughput omics profiling and sophisticated computational approaches [10]. The traditional model of "one mutation, one target, one test" is rapidly giving way to multidimensional perspectives that capture the full complexity of disease biology [10]. This paradigm shift is critically supported by machine learning (ML) and artificial intelligence (AI), which can analyze large, complex datasets to identify reliable and clinically useful biomarkers from diverse biological layers including genomics, transcriptomics, proteomics, metabolomics, and digital pathology [6]. The integration of these technologies addresses significant limitations of conventional biomarker discovery methods, including limited reproducibility, high false-positive rates, and inadequate predictive accuracy, ultimately accelerating the development of personalized treatment strategies that maximize therapeutic benefits while minimizing adverse effects [6].

Technological Landscape: Multi-Omics and Machine Learning Integration

Multi-Omics as the Engine of Discovery

The contemporary biomarker discovery landscape is dominated by integrated multi-omics approaches that provide a comprehensive view of disease biology. Spatial biology, single-cell analysis, and multi-omics have transitioned from buzzwords to the fundamental backbone of precision medicine, enabling researchers to move beyond static endpoints and capture dynamic disease processes [10]. Leading technology providers are demonstrating how these approaches reveal clinically actionable insights that traditional methods miss. For instance, 10x Genomics showcased how protein profiling identified tumor regions expressing poor-prognosis biomarkers with known therapeutic targets—signals that standard RNA analysis had entirely missed [10]. Similarly, Element Biosciences' AVITI24 system collapses previously separate workflows by combining sequencing with cell profiling to capture RNA, protein, and morphological data simultaneously [10]. These technological advances enable pharmaceutical companies to transform biomarker-driven drug development and meaningfully improve patient outcomes through more precise patient stratification that considers the full molecular and cellular context of disease rather than single mutations alone [10].

Machine Learning and AI Methodologies

Machine learning enhances biomarker discovery by integrating diverse and high-volume data types to identify diagnostic, prognostic, and predictive biomarkers across various disease areas including oncology, infectious diseases, and neurological disorders [6]. Several methodological approaches have proven particularly effective:

Supervised Learning Techniques: Include support vector machines (effective for small sample, high-dimensional omics data), random forests (providing robustness against noise and overfitting), and gradient boosting algorithms (e.g., XGBoost, LightGBM) that iteratively correct prediction errors for superior accuracy [6].

Deep Learning Architectures: Convolutional Neural Networks (CNNs) identify spatial patterns in imaging data such as histopathology, while Recurrent Neural Networks (RNNs) capture temporal dynamics and dependencies within sequential data, making them valuable for prognosis and treatment response prediction [6].

Automated ML Workflows: Cloud-based platforms like BiomarkerML provide standardized, user-friendly interfaces that streamline analyses and ensure reproducibility. These workflows employ techniques like weighted, nested cross-validation to avoid model over-fitting and data leakage, while using SHapley Additive exPlanations (SHAP) to quantify each protein's contribution to model predictions [11].

The application of these ML methods has demonstrated significant performance improvements over traditional approaches. Research on gastric cancer datasets showed that when specificity was fixed at 0.9, ML approaches achieved a sensitivity of 0.240 with 3 biomarkers and 0.520 with 10 biomarkers, substantially outperforming standard logistic regression which provided sensitivities of 0.000 and 0.040 respectively [12].

Table 1: Comparison of Machine Learning Performance in Biomarker Discovery

| Method | Number of Biomarkers | Sensitivity | Specificity | Application Domain |

|---|---|---|---|---|

| ML Approaches | 3 | 0.240 | 0.900 | Gastric Cancer |

| ML Approaches | 10 | 0.520 | 0.900 | Gastric Cancer |

| Logistic Regression | 3 | 0.000 | 0.900 | Gastric Cancer |

| Logistic Regression | 10 | 0.040 | 0.900 | Gastric Cancer |

| Random Forest Classifier | Digital Biomarkers | 0.882 | 0.841 | Alzheimer's Disease |

| BiomarkerML Workflow | Proteomic Features | Varies by dataset | Varies by dataset | Multi-Disease Application |

Experimental Approaches and Validation Frameworks

Methodologies for Biomarker Discovery and Validation

Robust experimental protocols are essential for translating biomarker discoveries into clinically applicable tools. The following methodologies represent current best practices across different biomarker types:

Proteomic Biomarker Discovery Using BiomarkerML The BiomarkerML workflow provides a comprehensive framework for proteomic biomarker discovery [11]. The process begins with data ingestion of proteomic and clinical data alongside sample labels. Subsequent pre-processing prepares data for model fitting, with optional dimensionality reduction and visualization. The workflow then fits a catalog of ML and DL classification and regression models, calculating performance metrics for model comparison. A critical step involves applying mean SHapley Additive exPlanations (SHAP) to quantify the contribution of each protein to model predictions across all samples. Proteins with high mean SHAP values and their co-expressed protein network interactors are finally identified as candidate biomarkers. This workflow employs hyperparameter fine-tuning via grid-search and weighted, nested cross-validation to prevent model over-fitting and data leakage, ensuring reproducible results [11].

Blood-Based Digital Biomarkers for Alzheimer's Disease A multicohort diagnostic study demonstrated an innovative approach for developing ML models with blood-based digital biomarkers for Alzheimer's disease diagnosis [13]. Researchers used Attenuated Total Reflectance-Fourier Transform Infrared (ATR-FTIR) spectroscopy to generate plasma spectra data from 1324 individuals, including patients with Alzheimer's disease, mild cognitive impairment, and other neurodegenerative diseases. They applied random forest classifiers with feature selection procedures to identify digital biomarkers from spectral features. The resulting models achieved area under the curve (AUC) values of 0.92 for distinguishing Alzheimer's disease from healthy controls, and 0.89 for identifying mild cognitive impairment. Validation included correlation analyses with established plasma biomarkers including p-tau217 and glial fibrillary acidic protein, confirming the biological relevance of the identified spectral features [13].

Feature Selection Methodologies for Optimal Biomarker Panels Comparative studies have evaluated multiple biomarker selection methods, finding that the optimal approach depends on the number of biomarkers permitted [12]. Causal-based feature selection methods proved most performant when fewer biomarkers were permitted, while univariate feature selection excelled when a greater number of biomarkers were allowed. These methodologies address the practical need for cost-effective diagnostic products by minimizing the number of biomarkers while maintaining predictive accuracy, thereby reducing model complexity and enhancing interpretability while minimizing spurious correlations [12].

Experimental Workflow Visualization

The following diagram illustrates the integrated experimental and computational workflow for machine learning-driven biomarker discovery:

Diagram 1: ML-Driven Biomarker Discovery Workflow

Comparative Analysis of Biomarker Modalities and Technologies

Performance Comparison Across Biomarker Types

Different biomarker modalities offer distinct advantages and limitations for precision medicine applications. The table below provides a structured comparison of key biomarker technologies based on recent studies and implementations:

Table 2: Comparative Analysis of Biomarker Technologies in Precision Medicine

| Biomarker Technology | Applications | Key Advantages | Limitations | Representative Performance |

|---|---|---|---|---|

| Multi-Omics Platforms (10x Genomics, Element Biosciences) | Tumor subtyping, drug mechanism analysis | Reveals clinically actionable subgroups missed by single-omics; captures full molecular context | Operational complexity; high computational requirements; data integration challenges | Protein profiling revealed prognostic biomarkers missed by RNA analysis [10] |

| Blood-Based Digital Biomarkers (ATR-FTIR Spectroscopy) | Alzheimer's disease, neurodegenerative disorders | Minimally invasive; cost-effective; high-dimensional data from simple blood samples | Requires specialized equipment; correlation with established biomarkers must be demonstrated | AUC 0.92 (AD vs HC); Sensitivity 88.2%; Specificity 84.1% [13] |

| Proteomic ML Workflows (BiomarkerML) | Multi-disease biomarker discovery | Automated analysis; reproducible results; identifies complex nonlinear patterns | Cloud-based implementation may raise data privacy concerns; requires technical expertise | Identifies high SHAP-value proteins and co-expressed network interactors [11] |

| Causal-Based Biomarker Selection | Gastric cancer, other complex diseases | Minimizes spurious correlations; enhances biological interpretability | Performance dependent on number of biomarkers permitted | Superior performance with limited biomarkers (3 biomarkers) [12] |

Regulatory and Implementation Considerations

The translation of biomarkers from discovery to clinical application requires navigating complex regulatory landscapes and implementation challenges. Europe's In Vitro Diagnostic Regulation (IVDR) has emerged as a significant "regulatory stress test" for biomarker and diagnostic development [10]. While intended to ensure safety and performance, IVDR implementation has created challenges including regulatory uncertainty, inconsistencies between jurisdictions, lack of transparency compared to FDA databases, and unpredictable timelines that complicate companion diagnostic and drug co-development. These regulatory hurdles are compounded by technical implementation barriers related to data privacy, security, and interoperability across healthcare systems [14]. Successful navigation of this complex environment often involves partnering with established diagnostic companies with regulatory expertise and investing in the digital infrastructure needed to embed biomarker insights into clinical workflows, including laboratory information management systems (LIMS), electronic quality management systems (eQMS), and clinician portals [10].

Essential Research Tools and Reagents

The experimental approaches discussed require specific research tools and reagents to implement successfully. The following table details key solutions and their functions in biomarker discovery workflows:

Table 3: Essential Research Reagent Solutions for Biomarker Discovery

| Research Tool | Function | Application Context |

|---|---|---|

| Next-Generation Sequencing Platforms (AVITI24, 10x Genomics) | High-throughput DNA/RNA sequencing with single-cell resolution | Multi-omics profiling; tumor heterogeneity studies; biomarker discovery [10] |

| ATR-FTIR Spectroscopy | Generates plasma spectra for spectral biomarker identification | Blood-based digital biomarker development for neurodegenerative diseases [13] |

| Cloud-Based ML Workflows (BiomarkerML) | Automated machine learning analysis of proteomic data | Biomarker discovery from high-dimensional proteomic data; candidate prioritization [11] |

| Electronic Lab Notebooks (SciNote, LabArchives) | Research data management, protocol tracking, and compliance documentation | Maintaining experimental integrity; supporting regulatory compliance; collaboration [15] [16] |

| Spatial Biology Platforms | Simultaneous analysis of RNA, protein, and morphological data | Tumor microenvironment characterization; cellular interaction studies [10] |

| Companion Diagnostic Development Tools | Regulatory-compliant diagnostic test development | Translating biomarker discoveries into clinically validated tests [10] |

The field of predictive biomarkers is evolving toward increasingly integrated and functional approaches. Future research will focus on directly linking genomic data to functional outcomes, particularly with biosynthetic gene clusters and non-coding RNAs [6]. The successful implementation of biomarker-driven precision medicine will depend not only on technological advancements but also on overcoming practical challenges related to regulatory frameworks, data standardization, and clinical workflow integration [10]. As biomarker science continues to advance, rigorous validation, model interpretability, and regulatory compliance will remain essential for clinical implementation [6]. The convergence of multi-omics technologies, sophisticated machine learning algorithms, and enhanced computational infrastructure promises to accelerate the development of personalized treatment strategies that ultimately improve patient outcomes across a broad spectrum of diseases.

Why Machine Learning? Overcoming the Limitations of Traditional Statistical Methods

The discovery and validation of biomarkers are fundamental to advancing precision medicine, enabling improved disease diagnosis, prognosis, and personalized treatment strategies [6]. Traditionally, this field has been dominated by conventional statistical methods, which focus on inference and testing prespecified hypotheses based on probabilistic models. While these methods provide interpretable results and are well-suited for studies with limited variables, they face significant challenges when confronted with the high-dimensional, complex datasets now common in biomedical research [17]. The emergence of machine learning (ML) represents a paradigm shift, offering powerful alternatives that overcome many limitations of traditional approaches through their ability to learn directly from data without relying on strict pre-specified models [18] [19].

This guide objectively compares the performance of machine learning and traditional statistical methods within the specific context of validation point-of-interest (POI) biomarkers research. We present experimental data, detailed methodologies, and analytical frameworks to help researchers and drug development professionals make informed decisions about which analytical approach best suits their specific research objectives, data characteristics, and validation requirements.

Fundamental Differences Between Machine Learning and Statistical Approaches

While often viewed as competing fields, machine learning and conventional statistics are increasingly recognized as complementary disciplines with intertwined foundations [20]. Understanding their core differences is essential for appropriate application in biomarker research.

Philosophical and Methodological Distinctions

Table 1: Core Conceptual Differences Between Statistical and Machine Learning Approaches

| Aspect | Traditional Statistics | Machine Learning |

|---|---|---|

| Primary Goal | Parameter estimation, inference, hypothesis testing [20] | Prediction, pattern recognition [18] [21] |

| Model Specification | Pre-specified model based on theoretical understanding [19] | Data-driven model discovery through algorithmic learning [19] |

| Data Relationship | Uses data to estimate parameters of a presumed model [19] | Uses data to learn the model structure itself [18] |

| Assumptions | Relies on strong statistical assumptions (e.g., linearity, distribution) [19] | Makes fewer inherent assumptions; learns complex relationships [19] |

| Interpretability | Typically highly interpretable with clear parameter meanings [19] | Often operates as a "black box" with limited inherent interpretability [22] [6] |

Vocabulary and Terminology Mapping

Despite methodological differences, both fields share common concepts under different terminology. In statistical prediction modeling, "predictors" correspond to "features" in ML, the "outcome" aligns with "label," "estimation" parallels "learning," and "validation data" is equivalent to "test data" [20]. This terminology mapping is crucial for interdisciplinary collaboration in biomarker research.

Key Limitations of Traditional Statistical Methods in Biomarker Research

Traditional statistical methods face several critical challenges when applied to modern biomarker discovery and validation contexts:

The High-Dimensionality Problem (p >> n)

Biomedical datasets, particularly from omics technologies (genomics, transcriptomics, proteomics), often contain thousands to millions of potential biomarker features (p) measured across a much smaller number of samples (n) [17]. This "p >> n" scenario violates fundamental assumptions of many traditional statistical models, which were designed for datasets with more observations than variables. Conventional methods like linear regression become mathematically impossible or highly unstable in these contexts, as they cannot uniquely estimate parameters when predictors exceed observations [6].

Handling Complex, Non-Linear Relationships

Biological systems rarely operate through simple linear pathways. Traditional statistical methods often struggle to capture the complex, non-linear interactions between multiple biomarkers and clinical outcomes [19]. While statistical models can incorporate interaction terms, researchers must specify these relationships in advance, potentially missing important complex patterns that machine learning algorithms can discover automatically from the data.

Limited Capacity for Data Integration

Modern biomarker research increasingly requires integration of diverse data types, including genomic, transcriptomic, proteomic, metabolomic, imaging, and clinical data [6]. Traditional statistical methods have limited capabilities for effectively integrating these multimodal data sources. Machine learning offers three primary integration strategies: early integration (combining raw data from multiple sources), intermediate integration (joining data sources during model building), and late integration (combining predictions from separate models) [17].

How Machine Learning Overcomes These Limitations: Experimental Evidence

Machine learning approaches address the fundamental limitations of traditional statistics through several demonstrated capabilities, supported by experimental evidence from biomarker research.

Superior Performance in High-Dimensional Contexts

Table 2: Performance Comparison in High-Dimensional Biomarker Discovery

| Study Context | Traditional Method | ML Method | Performance Metric | Result (Traditional) | Result (ML) |

|---|---|---|---|---|---|

| Alzheimer's Disease Diagnosis [22] | Logistic Regression | Random Forest | ROC-AUC | 0.79 | 0.896 |

| Building Performance [19] | Linear Regression | Various ML | R² | 0.62 | 0.82 |

| Multi-Omics Integration [6] | Generalized Linear Models | Support Vector Machines | Classification Accuracy | 74.2% | 88.6% |

Machine learning algorithms incorporate built-in regularization techniques that prevent overfitting even when analyzing datasets with thousands of potential biomarkers. Methods like LASSO (Least Absolute Shrinkage and Selection Operator) perform automatic feature selection while estimating model parameters, effectively identifying the most relevant biomarkers from high-dimensional data [6]. Tree-based ensemble methods like Random Forests naturally handle high-dimensionality by randomly selecting feature subsets for each tree, making them particularly robust for biomarker discovery [22].

Capturing Complex Biological Interactions

Machine learning excels at identifying non-linear relationships and complex interactions without requiring researchers to specify them in advance. In Alzheimer's disease research, ML models have identified novel biomarker interactions that were previously overlooked, leading to the discovery of promising new potential biomarkers like MYH9 and RHOQ [22]. Deep learning architectures, including convolutional neural networks (CNNs) and recurrent neural networks (RNNs), can model highly complex biological patterns in imaging data, molecular structures, and temporal patient records [6].

Enhanced Predictive Accuracy for Clinical Applications

Multiple comparative studies have demonstrated machine learning's superior predictive performance across various domains. A systematic review comparing both approaches in building performance found that ML algorithms performed better than traditional statistical methods in both classification and regression metrics [19]. Similarly, in clinical prediction models for Alzheimer's disease, random forest classifiers achieved area under the curve (AUC) values of 0.95 in test sets, significantly outperforming traditional approaches [22].

Experimental Protocols for Biomarker Validation Using Machine Learning

Robust experimental design and validation are crucial for developing reliable ML-based biomarker signatures. The following protocols outline key methodologies for rigorous biomarker discovery and validation.

Comprehensive Biomarker Discovery Workflow

This comprehensive workflow integrates traditional bioinformatics approaches with machine learning to identify and validate robust biomarker signatures. The process begins with precise definition of scientific objectives, cohort selection, and sample size determination to ensure adequate statistical power [17]. Quality control steps are critical, including checks for outliers, batch effects, and data normalization using established software packages (e.g., fastQC for NGS data, arrayQualityMetrics for microarray data) [17]. Multi-omics data integration employs early, intermediate, or late integration strategies depending on data characteristics and research goals [17].

Interpretable Machine Learning with SHAP Explanation

Interpretability remains a significant challenge in ML-based biomarker discovery. The "black box" nature of complex algorithms can limit clinical adoption and biological insight [22] [6]. SHapley Additive exPlanations (SHAP) addresses this by providing both global and local interpretability. In Alzheimer's disease research, SHAP has been successfully used to explain random forest models, identifying which hub genes (e.g., NFKB1, RHOQ, MYH9) function as risk factors versus protective factors and quantifying their contribution to disease prediction [22]. This approach transforms black-box models into clinically actionable tools by providing transparent decision support.

Validation Methodologies for ML-Based Biomarkers

Rigorous validation is essential for clinical translation of ML-discovered biomarkers. Internal validation through bootstrapping or k-fold cross-validation provides initial performance estimates while correcting for overoptimism [20]. External validation on completely independent cohorts from different institutions or populations assesses generalizability and transportability [20]. For Alzheimer's disease biomarkers, external validation might involve applying a model developed on one cohort (e.g., GSE109887) to an entirely independent dataset (e.g., GSE132903), where AUC values typically decrease but should remain clinically useful (e.g., from 0.95 to 0.79) [22]. Impact analysis through randomized trials should assess whether the biomarker actually improves clinical decisions and patient outcomes before widespread implementation [20].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for ML-Driven Biomarker Research

| Category | Specific Tool/Platform | Function in Biomarker Research | Relevance to ML Validation |

|---|---|---|---|

| Data Generation | RNA-Seq Platforms (Illumina) | Transcriptomic profiling for gene expression biomarkers [6] | Provides high-dimensional input features for ML models |

| Bioinformatics | fastQC, arrayQualityMetrics | Quality control of raw omics data [17] | Ensures data quality for reliable ML training |

| Statistical Analysis | R Statistics, Python SciPy | Conventional statistical analysis and hypothesis testing [19] | Baseline comparison for ML performance evaluation |

| Machine Learning | Scikit-learn, LightGBM, XGBoost | ML algorithm implementation for biomarker discovery [22] [6] | Core analytical engines for pattern detection |

| Interpretability | SHAP, LIME | Explainable AI for model interpretation [22] | Translates black-box predictions to biological insight |

| Validation | Cross-validation frameworks | Internal validation of model performance [20] | Assesses and mitigates overfitting |

| Data Integration | Canonical Correlation Analysis | Early integration of multi-omics data [17] | Combines diverse data types for ML analysis |

| Visualization | ggplot2, Matplotlib | Results visualization and interpretation [22] | Communicates findings to diverse audiences |

Integration of Machine Learning and Statistics: The Path Forward

Rather than viewing machine learning and traditional statistics as competing approaches, the most powerful framework for biomarker research integrates both paradigms [20]. Statistical methods provide rigorous foundations for study design, hypothesis generation, and handling uncertainty, while machine learning excels at exploring complex data structures and generating accurate predictions from high-dimensional data.

This integration can take several forms: using traditional statistics for initial data exploration and hypothesis generation before applying ML for pattern discovery; employing statistical techniques to preprocess data and select features for ML algorithms; or using ML for initial feature selection followed by statistical modeling for inference [20] [19]. As ML continues to evolve, particularly with advancements in interpretable AI and causal machine learning, the distinction between these fields will likely continue to blur, leading to more powerful, transparent, and clinically useful biomarker discovery pipelines.

For researchers embarking on biomarker validation studies, the choice between traditional statistics and machine learning should be guided by the specific research question, data characteristics, and intended application. Traditional statistics remain appropriate for confirmatory studies with limited variables and strong theoretical foundations, while machine learning offers distinct advantages for exploratory analysis of complex, high-dimensional datasets common in modern biomarker research.

This guide provides an objective comparison of four key data types—genomics, proteomics, metabolomics, and digital biomarkers—used in machine learning (ML)-driven biomarker discovery. Aimed at researchers and drug development professionals, it evaluates their performance based on technical characteristics, ML applications, and validation requirements, framed within the broader context of validating points of interest (POI) biomarkers.

Comparative Analysis of Key Data Types for ML Biomarker Discovery

The table below summarizes the core attributes, strengths, and challenges of the four data types, providing a foundation for selecting appropriate modalities for biomarker validation.

Table 1: Comparison of Data Types for ML-Driven Biomarker Discovery

| Feature | Genomics | Proteomics | Metabolomics | Digital Biomarkers |

|---|---|---|---|---|

| Defining Focus | Study of DNA/RNA sequences and genetic variations [23] | Analysis of protein expression, structure, and interactions [5] | Comprehensive profiling of small-molecule metabolites [24] | Objective, behavioral, and physiological data collected via digital devices [25] [26] |

| Representative Data Sources | Whole Genome Sequencing (WGS), RNA-Seq [23] | Mass Spectrometry (MS), Immunoassays [5] | Liquid Chromatography-MS (LC-MS) [24] | Wearables, smartphones, smart home devices [25] |

| Key Strength for ML | Identifies inherited traits and disease predisposition; foundational for functional genomics [23] [6] | Directly reflects functional cellular activity and drug targets [5] | Provides a dynamic snapshot of current physiological state; integrates genetic and environmental factors [24] [27] | Enables continuous, real-world monitoring in passive manner; high temporal resolution [25] [26] |

| Inherent Limitation | Does not fully capture dynamic environmental or post-transcriptional influences [23] | Susceptible to batch effects and dynamic range challenges; requires large sample sizes for robust ML [5] | High data complexity and sensitivity to pre-analytical variables (e.g., diet) [24] | Potential measurement variability across devices; risks of "over-measurement" without clinical meaning [25] [26] |

| Exemplary ML Application | DeepVariant for accurate genetic variant calling [23] | Identifying predictive protein signatures for disease classification [5] | Identifying metabolite panels for disease diagnosis (e.g., Rheumatoid Arthritis) [24] | Detecting subtle cognitive decline in Alzheimer's disease [26] |

| Validation Consideration | Requires functional validation (e.g., via CRISPR) to confirm causal roles [23] | Demands rigorous external validation to ensure generalizability beyond discovery cohorts [5] | Needs multi-center validation to confirm robustness across diverse populations and platforms [24] | Requires regulatory-grade validation to prove clinical meaningfulness and algorithmic fairness [25] [26] |

Experimental Protocols and Performance Data

This section details specific experimental methodologies and resulting performance metrics from recent studies, providing a tangible basis for comparison.

Metabolomics Biomarker Discovery for Rheumatoid Arthritis

A 2025 multi-center study developed and validated ML models to diagnose Rheumatoid Arthritis (RA) using targeted metabolomics [24].

Experimental Protocol:

- Cohort Design: The study analyzed 2,863 blood samples (plasma and serum) from seven independent cohorts across five medical centers. Cohorts included patients with RA, osteoarthritis (OA), and healthy controls (HC) [24].

- Biomarker Identification: Untargeted metabolomic profiling on an exploratory cohort identified candidate biomarkers. These were subsequently quantified using targeted LC-MS/MS for precise absolute quantification [24].

- Model Development & Validation: Metabolite-based classifiers were built using various ML algorithms. The models were trained on a discovery cohort and their performance was rigorously evaluated across five independent, geographically distinct validation cohorts to ensure generalizability [24].

Performance Data:

Table 2: Performance of Metabolite-Based RA Classifiers in Independent Validation

| Validation Context | Area Under the Curve (AUC) |

|---|---|

| RA vs. Healthy Controls (across 3 cohorts) | 0.8375 to 0.9280 |

| RA vs. Osteoarthritis (across 3 cohorts) | 0.7340 to 0.8181 |

The study confirmed that classifier performance was independent of serological status, proving effective for diagnosing seronegative RA [24].

Metabolomics for Drug-Induced Liver Injury (DILI) Subtyping

A 2025 study utilized ML-assisted metabolomics to differentiate intrinsic and idiosyncratic DILI [28].

Experimental Protocol:

- Patient Cohort: 44 DILI patients were classified into intrinsic (n=17) and idiosyncratic (n=27) types based on EASL guidelines [28].

- Metabolomic Profiling: Serum metabolomic profiling was conducted using High-Performance Chemical Isotope Labeling Liquid Chromatography-Mass Spectrometry (HP-CIL LC-MS) [28].

- Data Analysis: Differential metabolites were identified through univariate and multivariate analyses. Machine learning models were then trained to distinguish between the two DILI subtypes [28].

Performance Data:

- Identified Biomarkers: Four metabolites, including Alanyl-Glycine and N2-Acetyl-L-Cystathionine, were identified as potential biomarkers [28].

- Model Performance: All ML models achieved AUC values >0.8. A multiple regression model showed exceptional performance, with an AUC of 0.983 in cross-validation and 0.935 in holdout validation [28].

Workflow and Pathway Visualizations

The following diagrams illustrate the core workflows for biomarker discovery and validation for the discussed data types.

General Workflow for ML-Driven Biomarker Discovery

This diagram outlines the high-level, iterative process from discovery to clinical application, common to all biomarker data types.

Targeted Metabolomics Biomarker Validation Workflow

This diagram details the specific, sequential workflow for discovering and validating metabolomic biomarkers, as exemplified in the RA study [24].

The Scientist's Toolkit: Key Reagents and Materials

Successful execution of the experimental protocols requires specific, high-quality reagents and platforms.

Table 3: Essential Research Reagents and Platforms for Biomarker Discovery

| Item | Function/Application |

|---|---|

| Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) | The cornerstone platform for both untargeted and targeted metabolomic and proteomic analyses, providing high sensitivity and specificity [24]. |

| Stable Isotope-Labeled Internal Standards | Used in targeted metabolomics/proteomics for precise absolute quantification of molecules, correcting for analytical variability [24]. |

| EDTA-Coated and Serum Separator Tubes | Standardized blood collection tubes for plasma and serum preparation, respectively, to ensure sample integrity and pre-analytical consistency [24]. |

| Orbitrap Exploris Mass Spectrometer | Example of a high-resolution mass spectrometer used for untargeted metabolomic profiling due to its high mass accuracy and resolution [24]. |

| Wearable Biosensors (e.g., Actigraphy Sensors) | Devices that continuously collect physiological (e.g., heart rate) and behavioral (e.g., activity levels) data for digital biomarker development [25]. |

| Cloud Computing Platforms (e.g., AWS, Google Cloud Genomics) | Provide scalable computational infrastructure and storage required for processing and analyzing large multi-omics and digital biomarker datasets [23]. |

Current Landscape and Emerging Trends in Biomarker Research for 2025 and Beyond

The field of biomarker research is undergoing a profound transformation, shifting from traditional, hypothesis-driven approaches to a data-driven paradigm powered by artificial intelligence (AI) and machine learning (ML). This evolution is critical for precision medicine, enabling more accurate disease diagnosis, prognosis, and personalized treatment strategies [29] [6]. Biomarkers, defined as objectively measurable indicators of biological processes, now extend beyond single molecules to include multidimensional combinations and dynamic monitoring, providing a more comprehensive capture of disease biological features [29]. The integration of digital technology and AI has revolutionized predictive models based on clinical data, creating significant opportunities for proactive health management and a move away from traditional episodic care models toward systems that implement continuous physiological monitoring and dynamic risk assessment [29]. This paradigm shift is essential for addressing demographic challenges posed by increasing chronic disease prevalence in aging populations and aligns with global strategic health initiatives [29].

The scope of biomarkers has expanded dramatically, now encompassing genetic, epigenetic, transcriptomic, proteomic, metabolomic, imaging, and even digital biomarkers derived from wearable devices [29]. This diversification, coupled with advancements in detection technologies like single-cell sequencing and high-throughput proteomics, generates comprehensive molecular profiles offering unprecedented insights into disease mechanisms [29]. However, this progress introduces significant methodological challenges, including data standardization, model generalizability, and clinical implementation pathways that must be systematically resolved to realize the full potential of biomarker-driven precision health management [29]. This guide explores the current landscape, compares emerging methodologies, and examines the future trajectory of biomarker research within the critical context of validation for machine learning applications.

Current Landscape: AI and Multi-Omics Integration

The present landscape of biomarker research is characterized by the dominant role of artificial intelligence in deciphering complex, high-dimensional biological data. Machine learning and deep learning have proven exceptionally effective in biomarker discovery by integrating diverse and high-volume data types, including genomics, transcriptomics, proteomics, metabolomics, imaging data, and clinical records [6]. Unlike classical approaches based on hypothesized hypotheses, AI-based models uncover innovative and surprising connections within high-dimensional datasets that common statistical methods could easily miss [30]. This capability is particularly valuable in oncology, where AI biomarkers analyze routine clinical data such as medical imaging, electronic health records (EHRs), and pathology slides to predict key molecular alterations, stratify patients, and optimize clinical trial matching [31].

A significant trend in the current landscape is the move toward multi-omics integration. Researchers are increasingly leveraging data from genomics, proteomics, metabolomics, and transcriptomics to achieve a holistic understanding of disease mechanisms [27]. This multi-omics approach enables the identification of comprehensive biomarker signatures that reflect the complexity of diseases, facilitating improved diagnostic accuracy and treatment personalization [27]. For example, integrated profiling across these platforms captures dynamic molecular interactions between biological layers, revealing pathogenic mechanisms otherwise undetectable via single-omics approaches [29]. Studies demonstrate that the integration of multi-omics data and advanced analytical methods has improved early Alzheimer's disease diagnosis specificity by 32%, providing a crucial intervention window [29].

Table 1: Machine Learning Applications Across Biomarker Data Types

| Omics Data Type | ML Techniques | Typical Applications | Clinical Value |

|---|---|---|---|

| Transcriptomics | Feature selection (e.g., LASSO); SVM; Random Forest | Differential gene expression analysis, molecular subtyping | Disease classification, treatment response prediction [6] |

| Proteomics | Deep learning; Random Forests; SVM | Protein expression profiling, post-translational modification analysis | Disease diagnosis, prognosis evaluation, therapeutic monitoring [29] [6] |

| Genomics | CNNs; RNNs; Transformers | Variant calling, genome annotation, non-coding variant interpretation | Genetic disease risk assessment, drug target screening [29] [6] |

| Metabolomics | Random Forests; PCA; PLS-DA | Metabolic pathway analysis, biomarker panels identification | Metabolic disease screening, drug toxicity evaluation [29] [6] |

| Digital Pathology | CNNs; Vision Transformers | Tumor segmentation, feature extraction from histology images | Cancer diagnosis, prognosis assessment, treatment response prediction [6] [31] |

The application of these AI-driven approaches spans various medical specialties. In oncology, ML models have demonstrated superior efficacy in categorizing cancer types and stages, especially for breast, lung, brain, and skin cancers [30]. Beyond cancer, ML-based biomarker discovery is expanding into infectious diseases, neurodegenerative disorders, and chronic inflammatory diseases, illustrating the versatility of these methodologies [6]. Of particular interest is the emergence of microbiome and functional biomarkers, where ML methods are instrumental in predicting complex biological phenomena such as biosynthetic gene clusters (BGCs), crucial for novel antibiotic and anticancer compound discovery [6].

Emerging Trends for 2025 and Beyond

Enhanced AI Integration and Multimodal Systems

By 2025, artificial intelligence and machine learning are anticipated to play an even more substantial role in biomarker analysis [27]. The integration of AI-driven algorithms will revolutionize data processing and analysis, leading to more sophisticated predictive models that can forecast disease progression and treatment responses based on biomarker profiles [27]. Future directions in the field emphasize the development of multimodal AI systems that integrate data from pathology, radiology, genomics, and clinical records [31]. This holistic approach enhances the predictive power of AI models, uncovering complex biological interactions that single-modality analyses might overlook [31]. The ability to detect subtle signals early could support the identification of more robust therapeutic targets, giving R&D teams higher confidence before committing to costly preclinical programmes [32].

Explainable AI (XAI) frameworks are gaining prominence as essential tools for clinical adoption. These frameworks enrich the interpretability of AI systems, helping clinicians better understand the connection between particular biomarkers and patient outcomes [30]. For instance, a study showcases an XAI-based deep learning framework for biomarker discovery in non-small cell lung cancer (NSCLC), demonstrating how explainable models can assist in clinical decision-making [30]. This strategy not only improves diagnosis accuracy but also boosts health professionals' confidence in AI-generated results, addressing a significant barrier to clinical implementation [30].

Advanced Technologies and Patient-Centric Approaches

Liquid biopsy technologies are poised to become a standard tool in clinical practice by 2025, with advancements in technologies such as circulating tumor DNA (ctDNA) analysis and exosome profiling increasing their sensitivity and specificity [27]. These non-invasive monitoring tools will facilitate real-time monitoring of disease progression and treatment responses, allowing for timely adjustments in therapeutic strategies [27]. Beyond oncology, liquid biopsies are expected to expand into other areas of medicine, including infectious diseases and autoimmune disorders, offering a non-invasive method for disease diagnosis and management [27].

Single-cell analysis technologies are another area of rapid advancement, expected to become more sophisticated and widely adopted by 2025 [27]. These technologies provide deeper insights into tumor microenvironments by examining individual cells within tumors, uncovering heterogeneity, and identifying rare cell populations that may drive disease progression or resistance to therapy [27]. When combined with multi-omics data, single-cell analysis provides a more comprehensive view of cellular mechanisms, paving the way for novel biomarker discovery [27].

Concurrently, there is a pronounced shift toward patient-centric approaches in biomarker research. By 2025, efforts to improve patient education regarding biomarker testing and its implications will foster greater transparency and trust in clinical research [27]. Incorporating patient-reported outcomes into biomarker studies will provide valuable insights into treatment effectiveness from the patient's perspective, further guiding personalized treatment approaches [27]. Engaging diverse patient populations in biomarker research will be essential for understanding health disparities and ensuring that new biomarkers are relevant and beneficial across different demographics [27].

Table 2: Emerging Trends in Biomarker Research (2025 and Beyond)

| Trend Area | Specific Advancements | Potential Impact |

|---|---|---|

| AI & Machine Learning | Explainable AI (XAI); Multimodal AI systems; Transformer models | Enhanced predictive analytics; Improved model interpretability; Integration of diverse data types [27] [31] |

| Liquid Biopsies | Increased sensitivity/specificity; Real-time monitoring; Expansion beyond oncology | Non-invasive disease monitoring; Dynamic treatment response assessment; Broader clinical applications [27] |

| Single-Cell Analysis | Tumor microenvironment insights; Rare cell population identification; Integration with multi-omics | Understanding tumor heterogeneity; Personalized therapy targets; Comprehensive cellular mechanism views [27] |

| Multi-Omics Integration | Comprehensive biomarker profiles; Systems biology approaches; Collaborative research platforms | Holistic disease understanding; Novel therapeutic target identification; Improved diagnostic accuracy [29] [27] |

| Regulatory Science | Streamlined approval processes; Standardization initiatives; Emphasis on real-world evidence | Faster biomarker validation; Enhanced reproducibility; Performance in diverse populations [27] |

Validation Frameworks for ML-Derived Biomarkers

Key Validation Challenges and Requirements

The validation of machine learning-derived biomarkers presents unique challenges that must be addressed for successful clinical translation. Key concerns revolve around data quality issues, including limited sample sizes, noise, batch effects, and biological heterogeneity [6]. These data-related limitations can severely impact model performance, leading to issues such as overfitting and reduced generalizability [6]. Additionally, the interpretability of ML models remains a significant hurdle, as many advanced algorithms function as "black boxes," making it difficult to elucidate how specific predictions are derived [6]. This lack of interpretability poses practical barriers to clinical adoption, where transparency and trust in predictive models are essential [6].

Another critical issue is the insufficient use of rigorous external validation strategies [6]. Biomarkers identified through computational methods must undergo stringent validation using independent cohorts and experimental (wet-lab) methods to ensure reproducibility and clinical reliability [6]. Regulatory frameworks are also evolving to address these challenges. By 2025, regulatory agencies are likely to implement more streamlined approval processes for biomarkers, particularly those validated through large-scale studies and real-world evidence [27]. Collaborative efforts among industry stakeholders, academia, and regulatory bodies will promote the establishment of standardized protocols for biomarker validation, enhancing reproducibility and reliability across studies [27].

Proposed Validation Framework

A systematic biomarker validation process should encompass discovery, validation, and clinical validation phases, ensuring research findings' reliability and clinical applicability [29]. Multi-omics integration methods serve a crucial role in this process, developing comprehensive molecular disease maps by combining genomics, transcriptomics, proteomics, and metabolomics data, thereby identifying complex marker combinations that traditional methods might overlook [29]. Temporal data holds distinct value in biomarker validation. Through longitudinal cohort studies capturing markers' dynamic changes over time, researchers obtain vital information about disease natural history [29]. Studies demonstrate that marker trajectories generally provide more comprehensive predictive information than single time-point measurements [29].

The following diagram illustrates a proposed rigorous validation pipeline for ML-derived biomarkers:

ML Biomarker Validation Pipeline

Regulatory bodies will increasingly recognize the importance of real-world evidence in evaluating biomarker performance, allowing for a more comprehensive understanding of their clinical utility in diverse populations [27]. The dynamic nature of ML-driven biomarker discovery, where models continuously evolve with new data, presents particular challenges for regulatory oversight and demands adaptive yet strict validation and approval frameworks [6]. Ethical considerations also significantly influence the deployment of ML-derived biomarkers into clinical practice, as biomarkers used for patient stratification, therapeutic decision making, or disease prognosis must comply with rigorous standards set by regulatory bodies such as the US Food and Drug Administration (FDA) [6].

Experimental Data and Case Studies

Wastewater Biomarker Monitoring with ML Classification

A compelling example of innovative biomarker applications comes from wastewater-based epidemiology (WBE), which involves analyzing sewage to monitor population health [33]. A 2025 study investigated the application of machine learning models for classifying wastewater samples based on varying concentrations of C-Reactive Protein (CRP), a critical biomarker for inflammation [33]. The research utilized absorption spectroscopy spectra to distinguish between five concentration classes ranging from zero to 10⁻¹ μg/ml [33]. The comparative analysis revealed accuracies ranging from 64.88% to 65.48% for the best model, Cubic Support Vector Machine (CSVM), using both full-spectrum and restricted-range spectral data [33]. This approach demonstrates the potential of machine learning techniques to classify biomarker levels in complex environmental samples, offering promising insights for future biosensor development and real-time environmental monitoring [33].

The experimental protocol for this study involved:

- Sample Preparation: Wastewater samples were spiked with known concentrations of CRP, creating five distinct concentration classes from zero to 10⁻¹ μg/ml [33].

- Spectral Acquisition: Absorption spectroscopy spectra were collected using UV-Vis spectroscopy across a range of 220-750 nm [33].

- Data Processing: Spectral data were processed and normalized to reduce noise and enhance relevant features [33].

- Model Training: Multiple machine learning algorithms were trained and compared, including Cubic Support Vector Machine (CSVM), to identify the most effective approach for classification [33].

- Performance Validation: Model performance was assessed through repeated experiments using metrics including accuracy, precision, recall, F1 score, and specificity to ensure robustness and reproducibility [33].

AI-Derived Biomarkers in Oncology

In oncology, AI-derived biomarkers are showing remarkable potential for improving diagnostic precision and prognostic assessment. AI biomarkers provide information about the patient's reaction to treatment, particularly in immunotherapy, helping in cancer therapy and in predicting the progression of a disease and response to treatment [30]. For instance, AI models can amalgamate several data modalities, including radiography, histology, genomics, and electronic health records, to enhance diagnostic precision and reliability [30]. Deep learning algorithms, trained on a vast collection of histological images, have consistently demonstrated remarkable accuracy in identifying cancerous tissues, often surpassing the performance of human pathologists [30].

The prognostic value of AI-discovered biomarkers is of considerable importance in predicting patient outcomes and informing therapeutic choices [30]. Oncologists can make more informed treatment decisions using models based on biomarkers and AI, which can predict the likely response of patients to specific therapies [30]. This is especially important within the field of cancer immunotherapy, as patient responses are unpredictably variable. AI can pinpoint biomarker signatures, which help to determine certain patients who are more predisposed to react to immunotherapies like checkpoint inhibitors, thus aiding customized and more effective treatment plans [30].

Table 3: Experimental Data from Biomarker ML Studies

| Study Focus | ML Model(s) Used | Performance Metrics | Clinical/Research Utility |

|---|---|---|---|

| CRP Detection in Wastewater [33] | Cubic Support Vector Machine (CSVM) | Accuracy: 64.88-65.48% (5-class classification) | Environmental health monitoring; Public health surveillance |

| Cancer Diagnosis & Prognosis [30] | Deep Learning (CNN-based models) | Surpasses human pathologist accuracy in histology image analysis | Early cancer detection; Tumor classification; Prognostic assessment |

| Non-Small Cell Lung Cancer Biomarkers [30] | Explainable AI (XAI) Deep Learning Framework | Improved diagnostic accuracy; Enhanced clinician confidence | Treatment decision support; Biomarker interpretation |

| Multi-Omics Integration [29] | Transformer-based algorithms | 32% improvement in early Alzheimer's diagnosis specificity | Early disease screening; Risk stratification; Precision diagnosis |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Advancing biomarker research requires a comprehensive toolkit of sophisticated research reagents and analytical solutions. The following table details essential materials and their functions in contemporary biomarker investigations:

Table 4: Essential Research Reagents and Solutions for Biomarker Research

| Reagent/Solution Category | Specific Examples | Function in Biomarker Research |

|---|---|---|

| High-Throughput Sequencing Reagents | Whole genome sequencing kits; RNA-seq reagents; Single-cell sequencing kits | Comprehensive genomic and transcriptomic profiling; Identification of genetic variants and expression signatures [29] [6] |