Human Expert vs. AI in Sperm Morphology Classification: A Comprehensive Analysis of Accuracy, Standardization, and Future Directions

This article provides a systematic comparison between human expert and artificial intelligence (AI) methodologies for sperm morphology classification, a critical component of male infertility diagnosis.

Human Expert vs. AI in Sperm Morphology Classification: A Comprehensive Analysis of Accuracy, Standardization, and Future Directions

Abstract

This article provides a systematic comparison between human expert and artificial intelligence (AI) methodologies for sperm morphology classification, a critical component of male infertility diagnosis. We explore the foundational challenges of manual assessment, including high inter-observer variability and subjectivity, and contrast them with emerging AI solutions leveraging convolutional neural networks (CNNs) and deep feature engineering. The analysis covers methodological advances in automated systems, optimization strategies to overcome data and technical limitations, and rigorous validation of AI performance against expert benchmarks. Recent studies demonstrate AI models achieving accuracy rates of 90-96%, significantly reducing analysis time from 30-45 minutes to under one minute while improving standardization. For researchers and drug development professionals, this synthesis offers critical insights into the evolving landscape of reproductive diagnostics, highlighting pathways for integrating AI to enhance precision, efficiency, and clinical outcomes in fertility care.

The Foundational Challenge: Understanding Variability in Human Sperm Morphology Assessment

Male infertility constitutes a significant global health challenge, implicated in approximately 50% of all infertility cases among couples, either as a sole factor or in combination with female factors [1] [2]. Within the diagnostic landscape of male infertility, sperm morphology—the study of sperm size, shape, and structural integrity—has emerged as a cornerstone parameter due to its profound clinical relevance. The morphological assessment of spermatozoa provides crucial diagnostic and prognostic information, serving as a key predictor of fertilization potential in both natural conception and assisted reproductive technologies (ART) [1] [3]. Despite its established importance, sperm morphology evaluation has historically presented significant challenges in standardization, often relying on subjective manual analysis by experienced technicians, which leads to considerable inter-observer and inter-laboratory variability [1] [4].

The clinical imperative for accurate morphology assessment stems from its ability to reflect underlying testicular and epididymal function, offering insights that extend beyond fertility to encompass broader male health concerns [5] [3]. With the declining trends in semen quality parameters observed globally, particularly among young men, the rigorous evaluation of sperm morphology has gained renewed importance in the clinical evaluation of male fertility potential [3]. This article examines the evolving landscape of sperm morphology assessment, comparing traditional manual techniques with emerging artificial intelligence (AI) approaches, and explores how technological advancements are addressing long-standing limitations in standardization and accuracy, ultimately enhancing the clinical utility of this fundamental diagnostic parameter.

Traditional Morphology Assessment: Methods, Limitations, and Clinical Significance

Established Staining Techniques and Methodological Approaches

The accurate evaluation of sperm morphology necessitates precise staining techniques that provide clear differentiation of sperm components while minimizing structural artifacts. The World Health Organization (WHO) manual endorses several staining methods, with Diff-Quick and Papanicolaou being among the most widely utilized in clinical andrology laboratories [4]. These staining protocols enable detailed visualization of sperm head, midpiece, and tail structures, allowing for the identification and classification of morphological abnormalities according to standardized criteria.

A comparative study examining Diff-Quick and Spermac staining methods revealed significant methodological differences impacting morphological classification. While both techniques provided comparable assessment of head and tail abnormalities, Spermac staining demonstrated superior visualization of the midpiece, resulting in the identification of substantially higher rates of midpiece defects (55.7% ± 2.1% versus 24.8% ± 2.0%, p<0.0001) compared to Diff-Quick [4]. This discrepancy highlights how technical methodologies can directly influence diagnostic outcomes, potentially affecting patient management decisions. The same study found that Diff-Quick staining yielded a significantly higher percentage of morphologically normal sperm (3.98% ± 0.4% versus 2.8% ± 0.3%, p=0.0385), underscoring how staining selection alone can alter the clinical interpretation of semen quality [4].

Classification Systems and Reference Standards

Sperm morphology assessment employs standardized classification systems to categorize observed abnormalities. The most prominent frameworks include the Tygerberg strict criteria and the modified David classification, which provide systematic approaches for identifying and documenting specific defect types [1] [4]. The David classification, for instance, delineates 12 distinct morphological classes encompassing seven head defects (tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome), two midpiece defects (cytoplasmic droplet, bent), and three tail defects (coiled, short, multiple) [1].

The reference range for normal sperm morphology has become increasingly stringent over time, with the current WHO lower reference limit established at 4% morphologically normal forms [4]. This threshold serves as a critical benchmark in male fertility assessment, with values below this cutoff associated with reduced fertilization potential in both natural conception and ART cycles. The clinical significance of morphology is particularly pronounced when multiple semen parameter abnormalities coexist, with morphology often demonstrating the strongest correlation with fertility outcomes among conventional semen parameters [1] [5].

Table 1: Comparison of Staining Techniques for Sperm Morphology Assessment

| Staining Method | Normal Morphology (%) | Midpiece Defects (%) | Head Defects (%) | Tail Defects (%) | Key Advantages |

|---|---|---|---|---|---|

| Diff-Quick | 3.98 ± 0.41 | 24.82 ± 2.05 | 93.42 ± 0.66 | 16.60 ± 1.34 | Rapid procedure, established standard |

| Spermac | 2.80 ± 0.33 | 55.74 ± 2.06 | 94.24 ± 0.61 | 14.84 ± 1.39 | Superior midpiece visualization |

| Papanicolaou | WHO Reference Standard | - | - | - | Comprehensive structural detail |

Inherent Limitations of Traditional Assessment

Despite its clinical importance, traditional sperm morphology assessment faces several fundamental limitations. The process remains inherently subjective and operator-dependent, with classification consistency heavily influenced by technician expertise and experience [1] [3]. This subjectivity is evidenced by significant inter-expert variability, even among highly trained professionals. One study analyzing agreement between three experts reported varying consensus levels: total agreement (3/3 experts) in some cases, partial agreement (2/3 experts) in others, and no agreement among experts in certain classifications [1].

The manual evaluation process is also notably time-consuming and labor-intensive, requiring the systematic assessment of at least 200 individual spermatozoa per sample under high magnification (1000×) with oil immersion [4] [3]. This substantial analytical workload, combined with the inherent subjectivity, has compromised both the reproducibility and clinical reliability of traditional morphology assessment, creating an imperative for more standardized, objective approaches [3]. Additionally, conventional staining methods render sperm unsuitable for subsequent therapeutic use in ART, necessitating separate sample processing for diagnostic and treatment purposes [6].

The Rise of AI in Sperm Morphology Analysis

Artificial Intelligence Methodologies and Approaches

Artificial intelligence, particularly deep learning algorithms, has emerged as a transformative approach to overcoming the limitations of traditional sperm morphology assessment. Convolutional Neural Networks (CNNs) represent the predominant architectural framework in this domain, capable of automating the extraction of discriminative features from sperm images without relying on manual feature engineering [1] [3]. These AI models are trained on extensive datasets of annotated sperm images, learning to recognize and classify morphological patterns with increasing accuracy through iterative exposure to labeled examples.

The AI pipeline for sperm morphology analysis typically encompasses several sequential stages: image acquisition, pre-processing, data augmentation, model training, and validation [1]. Pre-processing techniques are employed to enhance image quality, denoise signals, and standardize dimensions, while data augmentation strategies expand limited datasets through transformations like rotation, scaling, and contrast adjustment, improving model robustness and generalizability [1]. Following training, model performance is rigorously evaluated using separate test datasets to assess metrics such as accuracy, precision, recall, and area under the curve (AUC) values [6] [2].

Transfer learning approaches, where pre-trained models like ResNet50 are adapted for sperm classification tasks, have demonstrated particular efficacy, especially when limited training data are available [6]. One study utilizing this approach achieved impressive performance metrics, including a test accuracy of 93%, precision of 0.95 for abnormal sperm detection, and recall of 0.95 for normal sperm identification [6]. The processing efficiency of these AI systems is equally notable, with reported average prediction times of approximately 0.0056 seconds per image, enabling rapid analysis of thousands of sperm cells [6].

Performance Comparison: AI Versus Human Experts

Multiple studies have directly compared the performance of AI-based systems against traditional manual assessment by human experts, with consistently promising results. A deep learning model developed on the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset demonstrated classification accuracy ranging from 55% to 92% across different morphological categories, approaching the consistency level of expert embryologists [1]. This study utilized a substantial dataset initially comprising 1,000 sperm images, expanded to 6,035 images through data augmentation techniques, with classifications established by consensus among three human experts serving as the reference standard [1].

In another investigation focusing on unstained live sperm evaluation—a significant advancement beyond conventional fixed and stained preparations—an AI model exhibited strong correlation with both computer-aided semen analysis (CASA) (r=0.88) and conventional semen analysis (r=0.76) [6]. This capability to assess sperm without staining is particularly valuable in ART settings, as it preserves sperm viability for subsequent therapeutic use immediately after evaluation [6]. The performance advantages of AI systems extend beyond classification accuracy to encompass superior consistency, processing speed, and freedom from fatigue-related variability that affects human analysts.

Table 2: Performance Metrics of AI Models in Sperm Morphology Classification

| Study | AI Methodology | Dataset Size | Accuracy | Precision | Recall/Sensitivity | Key Finding |

|---|---|---|---|---|---|---|

| Deep-learning based model for sperm morphology [1] | Convolutional Neural Network (CNN) | 1,000 images (expanded to 6,035) | 55-92% | - | - | Accuracy approaches expert-level consistency |

| AI model for unstained sperm assessment [6] | ResNet50 Transfer Learning | 21,600 images (12,683 annotated) | 93% | 0.95 (abnormal) 0.91 (normal) | 0.91 (abnormal) 0.95 (normal) | Strong correlation with CASA (r=0.88) and conventional analysis (r=0.76) |

| SVM Classifier [3] | Support Vector Machine | >1,400 sperm cells | AUC: 88.59% | >90% | - | High discriminatory power for sperm head classification |

Achievement of AI versus Human Expert in Sperm Morphology Assessment

Experimental Protocols in Sperm Morphology Research

Protocol 1: Traditional Staining and Manual Assessment

The conventional methodology for sperm morphology assessment follows a standardized protocol established by WHO guidelines. Semen samples are initially collected after 2-7 days of sexual abstinence and allowed to liquefy at 37°C [4]. Smear preparation involves placing a small semen aliquot (typically 6-10μL) onto a clean glass slide, with spreading techniques designed to achieve a monolayer distribution of spermatozoa to prevent overlap and ensure optimal visualization [4].

For Diff-Quick staining, the established protocol involves sequential immersion of air-dried smears in specific solutions: fixation in a 0.1% triarylmethane solution for 5 seconds, followed by immersion in 0.1% xanthenes solution for 5 seconds, 0.1% thiazines solution for 5 seconds, and a final rinse in distilled water for 5 seconds before air drying [4]. Alternatively, Spermac staining employs a more complex procedure including fixation in formaldehyde solution for 5 minutes, followed by sequential staining in three different solutions (A, B, and C) for 1 minute each, with distilled water washes between each staining step [4].

Manual morphological assessment is performed by trained technologists using brightfield microscopy under oil immersion at 1000× magnification. A minimum of 200 spermatozoa are systematically evaluated and classified according to established criteria (Tygerberg or David classification), with results expressed as the percentage of morphologically normal forms and the prevalence of specific defect categories [4]. Quality control measures include regular participation in external quality assurance programs and internal consistency checks to minimize inter-technician variability.

Protocol 2: AI-Assisted Morphology Classification

AI-based morphology assessment begins with image acquisition, typically using specialized microscopy systems. One protocol utilizes the MMC CASA (Computer-Assisted Semen Analysis) system for image capture, employing brightfield mode with an oil immersion 100× objective to acquire individual sperm images [1]. Each image contains a single spermatozoon encompassing the head, midpiece, and tail regions, ensuring comprehensive morphological assessment.

Image pre-processing represents a critical step in the AI pipeline, involving data cleaning to handle missing values or inconsistencies, and normalization to standardize image dimensions and intensity values [1]. Specific pre-processing techniques include resizing images to standardized dimensions (e.g., 80×80×1 grayscale) using linear interpolation strategies to minimize distortion artifacts [1].

For model development, datasets are typically partitioned into training (80%) and testing (20%) subsets, with a portion of the training set often reserved for validation during the development phase [1]. Data augmentation techniques are employed to address class imbalance and expand effective dataset size, including transformations such as rotation, scaling, flipping, and contrast adjustment [1]. The deep learning model is then trained using iterative optimization algorithms to minimize classification error, with performance validation conducted on the withheld test set to evaluate generalizability to unseen data.

AI-Assisted Sperm Morphology Analysis Workflow

Comparative Analysis: Human Expertise Versus AI Algorithms

Accuracy and Consistency Metrics

The fundamental distinction between human experts and AI algorithms in sperm morphology classification centers on the trade-off between experiential knowledge and computational consistency. Human experts bring sophisticated pattern recognition capabilities honed through extensive training and practical experience, enabling nuanced interpretation of borderline cases and integration of contextual clinical information [1] [3]. However, this expertise is inevitably accompanied by inherent subjectivity and inter-observer variability, even among highly trained professionals within the same laboratory [1].

In contrast, AI algorithms offer perfect consistency, applying identical classification criteria to every sperm cell analyzed without influence from fatigue, distraction, or temporal performance fluctuations [2] [3]. This computational objectivity addresses one of the most significant limitations of traditional morphology assessment. Studies directly comparing classification consistency have demonstrated that while human experts show varying agreement levels (total, partial, or no agreement across three experts), AI models maintain stable performance when presented with the same images [1]. The emerging consensus suggests that AI systems can achieve accuracy levels approaching or even exceeding human experts for well-defined morphological classifications, particularly for obvious abnormalities, though challenging borderline cases may still benefit from human oversight [1] [6] [3].

Processing Efficiency and Clinical Workflow Integration

A decisive advantage of AI-based systems lies in their processing efficiency and potential for workflow integration. Manual morphology assessment is notoriously time-consuming, requiring 15-30 minutes per sample for a trained technologist to evaluate 200 spermatozoa under high magnification [3]. This analytical burden creates practical limitations in high-volume clinical settings and restricts the number of sperm that can be reasonably assessed, potentially compromising statistical reliability.

AI systems demonstrate dramatically superior processing speeds, with one study reporting an average prediction time of 0.0056 seconds per image, enabling the analysis of thousands of sperm cells in minutes rather than hundreds in substantially longer timeframes [6]. This efficiency advantage permits more comprehensive sample characterization through the evaluation of larger sperm numbers while reducing technologist workload and enabling resource reallocation to higher-value tasks [2] [3].

From a clinical workflow perspective, AI systems offer the additional advantage of operating effectively on unstained, live sperm samples, as demonstrated by confocal laser scanning microscopy approaches [6]. This capability is particularly valuable in ART settings where preserving sperm viability for subsequent procedures is essential, eliminating the trade-off between diagnostic assessment and therapeutic utility that characterizes conventional staining methods.

Table 3: Comparative Analysis of Human Expert vs. AI-Based Sperm Morphology Assessment

| Parameter | Human Expert Assessment | AI-Based Assessment |

|---|---|---|

| Classification Basis | Subjective pattern recognition | Computational algorithm |

| Consistency | Variable (inter- and intra-observer variability) | Perfect (identical criteria applied consistently) |

| Processing Speed | 15-30 minutes per sample (200 sperm) | ~0.0056 seconds per image |

| Throughput | Limited by human fatigue and attention | Virtually unlimited |

| Staining Requirement | Generally required for optimal visualization | Possible with unstained, live sperm |

| Borderline Case Handling | Contextual interpretation and judgment | Algorithmic classification based on training |

| Standardization | Variable between laboratories and technicians | Consistent across implementations |

Essential Research Reagents and Materials

The experimental protocols for sperm morphology assessment, whether traditional or AI-assisted, rely on specific research reagents and materials that directly impact analytical outcomes. The selection of appropriate staining kits, fixation methods, and microscopy equipment represents a critical methodological consideration with substantial implications for result interpretation and cross-study comparability.

Table 4: Essential Research Reagents for Sperm Morphology Analysis

| Reagent/Material | Function/Purpose | Examples/Alternatives |

|---|---|---|

| Diff-Quick Stain | Rapid staining for general morphology assessment | Panótico Rápido kit (Laborclin) |

| Spermac Stain | Enhanced midpiece visualization and differentiation | Spermac stain (FertiPro N.V.) |

| Formaldehyde Solution | Fixation for morphological preservation | 4% paraformaldehyde for specific protocols |

| Glutaraldehyde | Alternative fixative for structural integrity | 2.5% glutaraldehyde in 0.1M sodium cacodylate buffer |

| RAL Diagnostics Kit | Staining for conventional morphology assessment | Used in SMD/MSS dataset development |

| Computer-Assisted Semen Analysis System | Automated image acquisition and initial morphometry | MMC CASA system, IVOS II (Hamilton Thorne) |

| Confocal Laser Scanning Microscope | High-resolution imaging of unstained, live sperm | LSM 800 for AI model development |

| Phase Contrast Microscope | Evaluation of unstained sperm morphology | Alternative to brightfield microscopy |

Future Directions and Clinical Implications

The integration of AI technologies into sperm morphology assessment represents a paradigm shift in male fertility evaluation, with profound implications for clinical practice and research. Current evidence indicates that AI-assisted approaches can enhance diagnostic accuracy, improve standardization, and increase analytical efficiency, addressing longstanding limitations of conventional methodology [1] [6] [2]. The ability to analyze unstained, live sperm samples using confocal microscopy and AI algorithms is particularly promising for ART applications, enabling simultaneous diagnostic assessment and therapeutic utilization [6].

Future developments in this field will likely focus on several key areas: the creation of larger, more diverse, and standardized datasets to enhance model generalizability; the refinement of algorithms for detecting subtle morphological features with clinical significance; and the integration of multi-parameter assessments combining morphology with motility, DNA fragmentation, and other functional parameters [2] [3]. Additionally, the validation of AI systems across diverse clinical settings and population groups will be essential to establish universal reliability and facilitate widespread adoption.

From a clinical perspective, the enhanced objectivity and efficiency offered by AI-assisted morphology assessment has the potential to improve infertility diagnosis accuracy, optimize treatment selection, and provide more precise prognostic information for couples [2] [3]. As these technologies continue to evolve and validate through rigorous clinical studies, they are poised to transform sperm morphology from a subjective, highly variable parameter into a precise, reproducible cornerstone of male fertility evaluation, ultimately advancing the standard of care in reproductive medicine.

The accurate classification of biological samples is a cornerstone of diagnostic medicine and scientific research. In fields ranging from reproductive biology to auditory neuroscience, manual analysis by human experts has traditionally been the gold standard. However, this approach is inherently susceptible to subjectivity, leading to potential inconsistencies in interpretation and diagnosis. This guide objectively examines the quantifiable variability between human experts across multiple domains, with a specific focus on sperm morphology classification, and contrasts this performance with emerging artificial intelligence (AI) solutions. As male factors contribute to approximately 50% of infertility cases, the precision of sperm analysis is of paramount importance [7]. The integration of AI and computer-assisted semen analysis (CASA) systems represents a paradigm shift, offering enhanced objectivity, standardization, and throughput in fertility diagnostics [8]. This analysis leverages recent comparative studies to provide researchers and drug development professionals with a clear understanding of the capabilities and limitations of both human and automated classification methods.

Quantifying Inter-Expert Variability in Manual Classification

Inter-expert variability is a well-documented phenomenon that challenges the reliability of manual classification across numerous scientific disciplines.

Evidence from Sperm Morphology Assessment

The assessment of sperm morphology is particularly prone to subjectivity. A seminal study developing a deep-learning model for sperm classification provided a stark quantification of this variability. Three experts independently classified 1,000 sperm images according to the modified David classification, which includes 12 distinct morphological defect classes. The analysis revealed a clear lack of consensus [1]:

- Total Agreement (TA): All three experts agreed on the same label for all categories in only 55% of cases.

- Partial Agreement (PA): Two out of three experts agreed on the same label for at least one category in 92% of cases.

- No Agreement (NA): The experts completely disagreed on the classification for 8% of the sperm images.

This discrepancy occurred despite all experts being from the same laboratory and possessing extensive experience, underscoring the inherent challenge of standardizing subjective visual criteria [1].

Variability in Other Diagnostic Fields

This phenomenon is not isolated to sperm analysis. Research into Auditory Brainstem Response (ABR) interpretation, a key tool for evaluating hearing capacity, found significant inconsistencies. Four expert examiners manually classified wave components in 160 ABR samples. While differences in latency annotations were generally below 0.1 ms (a clinically acceptable threshold), several comparisons showed larger errors and standard deviations exceeding 0.1 ms, indicating notable discrepancies in their identification of key signal components [9].

Similarly, a study on the manual classification of fixations in eye-tracking data concluded that "fixation classification by experienced untrained human coders is not a gold standard." Researchers found that while coders showed high agreement using sample-based Cohen’s kappa, substantial differences emerged when examining specific parameters like fixation duration and the number of fixations, suggesting the application of different implicit thresholds [10].

Table 1: Quantified Inter-Expert Variability Across Different Fields

| Field of Study | Classification Task | Number of Experts | Key Metric of Variability |

|---|---|---|---|

| Sperm Morphology [1] | David classification (12 defect classes) | 3 | Total Expert Agreement: 55% |

| Auditory Brainstem Response (ABR) [9] | Identification of Jewett wave latency | 4 | Presence of outliers with latency differences > 0.1 ms |

| Eye-Tracking [10] | Fixation classification in adult and infant data | 12 | Substantial differences in fixation duration/number |

Experimental Protocols for Benchmarking Human vs. AI Performance

To objectively compare human expert and AI performance, researchers employ structured experimental protocols. The following methodologies are drawn from recent, high-impact studies.

Protocol 1: Developing an AI Model for Unstained Sperm Morphology

A 2024 study aimed to develop an AI model that could assess live, unstained sperm, which is crucial for use in Assisted Reproductive Technology (ART) as it keeps sperm viable for procedures like Intracytoplasmic Sperm Injection (ICSI) [6].

- Sample Preparation: Semen samples were collected from 30 healthy volunteers. Each sample was aliquoted into three parts for parallel analysis.

- Imaging and Dataset Creation: A 6 µL droplet of sample was dispensed onto a standard chamber slide. Sperm images were captured using a confocal laser scanning microscope at 40x magnification in Z-stack mode (0.5 µm interval). Embryologists and researchers manually annotated over 12,000 sperm images, categorizing them into "normal" or one of eight "abnormal" classes based on strict WHO 6th edition criteria. The inter-observer correlation for this annotation was high (0.95 for normal, 1.0 for abnormal morphology).

- AI Model Training: A ResNet50 deep learning model was trained on this dataset. The model's performance was evaluated on a separate test set of images it had not seen during training.

- Comparison: The performance of the AI model was benchmarked against both Conventional Semen Analysis (CSA) and a Commercial CASA system (IVOS II, Hamilton Thorne) that analyzed fixed, stained sperm.

Protocol 2: Deep Learning for Stained Sperm Head Classification

Another approach leverages transfer learning to classify stained sperm heads according to WHO criteria [11].

- Datasets: The study used two public datasets, the Human Sperm Head Morphology (HuSHeM) and the SCIAN-MorphoSpermGS, which contain pre-classified images of sperm heads.

- AI Model Training: Instead of training a model from scratch, the VGG16 convolutional neural network, pre-trained on the general ImageNet database, was retrained (a process called transfer learning) on the sperm image datasets. The model was fine-tuned to classify sperm into five WHO categories: Normal, Tapered, Pyriform, Small, and Amorphous.

- Performance Benchmarking: The AI's classification accuracy was tested on dataset images and its performance was compared directly against earlier, non-deep-learning machine learning approaches (like Cascade Ensemble-Support Vector Machines) that relied on manual extraction of shape-based features.

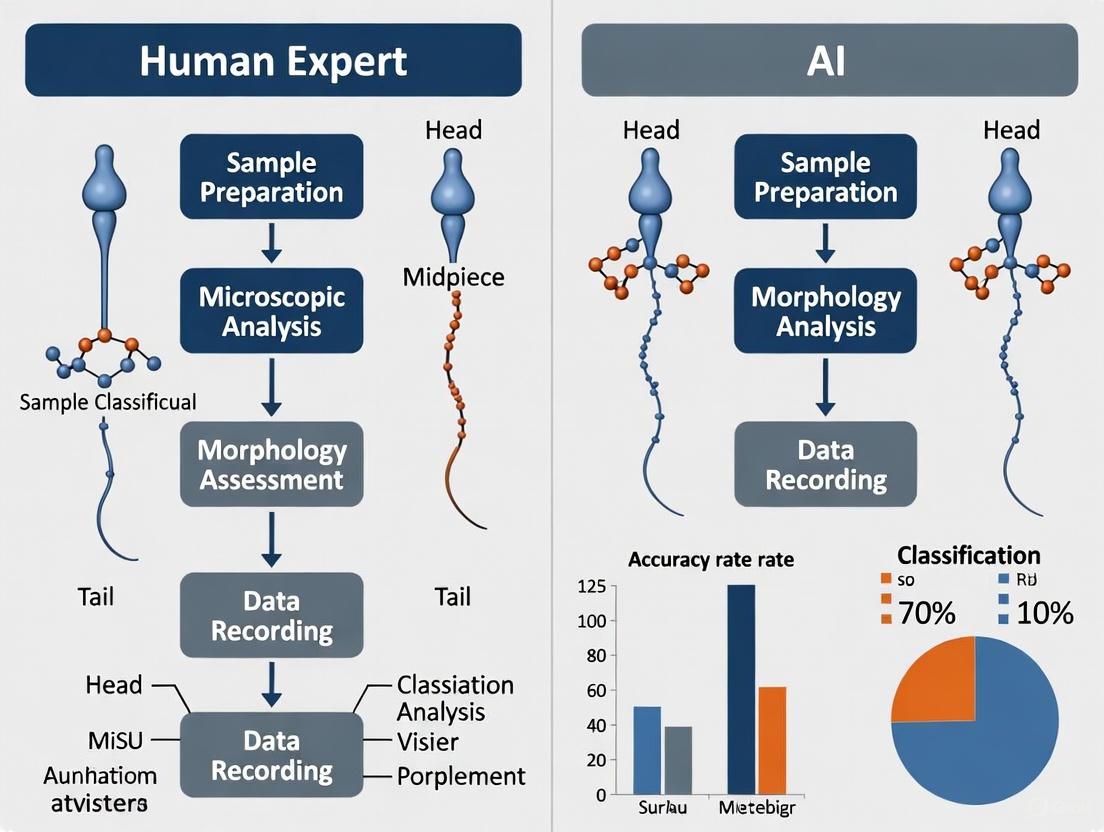

Diagram 1: Experimental Workflow for Comparing Human and AI Classification. This flowchart illustrates the parallel pathways for evaluating human expert and AI-based sperm classification, culminating in a comparative performance analysis.

Comparative Performance: Human Experts vs. AI Models

Quantitative data from controlled experiments demonstrate that AI models can not only match but in some cases exceed the performance of human experts, while offering greater consistency.

Performance in Sperm Morphology Classification

- Unstained Sperm Analysis: The in-house AI model demonstrated a stronger correlation with the Commercial CASA system (r=0.88) than Conventional Semen Analysis did with the same CASA system (r=0.57). This indicates that the AI's assessment of live sperm was more aligned with an established automated method than the manual method was [6].

- Stained Sperm Head Classification: On the HuSHeM dataset, the deep learning model (VGG16) achieved an average true positive rate of 94.1%, matching the performance of a state-of-the-art dictionary learning approach and significantly exceeding a Cascade Ensemble-SVM approach (78.5%) [11].

- Addressing Expert Disagreement: A deep learning model trained on a dataset where images were labeled based on total expert agreement (3/3 experts) achieved an accuracy of 92%. In contrast, when tested on images where experts had only partial agreement (2/3), accuracy dropped to 55%, highlighting the model's performance is closely tied to the consistency of the human "gold standard" used for its training [1].

Performance in Other Medical Imaging Tasks

The value of AI in reducing variability is also evident in other areas. A study on intravascular ultrasound (IVUS) image segmentation found that the difference between algorithmic contours and experts' contours was within the range of inter-expert variability. Furthermore, inter-expert variability was itself lower when using higher-resolution 60 MHz imaging compared to 40 MHz, showing that improved data quality can reduce human subjectivity [12].

Table 2: Performance Comparison of Human Experts vs. AI Classification Models

| Study & Task | Human Expert Performance Metric | AI Model Performance Metric | Conclusion |

|---|---|---|---|

| Sperm Morphology (Stained) [11] | Used as benchmark | 94.1% True Positive Rate (VGG16 model) | AI performance competitive with, and sometimes superior to, expert-level classification. |

| Sperm Morphology (Unstained) [6] | Correlation with CASA: r=0.57 | Correlation with CASA: r=0.88 (AI model) | AI assessment of live sperm showed stronger alignment with an automated standard than manual analysis. |

| IVUS Image Segmentation [12] | Measured inter-expert variability | Algorithmic differences within inter-expert variability | AI performance can fall within the range of human expert disagreement. |

The Scientist's Toolkit: Research Reagent Solutions

The experiments cited rely on a suite of specialized materials and software. The following table details key components essential for research in this field.

Table 3: Essential Research Reagents and Tools for Sperm Classification Studies

| Item Name | Type | Function in Research |

|---|---|---|

| RAL Diagnostics Staining Kit [1] | Chemical Reagent | Stains sperm smears for manual or CASA-based morphological analysis according to WHO standards. |

| Diff-Quik Stain [6] | Chemical Reagent | A Romanowsky-type stain variant used for rapid staining of sperm for morphological assessment. |

| Leja Chamber Slides (20 µm) [6] | Laboratory Consumable | Standardized chambers for preparing semen samples for microscopic analysis, ensuring consistent depth. |

| IVOS II CASA System [6] | Instrumentation | A commercial computer-assisted semen analyzer used for automated assessment of sperm concentration, motility, and morphology. |

| LensHooke X1 PRO [13] | Instrumentation | A portable, AI-enabled CASA device that uses optical microscopy and algorithms to provide rapid semen analysis. |

| Confocal Laser Scanning Microscope [6] | Instrumentation | Provides high-resolution, Z-stack images of unstained live sperm for creating detailed training datasets for AI models. |

| HuSHeM / SCIAN Datasets [11] | Digital Resource | Publicly available, expert-annotated image datasets of sperm heads used for training and benchmarking AI algorithms. |

| ResNet50 / VGG16 Models [6] [11] | Software/Algorithm | Pre-trained deep convolutional neural networks that can be adapted for specific image classification tasks like sperm morphology. |

Diagram 2: Logical Hierarchy of Sperm Classification Technologies. This diagram categorizes the main technological approaches to sperm classification, from traditional manual methods to advanced AI-driven techniques, highlighting the shift towards deep learning.

The diagnostic evaluation of male infertility heavily relies on semen analysis, with sperm morphology assessment—the detailed examination of sperm size, shape, and structural integrity—being a critical prognostic factor for natural conception and the success of Assisted Reproductive Technologies (ART) such as In Vitro Fertilization (IVF) [14] [15]. Historically, this assessment has been performed manually by trained embryologists following World Health Organization (WHO) guidelines, which define normal sperm morphology by specific metrics, including an oval head (length: 4.0–5.5 μm, width: 2.5–3.5 μm) and an intact acrosome covering 40–70% of the head [15]. However, this manual process is fraught with subjectivity, leading to what is known as a "standardization crisis" characterized by significant inconsistencies both across different laboratories and between various classification systems. This crisis undermines diagnostic reliability, compromises patient care, and confounds research outcomes.

The core of the problem lies in the inherent limitations of human-based visual analysis. Manual sperm morphology assessment is labor-intensive, requiring the examination of at least 200 sperm per sample, a process that can take 30 to 45 minutes and is prone to substantial inter-observer variability [14] [15]. Studies report diagnostic disagreements of up to 40% between expert evaluators, with kappa values, a statistical measure of inter-rater reliability, sometimes falling as low as 0.05–0.15, indicating minimal agreement beyond chance [15]. This lack of reproducibility stems from subjective interpretations of complex and subtle morphological features, differences in training, and the immense mental fatigue associated with visually scrutinizing hundreds of cells per sample. These inconsistencies are compounded when different classification systems (e.g., WHO strict criteria, David classification, Kruger criteria) are applied, further complicating the comparability of results between clinics and clinical studies [14].

In response to this crisis, Artificial Intelligence (AI) has emerged as a transformative tool. AI-powered systems, particularly those employing deep learning, offer a pathway to standardized, objective, and highly reproducible sperm morphology analysis. By automating the classification process, these systems can overcome human subjectivity, reduce analysis time from nearly an hour to under a minute, and establish a consistent benchmark for sperm quality evaluation [15]. This article provides a comparative guide, contextualized within broader research on human expert versus AI classification accuracy, to objectively evaluate the performance of these emerging technologies against traditional manual methods, detailing the experimental protocols and data that underscore their potential to resolve the standardization crisis.

Quantitative Performance Comparison: Human Experts vs. AI Models

A direct comparison of performance metrics reveals the significant advantage AI models hold over traditional manual analysis in terms of both accuracy and consistency. The following table summarizes key quantitative findings from recent studies, highlighting the objective improvements offered by automation.

Table 1: Performance Comparison of Sperm Morphology Analysis Methods

| Method / Study | Reported Accuracy / Metric | Dataset / Context | Key Performance Insight |

|---|---|---|---|

| Manual Analysis by Embryologists | Kappa values of 0.05 - 0.15 [15] | Routine clinical practice | High inter-observer variability, indicating poor diagnostic agreement. |

| Up to 40% coefficient of variation (CV) between experts [15] | Multiple laboratory comparisons | Significant inconsistency in results across different labs. | |

| Conventional ML (Bayesian Model) | 90% accuracy [14] | 4-class head morphology classification | Good performance but reliant on handcrafted features, limiting its scope. |

| Deep Learning (Proposed CBAM-ResNet50) | 96.08% ± 1.2% accuracy [15] | SMIDS dataset (3,000 images, 3-class) | Statistically significant improvement over baselines; high reproducibility. |

| Deep Learning (Proposed CBAM-ResNet50) | 96.77% ± 0.8% accuracy [15] | HuSHeM dataset (216 images, 4-class) | Demonstrates model robustness and superior performance on a different dataset. |

| Stacked CNN Ensemble (Spencer et al.) | 95.2% accuracy [15] | HuSHeM dataset | Example of a high-performing alternative AI architecture. |

The data unequivocally demonstrates that AI models not only match but substantially exceed the consistency of human experts. While human analysts show unacceptably high variability, advanced deep learning frameworks achieve near-perfect agreement and high accuracy across multiple, independent datasets. This transition from subjective judgment to quantitative, algorithm-driven assessment is the cornerstone for resolving the standardization crisis.

Experimental Protocols: Validating AI Performance

The superior performance of AI models is validated through rigorous, standardized experimental protocols. The following workflow details the standard methodology for training and evaluating a deep learning model for sperm morphology classification, as used in state-of-the-art research.

Diagram 1: AI Sperm Classification Workflow illustrates the standard pipeline for automated sperm morphology analysis, from image input to final classification.

Detailed Methodology

The experimental protocol can be broken down into the following key stages, which ensure the validity and reliability of the results:

Dataset Preparation and Curation:

- Public Datasets: Research is typically conducted on publicly available, benchmark datasets to enable direct comparison with other models. Commonly used datasets include:

- Data Annotation: Images in these datasets are annotated by experts, providing the "ground truth" labels used to train and test the AI models. The quality and consistency of these annotations are critical, though the existence of multiple datasets itself hints at the challenge of standardization.

AI Model Training and Validation:

- Deep Learning Architecture: A typical advanced approach involves using a pre-trained Convolutional Neural Network (CNN) like ResNet50 as a "backbone." This is often enhanced with a Convolutional Block Attention Module (CBAM), which helps the model learn to focus on the most diagnostically relevant parts of the sperm cell (e.g., head shape, acrosome) while ignoring irrelevant background noise [15].

- Deep Feature Engineering (DFE): This is a hybrid approach where the deep learning model is not used end-to-end. Instead, high-dimensional feature representations are extracted from intermediate layers of the network. These features are then processed using classical techniques like Principal Component Analysis (PCA) to reduce noise and dimensionality before being fed into a classifier like a Support Vector Machine (SVM) with an RBF kernel. This DFE pipeline has been shown to boost performance significantly, for instance, from ~88% accuracy to over 96% [15].

- Validation Protocol: To ensure the model is not simply "memorizing" the data, a 5-fold cross-validation protocol is standard. The dataset is split into five parts; the model is trained on four and tested on the fifth, with this process repeated five times. The final accuracy is the average across all five tests, providing a robust measure of generalizability [15].

Performance Evaluation and Statistical Analysis:

- Key Metrics: Performance is evaluated using standard metrics including accuracy, sensitivity, specificity, and F1-score. The Area Under the Receiver Operating Characteristic Curve (AUC) is also frequently reported [2].

- Statistical Significance: The improvement offered by a new model is validated using statistical tests like McNemar's test to confirm that the performance gain over a baseline model is not due to random chance [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

To replicate or build upon this research, scientists require access to specific datasets, software, and hardware. The following table details key resources in the "Research Reagent Solutions" for AI-based sperm morphology analysis.

Table 2: Essential Research Tools for AI-Based Sperm Morphology Analysis

| Tool Name / Category | Type / Format | Primary Function in Research |

|---|---|---|

| HuSHeM Dataset [15] | Image Dataset (216 images) | Benchmarking model performance on 4-class sperm head morphology classification. |

| SMIDS Dataset [15] | Image Dataset (3,000 images) | Training and validating models for larger-scale 3-class classification tasks. |

| SVIA Dataset [14] | Multimodal Dataset (Videos & Images) | Developing models for detection, segmentation, and classification from video data. |

| ResNet50 Architecture | Deep Learning Model | Serving as a powerful, pre-trained backbone for feature extraction from sperm images. |

| Convolutional Block Attention Module (CBAM) | Software Algorithm | Enhancing CNN performance by forcing the model to focus on salient sperm features. |

| Support Vector Machine (SVM) | Machine Learning Classifier | Performing final classification on engineered deep features in hybrid pipelines. |

| Principal Component Analysis (PCA) | Statistical Algorithm | Reducing dimensionality of deep features to improve classifier efficiency and performance. |

The field is actively evolving, with new, larger datasets like VISEM-Tracking [14] and SVIA [14] emerging. These datasets contain hundreds of thousands of annotated objects and video data, enabling the development of next-generation models for not just classification, but also detection, tracking, and segmentation of sperm cells.

The "standardization crisis" in sperm morphology analysis, driven by the inherent subjectivity and variability of manual expert assessment, presents a significant obstacle in both clinical andrology and reproductive research. The quantitative data and experimental protocols detailed in this comparison guide provide compelling evidence that AI-driven classification is not merely an incremental improvement but a paradigm shift. Deep learning models, particularly those enhanced with attention mechanisms and feature engineering, deliver consistently superior accuracy, objectivity, and reproducibility compared to human experts.

For researchers, scientists, and drug development professionals, the adoption of these AI tools offers a path toward globally comparable and reliable diagnostic standards. This will not only enhance the quality of clinical diagnostics and personalized treatment planning for infertility but also ensure that data from multi-center clinical trials for new pharmaceuticals is robust and consistent. By transitioning from subjective human judgment to quantitative, algorithm-based analysis, the field can finally overcome the inconsistencies of laboratory-specific practices and historical classification systems, ushering in a new era of precision and reliability in male fertility assessment.

Sperm morphology assessment is a cornerstone of male fertility evaluation, providing critical diagnostic and prognostic information for assisted reproductive technologies (ART). For decades, this analysis has relied on the expertise of trained technologists who manually classify sperm cells according to World Health Organization (WHO) guidelines, establishing a de facto gold standard. This manual process requires technologists to examine at least 200 sperm per sample, categorizing them based on strict criteria for head, neck, and tail abnormalities. However, despite its foundational role, this expert-driven approach is inherently subjective, leading to significant variability that directly impacts diagnostic consistency and clinical decision-making. Understanding the precise performance metrics and limitations of human experts is crucial for contextualizing the emergence of artificial intelligence (AI) solutions in reproductive medicine and for establishing a meaningful baseline against which automated systems can be fairly evaluated [3].

This guide objectively compares the performance of expert technologists against standardized benchmarks and emerging AI methodologies. By synthesizing data from recent studies, we quantify the accuracy, variability, and efficiency of human sperm morphology assessment, providing researchers and clinicians with a comprehensive evidence base for evaluating current practices and future innovations in semen analysis.

Quantitative Performance Metrics of Expert Technologists

The performance of expert technologists in sperm morphology assessment is characterized by several key metrics, including diagnostic accuracy, inter-observer variability, and processing speed. The table below summarizes quantitative findings from controlled studies.

Table 1: Performance Metrics of Expert Technologists in Sperm Morphology Analysis

| Performance Metric | Reported Value/Range | Study Context / Classification System | Comparative AI Performance |

|---|---|---|---|

| Initial Accuracy (Untrained) | 53% - 81% | Varies by system complexity (2 to 25 categories) [16] | AI models (e.g., CBAM-ResNet50) report >96% accuracy [15] |

| Post-Training Accuracy | 90% - 98% | After 4 weeks of standardized training [16] | |

| Inter-Observer Agreement (Kappa) | 0.05 - 0.15 (Very low) [15] | Among trained technicians | AI offers consistent, non-varying outputs |

| Inter-Observer Variability (CV) | Up to 40% [15] | Coefficient of variation between experts | |

| Time per Sample | 30 - 45 minutes [15] | Manual assessment of ~200 sperm | AI processing in <1 minute [15] |

| Correlation with CASA | r = 0.57 [6] | Conventional Semen Analysis vs. CASA | AI correlation with CASA: r = 0.88 [6] |

Key Findings from Performance Data

- Impact of Classification Complexity: Technologist accuracy is heavily influenced by the complexity of the classification system used. One study demonstrated that untrained users' accuracy dropped from 81% using a simple 2-category (normal/abnormal) system to 53% when using a detailed 25-category system [16]. This inverse relationship between system complexity and accuracy highlights a fundamental trade-off in manual diagnosis.

- Effectiveness of Standardized Training: Training significantly improves human performance. A cohort of novices exposed to a visual aid and video training significantly improved their initial test accuracy, achieving 94.9% on a 2-category system. Repeated training over four weeks further increased final accuracy rates to 98% (2-category) and 90% (25-category), while also improving diagnostic speed from 7.0 seconds to 4.9 seconds per image [16].

- Correlation with Other Methods: The strength of correlation between conventional semen analysis (CSA) performed by experts and computer-aided semen analysis (CASA) is reported to be r=0.57, indicating a moderate relationship. In contrast, an AI model showed a stronger correlation with CASA (r=0.88), suggesting that AI may align more closely with automated systems than human experts do [6].

Detailed Experimental Protocols for Benchmarking

To ensure reproducibility and transparent comparison, the following section outlines the key methodological details from the studies cited in this guide.

Protocol 1: Assessing Inter-Expert Variability and Training Efficacy

This protocol, derived from Seymour et al. (2025), details the process for quantifying baseline performance and the impact of standardized training on expert technologists [16].

- Sample Preparation: Semen smears are prepared following WHO guidelines and stained with a RAL Diagnostics staining kit or similar Romanowsky-type stain (e.g., Diff-Quik) [16].

- Data Acquisition: Images are acquired using a microscope equipped with a 100x oil immersion objective in bright-field mode. The CASA (Computer-Aided Semen Analysis) system's morphometric tool can be used to determine the precise dimensions of each spermatozoon [1].

- Expert Classification: Each sperm image is independently classified by multiple expert morphologists. The classification follows a defined system, such as the modified David classification, which includes up to 12 classes of morphological defects across the head, midpiece, and tail [1].

- Establishing Ground Truth: A consensus diagnosis from multiple experts is used to establish the "ground truth" for each image, applying a machine-learning principle to ensure data quality for human training [16].

- Blinded Assessment & Re-Testing: Technologists, both novice and experienced, are tested on standardized image sets. Their accuracy and speed are recorded. The training involves repeated testing over a period, such as four weeks, to measure improvement and plateau effects [16].

Protocol 2: Comparative Performance Analysis vs. AI

This protocol, based on Kılıç (2025) and others, describes a methodology for direct comparison between expert technologists and AI models [6] [15].

- Dataset Curation: A high-resolution dataset of sperm images is created. For live sperm assessment, confocal laser scanning microscopy at 40x magnification can be used to capture images without staining [6]. For fixed sperm, stained images at 100x magnification are standard.

- Blinded Evaluation: The same set of images (e.g., a test dataset of at least 200 sperm cells per sample) is presented to both expert technologists and the AI model for classification [15].

- Ground Truth Validation: The classifications from both humans and AI are compared against a pre-established, validated ground truth, typically defined by a consensus panel of senior experts [16] [15].

- Metric Calculation: Key performance metrics are calculated for both groups, including accuracy, precision, recall, F1-score, and processing time. Statistical tests (e.g., McNemar's test) are applied to determine the significance of performance differences [15].

Table 2: Essential Research Reagent Solutions for Sperm Morphology Studies

| Reagent / Material | Function in Experimental Protocol |

|---|---|

| Diff-Quik Stain | A Romanowsky-type stain variant used to color sperm structures (head, midpiece, tail) for clear visualization under a microscope on fixed samples [6]. |

| RAL Diagnostics Stain | A commercial staining kit used for preparing semen smears, enabling the differentiation of sperm morphological components [1]. |

| LEJA Slides (20 µm depth) | Standardized glass slides with a fixed chamber depth of 20 micrometers, used for creating consistent wet preparations for motility assessment or fixed smears for morphology analysis [6]. |

| Confocal Laser Scanning Microscope | Provides high-resolution, Z-stack images of unstained, live sperm, allowing for the creation of detailed datasets while keeping sperm viable for use in ART [6]. |

| CASA System (e.g., IVOS II) | An automated system that acquires sequential images via a microscope camera, used for objective analysis of sperm concentration, motility, and, to a limited extent, morphology [6] [8]. |

Visualizing Workflows and Relationships

The following diagrams illustrate the core experimental workflows and logical relationships involved in establishing expert technologist baselines.

Expert Technologist Assessment Workflow

Comparative Analysis Logic: Human vs. AI

The established performance baseline for expert technologists reveals a critical paradox in reproductive medicine: while human expertise forms the diagnostic gold standard, it is characterized by significant variability and inefficiency. The data show that even with extensive training, human accuracy plateaus, particularly with complex classification systems, and remains susceptible to subjective interpretation. These limitations have tangible consequences for clinical diagnostics, research consistency, and ultimately, patient care pathways.

This quantitative baseline is not merely a record of limitations but an essential framework for innovation. It provides the rigorous, empirical foundation necessary for the development and validation of AI-driven tools designed to augment human expertise. By addressing the specific gaps in accuracy, speed, and reproducibility identified in human performance, next-generation computational pathology solutions can transition from research concepts to clinically validated tools that enhance diagnostic precision and standardize male infertility assessment on a global scale.

Methodological Revolution: How AI and Deep Learning are Transforming Sperm Analysis

The field of medical image analysis is undergoing a revolutionary transformation, driven by advanced deep learning architectures capable of extracting meaningful patterns from pixel data. For researchers investigating complex diagnostic challenges like sperm morphology classification—a task historically plagued by subjectivity and inter-expert variability—understanding these architectures is crucial. Convolutional Neural Networks (CNNs), Residual Networks (ResNet), and Vision Transformers (ViTs) each offer distinct approaches to visual data processing, with significant implications for diagnostic accuracy and reliability [17] [1]. As of 2025, over half of surveyed fertility specialists report using AI tools in their practice, with embryo and sperm selection remaining dominant applications [17]. This guide provides an objective comparison of these core architectures, framed within the context of ongoing research comparing human expert versus AI classification accuracy in reproductive medicine.

Architectural Foundations: How AI Processes Visual Data

Core Components and Operational Mechanisms

Each architecture employs fundamentally different approaches to processing visual information, leading to varied performance characteristics in medical imaging tasks.

Convolutional Neural Networks (CNNs) process images through a hierarchical series of convolutional layers that detect patterns from local regions. Using filters that slide across the image, CNNs first identify elementary features like edges and textures, progressively building up to more complex patterns through deeper layers. This design incorporates inductive biases including translation invariance and locality, making them highly efficient for pattern recognition in images [18] [19]. Their architecture typically alternates between convolutional layers for feature extraction and pooling layers for spatial dimension reduction, culminating in fully connected layers for classification [19] [20].

ResNet (Residual Networks) address the vanishing gradient problem that plagues very deep CNNs through skip connections that allow gradients to flow directly through the network. These identity mappings enable the training of substantially deeper networks (e.g., ResNet-18, ResNet-50, ResNet-152) without performance degradation, capturing more complex feature hierarchies [21]. This architectural innovation has proven particularly valuable in medical imaging where subtle morphological differences can have significant diagnostic implications.

Vision Transformers (ViTs) fundamentally depart from the convolutional paradigm by treating images as sequences of patches. These patches are flattened, linearly embedded, and processed through self-attention mechanisms that model global relationships between all patches simultaneously [18] [22]. Unlike CNNs that build from local features, ViTs maintain a global view throughout processing, enabling them to capture long-range dependencies more effectively—a potential advantage for complex morphological assessments where contextual relationships matter [23].

Table 1: Core Architectural Characteristics Comparison

| Architecture | Core Operating Principle | Key Innovation | Inductive Bias |

|---|---|---|---|

| CNN | Local feature extraction via convolutional filters | Hierarchical feature learning | Strong (locality, translation equivariance) |

| ResNet | Deep network training via residual/skip connections | Identity mappings enabling very deep networks | Strong (inherited from CNN) |

| Vision Transformer | Global context via self-attention on image patches | Sequence-based image processing | Weak (learned from data) |

Visualizing Architectural Differences

The diagram below illustrates the fundamental workflow differences between these three architectures for image classification tasks:

Diagram 1: Architectural Workflows for Image Classification. CNNs process images through local feature extraction hierarchies, ResNet enhances deep CNNs with skip connections, while Vision Transformers use global self-attention mechanisms from the onset.

Performance Comparison: Experimental Data and Diagnostic Accuracy

Quantitative Performance Metrics Across Medical Imaging Tasks

Table 2: Performance Comparison Across Medical Imaging Applications

| Application Domain | Architecture | Reported Accuracy | Dataset Characteristics | Key Strengths |

|---|---|---|---|---|

| Sperm Morphology Classification [1] | CNN | 55-92% (across morphological classes) | 1,000 images expanded to 6,035 via augmentation | Effective with limited data, handles class imbalance |

| Colorectal Cancer Detection [21] | ResNet-50 | >80% (accuracy), >87% (sensitivity) | Colon gland images, 20-40% test splits | High sensitivity for malignant cases, deep feature learning |

| General Medical Diagnostics [24] | Generative AI/ViT-based | 52.1% (overall diagnostic accuracy) | 83 studies across multiple specialties | Competitive with non-expert physicians |

| Robustness to Image Corruption [22] | FAN-ViT (Base) | 83.9% (clean), 66.4% (corrupted) | ImageNet-1K with corruption benchmarks | Superior generalization under noisy conditions |

| Hybrid Human-AI Diagnostics [25] | Ensemble (Multiple AI + Physicians) | Highest accuracy | 2,100+ clinical vignettes, 40,000+ diagnoses | Complementary error patterns improve collective accuracy |

Sperm Morphology Classification: A Case Study in Methodology

Recent research on sperm morphology classification provides a detailed experimental framework for comparing AI and human performance. A 2025 study developed the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset containing 1,000 individual spermatozoa images classified by three experts according to the modified David classification, which includes 12 distinct morphological defect categories spanning head, midpiece, and tail anomalies [1].

Experimental Protocol:

- Image Acquisition: Samples were prepared from 37 patients, excluding high-concentration samples (>200 million/mL) to prevent image overlap. Images were captured using an MMC CASA system with bright field mode and oil immersion 100x objective [1].

- Expert Classification: Three experienced embryologists independently classified each spermatozoon, with statistical analysis (Fisher's exact test) determining significant differences in classification (p < 0.05) [1].

- Data Augmentation: The original 1,000-image dataset was expanded to 6,035 images using augmentation techniques to balance morphological class representation and mitigate overfitting [1].

- CNN Implementation: A convolutional neural network was developed in Python 3.8 with preprocessing including image denoising, normalization, and resizing to 80×80×1 grayscale. The dataset was partitioned 80:20 for training and testing, with 20% of the training set used for validation [1].

- Performance Validation: The model's 55-92% accuracy range across different morphological classes was benchmarked against the expert consensus, with analysis of inter-expert agreement distribution (categorized as no agreement, partial agreement, or total agreement) [1].

The experimental workflow for this case study is visualized below:

Diagram 2: Sperm Morphology Classification Workflow. Experimental protocol showing image acquisition, expert classification with agreement analysis, data augmentation, and CNN model development stages.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for AI-Based Morphological Analysis

| Item | Specification | Research Function |

|---|---|---|

| MMC CASA System [1] | Microscope with digital camera, bright field capability | Standardized image acquisition of sperm samples |

| RAL Diagnostics Staining Kit [1] | Standard staining reagents | Enhances morphological feature contrast for imaging |

| Python 3.8 with Deep Learning Libraries [1] | TensorFlow/PyTorch, OpenCV | CNN model implementation and training |

| Data Augmentation Pipeline [1] | Rotation, flipping, scaling transformations | Expands limited datasets and improves model generalization |

| SMD/MSS Dataset [1] | 1,000+ annotated sperm images, 12 morphological classes | Benchmarking model performance against expert classification |

| High-Performance Computing [22] | NVIDIA L4 GPUs with Ada Lovelace architecture | Accelerates ViT training and inference (FP8 with sparsity) |

| TAO Toolkit 5.0 [22] | Low-code AI toolkit with pre-trained ViT models | Streamlines implementation of advanced architectures |

Human vs. AI Diagnostic Performance: Comparative Insights

The interplay between human expertise and AI classification capabilities represents a critical research frontier. A comprehensive meta-analysis of generative AI models in medical diagnostics found an overall diagnostic accuracy of 52.1%, with no significant performance difference compared to physicians overall (p = 0.10) or non-expert physicians specifically (p = 0.93) [24]. However, AI models performed significantly worse than expert physicians (p = 0.007), highlighting the continued value of specialized expertise [24].

Fascinatingly, research on hybrid human-AI collectives demonstrates that combining human expertise with AI models produces the most accurate diagnostic outcomes [25]. This synergy arises from error complementarity—the phenomenon where humans and AI make systematically different types of errors, allowing each to compensate for the other's limitations [25]. In studies involving over 2,100 clinical vignettes and 40,000 diagnoses, adding even a single AI model to a group of human diagnosticians—or vice versa—substantially improved diagnostic quality [25].

For sperm morphology classification specifically, the inherent subjectivity of manual assessment creates particular challenges. Studies report significant inter-expert variability, with technicians classifying the same spermatozoon differently based on their experience and interpretive frameworks [1]. This variability underscores the potential value of AI systems in standardizing assessments, particularly in contexts where specialized expertise is unavailable.

Architectural Selection Guidelines for Research Applications

Choosing the appropriate architecture depends on multiple factors specific to the research context:

Data Availability Considerations:

- Limited datasets (<10,000 images): CNNs and ResNet architectures typically outperform ViTs due to their stronger inductive biases and more sample-efficient learning [18] [23].

- Large-scale datasets (>100,000 images): Vision Transformers increasingly dominate, showing superior scaling behavior and potential for higher asymptotic performance [18] [22].

- Data quality issues: ViTs demonstrate enhanced robustness against image corruption and noise in some studies, though this varies by specific architecture and task [22].

Computational Resource Constraints:

- Edge deployment/limited compute: Lightweight CNN variants (MobileNet, EfficientNet) offer the best tradeoff between performance and efficiency [18] [23].

- Cloud-based/high-performance computing: ViTs and very deep ResNet models leverage available computational power for maximum accuracy [22].

Task-Specific Considerations:

- Localized feature detection: CNNs maintain advantages for tasks requiring identification of local morphological patterns [20].

- Global context understanding: ViTs excel when diagnostic decisions require integration of information across the entire image [22] [23].

- Transfer learning scenarios: Pretrained CNNs (ResNet, VGG) offer proven effectiveness for fine-tuning on medical tasks with limited data [20].

Future Directions and Research Opportunities

The architectural landscape continues to evolve rapidly, with several emerging trends particularly relevant to medical image classification:

Hybrid architectures that combine convolutional inductive biases with transformer attention mechanisms (e.g., ConvNeXt, Swin Transformers) are gaining prominence, offering potential pathways to overcome the limitations of both approaches [18] [23]. These hybrids leverage CNN efficiency for local feature extraction while incorporating ViT-style global context modeling.

Self-supervised pretraining methods (e.g., MAE, DINO) are reducing the labeled data requirements for ViTs, potentially mitigating one of their primary limitations in medical domains where expert annotations are scarce and expensive [23].

Multimodal integration represents another frontier, with ViT-based architectures increasingly powering models that combine image analysis with clinical text data, potentially enabling more comprehensive diagnostic assessments [23].

For researchers specifically investigating sperm morphology classification, promising directions include developing specialized architectures that address class imbalance issues inherent in morphological datasets and creating standardized benchmarking frameworks to enable more systematic comparison of AI performance against human expert consensus across multiple laboratories and classification systems.

As the field progresses, the most impactful applications will likely emerge from human-AI collaborative systems that leverage the complementary strengths of both approaches, rather than positioning AI as a simple replacement for human expertise [25].

The application of Artificial Intelligence (AI) in male infertility treatment represents a paradigm shift in assisted reproductive technology (ART). Male factors contribute to approximately 50% of infertility cases, making accurate sperm analysis crucial for successful treatment outcomes [3]. Traditional manual sperm morphology assessment faces significant challenges with standardization due to its subjective nature, which is heavily reliant on operator expertise [1]. This subjectivity results in substantial inter-observer variability, complicating both diagnosis and treatment planning [2].

AI technologies, particularly deep learning models, promise to overcome these limitations by providing objective, automated, and accurate sperm analysis [6]. However, the performance and reliability of these AI systems are fundamentally constrained by the quality, size, and diversity of the curated datasets used for training. The inherent complexity of sperm morphology, characterized by subtle structural variations across head, neck, and tail compartments, presents fundamental challenges for developing robust automated analysis systems [3]. This review comprehensively examines the critical role of curated datasets and augmentation techniques in bridging the gap between human expertise and AI classification accuracy in sperm morphology analysis.

Comparative Analysis of Key Sperm Morphology Datasets

The development of high-performance AI models for sperm morphology classification relies on specialized datasets curated for this specific task. The landscape of available datasets has evolved significantly, with each offering distinct advantages and limitations.

Table 1: Comparison of Key Sperm Morphology Datasets for AI Training

| Dataset Name | Initial Image Count | Augmented Image Count | Annotation Basis | Key Features | Notable Limitations |

|---|---|---|---|---|---|

| SMD/MSS [1] | 1,000 | 6,035 | Modified David classification (12 defect classes) | Covers head, midpiece, and tail anomalies; Expert consensus labeling | Limited initial sample size; Requires augmentation |

| HuSHeM [3] | 1,475 | Not specified | WHO criteria | Publicly available; Focus on head morphology | Does not cover full sperm structure |

| SVIA [3] | 125,000 annotated instances | Not specified | Comprehensive annotation | Includes detection, segmentation, and classification tasks | Complex annotation process |

| Confocal Microscopy Dataset [6] | 12,683 annotated images from 21,600 total | Not specified | WHO criteria for unstained sperm | Uses confocal laser scanning microscopy; Assesses live sperm without staining | Specialized equipment required |

The SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset exemplifies the modern approach to dataset creation. Initially comprising 1,000 images of individual spermatozoa, it was expanded to 6,035 images through data augmentation techniques [1]. This dataset stands out for its use of the modified David classification system, which includes 12 distinct classes of morphological defects covering head, midpiece, and tail anomalies [1]. This comprehensive coverage enables AI systems to learn the nuanced differences between various sperm abnormalities that are critical for accurate diagnosis.

In contrast, the HuSHeM (Human Sperm Head Morphology) dataset and its modified version (MHSMA) focus primarily on sperm head morphology, with the MHSMA dataset containing 1,540 images of different sperm types with features such as acrosome, head shape, and vacuoles [3]. While valuable for specific applications, this focused approach limits the model's ability to assess complete sperm structures. The newer SVIA (Sperm Videos and Images Analysis) dataset represents a more ambitious effort, comprising 125,000 annotated instances for object detection, 26,000 segmentation masks, and 125,880 cropped image objects for classification tasks [3]. This multi-faceted approach supports more comprehensive model training but requires extensive annotation resources.

A specialized dataset developed using confocal laser scanning microscopy demonstrates how imaging technological advances can enhance dataset quality. This dataset contains 12,683 annotated images of unstained live sperm captured at 40× magnification, enabling assessment of sperm morphology without traditional staining that renders sperm unusable for subsequent procedures [6]. This approach highlights how dataset curation methodologies can directly address clinical limitations.

Experimental Protocols for Dataset Creation and AI Model Training

Dataset Development Methodologies

The creation of high-quality sperm morphology datasets follows rigorous experimental protocols to ensure accuracy and consistency. For the SMD/MSS dataset, researchers employed a systematic approach beginning with sample collection from 37 patients with varying morphological profiles [1]. Samples with sperm concentrations exceeding 200 million/mL were excluded to prevent image overlap and facilitate capture of complete sperm structures. Smears were prepared according to WHO manual guidelines and stained with RAL Diagnostics staining kit [1].

Image acquisition utilized the MMC CASA system with an optical microscope equipped with a digital camera, using bright field mode with an oil immersion 100× objective [1]. Each image contained a single spermatozoon, ensuring clear structural representation. Critical to the dataset's reliability was the annotation process involving three independent experts with extensive experience in semen analysis. These experts classified each spermatozoon according to the modified David classification system, which includes 12 distinct morphological defect categories [1]. To handle inevitable inter-expert disagreement, the researchers established three agreement scenarios: no agreement (NA), partial agreement (PA) where 2/3 experts concurred, and total agreement (TA) with complete consensus [1].

The confocal microscopy dataset followed a different protocol optimized for live sperm analysis. Semen samples from 30 healthy volunteers were dispensed as 6 μL droplets onto standard two-chamber slides [6]. Images were captured using a confocal laser scanning microscope at 40× magnification in confocal mode with Z-stack intervals of 0.5μm covering a total range of 2μm [6]. Embryologists and researchers manually annotated well-focused sperm images using the LabelImg program, achieving a remarkable coefficient of correlation of 0.95 for normal sperm morphology detection and 1.0 for abnormal morphology detection [6]. This high inter-annotator agreement demonstrates the protocol's effectiveness for consistent labeling.

Data Augmentation Techniques and AI Training

To address the common challenge of limited dataset size, researchers employ sophisticated data augmentation techniques. For the SMD/MSS dataset, augmentation transformed the original 1,000 images into 6,035 images, significantly expanding the training database [1]. Standard augmentation approaches include geometric transformations (rotation, scaling, flipping), color space adjustments, and noise injection, which help create a more diverse and robust training set while maintaining label integrity.

The AI training pipeline typically follows a structured workflow. For the deep learning model trained on the confocal microscopy dataset, researchers utilized a ResNet50 transfer learning model, a deep neural network designed for image classification [6]. The model was trained on 9,000 images (4,500 normal and 4,500 abnormal sperm morphology) with the objective of minimizing differences between predicted and actual labels [6]. The training achieved a test accuracy of 0.93 after 150 epochs, with precision of 0.95 and recall of 0.91 for detecting abnormal sperm morphology, and precision of 0.91 and recall of 0.95 for normal sperm morphology [6]. The model's processing speed was approximately 0.0056 seconds per image, demonstrating the potential for real-time clinical application [6].

Diagram 1: AI Dataset Creation Workflow (63 characters)

Performance Comparison: AI Models vs. Human Experts

The ultimate validation of AI systems in sperm morphology analysis lies in their performance compared to human experts and conventional analysis methods. Quantitative comparisons reveal significant insights into the current state of AI capabilities in this domain.

Table 2: Performance Metrics of AI Models vs. Conventional Methods

| Assessment Method | Accuracy | Precision | Recall/Sensitivity | Correlation with Reference | Key Strengths |

|---|---|---|---|---|---|

| In-house AI Model (Confocal) [6] | 93% | 91-95% | 91-95% | r=0.88 with CASA | Assesses live unstained sperm; High speed |

| Deep Learning (SMD/MSS) [1] | 55-92% | Not specified | Not specified | Not specified | Comprehensive defect classification |

| Conventional Semen Analysis (CSA) [6] | Not specified | Not specified | Not specified | r=0.76 with CASA | Standard clinical method |

| Computer-Aided Semen Analysis (CASA) [6] | Not specified | Not specified | Not specified | r=0.57 with CSA | Automated but limited accuracy |