Implementing Convolutional Neural Networks for Human Sperm Morphology Classification: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive exploration of the implementation of Convolutional Neural Networks (CNNs) for the automated classification of human sperm morphology, a critical parameter in male fertility assessment.

Implementing Convolutional Neural Networks for Human Sperm Morphology Classification: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive exploration of the implementation of Convolutional Neural Networks (CNNs) for the automated classification of human sperm morphology, a critical parameter in male fertility assessment. Tailored for researchers, scientists, and drug development professionals, it covers the foundational motivation for automating this traditionally subjective analysis, delves into specific methodological approaches and CNN architectures, addresses common troubleshooting and optimization challenges, and presents rigorous validation and performance comparison frameworks. By synthesizing current research and clinical applications, this guide aims to equip professionals with the knowledge to develop robust, AI-driven tools that enhance the standardization, accuracy, and efficiency of semen analysis in clinical and research settings.

The Why and What: Foundations of AI in Sperm Morphology Analysis

Male factor infertility is a significant public health issue, substantially contributing to approximately 50% of all infertility cases among couples [1]. The initial and cornerstone investigation for male infertility is the semen analysis, among which sperm morphology assessment—the evaluation of sperm size, shape, and structure—is considered one of the most clinically informative yet challenging parameters [2]. Traditionally, this assessment is performed manually by technicians using microscopy, a method notoriously plagued by high subjectivity and inter-laboratory variability due to its reliance on individual expertise [2]. This manual process is slow, difficult to standardize, and can lead to inconsistent clinical diagnoses.

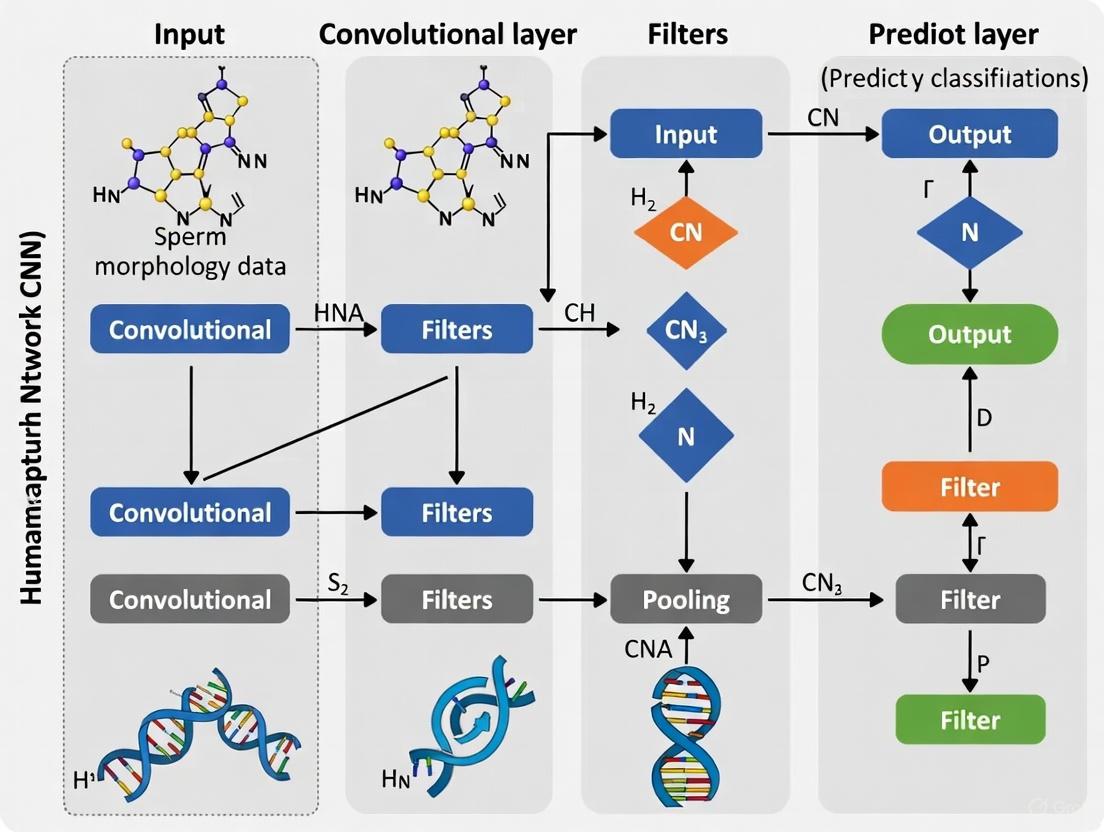

The integration of Convolutional Neural Networks (CNNs), a class of deep learning algorithms, presents a paradigm shift for andrology laboratories. CNNs are uniquely suited for image analysis tasks as they can learn hierarchical features directly from pixel data, automating the classification process and minimizing human bias [3] [4]. This document outlines the application of a CNN-based framework for the standardization of human sperm morphology classification, detailing the experimental protocols, data handling procedures, and technical specifications required for robust implementation.

The following tables summarize the key quantitative aspects of developing a CNN model for sperm morphology classification, from dataset composition to model performance.

Table 1: SMD/MSS Dataset Composition and Augmentation

| Component | Description | Quantity |

|---|---|---|

| Initial Image Collection | Individual sperm images acquired via MMC CASA system (100x oil immersion) | 1,000 images [2] |

| Data Augmentation | Application of techniques to create variant images (e.g., rotation, scaling) to balance classes and increase dataset size | Final dataset: 6,035 images [2] |

| Expert Classification | Three independent experts classifying based on modified David criteria (12 defect classes) | 3 experts per image [2] |

| Inter-Expert Agreement | Percentage of images where all three experts assigned identical labels for all categories | "Total Agreement" (TA) on a subset of images [2] |

Table 2: CNN Model Configuration and Performance Metrics

| Parameter | Specification | Value / Range |

|---|---|---|

| Programming Environment | Language and key libraries | Python 3.8 [2] |

| Image Pre-processing | Resizing, normalization, denoising | 80x80 pixels, grayscale [2] |

| Data Partitioning | Train/Test split | 80% Training, 20% Testing [2] |

| Reported Model Accuracy | Performance on the test set | 55% to 92% [2] |

Experimental Protocols & Workflows

Protocol 1: Sample Preparation and Data Acquisition

This protocol ensures the consistent creation of high-quality sperm image smears for subsequent digitization.

Materials:

- Fresh semen sample (sperm concentration ≥ 5 million/mL and < 200 million/mL) [2]

- RAL Diagnostics staining kit or equivalent [2]

- Microscope slides and coverslips

- Optical microscope with 100x oil immersion objective and digital camera (e.g., MMC CASA system) [2]

Procedure:

- Smear Preparation: Prepare a thin smear of the semen sample on a clean glass slide, following the guidelines outlined in the WHO laboratory manual [2].

- Staining: Apply the RAL Diagnostics stain according to the manufacturer's instructions to enhance cellular contrast and detail.

- Image Acquisition: Using the MMC CASA system or equivalent, capture images of individual spermatozoa with the 100x oil immersion objective in bright-field mode.

- Data Export: Ensure each captured image contains a single spermatozoon. Save images in a standard format (e.g., PNG, JPEG) and assign a unique filename.

Protocol 2: Image Labeling and Ground Truth Establishment

This protocol defines the process for creating a reliable "ground truth" dataset, which is critical for supervised learning.

Materials:

- Acquired sperm images (from Protocol 1)

- Standardized classification form (e.g., Excel spreadsheet) based on modified David classification [2]

Procedure:

- Expert Panel: Provide the set of images to three independent experts, each with extensive experience in semen analysis.

- Blinded Classification: Each expert independently classifies every spermatozoon into one or more of the 12 morphological classes defined by the modified David criteria (e.g., tapered head, microcephalous, bent midpiece, coiled tail) [2].

- Data Consolidation: Compile all classifications from the experts into a single ground truth file. This file should link each image filename to the classifications from all three experts and any associated morphometric data.

- Consensus Analysis: Analyze the level of inter-expert agreement. Images with total agreement (TA) among all three experts provide the highest confidence labels for model training.

Protocol 3: CNN Model Development and Training

This protocol covers the computational steps for building and training the deep learning model.

Materials:

- Hardware: Computer with a dedicated Graphics Processing Unit (GPU)

- Software: Python 3.8 with deep learning libraries (e.g., TensorFlow, PyTorch, Keras) [2] [4]

Procedure:

- Image Pre-processing:

- Resize: Scale all images to a uniform size of 80x80 pixels using a linear interpolation strategy.

- Grayscale Conversion: Convert color images to single-channel grayscale to simplify the initial model input.

- Normalization: Normalize pixel intensity values to a range of 0 to 1 to aid model convergence. [2]

- Data Partitioning: Randomly split the entire dataset of 6,035 images into a training set (80% of data) for model learning and a hold-out test set (20% of data) for final performance evaluation. [2]

- Model Training:

- Architecture Definition: Design a CNN architecture comprising convolutional layers for feature extraction, pooling layers for dimensionality reduction, and fully connected layers for final classification. [3] [4]

- Compilation: Define a loss function (e.g., categorical cross-entropy) and an optimizer (e.g., Adam).

- Iteration: Train the model by iteratively presenting batches of images from the training set, adjusting the model's internal weights to minimize classification error.

Visualization of Workflows

The following diagrams, generated with Graphviz DOT language, illustrate the logical relationships and workflows described in the protocols.

Sperm Morphology Analysis Workflow

Convolutional Neural Network Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for CNN-based Sperm Morphology Analysis

| Item | Function / Application |

|---|---|

| MMC CASA System | An integrated hardware and software system for the automated, sequential acquisition of images from sperm smears using a microscope-equipped camera. [2] |

| RAL Diagnostics Staining Kit | A ready-to-use staining solution used to prepare semen smears for morphological analysis, enhancing the contrast and visibility of sperm structures under a microscope. [2] |

| Modified David Classification Sheet | A standardized form detailing 12 specific classes of sperm defects (affecting the head, midpiece, and tail) used by experts to generate consistent ground truth labels. [2] |

| GPU-Accelerated Computing Workstation | A computer equipped with a dedicated Graphics Processing Unit (GPU) essential for performing the vast number of calculations required to train deep learning models in a feasible timeframe. [4] |

| Python with Deep Learning Libraries (TensorFlow/PyTorch) | The core programming environment and software libraries that provide the tools and functions necessary to define, train, and evaluate convolutional neural network models. [2] [4] |

The diagnostic evaluation of male infertility relies heavily on semen analysis, with sperm morphology assessment representing one of its most prognostically significant yet challenging components. For decades, this analysis has been performed manually by trained technicians observing stained sperm smears under a microscope, a method subject to significant subjectivity and variability. The introduction of Computer-Assisted Semen Analysis (CASA) systems promised to revolutionize the field by introducing automation, objectivity, and standardization. However, current CASA methodologies exhibit considerable limitations that impact their reliability and clinical utility, particularly in morphological assessment. This application note critically examines the limitations inherent in both manual and conventional CASA approaches, contextualized within the framework of emerging convolutional neural network (CNN) technologies that offer potential solutions to these longstanding challenges.

Manual Sperm Morphology Assessment: Inherent Subjectivity and Variability

Fundamental Methodological Constraints

Traditional manual sperm morphology assessment follows World Health Organization (WHO) guidelines, requiring technicians to classify at least 200 spermatozoa into normal and abnormal categories based on strict morphological criteria. The process involves staining semen smears (typically with RAL, Papanicolaou, or Diff-Quik stains) and systematic examination under high-power magnification (100x oil immersion) [2]. Despite standardized protocols, this method suffers from inherent limitations:

- Subjectivity in Classification: Borderline morphological features often receive different classifications between technicians, even within the same laboratory.

- Cognitive Fatigue: The visual intensity of scanning and classifying hundreds of sperm cells leads to declining accuracy over time.

- Inter-laboratory Variability: Differences in staining techniques, microscope calibration, and technician training create substantial inconsistencies between facilities.

Quantitative Evidence of Manual Method Limitations

Table 1: Documented Variability in Manual Sperm Morphology Assessment

| Parameter | Evidence of Variability | Impact on Diagnostic Reliability |

|---|---|---|

| Inter-observer Agreement | Kappa values as low as 0.05-0.15 reported between technicians [5] | Poor diagnostic reproducibility even among trained experts |

| Time Consumption | 30-45 minutes per sample for proper assessment [5] | Practical limitations in high-volume clinical settings |

| Disagreement Rate | Up to 40% coefficient of variation between evaluators [5] | Significant potential for misclassification and diagnostic error |

Computer-Assisted Semen Analysis (CASA): Persistent Technological Limitations

Conventional CASA systems utilize optical microscopy coupled with digital cameras and specialized software to capture and analyze sperm images. The general workflow involves:

- Sample Preparation: Semen samples are loaded into specialized chambers (e.g., Makler, MicroCell) of standardized depth

- Image Acquisition: Multiple digital images or videos are captured under phase-contrast or bright-field microscopy

- Software Analysis: Proprietary algorithms identify sperm cells, distinguish them from debris, and calculate parameters

- Data Output: Quantitative results for concentration, motility, and in some systems, morphological metrics

Despite four decades of technological evolution, current CASA systems face significant challenges in accurate morphological classification due to fundamental limitations in image analysis capabilities and algorithmic approaches.

Specific Limitations of Conventional CASA Systems

Table 2: Documented Limitations of Conventional CASA Systems in Morphology Assessment

| Limitation Category | Specific Technical Challenges | Impact on Analysis |

|---|---|---|

| Image Resolution & Quality | Limited ability to distinguish subtle morphological features; difficulty with overlapping sperm or debris-filled samples [6] [2] | Inaccurate detection and classification of abnormal forms |

| Algorithmic Constraints | Inability to properly classify midpiece and tail abnormalities; poor performance with complex defects [2] | Systematic under-reporting of specific abnormality types |

| Standardization Issues | High sensitivity to instrument settings (illumination, contrast, chamber depth) [7] | Poor inter-system reproducibility and comparability |

| Concentration Dependency | Increased variability in low (<15 million/mL) and high (>60 million/mL) concentration specimens [6] | Restricted reliable operating range |

| Morphological Heterogeneity | Difficulty handling the natural shape variation within samples and subjects [6] | Oversimplification of complex morphological patterns |

Experimental Protocols for Methodology Comparison

Protocol 1: Manual Morphology Assessment (WHO Standard)

Principle: Visual classification of stained spermatozoa based on standardized morphological criteria.

Materials:

- RAL, Papanicolaou, or Diff-Quik staining kits

- Microscope with 100x oil immersion objective

- Tally counters or specialized data entry software

- Standardized data collection forms

Procedure:

- Prepare thin semen smears on clean glass slides and air dry

- Fix and stain according to manufacturer protocols for selected stain

- Systematically scan slides using meander pattern at 100x magnification

- Classify each of 200+ consecutive spermatozoa into:

- Normal

- Head defects (tapered, thin, microcephalic, macrocephalic, multiple, abnormal acrosome)

- Midpiece defects (bent, cytoplasmic droplet)

- Tail defects (coiled, short, multiple, broken)

- Calculate percentage of normal forms and specific defect categories

- Record all data with quality control documentation

Quality Control: Participation in external quality assurance programs; regular inter-technician comparison exercises [8].

Protocol 2: Conventional CASA Morphology Analysis

Principle: Automated image capture and analysis of sperm morphological parameters.

Materials:

- CASA system (e.g., Hamilton Thorne IVOS/CEROS, SCA, SQA-V)

- Disposable counting chambers (e.g., Leja, MicroCell)

- Quality control beads (e.g., latex Accu-Beads) [6]

- Temperature-controlled stage (if assessing motility)

Procedure:

- Calibrate system using quality control beads according to manufacturer specifications

- Pre-warm counting chamber and stage to 37°C if analyzing motility

- Load appropriately diluted semen sample (following manufacturer recommendations)

- Set acquisition parameters:

- Number of fields to analyze (minimum 10)

- Sperm detection thresholds (size, intensity, contrast)

- Morphology classification criteria (based on WHO standards)

- Execute automated acquisition and analysis

- Review results for artifacts or misclassifications; manually override if necessary

- Generate and export comprehensive report

Quality Control: Regular calibration and validation; standardized operating procedures for all technicians; documentation of all instrument settings [7].

Visualizing Methodological Limitations and Solutions

Comparative Workflow: Traditional vs. AI-Enhanced Analysis

Technical Pathway: CASA System Operation and Failure Points

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Sperm Morphology Analysis

| Reagent/Material | Function/Application | Specific Examples & Notes |

|---|---|---|

| Staining Kits | Cellular staining for manual morphology assessment | RAL Diagnostics kit [2], Papanicolaou, Diff-Quik |

| Standardized Chambers | Consistent sample depth for analysis | Leja 20μm chambers [7], MicroCell, Makler |

| Quality Control Beads | System calibration and validation | Latex Accu-Beads [6] |

| CASA Systems | Automated semen analysis | Hamilton Thorne IVOS/CEROS [6], SCA Microptics [6] |

| Dataset Images | Algorithm training and validation | HuSHeM (216 images) [5], SCIAN (1,854 images) [9], SMIDS (3,000 images) [5] |

| Deep Learning Frameworks | CNN model development | YOLOv7 [10], VGG16 [11], ResNet50 [5], DenseNet169 [12] |

The limitations of current sperm morphology assessment methodologies—both manual and CASA-based—represent significant challenges in male infertility diagnostics. Manual methods suffer from irreproducible subjectivity and substantial inter-observer variability, while conventional CASA systems demonstrate inadequate performance in morphological classification, particularly for complex defects and challenging samples. These limitations necessitate technological innovation, with deep learning approaches—particularly CNN-based architectures—emerging as promising solutions. The integration of AI technologies offers the potential to overcome longstanding limitations through automated, standardized, and highly accurate sperm morphology classification, ultimately advancing both clinical diagnostics and research capabilities in reproductive medicine.

The morphological evaluation of human sperm is a cornerstone of male fertility assessment, providing critical diagnostic and prognostic information. Traditional manual analysis, however, is notoriously subjective, time-consuming, and plagued by significant inter-observer variability, with reported disagreement rates among experts reaching up to 40% [5]. This lack of standardization directly impacts the reliability of infertility diagnostics and treatment planning.

Convolutional Neural Networks (CNNs) offer a powerful solution to these challenges by enabling the automation, standardization, and acceleration of sperm morphology analysis. This document outlines the foundational classification task for a CNN-based system, defining the core categories of "Normal" versus "Abnormal" and detailing the key morphological defects that the model must learn to identify. By establishing a clear and consistent classification framework, researchers can develop robust models that enhance objectivity and reproducibility in reproductive medicine [2] [11].

Defining the Classification Classes

The primary task for a CNN in sperm morphology analysis is a classification problem. The system must analyze an input image of an individual spermatozoon and assign it to one of several predefined categories. These categories are hierarchically organized, starting with the broad distinction between normal and abnormal forms, followed by a more granular classification of specific defect types and their locations.

The "Normal" Spermatozoon

A morphologically normal spermatozoon is the reference point for all classification. According to World Health Organization (WHO) guidelines, it is characterized by the following features [5]:

- Head: Smooth, oval configuration with a well-defined acrosome covering 40-70% of the head area. The length should be between 4.0–5.5 µm and the width between 2.5–3.5 µm.

- Midpiece: Slender, approximately the same length as the head, and axially attached.

- Tail: A single, unbroken tail that is thinner than the midpiece and approximately 45 µm long, without sharp bends or coils.

Any deviation from this strict definition qualifies the sperm as abnormal. In clinical practice, a sample with ≥ 4% normal forms is generally considered within the normal range, though this threshold can vary [13].

The "Abnormal" Spermatozoon: A Framework of Defects

Abnormal sperm are categorized based on the specific part of the sperm cell that is defective. The most comprehensive systems, such as the modified David classification, define numerous specific anomaly types [2]. For a CNN-based system, these can be consolidated into a structured hierarchy of defects.

Table 1: Key Morphological Defects for CNN Classification

| Defect Category | Specific Defect Types | Key Morphological Characteristics |

|---|---|---|

| Head Defects | Tapered, Thin, Microcephalous (small), Macrocephalous (large), Multiple heads, Abnormal acrosome, Abnormal post-acrosomal region [2] | Irregular head shape (pyriform, round, amorphous), vacuolization, size discrepancies, disordered acrosome [9] |

| Midpiece Defects | Bent midpiece, Cytoplasmic droplet [2] | Thickened, asymmetrical, or bent midpiece; presence of a cytoplasmic remnant >1/3 the head size [9] |

| Tail Defects | Coiled tail, Short tail, Multiple tails [2] | Absent, broken, coiled, or multiple tails; sharp angular bends [9] |

It is common for a single spermatozoon to exhibit multiple defects across different compartments (e.g., a microcephalic head with a coiled tail). This is classified as a sperm with associated anomalies [2]. Some studies also include a distinct "Non-Sperm" class to identify cellular debris or other artifacts that are not sperm cells, which is crucial for reducing false positives in an automated system [14].

Quantitative Performance of CNN Models

Deep learning approaches have demonstrated significant success in automating the classification task. Performance varies based on the model architecture, dataset size and quality, and the specific classification scheme used.

Table 2: Reported Performance of Selected CNN Models for Sperm Morphology Classification

| Model Architecture / Approach | Dataset(s) Used | Reported Performance | Key Highlights |

|---|---|---|---|

| CBAM-enhanced ResNet50 with Deep Feature Engineering [5] | SMIDS (3-class), HuSHeM (4-class) | Accuracy: 96.08% (SMIDS), 96.77% (HuSHeM) | Integrates attention mechanisms; uses feature selection & SVM classifier. |

| Multi-model CNN Fusion (Soft-Voting) [14] | SMIDS, HuSHeM, SCIAN-Morpho | Accuracy: 90.73% (SMIDS), 85.18% (HuSHeM), 71.91% (SCIAN) | Fuses six different CNN models for robust prediction. |

| VGG16 with Transfer Learning [11] | HuSHeM, SCIAN | True Positive Rate: 94.1% (HuSHeM), 62% (SCIAN) | Applies transfer learning from ImageNet, avoiding manual feature extraction. |

| Custom CNN [2] | SMD/MSS (12-class) | Accuracy: 55% to 92% (varies by class) | Based on the modified David classification with 12 detailed defect classes. |

Experimental Protocol for CNN-Based Classification

The following protocol provides a detailed methodology for developing and validating a CNN model for human sperm morphology classification, synthesizing best practices from recent literature.

Sample Preparation and Image Acquisition

- Sample Preparation: Collect semen samples after obtaining informed consent. Prepare smears on glass slides according to WHO guidelines [2]. Stain using a standardized protocol (e.g., RAL Diagnostics staining kit) to ensure consistent contrast and nuclear/morphological detail [2].

- Image Acquisition: Use an optical microscope equipped with a high-resolution digital camera and a 100x oil immersion objective [2]. The CASA (Computer-Assisted Semen Analysis) system's morphometric tool can be utilized to capture images and initially determine basic dimensions of the head and tail [2]. Capture images of individual spermatozoa, ensuring they are well-separated to avoid overlap.

Data Preprocessing and Annotation

- Image Preprocessing:

- Cleaning: Handle missing values or inconsistent data.

- Normalization: Resize images to a uniform dimension (e.g., 80x80 pixels) and convert to grayscale to standardize input and reduce computational load [2].

- Denoising: Apply techniques like wavelet denoising to remove noise from insufficient lighting or poor staining, which can improve model accuracy [2] [15].

- Expert Annotation (Ground Truth Creation): Each sperm image must be independently classified by multiple experienced embryologists or technicians (e.g., three experts) [2]. The annotation should follow a standardized classification system (e.g., WHO criteria or modified David classification). A ground truth file is compiled, listing the image name and the classifications from all experts [2].

- Data Augmentation: To balance morphological classes and increase dataset size, apply augmentation techniques such as rotation, flipping, scaling, and changes in brightness and contrast. For example, one study expanded a dataset from 1,000 to 6,035 images through augmentation [2].

Model Training and Evaluation

- Dataset Partitioning: Randomly split the entire dataset into a training set (80%) and a testing set (20%) [2]. A portion of the training set (e.g., 20%) can be used as a validation set for hyperparameter tuning.

- Model Selection and Training:

- Architecture Choice: Select a CNN architecture (e.g., ResNet50, VGG16, custom CNN) [14] [11] [5].

- Transfer Learning: Consider using a pre-trained model (on datasets like ImageNet) and fine-tune it on the sperm morphology dataset, which can be effective, especially with limited data [11].

- Training: Train the model using the training set. Employ a suitable optimizer (e.g., Adam) and a loss function (e.g., categorical cross-entropy).

- Model Evaluation:

- Metrics: Evaluate the model on the held-out test set using metrics such as Accuracy, True Positive Rate (Sensitivity), Precision, and F1-Score [11] [5].

- Validation Technique: Use k-fold cross-validation (e.g., k=5 or k=10) to ensure the model's performance is consistent and not dependent on a particular data split [14] [16].

- Comparison to Baseline: Compare the model's performance (e.g., using Mean Absolute Error) against a simple baseline model (ZeroR) to demonstrate its predictive power [16].

The following workflow diagram summarizes the complete experimental pipeline:

CNN-Based Sperm Morphology Classification Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Analysis Research

| Item | Function / Application | Examples / Specifications |

|---|---|---|

| Staining Kits | Provides contrast for microscopic examination of sperm structures (head, acrosome, midpiece). | RAL Diagnostics kit [2] |

| Public Datasets | Benchmarks for training, validating, and comparing CNN models. | SMIDS, HuSHeM, SCIAN-MorphoSpermGS, SMD/MSS Dataset [2] [14] [5] |

| Deep Learning Frameworks | Software libraries for building, training, and deploying CNN models. | Python with TensorFlow/Keras or PyTorch [2] [16] |

| Microscopy Systems | Image acquisition for creating new datasets or validating model predictions. | Microscope with 100x oil objective, digital camera, CASA system [2] |

| Pre-trained Models | Accelerates development via transfer learning, improving performance with limited data. | VGG16, ResNet-50, InceptionV3 [11] [16] [5] |

Core Datasets in the Field: SMD/MSS, MHSMA, VISEM, and HuSHeM

The implementation of Convolutional Neural Networks (CNNs) for human sperm morphology classification represents a paradigm shift in male fertility research, offering a path to standardize a traditionally subjective and variable analysis. The robustness of any deep learning model is intrinsically linked to the quality, size, and diversity of the dataset used for its training. This application note provides a detailed examination of four core public datasets—SMD/MSS, MHSMA, VISEM-Tracking, and HuSHeM—that are pivotal for developing and benchmarking CNN-based sperm morphology analysis systems. We present structured quantitative comparisons, detailed experimental protocols for dataset utilization, and a scientist's toolkit to guide researchers in selecting and applying these resources effectively within a computational andrology framework.

Core Dataset Specifications and Quantitative Comparison

A critical first step in experimental design is the selection of an appropriate dataset. The core datasets vary significantly in their focus, encompassing static morphology from stained samples and dynamic motility from live video recordings. The quantitative specifications and primary applications of the SMD/MSS, MHSMA, VISEM-Tracking, and HuSHeM datasets are summarized in Table 1.

Table 1: Quantitative Comparison of Core Sperm Morphology and Motility Datasets

| Dataset Name | Primary Focus | Original Sample Size | Augmented/Extended Size | Annotation & Classification Standard | Key Strengths |

|---|---|---|---|---|---|

| SMD/MSS [2] [17] | Static Morphology | 1,000 images | 6,035 images (after augmentation) | Modified David Classification (12 defect classes) by 3 experts | Comprehensive defect annotation across head, midpiece, and tail; expert consensus |

| MHSMA [18] | Static Morphology | 1,540 images | Not specified | WHO-based guidelines for head, acrosome, and vacuole defects | Freely available; benchmark for head/acrosome/vacuole classification |

| VISEM-Tracking [19] | Motility & Tracking | 20 videos (29,196 frames) | 166 additional unlabeled video clips | Bounding boxes with tracking IDs; labels: normal, pinhead, cluster | Rich motility data; manually annotated tracking coordinates |

| HuSHeM [20] | Static Morphology (Head) | Not specified in detail | Not specified | Five head morphology categories (e.g., normal, tapered, pyriform) | Focused on sperm head morphology classification |

Diagram 1: Logical relationship between dataset type and CNN model development focus

Diagram 1 Title: Dataset Type Drives CNN Application Focus

Detailed Experimental Protocols

Protocol 1: Implementing a CNN for Morphology Classification Using SMD/MSS

The SMD/MSS dataset, with its detailed annotations based on the modified David classification, is ideal for training a CNN to perform multi-class defect identification [2].

Sample Preparation & Image Acquisition (as per SMD/MSS protocol):

- Prepare semen smears from samples with a concentration of at least 5 million/mL, excluding samples >200 million/mL to prevent image overlap.

- Stain smears using the RAL Diagnostics staining kit, following WHO guidelines.

- Acquire images of individual spermatozoa using an MMC CASA system, employing a bright field mode with an oil immersion x100 objective [2].

Data Pre-processing for CNN Input:

- Data Cleaning: Inspect images for artifacts and inconsistencies. The SMD/MSS protocol involves handling missing values and outliers to ensure data quality.

- Normalization: Resize all images to a consistent dimension (e.g., 80x80 pixels) and convert to grayscale. Normalize pixel values to a common scale, e.g., [0, 1], to stabilize and accelerate CNN training [2].

- Data Partitioning: Randomly split the augmented dataset of 6,035 images into training (80%), validation (10%), and testing (10%) subsets. Ensure stratification to maintain class distribution across splits [2].

CNN Training & Evaluation:

- Architecture Selection: Implement a CNN architecture such as ResNet50, which has demonstrated effectiveness on this dataset [21]. The network should culminate in a softmax output layer corresponding to the 12 morphological classes plus a 'normal' class.

- Training Configuration: Use categorical cross-entropy loss and the Adam optimizer. Mitigate overfitting by employing techniques like dropout and data augmentation (e.g., rotations, flips) beyond the initial dataset augmentation.

- Performance Metrics: Evaluate the model on the held-out test set using accuracy, precision, recall, and F1-score. The SMD/MSS study reported accuracies ranging from 55% to 92% across different morphological classes, reflecting the varying complexity of the classification task [2] [17].

Protocol 2: Sperm Detection and Motility Analysis Using VISEM-Tracking

The VISEM-Tracking dataset enables the development of models for sperm detection and movement analysis in video sequences, a key step towards automated CASA systems [19].

Data Acquisition (as per VISEM-Tracking protocol):

- Place unstained semen samples on a heated microscope stage (37°C) and examine under 400x magnification using a microscope with phase-contrast optics.

- Record videos using a microscope-mounted camera (e.g., IDS UI-2210C). VISEM-Tracking provides 20 annotated videos of 30 seconds each, saved as AVI files [19].

Data Pre-processing and Annotation:

- Frame Extraction: Decompose videos into individual frames for processing.

- Bounding Box Formatting: The dataset provides annotations in YOLO format, which includes normalized bounding box coordinates and class labels (0: normal sperm, 1: sperm clusters, 2: small/pinhead sperm) [19].

YOLO Model for Detection and Tracking:

- Model Training: Train a YOLO-based object detection model (e.g., YOLOv5 or YOLOv7) on the annotated frames. This model will learn to localize and classify spermatozoa in each frame [19] [10].

- Tracking Implementation: Utilize the provided tracking identifiers to link detections of the same sperm across consecutive frames. This allows for the computation of kinematic parameters (e.g., velocity, linearity).

- Baseline Performance: The dataset publishers established a baseline using YOLOv5, demonstrating the dataset's suitability for training complex deep learning models for sperm detection [19].

Diagram 2: Workflow for CNN-based Sperm Morphology Classification

Diagram 2 Title: End-to-End Morphology Classification Workflow

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of the protocols above requires both wet-lab materials and computational tools. The following table details key reagents, software, and datasets essential for research in this field.

Table 2: Essential Research Reagents and Resources for Automated Sperm Analysis

| Category | Item / Resource | Specification / Example | Primary Function in Research |

|---|---|---|---|

| Wet-Lab Reagents | RAL Diagnostics Staining Kit | As used in SMD/MSS protocol [2] | Provides contrast for detailed morphological analysis of sperm structures in static images. |

| Optixcell Extender | As used in bovine studies [10] | Preserves sperm viability and morphology during sample preparation and imaging. | |

| Non-Capacitating / Capacitating Media | As used in 3D-SpermVid dataset [22] | Enables study of sperm motility under different physiological conditions. | |

| Software & Tools | Python with Deep Learning Libraries | Python 3.8, PyTorch/TensorFlow [2] | Core programming environment for building, training, and evaluating CNN models. |

| YOLO Framework | YOLOv5, YOLOv7 [19] [10] | Real-time object detection and tracking of sperm in video sequences. | |

| LabelBox | Commercial annotation tool [19] | Facilitates manual annotation of bounding boxes for creating ground-truth datasets. | |

| Public Datasets | SMD/MSS, MHSMA, VISEM-Tracking | See Table 1 | Benchmark datasets for training and validating models on morphology and motility. |

| Synthetic Data | AndroGen Software | Open-source synthetic generator [23] | Generates customizable, annotated sperm images to augment real datasets and address data scarcity. |

Discussion and Future Perspectives

The reviewed datasets provide foundational resources for automating sperm analysis, yet they present complementary strengths and limitations. SMD/MSS offers exceptional morphological detail via expert annotation but is limited to static images [2]. Conversely, VISEM-Tracking provides rich motility data but less granular morphological classification [19]. MHSMA is a valuable, publicly available benchmark, though it may have limitations in resolution and sample size [18]. A significant challenge across the field is the lack of standardized, high-quality annotated datasets, which is crucial for developing robust, generalizable models [20].

Future research will likely focus on multi-dimensional datasets that combine high-resolution morphology with 3D motility tracking, as seen in emerging resources like the 3D-SpermVid dataset [22]. Furthermore, to combat data scarcity, the use of synthetic data generation tools like AndroGen provides a promising avenue to create large, balanced, and annotated datasets for training more accurate models without privacy concerns [23]. The clinical integration of these AI tools is advancing, with recent expert reviews providing a positive opinion on their use after rigorous qualification and validation within individual laboratories [13]. This progression from bespoke manual analysis to standardized, AI-driven pipelines heralds a new era of objectivity and efficiency in male fertility assessment.

The Role of Deep Learning and CNNs in Revolutionizing Reproductive Biology

Infertility represents a significant global health challenge, affecting approximately 15% of couples worldwide, with male factors contributing to nearly half of all cases [2] [20]. The morphological analysis of sperm remains a cornerstone in male fertility assessment, providing critical diagnostic and prognostic value for natural conception and assisted reproductive technologies (ART) [24] [25]. Traditional manual sperm morphology assessment, however, suffers from substantial limitations including subjectivity, extensive time requirements (30-45 minutes per sample), and significant inter-observer variability with reported disagreement rates reaching up to 40% among experts [2] [5].

The emergence of deep learning, particularly convolutional neural networks (CNNs), is transforming reproductive biology by introducing automated, standardized, and highly accurate analytical capabilities [24]. These artificial intelligence technologies demonstrate remarkable potential to exceed human expert performance in sperm classification tasks, offering improved reliability, throughput, and diagnostic consistency across laboratories [11] [25]. This paradigm shift addresses fundamental challenges in reproductive medicine while opening new avenues for precise male fertility assessment.

Deep Learning Architectures for Sperm Analysis

Convolutional Neural Network Fundamentals

CNNs represent a specialized class of deep neural networks particularly suited for processing structured grid data such as images [11]. Their architecture typically consists of multiple convolutional layers that automatically learn hierarchical feature representations directly from raw pixel data, followed by pooling layers for spatial invariance, and fully-connected layers for final classification [26] [5]. This endogenous feature learning capability eliminates the need for manual feature engineering, allowing CNNs to discern subtle morphological patterns often imperceptible to human observers [11].

Specialized Architectures and Performance

Recent research has investigated numerous CNN architectures optimized for sperm analysis, demonstrating exceptional classification performance across various morphological parameters:

Table 1: Performance of Deep Learning Models in Sperm Morphology Classification

| Architecture | Dataset | Classes | Performance | Reference |

|---|---|---|---|---|

| VGG16 (Transfer Learning) | HuSHeM | 5 WHO categories | 94.1% TPR | [11] |

| Custom CNN | SMD/MSS | 12 David classes | 55-92% Accuracy | [2] |

| CBAM-ResNet50 + DFE | SMIDS | 3-class | 96.08% Accuracy | [5] |

| CBAM-ResNet50 + DFE | HuSHeM | 4-class | 96.77% Accuracy | [5] |

| Sequential DNN | MHSMA | Head/Vacuole/Acrosome | 89-92% Accuracy | [26] |

| Specialized CNN | SCIAN | 5 WHO categories | 88% Recall | [25] |

The integration of attention mechanisms with traditional CNNs represents a significant advancement. The Convolutional Block Attention Module (CBAM) enhanced ResNet50 architecture sequentially applies channel-wise and spatial attention to feature maps, enabling the network to focus on diagnostically relevant sperm structures while suppressing irrelevant background information [5]. When combined with deep feature engineering pipelines incorporating multiple feature selection methods, this approach has achieved state-of-the-art performance with accuracy improvements of 8.08-10.41% over baseline CNN models [5].

Experimental Protocols for CNN-Based Sperm Morphology Classification

Dataset Curation and Preparation

Protocol 1: SMD/MSS Dataset Development [2]

Sample Collection and Preparation: Collect semen samples from patients with sperm concentration ≥5 million/mL. Prepare smears following WHO manual guidelines and stain with RAL Diagnostics staining kit.

Image Acquisition: Capture individual sperm images using MMC CASA system with bright field mode under oil immersion at 100x objective magnification. Ensure each image contains a single spermatozoon with clearly visible head, midpiece, and tail structures.

Expert Annotation and Ground Truth Establishment: Engage three independent experts with extensive semen analysis experience to classify each spermatozoon according to modified David classification (12 morphological classes). Resolve disagreements through consensus review.

Data Augmentation and Balancing: Apply transformation techniques including rotation, scaling, and flipping to address class imbalance. Expand original dataset from 1,000 to 6,035 images to enhance model generalizability.

Protocol 2: Deep Feature Engineering Pipeline [5]

Backbone Feature Extraction:

- Implement CBAM-enhanced ResNet50 architecture pre-trained on ImageNet

- Extract multi-level feature representations from CBAM, Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final layers

Feature Selection and Optimization:

- Apply multiple feature selection methods including Principal Component Analysis (PCA), Chi-square test, Random Forest importance, and variance thresholding

- Evaluate intersection combinations of selection methods to identify optimal feature subsets

Classification:

- Implement Support Vector Machines with RBF and Linear kernels

- Utilize k-Nearest Neighbors algorithms as complementary classifiers

- Apply 5-fold cross-validation for robust performance estimation

Model Training and Validation

Protocol 3: Transfer Learning Implementation [11]

Network Adaptation:

- Modify VGG16 architecture by replacing final fully-connected layers with task-specific classifiers

- Maintain pre-trained weights from ImageNet for initial feature detection capabilities

Progressive Fine-Tuning:

- Initially freeze early layers, training only replacement layers for 100 epochs

- Gradually unfreeze and fine-tune intermediate layers with reduced learning rates (0.0004)

- Employ Adam optimizer with default parameters from Keras Python package

Validation Strategy:

- Implement strict train-test splits (80-20%)

- Utilize ten-fold cross-validation to account for limited dataset sizes

- Apply early stopping with 15-epoch patience to prevent overfitting

Diagram 1: Sperm Morphology Analysis Workflow

Table 2: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Function/Application | Implementation Notes |

|---|---|---|---|

| Public Datasets | HuSHeM [11], SCIAN [25], SMD/MSS [2], MHSMA [26], VISEM [27] | Model training and benchmarking | Annotated by domain experts; Varied classification schemes (WHO/David) |

| Staining Reagents | RAL Diagnostics staining kit [2] | Sperm structural visualization | Follow WHO manual protocols for consistent results |

| Imaging Systems | MMC CASA System [2] | Digital image acquisition | 100x oil immersion objective; Bright field mode |

| CNN Architectures | VGG16 [11], ResNet50 [5], Custom CNN [2], Sequential DNN [26] | Feature extraction and classification | Transfer learning from ImageNet; Attention mechanisms (CBAM) |

| Data Augmentation | Rotation, scaling, flipping [2] | Dataset expansion and balancing | Address class imbalance; Improve model generalization |

| Programming Tools | Python 3.8 [2], Keras [16] | Algorithm implementation | Open-source libraries; GPU acceleration support |

Analytical Framework and Validation Methodologies

Performance Metrics and Statistical Validation

Rigorous validation constitutes an essential component of CNN implementation for sperm morphology classification. Established metrics include true positive rate (TPR), accuracy, recall, and mean absolute error (MAE) [11] [27]. Statistical significance testing, such as McNemar's test, validates performance improvements against baseline models and establishes clinical reliability [5].

Cross-validation strategies, particularly k-fold (k=5 or k=10) approaches, mitigate overfitting concerns with limited dataset sizes [16] [5]. The implementation of multiple agreement scenarios (no agreement, partial agreement, total agreement among experts) further strengthens validation frameworks by acknowledging the inherent subjectivity in morphological assessment [2].

Diagram 2: CNN Architecture with Feature Engineering

Clinical Implementation Considerations

The transition from experimental validation to clinical implementation necessitates addressing several practical considerations. Computational efficiency remains paramount, with processing times reduced from 30-45 minutes for manual assessment to under 1 minute per sample with optimized CNN implementations [5]. Real-time classification capabilities (approximately 25 milliseconds per sperm) enable comprehensive morphological analysis of sufficient sperm populations (200+ cells) as recommended by WHO guidelines [26].

Model interpretability, facilitated through Grad-CAM attention visualization, provides clinical transparency by highlighting the specific morphological features influencing classification decisions [5]. This explainability component enhances clinician trust and supports diagnostic verification, accelerating adoption within clinical workflows.

Deep learning approaches, particularly CNNs, are fundamentally transforming reproductive biology by addressing critical limitations in traditional sperm morphology assessment. The implementation of specialized architectures, comprehensive feature engineering pipelines, and rigorous validation frameworks has established new standards for accuracy, efficiency, and reproducibility in male fertility evaluation.

Future research directions include the development of multi-modal models integrating morphological, motile, and clinical parameters; expansion of classification schemes to encompass rare morphological variants; and standardization of validation protocols across institutions. As these technologies continue to mature, their integration into clinical practice promises to enhance diagnostic precision, personalize treatment strategies, and ultimately improve outcomes for couples facing infertility challenges.

Building the Model: CNN Architectures and Practical Implementation

The implementation of Convolutional Neural Networks (CNNs) for human sperm morphology classification research hinges on the quality and integrity of the digital image data fed into these models. The process of transforming a biological sample into a curated, analysis-ready digital dataset is critical, as the performance of any deep learning system is fundamentally bounded by its input data. This document details standardized protocols for acquiring and preparing microscopic images of human sperm, providing a foundational framework for building robust and reliable CNN-based classification systems within reproductive research and diagnostics.

Microscopy Acquisition Modalities

The choice of microscopy technique directly influences the type and quality of morphological information that can be extracted. The following modalities are particularly relevant for sperm analysis.

Conventional Optical Microscopy

Principle: This is the traditional method for semen analysis, often involving stained sperm smears examined under brightfield illumination. Staining (e.g., with hematoxylin and eosin) enhances the contrast of sperm structures, facilitating visual distinction between the head, midpiece, and tail [28].

Protocol for Stained Smear Preparation:

- Fixation: Place a drop of liquefied semen on a clean glass slide and allow it to air dry. Fix the smear by immersing it in 95% ethanol for 10 minutes. Air dry once more.

- Staining: Follow a standardized staining protocol, such as the Papanicolaou method or Quick-Diff stain, as recommended by the WHO laboratory manual.

- Mounting: Apply a coverslip using an appropriate mounting medium.

- Image Acquisition: Use a high-magnification oil immersion objective (e.g., 100x) on a brightfield microscope. Capture images from multiple, randomly selected fields to ensure a representative sample of the sperm population. Consistent Köhler illumination is crucial for uniform image quality.

Digital Holographic Microscopy (DHM)

Principle: DHM is a label-free, interferometric technique that quantifies the phase shift of light passing through a specimen. This allows for the reconstruction of three-dimensional topographic profiles of live, unstained spermatozoa [29].

Protocol for Live Sperm DHM Imaging:

- Sample Preparation: Use intact, live spermatozoa directly after semen liquefaction. Place a small droplet (~5-10 µL) of the sample on a microscope slide. For wet mounting, a coverslip can be applied.

- Acquisition: The DHM system records holograms via a CCD camera by interfering the laser beam that has passed through the sample with a reference beam.

- Reconstruction: Numerically back-propagate the recorded hologram to reconstruct the optical wavefront of the sperm cells. This process generates quantitative phase images.

- 3D Parameter Extraction: Extract novel 3D morphological parameters, such as head height (hh), acrosome/nucleus height (anh), and head/midpiece height (hmh), which have been shown to be less variable in sperm from fertile men [29].

Image-Based Flow Cytometry (IBFC)

Principle: IBFC combines the high-throughput capabilities of traditional flow cytometry with high-speed, single-cell imaging. It allows for the rapid collection of thousands of individual sperm images, which is ideal for building large-scale datasets for deep learning [28].

Protocol for Sperm Imaging via IBFC:

- Sample Fixation: Fix sperm samples in 2% formaldehyde for 40 minutes at room temperature. Wash and resuspend in phosphate-buffered saline (PBS) for analysis [28].

- Instrument Configuration: Use an instrument such as the ImageStreamX Mark II, which can be fitted with 20x, 40x, and 60x objective lenses.

- Acquisition: Hydrodynamically focus the sperm suspension to pass cells single-file past the objective. Trigger the camera to capture a brightfield image of each individual spermatozoon. Magnifications of 60x are recommended for high-fidelity morphological classification [28].

Table 1: Comparison of Microscopy Modalities for Sperm Image Acquisition

| Modality | Sample State | Key Advantages | Key Limitations | Suitability for CNN |

|---|---|---|---|---|

| Conventional (Stained) | Fixed & Stained | High contrast, standardized protocols, familiar to clinics | Staining artefacts, 2D only, destructive process | High, but may not reflect live-state morphology |

| Digital Holographic (DHM) | Live & Unstained | Label-free, provides 3D parameters, non-invasive | Specialized equipment, complex data reconstruction | High for novel 3D feature extraction |

| Image-Based Flow Cytometry | Fixed or Live | Very high throughput, single-cell images, scalable | Lower resolution per image compared to microscopy, cost | Excellent for building large training datasets |

From Raw Images to Analysis-Ready Data

Once acquired, raw images must undergo a series of preprocessing and annotation steps to be usable for supervised CNN training.

Image Preprocessing

The goal of preprocessing is to standardize images and enhance relevant features.

- Contrast Enhancement: Apply techniques like histogram equalization to improve the distinction between sperm structures and the background, which is especially critical for unstained images with low signal-to-noise ratios [30].

- Denoising: Use algorithms or self-supervised deep learning models (e.g., Noise2Void) to reduce noise without altering the underlying morphological structures [31].

- Normalization: Scale pixel intensity values to a standard range (e.g., 0 to 1) to ensure consistent input for the CNN and stabilize the training process.

Annotation and Ground Truth Labeling

For supervised learning, every image in the training set requires accurate annotation. This is a critical and time-consuming step.

- Multi-Part Segmentation: The most detailed annotation involves pixel-level segmentation of each sperm component. As per recent research, advanced models like Mask R-CNN, YOLOv8, and U-Net are used to segment the head, acrosome, nucleus, neck, and tail [30]. Annotators use software to manually outline these regions, creating "ground truth" masks.

- Whole-Cell Classification: For broader categorization, entire sperm cells are labeled according to WHO morphology classes (e.g., "normal", "head defect", "coiled tail") or specific research criteria [32] [33].

- Annotation Tools: Utilize specialized software that supports instance segmentation, such as those integrated in ZeroCostDL4Mic, or other bioimage analysis platforms [31].

Data Augmentation

Data augmentation artificially expands the size and diversity of the training dataset by applying random, realistic transformations to the original images. This technique is vital for improving model robustness and reducing overfitting.

- Common Techniques: Include random rotations, flipping (horizontal/vertical), zooming, shearing, and adjusting brightness/contrast.

- Implementation: This can be performed on-the-fly during CNN training using built-in functions in deep learning frameworks (e.g., Keras

ImageDataGenerator) or using Python packages like Augmentor or imgaug, which are integrated into platforms like ZeroCostDL4Mic [31].

Experimental Workflow for Data Acquisition and Model Training

The following diagram summarizes the integrated workflow from sample preparation to CNN model evaluation.

Table 2: Key Research Reagent Solutions and Computational Tools

| Category | Item / Tool | Function / Application |

|---|---|---|

| Wet Lab Reagents | Formaldehyde (2%) | Fixation of sperm for IBFC or stained smears to preserve morphology [28]. |

| Papanicolaou Stain | Standardized staining solution for enhancing contrast of sperm structures in brightfield microscopy. | |

| Phosphate-Buffered Saline (PBS) | Washing and suspension medium for sperm samples. | |

| Percoll Gradient | Density gradient medium for selecting morphologically normal spermatozoa for specific studies [29]. | |

| Computational Tools | ZeroCostDL4Mic | A cloud-based platform (Google Colab) providing free-access Jupyter Notebooks for training DL models (U-Net, YOLO, CARE) without coding expertise [31]. |

| Mask R-CNN / YOLOv8 / U-Net | Deep learning models for instance segmentation (Mask R-CNN, YOLO) and semantic segmentation (U-Net) of sperm components [30]. | |

| ResNet-50 | A deep CNN architecture used for classification tasks, such as assigning sperm motility categories from video data [16]. | |

| Augmentor / imgaug | Python packages for implementing data augmentation to increase the effective size and diversity of training datasets [31]. |

Quantitative Performance Metrics for Data Quality and Model Evaluation

Establishing quantitative metrics is essential for evaluating both the quality of the annotations and the performance of the trained CNN model.

Table 3: Key Quantitative Metrics for Segmentation and Classification

| Metric | Definition | Interpretation and Target Value | ||||||

|---|---|---|---|---|---|---|---|---|

| Intersection over Union (IoU) | Area of Overlap / Area of Union between predicted and ground truth mask. | Measures segmentation accuracy. A score of 0.8 or higher is generally considered reliable [34]. For sperm nuclei, advanced models can achieve ~0.97 [30]. | ||||||

| Dice Coefficient (F1 Score) | 2 × ( | Prediction ∩ Truth | ) / ( | Prediction | + | Truth | ) | Similar to IoU, it quantifies the overlap between segmentation masks. Values closer to 1.0 indicate better performance. |

| Precision | True Positives / (True Positives + False Positives) | Measures the reliability of positive predictions. High precision means fewer false alarms. | ||||||

| Recall | True Positives / (True Positives + False Negatives) | Measures the ability to find all relevant positive cases. High recall means fewer missed detections. | ||||||

| Mean Absolute Error (MAE) | Average absolute difference between predicted and actual values. | Used in regression or motility classification. A lower MAE is better. For 3-category motility classification, MAE can be as low as 0.05 [16]. |

Pre-processing and Augmentation Techniques to Enhance Model Robustness

The manual assessment of sperm morphology is a cornerstone of male fertility diagnosis but remains highly subjective, prone to significant inter-observer variability, and challenging to standardize across laboratories [2] [9]. Convolutional Neural Networks (CNNs) offer a promising path toward the automation, standardization, and acceleration of this analysis [2] [24]. However, the performance and robustness of these deep learning models are critically dependent on the quality and quantity of the training data. This document provides detailed Application Notes and Protocols for the essential pre-processing and data augmentation techniques required to build reliable CNN models for human sperm morphology classification, directly supporting the broader objectives of thesis research in this field.

Core Techniques and Their Impact

Effective data preparation involves a pipeline of techniques designed to clean the data, expand its diversity, and ultimately teach the model to focus on biologically relevant features while ignoring irrelevant noise. The following table summarizes the quantitative impact of these techniques as reported in recent literature.

Table 1: Impact of Pre-processing and Augmentation Techniques on Model Performance

| Technique Category | Specific Method | Reported Outcome/Performance | Source/Context |

|---|---|---|---|

| Dataset Augmentation | Multiple techniques (e.g., geometric, noise) | Expanded dataset from 1,000 to 6,035 images; Model accuracy 55-92% | SMD/MSS Dataset Development [2] |

| Deep Feature Engineering | CBAM + ResNet50 + PCA + SVM RBF | Accuracy of 96.08% on SMIDS dataset; ~8% improvement over baseline CNN | Sperm Classification with Feature Engineering [5] |

| Deep Feature Engineering | CBAM + ResNet50 + PCA + SVM RBF | Accuracy of 96.77% on HuSHeM dataset; ~10.4% improvement over baseline CNN | Sperm Classification with Feature Engineering [5] |

| Object Detection | YOLOv7 on bovine sperm | Global mAP@50: 0.73; Precision: 0.75; Recall: 0.71 | Veterinary Reproduction Study [35] [10] |

Detailed Experimental Protocols

Protocol 1: Image Pre-processing for Sperm Morphology Analysis

This protocol outlines the essential steps for preparing raw sperm images for CNN model training, aiming to reduce noise and standardize input data [2].

3.1.1 Materials and Equipment

- Source Images: Raw sperm images acquired via CASA system or bright-field microscope [2].

- Analysis Software: Python 3.8 with libraries (e.g., OpenCV, SciKit-Image, TensorFlow/PyTorch) [2] [5].

3.1.2 Step-by-Step Procedure

- Data Cleaning: Identify and handle missing values, outliers, or any inconsistencies in the dataset. Cleaning the data ensures the model is not influenced by noise or inaccuracies that hinder performance [2].

- Grayscale Conversion: Convert all input images to a single-channel (grayscale) format to reduce computational complexity and focus on morphological structures rather than potential staining color variations [2].

- Resizing: Resize all images to a consistent dimensions using a linear interpolation strategy. A common size used in research is 80x80 pixels [2]. This standardization is required for batch processing in CNN.

- Normalization: Normalize pixel intensity values to a common scale, typically [0, 1], by dividing all values by the maximum possible value (e.g., 255). This ensures no particular feature dominates the learning process due to differences in magnitude and improves numerical stability during training [2] [36].

Protocol 2: Data Augmentation for Enhanced Generalization

This protocol describes methods to artificially expand the training dataset, which is crucial for improving model robustness and mitigating overfitting, especially given the common challenge of limited and class-imbalanced medical datasets [2] [9].

3.2.1 Materials and Equipment

- Pre-processed Images: The standardized images output from Protocol 1.

- Software: Python with deep learning frameworks (e.g., TensorFlow/Keras, PyTorch) that include built-in image transformation functions.

3.2.2 Step-by-Step Procedure Apply a series of geometric and pixel-wise transformations to generate new training samples from the existing dataset. The following transformations are recommended:

- Geometric Transformations:

- Rotation: Randomly rotate images by a defined range of angles (e.g., ±15°).

- Flipping: Randomly flip images horizontally and/or vertically.

- Shearing and Zooming: Apply slight shearing and zoom transformations to simulate different perspectives.

- Pixel-wise Transformations:

3.2.3 Implementation Note These transformations can be applied in real-time during training (on-the-fly augmentation) or as a pre-processing step to create a larger, static dataset. The parameters for each transformation should be chosen to create plausible sperm images without distorting critical morphological features.

The workflow below illustrates the sequential steps of a robust data preparation pipeline for sperm morphology classification.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Sperm Morphology Analysis Experiments

| Item Name | Function/Application | Example/Specification |

|---|---|---|

| CASA System | Automated image acquisition and initial morphometric analysis of sperm cells. | MMC CASA system [2] |

| Optical Microscope | High-resolution imaging of sperm smears. | Microscope with oil immersion 100x objective in bright-field mode [2] |

| Staining Kit | Enhances contrast for visual and computational analysis of sperm structures. | RAL Diagnostics staining kit [2] |

| Annotation Software | For labeling images and creating ground truth data for model training. | Software like Roboflow [35] |

| Deep Learning Framework | Provides the programming environment to build, train, and test CNN models. | Python 3.8 with TensorFlow/PyTorch [2] [5] |

Advanced Integrated Framework

For researchers aiming to achieve state-of-the-art performance, combining advanced architectural components with pre-processing and augmentation yields significant benefits. The following workflow integrates an attention mechanism and feature engineering into a high-accuracy classification system.

Procedure for Advanced Framework Implementation:

- Model Architecture Setup: Employ a pre-trained CNN (e.g., ResNet50) as a feature extraction backbone. Integrate a Convolutional Block Attention Module (CBAM) that sequentially applies channel and spatial attention to intermediate feature maps. This mechanism enables the network to focus on the most relevant sperm features (e.g., head shape, acrosome, tail) while suppressing background or noise [5].

- Deep Feature Extraction & Selection: Instead of using the CNN for direct end-to-end classification, extract high-dimensional feature representations from the network's intermediate layers. Subsequently, apply classical feature selection and dimensionality reduction techniques, such as Principal Component Analysis (PCA), to this feature set. This process reduces noise and creates a more compact and discriminative feature vector for classification [5].

- Hybrid Classification: Feed the optimized feature vector into a shallow classifier, such as a Support Vector Machine (SVM) with an RBF kernel. This hybrid approach (CNN + feature engineering + SVM) has been demonstrated to yield superior performance compared to standalone CNNs, achieving accuracy levels above 96% on benchmark datasets [5].

The implementation of Convolutional Neural Networks (CNNs) for human sperm morphology classification represents a paradigm shift in male fertility assessment. Traditional manual analysis is highly subjective, time-intensive (taking 30–45 minutes per sample), and suffers from significant inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators [5] [37]. Automated CNN-based systems address these limitations by providing objective, standardized assessments that can reduce analysis time to under one minute per sample while improving diagnostic consistency across laboratories [5] [37]. These systems are particularly valuable in clinical settings where subtle morphological differences in sperm head shape, acrosome integrity, and tail structure must be consistently identified according to World Health Organization (WHO) criteria [20] [11].

The evolution of CNN architectures for this specialized domain has progressed from using pre-trained networks as feature extractors to developing sophisticated custom hybrids that integrate attention mechanisms and ensemble strategies. ResNet50 has emerged as a particularly effective backbone architecture due to its residual learning framework, which mitigates vanishing gradient problems in deep networks and enables effective training even with limited medical imaging data [5]. More recently, researchers have enhanced ResNet50 with Convolutional Block Attention Modules (CBAM) to help networks focus on morphologically discriminative sperm regions while suppressing irrelevant background information [5] [37]. Simultaneously, ensemble approaches combining multiple EfficientNetV2 variants have demonstrated robust performance across diverse abnormality classes by leveraging complementary feature representations [32].

Landscape of CNN Architectures: Performance Comparison

Table 1: Quantitative Performance Comparison of CNN Architectures for Sperm Morphology Classification

| Architecture | Key Features | Dataset | Classes | Performance |

|---|---|---|---|---|

| CBAM-Enhanced ResNet50 + Deep Feature Engineering | Attention mechanism + PCA + SVM classifier | SMIDS | 3 | 96.08% ± 1.2% accuracy [5] |

| HuSHeM | 4 | 96.77% ± 0.8% accuracy [5] [37] | ||

| Multi-Level Ensemble (EfficientNetV2) | Feature-level & decision-level fusion | Hi-LabSpermMorpho | 18 | 67.70% accuracy [32] |

| VGG16 with Transfer Learning | Fine-tuning pre-trained weights | HuSHeM | 5 | 94.1% true positive rate [11] |

| SCIAN | 5 | 62% true positive rate [11] | ||

| Custom CNN | Five convolutional layers | SMD/MSS | 12 | 55-92% accuracy range [2] |

Table 2: Technical Specifications of Featured CNN Architectures

| Architecture | Feature Extraction Method | Classifier | Attention Mechanism | Data Augmentation |

|---|---|---|---|---|

| CBAM-Enhanced ResNet50 | Multiple layers (CBAM, GAP, GMP, pre-final) | SVM with RBF/Linear kernels | CBAM (Channel & Spatial) | Not specified [5] |

| Multi-Level Ensemble | Multiple EfficientNetV2 variants | SVM, Random Forest, MLP-Attention | MLP-Attention | Yes (dataset specific) [32] |

| VGG16 Transfer Learning | Pre-trained on ImageNet | Fine-tuned fully connected layers | None | Not specified [11] |

| Custom CNN | Five convolutional layers | Fully connected layers | None | Yes (6035 images from 1000 originals) [2] |

Experimental Protocols for CNN Implementation

Protocol 1: CBAM-Enhanced ResNet50 with Deep Feature Engineering

Purpose: To implement an attention-based deep learning framework combining ResNet50 with comprehensive feature engineering for superior sperm morphology classification [5] [37].

Materials and Reagents:

- SMIDS dataset (3000 images, 3-class) or HuSHeM dataset (216 images, 4-class)

- Python 3.x with TensorFlow/PyTorch frameworks

- Hardware: GPU-enabled computing environment

- Stained semen smears following WHO guidelines [20]

Procedure:

- Backbone Architecture Preparation:

- Implement ResNet50 architecture as feature extraction backbone

- Integrate Convolutional Block Attention Module (CBAM) sequentially after residual blocks

- Configure CBAM to perform channel attention first, followed by spatial attention

Multi-Layer Feature Extraction:

- Extract deep features from four strategic layers: CBAM attention layers, Global Average Pooling (GAP), Global Max Pooling (GMP), and pre-final layer

- Concatenate features from multiple layers to capture both spatial and semantic information

Feature Selection and Dimensionality Reduction:

- Apply Principal Component Analysis (PCA) to reduce feature dimensionality while preserving discriminative information

- Evaluate alternative feature selection methods including Chi-square test, Random Forest importance, and variance thresholding

- Select optimal feature subset based on classification performance

Classifier Training and Evaluation:

- Implement Support Vector Machine (SVM) classifier with RBF and linear kernels

- Train classifier on reduced feature set using 5-fold cross-validation

- Evaluate performance using accuracy, precision, recall, and F1-score metrics

- Generate Grad-CAM visualizations to interpret model focus areas

Validation: Perform statistical significance testing using McNemar's test (p < 0.05) to compare against baseline CNN performance [5] [37].

Protocol 2: Multi-Level Ensemble Learning with EfficientNetV2

Purpose: To develop an ensemble framework combining multiple CNN architectures through feature-level and decision-level fusion for comprehensive sperm morphology classification across 18 distinct morphological classes [32].

Materials and Reagents:

- Hi-LabSpermMorpho dataset (18,456 images across 18 classes)

- Multiple EfficientNetV2 variants (S, M, L)

- Support Vector Machines (SVM), Random Forest, and MLP-Attention classifiers

- Data augmentation pipeline for class imbalance mitigation

Procedure:

- Multi-Model Feature Extraction:

- Implement multiple EfficientNetV2 variants (S, M, L) as parallel feature extractors

- Extract deep features from the penultimate layer of each network

- Apply dimensionality reduction using dense-layer transformations

Feature-Level Fusion:

- Concatenate features from all EfficientNetV2 variants into a unified feature representation

- Normalize fused features to ensure balanced contribution from each network

- Apply feature selection techniques to reduce dimensionality while preserving discriminative power

Classifier Implementation and Decision-Level Fusion:

- Train multiple classifier types (SVM, Random Forest, MLP-Attention) on fused features

- Implement soft voting mechanism for decision-level fusion

- Optimize fusion weights based on individual classifier performance

- Apply weighted averaging of class probabilities for final prediction

Class Imbalance Mitigation:

- Analyze performance across minority and majority classes

- Implement data augmentation strategies specific to underrepresented classes

- Adjust classification thresholds or loss functions to address imbalance

Validation: Evaluate framework using stratified k-fold cross-validation, with particular attention to performance consistency across all 18 morphological classes [32].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for CNN-Based Sperm Morphology Analysis

| Item | Specification | Function/Application |

|---|---|---|

| Benchmark Datasets | HuSHeM (216 images), SCIAN, SMIDS (3000 images), Hi-LabSpermMorpho (18,456 images) | Model training, validation, and benchmarking [32] [5] [11] |

| Data Augmentation Tools | Python libraries (TensorFlow, PyTorch, OpenCV) | Address class imbalance, expand training data, improve generalization [2] |

| Attention Mechanisms | Convolutional Block Attention Module (CBAM) | Enhance focus on discriminative morphological features [5] [37] |

| Feature Selection Methods | PCA, Chi-square test, Random Forest importance, variance thresholding | Dimensionality reduction, noise suppression, performance optimization [5] |

| Classification Algorithms | SVM (RBF/Linear), Random Forest, MLP-Attention | Final morphology classification using deep features [32] [5] |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, McNemar's test | Performance assessment and statistical validation [32] [5] |

Architectural Decision Framework and Clinical Implementation

The selection of appropriate CNN architecture for sperm morphology classification depends on multiple factors including dataset characteristics, computational resources, and clinical requirements. For laboratories with limited data (200-1000 images), transfer learning with VGG16 or ResNet50 provides robust performance without extensive training data requirements [11]. When classifying a broad spectrum of morphological abnormalities (10+ classes), ensemble approaches with EfficientNetV2 variants offer superior performance through complementary feature representation, though at increased computational cost [32]. For maximum classification accuracy on well-defined morphological categories, CBAM-enhanced ResNet50 with deep feature engineering currently represents the state-of-the-art, achieving up to 96.77% accuracy on benchmark datasets [5] [37].

Clinical implementation requires careful consideration of interpretability needs alongside raw performance. Attention mechanisms like CBAM not only improve accuracy but generate Grad-CAM visualizations that help clinicians understand model decisions and build trust in automated systems [5]. Furthermore, the significant time reduction from 30-45 minutes to under 1 minute per sample represents a substantial efficiency gain for clinical workflows, potentially increasing laboratory throughput and standardizing diagnostic criteria across institutions [5] [37]. As these technologies mature, integration with existing laboratory information systems and validation against clinical outcomes (pregnancy success rates) will be essential for widespread adoption in reproductive medicine.

The implementation of Convolutional Neural Networks (CNNs) for human sperm morphology classification presents a significant paradigm shift in male fertility diagnostics. This critical analysis within the broader thesis research context compares two fundamental training approaches: transfer learning, which leverages pre-existing knowledge from pre-trained models, and training from scratch, which builds models exclusively on target domain data. The morphological classification of human sperm is a well-established indicator of biological function and male fertility, yet manual assessment remains laborious, time-consuming, and subject to inter-observer variability [38] [25]. Deep learning approaches offer the potential to automate, standardize, and accelerate this analysis, with the choice of training strategy profoundly impacting model performance, computational efficiency, and practical applicability in clinical and research settings [2].

Comparative Analysis of Training Strategies

Theoretical Foundations and Performance Characteristics

Transfer Learning utilizes knowledge gained from solving a source problem (S) to improve learning efficiency and effectiveness on a target problem (T), where the domains or tasks may differ [39]. In practical implementation, this typically involves pre-training a model on a large, general dataset (e.g., ImageNet) followed by fine-tuning on the specific target task with a smaller dataset [38] [40]. This approach is particularly valuable in medical imaging domains where annotated data is scarce and expert labeling is costly.