Inter-Algorithm Agreement in Sperm Morphology Assessment: Resolving Variability with AI and Standardized Methods

Sperm morphology assessment is a critical yet highly variable component of male fertility evaluation, with significant implications for clinical decision-making in assisted reproductive technologies.

Inter-Algorithm Agreement in Sperm Morphology Assessment: Resolving Variability with AI and Standardized Methods

Abstract

Sperm morphology assessment is a critical yet highly variable component of male fertility evaluation, with significant implications for clinical decision-making in assisted reproductive technologies. This article explores the current landscape of inter-algorithm agreement across conventional semen analysis, computer-assisted systems, and emerging artificial intelligence models. Through examination of methodological approaches, troubleshooting strategies, and validation frameworks, we synthesize evidence from recent studies demonstrating how deep learning algorithms achieve superior correlation with expert assessment (r=0.88) compared to conventional methods. For researchers and drug development professionals, this review provides a comprehensive analysis of technological advancements that enhance reproducibility while addressing persistent challenges in dataset standardization, algorithm validation, and clinical implementation.

The Fundamental Challenge: Understanding Variability in Sperm Morphology Assessment

The Clinical Significance of Sperm Morphology in Male Infertility Diagnostics

Sperm morphology, which describes the size, shape, and structural integrity of spermatozoa, represents one of the fundamental parameters assessed during male infertility diagnostics. Its clinical significance, however, has been subject to considerable debate and evolution in interpretation over recent decades. Historically, the percentage of normally formed spermatozoa served as a key prognostic indicator for natural conception and success rates in assisted reproductive technologies (ART). Contemporary evidence, particularly from the 2025 French BLEFCO Group guidelines, now challenges this practice, indicating that the prognostic value of the percentage of normal forms for selecting ART procedures (IUI, IVF, or ICSI) is limited [1]. This paradigm shift underscores a critical transition in andrology: from utilizing morphology as a simple quantitative metric to understanding its role within a more nuanced diagnostic framework that emphasizes the detection of specific, severe morphological syndromes.

This comparison guide objectively evaluates the primary methodologies employed in sperm morphology assessment, with a specific focus on inter-algorithm agreement between conventional manual techniques and emerging computational approaches. The consistent variability observed across all assessment modalities highlights the complex challenge of standardizing morphological evaluation in both clinical and research settings. Understanding the capabilities, limitations, and concordance of these diverse assessment strategies is paramount for researchers, scientists, and drug development professionals working to advance male infertility diagnostics and develop novel therapeutic interventions.

Comparative Analysis of Sperm Morphology Assessment Methodologies

The evaluation of sperm morphology rests on a continuum of methodologies, ranging from subjective visual analysis to fully automated artificial intelligence (AI) systems. The following section provides a structured comparison of these approaches, detailing their core principles, performance characteristics, and experimental protocols.

Table 1: Comparison of Sperm Morphology Assessment Methodologies

| Methodology | Core Principle | Reported Accuracy/ Variability | Key Advantages | Inherent Limitations |

|---|---|---|---|---|

| Manual Microscopy (WHO Standard) | Visual assessment by trained morphologists using strict criteria [2]. | High inter-observer variability; Untrained novices: 53-81% accuracy (vs. expert consensus); Trained novices: 90-98% accuracy [3]. | Low initial cost; Direct implementation per WHO guidelines. | Subjectivity; High workload (>200 sperm/analysis); Classification drift over time [4]. |

| Conventional Machine Learning (ML) | Automated classification using handcrafted features (e.g., shape, texture) with classifiers like SVM [5]. | SVM classification accuracy: 49-90% [5]; Highly dependent on feature engineering and dataset quality. | Reduces subjective bias; Faster than manual analysis. | Limited to pre-defined features; Struggles with complex or overlapping sperm structures; Poor generalizability. |

| Deep Learning (DL) | End-to-end automated classification using complex neural networks to learn features directly from images [6] [7]. | Outperforms conventional ML; High accuracy in segmenting head, midpiece, and tail [5]. | Superior accuracy and objectivity; High-throughput analysis; Detects subtle, predictive patterns. | "Black-box" nature; Requires very large, high-quality annotated datasets for training [7] [5]. |

| Expert Consensus Training Tool | Trains morphologists using "ground-truth" datasets validated by multiple experts [3]. | Final test accuracy: 90% (25-category) to 98% (2-category) [3]. | Standardizes human assessment; Significantly reduces inter-observer variation. | Does not fully eliminate subjectivity; Requires access to validated datasets and training protocols. |

Experimental Protocols for Key Methodologies

Protocol for Manual Morphology Assessment (WHO Guidelines)

The manual assessment protocol remains the foundational method against which new technologies are benchmarked.

- Sample Preparation: Semen samples are collected after 2-7 days of abstinence. Smears are prepared on glass slides, air-dried, and stained (e.g., Diff-Quik, Papanicolaou) for clear visualization of sperm structures [2].

- Assessment Procedure: A systematic examination of at least 200 individual spermatozoa is performed under 1000x oil immersion magnification. Each sperm is categorized as "normal" or "abnormal" based on strict Tygerberg criteria, which define precise dimensions and shapes for a normal head, acrosome, midpiece, and tail [2] [8].

- Data Interpretation: The result is expressed as the percentage of spermatozoa with normal morphology. Abnormal forms may be sub-classified by defect location (head, midpiece, tail) [2]. Current guidelines, however, recommend against relying solely on this percentage for ART prognosis [1].

Protocol for AI-Based Assessment (Deep Learning)

AI-based assessment represents the cutting edge of automated, objective analysis.

- Data Acquisition and Curation: A large dataset of sperm images is compiled. High-quality, expert-annotated datasets are crucial, such as the SVIA dataset, which contains over 125,000 annotated instances for object detection and 26,000 segmentation masks [5].

- Model Training: A deep learning model, typically a convolutional neural network (CNN), is trained on the annotated dataset. The model learns to identify and segment sperm components (head, neck, tail) and classify morphological abnormalities directly from the pixel data [6] [5].

- Validation and Testing: The trained model's performance is validated against a separate set of images not used in training. Metrics such as accuracy, precision, recall, and the Dice coefficient (for segmentation overlap) are used to quantify performance against expert consensus or manual results [7] [5].

Inter-Algorithm Agreement and Clinical Implications

A central thesis in modern andrology research is the investigation of inter-algorithm agreement—the consistency of results between different methods and observers. The data reveals significant variability not only between manual and AI assessments but also among human morphologists themselves. This lack of standardization has direct clinical consequences.

- Classification Drift: A historical study comparing IUI outcomes between two eras found that the average sperm morphology scores decreased significantly over a decade (from 37% to 23% using WHO 3rd criteria), while pregnancy rates for couples with "poor" morphology improved. This suggests a drift in classification standards over time, leading to an over-diagnosis of teratozoospermia and a loss of the parameter's predictive value [4].

- The "Black Box" Challenge: While AI systems offer superior consistency, the "black-box" nature of complex DL models can be a barrier to clinical adoption. Understanding why a model classifies a sperm as abnormal is as important as the classification itself for gaining clinician trust and providing diagnostic insights [7].

- Shifting Clinical Utility: The 2025 French BLEFCO guidelines reflect an evolution in clinical thinking. The primary value of morphology assessment is no longer the percentage of normal forms but the detection of specific monomorphic syndromes, such as globozoospermia (round-headed sperm without acrosomes) or macrocephalic spermatozoa syndrome. These severe and consistent abnormalities have clear implications for fertilization failure and dictate specific ART treatment pathways (e.g., requiring ICSI) [1].

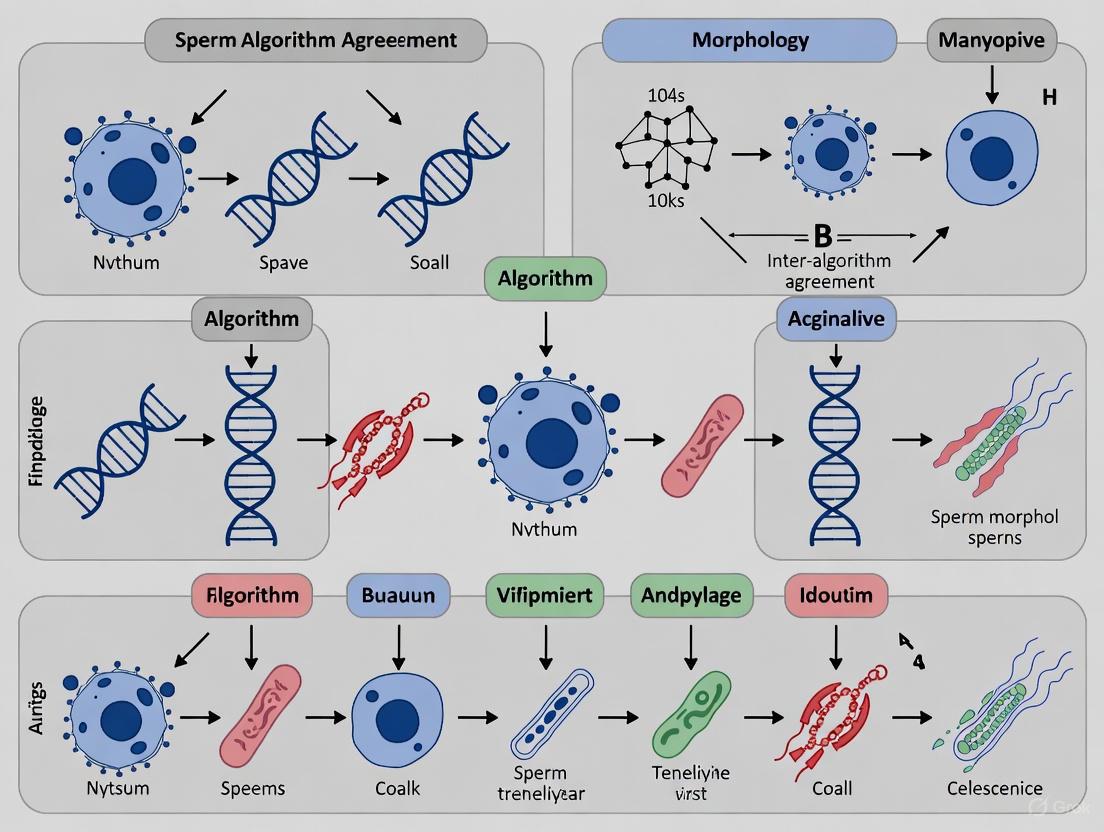

The diagram below illustrates the logical relationships and workflow between the different assessment methodologies and the overarching clinical goal of standardizing diagnosis.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful research in sperm morphology assessment depends on a suite of reliable reagents and technologies. The following table details key solutions and their functions in experimental workflows.

Table 2: Key Research Reagent Solutions for Sperm Morphology Assessment

| Item Name | Function/Application | Key Characteristics |

|---|---|---|

| Staining Kits (e.g., Diff-Quik, Papanicolaou) | Cytological staining of sperm smears for manual microscopy. | Provides contrast for visualizing sperm head acrosome, nucleus, midpiece, and tail defects [2]. |

| Validated "Ground-Truth" Image Datasets (e.g., SVIA, MHSMA) | Training and validation of AI/ML models for automated sperm analysis. | Contain thousands of sperm images with expert annotations for classification and segmentation tasks [5]. |

| Standardized Morphology Training Tools | Training and re-training of human morphologists to reduce inter-observer variability. | Utilizes expert-consensus-labeled images to train novices via supervised learning principles, improving accuracy to >90% [3]. |

| Computer-Aided Sperm Analysis (CASA) Systems | Automated, high-throughput analysis of sperm concentration, motility, and with advanced modules, morphology. | Integrates with AI algorithms for objective assessment; requires qualification and validation within each laboratory [1] [7]. |

| Sperm DNA Fragmentation (SDF) Assays (e.g., TUNEL, SCD) | Extended examination of sperm nuclear integrity, a key parameter beyond basic morphology. | Quantifies DNA damage, which is increasingly recognized as a critical factor in male infertility and ART outcomes [2]. |

The clinical significance of sperm morphology is undergoing a critical redefinition. The field is moving away from reliance on the percentage of normal forms as a standalone prognostic tool and toward a more sophisticated diagnostic approach. This new paradigm prioritizes the identification of severe monomorphic abnormalities and leverages advanced AI technologies to overcome the long-standing challenges of subjectivity and variability inherent in manual assessment.

Future research must focus on several key areas to solidify this transition. There is a pressing need for large-scale, multi-center studies to clinically validate AI models and establish standardized performance benchmarks. Furthermore, developing explainable AI that can provide diagnostically meaningful insights, not just classifications, will be crucial for bridging the gap between computational output and clinical decision-making. Finally, integrating morphology data with other advanced parameters, such as DNA fragmentation and genetic and epigenetic markers, will pave the way for a truly comprehensive and personalized diagnostic framework for male infertility. For researchers and drug developers, this evolving landscape presents significant opportunities to create novel, standardized tools and therapies that directly address the identified limitations and harness the power of computational biology.

Sperm morphology assessment is a cornerstone of male fertility evaluation, providing critical diagnostic and prognostic information for researchers and clinicians. However, its utility is fundamentally challenged by multiple sources of variability that can compromise result reliability and inter-laboratory comparability. The World Health Organization (WHO) has continually refined its laboratory manual to standardize semen analysis procedures, with the 6th edition emphasizing the importance of robust methodology for fertility diagnosis, assessment of male reproductive health, and guiding assisted reproductive technology choices [9]. Despite these efforts, significant variability persists across three primary domains: staining techniques, classification criteria, and technician subjectivity. This methodological comparison guide examines how these variables influence sperm morphology assessment outcomes, synthesizing experimental data to illuminate their individual and collective impacts on diagnostic accuracy. Understanding these sources of variability is particularly crucial within the emerging research context of inter-algorithm agreement, where consistent input data is essential for validating computational approaches to sperm morphology analysis.

Comparative Analysis of Staining Techniques

Methodological Principles and Protocol Variations

The choice of staining technique directly influences cellular visualization, which subsequently affects morphological classification. Different stains provide varying levels of contrast and definition for specific sperm components, leading to systematic differences in abnormality detection rates.

Diff-Quick Protocol: This rapid, three-step method involves air-drying slides followed by sequential immersion in fixative (0.1% triarylmethane solution for 5 seconds), solution I (0.1% xanthenes solution for 5 seconds), and solution II (0.1% thiazines solution for 5 seconds), concluding with a distilled water rinse and air-drying [10]. Originally developed for hematological examinations, it has been adapted for sperm morphology assessment due to its simplicity and speed.

Spermac Protocol: This specialized spermatological stain provides enhanced structural differentiation through a more complex procedure. Slides are fixed in formaldehyde solution for 5 minutes, then sequentially stained in three solutions: Solution A (containing rose Bengal and neutral red), Solution B (containing pyronin Y, orange G, and phosphomolybdic acid), and Solution C (containing janus green and fast green FCF), with each step lasting 1 minute and interspersed with distilled water washes [10]. The multi-color approach offers superior compartmental differentiation.

Experimental Data: Quantitative Comparison of Staining Efficacy

A 2023 study directly compared these staining methods using semen samples from fifty men, with morphological parameters classified based on Tygerberg criteria and statistical analysis performed using paired t-tests or Wilcoxon rank-sum tests [10]. The findings demonstrate significant staining-dependent variations:

Table 1: Comparison of Sperm Morphology Assessment Between Diff-Quick and Spermac Staining Methods

| Parameter | Diff-Quick Stain (%) | Spermac Stain (%) | p-value |

|---|---|---|---|

| Normal Morphology | 3.98 ± 0.41 | 2.8 ± 0.33 | 0.0385 |

| Head Defects | 93.42 ± 0.66 | 94.24 ± 0.61 | 0.3665 |

| Midpiece Defects | 24.82 ± 2.05 | 55.74 ± 2.06 | <0.0001 |

| Tail Defects | 16.6 ± 1.34 | 14.84 ± 1.39 | 0.3032 |

Data presented as mean ± SEM (Standard Error of the Mean) [10].

The experimental data reveals that Spermac staining detected significantly fewer normal spermatozoa (2.8% vs. 3.98%, p=0.0385) and more than double the rate of midpiece abnormalities (55.74% vs. 24.82%, p<0.0001) compared to Diff-Quick [10]. This discrepancy stems from Spermac's superior visualization of the midpiece, providing clearer demarcation of its boundaries and enabling more accurate identification of structural abnormalities in this region. Conversely, Diff-Quick's limited capacity to delineate midpiece thickness likely resulted in underestimation of defects, contributing to its higher normal morphology percentage [10].

Classification Systems: Evolving Criteria and Diagnostic Implications

WHO Criteria Versus Strict (Tygerberg) Criteria

Sperm morphology classification has evolved significantly, with two primary systems employed in clinical and research settings:

WHO Criteria: The traditional WHO classification system defines a morphologically normal spermatozoon as having an oval head with a well-defined acrosome covering 40-70% of the head area, no neck/midpiece or tail abnormalities, and no cytoplasmic droplets larger than 50% of the sperm head [11]. This system historically used a threshold of ≥30% normal forms for normozoospermia diagnosis.

Strict (Tygerberg) Criteria: Developed to enhance objectivity and reduce variability, the strict criteria impose more stringent parameters: head length of 4.0-5.0 µm, width of 2.5-3.5 µm, length-to-width ratio of 1.50-1.75, well-defined acrosome covering 40-70% of the head, thin midpiece (<1 µm wide and approximately 1.5 times head length), and a thin, uniform, uncoiled tail approximately 45 µm long [11]. All borderline forms are classified as abnormal, with the reference threshold reduced to <4% normal forms for teratozoospermia diagnosis in later WHO editions [10].

Diagnostic Concordance and Clinical Impact

A Croatian study comparing these classification systems in 49 patients found maximal concordance in diagnosing teratozoospermia, with both criteria agreeing in 45 of 49 cases (92% concordance rate) [11]. However, systematic differences emerged in defect detection rates:

Table 2: Sperm Defect Detection Rates by Classification System

| Defect Category | WHO Criteria (%) | Strict Criteria (%) | Statistical Significance |

|---|---|---|---|

| Head Defects | Higher in normozoospermia, asthenozoospermia, and oligoasthenozoospermia groups | Consistently lower | p = 0.001-0.031 |

| Neck/Midpiece Defects | Lower in oligoasthenozoospermia group | Significantly higher | p = 0.005 |

| Tail Defects | Lower in normozoospermia and asthenozoospermia groups | Significantly higher | p = 0.002-0.005 |

Adapted from Čipak et al. [11]

The stringency of classification systems has clinical consequences. Research on intrauterine insemination (IUI) outcomes revealed that between 1996-1997 and 2005-2006, average sperm morphology decreased from 37% to 23% by WHO 3rd criteria and from 8.0% to 4.0% by strict criteria [4]. This "classification drift" increased teratozoospermia diagnoses and diminished the predictive value of morphology for IUI success, as the strong relationship between morphology and pregnancy rates present in the earlier era was no longer evident in the later period [4].

Technician Subjectivity: The Human Factor in Morphology Assessment

Magnitude of Inter-Observer Variability

Despite standardized criteria, technician subjectivity remains a substantial source of variability in sperm morphology assessment. A study examining inter-observer agreement found similar kappa values for both WHO and strict criteria (0.700 vs. 0.715), indicating only a "good" rather than "excellent" level of agreement between technicians [11]. This variability stems from individual differences in interpreting borderline cases and applying classification criteria consistently.

Training as a Standardization Tool

Recent research demonstrates that standardized training can significantly reduce technician variability. A 2025 study utilizing a "Sperm Morphology Assessment Standardisation Training Tool" based on machine learning principles showed remarkable improvements in accuracy and consistency [3].

Table 3: Impact of Standardized Training on Technician Accuracy

| Classification System Complexity | Untrained Accuracy (%) | Trained Accuracy (%) | Improvement |

|---|---|---|---|

| 2-category (normal/abnormal) | 81.0 ± 2.5 | 98 ± 0.43 | +17.0% |

| 5-category (by defect location) | 68 ± 3.59 | 97 ± 0.58 | +29.0% |

| 8-category (specific abnormality types) | 64 ± 3.5 | 96 ± 0.81 | +32.0% |

| 25-category (individual defects) | 53 ± 3.69 | 90 ± 1.38 | +37.0% |

Data from Seymour et al. [3]

The training not only improved accuracy but also reduced assessment time (from 7.0±0.4s to 4.9±0.3s per image) and decreased inter-technician variation [3]. This demonstrates that standardized training incorporating expert consensus labels ("ground truth") and machine learning principles can effectively mitigate human subjectivity in sperm morphology assessment.

Integrated Workflow and Interrelationships

The relationship between staining methods, classification systems, and technician factors follows a sequential workflow pattern where outputs from earlier stages become inputs for subsequent stages, creating cumulative variability.

This workflow visualization illustrates how technical, methodological, and human factors interact sequentially to produce the final morphology assessment. Staining quality directly impacts the application of classification systems, while technician expertise mediates the entire interpretive process. This cascade effect means that variability at any stage propagates through the entire assessment pathway.

Essential Research Reagents and Materials

Standardization across research and clinical settings requires consistent use of high-quality reagents and materials. The following toolkit represents essential components for sperm morphology assessment:

Table 4: Essential Research Reagent Solutions for Sperm Morphology Assessment

| Reagent/Material | Primary Function | Application Notes |

|---|---|---|

| Diff-Quick Stain | Rapid sperm staining | Provides quick differentiation of basic structures; less effective for midpiece visualization [10] |

| Spermac Stain | Multi-color sperm staining | Superior compartmental differentiation; especially effective for midpiece assessment [10] |

| Giemsa Stain | General sperm staining | Traditional method for WHO criteria assessment; requires temperature control [11] |

| Eosin-Nigrosin | Vitality staining and morphology | Differentiates live/dead sperm; suitable for field conditions [12] |

| Formaldehyde Fixative | Cellular structure preservation | Used in Spermac protocol (5 minutes fixation) [10] |

| Triarylmethane Fixative | Rapid cellular fixation | Used in Diff-Quick protocol (5 seconds immersion) [10] |

| Sperm Washing Medium | Semen preparation | Removes seminal plasma; improves staining quality [11] |

| Standardized Classification Atlas | Reference for morphology assessment | Reduces technician subjectivity; improves inter-observer agreement [3] |

The experimental data comprehensively demonstrates that staining methods, classification systems, and technician subjectivity collectively introduce significant variability in sperm morphology assessment. Diff-Quick staining yields higher normal sperm percentages primarily due to its limited midpiece visualization, while Spermac provides more comprehensive structural assessment but yields lower normal ranges [10]. Classification stringency directly impacts teratozoospermia diagnosis rates, with strict criteria identifying more abnormalities but potentially overdiagnosing fertility impairment in some populations [4] [11]. Finally, technician subjectivity remains a substantial challenge, though standardized training utilizing expert consensus and machine learning principles shows promise for remarkable improvement in both accuracy and consistency [3].

For the research community pursuing inter-algorithm agreement studies, these findings underscore the critical importance of standardizing pre-analytical conditions when developing and validating computational morphology assessment tools. Consistent staining protocols, classification criteria, and comprehensive technician training are foundational prerequisites for generating reliable data sets capable of supporting robust algorithm development. Future standardization efforts should focus on establishing universally accepted staining protocols for specific morphological questions, refining classification systems to better correlate with clinical outcomes, and implementing continuous training and quality control programs to minimize human variability. Only through such comprehensive standardization can sperm morphology assessment fully realize its potential as an objective, reproducible diagnostic tool in both clinical and research settings.

Sperm morphology, the study of the size and shape of spermatozoa, is a cornerstone of male fertility evaluation. It is widely recognized as one of the most significant predictors of fertilization potential, both in natural conception and in assisted reproductive technology (ART) procedures [1] [13]. Despite its clinical importance, sperm morphology assessment remains one of the most challenging and subjective analyses to standardize in the andrology laboratory [3]. The inherent variability in human sperm shapes, combined with differences in staining techniques, microscopy, and operator expertise, has led to the development of multiple classification systems in an effort to improve consistency and prognostic value.

The three predominant systems in use today are the World Health Organization (WHO) guidelines, Kruger's strict criteria, and David's classification (also known as the modified David classification). Each system provides a framework for categorizing sperm as "normal" or "abnormal" based on specific morphological characteristics, but they differ significantly in their stringency, categorization of defects, and clinical application. This guide provides a detailed, objective comparison of these three systems, focusing on their methodological approaches, reliability, and clinical correlations, framed within the context of inter-algorithm agreement in sperm morphology research.

Comparative Analysis of Classification Systems

The following section provides a systematic comparison of the three main sperm morphology classification systems, detailing their fundamental principles, technical requirements, and performance characteristics.

Table 1: Key Characteristics of Sperm Morphology Classification Systems

| Feature | WHO Guidelines | Kruger Strict Criteria | David Classification |

|---|---|---|---|

| Primary Philosophy | Tolerant, descriptive | Highly stringent, prognostic | Detailed, descriptive |

| Classification Basis | Broad descriptive categories | Strict quantitative thresholds | Specific defect-based (12 classes) |

| Key Defects Categorized | Head, midpiece, tail, cytoplasmic droplets | Head (focus), midpiece, tail | 7 head defects, 2 midpiece defects, 3 tail defects |

| Staining Preference | Diff-Quik, Papanicolaou | Papanicolaou | RAL Diagnostics kit |

| Clinical Correlation | Moderate correlation with fertility | Better predictor of IVF success [14] | Used widely; debate on switching to strict criteria [14] |

| Inter-Laboratory Variability | High | Lower due to strict thresholds | High due to complexity |

Table 2: Analytical Performance and Research Findings

| Performance Metric | WHO Guidelines | Kruger Strict Criteria | David Classification |

|---|---|---|---|

| Correlation with Manual Assessment | - | - | Moderate correlation with strict criteria (r=0.386 for Diff-Quik) [15] |

| Inter-Expert Agreement | High variability [3] | Improved agreement with training | High complexity leads to variability [13] |

| Automation Potential | Challenging due to broad categories | More suitable for AI/automation | Complex for automation due to many classes |

| Key Research Findings | Overestimation of normal forms compared to strict criteria [15] | Abnormal results not reliably predicted by other SA parameters [16] | A 2011 study argued for its replacement by strict criteria for standardization [14] |

Experimental Protocols for Morphology Assessment

The reliability of sperm morphology assessment is heavily dependent on strict adherence to standardized laboratory protocols, from sample preparation to staining and analysis.

Sample Preparation and Staining Protocols

Consistent sample preparation is critical for minimizing analytical variability. According to WHO guidelines, semen smears should be prepared from a well-mixed, liquefied sample. For the David classification, as used in developing the SMD/MSS dataset, samples with a sperm concentration of at least 5 million/mL are selected, while samples with very high concentrations (>200 million/mL) are excluded to prevent image overlap and facilitate the capture of whole sperm [13]. The smears are then air-dried and fixed.

Staining methods vary by classification system:

- Diff-Quik Staining: This rapid, three-step Romanowsky stain is commonly used for WHO assessments and has been shown to produce a higher correlation (r=0.386) between WHO and strict criteria outcomes compared to Papanicolaou staining (r=0.110) in CASA systems [15].

- Papanicolaou Staining: This more complex, multi-step staining method is preferred for Kruger strict criteria evaluations as it provides superior cellular detail, particularly of the sperm head.

- RAL Diagnostics Staining: This kit was specifically used in the SMD/MSS dataset creation for David's classification, demonstrating the variety of staining solutions employed in different laboratories [13].

Microscopy and Image Acquisition

For manual assessment, a bright-field microscope with 100x oil immersion objective is standard. The use of phase-contrast optics is also common, especially for unstained samples. For CASA and AI-based systems, the process involves:

- Using an optical microscope equipped with a digital camera for image acquisition [13].

- Capturing a sufficient number of images (e.g., 37 ± 5 images per sample in the SMD/MSS study) to ensure a representative sample of spermatozoa [13].

- Ensuring each image contains a single spermatozoon for precise morphological classification, which is crucial for building AI training datasets [13].

Classification and Quality Control

A minimum of 200 spermatozoa should be evaluated and classified according to the chosen system's criteria. To establish ground truth data for research and training, the consensus of multiple experts is required. In the SMD/MSS study, each spermatozoon was independently classified by three experts, and the level of agreement (No Agreement, Partial Agreement, or Total Agreement) was analyzed to gauge the inherent complexity of the task [13]. Furthermore, studies have shown that standardized training tools, which use principles of supervised learning from expert-validated image datasets, can significantly improve the accuracy and reduce variation among novice morphologists [3].

Analytical Workflow for Sperm Morphology Assessment

The following diagram illustrates the general experimental workflow for sperm morphology analysis, from sample collection to final classification, highlighting steps where different standards may diverge.

Diagram 1: Sperm morphology assessment workflow.

The Scientist's Toolkit: Essential Reagents and Materials

Successful sperm morphology assessment relies on a set of specific laboratory reagents and instruments. The following table details key solutions and their functions in the analytical process.

Table 3: Essential Research Reagent Solutions for Sperm Morphology Analysis

| Reagent/Material | Primary Function | Application Notes |

|---|---|---|

| Diff-Quik Staining Kit | A rapid Romanowsky-type stain for cytological preparation. | Preferred for WHO assessments; shown to yield better inter-system correlation in CASA [15]. |

| Papanicolaou Stain | A multi-step stain providing detailed cellular morphology. | The preferred method for Kruger strict criteria due to superior nuclear and acrosomal detail. |

| RAL Diagnostics Stain | A staining kit for sperm morphology. | Used in studies employing David's classification for sample preparation [13]. |

| Phase-Contrast Microscope | Enables observation of unstained, live sperm. | Useful for initial motility and basic morphology checks; often used in simple "normal/abnormal" classifications. |

| Bright-Field Microscope | The standard microscope for observing stained cells. | Equipped with a 100x oil immersion objective for detailed morphology assessment of stained smears. |

| Computer-Assisted Semen Analysis (CASA) | Automated system for image acquisition and analysis. | Systems like SCA, IVOS, or CEROS capture images; performance varies, especially with morphology [17]. |

| Quality Control Beads (e.g., Accu-Beads) | Validated quality control beads for personnel training and proficiency testing. | Used to train technicians and standardize analysis across operators and laboratories [17]. |

The comparative analysis of WHO guidelines, Kruger strict criteria, and David classification reveals a fundamental trade-off in sperm morphology assessment: the balance between descriptive detail and analytical consistency. While the WHO system offers a broad overview and David's classification provides detailed defect categorization, the Kruger strict criteria demonstrate superior prognostic value for ART outcomes and better potential for standardization due to its stringent, quantitative thresholds.

The future of sperm morphology assessment lies in technological innovation to overcome human subjectivity. Artificial Intelligence (AI) and deep learning models are showing significant promise in automating the analysis, offering standardization, and accelerating the process [5] [13]. The development of large, high-quality, and expertly annotated datasets is the critical foundation for these technologies. As these AI-based systems evolve and are validated against robust, expert-derived ground truth data, they hold the potential to seamlessly integrate the diagnostic strengths of all three classification systems, ultimately providing andrology laboratories with a tool that is not only consistent and efficient but also deeply insightful for clinical decision-making in male infertility.

Impact of Assessment Variability on Clinical Decision-Making and ART Outcomes

Sperm morphology assessment, the evaluation of the size and shape of spermatozoa, serves as a cornerstone in the diagnostic evaluation of male infertility [18]. This parameter is established as the most prominent component in semen analysis, as it defines fertility status and potential, as well as the course of natural or assisted reproduction [18]. The clinical significance of morphology is profound; it directly influences critical treatment decisions in assisted reproductive technology (ART), particularly the choice between intrauterine insemination (IUI), in vitro fertilization (IVF), and intracytoplasmic sperm injection (ICSI) [18]. When the percentage of sperm with normal morphology falls below 4%, fertilization with IUI and IVF is typically poor, making ICSI the preferred treatment option [18].

Despite its clinical importance, sperm morphology assessment is widely recognized as one of the most challenging semen parameters to standardize due to its highly subjective nature [18] [13]. This variability presents a significant challenge in reproductive medicine, as inconsistent morphological evaluation can lead to misdiagnoses and inadequate treatment of infertile patients [18]. The fundamental issue lies in the inherent subjectivity of the test, which relies heavily on the technician's expertise and visual interpretation [3]. This paper examines the impact of assessment variability on clinical decision-making and ART outcomes, framed within the broader context of inter-algorithm agreement in sperm morphology assessment research, exploring both traditional manual methods and emerging artificial intelligence solutions.

Classification Systems and Reference Value Evolution

The landscape of sperm morphology classification has undergone significant evolution, contributing substantially to inter-laboratory variability. Normal sperm morphology reference values have been dramatically revised over successive World Health Organization (WHO) manuals, descending from ≥80.5% in the 1st edition to ≥14% in the 4th edition, and further reduced to ≥4% in the most recent 5th edition [18]. This progression reflects the ongoing refinement of what constitutes "normal" sperm morphology, but has simultaneously created inconsistency across laboratories using different classification standards.

The complexity of classification systems themselves directly impacts assessment accuracy and variability. Research has demonstrated that more complex classification systems result in lower overall accuracy and higher variability among morphologists [3]. Studies evaluating different category systems revealed significant differences in accuracy, with untrained users achieving 81.0% accuracy for simple 2-category (normal/abnormal) classification, compared to only 53% accuracy for a detailed 25-category system that defines all defects individually [3]. This demonstrates a fundamental trade-off between diagnostic detail and reliability in morphological assessment.

Technical and Human Factors in Assessment Variability

Multiple technical and human factors contribute to the substantial variability observed in sperm morphology assessment:

- Staining Methods and Preparation Techniques: Variations in staining methods (Papanicolaou, Shorr, Diff-Quik) and sample preparation techniques affect morphological interpretation [18]. Centrifugation may alter sperm morphology and must be carefully standardized [18].

- Microscopy and Measurement Inconsistencies: Without an ocular micrometer to accurately measure sperm dimensions, precise morphological evaluation is compromised [18]. The refractive index of immersion oil and microscope calibration further contribute to variability.

- Subjective Interpretation and Expertise Dependence: Manual assessment remains highly subjective and strongly dependent on the technician's experience [13] [3]. Inter-expert agreement analysis reveals significant discrepancies, with experts agreeing on normal/abnormal classification for only 73% of sperm images in some studies [3].

Table 1: Factors Contributing to Variability in Sperm Morphology Assessment

| Factor Category | Specific Source of Variability | Impact on Assessment |

|---|---|---|

| Classification Systems | Evolution of WHO reference values (80.5% → 4%) | Creates historical inconsistencies between laboratories |

| Complexity of classification categories | Higher complexity reduces accuracy (81% vs 53% for 2 vs 25 categories) | |

| Technical Methods | Staining techniques (Papanicolaou, Diff-Quik, Shorr) | Affects cellular detail visualization and interpretation |

| Sample preparation and centrifugation | May artificially alter sperm morphology | |

| Human Factors | Technician expertise and training | Untrained users show high variation (CV=0.28) and lower accuracy |

| Subjective interpretation of criteria | Experts disagree on 27% of normal/abnormal classifications |

Experimental Protocols and Methodological Approaches

Standardized Manual Assessment Protocol

The WHO-endorsed methodology for sperm morphology assessment involves a detailed, multi-step protocol designed to maximize consistency [18]. The process begins with semen sample collection in a sterile container followed by incubation at 37°C for 30 minutes to allow liquefaction. For viscous samples, proteolytic enzymes such as α-chymotrypsin or bromelain may be added. Smear preparation requires placing 10 µL of well-mixed semen on a clean frosted slide and using a second slide at a 45° angle to create a smooth, even smear, which is then air-dried before staining [18].

Staining follows standardized protocols, such as the Diff-Quik method, which consists of sequential immersion in fixative (triarylmethane dye, methanol), solution I (xanthene dye, sodium azide, pH buffer), and solution II (thiazine dye, pH buffer). The stained smear is examined under a bright field microscope with 100× objective and 10× eyepiece, with immersion oil having a refractive index of 1.52. Critical to this process is the use of an ocular micrometer to accurately measure sperm dimensions, as the sperm head should be 5 to 6 µm long and 2.5 to 3.5 µm wide, with specific acrosome (40-70% of head area) and midpiece (same length as head) proportions [18]. At least 200 spermatozoa must be evaluated across replicates, with all borderline forms considered abnormal.

Deep Learning and Artificial Intelligence Protocols

Recent research has developed automated assessment approaches using convolutional neural networks (CNNs) to address human subjectivity. One representative study created the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) containing 1,000 images of individual spermatozoa acquired using an MMC computer-assisted semen analysis (CASA) system [13]. Each spermatozoon was manually classified by three experts according to the modified David classification, which includes 12 classes of morphological defects: 7 head defects (tapered, thin, microcephalous, etc.), 2 midpiece defects (cytoplasmic droplet, bent), and 3 tail defects (coiled, short, multiple) [13].

The experimental workflow involved several stages: image acquisition, expert classification and consensus labeling, data augmentation to expand the dataset to 6,035 images, and algorithm development using Python 3.8. The CNN architecture included image pre-processing (denoising, normalization, standardization), database partitioning (80% training, 20% testing), data augmentation, model training, and evaluation. This approach achieved accuracy rates ranging from 55% to 92%, demonstrating the potential for AI to standardize morphological assessment [13].

Standardization Training Protocols

To address variability through improved training, researchers have developed specialized Sperm Morphology Assessment Standardisation Training Tools based on machine learning principles [3]. These tools utilize expert consensus labels ("ground truth") and supervised learning methodologies to train morphologists. The protocol involves iterative testing across different classification systems (2-category, 5-category, 8-category, and 25-category) with immediate feedback on accuracy [3].

In validation studies, novice morphologists underwent repeated training over four weeks, resulting in significant improvement in accuracy (from 82% to 90%) and diagnostic speed (from 7.0 to 4.9 seconds per image). The most substantial improvements occurred after the first intensive day of training, with final accuracy rates reaching 98%, 97%, 96%, and 90% across the 2-, 5-, 8- and 25-category systems respectively [3]. This demonstrates the critical importance of standardized, iterative training in reducing assessment variability.

Diagram 1: Sperm Morphology Assessment Workflow showing parallel manual and AI-assisted methodologies that inform clinical ART decisions. The workflow begins with sample collection and progresses through preparation, imaging, and alternative assessment pathways.

Quantitative Impact on Assessment Accuracy and Consistency

Inter-Expert Agreement and Training Efficacy

The fundamental challenge in sperm morphology assessment is reflected in inter-expert agreement studies, which reveal significant disparities in morphological classification. Analysis of agreement distribution among three experts shows three distinct scenarios: no agreement (NA) among experts, partial agreement (PA) where 2/3 experts concur, and total agreement (TA) where all three experts agree on the same label for all categories [13]. Without standardized training, users demonstrate high variation (coefficient of variation = 0.28) with accuracy scores ranging dramatically from 19% to 77% [3].

Structured training programs produce measurable improvements in assessment quality. Research demonstrates that repeated training over four weeks significantly enhances accuracy (from 82% to 90%) and reduces interpretation time (from 7.0 to 4.9 seconds per image) [3]. The most substantial improvements occur during initial training, with accuracy rates plateauing after the first intensive day. This training effect is consistent across classification system complexities, though absolute accuracy remains inversely related to system complexity.

Table 2: Impact of Training and Classification System Complexity on Assessment Accuracy

| Training Status | 2-Category System (Normal/Abnormal) | 5-Category System (By Location) | 8-Category System (Cattle Veterinary) | 25-Category System (All Defects) |

|---|---|---|---|---|

| Untrained Novices | 81.0% ± 2.5% | 68.0% ± 3.6% | 64.0% ± 3.5% | 53.0% ± 3.7% |

| After 1st Training Day | 94.9% ± 0.7% | 92.9% ± 0.8% | 90.0% ± 0.9% | 82.7% ± 1.1% |

| Final Accuracy (4 Weeks) | 98.0% ± 0.4% | 97.0% ± 0.6% | 96.0% ± 0.8% | 90.0% ± 1.4% |

| Coefficient of Variation | 0.027-0.137 | 0.027-0.137 | 0.027-0.137 | 0.027-0.137 |

Algorithm Performance and Comparative Effectiveness

Emerging artificial intelligence approaches show promising but variable performance in sperm morphology classification. Deep learning models using convolutional neural networks trained on the SMD/MSS dataset demonstrate accuracy ranging from 55% to 92% compared to expert classifications [13]. This performance variability reflects both the challenges of algorithm training and the inherent subjectivity in the "ground truth" expert classifications used for training.

The comparative effectiveness of different assessment methodologies reveals important trade-offs. Manual assessment by trained experts remains the reference standard but suffers from throughput limitations and residual subjectivity. Computer-assisted semen analysis (CASA) systems automate the image acquisition process but have limited ability to accurately distinguish between spermatozoa and cellular debris, and struggle to classify midpiece and tail abnormalities [13]. AI-based approaches offer potential for standardization and increased throughput but require extensive validation and may be limited by training dataset quality and diversity.

Consequences for Clinical Decision-Making and ART Outcomes

Direct Impact on Treatment Pathway Selection

Sperm morphology assessment directly determines clinical treatment pathways in assisted reproduction. The critical threshold of 4% normal forms established in the WHO 5th edition manual serves as a key decision point [18]. When the percentage of morphologically normal sperm is ≥4%, the probability of fertilization with conventional IVF or intrauterine insemination (IUI) is sufficient to justify these less invasive approaches. Conversely, when normal morphology falls below 4%, fertilization rates with IUI and conventional IVF decline significantly, making intracytoplasmic sperm injection (ICSI) the preferred treatment option [18].

Inaccurate morphology assessment therefore directly leads to suboptimal treatment selection. Overestimation of normal forms may result in failed fertilization cycles with conventional IVF, while underestimation may lead to unnecessary use of ICSI with its increased costs, technical demands, and theoretical genetic concerns [18] [19]. This is particularly significant given that ART-conceived pregnancies already demonstrate increased risks of adverse outcomes, including preterm delivery and small for gestational age infants, even after controlling for multiple gestations [20].

Broader Implications for ART Safety and Efficacy

Assessment variability contributes to broader challenges in evaluating ART safety and efficacy. Research has shown associations between ART and adverse perinatal outcomes, including cerebral palsy, autism, neurodevelopmental imprinting disorders, and cancer [19]. However, uncertainty persists regarding whether these outcomes relate to the ART procedures themselves, underlying infertility factors, or other medical and environmental influences. Inconsistent morphology assessment and treatment selection based on variable criteria complicate this determination.

The significant state-level variations in ART outcomes further highlight the impact of assessment and treatment variability. Massachusetts, which has comprehensive insurance coverage for ART services, demonstrates significantly lower rates of twins, triplets, and higher-order births compared to Florida and Michigan, where coverage is more limited [20]. This suggests that financial factors influencing treatment decisions, including those based on morphology assessment, significantly impact multiple gestation rates and associated complications.

Diagram 2: Clinical Decision Impact Pathway illustrating how morphology assessment results direct treatment choices and how assessment errors lead to clinical consequences. The pathway shows how variability contributes to broader ART outcome variations.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Sperm Morphology Assessment

| Item | Specification/Function | Application Context |

|---|---|---|

| Diff-Quik Stain | Triarylmethane dye fixative, xanthene dye (Solution I), thiazine dye (Solution II) | Rapid staining for manual morphology assessment [18] |

| Papanicolaou Stain | Gold standard staining method per WHO guidelines | Reference standard morphology assessment [18] |

| RAL Diagnostics Stain | Commercial staining kit for semen smears | Research use in standardized studies [13] |

| α-Chymotrypsin/Bromelain | Proteolytic enzymes for viscous sample preparation | Liquefaction of viscous semen samples [18] |

| Ocular Micrometer | Microscope calibration for sperm dimension measurement | Essential for accurate head size measurement (5-6 µm × 2.5-3.5 µm) [18] |

| MMC CASA System | Computer-assisted semen analysis with digital camera | Automated image acquisition for AI studies [13] |

| SMD/MSS Dataset | 1,000+ sperm images with expert classifications | Training and validation of AI algorithms [13] |

| Standardized Training Tool | Machine learning-based training with expert consensus | Morphologist training and standardization [3] |

The impact of assessment variability on clinical decision-making and ART outcomes represents a significant challenge in reproductive medicine. Current evidence demonstrates that inconsistency in sperm morphology assessment stems from multiple sources, including evolving classification standards, technical methodological differences, and inherent human subjectivity in interpretation. This variability directly influences critical treatment decisions, particularly the selection between conventional IVF and ICSI, with profound implications for treatment success, economic costs, and patient outcomes.

Promising approaches to address these challenges include standardized training tools based on machine learning principles, which demonstrate significant improvements in assessment accuracy and consistency, and artificial intelligence-based classification systems that offer potential for automation and standardization. Future research directions should focus on validating and refining these approaches across diverse laboratory settings, establishing robust quality control and quality assurance programs, and developing consensus standards that balance diagnostic detail with practical reliability. Through such efforts, the field can progress toward more consistent, accurate morphological assessment that optimizes clinical decision-making and ART outcomes for the millions affected by infertility worldwide.

The Emergence of Algorithm-Based Approaches to Reduce Subjectivity

Sperm morphology analysis, a cornerstone of male fertility evaluation, has long been constrained by its inherent subjectivity. Conventional semen analysis (CSA) relies on visual assessment by laboratory technicians, introducing substantial inter-observer variability that compromises result reproducibility and clinical reliability [3] [5]. This variability stems from multiple factors: the complexity of classification systems encompassing 26 distinct abnormality types, the challenge of evaluating over 200 sperm per sample, and inevitable human fatigue [5]. According to recent validation studies, even expert morphologists demonstrate only 73% agreement on basic normal/abnormal classifications for sperm images, highlighting the profound impact of subjective interpretation [3].

The emergence of artificial intelligence (AI) and machine learning (ML) technologies promises to address these limitations through algorithmic standardization. By applying computational methods to sperm assessment, researchers aim to establish objective, reproducible morphological analysis while minimizing human bias [21] [5]. This comparison guide examines the performance of various algorithm-based approaches against conventional methods, with particular focus on their agreement levels, technical capabilities, and potential to transform clinical andrology practices. The shift toward computational assessment represents not merely technical advancement but a fundamental reorientation toward evidence-based, standardized male fertility evaluation.

Comparative Performance Analysis of Assessment Methodologies

Quantitative Performance Metrics Across Assessment Platforms

Table 1: Correlation and Agreement Between Assessment Methods for Sperm Morphology

| Assessment Method | Comparison Reference | Correlation Coefficient (r) | Key Performance Metrics | Limitations |

|---|---|---|---|---|

| In-house AI Model (Unstained live sperm) | Computer-Aided Semen Analysis | 0.88 [21] | Precision: 0.95 (abnormal), 0.91 (normal); Recall: 0.91 (abnormal), 0.95 (normal) [21] | Requires specialized imaging equipment [21] |

| In-house AI Model (Unstained live sperm) | Conventional Semen Analysis | 0.76 [21] | Test accuracy: 0.93; Processing speed: 0.0056 seconds per image [21] | Limited clinical validation studies [21] |

| Computer-Aided Semen Analysis (CASA) | Conventional Semen Analysis | 0.57 [21] | Correctly classified sperm morphology compared to manual analysis [22] | Higher results for morphology vs. manual method [22] |

| Electro-Optical System (SQA-Vision) | Conventional Semen Analysis | Moderate to high correlation [22] | Acceptable sensitivity and specificity for classification [22] | Performance slightly poorer than CASA for morphology [22] |

| Conventional ML Algorithms (SVM, Bayesian) | Expert Morphologist Consensus | Accuracy: 88.59%-90% [5] | AUC-ROC: 88.59%; Precision rates >90% for sperm head classification [5] | Limited to sperm head analysis only [5] |

Diagnostic Accuracy Across Classification System Complexities

Table 2: Impact of Classification Complexity on Assessment Accuracy

| Classification System | Untrained Morphologist Accuracy | Trained Morphologist Accuracy | Expert Consensus Accuracy | AI Model Performance |

|---|---|---|---|---|

| 2-Category (Normal/Abnormal) | 81.0% ± 2.5% [3] | 98.0% ± 0.43% [3] | 73% agreement [3] | Precision: 0.91-0.95 [21] |

| 5-Category (Head, Midpiece, Tail, Cytoplasmic Droplet, Normal) | 68.0% ± 3.59% [3] | 97.0% ± 0.58% [3] | Not Reported | Not Specifically Reported |

| 8-Category (Pyriform, Knobbed, Vacuoles, etc.) | 64.0% ± 3.5% [3] | 96.0% ± 0.81% [3] | Not Reported | Not Specifically Reported |

| 25-Category (Individual Defects) | 53.0% ± 3.69% [3] | 90.0% ± 1.38% [3] | Not Reported | Not Specifically Reported |

Experimental Protocols and Methodologies

Deep Learning Model Development Protocol

The development of AI models for sperm morphology assessment follows a structured protocol centered on dataset creation, model training, and validation. Recent approaches utilize confocal laser scanning microscopy at 40× magnification to capture high-resolution Z-stack images (0.5 μm interval) of unstained live sperm [21]. This imaging protocol generates approximately 200 sperm images per sample, with each capture containing 2-3 sperm within a 159.7×159.7 μm field at 512×512 pixel resolution [21].

The annotation phase involves manual labeling by embryologists and researchers using specialized programs like LabelImg, with established inter-observer correlation coefficients of 0.95 for normal morphology detection and 1.0 for abnormal morphology detection [21]. Classification follows WHO sixth edition criteria, categorizing sperm into nine distinct datasets based on head shape (smooth oval, length-to-width ratio 1.5-2), vacuole presence, neck characteristics, tail uniformity, and cytoplasmic droplet size [21].

For model architecture, ResNet50 transfer learning models are implemented for deep learning-based classification. Training typically utilizes datasets of 21,600 images with 12,683 annotated sperm, with a standard split of 4,500 images each for normal and abnormal morphology [21]. Performance validation achieves test accuracy of 0.93 after 150 epochs, with precision and recall metrics exceeding 0.91 for both normal and abnormal classification [21].

Conventional Machine Learning Implementation

Traditional machine learning approaches employ distinct methodologies for feature extraction and classification. Conventional ML pipelines begin with shape-based descriptors including Hu moments, Zernike moments, and Fourier descriptors for manual feature extraction [5]. The k-means clustering algorithm serves for initial sperm head localization, complemented by histogram statistical methods for segmentation [5].

For classification, support vector machines (SVM) represent the most frequently employed algorithm, trained on datasets of 1,400-1,540 human sperm cells from multiple donors [5]. Performance evaluation typically incorporates area under the receiver operating characteristic curve (AUC-ROC) and area under the precision-recall curve (AUC-PR), with reported values of 88.59% and 88.67% respectively [5]. Bayesian density estimation models achieve approximately 90% accuracy for sperm head classification into four morphological categories (normal, tapered, pyriform, small/amorphous) [5].

Morphologist Training Validation Protocol

Recent research demonstrates the efficacy of standardized training tools based on machine learning principles. The "Sperm Morphology Assessment Standardisation Training Tool" employs expert consensus labels as "ground truth" for training novice morphologists [3]. Validation studies involve training cohorts of 16-22 participants across multiple classification systems (2-category to 25-category) [3].

The protocol incorporates repeated training over four weeks with initial tests establishing baseline accuracy (81.0% for 2-category), followed by intensive training with visual aids and instructional videos [3]. Post-training assessment reveals significant improvement in accuracy (94.9% for 2-category) and diagnostic speed (reduction from 7.0±0.4s to 4.9±0.3s per image) [3]. This approach demonstrates that standardized training can achieve final accuracy rates of 98.0% for 2-category systems, though more complex 25-category systems plateau at 90.0% accuracy [3].

Visualization of Algorithm-Based Sperm Assessment Workflow

AI Sperm Assessment Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Algorithm-Based Sperm Morphology Assessment

| Item | Specification | Research Function |

|---|---|---|

| Confocal Laser Scanning Microscope | LSM 800, 40× magnification, Z-stack capability [21] | High-resolution imaging of unstained live sperm without fixation [21] |

| Standardized Slides | Two-chamber slides, 20μm depth (Leja) [21] | Consistent sample preparation for reliable imaging [21] |

| Annotation Software | LabelImg program [21] | Manual annotation by embryologists for ground truth establishment [21] |

| Staining Solutions | Diff-Quik stain (Romanowsky variant) [21] | Conventional staining for CASA and manual assessment comparison [21] |

| CASA Systems | IVOS II (Hamilton Thorne) or SCA (Microptic) [21] [22] | Automated semen analysis for method comparison [21] [22] |

| AI Training Datasets | HSMA-DS (1,475 images), MHSMA (1,540 images), SVIA (125,000 annotations) [5] | Model training and validation with diverse sperm morphology examples [5] |

| Computational Resources | ResNet50 architecture, Python/NumPy, GPU acceleration [21] [5] | Deep learning model implementation and training [21] [5] |

Inter-Algorithm Agreement and Clinical Implications

The correlation between algorithm-based approaches and conventional methods reveals substantial variation across platforms. In-house AI models demonstrate strong correlation with CASA (r=0.88) and moderate correlation with conventional semen analysis (r=0.76), outperforming the correlation between CASA and conventional analysis (r=0.57) [21]. This pattern suggests that AI systems may capture morphological features more consistently than human observers, potentially bridging the gap between established automated systems and conventional manual assessment.

Recent clinical guidelines from the French BLEFCO Group question the prognostic value of sperm morphology assessment before assisted reproductive technologies, highlighting the need for more objective assessment methods [1]. Algorithm-based approaches address this limitation by detecting subtle morphological patterns potentially imperceptible to human observers, while enabling analysis of unstained live sperm – a significant advantage for clinical applications where sperm viability must be preserved [21]. Furthermore, standardized training tools leveraging machine learning principles demonstrate that morphologist accuracy can be significantly improved (from 81.0% to 98.0% for 2-category systems) through structured training protocols [3], suggesting a hybrid approach combining human expertise with algorithmic standardization may offer optimal outcomes.

The philosophical dimensions of algorithmic subjectivity warrant consideration alongside technical performance. Research in personalized image aesthetic assessment demonstrates that algorithms often reflect the biases of their training datasets, with ground truth labels predicting user choices with highly variable accuracy (55% for some participants, <30% for others) [23]. This underscores the importance of diverse, representative training datasets in sperm morphology algorithms to ensure equitable performance across varied patient populations and clinical contexts. As algorithmic approaches continue to evolve, their integration into clinical practice must balance technical precision with acknowledgment of inherent limitations in capturing the full spectrum of biological variation relevant to male fertility assessment.

Algorithmic Approaches: From Conventional ML to Advanced Deep Learning Architectures

The assessment of sperm morphology is a critical, yet notoriously subjective, component of male fertility diagnostics. Traditional manual analysis, performed by trained embryologists, is plagued by significant inter-observer variability, with studies reporting diagnostic disagreement as high as 40% between expert evaluators [24] [25]. This lack of standardization presents a major challenge for research and clinical practice, directly motivating the investigation into inter-algorithm agreement among automated methods. In this landscape, conventional machine learning (ML) pipelines that leverage robust feature engineering offer a pathway toward objective, reproducible analysis. This guide provides a comparative analysis of two cornerstone algorithms in this domain: K-means clustering for unsupervised pattern discovery and Support Vector Machines (SVM) for supervised classification, evaluating their performance and roles within the specific context of sperm morphology assessment.

Experimental Protocols in Sperm Morphology Analysis

To objectively compare algorithm performance, it is essential to understand the standard experimental workflows and metrics used for validation in this field.

Standardized Morphological Classification and Evaluation Metrics

Researchers typically develop predictive models using datasets of sperm images where the "ground truth" is established by expert andrologists based on standardized classification systems, such as the modified David classification or WHO guidelines [13] [5]. These systems categorize sperm into multiple classes based on defects in the head, midpiece, and tail.

The performance of ML models is then quantified using standard classification metrics and clustering validity indices, which are crucial for inter-algorithm comparison [26] [27].

- Classification Metrics:

- Accuracy: The proportion of total sperm images correctly classified.

- Precision: The ability of the classifier not to label a normal sperm as abnormal.

- Recall (Sensitivity): The ability of the classifier to find all the abnormal sperm.

- F1-Score: The harmonic mean of precision and recall.

- Clustering Metrics:

- Silhouette Score: Measures how similar an object is to its own cluster compared to other clusters. Ranges from -1 to 1, where higher values are better [26].

- Davies-Bouldin Index (DBI): Measures the average similarity between each cluster and its most similar one. Lower values indicate better cluster separation [26].

- Calinski-Harabasz Index (CHI): Measures the ratio of between-cluster dispersion to within-cluster dispersion. Higher values indicate better clustering [27].

Workflow for Conventional Machine Learning Analysis

The following diagram illustrates the generalized workflow for applying conventional machine learning to sperm morphology analysis, highlighting the roles of both K-means and SVM.

Comparative Performance Analysis

The table below summarizes the performance of conventional machine learning models, particularly SVM, as reported in studies on sperm morphology analysis, and contrasts them with deep learning alternatives.

Table 1: Performance Comparison of Machine Learning Models in Sperm Morphology Analysis

| Algorithm | Reported Performance | Key Strengths | Key Limitations / Challenges |

|---|---|---|---|

| SVM with Feature Engineering | ~88-91% Accuracy [5] [24] | High precision (reports of >90%); effective in high-dimensional spaces; robust with clear margin of separation. | Performance heavily dependent on quality of manual feature extraction; may struggle with complex, non-linear morphological patterns. |

| K-means Clustering | Evaluated via validity indices (Silhouette Score, DBI) [26] | Useful for exploratory data analysis; identifies inherent structures/groupings without pre-labeled data. | Purely descriptive; requires post-hoc analysis for clinical relevance; predefined K can be a limitation. |

| Deep Learning (CNN-based) | Up to 96% Accuracy [24] [25] | Automatic feature extraction from raw pixels; handles complex and subtle patterns; state-of-the-art accuracy. | Requires very large datasets (<1000 images often insufficient [13]); computationally intensive; "black box" nature. |

Analysis of Inter-Algorithm Agreement and Disagreement

The performance data reveals a central thesis in automated sperm analysis: the "agreement" between a model's output and expert consensus is a function of its feature representation capability.

SVM Performance: The high precision of SVMs, as demonstrated by Mirsky et al. with rates consistently above 90% [5], shows strong agreement with experts on morphologically distinct classes. However, this agreement can degrade with more subtle or complex anomalies that are difficult to capture with handcrafted features. Chang et al. reported classification accuracy as low as 49% for non-normal sperm heads using Fourier descriptors and SVM, highlighting a significant point of disagreement with expert judgment when feature engineering is inadequate [5].

K-means vs. Supervised Models: As an unsupervised technique, K-means does not directly agree or disagree with expert labels. Instead, its value lies in uncovering inherent structures in the data. A high Silhouette Score indicates that the algorithm agrees with itself on coherent cluster formation. Researchers must then interpret whether these clusters align with biological morphology, creating a different kind of agreement—between data-driven structure and clinical knowledge.

The Performance Gap with Deep Learning: The superior accuracy of deep learning models (e.g., 96.08% [24]) underscores a fundamental limitation of conventional ML. The automated feature extraction in deep learning leads to higher agreement with expert classification because it can learn a more nuanced and comprehensive representation of sperm morphology compared to manually engineered features.

Table 2: Key Research Reagents and Computational Tools for Sperm Morphology ML

| Item / Resource | Function in Research | Example / Note |

|---|---|---|

| Stained Sperm Smears | Creates contrast for microscopic imaging, enabling visualization of morphological details. | RAL Diagnostics staining kit is used following WHO manual guidelines [13]. |

| CASA System | Automated platform for image acquisition and basic morphometric analysis. | MMC CASA system used for image capture with a x100 oil immersion objective [13]. |

| Public Datasets | Benchmark for training and validating new algorithms. | HuSHeM (216 images), SMIDS (3000 images), SMD/MSS (1000+ images) [13] [5] [24]. |

| Feature Extraction Libraries | Software tools to compute handcrafted features from images. | Scikit-image (Python); used for shape descriptors (Hu moments, Zernike moments), texture. |

| Machine Learning Frameworks | Environment for building, training, and evaluating SVM, K-means, and other models. | Scikit-learn (Python) provides implementations of SVM, K-means, and clustering metrics [26]. |

The comparative analysis confirms that while SVM paired with careful feature engineering can achieve solid performance and high precision in classifying sperm morphology, its dependency on manual feature extraction limits its ultimate accuracy and generalizability. K-means clustering serves as a valuable exploratory tool for uncovering hidden patterns in unlabeled data but does not directly produce a diagnostic classification.

The prevailing trend in the field points toward hybrid models and deep learning. Future research for inter-algorithm agreement will likely focus on how conventional ML can augment deep learning, for instance, by using K-means for initial data stratification or SVM for classifying deep features extracted by a CNN—a method that has already pushed accuracies to 96% [24]. As datasets continue to grow in size and quality [13] [5], the role of conventional ML may evolve, but its principles of feature space optimization and model evaluation will remain foundational to developing robust, standardized tools for male fertility assessment.

The assessment of sperm morphology is a cornerstone of male fertility evaluation, yet it remains one of the most challenging and subjective tests in reproductive medicine. Traditional manual morphology assessment suffers from significant inter-laboratory and inter-technician variability, undermining its clinical reliability. A striking demonstration of this limitation comes from a 2022 multisite trial which found poor reproducibility of sperm morphology assessment using World Health Organization Fifth Edition (WHO5) strict grading criteria, with no correlation between fertility centers and a core laboratory for the same semen samples [28]. This variability poses a substantial challenge for both clinical diagnosis and reproductive research.

In response to these challenges, deep learning approaches, particularly Convolutional Neural Networks (CNNs), have emerged as powerful tools for automating sperm classification. These technologies offer the potential to standardize morphology assessment, reduce human subjectivity, and provide rapid, quantitative analyses. This review comprehensively compares the performance of various CNN architectures and traditional machine learning approaches for automated sperm classification, focusing on their experimental validation, technical capabilities, and agreement with expert andrology assessment.

Experimental Protocols and Methodologies in Sperm Imaging AI

Data Acquisition and Preparation Protocols

The foundation of any robust deep learning model is a high-quality, well-annotated dataset. Research in automated sperm classification utilizes diverse acquisition methodologies:

- Brightfield Microscopy with Stained Samples: Conventional approaches using RAL Diagnostics staining kits and optical microscopes with 100x oil immersion objectives provide standard brightfield images for morphological analysis [13]. These typically yield 2D images focusing on head, midpiece, and tail structures.

- Quantitative Phase Imaging (QPI): Advanced imaging using Partially Spatially Coherent Digital Holographic Microscopy (PSC-DHM) provides label-free, quantitative phase maps of sperm cells. This technique offers nanometric sensitivity to subcellular structures and has been used to distinguish normal sperm from those under stress conditions (cryopreservation, oxidative stress, alcohol exposure) [29].

- Video Analysis for Motility Assessment: The VISEM dataset, featuring videos captured at 400-fold magnification with an integrated heated table (37°C), enables both motility tracking and morphological analysis [30]. This multimodal approach captures dynamic movement patterns alongside static morphology.

Dataset Annotation and Ground Truth Establishment

Establishing reliable ground truth labels is crucial for model training and validation. The most rigorous studies employ multi-expert consensus approaches:

- The SMD/MSS Dataset: Three independent experts with extensive experience in semen analysis classified each spermatozoon according to modified David classification, which includes 12 classes of morphological defects (7 head defects, 2 midpiece defects, and 3 tail defects) [13].

- Analysis of Inter-Expert Agreement: Studies typically categorize agreement as: No Agreement (NA), Partial Agreement (PA: 2/3 experts agree), or Total Agreement (TA: 3/3 experts agree). Statistical analysis using Fisher's exact test ensures significant agreement between experts [13].

- Data Augmentation Techniques: To address limited dataset sizes and class imbalance, researchers employ augmentation strategies including rotation, scaling, and contrast adjustments. One study expanded an initial set of 1,000 images to 6,035 images after augmentation [13].

Deep Learning Model Architectures and Training Protocols

Various neural network architectures have been adapted for sperm classification tasks:

- Basic CNN Architectures: Custom CNNs with multiple convolutional layers followed by fully connected layers represent the fundamental approach. These typically operate on pre-processed (denoised, normalized) grayscale images resized to standard dimensions (e.g., 80×80 pixels) [13].

- Transfer Learning Approaches: Pre-trained architectures including VGG16, ResNet-18, ResNet-34, and ResNet-50, initially trained on ImageNet, are fine-tuned for sperm classification tasks. This approach leverages feature hierarchies learned from natural images [30].

- Region-Based CNNs (R-CNN): Faster R-CNN architectures have been implemented for simultaneous sperm head detection and segmentation, particularly useful for motility analysis in video data [30].

- Experimental Validation: Standard practice involves dataset partitioning (typically 80% training, 20% testing), k-fold cross-validation, and performance evaluation using metrics including accuracy, mean absolute error (MAE), sensitivity, specificity, and area under the curve (AUC) [13] [31].

Table 1: Summary of Key Datasets for Sperm Morphology Analysis

| Dataset Name | Sample Size | Image Type | Annotation Classes | Key Features |

|---|---|---|---|---|

| SMD/MSS [13] | 1,000 (extended to 6,035 with augmentation) | Brightfield, stained | 12 classes (modified David classification) | Multi-expert annotation, comprehensive defect classification |

| VISEM-Tracking [32] | 656,334 annotated objects | Video, low-resolution unstained | Detection, tracking, and regression | Multimodal with videos and biological data from 85 participants |

| SVIA [32] | 125,000 annotated instances | Images and videos | Detection, segmentation, classification | Comprehensive annotations for multiple computer vision tasks |

| MHSMA [32] | 1,540 images | Grayscale, non-stained | Acrosome, head shape, vacuoles | Focus on sperm head morphology features |

| QPI Dataset [29] | 10,163 phase maps | Quantitative phase imaging | Normal, cryopreserved, oxidative stress, alcohol affected | Label-free, nanometric sensitivity to subcellular changes |

Comparative Performance Analysis of Algorithm Architectures

Convolutional Neural Networks for Morphology Classification

CNN architectures have demonstrated remarkable capabilities in classifying sperm morphological abnormalities:

- SMD/MSS Dataset Performance: A custom CNN implementation achieved accuracy ranging from 55% to 92% across different morphological classes when trained on the augmented SMD/MSS dataset. This range reflects the varying complexity of distinguishing specific defect types, with some abnormalities being more challenging to classify than others [13].