Interpreting Male Fertility Machine Learning Models with SHAP: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive exploration of SHapley Additive exPlanations (SHAP) for interpreting machine learning (ML) models in male fertility research.

Interpreting Male Fertility Machine Learning Models with SHAP: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive exploration of SHapley Additive exPlanations (SHAP) for interpreting machine learning (ML) models in male fertility research. It addresses the critical need for transparency in AI-driven diagnostics, where models have traditionally been treated as black boxes. Covering foundational theory, practical implementation, and optimization strategies, this guide demonstrates how SHAP values enhance model interpretability by quantifying feature contributions to predictions. We review successful applications across fertility assessment domains, including sperm morphology analysis, treatment outcome prediction, and lifestyle factor impact evaluation. For researchers and drug development professionals, this resource offers methodological frameworks for model validation, comparative performance analysis, and clinical translation, ultimately supporting the development of more reliable and clinically actionable AI tools in reproductive medicine.

Understanding SHAP and Male Fertility Machine Learning Fundamentals

The Growing Role of AI in Male Infertility Assessment

Male infertility accounts for approximately 30-40% of all infertility cases, with azoospermia—a condition where no measurable sperm are present in semen—affecting up to 10% of infertile men [1] [2]. Traditional diagnostic methods rely heavily on manual microscopic analysis, which can miss rare sperm cells in severe cases. Artificial intelligence (AI) and machine learning (ML) are now revolutionizing this field by enabling the identification of sperm cells and predictive modeling of treatment outcomes with unprecedented accuracy [1] [3]. The integration of SHapley Additive exPlanations (SHAP) into ML models provides critical interpretability, allowing researchers and clinicians to understand which factors most significantly influence model predictions, thereby bridging the gap between black-box algorithms and clinically actionable insights [3] [4].

Current AI Applications in Male Infertility

Sperm Identification and Analysis

AI systems have demonstrated remarkable capabilities in identifying viable sperm in cases of severe male factor infertility. The Sperm Tracking and Recovery (STAR) system, developed at the Columbia University Fertility Center, uses a high-speed camera and high-powered imaging technology to scan semen samples, taking over 8 million images in under an hour to locate sperm cells [1]. In one documented case, skilled embryologists searched for two days without finding sperm, but the STAR system identified 44 sperm cells in just one hour [1]. This technology enables the recovery of extremely rare sperm cells—sometimes as few as two or three in an entire sample compared to the typical 200-300 million—allowing for successful fertilization through Intracytoplasmic Sperm Injection (ICSI) [1].

Predictive Modeling for Treatment Outcomes

Machine learning algorithms are increasingly used to predict the success of Assisted Reproductive Technology (ART) treatments. Multiple studies have employed various ML models to forecast clinical pregnancy and live birth outcomes based on clinical and laboratory parameters [5] [6] [4]. These models analyze complex relationships among multiple variables to provide personalized success probabilities, helping clinicians set realistic expectations and optimize treatment strategies.

Table 1: Performance Metrics of ML Algorithms in Male Fertility Assessment

| ML Algorithm | Reported Accuracy | Area Under Curve (AUC) | Primary Application |

|---|---|---|---|

| Random Forest (RF) | 90.47% | 99.98% | Male fertility detection [3] |

| Extreme Gradient Boosting (XGBoost) | 79.71% | 0.858 | Predicting clinical pregnancy with surgical sperm retrieval [4] |

| Support Vector Machine (SVM) | 86% | - | Sperm concentration and morphology [3] |

| Logistic Regression (LR) | - | 0.674 | Live birth prediction in IVF [6] |

| Artificial Neural Network (ANN) | 97% | - | Male fertility classification [3] |

Experimental Protocols for AI-Assisted Male Infertility Assessment

Protocol 1: AI-Assisted Sperm Identification in Azoospermic Samples

Principle: This protocol details the procedure for using the STAR AI system to identify and recover rare sperm cells from semen samples of patients diagnosed with azoospermia [1].

Materials:

- STAR system (microscope with high-speed camera and imaging technology)

- Specially designed chip for sample placement

- Semen sample collected through masturbation after 2-5 days of abstinence

- Tiny droplets of media for sperm isolation

Procedure:

- Sample Preparation: Place the freshly collected semen sample on a specially designed chip under the microscope.

- System Setup: Connect the STAR system to the microscope and ensure the high-speed camera is properly calibrated.

- Automated Scanning: Initiate the AI system to scan the entire sample, capturing over 8 million high-resolution images in under one hour.

- Sperm Identification: The AI algorithm analyzes each image in real-time, identifying objects that match the trained characteristics of sperm cells (morphology, size, shape).

- Sperm Recovery: The system automatically isolates identified sperm cells into tiny droplets of media using gentle fluidics, avoiding harmful lasers or stains that could damage the sperm.

- Quality Control: Embryologists verify the recovered sperm cells under the microscope before use in IVF/ICSI procedures.

Notes: This method has enabled successful pregnancies in couples with 18 years of infertility history where conventional methods failed. The entire process from sample collection to sperm recovery can be completed within a few hours [1].

Protocol 2: Developing SHAP-Interpretable ML Models for Treatment Prediction

Principle: This protocol outlines the development of machine learning models for predicting clinical pregnancy outcomes following surgical sperm retrieval, with model interpretability provided through SHAP analysis [4].

Materials:

- Retrospective dataset of 345 infertile couples who underwent ICSI with surgical sperm retrieval

- Clinical parameters: female age, testicular volume, smoking status, AMH, FSH (male and female), etiology of infertility

- Python/R programming environment with ML libraries (XGBoost, SHAP)

- Computing hardware capable of handling ML model training and validation

Procedure:

- Data Collection: Compile a comprehensive dataset including patient demographics, clinical parameters, laboratory results, and treatment outcomes (clinical pregnancy yes/no).

- Data Preprocessing: Handle missing values, normalize continuous variables, and encode categorical variables.

- Model Training: Train six different ML models (including XGBoost, Random Forest, Logistic Regression) using the compiled dataset with cross-validation.

- Model Evaluation: Compare model performance using AUROC, accuracy, precision, recall, F1 score, brier score, and area under the precision-recall curve.

- SHAP Analysis: Apply SHAP to the best-performing model (typically XGBoost) to interpret feature importance and direction of effect.

- Validation: Validate the model on a hold-out test set to ensure generalizability.

Notes: Research has demonstrated that female age is consistently the most important feature influencing clinical pregnancy outcomes, followed by testicular volume, smoking status, and hormone levels [4]. SHAP analysis reveals how each factor contributes to the prediction, showing that younger female age, larger testicular volume, non-tobacco use, higher AMH, and lower FSH levels increase the probability of clinical pregnancy.

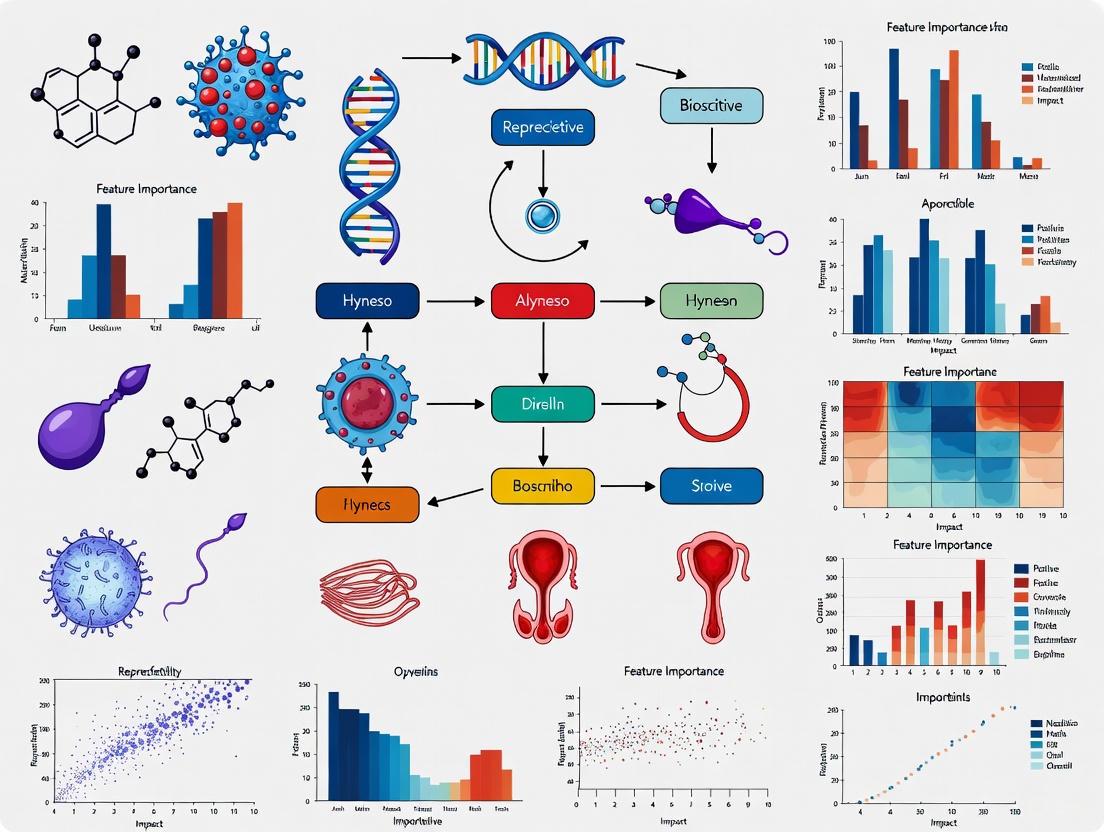

Visualization of AI Workflows in Male Infertility

AI-Assisted Sperm Identification Workflow

Diagram 1: AI sperm identification workflow

SHAP-Interpretable ML Model Development

Diagram 2: SHAP ML model development process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Materials for AI-Assisted Male Infertility Studies

| Reagent/Material | Function | Application Example |

|---|---|---|

| High-Speed Camera System | Captures millions of high-resolution images for AI analysis | STAR system for sperm identification in azoospermia [1] |

| Specialized Sample Chips | Provides optimized surface for semen sample analysis | Custom chips for microscope mounting in STAR system [1] |

| HPLC-MS/MS System | Precisely measures hormone and biomarker levels | Analysis of 25-hydroxy vitamin D3 in infertility studies [7] |

| SHAP Python Library | Provides model interpretability for ML predictions | Explaining feature importance in clinical pregnancy models [3] [4] |

| Synthetic Media Droplets | Enables gentle isolation of identified sperm | Recovery of rare sperm cells without damage [1] |

| Commercial Colour Maps (e.g., Viridis, Cividis) | Ensures accessible, perceptually uniform data visualization | Creating CVD-friendly charts for research publications [8] |

AI technologies are fundamentally transforming male infertility assessment, from enabling successful sperm retrieval in previously hopeless cases of azoospermia to providing accurate predictions for treatment outcomes. The integration of SHAP interpretation addresses the critical need for model transparency in clinical decision-making. As these technologies continue to evolve, they promise to further personalize infertility treatments and improve reproductive outcomes for couples worldwide. Future directions include the development of AI-guided surgical robots and virtual patient assistants, potentially further revolutionizing the field of reproductive medicine [9].

SHapley Additive exPlanations (SHAP) is a unified framework for interpreting machine learning model predictions, rooted in concepts from cooperative game theory. The core theoretical foundation of SHAP lies in the Shapley value, a solution concept developed by Lloyd Shapley in 1953 that fairly distributes the payout among players who collaborate. In the context of machine learning, the "players" are the input features, the "game" is the prediction task, and the "payout" is the difference between the actual prediction and the average prediction. SHAP provides a mathematically rigorous approach to explain how much each feature contributes to an individual prediction, bridging the gap between complex model internals and human-interpretable explanations [10] [11].

The significance of SHAP is particularly pronounced in high-stakes fields like healthcare and drug development, where understanding model decisions is crucial for clinical adoption. In male fertility research, where machine learning models are increasingly deployed for prediction tasks, the black-box nature of advanced algorithms can hinder their practical utility. SHAP addresses this limitation by offering transparent, quantifiable explanations for model outputs, enabling researchers and clinicians to verify predictions against domain knowledge and biological plausibility. This interpretability is essential for building trust in AI-assisted clinical decision support systems [10] [12] [13].

Mathematical Foundations

Shapley Values from Game Theory

The Shapley value is calculated by considering all possible permutations of feature combinations. For a machine learning model with feature set N, the Shapley value for a feature i is given by:

Where:

- S represents all possible subsets of features excluding i

- f(S) is the model prediction using only the feature subset S

- N is the total number of features

- The term

[|S|!(|N| - |S| - 1)!/|N|!]acts as a weighting factor that accounts for the number of ways a subset S can be formed

This formula ensures that the contribution of each feature is calculated fairly by considering its marginal contribution across all possible feature combinations, then taking a weighted average of these marginal contributions [11] [14].

From Shapley Values to SHAP

SHAP adapts the classical Shapley values from game theory to machine learning interpretation by establishing a unified framework that connects various explanation methods. The SHAP explanation method defines an additive feature attribution method that explains a model's output as a linear function of binary variables:

Where:

- g is the explanation model

- z' ∈ {0,1}^M represents the presence (1) or absence (0) of a feature

- M is the maximum number of simplified input features

- φ_i ∈ R is the Shapley value for feature i, representing the feature importance

- φ_0 is the model's base value when all features are absent (the average model output)

This formulation allows SHAP to provide consistent and locally accurate explanations for individual predictions across different model types and explanation methods [11] [14].

SHAP Implementation Frameworks

Computational Approaches

The direct computation of Shapley values is computationally expensive due to the exponential growth of possible feature combinations with increasing features. To address this challenge, several approximation methods and model-specific implementations have been developed:

Table 1: SHAP Computational Implementation Methods

| Method | Best Suited For | Computational Complexity | Key Advantages |

|---|---|---|---|

| KernelSHAP | Model-agnostic (any ML model) | High for many features | Works with any model; provides local explanations |

| TreeSHAP | Tree-based models (RF, XGBoost, DT) | Polynomial time O(TL·D²) | Exact calculations; fast for tree ensembles |

| DeepSHAP | Deep learning models | Moderate | Leverages deep learning architecture for efficient approximations |

| LinearSHAP | Linear models | Low O(n) | Exact and efficient for linear models |

| SegmentSHAP | Time series, image data | Variable based on segmentation | Reduces features via segmentation; handles temporal data |

In male fertility research, TreeSHAP has been particularly valuable due to the prevalence of tree-based models like Random Forest and XGBoost, which have demonstrated strong performance in fertility prediction tasks [10] [15] [13].

Handling Computational Challenges

For high-dimensional data such as time series or medical imaging data, feature segmentation strategies are employed to make SHAP computations tractable. Recent empirical evaluations have demonstrated that equal-length segmentation often outperforms more complex time series segmentation algorithms, with the number of segments having greater impact on explanation quality than the specific segmentation method. Additionally, introducing attribution normalization that weights segments by their length has been shown to consistently improve attribution quality in time series classification tasks [14].

SHAP in Male Fertility Research: Experimental Protocols

Protocol 1: Model Development and Interpretation for Fertility Prediction

Table 2: Research Reagent Solutions for Male Fertility ML Experiments

| Research Component | Function in Experiment | Implementation Example |

|---|---|---|

| Male Fertility Dataset | Model training and validation | 100+ samples with lifestyle, environmental factors, and clinical measurements [10] |

| Tree-Based Algorithms | Baseline predictive models | Random Forest, XGBoost, Decision Trees [10] [15] |

| SHAP Framework | Model interpretation and explanation | SHAP library (Python) with TreeExplainer [10] [13] |

| SMOTE | Handling class imbalance | Synthetic minority oversampling for improved model performance [10] [16] |

| Cross-Validation | Robust model evaluation | 5-fold or 10-fold CV to assess generalizability [10] [15] |

| Performance Metrics | Model assessment | Accuracy, precision, AUC-ROC [10] |

Experimental Workflow:

Data Collection and Preprocessing: Collect male fertility data including lifestyle factors (alcohol consumption, smoking habits, sitting hours), environmental factors (season, age), and clinical measurements. Preprocess data by handling missing values, encoding categorical variables, and normalizing numerical features [10].

Class Imbalance Handling: Address potential class imbalance in fertility status using Synthetic Minority Over-sampling Technique (SMOTE) or similar approaches to ensure robust model performance across both fertile and infertile categories [10].

Model Training: Implement multiple machine learning algorithms including Random Forest, XGBoost, Decision Trees, Support Vector Machines, and Logistic Regression. Utilize cross-validation to tune hyperparameters and prevent overfitting [10] [15].

Model Interpretation with SHAP: Apply SHAP to the trained model to explain individual predictions and global feature importance. Generate force plots for individual explanations and summary plots for global model behavior [10] [13].

Protocol 2: Clinical Validation of SHAP Explanations

Experimental Design for Clinical Utility Assessment:

Participant Recruitment: Engage clinicians (surgeons, physicians) with experience in fertility treatment. A sample size of 60+ participants provides sufficient statistical power for evaluating explanation effectiveness [12].

Explanation Format Design: Create three explanation conditions:

- Results Only (RO): Basic model predictions without explanations

- Results with SHAP (RS): Predictions with standard SHAP visualizations

- Results with SHAP and Clinical Context (RSC): SHAP explanations augmented with clinical interpretations [12]

Clinical Decision Assessment: Measure Weight of Advice (WOA) to quantify how much clinicians adjust their decisions based on AI recommendations. Assess trust, satisfaction, and usability through standardized questionnaires including the System Usability Scale (SUS) and Explanation Satisfaction Scale [12].

Statistical Analysis: Use Friedman tests and post-hoc Conover analysis to compare explanation formats across multiple metrics. Perform correlation analysis between explanation acceptance and trust/satisfaction/usability scores [12].

Quantitative Results in Male Fertility Applications

Model Performance with SHAP Interpretation

Table 3: Performance of ML Models in Male Fertility Prediction with SHAP Interpretation

| ML Model | Accuracy | AUC-ROC | Key Features Identified by SHAP | Clinical Interpretation |

|---|---|---|---|---|

| Random Forest | 90.47% | 99.98% | Lifestyle factors, environmental exposures | Strong non-linear pattern detection; robust to outliers [10] |

| XGBoost | 93.22% | Not reported | Season, age, alcohol consumption | Handles complex interactions; feature importance reliability [10] |

| AdaBoost | 95.10% | Not reported | Multiple clinical and lifestyle factors | Ensemble method with sequential learning [10] |

| Decision Tree | 86.00% | Not reported | Simplified feature relationships | Highly interpretable but prone to overfitting [10] |

| SVM | 86.00% | Not reported | Selected key predictors | Effective for high-dimensional spaces [10] |

SHAP Explanation Effectiveness Metrics

Table 4: Clinical Impact of Different Explanation Formats

| Explanation Format | Weight of Advice (WOA) | Trust Score | Satisfaction Score | Usability Score (SUS) |

|---|---|---|---|---|

| Results Only (RO) | 0.50 | 25.75 | 18.63 | 60.32 |

| Results + SHAP (RS) | 0.61 | 28.89 | 26.97 | 68.53 |

| Results + SHAP + Clinical (RSC) | 0.73 | 30.98 | 31.89 | 72.74 |

The superior performance of the RSC condition demonstrates that while SHAP provides valuable interpretability, augmenting with clinical context significantly enhances practical utility in healthcare settings [12].

Advanced Applications and Future Directions

Integration with Biological Pathways

SHAP explanations can be mapped to biological pathways to enhance understanding of male infertility mechanisms. The diagram below illustrates how SHAP-identified features connect to biological processes in male reproduction:

Emerging Research Applications

Recent studies have demonstrated SHAP's versatility across various reproductive medicine applications:

Follicle Size Optimization: In IVF treatment, SHAP analysis identified that intermediately-sized follicles (13-18mm) contributed most to successful mature oocyte retrieval, enabling more precise trigger timing decisions [13].

Fertility Preference Modeling: SHAP has been applied to women's fertility preferences in low-resource settings, identifying age group, region, and number of recent births as key predictors [15].

Personalized Treatment Planning: The integration of SHAP with survival prediction models in oncology demonstrates potential for adaptation to male fertility treatments, particularly for assessing intervention outcomes [11].

Future research directions include developing domain-specific SHAP variants optimized for medical data types, enhancing longitudinal SHAP analysis for tracking fertility changes over time, and creating standardized SHAP reporting frameworks for clinical validation of AI explanations in fertility medicine. As SHAP methodologies evolve, their integration into clinical decision support systems promises to enhance both the interpretability and actionable insights derived from male fertility prediction models [10] [13].

Why Interpretability Matters in Clinical Fertility Applications

The integration of Artificial Intelligence (AI) and Machine Learning (ML) into clinical fertility represents a paradigm shift in diagnosing and treating infertility, a condition affecting an estimated 15% of couples globally, with male factors being the sole cause in approximately 20% of cases and a contributing factor in 30-40% [3] [10]. Traditional diagnostic methods, such as manual semen analysis, are often hampered by subjectivity, inter-observer variability, and poor reproducibility [17] [18]. While AI models demonstrate superior predictive accuracy, their complex, non-linear structures often render them "black boxes," limiting clinical trust and adoption [3] [10].

The emergence of Explainable AI (XAI) frameworks, particularly SHapley Additive exPlanations (SHAP), addresses this critical gap by providing transparent, quantitative insights into model decision-making processes [15] [3]. In the high-stakes domain of clinical fertility, where decisions impact patient treatment pathways and emotional well-being, model interpretability is not merely a technical luxury but a clinical necessity. This document outlines the application notes and experimental protocols for implementing SHAP-based interpretability in male fertility ML research, providing scientists and clinicians with a framework for developing transparent, trustworthy, and clinically actionable AI tools.

Quantitative Performance of ML Models in Fertility Applications

Extensive research has evaluated the performance of various ML models in fertility applications. The following tables summarize key quantitative findings from recent studies, highlighting the performance metrics of different algorithms and the specific features they analyze.

Table 1: Performance of ML Models in Male Fertility Detection (Based on [3] [10])

| Machine Learning Model | Reported Accuracy (%) | Area Under Curve (AUC) | Key Predictors Identified |

|---|---|---|---|

| Random Forest (RF) | 90.47 | 99.98% | Lifestyle, environmental factors |

| Support Vector Machine (SVM) | 86.00 - 94.00 | Not Reported | Sperm concentration, morphology |

| Multi-layer Perceptron (MLP) | 69.00 - 93.30 | Not Reported | Sperm concentration, motility |

| Naïve Bayes (NB) | 87.75 - 88.63 | 77.90% | General fertility status |

| Adaboost (ADA) | 95.10 | Not Reported | General fertility status |

| XGBoost (XGB) | 93.22 | Not Reported | General fertility status |

Table 2: Key Features in Male Fertility Models and Their Clinical Relevance (Based on [10] [18])

| Feature Category | Specific Examples | Clinical/Research Relevance |

|---|---|---|

| Lifestyle Factors | Sedentary habits, tobacco use, alcohol consumption, stress | Modifiable risk factors for personalized intervention [18]. |

| Environmental Exposures | Air pollutants, heavy metals, endocrine disruptors | Explains declining semen quality trends [18]. |

| Sperm Parameters | Morphology, motility, concentration, DNA fragmentation | Core diagnostic indicators for infertility [17]. |

| Clinical History | History of pelvic infection, surgical history (e.g., varicocele) | Provides context for underlying etiology [19]. |

Experimental Protocols for SHAP-Based Model Interpretation

This section provides a detailed, step-by-step protocol for developing an interpretable ML model for male fertility, from data preparation to clinical interpretation. The workflow is designed to ensure robustness, transparency, and clinical applicability.

Data Preprocessing and Feature Engineering

Objective: To prepare a clean, balanced, and well-structured dataset suitable for training machine learning models. Materials: Raw fertility dataset (e.g., from UCI Machine Learning Repository), Python environment with pandas, scikit-learn, and imbalanced-learn libraries. Procedure:

- Data Cleaning: Handle missing values using techniques like Predictive Mean Matching (PMM) or removal of records with excessive missingness. Address outliers using the Interquartile Range (IQR) method [20].

- Feature Engineering: Transform continuous variables into categorical formats where clinically appropriate (e.g., age groups) to enhance model interpretability. Normalize or standardize all features to a consistent scale, such as [0, 1], to prevent bias from heterogeneous value ranges [18].

- Addressing Class Imbalance: A common issue in medical datasets where one class (e.g., "altered fertility") is underrepresented.

- Apply the Synthetic Minority Oversampling Technique (SMOTE) to generate synthetic samples for the minority class, creating a balanced dataset [20].

- Alternatively, employ undersampling techniques, though this may lead to loss of information.

Model Training and Validation

Objective: To train multiple ML models and select the best-performing one based on robust validation. Materials: Processed dataset from 3.1, Python environment with scikit-learn, XGBoost, and other relevant ML libraries. Procedure:

- Feature Selection: Use Recursive Feature Elimination (RFE) to iteratively remove the least significant predictors, retaining the most relevant feature subset for the final model [20].

- Model Selection and Training: Train a suite of industry-standard ML models, including Random Forest (RF), XGBoost, Support Vector Machine (SVM), and Logistic Regression [3] [10].

- Model Validation:

- Split the dataset into training and testing sets (e.g., 80/20).

- Employ five-fold cross-validation (CV) on the training set to tune hyperparameters and assess model stability [3] [19].

- Evaluate the final model on the held-out test set using metrics such as Accuracy, Precision, Recall, F1-score, and Area Under the Receiver Operating Characteristic Curve (AUROC) [15] [3].

Model Interpretation with SHAP

Objective: To interpret the trained model by quantifying the contribution of each feature to individual predictions and the model's overall behavior. Materials: Trained ML model from 3.2, test dataset, Python environment with the SHAP library. Procedure:

- Initialize a SHAP Explainer: Select the appropriate SHAP explainer for the model (e.g.,

TreeExplainerfor tree-based models like Random Forest and XGBoost). - Calculate SHAP Values: Compute SHAP values for the instances in the test set. SHAP values represent the marginal contribution of each feature to the model's prediction for each individual sample [15] [3].

- Visualize and Interpret Results:

- Summary Plot: Generate a summary plot that shows the global feature importance and the distribution of each feature's impact on the model output. This identifies the most influential predictors, such as sedentary lifestyle or environmental exposures [18].

- Force Plot: Create individual force plots for specific predictions to illustrate how features combined to push the model's output from the base value to the final prediction for a single patient. This is crucial for understanding individual case decisions [10].

- Dependence Plot: Plot a specific feature's SHAP value against its feature value to explore the model's functional relationship for that feature (e.g., whether the relationship is linear, monotonic, or more complex) [21].

Diagram 1: SHAP Interpretation Workflow.

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogues essential computational tools and data resources required for developing interpretable ML models in fertility research.

Table 3: Essential Research Reagents and Computational Tools for Interpretable Fertility ML

| Item Name | Function/Application | Specification/Example |

|---|---|---|

| Annotated Sperm Datasets | Training & validation data for sperm morphology & motility models. | HSMA-DS [22], VISEM-Tracking [22], SVIA dataset [22]. |

| Clinical & Lifestyle Datasets | Training & validation data for fertility status prediction models. | UCI Fertility Dataset [18], NHANES reproductive health data [19]. |

| SHAP (SHapley Additive exPlanations) Library | Python library for explaining output of any ML model. | Quantifies feature importance for model interpretability [15] [3]. |

| TreeExplainer | High-speed SHAP value calculator for tree-based models. | Used with Random Forest, XGBoost; enables fast explanation of industry-standard models [10]. |

| SMOTE (Synthetic Minority Oversampling Technique) | Algorithm to address class imbalance in medical datasets. | Generates synthetic samples for minority class (e.g., 'Altered' fertility) to improve model sensitivity [3] [20]. |

| Ant Colony Optimization (ACO) | Nature-inspired optimization algorithm for feature selection & parameter tuning. | Enhances model accuracy & efficiency in hybrid diagnostic frameworks [18]. |

The integration of SHAP-based interpretability is a critical step in translating black-box ML models into clinically trustworthy tools for fertility care. The protocols outlined provide a roadmap for researchers to build models that not only predict but also explain, thereby fostering clinician confidence and facilitating personalized patient interventions. Future work must focus on multi-center validation of these explainable models, integration with deep learning for image-based sperm analysis [22], and the development of standardized reporting guidelines for SHAP outputs in clinical settings. By prioritizing interpretability, the field can fully harness the power of AI to advance reproductive medicine in an ethical, transparent, and effective manner.

Key Male Fertility Prediction Tasks and Dataset Characteristics

Male infertility contributes to approximately 50% of infertility cases among couples globally, representing a significant clinical challenge with profound social and psychological implications [23] [17]. The etiology of male infertility is multifactorial, encompassing genetic predispositions, hormonal imbalances, anatomical abnormalities, environmental exposures, and lifestyle factors [23] [18]. Traditional diagnostic methods, such as manual semen analysis, suffer from substantial subjectivity, inter-observer variability, and limited predictive value for clinical outcomes [17] [24]. These limitations have stimulated growing interest in artificial intelligence (AI) and machine learning (ML) approaches to enhance diagnostic precision, prognostic accuracy, and clinical decision-making in male reproductive medicine [23] [10].

The integration of Explainable AI (XAI) frameworks, particularly SHapley Additive exPlanations (SHAP), has emerged as a critical advancement for interpreting complex ML models in clinical contexts [25] [10]. SHAP provides a mathematically rigorous framework for quantifying the contribution of individual features to model predictions, thereby addressing the "black-box" nature of many sophisticated algorithms [25]. This interpretability is essential for clinical adoption, as it enables researchers and clinicians to validate model reasoning, identify key predictive factors, and generate biologically plausible hypotheses [4] [10]. This article examines the primary prediction tasks, dataset characteristics, and experimental protocols in male fertility research, with particular emphasis on SHAP-based interpretation within the context of ML model development.

Key Prediction Tasks in Male Fertility

Research applying machine learning to male fertility has focused on several clinically significant prediction tasks, each with distinct methodological considerations and dataset requirements.

Table 1: Key Prediction Tasks in Male Fertility Research

| Prediction Task | Clinical Significance | Common Algorithms | Typical Dataset Size |

|---|---|---|---|

| Clinical Pregnancy Outcome | Predicts success of ICSI/IVF treatments following surgical sperm retrieval [4] | XGBoost, Random Forest [4] [10] | 100-500 patients [4] [18] |

| Semen Quality Classification | Distinguishes normal vs. altered seminal quality based on lifestyle and environmental factors [10] [18] | Random Forest, SVM, XGBoost [10] [18] | 50-200 samples [10] [18] |

| Sperm Retrieval Success | Predicts successful sperm extraction in non-obstructive azoospermia patients [17] | Gradient Boosting Trees [17] | 100-200 patients [17] |

| Sperm Motility Analysis | Automates assessment of progressive, non-progressive, and immotile spermatozoa [24] | CNN, Linear Regression [24] | 85-500 videos [24] |

| Molecular Biomarker Identification | Detects infertility-associated miRNA signatures [26] | Statistical Analysis, PCR Validation [26] | 100-200 samples [26] |

Clinical Pregnancy Prediction

The prediction of clinical pregnancy following assisted reproductive technologies represents one of the most clinically valuable applications of ML in male fertility. A 2024 retrospective study developed an interpretable ML model for predicting clinical pregnancies after surgical sperm retrieval from testes with different etiologies [4]. The study utilized data from 345 infertile couples who underwent ICSI treatment, evaluating six ML models before selecting Extreme Gradient Boosting (XGBoost) as the optimal performer (AUROC: 0.858, accuracy: 79.71%) [4]. SHAP analysis revealed that female age constituted the most important predictive feature, followed by testicular volume, tobacco use, anti-müllerian hormone (AMH) levels, and female follicle-stimulating hormone (FSH) [4]. This application demonstrates how ML models can integrate both male and female factors to predict couple-based reproductive outcomes.

Semen Quality Classification

Multiple studies have focused on classifying semen quality based on clinical, lifestyle, and environmental parameters. A comprehensive comparison of seven industry-standard ML models for male fertility detection found that Random Forest achieved optimal performance (90.47% accuracy, 99.98% AUC) using five-fold cross-validation with balanced data [10]. Another study proposed a hybrid diagnostic framework combining a multilayer feedforward neural network with an ant colony optimization algorithm, reporting 99% classification accuracy on a publicly available dataset of 100 clinically profiled male fertility cases [18]. These approaches typically incorporate features such as sedentary behavior, environmental exposures, smoking history, and age to distinguish between normal and altered seminal quality [10] [18].

Sperm Retrieval Prediction

For patients with non-obstructive azoospermia (NOA), predicting successful sperm retrieval represents a critical clinical challenge. Research in this area has employed ML models to preoperatively assess the likelihood of finding viable sperm during testicular sperm extraction procedures. One study applied gradient boosting trees to this prediction task, achieving an AUC of 0.807 with 91% sensitivity in a cohort of 119 patients [17]. These models typically integrate clinical parameters, hormonal profiles, and genetic markers to guide surgical decision-making for azoospermic men.

Dataset Characteristics and Preprocessing

The quality, size, and composition of datasets significantly influence ML model performance and generalizability in male fertility research.

Table 2: Characteristic Features in Male Fertility Datasets

| Feature Category | Specific Features | Data Type | Preprocessing Methods |

|---|---|---|---|

| Clinical Parameters | Testicular volume, FSH levels, AMH, sperm concentration [4] | Continuous & Categorical | Min-Max normalization [18] |

| Lifestyle Factors | Tobacco use, alcohol consumption, sedentary hours, stress [10] [18] | Binary & Ordinal | Range scaling [18] |

| Molecular Biomarkers | miRNA expression (hsa-miR-9-3p, hsa-miR-30b-5p, hsa-miR-122-5p) [26] | Continuous | Statistical normalization, PCR validation [26] |

| Demographic Information | Age, BMI, region, abstinence period [18] [24] | Continuous & Categorical | Min-Max normalization [18] |

| Sperm Parameters | Motility, morphology, DNA fragmentation [23] [17] | Continuous | Manual assessment, CASA systems [24] |

Male fertility datasets typically derive from clinical records, structured lifestyle questionnaires, and laboratory measurements. The Fertility Dataset from the UCI Machine Learning Repository represents a commonly used benchmark containing 100 samples with 10 attributes encompassing socio-demographic characteristics, lifestyle habits, and environmental exposures [18]. Larger clinical studies often incorporate data from hundreds of patients undergoing fertility treatment, with variables systematically recorded according to WHO guidelines [4]. For molecular biomarker discovery, datasets typically include miRNA expression profiles derived from techniques such as TaqMan real-time PCR, as demonstrated in a study analyzing 161 sperm samples to identify infertility-associated miRNAs [26].

Data Preprocessing and Imbalance Handling

Appropriate data preprocessing is essential for robust model performance. Common techniques include Min-Max normalization to rescale features to a [0, 1] range, addressing heterogeneity in measurement scales [18]. Class imbalance represents a frequent challenge in male fertility datasets, which often contain disproportionate numbers of fertile versus infertile samples [10]. To address this, researchers employ sampling strategies such as the Synthetic Minority Oversampling Technique (SMOTE), which generates synthetic samples from the minority class to balance dataset distribution [10]. One study demonstrated that combining SMOTE with Random Forest significantly improved model performance on imbalanced fertility data [10].

Experimental Protocols and Workflows

Protocol for ML Model Development with SHAP Interpretation

Objective: To develop and interpret a machine learning model for male fertility prediction using SHAP explainability.

Materials:

- Clinical dataset with fertility parameters

- Python programming environment with scikit-learn, XGBoost, and SHAP libraries

- Computing hardware with adequate processing power

Procedure:

- Data Preprocessing: Clean the dataset by removing incomplete records. Apply Min-Max normalization to scale continuous features to [0,1] range [18].

- Class Imbalance Handling: Apply SMOTE to generate synthetic samples for the minority class, creating a balanced dataset [10].

- Model Training: Split data into training (70%) and testing (30%) sets. Train multiple ML algorithms (Random Forest, XGBoost, SVM, etc.) using cross-validation [10].

- Model Evaluation: Assess performance using accuracy, precision, recall, F1-score, and AUROC. Select the best-performing model based on these metrics [4] [10].

- SHAP Interpretation: Compute SHAP values for the selected model. Generate summary plots to visualize feature importance and dependency plots to examine individual feature effects [4] [25].

- Clinical Validation: Interpret results in context of biological plausibility and compare with domain knowledge [4].

Protocol for miRNA Biomarker Discovery

Objective: To identify and validate miRNA signatures associated with male infertility.

Materials:

- Sperm samples from infertile patients and fertile controls

- RNA isolation kits

- TaqMan real-time PCR system

- Specific primers for target miRNAs

Procedure:

- Sample Collection: Obtain sperm samples from 161 participants (cases and controls) following ethical guidelines and informed consent [26].

- RNA Isolation: Extract total RNA from sperm samples using appropriate isolation methods [26].

- Literature Search: Conduct systematic review of existing studies to identify candidate miRNAs consistently associated with infertility [26].

- miRNA Quantification: Measure miRNA expression levels using TaqMan real-time PCR with specific primers [26].

- Statistical Analysis: Perform differential expression analysis between cases and controls. Use receiver operating characteristic (ROC) curve analysis to evaluate diagnostic potential [26].

- Meta-Analysis: Apply Comprehensive Meta-Analysis Software to validate findings across multiple studies [26].

- Validation: Confirm potential biomarkers in an independent validation set of cases and controls [26].

Visualization of Experimental Workflows

ML Workflow for Fertility Prediction

miRNA Biomarker Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Analytical Tools

| Tool/Reagent | Specific Examples | Function/Application | Reference |

|---|---|---|---|

| SHAP Library | Python SHAP package (version 0.44.0) | Model interpretation and feature importance visualization | [25] [27] |

| ML Algorithms | XGBoost, Random Forest, SVM | Predictive model development | [4] [10] |

| miRNA Analysis | TaqMan Real-Time PCR System | Quantification of sperm miRNA expression | [26] |

| Sperm Analysis | Computer-Assisted Sperm Analysis (CASA) | Automated assessment of sperm motility and morphology | [23] [24] |

| Data Balancing | SMOTE, ADASYN | Handling class imbalance in datasets | [10] |

| Optimization | Ant Colony Optimization | Hyperparameter tuning and feature selection | [18] |

Male fertility prediction represents a rapidly evolving research domain where machine learning approaches are demonstrating significant potential to enhance diagnostic and prognostic accuracy. The integration of SHAP interpretation frameworks addresses the critical need for model transparency and clinical interpretability, enabling researchers to validate model decisions and identify biologically plausible predictive factors. Optimal experimental design requires careful attention to dataset characteristics, appropriate preprocessing methodologies, and robust validation strategies. The protocols and workflows outlined in this article provide a structured approach for developing interpretable ML models in male fertility research, facilitating more reproducible and clinically relevant predictive analytics. Future research directions should include larger multicenter validation studies, standardized benchmarking datasets, and enhanced integration of multimodal data sources to further improve model performance and generalizability.

The Challenge of Black-Box Models in Medical Diagnostics

The integration of artificial intelligence (AI) in medical diagnostics promises significant advancements but introduces a critical challenge: the "black-box" nature of many sophisticated machine learning (ML) models. These models produce decisions based on complex algorithms that cannot be easily understood by examining their internal workings, creating a transparency barrier for patients, physicians, and even model designers [28]. In clinical practice, this lack of interpretability is particularly problematic as it obstructs understanding of how or why a specific diagnostic recommendation or treatment pathway was generated [28].

This opacity carries significant risks. Failures in medical AI could lead to serious consequences for clinical outcomes and patient experience, potentially eroding trust in healthcare institutions [29]. Furthermore, the unexplainability of black-box models can limit patient autonomy within patient-centered care models, where physicians are obligated to provide adequate information for shared medical decision-making [28]. Beyond these ethical considerations, the black-box problem creates practical barriers for clinical adoption, as healthcare professionals are trained to rely on evidence-based reasoning and may resist systems that cannot explain their outputs [12].

Black-Box Challenges in Male Fertility Diagnostics

Male fertility represents a particularly compelling domain for examining these challenges. Infertility affects a significant proportion of couples globally, with male factors being the sole cause in approximately 20% of cases and a contributing factor in 30-40% [3]. The application of AI and ML models has emerged as an effective solution for early fertility detection, with studies employing seven industry-standard algorithms including support vector machines, random forests, and multi-layer perceptrons [3].

Despite demonstrating promising performance, these models frequently operate as black boxes, limiting their clinical utility. While existing AI models have achieved high accuracy in detecting male fertility, most primarily report performance metrics without explaining the decision-making process [3]. Consequently, these models cannot elucidate how and why specific decisions are made, treating the AI system as a black box and restricting its application in clinical male fertility detection [3]. This limitation is especially significant in fertility diagnostics, where treatment planning requires understanding the relative contribution of various lifestyle, environmental, and clinical factors.

Performance of Standard ML Models in Male Fertility Detection

Table 1: Performance Comparison of ML Models in Male Fertility Detection [3]

| Machine Learning Model | Reported Accuracy (%) | AUC | Key Findings |

|---|---|---|---|

| Random Forest | 90.47 | 99.98% | Achieved optimal performance with 5-fold cross-validation on balanced data |

| Support Vector Machine (SVM-PSO) | 94.00 | Not reported | Outperformed other classifiers in specific implementations |

| Optimized Multi-Layer Perceptron | 93.30 | Not reported | Provided maximum outcome among selected AI tools |

| AdaBoost | 95.10 | Not reported | Performed best among three classifiers tested |

| Extra Tree Classifier | 90.02 | Not reported | Achieved maximum accuracy among eight classifiers |

| Naïve Bayes | 87.75 | 77.90% | Provided best outcome in specific comparative studies |

| Feed-Forward Neural Network | 97.50 | Not reported | High accuracy reported in training phase |

SHAP Interpretation as a Solution Framework

SHapley Additive exPlanations (SHAP) represents a groundbreaking approach to addressing the black-box problem in medical AI. Rooted in cooperative game theory, SHAP provides a mathematically rigorous framework for explaining the output of any machine learning model [25]. The method operates by calculating the marginal contribution of each feature to the prediction for individual instances, then aggregating these contributions across all possible feature combinations [25].

SHAP analysis has gained significant traction in medical diagnostics due to its versatility in providing both local and global explanations. Local explanations illuminate the reasoning behind individual predictions, while global explanations characterize overall model behavior and feature importance patterns across the entire dataset [25]. This dual capability makes SHAP particularly valuable in clinical contexts, where understanding both specific case decisions and general model behavior is essential for trust and verification.

The mathematical foundation of SHAP values derives from Shapley values, which provide a fair distribution of "payout" among players in a collaborative game according to four key properties: efficiency (the sum of all feature contributions equals the model's prediction), symmetry (features with identical contributions receive equal attribution), additivity (contributions are additive across multiple models), and null player (features that don't affect the prediction receive zero attribution) [25].

SHAP Experimental Protocol for Male Fertility Models

Implementing SHAP interpretation for male fertility ML models requires a systematic approach to ensure meaningful and clinically actionable explanations. The following protocol outlines a standardized methodology for applying SHAP analysis to fertility diagnostic models:

Protocol: SHAP Interpretation for Male Fertility ML Models

Objective: To explain predictions from black-box male fertility classification models using SHAP values, enabling clinical interpretation and verification.

Materials and Computational Environment:

- Python 3.7+ programming environment

- SHAP Python library (version 0.40.0+)

- Trained male fertility classification model (Random Forest, XGBoost, etc.)

- Preprocessed male fertility dataset with feature names

- Jupyter Notebook or similar computational notebook environment

- Visualization libraries (matplotlib, seaborn)

Procedure:

Model Training and Preparation

- Train male fertility classification model using standard procedures with train-test split (typically 70-30 or 80-20 ratio).

- Apply necessary data preprocessing including handling of missing values, feature scaling, and addressing class imbalance through techniques such as SMOTE (Synthetic Minority Over-sampling Technique) [3].

- Evaluate model performance using standard metrics (accuracy, precision, recall, F1-score, AUC-ROC).

SHAP Explainer Initialization

- Select appropriate SHAP explainer based on model type:

TreeExplainerfor tree-based models (Random Forest, XGBoost, Decision Trees)KernelExplainerfor model-agnostic applications (neural networks, SVMs)LinearExplainerfor linear models

- Initialize explainer with trained model:

explainer = shap.TreeExplainer(trained_model)

- Select appropriate SHAP explainer based on model type:

SHAP Value Calculation

- Calculate SHAP values for test set instances:

shap_values = explainer.shap_values(X_test) - For classification problems, specify whether to explain predictions for the positive (fertile) or negative (infertile) class.

- Calculate SHAP values for test set instances:

Global Model Interpretation

- Generate summary plot of feature importance:

shap.summary_plot(shap_values, X_test, feature_names=feature_names) - This visualization displays the mean absolute SHAP value for each feature, ranked by overall importance to the model's predictions.

- Analyze which features (e.g., lifestyle factors, environmental exposures, clinical measurements) contribute most significantly to fertility classifications.

- Generate summary plot of feature importance:

Local Instance Interpretation

- Select individual cases from the test set for detailed explanation.

- Generate force plots for specific predictions:

shap.force_plot(explainer.expected_value, shap_values[instance_index], X_test[instance_index], feature_names=feature_names) - These plots illustrate how each feature pushes the model's output from the base value (average model output) to the final prediction for that specific instance.

- Document how specific feature values (e.g., smoking status, age, sperm parameters) contribute to the classification of individual patients.

Dependence Analysis

- Create partial dependence plots to examine the relationship between specific features and model predictions:

shap.dependence_plot('feature_name', shap_values, X_test, feature_names=feature_names) - Analyze whether the relationship between key predictors (e.g., duration of infertility, hormonal levels) and fertility status aligns with clinical knowledge.

- Create partial dependence plots to examine the relationship between specific features and model predictions:

Clinical Validation and Verification

- Present SHAP explanations to clinical domain experts for verification.

- Assess whether the identified feature importance patterns and individual case explanations align with established medical knowledge.

- Identify any potentially spurious relationships or biases in the model's reasoning process.

Troubleshooting Tips:

- For large datasets, use a representative sample to calculate SHAP values to reduce computation time.

- If SHAP values appear unstable, verify data preprocessing consistency between training and explanation phases.

- Ensure feature names are human-readable for clinical interpretation.

Expected Outcomes:

- Quantitative assessment of feature importance for the fertility classification model.

- Explanations for individual patient predictions that can be reviewed by clinicians.

- Identification of potential model biases or inconsistencies with clinical knowledge.

- Enhanced trust and transparency in the AI-assisted diagnostic process.

Research Reagent Solutions for SHAP-Based Fertility Studies

Table 2: Essential Research Reagents and Computational Tools for SHAP Interpretation in Fertility Studies

| Research Reagent/Tool | Function/Application | Specifications/Alternatives |

|---|---|---|

| SHAP Python Library | Model-agnostic implementation of Shapley values for explaining ML model outputs | Versions 0.40.0+; Compatible with scikit-learn, XGBoost, LightGBM, CatBoost |

| SMOTE | Addresses class imbalance in fertility datasets through synthetic minority oversampling | Critical for male fertility data where infertile cases may be underrepresented [3] |

| TreeExplainer | High-speed exact algorithm for computing SHAP values for tree-based models | Specifically optimized for Random Forest, GBDT models commonly used in fertility prediction |

| scikit-learn | Provides baseline interpretable models and data preprocessing utilities | Includes logistic regression, decision trees for comparison with black-box approaches |

| Matplotlib/Seaborn | Creation of publication-quality visualizations for SHAP explanations | Customization of summary plots, dependence plots for clinical audiences |

| Jupyter Notebook | Interactive computational environment for exploratory model explanation | Enables iterative analysis and documentation of explanation process |

SHAP Interpretation Workflow for Male Fertility Models

The following diagram illustrates the comprehensive workflow for implementing SHAP interpretation in male fertility diagnostic models:

SHAP Interpretation Workflow for Male Fertility Models

Comparative Effectiveness of Explanation Methods

Recent research has systematically evaluated the effectiveness of different explanation formats in clinical environments. A 2025 study compared three explanation conditions in clinical decision support systems: results-only (RO), results with SHAP plots (RS), and results with SHAP plots plus clinical explanation (RSC) [12]. The findings demonstrated that while SHAP explanations alone improved clinician acceptance compared to results-only presentations, the highest levels of acceptance, trust, satisfaction, and perceived usability occurred when SHAP visualizations were accompanied by clinical interpretations [12].

Table 3: Comparative Effectiveness of Explanation Methods in Clinical Settings [12]

| Explanation Method | Acceptance (WOA Score) | Trust Score | Satisfaction Score | Usability Score | Clinical Utility |

|---|---|---|---|---|---|

| Results Only (RO) | 0.50 | 25.75 | 18.63 | 60.32 | Limited - provides no insight into decision process |

| Results with SHAP (RS) | 0.61 | 28.89 | 26.97 | 68.53 | Moderate - shows feature contributions but requires technical interpretation |

| Results with SHAP +\nClinical Explanation (RSC) | 0.73 | 30.98 | 31.89 | 72.74 | High - combines technical explanation with clinical context for comprehensive understanding |

These findings have significant implications for implementing SHAP explanations in male fertility diagnostics. While SHAP provides the technical foundation for model interpretability, its clinical utility is maximized when domain expertise is integrated to translate mathematical feature contributions into clinically meaningful narratives. This approach aligns with the need for transdisciplinary collaboration in medical AI, where computer scientists and clinical fertility specialists work together to create explanations that are both mathematically sound and clinically relevant [29].

The challenge of black-box models in medical diagnostics, particularly in sensitive domains like male fertility, requires sophisticated solutions that balance predictive performance with interpretability. SHAP analysis provides a mathematically rigorous framework for explaining complex model predictions, offering both global and local insights into feature contributions. The experimental protocols and workflows outlined in this document provide researchers with standardized methodologies for implementing SHAP interpretation in male fertility studies. By embracing these explainable AI approaches and combining them with clinical expertise, the field can advance toward AI-assisted diagnostic systems that are not only accurate but also transparent, trustworthy, and clinically actionable.

Implementing SHAP for Male Fertility Model Interpretation: Methods and Case Studies

Data Preparation and Preprocessing for Fertility Datasets

The application of machine learning (ML) in reproductive medicine represents a paradigm shift in fertility research and diagnostics. Explainable AI (XAI) techniques, particularly SHapley Additive exPlanations (SHAP), have emerged as crucial tools for interpreting complex model predictions in male fertility research [10] [30]. The reliability of these ML models is fundamentally dependent on the quality and appropriateness of the underlying data preparation and preprocessing pipeline. This protocol outlines comprehensive procedures for preparing fertility datasets optimized for developing interpretable ML models with SHAP-based explanation capabilities, with specific emphasis on male fertility applications where these techniques have demonstrated significant utility [31] [10] [30].

Data Collection and Initial Assessment

Fertility research utilizes diverse data sources, each with distinct characteristics and preprocessing requirements:

Clinical and Lifestyle Data: Data encompassing lifestyle factors, environmental exposures, and clinical history parameters, typically structured in tabular format [31] [30]. The Fertility Dataset from the UCI Machine Learning Repository represents a standardized example containing 100 samples with 10 attributes related to male fertility factors [31].

Medical Imaging Data: Sperm morphology images and videos requiring specialized preprocessing pipelines [32]. Datasets such as HSMA-DS (Human Sperm Morphology Analysis DataSet) and VISEM-Tracking provide annotated images for deep learning applications [32].

Demographic and Survey Data: Large-scale population data, such as that from demographic health surveys, which often require specialized preprocessing to handle complex sampling designs [33] [34].

Initial Data Quality Assessment

The initial assessment phase should document key dataset characteristics that fundamentally influence preprocessing strategy:

Table 1: Data Quality Assessment Metrics

| Assessment Dimension | Evaluation Method | Acceptance Criteria |

|---|---|---|

| Missing Data | Percentage of missing values per feature | <5% for critical features; <10% overall |

| Class Distribution | Ratio between majority and minority classes | Document imbalance ratio; flag if >4:1 |

| Sample Size Adequacy | Power analysis or heuristic assessment | Minimum 50 samples per feature |

| Feature Type Diversity | Categorical vs. numerical distribution | Balance appropriate for selected algorithms |

Data Preprocessing Pipeline

Handling Missing Data

Missing data represents a critical challenge in fertility datasets, particularly when combining multiple data sources:

Assessment Phase: Determine missing data mechanism (MCAR, MAR, MNAR) using statistical tests including Little's MCAR test.

Numerical Features: Apply K-nearest neighbors (KNN) imputation for datasets with strong feature correlations or multiple imputation by chained equations (MICE) for complex missingness patterns.

Categorical Features: Utilize mode imputation for features with <5% missing values or create separate "missing" category for patterns suggesting informative missingness.

Addressing Class Imbalance

Class imbalance presents a significant challenge in fertility datasets, where "altered" fertility status often represents the minority class [31]. Effective balancing techniques include:

Table 2: Class Imbalance Handling Techniques

| Technique | Mechanism | Best Suited Scenarios |

|---|---|---|

| SMOTE | Generates synthetic minority class samples | Moderate imbalance (ratio 3:1 to 5:1) |

| ADASYN | Adaptive synthetic sampling focusing on difficult examples | Complex decision boundaries |

| Random Undersampling | Reduces majority class instances | Large datasets with extreme imbalance |

| Combined Sampling | Both oversampling and undersampling | Severe imbalance with limited data |

Research demonstrates that applying SMOTE (Synthetic Minority Over-sampling Technique) significantly improves model performance in male fertility prediction, particularly when combined with ensemble methods like Random Forest [30].

Feature Engineering and Selection

Feature engineering enhances predictive signals while selection reduces dimensionality:

Domain-Informed Feature Creation:

- Calculate derived clinical ratios (e.g., motility indices)

- Create interaction terms between lifestyle factors (e.g., smoking × alcohol consumption)

- Generate temporal features from historical data

Feature Selection Techniques:

- Filter Methods: Mutual information, chi-square tests

- Wrapper Methods: Recursive feature elimination with cross-validation

- Embedded Methods: L1 regularization (Lasso), tree-based importance

- Nature-Inspired Optimization: Ant Colony Optimization (ACO) for feature selection [31]

Data Transformation and Scaling

Appropriate data transformation ensures optimal model performance:

Numerical Features:

- Standardization (Z-score normalization) for algorithms assuming unit variance (SVM, neural networks)

- Robust Scaling for datasets with outliers using median and interquartile range

- Power Transforms (Yeo-Johnson, Box-Cox) for heavily skewed distributions

Categorical Features:

- One-Hot Encoding for nominal features with limited categories (<10)

- Target Encoding for high-cardinality categorical features

- Ordinal Encoding for features with inherent hierarchy

Experimental Protocols

Complete Data Preprocessing Protocol

Objective: To systematically preprocess raw fertility data into an analysis-ready format suitable for interpretable ML modeling.

Materials:

- Raw fertility dataset (clinical, lifestyle, or morphological)

- Computing environment (Python/R with necessary libraries)

- Data documentation and codebooks

Procedure:

Data Ingestion and Validation (Duration: 1-2 hours)

- Load raw data from source files (CSV, Excel, database)

- Validate data against predefined schema and value constraints

- Document any schema violations or unexpected values

Initial Quality Assessment (Duration: 1 hour)

- Generate missing data report with percentages per feature

- Visualize class distribution using bar charts or pie charts

- Calculate basic descriptive statistics for numerical features

- Create correlation matrices to identify highly correlated features

Data Cleaning (Duration: 2-3 hours)

- Apply appropriate missing data handling strategy based on assessment

- Identify and handle outliers using IQR method or isolation forests

- Resolve data type inconsistencies and formatting issues

- Standardize categorical value representations

Feature Engineering (Duration: 2-4 hours)

- Create domain-informed derived features

- Encode categorical variables using appropriate scheme

- Generate interaction terms for theoretically important combinations

- Apply temporal feature engineering for longitudinal data

Data Balancing (Duration: 1-2 hours)

- Assess class imbalance ratio

- Apply selected balancing technique (e.g., SMOTE)

- Validate balanced dataset maintains feature relationships

Data Splitting (Duration: 30 minutes)

- Partition data into training (70%), validation (15%), and test (15%) sets

- Ensure consistent class distribution across splits using stratification

- Apply feature scaling fitted exclusively on training data

Documentation and Versioning (Duration: 1 hour)

- Document all preprocessing decisions and parameter values

- Create data lineage tracking from raw to processed data

- Version the final processed dataset for reproducibility

Quality Control:

- Compare summary statistics before and after preprocessing

- Validate that preprocessing transformations are reversible where appropriate

- Ensure no data leakage between splits by confirming no test data influences training transformations

Data Preprocessing Workflow

The following workflow diagram illustrates the complete data preprocessing pipeline for fertility datasets:

Research Reagent Solutions

Table 3: Essential Tools for Fertility Data Preprocessing

| Tool/Category | Specific Examples | Application in Fertility Research |

|---|---|---|

| Programming Environments | Python 3.8+, R 4.0+ | Primary computational environments for data manipulation and analysis |

| Data Manipulation Libraries | pandas, dplyr, numpy | Core data structures and operations for tabular fertility data |

| Imbalanced Learning | imbalanced-learn, SMOTE | Addressing class distribution issues in fertility datasets [30] |

| Feature Selection | scikit-learn, Ant Colony Optimization | Identifying most predictive features for fertility outcomes [31] |

| Data Visualization | matplotlib, seaborn, plotly | Exploratory data analysis and result communication |

| Explainable AI | SHAP, LIME, ELI5 | Interpreting model predictions for clinical relevance [10] [30] [35] |

| Deep Learning Frameworks | TensorFlow, PyTorch | Handling image-based sperm morphology data [32] |

| Optimization Algorithms | Particle Swarm Optimization, Genetic Algorithms | Hyperparameter tuning and feature selection [31] [36] |

Integration with SHAP Interpretation

Proper data preprocessing directly enhances the reliability and clinical utility of SHAP interpretations in male fertility models:

Feature Consistency: Consistent preprocessing ensures SHAP values accurately reflect feature contributions across different datasets and model iterations.

Handling Data Artifacts: Addressing class imbalance and missing data prevents SHAP explanations from being skewed by dataset artifacts rather than true biological signals.

Clinical Interpretability: Appropriate feature engineering and selection promote clinically meaningful SHAP explanations that align with domain knowledge.

Model Robustness: Rigorous preprocessing contributes to model generalizability, ensuring SHAP interpretations remain valid on new patient data.

Research demonstrates that combining sophisticated preprocessing with SHAP explanation enables transparent and clinically actionable male fertility assessment systems, bridging the gap between black-box predictions and clinical decision-making [10] [30].

Selecting and Training ML Models for Fertility Prediction

The application of machine learning (ML) in fertility represents a paradigm shift from traditional diagnostic methods, offering the potential to unravel complex, non-linear interactions between biological, lifestyle, and environmental factors that influence reproductive outcomes. Male fertility, in particular, has become a critical focus area, with male factors contributing to approximately 30-50% of all infertility cases [10] [31]. The emergence of Explainable AI (XAI) frameworks, particularly SHAP (SHapley Additive exPlanations), is addressing a crucial challenge in healthcare implementation: model interpretability. By providing transparent insights into model decision-making processes, SHAP enables clinicians to understand and trust ML-driven predictions, thereby facilitating their integration into clinical workflow and supporting personalized treatment planning [10].

Fertility prediction inherently presents as both a classification problem (distinguishing between fertile and infertile status) and a regression problem (predicting continuous outcomes like blastocyst yield or oocyte count). Success in this domain requires careful consideration of dataset characteristics, appropriate algorithm selection, and rigorous validation methodologies to ensure clinical reliability [21] [37]. This protocol outlines comprehensive procedures for developing, validating, and interpreting ML models specifically for male fertility prediction, with emphasis on practical implementation and explainability.

Comparative Performance of ML Models for Fertility Prediction

Quantitative Model Performance Metrics

Extensive benchmarking studies have evaluated numerous industry-standard machine learning algorithms for fertility prediction tasks. The performance metrics across different model architectures and fertility applications reveal distinct advantages for ensemble and tree-based methods.

Table 1: Performance Comparison of ML Models in Fertility Prediction Applications

| Model | Application Context | Accuracy (%) | AUC | Sensitivity/Specificity | Key Strengths |

|---|---|---|---|---|---|

| Random Forest | Male Fertility Detection [10] | 90.47 | 0.9998 | N/A | Robust to overfitting, handles mixed data types |

| LightGBM | Blastocyst Yield Prediction [21] | 67.5-71.0 | N/A | F1(0): Increased in subgroups | High speed, efficiency with large datasets |

| XGBoost | Natural Conception Prediction [38] | 62.5 | 0.580 | N/A | Advanced regularization, handles high dimensions |

| AdaBoost | Male Fertility Detection [10] | 95.1 | N/A | N/A | Ensemble boosting, handles weak learners |

| SVM | Male Fertility Detection [10] | 86.0 | N/A | N/A | Effective in high-dimensional spaces |

| MLP (Neural Network) | Male Fertility Detection [10] | 90.0 | N/A | N/A | Captures complex non-linear relationships |

| Hybrid MLFFN–ACO | Male Fertility Diagnostics [31] | 99.0 | N/A | Sensitivity: 100% | Ultra-fast computation (0.00006s), high sensitivity |

Random Forest consistently demonstrates strong performance across multiple studies, achieving optimal accuracy of 90.47% and near-perfect AUC of 99.98% in male fertility detection tasks. Its ensemble approach, which constructs multiple decision trees and outputs the mode of their classes, provides robustness against overfitting—a critical advantage with limited medical datasets [10]. Similarly, gradient boosting methods like LightGBM and XGBoost offer complementary strengths; LightGBM utilizes fewer features (8 vs. 10-11 for SVM/XGBoost), enhancing clinical interpretability without sacrificing predictive performance for blastocyst yield prediction (R²: 0.673-0.676) [21].

The exceptional performance of specialized hybrid architectures like the Multilayer Feedforward Neural Network with Ant Colony Optimization (MLFFN–ACO) highlights the potential of bio-inspired optimization algorithms in fertility diagnostics. This approach achieved 99% classification accuracy with 100% sensitivity, indicating perfect capture of true positive cases, while requiring minimal computational time (0.00006 seconds) for real-time clinical application [31].

Contextual Performance Considerations

Model performance must be evaluated relative to specific clinical contexts and outcome measures. For instance, while the XGB Classifier demonstrated the highest performance among models tested for natural conception prediction, its accuracy of 62.5% and ROC-AUC of 0.580 indicate limited predictive capacity for this particular application [38]. This underscores the challenge of predicting complex reproductive outcomes using primarily sociodemographic data without clinical biomarkers.

Furthermore, model performance often varies across patient subgroups. LightGBM maintained robust accuracy (0.675-0.71) in blastocyst prediction across both the overall cohort and poor-prognosis subgroups, though Kappa coefficients showed greater variation (0.365-0.5), indicating differential performance in classifying minority categories [21]. These nuances emphasize the importance of stratified validation in fertility prediction models.

Experimental Protocols for Model Development

Data Preprocessing and Feature Selection Protocol

Data Collection Standards

Comprehensive data collection should encompass multidimensional factors influencing fertility status. Based on validated methodologies, the following data categories should be included:

- Sociodemographic Factors: Age, BMI, lifestyle habits (smoking, alcohol consumption, caffeine intake) [38]

- Clinical History: Childhood diseases, accidents/trauma, surgical interventions, high fever episodes [31]

- Environmental Exposures: Chemical agent exposure, occupational heat exposure, sedentary behavior (sitting hours per day) [10] [38]

- Reproductive Specifics: Menstrual cycle characteristics, varicocele presence, sexual intercourse frequency [38]

- Laboratory Parameters: Semen quality metrics, hormonal assays, follicle sizes via ultrasound [13]

Data should be collected using structured forms with consistent encoding schemes (e.g., categorical variables appropriately binarized) to facilitate preprocessing.

Data Cleaning and Imputation Procedure

Missing Data Handling: Apply appropriate imputation strategies based on data type and missingness pattern:

- For continuous variables with <5% missingness: median imputation

- For categorical variables with <5% missingness: mode imputation

- For extensive missingness (>20%): consider exclusion with documentation

Class Imbalance Remediation: Address skewed distribution between fertile and infertile cases using:

- SMOTE (Synthetic Minority Oversampling Technique): Generates synthetic samples from minority class [10]

- Combination Sampling: Integrates both oversampling and undersampling approaches

- Stratified Cross-Validation: Maintains original distribution in training/validation splits

Feature Scaling and Normalization:

- Standardize continuous variables to zero mean and unit variance

- Apply min-max scaling for neural network architectures

- Encode categorical variables using one-hot encoding for tree-based models

Feature Selection Methodology

Implement a multi-stage feature selection process to identify the most predictive variables:

- Initial Filtering: Remove low-variance features (<1% variance threshold)

- Correlation Analysis: Calculate Pearson's correlation coefficients; remove highly correlated features (r > 0.85) to reduce multicollinearity [37]

- Permutation Feature Importance: Evaluate feature significance by measuring performance decrease when individual features are permuted [38]

- Recursive Feature Elimination (RFE): Iteratively remove the least important features until optimal subset is identified (e.g., 8-11 features for blastocyst prediction) [21]

Model Training and Validation Protocol

Dataset Partitioning Strategy

- Stratified Split: Partition data into training (80%) and testing (20%) sets while preserving the original class distribution [38]

- Cross-Validation Implementation: Apply k-fold cross-validation (typically k=5 or k=10) to assess model robustness and mitigate overfitting [10]

Hyperparameter Optimization Framework

Execute systematic hyperparameter tuning for selected algorithms:

Table 2: Key Hyperparameters for Optimal Fertility Model Performance

| Model | Critical Hyperparameters | Recommended Search Range | Optimization Technique |

|---|---|---|---|

| Random Forest | nestimators, maxdepth, minsamplessplit, minsamplesleaf | nestimators: [100, 500], maxdepth: [5, 30] | Grid Search or Random Search |

| LightGBM | numleaves, learningrate, featurefraction, regalpha | numleaves: [31, 127], learningrate: [0.01, 0.1] | Bayesian Optimization |

| XGBoost | maxdepth, learningrate, subsample, colsample_bytree | maxdepth: [3, 10], learningrate: [0.01, 0.3] | Random Search with Early Stopping |

| SVM | C, gamma, kernel | C: [0.1, 10], gamma: [0.001, 0.1] | Grid Search |

| Neural Networks | hiddenlayersizes, activation, learningrateinit | hiddenlayersizes: [(50,), (100,50)] | Bayesian Optimization |

Model Validation and Performance Assessment

Implement comprehensive evaluation using multiple metrics: