Leveraging Transfer Learning for Advanced Sperm Morphology Classification: A Roadmap for Biomedical AI

This article provides a comprehensive exploration of transfer learning applications for automating human sperm classification, a critical task in male fertility diagnostics.

Leveraging Transfer Learning for Advanced Sperm Morphology Classification: A Roadmap for Biomedical AI

Abstract

This article provides a comprehensive exploration of transfer learning applications for automating human sperm classification, a critical task in male fertility diagnostics. We cover the foundational challenges of traditional sperm morphology analysis, including its subjective nature and lack of standardization. The methodological section details how pre-trained convolutional neural networks (CNNs) like AlexNet can be adapted for high-accuracy sperm head classification, significantly reducing computational costs. We further address key troubleshooting aspects, such as overcoming limited dataset size through data augmentation and techniques for enhancing segmentation precision. Finally, the article presents a framework for the rigorous validation and comparative analysis of these models against expert classifications and traditional methods, discussing their clinical applicability and potential to revolutionize andrology workflows.

The Clinical Imperative and Foundational Challenges in Automated Sperm Analysis

The Global Burden of Male Infertility and the Role of Sperm Morphology

Male infertility constitutes a significant and growing global health challenge, affecting millions of reproductive-aged individuals and couples worldwide. According to the World Health Organization, infertility affects one in every six people of reproductive age globally, with male factors contributing to approximately 50% of all cases [1]. Among the various parameters assessed in male fertility evaluation, sperm morphology represents a critical diagnostic indicator with profound prognostic value for reproductive outcomes. This application note examines the escalating global burden of male infertility and details advanced computational methodologies, with a specific focus on transfer learning approaches for sperm morphological classification. We present comprehensive epidemiological data, experimental protocols, and resource guidance to support research and development efforts in male reproductive health.

Global Burden of Male Infertility

Epidemiological Trends

Quantitative analyses from the Global Burden of Disease (GBD) 2021 study reveal a substantial and increasing worldwide prevalence of male infertility. The condition represents a persistent and growing public health concern with significant geographical disparities.

Table 1: Global Burden of Male Infertility (1990-2021)

| Metric | 1990-2021 Change | 2021 Absolute Global Burden | Region with Highest Burden | Most Affected Age Group |

|---|---|---|---|---|

| Prevalence | +74.66% [2] | >55 million cases [3] | South & East Asia (50% of global burden) [3] | 35-39 years [3] [2] |

| DALYs | +74.64% [2] | >300,000 DALYs [3] | Eastern Europe & Western Sub-Saharan Africa (1.5x global average ASRs) [3] | 35-39 years [3] [2] |

| ASPR Trend | Steady increase globally (EAPC=0.5) [4] | 760.4/100,000 in High-middle SDI [4] | Fastest growth in Low-middle SDI regions [3] [4] | - |

| Metric | Noteworthy National Context | Primary Drivers |

|---|---|---|

| Prevalence | China accounts for ~20% of global cases [3] | Population growth (global), aging (China) [3] |

| DALYs | China shows declining trend post-2008 [3] | Environmental factors, STDs, lifestyle [3] |

| ASPR Trend | Andean Latin America: fastest increase (EAPC=2.2) [4] | Socio-demographic factors, healthcare access [3] |

DALYs: Disability-Adjusted Life Years; ASPR: Age-Standardized Prevalence Rate; ASRs: Age-Standardized Rates; EAPC: Estimated Annual Percentage Change; SDI: Socio-Demographic Index

Socioeconomic and Regional Disparities

The burden of male infertility demonstrates a complex relationship with socioeconomic development. The Socio-Demographic Index (SDI), a composite measure of income, education, and fertility rates, reveals distinctive patterns across development spectra. While the absolute number of cases is highest in middle SDI regions, the age-standardized rates are most elevated in high-middle SDI regions [4]. Low and low-middle SDI regions, particularly in South Asia, Southeast Asia, and Sub-Saharan Africa, are experiencing the most rapid increases in both prevalence and DALYs [3]. This trend highlights the critical need for targeted interventions in regions with developing healthcare infrastructure.

Sperm Morphology in Infertility Assessment

Clinical Significance

Sperm morphology assessment provides crucial diagnostic and prognostic information in male fertility evaluation. The World Health Organization recognizes abnormal sperm shape as one of the primary causes of male infertility [1]. Morphological evaluation encompasses analysis of the head, midpiece, and tail compartments, with specific defects in each region associated with impaired fertilizing capacity [5] [6]. Traditional manual assessment, while considered the clinical standard, faces significant challenges including subjectivity, inter-technician variability, and time-intensive procedures [6] [7].

Classification Systems

Multiple classification systems exist for sperm morphological assessment:

- WHO Classification: Categorizes sperm into normal and abnormal classes, with further subdivision of abnormal sperm into head defects, neck and midpiece defects, tail defects, and excess residual cytoplasm [5].

- David's Modified Classification: Employs 12 distinct morphological defect classes including 7 head defects (tapered, thin, microcephalous, etc.), 2 midpiece defects, and 3 tail defects [6].

Transfer Learning Approach for Sperm Classification

Experimental Protocol: Transfer Learning with AlexNet Architecture

Table 2: Protocol for Sperm Morphology Classification Using Transfer Learning

| Step | Procedure | Parameters/Specifications |

|---|---|---|

| 1. Data Acquisition | Utilize publicly available datasets (HuSHeM, SCIAN, SMD/MSS) | HuSHeM: 216 sperm images (4 classes) [5]; SMD/MSS: 1000 images extended to 6035 via augmentation [6] |

| 2. Data Preprocessing | Crop and align sperm heads; convert to grayscale; normalize pixel values | Image resizing to 64×64 or 80×80 pixels; histogram normalization; noise reduction filters [5] [6] |

| 3. Data Augmentation | Apply transformations to increase dataset size and diversity | Rotation (±15°), horizontal/vertical flipping, brightness adjustment (±20%), contrast variation [6] |

| 4. Model Architecture | Adapt pre-trained AlexNet with modified classifier | Batch Normalization layers added; final fully-connected layer adjusted for 4-5 output classes [5] |

| 5. Transfer Learning | Utilize pre-trained weights from ImageNet; fine-tune on sperm dataset | Feature extraction layers frozen initially; learning rate: 0.001; optimizer: Adam [5] [8] |

| 6. Training | Train model on annotated sperm images | Batch size: 32; epochs: 100-200; validation split: 20% [5] [8] |

| 7. Evaluation | Assess model performance on test dataset | Metrics: Accuracy, Precision, Recall, F1-score; Confusion matrix analysis [5] |

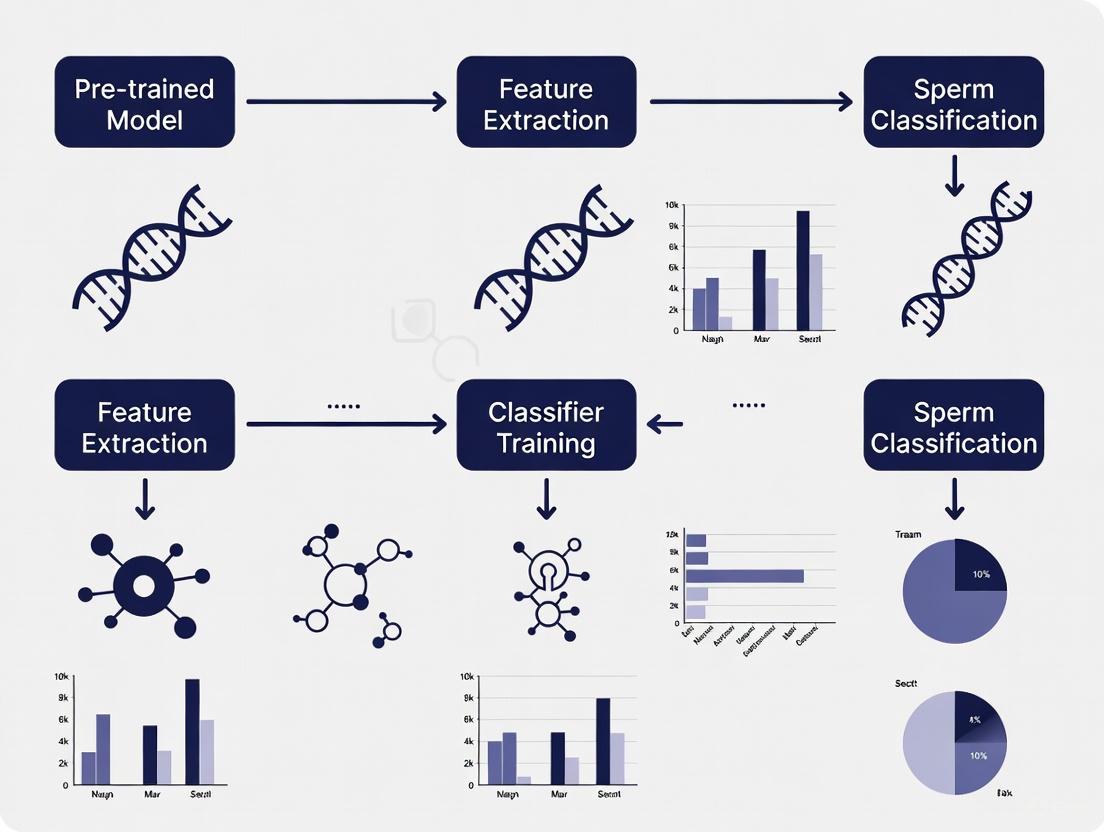

Workflow Visualization

Transfer Learning Workflow for Sperm Morphology Classification

Performance Metrics

The transfer learning approach detailed above has demonstrated exceptional performance in sperm morphology classification. When evaluated on the HuSHeM dataset, the modified AlexNet architecture achieved an average accuracy of 96.0% and precision of 96.4%, surpassing previous traditional machine learning and deep learning approaches [5]. Comparable transfer learning implementations using VGG16 architectures have achieved 94.1% accuracy on the same dataset, significantly exceeding conventional feature-based methods which showed accuracy rates between 58-62% on more challenging datasets [8].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Category | Item/Resource | Specification/Function | Application Context |

|---|---|---|---|

| Datasets | HuSHeM Dataset | 216 sperm images; 4 morphology classes [5] | Algorithm training & validation |

| Datasets | SCIAN-MorphoSpermGS | 1854 sperm cell images; 5 expert-classified categories [8] | Benchmarking & comparison |

| Datasets | SMD/MSS Dataset | 1000+ images; David's modified classification [6] | Multi-class morphology assessment |

| Software | Python 3.8+ | With TensorFlow/PyTorch frameworks [6] | Deep learning implementation |

| Libraries | OpenCV | Image processing and augmentation [5] | Data preprocessing |

| Hardware | GPU-enabled Workstation | NVIDIA CUDA-compatible graphics cards | Model training acceleration |

| Staining | RAL Diagnostics Kit | Sperm staining for morphological clarity [6] | Sample preparation |

| Imaging | MMC CASA System | Computer-assisted semen analysis with camera [6] | Standardized image acquisition |

The escalating global burden of male infertility demands innovative approaches to diagnosis and analysis. Sperm morphology represents a critical diagnostic parameter that benefits significantly from computational approaches, particularly transfer learning methodologies. The experimental protocols outlined herein provide researchers with robust frameworks for implementing these advanced classification systems. Transfer learning techniques have demonstrated superior performance compared to traditional methods, achieving classification accuracy exceeding 96% in controlled assessments. As the field advances, the integration of these computational tools with standardized epidemiological data offers promising avenues for addressing male infertility through improved diagnostic precision, resource optimization in healthcare systems, and ultimately, enhanced clinical outcomes for affected individuals worldwide.

Sperm morphology assessment is a cornerstone of male fertility evaluation, providing critical diagnostic and prognostic information. Despite its clinical importance, the manual analysis of sperm shape, as outlined by the World Health Organization (WHO), remains plagued by significant inherent limitations. This application note details the core issues of subjectivity and poor reproducibility that undermine conventional manual assessment. Furthermore, it positions these challenges within the context of modern andrology laboratories, where automated solutions, particularly those leveraging transfer learning for sperm classification, are emerging as viable solutions to standardize and enhance diagnostic accuracy.

The Core Challenge: Subjectivity and Variability

The fundamental limitation of manual sperm morphology assessment lies in its reliance on the visual interpretation of a technologist. This process is inherently subjective, leading to substantial inter- and intra-observer variability. The complexity of the task—requiring the simultaneous evaluation of the head, midpiece, and tail against multiple strict criteria—makes consistent application of rules challenging, even among seasoned experts.

Quantitative Evidence of Inter-Expert Disagreement

Recent studies provide stark quantitative evidence of this variability. An analysis of inter-expert agreement in sperm cell classification revealed a concerning distribution: total agreement (TA) among three experts occurred in only 41% of cases, while partial agreement (PA) was seen in 35%, and no agreement (NA) was found in 24% of the spermatozoa analyzed [6]. This indicates that for nearly a quarter of sperm cells, three qualified experts could not concur on a classification.

External Quality Control (EQC) data over a six-year period (2015-2020) further highlights which specific morphological criteria are most prone to subjective interpretation. The table below summarizes the agreement levels for various WHO strict criteria among EQC participants [9].

Table 1: Variability in the Assessment of WHO Sperm Morphology Criteria Based on EQC Data (2015-2020)

| Morphological Criterion | Agreement Level | Agreement Percentage |

|---|---|---|

| Head Ovality | Poor | <60% |

| Regularity of Head Contour | Poor | <60% |

| Midpiece Regularity | Poor | <60% |

| Midpiece/Head Alignment | Poor | <60% |

| Acrosomal Region (40-70%) | Intermediate | 60-90% |

| Major Axes Alignment | Intermediate | 60-90% |

| Acrosomal Vacuoles (<20%) | Good | >90% |

| Excessive Residual Cytoplasm | Good | >90% |

| Tail Thinner than Midpiece | Good | >90% |

This data identifies head shape and midpiece contour/alignment as the primary sources of diagnostic inconsistency, whereas assessments of the acrosome, residual cytoplasm, and tail are more reliable [9].

Impact of Classification System Complexity

The level of disagreement is directly correlated with the complexity of the classification system used. A 2025 training study demonstrated that untrained morphologists showed significantly higher accuracy and lower variation when using a simple 2-category system (normal/abnormal) compared to more detailed systems [10].

Table 2: Classification Accuracy of Untrained Morphologists Across Different Systems

| Classification System | Number of Categories | Untrained User Accuracy |

|---|---|---|

| Normal/Abnormal | 2 | 81.0 ± 2.5% |

| Defect Location | 5 | 68.0 ± 3.6% |

| Specific Defect Types | 8 | 64.0 ± 3.5% |

| Granular Defect Types | 25 | 53.0 ± 3.7% |

This demonstrates a clear inverse relationship between system complexity and consensus, underscoring the difficulty in standardizing manual assessment across laboratories that may use different classification schemes [10].

Experimental Protocols for Investigating Assessment Variability

To quantify and address these limitations, researchers can employ the following experimental protocols.

Protocol 1: Quantifying Inter-Observer Variability

This protocol measures the degree of disagreement between different analysts within a laboratory.

- Sample Preparation: Prepare semen smears from at least 10 different patient samples using the Papanicolaou (PAP) staining method, as recommended by the WHO [11] [9].

- Image Acquisition: Acquire a minimum of 200 high-quality, digitized images of individual spermatozoa per sample using a bright-field microscope with a 100x oil immersion objective [6] [11].

- Blinded Assessment: Have at least three trained morphologists independently classify each sperm image according to a predefined system (e.g., the modified David classification with 12 classes or WHO strict criteria) [6] [9].

- Data Analysis: Calculate the percentage agreement for each morphological category. Use statistical measures such as Fleiss' Kappa to quantify the level of inter-observer agreement beyond what would be expected by chance [6].

Protocol 2: Evaluating the Impact of Training on Reproducibility

This protocol assesses whether standardized training can improve consistency among novice morphologists.

- Recruitment: Recruit a cohort of novice morphologists (n=16-22) with no prior experience in sperm morphology assessment [10].

- Baseline Testing: Administer a baseline test using a dataset of expert-validated sperm images across multiple classification systems (e.g., 2, 5, 8, and 25 categories) to establish initial accuracy and speed [10].

- Structured Training: Implement a training intervention using a standardized tool, such as the "Sperm Morphology Assessment Standardisation Training Tool," which employs machine learning principles and expert consensus "ground truth" labels [10].

- Post-Training Evaluation: Conduct repeated tests over a period of several weeks to monitor improvements in accuracy and diagnostic speed. Compare post-training results with baseline performance to quantify the effect of standardized training [10].

The Pathway to Standardization: AI and Transfer Learning

The documented limitations of manual assessment have accelerated the development of automated solutions. Artificial Intelligence (AI), particularly deep learning, offers a path toward objective, standardized, and high-throughput sperm morphology analysis [6] [7] [12].

The Role of Transfer Learning in Sperm Classification

A significant challenge in developing robust AI models is the scarcity of large, high-quality, annotated datasets of sperm images [7] [13]. Transfer learning is a powerful technique that addresses this bottleneck. It involves taking a pre-trained deep learning model (e.g., AlexNet, ResNet)—already skilled at feature extraction from a vast general image database like ImageNet—and fine-tuning it for the specific task of sperm classification [5]. This approach reduces computational costs, saves time, and achieves high accuracy even with limited medical image datasets [5].

Experimental Protocol: Implementing a Transfer Learning Approach

This protocol outlines the key steps for developing a sperm classifier using transfer learning.

- Data Curation: Collect or obtain a dataset of sperm images with expert-annotated ground truth labels. Publicly available datasets such as HuSHeM or SCIAN can be used for this purpose [5].

- Data Preprocessing: Clean and preprocess the images. This includes resizing images to the input dimensions of the pre-trained model, normalization, and data augmentation techniques (e.g., rotation, flipping, brightness/contrast adjustment) to increase dataset diversity and improve model robustness [6] [5] [14].

- Model Selection and Adaptation: Select a pre-trained Convolutional Neural Network (CNN) architecture like AlexNet or ResNet. Modify the final classification layer to match the number of sperm morphology classes in your dataset (e.g., 4 classes: normal, tapered, pyriform, amorphous) [5].

- Model Training (Fine-Tuning): Train the model on your sperm image dataset. Initially, freeze the weights of the early layers (which detect general features) and only train the later, task-specific layers. This preserves general feature knowledge while adapting the model to the new task [5].

- Validation and Testing: Evaluate the model's performance on a separate, unseen test set of images. Report standard metrics such as accuracy, precision, recall, and F1-score to benchmark its performance against manual assessment [5].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents and materials essential for conducting standardized sperm morphology research, whether for manual assessment or the development of AI-based models.

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Reagent / Material | Function / Application | Specifications / Standards |

|---|---|---|

| Papanicolaou (PAP) Stain | Standard staining for sperm morphology; allows clear differentiation of head, acrosome, midpiece, and tail. | Recommended as the reference staining method by WHO and ISO 23162 [11] [9]. |

| RAL Diagnostics Stain | A standardized staining kit used for sperm morphology assessment as an alternative to PAP. | Used in developing the SMD/MSS dataset for deep learning model training [6]. |

| SSA-II Plus CASA System | Computer-Assisted Semen Analysis system for automated image acquisition and morphometric parameter measurement. | Used for high-throughput data collection and to provide objective measurements of head length, width, area, etc. [11]. |

| Expert-Validated Datasets | Publicly available image datasets with "ground truth" labels for training and validating AI models. | Examples: HuSHeM (216 images), SCIAN (1854 images), VISEM-Tracking (656k+ annotations) [7] [13] [5]. |

| Pre-trained CNN Models | Deep learning models (e.g., AlexNet, ResNet, U-Net) pre-trained on large image datasets. | Serves as the foundation for transfer learning, significantly reducing development time and data requirements for sperm classification tasks [5] [14]. |

The subjectivity and poor reproducibility of manual sperm morphology assessment are well-documented, quantifiable problems that compromise diagnostic consistency. These limitations are primarily driven by inter-observer variability and the complexity of classification systems. The integration of AI, specifically deep learning with transfer learning, presents a transformative solution. By leveraging pre-trained models and standardized protocols, researchers and clinicians can overcome data scarcity and develop robust, automated systems. This shift towards computational andrology promises to deliver the objective, reproducible, and high-throughput analysis necessary for advanced male fertility diagnostics and research.

Within the broader scope of research on transfer learning for sperm classification, it is crucial to understand the foundations and limitations of the methods it aims to supersede. Conventional machine learning (ML) has played a pivotal role in automating sperm morphology analysis, a critical diagnostic procedure for male infertility where male factors contribute to approximately 50% of cases [13] [7]. These traditional algorithms sought to introduce objectivity and consistency into an process traditionally burdened by high inter-observer variability and subjectivity [13] [6].

The defining characteristic of these conventional ML approaches is their fundamental reliance on handcrafted features. This methodology depends on manual image analysis and the extraction of specific, pre-defined characteristics—such as shape, texture, and grayscale intensity—before these features are fed into a classifier [13] [5]. This document details the standard protocols for building such models, quantitatively summarizes their performance, and analyzes the inherent pitfalls of this paradigm, thereby framing the rationale for the shift towards deep learning and transfer learning in modern sperm classification research.

Experimental Protocols & Workflows

The development of a conventional ML model for sperm morphology analysis follows a standardized, multi-stage pipeline. The workflow is fundamentally sequential, where the output of each stage directly influences the success of the next.

Protocol: Traditional Sperm Image Analysis via Handcrafted Features

Objective: To classify human sperm cells into morphological categories (e.g., normal, tapered, pyriform, amorphous) using a conventional machine learning pipeline based on manually engineered features.

Sample Preparation and Data Acquisition

- Sample Collection & Staining: Collect semen samples and prepare smears following World Health Organization (WHO) guidelines. Stain slides using a commercially available staining kit (e.g., RAL Diagnostics kit or Diff-Quik method) to enhance cellular contrast [6] [5].

- Image Acquisition: Capture digital images of individual spermatozoa using an optical microscope equipped with a digital camera, typically with a 100x oil immersion objective in bright-field mode [6] [5]. Systems like the MMC Computer-Assisted Semen Analysis (CASA) system can be used for this purpose.

Image Pre-processing and Manual Feature Engineering

- Goal: Isolate the sperm head and extract descriptive numerical features.

- Steps:

- Denoising: Apply filters (e.g., wavelet denoising, low-pass filters) to reduce background noise and enhance the sperm signal [15] [5].

- Conversion to Monochrome: Transform RGB images to grayscale to simplify subsequent analysis [5].

- Sperm Head Segmentation:

- Apply edge detection operators (e.g., Sobel operator) to highlight sperm contours [5].

- Use adaptive thresholding algorithms to create a binary image separating the sperm head from the background [5].

- Perform morphological operations (erosion and dilation) to eliminate small interference spots and smooth the contour [5].

- Employ clustering algorithms like K-means to isolate the sperm head based on color or intensity [13] [7].

- Contour Analysis & Feature Extraction: Fit an ellipse to the sperm head contour to determine its major and minor axes. This allows for cropping and rotational alignment to a uniform direction [5].

- Manual Feature Extraction: This is the core, hands-on engineering step. Extract the following types of features from the processed sperm head image:

Model Training and Classification

- Dataset Partitioning: Randomly split the dataset of extracted feature vectors into a training subset (e.g., 80%) and a testing subset (e.g., 20%) [6].

- Classifier Training: Train a selected classifier on the training feature vectors and their corresponding morphological labels (provided by expert embryologists).

- Model Evaluation: Use the held-out testing set to evaluate the final model's performance using metrics such as accuracy, precision, recall, and area under the curve (AUC) [6] [15].

The following diagram illustrates this multi-stage workflow, highlighting its sequential and engineered nature.

Performance Data and Pitfalls

The conventional ML pipeline has demonstrated variable but ultimately limited success in research settings. The table below summarizes the performance of several representative approaches, highlighting the algorithms and datasets used.

Table 1: Performance of Conventional Machine Learning Models in Sperm Morphology Analysis

| Study Citation | ML Algorithm(s) Used | Key Handcrafted Features | Reported Performance | Noted Limitations |

|---|---|---|---|---|

| Bijar A et al. [7] | Bayesian Density Estimation | Shape-based descriptors (Hu moments, Zernike moments, Fourier descriptors) | 90% accuracy (4-class head classification) | Relies exclusively on shape; lacks texture/grayscale data [7]. |

| Mirsky SK et al. [7] | Support Vector Machine (SVM) | Unspecified sperm head features | AUC-ROC: 88.59%, Precision >90% | Binary classification (good/bad) only [7]. |

| Chang V et al. [7] | Fourier Descriptor + SVM | Fourier shape descriptors | 49% accuracy (non-normal head classification) | Highlights high inter-expert variability and task difficulty [7]. |

| Shaker F et al. [5] | Adaptive Patch-based Dictionary Learning | Image patches from sperm heads | Avg. True Positive Rate: 62% (SCIAN dataset) | Requires manual feature extraction, not end-to-end [5]. |

Critical Analysis of Pitfalls

The data in Table 1 reveals several fundamental pitfalls inherent to the handcrafted feature approach:

Limited Scope and Granularity: Most conventional models are restricted to classifying sperm heads into a small number of categories (e.g., normal, tapered, pyriform, amorphous) [7] [5]. They generally fail to address the complete sperm structure, ignoring critical diagnostic information from the neck, midpiece, and tail, which according to WHO standards, encompasses 26 types of abnormal morphology [13] [7].

Inadequate Generalization and Accuracy: The performance of these models is highly variable and often unsatisfactory for clinical application. As shown in Table 1, accuracy can be as low as 49% on more challenging classification tasks, a figure that reflects the high inter-expert variability in the field [7]. These algorithms also struggle to distinguish sperm heads from impurities and cellular debris in semen samples, leading to misclassification [7].

Technical Drawbacks: The reliance on manually set thresholds and texture features frequently results in over-segmentation or under-segmentation [7]. Furthermore, the process of manual feature extraction is not only cumbersome and time-consuming but also inherently limits the algorithm's generalization ability. A feature set tuned for one dataset often performs poorly on another, as it cannot learn new, relevant features on its own [7].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Conventional ML in Sperm Analysis

| Item / Resource | Type | Function / Description | Example / Citation |

|---|---|---|---|

| SCIAN-MorphoSpermGS | Public Dataset | A gold-standard dataset with 1,854 sperm images for training and evaluating models, classified into five categories. | [7] [5] |

| HuSHeM | Public Dataset | A publicly available dataset containing 216 human sperm head images across four morphological classes. | [15] [5] |

| RAL Diagnostics Staining Kit | Laboratory Reagent | Used for staining semen smears to enhance contrast and morphological detail for microscopic imaging. | [6] |

| Support Vector Machine (SVM) | Computational Algorithm | A robust classifier frequently used as the final step in the pipeline to categorize sperm based on engineered features. | [7] [16] |

| K-means Clustering | Computational Algorithm | An unsupervised algorithm commonly used for segmenting and isolating the sperm head from the image background. | [13] [7] |

| Shape Descriptors (Hu, Zernike) | Computational Feature | Mathematical representations of shape and contour used as handcrafted features for the classifier. | [7] [15] |

Conventional machine learning, built on a foundation of handcrafted features, established the initial pathway toward automated sperm morphology analysis. The experimental protocols and performance data detailed herein underscore both its historical contribution and its profound limitations. The paradigm's reliance on manual feature engineering results in models with restricted classification granularity, inconsistent performance, and poor generalizability.

These identified pitfalls provide the critical context and justification for the ongoing research shift towards deep learning and, more specifically, transfer learning. Deep learning models, with their ability to automatically learn hierarchical and discriminative features directly from raw pixel data, represent a necessary evolution to overcome the constraints outlined in this document and build more robust, accurate, and clinically viable automated sperm analysis systems.

In the field of male infertility research, sperm morphology analysis is a crucial diagnostic procedure. The application of deep learning, particularly transfer learning, to automate and standardize this analysis shows significant promise [17]. However, the development of robust, generalizable models is fundamentally constrained by a critical bottleneck: the lack of standardized, high-quality annotated datasets [13]. This Application Note details this central challenge, quantitatively summarizes existing data resources, and provides detailed protocols for dataset creation to empower research in transfer learning for sperm classification.

The Core Dataset Challenge

Deep learning models require large volumes of high-quality, consistently annotated data to train effectively. In sperm morphology analysis, this requirement is difficult to meet for several key reasons:

- Inherent Data Complexity and Subjectivity: Sperm defect assessment requires the simultaneous evaluation of the head, vacuoles, midpiece, and tail, which substantially increases annotation difficulty and complexity [13]. The process is inherently subjective, leading to significant inter-observer variability even among experts, with reported kappa values as low as 0.05–0.15 [15].

- Acquisition and Annotation Difficulties: Sperm may appear intertwined in images, or only partial structures may be displayed, complicating both automated and manual analysis [13]. The process of manual annotation is time-intensive and requires specialized expertise [15].

- Impact on Model Performance: Without standardized datasets, models trained on one dataset often fail to generalize well to images from different clinics or acquired under different conditions [13]. This limits their clinical applicability and robustness.

A Landscape of Existing Datasets and Performance

Numerous public and private datasets have been developed to address these needs. The table below summarizes key datasets, highlighting their characteristics and the performance benchmarks achieved by deep learning models on them.

Table 1: Summary of Key Sperm Morphology Datasets and Model Performance

| Dataset Name | Key Characteristics | Image Count (Original) | Annotation Type | Noted Limitations | Representative Model Performance |

|---|---|---|---|---|---|

| HuSHeM [13] [15] | Stained sperm heads, higher resolution | 725 (216 public) | Classification | Limited public availability, focuses only on heads | 96.77% accuracy with CBAM-enhanced ResNet50 & DFE [15] |

| SMIDS [13] [15] | Stained sperm images | 3,000 | Classification (3-class) | - | 96.08% accuracy with CBAM-enhanced ResNet50 & DFE [15] |

| MHSMA [13] [18] | Non-stained, grayscale sperm heads | 1,540 | Classification | No-stain, noisy, low resolution [13] | Used for deep learning model development [18] |

| VISEM-Tracking [13] | Low-resolution unstained sperm and videos | 656,334 annotated objects | Detection, Tracking, Regression | Low-resolution, unstained [13] | A multi-modal dataset for sperm analysis tasks [13] |

| SVIA [13] [18] [19] | Low-resolution unstained sperm and videos | 4,041 images; 125,000 annotated instances | Detection, Segmentation, Classification | Low-resolution, unstained [13] | Used for training segmentation models like Mask R-CNN [19] |

| SMD/MSS [6] | Stained sperm, based on David classification | 1,000 (extended to 6,035 via augmentation) | Classification (12 defect classes) | - | Deep learning model accuracy ranged from 55% to 92% [6] |

A comparative analysis of state-of-the-art deep learning models further illustrates the interplay between data and architecture. The following table synthesizes quantitative results from recent studies.

Table 2: Performance of Deep Learning Models on Sperm Morphology Tasks

| Model / Framework | Primary Task | Dataset Used | Key Metric | Performance |

|---|---|---|---|---|

| CBAM-ResNet50 with DFE [15] | Head Morphology Classification | SMIDS | Accuracy | 96.08% |

| CBAM-ResNet50 with DFE [15] | Head Morphology Classification | HuSHeM | Accuracy | 96.77% |

| In-house AI (ResNet50) [18] | Unstained Live Sperm Morphology | Novel Confocal Microscopy Dataset | Correlation with CASA | r = 0.88 |

| Mask R-CNN [19] | Multi-part Segmentation (Head, Nucleus, Acrosome) | Live Unstained Sperm Dataset | IoU | Outperformed YOLOv8 & YOLO11 |

| U-Net [19] | Multi-part Segmentation (Tail) | Live Unstained Sperm Dataset | IoU | Highest performance for tail segment |

| CNN with Augmentation [6] | Multi-class Defect Classification | SMD/MSS | Accuracy | 55% - 92% (varies by class) |

Detailed Experimental Protocols

To facilitate the creation of high-quality datasets, we outline two detailed experimental protocols from recent literature.

Protocol 1: Creating a High-Resolution Unstained Sperm Dataset for Transfer Learning

This protocol is adapted from a 2025 study that used confocal microscopy to create a high-quality dataset for training an AI model to assess unstained, live sperm [18]. This is particularly valuable for clinical applications where staining is undesirable.

1. Sample Preparation

- Participants: Enroll donors following ethical guidelines. Maintain sexual abstinence for 2-7 days prior to sample collection [18].

- Liquefaction: Check semen samples within 30 minutes of ejaculation. Preserve specimens at 37°C before and during analysis [18].

- Slide Preparation: Dispense a 6 µL droplet onto a standard two-chamber slide with a depth of 20 µm [18].

2. Image Acquisition via Confocal Microscopy

- Microscope: Use a Confocal Laser Scanning Microscope (e.g., LSM 800) [18].

- Settings: Set magnification to 40x in confocal mode (LSM, Z-stack). Set Z-stack interval to 0.5 µm, covering a total range of 2 µm. Use a frame time of ~633 ms and an image size of 512 x 512 pixels [18].

- Data Collection: Capture at least 200 sperm images per sample, with each capture containing 2-3 sperm [18].

3. Expert Annotation and Categorization

- Annotation Tool: Use a program like LabelImg to manually draw bounding boxes around each sperm [18].

- Criteria for Normal Sperm: Based on WHO guidelines, classify sperm as normal if they have a smooth oval head (length-to-width ratio of 1.5–2), no vacuoles, a slender/regular neck, and a uniform tail [18].

- Categorization: Categorize each sperm image into normal or abnormal datasets. Abnormal sperm are characterized by defects in the head (tapered, amorphous, pyriform, round), observable vacuoles, aberrant neck, or abnormal tail [18].

4. Model Training with Transfer Learning

- Model Selection: Choose a pre-trained model such as ResNet50 for image classification tasks [18].

- Training: Train the model on the annotated dataset to minimize the difference between predicted and actual labels. A study using this method reported a test accuracy of 93% after 150 epochs [18].

Protocol 2: Building an Augmented Dataset for Multi-Class Defect Classification

This protocol, based on the creation of the SMD/MSS dataset, focuses on using data augmentation to balance morphological classes and train a model for detailed defect classification according to the modified David classification [6].

1. Sample Preparation and Staining

- Sample Inclusion: Use samples with a sperm concentration of at least 5 million/mL. Exclude samples with high concentrations (>200 million/mL) to avoid image overlap [6].

- Smear Preparation and Staining: Prepare smears following WHO guidelines and stain with a Romanowsky stain variant (e.g., RAL Diagnostics kit) [6].

2. Data Acquisition and Expert Classification

- System: Use a Computer-Aided Semen Analysis (CASA) system (e.g., MMC) with an optical microscope equipped with a digital camera and a 100x oil immersion objective in bright field mode [6].

- Classification: Have each spermatozoon manually classified by three independent experts. The classification should follow a structured system like the modified David classification, which includes 12 classes of defects (e.g., 7 head defects, 2 midpiece defects, 3 tail defects) [6].

- Inter-Expert Agreement Analysis: Calculate the level of agreement among experts (e.g., Total Agreement: 3/3, Partial Agreement: 2/3, No Agreement: 0/3) using statistical tests like Fisher's exact test [6].

3. Data Augmentation and Pre-processing

- Augmentation Techniques: Apply techniques such as rotation, scaling, and flipping to augment the dataset size and balance the representation of different morphological classes. One study increased a dataset from 1,000 to 6,035 images using augmentation [6].

- Image Pre-processing: Resize images (e.g., to 80x80 pixels for grayscale) and normalize pixel values to bring them to a common scale [6].

4. Model Training and Evaluation

- Data Partitioning: Split the augmented dataset randomly, using 80% for training and 20% for testing [6].

- Algorithm Implementation: Develop a Convolutional Neural Network (CNN) algorithm using a framework like Python. Train the model on the training set and evaluate its performance on the unseen test set [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Sperm Morphology Analysis

| Item | Function / Application |

|---|---|

| RAL Diagnostics Staining Kit (Romanowsky-type stain) | Stains sperm cells on smears to provide contrast for visualizing morphological details under a light microscope [6]. |

| Diff-Quik Stain (Romanowsky stain variant) | Used for rapid staining of sperm for morphology assessment, typically in Computer-Aided Semen Analysis (CASA) [18]. |

| Leja Standard Two-Chamber Slides (20 µm depth) | Provides standardized chambers for preparing semen samples for microscopic analysis, ensuring consistent depth for imaging [18]. |

| Confocal Laser Scanning Microscope (e.g., Zeiss LSM 800) | Enables high-resolution, Z-stack image acquisition of live, unstained sperm at lower magnifications, preserving sperm viability [18]. |

| MMC or IVOS II CASA System | Automated system for acquiring and analyzing sperm images, used for measuring concentration, motility, and morphometric parameters [18] [6]. |

| LabelImg Program | An open-source graphical image annotation tool used to draw bounding boxes around sperm for creating ground truth data [18]. |

The field of artificial intelligence (AI) has undergone a fundamental transformation, moving from traditional, manually-engineered algorithms to sophisticated, data-driven learning systems. This paradigm shift is most evident in the application of Deep Learning (DL) and Transfer Learning (TL) to complex scientific domains, where they are revolutionizing how researchers extract information from data. Traditional machine learning approaches have long been hampered by their reliance on handcrafted features—where domain experts must manually identify and quantify relevant characteristics from raw data, a process that is both time-consuming and inherently biased by human perception [20].

Deep learning represents a radical departure from this tradition. As a subfield of machine learning, it utilizes artificial neural networks with multiple layers (hence "deep") to automatically learn hierarchical representations of data directly from raw inputs, such as images. This automatic feature extraction eliminates the need for manual feature engineering, allowing models to discover complex, non-linear patterns that may be imperceptible to human experts [7] [20]. However, the power of deep learning comes with significant requirements: it is notoriously "data-hungry," often needing thousands or even millions of labeled examples to perform effectively, and demands substantial computational resources, typically requiring high-end GPUs for training [20].

The critical innovation that bridges the gap between deep learning's potential and practical application in data-scarce scientific fields is transfer learning. TL addresses the fundamental challenge of limited dataset sizes in specialized domains by leveraging knowledge gained from solving one problem (typically on a large, general-purpose dataset) and applying it to a different but related problem. This approach allows researchers to bootstrap specialized models with minimal data by fine-tuning pre-trained networks, dramatically reducing both data requirements and training time while improving overall performance [5] [21]. Together, deep learning and transfer learning are creating a new paradigm for scientific discovery, enabling breakthroughs in fields from medical imaging to reproductive biology.

Technical Foundations

Deep Learning Architectures

At the heart of the deep learning revolution are several key neural network architectures, each with unique capabilities for processing different types of data:

Convolutional Neural Networks (CNNs): Specifically designed for processing grid-like data such as images, CNNs use a series of convolutional layers that act as learnable filters to detect hierarchical patterns—from simple edges and textures in early layers to complex object parts in deeper layers. This architecture is particularly effective for image classification, object detection, and segmentation tasks [6] [5]. The spatial hierarchy learned by CNNs makes them ideally suited for analyzing medical images, including sperm morphology.

Transformer Networks: Originally developed for natural language processing, transformers utilize an attention mechanism to weigh the importance of different parts of the input data when making predictions. This architecture has recently been adapted for tabular data through models like TabTransformer, which creates robust embeddings for categorical variables and demonstrates strong performance even with limited labeled data [22]. Transformers excel at capturing long-range dependencies in data, making them powerful for diverse scientific applications.

Siamese Networks: A specialized architecture for contrastive learning, Siamese networks consist of two or more identical subnetworks that process different inputs simultaneously, then compare their outputs to learn similarity metrics. This approach is particularly valuable for one-shot or few-shot learning scenarios where labeled examples are extremely scarce, such as detecting rare defects in industrial quality control or classifying unusual morphological variants [23].

The Transfer Learning Workflow

Transfer learning operationalizes knowledge transfer through a systematic workflow that maximizes learning efficiency:

Pre-training on Source Domain: A base model (typically a CNN like AlexNet, VGG, or ResNet) is first trained on a large-scale benchmark dataset such as ImageNet, which contains over a million images across thousands of categories [5] [21]. This phase requires substantial computational resources but needs to be done only once, as the learned feature representations—especially in the early layers—capture universal visual patterns like edges, shapes, and textures.

Knowledge Transfer: The pre-trained model's weights are imported, preserving the general feature extraction capabilities developed during pre-training. The architecture is typically modified by replacing the final classification layer(s) with new layers tailored to the target task (e.g., classifying sperm morphology into specific abnormality categories rather than generic object classes) [5].

Fine-tuning on Target Domain: The model is further trained (fine-tuned) on the specialized target dataset, allowing the weights to adapt to the specific characteristics of the new domain. Strategic approaches vary in how many layers are fine-tuned—options include updating only the new final layers while keeping earlier layers frozen, or progressively unfreezing layers with lower learning rates to balance specificity and generality [5] [21].

Application in Sperm Morphology Research

The Clinical Challenge

Infertility affects approximately 15% of couples globally, with male factors contributing to nearly 50% of cases [6] [7]. Sperm morphology analysis represents a crucial diagnostic procedure in male fertility assessment, as the shape and structure of spermatozoa are proven indicators of biological function and fertilization potential [6] [5]. Traditional manual morphological assessment is exceptionally challenging, characterized by:

- High subjectivity and variability between different technicians and laboratories [6] [7]

- Substantial time requirements for analyzing 200+ sperm cells per sample according to WHO standards [7]

- Classification complexity with up to 26 types of abnormalities across head, neck, and tail compartments [7]

- Disagreement among experts, with studies showing significant inter-observer variability in morphological classification [6]

These limitations of conventional analysis have created an pressing need for automated, objective, and standardized approaches that can deliver consistent, reproducible results across clinical settings.

Comparative Performance: Traditional ML vs. Deep Learning vs. Transfer Learning

The evolution of computational approaches for sperm morphology analysis demonstrates a clear trajectory of performance improvement, with transfer learning emerging as the most effective strategy, particularly given the limited dataset sizes typical in medical domains.

Table 1: Performance Comparison of Different Computational Approaches for Sperm Morphology Classification

| Methodological Approach | Key Characteristics | Reported Accuracy | Data Requirements | Limitations |

|---|---|---|---|---|

| Traditional Machine Learning (SVM, K-means, Decision Trees) | Relies on handcrafted features (shape, texture, size); expert-driven feature selection | 49%-90% [7] | Moderate (hundreds of samples) | Limited to pre-defined features; poor generalization; often only classifies head defects |

| Deep Learning from Scratch (CNN trained on target domain only) | Automatic feature extraction; end-to-end learning | 62%-94.1% [5] | Very high (thousands of labeled samples) | Computationally intensive; requires large datasets; risk of overfitting with small datasets |

| Transfer Learning (Pre-trained CNN fine-tuned on sperm images) | Leverages pre-trained features; adapts to target domain | Up to 96.0% [5] | Low (hundreds of samples sufficient) | Optimal performance depends on source-target domain similarity; requires careful fine-tuning strategy |

The quantitative superiority of transfer learning is exemplified by a 2021 study that modified AlexNet architecture with batch normalization layers and pre-trained parameters from ImageNet, achieving 96.0% accuracy on the HuSHeM dataset for sperm head classification—significantly outperforming both traditional machine learning methods and deep learning models trained from scratch [5]. This approach demonstrated not only higher accuracy but also greater computational efficiency, with parameter counts less than one-sixth of a VGG16-based approach [5].

Experimental Protocol: Transfer Learning for Sperm Morphology Classification

For researchers implementing transfer learning for sperm classification, the following detailed protocol provides a robust methodological framework:

Data Acquisition and Preprocessing

Sample Preparation: Prepare semen smears following WHO laboratory guidelines [6]. Stain using standardized protocols (e.g., RAL Diagnostics staining kit or Diff-Quik method) to ensure consistent imaging characteristics [6] [5].

Image Acquisition: Capture individual sperm images using a Computer-Assisted Semen Analysis (CASA) system with a 100x oil immersion objective in bright-field mode [6]. Ensure each image contains a single spermatozoon with clear visibility of head, midpiece, and tail structures.

Expert Annotation: Have each sperm image independently classified by multiple experienced embryologists according to standardized classification systems (WHO criteria or modified David classification) [6] [5]. Resolve disagreements through consensus review with additional experts. A minimum of three expert annotations per image is recommended for establishing reliable ground truth.

Data Preprocessing Pipeline:

- Cropping: Automatically crop sperm heads using contour detection and elliptical fitting to focus on the most discriminative region [5].

- Rotation: Align sperm heads to a uniform orientation (e.g., major axis horizontal) to reduce rotational variance [5].

- Resizing: Standardize image dimensions to match input requirements of pre-trained models (typically 64×64 to 224×224 pixels) [5].

- Normalization: Scale pixel values to standard range (e.g., 0-1) using dataset mean and standard deviation.

Data Augmentation: Apply limited, realistic transformations to increase dataset diversity while preserving morphological integrity: slight rotations (±5°), minimal horizontal/vertical shifts (±10%), and careful brightness adjustments [6]. Avoid aggressive transformations that may alter morphological characteristics.

Transfer Learning Implementation

Model Selection: Choose appropriate pre-trained architecture based on dataset size and computational resources. For smaller datasets (<1000 images), AlexNet or EfficientNetB0 are recommended; for larger datasets, ResNet50 or VGG16 may be suitable [5] [21].

Architecture Modification:

Fine-tuning Strategy:

- Phase 1: Freeze all pre-trained layers and train only the newly added classification layers for 20-30 epochs with a reduced learning rate (e.g., 0.001).

- Phase 2: Unfreeze and fine-tune deeper layers of the network with an even lower learning rate (e.g., 0.0001) for an additional 30-50 epochs.

- Use early stopping with a patience of 10-15 epochs to prevent overfitting.

Training Configuration:

- Optimizer: Adam with default parameters (beta1=0.9, beta2=0.999)

- Loss Function: Categorical cross-entropy for multi-class classification

- Batch Size: 16-32 depending on available GPU memory

- Validation Split: 15-20% of training data for monitoring generalization performance

Model Evaluation

Performance Metrics: Compute comprehensive metrics including accuracy, precision, recall, F1-score, and area under the ROC curve (AUC) for each morphological class [5] [21].

Cross-Validation: Implement k-fold cross-validation (k=5 or 10) to obtain robust performance estimates and reduce variance from data partitioning [5].

Statistical Validation: Perform bootstrap resampling to calculate confidence intervals for performance metrics and ensure statistical significance of results [23].

Clinical Validation: Compare model classifications with independent expert annotations not used in training to assess real-world clinical relevance and diagnostic agreement.

Research Reagent Solutions

Successful implementation of deep learning for sperm morphology analysis requires both computational resources and specialized experimental materials:

Table 2: Essential Research Reagents and Resources for Sperm Morphology Analysis

| Resource Category | Specific Examples | Function/Application |

|---|---|---|

| Staining Kits | RAL Diagnostics kit, Diff-Quik method | Standardized staining of semen smears for consistent morphological visualization |

| Public Datasets | HuSHeM (216 images), SCIAN-MorphoSpermGS (1854 images), SMD/MSS (1000+ images), SVIA dataset (125,000+ annotations) [6] [7] [5] | Benchmarking algorithms; training and validation of models; comparative studies |

| Image Acquisition Systems | CASA (Computer-Assisted Semen Analysis) systems with digital cameras [6] | Standardized capture of sperm images under consistent magnification and lighting |

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras with pre-trained models (AlexNet, VGG, ResNet, EfficientNet) [5] [21] | Implementation of transfer learning pipelines; model training and inference |

| Computational Infrastructure | GPU-accelerated workstations (NVIDIA RTX series or equivalent), cloud computing platforms | Handling computational demands of deep learning model training and evaluation |

Visualizing the Workflow

The following diagram illustrates the complete transfer learning workflow for sperm morphology classification, from data preparation through to model deployment:

Diagram 1: Complete transfer learning workflow for sperm morphology classification, showing knowledge transfer from general image recognition to specialized medical image analysis.

The paradigm shift represented by deep learning and transfer learning continues to evolve, with several emerging trends poised to further transform sperm morphology research and other biomedical applications:

Foundation models for tabular data, such as TabPFN, demonstrate that the transfer learning paradigm can extend beyond image data to structured clinical information, potentially enabling integrated analysis of both visual morphological data and associated clinical parameters [24]. Hybrid approaches combining the strengths of Contrastive Learning (CL) and Deep Transfer Learning (DTL) show promise for addressing extreme class imbalance situations, such as when certain morphological defects are exceptionally rare in patient populations [23]. Additionally, explainable AI techniques are being developed to address the "black box" nature of deep learning models, making their decision processes more interpretable to clinicians and researchers [22].

In conclusion, the integration of deep learning with transfer learning has fundamentally reshaped the landscape of sperm morphology analysis and biomedical research more broadly. This paradigm shift from manual feature engineering to automated, data-driven learning has enabled unprecedented accuracy in classification tasks while simultaneously addressing the critical challenge of limited dataset sizes in specialized medical domains. As these technologies continue to mature and become more accessible, they hold the potential to standardize and automate male fertility assessment globally, reducing inter-laboratory variability and providing clinicians with more reliable diagnostic information. The methodological framework presented in this article provides researchers with a comprehensive foundation for leveraging these transformative technologies in their own work, contributing to the ongoing advancement of computational approaches in reproductive medicine and beyond.

Implementing Transfer Learning Models for Sperm Classification: Architectures and Workflows

The manual assessment of sperm morphology is a cornerstone of male infertility diagnosis, yet it remains highly subjective, challenging to standardize, and dependent on the technician's expertise [25] [7]. Artificial intelligence (AI), particularly deep learning, offers a path toward automation, standardization, and improved accuracy in this critical area of reproductive medicine [6] [7]. However, a significant challenge in developing robust deep learning solutions is the frequent scarcity of large, annotated medical image datasets [25] [6].

Transfer learning has emerged as a powerful technique to overcome this data limitation [26]. This approach involves taking a model pre-trained on a very large dataset, such as ImageNet, and adapting it to a new, specific task—like sperm classification [27]. By leveraging the generic feature detectors (e.g., for edges, textures, shapes) learned from millions of images, researchers can achieve high performance on specialized medical tasks with limited data, saving substantial computational resources and time [26] [28]. This document provides a structured review of popular pre-trained architectures and detailed experimental protocols to guide researchers in selecting and implementing the most suitable model for sperm morphology analysis.

A Review of Popular Pre-trained Architectures

The selection of an appropriate pre-trained model is a critical first step. The following section reviews key architectures, highlighting their core innovations, strengths, and weaknesses in the context of biomedical image analysis.

Model Architectures: From AlexNet to ResNet

AlexNet (2012): A pioneering deep convolutional neural network that demonstrated the power of deep learning on a large scale by winning the ImageNet challenge in 2012 [29] [30]. Its key innovations included the use of the ReLU activation function to speed up training, and dropout layers to reduce overfitting [29] [30]. While foundational, its use of large filters (11x11, 5x5) and lower depth make it less efficient and performant compared to more modern architectures for complex tasks like sperm segmentation [29] [31].

VGG (VGG16 & VGG19) (2014): The VGG network family emphasized the importance of network depth by using a very uniform architecture built from stacks of small 3x3 convolutional filters [29] [31] [30]. This design increased depth and non-linearity while controlling the number of parameters, leading to significantly improved accuracy over AlexNet [31]. VGG's simple and consistent structure has made it a popular choice for feature extraction in transfer learning [31]. A primary drawback is its computational expense; the model has a large number of parameters, and the trained VGG16 model is over 500MB, making it potentially cumbersome for some deployment scenarios [29].

GoogleNet (Inception v1) (2014): This architecture introduced the "Inception module," which allowed the network to be wider rather than just deeper [29]. Its key innovation was using parallel convolution paths with filters of different sizes (1x1, 3x3, 5x5) within the same layer, enabling the efficient capture of features at multiple scales [29] [30]. Crucially, it used 1x1 convolutions for dimensionality reduction, which helped control computational cost [29]. This efficient design was a precursor to more complex and powerful modern networks.

ResNet (2015): The Residual Network (ResNet) addressed a fundamental problem in very deep networks: degradation. As networks become deeper, accuracy can saturate and then degrade, not due to overfitting but because of optimization difficulties [30]. ResNet introduced "skip connections" or residual connections that allow a layer to bypass the next layer, making it easier to train networks with hundreds or even thousands of layers [30]. This architecture mitigates the vanishing gradient problem and has become a default choice for many computer vision tasks due to its robustness and high performance [26] [30].

Comparative Analysis of Architectures

Table 1: Comparative analysis of popular pre-trained model architectures.

| Architecture | Key Innovation | Depth (Layers) | Strengths | Weaknesses / Suitability for Sperm Analysis |

|---|---|---|---|---|

| AlexNet [29] [30] | ReLU, Dropout, GPU Training | 8 | • Pioneering architecture• Proven effectiveness on ImageNet | • Lower depth and performance vs. newer models• Less suitable for fine-grained sperm feature extraction |

| VGG16/VGG19 [29] [31] | Depth with small (3x3) filters | 16 / 19 | • Simple, uniform architecture• High accuracy, excellent for feature extraction | • Very large model size (>500MB) [29]• Computationally expensive• Good candidate if resources allow |

| GoogleNet [29] [30] | Inception modules (multi-scale) | 22 | • Captures features at multiple scales• Computationally efficient | • More complex architecture• Potential for fine-grained multi-scale sperm analysis (head, tail) |

| ResNet [26] [30] | Residual (skip) connections | 50, 101, 152+ | • Solves degradation in very deep networks• Robust training, state-of-the-art performance | • Often the preferred starting point for its balance of depth and trainability |

Experimental Protocols for Sperm Morphology Analysis

This section outlines a standardized experimental workflow for applying pre-trained models to sperm morphology analysis, from data preparation to model evaluation.

Workflow for Transfer Learning in Sperm Analysis

The following diagram visualizes the end-to-end experimental protocol for applying transfer learning to sperm classification.

Transfer Learning Workflow for Sperm Analysis

Data Preparation and Augmentation Protocol

Objective: To create a robust and generalized dataset for training a deep learning model, mitigating the risk of overfitting given the typically limited data available [25] [6].

Data Acquisition:

- Acquire sperm images using a Computer-Assisted Semen Analysis (CASA) system or a microscope with a digital camera [6]. Standardize the staining process (e.g., modified Hematoxylin/Eosin) and use an oil immersion 100x objective for consistent, high-quality images [25] [6].

- Annotation: Each sperm image must be meticulously annotated by multiple experts to establish a reliable ground truth. This involves labeling different morphological parts (head, acrosome, nucleus, midpiece, tail) and classifying them according to a standard like the modified David classification [6]. Analyze inter-expert agreement (e.g., Total Agreement, Partial Agreement) to quantify the subjectivity of the task and ensure label quality [6].

Data Preprocessing:

- Cleaning: Identify and handle any missing or corrupt images.

- Normalization: Resize all images to the input dimensions required by the chosen pre-trained model (e.g., 224x224 for VGG) [31]. Normalize pixel values to a standard range, typically [0, 1] or [-1, 1].

- Denoising: Apply techniques to reduce noise from insufficient lighting or poor staining, which can improve model performance [6].

Data Augmentation (Critical Step):

- To artificially expand the dataset and improve model generalization, apply a series of random transformations to the training images. Recommended techniques include [25] [6]:

- Geometric: Random rotation (±10°), horizontal and vertical flipping, slight zooming (±10%), and shearing.

- Photometric: Adjusting brightness, contrast, and saturation within a small range (±20%).

- Implementation: This is typically performed in real-time during training using frameworks like TensorFlow/Keras or PyTorch.

- To artificially expand the dataset and improve model generalization, apply a series of random transformations to the training images. Recommended techniques include [25] [6]:

Transfer Learning and Model Training Protocol

Objective: To adapt a pre-trained model to the specific task of sperm morphology classification or segmentation.

Model Selection & Adaptation:

- Selection: Choose a pre-trained model (see Section 2). ResNet-based models are often a strong starting point due to their trainability [30].

- Adaptation: Remove the original classification head (the final fully-connected layer) of the pre-trained model. Replace it with a new, untrained head tailored to the sperm classification task. This could be a simple softmax layer for binary (normal/abnormal) classification or multiple output layers for a multi-label problem [27].

Training Strategies:

- Feature Extraction: Freeze the weights of all convolutional layers from the pre-trained model. Only train the weights of the newly added classification head. This is a fast, computationally cheap method suitable for very small datasets [27].

- Fine-Tuning: After feature extraction, unfreeze some of the deeper layers of the pre-trained model and jointly train them with the new head. This allows the model to adapt its more specialized features to the sperm image domain, potentially leading to higher performance [27] [28]. Use a lower learning rate (e.g., 10 times smaller) for the fine-tuned layers to avoid destructive updates to the pre-trained weights [27].

Training Configuration:

- Optimizer: Use optimizers like Stochastic Gradient Descent (SGD) with momentum (e.g., 0.9) or Adam [29] [27].

- Learning Rate: Employ a learning rate scheduler to reduce the rate when validation performance plateaus (e.g., reduce by a factor of 10 every 10 epochs) [29].

- Regularization: Utilize dropout layers in the fully-connected layers to prevent overfitting [29] [27].

Model Evaluation and Validation Protocol

Objective: To rigorously assess the model's performance and ensure its generalizability to new, unseen data.

- Data Splitting: Split the annotated dataset into three subsets: Training Set (~70-80%), Validation Set (~10-15%), and Test Set (~10-15%) [6]. The test set must be held back and used only for the final evaluation.

- Evaluation Metrics: Move beyond simple accuracy. Report a comprehensive set of metrics calculated on the test set [32]:

- Precision: The ability of the classifier not to label a negative sample as positive.

- Recall (Sensitivity): The ability of the classifier to find all the positive samples.

- F1-Score: The harmonic mean of precision and recall.

- Confusion Matrix: A detailed breakdown of the model's predictions versus the actual labels.

- Comparative Analysis: Benchmark the performance of the transfer learning approach against custom-built models (e.g., a simple CNN) or traditional machine learning methods (e.g., SVM with handcrafted features) to quantify the benefits [32] [7].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key reagents, materials, and computational tools for deep learning-based sperm analysis.

| Item Name | Function / Explanation | Example / Specification |

|---|---|---|

| CASA System [6] | For standardized, high-throughput acquisition of digital sperm images. | MMC CASA system or equivalent. |

| Standardized Staining Kit [6] | To ensure consistent contrast and visibility of sperm structures for image analysis. | RAL Diagnostics kit, modified Hematoxylin/Eosin procedure [25]. |

| Pre-trained Models [26] [27] | Provides foundational feature detectors, saving time and computational resources. | VGG16, ResNet-50, available in Keras/TensorFlow PyTorch model zoos. |

| Deep Learning Framework | Provides the programming environment to build, train, and evaluate models. | TensorFlow/Keras, PyTorch, Python 3.x. |

| Computational Hardware [28] | Accelerates the model training process, which is computationally intensive. | NVIDIA GPUs (e.g., TITAN series, RTX series) with sufficient VRAM. |

| Annotation Software | Allows experts to manually label sperm parts and classes to create ground truth data. | LabelImg, VGG Image Annotator (VIA), or custom in-house tools. |

Model Selection Decision Framework

The final choice of a pre-trained model depends on the specific project constraints and goals. The following diagram provides a logical pathway for making this decision.

Pre-trained Model Selection Pathway

Within the broader scope of developing a robust transfer learning approach for sperm classification, the construction of a standardized data preprocessing pipeline is a critical foundational step. The accuracy of any deep learning model, particularly those leveraging transfer learning, is heavily dependent on the quality and consistency of its input data [13] [7]. In the specific domain of sperm morphology analysis, this challenge is pronounced. Manual assessments, which are the traditional standard, suffer from significant subjectivity and inter-observer variability, hindering the creation of unified datasets needed for reliable model generalization [6] [15]. Furthermore, existing public sperm image datasets are often characterized by issues such as low resolution, noise, and inconsistent staining, which can drastically reduce model performance if not adequately addressed [13] [7]. This document outlines a detailed preprocessing protocol to standardize sperm images, thereby enhancing the performance and reproducibility of subsequent transfer learning-based classification models. By implementing rigorous cropping, rotation, and normalization techniques, researchers can mitigate data-induced biases and create a solid foundation for advanced artificial intelligence (AI) applications in reproductive medicine.

Data Preprocessing Workflow

The preprocessing of sperm images is a multi-stage pipeline designed to transform raw, variable-quality microscopic images into a clean, uniform set of inputs suitable for deep learning models. The following workflow diagram illustrates the logical sequence of these operations, from initial acquisition to the final preprocessed data ready for model training.

Workflow Diagram

Detailed Experimental Protocols

Cropping and Isolation of Individual Sperm

Objective: To extract regions of interest (ROIs) containing individual spermatozoa from a larger microscopic field, which may contain multiple cells, debris, or artifacts.

Rationale: Whole-field images are unsuitable for direct model input. Isolating individual sperm enables the model to focus on morphological features of a single cell and is a prerequisite for many subsequent steps [6]. Automated systems can struggle with overlapping sperm or debris, making initial manual verification crucial [13].

Protocol:

- Input: Raw semen smear images, typically stained (e.g., RAL Diagnostics kit) and captured using a CASA system or similar microscope with a x100 oil immersion objective [6].

- Bounding Box Generation:

- Manual Annotation: An expert annotator draws a tight bounding box around each complete spermatozoon (head, midpiece, and tail), ensuring minimal background. This is the gold standard for creating ground truth data [13] [7].

- Automated Detection: For larger datasets, employ an object detection model (e.g., YOLO, Faster R-CNN) pre-trained on annotated sperm data. The SVIA dataset, which contains 125,000 annotated instances for object detection, is a valuable resource for this purpose [13].

- Exclusion Criteria: Discard images where the sperm is overlapping with another cell or debris, is truncated at the image border, or has significant artifacts that obscure morphological details [13].

- Output: A dataset of cropped images, each containing a single, centered spermatozoon. The image dimensions should be standardized. A common practice is to resize images to 80x80 pixels in grayscale [6].

Rotation and Orientation Normalization

Objective: To achieve rotational invariance by aligning all sperm images to a canonical orientation, reducing unnecessary variability for the model.

Rationale: A sperm cell can be captured in any rotational orientation. A model should classify morphology based on shape, not angle. Normalizing orientation simplifies the learning task and improves convergence [15].

Protocol:

- Input: Cropped images of individual sperm from the previous step.

- Reference Axis Definition: Define the primary axis of the sperm. The most stable reference is the long axis of the sperm head.

- Angle Calculation:

- Convert the image to grayscale if necessary.

- Use image moments or the Hough Transform to detect the orientation of the sperm head's elongated shape.

- Calculate the angle of this primary axis relative to the horizontal.

- Image Rotation: Apply an affine transformation to rotate the image so that the sperm's primary axis is aligned horizontally. Use techniques like linear interpolation to maintain image quality during rotation.

- Output: A set of orientation-normalized sperm images, with heads consistently aligned.

Image Normalization

Objective: To standardize the pixel value distribution across the entire dataset, mitigating variations caused by staining intensity, lighting conditions, and microscope settings.

Rationale: Inconsistent pixel value distributions can cause the model to learn these artifacts rather than the underlying biological features. Normalization stabilizes and accelerates the training process [6] [15].

Protocol:

- Input: Oriented, cropped sperm images (e.g., 80x80 pixels).

- Grayscale Conversion: Ensure all images are single-channel (grayscale). This reduces computational complexity and is sufficient for morphological analysis based on shape and texture. The SMD/MSS dataset preprocessing used grayscale conversion [6].

- Pixel Intensity Normalization: Rescale pixel intensities from their original range (e.g., 0-255) to a standardized range of [0, 1] by dividing all values by 255. Alternatively, for models that require it, standardize the data by subtracting the mean and dividing by the standard deviation of the dataset.

- Noise Reduction (Denoising):

- Problem: Sperm images can contain noise from insufficient lighting or poor staining [6].

- Solution: Apply a smoothing filter such as a Gaussian blur or a median filter to reduce high-frequency noise without significantly eroding important morphological edges. More advanced methods, like wavelet denoising, have also been employed in prior research [15].

- Output: A finalized, preprocessed image with normalized pixel values, ready for input into a deep learning model.

The following table summarizes the performance improvements attributed to robust preprocessing and subsequent deep-learning modeling as reported in recent literature.

Table 1: Impact of Preprocessing and Modeling on Sperm Morphology Classification Performance

| Study / Dataset | Dataset Size (Preprocessed) | Key Preprocessing Steps | Model Architecture | Reported Accuracy | Key Improvement |

|---|---|---|---|---|---|

| SMD/MSS [6] | 1,000 → 6,035 (after augmentation) | Grayscale, Resizing (80x80), Data Augmentation | Custom CNN | 55% to 92% | Standardization and augmentation enabled effective model training. |

| SMIDS [15] | 3,000 images | Not Specified (Implicit Cropping/Norm.) | CBAM-ResNet50 + Feature Engineering | 96.08% ± 1.2% | Hybrid approach leveraging deep features. |

| HuSHeM [15] | 216 images | Not Specified (Implicit Cropping/Norm.) | CBAM-ResNet50 + Feature Engineering | 96.77% ± 0.8% | High accuracy even on a smaller dataset. |

| Conventional ML [13] | Varies | Manual Feature Extraction | SVM, K-means, Decision Trees | Up to ~90% | Highlights limitation of manual feature reliance. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Sperm Morphology Analysis

| Item Name | Function / Application in Protocol |

|---|---|

| RAL Diagnostics Staining Kit | Provides differential staining of sperm structures (head, midpiece, tail) for enhanced visual contrast under bright-field microscopy [6]. |

| Computer-Assisted Semen Analysis (CASA) System | Integrated system (microscope, camera, software) for standardized image acquisition and initial morphometric analysis (head width/length, tail length) [6]. |

| MMC CASA System | A specific CASA system used for acquiring high-resolution images with an oil immersion x100 objective, facilitating detailed morphological examination [6]. |

| Sperm Morphology Datasets (e.g., SVIA, SMD/MSS, SMIDS) | Publicly available, annotated datasets providing crucial ground-truth data for training and validating preprocessing algorithms and deep learning models [13] [6]. |

| Data Augmentation Tools (e.g., in Python) | Software libraries (e.g., TensorFlow, PyTorch, Keras) used to artificially expand dataset size and diversity through transformations, combating overfitting [6]. |

Visualization of the Complete Experimental Pathway

The entire pathway, from raw biological sample to a trained diagnostic model, integrates wet-lab practices with computational analysis. The following diagram maps this comprehensive workflow, highlighting the central role of the data preprocessing pipeline.