Leveraging VGG16 Transfer Learning for Advanced Sperm Head Morphology Classification in Male Fertility Assessment

This article provides a comprehensive analysis of applying VGG16-based transfer learning to automate the morphological classification of human sperm heads, a critical yet subjective task in male infertility diagnosis.

Leveraging VGG16 Transfer Learning for Advanced Sperm Head Morphology Classification in Male Fertility Assessment

Abstract

This article provides a comprehensive analysis of applying VGG16-based transfer learning to automate the morphological classification of human sperm heads, a critical yet subjective task in male infertility diagnosis. We explore the foundational challenges in manual semen analysis and the theoretical superiority of deep learning over conventional methods. A detailed methodological guide for implementing a VGG16 transfer learning pipeline is presented, covering data preprocessing, model adaptation, and fine-tuning strategies specifically for sperm images. The content further addresses common computational and data-related challenges, offering practical optimization techniques, including selective fine-tuning and data augmentation. Finally, we validate the approach through a comparative performance analysis against other state-of-the-art methods and architectures, demonstrating its high accuracy and potential for clinical integration to standardize and enhance reproductive diagnostics.

The Clinical Problem and Deep Learning Solution: Foundations of Sperm Morphology Classification

The Critical Challenge of Male Infertility and Sperm Morphology Analysis

Male infertility represents a significant global health challenge, affecting approximately 15% of couples worldwide, with male factors contributing to nearly half of all infertility cases [1]. The epidemiological burden is substantial, with the global number of cases and Disability-Adjusted Life Years (DALYs) for male infertility having increased by 74.66% and 74.64%, respectively, since 1990 [2]. This condition transcends reproductive health alone, as emerging evidence indicates that male infertility may reflect broader health concerns and is associated with increased all-cause mortality, positioning it as a biomarker of overall male health status [1].

Sperm morphology analysis represents a critical component in the diagnostic evaluation of male infertility. Traditional manual assessment methods, however, are characterized by significant subjectivity, labor-intensiveness, and substantial inter-laboratory variability, with coefficients of variation reported from 4.8% to as high as 132% [3]. These limitations have prompted the development of automated approaches, particularly leveraging artificial intelligence and deep learning methodologies to standardize and enhance the accuracy of sperm morphology evaluation.

Table 1: Global Epidemiological Burden of Male Infertility (1990-2021)

| Metric | 1990-2021 Change | 2021 Global Burden | Highest Burden SDI Region |

|---|---|---|---|

| Cases | +74.66% | 180 million couples affected worldwide | Middle SDI region (~1/3 of total) |

| DALYs | +74.64% | Significant years of healthy life lost | Middle SDI region |

| Age Distribution | - | Highest cases in 35-39 age group | Consistent across SDI regions |

Clinical Significance of Sperm Morphology Assessment

Sperm morphology evaluation provides crucial diagnostic and prognostic information in male fertility assessment. A typical sperm head is oval-shaped and consists primarily of the acrosome and nucleus, with abnormalities in size, shape, or structure directly impairing fertilization potential by compromising motility and the ability to penetrate the egg's protective layers [3]. The World Health Organization (WHO) classification system categorizes sperm morphology into head, neck, and tail compartments, with 26 distinct types of abnormalities recognized [4].

Despite its clinical importance, the assessment of sperm morphology faces significant challenges. The French BLEFCO Group's 2025 expert review indicates that the overall level of evidence supporting current practices is low, and they do not recommend using the percentage of normal morphology as a prognostic criterion before assisted reproductive technologies (ART) such as IUI, IVF, or ICSI [5]. The review does, however, emphasize the importance of detecting specific monomorphic abnormalities including globozoospermia, macrocephalic spermatozoa syndrome, pinhead spermatozoa syndrome, and multiple flagellar abnormalities [5].

Table 2: Sperm Morphology Abnormalities and Clinical Impact

| Abnormality Type | Morphological Characteristics | Functional Consequences | Clinical Recommendations |

|---|---|---|---|

| Amorphous Heads | Lack symmetry and defined structure, irregular borders | Impairs motility, acrosome function, and DNA integrity | Qualitative detection recommended |

| Pyriform Heads | Pear-shaped, symmetrical along long axis but asymmetrical in short axis | Reduces fertilization potential | Numerical reporting of percentage |

| Tapered Heads | Excessively elongated with sharp or pointed tip | Compromises protective barrier penetration | Interpretative commentary |

| Monomorphic Defects | Consistent abnormal pattern across sperm population | Severe fertility impairment | Essential for clinical diagnosis |

VGG16 Transfer Learning for Sperm Head Classification

Theoretical Framework and Architecture

The application of VGG16 transfer learning for sperm head classification represents a significant advancement in automated sperm morphology analysis. This approach leverages a deep convolutional neural network (CNN) initially trained on ImageNet, a large-scale dataset of everyday images, and retrains it for the specific task of sperm classification using specialized sperm head datasets such as HuSHeM and SCIAN [6]. The VGG16 architecture, characterized by its simplicity and depth using 3×3 convolutional layers stacked with increasing depth, is particularly well-suited for image classification tasks and adapts effectively to sperm morphology analysis through transfer learning.

Transfer learning methodology involves replacing the final classification layer of the pre-trained VGG16 network with a new layer containing nodes corresponding to the sperm morphology categories of interest (normal, amorphous, pyriform, tapered, etc.). The earlier layers, which contain generic feature detectors learned from ImageNet, are fine-tuned using sperm images to adapt to the specific characteristics of sperm morphology. This approach avoids excessive computational requirements while leveraging the powerful feature extraction capabilities of deep CNNs [6].

Experimental Protocol for VGG16 Implementation

Dataset Preparation and Preprocessing:

- Dataset Acquisition: Obtain annotated sperm image datasets (HuSHeM: 216 RGB images; SCIAN-MorphoSpermGS: 1,854 images) [6] [4]

- Image Standardization: Resize all images to 224×224 pixels to match VGG16 input requirements

- Data Augmentation: Apply rotation (±15°), translation (±10%), brightness adjustment (±20%), and color jittering to expand training data and improve model robustness

- Dataset Partitioning: Split data into training (70%), validation (15%), and test (15%) sets, ensuring no data leakage between partitions

Model Training and Fine-tuning:

- Base Model Loading: Initialize with VGG16 weights pre-trained on ImageNet

- Architecture Modification: Replace final fully connected layer with new classification layer matching sperm morphology categories

- Layer Freezing: Initially freeze early convolutional layers to preserve generic feature detectors

- Training Configuration: Use categorical cross-entropy loss function with Adam optimizer (initial learning rate: 0.0001)

- Progressive Unfreezing: Gradually unfreeze deeper layers during training for specialized feature adaptation

Performance Evaluation:

- Accuracy Assessment: Compare predicted classifications against expert-annotated ground truth

- Cross-Validation: Implement 5-fold cross-validation to ensure result reliability

- Comparative Analysis: Benchmark against traditional methods (CE-SVM, APDL) and human expert performance

Performance and Validation

The VGG16 transfer learning approach has demonstrated exceptional performance in sperm head classification, achieving up to 94% accuracy on the HuSHeM dataset for identifying tapered, pyriform, amorphous, and small-headed sperm [3] [6]. This represents a significant improvement over traditional machine learning methods such as the Cascade Ensemble of Support Vector Machines (CE-SVM) and performs comparably to more complex Adaptive Patch-based Dictionary Learning (APDL) methods while requiring substantially less computational resources [6].

The model's effectiveness stems from its ability to automatically learn discriminative features from sperm images without relying on manual feature engineering, which has been a limitation of conventional computer-aided sperm analysis (CASA) systems. Furthermore, the transfer learning approach demonstrates robust generalization across different dataset characteristics and staining protocols, making it suitable for diverse clinical laboratory settings.

Advanced Experimental Protocols in Sperm Morphology Analysis

Comprehensive Sperm Morphology Assessment Protocol

Sample Preparation and Staining:

- Semen Sample Collection: Collect semen samples after 2-7 days of sexual abstinence following WHO guidelines

- Sample Liquefaction: Allow samples to liquefy at 37°C for 20-30 minutes

- Slide Preparation: Create thin smears on pre-cleaned glass slides and air-dry for 30 minutes

- Staining Procedure: Apply Diff-Quik or Papanicolaou staining according to manufacturer protocols

- Slide Mounting: Use permanent mounting medium and coverslips for long-term preservation

Image Acquisition and Processing:

- Microscopy Configuration: Use brightfield microscope with 100× oil immersion objective

- Image Capture: Acquire minimum 200 sperm images per sample using calibrated digital camera

- Quality Control: Exclude blurred, overlapping, or improperly stained sperm from analysis

- Image Standardization: Maintain consistent lighting, contrast, and resolution across all captures

Morphological Classification Criteria:

- Normal Sperm: Smooth, oval head configuration 4.0-5.0μm in length and 2.5-3.5μm in width; well-defined acrosome covering 40-70% of head; no neck or tail defects

- Amorphous Heads: Irregular head shape with disordered contour and structure

- Pyriform Heads: Pear-shaped morphology with widened base and narrowed apex

- Tapered Heads: Elongated, slender form with significantly increased length-to-width ratio

Integrated Deep Learning Framework with Pose Correction

Recent advancements have integrated the VGG16 classification approach with sophisticated preprocessing stages to enhance robustness. The 2024 automated deep learning framework incorporates EdgeSAM for precise sperm head segmentation and a dedicated Sperm Head Pose Correction Network to standardize orientation and position before classification [3]. This integrated system achieves a remarkable test accuracy of 97.5% on combined HuSHeM and Chenwy datasets, outperforming standalone VGG16 implementation.

Pose Correction Protocol:

- Segmentation: Apply EdgeSAM with single coordinate point prompts for rough sperm head localization

- Contour Detection: Extract precise sperm head boundaries using refined segmentation masks

- Orientation Analysis: Determine primary axis and rotation angle using principal component analysis

- Spatial Transformation: Apply calculated rotation and translation to standardize head position

- Polarity Assessment: Identify acrosome position to establish correct anatomical orientation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Reagent/Material | Specification | Application Purpose | Protocol Notes |

|---|---|---|---|

| Diff-Quik Stain Kit | Commercial triple stain solution | Rapid sperm morphology staining | Fixed smear staining (5 dips per solution) |

| Papanicolaou Stain | Modified for sperm morphology | Detailed nuclear and acrosomal assessment | Progressive staining with multiple solutions |

| HuSHeM Dataset | 216 annotated sperm images | Model training and validation | Publicly available benchmark dataset |

| SCIAN-MorphoSpermGS | 1,854 classified sperm images | Expanded training dataset | Five morphology classes |

| SVIA Dataset | 125,000 annotated instances | Large-scale model training | Includes detection, segmentation, classification tasks |

| EdgeSAM | Parameter-efficient segmenter | Sperm head segmentation and feature extraction | 1.5% trainable parameters of original SAM |

| VGG16 Pre-trained Weights | ImageNet initialization | Transfer learning foundation | PyTorch or TensorFlow implementation |

The integration of VGG16 transfer learning into sperm morphology analysis represents a paradigm shift in male infertility diagnostics, offering unprecedented accuracy, standardization, and efficiency compared to traditional manual methods. The documented performance of 94-97.5% classification accuracy demonstrates the viability of deep learning approaches to potentially exceed human expert capabilities in terms of consistency and throughput [6] [3].

Future research directions should focus on developing more comprehensive and diverse annotated datasets to address current limitations in generalization across different population demographics and laboratory protocols [4]. Additionally, the integration of multifactorial assessment combining morphology with motility, DNA fragmentation, and clinical parameters will likely provide enhanced diagnostic and prognostic value. As these technologies mature, their implementation in clinical laboratories promises to transform the standardization and accuracy of male fertility evaluation, ultimately improving diagnostic precision and therapeutic outcomes for infertile couples.

The critical challenge of male infertility demands continued innovation in diagnostic methodologies, and the application of advanced deep learning architectures like VGG16 transfer learning represents a significant step forward in addressing the global burden of this condition.

Conventional semen analysis remains the cornerstone of male fertility assessment, yet it is fraught with inherent limitations that compromise its diagnostic utility. Despite the publication of successive World Health Organization (WHO) laboratory manuals to standardize procedures, manual morphological assessment continues to be highly subjective and variable [7]. This application note details the critical limitations of conventional sperm analysis and contextualizes these challenges within research on automated deep learning solutions, specifically VGG16 transfer learning for sperm head classification. For researchers and drug development professionals, understanding these limitations is paramount for driving innovation in diagnostic technologies and developing more objective, quantitative biomarkers of male fertility potential.

Critical Limitations of Conventional Analysis

The evaluation of sperm morphology is a significant challenge in morphological analysis, characterized by high recognition difficulty and substantial inter-observer variability [4]. The primary limitations stem from the manual, visual nature of the assessment.

Subjectivity and Inter-Observer Variability

Traditional sperm morphology assessment is labor-intensive and susceptible to variability among observers [3]. This subjectivity arises from the reliance on human expertise to classify sperm based on complex morphological criteria defined by the WHO.

Table 1: Quantified Variability in Manual Sperm Morphology Assessment

| Source of Variability | Metric | Reported Impact/Value |

|---|---|---|

| Inter-laboratory Consistency | Coefficient of Variation | Ranges from 4.8% to as high as 132% [3] |

| Clinical Predictive Power | Ability to differentiate fertile from infertile men | Weak and inconsistent except in extreme cases [7] |

| Manual Workload | Minimum number of sperm assessed per sample | Over 200 sperm [4] |

Limitations of Computer-Assisted Sperm Analysis (CASA)

Computer-Assisted Semen Analysis (CASA) systems brought initial automation but possess significant constraints. They are often costly, inflexible, and limited in functionality, particularly when analyzing noisy or low-quality samples [8]. Furthermore, their analytical capabilities can be limited; for instance, many CASA systems focus primarily on assessing motility and vitality in fresh, unstained semen, overlooking subtle morphological details that are revealed only by using stained and fixed smears as recommended by the WHO [8].

The Shift to Automated Deep Learning Solutions

To overcome these limitations, the field is moving toward fully automated, deep learning-based classification systems. These systems aim to reduce subjectivity, minimize misclassification between visually similar categories, and provide more reliable diagnostic support [8].

VGG16 Transfer Learning for Sperm Head Classification

A pivotal study demonstrated the effectiveness of a deep learning approach by retraining the VGG16 convolutional neural network (CNN) initially trained on the ImageNet database, a technique known as transfer learning [9]. This method was trained and evaluated on labeled sperm head images from publicly available datasets (HuSHeM and SCIAN) to classify sperm into WHO categories: Normal, Tapered, Pyriform, Small, and Amorphous [9].

Table 2: Performance Comparison of Sperm Classification Methods on HuSHeM Dataset

| Classification Method | Key Features | Reported Average True Positive Rate |

|---|---|---|

| Manual Assessment | Subjective visual analysis | High variability (see Table 1) |

| Cascade Ensemble SVM (CE-SVM) | Manual extraction of shape-based descriptors (area, perimeter, eccentricity) | 78.5% [9] |

| VGG16 with Transfer Learning | Automated feature extraction from raw images | 94.1% [9] |

The VGG16 transfer learning approach does not require pre-extraction of shape descriptors and relies uniquely on image inputs, making it a highly effective and efficient method for sperm classification that is competitive with, and often superior to, previous machine learning approaches [9].

VGG16 Transfer Learning Workflow

Experimental Protocol: VGG16 Transfer Learning for Sperm Morphology

This protocol outlines the methodology for retraining the VGG16 network for sperm head classification using transfer learning, as validated in the literature [9].

Dataset Preparation and Preprocessing

- Datasets: Utilize publicly available, expert-annotated datasets such as the Human Sperm Head Morphology (HuSHeM) dataset or the SCIAN-MorphoSpermGS dataset [9].

- Image Standardization: Resize all input images to 224x224 pixels to match the VGG16 network's input size. This may involve reflection padding and upsampling of smaller images [3].

- Data Augmentation: Apply real-time data augmentation to the training set to increase diversity and prevent overfitting. Techniques should include:

- Random rotation

- Translation (shifting)

- Brightness jittering

- Color jittering [3]

- Data Splitting: Split the dataset into training and testing sets, typically at an 8:2 ratio. Employ k-fold cross-validation (e.g., 5-fold) for robust model validation [3].

Model Retraining and Fine-Tuning

This two-phase process leverages pre-trained knowledge and adapts it to the specific task.

Phase 1: Classifier Training

- Load the VGG16 model pre-trained on ImageNet, excluding its top classification layers.

- Attach new, randomly initialized fully-connected layers tailored to the number of sperm morphology classes (e.g., 5 classes).

- Freeze the convolutional base (feature extractor) to preserve the pre-learned weights.

- Train only the new classifier layers on the sperm image dataset. This allows the network to learn class-specific features based on robust, general-purpose visual features from ImageNet.

Phase 2: Fine-Tuning

- Unfreeze a portion or all the layers of the convolutional base.

- Continue training the entire network with a very low learning rate (e.g., 10 to 100 times smaller than the initial training phase).

- This step gently adapts the foundational features to be more specific to the nuances of sperm morphology, potentially leading to higher accuracy [9].

Model Evaluation

- Performance Metrics: Evaluate the final model on the held-out test set.

- Validation: The model's performance should be benchmarked against established methods and expert annotations.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Resources for DL-Based Sperm Morphology Research

| Resource Name | Type | Key Features / Function |

|---|---|---|

| HuSHeM Dataset [9] | Dataset | 216 RGB sperm head images; 4 morphology classes; Expert-annotated contours. |

| SCIAN-MorphoSpermGS [9] | Dataset | 1,854 sperm images; 5 WHO classes; Serves as a gold-standard benchmark. |

| Hi-LabSpermMorpho [8] | Dataset | Large-scale; 18 morphology classes; Images from 3 staining techniques. |

| VGG16 Architecture [9] | Deep Learning Model | Proven CNN for transfer learning; High performance on sperm classification. |

| EdgeSAM [3] | Deep Learning Model | Used for precise sperm head segmentation, isolating the head from tails/noise. |

Conventional manual sperm morphology analysis is fundamentally limited by subjectivity and high variability, which undermines its diagnostic reliability and clinical utility. The integration of deep learning, specifically through transfer learning with architectures like VGG16, presents a robust and automated solution. This approach demonstrates superior classification accuracy, operational efficiency, and objectivity, offering researchers and clinicians a powerful tool to advance male fertility diagnostics and drug development. Future work should focus on the development of larger, high-quality annotated datasets and the rigorous clinical validation of these automated systems to ensure their generalizability and efficacy in diverse patient populations.

Convolutional Neural Networks (CNNs) are a class of deep neural networks that have become predominant in analyzing visual imagery. In medical imaging, CNNs automatically and adaptively learn spatial hierarchies of features from images, from low-level edges to high-level semantic concepts. A typical CNN architecture consists of convolutional layers for feature extraction, pooling layers for spatial invariance, and fully connected layers for classification.

Transfer learning is a machine learning technique where a model developed for one task is reused as the starting point for a model on a second task. This is particularly valuable in medical imaging, where large, annotated datasets are often scarce. By leveraging models pre-trained on large-scale natural image datasets like ImageNet, researchers can achieve high performance with limited medical data. The VGG16 architecture, a 16-layer deep CNN, has been extensively applied in medical image analysis due to its strong feature extraction capabilities and widespread adoption [11] [9].

Quantitative Performance of CNN Architectures in Medical Imaging

The application of CNNs, particularly through transfer learning, has demonstrated remarkable success across various medical domains. The table below summarizes the quantitative performance of several architectures, highlighting the consistent effectiveness of VGG16.

Table 1: Performance of Pre-trained CNN Models in Medical Image Classification Tasks

| Medical Application | Model Architecture | Key Performance Metrics | Reference / Source |

|---|---|---|---|

| Sperm Head Classification | VGG16 (Transfer Learning) | Average True Positive Rate: 94.1% (HuSHeM dataset), 62% (SCIAN dataset) | [9] |

| Liver Tumor Classification | Hybrid V-Net & VGG16 | Classification Accuracy: 96.52% | [12] |

| Lung Disease Classification | ResNet50 with Fuzzy Logic | Accuracy: 98.7%, Sensitivity: 98.4%, Specificity: 98.8% | [13] |

| Lung Disease Classification | VGG16 with Fuzzy Logic | Accuracy: 97.8% | [13] |

| Heart Disease Detection | VGG16-Random Forest (Hybrid) | Accuracy: 92%, Precision: 91.3%, Recall: 92.2%, F1-Score: 91.75% | [11] |

Experimental Protocol: VGG16 Transfer Learning for Sperm Head Classification

This protocol details the methodology for adapting the VGG16 architecture to classify human sperm heads into morphological categories (e.g., Normal, Tapered, Pyriform, Small, Amorphous) based on established WHO criteria [9] [14].

Data Acquisition and Preprocessing

- Dataset Sourcing: Obtain a publicly available sperm image dataset, such as the Human Sperm Head Morphology (HuSHeM) dataset or the SCIAN dataset [9].

- Data Partitioning: Randomly split the dataset into three subsets:

- Training Set: 80% of the data for model training.

- Validation Set: 10% of the data for hyperparameter tuning and monitoring training.

- Test Set: 10% of the data for the final, unbiased evaluation of model performance [15].

- Image Preprocessing:

- Resizing: Resize all images to 224x224 pixels to match the VGG16 input size.

- Color Normalization: Convert images to RGB format and normalize pixel values using the mean and standard deviation of the ImageNet dataset.

- Data Augmentation (Training Phase): Apply random transformations to the training images to improve model robustness and prevent overfitting. Techniques include:

- Random rotation (±10°)

- Horizontal and vertical flipping

- Brightness and contrast adjustments [15]

Model Adaptation and Training

- Load Pre-trained Model: Initialize the model with weights from VGG16 pre-trained on ImageNet, excluding the top classification layers.

- Add Custom Classifier: Replace the original classifier with new layers tailored to the sperm classification task (e.g., a Global Average Pooling layer followed by a Dense layer with 5 units and a softmax activation for 5-class classification).

- Two-Phase Training:

- Phase 1 - Classifier Training: Freeze the convolutional base of VGG16. Train only the newly added classifier layers for a limited number of epochs (e.g., 20-50) using the training data. Use an optimizer like Adam with a relatively high learning rate (e.g., 1e-3).

- Phase 2 - Fine-Tuning: Unfreeze a portion of the deeper layers in the VGG16 base. Continue training the entire unfrozen model with a very low learning rate (e.g., 1e-5) to gently adapt the pre-trained features to the specifics of sperm morphology [9].

Model Evaluation

- Performance Metrics: Evaluate the final model on the held-out test set using metrics such as Accuracy, Precision, Recall, F1-Score, and a confusion matrix.

- Validation: Compare the model's classifications against expert annotations from the dataset to establish ground truth [9].

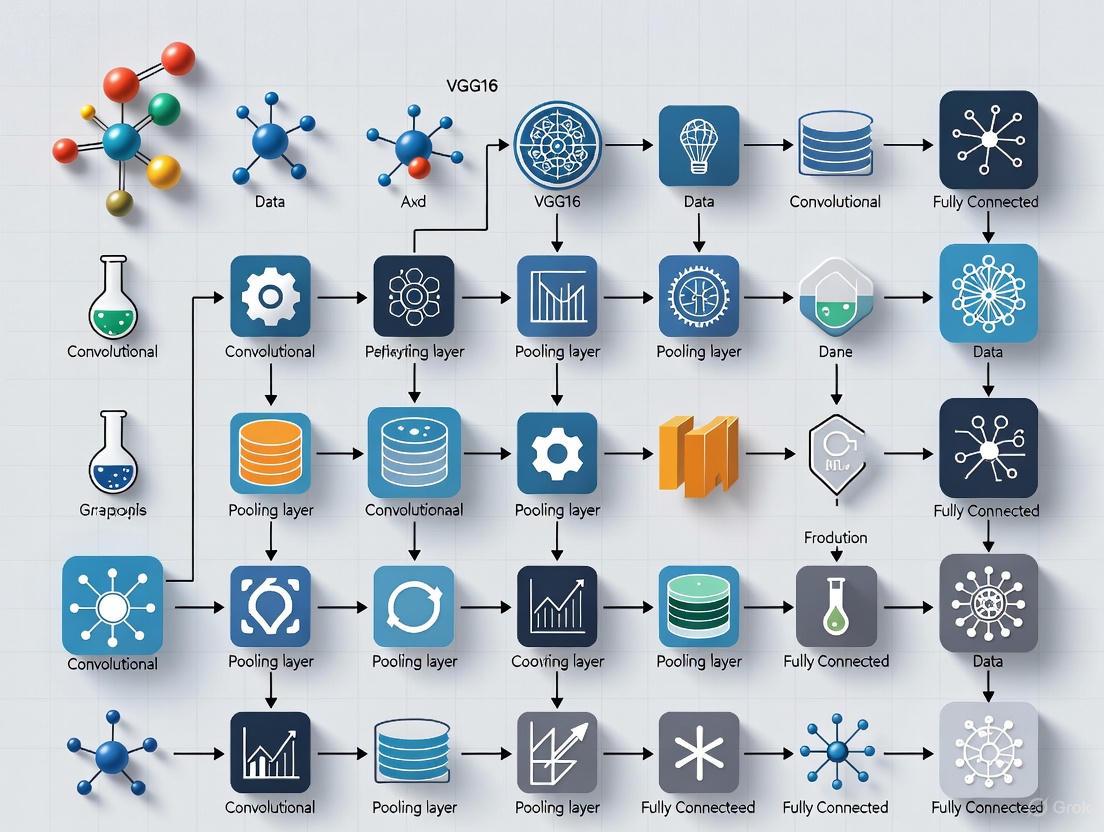

The following workflow diagram illustrates the complete experimental pipeline:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of a deep learning project for medical image analysis requires both computational and data resources. The following table lists key solutions and materials.

Table 2: Essential Research Reagent Solutions for VGG16-based Sperm Classification Research

| Item Name | Function / Description | Specification / Notes |

|---|---|---|

| Annotated Sperm Image Datasets | Provides ground-truth labeled data for model training and evaluation. | HuSHeM [9] or SCIAN [9] datasets; SVIA dataset [14] offers extensive annotations for detection and segmentation. |

| Computational Hardware (GPU) | Accelerates the training of deep neural networks, reducing computation time from weeks to hours. | NVIDIA GPUs (e.g., RTX A5000 [16]) with high VRAM are recommended for processing large image datasets. |

| Deep Learning Frameworks | Software libraries that provide the building blocks for designing, training, and validating deep learning models. | TensorFlow or PyTorch; often used with Python [15] [16]. |

| Image Annotation Software | Tools used by domain experts to label sperm images, creating the ground truth for supervised learning. | Software capable of precise segmentation and classification of sperm components (head, midpiece, tail) [14]. |

| Pre-trained VGG16 Weights | The knowledge base of the model learned from the ImageNet dataset, serving as the starting point for transfer learning. | Typically downloaded automatically within Keras or PyTorch libraries. |

Deep Learning and Convolutional Neural Networks represent a paradigm shift in medical image analysis. The VGG16 architecture, applied via transfer learning, has proven to be a powerful and accessible tool for specific classification tasks such as sperm head morphology analysis. The provided protocols and quantitative benchmarks offer a foundation for researchers to implement these methods, contributing to more standardized, efficient, and objective diagnostic tools in clinical and research settings. Future work will continue to focus on improving model interpretability, handling data imbalance, and expanding applications to more complex segmentation and detection tasks.

Why VGG16? Exploring the Architecture's Strengths for Image Classification Tasks

The VGG16 model, introduced by the Visual Geometry Group (VGG) at the University of Oxford in 2014, is a convolutional neural network (CNN) architecture that significantly advanced the state of the art in image recognition. Its primary innovation was a demonstration that network depth is a critical component for achieving high performance in visual recognition tasks. The model achieved 92.7% top-5 test accuracy on the challenging ImageNet dataset, which contains over 14 million images across 1,000 classes [17] [18].

VGG16's architecture consists of 16 weight layers, comprising 13 convolutional layers and 3 fully connected layers. Unlike earlier networks that used larger filters, VGG16 consistently uses small 3×3 convolution filters throughout the entire network, with a stride of 1 and same padding, followed by max-pooling layers with a 2×2 window and stride of 2 [17] [18]. This simple yet effective design philosophy has made VGG16 a timeless architecture that continues to be widely used in research and applications, particularly in transfer learning scenarios.

Architectural Advantages of VGG16

Core Architectural Features

The VGG16 architecture possesses several distinctive features that contribute to its enduring popularity and effectiveness in image classification tasks:

- Depth with Small Filters: By stacking multiple 3×3 convolutional layers, VGG16 effectively increases its receptive field while using fewer parameters than larger filters would require. For instance, two 3×3 convolutional layers have an effective receptive field of 5×5, but with more non-linearities and fewer parameters than a single 5×5 layer [17].

- Uniform Design: The architecture follows a consistent pattern of convolutional layers followed by max-pooling, making it easy to understand, implement, and modify for different tasks.

- Feature Hierarchy: The network naturally learns a hierarchy of features, with early layers capturing basic edges and textures, middle layers learning more complex patterns, and deeper layers identifying object parts and complex structures [17].

Advantages for Transfer Learning

VGG16 offers particular benefits for transfer learning applications, which are crucial for domains with limited labeled data:

- Feature Reusability: The generic visual features learned on ImageNet transfer well to other visual recognition tasks, especially the early and middle layers of the network.

- Proven Effectiveness: The architecture has demonstrated strong performance across diverse domains including medical imaging, satellite imagery, and biological analysis [17] [12].

- Implementation Simplicity: The uniform architecture makes it straightforward to remove the original classification head and replace it with custom layers for new tasks.

Table 1: VGG16 Architectural Configuration

| Block | Layer Type | Filter Size | Output Size | Parameters |

|---|---|---|---|---|

| Input | - | - | 224×224×3 | 0 |

| Block 1 | Conv+ReLU | 3×3 | 224×224×64 | 1,792 |

| Conv+ReLU | 3×3 | 224×224×64 | 36,928 | |

| Max Pooling | 2×2 | 112×112×64 | 0 | |

| Block 2 | Conv+ReLU | 3×3 | 112×112×128 | 73,856 |

| Conv+ReLU | 3×3 | 112×112×128 | 147,584 | |

| Max Pooling | 2×2 | 56×56×128 | 0 | |

| Block 3 | Conv+ReLU | 3×3 | 56×56×256 | 295,168 |

| Conv+ReLU | 3×3 | 56×56×256 | 590,080 | |

| Conv+ReLU | 3×3 | 56×56×256 | 590,080 | |

| Max Pooling | 2×2 | 28×28×256 | 0 | |

| Block 4 | Conv+ReLU | 3×3 | 28×28×512 | 1,180,160 |

| Conv+ReLU | 3×3 | 28×28×512 | 2,359,808 | |

| Conv+ReLU | 3×3 | 28×28×512 | 2,359,808 | |

| Max Pooling | 2×2 | 14×14×512 | 0 | |

| Block 5 | Conv+ReLU | 3×3 | 14×14×512 | 2,359,808 |

| Conv+ReLU | 3×3 | 14×14×512 | 2,359,808 | |

| Conv+ReLU | 3×3 | 14×14×512 | 2,359,808 | |

| Max Pooling | 2×2 | 7×7×512 | 0 | |

| Classifier | Fully Connected | - | 4096 | 102,764,544 |

| Fully Connected | - | 4096 | 16,781,312 | |

| Fully Connected | - | 1000 | 4,097,000 |

VGG16 for Sperm Head Classification: Experimental Evidence

Performance in Reproductive Medicine

Research has demonstrated the effectiveness of VGG16 for sperm head classification, a critical task in reproductive medicine and infertility treatment. In a landmark 2019 study, researchers applied transfer learning with VGG16 to classify human sperm into World Health Organization (WHO) shape-based categories using two publicly available datasets: HuSHeM and SCIAN [9] [6].

The approach involved retraining VGG16, initially trained on ImageNet, for sperm classification. This method achieved an average true positive rate of 94.1% on the HuSHeM dataset, matching the performance of adaptive patch-based dictionary learning (APDL) approaches and exceeding the 78.5% true positive rate achieved by cascade ensemble support vector machine (CE-SVM) classifiers [9]. On the more challenging SCIAN dataset, the VGG16-based approach achieved a true positive rate of 62%, comparable to earlier machine learning methods but with the advantage of automated feature extraction [9].

Table 2: Performance Comparison of Sperm Classification Methods

| Method | Dataset | Accuracy/True Positive Rate | Key Characteristics |

|---|---|---|---|

| VGG16 (Transfer Learning) | HuSHeM | 94.1% | Automated feature extraction, end-to-end learning |

| VGG16 (Transfer Learning) | SCIAN | 62.0% | Automated feature extraction, matches traditional methods |

| Adaptive Patch-based Dictionary Learning | HuSHeM | 92.3% | Requires manual patch extraction |

| Adaptive Patch-based Dictionary Learning | SCIAN | 62.0% | Requires manual patch extraction |

| Cascade Ensemble SVM | HuSHeM | 78.5% | Requires manual feature engineering |

| Cascade Ensemble SVM | SCIAN | 58.0% | Requires manual feature engineering |

| Modified AlexNet | HuSHeM | 96.0% | Lower computational requirements |

Comparative Advantages in Biological Imaging

The application of VGG16 to sperm classification highlights several advantages over traditional machine learning approaches:

- Elimination of Manual Feature Extraction: Unlike traditional methods that require manual extraction of features such as head area, perimeter, eccentricity, Zernike moments, and Fourier descriptors, VGG16 automatically learns relevant features directly from raw images [9].

- Robust Performance with Limited Data: The transfer learning approach enables effective learning even with relatively small datasets, which is common in medical domains where data collection is expensive and time-consuming.

- Computational Efficiency: While training deep networks from scratch requires substantial resources, fine-tuning a pre-trained VGG16 model is computationally efficient and doesn't require learning massive dictionaries or parameters from scratch [9].

Further research has built upon these foundations, with recent studies exploring hybrid approaches such as V-Net-VGG16 for liver tumor segmentation and classification, achieving 96.52% accuracy [12], and VGG16-ViT hybrids for white blood cell classification with up to 99.6% accuracy [19], demonstrating the continued relevance of VGG16 in modern medical image analysis pipelines.

Experimental Protocol: VGG16 Transfer Learning for Sperm Classification

Dataset Preparation and Preprocessing

The following protocol outlines the methodology for applying VGG16 transfer learning to sperm head classification, based on established approaches from the literature [9] [20]:

Materials and Datasets:

- HuSHeM Dataset: 216 sperm cell images (54 normal, 53 tapered, 57 pyriform, 52 amorphous) in RGB format with size 131×131 pixels [20]

- SCIAN Dataset: 1854 sperm cell images across five categories (normal, tapered, pyriform, small, amorphous) [9]

- Computational environment with deep learning framework (TensorFlow/Keras)

Preprocessing Pipeline:

- Image Cropping: Crop sperm heads using contour detection and elliptical fitting to focus on relevant regions

- Size Standardization: Resize all images to 224×224 pixels to match VGG16 input requirements

- Orientation Normalization: Rotate sperm heads to uniform direction using major axis detection

- Data Augmentation: Apply rotations, flips, and brightness adjustments to increase dataset variability

- Pixel Value Normalization: Scale pixel values to [0,1] range

Transfer Learning Implementation

Model Adaptation Protocol:

- Load Pre-trained VGG16: Initialize with weights trained on ImageNet, excluding the top classification layers

- Add Custom Classifier: Replace original fully connected layers with task-specific layers:

- Flatten layer to convert 7×7×512 feature maps to 1D vector

- Fully connected layer with 256 units and ReLU activation

- Dropout layer (0.5 rate) for regularization

- Output layer with softmax activation (5 units for WHO categories)

- Training Configuration:

- Freeze convolutional base initially

- Train only the added classifier layers for initial convergence

- Unfreeze deeper convolutional blocks for fine-tuning

- Use categorical cross-entropy loss and Adam optimizer (learning rate=0.0001)

Training and Evaluation Protocol

Two-Phase Training Approach:

Phase 1: Classifier Training (Epochs 1-100)

- Freeze all VGG16 convolutional layers

- Train only the newly added fully connected layers

- Monitor validation accuracy for convergence

Phase 2: Fine-tuning (Epochs 101-200)

- Unfreeze last 4 convolutional layers (Block 5)

- Use lower learning rate (0.00001) for gentle weight adjustments

- Employ early stopping if validation loss plateaus

Evaluation Metrics:

- True Positive Rate (TPR) for each sperm morphology class

- Average accuracy across all classes

- Confusion matrix analysis

- Comparison with expert human annotations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for VGG16 Transfer Learning Experiments

| Resource Category | Specific Resource | Function in Research | Implementation Notes |

|---|---|---|---|

| Computational Framework | TensorFlow/Keras with VGG16 | Deep learning infrastructure | Pre-trained models readily available in keras.applications |

| Hardware Acceleration | GPU with CUDA support | Accelerate training and inference | Minimum 8GB VRAM recommended for efficient fine-tuning |

| Public Datasets | HuSHeM Dataset | Benchmark for sperm head classification | 216 annotated sperm images across 4 morphology classes [20] |

| Public Datasets | SCIAN-MorphoSpermGS | Gold-standard for algorithm comparison | 1854 sperm images across 5 WHO categories [9] |

| Data Augmentation Tools | TensorFlow ImageDataGenerator | Dataset expansion and variability | Apply rotation, flipping, brightness adjustments |

| Evaluation Metrics | sklearn.metrics | Performance quantification | Calculate precision, recall, F1-score, confusion matrices |

| Visualization Tools | Grad-CAM | Model interpretability and feature visualization | Identify which image regions influence classification decisions [19] |

VGG16 remains a powerful architecture for image classification tasks, particularly in specialized domains like reproductive medicine where transfer learning is essential due to limited labeled data. Its strengths for sperm head classification research include a proven track record of performance (94.1% accuracy on HuSHeM dataset), simplified implementation through automated feature extraction, and computational efficiency compared to training networks from scratch.

The architectural advantages of VGG16—particularly its depth, uniform design with 3×3 filters, and effective feature hierarchy—make it exceptionally suitable for transfer learning applications. While newer architectures have emerged, VGG16 continues to offer an optimal balance of performance, interpretability, and implementation simplicity for research applications in biological image analysis, establishing it as a foundational tool in computational reproductive medicine.

The application of deep learning in medicine often faces a significant hurdle: the scarcity of large, annotated datasets. This challenge is particularly acute in specialized fields like reproductive medicine, where data collection is expensive, time-consuming, and requires expert knowledge. Transfer learning has emerged as a powerful strategy to overcome this limitation by leveraging knowledge gained from large-scale general image datasets (like ImageNet) to solve specific, data-scarce medical problems [11] [21].

Within this context, sperm morphology classification represents a compelling case study. Traditional manual assessment of sperm heads is subjective, labor-intensive, and prone to inter-observer variability [4] [22]. This article details the application of the VGG16 architecture, via transfer learning, to automate and standardize the classification of human sperm head morphology, providing detailed application notes and experimental protocols for researchers and drug development professionals.

Technical Protocols: Implementing VGG16 Transfer Learning for Sperm Head Classification

This section provides a detailed, step-by-step methodology for replicating a VGG16-based transfer learning pipeline for sperm head morphology classification, based on established protocols [21] and recent literature [8] [4].

Protocol: Bottleneck Feature Transfer Learning with VGG16

- Objective: To adapt the pre-trained VGG16 model for a 6-class sperm head morphology classification task using a bottleneck feature transfer learning approach.

Principle: The initial layers of a pre-trained CNN act as generic feature extractors. By freezing these layers and only re-training the top classifier layers, effective learning can be achieved even with small datasets.

Procedure:

Data Preparation:

- Input Specification: Resize all sperm head images to 224x224 pixels with 3 channels (RGB) to match VGG16's input expectations [11].

- Data Augmentation: Apply real-time data augmentation to the training set to increase diversity and prevent overfitting. Recommended operations include random rotations (±15°), horizontal/vertical flips, and slight brightness/contrast variations [4].

- Dataset Splitting: Divide the annotated sperm image dataset into training (60%), validation (20%), and testing (20%) subsets, ensuring class balance is maintained across splits [21].

Model Adaptation:

- Load the VGG16 model pre-trained on ImageNet, excluding its original top fully-connected layers.

- Freeze Base Layers: Fix the weights of the first 15 convolutional layers of VGG16 to preserve their learned feature representations [21].

- Add Custom Classifier: Append a new custom classifier head on top of the frozen base. This typically consists of:

- A flattening layer.

- One or more fully-connected (Dense) layers (e.g., with 512 units).

- A Dropout layer (rate=0.5) for regularization.

- A final Dense layer with 6 units and a Softmax activation function for class probability output.

Model Training:

- Compilation:

- Loss Function: Categorical Cross-Entropy.

- Optimizer: Adam with default parameters (learning rate = 0.001, β₁ = 0.9, β₂ = 0.999, ε = 1e-07) [21].

- Metrics: Accuracy.

- Execution:

- Epochs: Set to a maximum of 100.

- Batch Size: Determine based on available computational memory (e.g., 32).

- Validation: Use the validation subset to monitor performance after each epoch.

- Callbacks: Implement an Early Stopping callback to halt training if the validation accuracy does not improve for 10 consecutive epochs, preventing overfitting and saving computational time [21].

- Compilation:

Model Evaluation:

- Use the held-out test set for final model assessment.

- Generate a full classification report (Precision, Recall, F1-Score) and a Receiver Operating Characteristic (ROC) curve for all classes to evaluate performance comprehensively [21].

Advanced Framework: Two-Stage Divide-and-Ensemble Classification

For more complex classification tasks involving a wider spectrum of abnormalities (e.g., 18 classes [8]), a basic transfer learning model may be insufficient. A advanced two-stage framework has been developed to enhance performance [8].

- Workflow:

- Stage 1 - Splitting: A dedicated "splitter" model first categorizes sperm images into two major groups:

- Category 1: Head and neck region abnormalities.

- Category 2: Normal morphology and tail-related abnormalities.

- Stage 2 - Ensemble Classification: Images from each category are routed to a specialized ensemble model for fine-grained classification. This ensemble integrates multiple deep learning architectures (e.g., NFNet and Vision Transformer variants) and uses a multi-stage voting strategy for final decision-making, which has been shown to improve reliability over simple majority voting [8].

- Stage 1 - Splitting: A dedicated "splitter" model first categorizes sperm images into two major groups:

The following diagram illustrates the logical workflow of this advanced two-stage framework.

Performance Data and Comparative Analysis

Quantitative results from recent studies demonstrate the effectiveness of transfer learning and advanced frameworks for sperm morphology analysis. The following table summarizes key performance metrics.

Table 1: Performance Metrics of Deep Learning Models in Sperm Analysis

| Model / Framework | Task Focus | Key Performance Metrics | Reference / Dataset |

|---|---|---|---|

| VGG16 Transfer Learning | Sperm Head Morphology Classification | Training converged using early stopping (patience=10). ROC curves generated for all six classes. | [21] |

| Two-Stage Ensemble Framework | 18-class Sperm Morphology Classification | Accuracy: 69.43% - 71.34% (across staining protocols).Statistically significant +4.38% improvement over previous approaches. | Hi-LabSpermMorpho Dataset [8] |

| CNN for DNA Integrity | Predicting DNA Fragmentation Index (DFI) from Brightfield Images | Bivariate correlation between predicted/actual DFI: ~0.43.Can select sperm in the 86th percentile for DNA integrity. | [23] |

The performance of these models is intrinsically linked to the quality of the input data. The table below lists open-source datasets available for training and validating such models.

Table 2: Open-Source Datasets for Sperm Morphology Analysis

| Dataset Name | Key Characteristics | Content & Annotations | Reference |

|---|---|---|---|

| Hi-LabSpermMorpho | Images from 3 staining protocols (BesLab, Histoplus, GBL). | 18 distinct sperm morphology classes. | [8] |

| MHSMA (Modified Human Sperm Morphology Analysis) | 1,540 grayscale sperm head images. | Features related to acrosome, head shape, vacuoles. | [4] |

| SVIA (Sperm Videos and Images Analysis) | A large, multi-purpose dataset. | 125,000 instances for detection; 26,000 segmentation masks; 125,880 images for classification. | [4] |

| VISEM-Tracking | A multi-modal dataset with videos. | 656,334 annotated objects with tracking details. | [4] |

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of these protocols requires a combination of computational and biological materials. The following table details the essential "research reagents" for this field.

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Item Name | Specification / Example | Function / Purpose |

|---|---|---|

| Pre-trained Model | VGG16 (Pre-trained on ImageNet) | Provides a robust foundational feature extractor, enabling effective learning with limited medical image data. |

| Staining Reagents | Diff-Quick Staining Kits (e.g., BesLab, Histoplus, GBL) [8] | Enhances contrast and visibility of sperm morphological structures (head, neck, tail) for microscopic imaging. |

| Imaging Setup | Bright-field Microscope with Mobile Phone Camera [8] | A customizable and relatively low-cost system for acquiring high-quality sperm images. |

| Optimization Algorithms | Enhanced Hunger Games Search (EHGS) [24] | Metaheuristic algorithm for automated hyperparameter tuning of deep learning models, improving performance. |

| Validation Tool | Sperm Morphology Assessment Standardisation Training Tool [22] | Provides expert-consensus "ground truth" labels for training and validating both human morphologists and AI models. |

Experimental Workflow Visualization

The entire process, from sample preparation to model prediction, can be visualized as an integrated workflow. The following diagram maps the key stages of the experiment, aligning with the described protocols.

The integration of transfer learning, particularly using established architectures like VGG16, provides a powerful and pragmatic solution for automating sperm head classification in data-scarce medical domains. The detailed protocols and performance data outlined in this article offer researchers a clear roadmap for replicating and building upon these methods. Framing the problem with a hierarchical two-stage ensemble and leveraging high-quality, expert-annotated datasets can further push the boundaries of accuracy and clinical applicability. This approach demonstrates a viable path toward standardizing sperm morphology assessment, ultimately contributing to more objective and efficient diagnostic processes in reproductive medicine.

Building the Classifier: A Step-by-Step VGG16 Transfer Learning Pipeline for Sperm Images

The application of deep learning, particularly transfer learning with architectures like VGG16, has emerged as a powerful approach for automating sperm morphology analysis, a critical yet subjective task in male fertility assessment [14] [25]. The performance of these models is fundamentally dependent on the quality, scale, and appropriate preprocessing of the training data [14]. This document provides detailed application notes and protocols for sourcing and preprocessing three pivotal public datasets—HuSHeM, SCIAN, and SVIA—explicitly framed within a research context utilizing VGG16 transfer learning for sperm head classification. By standardizing the methodologies for dataset handling, we aim to enhance the reproducibility and reliability of computational andrology research.

Dataset Specifications and Comparative Analysis

A critical first step in any machine learning project is the selection of a dataset whose characteristics align with the research objectives. The following section provides a detailed overview of three relevant sperm image datasets.

Table 1: Quantitative Summary of Key Sperm Image Datasets for VGG16 Transfer Learning

| Dataset Feature | HuSHeM [26] | SCIAN-MorphoSpermGS [27] | SVIA [14] |

|---|---|---|---|

| Primary Content | Cropped sperm head images | Sperm head images from stained smears | Videos & extracted images for multiple tasks |

| Total Volume | 216 images | Information Missing | 125,000 annotated instances; 26,000 segmentation masks |

| Image Dimensions | 131 x 131 pixels (RGB) | Information Missing | Information Missing |

| Key Annotations | Head morphology class | Head morphology class | Bounding boxes, segmentation masks, object categories |

| Morphology Classes | 4 (Normal, Tapered, Pyriform, Amorphous) | 5 (Normal, Tapered, Pyriform, Small, Amorphous) | Includes sperm and impurities |

| Staining Method | Diff-Quick | Information Missing | Information Missing |

| Primary Use Case | Sperm head classification | Sperm head classification | Object detection, segmentation, & classification |

Table 2: Dataset Suitability for Model Training

| Aspect | HuSHeM | SCIAN-MorphoSpermGS | SVIA |

|---|---|---|---|

| Ideal for VGG16 Fine-Tuning | Excellent (Focused, pre-cropped) | Excellent (Focused, pre-cropped) | Good (Requires cropping for head-specific tasks) |

| Data Augmentation Need | Critical (Limited samples) | Critical (Assumed limited samples) | Moderate (Large-scale) |

| Annotation Overhead | Low | Low | High (Requires parsing multiple annotation types) |

| Challenge | Limited sample size, class imbalance | Information Missing | Complex preprocessing pipeline |

Experimental Protocols for Dataset Preprocessing

The following protocols describe standardized methodologies for preparing the HuSHeM, SCIAN, and SVIA datasets for training a VGG16 model for sperm head classification.

Protocol 1: HuSHeM Preprocessing for VGG16 Transfer Learning

Objective: To prepare the HuSHeM dataset for fine-tuning a VGG16 model to classify sperm heads into one of four morphological classes.

Materials:

- Dataset Source: Mendeley Data repository (DOI: 10.17632/tt3yj2pf38.3) [26].

- Software: Python 3.x with libraries: OpenCV, Pillow, TensorFlow/Keras or PyTorch.

Method:

- Data Acquisition and Verification:

- Download the dataset, which is organized into four folders: 'Normal', 'Tapered', 'Pyriform', and 'Amorphous'.

- Validate the integrity of the dataset by checking for corrupt image files and ensuring a total of 216 images.

Data Partitioning:

- Partition the images in each class into training (80%), validation (10%), and test (10%) sets using a stratified approach to preserve class distribution. Use a fixed random seed for reproducibility.

Data Augmentation (Critical for HuSHeM):

- Apply a rigorous set of augmentation techniques to the training set to combat overfitting, given the small initial dataset size. This is a key step inspired by successful practices in the field [15]. The following operations should be applied randomly:

- Rotation: ±15°

- Width and Height Shifts: ±10%

- Shear: ±10%

- Zoom: ±10%

- Horizontal Flipping

- Note: Augmentation should not be applied to the validation or test sets.

- Apply a rigorous set of augmentation techniques to the training set to combat overfitting, given the small initial dataset size. This is a key step inspired by successful practices in the field [15]. The following operations should be applied randomly:

Image Preprocessing for VGG16:

- Resizing: Resize all images to 224x224 pixels, the default input size for the standard VGG16 model.

- Color Scaling: Normalize pixel values to the range [0, 1] or apply VGG16-specific preprocessing (e.g., subtracting the mean RGB values computed on ImageNet).

Troubleshooting Tip: If model performance plateaus, consider increasing the intensity of data augmentation parameters or employing more advanced techniques like synthetic data generation [15].

Protocol 2: SVIA Dataset Preprocessing for Sperm Head Detection and Classification

Objective: To utilize the SVIA dataset for the dual task of localizing sperm heads within full images (detection) and subsequently classifying their morphology, creating a pipeline for end-to-end analysis.

Materials:

- Dataset Source: SVIA dataset, comprising 125,000 annotated instances for object detection [14].

- Software: Python with OpenCV, Pillow, and a deep learning framework supporting object detection (e.g., TensorFlow Object Detection API, PyTorch with Detectron2).

Method:

- Data Acquisition and Parsing:

- Download the SVIA dataset, specifically the subsets relevant to object detection and classification.

- Parse the provided annotation files (e.g., JSON, XML) to extract bounding box coordinates and class labels for sperm and impurities.

Sperm Head Detection Model Training:

- Objective: Train a model to localize and crop sperm heads from larger images.

- Utilize the bounding box annotations to train an object detection model like YOLOv5 or Faster R-CNN. This model will be used to automatically crop sperm heads from full images, similar to the pre-cropped nature of HuSHeM.

Dataset Generation for Classification:

- Run the trained detection model on the SVIA training images to generate a new dataset of cropped sperm head images.

- Filter out low-confidence detections and images classified as 'impurities' to create a clean set of sperm head crops.

- Manually verify a subset of these cropped images to ensure quality.

Classification Model Training:

- Use the newly generated dataset of cropped sperm heads to fine-tune the VGG16 model for morphology classification, following a similar protocol to that used for HuSHeM (including data partitioning and augmentation).

Note: This two-stage detection-and-classification pipeline is a common and effective strategy for analyzing complex image data where objects of interest must first be localized [14] [8].

Workflow Visualization: End-to-End Preprocessing Pipeline

The following diagram illustrates the logical workflow for preprocessing the SVIA dataset, as described in Protocol 2, highlighting its more complex, two-stage nature compared to the simpler HuSHeM workflow.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools for Sperm Image Analysis

| Item Name | Function/Application | Specifications/Notes |

|---|---|---|

| Diff-Quick Staining Kit | Staining semen smears to enhance morphological features for microscopy [26]. | Used in the preparation of the HuSHeM dataset. Provides contrast for head, midpiece, and tail structures. |

| RAL Diagnostics Staining Kit | Staining semen smears for morphological evaluation per WHO guidelines [15]. | An alternative staining method used in dataset creation (e.g., SMD/MSS). |

| MMC CASA System | Automated image acquisition from sperm smears for dataset creation [15]. | Consists of an optical microscope with a digital camera. Used for capturing and storing individual sperm images. |

| Olympus CX21 Microscope | Imaging system for acquiring sperm morphology images [26]. | Used with a 100x objective lens and a Sony color camera for the HuSHeM dataset. |

| VGG16 Model | Deep convolutional neural network for image classification tasks [8] [25]. | Pre-trained on ImageNet; can be fine-tuned for sperm head classification using datasets like HuSHeM. |

| YOLOv5 Model | Deep learning model for real-time object detection [27]. | Can be trained on the SVIA dataset to detect and localize sperm cells in images or video frames. |

| NFNet & Vision Transformer | Advanced deep learning architectures for image classification [8]. | Shown to be effective in complex sperm morphology classification tasks, potentially outperforming older architectures. |

The journey from a raw, public dataset to a robustly preprocessed input for a VGG16 model is a foundational process in computational andrology. This document has detailed the specific protocols for handling the HuSHeM, SCIAN, and SVIA datasets, highlighting the critical role of data augmentation, partitioning, and tailored preprocessing. Adherence to these standardized protocols ensures that researchers can build reliable, high-performing models for sperm head classification, thereby contributing to the broader goal of standardizing and automating male fertility assessment. The "Scientist's Toolkit" provides a concise reference for the key materials required to undertake this work, from wet-lab staining kits to state-of-the-art deep learning models.

Within the broader scope of developing a VGG16 transfer learning model for sperm head classification, the preparation of robust, high-quality training data is a critical prerequisite for success. The performance of deep learning models in this domain is often hindered by challenges such as limited public dataset sizes, class imbalance, and the inherent subjectivity of manual morphological assessments [4] [14]. This protocol details a comprehensive data preparation pipeline, encompassing cropping, rotation, and augmentation, specifically designed to overcome these hurdles and create optimal input data for a VGG16-based classifier. Standardizing this process is essential for automating sperm morphology analysis, reducing inter-observer variability, and ultimately enhancing the reliability of male fertility diagnostics [15].

A primary challenge in sperm morphology analysis is the scarcity of large, publicly available, and consistently annotated datasets. The following table summarizes key datasets used in recent research, highlighting their scope and limitations.

Table 1: Overview of Publicly Available Sperm Morphology Datasets

| Dataset Name | Number of Images | Annotation Type | Key Characteristics | Notable Limitations |

|---|---|---|---|---|

| HuSHeM [20] | 216 | Classification (Head) | Stained images; classified into normal, tapered, pyriform, amorphous. | Very limited sample size. |

| SCIAN-MorphoSpermGS [4] [20] | 1,854 | Classification (Head) | Five-class classification (normal, tapered, pyriform, small, amorphous). | --- |

| MHSMA [4] [14] | 1,540 | Classification (Head) | Grayscale sperm head images. | Non-stained, noisy, low resolution. |

| SMD/MSS [15] | 1,000 (extended to 6,035) | Classification (Full Sperm) | Based on modified David classification (12 defect classes); uses data augmentation. | Single-institution source. |

| SVIA [4] [14] | 4,041 images & videos | Detection, Segmentation, Classification | Includes 125,000 detection instances and 26,000 segmentation masks. | Low-resolution, unstained samples. |

The small size of datasets like HuSHeM necessitates the use of data augmentation to prevent overfitting and improve model generalizability [20]. Furthermore, the SMD/MSS dataset demonstrates a common strategy where the original dataset is significantly expanded (from 1,000 to 6,035 images) through data augmentation techniques to balance morphological classes and enhance model training [15].

Experimental Protocols for Data Preparation

Core Preprocessing: Cropping and Rotation

A critical first step is to isolate the region of interest—the sperm head—and standardize its orientation. This reduces computational complexity and forces the model to focus on morphological features rather than spatial orientation [20]. The following workflow, adapted from published methods, outlines this automated process.

Figure 1: Workflow for automated sperm head cropping and rotation.

Detailed Protocol:

- Input: Acquire a raw sperm image, typically in RGB format. The example protocol uses images from the HuSHeM dataset with an original size of 131x131 pixels [20].

- Denoising and Conversion: Apply a denoising algorithm (e.g., Gaussian blur) to reduce high-frequency noise. Convert the resulting image to a monochrome (grayscale) format to simplify subsequent processing [20].

- Gradient Calculation: Use the Sobel operator to obtain a gradient image. This highlights regions with high horizontal gradients and low vertical gradients, effectively outlining the sperm head's edges [20].

- Filtering and Binarization: Employ a low-pass filter to remove any remaining noise in the gradient image. Then, use an adaptive thresholding algorithm (e.g., Otsu's method) to convert the filtered image into a binary image, separating the sperm head from the background [20].

- Morphological Cleaning: Perform morphological operations—specifically, erosion followed by dilation—to eliminate small interference spots and smooth the contour of the sperm head in the binary image [20].

- Contour Fitting: Identify the largest contour in the processed binary image. Fit an ellipse to this contour to determine the precise orientation (major and minor axes) of the sperm head [20].

- Cropping: Extract the image region centered on the fitted ellipse. This yields a standardized, smaller image (e.g., 64x64 pixels) containing primarily the sperm head, as shown in Table 2 [20].

- Rotation: Based on the orientation of the major axis, rotate the cropped image to a uniform direction (e.g., with the head pointing right). This ensures rotational invariance during model training [20].

Table 2: Impact of Preprocessing Steps on Image Characteristics

| Processing Step | Output Image Size | Key Objective | Tool/Algorithm Used |

|---|---|---|---|

| Raw Input Image | 131 x 131 px (RGB) | Original data from microscope. | Microscope with camera. |

| Denoising & Conversion | 131 x 131 px (Grayscale) | Reduce noise and simplify processing. | Gaussian blur, color conversion. |

| Gradient & Binarization | 131 x 131 px (Binary) | Highlight and isolate sperm head edges. | Sobel operator, adaptive thresholding. |

| Cropping | 64 x 64 px (Grayscale) | Isolate the region of interest (sperm head). | Elliptical fitting, image cropping. |

| Rotation | 64 x 64 px (Grayscale) | Achieve rotational invariance for the model. | Affine transformation. |

Data Augmentation Techniques and Performance

With limited initial data, augmentation is indispensable. It increases dataset size and diversity by applying mathematical simulations to existing images, thereby improving model generalization and combating overfitting [28]. The techniques can be broadly categorized, and their effectiveness has been quantitatively demonstrated in reproductive biology research.

Table 3: Categorization and Application of Image Augmentation Methods

| Augmentation Category | Example Methods | Application in Sperm Image Analysis |

|---|---|---|

| Pixel Transformation [28] | ColorJitter, Gaussian blur, Noise injection (Gaussian, Pretzel), Histogram equalization (CLAHE). | Simulates variations in staining intensity, lighting conditions, and optical noise. |

| Geometric Deformation [28] | Random rotation, Horizontal/Vertical flip, Scaling, Elastic transformations. | Encourages rotational and scale invariance; use flips with caution due to sperm asymmetry. |

| Region Cropping/Padding [28] | RandomResizedCrop, CenterCrop, Padding. | Forces the model to learn from different spatial contexts and partial views. |

| Advanced/Generative [29] | Denoising Diffusion Probabilistic Models (DDPM), Conditional GANs (e.g., ImbCGAN, BAGAN). | Generates high-quality synthetic samples of rare morphological classes to address severe data imbalance. |

A study on the SMD/MSS dataset for full-sperm morphology classification provides a clear example of augmentation's impact. Researchers initially had 1,000 sperm images and employed various augmentation techniques to expand the dataset to 6,035 images. The subsequent deep learning model achieved accuracies ranging from 55% to 92% across different morphological classes, underscoring how augmentation enables the training of complex models that would otherwise be infeasible [15]. For extremely rare cell types, advanced generative models like DDPM have been shown to boost identification accuracy dramatically, from 45.5% to 87.0%, by creating high-fidelity examples of under-represented classes [29].

The following diagram illustrates how these techniques are integrated into a complete model training pipeline, from raw data to the final VGG16 classifier.

Figure 2: Integrated data preparation pipeline for VGG16 transfer learning.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools and Software for Sperm Image Analysis Pipelines

| Tool/Solution | Function | Application Note |

|---|---|---|

| ImageJ / Fiji [30] | Open-source image analysis platform for visualization, inspection, and quantification. | The "Fiji" distribution is recommended for its built-in bioimage analysis plugins and deep learning capabilities (e.g., CSBDeep, DeepImageJ) [30]. |

| OpenCV [20] | Library for real-time computer vision; used for image and video processing. | Ideal for implementing automated preprocessing scripts for cropping, rotation, and filtering in batch mode. |

| TensorFlow / PyTorch | Open-source libraries for machine learning and deep learning. | Used to build, train, and deploy deep learning models (e.g., VGG16); often integrated with ImageJ via plugins [30]. |

| RAL Diagnostics Stain [15] | Staining kit for semen smears. | Used in the creation of the SMD/MSS dataset to enhance the contrast and visibility of sperm structures [15]. |

| MMC CASA System [15] | Computer-Assisted Semen Analysis system for image acquisition. | Used for standardized capture of individual spermatozoa images in bright-field mode at 100x magnification. |

The meticulous preparation of data through standardized cropping, rotation, and strategic augmentation is not merely a preliminary step but a cornerstone of successful VGG16 transfer learning for sperm head classification. The protocols and data presented herein provide a reproducible framework that directly addresses the critical challenges of data scarcity and morphological variability in this field. By implementing this comprehensive data preparation pipeline, researchers can construct robust, generalizable, and high-performing models, thereby advancing the objective and automated analysis of sperm morphology for clinical diagnosis and drug development.

The morphological classification of human sperm is a fundamental procedure in the diagnosis of male infertility, but traditional manual assessment is highly subjective, time-consuming, and suffers from significant inter- and intra-laboratory variability [9] [14]. Deep learning approaches, particularly transfer learning with pre-trained convolutional neural networks (CNNs), have emerged as powerful solutions to automate this process, offering improvements in accuracy, reliability, and throughput [9] [31]. Within this context, the VGG16 architecture has proven to be exceptionally effective for sperm head classification when its final fully-connected layers are properly adapted to this specialized task [9] [20].

Transfer learning allows researchers to leverage features learned from large-scale natural image datasets (e.g., ImageNet) and refine them for domain-specific applications like medical image analysis, significantly reducing computational requirements and mitigating the challenges associated with limited biomedical dataset sizes [9] [20]. The modification of VGG16's classifier component represents a critical step in this adaptation process, enabling the network to effectively distinguish between subtle morphological differences in sperm heads according to World Health Organization (WHO) criteria [9] [31]. This protocol details the methodology for optimizing VGG16's fully-connected layers specifically for sperm morphology classification, providing a robust framework that has demonstrated state-of-the-art performance on benchmark datasets [9] [20].

The VGG16 model, originally developed for the ImageNet Large Scale Visual Recognition Challenge, employs a deep architecture consisting of 13 convolutional layers and 3 fully-connected layers [9] [20]. The convolutional layers function as robust feature extractors that learn hierarchical representations of visual patterns, while the fully-connected layers at the end of the network serve as a classifier that makes final predictions based on these extracted features [9]. For sperm classification, the standard VGG16 architecture presents two significant limitations: (1) its original fully-connected layers are designed for 1000-class ImageNet classification, and (2) these layers contain a substantial portion of the network's parameters, increasing the risk of overfitting on typically small medical imaging datasets [20].

Modifying the final fully-connected layers addresses both issues by creating a custom classifier specifically optimized for sperm morphology categories. This approach maintains the powerful, generic feature extraction capabilities developed during pre-training on ImageNet while adapting the classification component to the specific requirements of sperm head analysis [9] [20]. Research has demonstrated that this strategy yields superior performance compared to traditional machine learning approaches and even matches the performance of more complex deep learning frameworks while being computationally more efficient [9] [20].

Table 1: Performance Comparison of VGG16 Adaptation Against Other Methods on HuSHeM Dataset

| Method | Average Accuracy | Average Precision | Average Recall | Average F-Score |

|---|---|---|---|---|

| VGG16 with FC modifications [20] | 96.0% | 96.4% | 96.1% | 96.0% |

| AlexNet with Batch Normalization [20] | 96.0% | 96.4% | 96.1% | 96.0% |

| Adaptive Patch-based Dictionary Learning [9] | 92.3% | - | - | - |

| Cascade Ensemble SVM [9] | 78.5% | - | - | - |

Experimental Protocols for VGG16 Adaptation

Dataset Preparation and Preprocessing

The successful adaptation of VGG16 for sperm classification requires careful dataset preparation. Two publicly available datasets have been extensively used in literature: the Human Sperm Head Morphology (HuSHeM) dataset and the SCIAN-MorphoSpermGS dataset [9] [31].

The HuSHeM dataset contains 216 RGB sperm cell images (131×131 pixels) categorized into four classes: Normal (54 images), Tapered (53 images), Pyriform (57 images), and Amorphous (52 images) [20]. The SCIAN dataset is more extensive, containing 1854 sperm cell images classified into five categories: Normal, Tapered, Pyriform, Small, and Amorphous [31]. For the SCIAN dataset, researchers have employed different agreement levels, with the "total agreement" subset containing only images where all three experts concurred on the classification [31].

A critical preprocessing pipeline should be implemented to ensure optimal performance:

Image Cropping: Extract the sperm head region using contour detection and elliptical fitting to focus on relevant features [20]. This typically reduces image size to 64×64 pixels centered on the sperm head.

Orientation Normalization: Rotate sperm heads to a uniform direction (typically pointing right) to reduce rotational variance [20].

Data Augmentation: Apply transformations including rotation, flipping, scaling, and brightness adjustment to increase dataset size and improve model generalization [32] [15].

Dataset Splitting: Divide data into training (60-80%), validation (10-20%), and test (10-20%) sets, ensuring stratified sampling to maintain class distribution across splits [15] [23].

Table 2: Dataset Characteristics for Sperm Morphology Classification

| Dataset | Total Images | Classes | Image Size | Agreement Level |

|---|---|---|---|---|

| HuSHeM [20] | 216 | 4 (Normal, Tapered, Pyriform, Amorphous) | 131×131 pixels (original), 64×64 (processed) | Full expert agreement |

| SCIAN [9] [31] | 1132 (gray-scale) / 1854 | 5 (Normal, Tapered, Pyriform, Small, Amorphous) | ~35×35 pixels | Partial (2/3 experts) and total (3/3 experts) agreement |

| MHSMA [32] | 1540 | 3 (Head, Vacuole, Acrosome abnormalities) | 128×128 pixels (gray-scale) | Expert annotations |

VGG16 Modification Methodology

The adaptation of VGG16 for sperm classification involves a systematic approach to transfer learning with specific modifications to the fully-connected layers:

Base Model Preparation:

- Load the VGG16 model pre-trained on ImageNet, excluding the original fully-connected layers (often referred to as the "top" of the network).

- Freeze the convolutional layers initially to prevent destruction of pre-trained features during early training phases [9].

Custom Classifier Design:

- Replace the original fully-connected layers with a custom classifier tailored to sperm morphology classification.

- The standard adaptation includes:

- A flattening layer to convert 2D feature maps to 1D vectors.

- A fully-connected (dense) layer with 512-1024 units and ReLU activation.

- A dropout layer with rate of 0.5-0.7 to mitigate overfitting.

- A final output layer with softmax activation containing units corresponding to the number of sperm classes (4 or 5) [9] [20].

Training Strategy:

- Implement a two-phase training approach:

- Phase 1: Train only the custom fully-connected layers while keeping convolutional layers frozen, using a relatively low learning rate (e.g., 0.001) [9].

- Phase 2: Unfreeze some or all convolutional layers for fine-tuning with an even lower learning rate (e.g., 0.0001) to gently adapt pre-trained features to sperm images [9].

- Utilize batch sizes of 16-32, and train for 100-200 epochs with early stopping based on validation loss to prevent overfitting [9].