Machine Learning in Male Infertility Prediction: A Systematic Review of AI Models, Clinical Applications, and Future Directions

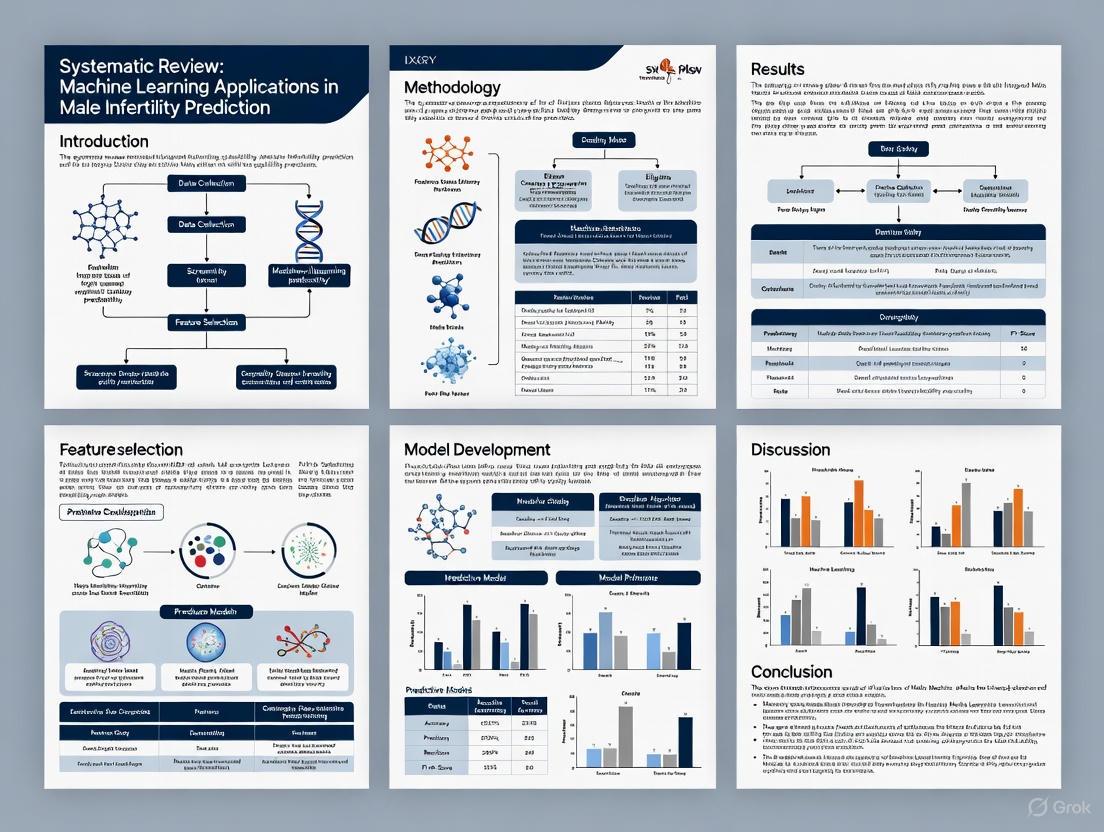

This systematic review synthesizes the current landscape of artificial intelligence (AI) and machine learning (ML) applications for predicting and diagnosing male infertility.

Machine Learning in Male Infertility Prediction: A Systematic Review of AI Models, Clinical Applications, and Future Directions

Abstract

This systematic review synthesizes the current landscape of artificial intelligence (AI) and machine learning (ML) applications for predicting and diagnosing male infertility. It explores foundational concepts, including the clinical need for new diagnostic tools and the role of key biomarkers. The review meticulously catalogs the performance of various ML algorithms—from support vector machines to random forests and neural networks—across diverse clinical tasks such as sperm analysis, treatment outcome prediction, and genetic factor assessment. It further addresses critical methodological challenges, including data quality and model interpretability, while providing a comparative analysis of model validation and performance metrics. Aimed at researchers, scientists, and drug development professionals, this article outlines a roadmap for the future integration of robust, clinically-adopted AI tools to enhance precision and accessibility in male infertility management.

The Rising Tide of Male Infertility and the AI Imperative

Epidemiology and Clinical Burden of Male Infertility

Abstract Male infertility constitutes a significant and growing global health challenge, with profound clinical, societal, and economic implications. This in-depth technical guide synthesizes the latest epidemiological data on its burden, detailing the established and emerging methodologies for its clinical assessment. Framed within the context of advancing machine learning (ML) prediction research, this review provides a foundational resource for researchers, scientists, and drug development professionals. It systematically presents quantitative burden trends, details key experimental protocols, and outlines the essential toolkit for contemporary andrological investigation, thereby setting the stage for the development of data-driven diagnostic and prognostic tools.

1. Global Epidemiological Burden: A Steady Increase Quantifying the burden of male infertility is essential for understanding its public health impact. Recent analyses from the Global Burden of Disease (GBD) studies reveal a consistent and substantial increase in its prevalence over the past decades.

Table 1: Global Burden of Male Infertility (1990-2021)

| Metric | Time Period | Findings | Data Source |

|---|---|---|---|

| Global Prevalence | 1990-2021 | Number of cases increased by 74.66%, from approximately 31.5 million to 55 million [1] [2]. | GBD 2021 |

| Age-Standardized Prevalence Rate (ASPR) | 1990-2021 | Significant growth, with an Estimated Annual Percentage Change (EAPC) of 0.5 [2]. | GBD 2021 |

| Global Prevalence | 1990-2019 | Increased by 76.9%, from ~32 million to 56.53 million cases [3]. | GBD 2019 |

| Age-Standardized Prevalence Rate (ASPR) | 1990-2019 | Stood at 1,402.98 per 100,000 in 2019, a 19% increase since 1990 [3]. | GBD 2019 |

| Regional Variation | 2019 | Highest ASPR and ASYR observed in Western Sub-Saharan Africa, Eastern Europe, and East Asia [3]. | GBD 2019 |

| Socio-demographic Index (SDI) | 2019 | The burden in High-middle and Middle SDI regions exceeded the global average [3]. A negative correlation exists between national SDI and infertility burden [1]. | GBD 2019/2021 |

| Peak Age Group | 2021 | The 35-39 age group has the highest number of prevalent cases globally [1] [2]. | GBD 2021 |

The data underscores that male infertility is not uniformly distributed. The heaviest burden falls on middle SDI regions, and specific areas like Eastern Europe and Sub-Saharan Africa [3] [2]. China alone accounts for over one-fifth of the global prevalence and DALYs, with rates significantly higher than the global average, though its domestic trend has recently stabilized [2].

2. Core Clinical Assessment and Experimental Protocols The clinical evaluation of male infertility relies on a multi-faceted approach, ranging from basic semen analysis to advanced hormonal and genetic testing.

2.1. Standard Semen Analysis Protocol (WHO Guidelines) Semen analysis is the cornerstone of male fertility assessment, though its predictive value for natural conception has limitations [4]. The protocol involves:

- Sample Collection: After a recommended abstinence period of 2-7 days, the sample is collected via masturbation and preferably analyzed near the laboratory to limit processing time [4].

- Physical & Microscopic Analysis: The sample is analyzed for volume, pH, liquefaction, and viscosity. It is then evaluated under a microscope for concentration (million/mL), motility (%), and vitality (%) [4].

- Morphology Assessment: The percentage of sperm with a normal cellular structure is determined.

- Reference Values: The WHO 2010 manual established lower reference limits based on the 5th centile of a fertile population. Key thresholds include [4]:

- Volume: 1.5 mL

- Sperm Concentration: 15 million/mL

- Total Motility (progressive + non-progressive): 40%

- Progressive Motility: 32%

- Normal Forms: 4%

It is critical to note that these thresholds are statistical references; men with parameters below these limits can still conceive, and those above may be infertile due to other factors [4]. The Total Motile Sperm Count (volume × concentration × motility) is often considered the most predictive individual parameter from standard semen analysis [4] [5].

2.2. Hormonal Profiling Protocol Serum hormone levels are measured to assess the hypothalamic-pituitary-gonadal (HPG) axis, which regulates spermatogenesis.

- Key Hormones: The standard panel includes Follicle-Stimulating Hormone (FSH), Luteinizing Hormone (LH), total Testosterone (T), Prolactin (PRL), and Estradiol (E2). The Testosterone-to-Estradiol ratio (T/E2) is also clinically significant [6].

- Clinical Correlation: FSH is a key marker of spermatogenic function and is often elevated in cases of impaired sperm production. LH stimulates testosterone production, and disruptions in the T/E2 ratio can indicate hormonal imbalances affecting fertility [6] [7].

2.3. Emerging Machine Learning Evaluation Protocols ML is being applied to complex andrological datasets to uncover hidden patterns and improve diagnostics.

- Dataset Construction: ML models are trained on diverse datasets that can include [8]:

- Semen Analysis Parameters: Concentration, motility, morphology.

- Clinical Variables: Hormone levels (FSH, LH, Testosterone, Inhibin B), testicular volume (from ultrasound).

- Lifestyle & Environmental Factors: Data on pollution (PM10, NO2), smoking, alcohol use.

- Genetic Data: Karyotypic abnormalities, Y-chromosome microdeletions [7].

- Model Training and Validation: A common approach uses the XGBoost algorithm. The workflow involves [8]:

- Data pre-processing: Normalizing numerical variables and encoding categorical ones.

- Handling missing values through imputation.

- Using 5-fold cross-validation for model training and hyperparameter tuning.

- Assessing performance via metrics like Area Under the Curve (AUC), with models achieving AUCs from 0.668 to over 0.98 in predicting conditions like azoospermia [8] [7].

- Novel Predictive Models: AI models have been developed to predict the risk of male infertility using only serum hormone levels (FSH, LH, T, E2, T/E2), achieving AUCs of approximately 74-75% [6]. This offers a potential non-invasive screening tool.

The following diagram illustrates the diagnostic workflow integrating traditional and ML-based approaches.

3. The Scientist's Toolkit: Key Research Reagents and Materials This section details essential materials and assays used in male infertility research, forming the basis for reproducible experimental protocols.

Table 2: Essential Research Reagents and Assays

| Reagent / Material | Primary Function / Application | Technical Notes |

|---|---|---|

| WHO Laboratory Manual | Provides standardized protocols for semen analysis, ensuring global consistency and reproducibility [4]. | The definitive reference for laboratory procedures; multiple editions exist (IV, V, VI). |

| Hormone Assay Kits | Quantify serum levels of FSH, LH, Testosterone, Estradiol, and Prolactin to assess endocrine function [6]. | Typically immunoassay-based (e.g., ELISA, CLIA). Critical for HPG axis evaluation. |

| Testicular Ultrasound | Non-invasive imaging to measure testicular volume and detect structural abnormalities like varicoceles [8]. | Bitesticular volume is a key predictive variable in ML models for azoospermia [8]. |

| Environmental Data | Publicly available parameters (e.g., PM10, NO2 levels) are used to correlate pollution exposure with semen quality [8]. | Sourced from environmental protection agencies; integrated as variables in ML datasets. |

| Genetic Test Panels | Identify known genetic causes of infertility, such as karyotype abnormalities and Y-chromosome microdeletions [7]. | Used for patient stratification; genetic factors are key variables in some ML classifiers [7]. |

4. Discussion and Integration with ML Prediction Research The escalating global burden of male infertility, coupled with the limitations of traditional diagnostic methods, creates a pressing need for innovative solutions. The integration of machine learning into this field represents a paradigm shift. The established clinical protocols and reagents detailed herein form the foundational data layers upon which ML models are built.

The high predictive accuracy (AUC >0.96 in some studies) of models using diverse features—from semen parameters and FSH levels to environmental data [8] [7]—validates this approach. Furthermore, the ability to predict infertility risk from serum hormones alone demonstrates the power of ML to extract latent patterns from existing, less invasive data [6]. For drug development, these models can enable better patient stratification for clinical trials, identifying homogeneous subgroups from the heterogeneous population of "idiopathic infertility" [8]. This paves the way for targeted therapeutic development and personalized treatment strategies, ultimately aiming to mitigate the significant clinical and societal burden of male infertility.

Limitations of Conventional Semen Analysis and Diagnostic Methods

Male infertility constitutes a significant global health challenge, contributing to 20–30% of all infertility cases among couples, with male factors involved in approximately 50% of cases overall [9] [10]. The diagnostic journey for male infertility traditionally begins with semen analysis, which has served as the cornerstone of male fertility assessment for decades. Despite its widespread use, conventional semen analysis faces substantial limitations in accurately predicting male fertility potential and treatment outcomes [10] [11]. Within the context of a systematic review of machine learning applications for male infertility prediction, understanding these limitations becomes paramount. The subjectivity, variability, and inadequate predictive power of conventional methods create precisely the challenges that computational approaches aim to overcome. This technical guide provides an in-depth examination of these limitations, details experimental protocols for emerging alternatives, and establishes a framework for evaluating new diagnostic technologies in male reproductive medicine.

Fundamental Limitations of Conventional Semen Analysis

Subjectivity and Variability in Assessment

The inherent subjectivity of conventional semen analysis represents one of its most significant limitations. Traditional assessment relies heavily on manual evaluation by laboratory technicians, leading to considerable inter-observer and intra-observer variability [9]. This subjectivity complicates the accurate evaluation of critical sperm parameters such as morphology, motility, and concentration, which are essential for treatment planning and prognosis [9]. The visual assessment of sperm motility exemplifies this challenge, as technicians must distinguish between progressive, non-progressive, and immotile sperm categories in real-time, a classification that suffers from poor reproducibility across different laboratories and technicians [10].

Morphology assessment presents similar challenges, with the classification of "normal" sperm forms being particularly problematic. The World Health Organization (WHO) has modified its criteria for normal morphology across successive manual editions, yet the assessment remains largely subjective and based on the "nice is good" principle (the καλὸς καὶ ἀγαθός principle of the ancient Greeks), despite evidence from assisted reproduction technologies that "ugly" sperm can still produce viable embryos [10]. This subjectivity directly impacts diagnostic consistency, with studies showing significant variability in morphology classification even among experienced technicians.

Poor Predictive Value for Fertility Outcomes

Conventional semen parameters demonstrate limited correlation with reproductive outcomes, particularly in predicting the ultimate goal of pregnancy. Numerous systematic reviews and large cohort studies have failed to establish clear threshold values that reliably predict pregnancy achievement [10]. In approximately 25% of infertility cases, conventional semen parameters fall within established "normal" ranges, leading to a diagnosis of unexplained infertility despite the couple's inability to conceive [10].

The predictive limitations extend to assisted reproductive technologies (ART), where semen parameters often poorly correlate with success rates. The advent of intracytoplasmic sperm injection (ICSI) has further diminished the prognostic value of routine semen analysis, as this technique requires only a few spermatozoa and bypasses many natural selection barriers [10]. This technological advancement has reduced the emphasis on evaluating male fertility potential through conventional parameters, as even semen with markedly suboptimal characteristics can result in successful fertilization with ICSI [10].

Inability to Assess Functional Competence

Conventional semen analysis provides essentially quantitative metrics but offers limited insight into the functional competence of spermatozoa. The diagnostic approach fails to measure the fertilizing potential of spermatozoa and the complex functional changes that occur in the female reproductive tract before fertilization [11]. Key functional aspects such as sperm capacitation, acrosome reaction capability, and chromosomal integrity are not assessed through standard analysis yet are crucial for successful fertilization and embryo development.

The diagnostic gap is particularly evident in cases of idiopathic male infertility, where routine semen parameters appear normal despite the couple's inability to conceive. This population represents approximately 40% of infertile men and highlights the critical need for diagnostic methods that probe beyond basic sperm characteristics [8]. The limitations of conventional analysis in these cases underscore the necessity of developing more sophisticated assessment techniques that evaluate functional sperm competence rather than merely counting and categorizing sperm cells.

Table 1: Key Limitations of Conventional Semen Analysis

| Limitation Category | Specific Issues | Clinical Impact |

|---|---|---|

| Analytical Subjectivity | Inter-observer variability, Manual assessment reliance, Classification inconsistency | Reduced diagnostic reproducibility, Inconsistent treatment recommendations |

| Poor Predictive Value | Weak correlation with pregnancy rates, Inability to distinguish fertile from infertile men except in extreme cases | Limited clinical utility for prognosis and treatment planning |

| Functional Assessment Gaps | No evaluation of DNA integrity, No assessment of fertilizing capacity, Limited molecular characterization | Failure to identify causes of idiopathic infertility, Incomplete diagnostic picture |

| Technical Standardization Challenges | Evolving WHO criteria, Laboratory-specific protocols, Variable quality control | Difficulties comparing results across centers and over time |

Quantitative Evidence of Diagnostic Shortcomings

Statistical Relationships Between Conventional Parameters and Outcomes

Research has demonstrated that the statistical associations between conventional semen parameters and fertility outcomes are generally weak and inconsistent. While extreme abnormalities in parameters such as concentration and motility show some correlation with reduced fertility, the vast middle range of values provides limited discriminatory power [10]. This diagnostic ambiguity creates significant challenges for clinicians attempting to prognosticate and plan treatments based solely on conventional semen analysis results.

The limitations extend beyond natural conception to assisted reproductive technologies. A comprehensive mapping review of artificial intelligence applications in male infertility examined 14 studies and found that traditional diagnostic methods struggle to integrate the complex interplay of clinical, environmental, and lifestyle factors, resulting in suboptimal accuracy for forecasting IVF outcomes or treatment success [9]. This fundamental shortcoming has driven the exploration of alternative assessment methods, including advanced sperm function tests and computational approaches.

Impact of Non-Seminal Factors on Diagnostic Interpretation

The interpretation of conventional semen analysis occurs in clinical isolation, often without adequate consideration of modifiable lifestyle factors and hormonal influences that significantly impact sperm quality and function. A 2025 cross-sectional study of 278 men demonstrated that factors such as advanced age (>40 years), tobacco use, alcohol consumption, abnormal BMI, and occupational heat exposure significantly affected semen quality and sperm DNA fragmentation, yet these elements are not routinely incorporated into diagnostic algorithms [12].

Table 2: Impact of Lifestyle and Hormonal Factors on Semen Quality (Based on a Study of 278 Men) [12]

| Factor | Impact on Conventional Semen Parameters | Impact on Sperm DNA Fragmentation |

|---|---|---|

| Age >40 years | No significant differences observed | Significant increase (p=0.038) |

| Tobacco Use | Significant reduction in concentration, motility, and morphology (p<0.001) | Increasing trend (not statistically significant) |

| Alcohol Consumption | Associated with reduced semen quality | Significant increase (p=0.023) |

| Abnormal BMI | Correlated with poorer semen quality (p<0.001) | Significant increase (p<0.001) |

| Occupational Heat Exposure | Not specified in study | Significant increase (p=0.013) |

| Low AMH Levels | Association with abnormal semen profiles | Significant correlation (p=0.011) |

Emerging Methodologies to Overcome Conventional Limitations

Advanced Sperm Function Assessment Protocols

Sperm DNA Fragmentation (SDF) Testing

Principle: The Sperm Chromatin Dispersion (SCD) test evaluates DNA integrity in spermatozoa, which has emerged as a key molecular biomarker for assessing sperm functional competence. Elevated SDF levels have been linked to lower fertilization rates, compromised embryo development, recurrent pregnancy loss, and poor outcomes in ART [12].

Experimental Protocol:

- Sample Preparation: Collect semen samples after a recommended 2-5 days of sexual abstinence. Allow samples to liquefy completely at 37°C for 20-30 minutes.

- Solution Preparation: Prepare agarose solution (1% in PBS) and maintain at 37°C in water bath. Prepare acid denaturation solution (0.08N HCl) and lysis solution (0.4M Tris, 0.8M DTT, 1% SDS, 0.05M EDTA, pH 7.5).

- Cell Embedding: Mix 25μL of semen with 50μL of agarose. Place 10-15μL of mixture on pre-coated slides and cover with coverslip. Place slides on cold plate (4°C) for 5 minutes to solidify.

- Denaturation and Lysis: Remove coverslip carefully. Incubate slides in acid denaturation solution for 7 minutes at room temperature. Transfer to lysis solution for 25 minutes at room temperature.

- Washing and Staining: Wash slides in distilled water for 5 minutes. Dehydrate through ethanol series (70%, 90%, 100%) for 2 minutes each. Air dry completely.

- Analysis: Stain with Diff-Quick or similar chromatin dyes. Examine under 100x oil immersion objective. Sperm with large, distinct halos of dispersed DNA loops are classified as non-fragmented, while those with small or absent halos indicate DNA fragmentation.

- Interpretation: Count a minimum of 500 spermatozoa per sample. Calculate DNA Fragmentation Index (DFI) as percentage of sperm without halos or with small halos.

Automated Sperm Morphology Analysis Using Deep Learning

Principle: Deep learning algorithms automatically segment and classify complete sperm structures (head, neck, and tail) to overcome subjectivity of manual morphology assessment [13].

Experimental Protocol:

- Dataset Preparation: Utilize publicly available datasets (e.g., SVIA dataset containing 125,000 annotated instances for object detection, 26,000 segmentation masks, and 125,880 cropped image objects for classification tasks) [13].

- Image Acquisition: Capture sperm images using standardized microscopy protocols. Ensure consistent staining (Diff-Quick or Papanicolaou) and magnification (100x oil immersion).

- Data Preprocessing: Apply image normalization, contrast enhancement, and artifact removal algorithms. Augment dataset through rotation, flipping, and scaling transformations.

- Model Architecture: Implement U-Net or Mask R-CNN architecture for segmentation task. Use ResNet or DenseNet backbone for classification.

- Training Protocol: Train model using 5-fold cross-validation. Apply randomized data sampling to address class imbalance.

- Validation: Compare model performance against manual assessment by multiple experienced technicians. Calculate inter-observer concordance metrics.

Diagram 1: Automated Sperm Morphology Analysis Workflow

Machine Learning Approaches for Male Infertility Prediction

Predictive Modeling for Non-Obstructive Azoospermia

Principle: Machine learning algorithms integrate multiple clinical variables to predict sperm retrieval success in patients with non-obstructive azoospermia (NOA) prior to microdissection testicular sperm extraction [14].

Experimental Protocol:

- Cohort Selection: Include patients with confirmed NOA diagnosis undergoing microTESE procedure. Multi-center design enhances generalizability (study included >2800 men) [14].

- Feature Selection: Collect preoperative clinical variables including reproductive hormones (FSH, LH, testosterone, inhibin B), testicular volume, age, genetic factors, and clinical history.

- Model Training: Train multiple machine learning models including Extreme Gradient Boosting (XGBoost), Random Forest, and Light Gradient Boosting Machine. Use 5-fold cross-validation to prevent overfitting.

- Model Evaluation: Assess predictive performance using area under the receiver operating characteristic curve (AUC), accuracy, sensitivity, and specificity. XGBoost achieved AUC of 0.9183 in development, maintaining AUC of 0.8469 in internal validation and 0.8301 in external validation [14].

- Clinical Implementation: Develop web-based prediction tool (SpermFinder) for preoperative assessment. Provide personalized sperm retrieval probabilities to support clinical decision-making.

Hybrid Bio-Inspired Optimization Framework

Principle: Integration of multilayer feedforward neural network with nature-inspired ant colony optimization algorithm to enhance predictive accuracy for male fertility diagnostics [15].

Experimental Protocol:

- Dataset Preparation: Utilize publicly available Fertility Dataset from UCI Machine Learning Repository (100 samples with 10 attributes encompassing socio-demographic characteristics, lifestyle habits, medical history, and environmental exposures) [15].

- Data Preprocessing: Apply min-max normalization to rescale all features to [0, 1] range. Address class imbalance (88 normal vs. 12 altered) through synthetic sampling techniques.

- Model Architecture: Implement hybrid MLFFN-ACO framework combining multilayer feedforward neural network with ant colony optimization for parameter tuning.

- Proximity Search Mechanism: Incorporate interpretability component for feature-level insights to support clinical decision-making.

- Validation: Evaluate model performance on unseen samples using classification accuracy, sensitivity, and computational time. Reported performance: 99% accuracy, 100% sensitivity, and computational time of 0.00006 seconds [15].

Diagram 2: Machine Learning Prediction Framework

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Advanced Male Infertility Research

| Reagent/Material | Specification | Research Application | Experimental Function |

|---|---|---|---|

| Sperm Chromatin Dispersion Kit | Commercial SCD kit (Halosperm or similar) | Sperm DNA fragmentation testing | Differential staining of sperm based on DNA integrity; identifies sperm with fragmented DNA |

| Agarose for Embedding | Molecular biology grade, low gelling temperature | Sperm functional assessment | Creates matrix for sperm immobilization during SCD testing and other functional assays |

| Computer-Assisted Sperm Analysis (CASA) | CASA system with minimum 60fps capture capability | Automated sperm motility and morphology | High-throughput, objective assessment of kinetic parameters and basic morphology |

| Deep Learning Training Dataset | Annotated sperm image datasets (e.g., SVIA, VISEM-Tracking) | AI model development | Provides ground truth data for training and validating segmentation and classification algorithms |

| Hormonal Assay Kits | ELISA-based FSH, LH, Testosterone, Inhibin B assays | Endocrine profiling | Quantifies reproductive hormones for integrative diagnostic models |

| Ant Colony Optimization Library | Python-based ACO implementation (ACO-Python or similar) | Algorithm development | Enhances neural network optimization for improved predictive accuracy |

The limitations of conventional semen analysis are substantial and multifaceted, encompassing issues of subjectivity, poor predictive value, and inadequate functional assessment. These shortcomings directly impact clinical decision-making and patient outcomes in male infertility management. Within the context of machine learning research for male infertility prediction, recognizing these limitations provides both justification for and direction toward novel computational approaches. The emerging methodologies detailed in this technical guide—from advanced sperm functional assessment to machine learning prediction models—represent promising avenues for overcoming the constraints of conventional diagnostics. As research progresses, the integration of these advanced techniques into standardized diagnostic workflows will be essential for advancing the field of male reproductive medicine and improving care for infertile couples. Future validation studies and standardized protocols will be necessary to establish these innovative approaches as mainstays in clinical practice.

Artificial intelligence (AI), particularly machine learning (ML), represents a transformative force in healthcare, enabling the analysis of complex datasets to uncover patterns that can inform diagnosis, prognosis, and treatment personalization. Unlike traditional statistical methods that often rely on testing pre-specified hypotheses, ML is designed to learn patterns directly from data, making it exceptionally suited for tasks involving large-scale, multi-dimensional biomedical data [6]. This paradigm shift is critically important in managing multifactorial health conditions, such as male infertility, where the interplay of genetic, hormonal, environmental, and lifestyle factors creates a complex etiological landscape that is difficult to decipher with conventional approaches [5] [16]. This technical guide provides an in-depth exploration of the core principles, methodologies, and applications of AI in healthcare, with a specific focus on its role in advancing male infertility prediction research, framing this within the context of a systematic review of the field.

Core ML Concepts and Clinical Workflows

Machine learning in healthcare encompasses a range of algorithms that can be broadly categorized into supervised, unsupervised, and reinforcement learning. For predictive modeling in clinical contexts, supervised learning is most prevalent, wherein algorithms learn from labeled historical data to make predictions on new, unseen data [7]. Key algorithms employed in male infertility research include Support Vector Machines (SVM), Random Forests (RF), decision trees, K-Nearest Neighbors (KNN), Naive Bayes, and ensemble methods like SuperLearner, which combines multiple algorithms to achieve superior predictive performance [7]. More complex artificial neural networks (ANNs) and deep learning models are also being applied, especially for image-based tasks such as analyzing sperm morphology and motility [9] [5].

The clinical workflow for implementing an ML solution, as detailed across numerous studies, follows a structured pipeline. It begins with problem definition, such as predicting infertility risk or blastocyst yield in IVF cycles. This is followed by data acquisition and pre-processing, which involves collecting and cleaning structured data (e.g., hormone levels, patient demographics) or unstructured data (e.g., microscopic sperm images). Feature engineering identifies the most predictive variables, such as follicle-stimulating hormone (FSH) levels or sperm concentration. The model training and validation phase uses part of the dataset to train the algorithm and another held-out part to test its performance, often employing k-fold cross-validation to ensure robustness [7]. Finally, the model undergoes deployment and monitoring in a clinical setting, where its real-world performance is tracked [17].

AI Applications in Male Infertility Prediction: A Systematic Quantitative Review

A systematic mapping of the literature reveals that AI applications in male infertility are diverse and have demonstrated high performance across several key clinical tasks. Research in this domain has surged since 2021, with 57% of identified studies in one review published between 2021 and 2023, reflecting growing interest in the field [9]. The following table synthesizes quantitative performance data from recent studies, providing a clear comparison of AI efficacy across different prediction tasks.

Table 1: Performance Metrics of AI Models in Male Infertility and Related IVF Applications

| Clinical Application | AI Model(s) Used | Performance Metrics | Sample Size | Data Modality |

|---|---|---|---|---|

| Male Infertility Risk Prediction | Support Vector Machines (SVM) [7] | AUC: 96% | 644 patients | Genetic, Hormonal & Clinical Factors |

| Male Infertility Risk Prediction | SuperLearner (Ensemble) [7] | AUC: 97% | 644 patients | Genetic, Hormonal & Clinical Factors |

| Male Infertility Screening | Prediction One / AutoML [6] | AUC: ~74.4% | 3,662 patients | Serum Hormone Levels Only |

| Sperm Morphology Analysis | Support Vector Machine (SVM) [9] | AUC: 88.59% | 1,400 sperm | Sperm Images |

| Sperm Motility Analysis | Support Vector Machine (SVM) [9] | Accuracy: 89.9% | 2,817 sperm | Sperm Motility Videos |

| Non-Obstructive Azoospermia (NOA) Sperm Retrieval Prediction | Gradient Boosting Trees (GBT) [9] | AUC: 0.807, Sensitivity: 91% | 119 patients | Clinical & Diagnostic Data |

| Sperm DNA Fragmentation Prediction | Multi-layer Perceptron (MLP) [9] | Not Specified | Not Specified | Clinical & Semen Parameters |

| Overall Male Infertility Prediction (Median Accuracy) | Various ML Models [5] | Median Accuracy: 88% | 43 Studies | Mixed Modalities |

| Overall Male Infertility Prediction (Median Accuracy) | Artificial Neural Networks (ANNs) [5] | Median Accuracy: 84% | 7 Studies | Mixed Modalities |

| Blastocyst Yield Prediction in IVF | LightGBM [18] | R²: 0.673, MAE: 0.793 | 9,649 cycles | Embryo Morphology & Patient Data |

| Embryo Implantation Prediction | Life Whisperer / FiTTE System [19] | Accuracy: 64.3-65.2%, AUC: 0.7 | Multiple Studies | Blastocyst Images & Clinical Data |

The data illustrates that model performance is closely tied to the data modality and the specific clinical question. For instance, models predicting general infertility risk from a rich set of genetic, hormonal, and clinical factors can achieve exceptional performance (AUC >95%) [7]. In contrast, models that rely solely on serum hormone levels for screening, while less accurate, offer a less invasive and more accessible alternative to traditional semen analysis, achieving AUCs around 74% [6]. Furthermore, AI excels in automating and objectifying tasks like sperm analysis, with models for motility and morphology assessment showing high accuracy and consistency [9].

Detailed Experimental Protocols in AI-Driven Infertility Research

Protocol 1: Predicting Infertility Risk from Hormonal Profiles

A pivotal 2024 study by Kobayashi et al. developed a non-invasive screening model using only serum hormone levels, bypassing the need for initial semen analysis [6]. This protocol is a prime example of using structured health data for prediction.

1. Objective: To develop and validate an AI model that predicts the risk of male infertility using only serum hormone levels and patient age.

2. Data Collection:

- Cohort: 3,662 patients who underwent both semen analysis and serum hormone testing between 2011-2020.

- Predictor Variables (Features): Age, Luteinizing Hormone (LH), Follicle-Stimulating Hormone (FSH), Prolactin (PRL), Testosterone, Estradiol (E2), and Testosterone/Estradiol ratio (T/E2).

- Outcome Variable (Label): A binary classification of "normal" (0) or "abnormal" (1) based on the total motile sperm count, with a threshold of 9.408 × 10^6 derived from WHO guidelines.

3. Data Pre-processing:

- Data was extracted from electronic medical records.

- Patients were classified into diagnostic categories (e.g., NOA, oligozoospermia) for descriptive analysis, but the model was trained on the binary outcome.

4. Model Training and Validation:

- Algorithms: Two proprietary AI platforms, Prediction One and Google's AutoML Tables, were used. These platforms automate much of the model selection and hyperparameter tuning process.

- Validation: The standard train-test split method was used to evaluate model performance. The dataset was divided, with a portion used for training and a held-out portion used for testing.

- Performance Metrics: Area Under the Receiver Operating Characteristic Curve (AUC ROC), Area Under the Precision-Recall Curve (AUC PR), Accuracy, Precision, Recall, and F-value were calculated at different classification thresholds.

5. Model Interpretation:

- Feature importance analysis was conducted to identify the hormones most predictive of infertility risk. FSH was consistently the top-ranked feature, followed by T/E2 ratio and LH [6].

Protocol 2: Quantitative Prediction of Blastocyst Yield in IVF

A 2025 study developed ML models to quantitatively predict the number of blastocysts an IVF cycle will yield, moving beyond simple binary classification [18]. This protocol highlights the use of ML for a more nuanced clinical decision.

1. Objective: To develop and validate machine learning models for the quantitative prediction of usable blastocyst yield per IVF cycle.

2. Data Collection:

- Cohort: 9,649 IVF/ICSI cycles.

- Predictor Variables: An initial set of 21 features related to demographic, treatment, and embryo morphology data from Day 2 and Day 3 of development.

- Outcome Variable: The number of usable blastocysts formed per cycle, later categorized into 0, 1-2, and ≥3 blastocysts for a multi-class evaluation.

3. Data Pre-processing:

- The dataset was randomly split into training and testing sets.

- Feature Selection: Recursive Feature Elimination (RFE) was employed to iteratively remove the least informative features, identifying the optimal subset for each model.

4. Model Training and Validation:

- Algorithms: Three ML models (SVM, LightGBM, XGBoost) were trained and compared against a baseline linear regression model.

- Validation: Internal validation was performed on the held-out test set. Model performance was assessed using R-squared (R²) and Mean Absolute Error (MAE) for regression, and accuracy and Kappa coefficient for the multi-class task.

5. Model Interpretation:

- The LightGBM model was selected as optimal due to its strong performance, use of fewer features (8), and superior interpretability.

- Feature importance analysis identified the number of embryos in extended culture, mean cell number on Day 3, and the proportion of 8-cell embryos as the most critical predictors [18].

The Scientist's Toolkit: Essential Research Reagents and Materials

The development and validation of AI models for male infertility prediction rely on a foundation of specific clinical data, computational tools, and biological reagents. The following table details key resources referenced in the cited literature.

Table 2: Essential Research Reagents and Computational Tools for AI-based Infertility Research

| Item Name | Type | Primary Function in Research | Example Context |

|---|---|---|---|

| Serum Hormone Panels | Biological Reagent / Diagnostic Test | Provides key input features for non-invasive prediction models. Measures FSH, LH, Testosterone, Estradiol, etc. | Used as primary predictors in the hormone-only infertility risk model [6]. |

| WHO Laboratory Manual for Human Semen | Standardized Protocol | Provides the gold-standard definitions and methodologies for semen analysis, used to create ground-truth labels for model training. | Used to define "normal" vs. "abnormal" semen parameters for labeling data [6]. |

| Prediction One | Commercial AI Software | An end-to-end automated machine learning platform used to build, validate, and deploy predictive models without extensive coding. | Used to develop the primary prediction model from hormonal data [6]. |

| AutoML Tables | Commercial AI Software (Google) | A cloud-based automated machine learning service for building high-quality models on structured data. | Used as an alternative platform to build and validate the infertility prediction model [6]. |

| LightGBM (Light Gradient Boosting Machine) | Open-Source ML Algorithm | A highly efficient, gradient-boosting framework that uses tree-based learning algorithms. Valued for its speed and high accuracy. | Selected as the optimal model for predicting blastocyst yield due to performance and interpretability [18]. |

| R Statistical Software with 'caret' & 'SuperLearner' packages | Open-Source Software / Library | A comprehensive environment for statistical computing and graphics. 'caret' streamlines model training, and 'SuperLearner' creates ensemble models. | Used to implement and compare multiple classifiers (SVM, RF, etc.) and ensemble methods [7]. |

| Computer-Assisted Sperm Analysis (CASA) | Laboratory Instrumentation | Automates the analysis of sperm concentration, motility, and morphology, generating quantitative data for AI model training. | Fundamental technology for generating high-quality, consistent sperm analysis data [9] [5]. |

The integration of AI and ML into male infertility prediction represents a significant advancement, moving the field toward more objective, data-driven diagnostics and prognostics. Current models demonstrate robust performance in tasks ranging from risk screening based on hormones to precise analysis of sperm and embryos [9] [6]. The consistent identification of key predictors like FSH, sperm concentration, and early embryo morphology provides valuable biological insights and validates the clinical relevance of these models [18] [7]. However, challenges remain, including the need for large, multi-center validation studies to ensure generalizability across diverse populations, addressing ethical concerns regarding data privacy and algorithm transparency, and the transition from research prototypes to clinically validated, user-friendly tools [9] [16] [17]. Future research should focus on developing multi-modal models that integrate imaging, clinical, and omics data, and on rigorous real-world trials to demonstrate improved patient outcomes, ultimately solidifying AI's role as an essential partner in reproductive medicine.

Key Infertility Biomarkers and Data Types for ML Models

Infertility, affecting an estimated one in six couples globally, represents a significant challenge in reproductive medicine [15]. The etiology of infertility is multifactorial, with male factors contributing to approximately 50% of cases, female factors accounting for 40%, and the remainder being unexplained or combined [20] [9]. Traditional diagnostic methods, such as semen analysis and hormonal assays, are often limited by subjectivity, inter-observer variability, and an inability to capture the complex interplay of genetic, environmental, and lifestyle factors [9] [15]. The emergence of artificial intelligence (AI) and machine learning (ML) promises to revolutionize infertility management by enhancing diagnostic precision, enabling personalized treatment predictions, and uncovering novel biomarkers from complex, high-dimensional data [20] [9]. This technical guide synthesizes current research on key infertility biomarkers and data types utilized in ML models, providing a foundational resource for researchers and drug development professionals engaged in the systematic review of machine learning for male infertility prediction.

Key Biomarker Categories for ML in Infertility

ML models leverage diverse biomarker categories to predict infertility diagnoses, treatment outcomes, and underlying pathophysiology. These biomarkers provide a multi-faceted view of reproductive health.

Table 1: Key Male Infertility Biomarkers for ML Models

| Biomarker Category | Specific Biomarkers | Clinical/Experimental Utility | Relevant ML Application |

|---|---|---|---|

| Hormonal Profiles | Follicle-Stimulating Hormone (FSH), Inhibin B, Testosterone | Assess hypothalamic-pituitary-gonadal axis function and spermatogenic status [8]. | Predicting azoospermia and sperm retrieval success [14] [8]. |

| Semen Parameters | Sperm Concentration, Motility, Morphology, DNA Fragmentation Index (DFI) | Core functional assessment of sperm quality; DFI indicates genetic integrity [9]. | Automated analysis, classification of normozoospermia vs. altered semen, IVF outcome prediction [9] [8]. |

| Anatomical & Ultrasonographic | Testicular Volume (Bitesticular) | Surrogate for spermatogenic potential and tubular mass [8]. | Key predictor for azoospermia in ensemble ML models [8]. |

| Environmental & Lifestyle | PM10, NO2 exposure, Sedentary hours, Caffeine intake [15] [8] | Quantifies impact of external factors on semen quality and reproductive function. | Identifying hidden risk factors and classifying fertility status [15] [8]. |

| Genetic & Molecular | SEMA3F, ANXA2, LCK (from transcriptomic studies) [21] [22] | Insights into molecular mechanisms of idiopathic and non-obstructive azoospermia (NOA). | Diagnostic biomarker discovery for conditions like unexplained infertility (UI) and premature ovarian insufficiency (POI) [21] [22]. |

Table 2: Key Female Infertility Biomarkers for ML Models

| Biomarker Category | Specific Biomarkers | Clinical/Experimental Utility | Relevant ML Application |

|---|---|---|---|

| Ovarian Reserve | Anti-Müllerian Hormone (AMH), Antral Follicle Count (AFC), basal FSH | Quantifies ovarian follicular pool and predicts response to stimulation [20]. | Personalizing treatment strategies and predicting success rates in Assisted Reproductive Technology (ART) [20]. |

| Endocrine & Metabolic | 25-hydroxy vitamin D3 (25OHVD3), Thyroid Function Tests, Blood Lipids [23] | 25OHVD3 deficiency is prominently associated with infertility and pregnancy loss; links to broader metabolic health. | Core feature in high-accuracy diagnostic models for infertility and pregnancy loss [23]. |

| Immune & Inflammatory | Immune cell infiltration (e.g., NK T cells, memory CD8 T cells) [21] | Correlates with unexplained infertility (UI) and endometrial receptivity. | Identifying immune-related diagnostic biomarkers via bioinformatics and ML [21]. |

| Genetic & Transcriptomic | COX5A, UQCRFS1, RPS2, EIF5A (from POI studies) [22] | Associated with oxidative phosphorylation and apoptotic pathways in Premature Ovarian Insufficiency (POI). | Biomarker discovery from full-length transcript profiles using Random Forest and Boruta algorithms [22]. |

Data Types and ML Model Applications

The performance of ML models is intrinsically linked to the types and quality of data used for training and validation.

Structured Clinical and Lifestyle Data

Structured data, often organized in tabular format, includes clinical parameters, lifestyle factors, and environmental exposures. ML algorithms such as Random Forest, XGBoost, and Support Vector Machines (SVM) are particularly effective for this data type [20] [15] [24]. For instance, a hybrid model combining a multilayer neural network with an Ant Colony Optimization algorithm achieved 99% accuracy in classifying male fertility using a dataset of 100 subjects, with key features including sedentary behavior and environmental exposures [15]. Similarly, a model predicting live birth before IVF treatment using 25 clinical features achieved an F1-score of 76.49% with Random Forest [24].

Unstructured and Complex Data

Unstructured data, including medical images and textual reports, requires more complex deep-learning approaches.

- Imaging Data: Convolutional Neural Networks (CNNs) are the standard for analyzing images such as embryo micrographs, sperm morphology, and testicular ultrasounds [20] [9]. These models automate assessments and identify subtle patterns imperceptible to the human eye.

- Genomic and Transcriptomic Data: High-throughput sequencing data, including that from Oxford Nanopore Technology (ONT), is used to identify novel biomarkers. Studies employ bioinformatics pipelines integrated with ML algorithms like LASSO and Random Forest to filter thousands of genes down to a few key diagnostic biomarkers for conditions like POI and unexplained infertility [21] [22].

Detailed Experimental Protocols

Reproducible experimental protocols are crucial for advancing ML applications in infertility research. Below are detailed methodologies from key studies.

Protocol for ML-Based Prediction of Sperm Retrieval in NOA

This multi-center cohort study developed a model to predict successful sperm retrieval via microdissection testicular sperm extraction (micro-TESE) in men with non-obstructive azoospermia (NOA) [14].

- Cohort Establishment: The study included over 2800 men with a confirmed NOA diagnosis who underwent micro-TESE. Data was sourced from multiple centers to enhance generalizability.

- Variable Selection: Preoperative clinical variables were collected, which typically include age, hormonal profiles (FSH, LH, Testosterone, Inhibin B), testicular volume, and genetic markers.

- Model Training and Validation: Eight different machine learning models were trained, tested, and validated. The dataset was split into training and testing sets, and external validation was performed on a cohort from a different center.

- Model Evaluation: Performance was assessed using the Area Under the Receiver Operating Characteristic Curve (AUC), accuracy, and other classification metrics. The Extreme Gradient Boosting (XGBoost) model consistently outperformed others, achieving a mean AUC of 0.9183.

- Clinical Implementation: The best-performing model was deployed as an online calculator named "SpermFinder" to aid clinicians and patients in preoperative counseling [14].

Protocol for Discovering Immune-Related Biomarkers in Unexplained Infertility

This study used bioinformatics and ML to identify immune-related diagnostic biomarkers for unexplained infertility (UI) from transcriptional data [21].

- Data Acquisition: The gene expression dataset (GSE165004) was obtained from the Gene Expression Omnibus (GEO) database. Immune-related genes (IRGs) were sourced from the Immport and InnateDB databases.

- Differential Analysis and Weighted Gene Co-expression Network Analysis (WGCNA): Differentially expressed genes (DEGs) between UI and control samples were identified. WGCNA was used to find gene modules highly correlated with the UI phenotype.

- Machine Learning for Feature Selection: Three distinct ML algorithms were applied to narrow down candidate biomarkers:

- Least Absolute Shrinkage and Selection Operator (LASSO) Regression: Shrinks coefficients to zero, effectively selecting a subset of non-redundant features.

- Support Vector Machine (SVM) Recursive Feature Elimination: Iteratively builds an SVM model and removes the feature with the smallest weight.

- Random Forest: Uses mean decrease in Gini impurity to rank feature importance.

- Biomarker Validation: The diagnostic performance of the final candidate biomarkers (e.g., ANXA2, CD300E, IL27RA) was evaluated in the original dataset and a separate validation set (GSE16532) using Receiver Operating Characteristic (ROC) analysis. Immune cell infiltration was analyzed to correlate biomarkers with the immune microenvironment.

Diagram 1: ML workflow for infertility prediction, showing structured and unstructured data paths.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential reagents, tools, and technologies used in the experiments cited herein, forming a core toolkit for researchers in this field.

Table 3: Essential Research Reagents and Tools for ML-Driven Infertility Research

| Tool/Reagent | Specific Example/Product | Function in Experimental Protocol |

|---|---|---|

| High-Performance Liquid Chromatography-Mass Spectrometry (HPLC-MS/MS) | Agilent 1200 HPLC system coupled with API 3200 QTRAP MS/MS [23] | Precise quantification of steroid hormones and metabolites (e.g., 25OHVD2 and 25OHVD3) from serum samples. |

| RNA Extraction & cDNA Library Kits | PAXgene Blood RNA tube (BD) and matching RNA extraction kit [22] | Standardized collection, stabilization, and extraction of high-quality total RNA from peripheral blood for transcriptomic studies. |

| Next-Generation Sequencing (NGS) & Third-Generation Sequencing | Oxford Nanopore Technology (ONT), specifically PromethION platform [22] | Generation of full-length transcriptome profiles for identifying novel isoforms and biomarkers without assembly. |

| Real-Time PCR Systems & Reagents | SYBR Green qPCR Master Mix and specific primer sets [22] | Validation of differentially expressed genes identified from transcriptomic sequencing or bioinformatics analysis. |

| Protein-Protein Interaction (PPI) Databases & Software | STRING database, Cytoscape software with CytoHubba plugin [22] | Construction and analysis of PPI networks to identify hub genes from lists of differentially expressed genes. |

| Machine Learning Libraries & Frameworks | XGBoost, Scikit-learn (for RF, SVM), Python Boruta package [14] [22] | Implementation of machine learning algorithms for feature selection, classification, and predictive model building. |

Signaling Pathways and Molecular Mechanisms

ML-driven biomarker discovery has shed light on key dysregulated pathways in infertility. Bioinformatics analyses, such as Gene Set Enrichment Analysis (GSEA), are critical for interpreting the functional role of identified biomarkers.

Diagram 2: Key pathways in Premature Ovarian Insufficiency (POI) identified via ML and transcriptomics [22].

For male infertility, particularly non-obstructive azoospermia, the pathophysiology is linked to disruptions in the hypothalamic-pituitary-gonadal axis, reflected in hormonal biomarkers like elevated FSH and decreased Inhibin B [8]. Furthermore, environmental factors are hypothesized to induce oxidative stress, leading to sperm DNA fragmentation, which is increasingly used as a predictive biomarker in ML models [9] [15].

Defining the Clinical Prediction Goals for AI Systems

This technical guide outlines a framework for establishing clinical prediction goals within the specific research domain of machine learning (ML) for male infertility. For researchers conducting systematic reviews or developing new models, a precise definition of these goals is paramount for ensuring clinical relevance, methodological rigor, and interpretability of findings.

Taxonomy of Clinical Prediction Goals in Male Infertility

The integration of AI into male infertility research focuses on distinct clinical prediction goals, each with a specific clinical use case. These goals can be systematically categorized as follows.

Table 1: Clinical Prediction Goals in AI for Male Infertility

| Prediction Goal Category | Clinical Use Case | Exemplary AI Model & Performance | Key Predictors/Inputs |

|---|---|---|---|

| Sperm Analysis & Characterization | Automate and objectify the assessment of sperm quality for diagnosis [25]. | SVM: 89.9% accuracy for motility (2,817 sperm) [25]. | Microscopic images and videos for morphology, motility, and concentration [25]. |

| Sperm Retrieval Prediction | Predict the success of surgical sperm retrieval in non-obstructive azoospermia (NOA) patients [25]. | Gradient Boosting Trees (GBT): 91% sensitivity, AUC 0.807 (119 patients) [25]. | Clinical patient profiles, hormonal assays, and genetic markers [25]. |

| IVF/ICSI Success Prediction | Forecast the likelihood of a successful pregnancy following assisted reproductive technology (ART) [26]. | Random Forest: AUC 84.23% (486 patients) [25]. | Female age (most common feature), sperm quality parameters, and embryological data [26]. |

| Quantitative Blastocyst Yield Prediction | Inform the decision to extend embryo culture to the blastocyst stage by predicting the number of blastocysts [18]. | LightGBM: R² 0.673-0.676, Mean Absolute Error 0.793-0.809 (9,649 cycles) [18]. | Number of extended culture embryos, mean cell number on Day 3, proportion of 8-cell embryos [18]. |

| Diagnostic Classification | Provide a non-invasive, early diagnostic classification of male fertility status based on multifactorial data [15]. | Hybrid MLP-ACO: 99% accuracy, 100% sensitivity (100 patients) [15]. | Lifestyle factors (e.g., sedentary habits), environmental exposures, and clinical history [15]. |

Methodological Protocols for Key Prediction Goals

Detailed experimental methodology is required to ensure the development of robust and clinically applicable prediction models.

Protocol for Diagnostic Classification Using Hybrid AI Models

This protocol is based on a hybrid framework combining a Multilayer Feedforward Neural Network (MLP) with a nature-inspired Ant Colony Optimization (ACO) algorithm [15].

- Dataset Curation: Utilize a clinically profiled dataset (e.g., the UCI Fertility Dataset) with ~100 samples and ~10 attributes encompassing socio-demographics, lifestyle habits, and environmental exposures. Preprocess data with Min-Max normalization to rescale all features to a [0,1] range to mitigate scale-induced bias [15].

- Feature Engineering: The ACO algorithm is employed for adaptive parameter tuning and feature selection, mimicking ant foraging behavior to identify the most discriminative pathways through the feature space, thereby enhancing model efficiency and generalizability [15].

- Model Training & Optimization: The MLP is trained with the ACO-optimized parameters. The ACO component helps overcome limitations of conventional gradient-based methods, improving convergence and predictive accuracy. A Proximity Search Mechanism (PSM) is integrated to provide feature-level interpretability for clinical decision-making [15].

- Model Evaluation: Evaluate the model on unseen samples using standard performance metrics. The cited study achieved 99% accuracy and 100% sensitivity, with an ultra-low computational time of 0.00006 seconds, highlighting real-time applicability [15].

Protocol for Quantitative Blastocyst Yield Prediction

This protocol outlines the development of a model to predict the exact number of usable blastocysts, a key decision point in IVF [18].

- Cohort Definition & Data Splitting: Include a large number of IVF/ICSI cycles (>9,000). Randomly split the dataset into training and testing sets, ensuring a representative distribution of cycles with 0, 1-2, and ≥3 usable blastocysts across both sets [18].

- Predictor Selection & RFE: Establish an initial set of clinical and embryological features. Use Recursive Feature Elimination (RFE) to iteratively remove the least informative features, identifying the optimal subset (e.g., 8-11 features) that maintains model performance while enhancing simplicity [18].

- Model Selection & Benchmarking: Train multiple ML models (e.g., SVM, LightGBM, XGBoost) and benchmark them against a traditional linear regression model. Select the optimal model based on a balance of performance metrics (R², Mean Absolute Error), number of features required, and clinical interpretability. LightGBM has been identified as a strong candidate for this purpose [18].

- Validation & Subgroup Analysis: Perform internal validation on the held-out test set. Conduct stratified analysis in poor-prognosis subgroups (e.g., advanced maternal age, poor embryo morphology) to assess model robustness across clinically relevant patient categories [18].

Workflow Visualization of AI Model Development and Maintenance

The following diagram illustrates the core lifecycle for developing and maintaining a clinical prediction model, integrating key concepts like the Lifelong ML (LML) framework to address performance degradation over time [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key computational and data resources essential for research in this field.

Table 2: Key Research Reagent Solutions for AI in Male Infertility

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Clinical Fertility Dataset | Serves as the foundational data for training and validating diagnostic and prognostic models. | Publicly available datasets (e.g., UCI Fertility Dataset) with ~100 samples and attributes like lifestyle, environmental exposures, and clinical outcomes [15]. |

| Ant Colony Optimization (ACO) Algorithm | A nature-inspired metaheuristic used for feature selection and hyperparameter tuning in hybrid models. | Enhances model convergence and predictive accuracy by adaptively optimizing parameters, overcoming limitations of gradient-based methods [15]. |

| LightGBM (Light Gradient Boosting Machine) | A highly efficient gradient boosting framework used for tasks like quantitative blastocyst yield prediction. | Selected for its high performance (R² ~0.67), ability to work well with fewer features, and superior interpretability compared to other complex models [18]. |

| Lifelong Machine Learning (LML) Framework | A model maintenance system that continuously monitors performance and updates models to counteract "calibration drift." | Uses a knowledge base to store past models and performance, enabling updates that address performance degradation caused by changes in data distributions over time [27]. |

| Explainable AI (XAI) & Feature Importance Tools | Techniques like SHAP or built-in feature importance plots to interpret model decisions and build clinical trust. | Critical for identifying key predictors (e.g., sedentary habits, number of extended culture embryos) and ensuring model transparency for clinical adoption [15] [18]. |

AI Algorithms in Action: From Sperm Analysis to Outcome Prediction

Taxonomy of Machine Learning Models in Male Infertility

Male infertility is a prevalent global health issue, contributing to 20–30% of all infertility cases and affecting an estimated 30 million men worldwide [9]. The diagnosis and management of male infertility have traditionally relied on manual semen analysis, which is often subjective and prone to inter-observer variability [9]. The complex, multifactorial nature of male infertility, encompassing genetic, hormonal, environmental, and lifestyle factors, presents significant challenges for traditional statistical methods [5] [6].

Artificial intelligence (AI) and machine learning (ML) have emerged as transformative technologies in healthcare, offering powerful tools to analyze complex datasets and identify subtle patterns beyond human capability [5]. In male infertility, ML approaches are revolutionizing diagnosis, treatment selection, and outcome prediction by enhancing precision, objectivity, and personalization [28]. This whitepaper establishes a comprehensive taxonomy of machine learning models applied to male infertility, providing researchers and drug development professionals with a structured framework of methodologies, performance metrics, and experimental protocols currently advancing this field.

Taxonomy of Machine Learning Applications in Male Infertility

ML applications in male infertility can be categorized into distinct domains based on their clinical purpose and the type of data they analyze. The table below summarizes these key application areas, their specific tasks, and the algorithms commonly employed.

Table 1: Taxonomy of Machine Learning Applications in Male Infertility

| Application Domain | Specific Task | Common ML Algorithms | Key Performance Metrics |

|---|---|---|---|

| Sperm Analysis & Characterization | Morphology Classification | SVM, MLP, Deep Neural Networks [9] | Accuracy (up to 89.9%), AUC (up to 88.59%) [9] |

| Motility Analysis | SVM, Gaussian Mixture Models, CNN [9] [28] | Accuracy (up to 89.9%) [9] | |

| DNA Fragmentation Assessment | AI-based Halo Evaluation, Deep Learning [28] | Processing time (40 min vs. 70 min conventional) [28] | |

| Diagnostic & Predictive Modeling | Infertility Risk Prediction | RF, SVM, SuperLearner, XGBoost [7] [29] | Accuracy (median 88%), AUC (up to 97%) [5] [7] |

| Hormone-Based Screening | AutoML, Prediction One [6] | AUC (≈74.4%), Feature Importance (FSH primary) [6] | |

| Azoospermia Identification | XGBoost [8] | AUC (up to 0.987) [8] | |

| Treatment Outcome prediction | IVF/ICSI Success Prediction | SVM, Random Forest, Bayesian Networks [26] | AUC (up to 0.997) [26] |

| Sperm Retrieval Prediction (NOA) | Gradient Boosting Trees (GBT) [9] | AUC (0.807), Sensitivity (91%) [9] |

Sperm Analysis and Characterization

This domain focuses on automating and enhancing the objectivity of traditional semen analysis.

Morphology and Motility Analysis: ML models, particularly Support Vector Machines (SVM) and Multi-Layer Perceptrons (MLP), have demonstrated high accuracy in classifying sperm morphology and assessing motility. For instance, one study achieved 88.59% AUC for morphology on 1,400 sperm images and 89.9% accuracy for motility on 2,817 sperm cells [9]. Deep learning-based region convolutional neural networks (R-CNN) further automate this process by distinguishing sperm from impurities, a significant limitation of conventional Computer-Assisted Semen Analysis (CASA) [28].

DNA Fragmentation Assessment: Sperm DNA Fragmentation (SDF) is a crucial biomarker for male infertility. AI-based halo evaluation and deep learning models can rapidly and objectively assess DNA integrity, with platforms like the LensHooke X1 PRO reducing evaluation time from 70 to 40 minutes compared to manual methods [28].

Diagnostic and Predictive Modeling

These models integrate diverse data types to diagnose infertility and predict its risk.

Infertility Risk Prediction: Supervised learning algorithms are extensively used. Studies comparing multiple classifiers often find Support Vector Machines (SVM) and ensemble methods like SuperLearner and Random Forest (RF) to be top performers, with AUCs reaching 96-97% [7]. A systematic review reported a median accuracy of 88% across various ML models for predicting male infertility [5].

Hormone-Based Screening: To circumvent the social stigma or unavailability of semen analysis, models have been developed using only serum hormone levels. Follicle-Stimulating Hormone (FSH) is consistently the most critical predictor, with models achieving AUCs of approximately 74.4%. The testosterone-to-estradiol (T/E2) ratio and Luteinizing Hormone (LH) are also significant features [6].

Azoospermia Identification: XGBoost algorithms have shown exceptional performance in identifying patients with azoospermia, achieving an AUC of 0.987. Key predictive variables include FSH serum levels, inhibin B, and bitesticular volume [8].

Treatment Outcome Prediction

ML models are critical for personalizing treatment and setting realistic expectations.

IVF/ICSI Success Prediction: Predicting the success of Assisted Reproductive Technology (ART) is a complex task involving numerous variables. Female age is the most consistently used feature. Models employing Random Forests, SVM, and Bayesian Networks have reported high performance, with one study achieving an remarkable AUC of 0.997 [26].

Sperm Retrieval Prediction: For men with non-obstructive azoospermia (NOA), predicting the success of surgical sperm retrieval is vital. Gradient Boosting Trees (GBT) have demonstrated strong performance in this area, with an AUC of 0.807 and sensitivity of 91% on a cohort of 119 patients [9].

Experimental Protocols and Methodologies

This section details the standard experimental workflows and data handling procedures used in developing ML models for male infertility.

Data Sourcing and Preprocessing

Data Sources: Research data is typically sourced from electronic health records (EHRs) of tertiary hospitals or fertility clinics. These datasets encompass clinical parameters (semen analysis, hormone levels, testicular ultrasound), lifestyle factors, and genetic information [8] [7].

Data Preprocessing: This is a critical step to ensure model robustness. Protocols generally include:

- Handling Missing Values: Techniques range from complete case analysis to imputation using nearest neighbor values or the most frequent value [8].

- Addressing Class Imbalance: Infertility datasets are often imbalanced (e.g., more fertile than infertile samples). Standard techniques include the Synthetic Minority Oversampling Technique (SMOTE) and its variants (ADASYN, SLSMOTE) to generate synthetic samples for the minority class [29] [30].

- Feature Scaling: Numerical variables are typically normalized (e.g., Z-score normalization) to ensure all features contribute equally to the model [7].

Model Training and Validation

A rigorous validation framework is essential for generating clinically relevant models.

Model Selection and Comparison: Studies commonly employ a multi-model approach, comparing the performance of several industry-standard algorithms such as SVM, RF, XGBoost, Decision Trees, and Artificial Neural Networks (ANNs) to identify the optimal one for a specific task [29] [7].

Validation Schemes: The use of k-fold cross-validation (CV)—typically with k=5 or k=10—is a standard practice to assess model generalizability and mitigate overfitting [7] [29]. The dataset is split into training and testing sets (common splits include 80/20, 70/30, or 60/40) to evaluate the model's performance on unseen data [7].

Performance Metrics: A wide range of metrics is used for comprehensive evaluation. The Area Under the Receiver Operating Characteristic Curve (AUC-ROC) is the most frequently reported metric [26]. Other common metrics include accuracy, sensitivity (recall), specificity, precision, and F1-score [26] [6].

The following workflow diagram illustrates the standard experimental protocol from data collection to model deployment.

Performance Analysis of ML Models

Quantitative performance varies significantly across different clinical tasks and algorithms. The table below provides a comparative summary of model performance as reported in the literature.

Table 2: Comparative Performance of Machine Learning Models in Male Infertility

| Clinical Task | Best-Performing Algorithm(s) | Reported Performance | Sample Size | Key Features |

|---|---|---|---|---|

| General Infertility Prediction | SuperLearner, SVM [7] | AUC: 97%, 96% | 644 patients | Sperm concentration, FSH, LH, genetic factors [7] |

| General Infertility Prediction | Random Forest [29] | Accuracy: 90.47%, AUC: 99.98% | N/A | Lifestyle and environmental factors [29] |

| Hormone-Based Risk Screening | AutoML (Prediction One) [6] | AUC: 74.42% | 3,662 patients | FSH, T/E2 ratio, LH [6] |

| Azoospermia Identification | XGBoost [8] | AUC: 0.987 | 2,334 subjects | FSH, Inhibin B, Bitesticular Volume [8] |

| Sperm Morphology Classification | SVM [9] | AUC: 88.59% | 1,400 sperm | Sperm images [9] |

| Sperm Motility Analysis | SVM [9] | Accuracy: 89.9% | 2,817 sperm | Sperm video sequences [9] |

| IVF Success Prediction | Bayesian Network [26] | AUC: 0.997 | 106,640 cycles | 24 features including female age [26] |

| NOA Sperm Retrieval Prediction | Gradient Boosting Trees [9] | AUC: 0.807, Sensitivity: 91% | 119 patients | Clinical and biomarker data [9] |

Key Insights from Performance Data

Algorithm Suitability: No single algorithm dominates all tasks. Ensemble methods (Random Forest, XGBoost, SuperLearner) often excel in predictive modeling with tabular clinical data [7] [29] [8], while SVMs show strong performance in image-based tasks like morphology and motility analysis [9]. For extremely large datasets, such as IVF cycles, Bayesian Networks can achieve exceptional performance [26].

Feature Importance: Identifying key predictors is crucial for model interpretability. FSH is consistently the most important hormonal predictor [6] [8]. For non-hormonal predictions, sperm concentration, genetic factors, and environmental parameters (e.g., PM10, NO2) are highly influential [7] [8].

The Scientist's Toolkit: Research Reagents and Materials

The following table catalogues essential reagents, tools, and software platforms frequently employed in ML-driven male infertility research.

Table 3: Essential Research Reagents and Solutions for ML in Male Infertility

| Reagent / Tool / Platform | Type | Primary Function in Research |

|---|---|---|

| WHO Laboratory Manual | Protocol | Provides standardized protocols for semen analysis, ensuring consistent and reproducible data generation for model training [6] [8]. |

| LensHooke X1 PRO | FDA-approved Device | AI-powered optical microscope for automated analysis of sperm concentration, motility, and DNA fragmentation; serves as a data source and validation tool [28]. |

| Computer-Assisted Semen Analysis (CASA) | Technology Platform | Automated system for objective assessment of sperm concentration and motility; often used as a baseline or data source for developing new AI models [28]. |

| SHAP (Shapley Additive Explanations) | Software Library | XAI tool that interprets ML model outputs by quantifying the contribution of each feature to individual predictions, enhancing clinical trust [29]. |

| Synthetic Minority Oversampling (SMOTE) | Algorithmic Technique | Addresses class imbalance in datasets by generating synthetic samples of the minority class, improving model performance on underrepresented conditions [29] [30]. |

| Prediction One / AutoML Tables | Commercial Software | User-friendly AI platforms that enable researchers without deep coding expertise to develop and validate predictive models from complex datasets [6]. |

| FSH, LH, Testosterone, Inhibin B Assays | Biochemical Reagents | Hormone measurement kits for generating critical endocrine input data for diagnostic and predictive models [6] [8]. |

Signaling Pathways and Biological Mechanisms

Understanding the endocrine pathways regulating male reproduction is fundamental to interpreting ML models that use hormonal inputs. The following diagram illustrates the key signaling axes and feedback mechanisms.

Sperm Morphology and Motility Analysis with Deep Learning

The diagnosis and treatment of male infertility, which contributes to approximately 50% of infertility cases among couples, rely heavily on the accurate assessment of semen parameters [13] [9]. Among these parameters, sperm morphology and motility are critically important, as they are most closely correlated with fertility potential [31] [32]. Traditional manual assessment of these parameters, however, is inherently subjective, time-consuming, and prone to significant inter-observer variability, which hinders standardized diagnosis and reproducible clinical outcomes [31] [9] [32].

Artificial intelligence (AI), particularly deep learning, is revolutionizing this field by introducing automated, objective, and high-throughput evaluation systems [32]. This technical guide explores the current state of deep learning applications in sperm morphology and motility analysis, detailing the technical architectures, experimental protocols, and performance benchmarks that are shaping the future of male infertility diagnostics within the broader context of machine learning-based prediction research.

Technical Approaches for Sperm Analysis

Deep Learning for Sperm Morphology Classification

Sperm morphology analysis involves categorizing individual spermatozoa based on structural defects in the head, midpiece, and tail, according to standardized classifications such as the modified David classification or WHO criteria [31] [13]. Convolutional Neural Networks (CNNs) have become the cornerstone of automated morphology assessment, capable of learning discriminative features directly from sperm images.

A representative study developed a predictive model using a CNN architecture trained on the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS) [31]. The initial dataset contained 1,000 individual sperm images, which was expanded to 6,035 images after applying data augmentation techniques to balance morphological classes and improve model generalization. The dataset encompassed 12 morphological defect classes, including seven head defects (tapered, thin, microcephalous, macrocephalous, multiple, abnormal post-acrosomal region, abnormal acrosome), two midpiece defects (cytoplasmic droplet, bent), and three tail defects (coiled, short, multiple) [31]. The deep learning model achieved promising accuracy ranging from 55% to 92% across different morphological classes, approaching the level of expert judgment [31].

Another implementation leveraged the YOLOv7 (You Only Look Once) object detection framework for bovine sperm morphology analysis, demonstrating the transferability of these approaches across species [33]. This system achieved a mean Average Precision (mAP@50) of 0.73, with precision and recall values of 0.75 and 0.71 respectively, indicating a balanced trade-off between accurate identification and comprehensive detection of sperm abnormalities [33].

Advanced Architectures for Motility and Morphology Estimation

Beyond static morphology assessment, deep learning approaches have evolved to analyze sperm motility through novel motion representation techniques. One innovative approach proposed a visual representation called MotionFlow, which encodes sperm cell motion from video sequences into a format suitable for deep neural networks [34].

The system constructed separate yet complementary neural networks for motility and morphology estimation, utilizing transfer learning from other domains to enhance performance [34]. Through K-fold cross-validation, this method achieved a mean absolute error (MAE) of 6.842% for motility estimation and 4.148% for morphology estimation, outperforming previous state-of-the-art solutions [34].

Table 1: Performance Benchmarks of Deep Learning Models in Sperm Analysis

| Study Focus | Model Architecture | Dataset | Key Performance Metrics |

|---|---|---|---|

| Morphology Classification [31] | Convolutional Neural Network (CNN) | SMD/MSS: 6,035 images (after augmentation) | Accuracy: 55-92% (across morphological classes) |

| Bovine Morphology Analysis [33] | YOLOv7 | 277 annotated images | mAP@50: 0.73, Precision: 0.75, Recall: 0.71 |

| Motility & Morphology Estimation [34] | MotionFlow with Deep Neural Networks | VISEM dataset | MAE (Motility): 6.842%, MAE (Morphology): 4.148% |

| Human Infertility Prediction [8] | XGBoost | UNIROMA: 2,334 subjects; UNIMORE: 11,981 records | AUC for azoospermia prediction: 0.987 (UNIROMA) |

Experimental Protocols and Workflows

Dataset Creation and Image Preprocessing

The robustness of deep learning models depends critically on the quality and diversity of the training data. A standardized protocol for dataset creation typically involves multiple meticulous steps:

Sample Preparation and Staining: Semen samples are obtained following ethical guidelines and institutional review board approvals. Smears are prepared according to WHO manual guidelines, typically stained with RAL Diagnostics staining kit or similar reagents to enhance contrast and morphological details [31]. Alternative fixation methods without staining also exist, using controlled pressure and temperature to immobilize spermatozoa for evaluation [33] [9].