Multi-Layer Perceptron Architectures for Semen Parameter Prediction: A Comprehensive Guide for Biomedical Research

This article comprehensively explores the application of Multi-Layer Perceptron (MLP) architectures in predicting semen parameters, a critical task in male infertility diagnosis and reproductive health.

Multi-Layer Perceptron Architectures for Semen Parameter Prediction: A Comprehensive Guide for Biomedical Research

Abstract

This article comprehensively explores the application of Multi-Layer Perceptron (MLP) architectures in predicting semen parameters, a critical task in male infertility diagnosis and reproductive health. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles establishing MLPs as a core technique in andrology, detailing specific architectural designs and data processing methodologies. The scope extends to troubleshooting common implementation challenges like data imbalance and model optimization, and provides a rigorous framework for model validation and performance comparison against other industry-standard machine learning algorithms. By synthesizing current research and performance metrics, this review serves as a technical reference for developing robust, clinically applicable AI tools for semen analysis.

Laying the Groundwork: The Role of MLPs in Modern Andrology and Male Fertility Assessment

The Critical Need for Objective Semen Analysis in Male Infertility Management

Male infertility is a prevalent global health issue, implicated in approximately 50% of infertile couples [1]. The standard diagnostic cornerstone, conventional semen analysis, exhibits significant limitations due to substantial intra-individual variability and subjective assessment [2] [3] [4]. This variability challenges clinical consistency and reliable fertility prediction, creating a critical need for more objective and automated analysis methods.

Artificial intelligence (AI) and machine learning (ML) approaches, particularly multi-layer perceptron (MLP) architectures, are emerging as transformative solutions. These technologies offer the potential to standardize semen analysis, improve diagnostic accuracy, and uncover complex, non-linear relationships between semen parameters and fertility outcomes that traditional statistics may miss. This document outlines the quantitative evidence supporting this need and provides detailed protocols for implementing AI-driven analysis in male infertility research.

Quantitative Evidence: Variability in Conventional Semen Analysis

The inherent variability of manual semen analysis is well-documented across multiple studies. The tables below summarize key quantitative evidence on this variability and the performance of emerging machine learning models designed to address it.

Table 1: Within-Subject Variability of Semen Analysis Parameters

| Semen Parameter | Within-Subject Coefficient of Variation (CVw) | Study Population | Citation |

|---|---|---|---|

| Total Motile Count (TMC) | 82% | Youths (18.8 ± 1.2 years) at risk for infertility | [2] |

| Sperm Motility | 36% | Youths (18.8 ± 1.2 years) at risk for infertility | [2] |

| Semen Volume | 36% | Youths (18.8 ± 1.2 years) at risk for infertility | [2] |

| All Major Parameters | 28% - 34% | Male partners of subfertile couples (n=5,240) | [3] |

Table 2: Performance of Machine Learning Models in Male Infertility

| Model Application | Model Type(s) | Reported Performance | Citation |

|---|---|---|---|

| Overall Male Infertility Prediction | Various ML Models (n=40) | Median Accuracy: 88% (across 43 studies) | [5] |

| Male Infertility Prediction | Artificial Neural Networks (ANNs) | Median Accuracy: 84% (across 7 studies) | [5] |

| Sperm Motility Prediction | Linear Support Vector Regressor | Mean Absolute Error (MAE): 7.31 (on a 0-100 scale) | [6] |

| Semen Parameter Classification from US | VGG-16 (Deep Learning) | AUC: 0.76 (Concentration), 0.89 (Motility), 0.86 (Morphology) | [7] |

Experimental Protocols for AI-Driven Semen Analysis

Protocol 1: Sperm Motility Prediction Using Video Analysis and Feature Quantization

This protocol is adapted from a study that achieved state-of-the-art results in automatically predicting sperm motility from video data [6].

Workflow Overview:

Detailed Methodology:

- Sample Preparation: Collect semen samples following WHO guidelines. Place 10 µL of liquefied semen on a glass slide, cover with a 22x22 mm coverslip, and maintain at 37°C on a heated microscope stage [4].

- Video Acquisition: Record videos using a phase-contrast microscope (e.g., Olympus CX31) with a mounted camera (e.g., UEye UI-2210C). Use 400x magnification, a frame rate of 50 frames-per-second, and a duration of 2-7 minutes [4]. Store videos in AVI format.

- Sperm Tracking and Feature Extraction:

- Apply an off-the-shelf tracking algorithm to generate individual sperm trajectories across video sequences.

- For each tracked sperm cell, calculate displacement features (e.g., total path length, straight-line distance, velocity) and custom movement statistics.

- Aggregate and quantize the features from all individual sperm cells into a unified representation for the entire sample.

- Model Training and Prediction:

- Train a Linear Support Vector Regressor (SVR) on the quantized features. The model should be trained to predict the percentage (0-100) of progressive, non-progressive, and immotile spermatozoa.

- Use a published dataset like VISEM [4] for training and benchmarking.

- Evaluate model performance using the Mean Absolute Error (MAE) against manually assessed motility values.

Protocol 2: Predicting Semen Parameters from Testicular Ultrasonography

This protocol describes an innovative approach using deep learning to predict semen analysis parameters from testicular ultrasound images, which can serve as a non-invasive adjunct [7].

Workflow Overview:

Detailed Methodology:

- Patient Selection and Standardization:

- Inclusion Criteria: Men aged 18-54 presenting with infertility (≥1 year of unprotected intercourse). Exclude patients with substance abuse, testicular tumors, microlithiasis, azoospermia, or other confounding genitourinary conditions [7].

- Data Collection: For each patient, collect blood for hormone profiling (FSH, LH, Testosterone), perform semen analysis per WHO 2021 guidelines, and conduct scrotal ultrasonography on the same day.

- Ultrasonography Imaging:

- Use a standardized ultrasonography device and linear probe (e.g., Samsung RS85 Prestige with LA2-14A probe).

- Set parameters to a testicular preset, THI mode, and 13.0 MHz. Keep Tissue Gain Compensation (TGC) and gain settings constant.

- Capture longitudinal-axis images of both testes, ensuring the entire testicular contour is visible and the mediastinum testis is excluded.

- Image Preprocessing and Dataset Creation:

- Convert images to PNG format.

- Manually outline and crop testicular contours to remove patient information and irrelevant areas.

- Categorize images into folders based on corresponding semen analysis results (e.g., "oligospermia" vs. "normal" for concentration).

- Augment the datasets and split them randomly into 80% training and 20% test sets.

- Model Training and Evaluation:

- Utilize a pre-defined deep learning architecture like VGG-16 for image classification.

- Train the model to perform binary classification for each semen parameter (e.g., oligospermia vs. normal, asthenozoospermia vs. normal).

- Evaluate model performance using the Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Semen Analysis Research

| Item | Function/Application | Specification/Example |

|---|---|---|

| Phase-Contrast Microscope | Visualization of live spermatozoa without staining. | E.g., Olympus CX31 with heated stage (37°C) [4]. |

| Microscope-Mounted Camera | Digital capture of sperm videos for computer analysis. | E.g., UEye UI-2210C camera [4]. |

| Sperm Analysis Chamber | Standardized volume chamber for sperm concentration and motility count. | Improved Neubauer Hemocytometer [7]. |

| Linear Array Ultrasound Probe | High-resolution imaging of testicular parenchyma. | E.g., LA2-14A linear probe at 13.0 MHz [7]. |

| Hormone Assay Kits | Quantification of reproductive hormones (FSH, LH, Testosterone) for patient stratification. | Chemiluminescent Microparticle Immunoassay (CMIA) on an Abbott Architect i2000 autoanalyzer [7]. |

| Public Datasets | Benchmarking and training data for algorithm development. | E.g., VISEM dataset (85+ semen videos with participant data) [4]. |

Fundamental Principles of Multi-Layer Perceptron (MLP) Neural Networks

The prediction of male fertility potential through semen analysis is a critical objective in reproductive medicine. Traditional semen analysis, guided by World Health Organization (WHO) manuals, is widely acknowledged to lack sufficient predictive value for reproductive outcomes [8]. Multi-Layer Perceptron (MLP) neural networks represent a promising computational approach to address this limitation. As a class of artificial neural networks, MLPs can model complex, non-linear relationships between basic semen parameters and clinical outcomes, offering the potential to transform andrology diagnostics from descriptive assessment to predictive analytics [8] [9]. This document establishes fundamental principles and protocols for implementing MLP architectures within semen parameter prediction research, providing scientists and drug development professionals with standardized methodologies for building robust predictive models.

Theoretical Foundations of MLP Architecture

Core Structural Components

A Multi-Layer Perceptron is a type of feedforward artificial neural network characterized by its fully connected layered structure [10] [11]. The architecture consists of:

- Input Layer: The initial layer where each neuron corresponds to a feature in the input data. In semen parameter prediction, these may include sperm concentration, motility, morphology, molecular features, or mitochondrial DNA copy number [8].

- Hidden Layers: One or more intermediate layers that perform the bulk of computational processing. Each hidden layer transforms the input data through weighted connections and non-linear activation functions, enabling the network to learn complex feature representations [12].

- Output Layer: The final layer that produces the network's prediction. For regression tasks (e.g., predicting motility percentage), this may be a single neuron; for multi-class classification (e.g., morphology categorization), multiple neurons with softmax activation are typically used [9] [12].

The term "multi-layer" specifically denotes the presence of at least one hidden layer between the input and output layers. Each connection between neurons has an associated weight, and each neuron has an associated bias term, which are iteratively adjusted during training to minimize prediction error [11].

Mathematical Formulation

The information processing within an MLP occurs through two fundamental mathematical operations at each layer:

Linear Transformation: Each neuron computes a weighted sum of its inputs plus a bias term. For a neuron in layer ( l ), this is expressed as: [ zi^{[l]} = \sum{j=1}^{n} w{ij}^{[l]} aj^{[l-1]} + bi^{[l]} ] where ( w{ij}^{[l]} ) are the weights, ( aj^{[l-1]} ) are the activations from the previous layer, and ( bi^{[l]} ) is the bias [12] [10].

Non-Linear Activation: The weighted sum ( zi^{[l]} ) is passed through a non-linear activation function ( g ) to produce the neuron's output: [ ai^{[l]} = g(z_i^{[l]}) ] This introduction of non-linearity is crucial for enabling the network to learn complex patterns beyond what linear models can capture [12].

Table 1: Common Activation Functions in MLP Architectures

| Function Name | Mathematical Expression | Properties | Typical Use Case |

|---|---|---|---|

| ReLU (Rectified Linear Unit) | ( f(z) = \max(0, z) ) | Computationally efficient; mitigates vanishing gradient | Hidden Layers [12] |

| Sigmoid | ( \sigma(z) = \frac{1}{1 + e^{-z}} ) | Output range (0, 1); smooth gradient | Binary Classification Output [12] [11] |

| Tanh (Hyperbolic Tangent) | ( \tanh(z) = \frac{2}{1 + e^{-2z}} - 1 ) | Output range (-1, 1); zero-centered | Hidden Layers [12] |

| Softmax | ( \sigma(\mathbf{z})i = \frac{e^{zi}}{\sum{j=1}^K e^{zj}} ) | Output sums to 1; multi-class probability | Multi-class Output [12] |

The Learning Process: Forward and Backward Propagation

MLPs learn from data through an iterative process of forward propagation and backpropagation [12] [10]:

Forward Propagation: Input data is passed through the network layer by layer, with each layer applying its linear transformations and activation functions, ultimately generating a prediction at the output layer [10].

Loss Calculation: A loss function quantifies the discrepancy between the network's prediction and the true target value. For regression tasks in semen analysis (e.g., predicting motility percentage), Mean Squared Error (MSE) is commonly used: [ L = \frac{1}{N} \sum{i=1}^{N} (yi - \hat{y}_i)^2 ] For classification tasks (e.g., morphology classification), binary or categorical cross-entropy is typically employed [12] [6].

Backpropagation: The gradients of the loss function with respect to all weights and biases in the network are calculated using the chain rule of calculus. This process efficiently propagates the error backward through the network to determine how each parameter should be adjusted to reduce the loss [12] [11].

Parameter Update: An optimization algorithm, such as Stochastic Gradient Descent (SGD) or Adam, uses the computed gradients to update the weights and biases, moving them in a direction that minimizes the loss [12].

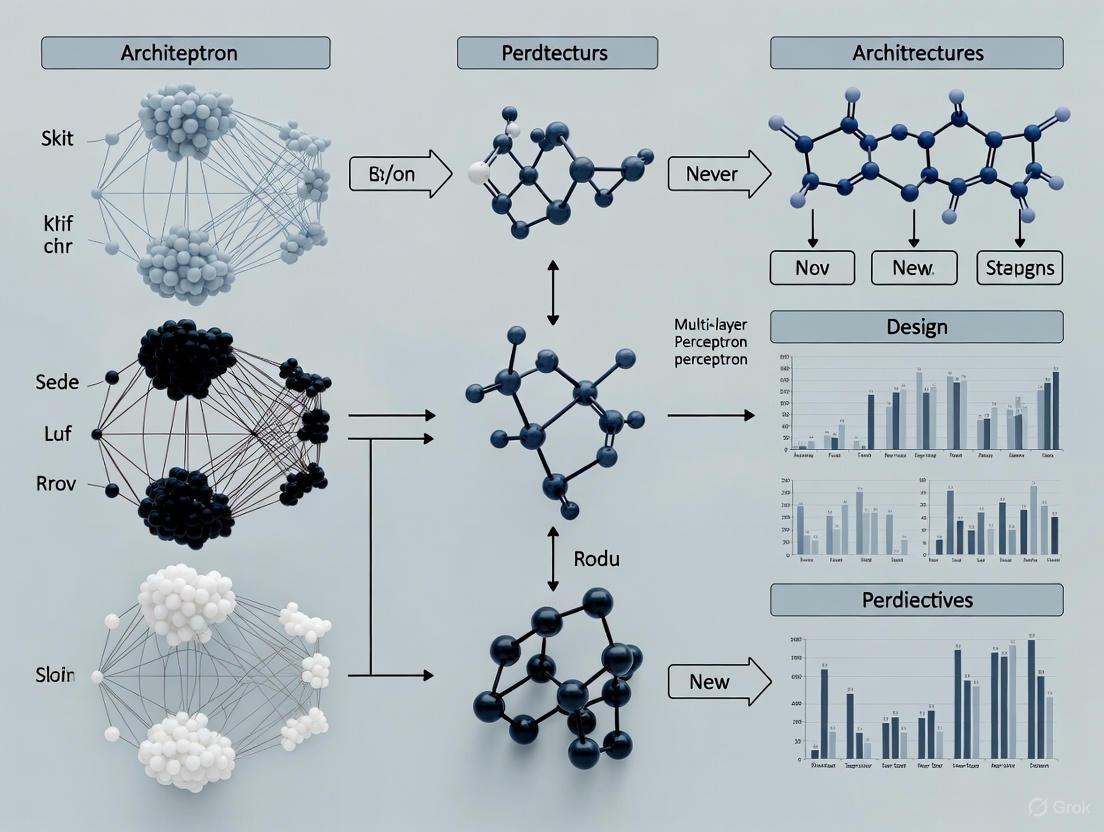

Diagram 1: MLP Training Cycle. This workflow illustrates the iterative process of training a Multi-Layer Perceptron.

Experimental Protocols for Semen Parameter Prediction

Protocol 1: MLP for Sperm Motility Regression

Objective: To train an MLP model for predicting the percentage of progressively motile spermatozoa based on movement statistics and displacement features [6].

Dataset Preparation:

- Data Source: Collect and label video recordings of human semen samples using the standardized Visem dataset or equivalent internal datasets [6].

- Feature Extraction: Implement unsupervised tracking algorithms to extract two distinct feature sets from sperm trajectories:

- Custom Movement Statistics: Velocity, linearity, and amplitude of lateral head displacement.

- Displacement Features: Time-series data of sperm head positioning across frames.

- Feature Aggregation: Apply quantization techniques to create an aggregated representation of individual sperm cell features for each sample [6].

- Data Partitioning:

Table 2: Data Partitioning Strategy for Motility Prediction

Subset Percentage Purpose Training Set 70% Model parameter learning Validation Set 15% Hyperparameter tuning and early stopping Test Set 15% Final unbiased performance evaluation

Model Architecture Specifications:

- Input Layer: 50 neurons (matching feature dimension)

- Hidden Layer 1: 128 neurons, ReLU activation

- Hidden Layer 2: 64 neurons, ReLU activation

- Output Layer: 1 neuron, linear activation

Training Configuration:

- Loss Function: Mean Squared Error (MSE)

- Optimizer: Adam (learning rate = 0.001)

- Batch Size: 32

- Early Stopping: Monitor validation loss with patience of 20 epochs

- Maximum Epochs: 200

Performance Metrics:

- Primary: Mean Absolute Error (MAE)

- Secondary: Root Mean Squared Error (RMSE), R² coefficient

Protocol 2: MLP for Sperm Morphology Classification

Objective: To develop an MLP model for automated classification of sperm morphological abnormalities, minimizing inter-observer variability [9].

Dataset Preparation:

- Data Source: Utilize the Hi-LabSpermMorpho dataset (18,456 images across 18 morphological classes) or equivalent clinical datasets [9].

- Image Preprocessing:

- Resize images to consistent dimensions (e.g., 128×128 pixels)

- Feature Extraction:

- Class Imbalance Handling: Apply data augmentation or class weighting to address unequal representation across morphological classes.

Model Architecture Specifications:

- Input Layer: 512 neurons (matching CNN-extracted feature dimension)

- Hidden Layer 1: 256 neurons, ReLU activation

- Hidden Layer 2: 128 neurons, ReLU activation

- Output Layer: 18 neurons, Softmax activation

Training Configuration:

- Loss Function: Categorical Cross-Entropy

- Optimizer: Adam (learning rate = 0.0005)

- Batch Size: 64

- Regularization: Dropout (rate = 0.3) after each hidden layer

Performance Metrics:

- Primary: Classification Accuracy

- Secondary: Per-class Precision, Recall, F1-Score

Diagram 2: Morphology Classification Pipeline. This diagram outlines the complete workflow from raw sperm images to morphological classification.

Advanced Implementation Considerations

Ensemble Learning and Feature Fusion

For enhanced predictive performance in semen analysis, consider advanced MLP integration strategies:

- Feature-Level Fusion: Combine features extracted from multiple CNN architectures (e.g., different EfficientNetV2 variants) before input into the MLP classifier. This leverages complementary feature representations [9].

- Decision-Level Fusion: Implement soft voting mechanisms across multiple MLP models (e.g., trained with different initializations or feature subsets) to improve robustness and classification accuracy [9].

- Hybrid Architectures: Integrate MLPs with other machine learning classifiers (Support Vector Machines, Random Forest) as final decision layers, potentially enhancing performance on specific morphological classification tasks [9].

Mitigating Overfitting in Medical Data

MLPs are particularly prone to overfitting on limited medical datasets. Employ these strategies to ensure generalization:

- Regularization Techniques:

- L1/L2 regularization on weights

- Dropout layers during training

- Early stopping based on validation performance

- Data Augmentation: Artificially expand training datasets through geometric transformations, noise injection, and synthetic sample generation.

- Cross-Validation: Implement k-fold cross-validation (k=5 or k=10) for more reliable performance estimation and hyperparameter tuning.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for MLP-based Semen Analysis

| Item | Function/Application | Specifications/Alternatives |

|---|---|---|

| Hi-LabSpermMorpho Dataset | Provides standardized image data for sperm morphology classification; contains 18,456 images across 18 morphological classes [9]. | Alternative: HuSHeM, SCIAN-SpermMorphoGS, or SMIDS datasets. |

| Visem Dataset | Video dataset for sperm motility analysis; enables tracking and feature extraction for motility prediction models [6]. | Publicly available dataset with annotated semen sample videos. |

| TensorFlow with Keras | Open-source deep learning framework for implementing and training MLP architectures [12]. | Alternative: PyTorch, Scikit-learn. |

| Computer-Assisted Sperm Analysis (CASA) System | Automated system for initial sperm parameter quantification (count, motility); can provide input features for MLP models [9]. | Multiple commercial systems available. |

| Support Vector Regressor (SVR) | Baseline model comparison for regression tasks; linear SVR has demonstrated state-of-the-art performance on motility prediction [6]. | Implemented in Scikit-learn. |

| EfficientNetV2 CNN Variants | Pre-trained convolutional neural networks for feature extraction from sperm images prior to MLP classification [9]. | Multiple size variants (S, M, L) available. |

| Adam Optimizer | Adaptive optimization algorithm for efficient MLP training; combines advantages of momentum and adaptive learning rates [12]. | Default parameters: lr=0.001, β₁=0.9, β₂=0.999. |

| Elastic Net Regularization | Regularization technique combining L1 and L2 penalties; used in feature selection for semen quality indices [8]. | Controls model complexity and prevents overfitting. |

Performance Evaluation and Validation Framework

Quantitative Assessment Metrics

Rigorous evaluation is essential for validating MLP models in clinical research contexts:

Table 4: Model Evaluation Metrics for Semen Parameter Prediction Tasks

| Task Type | Primary Metric | Secondary Metrics | Benchmark Performance |

|---|---|---|---|

| Motility Regression | Mean Absolute Error (MAE) | RMSE, R² | MAE of 7.31 achieved vs. 8.83 baseline [6] |

| Morphology Classification | Accuracy | Precision, Recall, F1-Score | 67.70% accuracy with ensemble MLP [9] |

| Time-to-Pregnancy Prediction | Hazard Ratio | AUC-ROC | Sperm epigenetic aging biomarker [8] |

Clinical Validation Protocols

- Correlation with Clinical Outcomes: Validate model predictions against actual reproductive outcomes (pregnancy success, fertilization rates) rather than intermediate laboratory parameters [8].

- Prospective Validation: Conduct studies on independent, prospectively collected datasets to assess real-world performance.

- Multi-Center Validation: Evaluate model generalizability across different clinics and patient populations to ensure robustness.

Multi-Layer Perceptron neural networks represent a powerful methodology for advancing predictive andrology beyond the limitations of conventional semen analysis. By implementing the standardized protocols and architectural principles outlined in this document, researchers can develop robust models for predicting clinically relevant outcomes from basic semen parameters. The integration of MLPs with ensemble techniques, appropriate validation frameworks, and clinical correlation establishes a foundation for meaningful decision support in reproductive medicine. Future research directions should focus on incorporating female factors, expanding sample sizes, and translating these predictive models into clinical workflows to optimize fertility treatments and minimize emotional and financial burdens associated with unsuccessful interventions.

Why MLPs? Advantages over Traditional Statistical Models for Complex Biomedical Data

Multi-Layer Perceptrons (MLPs), a foundational class of artificial neural networks, have emerged as powerful tools for analyzing complex biomedical data where traditional statistical models often reach their limitations. MLPs are particularly valuable in semen parameter prediction research due to their ability to model intricate, non-linear relationships between diverse input variables—such as environmental factors, lifestyle habits, and clinical measurements—and seminal outcomes that are not easily captured by conventional methods [13] [5]. This capability is crucial in male infertility assessment, where interactions between predictors are rarely linear or additive in nature.

The architecture of MLPs enables them to automatically learn relevant features and complex patterns directly from raw data without relying on strong prior assumptions about data distribution or variable relationships [14]. This characteristic makes them exceptionally well-suited for biomedical domains like semen analysis, where the underlying biological mechanisms are incompletely understood and data may contain hidden interactions that escape theoretical specification in traditional models. Research demonstrates that MLPs can achieve approximately 84% median accuracy in predicting male infertility, making them valuable tools for early diagnosis and clinical decision support [5].

Comparative Performance: MLPs Versus Traditional Statistical Models

Quantitative Performance Comparisons

Extensive research comparing machine learning approaches with traditional statistical models across biomedical domains reveals a consistent pattern: MLPs and other ML methods often demonstrate superior performance for complex prediction tasks, particularly when handling non-linear relationships and high-dimensional data [14]. In male fertility prediction specifically, artificial neural networks (including MLPs) have achieved a median accuracy of 84% across multiple studies, with some implementations reaching up to 97% accuracy in training phases [5].

Table 1: Performance Comparison of Prediction Models in Male Fertility Research

| Model Type | Specific Model | Reported Accuracy | Application Context | Data Characteristics |

|---|---|---|---|---|

| MLP | Artificial Neural Network | 84% (median) [5] | Male infertility prediction | Clinical & lifestyle factors |

| MLP | Multi-Layer Perceptron | 86% [15] | Sperm concentration detection | Lifestyle & environmental data |

| MLP | Multi-Layer Perceptron | 69% [15] | Sperm morphology detection | Lifestyle & environmental data |

| Traditional | Logistic Regression | Varied | Clinical prediction models | Structured tabular data |

| Ensemble | Random Forest | 90.47% [15] | Male fertility detection | Balanced dataset with 5-fold CV |

| Support Vector | SVM-PSO | 94% [15] | Male fertility detection | Optimized feature set |

Context-Dependent Performance Advantages

The performance advantage of MLPs is not universal but highly dependent on dataset characteristics and problem context. Research indicates that traditional statistical models like logistic regression often perform comparably to machine learning approaches on small, structured datasets with predominantly linear relationships [14] [16]. However, MLPs tend to demonstrate clearer advantages as data complexity increases, particularly when dealing with:

- Non-linear relationships between predictors and outcomes [14]

- Complex interaction effects among multiple variables [14]

- Larger sample sizes sufficient for training data-hungry algorithms [14]

- High-dimensional data with numerous potential predictors [16]

In semen parameter prediction, one study found that MLPs achieved 90% accuracy for predicting sperm concentration and 82% for sperm motility using environmental factors and lifestyle data [15]. This demonstrates their utility for modeling the multifactorial nature of male fertility, where complex interactions between environmental exposures, lifestyle factors, and clinical parameters collectively influence seminal outcomes.

Advantages of MLP Architecture for Complex Biomedical Data

Handling Non-Linear Relationships and Automatic Feature Learning

The fundamental advantage of MLPs lies in their ability to model complex non-linear relationships without requiring researchers to specify these relationships in advance. Unlike traditional statistical models that rely on researchers to explicitly define potential interactions and non-linearities, MLPs automatically learn these relationships directly from data during training [14]. This capability is particularly valuable in semen parameter research, where the biological mechanisms linking environmental exposures, lifestyle factors, and seminal outcomes are incompletely understood and likely involve complex, non-linear pathways.

MLPs can discover and represent intricate patterns through their layered architecture of interconnected neurons with activation functions. Each layer progressively transforms inputs into more abstract representations, enabling the network to capture hierarchical features in the data. This hierarchical feature learning eliminates the need for manual feature engineering, which is often necessary in traditional statistical modeling [14]. For sperm motility prediction, this means MLPs can automatically identify which combinations of input variables—such as interactions between BMI, abstinence period, and environmental exposures—are most predictive without researchers having to hypothesize these interactions beforehand.

Flexibility with Data Types and Missing Data

MLPs offer exceptional flexibility in handling diverse data types commonly encountered in biomedical research, including semen analysis studies. While traditional statistical models often struggle with mixed data types (continuous, categorical, ordinal) and require complete cases, MLPs can natively accommodate:

- Continuous clinical measurements (sperm concentration, motility percentages)

- Categorical lifestyle factors (smoking status, alcohol consumption)

- Ordinal variables (frequency of exposure)

- Missing data through various imputation techniques [14]

This flexibility extends to MLPs' ability to integrate multiple data modalities—a capability particularly relevant with advances in semen analysis that now incorporate video data alongside traditional clinical and questionnaire data [4]. While one study found that adding participant data (age, BMI, abstinence days) to video analysis did not significantly improve sperm motility prediction, the architectural flexibility of MLPs makes them well-suited for such multimodal integration as research progresses [4].

Table 2: MLP Capabilities for Handling Complex Data Challenges in Semen Research

| Data Challenge | Traditional Statistical Approach | MLP Approach | Advantage in Semen Parameter Prediction |

|---|---|---|---|

| Non-linear relationships | Manual specification of polynomial terms | Automatic learning through activation functions | Discovers complex dose-response relationships between environmental factors and semen parameters |

| Interaction effects | Manual specification of interaction terms | Automatic detection through network connections | Identifies synergistic effects between multiple lifestyle factors |

| Mixed data types | Transformation and encoding required | Native handling through input layer normalization | Integrates clinical, lifestyle, and environmental data without preprocessing burden |

| Missing data | Listwise deletion or imputation | Multiple approaches including masking | Preserves statistical power with incomplete clinical records |

| High-dimensional data | Stepwise selection or penalization | Automatic relevance determination through training | Handles numerous potential predictors without manual feature selection |

Experimental Protocols for MLP Implementation in Semen Research

Protocol 1: MLP Development for Semen Parameter Prediction

Objective: Develop an MLP model to predict semen parameters (concentration, motility, morphology) from environmental factors, lifestyle variables, and clinical data.

Materials and Reagents:

- Dataset: Structured dataset containing semen parameters and predictor variables (minimum recommended: 100-200 samples with at least 10 events per predictor variable) [14]

- Programming Environment: Python with TensorFlow/Keras or R with neural network packages

- Computational Resources: Standard workstation with GPU acceleration recommended for larger datasets

- Data Collection Tools: Standardized questionnaires for lifestyle factors, clinical assessment forms for semen parameters

Procedure:

- Data Preparation and Preprocessing

- Collect and clean dataset containing semen parameters and predictor variables

- Handle missing values using appropriate imputation methods (e.g., k-nearest neighbors, multiple imputation)

- Split data into training (70%), validation (15%), and test (15%) sets using stratified sampling to maintain outcome distribution

- Standardize continuous variables to zero mean and unit variance; one-hot encode categorical variables

Model Architecture Specification

- Initialize MLP with input layer matching the number of predictor variables

- Add 1-3 hidden layers with decreasing number of neurons (e.g., 64, 32, 16) using heuristic approach: start with single hidden layer containing 2/3 the size of input layer plus output layer size

- Select appropriate activation functions: ReLU for hidden layers (to mitigate vanishing gradient problem), sigmoid for binary classification outputs

- Add output layer with neuron(s) matching prediction task: single neuron with sigmoid for binary classification, multiple neurons with softmax for multi-class

Model Training and Optimization

- Initialize weights using He or Xavier initialization methods

- Select appropriate loss function: binary cross-entropy for classification, mean squared error for regression

- Choose adaptive learning rate optimizer (Adam, RMSprop) with initial learning rate of 0.001

- Implement batch training with batch size of 16-32 samples

- Apply early stopping with patience of 20-50 epochs based on validation performance

- Regularize using dropout (rate 0.2-0.5) and L2 weight regularization (lambda 0.001-0.01)

Model Validation and Evaluation

- Assess model discrimination using area under ROC curve (AUC) or concordance index

- Evaluate calibration using calibration plots and metrics (Brier score)

- Compute classification metrics (accuracy, sensitivity, specificity) at optimal threshold

- Perform internal validation using bootstrap or repeated k-fold cross-validation

- Conduct external validation on completely independent dataset when available

Troubleshooting Tips:

- If model fails to converge: reduce learning rate, check data preprocessing, verify activation functions

- If overfitting occurs: increase dropout rate, strengthen L2 regularization, reduce model complexity

- If training is unstable: adjust batch size, gradient clipping, or try different weight initialization

Protocol 2: Comparative Model Evaluation Framework

Objective: Systematically compare MLP performance against traditional statistical models for semen parameter prediction.

Materials:

- Dataset: As in Protocol 1

- Software: Statistical packages for traditional models (R, SPSS, SAS) alongside MLP implementation

- Evaluation Framework: Standardized performance metrics and validation procedures

Procedure:

- Baseline Model Development

- Develop traditional statistical models: logistic regression for classification, Cox regression for time-to-event outcomes [16] [17]

- For logistic regression: include potential non-linear terms (polynomials, splines) and prespecified interaction effects based on domain knowledge

- Use stepwise selection or penalized regression (LASSO, ridge) for variable selection if needed

MLP Model Development

- Follow Protocol 1 for MLP development

- Use identical training, validation, and test sets as baseline models

- Apply hyperparameter tuning using grid or random search

Comprehensive Performance Assessment

- Evaluate discrimination using AUC/C-index with 95% confidence intervals

- Assess calibration using calibration plots, intercept, and slope

- Compute clinical utility measures using decision curve analysis [14]

- Evaluate stability through repeated cross-validation or bootstrap resampling

Interpretation and Explanation

- Apply explainable AI techniques (SHAP, LIME) to interpret MLP predictions [15]

- Compare feature importance with statistical model coefficients

- Assess clinical relevance of identified predictors and interactions

Visualization of MLP Workflow and Architecture

MLP Architecture for Semen Parameter Prediction

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for MLP Implementation

| Category | Item | Specification/Version | Application in Semen Research |

|---|---|---|---|

| Data Collection Tools | Standardized questionnaires | WHO-based or validated instruments | Collection of lifestyle, environmental, and medical history data |

| Clinical data forms | Customized for semen analysis | Standardized recording of semen parameters (concentration, motility, morphology) | |

| Video recording system | Microscope with camera attachment [4] | Capture sperm motility videos for analysis | |

| Computational Environment | Python | 3.8+ with TensorFlow/Keras | Primary platform for MLP implementation and training |

| R | 4.0+ with neuralnet, nnet packages | Alternative platform, particularly for statistical comparisons | |

| GPU acceleration | NVIDIA CUDA-compatible GPU | Accelerate model training for larger datasets | |

| Data Management | Data preprocessing tools | pandas, scikit-learn (Python) | Handle missing data, feature scaling, encoding |

| Cross-validation frameworks | scikit-learn, tidymodels | Model validation and hyperparameter tuning | |

| Model Interpretation | SHAP | Latest stable release [15] | Explain MLP predictions and identify important features |

| LIME | Latest stable release | Create local explanations for individual predictions | |

| Performance Assessment | ROC analysis | pROC (R), scikit-learn (Python) | Evaluate model discrimination capability |

| Calibration assessment | rms (R), scikit-learn (Python) | Assess agreement between predicted and observed probabilities | |

| Decision curve analysis | dcurves (R), custom implementation | Evaluate clinical utility of prediction models |

MLPs offer distinct advantages for semen parameter prediction research by effectively handling the complex, non-linear relationships between diverse predictors and seminal outcomes. Their ability to automatically learn relevant features and interactions from data makes them particularly valuable when underlying biological mechanisms are incompletely understood. While traditional statistical models remain important for interpretability and with smaller sample sizes, MLPs provide enhanced predictive performance for complex biomedical data patterns characteristic of multifactorial conditions like male infertility.

Future research directions should focus on developing more sophisticated hybrid architectures that combine MLPs with other neural network types for multimodal data integration, incorporating explainable AI techniques to enhance model interpretability, and establishing standardized implementation protocols specific andrology applications. As dataset sizes grow and computational resources become more accessible, MLPs are poised to become increasingly valuable tools for advancing male reproductive health research and clinical practice.

Within the framework of developing multi-layer perceptron (MLP) architectures for male fertility assessment, the precise and automated evaluation of key semen parameters is paramount. These parameters—sperm motility, morphology, concentration, and DNA integrity—serve as critical biomarkers for predicting reproductive outcomes and are essential for validating the predictive models in our thesis research. Traditional manual analysis of these parameters is inherently subjective, time-consuming, and prone to inter-laboratory variability [18] [4]. This Application Note details standardized protocols and data analysis methods that leverage artificial intelligence (AI), particularly deep learning, to automate and standardize the assessment of these key parameters, thereby providing robust, high-quality data for training and validating predictive MLP models.

Key Parameters and Predictive Relevance

The following semen parameters are widely recognized as fundamental in male fertility evaluation. Their quantitative assessment provides the feature set for building accurate predictive models.

Table 1: Key Semen Parameters for Predictive Modeling

| Parameter | Clinical Significance | AI-Prediction Relevance | Common Assessment Method |

|---|---|---|---|

| Motility | Indicator of sperm viability and ability to reach the ovum. Crucial for natural conception. | High; motion patterns from videos can be analyzed with 3D CNNs and MLPs for accurate prediction [4]. | Manual microscopy or CASA; deep learning analysis of sperm videos [19]. |

| Morphology | Reflects sperm health and fertilization competence. Correlates with success in IVF [18]. | High; CNNs can classify sperm head, midpiece, and tail defects with accuracy rivaling experts [18]. | Stained smears assessed manually (e.g., David or Kruger classification) or via AI. |

| Concentration | Fundamental measure of sperm production. Below-reference values can indicate subfertility. | High; can be predicted from lifestyle data using MLPs [20] or from images/videos using CNNs [21]. | Hemocytometer or CASA; deep learning-based image analysis. |

| DNA Integrity | Biomarker for internal sperm quality. High DNA fragmentation index (DFI) is linked to poor embryonic development and miscarriage. | Emerging; mitochondrial DNA copy number (mtDNAcn) has been shown to be a predictive biomarker for fecundity [22]. | Specialized assays (e.g., SCSA, TUNEL). |

Experimental Protocols for Data Acquisition

The following protocols are designed to generate consistent, high-quality data suitable for computational analysis.

Sample Collection and Preparation

- Participant Recruitment and Questionnaire: Recruit participants following institutional ethics committee approval and informed consent. Administer a validated questionnaire to collect data on lifestyle, environmental exposures, health status, and abstinence period. These variables serve as crucial input features for predictive models [13] [20].

- Semen Collection: Collect semen samples via masturbation into a sterile container after 2-5 days of sexual abstinence [23] [24].

- Liquefaction: Allow the sample to liquefy for 30-60 minutes at room temperature (22-24°C) or in an incubator at 37°C before analysis [23].

Protocol for Motility Analysis via Deep Learning

Principle: Sperm motility is classified as progressive, non-progressive, or immotile. Deep learning models, particularly Convolutional Neural Networks (CNNs), can directly analyze video data to estimate these proportions with high consistency [4].

Workflow:

Steps:

- Video Acquisition: Place 10 µL of liquefied semen on a glass slide and cover with a 22x22 mm coverslip. Use a phase-contrast microscope with a heated stage (37°C) and a mounted camera. Record videos at 400x magnification with a frame rate of 50 frames-per-second (fps) for 2-7 minutes. Save videos in AVI or MP4 format [4].

- Pre-processing: Extract sequential frames from the video. For 3D-CNN models, stack frames to create a volume that captures temporal motion information [21]. Normalize pixel values.

- Model Training & Prediction:

- Input: Stacked video frames or pre-computed motion features (e.g., Optical Flow, MotionFlow) [19].

- Architecture: Employ a 3D-CNN to learn spatiotemporal features or a pre-trained 2D CNN (e.g., ResNet) with an MLP head for regression/classification [21].

- Output: The model directly predicts the percentages of progressive, non-progressive, and immotile spermatozoa. Mean Absolute Error (MAE) for such models has been reported to be as low as 6.84% for motility [19].

Protocol for Morphology Analysis via Deep Learning

Principle: Sperm morphology is assessed by classifying normal and abnormal forms based on head, midpiece, and tail defects. Convolutional Neural Networks (CNNs) automate this classification, reducing subjectivity [18].

Workflow:

Steps:

- Smear Preparation and Staining: Prepare thin smears of semen on glass slides. Fix and stain using a standardized staining kit (e.g., RAL, Shorr, or Papanicolaou) according to manufacturer protocols [18] [23].

- Image Acquisition: Capture images of individual spermatozoa using a bright-field microscope with a 100x oil immersion objective. A Computer-Assisted Semen Analysis (CASA) system can be used for automated image capture [18].

- Pre-processing and Augmentation:

- Resize images to a standard dimension (e.g., 80x80 pixels).

- Convert to grayscale and apply denoising algorithms to minimize staining or illumination artifacts [18].

- For small datasets, apply data augmentation techniques (rotation, flipping, scaling) to balance morphological classes and improve model generalizability. One study expanded a dataset from 1,000 to 6,035 images using augmentation [18].

- Model Training & Prediction:

- Input: Pre-processed individual sperm images.

- Architecture: A CNN architecture (e.g., custom CNN with convolutional and pooling layers) can be trained to classify sperm into multiple morphological classes based on modified David or WHO criteria [18].

- Output: The model classifies each spermatozoon, providing a percentage of morphologically normal forms. Deep learning models have achieved a MAE of 4.15% for morphology estimation [19].

Assessment of DNA Integrity

Principle: Sperm mitochondrial DNA copy number (mtDNAcn) has emerged as a biomarker for overall sperm fitness and is predictive of a couple's time to pregnancy (TTP) [22].

Procedure:

- DNA Extraction: Isolate total DNA from purified sperm samples using commercial DNA extraction kits, ensuring removal of any somatic cells.

- Quantitative PCR (qPCR): Perform qPCR to quantify the number of mitochondrial DNA genes relative to nuclear DNA genes. Use standardized primers and probes for both mitochondrial and nuclear targets.

- Data Analysis: Calculate the relative mtDNAcn using the ΔΔCt method. This continuous variable can be used directly as a feature in predictive MLP models.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Semen Analysis Protocols

| Item | Function/Application | Example/Note |

|---|---|---|

| RAL Diagnostics Staining Kit | For staining sperm smears for morphological analysis. Provides clear differentiation of sperm heads, midpieces, and tails [18]. | Used in the development of the SMD/MSS dataset for AI-based morphology classification [18]. |

| Eosin-Nigrosin Stain | Vitality staining to distinguish live (unstained) from dead (pink/red) spermatozoa. | A standard stain used according to WHO manuals across studies [23]. |

| Makler Counting Chamber | A specialized chamber for manual assessment of sperm concentration and motility. | Reduces the need for sample dilution and allows for direct analysis [23]. |

| MMC CASA System | Integrated system for automated image acquisition and initial morphometric analysis of sperm. | Used for acquiring images of individual spermatozoa for deep learning datasets [18]. |

| Sperm Mitochondrial DNA (mtDNA) Assay Kits | For quantifying mitochondrial DNA copy number, a biomarker for sperm fitness and fecundity prediction. | qPCR-based kits are commonly used. mtDNAcn was a key feature in a machine learning model predicting pregnancy [22]. |

| VISEM Dataset | An open, multimodal dataset containing sperm videos and participant data. | Serves as a benchmark for developing and testing AI models for motility and concentration prediction [4]. |

| SMD/MSS Dataset | A dataset of 1,000+ annotated sperm images based on modified David classification. | Used for training and testing deep learning models for sperm morphology classification [18]. |

Data Integration and Predictive Modeling with MLP

The protocols above generate structured quantitative data ideal for MLP models. MLPs, a foundational class of artificial neural networks, excel at learning complex, non-linear relationships between input features (semen parameters, mtDNAcn, and questionnaire data) and clinical outcomes (e.g., pregnancy success, varicocelectomy upgrade) [22] [20].

Model Performance: Research demonstrates the power of this approach:

- An MLP model achieved up to 86% accuracy in predicting sperm concentration from lifestyle and environmental data [20].

- An ensemble machine learning model (Elastic Net) that included mtDNAcn and semen parameters demonstrated strong predictive ability for pregnancy status at 12 cycles (AUC 0.73) [22].

- A random forest model (an ensemble method related to MLP principles) accurately predicted which men would experience a clinically meaningful improvement in sperm concentration after varicocelectomy (AUC 0.72) [24].

Table 3: Quantitative Performance of Featured AI Models

| Model/Study | Parameter/Outcome | Performance Metric | Result |

|---|---|---|---|

| Deep Learning [19] | Motility Estimation | Mean Absolute Error (MAE) | 6.84% |

| Deep Learning [19] | Morphology Estimation | Mean Absolute Error (MAE) | 4.15% |

| MLP / SVM [20] | Sperm Concentration | Prediction Accuracy | 86% |

| MLP / SVM [20] | Sperm Motility | Prediction Accuracy | 73-76% |

| Elastic Net SQI [22] | Pregnancy at 12 cycles | Area Under Curve (AUC) | 0.73 |

| Random Forest [24] | Post-Varicocelectomy Upgrade | Area Under Curve (AUC) | 0.72 |

The integration of standardized wet-lab protocols with advanced AI analysis, particularly deep learning for motility and morphology and MLPs for integrated prediction, represents a paradigm shift in male fertility assessment. The methods detailed in this Application Note provide a robust framework for generating high-quality, reproducible data on key semen parameters. This data is fundamental for training and validating sophisticated multi-layer perceptron architectures, moving the field toward more objective, accurate, and clinically meaningful predictive models for male fertility and treatment outcomes.

The integration of artificial intelligence (AI) into reproductive medicine is revolutionizing the diagnosis and treatment of infertility. This transformation is particularly evident in the evolution from Computer-Aided Sperm Analysis (CASA) systems to sophisticated deep learning models, including multi-layer perceptron (MLP) architectures. These technologies enable more objective, accurate, and high-throughput analysis of reproductive cells, moving the field toward data-driven, personalized care [25]. For researchers and drug development professionals, understanding this technological progression is crucial for developing next-generation diagnostic tools and therapeutic interventions. This document details the key applications, experimental protocols, and reagent solutions shaping the current and future landscape of AI in reproductive medicine.

Application Notes: Performance and Quantitative Data

The performance of AI models in predicting infertility-related outcomes has been quantitatively demonstrated across numerous studies. The tables below summarize key predictive performance metrics for models focused on male infertility and in vitro fertilization (IVF) outcomes.

Table 1: AI Model Performance in Predicting Male Infertility and Fecundity

| Prediction Target | AI Model / Input | Key Performance Metrics | Citation/Study |

|---|---|---|---|

| Male Infertility (General) | Various Machine Learning Models (40 models across 43 studies) | Median Accuracy: 88% | [5] |

| Male Infertility (General) | Artificial Neural Networks (ANNs) (7 studies) | Median Accuracy: 84% | [5] |

| Biochemical Markers (Protein, Fructose, etc.) | Back Propagation Neural Network (BPNN) | Mean Absolute Error: 0.025 - 0.166 (across markers) | [26] |

| Pregnancy at 12 Cycles | Sperm mtDNAcn alone | AUC: 0.68 (95% CI: 0.58–0.78) | [22] |

| Pregnancy at 12 Cycles | Elastic Net SQI (8 semen params + mtDNAcn) | AUC: 0.73 (95% CI: 0.61–0.84) | [22] |

Table 2: AI Model Performance in Predicting IVF and Embryo Outcomes

| Prediction Target | AI Model | Key Performance Metrics | Citation/Study |

|---|---|---|---|

| Blastocyst Yield | LightGBM | R²: ~0.675, MAE: ~0.793-0.809 | [27] |

| Blastocyst Yield | Linear Regression (Baseline) | R²: 0.587, MAE: 0.943 | [27] |

| Embryo Implantation | AI-based Selection (Pooled) | Sensitivity: 0.69, Specificity: 0.62, AUC: 0.7 | [28] |

| Clinical Pregnancy | Life Whisperer AI Model | Accuracy: 64.3% | [28] |

| Clinical Pregnancy | FiTTE System (Images + Clinical) | Accuracy: 65.2%, AUC: 0.7 | [28] |

| Live Birth | TabTransformer with PSO | Accuracy: 97%, AUC: 98.4% | [29] |

Experimental Protocols

Protocol: Developing an MLP for Semen Parameter Prediction

This protocol outlines the methodology for developing and validating a multi-layer perceptron (MLP) model to predict crucial biochemical markers from standard semen parameters, based on the work of Vickram et al. [26].

1. Sample Collection and Preparation

- Collect fresh semen samples from both fertile and infertile donors following ethical guidelines and informed consent.

- Immediately process samples for routine semen analysis based on World Health Organization (WHO) protocols.

- Categorize samples into diagnostic groups: normospermia, oligospermia, asthenospermia, oligoasthenospermia, azoospermia, and control.

2. Data Acquisition and Feature Engineering

- Input Features: Record standard semen parameters including sperm concentration, motility (total and progressive), and volume.

- Output Targets: Quantify key biochemical markers from seminal plasma using standard assays:

- Total Protein: Bradford or Lowry method.

- Fructose: Colorimetric resorcinol method.

- Glucosidase: Spectrophotometric enzymatic assay.

- Zinc: Atomic absorption spectroscopy (AAS).

- Create a structured dataset where semen parameters are inputs and biochemical levels are target outputs.

3. Model Architecture and Training

- Network Structure: Design an MLP with:

- Input Layer: Number of nodes equals the number of semen parameters.

- Hidden Layers: 1-2 fully connected layers with a sigmoid or ReLU activation function.

- Output Layer: A linear output node for each biochemical marker to be predicted.

- Training Algorithm: Implement a Back Propagation Neural Network (BPNN) using gradient descent.

- Model Validation: Perform k-fold cross-validation (e.g., 10-fold) to ensure robustness and avoid overfitting.

4. Model Evaluation

- Evaluate model performance by calculating the Mean Absolute Error (MAE) between predicted and actual biochemical values.

- Compare the performance of the MLP against other ANN architectures, such as Radial Basis Function Networks (RBFN).

Protocol: Machine Learning for Predicting Blastocyst Yield

This protocol describes the development of a machine learning model to quantitatively predict blastocyst yield from an IVF cycle, as demonstrated by Liu et al. [27].

1. Data Cohort and Preprocessing

- Include a large number of completed IVF/ICSI cycles (e.g., n > 9,000).

- Define the outcome variable as the number of usable blastocysts formed per cycle.

- Randomly split the dataset into training and testing subsets (e.g., 70/30 or 80/20).

2. Feature Selection and Engineering

- Compile an initial set of potential clinical and embryological features, including:

- Female age

- Number of oocytes retrieved

- Number of 2PN embryos

- Number of embryos in extended culture

- Day 2 and Day 3 embryo morphology parameters (cell number, symmetry, fragmentation).

- Apply Recursive Feature Elimination (RFE) to identify the optimal subset of features (e.g., 8-11) that maintains model performance.

3. Model Training and Selection

- Train multiple machine learning models, including LightGBM, XGBoost, and Support Vector Machines (SVM), alongside a traditional linear regression baseline.

- Use the training set to optimize model hyperparameters via grid or random search.

- Select the optimal model based on:

- Predictive Performance: R² and Mean Absolute Error (MAE).

- Simplicity: Number of features required.

- Interpretability: Ease of understanding feature contributions.

4. Model Validation and Interpretation

- Evaluate the final model on the held-out test set.

- Perform a subgroup analysis to assess performance in poor-prognosis patients.

- Use feature importance analysis (e.g., Gini importance for tree-based models) and Partial Dependence Plots (PDPs) to interpret the model and understand how key features influence the prediction.

Visualization of Workflows and Architectures

MLP Model Development Workflow

The diagram below outlines the end-to-end experimental workflow for developing an MLP model to predict seminal biochemical markers.

From CASA to Deep Learning: An Evolutionary Pipeline

This diagram illustrates the technological evolution from traditional CASA systems to modern deep learning pipelines for comprehensive sperm and embryo analysis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for AI-Driven Reproductive Research

| Item/Category | Function/Application | Specific Examples / Notes |

|---|---|---|

| Semen Analysis Kits | Standardized assessment of basic semen parameters per WHO guidelines. | Kits for concentration, motility, vitality. Forms input features for ML models. |

| Biochemical Assay Kits | Quantification of seminal plasma biomarkers for model validation. | Colorimetric kits for Fructose, Glucosidase, Total Protein, Zinc. |

| Embryo Culture Media | Support development of embryos to blastocyst stage for outcome data. | Sequential media systems for Day 1-3 and Day 3-5/6 culture. |

| Time-Lapse Imaging (TLI) Systems | Automated, continuous imaging for non-invasive morphokinetic data collection. | Provides rich image and video datasets for deep learning models. |

| DNA/Genetic Kits | Assessment of genetic integrity, a key predictor of fertility success. | Kits for sperm mtDNA copy number quantification [22]. |

| CASA Systems | Automated, objective analysis of sperm motility and morphology. | Generates high-throughput, quantitative data for classical ML input. |

| Programmable Freezing Platforms | Automated cryopreservation of gametes/embryos; potential for AI integration. | Microfluidic systems for gradual introduction/removal of cryoprotectants [30]. |

| Electronic Medical Record (EMR) Systems | Data integration hub for clinical, laboratory, and outcome data. | Critical for building comprehensive datasets that combine image and clinical data. |

Architectural Design and Implementation: Building Effective MLP Models for Semen Analysis

Application Note: Data Typology and Sourcing for Semen Quality Prediction

This document details the comprehensive data sourcing and preprocessing protocols for developing multi-layer perceptron (MLP) architectures in semen parameter prediction research. The integration of diverse data modalities addresses the multifactorial nature of male infertility, where environmental factors, lifestyle conditions, and clinical parameters collectively influence reproductive outcomes [31].

Clinical Semen Analysis Parameters

Standard clinical semen analysis provides fundamental quantitative metrics for model development. These parameters are routinely collected in andrology laboratories and serve as both input features and prediction targets for MLP architectures. The World Health Organization (WHO) has established reference values for these parameters, which are essential for data standardization across different research cohorts [32].

Table 1: Clinical Semen Analysis Parameters and WHO Reference Standards

| Parameter | Normal Range | Measurement Method | Clinical Significance |

|---|---|---|---|

| Sperm Concentration | ≥16 million/mL | Hemocytometer or CASA | Indicator of sperm production efficiency |

| Total Sperm Count | ≥39 million/ejaculate | Calculated (concentration × volume) | Total functional sperm capacity |

| Progressive Motility | ≥32% | Microscopic assessment or CASA | Sperm movement capability |

| Total Motility | ≥40% | Microscopic assessment | Overall sperm viability |

| Normal Morphology | ≥4% | Stained smear microscopy | Structural integrity of sperm |

| Semen Volume | ≥1.5 mL | Graduated cylinder | Accessory gland function |

| pH | 7.2-8.0 | pH indicator paper | Biochemical environment |

| Liquefaction Time | <60 minutes | Visual assessment | Seminal coagulum dissolution |

Lifestyle and Environmental Data

Lifestyle factors significantly impact semen quality, with studies demonstrating that environmental factors, climate conditions, smoking, alcohol use, lifestyle habits, and occupational exposures all influence sperm production and transport, thereby affecting male fertility [31]. These parameters require systematic collection through structured questionnaires and environmental monitoring.

Table 2: Lifestyle and Environmental Exposure Parameters

| Parameter Category | Specific Metrics | Collection Method | Quantification Approach |

|---|---|---|---|

| Substance Use | Smoking (pack-years), Alcohol (units/week), Recreational drugs | Structured interview | Frequency and duration coding |

| Occupational Factors | Chemical exposures, Heat stress, Physical strain, Sedentary time | Occupational history | Binary exposure indicators with duration |

| Dietary Patterns | Antioxidant intake, Omega-3 fatty acids, Processed food consumption | Food frequency questionnaire | Categorical (low/medium/high) or continuous scales |

| Physical Activity | Exercise frequency, Intensity, Type | International Physical Activity Questionnaire (IPAQ) | Metabolic equivalent (MET) hours/week |

| Environmental Exposures | Air quality index, Endocrine disruptors, Pesticides | Geographic mapping | Concentration levels or proximity-based metrics |

Image-Based Sperm Morphology Data

Advanced sperm morphology assessment extends beyond the basic WHO criteria through high-resolution imaging techniques. These methods enable detailed evaluation of sperm structures, including the presence of vacuoles, chromatin integrity, and tail abnormalities, which are critical for predicting fertilization potential [33].

Experimental Protocols for Data Acquisition and Preprocessing

Protocol: Clinical Data Collection and Standardization

Purpose: To systematically collect, validate, and standardize clinical semen analysis data for MLP model training.

Materials:

- Computer-assisted semen analysis (CASA) system

- Phase-contrast microscope with heated stage

- Makler counting chamber or hemocytometer

- pH indicator strips (range 6.0-9.0)

- Incubator maintained at 37°C

Procedure:

- Sample Collection and Processing:

Macroscopic Parameters Assessment:

- Measure volume using a graduated pipette or by weighing the collection container.

- Assess pH using indicator strips calibrated against standard solutions.

- Note color and consistency as categorical variables (white/gray/yellow; normal/viscous).

Sperm Concentration and Count:

- Prepare appropriate dilutions (1:10 to 1:50) using sodium bicarbonate-formalin solution.

- Load into counting chamber and assess minimum of 200 sperm in 5-10 fields.

- Calculate concentration (million/mL) and total sperm count (concentration × volume).

Motility Analysis:

- Place 10μL liquefied sample on pre-warmed Makler chamber.

- Assess minimum of 200 sperm, classifying as:

- Progressive motile (rapid and linear movement)

- Non-progressive motile (all other patterns of movement)

- Immotile (no movement)

- Express results as percentages for each category.

Morphology Assessment:

- Prepare thin smears on clean glass slides and air-dry.

- Stain using Diff-Quik or Papanicolaou method.

- Evaluate 200 sperm under oil immersion (1000× magnification).

- Classify as normal or abnormal based on WHO criteria [32].

Data Recording and Quality Control:

- Implement double-data entry system with automated discrepancy checking.

- Include internal quality control samples with known values in each batch.

- Calculate coefficients of variation for repeat measurements (<10% acceptable).

Protocol: Lifestyle Data Collection Through Structured Interviews

Purpose: To systematically capture lifestyle and environmental exposure variables that influence semen quality parameters.

Materials:

- Validated lifestyle assessment questionnaire

- Secure electronic data capture system

- Environmental exposure databases (regional air quality, water quality)

Procedure:

- Questionnaire Administration:

- Conduct face-to-face or electronic administration in controlled setting.

- Ensure informed consent and explain confidentiality measures.

- Use standardized response options to minimize free-text entries.

Substance Use Quantification:

- Record smoking history as pack-years (packs/day × years smoked).

- Document alcohol consumption as standard units per week (1 unit = 10g pure alcohol).

- Note recreational drug use with frequency, duration, and type.

Occupational Exposure Assessment:

- Document job title, industry, and specific exposures using standardized classification codes.

- Assess physical demands (sedentary, light, moderate, heavy) and heat exposure.

- Record use of personal protective equipment where applicable.

Dietary Pattern Evaluation:

- Administer validated food frequency questionnaire focusing on antioxidants (vitamins C, E, selenium, zinc).

- Calculate dietary antioxidant score based on fruit/vegetable intake frequency.

- Document supplement use (type, dose, duration).

Data Integration and Scoring:

- Develop composite lifestyle score incorporating all domains.

- Apply weighting based on established literature on effect sizes.

- Create categorical variables (low/medium/high risk) for MLP input.

Protocol: Sperm Image Acquisition and Preprocessing for Morphology Analysis

Purpose: To acquire high-quality sperm images and preprocess them for morphological feature extraction in MLP models.

Materials:

- Phase-contrast microscope with digital camera

- Computer-assisted sperm analysis (CASA) system with morphology module

- Staining reagents (Diff-Quik, Papanicolaou, or eosin-nigrosin)

- Image processing software (ImageJ, MATLAB)

Procedure:

- Sample Preparation and Staining:

- Prepare semen smears on pre-cleaned glass slides.

- Fix with methanol or ethanol-based fixatives.

- Stain using standardized protocols for consistent staining intensity.

- Air-dry completely before imaging.

Image Acquisition:

- Use 100× oil immersion objective with consistent lighting conditions.

- Capture minimum of 200 sperm images per sample.

- Maintain consistent focal plane and exposure settings.

- Include calibration micrometer images for pixel-size conversion.

Image Preprocessing Pipeline:

- Apply background subtraction to correct uneven illumination.

- Use contrast-limited adaptive histogram equalization to enhance features.

- Implement median filtering (3×3 kernel) to reduce noise.

- Apply Otsu's thresholding for binary segmentation.

Individual Sperm Isolation:

- Employ watershed algorithm for separating touching sperm.

- Extract connected components with size filtering (remove non-sperm objects).

- Generate bounding boxes for each isolated sperm.

Feature Extraction:

- Measure geometric parameters (head area, perimeter, ellipticity).

- Calculate intensity features (mean, standard deviation, texture).

- Detect specific structures (acrosome, vacuoles, midpiece, tail) [33].

- Export feature matrix for MLP model training.

Data Integration and Preprocessing Workflows

The effective integration of multimodal data requires sophisticated preprocessing pipelines that address heterogeneity in data types, scales, and distributions. The workflow below illustrates the comprehensive data processing pathway from raw data acquisition to MLP-ready feature sets.

Advanced Image Processing for Sperm Morphology Classification

The application of deep learning approaches to sperm morphology analysis represents a significant advancement over traditional manual assessment. The following workflow details the specific processing steps for convolutional neural networks integrated with MLP architectures for comprehensive semen quality prediction.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Semen Analysis Studies

| Reagent/Material | Function | Application Specifics |

|---|---|---|

| Diff-Quik Stain Kit | Sperm morphology assessment | Rapid staining of acrosome, nucleus, and tail structures |

| SpermSlow Medium | Motility reduction for analysis | Enables detailed motility scoring and imaging |

| Phosphate Buffered Saline (PBS) | Sample dilution and washing | Maintains osmotic balance and pH during processing |

| Formalin-Saline Solution | Sperm fixation | Preserves cellular structure for morphological analysis |

| Propidium Iodide | Viability staining | Membrane integrity assessment through DNA labeling |

| Computer-Assisted Semen Analysis (CASA) System | Automated parameter quantification | Standardized assessment of concentration, motility, and kinematics |

| Phase-Contrast Microscope with Digital Camera | Image acquisition | High-resolution imaging for morphological evaluation |

| Eosin-Nigrosin Stain | Viability and morphology | Simultaneous assessment of live/dead ratio and structure |

| Anti-ROS Reagents | Oxidative stress measurement | Quantification of reactive oxygen species in semen |

| Sperm DNA Fragmentation Kit | Genetic integrity assessment | Detection of DNA damage using TUNEL or SCSA assays |

Data Quality Assessment and Preprocessing Protocol

Purpose: To implement comprehensive quality control measures and preprocessing techniques for multimodal semen quality data.

Materials:

- Statistical software (R, Python with pandas/scikit-learn)

- Data visualization tools (Matplotlib, Seaborn)

- Quality control checklists and protocols

Procedure:

- Data Quality Assessment:

- Calculate completeness index for each variable (>95% target).

- Assess outliers using Tukey's fences (Q1 - 1.5×IQR, Q3 + 1.5×IQR).

- Evaluate distribution characteristics (skewness, kurtosis).

Missing Data Handling:

- Apply multiple imputation by chained equations (MICE) for clinical variables.

- Use k-nearest neighbors imputation for lifestyle data (k=5).

- Implement model-based imputation for image-derived features.

Feature Engineering:

- Create interaction terms between significant clinical and lifestyle variables.

- Generate polynomial features for non-linear relationships.

- Develop composite scores (e.g., overall semen quality index).

Data Transformation:

- Apply Box-Cox transformation for skewed continuous variables.

- Standardize continuous features to zero mean and unit variance.

- Encode categorical variables using one-hot encoding.

Dataset Partitioning:

- Split data into training (70%), validation (15%), and test (15%) sets.

- Maintain consistent distribution of outcome variables across partitions.

- Implement stratified sampling for rare outcome categories.

The protocols and methodologies detailed in this document provide a robust framework for sourcing and preprocessing diverse data types relevant to semen quality prediction. By systematically addressing the unique challenges of clinical, lifestyle, and image-based data, researchers can develop more accurate and generalizable MLP architectures for male fertility assessment. The integration of these multimodal data streams enables comprehensive modeling of the complex factors influencing semen parameters, ultimately advancing both clinical andrology and reproductive toxicology research.

Multilayer Perceptrons (MLPs) represent a fundamental class of artificial neural networks that have demonstrated significant utility in computational andrology, particularly for predicting semen parameters based on lifestyle and environmental factors. An MLP is a feedforward neural network consisting of fully connected neurons with nonlinear activation functions, organized in distinct layers, notable for its ability to distinguish data that is not linearly separable [34]. These networks form the basis of deep learning applications across diverse domains, including medical diagnostics and reproductive health [34] [20]. In the context of semen parameter prediction, MLPs have achieved notable performance, with research reporting prediction accuracy values of 86% for sperm concentration and 73-76% for motility parameters [20]. The architecture's capacity to model complex, non-linear relationships between input variables (such as environmental factors and lifestyle habits) and output semen parameters makes it particularly valuable for researchers and clinicians seeking to identify individuals at risk of fertility issues without immediately resorting to expensive laboratory tests [20].

The fundamental structure of an MLP includes an input layer that receives feature data, one or more hidden layers that progressively transform the inputs, and an output layer that produces predictions [35] [12]. This layered architecture enables the network to learn hierarchical representations of the input data, with earlier layers capturing basic patterns and subsequent layers building more complex abstractions [36]. For semen parameter prediction, this hierarchical learning capability allows the model to identify both straightforward and subtle relationships between factors like smoking, alcohol consumption, psychological stress, and physiological outcomes affecting fertility [5] [37].

MLP Architectural Components and Their Functions

Input Layer Configuration

The input layer serves as the entry point for feature data into the MLP architecture. Each neuron in this layer corresponds to a specific input variable relevant to semen quality prediction. Research in male fertility prediction has utilized various input features, including socio-demographic data, environmental factors, health status indicators, and lifestyle habits [38] [20]. These input variables are typically normalized to ensure consistent scaling across features, with continuous variables like age and cigarette consumption normalized between 0 and 1, and categorical variables converted to binary or ternary representations [20].

The design of the input layer requires careful consideration of feature selection and engineering. Studies have shown that appropriate feature selection significantly impacts model performance in semen parameter prediction [37]. The number of neurons in the input layer directly corresponds to the number of selected features after preprocessing. For example, a study by Gil et al. utilized a normalized questionnaire from young healthy volunteers, with the resulting features determining the input layer dimensionality [20].

Hidden Layers: The Computational Core

Hidden layers constitute the computational engine of the MLP, transforming inputs through weighted connections and nonlinear activation functions. A single hidden layer can theoretically approximate any continuous function given sufficient neurons, but multiple hidden layers often provide more efficient representation for complex problems [36]. In semen parameter prediction, both two-layer and three-layer MLP architectures have been empirically evaluated, with three-layer perceptrons demonstrating slightly better performance with error rates around 0.13 compared to 0.16 for two-layer architectures [38].

Each neuron in a hidden layer receives inputs from all neurons in the previous layer, computes a weighted sum, and applies an activation function. The transformation in a hidden neuron can be represented as:

(hj = \frac{1}{1 + \exp\left(-w{0j} + \sum{i=1}^{l} w{ij} x_i\right)}) [35]

where (xi) represents inputs, (w{ij}) represents weights, and (w_{0j}) represents bias terms. The universal approximation capability of MLPs with even one hidden layer makes them particularly suitable for modeling the complex, multifactorial relationships between lifestyle factors and semen parameters [36].

Output Layer Design for Semen Parameter Prediction

The output layer produces the final predictions of the network, with its structure determined by the specific prediction task. For binary classification tasks (e.g., normal vs. abnormal semen quality), a single neuron with sigmoid activation is typically used [12]. For multi-class classification or prediction of multiple continuous semen parameters, multiple output neurons with appropriate activation functions (softmax for classification, linear for regression) may be employed.

In semen quality prediction research, MLPs have been configured to predict various output parameters, including sperm concentration, motility, and morphology [20]. The choice of output layer activation function depends on the nature of the prediction: sigmoid functions for binary outcomes or probability estimates, and linear functions for continuous value predictions [35] [12].

Table 1: MLP Architectural Configurations for Semen Parameter Prediction

| Architectural Component | Configuration Options | Considerations for Semen Prediction |

|---|---|---|

| Input Layer Size | Based on feature count (e.g., 10-30 features from questionnaires) | Feature selection crucial; includes lifestyle, environmental, health factors [20] |

| Hidden Layer Count | 1-3 hidden layers | 3 layers show slightly better performance (0.13 error vs. 0.16 for 2 layers) [38] |

| Hidden Layer Size | Varies (e.g., 8-256 neurons); 21 neurons mentioned but not confirmed as optimal [38] | Limited sample size (n=100) may prevent definitive optimal size determination [38] |