Navigating Cellular Heterogeneity: A Comprehensive Guide for Accurate Methylation-Expression Integration

Integrating DNA methylation with transcriptome data offers powerful insights into gene regulation but is profoundly confounded by cellular heterogeneity.

Navigating Cellular Heterogeneity: A Comprehensive Guide for Accurate Methylation-Expression Integration

Abstract

Integrating DNA methylation with transcriptome data offers powerful insights into gene regulation but is profoundly confounded by cellular heterogeneity. This article provides researchers and drug development professionals with a current and actionable framework for correcting this bias. We explore the foundational impact of cell-type mixture on epigenetic and transcriptional signals, detail and compare key bioinformatic deconvolution methodologies, offer strategies for troubleshooting and optimization, and establish best practices for validating cell-type-specific findings in downstream analyses. By synthesizing recent benchmarking studies and advanced techniques, this guide empowers robust, reproducible multi-omics research.

The Cellular Mixture Problem: How Heterogeneity Confounds Methylation-Expression Integration

Defining Intersample Cellular Heterogeneity (ISCH) as a Major Source of Variation

Intersample Cellular Heterogeneity (ISCH) refers to the variation in cell type composition across different biological samples. In epigenome-wide association studies (EWAS), particularly those investigating DNA methylation (DNAme), ISCH is one of the largest contributors to observable variability [1]. When analyzing bulk tissue samples, differences in DNAme between experimental groups can reflect genuine epigenetic changes or simply mirror differences in the underlying cellular makeup [1]. Failure to properly account for ISCH can confound results, leading to both inflated false-positive and false-negative findings, thereby compromising the interpretation of methylation-expression relationships [1] [2]. This technical support guide provides a foundational understanding and practical solutions for researchers aiming to correct for cellular heterogeneity in their analyses.

FAQs on Intersample Cellular Heterogeneity (ISCH)

1. What is Intersample Cellular Heterogeneity (ISCH) and why is it a problem in epigenetic studies? ISCH describes the differences in the proportions of constituent cell types across samples collected from a seemingly homogeneous tissue or source [1]. In DNA methylation (DNAme) studies, it is a major source of variation because the epigenetic profile of a bulk tissue sample is a weighted average of the profiles of its component cells. If the cell type composition differs systematically between your case and control groups, any observed differential methylation might be falsely attributed to the condition of interest rather than the underlying cellular composition [1] [2]. This can severely confound your analysis and lead to incorrect biological conclusions.

2. How can I estimate or predict ISCH in my DNA methylation dataset? ISCH can be estimated using bioinformatic deconvolution methods applied to bulk DNA methylation data. These tools fall into two main categories:

- Reference-based Deconvolution: These algorithms require a pre-existing reference dataset containing the DNAme profiles of pure cell types. They estimate the proportion of each cell type in your mixed bulk samples. Examples include

EpiDISHandminfi'sestimateCellCountsfunction [1]. - Reference-free Deconvolution: These methods do not require an external reference and instead infer cellular components directly from the data itself, often using statistical approaches like Principal Component Analysis (PCA) [1]. The choice between methods depends on the tissue being studied and the availability of a validated reference panel for your tissue of interest.

3. What are the main methods to account for ISCH in downstream statistical analyses? Once you have estimated cell type proportions, you can adjust for ISCH in your models to isolate the true biological signal. Common strategies include:

- Including proportions as covariates: Adding the estimated cell type proportions as covariates in a linear regression model for your EWAS.

- Robust Linear Regression: Using regression methods that are less sensitive to outliers, which can be introduced during cell type estimation.

- PCA-based Adjustment: Including top principal components from the cell proportion estimates as covariates to capture major sources of heterogeneity [1].

4. Can I obtain cell-type-specific signals from bulk DNA methylation data? Yes, computational advances now make this possible. Methods like Tensor Composition Analysis (TCA) can deconvolute bulk DNAme data to infer cell-type-specific methylomes for each sample [2]. This allows you to test for differential methylation within a specific cell type, rather than across the entire heterogeneous tissue, providing a much more precise and biologically meaningful analysis [2].

5. My research involves tumor samples, which are highly heterogeneous. Are there specialized tools for this context?

Yes, the high level of cellular heterogeneity in tumors, including both cancer and immune cells, has driven the development of specialized deconvolution tools. Packages like MethylResolver and HiTIMED are designed to estimate the relative proportions of tumor and immune cells in the tumor microenvironment from bulk DNA methylation data [1]. Using these tissue-specific tools is crucial for accurate interpretation of cancer epigenomics data.

Troubleshooting Common Experimental & Analytical Issues

Problem: High Background Staining in In Situ Hybridization (ISH) Protocols

- Potential Cause: Inadequate stringent washing after hybridization.

- Solution: Ensure the stringent wash step is performed correctly. Use an SSC buffer at a temperature between 75-80°C for the wash. If processing multiple slides, increase the temperature by 1°C per slide, but do not exceed 80°C [3].

- Potential Cause: Probes with repetitive sequences (like Alu or LINE elements).

- Solution: Block probe binding to these repetitive sequences by adding COT-1 DNA to the specific hybridization mixture [3].

- Potential Cause: Using incorrect wash solutions.

- Solution: Always use the specified buffers (e.g., PBST) for washing steps. Washing with distilled water or PBS without detergent (e.g., Tween 20) can lead to elevated background [3].

Problem: Weak or No Signal in ISH Experiments

- Potential Cause: Improper tissue handling or fixation, leading to RNA/DNA degradation.

- Solution: Minimize the time between tissue collection and fixation. Ensure the tissue specimen is an appropriate size for the volume of fixative used and that fixation time is sufficient [3].

- Potential Cause: Over- or under-digestion during the pepsin digestion (permeabilization) step.

- Solution: Optimize the enzyme pretreatment conditions for your specific tissue type. Typically, 3-10 minutes at 37°C is recommended, but this requires empirical testing [3].

- Potential Cause: Inefficient denaturation.

- Solution: Perform the denaturation step at 95 ± 5°C for 5-10 minutes on a calibrated hot plate, ensuring the sections are cover-slipped in a humidified environment to prevent drying [3].

Problem: Inflated False Discoveries in EWAS Despite Accounting for ISCH

- Potential Cause: The deconvolution method or reference panel used is not optimal for your specific tissue.

- Solution: Consult resources, like Table 1 from the primer, to select a method and reference dataset that has been validated for your tissue of interest (e.g., blood, brain, saliva) [1].

- Potential Cause: The statistical model used for adjustment is not adequately capturing the complexity of the cellular heterogeneity.

- Solution: Consider using more robust regression techniques or PCA-based adjustments on the estimated cell proportions. Furthermore, if your goal is to find cell-type-specific effects, directly use a method like TCA for deconvolution rather than just adjusting for proportions [1] [2].

Problem: Tissue Loss or Degraded Morphology in ISH

- Potential Cause: Insufficient fixation or the use of incorrect slides.

- Solution: Optimize fixation by potentially changing fixatives or increasing fixation duration. Use positively charged, pre-cleaned adhesive slides to ensure tissue sections adhere properly [4] [5].

- Potential Cause: Excessive pretreatment, such as over-digestion with protease.

- Solution: Carefully optimize the tissue digestion time and temperature to ensure tissues are not over-processed, which degrades morphology [5].

Essential Experimental Protocols

Protocol 1: Bioinformatic Estimation of ISCH from DNA Methylation Array Data

This protocol outlines the key steps for estimating cell type proportions from Illumina Infinium BeadChip data (450K, EPIC) in R [1].

- Data Preprocessing: Begin with raw data (IDAT files) and perform quality control, background correction, and normalization. The

minfipackage in R is standard for this.- R Code Snippet:

- Select a Deconvolution Method: Choose a reference-based or reference-free method suitable for your tissue. For blood,

minfi::estimateCellCountsis a common choice.- R Code Snippet (using a reference-based method with EpiDISH):

- Inspect Output: The result is a matrix of estimated cell type proportions for each sample, which can then be used as covariates in downstream analyses.

Protocol 2: Deconvolution of Bulk Methylation to Cell-Type-Specific Signals

This protocol uses Tensor Composition Analysis (TCA) to obtain cell-type-specific DNA methylation values from bulk data [2].

- Input Data Preparation: You will need:

- A bulk DNA methylation data matrix (CpG sites x Samples).

- A matrix of estimated cell type proportions for each sample (from Protocol 1).

- Apply TCA: Use the

TCApackage in R to deconvolute the bulk data.- R Code Snippet (conceptual):

- Downstream Analysis: The output

cell_specific_methylationis a tensor containing inferred methylation levels for each CpG, each sample, and each cell type. You can now perform differential methylation analysis on a per-cell-type basis.

Research Reagent Solutions

Table 1: Essential Reagents and Tools for Cellular Heterogeneity Research

| Item | Function/Description | Example Application |

|---|---|---|

| Illumina Methylation Arrays | Platform for genome-wide DNA methylation profiling. | Generating beta value matrices for ISCH deconvolution from whole blood, saliva, or tissue samples [1] [2]. |

| Reference Methylation Panels | Pre-defined DNAme signatures of pure cell types. | Enabling reference-based deconvolution with tools like EpiDISH or minfi (e.g., FlowSorted.Blood.EPIC) [1]. |

| COT-1 DNA | A reagent rich in repetitive DNA sequences. | Blocking non-specific binding of probes to repetitive genomic elements during ISH, reducing background [3]. |

| Formamide | A denaturing agent used in hybridization buffers. | Allows hybridization to occur at lower temperatures, helping to preserve tissue morphology during ISH procedures [4]. |

| Protease (e.g., Pepsin) | Enzyme for tissue permeabilization. | Digests proteins surrounding the target nucleic acid, increasing probe accessibility in fixed tissue samples for ISH [3] [5]. |

| TCA (Tensor Composition Analysis) Software | Computational tool for cell-type-specific signal deconvolution. | Extracting cell-type-specific methylomes and transcriptomes from bulk tissue data [2]. |

| CIBERSORTx | Analytical tool for imputing cell type abundances and gene expression profiles. | Deconvoluting transcriptome data from bulk tissue to estimate cell fractions and cell-type-specific expression [2]. |

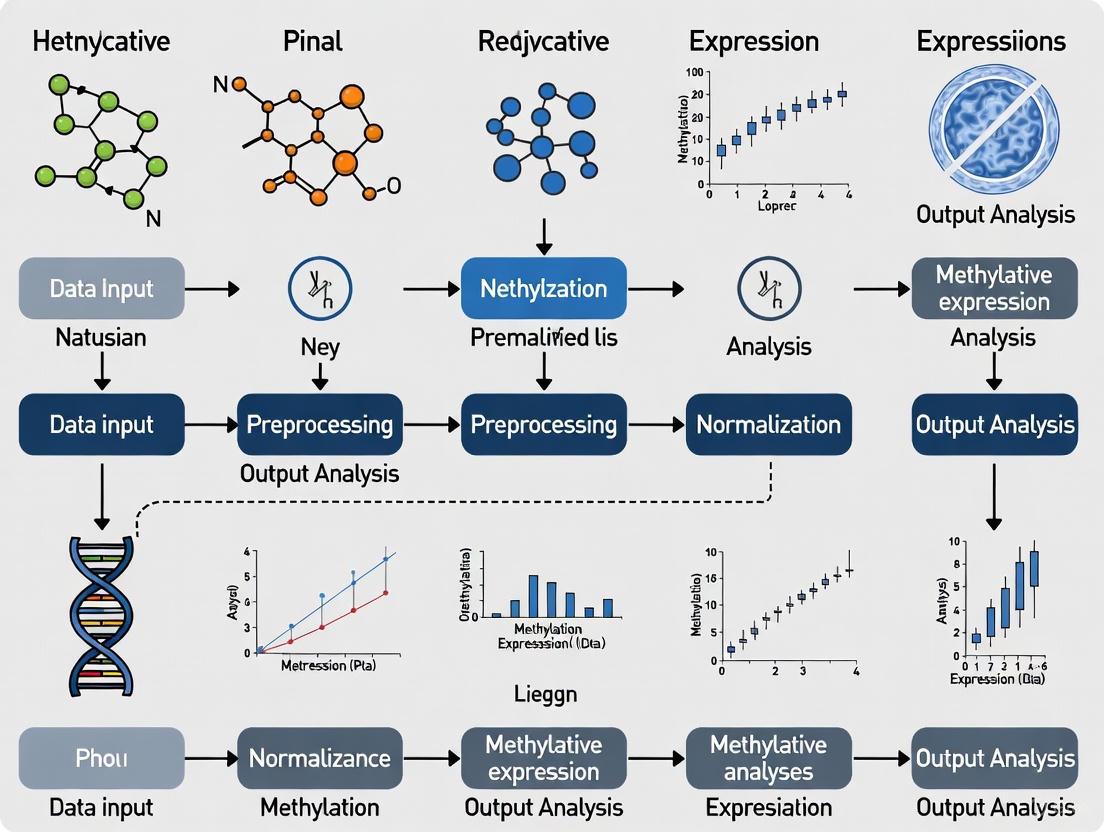

Workflow and Signaling Diagrams

Data Analysis Workflow for Correcting ISCH in Epigenomic Studies

How ISCH Acts as a Confounder in Bulk Tissue Analysis

Core Concept: Understanding the Confounding Mechanism

Bulk tissue samples, such as whole blood or solid tumors, are composed of multiple cell types. The measured molecular profile (e.g., DNA methylation or gene expression) from these samples represents an average across all constituent cells. When cell-type proportions vary between individuals and are associated with both the phenotype (e.g., a disease) and the molecular mark being studied, they introduce a confounding effect that can lead to spurious associations or mask true signals [6] [7].

This confounding occurs because:

- Phenotype Association: Disease states can actively alter tissue composition. For example, the proportion of immune cells in blood can change significantly in autoimmune diseases like rheumatoid arthritis [8] [7].

- Molecular Mark Association: Different cell types have distinct, inherent molecular profiles. For instance, DNA methylation levels can differ by over 80% at specific loci between cell types like neutrophils and CD4+ T cells [7].

The diagram below illustrates this confounding relationship and the principle of deconvolution.

Figure 1: Confounding by Cell-type Heterogeneity. Cell-type proportions are associated with both the phenotype and the bulk molecular measurement, creating a confounding path (blue arrows). Computational deconvolution aims to dissect the bulk signal into its constituent parts: cell-type-specific signatures (H) and estimated cell proportions (W).

Troubleshooting Guides

Guide: Poor Deconvolution Performance

Problem: Your deconvolution algorithm is returning inaccurate estimates of cell-type proportions, or the results are highly unstable.

| Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| High error in estimated proportions compared to ground truth (if available) | Incorrect number of cell types (K) specified. | Use a scree plot and Cattell's rule to determine the optimal K [9]. |

| Inconsistent results between runs | Sensitivity to random initialization in the algorithm. | Run the algorithm with multiple random initializations and average the results [9]. |

| Poor performance even with large sample sizes | Probe selection includes markers correlated with confounders (e.g., age, sex) rather than cell type. | Pre-filter the input data to remove probes strongly correlated with known confounders. This can reduce error by 30-35% [9]. |

| Biased estimates in reference-based methods | Reference profile does not match the biology of samples in your study. | Use a reference generated from a context (e.g., disease state, demographic) that matches your study population. If this is not possible, consider reference-free methods [6]. |

| Low power to detect cell-type-specific signals | Insufficient inter-sample variability in cell-type proportions. | Ensure your cohort has natural diversity in cell-type composition. Performance is best when this variability is large [9]. |

Guide: Interpreting EWAS/TWAS Results Amidst Heterogeneity

Problem: Your epigenome- or transcriptome-wide association study has identified significant hits, but you suspect many are driven by cell-type composition rather than the phenotype of interest.

| Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| A large number of significant hits in genes known to be cell-type-specific markers. | Phenotype is correlated with a shift in cell-type proportions. The detected molecular change reflects this shift, not intra-cellular alteration. | Re-run the association analysis, including the estimated cell-type proportions as covariates in the model [6] [8]. |

| Inability to replicate findings from a bulk tissue study. | The original association was confounded by cell-type heterogeneity that differed between the original and replication cohorts. | Perform deconvolution and adjusted analysis in both cohorts to identify true, cell-type-independent signals [8] [7]. |

| An ensemble-averaged signal (e.g., from bulk RNA-seq) does not represent the state of any major cell subpopulation. | The population is a mixture of distinct subpopulations with different molecular states [10]. | Apply deconvolution to identify the major subpopulations and analyze their signals separately. |

Frequently Asked Questions (FAQs)

Q1: When is it absolutely critical to adjust for cell-type heterogeneity? Adjustment is critical when studying accessible, highly heterogeneous tissues (e.g., blood, saliva, tumor biopsies) and when investigating phenotypes known to alter tissue composition, such as immune-related diseases, cancer, or aging. In these cases, the data variance from cell-type composition can be 5 to 10 times larger than the signal from the phenotype itself, severely confounding results [7].

Q2: What is the fundamental difference between reference-based and reference-free deconvolution methods?

- Reference-based (Supervised) methods require an a priori defined reference matrix containing cell-type-specific molecular profiles (e.g., gene expression or DNA methylation signatures). They solve for the proportion matrix by using this fixed reference [6]. Examples include CIBERSORT [6] and EPIC [6].

- Reference-free (Unsupervised) methods do not require pre-defined reference profiles. They simultaneously estimate both the cell-type proportions and the cell-type-specific signatures directly from the bulk data [6]. Examples include MeDeCom [9] and RefFreeEWAS [9].

Q3: How do I choose the right number of cell types (K) for a reference-free method? The most robust method is to use a scree plot (a plot of the model error against the number of cell types K) and apply Cattell's rule. The optimal K is typically found at the "elbow" of the plot, where adding more cell types no longer significantly improves the model fit [9].

Q4: Can I use deconvolution to analyze my existing archive of bulk genomic data? Yes. A key advantage of computational deconvolution is the ability to perform in silico re-analysis of historical bulk datasets (e.g., from microarrays) to extract cell-type-level information, which is impossible to obtain experimentally for samples that are no longer available [6].

Q5: What are the limitations of these computational approaches?

- Reference Reliability: Reference-based methods are highly sensitive to the accuracy and biological relevance of the reference profile used [6].

- Rare or Unknown Cell Types: Both approaches, especially reference-based ones, may fail to identify rare, unknown, or uncharacterized cell types present in the sample [6].

- Interpretation: Results from reference-free methods require careful biological validation to assign cell identity to the estimated latent factors [9].

Essential Research Reagent Solutions

The following table lists key computational tools and their properties, which serve as essential "reagents" in the field.

| Tool / Resource Name | Function / Category | Key Features & Applications |

|---|---|---|

| CIBERSORT [6] | Reference-based deconvolution (Gene Expression) | Uses support vector regression to estimate cell proportions from bulk tissue gene expression profiles. |

| EPIC [6] | Reference-based deconvolution (Gene Expression) | Estimates proportions of immune and stromal cells in tumor samples, accounting for uncharacterized cell types. |

| MuSiC [6] | Reference-based deconvolution (Gene Expression) | Leverages single-cell RNA-seq data to create references for deconvoluting bulk data, accounting for cross-subject and cross-cell variation. |

| MeDeCom [9] | Reference-free deconvolution (DNA Methylation) | Uses non-negative matrix factorization (NMF) to simultaneously infer cell proportions and methylomes from bulk DNA methylation data. |

| RefFreeEWAS [9] | Reference-free deconvolution (DNA Methylation) | Applies NMF to identify latent cell types and their proportions for use as covariates in EWAS. |

| TOAST [6] | Reference-free deconvolution (DNA Methylation) | A comprehensive toolkit for the analysis of heterogeneous tissues, including deconvolution and differential analysis. |

| SVA / ISVA [8] | Surrogate Variable Analysis | A general method for identifying and adjusting for unknown sources of heterogeneity, including cell-type effects, in high-dimensional data. |

Standardized Experimental Protocol for a Benchmarking Pipeline

Based on comparative analyses, the following pipeline provides a robust starting point for inferring cell-type proportions from DNA methylation data using a reference-free approach.

Workflow Diagram:

Figure 2: Reference-free Deconvolution Workflow. A step-by-step protocol for estimating cell-type proportions from bulk DNA methylation data.

Step-by-Step Protocol:

Pre-processing & Quality Control (QC):

- Input: Raw DNA methylation data (e.g., from Illumina Infinium arrays).

- Perform standard normalization (e.g., quantile normalization) and background correction. Recommendation: Using non-log data at this stage has been shown to be optimal for subsequent deconvolution [11].

- Filter out probes with low signal, known SNPs, or cross-reactive probes.

Confounder Adjustment:

- Identify technical or biological variables (e.g., batch, age, sex) that are not of primary interest.

- Use a regression model to remove the variation in the methylation data associated with these confounders. This step is critical and can reduce estimation error by 30-35% [9].

Feature Selection:

- Select a set of informative CpG probes that are most likely to vary by cell type.

- This can be achieved by selecting probes with high variance across samples or those known to be differentially methylated across cell types. This step improves performance similarly to confounder adjustment [9].

Determine the Number of Cell Types (K):

- Run the deconvolution algorithm (e.g., MeDeCom) over a range of possible K values (e.g., K=2 to 10).

- For each K, record the model error. Plot these errors to create a scree plot.

- Apply Cattell's rule to identify the "elbow" point, which represents the optimal K [9].

Deconvolution:

- Using the selected K and the pre-processed matrix from Step 3, run the core deconvolution algorithm.

- Critical: To mitigate sensitivity to random initialization, run the algorithm multiple times (e.g., 10-20) with different random seeds and average the stable solutions [9].

- The output are two matrices: (i) the estimated cell-type proportions (A), and (ii) the estimated cell-type-specific methylation profiles (T).

Validation and Interpretation:

- Validate the results by correlating the estimated proportions with known cell-type markers (if available) or with proportions estimated from orthogonal methods (e.g., flow cytometry, histology) [11] [9].

- Use the estimated proportions (matrix A) as covariates in downstream association studies (EWAS/TWAS) to correct for confounding [6] [8].

Troubleshooting Guides

Troubleshooting Guide 1: Diagnosing Spurious Associations in EWAS

Problem: Your epigenome-wide association study (EWAS) identifies numerous significant CpG sites, but you suspect many are false positives driven by cellular heterogeneity.

Symptoms:

- Q-Q plots of p-values show substantial genomic inflation (lambda λ >> 1).

- A high proportion of significant hits are located in genomic regions known to be cell-type-specific (e.g., enhancers, cell-type-specific regulatory regions).

- Results fail to replicate in an independent dataset with different cell composition.

Diagnostic Steps:

Calculate Genomic Inflation Factor (λ)

Interpretation: λ > 1.05 suggests potential confounding.

Annotate Significant Probes to Cell-Type-Specific Regions

Apply and Compare Multiple Correction Methods Test if associations persist across different adjustment approaches:

- Reference-based deconvolution (e.g., Houseman method)

- Reference-free methods (e.g., RefFreeEWAS)

- Surrogate variable analysis (SVA)

Troubleshooting Guide 2: Addressing Irreproducible Findings Across Studies

Problem: Differential methylation findings from one study fail to replicate in another, potentially due to differing cellular compositions across cohorts.

Symptoms:

- Effect sizes and directions vary substantially between studies.

- CpG sites significant in one study show no association in another.

- Between-study heterogeneity (I² statistic) is high in meta-analyses.

Diagnostic Steps:

Assess and Compare Cell Composition

Test for Cell-Type-Specific Effects Determine if associations are driven by specific cell types:

Apply Robust Adjustment Methods Use methods that perform well across different simulation scenarios:

Frequently Asked Questions (FAQs)

Q1: What are the primary consequences of failing to correct for cellular heterogeneity in DNA methylation studies?

Uncorrected cellular heterogeneity leads to two major problems: (1) Spurious associations - false positive findings where methylation differences appear associated with a phenotype but actually reflect underlying differences in cell-type composition, and (2) Irreproducible findings - results that fail to replicate across studies due to different cell-type proportions in independent cohorts [12] [8]. Simulation studies show that the number of false positives can be "unrealistically high" without proper adjustment, severely limiting the ability to distinguish true biological signals from confounding effects [8].

Q2: Which cell type adjustment method should I use for my DNA methylation study?

Method selection depends on your specific context and available data. Based on comparative evaluations:

- Surrogate Variable Analysis (SVA) demonstrated stable performance across diverse simulated scenarios and is generally recommended [8].

- Reference-based methods (e.g., Houseman method) require external reference data but provide biologically interpretable cell proportion estimates [12] [8].

- Reference-free methods are valuable when appropriate reference data is unavailable, though interpretation of estimated components can be challenging [12].

Consider your sample size, availability of reference data, and need for biological interpretability when selecting a method [12] [8].

Q3: How can I determine if my findings are affected by cellular heterogeneity?

Several diagnostic approaches can help identify cellular heterogeneity confounding:

- Examine Q-Q plots of p-values - pronounced deviations from the expected null distribution suggest confounding [8].

- Annotate significant CpGs to genomic regions - enrichment in cell-type-specific regulatory regions indicates potential confounding.

- Calculate genomic inflation factors (λ) - values substantially greater than 1 suggest systematic bias [8].

- Compare results with and without cell type adjustment - substantial changes in significant hits indicate sensitivity to cellular heterogeneity.

Q4: What are the best practices for reporting cell type adjustment in publications?

Always transparently report:

- The specific adjustment method used (including software and version)

- Parameters and reference data (if applicable)

- Comparisons between adjusted and unadjusted results

- Estimated cell proportions or surrogate variables in supplementary materials

- Justification for method selection based on your study design and data availability

Table 1: Performance Comparison of Cell Type Adjustment Methods in Simulation Studies

| Method | False Positives | True Positives | Stability | Ease of Use |

|---|---|---|---|---|

| SVA | Low | High | Stable | Moderate |

| Reference-based | Moderate | High | Variable | Moderate |

| Reference-free | Variable | Moderate | Variable | Moderate |

| No Adjustment | Very High | High (but biased) | N/A | Easy |

Data adapted from an extensive simulation study comparing eight correction methods [8].

Table 2: Impact of Cell Type Adjustment on Association Results

| Scenario | Number of Significant CpGs | Genomic Inflation (λ) | Replication Rate |

|---|---|---|---|

| Unadjusted | 1,542 | 1.78 | 23% |

| SVA Adjusted | 647 | 1.02 | 89% |

| Reference-based | 711 | 1.05 | 85% |

Hypothetical example based on simulation results showing how adjustment reduces false positives and improves replicability [8].

Experimental Protocols

Reference-Based Cell Type Deconvolution Protocol

Purpose: Estimate cell-type proportions in bulk tissue samples using established reference methylation signatures.

Materials:

- Illumina Infinium Methylation BeadChip data (450k or EPIC)

- Reference methylation profiles from purified cell types

- R statistical environment with appropriate packages

Procedure:

Data Preprocessing

Cell Proportion Estimation

Downstream Statistical Analysis

Troubleshooting Notes:

- If reference data doesn't match your tissue type, consider tissue-specific reference datasets.

- High correlations between cell types in the reference can cause estimation instability.

- For non-blood tissues, explore tissue-specific reference datasets or consider reference-free methods.

Surrogate Variable Analysis (SVA) Protocol

Purpose: Capture unknown sources of variation, including cellular heterogeneity, without requiring reference data.

Procedure:

Data Preparation

Surrogate Variable Estimation

Differential Methylation Analysis

Validation:

- Compare results with and without SVA adjustment

- Check if genomic inflation is reduced

- Verify that known biological signals are preserved

Signaling Pathways and Workflows

DNA Methylation Analysis Workflow with Heterogeneity Correction

Consequences of Uncorrected Heterogeneity

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Addressing Cellular Heterogeneity

| Tool/Package | Function | Application Context | Key Features |

|---|---|---|---|

| minfi (R/Bioconductor) | Data preprocessing & quality control | Illumina BeadChip data | Import IDAT files, normalization, quality metrics |

| FlowSorted.Blood.450k | Reference-based deconvolution | Blood tissue studies | Pre-computed reference matrices for blood cell types |

| sva (R/Bioconductor) | Surrogate variable analysis | General use, no reference needed | Captures unknown sources of variation |

| EpiDISH (R/Bioconductor) | Cell type deconvolution | Multiple tissue types | Reference-based method for various tissues |

| RefFreeEWAS (R) | Reference-free decomposition | When reference data unavailable | Estimates latent variables without reference |

| missMethyl (R/Bioconductor) | Normalization and analysis | Accounting for technical bias | Gene set analysis, region-based analysis |

Table 4: Experimental Reference Materials

| Resource | Description | Use Case | Access |

|---|---|---|---|

| FlowSorted.Blood.450k | Reference methylation data for purified blood cells | Blood-based EWAS studies | Bioconductor |

| FlowSorted.DLPFC.450k | Reference data for brain cell types | Neurological disorder studies | Bioconductor |

| IlluminaHumanMethylation450kanno.ilmn12.hg19 | Comprehensive annotation for 450k array | Probe annotation and interpretation | Bioconductor |

| BLUEPRINT Epigenome | Reference epigenomes for hematopoietic cells | Blood cell-specific analysis | Public database |

| ENCODE | Reference epigenomic data across cell types | Various tissue-specific studies | Public database |

Frequently Asked Questions (FAQs)

1. Why is DNA methylation considered a more stable biomarker than transcriptomic signals for cell identity? DNA methylation is an inherently stable epigenetic mark. The DNA double helix's structure provides physical stability, offering greater protection against degradation compared to single-stranded RNA [13]. Furthermore, DNA methylation patterns are faithfully inherited through multiple cell divisions by maintenance DNA methyltransferases like DNMT1, which shows a strong preference for hemimethylated DNA during replication [14]. This stability allows methylation profiles to reflect the history of a cell, serving as a cellular memory that persists even after long-term culture, unlike more dynamic transcriptomic profiles [14].

2. How does cellular heterogeneity confound DNA methylation analysis, and what can be done? Tissues like blood, saliva, or tumors are mixtures of different cell types, each with unique methylation profiles. If cell-type proportions vary between experimental groups (e.g., cases vs. controls), observed methylation differences may reflect this cellular heterogeneity rather than the biological process under study [8] [12]. This is a major source of confounding. To address this, computational deconvolution methods are used to estimate and adjust for cell-type proportions in analyses. It is recommended to account for this intersample cellular heterogeneity (ISCH) to accurately interpret results in epigenome-wide association studies [12].

3. My PCR amplification after bisulfite conversion is failing. What are the common causes? Several factors can cause amplification failure with bisulfite-converted DNA:

- Primer Design: Primers must be designed to amplify the converted template (where unmethylated cytosines are converted to uracil). They should be 24-32 nucleotides long and contain no more than 2-3 mixed bases. The 3’ end should not contain a mixed base [15].

- Polymerase Choice: Use a hot-start Taq polymerase. Proof-reading polymerases are not recommended as they cannot read through uracil in the template [15].

- Template DNA: Bisulfite treatment is harsh and can cause strand breaks, making it difficult to amplify large fragments. It is recommended to target amplicons around 200 bp [15].

- DNA Quality: Ensure the DNA used for conversion is pure and not degraded [15].

4. I am not detecting my methylated DNA target after enrichment. What could be wrong?

- Insufficient Input DNA: When using low DNA input, MBD (Methyl-CpG Binding Domain) proteins can bind non-specifically to non-methylated DNA. Always follow the protocol specified for your DNA input amount. If the target is not detected, increasing the input DNA to at least 1 µg can help if the target has low levels of CpG methylation [16].

- Inefficient Elution: The methylated DNA might not be eluting from the enrichment beads. Raising the elution temperature to 98°C can improve yield, though this will render the DNA single-stranded [16].

- Degraded DNA: Always verify the quantity, quality, and size of your input DNA on an agarose gel to rule out degradation [16].

5. What are the primary sources of error in sequencing-based methylation analysis? In Oxford Nanopore sequencing, prevalent errors include deletions within homopolymer stretches and errors at specific methylation sites, notably the central position of the Dcm site (CCTGG or CCAGG) and the Dam site (GATC) [17]. These regions require special care during data analysis and interpretation.

Troubleshooting Guides

Table 1: Common Bisulfite Conversion and PCR Issues

| Observed Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Very little or no amplification | Poor bisulfite conversion efficiency | Ensure DNA is pure before conversion; centrifuge particulate matter [15]. |

| Suboptimal PCR conditions | Use recommended hot-start polymerases; lower annealing temperature to 55°C; use 2-4 µl of eluted DNA per reaction [15] [16]. | |

| Large amplicon size | Design amplicons closer to 200 bp; bisulfite treatment causes DNA fragmentation [15]. | |

| No detection of methylated target after enrichment | DNA is degraded | Run DNA on agarose gel to check quality; increase EDTA concentration to 10 mM to inhibit nucleases [16]. |

| Target has low methylation | Increase input DNA concentration to at least 1 µg [16]. | |

| DNA did not elute from beads | Raise elution temperature to 98°C (note: yields single-stranded DNA) [16]. |

Table 2: Addressing Data Analysis and Specificity Challenges

| Challenge | Impact on Research | Corrective Methodology |

|---|---|---|

| Cellular Heterogeneity | Major confounder in EWAS; can cause both false positives and false negatives [8] [18]. | Use reference-based or reference-free deconvolution algorithms (e.g., MeDeCom, EDec, RefFreeEWAS) to estimate and adjust for cell-type proportions [12] [9]. |

| Global Methylation Variation | Can lead to test statistic inflation (λ >>1) or deflation (λ <<1), severely increasing false positive/negative rates in candidate-gene studies [18]. | Perform epigenome-wide analysis where possible; use Principal Component Analysis (PCA) or Surrogate Variable Analysis (SVA) to adjust for unmeasured confounders [8] [18]. |

| Low Abundance of ctDNA | Challenging detection in liquid biopsies, especially in early-stage cancer [13]. | Use highly sensitive targeted methods (dPCR, targeted NGS); select optimal liquid biopsy source (e.g., local fluids like urine for bladder cancer) [13]. |

Experimental Protocols for Key Applications

Protocol 1: A Basic Workflow for Cell-Type Heterogeneity Adjustment in EWAS

Accurately accounting for cell-type composition is critical for robust methylation analysis. The following workflow is adapted from best practices identified in the literature [8] [12] [9].

Step-by-Step Methodology:

- Data Preprocessing and Filtering: Begin with quality-controlled methylation data (e.g., from arrays or sequencing). Remove probes that are strongly correlated with known confounders (e.g., age, sex) or those with low variance. This step can reduce inference error by 30-35% [9].

- Cell-Type Proportion Estimation: Apply a deconvolution algorithm to estimate the proportion of constituent cell types in each sample.

- Reference-Based Methods: Require an external dataset of methylation profiles from purified cell types. Useful when such references are available and reliable.

- Reference-Free Methods: Use computational approaches like MeDeCom, EDec, or RefFreeEWAS to simultaneously estimate proportions and cell-type-specific methylation profiles from the mixed data [9]. These are essential for solid tissues or cancer cells where pure reference profiles are scarce.

- Determining the Number of Cell Types (K): Use statistical heuristics like Cattell's scree plot to choose the appropriate number of underlying cell types (K) [9].

- Statistical Model Adjustment: Include the estimated cell-type proportions as covariates in the linear model for your EWAS. For example:

Methylation ~ Phenotype + CellType_1 + CellType_2 + ... + CellType_K + Other_Covariates. This adjustment controls for heterogeneity and reduces spurious associations [8] [12].

Protocol 2: Deconvolution of Tumor Methylation Data Using Reference-Free Methods

Tumors are highly heterogeneous. This protocol outlines how to infer cell-type proportions from tumor DNA methylation data without pre-defined references.

Detailed Procedure:

- Input Data: Start with a matrix D of dimensions M (number of CpG probes) by N (number of tumor samples).

- Core Deconvolution Equation: The core of reference-free methods involves solving the equation D ≈ T x A through non-negative matrix factorization (NMF) [9].

- D is the original matrix of mixed tumor methylation profiles.

- T is the estimated matrix of M probes by K cell-type-specific methylation profiles.

- A is the estimated matrix of K cell-type proportions by N samples.

- Implementation:

- Software: Use packages like MeDeCom, EDec (Stage 1), or RefFreeEWAS in R.

- Initialization: Be aware that these methods can be sensitive to random initialization. It is good practice to run the analysis with multiple initializations and average the results [9].

- Performance: The accuracy of proportion estimation improves significantly with larger sample sizes (N) and greater inter-sample variability in cell-type mixtures [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DNA Methylation Analysis

| Reagent / Kit | Primary Function | Key Considerations |

|---|---|---|

| Sodium Bisulfite Conversion Kit | Chemically converts unmethylated cytosine to uracil, allowing for methylation status determination. | Ensure input DNA is pure. Conversion efficiency is critical for accuracy [15] [14]. |

| Methylated DNA Enrichment Kit (e.g., EpiMark) | Enriches for methylated DNA fragments using MBD2a-Fc beads. | Follow protocols for different DNA input amounts to minimize non-specific binding. High-temperature (98°C) elution may be needed [16]. |

| Hot-Start Taq Polymerase (e.g., Platinum Taq) | PCR amplification of bisulfite-converted DNA. | Essential because it can read through uracil residues in the template. Proof-reading polymerases are not suitable [15]. |

| Infinium MethylationEPIC BeadChip | High-throughput microarray for profiling methylation at >850,000 CpG sites. | Cost-effective for large studies. Covers promoter, gene body, and enhancer regions [14]. |

| Cell-Type Deconvolution Software (MeDeCom, EDec, RefFreeEWAS) | Computationally estimates cell-type proportions from mixed-tissue methylation data. | Choice between reference-free or reference-based methods depends on the availability of purified cell-type profiles [9]. |

A Practical Toolkit for Deconvolution: From Reference-Based to Reference-Free Algorithms

Frequently Asked Questions (FAQs)

General Principles

1. What is reference-based deconvolution and why is it important for DNA methylation analysis? Reference-based deconvolution is a computational method that estimates the proportions of different cell types within a complex biological sample (like whole blood or tissue) by leveraging known cell-type-specific DNA methylation patterns. It is crucial for correcting cellular heterogeneity in methylation-expression analyses, as variations in cell composition can confound association studies and lead to inaccurate biological interpretations. By mathematically decomposing the bulk methylation signal into its cellular constituents, researchers can control for this confounding and identify true epigenetic signatures related to disease, exposure, or other phenotypes [19].

2. How does reference-based deconvolution differ from reference-free methods? Reference-based methods are supervised and require a pre-defined reference panel containing DNA methylation profiles (signatures) of purified cell types. These signatures are used to estimate the proportion of each cell type in a mixed sample. In contrast, reference-free methods are unsupervised and do not require external references; they simultaneously estimate both putative cellular proportions and methylation profiles directly from the bulk data. While reference-based methods are generally more accurate and robust when high-quality references are available, reference-free methods offer a solution for tissues where reference panels are lacking [20] [19].

Technical and Experimental Setup

3. What are the key considerations when selecting or building a reference library? Selecting an optimal reference library is critical for accurate deconvolution. Key considerations include:

- Cell Type Specificity: The library must be built from highly specific differentially methylated regions (DMRs) that are invariant between individuals but distinct between cell types [19].

- Platform Compatibility: The reference must match the profiling technology used for your samples (e.g., Illumina 450K, EPIC array). References built for one platform (e.g., 450K) may perform suboptimally on another (e.g., EPIC), as a significant proportion of optimal probes can be unique to the newer platform [21].

- Library Size and Optimization: The number of marker CpGs matters. Larger libraries are not always better; optimized libraries like those identified by the IDOL algorithm can contain around 450 probes and achieve superior performance (R² > 99% for major cell types) compared to automatic selection methods [21].

- Biological Context: The reference should be appropriate for your biological question. For example, libraries derived from adult peripheral blood are not suitable for deconvoluting cord blood samples due to differences in cellular composition like nucleated erythrocytes [19].

4. My deconvolution results are inaccurate. What could have gone wrong? Inaccurate results can stem from several sources:

- Reference-Sample Mismatch: A common issue is using a reference library generated from a different dataset, profiling platform, or population than your study samples. This can lead to a consistent overestimation or underestimation of certain cell fractions [22].

- Poor Marker Specificity: The selected marker CpGs may not be sufficiently specific in your sample dataset, leading to cross-talk and error between similar cell types. The performance is highly dependent on the F-statistic (specificity) of the markers [22].

- Low Abundance Cell Types: Deconvolving the proportions of rare cell types (e.g., those present at <1%) remains challenging and is highly sensitive to the choice of algorithm and the number of marker loci used [22].

- Incorrect Algorithm Choice: The performance of deconvolution algorithms varies significantly depending on the context, such as the number of cell types, their abundance, and their similarity. Benchmarking studies show that no single algorithm performs best in all scenarios [22].

Data Analysis and Interpretation

5. Which deconvolution algorithm should I choose for my project? There is no one-size-fits-all algorithm. Comprehensive benchmarking of 16 algorithms revealed that performance depends heavily on specific experimental variables [22]. The choice should be tailored based on:

- Cell abundance: Some algorithms are better at estimating rare cell fractions.

- Cell type similarity: Complex mixtures with highly similar cell types may require more sophisticated methods.

- Reference panel size: The number of markers can interact with algorithm performance.

- Profiling method: Algorithms may perform differently on array-based (450K/EPIC) versus sequencing-based (WGBS/RRBS) data. Systematic evaluation using your specific data structure is recommended for optimal selection [22].

6. How can I validate my deconvolution results? The gold standard for validation is the use of orthogonal measurements—independent methods to quantify cell compositions—from the same samples. These can include [23] [24]:

- Fluorescence-activated cell sorting (FACS)

- Immunohistochemistry (IHC)

- Single-molecule fluorescent in situ hybridization (smFISH) In the absence of such matched data, using artificially constructed mixtures with known cell proportions is a common strategy to benchmark algorithm accuracy before applying it to unknown samples [22] [21].

Troubleshooting Guides

Problem 1: High Error in Specific Cell Type Estimates

Symptoms: One cell type is consistently over- or under-estimated across multiple samples, while others are accurately predicted. Possible Causes and Solutions:

- Cause: Non-specific marker CpGs. The marker loci for the problematic cell type lack specificity in your dataset.

- Solution: Re-evaluate your marker selection. Consider using an optimized, pre-curated library like those from the IDOL algorithm, which has been shown to reduce variance and improve accuracy [21].

- Cause: High similarity to another cell type. The epigenomic profiles of two cell types are very similar.

- Cause: Algorithm is biased against low-abundance or high-abundance cells.

- Solution: Test alternative deconvolution algorithms. Benchmarking studies indicate that algorithms like

MethylResolverorEMethvariants may perform differently across various abundance ranges [22].

- Solution: Test alternative deconvolution algorithms. Benchmarking studies indicate that algorithms like

Symptoms: High root mean square error (RMSE) and low correlation (Spearman's R²) between predicted and expected proportions in validation mixtures. Possible Causes and Solutions:

- Cause: Fundamental reference-to-sample mismatch. The reference library and the sample data are generated from different sources, leading to systematic bias.

- Solution: Ensure the reference and sample data are profiled on the same platform. If possible, use a reference generated from a population matched to your study. If a perfect match is unavailable, try different normalization methods for the bulk data, as this can significantly impact the results of some algorithms [22].

- Cause: Suboptimal deconvolution algorithm for your data structure.

- Cause: Insufficient sequencing depth (for sequencing-based data).

- Solution: For whole-genome bisulfite sequencing (WGBS) or reduced representation bisulfite sequencing (RRBS) data, ensure adequate sequencing depth. Performance degrades significantly with low coverage [22].

Problem 3: Results are Inconsistent or Non-reproducible

Symptoms: Large variance in estimates between technical replicates or when re-running the analysis. Possible Causes and Solutions:

- Cause: Noisy data or low-quality DNA.

- Solution: Apply stringent quality control (QC) metrics to your raw methylation data before deconvolution. Remove low-quality samples or probes with high detection p-values or low bead counts.

- Cause: Instability in the algorithm or marker set.

- Solution: Use a larger, more robust set of marker CpGs. Optimized libraries like the 450-CpG set from IDOL have been shown to produce estimates with significantly lower variance compared to other methods [21].

Experimental Protocols & Workflows

Workflow 1: Standardized Protocol for Blood Sample Deconvolution using EPIC Array

This protocol is adapted from methods used to generate highly accurate deconvolution estimates for whole-blood biospecimens [21].

1. DNA Extraction and Quality Control:

- Extract DNA from whole blood or buffy coat using a standard kit.

- Quantify DNA and assess quality (e.g., via Nanodrop or Qubit). DNA should be of high quality, but deconvolution is compatible with archival samples.

2. Methylation Profiling:

- Process DNA using the Illumina Infinium MethylationEPIC BeadChip according to the manufacturer's instructions.

- This array interrogates over 860,000 CpG sites, providing ample data for deconvolution.

3. Data Preprocessing and Normalization:

- Process raw intensity data (IDAT files) using the

minfiR/Bioconductor package. - Perform background correction and normalization (e.g., using

preprocessNooborpreprocessQuantile). - Extract beta-values for downstream analysis.

4. Reference Library Application and Deconvolution:

- Obtain the optimized reference library. The study by Salas et al. (2018) identified a 450-CpG library using the IDOL algorithm, which is highly recommended [21].

- Use the constrained projection method implemented in

minfi(e.g., theprojectCellTypefunction) to estimate cell proportions. - Input: A matrix of beta-values for your samples, filtered to the 450 CpGs in the IDOL library.

- Output: A matrix of estimated cell proportions for neutrophils, monocytes, B-cells, NK cells, and CD4+ and CD8+ T-cells.

5. Validation (If Possible):

- Compare deconvolution results with orthogonal cell counts from flow cytometry performed on a subset of matched samples.

Deconvolution Workflow for Blood Samples

Workflow 2: Benchmarking Deconvolution Algorithms

Before analyzing your full dataset, it is critical to benchmark algorithms to identify the best performer for your specific context [22] [25].

1. Create a Ground Truth Dataset:

- In-silico Mixtures: Combine DNA methylation profiles from purified cell types in predefined proportions. Sample proportions from a uniform distribution and rescale to sum to 1. Use 200+ such mixtures for robust testing.

- In-vitro Mixtures: Physically mix DNA from purified cell types in known proportions and profile them on your chosen platform.

2. Algorithm Selection and Configuration:

- Select a panel of algorithms for testing (e.g., CIBERSORT, EpiDISH, FARDEEP, minfi, NNLS, Ridge, Lasso, Elastic Net, EMeth variants).

- Apply different normalization methods to the mixture data (e.g., no normalization, quantile normalization, z-score transformation). Note that some algorithms are incompatible with certain normalizations.

3. Performance Evaluation:

- For each algorithm-normalization combination, compute performance metrics by comparing deconvolved proportions to the known ground truth.

- Key Metrics:

- Root Mean Square Error (RMSE): Measures absolute error.

- Spearman's R²: Measures correlation between true and predicted ranks.

- Jensen-Shannon Divergence (JSD): Assesses similarity between the distributions.

- Compile these into a summary Accuracy Score (AS) to rank the methods.

4. Select and Apply the Best Performer:

- Choose the algorithm-normalization combination with the highest AS or the best performance on the metric most critical to your study.

- Use this optimized configuration to deconvolve your actual study samples.

Quantitative Data and Performance Metrics

Table 1: Performance Comparison of Selected Deconvolution Algorithms on Tissue Mixtures

This table summarizes findings from a large-scale benchmarking study on mixtures of four tissues (small intestine, blood, kidney, liver), illustrating how performance varies [22].

| Algorithm Category | Example Algorithm | Normalization Used | Median RMSE | Median Spearman's R² | Notes on Performance |

|---|---|---|---|---|---|

| Non-negative Least Squares | NNLS | None | 0.07 | 0.90 | Stable, middle-of-the-road performance. |

| Constrained Projection | minfi | Illumina | 0.06 | 0.92 | Robust and commonly used, integrated into minfi. |

| Regularized Regression | Ridge Regression | Z-score | 0.08 | 0.88 | Performance can vary with the regularization parameter. |

| Robust Regression | FARDEEP | Log | 0.09 | 0.85 | Designed to be outlier-resistant. |

| Expectation-Maximization | EMeth-Binomial | None | 0.05 | 0.94 | Showed top-tier performance in specific benchmarking scenarios. |

RMSE: Root Mean Square Error; A lower value is better. R²: Spearman's coefficient; closer to 1 is better.

Table 2: Impact of Reference Library on Deconvolution Accuracy in Blood

Data from Salas et al. (2018) demonstrating the improvement gained by using an optimized reference library on the EPIC array for deconvolving immune cell types [21].

| Reference Library | Deconvolution Method | Average R² (across cell types) | Key Advantage |

|---|---|---|---|

| Reinius (450K) | Automatic (minfi) | >86% but highly variable | Historical standard, but suboptimal for EPIC. |

| EPIC - Automatic | Automatic (minfi) | ~90% | Better than 450K but not optimized. |

| EPIC - IDOL (450 CpGs) | Constrained Projection | 99.2% | Dramatically reduced variance, highest accuracy. |

| Resource Name | Type | Function / Application | Notes |

|---|---|---|---|

| Illumina MethylationEPIC BeadChip | Microarray | Genome-wide DNA methylation profiling. | The current standard array; covers >860,000 CpGs. Ideal for deconvolution with optimized libraries [21]. |

| FlowSorted.Blood.EPIC | Reference Dataset | Pre-built reference of methylation profiles for sorted blood cells. | Contains data for neutrophils, monocytes, B-cells, CD4+ T, CD8+ T, and NK cells. Essential for building or validating blood deconvolution models [21]. |

| IDOL Algorithm | Computational Method | Identifies Optimal L-DMR libraries for deconvolution. | Used to find the most informative CpGs for a given cell type panel, significantly improving accuracy over automatic selection [21]. |

| minfi (R/Bioconductor) | R Package | Comprehensive toolbox for analyzing methylation array data. | Includes functions for data preprocessing, quality control, and the Houseman method for constrained projection deconvolution [21] [19]. |

| EpiDISH (R/Bioconductor) | R Package | Suite for deconvolving DNA methylation data. | Implements multiple deconvolution algorithms (e.g., CIBERSORT, RPC) allowing for easy method comparison [22]. |

| Fluorescent Beads (for PSF) | Reagent | Used to generate empirical Point Spread Functions. | Note: This is a critical reagent for image deconvolution in microscopy, a different field. It is included here to prevent confusion, as it often appears in searches for "deconvolution" [26]. |

The Problem of Cellular Heterogeneity

In DNA methylation studies, most tissues of interest are complex mosaics of different cell types. For example, whole blood contains a mixture of granulocytes, lymphocytes, and other immune cells, while solid tissues like breast or tumor samples can be composed of numerous distinct cell types. The measured DNA methylation level in a bulk tissue sample represents a weighted average of the methylation levels from all constituent cell types. When the proportions of these cell types vary between individuals and are associated with the phenotype of interest (e.g., disease state), this can create spurious associations or mask true signals. This confounding effect is one of the largest contributors to DNA methylation variability and must be accounted for to accurately interpret analysis results. [27] [12] [7]

When Are Reference-Free and Semi-Supervised Methods Needed?

Reference-based deconvolution methods require an external reference dataset containing cell-type-specific methylation profiles for a predefined set of cell types. While powerful, such reference data only exist for a limited number of tissues like blood, breast, and brain. Furthermore, available references may not match the study population in terms of age, genetics, or environmental exposures. For instance, a blood reference from adults may fail to accurately estimate cell proportions in newborns. In these situations, reference-free (unsupervised) and semi-supervised methods become essential. [28] [7]

This Technical Support Center guide addresses the specific challenges researchers face when applying these advanced computational methods.

Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: What is the fundamental difference between reference-free and semi-supervised deconvolution methods?

- Answer: Reference-free (or unsupervised) methods, such as ReFACTor or non-negative matrix factorization (NNMF), aim to infer underlying cell composition directly from the bulk methylation data matrix without any prior information. They identify components that capture the major sources of variation, which often correspond to cell-type proportions. In contrast, semi-supervised methods, like BayesCCE, incorporate easily obtainable prior knowledge about the cell-type composition distribution of the studied tissue. This allows them to construct components that correspond more directly to specific cell types rather than just linear combinations of them. [28]

FAQ 2: My reference-free method output components that are highly correlated with cell types, but why can't I interpret them as direct cell proportions?

- Answer: This is a common point of confusion. Most reference-free methods are mathematically limited to inferring linear combinations of the true cell-type proportions, not the proportions themselves. A component might, for example, represent "0.5CD4+ T cells + 0.5Monocytes - 0.2*B cells." While this component is useful for adjusting for confounding in a linear regression, it cannot be used to report the actual percentage of CD4+ T cells or in any non-linear downstream analysis. Semi-supervised methods like BayesCCE were specifically designed to overcome this identifiability problem. [28]

FAQ 3: How do I choose the number of cell types (K) in a reference-free decomposition?

- Answer: Determining the correct number of constituent cell types (K) is a critical step. Some methods, like the one proposed by Houseman et al., incorporate a resampling-based procedure to evaluate the stability of the decomposition across different values of K and select the most reasonable estimate. It is recommended to run the method over a range of potential K values and use the built-in model selection criteria, if available, or to evaluate the biological interpretability of the resulting methylomes. Using a negative control, such as data from a relatively pure tissue like sperm, can help validate that the method correctly identifies a low number of components when heterogeneity is minimal. [27]

FAQ 4: After deconvolution, how can I biologically validate the estimated cell-type-specific methylomes?

- Answer: You can evaluate the biological relevance of the estimated methylomes (matrix M) by analyzing the CpG loci with the highest variance across the K components. These high-variance CpGs are the most informative for distinguishing the putative cell types. You can then test these CpGs for enrichment in known cell-type-specific regulatory markers using auxiliary annotation data from projects like The Roadmap Epigenomics Project. Significant enrichment provides evidence that the decomposed methylomes reflect true biological distinctions between cell types. [27]

FAQ 5: I have cell count data for a small subset of my samples. Can I use this information?

- Answer: Yes, and this is a major strength of semi-supervised methods like BayesCCE. While existing reference-based and reference-free methods typically ignore this valuable information, BayesCCE's Bayesian framework is flexible and allows for the incorporation of known cell counts from a subset of individuals (or from external data). This leads to a significant improvement in the correlation of the estimated components with the true cell counts, effectively imputing the missing cell counts for the rest of the cohort. [28]

Method Comparison & Selection Guide

The table below summarizes key reference-free and semi-supervised methods, their core principles, and typical use cases to help you select the right tool.

| Method Name | Core Methodology | Key Features | Best Use Cases |

|---|---|---|---|

| ReFACTor [28] | Reference-free (Unsupervised) | Computes principal components (PCs) that are prioritized to capture cell composition variation. | Adjusting for cell-type confounding in EWAS when the goal is not to obtain actual proportions. |

| Non-Negative Matrix Factorization (NNMF) [27] [28] | Reference-free (Unsupervised) | Decomposes the bulk methylation matrix (Y) into two non-negative matrices: putative methylomes (M) and proportions (Ω). | Exploring underlying cell-type structure and estimating putative proportions and methylomes without any prior data. |

| BayesCCE [28] | Semi-Supervised | A Bayesian framework that incorporates prior knowledge on the cell-type composition distribution of the tissue. | When approximate cell proportion distributions are known and the goal is to obtain estimates that correspond to specific cell types. |

| Meth-SemiCancer [29] | Semi-Supervised (Classification) | A neural network that uses pseudo-labeling to leverage unlabeled DNA methylome data during training. | Cancer subtype classification when you have a small set of labeled data and a larger set of unlabeled data. |

Experimental Protocols & Workflows

Standardized Workflow for Reference-Free Deconvolution

The following diagram illustrates a general, recommended workflow for performing and validating a reference-free deconvolution analysis.

Protocol: Conducting a Reference-Free Deconvolution with NNMF

This protocol is based on the method described in Houseman et al. (2016). [27]

1. Input Data Preparation:

- Data Type: An m × n matrix Y of DNA methylation data, where m is the number of CpG probes and n is the number of subjects/specimens. Values are typically beta-values between 0 and 1.

- Preprocessing: Perform standard quality control (e.g., probe filtering, normalization) and adjust for any technical artifacts or batch effects. The data should be formatted and cleaned as for a standard EWAS.

2. Algorithm Execution:

- Principle: The goal is to factorize the data matrix such that Y ≈ MΩ^T, where M is an m × K matrix of putative cell-type-specific methylomes and Ω is an n × K matrix of subject-specific cell-type proportions. Entries in M and Ω are constrained to the unit interval [0,1].

- Implementation: Use a non-negative matrix factorization (NNMF) algorithm. Due to computational intensity, use the fast approximation and resampling procedure suggested by the authors to determine the number of components K.

- Determine K: Run the NNMF algorithm over a range of K values (e.g., K=2 to K=10). Use the resampling approach to evaluate the stability of the solutions. The optimal K is the one that provides a stable decomposition where the components demonstrate anticipated associations with phenotypes.

3. Downstream Analysis:

- Phenotype Association: Include the estimated proportion matrix Ω as covariates in your EWAS model to adjust for cell-type heterogeneity:

Phenotype ~ Methylation_at_CpG_j + Ω_1 + Ω_2 + ... + Ω_K + Covariates. - Interpret *M:* For biological interpretation, calculate the variance of each CpG across the K columns of M. The CpGs with the highest row-wise variance are the most differential across the inferred cell types. Use these CpGs for functional enrichment analysis against databases of cell-type-specific marks.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key computational tools and resources that are fundamental to this field of research.

| Tool / Resource Name | Type | Function & Application |

|---|---|---|

| Illumina Infinium BeadChip [27] [30] | Experimental Platform | Genome-wide methylation profiling array (e.g., 450K, EPIC). Provides the primary bulk methylation data matrix (Y) for deconvolution. |

| ReFACTor [28] | Software / Algorithm | A reference-free method for estimating components that capture cell composition variation, useful for EWAS adjustment. |

| BayesCCE [28] | Software / Algorithm | A semi-supervised Bayesian method for estimating cell-type composition by incorporating prior knowledge on cell count distributions. |

| Roadmap Epigenomics Project [27] | Data Resource | A public repository of reference epigenomes for various cell types and tissues. Used for biological validation of estimated methylomes (M). |

| Metheor [31] | Software / Algorithm | A toolkit for measuring DNA methylation heterogeneity from bisulfite sequencing data, which can inform on cellular diversity. |

Troubleshooting Guides

SVA Troubleshooting: Common Errors and Solutions

Problem 1: "Subscript out of bounds" error in irwsva.build

- Error Description: The SVA process fails with a "subscript out of bounds" error during the iterative procedure of the

irwsva.buildfunction. - Root Cause: This often occurs in datasets with a small number of features (genes) and a high-dimensional response variable (e.g., many phenotype classes). The algorithm can down-weight features associated with the response so aggressively that the data matrix effectively becomes a matrix of all zeros. A subsequent singular value decomposition (SVD) on this zero matrix fails because no positive singular values can be found, causing the error [32].

- Solutions:

- Reduce the number of response variable classes: If biologically justified, reducing the number of levels in your phenotype variable can help [32].

- Use the two-step SVA method: Run

svawith the argumentmethod = 'two-step'. Be aware that this method has different properties and subsequent functions likefsvamight not be fully compatible [32]. - Limit the number of iterations: Setting

B=1(for one iteration) may allow the function to complete, though the results should be interpreted with caution [32]. - Check for sufficient surrogate variables: Use

num.svto verify that a non-zero number of surrogate variables is detected. Ifnum.svreturns 0, it indicates that all features are significantly associated with the variable of interest, leaving no residual variation for SVA to capture [32].

Problem 2: SVA fails to identify any surrogate variables

- Error Description: The

num.svfunction returns 0 significant surrogate variables. - Root Cause: This typically happens when the number of features is small, and most or all of them are strongly associated with the primary variable of interest (e.g., disease status). In this case, there is little to no unmodeled variation for SVA to detect [32].

- Solutions:

- Consider a different method: If SVA cannot find surrogate variables, its application may not be appropriate for your dataset. Consider alternative batch correction methods like ComBat or linear regression-based approaches [33] [34].

- Verify feature selection: Ensure that the input data matrix contains a sufficient number of features that are not directly driven by the primary variable.

General Workflow Troubleshooting for Cellular Heterogeneity Correction

Problem: Corrected data shows loss of biological signal

- Error Description: After applying a correction method (e.g., batch correction or deconvolution), the data no longer shows expected biological differences between groups.

- Root Cause: Over-correction. The method may be removing biological variation along with technical noise, especially if the batch is confounded with the biological variable of interest [34] [35].

- Solutions:

- Use unsupervised correction carefully: Methods like SVA and ComBat can remove biological signal if it is correlated with a batch. Where possible, include biological variables in the model to protect them during correction [34].

- Evaluate correction quality: Always assess the result. For batch correction, check that batches are mixed but known biological groups remain distinct. Use metrics like SVM accuracy to quantify batch mixing and preservation of within-batch structure [35].

Frequently Asked Questions (FAQs)

Q1: When should I use SVA versus a linear model-based method like removeBatchEffect or ComBat?

- A: The choice depends on your experimental design and prior knowledge.

- Use linear model-based methods (e.g.,

removeBatchEffect,ComBat,rescaleBatches) when you have a known batch or technical factor you wish to remove. These methods are statistically efficient and work best when the cell population composition is the same across batches or known a priori [33] [35]. - Use SVA when you suspect there are unknown sources of variation (e.g., unknown subpopulations, unmeasured clinical variables) that are confounding your analysis. SVA is an unsupervised approach designed to discover and account for these "surrogate variables" [34].

- Use linear model-based methods (e.g.,

Q2: How can I assess the performance of different normalization or correction methods in my own data?

- A: Benchmarking performance requires defining a gold standard and relevant metrics. Common strategies include:

- Downstream Analysis Accuracy: If you have prior biological knowledge, such as validated differentially expressed genes or known cell-type markers, you can measure how well each method recovers these signals after correction [36] [37].

- Technical Metric: Use metrics like the Area Under the Precision-Recall Curve (auPRC) to evaluate how well the corrected data recapitulates known functional relationships between genes from databases like Gene Ontology [37].

- Visual and Quantitative Diagnostics: For batch correction, use visualizations (t-SNE, PCA) and quantitative metrics (e.g., SVM accuracy for predicting batch) to check that technical variation is reduced without over-mixing biological groups [35].

Q3: My dataset is small and has high heterogeneity. What normalization methods are most robust for prediction tasks?

- A: Studies evaluating normalization for cross-study prediction under heterogeneity have found that:

- Batch Correction Methods (e.g., BMC, Limma) often consistently outperform other approaches [36].

- Transformation Methods designed to achieve data normality, such as Blom and NPN, can effectively align data distributions across different populations [36].

- Among scaling methods, TMM and RLE generally show more consistent performance compared to Total Sum Scaling (TSS)-based methods like UQ, MED, and CSS when population effects are present [36].

Q4: What is the role of deconvolution methods in correcting for cellular heterogeneity?

- A: Deconvolution methods (e.g., CIBERSORT, DeconmiR) are used to estimate the proportion of different cell types within a bulk tissue sample. This is crucial because observed molecular changes in bulk data could be due to either a shift in cell-type proportions or a change in expression within a cell type. By estimating these proportions, you can either adjust for them as covariates in statistical models or analyze cell-type-specific expression, thereby reducing confounding [38].

Comparative Performance Tables

Table 1: Summary of Normalization Method Performance in Cross-Study Prediction under Heterogeneity [36]

| Method Category | Specific Method | Key Strengths | Key Limitations |

|---|---|---|---|

| Scaling Methods | TMM, RLE | More consistent performance under population effects compared to TSS-based methods. | Performance declines rapidly with increasing population effects. |

| Transformation Methods | Blom, NPN | Effective at aligning data distributions across populations; good for capturing complex associations. | Can lead to high sensitivity but low specificity in predictions. |

| Batch Correction | BMC, Limma | Consistently outperforms other categories; provides high AUC, accuracy, sensitivity, and specificity. | May over-correct if biological signal is correlated with batch. |

| TSS-based Methods | UQ, MED, CSS | Standard methods for microbiome data. | Performance is generally inferior to TMM/RLE and batch correction methods in heterogeneous settings. |

Table 2: Troubleshooting Guide for Common SVA Errors [32]

| Error Symptom | Likely Cause | Recommended Solutions |

|---|---|---|

"Subscript out of bounds" in irwsva.build |

Data matrix down-weighted to all zeros due to small features/high response dimensions. | 1. Reduce phenotype classes.2. Use method='two-step'.3. Run with B=1 (single iteration). |

num.sv returns 0 |

All features are associated with primary variable; no residual variation for SVA. | 1. SVA may be inappropriate; try a different method (e.g., ComBat).2. Verify feature selection. |

| SVs correlate with biological variable of interest | Unmodeled variation is biologically relevant. | Reconsider use of SVA or include the variable in the model to protect it. |

Experimental Protocols

Protocol 1: Benchmarking Batch Correction Methods for scRNA-Seq Data

Objective: To evaluate the effectiveness of different batch correction methods in integrating single-cell RNA sequencing data from multiple batches.

Data Preparation and Preprocessing:

- Obtain your single-cell dataset(s) from multiple batches.

- Perform quality control (QC) and normalization within each batch separately. This includes filtering cells and genes, and computing size factors to normalize for library size [35].

- Subset all batches to a common set of features (e.g., genes) [33].

- Rescale batches to adjust for systematic differences in sequencing depth using a function like

multiBatchNorm[33] [35]. - Identify highly variable genes (HVGs) by averaging variance components across all batches [33].

Application of Correction Methods:

- Apply the batch correction methods you wish to benchmark. Common choices include:

- Follow the standard workflow for each method to obtain corrected low-dimensional embeddings or expression values.

Performance Evaluation:

- Mixing Efficiency: Assess how well cells from different batches are intermingled. A common approach is to train a non-linear classifier (e.g., a radial SVM) to predict the batch of each cell based on the corrected data. Lower cross-validation accuracy indicates better batch mixing [35].

- Biological Signal Preservation: Evaluate whether the correction has preserved biologically meaningful structure. Use metrics that compare the distance distributions or local neighborhood structures within each batch before and after correction [35].

- Visual Inspection: Generate low-dimensional embeddings (e.g., t-SNE, UMAP) of the corrected data, colored by batch and by known cell-type labels, to visually check for batch integration and biological separation.

Protocol 2: Evaluating Normalization Methods for Co-expression Network Analysis

Objective: To construct accurate gene co-expression networks from RNA-seq data by identifying the optimal normalization workflow.

Data Collection and Preprocessing:

- Gather RNA-seq datasets (e.g., from recount2 database), including both large homogeneous datasets (e.g., from GTEx) and smaller, heterogeneous datasets (e.g., from SRA) [37].

- Apply lenient filters to retain as many genes and samples as possible.

Workflow Construction:

- Test all combinations of the following stages to create multiple analysis workflows [37]:

- Within-sample normalization: CPM, TPM, RPKM, or none.

- Between-sample normalization: TMM, UQ, Quantile, or none. Also consider count-adjusted methods like CTF (Counts adjusted with TMM Factors).

- Network Transformation: Weighted Topological Overlap (WTO), Context Likelihood of Relatedness (CLR), or none.

- Test all combinations of the following stages to create multiple analysis workflows [37]:

Network Construction and Evaluation:

- For each dataset and each workflow, construct a gene co-expression network.