Navigating Data Quality Challenges in Sperm Image Analysis: From Dataset Biases to AI-Ready Solutions

This article provides a comprehensive analysis of the critical data quality issues plaguing sperm image datasets, which are essential for developing robust AI-assisted fertility diagnostics.

Navigating Data Quality Challenges in Sperm Image Analysis: From Dataset Biases to AI-Ready Solutions

Abstract

This article provides a comprehensive analysis of the critical data quality issues plaguing sperm image datasets, which are essential for developing robust AI-assisted fertility diagnostics. We explore the foundational challenges of small sample sizes, class imbalance, and inconsistent annotations that undermine model generalizability. The review covers methodological advances in deep learning for segmentation and classification, alongside practical troubleshooting strategies including data augmentation and synthetic data generation. Finally, we examine validation frameworks and performance benchmarks for these technologies, synthesizing key takeaways and future directions to guide researchers and clinicians in building more reliable, standardized, and clinically applicable sperm morphology analysis systems.

The Foundational Data Crisis: Understanding Core Quality Issues in Sperm Image Datasets

Frequently Asked Questions (FAQs) on Sperm Image Data

Q1: What are the primary data quality challenges in developing sperm image datasets? The creation of high-quality sperm image datasets is hindered by several interconnected challenges. Size and Diversity Limitations are fundamental; deep learning models require large volumes of data, yet many datasets contain only a few thousand images, which is insufficient for robust model training [1]. This is compounded by a Lack of Standardization in sample preparation, staining, and image acquisition across different clinical laboratories, leading to inconsistent data that harms model generalizability [1]. Furthermore, achieving High-Quality Annotations is difficult due to the intrinsic complexity of sperm morphology. Annotating defects in the head, midpiece, and tail requires expert knowledge, and even among experts, there is significant inter-observer disagreement on classification, making it difficult to establish a reliable "ground truth" [2] [3] [1].

Q2: How does inter-expert disagreement affect dataset quality? Inter-expert disagreement directly challenges the reliability of the dataset's labels, which are the foundation for training AI models. In one study, analysis of agreement among three experts showed varying levels of consensus: some cases had total agreement, others only partial agreement (2/3 experts), and some no agreement at all [3]. This subjectivity introduces noise and inconsistency into the training data. When an AI model learns from such ambiguous labels, its performance and reliability are compromised, as it cannot learn a consistent pattern for what constitutes, for example, a "tapered head" versus a "thin head" [2] [3].

Q3: Why are conventional machine learning methods limited for sperm morphology analysis? Conventional machine learning algorithms, such as Support Vector Machines (SVM) and k-means clustering, are fundamentally limited by their reliance on handcrafted features [1]. These models require researchers to manually design and extract image features (e.g., shape descriptors, texture, contours) for the algorithm to process. This process is not only tedious and time-consuming but also often results in models that focus only on the sperm head and struggle to distinguish complete sperm structures from cellular debris in semen samples [1]. Consequently, these models often suffer from poor generalization, with performance varying significantly from one dataset to another [1].

Q4: What is the role of data augmentation in addressing dataset scarcity? Data augmentation is a critical technique for artificially expanding the size and diversity of a dataset. It involves applying random but realistic transformations—such as rotation, flipping, and color/contrast adjustments—to existing images [3]. This process generates new training samples from the original data, which helps prevent the AI model from overfitting and improves its ability to generalize to new, unseen images. For instance, one research team expanded their dataset from 1,000 to 6,035 images using augmentation techniques, which was crucial for effectively training their deep learning model [3].

Troubleshooting Guides for Common Data Issues

Issue 1: Managing Small and Imbalanced Datasets

| Solution | Description | Key Considerations |

|---|---|---|

| Data Augmentation | Apply transformations (rotations, flips, brightness/contrast changes) to existing images to create new training samples [3]. | Ensure transformations are biologically plausible. Avoid alterations that could change the morphological class (e.g., making a normal head appear tapered). |

| Synthetic Data Generation | Use Generative Adversarial Networks (GANs) to create artificial sperm images, particularly for rare morphological classes [4]. | The quality and realism of synthetic data must be rigorously validated by domain experts before use in model training. |

| Strategic Sampling | In the model training loop, use techniques like oversampling of rare classes or undersampling of over-represented classes to balance class influence [1]. | Be cautious not to amplify noise or artifacts through excessive oversampling of a very small number of original examples. |

Issue 2: Ensuring Annotation Consistency and Quality

| Solution | Description | Key Considerations |

|---|---|---|

| Develop Detailed Guidelines | Create comprehensive, visual documentation defining each morphological class and how to handle edge cases [2]. | Treat guidelines as a living document; update them as new cases are encountered and communicate changes to all annotators promptly [2]. |

| Implement Multi-Stage Validation | Adopt a pipeline where initial annotations are reviewed by a second annotator, with conflicts escalated to a senior expert for adjudication [2]. | This process, while quality-critical, increases the time and cost of dataset creation and requires a hierarchy of annotator expertise [2]. |

| Analyze Inter-Annotator Agreement | Use statistical metrics (e.g., Fleiss' Kappa) to quantify the level of agreement between multiple annotators on the same set of images [3]. | Low agreement scores often indicate ambiguous class definitions in the guidelines or a need for further annotator training. |

Experimental Protocols from Current Research

Protocol: Building an Annotated Sperm Morphology Dataset (SMD/MSS)

The following methodology outlines the process used to create the Sperm Morphology Dataset/Medical School of Sfax (SMD/MSS), as detailed in recent research [3].

1. Sample Preparation and Image Acquisition

- Sample Collection: Semen samples are collected from patients after obtaining informed consent. Samples with very high concentrations (>200 million/mL) are typically excluded to prevent image overlap and facilitate the capture of whole spermatozoa [3].

- Smear Preparation and Staining: Smears are prepared according to World Health Organization (WHO) guidelines and stained using a standardized kit (e.g., RAL Diagnostics) [3].

- Image Capture: A Computer-Assisted Semen Analysis (CASA) system, comprising an optical microscope with a digital camera, is used for image acquisition. Images are captured in bright field mode using an oil immersion 100x objective [3]. Each image contains a single spermatozoon.

2. Expert Annotation and Ground Truth Establishment

- Classification Standard: Each spermatozoon is classified by multiple experts (e.g., three) based on a standardized classification system like the modified David classification, which includes 12 classes of defects across the head, midpiece, and tail [3].

- Independent Annotation: Experts perform their classifications independently, documenting their findings in a shared, structured file.

- Ground Truth File: A master file is compiled containing the image name, the classifications from all experts, and morphometric data (e.g., head width/length, tail length). For spermatozoa with associated anomalies, all defects are detailed in this file [3].

3. Data Preprocessing and Augmentation

- Image Pre-processing: This critical step involves denoising images and standardizing their format. Techniques include handling missing values or outliers, and normalization, such as resizing all images to a uniform grayscale resolution (e.g., 80x80 pixels) [3].

- Data Augmentation: To balance morphological classes and increase dataset size, augmentation techniques are applied. This can expand a dataset from 1,000 original images to over 6,000 usable images for model training [3].

- Data Partitioning: The entire dataset is randomly split into a training set (e.g., 80%) for model development and a hold-out test set (e.g., 20%) for final evaluation [3].

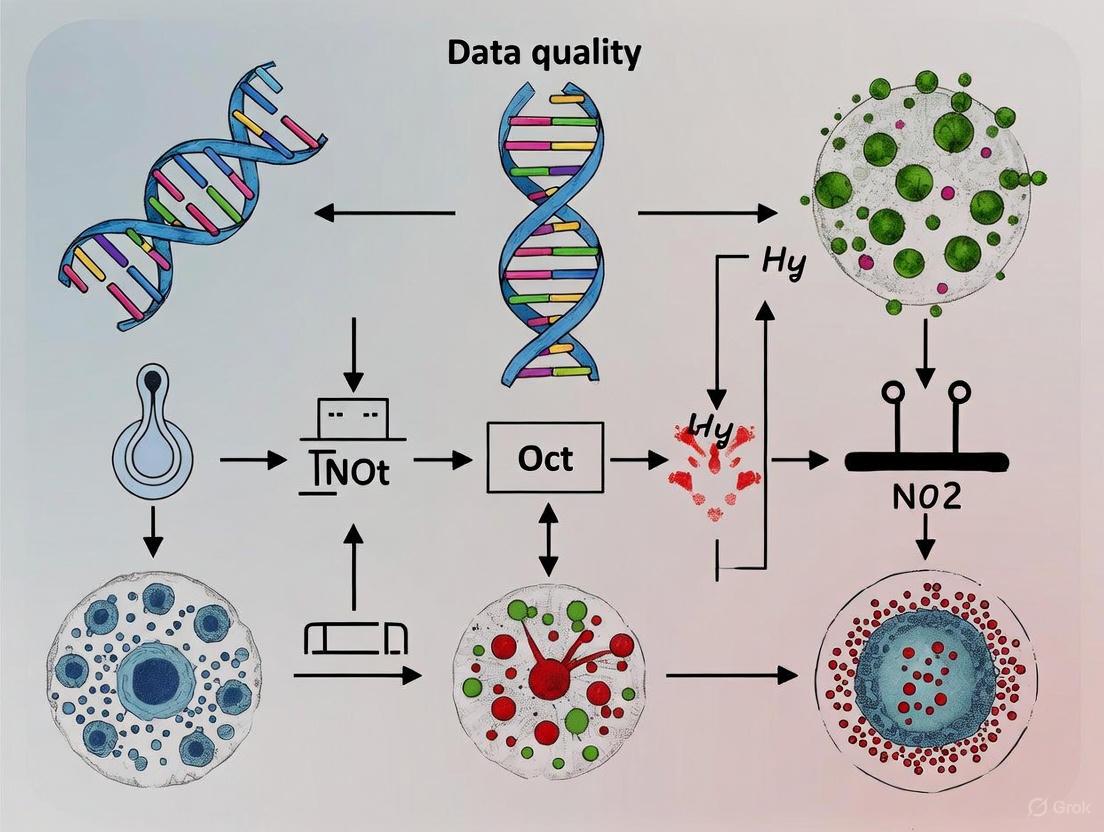

The workflow for this protocol is summarized in the following diagram:

Research Reagent and Resource Solutions

The table below lists key materials and computational tools essential for research in automated sperm morphology analysis.

| Item/Category | Function/Description | Example/Note |

|---|---|---|

| CASA System | Integrated hardware/software for automated semen analysis; captures images and videos for motility and morphology assessment [3] [5]. | Systems like the MMC CASA are used for high-throughput image acquisition [3]. |

| Staining Kits | Provide contrast for microscopic evaluation of sperm structures, crucial for consistent morphology assessment [3]. | RAL Diagnostics kit used in the SMD/MSS protocol [3]. |

| Public Datasets | Serve as benchmarks for training and validating new AI models, though they often have limitations in size and diversity [1]. | Examples: HSMA-DS, MHSMA, VISEM-Tracking, SVIA [1]. |

| Deep Learning Frameworks | Software libraries that provide the building blocks for designing, training, and deploying deep neural networks. | Python-based frameworks like TensorFlow and PyTorch are standard [3]. |

| Data Augmentation Tools | Software modules that automatically apply transformations to images to artificially expand the dataset. | Integrated into frameworks like TensorFlow and PyTorch, or available as standalone libraries (e.g., Albumentations) [3]. |

FAQs on Inter-Expert Variability and Data Quality

What is inter-expert variability and why is it a problem in sperm morphology analysis? Inter-expert variability refers to the differences in annotations or classifications made by different human experts examining the same data. In sperm morphology analysis, this is a significant problem because the assessment is highly subjective and relies on the operator's expertise [3] [1]. Manual classification is challenging to standardize, leading to inconsistencies in datasets that can compromise the reliability of AI models trained on this data. One study analyzing 1,000 sperm images found varying levels of agreement among three experts [3].

What are the primary data quality issues caused by annotation variability? Annotation variability directly impacts several key dimensions of data quality [6] [7]:

- Accuracy: Subjective judgments can lead to data that does not accurately reflect the true biological construct.

- Consistency: The same sperm cell may be classified into different morphological categories by different experts.

- Completeness: Ambiguous cases might be skipped or inconsistently recorded, leading to missing values.

How can inter-expert variability be quantitatively measured? The level of agreement among experts can be statistically assessed. In one study involving three experts, the agreement was categorized into three scenarios [3]:

- No Agreement (NA): All three experts disagreed on the classification.

- Partial Agreement (PA): Two out of three experts agreed on the same label for at least one category.

- Total Agreement (TA): All three experts agreed on the same label for all categories. Statistical tests like Fisher's exact test can then evaluate if differences between experts in each morphology class are significant [3].

What methodologies can reduce variability in manual annotation? To improve consistency, studies employ rigorous protocols [3] [8]:

- Multiple Annotators: Each data point is classified independently by several experts.

- Clear Classification Schemes: Using established standards like the modified David classification, which defines 12 classes of morphological defects [3].

- Analysis of Agreement: Compiling a ground truth file that documents each expert's classification for later analysis of consensus and disagreement [3].

- Annotation Guides: Providing detailed, domain-agnostic guides that help manage complex annotation projects, define goals, and mitigate human biases [8].

Can AI and synthetic data help overcome challenges posed by human annotation? Yes, AI and synthetic data offer promising solutions [9] [1]:

- Deep Learning Models: Convolutional Neural Networks (CNNs) can be trained to classify sperm morphology, aiming to automate, standardize, and accelerate the analysis, thereby reducing reliance on subjective human judgment [3] [1].

- Synthetic Data Generation: Tools like AndroGen can create realistic, labeled synthetic sperm images [9]. This approach bypasses the need for costly and variable manual annotation, provides a virtually unlimited supply of data, and ensures perfect ground truth labels, which is crucial for robust AI development.

Quantitative Data on Annotation Variability

The following table summarizes key metrics from a study that quantified inter-expert variability in sperm morphology classification across 1,000 images [3].

Table 1: Inter-Expert Agreement in Sperm Morphology Classification

| Agreement Scenario | Abbreviation | Description | Quantitative Findings |

|---|---|---|---|

| No Agreement | NA | All three experts assigned different labels to the same sperm cell. | Distribution among the three scenarios was analyzed to understand the underlying complexity of the classification task [3]. |

| Partial Agreement | PA | Two out of three experts agreed on the label for at least one category. | |

| Total Agreement | TA | All three experts agreed on the same label for all categories. |

For comparison, the table below shows variability metrics from a related field (prostate segmentation in TRUS imaging), illustrating how inter-observer variability is measured in medical image analysis [10].

Table 2: Inter-Individual Variability in Medical Image Segmentation (Prostate TRUS)

| Segmentation Method | Metric | Value (Median and IQR) |

|---|---|---|

| Manual (Statistical Shape Model) | Average Surface Distance (ASD) | 2.6 mm (IQR 2.3-3.0) |

| Manual (Deformable Model) | Average Surface Distance (ASD) | 1.5 mm (IQR 1.2-1.8) |

| Semi-Automatic | Average Surface Distance (ASD) | 1.4 mm (IQR 1.1-1.9) |

| Semi-Automatic | Dice Similarity Coefficient | 0.90 (IQR 0.88-0.92) |

Experimental Protocols for Quantifying Variability

Protocol 1: Establishing a Dataset with Inter-Expert Consensus This protocol is based on the methodology used to create the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset [3].

- 1. Sample Preparation: Collect semen samples from consenting patients. Prepare smears according to WHO guidelines and stain them (e.g., with RAL Diagnostics staining kit). Include samples with varying morphological profiles to maximize the diversity of classes.

- 2. Data Acquisition: Use a Computer-Assisted Semen Analysis (CASA) system with an optical microscope and a digital camera. Capture images in bright field mode with a 100x oil immersion objective. Ensure each image contains a single spermatozoon.

- 3. Expert Classification: Engage multiple experts (e.g., three) with extensive experience in semen analysis. Each expert should independently classify every spermatozoon based on a standardized classification system (e.g., the modified David classification). They should document their findings in a shared, structured format (e.g., an Excel spreadsheet).

- 4. Image Labeling and Ground Truth Compilation: Assign a filename to each image that encodes the type of anomaly. Create a master ground truth file that includes the image name, the classification from all experts, and morphometric dimensions (head width/length, tail length).

- 5. Variability Analysis: Analyze the compiled data to determine the level of inter-expert agreement. Categorize each sperm image into "No Agreement," "Partial Agreement," or "Total Agreement" scenarios. Use statistical software (e.g., IBM SPSS) and tests like Fisher's exact test to assess the significance of differences between experts for each morphological class.

Protocol 2: A General Framework for Annotation Quality Assurance This protocol adapts general data quality assurance principles to the scientific annotation process [7] [8].

1. Prevention:

- Define Clear Policies: Establish unambiguous annotation guidelines, including definitions of classes and detailed instructions for boundary cases.

- Annotator Training: Train annotators on the guidelines and the subject matter to improve recognition of complex scientific objects.

- Validation Rules: Implement software checks during data entry where possible (e.g., for format consistency).

2. Detection:

- Regular Audits: Schedule periodic checks where a subset of annotations is reviewed by a lead expert or a different annotator.

- Data Profiling: Use tools to analyze the annotated dataset for patterns, outliers, and inconsistencies in label distribution.

- Cross-Validation: Compare annotations for the same item from different experts to identify discrepancies.

3. Resolution:

- Consensus Meetings: Hold discussions among experts to resolve disagreements and establish a gold standard for difficult cases.

- Correction Procedures: Define clear procedures for correcting identified errors in the dataset.

- Root Cause Analysis: Investigate recurring issues to improve guidelines and training.

Workflow and Pathway Visualizations

Diagram 1: Sperm image annotation and quality workflow.

Diagram 2: Data quality management lifecycle.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sperm Image Dataset Creation

| Item | Function in the Experiment |

|---|---|

| RAL Diagnostics Staining Kit | Stains sperm cells on smears to make morphological features (head, midpiece, tail) visible for microscopic analysis [3]. |

| Non-Capacitating Media (NCC) | A physiological medium used as an experimental control to observe sperm motility and morphology in a baseline state [11]. |

| Capacitating Media (CC) | A medium containing Bovine Serum Albumin (BSA) and bicarbonate that induces capacitation, enabling the study of hyperactivated motility [11]. |

| CASA System | A Computer-Assisted Semen Analysis system, typically comprising a microscope with a digital camera, used for automated image acquisition and initial morphometric analysis [3] [11]. |

| High-Speed Camera | Captures video at very high frame rates (e.g., 5,000-8,000 fps), essential for recording fast flagellar movement and detailed 3D motility [11]. |

| Multifocal Imaging (MFI) System | An advanced setup with a piezoelectric device that moves the microscope objective, allowing rapid capture of images at different focal heights to reconstruct 3D movement from 2D slices [11]. |

FAQs on Class Imbalance in Sperm Morphology Analysis

Q1: What is the core problem of class imbalance in sperm morphology datasets? Class imbalance occurs when certain sperm morphology categories (e.g., normal sperm) are vastly overrepresented compared to others (e.g., specific tail defects). This is not merely a statistical issue but a fundamental data quality problem that can cause deep learning models to perform poorly on underrepresented classes, despite high overall accuracy. The root challenge is often an insufficient absolute number of rare defect samples for the model to learn meaningful patterns, not just the skewed ratio itself [12].

Q2: My model has high overall accuracy but fails to detect rare abnormalities. What should I check first? First, move beyond accuracy as your primary metric. A model can achieve high accuracy by simply always predicting the majority class. Instead, employ a suite of evaluation tools [12]:

- Use Confusion Matrices: To see per-class performance.

- Calculate F1-Score, Precision, and Recall for the minority classes.

- Leverage ROC-AUC and Precision-Recall (PR) Curves: The PR curve is particularly informative under heavy class skew [12].

- Apply Proper Scoring Rules: Such as log-loss or the Brier score, to evaluate the quality of the probability estimates [12].

Q3: Are oversampling techniques like SMOTE always the best solution for class imbalance? Not necessarily. Recent evidence suggests that for strong classifiers (e.g., XGBoost, modern CNNs), the benefits of SMOTE can be minimal, and the same effect can often be achieved by tuning the decision threshold instead of resampling the data [13]. Oversampling is most helpful for "weak" learners (e.g., decision trees, SVMs) or when the absolute number of minority samples is so low that the model cannot learn from them at all [13] [12]. In many cases, simpler random oversampling can be as effective as more complex methods like SMOTE [13].

Q4: How can my experimental design mitigate class imbalance from the start? Incorporate a two-stage classification framework. A proven method is to first use a "splitter" model to categorize sperm into major groups (e.g., "head/neck abnormalities" vs. "normal/tail abnormalities"), then use dedicated, smaller ensemble models for fine-grained classification within each group. This divide-and-conquer strategy has been shown to significantly reduce misclassification among visually similar categories [14].

Q5: What are the risks of blindly applying data balancing techniques? Artificially balancing a dataset through resampling corrupts the model's understanding of the true class distribution. This leads to miscalibrated probabilities—a prediction of "0.7" from a model trained on balanced data does not mean a 70% chance of the event in the real world. This breaks the model's utility for cost-sensitive decision-making [12].

Troubleshooting Guides

Issue: Poor Performance on Rare Sperm Defects

Symptoms:

- High overall accuracy but zero or near-zero recall for specific morphological classes (e.g., "bent neck," "coiled tail").

- The model consistently misclassifies rare defects as more common abnormalities.

Diagnostic Steps:

- Audit Your Dataset: Create a table of your class distribution. A high variation in class counts confirms imbalance.

- Analyze the Confusion Matrix: Identify which minority classes are being confused with which majority classes.

- Evaluate with Correct Metrics: Calculate per-class F1-scores and generate a Precision-Recall curve.

Solutions:

- Algorithmic Approach (Recommended): Fine-tune a strong pre-trained model (e.g., NFNet, Vision Transformer) using a weighted loss function. This assigns a higher penalty for errors on the minority class during training, forcing the model to pay more attention to them without distorting the dataset [15].

- Data-Level Approach: If the absolute number of rare defect images is extremely low (e.g., fewer than 50), consider data augmentation (rotation, flipping, brightness adjustment) or synthetic data generation with tools like AndroGen to carefully increase the sample size [3] [9].

- Architectural Approach: Implement a two-stage ensemble framework [14]. The workflow for this proven method is detailed below.

Issue: Model Predictions Are Not Calibrated for Clinical Use

Symptoms:

- The model's predicted probabilities do not reflect real-world likelihoods (e.g., a "0.9" prediction is correct only 50% of the time).

- This is often a hidden consequence of using resampling techniques.

Solutions:

- Train on the True Distribution: Whenever possible, train your final model on the original, unaltered dataset to preserve real-world priors [12].

- Apply Post-Hoc Calibration: If you must use resampling, or if your model is naturally miscalibrated, apply Platt Scaling or Isotonic Regression to the output probabilities. This fits a regressor to map your model's outputs to well-calibrated probabilities [12].

- Optimize the Decision Threshold: Do not use the default 0.5 threshold. Determine the optimal threshold based on clinical costs using the formula:

p* = C_FP / (C_FP + C_FN)whereC_FPis the cost of a false positive andC_FNis the cost of a false negative [12].

Experimental Protocols & Data from Key Studies

The following tables summarize quantitative results and methodologies from recent studies that successfully addressed class imbalance.

Table 1: Performance of a Two-Stage Ensemble Framework on the Hi-LabSpermMorpho Dataset (18 classes) [14]

| Staining Protocol | Framework Accuracy | Baseline Accuracy | Improvement |

|---|---|---|---|

| BesLab | 69.43% | ~65.05% | +4.38% |

| Histoplus | 71.34% | ~66.96% | +4.38% |

| GBL | 68.41% | ~64.03% | +4.38% |

Protocol Summary:

- Dataset: Hi-LabSpermMorpho dataset with 18 morphology classes across 3 staining types [14].

- Stage 1 (Splitter): A model categorizes images into two super-groups: 1) Head/Neck abnormalities, and 2) Normal/Tail abnormalities [14].

- Stage 2 (Ensemble): Each super-group is processed by a custom ensemble of four deep learning models (including NFNet and Vision Transformers). A multi-stage voting strategy is used for the final decision [14].

Table 2: Impact of Classification System Complexity on Novice Morphologist Accuracy [16]

| Classification System | Untrained User Accuracy | Trained User Accuracy |

|---|---|---|

| 2-category (Normal/Abnormal) | 81.0% ± 2.5% | 98.0% ± 0.43% |

| 5-category (by defect location) | 68.0% ± 3.59% | 97.0% ± 0.58% |

| 8-category (cattle industry standard) | 64.0% ± 3.5% | 96.0% ± 0.81% |

| 25-category (individual defects) | 53.0% ± 3.69% | 90.0% ± 1.38% |

Protocol Summary:

- Tool: Sperm Morphology Assessment Standardisation Training Tool [16].

- Method: Novice morphologists were trained using a "ground truth" dataset established by expert consensus, following machine learning principles of supervised learning [16].

- Key Finding: Accuracy decreases and variability increases with system complexity, highlighting the challenge of fine-grained classification.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Sperm Morphology Analysis

| Item Name | Function / Description | Relevance to Class Imbalance |

|---|---|---|

| Hi-LabSpermMorpho Dataset [14] | A large-scale, expert-labeled dataset with 18 distinct sperm morphology classes from three staining protocols. | Provides a benchmark for developing and testing imbalanced learning strategies on a complex, real-world dataset. |

| AndroGen Software [9] | Open-source tool for generating customizable synthetic sperm images without requiring real data or model training. | Mitigates the lack of data for rare morphological classes by creating realistic synthetic samples for data augmentation. |

| SMD/MSS Dataset [3] | Sperm Morphology Dataset from the Medical School of Sfax, using the modified David classification (12 defect classes). | An example of a dataset built with data augmentation (extending 1,000 to 6,035 images) to balance morphological classes. |

| Imbalanced-Learn Library [13] | A Python library offering resampling techniques like SMOTE, random over/undersampling, and special ensemble methods. | Provides readily available implementations of various resampling algorithms for experimental comparison. |

| YOLOv7 Framework [17] | An object detection model used for automatically detecting and classifying sperm abnormalities in micrographs. | Demonstrates an end-to-end automated system that must handle class imbalance in a real-world veterinary application. |

Workflow: A Principled Approach to Handling Class Imbalance

The following diagram synthesizes the insights from the FAQs and troubleshooting guides into a logical, step-by-step workflow for researchers.

Troubleshooting Guides

Guide: Addressing Staining-Related Inconsistencies

Staining procedures introduce significant variability in sperm morphology assessment, affecting both sperm viability and image analysis.

Problem: High inter-laboratory variability in assessing head shape and midpiece contours on stained smears.

- Cause: Subjective interpretation of staining criteria. One study found that criteria for head ovality and regularity of head contours had among the poorest agreement (below 60%) among experts [18].

- Solution: Implement stringent internal quality control and standardized training using a defined set of reference images. For clinical applications, consider stained-free methods to eliminate this variability entirely [19] [20].

Problem: Inability to use sperm for Assisted Reproductive Technology (ART) after analysis.

- Cause: Traditional staining procedures damage sperm cells, rendering them unusable [19].

- Solution: Adopt stained-free assessment methods. An AI model developed for unstained, live sperm showed a strong correlation (r=0.88) with Computer-Aided Semen Analysis (CASA) of stained sperm, providing a reliable non-invasive alternative [19].

Guide: Managing Magnification and Image Resolution Challenges

The choice of magnification involves a trade-off between cell viability, resolution, and measurement accuracy.

Problem: Blurred boundaries and loss of detail in low-magnification images leading to inaccurate morphological measurements.

- Cause: Sperm selection for ART is often performed at lower magnifications (e.g., 20x) to prevent sperm from swimming out of view. This results in reduced resolution [20]. One study noted this can cause errors, such as misclassifying a normal head length (3.7–4.7 µm) as abnormal (4.85 µm) [20].

- Solution: Employ a measurement accuracy enhancement strategy. One protocol uses statistical analysis (Interquartile Range for outliers) and signal processing (Gaussian filtering) on segmented images to correct measurement errors, reducing errors in head, midpiece, and tail parameters by up to 35.0% [20].

Problem: Need for high-resolution detail without staining or high magnification.

- Cause: High magnification (100x) with staining is standard for morphology but makes sperm unusable for ART [19].

- Solution: Use confocal laser scanning microscopy at lower magnifications (40x). This method captures Z-stack images, providing high-resolution detail from live, unstained sperm, enabling reliable AI-based morphology assessment [19].

Guide: Standardizing Microscope and Imaging Settings

Inconsistent imaging settings introduce noise and reduce the reliability of automated analysis.

Problem: Sperm image classification is strongly affected by noise, reducing the accuracy of deep learning models.

- Cause: Practical CASA applications are prone to conventional noise and adversarial attacks in imaging systems. Models based on local information (e.g., CNNs) are more vulnerable [21].

- Solution: Select deep learning models with strong anti-noise robustness. Research indicates that Visual Transformers (VT), which use global image information, maintain higher accuracy under noise interference (e.g., a drop from 91.45% to only 91.08% under Poisson noise) compared to Convolutional Neural Networks (CNNs) [21].

Problem: Inaccurate tracks of spermatozoa in motility analysis due to fast movement and occlusion.

- Cause: Traditional CASA algorithms can fail in complex scenarios with high sperm density and fast, nonlinear movements [22].

- Solution: Utilize a Hybrid Dynamic Bayesian Network (HDBN) for multi-target tracking. This method improves data association between frames in phase-contrast microscopy videos, leading to more accurate extraction of kinematic parameters like velocity [22].

Frequently Asked Questions (FAQs)

Q1: What are the most subjective criteria in manual sperm morphology assessment, and how can we standardize them? The most variable criteria are related to the head and midpiece, specifically head ovality, smoothness/regularity of contours, and alignment of the midpiece with the head. Standardization requires continuous training, internal quality control, and the use of reference images. For the most objective results, transitioning to automated, AI-based systems is recommended [18].

Q2: Can I use the same sperm sample for both morphology analysis and IVF/ICSI procedures? Yes, but only if you use stained-free methods. Traditional staining with methods like Diff-Quik or Papanicolaou (PAP) renders sperm unusable for fertilization. Techniques utilizing unstained sperm imaged with confocal or phase-contrast microscopy, analyzed by an AI model, allow for the selection of viable sperm for subsequent injection (ICSI) [19] [20].

Q3: What is a key advantage of using deep learning over traditional CASA for morphology? Deep learning models offer greater objectivity and standardization. Manual and traditional CASA assessments suffer from high inter-observer variability. Deep learning models, such as those combining CNN architectures with feature engineering, can achieve high accuracy (e.g., 96%) and process thousands of images in minutes, eliminating subjectivity and providing consistent results [3] [23].

Q4: How does low magnification affect the accuracy of sperm morphology measurements, and how can this be corrected? Low magnification leads to blurred boundaries and pixelation, causing errors in measuring critical parameters like head length and width. This can be corrected computationally by applying a measurement accuracy enhancement strategy after image segmentation, which uses statistical filtering and smoothing to significantly reduce measurement errors [20].

Quantitative Data on Assessment Variability

The table below summarizes data from an external quality control scheme, highlighting morphological criteria with the highest and lowest variability among laboratories [18].

Table 1: Variability in Sperm Morphology Assessment Based on EQC Data

| Morphological Criterion | Agreement Level | Implication for Data Uniformity |

|---|---|---|

| Criteria with High Variability (Poor Agreement <60%) | ||

| Head Ovality | Poor | Major source of inconsistency in dataset labeling |

| Smooth, Regularly Contoured Head | Poor | Leads to conflicting normal/abnormal classifications |

| Slender and Regular Midpiece | Poor | High subjectivity in midpiece assessment |

| Major Axis Midpiece = Major Axis Head | Poor | Alignment judgment is highly subjective |

| Criteria with Low Variability (Good Agreement >90%) | ||

| Acrosomal Vacuoles <20% of Head Surface | Good | Consistent interpretation across labs |

| Excessive Residual Cytoplasm (ERC) <1/3 Head | Good | Well-defined criterion with low subjectivity |

| Tail Thinner than Midpiece | Good | Easily identifiable feature with high consensus |

| Tail About 10x Head Length | Good | Metric-based criterion leads to high agreement |

Experimental Protocol for Standardized Image Acquisition

This protocol is adapted from methodologies used to build standardized datasets for AI model training [3] [19].

Objective: To acquire a uniform dataset of sperm images for morphological analysis, minimizing inconsistencies from staining and acquisition settings.

Workflow Diagram:

Materials:

- Fresh semen samples

- Staining reagents (e.g., RAL Diagnostics kit, Diff-Quik) [3] [19] OR chamber slides for live imaging (e.g., Leja) [19]

- Optical microscope with camera and 100x oil immersion objective [3] OR Confocal Laser Scanning Microscope (e.g., LSM 800) [19]

- MMC CASA system or equivalent for image capture and storage [3]

Procedure:

- Sample Preparation: Use samples with a sperm concentration of at least 5 million/mL. For stained smears, prepare and fix slides according to WHO guidelines. For unstained analysis, dispense a 6 µL droplet into a 20 µm deep chamber slide [3] [19].

- Image Acquisition:

- Expert Annotation: Have at least three experienced experts classify each sperm image independently based on a standardized classification (e.g., modified David or WHO criteria). Resolve disagreements to establish a consensus ground truth [3] [18].

- Data Augmentation: To increase dataset size and balance morphological classes, apply augmentation techniques like rotation and flipping to the raw images [3].

Research Reagent Solutions

Table 2: Key Materials and Reagents for Sperm Image Acquisition

| Item | Function/Application | Example |

|---|---|---|

| RAL Diagnostics Staining Kit | Staining sperm smears for traditional morphology assessment under high magnification [3]. | Used in the SMD/MSS dataset creation [3]. |

| Leja Chamber Slides (20 µm) | Standardized slides for preparing unstained, live sperm samples for motility and morphology analysis under low magnification [19]. | Used in unstained live sperm AI model development [19]. |

| Confocal Laser Scanning Microscope (LSM) | High-resolution imaging of live, unstained sperm at low magnification via Z-stacking, preserving cell viability [19]. | LSM 800 used for creating a high-resolution dataset [19]. |

| MMC CASA System | Integrated system for the automated acquisition and storage of sperm images from microscopes, often used for building datasets [3]. | Used for image acquisition in the SMD/MSS dataset study [3]. |

| ResNet50 (with CBAM) | A deep learning model architecture enhanced with an attention mechanism to focus on key morphological features of sperm [23]. | Achieved 96.08% accuracy in sperm morphology classification [23]. |

Troubleshooting Guides

Guide 1: Addressing Low Inter-Annotator Agreement

Problem: Significant inconsistency in annotations between different experts, leading to unreliable training data for deep learning models.

Explanation: The manual classification of sperm morphology is inherently subjective and heavily reliant on the operator's expertise. This can lead to substantial disagreements between annotators on how to label the same sperm structure, especially for complex or borderline cases [3].

Solution: A multi-faceted approach is recommended to improve consistency:

- Implement Multi-Annotator Validation: Assign the same image to multiple annotators and use a system to automatically compare their annotations. This helps measure agreement scores and highlights discrepancies for review before the data is used in production [24].

- Establish Comprehensive Guidelines: Develop detailed annotation guidelines that cover edge cases and provide clear decision trees. These guidelines should be based on a standardized classification system like the modified David classification and include numerous visual examples [3] [25].

- Utilize Honeypot Tasks: Secretly insert pre-labeled samples into the annotators' workflow. This allows you to measure annotator accuracy in real-time and identify those who may need additional training without their knowledge, ensuring consistent quality [24].

Guide 2: Managing Class Imbalance in Morphological Datasets

Problem: Certain morphological classes (e.g., specific head defects) are underrepresented in the dataset, causing models to be biased toward more common classes.

Explanation: In sperm morphology, "normal" sperm or certain common anomalies may appear frequently, while other defect types are rare. This natural imbalance results in a dataset that does not equally represent all morphological classes, which hampers the model's ability to learn rare features [3].

Solution: Apply data augmentation techniques specifically tailored to microscopic sperm images to create a more balanced dataset.

Table: Common Data Augmentation Techniques for Sperm Images

| Technique | Description | Application in Sperm Morphology |

|---|---|---|

| Color Augmentation | Randomly changes brightness, contrast, saturation, and hue of the image. | Helps the model become invariant to staining variations and differences in microscope lighting conditions [26]. |

| Geometric Transformations | Includes rotation, flipping, and scaling of the original image. | Allows the model to recognize sperm structures from different orientations, improving robustness [3]. |

| Synthetic Data Generation | Using advanced models to create new, realistic sperm images for rare classes. | Directly addresses class imbalance by artificially increasing the number of samples in under-represented morphological categories [3]. |

Guide 3: Handling Image Pre-processing and Color Variation

Problem: Variations in image color, contrast, and brightness due to different staining protocols or microscope settings reduce segmentation accuracy.

Explanation: Sperm images can be affected by insufficient lighting, poorly stained semen smears, and varying laboratory protocols. These color and contrast inconsistencies are a form of noise that can confuse the segmentation model [3] [26].

Solution: Implement a robust pre-processing pipeline.

- Color Space Conversion: Convert images from RGB (Red, Green, Blue) to a color space like HSV (Hue, Saturation, Value). This separates the color information (hue) from the intensity information, making the segmentation process less sensitive to lighting variations [26].

- Color Normalization: Apply techniques like histogram equalization to enhance contrast and standardize the brightness distribution across all images in the dataset [26].

- Data Cleaning and Denoising: Use filters to remove noise signals that overlap with the sperm images, enabling a more accurate estimation of each spermatozoon's true signal [3].

Frequently Asked Questions (FAQs)

Q1: What is the best way to measure the quality of my annotated sperm dataset? Beyond simple accuracy, you should measure Inter-Annotator Agreement (IAA). This involves having multiple experts annotate the same set of images and calculating the degree to which they agree. In one study, agreement was categorized as Total Agreement (3/3 experts), Partial Agreement (2/3 experts), or No Agreement [3]. Low IAA indicates a need for better guidelines or annotator training. Additionally, using honeypot tasks and reviewer scoring systems can provide continuous quality metrics [24].

Q2: Which classification system should I use for annotating sperm morphology? The choice depends on your clinical context. Many laboratories use the modified David classification, which defines 12 specific classes of morphological defects across the head, midpiece, and tail [3]. Alternatively, the WHO (World Health Organization) and Kruger (strict criteria) classifications are also widely used [3]. Consistency within your project is more important than the specific system chosen.

Q3: Our model performs well on training data but poorly on new images. What could be wrong? This is often a sign of overfitting due to a lack of dataset diversity or underlying data quality issues. Ensure you have used color augmentation [26] and other techniques to make your model robust to real-world variations. Also, re-examine your annotations for consistency; poor quality annotations can cause models to learn incorrect correlations and fail on new data [24].

Q4: What are the main technical challenges in segmenting the head, midpiece, and tail simultaneously? The primary challenge is the complexity of nested and overlapping structures. The midpiece and tail are long, thin, and often overlap with other cells or debris, making it difficult for the model to distinguish boundaries. Furthermore, a single spermatozoon can have defects in multiple compartments, requiring the annotation system to capture several labels for one cell simultaneously, which is a complex task known as handling nested entities [25].

Q5: Are there any open-source datasets available for training sperm segmentation models? Yes, several datasets are available. The VISEM-Tracking dataset provides video recordings with annotated bounding boxes and tracking information, which is valuable for motility and kinematics analysis [27]. The SMD/MSS dataset focuses on morphology, containing over 1,000 individual sperm images classified by experts according to the modified David classification [3]. The SCIAN-MorphoSpermGS and HuSHEM datasets are also available, focusing specifically on sperm head morphology [27].

Experimental Protocols & Workflows

Protocol 1: Building a Robust Sperm Morphology Dataset

This protocol outlines the steps for creating a high-quality dataset for training multi-structure segmentation models, as demonstrated in recent studies [3].

- Sample Preparation: Collect semen samples with a concentration of at least 5 million/mL. Prepare smears according to WHO guidelines and stain them (e.g., with RAL Diagnostics staining kit) [3].

- Data Acquisition: Use a CASA (Computer-Assisted Semen Analysis) system or a microscope with a mounted camera (e.g., Olympus CX31 with a UEye camera). Capture images at 400x magnification with phase-contrast optics. Ensure each image contains a single spermatozoon [3] [27].

- Multi-Expert Annotation: Have at least three experienced experts classify each spermatozoon independently using a standardized system (e.g., modified David classification). Use a shared spreadsheet or annotation platform to record their classifications for each part of the sperm (head, midpiece, tail) [3].

- Annotation Consolidation & Quality Control: Analyze inter-expert agreement. Resolve discrepancies through consensus or by deferring to a senior expert. Use a platform with multi-annotator validation to flag inconsistencies [3] [24].

- Data Augmentation: Apply techniques such as color jittering, rotation, and flipping to balance the representation across all morphological classes and increase the dataset's size and diversity [3].

Protocol 2: A Deep Learning Workflow for Segmentation

This protocol describes the steps for developing a Convolutional Neural Network (CNN) model for sperm segmentation and classification [3].

- Image Pre-processing:

- Denoising: Remove noise attributed to poor lighting or staining [3].

- Normalization: Resize images to a consistent scale (e.g., 80x80 pixels) and convert to grayscale to simplify the initial model [3].

- Color Space Adjustment: Convert images to HSV or LAB color space to reduce the impact of color variation [26].

- Data Partitioning: Randomly split the dataset into a training set (80%) and a testing set (20%). A portion of the training set can be used for validation [3].

- Model Training: Train a CNN architecture on the pre-processed and augmented training set. The model will learn to extract features and map them to the annotated morphological classes (e.g., normal, tapered head, coiled tail, etc.) [3].

- Model Evaluation: Test the trained model on the held-out test set. Evaluate performance using metrics like accuracy, precision, recall, and F1-score, comparing the model's predictions to the expert-validated ground truth [3].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Sperm Image Analysis Research

| Item | Function/Benefit |

|---|---|

| RAL Diagnostics Staining Kit | Stains semen smears to provide contrast for clear visualization of sperm structures under a microscope [3]. |

| Phase-Contrast Microscope | Essential for examining unstained, live sperm preparations, allowing for the assessment of motility and morphology without fixation [27]. |

| CASA System (e.g., MMC) | An integrated system for automated sperm analysis. It typically consists of a microscope with a camera and software to acquire images and analyze motility and concentration [3]. |

| Annotation Platform (e.g., LabelBox) | Software that enables efficient manual annotation of images and videos. Supports drawing bounding boxes and labeling different sperm parts and defects [27]. |

| Python 3.8 with Deep Learning Libraries | The programming environment and tools used to develop, train, and test convolutional neural network (CNN) models for automated classification [3]. |

| Data Augmentation Tools | Software functions (e.g., in PyTorch or TensorFlow) to perform rotations, color jittering, etc., which improve model robustness and address class imbalance [3] [26]. |

Methodological Breakthroughs: Advanced Techniques for Sperm Image Processing and Analysis

In the field of male fertility research, the morphological analysis of sperm cells represents a critical diagnostic procedure. Traditional manual assessment is inherently subjective, reliant on technician expertise, and challenging to standardize across laboratories [3]. While deep learning offers a pathway to automation and improved objectivity, researchers consistently encounter a fundamental obstacle: data quality and availability issues in sperm image datasets. These challenges include limited sample sizes, heterogeneous representation of morphological classes, and difficulties in obtaining consistent expert annotations [3] [1]. This technical support article addresses the specific implementation hurdles faced by researchers and drug development professionals when applying Convolutional Neural Networks (CNNs) and Sequential Deep Neural Networks (DNNs) to sperm morphology classification within this constrained data environment.

Frequently Asked Questions (FAQs)

Q1: What are the most common data-related challenges when training a CNN for sperm morphology classification?

- A1: The primary challenges are:

- Limited Dataset Size: Medical image datasets, including sperm images, are often limited to a few hundred or thousand samples, which is insufficient for training complex deep learning models from scratch and leads to overfitting [28] [1].

- Class Imbalance: Sperm datasets often have a heterogeneous representation of different morphological classes (e.g., many more normal sperm than specific tail defects), causing models to be biased toward majority classes [3].

- Annotation Subjectivity: Ground truth labels rely on manual expert classification, which can have significant inter-observer variability, confusing the model during training [3] [1].

- Image Quality Issues: Images can suffer from noise from staining artifacts, insufficient lighting, or overlapping debris, which the model must learn to ignore [3] [29].

Q2: How can I improve my model's performance when I have a small sperm image dataset?

- A2: Several strategies can mitigate data scarcity:

- Data Augmentation: Systematically create slightly different versions of your existing training images through transformations like flipping, rotation, and scaling [3] [28]. This artificially expands your dataset and encourages generalization.

- Transfer Learning: Initialize your model with weights pre-trained on a large, general-purpose image dataset (e.g., ImageNet). The early-layer features learned to detect edges and shapes are often transferable, allowing you to fine-tune the model on your smaller sperm dataset [28].

- Hybrid and Compact Architectures: Instead of using very deep CNNs, consider more compact models designed for small datasets. Hybrid architectures that combine CNNs with mathematical morphology operations have shown reliable performance with limited samples [28].

Q3: My model's predictions are not trusted by clinicians. How can I make the CNN's decision-making process more interpretable?

- A3: You can employ model interpretation techniques to generate visual explanations:

- Activation Heatmaps: Techniques like Grad-CAM can produce heatmaps that highlight which regions of the input sperm image were most influential in the model's classification decision [30] [31]. This allows a clinician to verify that the model is focusing on biologically relevant structures (e.g., the acrosome or tail) rather than artifacts.

Troubleshooting Guides

Problem: Poor Validation Accuracy (Model Overfitting)

Symptoms: Training accuracy is high and continues to improve, but validation accuracy stagnates or decreases after a few epochs.

Solutions:

- Aggressive Data Augmentation: Implement a robust augmentation pipeline. The table below summarizes effective techniques for sperm images.

| Augmentation Technique | Description | Application Note |

|---|---|---|

| Geometric Transformations | Rotation, flipping, scaling, shearing | Ensure transformations do not create biologically impossible sperm shapes [28]. |

| Color Space Adjustments | Variations in brightness, contrast, saturation | Simulate different staining intensities and lighting conditions [3]. |

| Elastic Deformations | Mild, non-rigid distortions | Can help the model become invariant to slight shape variations [28]. |

- Incorporate Regularization: Add Dropout layers to your CNN architecture to randomly disable a fraction of neurons during training, preventing complex co-adaptations on the training data.

- Use a Simpler Model: If overfitting persists, your model may be too complex for your dataset size. Consider a shallower network or a hybrid Morphological-Convolutional Neural Network (MCNN), which has been shown to perform well on medical images with few samples [28].

Problem: Inconsistent Model Performance Across Different Datasets

Symptoms: A model that performs well on one sperm image dataset shows a significant drop in accuracy when applied to images from another clinic or acquired with different equipment.

Solutions:

- Standardize Pre-processing: Ensure consistent image pre-processing across all data sources. This typically includes:

- Resizing: Standardize all input images to the same dimensions (e.g., 80x80 pixels) [3].

- Normalization: Scale pixel values to a standard range, typically [0, 1], to ensure stable model training [3] [32].

- Denoising: Apply a noise-removal filter, such as a Wiener filter, as a first step to clean the images and facilitate more accurate segmentation and analysis [29].

- Analyze Inter-Expert Agreement: Inconsistencies in the ground truth data can propagate into model errors. Analyze the level of agreement between the experts who annotated your training data. Models can be trained specifically on samples where experts show total agreement to improve reliability [3].

Problem: Failure to Accurately Segment Sperm Subcellular Structures

Symptoms: The model cannot correctly delineate the boundaries between the sperm head, midpiece, and tail, leading to erroneous feature extraction.

Solutions:

- Adopt a Multi-Stage Framework: Do not rely on a single model for both segmentation and classification. Implement a dedicated segmentation step first. A proven methodology is a three-stage framework [29]:

- Stage 1: Pre-processing with a dedicated denoising filter.

- Stage 2: Segmentation using an entropy-based method (e.g., modified Havrda-Charvat entropy) to isolate individual sperm and their parts.

- Stage 3: Abnormality Detection using a CNN on the segmented cells.

- Explore Advanced Segmentation Architectures: For more complex segmentation tasks, investigate specialized neural networks like U-Net, which are designed for biomedical image segmentation.

Experimental Protocols & Workflows

Standardized Workflow for Sperm Morphology Classification

The following diagram, generated using Graphviz, outlines a robust experimental workflow that integrates solutions to common data quality issues.

Detailed Protocol: Implementing a Hybrid Morphological-Convolutional Network

For researchers dealing with particularly small datasets (< 1000 images), a hybrid MCNN can be more effective than a standard deep CNN [28]. The protocol below outlines its implementation.

Objective: Classify sperm images into morphological classes (e.g., normal, tapered head, coiled tail) using a compact, data-efficient architecture.

Methodology:

Input Pre-processing:

Architecture Configuration:

- The MCNN uses independent neural networks to process information from different signal channels (e.g., color channels or morphological gradients).

- It incorporates a morphological layer that performs non-linear mathematical morphology operations (e.g., erosion, dilation). These operations are excellent at highlighting topological structures like edges and shapes, which are crucial for morphology assessment [28] [33].

- The features extracted by the convolutional and morphological pathways are then fused.

- A Random Forest classifier is often used on the fused features for the final classification, as it is a robust and interpretable model [28].

Training with Extreme Learning Machine (ELM):

- The independent neural networks within the MCNN can be trained using the ELM algorithm, which is computationally efficient and well-suited for models with a single hidden layer [28].

Expected Outcome: This architecture is designed to achieve reliable performance on small medical image datasets, potentially outperforming deeper CNNs like ResNet-18 in tasks such as binary classification of normal vs. abnormal sperm [28].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and their functions for building effective sperm morphology classification models.

| Research Reagent | Function & Explanation |

|---|---|

| Convolutional Neural Network (CNN) | The foundational architecture for image analysis. It automatically learns hierarchical features from raw pixels, from simple edges to complex morphological structures [34] [32]. |

| Hybrid MCNN | A compact architecture combining CNN layers with mathematical morphology operations. It is particularly effective for learning from small datasets by emphasizing shape-based features [28]. |

| Data Augmentation Pipeline | A software module that applies predefined transformations (rotation, flipping, etc.) to training images. It is essential for combating overfitting caused by limited data [3] [28]. |

| Grad-CAM / Saliency Maps | Model interpretation techniques that generate heatmaps. They are critical for validating that the model's attention aligns with biologically relevant regions of the sperm cell, building clinical trust [30] [31]. |

| Wiener Filter | A pre-processing filter used for image denoising. It helps remove noise and staining artifacts from the original microscopic images, leading to cleaner input data for segmentation and classification [29]. |

| Random Forest Classifier | A traditional machine learning model that can be used as the final classifier in a hybrid pipeline (e.g., after feature extraction by a CNN). It is less prone to overfitting on small datasets than a fully connected DNN layer [28]. |

The Cascade SAM for Sperm Segmentation (CS3) represents a significant innovation in addressing one of the most persistent challenges in automated sperm morphology analysis: the accurate segmentation of overlapping sperm structures in clinical samples. This unsupervised approach specifically tackles the limitations of existing segmentation techniques, including the foundational Segment Anything Model (SAM), which proves inadequate for handling the complex sperm overlaps frequently encountered in real-world laboratory settings [35] [36].

The core innovation of CS3 lies in its cascade application of SAM in multiple stages to progressively segment sperm heads, simple tails, and complex tails separately, followed by meticulous matching and joining of these segmented masks to construct complete sperm masks [36]. This methodological breakthrough is particularly valuable within research on data quality in sperm image datasets, as it functions effectively without requiring extensive labeled training data—a significant constraint in this specialized domain.

Technical Support & Troubleshooting

Frequently Asked Questions

Q1: Why does the standard Segment Anything Model (SAM) perform poorly on overlapping sperm tails? SAM primarily prioritizes segmentation by color before considering geometric features. When sperm tails overlap and share similar coloration, SAM tends to group them as a single entity rather than distinguishing individual tails. This fundamental limitation necessitates the specialized cascade approach implemented in CS3 [36].

Q2: What are the specific filtration criteria CS3 uses to identify single tail masks? The CS3 algorithm employs two critical filtration criteria after skeletonizing obtained masks into one-pixel-wide lines:

- Presence of a single connected segment (excludes masks representing multiple aggregated tails)

- The line must terminate in exactly two endpoints (confirms the mask outlines a solitary tail) Masks satisfying both specifications are classified as single tail masks, saved, and removed from the original image for subsequent processing stages [36].

Q3: How does CS3 handle cases where cascade processing fails to separate intertwined tails? For the marginal subset of overlaps that resist separation through cascade processing, CS3 employs an enlargement and bold technique. This process magnifies these challenging regions and thickens the slender tail structures, making them more discernible to SAM. After segmentation, the results are resized to their original dimensions [36].

Q4: What computational resources are required for implementing CS3? While the original research doesn't provide detailed computational specifications, reviewers have noted that the multi-stage cascade process and iterative SAM applications potentially make CS3 computationally intensive. This may limit practical deployment in settings with constrained computational resources [35].

Q5: How does CS3 compare to other deep learning models for sperm segmentation? Comparative evaluations demonstrate CS3's superior performance in handling overlapping sperm instances. However, for specific sub-tasks, other models show particular strengths:

- Mask R-CNN excels at segmenting smaller, more regular structures (head, nucleus, acrosome)

- U-Net achieves highest IoU for morphologically complex tails

- YOLOv8 performs comparably to Mask R-CNN for neck segmentation [37]

Common Experimental Challenges & Solutions

Table 1: Troubleshooting Common CS3 Implementation Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Incomplete head segmentation | Insufficient image pre-processing | Enhance background whitening and contrast adjustment in pre-processing stage |

| Poor tail mask separation | Overly complex overlapping regions | Apply enlargement and line-thickening technique to challenging areas before re-segmenting |

| Incorrect head-tail matching | Suboptimal distance/angle criteria | Recalibrate matching parameters based on specific dataset characteristics |

| Cascade process not converging | Too many iterative stages | Implement early stopping if segmentation outputs remain consistent across successive rounds |

Experimental Protocols & Methodologies

CS3 Cascade Segmentation Workflow

The CS3 methodology follows a structured, multi-stage process:

Image Pre-processing: Apply adjustments to brightness, contrast, and saturation, along with background whitening to reduce noise and emphasize primary sperm features [36].

Initial Head Segmentation (S₁): Use first SAM instance to segment sperm heads. Intersect obtained masks with purple regions of raw image and apply threshold filter based on intersection proportion to isolate all head masks [36].

Head Removal: Remove identified head masks from original image, creating an image containing only sperm tails.

Cascade Tail Segmentation (S₂ to Sₙ): Iteratively apply SAM to segment tails from simpler to more complex forms. After each round:

- Preserve and discard single tail masks using filtration criteria

- Focus subsequent iterations on overlapping and undetected tails

- Continue until segmentation outputs stabilize between successive rounds [36]

Complex Overlap Resolution: For persistently intertwined tails, apply enlargement and line-thickening before further SAM segmentation, then resize to original dimensions.

Head-Tail Matching: Assemble complete sperm masks by matching obtained head and tail masks based on distance and angle criteria.

The following diagram illustrates the complete CS3 workflow:

Quantitative Performance Evaluation

Table 2: Comparative Performance of Segmentation Methods on Sperm Images

| Method | Segmentation Type | Key Strengths | Reported Limitations |

|---|---|---|---|

| CS3 | Unsupervised instance segmentation | Superior handling of overlapping sperm; no labeled data required | Computationally intensive; struggles with >10 intertwined sperm [35] |

| Mask R-CNN | Supervised instance segmentation | Excellent for small, regular structures (head, nucleus, acrosome) [37] | Requires extensive labeled training data |

| U-Net | Semantic segmentation | Highest IoU for complex tail structures [37] | Limited capability for overlapping instances |

| YOLOv8/YOLO11 | Instance segmentation | Competitive neck segmentation; single-stage efficiency [37] | Lower performance on tiny subcellular structures |

Research Reagent Solutions

Table 3: Essential Research Materials for Sperm Segmentation Experiments

| Resource Category | Specific Solution | Function/Purpose |

|---|---|---|

| Base Model | Segment Anything Model (SAM) [36] | Foundation model providing zero-shot segmentation capabilities |

| Dataset | ~2,000 unlabeled sperm images + 240 expert-annotated images [36] | Method refinement and model evaluation benchmark |

| Image Pre-processing | Brightness/contrast/saturation adjustment tools [36] | Image quality enhancement for improved segmentation |

| Validation Framework | Expert-annotated sperm masks [36] | Ground truth for performance quantification |

| Comparative Models | Mask R-CNN, U-Net, YOLOv8, YOLO11 [37] | Baseline methods for performance comparison |

Advanced Technical Considerations

Theoretical Foundations of Cascade Segmentation

The CS3 approach is grounded in three key insights derived from preliminary experimentation with SAM:

Color Priority Principle: SAM prioritizes segmentation by color, only considering geometric features when color differentiation is minimal [36].

Exclusion Activation: Removing easily segmentable regions from images prompts SAM to target more complex areas that would otherwise be overlooked [36].

Morphological Enhancement: Enlarging and thickening overlapping sperm tail lines transforms previously indistinct areas into separable structures [36].

These principles enable CS3 to overcome fundamental limitations of conventional segmentation approaches in handling the specific challenges presented by sperm microscopy images, particularly the frequent overlapping of elongated tail structures that confounds standard instance segmentation methods.

Integration with Data Quality Research Frameworks

Within the context of data quality issues in sperm image datasets, CS3 offers significant advantages by reducing dependency on large annotated datasets—a critical constraint in this domain. The unsupervised nature of the approach directly addresses challenges of annotation scarcity and inter-rater variability that commonly plague sperm morphology research [38]. Furthermore, by specifically targeting overlapping sperm instances, CS3 enhances the completeness and accuracy of extracted morphological data, potentially reducing the sample preparation constraints typically required to minimize overlap in clinical samples.

This section provides a comparative overview of publicly available sperm image datasets to help researchers select the most appropriate one for their specific research objectives, whether focused on motility, morphology, or 3D dynamics.

Table 1: Comparison of Publicly Available Sperm Image Datasets

| Dataset Name | Primary Focus | Data Type & Volume | Key Annotations | Primary Use Cases in Model Development |

|---|---|---|---|---|

| VISEM-Tracking [27] [39] [40] | Motility & Kinematics | 20 videos (29,196 frames) [27] | Bounding boxes, tracking IDs, motility labels (normal, cluster, pinhead) [27] | Sperm detection, real-time tracking, motility classification, prediction of progressive vs. non-progressive motility [27] [39]. |

| MHSMA (Modified Human Sperm Morphology Analysis Dataset) [27] [11] | Morphology | 1,540 cropped sperm images [27] | Morphological defects (head, midpiece, tail) based on modified David classification [3]. | Classification of sperm into normal and abnormal morphological categories, often using CNNs [3]. |

| 3D-SpermVid [11] | 3D Flagellar Dynamics | 121 multifocal video-microscopy hyperstacks [11] | 3D+t raw image data of sperm under non-capacitating (49 samples) and capacitating conditions (72 samples) [11]. | Analysis of 3D motility patterns, flagellar beating, identification of hyperactivated sperm, development of next-generation CASA systems [11]. |

| SMD/MSS [3] | Morphology | 1,000 images (extendable to 6,035 with augmentation) [3] | 12 classes of morphological defects according to the modified David classification [3]. | Training predictive models for automated sperm morphological classification using Convolutional Neural Networks (CNNs) [3]. |

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: The bounding boxes in my VISEM-Tracking data have varying sizes for the same sperm cell. Is this an annotation error?

A: No, this is an expected and accurate representation of the data. The area of a bounding box for a single spermatozoon changes over time because the sperm moves and rotates within the video frame. As its position and orientation relative to the microscope change, the dimensions of its rectangular bounding box will naturally fluctuate to fully enclose the cell in each frame [27]. This is a normal characteristic that your tracking model must be designed to handle.

Q2: My morphology classification model, trained on MHSMA, performs poorly on new clinical images. What could be the cause?

A: This is a common challenge often stemming from data quality and domain shift issues. Consider the following:

- Staining and Preparation Variance: The MHSMA dataset consists of images from stained semen smears [3]. Your clinical images may use different staining protocols or preparation techniques, leading to different visual characteristics that your model has not learned.

- Class Imbalance: The original MHSMA dataset may have a heterogeneous representation of different morphological classes [3]. Check the distribution of classes in your training set. A model trained on an imbalanced dataset will be biased toward the majority classes.

- Solution: Apply extensive data augmentation techniques (e.g., color jitter, noise addition, blurring) to simulate the variations in your target clinical setting. Furthermore, as done with the SMD/MSS dataset, you can use data augmentation to balance the morphological classes before training, which can significantly improve model robustness and accuracy [3].

Q3: For motility prediction with VISEM-Tracking, can I rely on a single frame for analysis?

A: No, single-frame analysis is insufficient for predicting motility. The core of motility assessment lies in analyzing the movement over time. A single frame provides no information about the sperm's trajectory, speed, or movement patterns (progressive vs. non-progressive). The task organizers for VISEM-Tracking explicitly state that single-frame analysis is unable to capture the movement information necessary for tasks like predicting motility percentages [39]. Your model must be designed to process temporal sequences of frames.

Q4: How do I handle frames in VISEM-Tracking that have no spermatozoa present?

A: This is a real-world scenario accounted for in the dataset. For example, the video titled video_23 contains 174 frames without spermatozoa [27]. Your detection and tracking pipeline should be robust to this. During training, these frames serve as negative samples, helping your model learn to avoid false positives. During inference, your system should correctly identify these frames as having a count of zero, which is crucial for accurate overall analysis.

Experimental Protocols for Key Tasks

Protocol: Sperm Motility Analysis Using VISEM-Tracking

This protocol outlines the steps for training a deep learning model to classify sperm motility based on tracking data.

1. Data Preprocessing:

- Frame Extraction: Extract all frames from the provided AVI or MP4 video files. The dataset contains approximately 1,470 frames per 30-second video, with a resolution of 640x480 pixels [27] [39].

- Bounding Box Parsing: Load the corresponding bounding box annotations. The annotations are provided in YOLO format, which includes the class label and normalized bounding box coordinates (center x, center y, width, height) [27].

- Data Structuring: Organize the data into a format suitable for a temporal model. Create sequences of frames (e.g., 10-30 consecutive frames) that will be used to track individual sperm cells over time.

2. Model Training (YOLOv5 Baseline for Detection):

- The VISEM-Tracking paper provides a baseline using the YOLOv5 model for sperm detection [27].

- Configure the model to work with the three class labels:

0(normal sperm),1(sperm clusters), and2(small or pinhead sperm) [27]. - Train the model on the extracted frames and their corresponding bounding box labels. The loss function will typically consist of classification and bounding box regression losses.

3. Tracking and Motility Classification:

- Tracking: Use the detection results and assign a unique tracking ID to each sperm cell across frames. The dataset provides text files with tracking identifiers for this purpose [27].

- Trajectory Analysis: For each tracked sperm, calculate its trajectory and movement parameters, such as velocity and linearity.

- Motility Labeling: Classify each sperm based on its movement pattern:

- Progressive: Moves forward actively.

- Non-progressive: Moves in circles or without forward progression [39].

- Immotile: Shows no movement.

4. Evaluation:

- Detection: Evaluate using mean Average Precision (mAP) at a standard Intersection-over-Union (IoU) threshold [39].

- Motility Prediction: Evaluate using regression metrics like Mean Absolute Error (MAE) or Mean Squared Error (MSE) when predicting the overall percentage of progressive and non-progressive sperm in a sample [39].

Workflow for Sperm Motility Analysis

Protocol: Sperm Morphology Classification Using MHSMA/SMD-MSS

This protocol describes the workflow for building a CNN-based model to classify sperm morphology, using insights from the SMD/MSS dataset study [3].

1. Data Preprocessing and Augmentation:

- Cleaning: Identify and handle any inconsistencies. The SMD/MSS study highlights the importance of denoising images to address issues from insufficient lighting or poor staining [3].

- Normalization: Resize images to a fixed size (e.g., 80x80 pixels) and normalize pixel values. The SMD/MSS study converted images to grayscale [3].

- Augmentation: To address class imbalance and increase dataset size, apply augmentation techniques. The SMD/MSS study expanded their dataset from 1,000 to 6,035 images using augmentation [3]. Techniques include:

- Rotation

- Flips

- Changes in brightness and contrast

- Zoom

2. Model Training (CNN Architecture):

- Partitioning: Split the dataset randomly, for example, 80% for training and 20% for testing [3].

- Architecture: Design a Convolutional Neural Network (CNN). The typical structure includes:

- Convolutional layers for feature extraction.

- Pooling layers for down-sampling.

- Fully connected layers at the end for classification.

- Training: Train the model to classify sperm into the relevant morphological categories (e.g., normal, tapered head, coiled tail, etc.).

3. Evaluation and Handling of Expert Disagreement:

- Accuracy: Report the model's overall accuracy on the held-out test set.

- Expert Agreement: It is critical to analyze inter-expert agreement in your ground truth data. The SMD/MSS study categorized labels into "Total Agreement" (3/3 experts agree), "Partial Agreement" (2/3 agree), and "No Agreement" [3]. Train your model on samples with the highest expert agreement to improve reliability.

Workflow for Morphology Classification

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials and Reagents for Sperm Image Analysis Experiments

| Item Name | Function/Application | Example from Datasets |

|---|---|---|

| Phase-Contrast Microscope | Essential for all examinations of unstained, fresh semen preparations to visualize live, motile spermatozoa as recommended by WHO [27]. | Olympus CX31 microscope with 400x magnification [27]. |

| High-Speed Camera | Captures rapid movement of spermatozoa for detailed motility and kinematic analysis. | UEye UI-2210C camera [27]; MEMRECAM Q1v camera (5000-8000 fps) for 3D-SpermVid [11]. |

| Heated Microscope Stage | Maintains samples at body temperature (37°C), which is critical for preserving natural sperm motility during observation [27]. | Used in VISEM-Tracking sample preparation [27]. |