Navigating Linkage Disequilibrium in Trans-Ancestry GWAS: Methods, Challenges, and Clinical Translation

Trans-ancestry genome-wide association studies (GWAS) are revolutionizing our understanding of complex trait genetics across diverse populations.

Navigating Linkage Disequilibrium in Trans-Ancestry GWAS: Methods, Challenges, and Clinical Translation

Abstract

Trans-ancestry genome-wide association studies (GWAS) are revolutionizing our understanding of complex trait genetics across diverse populations. However, significant challenges persist due to population-specific differences in linkage disequilibrium (LD) patterns, which complicate genetic discovery, fine-mapping, and polygenic risk prediction. This article provides a comprehensive framework for handling LD differences in trans-ancestry analyses, covering foundational concepts, advanced methodological approaches, practical optimization strategies, and validation techniques. Drawing from recent advances in pathway analysis, fine-mapping algorithms, and polygenic risk score development, we offer researchers and drug development professionals actionable insights to improve statistical power, enhance causal variant identification, and ensure equitable translation of genetic discoveries across ancestral groups.

Understanding LD Heterogeneity: The Foundation of Trans-Ancestry Genetics

Defining Linkage Disequilibrium and Its Population-Specific Characteristics

Frequently Asked Questions (FAQs)

1. What is Linkage Disequilibrium (LD) and why is it important in genetic studies? Linkage disequilibrium (LD) refers to the non-random association of alleles at different loci in a population [1] [2]. It's a crucial concept in population genetics because it helps researchers understand how genes are inherited together and serves as a sensitive indicator of population genetic forces that structure a genome [2]. In practical terms, LD is fundamental for genome-wide association studies (GWAS) as it allows scientists to use tag SNPs to identify disease-associated genes without genotyping every single variant, significantly reducing costs while maintaining statistical power [3].

2. What's the difference between linkage and linkage disequilibrium? Linkage and linkage disequilibrium are distinct concepts. Linkage refers to whether genes are physically located on the same chromosome in an individual, which is a mechanical relationship. Linkage disequilibrium, in contrast, describes the statistical association between genes in a population [1]. There's no necessary relationship between the two—genes that are closely linked may or may not be associated in populations, and LD can occur between unlinked loci due to factors like population structure [1] [4].

3. What are the key metrics for measuring LD and when should I use each? The two primary metrics for measuring LD are D' and r², each serving different purposes as outlined in the table below.

Table 1: Key LD Metrics and Their Applications

| Metric | Definition | Primary Use Cases | Interpretation Guidelines |

|---|---|---|---|

| D | Raw difference between observed and expected haplotype frequencies: D = pAB - pApB [1] [5] | Foundational calculation | Scale-dependent; not ideal for comparisons [4] [5] |

| D' | D normalized by its theoretical maximum [5] [6] | Recombination mapping, historical events, haplotype block discovery [3] | ≥0.9 often indicates "complete" LD; less sensitive to MAF but inflated by rare alleles [3] |

| r² | Squared correlation coefficient between alleles at two loci: r² = D²/(pA(1-pA)pB(1-pB)) [1] [5] | Tag SNP selection, GWAS power, imputation quality [3] | 0.2=low, 0.5=moderate, ≥0.8=strong for tagging; sensitive to MAF [4] [3] |

4. What factors create or maintain LD in populations? Several evolutionary and demographic forces influence LD patterns, as detailed in the table below.

Table 2: Factors Affecting Linkage Disequilibrium

| Factor | Effect on LD | Practical Implications |

|---|---|---|

| Recombination | Decreases LD over time [1] [2] | Creates LD decay with distance; hotspots create sharp LD boundaries [2] [3] |

| Population Structure & Admixture | Creates LD, even for unlinked loci [1] [4] | Can generate spurious associations in GWAS if not accounted for [6] |

| Genetic Drift | Can create strong LD in small populations [4] [7] | Particularly impactful in founder populations and bottlenecks [3] |

| Natural Selection | Selective sweeps increase LD around selected sites [1] [3] | Can create extended LD regions independent of recombination rate |

| Mutation Rate | New mutations begin in complete LD with their background haplotypes [3] | Creates very recent LD that decays over generations |

5. How do LD patterns differ across populations and why does this matter for trans-ancestry studies? LD exhibits significant population specificity due to different demographic histories, selection pressures, and recombination patterns [7]. For example, a study of long-range LD found "substantially more population-specific LRLDs than coincident LRLDs" across African, European, and East Asian populations [7]. These differences have critical implications for trans-ancestry GWAS, as they can introduce artificial signals of association and reduce power to detect true associations in case-control designs, even when using meta-analytic approaches to account for stratification [6]. Leveraging these differential LD patterns through trans-ancestry fine-mapping, however, can help break apart correlated variants and improve causal variant identification [8] [3].

Troubleshooting Common LD-Related Experimental Issues

Problem 1: Inflated False Positive Rates in Multi-Population GWAS

Symptoms: Association tests show significant p-values that fail to replicate, particularly when analyzing combined datasets from different ancestral backgrounds.

Root Cause: Unaccounted population structure creates spurious associations due to allele frequency differences and variations in LD patterns between populations [6]. This can include "opposing LD" where the correlation between two SNPs occurs in opposite directions across different populations [6].

Solutions:

- Stratified Analysis: Perform association tests within homogeneous population groups first, then meta-analyze results [6].

- Statistical Correction: Use principal components analysis (PCA) or mixed models to account for population structure [3].

- Quality Control: Implement rigorous LD-based QC filters, excluding regions known to have long-range LD (e.g., MHC region, centromeres) during clumping [7] [3].

Table 3: Experimental Protocol for Handling Population Structure in GWAS

| Step | Procedure | Tools/Parameters |

|---|---|---|

| 1. Population Assignment | Confirm ancestry using PCA or similar methods | PLINK, EIGENSTRAT [3] |

| 2. LD Calculation | Compute LD metrics within each population group | PLINK (window: 200-1000 kb; MAF filter: ≥5%) [5] [3] |

| 3. Structure Correction | Include principal components as covariates in association testing | Typically 5-10 PCs sufficient for most studies [6] |

| 4. Meta-Analysis | Combine results across populations using appropriate methods | Fixed-effects or random-effects models [8] |

Problem 2: Poor Fine-Mapping Resolution in Association Loci

Symptoms: Large credible sets with many potentially causal variants, making functional validation costly and inefficient.

Root Cause: Extensive LD in the region creates large haplotype blocks where multiple highly correlated variants show similar association signals [2] [3].

Solutions:

- Trans-ancestry Fine-Mapping: Combine data from populations with different LD patterns to break apart correlated variants [8]. A 2025 trans-ancestry GWAS of kidney stone disease demonstrated this approach, identifying 59 susceptibility loci and detecting 25 causal signals with posterior inclusion probability >0.5 [8].

- Advanced Fine-Mapping Methods: Use methods like MESuSiE that leverage cross-population LD differences. In the kidney stone study, MESuSiE identified more causal signals with PIP >0.5 than population-specific methods [8].

- Credible Set Refinement: Integrate functional genomics data (e.g., chromatin state, regulatory elements) with LD information to prioritize variants [3].

Problem 3: Suboptimal Tag SNP Selection for Genotyping Arrays

Symptoms: Inefficient coverage of genetic variation, missing important variants, or redundant genotyping that increases costs without adding information.

Root Cause: Using inappropriate LD thresholds or failing to account for population-specific LD patterns when selecting tag SNPs [4] [3].

Solutions:

- Population-Specific Tagging: Select tag SNPs within each population of interest rather than using a universal set [3].

- Threshold Optimization: Use r² ≥ 0.8 for strong tagging, which indicates one SNP effectively predicts another [4] [3].

- MAF Considerations: Apply minor allele frequency filters (typically ≥5%) before tag selection to avoid unstable LD estimates [7] [3].

Experimental Protocol for Tag SNP Selection:

- Compute Pairwise LD: Calculate r² values for all SNP pairs within sliding windows (200-1000 kb) using tools like PLINK [5] [3].

- Apply MAF Filter: Remove variants with MAF <5% to ensure stable LD estimates [7].

- Tag Selection: For each SNP, if it has r² ≥ 0.8 with another SNP already in the tag set, exclude it; otherwise, include it [4].

- Validate Coverage: Ensure selected tags capture a high percentage (typically >80%) of common variation in the target population [3].

Problem 4: Inaccurate Imputation in Underrepresented Populations

Symptoms: Poor imputation quality metrics, discordant genotypes upon validation, or systematic differences in imputation accuracy across ancestral groups.

Root Cause: Reference panels that don't adequately represent the LD patterns and haplotype diversity of the study population [3].

Solutions:

- Population-Matched Reference Panels: Use reference panels that specifically match the ancestry of your study samples [3].

- LD-Aware Quality Control: Monitor pre- and post-imputation r² distributions as a QC measure for sample swaps, batch effects, or reference mismatches [4].

- Cross-Population PRS Methods: For polygenic risk scores, use methods like PRS-CSx that leverage trans-ancestry information. The 2025 kidney stone disease study showed that a cross-population PRS exhibited superior predictive performance compared to population-specific scores [8].

Table 4: Key Resources for LD Analysis in Trans-ancestry Studies

| Resource | Type | Primary Function | Application Notes |

|---|---|---|---|

| PLINK [5] | Software Toolset | LD calculation, pruning, clumping | Industry standard for GWAS workflows; fast and efficient for large datasets [5] [3] |

| LDlink [5] | Web Suite | Exploring population-specific haplotype structure | Includes LDproxy for querying proxies of a variant; supports multiple populations including EUR, EAS, AFR [5] |

| Haploview [3] | Software | Block visualization, D' heatmaps | Classic for haplotype block visualization; useful for defining block boundaries [3] |

| MESuSiE [8] | Statistical Method | Cross-population fine-mapping | Leverages LD differences across ancestries to improve causal variant identification [8] |

| 1000 Genomes Project [7] | Reference Data | Comprehensive LD reference | Provides haplotype data across diverse populations; essential for imputation and comparison [7] |

| PRS-CSx [8] | Algorithm | Cross-population polygenic risk scores | Improves PRS prediction by integrating data from multiple ancestries [8] |

The Impact of Differential LD on Genetic Association Studies

Frequently Asked Questions (FAQs)

1. What is Linkage Disequilibrium (LD) and why is it important in genetic association studies? Linkage Disequilibrium (LD) refers to the non-random association of alleles at different loci in a population. It is a crucial concept because it forms the foundation for genome-wide association studies (GWAS). In GWAS, researchers rely on the fact that genotyped markers can "tag" or serve as proxies for nearby causal variants due to LD. However, the patterns and extent of LD vary significantly between populations, which can greatly impact the resolution, power, and interpretation of association studies, especially in trans-ancestry research [9].

2. How do LD patterns differ across ancestral populations? LD patterns are highly dependent on population-specific demographic history, including factors like effective population size, selection, admixture, and genetic drift [10] [1]. For example, populations of European descent often have larger blocks of LD due to historical bottlenecks. In contrast, populations with larger effective population sizes or more ancient histories, such as many African populations, typically show a more rapid decay of LD, resulting in shorter LD blocks and finer-scale genomic structure [11]. These differences are a primary source of heterogeneity in trans-ancestry genetic studies.

3. What specific problems does differential LD create in trans-ancestry GWAS? Differential LD can lead to several major issues:

- Spurious Associations: It can create false positives if population structure is not properly accounted for [10] [9].

- Reduced Fine-Mapping Resolution: The same causal variant can be tagged by different sets of SNPs in different populations, making it difficult to pinpoint the true functional variant when meta-analyzing data [11].

- Heterogeneous Effect Sizes: The marginal effect size of a tagging SNP can differ across populations due to variations in LD with the underlying causal variant, complicating the detection of true associations [11].

- Inconsistent Gene/Pathway Assignment: In gene-based or pathway-based analyses, a SNP may be assigned to different genes depending on the population's LD structure, leading to inconsistent biological interpretations [12].

4. What is LD-based binning and how can it improve my GWAS? Traditional "positional binning" assigns SNPs to a gene based solely on physical proximity. LD-based binning is an alternative method that also assigns a SNP to a gene if it is in high LD with another SNP located within that gene's physical boundaries. This approach recovers valuable information; for instance, in studies of bipolar disorder, LD-based binning increased gene coverage by 6.1%–9.3% and assigned tens of thousands more SNPs to genes, thereby improving the concordance of results between independent studies [12].

5. What statistical methods can control for population structure in trans-ancestry studies? Several robust methods are available:

- Genetic Relationship Matrix (GRM)/Mixed Models: Methods like those implemented in tools such as BOLT-LMM and SAIGE can effectively correct for population stratification in biobank-scale datasets [9].

- Principal Components Analysis (PCA): Including the top principal components as covariates in regression models is a standard approach to control for broad-scale population structure [9].

- LD Score Regression: This technique, applied to GWAS summary statistics, can quantify the extent of confounding from population stratification and is also used to estimate heritability and genetic correlation [13].

- Trans-ancestry Meta-analysis Methods: Advanced meta-analysis techniques model the genetic differences among populations to account for heterogeneity in effect sizes, offering a more powerful alternative to simple replication-based approaches [11].

Troubleshooting Guides

Problem 1: Inconsistent Replication of Associations Across Populations

Symptoms:

- A variant significantly associated with a trait in one ancestry group shows a weak or non-significant effect in another.

- The lead (most significant) SNP differs between populations for the same trait locus.

Diagnosis: This is a classic symptom of differential LD. The causal variant is likely tagged by different SNPs (or tagged with different strengths) in each population due to distinct LD patterns [11].

Solution: Implement trans-ancestry fine-mapping.

- Collect Summary Statistics: Obtain GWAS summary statistics from each ancestry group.

- Use Trans-ancestry Fine-mapping Tools: Apply methods like those described in trans-ancestry frameworks that leverage differential LD to narrow down the set of putative causal variants. The varying LD patterns across populations can actually help break correlations, increasing fine-mapping resolution [11].

- Identify Credible Set: Generate a much smaller set of candidate causal variants that is consistent across ancestries.

Experimental Protocol: Trans-Ancestry Fine-Mapping

- Input: GWAS summary statistics from at least two distinct ancestry groups (e.g., European, East Asian, African) for the same trait.

- Software: Utilize specialized trans-ancestry fine-mapping tools.

- Reference Panels: Use ancestry-matched reference panels (e.g., from the 1000 Genomes Project) to accurately estimate LD patterns for each population.

- Procedure:

- Harmonize summary statistics and reference panels to the same genomic build and allele configurations.

- Run the trans-ancestry fine-mapping algorithm to jointly analyze all datasets.

- Output a merged credible set of variants that is refined using the combined LD information from all populations.

- Validation: Follow up with functional genomic assays (e.g., reporter assays, CRISPR editing) on the top candidate variants from the credible set.

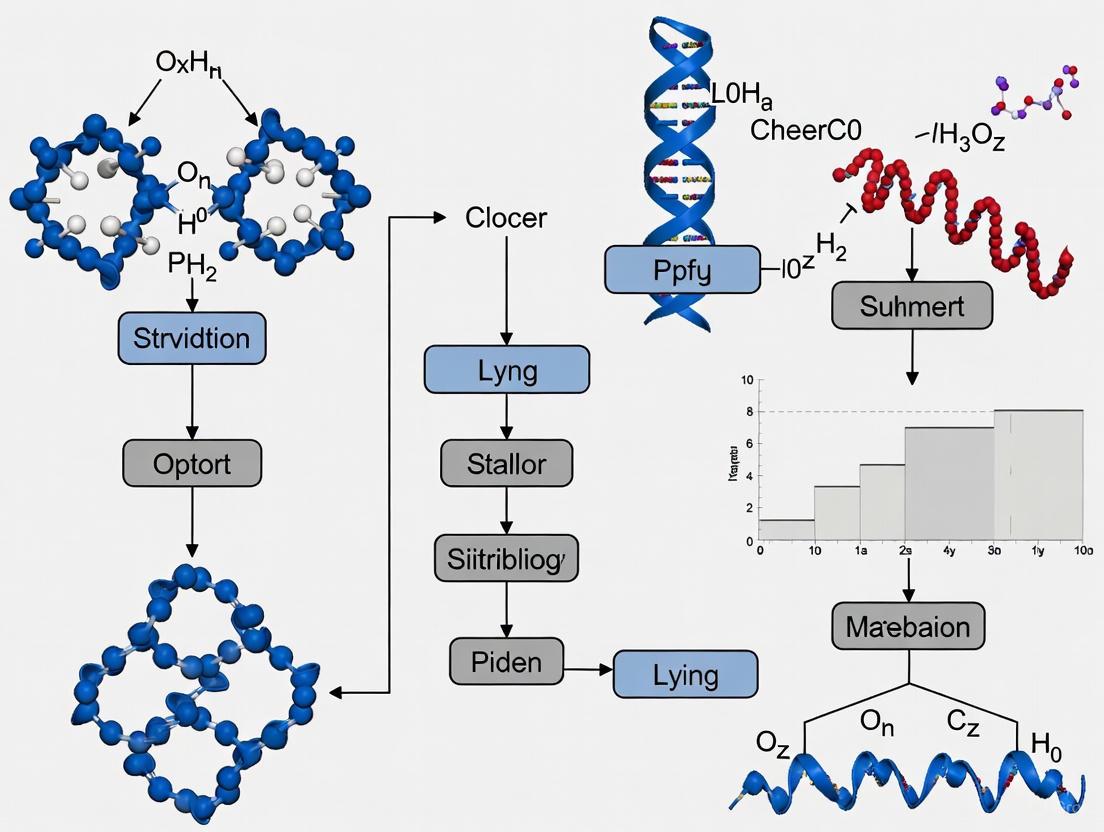

Workflow for Trans-ancestry Fine-mapping

Problem 2: Poor Concordance in Gene-Based or Pathway Analysis

Symptoms:

- A gene or pathway is significant in a GWAS of one population but not another.

- Gene-level results from independent studies of the same trait show low correlation.

Diagnosis: Standard gene-based tests that assign SNPs to genes based only on physical position (e.g., within 50 kb of the gene) fail to account for SNPs that are in high LD with the gene but are located farther away. This problem is exacerbated when LD structures differ [12].

Solution: Adopt an LD-based binning approach for gene and pathway analysis.

- Calculate Pairwise LD: Generate a matrix of pairwise LD (e.g., using r²) for your genotyped variants from a reference panel that matches your study population.

- Implement LD-based Assignment: Use tools like the LDsnpR package to assign SNPs to genes not only by physical location but also by LD. A common threshold is to include SNPs with an r² value above 0.8 with a SNP inside the gene [12].

- Perform Gene-Based Test: Conduct your gene or pathway analysis (e.g., using the Adaptive Rank Truncated Product method) using this expanded, LD-informed set of SNPs [11].

Problem 3: Apparent Heterogeneity in Genetic Effects

Symptoms:

- Meta-analysis of multi-ancestry data shows high statistical heterogeneity (e.g., high I² statistic).

- Effect sizes for the same SNP vary widely across populations.

Diagnosis: This heterogeneity can arise from genuine biological differences but can also be a technical artifact caused by population-specific LD between the genotyped tag-SNP and the underlying causal variant [11].

Solution: Apply a trans-ancestry meta-analysis framework that models this heterogeneity.

- Choose an Appropriate Framework: Select a method designed for trans-ancestry data, such as the Trans-Ancestry Gene Consistency (TAGC) framework, which posits that a subset of genes within a pathway is associated with the outcome across ancestries, even if the strength of association differs [11].

- Integrate at the Correct Level: These frameworks can integrate data at different levels:

- SNP-centric: Combine single-ancestry SNP summary statistics into trans-ancestry statistics before aggregation to the gene level.

- Gene-centric: Aggregate SNPs within genes for each ancestry first, then combine the gene-level statistics across ancestries [11].

- Interpret with Caution: Significant heterogeneity at the SNP level may resolve at the gene or pathway level, providing more robust cross-population insights.

Key Quantitative Data on Linkage Disequilibrium

Table 1: Measures of Linkage Disequilibrium and Their Applications

| Measure | Formula/Symbol | Interpretation | Primary Use in Association Studies |

|---|---|---|---|

| Coefficient of LD | D = pAB - pApB | Raw deviation from independence. Highly dependent on allele frequencies [1]. | Foundational calculation; less commonly used directly in reporting. |

| Standardized D' | D' = D / Dmax | Ranges from 0 (equilibrium) to 1 (complete LD). Measures recombination history, unaffected by allele frequencies [10]. | Useful for identifying historical recombination hotspots and cold spots. |

| Squared Correlation (r²) | r² = D² / (pA(1-pA)pB(1-pB)) | Ranges from 0 to 1. Directly related to statistical power in association studies [10] [1]. | The preferred measure for power and tagging efficiency. An r² of 0.8 is a common threshold for defining a tag SNP. |

Table 2: Impact of LD-based Binning on Gene Coverage in GWAS [12]

| Study | Genotyping Platform | Genes Covered (Positional Binning) | Genes Covered (LD-based Binning) | Increase in Coverage |

|---|---|---|---|---|

| WTCCC Bipolar | Affymetrix 500K | 30,610 (83.4%) | 33,443 (91.1%) | 2,833 genes (+9.3%) |

| TOP Bipolar | Affymetrix 6.0 | 31,823 (86.7%) | 33,905 (92.4%) | 2,082 genes (+6.5%) |

| German Bipolar | Illumina HumanHap550 | 31,708 (86.4%) | 33,861 (92.3%) | 2,153 genes (+6.8%) |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Analytical Tools for Handling Differential LD

| Tool/Resource Name | Type | Primary Function | Key Application in Differential LD Context |

|---|---|---|---|

| PLINK | Software Toolset | Whole-genome association analysis [9]. | Basic QC, stratification control via PCA, and fundamental association testing. |

| LD Score Regression (LDSC) | Statistical Method | Quantifying confounding and estimating heritability from summary statistics [13]. | Detecting and correcting for residual population stratification in trans-ancestry meta-analyses. |

| METAL | Software Tool | Meta-analysis of GWAS results [9]. | Combining summary statistics from multiple studies/ancestries using fixed or random effects models. |

| Trans-ancestry ARTP Framework | Statistical Framework | Pathway-based analysis of multi-ancestry GWAS data [11]. | Aggregating weak association signals across genes and pathways while accounting for ancestry-specific LD. |

| LDsnpR | R Package | SNP-to-gene assignment using LD-based binning [12]. | Improving gene-based analysis and cross-study concordance by accurately mapping SNPs to genes via LD. |

| 1000 Genomes Project | Reference Dataset | Catalog of human genetic variation and haplotype information [9]. | Providing population-specific LD reference panels for imputation and fine-mapping. |

| RICOPILI | Pipeline | Rapid imputation and analysis pipeline for consortium data [9]. | Streamlining the workflow for pre-processing and analyzing large-scale multi-ancestry GWAS data. |

Logical Flow for Addressing Differential LD Challenges

Linkage disequilibrium (LD) refers to the non-random association of alleles at different loci in a population. Understanding LD patterns is fundamental to genome-wide association studies (GWAS) because it affects the ability to detect and fine-map trait-associated variants. Different ancestral groups exhibit distinct LD patterns due to their unique demographic histories, including population bottlenecks, expansions, and migrations.

Trans-ancestry genetic studies leverage these differences in LD patterns across populations to improve the identification and fine-mapping of causal variants underlying complex traits and diseases. When genetic variants are in strong LD in one population but not in another, combining data from multiple ancestries can help pinpoint the likely causal variant within a risk locus. This approach has become increasingly important as the field moves toward more inclusive genetic studies that encompass global diversity.

FAQs: LD Patterns in Trans-ancestry Research

How do LD patterns differ across major ancestral groups? African ancestry populations typically show shorter-range LD and lower correlation between variants due to their greater genetic diversity and older population history. In contrast, non-African populations, including Europeans and East Asians, generally exhibit longer-range LD patterns as a result of population bottlenecks during migration out of Africa. These differences create complementary patterns that can be leveraged in trans-ancestry analyses.

Why do trans-ancestry GWAS have improved fine-mapping resolution? Trans-ancestry GWAS enhance fine-mapping resolution by exploiting differences in LD patterns across populations. A causal variant may be in strong LD with many other variants in one population, making it difficult to identify. However, in another population with different LD patterns, the same causal variant may be in LD with a different, often smaller, set of variants. By combining data, researchers can narrow down the set of candidate causal variants to those that show consistent association signals across diverse LD backgrounds.

What is the "trans-ancestry gene consistency" assumption? This assumption posits that a specific subset of genes within a biological pathway is associated with a particular outcome across various ancestry groups, although the strength of their association may differ due to genetic and environmental variations. This principle underpins many trans-ancestry pathway analysis methods and is considered reasonable because functional variants, especially common ones, are often shared among diverse populations.

How does heterogeneity in effect sizes impact trans-ancestry analyses? Effect size heterogeneity across populations presents significant challenges for trans-ancestry association methods. This variability can arise from the varying direct effects of functional SNPs potentially influenced by differential environmental interactions, and the uneven marginal effects of tagging SNPs due to population-specific LD patterns with underlying functional variants. Robust methods must account for this potential heterogeneity.

What are the key methodological considerations for trans-ancestry conditional analysis? Multi-ancestry conditional and joint analysis methods like Manc-COJO are designed to identify independent associations across diverse ancestral backgrounds. These approaches assume that most causal variants are shared across ancestries with comparable effect sizes but remain robust when this assumption is relaxed. They outperform methods applied to single-ancestry datasets of equivalent size by leveraging LD differences across populations.

Troubleshooting Common Experimental Challenges

Challenge: Inconsistent Replication of Associations Across Populations

| Issue | Cause | Solution |

|---|---|---|

| Association signals fail to replicate in populations of different ancestry | Differences in LD structure, allele frequency, or genetic architecture; insufficient statistical power in replication cohort | Calculate statistical power considering effect size and allele frequency in target population; use trans-ancestry methods that account for heterogeneity |

| Challenge: Inaccurate Fine-mapping Due to LD | ||

| Large credible sets containing many potential causal variants | Strong LD in the region makes it difficult to distinguish causal from non-causal variants | Combine data from multiple ancestries with different LD patterns; use methods like trans-ancestry fine-mapping that leverage LD differences |

| Challenge: Heterogeneous Genetic Effects | ||

| Effect sizes vary substantially across populations | True biological differences in variant impact, gene-environment interactions, or differences in LD with causal variants | Apply methods that allow for effect size heterogeneity; examine potential modifying environmental factors; check for differences in LD patterns |

Challenge: Accounting for LD in Replicability Analysis Standard replicability analysis often assumes independence among single-nucleotide polymorphisms (SNPs), ignoring the LD structure. This can produce either overly liberal or conservative results. Methods like ReAD (Replicability Analysis accounting for Dependence) use a hidden Markov model to capture the local dependence structure of SNPs across studies, providing more accurate significance rankings while controlling the false discovery rate.

Experimental Protocols & Data Analysis

Protocol: Trans-ancestry Pathway Analysis Framework

This protocol outlines a comprehensive approach for trans-ancestry pathway analysis that integrates genetic data at multiple levels [11].

Step 1: Data Preparation and Quality Control

- Gather summary statistics from multiple single-ancestry GWAS

- Ensure consistent genomic build and allele coding across studies

- Apply quality filters (e.g., minor allele frequency, imputation quality, Hardy-Weinberg equilibrium)

Step 2: SNP to Gene Assignment

- Assign SNPs to genes based on genomic position (typically within 50 kb of gene boundaries)

- Account for overlapping genes and SNPs assigned to multiple genes

Step 3: Gene-Level Association Statistics

- Aggregate SNP-level association signals within genes using methods like Adaptive Rank Truncated Product (ARTP)

- Account for LD structure using ancestry-matched reference panels

Step 4: Trans-ancestry Integration (Three Approaches)

- SNP-centric: Combine single-ancestry SNP-level summary data to generate trans-ancestry SNP statistics before gene and pathway analysis

- Gene-centric: Aggregate single-ancestry SNP data within genes first, then combine gene-level statistics across ancestries

- Pathway-centric: Conduct pathway analysis separately for each ancestry, then integrate p-values across studies

Step 5: Pathway Association Testing

- Test self-contained null hypothesis that no SNP in the pathway is associated with the outcome across all ancestral populations

- Use resampling-based procedures to account for correlation between genes and pathways

- Apply multiple testing corrections for the number of pathways tested

Quantitative Data on Trans-ancestry Replicability

Table: Replicability Rates of GWAS Findings Across Ancestral Groups [14]

| Ancestral Comparison | Replicability Rate (P<0.05) | Expected by Chance | Powered Subset (≥80% power) |

|---|---|---|---|

| Within Europeans | 85.6% (155/181) | ~5% | ~100% (147/168 observed vs. 149.1 expected) |

| European to East Asian | 45.8% (103/225) | ~5% | 76.5% (62/81) |

| European to African | Lower than East Asian | ~5% | Limited by sample size and power |

Trans-ancestry Pathway Analysis Workflow

Protocol: Multi-ancestry Conditional and Joint Analysis (Manc-COJO)

This protocol identifies independent genetic associations across diverse ancestral backgrounds [15].

Step 1: Input Data Preparation

- Collect GWAS summary statistics from multiple ancestry groups

- Obtain ancestry-matched LD reference panels (e.g., from 1000 Genomes Project)

- Ensure variant alignment across studies (same chromosomal positions and alleles)

Step 2: Effect Size Harmonization

- Check strand alignment and allele coding consistency

- Account for differences in allele frequencies across populations

- Model effect sizes under the assumption that most causal variants are shared

Step 3: Stepwise Association Testing

- Identify the most significant variant in the multi-ancestry dataset

- Condition on this variant and re-test remaining variants

- Iterate until no additional variants reach significance threshold

Step 4: Robustness Checks

- Apply Manc-COJO-MDISA extension to identify ancestry-specific associations

- Validate findings in independent cohorts when possible

- Compare results with single-ancestry COJO analyses

Table: Key Analytical Tools for Trans-ancestry LD Analysis

| Tool/Method | Primary Function | Application Context |

|---|---|---|

| PRS-CSx [16] | Bayesian polygenic risk score construction | Integrates GWAS from multiple populations using continuous shrinkage prior; accounts for population-specific LD |

| Manc-COJO [15] | Multi-ancestry conditional & joint analysis | Identifies independent associations across diverse ancestries; improves fine-mapping |

| ReAD [17] | Replicability analysis accounting for LD | Detects replicable SNPs from two GWAS using hidden Markov model to capture LD structure |

| Trans-ancestry ARTP [11] | Pathway analysis with multi-ancestry data | Tests pathway associations using SNP, gene, or pathway-level integration strategies |

| LD Reference Panels | Population-specific LD patterns | 1000 Genomes Project provides ancestry-matched LD estimates for European, African, East Asian populations |

Manc-COJO Analysis Workflow

Advanced Applications and Future Directions

Trans-ancestry Polygenic Risk Scores Integrating GWAS from multiple populations enables the development of more accurate polygenic risk scores (PRS) that perform better across diverse populations. For example, a trans-ancestry PRS for type 2 diabetes developed using PRS-CSx showed significant association with T2D status across European, African, and East Asian ancestral groups. The top 2% of the PRS distribution identified individuals with a 2.5-4.5-fold increase in T2D risk, comparable to the risk increase for first-degree relatives of affected individuals [16].

Drug Target Prioritization Trans-ancestry GWAS can improve drug target prioritization by identifying robust genetic associations that replicate across populations. The enhanced fine-mapping resolution enables more precise identification of causal genes and pathways, which is particularly valuable for target identification in drug development pipelines.

Clinical Translation As genetic risk prediction moves toward clinical implementation, trans-ancestry methods ensure that benefits are distributed equitably across population groups. Methods that express polygenic risk on the same scale across ancestrically diverse individuals facilitate the use of single risk thresholds in diverse clinical settings.

LD as Both Challenge and Opportunity in Trans-Ancestry Studies

Frequently Asked Questions (FAQs) & Troubleshooting Guides

The Fundamental Challenges

Q1: Why does Linkage Disequilibrium (LD) pose a unique problem in trans-ancestry genetic studies?

LD, the non-random association of alleles, varies significantly across populations due to differences in their demographic history, including migrations, population bottlenecks, and natural selection [18]. In trans-ancestry studies, this heterogeneity is a primary source of technical challenges.

- Challenge: Differences in LD patterns between populations can lead to spurious associations in Genome-Wide Association Studies (GWAS) if population structure is not properly accounted for [18]. Furthermore, it complicates the comparison and combination of genetic data, as the same causal variant may be tagged by different sets of SNPs in different ancestries.

- Troubleshooting Tip: Always use ancestry-specific LD reference panels when performing analyses that rely on LD structure, such as fine-mapping or heritability estimation. Do not assume an LD reference from one population (e.g., European) is applicable to another.

Q2: What is the "LD bottleneck" and how does it impact post-GWAS analysis?

The "LD bottleneck" refers to the computational and methodological burdens imposed by the reliance on massive, population-specific LD matrices [19]. The lack of standardized, portable LD resources hampers the progress and reproducibility of research.

- Challenge: Popular software tools (e.g., LDSC, LDPred) often come with their own, incompatible LD reference files, creating a fragmented ecosystem [19]. As sequencing resolution improves and more diverse populations are studied, managing these large LD matrices becomes increasingly computationally prohibitive.

- Troubleshooting Tip: Explore emerging computational methods that use more efficient approximations of LD. The development of deep learning models that can learn and generate LD patterns without explicit enumeration is a promising future direction [19].

Methodological Solutions and Protocols

Q3: What are the primary methodological strategies for conducting a multi-ancestry GWAS, and how do I choose?

There are two main strategies, each with advantages and limitations, as systematically evaluated in recent literature [20]:

Table 1: Comparison of Multi-ancestry GWAS Strategies

| Method | Description | Advantages | Disadvantages | Best Use Case |

|---|---|---|---|---|

| Pooled Analysis | Individuals from all ancestries are analyzed in a single model, often using Principal Components (PCs) to control for stratification. | Maximizes sample size and statistical power; accommodates admixed individuals [20]. | Risk of residual confounding if population structure is not perfectly captured by PCs [20]. | When studying shared genetic effects and maximizing discovery power is the priority [20]. |

| Meta-Analysis | Separate GWAS are run per ancestry, and summary statistics are combined. | Better controls for fine-scale population structure; easier data sharing [20]. | May lose power for ancestry-specific effects; requires careful handling of effect size heterogeneity [20]. | When ancestry-specific effects are of key interest or when combining consortia data with individual-level data access restrictions. |

Experimental Protocol: Conducting a Multi-ancestry Meta-analysis with Fine-mapping

Aim: To identify and refine trait-associated loci across diverse ancestries. Workflow: The following diagram outlines the key steps for a robust trans-ancestry meta-analysis and fine-mapping protocol, integrating methods like Manc-COJO [15] and MESuSiE [8].

Key Steps:

- Cohort Collection & GWAS: Perform quality-controlled (QC'd) GWAS on each ancestry group separately, using mixed models or PCs to control for population structure [20].

- Meta-Analysis: Combine summary statistics using a fixed-effects or random-effects model. Tools like MR-MEGA can explicitly account for ancestry differences [20].

- Identify Independent Loci: Use multi-ancestry conditional & joint analysis tools like Manc-COJO. This method enhances the detection of independent associations and reduces false positives compared to single-ancestry approaches [15].

- Fine-Mapping: Apply cross-population fine-mapping methods like MESuSiE. These leverage differences in LD patterns across ancestries to narrow down the set of probable causal variants, often resulting in smaller "credible sets" than single-population fine-mapping [8].

Q4: How can I estimate genetic correlation across ancestries, especially with unbalanced sample sizes?

Trans-ancestry genetic correlation measures the similarity of genetic architectures between populations. A new class of methods has been developed to handle the common scenario where one ancestry (e.g., European) has a much larger sample size than another (e.g., non-European).

- Protocol: The TAGC (Trans-ancestry Genetic Correlation) estimator is designed for this situation [21]. It uses genetically-predicted traits in the smaller non-European GWAS, where genetic effects are learned from the large-scale European GWAS. The method then explicitly corrects for prediction-induced bias and LD heterogeneity [21].

- Application: This approach is vital for understanding the transferability of polygenic risk scores and for assessing whether disease mechanisms are shared across populations.

Practical Implementation & Tools

Q5: What computational tools are available for advanced, genome-wide LD analysis?

Moving beyond single-chromosome LD calculation is critical. The following tools enable efficient, large-scale LD computation.

Table 2: Key Software for Linkage Disequilibrium Analysis

| Tool Name | Language | Key Features | Application in Trans-ancestry Studies |

|---|---|---|---|

| X-LDR [22] | C++ | A stochastic algorithm for biobank-scale data. Can create high-resolution LD grids for the entire genome. | Draft an atlas of LD across species and populations; analyze global LD patterns and the impact of population structure. |

| GWLD [23] | R & C++ | Rapidly calculates conventional LD measures (D/D', r²) and information-theoretic measures (MI, RMI) both within and across chromosomes. | Visualize genome-wide interchromosomal LD patterns, which may reflect selection intensity and other evolutionary forces. |

Q6: How can I improve polygenic risk score (PRS) portability in trans-ancestry contexts?

PRS trained on one population often perform poorly in others, partly due to differences in LD and allele frequency. Trans-ancestry GWAS is a key solution.

- Solution: Construct a cross-population polygenic risk score (PRS) using trans-ancestry GWAS summary statistics. For example, a study on kidney stone disease showed that a PRS built from both European and East Asian data (

PRS-CSxEAS&EUR) had superior predictive performance compared to scores built from either population alone [8]. - Result: Individuals in the highest quintile of this cross-population PRS had an 83% higher risk of kidney stone disease than those in the middle quintile, demonstrating the clinical potential of this approach [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Trans-ancestry GWAS

| Resource / Reagent | Type | Function | Example/Reference |

|---|---|---|---|

| Multi-ancestry LD Reference Panels | Dataset | Provides population-specific LD structure for accurate fine-mapping and heritability estimation. | TOPMed [18], 1KG, and population-specific biobanks. |

| Manc-COJO [15] | Software/Algorithm | Conducts conditional and joint analysis on multi-ancestry GWAS summary data to identify independent loci. | Identifies novel associations and reduces false positives in trans-ancestry meta-analyses [15]. |

| MESuSiE [8] | Software/Algorithm | A cross-population fine-mapping method that improves resolution by leveraging heterogeneous LD. | Pinpoints shared and ancestry-specific causal signals with higher confidence than single-ancestry methods [8]. |

| TAGC [21] | Software/Algorithm | Estimates trans-ancestry genetic correlation, robust to unbalanced sample sizes and LD differences. | Assesses the transferability of genetic findings from well-powered to under-represented populations [21]. |

| Pangenome Reference | Dataset | A more complete human genome reference that includes diverse haplotypes, improving variant discovery and alignment. | The Telomere-to-Telomere (T2T) and Human Pangenome Reference consortia [19]. |

Current Landscape and Persistent Gaps in Diverse Genomic Representation

Frequently Asked Questions

FAQ: Why is diverse genomic representation a critical issue in modern genetics research?

Historically, over 80% of genome-wide association study (GWAS) participants have been of European ancestry, creating major limitations for the generalizability of findings and equitable distribution of health benefits [24] [19]. This Eurocentric bias can lead to false pathogenic classifications and health disparities when findings are applied to underrepresented populations [19]. Expanding GWAS to multi-ancestry populations enhances the identification and fine-mapping of disease loci and provides more comprehensive insights into disease manifestation across different genetic backgrounds [11] [24].

FAQ: What is the primary genetic challenge when working with multi-ancestry datasets?

Linkage disequilibrium (LD) differences across populations present the most significant challenge [19]. LD patterns vary substantially between ancestry groups, complicating the identification of independent associations and true causal variants [15]. This "LD bottleneck" hampers post-GWAS analyses and requires specialized methods that can appropriately handle these differences without introducing false positives [19] [15].

FAQ: What practical approaches can improve variant prioritization in trans-ancestry studies?

Multi-ancestry conditional and joint analysis (Manc-COJO) represents a significant advancement over single-ancestry methods [15]. This approach conducts stepwise association testing across diverse ancestral backgrounds under the assumption that most causal variants are shared across ancestries, though it remains robust when this assumption is relaxed [15]. The method enhances detection of independent disease-associated loci while reducing false positives compared to European-only datasets of equivalent size [15].

Troubleshooting Guides

Problem: Inadequate Statistical Power in Non-European Cohorts

Symptoms

- Inability to replicate known variant-trait associations in underrepresented populations

- Wide confidence intervals for effect size estimates in non-European ancestries

- Failure to reach genome-wide significance for population-specific variants

Solutions

- Utilize Specialized Biobanks: Leverage diverse population biobanks to increase sample sizes

- Implement Meta-Analysis Frameworks: Join consortia initiatives like the Global Biobank Meta-analysis Initiative (GBMI) to combine datasets across institutions [25]

- Apply Power-Enhancing Methods: Use approaches like Manc-COJO-MDISA that enhance detection of ancestry-specific variants even with limited sample sizes [15]

Table 1: Global Biobanks for Diverse Genomic Research

| Biobank Name | Primary Population Focus | Sample Size | Key Features |

|---|---|---|---|

| All of Us [26] | Multi-ethnic, with focus on underrepresented groups | Goal: 1 million+ | NIH program capturing diverse genomic data |

| Biobank Japan [26] | Japanese ancestry | 200,000+ | Genetic and clinical data for East Asian populations |

| H3Africa [26] | Various African populations | Varies | Addresses historical underrepresentation of African ancestries |

Problem: Linkage Disequilibrium Mismatch in Trans-Ancestry Analysis

Symptoms

- Inconsistent association signals across populations for the same genomic region

- Failure to fine-map causal variants due to different LD patterns

- Spurious associations when using inappropriate LD reference panels

Solutions

- Select Appropriate LD References: Use ancestry-matched LD reference panels rather than European-only panels [15]

- Implement Robust Methods: Apply multi-ancestry frameworks like Manc-COJO designed to handle LD heterogeneity [15]

- Validate with Conditional Analysis: Perform stepwise conditional analyses to identify independent signals across ancestries [15]

Problem: Technical and Analytical Incompatibilities

Symptoms

- Inability to harmonize data across different genotyping platforms

- Computational bottlenecks when handling massive multi-ancestry LD matrices

- Limited portability of analysis tools and reference files between research groups

Solutions

- Adopt Advanced Computational Strategies: Explore deep learning models that can learn LD patterns without explicit enumeration of massive matrices [19]

- Utilize Flexible Software: Implement tools like Manc-COJO that accept either individual-level genotype data or precomputed LD matrices for better compatibility [15]

- Standardize Genomic Resources: Transition to newer genome assemblies (e.g., T2T, pangenome) despite technological inertia around GRCh37 [19]

Experimental Protocols & Methodologies

Protocol: Trans-Ancestry Pathway Analysis Framework

This protocol outlines the comprehensive framework for trans-ancestry pathway analysis that effectively utilizes diverse genetic information [11].

Principle: The Trans-Ancestry Gene Consistency (TAGC) assumption posits that a specific subset of genes within a pathway is associated with the outcome across various ancestry groups, although association strength may differ due to genetic and environmental variations [11].

Diagram 1: Trans-ancestry pathway analysis workflow.

Step-by-Step Procedure:

Data Preparation

- Collect summary data from L single-ancestry GWAS, each including n(l) subjects

- For each study, obtain summary statistics for T SNPs: estimated coefficients (β̂i(l)) and standard errors (τi(l))

- Calculate z-scores: Zi(l) = β̂i(l)/τi(l) and corresponding p-values [11]

SNP-to-Gene Assignment

- Assign SNPs to genes within 50 kb of gene boundaries (allow for multiple assignments)

- Alternative assignment strategies can be employed based on research objectives [11]

Select Integration Level

- SNP-centric: Consolidate SA-SNP summary data to generate trans-ancestry SNP-level statistics

- Gene-centric: Aggregate SA-SNP data within each gene to produce single-ancestry gene-level statistics

- Pathway-centric: Integrate p-values from pathway analyses across each SA-GWAS [11]

Apply Adaptive Rank Truncated Product (ARTP) Method

- Obtain association p-values for each component (SNP or gene)

- Use resampling to simulate M replicas of p-values under global null hypothesis

- Calculate Negative Log Product (NLP) statistics for candidate thresholds

- Estimate empirical p-values using resampled data [11]

Protocol: Multi-Ancestry Conditional and Joint Analysis (Manc-COJO)

This protocol enables identification of independent associations across diverse ancestries while addressing LD differences [15].

Diagram 2: Manc-COJO analysis workflow.

Implementation Steps:

Input Data Requirements

- Multi-ancestry GWAS meta-analysis summary statistics

- Ancestry-matched LD reference panels or individual-level genotype data

- Precomputed LD matrices (if data sharing restrictions exist) [15]

Stepwise Association Testing

- Conduct association testing across diverse ancestral backgrounds

- Assume most causal variants are shared across ancestries with comparable effect sizes

- Maintain robustness when this assumption is relaxed [15]

Ancestry-Specific Extension (Manc-COJO-MDISA)

- Use multi-ancestry data to inform single-ancestry analyses

- Identify ancestry-specific associations

- Incorporate causal variants validated through wet-lab experiments, even if below conventional significance thresholds [15]

The Scientist's Toolkit

Table 2: Essential Research Reagents & Computational Tools

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| PLINK [26] | Whole-genome association analysis | Quality control, basic association testing | Command-line toolset for association and population-based linkage analyses |

| Manc-COJO [15] | Multi-ancestry conditional & joint analysis | Fine-mapping across diverse populations | Identifies independent associations while handling LD differences |

| ARTP Framework [11] | Pathway-based association testing | Trans-ancestry pathway analysis | Aggregates association evidence across correlated components |

| Global Biobank Meta-analysis Initiative [25] | Multi-ancestry meta-analysis resource | Large-scale trans-ancestry studies | Provides standardized framework for combining biobank data |

| HapMap/1000 Genomes [25] | LD reference panels | Imputation and fine-mapping | Ancestry-specific linkage disequilibrium patterns |

Key Technical Considerations

LD Reference Selection: The similarity of a target population to a reference panel significantly impacts portability. LD in Europeans is moderate compared to other populations, enhancing portability within European groups but limiting applicability to other ancestries [24]. Always use ancestry-matched LD reference panels for accurate results [15].

Statistical Power Calculations: When designing trans-ancestry studies, estimate minimum ancestry-specific sample sizes required to achieve adequate statistical power. Manc-COJO provides tools for these calculations, which are essential for robust study design [15].

Harmonization Challenges: Differences in genotype platforms and filtering criteria across various SA-GWAS often result in missing SNP summary data. Implement rigorous quality control measures and imputation strategies to address these gaps [11].

Advanced Analytical Frameworks for LD-Aware Trans-Ancestry Integration

Pathway analysis is a powerful tool that moves beyond looking at individual genetic markers to examine the combined effects of multiple markers within biological pathways. This method is particularly effective for detecting subtle genetic influences on diseases that might be missed when analyzing individual single nucleotide polymorphisms (SNPs) alone [27]. Trans-ancestry pathway analysis expands this approach to include data from diverse ancestry groups, which has often been overlooked in traditional single-ancestry genetic studies [27].

The integration of multi-ancestry data presents both opportunities and challenges. While it enhances the identification of disease loci and improves generalizability, it must account for inherent genetic architecture heterogeneity among ancestral populations, particularly effect size variability arising from differential environmental interactions and population-specific linkage disequilibrium (LD) patterns [27]. This technical support center provides comprehensive guidance for implementing trans-ancestry pathway analysis methods while effectively addressing LD differences across populations.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental assumption underlying trans-ancestry pathway analysis methods?

The foundation of trans-ancestry pathway analysis is the Trans-Ancestry Gene Consistency (TAGC) assumption, which posits that a specific subset of genes within a pathway is associated with the outcome across various ancestry groups, though the strength of their association may differ across populations due to genetic and environmental variations [27]. This assumption is reasonable because functional variants, especially common ones, are likely shared among diverse populations [27]. Even when functional variants aren't directly genotyped, genes containing those variants should consistently show association with outcomes across different populations, provided each population has sufficient sample size [27].

Q2: How do LD differences between populations impact trans-ancestry analysis, and what strategies can mitigate these effects?

LD patterns differ significantly across populations, which can confound genetic association results [28]. In trans-ancestry pathway analysis, these differences affect how SNPs tag causal variants in each population. To address this:

- Use population-specific reference panels that accurately capture LD patterns for each ancestry group [27]

- Implement careful SNP pruning at r² thresholds between 0.20-0.75 to balance false positive control and power [28]

- Apply cross-population fine-mapping methods that leverage differential LD patterns to pinpoint causal variants [8]

- Account for ancestry-specific LD blocks when interpreting epistasis results [28]

Q3: What are the main strategies for integrating genetic data in trans-ancestry pathway analysis?

There are three primary approaches for data integration in trans-ancestry pathway analysis [27]:

- SNP-centric approach: Consolidates single-ancestry SNP-level summary data from multiple GWAS to generate trans-ancestry SNP-level summary statistics, which are then aggregated to derive gene-level and pathway-level statistics.

- Gene-centric approach: Aggregates SNP summary data within each gene from each GWAS to produce single-ancestry gene-level statistics, which are then unified across different GWAS.

- Pathway-centric approach: Integrates p-values from pathway analyses conducted separately for each ancestry group.

Table: Comparison of Trans-Ancestry Pathway Analysis Integration Approaches

| Approach | Integration Level | Key Advantage | Consideration for LD Handling |

|---|---|---|---|

| SNP-centric | SNP-level | Maximizes signal from individual variants | Requires careful alignment of LD patterns across populations |

| Gene-centric | Gene-level | More robust to LD differences within genes | Less sensitive to population-specific LD structures |

| Pathway-centric | Pathway-level | Accommodates heterogeneity in gene effects | May miss consistent subtle signals across ancestries |

Q4: What quality control steps are essential when preparing multi-ancestry GWAS summary data?

When preparing multi-ancestry GWAS summary data for pathway analysis, these QC steps are critical:

- Standardize SNP filtering across all datasets (MAF > 1%, missingness rate < 10%, HWE significance level 5·10⁻¹⁵) [28]

- Ensure consistent genomic build and alignment across all datasets

- Apply genomic control correction to account for residual population stratification (λ ~1.0 indicates proper correction) [8]

- Verify effect size consistency using metrics like Lin's concordance correlation coefficient (values >0.90 indicate good consistency) [8]

- Check for discordant effect directions across ancestry groups

Q5: How can researchers assign SNPs to genes appropriately in trans-ancestry analysis?

SNP-to-gene assignment follows established conventions but should be applied consistently:

- Physical position mapping: Assign SNPs to genes if they fall within 50 kb of gene boundaries [27]

- Account for multiple assignments: Allow SNPs to be assigned to multiple genes when applicable

- Consider alternative strategies: In real data analysis, researchers may implement additional assignment strategies based on functional annotations or chromatin interactions [27]

- Maintain consistency: Use identical assignment rules across all ancestry groups to ensure comparability

Experimental Protocols and Workflows

Core Framework for Trans-Ancestry Pathway Analysis

The comprehensive framework for trans-ancestry pathway analysis builds upon the Adaptive Rank Truncated Product (ARTP) method, a flexible, resampling-based approach initially developed for pathway analysis in single-ancestry GWAS [27]. The following diagram illustrates the three primary integration strategies:

Detailed ARTP Algorithm Implementation

The Adaptive Rank Truncated Product (ARTP) method forms the core statistical framework for pathway analysis. Implement it as follows [11]:

Obtain association p-values: For each component (SNP or gene), compile association p-values into vector p₀ = (p₀,₁, p₀,₂, ..., p₀,ᵩ)

Resampling under null hypothesis: Use a resampling-based procedure to simulate M replicas of p₀ under the global null hypothesis, denoted as pₘ = (pₘ,₁, pₘ,₂, ..., pₘ,ᵩ), m = 1, ..., M

Calculate NLP statistics: For each threshold cₖ from candidate values {cₖ, k = 1, ..., K}:

- Arrange elements in p₀ in ascending order: p₀,(ᵢ), i = 1, ..., q

- Calculate Negative Log Product (NLP) statistic: w₀,ₖ = -∑ᵢ₌₁^{cₖ} log p₀,(ᵢ)

Repeat for resampled data: Repeat step 3 for each resampled pₘ, obtaining NLP statistics wₘ,ₖ, m = 1, ..., M, k = 1, ..., K

Estimate empirical p-values: For each threshold cₖ, estimate empirical p-value for the NLP statistic by comparing w₀,ₖ to the distribution of wₘ,ₖ

Determine final significance: The final test statistic is the smallest p-value identified among candidate thresholds (minP statistic), with significance evaluated using the initially generated samples

Trans-Ancestry GWAS Meta-Analysis Protocol

For the initial trans-ancestry GWAS that provides input for pathway analysis, follow this protocol [8]:

Table: Trans-Ancestry GWAS Meta-Analysis Steps

| Step | Procedure | Quality Control |

|---|---|---|

| 1. Data Collection | Obtain GWAS summary statistics from multiple ancestry groups | Ensure consistent phenotype definitions across studies |

| 2. Variant Alignment | Harmonize SNPs across datasets using reference genome | Check for strand alignment, allele flipping, and build consistency |

| 3. Meta-Analysis | Perform fixed-effect inverse-variance weighted meta-analysis | Apply genomic control correction (λ ~1.0 indicates proper correction) |

| 4. Heterogeneity Assessment | Calculate heterogeneity statistics (e.g., Cochran's Q) | Identify variants with significant ancestry-heterogeneity |

| 5. Locus Definition | Define susceptibility loci as non-overlapping genomic regions within 1000 kb of lead SNPs | Merge lead SNPs within 1000 kb of each other |

Troubleshooting Common Experimental Issues

Problem: Inconsistent Effect Directions Across Ancestry Groups

Solution: This may indicate genuine biological differences or methodological issues. First, verify data harmonization and strand alignment. Calculate Lin's concordance correlation coefficient (ρc) to quantify effect direction consistency [8]. Values >0.90 indicate good consistency. If heterogeneity persists, consider using methods like MR-MEGA that explicitly model ancestry heterogeneity [8].

Problem: Low Pathway Detection Power Despite Large Sample Sizes

Solution:

- Check LD reference compatibility: Ensure population-specific LD reference panels match the ancestry composition of your GWAS data

- Adjust SNP pruning thresholds: Overly stringent pruning (r² < 0.20) can severely reduce power [28]

- Verify TAGC assumption: Test whether the same genes are associated across ancestries versus scenario where different genes are associated in different populations

- Consider alternative integration approaches: If SNP-centric approach underperforms, try gene-centric or pathway-centric methods

Problem: Computational Challenges with Large-Scale Resampling

Solution: The ARTP method is computationally intensive. Optimize by:

- Using efficient resampling algorithms that leverage the correlation structure among components

- Implementing parallel processing for resampling steps

- Starting with smaller resampling sizes (M = 1000) for initial analysis, then increasing (M = 10000) for final results

- Utilizing the ARTP3 R package available at https://github.com/KevinWFred/ARTP3 [27]

Problem: Incomplete Fine-Mapping of Causal Variants

Solution: Implement cross-population fine-mapping to leverage differential LD patterns:

- Use methods like MESuSiE that identify shared and ancestry-specific causal signals [8]

- Compare credible set sizes between trans-ancestry and single-ancestry fine-mapping

- Focus on variants with high posterior inclusion probability (PIP > 0.5) in trans-ancestry analysis [8]

Research Reagent Solutions

Table: Essential Tools and Resources for Trans-Ancestry Pathway Analysis

| Resource Type | Specific Tool/Resource | Function and Application |

|---|---|---|

| Software Packages | ARTP3 R package [27] | Implements trans-ancestry pathway analysis framework with all three integration approaches |

| Meta-Analysis Tools | METAL [8] | Performs efficient trans-ancestry GWAS meta-analysis using fixed-effect inverse-variance weighted models |

| Heterogeneity Modeling | MR-MEGA [8] | Accounts for ancestry heterogeneity in trans-ancestry meta-analysis |

| Fine-Mapping Methods | MESuSiE [8] | Cross-population fine-mapping that identifies shared and ancestry-specific causal signals |

| LD Reference Panels | 1000 Genomes Project [29] | Provides population-specific LD patterns for diverse ancestry groups |

| Pathway Databases | MSigDB C2 Curated Gene Sets [27] | Source of biological pathways for analysis (6,970 pathways available) |

| GWAS Catalog | NHGRI-EBI GWAS Catalog [30] | Repository of published GWAS results for comparison and validation |

| Data Harmonization | RICOPILI [9] | Pipeline for imputation and quality control in consortium studies |

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using cross-population data over single-population data for fine-mapping?

Cross-population fine-mapping leverages differences in Linkage Disequilibrium (LD) patterns across diverse populations. In a single population, high LD can make it difficult to distinguish the true causal variant from other highly correlated non-causal variants. Populations, such as those of African ancestry, often have shorter LD blocks, which can help break these correlations and narrow down the set of putative causal variants, thereby increasing fine-mapping resolution and power [31] [32] [33].

Q2: My fine-mapping analysis has identified a large credible set. What could be the reason?

Large credible sets are often a result of high LD within the locus, where many SNPs are strongly correlated with each other, making it difficult for the statistical model to prioritize a single variant. This can be addressed by:

- Integrating diverse populations: Using data from populations with different LD structures can help break these correlations [32] [33].

- Accounting for confounding: Hidden confounding bias in GWAS summary statistics can produce spurious signals and inflate credible sets. Using methods like XMAP that explicitly account for this can help [31].

- Increasing sample size: The power to pinpoint causal variants often improves with larger sample sizes from each population.

Q3: How do methods handle the scenario where a variant's effect on a trait is different across populations (effect heterogeneity)?

Modern cross-population fine-mapping methods employ different strategies to handle effect heterogeneity. Some methods, like MsCAVIAR, use a random-effects model that allows the effect sizes of a causal variant to vary across different studies or populations around a common mean [33]. This approach is more robust than assuming exactly the same effect size everywhere, which can lead to a loss of power if the assumption is violated.

Q4: What are the basic input requirements for running tools like XMAP or MsCAVIAR?

Most modern fine-mapping tools require only summary statistics from GWAS conducted in each population. The essential inputs typically are:

- Association statistics: Usually Z-scores or effect sizes (

beta) and their standard errors for SNPs in the locus of interest. - Linkage Disequilibrium (LD) matrices: A matrix of correlation coefficients (r²) between SNPs in the locus for each population. These can be computed from in-sample genotype data or from appropriate reference panels like the 1000 Genomes Project [31] [33].

Troubleshooting Common Experimental Issues

Computational and Memory Errors

| Problem & Symptoms | Possible Cause | Solution Steps |

|---|---|---|

| Job failure with memory-related exit codes (e.g., 2, 130, 137). The pipeline terminates unexpectedly, often when handling large files or custom resources [34]. | The default memory allocation for the job is insufficient for the provided data. | 1. Re-run with increased memory: Use command-line arguments (e.g., --memory) to allocate more memory to the process [34].2. Check cluster options: Ensure the memory requested from the computing cluster is equal to or greater than the memory given to the software tool [34]. |

| Unexpected job termination after a few hours (e.g., around 4 hours). | The job is being killed because it exceeded the time limit of the default compute queue (e.g., a "short" queue) [34]. | 1. Re-submit to a longer queue: Use arguments like --queue 'medium' or --queue 'long' to allow the job more time to complete [34]. |

Data Preparation and QC Issues

| Problem & Symptoms | Possible Cause | Solution Steps |

|---|---|---|

| Missing or incorrect 'fromPath' argument error. The pipeline fails immediately, stating a required input is missing [34]. | A required input file (e.g., genotype file, summary statistics) was not correctly specified in the command or configuration [34]. | 1. Double-check file paths: Verify that all required input files are listed and the paths are correct [34].2. Validate file formats: Ensure the files are in the expected format (e.g., VCF, BGEN, PGEN) and are not corrupted. |

| chrX has very few tested variants in the Manhattan plot. The analysis for the X chromosome yields unexpected or incomplete results [34]. | Incorrect specification of the chromosome name in the input file list [34]. | 1. Standardize chromosome naming: In the file listing VCF/BGEN/PGEN files, ensure the chromosome is specified as "chrX" (or "chr1", "chr2", etc.), and not as "X" or 23 [34]. |

The table below summarizes key findings from simulation studies and analyses reported in the literature, comparing the performance of various fine-mapping methods.

Table 1: Performance Comparison of Fine-Mapping Methods

| Method | Key Features / Approach | Reported Performance Advantages |

|---|---|---|

| XMAP [31] | Leverages genetic diversity; Accounts for confounding bias; Assumes sum of single effects. | Achieved greater statistical power and better control of the false positive rate compared to existing methods. Identified three times more putative causal SNPs for LDL than SuSiE. Offers substantially higher computational efficiency [31]. |

| MsCAVIAR [33] | Multi-study extension of CAVIAR; Uses a random-effects model to account for effect size heterogeneity. | Outperformed PAINTOR and single-study CAVIAR in simulations, resulting in a reduction in the number of variants needed for functional follow-up testing. Improved fine-mapping resolution in trans-ethnic analysis of HDL [33]. |

| Trans-ethnic PAINTOR [33] | Leverages different LD patterns from multiple populations to improve fine-mapping. | An established method for trans-ethnic fine-mapping, but can be limited in power compared to newer methods that explicitly model heterogeneity [33]. |

| SuSiE & SuSiEx [31] | Sum of Single Effects model; Efficient algorithm for multiple causal variants. SuSiEx extends to cross-population analysis. | A computationally efficient framework for detecting multiple causal SNPs. However, power can be limited in single-population settings with high LD. XMAP showed substantial power gain over SuSiE in real data analysis [31]. |

Experimental Protocols for Key Methodologies

Protocol: Fine-Mapping with XMAP

Objective: To identify putative causal variants by jointly analyzing GWAS summary statistics from multiple populations, while accounting for confounding bias [31].

Input Requirements:

- Summary Statistics: GWAS summary data (Z-scores or effect sizes and standard errors) for two or more populations (e.g., EUR, EAS, AFR).

- LD Matrices: Reference LD matrices for each population, estimated from a reference panel like 1000 Genomes or from the study samples if available [31].

Procedure:

- Data Preparation: Format summary statistics and LD matrices for each population as required by the XMAP software.

- Model Fitting: Run the XMAP algorithm. The model jointly analyzes all populations, leveraging their distinct LD structures. It corrects for confounding bias hidden in the summary statistics and uses an efficient algorithm to infer multiple causal variants [31].

- Output Interpretation: The primary output is the Posterior Inclusion Probability (PIP) for each SNP. A higher PIP indicates a higher probability that the SNP is causal. A 90% or 95% credible set can be formed by including the smallest set of SNPs whose cumulative PIP meets the threshold [31].

Downstream Analysis:

- Functional Enrichment: Test the identified putative causal SNPs for enrichment in functional annotations (e.g., regulatory elements, conserved regions).

- Integration with Single-cell Data: As demonstrated in the original study, XMAP results can be integrated with single-cell datasets to identify trait-relevant cell types, enhancing the biological interpretation of the findings [31].

Protocol: Fine-Mapping with MsCAVIAR

Objective: To compute a minimal-sized "causal set" of variants that contains all true causal variants with a high probability (e.g., 95%), using data from multiple studies and accounting for effect heterogeneity [33].

Input Requirements:

- Association Statistics: Z-scores for all SNPs at the locus of interest for each study/population.

- LD Matrices: An LD matrix for the same SNPs from each study/population, calculable from reference panels [33].

Procedure:

- Input Preparation: Compile Z-scores and LD matrices for the target locus across all studies.

- Configuration Analysis: MsCAVIAR uses a Bayesian framework. It models the effect sizes in different studies as being drawn from a distribution (random effects) to account for heterogeneity. It calculates the posterior probability for every possible combination of causal SNPs ("configurations") [33].

- Causal Set Construction: The algorithm starts with causal sets containing one SNP, then two, and so on. It sums the posterior probabilities of all configurations compatible with each set (i.e., where all causal SNPs are inside the set). The first set whose total posterior probability exceeds the user-defined threshold (e.g., ρ = 0.95) is reported as the output causal set [33].

Diagram 1: XMAP Workflow for multi-population fine-mapping.

Table 2: Essential Resources for Cross-Population Fine-Mapping Analysis

| Resource / Tool | Function / Description | Key Considerations |

|---|---|---|

| GWAS Summary Statistics | The foundational input data containing the strength of association between genetic variants and a trait for each population. | Ensure consistency in genome build, allele coding, and quality control (QC) metrics across different studies. |

| Reference Panels (e.g., 1000 Genomes, HapMap) | Provide population-specific genotype data used to estimate the LD matrices required for summary-statistics-based fine-mapping [33]. | Choose a reference panel that is ancestrally matched to your GWAS cohorts to ensure accurate LD estimation. |

| Fine-Mapping Software (XMAP, MsCAVIAR, PAINTOR) | The statistical software that performs the core fine-mapping analysis by integrating summary data and LD from multiple sources. | Select a method based on your needs: ability to handle multiple causal variants, effect size heterogeneity, and computational efficiency [31] [33]. |

| LD Calculation Tools (e.g., PLINK) | Used to compute the correlation (r²) between SNPs in a genomic region from genotype data, generating the LD matrix input. | Memory errors can occur with large sample sizes or many SNPs; adjust memory allocation as needed [34]. |

| High-Performance Computing (HPC) Cluster | Provides the necessary computational power and memory to run fine-mapping analyses, especially on genome-wide scales. | Be aware of queue time limits and memory allocation policies to avoid job termination [34]. |

Diagram 2: MsCAVIAR's causal set construction logic.

Core Concepts FAQ

Q1: What is the fundamental challenge with standard PRS in cross-ancestry applications? Standard PRS, typically derived from Genome-Wide Association Studies (GWAS) in European-ancestry populations, show reduced predictive performance in non-European populations. This stems from genetic differences including varied linkage disequilibrium (LD) patterns, allele frequencies, and causal variant effect sizes across populations [35]. LD, the non-random association of alleles at different loci, differs markedly between populations. For instance, African-ancestry populations typically have smaller LD blocks, requiring more variants to capture the same genetic information compared to European or East Asian populations [35].

Q2: How do LD-informed methods improve cross-ancestry prediction? LD-informed methods explicitly model or account for population-specific LD structure to improve PRS portability. They enhance cross-ancestry prediction by:

- Improving fine-mapping resolution to better identify causal variants [36].

- Providing more accurate effect size estimates for variants by accounting for LD differences between the base (discovery) and target (application) populations [35].

- Increasing the number of genome-wide significant loci discovered in trans-ancestry GWAS, which in turn provides a better foundation for PRS construction [36].

Implementation & Troubleshooting Guide

Q1: Our trans-ancestry PRS shows poor portability. What are the primary genetic factors to investigate? When facing poor portability, systematically evaluate these genetic factors, which are often interconnected.

| Genetic Factor | Impact on PRS Portability | Diagnostic Check |

|---|---|---|

| LD Pattern Differences | LD mismatch can cause the score to include non-causal variants that are not tagged the same way in the target population, reducing accuracy [35]. | Compare LD decay plots or reference LD scores (e.g., from 1000 Genomes) for base and target populations [37]. |

| Allele Frequency Spectrum | Causal variants common in the base population might be rare in the target population, and vice versa, leading to missed heritability [35]. | Compare Minor Allele Frequency (MAF) distributions of GWAS-significant variants in the target population. |

| Cross-Population Genetic Correlation | Incomplete genetic correlation suggests that the same trait may have a different underlying genetic architecture [35]. | Estimate genetic correlation (e.g., using LD Score Regression) between base and target cohorts. |

| Heritability (h²) | Differences in SNP-based heritability for the trait can limit the maximum achievable prediction accuracy in the target population [35]. | Estimate heritability within the target population, ensuring sufficient sample size [38]. |