Optimizing Deep Learning Parameters for Sperm Classification: A Guide for Biomedical Research

This article provides a comprehensive guide for researchers and scientists on optimizing deep learning parameters for automated sperm morphology analysis.

Optimizing Deep Learning Parameters for Sperm Classification: A Guide for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and scientists on optimizing deep learning parameters for automated sperm morphology analysis. It covers the foundational challenges of traditional analysis and dataset creation, explores the application of Convolutional Neural Networks (CNNs) and transfer learning for classification, and details advanced hyperparameter tuning and troubleshooting strategies. The content further addresses model validation, performance comparison with expert assessments and other ML techniques, and discusses the clinical implications and future directions of this technology for improving diagnostic accuracy in male infertility.

The Foundation: Understanding the Clinical Problem and Data Landscape

The Critical Need for Automation in Sperm Morphology Analysis

Male infertility is a significant global health concern, contributing to approximately 50% of all infertility cases. [1] [2] Among various diagnostic parameters, sperm morphology analysis is considered one of the most critical yet challenging assessments in male fertility evaluation. Traditional manual morphology assessment is highly subjective, time-consuming, and prone to significant inter-observer variability, creating a substantial bottleneck in clinical and research settings. [3] [1] This technical resource center explores how deep learning approaches are addressing these challenges by bringing automation, standardization, and enhanced accuracy to sperm classification research.

Experimental Protocols in Automated Sperm Morphology Analysis

The transition from manual assessment to automated, AI-driven analysis involves several sophisticated experimental workflows. The table below summarizes key methodologies from recent pioneering studies.

Table 1: Experimental Protocols for Automated Sperm Morphology Analysis

| Study Focus | Dataset Details | Deep Learning Architecture | Preprocessing & Augmentation | Key Performance Metrics |

|---|---|---|---|---|

| Sperm Morphology Classification [3] | SMD/MSS dataset: Initially 1,000 images, expanded to 6,035 images after augmentation | Convolutional Neural Network (CNN) | Data augmentation techniques to balance morphological classes; image normalization and resizing to 80×80×1 grayscale | Accuracy ranging from 55% to 92% |

| Unstained Live Sperm Analysis [4] | 21,600 images captured via confocal laser scanning microscopy; 12,683 annotated sperm | ResNet50 transfer learning model | Z-stack imaging at 0.5μm intervals; manual annotation with bounding boxes | Precision: 0.95 (abnormal), 0.91 (normal); Recall: 0.91 (abnormal), 0.95 (normal); Processing speed: 0.0056 seconds per image |

| Bovine Sperm Morphology [5] | 277 annotated images across 6 morphological categories | YOLOv7 object detection framework | Standardized bright-field microscopy; pressure and temperature fixation without dyes | Global mAP@50: 0.73; Precision: 0.75; Recall: 0.71 |

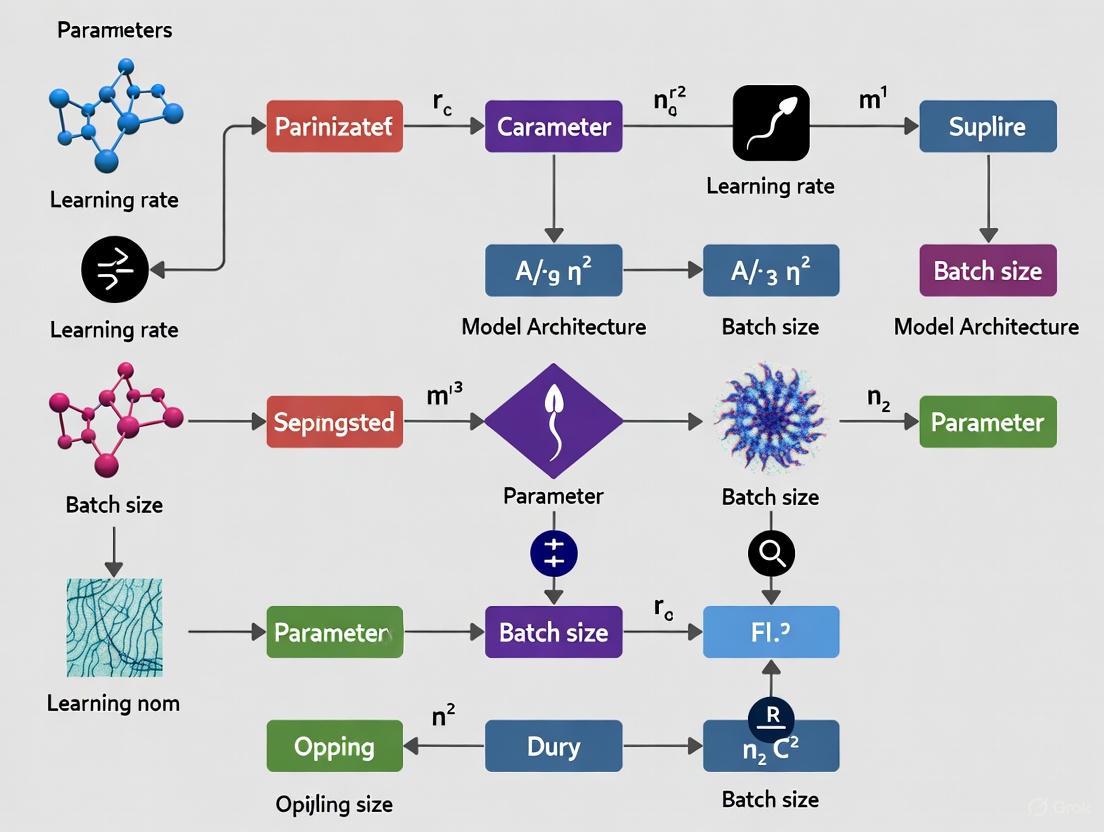

Deep Learning Workflow for Sperm Morphology Analysis

The following diagram illustrates the generalized experimental workflow for implementing deep learning in sperm morphology analysis, synthesized from current research methodologies:

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of deep learning for sperm morphology analysis requires specific laboratory materials and computational resources. The following table catalogues essential components for establishing an automated sperm classification pipeline.

Table 2: Essential Research Reagents and Materials for Automated Sperm Analysis

| Category | Item | Specification/Function | Research Application |

|---|---|---|---|

| Sample Preparation | Optixcell extender [5] | Semen diluent maintained at 37°C | Preserves sperm viability during processing |

| RAL Diagnostics staining kit [3] | Staining for traditional morphology assessment | Creates reference standards for model validation | |

| Diff-Quik stain [4] | Romanowsky stain variant for CASA systems | Enables comparative analysis with automated systems | |

| Image Acquisition | MMC CASA system [3] | Microscope with digital camera for image capture | Sequential acquisition of individual sperm images |

| Confocal laser scanning microscope [4] | High-resolution imaging at lower magnification | Captures subcellular features without staining | |

| Trumorph system [5] | Pressure and temperature fixation | Enables dye-free sperm morphology evaluation | |

| Computational Resources | Python 3.8 [3] | Programming environment for algorithm development | Implementation of CNN architectures and training pipelines |

| Roboflow [5] | Image labeling and annotation platform | Preprocessing and managing datasets for model training | |

| YOLOv7 framework [5] | Real-time object detection system | Identification and classification of sperm abnormalities |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: What are the primary data-related challenges in developing robust deep learning models for sperm classification, and how can they be mitigated?

Challenge: The lack of standardized, high-quality annotated datasets significantly impedes model development. Existing datasets often suffer from low resolution, limited sample size, insufficient morphological categories, and class imbalance. [1] [2]

Solution:

- Implement comprehensive data augmentation techniques to expand dataset size and balance morphological classes, as demonstrated in the SMD/MSS dataset which grew from 1,000 to 6,035 images after augmentation. [3]

- Establish standardized protocols for sperm slide preparation, staining, image acquisition, and annotation to ensure consistency across samples. [1]

- Utilize multi-expert annotation processes with statistical analysis of inter-expert agreement to establish reliable ground truth labels. [3]

FAQ 2: How do I select the appropriate deep learning architecture for sperm morphology analysis, and what performance metrics should I prioritize?

Architecture Selection:

- For classification tasks: Convolutional Neural Networks (CNNs) and ResNet50 models have demonstrated strong performance, with ResNet50 achieving 93% accuracy in classifying unstained live sperm. [4]

- For real-time detection and localization: YOLO frameworks (YOLOv5, YOLOv7) provide excellent efficiency with balanced precision (0.75) and recall (0.71) metrics. [5]

Performance Metrics:

- Beyond overall accuracy, prioritize precision and recall for abnormal sperm detection, as false negatives can significantly impact clinical decisions.

- Consider processing speed requirements, with state-of-the-art models achieving prediction times of 0.0056 seconds per image, enabling real-time analysis. [4]

- Utilize mAP@50 for object detection models to evaluate localization accuracy across different intersection-over-union thresholds. [5]

FAQ 3: What are the common pitfalls in preprocessing sperm images for deep learning applications, and how can they be addressed?

Common Pitfalls:

- Inconsistent staining intensity and illumination variations affecting feature extraction. [3]

- Presence of overlapping sperm, cellular debris, or impurities misclassified as abnormalities. [1]

- Poor image resolution limiting the model's ability to detect subtle morphological defects. [2]

Optimization Strategies:

- Implement normalization and standardization techniques to minimize technical variations, such as resizing images to consistent dimensions (e.g., 80×80×1 grayscale) with linear interpolation. [3]

- Employ advanced segmentation algorithms to separate touching objects and remove non-sperm elements before classification. [1]

- Utilize confocal laser scanning microscopy to enhance image quality without staining, preserving sperm viability for clinical use. [4]

FAQ 4: How can I improve model generalization and avoid overfitting to specific dataset characteristics?

Regularization Techniques:

- Incorporate data augmentation methods that simulate real-world variations in sperm presentation, focus, and orientation.

- Implement hybrid approaches combining deep learning with nature-inspired optimization algorithms like Ant Colony Optimization to enhance feature selection and model generalization. [6]

- Apply transfer learning from models pre-trained on larger biomedical image datasets, then fine-tune on domain-specific sperm morphology data. [4]

Validation Protocols:

- Employ rigorous train-test splits with 80-20% partitioning and cross-validation techniques. [3]

- Establish external validation protocols using samples from different clinical centers to assess true generalizability. [2]

- Utilize statistical analysis of inter-expert agreement as a benchmark for model performance evaluation. [3]

Performance Benchmarking Across Methodologies

To facilitate objective comparison of model effectiveness, the following table synthesizes performance metrics across diverse approaches documented in recent literature.

Table 3: Performance Benchmarking of Sperm Morphology Analysis Methods

| Methodology | Classification Scope | Accuracy Range | Precision | Recall | Clinical Applicability |

|---|---|---|---|---|---|

| Manual Assessment [1] | Head, midpiece, tail defects | Subjective (Expert-dependent) | Variable | Variable | Gold standard but limited by inter-observer variability |

| Conventional CASA [4] | Strict criteria morphology | Limited by image quality | Moderate | Moderate | Routine clinical use with staining requirements |

| Deep Learning (CNN) [3] | 12 morphological classes | 55%-92% | Not specified | Not specified | Research phase with promising standardization potential |

| Transfer Learning (ResNet50) [4] | Normal/Abnormal classification | 93% | 0.91-0.95 | 0.91-0.95 | High - enables unstained live sperm analysis |

| YOLO Object Detection [5] | 6 morphological categories | mAP@50: 0.73 | 0.75 | 0.71 | Veterinary applications with transfer potential to human samples |

| Hybrid ML-ACO Optimization [6] | Normal/Altered seminal quality | 99% | Not specified | 100% | Early prediction using clinical and lifestyle factors |

The automation of sperm morphology analysis through deep learning represents a paradigm shift in male fertility assessment, addressing critical limitations of traditional methods while opening new avenues for standardized, high-throughput diagnostic and research applications. By leveraging optimized experimental protocols, appropriate architectural choices, and comprehensive troubleshooting approaches, researchers can develop robust systems that enhance accuracy, efficiency, and clinical utility. As the field evolves, continued refinement of datasets, algorithms, and validation frameworks will further solidify the role of AI in advancing reproductive medicine.

Frequently Asked Questions (FAQs)

Q1: Why is data standardization critical specifically for deep learning models in sperm classification? Data standardization is crucial because it ensures that features like sperm head dimensions (length, width) and tail length, which may be measured in different units or have different numerical ranges, contribute equally to the model's analysis [7]. Without standardization, a feature with a naturally larger range (e.g., tail length) could disproportionately influence a distance-based model, leading to biased and inaccurate classifications [7]. Standardizing data to have a mean of 0 and a standard deviation of 1 mitigates this risk [7].

Q2: My dataset of sperm images is limited. How can data augmentation help? Data augmentation creates new, synthetic training examples from your existing dataset by applying realistic transformations to the images [8]. This technique is vital for preventing overfitting, where a model memorizes the limited training examples instead of learning generalizable patterns [8]. For sperm morphology, this can involve rotations (to account for different orientations), flips, slight adjustments to brightness/contrast (to simulate staining variations), and adding minor blur to improve the model's robustness [8] [9].

Q3: What are the most effective data augmentation techniques for sperm image analysis?

The effectiveness of a technique can depend on your specific dataset, but some generally powerful methods exist. Geometric transformations like random rotation and affine transformation are highly effective as they help the model recognize sperm from various angles [9]. Color jittering (adjusting brightness and contrast) is also valuable for making the model robust to differences in staining quality and lighting conditions during microscopy [9]. Techniques like CutOut (randomly obscuring parts of the image) can further train the model to classify sperm based on partial views [8].

Q4: How do I integrate a data augmentation pipeline into my existing deep learning workflow? You can seamlessly integrate augmentation into your training process using data loaders in frameworks like PyTorch. The pipeline is defined as a series of transformations that are applied on-the-fly during each training epoch. Below is a sample code structure [9]:

Q5: I've standardized and augmented my data, but my model performance is poor. What should I check? This is a common troubleshooting point. First, validate your ground truth labels. In sperm morphology, inter-expert disagreement can be high. If your training labels are inconsistent, the model cannot learn effectively [3]. Second, re-evaluate your augmentation choices. Excessively aggressive transformations (e.g., extreme rotations that never occur biologically) can generate unrealistic images and confuse the model [8]. Start with subtle transformations and monitor performance. Finally, ensure you are continuously monitoring data quality even after the pipeline is built, as drift in source data can occur [10].

Troubleshooting Guides

Problem: Model Performance is Inconsistent or Poor After Implementing Standardization

- Check: Whether you applied standardization correctly to both training and test sets.

- Solution: The parameters for standardization (mean, standard deviation) must be calculated only from the training data and then applied to the validation and test sets. Using the entire dataset to calculate these parameters causes data leakage and over-optimistic performance estimates [7].

- Check: If you are using a model that is inherently insensitive to feature scaling.

- Solution: Confirm that your model requires standardization. Tree-based models (e.g., Random Forests) are generally unaffected by feature scaling, while distance-based models (KNN, SVM) and models using gradient descent (Neural Networks) are highly sensitive [7].

Problem: Model is Overfitting Despite Using Data Augmentation

- Check: The diversity and "realism" of your augmented images.

- Solution: An augmentation strategy that is too narrow will not provide enough variation for the model to generalize. Expand your techniques to include a mix of geometric and photometric transformations that reflect real-world variances in your data acquisition process [9]. Refer to the table of techniques above for guidance.

- Check: The strength or probability of the applied transformations.

- Solution: If augmentation parameters are too strong (e.g., a 90-degree rotation for a sperm cell), the generated images may become biologically implausible. Tune parameters like rotation degree, color jitter range, and the probability (

p) of applying each transformation to ensure augmented data remains realistic [8].

Problem: High Expert Disagreement in the Training Labels

- Check: The level of agreement among the experts who labeled your dataset.

- Solution: As highlighted in the SMD/MSS dataset study, it is critical to analyze inter-expert agreement (e.g., Total Agreement, Partial Agreement, No Agreement) [3]. For classes with low agreement, consider consolidating fine-grained classes into broader, more reliably identifiable categories or implementing a consensus-based labeling system before training [3].

Data Presentation

Table 1: Impact of Data Standardization on Different Model Types in Sperm Classification

This table summarizes when and why to apply data standardization based on the underlying algorithm.

| Model Type | Standardization Required? | Rationale |

|---|---|---|

| K-Nearest Neighbors (KNN) | Yes [7] | Distance-based; ensures all features contribute equally. |

| Support Vector Machine (SVM) | Yes [7] | Maximizes margin; prevents features with large scales from dominating. |

| Principal Component Analysis (PCA) | Yes [7] | Components are directed by maximum variance, which is scale-dependent. |

| Convolutional Neural Networks (CNNs) | Yes (Recommended) | Accelerates convergence and improves performance during gradient descent. |

| Tree-Based Models (Random Forest) | No [7] | Splits are based on feature value order, not absolute scale. |

Table 2: Comparison of Data Augmentation Techniques for Sperm Morphology Images

This table lists common augmentation techniques and their specific utility in simulating biological and technical variation.

| Augmentation Technique | Primary Effect | Use Case in Sperm Morphology |

|---|---|---|

| Random Rotation [9] | Alters object orientation. | Teaches model invariance to sperm rotation on the slide. |

| Color Jitter [9] | Changes brightness/contrast. | Compensates for variations in staining intensity and microscope lighting. |

| Horizontal/Vertical Flip [8] | Reverses image along an axis. | A simple way to increase viewpoint variation. |

| Random Cropping [8] | Changes scale and perspective. | Helps the model focus on the sperm cell amidst background debris. |

| CutOut / Random Erasing [8] | Occludes parts of the image. | Improves robustness by forcing classification based on partial visual data. |

Experimental Protocols

Detailed Methodology: Building a Deep Learning Model for Sperm Classification

The following protocol is adapted from a 2025 study that developed a Convolutional Neural Network (CNN) for sperm morphological evaluation using the SMD/MSS dataset [3].

1. Data Acquisition and Ground Truth Labeling

- Sample Preparation: Prepare semen smears from samples with a concentration of at least 5 million/mL, stained per standard protocols (e.g., RAL Diagnostics kit) [3].

- Image Acquisition: Use a Computer-Assisted Semen Analysis (CASA) system with a 100x oil immersion objective in bright-field mode to capture images of individual spermatozoa [3].

- Expert Classification: Have each sperm image classified independently by multiple experienced analysts based on a standardized classification system (e.g., modified David classification). Compile a ground truth file that includes the image name and the classifications from all experts [3].

2. Data Pre-processing and Partitioning

- Image Pre-processing: Convert images to grayscale and resize them to a consistent size (e.g., 80x80 pixels). Normalize pixel values to a 0-1 range to stabilize training [3].

- Data Partitioning: Randomly split the entire dataset into a training set (80%) and a test set (20%). To tune hyperparameters, further split the training set to extract a validation subset (e.g., 20% of the training data) [3].

3. Data Augmentation Pipeline Implementation

- Technique Selection: Based on the comparison table above, select a set of augmentations. A strong starting pipeline includes Random Rotation (±20 degrees), Random Horizontal Flip, and Color Jitter (brightness and contrast of 0.2) [9].

- Integration: Implement this pipeline using a library like PyTorch's

torchvision.transformsand integrate it into the data loader for the training set. Crucially, the test set should not be augmented.

4. Model Training and Evaluation

- Model Architecture: Design a CNN architecture. The SMD/MSS study used a CNN implemented in Python 3.8 [3].

- Training: Train the model using the augmented training data. Monitor the loss and accuracy on both the training and validation sets to detect overfitting.

- Evaluation: Finally, evaluate the trained model's performance on the held-out, non-augmented test set. Report standard metrics like accuracy, precision, and recall.

Mandatory Visualization

The following diagram illustrates the integrated workflow for data standardization and augmentation in a deep learning project for sperm classification.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools for Sperm Morphology Analysis

| Item | Function / Description |

|---|---|

| RAL Diagnostics Stain [3] | A staining kit used to prepare semen smears, providing contrast for microscopic examination of sperm morphology. |

| CASA System [3] | Computer-Assisted Semen Analysis system; an optical microscope with a digital camera for automated acquisition and morphometric analysis of sperm images. |

| Python with PyTorch/TensorFlow [9] | Core programming language and deep learning frameworks used to build, train, and evaluate the convolutional neural network (CNN) models. |

| VisualDL / TensorBoard [11] | Visualization tools that allow researchers to track model training metrics in real-time, visualize model graphs, and debug performance. |

| Data Augmentation Library (e.g., Albumentations) | A specialized Python library that offers a wide variety of optimized image augmentation techniques for machine learning projects. |

Frequently Asked Questions (FAQs)

1. What are the most common challenges in automating sperm morphology analysis? The primary challenges include the high subjectivity of manual assessment, which relies heavily on the technician's experience, and the limitations of early automated systems (CASA) in accurately distinguishing sperm from cellular debris or classifying midpiece and tail abnormalities [3] [1]. Furthermore, creating robust deep learning models requires large, high-quality, and well-annotated datasets, which are difficult and time-consuming to produce [1].

2. How does deep learning improve upon conventional machine learning for this task? Conventional machine learning models (e.g., SVM, K-means) rely on manually engineered features (e.g., area, length-to-width ratio, Fourier descriptors). This process is cumbersome, and the features may not capture all relevant morphological complexities, leading to issues like over-segmentation or under-segmentation [1]. Deep learning models, particularly Convolutional Neural Networks (CNNs), can automatically learn hierarchical and discriminative features directly from images, often resulting in higher accuracy and robustness [3] [1].

3. My deep learning model's performance is inconsistent. What could be the cause? A common issue is sensitivity to the position and orientation of the sperm head in the image. Models can be confused by rotational and translational variations. Implementing a pose correction network as a preprocessing step can standardize the orientation and significantly improve classification consistency and accuracy [12]. Additionally, check for class imbalance in your training data and consider using data augmentation to create a more balanced and varied dataset [3].

4. What is the role of data augmentation in building a sperm morphology dataset? Data augmentation is crucial for creating a balanced and powerful dataset. Techniques such as rotation, translation, and color jittering can artificially expand a limited number of original images (e.g., from 845 to over 26,000 images), helping to prevent overfitting and improve the model's ability to generalize to new, unseen data [3] [12].

Troubleshooting Guides

Issue 1: Poor Segmentation Accuracy

Problem: The model fails to accurately segment the sperm head from the background or other components like the tail.

Solutions:

- Refine Segmentation Models: Move beyond traditional algorithms and employ state-of-the-art segmentation models. For instance, EdgeSAM can be used with a single coordinate point as a prompt to accurately extract and segment the specific sperm head, suppressing irrelevant features [12].

- Adopt an Integrated Framework: Use architectures designed for joint segmentation and classification. The SHMC-Net, for example, integrates features across multiple scales, leading to more precise segmentation and, consequently, better classification [1] [12].

Issue 2: Low Classification Accuracy on Abnormal Morphologies

Problem: The model performs well on normal sperm but is inaccurate when classifying specific head defects (e.g., pyriform, tapered, amorphous).

Solutions:

- Implement Pose Correction: Introduce a Sperm Head Pose Correction Network that uses Rotated RoI alignment to normalize the position and orientation of each sperm head before classification. This dramatically improves the model's robustness to spatial variations [12].

- Leverage Morphological Symmetries: Use architectural innovations to capture key morphological features. A flip feature fusion module can help the network learn the symmetrical characteristics of certain abnormal heads (e.g., pyriform), enhancing classification accuracy [12].

- Explore Advanced Architectures: Go beyond standard CNNs. Methods combining Generative Adversarial Networks (GANs) and Capsule Networks (CapsNets) can synthesize sperm images to address data imbalance and have demonstrated accuracies as high as 97.8% [12].

Issue 3: Handling Small or Imbalanced Datasets

Problem: It is difficult to train a high-performing model due to a limited number of images or an uneven number of examples across different morphological classes.

Solutions:

- Aggressive Data Augmentation: Systematically apply a suite of augmentation techniques to expand your dataset. This includes rotation, translation, brightness adjustment, and color jittering [12].

- Utilize Data Augmentation Techniques: As demonstrated in the SMD/MSS dataset creation, augmentation can expand a dataset from 1,000 to 6,035 images, making it more balanced across morphological classes and improving model training [3].

- Hybrid Models for Efficiency: If computational resources are a constraint, consider frameworks that combine a multilayer feedforward neural network with a nature-inspired optimization algorithm (like Ant Colony Optimization). These can achieve high accuracy (e.g., 99%) and require ultra-low computational time, making them suitable for scenarios with limited data [6].

The tables below summarize key quantitative data from recent studies to help you benchmark your own experiments.

Table 1: Deep Learning Model Performance on Sperm Morphology Tasks

| Model / Framework | Task | Accuracy | Key Features | Source |

|---|---|---|---|---|

| Deep Learning Model (SMD/MSS) [3] | Morphology Classification | 55% - 92% | CNN, Data Augmentation | PMC |

| Automated DL Model (HuSHem & Chenwy) [12] | Head Classification | 97.5% | EdgeSAM, Pose Correction, Flip Feature Fusion | MDPI |

| Hybrid MLFFN–ACO Framework [6] | Fertility Diagnosis | 99% | Neural Network with Ant Colony Optimization | Scientific Reports |

| VGG16 [12] | Head Classification | 94% | Standard CNN Architecture | MDPI |

| GAN + CapsNet [12] | Head Classification | 97.8% | Addresses Data Imbalance | MDPI |

Table 2: Summary of Publicly Available Sperm Image Datasets

| Dataset Name | Image Count | Key Annotations | Notable Features |

|---|---|---|---|

| SMD/MSS [3] | 1,000 (extended to 6,035 with augmentation) | Head, midpiece, tail anomalies (Modified David classification) | Includes expert classifications from three experts |

| HuSHem [12] | 216 | Contour, vertex, morphology category | Sperm head contours annotated by fertility specialists |

| Chenwy Sperm-Dataset [12] | 320 (1,314 extracted heads) | Contours of head, midpiece, tail; acrosome, nucleus, vacuole | Higher resolution images (1280x1024) |

| SVIA [1] | 125,000 annotated instances | Object detection, segmentation masks, classification | Large-scale dataset with multiple annotation types |

Experimental Protocols

Protocol 1: Building a Deep Learning Pipeline for Sperm Head Classification

This protocol is based on a state-of-the-art approach that integrates segmentation, pose correction, and classification [12].

Data Preprocessing:

- Resize and Normalize: Resize all images to a consistent size (e.g., 131×131 or 201×201 pixels) using reflection padding. Normalize pixel values to a standard range (e.g., [0, 1]) to ensure consistent contribution to the learning process and prevent scale-induced bias [3] [12].

- Data Augmentation: Apply a combination of augmentation techniques to the training set, including rotation, translation, brightness adjustment, and color jittering. This step is critical for expanding the dataset and improving model generalization [12].

Segmentation with EdgeSAM:

- Use EdgeSAM, a efficient segmentation model, for the initial sperm head segmentation.

- Provide a single coordinate point as a prompt to guide the model to the rough location of the sperm head, enabling precise feature extraction and suppressing irrelevant content like tails or debris [12].

Pose Correction:

- Pass the segmented head to a Sperm Head Pose Correction Network.

- This network predicts the precise position and angle of the sperm head.

- Use Rotated RoI (Region of Interest) Alignment to standardize the orientation and position of every sperm head, creating a normalized input for the classifier [12].

Classification with Deformable Convolutions:

- Employ a classification network that incorporates a flip feature fusion module. This module processes both the original and flipped feature maps to better capture symmetrical and asymmetrical characteristics of the sperm head.

- Integrate deformable convolutions to allow the network to adaptively adjust its receptive field, better capturing morphological variations in abnormal sperm heads [12].

Model Training and Evaluation:

- Split the dataset into training and testing sets (e.g., 80:20 ratio). Use five-fold cross-validation on the training set for robust hyperparameter tuning and model selection.

- Ensure that original and augmented images of the same sperm head are not leaked across the training and validation sets in the same fold.

- Evaluate the final model on the held-out test set using metrics like accuracy, sensitivity, and F1-score [12].

Protocol 2: Creating an Augmented Morphology Dataset

This protocol outlines the process used to create the SMD/MSS dataset, highlighting best practices for dataset curation [3].

Sample Preparation and Image Acquisition:

- Prepare semen smears according to WHO guidelines and stain them (e.g., with RAL Diagnostics staining kit).

- Acquire images using a CASA system or a microscope with a digital camera. Use a 100x oil immersion objective in bright field mode.

- Capture images of individual spermatozoa to facilitate annotation and analysis.

Expert Annotation and Ground Truth Creation:

- Have each sperm image classified independently by multiple experts (e.g., three) based on a standardized classification system like the modified David classification.

- Create a ground truth file that includes the image name, classifications from all experts, and morphometric data (e.g., head dimensions, tail length).

Data Augmentation and Balancing:

- Analyze the inter-expert agreement (Total Agreement, Partial Agreement, No Agreement) to understand the complexity of the classification task.

- Apply data augmentation techniques to significantly increase the number of images and, more importantly, to balance the representation of rare morphological classes.

Workflow and Pathway Diagrams

Deep Learning Pipeline for Sperm Morphology Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Analysis

| Item | Function / Application | Example / Specification |

|---|---|---|

| RAL Staining Kit [3] | Staining semen smears to provide contrast for microscopic examination of sperm morphology. | Standard staining kit used in andrology labs. |

| CASA System [3] | Computer-Assisted Semen Analysis system for automated image acquisition and initial morphometric analysis. | MMC CASA system; includes microscope with digital camera. |

| Brightfield Microscope [3] | High-magnification imaging of stained sperm samples. | Equipped with 100x oil immersion objective. |

| HuSHem Dataset [12] | Publicly available benchmark dataset for sperm head morphology classification. | Contains 216 images across 4 categories (Normal, Pyriform, Amorphous, Tapered). |

| Chenwy Sperm-Dataset [12] | Publicly available dataset for sperm segmentation tasks. | Contains 320 high-resolution images with detailed contour annotations. |

| Python with Deep Learning Frameworks [3] | Programming environment for developing and training CNN and other deep learning models. | Python 3.8, with libraries like TensorFlow or PyTorch. |

| EdgeSAM Model [12] | Efficient segmentation model for precise sperm head extraction from images. | Pre-trained model fine-tuned with sperm contour annotations. |

Frequently Asked Questions (FAQs)

1. What is "ground truth" in sperm morphology analysis and why is it critical for deep learning?

In deep learning for sperm classification, "ground truth" refers to the expert-validated labels assigned to sperm images that your model learns from. It is the benchmark against which your model's predictions are measured. Its importance cannot be overstated; the quality and reliability of your ground truth directly determine the performance and clinical applicability of your model. Inconsistent or low-quality annotations will lead to a model that learns these same inconsistencies, resulting in poor generalization to new data. Establishing a robust ground truth is the foundational step for any successful deep learning project in this field [3] [1].

2. Our model's performance is unstable. How can inter-expert disagreement be a cause, and how do we address it?

Inter-expert disagreement is a major source of "label noise" and a common cause of unstable model performance. If experts disagree on how to classify the same sperm image, the model receives conflicting signals during training, confusing its learning process [1].

Solutions include:

- Quantify Disagreement: Before training, analyze the level of agreement between your experts using statistics. One study categorized agreement into "No Agreement (NA)," "Partial Agreement (PA): 2/3 experts agree," and "Total Agreement (TA): 3/3 experts agree" [3]. This allows you to understand the inherent difficulty of your dataset.

- Train on Consensus Subsets: For initial model development, train your model only on the subset of data where experts fully agree (TA). This provides a cleaner learning signal. You can then cautiously introduce the "partial agreement" data in later stages [3] [13].

- Data Augmentation: If you have a limited number of images with expert consensus, use data augmentation techniques (e.g., rotation, flipping, brightness adjustment) to artificially expand your training set. This helps the model learn more robust features and reduces overfitting. One study successfully expanded a dataset of 1,000 images to over 6,000 through augmentation [3].

3. We have limited data with expert annotations. What strategies can we use to build an effective model?

Limited data is a common challenge in medical AI. Beyond data augmentation, consider these strategies:

- Transfer Learning: Instead of training a model from scratch, start with a pre-trained model (e.g., VGG16, ResNet50) that has already learned general feature representations from a large dataset like ImageNet. You can then "fine-tune" this model on your smaller, specialized sperm morphology dataset. This approach has been shown to be highly effective, achieving true positive rates over 94% on some datasets [13] [14].

- Leverage Public Datasets: Use publicly available datasets for pre-training or benchmarking. Examples include HuSHeM, SCIAN-MorphoSpermGS, SMIDS, and the more recent SVIA dataset [1] [14]. This can supplement your own data.

4. What are the key performance metrics beyond accuracy that we should monitor?

While accuracy is important, it can be misleading, especially if your dataset has class imbalance (e.g., many more normal sperm than abnormal ones).

- Precision and Recall (Sensitivity): These metrics are crucial. High precision ensures that when your model predicts an abnormality, it is likely correct. High recall ensures that the model captures most of the actual abnormalities [6].

- F1-Score: This is the harmonic mean of precision and recall, providing a single metric to balance both concerns.

- Area Under the Curve (AUC): The Area Under the Receiver Operating Characteristic (ROC) curve shows the model's ability to distinguish between classes across different classification thresholds [1].

- Per-Class Accuracy: Monitor accuracy for each morphological class (e.g., tapered, macrocephalous, coiled tail) to ensure your model isn't biased toward the majority classes [3].

Troubleshooting Guides

Problem: Model Performance Does Not Match Expert Clinical Judgment

Symptoms: Your model achieves high accuracy on the test set, but domain experts (embryologists) disagree with its classifications on new, real-world samples.

Diagnosis and Resolution:

Audit Your Ground Truth: This is the most likely cause.

- Action: Re-examine the classification guidelines used to create your labels. Ensure they align with current WHO standards or the modified David classification used in your clinic. Inconsistencies in the original labeling will be learned and reproduced by the model [3] [15].

- Action: If possible, have a senior expert re-annotate a sample of your test set to check for drift from clinical intuition.

Check for Dataset Bias:

- Action: Analyze your training data distribution. Does it adequately represent all the morphological variants and staining artifacts experts encounter in practice? If not, your model has not learned to handle these cases. Actively collect more diverse data to fill these gaps [1].

Implement Explainable AI (XAI) Techniques:

- Action: Use tools like Grad-CAM to generate visual explanations of which parts of the sperm image influenced your model's decision. This allows experts to validate whether the model is focusing on biologically relevant features (like acrosome shape) or irrelevant artifacts (like staining noise). This builds trust and helps diagnose faulty logic [14].

Problem: High Variance in Model Performance Across Dataset Splits

Symptoms: Model performance (e.g., accuracy, F1-score) changes dramatically when you re-split your data into training and test sets.

Diagnosis and Resolution:

Investigate Inter-Expert Agreement:

- Action: Stratify your dataset by the level of expert agreement. You will likely find that performance is high and stable on the "Total Agreement" subset but poor on the "No Agreement" subset. This confirms that the variance is inherent to the data ambiguity rather than your model architecture [3].

Refine Your Data Splitting Strategy:

- Action: Instead of random splitting, use a stratified split to ensure that each fold of your cross-validation has a similar distribution of expert agreement levels and morphological classes. This provides a more reliable performance estimate.

Review Your Augmentation Pipeline:

- Action: Overly aggressive data augmentation might be generating unrealistic or misleading images, confusing the model. Review your augmentation parameters (e.g., range of rotations, degree of shear) with a domain expert to ensure they produce biologically plausible variations of a sperm cell [3].

Experimental Protocols & Data

Protocol: Establishing a Ground Truth Dataset with Multiple Experts

This protocol outlines a method for creating a robustly labeled sperm morphology dataset [3].

- Sample Preparation and Image Acquisition: Prepare semen smears according to WHO guidelines and stain them (e.g., RAL Diagnostics kit). Acquire images of individual spermatozoa using a microscope with a 100x oil immersion objective, ideally coupled with a CASA system for consistent capture [3].

- Expert Classification: Have at least three experienced embryologists classify each sperm image independently. The classification should be based on a standardized system, such as the modified David classification, which includes head defects, midpiece defects, and tail defects [3].

- Blinded Annotation: Ensure experts perform their classifications blindly, without knowledge of each other's labels, to prevent bias.

- Compile Ground Truth File: Create a central file (e.g., CSV) for each image containing: Image filename, classifications from all experts, and the final consensus label. The consensus can be defined as the label assigned by at least 2 out of 3 experts [3].

- Analyze Inter-Expert Agreement: Use statistical software (e.g., IBM SPSS) to calculate agreement levels using metrics like Fleiss' Kappa. Categorize data into "Total Agreement," "Partial Agreement," and "No Agreement" [3].

Protocol: A Deep Learning Workflow for Sperm Classification

This is a high-level workflow for training a classification model, based on common practices in recent literature [3] [13] [14].

- Image Pre-processing:

- Resize: Standardize all images to a fixed size (e.g., 80x80 pixels).

- Normalize: Scale pixel values to a standard range (e.g., 0 to 1).

- Grayscale Conversion: Convert color images to grayscale to simplify the model input [3].

- Data Partitioning: Split your dataset into three sets: Training (e.g., 80%), Validation (e.g., 10%), and Test (e.g., 10%). Ensure the splits are stratified to maintain class distribution.

- Data Augmentation (on Training Set only): Apply random but realistic transformations to the training images, such as rotation, horizontal/vertical flipping, and slight changes to brightness and contrast [3].

- Model Selection and Training:

- Select a Base Architecture: Choose a proven CNN architecture like VGG16 or ResNet50.

- Transfer Learning: Load the model pre-trained on ImageNet. Replace the final classification layer to match your number of sperm morphology classes.

- Train: First, freeze the pre-trained layers and train only the new final layers. Then, unfreeze all layers and fine-tune the entire model on your sperm data with a very low learning rate [13].

- Evaluation: Finally, evaluate the trained model on the held-out Test set that was not used during training or validation, reporting a comprehensive set of metrics.

Diagram: This workflow summarizes the key steps in a deep learning-based sperm classification project.

Quantitative Data on Expert Agreement & Model Performance

Table 1: Categorization of Inter-Expert Agreement Levels. This framework helps diagnose dataset complexity [3].

| Agreement Level | Definition | Implication for Model Training |

|---|---|---|

| Total Agreement (TA) | 3/3 experts assign the same label for all categories. | High-Quality Data: Ideal for initial model training, provides a clean learning signal. |

| Partial Agreement (PA) | 2/3 experts agree on the same label for at least one category. | Moderate-Quality Data: Can be used for training but may introduce some noise. |

| No Agreement (NA) | No consensus among the experts on the labels. | Low-Quality Data: Consider excluding or for advanced training only; highly ambiguous. |

Table 2: Performance of Selected Deep Learning Models on Public Sperm Morphology Datasets. Note the variance in performance and classes [13] [14].

| Model / Approach | Dataset | Number of Classes | Key Performance Metric |

|---|---|---|---|

| CBAM-Enhanced ResNet50 + Feature Engineering | SMIDS | 3 | Accuracy: 96.08% ± 1.2 [14] |

| CBAM-Enhanced ResNet50 + Feature Engineering | HuSHeM | 4 | Accuracy: 96.77% ± 0.8 [14] |

| VGG16 (Transfer Learning) | HuSHeM | 5 | Average True Positive Rate: 94.1% [13] |

| VGG16 (Transfer Learning) | SCIAN (Partial Agreement) | 5 | Average True Positive Rate: 62% [13] |

| Custom CNN | SMD/MSS (Augmented) | 12 | Accuracy Range: 55% to 92% [3] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and computational tools for deep learning-based sperm morphology research.

| Item | Function / Application |

|---|---|

| RAL Diagnostics Staining Kit | Stains sperm cells on semen smears to provide contrast for visualizing morphological details under a microscope [3]. |

| CASA System | Computer-Assisted Semen Analysis system; an automated microscope and software platform for standardized image acquisition and initial morphometric analysis [3]. |

| Pre-trained CNN Models (VGG16, ResNet50) | Deep learning models pre-trained on the large ImageNet dataset. Used as a starting point via transfer learning to avoid training from scratch, significantly improving performance on small medical datasets [13] [14]. |

| Data Augmentation Libraries (e.g., in Python) | Software tools (e.g., TensorFlow, PyTorch) used to programmatically create variations of training images, expanding the effective size of the dataset and improving model robustness [3]. |

| Grad-CAM Visualization Tool | An explainable AI (XAI) technique that produces visual explanations for decisions from CNNs, allowing researchers to verify if the model focuses on biologically relevant features [14]. |

Methodologies in Practice: Architectures and Training Strategies

In the field of male fertility research, the analysis of sperm morphology is a critical diagnostic procedure. Traditional manual assessment is highly subjective, time-consuming, and prone to significant inter-observer variability, with reported disagreement rates among experts as high as 40% [14]. Convolutional Neural Networks (CNNs) have emerged as a powerful solution, offering the potential for automated, standardized, and accelerated semen analysis [3]. This guide addresses common challenges researchers face when selecting and optimizing CNN architectures specifically for image-based sperm classification, providing practical troubleshooting advice and experimental protocols to enhance your model's performance.

CNN Architectures for Sperm Classification: A Comparative Analysis

Selecting an appropriate CNN architecture is a foundational decision that significantly impacts classification performance. The table below summarizes the documented performance of various architectures on benchmark sperm morphology datasets.

Table 1: Performance of CNN Architectures on Sperm Morphology Classification

| Architecture | Key Features | Dataset | Reported Accuracy | Strengths and Applications |

|---|---|---|---|---|

| CBAM-enhanced ResNet50 [14] | Integration of Convolutional Block Attention Module (CBAM) with ResNet50 backbone. | SMIDS (3-class) | 96.08% ± 1.2% | Excellent for focusing on morphologically relevant regions (head, acrosome, tail). |

| CBAM-enhanced ResNet50 [14] | Deep Feature Engineering (DFE) with PCA and SVM. | HuSHeM (4-class) | 96.77% ± 0.8% | State-of-the-art performance; suitable for fine-grained classification. |

| VGG16 (Transfer Learning) [13] | Retrained on ImageNet, fine-tuned with sperm images. | HuSHeM | 94.1% | Strong baseline; effective even with limited data via transfer learning. |

| Custom CNN [3] | 5-layer CNN trained on augmented dataset. | SMD/MSS (12-class) | 55% to 92% | Adaptable for complex, multi-class problems (e.g., David classification). |

| Bi-Model CNN (Bi-CNN) [16] | Dual-path network capturing both global and local features. | Fundus Images (AMD) | 99.5% | Promising for analyzing sperm with multiple defect localizations. |

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: My model's accuracy is inconsistent and highly dependent on the initial random seed. What could be the issue?

Answer: This is a classic symptom of underspecification, a common challenge in deep learning where models with similar training performance can have wildly different behaviors on new data [17].

- Solution: Implement a rigorous validation strategy.

- K-Fold Cross-Validation: Use 5-fold or 10-fold cross-validation to ensure your performance metrics are stable across different data splits [14].

- Multiple Runs: Run your experiment multiple times with different random seeds and report the mean and standard deviation of the performance, as seen in the ResNet50 results (e.g., 96.08% ± 1.2%) [14].

- Simplify the Model: Reduce model complexity or increase regularization (e.g., dropout, weight decay) to prevent the model from latching onto spurious correlations in the small training set.

FAQ 2: How can I improve performance when I have a limited number of sperm images?

Answer: Small datasets are a major constraint. You can address this through data and algorithmic techniques.

- Solution A: Data Augmentation. Systematically increase your dataset size by applying label-preserving transformations. The SMD/MSS dataset was expanded from 1,000 to 6,035 images using techniques like rotation, flipping, and scaling [3].

- Solution B: Transfer Learning. This is the most effective approach. Leverage a pre-trained model (e.g., VGG16, ResNet50) that has been trained on a large dataset like ImageNet. Fine-tune the final layers, or the entire network, on your specific sperm morphology dataset [13] [14]. This allows the model to apply general feature detection knowledge to your specialized task.

- Solution C: Deep Feature Engineering (DFE). Extract features from a pre-trained CNN and then use classic machine learning classifiers like Support Vector Machines (SVM) on these features. This hybrid approach has been shown to boost accuracy significantly, for instance, from 88% to 96% [14].

FAQ 3: My model confuses different classes of abnormal sperm. How can I make it more discriminative?

Answer: The model is likely struggling to focus on the most relevant morphological structures.

- Solution: Integrate Attention Mechanisms. Add a Convolutional Block Attention Module (CBAM) to your CNN backbone. CBAM sequentially infers attention maps along both the channel and spatial dimensions, forcing the model to prioritize informative features like head shape or tail integrity while suppressing irrelevant background noise [14]. This has been proven to enhance performance and provide visual explanations for classifications.

FAQ 4: What should I do if my model is overfitting to the training data?

Answer: Overfitting occurs when a model learns the training data too well, including its noise, and fails to generalize to new data.

- Solution: Employ a combination of strategies.

- Enhanced Augmentation: Increase the diversity and robustness of your augmentation techniques.

- Regularization: Apply L2 regularization (weight decay) and Dropout layers to prevent complex co-adaptations of neurons.

- Early Stopping: Monitor the validation loss and stop training when it stops improving.

- Data Balance: Ensure your dataset has a balanced representation of all morphological classes. Use augmentation specifically for underrepresented classes [3] [17].

Experimental Protocols for Key Scenarios

Protocol 1: Implementing a Baseline Model with Transfer Learning

This protocol outlines the steps to establish a strong baseline using the pre-trained VGG16 architecture.

- Data Preprocessing:

- Resizing: Resize all input images to 224x224 pixels, the default input size for VGG16.

- Normalization: Normalize pixel values using the mean and standard deviation of the ImageNet dataset.

- Model Setup:

- Load the VGG16 model pre-trained on ImageNet.

- Remove the original classification head (the final fully connected layers).

- Add new, randomly initialized fully connected layers tailored to your number of sperm classes (e.g., Normal, Tapered, Pyriform).

- Training Scheme:

- Stage 1 (Feature Extractor): Freeze the weights of all convolutional layers and train only the new classification head. Use a low learning rate (e.g., 1e-4).

- Stage 2 (Fine-Tuning): Unfreeze some or all of the convolutional layers and continue training with an even lower learning rate (e.g., 1e-5). This allows the model to adapt pre-trained features to the specifics of sperm images [13].

- Evaluation: Use a hold-out test set and report metrics like accuracy, precision, recall, and F1-score.

Protocol 2: Enhancing Performance with Attention and Feature Engineering

This advanced protocol builds on the baseline to achieve state-of-the-art results.

- Architecture Modification: Replace the standard CNN backbone (e.g., ResNet50) with a CBAM-enhanced version. The CBAM module should be integrated after each convolutional block.

- Deep Feature Extraction: After training the CBAM-CNN, remove the final classification layer. Use the model to extract high-dimensional feature vectors from your pre-processed images.

- Feature Processing: Apply Principal Component Analysis (PCA) to the extracted features to reduce dimensionality and noise [14].

- Classification: Train a Support Vector Machine (SVM) with a Radial Basis Function (RBF) kernel on the PCA-reduced features for the final classification.

The following workflow diagram illustrates this advanced experimental pipeline.

The Scientist's Toolkit: Essential Research Reagents & Materials

A successful computational experiment relies on a foundation of high-quality data and software tools. The table below lists the key "research reagents" for sperm morphology classification.

Table 2: Essential Materials and Computational Tools for Sperm Classification Research

| Item Name | Type | Function / Application | Example / Source |

|---|---|---|---|

| Benchmarked Datasets | Data | Publicly available, annotated image sets for training and fair model comparison. | HuSHeM [13], SCIAN [13], SMIDS [14], SMD/MSS [3] |

| Data Augmentation Pipeline | Software | Algorithmically expands training dataset to improve model generalization and combat overfitting. | Rotation, flipping, scaling, color jitter (e.g., in TensorFlow/Keras or PyTorch) |

| Pre-trained CNN Models | Model | Provides a powerful starting point for feature extraction or transfer learning, reducing training time and data needs. | VGG16, ResNet50, EfficientNet (e.g., from TensorFlow Hub or PyTorch Vision) |

| Attention Modules | Algorithm | Enhances model discriminative power by focusing on semantically relevant image regions (e.g., sperm head). | Convolutional Block Attention Module (CBAM) [14] |

| Feature Selection Methods | Algorithm | Identifies the most discriminative features from deep networks to improve classifier performance. | PCA, Chi-square test, Random Forest importance [14] |

| Classification Algorithms | Algorithm | The final model that makes the class prediction based on extracted features. | Support Vector Machine (SVM), k-Nearest Neighbors (k-NN) [14] |

Workflow Diagram: From Data to Diagnosis

The following diagram maps the logical progression of a complete, optimized deep learning pipeline for automated sperm morphology classification, integrating the components and protocols discussed in this guide.

Frequently Asked Questions (FAQs)

1. What is transfer learning and why is it used in sperm morphology analysis? Transfer learning is a machine learning technique where a pre-trained model (a "teacher model") developed for one task is repurposed as the starting point for a related yet different task [18]. For sperm morphology analysis, this is particularly valuable because it allows researchers to leverage features learned from large datasets (like ImageNet) even when the available medical image datasets are limited [3] [19]. This approach can cut training time, reduce data requirements, and improve classification accuracy [18].

2. What does "freezing layers" mean and which layers should I freeze? Freezing a layer means preventing its weights from being updated during training. When a layer is frozen, data still flows through it in the forward pass, but during backpropagation, no gradients are calculated and its weights remain fixed [18]. As a general best practice, you should freeze the early layers of a Convolutional Neural Network (CNN) like VGG16, as they capture universal features like edges and textures [18]. The later, task-specific layers should typically be unfrozen and fine-tuned on your sperm morphology dataset.

3. I am getting constant validation accuracy during fine-tuning. What is wrong? This is a common issue, often related to an incorrect model configuration. The table below summarizes potential causes and solutions based on experimental observations:

Table: Troubleshooting Constant Validation Accuracy

| Observed Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| Constant validation accuracy of 0.0 or 1.0 [20] | Incorrect loss function and final layer activation mismatch | Ensure your output layer activation (e.g., softmax) aligns with your loss function (e.g., categorical_crossentropy) [21]. |

| Training and validation loss do not change [20] | Too many layers are frozen, preventing learning | Progressively unfreeze middle or higher-level layers to allow the model to adapt to the new task [18]. |

| Loss values become NaN or spike to infinity [21] | Exploding gradients | Implement gradient clipping in your optimizer to set a maximum gradient norm [21]. |

4. My model is overfitting to the sperm image dataset. How can I address this? Overfitting, where your model performs well on training data but poorly on validation data, is a frequent challenge, especially with smaller medical datasets. Strategies to combat this include:

- Data Augmentation: Artificially expand your dataset using techniques like rotation, flipping, and scaling. One study on sperm morphology extended a dataset from 1,000 to 6,035 images using augmentation [3].

- Regularization Techniques: Use L1/L2 regularization to penalize large weights or incorporate Dropout layers, which randomly ignore neurons during training [21].

- Early Stopping: Halt the training process when the validation performance plateaus or starts to degrade [21].

Troubleshooting Guide: Common Errors and Fixes

Problem: Vanishing/Exploding Gradients Deep networks can suffer from gradients that become excessively small (vanish) or large (explode) during backpropagation, hindering learning.

- Solutions:

- Use Proper Weight Initialization: Frameworks often handle this automatically, but ensure you are not using sigmoid activations in deep networks, as they can exacerbate this problem [21].

- Switch to ReLU or its Variants: These activation functions help maintain healthier gradients [21].

- Add Batch Normalization: This technique is a "game-changer for gradient stability" [21].

- Use Residual Connections: Skip connections allow gradients to flow directly to earlier layers [21].

Problem: The Model is Underfitting Underfitting occurs when the model is too simple to capture patterns, resulting in poor performance on both training and validation data.

- Solutions:

Problem: Poor Performance Despite Fine-Tuning If your model's accuracy remains low, the issue may lie with the data or a suboptimal fine-tuning strategy.

- Solutions:

- Verify Data Quality: Always start with exploratory data analysis. Plot your data, check distributions, and look for outliers or mislabeled examples [21]. Inconsistent staining or illumination in sperm images can significantly impact performance [3].

- Incorporate Attention Mechanisms: Research has shown that adding a Convolutional Block Attention Module (CBAM) to architectures like ResNet50 can significantly boost performance by helping the network focus on morphologically relevant parts of the sperm (e.g., head shape, tail defects) [14].

- Try a Hybrid Approach: One study achieved 92% accuracy in a medical classification task by using VGG16 for feature extraction and then feeding those features into a Random Forest classifier [19]. This hybrid deep learning-machine learning approach can sometimes outperform end-to-end CNNs.

Experimental Protocols for Sperm Classification

Protocol 1: Basic Fine-Tuning of VGG16 for Sperm Morphology

This protocol outlines a standard transfer learning workflow using Keras/TensorFlow.

Methodology:

- Load Base Model: Load the VGG16 model pre-trained on ImageNet, excluding its top (fully connected) classification layers.

- Freeze Layers: Freeze the early convolutional layers to retain their general feature detectors.

- Add Custom Classifier: Add new, trainable layers on top for the specific sperm morphology classification task (e.g., normal vs. abnormal, or defect-specific classes).

- Compile and Train: Compile the model with a low initial learning rate and train on your sperm image dataset.

Protocol 2: Advanced Hybrid and Attention-Based Models

For researchers seeking state-of-the-art results, more advanced architectures have been documented.

Methodology (Based on Published Research): A 2025 study achieved a test accuracy of 96.08% on a sperm morphology dataset by using a hybrid framework [14]. The workflow is as follows:

- Backbone with Attention: Use a ResNet50 architecture as a backbone, enhanced with a Convolutional Block Attention Module (CBAM) to help the model focus on critical sperm structures [14].

- Deep Feature Engineering: Extract multi-layered features from the network (e.g., from CBAM, Global Average Pooling - GAP, and Global Max Pooling - GMP layers) [14].

- Feature Selection: Apply feature selection methods like Principal Component Analysis (PCA) to reduce noise and dimensionality [14].

- Classification: Instead of a standard softmax layer, train a Support Vector Machine (SVM) with an RBF kernel on the refined feature set for the final classification [14].

Table: Performance Comparison of Different Models for Medical Image Classification

| Model Architecture | Dataset / Application | Reported Performance | Key Advantage |

|---|---|---|---|

| VGG16 + Random Forest [19] | Heart Disease Detection | 92% Accuracy | Combines deep feature extraction with robust classical ML. |

| CBAM-ResNet50 + PCA + SVM [14] | Sperm Morphology (SMIDS) | 96.08% Accuracy | State-of-the-art; uses attention for better feature refinement. |

| YOLOv7 [5] | Bovine Sperm Morphology | mAP@50 of 0.73 | Unified object detection for locating and classifying sperm. |

| Custom CNN [3] | Sperm Morphology (SMD/MSS) | 55% to 92% Accuracy | Demonstrates the potential of deep learning for standardization. |

Workflow Visualization

Fine-Tuning Workflow for Sperm Classification

Fine-Tuning Workflow for Sperm Classification

Advanced Hybrid Model Architecture

Advanced Hybrid Model Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Sperm Morphology Analysis Experiments

| Reagent / Material | Function in Experiment |

|---|---|

| RAL Diagnostics Stain [3] | Staining kit used to prepare semen smears, enhancing the contrast and visibility of sperm structures for microscopic analysis. |

| Optixcell Extender [5] | A commercial semen extender used to dilute and preserve bull sperm samples, maintaining sperm viability during processing. |

| Trumorph System [5] | A fixation system that uses controlled pressure and temperature (60°C, 6 kp) for dye-free immobilization of spermatozoa for morphology evaluation. |

Troubleshooting Guide: Hyperparameter-Related Issues

FAQ 1: My model's validation loss is oscillating and fails to converge. What should I check?

Answer: This is a classic symptom of an incorrectly set learning rate. Your troubleshooting should focus on two main areas:

Primary Suspect: Learning Rate is Too High. A learning rate that is too large causes the optimization algorithm to overshoot the minimum of the loss function, leading to oscillations [22] [23] [24]. The model updates its weights too aggressively with each step.

- Action: Systematically reduce your learning rate. Try decreasing it by an order of magnitude (e.g., from

0.01to0.001). Using a learning rate scheduler likeReduceLROnPlateau, which automatically reduces the learning rate when validation performance stops improving, can also resolve this [23].

- Action: Systematically reduce your learning rate. Try decreasing it by an order of magnitude (e.g., from

Secondary Check: Batch Size is Too Small. A very small batch size introduces high variance (noise) in the gradient estimates. Each update is based on a small, potentially non-representative sample of data, which can cause the training process to become unstable and bounce around [22] [25] [26].

- Action: If your computational resources allow, try increasing the batch size. This provides a more stable gradient estimate for each update, leading to smoother convergence [27].

FAQ 2: My model is training very slowly, and convergence takes too long. How can I speed it up?

Answer: Slow training often stems from hyperparameters that are too conservative, preventing the model from making meaningful progress.

Primary Suspect: Learning Rate is Too Low. A very small learning rate means the model only makes tiny adjustments to its weights with each update. While this can lead to precise convergence, it dramatically increases the number of steps required to reach the minimum [23] [24].

- Action: Gradually increase the learning rate. You can also use a Cyclical Learning Rate scheduler, which varies the learning rate between a lower and upper bound, often leading to faster convergence as it can escape shallow local minima more effectively [23].

Secondary Check: Batch Size is Too Large. While large batches provide stable gradients, they also mean the model performs fewer weight updates per epoch. In some cases, this can slow down the overall convergence process [25].

FAQ 3: My model performs well on training data but poorly on validation data (overfitting). Which hyperparameters can help?

Answer: Overfitting indicates that the model has memorized the training data instead of learning generalizable patterns. Several hyperparameters act as regularizers.

Primary Tuning Levers:

- Increase Regularization Strength (L1/L2): These techniques add a penalty to the loss function based on the magnitude of the weights, discouraging the model from becoming overly complex and reliant on any specific feature [22].

- Increase Dropout Rate: Dropout randomly "drops out" (ignores) a fraction of neurons during training, which prevents complex co-adaptations among neurons and forces the network to learn more robust features [22] [28].

Indirect Lever: Reduce Batch Size. Training with smaller batch sizes has a natural regularizing effect. The noise in the gradient estimates can prevent the model from overfitting to the specific training examples and help it find broader, more generalizable patterns in the data [25] [26].

FAQ 4: How do I choose an optimizer for my sperm morphology classification model?

Answer: The choice of optimizer can significantly impact both the speed of convergence and the final model performance. The following table summarizes key optimizers.

Table 1: Comparison of Common Optimization Algorithms

| Optimizer | Key Characteristics | Best For | Considerations for Sperm Morphology |

|---|---|---|---|

| SGD | Simple, often finds good minima but can be slow. | Well-understood problems, good generalizability [22]. | A solid baseline, but may require more tuning of the learning rate schedule. |

| Adam | Adaptive learning rates per parameter; fast convergence. | A wide range of problems; a popular default choice [29] [28]. | Excellent for quickly prototyping and testing new model architectures on image data. |

| RMSprop | Adapts learning rates based on a moving average of recent gradients. | Recurrent Neural Networks (RNNs) and non-stationary objectives [22] [24]. | Useful if dealing with sequential data or if Adam is overfitting. |

For a sperm classification task using CNNs on image data, Adam is a strong and recommended starting point due to its adaptive nature and fast convergence [3] [28]. If you find Adam leads to overfitting or unstable validation performance, switching to SGD with momentum or RMSprop is a good alternative strategy.

Experimental Protocols for Hyperparameter Tuning

Protocol 1: Systematic Hyperparameter Optimization with Bayesian Methods

For a rigorous thesis project, moving beyond manual tuning is recommended. This protocol outlines a systematic approach using Bayesian Optimization.

Table 2: Quantitative Ranges for Hyperparameter Tuning in Sperm Classification

| Hyperparameter | Typical Search Range | Notes for Sperm Image Data |

|---|---|---|

| Learning Rate | ( 1e^{-5} ) to ( 1e^{-2} ) (log scale) | Crucial for stable training; often optimal on the lower end for fine-tuning [24]. |

| Batch Size | 16, 32, 64, 128 (power of 2) | Limited by GPU memory. Smaller sizes (32, 64) can offer a regularization benefit [3] [26]. |

| Optimizer | {Adam, SGD, RMSprop} | Compare adaptive vs. non-adaptive methods [28]. |

| Dropout Rate | 0.2 to 0.5 | Helps prevent overfitting, which is critical for medical image models with limited data [22] [3]. |

Methodology:

- Define the Search Space: Create a dictionary of hyperparameters and their ranges, as shown in Table 2.

- Define the Objective Function: Write a function that takes a set of hyperparameters, builds and trains the model, and returns the validation accuracy (or any other target metric).

- Run Bayesian Optimization: Use a library like

bayes_optorhyperoptto perform Sequential Model-Based Global Optimization (SMBO) [29] [28]. Unlike random search, this method builds a probabilistic model to predict which hyperparameters will perform best, focusing the search on promising regions. - Validate: Train your final model with the best-found hyperparameters on a held-out test set to obtain an unbiased performance estimate.

Protocol 2: Establishing a Baseline with Random Search

If computational resources are limited, Random Search is a more efficient alternative to an exhaustive Grid Search [22] [29].

Methodology:

- Define Ranges: Specify the distributions for your hyperparameters (e.g., learning rate from a log-uniform distribution between ( 1e^{-5} ) and ( 1e^{-2} )).

- Random Sampling: Randomly sample a fixed number of configurations (e.g., 50-100) from these distributions.

- Train and Evaluate: For each sampled configuration, train a model and evaluate its performance on the validation set.

- Select Best: Choose the configuration that yields the highest validation performance.

Workflow and Relationship Diagrams

Diagram: Hyperparameter Troubleshooting Logic

Diagram: Causal Influence of Batch Size on Generalization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Deep Learning in Reproductive Biology

| Tool / Solution | Function / Rationale | Example / Note |

|---|---|---|

| Deep Learning Framework | Provides the foundation for building and training neural network models. | TensorFlow/Keras [3] [28] or PyTorch [29] are industry standards. |

| Hyperparameter Tuning Library | Automates the search for optimal hyperparameters, saving significant time and computational resources. | bayes_opt (for Bayesian Optimization) [28], scikit-learn (for Random/Grid Search). |

| Data Augmentation Pipeline | Artificially expands the training dataset by applying random transformations to images, which is crucial for preventing overfitting in medical imaging with limited data [3]. | Includes rotations, flips, brightness/contrast adjustments applied to sperm microscopy images. |

| Learning Rate Scheduler | Dynamically adjusts the learning rate during training to improve convergence and model performance [23]. | ReduceLROnPlateau, CosineAnnealingLR, or a custom exponential decay schedule. |

| Optimizer Algorithm | The engine that updates model weights to minimize the loss function during training [22] [24]. | Adam, SGD, and RMSprop are key options to evaluate (see Table 1). |

| Hardware Accelerator | Dramatically speeds up the model training process, which is essential for iterative experimentation. | GPUs (e.g., NVIDIA) are essential for practical deep learning research timelines [27]. |

Frequently Asked Questions (FAQs)

1. What are the most common data-related issues that degrade model performance in sperm morphology analysis? The primary issues are limited dataset size, low image quality, and high annotation complexity. Many public datasets, such as MHSMA (1,540 images) and HuSHeM (only 216 sperm heads publicly available), contain a limited number of samples, which can lead to model overfitting [2] [1]. Furthermore, images are often acquired with low resolution and contain noise from insufficient microscope lighting or poorly stained semen smears, complicating the model's ability to learn distinct features [3] [30]. Finally, the annotation process itself is challenging, as it requires experts to simultaneously evaluate head, midpiece, and tail abnormalities, leading to subjective labels and inter-expert variability [2] [3].

2. How can I effectively use data augmentation for a small sperm image dataset? A strategic approach combines basic and advanced augmentation techniques. For sperm image analysis, a successful protocol involved expanding a dataset from 1,000 to 6,035 images using a combination of techniques [3]. Beyond standard transformations (rotation, flipping), consider a "learning-to-augment" strategy that uses Bayesian optimization to determine the optimal type and parameters of noise to add to images, which has been shown to improve model generalization [31]. The key is to apply augmentations that reflect real-world variations in your data, such as differences in staining, lighting, and orientation.

3. My model trains successfully but performs poorly on new data. What steps should I take? This is a classic sign of overfitting or a data pipeline bug. Follow this troubleshooting sequence [32] [33]:

- Overfit a single batch: First, ensure your model can learn. Take a small batch (e.g., 2-4 examples) and drive the training error to near zero. If it cannot, there is likely a fundamental bug in your model architecture or loss function [32].

- Check for distribution shifts: Verify that the statistical distribution (mean, standard deviation) of your pre-processing pipeline is identical between your training and validation/test sets. Inconsistent normalization is a common culprit [33].

- Validate input data: Implement tests to check that input images are correctly normalized and that features are within the expected value range [33]. A model trained on incorrectly preprocessed data will fail on correctly preprocessed new data.

- Simplify the problem: Test your model on a simpler, smaller dataset where you can achieve a known baseline performance. This helps isolate whether the issue is with the data or the model itself [32].

4. Why is normalization critical for deep learning models in this domain? Normalization stabilizes and accelerates training by ensuring all input features (pixels) are on a comparable scale [33]. This prevents gradients from exploding or vanishing during backpropagation. For images, common practices include scaling pixel values to a [0, 1] or [-0.5, 0.5] range, or standardizing them to have a mean of zero and a standard deviation of one [32]. Consistent normalization across all data splits is essential for the model to generalize effectively.

5. What are the main approaches to denoising microscopic sperm images? Denoising techniques can be broadly classified as follows [30]:

- Spatial Domain Filtering: This includes linear filters (e.g., Mean, Wiener) and non-linear filters (e.g., Median, Bilateral). While simple, they can blur important image structures like edges [30].

- Variational Methods: These methods formulate denoising as an energy minimization problem. A prominent example is Total Variation (TV) regularization, which is effective at preserving edges but can introduce a "stair-casing" effect in smooth regions [30].

- Non-Local Means (NLM): This advanced method leverages the self-similarity property of images, averaging pixels from patches that are similar across the entire image. It is particularly robust to noise but can be computationally intensive [30].

- Deep Learning-Based Denoising: Modern approaches use CNNs and autoencoders to learn a mapping from noisy to clean images. These can be highly effective but require large amounts of training data [30].

Troubleshooting Guide: A Step-by-Step Workflow

Follow this structured workflow to diagnose and resolve issues in your data pre-processing pipeline.

Diagram 1: A systematic workflow for troubleshooting deep learning model training.

Phase 1: Establish a Baseline

The initial and most critical step is to start with a simple, controllable experimental setup [32].

- Action: Choose a simple model architecture (e.g., a few-layer CNN or a basic LSTM). Normalize your inputs and train on a small, manageable subset of your data (e.g., 10,000 samples) [32].