Optimizing Feature Selection for Fertility Prediction Models: A Machine Learning Roadmap for Researchers

This article provides a comprehensive analysis of feature selection methodologies for enhancing the performance and clinical applicability of machine learning models in fertility prediction.

Optimizing Feature Selection for Fertility Prediction Models: A Machine Learning Roadmap for Researchers

Abstract

This article provides a comprehensive analysis of feature selection methodologies for enhancing the performance and clinical applicability of machine learning models in fertility prediction. Targeting researchers and scientists, it systematically explores the foundational challenges of high-dimensional infertility data, evaluates advanced algorithmic approaches from hybrid filters to deep learning, and outlines rigorous optimization and validation frameworks. By synthesizing recent evidence and comparative studies, this review serves as a strategic guide for developing robust, interpretable, and clinically actionable prediction tools for assisted reproductive technology outcomes, from embryo selection to live birth prediction.

The High-Stakes Challenge: Why Feature Selection is Critical in Fertility Prediction

The Data-Rich, Outcome-Complex Landscape of Assisted Reproduction

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions for Researchers

Q1: What are the common statistical pitfalls in assisted reproduction study design and how can we avoid them?

The analysis of complex ART datasets presents several specific challenges that can lead to spurious conclusions if not properly addressed [1]:

Multiplicity and Multicollinearity: ART research typically involves numerous variables (patient parameters, laboratory conditions, clinical outcomes). Running multiple statistical comparisons without correction increases the risk of false-positive findings. Similarly, high correlation between predictor variables (multicollinearity) can destabilize regression models and make interpretation unreliable [1].

Overfitting Regression Models: With abundant data points, there's a risk of creating models that fit the specific dataset perfectly but fail to predict new observations accurately. This occurs when models include too many variables relative to the number of outcomes [1].

Inappropriate Handling of Female Age: Female age remains one of the most critical prognostic factors, yet methods to accurately account for it in research models are often inadequate. More sophisticated statistical approaches are becoming necessary to properly control for this variable [1].

Misinterpretation of "Trends": Researchers increasingly use the term "trend" to describe nonsignificant results, which can be misleading. Proper statistical thresholds (typically p < 0.05) should be maintained for claiming meaningful associations [1].

Q2: What technical parameters should be documented for embryo culture and quality assessment?

Proper documentation of laboratory conditions is essential for reproducible research and model building [2]:

Table: Essential Laboratory Parameters for ART Research Documentation

| Parameter Category | Specific Variables to Document | Research Impact |

|---|---|---|

| Culture Conditions | Incubator temperature, gas concentrations, pH levels, media composition | Affects embryo development rates and viability endpoints |

| Procedural Timing | Fertilization check timing, embryo division intervals, culture duration | Influences developmental scoring and transfer selection criteria |

| Embryo Quality Metrics | Cell number, symmetry, fragmentation程度, blastocyst grading | Critical input variables for implantation success prediction models |

| Cryopreservation Data | Freezing method, thaw survival rates, post-thaw development | Affects cycle outcome data and cumulative success calculations |

Q3: How should we handle missing data or failed fertilization in ART experiments?

Failed fertilization presents both a clinical challenge and a data integrity issue for researchers [3]:

Diagnostic Assessment: When fertilization fails, investigate whether the issue originates from sperm factors (assessed via semen analysis), egg factors (evaluated through maturity status), or combined factors [3].

Rescue Protocols: For conventional IVF failures, intracytoplasmic sperm injection (ICSI) can be employed where a single sperm is directly injected into each mature egg. ICSI has revolutionized treatment of male factor infertility and can achieve fertilization rates comparable to standard IVF [3] [4].

Data Reporting: In research contexts, complete documentation of fertilization failures is crucial. Studies should report fertilization rates (typically 65-75% of mature eggs) and clearly specify whether failed cases were excluded from analysis or handled with appropriate statistical methods [4].

Experimental Protocols for Feature Selection in Fertility Prediction

Protocol 1: Building a Predictive Model for IVF Success

This methodology outlines the key steps for developing fertility prediction models using ART data [5]:

Workflow for IVF Outcome Prediction

Materials and Methods:

Data Collection: Compile comprehensive cycle data including patient demographics (age, BMI, infertility duration), ovarian reserve markers (AMH, FSH, antral follicle count), stimulation parameters (medication types and doses), laboratory data (fertilization method, embryo quality metrics), and outcome data (implantation, clinical pregnancy, live birth) [3] [4].

Feature Selection: Apply multiple feature selection techniques to identify the most predictive variables. Research indicates that ensemble methods combining logistic regression and decision trees can achieve prediction accuracy of approximately 87% for fertility outcomes [5].

Model Validation: Use temporal validation (training on earlier cycles, testing on later cycles) or cross-validation to assess model performance on unseen data. Report precision, recall, accuracy, and F1-score to comprehensively evaluate predictive capability [5].

Protocol 2: Troubleshooting Ovarian Response Prediction Models

Poor ovarian response remains a significant challenge in ART cycles and represents an important prediction problem [3]:

Table: Feature Categories for Ovarian Response Prediction

| Feature Category | Specific Parameters | Data Type | Collection Method |

|---|---|---|---|

| Baseline Hormonal | FSH, AMH, Estradiol | Continuous | Blood testing (cycle day 2-3) |

| Ultrasound Metrics | Antral follicle count, Ovarian volume | Continuous/Count | Transvaginal ultrasound |

| Demographic | Age, BMI, Smoking status | Continuous/Categorical | Patient questionnaire |

| Stimulation Protocol | Medication type and dosage, GnRH analog type | Categorical | Treatment records |

Troubleshooting Approach:

Low Response Prediction: When models fail to accurately predict poor ovarian response, incorporate additional biomarkers such as AMH levels and antral follicle counts, which more directly reflect ovarian reserve than age alone [3].

Protocol Optimization: For predicted poor responders, consider alternative stimulation protocols including agonist flare protocols, luteal phase stimulation, or natural cycle IVF. Document the outcomes of these alternative approaches to refine future predictions [3].

Lifestyle Factors: Include modifiable factors such as BMI, smoking status, and environmental exposures in prediction models, as these can impact ovarian response and provide opportunities for pre-treatment optimization [3].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Materials for ART Investigations

| Reagent/Technology | Primary Function | Research Application | Considerations |

|---|---|---|---|

| Preimplantation Genetic Testing (PGT) | Screens embryos for chromosomal abnormalities | Research on aneuploidy rates and implantation failure | Distinguish between PGT for specific disorders (validated) and general screening (considered experimental) [3] |

| Intracytoplasmic Sperm Injection (ICSI) | Direct sperm injection into oocytes | Male factor infertility studies, fertilization mechanism research | Fertilization rates typically 65-75%; genetic counseling advised for inherited male factor issues [3] [4] |

| Assisted Hatching (AH) | Creates opening in zona pellucida | Investigation of implantation mechanisms | Limited evidence for efficacy; may be considered for older patients or previous IVF failures [4] |

| Cryopreservation Media | Preserves embryos via freezing | Studies on freeze-thaw survival, timing of transfer | Successful pregnancies reported with embryos frozen for extended periods (up to 20 years) [4] |

| Time-Lapse Imaging Systems | Continuous embryo monitoring without disturbance | Morphokinetic studies, developmental pattern analysis | Provides rich dataset for machine learning algorithms predicting embryo viability |

Data Analysis Framework for ART Research

The complex, multidimensional nature of ART data requires sophisticated statistical approaches [1]:

ART Data Analysis Framework

Key Considerations for Robust Analysis:

Multiplicity Adjustments: Apply appropriate statistical corrections (e.g., Bonferroni, False Discovery Rate) when conducting multiple hypothesis tests on the same dataset to reduce false positive findings [1].

Feature Selection Implementation: Employ rigorous feature selection methods before model building to identify the most relevant predictors and reduce dimensionality. Techniques including Random Forest importance, LASSO regression, and recursive feature elimination have shown utility in fertility prediction research [5].

Validation Frameworks: Implement both internal validation (cross-validation, bootstrap) and external validation (temporal, geographical) to assess model generalizability rather than relying solely on performance metrics from the training dataset [1] [5].

Federated Learning Approaches: For multi-center studies, consider federated learning techniques that allow model training across institutions without sharing raw patient data, thus addressing privacy concerns while leveraging larger datasets [5].

Frequently Asked Questions (FAQs)

Q1: My feature selection process yields unstable results with different feature subsets on each run. What is the cause and how can I resolve it?

A1: Instability in feature selection often stems from inherent randomness in certain algorithms or high interdependency between features. To resolve this:

- Use Deterministic Models: Consider switching from wrapper methods, which can have random and unstable results [6], to more deterministic filter or embedded methods.

- Apply Advanced Techniques: Implement a Fractal Feature Selection (FFS) model, which is designed to perform feature selection with high stability regarding the sets of features selected [6]. The FFS model divides features into blocks and uses root mean square error (RMSE) to measure similarity, selecting features based on low RMSE values to ensure consistency [6].

- Leverage Ensemble Feature Selection: Use tree-based ensemble methods like Random Forest or Extreme Gradient Boosting (XGBoost), which provide robust embedded feature importance scores and are effective at capturing complex, non-linear relationships in data [7].

Q2: What is the most effective way to handle missing data in high-dimensional biological studies before feature selection?

A2: The choice of imputation method is critical for preserving data integrity.

- Recommended Method: Multiple Imputation by Chained Equations (MICE) is consistently recommended. It operates under the assumption that missing values can be predicted based on observed data, thus reflecting the underlying distribution more accurately [7]. Studies show MICE outperforms other techniques like K-Nearest Neighbors (KNN) imputation, reducing imputation error by 23% and achieving 89% accuracy in maintaining temporal consistency in longitudinal data [7].

- Alternative Approaches: Other viable methods include Missforest imputation. The Data Analytics Challenge on Missing Data Imputation (DACMI) also highlighted competitive models like LightGBM and XGBoost for clinical time series imputation [7].

Q3: Which feature selection methods are best suited for identifying non-linear relationships in fertility prediction data?

A3: Traditional statistical methods may miss complex interactions.

- Mutual Information (MI): This is a powerful filter method that can capture both linear and non-linear relationships between features and the target variable [7].

- Tree-Based Ensemble Methods: Algorithms like Random Forest, Gradient Boosting, and XGBoost are highly effective as they naturally handle non-linearities and complex interactions [7]. Their embedded feature importance scores can be directly used for selection.

- Fractal Feature Selection (FFS): This model is particularly adept at detecting underlying self-similarities and hierarchical relationships across different scales in high-dimensional data, allowing it to uncover hidden, non-linear patterns [6].

Q4: Our model for predicting natural conception achieved limited accuracy (e.g., ~62%). How can we improve its predictive performance? [8]

A4: Limited model performance can be addressed through several strategic improvements:

- Expand Dataset Size and Diversity: A primary limitation is often a small dataset. Future studies should use larger datasets to improve accuracy and generalizability [8].

- Incorporate Additional Predictors: Supplement sociodemographic and health history data with clinical and biochemical markers. Key predictors identified in fertility research include BMI, age, menstrual cycle characteristics, varicocele presence, caffeine consumption, history of endometriosis, and exposure to chemical agents or heat [8]. Expanding the predictor set is crucial for enhancing model accuracy [8].

- Optimize Feature Selection: Ensure you are using a robust feature selection method, like the FFS model, which has been shown to increase machine learning accuracy from an average of 79% (using all features) to 94% on high-dimensional biological datasets [6].

Troubleshooting Guides

Problem: Dimensionality Curse Leading to Model Overfitting

Symptoms: The model performs excellently on training data but poorly on unseen test data. Performance becomes highly complex and computationally expensive with a large number of features [6].

Diagnosis and Solution:

| Step | Action | Methodology & Tools |

|---|---|---|

| 1 | Apply Robust Feature Selection | Use the Fractal Feature Selection (FFS) model to eliminate redundant and irrelevant features, streamlining the analysis and reducing overfitting risk [6]. |

| 2 | Validate with Cross-Validation | Use k-fold cross-validation techniques to assess the generalizability and robustness of your models, ensuring reliable performance comparisons [8]. |

| 3 | Utilize Regularized Models | Implement algorithms like the XGB Classifier, which incorporates advanced regularization techniques to prevent overfitting in high-dimensional spaces [8]. |

Problem: Inefficient Search During Feature Selection

Symptoms: The feature selection process is slow, gets stuck in local optima, or fails to find an optimal feature subset due to a constrained search space [6].

Diagnosis and Solution:

| Step | Action | Methodology & Tools |

|---|---|---|

| 1 | Adopt a Metaheuristic or Fractal Approach | Replace random search strategies with models that offer a more comprehensive exploration of the feature space. The FFS model, for example, penetrates deeper into the dataset and broadens analytical horizons without high computational overhead [6]. |

| 2 | Leverage Efficient Algorithms | For wrapper methods, use efficient algorithms like Extended Particle Swarm Optimization (EPSO) or a modified Harris-Hawks optimizer, which can improve the search process for optimal feature subsets [6]. |

| 3 | Set Clear Stopping Criteria | Define performance-based criteria (e.g., minimal improvement in cross-validation score) to terminate the search efficiently once a satisfactory feature subset is found. |

Experimental Protocols & Data

The following table summarizes the performance of various feature selection and modeling approaches as reported in the literature, providing a benchmark for expected outcomes.

| Method / Model | Application Context | Key Performance Metrics | Reference |

|---|---|---|---|

| Fractal Feature Selection (FFS) | High-dimensional biological datasets | Increased avg. ML accuracy from 79% (full features) to 94%; stable feature sets [6]. | [6] |

| XGB Classifier | Predicting natural conception | Accuracy: 62.5%; ROC-AUC: 0.580; limited predictive capacity [8]. | [8] |

| Random Forest | Fetal birthweight prediction | Achieved a coefficient of determination (R²) of 0.87 [7]. | [7] |

| Support Vector Machines (SVM) | Fetal birthweight prediction | Achieved a coefficient of determination (R²) of 0.83 [7]. | [7] |

| Multiple Imputation by Chained Equations (MICE) | Handling missing data (Pune Maternal Nutrition Study) | Superior to KNN; reduced imputation error by 23%; 89% temporal consistency accuracy [7]. | [7] |

Detailed Methodology: Fractal Feature Selection (FFS) Model

This protocol outlines the steps for implementing the FFS model to enhance classification performance on high-dimensional biological data [6].

1. Principle: The FFS model divides features into blocks and measures the similarity between blocks using Root Mean Square Error (RMSE). Features are ranked and selected based on low RMSE values, identifying highly relevant and correlated features that improve predictive ability [6].

2. Procedure:

- Input: High-dimensional dataset (e.g., Gene Expression Profiling data).

- Step 1 - Feature Blocking: Divide the entire set of features into distinct blocks. Each block should correspond to a particular data category or a random grouping.

- Step 2 - Similarity Measurement: For each feature block, calculate the RMSE to quantify its similarity to other blocks or a target pattern.

- Step 3 - Feature Ranking: Rank all features based on their computed RMSE values. Features with the lowest RMSE are considered the most important, as they indicate high similarity and relevance to the target category.

- Step 4 - Subset Selection: Select the top-k features from the ranked list to form the final feature subset for model training.

- Output: A reduced set of highly relevant features for use in machine learning classifiers.

3. Validation:

- Evaluate the selected feature subset using standard metrics (Accuracy, Precision, Recall, F1-score) on a chosen ML classifier.

- Compare performance against the model trained using all features to demonstrate improvement [6].

Detailed Methodology: Permutation Feature Importance for Predictor Identification

This protocol describes how to identify key predictors in a dataset for a specific outcome, such as natural conception, using a couple-based approach [8].

1. Principle: This method evaluates the importance of a feature by randomly shuffling its values and measuring the resulting decrease in the model's performance. A significant drop in performance indicates that the feature is important for prediction [8].

2. Procedure:

- Input: A dataset containing a large number of candidate variables (e.g., 63 parameters from both female and male partners) [8].

- Step 1 - Train a Model: First, train a machine learning model (e.g., Random Forest, XGBoost) on the original dataset and record its baseline performance (e.g., using R² score).

- Step 2 - Permute and Re-evaluate: For each feature column:

- Permute (shuffle) the values of that feature, breaking its relationship with the outcome.

- Use the permuted data to make new predictions with the already-trained model.

- Calculate the new model performance score.

- Step 3 - Calculate Importance: The importance of the feature is the difference between the baseline performance and the performance after permutation.

- Step 4 - Rank Features: Rank all features based on their calculated importance scores.

- Output: A ranked list of the most influential predictors (e.g., the top 25 key variables from the original 63) [8].

3. Key Predictors for Natural Conception: The method identified a balance of medical, lifestyle, and reproductive factors for both partners, including BMI, caffeine consumption, history of endometriosis, and exposure to chemical agents or heat [8].

Research Reagent Solutions: Essential Materials for Feature Selection Experiments

The following table details key computational tools and methodological approaches essential for conducting feature selection in high-dimensional fertility prediction research.

| Item / Solution | Function in Research |

|---|---|

| Fractal Feature Selection (FFS) Model | A novel feature selection model that uses fractal concepts to identify highly relevant features by dividing them into blocks and measuring similarity via RMSE, leading to high accuracy and stability [6]. |

| Multiple Imputation by Chained Equations (MICE) | An advanced statistical technique for handling missing data by creating multiple plausible imputations, preserving data integrity and relationships more accurately than simpler methods [7]. |

| Permutation Feature Importance | A model-inspection technique used to identify the most influential predictors in a dataset by measuring the drop in model performance when a single feature's values are randomly shuffled [8]. |

| Tree-Based Ensemble Algorithms (XGBoost, Random Forest) | Machine learning algorithms that provide robust, embedded feature importance scores and are highly effective at capturing non-linear relationships and complex interactions in data [7] [8]. |

| Mutual Information (MI) | A filter-based feature selection method that measures the statistical dependency between variables, capable of capturing both linear and non-linear relationships [7]. |

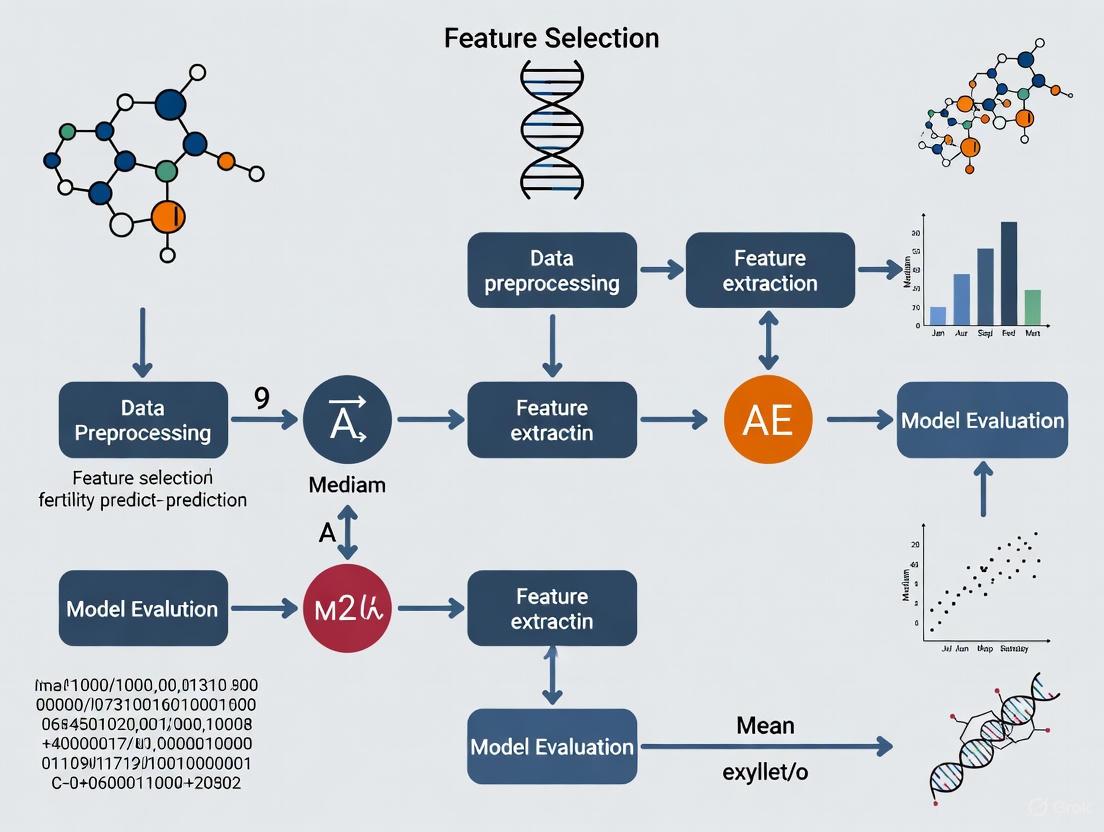

Workflow and Model Diagrams

Diagram 1: High-Dimensional Data Analysis Workflow.

Diagram 2: Permutation Feature Importance Process.

In the field of assisted reproductive technology (ART), machine learning models are increasingly deployed to predict treatment outcomes and optimize success rates. The performance and clinical utility of these models depend critically on the selection of input variables, a process known as feature selection. Effective feature selection directly enhances IVF prediction models by eliminating redundant and irrelevant features, reducing overfitting, improving model interpretability, and decreasing computational costs [9]. This technical support guide explores how strategic feature selection directly influences the accuracy and reliability of fertility prediction models, providing researchers with practical methodologies to enhance their experimental designs.

FAQs: Technical Challenges in Feature Selection for IVF Research

Q1: Why is feature selection critical specifically for IVF prediction models compared to other medical applications?

IVF involves complex, multifactorial processes with numerous interacting clinical, demographic, and laboratory parameters. Without effective feature selection, models suffer from the "curse of dimensionality," where too many features relative to patient samples (a common scenario in single-center IVF studies) severely impairs model generalizability [9]. Feature selection directly addresses this by identifying the most predictive factors, such as female age, total number of embryos, and number of injected oocytes, which have been consistently validated as top predictors for live birth outcomes [10] [11]. This process enhances model performance while providing clinically interpretable insights into the key determinants of IVF success.

Q2: What are the most common feature selection pitfalls in fertility research, and how can we avoid them?

A frequent pitfall is relying solely on filter methods without considering feature interactions with the model, potentially missing biologically relevant but weakly correlated variables [9]. Another critical issue is data leakage, where information from the test set influences feature selection, creating optimistically biased performance estimates. To avoid this, always perform feature selection within each cross-validation fold using only training data. Additionally, many studies lack external validation, with one review noting that all 20 examined papers on machine learning in ART relied only on internal validation [12]. Implement rigorous train-validation-test splits and collaborate with multiple institutions for external validation to ensure feature robustness across diverse populations.

Q3: How does feature selection directly impact clinical decision-making in IVF?

By identifying the most influential predictors, feature selection enables the development of simplified, highly accurate models that clinicians can trust and interpret. For instance, research has demonstrated that with proper feature selection, models can achieve up to 96.35% accuracy in predicting IVF success using key variables like female age, ovarian reserve markers, and embryo quality metrics [13]. This allows for:

- Personalized treatment protocols based on patient-specific feature profiles

- Improved patient counseling with transparent success probability estimates

- Optimized resource allocation by focusing on the most diagnostically valuable tests and measurements

- Reduced diagnostic costs by eliminating redundant or non-predictive assessments

Q4: What advanced computational techniques show promise for feature selection in high-dimensional fertility data?

For high-dimensional fertility datasets (e.g., those incorporating omics data or extensive clinical variables), advanced optimization techniques are emerging. The Dynamic Multitask Learning with Competitive Elites (DMLC-MTO) framework generates complementary tasks through multi-criteria strategies that combine feature relevance indicators like Relief-F and Fisher Score, resolving conflicts between different metrics [14]. Bio-inspired approaches, such as Ant Colony Optimization (ACO) integrated with neural networks, have demonstrated 99% classification accuracy in male fertility diagnostics by adaptively tuning parameters and selecting optimal feature subsets [15]. These methods balance global exploration and local exploitation in the feature space, overcoming premature convergence common in traditional algorithms.

Experimental Protocols & Methodologies

Protocol: Wrapper-Based Feature Selection for Live Birth Prediction

This protocol employs recursive feature elimination (RFE) with cross-validation, suitable for medium-sized IVF datasets (typically hundreds to thousands of samples with 20-100 potential features).

Materials:

- Pre-processed IVF dataset with confirmed outcome labels (live birth yes/no)

- Computing environment with Python/R and necessary libraries (scikit-learn, pandas, numpy)

- Validation framework (nested cross-validation recommended)

Procedure:

- Data Preparation: Partition data into training (70%) and hold-out test (30%) sets, ensuring no patient overlap between sets [10].

- Initial Filtering: Remove zero-variance features and those with >40% missing values. Impute remaining missing values using median/mode.

- Classifier Selection: Choose a tree-based ensemble method (e.g., Random Forest or XGBoost) as the base model due to their robust feature importance metrics [10].

- Recursive Feature Elimination:

- Initialize with all features

- Train model and rank features by importance (Gini impurity or permutation importance)

- Eliminate bottom 10% of features

- Re-train and evaluate model performance via 5-fold cross-validation

- Repeat until performance metric (AUC-ROC) declines significantly

- Validation: Assess final feature subset on held-out test set. Calculate sensitivity, specificity, and AUC [10].

Technical Notes: For datasets with strong multicollinearity (e.g., multiple correlated hormone measurements), consider grouping features or applying variance inflation factor (VIF) analysis prior to RFE [9].

Protocol: Hybrid Filter-Wrapper Approach for High-Dimensional Data

For studies incorporating extensive feature sets (including genetic, proteomic, or extensive clinical variables), this hybrid approach balances computational efficiency with model-specific optimization.

Procedure:

- Filter Stage:

- Calculate feature relevance scores using multiple filter methods (e.g., Mutual Information, Fisher Score, Relief-F)

- Apply a multi-indicator integration strategy to resolve conflicts between different relevance measures [14]

- Retain features that rank in top percentiles across multiple methods

- Wrapper Stage:

- Use the filtered feature subset as input to an evolutionary multitasking optimization algorithm

- Implement a dual-task framework that simultaneously optimizes a global task (full filtered feature set) and an auxiliary task (reduced subset) [14]

- Enable knowledge transfer between tasks to accelerate search efficiency

- Validation:

- Perform statistical testing on selected features using appropriate methods (e.g., Bonferroni correction for multiple comparisons)

- Validate feature stability through bootstrap resampling

Data Presentation: Quantitative Evidence

Table 1: Performance Comparison of Models with Different Feature Selection Methods in IVF Prediction

Data compiled from multiple studies examining live birth prediction

| Feature Selection Method | Number of Initial Features | Final Features Selected | Model Accuracy | AUC-ROC | Key Top-Ranked Features |

|---|---|---|---|---|---|

| Random Forest (Embedded) [10] | 23 | 8 | 81% | 0.85 | Total embryos, Injected oocytes, Female age, PCOS status |

| Logit Boost (Embedded) [13] | 67 | 15 | 96.35% | N/R | Female age, Ovarian reserve, Embryo quality, Infertility duration |

| Hybrid MLFFN–ACO [15] | 10 | 5 | 99% | N/R | Lifestyle factors, Environmental exposures, Clinical markers |

| XGBoost (Embedded) [16] | 67 | 22 | Top 26% in competition | N/R | Treatment history, Patient age, Stimulation parameters |

| SVM-RFE (Wrapper) [10] | 23 | 10 | 78% | 0.82 | Female age, Injected oocytes, Infertility cause, Embryo count |

Table 2: Most Influential Features for IVF Success Identified Through Selection Algorithms

Consensus features across multiple studies ordered by frequency of identification

| Feature | Frequency in Studies | Clinical Category | Direction of Association |

|---|---|---|---|

| Female Age | 100% [10] [11] [13] | Demographic | Negative |

| Total Number of Embryos | 80% [10] [11] | Embryological | Positive |

| Number of Injected Oocytes | 80% [10] [11] | Stimulation | Positive |

| Ovarian Reserve Markers (AMH, AFC) | 75% [17] [11] | Endocrine | Positive |

| Body Mass Index (BMI) | 70% [10] [11] | Demographic | Negative (when elevated) |

| Infertility Duration | 65% [10] [11] | History | Negative |

| Sperm Parameters | 60% [11] [15] | Male Factor | Positive |

| Embryo Quality Metrics | 60% [10] [11] | Embryological | Positive |

| Previous Pregnancy History | 55% [11] | History | Positive |

| Polycystic Ovary Syndrome (PCOS) | 50% [10] | Diagnosis | Context-dependent |

Visual Workflows

Diagram 1: Feature Selection Methodology for IVF Data

Diagram 2: Relationship Between Feature Selection and Clinical Impact in IVF

| Tool/Resource | Type | Primary Function | Implementation Example |

|---|---|---|---|

| scikit-learn FeatureSelection [9] | Python Library | Provides variance threshold, RFE, SelectKBest | from sklearn.feature_selection import RFE |

| XGBoost [10] | Algorithm | Embedded feature selection via gain importance | xgb.XGBClassifier().feature_importances_ |

| Ant Colony Optimization [15] | Bio-inspired Algorithm | Feature subset optimization inspired by ant foraging | Hybrid MLFFN-ACO framework |

| Multitask Evolutionary Algorithm [14] | Optimization Framework | Solves multiple feature selection tasks simultaneously | DMLC-MTO for high-dimensional data |

| SHAP (SHapley Additive exPlanations) | Interpretability Library | Quantifies feature contribution to predictions | Post-hoc explanation of selected features |

| Variance Inflation Factor (VIF) [9] | Statistical Measure | Identifies multicollinearity in feature subsets | statsmodels.stats.outliers_influence.variance_inflation_factor |

| Boruta [9] | Wrapper Method | Compares original features with shadow features | All-relevant feature selection for comprehensive discovery |

Frequently Asked Questions (FAQs)

Q1: Why do some predictive features perform well in one population but poorly in another? Feature performance variation often stems from population-specific genetic diversity, environmental exposures, lifestyle factors, or hormonal baseline differences. Features with high universal predictive value typically relate to fundamental biological pathways, while context-dependent features may correlate with population-specific characteristics. Implement cross-population validation protocols to identify robust features.

Q2: What is the minimum acceptable color contrast for experimental workflow diagrams in publications? For standard text in diagrams, the Web Content Accessibility Guidelines (WCAG) require a minimum contrast ratio of 4.5:1. For large-scale text (approximately 18pt or 14pt bold), the minimum is 3:1. For enhanced compliance (Level AAA), the requirements are stricter at 7:1 for standard text and 4.5:1 for large text [18]. Insufficient contrast can render diagrams unreadable for some users and may lead to publication rejection.

Q3: How can I quickly check contrast ratios in my graphical abstracts?

Use online color contrast analyzers. Input your foreground (text) and background (node fill) colors to receive a pass/fail rating against WCAG standards. For Graphviz, always explicitly set the fontcolor attribute to ensure it contrasts sufficiently with the fillcolor or color attribute of nodes [19].

Q4: Which technical attributes in Graphviz control text and background colors?

In Graphviz DOT language, use the fontcolor attribute for text color, fillcolor for the node's interior, color for the node's border, and bgcolor for the graph's overall background [19].

Troubleshooting Guides

Problem: Inconsistent Feature Performance Across Populations

Symptoms:

- A feature set achieves high accuracy in Population A but low accuracy in Population B.

- Model performance degrades significantly when applied to a new cohort.

Diagnosis and Resolution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Conduct Feature Stability Analysis: Calculate the coefficient of variation (CV) for each feature's importance score across multiple bootstrap samples within each population. | Identification of features with highly variable importance (high CV), indicating potential context-dependence. |

| 2 | Perform Clustering Analysis: Use unsupervised clustering (e.g., k-means) on normalized feature values to see if population subgroups emerge naturally. | Determination of whether population structure is a major driver of feature performance variation. |

| 3 | Apply Statistical Tests: Use Mann-Whitney U tests or ANCOVA to compare feature values between populations, controlling for covariates like age or BMI. | A p-value < 0.05 (with correction for multiple testing) indicates a feature with statistically significant population-specific differences. |

| 4 | Validate with Cross-Population Protocol: Split data into discovery (Population A) and validation (Population B) sets. Train on A and test on B. | Quantification of the generalizability gap. A significant performance drop suggests features are not universal. |

Problem: Poor Readability in Experimental Workflow Diagrams

Symptom:

- Text within diagram nodes is difficult to read against the background color.

Diagnosis and Resolution:

This is almost always caused by insufficient color contrast between the text (fontcolor) and the node's background (fillcolor).

- Explicitly Set Colors: In your Graphviz DOT script, never rely on default colors. Always define

fontcolorandfillcolorfor nodes [19]. - Choose High-Contrast Colors: Select color pairs from the approved palette that meet WCAG standards. For example, use dark text on a light background or vice versa.

- Test the Contrast: Use the following protocol to verify:

- Extract the HEX codes for your chosen

fontcolorandfillcolor. - Input them into a color contrast checker.

- Confirm the contrast ratio is at least 4.5:1.

- Extract the HEX codes for your chosen

Incorrect Code Example (Low Contrast):

This results in black text on a yellow background with a contrast ratio of about 1.2:1, which fails accessibility standards.

Corrected Code Example (High Contrast):

This combination provides a contrast ratio of over 9:1, ensuring excellent readability [18].

Data Presentation

Table 1: Quantitative Comparison of Universal vs. Context-Dependent Features in Fertility Prediction

| Feature Category | Example Features | Performance Stability (Cross-Population AUC) | Coefficient of Variation (CV) | Recommended Use Case |

|---|---|---|---|---|

| Universal Features | Basal Hormone Level (AMH), Antral Follicle Count (AFC) | 0.85 - 0.88 | Low (< 15%) | Core features for generalizable model development |

| Context-Dependent Features | Vitamin D Level, Specific Genetic Polymorphism (e.g., FSHR) | 0.65 - 0.92 | High (> 40%) | Population-specific model refinement; requires validation |

| Environmental Covariates | BMI, Smoking Status | 0.70 - 0.82 | Medium (15-30%) | Model adjustment factors to improve local accuracy |

Experimental Protocols

Protocol 1: Cross-Population Feature Validation

Objective: To identify and validate predictive features for ovarian reserve that are robust across distinct ethnic and geographic populations.

Methodology:

- Cohort Selection: Recruit cohorts from at least three geographically and ethnically diverse populations (e.g., East Asian, European, Hispanic). Match cohorts for key covariates like age range.

- Data Collection: Collect serum samples for hormone assays (AMH, FSH, Inhibin B) and perform standardized transvaginal ultrasonography for AFC.

- Feature Quantification: Assay hormones using a single, validated platform to minimize inter-assay variability. AFC should be performed by certified sonographers using a standardized counting method.

- Statistical Analysis:

- Perform logistic regression to build a prediction model for a defined outcome (e.g., poor ovarian response) in the discovery population.

- Apply the model to the validation populations and record the change in AUC.

- Features that maintain an AUC > 0.8 with minimal deviation (< 0.05) across all populations are classified as "universal."

Protocol 2: High-Contrast Scientific Diagram Creation

Objective: To generate accessible and publication-ready workflow diagrams using Graphviz that comply with WCAG contrast standards.

Methodology:

- Define Color Palette: Restrict all diagram elements to the approved color palette:

#4285F4(blue),#EA4335(red),#FBBC05(yellow),#34A853(green),#FFFFFF(white),#F1F3F4(light gray),#202124(dark gray),#5F6368(medium gray). - Author DOT Script: Write the Graphviz script, explicitly defining

fontcolorandfillcolorfor every node. - Contrast Verification: Before final rendering, validate all color pairs used in nodes. For example:

#FFFFFF(white) text on#4285F4(blue) background has a ratio of 4.5:1 (Pass).#FFFFFF(white) text on#FBBC05(yellow) background has a ratio of 1.2:1 (Fail).

- Render and Inspect: Render the diagram and visually inspect it for clarity. Use an automated accessibility checker for final confirmation [18].

Mandatory Visualizations

Diagram 1: Universal Feature Selection Workflow

Diagram 2: Diagram Accessibility Contrast Logic

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Fertility Prediction Studies

| Reagent / Material | Function | Specification Notes |

|---|---|---|

| AMH ELISA Kit | Quantifies Anti-Müllerian Hormone levels in serum, a key universal biomarker for ovarian reserve. | Choose a kit with validated cross-reactivity across ethnicities; check for standardization to the new WHO reference preparation. |

| RNA Stabilization Tube | Preserves RNA integrity in whole blood for transcriptomic feature discovery. | Ensures stability of labile RNA, allowing for batch processing and reducing pre-analytical variation in multi-center studies. |

| Genotyping Microarray | Interrogates millions of single nucleotide polymorphisms (SNPs) for genetic feature identification. | Select arrays with content relevant to reproductive traits and adequate coverage in diverse populations to minimize bias. |

| Ultrasound Gel | Acoustic coupling medium for transvaginal ultrasonography to perform Antral Follicle Counts (AFC). | Hypoallergenic, non-interfering formulation is critical for patient comfort and standardized image acquisition. |

Algorithmic Arsenal: Advanced Feature Selection Techniques for Fertility Data

Frequently Asked Questions

FAQ 1: What are univariate filter methods, and why are they used as an initial screening step in fertility prediction research?

Univariate filter methods evaluate and rank a single feature according to certain statistical criteria, completely independently of any machine learning algorithm [20]. They are typically the first step in a feature selection pipeline because they are computationally inexpensive and fast to execute, allowing you to process thousands of features in seconds [20]. This helps to quickly remove obviously irrelevant features, such as those that are constant or quasi-constant, thereby reducing the dataset's dimensionality before applying more complex, computationally expensive models [20] [21]. In fertility prediction research, where datasets may contain hundreds of genetic markers or patient characteristics, this initial screening is crucial for improving model performance and interpretability.

FAQ 2: What is the main limitation of using univariate filter methods?

The primary limitation of univariate filter methods is that they evaluate each feature in isolation [20] [21]. They treat each feature individually and independently of the feature space [20]. Because of this, they are unable to account for interactions or dependencies between features. A feature might be weakly informative on its own but become highly predictive when combined with another. Univariate methods risk filtering out such features, and they may also select redundant variables that provide similar information [20] [21].

FAQ 3: Which univariate statistical test should I use for my dataset?

The choice of statistical test depends on the data types of your features and target variable. The table below summarizes common tests and their applications:

Table 1: Common Univariate Statistical Tests for Feature Filtering

| Statistical Test | Feature Type | Target Variable Type | Key Characteristics |

|---|---|---|---|

| ANOVA F-test [22] [21] | Numerical | Categorical | Assesses if there are significant differences between the means of two or more groups. A large F-value indicates the feature is a good discriminator. |

| Chi-Squared (χ²) Test [20] [22] | Categorical | Categorical | Tests the independence between two categorical variables. A high χ² statistic suggests a strong association between the feature and the target. |

| Mutual Information [22] [21] | Any | Any | Measures the amount of information gained about the target by observing the feature. Captures any kind of statistical dependency, including non-linear relationships. |

| Pearson Correlation [20] | Numerical | Numerical | Measures the strength of a linear relationship between two variables. |

| Spearman's Rank Correlation [20] | Ordinal | Ordinal | A non-parametric test that measures the strength of a monotonic (increasing or decreasing) relationship. |

FAQ 4: I've performed univariate filtering. What should be the next step in my feature selection pipeline?

After univariate filtering, it is common to use multivariate filter methods or model-based embedded methods [23] [21]. Multivariate filters evaluate the entire feature space and can handle duplicated and correlated features, which univariate methods cannot [20]. Embedded methods, such as Lasso regression or tree-based algorithms, perform feature selection as part of the model construction process and naturally account for feature interactions [23] [22]. A robust pipeline might involve: 1) Univariate filtering to remove clearly irrelevant features, 2) A multivariate method to remove redundancy, and 3) An embedded or wrapper method for the final selection optimized for your specific prediction algorithm [23].

FAQ 5: How can I implement a basic univariate feature selection in Python?

You can easily implement univariate feature selection using the scikit-learn library. The SelectKBest class is commonly used to select the top ( k ) features based on a scoring function. The example below uses the ANOVA F-test for a classification problem:

Other scoring functions like chi2 (for categorical data) and mutual_info_classif (for any dependency) can be swapped in for f_classif [22].

Troubleshooting Guides

Problem 1: Poor Model Performance After Univariate Feature Selection

- Symptoms: Your final predictive model (e.g., for live birth outcomes) has low accuracy or poor generalization, even after selecting features with high univariate scores.

- Possible Causes:

- Ignored Feature Interactions: Critical features that are only predictive in combination with others were filtered out [21].

- Redundant Features: The selected feature set contains multiple highly correlated features, introducing noise and multicollinearity [20].

- Data Leakage: Information from the test set was used during the feature selection process, leading to over-optimistic scores and model overfitting [21].

- Solutions:

- Incorporate Multivariate Filters: Follow up with a method like "mrmr" (Maximum Relevance Minimum Redundancy) which explicitly balances feature relevance and redundancy [23].

- Use Embedded Methods: Apply algorithms like Lasso or tree-based models (e.g., Random Forest, XGBoost) that inherently perform feature selection during training and can capture interactions [23] [24] [22].

- Ensure Proper Data Handling: Always split your data into training and testing sets first. Perform univariate feature selection only on the training set, then apply the selected features to the test set [21].

Problem 2: Inconsistent Selected Features Across Different Data Samples

- Symptoms: The list of top features selected by a univariate method changes significantly when applied to different subsets of your dataset (e.g., different clinical trial cohorts).

- Possible Causes:

- Solutions:

- Assess Stability: Use stability metrics to quantify the reproducibility of your feature selection results across multiple data subsamples [23].

- Prioritize Stable Filters: Research indicates that for genomic data, filters like Spearman's correlation ("spearcor") and "mrmr" can produce more stable results compared to tree-based univariate methods [23].

- Increase Sample Size: If possible, use a larger dataset to make the statistical estimates more robust.

Problem 3: Handling Mixed Data Types (Numerical and Categorical)

- Symptoms: Your fertility dataset contains a mix of numerical (e.g., patient age, hormone levels) and categorical (e.g., ethnicity, infertility type) features, and you are unsure how to apply univariate filters.

- Solution:

- Split by Type: Separate your numerical and categorical features.

- Apply Appropriate Tests: Use ANOVA F-test or Mutual Information for numerical features against your target. Use the Chi-squared test for categorical features [20] [22].

- Combine Results: Rank the features from both groups by their scores (e.g., p-values) and select the top ( k ) from the combined list, or select a pre-defined number from each group.

Workflow Visualization

The following diagram illustrates a typical feature selection pipeline that incorporates univariate statistical filters as an initial step, within the context of building a fertility prediction model.

Research Reagent Solutions

Table 2: Essential Tools for Feature Selection Experiments

| Tool / Reagent | Function / Description | Example in Research Context |

|---|---|---|

| scikit-learn Library [22] | A comprehensive open-source machine learning library for Python. Provides unified implementations of feature selection algorithms. | Used for implementing SelectKBest, VarianceThreshold, and SelectFromModel in a study predicting live birth from IVF treatment data [24] [22]. |

| VarianceThreshold [22] | A basic filter method that removes all features whose variance does not meet a specified threshold. Used to eliminate constant and quasi-constant features. | The first preprocessing step in a pipeline to remove non-informative genetic markers or patient questionnaire answers that show almost no variation [20] [22]. |

| SelectKBest [22] | A univariate filter that removes all but the ( k ) highest scoring features, based on a provided statistical test. | Selecting the top 500 SNPs most associated with residual feed intake in pigs from a high-dimensional genomic dataset [23]. |

| Statistical Tests (fclassif, chi2, mutualinfo_classif) [22] | Scoring functions used by SelectKBest to evaluate feature importance. |

Using f_classif (ANOVA) to find patient age and hormone levels most predictive of successful fertility treatment [24] [22]. |

| Pandas & NumPy | Foundational Python libraries for data manipulation and numerical computation. Essential for data cleaning and preprocessing before feature selection. | Handling and cleaning clinical data from a fertility center, including encoding categorical variables and handling missing values [24]. |

Frequently Asked Questions

Q1: What is the main advantage of wrapper methods over filter methods for feature selection? Wrapper methods evaluate feature subsets based on the performance of a specific machine learning model, allowing them to detect complex feature interactions that filter methods, which rely on intrinsic statistical properties, might miss. While computationally more expensive, this model-specific evaluation often leads to better predictive performance [25] [26].

Q2: My Sequential Feature Selection algorithm is running very slowly. How can I improve its efficiency? The computational expense stems from repeatedly training and evaluating a model. To improve efficiency:

- Set a

k_featureslimit: Instead of testing all possible subset sizes, specify a target number of features [25] [27]. - Use a faster model: A less complex estimator (e.g., Logistic Regression over Random Forest) can speed up each iteration [25].

- Leverage cross-validation wisely: While important for robustness, setting

cv=0during initial prototyping performs evaluation on the training set only, reducing runtime [25].

Q3: When should I use floating selection methods (SFFS, SBFS) over standard forward or backward selection? Use floating methods when you suspect that a feature excluded in an early round of Sequential Forward Selection (SFS) might become valuable after other features are added, or that a feature removed in Sequential Backward Selection (SBS) should be reconsidered. The floating mechanism allows for backtracking, which can help escape local performance maxima and find a better feature subset [25].

Q4: In the context of fertility prediction, what are some common features selected by these algorithms? Research in IVF outcome prediction has identified several clinically relevant features. Studies building models for live birth prediction have found features such as maternal age, BMI, Anti-Müllerian Hormone (AMH) levels, duration of infertility, and previous pregnancy history to be highly significant [13] [24]. Wrapper methods can help refine this set further for a specific dataset and model.

Q5: How do I choose between forward selection and backward elimination? The choice often depends on the number of features in your initial dataset.

- Forward Selection starts with no features and adds them iteratively. It is computationally efficient when you suspect the optimal number of features

kis much smaller than the total featuresd[25] [27]. - Backward Elimination starts with all features and removes them iteratively. It is often preferable when

kis large relative tod, as it considers the impact of removing features that might be part of important interactions from the beginning [25] [27].

Troubleshooting Guides

Problem: Inconsistent Feature Subsets Across Different Runs or Similar Datasets

- Potential Cause: High variance in the model performance estimate, often due to a small dataset or high model instability.

- Solution:

- Use Cross-Validation: When configuring

SequentialFeatureSelector, set thecvparameter to a value greater than 0 (e.g.,cv=5). This provides a more robust performance estimate for each subset, leading to a more stable feature selection [25] [27]. - Increase Dataset Size: If possible, work with larger datasets to reduce the variance of performance estimates.

- Consensus Approach: Run multiple wrapper methods (e.g., SFS, SBS, SFFS) and identify the features that are consistently selected across them, as proposed in unifying approaches to feature selection [28].

- Use Cross-Validation: When configuring

Problem: Selected Feature Subset Performs Poorly on a Hold-Out Test Set

- Potential Cause: Data leakage during the feature selection process. If the feature selection algorithm had access to the entire dataset (including the test set) during its search, it may have overfitted to the noise in the data.

- Solution:

- Perform Feature Selection Within Training Folds: The most robust method is to integrate the feature selection process into a cross-validation loop. This means that for each training fold in your cross-validation, the feature selection is performed only on that training fold, and the selected features are used to transform the validation fold.

- Use Nested Cross-Validation: For an unbiased estimate of your model's generalization performance, use nested cross-validation, where an outer loop evaluates the model and an inner loop is dedicated to feature selection and hyperparameter tuning [24].

Comparative Analysis of Sequential Search Algorithms

The table below summarizes the core characteristics of different sequential wrapper methods.

| Algorithm | Initial Feature Set | Primary Operation | Key Advantage | Key Disadvantage |

|---|---|---|---|---|

| Sequential Forward Selection (SFS) | Empty | Adds the one feature that most improves model performance [25] [27]. | Computationally efficient for a small target k [27]. |

Cannot remove features added in previous steps [25]. |

| Sequential Backward Selection (SBS) | Full | Removes the one feature whose removal causes the least performance drop [25] [27]. | Considers all features initially, good for large k [27]. |

Cannot add back features removed in previous steps [25]. |

| Sequential Forward Floating Selection (SFFS) | Empty | Adds one feature, then conditionally removes the least important feature from the currently selected set [25]. | Can correct previous additions, often finds a better subset [25]. | More computationally expensive than SFS [25]. |

| Sequential Backward Floating Selection (SBFS) | Full | Removes one feature, then conditionally adds back the most important feature from the excluded set [25]. | Can correct previous removals, often finds a better subset [25]. | More computationally expensive than SBS [25]. |

Experimental Protocol: Applying SFS/SBS to an IVF Dataset

This protocol provides a step-by-step guide for using Sequential Feature Selection in a fertility prediction project, such as predicting live birth outcomes from IVF treatment.

1. Data Preparation and Baseline Modeling

- Data Source: Utilize a curated clinical dataset, like the one used in the study from Shengjing Hospital, which included pre-treatment variables like age, AMH, BMI, and infertility duration [24].

- Preprocessing: Handle missing values and encode categorical variables. Scale numerical features if using a model sensitive to feature magnitudes.

- Establish Baseline: Train and evaluate your chosen model (e.g., Logistic Regression, XGBoost [24]) using all available features. This performance (e.g., 80.2% accuracy [25]) serves as a baseline for comparison.

2. Configure and Execute the Wrapper Method

- Tool Selection: Use the

SequentialFeatureSelectorfrom themlxtendlibrary in Python [25] [27]. - Parameter Configuration:

estimator: Your machine learning model (e.g.,LogisticRegression(max_iter=1000)).k_features: The number of features to select. You can specify a fixed number or a range (e.g.,(3,11)) to find the optimal value [27].forward:Truefor SFS,Falsefor SBS.floating: Set toTruefor floating variants [25].scoring: The performance metric (e.g.,'accuracy','roc_auc').cv: The number of cross-validation folds for robust evaluation [25] [27].

- Execution: Fit the selector object on your training data.

3. Result Analysis and Validation

- Identify Optimal Subset: Retrieve the best feature subset using

sfs.k_feature_names_[25]. - Visualize Performance: Plot the cross-validated performance against the number of features to understand the trade-off. The following workflow diagram illustrates this process.

- Final Evaluation: Train your final model on the entire training set using only the selected features and evaluate its performance on a held-out test set that was not used during the feature selection process.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational "reagents" for implementing wrapper methods in fertility prediction research.

| Tool/Resource | Function in Experiment | Key Parameters & Notes |

|---|---|---|

mlxtend.feature_selection.SequentialFeatureSelector [25] [27] |

The core Python class for implementing SFS, SBS, and their floating variants. | k_features, forward, floating, scoring, cv. Essential for the feature selection pipeline. |

Scikit-learn Estimators (e.g., LogisticRegression, XGBoost) [25] [24] |

The predictive model used to evaluate the quality of a selected feature subset. | Model choice is critical. Simpler models (e.g., Logistic Regression) reduce computation time. |

| UCI Fertility Dataset [15] | A publicly available benchmark dataset containing 100 samples with 10 attributes related to male lifestyle and seminal quality. | Useful for initial method validation and benchmarking against published studies. |

| SHAP (SHapley Additive exPlanations) [29] | A post-selection analysis tool for interpreting the output of the final model and validating the clinical relevance of the selected features. | Helps bridge the gap between model performance and clinical interpretability. |

| Ant Colony Optimization (ACO) [15] | A nature-inspired optimization algorithm that can be used as an alternative to sequential methods for feature selection, especially in complex, high-dimensional scenarios. | Part of advanced hybrid frameworks for overcoming limitations of greedy sequential searches. |

Foundational Concepts & FAQs

This section addresses frequently asked questions about embedded feature selection methods, which integrate the selection process directly into the model training. This approach is central to building efficient and interpretable predictive models for fertility research.

Q1: What are the primary advantages of using embedded feature selection methods like L1 regularization over filter methods?

Embedded methods offer a significant computational advantage, particularly with high-dimensional data commonly encountered in genomics and healthcare. Techniques like Lasso (L1 regularization) can operate on datasets containing tens of thousands of variables, whereas other feature selection methods can become impractical [30]. Furthermore, because regularization operates over a continuous space, it often produces more accurate predictive models than discrete feature selection methods by fine-tuning the model more effectively [30].

Q2: In a Random Forest model for fertility prediction, how is feature importance actually calculated?

In tree-based models like Random Forest, feature importance is typically calculated using Gini Importance (also known as Mean Decrease in Impurity). As each tree is built, the algorithm selects features to split the data based on criteria like Gini impurity or entropy. The importance of a feature is then the total reduction in the impurity criterion achieved by that feature, averaged across all the trees in the forest [31]. This score provides a powerful, model-integrated measure of which features (e.g., age, parity, education level) contribute most to predicting fertility preferences.

Q3: My Lasso regression model is forcing all coefficients to zero. What could be the cause and how can I address it?

This behavior is typically caused by an excessive regularization strength (lambda) value. Lasso adds a penalty equal to the absolute value of the coefficients, and if the penalty is too high, it will shrink all coefficients toward zero [32] [30]. To address this:

- Systematic Tuning: Use cross-validation to find the optimal regularization parameter. The

LassoCVclass in Python automates this process [33]. - Check Feature Scaling: The performance of Lasso is highly sensitive to the scale of the features. Ensure all features are standardized (e.g., using

StandardScaler) before training [33]. - Consider Alternative Models: If you suspect many features are relevant, Ridge regression or Elastic Net (which combines L1 and L2 penalties) might be more appropriate, as they handle correlated predictors differently [30].

Q4: How can I visually communicate the results of feature importance from an embedded method to a non-technical audience?

Creating clear visualizations is key to communicating your findings:

- Bar Charts: The most common and intuitive method is a bar chart where each bar represents a feature and its height corresponds to the feature's importance score [31].

- SHAP (SHapley Additive exPlanations): For complex models, SHAP values can provide a unified measure of feature importance and show the direction of each feature's impact (positive or negative). This is invaluable for interpreting models in fertility research, showing, for instance, how an increase in age or parity influences the prediction [34].

Troubleshooting Common Experimental Issues

The table below outlines common problems, their potential diagnoses, and recommended solutions based on established experimental protocols.

Table 1: Troubleshooting Guide for Embedded Feature Selection Experiments

| Problem | Potential Diagnosis | Recommended Solution |

|---|---|---|

| Model performance degrades after feature selection. | The selection process may have been too aggressive, removing informative features. | Use a less stringent alpha parameter in Lasso or employ Elastic Net to retain more features. Combine embedded method results with domain knowledge to validate the features removed [35]. |

| Feature importance rankings are inconsistent between different runs or algorithms. | High correlation between features (multicollinearity) can make rankings unstable. Data resampling can also introduce variance. | Use permutation importance, which is more robust to correlated features [31]. Report results over multiple runs or with different random seeds and calculate average rankings. |

| Tree-based model (e.g., Random Forest) shows low feature importance for all predictors. | The model may be using a large number of features weakly, or the dataset may have a low signal-to-noise ratio. | Increase the depth of the trees or use the min_impurity_decrease parameter to enforce more selective splits. Verify the predictive power of your dataset with a simpler model first. |

| Lasso regression selects different features for slight variations in the training data. | The L1 penalty is known to be unstable with highly correlated features, leading to arbitrary selection. | Use Bootstrap Aggregating (Bagging) with Lasso to create a more stable selection consensus. Alternatively, switch to Ridge or Elastic Net regularization [30]. |

Experimental Protocols & Methodologies

This section details standardized protocols for implementing embedded feature selection, drawing from successful applications in predictive modeling.

Protocol for L1 Regularization (Lasso) in Fertility Prediction

This protocol is adapted from studies applying machine learning to predict fertility preferences using demographic and health survey data [34].

Data Preprocessing:

- Handle missing values using imputation suitable for your data (e.g., mean/mode for continuous/categorical variables).

- Standardize Features: Scale all continuous predictor variables (e.g., age, number of children, distance to health facility) to have a mean of 0 and a standard deviation of 1. This is critical for Lasso, as it is sensitive to feature scale [33].

Model Training with Cross-Validation:

- Employ

LassoCVfrom thescikit-learnlibrary, which uses cross-validation to find the optimal regularization parameteralpha. - Fit the model on the training data. The solver will automatically test a range of

alphavalues.

- Employ

Feature Selection & Interpretation:

- Extract the model's

coef_attribute. Features with a coefficient of zero have been eliminated by the L1 penalty. - The non-zero coefficients represent the selected feature set. Their magnitude and sign indicate the direction and strength of the relationship with the target variable (e.g., desire for more children).

- Extract the model's

The workflow below visualizes this protocol.

Protocol for Tree-Based Feature Importance

This protocol is based on standard practices for using Random Forest, a common algorithm in fertility and health prediction studies [34] [31].

Model Training:

- Train a

RandomForestClassifierorRandomForestRegressoron your data. No feature standardization is required for tree-based models. - Ensure the forest is sufficiently large (e.g.,

n_estimators=100or more) for stable importance estimates.

- Train a

Importance Calculation:

- After training, the feature importance scores (computed via mean decrease in impurity) are available in the

feature_importances_attribute.

- After training, the feature importance scores (computed via mean decrease in impurity) are available in the

Validation via Permutation:

- For a more reliable estimate of importance that is not biased by the impurity-based metric, use Permutation Importance.

- Using

sklearn.inspection.permutation_importance, shuffle the values of each feature one at a time and measure the drop in the model's performance (e.g., accuracy). A large drop indicates an important feature [31].

The following diagram illustrates the validation of feature importance.

The Scientist's Toolkit: Research Reagent Solutions

The table below catalogs essential computational "reagents" for conducting experiments with embedded feature selection methods.

Table 2: Essential Tools for Embedded Feature Selection Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| scikit-learn (Python) | A comprehensive machine learning library. | Provides implementations for LassoCV, RandomForest, and permutation importance, forming the core toolkit for applying these methods [33] [31]. |

| SHAP (SHapley Additive exPlanations) | A game theory-based library for explaining model predictions. | Critical for interpreting complex models post-feature selection, revealing how each feature contributes to an individual prediction in fertility studies [34]. |

| Pandas (Python) | A fast, powerful data analysis and manipulation tool. | Used for loading, cleaning, and managing structured data (e.g., from demographic surveys) before model training [32]. |

| Matplotlib/Seaborn (Python) | Libraries for creating static, animated, and interactive visualizations. | Essential for generating feature importance bar charts, correlation heatmaps, and other diagnostic plots to communicate results [33] [31]. |

Troubleshooting Guides and FAQs

Q1: Why is my hybrid feature selection model underperforming compared to individual methods?

A: This is often due to incorrect aggregation of results from the different feature selection phases. When combining filter, wrapper, and embedded methods using Hesitant Fuzzy Sets (HFS), ensure you are properly handling the hesitant information from multiple decision sources.

- Solution: Implement a weighted aggregation strategy for HFS that accounts for the performance of each base method. For example, assign higher weights to methods that demonstrate better performance on a validation set. A sample workflow is below:

- Experimental Protocol:

- Phase 1 - Individual Selection: Run Filter (e.g., Information Gain), Wrapper (e.g., Sequential Forward Selection), and Embedded (e.g., Lasso) methods independently.

- Phase 2 - HFS Scoring: For each feature, create a Hesitant Fuzzy Set that contains the membership degrees (scores) assigned by the different methods.

- Phase 3 - Weight Assignment: Use a validation set to evaluate the performance of a simple classifier (e.g., k-NN) using the top-k features from each method. Assign weights to each method based on its achieved accuracy (e.g., higher accuracy gets a higher weight).

- Phase 4 - Aggregation: Calculate the final score for each feature using a weighted aggregation operator (like the hesitant fuzzy weighted average) on the HFS. Select the features with the highest final scores.

Q2: How do I handle high computational cost in the wrapper phase of my hybrid pipeline for large fertility datasets?

A: The wrapper method, while effective, is computationally expensive because it repeatedly trains a model to evaluate feature subsets.

Solution: Optimize the search process using a metaheuristic algorithm and use the filter method to pre-reduce the feature space.

- Use a filter method as a pre-processing step to eliminate clearly irrelevant features, thus reducing the search space for the wrapper method.

- Implement a metaheuristic wrapper like the Artificial Bee Colony (ABC) or Genetic Algorithm (GA) instead of an exhaustive search. These can find near-optimal feature subsets with fewer evaluations [36].

- Utilize a robust computational framework like Random Forest as the evaluator within the wrapper, which can handle moderate-sized datasets effectively and provide feature importance scores [37].

Experimental Protocol for Efficient Hybrid Selection:

- Pre-filtering: Apply a filter method (e.g., Information Gain or Chi-squared) to the original dataset and retain only the top 60% of features.

- Metaheuristic Wrapper: On the reduced feature set, run a Genetic Algorithm wrapper.

- Encoding: Represent a feature subset as a binary chromosome.

- Fitness Function: Use the accuracy of a Random Forest classifier with 10-fold cross-validation.

- Operators: Use standard crossover and mutation.

- Stopping Criterion: Run for a maximum of 50 generations or until fitness plateaus.

Q3: How can I ensure my hybrid HFS model is interpretable for clinical experts in fertility treatment?

A: The "black-box" nature of complex models can hinder clinical adoption. Interpretability can be achieved by providing clear feature importance scores and using explainable AI (XAI) techniques.

Solution: Employ a two-step interpretability process:

- Feature Importance Ranking: After the HFS aggregation, provide a final, ranked list of selected features. This list is inherently interpretable.

- Post-hoc Explanation: Use SHapley Additive exPlanations (SHAP) on the final predictive model to explain how each selected feature contributes to individual predictions [29].

Experimental Protocol for SHAP Analysis:

- Train your final fertility prediction model (e.g., a Random Forest) using the features selected by your hybrid HFS method.

- Create a SHAP explainer object (e.g.,

TreeExplainerfor Random Forest) using the trained model. - Calculate SHAP values for a representative sample of your test dataset.

- Generate summary plots to show the global importance of features and force plots to explain individual predictions for specific patient cases.

Performance Comparison of Feature Selection Methods

The table below summarizes the performance of various feature selection methods as reported in recent literature, particularly in the context of fertility and biomedical diagnostics.

Table 1: Performance Comparison of Different Feature Selection Approaches

| Feature Selection Method | Reported Accuracy | Number of Selected Features | Key Strengths | Application Context |

|---|---|---|---|---|

| Hybrid Filter (HFS + Rough Sets) | N/A | Significant reduction reported [38] | Handles high-dimensional, noisy data; manages uncertainty. | Microarray data classification [38] |

| Hybrid Filter-Wrapper (Ensemble Filter + ABC+GA) | High precision and fitness score [36] | Minimal feature subset [36] | Balances exploration & exploitation; avoids local optima. | Text classification [36] |

| Hybrid Feature Selection (HFS + Random Forest) | 79.5% [39] | 7 [39] | Identifies clinically relevant factors; uses multi-center data. | IVF/ICSI success prediction [39] |

| Embedded (Lasso Regularization) | N/A | Varies with penalty [37] | Intrinsic feature selection during model training; fast. | General-purpose / Medical data [37] |

| Embedded (Random Forest Importance) | N/A | Varies with threshold [37] | Robust to multicollinearity; provides importance measures. | General-purpose / Medical data [37] |

| Wrapper (GA) with Deep Learning | 76% [13] | N/A | Personalized predictions; handles complex feature interactions. | Initial IVF cycle success prediction [13] |

| PSO + TabTransformer | 97% [29] | N/A | High accuracy and AUC; model interpretability via SHAP. | IVF live birth prediction [29] |

The Scientist's Toolkit: Essential Reagents & Algorithms

Table 2: Key Research Reagents and Computational Tools for Hybrid Feature Selection Experiments

| Item / Algorithm Name | Function / Purpose | Specifications / Notes |

|---|---|---|

| Hesitant Fuzzy Sets (HFS) | A framework to model and aggregate uncertainty from multiple feature selection methods. | Allows a set of possible values for membership degree; crucial for combining filter/wrapper/embedded scores [39] [38]. |

| Ant Colony Optimization (ACO) | A nature-inspired metaheuristic used in wrapper methods to efficiently search for optimal feature subsets. | Mimics ant foraging behavior; effective for combinatorial optimization problems like feature selection [15]. |

| Genetic Algorithm (GA) | A population-based metaheuristic for wrapper feature selection. | Uses selection, crossover, and mutation to evolve high-performing feature subsets over generations [36]. |

| Lasso (L1) Regularization | An embedded feature selection method that penalizes less important features by setting their coefficients to zero. | Implemented in sklearn.linear_model.Lasso or LogisticRegression with penalty='l1' [37]. |

| Random Forest Classifier | A powerful ensemble learning algorithm used both as a predictor and for deriving embedded feature importance scores. | Feature importance is calculated as the total decrease in node impurity weighted by the probability of reaching that node [37]. |

| SHAP (SHapley Additive exPlanations) | A unified framework for interpreting the output of any machine learning model. | Used to explain the contribution of each selected feature to the final prediction, building trust with clinicians [29]. |

| StandardScaler | A pre-processing tool to standardize features by removing the mean and scaling to unit variance. | Essential when using methods like Lasso that are sensitive to the scale of features [37]. |

FAQs: Troubleshooting Common Experimental Issues

Q1: My high-dimensional fertility dataset is causing my PSO algorithm to converge slowly. What can I do? A1: This is a classic "curse of dimensionality" problem. Implement a dynamic dimension reduction strategy using Principal Component Analysis (PCA). Unlike static pre-processing, periodically execute a modified PCA after a fixed number of PSO iterations. This dynamically identifies the most important dimensions during the optimization process, focusing the computational effort and accelerating convergence. Research shows this cooperative method can reduce computational cost by at least 40% compared to standard PSO [40].