Optimizing Hyperparameters for Infertility Prediction Models: Advanced Methods and Clinical Applications

This article provides a comprehensive guide to hyperparameter optimization (HPO) methods for developing robust machine learning models in infertility prediction.

Optimizing Hyperparameters for Infertility Prediction Models: Advanced Methods and Clinical Applications

Abstract

This article provides a comprehensive guide to hyperparameter optimization (HPO) methods for developing robust machine learning models in infertility prediction. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of HPO, details advanced methodologies like Bayesian optimization and population-based algorithms, and addresses critical troubleshooting and optimization challenges. The content further covers rigorous validation strategies and performance comparisons, synthesizing insights from recent studies on predicting outcomes such as live birth, blastocyst formation, and ovarian reserve. By bridging technical machine learning processes with clinical application needs, this resource aims to enhance the accuracy, interpretability, and clinical utility of predictive models in reproductive medicine.

The Critical Role of Hyperparameter Optimization in Reproductive Medicine AI

Defining Hyperparameter Optimization and Its Impact on Model Performance

In the specialized field of infertility prediction research, developing robust machine learning models is paramount for advancing diagnostic and prognostic capabilities. Hyperparameter optimization stands as a critical, yet often challenging, step in this process. Unlike model parameters learned during training, hyperparameters are configuration variables set prior to the training process that control the learning algorithm's behavior. Their optimal selection is not merely a technical exercise; it directly governs a model's ability to uncover complex, non-linear relationships in clinical data, ultimately impacting the predictive accuracy that informs patient counseling and treatment strategies [1] [2]. This technical support center is designed to guide researchers through the intricacies of hyperparameter optimization, providing clear methodologies and troubleshooting advice tailored to the unique challenges of reproductive medicine data.

Core Concepts and Quantitative Comparisons

What is Hyperparameter Optimization and Why is it Critical for Infertility Prediction Models?

Hyperparameter optimization is the systematic process of searching for the ideal set of hyperparameters that maximize a model's performance on a given task. In the context of infertility research, this is crucial because these models often deal with high-dimensional clinical data where complex, non-linear interactions between features—such as female age, anti-Müllerian hormone (AMH) levels, and embryo morphology—determine outcomes like blastocyst formation or live birth [3] [2]. Selecting appropriate hyperparameters ensures the model is neither too simple (underfitting) nor too complex (overfitting), allowing it to generalize well to new patient data and provide reliable clinical insights.

What are the Common Hyperparameter Optimization Techniques?

Researchers have several strategies at their disposal, each with distinct advantages and computational trade-offs. The table below summarizes the primary methods.

Table 1: Comparison of Common Hyperparameter Optimization Techniques

| Technique | Core Principle | Advantages | Disadvantages | Ideal Use Case in Infertility Research |

|---|---|---|---|---|

| Grid Search [4] | Exhaustive search over a predefined set of hyperparameter values. | Simple, comprehensive, guarantees finding the best combination within the grid. | Computationally expensive; cost grows exponentially with more parameters. | Small, well-defined hyperparameter spaces where computational resources are ample. |

| Random Search [4] | Randomly samples hyperparameter combinations from defined distributions. | More efficient than grid search; better for high-dimensional spaces. | Can miss the optimal combination; results may vary between runs. | Initial exploration of a large hyperparameter space with limited resources. |

| Bayesian Optimization [5] [4] | Builds a probabilistic model of the objective function to direct future searches. | Highly sample-efficient; requires fewer evaluations to find a good solution. | Higher computational overhead per iteration; more complex to implement. | Optimizing complex models like XGBoost or neural networks where each model evaluation is costly [1] [2]. |

| Genetic Algorithms [4] | Mimics natural selection by evolving a population of hyperparameter sets. | Good for complex, non-differentiable spaces; can find robust solutions. | Can require a very large number of evaluations; slow convergence. | Non-standard model architectures or highly complex, multi-modal search spaces. |

The impact of these methods is evident in real-world studies. For instance, in developing a model to predict blastocyst yield in IVF cycles, researchers tested multiple machine learning models and found that advanced algorithms like LightGBM, XGBoost, and SVM, which inherently benefit from careful hyperparameter tuning, significantly outperformed traditional linear regression (R²: 0.673–0.676 vs. 0.587) [3].

How Do I Choose the Right Optimization Technique for My Project?

The choice depends on your computational budget, the size of your hyperparameter space, and the cost of evaluating a single model configuration.

- For a small number of hyperparameters (e.g., 2-3) with limited value ranges, Grid Search is a straightforward choice.

- When dealing with more than 3-4 hyperparameters, Random Search is typically more efficient.

- If you are training a single, large model where each training cycle takes hours or days (e.g., a deep neural network), Bayesian Optimization is the preferred method due to its sample efficiency.

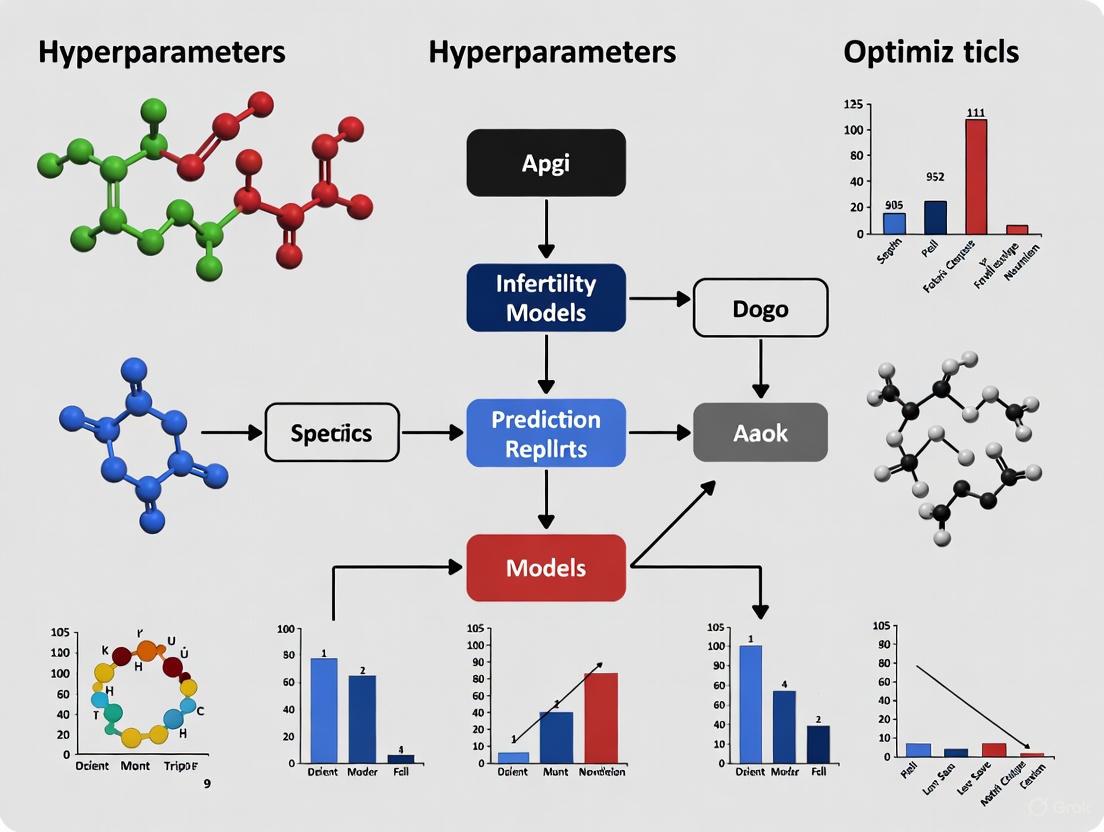

The following workflow diagram outlines a decision-making process for selecting an optimization technique.

Troubleshooting Common Experimental Issues

My Model is Overfitting Despite Hyperparameter Tuning. What Can I Do?

Overfitting indicates your model has learned the noise in your training data rather than the underlying signal. Beyond hyperparameter tuning, consider these steps:

- Simplify the Model: Directly tune hyperparameters that control model complexity. For tree-based models like XGBoost or Random Forest, this includes increasing

min_child_weightormin_samples_split, and decreasingmax_depth[1] [4]. - Increase Regularization: Most algorithms have regularization hyperparameters. For XGBoost, increase

gamma,reg_alpha, andreg_lambda. For neural networks, increase dropout rates or L2 regularization factors [4]. - Re-evaluate Your Data: Ensure your training dataset is large and representative enough. Use techniques like cross-validation during tuning to get a more robust estimate of model performance [1] [2].

The Optimization Process is Taking Too Long. How Can I Speed It Up?

Computational cost is a major constraint. To improve efficiency:

- Use a Faster Technique: Switch from Grid Search to Random Search or Bayesian Optimization [4].

- Reduce the Search Space: Start with a wider, coarser search to identify promising regions, then perform a finer-grained search in those areas. Leverage domain knowledge to set intelligent initial bounds for hyperparameters.

- Parallelize: Most optimization libraries (e.g.,

scikit-learn,Optuna) support parallelization. Distribute the evaluation of different hyperparameter sets across multiple CPU cores or machines [4]. - Use a Subset of Data: For initial exploration, use a smaller but representative subset of your training data to quickly rule out poor hyperparameter combinations.

How Can I Ensure My Optimized Model is Clinically Relevant?

A model with high accuracy on a test set may not be useful if it does not generalize or is not interpretable for clinicians.

- Incorplement Rigorous Validation: Always use a held-out test set or nested cross-validation to evaluate the final model selected by hyperparameter optimization. This prevents information leakage from the tuning process and provides an unbiased performance estimate [3] [2].

- Focus on Interpretability: Use tools like SHAP (SHapley Additive exPlanations) or analyze feature importance to ensure the model's predictions are driven by clinically plausible features, such as female age and embryo quality, which aligns with biological understanding [3] [6] [2].

- Perform Subgroup Analysis: Evaluate your model's performance on key patient subgroups (e.g., advanced maternal age, poor ovarian response) to ensure it does not fail for specific populations [3].

Experimental Protocols & The Scientist's Toolkit

Detailed Methodology: Hyperparameter Optimization for an Infertility Prediction Model

The following protocol is adapted from studies that successfully developed predictive models for IVF outcomes [3] [1] [2].

Problem Formulation and Metric Definition:

- Objective: Define the prediction task (e.g., classification of live birth success, regression of blastocyst yield).

- Performance Metric: Select an appropriate evaluation metric (e.g., Area Under the Curve (AUC), Accuracy, F1-Score, R²). For class-imbalanced data common in medical studies, AUC is often preferred.

Data Preprocessing and Splitting:

- Split the dataset into Training, Validation, and Test sets. A typical split is 70/15/15. The validation set is used for guiding hyperparameter optimization, and the test set is used only once for the final evaluation.

- Handle missing values (e.g., using imputation methods) and normalize or standardize features as required by the algorithm.

Define the Model and Hyperparameter Space:

- Select a machine learning algorithm (e.g., XGBoost, Random Forest, LightGBM).

- Define the hyperparameter search space. The table below provides a starting point for a tree-based model like XGBoost.

Table 2: Essential Research Reagent Solutions - Hyperparameters for a Tree-Based Model

| Hyperparameter | Function | Common Search Range/Values |

|---|---|---|

n_estimators |

Number of boosting rounds (trees). | 50 - 1000 |

max_depth |

Maximum depth of a tree. Controls complexity; higher can lead to overfitting. | 3 - 10 |

learning_rate |

Shrinks the contribution of each tree. Lower rate often requires more trees. | 0.01 - 0.3 |

subsample |

Fraction of samples used for fitting individual trees. Prevents overfitting. | 0.6 - 1.0 |

colsample_bytree |

Fraction of features used for fitting individual trees. Prevents overfitting. | 0.6 - 1.0 |

reg_alpha (L1) |

L1 regularization term on weights. | 0, 0.001, 0.01, 0.1, 1 |

reg_lambda (L2) |

L2 regularization term on weights. | 0, 0.001, 0.01, 0.1, 1, 10 |

Execute the Optimization:

- Choose an optimization technique (e.g., Bayesian Optimization with a tool like

Optunaorscikit-optimize). - Run the optimization for a set number of trials (e.g., 100). Each trial involves training the model with a specific hyperparameter set on the training data and evaluating it on the validation set using the chosen metric.

- Choose an optimization technique (e.g., Bayesian Optimization with a tool like

Final Model Training and Evaluation:

- Train a new model on the combined training and validation data using the best-found hyperparameters.

- Perform a single, final evaluation of this model on the held-out test set to report its generalized performance.

The following diagram visualizes this end-to-end workflow.

Frequently Asked Questions & Troubleshooting Guides

This technical support resource addresses common challenges in developing and optimizing predictive models for infertility treatment outcomes.

Predicting Live Birth after Fresh Embryo Transfer

Q: What are the key predictive features for live birth following fresh embryo transfer, and which machine learning models show the best performance?

A: Research on a large dataset of over 11,000 ART records identified several critical predictors. The Random Forest (RF) model demonstrated superior performance, achieving an Area Under the Curve (AUC) exceeding 0.8 [1].

- Top Predictive Features: The most important features identified were female age, the quality (grades) of the transferred embryos, the number of usable embryos, and endometrial thickness [1].

- Model Comparison: The study compared six machine learning models. The performance ranking indicated that RF was the best, followed by eXtreme Gradient Boosting (XGBoost) [1].

Troubleshooting Guide: Addressing Poor Model Generalizability

| Challenge | Possible Cause | Solution |

|---|---|---|

| Model performs well on training data but poorly on new data (Overfitting). | Model is too complex or has learned noise in the training data. | Implement hyperparameter tuning techniques like Bayesian Optimization to find the optimal settings that balance complexity [7]. |

| Low predictive accuracy (AUC) across all data. | The selected features may not be sufficiently informative, or the model architecture is not suitable. | Re-evaluate feature selection. Consider incorporating the key predictors listed above and explore ensemble methods like Random Forest, which are often robust [1]. |

Forecasting Blastocyst Formation Yield

Q: For patients with Diminished Ovarian Reserve (DOR), what factors best predict the formation of viable blastocysts?

A: In patients diagnosed with DOR, the presence of Day 3 (D3) available cleavage-stage embryos is the strongest independent predictor of viable blastocyst formation [8]. The quality of these cleavage-stage embryos is also crucial.

- Predictor for Clinical Pregnancy: For women under 40, having a D3 top-quality cleavage-stage embryo is a more reliable predictor of clinical pregnancy than age itself. For women aged 40 or above, age becomes the dominant predictive factor [8].

- Ovarian Reserve Tests (ORTs): For predicting earlier outcomes like oocyte retrieval, AMH is a more effective predictor than Antral Follicle Count (AFC) or basal FSH. For predicting the obtainment of D3 available cleavage-stage embryos, AFC shows superior predictive accuracy, with a threshold of 3.5 [8].

Experimental Protocol: Key Steps for Predictive Modeling of Blastocyst Yield

- Patient Cohort Definition: Define your study population using established DOR criteria (e.g., AMH < 1.1 ng/mL, AFC < 7 follicles, and/or basal FSH ≥ 10 IU/L) [8].

- Data Collection: Collect data on key variables, including:

- Ovarian Reserve Markers: AMH, AFC, basal FSH.

- Embryo Morphology: Record the number and quality of D3 available cleavage-stage embryos (defined as embryos with ≥6 blastomeres and ≤25% fragmentation) [8].

- Blastocyst Outcome: Culture remaining embryos and record the formation of viable blastocysts.

- Model Development: Use logistic regression or machine learning models to identify independent predictors and establish predictive thresholds for blastocyst formation.

Assessing Ovarian Reserve and Response

Q: Which biomarkers are most reliable for predicting ovarian response in ART, and how should they be interpreted?

A: Ovarian reserve tests are critical for personalizing stimulation protocols and setting expectations.

- Anti-Müllerian Hormone (AMH): AMH is a key biomarker due to its stability during the menstrual cycle and strong correlation with the number of preantral and antral follicles. It is a fundamental component of ovarian reserve assessment and is widely used to tailor ovulation induction plans [9].

- Antral Follicle Count (AFC): AFC, assessed via transvaginal ultrasound, is another strong predictor of ovarian response, often considered comparable to AMH [9].

- Follicle-Stimulating Hormone (FSH): Basal FSH (measured on cycle day 3) is a traditional marker. Lower day 3 FSH levels (e.g., <15 mIU/mL) are correlated with higher pregnancy rates, while consistently high levels (>20 mIU/mL) are inversely related to pregnancy achievement [9].

Troubleshooting Guide: Inconsistent Biomarker Readings

| Challenge | Possible Cause | Solution |

|---|---|---|

| AMH level is very low or undetectable. | Very low ovarian reserve. | Counsel patients on the high likelihood of cycle cancellation and poor outcomes, but note that undetectable AMH does not equate to absolute sterility, especially in younger patients [9]. |

| Discrepancy between AMH, AFC, and FSH values. | Biological variability or technical issues with assays/ultrasound. | Use an age-specific analysis for a more accurate assessment. Always interpret biomarkers in the context of the patient's age and clinical history, as AMH and AFC levels decline with age [9]. |

Optimizing Hyperparameters for Prediction Models

Q: What are the most effective techniques for hyperparameter optimization in deep learning models for infertility prediction?

A: Hyperparameter tuning is a critical step that significantly influences model performance and computational efficiency [7].

- Core Hyperparameters: Key hyperparameters include learning rate, batch size, number of epochs, optimizer type (e.g., Adam, SGD), and dropout rate [7].

- Optimization Techniques: The choice of technique often depends on computational resources and the number of hyperparameters [7]:

- Grid Search: Systematically tries all combinations in a predefined set. Best for a small number of hyperparameters due to high computational cost.

- Random Search: Randomly samples combinations from defined distributions. More efficient than grid search for exploring a large hyperparameter space.

- Bayesian Optimization: Builds a probabilistic model to predict promising hyperparameter combinations. It is especially effective for deep learning where model training is expensive, as it reduces the number of training runs needed [7].

Essential Research Reagent Solutions

The following table details key materials and assays used in the featured research on infertility prediction.

| Research Reagent / Material | Function / Explanation |

|---|---|

| Anti-Müllerian Hormone (AMH) Assay | Quantifies serum AMH levels to assess ovarian reserve and predict response to controlled ovarian stimulation [9] [8]. |

| Follicle-Stimulating Hormone (FSH) Assay | Measures basal FSH (on cycle day 3) as part of the initial assessment of ovarian function [9] [10]. |

| Transvaginal Ultrasound Probe | Used to perform Antral Follicle Count (AFC) and measure endometrial thickness, both key predictive features [1] [9]. |

| Embryo Culture Media | Supports the in-vitro development of zygotes to cleavage-stage embryos and viable blastocysts for quality assessment and transfer [8]. |

| Gonadotropins (e.g., FSH, HMG) | Medications used for controlled ovarian stimulation to promote the growth of multiple follicles [10]. |

Experimental Workflow and Logical Diagrams

Predictive Modeling Workflow for Infertility Outcomes

Biomarker Decision Pathway for Ovarian Response

Frequently Asked Questions

What are the typical AUC and accuracy targets for high-performing infertility prediction models?

High-performing machine learning models for infertility prediction typically achieve AUC values between 0.8 and 0.92 and accuracy values between 78% and 82%, as demonstrated by recent studies. The most successful models are usually tree-based ensembles.

The table below summarizes performance benchmarks from recent, key studies in reproductive medicine:

| Study Focus | Best Model(s) | AUC | Accuracy | Key Predictors |

|---|---|---|---|---|

| Live Birth Prediction (Fresh ET) [1] | Random Forest (RF) | > 0.80 | Information Not Provided | Female age, embryo grades, usable embryo count, endometrial thickness |

| IVF Outcome Prediction (Pre-procedural) [2] | XGBoost | 0.876 - 0.882 | 81.7% | Female age, AMH, BMI, FSH, LH, sperm parameters |

| Blastocyst Yield Prediction [3] | LightGBM | R²: 0.673-0.676 (Regression) | 67.5% - 71.0% (Multi-class) | Number of extended culture embryos, Day 3 mean cell number, proportion of 8-cell embryos |

My model's AUC is acceptable, but accuracy is poor. What should I investigate?

This discrepancy often indicates issues with class imbalance or an inappropriate classification threshold.

- Problem: In medical datasets, the outcome of interest (e.g., successful live birth) is often the minority class. A model can achieve a decent AUC by correctly ranking a few high-risk patients but still misclassify many samples if the default threshold (typically 0.5) is not optimal for the imbalanced data [11] [12].

- Solution:

- Analyze the ROC and Precision-Recall Curves: Do not rely on a single metric. The ROC curve might look good, but the Precision-Recall curve is more informative for imbalanced datasets.

- Adjust the Decision Threshold: Move the probability threshold away from 0.5 to maximize a metric that is more relevant to your clinical goal, such as F1-score or a balance of sensitivity and specificity [12].

- Use Resampling Techniques: Apply methods like SMOTE (Synthetic Minority Over-sampling Technique) on the training set only to artificially balance the classes before model training [11].

- Apply Cost-Sensitive Learning: Use algorithms that assign a higher penalty for misclassifying the minority class during training [12].

How can I select the most informative features for my model without overfitting?

Employ robust feature selection techniques integrated with your model training process.

- Recursive Feature Elimination (RFE): This is a powerful and widely used method. It works by recursively training the model, removing the least important feature(s), and then retraining until the optimal number of features is found. This directly links feature selection to model performance [13] [3].

- Model-Based Selection: Tree-based models like Random Forest and XGBoost provide native feature importance scores (e.g., "Gain"). You can use these scores to filter out low-impact features, creating a more parsimonious and interpretable model without significant performance loss [2].

- Stability Focus: Look for features that are consistently selected as important across different models or cross-validation folds. This increases confidence that the features are robustly associated with the outcome [13] [14].

A competing study reports an AUC >0.9, but my similar model only achieves 0.81. Is my approach flawed?

Not necessarily. An AUC above 0.9 is exceptional in medical prediction. It is crucial to critically evaluate the validity of the reported performance.

- Benchmark Realistically: Compare your results against the performance spectrum shown in the table above. An AUC of 0.81 is consistent with robust models in this field [1] [2].

- Beware of "AUC Hacking": Widespread evidence shows an excess of AUC values just above common thresholds like 0.9, suggesting that some results may be over-inflated due to questionable research practices. These can include trying multiple analyses and only reporting the best one, or improper handling of data splits [15].

- Validate Rigorously: Ensure your model was evaluated on a held-out test set or via cross-validation that was completely separate from the feature selection and model tuning process. Performance on the training set is always over-optimistic.

Troubleshooting Guides

Diagnosing and Correcting for Class Imbalance

Class imbalance is a major challenge in infertility prediction, where successful outcomes are often less frequent.

Diagram: A workflow for diagnosing and addressing class imbalance in predictive models.

Experimental Protocol:

Diagnosis:

- Calculate the ratio of your outcome classes (e.g., Live Birth vs. No Live Birth).

- Plot the ROC curve and the Precision-Recall curve side-by-side. A large gap between the two is a tell-tale sign.

Intervention (Apply one at a time and evaluate):

- SMOTE: Use the

imbalanced-learnlibrary in Python. Implement SMOTE only on the training data during cross-validation to avoid data leakage. - Class Weighting: Set

class_weight='balanced'in Scikit-learn models or use thescale_pos_weightparameter in XGBoost. - Threshold Tuning: Use the ROC curve to find the probability threshold that maximizes sensitivity or specificity for your clinical need.

- SMOTE: Use the

Optimizing Hyperparameters for Tree-Based Models

Tree-based models like XGBoost and Random Forest are state-of-the-art for structured medical data but require careful tuning [11] [1] [2].

Experimental Protocol:

A standard protocol for hyperparameter optimization using Grid Search with Cross-Validation:

Data Preparation: Split your data into a training set (e.g., 80%) and a final hold-out test set (e.g., 20%). The test set should only be used for the final evaluation.

Define the Model and Parameter Grid:

Execute Grid Search:

Validate and Finalize:

- Identify the best parameters from

grid_search.best_params_. - Retrain a model on the entire training set using these best parameters.

- Perform the final, unbiased evaluation on the held-out test set.

- Identify the best parameters from

Implementing a Rigorous Model Validation Framework

Proper validation is non-negotiable to ensure your performance estimates are reliable and generalizable.

Diagram: A nested validation workflow separating tuning and testing data.

Experimental Protocol:

Hold-Out Test Set: Before doing anything, set aside a portion of your data (ideally 20-30%) as the final test set. Do not use it for any aspect of model development.

Nested Cross-Validation (Gold Standard):

- Outer Loop: Split the remaining data (training pool) into K-folds (e.g., 5).

- Inner Loop: For each fold in the outer loop, use the remaining K-1 folds to perform hyperparameter tuning (e.g., another 5-fold CV). This prevents optimistic bias from tuning on the entire dataset.

- The final performance is the average of the performance across the outer K test folds.

External Validation: The most robust method is to evaluate the final model on a completely independent dataset collected from a different center or time period [2].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Experiment | Example Context |

|---|---|---|

| Absolute IDQ p180 Kit | A targeted metabolomics kit used to quantitatively measure the concentrations of 188 endogenous metabolites from a plasma sample [13]. | Identifying plasma metabolite biomarkers associated with large-artery atherosclerosis [13]. |

| missForest Imputation | A non-parametric imputation method based on Random Forests, capable of handling mixed data types (continuous and categorical) and complex interactions [1]. | Handling missing values in clinical datasets from IVF cycles prior to model training [1]. |

| SHAP (SHapley Additive exPlanations) | A unified framework for interpreting model predictions by quantifying the marginal contribution of each feature to the final prediction, providing both global and local interpretability [11]. | Explaining feature importance in cardiovascular disease prediction models based on tree-based ensembles or transformer models [11]. |

| SMOTETomek | A hybrid resampling technique that combines SMOTE (Synthetic Minority Over-sampling Technique) to generate synthetic minority class samples and Tomek links to clean the resulting data by removing overlapping examples [11]. | Addressing class imbalance in clinical datasets, such as the Framingham heart study, to improve model sensitivity [11]. |

| Recursive Feature Elimination (RFE) | A feature selection wrapper method that recursively removes the least important features and rebuilds the model to identify an optimal subset of features that maintain high performance [13] [3]. | Identifying a minimal set of predictive biomarkers for large-artery atherosclerosis or key predictors for blastocyst yield in IVF [13] [3]. |

Identifying High-Impact Clinical Features for Model Input

Frequently Asked Questions

What are the most frequently identified high-impact features for predicting infertility treatment outcomes? Across numerous studies, several clinical features consistently demonstrate high predictive value. The most common features include maternal age, sperm concentration and motility, hormone levels (such as follicle-stimulating hormone, estradiol, and progesterone on HCG day), and ovarian stimulation protocols [16] [17] [18]. Female age is the most universally utilized feature in predictive models for assisted reproductive technology [18].

Which machine learning algorithms show the best performance for infertility prediction models? Studies have evaluated various algorithms, with optimal performance depending on specific datasets and prediction targets. Support Vector Machines (SVM), particularly Linear SVM, have shown strong performance for predicting pregnancy following intrauterine insemination (IUI) [16] [18]. For IVF live birth prediction, random forest and logistic regression models have demonstrated excellent performance, with transformer-based models achieving particularly high accuracy in recent research [6] [17].

How should researchers handle missing data in infertility prediction datasets? Appropriate data imputation is crucial for maintaining dataset integrity. For cycles with only one or two missing features, replacing missing values with the feature's median or mode is a validated approach [16]. Cycles with more extensive missing data (e.g., three or more missing features) should typically be excluded from analysis to preserve model reliability.

What evaluation metrics are most appropriate for assessing model performance? The area under the receiver operating characteristic curve (AUC) is the most frequently reported performance indicator, used in approximately 74% of studies [18]. Accuracy (55.6%), sensitivity (40.7%), and specificity (25.9%) are also commonly reported. The Brier score is recommended for calibration assessment, with values closer to 0 indicating better performance [17].

Troubleshooting Guides

Poor Model Performance Despite Comprehensive Features

Problem: Your model shows inadequate predictive performance even after including numerous clinical parameters.

Solution:

- Verify Feature Quality: Ensure you're including the highest-impact features identified in literature, particularly maternal age, which is the most consistent predictor across studies [18].

- Implement Feature Optimization: Apply feature selection techniques like Principal Component Analysis (PCA) or Particle Swarm Optimization (PSO) to identify the most relevant feature subsets [6].

- Check Data Preprocessing: Utilize appropriate normalization methods such as PowerTransformer, which has proven effective for aligning reproductive health data distributions more closely with Gaussian distributions [16].

Inconsistent Feature Importance Across Validation Sets

Problem: Feature importance rankings vary significantly between training and validation datasets.

Solution:

- Increase Dataset Size: Utilize larger datasets; studies with better performance often include thousands of cycles (e.g., 9,501 IUI cycles or 11,486 IVF cycles) [16] [17].

- Apply Robust Validation: Implement stratified cross-validation (e.g., four-fold) and bootstrap methods (500 iterations) rather than simple data splitting [16] [17].

- Analyze Feature Interactions: Use SHAP analysis to understand complex feature interactions and improve interpretability [6].

High-Impact Clinical Features for Infertility Prediction

Feature Performance Across Study Types

| Feature Category | Specific Features | Prediction Context | Performance Impact |

|---|---|---|---|

| Female Factors | Maternal Age [16] [17] [18] | IUI, IVF Live Birth | Strong predictor; most common feature in models |

| Ovarian Stimulation Protocol [16] | IUI Pregnancy | Strong predictor (AUC=0.78) | |

| Cycle Length [16] | IUI Pregnancy | Strong predictor | |

| Basal FSH [17] | IVF Live Birth | Among top 7 predictors | |

| Male Factors | Pre-wash Sperm Concentration [16] | IUI Pregnancy | Strong predictor (AUC=0.78) |

| Progressive Sperm Motility [17] | IVF Live Birth | Among top 7 predictors | |

| Paternal Age [16] | IUI Pregnancy | Weakest predictor | |

| Treatment Parameters | Hormone Levels (E2, P) on HCG Day [17] | IVF Live Birth | Highest contribution to prediction |

| Duration of Infertility [17] | IVF Live Birth | Among top 7 predictors |

Algorithm Performance Comparison

| Algorithm | Prediction Task | Performance | Reference |

|---|---|---|---|

| Linear SVM | IUI Pregnancy | AUC=0.78 | [16] |

| Random Forest | IVF Live Birth | AUC=0.671 | [17] |

| Logistic Regression | IVF Live Birth | AUC=0.674 | [17] |

| TabTransformer with PSO | IVF Live Birth | AUC=0.984, Accuracy=97% | [6] |

| Support Vector Machine | ART Success | Most frequently applied technique (44.44%) | [18] |

Experimental Protocols

Protocol 1: Developing IUI Pregnancy Prediction Models

Objective: Predict positive pregnancy test following intrauterine insemination.

Dataset Characteristics:

- 3,535 couples aged 18-43 years

- 9,501 IUI cycles

- 21 clinical and laboratory parameters

- Single-center study (2011-2015) [16]

Methodology:

- Data Preprocessing:

- Exclude cycles with >2 missing features

- Impute 1-2 missing features with median/mode

- Apply PowerTransformer normalization

- Perform one-hot encoding for categorical variables [16]

Model Training:

- Split data into training, validation, and test sets

- Apply stratified four-fold cross-validation

- Compare Linear SVM, AdaBoost, Kernel SVM, Random Forest, Extreme Forest, Bagging, and Voting classifiers [16]

Feature Importance Analysis:

- Rank features by impact on model performance

- Identify strongest predictors: pre-wash sperm concentration, ovarian stimulation protocol, cycle length, maternal age [16]

Protocol 2: Advanced Feature Optimization for IVF Live Birth Prediction

Objective: Predict live birth success following IVF treatment using optimized feature selection.

Methodology:

- Feature Optimization:

- Apply Principal Component Analysis (PCA) for dimensionality reduction

- Implement Particle Swarm Optimization (PSO) for feature selection [6]

Model Architecture:

- Utilize transformer-based models with attention mechanisms

- Compare with traditional machine learning algorithms (Random Forest, Decision Tree) [6]

Interpretability Analysis:

- Perform SHAP analysis to identify clinically relevant features

- Validate robustness across different preprocessing techniques [6]

Research Reagent Solutions

| Reagent/Resource | Application in Research | Function | Example Specifications |

|---|---|---|---|

| Gonadotropins (Gonal-F, Puregon) | Ovarian Stimulation | Induce follicular development; dose range 37.5-300 IU [16] | Recombinant FSH |

| Ovulation Triggers (Ovidrel) | Cycle Timing | Trigger final oocyte maturation; 250μg subcutaneous [16] | Recombinant hCG |

| Sperm Processing Media (Gynotec Sperm filter) | Semen Preparation | Density gradient centrifugation for sperm selection [16] | Colloidal silica gradient |

| Luteal Support (Prometrium) | Endometrial Preparation | Support implantation; 200mg daily micronized progesterone [16] | Micronized progesterone |

| Hormone Assays | Ovarian Reserve Testing | Measure FSH, AMH, estradiol levels [19] | Quantitative immunoassays |

Advanced HPO Techniques and Their Implementation in Infertility Prediction

FAQs: Choosing Your Optimization Paradigm

What is the core difference between gradient-based and population-based optimization methods?

Gradient-based optimization algorithms use the gradient (the derivative) of the loss function with respect to the model's parameters to find the direction of the steepest descent and iteratively update parameters to minimize the loss [20] [21]. They are like a hiker carefully feeling the slope of the hill to find the quickest way down.

Population-based optimization methods, in contrast, imitate natural processes like biological evolution or swarm intelligence. They maintain a population of candidate solutions and use mechanisms like selection, crossover, and mutation to iteratively improve the entire population toward better regions of the design space [22]. They are like a flock of birds exploring a large valley, sharing information about good locations they discover.

When should I use a gradient-based method for my infertility prediction model?

Gradient-based methods are the default choice for training deep learning models and are highly recommended when [20] [23] [21]:

- You have a large number of parameters: They are computationally efficient for models with many parameters, such as neural networks.

- Your problem is continuous and differentiable: The loss landscape is relatively smooth.

- You need fast convergence: They can converge to a good solution quickly, especially with a well-tuned learning rate.

- Computational resources are limited: They are generally less computationally expensive than population-based methods for high-dimensional problems.

In the context of infertility prediction, gradient-based optimizers like Adam are typically used to train the neural networks or other differentiable models once the architecture and hyperparameters are chosen [1] [6].

When is a population-based method more suitable?

Population-based methods are often the preferred or last-resort choice in the following scenarios [22]:

- The problem is non-differentiable or noisy: The loss function has discontinuities or flat regions where gradients are zero or uninformative.

- You need to avoid local minima: Their global exploration nature helps escape local minima and has a better chance of finding a global optimum.

- The search space contains discrete or binary variables: They can naturally handle variables that are not continuous.

- Gradient-based methods fail: They are robust and can still perform well even when a high number of design evaluations fail.

For hyperparameter tuning of your infertility prediction model (e.g., finding the optimal learning rate, number of layers in a neural network, or max depth of a decision tree), population-based methods can be very effective as they treat the hyperparameter optimization as a black-box problem [22] [6].

What are common failure modes of gradient-based optimizers and how can I troubleshoot them?

| Problem | Symptoms | Troubleshooting Steps |

|---|---|---|

| Vanishing/Exploding Gradients | Loss stops improving very early (vanishing) or becomes NaN (exploding). | - Use gradient clipping to cap gradient values [20].- Use specific activation functions (e.g., ReLU, Leaky ReLU) and weight initialization schemes [21]. |

| Oscillation or Slow Convergence | Loss jumps around the minimum or decreases very slowly. | - Implement a learning rate schedule to decay the learning rate over time [20].- Use optimizers with momentum to smooth the update path [20] [21]. |

| Convergence to Poor Local Minima | Model converges but performance is suboptimal. | - Restart training from different initial parameters.- Use a population-based method for initial exploration [22]. |

What are common failure modes of population-based optimizers and how can I troubleshoot them?

| Problem | Symptoms | Troubleshooting Steps |

|---|---|---|

| Premature Convergence | The population diversity drops too quickly, getting stuck in a suboptimal region. | - Increase the mutation rate to introduce more randomness [22].- Use a larger population size.- Implement techniques like "island models" to maintain diversity. |

| Slow Convergence | Steady but very slow improvement over many generations. | - Hybrid approach: Use a population-based method to find a good region, then switch to a gradient-based method for fine-tuning [22] [24]. |

Can I combine these two paradigms?

Yes, hybrid approaches that combine the strengths of both paradigms are powerful. A common strategy is to [22] [24]:

- Use a population-based method (e.g., an evolutionary algorithm) for global exploration to locate promising regions in the complex hyperparameter space of your prediction model.

- Then, hand off the best-found solution to a gradient-based method for local exploitation and fine-tuning, quickly converging to a high-quality minimum.

Research in reinforcement learning has also successfully combined policy networks (trained with gradients) with gradient-based Model Predictive Control (MPC) for improved performance [24].

Troubleshooting Guides

Guide 1: Diagnosing and Fixing Poor Convergence in Gradient-Based Optimization

Symptoms: The model's loss value does not decrease, decreases very slowly, or is unstable (oscillates wildly).

Methodology:

- Check your gradients: Use tools in deep learning frameworks to monitor the magnitude of gradients. If they are extremely small, you may have a vanishing gradient problem. If they are extremely large, you may have an exploding gradient problem.

- Monitor the loss: Plot the training and validation loss over iterations (epochs). This visual cue is essential for diagnosis.

- Systematically adjust hyperparameters: Follow a structured process like the one below to identify and fix the issue.

Guide 2: Tuning Hyperparameters Using Population-Based Methods

Objective: Find the optimal set of hyperparameters for a machine learning model (e.g., Random Forest, XGBoost) used in infertility prediction when the search space is large or contains discrete values.

Experimental Protocol (using Evolutionary Algorithms):

- Initialization: Randomly generate an initial population of µ individuals, where each individual is a vector representing a specific set of hyperparameters (e.g.,

{n_estimators=100, max_depth=5, ...}) [22]. - Evaluation: Train and evaluate a model for each individual in the population using a performance metric like Area Under the Curve (AUC) or accuracy on a validation set [1] [3].

- Selection: Select the fittest individuals (those with the highest AUC/accuracy) to be parents for the next generation. Selection is often rank-based [22].

- Variation (Crossover & Mutation):

- Crossover: Recombine pairs of parent individuals to produce offspring. This exchanges hyperparameter values between parents.

- Mutation: Randomly alter some hyperparameter values in the offspring with a low probability. This introduces new genetic material.

- Replacement: Form the new population for the next generation from the offspring and, optionally, the best individuals from the previous generation (e.g., using a (µ + λ) strategy) [22].

- Termination: Repeat steps 2-5 for many generations until a stopping criterion is met (e.g., a maximum number of generations, or convergence of the fitness score).

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" used in optimizing models for infertility prediction research.

| Item | Function / Description | Application Context in Infertility Prediction |

|---|---|---|

| Gradient-Based Optimizers (e.g., Adam, SGD with Momentum) | Algorithms that use the gradient of the loss function to update model parameters efficiently. Often include adaptive learning rates [20] [21]. | Training deep learning models (e.g., Artificial Neural Networks, TabTransformer) for classifying live birth outcomes [1] [6]. |

| Population-Based Optimizers (e.g., EA, PSO) | Algorithms that maintain and evolve a population of solutions to explore the search space globally, useful for non-differentiable problems [22]. | Hyperparameter tuning for classic ML models (RF, XGBoost) and feature selection to identify the most predictive clinical features [6]. |

| Hyperparameter Tuning Strategies (Bayesian, Random Search) | Frameworks for systematically searching hyperparameter spaces. Bayesian optimization builds a surrogate model to guide the search [25] [26]. | Finding optimal model configurations (e.g., C in Logistic Regression, max_depth in Decision Trees) to maximize prediction accuracy[A] [26]. |

| Feature Selection Algorithms (e.g., PSO, PCA) | Techniques for reducing input feature dimensionality to improve model generalizability and interpretability. PSO is a population-based method for this task [6]. | Identifying a parsimonious set of key predictors (e.g., female age, embryo grade, endometrial thickness) from dozens of clinical features [1] [6]. |

| Model Interpretation Tools (e.g., SHAP, Partial Dependence Plots) | Methods to explain the output of any ML model, showing the contribution of each feature to a prediction [3]. | Providing clinical insights by highlighting the most influential factors for live birth success, aiding in transparent and trustworthy AI [3] [6]. |

Comparative Analysis: Gradient-Based vs. Population-Based Optimization

The following table provides a structured comparison to guide your choice of optimization paradigm, with examples from infertility prediction research.

| Aspect | Gradient-Based Optimization | Population-Based Optimization |

|---|---|---|

| Core Mechanism | Uses gradient calculus for steepest descent [20] [21]. | Imitates natural processes (evolution, swarms) [22]. |

| Typical Use Cases | Training deep neural networks; large, continuous, differentiable problems [1] [21]. | Hyperparameter tuning; dealing with discrete, noisy, or non-differentiable spaces [22] [6]. |

| Handling of Local Minima | Can get stuck in local minima [20] [21]. | Better at avoiding local minima due to global exploration [22]. |

| Convergence Speed | Faster convergence to a (local) optimum [21]. | Slower convergence, requires more function evaluations [22]. |

| Computational Cost | Lower cost per iteration, efficient in high dimensions [23]. | Higher cost per iteration due to population size [22]. |

| Key Hyperparameters | Learning rate, momentum, learning rate schedule [20] [21]. | Population size, mutation rate, crossover strategy [22]. |

| Example in Infertility Research | Training an Artificial Neural Network (ANN) classifier for live birth prediction [1]. | Using Particle Swarm Optimization (PSO) for feature selection to improve a prediction model [6]. |

Troubleshooting Guide: Common Bayesian Optimization Issues

My optimization is converging too slowly or seems stuck in a local minimum. What should I do?

This is a common problem often related to the balance between exploration and exploitation.

- Problem Diagnosis: Check if your acquisition function is over-prioritizing exploitation (refining known good areas) at the expense of exploring new regions [27].

- Recommended Solution: Adjust the exploration parameter in your acquisition function. For the Expected Improvement (EI) function, increase the

xiparameter to encourage more exploration. Studies suggest starting with a default value of 0.01 [28] or 0.075 [27] and increasing it if the model gets stuck [27]. - Alternative Approach: If using a Gaussian Process (GP) surrogate model, review the prior width and kernel choice. An incorrectly specified prior can lead to poor model performance and slow convergence [29].

Which surrogate model should I choose: Gaussian Process or Tree-Structured Parzen Estimator?

The choice depends on the nature of your hyperparameter space and computational constraints.

- For high-dimensional spaces or many categorical parameters: The Tree-Structured Parzen Estimator (TPE) is often more efficient. TPE models the probability of good (

l(x)) versus bad (g(x)) hyperparameters separately, which scales better than GP in these scenarios [30] [31]. - For smaller, continuous search spaces: Gaussian Processes (GP) can be more sample-efficient, providing well-calibrated uncertainty estimates which help the acquisition function make better decisions [32] [28].

- Practical Recommendation in Medical Context: For optimizing infertility prediction models, which may have a mix of continuous (e.g., learning rate) and categorical (e.g., activation function) hyperparameters, TPE is often a robust starting point due to its handling of complex spaces [30] [33].

My optimization is too computationally expensive. How can I reduce the runtime?

Bayesian optimization is designed for expensive black-box functions, but the optimization itself can become costly.

- With Gaussian Processes: The computational cost of GP scales poorly (

O(n³)) with the number of evaluations (n), due to matrix inversion [30] [32]. - Strategy 1: Use TPE instead. TPE relies on efficient density estimation, which is often faster for large datasets or high-dimensional spaces [30].

- Strategy 2: For GP, ensure you are using an appropriate kernel. The RBF kernel is common, but simpler kernels can sometimes reduce computational load [29] [32].

- Strategy 3: Start with a broader, smaller initial random search (

n_seedin TPE) to coarsely explore the space before letting the Bayesian algorithm take over [31].

Frequently Asked Questions (FAQs)

What is the fundamental difference between Gaussian Process and TPE-based optimization?

Both are Bayesian optimization methods, but they differ in their core approach:

- Gaussian Process (GP): Directly models the objective function

f(x)itself as a probability distribution, typically a Gaussian. It provides a posterior distribution of the function given the data [29] [28]. - Tree-Structured Parzen Estimator (TPE): Instead of modeling the objective function, it models the probability

p(x|y)of the hyperparametersxgiven the performancey. It splits observations into "good" and "bad" distributions (l(x)andg(x)) and selects new hyperparameters that are more likely under the "good" distribution [30] [31].

How do I decide on the initial number of random points to evaluate?

This is a crucial step as it sets the prior belief for the Bayesian model.

- General Guidance: A common practice is to start with 10-20 random evaluations, which helps build an initial model of the space without consuming too much of the budget [31].

- Trade-off Consideration: Using more random points leads to a better initial approximation but at a higher computational cost. If the optimal hyperparameters are not captured in this initial random sample, the algorithm may struggle to converge to the best region later [31].

- Formal Recommendation: Some literature suggests starting with a number of points equal to 5-10% of your total evaluation budget [33].

Can I use these methods for optimizing deep learning models in medical image analysis?

Yes, absolutely. Bayesian optimization is particularly well-suited for this task.

- Evidence from Research: A 2025 study on Polycystic Ovary Syndrome (PCOS) detection from ultrasound images successfully used Bayesian optimization to fine-tune critical hyperparameters (learning rate, batch size, dropout rate) of a convolutional neural network (CNN), achieving a classification accuracy of 94.8% [34].

- Why it Works: Deep learning model training is a perfect example of an expensive, black-box function. Bayesian optimization efficiently navigates the high-dimensional hyperparameter space to find a good configuration with fewer trials compared to random or grid search [30] [35].

Comparison of Bayesian Optimization Methods

The table below summarizes the key characteristics of Gaussian Process and Tree-Structured Parzen Estimator methods to guide your selection.

| Feature | Gaussian Process (GP) | Tree-Structured Parzen Estimator (TPE) | |

|---|---|---|---|

| Core Modeling Approach | Models the posterior of the objective function f(x) directly [29] [28]. |

Models `p(x | y)`, the density of hyperparameters given performance [30] [31]. |

| Handling of Categorical/Discrete Params | Can be challenging; requires special kernels [30]. | Native and efficient handling [30]. | |

| Scalability to High Dimensions | Poor; computational cost scales as O(n³) [30] [32]. |

Good; more efficient density estimation [30]. | |

| Uncertainty Estimation | Provides natural, well-calibrated uncertainty estimates [32] [28]. | Uncertainty is implicit in the density models l(x) and g(x) [31]. |

|

| Best Use Case | Smaller search spaces (<20 dimensions), continuous parameters, where uncertainty quantification is critical [29] [32]. | High-dimensional spaces, many categorical/discrete parameters, large datasets [30] [33]. |

Experimental Protocol for Hyperparameter Optimization in Infertility Prediction

This protocol outlines a standard workflow for optimizing a model designed to predict infertility outcomes, such as those based on follicle ultrasound data [36] or other clinical markers.

Step 1: Define the Objective Function

- Formulate your objective, which for infertility prediction is typically the maximization of a performance metric like the Area Under the ROC Curve (AUC) or F1-score on a validation set. The function should take a set of hyperparameters as input and return this metric [34] [36].

Step 2: Specify the Hyperparameter Search Space

- Define the plausible range for each hyperparameter. For a tree-based model (e.g., XGBoost), this might include:

- For a CNN analyzing medical images, key parameters are learning rate, batch size, and dropout rate [34].

Step 3: Configure the Bayesian Optimizer

- For a TPE-based optimization using Optuna:

- Key Configuration: The

TPESampler()uses a default quantile thresholdgamma=0.2to split observations into "good" and "bad" groups [30] [31]. The number of trials (n_trials) should be set based on your computational budget.

Step 4: Execute and Validate

- Run the optimization. Upon completion, validate the best hyperparameter set on a held-out test set that was not used during the optimization process to ensure generalizability, a critical step for clinical applications [34] [36].

Workflow and Logical Diagrams

Bayesian Optimization Core Loop

TPE Hyperparameter Selection Logic

Research Reagent Solutions: The Hyperparameter Optimization Toolkit

| Tool / Reagent | Function / Purpose | Example/Notes |

|---|---|---|

| Optuna | A hyperparameter optimization framework that implements TPE and other algorithms. | Used to define the objective function and search space for an XGBoost model [30]. |

| Scikit-learn | Provides machine learning models and tools like KernelDensity for building Parzen estimators. |

Can be used to implement the core TPE density estimation from scratch [31]. |

| GaussianProcessRegressor | A surrogate model for GP-based Bayesian optimization. | From scikit-learn, can be configured with kernels like RBF or Matérn [28]. |

| Acquisition Function | Decides the next point to evaluate by balancing exploration and exploitation. | Expected Improvement (EI) is a widely used and effective choice [27] [28]. |

| XGBoost / CNN Model | The "expensive black-box function" being optimized. | In our context, this is the infertility prediction model (e.g., for PCOD [34] or follicle analysis [36]). |

Frequently Asked Questions & Troubleshooting

This section addresses common technical challenges researchers face when implementing evolutionary and swarm intelligence algorithms for hyperparameter optimization in infertility prediction models.

Q1: Our Particle Swarm Optimization (PSO) algorithm converges to suboptimal solutions prematurely when tuning our deep learning model for IVF outcome prediction. How can we improve exploration?

A: Premature convergence often indicates an imbalance between exploration and exploitation. Implement these strategies:

- Adaptive Inertia Weight: Start with a high inertia weight (e.g., w=0.9) to encourage global exploration and linearly decrease it to a lower value (e.g., w=0.4) over iterations to refine the search [37].

- Parameter Tuning: Adjust the cognitive (c1) and social (c2) coefficients. Values of 1.5-2.0 for each are typical, but slightly increasing c1 can enhance individual particle exploration [38] [37].

- Neighborhood Topologies: Instead of a global best (gbest), use a local best (lbest) topology where particles only share information with immediate neighbors. This maintains swarm diversity and helps avoid local optima [38].

Q2: What are the primary advantages of using PSO over Genetic Algorithms (GAs) for hyperparameter optimization in a clinical research setting with limited computational resources?

A: PSO offers several beneficial characteristics for such environments [39] [37]:

- Simpler Implementation and Fewer Parameters: PSO typically requires tuning fewer parameters (inertia weight, acceleration coefficients) compared to GAs (crossover rate, mutation rate).

- Faster Convergence: PSO often converges more quickly to good solutions, especially with smaller population sizes, reducing the number of expensive model evaluations [39].

- No Gradient Requirement: Like GAs, PSO is a gradient-free optimizer, making it suitable for the complex, non-convex search spaces of deep learning hyperparameters [37].

Q3: When building a prediction model for infertility treatment outcomes, which feature selection method—Genetic Algorithm (GA) or PSO—has been shown to yield higher performance?

A: Recent research in infertility prediction demonstrates the effectiveness of both. One study integrating PSO for feature selection with a Transformer-based deep learning model achieved exceptional performance, with 97% accuracy and a 98.4% AUC in predicting live birth outcomes [6]. This suggests PSO is a powerful method for identifying the most relevant clinical features in this domain.

Q4: How do we handle categorical and continuous hyperparameters simultaneously within a PSO or GA framework?

A: For PSO, which is naturally designed for continuous spaces, categorical parameters can be handled by mapping the particle's continuous position to discrete choices (e.g., rounding to the nearest integer for the number of layers). GAs are more inherently flexible, as their representation (binary, integer, real-valued) can be mixed, and crossover/mutation operators can be designed to handle different data types [40] [37].

Performance Comparison of Optimization Algorithms

The following table summarizes the quantitative performance of different hyperparameter optimization algorithms as reported in recent research, including studies focused on medical prediction.

Table 1: Performance Comparison of Hyperparameter Optimization Techniques

| Optimization Algorithm | Application Context | Reported Performance | Key Strengths |

|---|---|---|---|

| Particle Swarm Optimization (PSO) | Feature selection for IVF live birth prediction [6] | 97% Accuracy, 98.4% AUC [6] | Effective in high-dimensional search spaces; fast convergence [37] |

| Genetic Algorithm (GA) | Feature selection for IVF success prediction [41] | Boosted AdaBoost accuracy to 89.8% and Random Forest to 87.4% [41] | Robust wrapper method; handles complex variable interactions well [41] |

| Bayesian Optimization | Hyperparameter tuning for Convolutional Neural Networks (CNNs) [42] | High efficiency for computationally expensive models [42] [40] | Builds a probabilistic model to balance exploration and exploitation [40] |

| Random Search | General hyperparameter optimization [40] | Often more efficient than Grid Search [40] | Simple to implement; good for initial explorations of the search space [40] |

Detailed Experimental Protocol for Hyperparameter Optimization

This protocol outlines a methodology for optimizing a machine learning model for infertility prediction using a swarm intelligence approach, based on recent successful research [6].

Objective: To optimize the hyperparameters and feature set of a deep learning model (e.g., TabTransformer) to maximize its predictive accuracy for IVF live birth outcomes.

Materials and Dataset:

- Clinical Data: A dataset containing de-identified patient records from IVF cycles. Key features may include maternal age, duration of infertility, basal FSH, sperm motility, endometrial thickness, and hormone levels on the day of HCG trigger [41] [17].

- Computing Environment: A machine with sufficient CPU/GPU resources, as the process involves training multiple deep learning models.

Procedure:

- Data Preprocessing: Handle missing values, normalize continuous variables, and encode categorical variables.

- Problem Definition: Define the search space for hyperparameters (e.g., learning rate, number of layers, attention dimensions) and the set of all available features.

- Fitness Function: Design the objective function. This function should:

- Receive a set of hyperparameters and a feature subset from the optimizer.

- Train the prediction model using the provided configuration.

- Evaluate the model on a validation set.

- Return a performance metric (e.g., AUC, accuracy) to be maximized.

- PSO Setup:

- Swarm Initialization: Initialize a population of particles. Each particle's position vector represents a candidate solution (values for hyperparameters and feature subset).

- Parameter Setting: Set PSO parameters: swarm size (e.g., 20-50), inertia weight (w), cognitive coefficient (c1), and social coefficient (c2) [37].

- Optimization Loop:

- Evaluation: Evaluate each particle's position using the fitness function.

- Update Memory: Update each particle's personal best (pbest) and the swarm's global best (gbest).

- Move Swarm: Update particle velocities and positions using the standard PSO equations [38] [37].

- Iterate: Repeat the evaluation-update-move cycle until a stopping criterion is met (e.g., max iterations, convergence).

- Validation: Assess the final model, configured with the gbest solution, on a held-out test set to estimate its real-world performance.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Algorithms for Hyperparameter Optimization Research

| Item / Algorithm | Function in Research | Key Application in Infertility Prediction |

|---|---|---|

| Particle Swarm Optimization (PSO) | Optimizes model hyperparameters and/or selects the most predictive features from clinical datasets. | Used in a pipeline that achieved state-of-the-art results (97% accuracy) in predicting IVF live birth [6]. |

| Genetic Algorithm (GA) | A robust wrapper method for feature selection; evolves a population of solutions to find an optimal feature subset. | Significantly improved classifier accuracy (to ~90%) for predicting IVF success by identifying key features like female age and AMH [41]. |

| TabTransformer Model | A deep learning architecture that uses attention mechanisms to handle tabular clinical data effectively. | Served as the high-performance classifier in the PSO-based optimization pipeline [6]. |

| SHAP (SHapley Additive exPlanations) | Provides post-hoc model interpretability by quantifying the contribution of each feature to a prediction. | Crucial for clinical trust; used to identify and rank the most important clinical predictors of live birth (e.g., maternal age, progesterone levels) [6]. |

Workflow Visualization

PSO Hyperparameter Optimization Workflow for IVF Prediction

PSO Algorithm Core Mechanics

Technical Support Center: Troubleshooting Guides & FAQs

This guide addresses common challenges researchers face when replicating and adapting the integrated Particle Swarm Optimization (PSO) and TabTransformer pipeline for predicting in vitro fertilization (IVF) success.

Frequently Asked Questions (FAQs)

Q1: Our model's performance is significantly lower than the reported 97% accuracy. What are the most likely causes?

- A: A large performance gap can stem from several sources in this complex pipeline. First, verify your data preprocessing. The original study used a meticulously cleaned dataset from the Human Fertilization and Embryology Authority (HFEA). Ensure you have effectively handled missing values, normalized numerical features, and encoded categorical variables [43]. Second, examine your feature selection. The high performance was achieved using PSO for feature selection. If you are using a different method (like PCA) or a suboptimal PSO configuration, your feature set may be less informative [6]. Third, check for data leakage, where information from the test set inadvertently influences the training process. Always ensure a strict separation between training and validation sets, using techniques like 10-fold cross-validation as performed in the original study [6] [43].

Q2: How do we prevent the PSO algorithm from converging on a suboptimal set of features?

- A: PSO is a metaheuristic algorithm, and its performance depends on its hyperparameters. To improve its search:

- Tune the PSO hyperparameters: Adjust parameters like the inertia weight, and cognitive and social scaling factors to balance exploration and exploitation [44].

- Define an appropriate fitness function: The study used a cost function that maximized the F1 score of a logistic regression model while penalizing large feature sets. An ill-defined fitness function will lead to poor feature subsets [43].

- Increase swarm size and iterations: A larger swarm and more iterations allow for a more extensive search of the feature space, though this increases computational cost [6].

Q3: The TabTransformer model is overfitting, with high training accuracy but low validation accuracy. How can we improve generalization?

- A: Overfitting in deep learning models like TabTransformer is a common issue. Implement these strategies:

- Increase Regularization: Apply stronger dropout rates and weight decay within the TabTransformer architecture.

- Use Cross-Validation: The original study employed 10-fold cross-validation, which provides a more robust estimate of model performance and helps reduce overfitting [6] [43].

- Simplify the Architecture: Reduce the number of attention heads, layers, or the embedding dimensions if your dataset is not large enough to support a very complex model.

- Early Stopping: Halt the training process when the validation performance stops improving.

Q4: What is the best way to interpret the predictions made by the PSO-TabTransformer model for clinical relevance?

- A: The combined use of SHapley Additive exPlanations (SHAP) is recommended. SHAP analysis was integral to the original study, helping to identify the most significant clinical predictors of live birth and guaranteeing the model's clinical relevance [6]. It provides a unified measure of feature importance and shows how each feature contributes to individual predictions, moving beyond the "black box" nature of complex AI models.

Q5: How does the performance of the TabTransformer compare to traditional machine learning models on this specific task?

- A: In the referenced study, the TabTransformer model, especially when combined with PSO for feature selection, consistently outperformed traditional models. The table below summarizes the key quantitative findings from the research.

Table 1: Comparative Model Performance on IVF Live Birth Prediction [6]

| Model | Feature Selection Method | Accuracy | AUC (Area Under the Curve) |

|---|---|---|---|

| TabTransformer | Particle Swarm Optimization (PSO) | 97% | 98.4% |

| Transformer-based Model | Particle Swarm Optimization (PSO) | Information Not Specified | Information Not Specified |

| Random Forest | PSO / PCA | Information Not Specified | Information Not Specified |

| Decision Tree | PSO / PCA | Information Not Specified | Information Not Specified |

Troubleshooting Guide: Common Experimental Pitfalls

Table 2: Troubleshooting Common Implementation Issues

| Problem | Symptom | Potential Solution |

|---|---|---|

| Poor PSO Convergence | Fitness function score stagnates; selected features are not predictive. | Increase swarm size; tune PSO hyperparameters (inertia, acceleration coefficients); re-evaluate the fitness function [44]. |

| Unstable TabTransformer Training | Validation loss fluctuates wildly between epochs. | Adjust learning rate (likely too high); use a learning rate scheduler; check batch size; ensure proper data normalization [45]. |

| Long Training Times | The pipeline takes impractically long to complete one run. | Leverage GPU acceleration; use a smaller feature subset from PSO as an initial test; consider transfer learning if possible. |

| Low Interpretability | The model makes good predictions but the "why" is unclear. | Integrate SHAP analysis post-training to explain global and local feature importance [6]. |

Experimental Protocol: PSO-TabTransformer Integration for IVF Prediction

This section outlines the detailed methodology for replicating the integrated optimization and deep learning pipeline as described in the core case study [6] and a related implementation [43].

Data Source and Preprocessing

- Data: Utilize the dataset from the Human Fertilization and Embryology Authority (HFEA), containing hundreds of thousands of patient records with numerous features [43].

- Cleaning: Apply a rigorous preprocessing pipeline: remove columns with excessive missing values (>99% null), impute remaining missing values, and normalize numerical features.

- Inclusion Criteria: Filter records to include only those with valid outcomes, identifiable infertility causes, and non-negative treatment cycles to ensure data quality for binary classification [43].

Feature Selection via Particle Swarm Optimization (PSO)

- Objective: To find an optimal subset of features that maximizes predictive performance while minimizing redundancy.

- PSO Setup:

- Representation: Each particle in the swarm represents a feature subset as a binary vector, where '1' denotes inclusion and '0' denotes exclusion of a feature [43].

- Fitness Function: A cost function is designed to maximize the F1 score of a simple logistic regression model, with a penalty term for larger feature sets to promote parsimony [43].

- Process: The swarm iteratively updates particle velocities and positions based on personal and global best solutions until a stopping criterion is met.

Model Training with TabTransformer

- Architecture: The TabTransformer is a deep learning model specifically designed for tabular data.

- Categorical Embeddings: Categorical features are passed through embedding layers to create dense vector representations.

- Attention Mechanism: The embedded features are then processed by a transformer layer with multi-head self-attention. This allows the model to learn contextual relationships and complex interactions between different features [6] [45].

- MLP for Prediction: The output from the transformer is concatenated with the continuous numerical features and fed into a final Multi-Layer Perceptron (MLP) for classification [6].

- Training:

Model Interpretation

- SHAP Analysis: Apply SHapley Additive exPlanations (SHAP) to the trained model. This identifies the most significant predictors of infertility and helps validate the clinical relevance of the model's decisions [6].

The following workflow diagram illustrates the entire integrated pipeline:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Frameworks

| Item Name | Function / Role in the Experiment |

|---|---|

| TabTransformer Architecture | A deep learning model based on transformer attention mechanisms, specifically engineered for high performance on tabular data. It captures complex interactions between categorical and numerical features [6] [46]. |

| Particle Swarm Optimization (PSO) | A metaheuristic optimization algorithm used for feature selection. It efficiently searches the high-dimensional space of possible feature subsets to find a performant and parsimonious set [6] [44]. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic method for explaining the output of any machine learning model. It is used post-hoc to interpret the PSO-TabTransformer model, identifying key predictive features and ensuring clinical trustworthiness [6]. |

| k-Fold Cross-Validation | A resampling procedure used to evaluate the model on limited data. It reduces overfitting and provides a more reliable estimate of model performance on unseen data [6] [47]. |

| Synthetic Data Generation (e.g., GPT-4) | In scenarios with class imbalance or data scarcity, synthetic data generation can be used to augment the dataset, improving model robustness and generalization, as demonstrated in other medical prediction studies [48]. |

Automated Machine Learning (AutoML) Frameworks for Pipeline Optimization

Framework Comparison & Selection Guide

The table below summarizes key open-source AutoML frameworks suitable for optimizing machine learning pipelines, particularly for structured data common in medical research.

| Framework | Primary Language | Optimization Focus | Key Strengths | Best Suited For |

|---|---|---|---|---|

| Auto-Sklearn [49] | Python | CASH Problem, HPO | Leverages meta-learning & ensemble construction; drop-in scikit-learn replacement [49]. | Researchers seeking a robust, out-of-the-box solution for tabular data. |

| AutoGluon [49] | Python | Automated Stack Ensembling | Achieves high accuracy via multi-layer model stacking; excels with tabular data [49]. | Projects where predictive accuracy is paramount and computational resources are adequate. |

| FLAML [49] | Python | HPO, Model Selection | A fast and lightweight library optimized for low computational cost [49]. | Resource-constrained environments or for rapid prototyping. |

| TPOT [49] | Python | Pipeline Optimization | Uses genetic programming to optimize full ML pipelines; has a focus on biomedical data [49]. | Pipeline design exploration and biomedical applications [49]. |

| H2O AutoML [49] | Python, R, Java, Scala | HPO, Model Training | Highly scalable, trains a diverse set of models and ensembles quickly [49]. | Large datasets and users requiring scalability and a user-friendly interface. |

The following table synthesizes performance data from large-scale benchmarks on open-source AutoML frameworks, providing a basis for expectation setting [49].

| Framework | Performance Note | Typical Training Time for High Accuracy |

|---|---|---|

| AutoGluon | Often outperforms other frameworks and even best-in-hindsight competitor combinations; can beat 99% of data scientists in some Kaggle competitions [49]. | ~4 hours on raw data for competitive performance [49]. |

| Auto-Sklearn 2.0 | Can reduce the relative error of its predecessor by up to a factor of 4.5 [49]. | ~10 minutes for performance substantially better than Auto-Sklearn 1.0 achieved in 1 hour [49]. |

| FLAML | Significantly outperforms top-ranked AutoML libraries under equal or smaller budget constraints [49]. | Optimized for low computational resource consumption [49]. |

Troubleshooting Common AutoML Issues

This section addresses specific issues you might encounter during your experiments.

FAQ 1: My model imports or executions are failing with "ModuleNotFoundError" or "AttributeError" after an SDK/packages update. How can I resolve this?

- Issue: Version dependencies between your AutoML framework and other packages (like

scikit-learnorpandas) can break, causing import or attribute errors [50]. - Solution: Identify the version of your AutoML training SDK and install the compatible package versions [50].

- If your

AutoML SDK training version > 1.13.0, run: - If your

AutoML SDK training version <= 1.12.0, run:

- If your

FAQ 2: My automated ML job has failed. What is the most efficient way to diagnose the root cause?

- Issue: A high-level job failure that requires drilling down to the specific error.

- Solution: Follow a structured diagnostic workflow [51]:

- Check the failure message in the AutoML job's overview in your platform's UI (e.g., Azure ML Studio).

- Navigate to the details of the failed trial or child job.

- In the failed trial's overview, look for error messages in the status section.

- For detailed logs, check the

std_log.txtfile in theOutputs + Logstab, which contains exception traces and logs [51].

FAQ 3: I am setting up my AutoML environment and the setup script fails, especially on Linux. What could be wrong?

- Issue: Failed environment setup due to missing system dependencies.

- Solution: Ensure essential build tools are installed. On Ubuntu Linux, run the following commands before re-executing the setup script [50]:

Experimental Protocol: Optimizing an Infertility Prediction Model

This protocol outlines how to use AutoML for a hyperparameter optimization task in the context of infertility prediction research, based on methodologies from published studies [16] [52].

The diagram below illustrates the end-to-end experimental workflow for building an infertility prediction model using AutoML.

Phase 1: Data Preparation

- Data Sourcing: Utilize a retrospective dataset of assisted reproduction cycles. A typical study might include data from thousands of couples and cycles [16].

- Feature Selection: Include a comprehensive set of 20+ clinical and laboratory parameters. Based on existing research, key features for infertility prediction often include [16] [52]:

- Maternal Age

- Paternal Age