Optimizing Sperm Image Pre-processing: Advanced Techniques for Enhanced AI Analysis in Male Fertility Research

This article provides a comprehensive guide to sperm image pre-processing, a critical step for accurate automated morphology analysis in male fertility diagnostics.

Optimizing Sperm Image Pre-processing: Advanced Techniques for Enhanced AI Analysis in Male Fertility Research

Abstract

This article provides a comprehensive guide to sperm image pre-processing, a critical step for accurate automated morphology analysis in male fertility diagnostics. Aimed at researchers and biomedical professionals, it explores the foundational challenges of manual assessment, details state-of-the-art methodological approaches including data augmentation and noise reduction, and addresses common troubleshooting scenarios. Furthermore, it presents a comparative validation of emerging deep learning architectures, such as Vision Transformers, against conventional methods. The synthesis of these elements offers a roadmap for developing robust, standardized, and highly accurate computational tools for sperm image analysis, with significant implications for improving assisted reproductive technology outcomes.

The Critical Role of Pre-processing in Overcoming Sperm Morphology Analysis Challenges

Frequently Asked Questions (FAQs)

Q1: What are the primary sources of subjectivity in manual sperm morphology assessment? The primary sources of subjectivity are the reliance on visual estimation under a microscope and the application of strict morphological criteria (like Tygerberg's) by human technicians. This leads to significant inter- and intra-laboratory variability in classifying a sperm as "normal." Factors such as technician experience, visual fatigue, and subtle differences in the interpretation of borderline cases for head, midpiece, and tail defects contribute heavily to inconsistent results [1] [2].

Q2: How does manual assessment's reliability compare to Computer-Aided Sperm Analysis (CASA)? While manual assessment is the traditional standard, it is prone to human error and is relatively slow. In contrast, CASA systems offer greater objectivity, reproducibility, and high-throughput analysis. However, CASA performance can be hindered by high sperm concentration, where overlapping sperm cells lead to detection errors, and requires rigorous standardization and validation to ensure accuracy across different instruments [2].

Q3: What are the key WHO reference standards for normal sperm morphology? According to the World Health Organization (WHO) laboratory manual, the lower reference limit for normal sperm morphology is 4% (5th percentile, 95% CI: 3–4%), as established using strict criteria. This means fertility may be impaired if the percentage of morphologically normal forms falls below this threshold [1].

Q4: What specific morphological criteria define a "normal" sperm cell? The WHO standard defines a normal sperm by the following characteristics [2]:

- Head: Smooth, oval configuration, 3–5 μm in length and 2–3 μm in width.

- Midpiece: Slender, less than 1 μm in width, 5–7.5 μm long, and axially attached to the head.

- Tail: Uniform, thinner than the midpiece, approximately 45 μm long, and without sharp bends.

Q5: Why is image pre-processing critical for automated sperm morphology analysis? Consistent and minimal pre-processing is fundamental for accurate automated analysis. It ensures that the input images are standardized, thereby reducing background noise and enhancing relevant features without introducing artifacts. This directly improves the reliability of downstream tasks like segmentation, classification, and morphological measurement. Adherence to image integrity guidelines, such as applying adjustments to the entire image and avoiding oversaturation, is mandatory for scientific validity [3] [4].

Troubleshooting Guides

Guide 1: Addressing High Variability in Morphology Scores Between Technicians

Problem: Significant disagreement in normal morphology percentages when the same sample is assessed by different technicians.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inconsistent application of "strict" criteria. | Review classified images together; have technicians re-score a set of reference images. | Implement regular, mandatory calibration sessions using standardized training slides. Adopt a double-blind scoring system for critical samples. |

| Fatigue and high workload. | Monitor scoring results over time to identify drift. | Enforce periodic rest breaks and limit continuous microscope evaluation sessions. |

| Suboptimal sample preparation. | Check for staining consistency and presence of debris. | Standardize the staining protocol (e.g., Diff-Quik, Papanicolaou) and ensure uniform smear thickness across all samples. |

Guide 2: Managing Borderline Morphology Classifications

Problem: Difficulty in consistently classifying spermatozoa with subtle or mixed defects.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Ambiguity in classification rules for specific defects. | Create a shared digital library of "borderline" cases for group discussion and consensus. | Develop a detailed, visual internal standard operating procedure (SOP) with clear examples of accept/reject decisions for vague defects. |

| Lack of high-quality imaging. | Capture still images of borderline cells during analysis. | Use a high-resolution microscope with oil immersion and digital imaging capabilities to capture and archive stills of difficult cells for secondary review and training. |

The following table summarizes the key reference values for semen parameters as defined by the WHO, which provide the essential context for interpreting morphology results [1].

Table 1: WHO Laboratory Manual Lower Reference Limits for Semen Analysis

| Parameter | Lower Reference Limit (5th Percentile) | 95% Confidence Interval |

|---|---|---|

| Semen Volume | 1.5 ml | 1.4 – 1.7 |

| Sperm Concentration | 15 million/ml | 12 – 16 |

| Total Sperm Number | 39 million per ejaculate | 33 – 46 |

| Normal Morphology (Strict Criteria) | 4% | 3 – 4 |

| Total Motility | 40% | 39 – 42 |

| Vitality | 58% | 55 – 63 |

Experimental Protocols

Protocol 1: Standardized Sperm Morphology Staining and Assessment (Based on WHO Guidelines)

Title: Manual Sperm Morphology Assessment Using Strict Criteria

Principle: This protocol describes the staining and evaluation of human spermatozoa for morphological anomalies based on the WHO's strict criteria, aiming to minimize subjectivity through standardized procedures.

Reagents and Materials:

- Microscope slides

- Fixative solution (e.g., 95% ethanol)

- Staining solutions (e.g., Diff-Quik, Hematolor, or Papanicolaou stains)

- Immersion oil

- Bright-field microscope with 100x oil immersion objective

Procedure:

- Smear Preparation: Create a thin, uniform smear of a well-mixed liquefied semen sample on a clean glass slide. Allow to air-dry completely.

- Fixation: Immerse the air-dried smear in 95% ethanol for 15 minutes. Allow to dry.

- Staining: Follow the specific protocol for the chosen stain (e.g., for Diff-Quik: dip in Solution A for 10 seconds, Solution B for 5 seconds, then rinse gently with water).

- Microscopy: Examine the stained smear under oil immersion at 1000x total magnification.

- Assessment: Systematically scan the slide and evaluate at least 200 spermatozoa. Classify each sperm as either "normal" or having one or more defects (head, neck/midpiece, tail, or cytoplasmic droplets) according to the strict morphological criteria [1].

- Calculation: Calculate the percentage of morphologically normal forms.

Safety Notes: Treat all semen samples as potentially infectious and handle using appropriate personal protective equipment (PPE) and biosafety level 2 practices.

Protocol 2: Image Acquisition for Automated Analysis

Title: Standardized Digital Image Capture for CASA and ML Models

Principle: To acquire consistent, high-quality digital images of sperm cells for input into Computer-Aided Sperm Analysis (CASA) systems or machine learning algorithms, ensuring reproducible pre-processing.

Reagents and Materials:

- Prepared sperm morphology slides (from Protocol 1)

- Research-grade microscope with a high-resolution digital camera

- Calibrated micrometer

Procedure:

- System Calibration: Calibrate the digital imaging system using a stage micrometer to define the pixel-to-micrometer ratio accurately.

- Image Settings: Set the microscope Kohler illumination for even lighting. Use a consistent magnification (100x oil immersion objective). Set a fixed resolution (e.g., 1920x1080), bit depth, and avoid using automatic gain or gamma adjustments.

- Image Capture: Capture images from multiple, randomly selected fields of view to ensure a representative sample. Each field should be in sharp focus.

- Data Logging: In the methods section, document all key acquisition details including the microscope model, camera model, objective lens specifications, and software used, as per image integrity standards [4].

- Pre-processing (Minimal): If adjustments like brightness/contrast are necessary for clarity, apply them uniformly across the entire image. Document any such adjustments in the figure legend or methods [3].

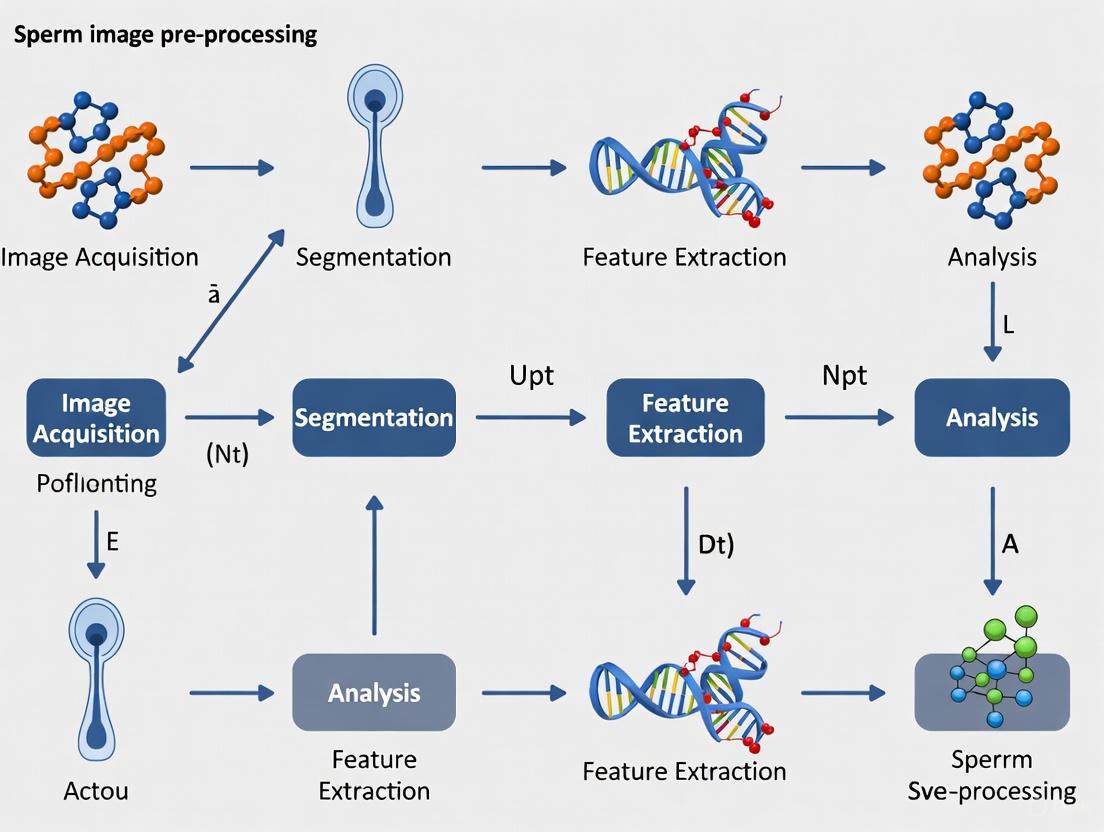

Experimental Workflow Visualization

Diagram: Sperm Morphology Analysis Workflow

Diagram: Subjectivity Factors in Manual Assessment

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Sperm Morphology Research

| Item | Function/Description |

|---|---|

| Diff-Quik Staining Kit | A rapid Romanowsky-type stain for differential staining of sperm cell components (head, midpiece, tail), enabling clear visualization of morphology. |

| Papanicolaou Stain | A more complex, multi-step staining procedure often considered the gold standard for detailed morphological assessment of sperm heads. |

| Computer-Aided Sperm Analysis (CASA) System | An integrated system comprising a microscope, camera, and software for the automated, objective analysis of sperm concentration, motility, and morphology. |

| High-Resolution Microscope & Camera | A research-grade microscope with a 100x oil immersion objective and a high-resolution digital camera is essential for acquiring images for both manual analysis and CASA/ML input. |

| Strict Criteria Classification Guide | A visual guide, often based on the WHO manual, containing reference images of normal and abnormal sperm forms to standardize technician scoring. |

| Ant Colony Optimization (ACO) Algorithm | A nature-inspired optimization algorithm that can be integrated with machine learning models to enhance feature selection and predictive accuracy in classifying sperm health [5]. |

Frequently Asked Questions (FAQs)

Q1: What are the primary sources of noise and debris in sperm images, and how do they impact analysis? The main sources include poorly stained semen smears, insufficient lighting during microscopy, and the presence of cellular fragments or impurities in the seminal fluid [6] [7]. These factors significantly compromise the accuracy of both manual and Computer-Assisted Sperm Analysis (CASA) by obscuring sperm morphology, leading to misclassification of sperm cells and impurities [8] [9]. In automated systems, this can cause an overestimation or underestimation of key parameters like sperm concentration and morphology [8].

Q2: How does staining variability affect the reliability of sperm morphology assessment? Different staining techniques (e.g., Giemsa, Spermac, Papanicolaou) and smear preparation protocols yield varying levels of detail and contrast [10]. This variability is a major cause of significant inter- and intra-laboratory variation, making it difficult to compare results across different studies or clinics [10] [7]. For instance, the same sample assessed with Giemsa (using WHO criteria) versus Spermac stain (using strict criteria) can yield different percentages of normal sperm, directly affecting the diagnosis of teratozoospermia [10].

Q3: What advanced methods are available to mitigate the impact of debris in automated semen analysis? Deep learning-based detection models have shown superior performance in distinguishing sperm from debris compared to traditional image processing [11]. For example, an improved YOLO-v7 model achieved an Average Precision (AP50) of 95.1% for sperm detection and 62.4% for impurity detection, significantly reducing the need for manual intervention [11]. Furthermore, ensuring that operators of automated systems like the SQA-V are highly competent in correctly assessing debris levels is crucial, as misestimation can directly skew results [8].

Q4: Which imaging classification models demonstrate the best robustness against noise? Recent comparative studies indicate that Visual Transformer (VT) models, which are based on global information, exhibit stronger robustness against conventional noise and adversarial attacks compared to Convolutional Neural Network (CNN) models that rely on local information [6]. Under the influence of Poisson noise, one study showed VT models maintained an accuracy of 91.08%, a sperm recall of 93.8%, and an impurity precision of 91.3%, with minimal performance degradation [6].

Troubleshooting Guides

Quantitative Impact of Common Issues on Sperm Analysis

Table 1: Impact of Analysis Challenges on Key Sperm Parameters

| Challenge | Affected Parameter | Impact Description | Quantitative Effect | Citation |

|---|---|---|---|---|

| Noise in Imaging | Deep Learning Model Accuracy | Reduces classification accuracy of sperm and impurities under noisy conditions. | Accuracy drop from 91.45% to 91.08% (Poisson noise); Impurity Precision: 92.7% to 91.3%. | [6] |

| Debris in Sample (Automated System) | Sperm Concentration | Underestimation of debris levels leads to overestimation of sperm concentration. | High correlation with manual count (rho=0.987) requires correct debris assessment. | [8] |

| Progressive Motility | Overestimation of debris levels leads to increased motility readings. | High correlation (rho=0.949) dependent on accurate debris level input. | [8] | |

| Normal Morphology | Overestimation of debris artificially increases % of normal forms. | Moderate correlation (rho=0.694); highly dependent on operator's debris assessment. | [8] | |

| Staining Variability | Normal Morphology Diagnosis | Concordance in teratozoospermia diagnosis using different stains/criteria. | Concordant diagnosis in 45 out of 49 cases (91.8%). | [10] |

| Inter-Observer Agreement | Agreement between different technicians assessing morphology. | Kappa values: 0.700 (WHO criteria) and 0.715 (Strict criteria). | [10] |

Step-by-Step Troubleshooting Protocols

Protocol A: Addressing Staining Variability and Improving Morphology Assessment

- Problem: Inconsistent morphology scores due to different staining methods.

- Objective: Standardize staining and assessment to minimize inter-laboratory variation.

- Materials: Spermac stain kit, Quinn’s Sperm Washing Medium, centrifuge, brightfield microscope with oil immersion.

- Procedure: a. Sample Preparation: After liquefaction, wash an aliquot of semen with Quinn’s Sperm Washing Medium and centrifuge at 300 g for 10 minutes [10]. b. Smear Preparation: Remove the supernatant, resuspend the pellet in 0.5 mL of medium. Place 10 µL of washed semen on a glass slide, air-dry, and fix [10]. c. Staining: Follow the specific protocol for Spermac stain: wash the smear with distilled water, apply the stain, then wash again with distilled water [10]. d. Assessment: Use brightfield illumination at 1000x magnification with oil immersion. Assess at least 200 spermatozoa per smear according to strict (Tygerberg) criteria [10].

- Expected Outcome: This method reduces borderline forms considered as normal and provides a more stringent assessment, enhancing objectivity and decreasing variability [10].

Protocol B: Minimizing Debris Interference in Automated Sperm Analysis

- Problem: Debris causing inaccurate readings for concentration, motility, and morphology on automated systems.

- Objective: To correctly identify and input debris levels to ensure the automated analyzer provides accurate results.

- Materials: SQA-V automated semen analyzer (or similar), microscope.

- Procedure: a. Initial Calibration: Prior to sample analysis, ensure the automated system is properly calibrated. b. Manual Debris Assessment: Using a microscope, independently assess the level of debris in the sample. Categorize it into one of the four standard levels: None/Few, Moderate, Many, or Gross [8]. c. Input and Analysis: Input the correct debris level category into the SQA-V system before initiating the automated analysis of the sample [8]. d. Verification: For critical samples, consider verifying automated results with a manual count, especially if the results are inconsistent with clinical expectations.

- Troubleshooting Tip: Underestimation of debris will falsely increase the sperm concentration reading and decrease motility and morphology percentages. Overestimation of debris will have the opposite effect [8].

Experimental Protocols from Key Research

Deep Learning Model Training for Robust Sperm Image Classification

- Background: Deep learning models can automate and standardize sperm morphology analysis, but their performance is highly dependent on the quality and size of the training dataset [7] [9].

- Objective: To develop a Convolutional Neural Network (CNN) model for classifying sperm morphology according to the modified David classification, enhancing the dataset using augmentation techniques to improve model robustness [7].

- Materials: MMC CASA system for image acquisition, RAL Diagnostics staining kit, Python 3.8 with deep learning libraries (e.g., TensorFlow, PyTorch).

- Procedure: a. Dataset Creation (SMD/MSS): Capture images of individual spermatozoa from stained smears. Have three independent experts classify each spermatozoon into one of 12 morphological defect classes based on the modified David classification [7]. b. Data Augmentation: To balance the representation of different morphological classes and increase dataset size, apply augmentation techniques such as rotation, flipping, and scaling to the original images. One study expanded a dataset from 1,000 to 6,035 images using these methods [7]. c. Image Pre-processing: Resize images to a uniform size (e.g., 80x80 pixels) and convert them to grayscale. Normalize pixel values to a common scale to facilitate model training [7]. d. Model Training and Testing: Randomly partition the augmented dataset into a training set (80%) and a testing set (20%). Train the CNN model on the training set and evaluate its final performance on the unseen testing set [7].

- Expected Outcome: A deep learning model capable of classifying sperm morphology with an accuracy that can range from 55% to 92%, reducing subjectivity and workload [7].

Microfluidic Sperm Separation for High-Quality Sample Preparation

- Background: Traditional sperm separation methods like density gradient centrifugation can cause DNA fragmentation and have low recovery rates. Microfluidic devices leverage sperm's intrinsic behavior (rheotaxis) to gently isolate high-motility sperm [12].

- Objective: To separate motile sperm with improved morphology from raw human semen using a simple, rapid, and centrifugation-free microfluidic device.

- Materials: Fabricated PDMS microfluidic device (four chambers interconnected by channels), raw human semen sample, syringe pump.

- Procedure: a. Device Priming: Introduce a medium into the device to remove air bubbles and prepare the channels. b. Sample Loading: Introduce the raw, unwashed semen sample into the device's inlet. c. Flow Control: Use a syringe pump to create a low shear rate flow (optimized at 40-50 nL/s) through the device. This flow encourages motile and morphologically normal sperm to exhibit rheotaxis (swimming against the flow) and navigate to specific isolation chambers [12]. d. Sample Collection: After a short runtime (under 5 minutes), increase the flow rate to 500 nL/s to recover the separated, high-quality sperm from the outlet of the isolation chambers [12].

- Expected Outcome: The method can achieve up to 100% motility in the isolated sperm fraction and improve the proportion of morphologically normal sperm by up to 56%, providing superior samples for Assisted Reproductive Technology (ART) [12].

Visualization Diagrams

Sperm Analysis Challenge-Solution Framework

Sperm Analysis Challenge-Solution Map

Microfluidic Sperm Separation Workflow

Microfluidic Sperm Separation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Sperm Imaging and Analysis

| Item Name | Function/Application | Key Feature/Benefit | Citation |

|---|---|---|---|

| Spermac Stain | Staining for morphology assessment by strict criteria. | Provides clear delineation of sperm structures (head, acrosome, midpiece, tail) for precise measurement. | [10] |

| Giemsa Stain | Staining for morphology assessment by WHO criteria. | A common stain for general sperm morphology and differential counting of leukocytes/immature cells. | [10] |

| Quinn’s Sperm Washing Medium | Preparation of semen samples for staining. | Used to wash semen samples prior to smear preparation, removing seminal plasma. | [10] |

| RAL Diagnostics Staining Kit | Staining for morphology based on David's classification. | Used in the creation of datasets for deep learning model training. | [7] |

| HyperSperm Preparation Media | Sequential media for sperm capacitation. | Enhances sperm hyperactivation, leading to improved blastocyst development rates in IVF. | [13] |

| PDMS-based Microfluidic Device | Sperm separation from raw semen. | Uses rheotaxis and parallelization for centrifugation-free, rapid (under 5 min) isolation of motile sperm. | [12] |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Why does my deep learning model for sperm head classification perform poorly despite having a large number of images? Poor performance with a large image dataset often stems from underlying data quality issues rather than model architecture. Common causes include noisy labels, where sperm images are misclassified by experts [7] [14], class imbalance, where certain morphological defect classes are underrepresented [7] [15], and low image quality due to factors like insufficient lighting or poorly stained semen smears [7]. A data-centric approach, focusing on improving data quality through techniques like confident learning to detect mislabeled images and data augmentation to balance classes, has been shown to improve performance by at least 3% compared to a model-centric approach [14].

Q2: What are the most effective methods to detect and correct mislabeled sperm images in my dataset? The most effective method involves using confident learning, a technique that estimates the noise in labels and identifies examples with a high probability of being mislabeled [14]. This is done by calculating a probability threshold for each classification; instances with a probability distribution below this optimized threshold are flagged as potential noisy labels [14]. These flagged images should then be reviewed and corrected through human annotation [14]. For duplicate images, which can also skew model training, using a multi-stage hashing technique involving Perceptual Hashing (pHash) is effective for removal [14].

Q3: How can I improve my model's ability to generalize to new, unseen sperm samples? Generalizability is heavily influenced by the representativeness and quality of the training data [16] [15]. To improve it:

- Ensure Dataset Diversity: Your training data should cover the biological variability and different staining conditions encountered in real-world clinical practice [15].

- Apply Data Augmentation: Use techniques like rotation to artificially create more varied examples of underrepresented morphological classes, making the dataset more balanced and robust [7] [14].

- Remove Duplicates and Noisy Labels: This prevents the model from overfitting to specific, potentially erroneous, data points [14].

- Implement Continuous Monitoring: After deployment, use automated data quality checks to monitor for performance degradation and retrain the model periodically with new, curated data [17].

Troubleshooting Guides

Problem: Model performance is inconsistent with real-world data after promising validation results. This indicates a data drift or data quality mismatch between your training set and production environment [17].

- Step 1: Profile Incoming Data. Use automated data quality checks to compare the statistical properties (e.g., mean, distribution) of incoming real-world data against your training dataset [17].

- Step 2: Check for New Artifacts. Investigate if new, unseen image artifacts are present, such as different staining patterns or debris introduced during new sample preparation cycles [7] [18].

- Step 3: Retrain with New Data. Create a new version of your model by incorporating a carefully validated subset of the new real-world data into your training pipeline [17]. Continuously monitor performance to ensure improvements [17].

Problem: The deep learning model is biased towards predicting "normal" morphology and misses rare defects. This is a classic symptom of a severe class imbalance in your training dataset [7] [15].

- Step 1: Conduct Class Distribution Analysis. Calculate the number of images per morphological class (e.g., normal, tapered head, coiled tail) to identify underrepresented categories [7].

- Step 2: Apply Data-Level Techniques.

- Step 3: Consider Algorithm-Level Techniques. If data-level methods are insufficient, adjust the loss function (e.g., using weighted cross-entropy) to penalize misclassifications on the minority classes more heavily [15].

Quantitative Data on Data Quality Impact

Table 1: Performance Comparison of Model-Centric vs. Data-Centric Approaches on Benchmark Datasets This table summarizes the findings from a study that systematically compared the two approaches using the same ResNet-18 model architecture [14].

| Dataset | Model-Centric Approach (Accuracy) | Data-Centric Approach (Accuracy) | Relative Performance Improvement |

|---|---|---|---|

| MNIST | Baseline (Not Specified) | Not Specified | ≥ 3% [14] |

| Fashion MNIST | Baseline (Not Specified) | Not Specified | ≥ 3% [14] |

| CIFAR-10 | Baseline (Not Specified) | Not Specified | ≥ 3% [14] |

Table 2: Common Data Quality Issues in Sperm Image Analysis and Their Impact This table outlines specific data issues relevant to the domain of sperm morphology analysis.

| Data Quality Issue | Impact on Deep Learning Model | Relevant Technique for Mitigation |

|---|---|---|

| Noisy Labels [14] | Model learns incorrect patterns, reducing accuracy [15]. | Confident Learning & Human Re-annotation [14] |

| Class Imbalance [7] [15] | Model is biased toward majority classes, failing to detect rare defects [15]. | Data Augmentation [7] [14] |

| Duplicate Images [14] | Inflates validation performance, causes overfitting [14]. | Multi-stage Hashing (pHash, CityHash) [14] |

| Low Image Quality [7] | Obscures morphological features, hindering learning. | Image Pre-processing (Denoising, Normalization) [7] |

Detailed Experimental Protocols

Protocol 1: Implementing a Data-Centric Workflow for Sperm Morphology Classification This protocol is based on methodologies used to create the SMD/MSS dataset and improve model performance through data quality [7] [14].

Data Acquisition & Expert Labeling:

- Acquire images of individual spermatozoa using a system like the MMC CASA system with a 100x oil immersion objective [7].

- Have multiple experts (e.g., three) classify each spermatozoon independently based on a standardized classification system like the modified David classification (which includes 12 classes for head, midpiece, and tail defects) [7].

- Compile a ground truth file that includes the image name, all expert classifications, and morphometric data [7].

Data Quality Enhancement:

- Remove Duplicates: Apply a multi-stage hashing process. Use Perceptual Hashing (pHash) to identify and remove duplicate or near-duplicate images to prevent dataset bias [14].

- Detect Noisy Labels: Use confident learning to analyze the expert classifications and probability distributions from an initial model to identify images with a high likelihood of being mislabeled [14].

- Correct Labels: Re-annotate the images flagged in the previous step through a consensus review by experts [14].

- Address Class Imbalance: Apply data augmentation techniques (e.g., rotation, flipping) to the underrepresented morphological classes to create a more balanced dataset [7] [14].

Model Training & Evaluation:

Diagram 1: Data-centric workflow for sperm image analysis.

Protocol 2: Assessing Image Quality for Microscopy Data This protocol outlines key factors to assess when ensuring the quality of sperm microscopy images, based on standard image quality factors [18].

Sharpness (MTF - Modulation Transfer Function) Assessment:

- Importance: Determines the amount of detail an image can convey; arguably the most important factor for identifying morphological defects [18].

- Method: Use slanted-edge patterns (e.g., with SFR or SFRplus modules) to measure the Spatial Frequency Response (SFR), also called MTF. The 50% MTF frequency correlates well with perceived sharpness [18].

Noise Measurement:

Tonal Response (Contrast) Check:

- Importance: Ensures that the staining allows for clear differentiation between the sperm cell and its background, as well as between different parts of the sperm [18].

- Method: Use grayscale step charts to measure the tonal response curve of the imaging system. Higher contrast generally improves quality but must be balanced to avoid clipping of details [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sperm Image Pre-processing and Analysis

| Item | Function / Purpose | Example / Specification |

|---|---|---|

| RAL Diagnostics Staining Kit | Stains sperm smears to provide contrast for morphological assessment under a microscope [7]. | Standard staining kit as used in the SMD/MSS dataset creation [7]. |

| MMC CASA System | An integrated system for Computer-Assisted Semen Analysis. Used for automated image acquisition from sperm smears, often with morphometric capabilities [7]. | Microscope with digital camera and software for capturing and storing individual sperm images [7]. |

| X100 Oil Immersion Objective | A high-magnification microscope objective lens essential for visualizing detailed morphological structures of sperm heads, midpieces, and tails [7]. | Standard optical component for high-resolution microscopy [7]. |

| Slanted-Edge MTF Chart | A test chart used to quantitatively measure the sharpness (Modulation Transfer Function) of the entire imaging system (lens, sensor, software) [18]. | eSFR ISO or SFRplus chart from commercial providers [18]. |

| ColorChecker Chart | Used to assess and calibrate color accuracy and tonal response of the imaging system, ensuring consistency across different samples and sessions [18]. | X-Rite ColorChecker or similar [18]. |

This guide provides technical support for researchers working with key public datasets for sperm morphology analysis: HuSHeM, SMIDS, and SMD/MSS. The standardization of sperm image pre-processing is critical for developing robust, generalizable AI models in male fertility diagnostics. The table below summarizes the core characteristics of these datasets for your initial assessment [7] [19] [20].

Table 1: Key Characteristics of Sperm Morphology Datasets

| Feature | HuSHeM & SCIAN-MorphoSpermGS | SMIDS | SMD/MSS |

|---|---|---|---|

| Primary Focus | Sperm head, flagellum, vacuole, and acrosome morphology [20] | A general-purpose dataset for feature detection; images labelled as normal, abnormal, or non-sperm [19] | Detailed morphological classification based on the modified David classification [7] |

| Image Content | High-resolution images focusing on structural details [20] | RGB images; may include noise, multiple sperm heads, and mixed tails [19] | 1,000 individual spermatozoa images, extended to 6,035 via augmentation [7] |

| Classification System | Morphology of sperm head and other specific components [20] | Binary (Normal/Abnormal) and Non-sperm class [19] | Multi-class (12 defect types across head, midpiece, and tail) [7] |

| Key Application | Advanced morphological studies of specific sperm structures [20] | General sperm detection and classification tasks [19] | Training deep learning models for fine-grained anomaly detection [7] |

Frequently Asked Questions (FAQs)

Q1: Which dataset is most suitable for training a model to perform a detailed, multi-class analysis of sperm defects? The SMD/MSS dataset is explicitly designed for this purpose. It uses the modified David classification, which includes 12 distinct classes of morphological defects across the sperm head, midpiece, and tail, such as tapered heads, microcephalous heads, bent midpieces, and coiled tails [7]. This level of granularity is essential for models that go beyond a simple normal/abnormal binary classification.

Q2: Our research aims to develop a new 3D sperm motility analysis tool. Are any of these datasets appropriate? No. The HuSHeM, SMIDS, and SMD/MSS datasets are primarily focused on static 2D morphology. For 3D motility analysis, you should consider the 3D-SpermVid dataset, a newer repository comprising 121 multifocal video-microscopy hyperstacks that capture sperm movement in a volumetric space over time, enabling the study of 3D flagellar beating patterns [20].

Q3: We are encountering high disagreement in image labels from different human experts. How can we address this? This is a common challenge in sperm morphology analysis. The creators of the SMD/MSS dataset proactively addressed this by implementing an inter-expert agreement analysis. They categorized labels into "No Agreement" (NA), "Partial Agreement" (PA), and "Total Agreement" (TA). When training your model, you can treat the TA subset as a high-confidence ground truth to improve label reliability and model performance. For the PA subset, you might use the majority vote from the agreeing experts [7].

Q4: Our image pre-processing pipeline is struggling with noisy images that contain multiple cells or debris. Which dataset reflects this real-world challenge? The SMIDS dataset explicitly states that its images may include noise, multiple sperm heads, and mixed tails [19]. While this adds complexity, it is highly representative of real-world laboratory conditions. Using this dataset can help you develop and test more robust pre-processing and segmentation algorithms that can handle these challenges effectively.

Troubleshooting Common Experimental Issues

Issue 1: Model Performance is Poor Due to Limited and Imbalanced Data

Problem: You are working with the SMD/MSS dataset and find that your model's accuracy is low for morphological classes that had few original samples.

Solution:

- Implement Data Augmentation: The SMD/MSS team successfully expanded their dataset from 1,000 to 6,035 images using augmentation techniques [7]. Integrate a real-time augmentation pipeline into your training process.

- Recommended Augmentation Techniques: Apply transformations such as:

- Geometric: Rotation, flipping, and scaling.

- Photometric: Adjusting brightness, contrast, and adding slight noise blur to simulate focus variations.

- Strategic Data Splitting: When splitting your data into training and test sets, use stratified partitioning. This ensures that all morphological classes are proportionally represented in both sets, preventing your model from being blind to rare but clinically important defects during evaluation [7].

Issue 2: Inconsistent Results When Transitioning from Clean to Messy Images

Problem: Your model, trained on a clean dataset, fails when applied to new, noisier data from your own lab.

Solution:

- Hybrid Training: Utilize a multi-dataset training approach. Start with a larger, more varied dataset like SMIDS, which includes noise and multiple cells, to teach your model robust feature detection [19].

- Transfer Learning: Then, fine-tune the pre-trained model on the high-quality, meticulously labeled SMD/MSS dataset to refine its ability to perform detailed morphological classification [7]. This two-stage process enhances generalizability.

- Advanced Pre-processing: Incorporate a denoising step in your pre-processing workflow. This can involve techniques to handle insufficient lighting or poorly stained smears, accurately isolating the spermatozoon's signal from the background [7].

Experimental Workflow & Signaling Pathways

The following diagram illustrates a standardized image pre-processing and analysis workflow, integrating best practices for handling these datasets. This workflow is designed to optimize data quality for downstream AI model training.

Data Pre-processing & Model Training Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key reagents and materials used in the creation of the featured datasets, which are crucial for replicating experimental protocols or designing new studies.

Table 2: Key Research Reagents and Materials for Sperm Image Analysis

| Reagent/Material | Function/Application | Example from Datasets |

|---|---|---|

| RAL Diagnostics Staining Kit | Stains semen smears to enhance visual contrast for morphological analysis under a microscope. | Used in the SMD/MSS dataset preparation [7]. |

| Non-Capacitating Media (NCC) | A physiological medium used as an experimental control to maintain sperm in a non-capacitated state. | Used in the 3D-SpermVid dataset to study baseline motility [20]. |

| Capacitating Media (CC) | Media containing BSA and bicarbonate to induce hyperactivation, a motility pattern essential for fertilization. | Used in the 3D-SpermVid dataset to study changes in 3D flagellar dynamics [20]. |

| Computer-Assisted Semen Analysis (CASA) System | An integrated system (microscope, camera, software) for automated image acquisition and morphometric analysis. | The MMC CASA system was used for image acquisition in the SMD/MSS study [7]. |

| High-Speed Camera (e.g., MEMRECAM Q1v) | Captures high-frame-rate videos required for detailed 2D or 3D motility and flagellar beating analysis. | Critical for acquiring the 3D+t multifocal videos in the 3D-SpermVid dataset [20]. |

A Technical Deep Dive: Implementing Modern Sperm Image Pre-processing Pipelines

This technical support guide is framed within a broader thesis on optimizing sperm image pre-processing techniques for research in male fertility. It addresses the critical challenges researchers face in acquiring high-quality morphological data, bridging traditional stained methods and emerging stain-free technologies. The following FAQs and troubleshooting guides provide targeted, practical solutions for common experimental hurdles.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What are the best practices for creating a high-quality stained sperm smear for morphological analysis?

Answer: A well-prepared stained smear is foundational for accurate morphology assessment. Adhere to the following validated protocol [7]:

- Sample Preparation: Use liquefied semen samples with a concentration of at least 5 million/mL. Avoid samples with concentrations exceeding 200 million/mL to prevent image overlap.

- Smear Creation: Prepare smears strictly according to the guidelines outlined in the WHO manual.

- Staining: Use a RAL Diagnostics staining kit or similar Romanowsky stain variants (e.g., Diff-Quik) for clear contrast [7] [21].

- Image Acquisition: Use an optical microscope with a 100x oil immersion objective in bright-field mode. A CASA (Computer-Assisted Semen Analysis) system, such as the MMC system or IVOS II, can be used for sequential image acquisition and storage [7] [21].

Troubleshooting Common Issues:

| Problem | Possible Cause | Solution |

|---|---|---|

| Blurry or low-contrast images | Insufficient staining; Poorly stained semen smear [7]. | Optimize staining time; ensure proper smear thickness and air-drying. |

| High debris in images | Improper sample washing or preparation. | Centrifuge the sample and resuspend in a clean medium before smear preparation. |

| Sperm overlapping in images | Sample concentration too high [7]. | Dilute the sample to an appropriate concentration before creating the smear. |

FAQ 2: How can I assess the morphology of live, unstained sperm for use in assisted reproductive technology?

Answer: Traditional staining renders sperm unusable for further procedures. For unstained live sperm analysis [21]:

- Microscopy Technique: Use Confocal Laser Scanning Microscopy (CLSM) at 40x magnification in confocal mode (Z-stack). This provides high-resolution images without damaging the sperm.

- Sample Preparation: Dispense a 6 µL droplet onto a standard two-chamber slide with a 20 µm depth (e.g., Leja).

- AI Analysis: Train a deep learning model (e.g., ResNet50) on a dataset of images captured via CLSM. This model can classify sperm as normal or abnormal based on criteria like head shape (smooth oval, length-to-width ratio of 1.5–2), absence of vacuoles, and tail uniformity [21].

Troubleshooting Common Issues:

| Problem | Possible Cause | Solution |

|---|---|---|

| Sperm swim out of field of view | Use of high-magnification objective on live samples. | Perform sperm selection under a 20x objective and use a microfluidic chamber or slide with a defined depth (20µm) to restrict movement [21] [22]. |

| Low-resolution images | Use of low magnification for live-cell imaging [22]. | Employ CLSM to achieve high resolution at low magnification. Implement measurement enhancement algorithms to correct boundary errors [22]. |

| Poor AI model accuracy | Limited or low-quality training dataset. | Use data augmentation techniques (flipping, rotation, scaling) to expand and balance your dataset. Manually annotate images with bounding boxes for training [21] [23]. |

FAQ 3: My segmentation model performs poorly with overlapping sperm. How can I resolve this?

Answer: Sperm overlap is a common challenge that standard models like the Segment Anything Model (SAM) struggle with. The CS3 (Cascade SAM) framework provides an effective, unsupervised solution through a cascade process [24]:

- Pre-processing: Adjust brightness, contrast, and saturation, and whiten the background to reduce noise.

- Cascade Segmentation: Apply SAM in stages.

- Stage 1: Segment and remove easily identifiable sperm heads.

- Stage 2-N: Iteratively segment simple, non-overlapping tails and remove them from the image after each round, forcing SAM to focus on increasingly complex, overlapping structures.

- Post-processing: For persistently overlapping tails, enlarge and thicken the lines in the image to make them more distinct for SAM. Finally, match the segmented heads and tails based on distance and angle to assemble complete sperm masks [24].

Troubleshooting Common Issues:

| Problem | Possible Cause | Solution |

|---|---|---|

| SAM fails to segment any tails | The model prioritizes segmentation by color over geometric features [24]. | Remove the sperm heads (which have a different color) from the image. This forces SAM to switch to a geometry-based segmentation for the remaining parts. |

| Incomplete segmentation of overlapping tails | SAM's inherent limitation with slender, intersecting structures [24]. | In the final cascade stage, apply an image transformation that enlarges and bold the overlapping tail regions before re-running SAM. |

Experimental Protocols for Key Methodologies

Protocol 1: Stained Sperm Morphology Analysis Using Deep Learning

This protocol details the creation of a deep-learning model for classifying sperm morphology from stained images, as used in the SMD/MSS dataset study [7].

- Data Acquisition: Capture at least 1,000 images of individual spermatozoa using a CASA system with a 100x oil immersion objective.

- Expert Annotation: Have three independent experts classify each spermatozoon based on a standard classification system (e.g., modified David classification). Resolve disagreements to establish a robust ground truth.

- Data Augmentation: Augment the dataset to at least 6,000 images using techniques like rotation, flipping, and scaling to balance morphological classes and improve model generalizability [7].

- Image Pre-processing:

- Clean the data by handling missing values or inconsistencies.

- Normalize pixel values to a common scale.

- Resize images to a fixed size (e.g., 80x80 pixels) and convert to grayscale [7].

- Model Training & Testing:

- Partition the dataset: 80% for training, 20% for testing.

- Develop a Convolutional Neural Network (CNN) in an environment like Python 3.8.

- Train the model on the training set and evaluate its accuracy on the unseen test set.

Protocol 2: Stain-Free Live Sperm Morphology Analysis Using AI

This protocol enables the functional assessment of live, unstained sperm for use in ART [21].

- Sample Preparation: Aliquot liquefied semen into a microfluidic chamber or a standard two-chamber slide with a 20µm depth.

- Image Acquisition: Use a Confocal Laser Scanning Microscope (e.g., LSM 800) at 40x magnification. Capture Z-stack images with an interval of 0.5 µm over a 2 µm range.

- Dataset Curation: Manually annotate well-focused sperm images using a program like LabelImg. Categorize sperm into "normal" and "abnormal" based on WHO criteria for unstained sperm.

- AI Model Development:

- Select a Transfer Learning Model: Use a pre-trained architecture like ResNet50.

- Training: Train the model on your annotated dataset (e.g., 9,000 images: 4,500 normal and 4,500 abnormal) to minimize the difference between predicted and actual labels.

- Validation: Evaluate the model on a separate test set. A well-trained model should achieve high precision and recall (e.g., >0.90) for both normal and abnormal classes [21].

Workflow Visualization

Stained vs. Unstained Sperm Analysis Workflow

Cascade SAM (CS3) Segmentation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Application Context |

|---|---|---|

| RAL Diagnostics Stain | Provides contrast for clear visualization of sperm structures (head, midpiece, tail). | Stained smear morphology analysis [7]. |

| Diff-Quik Stain | A variant of Romanowsky stain for rapid staining of sperm cells on slides. | Stained smear morphology analysis with CASA systems [21]. |

| Leja Standard Slides | Two-chamber slides with a defined depth (20µm) for preparing standardized samples. | Unstained live sperm analysis; restricts sperm movement for imaging [21]. |

| MMC CASA System | Computer-Assisted Semen Analysis system for automated image acquisition and morphometry. | Stained sperm image capture and basic analysis [7]. |

| IVOS II CASA System | Advanced CASA system for analyzing concentration, motility, and stained morphology. | Standardized semen analysis in clinical settings [21]. |

| Confocal Laser Scanning Microscope | Enables high-resolution, non-destructive imaging of live cells at low magnification. | Unstained live sperm morphology analysis [21]. |

| ResNet50 Model | A deep neural network for image classification; can be fine-tuned for sperm assessment. | AI-based classification of normal/abnormal sperm morphology [21]. |

| Segment Anything Model (SAM) | Foundational model for image segmentation that can be adapted for complex tasks. | Segmenting overlapping sperm structures via the CS3 framework [24]. |

Frequently Asked Questions

1. Why is pre-processing crucial for automated sperm morphology analysis? Raw sperm images captured via microscopy often contain noise, varying brightness, and impurities. Without pre-processing, these inconsistencies can severely degrade the performance of deep learning models and automated analysis systems. Proper pre-processing enhances image quality, standardizes inputs, and is a critical step for achieving accurate, reproducible results in clinical diagnostics and research [25] [9].

2. My deep learning model for sperm classification is sensitive to noisy images. What are robust solutions? Your model may be overly reliant on local image features. Recent research indicates that Visual Transformer (VT) models, which leverage global image information, demonstrate superior anti-noise robustness compared to Convolutional Neural Networks (CNNs). Under common noise types like Poisson noise, VT models can maintain accuracy drops of less than 0.5%, significantly outperforming CNN-based approaches [25].

3. How can I accurately segment overlapping sperm, a common issue in clinical images? Segmenting overlapping sperm, particularly their tails, is a recognized challenge. Traditional Segment Anything Model (SAM) applications often fail in these scenarios. A proven method is the CS3 (Cascade SAM for Sperm Segmentation) framework, which uses a cascade application of SAM. It strategically removes easily segmentable parts like sperm heads and then processes the remaining complex, overlapping tails, significantly improving segmentation accuracy [26].

4. What are the standard steps for preparing a sperm image dataset for deep learning? A robust pre-processing pipeline for deep learning typically includes several stages [26]:

- Brightness, Contrast, and Saturation Adjustment: To standardize images from different sources.

- Background Whitening: To reduce noise and isolate sperm structures.

- Resizing: To normalize image dimensions for network input.

- Data Augmentation: Applying synthetic noise or transformations to improve model generalizability and robustness [25].

Troubleshooting Guides

Problem: High Variability in Model Performance Due to Image Noise

Issue: Your deep learning model for sperm classification or segmentation performs inconsistently when applied to images from different microscopes or staining batches, often due to unseen noise.

Solution: Integrate anti-noise robustness testing into your training pipeline and consider model architecture choices.

Experimental Protocol: Comparative Noise Robustness Analysis This methodology is designed to evaluate and select models based on their resilience to noise [25].

- Dataset Selection: Use a large-scale, publicly available dataset like SVIA (subset-C), which contains over 125,000 sperm and impurity images [25].

- Model Selection: Choose a range of deep learning models for comparison, including both CNN-based (e.g., ResNet50, VGG16) and Visual Transformer (VT) architectures.

- Noise Introduction: Systematically introduce various types of conventional and adversarial noise to the test dataset. Common examples include:

- Gaussian Noise

- Poisson Noise

- Salt-and-Pepper Noise

- Evaluation: Measure standard performance metrics (Accuracy, Precision, Recall, F1-Score) on the noisy test sets and compare them to the baseline performance on the clean images.

Table 1: Example Performance of a Visual Transformer Model Under Poisson Noise

| Metric | Clean Images | With Poisson Noise | Change (Percentage Points) |

|---|---|---|---|

| Overall Accuracy | 91.45% | 91.08% | -0.37 |

| Impurity Precision | 92.7% | 91.3% | -1.40 |

| Impurity Recall | 88.8% | 89.5% | +0.70 |

| Impurity F1-Score | 90.7% | 90.4% | -0.30 |

| Sperm Precision | 90.9% | 90.5% | -0.40 |

| Sperm Recall | 92.5% | 93.8% | +1.30 |

| Sperm F1-Score | 92.1% | 90.4% | -1.70 |

Source: Adapted from [25]

Interpretation: The data in Table 1 shows that a robust model like a VT maintains stable overall accuracy even with added noise. The small fluctuations in precision and recall across categories indicate resilience, which is critical for reliable clinical application [25].

Problem: Failure to Segment Overlapping Sperm Tails

Issue: Instance segmentation models, including the standard Segment Anything Model (SAM), often fail to separate individual sperm when their tails are intertwined, leading to inaccurate morphology analysis.

Solution: Implement the CS3 framework, an unsupervised cascade method designed to address sperm overlap [26].

Experimental Protocol: CS3 for Overlapping Sperm Segmentation The following workflow details the CS3 process.

Explanation of the Workflow:

- Initial Pre-processing: Adjust brightness, contrast, and whiten the background to improve feature visibility [26].

- Cascade Stage 1 (Head Segmentation): Apply SAM to the pre-processed image. Sperm heads, due to their distinct color and shape, are easily segmented. These masks are isolated using color filters, saved, and then removed from the original image, leaving an image containing only tails [26].

- Cascade Stage 2..n (Tail Segmentation): Apply SAM iteratively to the tail-only image. After each round, the resulting masks are filtered to identify "single tails" (using criteria like skeleton endpoints). These identified single tails are saved and removed from the image. This cascade process continues until no new single tails are found in consecutive rounds [26].

- Processing Complex Tails: For the remaining intertwined tails, the image region is enlarged, and the tail lines are thickened. This enhancement makes the overlapping structures more distinct and separable for SAM [26].

- Final Segmentation and Reconstruction: SAM is run on the enhanced image. The final step involves matching the segmented head masks with the corresponding tail masks based on proximity and angle to reconstruct complete, individual sperm instances [26].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Resources for Sperm Image Pre-processing

| Resource / Tool | Function / Description | Relevance in Pre-processing & Analysis |

|---|---|---|

| SVIA Dataset [25] | A public large-scale sperm image dataset. | Provides over 125,000 annotated images for training and testing deep learning models, essential for benchmarking pre-processing techniques. |

| OpenCASA [27] | An open-source software for Computer-Assisted Sperm Analysis. | Used for validating pre-processing results by analyzing classical sperm parameters like motility, morphometry, and concentration. |

| Segment Anything Model (SAM) [26] | A foundational model for image segmentation. | Serves as the core engine in advanced segmentation pipelines like CS3 for segmenting sperm structures without manual prompts. |

| SpermQ [28] | An ImageJ plugin for flagellar beat analysis. | A specialized tool for analyzing sperm tail motility, which can benefit from high-quality pre-processed images. |

Experimental Framework for Pre-processing Optimization

To systematically evaluate the impact of different pre-processing operations on downstream analysis, researchers can adopt the following comparative framework.

Implementation:

- Define Pipelines: Establish two or more pre-processing pipelines. For example, Pipeline A could be a basic standardization (resizing, simple normalization), while Pipeline B incorporates advanced denoising techniques and robust normalization [25] [9].

- Apply Downstream Task: Process the outputs of each pipeline with the same segmentation (e.g., CS3) or classification model (e.g., VT or CBAM-enhanced ResNet50) [25] [26] [29].

- Quantitative Evaluation: Compare the performance of the downstream tasks using relevant metrics. Superior performance from one pipeline indicates its effectiveness for the specific image analysis challenge.

Frequently Asked Questions

How can data augmentation improve the accuracy of my sperm morphology classification model? Data augmentation artificially increases the size and diversity of your training dataset by creating modified versions of existing images. This technique helps prevent overfitting, a common problem where a model performs well on its training data but fails to generalize to new, unseen images. By exposing your model to a wider variety of sperm orientations, sizes, and appearances, you enable it to learn more robust and generalizable features, ultimately leading to higher accuracy on your test set [30]. One study on deep-learning for sperm morphology reported that using data augmentation to expand a dataset from 1,000 to 6,035 images was crucial for achieving a promising classification accuracy [7].

My dataset has a class imbalance for certain sperm defects. Can augmentation help? Yes, data augmentation is a powerful strategy for addressing class imbalance. For under-represented morphological classes (e.g., specific head defects like microcephaly or tail defects like coiled tails), you can selectively apply augmentation techniques like rotation, flipping, and scaling to generate more samples. This creates a more balanced dataset, which helps the model learn to recognize these rare defects without being biased toward the more common classes [5] [30].

Which augmentation techniques are most suitable for sperm image analysis? The most appropriate techniques are those that mimic the natural variations found in microscopic semen analysis. Rotation is highly relevant as sperm can be oriented in any direction on a smear. Flipping (horizontally) is effective because sperm morphology is not laterally biased. Scaling (zooming) can simulate minor differences in the distance between the sperm and the microscope camera [31]. Techniques that alter color properties, like brightness adjustment, can also help the model become robust to variations in staining intensity [32] [31].

What are the common pitfalls when implementing rotation and scaling? A common error is applying excessive transformations that destroy biologically relevant information. For example, rotating a sperm image by 90 degrees might incorrectly alter the perceived orientation of the head and tail, and aggressive scaling can make critical structural details, like the acrosome or midpiece, unrecognizable [31]. It is crucial to define reasonable parameter ranges (e.g., rotation within a ±30 degree range) and to visually inspect the augmented images to ensure they remain biologically plausible.

Troubleshooting Guides

Problem: Model Performance is Poor on New Clinical Images

Possible Causes and Solutions:

- Cause 1: Overfitting to the Original Training Data.

- Cause 2: Augmentation Parameters are Too Extreme.

- Solution: Review the parameters for your scaling and rotation operations. If the zoom factor is too high, the sperm may be cropped out. If the rotation range is too large, it may generate anatomically impossible orientations. Tune these parameters to reflect real-world variability [31].

- Cause 3: Inconsistent Staining in Original Images.

Problem: "Invalid Argument" Error During Model Training

Possible Causes and Solutions:

- Cause 1: Data Type or Range Mismatch.

- Solution: After augmentation, ensure that your image pixel values are in the expected data type (e.g.,

float32) and normalized to the correct range (typically [0,1] or [-1,1]). Most deep learning models require consistent input normalization for stable training [7].

- Solution: After augmentation, ensure that your image pixel values are in the expected data type (e.g.,

- Cause 2: Incorrect Image Dimensions.

- Solution: Verify that all images, including those generated by scaling and cropping, are resized to the same dimensions required by your model's input layer. A common practice is to resize all images to a fixed size (e.g., 80x80 pixels) after augmentation [7].

The following table summarizes a referenced experimental protocol that successfully employed data augmentation for a sperm morphology deep learning model.

Table 1: Summary of Experimental Setup from SMD/MSS Dataset Study [7]

| Aspect | Description |

|---|---|

| Original Dataset Size | 1,000 images of individual spermatozoa |

| Final Augmented Dataset Size | 6,035 images |

| Augmentation Goal | Balance the representation across different morphological classes. |

| Classification Standard | Modified David classification (12 classes of defects) [7]. |

| Deep Learning Model | Convolutional Neural Network (CNN) |

| Reported Outcome | Model accuracy ranged from 55% to 92% after augmentation. |

Detailed Methodology for Image Augmentation

The workflow for implementing a standard augmentation pipeline for sperm images is as follows. This protocol can be implemented using deep learning libraries like TensorFlow/Keras or PyTorch.

Table 2: Common Parameter Ranges for Sperm Image Augmentation

| Technique | Example Implementation | Key Parameters | Biological Justification |

|---|---|---|---|

| Rotation | RandomRotation(factor=0.1) [31] |

factor: 0.1 (≡ ±36°) |

Sperm orientation on a smear is random. |

| Flipping | RandomFlip("horizontal") [31] |

mode: "horizontal" |

Sperm morphology has no inherent left-right bias. |

| Scaling/Zoom | RandomZoom(height_factor=0.2, width_factor=0.2) [31] |

height_factor/width_factor: 0.2 (80%-120% zoom) |

Minor variations in distance from the microscope objective. |

| Brightness | RandomBrightness(factor=0.2) [31] |

factor: 0.2 (±20% change) |

Compensates for variations in staining intensity and light source. |

Data Augmentation Workflow for Sperm Images

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Solution | Function in Experiment |

|---|---|

| RAL Diagnostics Staining Kit | Used to prepare semen smears, providing contrast for morphological analysis of sperm heads, midpieces, and tails [7]. |

| MMC CASA System | A Computer-Assisted Semen Analysis system used for the automated acquisition and storage of high-quality individual sperm images from smears [7]. |

| Python 3.8+ with Deep Learning Libraries | The primary programming environment for implementing data augmentation pipelines and convolutional neural networks (CNNs) [7]. |

TensorFlow/Keras RandomFlip, RandomRotation, RandomZoom |

Pre-built layers for easily integrating geometric image transformations into a deep learning model [31]. |

TensorFlow/Keras RandomBrightness |

A pre-built layer for adjusting image brightness to make models robust to staining and lighting variations [31]. |

Frequently Asked Questions (FAQs)

Q1: Why should we use synthetic data from game engines instead of real medical images for sperm analysis research? Synthetic data addresses the critical lack of large, diverse, and well-annotated datasets in medical imaging [33] [34]. It is especially useful in data-scarce environments, as it allows for the creation of highly customizable datasets without relying on real images, which can be limited, expensive to acquire, and raise privacy concerns [34] [35]. Game engines like Unity and Unreal Engine enable the generation of thousands of images with precise control over parameters, creating a wide variety of permutations and backgrounds that a model might encounter in the real world [36].

Q2: Our synthetic images look visually unrealistic. How can we improve their photorealism for model training? A common solution is to combine game engine rendering with advanced machine learning models. You can feed the game engine outputs into a model like a Composable Adapter (CoAdapter), which uses input modalities such as canny edge maps and depth maps to enhance realism while preserving the underlying structure [37]. Additionally, leverage domain adaptation techniques during preprocessing. These can normalize color and brightness or use style transfer to bridge the gap between synthetic and real visual domains, improving model generalization [38].

Q3: What are the key parameters we should randomize when generating synthetic sperm imagery? To ensure dataset diversity and model robustness, you should randomize several key parameters. Using a game engine's procedural generation capabilities, you can randomize aspects like camera angles (including off-nadir angle), lighting conditions (time of day), environmental effects (cloud cover), and the level of activity onsite (e.g., density of cells) [37]. Furthermore, you can programmatically alter scene parameters like textures, colors, and object locations through domain randomization to expose your model to a wide array of visual scenarios [35].

Q4: We are getting poor model accuracy when transitioning from synthetic to real clinical images. What steps can we take? This issue, known as domain shift, can be mitigated through several strategies. First, ensure your synthetic data is as diverse as possible by using the domain randomization techniques mentioned above [35]. Second, employ targeted image preprocessing on your real-world images, such as histogram equalization for contrast enhancement and noise reduction filters, to make the input characteristics more consistent [38] [39] [40]. Finally, if possible, incorporate a small set of real, annotated clinical images into your training process to fine-tune the model, which can significantly improve its performance on the target domain [33].

Troubleshooting Guides

Issue 1: Handling Limited or Low-Quality Real Datasets

Problem: A researcher cannot assemble a large, high-quality dataset of real sperm images for training, due to factors like data loss, low resolution, or high annotation difficulty [33].

Solution: Implement a synthetic data generation pipeline to augment or create your dataset.

Step-by-Step Protocol:

- Select a Tool: Choose a synthetic data generation platform.

- For specialized sperm analysis: Use AndroGen, an open-source tool designed specifically for generating customizable synthetic microscopic images of sperm cells [34].

- For general-purpose high-quality synthesis: Use game engines like Unity or Unreal Engine, which offer powerful real-time 3D creation tools [36].

- For integrated physical AI workflows: Use NVIDIA Omniverse and Isaac Sim, which are built for generating physically accurate synthetic data [35].

- Model the Scene: Create 3D models of sperm cells, including structures like the head, neck, and tail. Use a game engine's procedural generation algorithms to create different layouts and configurations automatically [37].

- Apply Domain Randomization: Systematically randomize parameters within the synthetic environment to ensure diversity. The table below summarizes key areas for randomization [35] [37]:

| Randomization Area | Specific Parameters | Purpose |

|---|---|---|

| Camera Properties | Off-nadir angle, distance to sample, focal length | Simulate different microscopes and viewing angles. |

| Lighting Conditions | Intensity, color, direction (e.g., time-of-day simulation) | Build invariance to illumination changes in the clinic. |

| Environmental Effects | Cloud cover (simulated optical noise), background texture | Add visual noise and complexity. |

| Object Appearance | Cell texture, color, size, and shape (within biologically plausible limits) | Increase morphological diversity. |

| Level of Activity | Density of cells in a sample, presence of debris | Mimic different sample qualities. |

- Generate and Annotate: Render the images. A key advantage of synthetic data is that annotations (e.g., bounding boxes, segmentation masks) are generated automatically and perfectly during the rendering process [36] [35].

Issue 2: Preprocessing Synthetic Images for Optimal Model Performance

Problem: A model trained solely on synthetic images fails to generalize to real-world clinical images due to domain gap and poor preprocessing.

Solution: Apply a robust preprocessing pipeline to both synthetic and real images to standardize inputs and highlight relevant features.

Step-by-Step Protocol:

- Resize and Crop: Resize all images to a standard dimension required by your model (e.g., 224x224). Use interpolation methods like

cv2.INTER_CUBICin OpenCV for high-quality resizing. Center-crop images to maintain a consistent field of view [39] [40]. - Normalize Pixel Values: Rescale pixel intensity values to a range of 0 to 1 by dividing by the maximum value (255) to stabilize and speed up training [39] [40].

- Enhance Contrast: Apply Histogram Equalization (e.g., using

cv2.equalizeHist) to improve contrast and make features like sperm heads and tails more distinguishable [39] [40]. - Reduce Noise: Apply filters to minimize noise. The table below compares common choices [23] [39] [40]:

| Filter | Primary Use | Key Advantage |

|---|---|---|

| Gaussian Blur | General noise reduction and smoothing. | Creates a smooth effect by averaging pixels with a Gaussian function. |

| Median Blur | Removing "salt-and-pepper" noise. | Preserves edges while effectively removing noise. |

| Bilateral Filter | Strong noise reduction while preserving edges. | Considers both spatial and color intensity similarity. |

- Augment the Data (Real and Synthetic): Further increase dataset diversity by applying random transformations such as horizontal/vertical flipping, slight rotations (±10°), and adding minor random noise [23] [40]. Tools like

albumentationsorTensorFlow's image module can automate this.

Workflow for Synthetic Data in Sperm Image Analysis

The following diagram illustrates the integrated workflow for generating and utilizing synthetic imagery in research.

Research Reagent Solutions: Essential Tools for Synthetic Sperm Image Experiments

The table below details key software and hardware tools for building a synthetic data pipeline for sperm image analysis.

| Item Name | Function / Purpose | Key Features |

|---|---|---|

| Unity / Unreal Engine [36] | Real-time 3D game engines for creating and rendering synthetic microscopic scenes. | High visual quality, procedural generation, extensive asset libraries, and powerful lighting simulation. |

| NVIDIA Omniverse [35] | A platform for 3D simulation and synthetic data generation based on Universal Scene Description (OpenUSD). | Physically accurate rendering, seamless tool interoperability, and built-in annotators for various AI tasks. |

| AndroGen [34] | Open-source software specifically designed for generating synthetic microscopic images of sperm cells. | Highly customizable for the domain, user-friendly GUI, and does not require generative model training. |

| OpenCV / Pillow [39] [40] | Core Python libraries for implementing image preprocessing pipelines. | Comprehensive functions for resizing, filtering, color space conversion, and histogram equalization. |

| YOLOv8 [41] | A state-of-the-art object detection algorithm used for tasks like locating and classifying sperm in images. | High precision and efficiency, suitable for real-time applications, and can be fine-tuned with synthetic data. |

| NVIDIA RTX PRO Server [35] | High-performance computing platform for accelerating simulation and AI training workloads. | Fastens the rendering of complex scenes and reduces the time required for model training. |

FAQs and Troubleshooting Guides

1. Why is accurate multi-part sperm segmentation important, and what are its main challenges? Accurate segmentation of the head, midpiece, and tail is fundamental for evaluating sperm morphology, which is a key indicator of male fertility potential [42]. The main challenges, especially when working with unstained live human sperm, include poor image quality, low signal-to-noise ratio, indistinct structural boundaries (particularly the neck), and overlapping sperm heads [42]. These factors complicate the process of distinguishing the small, intricate parts of the sperm, such as separating the acrosome from the nucleus or cleanly isolating the tail [42].

2. Which deep learning model is best for segmenting different sperm components? No single model excels at segmenting every part perfectly. Performance varies by component, and the choice of model should be guided by your primary segmentation target [42]. The table below summarizes a quantitative comparison of different models.

Table: Performance Comparison of Deep Learning Models for Sperm Part Segmentation (Quantified by IoU)

| Sperm Component | Mask R-CNN | YOLOv8 | YOLO11 | U-Net |

|---|---|---|---|---|

| Head | Best Performance [42] | Good Performance [42] | Not Specified | Good Performance [42] |

| Nucleus | Best Performance [42] | Good Performance [42] | Not Specified | Good Performance [42] |

| Acrosome | Best Performance [42] | Not Specified | Good Performance [42] | Good Performance [42] |

| Neck | Good Performance [42] | Best Performance [42] | Not Specified | Good Performance [42] |

| Tail | Good Performance [42] | Good Performance [42] | Not Specified | Best Performance [42] |

3. How can I improve my model's performance when I have a limited dataset? Data augmentation is a crucial technique to improve model generalizability and robustness, especially with limited data. Effective strategies include [43]:

- Rotation and Flipping: To make the model invariant to the random orientation of sperm cells.

- Brightness and Contrast Adjustment: To simulate variations in lighting and image quality from different microscopes or sample preparations.

- Adding Gaussian Noise: To train the model to distinguish between image noise and actual sperm features, enhancing real-world performance.

4. My segmentation results are inconsistent. What could be the issue? Inconsistencies can stem from the microscope setup itself. The optical resolution of your system is critical for visualizing fine details [44]. Key factors to check are:

- Numerical Aperture (NA): Use objectives with a high NA (e.g., >1.0 with immersion oil) for the best resolution [44].

- Condenser NA: Ensure your condenser's NA is appropriately matched to your objective's NA [44].

- Corrected Objectives: Verify that your objectives are corrected for the thickness of the dish or coverslip you are using [44]. A poorly optimized system will fail to resolve the details needed for accurate segmentation.

Experimental Protocols

Protocol 1: Multi-Part Segmentation of Unstained Sperm Using Deep Learning

This protocol is adapted from recent research on segmenting live, unstained human sperm [42].

Dataset Preparation:

- Source: Use a clinically labeled dataset of live, unstained human sperm images.

- Selection: To ensure consistency, select images labeled as "Normal Fully Agree Sperms" by multiple morphology experts.

- Annotation: Each sperm component (acrosome, nucleus, head, midpiece, and tail) must be accurately annotated with pixel-wise masks.

- Division: Split the annotated images into training and validation sets.

Model Selection and Training:

- Model Choice: Based on your target component (see FAQ #2), select an appropriate model (e.g., Mask R-CNN for heads, U-Net for tails).

- Training: Train the selected model on the training set. Employ data augmentation techniques as described in FAQ #3.

- Evaluation: Quantitatively evaluate model performance on the validation set using metrics like Intersection over Union (IoU), Dice coefficient, Precision, and Recall.

Protocol 2: Traditional Image Analysis Workflow for Sperm Segmentation

This protocol details a pre-deep learning method for segmenting sperm parts using an image-flow cytometer and analysis software, highlighting the logical steps involved [45].

Create an Entire Cell Mask:

- Use the morphology function on the bright-field image to detect all pixels containing the sperm cell.

- Perform a one-pixel erosion to refine the mask [45].

Create a Head Mask:

- Apply the "adaptive erode" function with a circular coefficient to identify the sperm head's round region.

- Dilate the resulting mask by two pixels to ensure complete head coverage [45].

Create a Principal Piece (Tail) Mask:

- Dilate the head mask by approximately 13 pixels to cover the midpiece area.

- Subtract this dilated mask from the entire cell mask. The result is the mask for the principal piece of the tail [45].

Create a Midpiece Mask:

- Subtract the principal piece mask from the entire cell mask, resulting in a mask containing both the head and midpiece.

- Subtract the (dilated) head mask from this new mask to isolate the midpiece region.

- Erode one pixel and then dilate two pixels to finalize the midpiece mask [45].

The following workflow diagram illustrates this multi-step segmentation process:

Sperm Segmentation via Traditional Image Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Reagents for Sperm Segmentation Experiments

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| Beltsville Thawing Solution (BTS) | Semen extender and diluent; used to reduce dilution shock and for sample storage. | Used for diluting and low-temperature storage (e.g., 17°C) of porcine semen in motility studies [46]. |

| CELL-TAK | Cell adhesive; used to fix sperm heads to culture dishes for detailed motility analysis. | Allows for immobilization of sperm for confocal microscopy, enabling analysis of flagellar beats [46]. |