Overcoming Data Scarcity in Sperm Morphology Analysis: Strategies for Robust AI Model Development

The application of artificial intelligence (AI) for automated sperm morphology analysis represents a paradigm shift in male fertility diagnostics, offering a solution to the high subjectivity and inter-observer variability of...

Overcoming Data Scarcity in Sperm Morphology Analysis: Strategies for Robust AI Model Development

Abstract

The application of artificial intelligence (AI) for automated sperm morphology analysis represents a paradigm shift in male fertility diagnostics, offering a solution to the high subjectivity and inter-observer variability of manual methods. However, the development of robust, generalizable AI models is critically constrained by the limited size and quality of annotated datasets. This article synthesizes current research to provide a comprehensive framework for addressing data scarcity, exploring the root causes of limited datasets, detailing methodological solutions like data augmentation and transfer learning, presenting optimization techniques for model architecture, and establishing rigorous validation protocols. Aimed at researchers and drug development professionals, this review underscores how overcoming data limitations is essential for translating AI-powered diagnostic tools from research into reliable clinical practice, ultimately advancing the field of reproductive medicine.

The Data Bottleneck: Understanding the Core Challenges in Sperm Morphology Datasets

The foundational step in diagnosing male infertility is the basic semen analysis. However, for researchers and drug development professionals, the inherent subjectivity and high variability of manual assessment methods present a significant barrier to generating robust, reproducible data. This technical support guide outlines common experimental pitfalls and solutions, framed within the critical research challenge of limited dataset size in sperm morphology studies. The manual evaluation of sperm concentration, motility, and morphology is notoriously prone to inter-laboratory and inter-technician discrepancy [1]. This variability directly compromises the quality and size of reliable datasets, as data from different sources cannot be pooled or compared with confidence. Automation through Computer-Aided Sperm Analysis (CASA) and machine learning (ML) offers a path toward standardization, but its success is contingent on the availability of large, accurately annotated datasets [2].

Troubleshooting Guides: Common Experimental Pitfalls and Solutions

Sample Preparation and Handling

| Problem Area | Common Issue | Impact on Research Data | Verified Solution |

|---|---|---|---|

| Temperature Control | Microscope stage, slides, and pipette tips not maintained at 37°C [3]. | Alters sperm metabolism, leading to inaccurate motility measurements and kinetics (e.g., VCL) [3]. | Pre-warm all consumables. Use a temperature-controlled microscope stage, essential for CASA [3]. |

| Sample Collection | Use of negative displacement pipettes for viscous semen [3]. | Inaccurate sperm concentration due to air bubbles and sperm sticking to pipette surface [3]. | Use a positive displacement pipette for aspirating semen [3]. |

| Timing | Measuring motility and vitality after one hour post-ejaculation [3]. | Rapid decline in these parameters, especially in poor-quality samples, skews time-series data [3]. | Perform all motility and vitality measurements within one hour of collection [3]. |

| Sample Viscosity | Patient not properly hydrated before sample collection [4]. | Abnormal semen viscosity, which can influence sperm motility analysis [4]. | Ensure patients are properly hydrated prior to sample provision [4]. |

Microscopy and Motility Analysis

| Problem Area | Common Issue | Impact on Research Data | Verified Solution |

|---|---|---|---|

| Microscope Setup | Failure to achieve Critical and Köhler illumination [3]. | Uneven background illumination and poor image quality, crippling accuracy for both manual and CASA assessment [3]. | Train staff on basic microscope settings for both bright field and positive phase contrast optics [3]. |

| Standardized Slides | Use of cover slip/slide method without fixed chambers [3]. | Motility over-estimated due to variability in different parts of the slide; inaccurate concentration with low counts [3]. | Use standardized, fixed-depth chambers (e.g., Leja) for consistent measurements [3]. |

| Manual Motility Assessment | Counting motile/immotile sperm only in central areas of a slide [3]. | Systematic over-estimation of motility percentage, reducing data comparability across studies [3]. | Adhere to standardized counting protocols across all fields or use CASA with fixed chambers [3]. |

Morphology Assessment and Staining

| Problem Area | Common Issue | Impact on Research Data | Verified Solution |

|---|---|---|---|

| Technician Variability | Differences in staining techniques and application of "strict" criteria [5] [1]. | Extremely poor inter-observer agreement (κ = 0.05-0.15); no correlation between expert labs on % normal forms [5]. | Implement rigorous, continuous training and regular proficiency testing for all technologists [1]. |

| Smear Preparation | Pushing the semen drop instead of dragging it to make a smear [3]. | Sperm can be broken by the sharp edge of the slide, creating morphological artifacts [3]. | Use a standardized dragging technique for creating sperm smears [3]. |

| Lack of Standardization | Use of different WHO manual editions or lab-specific criteria [1]. | Inconsistent classifications of "normal," rendering multi-study meta-analyses unreliable [5] [1]. | Adopt a single, community-agreed standard and use CASA to reduce subjectivity [6] [7]. |

Frequently Asked Questions (FAQs) for Researchers

Q1: What is the primary clinical evidence that manual sperm morphology assessment is too variable for robust research? A key secondary analysis of the Males, Antioxidants, and Infertility trial compared morphological assessments on the same semen sample performed by local Reproductive Medicine Network laboratories and a central core laboratory. The study found no overall correlation between the percent normal sperm values. When using clinical cut-offs (4% or 0%), the agreement between expert sites was extremely poor (κ = 0.05 and 0.15, respectively) [5]. This demonstrates that even world-class laboratories cannot consistently agree on a fundamental morphological assessment, severely limiting the pooling of data from different research sites.

Q2: How does laboratory variability directly impact the problem of limited dataset size in sperm analysis research? The high variability between laboratories acts as a confounder that effectively fragments the available data. If results from Lab A are not comparable to results from Lab B, the data from each must be treated as originating from separate, non-combinable populations. This prevents researchers from building larger, more powerful datasets from multiple studies or clinics, thereby perpetuating the problem of small sample sizes and underpowered statistical analyses in male fertility research [8] [1].

Q3: We are developing a new CASA algorithm. What open-source datasets are available for training and validation? The VISEM-Tracking dataset is a key resource for ML-based motility and morphology research. It provides [2]:

- 20 video recordings of 30 seconds each (29,196 frames) of wet semen preparations.

- Manually annotated bounding-box coordinates and tracking data for individual spermatozoa.

- Sperm characteristics analyzed by domain experts, including classification into 'normal sperm', 'pinhead', and 'cluster'.

- Additional unlabeled video clips for self-supervised learning. This dataset is specifically designed to support the training of complex deep learning models for tasks like sperm detection, tracking, and motility classification [2].

Q4: What are the validated performance metrics of automated systems compared to manual analysis? Studies have demonstrated that automated systems can perform comparably to manual methods for key parameters. One double-blind prospective study of 50 semen samples found that an automated SQA-V analyzer could be used interchangeably with manual analysis for examining sperm concentration and motility. The study also reported that the automated assessment of morphology showed high sensitivity (89.9%) for identifying percent normal morphology and exhibited considerably higher precision compared to the manual method, which had significant inter-operator variability [7].

Q5: Beyond core semen parameters, what are the emerging areas for automated analysis? Research is increasingly focused on using machine learning for more advanced applications. The VISEM-Tracking dataset, for instance, enables research into sperm kinematics and movement patterns [2]. Furthermore, there is a push to incorporate sperm functional tests—such as assessing hyperactivation—into more comprehensive fertility potential assessments, moving beyond the limitations of basic parameters alone [3].

The Researcher's Toolkit: Essential Materials and Reagents

| Item | Function in Research | Technical Note |

|---|---|---|

| Positive Displacement Pipette | Accurate aspiration of viscous semen for concentration dilution [3]. | Eliminates error from air bubbles and sperm adhesion common with negative displacement pipettes. |

| Phase-Contrast Microscope with Köhler Illumination | High-contrast imaging of live, unstained sperm for motility and morphology [3]. | Critical for both manual and CASA analysis to ensure even illumination and sharp focus. |

| Temperature-Controlled Microscope Stage | Maintains sample at 37°C during analysis [3]. | Essential for consistent metabolic activity and accurate motility kinetics (VCL, etc.). |

| Standardized Counting Chamber (e.g., Leja) | Provides a fixed depth for evaluating sperm concentration and motility [3]. | Reduces field-to-field variability, improving consistency and repeatability of measurements. |

| VISEM-Tracking Dataset | Open-access video data with bounding box and tracking annotations [2]. | Serves as a benchmark for training and validating novel ML and CASA algorithms. |

| Staining Solutions (e.g., Eosin-Nigrosin) | Differentiates live (unstained) from dead (stained) sperm for vitality testing [3]. | Requires strict temperature control (37°C) of solutions and slides for accurate results. |

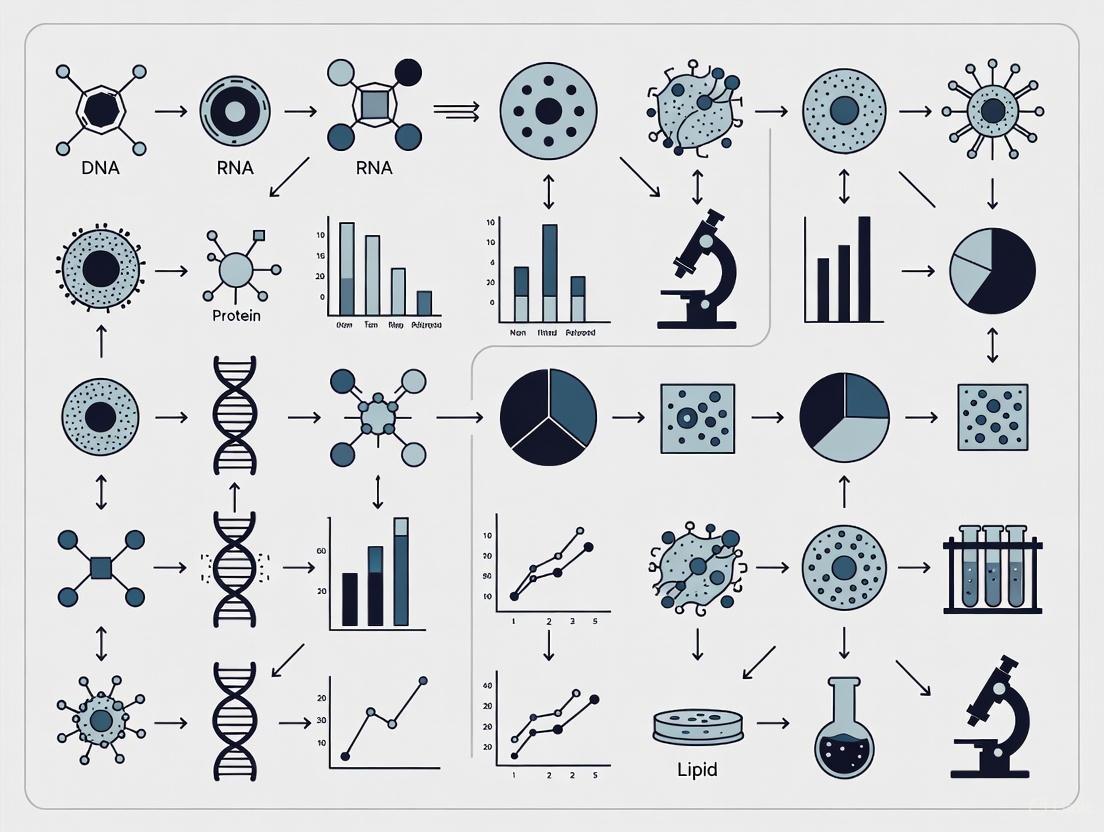

Workflow: From Manual Variability to Automated Standardization

The following diagram illustrates the logical pathway that connects the problem of manual analysis variability to its solution through automation and expanded datasets, while also highlighting the critical feedback loop that improves machine learning models.

Troubleshooting Guide & FAQs

This guide addresses common challenges in creating high-quality datasets for sperm morphology analysis, a critical step for developing robust AI models in male infertility research.

Annotation Complexity

FAQ: Why is achieving consistent annotation in sperm morphology datasets so difficult?

Manual annotation of sperm morphology is inherently complex and subjective. Experts must simultaneously evaluate defects in the head, vacuoles, midpiece, and tail across thousands of sperm, a task with high cognitive load [9]. Inconsistent annotations are a known source of "noise" in AI development, where even highly experienced clinical experts can disagree on labels due to inherent bias, judgment differences, and occasional slips [10]. This problem is pervasive in medical AI, where agreement between different clinical experts can be as low as 70% [11].

Troubleshooting Guide: Problem - High Inter-Expert Variability in Annotations

- Symptoms: AI models trained on the dataset show poor performance and low agreement when validated against labels from a different expert. The model's classifications are inconsistent.

- Root Cause: The annotation task involves subjective judgment. Differences in how experts interpret subtle or borderline morphological features lead to a "shifting ground truth." [10]

- Solution - Standardized Annotation Protocol:

- Develop Precise Guidelines: Create detailed, visual documentation defining each morphological defect category based on WHO standards [9]. Use high-quality reference images.

- Conduct Consensus Training: Hold joint annotation sessions with all experts to calibrate their judgments and discuss borderline cases before the main annotation task begins.

- Implement Multi-Stage Review: Have annotations reviewed by a second expert, with a third expert serving as an arbiter for disputed labels.

The workflow below illustrates a structured protocol to standardize annotations and manage disagreements.

Stain Variability

FAQ: How does staining variability affect the development of AI models for digital pathology?

Staining variation is a pivotal problem in slide preparation. Hematoxylin and eosin (H&E) staining, while routine, exhibits high levels of variation between labs due to different staining methods and protocols [12]. The human visual system can compensate for these variations, but they can profoundly impact the performance and generalizability of AI models in digital pathology [12] [13]. A model trained on slides from one lab may fail on slides from another due to differences in stain color and intensity.

Troubleshooting Guide: Problem - Model Performance Drops on Slides from a Different Lab

- Symptoms: An AI model for sperm segmentation or classification that was highly accurate on its training data performs poorly when presented with new images stained at a different facility.

- Root Cause: The model has overfitted to the specific color distribution and stain intensity of its original training set. It perceives stain differences as meaningful features.

- Solution - Stain Quantification and Standardization:

- Quantify the Variation: Use quantitative digital analysis to measure stain color in your slides. Techniques include H&E color deconvolution and color difference determination (ΔE) [12].

- Use a Reference Standard: Implement stain assessment slides that use a stain-responsive biopolymer film to objectively quantify stain uptake and monitor inter-instrument variation [13].

- Apply Stain Normalization: As a pre-processing step, use digital image analysis algorithms to standardize the color appearance of all images in your dataset, mapping them to a common stain appearance profile [14].

The following workflow outlines steps for quality control using quantitative stain assessment.

Table 1: Quantitative Stain Quality Assessment in H&E Staining (Based on a Multi-Lab Study) [12]

| Assessment Method | Performance Metric | Result / Value | Implication for AI Dataset Creation |

|---|---|---|---|

| Expert EQA Score | Percentage of labs with "Good" or "Excellent" staining | 69% | Even with expert assessment, a significant portion of labs may produce data requiring normalization. |

| Expert Concordance | Inter-observer agreement (within one mark) | 92.5% | High agreement on what constitutes "good" staining, enabling the definition of a clear quality target. |

| Digital Color Analysis | Percentage of labs within 2 ΔE of the mean stain | 60% | A majority of labs cluster near the mean, but stain variation is a continuous, widespread issue. ΔE measures color difference. |

| Digital vs. Expert | Correlation between H&E intensity and assessor score | Little correlation found | Objective intensity measures alone are insufficient; the relationship (ratio) between stains is more critical. |

High Costs

FAQ: What are the primary cost drivers when creating a large-scale dataset for medical AI?

The costs are multifaceted but dominated by data acquisition and, most significantly, annotation. Labelling costs are often the most underestimated and common problem for ML teams [15]. Acquiring medical data requires highly skilled clinical personnel, significant capital investment in equipment, and navigation of complex regulatory and privacy constraints, all of which add to the cost [11]. The specialized expertise needed for annotating medical data further increases the price compared to annotating everyday objects.

Troubleshooting Guide: Problem - Prohibitively High Cost of Annotating a Large Dataset

- Symptoms: A project has a large volume of unlabelled sperm images but the estimated cost and time to annotate them all are unsustainable, potentially running into millions of dollars and thousands of working days for large datasets [15].

- Root Cause: Attempting to use a brute-force, "label everything" approach without strategically selecting the most valuable samples to annotate.

- Solution - Implement Active Learning and Data-Centric Strategy:

- Shift to a Data-Centric Approach: Prioritize budget for curating clean, varied, and well-annotated data over endless model architecture tweaks [15].

- Use Active Learning: Employ algorithms (e.g., Coreset Selection) to intelligently select the most "informative" or uncertain data points for your experts to label next [15].

- Targeted Labelling: Instead of labelling the entire dataset, use active learning to identify and label a critical subset (e.g., 20,000 images instead of 1,000,000), dramatically reducing costs and time while preserving model performance [15].

Table 2: Estimated Labelling Cost Breakdown for a Medical Image Dataset [15]

| Cost Factor | Scenario: 100,000 Images | Scenario: 1,000,000 Images (Projected) | Impact and Mitigation |

|---|---|---|---|

| Total Labelling Cost | $200,000 | ~$2,000,000 | The cost scales linearly with dataset size, quickly becoming prohibitive. |

| Total Labelling Time | 1,041 working days | ~10,000 working days | The time delay can render projects obsolete before completion. |

| Assumptions | 50 bounding boxes per image | 50 bounding boxes per image | Common in object detection tasks for locating sperm parts and defects. |

| Mitigation with Active Learning | Reduce to a targeted subset (e.g., 20,000 images) | Reduce to a targeted subset (e.g., 20,000 images) | Cost Saved: ~$1,600,000+ Time Saved: ~8,000+ working days |

The Scientist's Toolkit: Research Reagent & Solution Guide

Table 3: Essential Materials and Tools for Sperm Morphology AI Research

| Item / Solution | Function / Description | Application in Dataset Creation |

|---|---|---|

| Stain Assessment Slides [13] | Microscope slides with a biopolymer film that quantifies stain uptake during H&E processing. | Provides an objective, quantitative measure of stain quality for quality control and stain normalization. |

| Active Learning Platforms [15] | Software that uses algorithms (e.g., Coreset Selection) to identify the most valuable data points to label. | Drastically reduces the cost and time required for annotation by strategically selecting samples for expert review. |

| Quantitative Digital Analysis Tools [12] [13] | Image processing software capable of H&E color deconvolution and color difference (ΔE) calculation. | Measures and quantifies stain variability across slides and labs, enabling digital standardization. |

| Fine-tuned Large Language Models (LLMs) [16] | Specialized AI models trained to map biological sample labels to ontological concepts (e.g., Cell Ontology). | Can automate and accelerate the initial stages of metadata annotation for dataset organization, though expert validation is still required. |

| Sperm Morphology Analysis Datasets [9] | Publicly available datasets like VISEM-Tracking, SVIA, and MHSMA. | Serve as benchmark datasets for initial model prototyping and testing, though they often have limitations in size and annotation breadth. |

Frequently Asked Questions

Q1: What are the immediate technical consequences of a small dataset for a sperm morphology analysis model? A small dataset directly increases the risk of overfitting, where a model learns the specific patterns, and even noise, of the training images instead of the generalizable features of sperm morphology. This results in high accuracy on the training data but a significant performance drop on new, unseen data from a different clinic or patient population, a phenomenon known as poor generalization [17] [9]. Furthermore, if the limited data does not represent the full spectrum of biological and staining variations, the model can develop algorithmic bias, performing poorly on subtypes of samples that were underrepresented during training [18].

Q2: Beyond collecting more data, what are effective strategies to mitigate overfitting in deep learning models for medical images? Several technical strategies can help mitigate overfitting without requiring an exponential increase in data collection:

- Data Augmentation: Artificially expand your dataset using techniques like rotations, flips, and color adjustments to create more diverse training examples [19].

- Noise Injection: Intentionally adding noise (e.g., Gaussian, Speckle) during training can force the model to become more robust and rely on core morphological features rather than sharp, potentially irrelevant details [20].

- Leveraging Pre-trained Models & Feature Engineering: Using a pre-trained architecture (like ResNet50) and fine-tuning it for your specific task, or using it as a feature extractor combined with a classical classifier (like SVM), can yield high accuracy and reduce overfitting, especially with limited data [21].

Q3: How can we assess a model's generalization capability before clinical deployment? Robust validation is key. This involves:

- Stratified K-Fold Cross-Validation: This technique maximizes the use of limited data for both training and validation, providing a more reliable estimate of model performance [17].

- Out-of-Distribution (OOD) Testing: The model must be tested on data from a completely different source (e.g., a new hospital, different microscope, or new staining protocol) that was not represented in the training set. A large performance gap between internal and OOD test results indicates poor generalization [20] [18].

Troubleshooting Guides

Problem: My model's accuracy is >95% on the training set but falls below 60% on the validation set. This is a classic sign of overfitting.

- Step 1: Apply or intensify data augmentation. Ensure your augmentation pipeline reflects real-world variations in sperm images (e.g., slight staining differences, illumination changes) [19].

- Step 2: Introduce regularization techniques. Implement dropout layers or L2 regularization within your neural network to penalize model complexity.

- Step 3: Simplify the model architecture. A model with too many parameters for a small dataset will easily memorize the data. Consider a simpler network or using a pre-trained model with frozen initial layers [22].

- Step 4: Try noise injection. As demonstrated in chest X-ray analysis, adding fundamental noise during training can significantly improve robustness and close the performance gap between training and validation data [20].

Problem: The model performs well in our lab but fails when tested with data from a collaborating clinic. This indicates a failure to generalize, likely due to dataset bias and distribution shift.

- Step 1: Analyze data divergence. Use metrics like Kullback–Leibler Divergence (KLD) to quantitatively measure the difference in data distributions (e.g., in staining intensity, sperm head size) between your lab and the collaborator's. This can predict generalization performance [18].

- Step 2: Diversify the training data. If possible, incorporate a small, carefully selected set of images from the collaborator's clinic into your training process to help the model adapt to the new distribution.

- Step 3: Re-evaluate the preprocessing. Ensure that image preprocessing steps (e.g., normalization, segmentation) are robust and applicable to images from both sources [19] [21].

- Step 4: Utilize synthetic data. In resource-constrained scenarios, generating high-quality synthetic imagery that mimics the collaborator's data characteristics can be a viable strategy to bridge the distribution gap [23].

Experimental Data & Protocols

Table 1: Impact of Data Augmentation on Model Performance (Sperm Morphology Classification)

| Dataset Size (Original) | Augmentation Technique | Final Dataset Size | Model Accuracy (Baseline) | Model Accuracy (After Augmentation) | Key Finding |

|---|---|---|---|---|---|

| 1,000 images [19] | Multiple techniques (unspecified) | 6,035 images [19] | Not Reported | 55% to 92% [19] | Augmentation enabled model training, achieving near-expert level accuracy. |

| 3000 images (SMIDS) [21] | Not the primary focus | Not Augmented | ~88% (CNN baseline) [21] | 96.08% (with feature engineering) [21] | Highlights that advanced feature engineering can compensate for data limitations. |

Table 2: Generalization Performance: Single-Institution vs. Multi-Institution Models This data, from a clinical text classification study, clearly illustrates the generalization trade-off highly relevant to sperm image analysis.

| Model Training Strategy | Internal Test Performance (F1 Score) | External Test Performance (F1 Score) | Generalization Gap (F1 Score) |

|---|---|---|---|

| Single-Institution Model [18] | 0.923 | 0.700 | -0.223 |

| Multi-Institution (All-data) Model [18] | 0.878 | 0.860 | -0.018 |

Experimental Protocol: Mitigating Overfitting with Noise Injection

This protocol is based on research showing that noise injection improves Out-of-Distribution (OOD) generalization for limited-size datasets [20].

- Data Preparation: Split your data into In-Distribution (ID) sets (training, validation, and test) and a hold-out Out-of-Distribution (OOD) test set from a different source.

- Baseline Model Training: Train your chosen model architecture (e.g., ResNet-50) on the ID training set without any noise injection. Evaluate its performance on both the ID test set and the OOD test set to establish a baseline generalization gap.

- Noise Injection Training: Apply a noise injection pipeline to the ID training set. For each epoch, randomly apply one of the following noise types to each image:

- Gaussian Noise: Mean=0.0, Variance=0.01.

- Salt and Pepper Noise: Density=0.05, Salt-to-Pepper ratio=0.5.

- Speckle Noise: Variance=0.01.

- Poisson Noise.

- Model Evaluation: Train an identical model architecture on the noise-augmented ID training set. Evaluate the final model on the same ID and OOD test sets.

- Result Analysis: Compare the performance gap between ID and OOD tests for the baseline model and the noise-injected model. The successful mitigation of overfitting is indicated by a significantly reduced performance gap in the noise-injected model.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sperm Morphology Analysis Experiments

| Reagent / Material | Function in Experiment |

|---|---|

| RAL Diagnostics Staining Kit [19] | Provides differential staining for sperm cells to enhance contrast and visibility of morphological structures (head, midpiece, tail) under a microscope. |

| MMC CASA System [19] | A Computer-Assisted Semen Analysis system used for the automated acquisition and storage of high-quality digital images of sperm smears. |

| Whatman Filter Paper [23] | Serves as the substrate for fabricating low-cost, paper-based colorimetric sensors for point-of-care semen analysis (e.g., pH, count). |

| Pre-trained Deep Learning Models (e.g., ResNet50, YOLOv8) [21] [23] | Provides a powerful starting point for feature extraction or object detection, reducing the need for massive datasets from scratch and accelerating model development. |

| Synthetic Image Generation Software (e.g., Unity, Unreal Engine) [23] | Used to create procedurally generated, realistic synthetic images of sperm or test kits to augment small real-world datasets and improve model robustness. |

Workflow and Relationship Diagrams

Diagram 1: OOD Generalization Workflow

Diagram 2: Model Training Strategy Trade-offs

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common limitations of existing public sperm morphology datasets? Most public datasets face challenges related to limited sample size, lack of diversity in morphological classes, and variable image quality. For instance, many datasets contain only a few thousand images, which is insufficient for training robust deep learning models without augmentation. An analysis of available resources shows that datasets often have heterogeneous representation of different sperm defects, with a focus on head abnormalities while underrepresenting neck and tail defects [24] [9]. Additionally, issues with staining consistency, image resolution, and the presence of cellular debris further complicate automated analysis [19].

FAQ 2: Which public datasets are available for sperm morphology analysis research? Researchers have access to several public datasets, each with specific characteristics and use cases. The table below summarizes key available datasets:

Table 1: Publicly Available Sperm Morphology Datasets

| Dataset Name | Sample Size | Data Type | Key Features | Primary Use Cases |

|---|---|---|---|---|

| VISEM-Tracking [2] | 20 videos (29,196 frames); 656,334 annotated objects | Video, Clinical data | Sperm tracking IDs, motility analysis, clinical participant data | Sperm detection, tracking, motility analysis |

| VISEM [25] | 85 participants | Multi-modal (videos, biological data) | Semen analysis data, fatty acid profiles, sex hormone levels | Multimodal analysis, quality prediction |

| HSMA-DS [24] | 1,457 sperm images from 235 patients | Images | Unstained sperm, classification of abnormalities | Morphology classification |

| MHSMA [24] | 1,540 grayscale sperm head images | Images | Modified version of HSMA-DS, grayscale heads | Head morphology classification |

| SMD/MSS [19] | 1,000 images (augmented to 6,035) | Images | Modified David classification (12 defect classes), expert annotations | Multi-class morphology classification |

| SCIAN-MorphoSpermGS [24] | 1,854 sperm images | Images | Stained sperm, higher resolution, 5-class classification | Head morphology classification |

| HuSHeM [24] | 725 images (216 publicly available) | Images | Stained sperm heads, morphology classification | Head morphology analysis |

| SMIDS [24] | 3,000 images | Images | Stained sperm, 3 classes (normal, abnormal, non-sperm) | Classification and detection |

| SVIA [24] | 125,000 annotated instances; 26,000 segmentation masks | Videos, Images | Object detection, segmentation masks, classification tasks | Detection, segmentation, classification |

FAQ 3: What methodologies can overcome limited dataset size in sperm morphology research? Data augmentation and multi-modal learning represent the most effective strategies for addressing limited dataset size. Technical approaches include:

- Geometric Transformations: Rotation, scaling, and flipping of existing images to create synthetic variants [19].

- Color Space Manipulations: Adjusting brightness, contrast, and hue to simulate different staining conditions [19].

- Advanced Augmentation: Employing generative adversarial networks (GANs) to create realistic synthetic sperm images that preserve morphological features while expanding dataset diversity.

- Cross-Dataset Validation: Training models on multiple public datasets to improve generalization and mitigate dataset-specific biases [24].

- Transfer Learning: Leveraging pre-trained models from related computer vision tasks and fine-tuning them on available sperm morphology data.

Diagram: Experimental Workflow for Leveraging Limited Datasets

FAQ 4: How do I select the appropriate dataset for my specific research question? Dataset selection should align with your specific research objectives and analytical requirements:

- For motility and kinematic studies: Prioritize video-based datasets like VISEM-Tracking with tracking annotations [2].

- For detailed morphological classification: Opt for datasets with comprehensive defect annotations across head, neck, and tail regions, such as SMD/MSS with its 12-class David classification [19].

- For multimodal predictive modeling: Choose VISEM which combines video with clinical, hormonal, and fatty acid data [25].

- For method comparison and benchmarking: Select datasets that are commonly referenced in literature to enable direct comparison with existing approaches.

FAQ 5: What are the key technical challenges in annotating sperm morphology datasets? Annotation complexity arises from several factors: the small size and rapid movement of spermatozoa, the need to classify multiple defect types simultaneously, and significant inter-expert variability. Studies show that even among experienced technicians, total agreement on sperm classification can be limited, with one study reporting only partial agreement among experts for many morphological classes [19]. This challenge is compounded by the need to evaluate multiple sperm components (head, vacuoles, midpiece, and tail) according to standardized criteria like WHO guidelines [24] [26].

Troubleshooting Guides

Problem 1: Poor Model Generalization Across Different Datasets Symptoms: Your model performs well on training data but shows significantly reduced accuracy on validation data or different datasets.

Solutions:

- Implement Domain Adaptation Techniques

- Use style transfer methods to normalize image characteristics across different staining protocols and microscope settings

- Apply test-time augmentation to make predictions more robust to variations in image quality

Adopt Cross-Dataset Validation Protocols

- Train your model on multiple publicly available datasets simultaneously

- Perform leave-one-dataset-out validation to assess generalization capability

- Utilize ensemble methods that combine models trained on different data sources

Focus on Data Quality Over Quantity

- Curate a smaller, but well-annotated validation set with high expert agreement

- Implement quality control metrics to filter poor-quality images before training

- Prioritize consistent annotation standards across your entire dataset

Problem 2: Insufficient Training Data for Specific Morphological Classes Symptoms: Your model performs poorly on rare morphological defects or imbalanced classes.

Solutions:

- Strategic Data Augmentation

- Apply Class-Imbalance Learning Techniques

- Use weighted loss functions that assign higher penalties to misclassifications of rare classes

- Implement focal loss to focus learning on difficult-to-classify examples

- Employ oversampling strategies for minority classes and undersampling for majority classes

- Leverage Transfer Learning

- Utilize pre-trained models from related domains (e.g., general cell morphology)

- Fine-tune final layers on your specific sperm morphology task

- Use few-shot learning approaches specifically designed for limited data scenarios

Problem 3: Inconsistent Annotations and Inter-Expert Variability Symptoms: High disagreement in labels between experts, leading to noisy training data and unstable model performance.

Solutions:

- Implement Annotation Quality Control

- Use multiple annotators for each image and employ majority voting

- Calculate inter-annotator agreement metrics (e.g., Cohen's kappa) to identify problematic cases

- Focus training on samples with high expert agreement initially

- Develop Robust Training Strategies

- Train models with label smoothing to account for annotation uncertainty

- Utilize noisy label learning approaches that are robust to annotation errors

- Implement co-teaching frameworks where two models learn concurrently and select likely clean samples for each other

Diagram: Data Augmentation and Annotation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Sperm Morphology Analysis

| Tool/Category | Specific Examples | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Public Datasets | VISEM-Tracking, SMD/MSS, HSMA-DS | Training and benchmarking models | Combine multiple datasets for increased sample diversity [2] [19] |

| Data Augmentation Tools | Albumentations, Imgaug, TensorFlow Data Augmentation | Expanding effective dataset size | Apply domain-specific transformations mimicking real morphological variations [19] |

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras | Model development and training | Utilize pre-trained models (ResNet, VGG) with transfer learning [24] |

| Annotation Tools | LabelBox, VGG Image Annotator, Computer Vision Annotation Tool (CVAT) | Creating ground truth labels | Implement multi-expert annotation protocols to measure inter-rater reliability [2] [19] |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, Cohen's Kappa | Assessing model performance | Use metrics robust to class imbalance; report performance per morphological class [19] |

| Class Imbalance Techniques | SMOTE, Focal Loss, Weighted Sampling, Class Weights | Addressing rare morphological classes | Combine multiple techniques for optimal results on imbalanced sperm morphology data [19] |

Experimental Protocols for Leveraging Limited Data

Protocol 1: Cross-Dataset Validation Framework Purpose: To evaluate model generalization across different data sources and mitigate dataset-specific biases.

Procedure:

- Select at least three public datasets with varying characteristics (e.g., VISEM-Tracking, SMD/MSS, HSMA-DS)

- Preprocess all images to consistent specifications (size, normalization, color space)

- Train your model on combined data from multiple sources

- Implement leave-one-dataset-out cross-validation:

- Train on N-1 datasets, validate on the excluded dataset

- Rotate through all dataset combinations

- Calculate overall performance metrics as mean ± standard deviation across all folds

- Compare against single-dataset training to quantify generalization improvement

Protocol 2: Data Augmentation for Rare Morphological Classes Purpose: To balance class distribution and improve model performance on under-represented sperm defects.

Procedure:

- Analyze class distribution in your dataset and identify rare morphological classes

- For classes with insufficient representation (<5% of total samples):

- Apply aggressive augmentation (rotation ±45°, scaling 80-120%, brightness variation ±30%)

- Use generative adversarial networks (GANs) to create synthetic examples

- Implement copy-paste augmentation by transplanting rare morphological features onto normal sperm

- For moderately represented classes (5-15% of total):

- Apply moderate augmentation (rotation ±15°, scaling 90-110%)

- Use elastic transformations and mild noise injection

- Continuously monitor per-class performance metrics during training

- Adjust augmentation strategies based on validation performance

Protocol 3: Multi-Expert Annotation Quality Control Purpose: To establish reliable ground truth labels despite inherent subjectivity in sperm morphology assessment.

Procedure:

- Engage multiple domain experts (minimum 3) for annotation tasks

- Conduct training sessions to calibrate annotation standards using WHO guidelines [26]

- Implement blinded annotation where experts independently classify the same images

- Calculate inter-annotator agreement using Cohen's kappa or intraclass correlation coefficients

- Categorize samples based on agreement level:

- High-confidence: unanimous expert agreement

- Medium-confidence: majority agreement (2/3 experts)

- Low-confidence: no agreement (review with additional experts)

- Weight training samples based on agreement level or focus initially on high-confidence samples

This technical resource will continue to expand as new datasets and methodologies emerge. Researchers are encouraged to contribute to community-driven data initiatives and adopt standardized evaluation protocols to advance the field collectively.

Building from Scratch: Methodological Solutions for Data Augmentation and Feature Extraction

Frequently Asked Questions (FAQs)

1. Why is my model performing well on the training set but poorly on the validation set after applying data augmentation? This is a classic sign of overfitting, which means your model has memorized the training data instead of learning to generalize. Data augmentation is a primary tool to combat this. If performance remains poor, your augmentation strategy might not be realistic or diverse enough. Ensure you are applying a sufficient mix of geometric and photometric transformations that reflect real-world variations in sperm images, such as slight differences in staining intensity or orientation [27] [28]. Also, verify that you are applying augmentation only to your training set and not your validation or test sets [29].

2. My dataset has a severe class imbalance. How can I use data augmentation to address this? Data augmentation is highly effective for tackling class imbalance. The strategy is to selectively augment the underrepresented classes in your dataset. For instance, if you have fewer examples of sperm with "coiled tails" compared to "normal" sperm, you can apply augmentation techniques (like rotations, flips, and color jitters) more aggressively on the "coiled tail" class to balance the dataset size [28] [30]. This prevents the model from being biased toward the majority class.

3. What is the most effective data augmentation technique for sperm morphology analysis? There is no single "best" technique; effectiveness depends on your specific data and task. However, research in medical imaging, including sperm morphology analysis, has shown that geometric transformations like rotation and flipping are highly effective [31]. For instance, one study on prostate cancer detection in MRIs found that random rotation yielded the best performance improvement [31]. A good starting point is to combine horizontal flipping, small-degree rotations, and slight color adjustments, as these mimic plausible variations in microscopic image acquisition [28].

4. Should I implement data augmentation offline or online? For most scenarios, online augmentation is recommended. In online augmentation, transformations are applied randomly on-the-fly during each training epoch. This means your model sees a new, randomly varied version of each image every time, leading to better generalization and infinite data diversity without consuming additional disk space [28]. Offline augmentation (pre-generating and saving transformed images) is useful for inspecting the quality of your augmented dataset but can be storage-intensive [28].

5. How can I determine if my data augmentations are too aggressive? Excessively aggressive augmentation can create unrealistic images that harm model performance. For example, a 180-degree rotation might not be valid for sperm images if it creates implausible orientations, or extreme color shifts might simulate staining artifacts never seen in a real lab [28]. To diagnose this, visually inspect a batch of your augmented images. If the transformed images no longer resemble realistic sperm cells or the semantic label is ambiguous, you should reduce the magnitude of your transformations (e.g., lower the rotation degree range, decrease the color jitter factor) [27].

Experimental Protocols from Key Studies

The following table summarizes the data augmentation methodologies from recent, influential research in automated sperm morphology analysis.

Table 1: Data Augmentation Protocols in Sperm Morphology Research

| Study / Model | Augmentation Techniques Applied | Dataset & Initial Size | Impact on Performance |

|---|---|---|---|

| Deep-learning model (SMD/MSS Dataset) [32] [19] | Data augmentation techniques were used to expand and balance the dataset. | SMD/MSS Dataset; 1,000 images extended to 6,035 images [32] [19] | Model accuracy ranged from 55% to 92%, demonstrating the role of augmentation in achieving expert-level accuracy [32] [19]. |

| CBAM-enhanced ResNet50 with Deep Feature Engineering [21] | Not explicitly detailed in the provided excerpt. The focus was on deep feature engineering and attention mechanisms. | SMIDS (3,000 images) and HuSHeM (216 images) [21] | Achieved state-of-the-art test accuracies of 96.08% (SMIDS) and 96.77% (HuSHeM) [21]. |

| Prostate Cancer Detection (DW-MRI) [31] | Random rotation, horizontal flip, vertical flip, random crop, and translation were evaluated separately. | 217 patients (10,128 2D slices) [31] | Random rotation provided the highest performance boost (AUC: 0.85), highlighting the value of geometric transformations in medical image analysis [31]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Sperm Morphology Analysis Experiments

| Item Name | Function / Explanation |

|---|---|

| MMC CASA System | A Computer-Assisted Semen Analysis (CASA) system used for the automated acquisition and storage of high-quality individual sperm images from prepared smears [19]. |

| RAL Diagnostics Staining Kit | A staining solution used to prepare semen smears, enhancing the contrast and visibility of sperm structures (head, midpiece, tail) for microscopic evaluation [19]. |

| Modified David Classification | A standardized morphological classification system with 12 defect classes, used by experts to create ground truth labels for model training [19]. |

| Python 3.8 with Deep Learning Libraries (e.g., PyTorch, TensorFlow) | The programming environment and libraries used to implement convolutional neural networks (CNNs), data augmentation pipelines, and model training procedures [19] [33]. |

| imgaug / Albumentations Libraries | Specialized Python libraries that provide a wide range of image augmentation techniques, making it efficient to build complex augmentation pipelines for online data generation [28]. |

Workflow: Data Augmentation for Sperm Morphology Analysis

The diagram below illustrates a recommended workflow for developing and applying a data augmentation pipeline in this research context.

In the field of male fertility research, sperm morphology analysis is a crucial diagnostic procedure. However, the development of robust, automated analysis systems using deep learning is significantly hindered by a fundamental challenge: the limited availability of high-quality, standardized, and large-scale annotated image datasets [24]. Manual analysis is subjective, time-consuming, and suffers from inter-observer variability [24]. Generative Adversarial Networks (GANs) present a promising solution by generating realistic, synthetic sperm images to augment small datasets. This technical support guide explores the application of GANs for this purpose, addressing common pitfalls and providing best practices for researchers.

Troubleshooting GAN Training

Training GANs is notoriously unstable. The table below outlines common failure modes, their symptoms, and potential remedies, specifically contextualized for sperm image synthesis.

Table 1: Common GAN Failures and Troubleshooting Guide

| Problem | What It Looks Like | Potential Solutions & Practical Checks |

|---|---|---|

| Mode Collapse [34] [35] | The generator produces a very limited variety of sperm images (e.g., the same head shape or tail orientation repeatedly). | Architecture Tweaks: Use Wasserstein loss (WGAN) [34] or unrolled GANs [34].Parameter Tuning: Add dropout and batch normalization layers to the generator [35].Check: Manually inspect a large batch of generated images for diversity. |

| Vanishing Gradients [34] | The generator fails to improve, as indicated by its loss becoming stagnant or rising, while the discriminator loss drops to near zero. | Loss Function: Switch to a modified loss function like Wasserstein loss, which provides more useful gradients even with a strong discriminator [34].Check: Monitor the loss curves for both networks. A discriminator loss near zero is a key indicator. |

| Failure to Converge [34] | The training process is unstable, with oscillating losses, and the generated images never reach a realistic quality. | Regularization: Add noise to the inputs of the discriminator or penalize its weights [34].Training Strategy: Ensure the generator and discriminator are balanced; do not let one become too powerful too quickly. |

| Slow Convergence [35] | Training takes an impractically long time to produce usable images, even with powerful hardware. | Patience & Hardware: Train for more epochs and ensure you have a GPU with sufficient CUDA cores [35].Architecture Simplification: Try removing some inner layers from the generator or discriminator [35]. |

| Deceptive Loss [35] | The loss values for both networks appear to be improving and converging, but the generated images are of poor quality. | Use Better Metrics: Do not rely solely on loss. Use additional metrics like the Structural Similarity Index (SSIM) [35] or task-specific metrics (e.g., the accuracy of a pre-trained classifier on generated images). |

Frequently Asked Questions (FAQs)

Q1: What are the main data-related challenges in applying GANs to sperm morphology analysis? The primary challenge is the lack of standardized, high-quality annotated datasets [24]. Existing public datasets often have limitations such as low resolution, small sample sizes, and insufficient morphological categories [24]. Furthermore, sperm images can be intertwined or show only partial structures, and the annotation process itself is difficult, requiring experts to simultaneously evaluate head, vacuoles, midpiece, and tail abnormalities [24].

Q2: My GAN's loss values look good, but the generated sperm images are clearly unrealistic. What is happening? This is a classic case of a deceptive loss function [35]. The loss function in GAN training may not always correlate perfectly with image quality. It is essential to use supplemental metrics to evaluate progress. For medical imaging, relevant metrics include the Structural Similarity Index (SSIM) and, more importantly, task-assisted evaluation [36]. For instance, you can use a pre-trained sperm classifier or segmentation model to see if it can correctly process your generated images.

Q3: How can I ensure that the synthetic sperm images generated by the GAN are biologically accurate and not just visually plausible? To ensure biological fidelity, consider using a Task-Assisted GAN (TA-GAN) architecture [36]. This approach incorporates an auxiliary task, such as the segmentation of the sperm head or the classification of morphological defects, directly into the GAN's training loop. This guides the generator to produce images where the biological structures of interest are not only realistic but also accurate and analyzable, making the synthetic data much more useful for downstream analysis tasks [36].

Q4: Are there specific GAN architectures that are more suited for this domain? Yes. While vanilla GANs can be used, more advanced architectures have shown promise:

- StyleGAN: Known for generating very high-resolution, realistic images [37].

- Projected GANs & Stylized Projected GANs: These can improve training speed and convergence. Recent work on "Stylized Projected GANs" aims to reduce artifacts in generated samples, a common issue in earlier versions [38].

- CycleGAN / TA-CycleGAN: Useful for unpaired image-to-image translation, such as converting images from one staining technique to another. The TA-CycleGAN variant incorporates a task network to preserve biological structures during translation [36].

Experimental Protocols for Sperm Image Synthesis

Protocol: Implementing a Task-Assisted GAN (TA-GAN)

This protocol is designed to generate high-resolution sperm images while ensuring the accuracy of key morphological features.

Objective: To train a GAN that synthesizes realistic sperm images, with a focus on biologically accurate segmentation of the sperm head.

Materials:

- A dataset of paired low-resolution and high-resolution sperm images, with corresponding segmentation masks for the sperm heads.

- Computing resources with a powerful GPU (e.g., NVIDIA RTX series or higher).

Methodology:

- Data Preprocessing: Normalize all images to a standard pixel range (e.g., [0,1] or [-1,1]). Resize images to a uniform resolution (e.g., 256x256 pixels).

- Model Architecture Setup: Implement a TA-GAN comprising three core networks [36]:

- Generator (G): A U-Net-like architecture that takes a low-resolution or noisy sperm image and generates a high-resolution, synthetic version.

- Discriminator (D): A convolutional network that distinguishes between real high-resolution images and synthetic ones from the generator.

- Task Network (T): A pre-trained segmentation network (e.g., U-Net) whose goal is to accurately segment the sperm head.

- Training Loop:

- Step A: Train the generator and discriminator in an adversarial manner using a loss function like Wasserstein loss to improve stability [34].

- Step B: Simultaneously, pass the generator's output through the task network. Calculate the segmentation loss (e.g., Dice loss) between the task network's prediction and the ground-truth segmentation mask.

- Step C: Combine the adversarial loss and the segmentation loss to update the generator's weights. This forces the generator to produce images that are not only realistic to the discriminator but also segmentable by the task network, ensuring biological accuracy [36].

- Validation: Evaluate the generated images using:

- Pixel-wise metrics: PSNR, SSIM.

- Task-specific metrics: Dice coefficient or IoU achieved by an independent segmentation model on the generated images.

The following diagram illustrates the TA-GAN workflow and the logical relationship between its core components:

Diagram 1: TA-GAN Training Workflow

Protocol: Experimental Pipeline for Data Augmentation

This protocol describes a complete experimental workflow to validate the utility of GAN-generated images for improving a sperm morphology classification model.

Objective: To assess whether augmenting a small training dataset with GAN-synthetic images improves the performance of a deep learning-based sperm morphology classifier.

Methodology: The logical flow of the entire experiment is outlined below:

Diagram 2: Data Augmentation Validation Pipeline

- Dataset Splitting: Split the original, limited dataset into a small training set and a held-out test set.

- GAN Training: Train a GAN (e.g., the TA-GAN from Protocol 4.1) exclusively on the training split to create a bank of synthetic images.

- Data Augmentation: Create an augmented training set by combining the original small training set with the generated synthetic images.

- Model Training & Evaluation:

- Baseline: Train a sperm morphology classifier (e.g., a CNN) on the original small training set.

- Augmented Model: Train the same classifier architecture on the augmented training set.

- Evaluation: Compare the performance (e.g., accuracy, F1-score) of both models on the same held-out test set. A statistically significant improvement in the augmented model's performance validates the utility of the GAN-generated data.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description | Relevance to Sperm Image GANs |

|---|---|---|

| SVIA Dataset [24] | A public dataset containing annotated sperm images for detection, segmentation, and classification. | Provides a potential benchmark dataset for training and evaluating GAN models in this domain. |

| VISEM-Tracking Dataset [24] | A multi-modal dataset with over 650,000 annotated objects, including sperm videos and tracking data. | Useful for more complex GAN architectures that can model temporal relationships, such as Video-to-Video GANs. |

| Wasserstein Loss (WGAN) [34] | A loss function designed to combat mode collapse and vanishing gradients. | A key technical choice to stabilize the difficult training process of GANs for medical images. |

| Task Network [36] | A pre-trained model (e.g., for segmentation) used to guide the GAN generator. | Crucial for the TA-GAN approach, ensuring generated sperm images are not just realistic but also biologically accurate for analysis tasks. |

| Structural Similarity Index (SSIM) [35] | A metric for measuring the perceptual similarity between two images. | A more meaningful evaluation metric than pixel-wise loss for assessing the quality of generated sperm images. |

Core Concepts: A Technical FAQ

FAQ: What is deep feature engineering, and how does it differ from traditional feature engineering?

Deep feature engineering is a hybrid approach that combines the automated feature learning capabilities of Deep Neural Networks, particularly Convolutional Neural Networks (CNNs), with the statistical rigor of classical feature selection methods. Traditional feature engineering is a manual process that relies heavily on domain expertise to handcraft, select, or transform input features (e.g., creating specific shape or texture measurements from sperm images) [39] [40]. In contrast, CNNs learn hierarchical features directly from raw data: early layers learn simple features like edges, with later layers combining them into complex patterns [41]. Deep feature engineering leverages these CNN-learned representations but applies subsequent classical screening to the original feature space, marrying the strengths of both paradigms [42].

FAQ: Why is this hybrid approach particularly useful for sperm morphology analysis with limited datasets?

Sperm morphology analysis often faces the "high-dimension, low-sample-size" problem, where the number of potential image features is vast, but the number of annotated sperm images is small [19] [42]. In this context, the hybrid approach offers key advantages:

- Overcoming Dimensionality: It mitigates overfitting and computational challenges associated with traditional methods on small datasets [42].

- Model-Free Insights: It is distribution-free and can capture complex, non-linear relationships between features (e.g., interactions between head shape and tail structure) without pre-specified model assumptions [42].

- Handling Subjectivity: It helps standardize analysis, reducing the high inter-expert variability (coefficient of variation up to 80%) common in manual morphology assessment [19] [43].

FAQ: What are the common failure points when implementing a deep feature screening pipeline?

- Data Quality and Preparation: Insufficient pre-processing of raw microscopic images, such as inadequate denoising, normalization, or handling of staining artifacts, will corrupt the features learned by the CNN [19].

- Incorrect Feature Space Application: A critical failure is performing feature selection only on the CNN's extracted low-dimensional space. The validated approach is to use this representation to guide screening back in the original input feature space, ensuring biological interpretability [42].

- Ignoring Expert Agreement: Disregarding the level of consensus among human experts (e.g., Total Agreement vs. Partial Agreement on labels) when establishing ground truth can lead to models learning from noisy or unreliable labels [19].

Implementation Workflow & Protocols

The following diagram illustrates the integrated pipeline for deep feature engineering, from raw data input to a finalized, interpretable model.

Experimental Protocol: Data Augmentation for Sperm Morphology CNNs

This protocol is based on the methodology used to create the SMD/MSS dataset, which expanded 1,000 original images to 6,035 augmented samples [19].

- Objective: To artificially increase the size and diversity of a limited sperm image dataset, improving CNN generalizability and robustness.

- Materials:

- Procedure:

- Image Pre-processing: Resize all images to a consistent resolution (e.g., 80x80 pixels). Convert to grayscale and apply pixel normalization [19].

- Augmentation Techniques: Apply a series of random transformations to the original images to generate new variants. Key techniques include:

- Geometric Transformations: Random rotation (e.g., ±15°), horizontal and vertical flipping, and slight shearing.

- Photometric Transformations: Adjust brightness and contrast within a small range to simulate varying staining intensities.

- Balancing: Ensure augmented images are distributed across all morphological classes (e.g., normal, tapered head, coiled tail) to avoid biasing the CNN [19].

- Troubleshooting: If model performance does not improve, verify that the augmentations are biologically plausible. For example, excessive rotation might create implausible sperm orientations.

Experimental Protocol: Deep Feature Screening (DeepFS)

This protocol is adapted from the Deep Feature Screening methodology designed for high-dimensional, low-sample-size data [42].

- Objective: To identify the most significant original input features by leveraging a low-dimensional representation learned by a deep neural network.

- Materials:

- Pre-processed and (optionally) augmented dataset.

- Deep learning framework (e.g., TensorFlow, PyTorch).

- Statistical computing environment for calculating correlation metrics.

- Procedure:

- Feature Extraction: Train an autoencoder or supervised CNN on your dataset. Extract the activations from the central "bottleneck" layer as the low-dimensional feature representation, Z [42].

- Importance Scoring: For each feature Xj in the original input space, compute its importance score. This is done by calculating the multivariate rank distance correlation between Xj and the deep representation Z. Formula:

Score_j = RdCov(X_j, Z)[42]. - Feature Selection: Rank all original features based on their importance scores. Select the top k features with the highest scores for your final model.

- Troubleshooting: If the selected features lack interpretability, ensure the screening is applied to the original input features and not just the deep learning embeddings. The strength of DeepFS is its link back to the original domain [42].

The table below summarizes quantitative results from key studies that inform the deep feature engineering approach.

Table 1: Performance Metrics of Relevant Models and Datasets

| Model / Dataset | Dataset Size (Pre-/Post-Augmentation) | Key Performance Metric | Application Context & Notes |

|---|---|---|---|

| CNN for Sperm Morphology [19] | 1,000 / 6,035 images | Accuracy: 55% to 92% | Classification based on modified David criteria (12 defect classes). Performance range reflects task complexity and inter-expert agreement levels. |

| SMD/MSS Dataset [19] | 1,000 original images | N/A | Includes 12 classes of defects (head, midpiece, tail). Ground truth established by three experts, with analysis of inter-expert agreement (TA, PA, NA). |

| 3D-SpermVid Dataset [44] | 121 hyperstack videos | N/A | Enables 3D+t analysis of sperm flagellar motility under non-capacitating (NCC) and capacitating conditions (CC). Represents next-generation data for dynamic feature extraction. |

| Manual Morphology Assessment [43] | ~200 sperm evaluated per sample | Coefficient of Variation (CV): ~80% | Highlights the high subjectivity of manual analysis, underscoring the need for automated, standardized methods like deep learning. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Sperm Morphology Analysis Experiments

| Item | Function / Application | Specification / Note |

|---|---|---|

| Computer-Assisted Semen Analysis (CASA) System | Automated image acquisition and initial morphometric analysis (head width/length, tail length) [19]. | Systems like the MMC CASA system are used for standardized 2D image capture [19]. |

| RAL Diagnostics Staining Kit | Staining semen smears for clear visualization of sperm structures under a microscope [19]. | Follows WHO manual guidelines for preparation [19]. |

| Multifocal Imaging (MFI) System | Capturing 3D and temporal (3D+t) data of sperm movement, crucial for analyzing dynamic flagellar patterns [44]. | Based on an inverted microscope with a piezoelectric objective controller and high-speed camera [44]. |

| HTF Medium & Bovine Serum Albumin (BSA) | Media for sperm preparation. HTF is used for initial incubation; BSA is added to induce capacitation [44]. | Capacitation is a key biological process that affects sperm motility and is a variable in advanced studies [44]. |

| Python with Deep Learning Libraries | Core programming environment for implementing CNNs (e.g., Keras, TensorFlow, PyTorch), data augmentation, and feature screening algorithms [19] [42]. | High-level libraries like Keras are recommended for beginners for easier experimentation [45]. |

Male infertility is a significant global health concern, with male factors contributing to approximately 50% of infertility cases [24]. Sperm morphology analysis (SMA) represents one of the most important examinations for evaluating male fertility, but it presents substantial challenges for automation using deep learning approaches [24]. The primary obstacle researchers face is the limited availability of high-quality, annotated datasets, which creates a fundamental constraint for training robust models from scratch [24] [19].

This technical support guide addresses how transfer learning—the practice of adapting pre-trained models to new tasks—provides a viable pathway to overcome data limitations in sperm analysis research. By leveraging knowledge from models trained on large-scale vision datasets, researchers can develop accurate sperm morphology classification systems even with constrained medical image data [46] [47].

Technical FAQs: Overcoming Implementation Challenges

Q1: Why should I use transfer learning instead of training a custom model for sperm analysis?

Transfer learning significantly reduces training time and computational costs while improving performance with limited data [48] [47]. Pre-trained models have already learned general visual features (edges, textures, shapes) that are transferable to medical imaging tasks [49]. For sperm morphology analysis, which typically has small datasets (often only 1,000-6,000 images initially), training from scratch would likely lead to overfitting, whereas transfer learning leverages previously learned patterns [19] [47].

Q2: How do I select the right pre-trained model for sperm morphology tasks?

Consider both your dataset characteristics and model architecture. For sperm analysis, models pre-trained on ImageNet (like ResNet, VGG, or Inception) provide a strong foundation [48] [49]. The key factors in selection are:

- Dataset size: Small datasets (<1,000 images per class) benefit from simpler architectures [49]

- Similarity to natural images: Sperm images differ significantly from ImageNet, favoring approaches that prioritize feature extraction [46]

- Computational constraints: MobileNet-v2 or SqueezeNet offer good performance with lower resource requirements [47]

Q3: What is the recommended strategy for fine-tuning with small sperm datasets?

For limited sperm morphology data (typically small datasets similar to pre-training domains), the recommended approach is feature extraction rather than full fine-tuning [49]. Freeze the convolutional base of the pre-trained model and only train a new classifier on top. This prevents overfitting while adapting the model to sperm-specific features [50] [49]. As demonstrated in recent studies, this approach can achieve accuracy improvements of up to 30% compared to training from scratch [47].

Q4: How can I address the domain gap between natural images and sperm microscopy?

The significant differences between natural images (ImageNet) and sperm microscopy images reduce transfer learning effectiveness [46]. To bridge this domain gap:

- Use in-domain pre-training when possible—first train on unlabeled medical images, then fine-tune on labeled sperm data [46]

- Implement extensive data augmentation to increase effective dataset size and variability [19] [51]

- Apply gradual unfreezing during fine-tuning, starting with later layers and progressively including earlier layers [49]

Q5: What are the solutions for limited or poorly annotated sperm datasets?

Several approaches can mitigate data limitations:

- Data augmentation: Apply transformations (rotation, flipping, contrast adjustment) to increase dataset size [19] [51]

- Semi-supervised learning: Leverage unlabeled images through consistency regularization [51]

- Data synthesis: Use GAN-based methods to generate artificial sperm images (though this requires careful validation) [51]

- Collaborative datasets: Utilize publicly available datasets like SVIA, VISEM-Tracking, or MHSMA to supplement your data [24]

Quantitative Data Comparison

Table 1: Publicly Available Sperm Morphology Datasets for Transfer Learning

| Dataset Name | Images | Ground Truth | Key Characteristics | Annotation Type |

|---|---|---|---|---|

| HSMA-DS [24] | 1,457 | Classification | Non-stained, noisy, low resolution | 235 patients, unstained sperms |

| MHSMA [24] | 1,540 | Classification | Non-stained, noisy, low resolution | Grayscale sperm heads |

| SCIAN-MorphoSpermGS [24] | 1,854 | Classification | Stained, higher resolution | 5 classes: normal, tapered, pyriform, small, amorphous |

| HuSHeM [24] | 725 (216 public) | Classification | Stained, higher resolution | Sperm heads only |

| SVIA [24] | 4,041 | Detection, Segmentation, Classification | Low-resolution unstained grayscale | 125K annotated instances, 26K segmentation masks |

| VISEM-Tracking [24] | 656,334 objects | Detection, Tracking, Regression | Low-resolution unstained grayscale | Annotated objects with tracking details |

| SMD/MSS [19] | 6,035 (after augmentation) | Classification | Bright-field, stained | 12 morphological defect classes |

Table 2: Performance Comparison of Transfer Learning Approaches in Medical Imaging

| Application | Base Model | Dataset Size | Approach | Performance |

|---|---|---|---|---|

| Skin Cancer Classification [46] | Custom DCNN | 200K unlabeled + limited labeled | In-domain pre-training | F1-score: 98.53% (vs. 89.09% from scratch) |

| Breast Cancer Classification [46] | Custom DCNN | 200K unlabeled + limited labeled | In-domain pre-training | Accuracy: 97.51% (vs. 85.29% from scratch) |

| Diabetic Foot Ulcer [46] | Skin Cancer Pre-trained | Small dataset | Double-transfer learning | F1-score: 99.25% |

| Sperm Morphology [19] | CNN | 1,000 → 6,035 (augmented) | Data augmentation + transfer learning | Accuracy: 55-92% |

| General Medical Imaging [47] | MobileNet-v2 | Limited data | Feature extraction | Accuracy: 96.78%, Sensitivity: 98.66% |

Experimental Protocols

Protocol 1: Basic Transfer Learning Workflow for Sperm Morphology Classification

Data Preparation

- Collect sperm images using standardized acquisition protocols (RAL staining, bright-field microscopy, 100x oil immersion) [19]

- Annotate images following modified David classification (12 defect classes) with multiple expert reviewers [19]

- Split data into training (80%), validation (10%), and test sets (10%) [19]

Pre-trained Model Selection

Model Adaptation

Training Configuration

Evaluation

- Validate on separate test set not used during training

- Report accuracy, precision, recall, and F1-score across morphology classes [19]

Protocol 2: Advanced Fine-tuning for Optimal Performance

Initial Feature Extraction

- Follow Protocol 1 for initial training with frozen base [50]

- Achieve validation accuracy plateau before proceeding

Selective Unfreezing

Progressive Fine-tuning

- Gradually unfreeze earlier layers while monitoring validation performance [49]

- Stop if validation loss increases significantly, indicating overfitting

Regularization

Workflow Visualization

Transfer Learning Workflow for Sperm Morphology Analysis

Data Processing Pipeline for Limited Sperm Datasets

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Resource Type | Specific Examples | Function/Application |

|---|---|---|

| Staining Kits | RAL Diagnostics staining kit [19] | Standardized sperm staining for morphology analysis |

| Microscopy Systems | MMC CASA system [19] | Automated image acquisition with bright-field microscopy |

| Pre-trained Models | ResNet, VGG, Inception, MobileNet [48] [47] | Feature extraction and transfer learning backbone |

| Deep Learning Frameworks | PyTorch [52], TensorFlow [50], Keras [49] | Model implementation and training infrastructure |

| Public Datasets | SVIA, VISEM-Tracking, MHSMA, SMD/MSS [24] [19] | Benchmark data for training and validation |

| Data Augmentation Tools | Online Automatic Augmenter (OAA) [51] | Automated image transformation for dataset expansion |

| Annotation Standards | Modified David classification [19], WHO criteria [24] | Consistent labeling of sperm morphology defects |

Frequently Asked Questions (FAQs)

1. What is the primary challenge of using deep learning for sperm morphology analysis? A major challenge is the lack of large, high-quality, and diverse annotated datasets. Deep learning models require substantial data to learn effectively, but obtaining real, labeled microscopic sperm samples is often costly, time-consuming, and can be limited by privacy concerns [9] [53] [54].

2. Why can't I just use a smaller dataset to train my model? Using a small dataset significantly increases the risk of overfitting, where the model memorizes the training examples rather than learning generalizable features. This leads to poor performance on new, unseen data [9]. Data augmentation is a key strategy to mitigate this by artificially increasing the dataset's size and diversity [19].

3. What are the main types of data augmentation techniques? Data augmentation techniques can be broadly categorized as basic image transformations and advanced generation methods [55].

- Basic Transformations: Include geometric and color space changes like rotation, flipping, cropping, scaling, and adjusting brightness or contrast.

- Advanced Methods: Include Generative Adversarial Networks (GANs) and the use of realistic simulation software to create synthetic data from scratch [56] [53].

4. My model's performance is inconsistent across different classes of sperm defects. What could be wrong? This is often a symptom of class imbalance, where some morphological classes have many more examples than others in your training set. When applying data augmentation, ensure you augment the under-represented classes more heavily to balance the dataset. Advanced feature engineering and ensemble learning methods can also help improve robustness against such imbalances [21] [57].

Troubleshooting Guides

Problem: After augmentation, my model's accuracy does not improve or gets worse.

| Potential Cause | Diagnostic Steps | Solution | |

|---|---|---|---|

| Excessive Distortion | The augmented images no longer resemble realistic sperm morphology. | Review the parameters of your augmentation techniques (e.g., rotation angles that are too extreme). | Ensure augmentations preserve biologically plausible structures. Use domain knowledge to set reasonable limits for transformations. |

| Data Leakage | Information from the test set is inadvertently used during training. | Check your data splitting procedure. Ensure the training and test sets are separated before any augmentation is applied. | Apply augmentation only to the training set after it has been isolated. The validation and test sets should remain completely untouched and representative of original data. |

| Ineffective Augmentations | The chosen augmentations do not reflect the real-world variations in your problem. | Analyze the types of variations present in your original, un-augmented dataset and in real-world clinical settings. | Tailor augmentation strategies to the task. For sperm morphology, rotations and flips may be effective, while extreme color changes might not be relevant for stained samples. |

Problem: My model is struggling to segment the different parts of the sperm (head, midpiece, tail).

| Potential Cause | Diagnostic Steps | Solution | |

|---|---|---|---|

| Insufficient Feature Focus | The model does not know which parts of the image are most important. | Use visualization tools like Grad-CAM to see what image regions the model is using for its decisions [21]. | Integrate attention mechanisms (like CBAM) into your neural network architecture. This forces the model to learn to focus on morphologically relevant regions like the acrosome or tail [21]. |

| Lack of Spatial Diversity | The augmented dataset does not contain enough variation in the structure and connection of sperm parts. | Manually inspect a sample of your augmented images, paying specific attention to the integrity of the head, midpiece, and tail. | If using synthetic generation, ensure the simulation software or GAN can accurately model the connections between sperm components [53]. |

Quantitative Data from the SMD/MSS Case Study

The following table summarizes the key quantitative outcomes from a real-world study that successfully augmented a sperm morphology dataset [19] [32].

Table: Dataset Augmentation and Model Performance Metrics