Overcoming Sperm Morphology Assessment Standardization Challenges: From Human Bias to AI Solutions

This article examines the critical challenges in standardizing sperm morphology assessment, a cornerstone of male fertility evaluation that remains plagued by significant subjectivity and inter-observer variability.

Overcoming Sperm Morphology Assessment Standardization Challenges: From Human Bias to AI Solutions

Abstract

This article examines the critical challenges in standardizing sperm morphology assessment, a cornerstone of male fertility evaluation that remains plagued by significant subjectivity and inter-observer variability. We explore the foundational causes of this variability, including the lack of robust training protocols and inconsistent methodology. The content delves into emerging technological solutions, including standardized training tools validated by expert consensus and advanced deep learning algorithms for automated classification. For researchers and drug development professionals, we provide a comparative analysis of troubleshooting strategies and validation metrics, highlighting how standardization improves diagnostic accuracy, reproducibility, and clinical correlation. The synthesis of these areas underscores a pivotal shift toward data-driven, objective assessment methods that promise to enhance reliability in both research and clinical andrology.

The Root of Variability: Understanding Foundational Challenges in Sperm Morphology Assessment

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the evidence that sperm morphology assessment is highly subjective? Multiple studies document significant inter-observer variability in sperm morphology assessment. A 2023 quality control initiative found that when three different assessors examined the same semen samples, the mean coefficient of variation (CV) was 6.24% for sperm concentration and 10.14% for sperm vitality [1]. While morphology showed lower variability (CV 2.66%) in this specific study, other research highlights substantial disagreement, particularly with complex classification systems. One study found that expert morphologists only agreed on normal/abnormal classification for 73% of sperm images, demonstrating fundamental subjectivity in even basic assessments [2].

Q2: How does the complexity of the classification system affect assessment variability? The number of categories in a classification system directly impacts accuracy and variability. A 2025 training study demonstrated that as classification systems become more complex, accuracy decreases and variation increases [2]. The table below summarizes these findings:

Table 1: Impact of Classification System Complexity on Assessment Accuracy

| Classification System | Untrained User Accuracy | Trained User Accuracy | Key Finding |

|---|---|---|---|

| 2-category (Normal/Abnormal) | 81.0 ± 2.5% | 98.0 ± 0.4% | Highest accuracy and lowest variation |

| 5-category (by defect location) | 68.0 ± 3.6% | 97.0 ± 0.6% | Moderate impact on untrained users |

| 8-category (specific defects) | 64.0 ± 3.5% | 96.0 ± 0.8% | Significant complexity challenge |

| 25-category (individual defects) | 53.0 ± 3.7% | 90.0 ± 1.4% | Lowest accuracy and highest variation |

Q3: What are the clinical implications of this variability in morphology assessment? The variability in sperm morphology assessment can directly impact patient management and clinical outcomes. This "classification drift" over time can alter the diagnostic criteria for teratozoospermia, potentially affecting treatment recommendations [3]. Studies show that the predictive value of sperm morphology for fertility outcomes like intrauterine insemination (IUI) success has diminished in some eras, likely due to such drift and standardization issues [4] [3]. Consequently, treatment decisions based on morphology alone may be unreliable without proper laboratory quality controls.

Q4: What methodologies can reduce inter-observer variability? Implementing standardized training tools based on expert consensus is highly effective. A 2025 study utilized a "Sperm Morphology Assessment Standardisation Training Tool" that applied machine learning principles, using images with 100% expert consensus as "ground truth" [2] [5]. After four weeks of repeated training with this tool, novice morphologists significantly improved their accuracy (from 82% to 90%) and diagnostic speed (from 7.0 to 4.9 seconds per image) while reducing variation [2]. This demonstrates that structured, consistent training with validated reference images can markedly improve standardization.

Experimental Protocol: Validating a Sperm Morphology Training Tool

Objective: To assess and improve the accuracy and consistency of sperm morphologists using a standardized training tool.

Methodology Summary (Based on Seymour et al., 2025 [2]):

Image Database Creation:

- Source: Collect semen samples from 72 rams (applicable to human samples).

- Imaging: Use an Olympus BX53 microscope with DIC optics at 40x magnification.

- Processing: Capture 50 fields of view per sire (3,600 total). Use a machine-learning algorithm to crop images to individual sperm, yielding 9,365 single-sperm images.

Establishing Ground Truth:

- Three experienced assessors independently classify all single-sperm images.

- Only images with 100% consensus on all labels (4,821 images) are integrated into the training tool as validated "ground truth."

Training and Assessment Protocol:

- Experiment 1 (Initial Accuracy): Novice morphologists (n=22) classify images using 2-, 5-, 8-, and 25-category systems without training to establish baseline accuracy.

- Experiment 2 (Training Efficacy): A second cohort (n=16) undergoes repeated training over four weeks using the tool, which provides instant feedback on classification accuracy.

- Metrics: Track accuracy (%) and time spent per image (seconds) across tests.

Key Resources: High-resolution microscope with DIC or phase contrast optics, web-based training interface, database of consensus-classified sperm images.

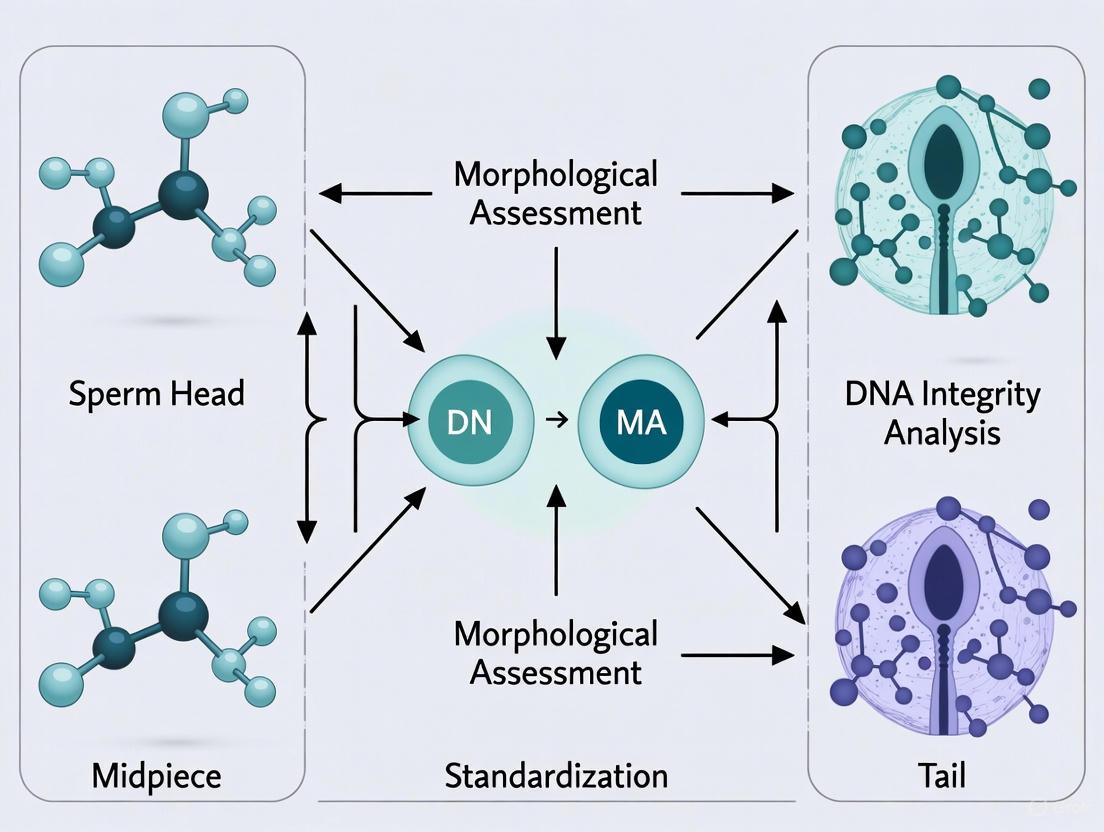

Workflow Diagram: Standardized Training Tool Development

Research Reagent Solutions

Table 2: Essential Materials for Standardized Sperm Morphology Assessment

| Item | Function | Specification / Example |

|---|---|---|

| Research Microscope | High-resolution imaging of sperm cells | Olympus BX53 with DIC or phase contrast objectives [5] |

| High-Performance Camera | Capture detailed digital images for analysis | Olympus DP28 camera (8.9-megapixel CMOS sensor) [5] |

| Standardized Staining Reagents | Prepare semen slides for consistent morphology evaluation | As per WHO Laboratory Manual recommendations [1] |

| Consensus Image Database | Provides "ground truth" for training and validation | Database of 4,821 sperm images with 100% expert consensus [2] |

| Web-Based Training Interface | Platform for delivering standardized training and assessment | Custom tool providing instant feedback on classification accuracy [2] |

| Quality Control Materials | For regular equipment and procedure calibration | Used in internal quality control programs per WHO guidelines [1] |

Historical Lack of Standardized Training and Proficiency Testing

The subjective assessment of sperm morphology has long been a critical, yet highly variable, test in male fertility evaluation. This variability stems fundamentally from a historical lack of standardized training and proficiency testing for morphologists [2]. Without robust, universally adopted standardization protocols, this subjective test remains prone to human bias and error, compromising the reliability of results across different laboratories and practitioners [5]. This FAQ guide addresses the specific challenges researchers face due to this lack of standardization and provides evidence-based troubleshooting strategies.

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary evidence that training improves sperm morphology assessment accuracy?

Multiple studies demonstrate that structured training significantly improves the accuracy and reduces the variation in sperm morphology classification. The data below summarizes the performance improvement observed in novice morphologists after using a standardized training tool.

Table: Impact of Standardized Training on Morphology Assessment Accuracy

| Classification System Complexity | Untrained User Accuracy (%) | Trained User Accuracy (%) | Statistical Significance (p-value) |

|---|---|---|---|

| 2-category (Normal/Abnormal) | 81.0 ± 2.5 | 98.0 ± 0.4 | < 0.001 |

| 5-category (Head, Midpiece, etc.) | 68.0 ± 3.6 | 97.0 ± 0.6 | < 0.001 |

| 8-category (Specific defects) | 64.0 ± 3.5 | 96.0 ± 0.8 | < 0.001 |

| 25-category (Individual defects) | 53.0 ± 3.7 | 90.0 ± 1.4 | < 0.001 |

Furthermore, training significantly increased diagnostic speed, reducing the time taken to classify an image from 7.0 ± 0.4 seconds to 4.9 ± 0.3 seconds [2].

FAQ 2: How was "ground truth" established for training in a subjective field?

Establishing a reliable "ground truth" dataset is a major challenge. The recommended methodology, adapted from machine learning principles, involves a consensus-based approach among multiple experts [2] [5].

- Image Sourcing: Collect high-resolution field-of-view images from numerous samples (e.g., 50 FOVs from each of 72 rams = 3,600 FOVs) [5].

- Image Cropping: Use a machine-learning algorithm to crop fields of view into images containing a single spermatozoon for unambiguous assessment [5].

- Expert Labelling: Have multiple experienced assessors (e.g., three) independently classify each individual sperm image using a comprehensive classification system [5].

- Consensus Validation: Include in the final training set only those sperm images where all experts demonstrate 100% consensus on the classification. In one study, this resulted in 4,821 out of 9,365 images (51.5%) being validated for the training tool [5].

FAQ 3: What are the current expert recommendations regarding sperm morphology assessment?

Recent guidelines significantly simplify the role of sperm morphology assessment. The 2025 expert review from the French BLEFCO Group provides the following key recommendations [6] [7]:

- R1: Do not recommend systematic detailed analysis of all abnormality groups during routine assessment.

- R2: Do recommend that laboratories use qualitative or quantitative methods to detect specific monomorphic abnormalities (e.g., globozoospermia, macrocephalic spermatozoa syndrome).

- R3: Do not recommend the use of sperm abnormality indexes (TZI, SDI, MAI) for infertility investigation and ART, due to insufficient evidence of clinical value.

- R5: Do not recommend using the percentage of normal-form spermatozoa as a prognostic criterion for selecting IUI, IVF, or ICSI procedures.

FAQ 4: What modern technological solutions are emerging to address standardization challenges?

Artificial Intelligence (AI) and deep learning models are being developed to automate sperm morphology classification, thereby reducing reliance on human subjective judgment. One study created a convolutional neural network (CNN) model trained on an expert-validated dataset of 1,000 sperm images, which was augmented to 6,035 images [8]. This model achieved classification accuracies ranging from 55% to 92%, demonstrating the potential for AI to standardize and accelerate semen analysis [8].

Experimental Protocols & Workflows

Protocol for Implementing a Standardized Training Program

This protocol is based on the validation of a "Sperm Morphology Assessment Standardisation Training Tool" [2].

- Tool Setup: Implement a web-based training tool that provides instant feedback on user classifications.

- Baseline Testing: Have novice morphologists complete an initial assessment on a validated image set to establish baseline accuracy and speed.

- Structured Training: Expose trainees to visual aids, instructional videos, and repeated practice sessions using the tool. One effective regimen involved repeated training and testing over a four-week period.

- Progressive Complexity: Start training with a simple 2-category system (Normal/Abnormal) before progressing to more complex classification systems (5, 8, or 25 categories).

- Proficiency Evaluation: Conduct final proficiency tests to confirm that morphologists have achieved the required accuracy levels (e.g., >90% for complex systems) and reduced their classification time.

Workflow Diagram: Traditional vs. Modern Assessment

The following diagram contrasts the traditional, highly variable workflow with a modern approach incorporating standardized training and technology.

The Scientist's Toolkit: Key Research Reagents & Materials

Table: Essential Materials for Standardized Sperm Morphology Research

| Item | Function/Description | Key Consideration |

|---|---|---|

| High-Resolution Microscope | For detailed visualization of sperm structures. | Equip with high Numerical Aperture (NA) objectives (e.g., 0.95 for DIC) and a high-megapixel camera [5]. |

| DIC/Phase Contrast Optics | Enhances contrast for viewing unstained or live sperm without artifacts. | Superior to bright-field for assessing subtle morphological details [5]. |

| Standardized Staining Kits | Provides consistent staining for cytological analysis. | Required for detailed assessment after staining; must be validated within the lab [6]. |

| "Ground Truth" Image Dataset | A consensus-validated library of sperm images for training and calibration. | The cornerstone for standardized training and tool validation [2] [5]. |

| Classification System Guide | A detailed reference defining normal and abnormal sperm categories. | Can range from simple (2-category) to complex (25+ categories); must be consistently applied [2]. |

| Automated/AI Analysis Software | For objective, high-throughput morphology assessment. | Deep-learning models (CNNs) can standardize and accelerate analysis [8]. |

| Proficiency Testing (PT) Scheme | External quality control program to monitor morphologist performance. | e.g., CAP's SPERM MORPHOLOGY ONLINE-SM1CD program [9]. |

In the field of male fertility assessment, sperm morphology analysis remains a cornerstone diagnostic test. However, its clinical value is critically undermined by a lack of standardization, leading to data of questionable reliability. This technical support document outlines the specific challenges posed by non-standardized data collection and analysis, provides evidence-based troubleshooting guidance, and details standardized protocols to enhance the accuracy, reproducibility, and clinical utility of sperm morphology assessment in research and development.

Troubleshooting Guides & FAQs

FAQ 1: Why do our sperm morphology results show high variability, even when repeated on the same sample?

- Problem: High intra- and inter-laboratory variation in morphology assessment.

- Solution:

- Primary Cause: The subjective nature of the test and lack of standardized training for morphologists are the most significant contributors [10] [2]. Without a traceable standard, individual perception and bias lead to inconsistent scoring.

- Actionable Steps:

- Implement a structured, ongoing training program for all morphologists using validated tools. Studies show that using a "Sperm Morphology Assessment Standardisation Training Tool" based on expert consensus labels can significantly improve novice accuracy and reduce variation [2].

- Establish and adhere to a single, clearly defined classification system (e.g., 2-category: normal/abnormal) as more complex systems increase diagnostic error [2].

- Introduce regular internal quality control (QC) and participate in external quality assurance (QA) schemes [10].

FAQ 2: How should we handle viscous semen samples for morphology smears without damaging sperm?

- Problem: Sample viscosity prevents the creation of even, interpretable smears.

- Solution:

- Primary Cause: Incomplete liquefaction of the semen sample.

- Actionable Steps:

- After initial incubation at 37°C for 30 minutes, if viscosity persists, add proteolytic enzymes such as α-chymotrypsin or bromelain to the sample.

- Incubate at 37°C for an additional 10 minutes [10].

- Gently vortex the sample for 10 seconds before preparing the smear. Avoid vigorous pipetting or shaking, which can mechanically damage sperm.

FAQ 3: Is the percentage of normal sperm forms a reliable prognostic criterion for selecting ART procedures like IUI, IVF, or ICSI?

- Problem: Conflicting evidence on the predictive value of sperm morphology for ART outcomes.

- Solution:

- Evidence-Based Guidance: Recent 2025 clinical guidelines from the French BLEFCO Group state that the percentage of spermatozoa with normal morphology should not be used as a prognostic criterion for selecting the ART procedure (IUI, IVF, or ICSI) [11]. The overall level of evidence supporting its predictive value is low. The focus should shift to detecting specific, clinically relevant monomorphic abnormalities (e.g., globozoospermia) rather than relying on a single percentage threshold [11].

FAQ 4: What is the most critical step in staining to ensure accurate sperm head measurement?

- Problem: Inconsistent staining leads to inaccurate identification of normal sperm heads.

- Solution:

- Critical Step: The use of an ocular micrometer is essential for precise measurement of sperm dimensions [10]. Without it, a precise evaluation of morphology cannot be performed.

- Protocol Adherence: Follow the staining protocol (e.g., Diff-Quik, Papanicolaou) meticulously, including exact immersion times and proper drying, to ensure consistent staining of the acrosomal and post-acrosomal regions, which is critical for head assessment [10].

Quantitative Data on Standardization Impact

The tables below summarize quantitative evidence demonstrating the effect of standardization and training on the accuracy of sperm morphology assessment.

Table 1: Impact of Training on Morphology Assessment Accuracy (Experiment 2) [2]

| Training Stage | 2-Category System Accuracy (%) | 5-Category System Accuracy (%) | 8-Category System Accuracy (%) | 25-Category System Accuracy (%) | Average Speed per Image (seconds) |

|---|---|---|---|---|---|

| Test 1 (Untrained) | 82.0 ± 1.05 | 79.0* | 76.0* | 70.0* | 7.0 ± 0.4 |

| Test 14 (Trained) | 98.0 ± 0.43 | 97.0 ± 0.58 | 96.0 ± 0.81 | 90.0 ± 1.38 | 4.9 ± 0.3 |

Note: Data for 5, 8, and 25-category systems at Test 1 are approximated from graphical data in [2] for comparison purposes.

Table 2: Initial Accuracy of Novice Morphologists Using Different Classification Systems (Experiment 1) [2]

| Classification System | Untrained User Accuracy (%) | Trained User Accuracy (with visual aid) (%) |

|---|---|---|

| 2-Category (Normal/Abnormal) | 81.0 ± 2.5 | 94.9 ± 0.66 |

| 5-Category (by defect location) | 68.0 ± 3.59 | 92.9 ± 0.81 |

| 8-Category (specific defects) | 64.0 ± 3.5 | 90.0 ± 0.91 |

| 25-Category (individual defects) | 53.0 ± 3.69 | 82.7 ± 1.05 |

Experimental Protocols for Standardized Morphology Assessment

Protocol 1: Standardized Smear Preparation and Staining (Diff-Quik)

This protocol is adapted from established WHO guidelines and relevant literature [10].

- Sample Preparation: Collect semen in a sterile container and incubate at 37°C for 30 minutes to allow liquefaction. For viscous samples, add enzymes like α-chymotrypsin and incubate for a further 10 minutes at 37°C [10].

- Smear Creation:

- Vortex the liquefied sample for 10 seconds.

- Place a 10 µL aliquot on one end of a clean, frosted slide.

- Use a second slide at a 45° angle to swiftly and smoothly spread the drop, creating a thin, even smear.

- Prepare duplicate slides and air-dry completely [10].

- Staining (Diff-Quik):

- Immerse the dry smear slide in fixative five times. Allow to dry completely for 15 minutes.

- Immerse the slide three times in Solution I (xanthene dye) for 10 seconds each. Drain excess stain.

- Immerse the slide five times in Solution II (thiazine dye) for 10 seconds each.

- Rinse the slide gently but thoroughly in sterile water to remove excess stain.

- Place the slide vertically on absorbent paper to air-dry.

- Mounting:

- Once dry, apply a few drops of mounting medium (e.g., Cytoseal) and carefully lower a coverslip onto the slide, avoiding air bubbles.

- Allow the mounted slide to dry completely before examination [10].

Protocol 2: Microscopy and Evaluation Based on Strict Criteria

- Microscopy Setup: Examine the stained smear using a bright-field microscope with a 100x oil immersion objective and 10x eyepieces. Use immersion oil with a refractive index (RI) of 1.52. An ocular micrometer must be placed in one of the eyepieces for accurate measurement [10].

- Evaluation of Morphology:

- Head: Must be smooth, regularly contoured, and oval. Length: 5–6 µm; Width: 2.5–3.5 µm. The acrosome should cover 40–70% of the head area and contain no more than two small vacuoles (<20% of head area) [10].

- Mid-piece: Should be slender, regular, approximately the same length as the head, and aligned with its axis. The presence of excess residual cytoplasm (>one-third of the head area) is considered abnormal [10].

- Tail: Should be approximately 45 µm long, uniform, thinner than the mid-piece, and without sharp bends [10].

- Scoring:

- Score at least 200 spermatozoa (in replicates of 100) per sample.

- Classify all borderline forms as abnormal.

- The reference threshold for morphologically normal forms is ≥4% according to the WHO 5th edition strict criteria [10].

Process Visualization: Pathways and Workflows

Standardization Deficit Pathway

Standardized Assessment Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Standardized Sperm Morphology Assessment

| Item | Function / Purpose | Key Considerations |

|---|---|---|

| Diff-Quik Stain | A rapid, standardized stain for sperm morphology. Allows differentiation of acrosome (light blue) and post-acrosomal region (dark blue) [10]. | Consistent immersion times are critical for staining quality and result reproducibility. |

| Ocular Micrometer | A calibrated graticule placed in the microscope eyepiece. Essential for accurately measuring sperm head dimensions (5-6 µm long, 2.5-3.5 µm wide) against strict criteria [10]. | Without this tool, precise morphology classification is impossible, leading to subjective and variable results. |

| Mounting Medium (e.g., Cytoseal) | A clear resin used to preserve the stained smear under a coverslip for microscopy. | Prevents damage to the smear and allows for clear, high-resolution imaging under oil immersion. |

| Proteolytic Enzymes (α-chymotrypsin/bromelain) | Used to reduce viscosity in semen samples that have not fully liquefied, enabling the creation of even smears [10]. | Incubation time should be controlled (e.g., 10 mins) to avoid potential damage to sperm morphology. |

| Sperm Morphology Training Tool | Software or image sets using expert consensus labels ("ground truth") to train and standardize morphologists, applying machine learning principles to human training [2]. | Shown to significantly improve accuracy and reduce variation among novice and experienced staff. |

FAQs: Core Challenges in Sperm Morphology Assessment

Q1: What is the primary source of variability in sperm morphology assessment? The primary source is the inherent subjectivity in interpreting morphological criteria. Studies highlight significant inter-observer variability, even among experienced technicians. Key morphological criteria related to the head ovality, regularity of head and midpiece contours, and alignment of the midpiece and head consistently show the highest variability in outcomes [12] [13]. In external quality control programs, agreement on these specific criteria can be as low as <60%, classifying them as having "poor" consensus [12].

Q2: Why is establishing a "ground truth" for sperm morphology so difficult? Establishing "ground truth" is challenging due to the lack of a traceable standard and reliance on subjective human judgment. Without robust, standardized training, each morphologist may apply slightly different interpretations to the same sperm image. Research indicates that untrained users classifying sperm into 25 different abnormality categories showed initial accuracies as low as 53% and high variation (CV=0.28) [2]. This underscores that expert consensus, rather than a single opinion, is required to establish a reliable baseline [2].

Q3: Which specific sperm parts are most prone to inconsistent classification? Based on analyses from multi-year external quality control schemes, the most problematic criteria are [12] [13]:

- Head: Oval shape and smooth, regularly contoured.

- Midpiece: Slender and regular contour, and alignment of its major axis with the head's axis. In contrast, criteria related to acrosomal vacuoles, excessive residual cytoplasm, and tail metrics show significantly higher agreement among evaluators [12].

Q4: How does the complexity of the classification system impact accuracy and reliability? The complexity of the classification system is inversely related to accuracy and reliability. Studies demonstrate that as the number of categories increases, accuracy drops and variability rises [2]. The table below summarizes the performance of trained morphologists across different systems:

Table 1: Impact of Classification System Complexity on Assessment Accuracy

| Classification System | Reported Final Accuracy (after training) | Key Characteristics |

|---|---|---|

| 2-category (Normal/Abnormal) | 98 ± 0.43% [2] | Highest accuracy and lowest variability. |

| 5-category (by defect location) | 97 ± 0.58% [2] | Moderate complexity, based on head, midpiece, tail, and droplet. |

| 8-category (specific defects) | 96 ± 0.81% [2] | Common in veterinary medicine (e.g., cattle). |

| 25-category (individual defects) | 90 ± 1.38% [2] | Highest complexity, leads to lowest accuracy and highest variability. |

Q5: What are the proven methods to reduce variability and standardize assessment? Structured, repeated training is the most effective method. Utilizing a standardized training tool with an expert-validated image dataset ("ground truth") has been shown to significantly improve performance. One study showed that novice morphologists who underwent such training improved their accuracy from 82% to 90% in a 25-category system and increased their diagnostic speed by over 30% [2]. Furthermore, e-learning modules have proven successful in standardizing analysis across multiple laboratories, significantly improving agreement with expert consensus [14].

Troubleshooting Guides

Issue 1: High Inter-Technician Variability

Problem: Different technicians in the same lab produce significantly different morphology reports for the same sample.

Solution:

- Implement a Standardized Training Tool: Use a training tool based on machine learning principles, which provides a large set of pre-classified sperm images validated by expert consensus (the "ground truth"). This creates a consistent and traceable training standard [2].

- Schedule Regular Proficiency Testing (PT): Conduct frequent internal and participate in external quality control (EQC) schemes. Regular testing ensures technicians maintain their skills and allows for early identification of deviations [12] [14].

- Simplify the Reporting System: If high precision for specific defects is not clinically critical, consider collapsing complex classification systems (e.g., 25 categories) into a simpler one (e.g., 5 or 8 categories). This directly improves inter-observer agreement [2].

Issue 2: Discrepancies with External Quality Control (EQC) Results

Problem: Your laboratory's results consistently fall outside the acceptable range in EQC programs.

Solution:

- Re-train on High-Variability Criteria: Focus retraining efforts on the specific morphological criteria known to have the highest variability. Use EQC report feedback to identify weak spots, paying particular attention to the assessment of head shape and midpiece contour/alignment [12].

- Blinded Re-assessment: Have technicians re-assess the EQC samples blindly after a cooling-off period. Compare the results internally to identify inconsistent application of criteria.

- Adopt a Consensus-Based "Ground Truth": Do not rely on a single expert for internal standards. Establish your laboratory's reference values and "ground truth" image library through the consensus of multiple senior morphologists to minimize individual bias [2] [15].

Issue 3: Inefficient and Time-Consistent Analysis

Problem: Manual morphology assessment is too slow, causing workflow bottlenecks and technician fatigue, which can increase error rates.

Solution:

- Invest in Automated Systems: Implement qualified computer-assisted sperm analysis (CASA) systems or AI-based models for morphology. Deep learning models can now analyze a sample in under one minute with accuracies exceeding 96%, matching expert-level performance and providing complete standardization [8] [16].

- Validate Automated Systems Rigorously: Any automated system must be validated within your own laboratory using your specific protocols and reagents. The system's analytical performance must be qualified against manual assessments based on expert consensus [6] [15].

- Continuous Training for Speed and Accuracy: Use training tools that track both accuracy and time. Studies show that with repeated training, technicians not only become more accurate but also significantly faster, reducing the time spent per image from 7.0 seconds to 4.9 seconds [2].

Experimental Protocols

Protocol 1: Establishing Expert Consensus for "Ground Truth" Image Labeling

Objective: To create a validated dataset of sperm images for use in training and quality control.

Methodology:

- Image Acquisition: Capture high-quality images of stained spermatozoa (Papanicolaou is the reference stain [12]) using a standardized microscope and camera setup. Ensure images are in focus, with multiple focal planes if necessary [15].

- Independent Expert Review: A panel of at least three experienced morphologists independently classifies each sperm image. Experts should have a proven record of reliability, such as publications in the field or leadership in EQC programs [12].

- Consensus Meeting: For images where there is initial disagreement, experts review them together in a consensus meeting. The goal is to discuss and resolve discrepancies based on strict morphological criteria definitions.

- Final Labeling: The agreed-upon classification for each image is recorded as the "ground truth" label. This curated dataset becomes the gold standard for all subsequent training and proficiency testing [2].

Protocol 2: Validating a New Training or Automated System

Objective: To evaluate the performance of a new training tool or an AI-based sperm morphology analysis system against the established "ground truth."

Methodology:

- Baseline Assessment: A cohort of novice or trained morphologists (or the AI system) performs an initial classification test on the validated image dataset. Accuracy and time per image are recorded [2].

- Intervention: The participants undergo training using the new tool, or the AI model is trained on the "ground truth" dataset. For AI, this involves using a Convolutional Neural Network (CNN) architecture, often enhanced with attention mechanisms (e.g., CBAM-enhanced ResNet50), and a large dataset potentially augmented to improve learning [8] [16].

- Post-Intervention Testing: After the training period, participants are tested again on a different set of images from the validated dataset.

- Data Analysis: Compare pre- and post-training scores for accuracy, variability (e.g., coefficient of variation), and speed. Statistical tests (e.g., McNemar's test for AI models [16]) are used to determine if improvements are significant. Successful validation is indicated by a significant increase in accuracy and a decrease in inter-observer variation [2] [14].

Workflow and Relationship Diagrams

Diagram 1: Ground Truth Establishment Workflow

This diagram illustrates the multi-step process for creating a consensus-driven "ground truth" dataset.

Diagram 2: Classification Complexity vs. Performance

This diagram shows the inverse relationship between the number of classification categories and key performance metrics.

Research Reagent Solutions

Table 2: Essential Materials for Standardized Sperm Morphology Analysis

| Item | Function / Application | Key Considerations |

|---|---|---|

| Papanicolaou (PAP) Stain | Reference staining method for sperm morphology. Provides clear differentiation of sperm structures (head, acrosome, midpiece) [12] [15]. | Adherence to standardized staining protocols is critical for consistency. |

| Standardized Staining Kits | Commercial kits ensure reagent consistency, reducing technical variability in sample preparation. | Must be validated against laboratory's established reference ranges. |

| Computer-Assisted Sperm Analysis (CASA) System | Automated system for objective analysis of sperm concentration, motility, and morphometry (head dimensions) [15]. | Requires rigorous internal validation; morphology modules may still need expert verification. |

| Validated "Ground Truth" Image Datasets | Serves as the primary reference for training, proficiency testing, and validating new methods (e.g., AI models) [2] [16]. | Quality is paramount; must be built on multi-expert consensus. Public datasets are available but may have limitations. |

| E-Learning & Proficiency Testing Platforms | Digital tools for standardized training, continuous skill assessment, and participation in external quality control schemes [2] [14]. | Effective for scaling standardized training across multiple laboratories and technicians. |

Standardization in Practice: Methodological Advances and Training Applications

Sperm morphology assessment serves as a fundamental diagnostic tool in male fertility evaluation, yet it remains plagued by significant subjectivity and inter-observer variability. This inconsistency stems from the lack of robust, standardized training protocols for morphologists, leading to diagnostic inaccuracies that can directly impact clinical decision-making and patient management. Traditional training methods often rely on side-by-side assessment with a senior morphologist, an approach that is not only time-consuming but also inherently propagates existing biases [5]. Within clinical and research settings, this variability translates to unreliable data, compromised diagnostic accuracy, and ultimately, suboptimal patient care. The emergence of sperm-by-sperm standardization platforms represents a paradigm shift, leveraging digital technologies and expert-validated "ground truth" to fundamentally address these long-standing challenges in reproductive science [2].

Frequently Asked Questions (FAQs)

1. What is the primary source of variability in traditional sperm morphology assessment? The primary source is human subjectivity. Without standardized training, different morphologists can classify the same sperm differently. Studies show that even expert morphologists only achieved 73% consensus on a simple normal/abnormal classification for ram sperm images, highlighting the inherent subjectivity of the test [2].

2. How does a "sperm-by-sperm" training tool improve accuracy? These tools utilize principles from machine learning, specifically the concept of "ground truth." Each sperm image in the platform is pre-classified with 100% consensus by multiple expert morphologists. When a trainee classifies a sperm, they receive instant feedback on its correct/incorrect label, enabling supervised, self-paced learning based on validated data rather than a single opinion [5] [2].

3. What is the impact of using a more complex classification system? Research demonstrates that accuracy decreases as the number of classification categories increases. One study found that untrained users had an accuracy of 81% with a 2-category system (normal/abnormal), which dropped to 53% with a 25-category system. However, with training, final accuracy rates improved to 98% and 90% for the 2- and 25-category systems, respectively [2].

4. Can these platforms be adapted for different research needs? Yes, a key design feature of modern training tools is their adaptability. They can be configured for various species (e.g., human, ram, cattle), different microscope optics, and multiple morphological classification systems, making them a versatile resource for diverse research environments [5] [2].

5. What quantitative improvements can be expected after training? Structured training leads to significant gains in both accuracy and efficiency. One validation study showed trainee accuracy improved from 82% to 90% over a four-week period, while the time taken to classify a single sperm image decreased from 7.0 seconds to 4.9 seconds [2].

Troubleshooting Common Experimental Issues

Issue 1: High Inter-Operator Variability in Results

Problem: Different technicians in the same lab produce significantly different morphology reports for the same sample, leading to unreliable data.

Solution:

- Implement a Standardized Training Regimen: Utilize a sperm-by-sperm training tool for all new and existing staff. In one study, this approach significantly reduced variation among users, with the greatest improvement observed after the first intensive day of training [2].

- Establish a Validation Workflow: Follow a protocol where trainees must achieve a predefined accuracy score (e.g., >95% for a 2-category system) against the expert-consensus "ground truth" before analyzing patient samples.

- Conduct Regular Proficiency Testing: Schedule periodic re-testing using the platform's assessment mode to monitor for drift in classification accuracy and ensure long-term standardization.

Issue 2: Low Accuracy with Complex Classification Systems

Problem: Technicians struggle to correctly identify and categorize sperm using detailed, multi-category classification systems (e.g., systems with 8 or 25 categories).

Solution:

- Adopt a Phased Training Approach: Begin training with a simple 2-category system (normal/abnormal). Once high proficiency is achieved, progressively introduce more complex categories. This builds a solid foundational understanding before tackling finer distinctions [2].

- Leverage Visual Aids and Feedback: The training tool's instant feedback is crucial. It corrects misclassifications in real-time, reinforcing correct identification. Studies show that cohorts using visual aids and video instruction achieved significantly higher first-test accuracy (94.9%) compared to untrained users (81%) [2].

Issue 3: Integrating New Technology with Existing Workflows

Problem: Resistance to adopting new digital tools due to perceived complexity, cost, or disruption to established laboratory routines.

Solution:

- Demonstrate Efficiency Gains: Emphasize data showing that trained users not only become more accurate but also faster, reducing the time spent per analysis [2].

- Highlight Versatility: Showcase the platform's adaptability to the specific classification system and species already in use within your lab, ensuring it complements rather than replaces existing protocols [5].

- Start with a Pilot Program: Implement the platform initially for training and quality control of a small group or for a specific project to demonstrate its value before a lab-wide rollout.

Data Presentation: Quantitative Evidence for Standardization

Table 1: Impact of Standardized Training on Morphology Assessment Accuracy

| Classification System | Untrained User Accuracy | Trained User Accuracy (Final Test) | Improvement | Source |

|---|---|---|---|---|

| 2-Category (Normal/Abnormal) | 81.0% ± 2.5% | 98.0% ± 0.43% | +17.0% | [2] |

| 5-Category (Head, Midpiece, etc.) | 68.0% ± 3.59% | 97.0% ± 0.58% | +29.0% | [2] |

| 8-Category (Industry Standard) | 64.0% ± 3.5% | 96.0% ± 0.81% | +32.0% | [2] |

| 25-Category (Detailed) | 53.0% ± 3.69% | 90.0% ± 1.38% | +37.0% | [2] |

Table 2: Technical Comparison of Sperm Analysis Modalities

| Parameter | Manual Analysis | Traditional CASA | AI-Guided & Standardized Platforms |

|---|---|---|---|

| Inter-Operator Variability | High (20-30% CV) [17] | Moderate | Low (CV < 0.14 after training) [2] |

| Statistical Basis | Limited fields of view | Standard FOV (~1x1mm) | Expanded FOV (~13x larger) [17] |

| Training Methodology | Apprenticeship, subjective | System operation | "Ground truth" consensus, objective [5] |

| Key Innovation | - | Automation | Standardization and validation |

Experimental Protocols for Validation

Protocol 1: Validating a Sperm Morphology Training Tool

This protocol is adapted from studies that developed and tested a standardized sperm morphology assessment training tool [5] [2].

1. Image Database Creation:

- Sample Collection: Collect semen samples from a relevant cohort (e.g., 72 rams was used in one study).

- Image Acquisition: Use a high-resolution microscope (e.g., Olympus BX53 with DIC optics, 40x magnification, high NA objectives) to capture thousands of field-of-view (FOV) images.

- Single-Cell Isolation: Employ a machine-learning algorithm to automatically crop FOV images, generating thousands of images containing a single sperm cell.

2. Establishing "Ground Truth" Labels:

- Expert Consensus: Have multiple experienced morphologists independently classify each single-sperm image.

- Data Validation: Use only images where classifiers achieve 100% consensus on all morphological labels for integration into the training tool. In one study, this resulted in 4,821 consensus-classified images from an initial set of 9,365 [5].

3. Tool Development and Testing:

- Web Interface: Develop an interactive platform that presents users with randomized sperm images from the validated dataset.

- Training Mode: Provide instant feedback on classification accuracy.

- Assessment Mode: Test user proficiency without feedback to establish baseline and post-training accuracy.

- Validation Experiment: Train a cohort of novice morphologists over several weeks (e.g., 14 tests over 4 weeks), recording their accuracy and speed for different classification systems [2].

Protocol 2: Assessing an Expanded Field-of-View (FOV) System

This protocol is based on the evaluation of the LuceDX system, which uses an expanded FOV to improve statistical accuracy [17].

1. System Setup:

- Utilize an imaging system with an expanded FOV (e.g., 3x4.2 mm, approximately 13 times larger than a standard 1x1 mm FOV).

- Ensure the system maintains resolution comparable to standard Computer-Assisted Semen Analysis (CASA) systems.

2. Sample Analysis:

- Prepare semen samples according to standard laboratory protocols (e.g., WHO guidelines).

- Load samples into the system for analysis. The large FOV captures a substantially larger portion of the sample in a single frame.

3. Data Comparison and Analysis:

- Precision Measurement: Compare the measurement precision of the expanded-FOV system against conventional CASA or manual analysis. Pilot data for LuceDX indicated a 3.6-fold improvement in precision [17].

- Statistical Reliability: Evaluate the system's performance, particularly in oligospermic samples, by its ability to mitigate biases from non-uniform sperm distribution and clustering effects, reducing false negatives in low-concentration specimens.

Visualization of Workflows and Systems

Diagram 1: Ground Truth Training Workflow

This diagram illustrates the process of creating a validated image dataset and using it for standardized training.

Diagram 2: Expanded FOV vs. Standard Analysis

This diagram contrasts the statistical basis of traditional analysis with expanded FOV technology.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sperm Morphology Standardization Research

| Item | Function/Description | Example/Note |

|---|---|---|

| High-Resolution Microscope | Capturing clear, detailed images for classification and analysis. | Microscope with DIC optics and 40x high NA objective (e.g., Olympus BX53) [5]. |

| Digital Camera & Sensor | High-resolution image acquisition for single-sperm analysis. | 8.9-megapixel CMOS sensor camera [5]. |

| Sperm Morphology Training Tool | Web-based platform for standardized training and proficiency testing. | Platform populated with expert-consensus "ground truth" images [5] [2]. |

| Expanded FOV Imaging System | Increases analyzed sample volume to improve statistical accuracy. | System like LuceDX with a 13x larger FOV than standard CASA [17]. |

| Stained Slides & Cytology Kits | For automated morphology analysis systems requiring stained samples. | Used by AI-CASA systems for cytological analysis after staining [6]. |

| AI-Enabled CASA System | Provides automated, objective assessment of concentration, motility, and morphology. | Systems like LensHooke X1 PRO or SCA [18] [19]. |

The Role of Expert Consensus in Creating Validated Image Datasets

Frequently Asked Questions

Q1: What is the primary challenge in creating a ground truth for sperm image datasets, and how is it addressed? The primary challenge is the inherent subjectivity of manual sperm morphology assessment, which can lead to inconsistent labels. This is addressed by employing a multi-expert consensus model. In practice, each sperm image is independently classified by multiple experts [20]. A ground truth file is then compiled, detailing the classification from each expert. The level of agreement among them is analyzed, categorizing results into "Total Agreement," "Partial Agreement," or "No Agreement," which helps quantify the subjectivity of the task and establish a more reliable reference standard [20].

Q2: Our model performs well on the training data but fails on external datasets. What might be the cause? This is typically a problem of dataset representativeness. Your training dataset may not reflect the diversity of the target population or real-world clinical settings. To ensure robustness, a benchmark dataset must encompass a broad spectrum of disease severity, demographic diversity (e.g., age, ethnicity), and variations in data collection systems (e.g., different microscope vendors, staining protocols) [21]. Failure to include this heterogeneity can lead to biased models that do not generalize effectively [21].

Q3: How do we handle cases where experts disagree on an image label? Establish a pre-defined protocol for managing discordant expert opinions. One method is to use a consensus meeting where experts review disagreed-upon cases and deliberate to reach a common conclusion. Alternatively, a majority vote (e.g., 2 out of 3 experts) can be used as the final label. It is also critical to document the level of inter-expert agreement, as cases with persistent disagreement might indicate particularly challenging or ambiguous morphological features that require special attention [20].

Q4: What are the key considerations for properly labeling medical images? Proper labeling requires involvement from domain experts, whose years of experience should be considered and reported [21]. Key considerations include:

- Label Type and Instructions: Use clear, standardized instructions to ensure homogeneous labeling across different experts or institutions [21].

- Annotation Format: Decide on a consistent format for annotations (e.g., DICOM-SEG, NIfTI) [21].

- Metadata: Include relevant, de-identified metadata such as patient demographics and clinical history to provide context for the labeled data [21].

Troubleshooting Guides

Problem: Low Inter-Expert Agreement on Image Labels

Description: A high rate of disagreement among experts during the image labeling phase threatens the reliability of the ground truth.

Solution:

- Standardize Classification Criteria: Provide all experts with detailed, written guidelines based on a recognized classification system (e.g., the modified David classification for sperm morphology) [20].

- Conduct Training Sessions: Before formal labeling, hold joint sessions where experts label a sample set of images and discuss discrepancies to align their understanding.

- Implement a Structured Agreement Framework: Categorize agreement levels as Total (3/3 experts agree), Partial (2/3 agree), or None. Statistically analyze agreement using tools like IBM SPSS Statistics with Fisher’s exact test to identify significant discrepancies [20].

- Escalate to Consensus Panel: For cases with persistent disagreement (No Agreement), escalate them to a panel of senior experts for a final, consensus-based decision [20].

Problem: Creating a Representative and Unbiased Dataset

Description: The curated dataset is too homogenous, leading to an AI model that fails when applied to data from different sources or populations.

Solution:

- Define the Use Case: Clearly identify the clinical context, target population, and healthcare setting for which the AI is intended [21].

- Ensure Population Diversity: Actively gather data that reflects diversity in demographics, disease prevalence, and imaging equipment vendors [21].

- Address Rare Findings: For rare diseases or morphological anomalies, consider data augmentation techniques or generating synthetic data to ensure the model has sufficient examples to learn from [21].

- External Validation: Always validate your model's performance on a completely independent, well-curated benchmark dataset from a different source to test its true generalizability [21].

Problem: Integrating Expert Consensus at Scale

Description: The process of having experts manually validate a large number of AI-generated labels is prohibitively time-consuming and not scalable.

Solution: Implement a Human-AI Hybrid Pipeline. This multi-stage process efficiently leverages both AI scalability and expert knowledge [22].

- AI Initial Labeling: Use a large language model (LLM) or deep learning system to generate initial labels and chain-of-thought explanations for dataset items [22].

- Initial Human Review: Have experts review the AI-generated outputs against a structured rubric, scoring them for correctness and sufficiency [22].

- AI Re-verification: Re-process the human-reviewed questions through the AI to check for consistency and identify persistently problematic items [22].

- Expert Panel Refinement: Escalate items that fail AI re-verification (e.g., using a "five-strike" rule where the AI fails five times) to a senior expert panel for final refinement and correction [22].

Experimental Protocols & Data

Protocol 1: Multi-Expert Consensus for Sperm Morphology Labeling

This protocol is adapted from the methodology used to create the Sperm Morphology Dataset (SMD/MSS) [20].

- Sample Preparation: Prepare semen smears from patient samples, stained with a RAL Diagnostics kit following WHO guidelines [20].

- Image Acquisition: Capture images of individual spermatozoa using a CASA system with a x100 oil immersion objective in bright field mode [20].

- Expert Classification: Three independent experts, each with extensive experience in semen analysis, classify every spermatozoon based on the modified David classification (covering 12 classes of defects across the head, midpiece, and tail) [20].

- Data Compilation: Record all classifications in a shared ground truth file. Each image is assigned a filename that encodes the identified anomaly type [20].

- Agreement Analysis: Use statistical software (e.g., IBM SPSS Statistics 23) to analyze inter-expert agreement. Categorize outcomes as Total Agreement (3/3), Partial Agreement (2/3), or No Agreement.

Table 1: Sperm Morphology Dataset Composition and Expert Agreement [20]

| Category | Description | Quantity / Metric |

|---|---|---|

| Initial Image Count | Individual spermatozoa images acquired | 1,000 |

| Final Image Count | After data augmentation techniques | 6,035 |

| Expert Count | Number of classifying experts | 3 |

| Agreement Scenarios | Total, Partial, No Agreement | 3 |

| Statistical Test | Software used for agreement analysis | IBM SPSS Statistics 23 |

Protocol 2: Human-AI Hybrid Validation Pipeline

This protocol is designed for scalable, expert-driven validation of large datasets, as demonstrated in clinical reasoning datasets [22].

- Initial AI Generation: Use an LLM to generate initial answers and reasoning chains (e.g., Chain-of-Thought) for a set of questions [22].

- Structured Human Review: Medical experts independently review each AI-generated item against a multi-dimensional rubric (e.g., medical correctness, reasoning structure, information sufficiency) and provide a binary score (pass/fail) [22].

- AI Re-Answering and Verification: The reviewed questions are fed back into the AI to see if it can now answer them correctly based on the refined data. This step helps identify ambiguous or flawed questions [22].

- Five-Strike Escalation Rule: If the AI fails to answer a question correctly after five attempts, the item is automatically flagged and escalated to a panel of senior medical experts for in-depth review and final correction [22].

Table 2: Key Reagents and Materials for Dataset Creation [20] [21]

| Research Reagent / Material | Function in Experiment |

|---|---|

| RAL Diagnostics Staining Kit | Stains semen smears to visualize spermatozoa morphology for analysis [20]. |

| Computer-Assisted Semen Analysis (CASA) System | Microscope-based system with a digital camera for automated acquisition and storage of sperm images [20]. |

| Modified David Classification Guide | A standardized framework of 12 defect classes used by experts to ensure consistent morphological labeling [20]. |

| Benchmark Dataset | A well-curated, expert-labeled collection of data representing the full spectrum of target diseases and population diversity, used for robust AI validation [21]. |

| DICOM-SEG or NIfTI Format | Standardized file formats for storing medical images and their associated annotations, ensuring compatibility and consistency [21]. |

Workflow Visualization

Multi-Expert Consensus Workflow for Image Labeling

Human-AI Hybrid Pipeline for Dataset Validation

Within the broader thesis on standardizing sperm morphology assessment, this technical support guide addresses a critical and practical bottleneck: the variability introduced by staining protocols and microscope optics. Recent expert reviews highlight that "there is a huge variability in the performance and interpretation of this test," challenging its clinical relevance [6]. This variability stems directly from methodological choices in the laboratory. This resource provides targeted troubleshooting guides and FAQs to help researchers and drug development professionals overcome these specific challenges, ensuring their data is both reliable and reproducible.

Staining Technique Comparison and Selection Guide

The choice of staining technique directly impacts morphological clarity, measurement accuracy, and the reliability of diagnostic outcomes. Different stains offer varying levels of contrast, detail for specific organelles, and stability over time.

Comparative Analysis of Common Staining Techniques

| Staining Method | Key Advantages | Key Limitations | Best Use Cases |

|---|---|---|---|

| Eosin & Eosin-Nigrosin | Fastest; most cost-effective; provides strong contrast [23]. | Causes structural alterations; eosin-nigrosin forms colored crystals over time [23]. | Routine, high-throughput morphological evaluation where cost and speed are priorities [23]. |

| Diff-Quick | Quick, standardized analysis; good initial performance [23]. | Performance may vary with storage; part of a commercial kit. | Rapid clinical assessment and automated sperm cell analysis [23]. |

| Spermac | Delivers high contrast; valuable for acrosomal integrity assessment [23]. | Time-consuming procedure [23]. | Detailed evaluation of acrosome status, particularly post-cryopreservation [23]. |

| Papanicolaou | Recommended by WHO manuals; widely used in clinical settings [15]. | Procedure is complex and requires multiple steps. | Gold-standard clinical diagnosis; establishing reference values for CASA systems [15]. |

| Formol-Citrate-Rose Bengal | Detailed morphology analysis [23]. | Requires extensive preparation; significant post-storage changes [23]. | Specialized morphological studies when immediate analysis is guaranteed. |

| Methyl Violet | Simple protocol. | Lacks sufficient resolution; highly unstable over time; significantly lower interpretability [23]. | Limited to basic, immediate assessments where no other stains are available. |

Staining Techniques FAQ

Q: Which stain is the most practical for routine morphological evaluation of semen?

- A: Based on recent comparative studies, Eosin emerges as the most practical and economical option for routine evaluation, offering a strong balance of speed, cost, and contrast. However, users should be aware that it may increase the detection of structural alterations [23].

Q: Why do my stained slides become difficult to interpret after a few weeks of storage?

- A: Storage instability is a known issue with several stains. Eosin-nigrosin is prone to colored crystal formation, and Hemacolor can lose pigment clarity over months. For long-term archival stability, Diff-Quick or Spermac may offer better performance, though all slides should be analyzed as soon as possible after staining [23].

Q: Our lab is implementing a CASA system. How does stain choice affect this?

- A: Stain choice is critical for CASA. The stain must provide consistent, high-contrast images for the software to analyze accurately. Papanicolaou staining is commonly used to establish reference values for CASA systems. It is essential to validate your CASA system's performance with the specific staining protocol you adopt [15].

Microscope Optics and Calibration for Reproducible Imaging

Quantitative sperm morphology analysis, especially with advanced techniques like AI, demands rigorous calibration of microscope optics. Inconsistent illumination, uncalibrated detectors, and poor resolution directly undermine measurement reproducibility.

Essential Microscope Calibration Parameters

| Parameter | Importance | Calibration Method & Troubleshooting Tips |

|---|---|---|

| Illumination Power | Critical for fluorescence intensity, signal-to-noise ratio, and photobleaching. Inconsistent power causes non-comparable results. | Use a calibrated power meter. Follow protocols to estimate power density at the focal plane. Troubleshooting Tip: If fluorescence intensity is inconsistently low, check laser alignment and stability, and ensure no oil or dust is on the objective lens [24]. |

| Spatial Resolution | Determines the level of morphological detail resolvable. Essential for detecting head vacuoles or tail defects. | Monitor with patterned glass slides or sub-diffraction-sized fluorescent beads (100 nm) to determine the Point Spread Function (PSF). Troubleshooting Tip: Blurry images may indicate a misaligned pinhole (in confocal systems) or an incorrect coverglass thickness for the objective lens [24]. |

| Detector Sensitivity & Linearity | The quantum efficiency and linear response of the camera/PMT affect the accuracy of intensity measurements. | Evaluate using calibration slides or a calibrated external light source (e.g., reference standard LED). Troubleshooting Tip: If image data appears saturated or "clipped," even with low laser power, check the detector's dynamic range settings and ensure it is operating within its linear range [24]. |

| Field Uniformity | Ensures even illumination and detection across the entire field of view, preventing location-based bias. | Use fluorescent slides designed for flat-field correction. Troubleshooting Tip: If one edge of the image is consistently darker, perform a flat-field correction and check the alignment of the light source and condenser [24]. |

Microscope Optics FAQ

Q: How often should I calibrate my microscope for quantitative sperm morphology work?

- A: A full calibration should be performed at least quarterly, or whenever you change a critical component (e.g., objective lens, laser, or camera). It is good practice to perform a quick check of illumination uniformity and intensity using a reference slide at the beginning of each imaging session [24].

Q: Our AI model for sperm morphology performs well in our lab but fails in a collaborator's lab. Could microscope optics be the cause?

- A: Absolutely. Differences in microscope components, calibration status, and imaging parameters (e.g., illumination power, camera gain) can create a "domain shift" that severely degrades AI model performance. This highlights the need for standardized imaging protocols and the use of reference materials to ensure cross-instrument reproducibility [24].

Q: What is the most overlooked aspect of microscope maintenance that affects image quality?

- A: The cleanliness of optical components, particularly the objective lens front element. Accumulated oil, dust, and dirt significantly reduce light throughput and image contrast. Always clean the objective lens carefully before and after use with immersion oil [24].

Emerging Methodologies: Artificial Intelligence and Standardized Training

To overcome the subjectivity of manual assessment, laboratories are turning to Artificial Intelligence (AI) and standardized training tools. These approaches promise greater objectivity and reproducibility in sperm morphology analysis.

AI Model Development Workflow for Sperm Morphology

Recent studies demonstrate that AI models can be trained to assess sperm morphology from high-resolution images captured at lower magnifications, even on unstained, living sperm [25]. This is a significant advancement for Assisted Reproductive Technology (ART), as it allows for the selection of high-quality sperm without the damaging effects of staining. One in-house AI model showed a stronger correlation with CASA (r=0.88) than the correlation between CASA and conventional semen analysis (r=0.57) [25].

Standardized Training Tools for Morphologists

For manual assessment, standardized training is crucial. Research shows that novice morphologists using a "Sperm Morphology Assessment Standardisation Training Tool"—built on machine learning principles with expert-validated "ground truth" images—significantly improved their accuracy and reduced variation.

- Without training, novice accuracy for a 2-category (normal/abnormal) system was 81.0% ± 2.5%, which dropped to 53% ± 3.69% for a complex 25-category system [2].

- With standardized training, final accuracy rates improved dramatically to 98% ± 0.43% for the 2-category system and 90% ± 1.38% for the 25-category system [2].

AI and Training FAQ

Q: What is the main challenge in developing a robust AI model for sperm morphology?

- A: The primary challenge is the lack of large, standardized, and high-quality annotated datasets. Models require thousands of sperm images with accurate "ground truth" labels, agreed upon by multiple experts, to learn effectively and generalize to new data [26].

Q: Can AI completely replace manual assessment by a trained morphologist?

- A: Not yet. Current guidelines caution against using normal morphology percentages alone for ART procedure selection [6]. AI is best used as a powerful tool to augment and standardize the analysis, reducing subjectivity and workload. The detection of specific monomorphic abnormalities (e.g., globozoospermia) still requires expert verification [6] [26].

Q: How can our lab quickly improve the consistency of our manual morphology assessments?

- A: Implement a standardized training and re-training protocol using a tool with validated image sets. Studies show that even a single day of intensive, standardized training can lead to significant improvements in accuracy and reductions in inter-technician variation [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item Name | Function/Benefit | Application Note |

|---|---|---|

| Papanicolaou Stain | Provides detailed staining of sperm head (pink), acrosome (blue), and nucleus (purple) as per WHO guidelines. | Essential for establishing reference values and gold-standard clinical diagnosis [15]. |

| Computer-Assisted Sperm Analysis (CASA) System | Automates sperm analysis, reducing subjective errors and providing high repeatability for concentration, motility, and morphometry. | Systems like SSA-II Plus can measure over 10 head, neck, and acrosome parameters; requires validation for each lab [15]. |

| Sperm Morphology Training Tool | Software-based tool using expert-consensus images to train and standardize morphologists, improving accuracy and reducing variability. | A study showed training improved novice accuracy in a 2-category system from ~81% to over 98% [2]. |

| Reference Material Slides (e.g., Fluorescent Beads, Patterned Slides) | Used to benchmark microscope performance for parameters like spatial resolution, illumination uniformity, and detector sensitivity. | Critical for ensuring quantitative and reproducible imaging, especially for cross-instrument comparisons [24]. |

| Diff-Quik Stain | A rapid, standardized Romanowsky stain variant used for quick assessment of sperm morphology. | Provides good initial performance and is suitable for routine analysis [23]. |

| Confocal Laser Scanning Microscope | Enables high-resolution Z-stack imaging of sperm, capturing subcellular features without the need for staining. | Key for creating high-quality datasets to train AI models for unstained sperm analysis [25]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the primary source of variability in sperm morphology assessment, and how can it be mitigated? The primary source of variability is the subjective nature of the test and the lack of standardized training for morphologists. This human bias leads to unreliable assessments, as different experts may classify the same sperm differently. A key mitigation strategy is the use of a standardized training tool developed using principles from machine learning. This tool trains novices using a robust dataset of sperm images that have been classified with high confidence via expert consensus, establishing a reliable "ground truth" for learning [5] [2].

Q2: How does the complexity of a classification system impact assessment accuracy? The complexity of the classification system has a direct and significant inverse relationship with assessment accuracy. Research shows that untrained users have higher accuracy and lower variation with simpler systems. Performance degrades as the number of categories increases [2]. The table below summarizes the quantitative data from training tool experiments.

| Classification System Complexity | Untrained User Accuracy (Mean ± Variation) | Final Trained User Accuracy (Mean ± Variation) |

|---|---|---|

| 2-Category (Normal/Abnormal) | 81.0% ± 2.5% | 98.0% ± 0.43% |

| 5-Category (by sperm part) | 68.0% ± 3.59% | 97.0% ± 0.58% |

| 8-Category (e.g., Cattle Vets) | 64.0% ± 3.5% | 96.0% ± 0.81% |

| 25-Category (Individual defects) | 53.0% ± 3.69% | 90.0% ± 1.38% |

Table 1: Impact of classification system complexity on assessment accuracy. Source: [2]

Q3: Are there any clinical guidelines for sperm morphology assessment in human infertility workups? Recent expert reviews, such as the 2025 guidelines from the French BLEFCO Group, suggest a significant simplification of sperm morphology assessment in clinical practice. They do not recommend using the percentage of normal forms as a prognostic criterion before Assisted Reproductive Technology (ART) procedures like IUI, IVF, or ICSI. The guidelines emphasize that the test's clinical value lies primarily in detecting specific monomorphic abnormalities (e.g., globozoospermia) rather than providing a general percentage of normal sperm [6] [7].

Q4: What is the role of "ground truth" in standardizing sperm morphology training? In machine learning, "ground truth" refers to data that has been accurately classified, typically through consensus among multiple experts. Applying this principle to human training is crucial for standardization. For sperm morphology, this involves having multiple experienced assessors label individual sperm images, and only those images with 100% consensus are integrated into the training tool. This ensures that trainees learn from a validated, unbiased dataset, which is foundational for improving and maintaining accuracy across different morphologists [5] [2].

Experimental Protocol: Validating a Sperm Morphology Training Tool

The following methodology details the development and validation of a standardized training tool as described in recent scientific literature [5] [2].

1. Objective: To develop and validate an interactive web-based training tool that improves the accuracy and reduces the variability of sperm morphology assessments across different classification systems.

2. Materials and Reagents:

- Semen Samples: Source from 72 rams (applicable to other species).

- Microscopy: Olympus BX53 microscope with DIC and phase contrast objectives (40x magnification, high numerical aperture).

- Camera: Olympus DP28 camera (8.9-megapixel CMOS sensor).

- Software: A novel machine-learning algorithm for cropping single sperm from field-of-view images; a web interface for user interaction.

3. Image Database Creation:

- Image Collection: Capture 50 fields of view (FOV) per sire, totaling 3,600 FOV images.

- Single-Sperm Cropping: Use a custom machine-learning algorithm to crop FOV images, resulting in 9,365 individual sperm images.

- Expert Labelling: Three experienced assessors label all individual sperm images according to a comprehensive 30-category classification system.

- Establishing Ground Truth: Only images with 100% consensus from all assessors (4,821 out of 9,365) are integrated into the training tool as the validated dataset.

4. Training Tool Application:

- User Testing: Novice morphologists (n=22 in Experiment 1; n=16 in a second cohort) use the web interface.

- Functionality: The tool provides instant feedback on classification choices and assesses user proficiency.

- Training Regimen: Experiment 2 involved repeated training and testing over four weeks (14 tests total) to measure improvement in accuracy and diagnostic speed.

5. Outcome Measures:

- Primary: Accuracy of classification against the expert consensus "ground truth."

- Secondary: Time taken to classify each image (speed) and coefficient of variation between users.

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item Name & Specification | Function in Experiment |

|---|---|

| High-Resolution Microscope (e.g., Olympus BX53 with DIC) | Provides high-resolution, clear images of sperm for accurate morphological analysis. |

| Machine Learning Cropping Algorithm | Automates the extraction of individual sperm from field-of-view images, ensuring consistency and saving time. |

| Expert-Validated Image Database ("Ground Truth") | Serves as the standardized reference for training and testing morphologists, reducing human bias. |

| Web-Based Training Interface | Delivers self-paced, accessible training and instantaneous feedback to users, facilitating independent standardization. |

| Multi-Category Classification System Framework | Allows the training tool to be adapted for various existing classification systems (e.g., 2, 5, 8, or 25 categories). |

Table 2: Key materials and reagents for developing a sperm morphology standardization tool.

Workflow and Relationship Diagrams

Training Tool Development Workflow

System Complexity vs. Accuracy

Troubleshooting Laboratory Practice and Optimizing Assessment Workflows

A technical guide for researchers navigating the practical challenges of sperm morphology assessment.

Frequently Asked Questions

FAQ 1: With limited funding for expensive stains, what is a cost-effective alternative that provides good morphological detail?

Rapid Papanicolau stain is identified as the most ideal, simple, and cost-effective stain for the overall assessment of sperm morphology, providing very clear visualization of the acrosome, head, and clear views of the middle piece and tail [27]. For a specific and excellent assessment of the sperm head, Haematoxylin and Eosin (H&E) is the best option [27]. These basic stains are recommended for settings where commercial stains like Shorr, Janus Green, or Sperm Blue are too expensive [27].

FAQ 2: Does the choice of fixative affect the long-term stability of sperm samples for morphological studies?

Yes, the fixative choice significantly impacts morphological integrity over time. A study on avian sperm found that formalin is superior to ethanol for long-term preservation [28]. While sperm cell length remained relatively stable in both fixatives over periods of 227 days and even three years, the proportion of sperm cells with head damage was much higher in ethanol (70%) compared to formalin (3%) [28]. Sperm cells initially fixed in formalin also remained quite stable in dry storage on glass slides for at least six months [28].

FAQ 3: We observe high variability in morphology assessments between technicians. How can this be improved?

The lack of standardization is a well-documented challenge. A 2025 proof-of-concept study highlighted the development of a standardized sperm morphology assessment training tool to address this exact issue [5]. Unlike traditional methods, this tool uses a large dataset of sperm images that have been classified with 100% consensus by multiple expert assessors, establishing a reliable "ground truth." It provides instant feedback to users, enabling self-paced, independent training to reduce human bias and improve assessment reliability [5].

FAQ 4: For a basic viability assessment, which stain is most appropriate?

Eosin-Nigrosin stain is commonly used for distinguishing between live and dead sperm [27]. Viable sperm with intact cell membranes exclude the dye and appear white, while non-viable sperm with damaged membranes take up the eosin dye and stain pink [27]. This stain is commercially available and is a standard component for a male fertility exam [29].

Staining Method Comparison and Protocols

The table below summarizes the performance of four common staining techniques for assessing different parts of the spermatozoon, as evaluated by independent observers [27].

Table: Clarity of Sperm Morphology Assessment Using Different Staining Techniques

| Staining Technique | Acrosome | Head | Middle Piece | Tail |

|---|---|---|---|---|

| Haematoxylin & Eosin | Very clear | Very clear | Not clear | Clear |

| Giemsa | Very clear | Clear | Not clear | Not clear |

| Eosin-Nigrosin | Clear | Clear | Pale | Pale |

| Rapid Papanicolau | Very clear | Very clear | Clear | Clear |

Detailed Staining Protocols

Based on the research, here are the methodologies for the two most effective stains:

Protocol for Rapid Papanicolau Staining [27]

- Smear Preparation: Prepare a thin smear of the liquefied semen sample.

- Fixation: Immediately fix the smear in ethyl alcohol. Note: This is a key difference from air-dried smears.

- Staining: Follow the standard rapid Papanicolau staining procedure.

- Analysis: Examine under a light microscope. This method provides very clear acrosomal condensation and head morphology, clearly appreciable middle piece and tail, and additionally helps in the separation of immature germ cells.

Protocol for Haematoxylin and Eosin (H&E) Staining [27]

- Smear Preparation: Prepare a thin smear of the liquefied semen sample.

- Fixation: Air-dry the smear at room temperature.

- Staining: Stain using Harris’ Haematoxylin and Eosin Y.

- Analysis: Examine under a light microscope. The stain is uniformly distributed on the head with dark purple condensation, making it extremely clear for assessing the acrosome and head. The middle piece and tail morphology are also identifiable but were graded as less clear than with Papanicolau.

Research Reagent Solutions

Table: Essential Materials for Sperm Morphology Assessment

| Item | Function/Benefit | Example Product/Note |

|---|---|---|

| Papanicolau Stain | Cost-effective stain for overall sperm morphology | Recommended as the ideal balance of cost and clarity [27] |

| Haematoxylin & Eosin | Best stain for detailed sperm head morphology | A standard, widely available histological stain [27] |

| Eosin-Nigrosin Stain | Distinguishes between live and dead sperm for viability assessment | Available as a pre-made kit [29] |

| Formalin (10%) | Fixative for long-term preservation of sperm morphological integrity | Superior to ethanol for preventing acrosome damage during storage [28] |

| Pre-stained Morphology Slides | Quality control and saving preparation time | e.g., Testsimplets, Cell-Vu [30] |

| Sperm Cryopreservation Media | Long-term storage of semen samples for future analysis | e.g., Test yolk buffer with a programmable freezer [31] |