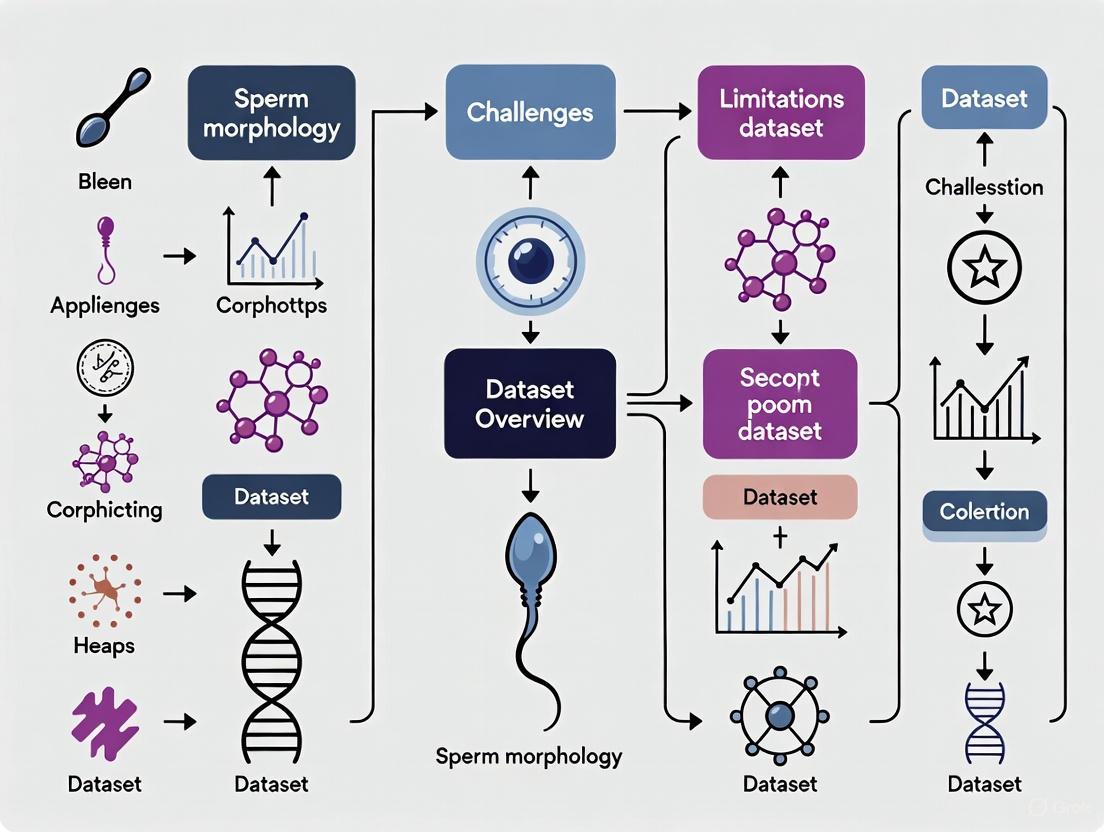

Overcoming the Data Barrier: Current Challenges and Future Directions in Sperm Morphology Datasets for AI-Driven Male Fertility Research

This article synthesizes the critical challenges and limitations facing sperm morphology datasets, which are foundational for developing robust artificial intelligence (AI) models in male fertility research.

Overcoming the Data Barrier: Current Challenges and Future Directions in Sperm Morphology Datasets for AI-Driven Male Fertility Research

Abstract

This article synthesizes the critical challenges and limitations facing sperm morphology datasets, which are foundational for developing robust artificial intelligence (AI) models in male fertility research. For an audience of researchers and drug development professionals, we explore the foundational issues of data scarcity and annotation complexity. We then investigate methodological advancements in deep learning and data augmentation, analyze strategies for optimizing model performance and generalizability, and review validation frameworks and comparative analyses of existing datasets. The conclusion underscores the necessity for standardized, high-quality data to translate algorithmic success into reliable clinical tools for infertility diagnosis and treatment.

The Core Hurdles: Scarcity, Subjectivity, and Standardization in Sperm Morphology Data

The Critical Shortage of Public, Large-Scale Datasets

The field of male infertility research, particularly sperm morphology analysis, is confronting a significant bottleneck: a critical shortage of public, large-scale, and high-quality datasets. This scarcity fundamentally impedes the development of robust, generalizable, and clinically reliable artificial intelligence (AI) models for automated semen analysis. Sperm morphology analysis is a cornerstone of male fertility assessment, providing crucial diagnostic and prognostic information [1]. According to the World Health Organization (WHO) guidelines, a thorough evaluation requires the analysis of at least 200 spermatozoa, categorizing abnormalities across the head, neck, and tail, which encompasses 26 different types of defects [1]. This labor-intensive process is notoriously subjective, with manual analysis suffering from significant inter-observer variability, reported to be as high as 40% among expert evaluators [2].

While deep learning (DL) has demonstrated remarkable potential to automate this task, reduce diagnostic variability, and save time—potentially cutting analysis time from 30–45 minutes to under one minute per sample [2]—its success is intrinsically tied to access to large, diverse, and accurately annotated datasets. Current public datasets are often constrained by issues of small scale, limited morphological categories, low image resolution, and a lack of standardized annotation protocols [1]. This data deficit forms a critical barrier to translating AI research into validated clinical tools, ultimately affecting patient care and treatment outcomes in reproductive medicine.

The Current Landscape and Quantitative Deficits of Sperm Morphology Datasets

A review of the available public datasets for sperm morphology analysis reveals a landscape fragmented by limitations in size, quality, and scope. Table 1 provides a comparative overview of existing datasets, highlighting their specific characteristics and inherent constraints. The quantitative shortfall is evident; many datasets contain only a few thousand images or less, a volume that is insufficient for training complex deep-learning models without a high risk of overfitting.

The "small data" problem is compounded by issues of quality and diversity. Many datasets, such as HSMA-DS and MHSMA, are derived from non-stained samples and are described as having noisy and low-resolution images [1]. Others, like the original HuSHeM dataset, are of higher quality but are extremely limited in scale, with only 216 sperm head images publicly available [1]. This lack of standardized, high-quality data stems from challenges in the systematic acquisition and annotation of sperm images. In clinical practice, valuable image data is often not systematically saved, leading to irretrievable data loss [1]. Furthermore, the annotation process itself is exceptionally complex, requiring skilled embryologists to simultaneously evaluate defects in the head, vacuoles, midpiece, and tail, which increases the difficulty and cost of creating high-fidelity datasets [1].

Table 1: Overview of Publicly Available Sperm Morphology Datasets

| Dataset Name | Year | Image Count | Key Characteristics | Reported Limitations |

|---|---|---|---|---|

| HSMA-DS [1] | 2015 | 1,457 | Images from 235 patients; unstained sperm. | Non-stained, noisy, low resolution. |

| SCIAN-MorphoSpermGS [1] | 2017 | 1,854 | Stained sperm; classified into five morphological classes. | Limited sample size and categories. |

| HuSHeM [1] | 2017 | 216 (public) | Stained sperm heads with higher resolution. | Very limited publicly available sample size. |

| MHSMA [1] | 2019 | 1,540 | Grayscale images of sperm heads. | Non-stained, noisy, low resolution. |

| VISEM [1] | 2019 | Multi-modal | Includes videos and biological data from 85 participants. | Low-resolution, unstained grayscale data. |

| SMIDS [1] | 2020 | 3,000 | Stained images across three classes. | Limited to three classes (normal, abnormal, non-sperm). |

| SVIA [1] | 2022 | 4,041 images & videos | Includes detection, segmentation, and classification tasks; 125,000 annotated instances. | Low-resolution, unstained sperm. |

| VISEM-Tracking [1] | 2023 | 656,334 objects | Extensive annotations for detection and tracking. | Low-resolution, unstained grayscale data. |

Root Causes and Broader Implications of Data Scarcity

Fundamental Challenges in Dataset Curation

The creation of high-quality public datasets is a challenging endeavor hampered by several interconnected factors. First, many medical institutions still rely on conventional assessment methods that are not designed for systematic data capture, leading to the loss of valuable image information [1]. Second, the technical acquisition of sperm images is fraught with difficulties; sperm cells may appear intertwined in images, or only partial structures may be visible at the edges of the frame, compromising the accuracy and utility of the acquired data [1]. Finally, as previously mentioned, the annotation process requires specialized expertise and is time-consuming, creating a significant bottleneck.

Beyond these technical and logistical hurdles, a broader trend threatens all data-driven research: the vanishing public record. Reports indicate that public data in many countries is becoming increasingly fragile, subject to political intervention and systemic neglect [3]. For instance, in early 2025, the U.S. government removed thousands of datasets across agencies like the EPA, NOAA, and CDC, effectively scrubbing key sources of scientific data from the public record [3]. This loss of reliable public data, which once underpinned an estimated $750 billion of business activity, blindsides companies and researchers who build models for everything from supply chain forecasting to biomedical discovery [3].

Impact on Algorithm Development and Clinical Applicability

The shortage of adequate data has direct and profound consequences on the AI models being developed. Conventional machine learning (ML) algorithms, such as K-means and Support Vector Machines (SVM), are fundamentally limited by their reliance on handcrafted features (e.g., grayscale intensity, edge detection) [1]. While they can achieve accuracies around 90% in controlled settings, their performance is often not generalizable [1]. Deep learning models promise automatic feature extraction but require massive datasets to do so effectively. Without large-scale and diverse data, even advanced DL models can suffer from poor generalization, overfitting, and an inability to handle the vast variability of sperm abnormalities encountered in a real-world clinical setting.

Emerging Solutions and Methodological Advances

Synthetic Data Generation

To circumvent the challenges of acquiring real-world clinical data, researchers are turning to synthetic data generation. Tools like AndroGen offer an open-source solution for creating customized, realistic synthetic images of sperm from different species [4]. The significant advantage of this approach is that it requires no real data or intensive training of generative models, drastically reducing costs and annotation effort [4]. AndroGen allows researchers to specify parameters to create task-specific datasets, providing a fast and interactive way to generate large volumes of labeled data for training and evaluating machine learning models, particularly for computer-aided semen analysis (CASA) systems [4].

Advanced Feature Engineering and Model Architectures

Another strategy to maximize the utility of limited data involves sophisticated model architectures and feature engineering techniques. For example, a 2025 study proposed a hybrid framework combining a ResNet50 backbone with a Convolutional Block Attention Module (CBAM) [2]. This architecture directs the model's focus to the most relevant sperm features (e.g., head shape, acrosome) while suppressing background noise [2]. The model is further enhanced by a comprehensive deep feature engineering (DFE) pipeline, which extracts high-dimensional features from the network and applies classical feature selection methods like Principal Component Analysis (PCA) before classification with a Support Vector Machine (SVM) [2]. This hybrid approach achieved state-of-the-art test accuracies of 96.08% on the SMIDS dataset and 96.77% on the HuSHeM dataset, demonstrating that advanced methodologies can partially compensate for data limitations [2].

Data Rescue and Alternative Sourcing

In response to the disappearance of public data, grassroots "data rescue" initiatives have emerged. These efforts involve researchers and non-profit organizations racing to archive public data before it is taken down. CIOs and researchers are advised to explore resources such as the Wayback Machine for historical versions of websites, the Harvard Dataverse, the Environmental Data & Governance Initiative (EDGI), and the Data Rescue Project tracker to locate and preserve critical datasets [3]. Furthermore, enterprises are increasingly looking to monetize their internal data assets through licensing or Data-as-a-Service (DaaS) models, which could open up new, though potentially costly, sources of information for research [3].

Experimental Protocols for Proteomic Profiling as a Complementary Approach

While image-based morphology analysis is vital, the molecular profiling of sperm offers a deeper understanding of male infertility. Mass spectrometry-based proteomics is one such powerful methodology. The following workflow, derived from a 2025 study that built a comprehensive proteomic dataset of human spermatozoa, can serve as a template for generating rich, multi-modal datasets [5]. This detailed protocol is summarized in Table 2 and visualized in the diagram below.

Diagram 1: Experimental workflow for sperm proteomic profiling

Table 2: Detailed Experimental Protocol for Sperm Proteomics [5]

| Protocol Step | Detailed Methodology & Reagents | Function & Purpose |

|---|---|---|

| 1. Sample Collection & Preparation | - Collect semen samples after 3-5 days of abstinence.- Analyze per WHO (2021) guidelines using Computer-Aided Sperm Analysis (CASA).- Group as Normozoospermic (total motility >40%, PR >32%) or Asthenozoospermic (total motility ≤40%, PR ≤32%).- Liquefy at 37°C, centrifuge, and wash pellet 3x with ice-cold PBS. | To obtain purified sperm cells, categorized by motility characteristics, for downstream protein analysis. |

| 2. Protein Extraction | - Resuspend sperm pellet in T-PER (Tissue Protein Extraction Reagent) or UA Lysis Buffer (8 M Urea, 100 mM Tris-HCl, pH 8.0).- Perform sonication on ice (1s on/2s off, 99 cycles, 200W).- Centrifuge at 14,000 × g for 15 min and collect supernatant. | To effectively lyse sperm cells and solubilize proteins while minimizing degradation and activity loss. |

| 3. Protein Quantification | - Use the Bradford Assay. | To accurately measure protein concentration for loading consistency in subsequent steps. |

| 4. Filter-Aided Sample Preparation (FASP) | - Use a 30 kDa MWCO ultrafiltration device.- Denaturation/Reduction: Add UA buffer + 20 mM Dithiothreitol (DTT), incubate at 37°C for 4h.- Alkylation: Add UA buffer + 50 mM Iodoacetamide, incubate in the dark at room temperature. | To remove detergents, denature proteins, reduce disulfide bonds, and alkylate cysteine residues to prevent reformation. |

| 5. Enzymatic Digestion | - Digest proteins with Trypsin at 37°C overnight. | To cleave proteins into peptides suitable for mass spectrometry analysis. |

| 6. Peptide Fractionation | - Use basic reversed-phase chromatography. | To reduce sample complexity and increase proteome coverage by separating peptides prior to MS injection. |

| 7. LC-MS/MS Analysis | - Use a Vanquish Neo UHPLC system coupled to an Orbitrap Astral mass spectrometer.- Operate in Data-Independent Acquisition (DIA) mode. | To separate peptides by liquid chromatography (LC) and generate high-resolution MS/MS spectra for precise protein identification and quantification. |

| 8. Data Analysis | - Process raw files with Spectronaut software.- Search against a protein database.- Perform functional analysis (GO, ssGSEA). | To identify and quantify proteins, and to elucidate biological processes and pathways related to sperm function. |

The Scientist's Toolkit: Key Research Reagents and Materials

The successful execution of the proteomic workflow above, and similar experiments, relies on a suite of critical reagents and tools.

Table 3: Essential Research Reagent Solutions for Sperm Proteomics

| Reagent / Tool | Function / Application |

|---|---|

| T-PER (Tissue Protein Extraction Reagent) | A ready-to-use, proprietary reagent for efficient extraction of soluble proteins from tissues and cells, including resilient sperm cells [5]. |

| Urea-Assisted (UA) Lysis Buffer | A classical, strong denaturing buffer (8 M Urea) that effectively disrupts cellular structures and solubilizes proteins, including membrane-associated proteins [5]. |

| Dithiothreitol (DTT) | A reducing agent that breaks disulfide bonds within and between protein molecules, aiding denaturation and unfolding [5]. |

| Iodoacetamide | An alkylating agent that modifies cysteine residues by adding carbamidomethyl groups, preventing disulfide bond reformation and aiding in accurate protein identification [5]. |

| Trypsin | A protease enzyme that cleaves peptide bonds specifically at the carboxyl side of lysine and arginine residues, generating peptides ideal for MS analysis [5]. |

| Orbitrap Astral Mass Spectrometer | A high-resolution mass spectrometer that combines Orbitrap full-scan precision with the high speed and sensitivity of the Astral analyzer, ideal for comprehensive DIA proteomics [5]. |

| Spectronaut Software | A specialized software platform for the analysis of DIA-MS data, enabling precise identification and quantification of thousands of proteins from complex samples [5]. |

The critical shortage of public, large-scale datasets for sperm morphology analysis is a multi-faceted problem with deep implications for the advancement of male infertility diagnostics and treatment. This shortage stems from technical challenges in data acquisition and annotation, as well as broader societal trends affecting the availability of public scientific data. The field has responded with innovative technological solutions, including synthetic data generation, advanced deep feature engineering, and sophisticated proteomic workflows that maximize the value of limited samples.

Moving forward, a concerted effort is needed from the global research community. This includes establishing standardized protocols for sperm image acquisition and annotation to ensure consistency, promoting the public sharing of de-identified datasets in curated repositories, and continuing to invest in novel methods like synthetic data and multi-omics integration. By addressing the data scarcity challenge head-on, researchers can unlock the full potential of AI and molecular profiling, paving the way for more objective, efficient, and personalized care in reproductive medicine.

Inherent Subjectivity and High Inter-Expert Variability in Manual Annotation

The manual annotation of sperm morphology is a cornerstone of male fertility assessment, yet it is fundamentally compromised by inherent subjectivity and significant variability between experts. This variability presents a critical challenge in reproductive science, where the accurate classification of sperm into normal and abnormal categories directly influences clinical diagnoses and treatment pathways. The World Health Organization (WHO) recognizes over 26 distinct types of abnormal sperm morphology, requiring the analysis of at least 200 sperm per sample to obtain a reliable assessment [1]. However, this manual process is characterized by high recognition difficulty and is heavily influenced by the observer's subjectivity, leading to substantial limitations in the reproducibility and objectivity of morphological evaluations [1]. This whitepaper delves into the quantitative evidence of this variability, explores its underlying causes, and outlines experimental protocols and emerging solutions, including artificial intelligence (AI), aimed at standardizing sperm morphology analysis within the broader context of dataset challenges.

Quantitative Evidence of Inter-Expert Variability

Empirical studies consistently demonstrate troubling levels of disagreement among highly trained experts annotating the same biological phenomena. The implications of this variability extend beyond sperm morphology to other critical medical fields.

Table 1: Measured Inter-Expert Variability Across Medical Domains

| Field of Study | Metric of Agreement | Result | Interpretation |

|---|---|---|---|

| General Clinical Judgment [6] | Fleiss' κ | 0.383 | Fair agreement |

| General Clinical Judgment [6] | Average Cohen's κ (External Validation) | 0.255 | Minimal agreement |

| Sperm Morphology Assessment [2] | Inter-observer Variability | Up to 40% disagreement | High diagnostic variability |

| Sperm Morphology Assessment [2] | Kappa values | 0.05 - 0.15 | Substantial diagnostic disagreement |

| ICU Discharge Decisions [6] | Fleiss' κ | 0.174 | Higher disagreement than mortality prediction |

| ICU Mortality Prediction [6] | Fleiss' κ | 0.267 | More agreement than discharge decisions |

| Breast Proliferative Lesions [6] | Fleiss' κ | 0.34 | Fair agreement |

| Major Depressive Disorder [6] | Diagnostic Agreement | 4-15% | Very low consensus |

| EEG Identification [6] | Average pairwise Cohen's κ | 0.38 | Minimal agreement |

In the specific context of sperm morphology, the challenge of achieving consensus is further quantified by dataset construction efforts. One study aimed at creating a high-quality dataset for a training tool began with 9,365 individual ram sperm images. After being labeled by three experienced assessors, only 5,121 images (54.7%) achieved 100% consensus on all labels, meaning nearly half of all images provoked some degree of disagreement among the experts [7]. This figure starkly illustrates the pervasive nature of subjectivity in even seemingly straightforward morphological classifications.

Root Causes of Annotation Subjectivity

The inconsistency in expert annotations is not primarily due to a lack of training or diligence, but stems from deeper, systemic sources inherent to subjective judgment tasks.

- Subjectivity and Bias in Labeling Tasks: The very nature of morphological assessment often involves judgment calls, particularly for sperm that exhibit borderline or subtle abnormal features. Experts may apply internal thresholds differently, leading to systematic biases between individuals [6].

- Human Error and Cognitive "Slips": Even highly skilled experts are susceptible to momentary lapses in concentration or cognitive overload, especially during the tedious process of evaluating hundreds of sperm cells per sample [6].

- Complexity of Defect Assessment: A single sperm cell must be evaluated for concurrent defects in multiple compartments—the head, vacuoles, midpiece, and tail [1]. This multi-parametric assessment increases the cognitive load and the number of potential decision points where observers can disagree.

- Insufficient or Ambiguous Guidelines: While the WHO provides standards, applying these to the vast spectrum of real-world sperm appearances can be challenging. Ambiguities in classification criteria or a lack of clear examples for rare morphological types can lead to inconsistent application of the rules [8].

Experimental Protocols for Establishing Ground Truth

To overcome the challenges of inter-expert variability, particularly for building reliable datasets for AI model training, rigorous experimental protocols for establishing "ground truth" data have been developed.

Protocol: Multi-Consensus Expert Labeling for High-Quality Dataset Creation

- Objective: To create a dataset of sperm morphology images with verified ground truth labels for use in training and standardizing human assessors and machine learning models [7].

- Image Acquisition:

- Microscope: Olympus BX53 with DIC optics.

- Magnification: 40x objective with high numerical apertures (0.95 for DIC) to maximize resolution.

- Camera: Olympus DP28 with an 8.9-megapixel CMOS sensor.

- Process: Capture 50 random fields of view (FOV) per semen sample (total of 3,600 FOV from 72 rams) [7].

- Sperm Isolation and Cropping:

- Use a novel machine-learning algorithm to automatically crop individual sperm cells from each FOV, resulting in a library of 9,365 single-sperm images [7].

- Annotation and Consensus Process:

- Step 1: Three independent, experienced assessors label each of the 9,365 sperm images according to a predefined, comprehensive classification system (e.g., a 30-category system for adaptability) [7].

- Step 2: Analyze the labels to identify images where all three assessors are in 100% agreement on all morphological categories.

- Step 3: Establish the "ground truth" dataset using only the 5,121 images that passed this strict consensus filter. Images without full consensus are excluded to ensure label reliability [7].

- Application: The validated dataset is integrated into a web interface to serve as a benchmark for training new morphologists and assessing their proficiency against a standardized reference [7].

This multi-consensus approach is recognized as a best practice. One study noted that the precision-recall of a machine learning model for sperm morphology improved by 12.6% to 26% when a two-person consensus strategy was used for generating the training labels [7].

Diagram 1: Multi-consensus ground truth workflow.

AI and Standardization as Mitigation Strategies

The limitations of manual annotation have accelerated the development of AI and standardized training tools to mitigate human subjectivity.

Table 2: Key Research Reagent Solutions for Sperm Morphology Analysis

| Item / Solution | Function / Description | Role in Standardization |

|---|---|---|

| DIC Microscope (e.g., Olympus BX53) | Provides high-resolution, clear images with detailed structural contrast without staining. | Essential for consistent, high-quality image acquisition across different labs [7]. |

| High-NA Objectives (0.75-0.95) | Maximizes resolution and light-gathering capability, crucial for discerning subtle morphological defects. | Reduces image quality variability, a key source of annotation disagreement [7]. |

| Standardized Morphology Classification System | A comprehensive set of categories (e.g., 30 categories) for labeling sperm defects (Normal, Pyriform, Bent Midpiece, etc.). | Provides a common, unambiguous vocabulary for all annotators, reducing bias and inconsistency [7]. |

| Consensus-Based Ground Truth Datasets | Datasets where labels are only valid after multiple experts achieve 100% agreement. | Serves as an objective benchmark for both training human assessors and developing AI models [7]. |

| Deep Learning Models (e.g., CBAM-enhanced ResNet50) | Automated systems that extract features and classify sperm morphology with high accuracy. | Removes human subjectivity, reduces analysis time from 45 minutes to <1 minute, and provides consistent results [2]. |

Deep learning frameworks represent a paradigm shift in addressing annotation variability. A novel framework combining a ResNet50 backbone with a Convolutional Block Attention Module (CBAM) and deep feature engineering has demonstrated exceptional performance, achieving test accuracies of 96.08% and 96.77% on standard datasets [2]. This approach not only surpasses the performance of conventional machine learning models, which rely on manually engineered features, but also provides clinically interpretable results through attention visualization, offering a path toward standardized, objective, and efficient fertility assessment [1] [2].

The inherent subjectivity and high inter-expert variability in manual sperm morphology annotation is a well-documented and quantifiable challenge that poses a significant barrier to reproducible diagnostics and reliable dataset creation. Evidence shows that even experienced consultants exhibit only "fair" to "minimal" agreement on clinical judgments. The path forward requires a concerted shift towards rigorous, consensus-driven protocols for establishing ground truth data and the integration of robust AI systems. By adopting multi-expert consensus strategies and leveraging deep learning models, the field can overcome the limitations of human annotation, paving the way for standardized, accurate, and objective sperm morphology analysis that enhances both clinical decision-making and research reproducibility.

The quantitative assessment of sperm morphology represents a critical component in the diagnosis of male infertility, providing crucial insights into testicular and epididymal function and predicting natural pregnancy outcomes [1] [9]. According to World Health Organization (WHO) standards, a comprehensive morphological evaluation requires the analysis and classification of over 200 sperm cells, each divided into three primary structural compartments: the head, neck/midpiece, and tail, with up to 26 recognized types of abnormal morphology [1] [9]. This intricate classification system, while essential for clinical assessment, introduces significant analytical challenges that are further compounded by substantial inter-observer variability in manual evaluations, with reported disagreement rates reaching up to 40% among expert embryologists [2].

The core complexity of sperm morphology annotation stems from the simultaneous and interdependent evaluation of multiple structural domains. As noted in recent literature, "sperm defect assessment under microscopy requires simultaneous evaluation of head, vacuoles, midpiece, and tail abnormalities, which substantially increases annotation difficulty" [1] [9]. This multi-compartment approach necessitates specialized expertise and introduces subjectivity at each analytical stage. Furthermore, technical limitations in image acquisition, including low-resolution images, overlapping sperm cells, and partial structures captured at image boundaries, create additional barriers to establishing standardized annotation protocols [1] [9]. The absence of large, high-quality, and diversely annotated public datasets continues to hinder the development of robust automated systems, ultimately affecting the consistency and clinical utility of sperm morphology assessment across different laboratories and clinical settings [1] [2] [9].

Structural Components and Annotation Complexities

Head and Acrosome

The sperm head presents the most complex annotation target due to its multifaceted morphological characteristics and critical functional importance. Normal morphology is strictly defined by WHO criteria as an oval configuration with specific dimensional parameters (length: 4.0-5.5 μm, width: 2.5-3.5 μm) and an intact acrosome covering 40-70% of the head surface area [2]. Annotation protocols must capture deviations from this standard, including tapered, pyriform, small, or amorphous shapes; microcephalic (abnormally small) or macrocephalic (abnormally large) sizing; and structural anomalies in the post-acrosomal region [2] [10]. The acrosome itself requires precise annotation for integrity and coverage, while the presence, number, and size of vacuoles—cytoplasmic inclusions associated with reduced DNA integrity—represent additional grading criteria that demand high-resolution imaging and experienced annotation [1] [9].

The complexity of head annotation is further heightened by the subtlety of distinguishing normal variations from pathological forms and the technical challenges of consistently identifying acrosomal boundaries and vacuolar inclusions across different staining protocols and image qualities [1]. Studies utilizing the Modified Human Sperm Morphology Analysis (MHSMA) dataset have demonstrated that even deep learning models face challenges in achieving consistent performance across these fine-grained head abnormalities, with F0.5 scores varying significantly between acrosome (84.74%), head shape (83.86%), and vacuole (94.65%) detection tasks [11].

Midpiece and Neck

The midpiece and neck region connects the sperm head to the flagellar tail and contains the mitochondria essential for energy production. Annotation protocols for this region focus on structural integrity and alignment, with specific criteria for identifying bent necks, asymmetrical midpiece attachments, and the presence of cytoplasmic droplets—residual cytoplasm that should have been extruded during spermatogenesis [10] [11]. According to the modified David classification system used in annotation protocols, midpiece defects primarily include "cytoplasmic droplet (h), bent (j)" [10].

The anatomical complexity of this region presents unique annotation challenges, as the midpiece's helical structure and subtle bending can be difficult to assess in two-dimensional microscopy images. Furthermore, distinguishing pathological bending from normal flexibility requires careful consideration of angle thresholds and consistency across multiple viewing planes. These challenges are reflected in dataset statistics, where midpiece abnormalities often show lower annotation consistency compared to more obvious head defects, highlighting the need for specialized training and standardized criteria for this anatomical region [10].

Tail and Flagellum

The sperm tail, or flagellum, is structurally divided into the principal piece and end piece, and is responsible for propulsion through whip-like movements. Annotation protocols classify tail abnormalities according to the modified David system as "coiled (n), short (l), multiple (o)" tails, along with complete absence [10]. Additional classification systems used in bovine studies further categorize tail defects as "folded tail," "loose tail," or complete detachment [11].

The dynamic, three-dimensional nature of tail movement introduces significant annotation complexity, particularly in static images where the full extent of coiling or bending may not be apparent. Recent advances in 3D+t multifocal imaging have enabled more comprehensive flagellar assessment by capturing movement in volumetric space over time, addressing critical limitations of traditional two-dimensional analysis [12]. However, these technological advances introduce new annotation challenges, including the need for specialized tracking algorithms and computational resources capable of processing complex four-dimensional data structures [12] [13].

Table 1: Sperm Morphology Classification Systems and Defect Types

| Structural Component | Classification System | Defect Categories | Annotation Challenges |

|---|---|---|---|

| Head & Acrosome | WHO Standards [2] | Tapered, thin, microcephalous, macrocephalous, multiple heads, abnormal acrosome, abnormal post-acrosomal region | Subtle shape distinctions, acrosome coverage measurement, vacuole identification |

| Midpiece & Neck | Modified David [10] | Cytoplasmic droplet, bent | 2D assessment of 3D structure, bending angle quantification |

| Tail & Flagellum | Modified David [10] | Coiled, short, multiple tails | Dynamic assessment in static images, 3D movement patterns |

Quantitative Analysis of Annotation Challenges

Dataset Limitations and Variability

The development of robust sperm morphology annotation systems is fundamentally constrained by limitations in existing datasets, which exhibit considerable variability in size, quality, and annotation consistency. Current public datasets, including HSMA-DS, MHSMA, HuSHeM, and SMIDS, typically contain between 216 and 3,000 images, with significant variations in resolution, staining protocols, and class representation [1] [2]. This heterogeneity directly impacts annotation quality and model generalizability, as algorithms trained on limited or imbalanced datasets struggle to maintain performance across diverse clinical settings and population characteristics.

Recent studies have quantified these annotation inconsistencies through rigorous inter-expert agreement analysis. Research utilizing the SMD/MSS (Sperm Morphology Dataset/Medical School of Sfax) dataset, which employed three independent experts for annotation, revealed a complex distribution of consensus: "There were three separate agreement scenarios among the three experts: 1: No agreement (NA) among the experts; 2: partial agreement (PA): 2/3 experts agree on the same label for at least one category, and 3: total agreement (TA): 3/3 experts agree on the same label for all categories" [10]. This multi-tiered agreement structure highlights the inherent subjectivity in morphological assessment, particularly for borderline cases and subtle abnormalities that lack definitive classification criteria.

Table 2: Sperm Morphology Datasets and Annotation Characteristics

| Dataset | Image Count | Annotation Scope | Key Limitations |

|---|---|---|---|

| HSMA-DS [1] | 1,457 | Classification | Non-stained, noisy, low resolution |

| MHSMA [1] [11] | 1,540 | Classification | Non-stained, low resolution, limited sample size |

| HuSHeM [1] [2] | 216 (publicly available) | Sperm head morphology | Small size, limited structural coverage |

| SMIDS [1] [2] | 3,000 | 3-class classification | Restricted abnormality categories |

| VISEM-Tracking [1] | 656,334 annotated objects | Detection, tracking, regression | Limited morphological detail |

| SVIA [1] | 125,000 annotated instances | Detection, segmentation, classification | Low-resolution, unstained samples |

| SMD/MSS [10] | 6,035 (after augmentation) | 12-class David classification | Single-institution source |

Performance Metrics of Automated Annotation Systems

Recent advances in deep learning have produced increasingly sophisticated automated annotation systems, though their performance varies significantly across different morphological structures and defect types. Convolutional neural network (CNN) approaches have demonstrated remarkable improvements over conventional machine learning methods, with one CBAM-enhanced ResNet50 architecture achieving test accuracies of 96.08% ± 1.2% on the SMIDS dataset and 96.77% ± 0.8% on the HuSHeM dataset, representing significant improvements of 8.08% and 10.41% respectively over baseline CNN performance [2].

For complete sperm structure analysis, more complex multi-stage frameworks have been developed. One comprehensive system utilizing the BlendMask segmentation method coupled with SegNet for component separation achieved a morphological accuracy percentage of 90.82% when validated against experienced embryologists [13]. In bovine applications, YOLOv7-based frameworks demonstrated a global mAP@50 of 0.73, precision of 0.75, and recall of 0.71 across six morphological categories, indicating a balanced tradeoff between accuracy and efficiency [11]. These quantitative metrics reveal persistent challenges in achieving consistent performance across all sperm structures, with midpiece and tail annotations typically showing lower accuracy compared to head structures due to their greater variability and more complex morphological features.

Experimental Protocols for Structural Annotation

Sample Preparation and Image Acquisition

Standardized sample preparation is fundamental to reproducible morphological annotation. Established protocols specify that semen smears should be prepared following WHO guidelines using staining kits such as RAL Diagnostics to enhance structural contrast [10]. For live sperm analysis without staining, alternative fixation methods employing controlled pressure (6 kp) and temperature (60°C) can immobilize sperm while maintaining structural integrity for morphological evaluation [11]. Bright-field microscopy with oil immersion 100x objectives is standard for image acquisition, though negative phase contrast at 40x magnification is employed in bovine studies using systems like the Trumorph [11].

Advanced imaging systems such as the MMC CASA (Computer-Assisted Semen Analysis) system facilitate sequential image acquisition using a microscope equipped with a digital camera [10]. For three-dimensional dynamic analysis, innovative multifocal imaging (MFI) systems based on inverted microscopes (e.g., Olympus IX71) with piezoelectric devices that oscillate objectives at 90 Hz with 20 μm amplitude enable capture of sperm movement in volumetric space [12]. These systems, coupled with high-speed cameras recording at 5000-8000 fps, generate multifocal video-microscopy hyperstacks that support detailed 4D (3D + time) analysis of sperm dynamics, though they require sophisticated computational resources for subsequent annotation and analysis [12].

Annotation Workflows and Quality Control

Robust annotation workflows incorporate multiple quality control mechanisms to address inherent subjectivity in morphological assessment. A standardized protocol involves independent classification by multiple experts (typically three) with documented experience in semen analysis, using established classification systems such as the modified David criteria or WHO standards [10]. For each sperm image, experts independently document morphological classes for each sperm component, with results compiled in a centralized ground truth file that includes image names, expert classifications, and morphometric measurements [10].

To resolve inter-expert discrepancies, statistical analysis of agreement distribution using methods such as Fisher's exact test (with significance at p < 0.05) helps identify systematically contentious morphological categories [10]. For automated systems, data augmentation techniques—including rotation, scaling, and contrast adjustment—are employed to address class imbalance and improve model generalization [10]. The SMD/MSS dataset, for instance, expanded from 1,000 to 6,035 images through such augmentation strategies, significantly enhancing training stability and classification performance [10].

Diagram 1: Sperm annotation workflow showing key stages from sample preparation to model training.

Computational Approaches and Methodologies

Deep Learning Architectures for Morphological Analysis

Contemporary approaches to automated sperm morphology annotation predominantly utilize deep learning architectures, with convolutional neural networks (CNNs) demonstrating particular efficacy. The ResNet50 backbone enhanced with Convolutional Block Attention Module (CBAM) mechanisms has emerged as a leading architecture, with studies reporting that "the integration of CBAM into ResNet50 aims to enhance the representational capacity of extracted features, particularly for capturing subtle morphological differences between normal and teratozoospermic sperm" [2]. These attention mechanisms enable the network to focus computational resources on the most morphologically relevant regions—such as head shape anomalies, acrosome integrity, and tail defects—while suppressing background noise and irrelevant artifacts.

Advanced ensemble methods further enhance classification performance by combining multiple architectures. Recent research has explored "feature-level fusion by combining features extracted from multiple EfficientNetV2 models to leverage complementary strengths and enhance classification accuracy" [14]. These multi-level ensemble approaches integrate feature-level fusion (combining CNN-derived features) with decision-level fusion (using soft voting mechanisms) to achieve robust performance across diverse abnormality classes. One such framework achieved 67.70% accuracy on a challenging 18-class morphology dataset, significantly outperforming individual classifiers and demonstrating particular effectiveness in addressing class imbalance [14].

For complete sperm structural analysis, hybrid frameworks combining multiple specialized networks have shown promising results. One comprehensive system employs an improved FairMOT tracking algorithm that incorporates "the distance and angle of the same sperm head movement in adjacent frames, as well as the head target detection frame IOU value, into the cost function of the Hungarian matching algorithm" for robust sperm tracking [13]. This tracking backbone is integrated with BlendMask for instance segmentation and SegNet for separating head, midpiece, and principal piece components, enabling comprehensive morphological analysis of moving sperm without requiring staining or fixation [13].

Performance Optimization Strategies

Optimizing automated annotation systems requires addressing several domain-specific challenges, including class imbalance, limited dataset size, and generalization across imaging protocols. Deep feature engineering (DFE) approaches that combine the representational power of deep neural networks with classical feature selection methods have demonstrated significant performance improvements [2]. One study reported that "applying PCA to the deep feature embeddings and subsequently training an SVM led to a classification accuracy of 96.08%, representing a substantial improvement of approximately 8 percentage points" over end-to-end CNN classification [2].

Data augmentation represents another critical optimization strategy, particularly for addressing the severe class imbalance inherent in sperm morphology datasets where normal sperm may be significantly outnumbered by various abnormal forms. Standard geometric transformations (rotation, scaling, flipping) are supplemented with more advanced techniques such as generative adversarial networks (GANs) to create synthetic training samples that increase minority class representation [10]. These approaches have enabled studies to expand limited original datasets (e.g., 1,000 images) to more robust training sets (e.g., 6,035 images) with improved class balance [10].

Diagram 2: Deep learning architecture with attention mechanisms and feature engineering.

Essential Research Reagents and Materials

The development of robust sperm morphology annotation systems requires carefully selected reagents and materials throughout the analytical pipeline, from sample preparation to computational analysis. The following table summarizes critical components and their functions in supporting reproducible, high-quality morphological assessment.

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Category | Specific Examples | Function & Application |

|---|---|---|

| Staining Kits | RAL Diagnostics staining kit [10] | Enhances structural contrast for morphological evaluation |

| Fixation Systems | Trumorph system (pressure: 6 kp, temperature: 60°C) [11] | Dye-free immobilization preserving native sperm structure |

| Microscopy Systems | Olympus IX71 with piezoelectric device [12], Optika B-383Phi [11] | High-resolution image acquisition with z-axis capability |

| Imaging Media | Non-capacitating media (NaCl, KCl, CaCl₂, MgCl₂, pyruvate, glucose, HEPES, lactate) [12] | Maintains sperm viability while preventing hyperactivation |

| Capacitation Media | Bovine Serum Albumin (5 mg/ml) + NaHCO₃ (2 mg/ml) [12] | Induces hyperactivated motility for functional assessment |

| Annotation Software | Roboflow [11] | Image labeling and dataset management for model training |

| Deep Learning Frameworks | YOLOv7 [11], ResNet50-CBAM [2], BlendMask [13] | Automated detection, segmentation, and classification |

The structural annotation of sperm morphology—encompassing the head, vacuoles, midpiece, and tail—remains a formidable challenge at the intersection of reproductive biology, clinical medicine, and computer science. While current automated systems have made significant strides in standardization and accuracy, achieving performance levels of 90.82% to 96.77% in validation studies [2] [13], fundamental limitations persist in dataset quality, annotation consistency, and computational methodology. The complexity of simultaneous multi-structure assessment, coupled with the subtlety of morphological distinctions and technical variations in imaging protocols, continues to necessitate expert intervention and manual verification in clinical settings.

Future progress in this domain will likely emerge from several promising research directions. The development of larger, more diverse, and consistently annotated datasets following standardized protocols for slide preparation, staining, image acquisition, and annotation represents an urgent priority [1] [9]. Advanced imaging technologies, particularly 3D+t multifocal systems that capture sperm dynamics in volumetric space over time, offer unprecedented opportunities for analyzing the functional implications of morphological defects [12]. Computational innovations in explainable artificial intelligence, including Grad-CAM attention visualization and hierarchical classification approaches, will enhance clinical interpretability and trust in automated systems [2]. Finally, the integration of morphological assessment with complementary parameters such as DNA fragmentation, molecular biomarkers, and clinical outcomes will enable more comprehensive fertility prediction models that transcend the limitations of pure morphological analysis. Through coordinated advances across these domains, the field can overcome current annotation complexities and deliver increasingly robust, standardized, and clinically impactful sperm morphology assessment systems.

The accurate analysis of sperm morphology is a critical component of male fertility assessment, with abnormal morphology strongly correlated with reduced fertility rates and poor outcomes in assisted reproductive technology [1] [2]. However, the diagnostic process is fundamentally constrained by technical variability introduced during laboratory procedures, particularly in staining protocols, image acquisition parameters, and image resolution. This technical variability presents significant challenges for both traditional manual analysis and emerging artificial intelligence (AI) methodologies, impacting the reproducibility, reliability, and clinical utility of sperm morphology datasets [1] [15].

Within the broader context of research on sperm morphology dataset challenges, technical variability represents a primary source of data inconsistency and model generalizability failure. This whitepaper provides an in-depth technical examination of these variability sources, summarizes experimental evidence of their impacts, details standardized protocols for mitigation, and visualizes the complex relationships between variability sources and data quality. The guidance is specifically framed for researchers, scientists, and drug development professionals working to build robust, standardized, and clinically applicable sperm morphology analysis systems.

Stain Variation: Causes, Impacts, and Normalization Techniques

Origins and Impact of Stain Variability

Staining variation is an inherent challenge in histological and cytological preparations, with Hematoxylin and Eosin (H&E) staining alone accounting for over 80% of slides stained worldwide [16]. A large-scale international study evaluating H&E staining across 247 laboratories found that while 69% of labs achieved good or excellent staining scores, significant inter-laboratory variation persisted due to differing staining methods and protocols [16]. This variation introduces substantial noise into morphological datasets, complicating both manual analysis and automated classification.

The impact of stain variation on AI-driven analysis is particularly profound. A controlled study demonstrated that a well-trained Deep Neural Network (DNN) model for predicting metastasis in non-small cell lung cancer, which achieved high accuracy (AUC = 0.74-0.81) when trained and tested on slides from the same batch, failed completely (AUC = 0.52-0.53) when generalizing to adjacent recuts from the same tissue blocks prepared at a different time [15]. This performance degradation occurred despite the cellular content being nearly identical, highlighting DNNs' vulnerability to fixating on extraneous staining variations rather than biologically relevant morphological features [15].

Stain Normalization Methodologies

Traditional Image-Processing Based Methods:

- Vahadane Method: Utilizes sparse non-negative matrix factorization to separate different staining in source and target images, normalizing images while preserving structural information [15].

- Macenko Method: Operates by projecting stained images into the optical density space and utilizing singular value decomposition to identify the principal stain vectors for normalization [15].

- Reinhard Method: Transforms images between color spaces by matching the mean and standard deviation of the intensity distributions in the target image to a reference image [15].

Machine Learning-Based Normalization:

- CycleGAN-Based Methods: Employ generative adversarial networks to learn a mapping between different color spaces, enabling stain normalization by projecting all images into a unified color space defined by reference images [15]. This approach can more effectively handle complex stain variations but may alter cellular morphology if not properly constrained.

Table 1: Comparison of Stain Normalization Techniques

| Method | Underlying Principle | Advantages | Limitations |

|---|---|---|---|

| Vahadane | Sparse non-negative matrix factorization | Preserves structural information in images | Requires representative sample images |

| Macenko | Singular value decomposition in optical density space | Computationally efficient | Sensitive to outlier pixels |

| Reinhard | Color distribution matching | Simple and fast implementation | Limited to global color statistics |

| CycleGAN | Generative adversarial networks | Can handle complex stain variations | May alter cellular morphology |

Image Acquisition and Resolution Variability

Image acquisition in sperm morphology analysis introduces multiple dimensions of technical variability that directly impact analysis reliability. Imaging flow cytometry systems, such as the ImageStream MkII, exemplify these challenges through their configurable parameters, including multiple magnification options (20, 40, and 60X), varying pixel resolutions (1, 0.5, and 0.3μm), and multiple fluorescence channels [17]. These systems, while providing rich morphological data, demonstrate how acquisition settings become embedded in dataset characteristics.

Sample preparation further compounds acquisition variability. As detailed in imaging flow cytometry protocols, cells must be concentrated at 20-30 million cells per milliliter, with deviations affecting image quality and analysis consistency [17]. The concentration requirement highlights the interaction between sample preparation and image acquisition parameters, where suboptimal preparation can undermine even carefully controlled acquisition settings.

Impact on Automated Analysis

Variability in image acquisition parameters directly challenges deep learning approaches for sperm morphology classification. Current state-of-the-art models, including CBAM-enhanced ResNet50 architectures, achieve exceptional performance (96.08% accuracy) on standardized datasets but remain vulnerable to domain shift induced by acquisition parameter changes [2]. This vulnerability is particularly problematic for clinical deployment, where acquisition systems may differ from those used in model development.

The limitations of existing public sperm morphology datasets exacerbate acquisition variability challenges. As noted in a comprehensive review, datasets such as HSMA-DS, MHSMA, and VISEM-Tracking frequently suffer from low resolution, limited sample size, and insufficient categories of morphological abnormalities [1]. This lack of standardized, high-quality annotated datasets containing diverse acquisition parameters fundamentally limits the development of robust analysis models.

Table 2: Public Sperm Morphology Datasets and Their Characteristics

| Dataset Name | Year | Key Characteristics | Limitations |

|---|---|---|---|

| HSMA-DS [1] | 2015 | 1,457 sperm images from 235 patients | Non-stained, noisy, low resolution |

| HuSHeM [1] | 2017 | 725 images (216 publicly available) | Stained, higher resolution, limited availability |

| MHSMA [1] | 2019 | 1,540 grayscale sperm head images | Non-stained, noisy, low resolution |

| VISEM-Tracking [1] | 2023 | 656,334 annotated objects with tracking details | Low-resolution unstained grayscale sperm and videos |

| SVIA [1] | 2022 | 125,000 annotated instances, 26,000 segmentation masks | Low-resolution unstained grayscale images |

Experimental Protocols for Assessing Technical Variability

Protocol for Quantifying Stain Variation Impact

Objective: To evaluate the effect of stain variation on deep learning model generalizability using adjacent tissue sections stained at different times.

Materials:

- Tissue sections from the same donor block

- Standard H&E staining reagents

- Aperio/Leica AT2 slide scanner or equivalent

- Computational resources for DNN training and evaluation

Methodology:

- Slide Preparation: Obtain adjacent recuts (10-20μm thickness) from the same tissue block.

- Staining Procedure: Process slides in the same laboratory but at different time points (e.g., 8 months apart) using identical staining protocols.

- Digital Imaging: Scan all slides at 40× magnification using consistent scanner settings.

- Region of Interest (ROI) Annotation: Have an expert pathologist circle the primary tumor region or area of morphological interest on each slide.

- Tile Sampling: Apply Otsu thresholding within annotated ROIs to exclude empty areas, then randomly sample 1000 image tiles (256×256 pixels) from each whole-slide image.

- Model Training and Evaluation:

- Train identical DNN architectures (e.g., ResNet models) on tiles from each batch separately.

- Implement stain normalization techniques (Vahadane, CycleGAN) as an intermediate processing step.

- Evaluate cross-batch performance by testing each model on the opposite batch's tiles.

- Quantify performance using Area Under the Curve (AUC) metrics [15].

Protocol for Evaluating Resolution and Acquisition Variability

Objective: To assess the impact of image resolution and acquisition parameters on sperm morphology classification accuracy.

Materials:

- Fresh semen samples from consented donors

- Imaging flow cytometer (e.g., ImageStream MkII) or microscope with multiple magnification capabilities

- Standard staining reagents for sperm morphology

- Computing infrastructure for deep feature engineering

Methodology:

- Sample Preparation: Prepare sperm samples according to standardized protocols, concentrating to 20-30 million cells per milliliter [17].

- Multi-Resolution Image Acquisition: Capture images of the same sample population at different magnifications (20, 40, and 60X) corresponding to pixel resolutions of 1, 0.5, and 0.3μm.

- Expert Annotation: Have trained embryologists annotate at least 200 sperm per sample according to WHO guidelines, classifying normal and abnormal morphology across head, neck, and tail compartments [1] [2].

- Model Training with Deep Feature Engineering:

- Implement a CBAM-enhanced ResNet50 architecture for feature extraction.

- Apply multiple feature selection methods (PCA, Chi-square test, Random Forest importance).

- Train classifiers (SVM with RBF/Linear kernels, k-Nearest Neighbors) on features extracted from different resolution tiers.

- Evaluate performance using 5-fold cross-validation to ensure statistical robustness [2].

- Cross-Resolution Validation: Test models trained on one resolution tier against images acquired at different resolution tiers to quantify performance degradation.

Visualizing Technical Variability Impacts

The following diagram illustrates the sources of technical variability in sperm morphology analysis and their cascading effects on data quality and model performance:

Figure 1: Pathways of technical variability impact on sperm morphology analysis, showing how sources of variability affect data quality and ultimately model performance.

The experimental workflow for assessing and mitigating technical variability involves multiple coordinated steps:

Figure 2: Experimental workflow for comprehensive assessment of technical variability in sperm morphology analysis.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents and Materials for Sperm Morphology Analysis

| Reagent/Material | Function | Technical Specifications | Considerations |

|---|---|---|---|

| Anti-CD45 Antibody [17] | Pan-white blood cell marker | Fluorescently conjugated for detection | Enables distinction of white blood cells from red blood cells and debris |

| Nuclear Dye [17] | Nuclear staining for localization studies | Must be carefully titrated to avoid signal saturation | Essential for measuring nuclear occupancy of transcription factors |

| Fixative Solution [17] | Cell structure preservation | 2-5% formaldehyde concentration | Critical for maintaining morphological integrity during processing |

| Permeabilization Agent [17] | Enables intracellular antibody access | TritonX-100, Nonidet-P40, or Saponin | Concentration must be optimized for sperm cell membranes |

| H&E Staining Reagents [16] | Standard morphological staining | Variable between laboratories | Major source of technical variability; requires standardization |

| Fluorescent Antibody Panels [17] | Multi-parameter cell phenotyping | Requires spectral compatibility assessment | Low-expressed markers should be assigned to bright fluorochromes |

Technical variability in staining, image acquisition, and resolution represents a fundamental challenge in sperm morphology analysis that directly impacts diagnostic reliability and the development of robust AI solutions. The experimental evidence demonstrates that even state-of-the-art deep learning models experience significant performance degradation when faced with domain shift induced by technical variability. Addressing these challenges requires standardized protocols, comprehensive stain normalization strategies, and the development of more diverse and well-annotated datasets that account for real-world technical variations. For researchers and drug development professionals, acknowledging and systematically controlling for these sources of variability is essential for advancing the field toward clinically applicable, reliable, and standardized sperm morphology assessment.

From Pixels to Diagnosis: Methodological Advances in Dataset Utilization and AI Modeling

Leveraging Deep Learning for Automated Feature Extraction and Classification

The analysis of sperm morphology is a cornerstone of male fertility assessment, with its results being highly correlated with fertility outcomes [10] [9]. Traditionally, this analysis is performed manually via visual inspection under a microscope, a process that is not only time-consuming and labor-intensive but also plagued by significant subjectivity and inter-observer variability [10] [18]. This lack of standardization presents a major challenge for clinical diagnosis and large-scale research, particularly in the context of drug development for male infertility, where objective and reproducible biomarkers are critically needed [19] [9].

Deep learning, a subset of artificial intelligence (AI), is poised to revolutionize this field by enabling the automation, standardization, and acceleration of semen analysis [10] [18]. By leveraging convolutional neural networks (CNNs) and other sophisticated architectures, these models can learn to extract complex features from sperm images directly, without relying on manual feature engineering [9]. This technical guide explores the application of deep learning for the automated feature extraction and classification of sperm cells, focusing on the technical methodologies, performance, and experimental protocols that are relevant for researchers and drug development professionals working within the constraints of current sperm morphology datasets.

The Central Challenge: Data Scarcity and Quality

The development of robust deep learning models is fundamentally dependent on large, high-quality, and well-annotated datasets. In the domain of sperm morphology, the creation of such datasets remains a primary bottleneck [9]. Key challenges include:

- Lack of Standardization: Many medical institutions rely on conventional assessment methods that do not systematically save valuable image data, leading to data loss [9].

- Annotation Difficulty: Accurate assessment requires the simultaneous evaluation of the head, midpiece, and tail for defects, a complex task that demands expert knowledge and time [10] [9]. Furthermore, sperm cells can appear intertwined or partially displayed at image edges, complicating annotation [9].

- Inter-Expert Variability: Even among experts, there can be significant disagreement in morphological classification, highlighting the inherent complexity of the task and complicating the establishment of a definitive "ground truth" [10].

To overcome the issue of limited data, researchers have turned to data augmentation techniques. These methods artificially expand the size and diversity of training datasets by applying random but realistic transformations to existing images, such as rotation, flipping, and changes in contrast [10]. For instance, one study expanded its initial dataset of 1,000 sperm images to 6,035 images through augmentation, which was crucial for training a more robust model [10].

Table 1: Publicly Available Datasets for Sperm Morphology Analysis

| Dataset Name | Key Features | Number of Images/Instances | Notable Characteristics |

|---|---|---|---|

| SMD/MSS [10] | Sperm images based on modified David classification (12 defect classes) | 1,000 original, extended to 6,035 with augmentation | Annotated by three experts; includes head, midpiece, and tail anomalies |

| MHSMA [9] | Focus on features like acrosome, head shape, and vacuoles | 1,540 images | Contains different sperm types; used for deep learning model training |

| SVIA [9] | Comprehensive dataset for detection, segmentation, and classification | 125,000 annotated instances for detection; 26,000 segmentation masks | Includes video data and cropped image objects for multiple tasks |

Deep Learning Architectures and Workflows

From Conventional Machine Learning to Deep Learning

Early attempts to automate sperm analysis relied on conventional machine learning algorithms, such as Support Vector Machines (SVM), K-means clustering, and decision trees [9]. While these methods demonstrated some success, they were fundamentally limited by their dependence on handcrafted features. Experts had to manually design and extract features from images—such as grayscale intensity, shape descriptors (e.g., Hu moments, Zernike moments), and texture—before these features could be fed into a classifier [9]. This process was cumbersome, time-consuming, and often failed to capture the full complexity of morphological variations, leading to issues with generalization across different datasets [9].

Deep learning models, particularly Convolutional Neural Networks (CNNs), overcome this limitation. CNNs are capable of automatically learning a hierarchy of relevant features directly from raw pixel data, from simple edges and textures in early layers to complex morphological structures in deeper layers [10] [9]. This end-to-end learning paradigm has led to significant improvements in the accuracy and robustness of automated sperm analysis systems.

Core Deep Learning Architectures for Sperm Analysis

Two main architectural paradigms are employed for sperm analysis:

- Classification Models: These models, typically standard CNNs, take a pre-processed image of a single sperm cell and output a probability distribution over predefined morphological classes (e.g., normal, tapered head, coiled tail) [10]. They are used when the location of the sperm cell is already known.

- Object Detection and Segmentation Models: Models like YOLO (You Only Look Once) networks are used to both locate and classify multiple sperm cells within a larger microscope image [20]. Furthermore, advanced segmentation models are critical for delineating the precise boundaries of different sperm components (head, midpiece, tail), which is a prerequisite for detailed morphological analysis [9]. The recent adaptation of foundation models like Segment Anything Model (SAM) for microscopy, known as μSAM, shows promise for providing a unified and powerful tool for this segmentation task [21].

The following diagram illustrates a typical end-to-end workflow for training and applying a deep learning model to sperm morphology analysis.

Figure 1: Sperm Morphology Analysis Workflow

Experimental Protocols and Performance

A Detailed Methodology for CNN-based Classification

The following protocol outlines a representative methodology for developing a deep learning model for sperm classification, as detailed in the research building the SMD/MSS dataset [10].

1. Sample Preparation and Image Acquisition:

- Sample Source: Semen samples are obtained from patients after informed consent. Samples with very high concentration (>200 million/mL) are often excluded to avoid image overlap [10].

- Smear Preparation: Smears are prepared according to WHO manual guidelines and stained using a standardized kit (e.g., RAL Diagnostics) [10].

- Image Capture: Images are acquired using a Computer-Assisted Semen Analysis (CASA) system, such as the MMC system, consisting of an optical microscope with a digital camera. A bright field mode with an oil immersion 100x objective is typically used [10]. Each image should contain a single spermatozoon.

2. Data Annotation and Ground Truth Establishment:

- Expert Classification: Each sperm image is independently classified by multiple experts (e.g., three) based on a standardized classification system like the modified David classification. This system defines 12 classes of defects across the head (tapered, thin, microcephalous, etc.), midpiece (cytoplasmic droplet, bent), and tail (coiled, short, multiple) [10].

- Labeling and Ground Truth File: A ground truth file is compiled for each image, containing the image name, classifications from all experts, and morphometric dimensions (head width/length, tail length) [10].

- Analysis of Inter-Expert Agreement: The level of agreement among experts (Total Agreement, Partial Agreement, No Agreement) is statistically assessed using tools like IBM SPSS, with Fisher's exact test used to evaluate differences [10].

3. Image Pre-processing and Data Augmentation:

- Pre-processing: This step is critical for denoising images and standardizing inputs. Steps include:

- Data Cleaning: Handling missing values or inconsistent data.

- Normalization/Standardization: Rescaling pixel values to a common range (e.g., 0-1).

- Resizing: Images are resized to a fixed dimension (e.g., 80x80 pixels) and often converted to grayscale (1 channel) [10].

- Data Augmentation: Techniques such as rotation, flipping, and scaling are applied to the training dataset to increase its size and variability, thus improving model generalization [10].

4. Model Training and Evaluation:

- Data Partitioning: The entire dataset is randomly split into a training set (e.g., 80%) and a testing set (e.g., 20%). A portion of the training set (e.g., 20%) may be used for validation during training [10].

- Algorithm Implementation: A CNN model is implemented in a programming environment like Python 3.8. The architecture typically consists of multiple convolutional and pooling layers for feature extraction, followed by fully connected layers for classification.

- Training: The model is trained on the training set, learning to map input images to the expert-defined morphological classes.

- Evaluation: The trained model's performance is evaluated on the unseen test set using metrics such as accuracy, precision, and recall [10].

Quantitative Performance of Deep Learning Models

Deep learning approaches have demonstrated strong performance in automating sperm morphology analysis, as evidenced by recent studies summarized in the table below.

Table 2: Performance of AI Models in Sperm Analysis

| Application / Study Focus | AI Model / Technique | Reported Performance | Dataset / Sample Size |

|---|---|---|---|

| Sperm Morphology Classification [10] | Convolutional Neural Network (CNN) | Accuracy: 55% to 92% | 1,000 images extended to 6,035 (SMD/MSS) |

| Sperm Head Classification [9] | Support Vector Machine (SVM) | AUC-ROC: 88.59%; Precision >90% | >1,400 sperm cells from 8 donors |

| Bull Sperm Morphology & Vitality [20] | YOLO-based CNN | Accuracy: 82%; Precision: 85% | 8,243 annotated images |

| Sperm Motility Analysis [18] | Support Vector Machine (SVM) | Accuracy: 89.9% | 2,817 sperm |

| Non-Obstructive Azoospermia Prediction [18] | Gradient Boosting Trees (GBT) | AUC: 0.807; Sensitivity: 91% | 119 patients |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, software, and equipment essential for conducting experiments in deep learning-based sperm morphology analysis, as derived from the cited methodologies.

Table 3: Research Reagent Solutions for Sperm Morphology Analysis

| Item Name | Function / Application in the Workflow |

|---|---|

| RAL Diagnostics Staining Kit [10] | Stains semen smears to enhance visual contrast of sperm structures for microscopic imaging. |

| MMC CASA System [10] | An integrated system (microscope, camera, software) for automated acquisition and storage of sperm images. |

| Python 3.8 [10] | The programming environment used for implementing and training deep learning algorithms (CNNs). |

| IBM SPSS Statistics [10] | Statistical software used for analyzing inter-expert agreement and validating annotation consistency. |

| YOLO (You Only Look Once) Networks [20] | A type of convolutional neural network designed for real-time object detection and classification in images. |

| Segment Anything for Microscopy (μSAM) [21] | A foundation model fine-tuned for microscopy, used for accurate segmentation of cells and nuclei in images. |

| Napari [21] | A multi-dimensional image viewer for Python that hosts plugins (e.g., for μSAM) for interactive image analysis. |

Advanced Applications and Future Directions

The application of deep learning in male infertility extends beyond basic morphology classification. It plays an increasingly important role in drug development and advanced reproductive technologies.

- High-Throughput Screening for Drug Discovery: The lengthy and costly traditional drug discovery pathway for male subfertility is a significant hurdle [19]. Deep learning models can be integrated with high-throughput screening platforms to rapidly test the effects of thousands of chemical compounds on sperm function. This allows for the observation of phenotypic changes in sperm morphology and motility at scale, accelerating the identification of promising therapeutic candidates [19].

- Predicting IVF Success and Sperm Retrieval: AI models are being developed to predict the success of assisted reproductive technologies like IVF and Intracytoplasmic Sperm Injection (ICSI). For instance, random forest models have been used to predict IVF success with an AUC of 84.23% based on patient data [18]. Furthermore, for the most severe form of male infertility, non-obstructive azoospermia (NOA), gradient boosting trees have shown 91% sensitivity in predicting the success of surgical sperm retrieval, helping to guide clinical decisions [18].

The integration of deep learning with other 'omics' data represents a powerful future direction. As seen in other fields like histopathology, ensemble deep learning approaches that merge segmentation results from multiple models can provide robust cellular composition data that strongly correlates with gene expression variance [22]. Applying similar multi-modal approaches to sperm analysis—correlating deep learning-derived morphological features with genomic or proteomic data—could unlock new diagnostic and prognostic biomarkers for male infertility.

Deep learning has unequivocally demonstrated its potential to transform the analysis of sperm morphology by automating feature extraction and classification. This transition from subjective manual assessment to objective, AI-driven analysis directly addresses the critical challenges of standardization and reproducibility that have long plagued the field. For researchers and drug development professionals, these technologies offer not only a gain in efficiency but also a path to discovering novel, quantifiable biomarkers of sperm quality. While challenges related to dataset quality and model generalizability persist, the ongoing development of standardized, high-quality datasets and more sophisticated, foundation models like μSAM promises a future where deep learning is integral to both clinical diagnostics and the development of novel therapies for male infertility.

The Role of Data Augmentation in Mitigating Limited Sample Sizes

In the field of male fertility research, sperm morphology analysis represents a critical diagnostic tool, yet it confronts a fundamental challenge: the scarcity of large, well-annotated datasets. Male factors contribute to approximately 50% of infertility cases, making accurate sperm assessment crucial for diagnosis and treatment [1]. Traditional manual sperm morphology assessment performed by embryologists is notoriously subjective, time-intensive (requiring 30-45 minutes per sample), and prone to significant inter-observer variability, with studies reporting up to 40% disagreement between expert evaluators and kappa values as low as 0.05–0.15 [2]. This diagnostic inconsistency underscores the urgent need for automated, objective analysis methods.

Deep learning approaches offer a promising solution for standardizing sperm morphology assessment but face substantial data limitations. The robustness of these artificial intelligence technologies relies primarily on the creation of large and diverse databases [10]. However, researchers encounter two major issues during database construction: the limited number of available sperm images and heterogeneous representation across different morphological classes [10]. Data augmentation emerges as a critical strategy to compensate for these shortcomings, artificially expanding training datasets to improve model generalization and mitigate overfitting in this data-scarce domain.

The Data Scarcity Challenge in Sperm Morphology Analysis

Current Limitations in Sperm Image Datasets

The development of robust deep learning models for sperm morphology classification faces significant hurdles due to several dataset limitations. Existing public datasets, such as HSMA-DS, MHSMA, VISEM-Tracking, and HuSHeM, typically contain only thousands of images—insufficient for training complex neural networks without overfitting [1]. These collections often suffer from low resolution, poor staining quality, and limited representation of rare morphological abnormalities, creating an imbalance in class distribution that biases model learning [1].

The annotation process itself presents considerable challenges. Sperm defect assessment requires simultaneous evaluation of head, vacuoles, midpiece, and tail abnormalities, substantially increasing annotation difficulty [1]. Furthermore, the complexity of sperm morphology classification is evidenced by studies showing limited inter-expert agreement, with analyses revealing three distinct agreement scenarios among experts: no agreement (NA), partial agreement (PA) where 2/3 experts concur, and total agreement (TA) where all three experts agree on labels for all categories [10]. This annotation inconsistency introduces noise into training data, further complicating model development.

Impact on Model Performance