Performance Evaluation of Fertility Diagnostic Models: From Bench to Bedside in Reproductive Medicine

This article provides a comprehensive performance evaluation of next-generation diagnostic models for fertility assessment, tailored for researchers and drug development professionals.

Performance Evaluation of Fertility Diagnostic Models: From Bench to Bedside in Reproductive Medicine

Abstract

This article provides a comprehensive performance evaluation of next-generation diagnostic models for fertility assessment, tailored for researchers and drug development professionals. It explores the foundational principles of computational fertility diagnostics, examines the application of diverse methodologies from traditional machine learning to advanced neural networks and bio-inspired optimization, addresses critical troubleshooting and optimization challenges like class imbalance and feature selection, and conducts rigorous validation and comparative analysis of model generalizability and clinical interpretability. The review synthesizes performance metrics, clinical applicability, and future directions for integrating predictive models into biomedical research and clinical workflows to advance personalized reproductive care.

The Evolving Landscape of Computational Fertility Diagnostics: Foundations and Clinical Imperatives

Infertility, defined as the failure to achieve a pregnancy after 12 months or more of regular unprotected sexual intercourse, represents a significant global health crisis [1]. Recent data indicates that approximately 1 in 6 adults worldwide experiences infertility during their lifetime, establishing it as a common condition with substantial personal, social, and economic ramifications [2] [1]. The global burden has intensified dramatically over recent decades, with female infertility cases alone surging from approximately 59.7 million in 1990 to over 110 million in 2021—an increase of 84.4% [2] [3]. Similarly, male infertility cases have risen by 74.66% over the same period [4]. This escalating prevalence has fueled parallel growth in the assisted reproduction technology market, with the in vitro fertilization (IVF) sector projected to reach $37.7 billion by 2027 [2].

The economic impact of infertility extends beyond treatment costs to include broader societal consequences. IVF remains financially inaccessible to many, with costs exceeding $60,000 per live birth in the U.S., creating significant disparities in care access [2]. Concurrently, declining global fertility rates—now at a total fertility rate (TFR) of 2.2—signal impending demographic challenges including shrinking workforces and strained social systems in many countries [2]. This complex landscape of rising clinical need and economic barrier is driving innovation across the diagnostic spectrum, from conventional clinical evaluations to cutting-edge computational approaches aimed at improving accessibility, accuracy, and personalization in fertility care.

Conventional Diagnostic Frameworks: Establishing the Baseline

Standardized Diagnostic Pathways

Conventional infertility diagnosis follows a systematic, stepwise approach designed to identify the most common causes through the least invasive methods initially [5]. The diagnostic process begins with a comprehensive assessment of both partners simultaneously, as male factors contribute to approximately 50% of infertility cases either alone or in combination with female factors [6]. For women, evaluation is recommended after 12 months of unsuccessful conception attempts for women under 35, and after only 6 months for women aged 35 and older, reflecting the impact of aging on female fertility [7] [5].

The standard female fertility evaluation includes several key components beginning with assessment of ovulatory function through menstrual history and mid-luteal progesterone testing [7]. Approximately 25% of infertility diagnoses are attributed to ovulatory disorders, with polycystic ovary syndrome (PCOS) representing the most common cause [7]. Additionally, tubal patency testing via hysterosalpingogram and ovarian reserve assessment through biomarkers like anti-Müllerian hormone (AMH) and antral follicle count (AFC) constitute fundamental elements of the basic infertility workup [7] [6]. For male partners, the cornerstone of evaluation remains the semen analysis, though this assessment is often supplemented by endocrine profiling and physical examination when abnormalities are detected [7] [6].

Table 1: Key Performance Indicators in Conventional Infertility Diagnosis

| Diagnostic Parameter | Clinical Application | Performance Metrics | Limitations |

|---|---|---|---|

| Semen Analysis | Initial male factor assessment | Identifies ~90% of severe male factor cases [6] | Poor predictor of functional sperm capacity; inter-laboratory variability |

| Hysterosalpingogram (HSG) | Tubal patency evaluation | Sensitivity: 65%; Specificity: 83% [7] | Limited in detecting peritubular adhesions, endometriosis |

| Serum Progesterone | Ovulation confirmation | Single value >3 ng/mL confirms ovulation [7] | Does not assess oocyte quality or endometrial receptivity |

| Anti-Müllerian Hormone (AMH) | Ovarian reserve assessment | Strong correlation with antral follicle count [6] | Limited predictability for natural conception; cycle variability |

| Day 3 FSH/E2 | Ovarian reserve assessment | FSH >10-15 IU/L suggests diminished reserve [7] | High cycle-to-cycle variability; affected by estrogen levels |

Limitations Driving Innovation

Despite standardized protocols, conventional diagnostic approaches face significant limitations that impact their effectiveness and efficiency. The comprehensive evaluation of an infertile couple traditionally requires multiple cycle days and specialized testing facilities, creating logistical barriers and extending time-to-diagnosis [5]. Furthermore, even after exhaustive assessment, approximately 15% of couples receive a diagnosis of "unexplained infertility" without identifiable causation [7]. This diagnostic gap highlights critical limitations in current paradigms, particularly regarding functional rather than anatomical fertility assessment.

Additional challenges include the subjective interpretation of diagnostic tests like semen analysis and hysterosalpingography, which demonstrate significant inter-observer variability [6]. The predictive value of conventional tests for live birth outcomes also remains modest, with even the most sophisticated models achieving limited clinical utility for individual prognosis [7]. These limitations, combined with rising global prevalence and increasing cost pressures, have created an urgent need for innovative diagnostic technologies that offer greater precision, efficiency, and accessibility.

Innovative Diagnostic Models: Performance Comparison

Bio-Inspired Computational Approaches

Recent advances in computational diagnostics have introduced powerful new capabilities for infertility assessment, particularly in male factor evaluation. A groundbreaking hybrid diagnostic framework combining multilayer feedforward neural networks with nature-inspired ant colony optimization has demonstrated remarkable performance in male fertility classification [8]. This bio-inspired approach integrates adaptive parameter tuning based on ant foraging behavior to overcome limitations of conventional gradient-based methods, achieving exceptional accuracy and efficiency [8].

Table 2: Performance Metrics of Innovative Diagnostic Models

| Model/Protocol | Classification Accuracy | Sensitivity | Computational Time | Clinical Validation |

|---|---|---|---|---|

| Bio-Inspired Optimization with Neural Network [8] | 99% | 100% | 0.00006 seconds | 100 clinically profiled male cases |

| Fertility Pathways Protocol [2] | Not applicable (treatment protocol) | Not applicable | Not applicable | 60% live birth rate without IVF; 84% with IVF |

| Standard Clinical Workup [7] | ~85% (identifies causation) | Varies by test | Days to weeks | Identifies cause in 85% of couples |

This innovative model was trained and validated on a dataset of 100 clinically profiled male fertility cases representing diverse lifestyle and environmental risk factors [8]. The system achieved 99% classification accuracy with 100% sensitivity and an unprecedented computational time of just 0.00006 seconds, highlighting its potential for real-time clinical application [8]. Beyond raw performance metrics, the model offers enhanced clinical interpretability through feature-importance analysis, which identifies and ranks contributory factors such as sedentary habits and environmental exposures, thereby enabling healthcare professionals to readily understand and act upon the predictions [8].

Protocol-Based Integrated Care Models

Parallel to technological innovations, structured clinical protocols represent another innovative approach to improving diagnostic efficiency and treatment outcomes. The Fertility Pathways protocol (based on the Rockford or Holden Protocol) guides primary care providers through individualized diagnosis and treatment without requiring specialized reproductive endocrinology training [2]. This system emphasizes root-cause correction addressing hormonal, anatomical, and ovulatory issues before conception attempts, achieving 59.8% live birth rates without IVF—nearly double the highest national average reported in Denmark (34.2% without IVF) [2].

When combined with IVF, the Fertility Pathways approach demonstrates 84% live birth rates, dramatically surpassing U.S. national averages of approximately 30% per transfer [2]. Beyond outcomes, this protocol significantly improves accessibility by reducing costs by approximately 91% per live birth compared to conventional specialty care, potentially extending fertility services to the estimated 86% of infertile couples currently untreated due to financial, geographic, or cultural barriers [2].

Experimental Protocols and Methodologies

Hybrid Computational Diagnostic Framework

The development and validation of the bio-inspired optimization model for male fertility diagnosis followed a rigorous methodological pathway designed to ensure robustness and clinical relevance [8]. The experimental protocol encompassed several critical phases:

Data Acquisition and Preprocessing: The research utilized a publicly available dataset of 100 clinically profiled male fertility cases representing diverse lifestyle and environmental risk factors. Each case included comprehensive parameters encompassing semen quality metrics, lifestyle factors, environmental exposures, and clinical outcomes. Data normalization procedures were applied to ensure comparability across features with different measurement scales.

Model Architecture and Training: The core system implemented a multilayer feedforward neural network with architecture optimized for the specific dimensionality of the fertility dataset. This network was integrated with an ant colony optimization (ACO) algorithm that employed a proximity search mechanism simulating ant foraging behavior to refine network parameters and overcome local minima limitations of conventional backpropagation.

Validation and Testing: Model performance was assessed using rigorous k-fold cross-validation techniques on unseen samples to prevent overfitting and ensure generalizability. The evaluation metrics included standard classification measures (accuracy, sensitivity, specificity) as well as computational efficiency parameters relevant to clinical implementation.

Clinical Interpretability Analysis: A critical final phase applied feature-importance analysis to identify the relative contribution of different risk factors to the model's predictions, thereby enhancing clinical utility by highlighting modifiable lifestyle and environmental factors.

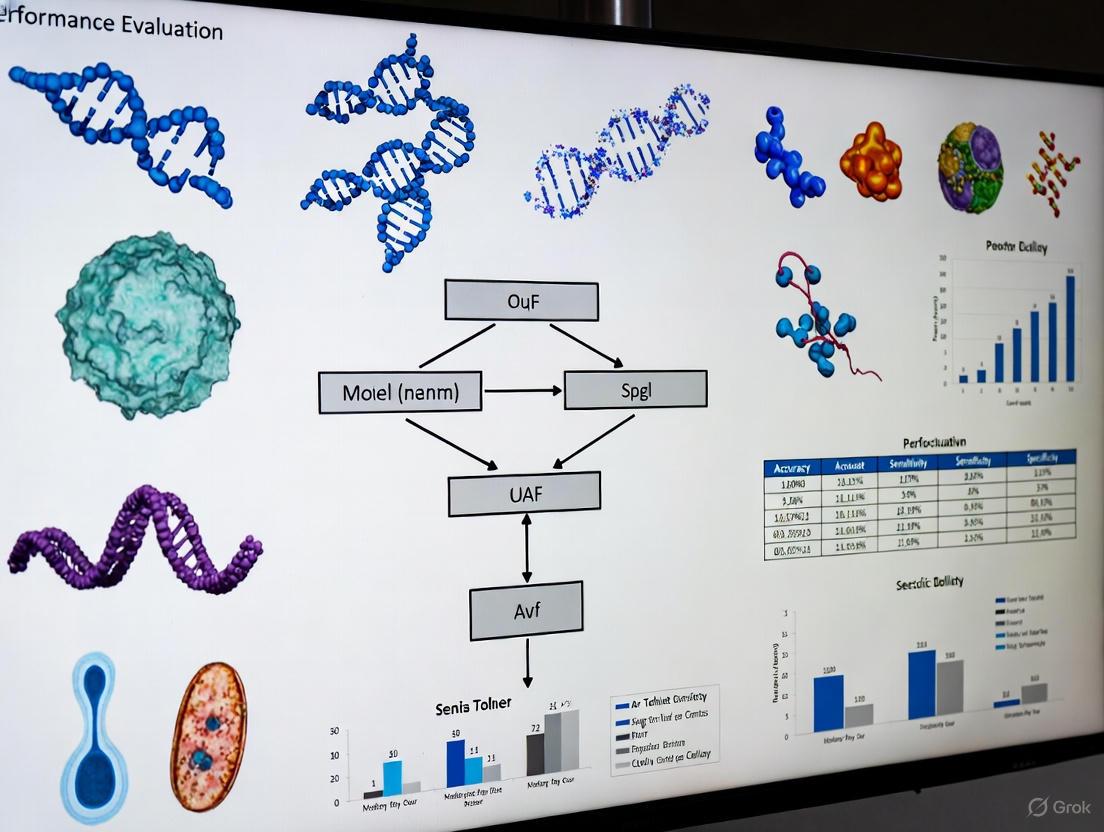

The following workflow diagram illustrates the experimental design:

Key Performance Indicator Monitoring in Clinical Settings

For conventional IVF settings, recent consensus guidelines from Italian fertility societies have established standardized key performance indicators (KPIs) to monitor clinical and laboratory quality [9]. The experimental framework for implementing these KPIs involves:

Stratified Patient Allocation: The reference population is stratified by female age (≤34 years, 35-39 years, ≥40 years) and ovarian response (poor, normal, high responders) based on the number of oocytes retrieved, recognizing that performance benchmarks vary significantly across these categories [9].

Cycle Cancellation Rate Monitoring: This KPI measures treatment discontinuation before oocyte pickup, with competence values set at ≤30% for poor responders and ≤3% for normal and hyper-responders, while benchmark goals aim for ≤10% and ≤0.5% respectively [9].

Follicle-to-Oocyte Index (FOI) Calculation: This metric assesses the consistency between the antral follicle pool at stimulation initiation and the number of oocytes retrieved, providing a quantitative measure of ovarian stimulation efficiency [9].

The systematic application of these KPIs enables continuous quality improvement in clinical settings through rigorous internal quality control systems that benchmark performance against established competence values and aspirational goals [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of innovative fertility diagnostic models requires specific research reagents and analytical tools. The following table details essential components for establishing these experimental systems in research settings:

Table 3: Research Reagent Solutions for Fertility Diagnostic Innovation

| Reagent/Material | Specifications | Research Application | Performance Considerations |

|---|---|---|---|

| Clinical Fertility Datasets | Minimum 100 clinically profiled cases with lifestyle, environmental, and laboratory parameters [8] | Model training and validation | Dataset diversity critical for generalizability; must include varied etiologies |

| Multilayer Feedforward Neural Network Framework | Python/TensorFlow with customizable architecture | Core computational classification | Architecture must match data dimensionality; typically 3-5 hidden layers |

| Ant Colony Optimization Library | Customizable proximity search mechanisms; adaptive parameter tuning | Enhanced model accuracy and convergence | Reduces local minima trapping; improves gradient descent efficiency |

| Feature Importance Analysis Tools | SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) | Clinical interpretability of model predictions | Identifies key contributory factors like sedentary habits, environmental exposures [8] |

| Statistical Validation Suite | k-fold cross-validation; receiver operating characteristic (ROC) analysis | Model performance assessment | Must include sensitivity, specificity, accuracy, computational time metrics [8] |

The evolving landscape of infertility diagnostics reflects a necessary response to the growing global burden of this complex condition. Conventional diagnostic frameworks, while establishing important baseline protocols, face significant limitations in comprehensiveness, predictive value, and accessibility. Innovative approaches, particularly bio-inspired computational models and standardized clinical pathways, demonstrate promising advances in accuracy, efficiency, and cost-effectiveness.

The integration of machine learning with nature-inspired optimization algorithms achieves unprecedented classification accuracy for male factor infertility while providing crucial clinical interpretability through feature importance analysis [8]. Simultaneously, structured clinical protocols like Fertility Pathways dramatically improve live birth outcomes while reducing costs by approximately 91% per live birth, potentially expanding access to the estimated 86% of infertile couples currently untreated [2]. These innovations represent a paradigm shift from descriptive diagnosis to predictive, personalized fertility assessment that addresses both the biological complexity of infertility and the practical barriers to care.

Future directions will likely focus on multimodal diagnostic integration, combining computational approaches with novel biomarker discovery to further enhance predictive accuracy. Additionally, the development of point-of-care diagnostic technologies based on these innovative models could revolutionize fertility care accessibility, particularly in resource-limited settings. As the global burden of infertility continues to grow, these diagnostic innovations offer promising pathways toward more effective, efficient, and equitable fertility care for the millions of individuals and couples worldwide facing this challenging condition.

Limitations of Traditional Diagnostic Methods in Reproductive Medicine

Reproductive medicine remains heavily reliant on diagnostic methods that have seen minimal evolution over recent decades, creating significant bottlenecks in patient care and research. Traditional techniques for assessing fertility in men and women, as well as for evaluating gametes and embryos in assisted reproductive technology (ART) laboratories, are fundamentally constrained by their subjectivity, invasiveness, and limited predictive capacity. These limitations persist despite infertility affecting an estimated 17.5% of the global adult population, with male factors contributing to approximately 50% of cases [10] [11]. This analysis systematically examines the specific constraints of conventional diagnostic approaches across key domains of reproductive medicine, supported by experimental data and structured comparisons. By framing these limitations within the context of emerging technological alternatives, this review provides researchers and drug development professionals with a comprehensive evidence base for evaluating next-generation diagnostic models in fertility research and clinical practice.

Limitations in Male Infertility Diagnostics

Semen Analysis: The Outdated Gold Standard

Conventional semen analysis, encompassing parameters of concentration, motility, and morphology, constitutes the cornerstone of male infertility evaluation. Despite its longstanding status as a gold standard, this approach suffers from critical limitations that impair its diagnostic and prognostic utility.

Table 1: Limitations of Conventional Semen Analysis

| Parameter | Limitation | Clinical Impact | Experimental Evidence |

|---|---|---|---|

| Morphology Assessment | High inter-observer variability and subjectivity | Poor consistency in treatment planning | SVM models achieved 88.59% AUC on 1400 sperm images, surpassing manual assessment [11] |

| Motility Evaluation | Manual grading lacks precision and reproducibility | Inaccurate prediction of fertilization potential | AI motility analysis achieved 89.9% accuracy on 2817 sperm samples [11] |

| DNA Fragmentation | Not detected in routine analysis | Missed underlying causes of infertility | Conventional methods lack precision for subtle SDF detection [11] |

| Integration Complexity | Inability to capture multifactorial interactions | Limited prognostic value for ART outcomes | Random forest models integrating multiple factors achieved 84.23% AUC for IVF prediction vs. 65-70% for conventional methods [11] |

The fundamental constraint of traditional semen analysis lies in its reliance on manual assessment, which introduces substantial inter-observer variability and subjectivity [11]. This variability complicates accurate evaluation of critical sperm parameters, ultimately affecting treatment planning decisions. Experimental evidence demonstrates that artificial intelligence (AI) approaches significantly outperform conventional methods, with support vector machine (SVM) models achieving 88.59% area under the curve (AUC) in morphological assessment of 1400 sperm images, and 89.9% accuracy in motility analysis of 2817 sperm samples [11].

Beyond basic parameter assessment, conventional diagnostics struggle to detect subtle underlying causes of infertility such as sperm DNA fragmentation (SDF), which requires specialized testing not routinely performed [11]. Perhaps most significantly, traditional methods lack the capacity to integrate the complex interplay of clinical, environmental, and lifestyle factors that collectively influence fertility outcomes. This integration limitation results in suboptimal accuracy for forecasting IVF success, with traditional statistical models achieving only 65-70% prediction accuracy compared to the 84.23% AUC demonstrated by random forest models incorporating multifactorial data [11].

Diagnostic Challenges in Severe Male Factor Infertility

Non-obstructive azoospermia (NOA), the most severe form of male infertility affecting 10-15% of infertile men, presents particular diagnostic challenges [11]. Conventional approaches, including hormonal profiles and histopathological evaluation of testicular biopsies, offer limited predictive value for sperm retrieval success. This prognostic uncertainty complicates patient counseling and decision-making regarding invasive surgical sperm retrieval procedures.

Advanced machine learning models have demonstrated potential to overcome these limitations. Gradient boosting trees (GBT) applied to 119 NOA patients achieved an AUC of 0.807 with 91% sensitivity in predicting successful sperm retrieval, significantly outperforming conventional predictive methods [11]. This performance differential highlights the substantial limitations of traditional diagnostic paradigms in severe male factor infertility.

Figure 1: Comparative Diagnostic Pathways in Male Infertility. Traditional approaches (yellow/red) operate in isolation with limited integration, while modern methods (green/blue) leverage multifactorial data for enhanced prognostic accuracy.

Limitations in Female Infertility Assessment

Ultrasound-Based Diagnostics in PCOS and Endometrial Evaluation

Conventional ultrasound imaging, while invaluable for assessing female reproductive anatomy, provides limited information about tissue functional status and biomechanical properties. This limitation is particularly evident in the diagnosis of polycystic ovary syndrome (PCOS) and evaluation of endometrial receptivity.

In PCOS assessment, traditional transvaginal ultrasound evaluates ovarian morphology but cannot assess tissue stiffness, which represents an important pathophysiological aspect of the syndrome [12]. Research utilizing real-time elastography (RTE) has revealed that women with PCOS exhibit significantly increased ovarian stiffness compared to healthy controls, attributed to alterations in stromal structure and fibrosis that may contribute to anovulation and impaired ovarian function [12]. This diagnostic gap in conventional imaging limits comprehensive PCOS evaluation.

Similarly, endometrial assessment traditionally relies on thickness measurement, echogenicity, and blood flow evaluation via Doppler imaging. However, these parameters provide only indirect markers of receptivity and fail to assess biomechanical properties that critically influence implantation potential [12]. Shear wave elastography (SWE) studies have demonstrated that endometrial stiffness is significantly higher in women with unexplained infertility compared to fertile controls, with increased stiffness associated with poor blood perfusion and reduced implantation potential [12].

Table 2: Limitations in Female Reproductive Tissue Assessment

| Diagnostic Context | Traditional Method | Key Limitations | Advanced Alternative |

|---|---|---|---|

| PCOS Diagnosis | Transvaginal ultrasound (ovarian morphology) | Cannot assess tissue stiffness; limited to anatomical evaluation | Real-time elastography shows increased ovarian stiffness in PCOS patients [12] |

| Endometrial Receptivity | Endometrial thickness + Doppler blood flow | Poor prediction of implantation potential; no biomechanical data | Shear wave elastography measures stiffness; higher values correlate with reduced implantation [12] |

| Uterine Contractility | Visual assessment of peristalsis | Subjective, operator-dependent, inconsistent | Elastography quantifies tissue stiffness as contractility surrogate; correlates with IUI success [12] |

| Ovarian Reserve | Antral follicle count + AMH | Anatomical and biochemical data without functional tissue assessment | Elastography emerging for ovarian tissue characterization [12] |

Endometrial Receptivity and the Window of Implantation

The histological evaluation of endometrial receptivity, utilized for over 60 years, lacks the precision and accuracy necessary for reliable prediction of implantation potential [12]. The assumption of a consistent "window of implantation" across all patients has been challenged by evidence suggesting that some patients with recurrent implantation failure may benefit from personalized embryo transfer timing based on individual endometrial receptivity patterns [12].

Molecular technologies represent a paradigm shift beyond conventional histological evaluation. Endometrial receptivity array (ERA) testing utilizes molecular analysis to identify the optimal window of implantation, demonstrating superior personalization compared to traditional histological dating [13]. This advancement addresses a critical limitation in conventional endometrial assessment that has previously compromised outcomes in assisted reproduction.

Limitations in Embryo Assessment and Selection

Morphological Grading Systems

Embryo selection represents perhaps the most critical determinant of success in assisted reproductive technologies. Conventional morphological grading systems, while widely implemented, face substantial limitations that constrain their predictive value.

Table 3: Comparative Performance: Traditional vs. Advanced Embryo Assessment

| Assessment Method | Key Features | Performance Data | Study Details |

|---|---|---|---|

| Traditional Morphological Grading | Static evaluation at single time points; subjective scoring | Limited predictive value for implantation potential | Manual grading prone to inter-observer variability [10] |

| Time-Lapse Morphokinetics | Dynamic monitoring without culture disturbance; objective timing parameters | Improved but labor-intensive; requires expert analysis | Subjective interpretation challenges persist despite automated imaging [10] |

| AI-Based Assessment (FEMI Model) | Self-supervised learning on 18M time-lapse images; multiple prediction tasks | AUROC >0.75 for ploidy prediction using image data only | 17,968,959 time-lapse images; outperformed benchmarks [14] |

| BELA Algorithm | Multitask learning on time-lapse sequences; no embryologist input | AUC 0.76 for ploidy prediction | Surpassed models relying on manual embryologist scoring [14] |

Manual embryo grading is inherently subjective and prone to significant inter-observer variability, leading to inconsistent assessments across laboratories and embryologists [10]. Static morphological grading systems, such as Gardner's blastocyst grading, provide only limited predictive insights as they evaluate embryos at isolated time points rather than tracking developmental patterns [10]. This static assessment fails to capture dynamic processes critical to embryonic viability.

Morphokinetic analysis using time-lapse imaging (TLI) adds predictive value by monitoring cell division timings, but remains labor-intensive, inconsistent, and difficult to standardize across clinics [10]. Furthermore, manual evaluations lack scalability for high-throughput IVF settings, requiring substantial time and expertise from highly trained personnel [10].

Preimplantation Genetic Testing Limitations

Preimplantation genetic testing for aneuploidy (PGT-A) has transitioned from fluorescence in situ hybridization (FISH) screening, limited to analyzing a restricted number of chromosomes, to comprehensive chromosomal assessment via next-generation sequencing (NGS) and chromosomal microarrays [13]. Despite this advancement, current PGT-A techniques remain constrained by several factors.

A significant limitation of PGT-A involves the presence of chromosomal mosaicism within blastocysts, where the cells analyzed may not represent the chromosomal status of the entire embryo, potentially resulting in misdiagnosis [13]. Additionally, current PGT-A requires invasive embryo biopsy, which raises concerns about potential impacts on embryo development despite trophectoderm biopsy being generally considered safe [13]. The biopsy procedure itself is technically demanding, requiring experienced embryologists, and significantly increases the overall costs of ART cycles [13].

Figure 2: Embryo Assessment Methodology Evolution. The transition from subjective, static evaluation to objective, dynamic AI-driven analysis significantly enhances prediction accuracy and standardization.

The Researcher's Toolkit: Experimental Reagents and Technologies

Table 4: Essential Research Solutions for Advanced Fertility Diagnostics

| Technology/Reagent | Primary Research Application | Function and Utility | Experimental Evidence |

|---|---|---|---|

| Shear Wave Elastography (SWE) | Quantitative tissue stiffness measurement | Assesses ovarian stiffness in PCOS, endometrial receptivity | Quantitative stiffness measurement in kPa; objective, reproducible [12] |

| Time-Lapse Imaging Systems | Continuous embryo monitoring | Captures morphokinetic parameters without culture disturbance | 17,968,959 images used for FEMI model training [14] |

| Next-Generation Sequencing | Preimplantation genetic testing | Comprehensive aneuploidy screening; mosaic detection | Higher resolution than FISH; detects mosaic aneuploidy [13] |

| Vision Transformer Models | Embryo image analysis | Self-supervised learning on large image datasets | FEMI model trained on ~18 million time-lapse images [14] |

| Chromosomal Microarrays | Embryo ploidy assessment | Detects all mitotic/meiotic abnormalities in biopsied cells | Comprehensive aneuploidy detection beyond FISH limitations [13] |

| Ant Colony Optimization | Male infertility classification | Bio-inspired algorithm enhances neural network performance | 99% classification accuracy in male fertility assessment [15] |

Traditional diagnostic methods in reproductive medicine face fundamental limitations across all domains of fertility assessment. Semen analysis remains constrained by subjectivity and inability to detect subtle functional abnormalities, while conventional ultrasound and histological evaluations provide anatomical information without insight into tissue biomechanical properties or functional status. Embryo assessment continues to rely heavily on subjective morphological evaluation with limited predictive value for implantation potential. These diagnostic shortcomings collectively contribute to suboptimal treatment outcomes and inefficient resource utilization in reproductive medicine.

The emerging generation of diagnostic technologies, including artificial intelligence, elastography, and molecular profiling, demonstrates significant potential to overcome these limitations through quantitative, objective, and personalized assessment approaches. Experimental evidence confirms that these advanced methods consistently outperform traditional techniques across critical parameters including prediction accuracy, reproducibility, and clinical utility. For researchers and drug development professionals, these technological advances create new opportunities to develop more effective, data-driven diagnostic models that can ultimately enhance patient outcomes in reproductive medicine.

The evaluation of fertility diagnostic and predictive models relies on a suite of quantitative performance metrics that provide researchers and clinicians with critical insights into model reliability and clinical applicability. These metrics—including accuracy, sensitivity, specificity, and area under the curve (AUC)—serve as fundamental benchmarks for comparing emerging technologies against established methodologies. In the context of infertility, which affects a significant proportion of couples worldwide, the development of accurate diagnostic tools is paramount for directing appropriate treatment interventions [16]. The integration of artificial intelligence (AI) and machine learning (ML) has introduced sophisticated predictive models that require rigorous performance validation against clinical standards.

This guide provides an objective comparison of performance metrics across various fertility diagnostic approaches, focusing specifically on predictive models for treatment success and condition identification. The comparative analysis presented herein is framed within the broader thesis of performance evaluation in fertility diagnostic research, offering researchers and drug development professionals a standardized framework for assessing technological innovations in reproductive medicine. By examining experimental protocols and resulting performance data across multiple studies, this analysis aims to establish reference points for evaluating model efficacy in both male and female fertility assessment.

Performance Metrics Comparison Across Diagnostic Models

Quantitative Performance Metrics for Fertility Diagnostics

Table 1: Performance metrics of AI-based fertility diagnostic and predictive models

| Study Focus | Model/Technique | Accuracy (%) | Sensitivity/Recall | Specificity | AUC | Sample Size |

|---|---|---|---|---|---|---|

| Male Fertility Diagnostics [8] | ANN with Ant Colony Optimization | 99 | 1.00 | - | - | 100 cases |

| PCOS Risk Assessment [17] | Calibrated Random Forest | 90.8 | - | - | - | 541 instances |

| IVF/ICSI Treatment Prediction [16] | Random Forest | - | 0.76 | - | 0.73 | 733 cycles |

| IUI Treatment Prediction [16] | Random Forest | - | 0.84 | - | 0.70 | 1,196 cycles |

| First IVF Cycle Prediction [18] | Logistic Regression | - | - | - | 0.68 | 22,413 cycles |

| Embryo Selection for IVF [19] | AI-based Methods (Pooled) | - | 0.69 | 0.62 | 0.70 | Multiple studies |

Table 2: Advanced performance metrics for fertility prediction models

| Study Focus | F1-Score | Positive Predictive Value | Brier Score | Matthew's Correlation Coefficient | Computational Time (seconds) |

|---|---|---|---|---|---|

| Male Fertility Diagnostics [8] | - | - | - | - | 0.00006 |

| IVF/ICSI Treatment Prediction [16] | 0.73 | 0.80 | 0.13 | 0.50 | - |

| IUI Treatment Prediction [16] | 0.80 | 0.82 | 0.15 | 0.34 | - |

| PCOS Risk Assessment [17] | - | - | 0.0678 | - | - |

Comparative Analysis of Model Performance

The performance metrics reveal significant variation across different fertility diagnostic applications. The hybrid diagnostic framework for male fertility, combining a multilayer feedforward neural network with a nature-inspired ant colony optimization algorithm, demonstrated exceptional performance with 99% classification accuracy and 100% sensitivity, highlighting the potential of bio-inspired optimization techniques in reproductive health diagnostics [8]. This model also achieved an ultra-low computational time of just 0.00006 seconds, emphasizing its efficiency and real-time applicability potential for clinical settings.

For treatment outcome prediction, Random Forest models applied to Intrauterine Insemination (IUI) data showed higher sensitivity (0.84) compared to models for In Vitro Fertilization/Intracytoplasmic Sperm Injection (IVF/ICSI) cycles (0.76), suggesting better performance at identifying true positive outcomes in IUI treatments [16]. The F1-scores (0.80 for IUI vs. 0.73 for IVF/ICSI) and Positive Predictive Values (0.82 for IUI vs. 0.80 for IVF/ICSI) further support this observation, indicating better balance between precision and recall in IUI prediction models.

In embryo selection for IVF, AI-based methods demonstrated pooled sensitivity of 0.69 and specificity of 0.62 according to a recent meta-analysis, with an AUC of 0.70, indicating moderate overall diagnostic performance for implantation prediction [19]. The study noted that specific models like Life Whisperer achieved 64.3% accuracy in predicting clinical pregnancy, while the FiTTE system, which integrates blastocyst images with clinical data, improved prediction accuracy to 65.2%.

Experimental Protocols and Methodologies

Data Collection and Preprocessing Standards

Across the studies examined, consistent data collection and preprocessing methodologies were employed to ensure model reliability. For male fertility assessment, the study utilized a publicly available dataset of 100 clinically profiled male fertility cases representing diverse lifestyle and environmental risk factors [8]. The research on treatment outcome prediction incorporated data from 1,931 patients consisting of IVF/ICSI (733 cycles) and IUI (1,196 cycles) treatments, with exclusion criteria applied to cycles using donor gametes [16]. The large-scale IVF prediction study analyzed 22,413 first autologous oocyte IVF cycles from 2001 to 2018, excluding cycles with donor oocytes or no embryo transfers [18].

A critical methodological step involved handling missing data, with approaches varying by study. The IVF prediction research excluded variables with more than 99% missing data and employed median imputation for continuous variables while using indicator variables for missing categorical data [18]. Another study used Multi-Level Perceptron (MLP) to predict missing values, reporting that this approach provided better results than classic imputation strategies despite data noise [16]. For the PCOS risk assessment model, rows containing missing values were removed entirely to ensure a complete dataset [17].

Feature selection techniques played a crucial role in model development. The male fertility study implemented feature-importance analysis to identify key contributory factors such as sedentary habits and environmental exposures [8]. The IVF prediction research addressed collinearity by retaining only one variable from highly correlated pairs (threshold of 0.8), selecting variables based on AUC impact and clinical expertise [20]. The PCOS study divided features into binary categorical features (kept as unscaled variables) and continuous features (standardized using StandardScaler) [17].

Diagram 1: Experimental workflow for fertility diagnostic models: This diagram illustrates the standardized experimental workflow for developing and validating fertility diagnostic models, from initial data collection through clinical validation, highlighting critical preprocessing steps and performance evaluation metrics.

Model Development and Validation Approaches

The studies employed diverse machine learning algorithms with rigorous validation methodologies. The male fertility study combined a multilayer feedforward neural network with an ant colony optimization algorithm, implementing adaptive parameter tuning through ant foraging behavior to enhance predictive accuracy [8]. The IVF/IUI prediction research compared six well-known machine learning algorithms: Logistic Regression (LR), Random Forest (RF), k-Nearest Neighbors (KNN), Artificial Neural Network (ANN), Support Vector Machine (SVM), and Gradient Naïve Bayes (GNB), with random search and cross-validation used to optimize hyperparameters [16].

A distinctive approach was employed in the longitudinal IVF study, which developed four successive predictive models corresponding to different stages of the IVF process: (1) demographic parameters after initial consultation, (2) ovarian stimulation parameters, (3) laboratory data after oocyte retrieval, and (4) embryo transfer parameters [20]. This sequential modeling approach allowed researchers to determine which parameters were predictive at each stage and how predictive power evolved throughout treatment.

Validation methodologies consistently emphasized robust performance assessment. The IVF/IUI study used k-fold cross-validation with k=10 to evaluate models and avoid overfitting, particularly important for smaller datasets [16]. The PCOS risk assessment study incorporated probabilistic calibration metrics including Brier Score and Expected Calibration Error (ECE) to ensure reliable risk predictions across subgroups, with Random Forest achieving the best balance between calibration and interpretability (Brier=0.0678, ECE=0.0666) [17]. The large-scale IVF prediction study divided input data into training (80%) and test (20%) sets, with five-fold cross-validation over the training set to select optimal hyperparameters [18].

Key Predictive Features and Clinical Parameters

Significant Prognostic Factors Across Studies

The research identified consistent predictive features across different fertility diagnostic applications. Female age emerged as a dominant factor across multiple studies, with a strong relationship demonstrated between clinical pregnancy and a woman's age [16]. The large-scale IVF study found age in three groups (38-40, 41-42, and above 42 years old) to be among the most important predictors, along with the number of transferred embryos and the number of cryopreserved embryos [18]. The sequential IVF prediction model identified eight parameters predictive of live birth after the first consultation, expanding to thirteen parameters by the embryo transfer stage [20].

For PCOS risk assessment, SHAP analysis identified follicle count, weight gain, and menstrual irregularity as the most influential features, aligning with established Rotterdam diagnostic criteria [17]. The male fertility study emphasized key contributory factors such as sedentary habits and environmental exposures through feature-importance analysis [8]. In the IVF/IUI prediction research, essential features included age, follicle stimulation hormone (FSH), endometrial thickness, and infertility duration, with endometrial thickness and the number of follicles noted to decrease with increasing female age in both treatments [16].

Diagram 2: Key predictive features in fertility diagnostics: This diagram illustrates the hierarchy of significant prognostic factors identified across fertility diagnostic studies, categorized into demographic, ovarian reserve, treatment parameters, and lifestyle/environmental factors.

Research Reagent Solutions and Essential Materials

Table 3: Essential research reagents and materials for fertility diagnostics development

| Reagent/Material | Application in Research | Key Function | Example Usage in Studies |

|---|---|---|---|

| Anti-Müllerian Hormone (AMH) Testing | Ovarian Reserve Assessment | Quantifies ovarian reserve; predicts response to stimulation | Pre-cycle fertility evaluation [18]; Predictive parameter in IVF models [20] |

| Follicle Stimulating Hormone (FSH) Testing | Ovarian Function Assessment | Evaluates follicular development potential; measured on cycle day 3 | Basal day 3 FSH assessment [16]; Included in predictive models for treatment success [18] |

| Antral Follicle Count (AFC) Protocol | Ovarian Reserve Quantification | Ultrasound assessment of resting follicle count; predicts ovarian response | Ovarian reserve assessment [21]; Categorized into ranges (≤5, 6-10, 11-15, >15) for modeling [20] |

| Semen Analysis Reagents | Male Fertility Assessment | Evaluates sperm concentration, motility, and morphology | Initial infertility evaluation [21]; Included in male factor assessment [5] |

| Embryo Culture Media | IVF Laboratory Procedures | Supports embryo development in vitro | Essential for embryo culture in IVF/ICSI cycles [16] |

| Time-Lapse Imaging Systems | Embryo Morphokinetic Assessment | Continuous monitoring of embryo development without disturbance | Used in AI-based embryo selection studies [19] |

| Hormonal Assays (LH, Estradiol, Progesterone) | Cycle Monitoring and Assessment | Tracks follicular development and endometrial preparation | Part of standard infertility evaluation [21]; Used in predictive model development [18] |

The comparative analysis of performance metrics across fertility diagnostic models reveals a complex landscape where accuracy, sensitivity, and clinical utility must be balanced against computational efficiency and interpretability. The exceptionally high accuracy (99%) and sensitivity (100%) demonstrated by the bio-inspired optimization approach for male fertility diagnostics [8] must be contextualized within its limited sample size (100 cases) compared to the large-scale IVF prediction study (22,413 cycles) which achieved more moderate but potentially more generalizable performance (AUC 68%) [18].

The variation in performance metrics across different clinical applications—from male fertility assessment to PCOS risk prediction and treatment outcome forecasting—highlights the importance of context-specific metric evaluation. For instance, sensitivity may be prioritized over overall accuracy in screening contexts where missing true cases has significant clinical consequences, while specificity might be more valuable in diagnostic confirmation scenarios. The emergence of advanced metrics like Brier Score and Expected Calibration Error in more recent studies [17] reflects growing recognition that prediction reliability across subgroups is as important as overall performance.

These comparative findings suggest that while raw performance metrics provide valuable benchmarking data, researchers and clinicians must consider the clinical context, population characteristics, and intended use case when evaluating fertility diagnostic models. The integration of AI and machine learning continues to advance the field, but rigorous validation against established clinical standards remains essential for translating technical performance into improved patient outcomes.

The evaluation of human fertility has evolved dramatically, moving from the assessment of isolated semen parameters to the prediction of the ultimate clinical outcome: live birth. This paradigm shift is driven by advances in artificial intelligence (AI) and multimodal data integration, which together enhance the precision of assisted reproductive technology (ART). Contemporary prediction targets now form a continuum, spanning from basic seminal quality to complex blastocyst viability. This guide objectively compares the performance of these emerging predictive models against conventional analytical methods, providing researchers and drug development professionals with a clear comparison of their experimental protocols, performance data, and reagent requirements. By systematically evaluating these technologies, this analysis aims to inform strategic decisions in research tool selection and clinical translation.

Comparative Performance of Fertility Prediction Models

The table below summarizes the key performance metrics of contemporary models targeting different endpoints in the fertility treatment journey.

Table 1: Performance Comparison of Fertility Prediction Models and Conventional Methods

| Prediction Target & Model | Key Performance Metrics | Data Inputs | Clinical Utility |

|---|---|---|---|

| Sperm Morphology (AI Model) [22] | Correlation with CASA: r=0.88 [22]Test Accuracy: 0.93 [22]Precision/Recall (Normal Sperm): 0.91/0.95 [22] | Confocal laser scanning microscopy images (40x) [22] | Enables selection of viable, unstained sperm with normal morphology for ICSI, improving fertilization potential [22]. |

| Sperm Morphology (CASA) [22] | Correlation with AI model: r=0.88 [22]Correlation with CSA: r=0.57 [22] | Stained sperm images (100x magnification) [22] | Standardized, automated assessment of fixed sperm; cannot be used for subsequent treatment cycles [22]. |

| Sperm Morphology (CSA) [22] | Correlation with AI model: r=0.76 [22]Correlation with CASA: r=0.57 [22] | Stained sperm assessed manually per WHO guidelines [22] | Traditional benchmark; subject to inter-observer variability; renders sperm unusable [22]. |

| ICSI Outcome (Seminal ORP) [23] | Live Birth Prediction (AUC): 0.728 [23]Correlation with Live Birth: r=-0.366 [23] | Oxidation-reduction potential measured via MiOXSYS system [23] | Measures oxidative stress in semen, a negative predictor of blastocyst development, clinical pregnancy, and live birth after ICSI [23]. |

| Live Birth (Multimodal AI) [24] | Live Birth Prediction (AUC): 0.77 [24] | Blastocyst images + 103 patient couple’s clinical features [24] | Integrates embryo morphology with maternal/clinical context for superior blastocyst selection in IVF [24]. |

| Live Birth (Image-Only AI) [24] | Live Birth Prediction (AUC): ~0.65 [24] | Static blastocyst images (focus on ICM and Trophectoderm) [24] | Automates embryo grading; provides a subjective, consistent assessment but lacks clinical context [24]. |

| National Average (SART Data) [25] | Live Birth Rate (Age <35): 53.5% [25]Live Birth Rate (Age 41-42): 13.0% [25] | Population-level aggregated clinical data [25] | Provides broad, population-based benchmarks for success rates by female age group [25]. |

Detailed Experimental Protocols for Key Prediction Models

AI-Based Morphology Assessment of Unstained Live Sperm

This protocol outlines the methodology for developing an AI model to assess sperm morphology without staining, preserving sperm viability for clinical use [22].

- Sample Preparation: Semen samples are collected from donors after 2-7 days of abstinence. A 6 µL droplet of the sample is dispensed onto a standard two-chamber Leja slide with a 20 µm depth [22].

- Image Acquisition: Sperm images are captured using a confocal laser scanning microscope (e.g., ZEISS LSM 800) at 40x magnification in confocal mode (LSM, Z-stack). A Z-stack interval of 0.5 µm over a 2 µm range is used to generate high-resolution, multi-focal plane images. At least 200 sperm images are collected per sample [22].

- Data Annotation and Categorization: Embryologists and researchers manually annotate well-focused sperm in the images using a tool like LabelImg. Each sperm is categorized as normal or abnormal based on strict WHO criteria (e.g., smooth oval head, length-to-width ratio of 1.5-2, no vacuoles, normal tail). Normal morphology must be confirmed across all five focal frames [22].

- AI Model Training and Validation: A deep learning model (e.g., ResNet50) is trained using transfer learning. The dataset is split into training and validation sets. The model is trained to minimize the difference between predicted and actual labels, with performance evaluated on a separate test set. The final model in the cited study achieved a test accuracy of 0.93 after 150 epochs [22].

Figure 1: AI sperm morphology assessment workflow.

Predictive Power of Seminal Oxidation-Reduction Potential (ORP) for ICSI

This protocol describes the measurement of seminal ORP and its correlation with reproductive outcomes after Intracytoplasmic Sperm Injection (ICSI) [23].

- Study Population and Sample Collection: The study includes couples undergoing fresh ICSI cycles with autologous gametes. Semen samples are collected and processed according to standard laboratory protocols. Cases of azoospermia are excluded [23].

- ORP Measurement: Seminal ORP is measured using the MiOXSYS system. The ORP value is normalized per 10^6 sperm/mL. Measurements are taken in accordance with the manufacturer's instructions [23].

- Outcome Measures: Reproductive outcomes are rigorously tracked, including fertilization rate, blastocyst development rate, implantation/clinical pregnancy, and live birth. Correlations between seminal ORP and these outcomes are analyzed using statistical methods like Pearson correlation [23].

- Statistical Analysis and Predictive Power Assessment: Receiver operating characteristic (ROC) curve analysis is performed to determine the predictive power of ORP for each reproductive outcome. The area under the curve (AUC) is calculated, and a cut-off value for ORP with significant effects on odds ratios is identified, often controlling for male age as a confounding factor [23].

Multimodal AI for Live Birth Prediction from Blastocysts

This protocol details the development of a multimodal AI model that integrates blastocyst images with clinical data to predict live birth [24].

- Dataset Curation: A large, retrospective dataset is compiled, including blastocysts with known live birth outcomes. For each blastocyst, two grayscale images are captured using a standard optical light microscope: one focused on the inner cell mass (ICM) and the other on the trophectoderm (TE). Images are cropped and padded to a standard size (e.g., 500x500 pixels) [24].

- Clinical Feature Compilation: A comprehensive set of 103 clinical features from the patient couple is assembled. This includes maternal factors (e.g., age, hormone profiles, endometrium thickness, antral follicle count), paternal factors (e.g., semen quality), and cycle details (e.g., day of blastocyst transfer) [24].

- Multimodal Model Architecture: A hybrid AI model is developed, typically consisting of:

- A Convolutional Neural Network (CNN) branch for processing blastocyst images.

- A Multilayer Perceptron (MLP) branch for processing the structured clinical data.

- A fusion layer that combines features from both branches to make the final prediction [24].

- Model Evaluation and Feature Importance: The model's performance is evaluated using the Area Under the ROC Curve (AUC). The model is compared against benchmarks, including models using only images. The top contributing clinical features to the prediction are identified (e.g., maternal age, transfer day, antral follicle count) [24].

Figure 2: Multimodal AI model for live birth prediction.

The Scientist's Toolkit: Essential Reagents and Materials

The table below lists key reagents, instruments, and software solutions essential for implementing the advanced prediction models described.

Table 2: Key Research Reagent Solutions for Fertility Prediction Studies

| Item | Function/Application | Specific Example/Model |

|---|---|---|

| Confocal Laser Scanning Microscope | High-resolution, multi-plane imaging of unstained live sperm for AI morphology analysis [22]. | ZEISS LSM 800 [22] |

| Computer-Aided Semen Analysis (CASA) System | Automated, standardized analysis of sperm concentration, motility, and stained sperm morphology [22]. | IVOS II with DIMENSIONS II Software (Hamilton Thorne) [22] |

| MiOXSYS System | Measures seminal oxidation-reduction potential (ORP) to quantify oxidative stress as a predictor of ICSI outcomes [23]. | MiOXSYS System [23] |

| Standard Optical Light Microscope | Capturing static, high-quality images of blastocysts for AI-based embryo evaluation and live birth prediction [24]. | Not Specified [24] |

| Deep Learning Framework | Platform for developing and training convolutional neural networks (CNNs) and multimodal models for image and data analysis [24]. | ResNet50, Custom CNN/MLP Architectures [22] [24] |

| Gradient Boosting Algorithms | Building ensemble prediction models for complex, multivariate clinical outcomes from large datasets [26]. | LightGBM, CatBoost [26] |

The comparative data presented in this guide illuminates a clear trajectory in fertility diagnostics: models that integrate multiple data types—such as cellular images and clinical parameters—consistently outperform those relying on a single data source. The progression from assessing static, stained sperm to analyzing dynamic, functional properties like oxidative stress and live blastocyst development represents a fundamental shift towards more holistic and predictive evaluation.

For researchers and drug developers, these findings highlight critical strategic considerations. First, investment in multimodal AI platforms is essential for pushing the boundaries of prediction accuracy. Second, functional sperm assays, like ORP measurement, provide valuable, non-invasive prognostic information complementary to morphology. Finally, the research community must prioritize the creation of large, high-quality, and diverse datasets to train these next-generation models, ensuring they are robust and generalizable across patient populations. By focusing on these integrated and data-rich approaches, the field can continue to improve ART success rates and deliver on the promise of personalized fertility care.

Infertility, a complex condition affecting an estimated 8-12% of reproductive-aged couples globally, presents a multifaceted challenge that demands increasingly sophisticated diagnostic approaches [27]. The limitations of traditional univariate or limited-factor models in predicting reproductive outcomes have become increasingly apparent, with even the most established clinical parameters offering incomplete prognostic value. The integration of multifactorial data—spanning clinical, lifestyle, and environmental domains—represents a paradigm shift in fertility research and clinical practice. This approach leverages advanced machine learning (ML) and artificial intelligence (AI) methodologies to synthesize diverse data types into comprehensive predictive models. By moving beyond the conventional focus on female-specific factors, these integrated models offer unprecedented opportunities for personalized prognosis, targeted intervention, and improved assisted reproductive technology (ART) outcomes. This analysis objectively compares the performance of various data-integration approaches in fertility diagnostics, examining their experimental foundations, methodological rigor, and translational potential for researchers and clinicians.

Comparative Performance of Multifactorial Prediction Models

Table 1: Performance Comparison of Machine Learning Models in Fertility Prediction

| Study Focus | Best Performing Model | Accuracy | AUC/ROC | Key Predictive Features | Data Source |

|---|---|---|---|---|---|

| IVF Live Birth Prediction [27] | XGBoost | 70.0% | 0.73 | Female age, AMH, BMI, infertility duration, previous live birth/miscarriage/abortion, infertility type | 7,188 first IVF cycles (Single center) |

| Fertility Preferences (Nigeria) [28] | Random Forest | 92.0% | 0.92 | Number of living children, woman's age, ideal family size, region, contraception intention | 37,581 women (NDHS 2018) |

| Natural Conception Prediction [29] | XGB Classifier | 62.5% | 0.58 | BMI (both partners), caffeine consumption, endometriosis history, chemical/heat exposure | 197 couples (Prospective study) |

| Oocyte Quality Prediction [30] | Random Forest | 76.1% (K-Fold) | N/A | Cortical Tension, Deformation Index, oocyte diameter, critical flow rate | 54 oocytes (Microfluidic analysis) |

| Population Birth Forecasting [31] | Prophet Time-Series | (RMSE: 6,231.41 CA) | N/A | Miscarriage totals, abortion access, state-level policy variation | State-level data (1973-2020) |

Table 2: Data Type Integration Across Fertility Prediction Studies

| Study | Clinical/Demographic | Lifestyle & Environmental | Genetic/Epigenetic | Biomechanical | Policy Context |

|---|---|---|---|---|---|

| IVF Live Birth [27] | Age, AMH, BMI, reproductive history | (Limited in this model) | Not included | Not included | Not included |

| Fertility Preferences [28] | Age, region, education, number of children | Contraception intention, spouse's occupation | Not included | Not included | Not included |

| Natural Conception [29] | BMI, medical history (endometriosis) | Caffeine, smoking, chemical/heat exposure | Not included | Not included | Not included |

| Oocyte Quality [30] | (Implicit via oocyte source) | Not included | Not included | Cortical Tension, Deformation Index | Not included |

| Sperm Epigenetics [32] | Male age, medical history | Paternal smoking, obesity, alcohol, occupation | Sperm epigenome | Not included | Not included |

| Population Forecasting [31] | Pregnancy, miscarriage, abortion rates | (Aggregated population level) | Not included | Not included | State identifier (CA vs. TX) |

The performance data reveal significant variation in model accuracy across different fertility prediction tasks. Models predicting population-level trends or demographic preferences, which utilize large, standardized datasets, achieve the highest accuracy (e.g., 92% for fertility preferences in Nigeria) [28]. In contrast, models forecasting individual clinical outcomes, such as natural conception or IVF success, demonstrate more modest performance, with accuracy ranging from 62.5% to 76.1% [29] [30] [27]. This discrepancy underscores the greater complexity of predicting biological outcomes compared to stated preferences. The consistent superior performance of ensemble methods like Random Forest and XGBoost across multiple studies highlights their particular utility for handling the non-linear relationships and complex interactions characteristic of multifactorial fertility data [28] [30] [27]. Furthermore, the type of data integrated significantly influences predictive power. While clinical and demographic factors remain foundational, the emerging incorporation of male lifestyle factors, biomechanical properties of gametes, and policy contexts represents a critical expansion of the traditional diagnostic paradigm [31] [32] [29].

Experimental Protocols and Methodologies

Machine Learning for Demographic Prediction (Nigeria Study)

Objective: To predict fertility preferences (desire for another child vs. no more children) among Nigerian women using machine learning algorithms [28].

Data Source & Preprocessing: The study utilized data from the 2018 Nigeria Demographic and Health Survey (NDHS), comprising 37,581 women. The dataset exhibited class imbalance, which was addressed using the Synthetic Minority Oversampling Technique (SMOTE). Missing data (<10%) were handled using Multiple Imputation by Chained Equations (MICE). Continuous variables were categorized, and low-frequency categories were recategorized to ensure data quality [28].

Feature Selection & Model Training: A multi-step feature selection process was employed, combining:

- Recursive Feature Elimination (RFE)

- Bivariate logistic regression

- Correlation heatmaps to eliminate multicollinearity. Six machine learning algorithms were trained on 70% of the dataset: Logistic Regression, Support Vector Machine, K-Nearest Neighbors, Decision Tree, Random Forest, and eXtreme Gradient Boosting. Model performance was evaluated on the remaining 30% holdout test set using accuracy, precision, recall, F1-score, and Area Under the Receiver Operating Characteristic Curve (AUROC) [28].

Validation & Interpretation: Model validation included k-fold cross-validation. Permutation importance and Gini importance techniques were used to interpret the final model and identify key predictors, with number of living children, woman's age, and ideal family size emerging as the most influential features [28].

Microfluidic and ML Framework for Oocyte Quality Assessment

Objective: To non-invasively predict oocyte quality for IVF by integrating biomechanical profiling with machine learning [30].

Experimental Workflow: Immature oocytes were individually passed through a custom-designed microfluidic channel under controlled flow rates. Using image processing, two key biomechanical features were extracted: Cortical Tension (CT) and Deformation Index (DI). Additional measured variables included oocyte diameter and the critical flow rate (Q), defined as the minimum flow rate required for an oocyte to pass through the channel [30].

Data Labeling & Model Development: A dataset of 54 oocytes was labeled based on post-hoc maturation, fertilization, and cleavage outcomes. The dataset was used to train and evaluate eight supervised learning models (including Random Forest, Decision Tree, SVM) and four unsupervised learning models (K-Means, DBSCAN, etc.). Model performance was assessed using K-Fold and Leave-One-Out Cross-Validation [30].

Explainable AI for Population-Level Fertility Forecasting

Objective: To forecast annual births and identify key drivers of fertility trends in California and Texas using explainable AI [31].

Data Source & Preparation: The study used publicly available state-level data from 1973 to 2020, sourced from the Open Science Framework (OSF) repository, which aggregates data from the CDC and National Center for Health Statistics. Key variables included annual totals of births, abortions, miscarriages, and pregnancies. Data were formatted for time-series analysis, with missing values addressed via forward-filling or interpolation [31].

Modeling Framework: The methodology employed a dual-model approach:

- Forecasting Model: The Prophet time-series algorithm was used to forecast annual births through 2030, decomposing trends into seasonal and long-term components. Its performance was benchmarked against a linear regression baseline using Root Mean Squared Error (RMSE) and Mean Absolute Percentage Error (MAPE).

- Interpretability Framework: An XGBoost regression model was trained on the historical data. SHapley Additive exPlanations (SHAP) values were then calculated to quantify the relative influence of each predictor (e.g., miscarriage totals, abortion access) on the model's birth total predictions [31].

Validation: A standard 80/20 train-test split was used for the XGBoost model, with hyperparameter tuning conducted via grid search. The Prophet model's performance was validated by its superior RMSE and MAPE compared to the linear regression baseline [31].

The Scientist's Toolkit: Key Reagents and Research Solutions

Table 3: Essential Research Tools for Multifactorial Fertility Studies

| Tool / Reagent | Specific Example / Model | Research Application | Key Function |

|---|---|---|---|

| Machine Learning Libraries | Scikit-learn, XGBoost, SHAP [31] [27] | Model development and interpretation | Enable predictive modeling and feature importance analysis on complex datasets |

| Microfluidic Devices | Custom-designed oocyte channels [30] | Gamete quality assessment | Provide controlled environment for measuring biomechanical properties of oocytes |

| Hormone Assays | Anti-Müllerian Hormone (AMH) tests [27] | Ovarian reserve assessment | Quantify key hormonal biomarkers for female fertility potential |

| Demographic Survey Data | Nigeria Demographic and Health Survey (NDHS) [28] | Population-level studies | Provide large-scale, standardized demographic and health data |

| Time-Series Analysis Tools | Prophet algorithm [31] | Population trend forecasting | Decompose and forecast long-term fertility trends from temporal data |

| Epigenetic Profiling Kits | Sperm epigenome analysis kits [32] | Male factor infertility research | Assess epigenetic modifications in sperm that influence embryo development |

Discussion and Research Implications

The integration of multifactorial data represents the frontier of fertility diagnostics research, yet it presents significant methodological challenges. A primary limitation across studies is data heterogeneity and accessibility. While studies like the Nigerian fertility preference analysis benefit from large, national datasets [28], many clinical models rely on single-center data, limiting their generalizability [27]. Furthermore, the integration of novel data types, such as epigenetic markers [32] and biomechanical properties [30], remains in its infancy, with sample sizes often too small for robust validation.

The choice of modeling framework critically influences interpretability and clinical utility. The superior performance of ensemble methods like Random Forest and XGBoost is consistent across studies [28] [27], but their "black box" nature can impede clinical adoption. The integration of explainable AI (XAI) techniques, such as SHAP analysis [31] and permutation importance [28], is therefore a crucial development, enabling researchers to identify key drivers behind predictions and build trust in model outputs.

Future research directions should prioritize standardized data collection protocols to facilitate multi-center validation studies. There is also a pressing need to incorporate male-factor data more comprehensively, as current models remain predominantly female-centric [32] [29]. Finally, the transition from static prediction to dynamic treatment planning represents the next major challenge, requiring longitudinal data integration and adaptive learning algorithms to guide personalized intervention strategies throughout the fertility journey.

Methodological Architectures in Fertility Diagnostics: From Machine Learning to Bio-Inspired Optimization

This guide provides an objective comparison of Support Vector Machine (SVM), Random Forest, and eXtreme Gradient Boosting (XGBoost) models within the specific context of fertility diagnostics research. It synthesizes performance data, experimental protocols, and key resources to aid researchers and scientists in model selection and implementation.

Performance Comparison in Fertility Diagnostics

The following table summarizes the documented performance of SVM, Random Forest, and XGBoost models across various fertility and reproductive health studies.

| Model | Application Context | Reported Performance | Key Strengths | Key Limitations / Notes |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Detecting Multiple System Atrophy (Neurodegenerative) [33] | Accuracy: 88.1%, F1-Score: 87.1% [33] | Superior performance in a direct comparative benchmark on clinical features [33]. | |

| Sperm Morphology Classification (with deep feature engineering) [34] | Accuracy: 96.08% [34] | Effective as a final-stage classifier on engineered features from deep learning models [34]. | Performance is tied to the quality of upstream feature extraction. | |

| Random Forest (RF) | Detecting Multiple System Atrophy (Neurodegenerative) [33] | Accuracy: 85.4%, F1-Score: 83.9% [33] | Robust and less prone to overfitting on test data in some scenarios [35]. | Can produce "spikes of probability" and near-perfect training AUCs, which may not always harm test AUC but affect calibration [35]. |

| Predicting Live Birth from first IVF treatment [27] | Performance below XGBoost [27] | Outperformed by XGBoost in a large clinical study (n=7188) [27]. | ||

| XGBoost | Predicting Live Birth from first IVF treatment [27] | AUC: 0.73 [27] | Handles complex variable interactions; identified as best-performing model for this task [27]. | Demonstrates strong performance in clinical prediction tasks. |

| Predicting Clinical Pregnancy in IVF [36] | AUC: 0.999 [36] | Achieved near-perfect discrimination for clinical pregnancy prediction in one study [36]. | Extreme performance should be validated for generalizability. | |

| Predicting Live Birth in IVF [36] | Performance below LightGBM (AUC: 0.913) [36] | While powerful, may be outperformed by other advanced boosting algorithms in specific tasks [36]. |

Detailed Experimental Protocols

To ensure reproducibility and critical appraisal, this section details the methodologies from key studies cited in the performance comparison.

Protocol: Comparative Analysis of SVM and Random Forest

This protocol is derived from a study comparing SVM and Random Forest for detecting Multiple System Atrophy (MSA) based on clinical features [33].

- Objective: To benchmark the performance of Decision Tree (DT) and SVM algorithms in detecting MSA using a clinical dataset [33].

- Data: The study utilized a dataset of 300 patient records. The "clinical features" were the input variables, and the diagnosis of MSA was the outcome [33].

- Model Training & Validation:

- Performance Metrics: The models were compared based on key classification metrics: Accuracy, Precision, Recall, and F1-Score [33].

Protocol: XGBoost for Live Birth Prediction

This protocol outlines the methodology from a study that developed a machine learning model to predict the chance of a live birth prior to the first IVF treatment [27].

- Objective: To develop and assess a prediction model for estimating the cumulative live birth chance of the first complete IVF cycle using pre-treatment variables [27].

- Data:

- Cohort: 7188 women undergoing their first IVF/ICSI cycle [27].

- Predictors: Pre-treatment variables including age, AMH, BMI, duration of infertility, previous live birth, previous miscarriage, previous abortion, and type of infertility [27].

- Outcome: Ongoing pregnancy leading to at least one live birth [27].

- Model Training & Validation:

- Data Split: The dataset was randomly split, with 70% used for training and 30% for validation [27].

- Nested Cross-Validation: A repeated nested cross-validation (5 folds inner, 5 folds outer, repeated 11 times) was used to obtain an unbiased estimate of the model's generalization performance and to avoid overfitting [27].

- Comparison: XGBoost was compared against Logistic Regression, Random Forest, and SVM [27].

- Hyperparameter Tuning: Grid-search with k-fold cross-validation (k=5) was used to find the optimal hyperparameters for each model [27].

- Performance Metrics: Discrimination was evaluated using the Area Under the Receiver Operating Characteristic Curve (AUC), and calibration was assessed with calibration plots [27].

Research Workflow and Model Logic

The diagram below illustrates a generalized experimental workflow for developing and comparing machine learning models in fertility diagnostic research, integrating key steps from the cited protocols.

The Scientist's Toolkit: Key Research Reagents & Materials

The table below lists essential materials and computational tools frequently employed in fertility diagnostics research involving machine learning.

| Item / Reagent | Function / Application in Research |

|---|---|

| Clinical Datasets | Curated patient data (e.g., from UCI Repository, clinical trials) used as the foundational input for training and validating predictive models. Examples include fertility-related clinical profiles and lifestyle factors [15] [27]. |

| HPLC-MS/MS Systems | Used for precise quantification of biomarkers (e.g., 25-hydroxy vitamin D3) from serum samples, which can serve as critical predictive features in models for infertility and pregnancy loss [37]. |

| Python with Scikit-learn & XGBoost | The primary programming environment and libraries for implementing machine learning algorithms, including SVM, Random Forest, and XGBoost, and for performing data preprocessing and model evaluation [27]. |

| High-Performance Computing (HPC) Cluster | Essential for handling computationally intensive tasks such as training on large datasets (e.g., thousands of patient records or medical images) and running complex procedures like nested cross-validation [27]. |

| Convolutional Neural Network (CNN) Models | Used for automated feature extraction from medical images (e.g., hysteroscopic images, sperm morphology). These deep features can then be classified using traditional ML models like SVM [38] [34]. |

The evaluation of fertility diagnostic models represents a critical frontier in reproductive medicine, where the precision of predictions directly impacts clinical outcomes and patient counseling. Within this domain, deep learning architectures, particularly Convolutional Neural Networks (CNNs), have emerged as powerful tools for analyzing both structured Electronic Medical Record (EMR) data and medical images. While CNNs are traditionally applied to image-based diagnosis, recent methodological innovations have demonstrated their adaptability to structured EMR data, creating opportunities for comprehensive fertility assessment models that leverage multiple data types [39] [40]. This comparative guide examines the performance of CNN architectures against traditional machine learning models in fertility diagnostics, providing researchers and drug development professionals with experimental data and implementation frameworks to inform model selection for specific research and clinical applications.

The integration of artificial intelligence in fertility care addresses several persistent challenges, including the suboptimal live birth rates per In Vitro Fertilization (IVF) cycle, which often remain below 40% globally [39]. Accurate prediction of IVF outcomes enables improved clinical decision-making, better resource allocation, and realistic patient expectations. Meanwhile, image-based diagnostic systems offer transformative potential for conditions like Asherman's syndrome, where early and accurate detection significantly impacts treatment success [38]. This performance evaluation systematically assesses how different deep learning architectures address these clinical needs through comparative analysis of experimental results across multiple studies and datasets.

Performance Comparison of Deep Learning Architectures in Fertility Diagnostics

Quantitative Performance Metrics Across Model Architectures

Table 1: Performance comparison of CNN models versus traditional machine learning in fertility diagnostics

| Model Architecture | Application Context | Dataset Size | Key Performance Metrics | Superior Performing Model |

|---|---|---|---|---|

| CNN (Structured EMR) | IVF Live Birth Prediction | 48,514 IVF cycles [39] | Accuracy: 0.9394 ± 0.0013, AUC: 0.8899 ± 0.0032, Recall: 0.9993 ± 0.0012 [39] | Random Forest (AUC: 0.9734 ± 0.0012) [39] |

| Random Forest | IVF Live Birth Prediction | 48,514 IVF cycles [39] | Accuracy: 0.9406 ± 0.0017, AUC: 0.9734 ± 0.0012 [39] | Random Forest [39] |

| Proportional Hazard CNN | Hysteroscopic Fertility Assessment | 555 cases with 4,922 images [38] | AUC: 0.982-0.992 (1-year prediction), c-index: 0.920-0.940 (2-year prediction) [38] | CNN [38] |

| InceptionV3 | Hysteroscopic Fertility Assessment | 555 cases with 4,922 images [38] | Lower AUC values compared to Proportional Hazard CNN [38] | CNN [38] |

| Feedforward Neural Network | IVF Live Birth Prediction | 48,514 IVF cycles [39] | Lower performance compared to CNN and Random Forest [39] | CNN/Random Forest [39] |

Table 2: Performance of deep learning models in general medical imaging diagnostics