Predicting Infertility Risk with Machine Learning: A Data-Driven Approach Using Serum Hormone Biomarkers

This article provides a comprehensive review for researchers and scientists on the development, application, and validation of machine learning (ML) models for predicting infertility risk from serum hormone levels.

Predicting Infertility Risk with Machine Learning: A Data-Driven Approach Using Serum Hormone Biomarkers

Abstract

This article provides a comprehensive review for researchers and scientists on the development, application, and validation of machine learning (ML) models for predicting infertility risk from serum hormone levels. It explores the foundational relationship between hormones like FSH, LH, testosterone, and estradiol with fertility status. The manuscript details methodological approaches, including data preprocessing and the application of ensemble models like Random Forest and XGBoost, which have demonstrated AUC values exceeding 0.7 in recent studies. It further addresses critical challenges in model optimization, such as feature selection and handling class imbalance, and provides a framework for the rigorous internal and clinical validation of these predictive tools. The synthesis of current evidence underscores the potential of ML to offer a minimally invasive screening method, paving the way for personalized diagnostic strategies in reproductive medicine.

The Biological Basis: Linking Serum Hormone Profiles to Infertility Risk

The Hypothalamic-Pituitary-Gonadal (HPG) Axis and Its Role in Fertility

The Hypothalamic-Pituitary-Gonadal (HPG) axis is a fundamental neuroendocrine system that regulates reproductive development, fertility, and aging across mammalian species [1]. This intricate axis coordinates signaling between the brain and gonads to control gamete production and the secretion of sex steroid hormones, making it essential for reproductive success [2] [3]. The HPG axis functions through a cascade of hormonal signals: the hypothalamus secretes gonadotropin-releasing hormone (GnRH), which stimulates the anterior pituitary to produce luteinizing hormone (LH) and follicle-stimulating hormone (FSH), which in turn act on the gonads (ovaries or testes) to promote gametogenesis and secretion of sex steroids like estradiol and testosterone [1] [3]. These gonadal steroids then complete critical feedback loops to the hypothalamus and pituitary, modulating further GnRH and gonadotropin release [2]. Understanding the precise regulation of this axis is crucial for developing diagnostic tools and therapeutic interventions for infertility.

Recent advances in machine learning have created new opportunities to analyze HPG axis function for clinical applications. Several studies have demonstrated that hormone levels within this axis can serve as biomarkers for predicting infertility risk [4] [5]. These computational approaches leverage the quantitative relationships between HPG axis components to identify patterns indicative of impaired reproductive function, offering less invasive screening methods and potentially earlier detection of fertility issues.

Core Physiology and Signaling Pathways

Neural Regulation of GnRH Secretion

The pulsatile secretion of GnRH from hypothalamic neurons initiates and maintains HPG axis activity [2] [1]. This pulsatile release pattern is critical for proper gonadotropin secretion; continuous GnRH exposure leads to desensitization of pituitary gonadotropes and suppressed LH and FSH production [1]. The frequency and amplitude of GnRH pulses are tightly regulated, with different frequencies preferentially stimulating synthesis of either LH or FSH—rapid pulsatility promotes LH synthesis while slower pulsatility favors FSH production [1].

Key neuronal populations upstream of GnRH neurons provide essential regulation:

- Kisspeptin neurons located in the arcuate nucleus (ARC) and anteroventral periventricular nucleus (AVPV) directly stimulate GnRH release through kisspeptin receptor (Kiss1R) signaling [2]. ARC kisspeptin neurons are implicated in pulsatile GnRH secretion and negative sex steroid feedback, while AVPV kisspeptin neurons mediate positive estrogen feedback that generates the preovulatory LH surge in females [2].

- RFRP-3 neurons in the dorsomedial nucleus of the hypothalamus produce RFamide-related peptide-3 (RFRP-3), which has potent inhibitory effects on LH secretion in many mammalian species [2]. RFRP-3 may suppress the reproductive axis by signaling directly to GnRH neurons or indirectly via kisspeptin populations [2].

Metabolic signals also significantly influence GnRH secretion:

- Leptin (from adipocytes) and insulin stimulate GnRH secretion through indirect pathways, as GnRH neurons lack receptors for these hormones [1].

- Ghrelin (the "hunger hormone") inhibits GnRH neuronal activity, suppressing reproductive function during energy deficit [1].

Figure 1: HPG Axis Regulatory Pathways. The core HPG axis (yellow to green) shows the primary hormonal cascade, while regulatory inputs (blue) illustrate modulation by neural and metabolic factors. ARC: arcuate nucleus; AVPV: anteroventral periventricular nucleus; DMN: dorsomedial nucleus.

Pituitary Gonadotropin Production and Regulation

GnRH binding to its receptor on anterior pituitary gonadotrope cells activates complex intracellular signaling pathways that control synthesis and secretion of LH and FSH [2]. The GnRH receptor is a G protein-coupled receptor that primarily activates Gαq/11, leading to phospholipase C activation, generation of inositol trisphosphate (IP3) and diacylglycerol (DAG), increased intracellular calcium, and activation of protein kinase C isoforms [2]. These signaling events stimulate both the secretion of stored gonadotropins and the transcription of gonadotropin subunit genes.

LH and FSH production is regulated through both transcriptional and epigenetic mechanisms:

- LHβ gene transcription is highly sensitive to GnRH stimulation and depends on conserved promoter elements including binding sites for early growth response protein 1 (Egr-1) and steroidogenic factor 1 (SF-1) [2].

- Epigenetic regulation involves GnRH-induced chromatin modifications including histone acetylation by p300, increased H3K4me3 marks by menin-MLL complexes, and citrullination of histone H3 arginine residues [2].

Gonadal Function and Feedback Mechanisms

The gonads respond to LH and FSH stimulation by producing gametes and secreting sex steroids. These steroids then complete feedback loops to regulate upstream HPG axis activity:

In Males:

- LH stimulates Leydig cells to produce testosterone, which drives spermatogenesis and maintains secondary sexual characteristics [3].

- FSH acts on Sertoli cells to support spermatogenesis and production of androgen-binding protein (ABP), inhibins, and aromatase [3].

- Testosterone and inhibin B provide negative feedback at the hypothalamus and pituitary to suppress GnRH, LH, and FSH secretion [4].

In Females:

- FSH stimulates follicular development and granulosa cell aromatase activity, converting androgens to estrogens [3].

- LH triggers ovulation and supports the corpus luteum to produce progesterone [3].

- Estrogen exhibits biphasic feedback: moderate levels inhibit (negative feedback) while sustained high levels stimulate (positive feedback) gonadotropin secretion [3].

- The HPG axis exhibits bistability, with distinct hormonal profiles characterizing the follicular and luteal phases, ensuring proper timing of ovulation [1].

Machine Learning Approaches for Infertility Risk Assessment

Male Infertility Prediction Models

Recent research has demonstrated the feasibility of using machine learning algorithms to predict male infertility risk based solely on serum hormone levels from the HPG axis, potentially reducing reliance on traditional semen analysis [4] [6]. A 2024 study of 3,662 patients developed AI models that achieved 74.4% area under the curve (AUC) for predicting infertility conditions including non-obstructive azoospermia (NOA), obstructive azoospermia, cryptozoospermia, and oligozoospermia [4]. The models perfectly predicted severe conditions like NOA (100% accuracy in validation years) using only hormone profiles [4].

Table 1: Feature Importance in Male Infertility Prediction Models

| Rank | Prediction One Model [4] | AutoML Tables Model [4] | SVM/SuperLearner Models [5] |

|---|---|---|---|

| 1 | FSH | FSH (92.24%) | Sperm Concentration |

| 2 | Testosterone/Estradiol (T/E2) ratio | T/E2 ratio (3.37%) | FSH |

| 3 | LH | LH (1.81%) | LH |

| 4 | Age | Testosterone | Genetic Factors |

| 5 | Testosterone | Age | Age |

| 6 | Estradiol (E2) | E2 | Testosterone |

| 7 | Prolactin (PRL) | PRL | Estradiol |

The comparative analysis of feature importance across multiple studies reveals that FSH consistently ranks as the most significant predictor of male infertility, reflecting its crucial role in spermatogenesis [4] [5]. The testosterone-to-estradiol (T/E2) ratio and LH levels also demonstrate substantial predictive value across different algorithmic approaches [4]. These findings align with the physiological understanding that both FSH and testosterone are required for normal spermatogenesis, with FSH often elevated in cases of spermatogenic dysfunction [4].

Table 2: Performance Metrics of Machine Learning Algorithms for Male Infertility Prediction

| Algorithm | AUC | Accuracy | Precision | Recall | F-Value | Data Source |

|---|---|---|---|---|---|---|

| SuperLearner | 97% | N/R | N/R | N/R | N/R | [5] |

| Support Vector Machine (SVM) | 96% | N/R | N/R | N/R | N/R | [5] |

| Prediction One | 74.42% | 69.67% | 76.19% | 48.19% | 59.04% | [4] |

| AutoML Tables | 74.2% | 71.2% | 83.0% | 47.3% | 60.2% | [4] |

| Random Forest | N/R | 84.8% | 85.3% | 84.8% | 85.0% | [5] |

The performance comparison demonstrates that ensemble methods like SuperLearner achieve superior predictive accuracy compared to individual algorithms [5]. These advanced ML approaches can identify complex, non-linear relationships between HPG axis hormones that may not be apparent through conventional statistical analysis.

Female Fertility Assessment and Ovarian Reserve Testing

In females, HPG axis hormones are commonly measured to assess ovarian reserve, which refers to the quantity of remaining oocytes [7] [8]. Commonly used biomarkers include anti-Müllerian hormone (AMH), FSH, estradiol, and inhibin B [7] [8]. However, unlike in male infertility prediction, current evidence suggests limitations in using these biomarkers alone for predicting future fertility in women without diagnosed infertility.

Key findings from cohort studies include:

- Women with diminished ovarian reserve (AMH < 0.7 ng/mL or FSH ≥ 10 mIU/mL) showed no significant difference in probability of future live birth compared to women with normal ovarian reserve after adjusting for age (RR 1.32 and RR 1.28, respectively) [7].

- No significant association was found between diminished ovarian reserve and risk of future infertility diagnosis (RR 0.65 for AMH and RR 1.69 for FSH) [7].

- A single AMH measurement in women with presumed fertility does not reliably predict time to pregnancy and should not be used for routine fertility counseling [8].

These findings highlight important physiological differences between male and female fertility assessment and underscore that ovarian reserve biomarkers reflect oocyte quantity rather than quality, which is more strongly influenced by chronological age [7] [8].

Experimental Protocols for HPG Axis Investigation

Protocol: Serum Hormone Analysis for Infertility Risk Assessment

Purpose: To quantitatively measure HPG axis hormone levels for machine learning-based infertility risk prediction.

Materials:

- Serum collection tubes (SST)

- Centrifuge

- Automated immunoassay platforms

- LH, FSH, testosterone, estradiol, prolactin assay kits

- Data collection form

Procedure:

- Sample Collection: Collect 5-10 mL venous blood in SST following standard phlebotomy procedures. Fasting samples are preferred, collected between 7-10 AM to control for diurnal variation.

- Sample Processing: Allow blood to clot at room temperature for 30 minutes, then centrifuge at 1300-2000 × g for 10 minutes. Aliquot serum into cryovials and store at -20°C if not analyzed immediately.

- Hormone Assay: Perform hormone measurements using FDA-approved automated immunoassays according to manufacturer protocols:

- LH and FSH: Use two-site chemiluminescent immunoassays with reported detection limits of 0.07 mIU/mL and 0.3 mIU/mL, respectively.

- Testosterone: Employ competitive electrochemiluminescent immunoassay with sensitivity of 0.5 ng/mL.

- Estradiol: Use competitive immunoassay with analytical sensitivity of 10 pg/mL.

- Prolactin: Utilize two-site immunoenzymatic "sandwich" assay with detection limit of 0.6 ng/mL.

- Quality Control: Include two levels of quality control materials in each assay run. Accept results only when controls fall within established ranges.

- Data Calculation: Calculate T/E2 ratio from absolute values. Compile data with patient age for ML model input.

Validation: The Kobayashi et al. study validated this approach on 3,662 patients, demonstrating clinical utility for infertility risk stratification [4].

Protocol: Machine Learning Model Development for Infertility Prediction

Purpose: To develop and validate predictive models for infertility risk using HPG axis hormone data.

Materials:

- R or Python programming environment

- Machine learning libraries (caret, SuperLearner, e1071 in R; scikit-learn, pandas in Python)

- Clinical dataset with hormone levels and fertility outcomes

Procedure:

- Data Preprocessing:

- Handle missing values using appropriate imputation methods

- Normalize numerical data using Z-score standardization

- Encode categorical variables

- Split data into training (70-80%) and testing (20-30%) sets

Algorithm Selection and Training:

- Implement multiple classifiers: Decision Trees, Random Forest, Naive Bayes, K-Nearest Neighbors, Support Vector Machines, and SuperLearner ensemble method

- Use 10-fold cross-validation on training data to tune hyperparameters

- For Random Forest, set number of trees (ntree = 500) and number of variables sampled for splitting at each node (mtry = square root of total variables)

Model Validation:

- Evaluate performance on held-out test set using AUC, accuracy, precision, recall, and F-value

- Assess feature importance through variable importance plots or permutation importance

- Perform external validation with temporal or geographical validation cohorts when possible

Model Interpretation:

- Generate variable importance rankings to identify most predictive HPG axis components

- Create partial dependence plots to visualize relationship between hormone levels and predicted risk

- Develop clinical risk stratification thresholds based on model probabilities

Application: The validated model can be integrated into clinical decision support systems to identify high-risk individuals requiring comprehensive fertility evaluation [4] [5].

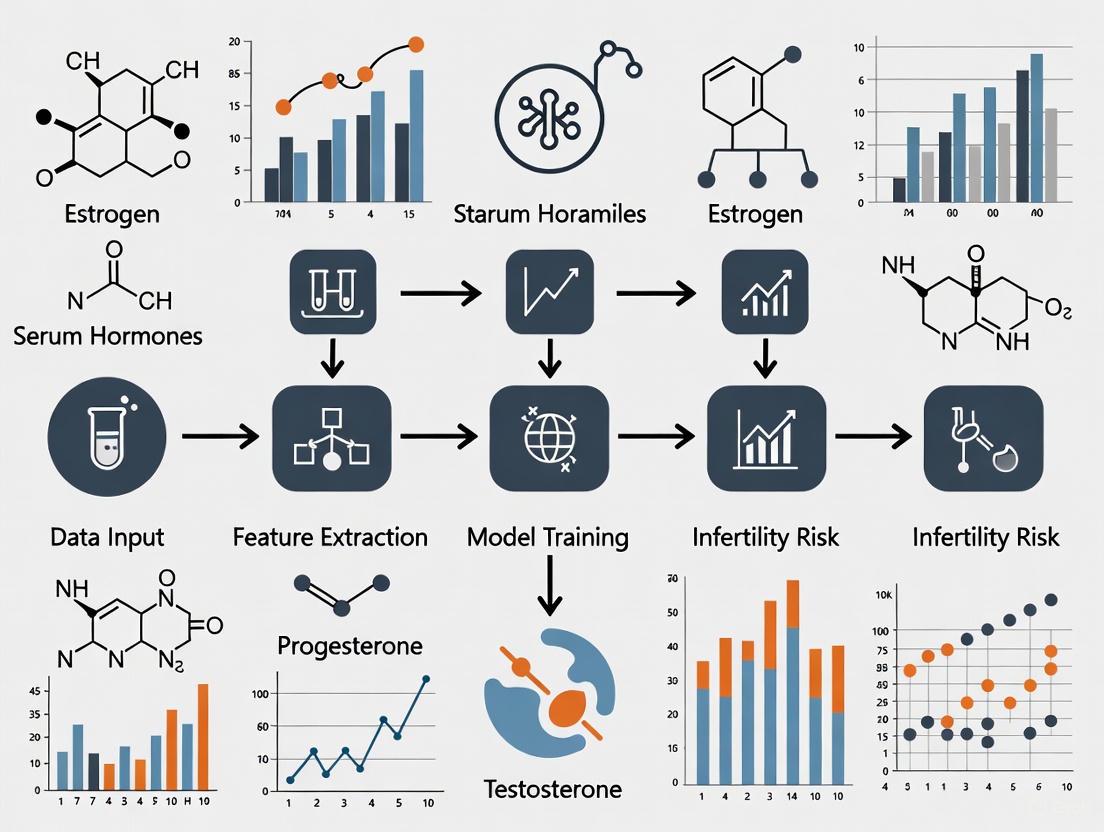

Figure 2: Machine Learning Workflow for HPG-Based Infertility Prediction. The pipeline illustrates the sequential process from data collection through clinical application, with blue nodes representing input data and computational elements.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for HPG Axis Investigation

| Reagent/Category | Specific Examples | Research Application | Technical Notes |

|---|---|---|---|

| GnRH Agonists/Antagonists | Leuprolide, Cetrorelix, Ganirelix | Manipulation of HPG axis; studying pulsatile vs continuous GnRH effects | Continuous administration causes receptor desensitization; used in prostate cancer treatment [3] |

| Hormone Immunoassays | ELISA, CLIA, EIA kits for LH, FSH, testosterone, estradiol | Quantifying hormone levels in serum/plasma; assessing feedback mechanisms | AMH assays lack international standardization; interpret with caution [8] |

| Cell Culture Models | LβT2 gonadotrope cells, αT3-1 cells | Studying gonadotropin synthesis and regulation | LβT2 cells express both LHβ and FSHβ; useful for studying gonadotropin gene regulation [2] |

| Kisspeptin Analogues | Kisspeptin-10, Kisspeptin-54 | Probing GnRH regulation mechanisms; potential therapeutic applications | Different effects based on administration route and pattern (bolus vs continuous) [2] |

| Signal Transduction Inhibitors | PKC inhibitors, MAPK pathway inhibitors, calcium chelators | Elucidating intracellular signaling pathways in gonadotrope cells | GnRH activates multiple MAPKs (ERK1/2, JNK, p38) forming complex regulatory networks [2] [1] |

| Gene Expression Tools | Egr-1 reporters, SF-1 binding assays, chromatin immunoprecipitation | Studying gonadotropin gene regulation and epigenetic mechanisms | LHβ promoter contains conserved Egr-1 and SF-1 binding sites critical for GnRH responsiveness [2] |

The HPG axis represents a sophisticated neuroendocrine system that integrates neural, hormonal, and metabolic signals to regulate reproductive function. Understanding its complex regulatory mechanisms provides the foundation for developing advanced diagnostic and therapeutic approaches for infertility. The emergence of machine learning methods that leverage HPG axis hormone data offers promising avenues for non-invasive infertility risk assessment, particularly in male patients where FSH, LH, and testosterone-to-estradiol ratio demonstrate strong predictive value.

Future research directions should focus on:

- Multi-omics integration combining HPG axis hormones with genetic, epigenetic, and proteomic biomarkers

- Dynamic testing protocols that capture HPG axis responsiveness to stimulation challenges rather than just baseline levels

- Standardized assay platforms to enable direct comparison of hormone measurements across studies and populations

- Longitudinal studies tracking HPG axis function and fertility outcomes across the reproductive lifespan

- Interventional trials testing whether ML-guided early identification of at-risk individuals improves reproductive outcomes through timely intervention

As machine learning algorithms continue to evolve and datasets expand, HPG axis profiling is poised to become an increasingly powerful tool for personalized fertility assessment and management, ultimately improving care for individuals and couples facing reproductive challenges.

The quantitative analysis of serum hormones represents a cornerstone of diagnostic endocrinology. Within the specific field of human reproduction, the hormones Follicle-Stimulating Hormone (FSH), Luteinizing Hormone (LH), Testosterone, Estradiol, and Prolactin have established roles in regulating physiological function. The contemporary research landscape is now defined by a paradigm shift: the use of these classic biomarkers as features for machine learning (ML) models predicting clinical outcomes. This application note details the precise experimental protocols and analytical frameworks required to generate high-quality data for such research, with a specific focus on developing ML models for assessing infertility risk. The reproducibility and clinical validity of these models are fundamentally dependent on standardized data acquisition, a principle central to the methodologies described herein.

Hormonal Biomarkers: Reference Ranges and Clinical Significance

A precise understanding of hormonal reference ranges and their clinical correlations is essential for both interpreting individual patient status and for crafting meaningful predictive features for ML models. The following tables summarize key quantitative data and functional significance for the central hormonal biomarkers.

Table 1: Key Hormonal Biomarkers in Male Reproductive Endocrinology

| Hormone | Primary Function | Clinical Significance in Infertility | Key Quantitative Findings |

|---|---|---|---|

| FSH | Stimulates Sertoli cells and spermatogenesis [4] | Often elevated in spermatogenic dysfunction; clear top feature in AI infertility prediction models [4] [6] | Mean in infertile cohort: 8.845 mIU/mL (95% CI: 8.535–9.155) [4] |

| LH | Stimulates Leydig cells to produce Testosterone [4] | Elevated with low T indicates primary hypogonadism; ranked 3rd in AI feature importance [4] [9] | Mean in infertile cohort: 5.681 mIU/mL (95% CI: 5.545–5.817) [4] |

| Testosterone | Essential for libido, erectile function, and spermatogenesis [9] [10] | Low levels associated with reduced libido and ED; but not always correlated with ED in eugonadal men [9] [10] | Mean in infertile cohort: 4.741 ng/mL (95% CI: 4.672–4.810) [4] |

| Estradiol | Maintains bone density, modulates libido [9] | Imbalances can disrupt erectile function; significant independent association with ED in men without hypoandrogenism [9] [10] | Mean in infertile cohort: 26.166 pg/mL (95% CI: 25.802–26.530) [4] |

| Prolactin | Modulates dopaminergic pathways for sexual desire [9] | Hyperprolactinemia can cause hypogonadism; very low levels may also contribute to ED [9] | Mean in infertile cohort: 10.540 ng/mL (95% CI: 9.865–11.214) [4] |

Table 2: Hormonal Associations with Clinical Conditions Beyond Infertility

| Condition | Relevant Hormones | Key Associations and Findings |

|---|---|---|

| Erectile Dysfunction (ED) | Testosterone, Free Testosterone, DHEA-S, Estradiol, SHBG | Total and Free Testosterone levels progressively decrease with ED severity. Free Testosterone is a more sensitive marker, with median levels below the normal threshold in all ED groups [9]. |

| Gender-Affirming Hormone Therapy (GAHT) | Testosterone, Estradiol, Prolactin | GAHT is associated with QTc interval prolongation in transgender women and shortening in transgender men, corresponding to the restoration of sexual dimorphism observed in cisgender adults [11]. |

| Polycystic Ovary Syndrome (PCOS) | Anti-Müllerian Hormone (AMH), LH, Testosterone | AMH has emerged as a key biomarker reflecting ovarian reserve and may play a role in pathogenesis. PCOS is now considered a cardiovascular disease risk-enhancing factor [12]. |

| Turner Syndrome | Anti-Müllerian Hormone (AMH) | AMH is a reliable biomarker for ovarian reserve and prediction of spontaneous puberty, with significantly lower levels in TS patients versus controls (WMD: -3.04 ng/mL) [13]. |

Experimental Protocols for Hormone Assay and Data Collection

Standardized Pre-Analytical Protocol for Blood Sample Collection

Robust ML models require datasets generated from standardized laboratory practices to minimize technical noise.

- Patient Preparation: Participants should provide samples after an 8–10 hour fast. For male infertility studies, a defined period of sexual abstinence (e.g., 2-5 days) may be recommended prior to semen analysis [4].

- Sample Timing: Blood collection must be performed in the morning (e.g., before 10:00 AM) to account for diurnal variation in hormone levels, particularly for testosterone [9] [10].

- Sample Processing: Collect venous blood into serum separator tubes (e.g., BD Vacutainer). After collection, allow samples to clot for 30 minutes at room temperature. Subsequently, centrifuge to isolate serum, aliquot into sterile tubes (e.g., Eppendorf), and store at -80°C until analysis to prevent degradation [9].

Analytical Protocol for Hormone Quantification

The choice of assay methodology significantly impacts result accuracy and inter-study comparability.

- Recommended Platform: Utilize automated chemiluminescence immunoassay (CLIA) systems, such as the ARCHITECT i1000 or i2000 series (Abbott Diagnostics) or similar platforms from Roche Diagnostics [11] [9]. These systems provide the high throughput and precision required for large-scale studies.

- Methodology Specifics:

- For the majority of hormones (FSH, LH, Prolactin, Estradiol), standard CLIA methods are sufficient and widely used [9] [10].

- For Total Testosterone, liquid chromatography–mass spectrometry (LC-MS/MS) is considered the gold standard due to its high specificity and accuracy, especially at lower concentrations [11].

- Quality Control: Each assay run must include internal quality controls at low, medium, and high concentrations. Participation in external quality assurance (proficiency testing) programs is mandatory for laboratory accreditation.

Clinical Phenotyping Protocol for Model Ground Truth

The predictive power of an ML model is contingent on the accuracy of its diagnostic labels.

- For Male Infertility Studies: The ground truth for model training must be established via standard semen analysis conducted according to the latest World Health Organization (WHO) laboratory manual [4] [6]. Key parameters include:

- Sperm Concentration: Azoospermia, cryptozoospermia, oligozoospermia.

- Sperm Motility: Asthenozoospermia.

- Total Motile Sperm Count (TMSC): Often used as a key outcome threshold (e.g., 9.408 × 10^6 defined as lower limit of normal) [4].

- For Erectile Dysfunction Studies: Patient assessment should be conducted using the validated International Index of Erectile Function (IIEF-15 or IIEF-5) questionnaire to provide a quantitative and standardized measure of dysfunction severity [9] [10].

The Hypothalamic-Pituitary-Gonadal (HPG) Axis: A Systems View

The hormonal biomarkers detailed in this document do not function in isolation but are components of an integrated endocrine system. The following diagram illustrates the core feedback loops of the HPG axis, the primary system governing reproductive function. A systems-level understanding of these interactions is critical for generating meaningful features for machine learning models, as it reveals potential synergies and regulatory relationships between biomarkers.

Machine Learning Workflow for Infertility Risk Prediction

Translating standardized hormone data into a predictive ML model requires a structured pipeline from data pre-processing to model deployment. The following diagram outlines this workflow, highlighting the critical steps that ensure the developed model is robust, accurate, and clinically actionable.

Protocol for Model Development and Validation

The workflow illustrated above depends on rigorous execution at each stage.

- Data Pre-processing: Address missing values appropriately (e.g., imputation or removal). Apply Z-score normalization to scale numerical hormone data, preventing features with larger intrinsic scales from dominating the model [5].

- Feature Engineering: Beyond raw hormone levels, create derived ratios that have biological plausibility. The Testosterone-to-Estradiol (T/E2) ratio has been identified as the second most important predictive feature after FSH in several models [4].

- Model and Algorithm Selection: Implement and compare multiple supervised learning algorithms to identify the best performer for your dataset. High-performing algorithms in this domain include:

- Model Validation: Employ 10-fold cross-validation to assess model generalizability and avoid overfitting. Evaluate performance using Area Under the Curve (AUC) for Receiver Operating Characteristic (ROC) and Precision-Recall (PR) curves. The model by Kobayashi et al. achieved an AUC of 74.42% [4] [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Reagents and Materials for Hormone and ML Research

| Item | Specification/Example | Critical Function |

|---|---|---|

| Automated Immunoassay System | ARCHITECT i1000/i2000SR (Abbott), Cobas e801 (Roche) | High-throughput, precise quantification of FSH, LH, Prolactin, Estradiol via chemiluminescence (CLIA) [9]. |

| LC-MS/MS System | Agilent 6470, Sciex Triple Quad 6500+ | Gold-standard quantification for testosterone and other steroids, providing superior specificity and accuracy [11]. |

| Blood Collection System | BD Vacutainer (Serum Separator Tubes with clot activator) | Standardized sample collection and serum separation for consistent pre-analytical conditions [9]. |

| Laboratory Software | CalECG, Version 3.7 (AMPS LLC) | For semi-automatic analysis of complex physiological data (e.g., ECG), demonstrating the principle of using specialized software for feature extraction [11]. |

| AI Development Platform | No-code AI software (e.g., Prediction One, AutoML Tables) | Allows researchers without deep coding expertise to build and compare initial predictive models from structured data [4]. |

| Statistical & Coding Environment | R Programming Language (with packages: caret, SuperLearner, e1071, rpart) |

Provides a flexible, open-source environment for data pre-processing, machine learning, and statistical validation [5]. |

The diagnosis and treatment of infertility rely heavily on the precise correlation between serum hormone levels and direct measures of reproductive function: semen analysis in men and ovarian reserve in women. Hormonal dysregulation of the hypothalamic-pituitary-gonadal (HPG) axis serves as a critical indicator of underlying pathology and treatment response. This document synthesizes recent clinical evidence and establishes standardized protocols for investigating these correlations, providing a foundational context for the development of machine learning models that predict infertility risk from serum biomarkers. The integration of quantitative hormone data with clinical outcomes enables more precise, individualized treatment strategies and enhances the predictive capability of computational tools.

Quantitative Data Synthesis

Key Hormonal Correlates in Male Infertility

Table 1: Hormonal Profiles and Predictive Values for Male Infertility Conditions

| Condition | FSH (mIU/mL) | LH (mIU/mL) | Testosterone (ng/mL) | T/E2 Ratio | Predictive Accuracy |

|---|---|---|---|---|---|

| Normal Fertility [4] | 8.85 (CI: 8.54-9.16) | 5.68 (CI: 5.55-5.82) | 4.74 (CI: 4.67-4.81) | 19.92 (CI: 19.54-20.29) | - |

| Non-Obstructive Azoospermia (NOA) [4] [6] [14] | Significantly Elevated | Variable | Variable | Significant Reduction | 100% (AI Model Prediction) [14] |

| Oligo/Asthenozoospermia [4] | Elevated | Variable | Variable | Reduced | - |

| AI Model Feature Importance [4] | 1st (92.24%) | 3rd (1.81%) | 4th/5th | 2nd (3.37%) | AUC: 74.2-74.4% [4] |

Key Hormonal Correlates in Female Infertility and Ovarian Reserve

Table 2: Hormonal and Ultrasonographic Predictors of Ovarian Response in IVF

| Parameter | Role in Ovarian Reserve Assessment | Correlation with Gn Starting Dose | Predictive Value in POI |

|---|---|---|---|

| AMH [15] [16] | Reflects pool of early antral follicles; cycle-stable [15] | Significant negative correlation (P<0.05) [16] | Superior predictor of follicular growth (AUC: 0.957); optimal threshold: 2.45 pg/mL [15] |

| Basal FSH (bFSH) [15] [16] | Indirect measure of follicular pool; high levels indicate diminished reserve | Significant positive correlation (P<0.05) [16] | Shorter amenorrhea duration and lower levels in POI patients with follicular development [15] |

| Antral Follicle Count (AFC) [16] | Direct ultrasonographic count of recruitable follicles | Significant negative correlation (P<0.05) [16] | - |

| Age [16] | Non-hormonal factor influencing oocyte quantity and quality | Significant positive correlation (P<0.05) [16] | - |

| BMI [16] | Modifies metabolic and endocrine environment | Significant positive correlation (P<0.05) [16] | - |

Experimental Protocols

Protocol for Investigating Male Infertility Using Serum Hormones and AI Modeling

Objective: To develop a machine learning model for predicting male infertility risk based solely on serum hormone levels, bypassing initial semen analysis [4] [6].

Patient Population and Data Collection:

- Cohort: 3,662 patients undergoing fertility evaluation (2011-2020) [4].

- Inclusion: Patients with complete semen analysis and serum hormone profiles.

- Data Extracted: Age, LH, FSH, Prolactin (PRL), Testosterone, Estradiol (E2), and calculated T/E2 ratio [4].

- Outcome Variable: Total motile sperm count (TMSC), with a value below 9.408 x 10^6 defined as abnormal [4] [14].

Machine Learning Methodology:

- Software & Algorithms: Utilize no-code AI platforms (e.g., Prediction One) or code-based libraries (e.g.,

caretin R). Apply algorithms such as Support Vector Machines (SVM) and ensemble methods (e.g., SuperLearner) [4] [5]. - Model Training & Validation: Split data into training (e.g., 80%) and validation (e.g., 20%) sets. Use 10-fold cross-validation to assess model performance and prevent overfitting [5].

- Performance Metrics: Evaluate using Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve, accuracy, precision, and recall [4] [5]. Analyze feature importance to identify key hormonal predictors [4].

Protocol for Correlating AMH with Follicular Growth in Primary Ovarian Insufficiency (POI)

Objective: To evaluate the efficacy of a highly sensitive AMH assay in predicting follicular development during prolonged controlled ovarian stimulation (COS) in POI patients [15].

Patient Selection and Design:

- Design: Retrospective cohort study.

- Patients: 165 POI patients undergoing 504 long COS cycles [15].

- Inclusion Criteria: Age 20-48, last menstruation before 40, serum FSH >25 mIU/mL and E2 <20 pg/mL on two occasions, >3 months amenorrhea without hormone therapy [15].

- Stimulation Protocol: Use of GnRH-agonist (Buserelin acetate) for pituitary down-regulation, followed by stimulation with human menopausal gonadotrophin or recombinant FSH for over four weeks [15].

Measurement and Analysis:

- AMH Measurement: Serum AMH levels measured at 3 weeks (days 18-27) post-stimulation initiation using the highly sensitive pico AMH ELISA (MenoCheck pico AMH, Ansh Labs) with a LoD of 1.3 pg/mL [15].

- Primary Outcome: Follicular development defined by ultrasonically detectable antral follicles (≥2 mm) [15].

- Statistical Analysis: ROC curve analysis to determine the predictive power and optimal threshold of 3-week AMH levels for follicular growth. Correlation analysis (e.g., Pearson's R) between AMH levels and time to follicular detection [15].

Protocol for Individualizing Gonadotropin Starting Dose in Normal Ovarian Responders

Objective: To create and validate a clinical prediction model (nomogram) for determining the optimal Gn starting dose in NOR patients undergoing their first IVF/ICSI-ET cycle [16].

Study Population and Design:

- Design: Retrospective analysis of 535 first IVF/ICSI-ET cycles.

- Inclusion: NOR patients (aged 20-38) with 5-15 oocytes retrieved, undergoing GnRH-agonist or antagonist protocols [16].

- Exclusion: Patients with PCOS, endocrine, metabolic, or autoimmune diseases [16].

Data Collection and Model Development:

- Predictor Variables: Collect age, BMI, basal FSH (bFSH), AMH, and AFC on cycle day 2-3 [16].

- Outcome Variable: The actual Gn starting dose (IU) used in the cycle [16].

- Statistical Analysis:

- Randomly split data into training (60%) and validation (40%) sets.

- Perform univariate and multivariate linear regression to identify factors significantly (P<0.05) associated with the Gn dose.

- Construct a nomogram based on the significant predictors.

- Validate the model by comparing the predicted dose to the actual dose in the validation set, using metrics like Mean Absolute Error (MAE) and a t-test (P>0.05 indicates no significant difference) [16].

Signaling Pathways and Physiological Correlations

The Hypothalamic-Pituitary-Gonadal (HPG) Axis

The HPG axis is the central regulatory system for reproduction, and its dysregulation is a primary source of infertility [17]. Understanding this pathway is fundamental to interpreting hormone profiles.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Assays for Hormonal and Functional Analysis in Infertility Research

| Item Name | Manufacturer (Example) | Function & Application |

|---|---|---|

| Pico AMH ELISA | Ansh Labs (MenoCheck pico AMH) [15] | Highly sensitive quantification of very low AMH levels (LoD: 1.3 pg/mL); crucial for assessing patients with severely diminished ovarian reserve, such as POI. |

| Automated Immunoassay Analyzer | TOSOH (AIA-900) [15] | Automated, high-throughput measurement of reproductive hormones (FSH, LH, E2, P, PRL) in serum samples. |

| Access AMH Immunoassay / Gen II AMH ELISA | Beckman Coulter [15] | Standard clinical assays for measuring AMH levels in patients with normal to moderately reduced ovarian reserve. |

| Recombinant FSH / Human Menopausal Gonadotrophin (hMG) | Various | Used in Controlled Ovarian Stimulation (COS) protocols to induce multifollicular development for IVF [15] [16]. |

| GnRH Agonist (e.g., Buserelin acetate) | Various | Used for pituitary down-regulation in long-protocol IVF cycles to prevent premature luteinizing hormone surge [15] [16]. |

| GnRH Antagonist | Various | Used in flexible IVF protocols to prevent premature LH surge by competitively blocking GnRH receptors [16]. |

| Vitrification Kit | Kitazato Corp. (Cryotop) [15] | For the cryopreservation of oocytes and embryos post-retrieval, utilizing ultra-rapid cooling to maintain cellular viability. |

| No-Code AI Creation Software | Prediction One, AutoML Tables [4] [6] | Enables researchers without advanced programming skills to develop and validate predictive machine learning models using clinical data. |

Infertility, defined as the failure to conceive after 12 months of regular unprotected intercourse, affects approximately 15% of couples worldwide [18]. Traditional diagnostic approaches have relied heavily on isolated hormone measurements, including follicle-stimulating hormone (FSH), luteinizing hormone (LH), anti-Müllerian hormone (AMH), and prolactin, to assess reproductive function [19]. These biomarkers are typically interpreted individually using population-based reference ranges, despite compelling evidence that their predictive value is limited when examined in isolation [20]. The complex, multifactorial nature of infertility necessitates a more sophisticated analytical approach that can integrate hormonal data with demographic, clinical, and lifestyle factors to provide clinically meaningful prognostic information.

The fundamental limitation of single-hormone testing lies in its reductionist approach to a systems biology challenge. Female reproductive function involves intricate feedback mechanisms between the hypothalamic-pituitary-ovarian axis, where hormones interact in dynamic, non-linear patterns throughout the menstrual cycle [20]. Isolated measurements capture merely a static snapshot of this complex, fluctuating system, failing to represent the integrated hormonal milieu that ultimately determines reproductive outcomes. Furthermore, hormone concentrations exhibit significant variation across different female hormonal statuses—including oral contraceptive pill users, menstrual cycle phases, and menopausal status—further complicating the interpretation of single measurements without proper contextualization [20].

Quantitative Evidence: The Limitations of Single-Marker Approaches

Robust scientific evidence demonstrates the inherent limitations of isolated hormone testing for infertility assessment. A comprehensive analysis of 171 serum biomarkers revealed that 68% (117 analytes) showed significant variation with sex and female hormonal status, indicating that single hormone measurements without proper contextualization can be highly misleading [20]. This biological variability directly impacts clinical test reproducibility and diagnostic accuracy, contributing to the poor translational success of biomarker studies from research to clinical practice.

Table 1: Impact of Biological Variability on Serum Biomarker Levels

| Variability Factor | Number of Affected Biomarkers | False Discovery Rate in Unmatched Studies | Key Clinical Implications |

|---|---|---|---|

| Sex differences | 96 biomarkers | Up to 39.6% | Male and female reference ranges required for accurate interpretation |

| Oral contraceptive use | 55 biomarkers | Up to 41.4% | Contraceptive status must be recorded and matched in study designs |

| Menopausal status | 26 biomarkers | Not quantified | Age and menopausal status critically impact reference values |

| Menstrual cycle phase | 5 biomarkers | Not quantified | Timing within cycle essential for proper interpretation |

The clinical consequences of these limitations are substantial. Simulation studies demonstrate that when patient and control groups are not matched for sex, researchers can encounter false positive findings in nearly 40% of measured analytes [20]. Similarly, when premenopausal female groups differ in oral contraceptive usage, false discoveries can affect over 41% of biomarkers. These staggering rates of misinterpretation highlight the critical inadequacy of single-marker approaches that fail to account for fundamental biological variabilities.

Beyond the statistical challenges, isolated hormone testing provides insufficient prognostic value for clinical decision-making. A retrospective study of 1,931 patients showed that no single hormone parameter alone could accurately predict clinical pregnancy rates in either IVF/ICSI or IUI treatments [21]. The random forest model, which integrated multiple hormonal, demographic, and treatment parameters, demonstrated superior performance with accuracy exceeding standalone hormone assessments, underscoring the limitation of reductionist approaches [21].

Machine Learning Solutions for Infertility Risk Assessment

Machine learning (ML) approaches represent a paradigm shift in infertility assessment by simultaneously analyzing multiple hormonal, demographic, and clinical parameters to generate integrated risk predictions. These models capture complex, non-linear relationships between variables that conventional statistical methods often miss, providing superior prognostic accuracy [19]. The HyNetReg model exemplifies this approach, combining deep feature extraction using neural networks with regularized logistic regression to achieve enhanced predictive performance for infertility outcomes based on hormonal and demographic profiles [19].

Table 2: Performance Comparison of Predictive Modeling Approaches

| Model Type | Key Features | Accuracy Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Isolated hormone testing | Single hormone interpretation | Varies by hormone | Simple to implement, low cost | Poor prognostic value, high false discovery rates |

| Traditional statistical models | Multivariable regression | Not consistently reported | Familiar methodology, interpretable | Limited capture of complex interactions |

| Random forest | Ensemble decision trees | Highest accuracy in comparative studies [21] | Handles non-linear relationships, robust to outliers | Less interpretable than simpler models |

| HyNetReg hybrid model | Neural network feature extraction + logistic regression | Superior to traditional logistic regression [19] | Captures complex patterns, improved classification | Computationally intensive |

| Machine learning center-specific (MLCS) | Center-specific training and validation | Improved minimization of false positives/negatives vs. SART model [22] | Adapts to local patient populations, clinically relevant | Requires substantial center-specific data |

The clinical utility of ML approaches extends beyond basic infertility prediction to specific treatment applications. For fresh embryo transfer in patients with endometriosis, an XGBoost model incorporating eight key predictors—including AMH, female age, antral follicle count, infertility duration, and GnRH agonist protocol—demonstrated superior predictive performance for live birth outcomes compared to seven other machine learning models [23]. The model achieved an AUC of 0.852 in the test set, significantly outperforming traditional approaches and enabling more personalized treatment recommendations for this challenging patient population [23].

The implementation of ML models in clinical settings has demonstrated tangible improvements in treatment outcomes. An AI model trained on 53,000 IVF cycles and designed to optimize trigger timing resulted in significantly improved oocyte yield when clinicians followed the model's recommendations compared to physician estimates alone [24]. Cycles aligned with AI-guided trigger timing yielded an average of 3.8 more mature oocytes and 1.1 more usable embryos, highlighting the clinical impact of data-driven decision support systems [24].

Experimental Protocols for Predictive Model Development

Data Collection and Preprocessing Methodology

Comprehensive data collection forms the foundation of robust predictive models for infertility risk assessment. The following protocol outlines standardized procedures for acquiring and preparing data for model development:

Patient Population and Inclusion Criteria: Recruit patients presenting for infertility evaluation and treatment. Inclusion criteria should encompass complete demographic data, hormonal profiles, and treatment outcomes. Standard exclusion criteria typically include use of donor gametes, surrogacy arrangements, and cycles with incomplete data (>50% missing values) [21].

Hormonal Assessment Protocol: Collect blood samples during the early follicular phase (day 2-4) of the menstrual cycle for basal hormone measurements. Process samples within 2 hours of collection and store at -80°C until analysis. Analyze reproductive hormones using standardized immunoassay platforms (e.g., Beckman Coulter DxI 800 Immunoassay Analyzer) with consistent quality control procedures [25]. Essential hormones include FSH, LH, AMH, estradiol (E2), and prolactin.

Clinical and Demographic Data Collection: Record comprehensive patient characteristics including female age, male age, body mass index (BMI), infertility duration and type (primary/secondary), ovarian reserve markers (antral follicle count), and semen analysis parameters according to WHO guidelines [25].

Data Preprocessing Pipeline: Implement a multi-step preprocessing protocol:

- Missing Data Imputation: Apply sophisticated imputation methods such as Multi-Level Perceptron (MLP) to predict missing values, which provides superior results compared to traditional mean imputation [21].

- Data Normalization: Use standard scaling or normalization techniques to address varying measurement scales across different biomarkers.

- Class Imbalance Handling: Apply Synthetic Minority Over-sampling Technique (SMOTE) to address class imbalance in the dataset, particularly when modeling relatively rare outcomes such as clinical pregnancy or live birth [25].

Model Development and Validation Framework

The development of robust, clinically applicable predictive models requires a structured approach to model selection, training, and validation:

Predictor Variable Selection: Employ feature selection algorithms such as Least Absolute Shrinkage and Selection Operator (LASSO) and Recursive Feature Elimination (RFE) to identify the most informative predictors for model inclusion [23]. For infertility applications, key predictors typically include female age, AMH, FSH, infertility duration, and specific treatment parameters.

Model Architecture and Training: Implement multiple machine learning algorithms to compare performance, including random forest, XGBoost, logistic regression, support vector machines, and artificial neural networks [21] [23]. Utilize a nested cross-validation framework with outer validation using stratified 5-fold cross-validation for training/testing splits and inner 5-fold stratified cross-validation for hyperparameter optimization [25].

Model Validation Protocol: Implement comprehensive validation procedures:

- Internal Validation: Use k-fold cross-validation (typically k=10) to evaluate model performance and avoid overfitting, particularly important for smaller datasets [21].

- External Validation: Reserve a portion of the dataset (typically 20-30%) that is not used in model development for final performance assessment [21] [23].

- Live Model Validation (LMV): Test model performance on out-of-time test sets comprising patients who received treatment contemporaneous with clinical model usage to assess ongoing applicability and detect data drift [22].

Performance Metrics and Clinical Utility Assessment: Evaluate models using multiple metrics including area under the receiver operating characteristic curve (ROC-AUC), precision-recall AUC (PR-AUC), F1 score, Brier score, and calibration curves [23] [22]. Supplement statistical evaluation with decision curve analysis to assess clinical utility across different probability thresholds [23] [25].

The Scientist's Toolkit: Essential Research Reagents and Analytical Platforms

Table 3: Essential Research Reagents and Platforms for Hormonal Predictive Modeling

| Reagent/Platform | Specific Function | Application Context | Technical Considerations |

|---|---|---|---|

| Multiplex Immunoassay Platforms (e.g., Human DiscoveryMAP) | Simultaneous measurement of 171+ proteins and small molecules | Comprehensive biomarker profiling for model development [20] | Enables broad biomarker discovery but requires validation of individual assays |

| Chemiluminescence Immunoassay Analyzer (e.g., Beckman Coulter DxI 800) | Quantitative measurement of reproductive hormones | Standardized AMH, FSH, LH, E2 assessment in clinical samples [25] | Provides clinical-grade accuracy essential for valid model inputs |

| Leica Biosystems Aperio AT2 Digital Pathology Scanner | Digitization of H&E-stained histopathology slides at 20x magnification | Digital pathology feature extraction for multimodal AI models [26] | Enables integration of histopathological features with clinical data |

| Isolate Double-Density Gradient Centrifugation Media | Sperm selection and preparation for ART procedures | Standardized semen processing for consistent parameter assessment [25] | Critical for obtaining reproducible male factor parameters |

| Sperm Chromatin Structure Assay (SCSA) Reagents | Assessment of sperm DNA fragmentation index (DFI) | Evaluation of sperm quality parameter predictive of fertilization success [25] | Standardized protocol essential for comparable results across studies |

| Resnet-50 Feature Extraction Model | Self-supervised learning for digital pathology image analysis | Extraction of meaningful features from histopathology images without manual annotation [26] | Requires substantial computational resources for training and implementation |

The limitations of isolated hormone testing in infertility assessment are both significant and well-documented. Single hormone measurements fail to capture the complex, dynamic interactions of the endocrine system and exhibit substantial biological variability that compromises their diagnostic and prognostic utility. Machine learning approaches that integrate multiple hormonal parameters with clinical, demographic, and treatment factors represent a transformative advancement in infertility risk assessment. These models demonstrate superior performance compared to both traditional isolated hormone testing and conventional statistical approaches, providing more accurate prognostic information to guide clinical decision-making.

The implementation of standardized protocols for data collection, preprocessing, and model validation is essential for developing robust, clinically applicable predictive tools. As the field progresses toward a systems medicine approach to infertility care, integrating multi-omics data and leveraging advanced analytical techniques will further enhance our ability to provide personalized, predictive, and preventive reproductive healthcare. The era of data-driven medicine in infertility has arrived, offering new hope for the millions of couples struggling with infertility worldwide.

Building the Model: Data, Algorithms, and Feature Engineering for Hormonal Data

Within the research domain of developing machine learning (ML) models for predicting infertility risk from serum hormones, the integrity of the underlying data is paramount. This document outlines critical application notes and protocols for data sourcing and preprocessing, with a specific focus on handling missing values and defining patient cohorts. These steps are foundational to building robust, accurate, and reliable predictive models. Proper execution ensures that the model's findings on the relationship between hormone levels (e.g., FSH, LH, Testosterone) and infertility outcomes are valid and clinically meaningful [4].

Handling Missing Data in Hormonal Datasets

Missing data is a common occurrence in medical datasets and, if not handled appropriately, can introduce significant bias, reduce statistical power, and lead to incorrect conclusions [27]. The approach to handling missing values must be deliberate and justified.

Types and Identification of Missing Values

Understanding why data is missing is crucial for selecting the correct handling strategy. The underlying mechanism is typically categorized as follows:

- Missing Completely at Random (MCAR): The probability of data being missing is unrelated to any observed or unobserved variables. For example, a data point is missing due to a random processing error.

- Missing at Random (MAR): The probability of missingness depends on other observed variables but not on the missing value itself. For instance, the missingness of a specific hormone value might be related to the patient's age group, which is fully recorded.

- Missing Not at Random (MNAR): The probability of missingness is related to the unobserved missing value itself. An example would if individuals with very high or very low hormone levels are less likely to report them [27] [28].

The first step is to identify missing values, which can be represented as NaN, NULL, None, or other placeholders like -999 [27] [28]. In Python, using the pandas library is standard practice:

Strategies and Protocols for Handling Missing Values

The choice of strategy depends on the proportion of missing data, its mechanism, and the specific analytical goals. The following table summarizes the primary methods.

Table 1: Strategies for Handling Missing Values in Hormonal Data

| Strategy | Description | Best Use Case | Pros & Cons |

|---|---|---|---|

| Listwise Deletion | Removing any row (participant) that has a missing value in any of the variables used in the analysis. | Data is MCAR and the number of deleted rows is small (<5% of the dataset). | Pros: Simple, quick. Cons: Can reduce sample size significantly and introduce bias if data is not MCAR [27] [28]. |

| Mean/Median/Mode Imputation | Replacing missing values with the mean (for normally distributed data), median (for skewed data), or mode (for categorical data) of the available cases in that column. | MCAR data; numerical variables where a simple, fast fix is needed for a small number of missing values. | Pros: Easy and fast to implement. Cons: Can distort the data distribution and underestimate variance [27] [29]. |

| Forward Fill/ Backward Fill | Filling missing values with the last (forward fill) or next (backward fill) valid observation in the dataset. | Time-series data or data where the order of records is meaningful. | Pros: Preserves the order of data points. Cons: Can be inaccurate if the adjacent values are not similar [27]. |

| Interpolation | Estimating missing values based on other data points, often using methods like linear or polynomial interpolation to capture trends. | Data with a discernible trend, such as hormone levels measured over time. | Pros: More accurate than simple imputation as it captures trends. Cons: Assumes a specific pattern (e.g., linear) between points [27] [29]. |

| K-Nearest Neighbors (KNN) Imputation | Replacing a missing value with the mean or median of the 'k' most similar participants (neighbors) based on other available variables. | MAR data; datasets with multiple correlated variables. | Pros: Can be more accurate than simple imputation by using information from similar cases. Cons: Computationally intensive for large datasets [28]. |

| Model-Based Imputation | Using a predictive model (e.g., regression, Random Forest) to estimate missing values based on all other available variables. | MAR data; complex datasets where other variables are strong predictors of the missing one. | Pros: Potentially the most accurate method. Cons: Complex to implement; risk of overfitting [29]. |

Recommended Protocol for Hormonal Data: For a dataset of serum hormone levels (FSH, LH, Testosterone, etc.) aimed at training an ML model, the following workflow is recommended.

Cohort Definition for Infertility Risk Studies

A cohort study is an observational research design that follows a group of people (a cohort) over a period of time to investigate how specific factors affect the incidence of an outcome [30] [31]. In the context of infertility risk, this design is powerful for establishing temporality—confirming that exposure (serum hormone levels) was measured before the outcome (infertility diagnosis) was determined.

Cohort Study Design and Selection

The two primary types of cohort studies are prospective and retrospective, both applicable to infertility research.

Table 2: Types of Cohort Studies for Infertility Research

| Cohort Type | Description | Application in Infertility Risk | Advantages & Disadvantages |

|---|---|---|---|

| Prospective Cohort | A group of participants without the outcome of interest is recruited and followed forward in time to see who develops the outcome. | Recruiting men with no current infertility diagnosis, measuring their baseline serum hormones, and following them for several years to see who later receives an infertility diagnosis. | Advantages: High data quality control, clear temporality. Disadvantages: Time-consuming and expensive [30] [31]. |

| Retrospective Cohort | Researchers look back at historical data to identify a cohort based on past exposure status and then determine if they have since developed the outcome. | Using existing medical records to identify men whose serum hormones were measured 5 years ago, and then reviewing their subsequent fertility status up to the present. | Advantages: Faster and less costly than prospective studies. Disadvantages: Reliance on pre-existing data of potentially variable quality [30] [31]. |

Key Considerations for Cohort Definition:

- Inclusion/Exclusion Criteria: Clearly define the cohort's characteristics. For example: "The cohort will include males aged 20-45 who presented for fertility evaluation, with complete baseline serum hormone profiles (FSH, LH, Testosterone). Exclusion criteria: history of vasectomy, obstructive azoospermia, or hormonal treatment within the last 6 months." [30]

- Exposure and Outcome Measurement:

- Exposure: Precisely define the serum hormone measures (e.g., "baseline FSH level in mIU/mL").

- Outcome: Clearly define the infertility outcome based on standardized criteria, such as the WHO semen analysis guidelines [4] or clinical diagnosis.

- Minimizing Bias: Be aware of biases like attrition bias (participants dropping out in a prospective study) and information bias (inaccurate measurement of exposure or outcome) [31].

The following diagram illustrates the logical structure of a cohort study in this context.

Experimental Protocol: Building an ML Model for Infertility Risk

This protocol integrates the concepts of data preprocessing and cohort definition, drawing from recent research that successfully predicted male infertility risk using serum hormones and AI [4].

Study Design and Data Sourcing

- Cohort Definition: A retrospective cohort study design is employed.

- Participants: The study uses data from 3,662 male patients who underwent both semen analysis and serum hormone testing [4].

- Inclusion/Exclusion: Participants are classified based on semen analysis results (e.g., normal, oligozoospermia, azoospermia) according to WHO standards.

Data Collection and Preprocessing

- Variables Collected: The following data is extracted from medical records:

- Handling Missing Values: The specific method used in the source study is not detailed, but based on best practices (Section 2.2), a model-based imputation or KNN imputation would be appropriate for a dataset of this nature to preserve sample size and statistical power.

Model Training and Evaluation

- ML Technique: The referenced study used AI/Machine Learning models (Prediction One and AutoML Tables) [4].

- Feature Importance: The study found that FSH was the most important predictive feature, followed by T/E2 ratio and LH [4].

- Performance Metrics: The model's performance was evaluated using the Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve, achieving approximately 74.4%, and other metrics like Precision and Recall [4].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and tools essential for research in this field.

Table 3: Essential Research Reagents and Materials for Serum Hormone-Based Infertility Studies

| Item | Function/Description | Example/Note |

|---|---|---|

| Immunoassay Kits | To quantitatively measure serum levels of specific hormones (FSH, LH, Testosterone, Estradiol, Prolactin). | Commercial ELISA (Enzyme-Linked Immunosorbent Assay) or CLIA (Chemiluminescent Immunoassay) kits are standard. |

| WHO Laboratory Manual | The international standard for the examination and processing of human semen to define infertility outcomes. | "WHO Laboratory Manual for the Examination and Processing of Human Semen" (e.g., 6th Edition, 2021) [4]. |

| Data Analysis Software | For statistical analysis, data preprocessing, and machine learning model development. | Python (with pandas, scikit-learn) or R. The cited study used "Prediction One" and "AutoML Tables" [4]. |

| Biobank Storage | For the long-term, stable storage of serum samples at ultra-low temperatures for future validation or testing. | Freezers maintaining -80°C. |

| Automated Semen Analyzer (CASA) | For objective, computer-assisted analysis of semen parameters (concentration, motility, morphology). | Provides standardized, reproducible data for defining the outcome variable. |

Within the development of machine learning (ML) models for assessing infertility risk from serum hormones, feature selection is a critical step that directly impacts model performance, interpretability, and clinical applicability. Identifying the most predictive biochemical markers allows for the creation of robust, efficient, and cost-effective diagnostic tools. This document outlines key predictive hormones and ratios, summarizes supporting quantitative evidence, and provides detailed protocols for their measurement and integration into ML workflows, contextualized within a broader thesis on computational approaches to infertility risk assessment.

Research demonstrates that a select group of serum hormones and their derived ratios serve as powerful predictors for male infertility risk. The table below summarizes the key features and their relative importance as identified in a large-scale study developing an AI model for determining male infertility risk without semen analysis [4].

Table 1: Key Predictive Hormones and Ratios for Male Infertility Risk Assessment

| Feature Name | Feature Type | Reported Feature Importance (Ranking) | Key Rationale & Association |

|---|---|---|---|

| Follicle-Stimulating Hormone (FSH) | Hormone | 1st (Highest) [4] | Primary indicator of spermatogenic function; often elevated in spermatogenic dysfunction [4]. |

| Testosterone to Estradiol Ratio (T/E2) | Calculated Ratio | 2nd [4] | Reflects androgen-estrogen balance; crucial for spermatogenesis and bone health [4] [32]. |

| Luteinizing Hormone (LH) | Hormone | 3rd [4] | Stimulates Leydig cells to produce testosterone; indicates pituitary-testicular axis function [4]. |

| Testosterone | Hormone | 4th-5th [4] | Primary androgen required, with FSH, for spermatogenesis [4] [32]. |

| Estradiol (E2) | Hormone | 6th [4] | Formed from testosterone via aromatase; has negative feedback effects [4] [32]. |

| Prolactin (PRL) | Hormone | 7th [4] | Hyperprolactinemia can suppress hypothalamic-pituitary-gonadal axis [4]. |

| Age | Demographic Variable | 4th-5th [4] | Confounding factor influencing hormonal levels and overall fertility potential [4]. |

The predictive power of these features is validated by ML model performance. A model utilizing these serum markers achieved an Area Under the Curve (AUC) of 74.42% in predicting male infertility risk, demonstrating the viability of this approach [4].

Experimental Protocols for Key Feature Assessment

Protocol: Blood Collection and Serum Hormone Profiling

This protocol details the standard procedure for obtaining the serum samples used for hormone analysis in predictive modeling.

1. Principle: To collect high-quality blood serum for the accurate quantification of reproductive hormones via immunoassay or mass spectrometry.

2. Reagents & Equipment:

- Serum separator tubes (SST)

- Venipuncture kit (tourniquet, alcohol swabs, needles, adhesive bandage)

- Centrifuge

- -20°C or -80°C freezer for sample storage

- HPLC-MS/MS system (for 25OHVD3 analysis, as an example of advanced testing [33])

3. Procedure: 1. Patient Preparation: Instruct the patient to fast for 8-12 hours prior to blood collection. Blood draws should ideally be performed in the morning (e.g., 7 AM - 10 AM) to account for diurnal variation in hormone levels, particularly testosterone [34]. 2. Phlebotomy: Perform venipuncture and collect blood into a serum separator tube. 3. Clot Formation: Allow the blood to clot at room temperature for 30-60 minutes. 4. Centrifugation: Centrifuge the sample at 1,500 - 2,000 RCF for 10-15 minutes to separate the serum. 5. Aliquoting and Storage: Gently aliquot the clear serum into cryovials without disturbing the cellular layer. Store aliquots at -20°C for short-term use (within weeks) or -80°C for long-term preservation to maintain analyte integrity.

4. Notes: Adherence to standardized phlebotomy and processing protocols is critical to minimize pre-analytical variability, which can significantly impact ML model performance.

Protocol: Calculation of Testosterone to Estradiol (T/E2) Ratio

The T/E2 ratio is a critical derived feature that requires precise measurement of its components.

1. Principle: The T/E2 ratio is calculated from serum concentrations of total testosterone (T) and estradiol (E2), integrating gonadal output and peripheral aromatase activity into a single balance metric [32] [34].

2. Reagents & Equipment:

- Results from testosterone and estradiol assays, reported in consistent units.

3. Procedure: 1. Unit Conversion: Ensure testosterone and estradiol concentrations are in consistent units. Laboratories often report T in ng/dL and E2 in pg/mL. - To convert T from ng/dL to pmol/L: T (pmol/L) = T (ng/dL) × 34.66 [35]. - To convert E2 from pg/mL to pmol/L: E2 (pmol/L) = E2 (pg/mL) × 3.6713 [35]. 2. Ratio Calculation: The T/E2 ratio is computed using the formula: - T/E2 Ratio = Testosterone Concentration / Estradiol Concentration [35]. 3. Interpretation: While a universally defined "optimal" range is debated, a range of 10 to 30 (calculated from T in ng/dL and E2 in pg/mL) has been associated with beneficial outcomes for spermatogenesis and bone density [32].

4. Notes: Significant variability exists between different hormone assays. It is imperative that the ML model is trained and validated using data generated from the same assay platform and methodology to ensure consistency.

Workflow and Signaling Pathways Visualization

Hormonal Regulation of Spermatogenesis Pathway

The following diagram illustrates the hypothalamic-pituitary-testicular (HPT) axis, showing the functional relationships between the key predictive hormones.

ML Feature Selection and Model Building Workflow

This workflow outlines the process from data collection to model deployment, highlighting the role of feature selection.

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogues essential materials and tools for conducting research in this field.

Table 2: Essential Research Reagents and Materials for Predictive Hormone Modeling

| Item Name | Function/Application | Specific Examples & Notes |

|---|---|---|

| Serum Separator Tubes (SST) | Collection and processing of blood for serum isolation. | Standard tubes for clinical phlebotomy. Ensure compatibility with downstream analyzers. |

| Immunoassay Kits | Quantifying hormone levels (FSH, LH, Testosterone, Estradiol, Prolactin). | Commercial kits from diagnostic companies (e.g., Roche, Siemens). Critical for generating the input data. |

| HPLC-MS/MS System | Gold-standard method for precise hormone quantification and validation; used for novel biomarkers like Vitamin D [33]. | Agilent 1200 HPLC system coupled with API 3200 QTRAP MS/MS [33]. |

| Aromatase Enzyme | Key for in vitro studies of testosterone to estradiol conversion. | Human recombinant aromatase (product of CYP19A1 gene) for mechanistic studies [32]. |

| Machine Learning Software Libraries | Building and testing predictive models (e.g., Random Forest, XGBoost). | Python (Scikit-learn, XGBoost) or R. AutoML platforms like "Prediction One" were used in foundational studies [4]. |

| Statistical Analysis Software | Performing data cleaning, normalization, and basic statistical tests. | R, SPSS, or Python (Pandas, SciPy) [36] [33]. |

The strategic selection of hormonal features, particularly FSH, the T/E2 ratio, and LH, forms the cornerstone of performant ML models for non-invasive infertility risk assessment. The experimental protocols and workflows detailed herein provide a reproducible framework for generating high-quality data and building robust predictive tools. Future work should focus on the external validation of these models across diverse populations and the integration of novel biomarkers to further enhance predictive accuracy and clinical utility.

Infertility, affecting an estimated 10–15% of couples globally, represents a significant challenge in reproductive medicine [37] [38]. The diagnosis and treatment of conditions leading to infertility, such as polycystic ovary syndrome (PCOS) and other endocrine disorders, rely heavily on the interpretation of complex serum hormone panels and clinical markers [39]. Traditional statistical methods often struggle to capture the intricate, non-linear relationships between these multifaceted biomarkers and patient outcomes.

Machine learning (ML) has emerged as a powerful tool to address this complexity, offering enhanced predictive accuracy for infertility risk assessment, diagnosis, and treatment success [40] [38]. This article provides a comprehensive overview of ML algorithms—from foundational logistic regression to advanced ensemble methods like Random Forest (RF), XGBoost, and LightGBM—within the context of infertility research based on serum hormones and clinical biomarkers. We detail their applications, provide structured protocols for implementation, and discuss their relative performance in this specialized field.

Machine Learning Algorithms in Infertility Research

Logistic Regression

Logistic Regression (LR) remains a widely used baseline model in medical research due to its high interpretability and computational efficiency [39]. It models the relationship between a set of independent variables (e.g., hormone levels) and a binary dependent variable (e.g., infertile vs. fertile) by estimating probabilities using the logistic function.

Recent studies demonstrate its continued relevance. A 2025 diagnostic model for PCOS achieved robust performance using LR, with an Area Under the Curve (AUC) of 0.86, based on predictors including luteinising hormone (LH), anti-Müllerian hormone (AMH), and testosterone (T) [39]. Furthermore, hybrid models that combine LR with optimization algorithms like the Artificial Bee Colony (ABC) have shown potential to enhance predictive performance for in vitro fertilization (IVF) outcomes, achieving accuracy up to 91.36% in proof-of-concept studies [41] [42].

Ensemble Methods: Random Forest, XGBoost, and LightGBM

Ensemble methods combine multiple base models to create a single, superior predictive model. They are particularly effective for the high-dimensional data common in biomarker research.

- Random Forest (RF): An ensemble of decision trees, RF reduces overfitting by aggregating predictions from trees trained on random subsets of data and features. It has demonstrated top-tier performance in predicting live birth outcomes from fresh embryo transfer, achieving an AUC exceeding 0.8. Key predictive features identified by RF included female age, embryo grades, and endometrial thickness [38].

- XGBoost (eXtreme Gradient Boosting): This model builds trees sequentially, where each new tree corrects the errors of the previous ones. It incorporates regularization to prevent overfitting and often delivers state-of-the-art results. In a study predicting blastocyst yield in IVF cycles, XGBoost demonstrated strong performance (R²: ~0.67) [43]. However, its performance can be dependent on the context, as another study using mainly sociodemographic data for natural conception prediction showed more limited capacity (AUC: 0.580) [44].

- LightGBM (Light Gradient Boosting Machine): Designed for speed and efficiency, LightGBM uses a novel technique to grow trees vertically (leaf-wise) rather than horizontally (level-wise). A 2025 study on blastocyst yield prediction found LightGBM to be the optimal model, matching the performance of XGBoost and SVM (R²: 0.673–0.676) but with greater practicality and interpretability by requiring fewer features (8 vs. 10-11) [43].

Table 1: Performance Comparison of Machine Learning Algorithms in Recent Infertility Studies

| Algorithm | Application Context | Key Performance Metrics | Key Predictors Identified |

|---|---|---|---|

| Logistic Regression | PCOS Diagnosis [39] | AUC: 0.86 | LH, LH/FSH, AMH, Testosterone |

| Random Forest (RF) | Live Birth Prediction [38] | AUC > 0.8 | Female Age, Embryo Grade, Endometrial Thickness |

| XGBoost | Blastocyst Yield Prediction [43] | R²: 0.673-0.676, MAE: 0.793-0.809 | Number of Extended Culture Embryos, Day 3 Embryo Morphology |

| LightGBM | Blastocyst Yield Prediction [43] | R²: 0.673-0.676, MAE: 0.793-0.809 | Number of Extended Culture Embryos, Proportion of 8-cell Embryos |

| SVM | Infertility Diagnosis [33] | AUC > 0.958, Sens. > 86.52%, Spec. > 91.23% | 25OHVD3, Lipids, Thyroid Function |

Additional Machine Learning Algorithms

Other algorithms also play significant roles. Support Vector Machines (SVM) have been successfully employed for infertility diagnosis, creating models with high sensitivity (>86.52%) and specificity (>91.23%) [33]. Furthermore, hybrid models, such as LR-ABC, demonstrate the potential of meta-optimization to enhance the performance of base algorithms for specific clinical tasks like IVF outcome prediction [42].

Experimental Protocols for Model Development

Protocol 1: Data Collection and Preprocessing for Serum Hormone-Based Models

Objective: To systematically collect and preprocess clinical and hormonal data for training ML models to assess infertility risk.

Materials and Reagents:

- Serum Samples: Collected from participants following standardized protocols [39].

- Hormone Assay Kits: For example, electrochemiluminescence kits for AMH detection (e.g., Roche Cobas 6000) [39].

- Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) System: For precise quantification of steroid hormones (e.g., Agilent 1290-AB Sciex 5500 system) [39].

Procedure:

- Participant Recruitment & Criteria: Define clear inclusion/exclusion criteria. For PCOS diagnosis, this typically involves adhering to the Rotterdam criteria, with age ranges (e.g., 20-35 years) and exclusion of other endocrine disorders [39].

- Sample Collection: Collect venous blood serum from participants in the morning after fasting. For cycling women, sample collection should be standardized, e.g., on day 3-5 of the menstrual cycle [39].

- Hormone Level Quantification:

- Data Curation: Store all laboratory results, patient histories, and demographic information in a secure database. Ensure data anonymization [33].

- Data Preprocessing:

- Handle Missing Values: Use imputation methods suitable for the data type and proportion of missingness (e.g., the

missForestnon-parametric method for mixed-type data) [38]. - Address Class Imbalance: If the outcome classes are unbalanced (e.g., many more negative outcomes than positive), apply techniques like the Synthetic Minority Over-sampling Technique (SMOTE) during model training [42].

- Feature Scaling: Normalize or standardize continuous variables, especially for models like SVM and Logistic Regression.

- Handle Missing Values: Use imputation methods suitable for the data type and proportion of missingness (e.g., the

Protocol 2: Building and Evaluating a Predictive Model

Objective: To train, validate, and interpret a machine learning model for infertility risk prediction.

Procedure:

- Feature Selection:

- Filter Methods: Use statistical tests (e.g., p-value < 0.05) or correlation analysis to remove redundant features.