Replication Studies for Candidate Genes: A Comprehensive Guide for Robust Genetic Association Research

This article provides a comprehensive framework for designing, executing, and interpreting replication studies for candidate genes, tailored for researchers and drug development professionals.

Replication Studies for Candidate Genes: A Comprehensive Guide for Robust Genetic Association Research

Abstract

This article provides a comprehensive framework for designing, executing, and interpreting replication studies for candidate genes, tailored for researchers and drug development professionals. It explores the critical role of replication in validating genetic associations, addressing the historical challenges and contentious findings in the field. The content covers foundational principles, rigorous methodological workflows, strategies for troubleshooting common pitfalls, and comparative analysis with genome-wide approaches. By integrating evidence from empirical studies and current best practices, this guide aims to enhance the reliability and translational potential of genetic research in biomedicine.

The Critical Role and Historical Context of Replication in Candidate Gene Research

In the field of human genetics, the identification of a disease-associated gene is merely the first step in a long validation process. For research on Premature Ovarian Insufficiency (POI) candidate genes, establishing a truly validated genetic finding requires robust replication studies that confirm initial discoveries and assess their clinical significance. This guide examines the framework for validating genetic findings in POI research, comparing key validation criteria and providing detailed experimental methodologies used in this specialized field.

The Validation Framework for Genetic Findings

A genetically validated finding is not a single discovery but a conclusion supported by consistent evidence across multiple independent studies. This process evaluates findings across several critical dimensions.

Table 1: Core Components of Genetic Test and Finding Validation

| Component | Definition | Key Question | Replication Study Focus |

|---|---|---|---|

| Analytical Validity | How accurately and reliably the test detects specific genetic variants [1] [2] | Can the test consistently detect whether a specific genetic variant is present or absent? [1] | Verify that laboratory methods can reproduce the initial variant detection with high precision. |

| Clinical Validity | How well the genetic variant is linked to the presence, absence, or risk of a specific disease [1] [2] | Is the variant conclusively associated with increased risk of POI? [3] | Confirm the statistical association between the variant and POI in an independent cohort. |

| Clinical Utility | Whether the test provides information useful for diagnosis, treatment, or prevention [1] [2] | Does knowledge of the variant lead to improved health outcomes? [3] | Assess whether the finding informs clinical management, counseling, or therapeutic decisions. |

The process of establishing these validation components follows a logical progression, building from technical accuracy to clinical application.

Validation Evidence Hierarchy

Quantitative Landscape of POI Candidate Genes: Known vs. Novel Findings

Recent large-scale genomic studies have dramatically expanded our understanding of the genetic architecture of Premature Ovarian Insufficiency. The comparison between established and newly discovered genes reveals important patterns for validation efforts.

Table 2: Validation Status of POI-Associated Genes from Recent Large-Scale Study

| Gene Category | Number of Genes | Cases Explained | Key Characteristics | Validation Evidence |

|---|---|---|---|---|

| Known POI-Causative Genes | 59 | 18.7% (193/1030) | Primarily involved in meiosis, HR repair, mitochondrial function [4] | Multiple independent reports, functional studies, inclusion in clinical panels |

| Novel POI-Associated Genes | 20 | Additional contribution (total 23.5%) | Roles in gonadogenesis, meiosis, folliculogenesis [4] | Significant burden of LoF variants in case-control analysis (P < 1 × 10^(-5)) |

| Genes with Higher Yield in PA | FSHR, etc. | 4.2% in PA vs. 0.2% in SA | More severe phenotype association [4] | Biallelic/multi-het variants more common in PA (8.3%) than SA (3.1%) |

The distribution of genetic findings across different biological mechanisms highlights the diverse pathways that can lead to POI when disrupted.

POI Gene Functional Distribution

Experimental Protocols for Validating POI Genetic Findings

Whole Exome Sequencing in POI Cohort Studies

The identification of novel POI genes typically employs a case-control design with comprehensive genetic analysis.

Protocol Overview:

- Cohort Selection: 1,030 unrelated POI patients meeting ESHRE criteria (amenorrhea before age 40 + FSH >25 IU/L on two occasions) compared to 5,000 controls [4]

- Exclusion Criteria: Chromosomal abnormalities, autoimmune diseases, iatrogenic causes [4]

- Sequencing Method: Whole exome sequencing using Illumina platforms with minimum 30x coverage

- Variant Filtering: Remove common variants (MAF >0.01 in gnomAD or population-matched controls) [4]

- Variant Classification: Follow ACMG guidelines with functional validation of uncertain significance variants [4]

Functional Validation of Candidate Variants

For variants of uncertain significance, functional studies are essential for establishing pathogenicity.

Gene-Specific Functional Assays:

- Homologous Repair Efficiency: Measure RAD51 foci formation or host cell reactivation assays for genes like HFM1, MCM8, MCM9 [4]

- Gene Expression Analysis: Quantitative RT-PCR to assess mRNA expression in ovarian tissue or appropriate cell lines

- Protein Function Assays: Western blotting, subcellular localization, and protein-protein interaction studies

Statistical Framework for Association Testing

Robust statistical methods are critical for distinguishing true associations from background noise.

Burden Testing Protocol:

- Compare burden of loss-of-function variants between cases and controls

- Apply sequence kernel association test (SKAT) for gene-based analysis

- Establish genome-wide significance threshold (P < 1 × 10^(-5)) [4]

- Calculate contribution yield as percentage of cases with pathogenic variants

The Scientist's Toolkit: Essential Reagents for POI Genetic Research

Table 3: Key Research Reagent Solutions for POI Gene Validation

| Reagent/Category | Specific Examples | Function in Validation Pipeline |

|---|---|---|

| Exome Capture Kits | Illumina Nextera, IDT xGen | Uniform coverage of coding regions for variant discovery [4] |

| NGS Platforms | Illumina NovaSeq, Ion Torrent | High-throughput sequencing for large cohort studies [5] |

| Variant Annotation | ANNOVAR, SnpEff, CADD | Functional prediction and pathogenicity assessment [4] |

| Functional Assay Kits | RAD51 foci detection, GTP/GDP exchange assays | Experimental validation of variant impact [4] |

| Gene Panel Solutions | Custom AmpliSeq panels | Targeted validation of candidate genes in replication cohorts [5] |

| CNV Detection | Microarray platforms, CNVpytor | Identification of copy number variations associated with POI [6] |

| Data Sharing | ENIGMA, gnomAD, ClinVar | Comparison with population frequencies and previous findings [4] |

Interpretation Framework for Replication Success

The validation of POI genetic findings requires careful consideration of multiple evidence types:

Confirmatory Evidence:

- Statistical Replication: Consistent association (same direction and comparable effect size) in independent cohort

- Functional Concordance: Experimental evidence supporting plausible biological mechanism

- Phenotypic Correlation: Genotype-phenotype relationships (e.g., PA vs. SA distinction) [4]

Discordant Results Analysis:

- Population Differences: Allele frequency variations across ethnic groups

- Technical Artifacts: Platform-specific biases or variant calling differences

- Methodological Heterogeneity: Differences in inclusion criteria or phenotypic characterization

Validating genetic findings in POI research requires a multi-dimensional approach that spans analytical precision, clinical association, and functional relevance. The framework presented here enables systematic comparison between established and novel POI genes, providing researchers with clear criteria for assessing replication success. As the field moves toward clinical application, these validation standards will ensure that genetic findings provide meaningful insights for diagnosis, counseling, and therapeutic development.

The replication crisis, a period of intense self-scrutiny across scientific fields, has revealed that many high-profile research findings are not replicable when independent labs repeat the experiments. In biomedical research, this has profound implications, potentially slowing drug development and misdirecting research resources. A landmark project by the Center for Open Science found that 54% of attempted preclinical cancer studies could not be replicated [7], while other internal surveys from industry reported even higher failure rates, with one finding that 89% of hematology and oncology results were irreproducible [7]. This guide examines key case studies from this crisis, focusing on candidate gene research, to compare robust and failed scientific approaches and extract lessons for improving research practices in drug development.

The Fall of Candidate Gene Studies in Psychiatry and Well-Being

Candidate gene studies were once a dominant approach for linking specific genetic variants to traits and diseases. Researchers would select genes based on their presumed biological relevance (their "candidate" status) and test for associations with particular conditions. However, this field has become one of the most prominent casualties of the replication crisis.

◉ Case Study: Genetic Foundations of Well-Being

For years, researchers proposed that specific genes were responsible for variations in human well-being. The serotonin transporter gene (SLC6A4), particularly its 5-HTTLPR promoter region, was a star candidate based on serotonin's known role in mood disorders [8]. Initial, smaller studies reported promising associations, suggesting that individuals with different versions of this gene experienced different levels of life satisfaction.

A comprehensive 2022 re-evaluation systematically reviewed this literature and tested these candidate genes against large-scale genetic databases, including the UK Biobank. The results were stark: no support was found for any of the previously proposed candidate genes or their interactions with the environment for well-being [8]. The only reliable genetic associations were identified through hypothesis-free genome-wide approaches, not the candidate gene method. The study strongly advised well-being researchers to abandon the candidate gene approach in favor of genome-wide methods [8].

◉ Case Study: Pharmacogenetics of Amphetamine Response

This failure to replicate extends beyond observational studies to experimental pharmacogenetics. A research group conducted a series of 12 candidate gene analyses on acute responses to amphetamine, studying genes including ADORA2A, SLC6A3 (dopamine transporter), and COMT [9]. When they attempted to replicate their own initial findings in a larger sample of over 200 new participants using identical methods, the result was clear: they were unable to replicate any of their previous findings [9]. This self-contained replication failure highlights the pervasiveness of the problem, even within the same research team.

Table 1: Summary of Candidate Gene Study Replication Failures

| Research Area | Prominent Candidate Genes | Replication Outcome | Key Reason for Failure |

|---|---|---|---|

| Subjective Well-Being | SLC6A4 (5-HTTLPR), BDNF, DRD4 [8] | No support found for any candidate genes in large-scale analysis [8] | Small sample sizes; small true effect sizes of individual genes [8] |

| Amphetamine Response | ADORA2A, SLC6A3, COMT, OPRM1 [9] | Zero out of 12 prior findings replicated in a larger sample [9] | Inadequate statistical power; multiple testing issues [9] |

| Depression & Schizophrenia | Historical candidate genes (e.g., 5-HTTLPR for depression) [8] | No robust evidence in large genomic studies [8] | Over-reliance on presumed biological function without genome-wide evidence [8] [10] |

A Shift in Paradigm: Lessons from Genome-Wide Association Studies (GWAS)

The repeated failure of candidate gene studies forced a major methodological shift in genetics. The field moved toward genome-wide association studies (GWAS), which scan the entire genome without pre-selecting specific genes. This approach revealed that most behavioral and psychological traits are influenced by hundreds to thousands of genetic variants, each with minuscule effects [8]. Detecting these tiny effects requires massive sample sizes, often in the hundreds of thousands, which is the opposite of the small-sample approach that plagued candidate gene research [8] [10]. This shift is a key lesson for other fields: abandoning simplistic models for data-driven, large-scale collaboration is essential for robust discovery.

◉ Case Study: A Contrast in Reproducibility - Fruit-Fly Immunology

Not all replication stories are negative. A massive project analyzing over 1,000 claims from fruit-fly immunity research published over 50 years found that at least 61% were verifiable [11]. This suggests that some biological research fields may have a stronger foundation of replicable findings. The high rate of verification in this field can be attributed to the use of model organisms with highly controlled genetics and environments, which reduces variability and increases the reliability of experimental outcomes.

Experimental Protocols in Replication Research

Conducting a rigorous replication study involves a meticulous process to ensure the results are comparable and meaningful. The following workflow outlines the standard protocol used in large-scale replication projects, such as those in cancer biology [12] [13].

Detailed Methodologies for Key Replication Experiments

Protocol Development and Preregistration: The first critical step is to write a detailed experimental plan, or a Registered Report, which is peer-reviewed and published before any data is collected [12] [14]. This prevents later manipulation of the hypotheses or analysis methods based on the results. The plan must specify:

- The exact experimental effects to be assessed.

- All protocols, including animal models, cell lines, drug doses, and treatment schedules.

- The primary outcome measures and the precise statistical analysis plan, including corrections for multiple comparisons [12].

Reagent Sourcing and Validation: A major hurdle in replication is obtaining the exact materials used in the original study. The Reproducibility Project: Cancer Biology found that none of the 53 papers they selected contained enough detail to repeat the experiments [13]. Replicators must:

- Request key reagents (cell lines, plasmids, model organisms) directly from the original authors, a process with only a 69% success rate [13].

- If unavailable, source from reputable repositories and perform validation tests (e.g., STR profiling for cell lines, sequencing for plasmids) to ensure identity and functionality.

In Vivo Replication Experiment (Example: Xenograft Model):

- Cell Line Preparation: Culture the relevant cell lines (e.g., A549 for lung adenocarcinoma, ACHN for renal carcinoma) under conditions matching the original study [12].

- Animal Model Generation: Implant cells into immunodeficient mice to grow xenograft tumors. Randomize mice into treatment and control groups to eliminate selection bias.

- Intervention: Administer the drug (e.g., cimetidine at 100 mg/kg) or vehicle control according to the original treatment schedule [12].

- Outcome Measurement: Monitor tumor volume regularly using calipers. The primary endpoint is often the relative change in tumor volume at a predetermined day (e.g., Day 11) [12].

- Data Analysis: Analyze the results strictly according to the preregistered plan, such as using an ANOVA to test for interaction effects between cell line and drug treatment, followed by planned pairwise comparisons with appropriate multiple-testing corrections [12].

The Scientist's Toolkit: Research Reagent Solutions

The replication crisis has underscored the critical importance of reliable and well-documented research materials. The following table details essential reagents and the best practices for their use.

Table 2: Key Research Reagents and Best Practices for Replicable Science

| Reagent / Material | Critical Function | Replication Challenge & Solution |

|---|---|---|

| Validated Cell Lines | Fundamental in vitro and xenograft model system. | Challenge: Contamination and misidentification. Solution: Obtain from authenticated repositories (e.g., ATCC); perform regular STR profiling. |

| Key Biological Reagents | Specific plasmids, antibodies, or chemical compounds for intervention. | Challenge: Often unpublished "secret sauce" not commercially available [13]. Solution: Journals should mandate deposition in repositories; authors must share upon request. |

| Genetically Defined Model Organisms | Controlled in vivo studies (e.g., Drosophila, mice). | Challenge: Genetic drift and differences in housing conditions. Solution: Use standardised strains from major suppliers; document housing and breeding conditions in detail. |

| Computable Phenotypes | For studies using real-world data (EHR/claims) [15]. | Challenge: Indications and endpoints often not easily extracted from structured data [15]. Solution: Develop and share precise algorithms for defining patient cohorts and outcomes. |

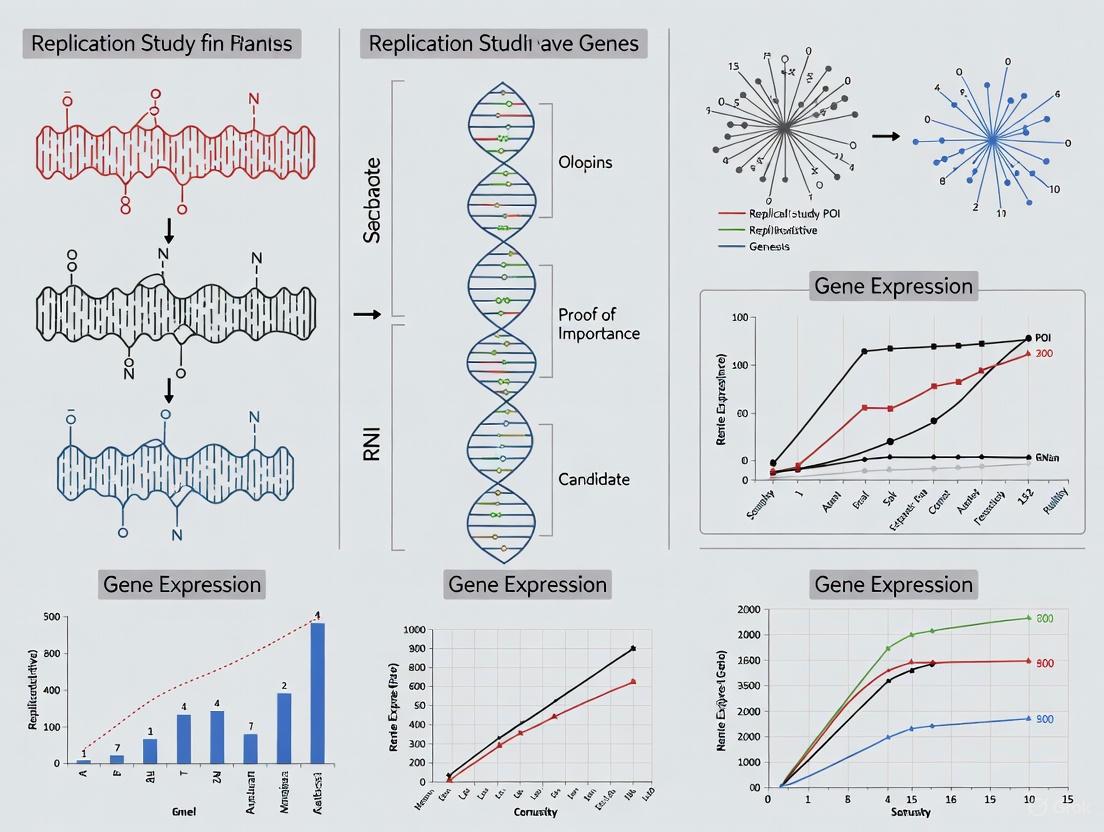

Visualization: The Shift from Candidate Gene to GWAS

The fundamental logic of genetic discovery has undergone a radical transformation, moving from a hypothesis-driven but often flawed approach to an open, data-driven one. The following diagram contrasts these two paradigms.

The high-profile failures in candidate gene research offer a clear lesson: scientific rigor must trump compelling narratives. The path forward requires a fundamental change in practice and incentives. Key solutions include:

- Embracing Large-Scale Collaboration: As genetics has shown, many complex questions can only be answered by pooling data and resources across institutions to achieve sufficient statistical power [8] [10].

- Mandating Transparency: Journals and funders must require full protocol sharing, data archiving, and detailed "failure analyses" that document the tricks and hurdles essential for making an experiment work [7] [13].

- Reforming Incentives: The scientific ecosystem must reward robust, replicated findings over novel but potentially flimsy breakthroughs. This involves supporting replication studies financially and publishing their results regardless of outcome [16] [14].

The replication crisis is not a sign of science's failure, but a painful but necessary phase of self-correction. By learning from the past and adopting more rigorous, transparent, and collaborative methods, researchers and drug developers can build a more reliable foundation for future discoveries.

Replication is a cornerstone of the scientific method, serving as a critical check on the validity and reliability of research findings. In fields like candidate gene research, where findings often form the foundation for further drug development and clinical studies, the inability to replicate results can lead to a waste of resources and misdirected scientific effort. This guide examines the indispensable role of replication in combating false positives and publication bias, providing researchers with a framework for evaluating and implementing rigorous replication practices.

The Replication Crisis and Its Core Drivers

Science is facing a “replication crisis” in which many high-profile experimental findings cannot be replicated in subsequent studies [17]. Large-scale analyses have revealed that many published papers in fields ranging from cancer biology to psychology cannot be replicated, raising concerns that many published conclusions may be false [17]. This crisis is primarily driven by two interconnected factors: publication bias and the misuse of statistical significance.

Publication bias arises when the probability that a study is published is not independent of its results [17]. A substantial majority of published scientific results are positive, with more than 80% of papers across disciplines reporting positive findings, and this number exceeds 90% in fields such as psychology and ecology [17]. This bias is starkly illustrated by research on antidepressant studies, which found that 37 of 38 positive studies were published, but only 3 of 24 negative studies were published as negative results [17].

This preference for positive findings creates a "file drawer problem," where statistically non-significant results are filed away and never published [17] [18]. Consequently, the published literature presents a distorted view of the evidence, systematically overrepresenting positive findings. When a scientific community relies on this biased body of literature, false claims can frequently become canonized as fact [17].

A Framework for Interpreting Replication Results

Interpreting the outcomes of replication studies requires moving beyond a simple "success/failure" dichotomy. Statistically sophisticated researchers often struggle with this interpretation [19]. The table below outlines key statistical outcomes and their interpretations when an original study and a replication study are compared.

Table 1: Interpretation of Replication Study Outcomes

| Original Study Result | Replication Result | Probability when H₀ is True | Probability when H₁ is True (50% power) | Interpretation & Implications |

|---|---|---|---|---|

| Significant | Significant | 0.25% (alpha²) | 25% ((1-beta)²) | Strong evidence against H₀; unlikely to occur by chance if H₀ is true. |

| Significant | Non-Significant | 4.25% (alpha*(1-alpha)) | 25% (beta*(1-beta)) | Inconsistent results; difficult to interpret without meta-analysis. |

| Non-Significant | Significant | 4.25% ((1-alpha)*alpha) | 25% ((1-beta)*beta) | Inconsistent results; difficult to interpret without meta-analysis. |

| Non-Significant | Non-Significant | 90.25% ((1-alpha)²) | 25% (beta²) | Suggests H₀ may be true, but does not prove it (absence of evidence). |

A single replication failure does not necessarily invalidate an original finding. Inconsistent results (one significant, one non-significant) are surprisingly common, especially when statistical power is low [19]. With a typical power of 50%, this outcome occurs 50% of the time whether the null hypothesis is true or false, making it largely uninformative [19]. A more powerful approach is effect size meta-analysis, which combines results from original and replication studies to provide a more accurate overall estimate [19].

Types and Methodologies of Replication Studies

Replication is not a monolithic concept. Different types of replication studies serve distinct purposes in validating scientific claims [20].

- Direct Replication: A new study follows the original study's methods as closely as possible. The goal is to determine whether the finding can be reproduced under similar conditions, though samples, specific conditions, and research teams are necessarily different [20].

- Conceptual Replication: A study employs different methodologies or measures to test the same underlying hypothesis or theoretical construct as the original study. This approach tests the robustness and generalizability of a finding beyond the specific original operationalization [20].

The distinction between "direct" and "conceptual" is increasingly seen as a continuum rather than a strict dichotomy. Both are valuable, and the choice depends on the specific research question being investigated [20].

A Protocol for Conducting Replication Research

Conducting a rigorous replication study requires careful planning and execution. The following workflow, based on guidelines from the Open Science Framework, outlines the key stages [20].

Diagram 1: Replication Study Workflow

Key steps include [20]:

- Identifying a study that is feasible to replicate given available time, expertise, and resources.

- Obtaining the original materials and protocols used in the initial study.

- Developing a detailed plan specifying the type of replication and research design.

- Implementing the study according to pre-specified best practices.

- Conducting the study, analyzing the data, and sharing the results regardless of the outcome.

When conducting replications, researchers should avoid critiquing the original study's design as a basis for the replication findings and should always communicate with the original authors throughout the process [20].

Replication in Action: A Case Study from Genetics

The critical importance of functional validation through replication is powerfully illustrated in genome-wide association studies (GWAS). GWA studies identify statistical associations between genetic markers and traits, but these associations are prone to false positives [21]. Therefore, functional validation is necessary to make strong claims about gene function [21].

A landmark study in Medicago truncatula demonstrated this principle. Researchers began with a GWAS that identified an initial list of 100 candidate single nucleotide polymorphisms (SNPs) most strongly associated with variation in nodulation [21]. This list was filtered based on statistical support, linkage disequilibrium, location near annotated genes, and expression in relevant tissues [21]. Ten candidate genes were selected for functional validation using three independent reverse genetics mutagenesis tools [21].

Table 2: Key Research Reagent Solutions for Genetic Replication

| Research Reagent / Tool | Function in Validation | Application in the Case Study |

|---|---|---|

| Tnt1 Retrotransposon Mutants | Disrupts gene function by inserting a mobile genetic element into the gene sequence. | Used to create homozygous mutant lines (e.g., pho2-likeTnt, pen3-likeTnt). |

| Hairpin RNA-Interference (RNAi) | Knocks down gene expression by triggering the degradation of specific mRNA sequences. | Generated stable whole-plant knockdown lines (e.g., pno1-likeHP). |

| CRISPR/Cas9 Nuclease | Provides precise, targeted gene editing to create specific knock-out mutations. | Used to generate eight independent mutant lines for validation. |

| Phenotypic Assays | Measures the observable characteristics (phenotypes) resulting from genetic manipulation. | Well-replicated experiments to count nodule production in mutants vs. wild types. |

The use of multiple, independent mutagenesis platforms (Tnt1, RNAi, CRISPR/Cas9) constituted a form of internal replication, where the same research team tests the hypothesis using different methods [21] [20]. The results were striking: of the ten candidate genes identified by the initial GWAS, only three (PHO2-like, PNO1-like, and PEN3-like) showed statistically significant effects on nodule production in the validation experiments, and each of these was confirmed in two independent mutants [21]. This demonstrates how rigorous replication separates true signals from statistical noise.

The Economic Value and Strategic Funding of Replication

Given limited scientific resources, a key question is how much should be allocated to replication versus new research. A data-driven framework suggests that a well-calibrated replication program could productively spend about 1.4% of the National Institutes of Health (NIH) annual budget before hitting negative returns relative to funding new science [22]. This is significantly less than some political suggestions of 20%, but still represents a substantial investment that would far exceed current levels [22].

The value of a replication study is highest when it is targeted at recent, influential studies that are likely to receive substantial downstream attention. The return on investment is driven by four key factors [22]:

- The probability the finding would be overturned by a replication.

- The amount of downstream attention the study would receive without a replication.

- The proportion of that attention preempted by a failed replication.

- The cost of replication relative to a new study.

A conservative analysis suggests that about 11% of published studies may be genuinely unreliable [22]. By shutting down these false leads early, replications prevent follow-on resources from being spent on fruitless research, thereby accelerating genuine discovery [22].

Building a More Robust Scientific Future

Combating false positives and publication bias requires a systemic shift in scientific incentives and practices. Promising reforms include [20]:

- Full transparency of materials, methods, and data.

- Study pre-registration, where researchers publicly declare their hypotheses and analysis plan before data collection begins.

- Result-blind peer review, where journals accept studies based on the importance of the research question and rigor of the methodology, not the nature of the results.

- Statistical reform, including redefining the significance of the p-value and encouraging the use of Bayesian methods, which can reduce the occurrence of publication bias [23].

Replication is a form of scientific checks and balances. It is no more or less important than other parts of the scientific method, but it is absolutely necessary to perpetuate the self-correcting cycle of scientific discovery [20]. For researchers in candidate gene research and drug development, embracing replication is not an admission of failure but a commitment to building a more reliable, efficient, and credible foundation for future innovation.

The field of genetic epidemiology has undergone a profound transformation over the past two decades, moving from hypothesis-driven candidate gene studies to comprehensive, hypothesis-generating genome-wide association studies (GWAS). This paradigm evolution represents more than just a methodological shift—it reflects a fundamental change in how researchers approach complex genetic architectures. Candidate gene studies, which focus on one or a small number of biologically presumed genes, once dominated the literature but have increasingly been supplanted by GWAS, which agnostically test hundreds of thousands to millions of genetic variants across the genome [24].

This transition was driven by growing recognition that previous biological knowledge was often insufficient to correctly specify candidate gene hypotheses, leading to high rates of false positives and low replication rates [25] [24]. Meanwhile, technological advances in high-throughput genotyping and computational methods have made GWAS increasingly accessible and powerful. The implications of this shift extend throughout biomedical research, affecting how studies are designed, how findings are validated, and how genetic discoveries are translated into clinical applications.

Fundamental Methodological Differences

Core Principles and Approaches

The fundamental distinction between these approaches lies in their starting points and underlying philosophies. Candidate gene studies begin with prior biological knowledge about gene function and presumed relevance to a phenotype, typically testing a limited number of single nucleotide polymorphisms (SNPs) in genes selected based on their understood biological roles [26] [24]. This targeted approach offers dense coverage of specific genomic regions but is inherently limited by current biological understanding.

In contrast, GWAS takes an agnostic, data-driven approach that scans the entire genome without pre-specified hypotheses about particular genes. By examining hundreds of thousands to millions of genetic markers simultaneously, GWAS can discover entirely novel genetic associations independent of prior biological knowledge [26] [25]. This comprehensive coverage comes with significant multiple testing burdens, requiring stringent statistical thresholds (typically P < 5 × 10⁻⁸) to account for the vast number of comparisons being made [26] [27].

Statistical Power and Multiple Testing Challenges

The statistical properties of these approaches differ dramatically, particularly regarding power and multiple testing corrections. The table below summarizes key comparative aspects:

Table 1: Fundamental Methodological Differences Between Approaches

| Aspect | Candidate Gene Studies | Genome-Wide Association Studies (GWAS) |

|---|---|---|

| Hypothesis Framework | Hypothesis-driven based on prior biological knowledge | Hypothesis-generating, agnostic scan |

| Genomic Coverage | Limited to preselected genes | Comprehensive genome-wide coverage |

| Number of Variants Tested | Typically 1-100 variants | 100,000 to millions of variants |

| Statistical Threshold | Standard significance (P < 0.05) | Genome-wide significance (P < 5 × 10⁻⁸) |

| Multiple Testing Burden | Minimal | Extreme, requiring stringent correction |

| Discovery Potential | Limited to known biology | Can reveal novel biological pathways |

| Primary Strength | Deep investigation of specific genes | Unbiased discovery capacity |

Statistical power represents a crucial differentiator between these approaches. Candidate gene studies tend to have greater inherent statistical power for detecting effects in their targeted regions because they test far fewer variants and thus require less stringent multiple testing corrections [26]. However, this apparent advantage is offset by a critical limitation: if the true causal genes lie outside the preselected candidates, these studies have zero power to detect them.

GWAS addresses this limitation through comprehensive genomic coverage but pays a substantial penalty in multiple testing. With one million independent common variants in the human genome, the Bonferroni-corrected significance threshold becomes approximately 5 × 10⁻⁸, making it difficult to detect variants with small effect sizes without extremely large sample sizes [26] [25]. This fundamental trade-off between genomic coverage and statistical stringency has driven the formation of large international consortia to achieve sample sizes in the tens to hundreds of thousands.

Comparative Performance and Empirical Evidence

Replication Rates and Validation

The ultimate test of any genetic association study lies in its ability to produce replicable findings across independent samples. By this metric, GWAS has demonstrated clear advantages over traditional candidate gene approaches. Candidate gene studies have suffered from notoriously high rates of false positives and low replication rates across many fields, including psychiatry [25] [24]. Many initially promising candidate gene associations have failed to replicate in larger, more rigorous studies.

In contrast, GWAS-identified loci have shown remarkably consistent replication across diverse populations when sample sizes are sufficient. For instance, in a recent childhood B-cell acute lymphoblastic leukemia (B-ALL) GWAS involving 840 African American cases and 3,360 controls, multiple loci achieved genome-wide significance, with established trans-ancestral susceptibility regions at IKZF1 and ARID5B replicating previous findings [27]. Similarly, a GWAS meta-analysis of body weight traits in chickens identified 77 novel independent variants that were consistently associated across populations [28].

The table below illustrates quantitative comparisons from empirical studies:

Table 2: Empirical Performance Comparison from Published Studies

| Study Example | Approach | Sample Size | Significant Findings | Replication Success |

|---|---|---|---|---|

| B-ALL in African Americans [27] | GWAS | 840 cases, 3,360 controls | 9 genome-wide significant loci | 2 established loci replicated; 7 novel ancestry-specific loci |

| Chicken Body Weight Meta-analysis [28] | GWAS meta-analysis | 1,143 individuals across 3 populations | 77 novel variants | Consistent effects across populations |

| Chronic Post-Surgical Pain [29] | GWAS | 1,350 individuals | 77 SNPs in 24 loci | SNP-based heritability ~39% |

| Bovine Tuberculosis Susceptibility [26] | Candidate gene vs. GWAS simulation | 250-2,000 cases/controls | Candidate genes had higher power with known variants | GWAS struggled with weak effects in small samples |

Biological Insights and Novel Discoveries

Beyond mere replication, the true value of genetic studies lies in their ability to generate novel biological insights. GWAS has excelled in this domain, repeatedly identifying unsuspected biological pathways involved in complex diseases. The unbiased nature of GWAS has revealed that the majority of disease-associated variants reside in noncoding regions of the genome, suggesting they influence gene regulation rather than protein structure [25] [30] [24].

For example, GWAS of psychiatric disorders have identified numerous risk variants in genomic regions with no previously known connection to neurobiology, opening entirely new avenues for investigation [25]. Similarly, in sickle cell disease, GWAS have identified novel fetal hemoglobin-associated variants at loci including ASB3, BACH2, PFAS, ZBTB7A, and KLF1, which were subsequently replicated and shown to have functional effects in erythroid cells [31].

Candidate gene studies, while limited in discovery potential, can provide deep mechanistic insights when focused on truly causal genes. The key distinction is that GWAS is more reliable for identifying which genes warrant such intensive investigation.

Experimental Protocols and Methodological Frameworks

GWAS Workflow and Functional Validation

Modern GWAS follows a structured pipeline from genotyping to functional validation. The standard workflow begins with sample collection and genotyping using microarray platforms covering hundreds of thousands to millions of SNPs. After quality control and imputation to increase variant coverage, association analysis tests each variant for statistical association with the phenotype of interest [27] [28].

Significant associations undergo replication in independent samples to confirm validity, followed by fine-mapping to identify likely causal variants within associated regions [30]. The final and most challenging step involves functional validation using experimental methods to demonstrate biological mechanisms.

Figure 1: Standard GWAS Workflow from Sample Collection to Functional Validation

Functional Validation of GWAS Findings

Once GWAS identifies associated loci, determining their biological mechanisms requires sophisticated experimental approaches. Genome editing technologies, particularly CRISPR-based systems, have revolutionized this process by enabling precise modification of putative causal variants in relevant cell models [30].

A typical functional validation pipeline involves:

- Priority setting using functional genomic annotations (chromatin accessibility, TF binding sites)

- Regulatory element mapping through chromatin conformation capture (3C, Hi-C)

- Expression quantitative trait loci (eQTL) analysis to connect variants to target genes

- Genome editing in disease-relevant cell types

- Phenotypic assays to measure effects on gene expression and cellular functions [30]

For noncoding variants, protein binding assays including ChIP-Seq and electrophoretic mobility shift assays (EMSAs) can determine whether variants alter transcription factor binding affinities [30]. High-throughput approaches like SNP-seq enable unbiased identification of functional SNPs that allelically modulate regulatory protein binding [30].

Research Reagent Solutions and Experimental Tools

Success in both candidate gene and GWAS research depends on appropriate selection of research reagents and experimental platforms. The table below details essential tools and their applications:

Table 3: Essential Research Reagents and Experimental Platforms

| Reagent/Platform | Primary Function | Application Context | Key Considerations |

|---|---|---|---|

| SNP Microarrays | Genome-wide variant genotyping | GWAS discovery phase | Density (300K-5M variants), population-specific content |

| Whole Genome Sequencing | Comprehensive variant discovery | GWAS fine-mapping, rare variants | Coverage depth, structural variant detection |

| CRISPR-Cas9 Systems | Precise genome editing | Functional validation of causal variants | Delivery efficiency, off-target effects |

| ChIP-Seq Kits | Genome-wide protein-DNA interaction mapping | TF binding disruption by noncoding variants | Antibody specificity, cell type applicability |

| Reporter Gene Assays | Regulatory element activity measurement | Functional testing of putative enhancers | Minimal promoter choice, normalization controls |

| eQTL/SQTL Resources | Variant-gene expression association | Target gene prioritization | Cell type relevance, sample size |

Implications for Drug Development and Precision Medicine

The paradigm shift from candidate genes to GWAS has profound implications for therapeutic development. GWAS discoveries have increasingly revealed novel therapeutic targets in unsuspected biological pathways, expanding the potential intervention landscape for complex diseases [30]. The statistical robustness of well-powered GWAS findings provides greater confidence in investing in target validation and drug development programs.

Furthermore, GWAS findings enable polygenic risk scores that aggregate the effects of many variants to stratify individuals by disease risk. For instance, in the African American B-ALL study, children in the top polygenic risk score decile had a 7.9-fold greater odds of disease compared to those with median risk or lower [27]. Such risk prediction tools hold promise for targeting preventive interventions to high-risk subgroups.

However, challenges remain in translating GWAS findings to clinical applications. Most associated variants reside in noncoding regions with unclear functional consequences, and determining their target genes and mechanisms requires substantial additional investigation [30]. Additionally, the majority of GWAS have been conducted in European ancestry populations, limiting the translatability of findings across diverse populations—a concern that recent efforts have begun to address through more inclusive sampling [27].

The evolution from candidate gene to GWAS approaches represents genuine scientific progress in understanding complex trait genetics. Rather than completely replacing candidate gene research, GWAS has redefined its role—from initial discovery to focused mechanistic follow-up of robust genetic associations. The most productive research strategy now leverages the complementary strengths of both approaches: using GWAS for unbiased discovery of authentic genetic associations, then applying candidate gene-style intensive investigation to validate mechanisms and therapeutic implications [32] [30].

As genomic technologies continue advancing, this integrated approach will likely further evolve to include whole genome sequencing, multi-omics integration, and even larger-scale collaboration. The paradigm shift chronicled here has fundamentally improved the rigor, reliability, and biological insights from human genetics research, with lasting benefits for understanding disease mechanisms and developing targeted interventions.

Dopamine and serotonin represent two pivotal monoamine neurotransmitter systems that regulate a vast array of brain functions, including motivation, reward processing, mood stability, cognition, and motor control. The genetic architecture underlying these systems has become a primary focus in neuropsychiatric research, particularly for replication studies seeking to validate candidate genes across populations. Understanding the specific genes that encode synthesis enzymes, receptors, transporters, and metabolic components for these neurotransmitters provides crucial insights into individual differences in behavior, treatment response, and disease susceptibility [33] [34]. This guide systematically compares the key genetic elements of dopamine and serotonin pathways, summarizes experimental approaches for their investigation, and provides resources to facilitate rigorous replication studies in this domain.

Comparative Genetics of Dopamine and Serotonin Systems

Core Genetic Components of Major Neurotransmitter Pathways

Table 1: Key Gene Families in Dopamine and Serotonin Pathways

| System | Gene Category | Representative Genes | Protein Function | Chromosomal Location |

|---|---|---|---|---|

| Dopamine | Receptors | DRD1, DRD2, DRD3, DRD4, DRD5 | Dopamine receptor subtypes | Chromosome 5 (DRD1), 11 (DRD2), 3 (DRD3), 4 (DRD5) [33] |

| Synthesis & Metabolism | DDC, DBH, COMT | Dopamine synthesis (DDC), conversion to norepinephrine (DBH), degradation (COMT) | Chromosome 7 (DDC), 9 (DBH), 22 (COMT) [33] | |

| Transport | DAT1/SLC6A3, VMAT1/SLC18A1, VMAT2/SLC18A2 | Dopamine reuptake (DAT1), vesicular packaging (VMAT1/2) | Chromosome 5 (DAT1), 8 (VMAT1), 10 (VMAT2) [33] | |

| Serotonin | Receptors | HTR1A, HTR1B, HTR2A, HTR2C, HTR3A, HTR4, HTR5A, HTR6, HTR7 | Serotonin receptor subtypes | Multiple chromosomes [34] |

| Synthesis & Metabolism | TPH1, TPH2, DDC, MAOA | Serotonin synthesis (TPH1/2), conversion from 5-HTP (DDC), degradation (MAOA) | Chromosome 11 (TPH1), 12 (TPH2), 7 (DDC), X (MAOA) [34] | |

| Transport | SLC6A4/SERT | Serotonin reuptake | Chromosome 17 [35] |

Functionally Significant Polymorphisms and Associated Phenotypes

Table 2: Key Polymorphisms in Dopamine and Serotonin Genes and Their Research Implications

| Gene | Polymorphism | Functional Impact | Associated Phenotypes/Responses |

|---|---|---|---|

| COMT | rs4680 (Val158Met) | Met allele reduces enzyme activity by 4-fold, increasing synaptic dopamine [36] | Met/Met genotype linked to lower cooperation expectations in social dilemmas; influences motor learning response to rewards [37] [36] |

| DRD4 | 48-bp VNTR in exon III | 7-repeat allele associated with reduced receptor sensitivity [36] | Linked to reduced altruism, higher impulsivity, and aggression risk [36] |

| DAT1 | VNTR at 3'UTR | 9- and 10-repeat alleles most common; affects gene expression [35] | Associated with anxiety and stress sensitivity; epigenetic regulation under stress [35] |

| SLC6A4 | 5-HTTLPR (S/L alleles) | S allele reduces transcriptional efficiency; L allele increases serotonin reuptake [36] [35] | S allele: anxiety-related traits; L/L genotype: lower contributions in cooperative games without punishment [36] |

| HTR1B | rs13212041 (T/C) | T allele reduces gene expression via microRNA interaction [36] | T/T genotype associated with lower expectations of antisocial punishment [36] |

| Polygenic Score | Combined dopamine gene score | Summarizes cumulative genetic effects on dopamine transmission [37] | Predicts motor learning performance: low scores benefit from rewards, high scores learn better without rewards [37] |

Experimental Methodologies for Pathway Gene Investigation

Genetic Association Protocol: Dopamine-Related Motor Learning

Objective: To assess how individual variations in dopamine-related genes affect motor sequence learning with and without monetary rewards in children and young adults with and without cerebral palsy [37].

Participants: Inclusion of subjects aged 5-25 years with cerebral palsy and healthy volunteers, excluding those on medications affecting dopamine transmission (e.g., levodopa, methylphenidate) [37].

Genetic Analysis:

- Blood collection for genetic analysis of dopamine-related genes (DRD1-5, DAT1, COMT, DBH, DDC)

- Calculation of individual polygenic dopamine gene scores based on methodology by Pearson-Fuhrhop et al. [37]

- Stratification of participants into high and low gene score groups

Behavioral Task:

- Administration of serial reaction time task (SRTT) under two conditions:

- Unrewarded condition: Baseline performance assessment

- Rewarded condition: Monetary rewards for correct/rapid responses

- Measurement of learning rate as reduction in reaction times across sequence blocks

Statistical Analysis:

- Mixed-model ANOVA with gene score group as between-subject factor and reward condition as within-subject factor

- Post-hoc testing of gene score × condition interaction effects on learning rates [37]

Epigenetic Regulation Protocol: Transporter Gene Methylation in Stress

Objective: To evaluate DAT1 and SERT gene regulation through DNA methylation analysis in university students under perceived stress [35].

Participant Characterization:

- Administration of Highly Sensitive Person (HSP) scale (12-item) and Perceived Stress Scale (PSS-10)

- Stratification into low, medium, and high cumulative risk groups based on HSP × PSS interactions [35]

Sample Collection and Processing:

- Saliva collection (2 mL) after 2-hour fasting from food, drink (except water), smoking, or tooth brushing

- Genomic DNA extraction using salting-out method

- DNA methylation analysis at specific CpG sites in DAT1 5'UTR and SERT promoter regions [35]

Molecular Analysis:

- VNTR genotyping for DAT1 3'UTR (9- and 10-repeat alleles)

- Methylation-specific analysis of identified CpG sites

- Expression analysis of regulatory microRNAs (miR-491 for DAT1, miR-135 for SERT) [35]

Data Interpretation:

- Correlation of methylation patterns with stress sensitivity phenotypes

- Assessment of genotype × methylation interactions on stress vulnerability [35]

Signaling Pathway Architecture

The diagram above illustrates the parallel organization of dopamine and serotonin systems, highlighting shared elements like the AADC (DDC) enzyme while emphasizing system-specific components. Notably, the serotonin system originates from the essential amino acid tryptophan, while dopamine synthesis begins with tyrosine. Both systems employ similar regulatory mechanisms including transporter-mediated reuptake (SERT for serotonin, DAT for dopamine) and enzymatic degradation (MAOA for serotonin, COMT for dopamine), with the dopamine system additionally feeding into norepinephrine synthesis via DBH [33] [34].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Dopamine and Serotonin Gene Studies

| Reagent/Category | Specific Examples | Research Application | Function in Investigation |

|---|---|---|---|

| Genotyping Assays | TaqMan SNP Genotyping, VNTR analysis | Polymorphism screening (5-HTTLPR, COMT Val158Met, DAT1 VNTR) | Determines genetic variants of interest in candidate genes [37] [35] |

| DNA Methylation Kits | Bisulfite conversion kits, Methylation-specific PCR | Epigenetic regulation studies | Quantifies DNA methylation at promoter/regulatory regions of transporter genes [35] |

| RNA-Seq Platforms | Illumina, Thermo Fisher | Transcriptome profiling | Measures gene expression changes in response to experimental conditions [38] |

| Behavioral Task Software | Serial Reaction Time Task (SRTT), Weather Prediction Task (WPT), Public Goods Game | Cognitive and motor learning assessment | Quantifies behavioral phenotypes associated with genetic variants [37] [36] |

| LC-MS/MS Systems | HPLC with electrochemical or mass spectrometry detection | Neurotransmitter and metabolite quantification | Measures serotonin, dopamine, and metabolites (5-HIAA, DOPAC) [39] |

| microRNA Analysis Kits | miRNA extraction, qRT-PCR assays | Epigenetic regulation studies | Evaluates expression of miRNAs regulating transporter genes (miR-132, miR-491, miR-135) [35] |

Research Implications and Future Directions

The comparative analysis of dopamine and serotonin pathway genes reveals distinctive genetic architectures with important implications for replication studies. The dopamine system features a more diverse receptor family (DRD1-DRD5) distributed across multiple chromosomes, while serotonin signaling involves a more extensive receptor repertoire (HTR1-HTR7) with diverse signaling properties [33] [34]. Methodologically, comprehensive assessment requires integrated approaches spanning genetics (SNPs, VNTRs), epigenetics (DNA methylation, miRNA regulation), and precise behavioral phenotyping.

Future research directions should prioritize:

- Developing standardized polygenic scoring methods for cumulative genetic effects [37]

- Establishing best practices for epigenetic studies in accessible tissues (e.g., saliva) with brain relevance [35]

- Validating candidate genes across diverse populations and diagnostic categories

- Integrating multi-omics approaches to understand gene × environment interactions in neurotransmitter system regulation

These approaches will strengthen replication studies and facilitate the translation of genetic findings into personalized therapeutic strategies targeting dopamine and serotonin systems in neuropsychiatric disorders.

A Step-by-Step Protocol for Designing and Executing a Robust Replication Study

In the field of premature ovarian insufficiency (POI) research, the identification of candidate genes has accelerated dramatically, with over 90 genes now linked to this condition that affects 1-3.7% of women under 40 [40] [41]. This rapid expansion of genetic discoveries creates a critical bottleneck: determining which findings represent robust, reliable biological relationships worthy of further investigation and clinical translation. The selection of appropriate replication targets has thus become a fundamental challenge in the transition from initial discovery to validated knowledge. This guide provides a systematic framework for identifying the most valuable POI candidate genes for replication studies, enabling researchers to prioritize limited resources toward verifying the most promising genetic associations.

The POI Genetic Landscape: Quantifying Current Knowledge

POI represents a genetically heterogeneous condition, with established genetic causes accounting for approximately 20-25% of cases [42] [40]. Recent large-scale sequencing studies have significantly expanded our understanding of this genetic architecture. The table below summarizes the current genetic contribution to POI based on a 2023 study of 1,030 patients:

Table 1: Genetic Landscape of POI Based on Large-Scale Sequencing

| Genetic Category | Contribution to POI Cases | Key Genes and Examples |

|---|---|---|

| Overall Genetic Contribution | 23.5% (242/1030 cases) | Combinations of known and novel genes [4] |

| Known POI Genes | 18.7% (193/1030 cases) | 59 well-characterized genes [4] |

| Novel POI-Associated Genes | Additional 4.8% | 20 newly identified genes [4] |

| Chromosomal Abnormalities | 10-13% | Turner syndrome, X-structural variations [42] |

| Primary vs Secondary Amenorrhea | PA: 25.8% vs SA: 17.8% | Higher diagnostic yield in primary amenorrhea [4] |

The pathways implicated in POI pathogenesis are diverse, encompassing meiotic processes, DNA repair, mitochondrial function, and folliculogenesis. The diagram below illustrates the key biological pathways and their interrelationships in POI pathogenesis:

Core Criteria for Selecting Replication Targets

Assessing Value and Impact

The theoretical importance and potential clinical applicability of a genetic finding should be primary considerations in replication target selection. In POI research, value can be operationalized through specific criteria:

Clinical Impact: Genes associated with more severe phenotypic presentations typically warrant higher priority. For instance, variants in genes like

NR5A1andMCM9demonstrate relatively high prevalence among POI patients (1.1% each in recent studies) [4], suggesting broader clinical relevance.Biological Plausibility: Genes functioning in pathways critically important for ovarian development and function, particularly those with strong animal model evidence, represent stronger candidates. Meiosis and DNA repair genes constitute nearly half (48.7%) of genetically explained POI cases [4], highlighting this pathway's central importance.

Therapeutic Potential: Genes encoding potentially druggable targets or those that might inform clinical management decisions (e.g., cancer risk associations) should be prioritized. Recent research indicates that 37.4% of POI cases with genetic findings have implications for cancer susceptibility surveillance [41].

Evaluating Uncertainty and Robustness

Not all initial genetic associations are equally reliable. Assessing the degree of uncertainty surrounding a finding is crucial for replication prioritization:

Statistical Strength: Initial associations with modest statistical support require verification. Many POI gene discoveries originate from single-family studies without independent validation [41], representing high-uncertainty candidates.

Evidence Consistency: Genes supported by multiple independent case reports or functionally validated variants present stronger candidates. For example, the

EIF2B2gene showed the highest prevalence of pathogenic alleles in a recent study (0.8% of cases) due to a recurrent variant [4].Technical Considerations: Findings from studies with methodological limitations or those that have not employed optimal sequencing approaches (e.g., lack of trio-based sequencing to confirm de novo mutations) merit replication.

Considering Practical Feasibility

The practical aspects of replication studies significantly influence target selection:

Cohort Availability: Genes associated with more prevalent POI subtypes enable adequate sample collection. The higher diagnostic yield in primary amenorrhea (25.8%) versus secondary amenorrhea (17.8%) [4] suggests potentially easier recruitment for replication studies.

Variant Characterization: Well-documented variants with clear pathogenicity assessments are more straightforward to replicate. The 2023 Nature Medicine study established 195 pathogenic/likely pathogenic variants across 59 genes [4], providing a solid foundation for replication design.

Technical Infrastructure: Genes requiring specialized functional validation approaches (e.g., meiotic phenotyping) may present greater logistical challenges for replication.

Application to POI Candidate Genes

The following table applies the selection criteria to representative POI gene categories, providing a practical framework for replication prioritization:

Table 2: Replication Priority Assessment for POI Gene Categories

| Gene Category | Value/Impact | Uncertainty | Feasibility | Replication Priority |

|---|---|---|---|---|

| Meiosis/DNA Repair Genes (e.g., HFM1, MCM8, MCM9) | High (48.7% of solved cases) [4] | Medium (growing evidence) | Medium (requires specialized assays) | High |

| Syndromic Genes (e.g., AIRE, ATM) | Medium (multi-system involvement) | Low (well-established) | High (clinical features aid recruitment) | Medium |

| Novel Candidates (e.g., LGR4, PRDM1) | Unknown (pathway relevance) | High (limited evidence) | Variable | Research Question-Dependent |

| Mitochondrial Genes (e.g., AARS2, MRPS22) | Medium (22.3% of solved cases) [4] | Medium (emerging evidence) | Medium (requires functional studies) | Medium-High |

| FMR1 Premutation | High (well-established cause) | Low (extensively replicated) | High (standard testing available) | Low (already validated) |

Experimental Design for POI Gene Replication

Cohort Selection and Design Considerations

Successful replication requires careful attention to cohort composition and study design:

Sample Size Considerations: Large cohorts are essential for statistically meaningful replication. The 2023 study of 1,030 patients represents the current standard for sufficiently powered genetic studies in POI [4].

Phenotypic Stratification: Separating primary amenorrhea (PA) and secondary amenorrhea (SA) cases is crucial, as they demonstrate different genetic contributions (25.8% vs. 17.8% respectively) [4].

Population Considerations: Accounting for ethnic diversity is essential, as some genetic associations show population-specific effects.

Methodological Approaches

A tiered approach to replication provides comprehensive validation:

Technical Validation: Confirm initial variants using orthogonal methods (Sanger sequencing, etc.).

Independent Cohort Replication: Recruit new patient cohorts matching original study characteristics.

Functional Validation: Implement pathway-specific assays to confirm biological impact.

The diagram below illustrates a comprehensive replication workflow for POI candidate genes:

Essential Research Reagents and Solutions

The table below outlines key reagents and methodologies essential for conducting replication studies in POI genetics:

Table 3: Research Reagent Solutions for POI Gene Replication Studies

| Reagent/Methodology | Primary Function | Application in POI Research |

|---|---|---|

| Whole Exome Sequencing | Comprehensive coding variant detection | Initial gene discovery and variant identification [4] |

| Sanger Sequencing | Targeted variant confirmation | Orthogonal validation of putative pathogenic variants [4] |

| Array CGH | Copy number variation detection | Identification of chromosomal structural variants [42] |

| FSH/LH/AMH Assays | Hormonal profiling | Phenotypic characterization of POI patients [40] |

| T-clone/10x Genomics | Phase determination | Establishing cis/trans configuration of multiple variants [4] |

| Functional Assays | Pathogenicity assessment | MEIOSIN, CPEB1 validation for meiotic genes [4] |

Systematic selection of replication targets in POI research requires balanced consideration of multiple factors, with genes involved in DNA repair and meiotic pathways currently representing the highest-value candidates based on their substantial contribution to explained cases. As the field evolves toward personalized medicine approaches—where genetic diagnoses may inform cancer risk management (37.4% of cases) [41] or potential fertility interventions—the importance of robust, replicated genetic data becomes increasingly critical. By applying the structured framework presented in this guide, researchers can prioritize their replication efforts to maximize scientific yield and accelerate the translation of genetic discoveries to clinical applications in ovarian insufficiency.

In the field of genetic epidemiology, the "winner's curse" represents a critical statistical phenomenon that profoundly impacts the validation of disease-associated genetic variants. When a variant is identified as significant in an initial genomewide association study (GWAS), the estimated genetic effect size is often substantially inflated, particularly when the original study had low or moderate statistical power [43]. This overestimation occurs because, for a genuinely associated variant with modest effect, only those chance occurrences where the effect appears largest will reach statistical significance threshold [43]. This ascertainment bias has far-reaching implications, as it frequently leads to underpowered replication studies that fail to confirm genuine associations, ultimately wasting scientific resources and delaying discoveries [43] [44].

The challenge is particularly acute in complex genetic disorders like premature ovarian insufficiency (POI), where researchers must identify genuine genetic signals from a sea of candidates. POI affects approximately 1-2% of women before age 40 and represents a clinically heterogeneous condition with substantial knowledge gaps in its etiology [45] [46]. Nearly 70% of POI cases remain unexplained, making genetic discovery a paramount research priority [45]. In this context, properly addressing the winner's curse through rigorous power and sample size calculation becomes not merely a statistical formality, but a fundamental requirement for robust gene discovery and replication.

Statistical Foundations: Understanding Power, Sample Size, and Effect Size

The Interrelationship of Key Statistical Parameters

Statistical power, sample size, effect size, and significance thresholds form an interconnected framework that determines the validity of genetic association studies. Power, defined as the probability of correctly rejecting a false null hypothesis (1-β), is critically dependent on three factors: the chosen significance threshold (α), the true effect size of the variant, and the sample size available for analysis [44]. The delicate balance between Type I error (false positives) and Type II error (false negatives) must be carefully managed through appropriate experimental design [44].

The traditional convention in scientific research sets α at 0.05 and power at 0.8 (80%), though these values should be adjusted based on the specific research context [44]. For pilot studies with exploratory aims, α may be relaxed to 0.10 or 0.20, while in situations with severe consequences for false positives (such as drug development studies), α might be set at 0.001 or lower [44]. Understanding these relationships is essential for designing replication studies that can overcome the winner's curse.

Quantifying the Winner's Curse Effect

The magnitude of the winner's curse bias is inversely related to the power of the initial study. When power is high (e.g., >90%), most random samples from the true distribution will yield significant results, making the ascertainment bias minimal. However, when power is low, conditioning on significant association in the discovery phase creates substantial upward bias in effect size estimates [43]. Empirical evidence demonstrates this problem is widespread in genetic association studies. A meta-analysis of 301 studies across 25 putative disease loci found that 24 of the 25 initial reports showed higher odds ratios than subsequent replication studies [43].

This effect has direct consequences for POI research, where genetic variants often have modest effects and initial sample sizes may be limited. If replication studies are designed based on inflated effect sizes from initial discoveries, the sample sizes will be insufficient to detect the true, more modest effects, leading to failure in replication and potentially abandoning genuine associations [43].

Methodological Approaches for Bias Correction

Statistical Methods for Correcting Parameter Estimates

Several methodological approaches have been developed to correct for the winner's curse in genetic association studies. The maximum likelihood estimation (MLE) approach provides a framework for generating corrected estimates of penetrance and allele frequency parameters [43]. This method calculates the likelihood of the observed genotype counts conditional on having detected a significant association, effectively weighting the naive estimates by the power of the initial test [43].

The mathematical foundation of this approach can be represented as:

L(θ,φ) = Pr(D|θ,φ,S) / Pr(B|θ,φ,S)

Where D represents the observed genotype counts, B indicates a significant association, S denotes the sampling design, θ represents the penetrance parameters, and φ represents the genotype frequencies [43]. The denominator represents the statistical power to detect association, which acts as a correction factor, tilting the maximum likelihood toward more conservative effect sizes [43].

Alternative approaches include the two-stage method that randomly splits samples into discovery and estimation sets, though this approach produces estimates with higher standard errors [43]. Another method lowers the significance threshold in the initial test to increase power and reduce ascertainment bias, though this comes at the cost of increased false positives [43].

Sample Size Calculation for Replication Studies

Proper sample size calculation for replication studies must account for the corrected effect sizes rather than the naive estimates from the initial discovery. The required sample size depends on the specific genetic model (additive, dominant, recessive), minor allele frequency, and the corrected effect size [43] [44].

Table 1: Sample Size Calculation Formulas for Different Study Types

| Study Type | Formula | Parameters |

|---|---|---|

| Two proportions | (n = \frac{(Z{1-\alpha/2} + Z{1-\beta})^2 \times [p1(1-p1) + p2(1-p2)]}{(p1 - p2)^2}) | p₁, p₂ = event proportions in groups [44] |

| Two means | (n = \frac{(Z{1-\alpha/2} + Z{1-\beta})^2 \times 2\sigma^2}{d^2}) | σ = pooled standard deviation, d = difference of means [44] |

| Odds ratio | (n = \frac{(Z{1-\alpha/2} + Z{1-\beta})^2}{[ln(OR)]^2 \times \frac{1}{p1(1-p1)} + \frac{1}{p2(1-p2)}}) | OR = odds ratio, p₁, p₂ = event proportions [44] |

| Correlation | (n = \frac{(Z{1-\alpha/2} + Z{1-\beta})^2}{[0.5 \times ln(\frac{1+r}{1-r})]^2} + 3) | r = correlation coefficient [44] |

These calculations can be implemented using various statistical software packages and online calculators, though understanding their theoretical foundations remains essential for appropriate application [44].

Application to POI Candidate Gene Research

Current Genetic Research Landscape in POI

POI research has increasingly relied on advanced genetic technologies to identify causative variants. Recent studies utilize a combination of array comparative genomic hybridization (array-CGH) and next-generation sequencing (NGS) panels targeting genes known or suspected to be involved in ovarian function [45]. This approach has identified genetic anomalies in 57.1% (16/28) of idiopathic POI patients, with causal copy number variations found in 3.6% and causal single nucleotide variations/indels in 28.6% [45].

The complex genetic architecture of POI means that most individual variants have modest effects, making them particularly susceptible to the winner's curse. Family studies demonstrate the strong genetic component of POI, with familial forms identified in 12-31% of cases [45]. The emerging understanding of POI pathophysiology includes abnormalities in follicular pool establishment, accelerated follicular atresia, and alterations in primordial follicle recruitment [45].

Research Workflow in POI Genetic Studies

The standard research workflow in contemporary POI genetic studies incorporates multiple validation steps to ensure robust findings, though additional attention to power considerations is needed to fully address the winner's curse.

Experimental Protocols for POI Genetic Studies

Sample Collection and Diagnostic Criteria

Well-defined patient cohorts are fundamental to robust genetic studies of POI. Current research typically includes women meeting the following criteria: (i) age < 40 years; (ii) at least 4 months of oligo/amenorrhea; and (iii) elevated serum follicle-stimulating hormone (FSH) > 25 IU/L on two occasions至少4 weeks apart [46]. Exclusion criteria generally encompass other endocrine diseases, history of ovarian surgery, and recent hormone use (within 3 months) [46]. Control groups typically consist of women with infertility due to tubal factors but with normal menstrual cycles and basal sex hormone levels [46].

Genetic Analysis Techniques

Comprehensive genetic analysis in POI research involves multiple complementary approaches:

Array-CGH: Conducted using platforms such as SurePrint G3 Human CGH Microarray 4 × 180 K technology (Agilent Technologies), with data analysis using Feature Extraction and CytoGenomics software. This technique identifies copy number variations (CNVs) with minimum resolution of 60 kb [45].

Next-Generation Sequencing: Performed using capture-based targeted sequencing (e.g., SureSelect XT-HS) of gene panels encompassing 163 genes associated with ovarian function. Sequencing occurs on platforms such as Illumina NextSeq 550, with variant calling using Alissa Align&Call and interpretation with Alissa Interpret [45].

Third-Generation Sequencing: Emerging approaches utilizing Oxford Nanopore Technology (ONT) enable full-length transcript characterization, overcoming limitations of short-read sequencing and improving detection of structural variants [46].

Validation Methods

Independent validation of genetic findings employs several experimental approaches:

qRT-PCR: Used to confirm expression changes of candidate genes in independent sample sets. RNA is extracted from monocytes or granulosa cells, reverse transcribed, and quantified using SYBR Green chemistry with normalization to housekeeping genes like GAPDH [46].

Functional Assays: Include in vitro models to test the impact of genetic variants on protein function, pathway analysis, and biomarker development.

Table 2: Essential Research Reagents for POI Genetic Studies

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| RNA Collection | PAXgene Blood RNA tubes (BD) | Standardized RNA preservation from peripheral blood [46] |

| RNA Extraction | QIAsymphony DNA midi kits (Qiagen) | Automated nucleic acid extraction [45] |

| Microarray | SurePrint G3 Human CGH Microarray 4×180K (Agilent) | CNV detection [45] |

| NGS Library Prep | SureSelect XT-HS (Agilent) | Target enrichment for sequencing [45] |

| Sequencing Platforms | Illumina NextSeq 550, Oxford Nanopore PromethION | DNA and RNA sequencing [45] [46] |

| Variant Interpretation | CytoGenomics, Alissa Interpret, STRING, Cytoscape | Bioinformatic analysis [45] [46] |

| Validation | SYBR Green qPCR Master Mix (ServiceBio) | Gene expression validation [46] |

Comparative Analysis of Statistical Approaches

Evaluating Correction Methods for Genetic Studies

Different statistical approaches for addressing the winner's curse offer distinct advantages and limitations in the context of POI research. The table below summarizes key methodologies and their applications:

Table 3: Comparison of Statistical Methods for Overcoming Winner's Curse

| Method | Key Principle | Advantages | Limitations | Relevance to POI |

|---|---|---|---|---|

| Maximum Likelihood Estimation [43] | Conditions estimates on significance in initial study | Provides point estimates and confidence regions; greatly reduces bias | Complex computation; requires specification of genetic model | High - suitable for well-defined genetic models in POI |

| Sample Splitting [43] | Randomly divides data into discovery and estimation sets | Simple implementation; minimal technical expertise | Reduced power in both phases; higher standard errors | Moderate - useful for large cohorts with sufficient samples |

| Significance Threshold Adjustment [43] | Lowers α in initial test to increase power | Reduces ascertainment bias through higher power | Increases false positives; requires more stringent final thresholds | Low - limited by typically small POI cohort sizes |

| Bootstrapping/Resampling | Empirical estimation of bias through resampling | Non-parametric; makes minimal assumptions | Computationally intensive; may underestimate extreme biases | Moderate - valuable for complex genetic models |

Power Considerations in POI Study Design

The implementation of appropriate power calculations requires careful consideration of POI-specific research challenges. Family-based designs offer increased power for detecting rare variants but require specialized recruitment strategies [45]. Case-control association studies are more feasible but require larger sample sizes to achieve adequate power for modest genetic effects [43] [44].

Recent research has identified several promising genetic biomarkers for POI through advanced methodologies, including COX5A, UQCRFS1, LCK, RPS2, and EIF5A, which demonstrate consistent expression trends in validation studies [46]. These findings highlight the importance of oxidative phosphorylation pathways and DNA damage repair mechanisms in POI pathophysiology [46]. The convergence of these biological pathways through independent genetic studies strengthens their validity and provides promising targets for therapeutic development.

Implementation Framework for POI Research

Integrated Workflow for Robust Genetic Discovery

Building on the statistical principles and methodological considerations discussed, the following pathway diagram illustrates an integrated approach to POI genetic research that systematically addresses the winner's curse throughout the research process:

Future Directions and Recommendations

As POI genetic research advances, several key considerations will enhance the field's ability to overcome the winner's curse and produce more reliable findings:

Collaborative Consortia: Given the relative rarity of POI and the modest effect sizes of most genetic variants, multi-center collaborations are essential to achieve sample sizes sufficient for well-powered discovery and replication [45] [46].

Standardized Phenotyping: Implementation of consistent diagnostic criteria across research centers will improve cohort homogeneity and enhance statistical power [45] [46].

Advanced Statistical Methods: Incorporation of machine learning approaches such as random forest and Boruta algorithms can enhance feature selection and identify genuine genetic signals amidst multiple candidates [46].

Multi-Omics Integration: Combining genomic data with transcriptomic, epigenomic, and proteomic information will provide complementary evidence for genetic associations and enhance biological validation [47] [46].