Sequencing Platform Concordance: A Comprehensive Guide for Reliable Genomic Analysis in Biomedical Research

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for understanding, measuring, and optimizing concordance across next-generation sequencing (NGS) platforms.

Sequencing Platform Concordance: A Comprehensive Guide for Reliable Genomic Analysis in Biomedical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for understanding, measuring, and optimizing concordance across next-generation sequencing (NGS) platforms. Covering foundational principles to advanced validation strategies, we explore why sequencing results vary between platforms and methodologies, present practical approaches for concordance testing, address common troubleshooting scenarios, and offer comparative performance data for major commercial systems. With the growing importance of genomic data in clinical decision-making and drug development, this guide empowers professionals to ensure data reliability, improve reproducibility, and implement robust quality control measures for sequencing-based studies.

Why Sequencing Results Differ: Understanding the Fundamentals of Platform Discordance

In genomic analysis, concordance is a critical measure of consistency and reproducibility between different experimental methods or platforms. It extends far beyond simple agreement to encompass the probability that different tests will yield the same result for a specific genomic characteristic, whether assessing phenotypic traits in twin studies, variant classifications between laboratories, or detection of genomic alterations across different sequencing technologies. [1] For researchers in drug development and pharmaceutical research, understanding concordance is essential for validating biomarkers, companion diagnostics, and making critical decisions based on genomic data.

Experimental Approaches to Measuring Concordance

Measuring concordance requires carefully designed experiments that directly compare results across different platforms, methodologies, or sample types under controlled conditions.

Tissue vs. Circulating Tumor DNA (ctDNA) Concordance Study

Objective: To evaluate the concordance of genomic alterations identified in tumor tissue biopsies versus those detected in circulating cell-free DNA (cfDNA) from blood samples in patients with advanced solid tumors. [2]

Methodology: This retrospective study analyzed 28 patients with advanced cancers (50% lung cancer, 93% stage IV disease). Researchers performed next-generation sequencing on both tumor tissue samples and peripheral blood cfDNA samples using platforms that shared 65 common genes. The median interval between paired sample collections was 89 days. Concordance was strictly defined as the presence or absence of identical genomic alterations in individual genes detected by both platforms. [2]

Key Parameters:

- Patient Cohort: 28 patients with advanced solid tumors

- Gene Panel: 65 genes common to both tissue and cfDNA assays

- Statistical Measures: Overall concordance rate, sensitivity, specificity

- Analysis Focus: TP53, EGFR, KRAS, APC, and CDKN2A genes

Multi-Platform Sequencing Performance Benchmark

Objective: To comprehensively benchmark the performance of multiple DNA sequencing platforms using standardized reference materials to assess reproducibility, accuracy, and variant calling consistency. [3]

Methodology: The Association of Biomolecular Resource Facilities (ABRF) Next-Generation Sequencing Study analyzed multiple sequencing platforms including Illumina HiSeq/NovaSeq, Ion Torrent S5/Proton, PacBio circular consensus sequencing, Oxford Nanopore PromethION/MinION, and BGISEQ-500/MGISEQ-2000. The study utilized human and bacterial reference DNA samples to evaluate platform performance across multiple parameters including genome coverage, error rates, mapping rates, and accuracy in detecting known insertion/deletion events. [3]

Comparative Performance Data Across Platforms

The following tables summarize key concordance metrics and performance data from published studies comparing different genomic analysis approaches.

Table 1: Tissue vs. cfDNA Concordance Metrics for Detecting Genomic Alterations [2]

| Metric | All Genes (%) | Genes with Alterations (%) | Notes |

|---|---|---|---|

| Overall Concordance | 91.9-93.9 | 11.8-17.1 | Includes both mutated and non-mutated genes |

| Sensitivity | 59.1 | - | For TP53, EGFR, KRAS, APC, CDKN2A |

| Specificity | 94.8 | - | For TP53, EGFR, KRAS, APC, CDKN2A |

| Mean Alterations per Patient | Tissue: 4.82; cfDNA: 2.96 | - | After filtering: Tissue: 3.21; cfDNA: 2.96 |

Table 2: Sequencing Platform Performance Comparison in ABRF Benchmarking Study [3]

| Platform Category | Most Consistent Genome Coverage | Lowest Error Rates | Best for Insertion/Deletion Detection | Best Performance in Repeat-Rich Regions |

|---|---|---|---|---|

| Short-Read | Illumina HiSeq 4000 and X10 | BGISEQ-500/MGISEQ-2000 | Illumina NovaSeq 6000 (2×250-bp chemistry) | - |

| Long-Read | - | - | - | PacBio CCS and Oxford Nanopore PromethION/MinION |

Table 3: Variant Classification Concordance Across Clinical Laboratories [4]

| Variant Category | Complete 5-Category Concordance | Clinically Meaningful Discordance | Post-Review Concordance |

|---|---|---|---|

| All Submitted Variants (n=158) | 54% (86/158) | 11% (17/158) | 84% (118/140) |

| Pathogenic (P) Variants | 79% stable | 21% discordant with VUS | Improved after consensus review |

| Likely Pathogenic (LP) Variants | 37% stable | 63% discordant with VUS | Improved after consensus review |

Analysis of Concordance Challenges and Limitations

The data reveals several critical challenges in achieving high concordance across genomic platforms. The stark difference between overall concordance (91.9-93.9%) and concordance for genes with actual alterations (11.8-17.1%) highlights how including non-mutated genes inflates perceived agreement. [2] This suggests that over 50% of mutations detected by either tissue or cfDNA testing were not identified by the other method, indicating these approaches may play complementary roles in comprehensive genomic profiling.

Variant classification demonstrates similar challenges, with only 54% complete concordance across laboratories despite using standardized ACMG-AMP guidelines. [4] This discordance stems from differences in interpreting evidence codes, applying gene-specific guidelines, and accessing proprietary databases. The improvement to 84% concordance after consensus review demonstrates the value of data sharing and standardized interpretation frameworks.

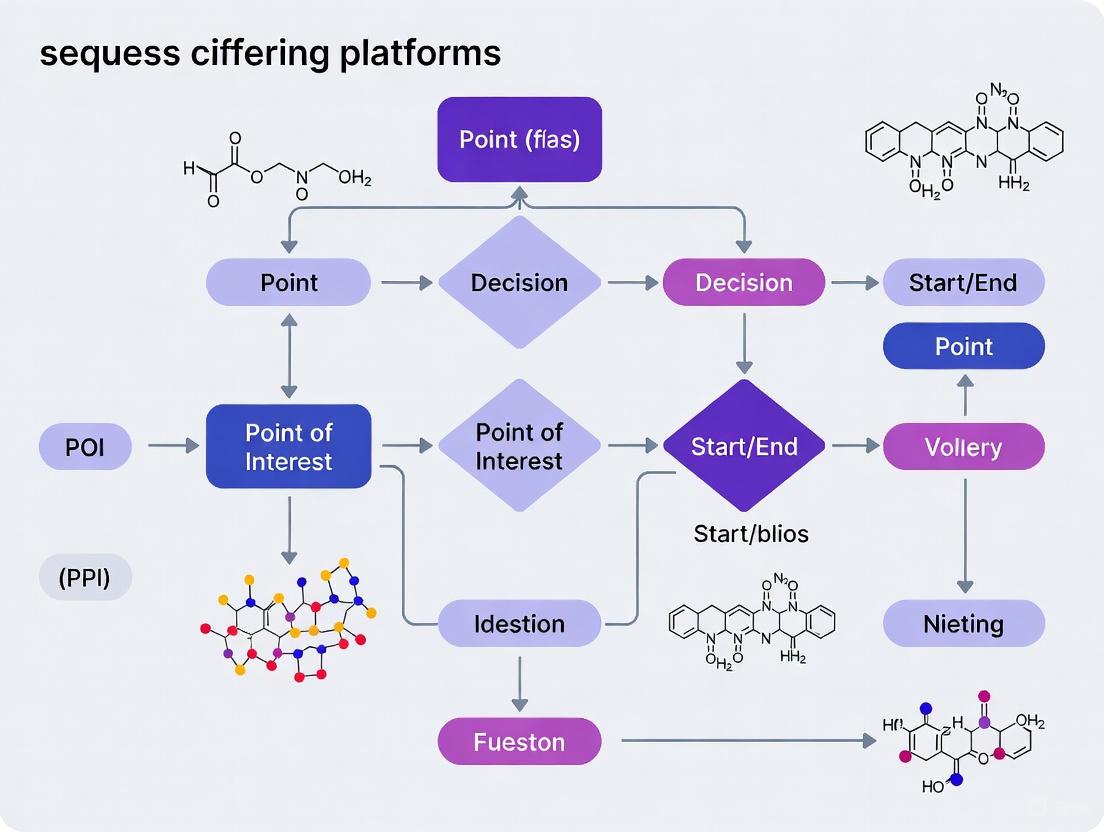

Visualizing Concordance Analysis Workflows

Figure 1: Generalized concordance analysis workflow for comparing genomic platforms.

Figure 2: Tissue versus liquid biopsy concordance study design showing key metrics.

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Platforms for Concordance Studies

| Reagent/Platform | Function | Application in Concordance Research |

|---|---|---|

| Next-Generation Sequencers (Illumina, Ion Torrent, BGISEQ) [3] | DNA/RNA sequencing | Platform comparison studies, reference material sequencing |

| Targeted Gene Panels (65-gene panel) [2] | Focused genomic analysis | Standardized comparison across platforms with common genes |

| Reference DNA Materials (NIST, Coriell) [3] | Benchmark standards | Platform performance assessment using characterized samples |

| cfDNA Extraction Kits | Isolation of circulating DNA | Liquid biopsy comparison studies |

| ACMG-AMP Classification Guidelines [4] | Variant interpretation framework | Standardizing pathogenicity assessment across laboratories |

| Bioinformatics Pipelines (GATK, MegaBOLT) [5] [6] | Data processing and variant calling | Analysis standardization across platforms |

Implications for Drug Development and POI Research

For drug development professionals, these concordance findings have significant implications. The complementary nature of tissue and liquid biopsy sequencing suggests that optimal biomarker strategy should incorporate both methods where possible. [2] The demonstrated variability in variant classification highlights the importance of independent verification of potentially actionable mutations, particularly when making therapeutic decisions based on specific genomic alterations.

When designing studies for pharmacogenomic or pharmacogenetic research, consideration of platform-specific strengths and limitations is crucial. Long-read platforms excel in repeat-rich regions and complex structural variants, while short-read platforms provide more consistent coverage and lower error rates for single-nucleotide variants. [3] The choice between tissue and liquid biopsy approaches should factor in tumor heterogeneity, cancer type, disease stage, and accessibility of biopsy sites.

As genomic technologies continue to evolve, ongoing concordance assessment remains essential for ensuring the reliability and reproducibility of data driving drug development decisions.

In pharmacogenomics and drug development, Whole Exome Sequencing (WES) and Whole Genome Sequencing (WGS) are critical for identifying genetic variants that influence drug response. However, technical variations between sequencing platforms can significantly impact data concordance, potentially leading to different biological interpretations. This guide objectively compares the performance of leading sequencing platforms and exome capture technologies, providing researchers with experimental data to inform their genomic studies in POI (Pharmacogenomics and Personalized Medicine) research.

Platform Performance Comparison

Whole Exome Sequencing Platforms

A 2025 study compared four commercially available WES platforms on the DNBSEQ-T7 sequencer, evaluating data quality, capture specificity, coverage uniformity, and variant detection accuracy [5].

Table 1: Comparison of Four Exome Capture Platforms on DNBSEQ-T7 [5]

| Platform (Manufacturer) | Capture Specificity | Coverage Uniformity | Variant Detection Accuracy | Technical Stability |

|---|---|---|---|---|

| TargetCap Core Exome Panel v3.0 (BOKE) | Comparable across platforms | Comparable across platforms | Comparable reproducibility | Superior on DNBSEQ-T7 |

| xGen Exome Hyb Panel v2 (IDT) | Comparable across platforms | Comparable across platforms | Comparable reproducibility | Superior on DNBSEQ-T7 |

| EXome Core Panel (Nad) | Comparable across platforms | Comparable across platforms | Comparable reproducibility | Superior on DNBSEQ-T7 |

| Twist Exome 2.0 (Twist) | Comparable across platforms | Comparable across platforms | Comparable reproducibility | Superior on DNBSEQ-T7 |

Whole Genome Sequencing Platforms

A comparative analysis in 2025 highlighted significant performance differences between the Illumina NovaSeq X Series and the Ultima Genomics UG 100 platform for WGS [7].

Table 2: WGS Platform Comparison: NovaSeq X Series vs. Ultima Genomics UG 100 [7]

| Performance Metric | Illumina NovaSeq X Series | Ultima Genomics UG 100 |

|---|---|---|

| Reference Benchmark | Full NIST v4.2.1 benchmark | Subset of NIST benchmark (UG "High-Confidence Region") |

| Genome Coverage | Comprehensive analysis of all genomic regions | Excludes 4.2% of the genome, including 2.3% of exome |

| SNV Errors | Baseline | 6× more errors |

| Indel Errors | Baseline | 22× more errors |

| Performance in GC-Rich Regions | Maintains high coverage | Significant coverage drop in mid-to-high GC-rich regions |

| Homopolymer Analysis | Maintains accuracy in homopolymers >10 bp | Indel accuracy decreases for homopolymers >10 bp; regions >12 bp excluded |

Key Experimental Protocols

WES Platform Comparison Methodology

The comparative assessment of the four WES platforms followed a rigorous, standardized workflow to ensure a fair comparison [5]:

- Sample Preparation: DNA samples from the well-characterized NA12878 cell line were used.

- Library Construction: A total of 72 libraries were prepared using the MGIEasy UDB Universal Library Prep Set on an automated sample preparation system. Each library was uniquely dual-indexed.

- Pre-capture Pooling & Hybridization:

- Both 1-plex and 8-plex hybridizations were performed.

- Two different enrichment protocols were tested: one using each manufacturer's respective reagents and protocol, and another using a uniform MGI enrichment reagent and workflow (MGIEasy Fast Hybridization and Wash Kit) for a more controlled comparison.

- The probe hybridization step was standardized to a 1-hour incubation across all methods.

- Sequencing: The 16 captured DNA libraries (representing 72 samples) were pooled and sequenced on a single lane of a DNBSEQ-T7 sequencer using PE150 to a depth of >100x coverage.

- Bioinformatics Analysis: Data processing and variant calling were performed using MegaBOLT v2.3.0.0, following GATK best practices. Public variant datasets (hg19, dbSNP build 151) were applied to enhance variant calling accuracy.

WGS Platform Comparison Methodology

The benchmarking of the Illumina NovaSeq X Plus against the Ultima Genomics UG 100 was conducted as follows [7]:

- Data Generation:

- Illumina Data: WGS data was generated on a NovaSeq X Plus using a NovaSeq X Series 10B Reagent Kit and analyzed with DRAGEN v4.3. Data was downsampled to 35x coverage.

- Ultima Data: A publicly available dataset generated on the UG 100 platform at 40x coverage and analyzed by Ultima using DeepVariant software was used.

- Accuracy Benchmarking: Variant calling performance for both platforms was assessed against the full NIST v4.2.1 benchmark for the GIAB HG002 reference genome.

- Analysis of Challenging Regions: Performance was specifically evaluated in challenging genomic regions, including GC-rich sequences and homopolymers.

Visualizing Technical Variations

WES Platform Comparison Workflow

The following diagram illustrates the experimental workflow used to compare the four exome capture platforms, highlighting the points where technical variation can be introduced.

WGS Benchmarking and Variant Analysis

This diagram outlines the logic of the WGS benchmarking study, showing how differences in benchmarking methodology and genomic region performance contribute to observed technical variation.

The Scientist's Toolkit

Table 3: Key Research Reagents and Materials for Exome Sequencing Studies [5]

| Item | Function | Example in Featured Study |

|---|---|---|

| Reference DNA | Provides a well-characterized genome for benchmarking platform performance and accuracy. | HapMap-CEPH NA12878 DNA from Coriell Institute [5]. |

| Library Prep Kit | Fragments DNA, ligates adapters, and amplifies the library for sequencing. | MGIEasy UDB Universal Library Prep Set [5]. |

| Exome Capture Panels | Probe sets designed to hybridize and enrich for protein-coding regions of the genome. | TargetCap (BOKE), xGen (IDT), EXome (Nad), Twist Exome 2.0 [5]. |

| Hybridization Reagents | Chemical solutions that facilitate the binding of exome probes to genomic DNA libraries. | MGIEasy Fast Hybridization and Wash Kit (used in uniform protocol) [5]. |

| NIST Benchmark | A community-standard set of high-confidence variant calls for a reference genome, used to validate variant calling accuracy. | NIST v4.2.1 benchmark for GIAB HG002 [7]. |

Within genomics research, particularly in pharmacogenomics and the study of pharmacogenes of interest (POI), the accurate detection of variants is paramount. Sequencing technologies, however, are not error-free; the types and frequencies of errors they introduce are technology-dependent and can significantly impact downstream analysis and interpretation. A precise understanding of whether a platform is prone to substitution errors (swapping one base for another) or indel errors (insertions or deletions of bases) is crucial for designing robust studies and correctly identifying true biological variants, especially in clinically relevant regions. This guide provides a objective comparison of these error profiles across major sequencing platforms, equipping researchers with the data needed to select the appropriate technology for their POI research and to critically evaluate sequencing results.

Next-generation sequencing (NGS) technologies have evolved through distinct generations, each with characteristic error profiles. Second-generation or short-read platforms, such as Illumina, utilize sequencing-by-synthesis and are generally characterized by low overall error rates but a predisposition for substitution errors [8] [9]. These errors are not random; they are often associated with specific sequence contexts, such as motifs ending in "GG" [8]. In contrast, third-generation or long-read technologies, exemplified by Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT), sequence single molecules and produce much longer reads. This comes at the cost of a higher raw error rate, which is predominantly comprised of indel errors [9] [10]. For PacBio, these errors are typically randomly distributed, whereas for ONT, indels have a strong tendency to occur within homopolymer regions (stretches of the same base) [10].

Table 1: Fundamental Characteristics of Major Sequencing Platforms and Their Dominant Error Types

| Platform (Generation) | Representative Technology | Typical Read Length | Dominant Error Type | Typical Raw Error Rate | Primary Strengths |

|---|---|---|---|---|---|

| Short-Read (2nd) | Illumina (SBS) | 36-300 bp [11] | Substitution [8] [9] | ~0.1-1% [9] [10] | High throughput, low cost per base, high raw accuracy |

| Long-Read (3rd) | PacBio (SMRT) | 10,000-25,000 bp [11] | Indel (random) [10] | ~10-15% [9] [10] | Long reads, random errors correctable via consensus |

| Long-Read (3rd) | Oxford Nanopore | 10,000-30,000 bp [11] | Indel (in homopolymers) [10] | ~10-15% [10] | Ultra-long reads, real-time sequencing, native base detection |

The underlying chemistry of each platform dictates its error profile. Illumina's sequencing-by-synthesis with reversible dye-terminators is highly accurate but can be affected by issues such as phasing and pre-phasing, and is sensitive to specific sequence contexts that lead to substitution errors [8]. PacBio's Single Molecule Real-Time (SMRT) sequencing detects nucleotide incorporations in real time, with errors largely being stochastic and introduced by the polymerase [11] [12]. Oxford Nanopore's technology measures changes in electrical current as DNA strands pass through a protein pore; the complex signal, particularly within homopolymer regions, makes the technology susceptible to indel errors [11] [10].

Quantitative Comparison of Error Rates

When selecting a sequencing platform for POI research, understanding the quantitative differences in error rates is as important as knowing the qualitative error profiles. The following table synthesizes experimental data from comparative studies to provide a clearer picture of performance.

Table 2: Comparative Error Rates and Performance in Genomic Contexts

| Platform / Metric | Substitution Error Rate | Indel Error Rate | Performance in Homopolymers | Performance in GC-Rich Regions |

|---|---|---|---|---|

| Illumina | Very Low (e.g., 0.0021 - 0.0042 errors/base in HiSeq data) [8] | Very Low [7] | Robust [7] | Maintains uniform coverage [7] |

| PacBio HiFi | Extremely Low (>99.9% accuracy after consensus) [13] [12] | Very Low after consensus [12] | High accuracy after consensus [12] | Good coverage |

| Oxford Nanopore | Moderate (improving with kits; >99% with Q20+) [13] [12] | Higher, especially in homopolymers [10] | Indel accuracy decreases with homopolymer length [7] [10] | Coverage can drop in high-GC regions [7] |

| Ultima UG 100 | Higher than Illumina (6x more SNV errors in one study) [7] | Significantly higher than Illumina (22x more indel errors in one study) [7] | Indel accuracy decreases for homopolymers >10 bp [7] | Significant coverage drop in mid-to-high GC regions [7] |

A key consideration for long-read technologies is that their high raw error rates can be mitigated. PacBio's HiFi reads, generated through Circular Consensus Sequencing (CCS), where the same molecule is sequenced multiple times, achieve exceptional accuracy (>99.9%) for both substitutions and indels [13] [12]. Similarly, Oxford Nanopore's duplex sequencing, which sequences both strands of a DNA molecule, can push accuracy beyond Q30 (>99.9%) [12]. However, the homopolymer bias for ONT can persist even after consensus correction [10]. For Illumina, while overall error rates are low, the persistent and context-specific nature of its substitution errors means they are not entirely solved by increased coverage and require specific bioinformatic awareness [8].

Experimental Protocols for Error Profiling

Robust error profiling relies on standardized experimental designs and benchmarks. The following methodologies are commonly employed in the field to generate the comparative data discussed in this guide.

Whole-Genome Sequencing Benchmarking with Reference Materials

This protocol is the gold standard for comprehensively assessing a platform's variant-calling accuracy, including its error profile.

- Reference Sample: The Genome in a Bottle (GIAB) consortium provides well-characterized reference genomes, such as the HG002 sample, with high-confidence call sets for SNVs, indels, and structural variants [7].

- Sequencing and Analysis: The test platform is used to sequence the reference sample to a high coverage (e.g., 35x). The resulting reads are aligned to the reference genome, and variants are called using the platform's recommended bioinformatic pipeline (e.g., DRAGEN for Illumina, DeepVariant for Ultima data) [7].

- Error Calculation: The called variants are compared against the GIAB benchmark. False positives (variants called that are not in the benchmark) and false negatives (benchmark variants not called) are tallied separately for SNVs and indels. This provides a direct measure of the technology's substitution and indel error rates in a real-world application [7].

- Regional Analysis: Performance is further assessed in challenging genomic contexts, such as homopolymers of varying lengths and GC-rich regions, to identify technology-specific biases [7].

Amplicon Sequencing of Microbiome Mock Communities

This approach is widely used to evaluate sequencing accuracy for targeted applications like 16S rRNA gene sequencing.

- Standardized Sample: A mock microbial community with a known composition of DNA is used. This eliminates biological variability and provides a ground truth [13].

- Multi-Platform Sequencing: The same mock community sample is sequenced across multiple platforms (e.g., Illumina for V3-V4 region, PacBio and ONT for full-length 16S) [13].

- Bioinformatic Processing: Sequencing reads are processed through standardized pipelines (e.g., DADA2, Deblur) tailored to each platform to derive Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs). The error rate can be inferred from the rate of unique reads that diverge from the expected reference sequences [14].

- Diversity Assessment: The resulting microbial community profiles are compared to the known composition. Discrepancies, such as the inflation of diversity measures due to sequencing errors, provide an indirect measure of the platform's error rate and its impact on biological conclusions [13].

Visualization of a Hybrid Error Correction Workflow

Given that errors are technology-dependent, a common strategy to achieve high accuracy is hybrid error correction, which leverages the strengths of both short- and long-read technologies. The following diagram illustrates this multi-step computational process.

Diagram 1: Hybrid error correction workflow for long reads.

The workflow begins with two data inputs: high-accuracy short reads from a platform like Illumina and error-prone long reads from PacBio or ONT [9]. The short reads are used to construct a de Bruijn graph (DBG), a computational structure that represents all possible k-mers (short overlapping sequences) from the data. The long reads are then aligned to this graph. Indel errors in the long reads create "bubbles" or misalignments within the DBG. These are first corrected by finding an optimal path through the graph, often one that maximizes the minimum k-mer coverage, which represents the most confident sequence based on the short read data [9] [15]. Finally, substitution errors, which may have been present in the original long reads or introduced by the short reads during the DBG construction, are identified and corrected. This is typically done by analyzing the k-mer coverage profile along the read and applying a majority voting system to rectify bases that appear to be errors [9] [15]. The final output is a set of long reads that retain their length advantage while achieving accuracy comparable to short-read data.

The Scientist's Toolkit: Essential Reagents and Software for Error Analysis

- GIAB Reference Materials: Genomic DNA from characterized cell lines (e.g., HG002) that provides a ground truth for benchmarking platform-specific error rates and validating entire sequencing workflows [7].

- ZymoBIOMICS Mock Microbial Communities: Defined mixes of microbial cells or DNA with known composition, essential for empirically measuring error rates in amplicon-based sequencing studies without biological ambiguity [13].

- SMRTbell Templates: The proprietary library structure for PacBio sequencing, which involves ligating hairpin adapters to double-stranded DNA to create a circular template essential for generating multi-pass HiFi reads [11] [12].

- Nanopore Native Barcoding Kits: Kits (e.g., SQK-NBD114.24) that allow for multiplexed sequencing and are integral to workflows that assess performance across multiple samples simultaneously [13].

- DRAGEN Secondary Analysis Platform: A comprehensive bioinformatic suite from Illumina used for primary and secondary analysis, including mapping and variant calling, which is critical for generating the data used in platform performance comparisons [7].

- De Bruijn Graph-Based Correction Tools: Software such as LoRDEC and ParLECH that implement the hybrid correction workflow. They are essential for researchers using long-read data who need to achieve high accuracy by leveraging complementary short-read datasets [9] [10].

- Emu Algorithm: A specialized bioinformatic tool designed for analyzing full-length 16S rRNA sequences from long-read platforms like ONT. It helps mitigate the impact of sequencing errors on taxonomic profiling by generating fewer false positives and negatives [13].

The landscape of sequencing technologies offers a clear trade-off: short-read platforms provide high base-level accuracy with a tendency for context-specific substitution errors, while long-read platforms offer unparalleled continuity and structural variant resolution at the cost of a higher, yet often correctable, indel error rate. For POI research, where the accurate identification of all variant types is critical for understanding drug response, the choice of technology must be informed by these distinct error profiles. Emerging platforms continue to push the boundaries of accuracy and cost. Looking forward, the field is moving towards a multi-platform approach, where the synergistic use of short and long-read data, combined with advanced hybrid correction algorithms, will provide the most comprehensive and accurate view of the genome, ultimately strengthening the foundation of pharmacogenomic discovery and personalized medicine.

The Impact of Library Preparation and Target Capture on Results

In genomics research, the accuracy and reliability of sequencing data are critically dependent on the initial steps of library preparation and target capture. These pre-sequencing workflows determine which portions of the genome are isolated, amplified, and prepared for sequencing, directly influencing downstream analytical outcomes [11]. Within the context of Pharmacogenomics (PGx) and Oncology Research, particularly for genes of interest (GOI) and patient outcomes investigation (POI), the choice of methodology can mean the difference between detecting a critical, actionable genetic variant and missing it entirely [16]. As sequencing technologies evolve, understanding how these preparatory steps impact concordance across different platforms has become a fundamental requirement for rigorous research and reliable diagnostic applications.

This guide objectively compares the performance of various library preparation and target capture techniques, providing supporting experimental data to help researchers navigate this complex landscape. We focus on the practical implications for research aimed at achieving consistent results across the diverse sequencing platforms common in modern collaborative studies.

Core Principles and Technologies

The Workflow from Sample to Sequence

Library preparation and target capture are sequential processes that convert raw nucleic acids into a format compatible with sequencing instruments. The specific steps vary by application but share a common goal: to create a representative, unbiased library of DNA or cDNA fragments with the appropriate adapters for sequencing.

The process begins with Sample input, which can be genomic DNA, RNA, or cDNA. Fragmentation is performed to shear the nucleic acids into appropriately sized fragments. This is followed by End Repair to create blunt ends, Adapter Ligation where platform-specific sequencing adapters are added, and Size Selection to purify fragments of the desired length. A Pre-Capture PCR step may be used to amplify the library before Target Capture, where specific genomic regions of interest are enriched using hybridization probes. Finally, Post-Capture PCR amplifies the captured library before Sequencing [17] [5].

Target Enrichment Strategies

Targeted sequencing requires methods to isolate specific genomic regions from the entire genome. Two primary enrichment strategies are commonly employed:

- Amplicon-Based Enrichment: Uses PCR primers to directly amplify targeted regions. This method is efficient for small target sizes but can struggle with uniformity and GC-rich regions.

- Hybridization-Based Capture: Utilizes biotinylated RNA or DNA probes that hybridize to targeted regions, which are then pulled down with streptavidin beads. This approach, used in whole exome sequencing (WES) and custom panels, offers better uniformity and the ability to target larger regions [17].

Key Technical Considerations

Several technical factors directly impact the performance of library preparation and target capture:

- Coverage Uniformity: The consistency of sequencing depth across all targeted regions. Poor uniformity results in some regions being under-sequenced, potentially missing variants [17].

- Capture Specificity: The percentage of sequencing reads that map to the intended target regions versus off-target regions. Higher specificity makes sequencing more cost-effective [5].

- GC Bias: The tendency of certain methods to under-represent GC-rich or GC-poor regions, creating coverage gaps in important genomic areas [7].

- Duplicate Rates: Arise from over-amplification during PCR steps and reduce effective sequencing depth, potentially skewing variant allele frequency measurements [18].

Experimental Comparisons and Performance Data

Comparative Study of Exome Capture Platforms

A comprehensive 2025 study evaluated four commercial exome capture platforms on the DNBSEQ-T7 sequencer, providing direct performance comparisons relevant to targeted sequencing applications [17] [5].

Table 1: Performance Metrics of Four Exome Capture Platforms on DNBSEQ-T7

| Platform | Target Coverage | Uniformity | Duplicate Rate | Specificity | Sensitivity |

|---|---|---|---|---|---|

| BOKE TargetCap v3.0 | 99.2% | 97.1% | 5.8% | 71.5% | 99.1% |

| IDT xGen Exome Hyb v2 | 99.4% | 97.5% | 5.2% | 72.8% | 99.3% |

| Nad EXome Core Panel | 98.9% | 96.8% | 6.3% | 70.1% | 98.8% |

| Twist Exome 2.0 | 99.5% | 97.8% | 4.9% | 73.5% | 99.5% |

Note: Performance metrics shown are for 1-plex hybridization with 1000 ng input DNA, assessed at 100× mean coverage. Target coverage = percentage of target bases with ≥20% mean coverage; Uniformity = percentage of bases with depth >0.2× mean; Specificity = percentage of reads on target; Sensitivity = variant detection accuracy against known standards [5].

The study found that all four platforms exhibited comparable reproducibility and superior technical stability on the DNBSEQ-T7 sequencer. The researchers also established a robust workflow for probe hybridization capture that demonstrated broad compatibility across all four commercial exome probe sets, enhancing interoperability regardless of probe brand [17]. This uniform performance is particularly valuable for multi-center studies where consistent results across laboratories are essential.

Impact of Input Quality and Quantity

The quality of sequencing results is significantly influenced by the starting material. A 2025 study on H. pylori diagnosis from formalin-fixed, paraffin-embedded (FFPE) gastric biopsies demonstrated how input DNA quality affects target capture efficiency [19].

Table 2: Impact of Sample Quality on Target Capture Sequencing Performance

| PCR Ct Value | Average Sequencing Depth | Percentage of Reads Mapped to H. pylori | Successful Characterization Rate |

|---|---|---|---|

| <30.0 | >10× | 14 ± 4% | 100% |

| 30.0-32.9 | Variable (5-15×) | 8 ± 3% | 83.3% |

| >33.0 | <5× | 3 ± 1% | <50% |

Note: Data derived from target-enrichment sequencing of 30 FFPE gastric biopsy samples for H. pylori diagnosis. Characterization includes detection of resistance markers, virulence factors, and multilocus sequence typing profiles [19].

The research established a clear Ct value cutoff of approximately 32.9, beyond which samples were unlikely to achieve sufficient sequencing depth for complete characterization. This highlights the critical relationship between input DNA quality and the success of target capture approaches, particularly with suboptimal samples like FFPE tissue [19].

Platform-Specific Performance in Challenging Genomic Regions

Different sequencing and capture technologies exhibit variable performance in genomically challenging regions. A comparative analysis of the Illumina NovaSeq X Series and Ultima Genomics UG 100 platform revealed significant differences in coverage of difficult sequences [7].

The UG 100 platform employed a "high-confidence region" (HCR) that excluded 4.2% of the genome, including problematic areas such as homopolymers longer than 12 base pairs and various repetitive sequences. These excluded regions contained approximately 450,000 variants, representing 2.3% of the exome and 1.0% of ClinVar variants. In contrast, the NovaSeq X Series maintained coverage across these challenging regions, resulting in 6× fewer SNV errors and 22× fewer indel errors when assessed against the complete NIST v4.2.1 benchmark [7].

This demonstrates how platform-specific limitations can effectively mask biologically relevant genomic regions, potentially missing pathogenic variants in clinically important genes such as B3GALT6 (linked to Ehlers-Danlos syndrome) and FMR1 (associated with fragile X syndrome) [7].

Specialized Applications and Protocols

Target Enrichment for Antimicrobial Resistance Detection

A 2025 study developed a targeted sequencing approach for H. pylori diagnosis and characterization directly from FFPE biopsies, demonstrating a specialized application of target capture technology [19].

Experimental Protocol:

- DNA Extraction: DNA was isolated from FFPE gastric biopsy sections

- Library Preparation: The Agilent SureSelect XT protocol was modified for implementation on the Magnis automated system

- Target Capture: RNA probes targeted key virulence genes (cagA, vacA), antibiotic resistance determinants (23S rRNA, 16S rRNA, gyrA, rpoB), and multilocus sequence typing (MLST) genes

- Sequencing: Prepared libraries were sequenced on the iSeq 100 platform

- Data Analysis: Sequences were compared to cultured H. pylori strains from the same patients

The method accurately detected mutations in 23S rRNA associated with macrolide resistance, mutations in the quinolone resistance-determining region of gyrA, and mutations conferring rifamycin resistance. The MLST profiles generated through this target-enrichment method were consistent with those obtained via Sanger sequencing, demonstrating excellent concordance between platforms [19].

RNA-Seq Library Preparation Improvements

Advances in RNA-seq library preparation have significantly impacted data quality, particularly for challenging sample types. A 2025 validation study compared the Watchmaker Genomics (WMG) RNA-sequencing workflow with standard capture RNA-sequencing methods [18].

Table 3: Comparison of RNA-Seq Library Preparation Methods

| Performance Metric | Standard Capture Method | Watchmaker Genomics with Polaris Depletion | Improvement |

|---|---|---|---|

| Library Preparation Time | 16 hours | 4 hours | 75% reduction |

| Duplication Rate | 25-35% | 10-15% | ~60% reduction |

| Genes Detected | Baseline | 30% more genes | Significant increase |

| rRNA Depletion | Moderate | High | Marked improvement |

| Globin RNA in Whole Blood | High | Low | Significant reduction |

The Watchmaker workflow demonstrated substantial improvements in efficiency and data quality, with significantly reduced duplication rates and increased detection of genes across multiple sample types, including universal human reference RNA, whole blood, and FFPE samples [18]. This highlights how innovations in library preparation chemistry can dramatically enhance sequencing outcomes without changing the underlying sequencing technology.

Long-Read Sequencing for Complex Pharmacogenes

Long-read sequencing (LRS) technologies address specific limitations of short-read approaches in characterizing complex genomic regions. A 2025 review highlighted the advantages of LRS for pharmacogenomics (PGx) research, particularly for genes with structural complexities that challenge short-read technologies [16].

Key Applications:

- CYP2D6: Resolving structural variants, copy number variations, and distinguishing from highly homologous pseudogenes (CYP2D7, CYP2D8)

- CYP2B6: Accurate characterization of structural variants (CYP2B629, CYP2B630) and repetitive sequences (SINEs)

- UGT2B17: Analysis of gene deletion polymorphisms and differentiation from homologous family members

- HLA genes: Comprehensive typing of highly polymorphic regions with complex structural variations

LRS platforms can perform accurate genotyping in analytically challenging pharmacogenes without specific DNA treatment, provide full phasing to resolve complex diplotypes, and decrease false-negative results in a single assay [16]. This capability is particularly valuable for POI research where accurately determining haplotype structure can be critical for understanding drug response phenotypes.

Essential Research Reagent Solutions

The following table details key reagents and their functions in library preparation and target capture workflows, based on the methodologies cited in the comparative studies.

Table 4: Essential Research Reagents for Library Preparation and Target Capture

| Reagent / Kit | Manufacturer | Primary Function | Application Notes |

|---|---|---|---|

| MGIEasy UDB Universal Library Prep Set | MGI | Library construction from fragmented DNA | Provides end repair, A-tailing, adapter ligation capabilities; enables unique dual indexing for multiplexing [5] |

| Agilent SureSelect XT | Agilent Technologies | Target enrichment using biotinylated RNA probes | Automated on Magnis system; customizable probe design for specific gene panels [19] |

| Twist Exome 2.0 | Twist Bioscience | Whole exome capture | Demonstrates high target coverage (99.5%) and low duplicate rates (4.9%) [17] |

| xGen Exome Hyb Panel v2 | Integrated DNA Technologies | Whole exome capture | Shows strong uniformity (97.5%) and specificity (72.8%) metrics [5] |

| Watchmaker RNA Library Prep with Polaris Depletion | Watchmaker Genomics | RNA-seq library preparation | Significantly reduces rRNA and globin reads; improves gene detection in FFPE and whole blood [18] |

| MGIEasy Fast Hybridization and Wash Kit | MGI | Post-capture processing | Enables uniform workflow across different probe capture platforms; 1-hour hybridization [17] |

Implications for Multi-Platform Research Concordance

The choice of library preparation and target capture methods directly impacts the concordance of research findings across different sequencing platforms—a critical consideration for multi-center studies and biomarker validation.

Factors Affecting Cross-Platform Consistency

Several technical factors influence how well results correlate across different sequencing platforms:

- Input DNA Quality: As demonstrated in the H. pylori study, sample quality (reflected by PCR Ct values) directly affects sequencing depth and characterization rates, potentially creating discrepancies between labs using different sample quality thresholds [19].

- Capture Probe Design: Differences in probe design and target boundaries can lead to varying coverage of key genomic regions. The exclusion of challenging regions by some platforms highlights how technically difficult areas can become sources of inter-platform discrepancy [7].

- Uniformity of Coverage: Variable coverage across targeted regions means that some areas may be under-represented in one platform but adequately sequenced in another, leading to inconsistent variant detection [17].

- GC Bias: Platforms demonstrate different sensitivities to GC-rich regions, potentially creating systematic gaps in coverage that affect gene expression quantification and variant detection in specific genomic contexts [7].

Strategies for Enhancing Concordance

Based on the comparative studies, several strategies can improve consistency across sequencing platforms:

- Standardize Input Quality Metrics: Implement uniform QC thresholds for input DNA (e.g., Ct value cutoffs) to ensure comparable starting material across platforms [19].

- Utilize Platform-Agnostic Capture Workflows: Employ library preparation methods that demonstrate broad compatibility across different capture platforms, such as the MGI enrichment protocol that performed uniformly well with four different exome capture panels [17].

- Account for Platform-Specific Limitations: Understand and document the specific genomic regions that may be under-represented on different platforms, particularly when comparing results across technologies [7].

- Validate Critical Findings Orthogonally: For clinically actionable variants or key research findings, consider confirmation using an alternative technology or platform to control for platform-specific artifacts [16].

Library preparation and target capture methodologies fundamentally shape sequencing outcomes, influencing data quality, variant detection capability, and ultimately, the concordance of research findings across platforms. The comparative data presented in this guide demonstrates that while different platforms and methods each have distinct performance characteristics, informed selection and standardization of these initial workflow steps can significantly enhance the reliability and reproducibility of genomic research.

For researchers investigating patient outcomes and genes of interest, careful consideration of these pre-sequencing factors is not merely technical optimization but a fundamental requirement for generating biologically meaningful and clinically actionable results. As sequencing technologies continue to evolve, ongoing benchmarking of library preparation and target capture methods will remain essential for advancing precision medicine and multi-platform research initiatives.

In the field of genomics, the translation of research findings into clinical practice represents a fundamental pathway for advancing personalized medicine. This process, however, depends critically on the analytical concordance between research-based sequencing methods and clinically validated diagnostic platforms. The eMERGE-PGx (Electronic Medical Records and Genomics Pharmacogenomics) Study directly addressed this need by conducting a large-scale comparison of next-generation sequencing (NGS) results from research laboratories with orthogonal clinical genotyping [20] [21]. This case study examines the design, implementation, and findings of this pivotal investigation, which provides crucial evidence for the reliability of NGS-derived pharmacogenetic data.

For researchers investigating complex conditions like Premature Ovarian Insufficiency (POI), understanding the concordance and limitations of different sequencing platforms is particularly relevant. While POI research often focuses on identifying genetic variants affecting ovarian function, the technical challenges in variant detection mirror those encountered in pharmacogenomics—including the need to accurately identify single nucleotide variants (SNVs), structural variants, and copy number variations (CNVs) across challenging genomic regions [16] [22]. The eMERGE-PGx findings therefore offer valuable methodological insights applicable across genomic research domains.

Experimental Design and Methodologies

The eMERGE-PGx study implemented a rigorous comparative design to evaluate concordance between research and clinical genotyping platforms. The study analyzed 4,077 samples across nine different combinations of research and clinical laboratories, creating a robust dataset for statistical analysis [20] [23]. A subset of 1,792 samples underwent retesting to facilitate detailed investigation of identified discrepancies [21].

Table 1: Key Experimental Parameters of the eMERGE-PGx Study

| Parameter | Specification | Significance |

|---|---|---|

| Research Sequencing Platform | PGRNseq | Custom NGS panel developed by Pharmacogenomics Research Network |

| Clinical Genotyping | Orthogonal targeted methods | CLIA-approved laboratory methods |

| Sample Size | 4,077 samples | Provides statistical power for concordance assessment |

| Retesting Subset | 1,792 samples | Enables root cause analysis of discrepancies |

| Laboratory Combinations | 9 research-clinical pairs | Assesses inter-laboratory variability |

Analytical Workflow

The experimental workflow followed a systematic process from sample processing to discrepancy resolution:

Sample Processing: Each subject sample underwent parallel processing through research NGS (PGRNseq) and clinical genotyping platforms [20]

Variant Calling: Research sequencing utilized NGS with comprehensive variant calling, while clinical laboratories employed targeted genotyping methods optimized for specific pharmacogenetic variants [20] [24]

Comparison Analysis: Genotype results were systematically compared between platforms to identify concordant and discordant calls [23]

Discrepancy Investigation: Local laboratory directors performed root cause analysis on genotype discrepancies using retesting data from the subset of 1,792 samples [21]

The following workflow diagram illustrates the experimental design and primary findings of the eMERGE-PGx concordance study:

Key Findings: Concordance Rates and Discrepancy Analysis

The eMERGE-PGx study demonstrated strong overall agreement between research NGS and clinical genotyping platforms. The analysis revealed an overall per-sample concordance rate of 0.972 (97.2%), indicating that the vast majority of samples showed identical genotype calls across both platforms [20] [23]. When examined at the variant level, concordance was even higher, with a per-variant concordance rate of 0.997 (99.7%) [21].

These high concordance rates provide substantial evidence for the analytical validity of research NGS for pharmacogenetic variant detection. The findings support the potential utility of research-generated genomic data for clinical decision-making, particularly for preemptive pharmacogenomics where research data might inform future prescribing decisions [24].

Discrepancy Classification and Root Causes

Despite high overall concordance, systematic investigation of discrepancies revealed distinct patterns in error sources:

Table 2: Classification and Root Causes of Genotype Discrepancies

| Platform | Primary Error Source | Percentage | Root Cause |

|---|---|---|---|

| Research NGS | Preanalytical Errors | Majority | Sample switching incidents during processing |

| Clinical Genotyping | Analytical Errors | 92.3% | Allele dropout due to rare variants interfering with primer hybridization |

The root cause analysis demonstrated that discrepancies attributed to research NGS predominantly resulted from sample switching (preanalytical errors) rather than technical limitations of the sequencing technology itself [20] [21]. In contrast, the vast majority (92.3%) of discrepancies attributed to clinical genotyping were caused by allele dropout—an analytical error occurring when rare genetic variants interfere with primer hybridization in targeted genotyping assays [21] [23].

This finding highlights a notable advantage of NGS approaches: their reduced susceptibility to allele dropout artifacts that can affect targeted genotyping methods reliant on specific primer binding. For research applications like POI investigation, where novel or rare variants may be particularly relevant, this characteristic of NGS provides important methodological benefits [16].

Technological Considerations for Genomic Research

Sequencing Platforms and Their Applications

The rapidly evolving landscape of sequencing technologies offers researchers multiple options for genomic investigations. Each platform presents distinct advantages and limitations for different research contexts:

Table 3: Sequencing Technology Comparison for Genetic Research

| Technology | Key Features | Advantages | Limitations |

|---|---|---|---|

| Short-Read NGS (PGRNseq) | High-throughput, short fragments | Cost-effective, established analysis pipelines | Limited in complex genomic regions |

| Long-Read Sequencing | Reads >10kb, single molecule | Resolves complex variants, phased haplotypes | Higher cost, evolving analytical methods |

| Clinical Genotyping Arrays | Targeted variant detection | Rapid, cost-effective for known variants | Limited to predefined variants, no novel discovery |

The emergence of long-read sequencing (LRS) technologies represents a particularly significant advancement for investigating genetically complex conditions [16]. LRS platforms can span repetitive regions, resolve structural variants, and provide complete haplotype information—addressing key limitations of short-read NGS for challenging genomic loci [16] [24].

Application to POI and Reproductive Research

While the eMERGE-PGx study focused on pharmacogenes, its implications extend to POI research, which shares similar technical challenges. POI investigations require accurate variant detection in genes with complex architecture, including:

- Structural variants and copy number variations in genes involved in ovarian function

- Haplotype phasing for compound heterozygotes in autosomal genes

- Accurate genotyping in GC-rich or repetitive regions common in developmental genes

Long-read sequencing technologies show particular promise for POI research by enabling comprehensive assessment of genes with complex genomic landscapes that are difficult to resolve with short-read NGS or targeted arrays [16]. The technology's ability to detect hybrid genes, large rearrangements, and provide complete phasing makes it well-suited for investigating the genetic architecture of complex traits like POI.

Research Reagent Solutions for Genomic Studies

Table 4: Essential Research Reagents and Platforms for Genomic Concordance Studies

| Reagent/Platform | Function | Application in eMERGE-PGx |

|---|---|---|

| PGRNSeq Panel | Targeted NGS capture | Research sequencing of pharmacogenes |

| Orthogonal Genotyping Assays | Clinical validation | CLIA-approved clinical genotyping |

| DamID-seq Protocols | Mapping chromatin interactions | Specialized genomic applications (unrelated to PGx) [25] |

| Long-Read Sequencers (PacBio, Nanopore) | Complex variant resolution | Emerging technology for challenging pharmacogenes [16] |

| Bioinformatic Pipelines | Variant calling, quality control | Data processing, concordance analysis |

Implications for Genomic Research and Clinical Translation

Quality Management Considerations

The eMERGE-PGx findings highlight critical areas for quality improvement in both research and clinical settings:

Research Laboratories should implement enhanced sample tracking and chain-of-custody protocols to minimize preanalytical errors like sample switching [20]

Clinical Laboratories using targeted genotyping methods should develop strategies to address allele dropout, such as primer site evaluation for rare variants or supplemental testing for discordant results [21]

Both Settings benefit from regular concordance testing between platforms, particularly when implementing new technologies or assay designs

Pathway for Clinical Implementation of Research Genomics

The demonstrated concordance between research NGS and clinical genotyping supports the potential for utilizing research-generated genomic data in clinical care through carefully structured pathways:

The eMERGE-PGx study provides compelling evidence for the high concordance (97.2%) between research-based NGS and clinical genotyping platforms for pharmacogenetic variants. This demonstration of analytical validity supports the potential utility of research genomic data for clinical implementation, while also highlighting platform-specific limitations that require methodological attention.

For researchers investigating complex traits like Premature Ovarian Insufficiency, these findings underscore the importance of platform selection based on genomic context and the value of orthogoal validation for clinically relevant variants. The rapid advancement of sequencing technologies, particularly long-read platforms, promises enhanced capability for resolving complex variants in challenging genomic regions—further strengthening the potential for research findings to inform clinical practice.

As genomic medicine continues to evolve, ongoing assessment of platform concordance remains essential for ensuring the reliable translation of research discoveries into clinical applications that improve patient care across diverse medical domains, including reproductive medicine and pharmacotherapy.

Practical Approaches for Concordance Testing and Platform Comparison

In pharmacogenomics and microbiome research, next-generation sequencing (NGS) technologies have become indispensable tools for unlocking the genetic basis of drug response and microbial community dynamics. However, the rapid evolution of sequencing platforms, each with distinct technical characteristics, introduces significant challenges in ensuring data consistency and reliability across studies. Concordance studies have therefore emerged as critical methodologies for quantifying agreement between different sequencing technologies and analytical pipelines. This guide introduces a structured Four 'S' Framework—encompassing Samples, Sequencing, Standards, and Statistics—to design robust concordance studies that generate trustworthy, comparable data for pharmaceutical and clinical research applications.

The Critical Role of Concordance in Genomic Research

High concordance between sequencing platforms provides confidence in variant calls and taxonomic assignments, which is particularly crucial in clinical and drug development settings where erroneous data can directly impact patient outcomes. The eMERGE-PGx study, which compared research NGS with clinical genotyping, demonstrated that well-designed sequencing approaches can achieve exceptional agreement, with per-variant concordance rates of 99.7% across 67,900 pharmacogenetic variants [26]. Similarly, a comparative evaluation of sequencing platforms for soil microbiome profiling found that despite technological differences, Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) provided comparable assessments of bacterial diversity, ensuring clear clustering of samples based on biological origin rather than technical platform [27] [13].

However, significant discordance can arise from multiple sources. One study analyzing 15 exomes with five different bioinformatics pipelines revealed strikingly low concordance rates—only 57.4% for single-nucleotide variants (SNVs) and 26.8% for insertions and deletions (indels) across pipelines [28]. These findings underscore the necessity of rigorous concordance studies, especially for clinical applications where variant calling inaccuracies could affect medical interpretations.

The Four 'S' Framework for Concordance Studies

Samples: Strategic Selection and Preparation

The foundation of any robust concordance study lies in careful sample selection and preparation. This involves using well-characterized reference materials and implementing standardized processing protocols to minimize pre-analytical variability.

Table 1: Sample Selection for Sequencing Concordance Studies

| Sample Type | Description | Applications | Examples from Literature |

|---|---|---|---|

| Human Reference Standards | Well-characterized genomes from initiatives like Genome in a Bottle (GIAB) | Whole genome sequencing performance assessment | HG001-HG005 samples used in Sikun 2000 evaluation [29] |

| Microbial Community Standards | Defined mock communities with known composition | Microbiome profiling accuracy | ZymoBIOMICS Gut Microbiome Standard [27] [13] |

| Biological Replicates | Multiple samples from same source | Technical variability assessment | Three soil types with three replicates each [27] [13] |

| Multi-Generational Families | DNA from related individuals | Variant calling accuracy improvement | Family pedigrees in pipeline comparison study [28] |

Effective sample preparation follows standardized DNA extraction protocols using kits specifically validated for the sample type, such as the Quick-DNA Fecal/Soil Microbe Microprep kit for environmental samples [27] [13]. DNA quality and quantity should be rigorously assessed using fluorometric methods (e.g., Qubit Fluorometer) and electrophoretic quality control (e.g., Fragment Analyzer) to ensure input material consistency across platforms.

Sequencing: Platform Comparison and Experimental Design

The core of a concordance study involves parallel sequencing of the same samples across multiple platforms, with careful attention to platform-specific protocols and normalized sequencing depths.

Table 2: Sequencing Platform Performance Characteristics

| Platform | Read Type | Key Strengths | Key Limitations | Reported Accuracy |

|---|---|---|---|---|

| Illumina NovaSeq X | Short-read | High throughput, excellent SNV detection | Declining performance in homopolymers | Q30: 97.37% [29] |

| PacBio Sequel IIe | Long-read | Full-length 16S rRNA sequencing, high consensus accuracy | Lower throughput, requires CCS for high accuracy | >99.9% [27] [13] |

| Oxford Nanopore | Long-read | Real-time sequencing, long reads | Higher raw error rates | ~99.84% with R10.4.1 flow cell [27] [13] |

| Sikun 2000 | Short-read | Competitive SNV accuracy, low duplicate reads | Lower indel detection performance | Q30: 93.36% [29] |

For 16S rRNA microbiome studies, platform-specific library preparation is essential. PacBio utilizes the SMRTbell Prep Kit with barcoded universal primers targeting the full-length 16S rRNA gene, while ONT employs the Native Barcoding Kit with similar full-length targets [27] [13]. Illumina platforms typically target hypervariable regions (V4 or V3-V4) using kits such as the Illumina MiSeq or iSeq, which have demonstrated 99.5% SNP concordance for viral genomic sequencing [30].

To ensure fair comparisons, sequencing depth should be normalized across platforms (e.g., 10,000-35,000 reads per sample for microbiome studies [27] [13]), and the same DNA extracts should be used for all platform comparisons to eliminate extraction bias.

Figure 1: Workflow for sequencing platform concordance studies

Standards: Bioinformatics Pipelines and Reference Materials

Standardized bioinformatics processing is crucial for meaningful platform comparisons. The same reference genomes and database versions should be used across all analyses to eliminate confounding factors.

For human genome studies, the Genome in a Bottle (GIAB) consortium provides high-confidence reference calls for benchmark regions within well-characterized genomes like HG002 [7]. For microbiome studies, curated databases such as SILVA or Greengenes provide reference sequences for taxonomic assignment.

Bioinformatics pipelines must be tailored to each platform's characteristics. For example:

- Illumina short reads: BWA alignment followed by GATK variant calling [28]

- PacBio long reads: Circular Consensus Sequencing (CCS) processing for high accuracy [27] [13]

- ONT long reads: Platform-specific basecalling (e.g., Dorado) followed by alignment

The eMERGE-PGx study demonstrated the importance of standardized processing, achieving 99.7% variant concordance when applying consistent quality filters across platforms [26]. It's also critical to assess performance in challenging genomic regions, such as homopolymers, segmental duplications, and GC-rich areas, which may be excluded from "high-confidence regions" by some platforms [7].

Statistics: Quantitative Concordance Assessment

Robust statistical measures are essential for quantifying agreement between platforms. The choice of metrics depends on the data type and research question.

Table 3: Statistical Measures for Concordance Assessment

| Metric | Formula/Calculation | Application | Interpretation | ||||

|---|---|---|---|---|---|---|---|

| Percentage Agreement | (Concordant calls / Total calls) × 100 | Variant calling, taxonomic assignment | 0-100%, higher indicates better agreement | ||||

| Jaccard Similarity | A ∩ B | / | A ∪ B | SNV/indel detection | 0-1, measures overlap between variant sets [29] | ||

| Cohen's Kappa | (P₀ - Pₑ) / (1 - Pₑ) | Categorical agreement (e.g., taxon presence) | -1 to 1, >0.75 indicates excellent agreement [31] | ||||

| F-score | 2 × (Precision × Recall) / (Precision + Recall) | Variant calling accuracy | 0-1, balances precision and recall [29] |

For microbiome studies, additional ecological metrics include:

- Alpha diversity: Richness and evenness within samples compared across platforms

- Beta diversity: Between-sample differences assessed via PERMANOVA

- Taxonomic composition: Relative abundance correlation at different taxonomic levels

The Sikun 2000 evaluation demonstrated how these metrics apply in practice, showing 92.42% SNV concordance with Illumina NovaSeq 6000, but lower indel concordance (66.63%) [29].

Figure 2: The Four 'S' Framework for concordance studies

Essential Reagents and Research Tools

Table 4: Essential Research Reagent Solutions for Concordance Studies

| Category | Specific Product/Kit | Function | Example Use Case |

|---|---|---|---|

| DNA Extraction | Quick-DNA Fecal/Soil Microbe Microprep Kit | High-quality DNA isolation from challenging samples | Soil microbiome DNA extraction [27] [13] |

| DNA Quantification | Qubit Fluorometer with HS DNA Kit | Accurate DNA concentration measurement | Pre-library preparation QC [27] [13] |

| Library Preparation | SMRTbell Prep Kit 3.0 | PacBio library construction for long-read sequencing | Full-length 16S rRNA sequencing [27] [13] |

| Library Preparation | Native Barcoding Kit 96 | ONT barcoded library preparation | Multiplexed full-length 16S sequencing [13] |

| Reference Materials | ZymoBIOMICS Gut Microbiome Standard | Mock community for process validation | DNA extraction and sequencing control [27] [13] |

| Reference Materials | GIAB Reference Standards | Human genome benchmarks | Platform performance validation [29] [7] |

| Quality Control | Fragment Analyzer | Size distribution and quality assessment | Post-amplification library QC [27] [13] |

Case Studies in Concordance Assessment

Microbiome Profiling Platform Comparison

A comprehensive 2025 evaluation compared PacBio, ONT, and Illumina for 16S rRNA-based soil microbiome profiling. Researchers analyzed three distinct soil types with three biological replicates each, applying standardized bioinformatics pipelines with normalized sequencing depths (10,000-35,000 reads per sample). Results demonstrated that ONT and PacBio provided comparable bacterial diversity assessments, with PacBio showing slightly higher efficiency in detecting low-abundance taxa. Despite ONT's inherent sequencing errors, the platform produced results closely matching PacBio, suggesting that errors didn't significantly affect interpretation of well-represented taxa. Importantly, all technologies enabled clear clustering of samples by soil type, except for the Illumina V4 region alone (p=0.79), highlighting the value of broader genomic coverage [27] [13].

Clinical Pharmacogenetics Validation

The eMERGE-PGx study provided a robust framework for clinical-genomic concordance assessment, comparing research NGS (PGRNseq) with orthogonal clinical genotyping across 4,077 subjects. The study achieved an overall per-sample concordance of 97.2% and per-variant concordance of 99.7%. Notably, investigation of discrepancies revealed that all research NGS errors were attributable to pre-analytical sample switches rather than analytical sequencing errors, while 92.3% of clinical genotyping discrepancies resulted from allele dropout due to rare variants interfering with primer hybridization [26]. This finding underscores the importance of examining all testing phases—pre-analytical, analytical, and post-analytical—in comprehensive concordance assessments.

Emerging Platform Evaluation

The performance assessment of the Sikun 2000 platform exemplifies rigorous evaluation of new sequencing technologies. Researchers compared the platform with Illumina NovaSeq 6000 and NovaSeq X using five GIAB reference genomes. The Sikun 2000 demonstrated competitive SNV accuracy (F-score: 97.86% vs. 97.64% for NovaSeq 6000) but lower indel detection performance (F-score: 83.08% vs. 87.08%). The platform also showed a significantly lower duplication rate (1.93% vs. 18.53% for NovaSeq 6000), indicating more efficient data utilization [29]. Such comprehensive benchmarking provides valuable insights for researchers selecting platforms for specific applications.

The Four 'S' Framework provides a systematic approach for designing sequencing concordance studies that generate reliable, comparable data across platforms. By addressing each component—Samples, Sequencing, Standards, and Statistics—researchers can overcome the technical variability inherent in different NGS technologies and produce robust findings suitable for clinical and pharmaceutical applications. As sequencing technologies continue to evolve, this framework offers a adaptable structure for validating new platforms and methodologies, ultimately strengthening the foundation of genomic science in drug development and personalized medicine.

Standard Reference Materials and Control Samples for Cross-Platform Validation

In precision oncology research, the accurate and reproducible identification of variants is paramount. Next-generation sequencing (NGS) has become a cornerstone technology for this purpose, yet a significant challenge remains: ensuring that results are consistent and comparable across different sequencing platforms and laboratory workflows [11]. Differences in chemistry, protocols, and data analysis can introduce biases, potentially impacting downstream clinical and research decisions. This is where Standard Reference Materials (SRMs) and well-characterized control samples become indispensable. They provide a fixed benchmark, or "ground truth," enabling researchers to validate the performance of their chosen platforms, harmonize data obtained from different sources, and build confidence in the resulting variant calls [32] [33]. This guide objectively compares the performance of several commercial exome capture platforms using a standardized reference, providing a framework for cross-platform validation in your research.

The Role of Standards in Validation

Definitions and Types

Certified Reference Materials (CRMs) are controls or standards used to check the quality and metrological traceability of products, validate analytical measurement methods, or for the calibration of instruments [32]. A key characteristic of CRMs is that they are accompanied by a certificate providing the value of a specified property, its associated uncertainty, and a statement of metrological traceability.

For genomic studies, this often translates to materials with a thoroughly characterized genome, where variants have been identified through multiple, orthogonal methods. The most robust materials are matrix reference materials, which are characterized for the composition of specified major, minor, or trace constituents and are prepared in a matrix that closely resembles the actual study samples [32]. For human genetics research, this typically means DNA extracted from human cell lines, such as the widely used NA12878, which was utilized in the comparative study discussed in this guide [5].

The Validation Workflow

The use of SRMs fits into a broader cross-platform validation workflow designed to assess concordance. The process begins with the selection of a well-characterized reference sample. This sample is processed using standardized library preparation methods before being split and subjected to targeted enrichment or sequencing on the platforms under investigation. Following sequencing, data is processed through a uniform bioinformatics pipeline, and key performance metrics are compared against the known "truth set" to evaluate accuracy, sensitivity, and specificity [5] [34]. This systematic approach isolates the variable of interest—the sequencing or capture platform—while controlling for other factors.

Experimental Comparison of Exome Capture Platforms

Experimental Design & Methodology

A 2025 study provides a robust model for cross-platform validation, focusing on four commercial Whole Exome Sequencing (WES) platforms on the DNBSEQ-T7 sequencer [5]. The study was designed to minimize variability and enable a direct comparison of platform performance.

- Reference Sample: The study used genomic DNA from the HapMap-CEPH NA12878 cell line, a well-characterized human genome used as an international standard [5].

- Library Preparation: A total of 72 libraries were prepared from the NA12878 sample using the MGIEasy UDB Universal Library Prep Set. Each library was uniquely dual-indexed to allow for multiplexing [5].

- Platforms Compared: The four exome capture platforms evaluated were:

- BOKE: TargetCap Core Exome Panel v3.0 (BOKE Bioscience)

- IDT: xGen Exome Hyb Panel v2 (Integrated DNA Technologies)

- Nad: EXome Core Panel (Nanodigmbio Biotechnology)

- Twist: Twist Exome 2.0 (Twist Bioscience) [5]

- Hybridization Methods: The experiment tested both 1-plex and 8-plex hybridization. Crucially, a subset of libraries was captured using a unified MGI enrichment protocol rather than the manufacturers' proprietary protocols, testing the impact of a standardized workflow [5].

- Sequencing and Analysis: All 72 captured libraries were pooled and sequenced on a single lane of a DNBSEQ-T7 (PE150). Data processing and variant calling were performed uniformly using the MegaBOLT pipeline, aligning to the hg19 reference genome and using dbSNP build 151 for variant annotation [5].

The following diagram illustrates this experimental workflow.

Key Performance Metrics for Comparison

When validating NGS platforms, several quantitative metrics are critical for assessing performance. The following table summarizes the core metrics that were evaluated in the comparative study.

Table 1: Key Performance Metrics for NGS Platform Validation

| Metric | Description | Importance in Validation |

|---|---|---|

| Capture Specificity | The percentage of sequencing reads that map to the intended target regions [5]. | Measures the efficiency of the probe capture system; high specificity reduces sequencing costs for a desired coverage. |

| Uniformity of Coverage | How evenly sequencing reads are distributed across all target regions (e.g., >90% of targets covered at 20x) [5]. | Ensures that all genomic regions of interest are sequenced adequately, minimizing "drop-outs." |

| Variant Detection Accuracy | The concordance of identified variants with a known truth set, measured by sensitivity and precision [5]. | Directly assesses the analytical performance of the entire workflow for the primary goal of variant calling. |

| Reproducibility | The consistency of results between technical replicates [5]. | Evaluates the technical stability and reliability of the platform. |

| GC Bias | The variation in coverage depth in regions with high or low GC content [5]. | Identifies sequence-dependent biases that can lead to inaccurate variant calls in certain genomic contexts. |

Comparative Performance Data

The study generated a wealth of data on the performance of the four platforms. The results below highlight the comparative performance of the BOKE, IDT, Nad, and Twist platforms when processed using the standardized MGI workflow.

Table 2: Comparative Performance of Exome Capture Platforms on DNBSEQ-T7

| Platform | Capture Specificity | Uniformity of Coverage (e.g., % of targets at >20x) | Variant Detection Sensitivity | Variant Detection Precision | Notes |

|---|---|---|---|---|---|

| BOKE | Reported as high and comparable across platforms [5] | Reported as high and comparable across platforms [5] | High and comparable accuracy reported for all platforms [5] | High and comparable accuracy reported for all platforms [5] | Performance was robust using the standardized protocol. |

| IDT | Reported as high and comparable across platforms [5] | Reported as high and comparable across platforms [5] | High and comparable accuracy reported for all platforms [5] | High and comparable accuracy reported for all platforms [5] | Performance was robust using the standardized protocol. |

| Nad | Reported as high and comparable across platforms [5] | Reported as high and comparable across platforms [5] | High and comparable accuracy reported for all platforms [5] | High and comparable accuracy reported for all platforms [5] | Performance was robust using the standardized protocol. |

| Twist | Reported as high and comparable across platforms [5] | Reported as high and comparable across platforms [5] | High and comparable accuracy reported for all platforms [5] | High and comparable accuracy reported for all platforms [5] | Performance was robust using the standardized protocol. |

A key finding was that all four platforms demonstrated comparable reproducibility and superior technical stability and detection accuracy on the DNBSEQ-T7 sequencer [5]. Furthermore, the use of a unified probe hybridization capture workflow (the MGI protocol) provided uniform and outstanding performance across all four probe brands, enhancing broader compatibility and simplifying the process for labs using multiple platforms [5].

The Scientist's Toolkit: Essential Research Reagents

Successful cross-platform validation relies on a set of well-defined materials and reagents. The following table details the key components used in the featured experiment that can be adapted for similar studies.

Table 3: Essential Reagents and Materials for Cross-Platform NGS Validation

| Item | Function in Validation | Example from Study |

|---|---|---|

| Certified Reference DNA | Serves as the "ground truth" for assessing variant calling accuracy and reproducibility. | HapMap-CEPH NA12878 DNA [5]. |

| Commercial Exome Panels | The targeted capture probes being compared; different designs impact performance. | BOKE, IDT, Nad, and Twist Exome Panels [5]. |

| Uniform Library Prep Kit | Standardizes the initial steps of the workflow to isolate the variable (the capture platform). | MGIEasy UDB Universal Library Prep Set [5]. |

| Stable Instrument Platform | Provides the sequencing data; using one sequencer for the test removes instrument variability. | DNBSEQ-T7 Sequencer [5]. |

| Validated Bioinformatics Pipeline | Ensures consistent data processing and variant calling across all samples. | MegaBOLT pipeline with GATK best practices [5]. |