Standardizing Sperm Morphology Assessment: Development and Validation of a Novel Training Tool for Biomedical Research

Sperm morphology assessment is a critical yet highly subjective component of male fertility evaluation, with significant variability undermining its diagnostic reliability.

Standardizing Sperm Morphology Assessment: Development and Validation of a Novel Training Tool for Biomedical Research

Abstract

Sperm morphology assessment is a critical yet highly subjective component of male fertility evaluation, with significant variability undermining its diagnostic reliability. This article explores the development, application, and validation of a novel sperm morphology assessment standardization training tool, designed using machine learning principles of supervised learning and expert consensus to establish 'ground truth.' We examine the tool's foundational framework, methodological implementation for training researchers, strategies for optimizing user accuracy and diagnostic speed, and comparative performance against traditional training methods. For researchers, scientists, and drug development professionals, this resource addresses the pressing need for standardized, reproducible morphological analysis, which is essential for advancing reproductive toxicology studies, drug safety assessments, and clinical diagnostics.

The Critical Need for Standardization in Sperm Morphology Analysis

Sperm morphology assessment is a cornerstone of male fertility evaluation, widely recognized for its prognostic value in predicting reproductive outcomes both in natural conception and assisted reproductive technologies (ART) [1]. Despite its clinical importance, sperm morphology assessment remains one of the most challenging and subjective analyses performed in andrology laboratories [2]. The inherent variability in morphological classification stems from multiple factors, including technician training and experience, adherence to standardized protocols, staining methods, and the classification systems employed [2] [1]. This subjectivity poses significant challenges for clinical decision-making, research consistency, and quality assurance across laboratories.

The fundamental issue lies in the nature of morphological assessment itself—unlike sperm concentration or motility, which can be partially automated using computer-assisted systems, morphology evaluation primarily depends on visual inspection and subjective judgment by laboratory personnel [2]. Without robust standardization protocols, this subjective test is prone to bias and human error, leading to inaccurate and highly variable results that compromise clinical utility [2] [3]. The problem is exacerbated by the lack of widely accepted, standardized training methods for morphologists, creating a cycle of variability that affects both diagnostic accuracy and treatment decisions [3].

Quantitative Evidence of Assessment Variability

Data on Pre- and Post-Training Accuracy

Recent research has quantified the dramatic impact of training and classification system complexity on assessment accuracy. The following table summarizes key findings from a systematic training study that evaluated novice morphologists across different classification systems:

Table 1: Accuracy of Sperm Morphology Assessment Across Classification Systems

| Classification System Complexity | Number of Categories | Untrained User Accuracy (%) | Trained User Accuracy (%) | Improvement with Training |

|---|---|---|---|---|

| Simple Binary | 2 | 81.0 ± 2.5 | 98.0 ± 0.43 | +17.0% |

| Location-Based | 5 | 68.0 ± 3.59 | 97.0 ± 0.58 | +29.0% |

| Standard Veterinary | 8 | 64.0 ± 3.5 | 96.0 ± 0.81 | +32.0% |

| Comprehensive Research | 25 | 53.0 ± 3.69 | 90.0 ± 1.38 | +37.0% |

The data reveal several critical patterns: untrained users demonstrate high variation (coefficient of variation = 0.28) with accuracy scores ranging from 19% to 77% on initial assessment [2]. Additionally, assessment accuracy inversely correlates with classification system complexity, with more complex systems yielding lower initial accuracy rates. However, structured training produces the most dramatic improvements for complex classification systems, highlighting the potential for standardized training protocols to enhance accuracy across all levels of morphological assessment.

Analysis of Inter-Operator Variability

The variability in morphology assessment extends beyond novice practitioners. Studies comparing expert morphologists have revealed significant discrepancies even among experienced personnel. When experts were asked to classify the same sperm images using a simple binary (normal/abnormal) system, they only reached consensus on 73% of the images [2]. This fundamental disagreement among trained professionals underscores the deep-rooted subjectivity in current assessment practices and emphasizes the need for standardized training tools that can establish consistent classification criteria across laboratories and practitioners.

Standardized Training Tool Protocol

Development of the Training Platform

The Sperm Morphology Assessment Standardisation Training Tool represents a novel approach to addressing variability through the application of machine learning principles to human training [2] [3]. The development protocol involves creating a robust database of pre-classified sperm images that serve as "ground truth" for training purposes, following this multi-stage workflow:

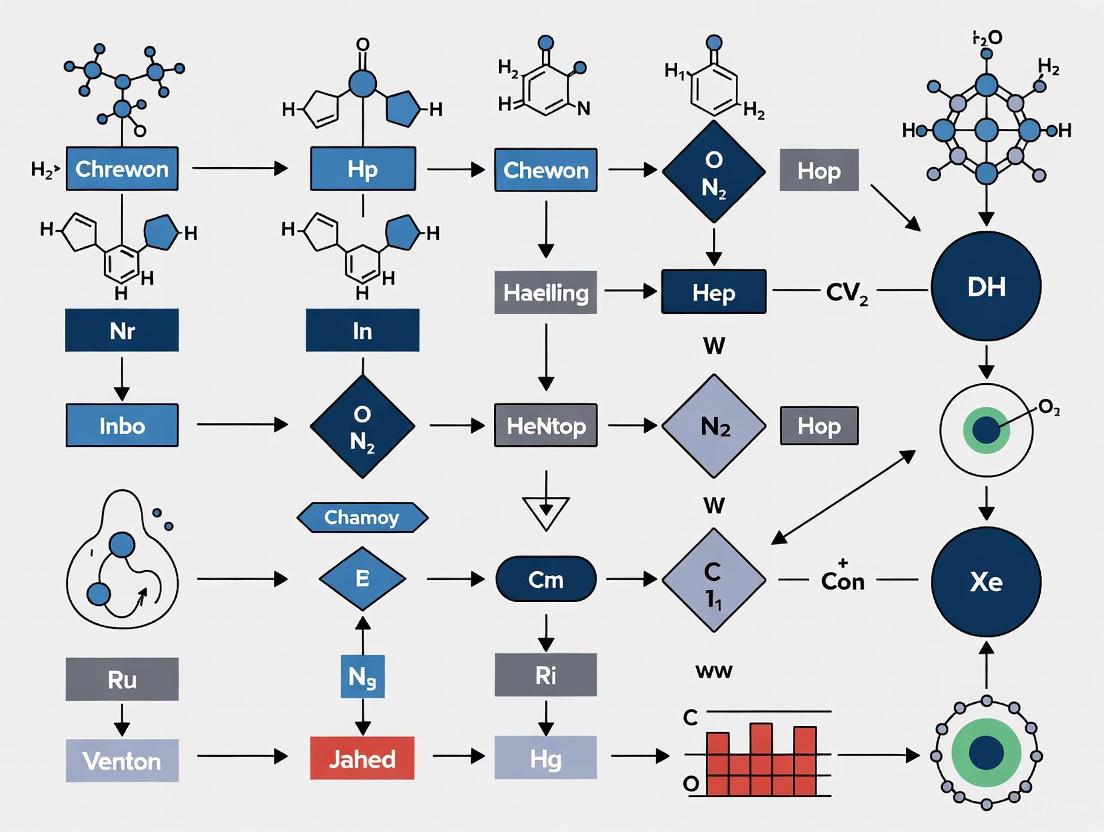

Diagram 1: Training Tool Development Workflow

The development process begins with comprehensive sample collection and high-resolution image acquisition using differential interference contrast (DIC) optics at 40× magnification [3]. A critical innovation in this protocol is the application of a machine learning algorithm to isolate individual sperm cells from field-of-view images, generating a dataset of 9,365 single-sperm images [3]. These images undergo independent classification by multiple expert morphologists, with only those achieving 100% consensus (4,821 images) incorporated into the final training database [2] [3]. This rigorous consensus approach ensures the establishment of reliable "ground truth" classifications that form the foundation for standardized training.

Implementation for Morphologist Training

The training protocol implementation follows a structured framework that systematically develops morphological classification skills through progressive exposure to different categorization systems:

Table 2: Research Reagent Solutions for Morphology Assessment

| Reagent/Equipment | Specification | Function in Protocol |

|---|---|---|

| Microscope | Olympus BX53 with DIC objectives | High-resolution image acquisition |

| Camera | Olympus DP28 CMOS sensor | Digital image capture (8.9-megapixel) |

| Staining Method | Diff-Quik rapid stain | Sperm structure visualization |

| Mounting Medium | Cytoseal with refractive index 1.52 | Slide preparation for optimal clarity |

| Classification Database | 4,821 consensus-labeled images | Ground truth reference for training |

| Web Interface | Custom JavaScript application | Training delivery and accuracy tracking |

Diagram 2: Morphologist Training Implementation Protocol

The implementation protocol begins with an initial proficiency assessment across all classification systems to establish baseline performance [2]. Participants then engage in a structured four-week training program consisting of 14 sessions that systematically progress through classification systems of increasing complexity [2]. A critical component of this protocol is the instant feedback mechanism, which provides immediate correction and reinforcement for each sperm classification [3]. Performance is continuously monitored through accuracy and speed metrics, with studies demonstrating significant improvement in both parameters—from initial accuracy of 82% to final accuracy of 90% in the 25-category system, and assessment speed improving from 7.0 seconds to 4.9 seconds per image [2].

Impact on Clinical Practice and Research

Implications for Andrology Laboratory Quality Assurance

The variability in sperm morphology assessment has direct implications for clinical decision-making in reproductive medicine. Treatment pathways for infertile couples often depend on semen parameter thresholds, with significant clinical and financial consequences [4]. For instance, the decision between intrauterine insemination (IUI) and in vitro fertilization with intracytoplasmic sperm injection (IVF/ICSI) frequently relies on total motile sperm count and morphology assessments [4] [1]. Inaccurate morphology classification may lead to inappropriate treatment recommendations, with cost disparities between procedures being substantial—IUI costs ranging from $1,275–$3,825 versus IVF/ICSI costing $8,825–$26,476 for three cycles of each procedure [4].

Standardized training tools address this variability by implementing traceable quality control measures that transcend traditional external quality assessment programs. While existing programs like the German QuaDeGA and UK NEQAS provide limited samples infrequently due to expense and availability constraints, digital training tools enable continuous proficiency assessment and refinement [2]. This approach shifts laboratory quality assurance from periodic validation to ongoing competency development, potentially reducing inter-laboratory variation that currently compromises the comparability of clinical and research data [2] [1].

Integration with Emerging Technologies

The development of standardized morphology assessment protocols creates opportunities for integration with advanced sperm evaluation technologies. Computer-assisted sperm morphometry analysis (CASA-Morph) systems have emerged as potential solutions to assessment subjectivity, using multivariate statistical approaches to identify sperm subpopulations within ejaculates [5]. Fluorescence-based CASA-Morph systems can classify human sperm into distinct morphometric subpopulations (large-round 30.4%, small-round 46.6%, and large-elongated 22.9%) using clustering and discriminant procedures [5].

Standardized training tools complement these technological advances by establishing consistent classification criteria that can be validated across platforms. Furthermore, the expert-validated image databases developed for training purposes can serve as robust datasets for training machine learning algorithms, creating synergy between human expertise and artificial intelligence applications in sperm analysis [3]. This integrated approach represents the future of morphology assessment—combining the consistency of computational methods with the nuanced judgment of trained morphologists to achieve both standardization and comprehensive evaluation.

The high variability in manual sperm morphology assessment represents a significant challenge in both clinical andrology and reproductive research. Evidence demonstrates that structured training using standardized tools can dramatically improve assessment accuracy and reduce inter-operator variability, particularly for complex classification systems [2]. The implementation of these training protocols, based on machine learning principles of supervised learning and expert consensus, provides a pathway to greater consistency in sperm morphology evaluation [2] [3].

Future developments in this field will likely focus on expanding these standardization approaches to encompass different species, staining methods, and classification systems while integrating with emerging technologies like computer-assisted morphometry systems and artificial intelligence [5] [6]. As the field moves toward greater standardization, the fundamental goal remains ensuring that sperm morphology assessment fulfills its potential as a reliable, reproducible, and clinically valuable component of male fertility evaluation.

Sperm morphology assessment is a foundational analysis in male fertility evaluation, playing a crucial role in both clinical diagnostics and research settings in veterinary and human medicine [7]. Despite its importance, sperm morphology assessment has historically been a highly subjective test, prone to significant inter-observer variability due to the lack of standardized training protocols for morphologists [7] [3]. This variability introduces substantial challenges for research reproducibility and reliability, particularly in pharmaceutical development where consistent endpoints are essential for evaluating therapeutic efficacy. The absence of robust standardization, quality control (QC), and quality assurance (QA) protocols can lead to inaccurate and highly variable results, ultimately compromising data integrity in multi-center trials and fundamental research [1] [8]. Standardization training has long been recognized as a critical factor for ensuring reliable results across industrial and clinical applications, yet until recently, no widely accepted method existed to train or standardize morphologists performing these assessments [7]. This application note examines the consequences of this variability on research and drug development and presents a novel standardized training tool that addresses these critical limitations.

Impact of Variability on Data Integrity

Documented Variability in Morphology Assessment

The reproducibility of sperm morphology assessment has been significantly hampered by poor inter-laboratory consistency. Studies examining laboratory adherence to World Health Organization (WHO) standards have identified inherent subjectivity and lack of traceable standards as major contributors to result variation [7]. Evidence from a decade-long external quality assurance program revealed that before the publication of the WHO 5th edition manual, at least six different classification criteria were in simultaneous use across laboratories [9]. This methodological heterogeneity inevitably led to substantial differences in reported normal morphology values, complicating cross-study comparisons and meta-analyses.

Even with the introduction of more standardized guidelines, significant challenges persist. Following the release of the WHO 5th edition manual, which established a 4% lower reference limit for normal sperm forms, adoption was initially slow, taking over eight years for 90% of laboratories enrolled in one quality assurance program to implement the recommended protocols and interpretations [9]. Furthermore, once implemented, morphology results from WHO 5th edition users declined significantly over time, suggesting that laboratories were becoming progressively stricter in their identification of normal spermatozoa despite using the same classification criteria [9]. This temporal inconsistency highlights the profound impact of subjective interpretation even when standardized methodologies are nominally employed.

Consequences for Research and Development

The implications of this variability extend directly into the research and drug development domains. In preclinical studies evaluating potential therapeutic compounds for male infertility, inconsistent morphology assessment can obscure true treatment effects or generate false positive outcomes. The resulting data irreproducibility contributes to the high failure rates in drug development pipelines, particularly for fertility treatments where sperm parameters often serve as primary endpoints in early-phase trials.

Multi-center research studies face additional challenges when morphology assessment varies between sites. A comparative study on standardized semen evaluation methods highlighted that rigorous standardization of laboratory protocols and strict quality control are essential for meaningful comparison of data from multiple sites [10]. Without such standardization, therapeutic efficacy signals may be lost in the noise of methodological variability, potentially causing promising compounds to be abandoned or ineffective treatments to be pursued further.

Table 1: Quantifying the Impact of Standardized Training on Assessment Accuracy

| Classification System Complexity | Untrained User Accuracy (%) | Trained User Accuracy (%) | Improvement with Training |

|---|---|---|---|

| 2-category (normal/abnormal) | 81.0 ± 2.5 | 98 ± 0.43 | +17.0% |

| 5-category (location-based defects) | 68 ± 3.59 | 97 ± 0.58 | +29.0% |

| 8-category (cattle industry standard) | 64 ± 3.5 | 96 ± 0.81 | +32.0% |

| 25-category (comprehensive) | 53 ± 3.69 | 90 ± 1.38 | +37.0% |

Standardized Training Tool: Development and Validation

Theoretical Foundation and Development

To address the critical need for standardization in sperm morphology assessment, researchers developed a novel 'Sperm Morphology Assessment Standardisation Training Tool' based on machine learning principles [7] [3]. The development of this tool adopted a methodology similar to that used for creating supervised machine learning models, which require accurately labeled "ground truth" datasets to achieve high classification accuracy [3]. This approach recognized that human morphologists, like machine learning algorithms, cannot achieve optimal performance without training on robust, validated data.

The training tool was created through a multi-stage process. First, a comprehensive dataset of high-resolution ram sperm images was generated using differential interference contrast (DIC) optics at 40× magnification, yielding 3,600 field-of-view images from 72 rams [3]. These images were then cropped to individual sperm images using a novel machine-learning algorithm, producing 9,365 single-sperm images. Each image was classified by three experienced assessors according to a detailed 30-category system, with only images achieving 100% consensus among all experts (4,821 images) integrated into the final training tool [3]. This rigorous consensus approach established the "ground truth" essential for effective training, mirroring the validation standards required for medical imaging in machine learning applications.

Implementation and Validation

The resulting web-based training tool provides two key functionalities: (i) instant feedback to users on correct/incorrect labels for training purposes, and (ii) proficiency assessment capabilities [3]. This design enables self-paced, independent learning while maintaining objective evaluation against expert-validated standards. The tool's adaptability across different classification systems, microscope optics, and species enhances its utility across diverse research environments.

Validation studies demonstrated the tool's remarkable effectiveness. In Experiment 1, untrained users (n=22) displayed high variation (CV=0.28) and moderate accuracy across classification systems, ranging from 81.0% for simple 2-category assessments to just 53% for complex 25-category classifications [7]. A second cohort (n=16) exposed to the training tool's visual aid and video resources showed significantly improved first-test accuracy, achieving 94.9%, 92.9%, 90%, and 82.7% across 2-, 5-, 8-, and 25-category systems respectively (p<0.001) [7].

Experiment 2 evaluated repeated training over four weeks, revealing significant improvements in both accuracy (82% to 90%, p<0.001) and diagnostic speed (7.0±0.4s to 4.9±0.3s per image, p<0.001) [7]. Final accuracy rates reached 98%, 97%, 96%, and 90% across the 2-, 5-, 8-, and 25-category systems respectively, demonstrating that standardized training can achieve high accuracy even with complex classification schemes [7]. The reduction in time required for classification while simultaneously improving accuracy indicates enhanced diagnostic efficiency, a critical factor for high-throughput research settings.

Figure 1: Development and Validation Workflow for the Sperm Morphology Assessment Standardisation Training Tool

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Reagents and Materials for Standardized Sperm Morphology Assessment

| Item | Specification | Research Application |

|---|---|---|

| Microscope Optics | Phase contrast or DIC objectives with high numerical apertures (0.75-0.95 NA) | High-resolution imaging of sperm ultrastructure without staining [3] |

| Staining Methods | Diff-Quik, Papanicolaou, or eosin-nigrosin stains | Cellular detail enhancement for morphological assessment [1] [11] |

| Classification Systems | 2-category to 30-category systems adaptable to species-specific requirements | Standardized abnormality categorization across research studies [7] [3] |

| Quality Control Materials | QC slides, reference images, standardized sampling chambers | Instrument calibration and proficiency testing [8] |

| Training Tool | Web-based interface with expert-validated image libraries | Standardized training and assessment of morphologists [7] [3] |

| Image Analysis Software | CASA systems or custom algorithms for automated assessment | Objective, high-throughput morphology analysis [12] [13] |

Experimental Protocols for Implementation

Protocol 1: Standardized Morphology Assessment Procedure

The following protocol outlines the standardized methodology for sperm morphology assessment, incorporating quality control measures essential for research reproducibility:

Sample Preparation: Collect semen samples in sterile containers after 2-7 days of sexual abstinence. Allow samples to liquefy at 37°C for 30-60 minutes. For viscous samples, add proteolytic enzymes (α-chymotrypsin or bromelain) and incubate for an additional 10 minutes at 37°C [1] [8].

Smear Preparation: Vortex the liquefied sample for 10 seconds. Place 10µL of well-mixed semen on a clean frosted slide. Use a second slide at a 45° angle to create a smooth, even smear. Prepare duplicates and air-dry completely before staining [1].

Staining Procedure: For Diff-Quik staining, immerse air-dried smears in fixative five times, then air-dry for 15 minutes. Immerse slides three times in Solution I for 10 seconds, drain excess, then immerse five times in Solution II for 10 seconds. Rinse briefly in sterile water and air-dry vertically. Apply mounting medium and coverslip [1].

Microscopy Assessment: Examine stained smears using a bright-field microscope with 100× objective and 10× eyepiece. Use immersion oil with a refractive index of 1.52 for optimal resolution. Incorporate an ocular micrometer for accurate sperm dimension measurement [1].

Morphology Classification: Assess at least 200 spermatozoa per sample across two replicates. Classify according to standardized categories (2-, 5-, 8-, or 25-category systems). Consider all borderline forms as abnormal. For WHO strict criteria, use ≥4% as the reference threshold for morphologically normal forms [1] [9].

Quality Control Implementation: Participate in internal and external quality assurance programs. Perform regular instrument calibration and technician proficiency testing. Maintain detailed records of all QC activities [8].

Protocol 2: Training Tool Implementation for Research Staff

This protocol details the implementation of the standardized training tool for research personnel:

Baseline Assessment: Have new morphologists complete an initial assessment using the training tool across multiple classification systems (2-, 5-, 8-, and 25-categories) to establish baseline accuracy and speed metrics [7].

Structured Training Program: Implement a four-week training program consisting of:

- Week 1: Intensive daily training sessions using the tool's training mode with immediate feedback

- Weeks 2-4: Twice-weekly reinforcement sessions with progressively more complex classification systems [7]

Proficiency Evaluation: Conduct final assessment after four weeks to document accuracy improvements. Establish minimum proficiency thresholds (e.g., >90% accuracy for 2-category system, >80% for 25-category system) for research personnel [7].

Ongoing Quality Assurance: Implement quarterly proficiency testing using the tool's assessment mode. Track longitudinal performance to identify drift in classification standards. Provide refresher training when accuracy declines below established thresholds [7] [9].

Figure 2: Training Progression and Outcomes for Morphology Assessment Standardization

Implications for Research and Drug Development

The implementation of standardized sperm morphology assessment protocols has far-reaching implications for research quality and therapeutic development. The significant variability in morphology assessment between laboratories and even within the same laboratory over time has profound consequences for multi-center trials and longitudinal studies [9]. In drug development, where sperm parameters often serve as key efficacy endpoints for fertility compounds, this variability can obscure true treatment effects or generate false positive outcomes.

The adoption of standardized training tools directly addresses these challenges by establishing consistent assessment criteria across research sites. The demonstrated improvement in classification accuracy from 53-81% to 90-98% across different categorization systems represents a substantial enhancement in data quality [7]. Furthermore, the reduction in assessment variation (coefficient of variation decreasing from 0.28 to less than 0.05 in trained users) significantly improves statistical power in research studies, potentially reducing the sample sizes required to detect meaningful treatment effects [7].

For pharmaceutical development targeting male infertility, standardized morphology assessment provides more reliable endpoints for evaluating therapeutic efficacy. This enhanced reliability can accelerate drug development by providing clearer go/no-go decisions based on robust morphological data. Additionally, the training tool's adaptability across species facilitates more effective translation between preclinical models and human clinical trials, addressing a critical bottleneck in fertility drug development.

The integration of these standardized approaches with emerging technologies such as computer-assisted sperm analysis (CASA) and artificial intelligence-based classification systems further enhances objectivity and throughput [12] [13]. As these automated systems continue to evolve, the standardized training tool provides a crucial reference point for validating automated classifications against expert consensus, ensuring that technological advancements maintain alignment with biological reality.

By addressing the fundamental issue of assessment variability, standardized training protocols strengthen the foundation of reproductive research and drug development, enabling more reliable conclusions, more efficient therapeutic development, and ultimately, more effective treatments for male factor infertility.

Sperm morphology assessment is a cornerstone of male fertility evaluation, yet it remains one of the most variable and subjective tests in reproductive science [7]. This variability stems primarily from two interconnected limitations: the lack of traceable standards for morphological classification and the reliance on traditional training methods that fail to ensure consistency between assessors [3] [7]. In clinical practice, these limitations directly impact diagnostic accuracy, treatment decisions, and ultimately patient outcomes. Without robust standardization, morphological assessment becomes vulnerable to human bias, making it difficult to reliably compare results across different laboratories or even between different morphologists within the same facility [3]. This application note examines these critical limitations through quantitative analysis and provides validated experimental protocols for implementing standardized training tools that address these fundamental challenges.

Quantitative Analysis of Current Limitations

The Impact of Classification System Complexity

Table 1: Accuracy and Variation Across Different Morphology Classification Systems

| Classification System | Number of Categories | Untrained User Accuracy (%) | Trained User Accuracy (%) | Coefficient of Variation (Untrained) |

|---|---|---|---|---|

| Normal/Abnormal | 2 | 81.0 ± 2.5 | 98.0 ± 0.43 | 0.28 |

| Location-Based | 5 | 68.0 ± 3.59 | 97.0 ± 0.58 | Not Reported |

| Australian Cattle Vets | 8 | 64.0 ± 3.5 | 96.0 ± 0.81 | Not Reported |

| Comprehensive Defect-Based | 25 | 53.0 ± 3.69 | 90.0 ± 1.38 | Not Reported |

Data adapted from Seymour et al. (2025) demonstrating that more complex classification systems intrinsically lead to lower accuracy and higher variability, particularly among untrained morphologists [7].

Efficacy of Standardized Training Intervention

Table 2: Training-Induced Improvements in Assessment Proficiency

| Proficiency Metric | Pre-Training Performance | Post-Training Performance | Improvement | P-Value |

|---|---|---|---|---|

| Overall Accuracy | 82.0 ± 1.05% | 90.0 ± 1.38% | +8.0% | <0.001 |

| Assessment Speed | 7.0 ± 0.4 seconds/sperm | 4.9 ± 0.3 seconds/sperm | -2.1 seconds | <0.001 |

| Inter-Assessor Variation | High (CV=0.28) | Significantly Reduced | Not Reported | <0.001 |

Data from Scientific Reports (2025) showing significant improvements in accuracy and efficiency following implementation of a standardized training tool over a four-week period [7].

Experimental Protocols for Validation Studies

Protocol 1: Establishing Expert Consensus for Ground Truth Classification

Purpose: To create a validated dataset of sperm images with expert-verified morphological classifications for use as "ground truth" in training and assessment [3].

Materials:

- Sperm samples from 72 rams (adaptable to human samples)

- Olympus BX53 microscope with DIC optics (40× magnification)

- Olympus DP28 camera (8.9-megapixel CMOS sensor)

- Custom machine-learning algorithm for single-sperm cropping

- Web interface for image classification and management

Methodology:

- Image Acquisition: Capture 50 fields of view (FOV) per sire, totaling 3,600 FOV images [3]

- Single-Sperm Isolation: Process FOV images through novel machine-learning algorithm to generate individual sperm images (resulting in 9,365 individual sperm images) [3]

- Expert Classification: Three experienced assessors independently classify each sperm image using a comprehensive 30-category classification system [3]

- Consensus Establishment: Retain only images with 100% consensus among all three assessors (4,821 images meeting this criterion) [3]

- Dataset Curation: Integrate consensus-verified images into a searchable database with capacity for multiple classification systems (2-category to 25-category systems) [3]

Validation Metrics:

- Inter-assessor agreement rate prior to consensus

- Percentage of images achieving 100% consensus (51.5% in validation study) [3]

- Classification consistency across different morphological categories

Protocol 2: Evaluating Training Efficacy Across Classification Systems

Purpose: To quantify the effectiveness of standardized training tools in improving morphologist accuracy and reducing variation across different classification systems [7].

Materials:

- Web-based sperm morphology assessment training tool

- 38 novice morphologists (divided into two experimental cohorts)

- Validated image dataset from Protocol 1

- Statistical analysis software (R, SPSS, or equivalent)

Methodology:

- Baseline Assessment (Experiment 1):

- Test novice morphologists (n=22) across four classification systems (2, 5, 8, and 25 categories) without prior training [7]

- Record accuracy scores and time per classification

- Analyze variation using coefficient of variation calculations

Intervention Phase (Experiment 2):

Outcome Measures:

- Calculate accuracy improvements for each classification system

- Measure reduction in time per classification

- Assess reduction in inter-assessor variation

- Statistical analysis using ANOVA with post-hoc testing for multiple comparisons [7]

Analysis:

- Compare pre- and post-training accuracy using paired t-tests

- Analyze time efficiency improvements using linear regression

- Calculate effect sizes for training interventions

Figure 1: Experimental Design for Validating Training Tool Efficacy

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Sperm Morphology Standardization Research

| Item | Specifications | Research Function |

|---|---|---|

| Research Microscope | Olympus BX53 with DIC optics, 40× magnification, 0.95 NA objective | High-resolution image acquisition with superior optical clarity for morphological detail [3] |

| Imaging Camera | Olympus DP28, 8.9-megapixel CMOS sensor, 25 field number | Capture high-quality digital images suitable for detailed morphological analysis [3] |

| Classification Framework | 30-category comprehensive system (adaptable to 2-25 categories) | Provides flexible morphological classification adaptable to various clinical and research needs [3] |

| Web-Based Training Interface | Custom-developed platform with instant feedback capability | Enables standardized training and assessment with immediate corrective feedback [3] [7] |

| Expert-Validated Image Bank | 4,821 sperm images with 100% consensus classification | Serves as ground truth reference for training and proficiency testing [3] |

| Statistical Analysis Package | R, SPSS, or equivalent with ANOVA capabilities | Quantifies training efficacy and inter-assessor variation [7] |

Implementation Workflow for Standardized Training

Figure 2: Standardized Training Implementation Workflow

Discussion and Future Directions

The quantitative data presented in this application note demonstrates that the current limitations in sperm morphology assessment - specifically the lack of traceable standards and ineffective traditional training methods - can be effectively addressed through standardized tools built on expert consensus and iterative training protocols. The implementation of such systems shows statistically significant improvements in assessment accuracy (increasing from 82% to 90% overall) and efficiency (decreasing classification time from 7.0 to 4.9 seconds per sperm) [7].

Future development in this field should focus on expanding these standardization principles to automated sperm morphology analysis systems, which similarly require robust ground truth data for algorithm training [14]. Additionally, the adaptation of these training tools for human sperm morphology assessment represents a promising direction for clinical andrology, particularly given recent expert recommendations questioning the prognostic value of traditional morphology assessment without proper standardization [14].

The protocols and methodologies outlined herein provide a framework for laboratories and research institutions to implement standardized training programs that directly address the critical limitations of traceability and training efficacy in sperm morphology assessment. Through the adoption of these evidence-based approaches, the field can move toward greater consistency, reliability, and clinical utility of morphological evaluation in male fertility assessment.

In both machine learning and scientific training protocols, ground truth refers to verified, accurate data used as a benchmark for training, validation, and testing [15]. In the context of training human professionals, particularly in subjective assessment tasks, ground truth provides the "correct answer" against which trainee performance is measured and refined [7]. This establishes an objective standard in fields where assessment has traditionally been vulnerable to individual interpretation and bias.

The application of machine learning principles to human training represents a significant methodological advancement. Supervised learning, a subcategory of machine learning that uses labeled datasets to train algorithms, provides a powerful framework for standardizing human assessment skills [15]. This approach is particularly valuable in medical and biological fields such as sperm morphology assessment, where subjective evaluation has historically led to substantial inter-laboratory variation [7].

Core Principles and Definitions

Conceptual Framework of Ground Truth

Ground truth, or ground truth data, constitutes the gold standard of accurate information against which predictions or assessments are compared [15]. In machine learning parlance, it represents the expert-validated labels that enable models to learn correct patterns. When applied to human training, this concept translates to using expertly-validated reference standards that trainees use to calibrate their assessments.

The importance of ground truth data stems from its role as the foundational reference point throughout the learning lifecycle. During the training phase, ground truth provides the correct answers for the learner to internalize. In the validation phase, a different sample of ground truth data allows for performance evaluation and adjustment. Finally, during testing phase, previously unseen ground truth data assesses how well the learner can generalize their skills to new examples [15].

Establishing Ground Truth Through Expert Consensus

In subjective domains like visual assessment, ground truth cannot be established by simple measurement. Instead, it requires expert consensus to create validated classifications. This methodology, known as "ground truthing," involves obtaining proper objective (provable) data for testing [16]. In medical imaging and morphological assessment, this typically involves multiple experts independently classifying each item, with final ground truth labels determined through their consensus [7] [15].

This approach directly addresses the challenge of subjectivity and ambiguity inherent in many assessment tasks [15]. When different experts might naturally interpret the same data differently, establishing a consensus-based ground truth creates a consistent standard that transcends individual judgment tendencies. This process is particularly crucial for sperm morphology assessment, where research has shown that even expert morphologists only agreed on normal/abnormal classification for 73% of sperm images when working without a standardized reference [7].

Application to Sperm Morphology Assessment Training

Experimental Validation of the Training Approach

Recent research has validated the effectiveness of applying machine learning principles to sperm morphology training. A 2025 study utilized a bespoke 'Sperm Morphology Assessment Standardisation Training Tool' to train novice morphologists using machine learning principles and expert consensus labels ("ground truth") [7]. The study design consisted of two key experiments that demonstrated significant improvements in assessment accuracy and consistency.

The training approach addressed a critical gap in andrology laboratories: while previous efforts focused mainly on standardizing semen sample preparation methodologies, they largely neglected the standardisation of training and re-training protocols for morphologists [7]. This omission was particularly problematic given that morphology assessment remains primarily subjective and therefore vulnerable to bias and human error without robust standardization protocols.

Quantitative Outcomes of Standardized Training

Table 1: Accuracy Improvement Across Classification System Complexity

| Classification System | Untrained Accuracy (%) | Trained Accuracy (%) | Final Accuracy After Protocol (%) |

|---|---|---|---|

| 2-category (normal/abnormal) | 81.0 ± 2.5 | 94.9 ± 0.66 | 98 ± 0.43 |

| 5-category (by defect location) | 68 ± 3.59 | 92.9 ± 0.81 | 97 ± 0.58 |

| 8-category (specific defect types) | 64 ± 3.5 | 90 ± 0.91 | 96 ± 0.81 |

| 25-category (individual defects) | 53 ± 3.69 | 82.7 ± 1.05 | 90 ± 1.38 |

Table 2: Impact of Training on Assessment Speed and Consistency

| Training Metric | Initial Performance | Final Performance | Improvement |

|---|---|---|---|

| Assessment Accuracy | 82 ± 1.05% | 90 ± 1.38% | +8% (p < 0.001) |

| Time per Image | 7.0 ± 0.4 seconds | 4.9 ± 0.3 seconds | -30% (p < 0.001) |

| Inter-User Variation | High (CV = 0.28) | Low (CV = 0.027-0.137) | Significant reduction (p < 0.001) |

The quantitative results demonstrated several key findings. First, without standardized training, novice morphologists showed high variation and lower accuracy, with scores ranging from 19% to 77% and a coefficient of variation (CV) of 0.28 [7]. Second, the complexity of the classification system significantly impacted performance, with simpler systems (2-category) yielding higher accuracy than more complex systems (25-category) both before and after training [7]. Third, training produced not only accuracy improvements but also significant gains in efficiency, with assessment speed improving by approximately 30% over the training period [7].

Detailed Experimental Protocols

Protocol 1: Initial Accuracy Assessment Across Classification Systems

Purpose: To establish baseline assessment capabilities of novice morphologists across classification systems of varying complexity.

Materials:

- Sperm Morphology Assessment Standardisation Training Tool [7]

- Dataset of sperm images with expert-consensus ground truth labels [7]

- Phase contrast microscope or digital images

Procedure:

- Recruit novice morphologists (n=22) with minimal prior experience [7]

- Present each participant with a series of sperm images across multiple classification systems:

- 2-category system: normal vs. abnormal

- 5-category system: normal; head defect; midpiece defect; tail defect; cytoplasmic droplet

- 8-category system: normal; cytoplasmic droplet; midpiece defect; loose heads and abnormal tails; pyriform head; knobbed acrosomes; vacuoles and teratoids; swollen acrosomes

- 25-category system: all defects defined individually [7]

- Record accuracy scores for each participant across all classification systems

- Measure time taken per image classification

- Calculate variation between users using coefficient of variation

Quality Control: All images must have validated ground truth labels established through expert consensus to ensure benchmark reliability [7] [15].

Protocol 2: Extended Training Regimen

Purpose: To evaluate the effect of repeated training over an extended period on assessment accuracy and speed.

Materials:

- Sperm Morphology Assessment Standardisation Training Tool [7]

- Visual aid and training video [7]

- Dataset of sperm images with expert-consensus ground truth labels

- Performance tracking system

Procedure:

- Recruit novice morphologists (n=16) [7]

- Implement training protocol over four weeks with testing sessions distributed throughout

- Provide initial training using visual aids and instructional videos

- Conduct repeated assessment sessions (14 tests total) with immediate feedback on accuracy

- Schedule testing sessions to evaluate retention and improvement:

- Tests 1-4: Day 1 (intensive training)

- Tests 5-8: Week 1 follow-up

- Tests 9-11: Week 2 consolidation

- Tests 12-14: Weeks 3-4 proficiency confirmation [7]

- Record both accuracy and speed metrics for each session

- Compare performance across different classification systems

- Analyze learning curves and plateau points

Quality Control: Maintain consistent testing conditions throughout the training period. Use the same ground truth reference standard for all assessments to ensure consistency [7].

Implementation Framework and Visualization

Workflow Diagram: Ground Truth Establishment and Application

Ground Truth Establishment and Training Workflow

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials and Their Functions

| Material/Resource | Function/Purpose | Implementation Example |

|---|---|---|

| Sperm Morphology Assessment Standardisation Training Tool | Digital platform for training and testing morphologists | Web-based application adaptable for multiple classification systems [7] |

| Expert-Validated Image Dataset | Provides ground truth reference standard | Sperm images classified through multi-expert consensus [7] [15] |

| Phase Contrast Microscopy | Essential for live sperm assessment without staining | Standard equipment for morphology assessment in veterinary and human medicine [7] |

| Quality Control (QC) Program | Ensures ongoing assessment reliability | External programs like German QuaDeGA or UK NEQAS [7] |

| Visual Aid and Training Video | Accelerates initial learning curve | Instructional materials demonstrating defect classification [7] |

Discussion and Best Practices

Addressing Implementation Challenges

The application of machine learning principles to human training presents several challenges that must be addressed for successful implementation. Inconsistent data labeling can introduce errors that compound throughout the training process [15]. This is particularly relevant when establishing the initial ground truth dataset, as even minor inconsistencies between experts can significantly impact trainee outcomes. Implementation requires careful standardization of labeling guidelines to ensure uniform annotation across the entire dataset.

The complexity of data presents another significant challenge, particularly in morphological assessment where multiple classification systems may be used simultaneously [15]. The research demonstrated that more complex classification systems (25-category) inherently resulted in lower accuracy rates even after extensive training, suggesting that balancing system complexity with practical utility is essential [7]. Additionally, scalability and cost considerations must be addressed, particularly when expert time is required for ground truth establishment [15].

Strategies for High-Quality Ground Truth Implementation

Several strategies can optimize ground truth quality and training effectiveness. First, defining clear objectives and data requirements ensures that the training protocol aligns with its intended application [15]. Second, developing comprehensive labeling strategies with standardized guidelines promotes consistency across annotators and over time. Third, verifying data consistency through statistical measures like inter-annotator agreements (IAA) helps maintain quality standards [15].

Crucially, implementation should address potential biases by ensuring diverse data collection and using multiple annotators for each data point [15]. Finally, organizations should recognize that ground truth data represents a dynamic asset that may require updates as real-world conditions evolve or assessment standards change [15]. In the context of sperm morphology, this might involve incorporating new defect classifications as research advances.

The application of machine learning principles, particularly the rigorous use of ground truth data, represents a transformative approach to standardizing subjective assessment tasks in scientific and medical fields. By adopting expert-consensus reference standards, structured training protocols, and continuous performance validation, organizations can significantly reduce inter-assessor variability while improving both accuracy and efficiency. The experimental results from sperm morphology assessment demonstrate that this approach can achieve accuracy rates exceeding 90% even for complex classification systems, providing a robust framework that could be adapted across multiple domains where subjective assessment currently limits reproducibility and reliability.

Building a Better Training Tool: Architecture and Implementation

Sperm morphology analysis constitutes a critical diagnostic tool in male fertility assessment, with abnormal sperm morphology strongly correlated with reduced fertility rates and poor outcomes in assisted reproductive technologies [17]. Despite its clinical importance, manual sperm morphology assessment remains highly subjective, exhibiting significant inter-observer variability and reliance on operator expertise [18]. Studies report up to 40% disagreement between expert evaluators, with kappa values as low as 0.05–0.15 highlighting substantial diagnostic inconsistency even among trained technicians [17]. This variability stems from the inherent challenges of subjective biological assessment and the absence of standardized, validated training methodologies [3].

The emerging paradigm of supervised learning frameworks for morphologist training addresses these limitations by applying principles of machine learning validation to human education. Just as machine learning models require robust "ground truth" datasets for effective training, morphologists necessitate training on validated, consensus-classified sperm images to achieve accurate and reproducible assessments [3]. This approach recognizes that if machine learning algorithms demonstrate improved precision with consensus-validated training data, human classifiers similarly benefit from training on robustly validated morphological classifications [3]. By implementing supervised learning frameworks grounded in expert consensus, these systems offer a transformative methodology for standardizing sperm morphology assessment across laboratories and clinical settings.

Core Principles of Supervised Learning Frameworks

Foundation in Expert Consensus and Ground Truth Establishment

The cornerstone of effective supervised learning frameworks for morphologist training lies in establishing validated "ground truth" classifications through multi-expert consensus. This process addresses the fundamental challenge of subjective interpretation in biological assessments by creating a reference standard against which trainee performance can be objectively measured [3]. The framework developed by Seymour et al. exemplifies this approach, wherein images of spermatozoa were classified by three experienced assessors, with only those achieving 100% consensus across all labels integrated into the training tool [3] [19]. This rigorous validation process ensures that training materials reflect unequivocal morphological classifications, providing a definitive standard for trainee assessment.

The consensus-driven approach directly mitigates the human bias inherent in traditional morphology assessment. Research demonstrates that conventional training methods, such as side-by-side training with an experienced assessor or classroom-based instruction, yield inconsistent results with high intra- and inter-assessor variability [3]. One study noted that in 43% of instances, novices reversed their classification of the same sperm during secondary assessment, highlighting the instability of unstandardized training approaches [3]. By contrast, supervised frameworks grounded in expert consensus provide consistent, validated reference points that enable precise measurement of trainee accuracy and progression.

Adaptive Learning Architectures for Morphological Classification

Advanced supervised learning frameworks incorporate adaptive architectures capable of accommodating diverse morphological classification systems and species-specific assessment criteria. This flexibility represents a critical advancement over rigid training protocols, allowing the framework to be tailored to specific clinical or research requirements. The training tool developed by Seymour et al. exemplifies this principle through its comprehensive 30-category classification system, which can be readily adapted to simpler classification schemes such as the 5-category location-based system or the 8-category Australian Cattle Vets system [3]. This design ensures broad applicability across different clinical contexts and research settings.

The architectural flexibility extends to incorporating various microscope optics and imaging modalities. Differential interference contrast (DIC) microscopy, recognized as the professional gold standard for sperm morphology assessment in veterinary applications, provides superior visualization of subtle morphological features compared to bright-field microscopy [20]. Supervised learning frameworks can integrate images captured using multiple optical systems, training morphologists to recognize morphological features across different imaging conditions. This capability is particularly valuable for standardizing assessments across laboratories employing varied equipment configurations, enhancing the reproducibility of morphological evaluations in multi-center research and clinical networks.

Table 1: Core Components of Supervised Learning Frameworks for Morphologist Training

| Framework Component | Function | Implementation Example |

|---|---|---|

| Consensus-Classified Image Repository | Provides validated ground truth for training and assessment | 4,821 ram sperm images with 100% expert consensus [3] [19] |

| Multi-System Classification Support | Enables adaptation to various classification standards | 30-category system adaptable to WHO, David, or species-specific classifications [3] [18] |

| Real-Time Feedback Mechanism | Provides immediate correction and reinforcement | Web interface with instant correct/incorrect labeling feedback [3] [19] |

| Proficiency Assessment Module | Quantifies trainee competency and progress | Accuracy measurement against consensus classifications [3] |

| Optical System Variability | Accommodates different microscopy modalities | Support for DIC, phase contrast, and bright-field images [3] [20] |

Experimental Protocols and Implementation

Protocol for Ground Truth Dataset Development

Establishing a validated image dataset constitutes the foundational step in implementing a supervised learning framework for morphologist training. The following protocol outlines the standardized methodology for image acquisition, processing, and expert classification:

Sample Preparation and Image Acquisition: Collect semen samples from appropriate subjects (72 rams in the proof-of-concept study) following ethical guidelines. Prepare smears according to standardized protocols, such as those outlined in the WHO manual, using appropriate staining techniques (e.g., RAL Diagnostics staining kit) [18]. Capture images using high-resolution microscopy systems, such as an Olympus BX53 microscope with DIC optics at 40× magnification, coupled with high-sensitivity cameras (e.g., Olympus DP28 with 8.9-megapixel CMOS sensor) [3]. Acquire multiple fields of view per sample (50 FOV/ram) to ensure representative sampling of morphological diversity.

Image Processing and Single-Sperm Isolation: Process field-of-view images to isolate individual spermatozoa using machine learning algorithms specifically trained for sperm detection and cropping [3]. This critical step ensures that each training image contains only one sperm cell, eliminating potential confusion from overlapping or adjacent spermatozoa. The proof-of-concept study implemented a novel machine-learning algorithm that processed 3,600 FOV images to generate 9,365 individual sperm images [3] [19].

Multi-Expert Classification and Consensus Establishment: Engage multiple experienced assessors (minimum of three) to independently classify each sperm image according to a comprehensive classification system. Implement a structured process for reconciling classifications, such as the advanced annotation sheet system used in the SMD/MSS dataset development [18]. Establish ground truth by including only images with complete inter-expert consensus (51.5% of images in the proof-of-concept study achieved 100% consensus) [3]. Analyze inter-expert agreement using statistical measures such as Fisher's exact test to identify classifications requiring further review [18].

Protocol for Training Tool Implementation and Validation

Following ground truth dataset development, the subsequent protocol guides the implementation and validation of the interactive training tool:

Web Interface Development and Integration: Develop a web-based interface capable of presenting individual sperm images to trainees in a randomized, controlled sequence. Implement functionality for trainees to classify each sperm according to the designated morphological system. Incorporate instant feedback mechanisms that indicate correct/incorrect classifications immediately after each assessment, referencing the established ground truth [3] [19]. Design the interface to accommodate different classification systems by mapping the comprehensive ground truth classifications to simpler categorical systems as needed.

Proficiency Assessment and Progressive Learning Modules: Implement assessment modules that evaluate trainee proficiency by measuring classification accuracy against the consensus ground truth. Structure training sessions to progressively introduce morphological categories of increasing complexity, beginning with broad distinctions (normal vs. abnormal) before advancing to specific defect classifications [3]. Incorporate spaced repetition algorithms to reinforce challenging morphological classifications, presenting misclassified sperm types with greater frequency until mastery is demonstrated.

Validation Studies and Performance Benchmarking: Conduct rigorous validation studies to quantify training effectiveness by measuring improvements in classification accuracy before and after training intervention. Establish performance benchmarks for competency certification, such as minimum accuracy thresholds for each morphological category [3]. Compare the performance of tool-trained morphologists against both novice assessors and experienced experts to validate the tool's efficacy in achieving standardization across proficiency levels.

Table 2: Key Research Reagents and Materials for Framework Implementation

| Reagent/Material | Specification | Research Function |

|---|---|---|

| Microscope System | Olympus BX53 with DIC optics, 40× objectives (NA 0.75-0.95) | High-resolution image acquisition for morphological analysis [3] |

| Imaging Camera | Olympus DP28 with 8.9-megapixel CMOS sensor, 25 field number | Capture of detailed sperm morphology images at 4000px resolution [3] |

| Staining Kit | RAL Diagnostics staining kit | Semen smear preparation for morphological assessment [18] |

| Sample Fixation | Buffered formal saline or Trumorph system (60°C, 6kp pressure) | Preservation of sperm morphology for wet mount or fixed preparation [20] [21] |

| Annotation Software | Custom web interface or Roboflow for image labeling | Organization and management of classified sperm images [3] [21] |

Performance Metrics and Validation

Quantitative Assessment of Training Efficacy

Robust validation of supervised learning frameworks requires comprehensive quantitative assessment across multiple performance dimensions. The proof-of-concept training tool development reported substantial inter-expert consensus in morphological classifications, with 51.5% of images (4,821 out of 9,365) achieving 100% consensus across three experienced assessors [3] [19]. This consensus rate establishes the upper bound of achievable agreement and provides a benchmark for trainee performance targets. Implementation of deep learning models enhanced with feature engineering techniques has demonstrated the potential of automated systems, with one framework achieving accuracy rates of 96.08% on the SMIDS dataset and 96.77% on the HuSHeM dataset, representing improvements of 8.08% and 10.41% respectively over baseline convolutional neural network performance [17].

The transition from conventional machine learning approaches to advanced deep learning architectures has yielded significant improvements in classification performance. Traditional methods utilizing handcrafted features and classifiers such as Support Vector Machines achieved accuracy rates ranging from 49% to 90% depending on the morphological features being assessed [22]. Contemporary approaches integrating attention mechanisms and deep feature engineering have elevated performance to expert-level accuracy, establishing new benchmarks for both automated systems and human trainees [17]. These quantitative metrics provide crucial validation of the supervised learning approach and establish performance targets for morphologist training.

Comparative Analysis of Traditional vs. Standardized Training

Comparative evaluation reveals substantial advantages of standardized supervised learning frameworks over conventional training methodologies. Traditional side-by-side training approaches suffer from significant limitations, including dependency on trainer availability, inconsistent training quality, and the inability to precisely quantify trainee progression [3]. Classroom-based training methods have demonstrated minimal efficacy, with one study reporting no significant improvement following training and noting that novices reversed their classification of the same sperm in 43% of instances during secondary assessment [3].

In contrast, supervised learning frameworks provide objective, quantifiable metrics of trainee proficiency through direct comparison against consensus ground truth. The immediate feedback mechanism enables rapid correction of misclassifications, accelerating the learning curve and reinforcing correct morphological assessments [3]. Furthermore, the self-paced nature of these frameworks accommodates variable learning rates among trainees, addressing a critical limitation of synchronous classroom instruction [3] [19]. This individualized approach combined with standardized validation against expert consensus represents a paradigm shift in morphologist training methodology.

Implementation Considerations and Future Directions

Integration with Clinical and Research Workflows

Successful implementation of supervised learning frameworks requires strategic integration with existing clinical and research workflows. The adaptability of these frameworks to various classification systems facilitates incorporation into diverse laboratory environments employing different assessment protocols [3]. For veterinary applications, integration with established standardization programs such as the University of Queensland Sperm Morphology Standardization Program (UQSMSP) ensures alignment with industry standards and certification requirements [20]. In clinical human fertility assessment, compatibility with WHO guidelines and Kruger strict criteria maintains diagnostic relevance and regulatory compliance [18].

The integration of these training frameworks with emerging automated sperm analysis systems presents a synergistic opportunity for comprehensive quality assurance. While deep learning-based automated classification systems achieve high accuracy rates, they remain dependent on high-quality training data and may struggle with rare morphological abnormalities [22] [17]. Supervised human training frameworks complement these systems by maintaining expert-level human assessment capabilities for quality control and complex edge cases. This integrated approach ensures robust morphological assessment while leveraging the efficiency advantages of automation for high-volume routine screening.

Technological Advancements and Framework Evolution

Future development of supervised learning frameworks will likely incorporate technological advancements to enhance training efficacy and accessibility. Integration of attention mechanism visualizations, such as Grad-CAM displays from deep learning models, could provide trainees with insights into which morphological features expert systems prioritize during classification [17]. Augmented reality interfaces may eventually enable overlay of guidance annotations during live microscopy sessions, bridging the gap between digital training and practical application.

The evolution of these frameworks will also address current limitations in morphological classification systems by incorporating emerging research on the functional implications of specific morphological defects. The distinction between compensable and uncompensable abnormalities, well-established in veterinary medicine, provides a clinically relevant framework for prioritizing morphological assessments based on fertility impact [20] [21]. Future training frameworks will likely integrate this functional dimension, enabling morphologists to not only identify morphological defects but also assess their potential clinical significance based on established fertility correlation data.

As these frameworks mature, their validation through multi-center studies will establish standardized proficiency benchmarks for morphologist certification. The implementation of centralized quality control programs, similar to the Australian UQSMSP model which requires morphologists to perform competency checks on five samples annually with results submitted for analysis, will ensure ongoing standardization across laboratories and geographical regions [20]. This systematic approach to quality assurance, combined with technologically advanced training methodologies, represents the future of standardized sperm morphology assessment in both clinical and research contexts.

The development of a standardized training tool for sperm morphology assessment is critically dependent on two foundational pillars: the acquisition of high-resolution, consistent microscopic images and the creation of an expert-validated labeled dataset. The accuracy of any subsequent machine learning model or training system is a direct reflection of the quality of the data it learns from [23] [24]. This document details the application notes and protocols for creating a high-fidelity image database, framing the process within the specific research context of sperm morphology assessment standardization.

High-Resolution Image Acquisition Protocol

Objective

To establish a standardized methodology for acquiring high-quality, consistent, and reproducible digital images of spermatozoa for morphological analysis, ensuring optimal image quality for both expert labeling and computational processing.

Experimental Protocol

Materials and Equipment:

- Phase-contrast microscope with 100x oil immersion objective lens.

- Digital camera with a resolution of at least 5 megapixels.

- Calibrated micrometer slide.

- Standardized sperm stains (e.g., Papanicolaou, Diff-Quik).

- Consistent light source with adjustable intensity.

Procedure:

- Slide Preparation: Smear semen samples on pre-cleaned glass slides and stain using a standardized, validated staining protocol to ensure uniform contrast and coloration [25].

- Microscope Calibration: Prior to each imaging session, calibrate the microscope using a stage micrometer to ensure consistent spatial measurements across all acquired images.

- Image Quality Optimization: Optimize the image by minimizing noise and maximizing contrast. This involves:

- Reducing Noise: Increase the number of photon registrations by adjusting the acquisition time. A longer exposure time reduces noise but requires the sample to remain perfectly still [26].

- Spatial Resolution: Select an appropriate matrix size (e.g., 128x128 or 256x256 pixels). A larger pixel size can reduce noise but may compromise spatial resolution if too large [26].

- Contrast: Ensure proper Köhler illumination and adjust the pulse height analyser (or similar settings) to reduce scattered radiation, thereby improving the contrast between the sperm and background [26].

- Image Capture: For each microscopic field, capture images in a lossless or minimally compressed format (e.g., TIFF). Record metadata including magnification, scale bar, stain type, and date of acquisition.

- Quality Control: Implement a routine quality control (QC) programme for the imaging equipment. This starts with an initial acceptance test and involves regular QC measurements to maintain long-term stability of performance [26].

The following workflow diagram illustrates the sequential steps for high-resolution image acquisition.

Key Parameters for Standardization

Table 1: Quantitative Parameters for Image Acquisition Standardization.

| Parameter | Specification | Rationale |

|---|---|---|

| Magnification | 100x (Oil Immersion) | Essential for visualizing critical morphological details of the sperm head, neck, midpiece, and tail [27]. |

| Spatial Resolution | ≤ 0.1 µm/pixel | Ensures sufficient detail to assess strict Tygerberg criteria, including smoothness and shape of the sperm head [27] [25]. |

| Image Matrix | 256 x 256 pixels or 512 x 512 pixels | A compromise between image detail (resolution) and file size/processing requirements [26]. |

| File Format | TIFF | Lossless format preserves original image data without compression artifacts. |

Expert Consensus Labeling Protocol

Objective

To generate accurate, consistent, and unbiased labels for sperm morphology by leveraging a consensus-based approach among multiple expert annotators, thereby establishing a reliable ground-truth dataset.

Background and Importance

Consensus labeling is an annotation approach where multiple annotators independently label the same set of images, and the final annotation is derived from their collective agreement [28]. This method is crucial for several reasons:

- Reducing Labeling Errors: It mitigates the impact of individual human error or lapses in concentration by leveraging collective accuracy [23].

- Mitigating Bias: By incorporating diverse perspectives from annotators with different backgrounds, the risk of systematic bias influencing the dataset is diminished [23].

- Resolving Ambiguity: Sperm morphology can be subjective. Consensus forces annotators to discuss and reconcile differing interpretations, leading to a more accurate and representative dataset [28] [23].

Experimental Protocol

Materials and Equipment:

- Curated set of high-resolution sperm images.

- Collaborative annotation platform (e.g., Supervisely) or standardized digital labeling tool.

- Defined labeling guidelines based on Strict (Tygerberg) criteria [25].

Procedure:

- Selection of Annotators: Assemble a diverse group of 3-5 expert annotators, such as experienced andrologists or embryologists trained in strict sperm morphology assessment [23].

- Defining Consensus Rules: Establish clear guidelines prior to labeling. This includes:

- Labeling Guidelines: A detailed document with visual examples of "normal" sperm morphology and the various abnormality types (head, neck, midpiece, tail) based on Strict (Tygerberg) criteria [27] [25].

- Consensus Threshold: Define what constitutes agreement, for example, a simple majority vote or a requirement for unanimity on specific, challenging cases [28] [23].

- Independent Labeling Round: Each annotator independently labels the same set of images, classifying each spermatozoon as "normal" or specifying the type of abnormality present.

- Consensus Calculation and Report Generation: Use a labeling consensus tool to calculate a consensus score, which measures the agreement between different annotators (from 0% to 100%) [28]. Generate a detailed report highlighting images with low inter-annotator agreement.

- Adjudication and Finalization: For images where consensus is not met automatically, conduct an adjudication session where annotators discuss their reasoning. A lead expert makes the final call to establish the ground-truth label.

The following workflow diagram illustrates the iterative, collaborative process of expert consensus labeling.

Quantitative Metrics and Quality Control

Table 2: Metrics for Monitoring Labeling Quality and Consensus.

| Metric | Description | Target Value |

|---|---|---|

| Consensus Score | The percentage of similarity between annotations from a pair of annotators [28]. | > 90% for experienced annotators. |

| Inter-Annotator Agreement (IAA) | Statistical measure of agreement between all annotators (e.g., Fleiss' Kappa). | Kappa > 0.8 (indicating almost perfect agreement). |

| Adjudication Rate | Percentage of images requiring expert discussion to resolve labels. | Monitor for trends; a high rate may indicate unclear guidelines. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sperm Morphology Image Database Creation.

| Item | Function/Application |

|---|---|

| Papanicolaou Stain | A standardized staining solution set used to provide consistent and detailed contrast to sperm cells, allowing for clear visualization of the acrosome, head, midpiece, and tail [25]. |

| Strict (Tygerberg) Criteria Guidelines | The definitive classification system used to define a morphologically "normal" spermatozoon, including precise measurements for head size and shape, and well-defined acrosome, neck, midpiece, and tail [27] [25]. |

| Calibrated Micrometer Slide | A precision tool for validating the spatial scale (µm/pixel) of the digital microscopy system, ensuring all morphological measurements are accurate and comparable across imaging sessions. |

| Collaborative Annotation Platform | Software that enables multiple experts to label the same images, calculates consensus metrics, and provides detailed reports on annotator agreement, which is vital for implementing the consensus labeling protocol [28]. |

The meticulous application of the protocols described herein for high-resolution image acquisition and expert consensus labeling is fundamental to building a robust image database. This database serves as the critical foundation for any subsequent initiative in sperm morphology assessment standardization, whether for the training of human professionals or the development of reliable, data-centric AI tools. Adherence to these detailed methodologies ensures the creation of a high-quality, reliable dataset that accurately reflects the strict criteria necessary for meaningful morphological analysis.

Sperm morphology assessment is a cornerstone of male fertility evaluation in both clinical andrology and veterinary medicine. Despite its diagnostic importance, it remains one of the most challenging and subjective tests to standardize due to inherent human bias and interpretation variability [7] [3]. This variability stems primarily from the lack of robust, standardized training protocols for morphologists, which compromises the reliability and reproducibility of results across different laboratories and practitioners [7] [22]. The fundamental challenge lies in establishing a traceable standard for both training and proficiency testing, a gap that becomes increasingly problematic as classification systems grow more complex.

The drive toward standardization has catalyzed the development of innovative training tools and automated classification systems. These advancements are founded on machine learning principles, particularly the concept of "ground truth" established through expert consensus, which provides a validated benchmark for both human training and algorithm development [7] [3]. This article explores the landscape of sperm morphology classification systems, from simple binary assessments to intricate multi-category frameworks, and provides detailed protocols for their implementation within standardization research.

Quantitative Analysis of Classification System Performance

The complexity of a classification system directly impacts morphologist accuracy, variability, and diagnostic speed. Research demonstrates a clear performance trade-off between system simplicity and diagnostic granularity.

Table 1: Performance Metrics of Untrained Novice Morphologists Across Classification Systems [7]

| Classification System | Number of Categories | Initial Accuracy (%) | Coefficient of Variation |

|---|---|---|---|

| Binary | 2 | 81.0 ± 2.5 | 0.28 |

| Location-Based | 5 | 68.0 ± 3.59 | Not Reported |

| Australian Cattle Vets | 8 | 64.0 ± 3.5 | Not Reported |

| Comprehensive Individual | 25 | 53.0 ± 3.69 | Not Reported |

Table 2: Performance of Trained Morphologists After Standardization Training [7]

| Classification System | Number of Categories | Final Accuracy (%) | Average Classification Speed (seconds/sperm) |

|---|---|---|---|

| Binary | 2 | 98.0 ± 0.43 | 4.9 ± 0.3 |

| Location-Based | 5 | 97.0 ± 0.58 | 4.9 ± 0.3 |

| Australian Cattle Vets | 8 | 96.0 ± 0.81 | 4.9 ± 0.3 |

| Comprehensive Individual | 25 | 90.0 ± 1.38 | 4.9 ± 0.3 |

Training significantly improves performance across all systems. A structured training program using a sperm morphology assessment standardization tool demonstrated dramatic improvements, reducing the coefficient of variation among users and increasing diagnostic speed from 7.0 ± 0.4 seconds to 4.9 ± 0.3 seconds per sperm image [7]. The most substantial improvements occurred after the first intensive day of training, with results plateauing in subsequent weeks.

Hierarchical Classification Frameworks and Experimental Protocols

Classification systems for sperm morphology exist along a spectrum of complexity, each serving distinct diagnostic purposes. The simplest is the 2-category system (normal/abnormal), primarily used in the sheep industry for rapid screening [7]. The 5-category system classifies abnormalities based on their anatomical location (head, midpiece, tail, cytoplasmic droplet, normal) [7]. The 8-category system, used by Australian Cattle Veterinarians, includes normal sperm and seven abnormality classes: cytoplasmic droplet; midpiece defect; loose heads and abnormal tails; pyriform head; knobbed acrosomes; vacuoles and teratoids; and swollen acrosomes [7] [20]. More complex systems expand to 25-30 categories to capture subtle morphological variations for research purposes [7] [3].

The following diagram illustrates the hierarchical relationship between these classification systems and their diagnostic applications:

Protocol for Implementing a Standardized Training Tool

Experiment 1: Baseline Assessment of Untrained Morphologists