Validating Linkage Algorithms for Fertility Registries: A Framework for Robust Data Integration in Reproductive Research

This article provides a comprehensive framework for the validation of data linkage algorithms specifically tailored to fertility registries.

Validating Linkage Algorithms for Fertility Registries: A Framework for Robust Data Integration in Reproductive Research

Abstract

This article provides a comprehensive framework for the validation of data linkage algorithms specifically tailored to fertility registries. It addresses the critical need for robust methods to combine disparate data sources—such as clinical IVF outcomes, genetic data, and long-term health records—to power advanced research and drug discovery. Covering foundational concepts, methodological choices, optimization strategies, and rigorous validation techniques, this guide equips researchers and drug development professionals with the tools to create high-quality, linked datasets. By ensuring the accuracy and reliability of these linkages, the scientific community can unlock deeper insights into reproductive medicine, improve patient outcomes, and accelerate the development of novel therapies, all while navigating the unique ethical and practical challenges of fertility data.

The Critical Role and Core Concepts of Data Linkage in Fertility Research

Why Data Linkage is a Game-Changer for Fertility and Drug Discovery

Data linkage, the process of connecting information from different sources about the same entity, is revolutionizing biomedical research. By creating a unified view from disparate datasets, it unlocks deeper insights into human health and disease. This is particularly transformative in the fields of fertility and drug discovery, where it enables large-scale, longitudinal studies that were previously impossible. The ability to generate robust real-world evidence hinges on the validation of the linkage algorithms themselves, ensuring that the connected data is both accurate and reliable.

The Critical Role of Data Linkage in Modern Research

Data linkage integrates records from multiple databases—such as electronic health records (EHRs), administrative claims, research registries, and genomics data—to create a comprehensive picture without collecting new information. It uses identifiers like names, dates of birth, or unique ID numbers to match records belonging to the same person or entity. [1]

The power of this approach is its ability to reveal insights invisible in isolated data sources. For example, England's linked electronic health records cover over 54 million people, creating one of the world's largest research resources. Similarly, the WA Data Linkage System has connected over 150 million records from more than 50 datasets. [1] This is not merely a technical exercise; it is a foundational capability for generating real-world evidence. During the COVID-19 pandemic, researchers in England linked primary care records, hospital admissions, and death registries for 17 million adults almost overnight, revealing critical ethnic disparities in outcomes that reshaped public health responses. [1]

However, the process is fraught with challenges. Linkage error is inevitable, manifesting as false matches (linking records from different people) or missed matches (failing to link records from the same person). The validation of linkage algorithms is therefore paramount, as unvalidated data can lead to misclassification bias and unmeasured confounding in research. [1] [2]

Data Linkage Methods and Performance Comparison

Choosing the right linkage method is crucial for data quality. The three primary approaches—deterministic, probabilistic, and machine learning (ML)-driven—each have distinct strengths, weaknesses, and performance characteristics, as summarized in the table below.

Table 1: Comparison of Primary Data Linkage Methods

| Method | Core Principle | Key Advantage | Key Limitation | Typical Application Context |

|---|---|---|---|---|

| Deterministic Linkage [1] | Requires exact agreement on specified identifiers (e.g., NHS number, date of birth). | High scalability and speed; simple rules enable quick processing of millions of records. | Inflexible; fails when identifiers contain errors or change over time (e.g., name changes, data entry errors). | Environments with reliable, high-quality unique identifiers. |

| Probabilistic Linkage [1] | Weights evidence across multiple fields to calculate a match probability; does not require perfect agreement. | Handles messy, real-world data effectively; more robust to errors and variations in identifiers. | Involves a fundamental trade-off between false matches and missed matches; requires careful threshold tuning. | The workhorse method for most large-scale linkage projects where perfect identifiers are unavailable. |

| ML-Driven Linkage [1] | Uses algorithms (e.g., gradient-boosting, neural networks) to learn optimal matching patterns directly from data. | Can capture complex, non-linear patterns in data; can reduce manual review burden by up to 70% via active learning. | Requires large amounts of training data; "black box" nature can reduce transparency. | Emerging applications for complex linkage tasks and improving efficiency. |

The performance of these methods is often a trade-off. Good linkage algorithms typically achieve sensitivity and positive predictive value (PPV) exceeding 95%, but reaching these benchmarks requires careful tuning. For instance, setting a conservative threshold can result in fewer than 1% false matches but miss 40% of true matches. Conversely, a lower threshold can capture 90% of true matches but with a 30% false match rate. [1] Hierarchical deterministic matching, as used by the Canadian Institute for Health Information, employs a cascading approach that can capture 95% of true matches while maintaining false match rates below 0.1%. [1]

Data Linkage in Fertility Research and Registries

In fertility research, data linkage is key to understanding treatment outcomes, long-term health of mothers and children, and the effectiveness of policies. A systematic review highlighted a critical gap: there is a "paucity of literature on validation of routinely collected data from a fertility population." Of 19 included studies, only one validated a national fertility registry, and none fully adhered to recommended reporting guidelines for validation studies. [2] This underscores a significant quality challenge in the field.

Experimental Validation of Fertility Data Linkage

Objective: To validate a linkage algorithm between a fertility registry and another administrative database (e.g., a birth registry). The goal is to accurately identify children born from Assisted Reproductive Technology (ART) within the broader birth registry for long-term outcome studies. [2]

Methodology:

- Data Sources: A national ART registry (e.g., the Society for Assisted Reproductive Technology (SART) database) and a national birth registry.

- Linkage Algorithm: A probabilistic linkage algorithm is typically used. It compares records based on:

- Maternal identifiers: First and last name (using Jaro-Winkler similarity or other string comparators), date of birth.

- Paternal identifiers: First and last name.

- Event details: Date of conception (estimated from birth date and gestational age). [2]

- Validation ("Gold Standard"): A subset of linked and unlinked records is manually reviewed against medical charts to establish ground truth. [1] [2]

- Measures of Validity: The algorithm's performance is assessed by calculating:

- Sensitivity: The proportion of true ART births correctly identified by the algorithm.

- Specificity: The proportion of true non-ART births correctly excluded by the algorithm.

- Positive Predictive Value (PPV): The proportion of algorithm-identified ART births that are true ART births. [2]

Table 2: Key Performance Metrics for Fertility Data Linkage Validation

| Metric | Definition | Interpretation in Fertistry Linkage Context |

|---|---|---|

| Sensitivity [2] | True Positives / (True Positives + False Negatives) | Measures the ability to correctly find true ART-born children in the birth registry. A low value means many are missed. |

| Specificity [2] | True Negatives / (True Negatives + False Positives) | Measures the ability to correctly exclude children not conceived via ART. A low value means many children are incorrectly labeled as ART-conceived. |

| Positive Predictive Value (PPV) [2] | True Positives / (True Positives + False Positives) | The probability that a child identified by the algorithm as ART-conceived is truly ART-conceived. Critical for research accuracy. |

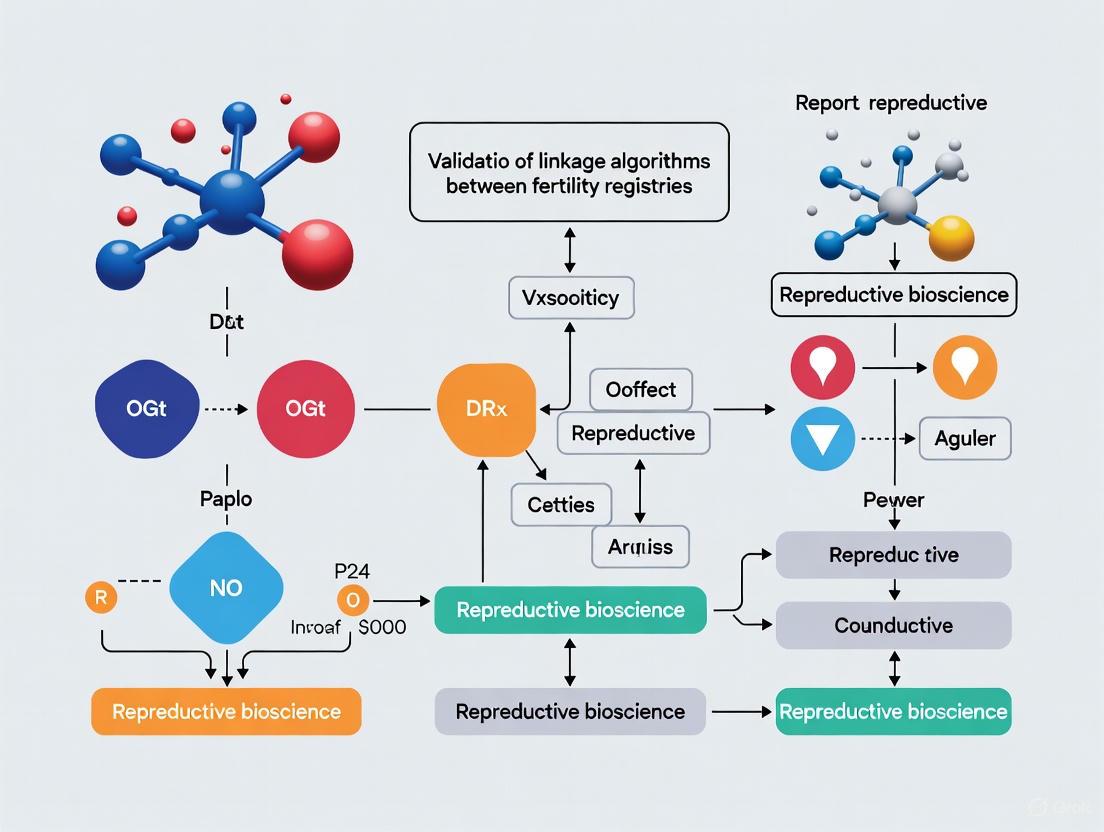

The following workflow diagram illustrates the typical process for validating a fertility data linkage algorithm:

Data Linkage as a Catalyst in AI-Driven Drug Discovery

In drug discovery, data linkage is accelerating innovation by creating rich, longitudinal datasets that train and validate AI models. A prominent application is clinical trial tokenization, a privacy-preserving linkage method that de-identifies and links trial participants to external data sources like EHRs, claims, and pharmacy records. [3]

Experimental Protocol: Clinical Trial Tokenization for Long-Term Follow-Up

Objective: To enable long-term safety and efficacy monitoring of a new cell or gene therapy for oncology beyond the initial trial period (often 10-15 years) without imposing excessive burden on patients and sites. [3]

Methodology:

- Trial Onset: During trial enrollment, collect patient identifiers and generate a unique, de-identified token for each participant using a secure, one-way hash function. [3]

- Data Partner Linking: The token (not identifiable information) is shared with data partners who hold real-world data (RWD) sources, such as:

- Electronic Health Records (EHRs)

- Insurance claims data

- Pharmacy records

- Mortality registries [3]

- Data Retrieval and Analysis: Data partners use the token to find matching records in their systems and return de-identified, longitudinal health data to the trial sponsor. This allows for the assessment of long-term outcomes like overall survival, disease progression, and healthcare utilization. [3]

- Validation: The tokenization and linkage process is validated by measuring the proportion of trial participants successfully matched to RWD sources and assessing the completeness and quality of the returned data. [3]

Table 3: Top Therapeutic Areas for Trial Tokenization and Representative Use Cases (2025)

| Therapeutic Area | Prevalence in Tokenization | Primary Linkage Use Cases |

|---|---|---|

| Psychiatric Disorders [3] | Top area | Mapping complex historical treatment pathways and therapy-switching patterns for conditions like schizophrenia and depression. |

| Screening & Diagnostics [3] | Second | Validating diagnostic test performance and assessing the long-term impact of early detection on health outcomes. |

| Oncology [3] | Third | Enabling 10-15 year follow-up for cell/gene therapies, linking to mortality records and EHRs for regulatory submissions. |

| Rare Diseases [3] | Emerging | Understanding disease progression and treatment durability; creating external control arms due to small patient populations. |

| Metabolic Disorders [3] | Emerging | Long-term treatment monitoring and uncovering unexpected drug effects in new disease areas (e.g., GLP-1 agonists and Alzheimer's risk). |

The following diagram outlines the tokenization and linkage process for a clinical trial, highlighting how privacy is maintained:

Successfully implementing data linkage requires a combination of specialized methods, software, and data resources.

Table 4: Essential Tools and Resources for Data Linkage Research

| Tool/Resource | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| Deterministic Algorithm [1] | Method | Links records based on exact matches of identifiers. | Foundation for linkage in environments with high-quality, stable unique identifiers. |

| Probabilistic Algorithm (Fellegi-Sunter) [1] | Method | Calculates match probability using weights for different identifier agreements. | The standard statistical model for handling messy, real-world data where errors are present. |

| Jaro-Winkler Similarity [1] | Software Function | Measures string similarity, effective for detecting typos and minor spelling variations in names. | Critical for preprocessing and comparing text-based identifiers like patient names. |

| Expectation-Maximization (EM) Algorithm [1] | Software Function | Automatically learns optimal matching parameters (weights and thresholds) from the data itself. | Reduces the need for manual parameter setting, improving efficiency and objectivity. |

| SHAP (SHapley Additive exPlanations) [4] | Software Library | Explains the output of machine learning models, including those used for linkage or prediction. | Provides interpretability for black-box ML linkage models, crucial for validation and trust. |

| Real-World Data Partners [3] | Data Resource | Provide access to linked datasets from EHRs, claims, and other administrative sources. | Enable the practical application of tokenization and linkage for clinical trial follow-up and epidemiology. |

Data linkage is undeniably a game-changer, creating powerful, unified datasets that drive progress in both fertility research and drug discovery. In fertility, it enables the long-term follow-up of ART-born children and critical policy evaluation, though the field must prioritize the robust validation of its linkage algorithms. In drug discovery, privacy-preserving tokenization is becoming a foundational practice, accelerating evidence generation across therapeutic areas from oncology to psychiatry. The future of this field lies in the continued refinement of linkage methods, particularly ML-driven approaches, and a steadfast commitment to transparency and validation. This will ensure that the connected datasets used to answer science's most pressing questions are as accurate and reliable as possible.

Record linkage connects data from different sources to create comprehensive datasets, which is vital in fertility and Assisted Reproductive Technology research. By combining data from clinical registries, insurance claims, and birth records, researchers can study long-term outcomes and effectiveness on a large scale [5] [6]. The validity of this research depends entirely on the accuracy of the linkage process, making understanding its core components and potential errors essential [2] [7].

This guide defines the fundamental terms—records, identifiers, master keys, and linkage error—within fertility research. We compare linkage methods and present experimental data on their performance, providing a foundation for validating linkage algorithms in reproductive health studies.

Defining the Core Components

Records

In fertility research, a record is a collection of data pertaining to a single entity—typically a patient, cycle, or birth. These records are stored across diverse databases:

- Clinical Registries: Contain detailed treatment data, such as IVF cycle parameters, embryo quality, and pregnancy outcomes [2] [8]. For example, the Society for Assisted Reproductive Technology (SART) registry maintains records of ART cycles in the US [8].

- Administrative Databases: Include insurance claims data, which track procedures and diagnoses for billing purposes. The Clinformatics Data Mart (CDM) is one such database used to study insured IVF cycles [6].

- Vital Statistics: Birth records from systems like the US National Vital Statistics System capture birth weight, gestational age, and parental demographic information [9].

- Longitudinal Cohorts: Research datasets like the LISS panel or the Dutch register data follow individuals over time, collecting a wide range of health and social variables related to fertility [10].

Identifiers

Identifiers are the specific data variables used to determine if two records refer to the same individual or entity. The quality of identifiers determines the success of linkage [7].

Table: Common Identifiers in Fertility Record Linkage

| Identifier Category | Examples | Role in Linkage | Considerations in Fertility Context |

|---|---|---|---|

| Direct Identifiers | Full name, Social Security Number (SSN), exact date of birth [7]. | Provide high discriminatory power for exact matching. | Often protected for privacy; may not be available for research [7]. |

| Indirect Identifiers | Postal code, birth date (year/month), maternal age, parity, infant sex [11] [5] [9]. | Combined to create a quasi-unique profile for probabilistic linkage. | Crucial when direct identifiers are unavailable; subject to errors and changes over time. |

| Contextual Data | Infertility diagnosis, treatment type (IVF/ICSI), number of embryos transferred [8]. | Can help resolve ambiguities when other identifiers conflict. | Provides domain-specific validation but may have lower discriminatory power on its own. |

Master Keys

A master key (or linkage key) is a single, constructed identifier that combines information from several source identifiers to uniquely identify an individual across datasets [7]. In probabilistic linkage, this is a composite score, while deterministic linkage may use a constructed string.

- Probabilistic Key: A score calculated from the weighted agreement of multiple identifiers (e.g., date of birth, postal code, sex). The weights are based on the probability of agreement among true matches versus non-matches [5] [7].

- Deterministic Key: A string created by concatenating standardized values of identifiers (e.g.,

YYYYMMDD_OF_BIRTH_POSTCODE_LASTNAME). Records match if the strings match exactly [7].

Linkage Error

Linkage error occurs when the algorithm incorrectly classifies a record pair. It is a critical source of bias in research based on linked data [7].

- False Positives (False Matches): Records from different individuals are incorrectly linked. This can occur with common identifiers or data errors, potentially merging patient histories and corrupting study results.

- False Negatives (False Non-Matches): Records from the same individual are not linked. This is often caused by errors or changes in identifiers (e.g., misspelled names, changed addresses), leading to incomplete data and loss of statistical power.

Comparative Analysis of Linkage Methods

The two primary methodological frameworks for record linkage are deterministic and probabilistic. Their performance varies significantly based on data quality and the identifiers available.

Table: Comparison of Deterministic vs. Probabilistic Linkage Methods

| Feature | Deterministic Linkage | Probabilistic Linkage |

|---|---|---|

| Core Principle | Requires exact agreement on one or more identifiers [7]. | Uses statistical weights to handle partial agreement; a composite score determines match status [5] [7]. |

| Handling of Data Errors | Poor. A single character error in a key identifier prevents a match [7]. | Robust. Can tolerate minor errors and still classify a pair as a match [5]. |

| Typical Match Rate | Lower, due to strict exact-match requirements [7]. | Higher, due to ability to credit partial agreement [5]. |

| Complexity & Transparency | Simple rules, easy to implement and audit [7]. | Complex; requires estimating agreement probabilities and setting score thresholds [5] [7]. |

| Best Suited For | Scenarios with high-quality, standardized data and unique identifiers (e.g., SSN) [7]. | Scenarios with "real-world" data containing errors, or when only indirect identifiers are available [5] [7]. |

Experimental Data on Method Performance

A Dutch perinatal study provides quantitative evidence of probabilistic linkage's superiority in handling errors. Researchers introduced "close agreement" for variables like postal code and date of birth, which accounts for typical data entry mistakes (e.g., transposed digits) without requiring perfect matches [5].

Table: Impact of "Close Agreement" on Linkage Uncertainty [5]

| Linking Scenario | Number of Record Pairs in "Grey Area" (Uncertain Status) |

|---|---|

| Standard Probabilistic Linkage | Baseline (100%) |

| Probabilistic Linkage with "Close Agreement" | 5% of Baseline |

| Result | A 95% reduction in uncertain pairs, dramatically increasing the number of records that can be confidently classified as matches or non-matches. |

This demonstrates that enhanced probabilistic methods can significantly mitigate linkage error, a crucial consideration for the validity of fertility research.

Experimental Protocols for Validation

Validating a linkage algorithm is essential before using the linked data for research. The gold standard involves comparing the algorithm's results to a manually verified, "true" set of matches.

Core Validation Protocol

A typical protocol involves creating a sample of record pairs where the true match status is known.

- Create a Gold Standard Sample: Manually review and verify the match status of a random sample of record pairs (e.g., 500-1000 pairs) from the total set of potential matches. This is the validation dataset [2].

- Run Linkage Algorithm: Apply the proposed linkage algorithm (deterministic or probabilistic) to the entire dataset, including the gold standard sample.

- Compare and Calculate Metrics: Compare the algorithm's classification against the manual verification for the gold standard sample.

- Calculate Performance Metrics:

- Sensitivity/Recall: Proportion of true matches correctly identified.

- Positive Predictive Value (PPV)/Precision: Proportion of algorithm-identified matches that are true matches.

- Specificity: Proportion of true non-matches correctly identified.

- False Positive Rate: Proportion of true non-matches incorrectly classified as matches.

Validation in Fertility Research Context

A systematic review highlighted a critical gap: validation is severely under-reported in fertility database research. Of 19 studies, only one validated a national fertility registry, and none fully adhered to recommended reporting guidelines [2]. This underscores the need for rigorous validation protocols specific to the domain. When linking a fertility registry to birth outcomes, key validation steps include:

- Check Linkage of Known Outcomes: For a sample of IVF cycles in the registry that resulted in a documented live birth, verify that the algorithm successfully links to the corresponding birth record.

- Inspect Unlinked Records: Manually review a sample of fertility treatment cycles that failed to link to any birth record to determine if they are true non-births or false negatives.

- Assess Implausibilities: Check for biologically or temporally implausible links (e.g., a birth record linked to an IVF cycle that occurred after the birth date).

Validation Workflow for Linkage Algorithms

The Scientist's Toolkit

Successful record linkage and validation require specific tools and data resources.

Table: Essential Research Reagents for Record Linkage Validation

| Tool / Resource | Function | Example in Fertility Research |

|---|---|---|

| Gold Standard Dataset | Serves as the ground truth for validating the accuracy of the linkage algorithm. | A manually verified sample of pairs from an IVF registry and a birth registry, where the true match status is known [2]. |

| Data Cleaning & Standardization Scripts | Prepare identifiers for comparison (e.g., convert to uppercase, remove punctuation, parse names). | Standardizing clinic names and addresses in a fertility registry before linking to an administrative database [7]. |

| Probabilistic Linkage Software (e.g., FRIL, LinkPlus) | Implements the Fellegi-Sunter model to calculate match weights and probabilities. | Used to link a national perinatal registry (LVR) to population and mortality registers in the Netherlands [5]. |

| Phonetic Encoding (e.g., Soundex) | Accounts for minor misspellings in names by converting them to a phonetic code. | Matching patient last names that might have typographical errors (e.g., "Smith" vs. "Smyth") [7]. |

| "Close Agreement" Logic | Defines rules for near-matches on key identifiers to reduce false negatives. | Defining a transposition in date of birth (e.g., "01/05" vs "05/01") or postal code as a "close" rather than a disagreement [5]. |

| Validation Metrics Calculator | A script or tool to compute sensitivity, PPV, and other performance metrics from the results. | Calculating the proportion of true IVF-birth matches correctly captured by the algorithm for a study [2]. |

The integrity of fertility registry research that uses linked data is fundamentally dependent on the quality of the linkage process. This guide establishes that while deterministic linkage offers simplicity, probabilistic methods are generally more robust to the errors common in real-world data, as evidenced by their ability to reduce uncertain links by up to 95% [5].

Crucially, the field faces a significant validation gap [2]. Simply performing the linkage is insufficient. Researchers must rigorously validate their algorithms using gold standard samples and report standard metrics like sensitivity and PPV. Adopting advanced techniques like "close agreement" and thorough validation protocols is essential for producing reliable, actionable evidence to guide patients, clinicians, and policymakers in reproductive medicine.

The global expansion of Assisted Reproductive Technology has made the rigorous collection and validation of fertility data more critical than ever. With over 77,500 in vitro fertilisation cycles performed in the UK alone in 2023 and IVF births constituting approximately 3% of all UK births—roughly one child in every classroom—the imperative for robust data validation has never been greater [12]. These data form the foundation for clinical decision-making, policy development, and patient counseling, yet their accuracy is often compromised by systematic challenges in collection and linkage processes.

Routinely collected data, including administrative databases and registries, serve as excellent sources for reporting, quality assurance, and research. However, these data are subject to misclassification bias due to diagnostic inaccuracies or errors in data entry, necessitating comprehensive validation before use for clinical or research purposes [2]. A systematic review of validation studies among fertility populations revealed that of 19 studies included, only one validated a national fertility registry, and none reported their results according to recommended reporting guidelines for validation studies [13]. This validation gap represents a significant methodological challenge for researchers relying on these data sources for epidemiological studies and outcomes research.

This analysis examines the current fertility data landscape, focusing specifically on validation methodologies for linkage algorithms between fertility registries and other data sources. By comparing data sources, presenting validation frameworks, and identifying emerging technologies, we provide researchers with tools to navigate the complexities of fertility data infrastructure.

National and International Registry Frameworks

Table 1: Characteristics of Major Fertility Data Sources

| Data Source | Geographic Coverage | Key Metrics Reported | Validation Status | Primary Applications |

|---|---|---|---|---|

| HFEA (UK Fertility Registry) | United Kingdom | Pregnancy rates, birth rates, multiple birth rates, storage cycles | Preliminary data for 2020-2023 not yet validated; validation expected Winter 2025/26 [12] | National trend analysis, clinic performance monitoring, policy development |

| CDC ART Success Rates | United States | Clinic-specific success rates, live birth deliveries, patient characteristics | Data reported and verified annually by clinics [14] | Patient decision-making, clinic benchmarking, public health surveillance |

| International Committee for Monitoring Assisted Reproductive Technologies | Global | International trends, practice patterns, utilization rates | Relies on validation of contributing national registries; limited validation studies available [2] | Global trend analysis, cross-country comparisons, standards development |

National fertility registries provide invaluable population-level data but face significant validation challenges. The UK's Human Fertilisation and Embryology Authority has reported unprecedented growth in treatment cycles, with a 15% increase from 2019 to 2023, reaching nearly 99,000 cycles [12]. However, large-scale work to upgrade data submission systems has delayed validation of recent data, highlighting the vulnerability of even well-established registries to technical disruptions. Similarly, the U.S. CDC's ART Success Rates program provides clinic-specific data, but the systematic review by Bacal et al. found a general paucity of validation literature supporting such databases [2] [13].

Table 2: Data Quality Indicators Across Source Types

| Data Quality Dimension | Clinical Trial Data | Clinic-Specific Databases | National Registries | Patient-Self Reported Data |

|---|---|---|---|---|

| Completeness | High (protocol-driven) | Variable (clinic-dependent) | High (mandatory reporting) | Moderate to low (self-selection bias) |

| Accuracy | High (controlled collection) | Moderate (clinical workflow constraints) | Moderate (submission errors) | Variable (recall bias) |

| Timeliness | Low (follow-up requirements) | High (real-time entry) | Moderate (aggregation delays) | High (immediate entry) |

| Standardization | High (protocol-specific) | Variable (clinic-specific practices) | High (standardized fields) | Low (idiosyncratic reporting) |

| Linkage Potential | Moderate (ethical constraints) | High (complete patient data) | High (population coverage) | Low (identifier limitations) |

Clinical databases maintained by individual fertility clinics typically demonstrate higher accuracy for technical parameters like embryo quality and laboratory conditions but suffer from limited generalizability. National registries offer broader population coverage but often lack the granularity of clinic-specific databases. The emergence of digital fertility trackers introduces new data sources, with research indicating these tools are most frequently used alongside, but sometimes in place of, clinical care [15]. However, these digital tools may disrupt patient-provider relationships and pose risks when developed without a strong research or medical basis.

Validation Methodologies for Fertility Data Linkage

Experimental Protocols for Algorithm Validation

The validation of linkage algorithms between fertility registries and other data sources requires meticulous methodology. Based on the systematic review of validation practices, we propose a comprehensive framework incorporating four critical validation measures: sensitivity, specificity, positive predictive value, and negative predictive value [2]. Current literature reveals that sensitivity is the most commonly reported measure (12 of 19 studies), followed by specificity (9 studies), with only three studies reporting four or more validation measures [13].

The reference standard problem represents a fundamental methodological challenge. In the absence of a true gold standard, medical records often serve as the best available reference, though themselves subject to documentation errors [2]. The validation protocol should include:

Sample Selection: Random sampling of records from the source fertility registry, stratified by key variables such as age, treatment type, and outcome status.

Linkage Algorithm Application: Implementation of probabilistic or deterministic matching algorithms using common identifiers such as name, date of birth, and geographic location.

Reference Standard Comparison: Manual verification of matched and unmatched records against the reference standard (e.g., medical records, vital statistics).

Validation Metric Calculation: Computation of sensitivity, specificity, predictive values, and likelihood ratios with confidence intervals.

Stratified Analysis: Assessment of algorithm performance across clinically relevant subgroups to identify potential bias.

This protocol addresses the critical finding that only five of 19 validation studies presented confidence intervals for their estimates, and just seven reported the prevalence of the validated variable in the target population [13].

Visualization of Fertility Data Linkage Validation

Fertility Data Validation Workflow

This workflow delineates the sequential validation process, highlighting both the structured approach required and the multiple points where potential inaccuracies may be introduced. The manual verification step represents the most resource-intensive component but is essential for establishing accuracy.

Emerging Technologies and Methodological Innovations

Metabolic Biomarkers and Non-Invasive Assessment

The quest for metabolic biomarkers of IVF outcomes represents a promising frontier for enhancing data collection in embryo assessment. Analysis of spent culture media offers a non-invasive strategy for evaluating embryo viability and implantation potential [16]. By profiling the consumption and secretion of low molecular weight metabolites, SCM analysis provides insights into embryonic metabolic activity and developmental competence.

Recent meta-analyses have identified seven metabolites positively associated and ten metabolites negatively associated with favorable IVF outcomes [16]. However, methodological challenges persist, including heterogeneous study designs, variable analytical methods, and inconsistent reporting of outcomes. The field requires standardized protocols, validated analytical methods, and transparent reporting before these approaches can be fully integrated into clinical data streams.

Artificial Intelligence and Data Architecture Solutions

Artificial intelligence applications in fertility face significant data reliability challenges, with industry leaders raising concerns about AI "hallucination" - a phenomenon where models generate inaccurate or false information [17]. This problem is particularly acute in fertility medicine, where 97% of healthcare data remains unstructured and untapped, and many AI solutions rely on large language models trained on outdated or unverified public data.

Advanced database architectures, particularly graph databases, show promise for addressing these challenges by recognizing complex relationships between diverse data points such as hormonal levels, embryonic development, and patient demographics [17]. These systems, when combined with retrieval-augmented generation methods that supplement AI responses with verified, real-time data sources, may reduce hallucination risks while improving predictive capabilities for outcomes such as live birth rates.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Fertility Data Research

| Reagent/Platform | Function | Application in Validation Research | Technical Considerations |

|---|---|---|---|

| Graph Database Architecture | Enables recognition of complex relationships between diverse fertility data points | Facilitates accurate linkage algorithms; reduces AI hallucination risk [17] | Superior to relational databases for interconnected fertility data; requires specialized expertise |

| Retrieval-Augmented Generation | Supplements AI responses with verified, real-time data sources | Enhances reliability of AI-generated insights from fertility databases [17] | Mitigates hallucination risk; depends on quality of underlying data sources |

| Spent Culture Media Analysis | Non-invasive metabolic profiling of embryo viability | Provides objective biomarkers beyond morphological assessment [16] | Requires standardized protocols; analytical variability challenges reproducibility |

| Probabilistic Linkage Algorithms | Determines record matches using statistical probabilities | Enables linkage when exact identifiers are unavailable; accommodates data errors | Balance between sensitivity and specificity requires tuning to specific datasets |

| Digital Fertility Trackers | Collection of patient-generated health data | Captures real-world treatment adherence and outcomes [15] | Variable accuracy; potential to disrupt patient-provider relationships |

The fertility data landscape presents both extraordinary opportunities and significant methodological challenges. While national registries provide invaluable population-level insights, their validation remains inadequate, with only one of 19 studies validating a national fertility registry according to a systematic review [13]. The progression from clinical IVF cycles to long-term outcomes depends on robust linkage algorithms that can accurately connect fertility treatment data with subsequent maternal and child health outcomes.

Researchers navigating this landscape must prioritize validation methodologies, incorporating multiple measures of accuracy with appropriate confidence intervals. Emerging technologies, including metabolic biomarker profiling and AI-enhanced data architectures, offer promising approaches but require rigorous validation before clinical implementation. As fertility treatment continues to evolve—with freezing cycles now accounting for 45% of all embryo transfers in the UK [12]—the data infrastructure supporting this field must similarly advance through standardized protocols, transparent reporting, and multidisciplinary collaboration.

Linking fertility data presents a unique set of methodological challenges that distinguish it from other health data linkage domains. Fertility information encompasses exceptionally sensitive details including menstrual cycles, sexual activity, contraceptive use, pregnancy outcomes, and assisted reproductive technologies [18] [15]. The integration of this data into longitudinal population studies (LPS) and registry research offers tremendous potential for advancing reproductive science but introduces significant complexities regarding privacy, confidentiality, and ethical governance [19] [18]. This review examines the distinctive challenges in fertility data linkage through the lens of validation frameworks for linkage algorithms, focusing on the intersection of technical methodology and ethical imperatives in fertility registries research.

The femtech industry's rapid expansion, projected to exceed $50 billion, has accelerated both data availability and privacy concerns, with hundreds of reproductive tracking technologies now collecting intimate health data [18] [20]. Simultaneously, traditional clinical fertility data from in vitro fertilization (IVF) treatments and pregnancy outcomes continues to grow in volume and complexity [21] [6]. This article synthesizes current frameworks and validation methodologies for linking these diverse data sources while addressing the unique sensitivities inherent to reproductive health information.

Methodological Framework for Data Linkage

Structured Approach to Sensitive Data Integration

A robust four-stage framework for linking digital footprint data into longitudinal population studies provides a methodological foundation that can be specifically adapted for fertility data [19]. This structured approach addresses the end-to-end process from participant engagement to secure data access, with particular relevance to fertility information's sensitive nature.

Table: Four-Stage Framework for Fertility Data Linkage

| Stage | Core Objectives | Fertility-Specific Considerations |

|---|---|---|

| 1. Understand Participant Expectations | Assess acceptability, build trust, ensure transparency | Address heightened sensitivity of reproductive data; variable perceptions by data type (e.g., menstrual cycles vs. pregnancy outcomes) [19] |

| 2. Collect and Link Data | Establish technical linkage, ensure data quality | Navigate reliance on third-party platforms (e.g., fertility apps); implement opt-in consent models for intimate data [19] [18] |

| 3. Evaluate Data Properties | Assess completeness, accuracy, representativeness | Address measurement errors in self-tracked fertility metrics; identify biases in app-user populations [19] [15] |

| 4. Ensure Secure Ethical Access | Implement governance, control access | Utilize Trusted Research Environments (TREs); consider synthetic datasets for fertility information given legal vulnerabilities [19] [18] |

Participant-Centric Approaches

The initial framework stage emphasizes understanding participant expectations and acceptability, which proves particularly crucial for fertility data given its intimate nature. Research indicates that participant perceptions of data sensitivity vary significantly by data type, necessitating tailored consent approaches for different categories of fertility information [19]. For instance, studies within the Avon Longitudinal Study of Parents and Children (ALSPAC) revealed that participants perceived some data types as more sensitive (e.g., banking, GPS) than others (e.g., physical activity), suggesting fertility data may occupy a particularly high sensitivity category [19].

Maintaining participant trust requires transparent communication about data usage and giving participants control over their information. Recommendations for enhancing security with sensitive transaction data include allowing participants to choose whether to share retrospective, future, or both types of data – an approach directly applicable to fertility tracking information [19]. The opt-in consent model predominates for digital footprint linkage, as exemplified by ALSPAC's supermarket loyalty card linkages where participants explicitly consent after being informed about data collection purposes [19].

Public engagement initiatives have demonstrated value in addressing uncertainties about data sensitivity. Science center exhibitions that facilitated interactive discussions about tracking mental health using digital footprint data highlight the importance of dismantling misconceptions about privacy and consent while emphasizing data's value for public good [19]. For fertility data specifically, participant input can directly shape research design, as demonstrated by a Generation Scotland pilot where an advisory group influenced technical and practical aspects of a loneliness app, including notification frequency and interface design [19].

Technical and Regulatory Challenges

Privacy Vulnerabilities and Regulatory Gaps

Fertility data exists within a complex regulatory landscape characterized by significant protection gaps, particularly for digitally-collected information. The Health Insurance Portability and Accountability Act (HIPAA) provides limited coverage for fertility tracking technologies, as most applications fall outside its jurisdiction because they aren't classified as "covered entities" like traditional healthcare providers [18] [20]. This regulatory gap has enabled widespread data sharing practices, with one analysis finding that 21 of 25 reviewed period tracking technologies shared data with third parties [18].

Table: Regulatory Frameworks Governing Fertility Data

| Regulatory Mechanism | Scope and Coverage | Key Limitations for Fertility Data |

|---|---|---|

| HIPAA (US) | Protects health information held by "covered entities" (healthcare providers, insurers) | Does not cover most fertility apps unless they interface directly with electronic health records [18] [20] |

| FTC Health Breach Notification Rule | Requires notification for unauthorized disclosures of health data | Does not prohibit third-party data sharing; only triggers after breaches occur [18] |

| GDPR (EU) | Special category protections for health data requiring explicit consent | Enforcement challenges; 78% of FemTech apps fail to obtain granular consent [20] |

| State Laws (e.g., Washington's My Health, My Data Act) | State-specific protections for health data not covered by HIPAA | Creates patchwork regulation; variable protections across jurisdictions [18] |

The post-Roe legal landscape has intensified privacy concerns for fertility data. Law enforcement agencies in states with abortion restrictions have successfully obtained reproductive health information through legal processes, including period tracker logs showing deleted pregnancy entries, location data placing users near abortion clinics, and search histories containing terms related to abortion access [20]. This evidentiary use creates unprecedented vulnerabilities for fertility data subjects.

Data Quality and Representativeness Issues

Beyond privacy concerns, fertility data linkage faces significant methodological challenges regarding data quality and representativeness. Digital fertility tracking technologies exhibit varying levels of accuracy, with only a select few receiving FDA clearance for contraceptive purposes [18]. For instance, Natural Cycles became the first app cleared by the FDA as a direct-to-consumer contraceptive in 2018, followed by Clue Birth Control in 2021, yet many applications operate without rigorous validation [18].

Measurement error represents another fundamental challenge, particularly for user-reported data in fertility applications. Research indicates that calendar-based apps frequently incorrectly estimate ovulation windows, potentially leading to inaccurate fertility predictions [15]. Additionally, algorithmic biases may disadvantage marginalized groups, with one 2024 study finding that some applications undercount ovulation days for women with polycystic ovary syndrome (PCOS), potentially leading to inaccurate contraceptive guidance [20].

Selection bias presents further complications, as users of digital fertility trackers represent demographic subgroups that may not reflect broader populations. Studies indicate fertility app users often differ in socioeconomic status, technological proficiency, and health engagement levels, potentially skewing research findings [15]. These representativeness challenges necessitate careful methodological adjustments during data linkage and analysis.

Validation Frameworks and Experimental Protocols

Algorithm Validation Methodologies

Validating linkage algorithms for fertility data requires robust methodological frameworks that address both technical accuracy and privacy preservation. The evaluation of a national commercial claims database for IVF data accuracy exemplifies a comprehensive validation approach, comparing key clinical events against national IVF registries to verify completeness and accuracy [6]. This methodology demonstrates how linked fertility data can be validated against established clinical benchmarks.

Machine learning approaches offer promising validation pathways for fertility data linkage while maintaining privacy standards. The development of machine learning models for predicting blastocyst yield in IVF cycles illustrates the application of algorithmic validation to fertility-specific outcomes [21]. This research employed three machine learning models—SVM, LightGBM, and XGBoost—which demonstrated comparable performance and outperformed traditional linear regression models (R²: 0.673–0.676 vs. 0.587, MAE: 0.793–0.809 vs. 0.943) [21]. The methodological rigor included feature selection analysis and internal validation with multiple performance metrics to assess robustness.

Diagram 1: Fertility Data Linkage Validation Workflow. This protocol illustrates the sequential process for validating linkage algorithms, incorporating privacy preservation through synthetic data generation and multiple validation metrics.

Experimental Protocols for Fertility Data Linkage

Research validating the accuracy of IVF data in a national commercial claims database exemplifies robust experimental design for fertility data linkage [6]. The study compared key clinical events including pregnancy rates, live births, and live birth types against national IVF registries, establishing a methodology for verifying linked fertility data quality. This approach enables policymakers considering IVF insurance mandates and employers evaluating coverage expansion to utilize claims data with confidence in its accuracy [6].

Machine learning validation protocols represent another experimental approach with particular relevance to fertility data. The development of diagnostic models for infertility and pregnancy loss demonstrates a structured methodology incorporating multiple machine learning algorithms and feature selection techniques [22]. This research employed five machine learning algorithms to develop models based on the most relevant clinical indicators, with results showing high diagnostic performance (AUC > 0.958, sensitivity > 86.52%, specificity > 91.23%) [22]. The protocol included rigorous internal validation and comparative performance assessment across multiple algorithms.

For digital fertility data specifically, experimental protocols must address the distinctive challenges of app-derived information. Research indicates that comprehensive evaluation should assess data completeness, measurement consistency against clinical standards, temporal alignment of data points, and representativeness of the resulting linked dataset [19] [15]. These protocols help mitigate the unique quality challenges presented by fertility tracking technologies.

Research Reagent Solutions

Table: Essential Research Reagents for Fertility Data Linkage

| Research Reagent | Function | Application Example |

|---|---|---|

| Trusted Research Environments (TREs) | Secure data analysis platforms preventing unauthorized data export | Enables analysis of sensitive fertility data without compromising confidentiality [19] |

| Synthetic Datasets | Artificially generated data preserving statistical properties of original data | Allows algorithm development and testing without exposing actual patient fertility information [19] |

| Differential Privacy Techniques | Mathematical framework for privacy preservation adding calibrated noise | Protects individual fertility records while maintaining dataset utility for analysis [20] |

| De-identification Tools | Algorithms removing direct identifiers from fertility data | Reduces re-identification risk for fertility app data and clinical records [18] |

| Data Use Agreements (DUAs) | Legal contracts governing appropriate data use and security requirements | Establishes permitted uses for linked fertility data and security obligations [19] |

| Federated Learning Systems | Distributed machine learning approach keeping data localized | Enables collaborative model training on fertility data across institutions without data sharing [20] |

The linkage of fertility data presents distinctive challenges stemming from the exceptional sensitivity of reproductive information, regulatory protection gaps, and methodological complexities in data quality and representativeness. A structured framework addressing participant expectations, technical linkage, data evaluation, and secure access provides a foundation for robust fertility data integration. Validation protocols must incorporate both technical accuracy measures and privacy preservation safeguards, utilizing emerging methodologies from machine learning and privacy-enhancing technologies. As fertility data sources continue to expand through both clinical documentation and digital tracking technologies, maintaining the delicate balance between research utility and individual privacy remains paramount. Future methodological development should focus on standardized validation metrics specific to fertility data, interoperable governance frameworks, and participant-centric approaches that empower individuals within the fertility data ecosystem.

Choosing and Implementing Linkage Methods: From Deterministic Rules to Machine Learning

In the evolving field of reproductive medicine, data linkage serves as a cornerstone for robust research and clinical insights. Linking fertility registries with other health databases enables researchers to track long-term outcomes, monitor treatment safety, and understand the broader implications of assisted reproductive technologies (ART). Within this context, deterministic linkage stands as a fundamental methodology that uses exact-match rules to combine records pertaining to the same individual across different datasets. This approach relies on predefined identifiers—such as national health numbers, dates of birth, and postcodes—that must agree perfectly for a match to be declared [1] [23]. For fertility research, where accurate longitudinal tracking is essential yet challenging, implementing validated linkage algorithms is paramount for generating reliable evidence.

The need for rigorous validation of fertility data linkage is underscored by a systematic review which revealed a significant gap in current practices. Among reviewed studies, only one had validated a national fertility registry, and none reported their results in accordance with recommended reporting guidelines for validation studies [2] [13]. This validation gap is particularly concerning given that stakeholders increasingly rely on these linked data for monitoring treatment outcomes and adverse events [2]. This guide provides a comprehensive comparison of deterministic linkage implementation, offering experimental data and methodological protocols to strengthen fertility registry research.

Understanding Deterministic Linkage Methodology

Core Principles and Mechanisms

Deterministic linkage operates on the principle of exact agreement between identifying variables across different datasets. Unlike probabilistic methods that calculate match probabilities, deterministic linkage employs categorical rules that must be satisfied completely for records to be linked. This method typically uses a combination of personal identifiers, with some implementations using a hierarchical approach that applies sequential matching rules of varying strictness [1] [23].

A prime example of this methodology can be seen in England's National Hospital Episode Statistics, which implements a three-step deterministic algorithm seeking exact agreement on NHS number, date of birth, postcode, and sex [1]. Similarly, the Canadian Institute for Health Information (CIHI) employs a sophisticated seven-step deterministic algorithm that begins with the most reliable identifiers and progressively relaxes matching criteria if initial steps fail [1]. This cascading approach successfully captures approximately 95% of true matches while maintaining false match rates below 0.1% [1].

Comparative Framework: Deterministic vs. Probabilistic Linkage

Table 1: Fundamental Characteristics of Data Linkage Methods

| Feature | Deterministic Linkage | Probabilistic Linkage |

|---|---|---|

| Matching Principle | Exact agreement on specified identifiers | Statistical likelihood of records belonging to same entity |

| Identifier Requirements | Relies on direct personal identifiers (e.g., unique IDs, date of birth) | Can utilize indirect and proxy identifiers (e.g., area of residence, treating hospital) |

| Error Handling | Limited flexibility; data entry errors cause missed matches | More tolerant of minor variations and missing data |

| Computational Complexity | Generally lower; uses simple comparison rules | Higher; requires calculation of match weights and probabilities |

| Typical Match Rate | Lower sensitivity when identifiers are incomplete or erroneous | Higher sensitivity but potentially lower specificity |

| Implementation Scale | Efficiently processes millions of records quickly | More computationally intensive, especially without blocking strategies |

Experimental Comparison: Performance in Healthcare Contexts

Experimental Protocol and Validation Framework

A rigorous comparative study provides valuable experimental data on the performance of deterministic versus probabilistic linkage methodologies. The study utilized electronic health records from the National Bowel Cancer Audit (NBOCA) and Hospital Episode Statistics (HES) databases for 10,566 bowel cancer patients undergoing emergency surgery within the English National Health Service [23]. This research offers a validated framework that can be adapted for fertility registry linkage.

The deterministic linkage protocol employed an eight-step sequential matching process using patient identifiers. The algorithm began with exact matches on all four primary identifiers (NHS number, sex, date of birth, and postcode), progressively relaxing criteria through subsequent steps until eventually matching on NHS number alone at the final stage [23]. This hierarchical approach represents current best practices in deterministic linkage implementation.

The probabilistic linkage protocol was implemented without personal information, using instead proxy identifiers (age at diagnosis for date of birth, Lower Super Output Area for postcode) and indirect identifiers (sex, date of surgery, surgical procedure, responsible surgeon, hospital trust, and others) [23]. The probabilistic approach calculated m-probabilities (measure of data quality) and u-probabilities (measure of chance agreement) to generate match weights for determining linkage.

Quantitative Performance Results

Table 2: Experimental Results from Comparative Linkage Study

| Performance Metric | Deterministic Linkage | Probabilistic Linkage |

|---|---|---|

| Overall Match Rate | 82.8% | 81.4% |

| Systematic Bias | No systematic differences observed between linked and non-linked patients | No systematic differences observed between linked and non-linked patients |

| Regression Model Sensitivity | Not sensitive to linkage approach for mortality and length of stay outcomes | Not sensitive to linkage approach for mortality and length of stay outcomes |

| Data Security | Requires access to personal identifiers | Can be implemented without personal information |

| Implementation Context | Suitable within secure data environments with complete identifier data | Enables linkage by analysts outside highly secure environments |

The experimental results demonstrate that deterministic linkage achieved a slightly higher match rate (82.8% vs. 81.4%) without introducing systematic biases between linked and non-linked patient groups [23]. Importantly, regression models for key outcomes including mortality and hospital stay length were not sensitive to the linkage method, suggesting comparable validity for research purposes when implemented appropriately [23].

Implementation Protocol for Fertility Data

Workflow for Deterministic Linkage Implementation

The following diagram illustrates the sequential workflow for implementing deterministic linkage with fertility registry data:

Deterministic Linkage in Fertility Research Context

Implementing deterministic linkage for fertility registries presents specific challenges and considerations. Fertility treatments often involve multiple cycles over extended periods, requiring longitudinal tracking that can be compromised by changes in personal circumstances such as name changes, address moves, or other demographic shifts [2] [1]. These factors can reduce linkage sensitivity if not accounted for in the linkage methodology.

The systematic review of database validation in fertility populations found that current validation practices are insufficient, with only three of nineteen studies reporting four or more measures of validation, and just five studies presenting confidence intervals for their estimates [2]. This highlights the critical need for more rigorous validation protocols when implementing deterministic linkage for fertility data.

Research Reagent Solutions: Essential Components for Implementation

Table 3: Essential Components for Deterministic Linkage Implementation

| Component | Function | Implementation Considerations |

|---|---|---|

| Unique Patient Identifiers | Serves as primary anchor for exact matching | Fertility registries should collect standardized health system identifiers when available |

| Demographic Verifiers | Secondary validation fields (date of birth, sex) | Require standardized formats across source systems |

| Geographic Identifiers | Tertiary matching variables (postcode, area codes) | Subject to change over time; need periodic updating |

| Data Cleaning Tools | Preprocessing standardization of identifiers | Critical for handling typographical errors and format inconsistencies |

| Validation Framework | Assessment of linkage quality | Should measure sensitivity, specificity, and positive predictive value |

| Secure Data Environment | Protection of personal information | Essential for handling identifiable data required for deterministic approach |

Discussion: Applications and Limitations in Fertility Context

Advantages for Fertility Registry Research

Deterministic linkage offers several distinct advantages for fertility research. The method provides high specificity with minimal false matches when using reliable unique identifiers [1] [23]. This precision is particularly valuable when studying rare adverse outcomes following ART treatments, where false positive links could significantly distort risk estimates.

The computational efficiency of deterministic linkage enables processing of large-scale fertility registry data with minimal resources [1]. This scalability facilitates the creation of comprehensive linked datasets for population-level fertility research, such as tracking long-term health outcomes for children born through ART or monitoring cross-generational effects of fertility treatments.

Limitations and Methodological Considerations

The primary limitation of deterministic linkage emerges when personal identifiers are missing, incomplete, or erroneous [1] [23]. In fertility research, this challenge is compounded by the longitudinal nature of treatment and follow-up, where patient information may change over time. Data from Nordic countries shows that deterministic linkage using personal identification numbers achieves exceptional accuracy (>99.5%), but this performance degrades rapidly when unique identifiers are unavailable or unreliable [1].

The systematic review of fertility database validation studies revealed additional methodological concerns, noting that pre-test prevalence (the prevalence of the variable in the target population) was reported in only seven of nineteen studies, with just four studies having prevalence estimates from the study population within a 2% range of the pre-test estimate [2]. This discrepancy can lead to biased estimates in fertility research outcomes.

For researchers implementing deterministic linkage with fertility data, strategic application is essential. Deterministic methods are most appropriate when:

- High-quality unique identifiers are available across all datasets to be linked

- Data completeness is high for critical matching variables

- The research question requires maximal specificity over sensitivity

- Secure data environments are available for handling personal information

- Validation protocols are implemented to assess and report linkage quality

As fertility research increasingly relies on linked administrative data and registries, rigorous validation of linkage methodologies becomes paramount. Future work should develop and standardize validation frameworks specific to fertility data, addressing the current gaps in reporting and methodology identified in the systematic review [2]. By implementing robust deterministic linkage protocols with comprehensive validation, researchers can enhance the reliability of evidence generated from linked fertility data, ultimately supporting improved patient counseling, treatment protocols, and policy decisions in reproductive medicine.

Record linkage is a fundamental process for identifying and matching records that belong to the same entity across disparate data sources, a particularly crucial task in health informatics and registry research where unique identifiers are often unavailable across systems [24]. In the specific context of fertility registries research, accurately linking assisted reproductive technology (ART) data with birth records and other vital statistics is essential for monitoring maternal and child health outcomes, yet this task presents significant methodological challenges [25]. The two predominant methodological approaches for addressing this challenge are deterministic linkage and probabilistic linkage, each with distinct theoretical foundations and operational characteristics.

Deterministic record linkage (DRL) operates on exact or predefined agreement rules, where record pairs must match perfectly on all or a specified subset of identifying variables to be considered links [26]. While this approach benefits from simplicity and full automation capabilities, it suffers from significant limitations in handling real-world data quality issues such as typographical errors, missing values, and legitimate changes in identifying information over time [27]. The deterministic approach typically produces low false positive rates but at the expense of high missed match rates, particularly when data quality is poor or when linking variables contain errors [26].

Probabilistic record linkage (PRL), with the Fellegi-Sunter model as its theoretical foundation, introduces a more nuanced approach that calculates match probabilities based on the agreement and disagreement patterns across multiple identifying fields [24] [28]. This method accounts for the varying discriminating power of different matching variables and their values, offering greater flexibility in handling data imperfections commonly encountered in real-world registry data [27] [26]. The Fellegi-Sunter model functions as an unsupervised classification algorithm that assigns field-specific weights without requiring training data, making it particularly valuable for research applications where verified match status is unavailable [24].

The Fellegi-Sunter Model: Theoretical Framework

Core Parameters and Mathematical Foundation

The Fellegi-Sunter model operates on three fundamental parameters that collectively determine the probability that two records represent the same entity. These parameters enable the model to quantify the evidence for and against a match contained within the observed agreement patterns of record pairs [28].

The first parameter, lambda (λ), represents the prior probability that any two randomly selected records match, expressed as λ = Pr(Records match). This parameter varies significantly depending on the linkage context, including the total number of records, the prevalence of duplicate records, and the degree of overlap between datasets. Two datasets covering the same patient cohort would exhibit a high λ value, while entirely independent datasets would have a low λ [28].

The second parameter, the m probability, represents the probability of observing a specific agreement pattern given that the two records are truly a match: m = Pr(Observation | Records match). This parameter primarily reflects data quality and reliability. For instance, considering date of birth matching, the m probability would be high (approximately 0.98) for exact agreement, with the remaining 0.02 accounting for legitimate data errors or changes [28].

The third parameter, the u probability, represents the probability of observing a specific agreement pattern given that the two records are not a match: u = Pr(Observation | Records do not match). This parameter primarily measures coincidence or the discriminating power of the variable. For high-cardinality fields like date of birth, the u probability is very low (approximately 0.0001), while for low-cardinality fields like sex, the u probability is much higher (approximately 0.5) [28].

The mathematical foundation of the Fellegi-Sunter model combines these parameters to calculate a match weight, which is then converted to a match probability. The match weight (M) is derived using the formula:

M = log₂(λ/(1-λ)) + log₂(m/u)

This formula can be extended to multiple independent fields, where the total match weight becomes the sum of the prior match weight and the partial match weights for each field [28] [29]. The match probability is then calculated as:

Pr(Match | Observation) = 2^M / (1 + 2^M)

This mathematical framework enables the Fellegi-Sunter model to make nuanced linkage decisions based on the cumulative evidence across multiple fields, properly accounting for both the quality and discriminating power of each identifier [28] [29].

Workflow and Computational Process

The operationalization of the Fellegi-Sunter model follows a structured workflow that transforms raw record comparisons into match predictions. The process begins with the comparison of each record in one dataset with all potential matching records in the other dataset, though in practice, blocking methods are employed to reduce the computational burden by limiting comparisons to records that share common characteristics on blocking variables [30].

For each record pair comparison, the model evaluates the agreement pattern across predetermined matching variables and assigns a "comparison vector value" (γ) that encodes which specific agreement scenario is activated for each field [30]. These scenarios may include exact matches, fuzzy matches, or non-matches, with each scenario having associated m and u probabilities predetermined for the linkage project. The comparison vector effectively transforms qualitative agreement patterns into a quantitative representation that can be processed mathematically [30].

The model then looks up the partial match weights corresponding to each activated scenario in the comparison vector. The partial match weight for each field is calculated as log₂(m/u), representing the evidence contributed by that specific field's agreement pattern [30] [28]. The final match weight is computed by summing all partial match weights along with the prior match weight, which represents the baseline odds of a match before considering any field comparisons [28].

This computational process culminates in the conversion of the total match weight into a match probability, which provides an intuitive measure of similarity that researchers can use to classify record pairs as matches, non-matches, or potential matches requiring manual review [30] [28]. The entire process is illustrated in the following workflow diagram:

Figure 1: Fellegi-Sunter Model Computational Workflow

Experimental Comparisons and Performance Data

Methodological Protocols for Comparative Studies

Rigorous evaluation of record linkage methodologies requires carefully designed experiments that quantify performance across varying data conditions. One comprehensive simulation study created multiple datasets by systematically varying two critical factors: the frequency of registration errors and the discriminating power of the linking variables [26]. This approach generated a range of realistic linking scenarios, each consisting of four linking variables with specified possible values, underlying distributions, and proportions of incorrect values. The study compared three linkage strategies: deterministic "full" (requiring agreement on all variables), deterministic "N-1" (tolerating one disagreement), and probabilistic linkage using the Fellegi-Sunter model [26].

In another investigation focused on healthcare data linkage, researchers evaluated the performance of probabilistic linkage in connecting ART information with vital records in Massachusetts [25]. This study employed Link Plus software without access to direct identifiers, using maternal and infant dates of birth and plurality as primary linking variables, with ancillary variables such as maternal ZIP code and gravidity helping to resolve duplicate matches. The probabilistic approach was validated against a reference standard created using enhanced probabilistic matching with additional clinical and demographic information [25].

A separate evaluation of hospital episode statistics in England implemented a probabilistic step to complement existing deterministic algorithms [27]. This study specified m probabilities for various identifiers based on preliminary analyses of agreement patterns in the reference standard dataset: date of birth components (day: 0.95, month: 0.94, year: 0.91), sex (0.9), NHS number (0.9), local ID within provider (0.62), and postcode (0.68). The u probabilities were estimated based on the chance of random agreement: sex (0.5), date components (day: 0.032, month: 0.083, year: 0.05), and identifying fields (NHS number: 0.00001, local ID: 0.00002, postcode: 0.00001) [27].

Quantitative Performance Results

Comparative studies consistently demonstrate the performance advantages of probabilistic linkage methods across diverse data conditions. The simulation study examining error rates and discriminating power found that the full deterministic strategy produced the lowest number of false positive links but at the expense of missing considerable numbers of matches, with the false nonlink rate directly dependent on the error rate of the linking variables [26]. The probabilistic strategy outperformed both deterministic approaches across all scenarios, with a deterministic strategy matching probabilistic performance only when researchers correctly predetermined which disagreements to tolerate—information that probabilistic methods inherently generate from the data [26].

In the evaluation of hospital episode statistics linkage, the addition of a probabilistic step to the existing deterministic algorithm substantially reduced missed matches, with improvement observed over time (from 8.6% in 1998 to 0.4% in 2015) [27]. The study also identified important disparities in linkage accuracy, with missed matches more common for ethnic minorities, those living in areas of high socio-economic deprivation, foreign patients, and those with "no fixed abode." These systematic biases translated to biased estimates of readmission rates, which were reduced for nearly all patient groups with the enhanced probabilistic approach [27].

The Massachusetts ART linkage study demonstrated that probabilistic methods could achieve high linkage rates (87.8% of 6,139 deliveries) while correctly identifying 96.4% of matches previously obtained using deterministic linkage methods with direct identifiers [25]. This performance highlights the practical utility of probabilistic linkage for sensitive health applications where direct identifiers may be unavailable for privacy reasons.

Table 1: Comparative Performance of Linkage Methods Across Studies

| Study Context | Deterministic Approach Limitations | Probabilistic Approach Advantages | Key Performance Metrics |

|---|---|---|---|

| Simulation Study [26] | High false nonlink rates (330 of 4,000 matches missed in basic scenario) | Outperformed deterministic across all scenarios | Lower false links and false nonlinks across varying error rates and discriminating power |

| Hospital Episode Statistics [27] | Missed matches more common for vulnerable populations (ethnic minorities, high deprivation) | Reduced missed matches (8.6% to 0.4% over time) and reduced bias | More accurate readmission rate estimates across patient groups |

| ART-Vital Records Linkage [25] | Limited to exact matches on direct identifiers | High linkage rate without direct identifiers | 87.8% linkage rate, 96.4% concordance with deterministic using identifiers |

Advanced Enhancements to the Fellegi-Sunter Model

Recent methodological advancements have extended the capabilities of the Fellegi-Sunter model to address specific limitations. One significant enhancement incorporates frequency-based matching that accounts for the varying discriminating power of different field values [24]. The standard Fellegi-Sunter model assigns identical weights for agreements on common and rare values (e.g., "Smith" vs. "Harezlak" for last names), despite agreement on rare values providing stronger evidence for a match. Frequency-based matching adjusts weights so that rare values receive higher weights for agreement and common values receive lower weights, better reflecting their true discriminating power [24].

Another extension incorporates approximate field comparators into the weight calculation process, moving beyond simple binary agreement-disagreement patterns [31]. This enhancement allows for more nuanced similarity assessments using fuzzy matching algorithms for text fields and numeric similarity measures for continuous variables. In a case study using data from a large academic medical center, the approximate comparator extension misclassified 25% fewer record pairs than the standard Fellegi-Sunter method across different demographic field sets and matching cutoffs [31].

These methodological refinements demonstrate the continuing evolution of probabilistic linkage methods to address complex real-world data challenges while maintaining the theoretical rigor of the Fellegi-Sunter foundation.

Practical Implementation Guide

Research Reagent Solutions for Linkage Projects

Implementing a robust probabilistic linkage system requires both methodological expertise and appropriate technical components. The following table outlines essential "research reagents" for establishing a Fellegi-Sunter linkage framework:

Table 2: Essential Components for Fellegi-Sunter Implementation

| Component | Function | Implementation Considerations |

|---|---|---|

| Blocking Scheme | Reduces computational burden by limiting comparisons to record pairs sharing blocking variable values | Common blocking variables: date of birth components, geographic codes, name soundex codes [24] [30] |